Cisco Al POD for Enterprise Training and Fine-Tuning with Everpure Deployment Guide

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

Published: March 2026

In partnership with:

![]()

![]()

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to: http://www.cisco.com/go/designzone.

Executive Summary

This document provides prescriptive step-by-step procedures for deploying a Cisco AI POD solution with Everpure FlashBlade//S for enterprise training and fine-tuning. The solution is based on one of several design options presented in the Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide. The implementation details enable infrastructure engineers and AI/ML practitioners to quickly build, configure, and operationalize a high-performance AI cluster.

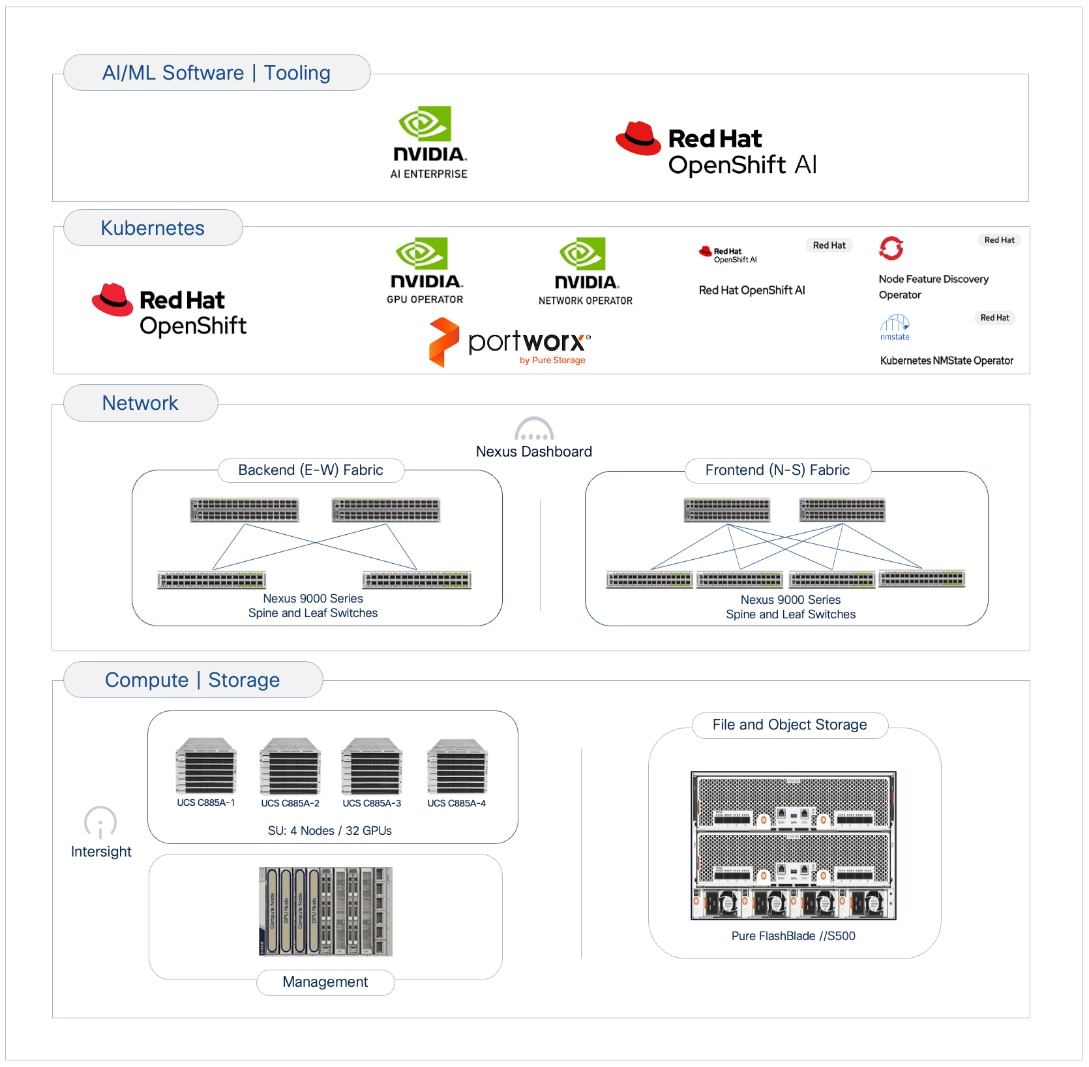

Cisco AI PODs are modular, validated infrastructure solutions that are designed to meet enterprise AI infrastructure requirements. These solutions integrate dense GPU platforms, high-performance networking, storage, enterprise-class Kubernetes, and MLOps to deliver a complete stack for enterprise AI initiatives. The architecture takes a building-block approach using Scale Unit Types, enabling organizations to start with deployments of 32-, 64-, or 128-GPU clusters. These foundational building blocks can then scaled incrementally and predictably to support 256, 512, or higher GPU clusters as requirements evolve.

This solution was built and validated in Cisco labs and consists of Cisco UCS C-Series GPU servers, specifically Cisco UCS C885A M8 servers, in a dual-fabric architecture. The designs include a backend (East-West) fabric optimized for low-latency GPU-to-GPU communication and a frontend (North-South) fabric for cluster management, services, storage I/O, and other connectivity. Both fabrics are deployed using pre-built, best-practice templates available in Cisco Nexus Dashboard. The solution also includes a Cisco UCS X-Series Direct-based cluster for management and other services, provisioned and managed using Cisco Intersight in the cloud.

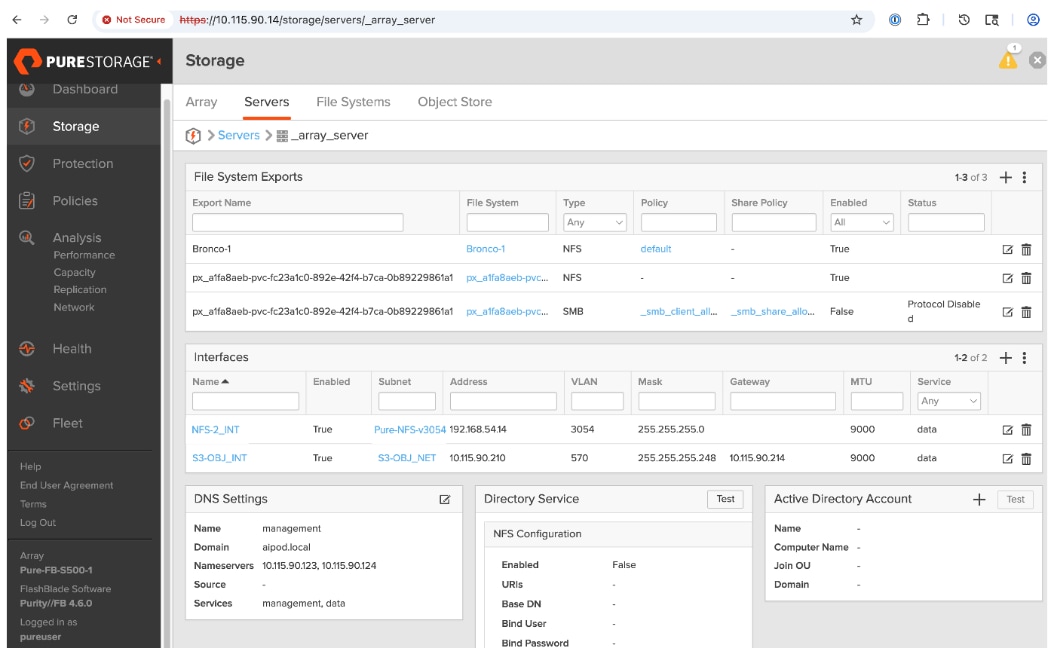

Storage is provided by Everpure FlashBlade//S, an all-flash, scale-out, file and object platform. The procedures detail the configuration of FlashBlade to support the AI data pipeline using both NFS-based file services for active training datasets and S3-compatible object storage to serve as a data and model repository. Portworx by Everpure, backed by NFS storage on Pure FlashBlade//S, is also included in the solution to provide persistent data services to containers natively in Kubernetes. This multi-protocol approach ensures the storage layer supports the entire data lifecycle, from initial ingestion to long-term archiving.

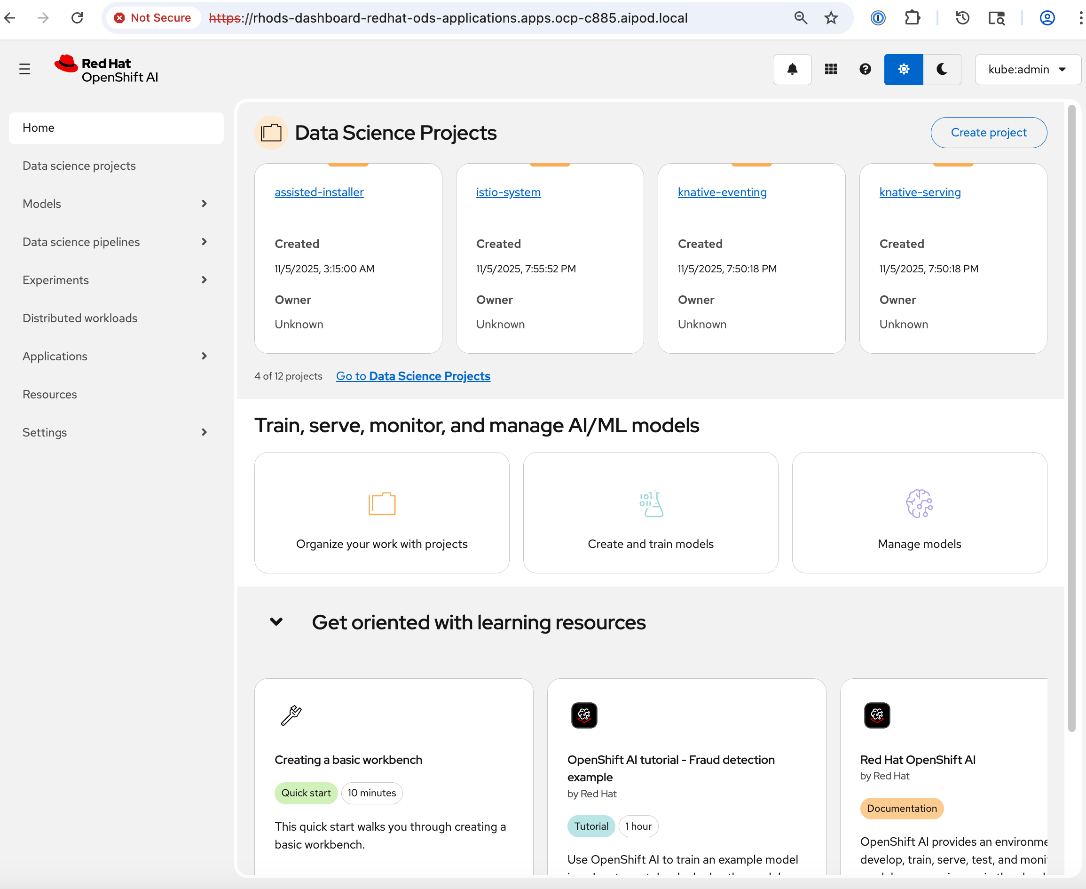

The solution supports both Linux and Kubernetes software stacks but was validated with Red Hat OpenShift to provide customers with a consistent, enterprise-class software stack for training that aligns with AI inference and non-AI environments that may already be in place. For organizations requiring a comprehensive development environment to accelerate AI initiatives, the solution also includes Red Hat OpenShift AI as an MLOps platform to manage the lifecycle of AI initiatives, from model development to production deployment.

Centralized management is provided through Cisco Intersight and Nexus Dashboard, enabling automation and operational visibility. Intersight manages the complete lifecycle of the Cisco UCS X-Series management cluster while providing hardware visibility and monitoring for the UCS dense GPU nodes. The solution validation includes functional verification and platform-level validation using the NVIDIA Collective Communications Library (NCCL) to ensure the cluster performs as expected.

This deployment guide, along with the Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide and the associated GitHub repository, serves as the full set of deliverables for this AI POD CVD. To access all Cisco AI POD CVDs, navigate to: Cisco Validated Design Zone for AI-Ready Infrastructure.

Solution Overview

This chapter contains the following:

AI/ML is rapidly transforming enterprise organizations, driving a need for reliable, scalable, and secure AI-ready infrastructure. This guide provides detailed procedures for deploying a complete AI infrastructure stack for model training and fine-tuning in enterprise data centers.

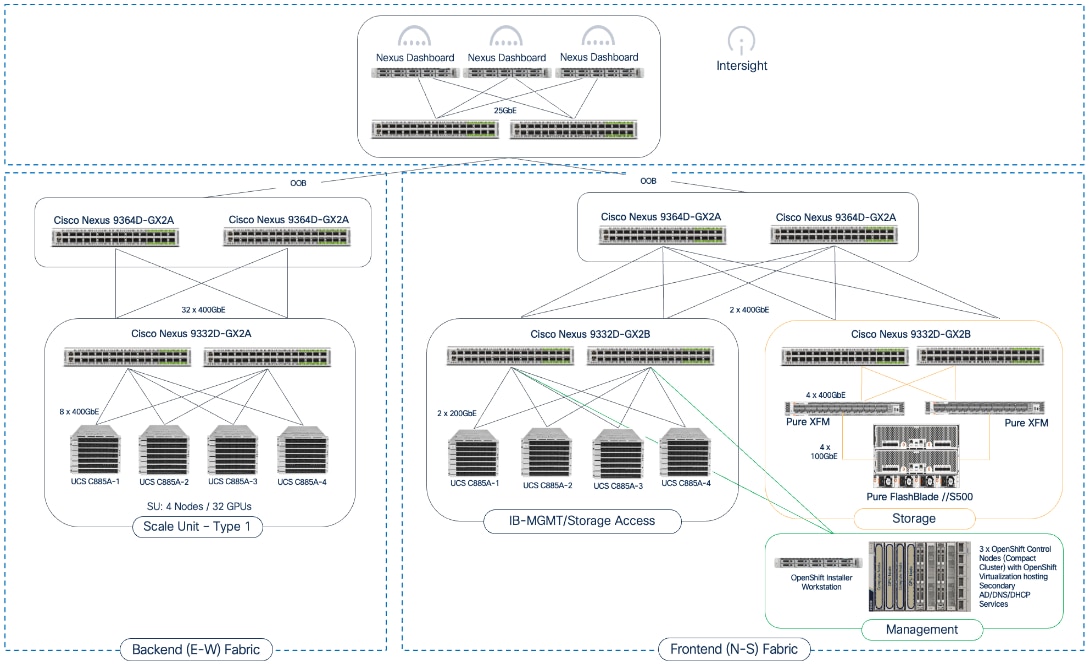

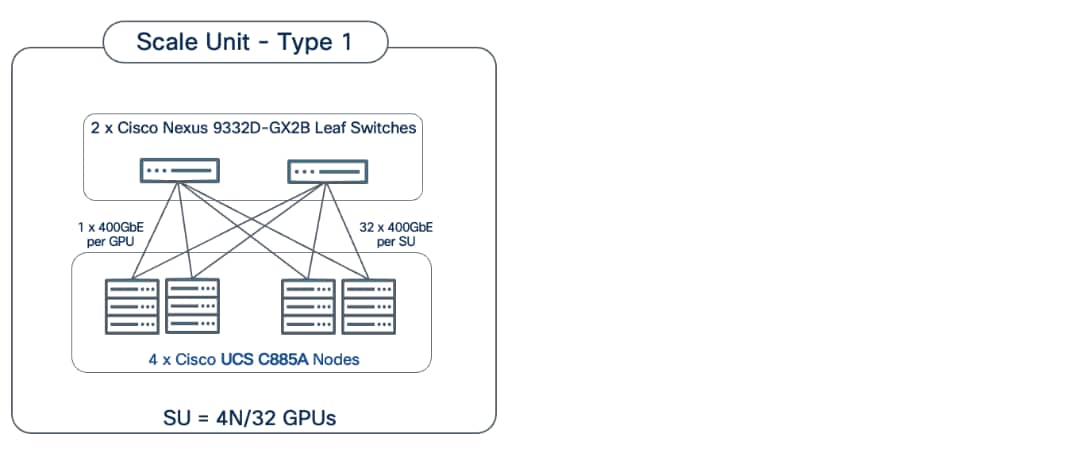

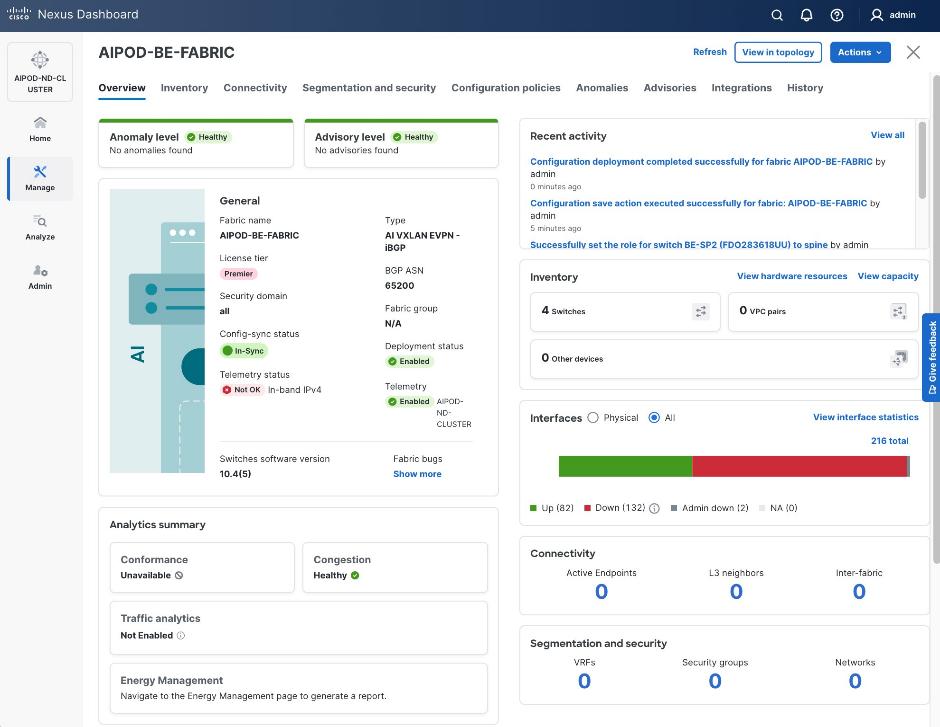

The solution in this guide consists of a 4-node, 32-GPU cluster utilizing Scale Unit – Type 1 as the foundational building block. This configuration was validated using Cisco UCS C885A M8 servers with NVIDIA H200 GPUs, connected to two network fabrics and integrated with high-performance file and object storage provided by Everpure FlashBlade//S. Cisco Nexus Dashboard (ND) is used to provision and manage the two fabrics (Backend/East-West, Frontend/North-South), built using Cisco Nexus 9000 Series switches.

This guide is for IT architects, infrastructure engineers, and AI/ML practitioners responsible for the deployment, configuration, and operation of AI/ML infrastructure in enterprise data centers. Basic understanding of Cisco UCS compute platforms, Cisco Nexus networking, enterprise storage concepts, and Kubernetes is assumed.

While the AI POD Design Guide details the architecture and design options, this CVD document provides the configuration procedures for building and operationalizing a specific Cisco AI POD design. By following the procedures in this document, engineering teams can build and quickly operationalize an AI cluster that aligns with established best practices.

The Cisco AI POD solution in this document is a fully integrated solution with high-density compute, high-performance networking, scale-out storage, and a robust software stack, designed for Enterprise Training and Fine-Tuning. This guide provides detailed implementation guidance for deploying a 32-GPU cluster and covers the configuration of compute, network, storage, and the software stack required to support distributed training and fine-tuning workloads. It also includes comprehensive validations to ensure that the integrated subsystems are functioning as expected. The integrated solution consists of the following components:

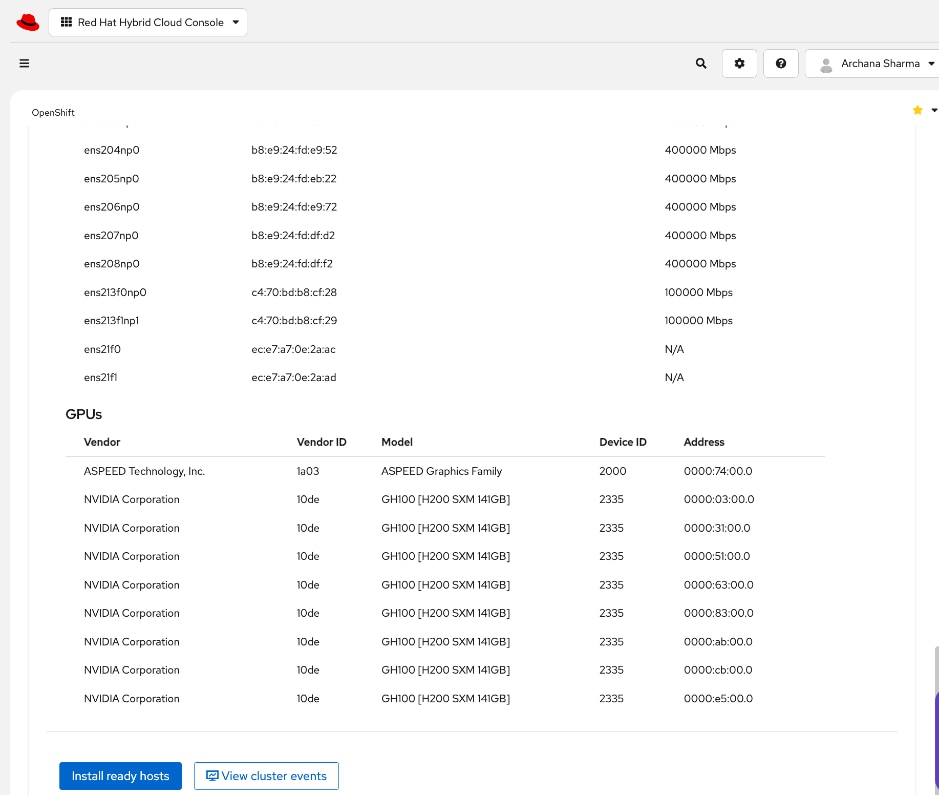

● Cisco UCS C885A M8 Servers: Four nodes, each equipped with eight NVIDIA H200 GPUs (SXM) and dual AMD EPYC processors. These servers provide the primary compute power for distributed training and fine-tuning. Within the server, GPUs are interconnected via NVIDIA NVLink, delivering 900 GB/s of bidirectional bandwidth per node.

● Cisco UCS X-Series Direct: A dedicated management cluster used to host the control plane and management services for the Red Hat OpenShift cluster with UCS C885A worker nodes.

● Network: Dual-fabric architecture (Backend and Frontend) utilizing Cisco Nexus 9000 Series switches, managed and deployed using Cisco Nexus Dashboard.

● Backend (East-West) Fabric: Four Cisco Nexus 9332D-GX2B switches connected in a two-tier spine-leaf Clos-based topology. This fabric provides a dedicated, non-blocking 400GbE environment for GPU-to-GPU communication via RoCEv2.

● Frontend (North-South) Fabric: Four Cisco Nexus 9332D-GX2B switches, two as compute + management leaf switches and two as dedicated storage leaf switches. This fabric provides connectivity for cluster management, storage I/O, and user access.

● Cisco Intersight: Provides hardware health monitoring and visibility for the Cisco UCS C885A M8 GPU nodes while managing the complete lifecycle of the Cisco UCS X-Series management cluster.

● Cisco Nexus Dashboard: Serves as the centralized automation and operations platform for the network fabrics in the solution, providing full life-cycle management and best-practice based templates to quickly deploy and stand up both the backend and frontend fabrics.

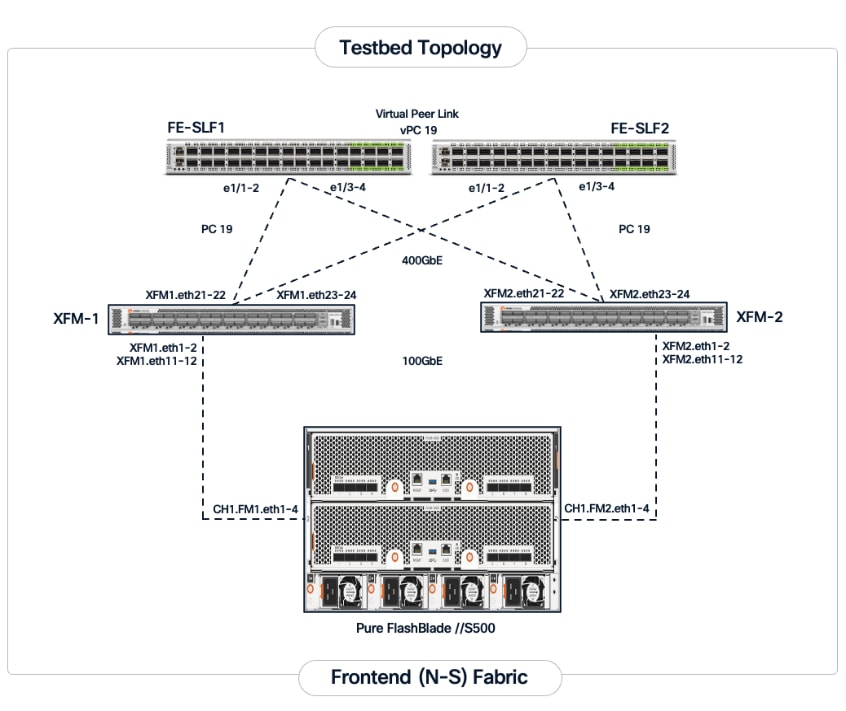

● Everpure FlashBlade//S500: An all-flash, scale-out, unified file and object storage platform providing up to 6PB of storage capacity per system. In this CVD, FlashBlade is configured to provide high-performance NFS file services for active training datasets and S3-compatible object storage for data ingestion and to serve as a model and pipeline-artifacts repository.

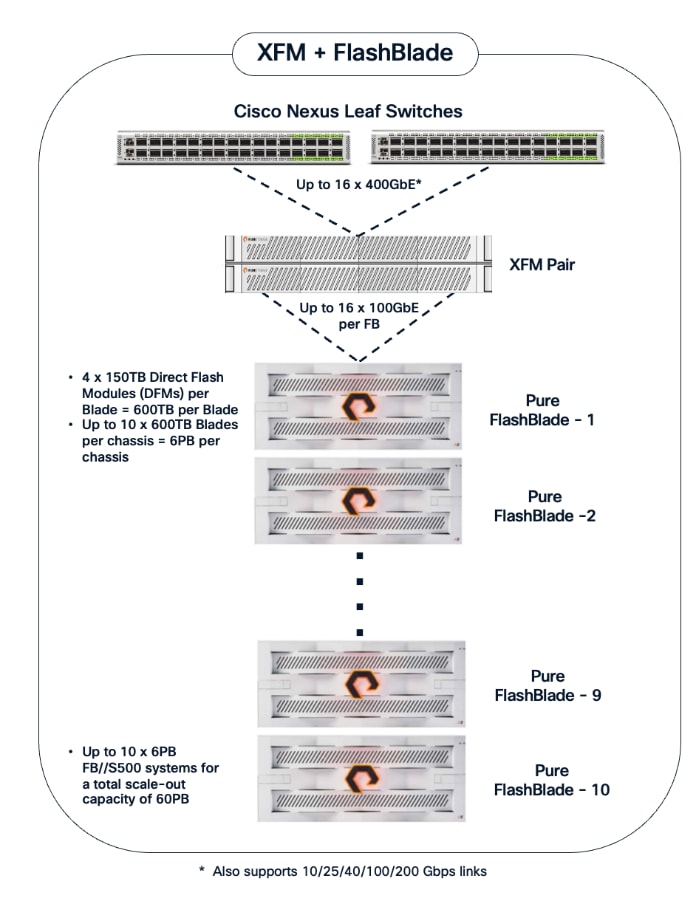

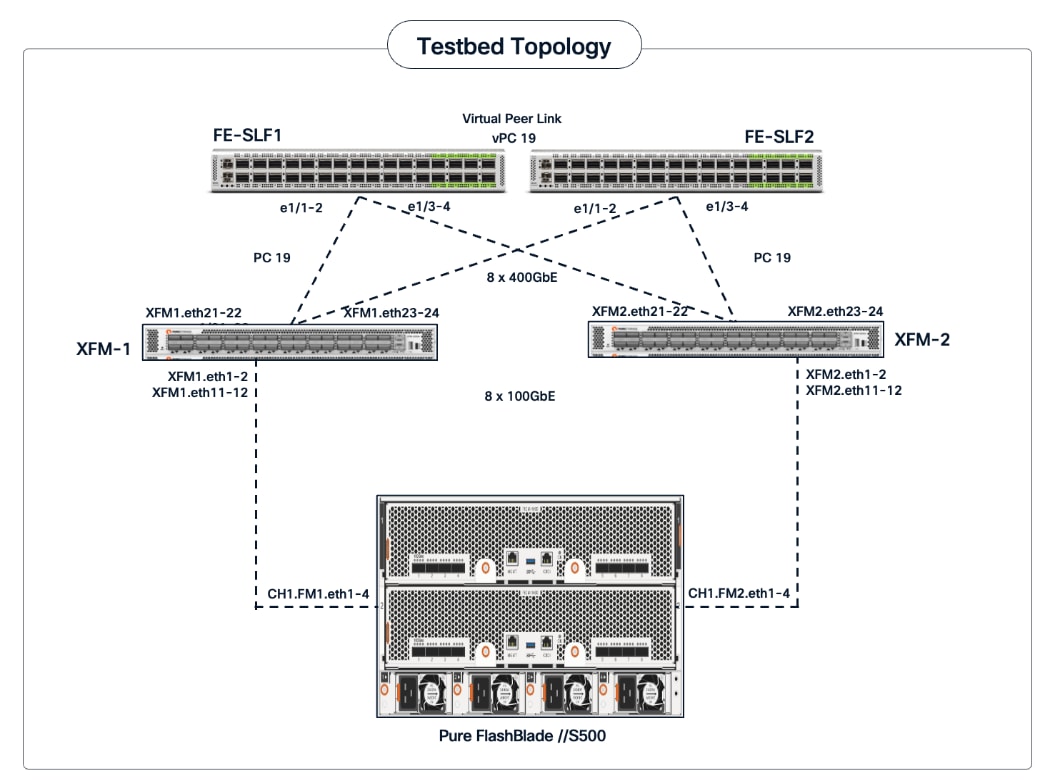

● Everpure eXternal Fabric Modules (XFM): A pair of 400GbE fabric modules is deployed as an aggregation layer to connect the FlashBlade to the Nexus storage leaf switches in the frontend fabric. XFM is Everpure's dedicated, multi-chassis fabric component for building scalable FlashBlade systems. The XFM pair provides a high-bandwidth internal network and Top-of-Rack (ToR) uplinks to enable a scale-out architecture capable of supporting up to 10 FlashBlade chassis and a total storage capacity of 60PB. The tight integration with Purity//FB enables the entire system to be managed as a single, unified system regardless of the number of chassis deployed.

● Portworx by Everpure: Kubernetes-native data platform that provides a complete container data management solution. It provides file services natively to Kubernetes containers for persistent storage, backup, disaster recovery and data security.

● Red Hat OpenShift: The solution is validated using Red Hat OpenShift Container Platform, providing a secure, enterprise-class Kubernetes-native environment for containerized AI workloads.

● NVIDIA AI Enterprise (NVAIE): A comprehensive suite of AI software that includes optimized drivers, CUDA libraries, and the NVIDIA Collective Communications Library (NCCL) required for performant distributed training.

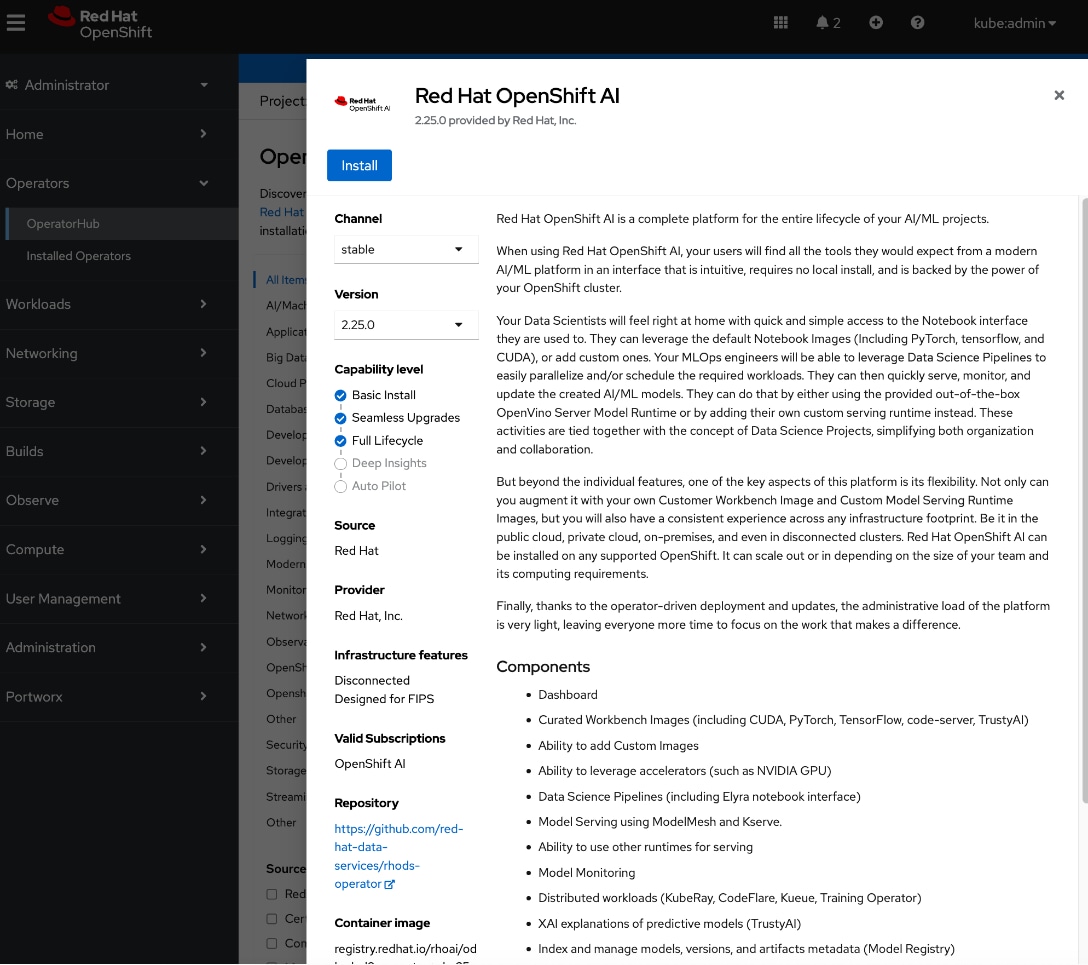

● Red Hat OpenShift AI: Integrated to provide a collaborative development environment. It enables data scientists to manage the entire lifecycle of AI initiatives, from data preparation and model development to pipeline orchestration.

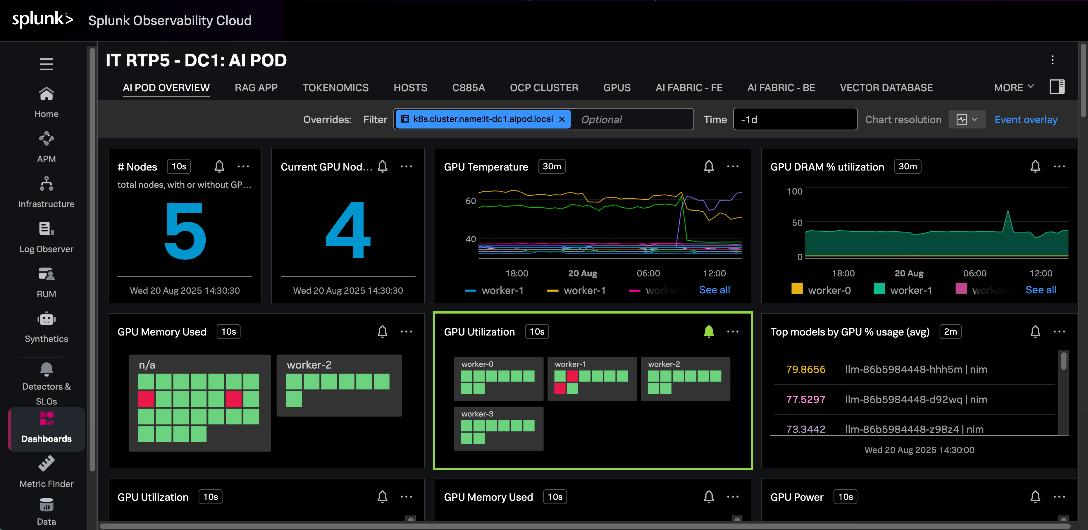

● Splunk Observability Cloud: Delivers full-stack visibility by correlating metrics and telemetry across the compute, network, and storage layers to accelerate troubleshooting.

Solution Design

This chapter describes the specific infrastructure design that was built and validated in Cisco labs to provide a high-performance environment for enterprise model training and fine-tuning.

This chapter contains the following:

The Cisco AI POD architecture is a modular, building-block design using Scale Unit Types that can be predictably and incrementally scaled to support large GPU clusters as described in the Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide. Figure 1 illustrates the logical infrastructure stack, highlighting the key components and layers integrated and validated in this solution.

This section provides the specific hardware and software components used in this deployment.

| Component (PID) |

Quantity |

Notes |

| UCS GPU Cluster |

||

| Cisco UCS C885A M8 Servers |

4 Nodes |

|

| NVIDIA H200 SXM5 GPUs |

32 GPUs (total), 8 GPUs per server |

141GB of HBM3e memory each |

| NVIDIA ConnectX-7 NICs |

8 NICs per server |

1x 400GbE NIC > backend fabric |

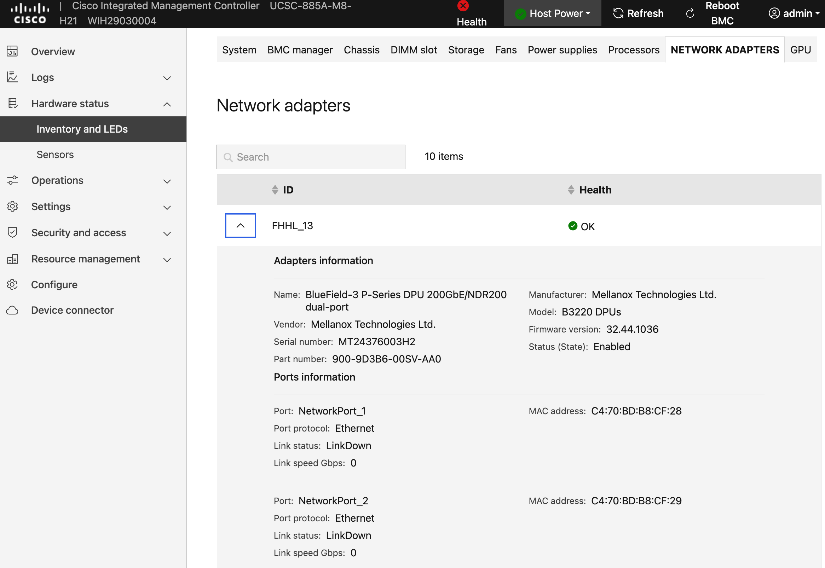

| NVIDIA BlueField-3 NICs |

1 NIC per server |

2x 200GbE NIC > frontend fabric |

| Backend Fabric |

||

| Cisco Nexus 9332D-GX2B |

2 Spine, 2 Leaf Switches |

400GbE fabric |

| Frontend Fabric |

||

| Cisco Nexus 9364D-GX2A |

2 Spine Switches |

400GbE from Spine to Leaf |

| Cisco Nexus 9332D-GX2B |

2 Compute, 2 Storage Leaf Switches |

200GbE to UCS, 400GbE to storage |

| UCS Management Cluster |

||

| Cisco UCS X-Series Direct |

|

|

| UCS X9508 Chassis (UCSX-9508) |

1 |

|

| UCS X Direct 100G (UCSX-S9108-100G) |

2 |

|

| UCS X210c M7 Servers (UCSX-210C-M7) |

3 OpenShift Control Nodes |

|

| VIC 15231 MLOM (UCSX-ML-V5D200G) |

3 (2x100G mLOM) |

To connect to frontend fabric |

| Storage - Unified File and Object |

||

| Everpure FlashBlade//S500 |

1 |

|

| Everpure XFM-8400R2 |

2 |

Fabric modules providing 400GbE aggregation |

| Software |

||

| Red Hat OpenShift |

|

Workload Orchestrator |

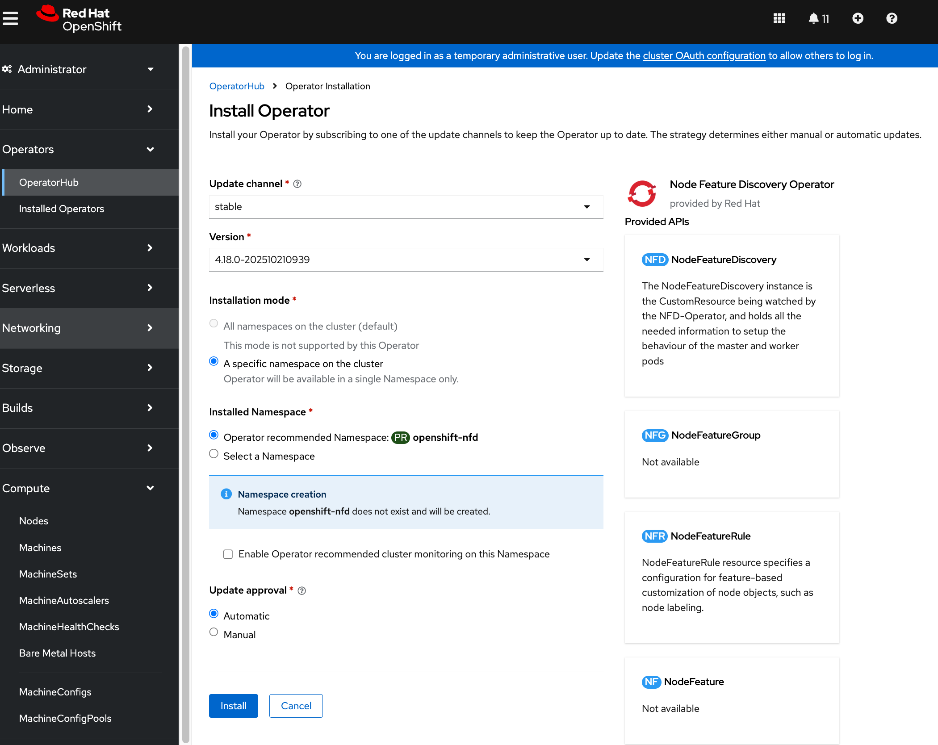

| Red Hat NFD Operator |

N/A |

|

| NVIDIA GPU Operator |

N/A |

|

| NVIDIA Network Operator |

N/A |

|

| Portworx Enterprise (Operator) |

N/A |

Portworx by Everpure |

| Red Hat NMState Operator |

N/A |

|

| Red Hat OpenShift AI Operator |

N/A |

|

| NVIDIA AI Enterprise (NVAIE) |

N/A |

|

| Red Hat OpenShift AI |

N/A |

MLOps Platform |

| Cisco Nexus Dashboard |

3 |

3-node physical cluster |

| Cisco Intersight |

N/A |

SaaS platform |

| Splunk Observability Cloud |

N/A |

SaaS platform |

The solution architecture consists of two independent fabrics, the backend and frontend, implemented as a two-tier spine-leaf (Clos) topology to ensure predictable, low-latency communication across the cluster. Both fabrics utilize an MP-BGP VXLAN EVPN architecture, providing flexible Layer 2 and Layer 3 overlays across a high-performance IP underlay.

The fabrics are centrally managed by Cisco Nexus Dashboard, while Cisco Intersight is used for the Cisco UCS compute servers. Figure 2 illustrates the end-to-end solution topology that was built and validated in Cisco labs.

The key building blocks of this AI POD solution are:

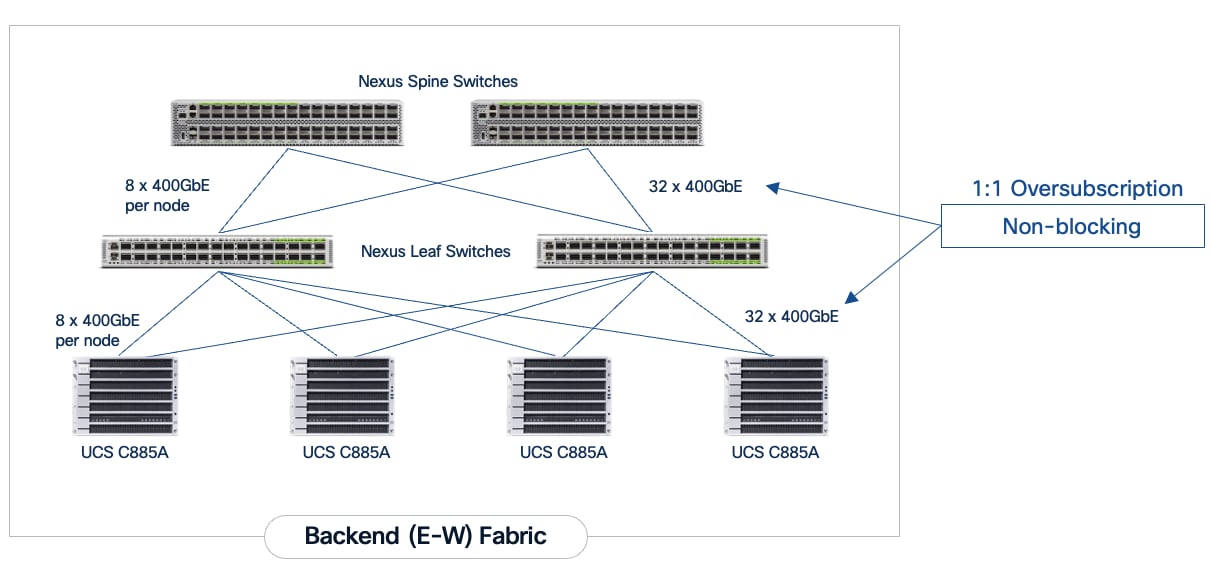

Backend (East-West) Fabric: A dedicated, non-blocking 400GbE fabric utilizing a spine-leaf Clos topology and optimized for inter-node GPU-to-GPU communication. For this CVD, the fabric was built using two Nexus spine switches and two leaf switches. This fabric can be scaled to support a 128-GPU cluster by adding leaf pairs, and further expansion by adding both spine and leaf pairs.

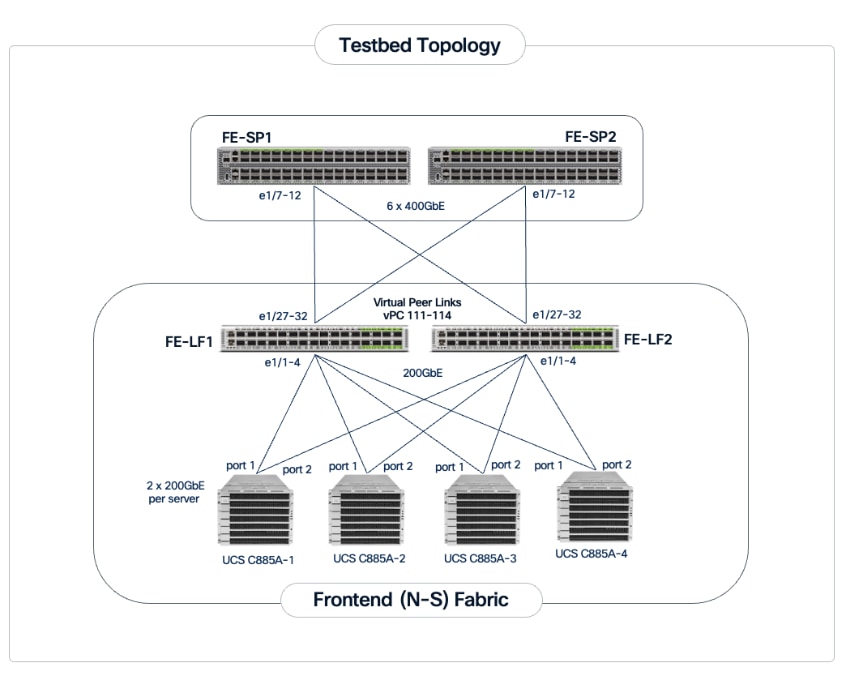

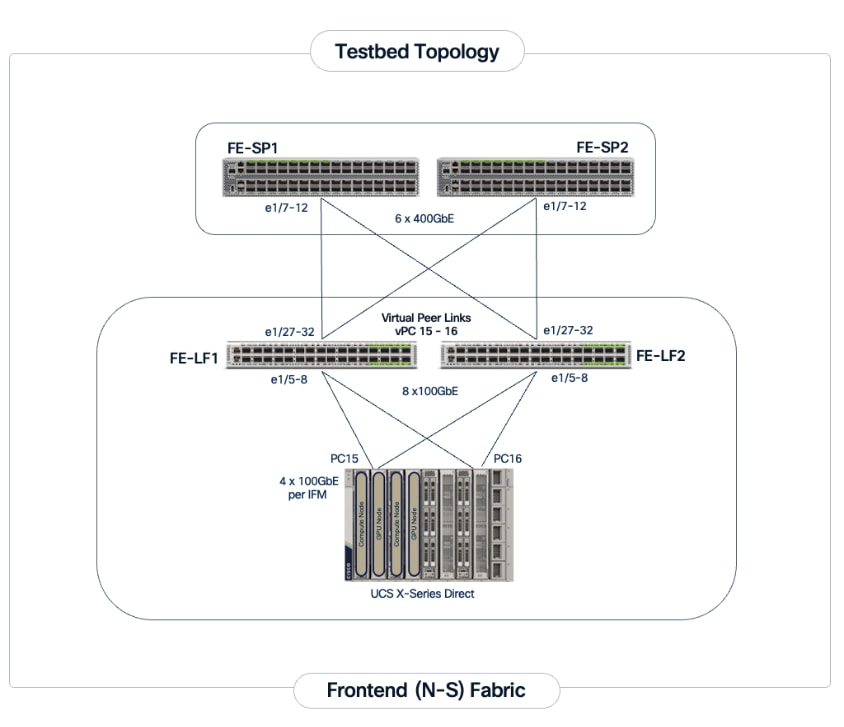

Frontend (North-South) Fabric: A 400GbE-capable spine-leaf fabric providing connectivity for all other services, including management, user access, storage, and other services. In this design, the fabric consists of two spine switches and four leaf switches as outlined below. This fabric can also be scaled as needed by adding or upgrading links or adding switch pairs.

● Compute/Management Leaf Pair: Provides 200GbE connectivity for Cisco UCS C885A nodes and 100GbE connectivity for the Cisco UCS X-Series Direct management cluster, utilizing multiple links in each case.

● Dedicated Storage Leaf Pair: Provides 400GbE connectivity to Everpure XFMs that connect to the FlashBlade//S storage in the solution. Multiple links are used to connect the leaf switches to the storage subsystem. This architecture enables storage to scale as GPU clusters expand to support enterprise needs.

The frontend fabric can also be scaled by adding switch pairs or by upgrading to higher-bandwidth links.

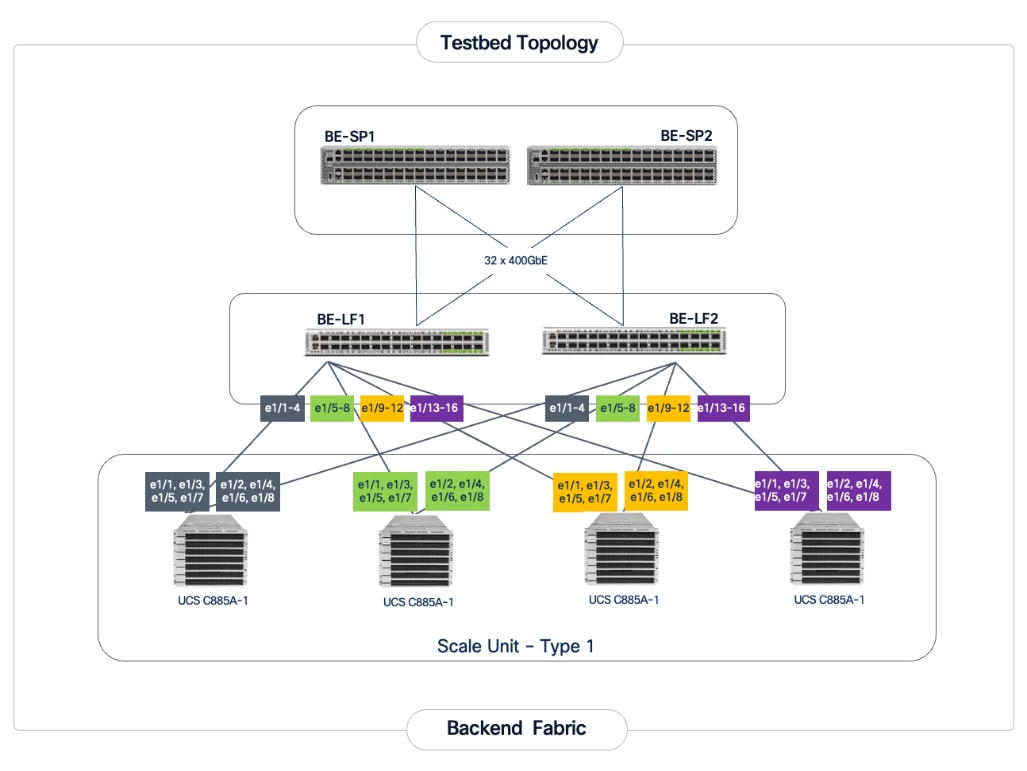

Scale Unit Types: The implementation in this CVD is based on Scale Unit – Type 1 (Figure 3), providing a 32-GPU cluster utilizing Cisco UCS C885A M8 servers with H200 GPUs, Cisco Nexus 9000 Series switches, Everpure FlashBlade//S, and Red Hat OpenShift integrated to deliver a unified infrastructure stack. To form this 32-GPU cluster, four Cisco UCS C885A M8 servers are connected to two 32-port, 400GbE Nexus 9332D-GX2B leaf switches in the backend fabric. Each server connects to the backend and frontend fabrics using eight East-West (E-W) NICs and one North-South (N-S) NIC, respectively.

This design can scale to a 128-GPU cluster by adding scale units of the same type, and to larger cluster sizes by adding both spine pairs and scale units. Note that scale unit types do not need to remain uniform during expansion, provided the backend fabric remains non-blocking and the switches have sufficient port density to support the configuration.

The physical connectivity of the AI POD is designed to maximize throughput and minimize latency while maintaining architectural predictability and consistency as the cluster scales to support evolving needs. This section details the cabling and connectivity implemented in this design.

Backend (East-West) Connectivity

The backend fabric is engineered to provide non-blocking connectivity between GPU servers in the cluster. This is achieved by ensuring that the number of uplinks from leaf-to-spine are equal in number and bandwidth to the number of downlinks from leaf-to-UCS server. As illustrated in Figure 4, the total number of 400GbE host-facing ports on the leaf switches (32 ports across 4 nodes) is matched by an equal number of 400GbE uplinks to the spine layer, ensuring that GPU synchronization traffic does not encounter oversubscription issues due to a lack of bandwidth.

Each Cisco UCS C885A node is connected to the two leaf switches in the fabric using a 2-way rail-optimized topology. To achieve this, the eight 400GbE connections from each server are distributed across the two leaf switches in the Scale Unit – Type 1. This ensures that GPUs of the same rank, across all nodes in the Scale Unit, connect to the same physical leaf switch, minimizing the network hops required for critical collective operations.

Table 2. Backend Fabric Connectivity

| From |

GPU NICs |

To |

Port Speed |

Connectivity |

| UCS C885A (1-4) |

NICs 1, 3, 5, 7 |

Leaf Switch 1 |

400GbE |

Access VLAN |

| UCS C885A (1-4) |

NICs 2, 4, 6, 8 |

Leaf Switch 2 |

400GbE |

Access VLAN |

| Leaf Switch 1 |

16 x Uplinks – evenly distributed across Spines |

Spine Switch 1-2 |

400GbE |

Routed |

| Leaf Switch 2 |

16 x Uplinks – evenly distributed across Spines |

Spine Switch 1-2 |

400GbE |

Routed |

Each Cisco UCS C885A server is equipped with eight NVIDIA ConnectX-7 (1 x 400GbE) NICs, one per GPU to connect to the backend fabric. NVIDIA BlueField-3 NICs can also be used for East-West connectivity.

Figure 5 illustrates the backend topology used to validate this solution.

Frontend (North-South) Connectivity

The frontend fabric provides connectivity for cluster management, storage, services, users, and other networks, both inside and outside the enterprise. The frontend fabric utilizes link aggregation and 802.1Q trunking to provide a resilient, high-bandwidth path for all traffic. Table 3 lists the endpoint connectivity used in the frontend fabric.

Table 3. Frontend Fabric Connectivity

| From |

To |

Connectivity |

Traffic Type |

| UCS C885A Nodes |

Compute Leaf Pair |

2-Port LACP Bond |

VLAN Trunk |

| UCS X-Series Direct |

Compute Leaf Pair |

Multi-Port LACP |

VLAN Trunk (Management/Control) Plane) |

| Everpure XFMs |

Storage Leaf Pair |

Multi-Port LACP Bond |

VLAN Trunk |

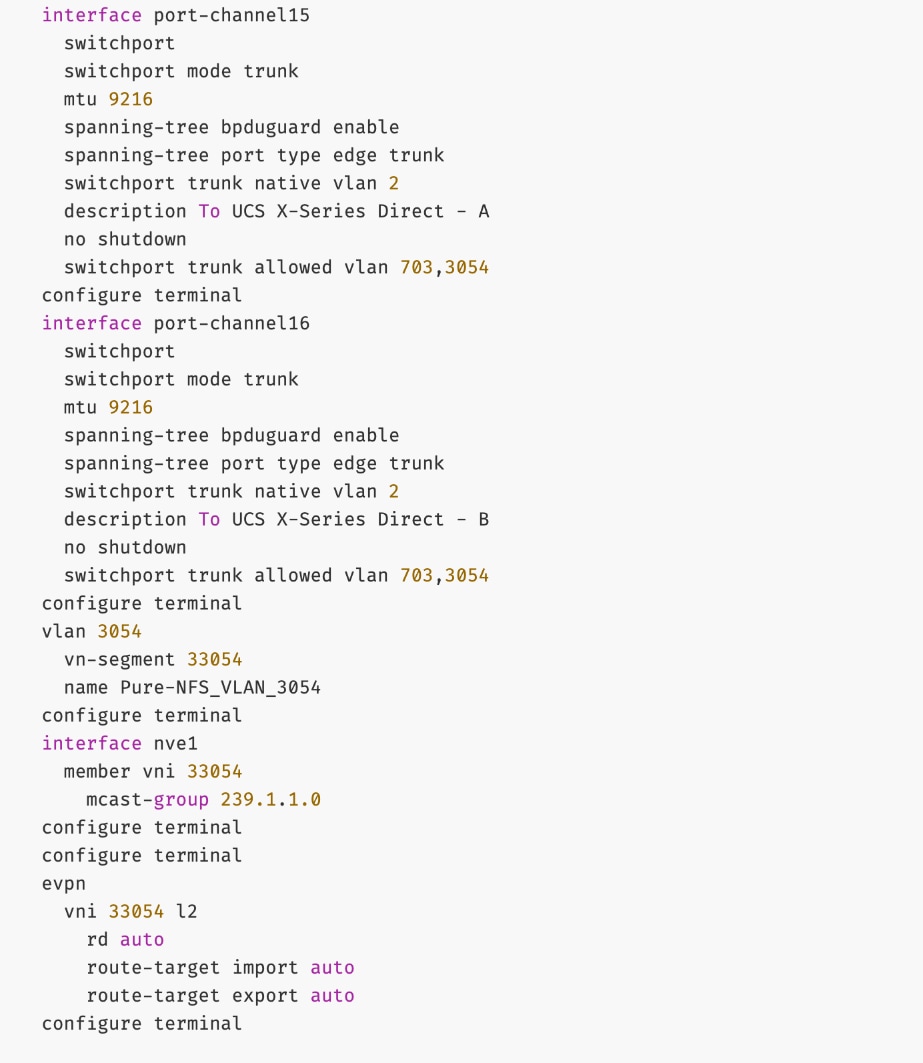

Each Cisco UCS C885A server is equipped with one NVIDIA BlueField-3 B3220 (2 x 200GbE) NIC for connecting to the frontend fabric. The two ports on the frontend NIC are bundled into an LACP port channel. This bond is configured as a VLAN trunk, allowing management and storage traffic to share the high-speed path while remaining logically isolated. Additional NICs can be added as needed to meet evolving requirements. The detailed connectivity from Cisco UCS C885A nodes to the compute/management leaf pair is shown in Figure 6.

The Cisco UCS X-Series management cluster connects to the same compute leaf switches using multiple 100GbE links (Figure 7). The frontend fabric also provides connectivity to Cisco Intersight in the cloud, which is used to deploy and manage this environment.

For a high-bandwidth connectivity to Everpure FlashBlade//S storage, Everpure XFMs are deployed as a storage aggregation layer to connect to a pair of dedicated storage leaf switches. The Everpure XFMs use a LACP port channel to connect to the storage leaf switches, with multiple 400GbE links to support concurrent, high-bandwidth access to NFS and S3-compatible object storage. The detailed connectivity from Pure FlashBlade to the storage leaf pair is shown in Figure 8.

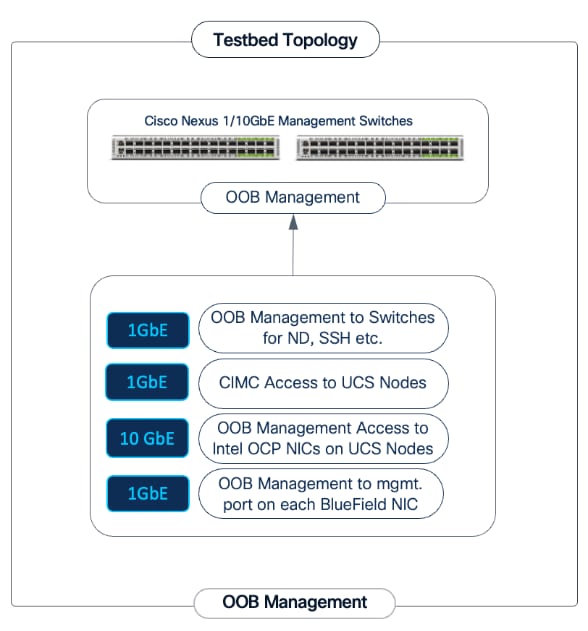

Out-of-Band Management Network

A dedicated 1GbE/10GbE Out-of-Band (OOB) management network is deployed to connect to all devices in the solution. This ensures that administrators have backup access to server consoles, management ports on NVIDIA BlueField-3 NICs, Nexus management ports, and storage controllers, independent of the state of the high-speed data fabrics. This network is also used for the initial provisioning of Cisco UCS C885A servers (via Cisco BMC, Redfish). Cisco provides a 48 port 10GBASE-T switch (Cisco Nexus 93108TC-FX3) that can be used for this network.

This section outlines the design of the compute, network, storage, and other subsystems in the validated AI POD solution.

Cisco Nexus Dashboard and Cisco Intersight are used to provide unified management for the network and compute infrastructure, respectively. A three-node Nexus Dashboard cluster is deployed on dedicated servers within the enterprise network. This cluster must be operational before the backend and frontend fabrics can be deployed.

Backend (East-West) Fabric

The backend fabric is a lossless, low-latency, high-throughput ethernet fabric, designed to support the stringent performance requirements of GPU-to-GPU RDMA communication. This fabric is exclusively for inter-node RDMA over Ethernet (RoCEv2) GPU communication. As stated earlier, the fabric is deployed as a two-tier spine-leaf Clos topology using a MP-BGP VXLAN EVPN architecture, which provides a multi-tenant environment with flexible support for both scalable Layer 2 and Layer 3 overlays across an IP underlay.

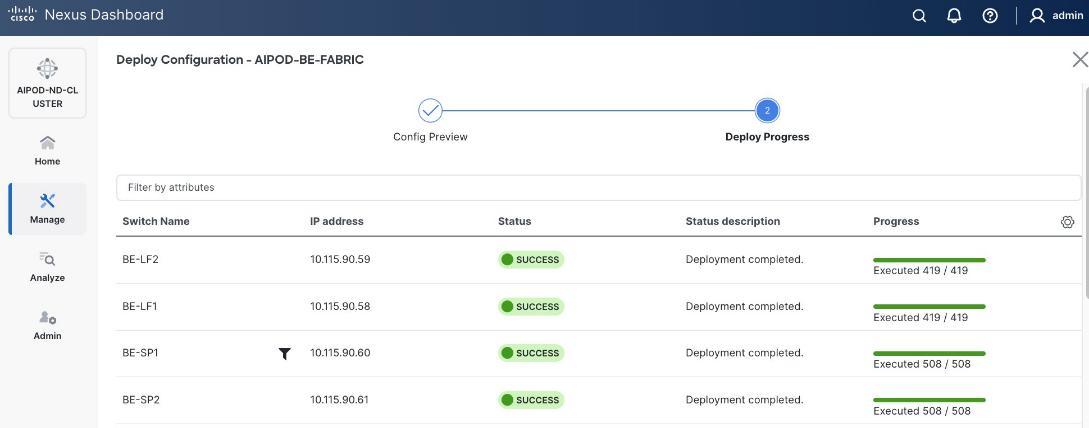

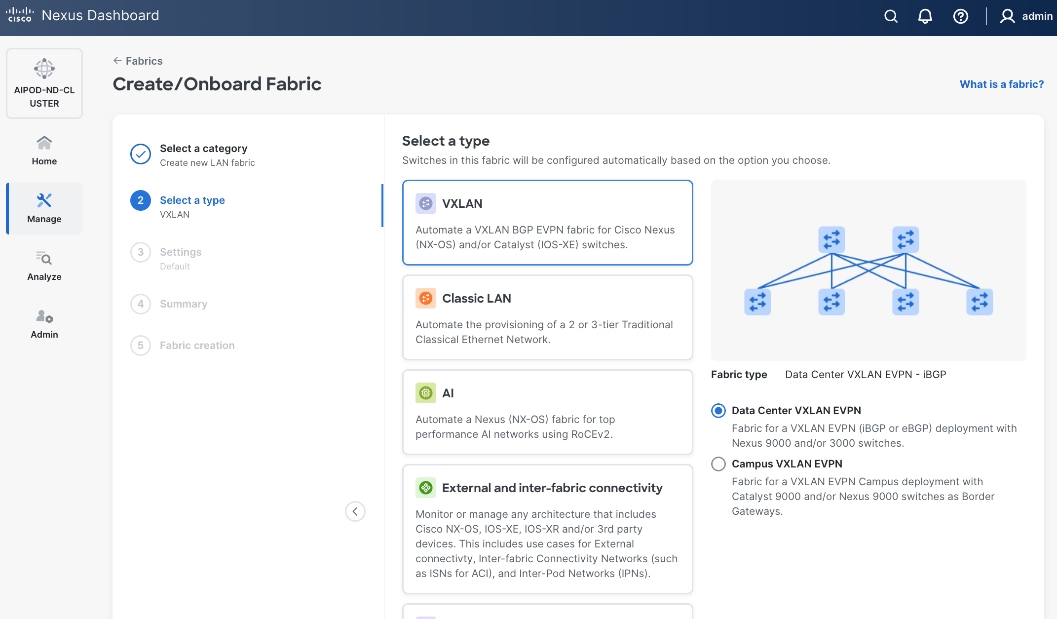

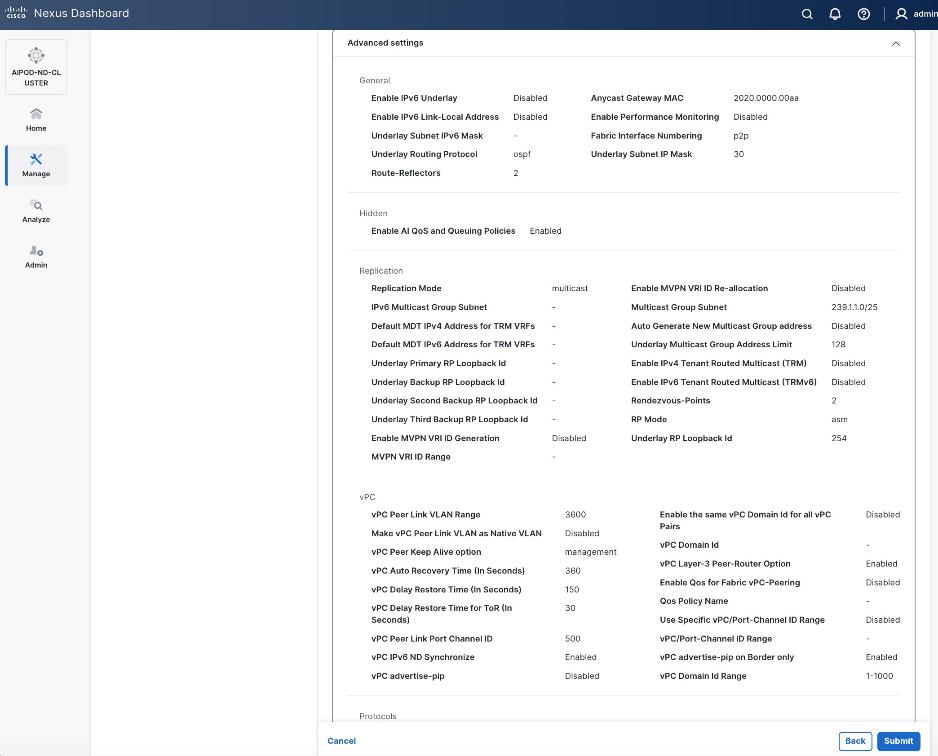

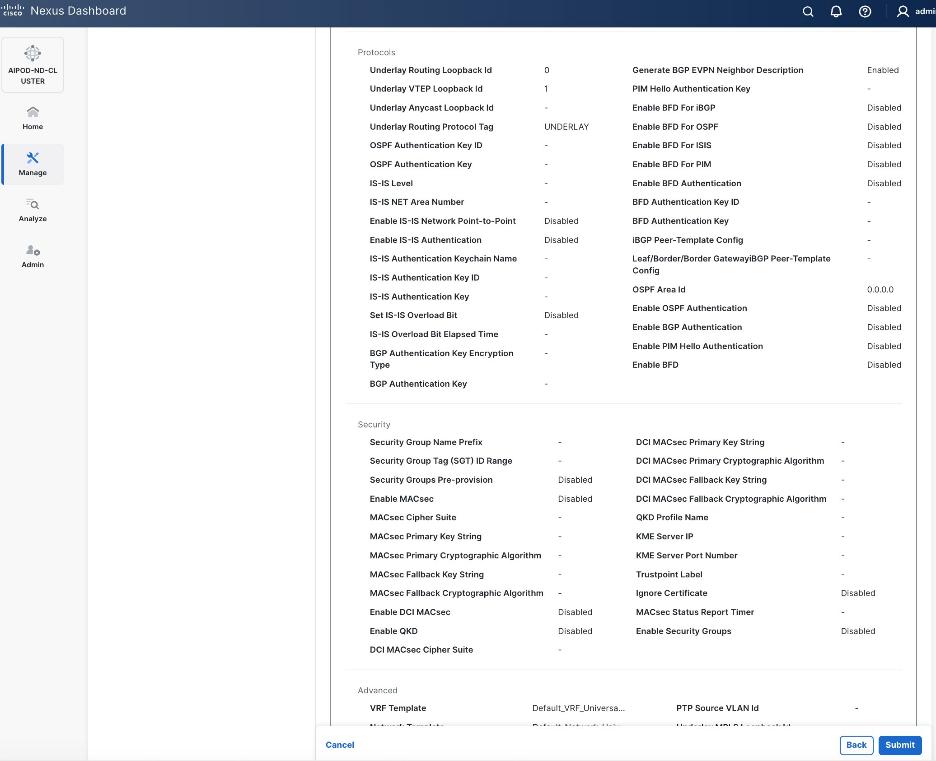

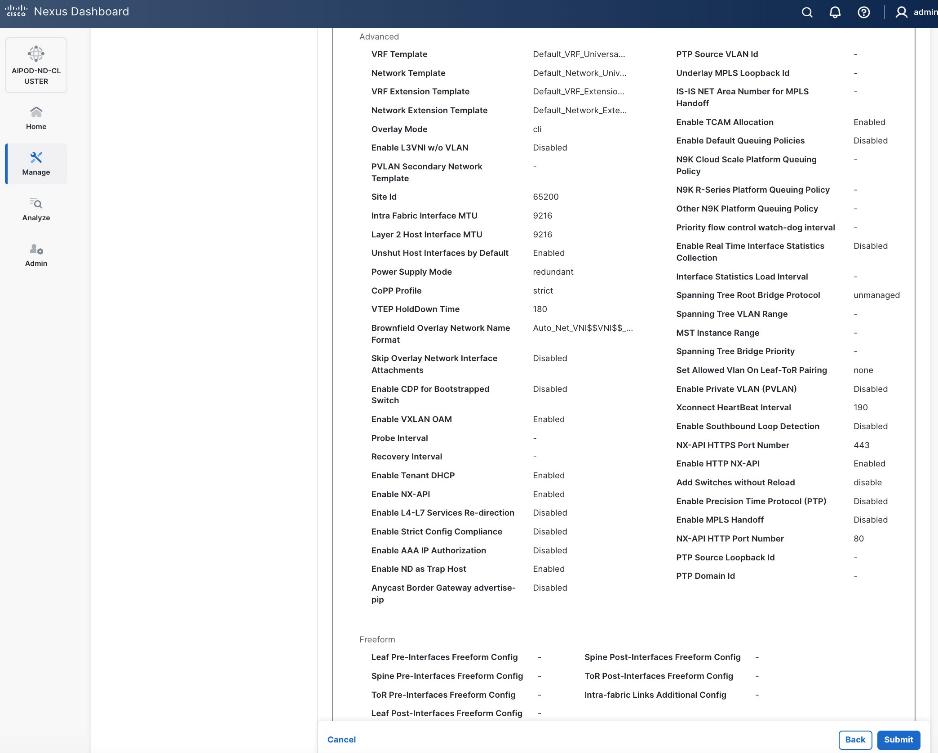

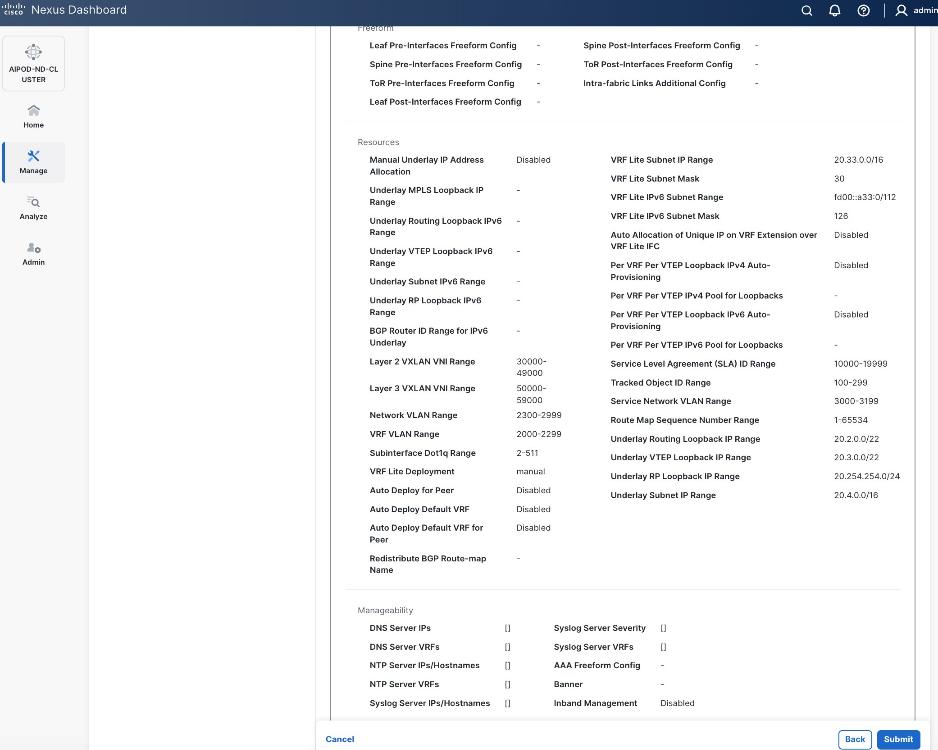

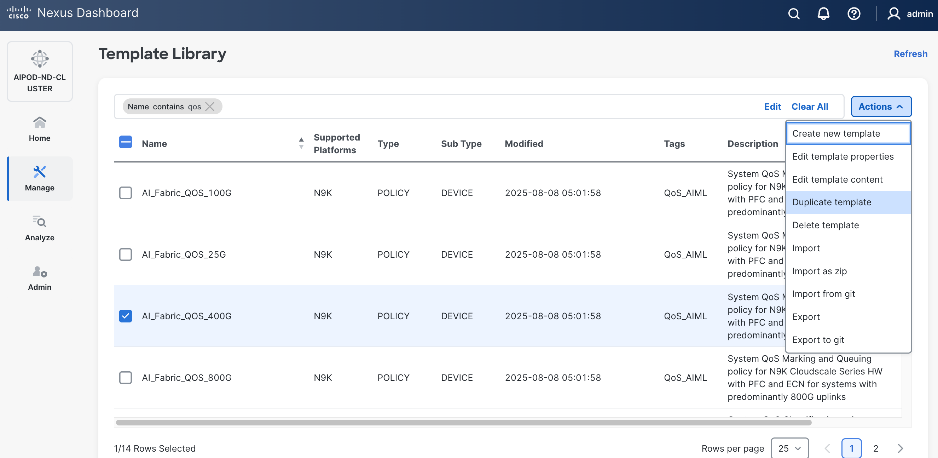

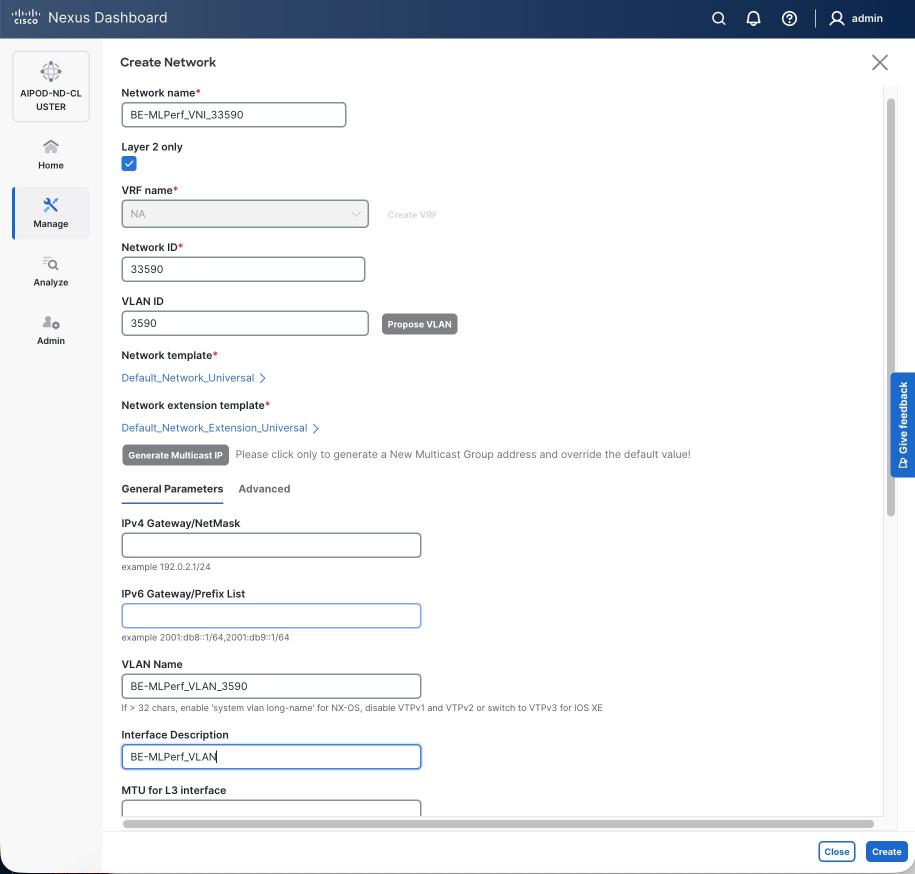

The fabric is deployed using a pre-built AI VXLAN EVPN fabric template available in Cisco Nexus Dashboard. This template implements a prescriptive, best-practice design for the backend fabric as shown in Figure 10. While the template provides a specific configuration, organizations retain flexibility to customize the template as needed.

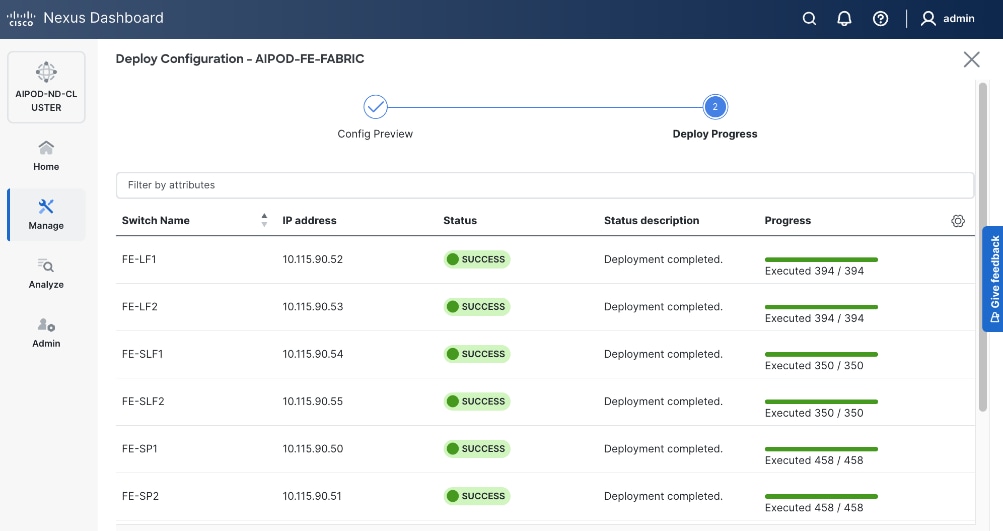

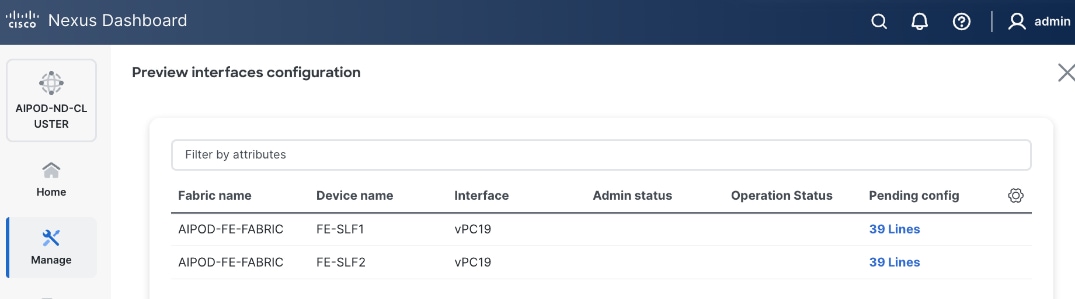

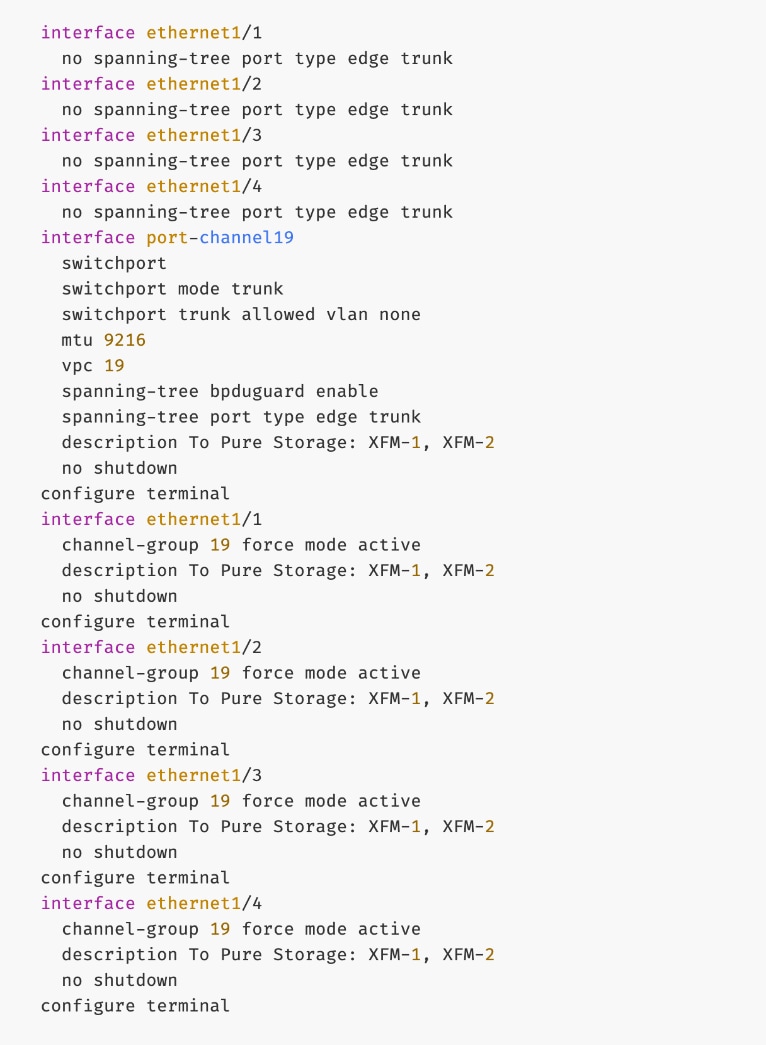

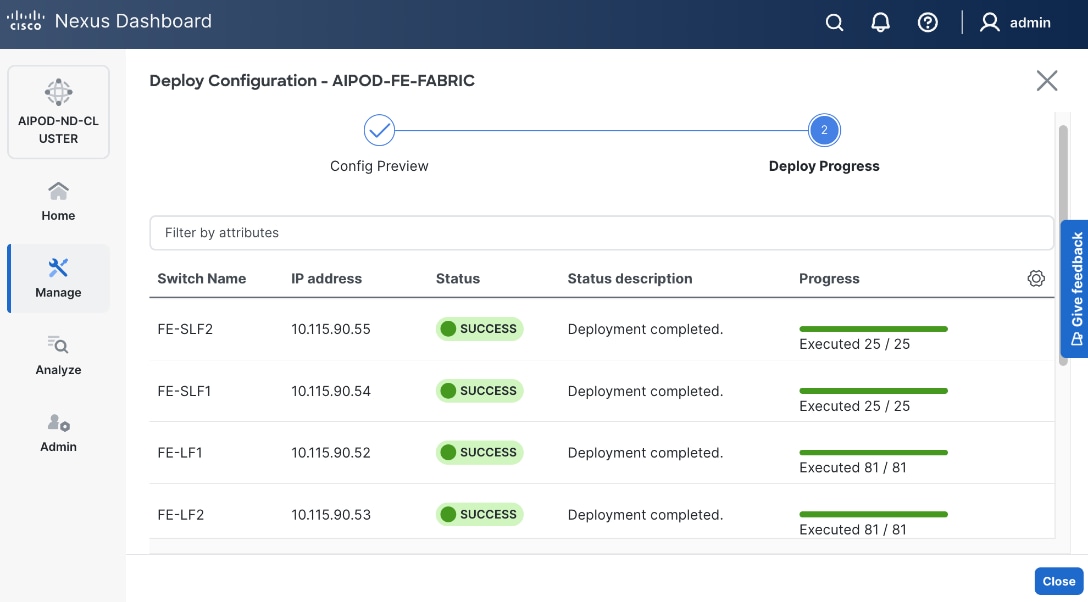

Once the switches are physically connected in a Clos-based spine-leaf topology, these templates enable the fabric to be provisioned and deployed quickly. In this design, the two-spine, two-leaf backend fabric would have required 400 lines (Figure 11) of manual configuration but it was deployed in minutes using the template workflow.

To enable inter-node GPU-to-GPU communication across the backend fabric, this design uses a Layer 2 overlay where all 32 GPUs in the cluster reside in the same VLAN. This is a viable option for enterprise deployments with multiple, smaller workloads that require a smaller subset of a larger cluster for any given workload. Alternatively, a Layer 3 overlay can also be used to enable this connectivity. The UCS GPU worker node configurations may need to change to align with the design used.

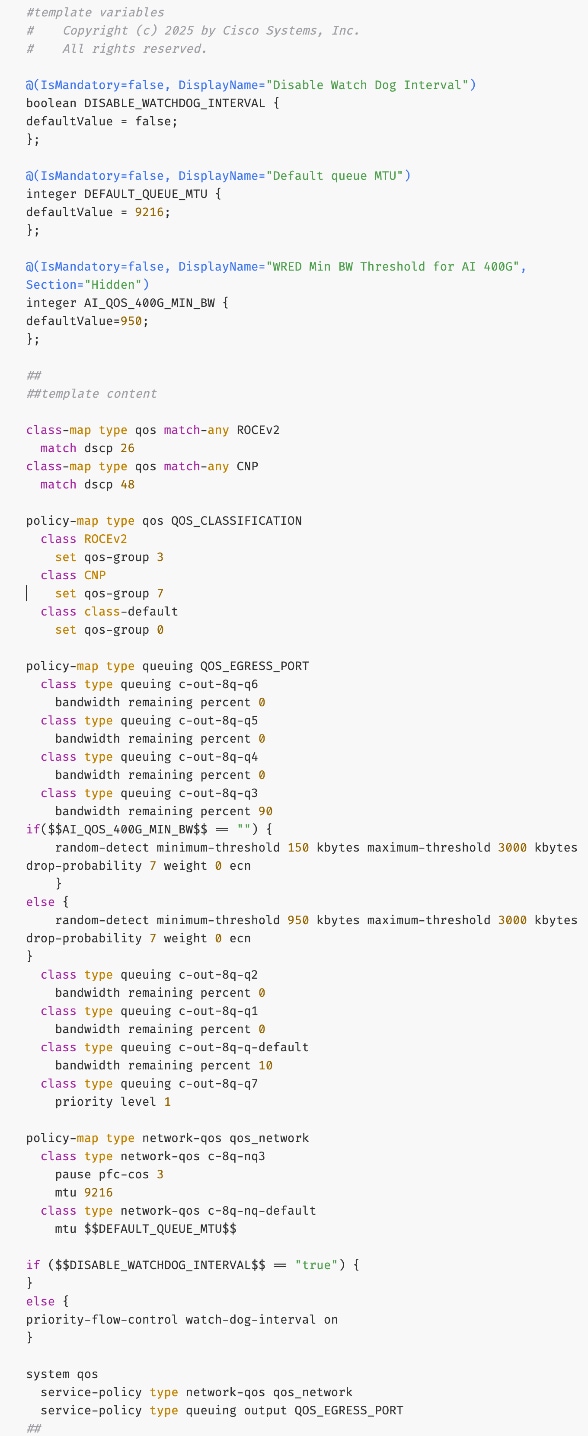

To create a lossless environment for RoCEv2 traffic, a default QoS policy is implemented by the deployed AI/ML template to enable the following QoS features on the backend fabric.

● Traffic Classification: A dedicated class-map (COS 3) is used to classify RoCEv2 synchronization traffic.

● Priority Flow Control (PFC): PFC is enabled on COS 3 to provide hop-by-hop flow control. In the event of congestion, this ensures that the switch can signal the upstream device to pause transmission, preventing packet loss.

● Explicit Congestion Notification (ECN): ECN is configured with specific WRED (Weighted Random Early Detection) thresholds. As buffers begin to fill, this enables the Nexus switches to mark packets signaling GPU endpoints to throttle their transmission rate before PFC is triggered, maintaining a smooth data flow.

● MTU: A global MTU of 9000 (Jumbo Frames) is applied across all links in the fabric to ensure large AI data packets are switched efficiently without fragmentation.

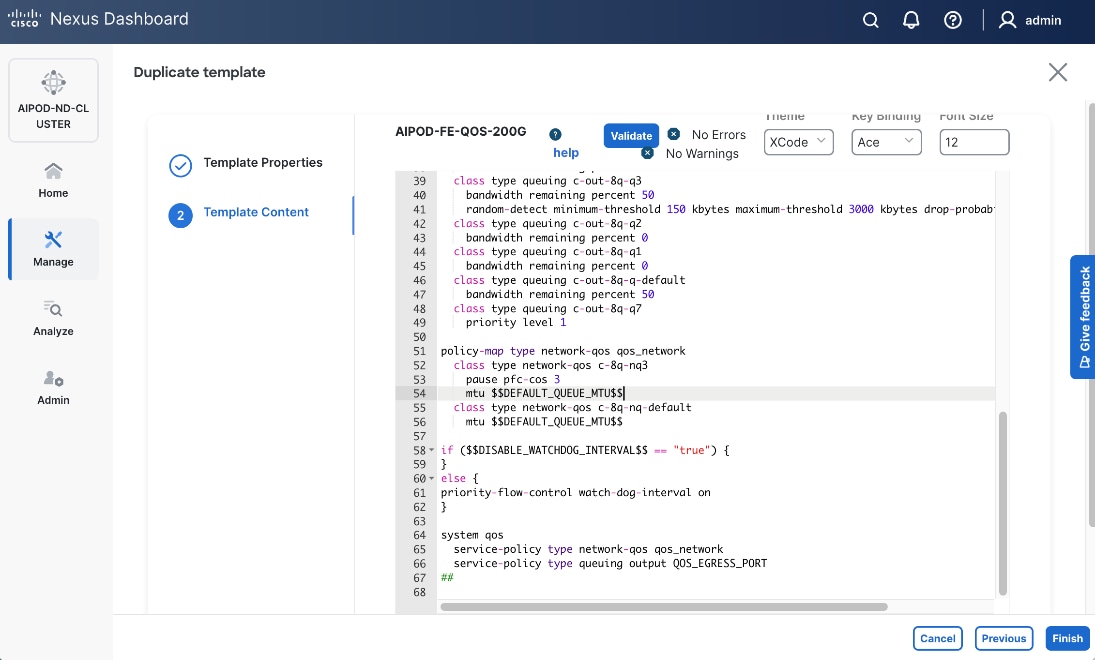

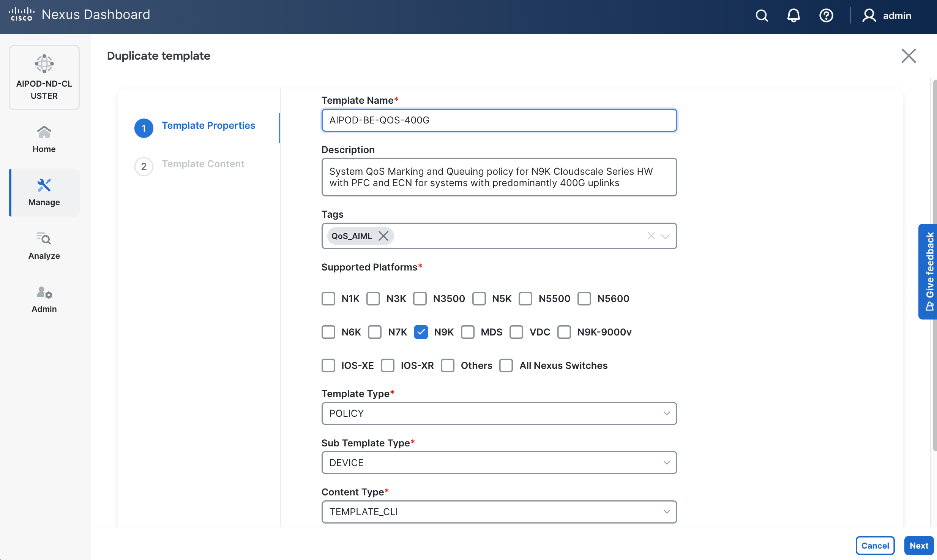

In this design, the default QoS policy within the deployed template was modified as listed:

● MTU for PFC3 traffic changed from 4200 to 9216

● QoS Bandwidth Allocation for the RoCEv2 queue was adjusted to 90 percent since the backend fabric primarily carries GPU-to-GPU RDMA traffic. The remaining bandwidth is allocated to control, management, and monitoring traffic.

Frontend (North-South) Fabric

The frontend fabric provides UCS GPU nodes with connectivity to management, services, storage, and to other networks, both within and external to the enterprise. In a hybrid deployment, inferencing traffic from users and application also use this network for reachability to AI models hosted on the UCS GPU nodes.

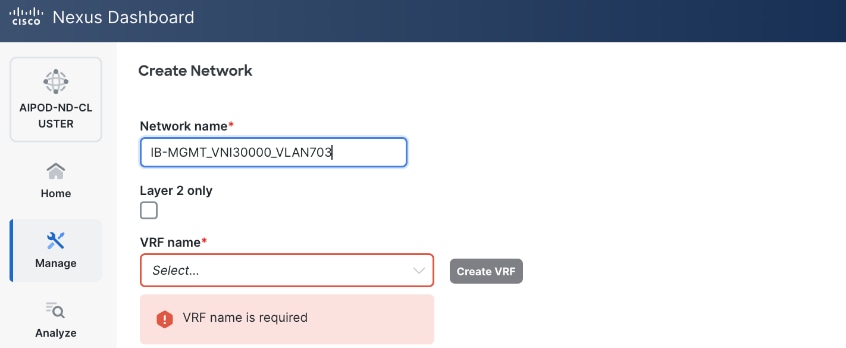

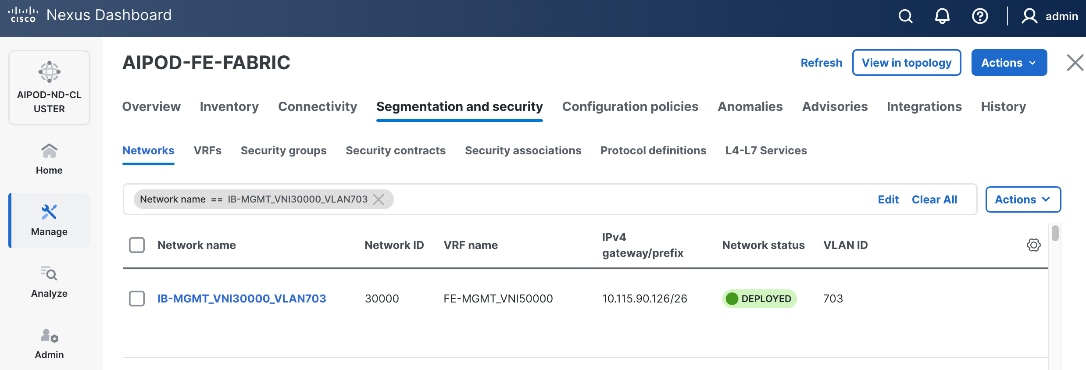

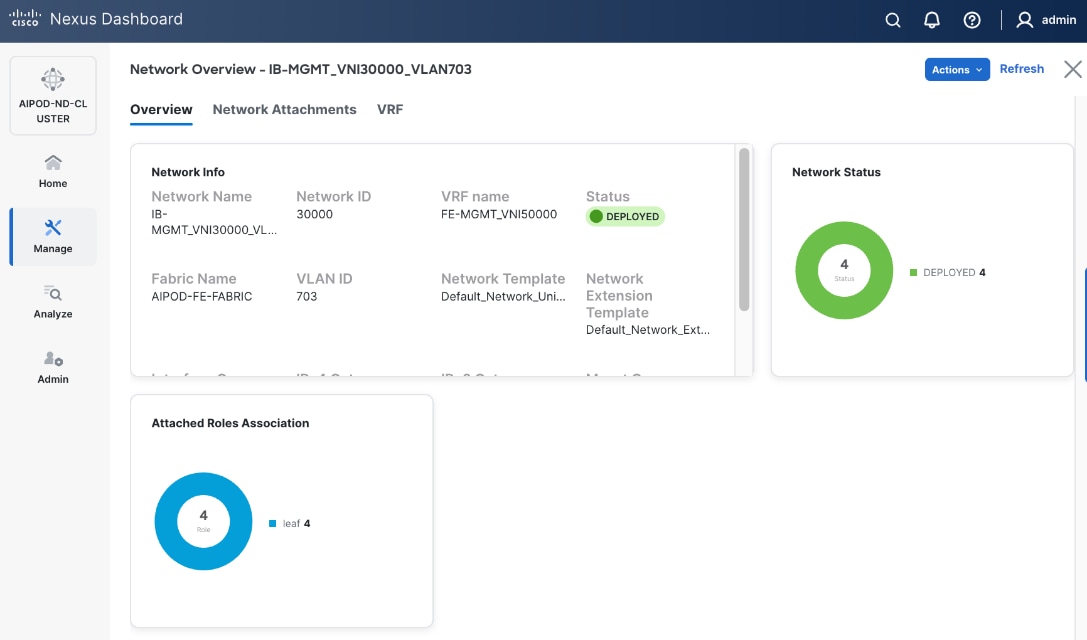

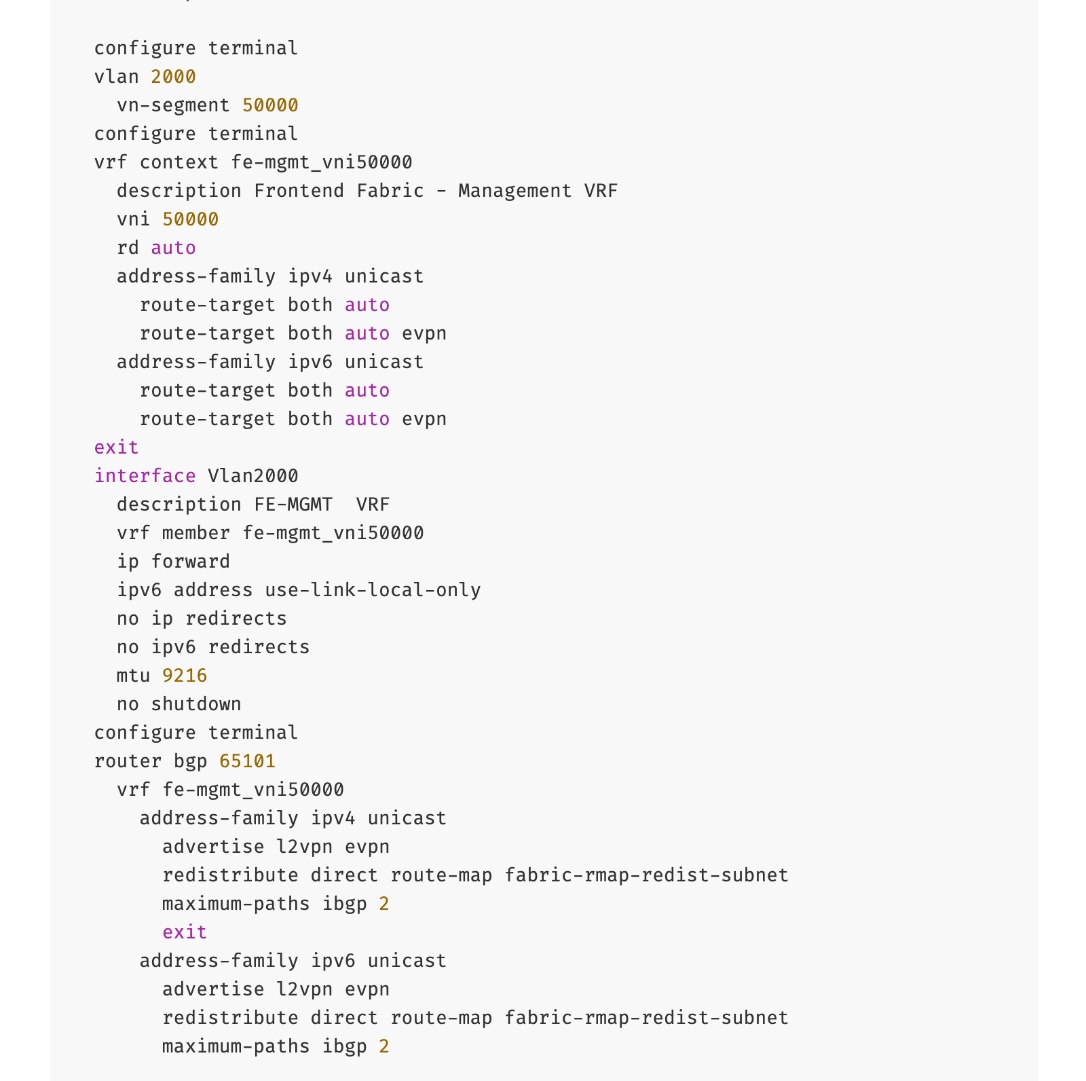

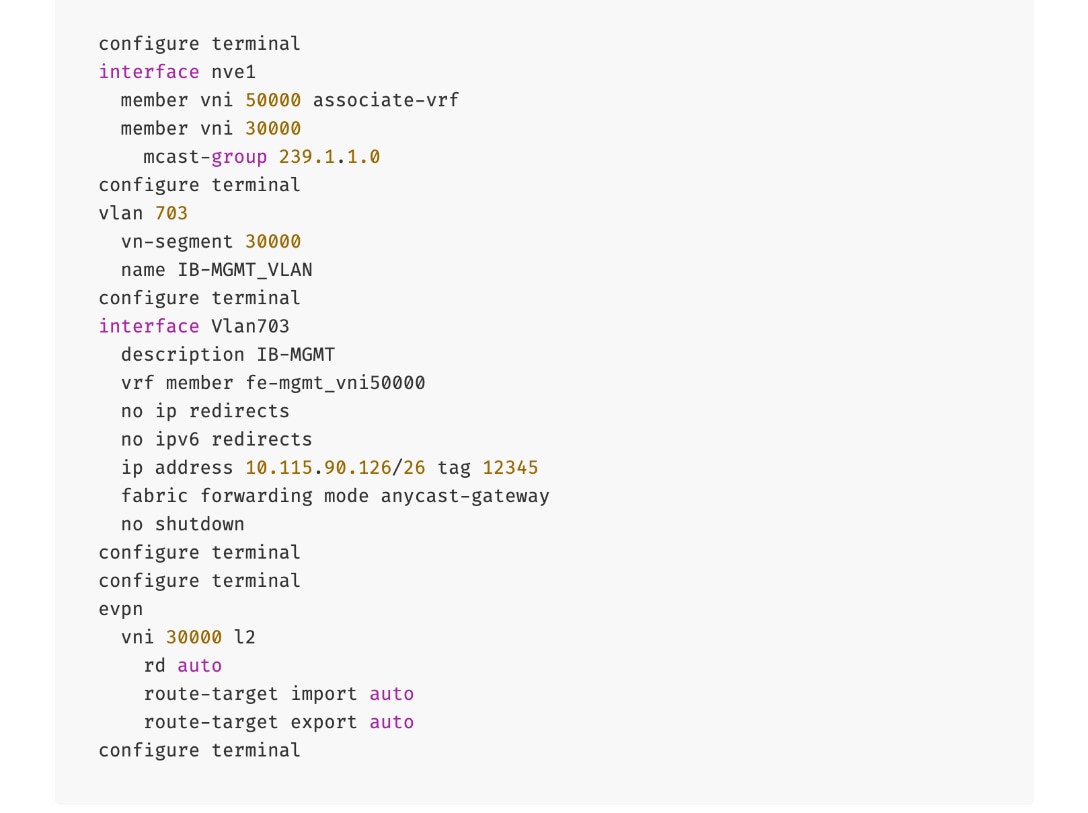

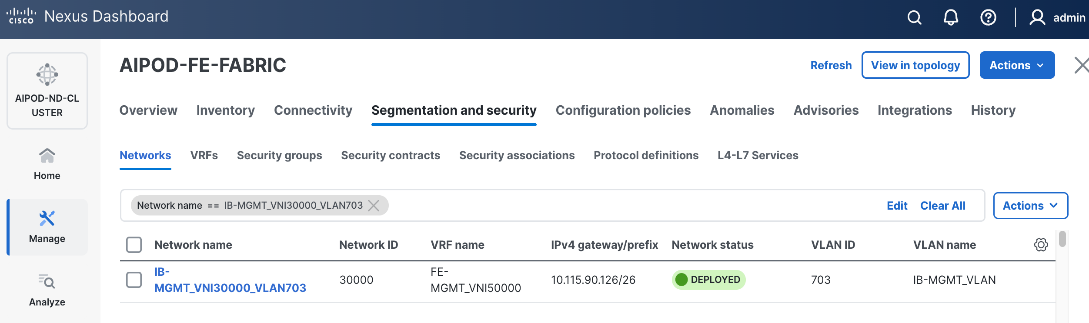

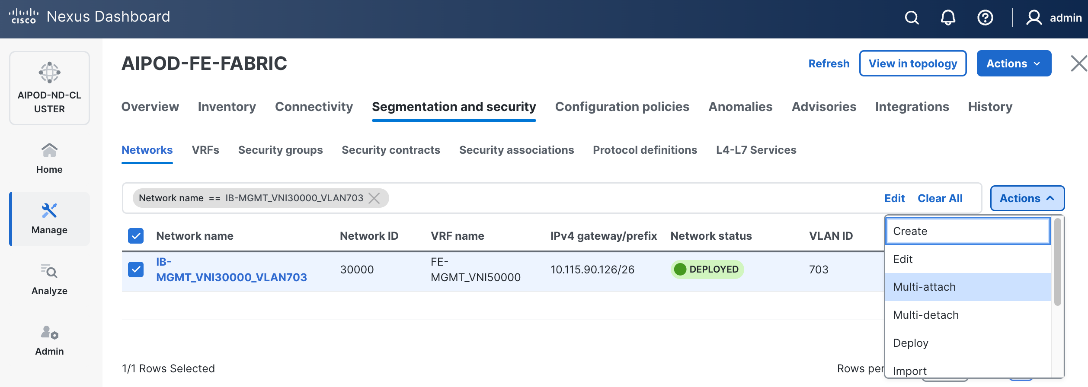

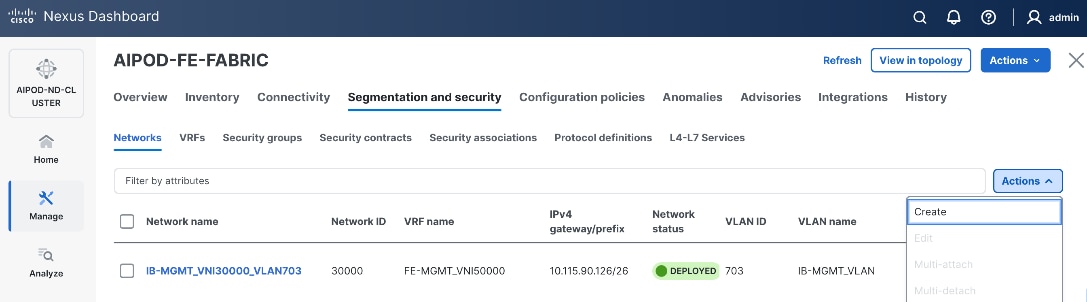

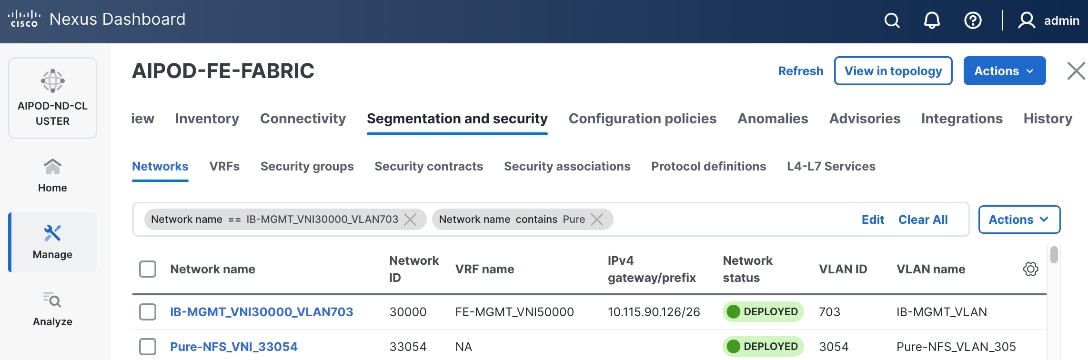

Similar to the backend fabric, the frontend also uses a two-tier spine-leaf Clos topology with MP-BGP VXLAN EVPN to provide connectivity. In this design, both Layer 2 and Layer 3 overlays are used (Table 4) to segment the different types of traffic being transported across this network.

Table 4. Traffic Segmentation and Overlays in Frontend Fabric

| Traffic Type |

Overlay Type |

VLAN |

IP Subnet |

MTU |

| In-Band Management |

Layer 3 |

703 |

10.115.90.64/26 GW: 10.115.90.126 |

1500 |

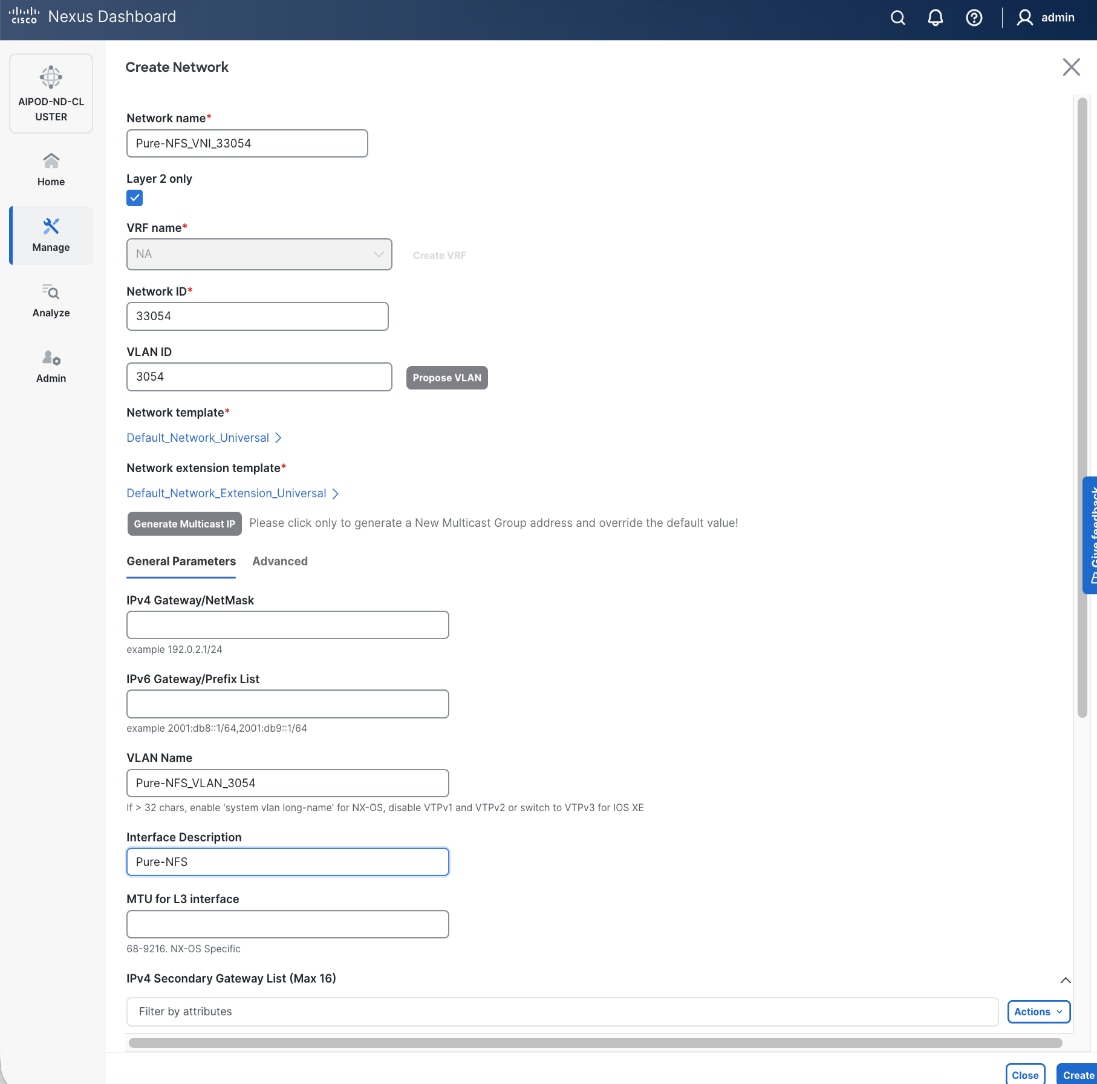

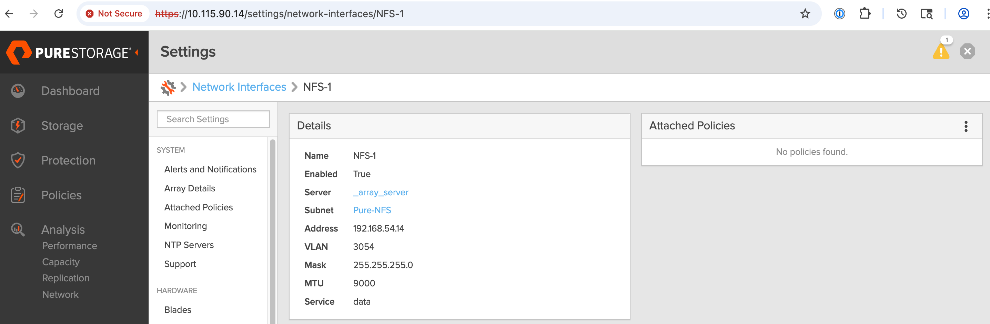

| Storage Data – NFS |

Layer 2 |

3054 |

192.168.54.0/24 |

9000 |

| Storage Data – Object |

Layer 3 |

703* |

10.115.90.64/24 |

1500 |

Note: In an OpenShift environment, object store access is through the OpenShift Cluster IP network. This traffic is routed to the Everpure object store network in the same VRF.

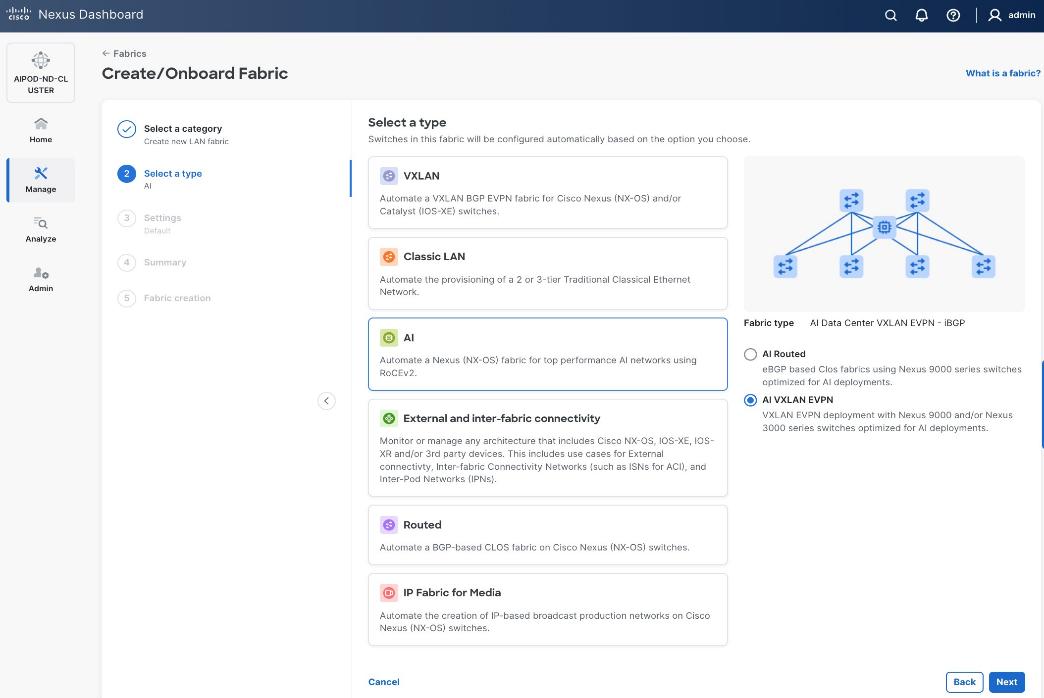

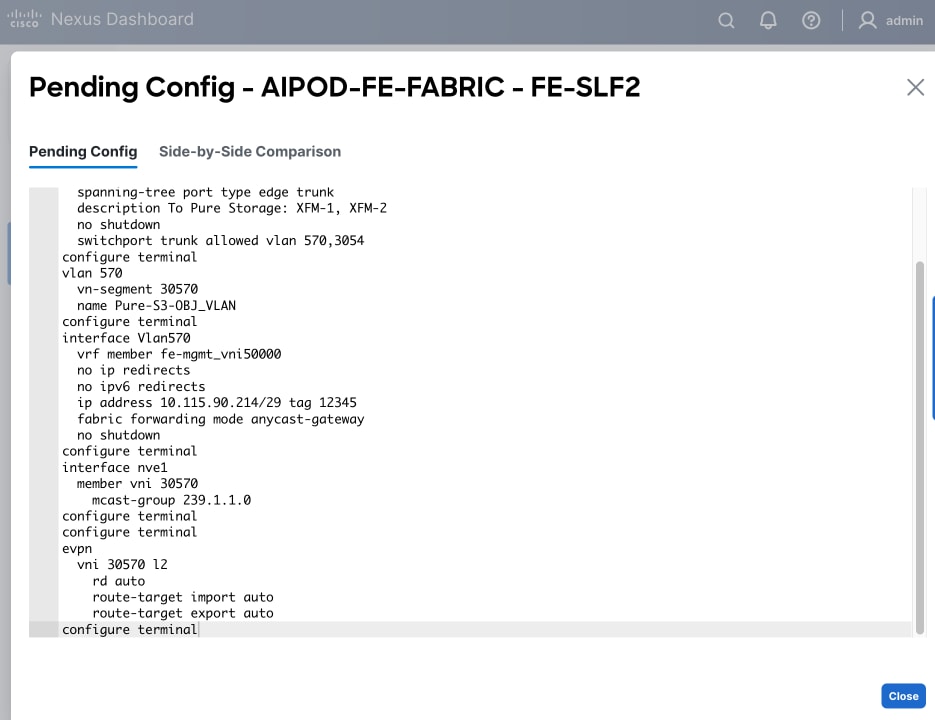

This fabric is also deployed using a pre-built Nexus Dashboard fabric template (Data Center VXLAN EVPN) but one that aligns with the needs of a frontend fabric. It also implements a prescriptive, best-practice design (Figure 12) with the flexibility to customize as needed.

As with the backend fabric, the pre-built template enables the frontend fabric (two-spine, four-leaf) with ~400 lines of configuration (Figure 13) to be deployed in minutes.

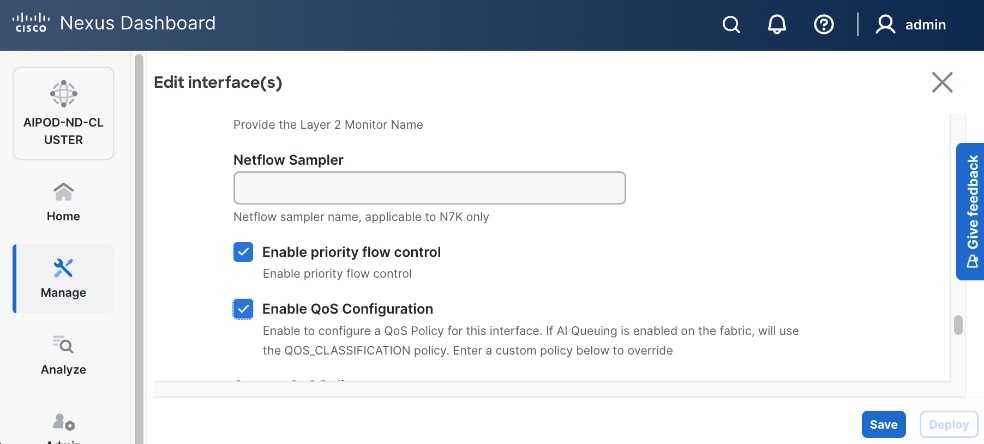

Quality of Service (QoS), including PFC and ECN, was also deployed in this fabric to provide lossless connectivity for the RDMA traffic to Everpure FlashBlade//S. VLANs and overlays provide network isolation for the different traffic types traversing the fabric, but bandwidth fairness is achieved through the QoS policies deployed in the fabric. A dedicated queue is assigned to storage RDMA traffic to ensure consistent performance during data-intensive training. Similar to the backend, an MTU of 9216 is used for PFC and the bandwidth allocation is adjusted to ensure fair treatment.

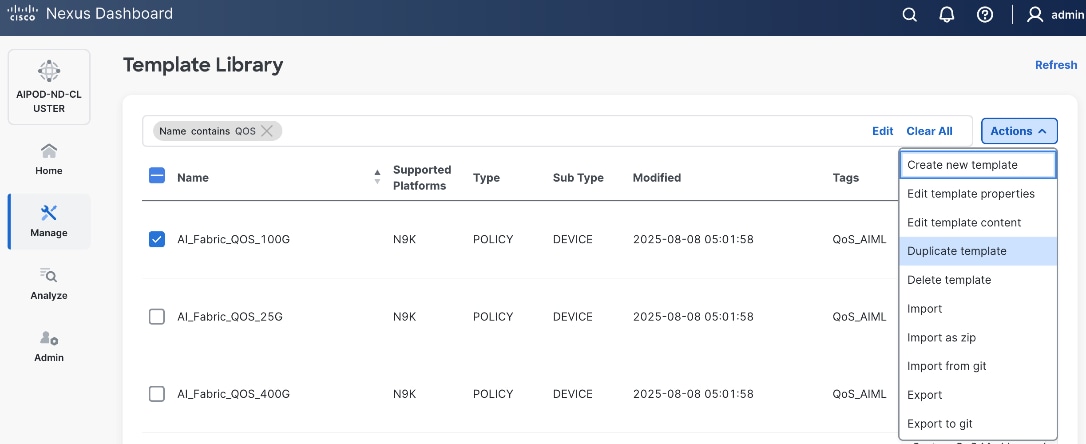

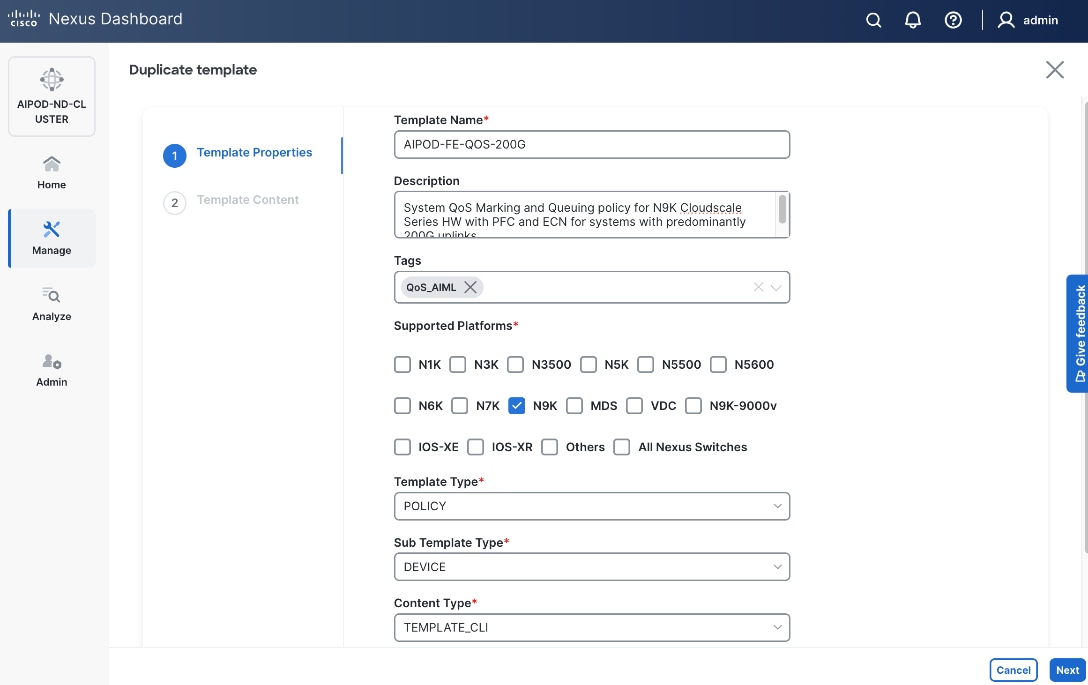

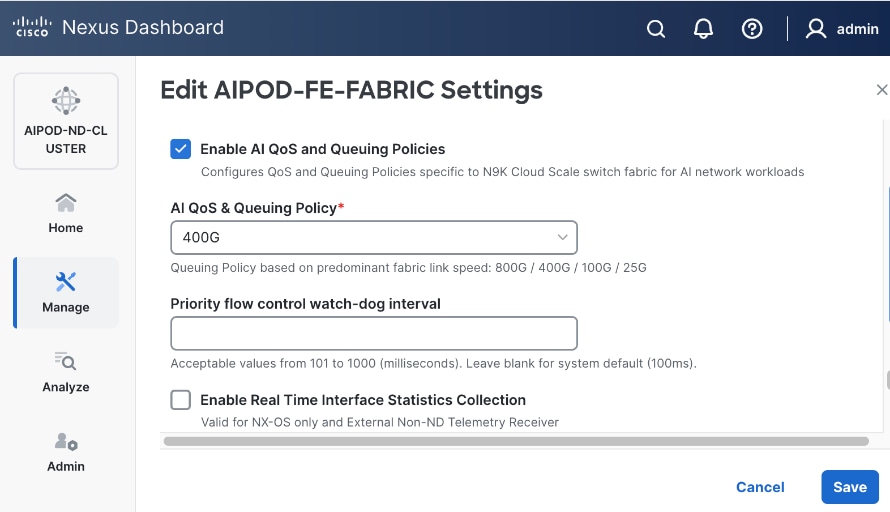

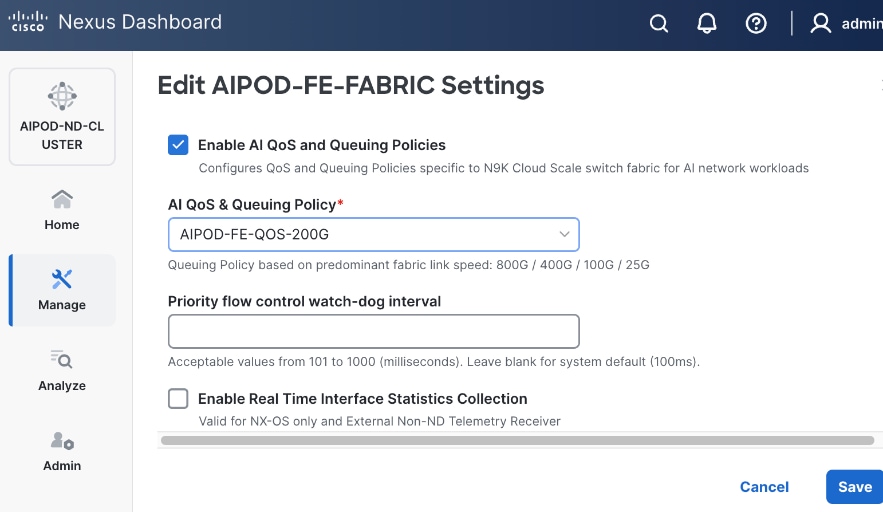

The template deployed in the frontend does not include a QoS policy by default. For the CVD, a new QoS policy was created to reflect the 200GbE design used in this design. The new policy was created by duplicating an existing policy and making the necessary adjustments. Customers should review the available QoS policies and customize it as needed to meet the needs of their environments.

Everpure FlashBlade//S

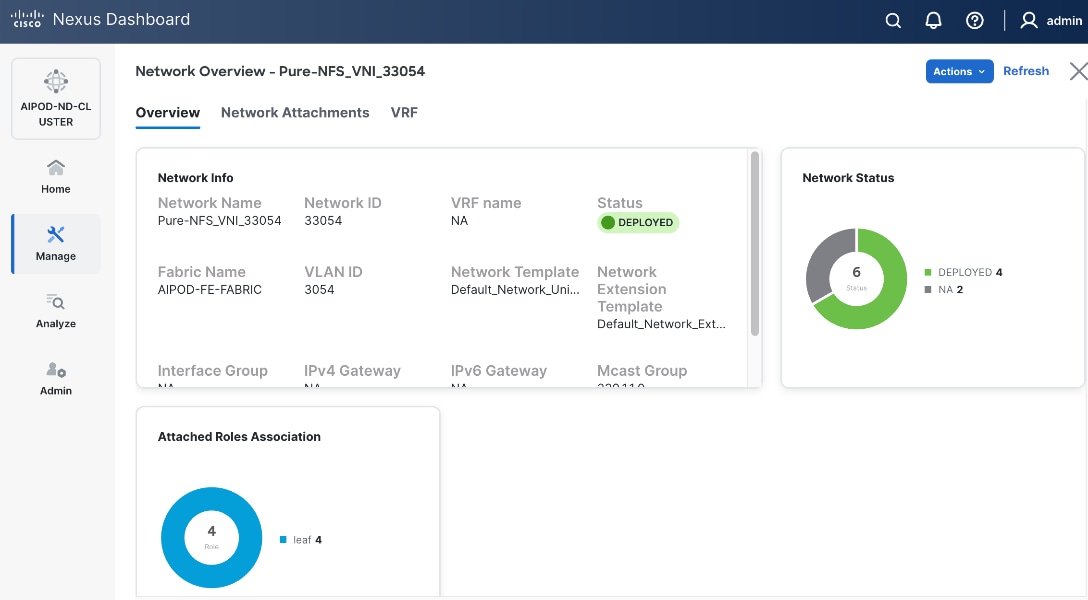

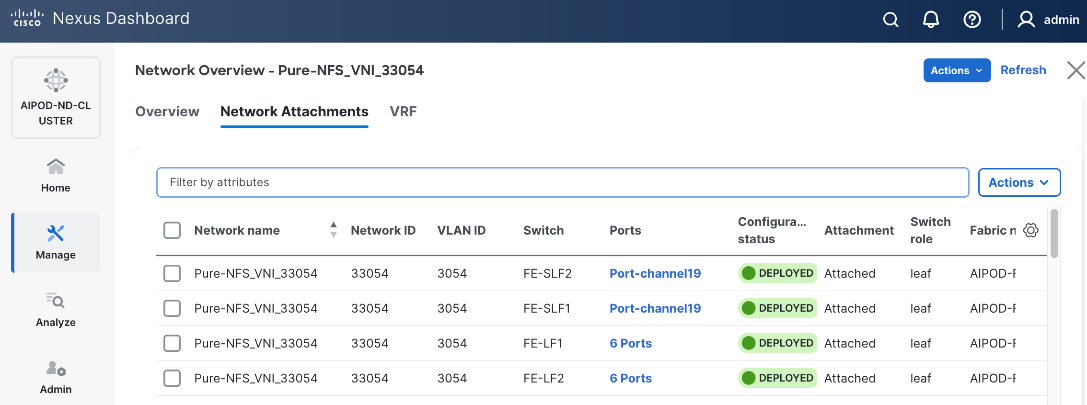

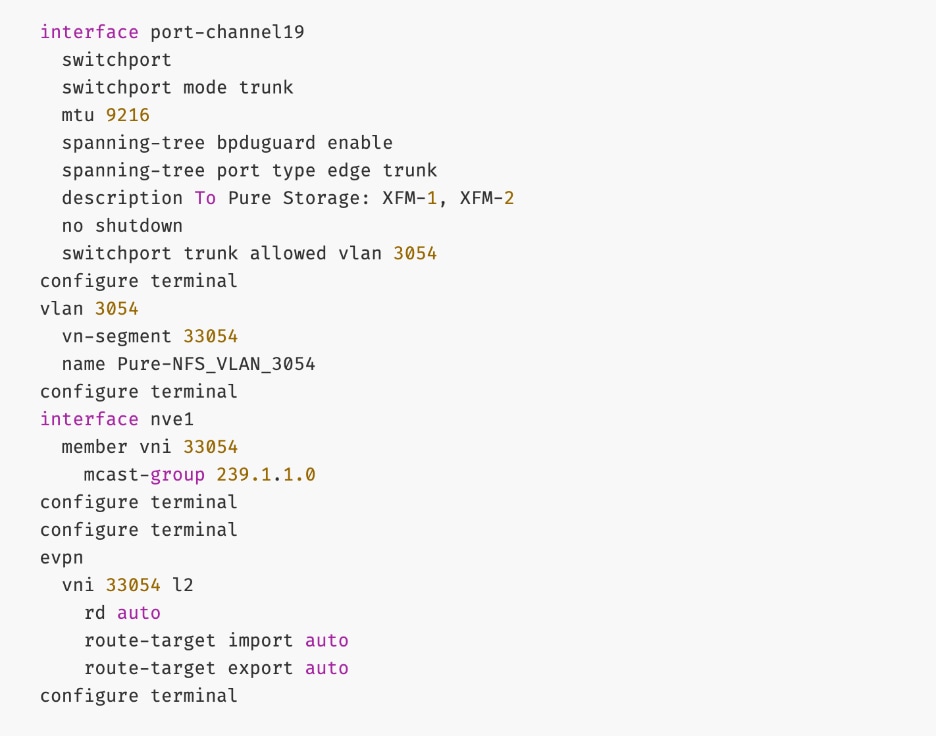

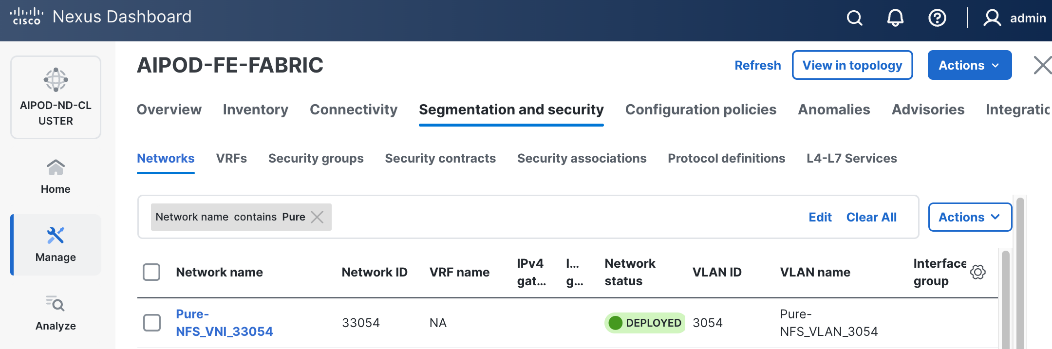

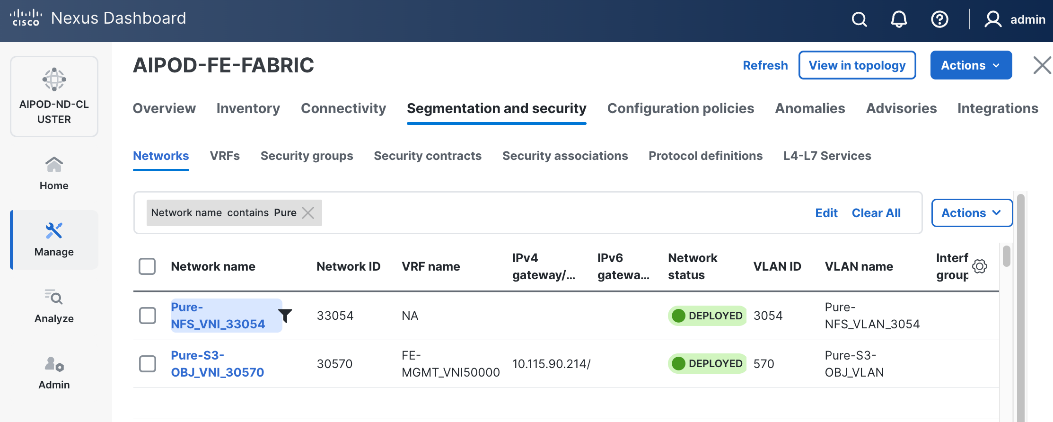

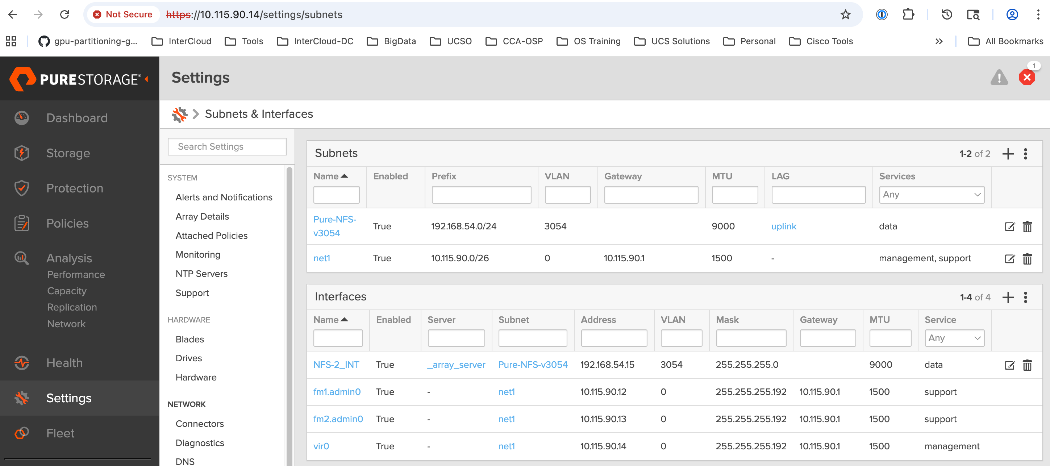

The storage sub-system provides a unified platform for both file and object data protocols using Everpure FlashBlade. In this design, Everpure XFM-8400R2 modules serve as a storage aggregation layer, bundling individual links into a high-capacity 400GbE port-channel that connect to dedicated storage leaf switches in the frontend fabric. As storage demands grow, the scale-out architecture enables enterprises to incrementally scale their deployment, both by adding capacity within a FlashBlade//S system or by adding more systems as shown in Figure 14.

The NFS and object store traffic to and from Pure FlashBlade//S are segmented using different VLANs and trunked on the port channels from the XFMs to the storage leaf switches. NFS traffic uses a Layer 2 overlay while the S3 object store traffic uses a Layer 3 overlay across the frontend fabric.

Table 5. Everpure FlashBlade//S VLANs

| VLAN Name |

VLAN ID |

vPC ID |

L2VNI |

IP Subnet |

Purpose |

| OOB-MGMT |

550 |

N/A |

N/A |

10.115.90.14/26 (XFM-VIP) |

Pure One/Mgmt. Access |

| Pure-NFS_VLAN_3054 |

3054 |

19 |

33054 |

192.168.54.0/24 |

FE: NFS Storage Data |

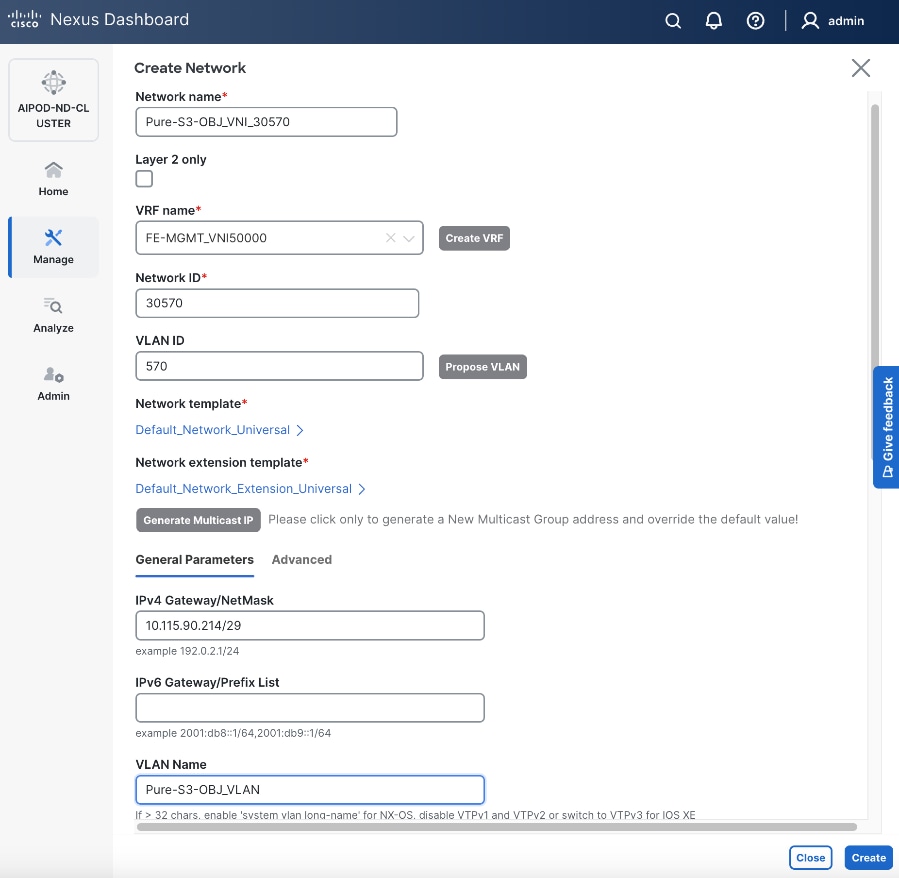

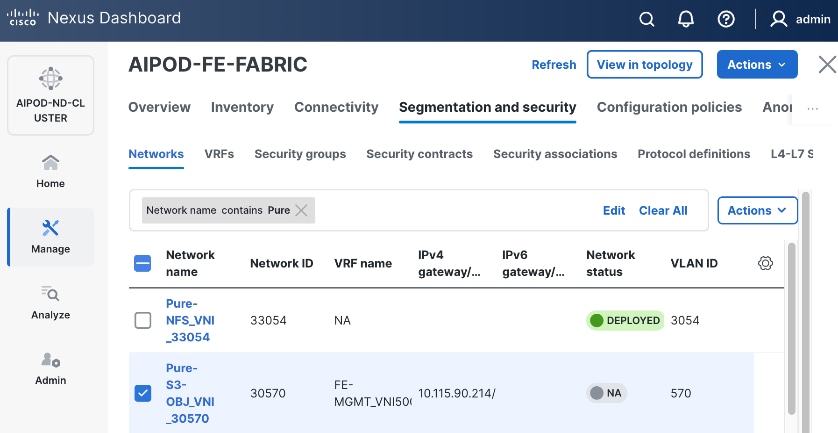

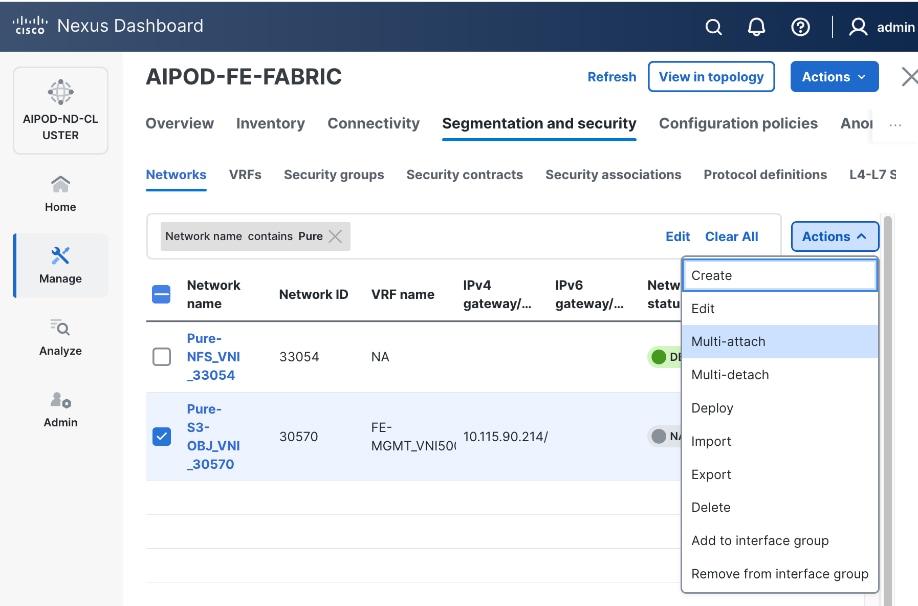

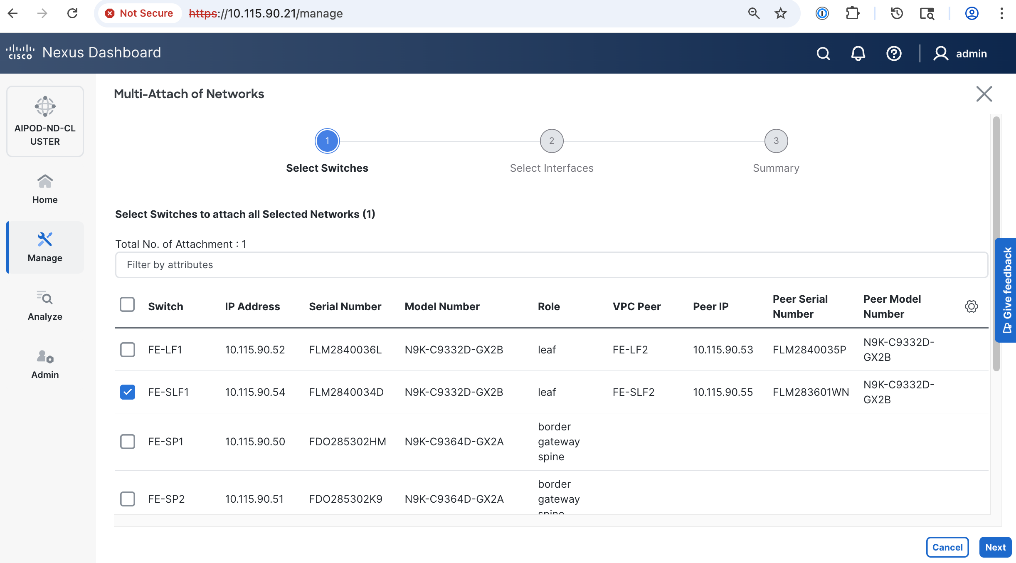

| Pure-S3-OBJ_VLAN |

570 |

19 |

30570 |

10.115.90.208/29 |

FE: Object Store Data |

|

|

703 |

111-114 |

30703 |

10.115.90.64.0/26 |

FE: UCS C885A (Client side) |

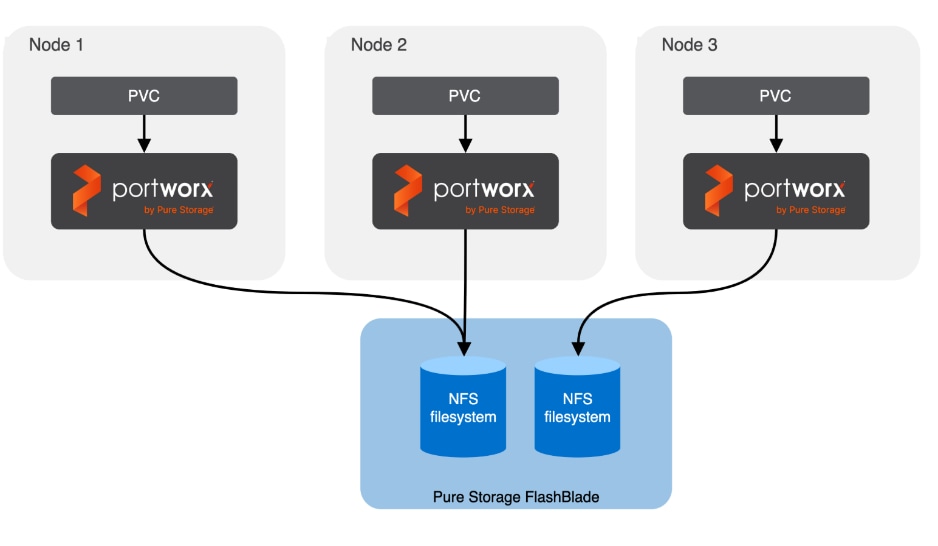

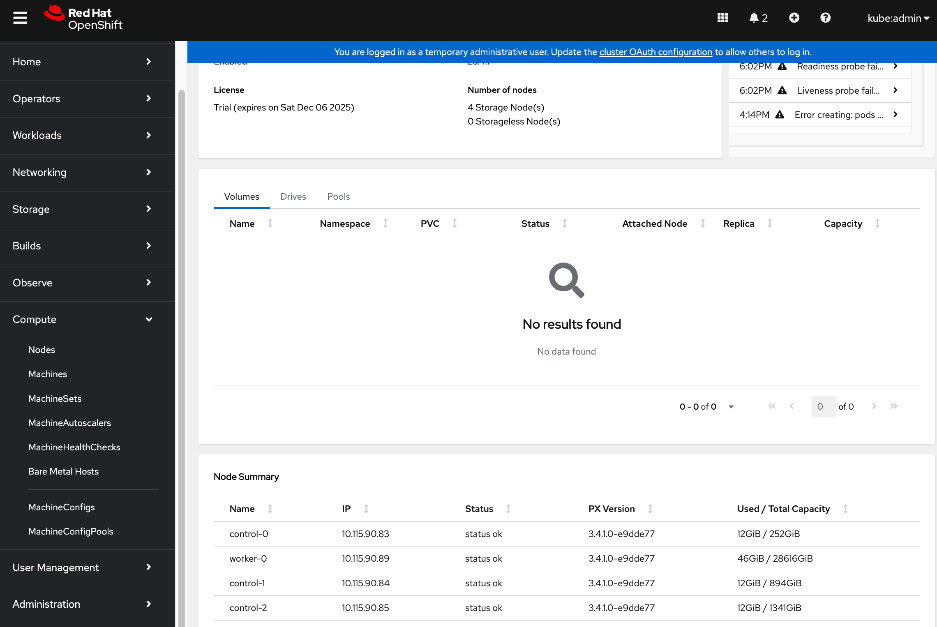

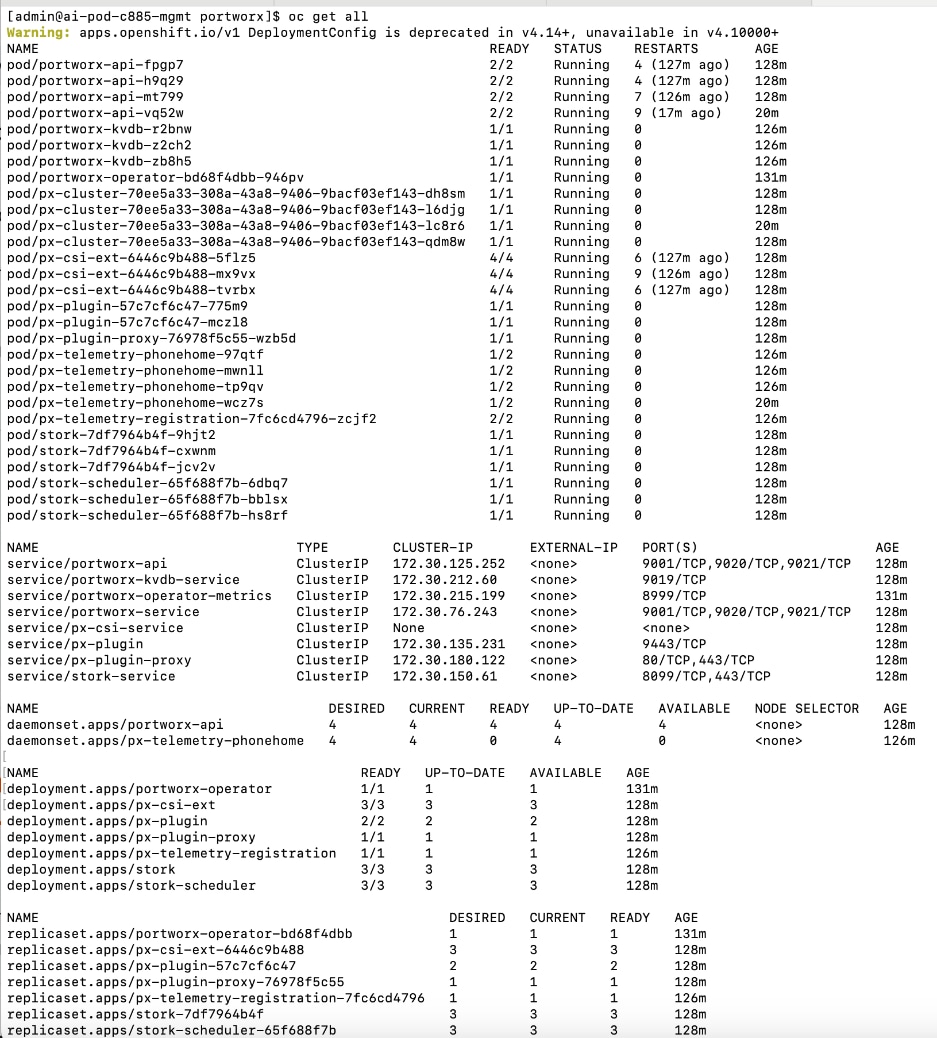

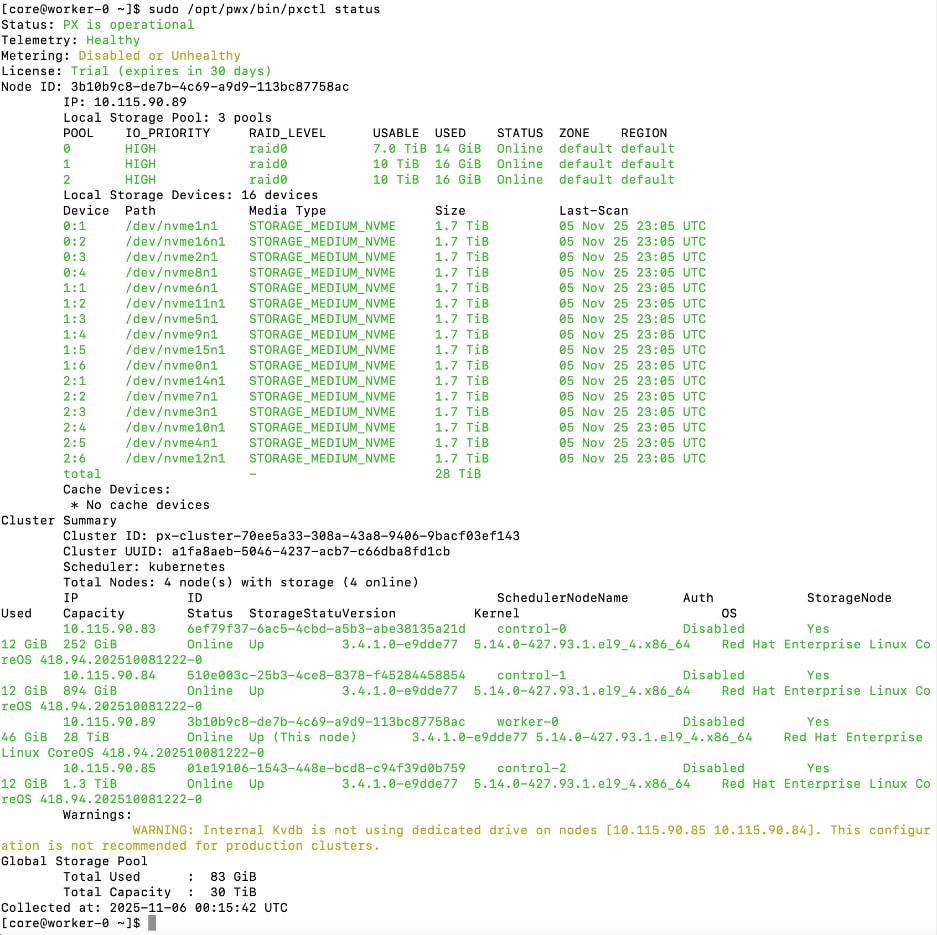

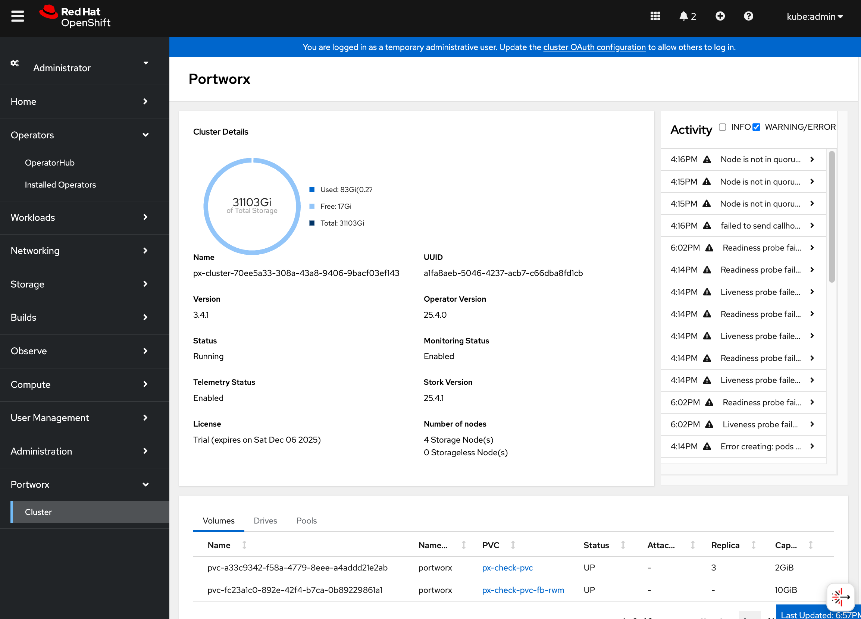

Portworx by Everpure provides persistent storage for the Kubernetes workloads in this design. It functions as a Container Storage Interface (CSI) plugin to enable dynamic storage provisioning for workloads running on OpenShift. The solution also includes a Kubernetes operator that automates deployment, configuration, and upgrades within the cluster.

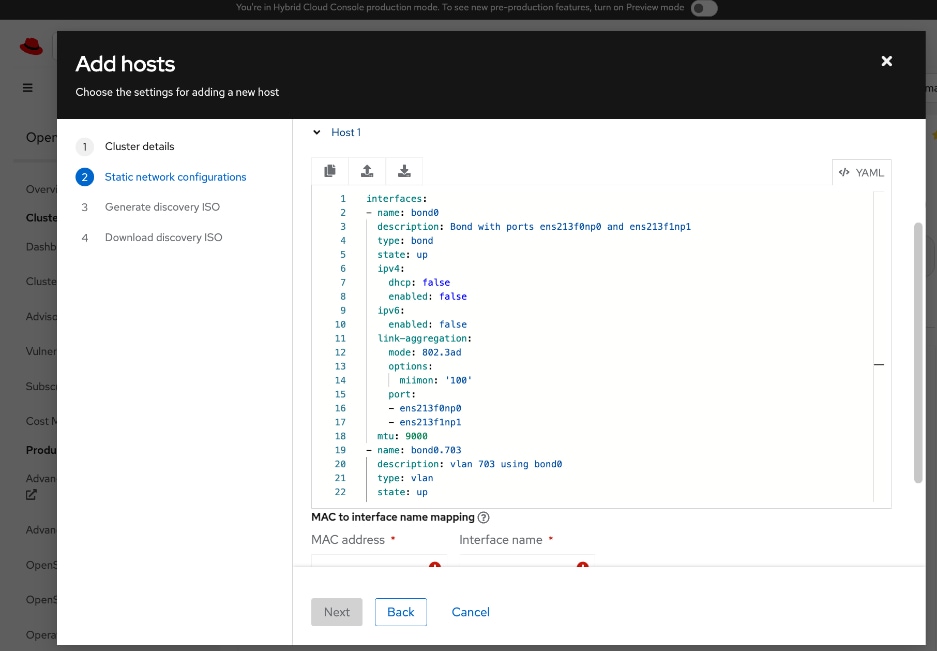

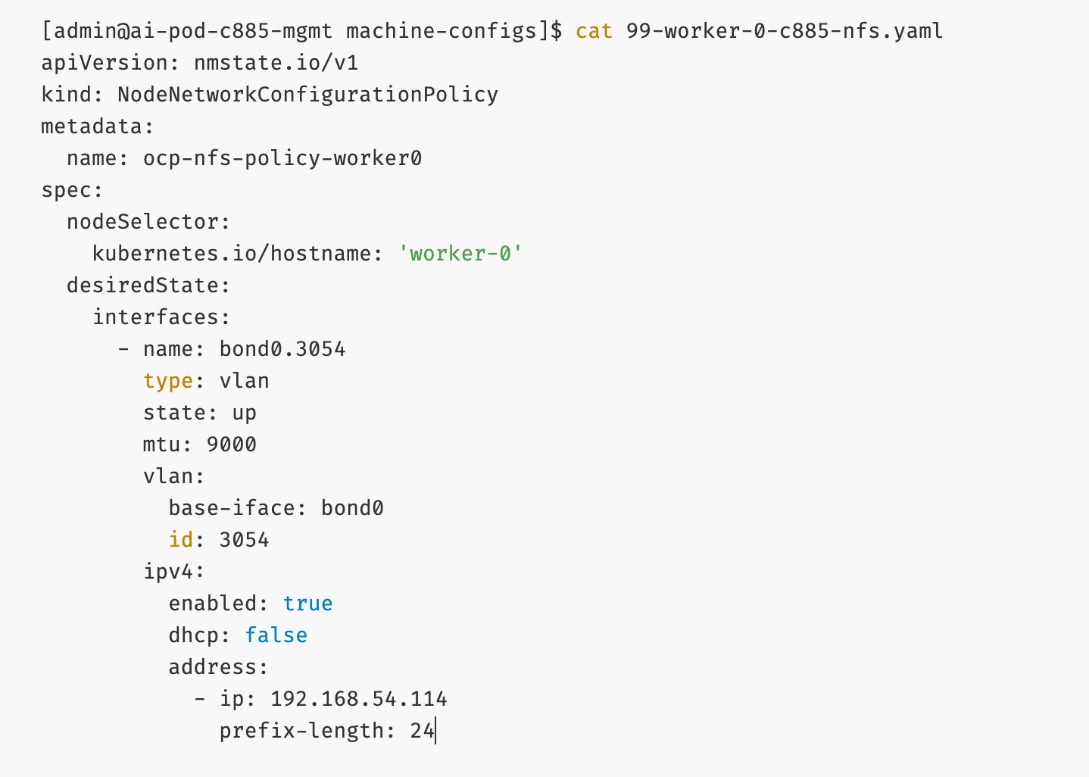

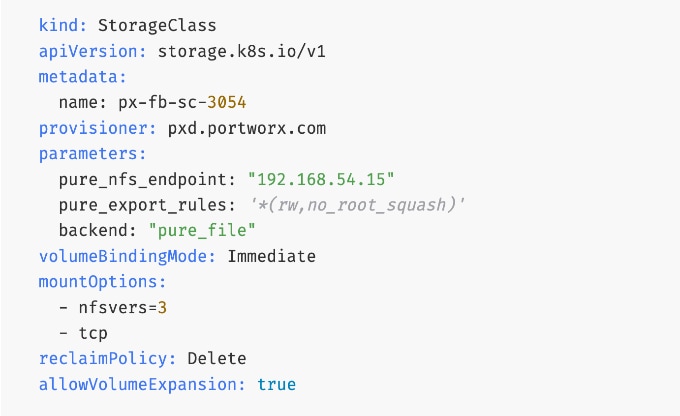

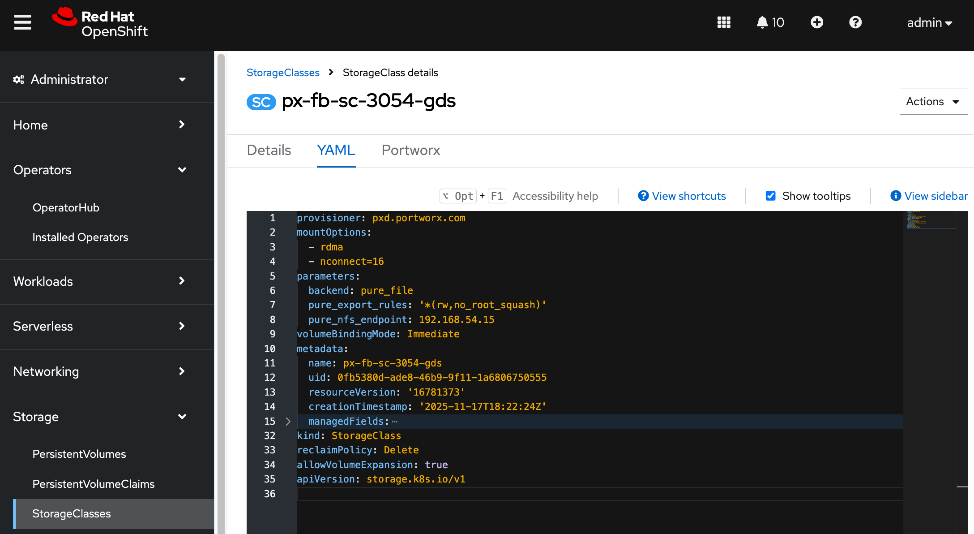

For AI deployments, Portworx by Everpure is backed by NFS file systems running on Pure FlashBlade, as shown in Figure 15. The design utilizes the nconnect=16 mount option in the defined Storage Class, allowing the compute nodes to establish multiple parallel TCP sessions to the Everpure FlashBlade. This ensures the frontend uplinks are fully utilized during data-intensive training phases. Additionally, the Red Hat NMState Operator is deployed to provision the storage networking required to enable NFS storage access from the UCS worker nodes in the OpenShift cluster.

AI (Kubernetes) workloads running on the OpenShift cluster also have direct access to object stores deployed on Everpure FlashBlade//S. A dedicated network and interface is provisioned on the Everpure for this and the storage access traffic from these workloads will be routed to this network to access the S3 buckets.

A quick CLI based example of deploying and validating NFS using RDMA using Everpure FlashBlade//S is provided in the AI POD GitHub repo (see Everpure folder). The specifc deployment steps used in this CVD in provided in later sections.

Cisco UCS Compute

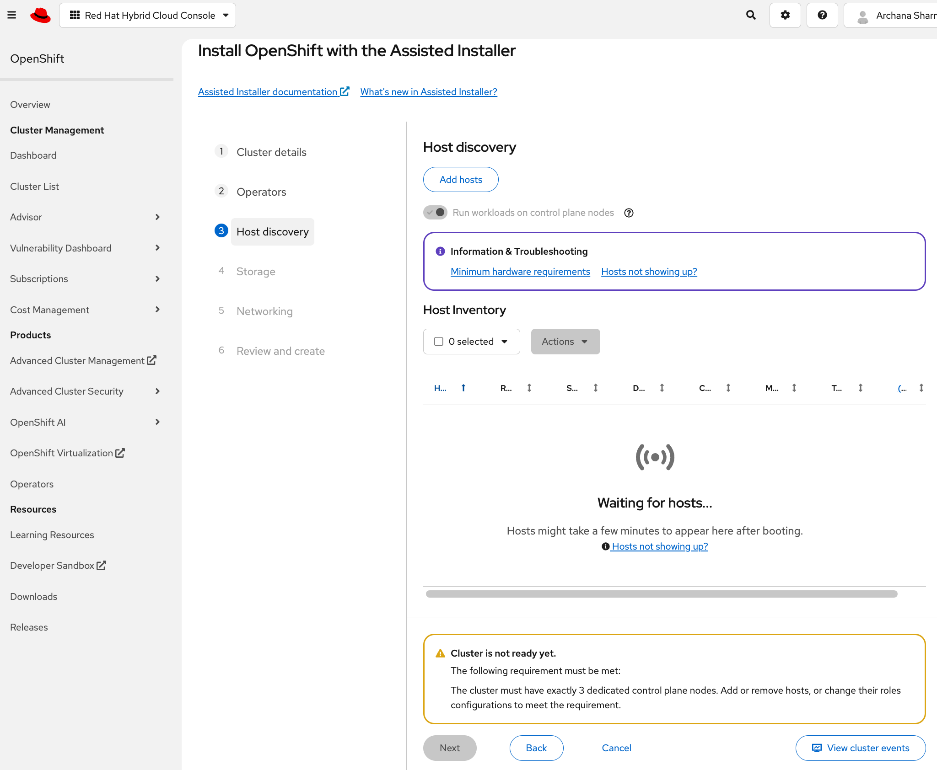

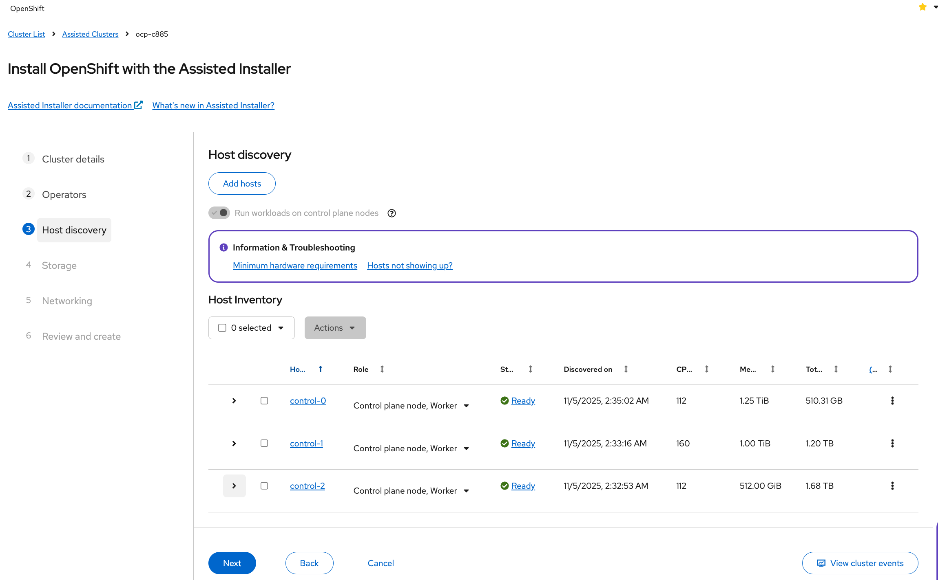

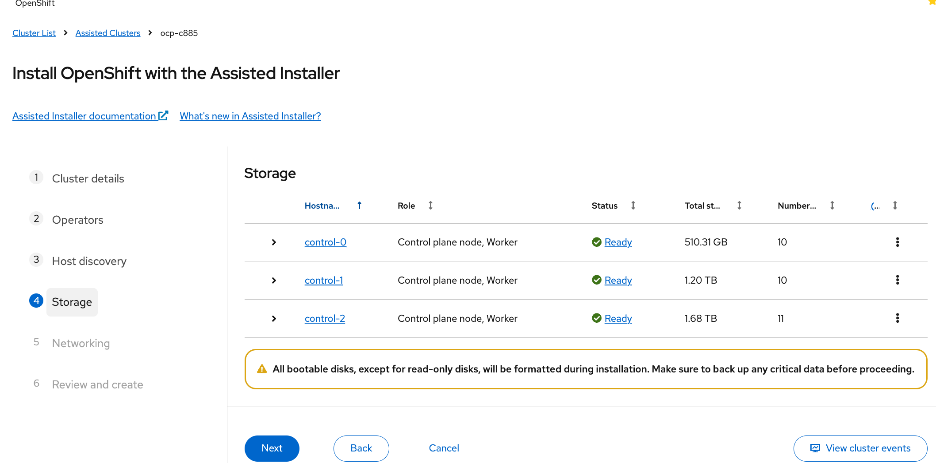

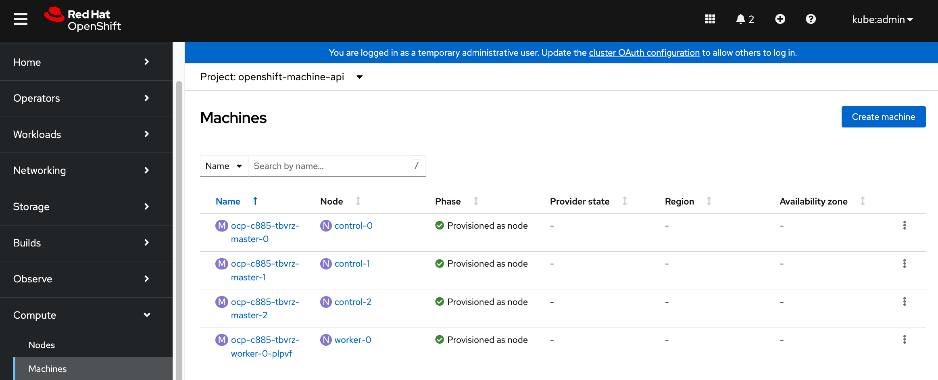

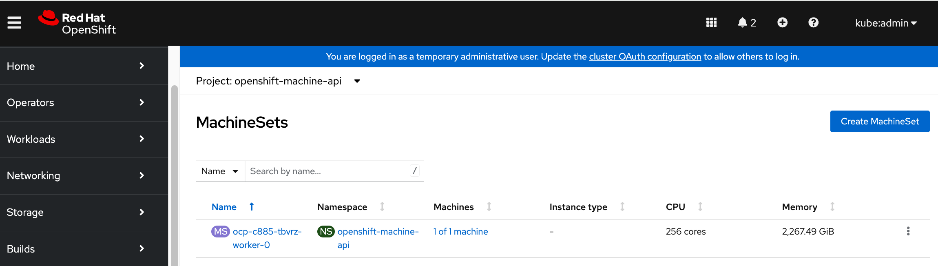

A Cisco UCS X-Series Direct system with three servers is deployed as control nodes for the OpenShift cluster. These servers are provisioned using a Server Profile Template in Cisco Intersight and deployed as bare-metal nodes using the Cisco Intersight integration in the Red Hat Assisted Installer workflow.

The initial configuration of these servers requires at least one network interface to be configured with the OpenShift cluster IP network VLAN to bring the cluster up. The necessary VLANs for control, management, and auxiliary services are trunked over the virtual port channel (vPC) to the Cisco UCS X-Series Direct chassis.

Once the cluster is operational, the Cisco UCS C885A GPU nodes are added as worker nodes. This is done as a follow-on step because these servers require different provisioning and networking configurations compared to the Cisco UCS X-Series servers. Also, unlike the GPU nodes, Cisco UCS X-Series server’s use:

● Server Profile Templates and Profiles in Cisco Intersight for initial configuration.

● Cisco Intersight integration within the Red Hat Assisted Installer for bare metal deployments.

Post-deployment, Cisco Intersight is used for ongoing operations and management of all servers.

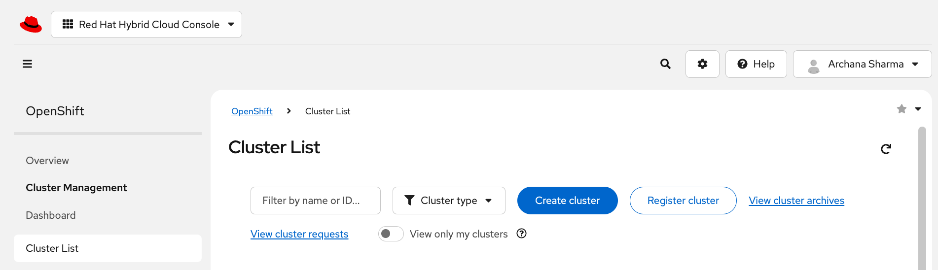

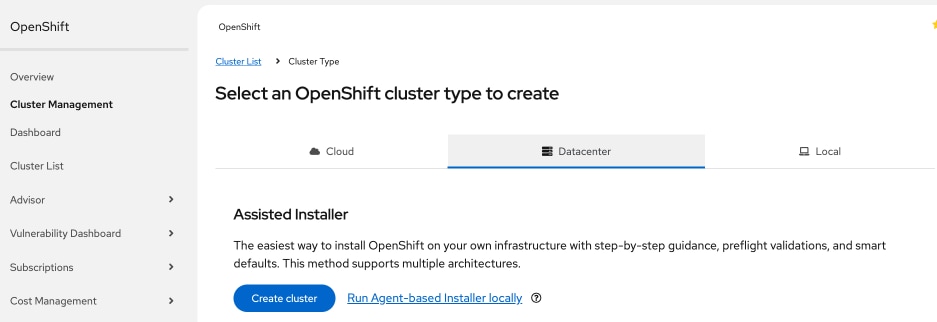

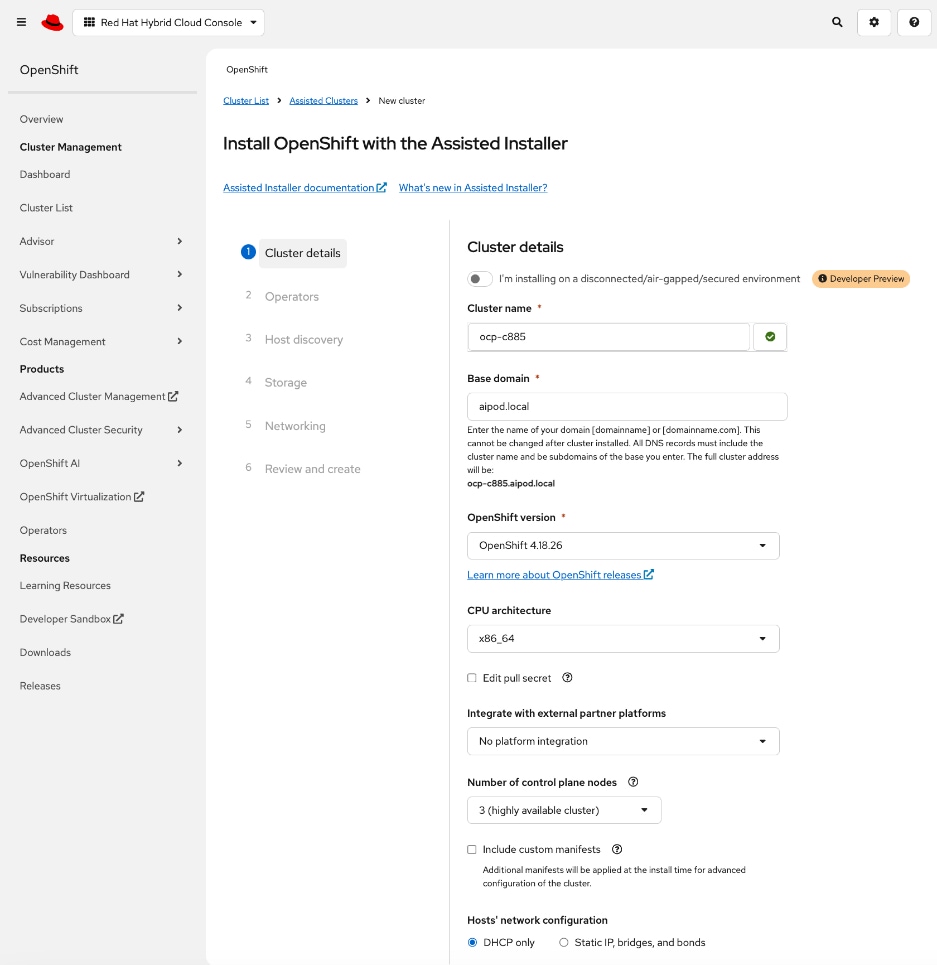

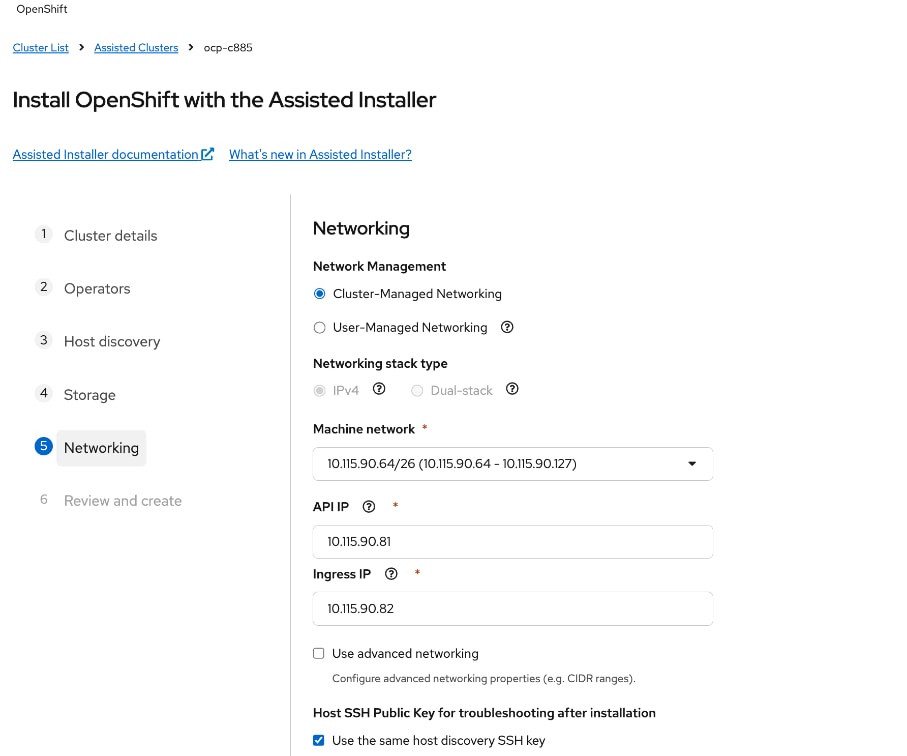

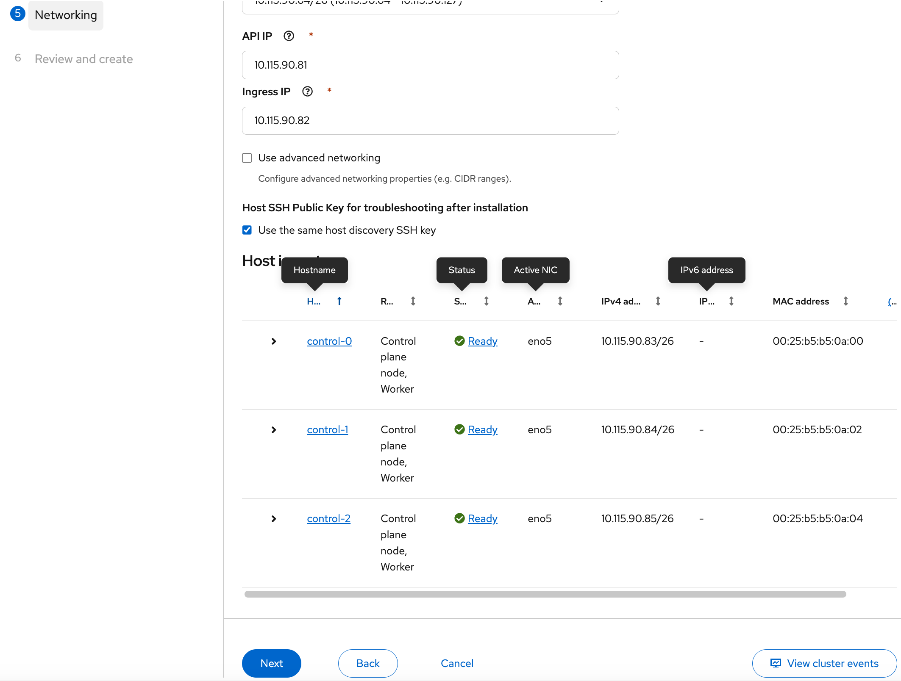

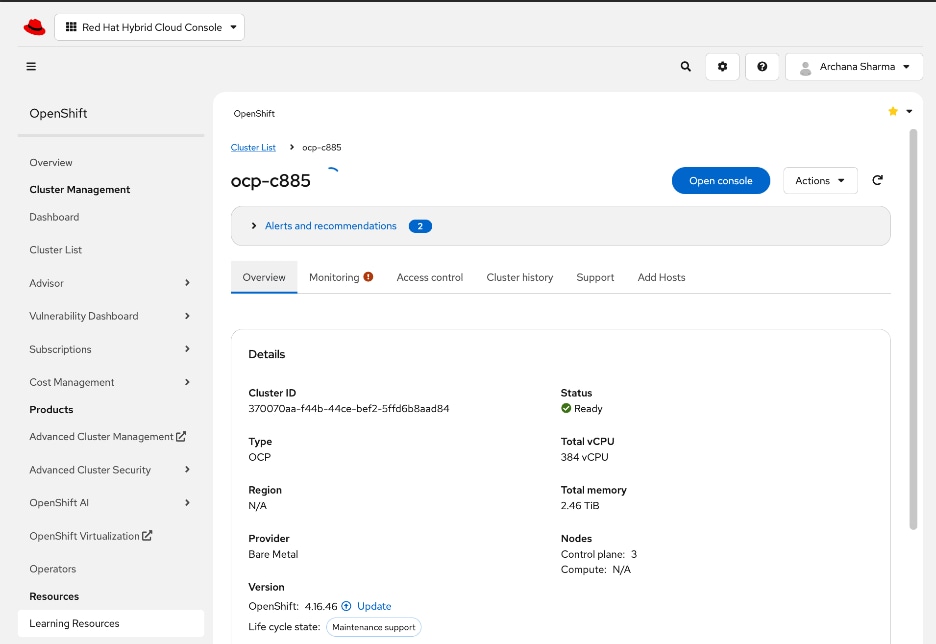

Red Hat OpenShift

The OpenShift cluster is deployed using the recommended Red Hat Assisted Installer available via the Red Hat Hybrid Cloud Console, a SaaS-based service in the cloud. The installer requires DNS and DHCP services, and external connectivity to the Red Hat Hybrid Cloud Console to be in place before the installation can start. An OpenShift Installer workstation (any Linux distribution) is also required to deploy and manage the cluster.

Three servers in the Cisco UCS X-Series Direct are deployed as a highly available control plane for the OpenShift cluster. The OpenShift cluster is implemented as a compact cluster, allowing the Cisco UCS X-Series servers to also function as worker nodes to host other services and workloads. In this design, these servers host secondary DHCP and DNS services by leveraging OpenShift Virtualization. The design and deployment of OpenShift Virtualization is outside the scope of this document.

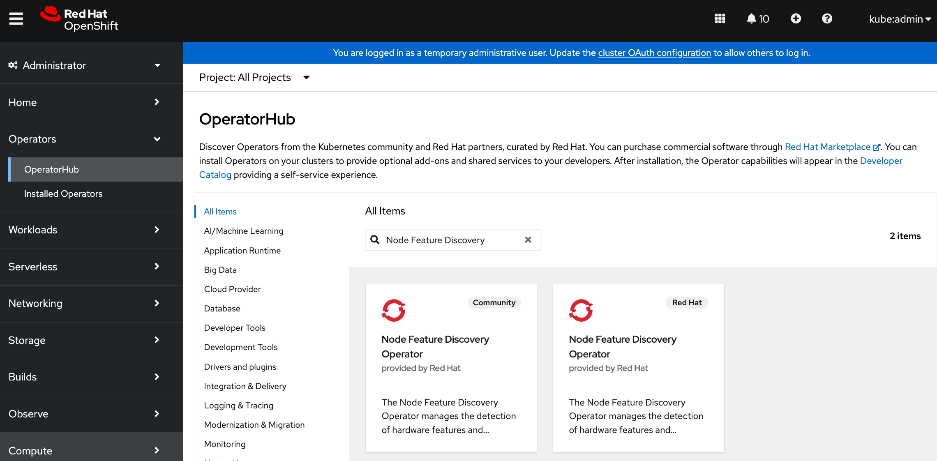

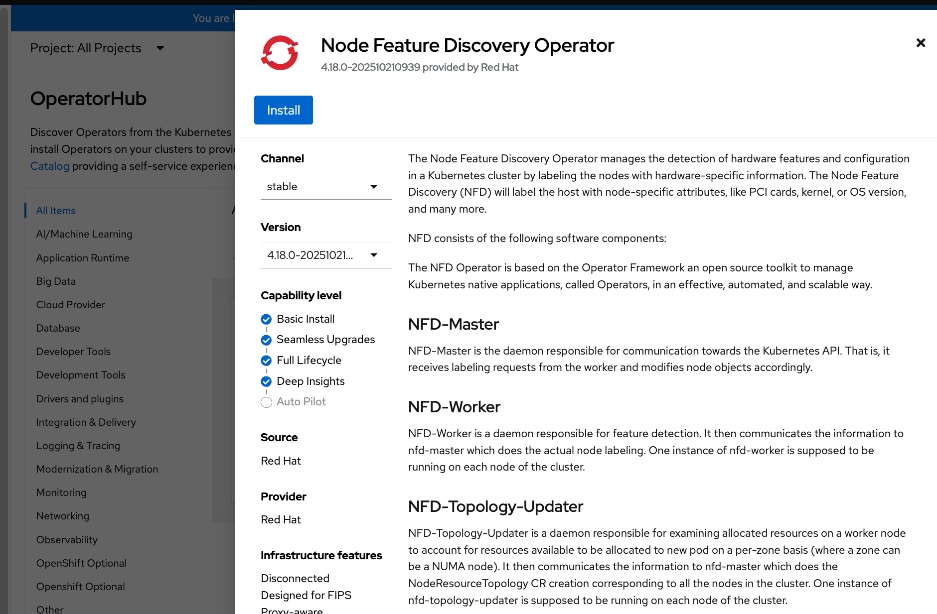

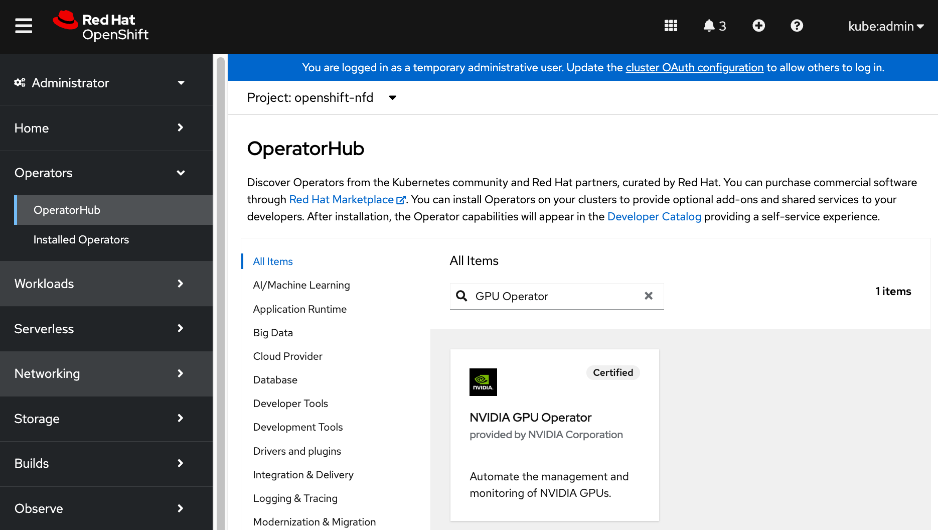

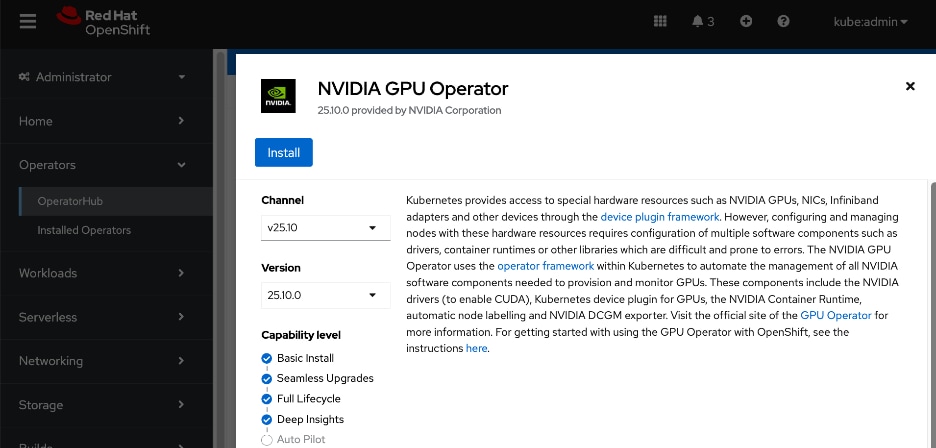

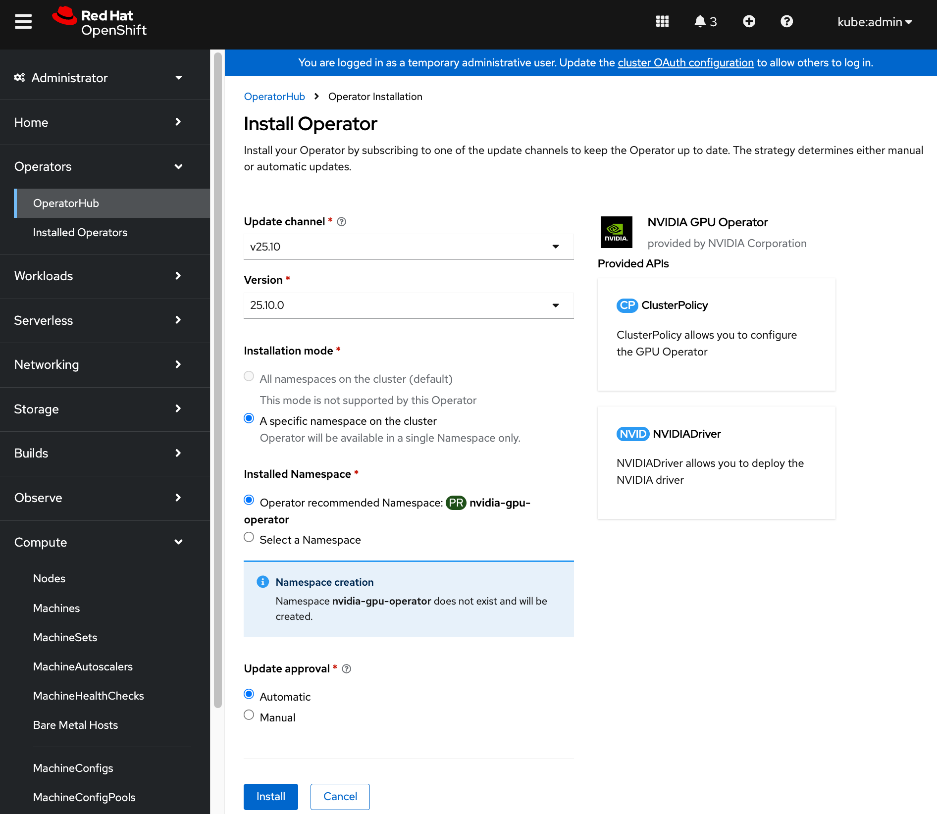

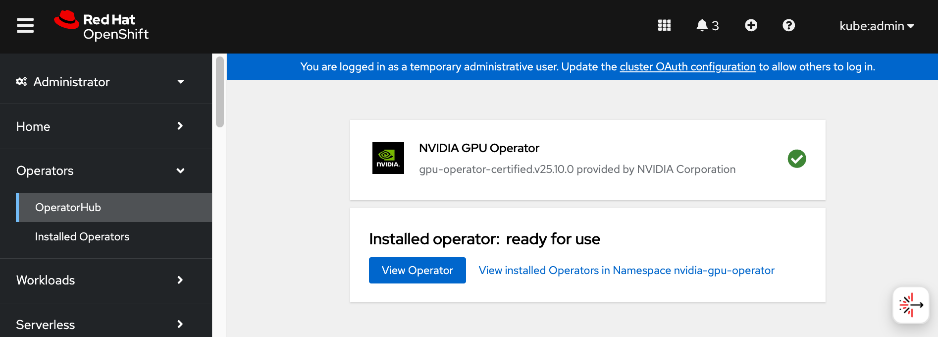

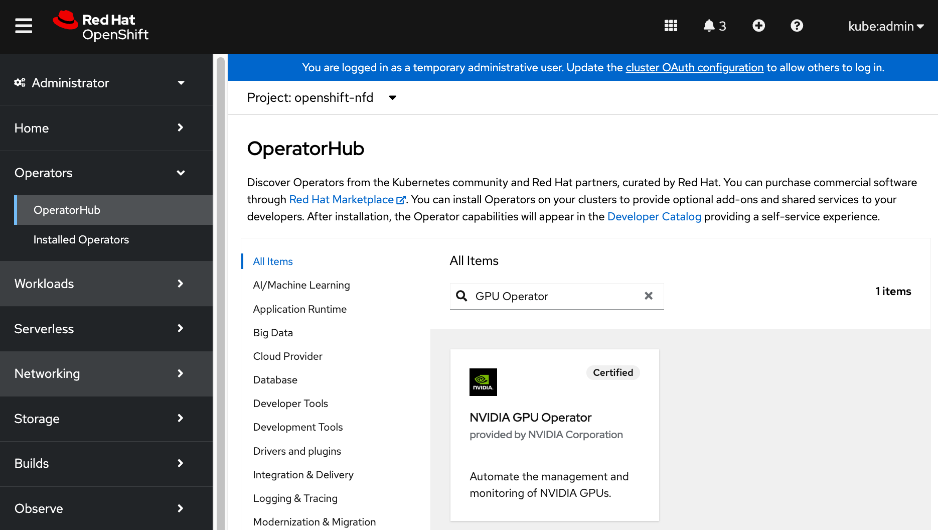

As stated earlier, the UCS GPU nodes are added as worker nodes to the same OpenShift cluster. The networking connectivity to the frontend fabric is provisioned at install time. The remaining configuration is done using OpenShift operators once the cluster is up and running with GPU worker nodes. All required operators are available via the Red Hat Operator Hub and are directly accessible from the OpenShift cluster console. The following operators are used to provision the GPU worker nodes in this design.

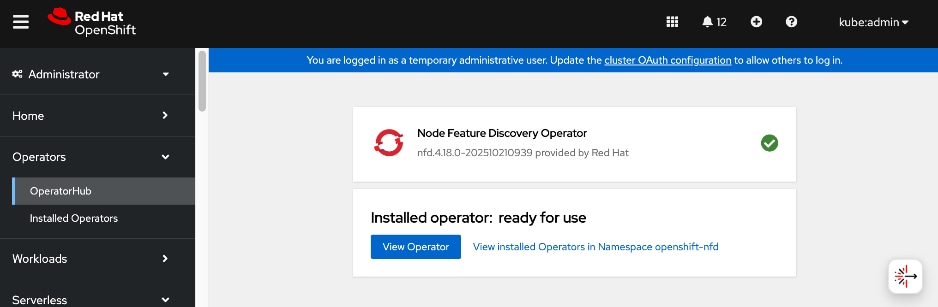

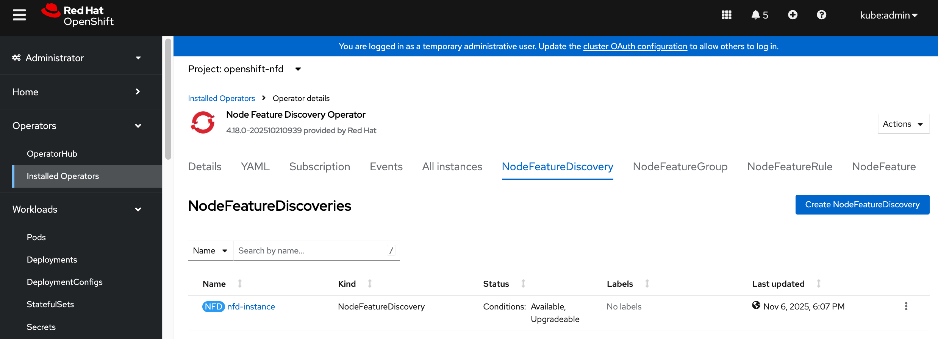

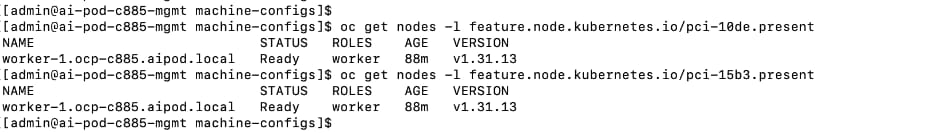

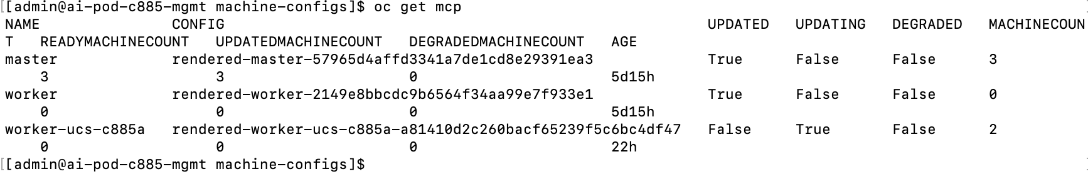

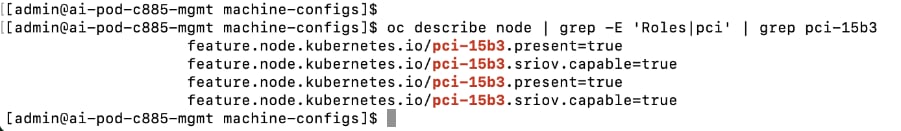

● Red Hat Node Feature Discovery (NFD) Operator is responsible for discovering and exposing the hardware capabilities of the NVIDIA GPUs and NICs in the UCS worker nodes. NFD labels the nodes with hardware-specific information (for example, PCI vendor code, CUDA capabilities, kernel version), enabling NVIDIA GPU and Network operators to deploy and configure the devices accordingly. The NFD operator detects and labels the NVIDIA NIC and GPU as shown. Multiple labels are added for a given hardware, but the presence of a NVIDIA GPU and NIC is indicated by the following labels:

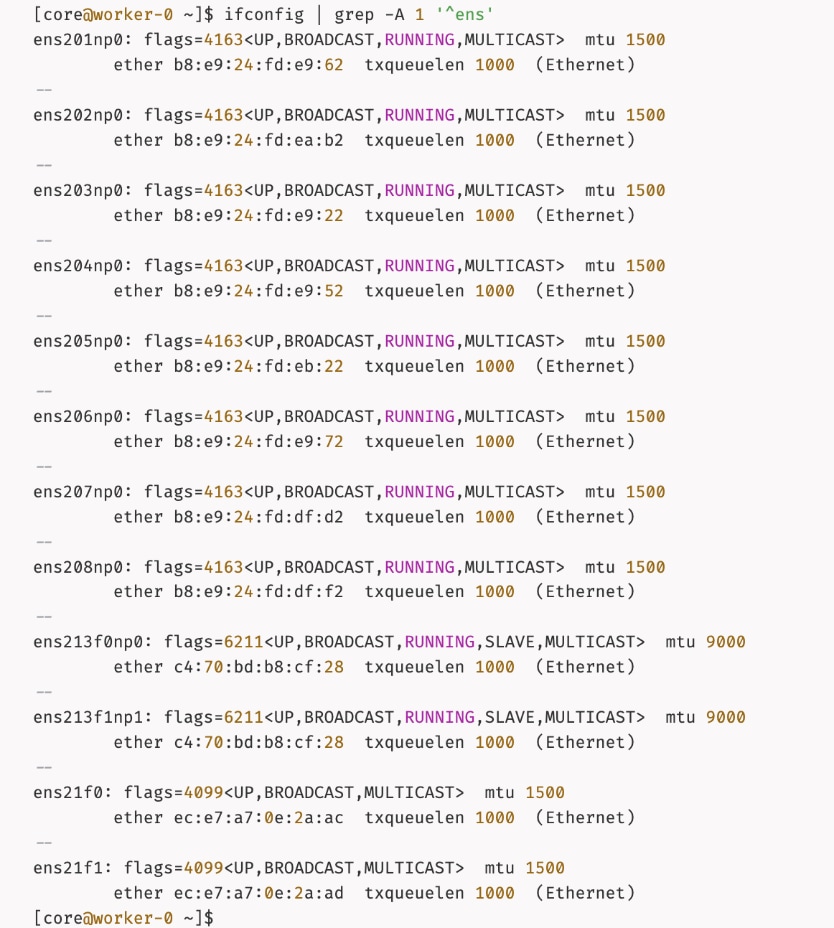

◦ feature.node.kubernetes.io/pci-10de.present=true (GPU)

◦ feature.node.kubernetes.io/pci-15b3.present=true (NIC)

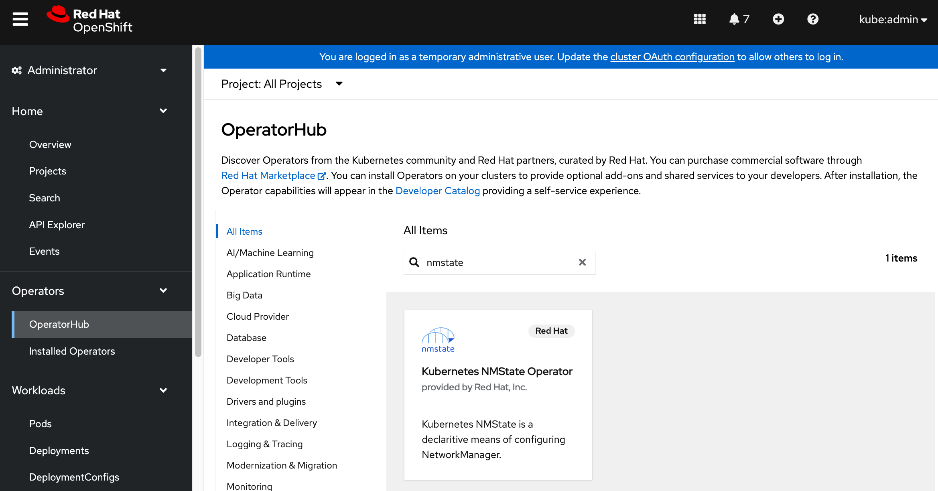

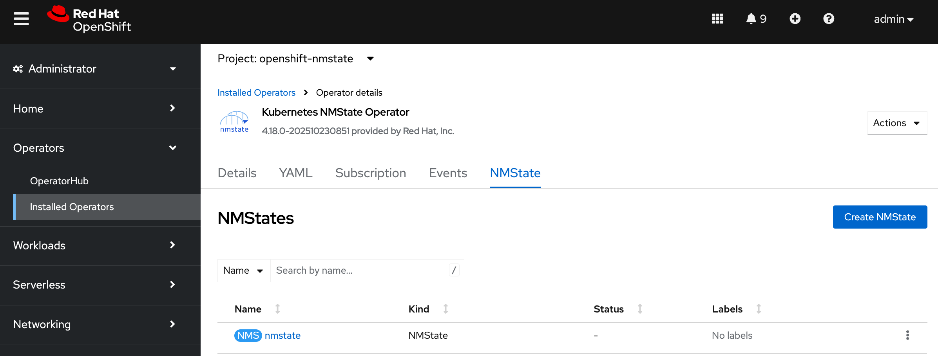

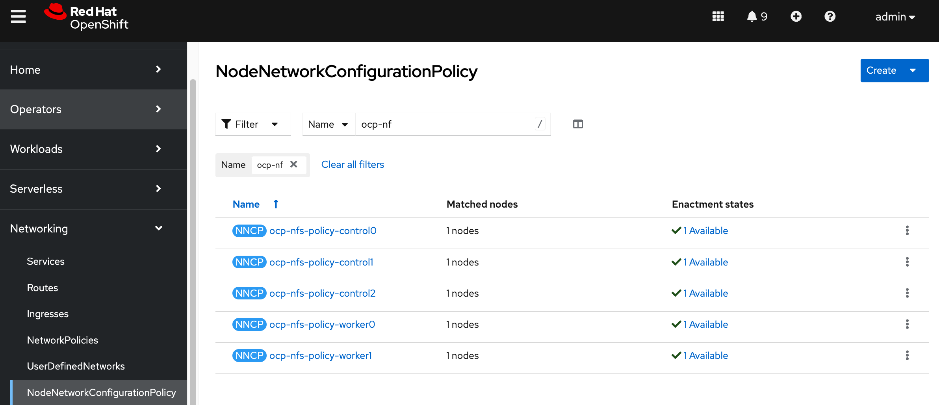

● Red Hat NMState Operator provides a declarative, Kubernetes-native way to manage node-level networking across the OpenShift cluster. Instead of manually configuring each server, you define the desired network state (such as IP addresses, MTU, and interface bonding) through a Kubernetes API. For this CVD, the NMState operator is used to adding storage interfaces with VLAN trunking, changing MTU to support jumbo frames etc. By using this operator, the network configuration becomes part of the cluster's desired state and by applying it to all worker nodes (for example) makes it easier to scale the AI POD by automatically applying the correct settings to new nodes as added.

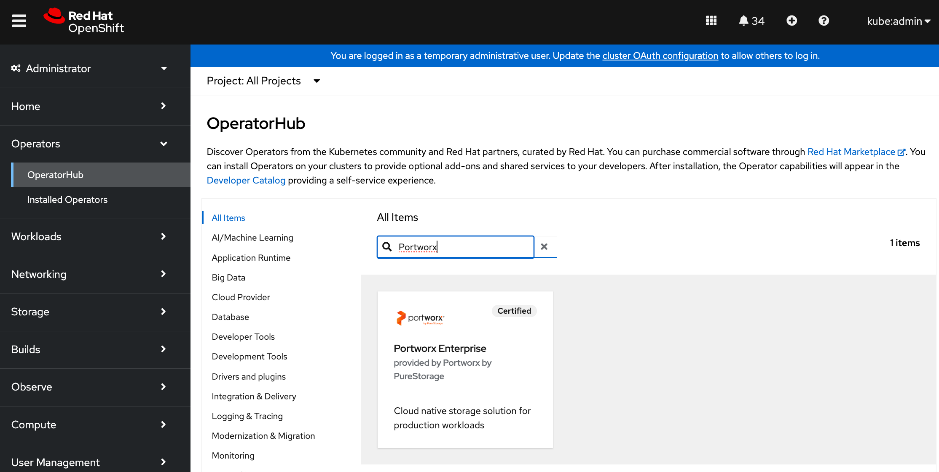

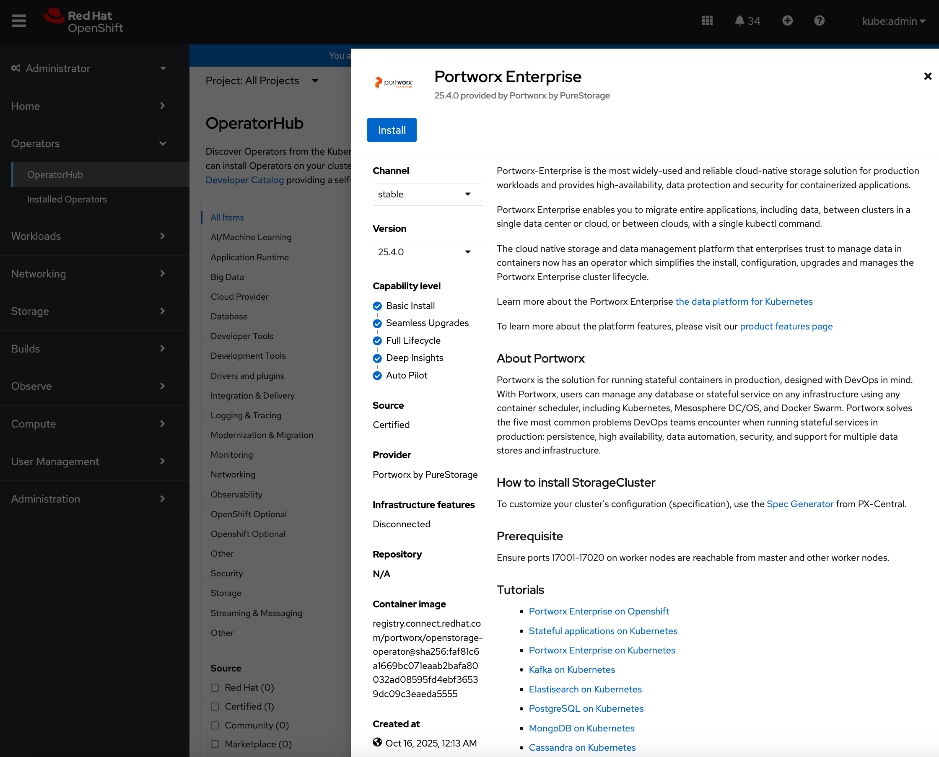

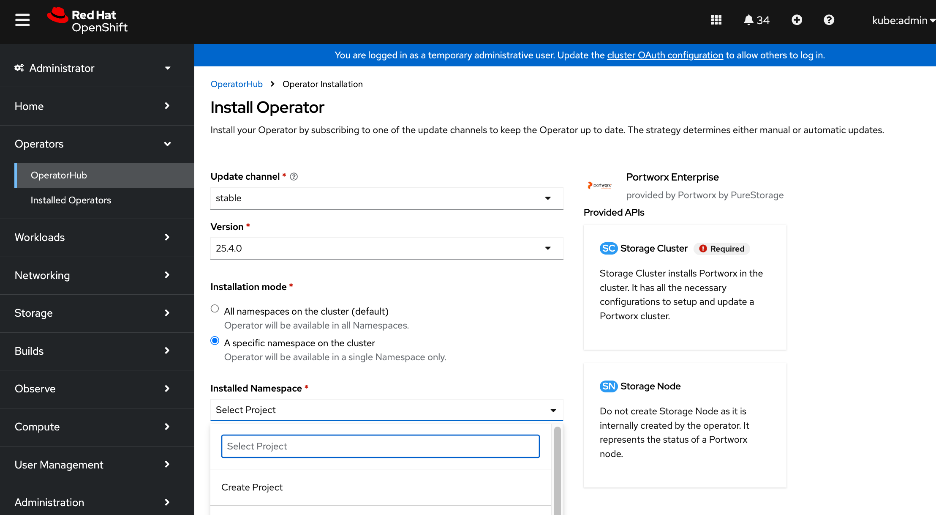

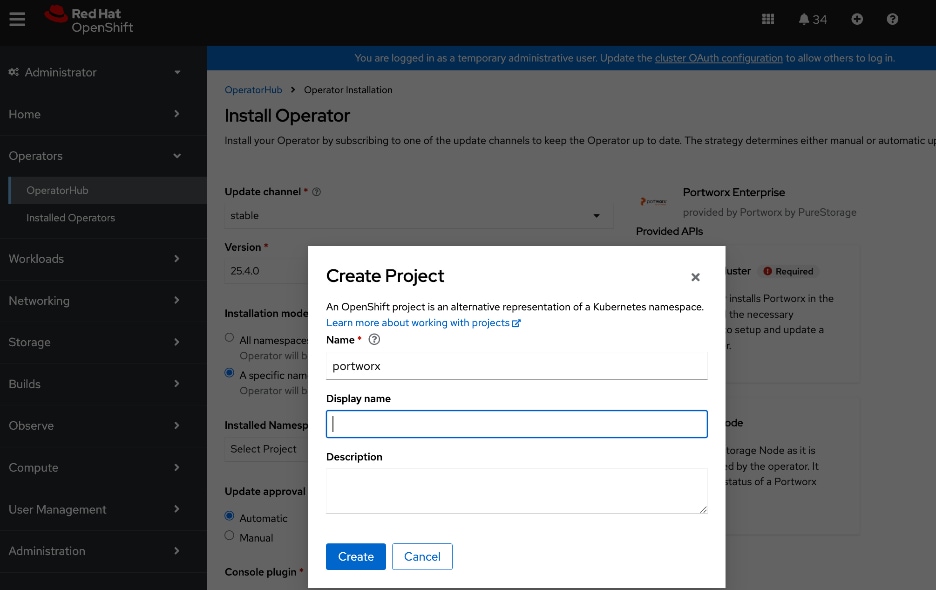

● Portworx Enterprise by Everpure Operator provides persistent storage for Kubernetes’ workloads within the Cisco AI POD. It functions as a CSI plugin to enable dynamic storage provisioning and management for AI pipelines running on Red Hat OpenShift. The operator automates the deployment, simplifying the lifecycle management of the storage environment as the cluster scales. Portworx also provides a dashboard that is directly accessible from the OpenShift cluster console to manage the environment.

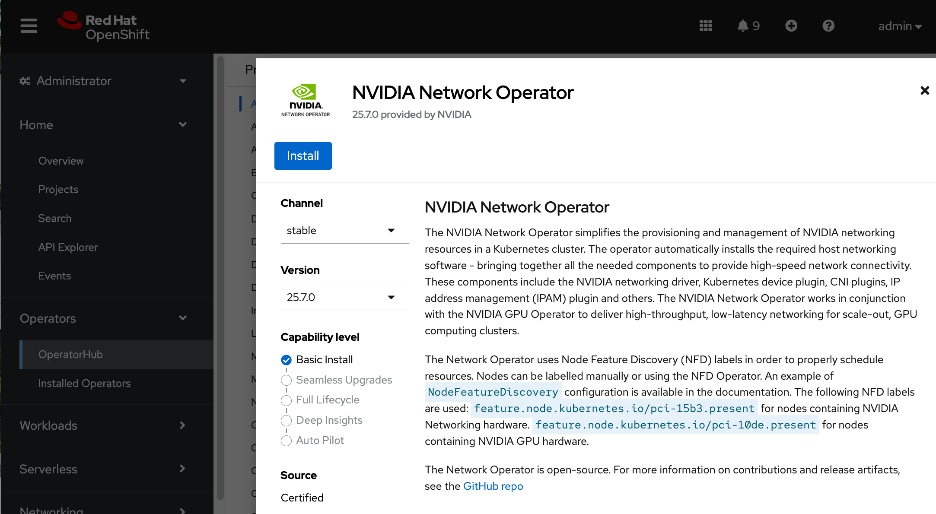

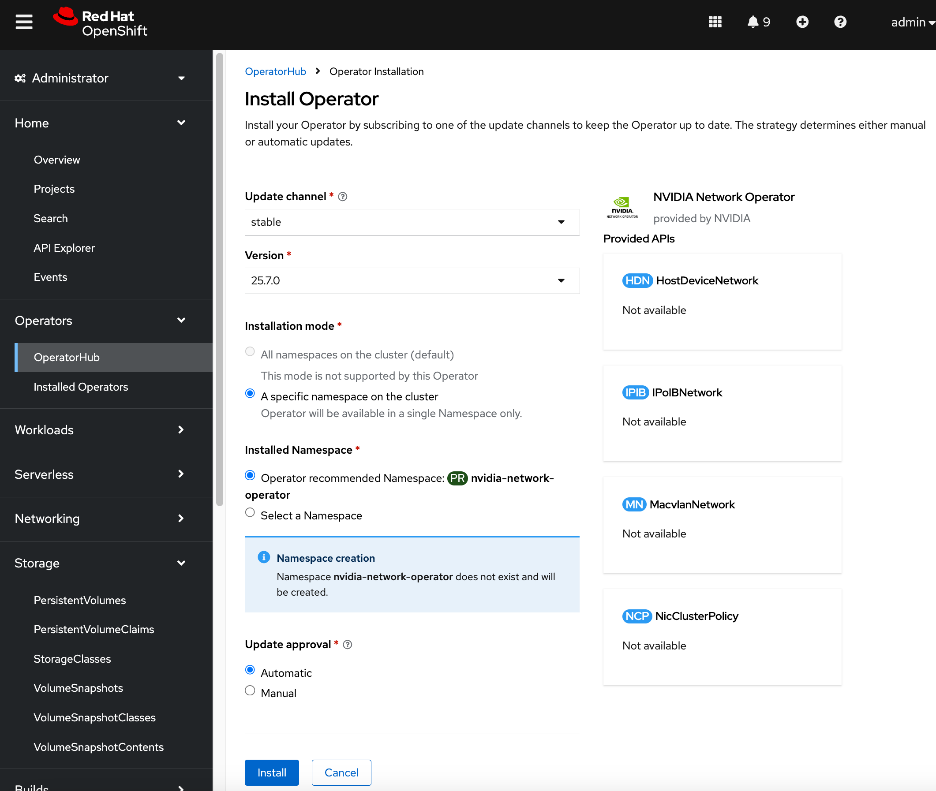

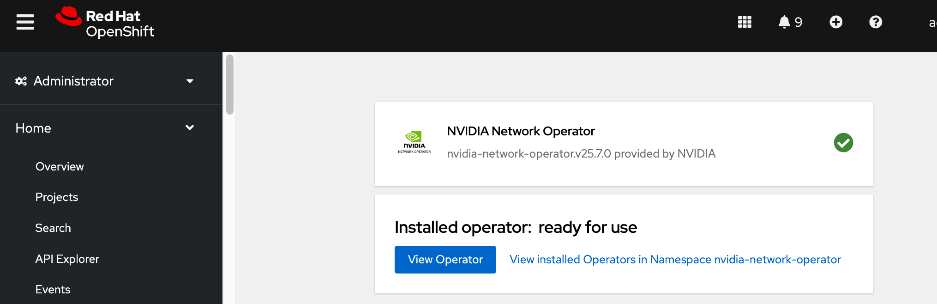

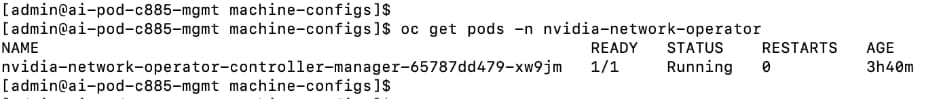

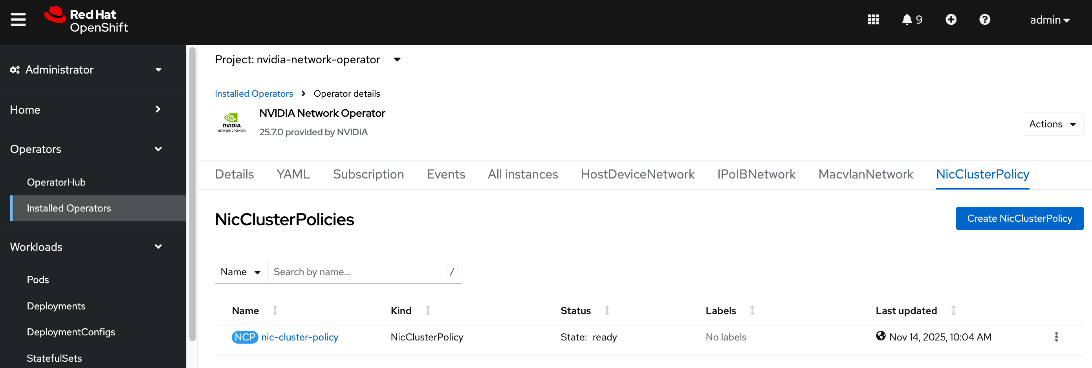

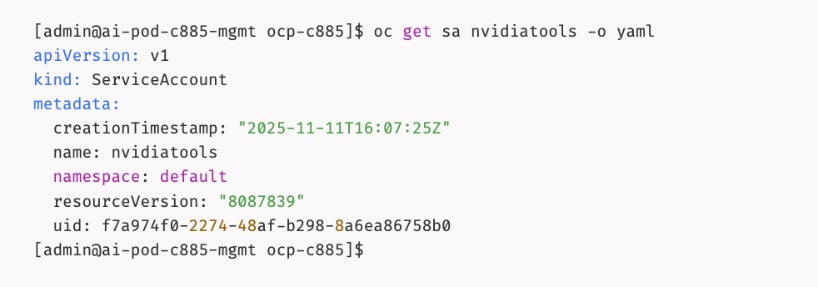

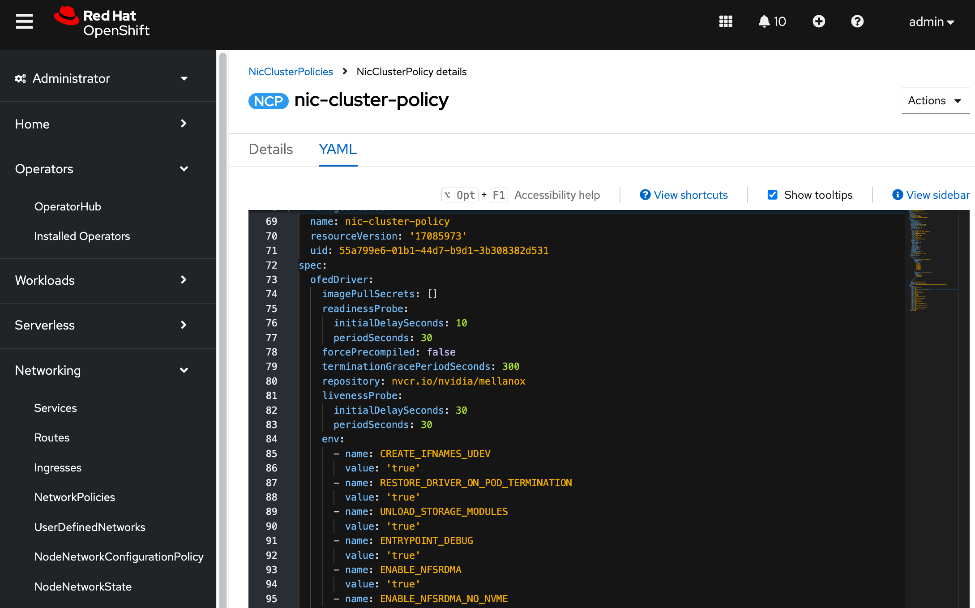

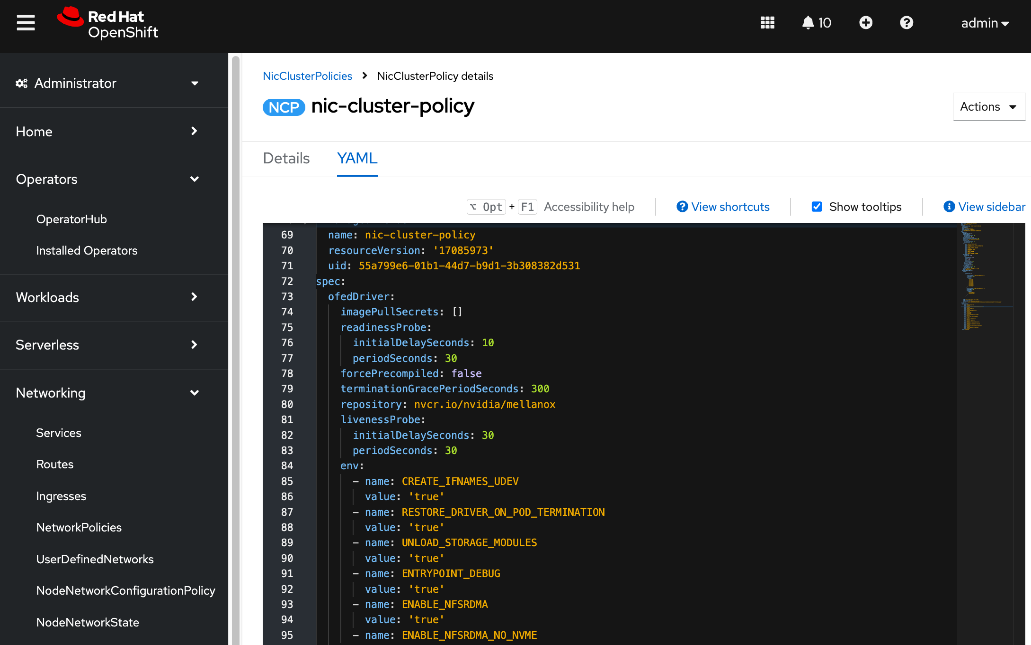

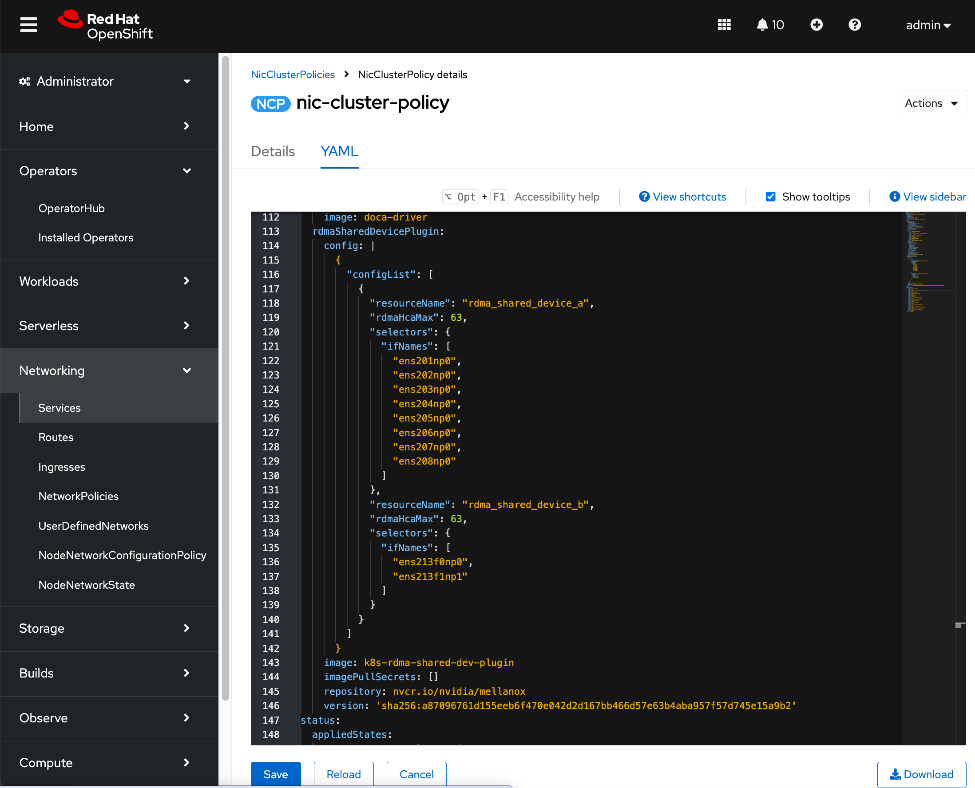

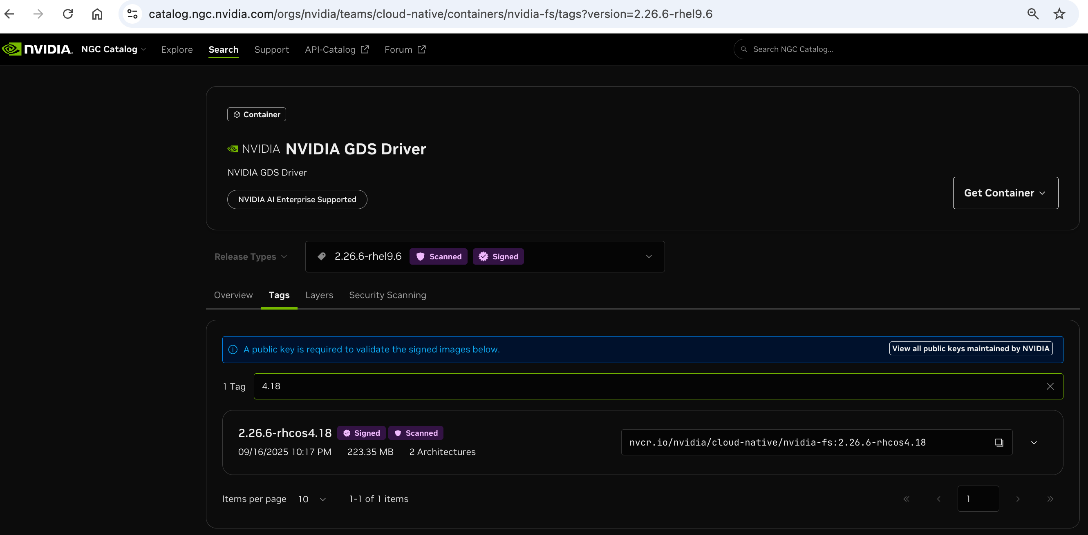

● NVIDIA Network Operator automates the provisioning of high-performance networking for the NVIDIA NICs in the UCS GPU nodes. By managing the installation of necessary drivers and libraries, such as MOFED, it enables low-latency communication between GPUs. The associated Network Cluster Policy is used to enable GPUDirect RDMA and GPUDirect Storage (GDS), ensuring the backend fabric is optimized for high-bandwidth AI workloads.

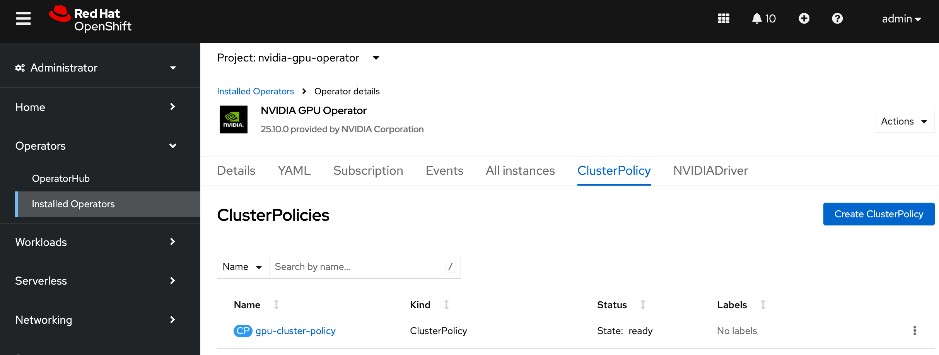

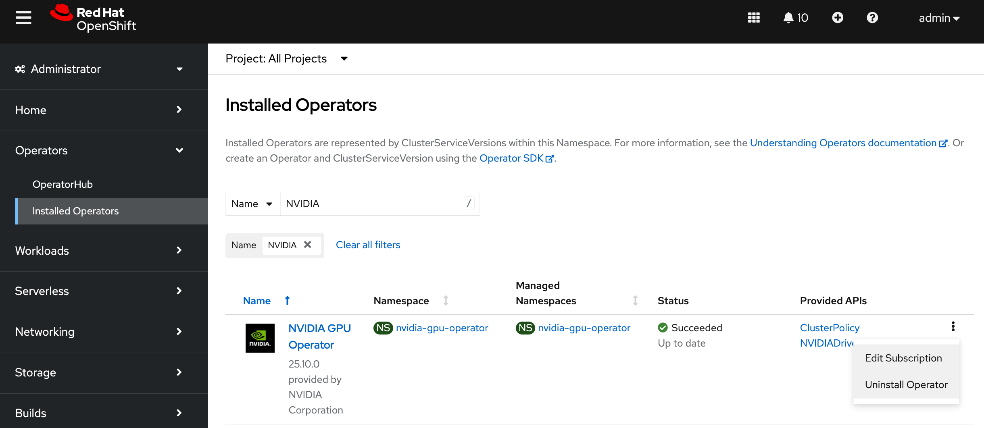

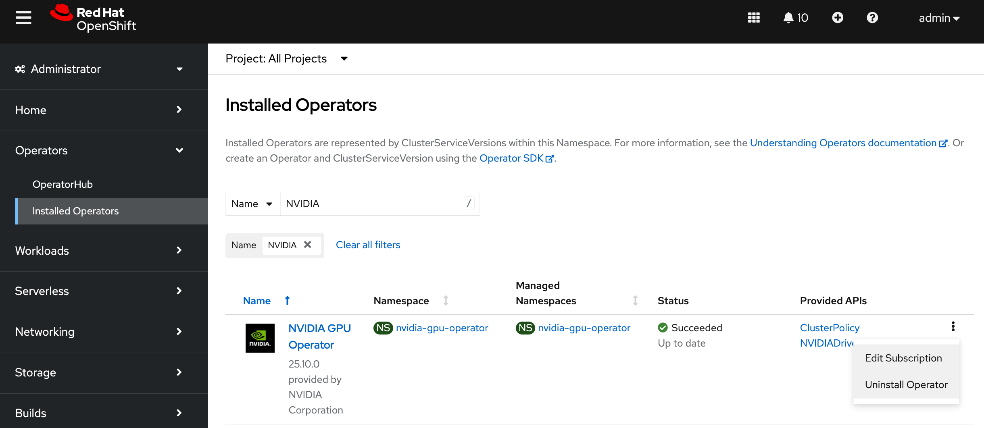

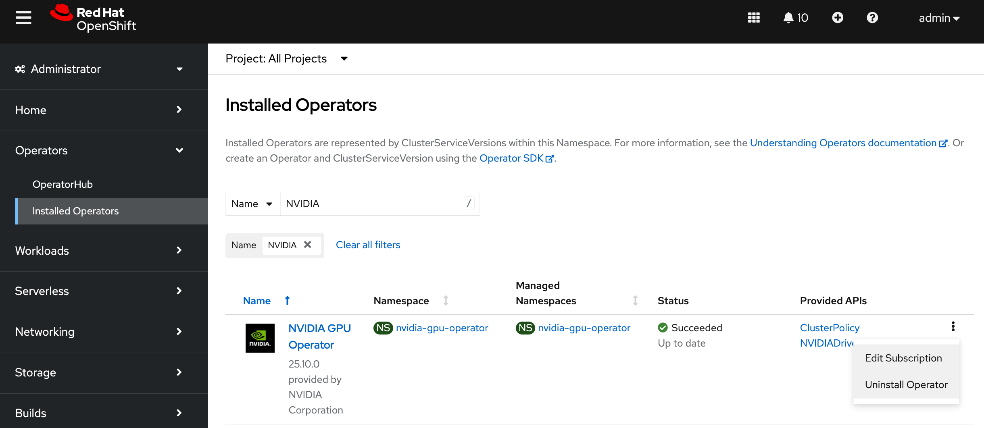

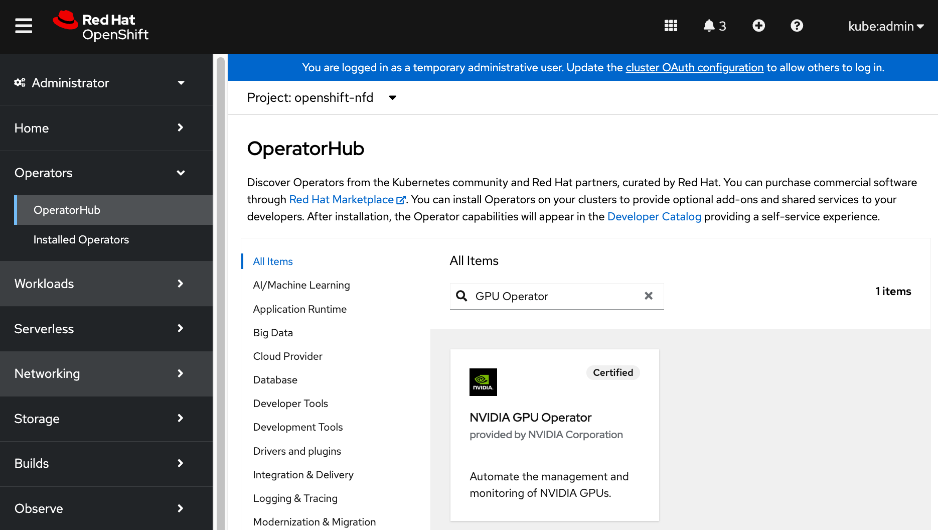

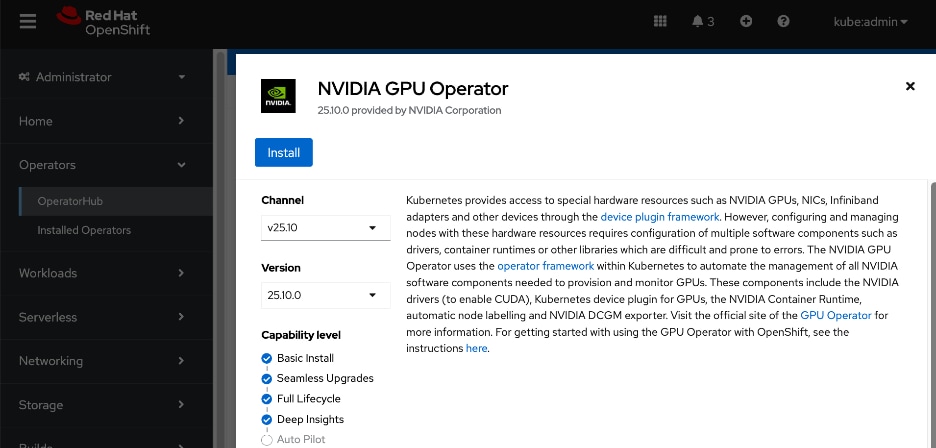

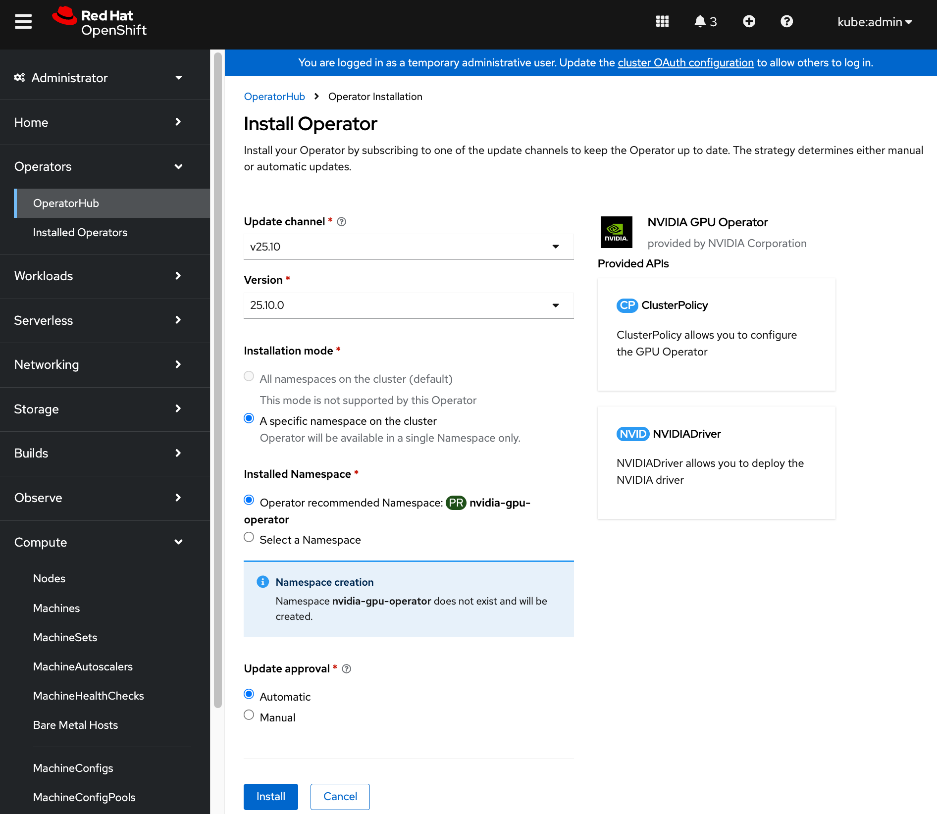

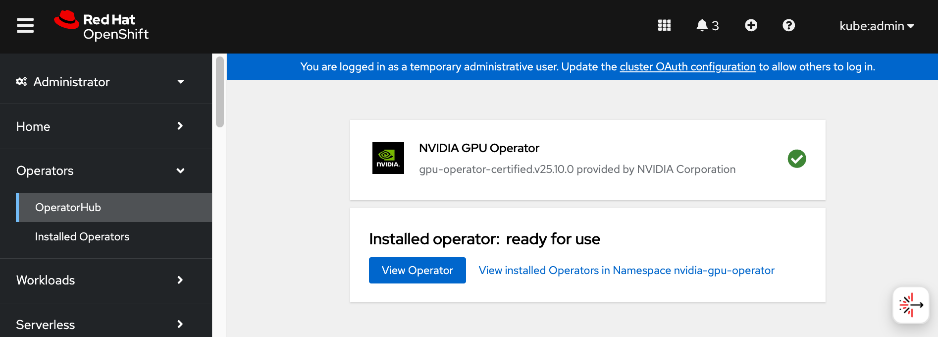

● NVDIA GPU Operator simplifies the management of NVIDIA GPUs by automating the installation of drivers, the container toolkit, and monitoring tools. It ensures that GPU resources are correctly exposed to the OpenShift scheduler and ready for AI tasks. The associated GPU Cluster Policy works in conjunction with the Network Operator to enable GPUDirect RDMA and GPUDirect Storage, allowing for direct data transfers between GPU memory and the network.

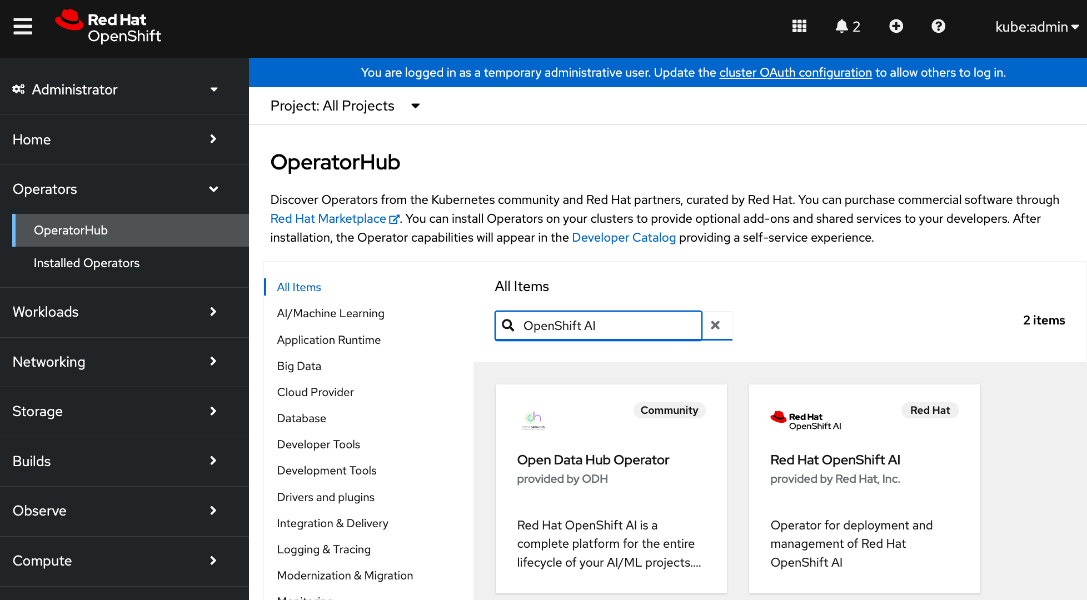

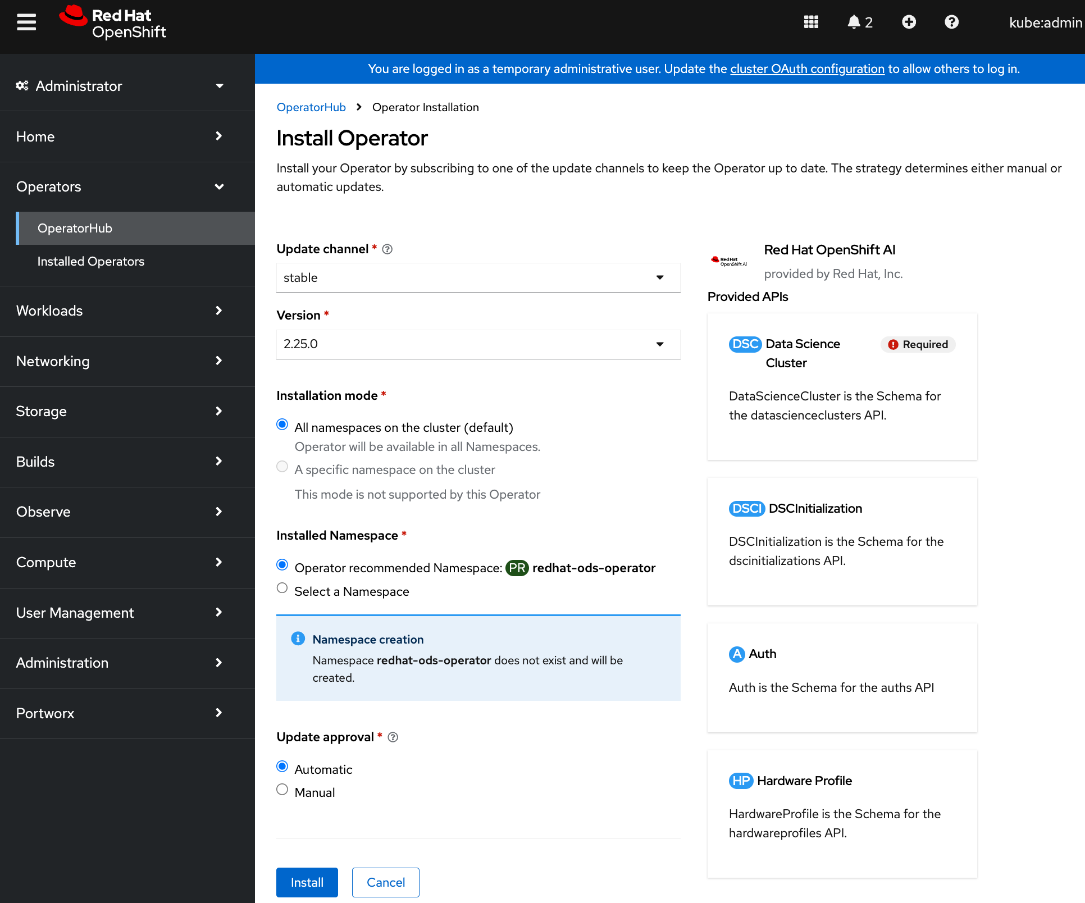

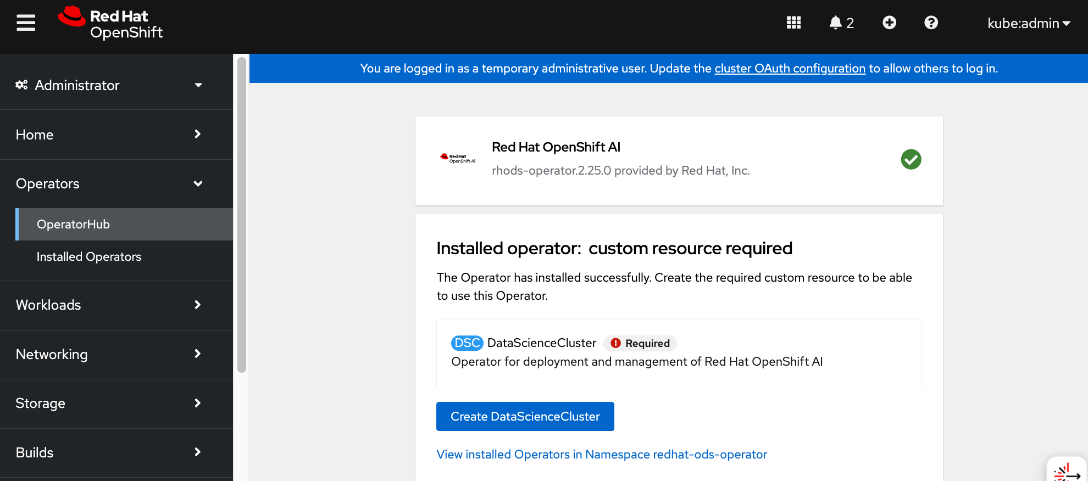

● Red Hat OpenShift AI Operator is used to deploy and enable a comprehensive MLOps environment for the cluster. It manages the full lifecycle of AI workloads—from model development and training to serving—and coordinates with additional operators to provide a seamless, end-to-end AI pipeline.

Deploying the NVIDIA Network Operator with a cluster policy enabled for RDMA will cause new drivers to be loaded on all NVIDIA NICs in the node, including the frontend NIC used as the OpenShift Cluster IP, resulting in a temporary outage during this time. It is recommended that you deploy a jump host with direct access to the cluster via Intel OCP NICs on the Cisco UCS C885A servers to ensure backup access to the cluster in the event of a problem.

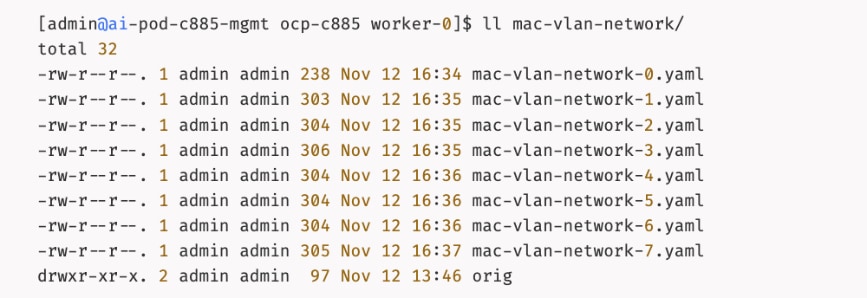

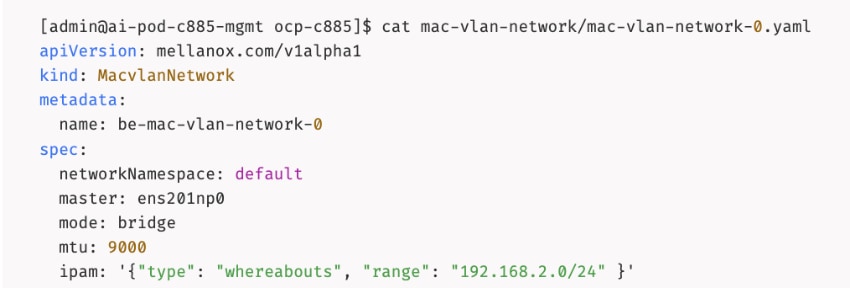

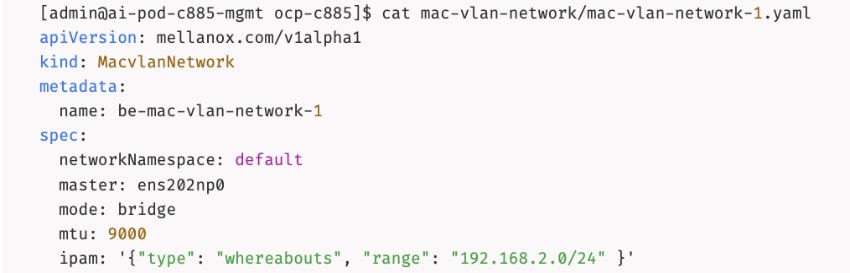

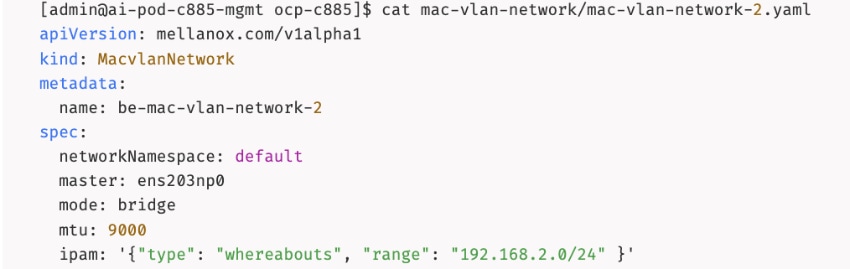

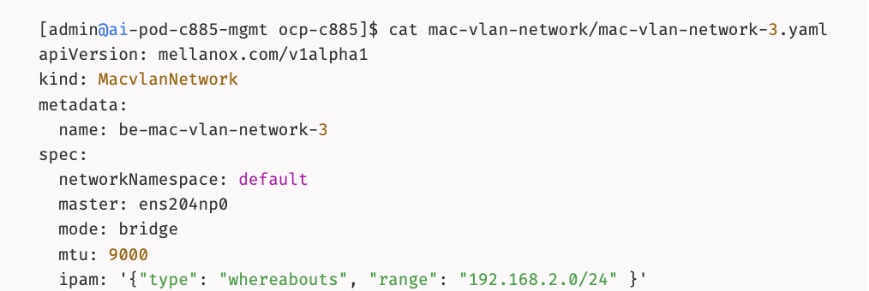

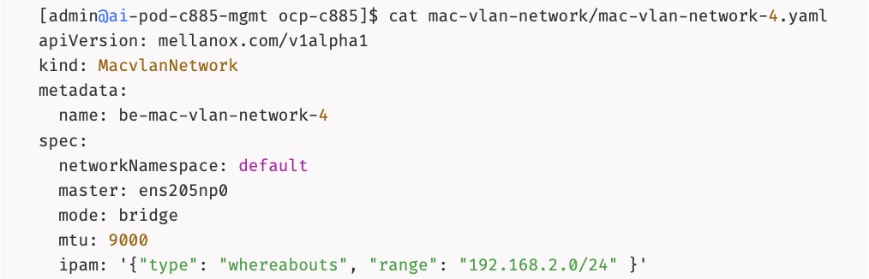

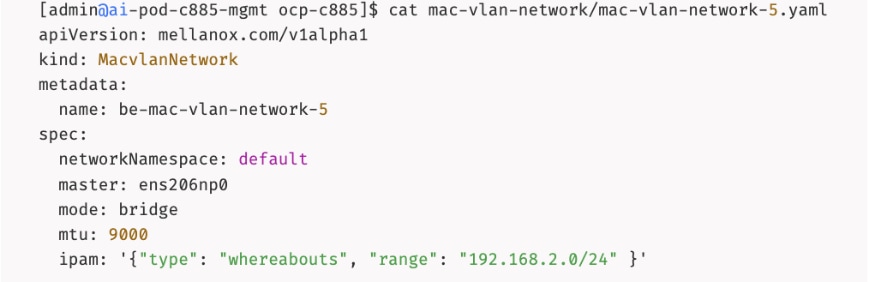

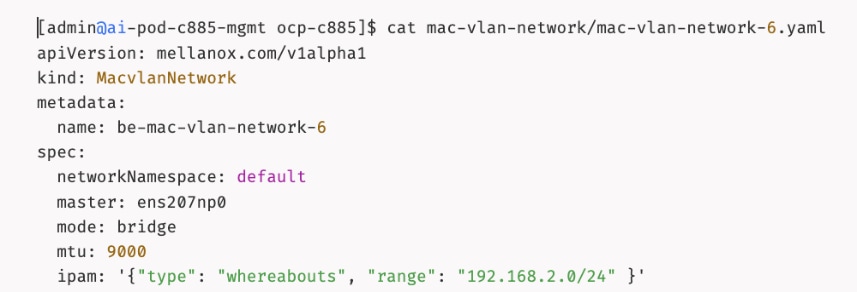

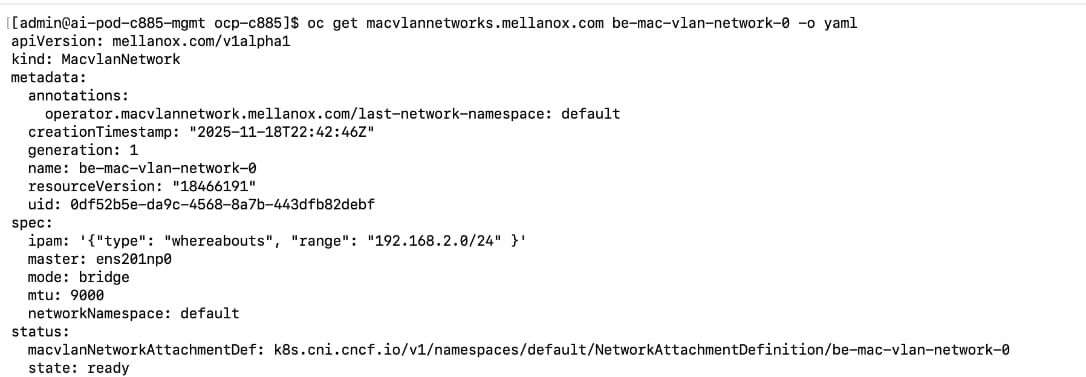

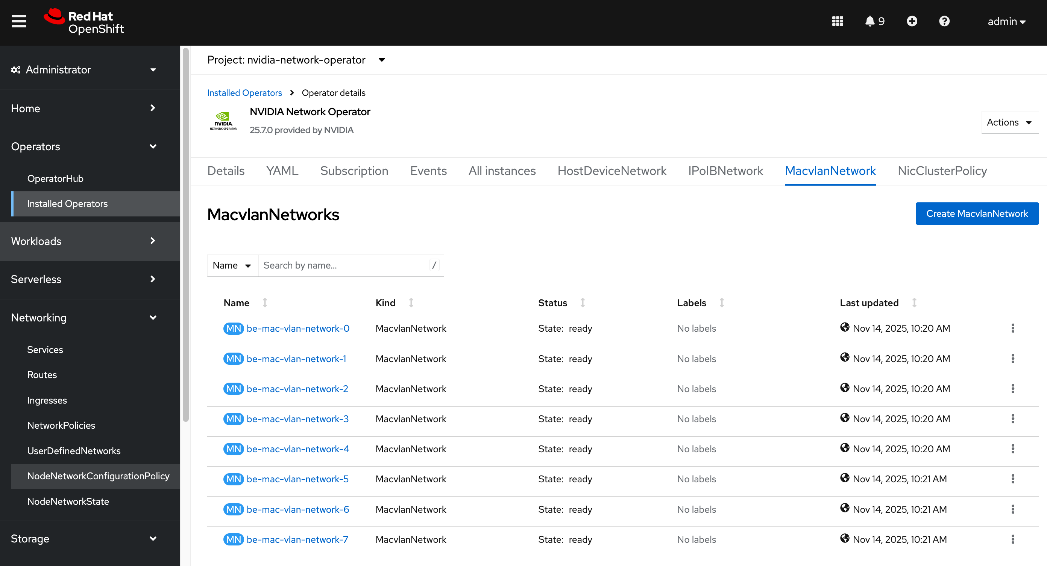

The AI POD design implements GPUDirect RDMA to accelerate distributed training and fine-tuning across the backend fabric and GPUDirect Storage for high-speed storage access via the frontend fabric. While this can be deployed using various methods, this CVD uses a multi-tenant approach that allows multiple pods on the same worker node to share the RDMA device (NIC). Specifically, a MacvlanNetwork Custom Resource Definition (CRD) is used to provision IP addressing and enable network access across the shared physical network interface for both the backend and frontend fabrics to support GPUDirect RDMA and GPUDirect Storage, respectively.

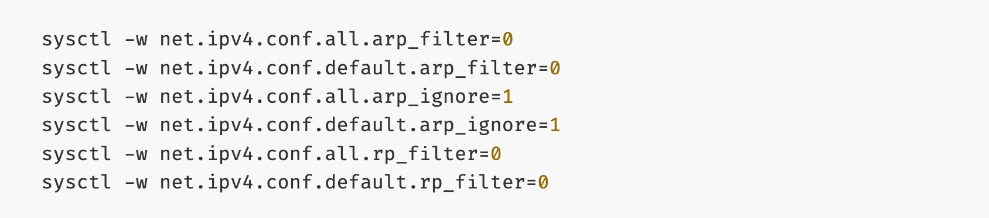

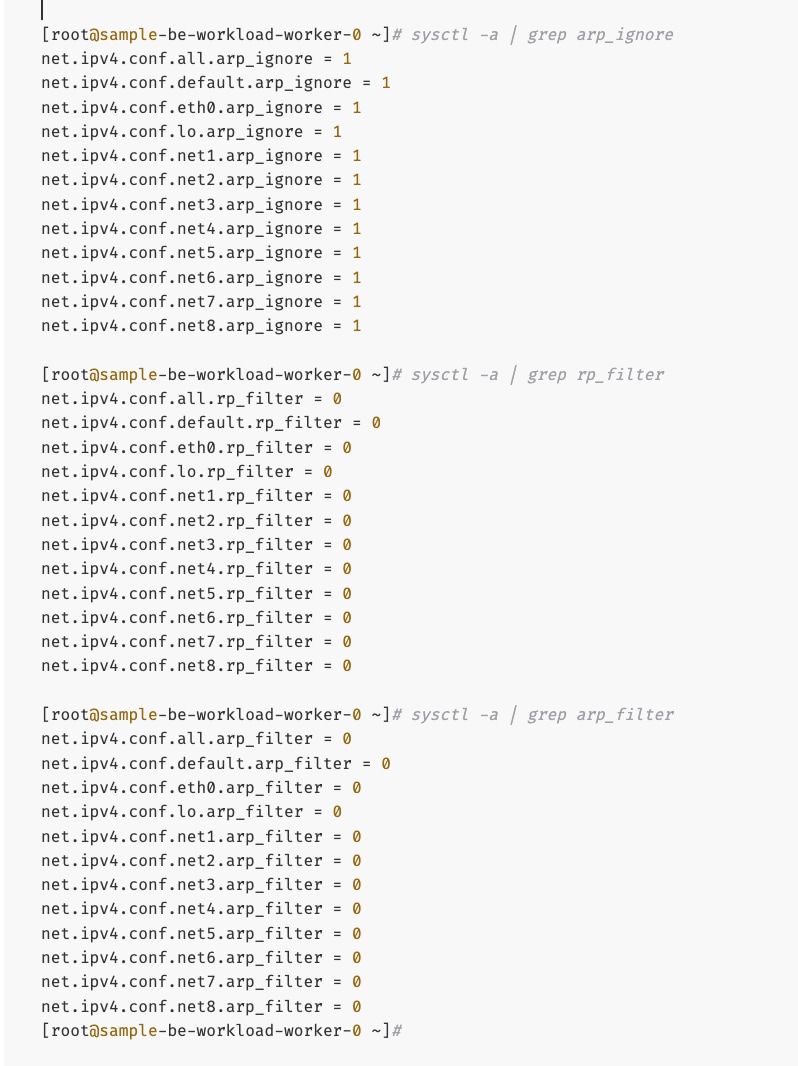

The Layer 2 (overlay) connectivity used for inter-node GPU communication between the 4 Cisco UCS C88A M8 nodes in the backend fabric also requires the following changes to be implemented on node.

Solution Deployment

This section details the deployment of the specific AI POD design discussed in the previous section, based on validation in Cisco labs. This chapter contains the following:

Deploy Frontend Fabric using Nexus Dashboard

Deploy Backend Fabric using Nexus Dashboard

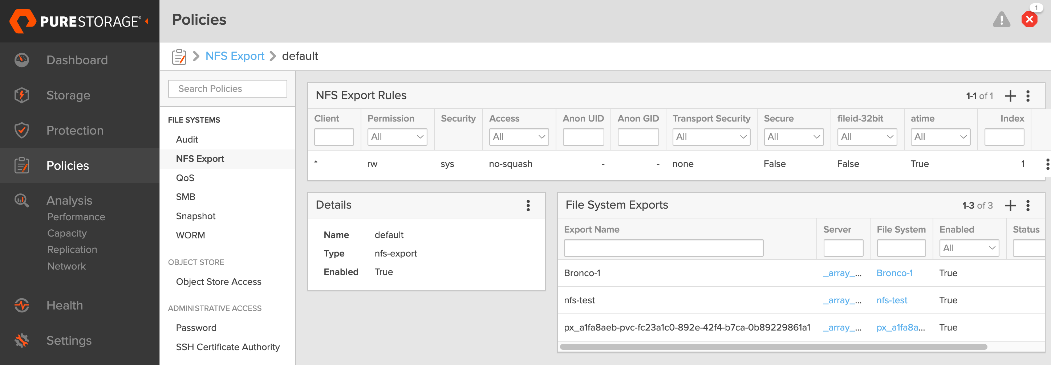

Deploy NFS Storage on Everpure FlashBlade

Deploy Object Store on Everpure FlashBlade

Deploy UCS Management Nodes from Cisco Intersight

Deploy Red Hat OpenShift on UCS Servers

Initial Setup of Cisco UCS C885A GPU Servers

Add Cisco UCS C885A GPU Servers to OpenShift Cluster

Set up Networking for Storage Access

Deploy Portworx to provide Persistent Storage

Set up Portworx for NFS over TCP Access to Storage

Deploy GPUDirect RDMA on Backend Fabric

Set up Portworx for NFS over RDMA Access to Storage

Set up Portworx for GPUDirect Storage

Deploy Red Hat OpenShift AI for MLOps

This section provides a high-level overview of the steps involved in deploying the end-to-end solution. The sections that follow will provide the detailed procedures for each step. A summary view of these implementation steps are provided in Table 6.

| Steps |

Deployment Action |

| CVD_01 |

Deploy a 3-node Nexus Dashboard (ND) Cluster

● Set up the first node in a 3-node Nexus Dashboard cluster

● Use the cluster bring up workflow to (1) complete the setup of the first node, (2) deploy remaining nodes in the cluster and (3) bring up the 3-node ND cluster.

|

| CVD_02 |

Deploy Frontend (FE) Network Fabric

● Use Nexus Dashboard blueprint/template to deploy a VXLAN EVPN Fabric on the FE switches connected in a 2-tier Spine-Leaf topology

● Enable Virtual Port-Channel (vPC) peering on Compute/Management leaf pairs in FE fabric.

● Enable vPC peering on Storage Leaf switch pairs to connect to Everpure XFM modules

● Enable Layer 2 Connectivity to Management UCS X-Direct from FE fabric

● Enable Layer 2 Connectivity to UCS GPU Nodes from FE Fabric

● Enable IB-MGMT Connectivity for Cisco UCS (GPU + Management) Nodes

● Enable Layer 2 Connectivity to Everpure FlashBlade//S from FE Fabric

● Enable NFS Storage Data Access to Everpure FlashBlade//S

● Enable S3-compatible Object Store Data Access to Everpure FlashBlade//S

● Enable external connectivity from the FE fabric to access SaaS services (Cisco Intersight, Red Hat Hybrid Cloud Console) and other services outside this fabric

● Enable QoS for storage RDMA traffic in the FE fabric

|

| CVD_03 |

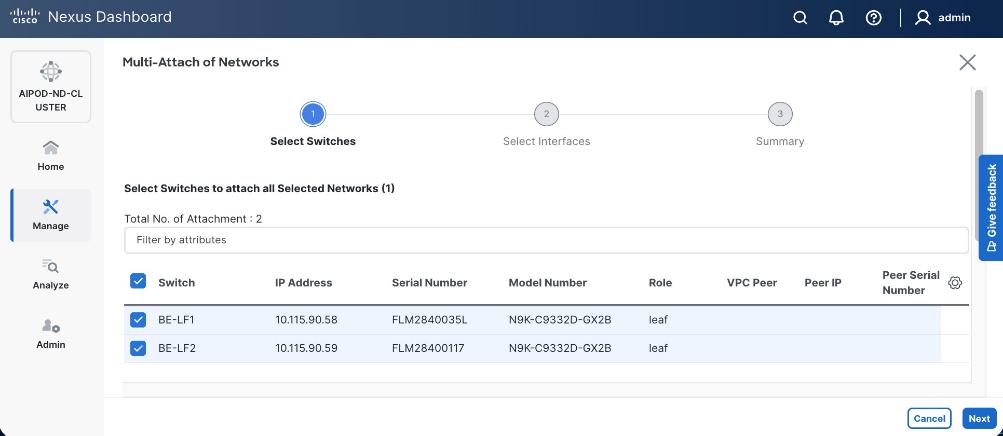

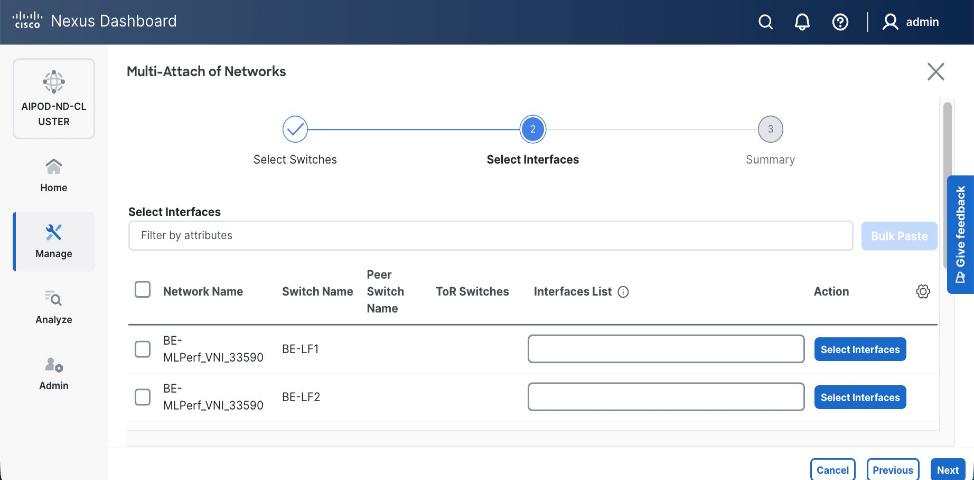

Deploy Backend (BE) Network Fabric

● Use Nexus Dashboard blueprint/template to deploy a VXLAN EVPN Fabric on the BE switches connected in a 2-tier Spine-Leaf topology

● Enable QoS for inter-node GPU-to-GPU RDMA traffic in the BE fabric

● Enable GPU-to-GPU networking between UCS GPU nodes across the BE fabric using a Layer 2 overlay

|

| CVD_04 |

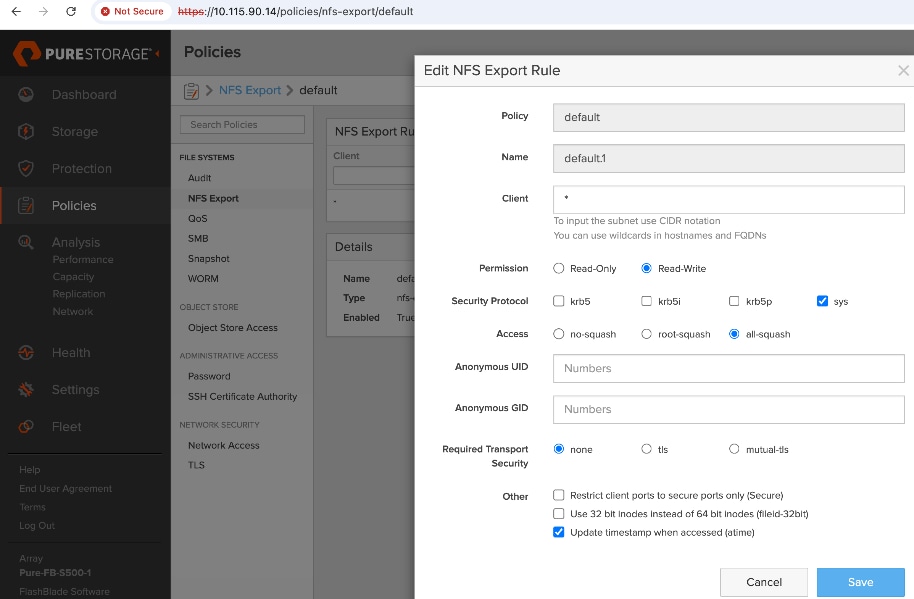

Deploy NFS Storage on Everpure FlashBlade//S

● Prepare Hardware: Coordinate site power/cooling and complete the Installation Workbook to define your network parameters.

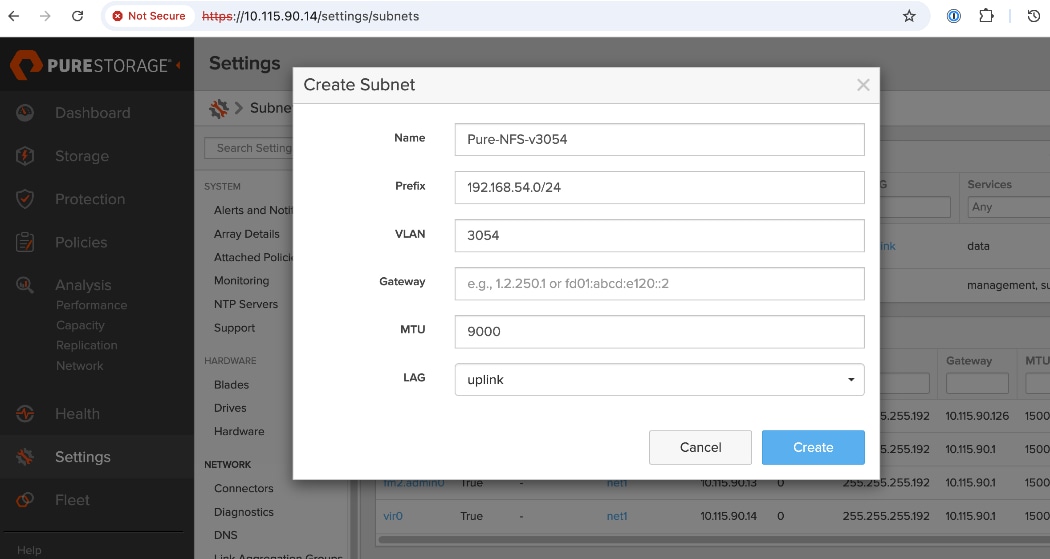

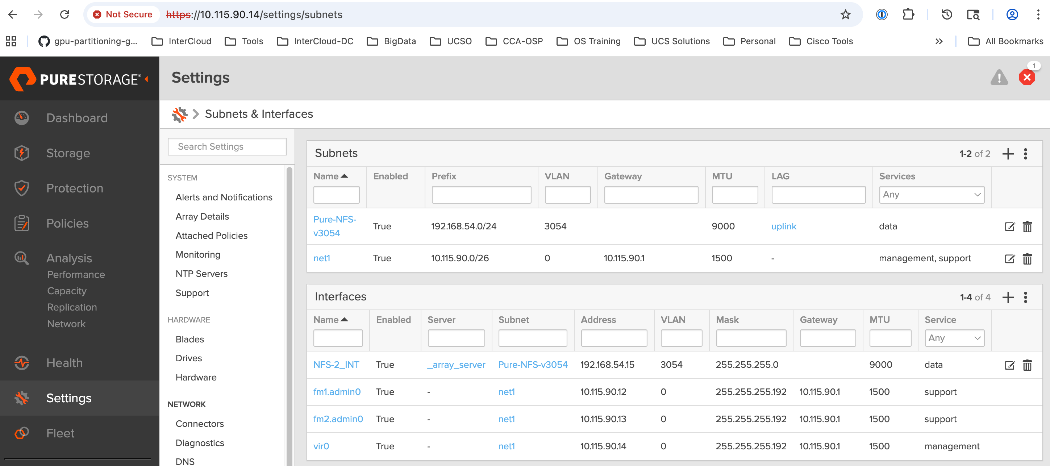

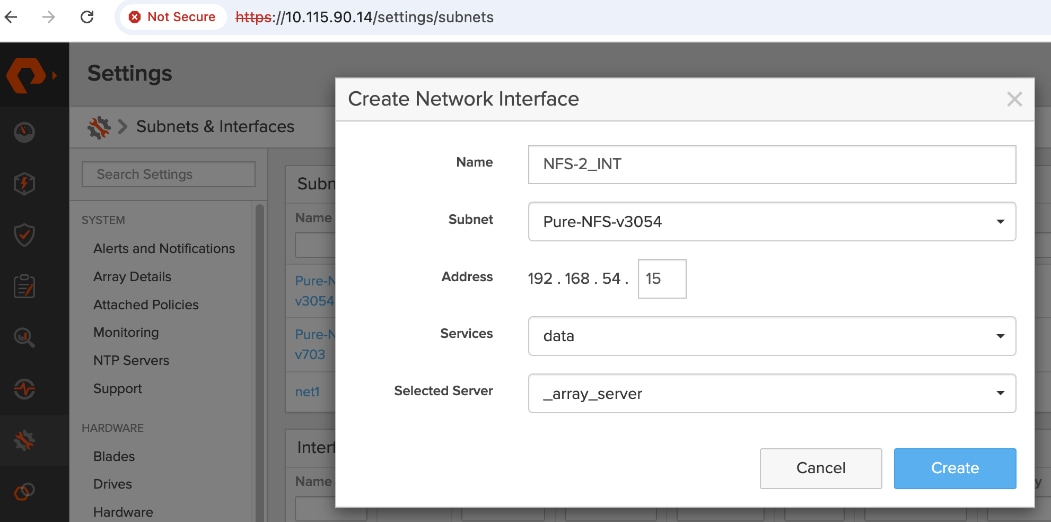

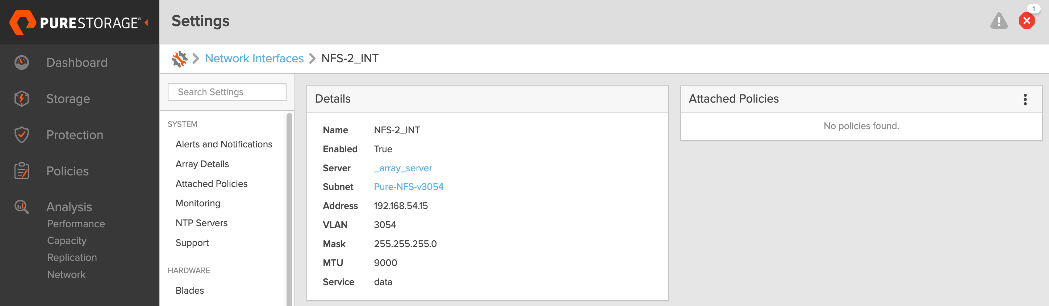

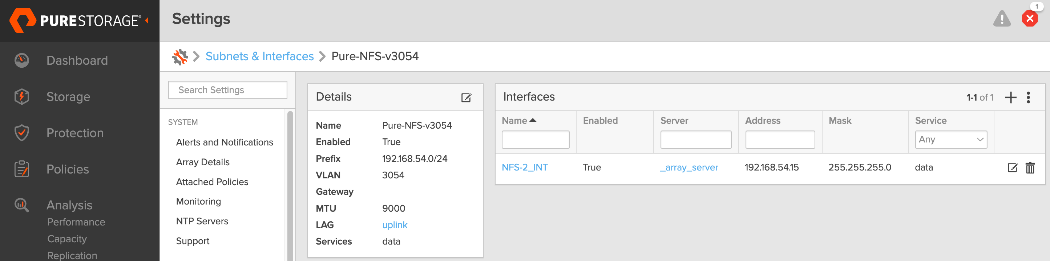

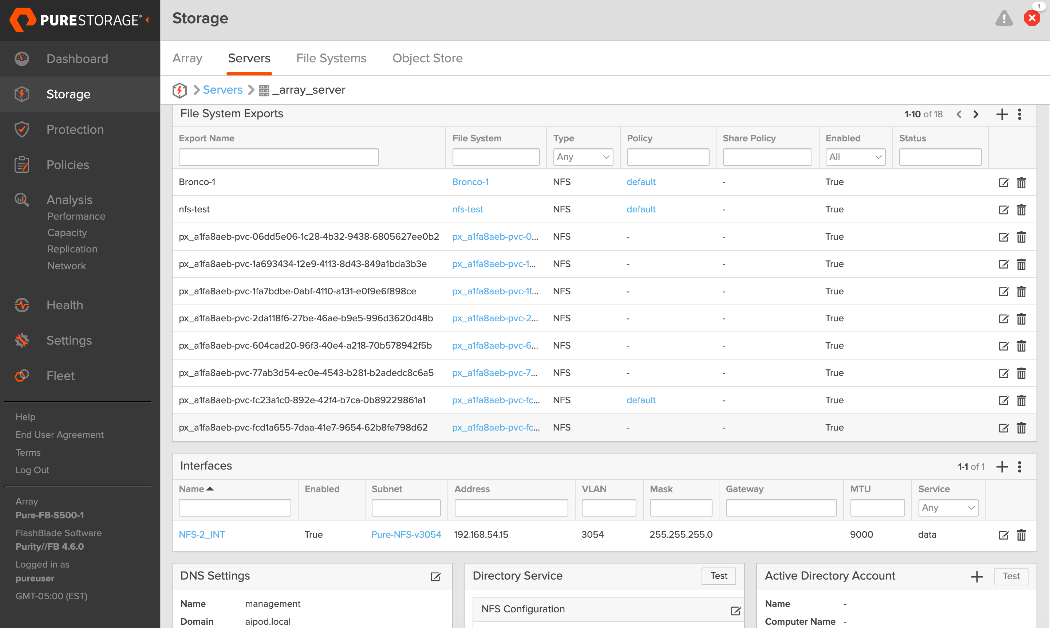

● Configure Networking: Log into the FlashBlade CLI or portal to create the required subnets and two specific DataVIPs for pod connectivity.

● Provision NFS Storage: Create the filesystem with your desired capacity and enable the NFSv3 protocol.

● Route and Mount: Verify routing from compute nodes to storage using the NFS data interface. Mount the filesystem using NFS over RDMA with aggregate mount options.

● Validate Installation: Run the

mountmount command on compute nodes to confirm the filesystem is active with the correct RDMA and

nconnectnconnect settings.

|

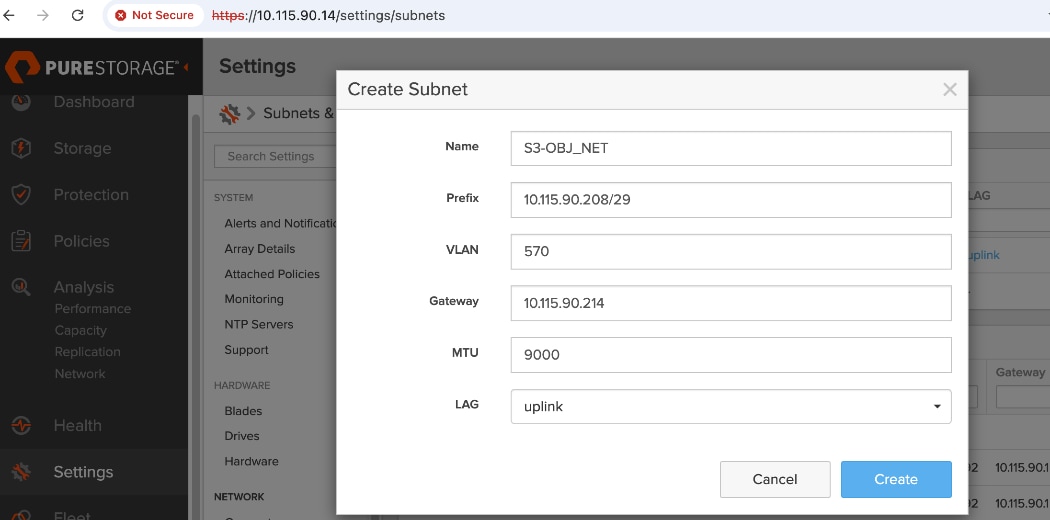

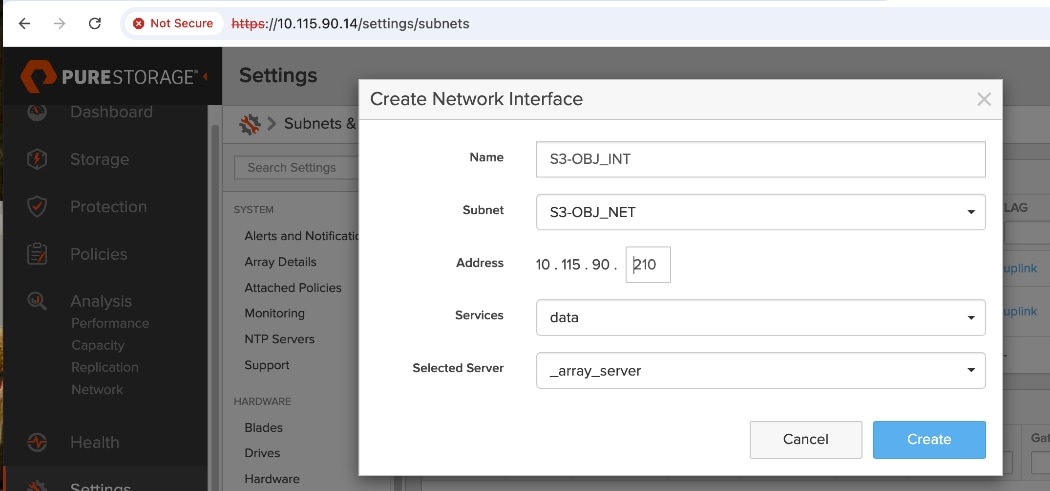

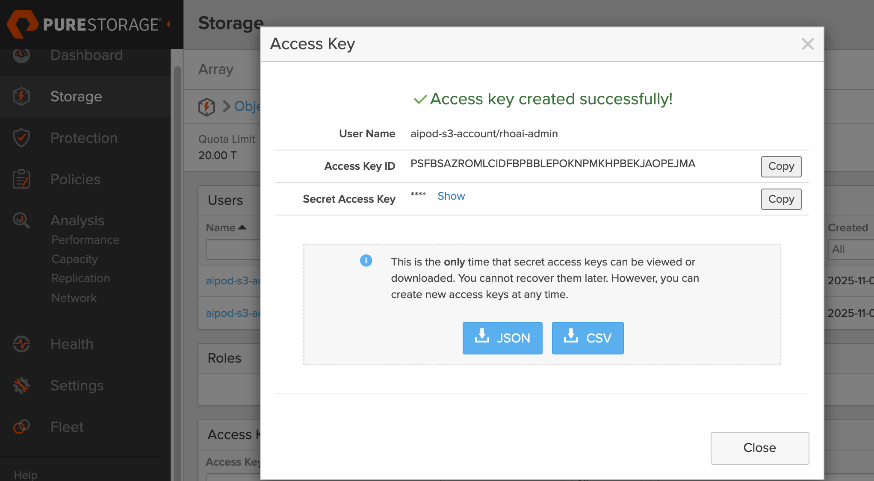

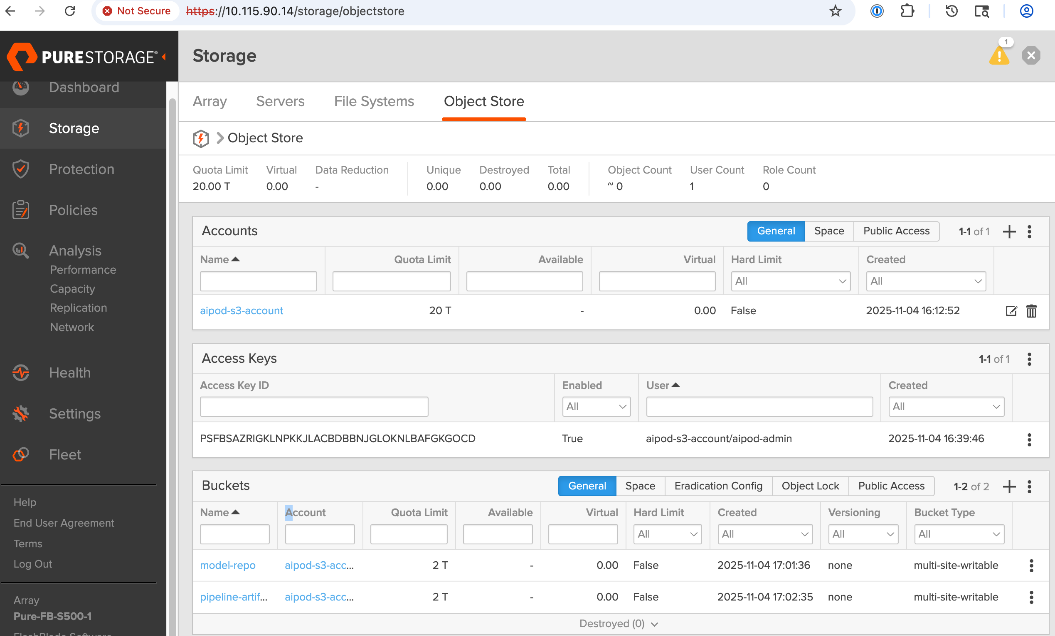

| CVD_05 |

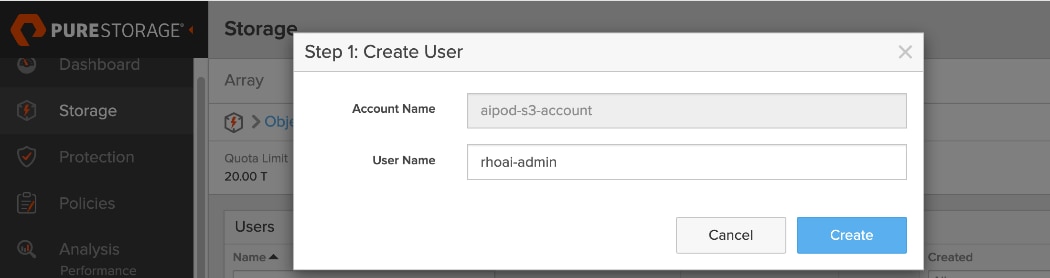

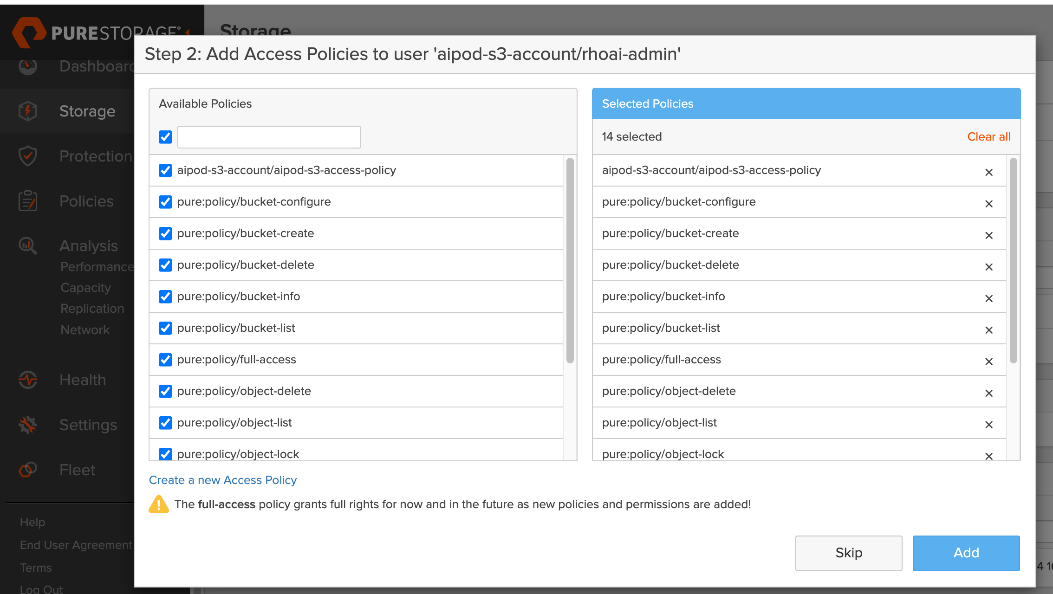

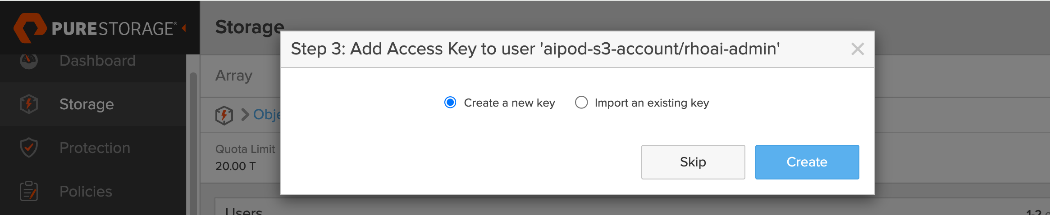

Deploy S3-compatible Object Store on Everpure FlashBlade//S

● Configure Networking: Log into the FlashBlade CLI or portal to create the required subnets and specific DataVIPs for pod connectivity.

● Provision Object Store: Create an account and quota for the account. Create user, access policies, S3 buckets and access key to access the S3 buckets.

● Route: Verify routing from compute nodes to storage using the S3 Object data interface. Deploy workload to verify access.

|

| CVD_06 |

Deploy UCS Management Servers from Cisco Intersight

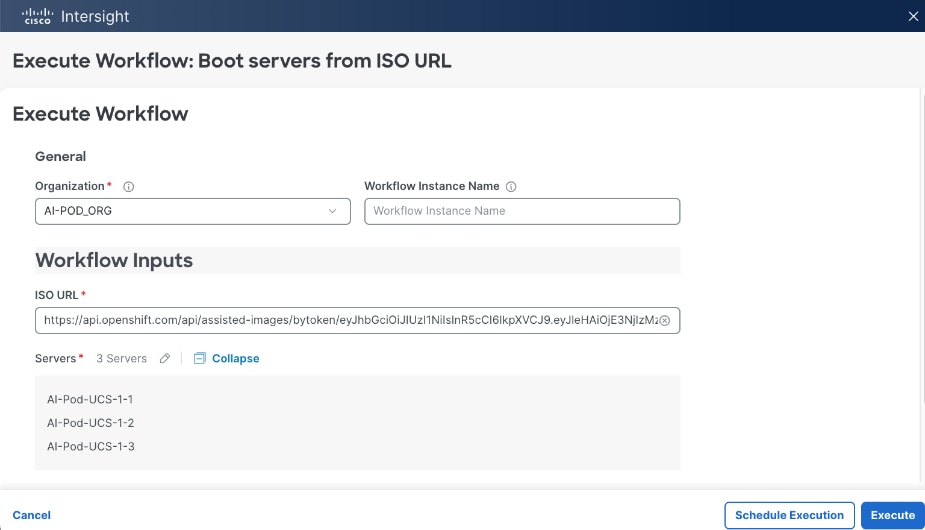

● Create Server Profile Template for UCS servers for use as OpenShift control (optionally as worker nodes) in the OpenShift cluster with Cisco UCS C885A GPU nodes. Servers should have at least one interface provisioned in the cluster management network (same as in-band management in this deployment).

● Deploy server profile to provision a minimum of 3 servers to provide high availability.

|

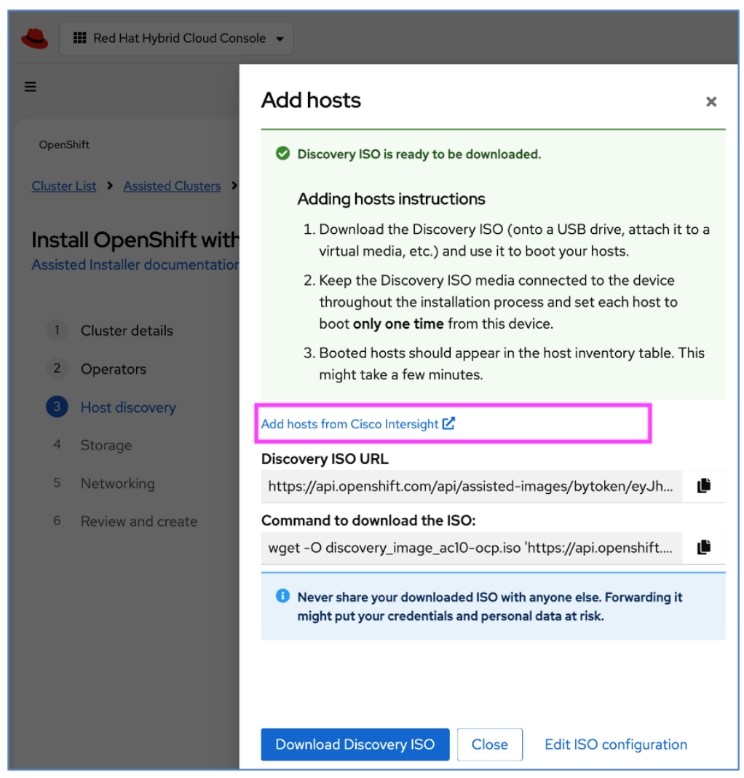

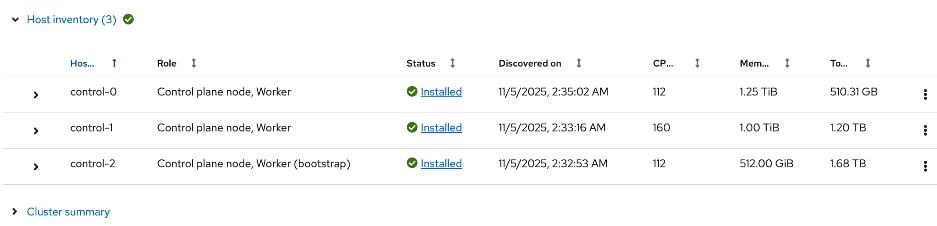

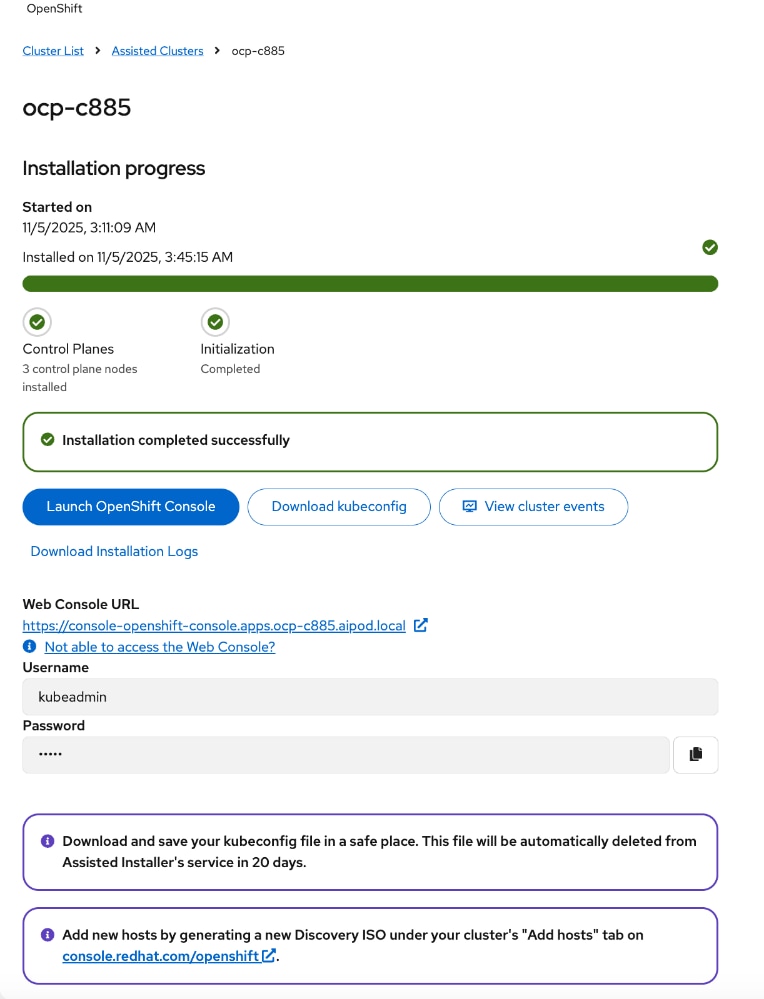

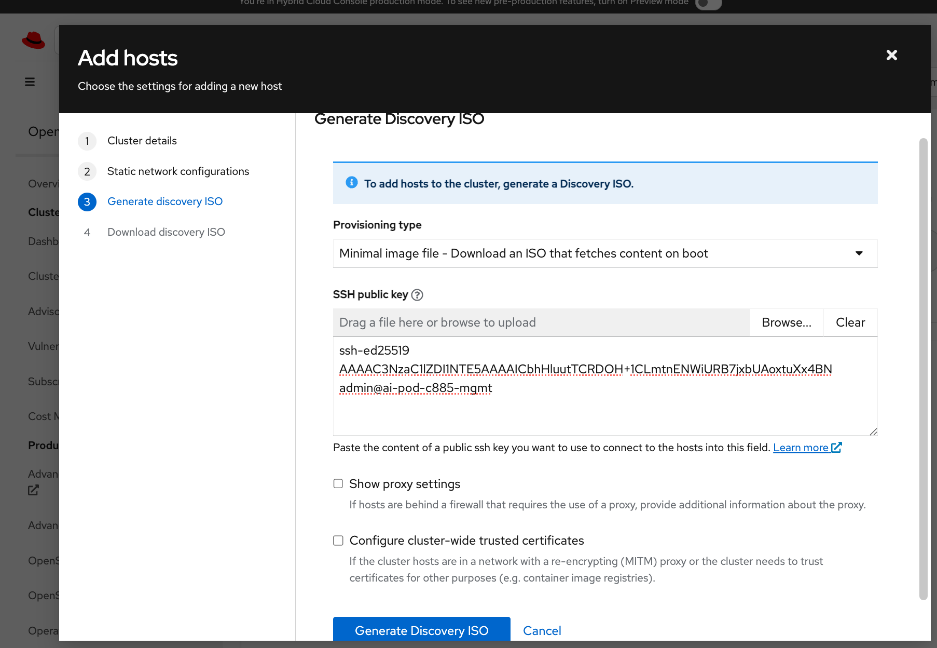

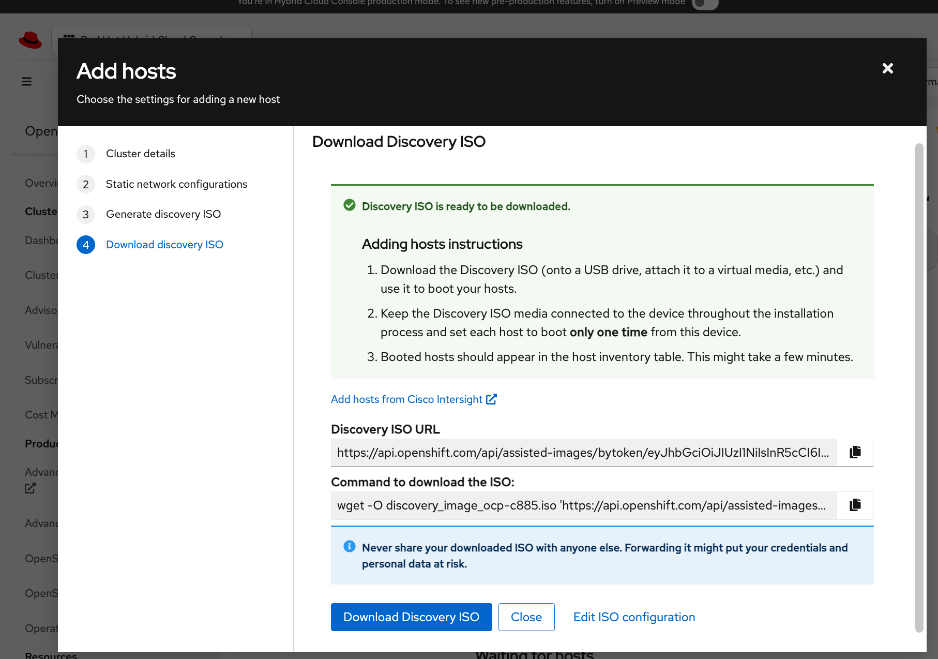

| CVD_07 |

Deploy Red Hat OpenShift on Bare Metal UCS Management Servers

● Setup prerequisites for deploying the cluster such as setting up an installer workstation, DNS, DHCP and so on.

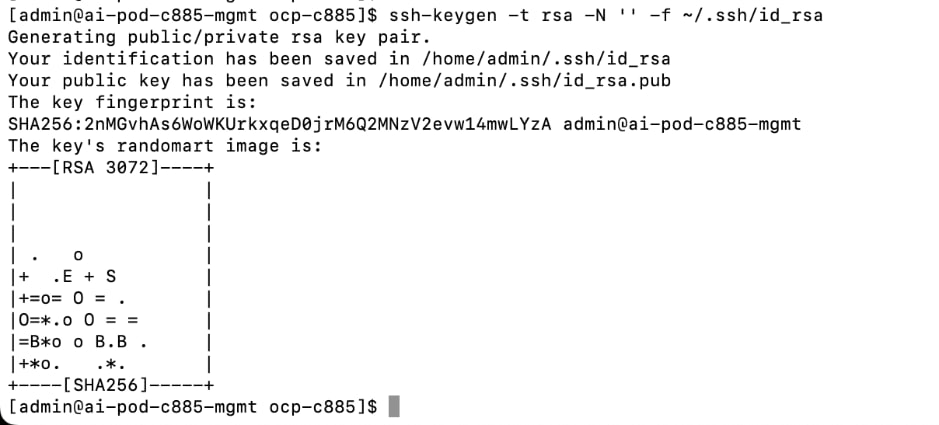

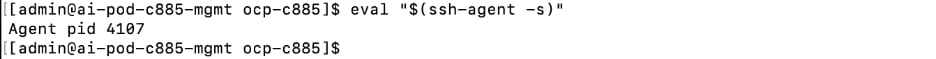

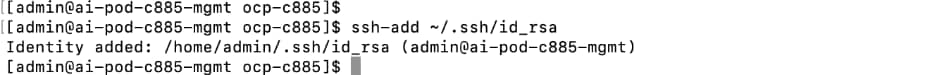

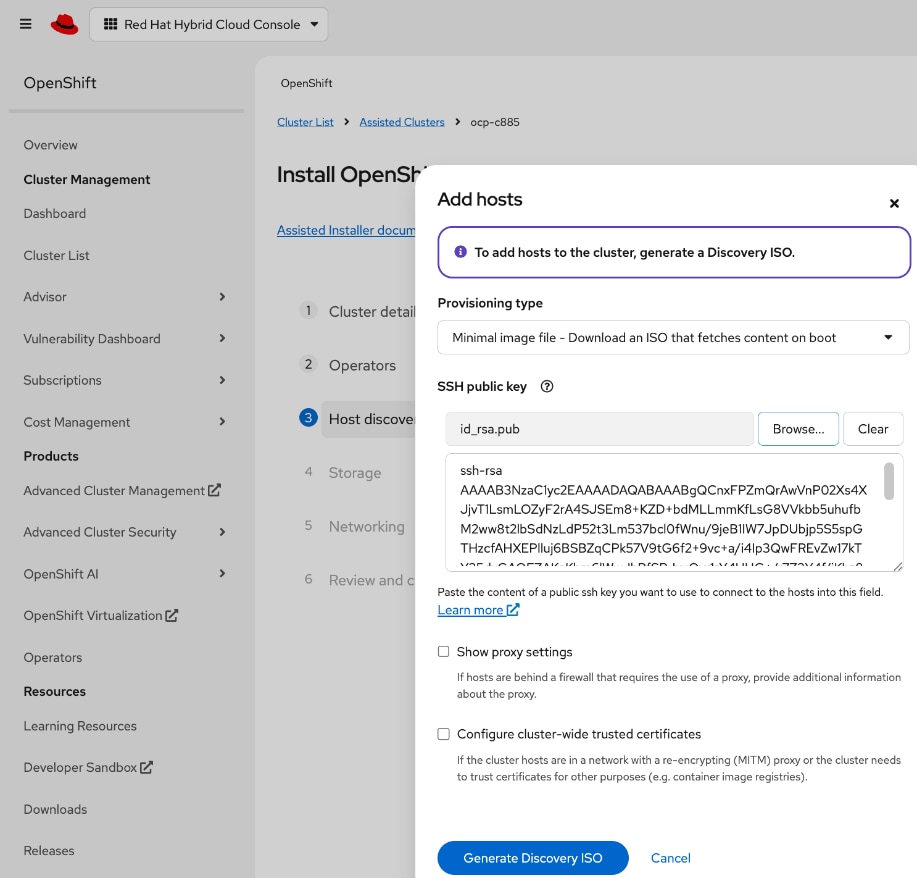

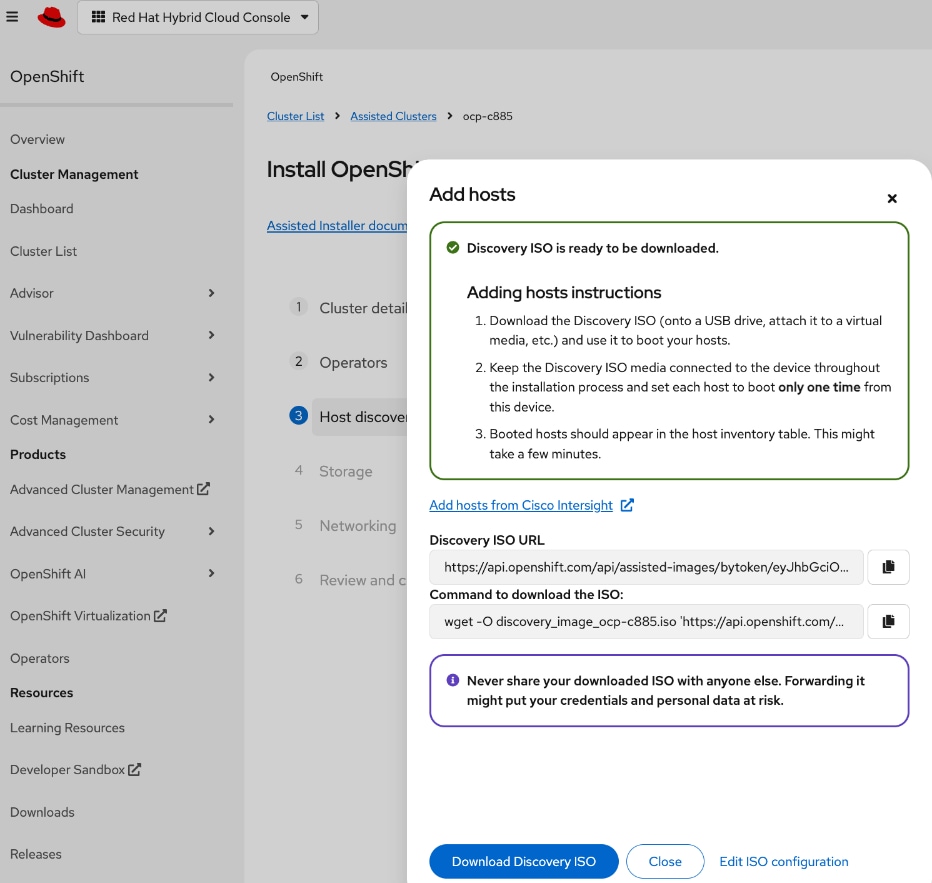

◦ Deploy an installer machine to remotely manage the OpenShift cluster and to serve as an HTTP server to load OpenShift images on Cisco UCS C885 servers (later). Generate public SSH keys on the installer to enable SSH access to OpenShift cluster post-install.

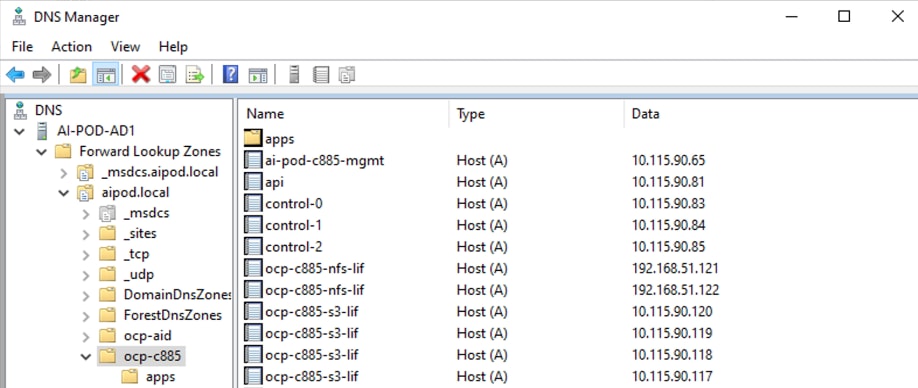

◦ Add DNS records for cluster API and Ingress Virtual IP (VIP)

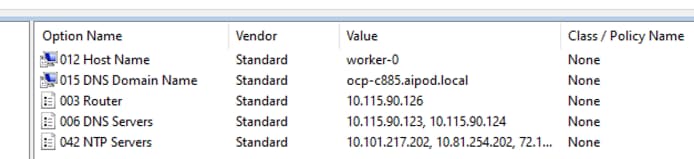

◦ Add DHCP pool for OpenShift cluster nodes to use. Configure DHCP options for NTP, Gateway (for routed subnets) and DNS.

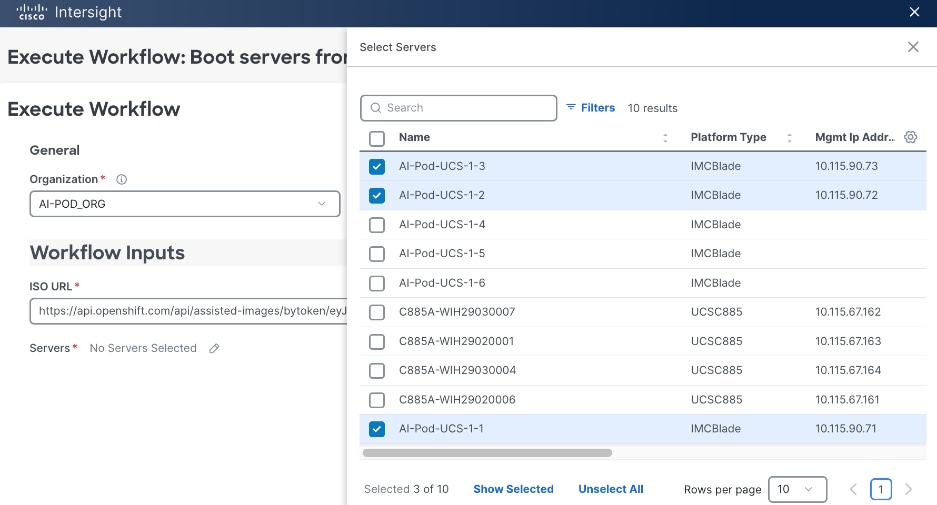

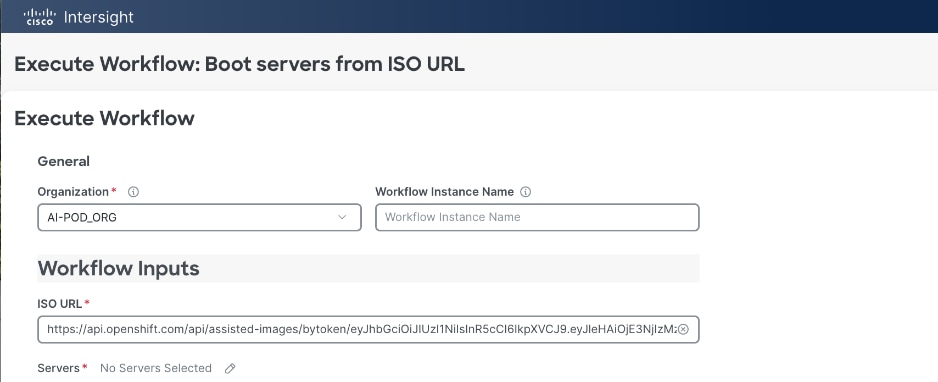

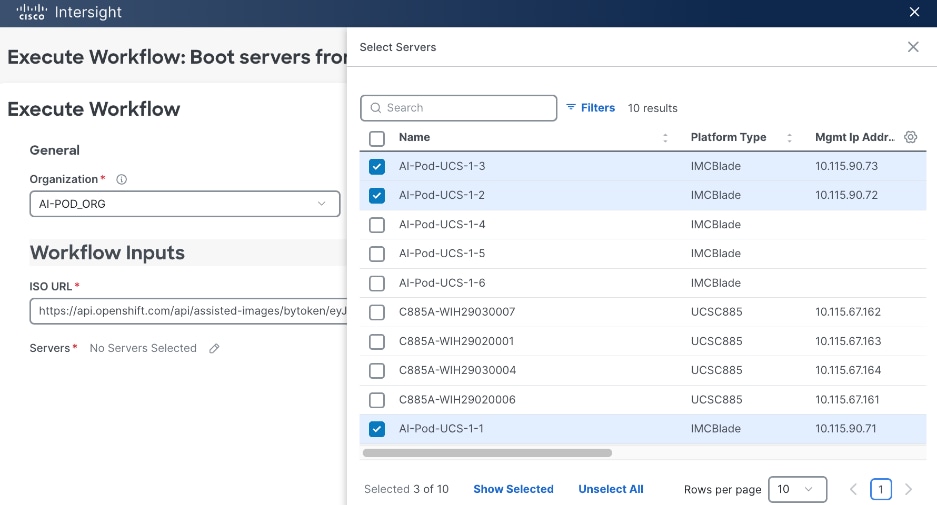

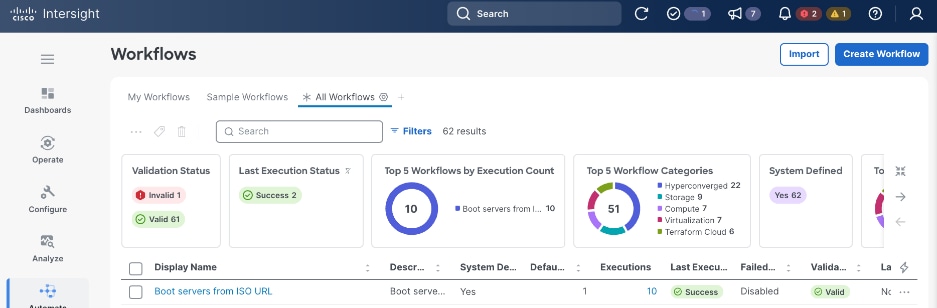

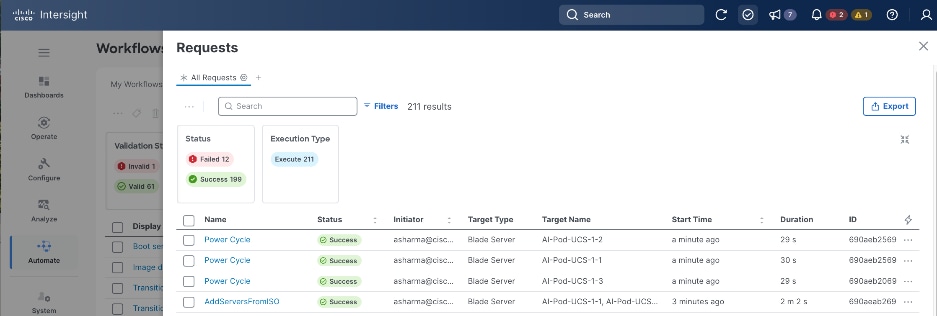

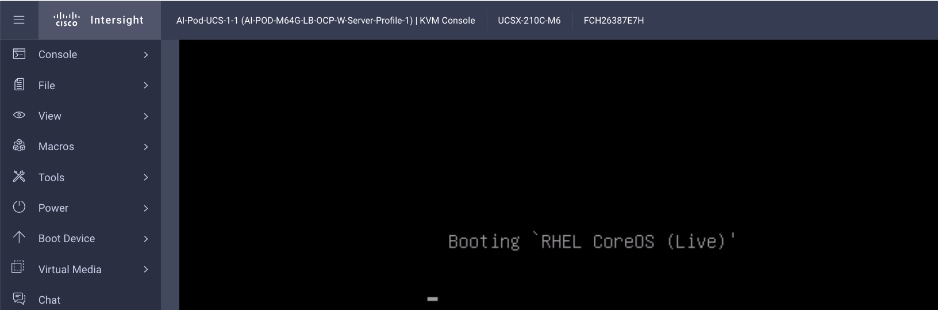

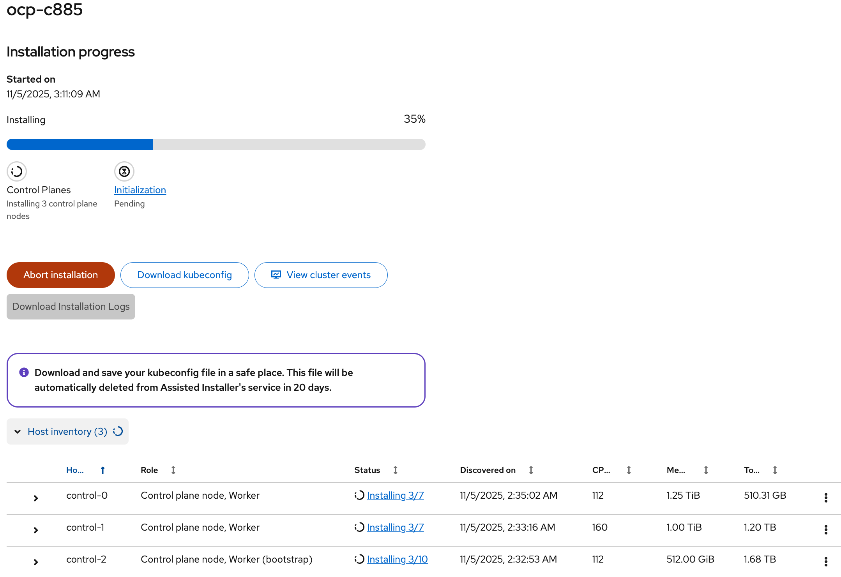

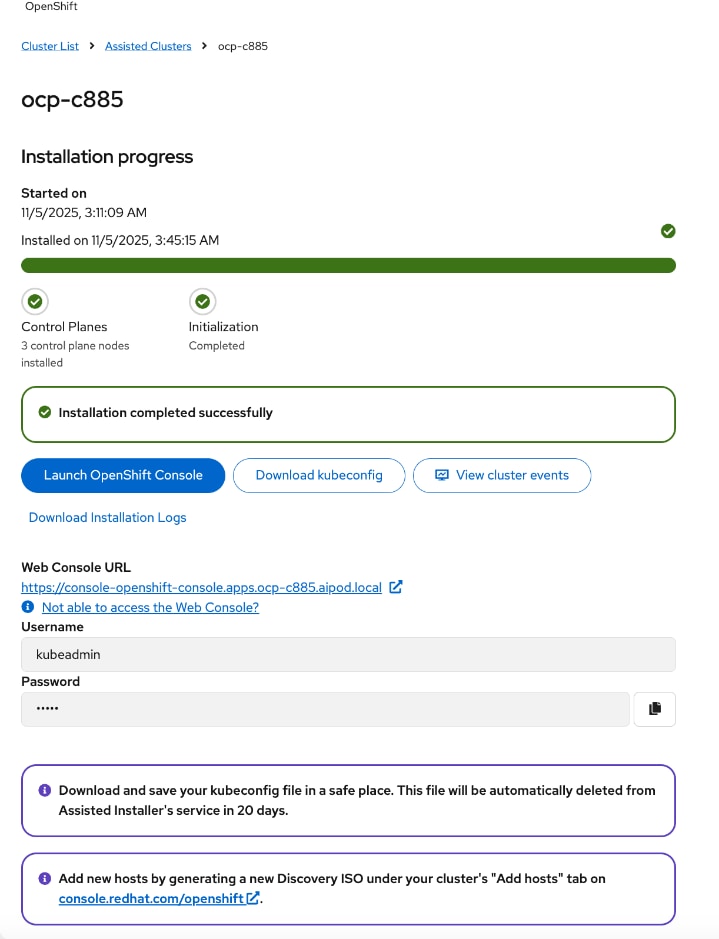

● Deploy OpenShift cluster using Assisted Installer workflow from Red Hat Hybrid Cloud Console (console.redhat.com). Use Cisco Intersight integration to seamlessly discover and deploy OpenShift on bare metal UCS management/control nodes.

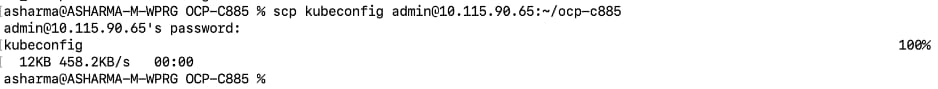

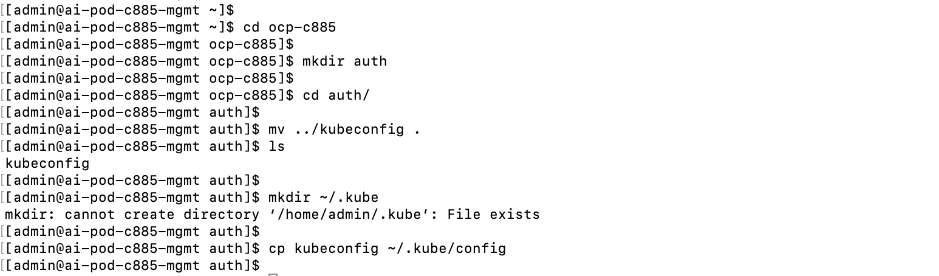

● Complete post-deployment setup

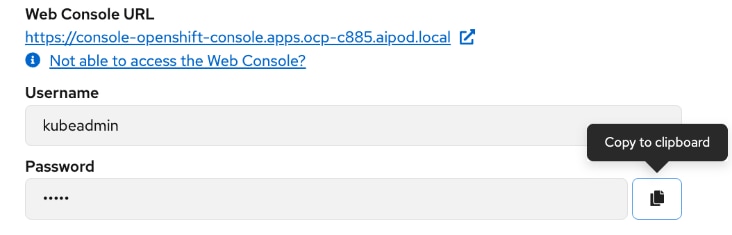

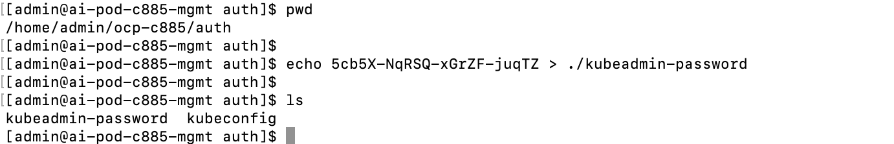

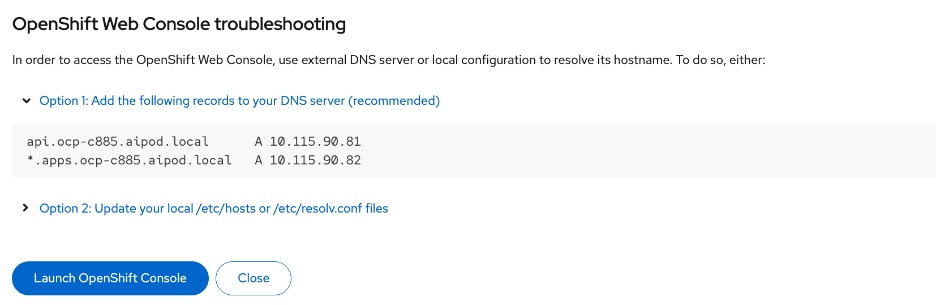

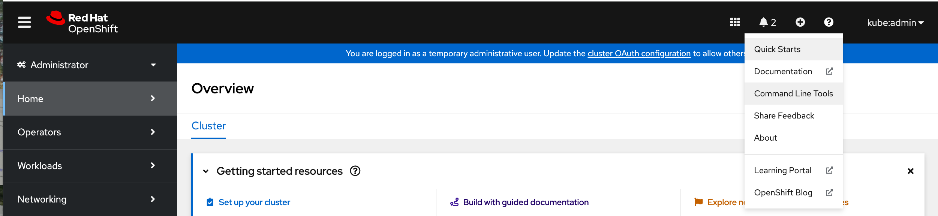

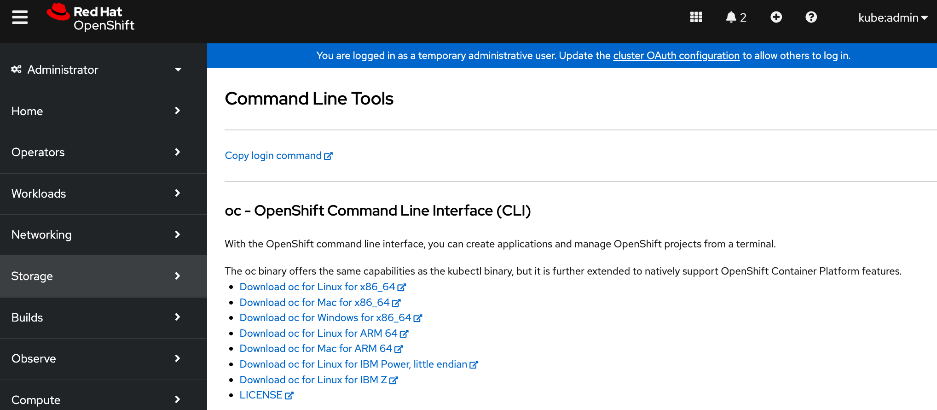

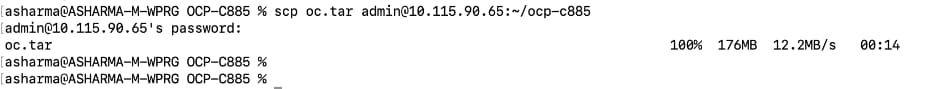

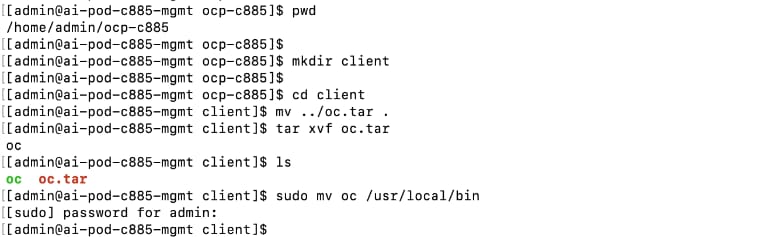

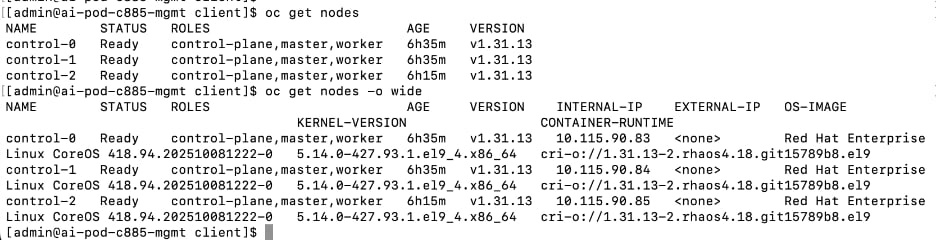

◦ Save installation files - kubeconfig and kubeadmin password

◦ Download oc tools

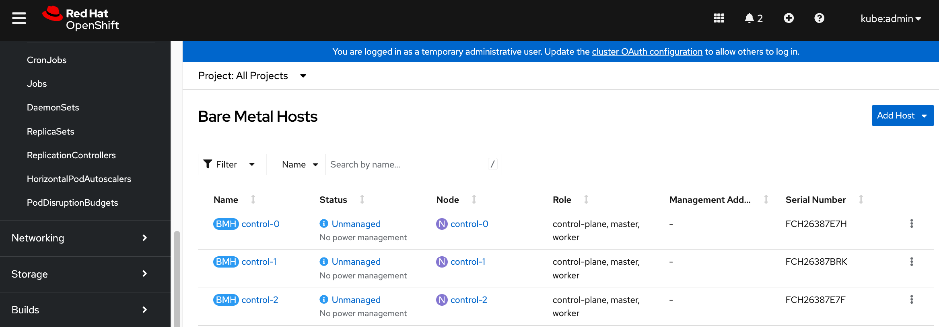

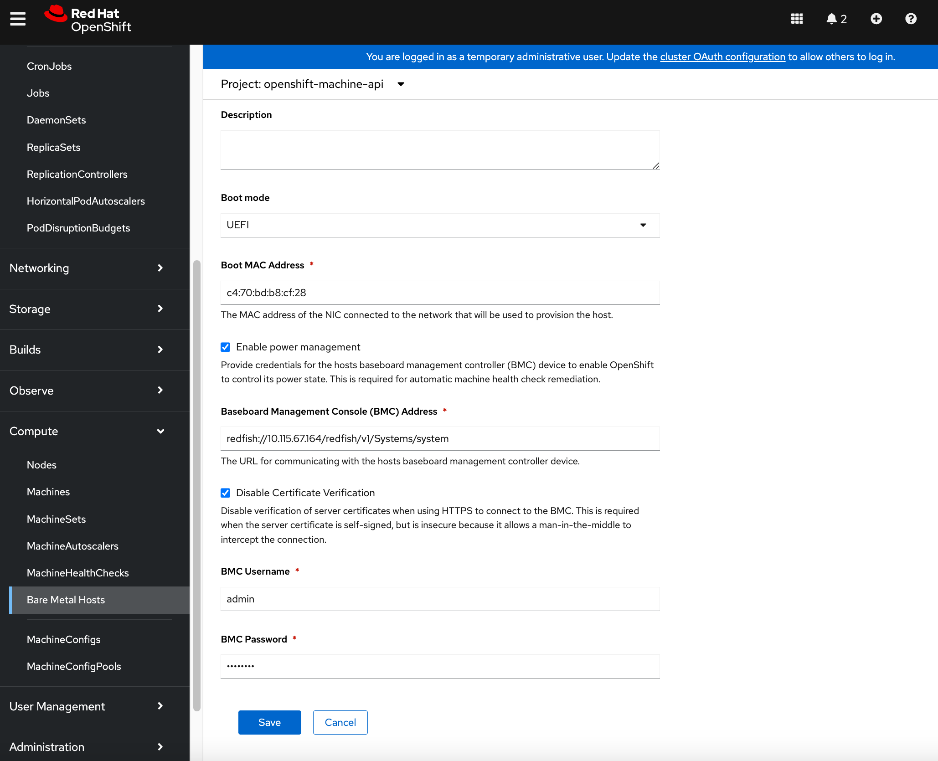

◦ Setup power management for the UCS bare metal hosts in the newly deployed cluster

◦ Reserve resources for system components on control and worker nodes

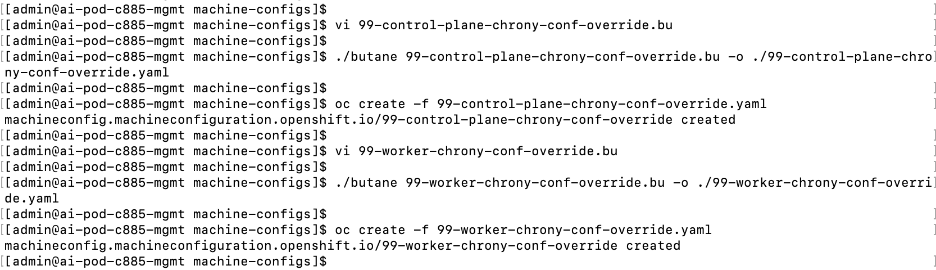

◦ Setup NTP on control and worker nodes

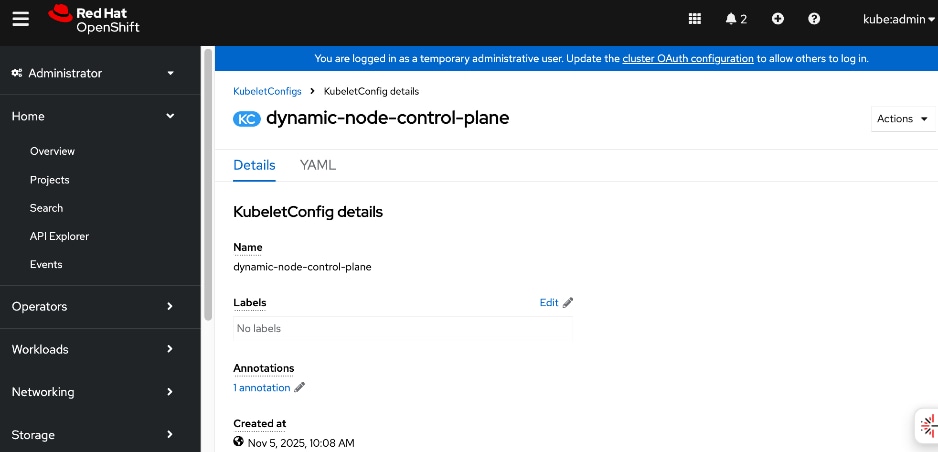

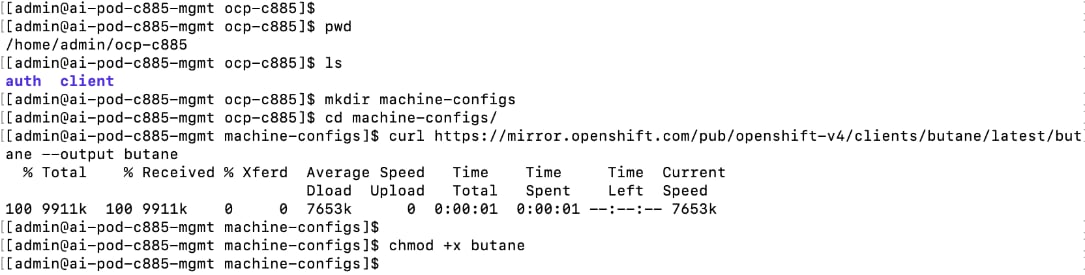

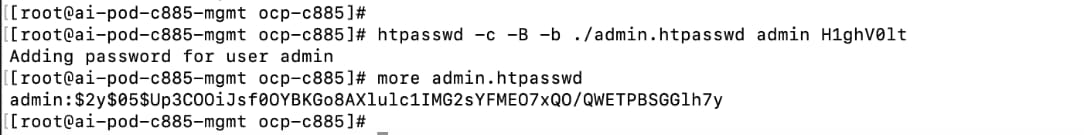

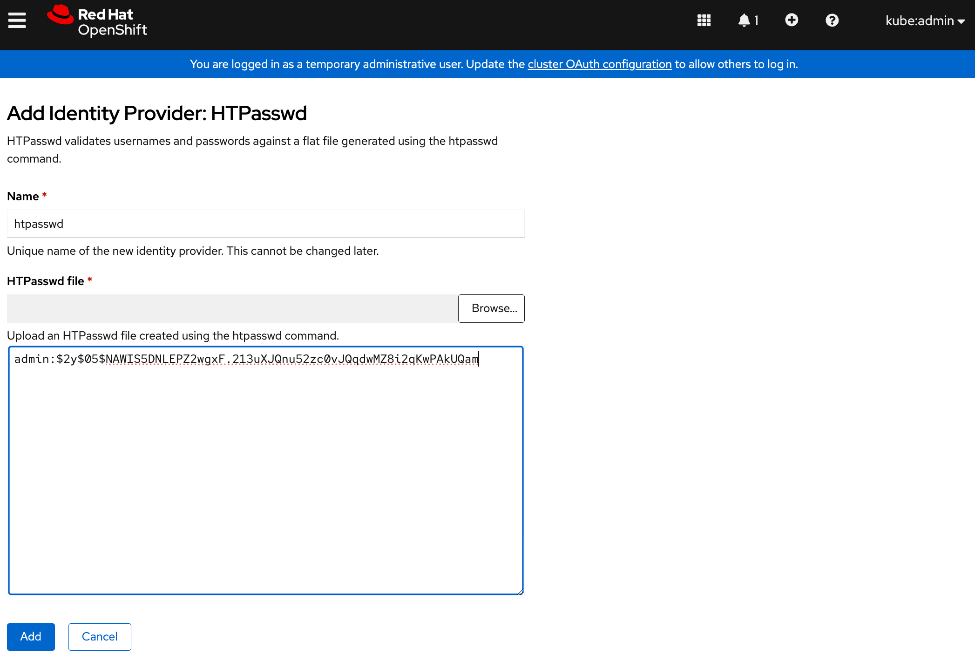

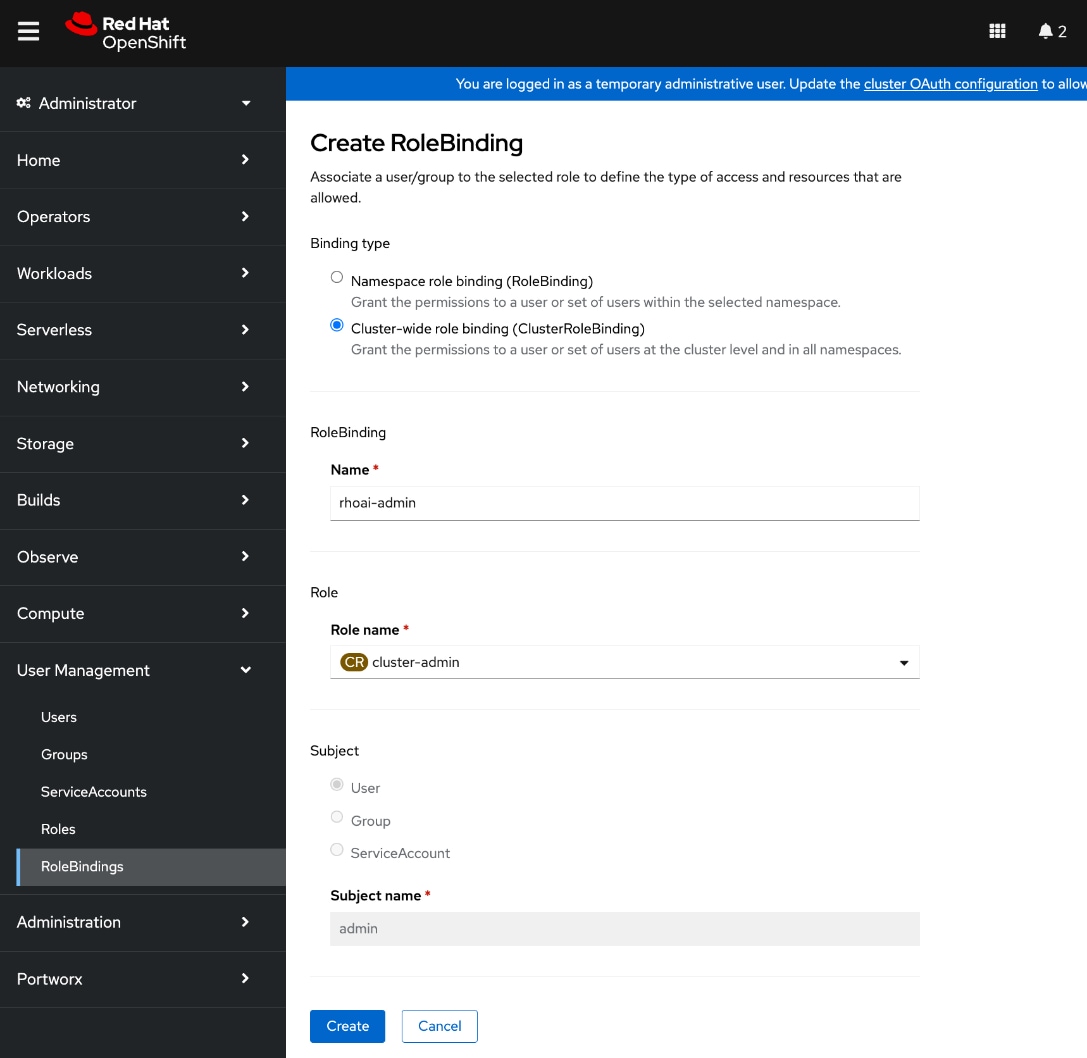

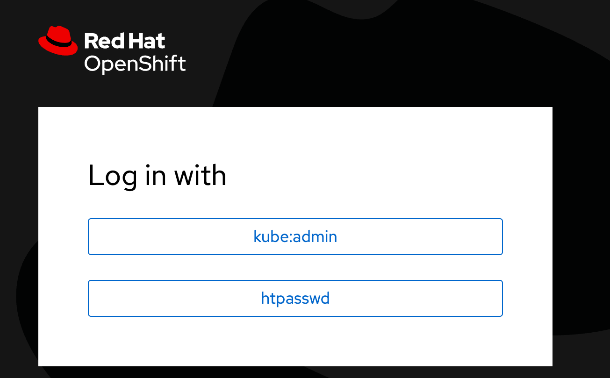

◦ Setup a second administrative user (htpasswd used in this setup)

|

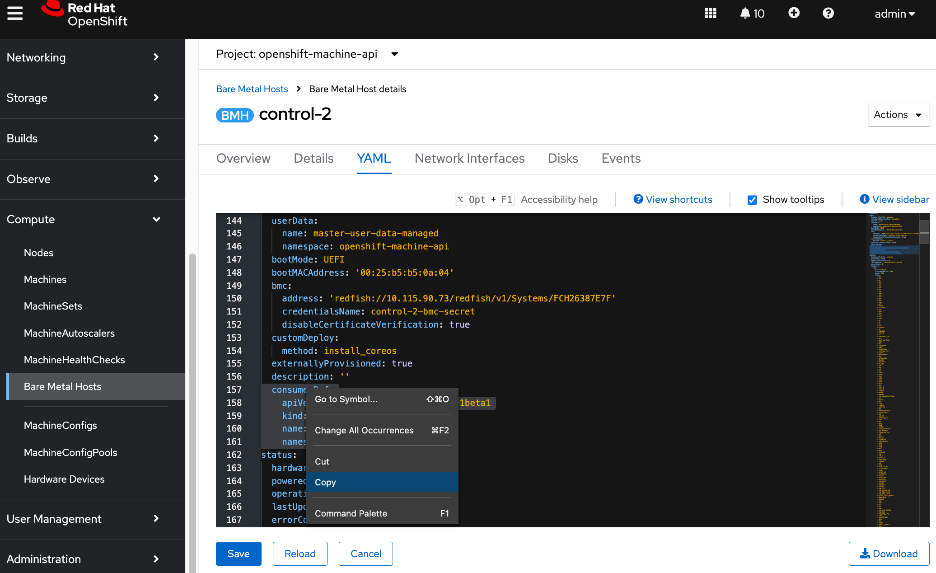

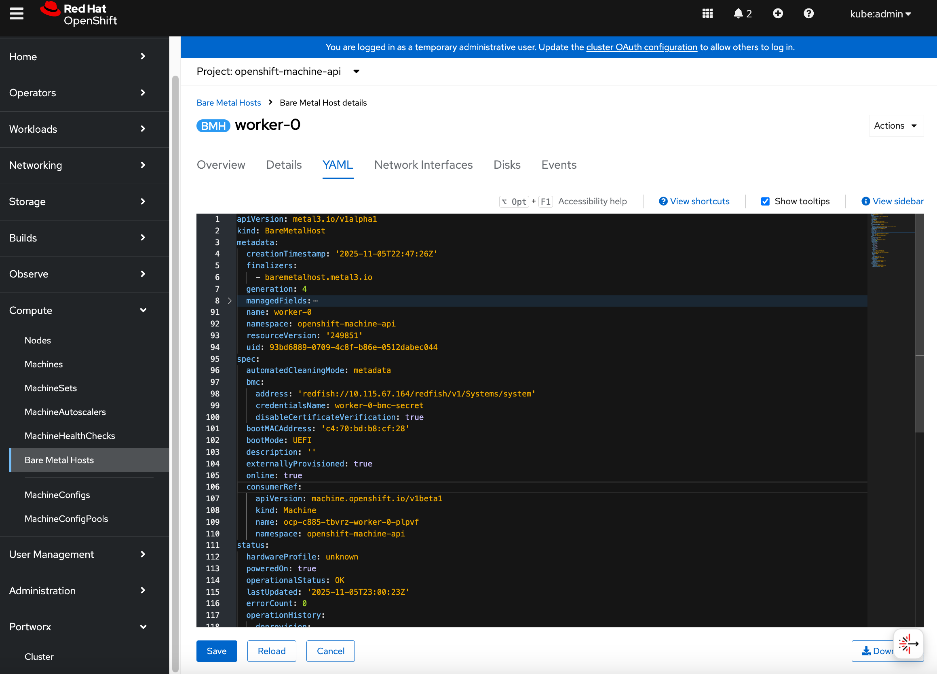

| CVD_08 |

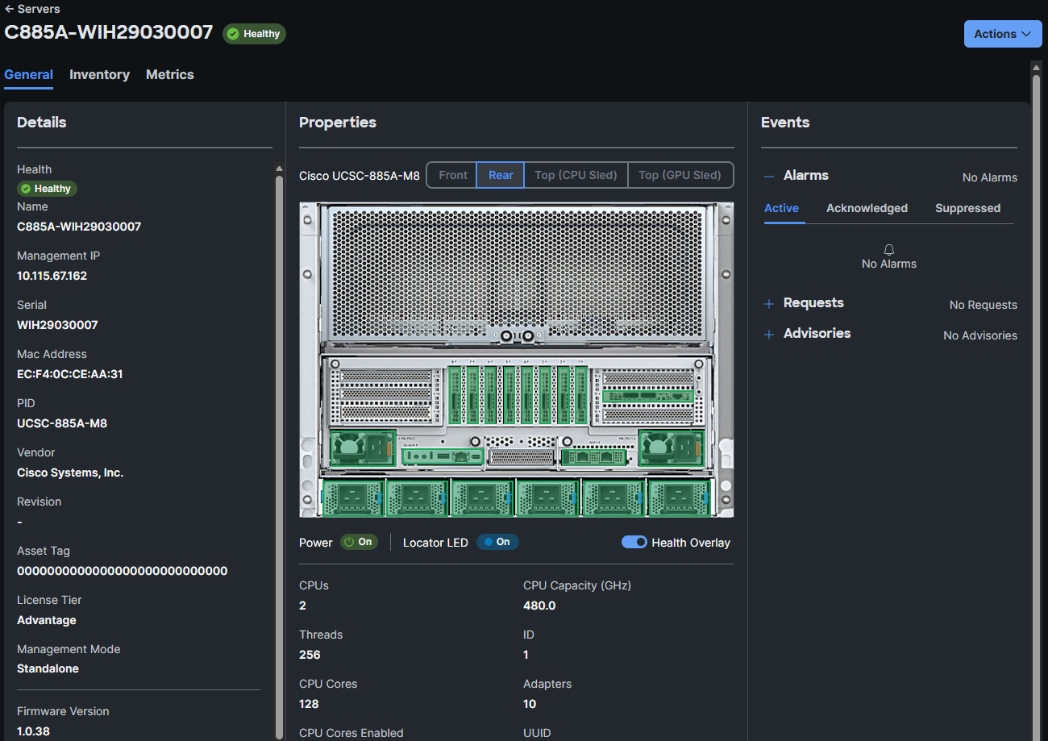

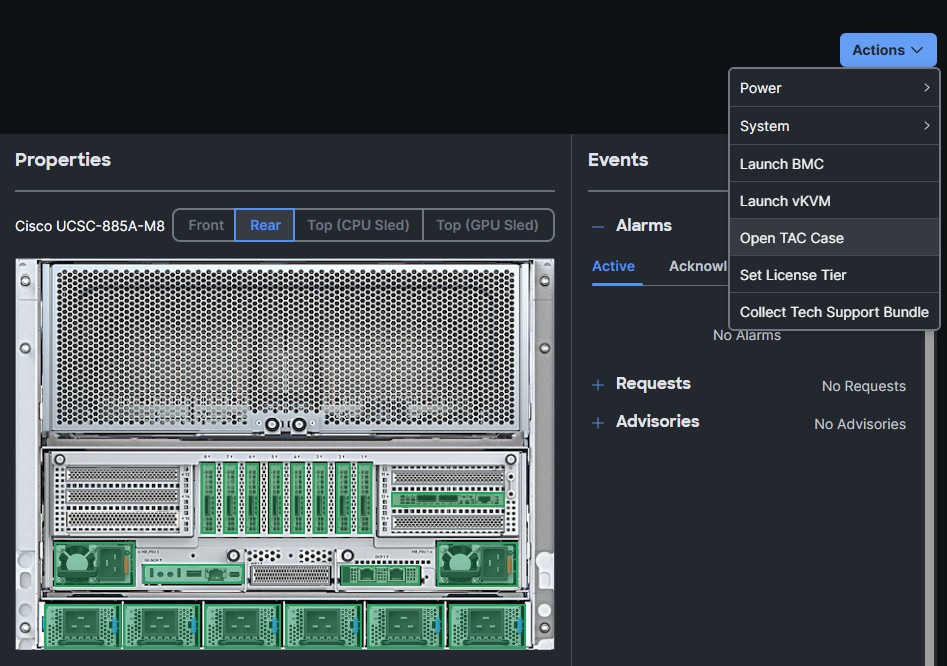

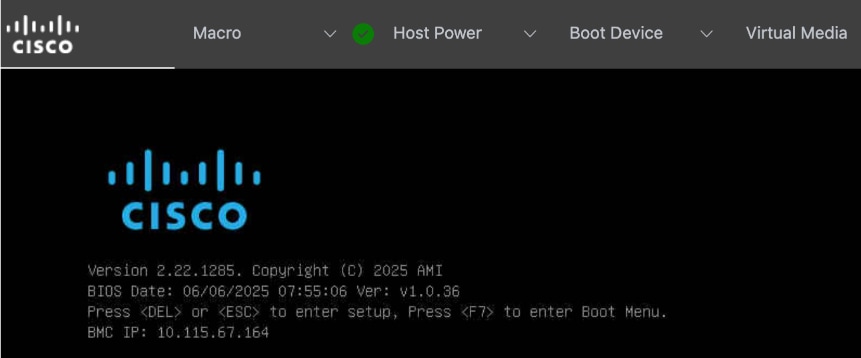

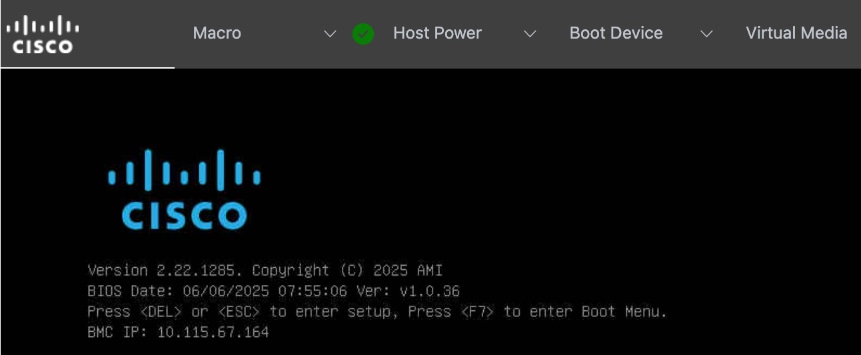

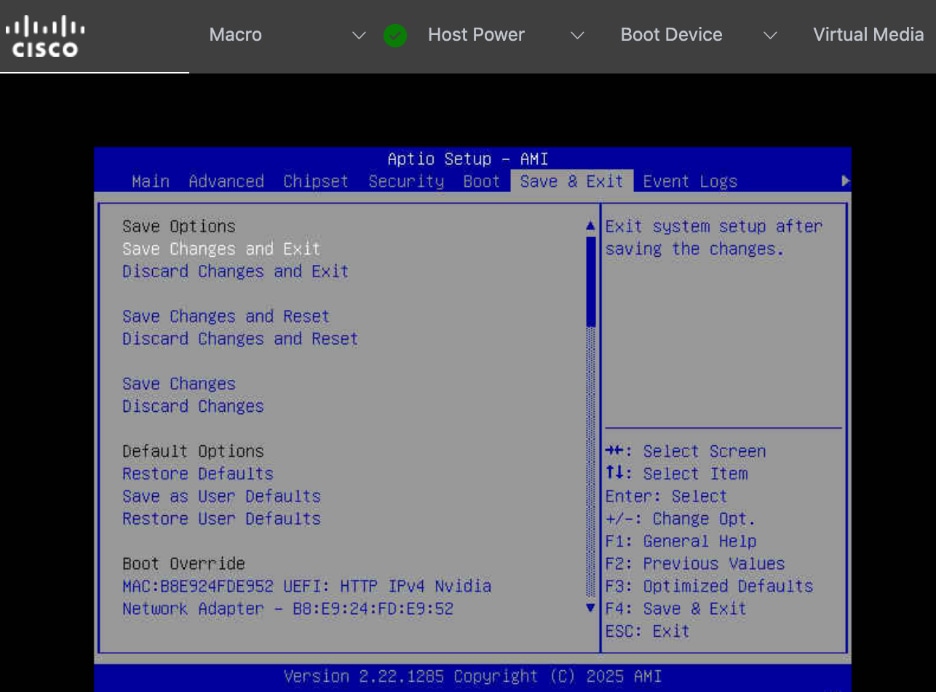

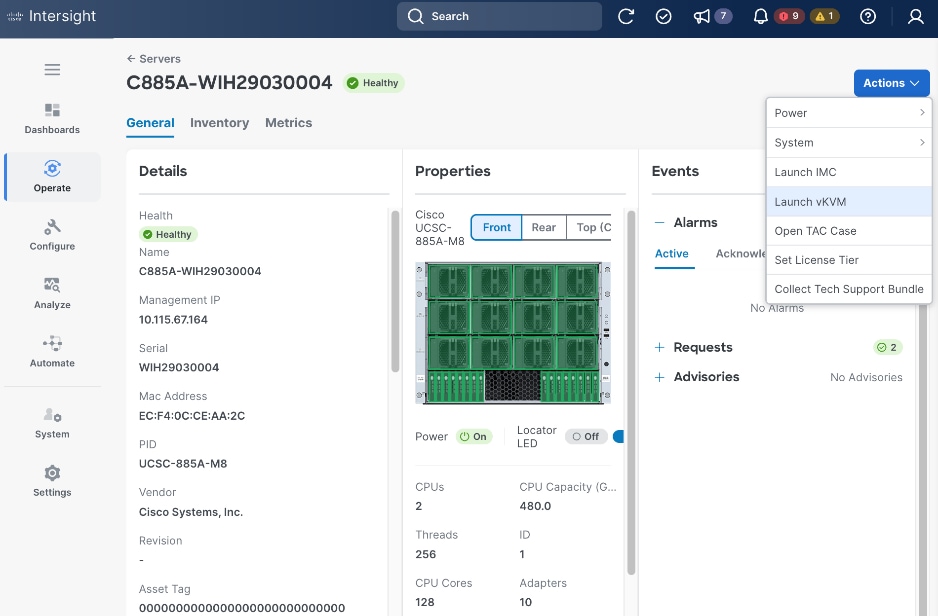

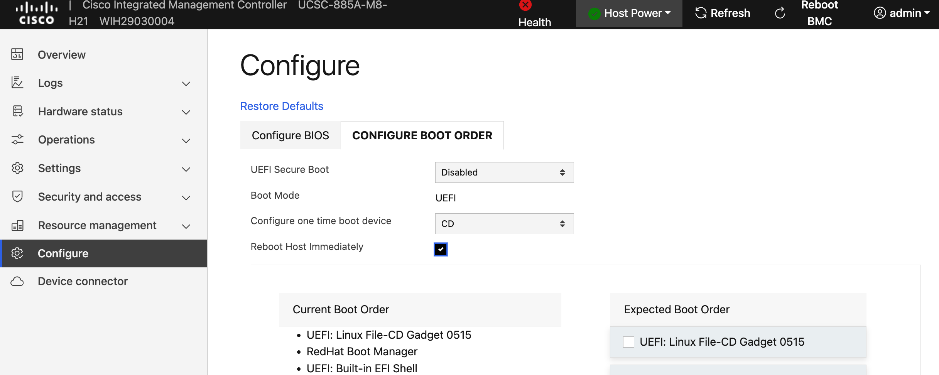

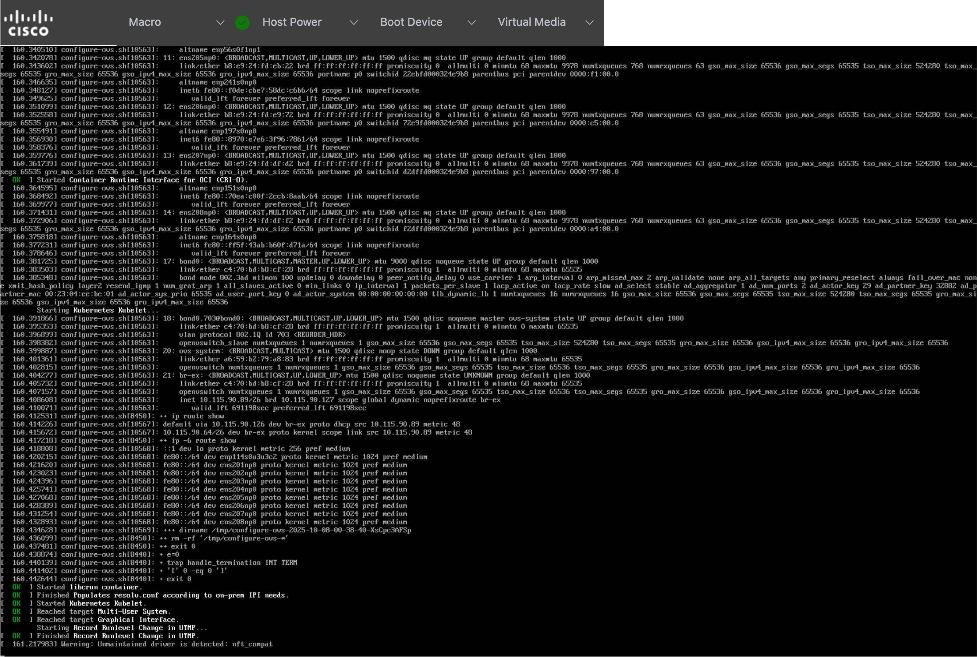

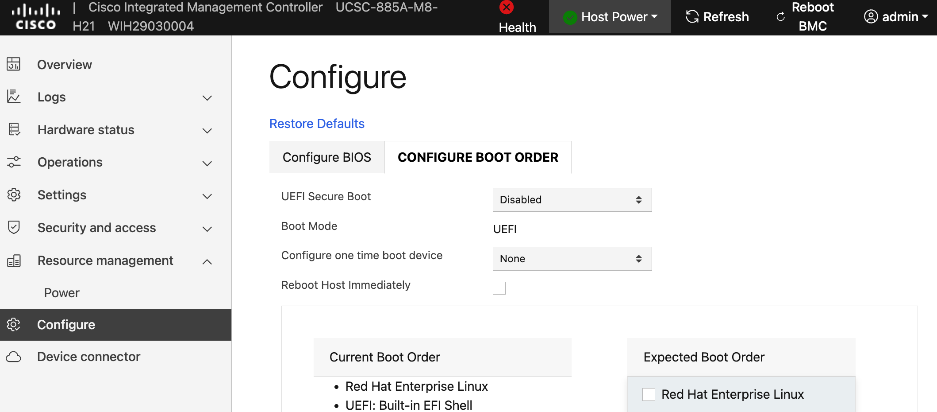

Initial Setup of Cisco UCS C885A GPU Servers

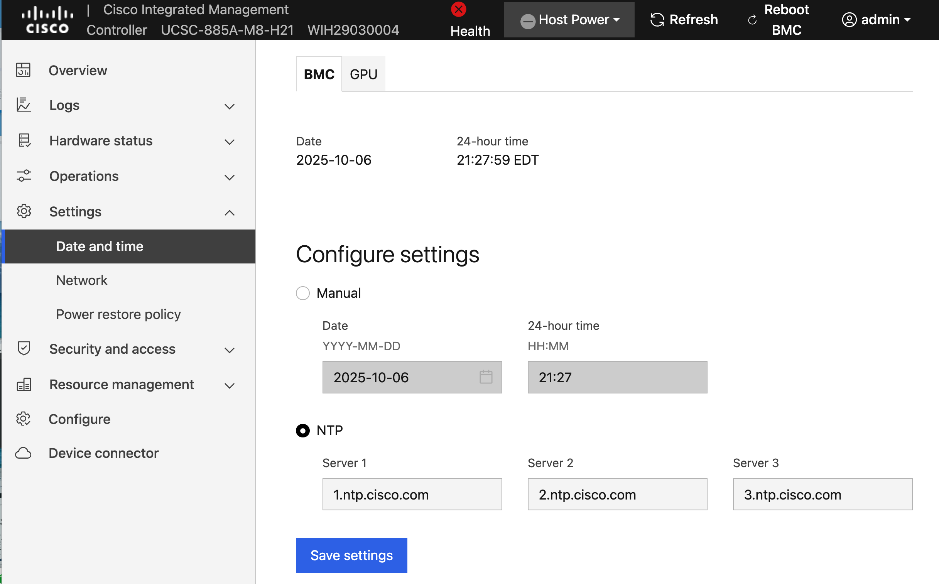

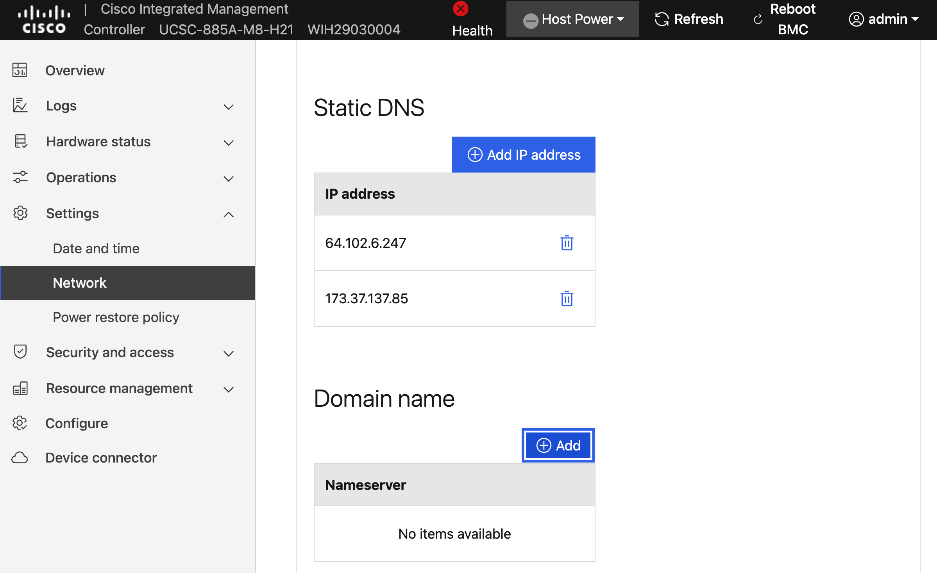

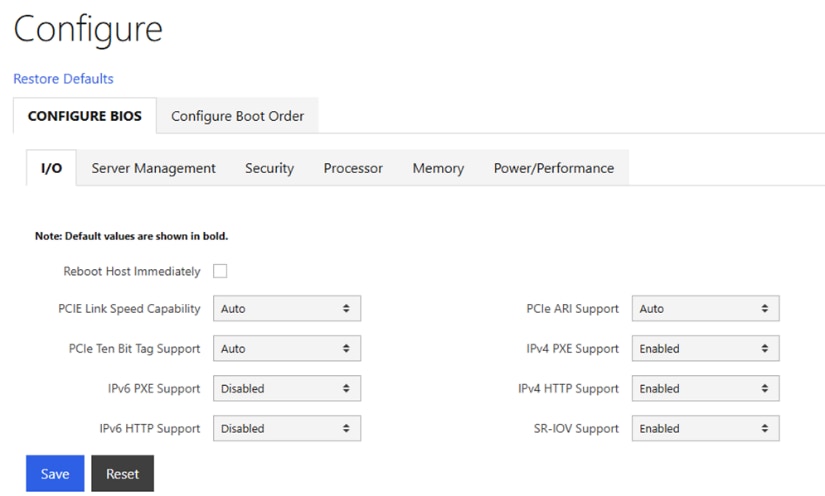

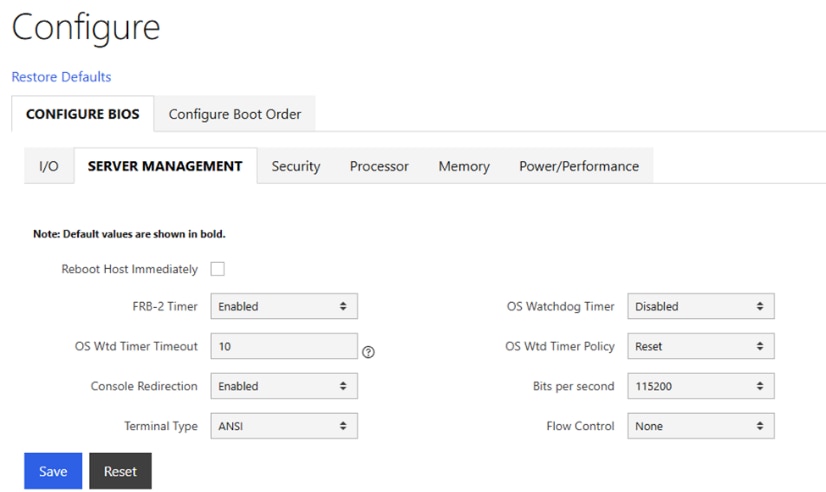

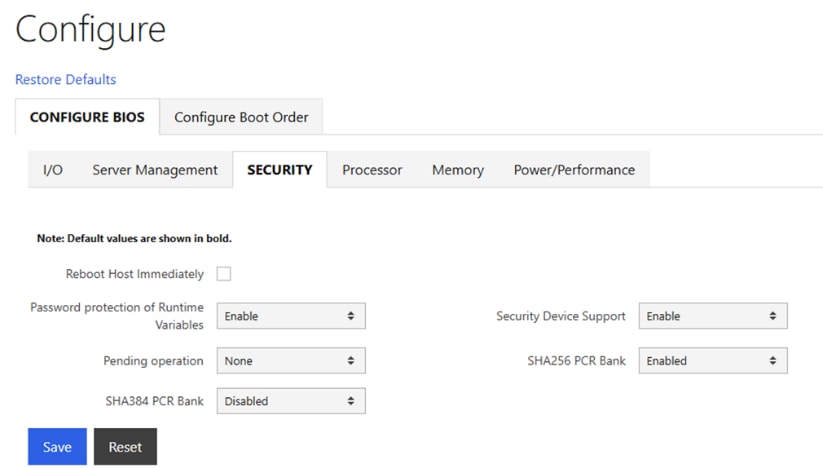

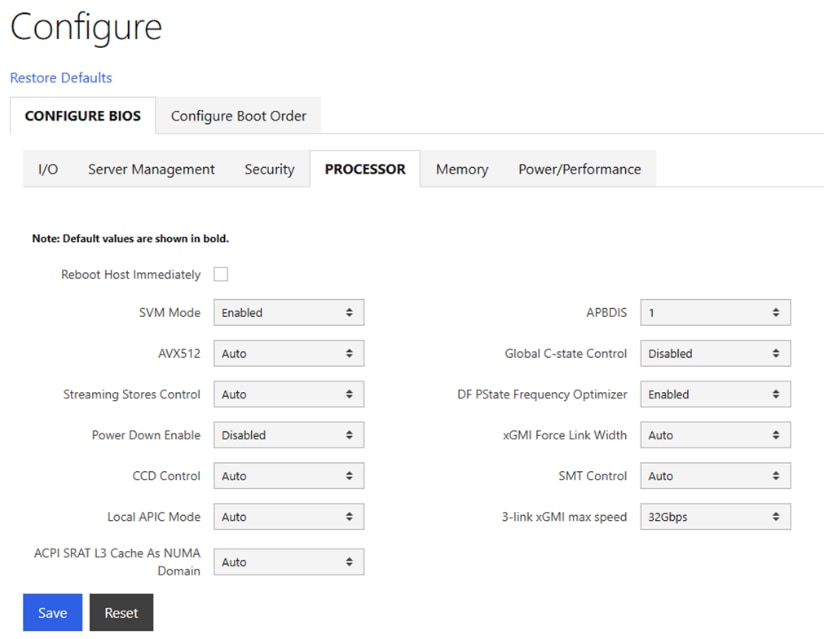

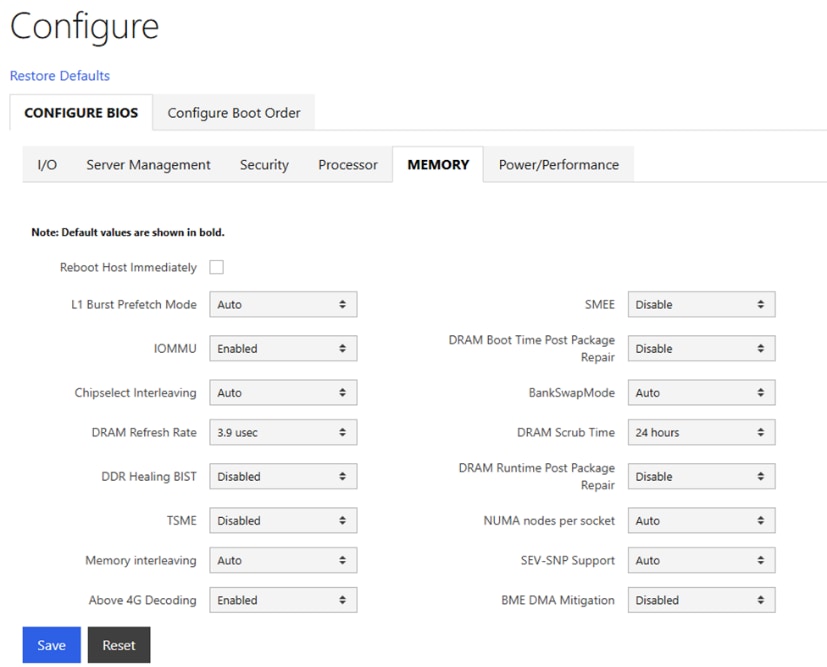

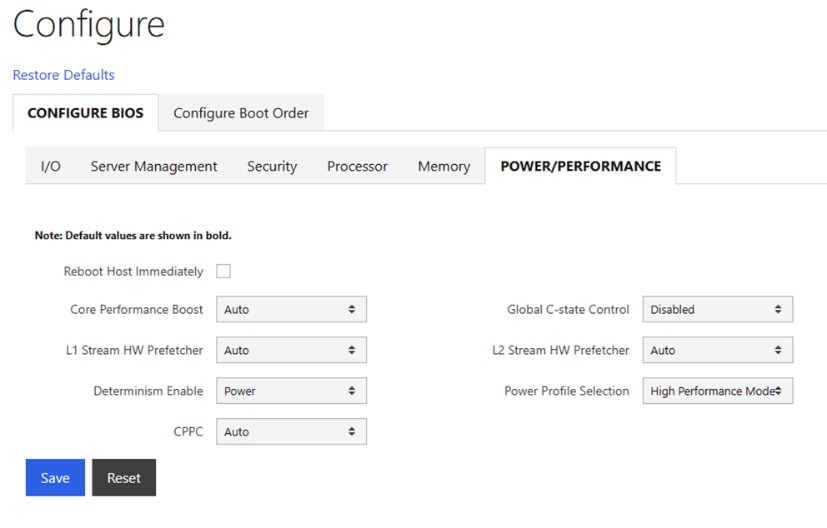

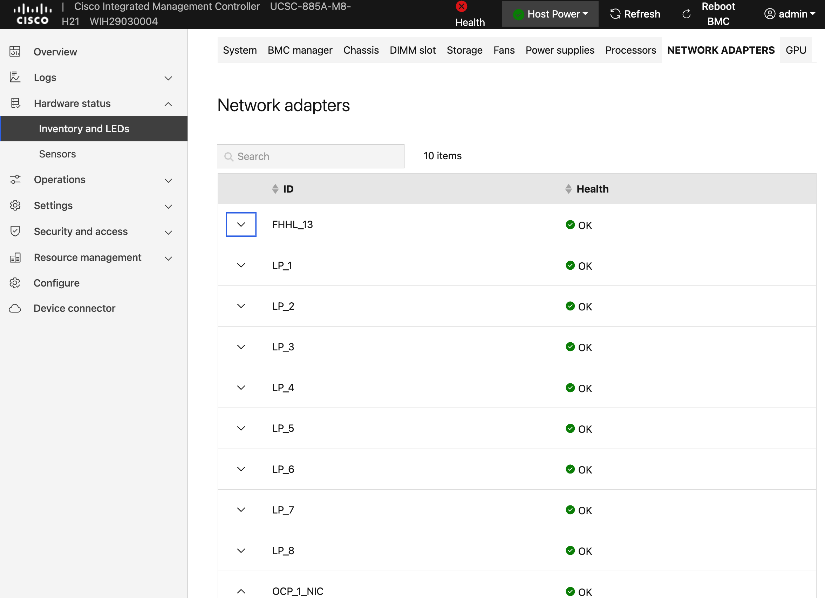

● Configure NTP, DNS, Security policies, BIOS settings and other basic setup on Cisco UCS via Cisco BMC.

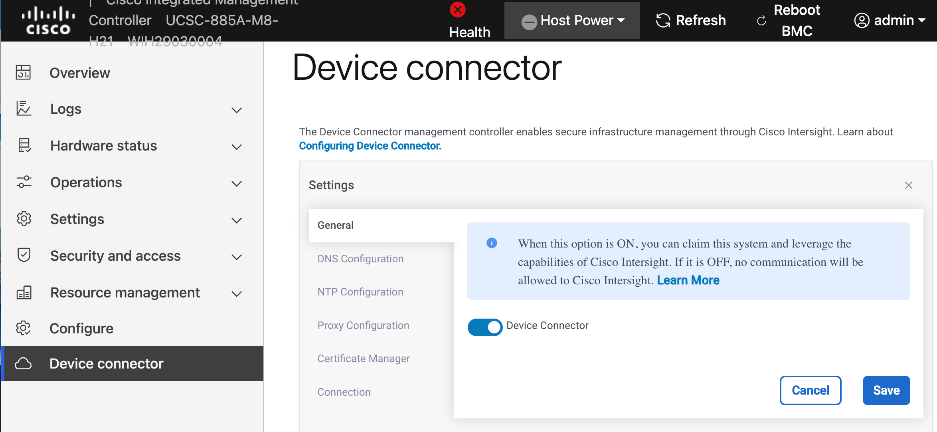

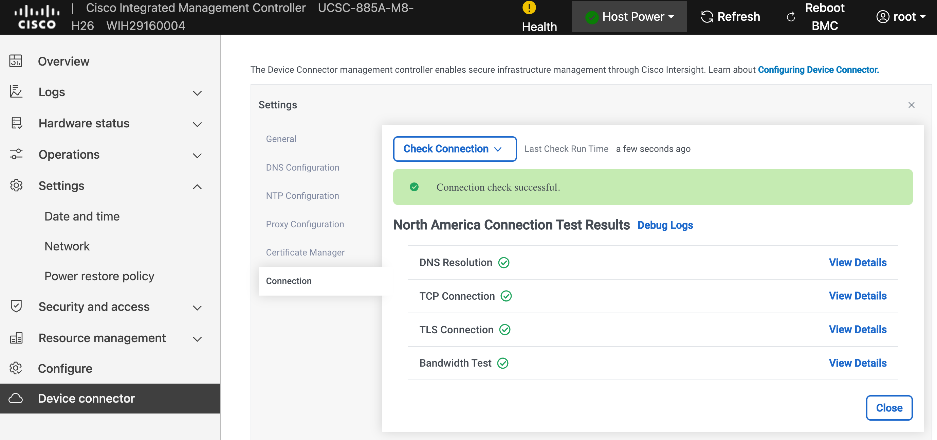

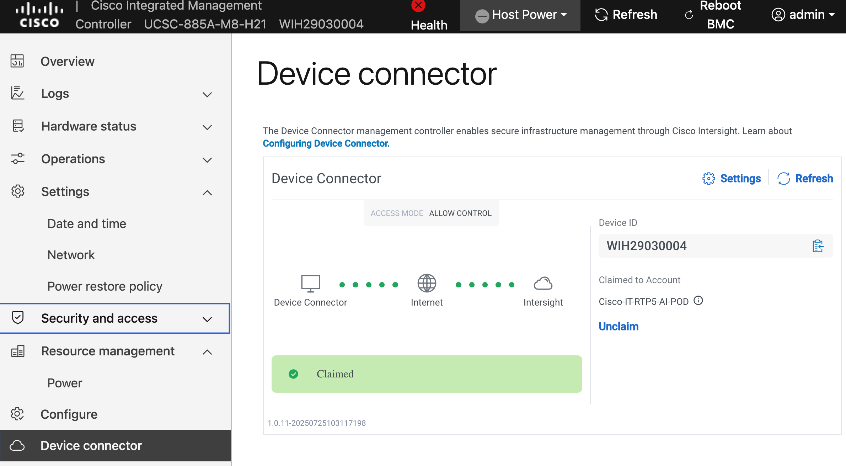

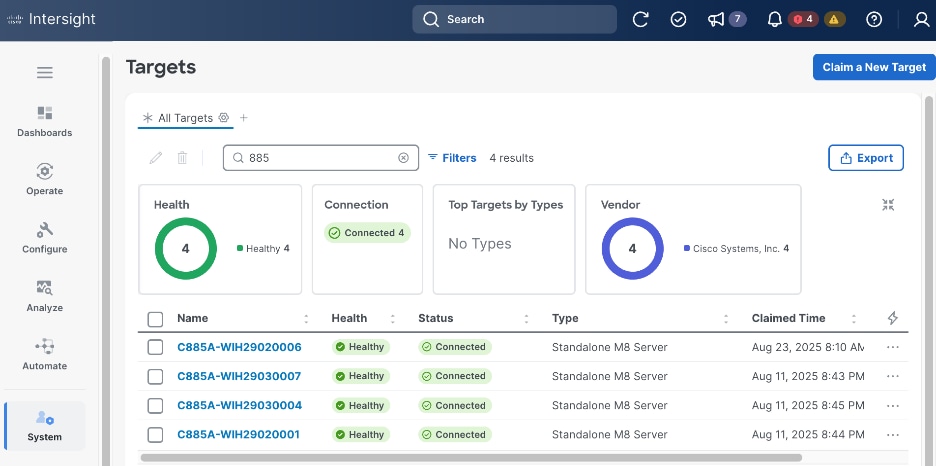

● Setup Intersight Management – Claim and add UCS C885A nodes in Cisco Intersight.

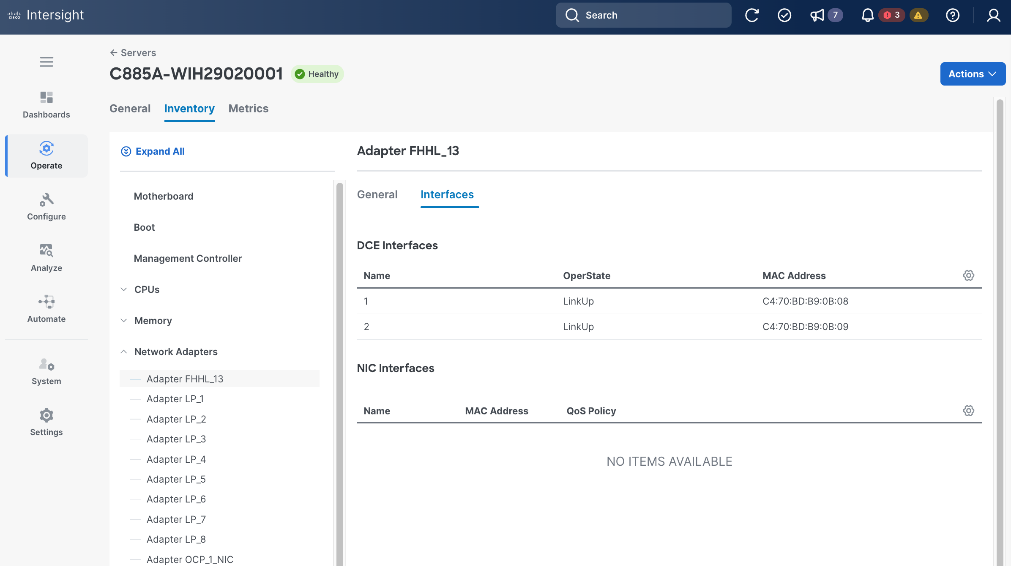

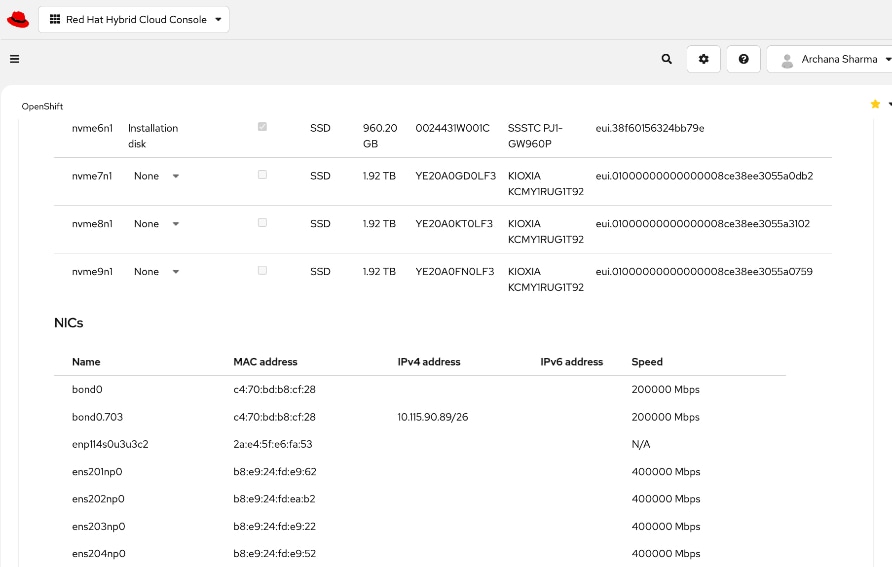

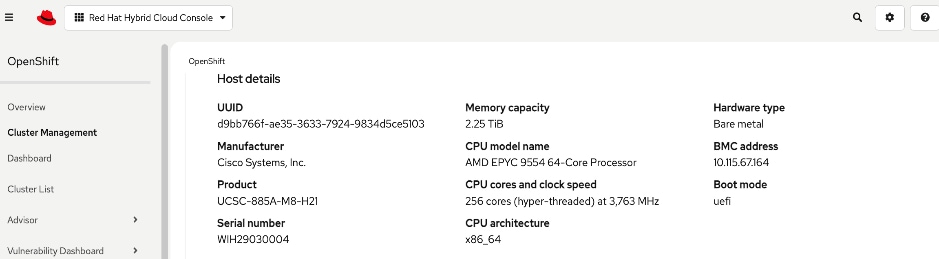

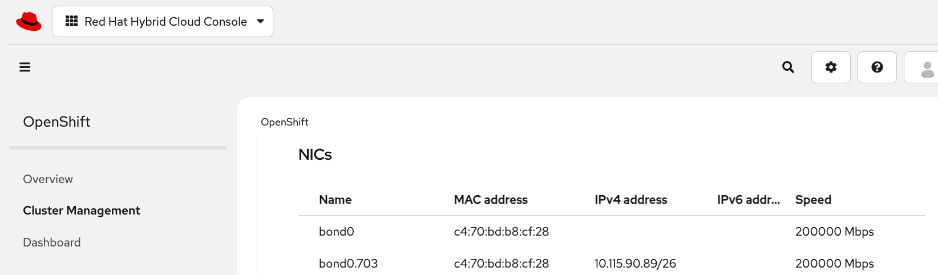

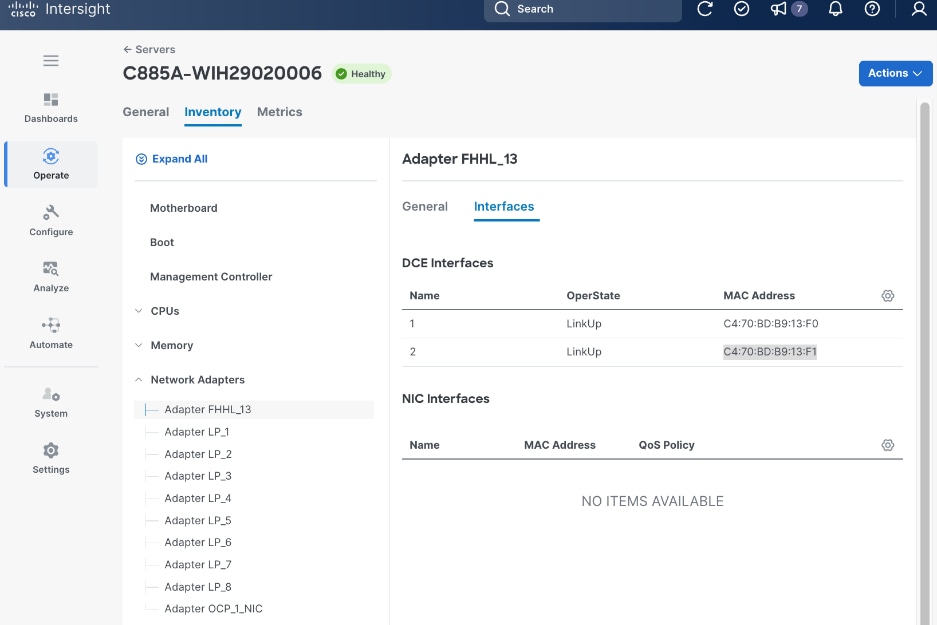

● Collect MAC addresses of the frontend NIC from all UCS C885A Nodes. The first port will be provisioned as the cluster IP network in OpenShift. You can collect this from Intersight or via Cisco BMC.

● Create DHCP reservations for the mac addresses collected above.

● Create DNS records for the reserved DHCP IP addresses.

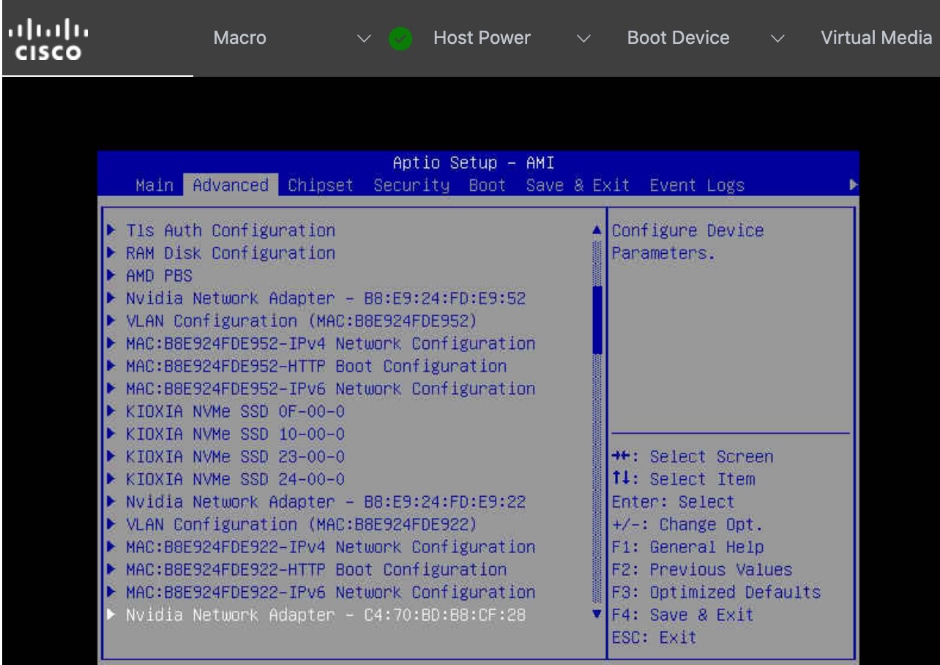

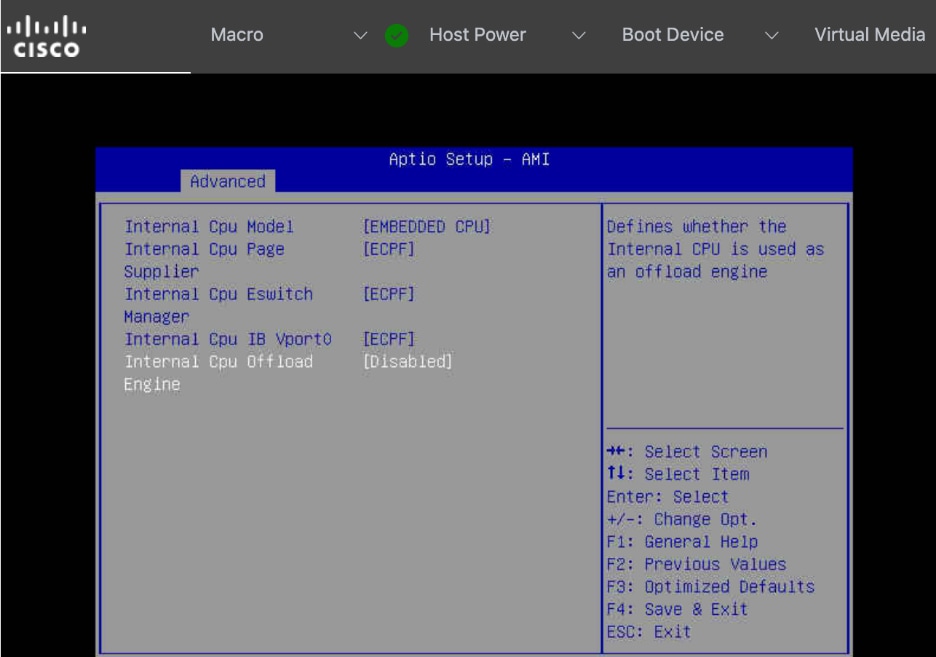

● Setup/Verify that the BlueField-3 NICs are in NIC mode (vs. DPU mode).

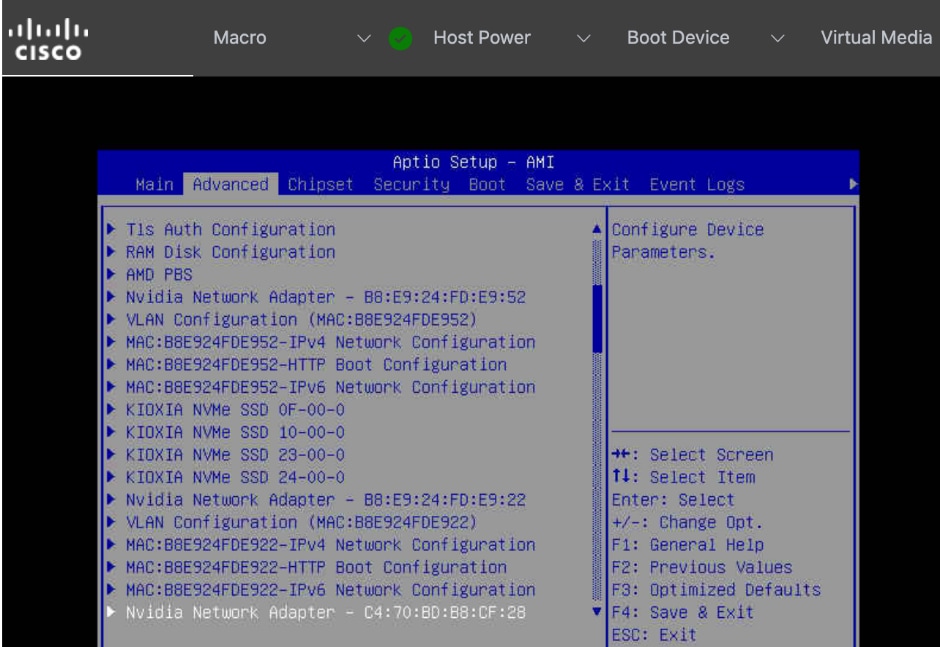

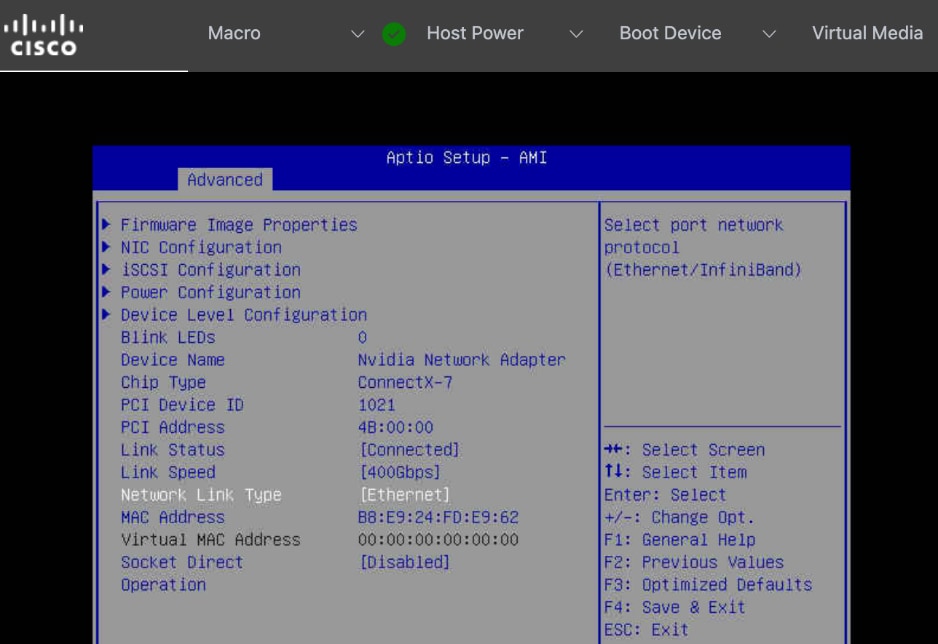

● Setup/Verify that the NVIDIA CX-7 cards are in Ethernet mode (vs. InfiniBand/IB).

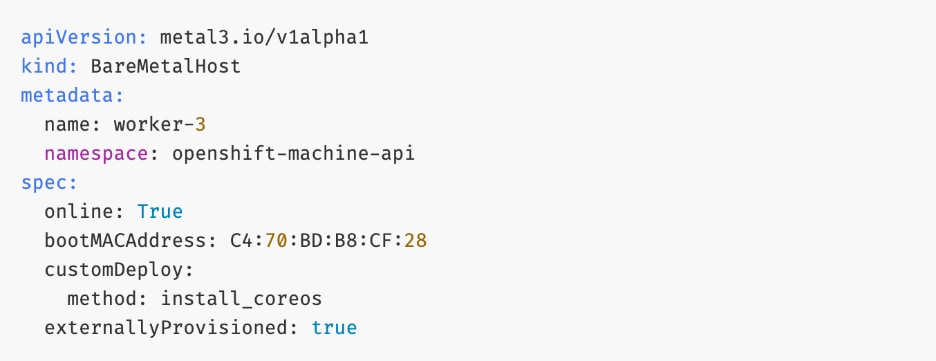

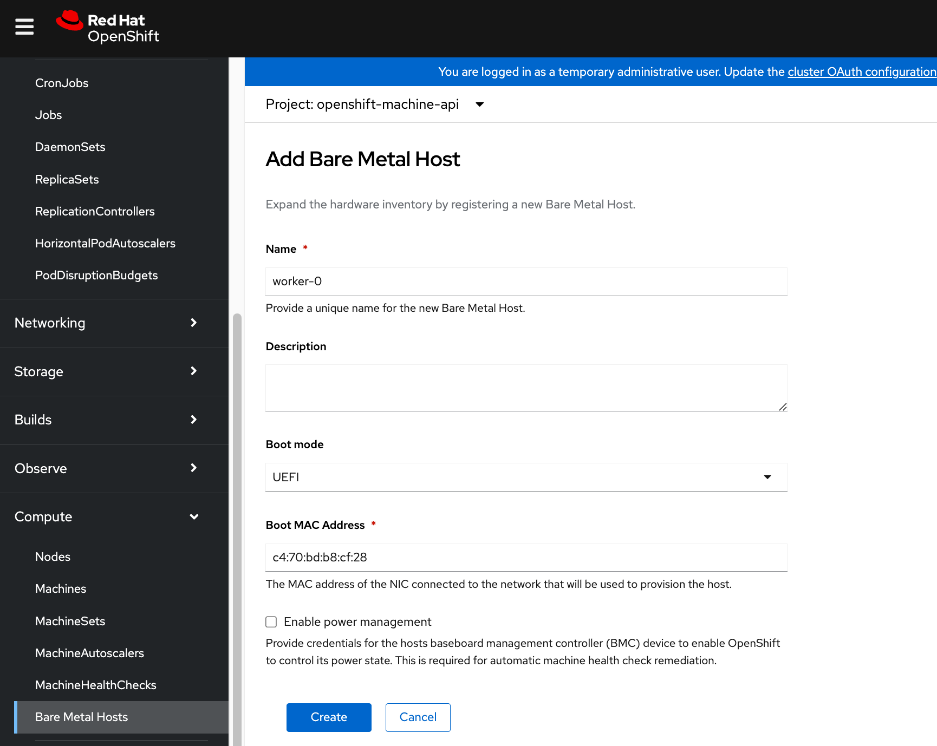

● Create machine configuration files on installer VM. UCS C885A will require a Bare Metal Host (BMH) config. file using the above frontend NIC MAC address.

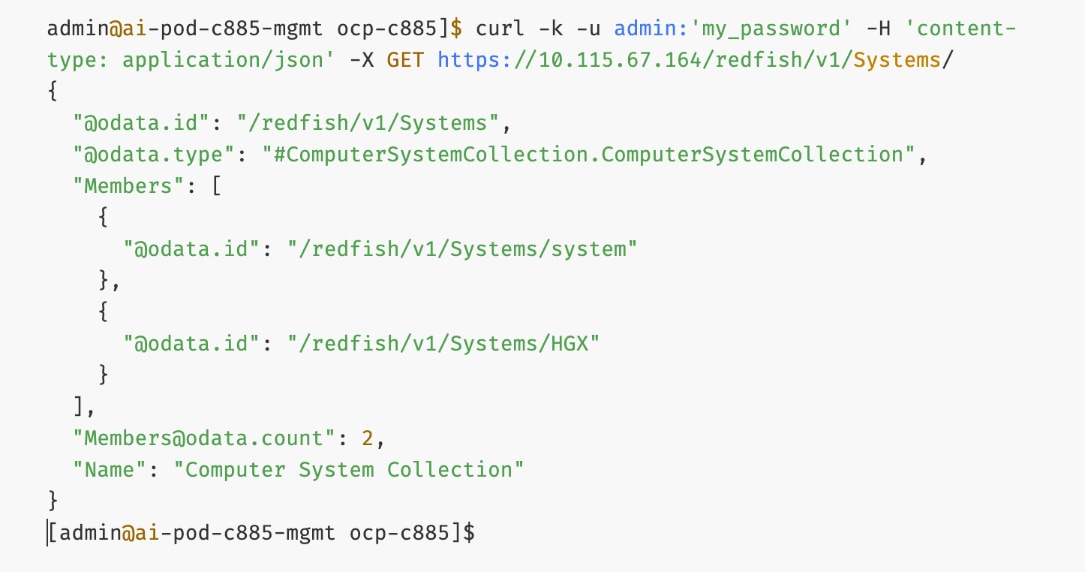

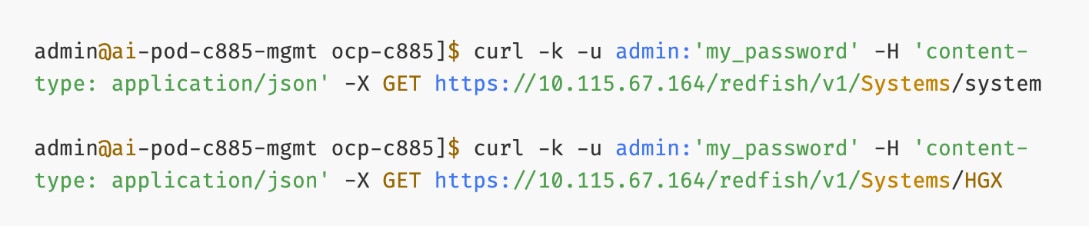

● Verify Redfish access to the UCS-C885A.

● Upgrade to latest firmware for all components on the UCS C885A. Use Cisco UCS Hardware Compatibility (HCL) tool and Intersight HCL check to confirm the latest version is deployed on the node.

|

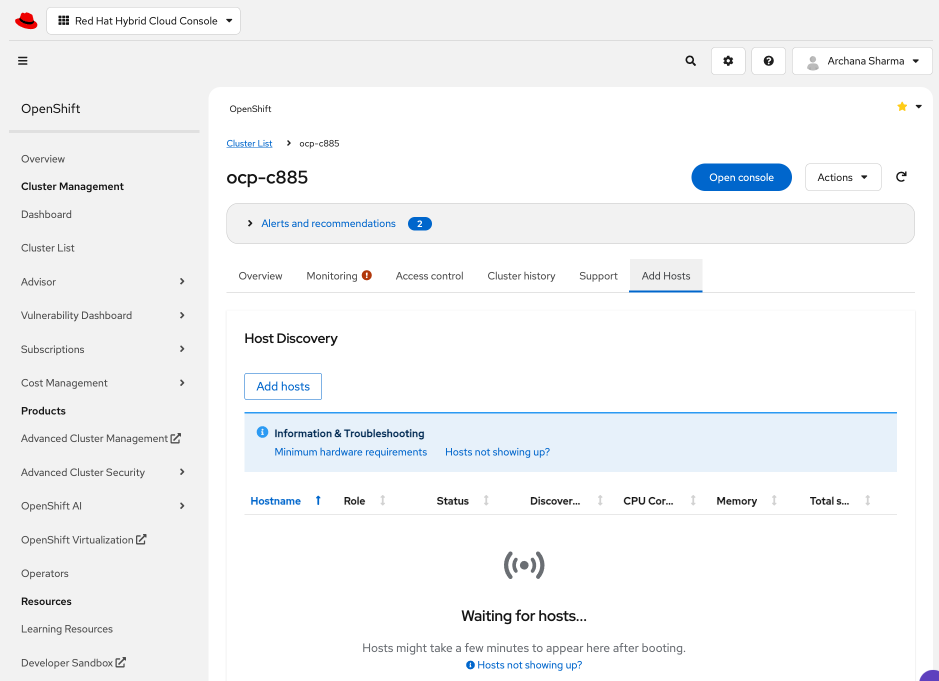

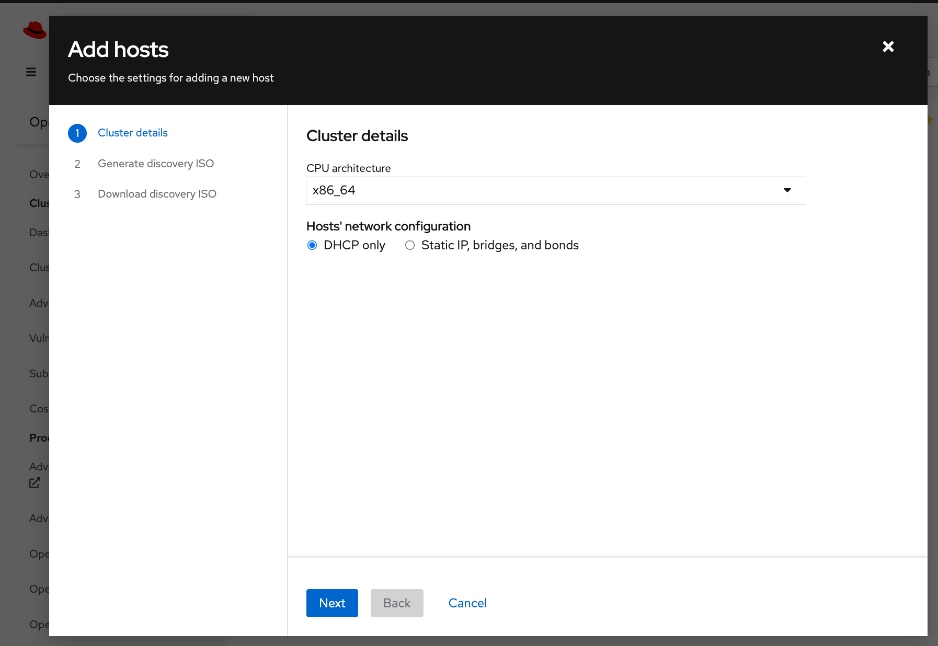

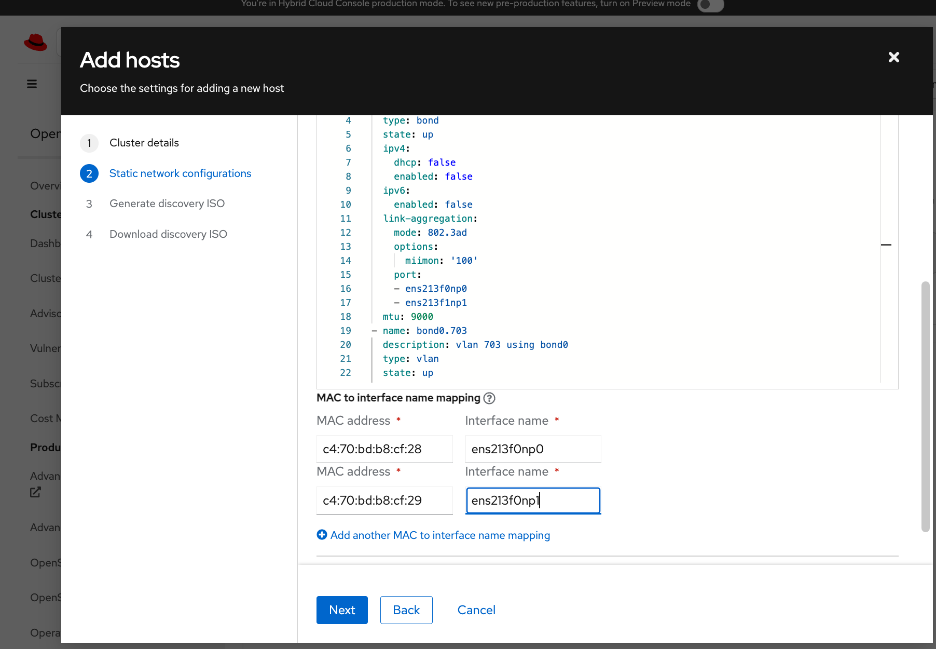

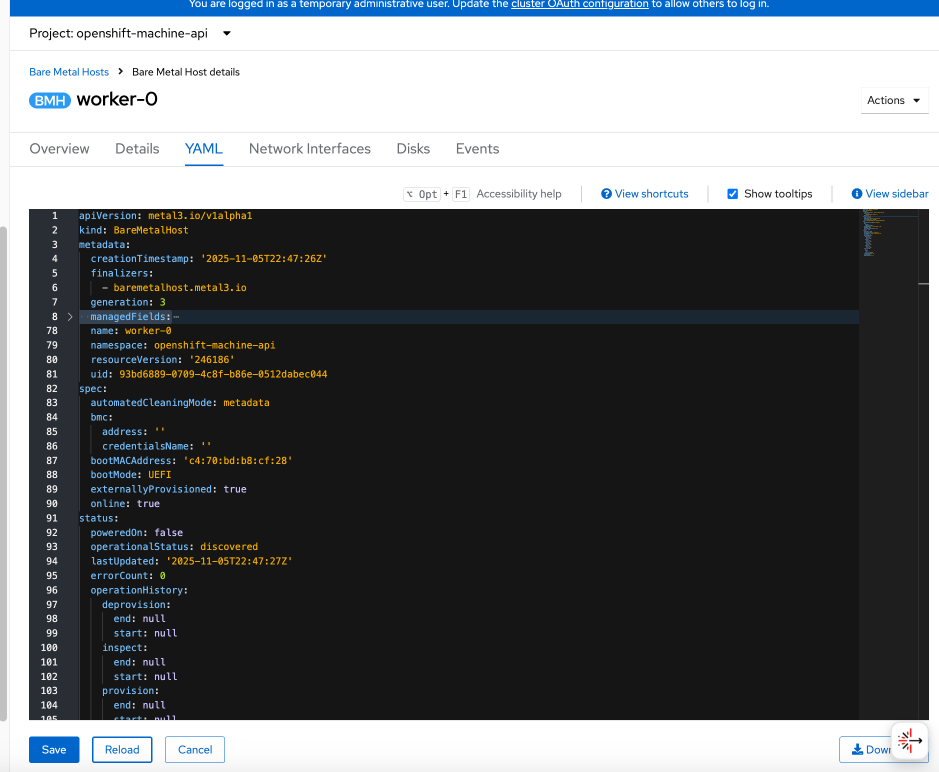

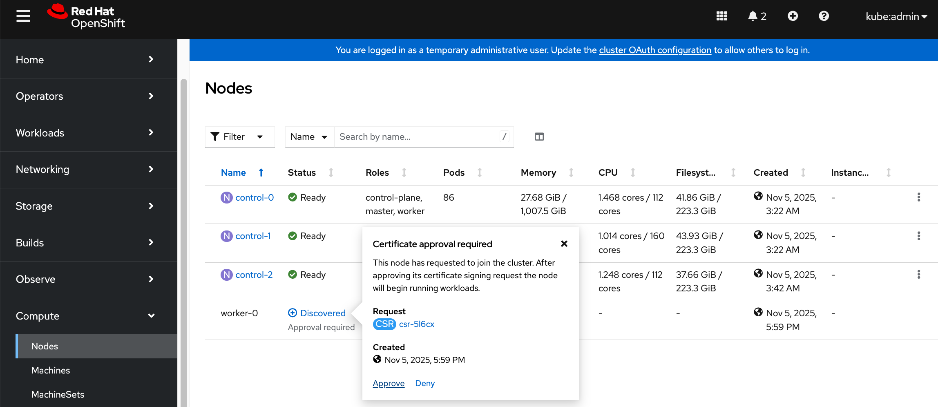

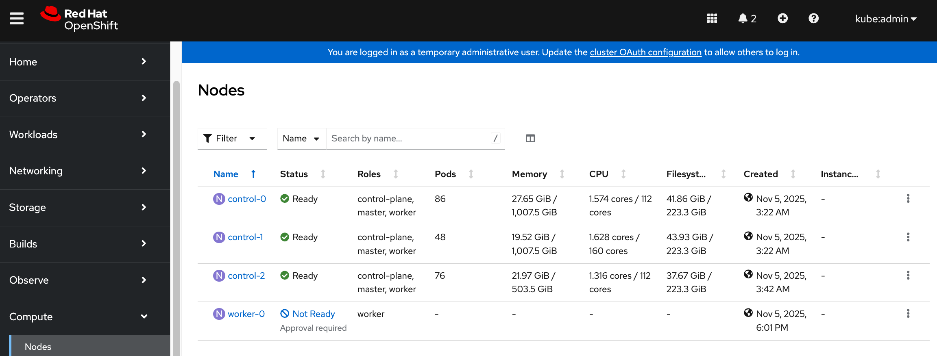

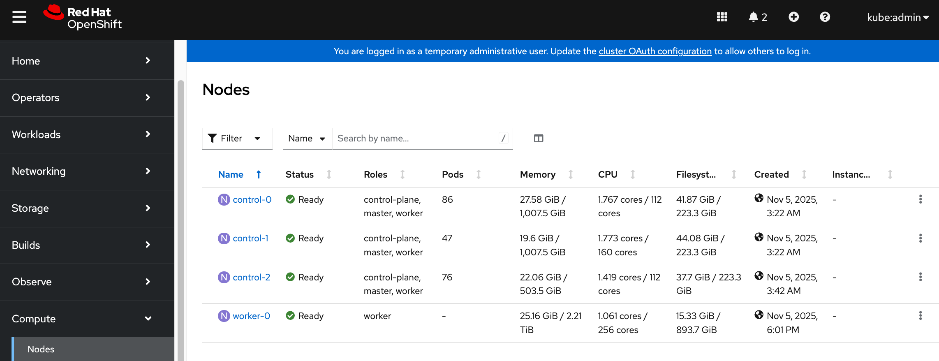

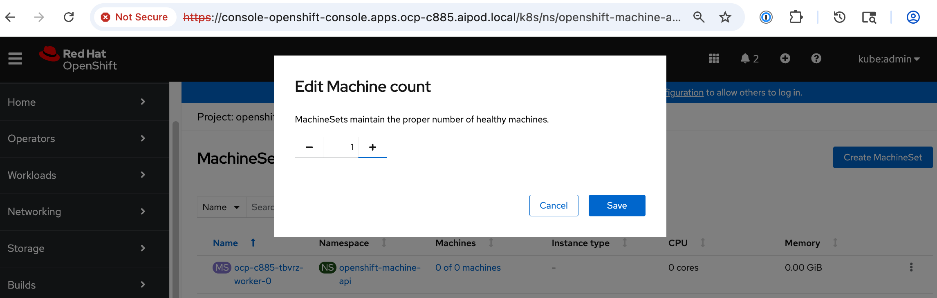

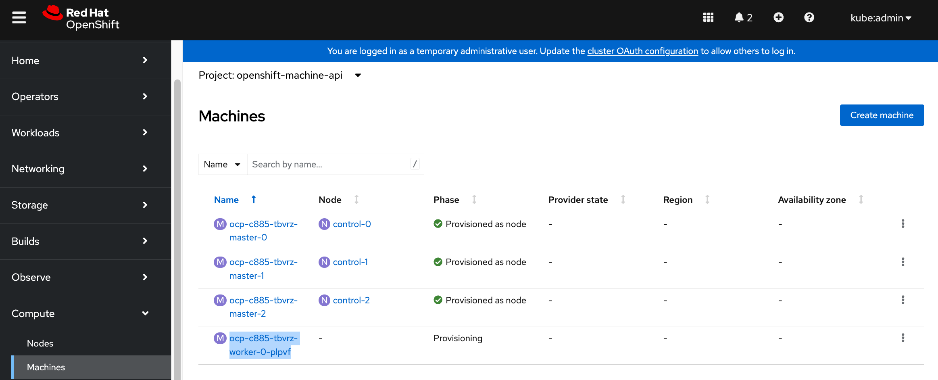

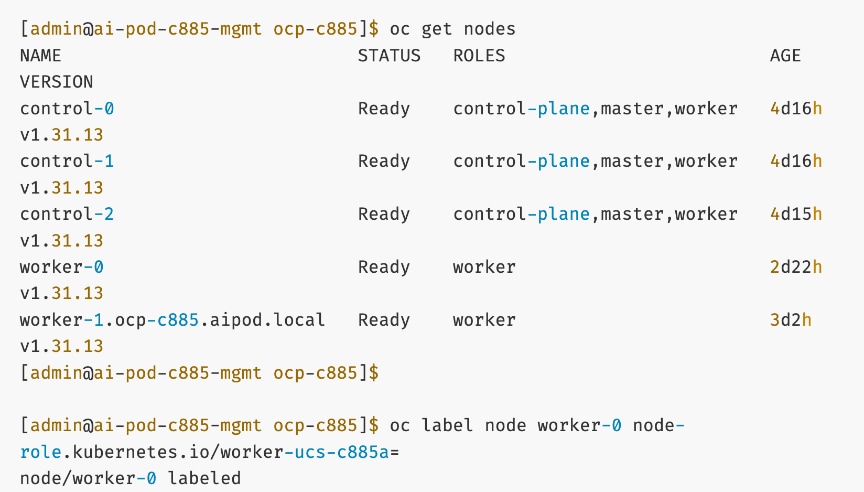

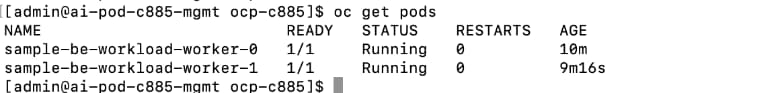

| CVD_09 |

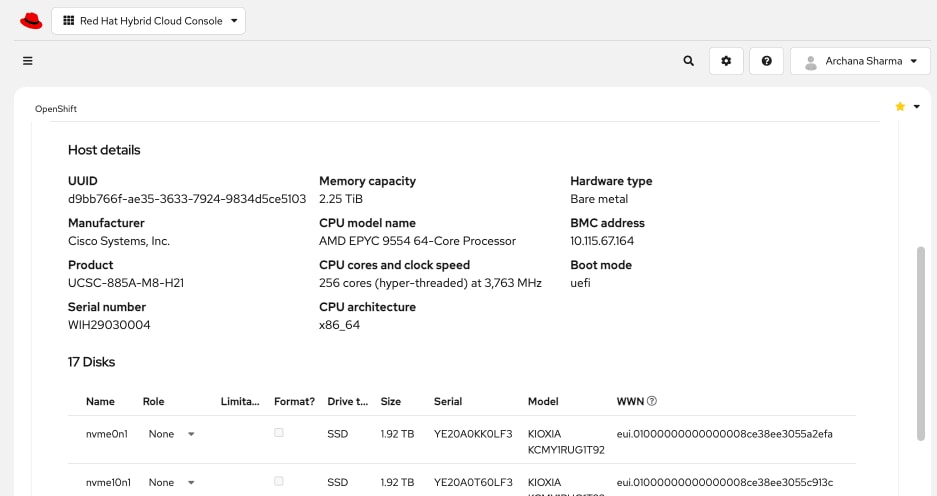

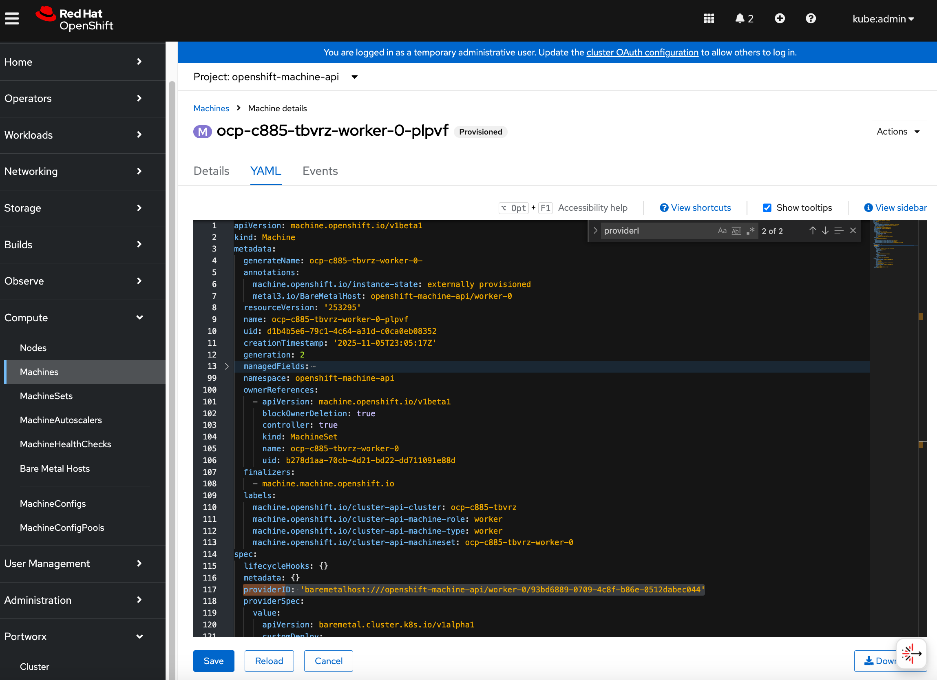

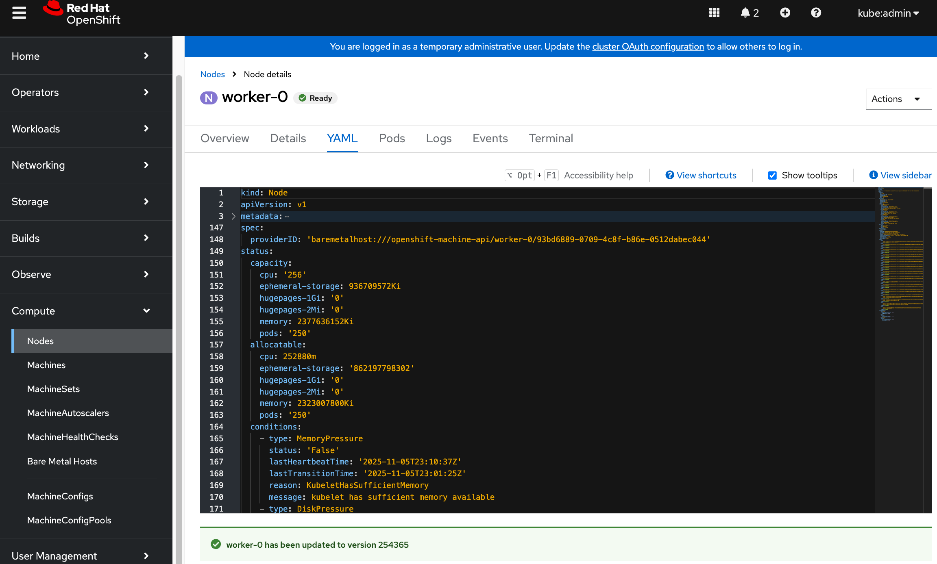

Add Cisco UCS C885A nodes to the OpenShift cluster from Red Hat Hybrid Cloud Console

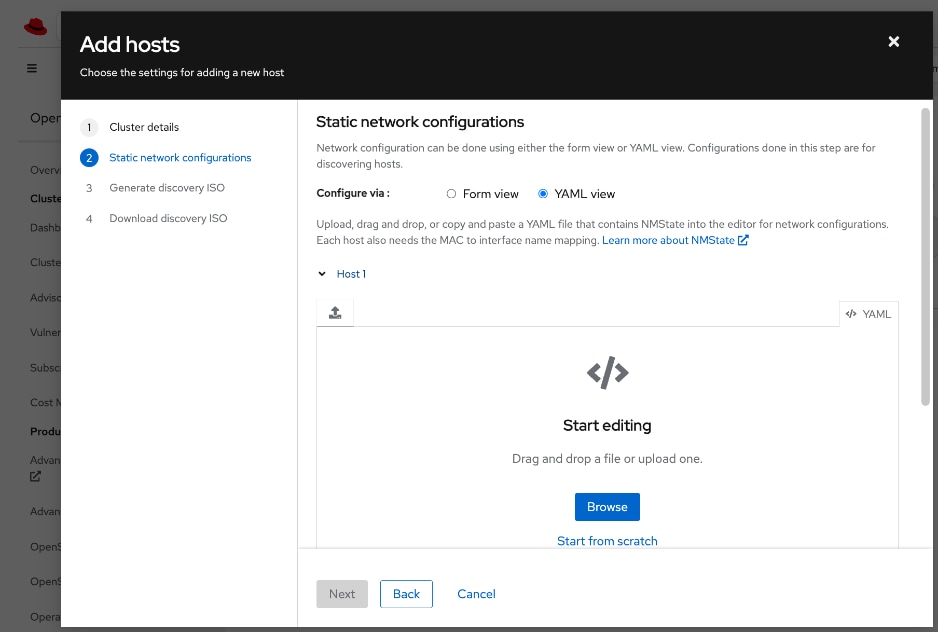

● Networking configuration for UCS C885A worker nodes is specified using

Static IP, bridges and bondsStatic IP, bridges and bonds option in the Assisted Installer. The two ports on the FE NIC are configured as an LACP bond with the OpenShift Cluster IP VLAN added as trunked VLAN to this bond. You will need the mac address of both FE interfaces collected earlier.

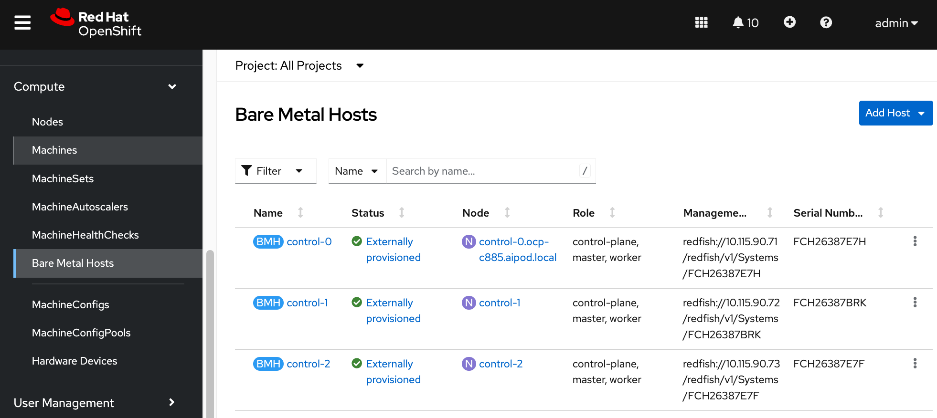

● Set up UCS server as a bare metal host from OpenShift cluster console (or via CLI from OpenShift Installer machine)

● Provision power management for UCS C885A using Redfish

|

| CVD_10 |

Set up Networking for Storage Access using NFS

● Deploy Red Hat Kubernetes NMState Operator

● Configure UCS worker node(s) for NFS storage access

● Verify the connectivity to Everpure FlashBlade using NFS network

|

| CVD_11 |

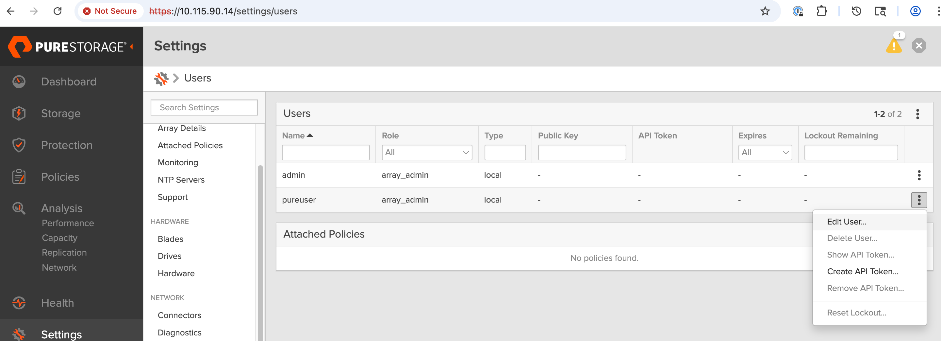

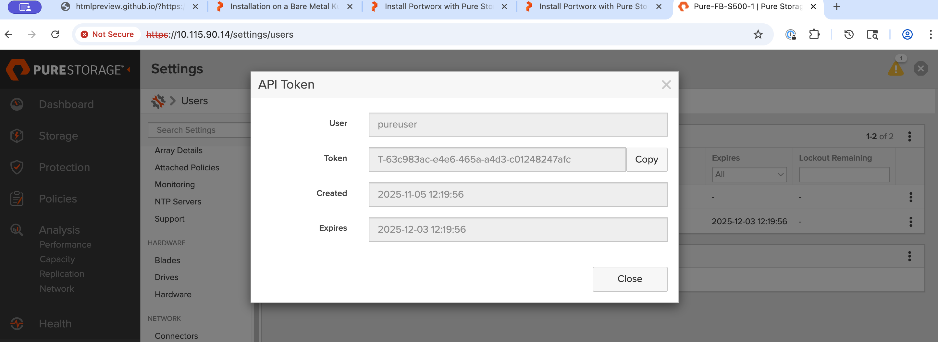

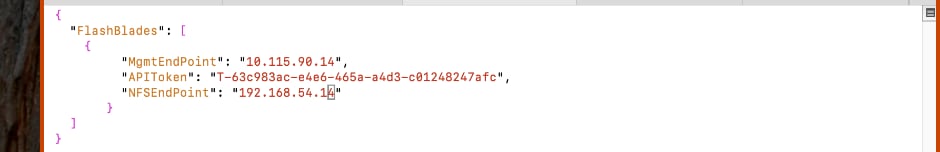

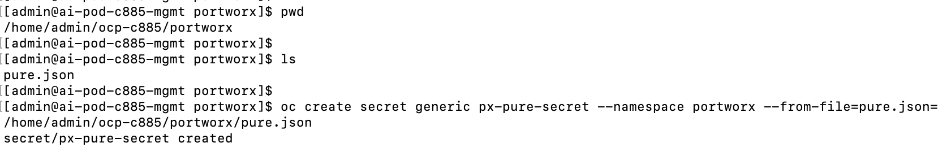

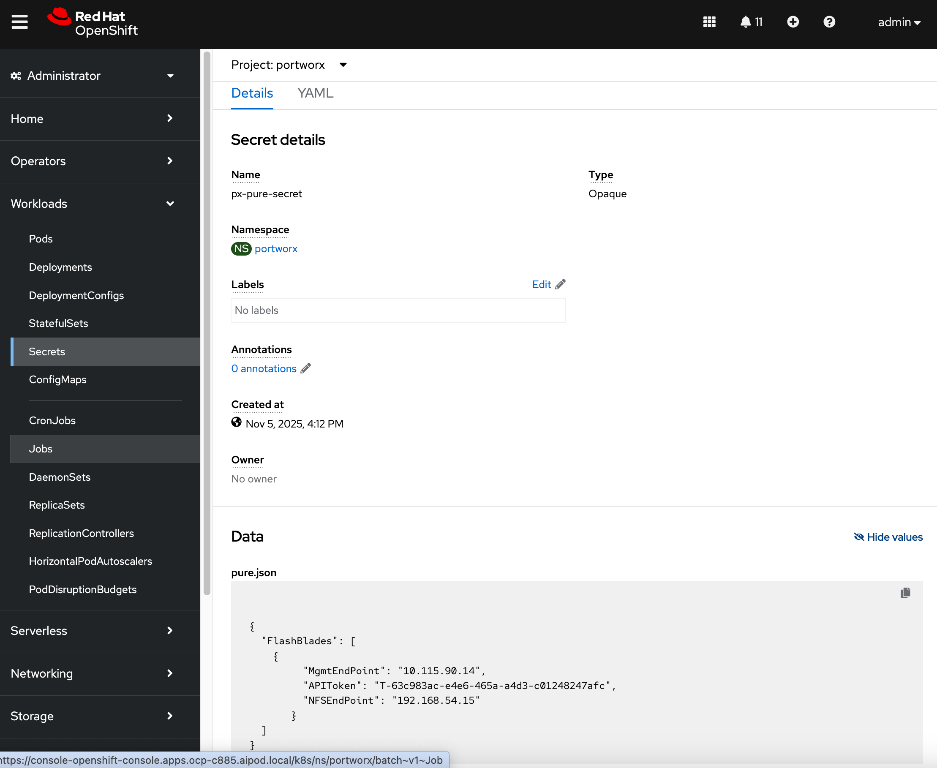

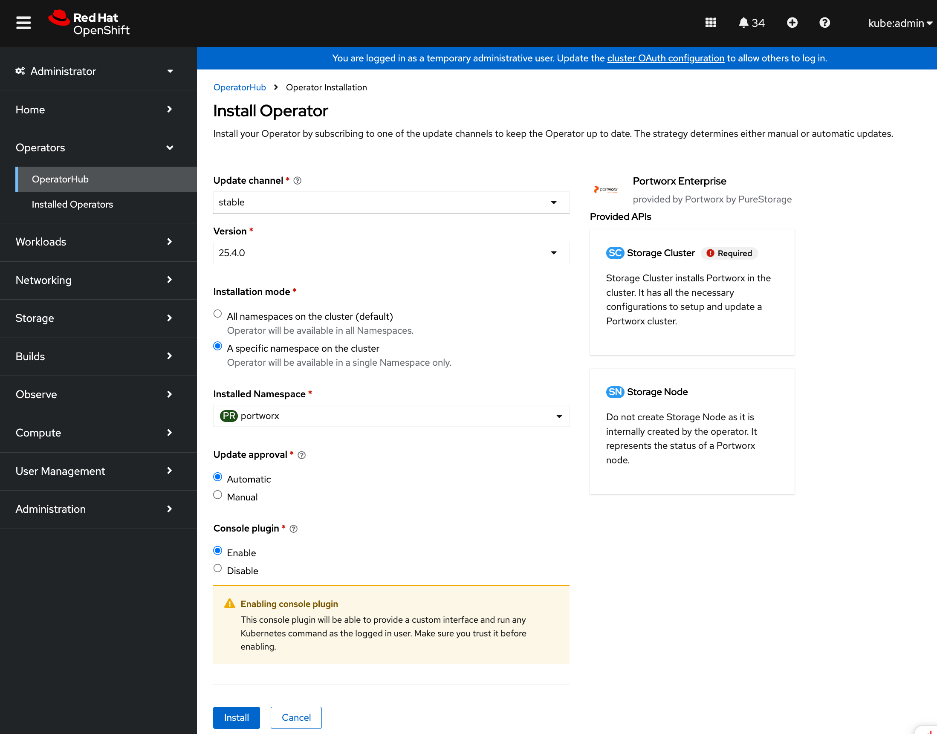

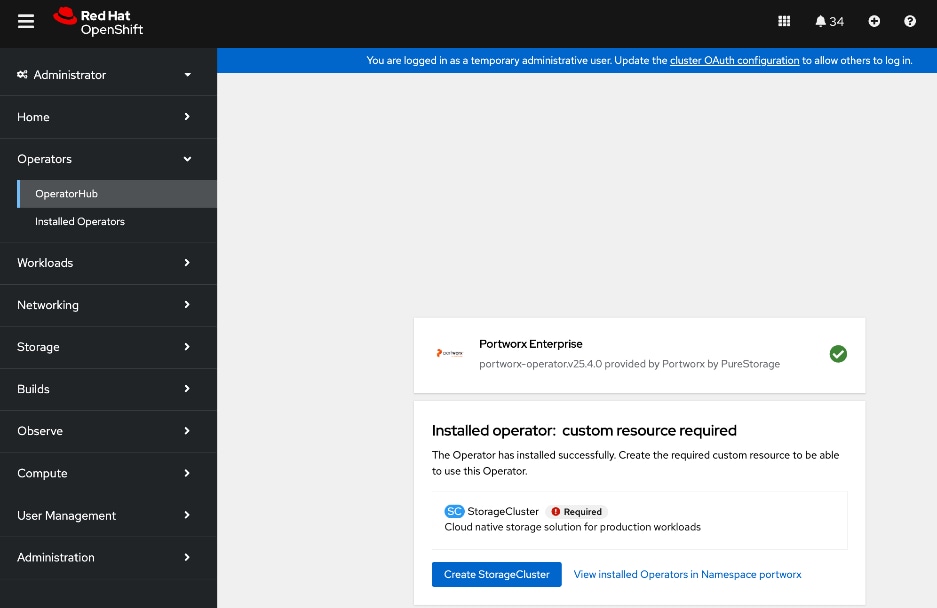

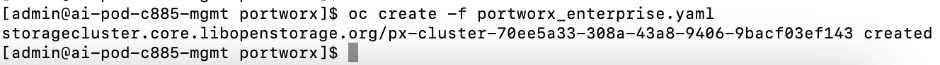

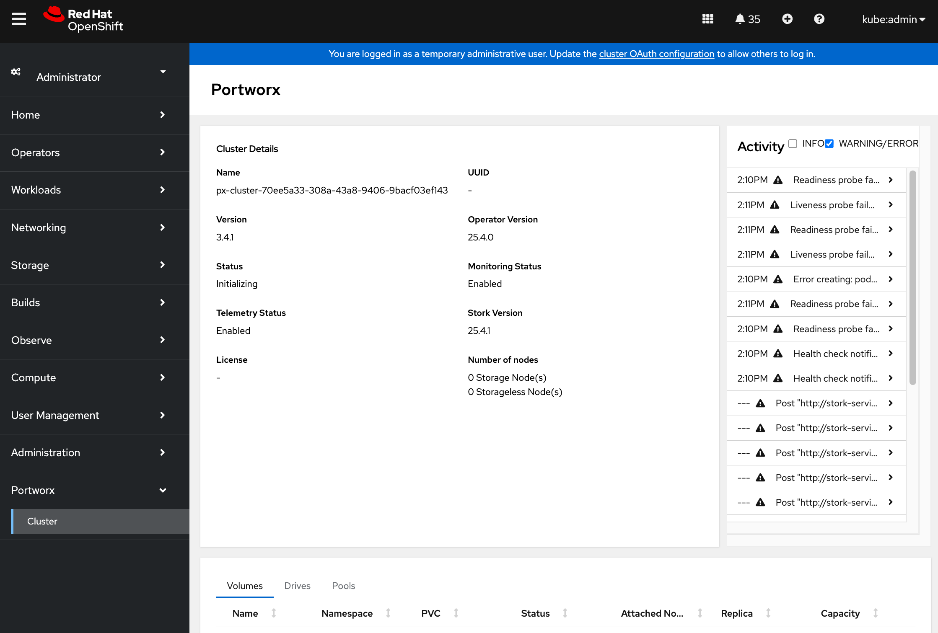

Deploy persistent storage using Portworx by Everpure

● Create API token on Everpure FlashBlade for use by Portworx

● Deploy Kubernetes secret to securely access Everpure FlashBlade

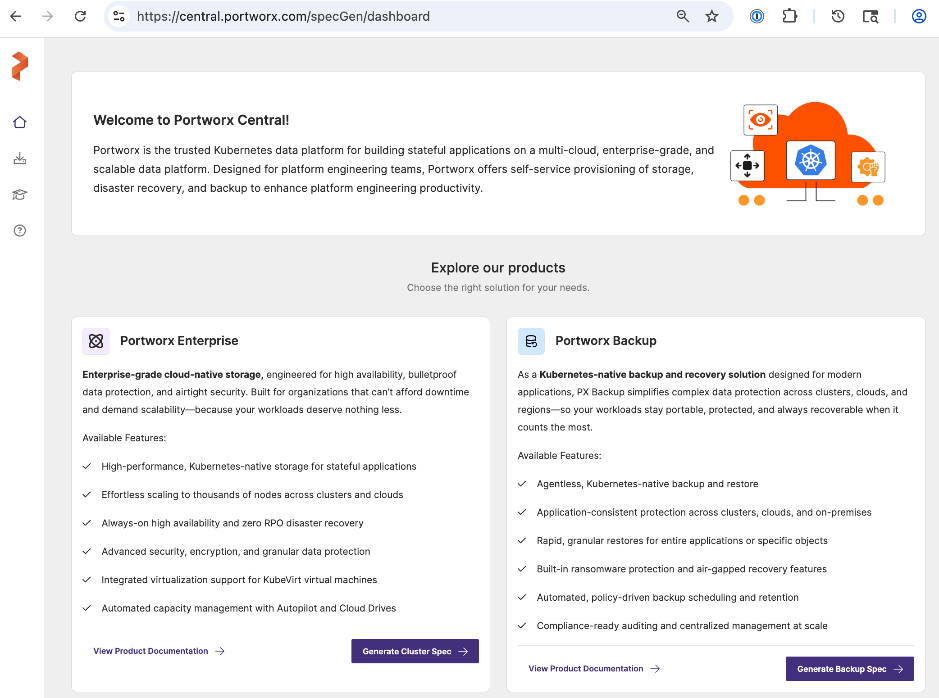

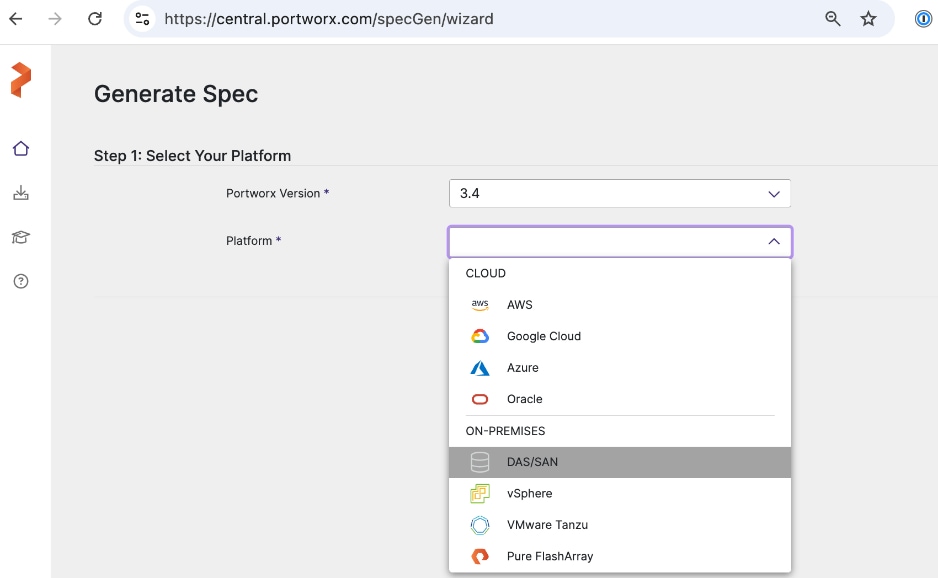

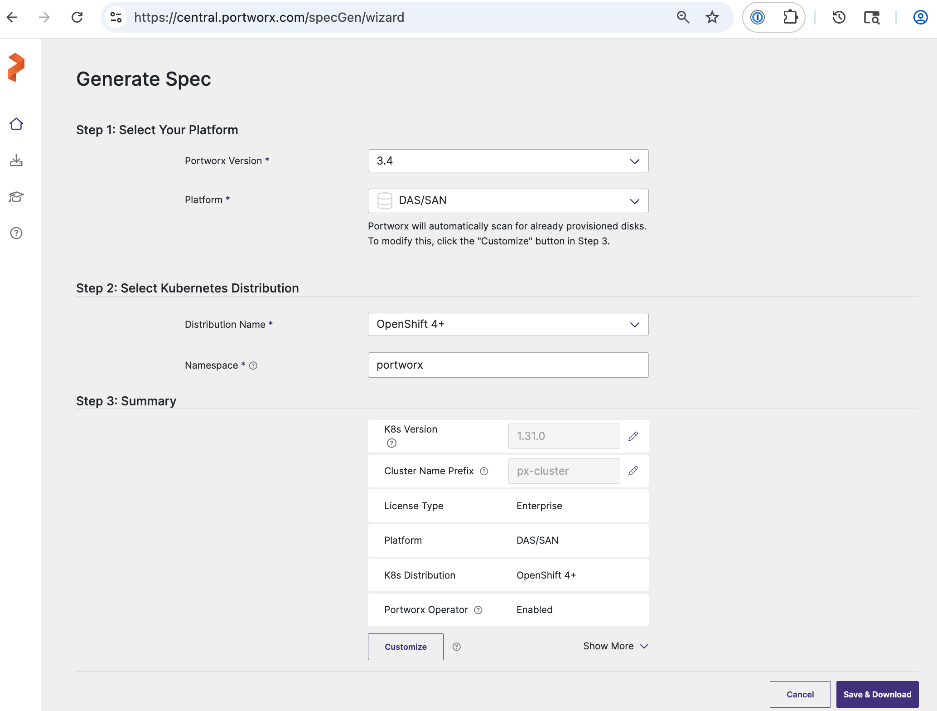

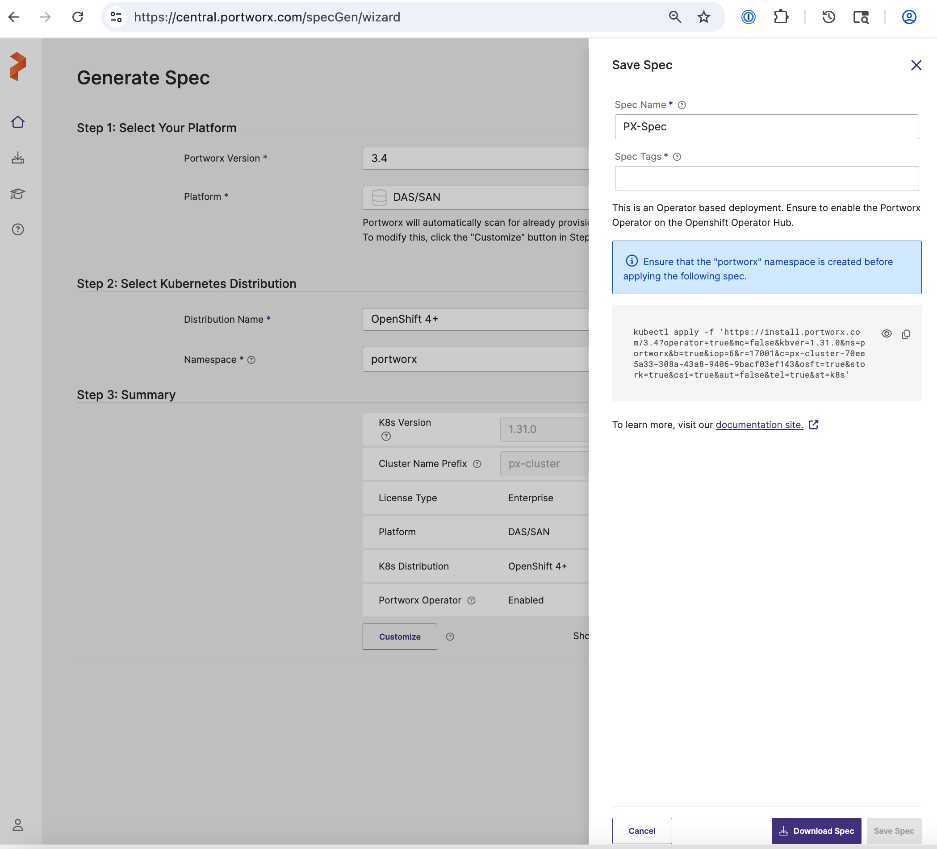

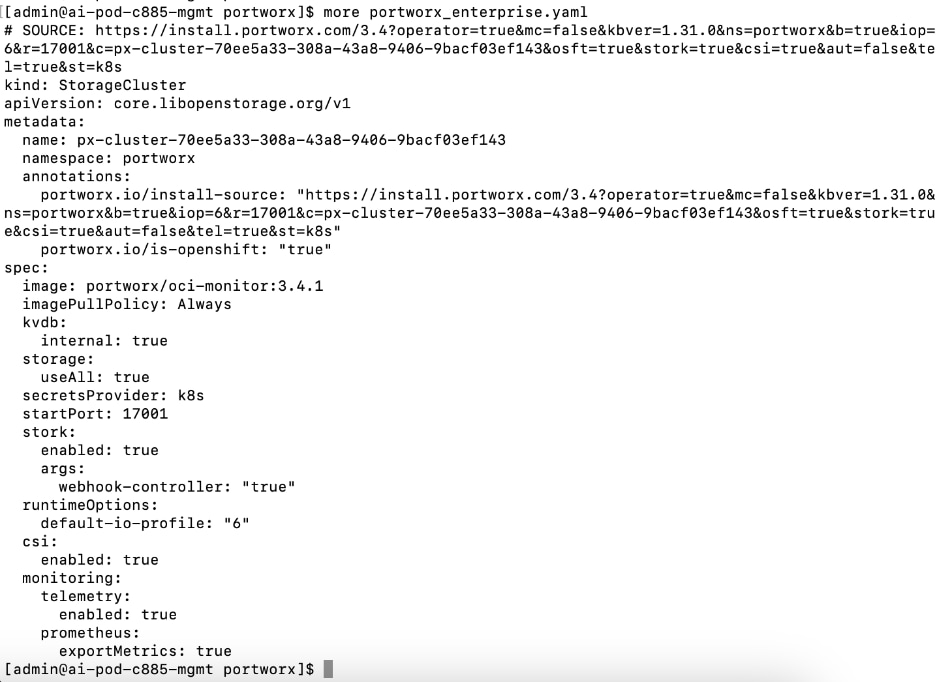

● Generate Portworx Enterprise Specification from Portworx Central

● Deploy Portworx Enterprise Operator from Red Hat OpenShift Cluster Console

● Verify that Portworx cluster is up and running in OpenShift

|

| CVD_12 |

Setup Portworx for NFS over TCP access to storage

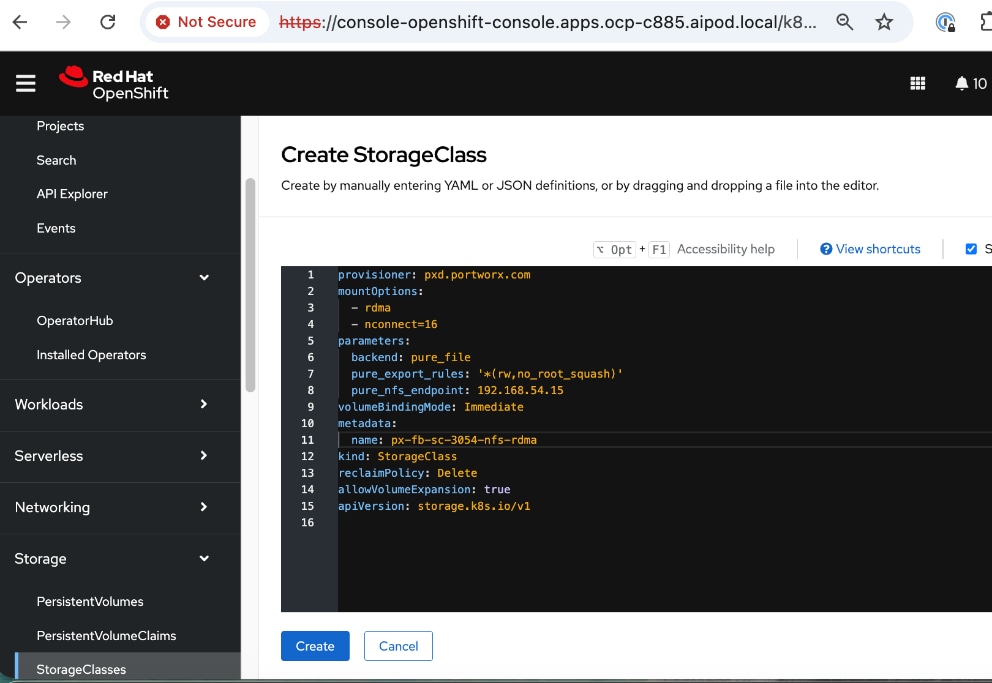

● Create Storage Class for NFS over TCP to Everpure FlashBlade

● Provision the newly created storage classes as the default storage class (Optional)

● Create a Persistent Volume Claim to verify setup

|

| CVD_13 |

Deploy NVIDIA GPU Operator in Red Hat OpenShift

● Deploy Red Hat Feature Discovery Operator. Verify that the worker nodes with GPUs are identified and labelled. Label should be (

pcie-10de.present=truepcie-10de.present=true)

● Deploy NVIDA GPU Operator

● Enable Data Center GPU Monitoring (DCGM) dashboard in OpenShift cluster console:

https://docs.nvidia.com/datacenter/cloud-native/openshift/latest/enable-gpu-monitoring-dashboard.htmlhttps://docs.nvidia.com/datacenter/cloud-native/openshift/latest/enable-gpu-monitoring-dashboard.html

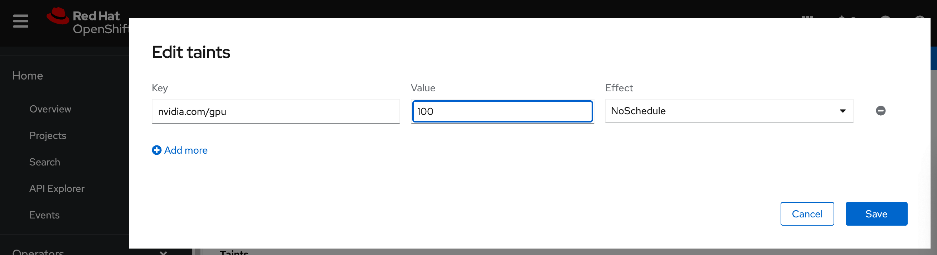

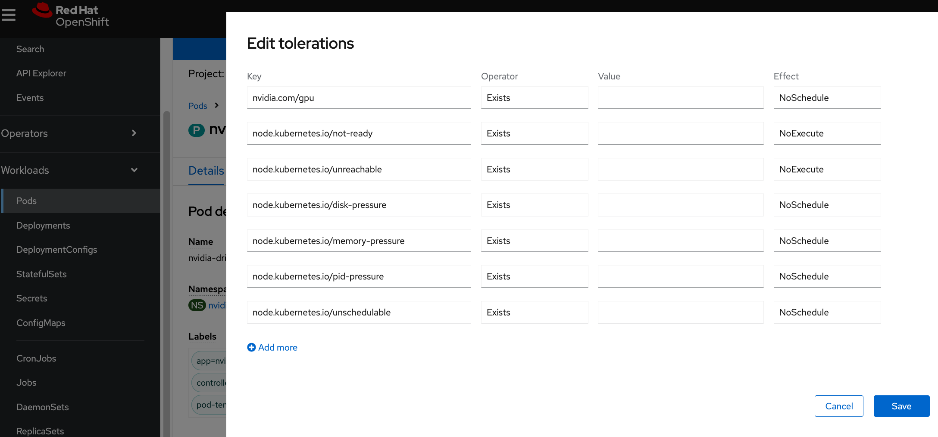

● Set up taints on worker nodes and tolerations on workloads (or Pods)

|

| CVD_14 |

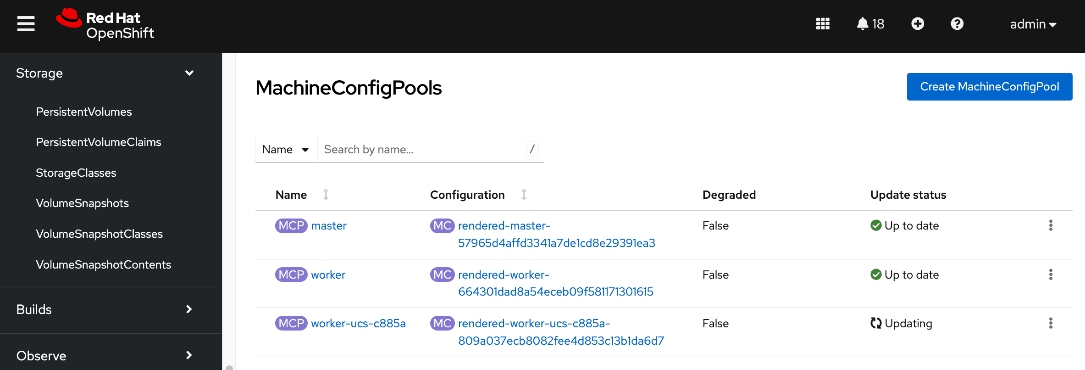

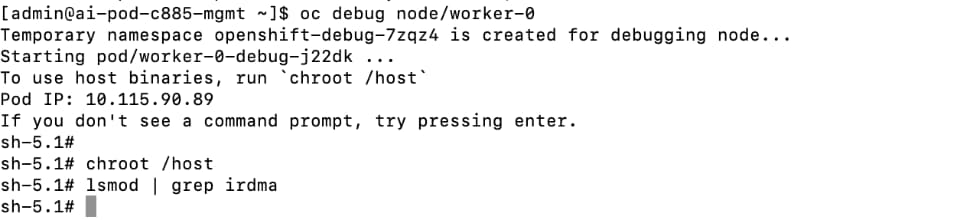

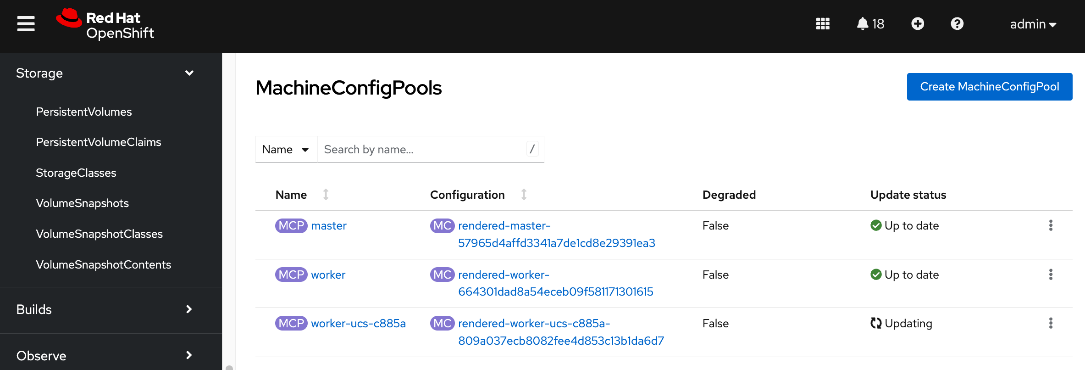

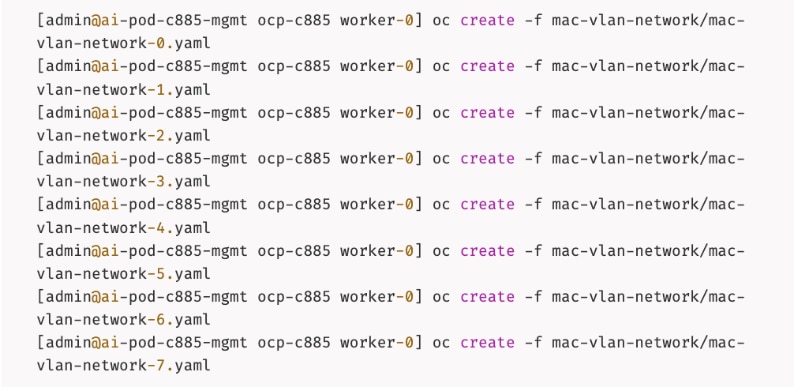

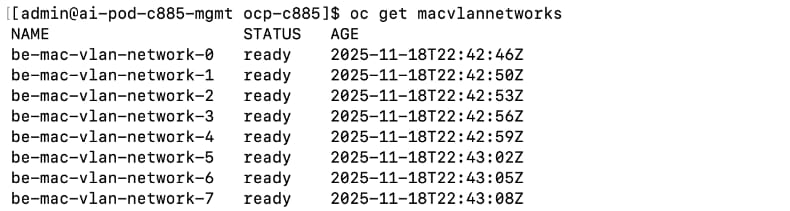

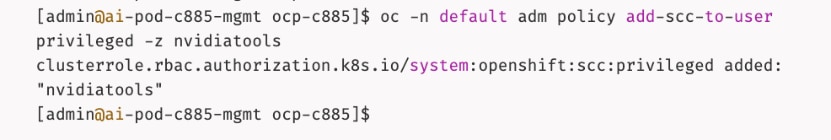

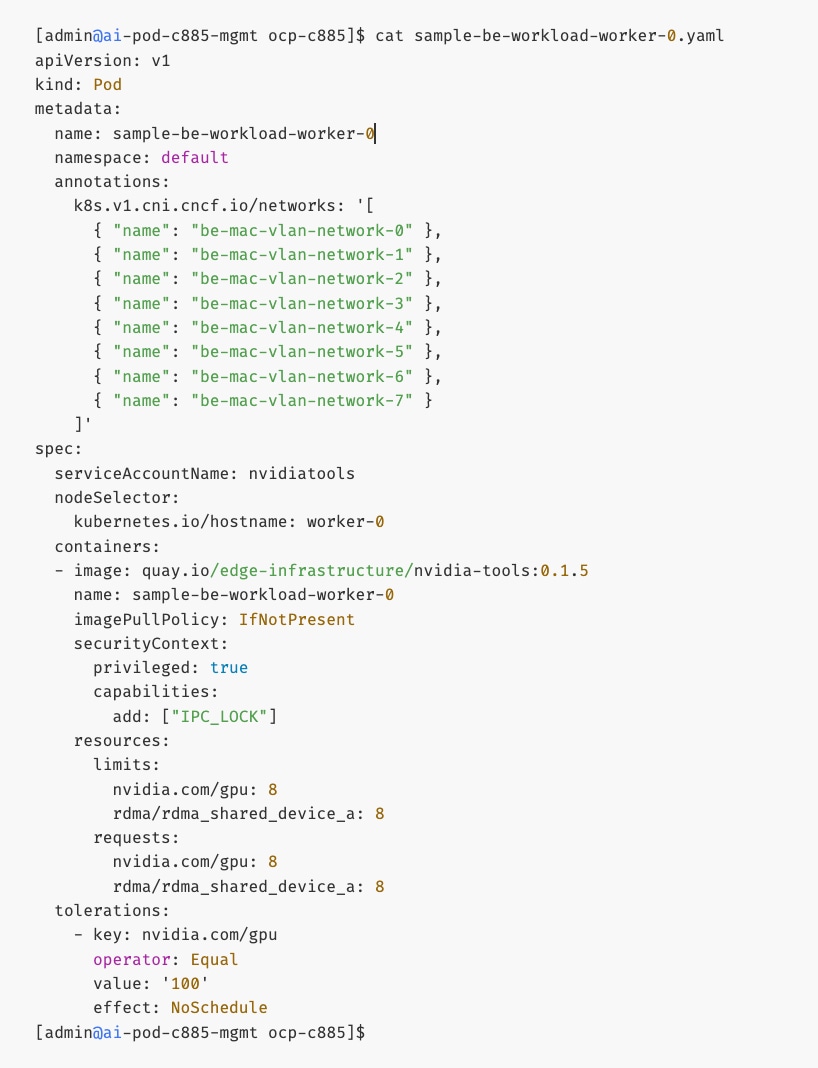

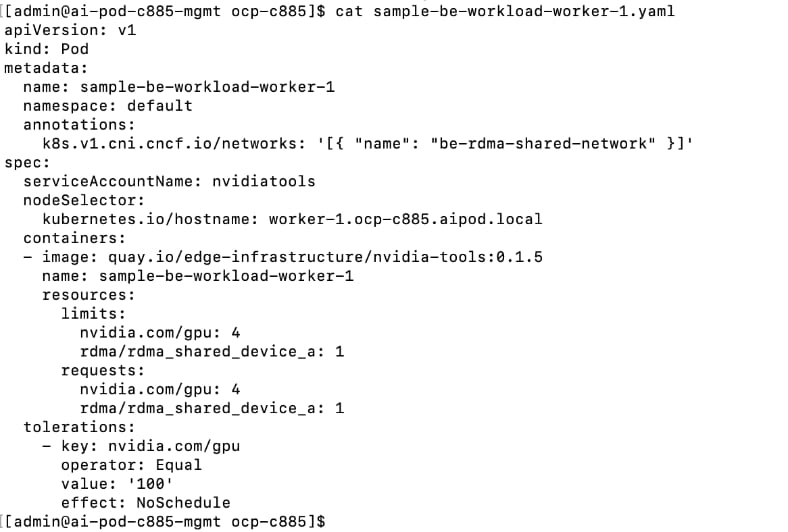

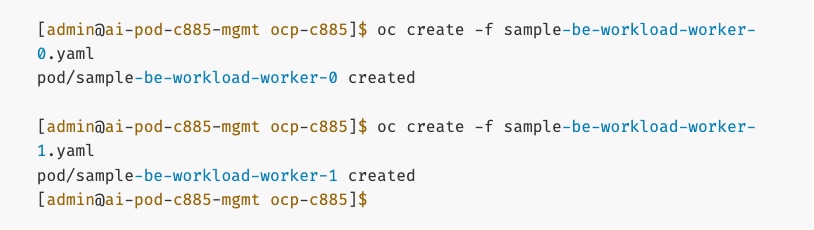

Deploy GPUDirect RDMA on Backend Fabric

● Log into

intersight.com and collect MAC Addresses for all E-W and N-S NICs and interface names for the C885As.

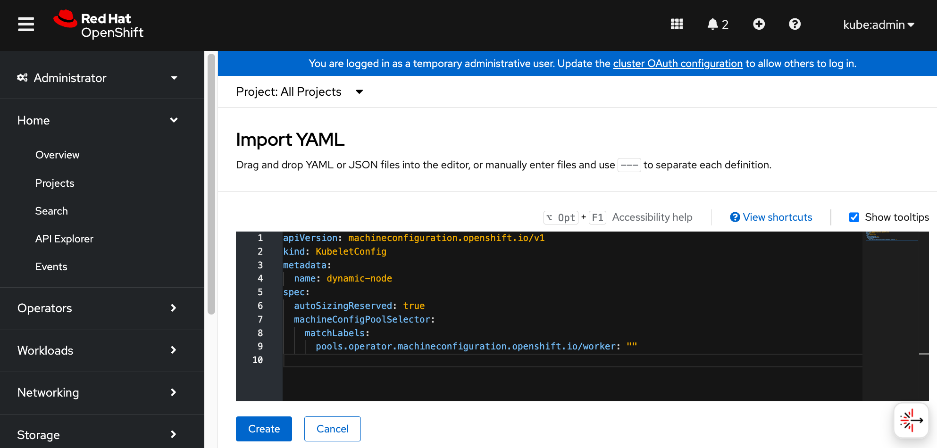

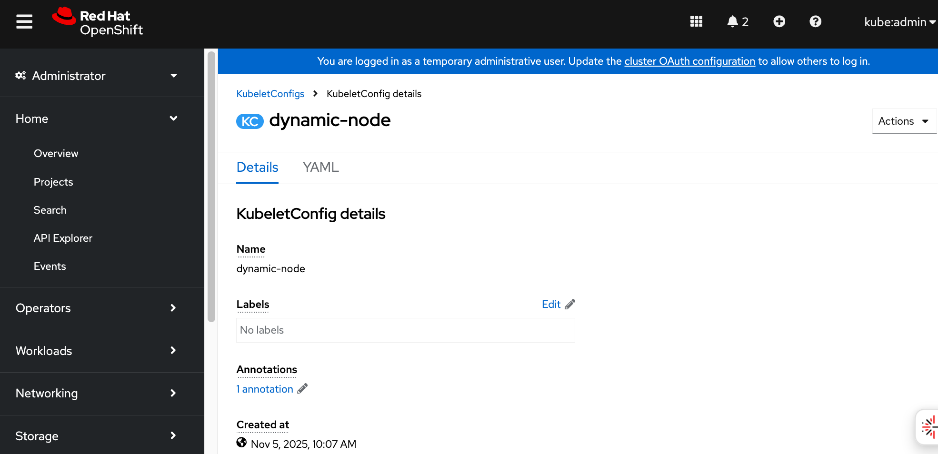

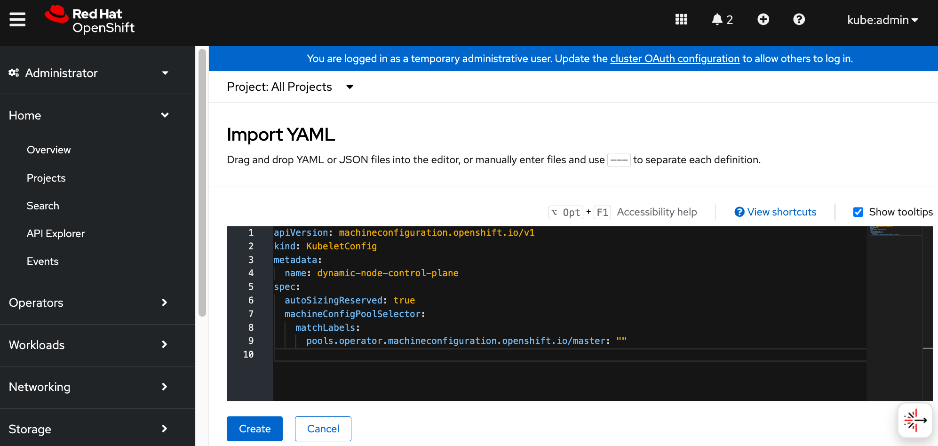

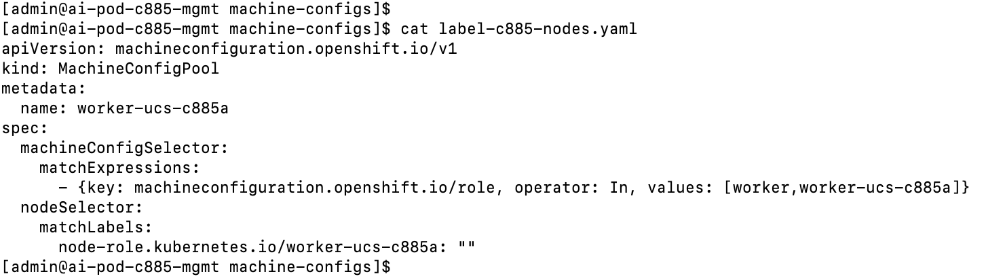

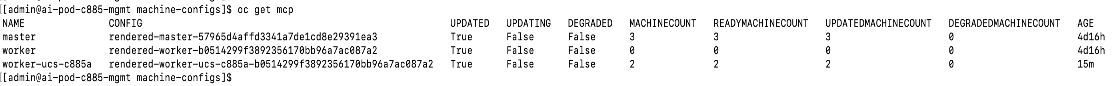

● Create and apply unique node label to UCS C885A nodes

● Create a new machine config pool with only UCS C885 GPU nodes

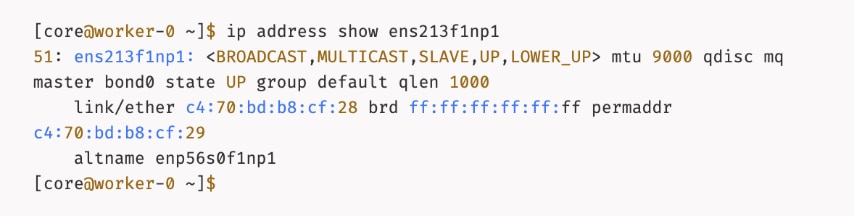

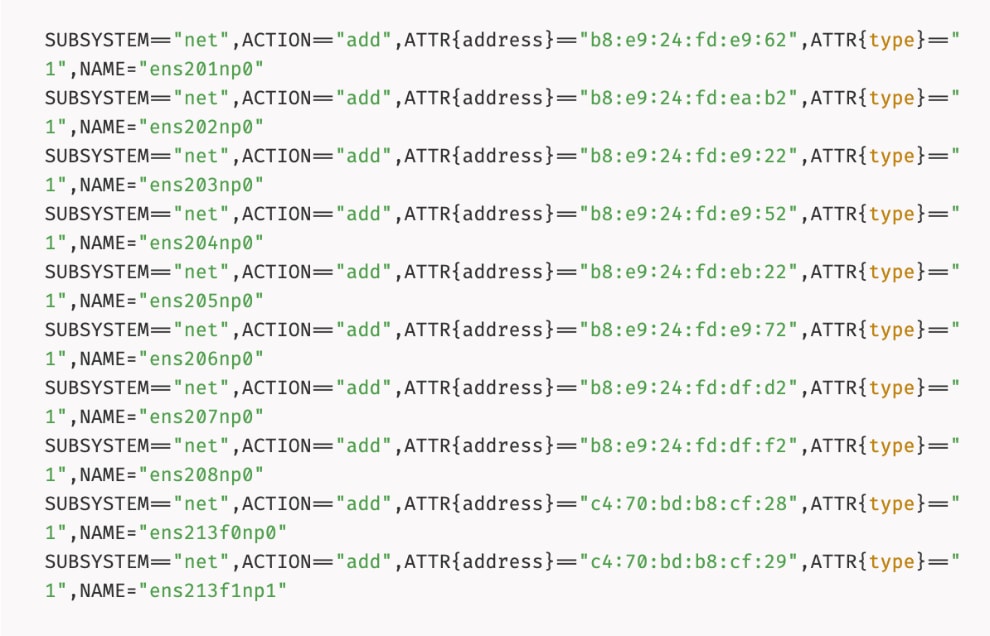

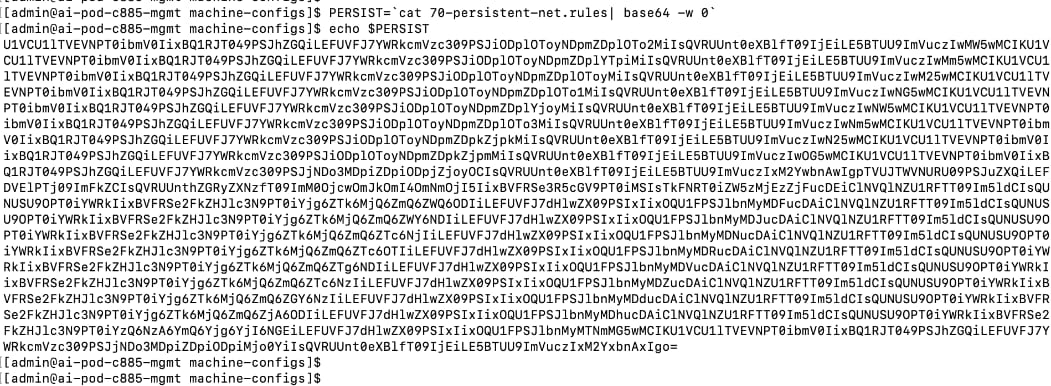

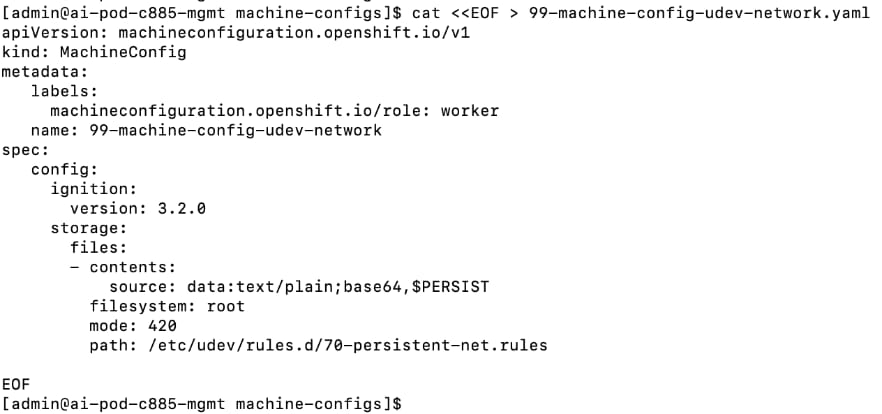

● Create persistent Interface Naming for all NVIDIA NICs

● Verify that NVIDIA network devices are present and labelled

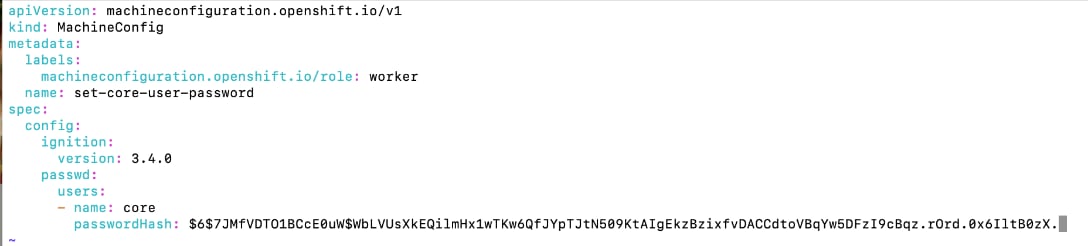

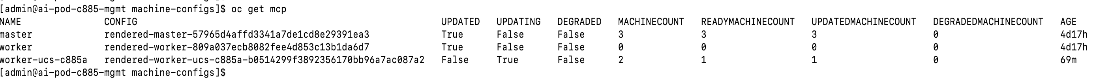

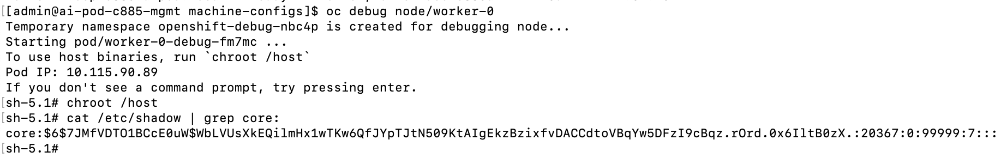

● Create password for core user to access node

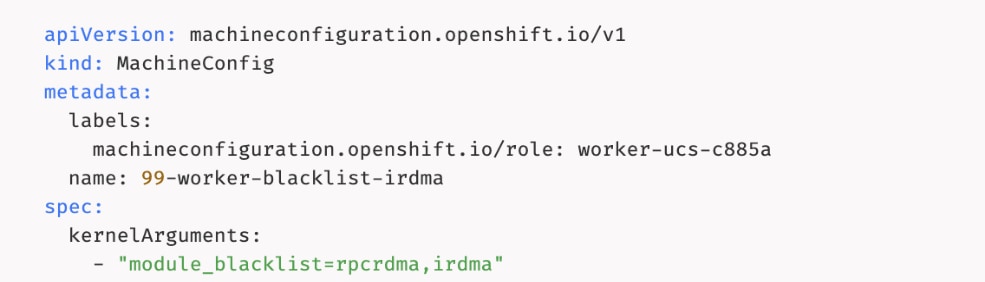

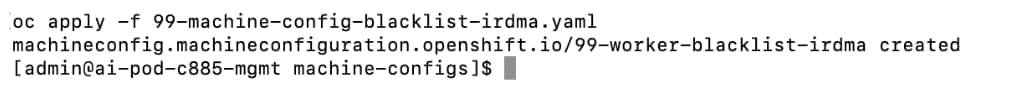

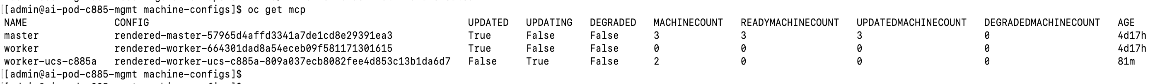

● Disable IRDMA and RPCRDMA Kernel Modules

● Deploy NVIDIA Network Operator from Red Hat Cluster Console

● Configure NIC Cluster Policy for NVIDIA Network Operator

● Set MTU to 9000 on NVIDIA backend NICs

● Create MAC VLAN Network to provision backend interfaces

● Deploy ARP and RP policies

● Update GPU Cluster Policy

Note: If you run into issues with NIC Cluster policy coming up, you may need to put Portworx into maintenance mode and/or remove GPU operator.

|

| CVD_15 |

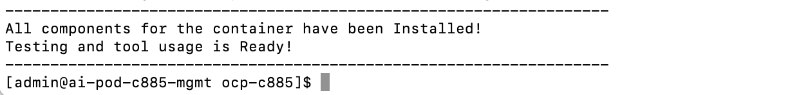

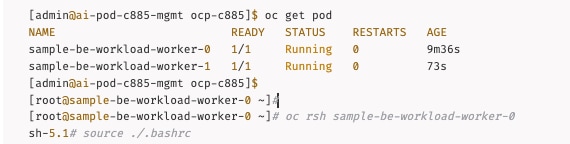

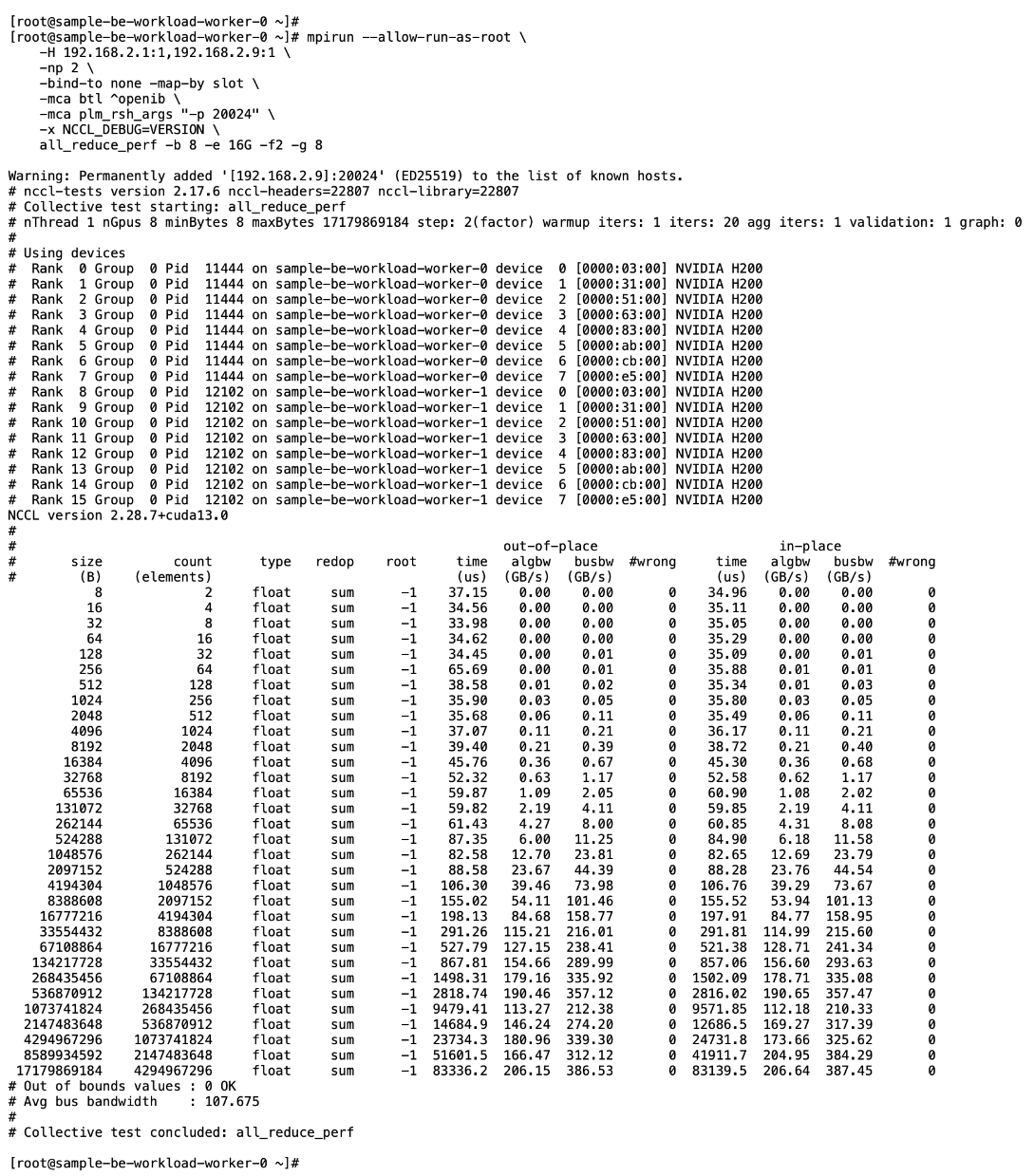

Validate GPUDirect RDMA

● Deploy workload to test and verify GPUDirect RDMA

● Use

IB_WRITE IB_WRITE tests to validate RDMA traffic across backend fabric

● Use

NCCL NCCL tests to validate inter-node GPU-to-GPU RDMA traffic across backend fabric

|

| CVD_16 |

Setup Portworx for NFS over RDMA access to storage

● Provision Kubernetes storage class to use NFS over RDMA with Portworx, backed by FlashBlade

● Put Portworx in maintenance mode

● Remove previously deployed NVIDIA GPU operator(if present)

● Update NVIDIA NIC Cluster policy to use RDMA

● Deploy GPU Operator and Cluster Policy

● Take Portworx out of maintenance mode

● Validate NFS over RDMA to Everpure

Note: Unlike deployment steps for “Deploy GPUDirect RDMA on Backend Fabric” earlier, the above procedures put Portworx in maintenance mode and removes GPU cluster policy before NIC cluster policy update.

|

| CVD_17 |

Setup Portworx for GPUDirect Storage

● Provision Kubernetes storage class to use NFS over RDMA with Portworx, backed by FlashBlade

● Put Portworx in maintenance mode

● Remove previously deployed NVIDIA GPU operator(if present)

● Update NVIDIA NIC Cluster policy to use RDMA

● Deploy GPU Operator and Cluster Policy

● Take Portworx out of maintenance mode

● Validate GPUDirect Storage to Everpure

Note: Unlike deployment steps for “Deploy GPUDirect RDMA on Backend Fabric” earlier, the above procedures put Portworx in maintenance mode and removes GPU cluster policy before NIC cluster policy update. Deploy GPU Cluster Policy

|

| CVD_18 |

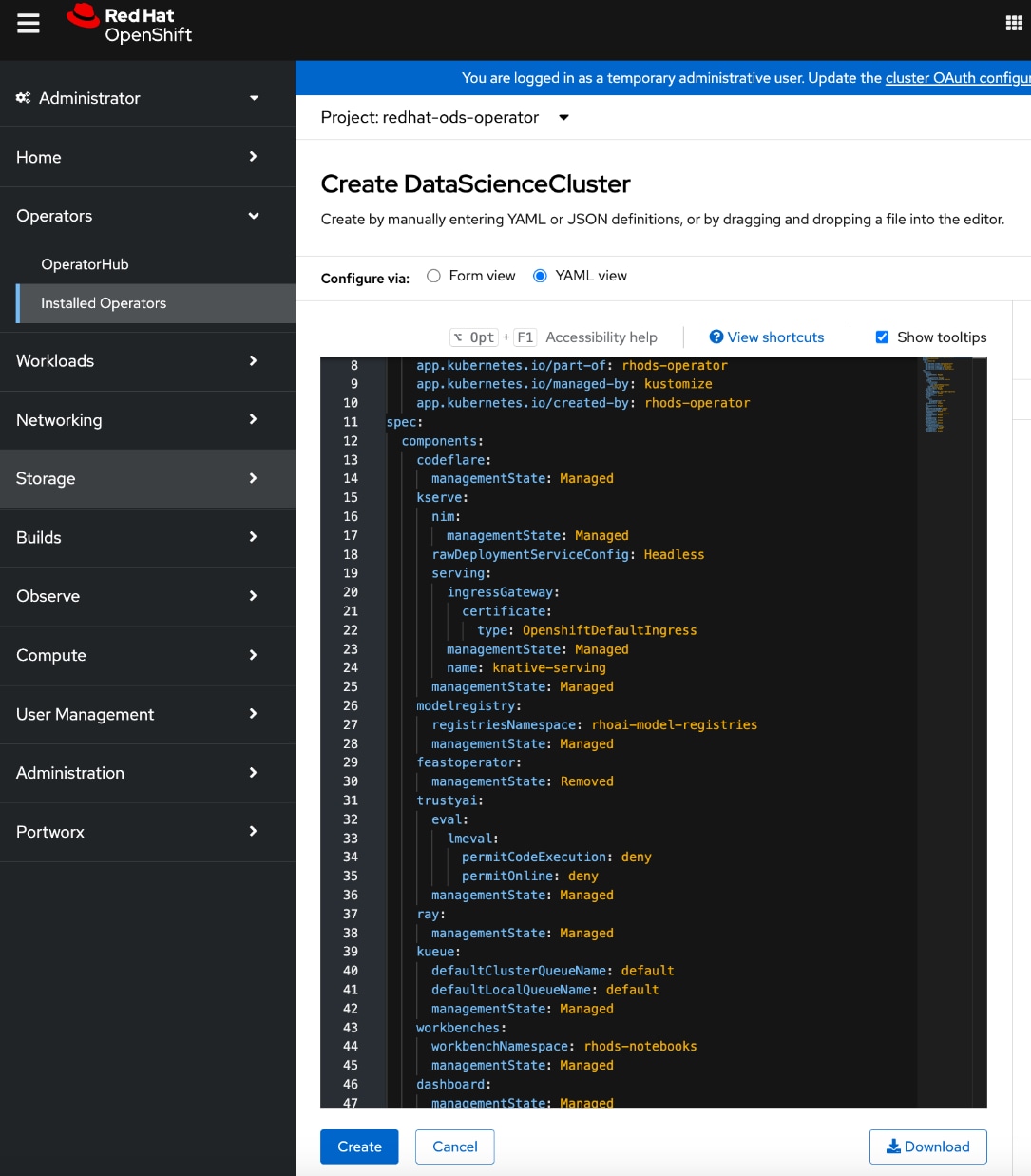

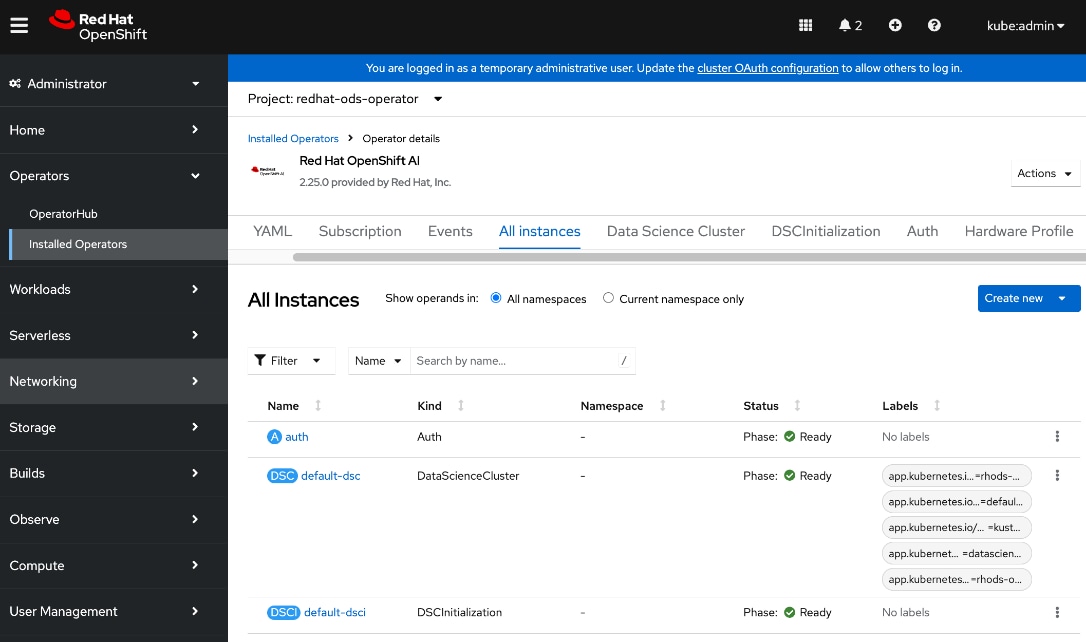

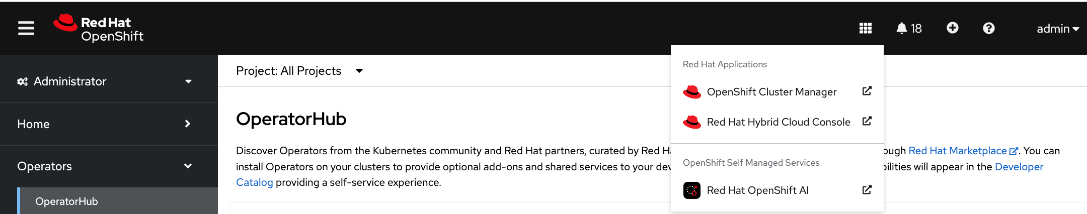

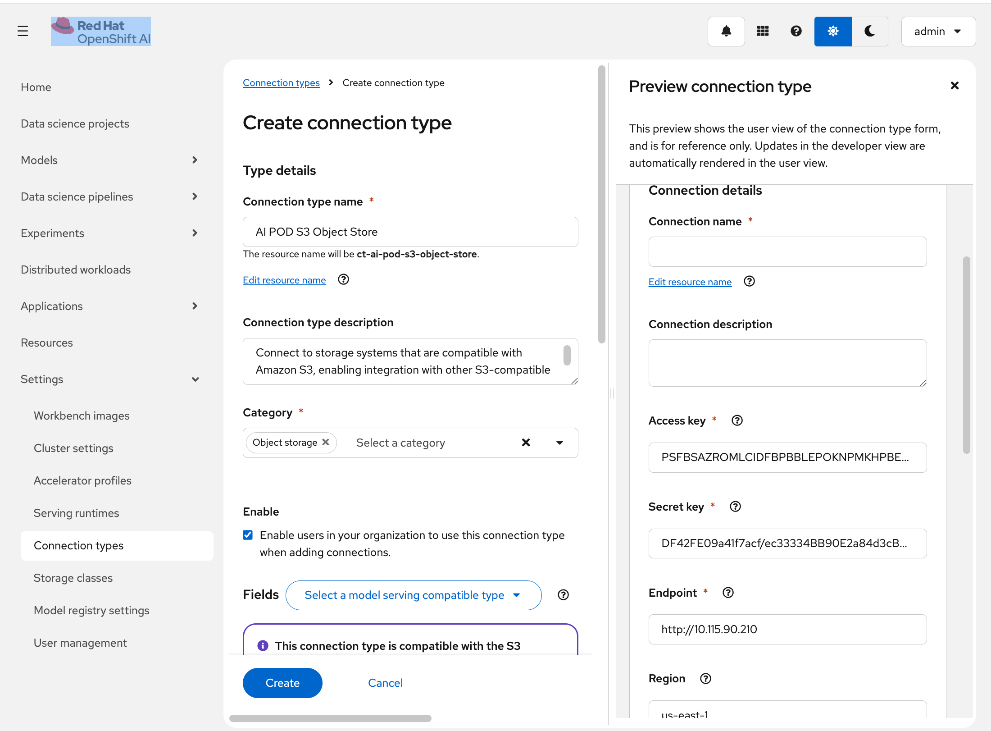

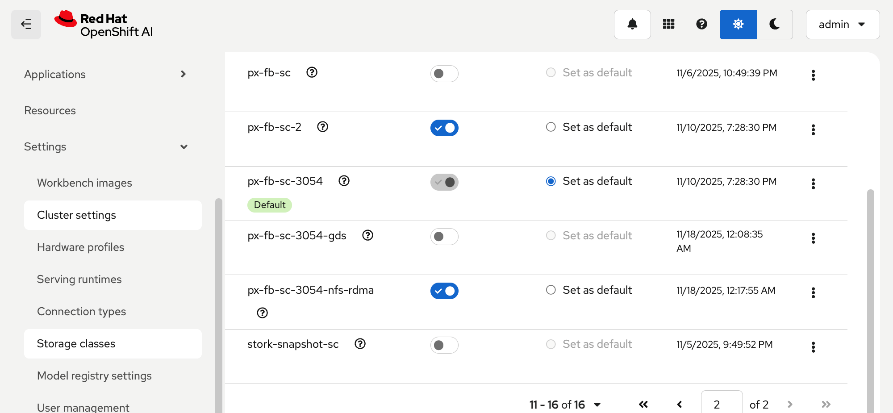

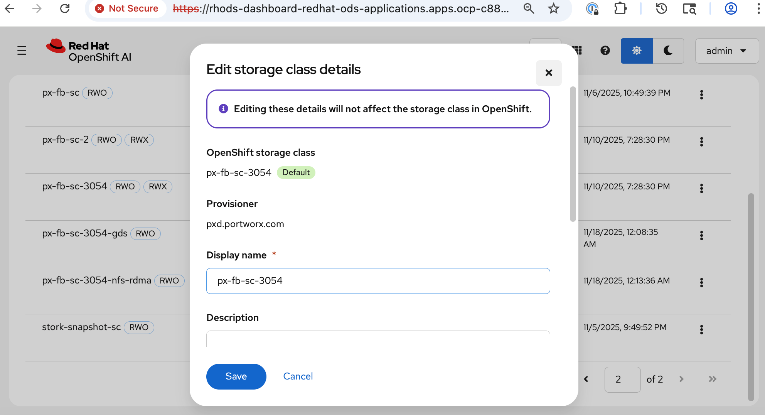

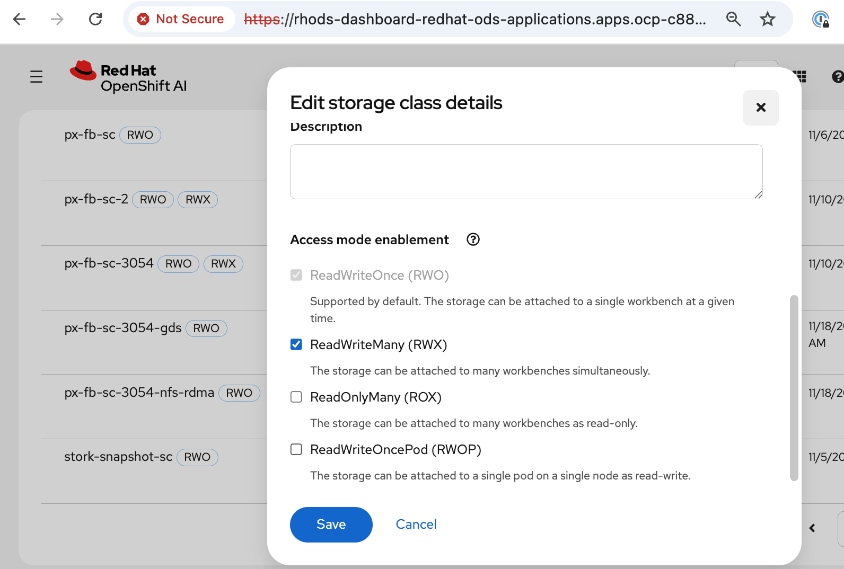

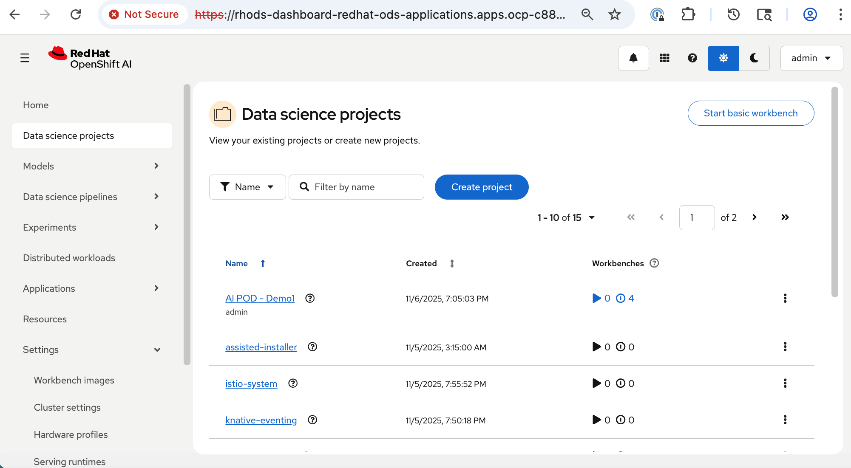

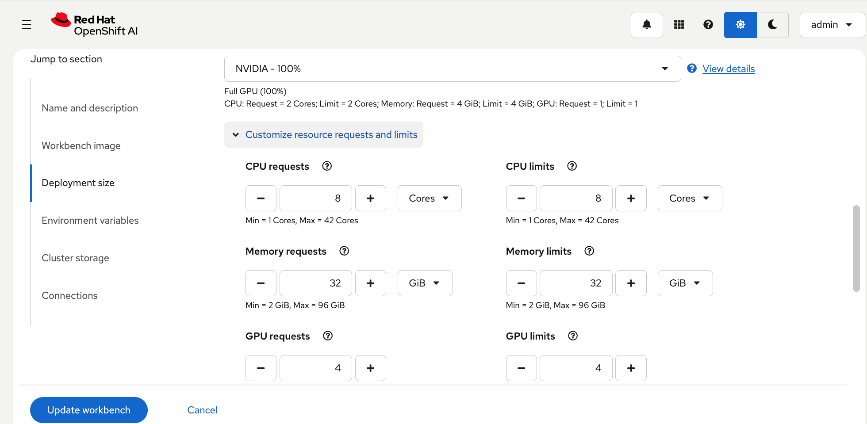

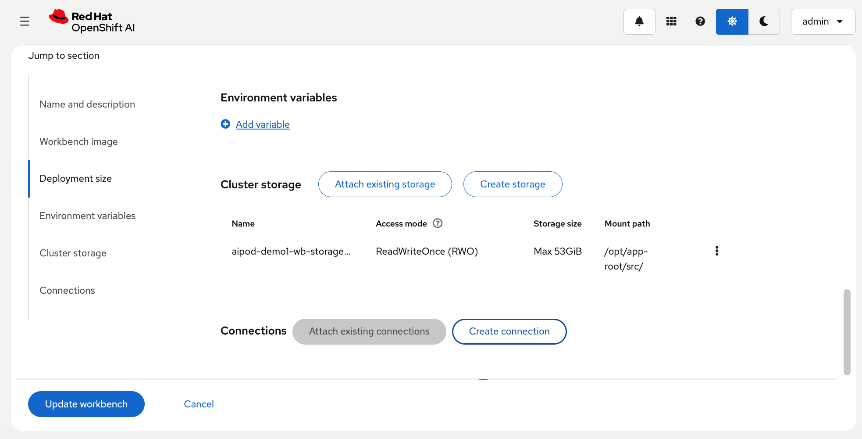

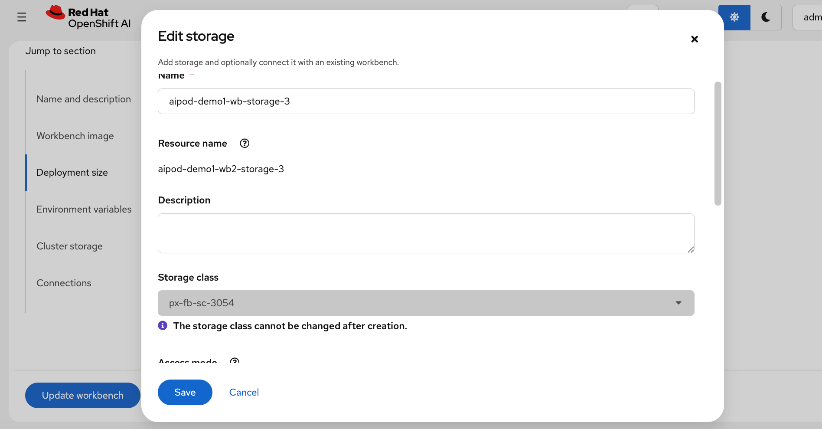

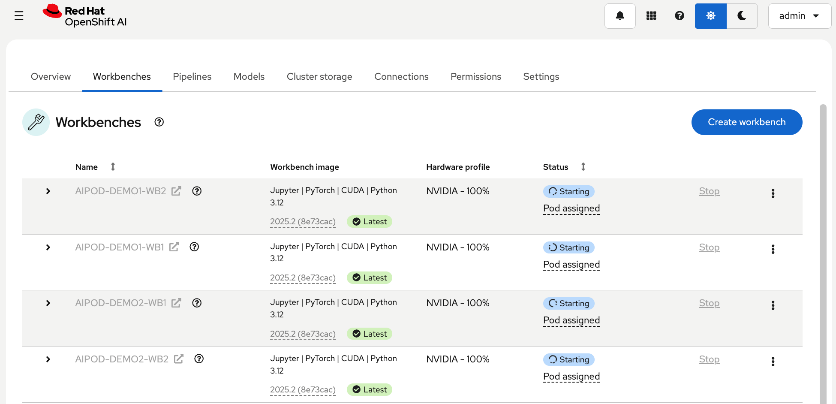

Deploy Red Hat OpenShift AI for MLOps

● Add users and administrator groups in OpenShift to enable for access to OpenShift AI web UI.

● Deploy Persistent storage for AI/ML efforts. In this solution, the ML engineer’s work (image, environment) is saved on persistent volumes, backed by Everpure FlashBlade.

● Deploy S3-compatible object store. In this CVD, it used as model repository and to store pipeline artifacts on Everpure FlashBlade

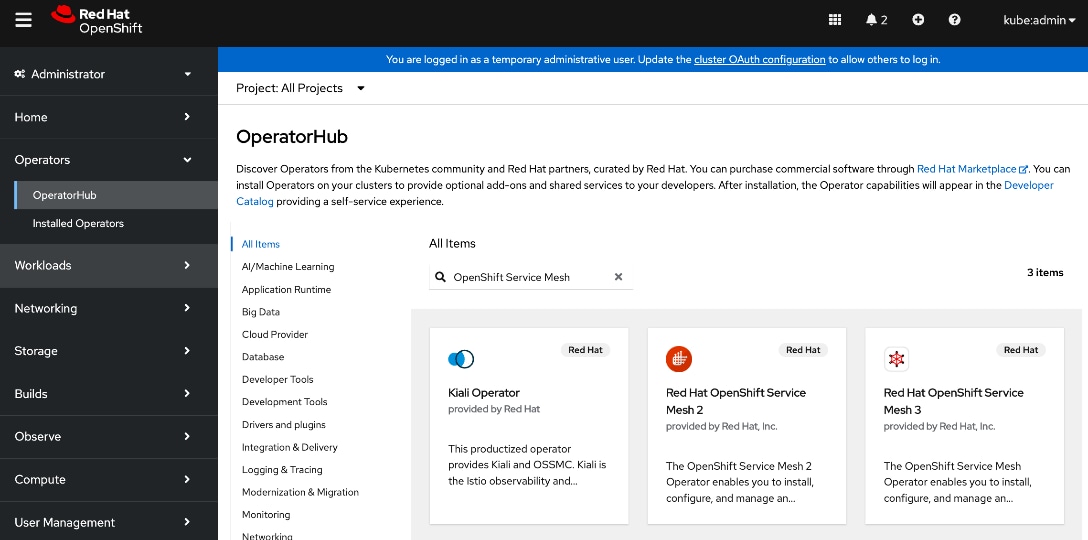

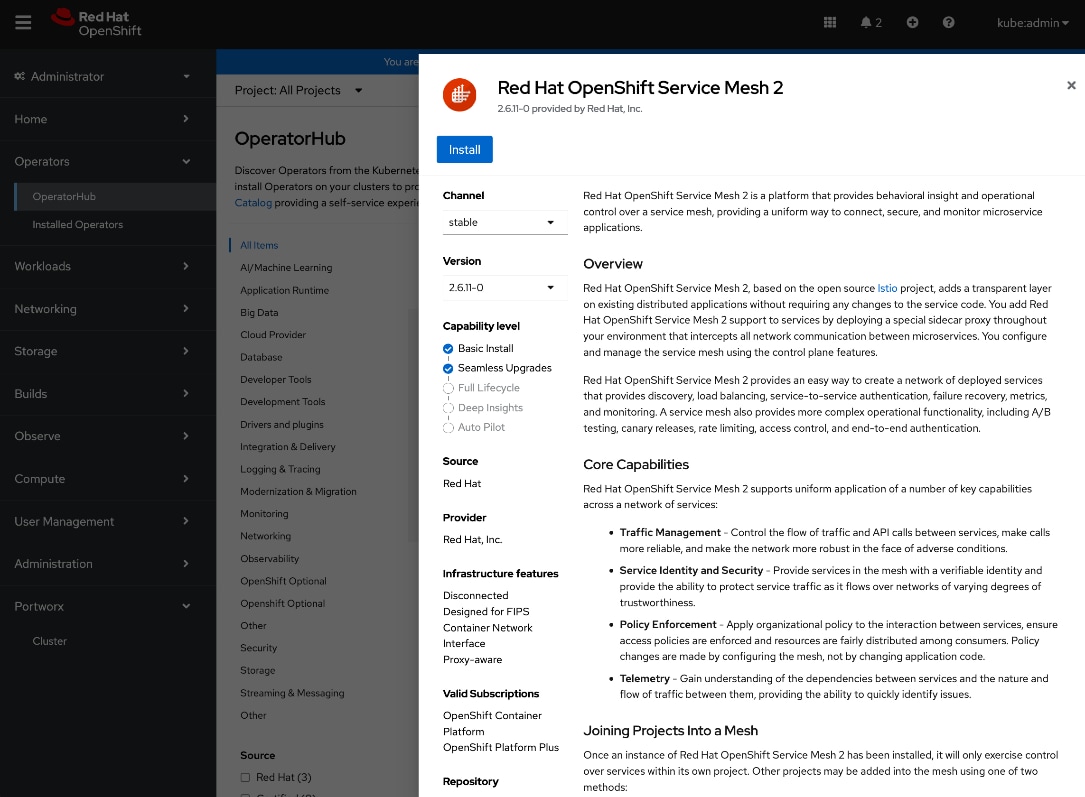

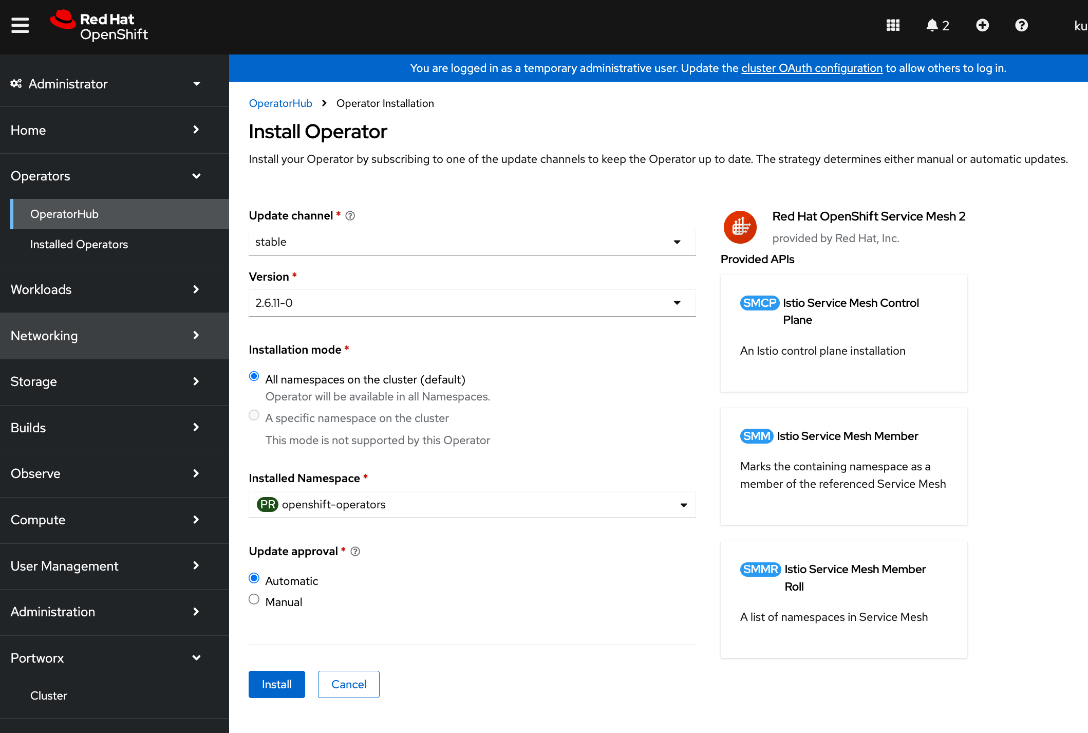

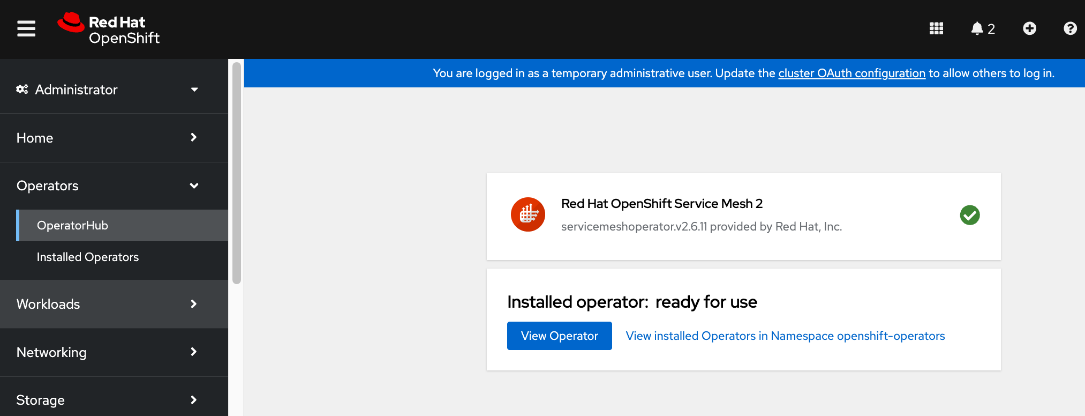

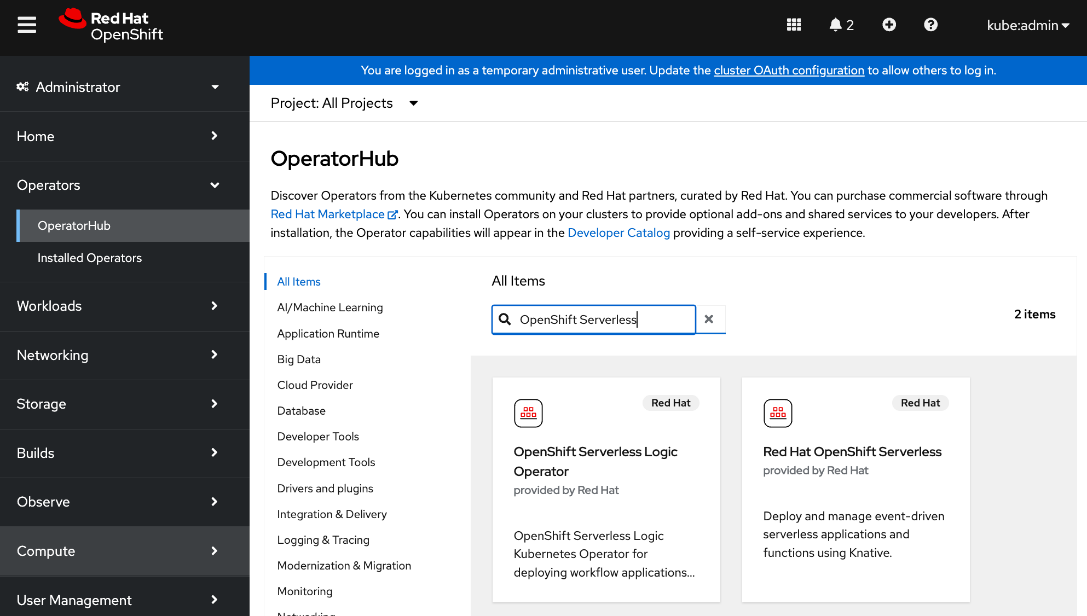

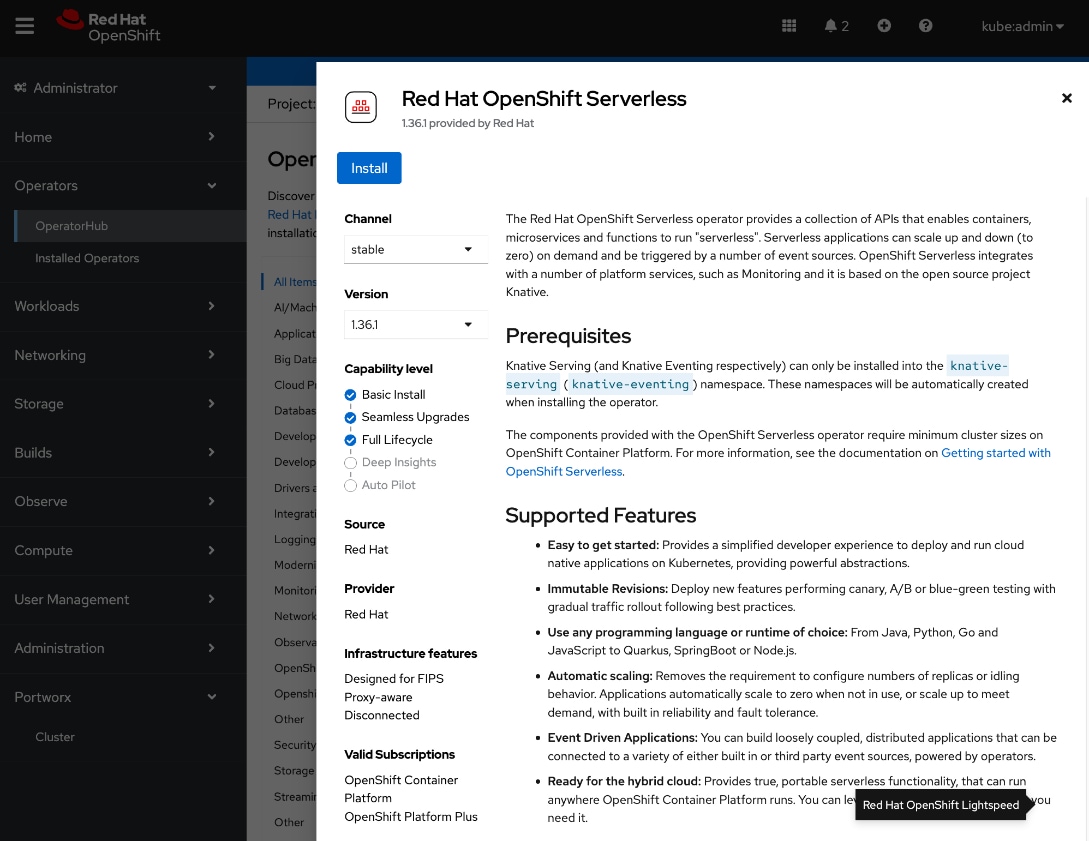

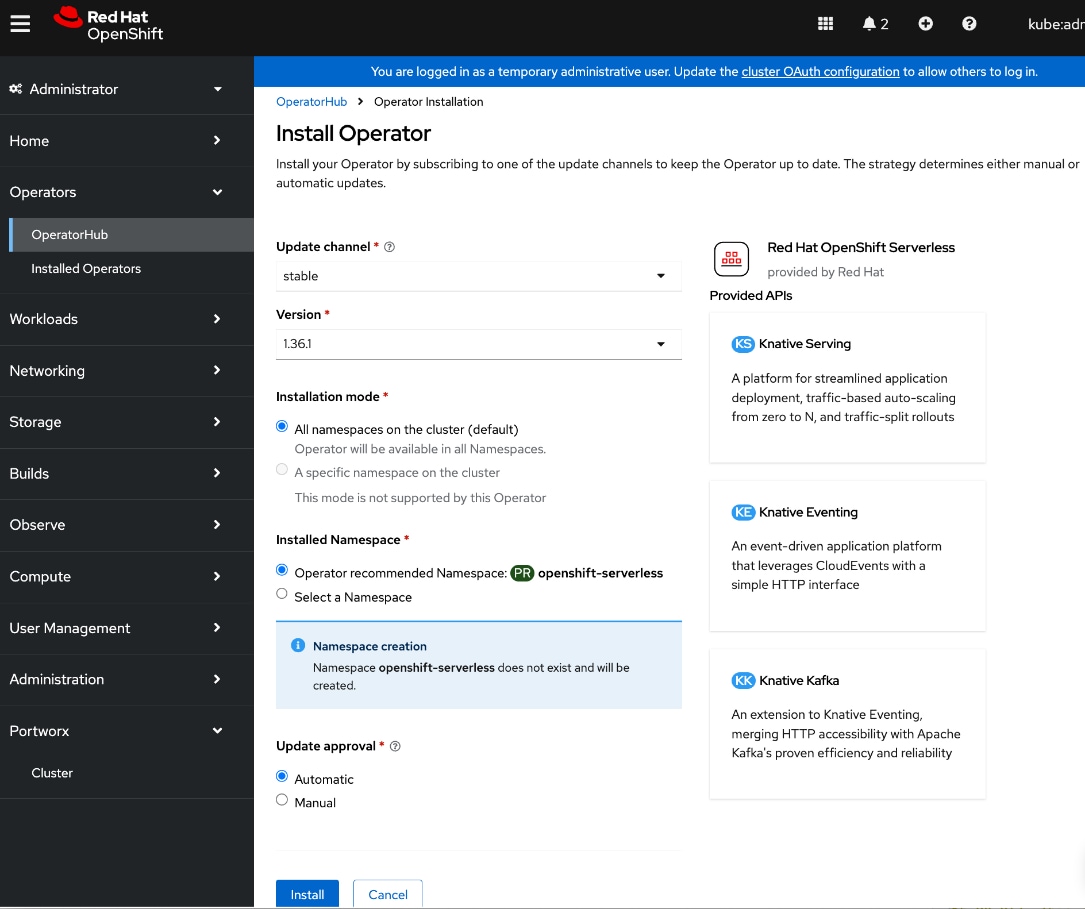

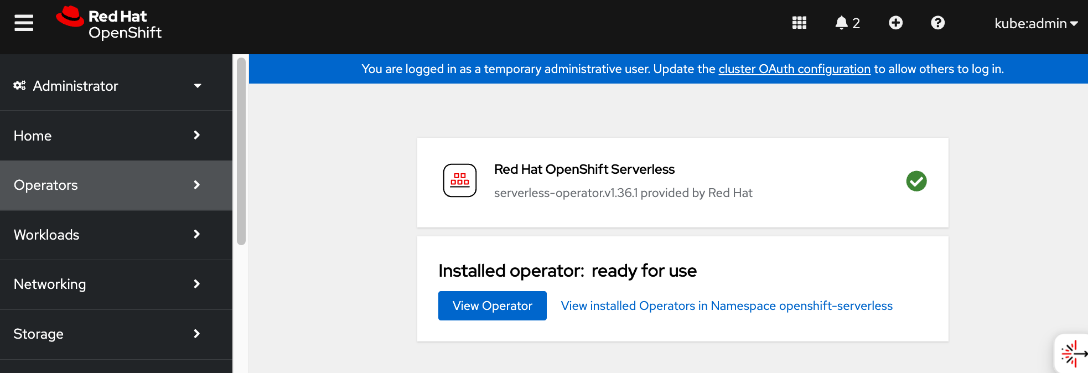

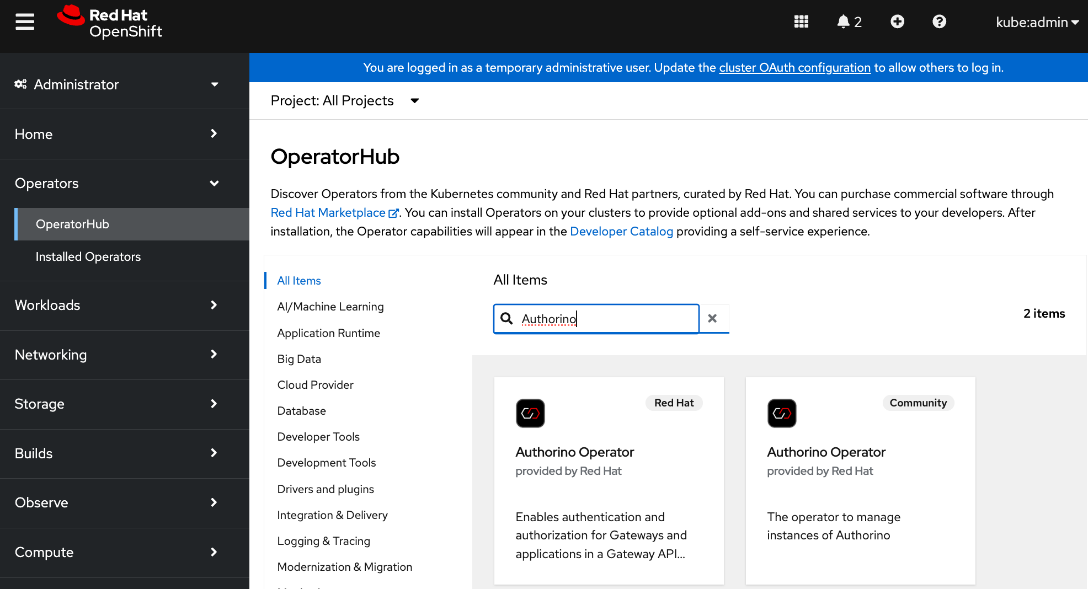

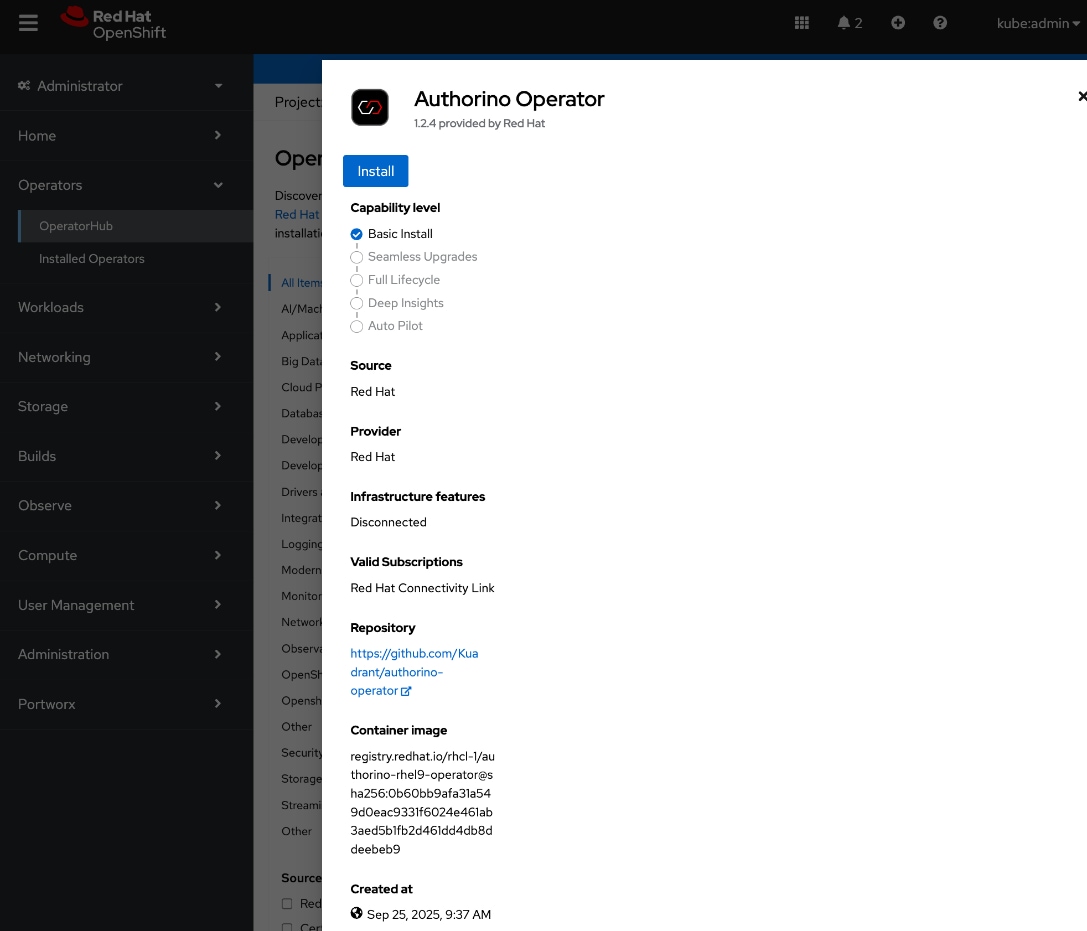

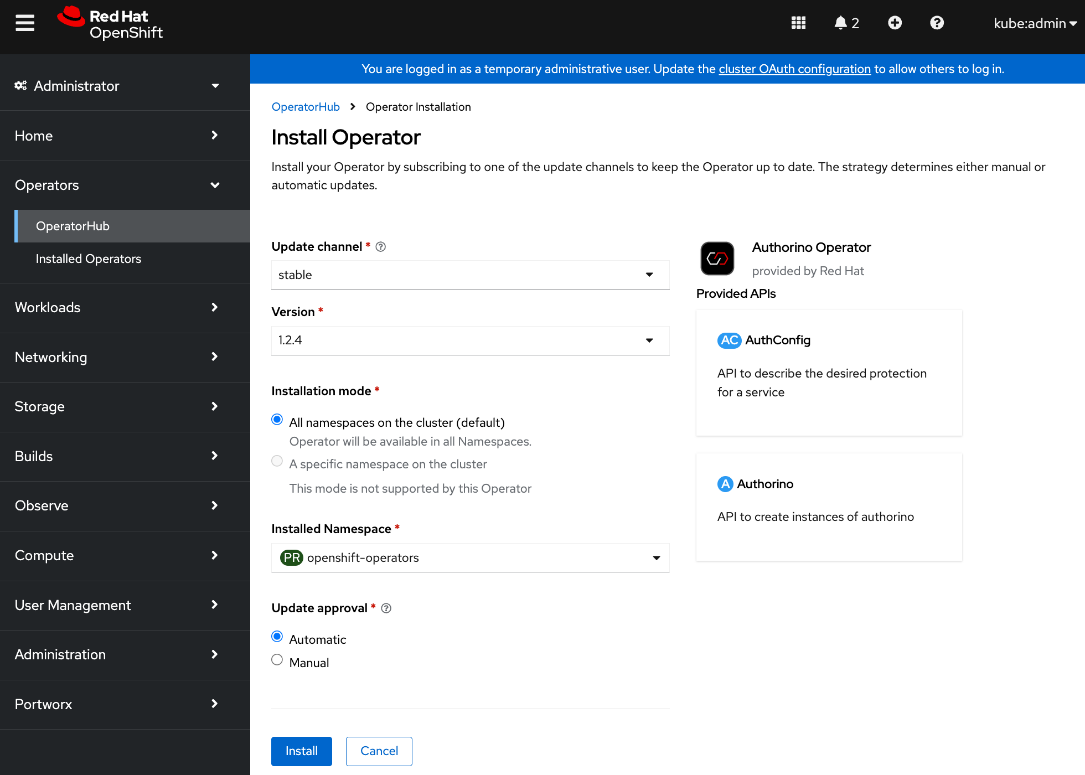

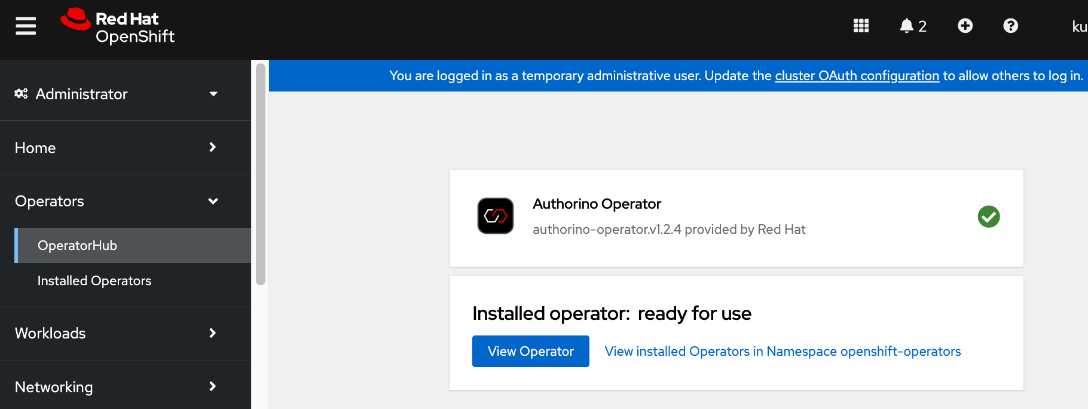

● Deploy prerequisite operators for OpenShift AI - Service Mesh Operator, Red Hat OpenShift Serverless, Red Hat Authorino

● Deploy Red Hat OpenShift AI Operator. The environment is now ready for accelerating and operationalizing enterprise AI/ML efforts at scale.

● Modify Storage Classes for persistent storage – enable ReadWriteMany for training and fine-tuning workloads

|

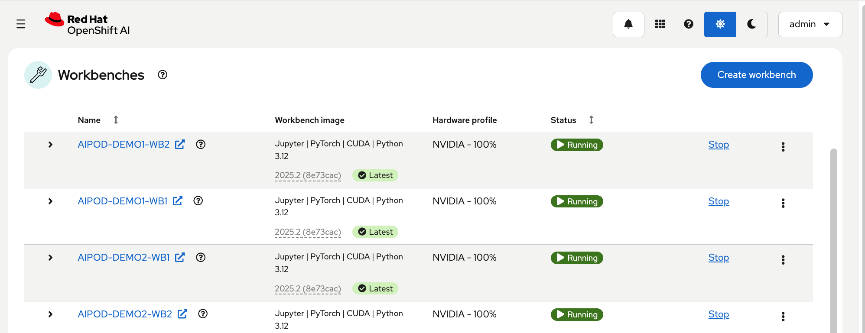

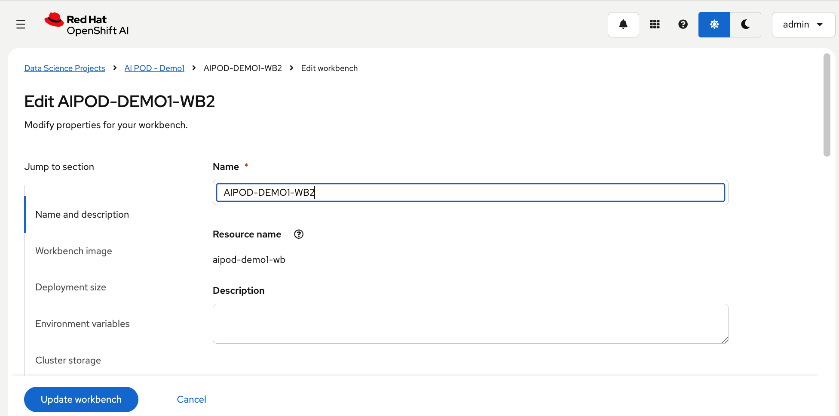

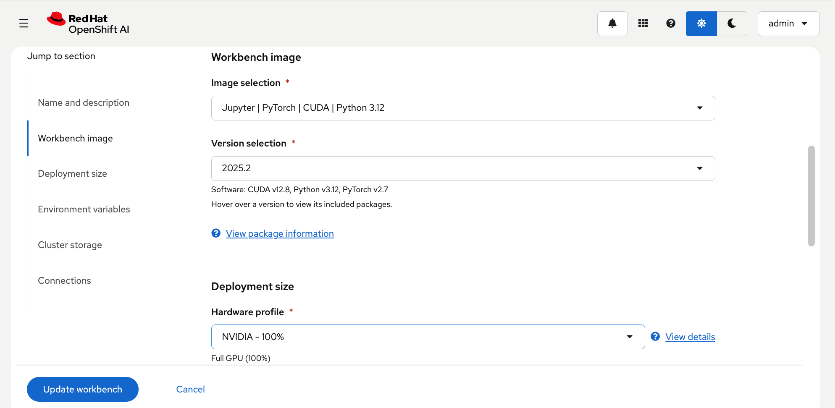

| CVD_19 |

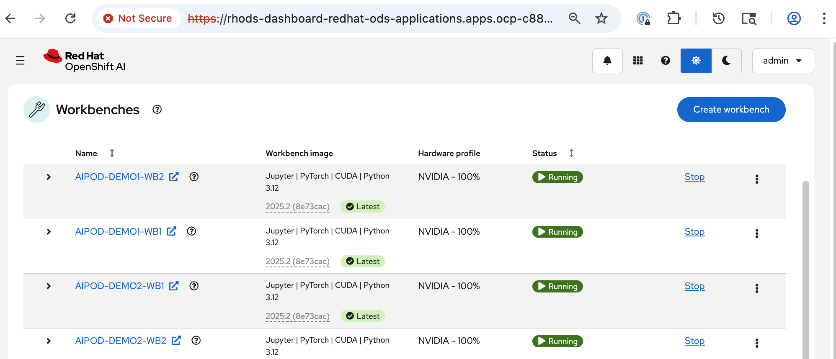

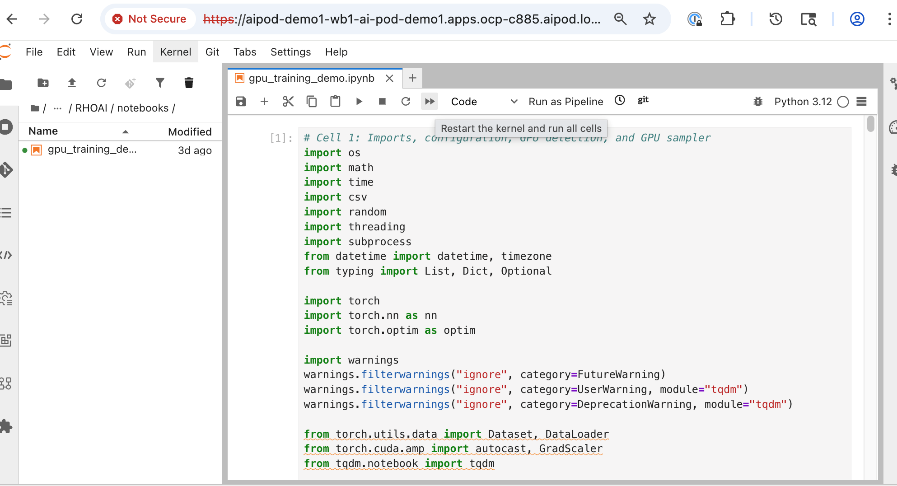

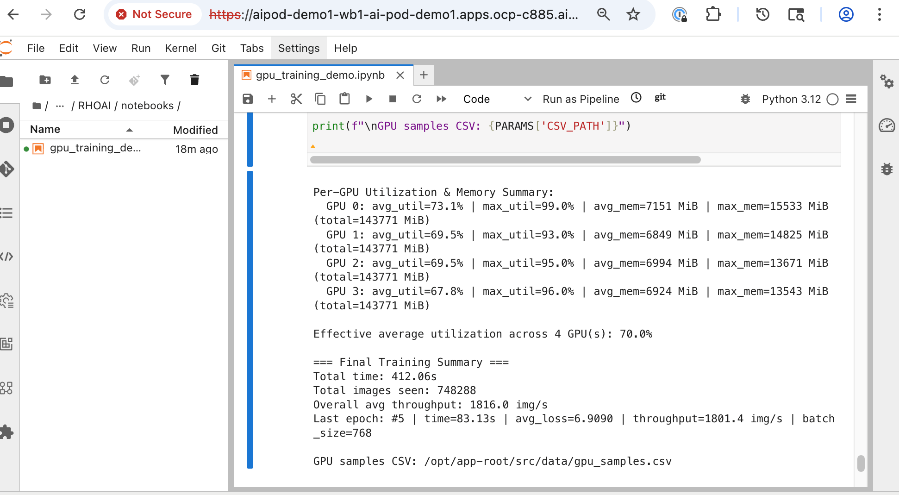

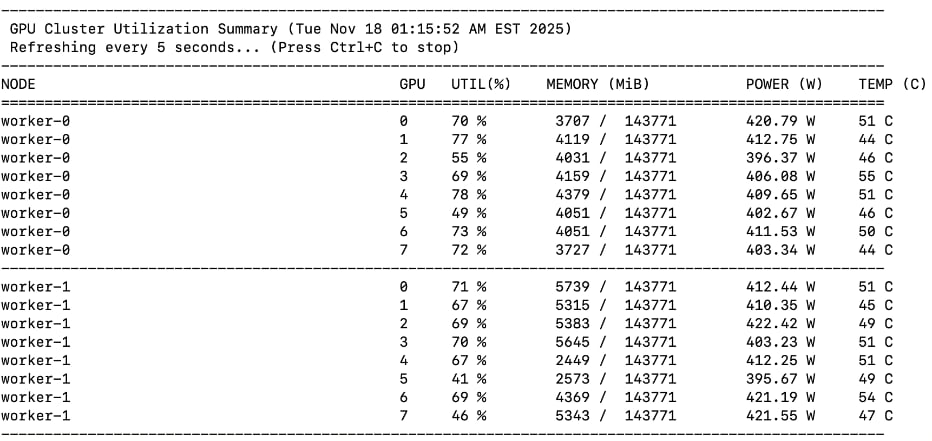

Validate solution using fine-tuning workload in OpenShift AI

● Setup workbench for the workload in OpenShift AI

● Setup GitHub access from workbench and deploy workload

● Monitor GPU utilization

|

| CVD_20 |

Validation summary

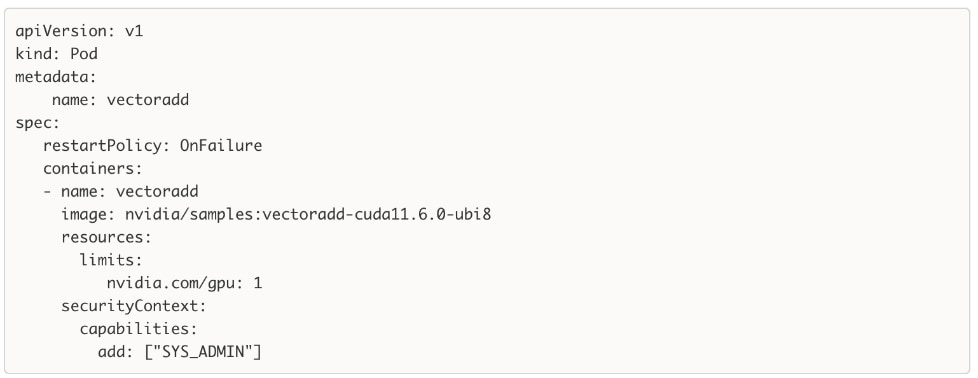

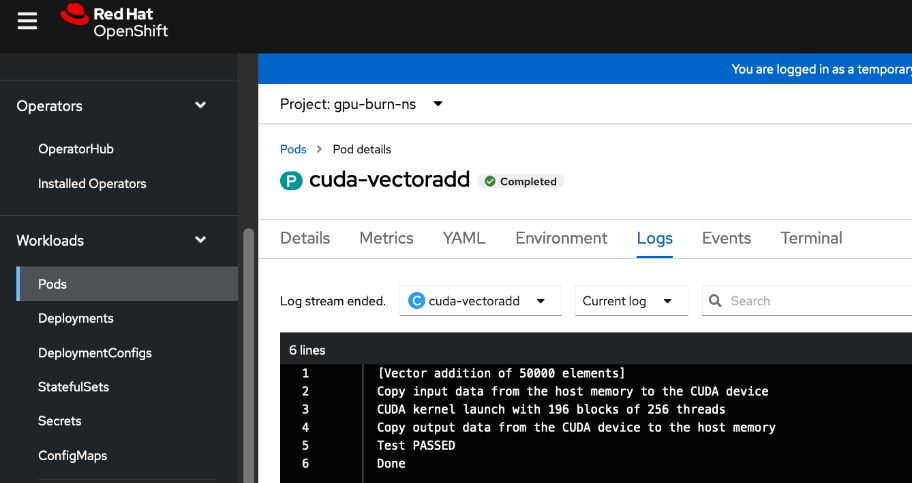

● GPU Functional Validation – Sample CUDA Application

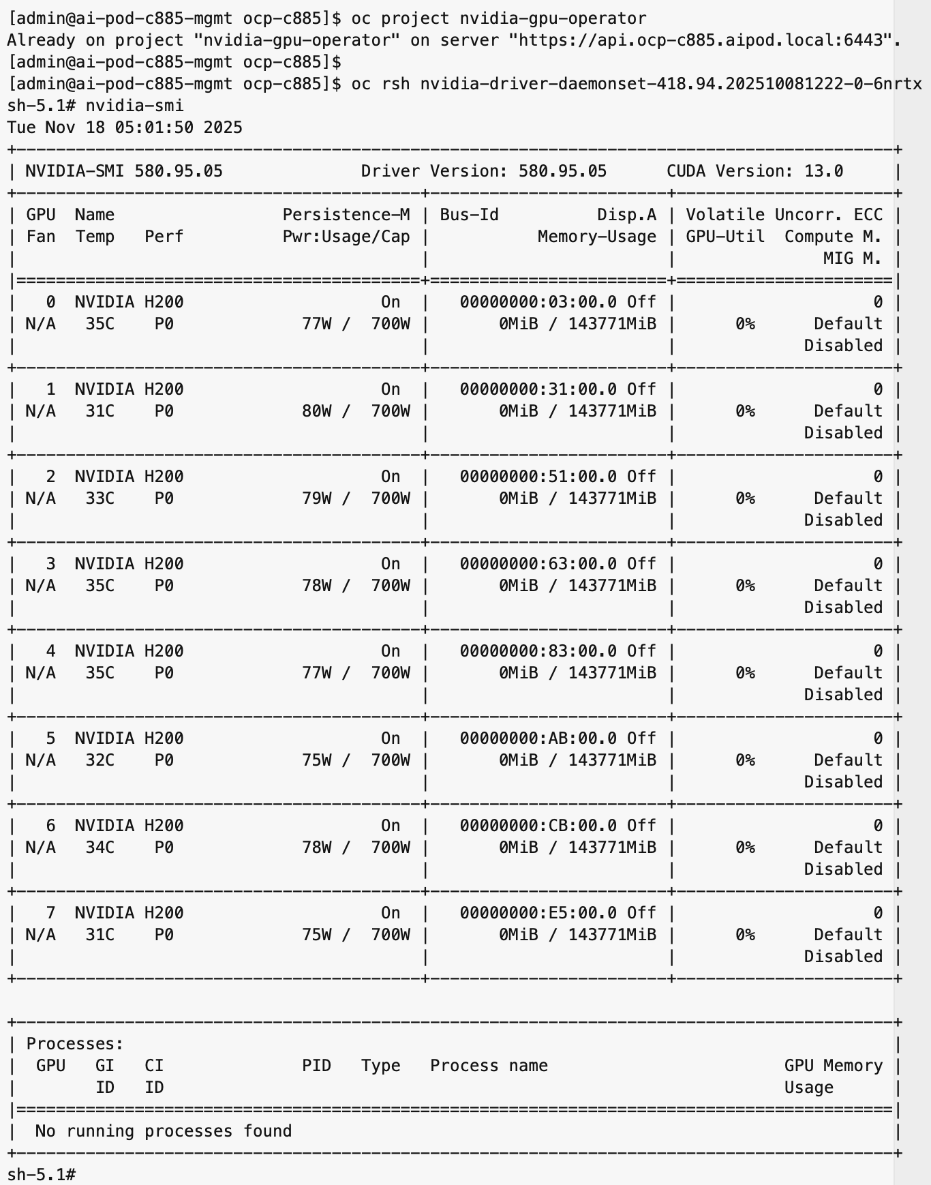

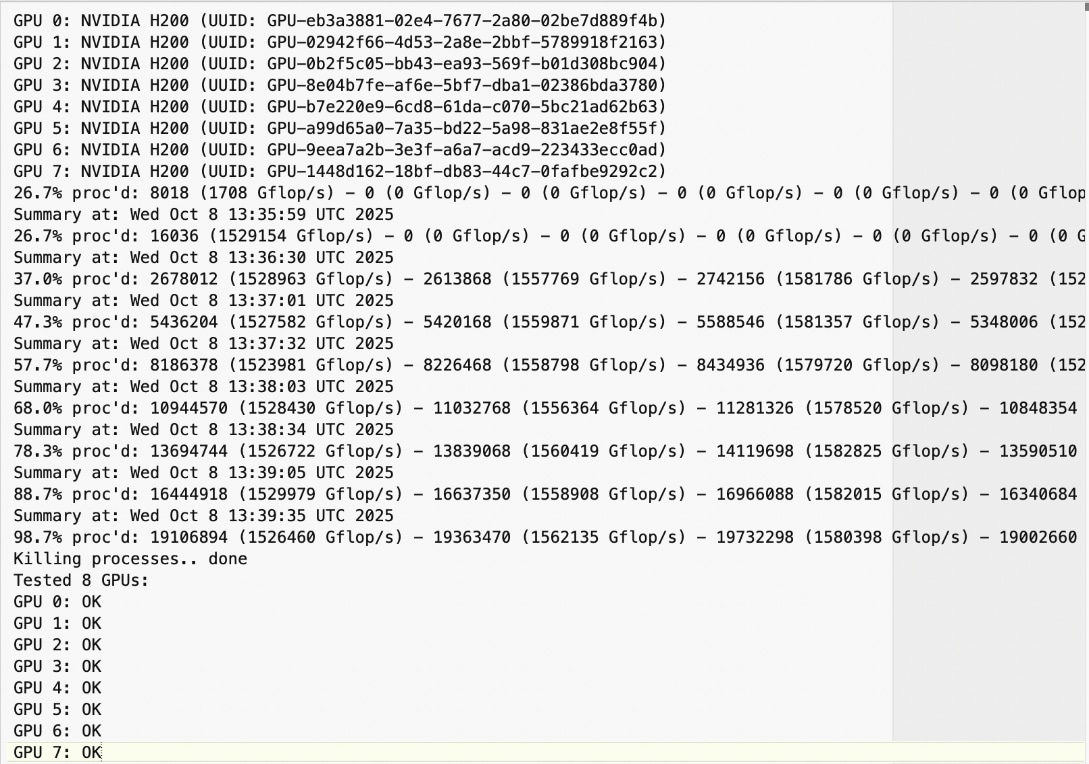

● GPU Burn Test:

https://github.com/wilicc/gpu-burn

● IB_WRITE Tests

● NCCL Tests

● MLPerf

|

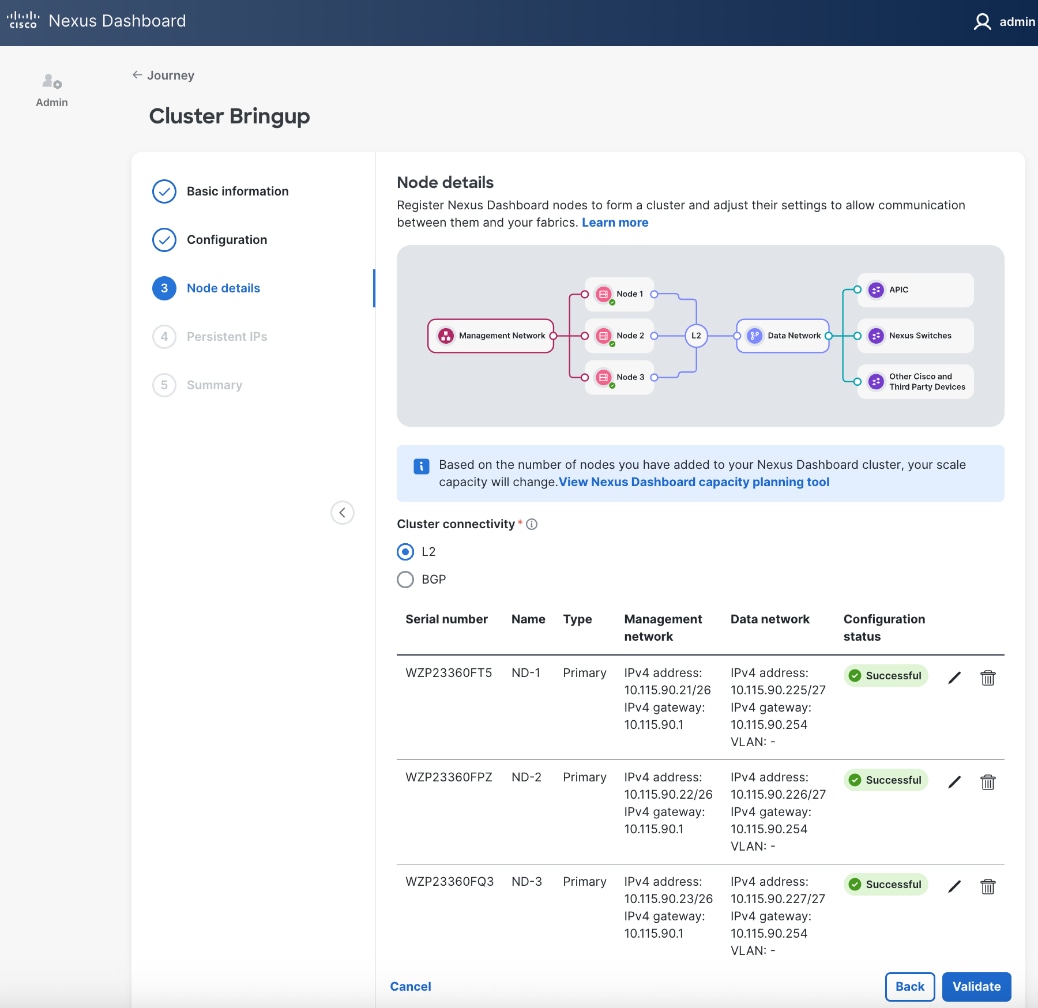

The procedures detailed in this section explain how to deploy a 3-node Nexus Dashboard (ND) cluster. The Cisco Nexus Dashboard provided templates are used to deploy both the backend and frontend fabrics in the AI POD solution.

The procedures in this section will:

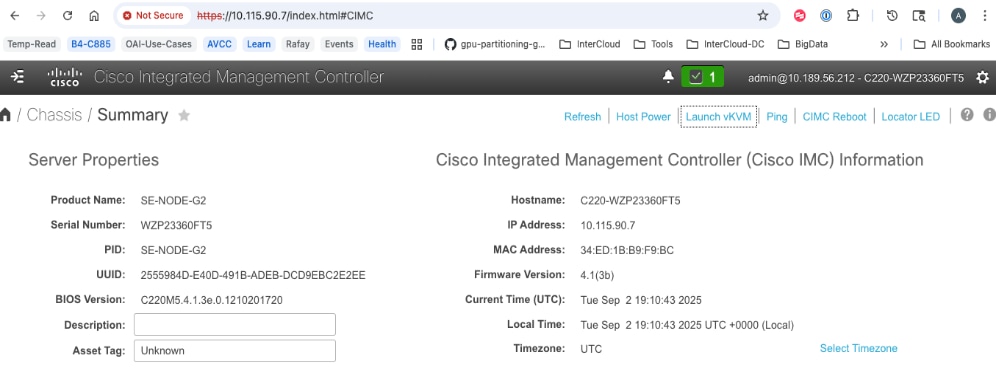

● Setup the first node in a 3-node Nexus Dashboard Cluster using CIMC management.

● Use the web UI and cluster bring up workflow to complete the setup of the first node, deploy remaining nodes in the cluster and bring up the 3-node ND cluster.

Assumptions and Prerequisites

● Out-of-band/CIMC access to the first Nexus Dashboard node in the cluster.

● Access to Nexus Dashboard ISO image on a local or remote (NFS/HTTP) server.

● DNS and NTP services are available for use by Nexus Dashboard cluster

Setup Information

Table 7. Setup Parameters for Nexus Dashboard

| Parameter Type |

Parameter Name | Value |

Additional Information |

| Nexus Dashboard Management |

ND-MGMT |

|

| CIMC IP for first ND (ND-1) |

10.115.90.7/26 |

|

| ND-MGMT IP for first ND |

10.115.90.21/26 |

|

| Gateway IP |

10.115.90.1/26 |

|

| Cluster Bringup Workflow |

||

| Basic Information |

|

|

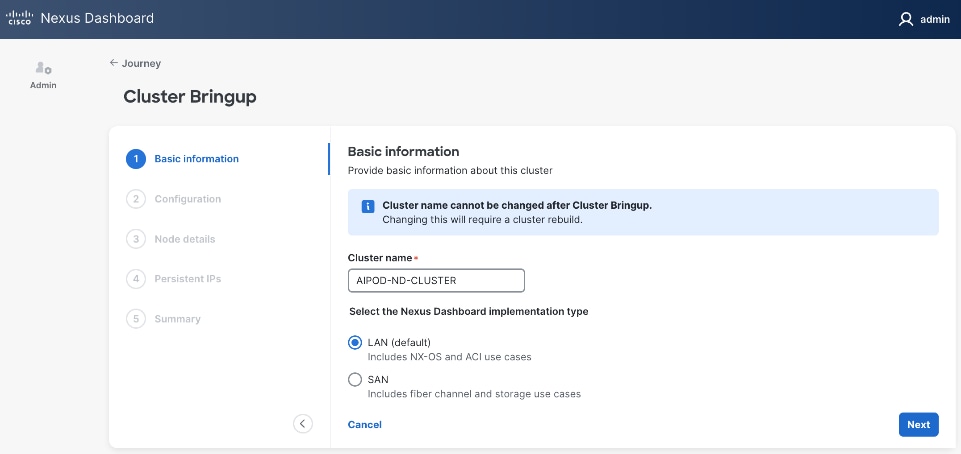

| Cluster Name |

AIPOD-ND-CLUSTER |

|

| Implementation Type |

LAN (default) |

|

| Configuration |

|

|

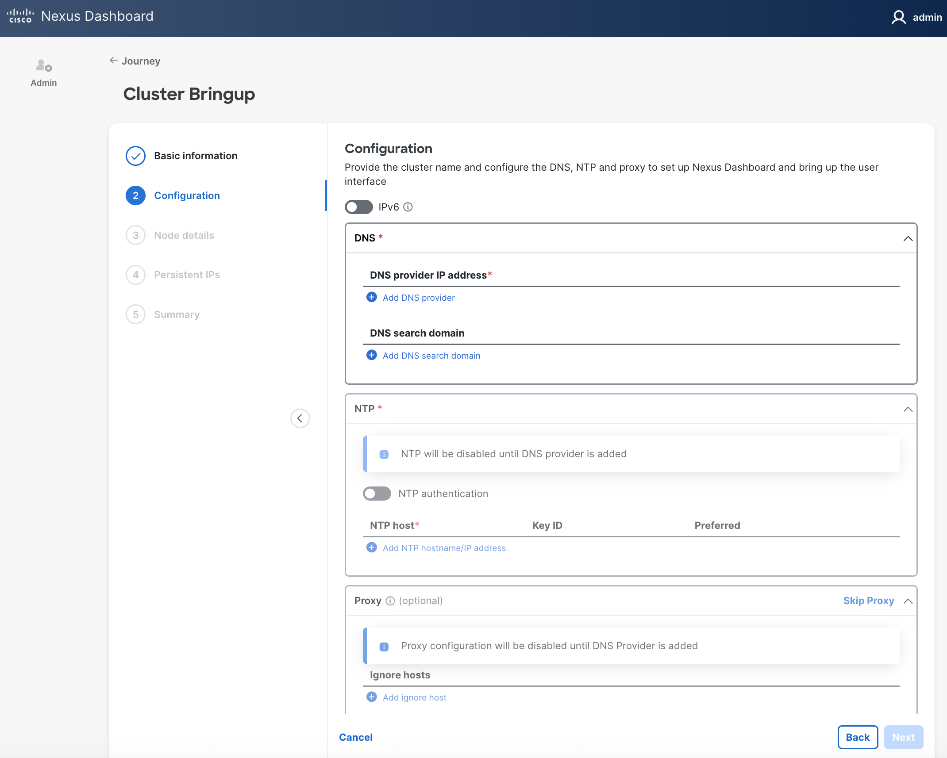

| DNS Server |

64.102.6.247 |

|

| DNS Search Domain |

cisco.com |

|

| NTP |

1.ntp.esl.cisco.com 2.ntp.esl.cisco.com 3.ntp.esl.cisco.com |

Add at least two NTP sources for redundancy |

| Proxy |

Skip Proxy |

|

| Advanced Settings |

<Using defaults> |

|

| Node Details: ND-1 |

|

|

| General |

|

|

| Hostname |

ND-1 |

First ND Node |

| Type |

Primary |

|

| Management Network |

|

|

| IP Address |

<Same as ND-MGMT > |

Provided above |

| Gateway |

<Same as ND-MGMT> |

Provided above |

| Data Network |

|

|

| ND-DATA: IP for ND-1 |

10.115.90.225/27 |

|

| ND-DATA: Gateway |

10.115.90.254 |

|

| Node Details: ND-2 |

|

|

| CIMC IP |

10.115.90.8 |

Second ND Node |

| Username |

admin |

|

| Password |

<specify> |

|

| General |

|

|

| Hostname |

ND-2 |

|

| Type |

Primary |

|

| Management Network |

|

|

| IP Address |

10.115.90.22/26 |

|

| Gateway |

10.115.90.1 |

|

| Data Network |

|

|

| ND-DATA: IP for ND-2 |

10.115.90.226/27 |

|

| ND-DATA: Gateway |

10.115.90.254 |

|

| Node Details: ND-3 |

|

|

| CIMC IP |

10.115.90.9 |

Third ND Node |

| Username |

admin |

|

| Password |

<specify> |

|

| General |

|

|

| Hostname |

ND-3 |

|

| Type |

Primary |

|

| Management Network |

|

|

| IP Address |

10.115.90.23/26 |

|

| Gateway |

10.115.90.1 |

|

| Data Network |

|

|

| ND-DATA: IP for ND-3 |

10.115.90.227/27 |

|

| ND-DATA: Gateway |

10.115.90.254 |

|

|

|

|

|

| Persistent IPs |

10.115.90.228-.238 |

|

Deployment Steps

To deploy a 3-node Nexus Dashboard cluster, complete the procedures below using the setup information provided in this section.

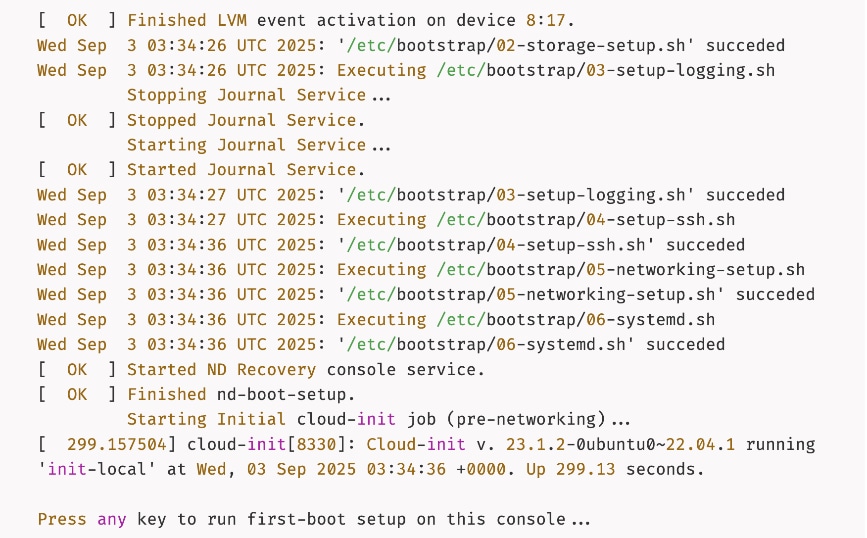

Bring up first node in a 3-node Nexus Dashboard cluster

Procedure 1. Deploy first Nexus Dashboard cluster node

Step 1. SSH into the first node:

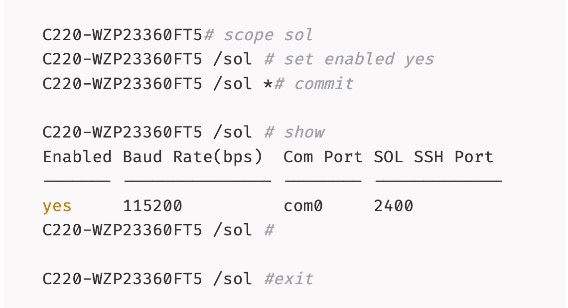

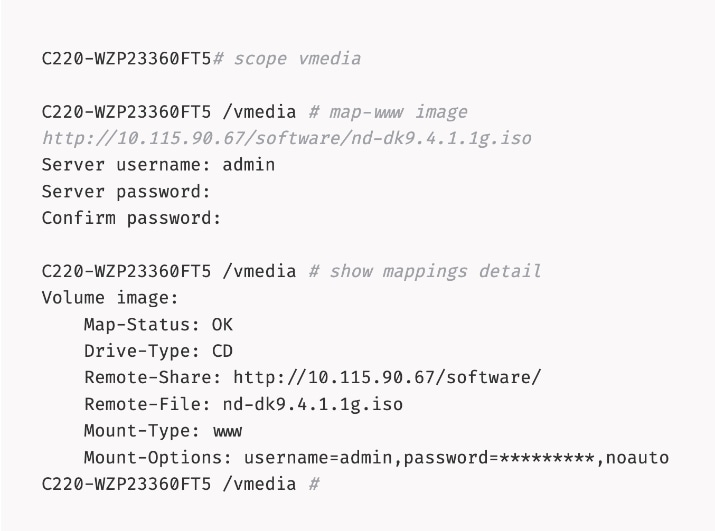

Step 2. Mount the Nexus Dashboard ISO from a HTTP server using remote vMedia:

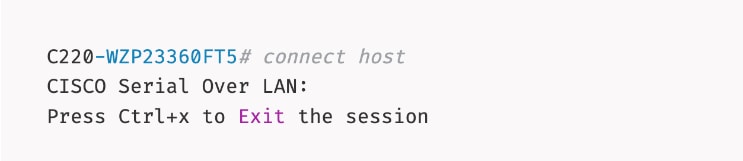

Step 3. Connect to Host over Serial Over LAN or go to next step and using vKVM from a browser.

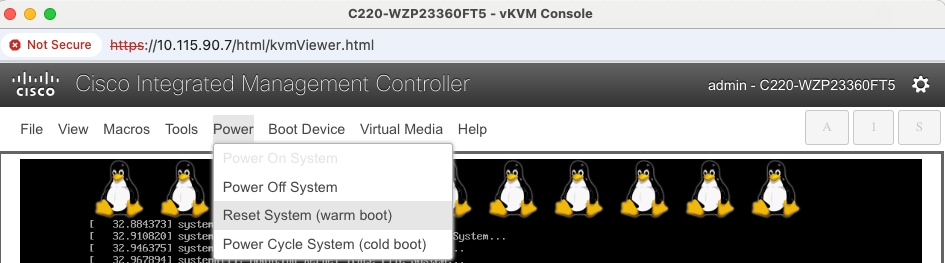

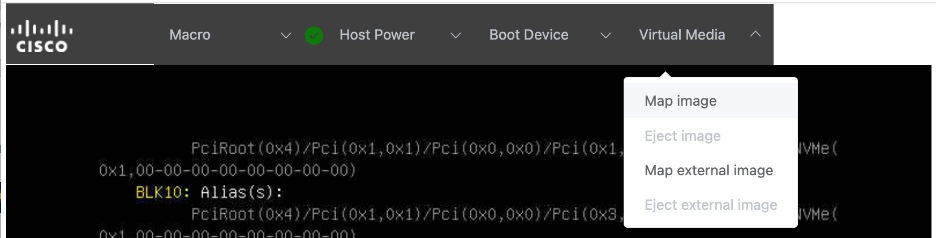

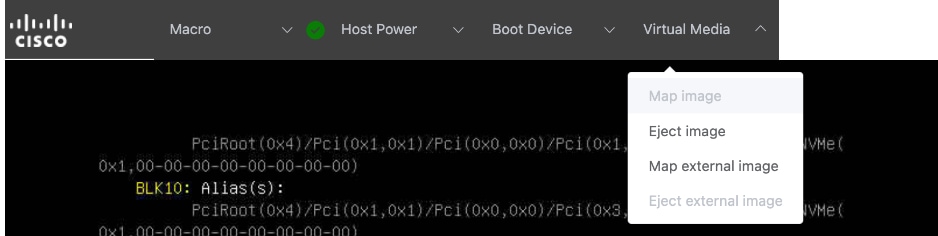

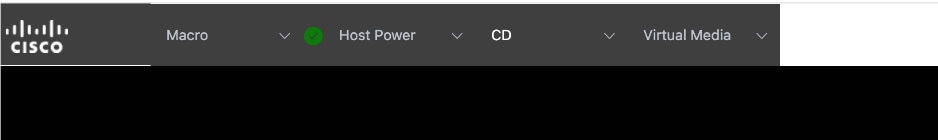

Step 4. From a browser, go back to CIMC of the node and open the vKVM console.

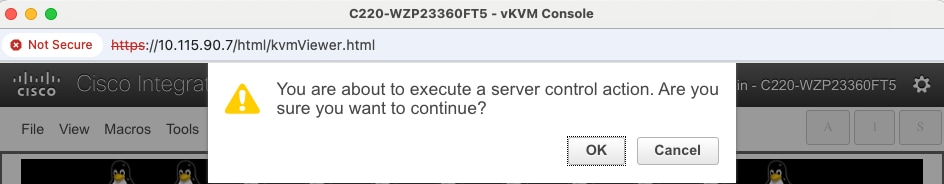

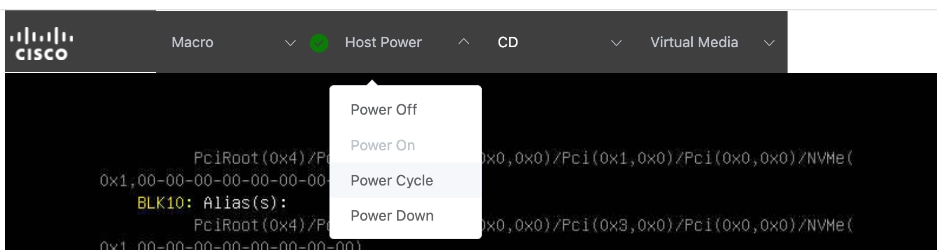

Step 5. Click Launch vKVM from the top menu. In the newly opened vKVM viewer window, click Reset System.

Step 6. Click OK in the next pop-up window.

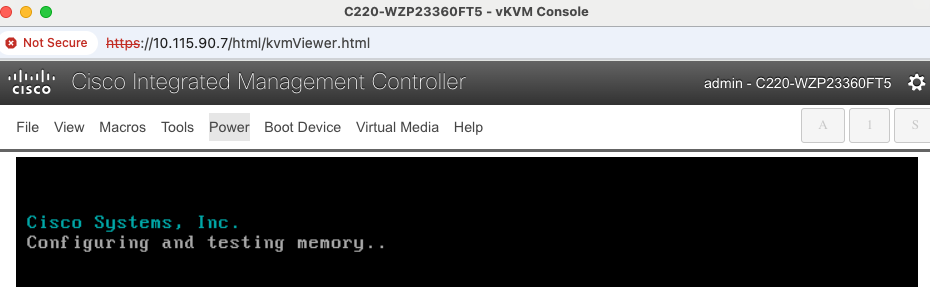

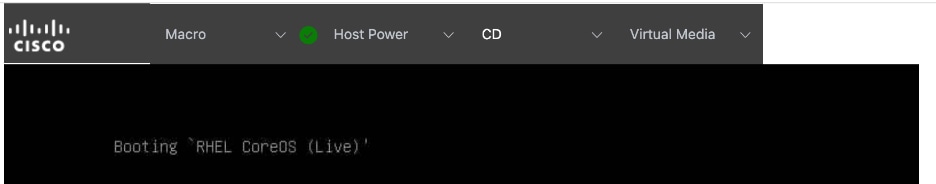

Step 7. Monitor the booting process.

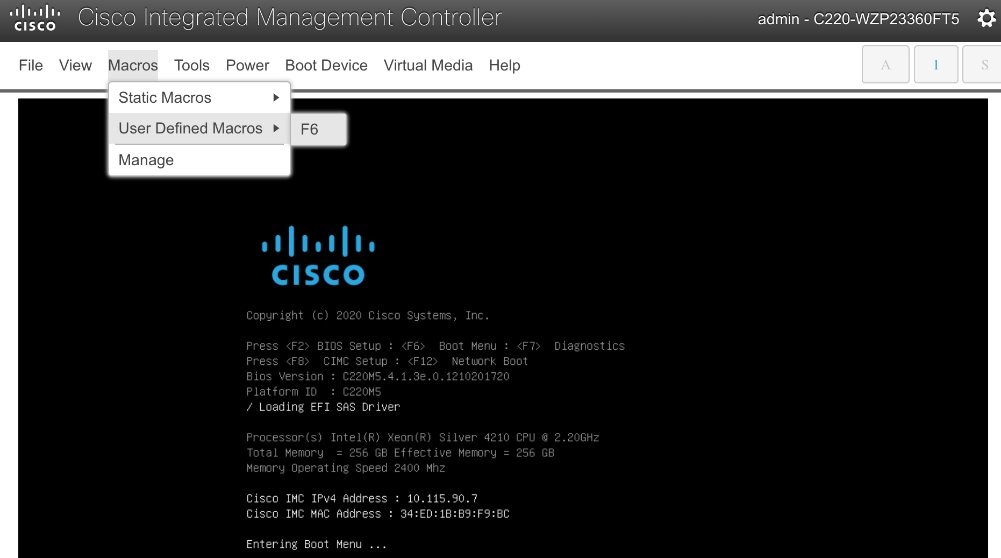

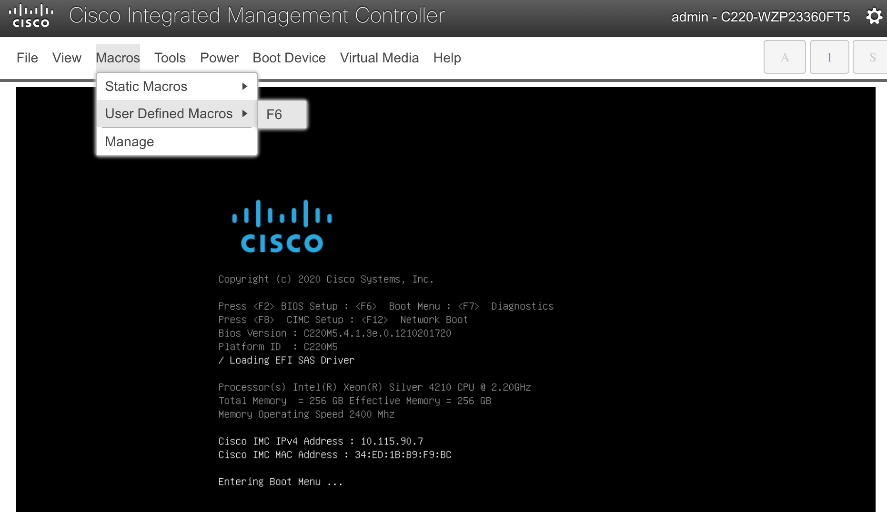

Step 8. Go to Macros to define the User Defined Macros for F6.

Step 9. Click F6 to bring up the boot menu when you see Entering Boot Menu in vKVM.

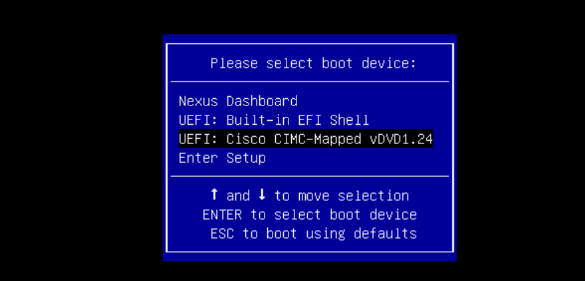

Step 10. Use Up/Down arrow keys to select UEFI: Cisco CIMC-Mapped vDVD as the boot device from the boot menu.

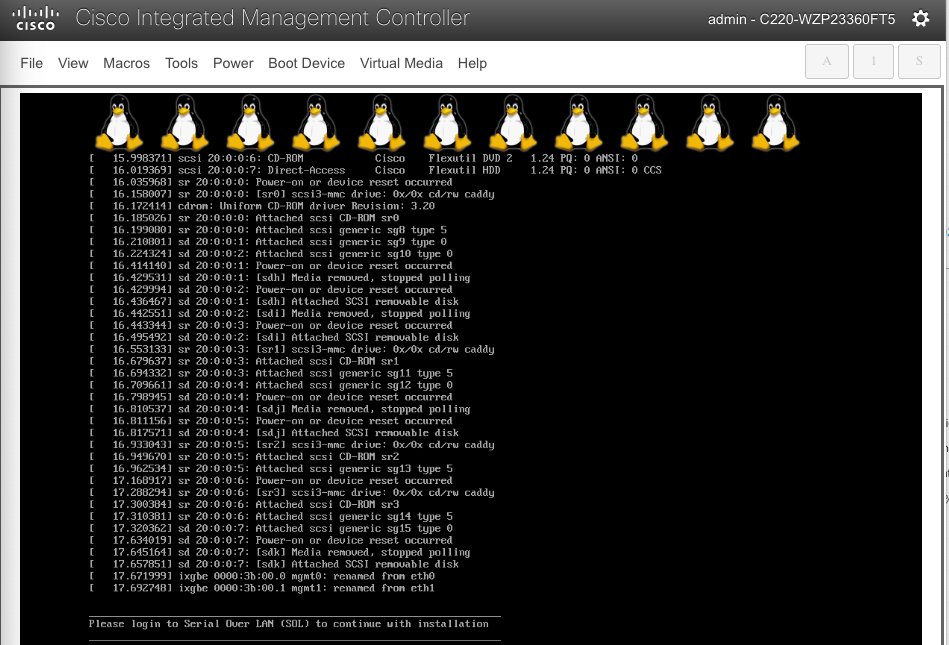

Step 11. Wait until you see the message to login to Serial Over LAN (SOL) console.

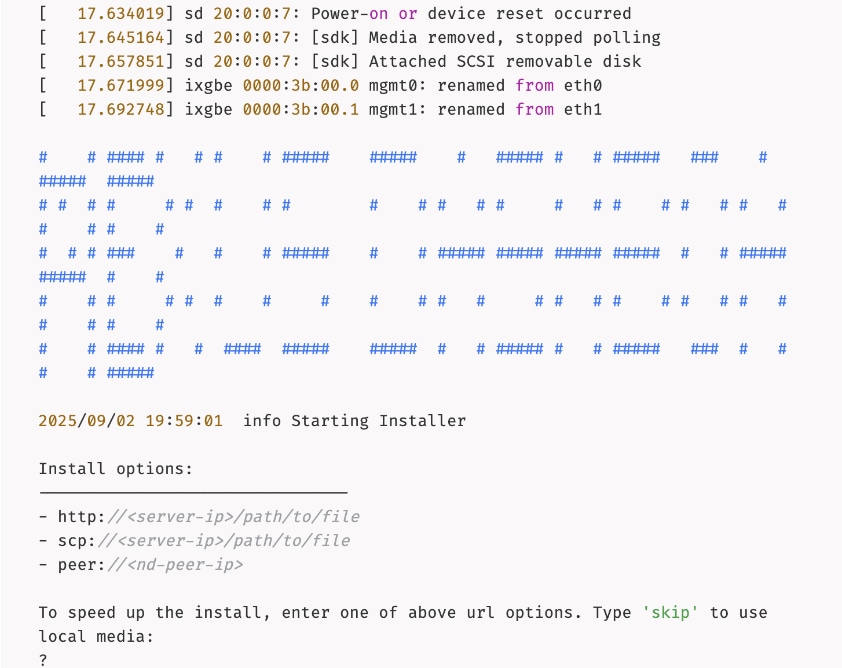

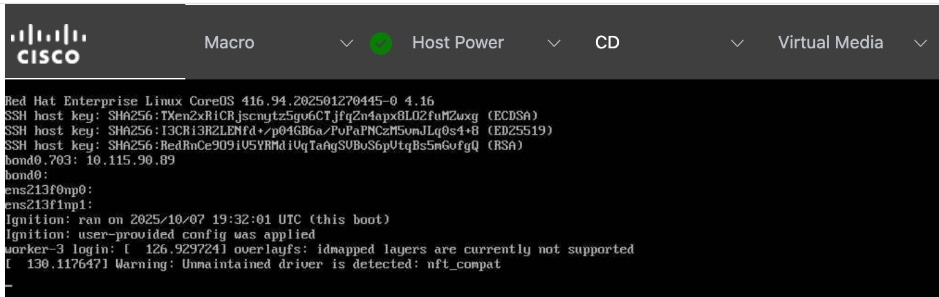

Step 12. Switchback to the SSH terminal session and you should see the following messages on the host console:

Step 13. Type skip to use the previously CIMC-mounted vMedia image.

Step 14. Once the installation completes successfully, the node will be powered off. Unmount the vMedia and power on the host.

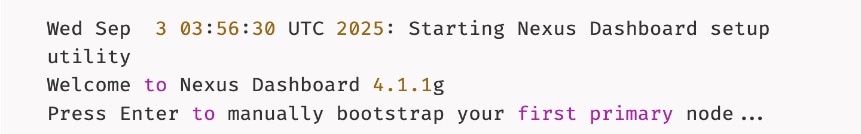

Step 15. Press any key to run first-boot setup.

Step 16. Press Enter to manually bootstrap your first primary node...

Step 17. Specify admin password, IP Address/Mask for Management Network, and Gateway.

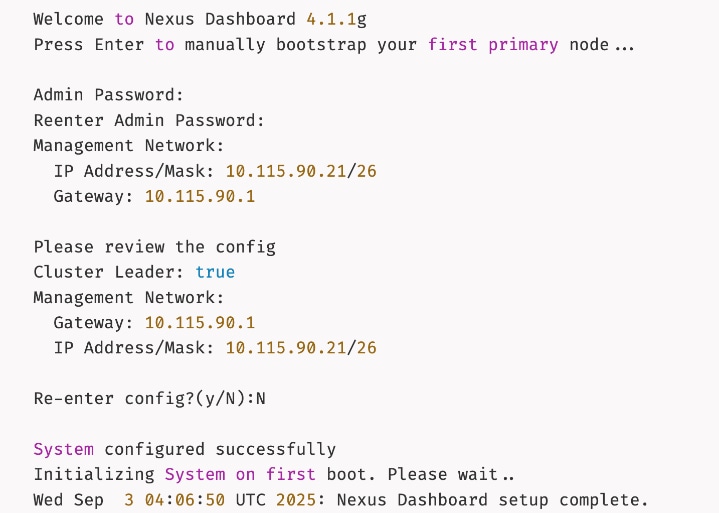

Step 18. Wait for the setup to complete and you see the following message on the console.

Complete Deployment and Setup of Nexus Dashboard Cluster using Workflow

Procedure 1. Install and setup remaining nodes and bring up Nexus Dashboard cluster

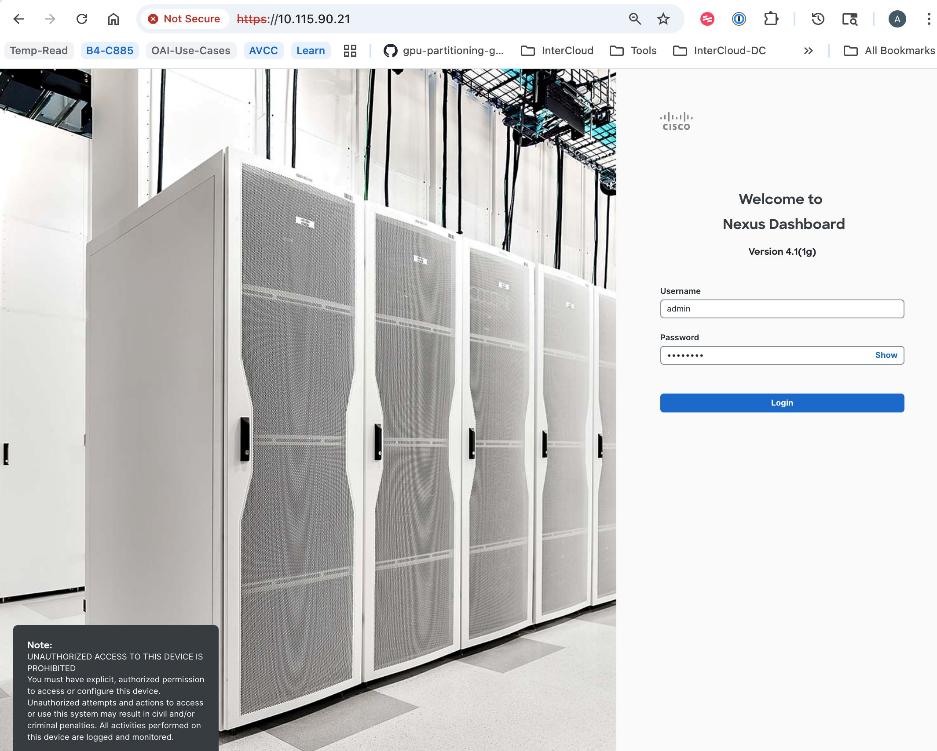

Step 1. From a browser, go to the management IP of the Nexus Dashboard. Log in using admin account.

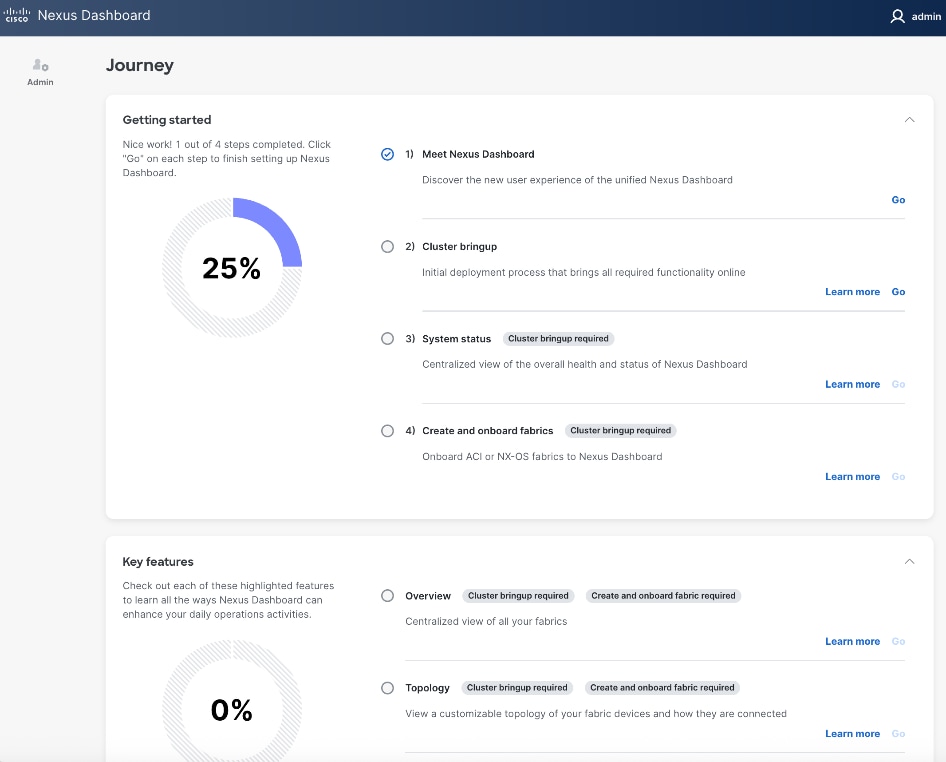

Step 2. Cancel the pop-up windows titled Meet Nexus Dashboard. You should be redirected to the initial setup wizard or Journey screen.

Step 3. From Cluster bringup, click Go.

Step 4. In the Cluster Bringup workflow, for Basic Information, specify a Cluster Name and select radio button for LAN (Default). Click Next.

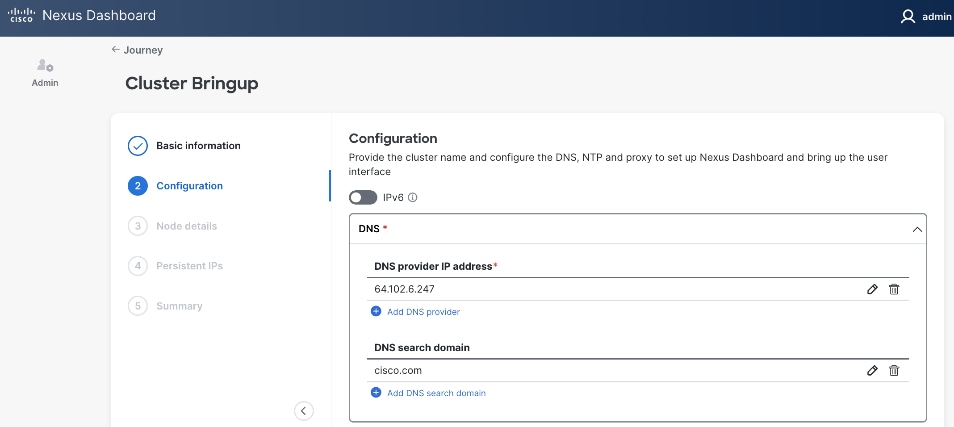

Step 5. In the Cluster Bringup workflow, for Configuration, for DNS, click Add DNS provider and specify a DNS Server IP.

Step 6. (Optional) Repeat this step to add multiple DNS servers.

Step 7. (Optional) Add DNS search domain.

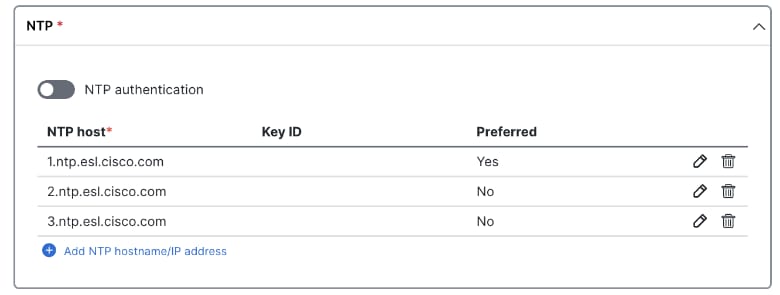

Step 8. For NTP, click Add NTP hostname/IP address and specify NTP server IP or hostname.

Step 9. (Optional) Repeat this step to add multiple NTP servers. Minimum of two NTP servers are recommended. Click the checkbox to select one as Preferred.

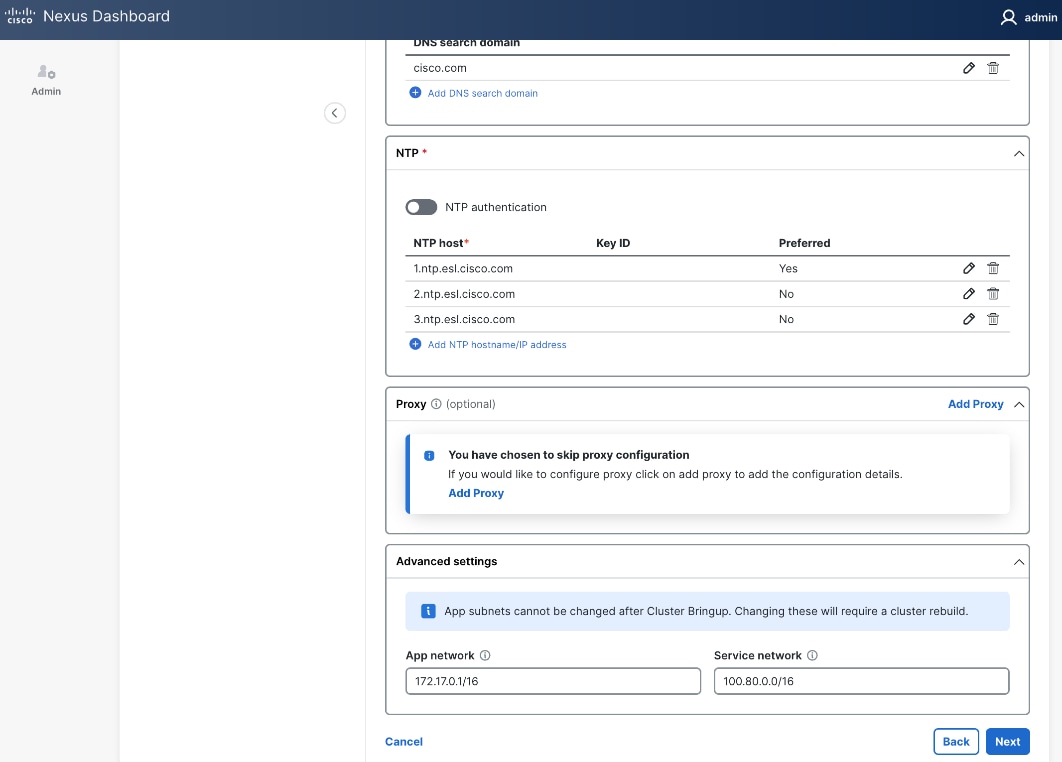

Step 10. (Optional) For Proxy, provide Proxy server information. Otherwise, click Skip Proxy in the section and click Confirm in the pop-up warning message.

Step 11. (Optional) For Advanced Settings, expand Advanced settings to specify App/Service subnets from the default settings (not recommended).

Step 12. Click Next.

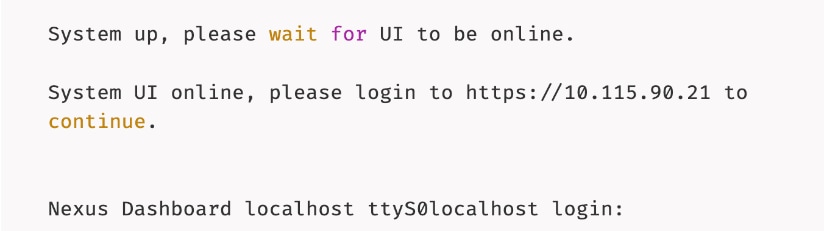

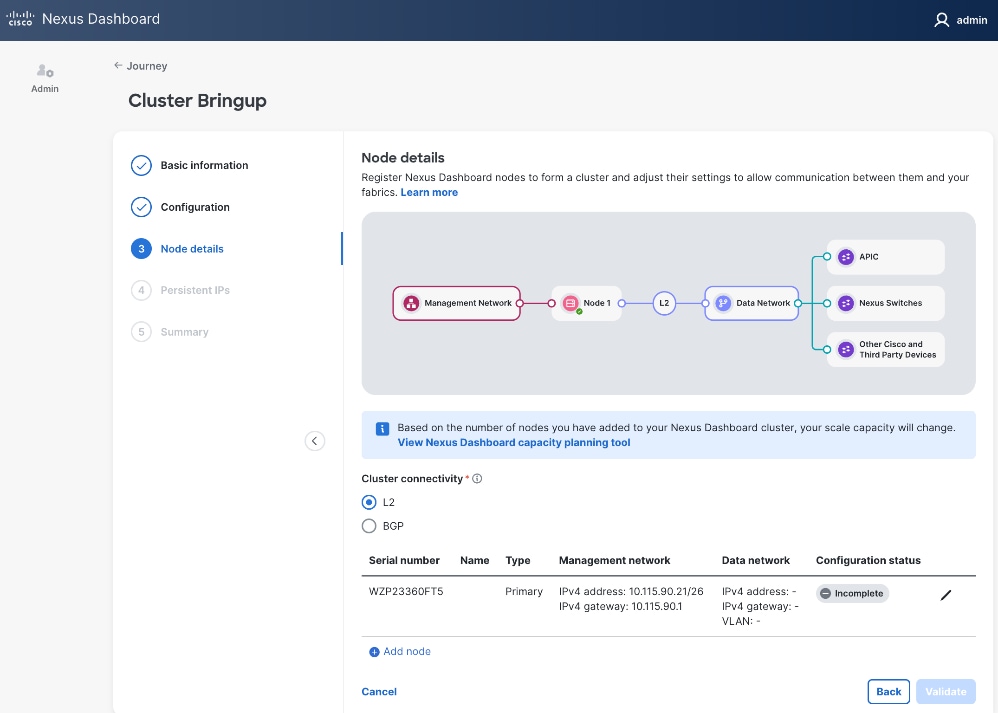

Step 13. In the Cluster Bringup workflow, for Node Details, click Incomplete to the right of the serial number for the first primary ND node to complete the setup.

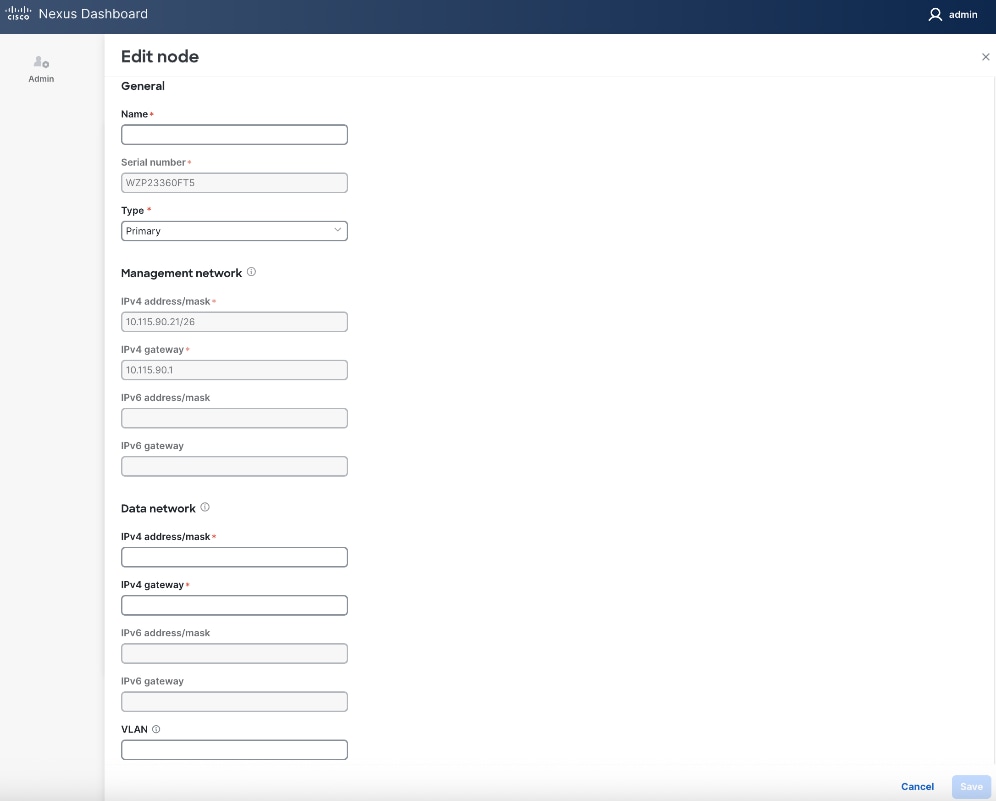

Step 14. In the Edit node window, specify hostname for first ND. Leave Type as Primary. Everything else should already be filled in under General and Management network sections below it.

![]()

Step 15. Scroll down to Data network section. Specify IP address/mask and gateway info for the data network.

Step 16. (Optional) specify a VLAN ID if you're not using a native VLAN on the link towards the fabric. If a VLAN is specified, the fabric facing links/ports on the ND node and switch it connects to will need to be configured for trunking.

Step 17. Click Save.

Step 18. Click Add Node to add a second Primary node to the cluster.

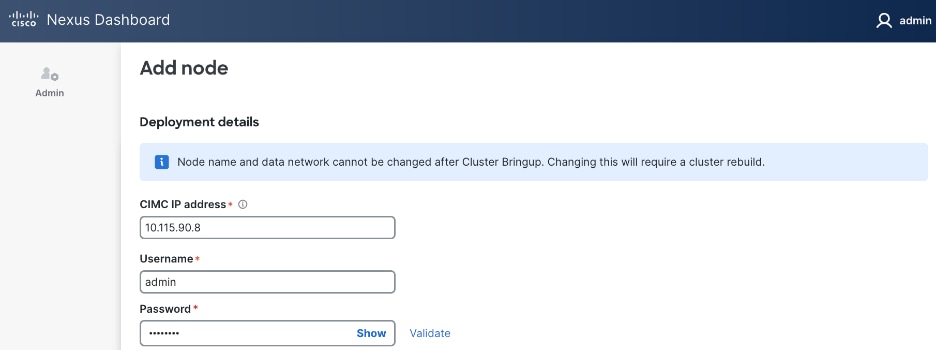

Step 19. In the Add node window, specify CIMC (IP address, Access info). Click Validate.

Step 20. If the CIMC verification succeeds, after a couple of minutes, remaining fields in the Add node window will become available.

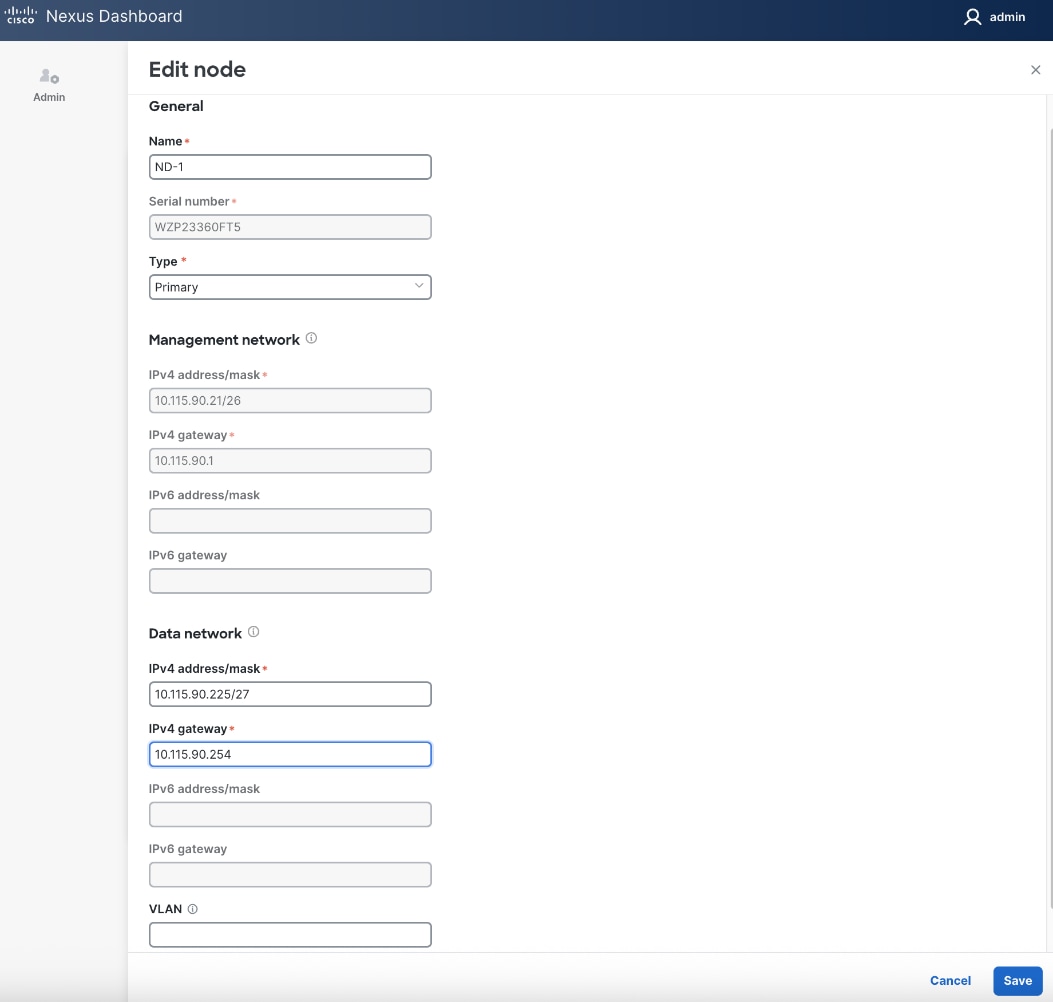

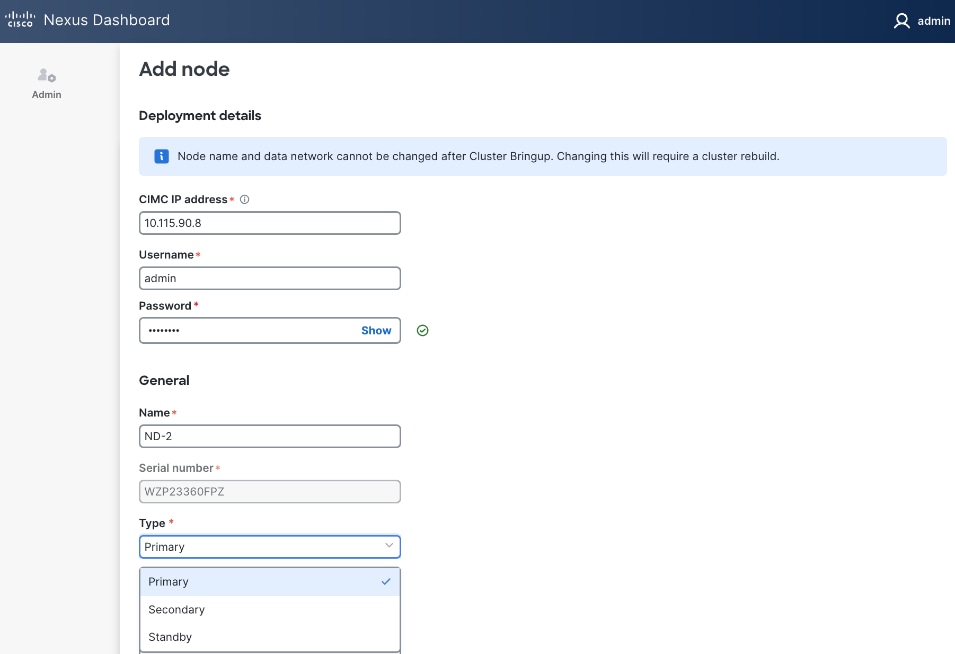

Step 21. For Name, specify hostname for second ND node. For Type, select Primary from the drop-down list.

![]()

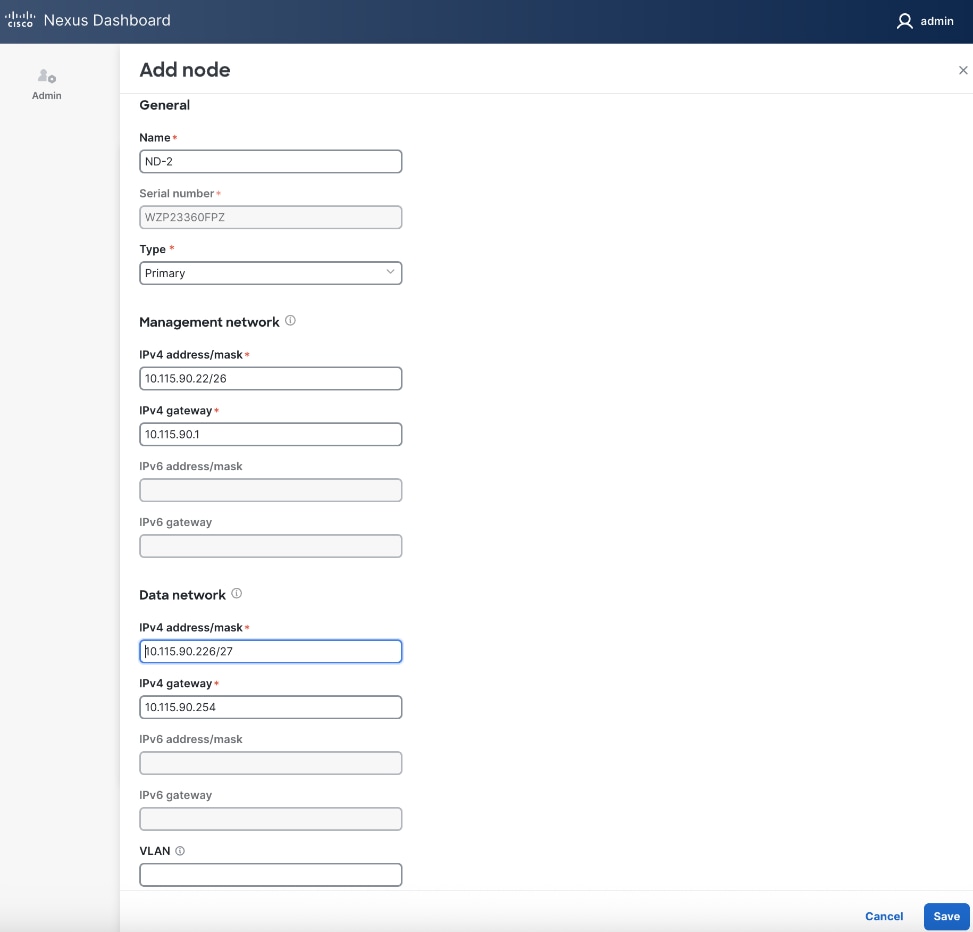

Step 22. For the Management network and Data network, specify IP address/mask, gateway info for the second ND node.

Step 23. Click Save.

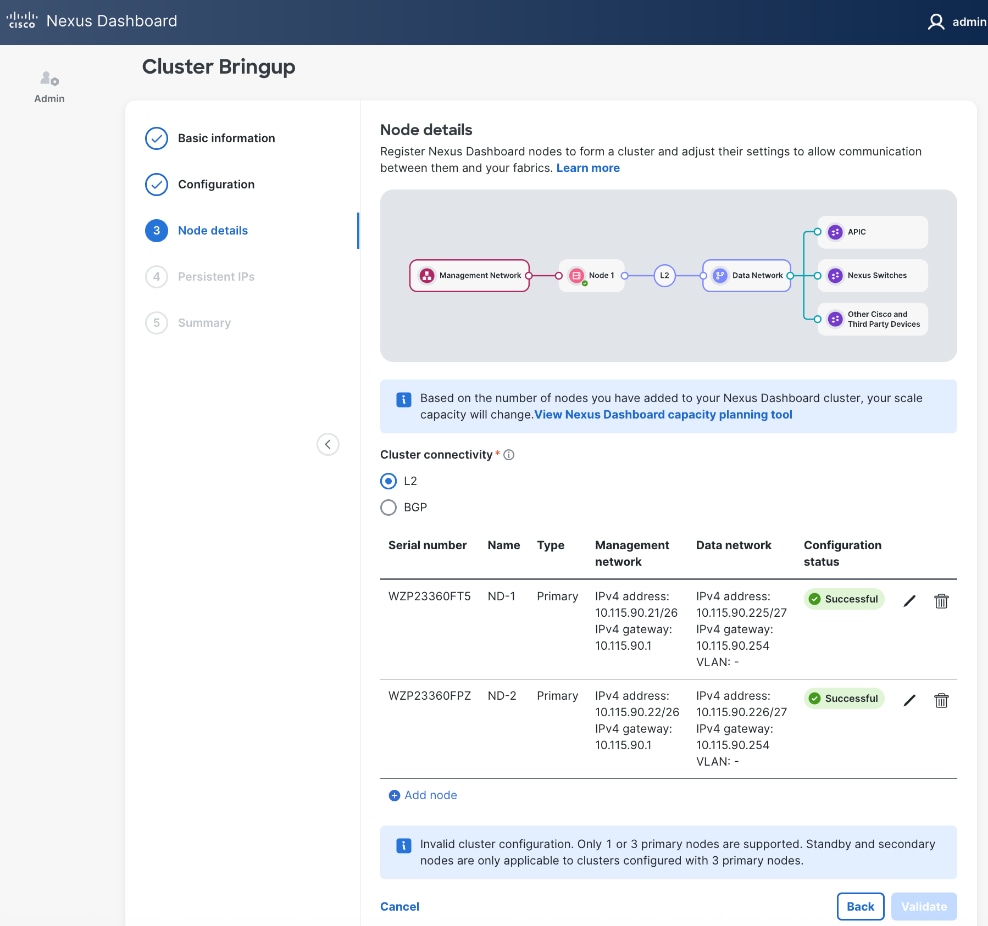

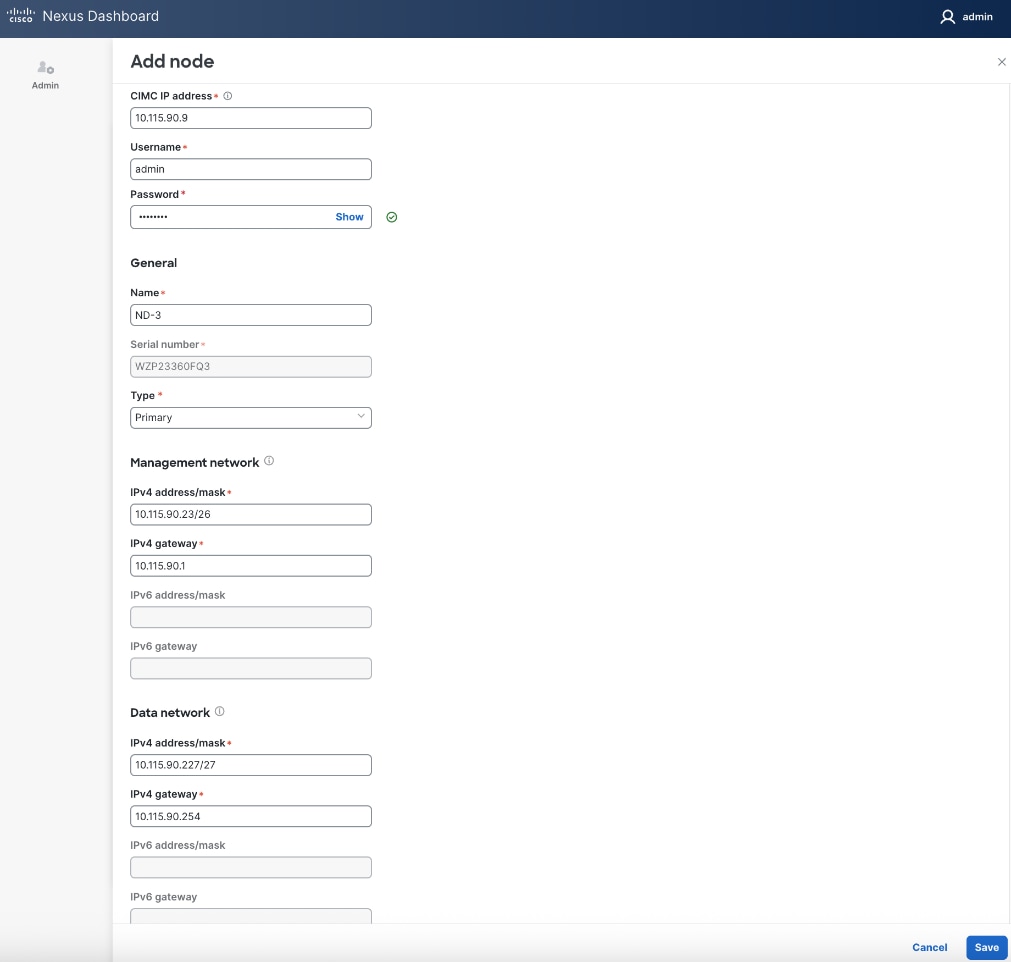

Step 24. Repeat steps 1 – 23 for second ND Node to add a third primary node to ND cluster.

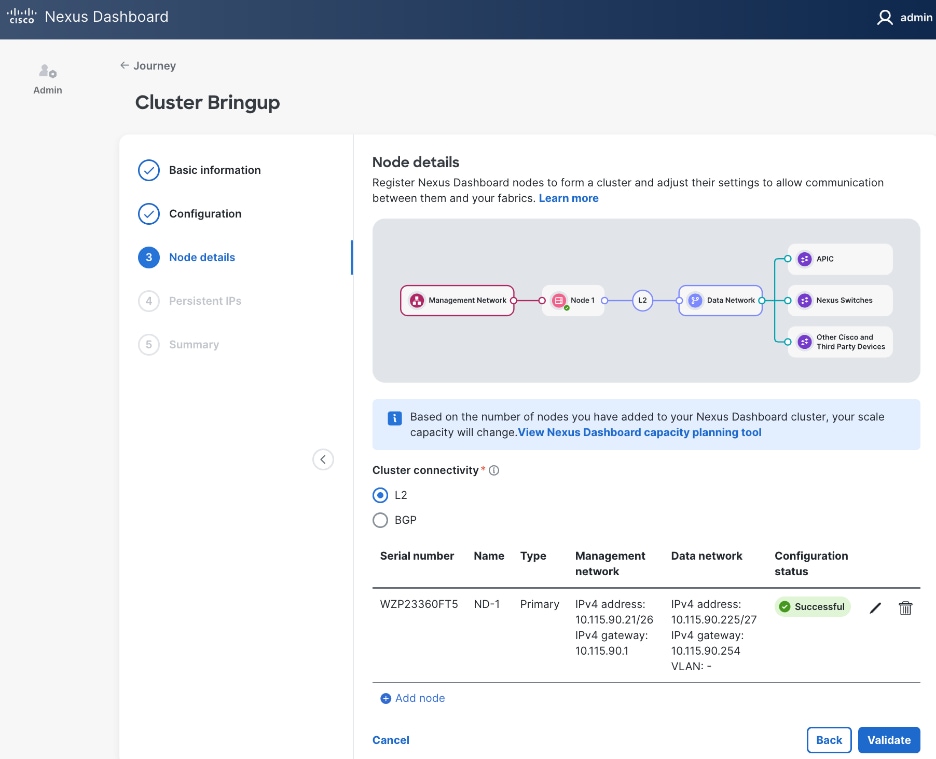

![]()

Step 25. Click Save.

Step 26. Click Validate.

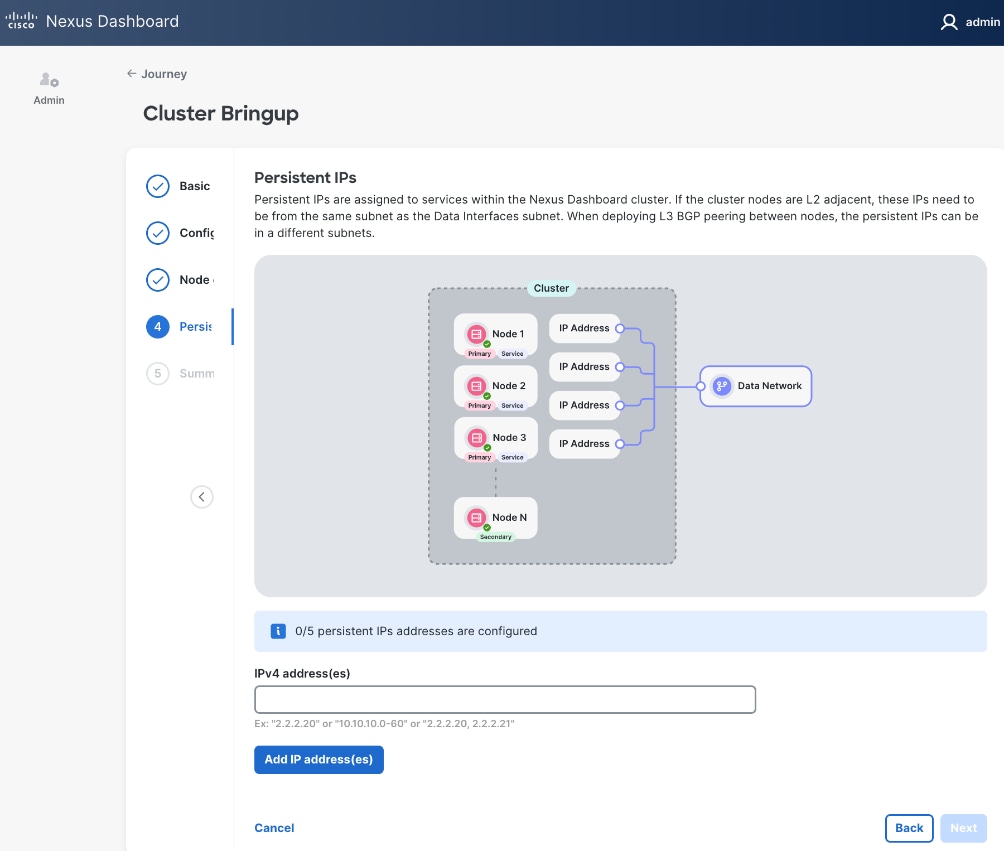

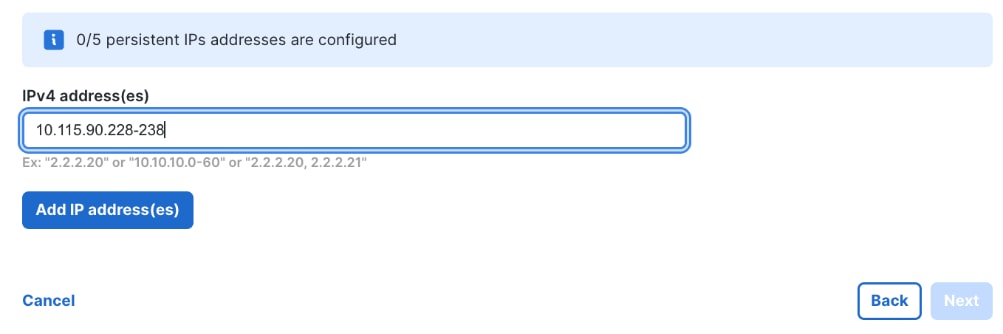

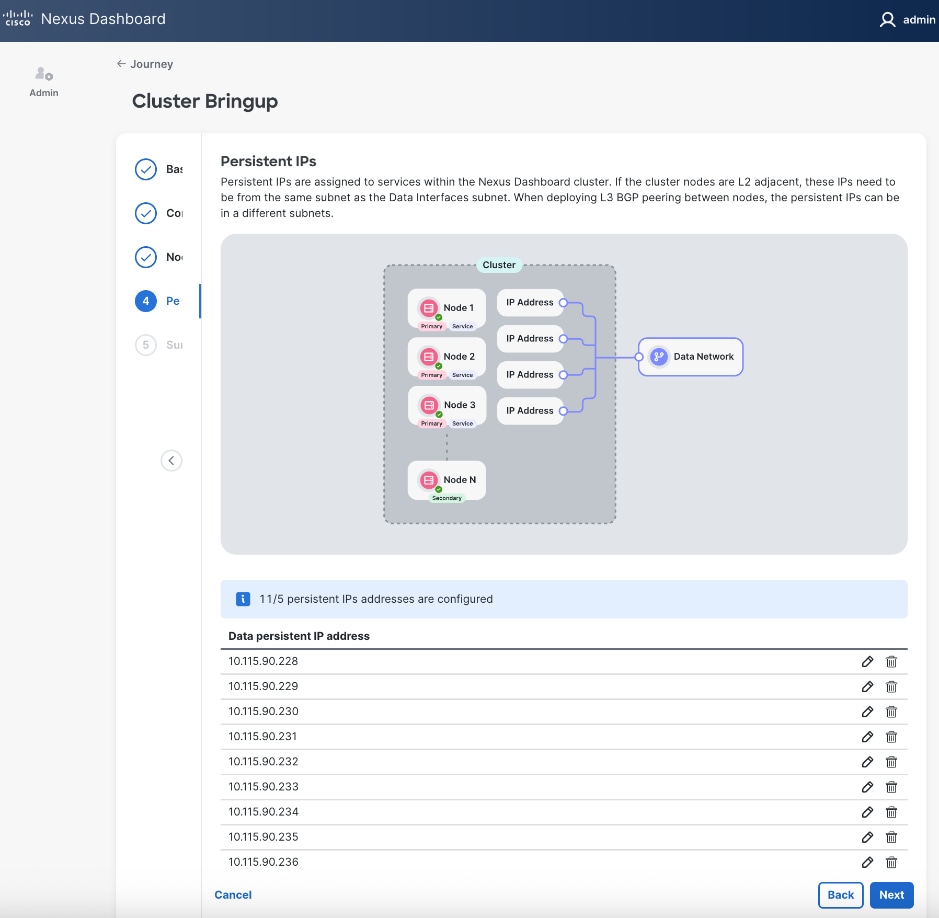

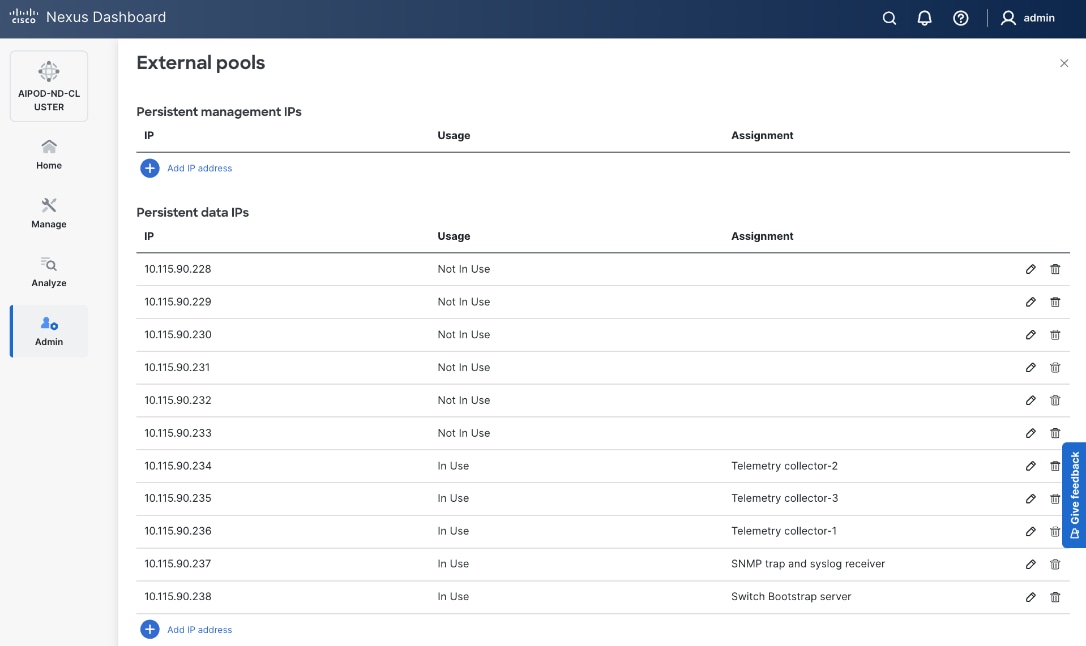

Step 27. Specify Persistent IPs - one by one or a range. Minimum of 5 must be provided. In L2 mode, they must be from the same subnet as ND Data Network subnet.

Step 28. Click Add IP address(es).

Step 29. Click Next.

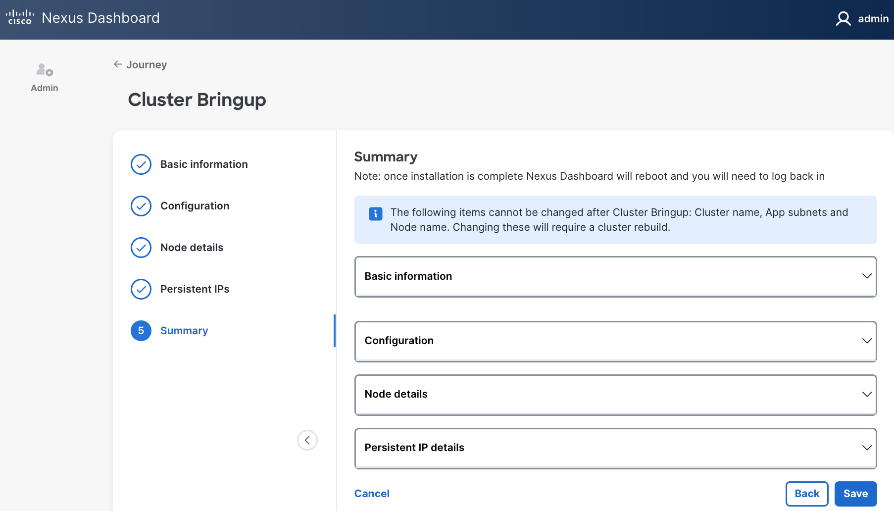

Step 30. In the Summary view, expand each section and verify all settings.

Step 31. Click Save.

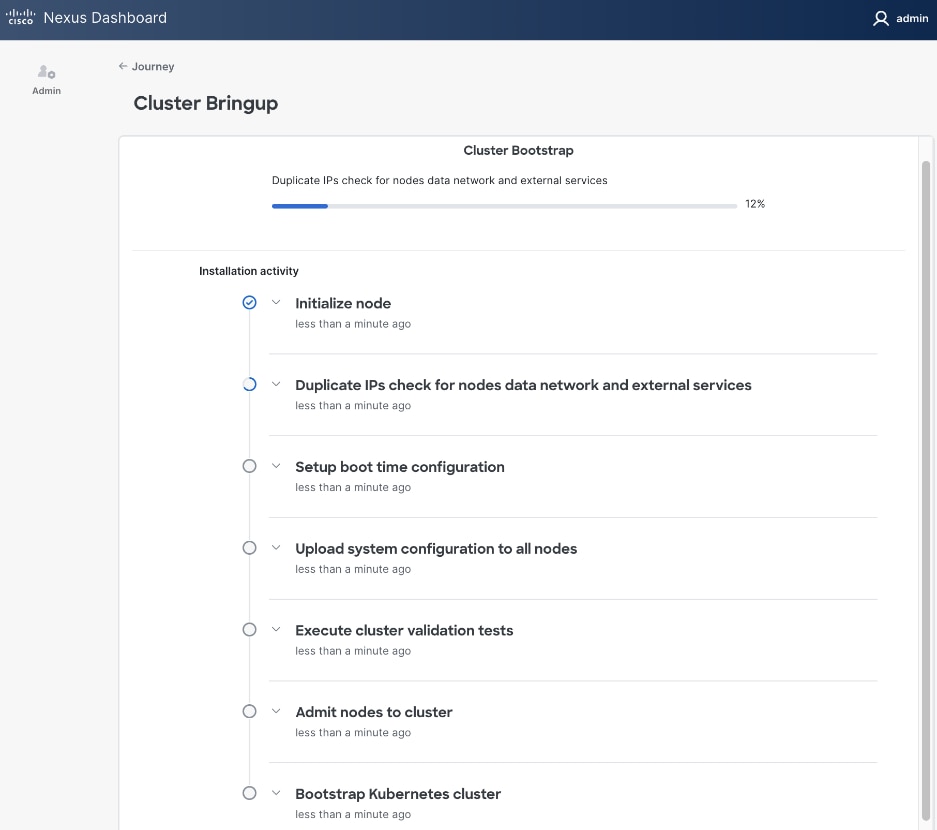

Step 32. Once the workflow finishes, the Cluster Install begins.

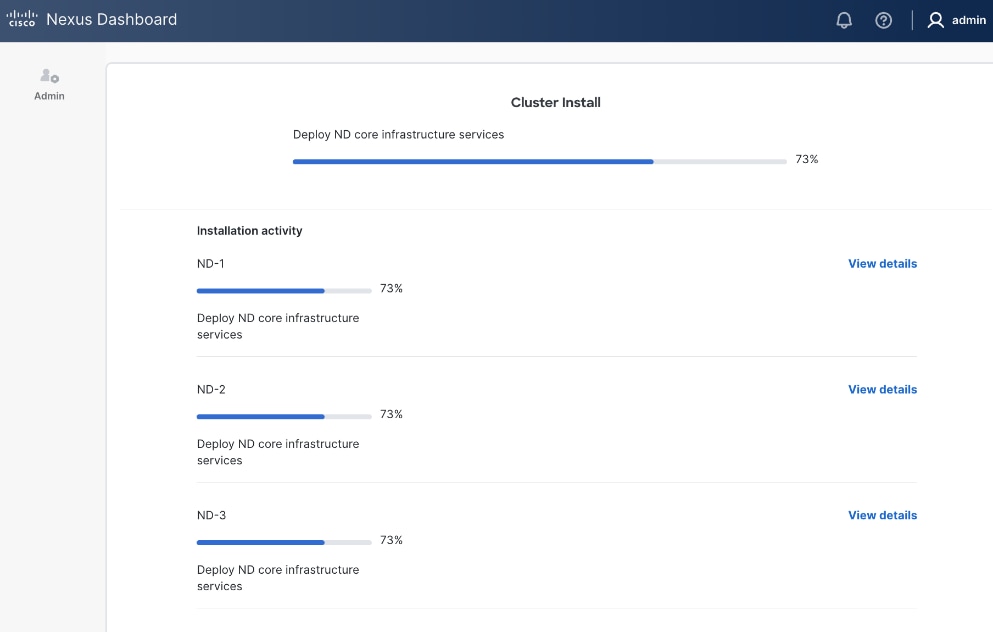

Step 33. Once cluster install completes, the services will be activated. This can take ~ 30 min. for this stage to complete.

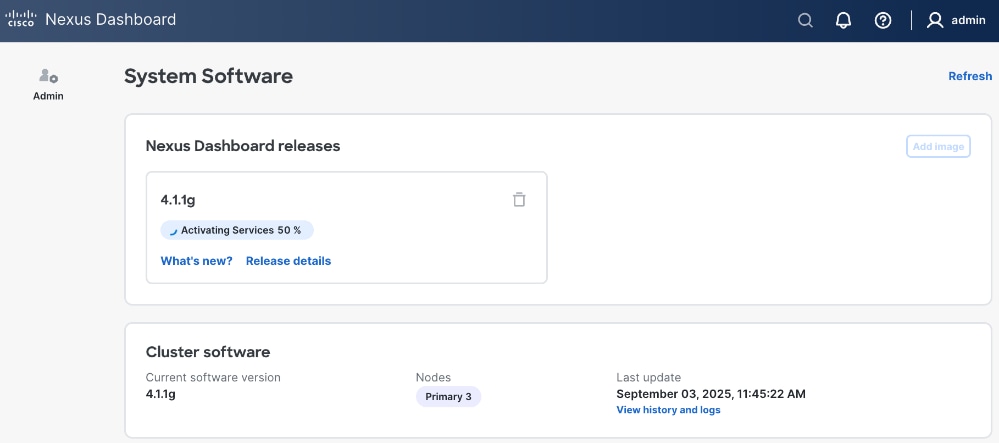

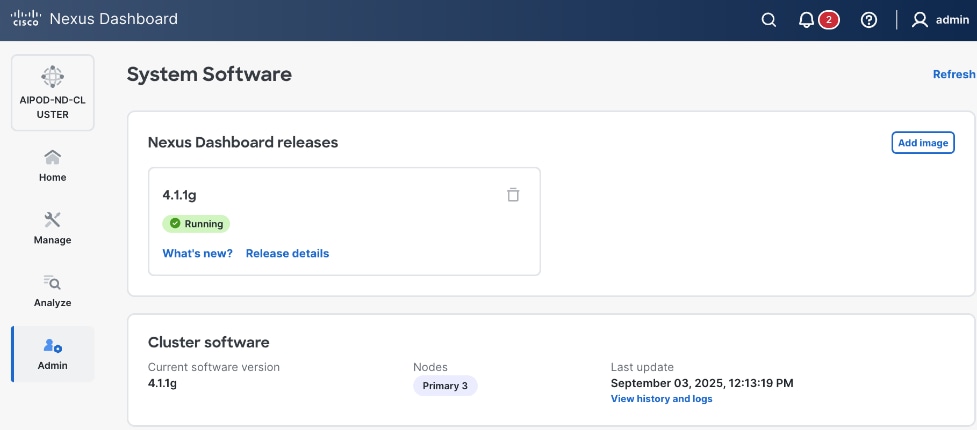

Step 34. Once ND cluster deployment completes, you will see the following status on first ND node at: https://10.115.90.21/system-software.

Step 35. You should now see more options on the left navigation menu and not just Admin as was the case earlier.

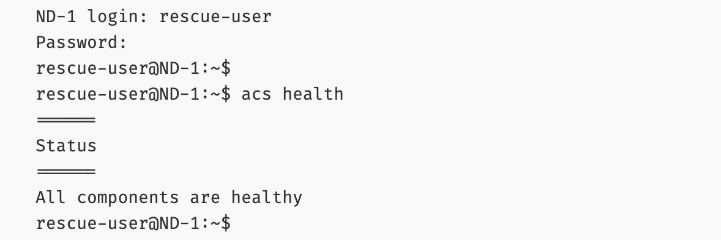

Step 36. SSH into ND nodes and verify the cluster is healthy:

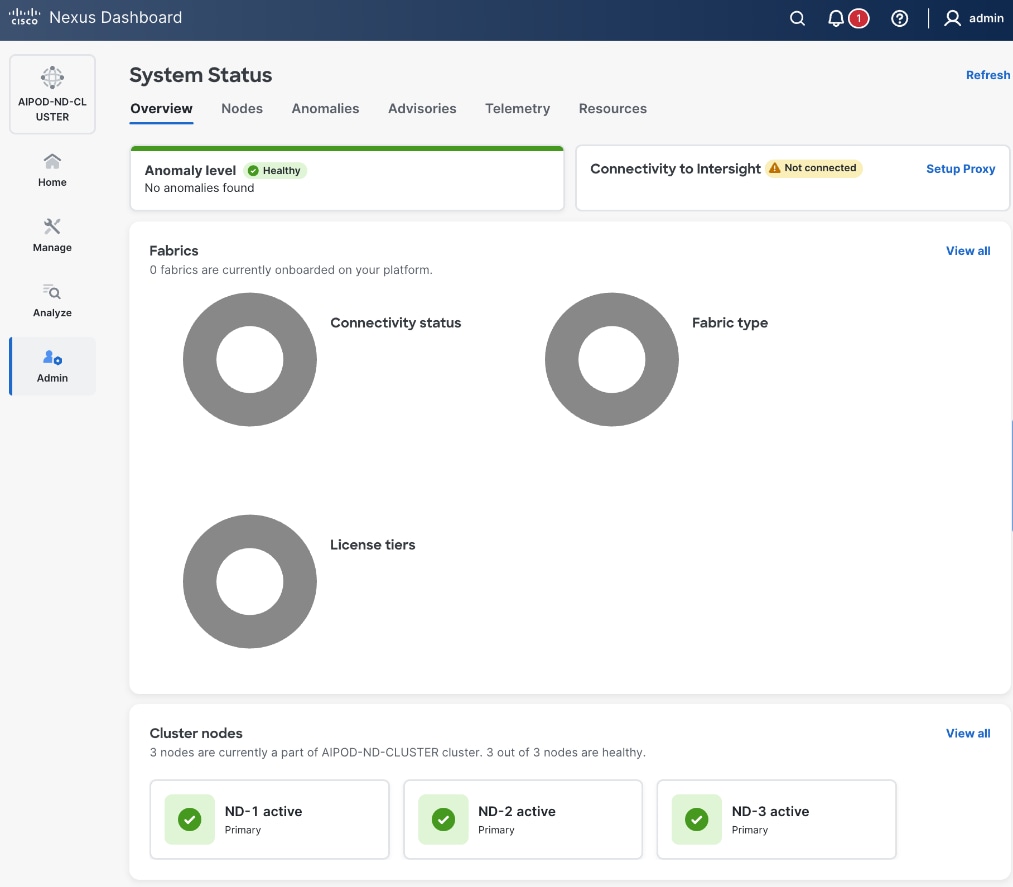

Step 37. From a browser, log back into any of the ND nodes and go to Admin > System Status.

Step 38. You can view how the previously allocated pool of persistent IPs are allocated as various capabilities are deployed on the fabrics being managed by this ND cluster as shown below.

Step 39. Review the overall status and addressing any remaining steps by stepping through the different options in the left navigation menu. For example, some optional but recommended steps could be:

● From AdminAdmin > Intersight, > Intersight, claim ND nodes in cisco.intersight.comcisco.intersight.com using the account (for example AI-POD) that is managing the compute nodes in the solution

● From Admin >Admin > Backup and Restore, Backup and Restore, setup backup and restore to save the ND configuration.

● From Analyze >Analyze > AnomaliesAnomalies and Advisories, Advisories, review and take action or acknowledge them if they are not relevant to your environment.

● From Admin > System Settings, review options in the Fabric Management tab.

● From AdminAdmin > System Settings, > System Settings, review options in the Flow Collection Flow Collection tab to enable Telemetry

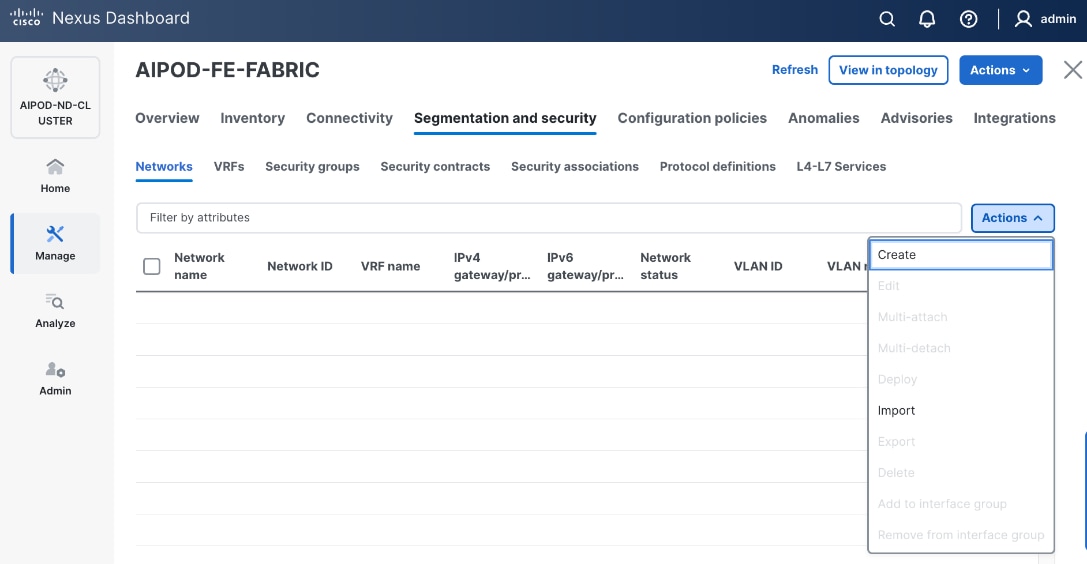

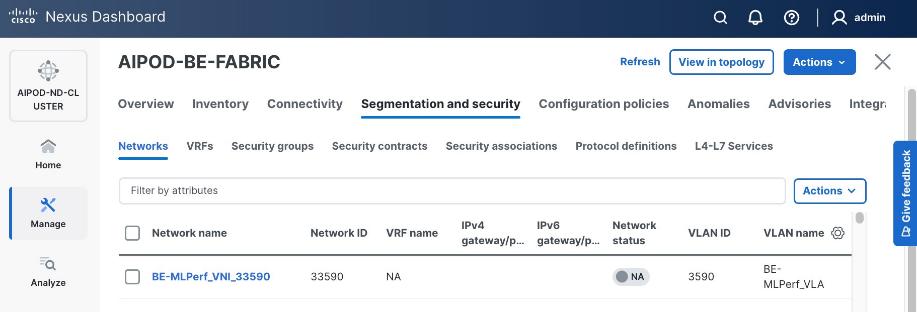

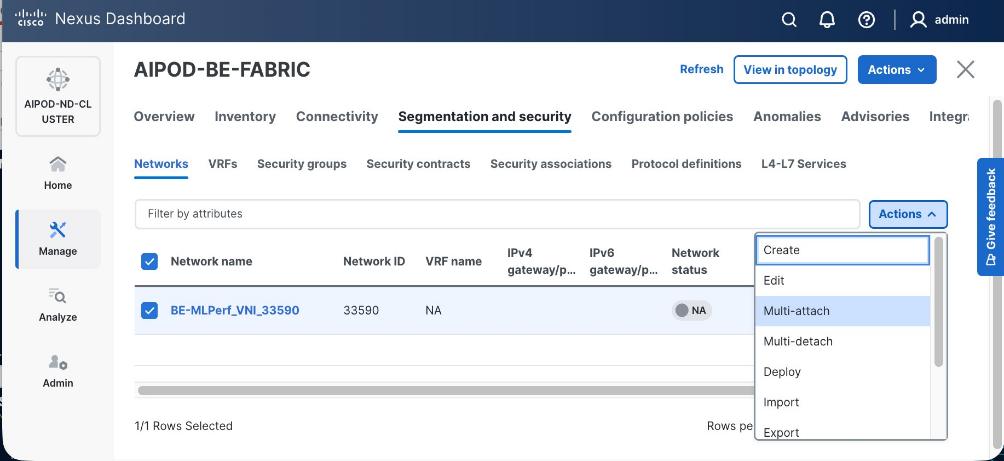

Deploy Frontend Fabric using Nexus Dashboard

The procedures outlined in this section will use Cisco Nexus Dashboard, specifically the fabric templates provided by ND, to deploy the frontend fabric in the AI POD solution. The frontend fabric is a 2-tier, 3-stage spine-leaf Clos topology, built using Cisco Nexus 9000 series data center switches. Once the fabric is deployed, ND will be used to provision connectivity between various infrastructure components connected to the frontend fabric. The Cisco UCS GPU servers in the AI POD training cluster will use the frontend (N-S) NIC to connect to the frontend fabric.

The procedures in this section will:

● Deploy a VXLAN EVPN fabric on the frontend leaf and spine switches, connected in a 2-tier spine-leaf topology

● Enable Virtual Port Channel (vPC) peering on compute/management leaf pairs and storage leaf pairs in the frontend fabric

● Provision L2 and in-band management connectivity to UCS server that will be used to host the control plane and workload management components for the AI workloads running on UCS GPU servers.

● Provisioning external connectivity from the frontend fabric to other enterprise internal and external networks. This includes connectivity to Cisco Intersight, Red Hat Hybrid Cloud Console and other SaaS services used in the AI POD solution.

● Provision Layer 2 and Layer 3 connectivity to Everpure FlashBlade//S from front end fabric as needed.

● Enable reachability between UCS nodes and Everpure FlashBlade//S to enable access to NFS and object data stores.

● Enable QoS in frontend fabric to ensure losses RDMA to the storage system.

Deploy VXLAN EVPN Fabric using Nexus Dashboard Templates

Assumptions and Prerequisites

● Nexus Dashboard cluster deployed

● All switches in the frontend fabric cabled in a spine-leaf topology

● Reachability from ND cluster to switches so that they can be discovered and added to the fabric

Setup Information

Table 8. Setup Parameters for FE Fabric: Deploy VXLAN EVPN Fabric

| Parameter Type |

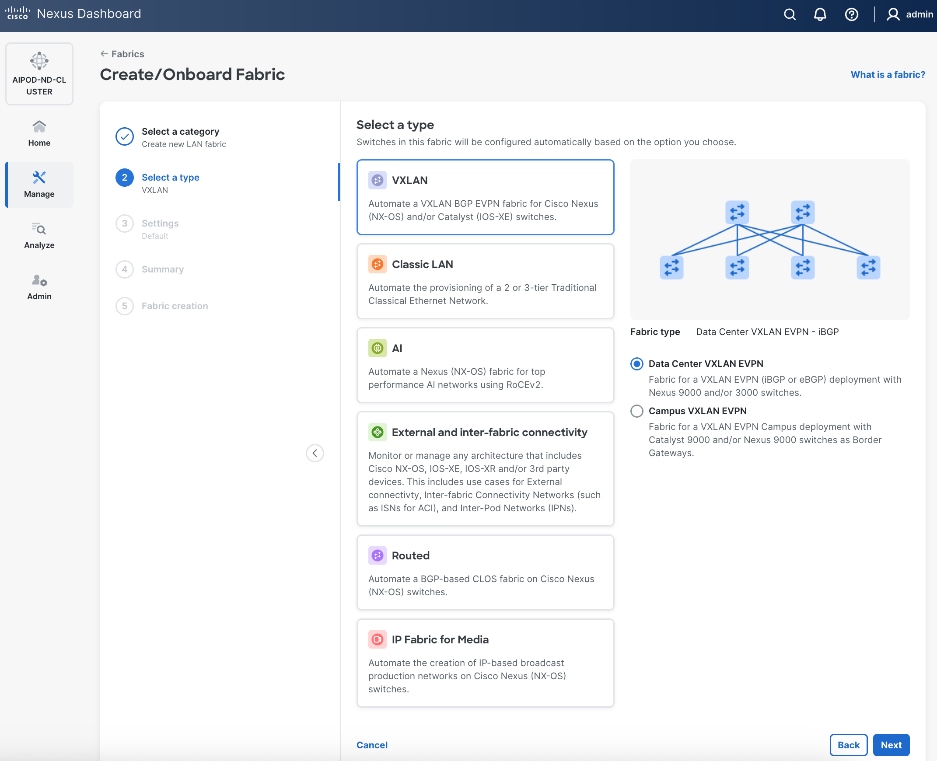

Parameter Name | Value |

Parameter Type |

| Create new LAN fabric workflow |

||

| Type |

VXLAN |

|

| Fabric Type |

Data Center VXLAN EVPN |

|

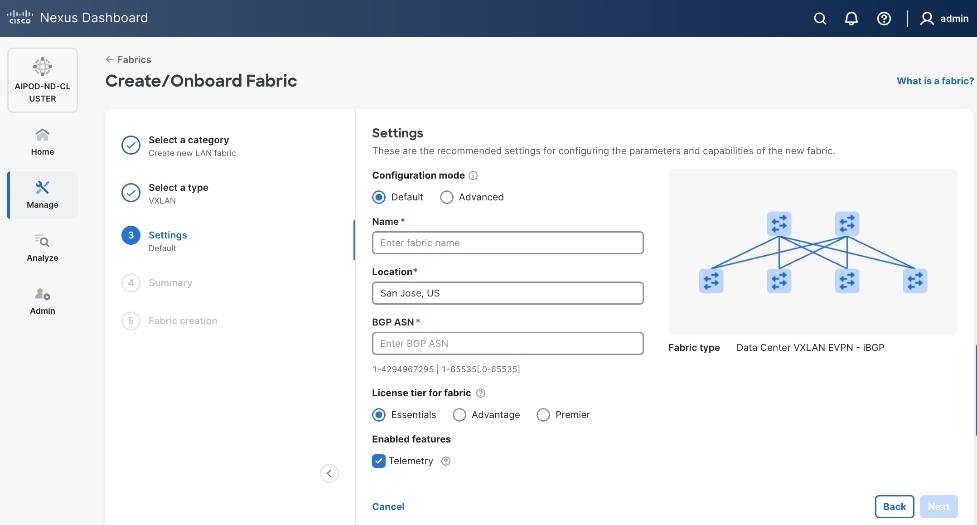

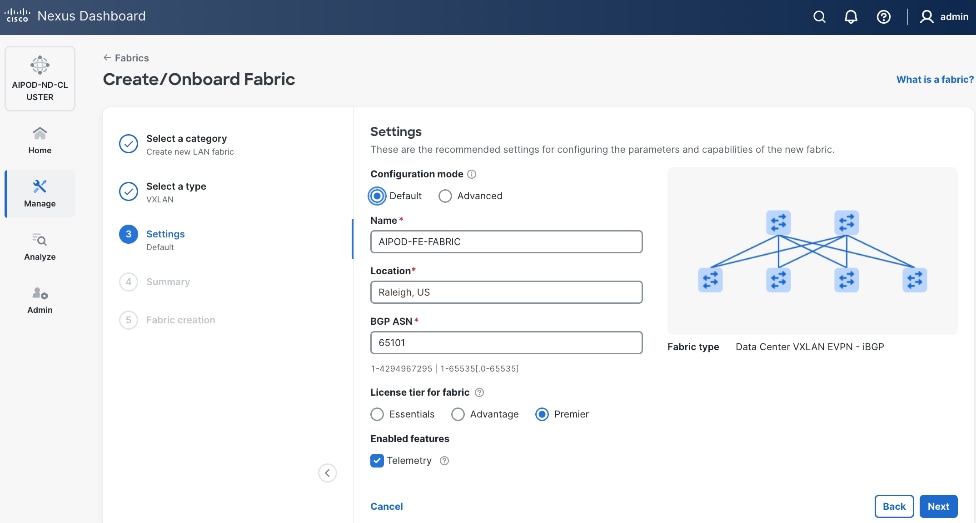

| Configuration Mode |

Default |

|

| Name |

AIPOD-FE-Fabric |

|

| Location |

Raleigh, US |

|

| BGP ASN |

65101 |

|

| License Tier for fabric |

Premier |

|

| Enabled Features |

Telemetry |

|

| Add switches without a reload |

Enabled |

|

| Set Default Credentials |

|

|

| Username |

admin |

|

| Password |

<specify> |

|

| Add Switches |

|

|

| Seed IP |

<specify> |

|

| Username |

Admin |

|

| Password |

<specify> |

|

| Max Hops |

<specify> |

|

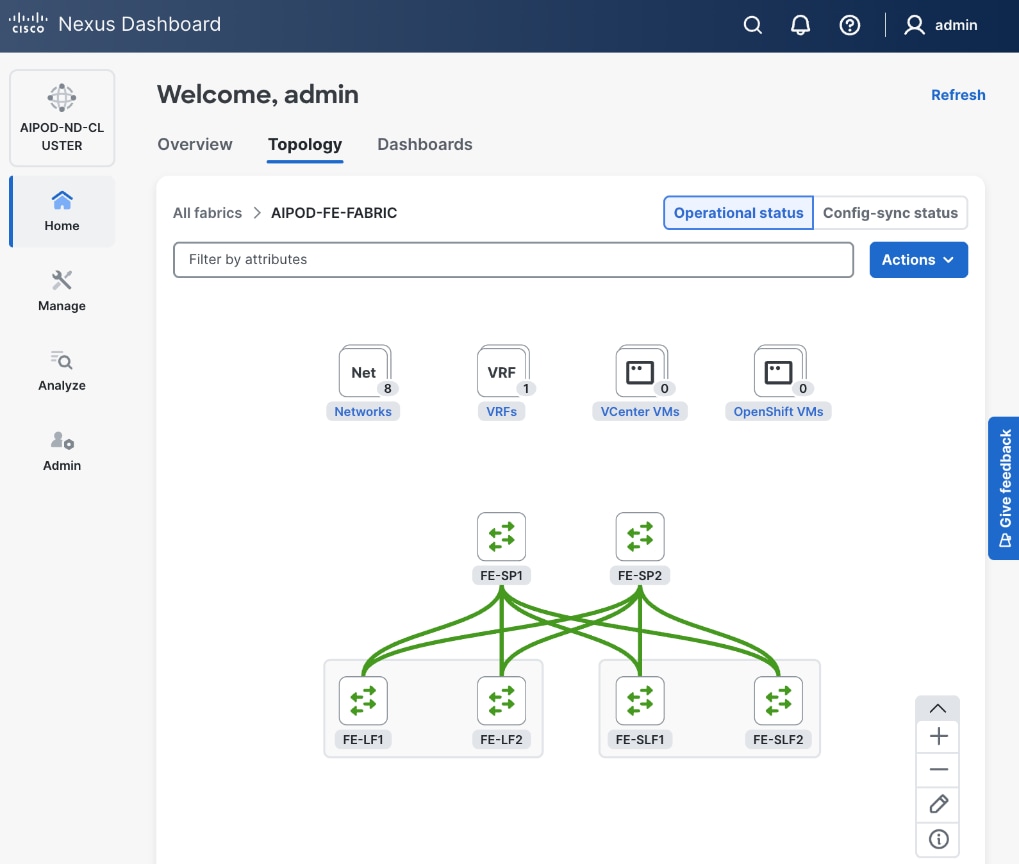

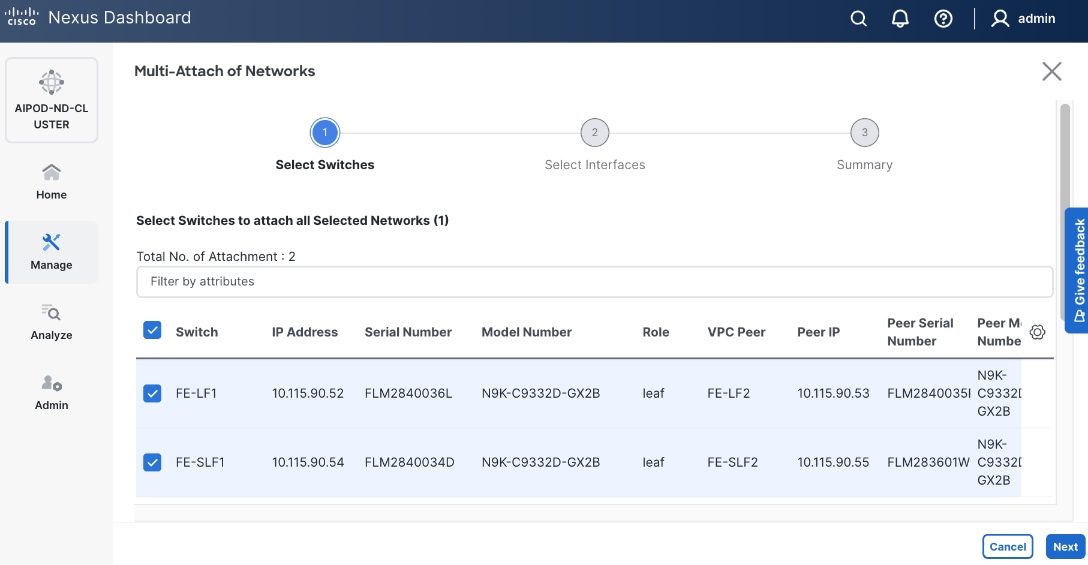

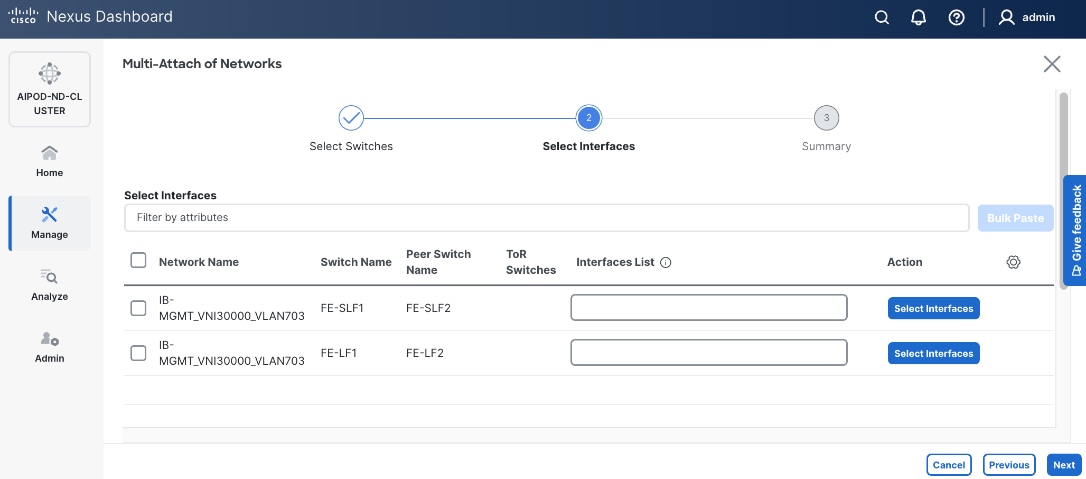

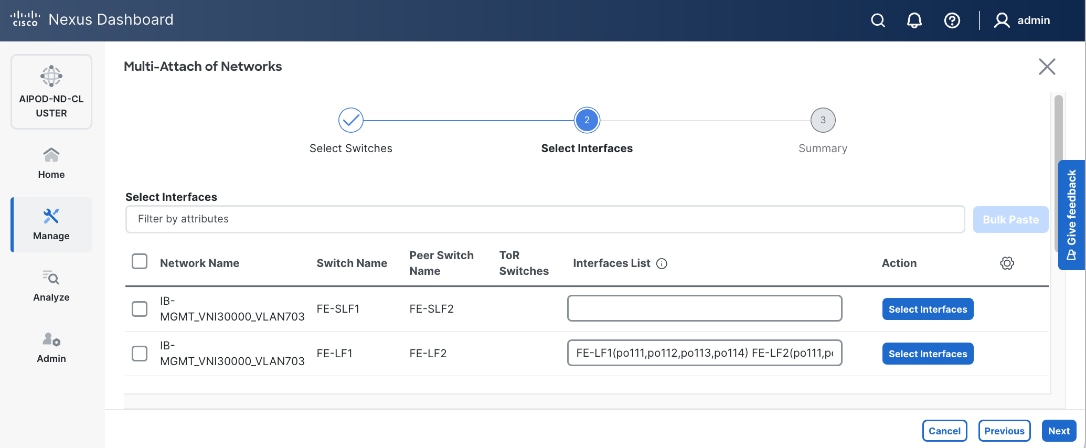

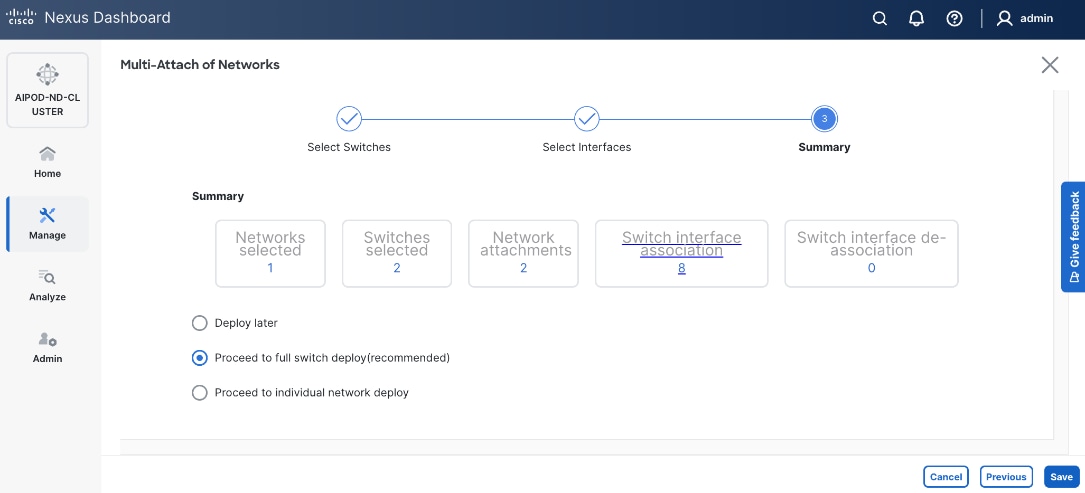

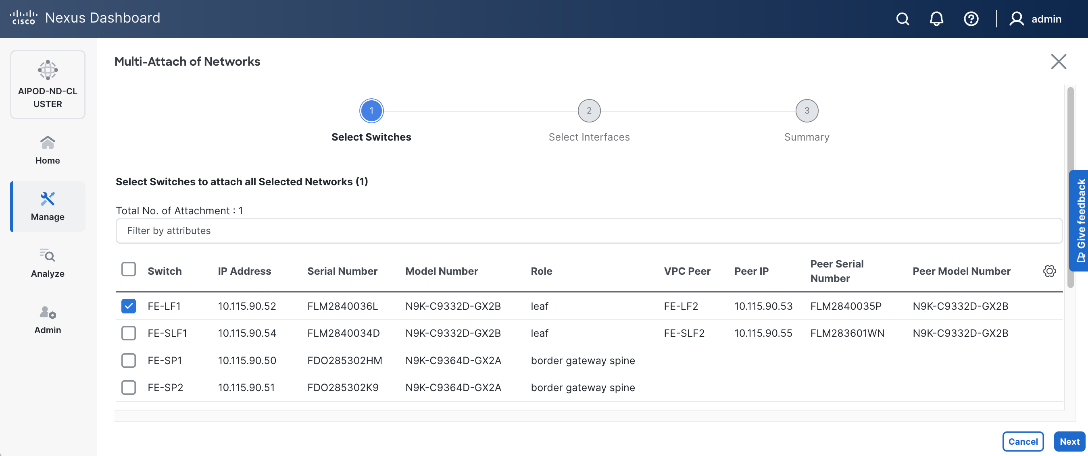

In this setup, the Nexus frontend fabric consisted of 2 spine and 4 leaf switches. The fabric switch details are listed in Table 9.

Table 9. Setup Parameters for FE Fabric: Fabric Switch Details

| Switch |

Role |

OOB IP |

Firmware |

Model |

| FE-LF1 |

Compute/Management Leaf |

10.115.90.52 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-LF2 |

Compute/Management Leaf |

10.115.90.53 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SLF1 |

Storage Leaf |

10.115.90.54 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SLF2 |

Storage Leaf |

10.115.90.55 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SP1 |

Spine |

10.115.90.50 |

10.4(5) |

Cisco Nexus 9364D-GX2A |

| FE-SP2 |

Spine |

10.115.90.51 |

10.4(5) |

Cisco Nexus 9364D-GX2A |

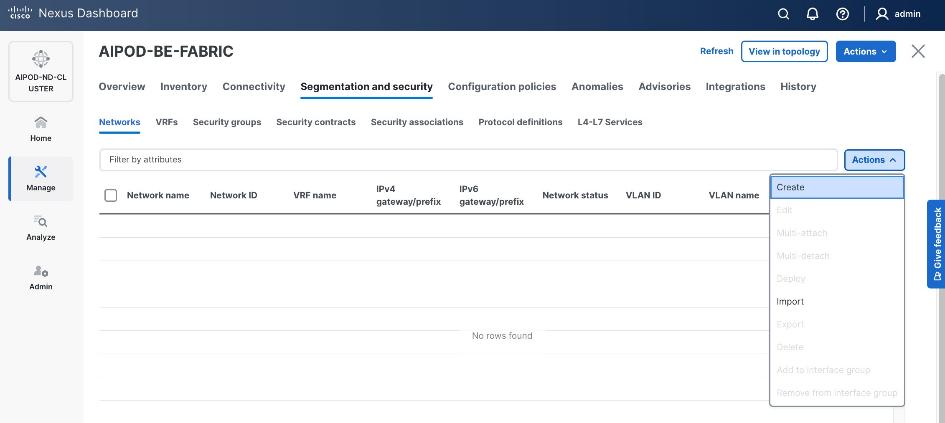

Deployment Steps

To deploy a VXLAN EVPN Frontend Fabric, follow the procedures below.

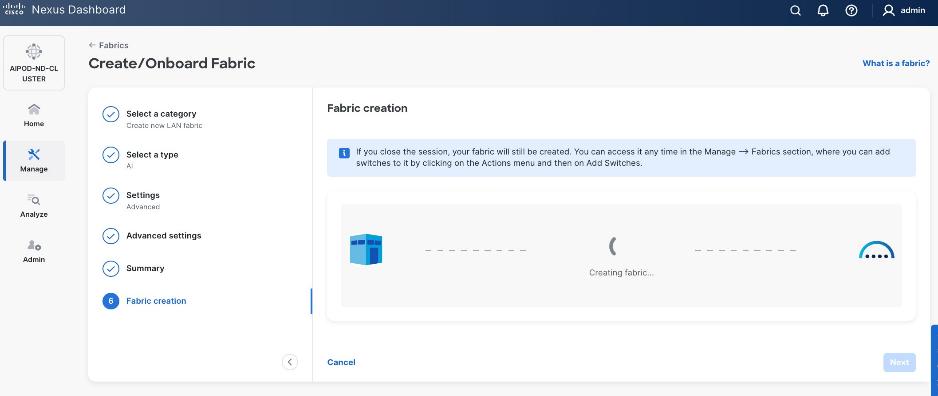

Procedure 1. Deploy VXLAN EVPN fabric on the two-tier spine and leaf switches

Step 1. From a browser, go to the management IP of any node in the Nexus Dashboard cluster. Log in using admin account.

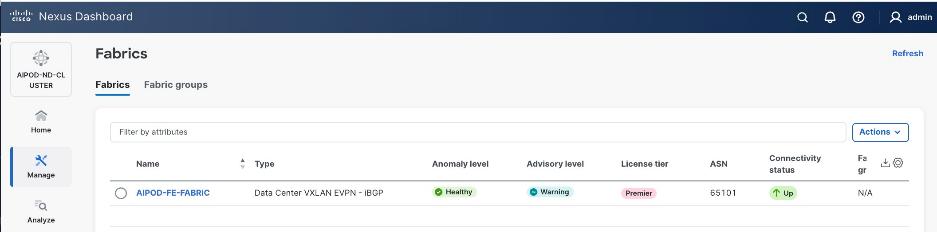

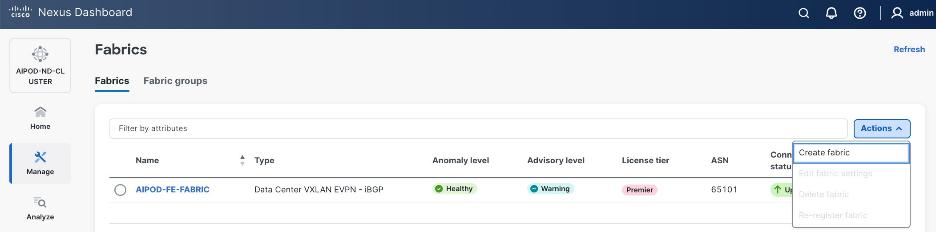

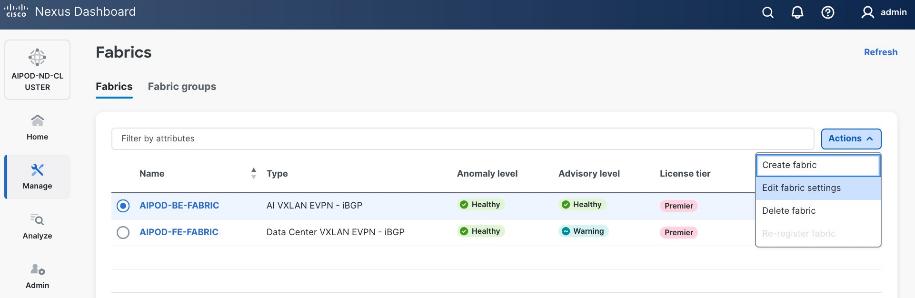

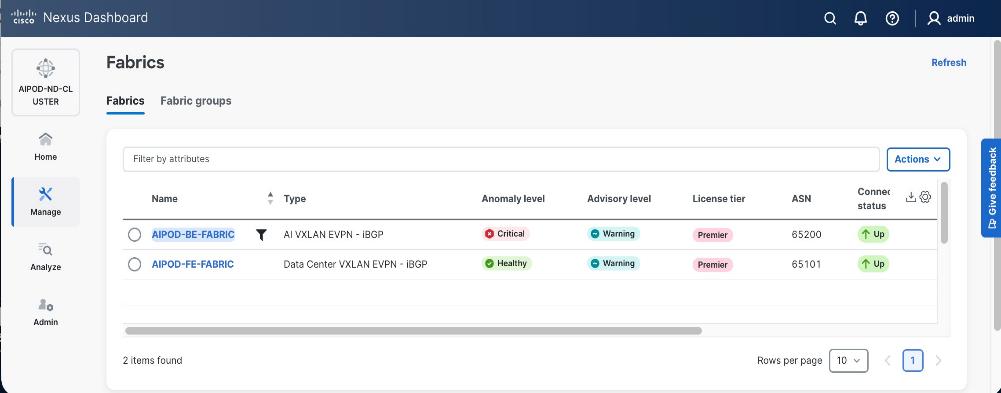

Step 2. From the left navigation menu, go to Manage > Fabrics.

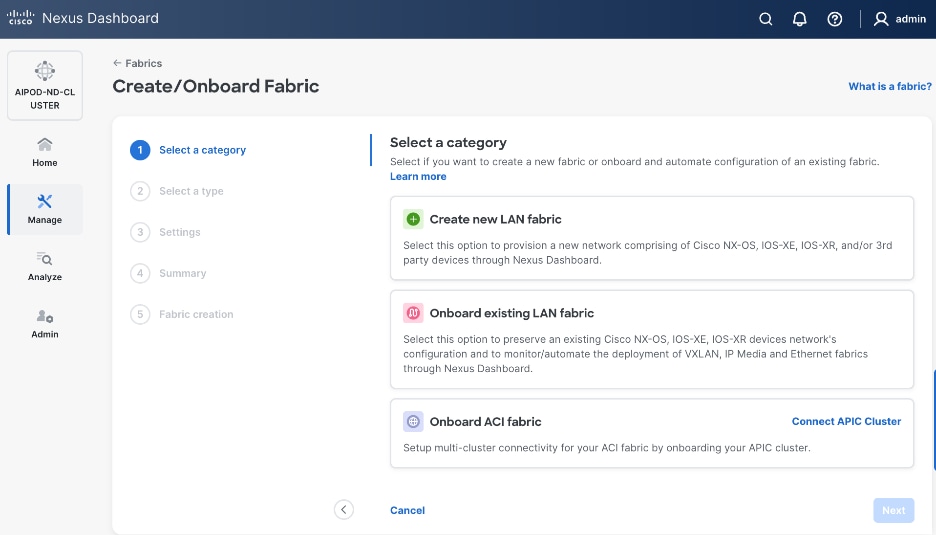

Step 3. Click Actions and select Create Fabric from the drop-down list.

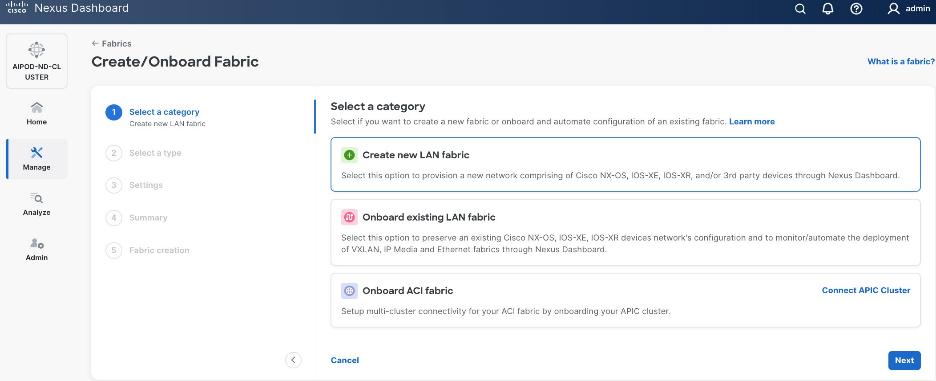

Step 4. Select Create new LAN fabric box. Click Next.

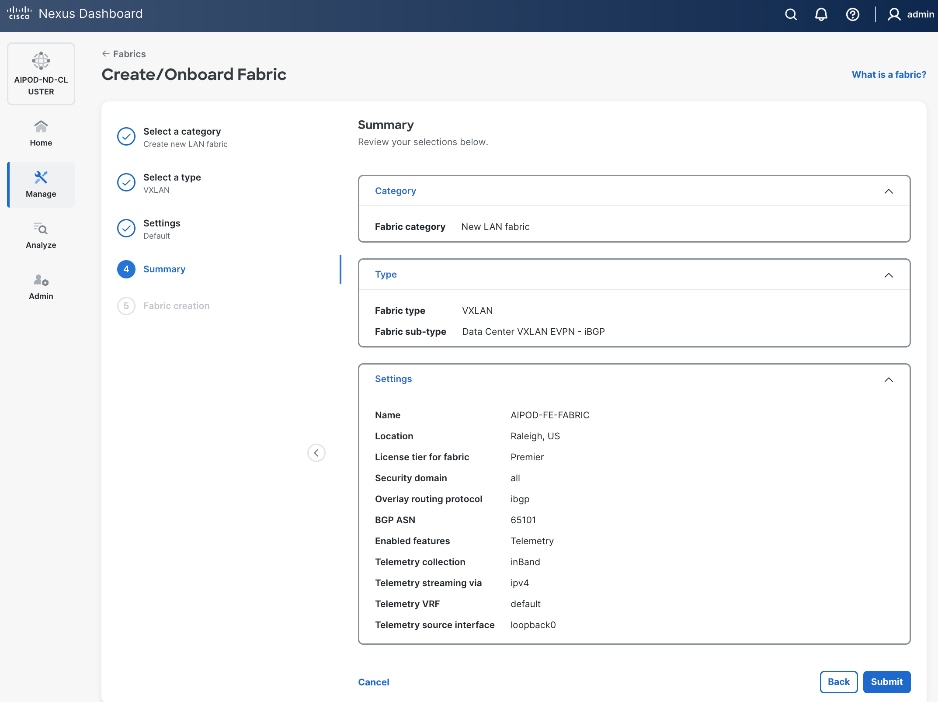

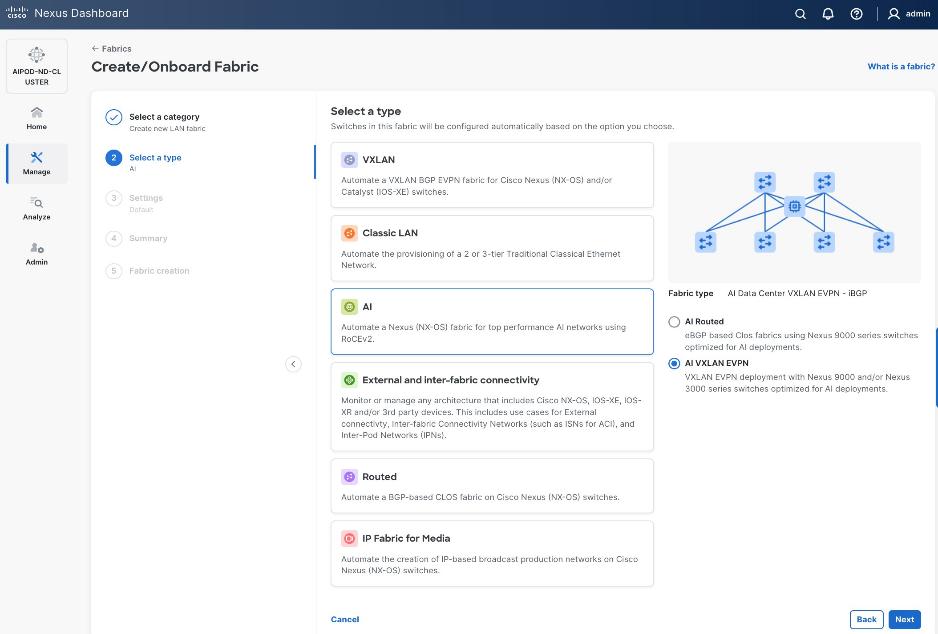

Step 5. Select VXLAN and radio button for Data Center VXLAN EVPN for the fabric type. Click Next.

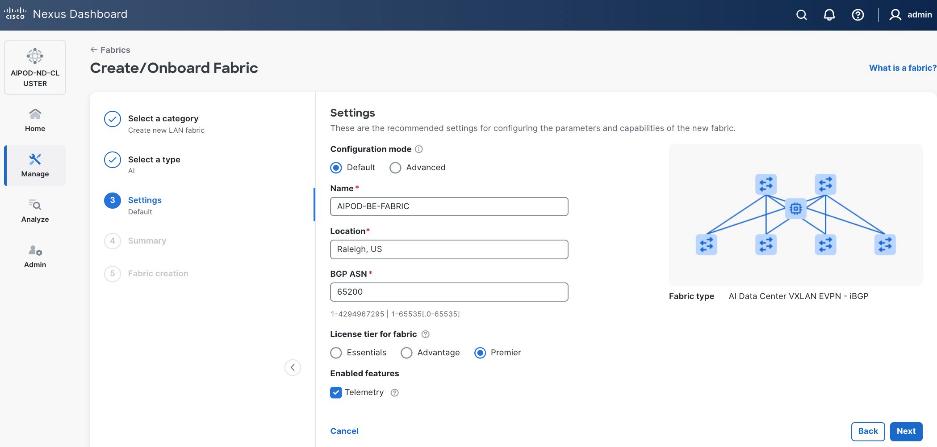

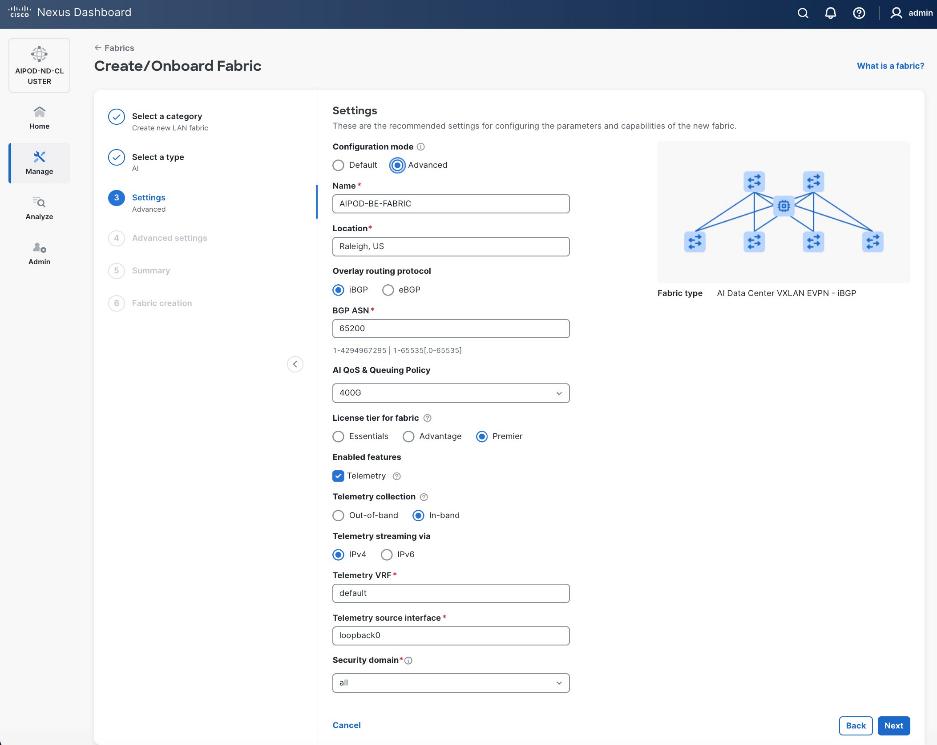

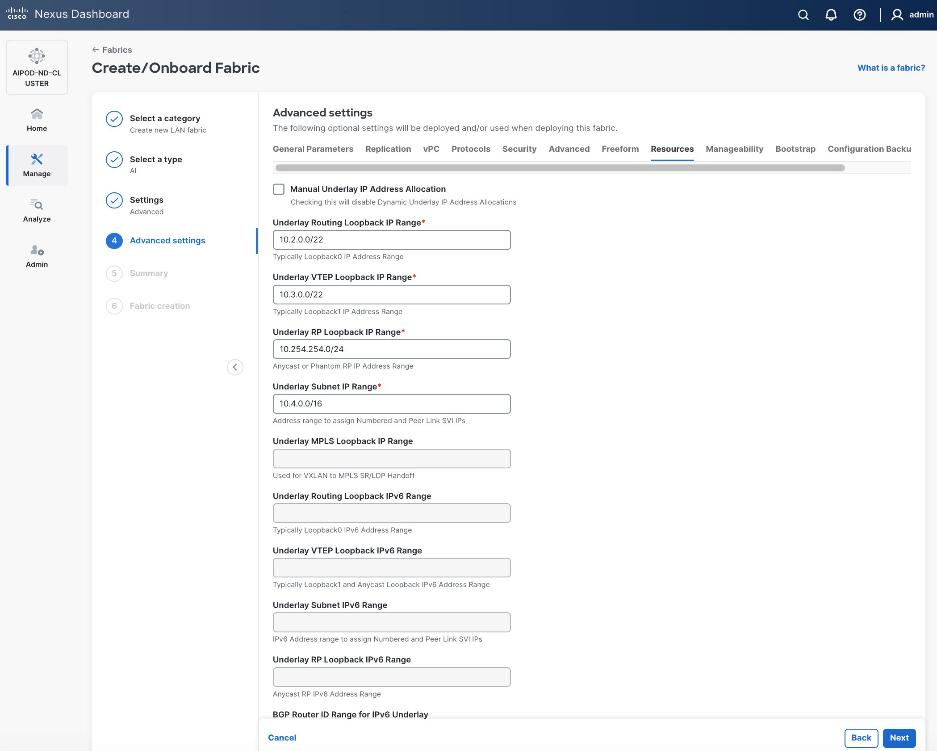

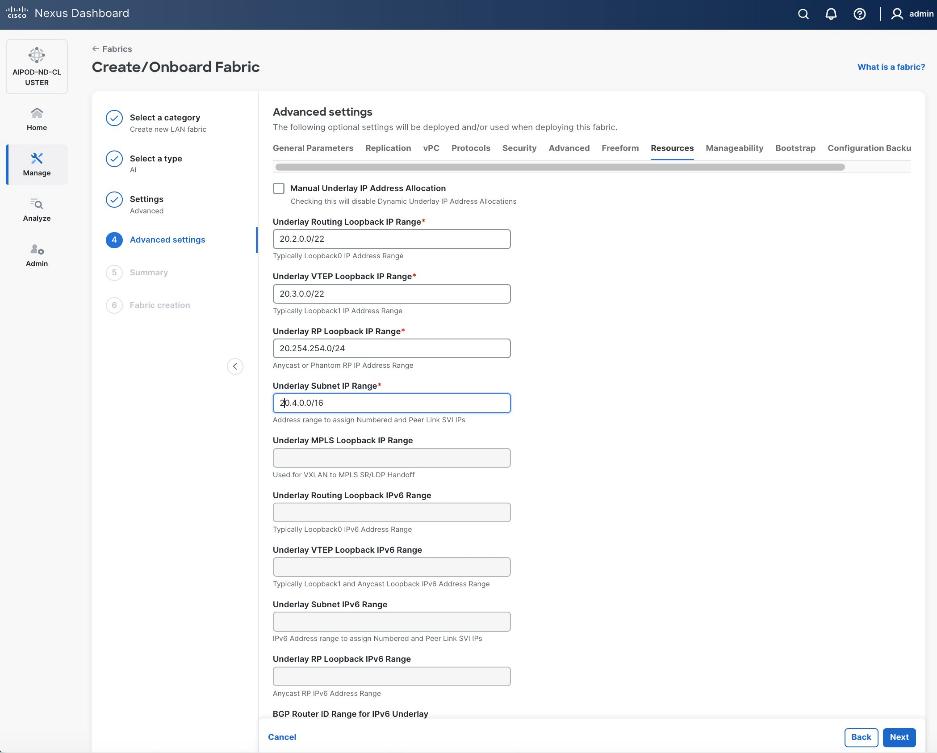

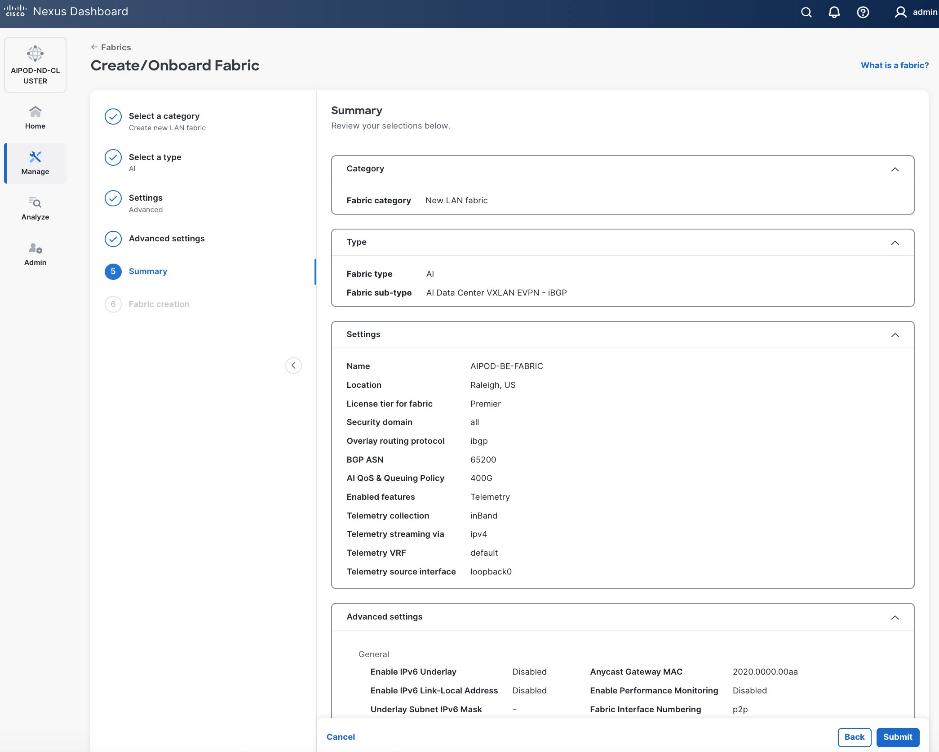

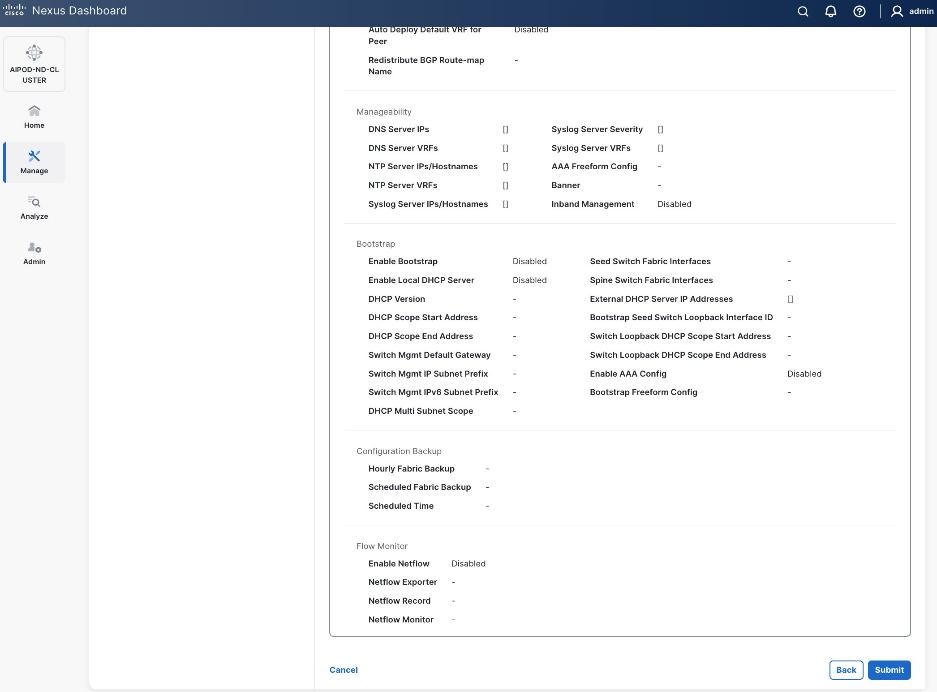

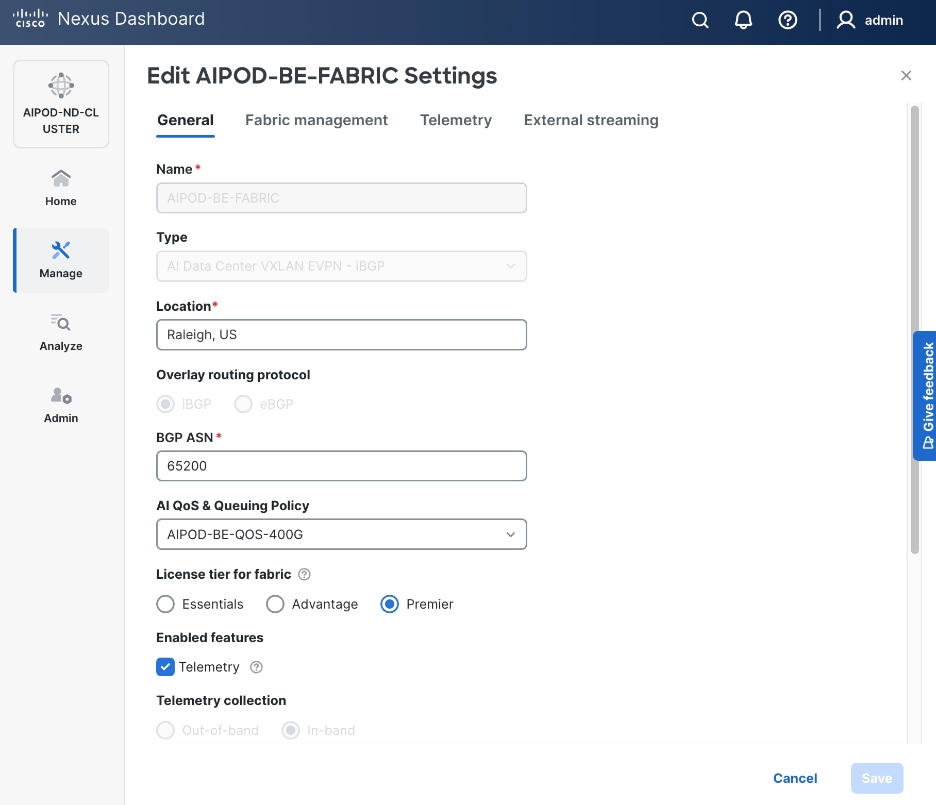

Step 6. For Configuration Mode, keep the Default option. Specify Name, Location, and BGP ASN for fabric. Also select the Licensing tier for fabric from the options available. Premier is required for advanced network analytics and day 2 operations. Click the ? icon to see the features available in each tier.

Step 7. Click Next.

Step 8. In the Summary view, verify the settings and click Submit.

Step 9. Click Submit.

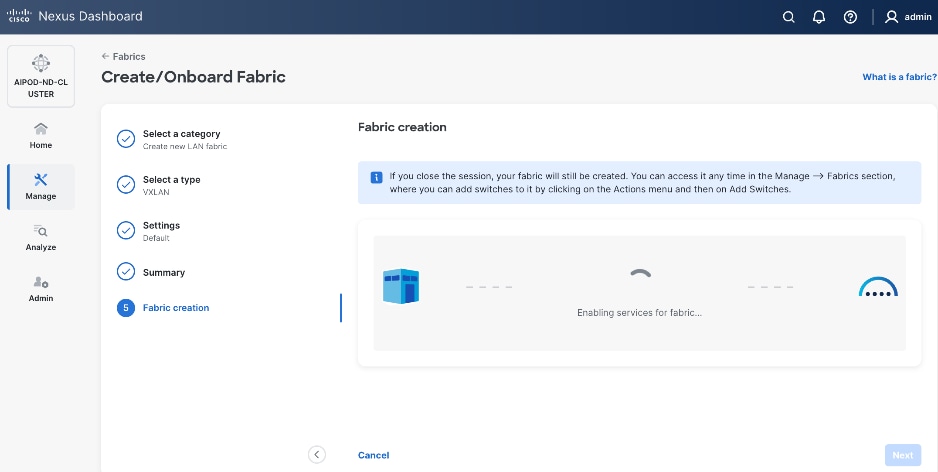

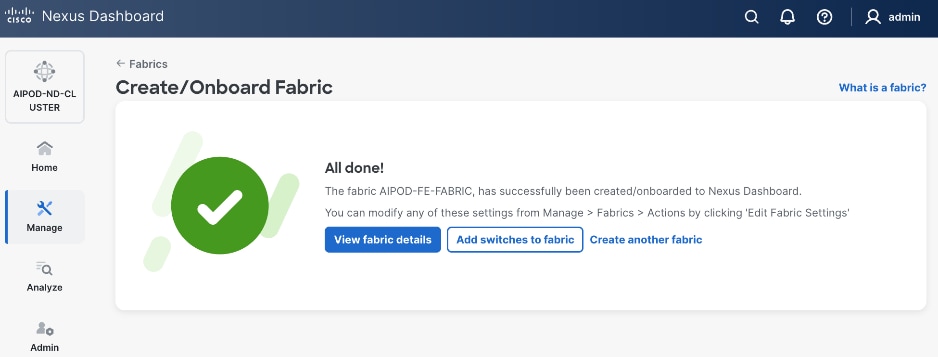

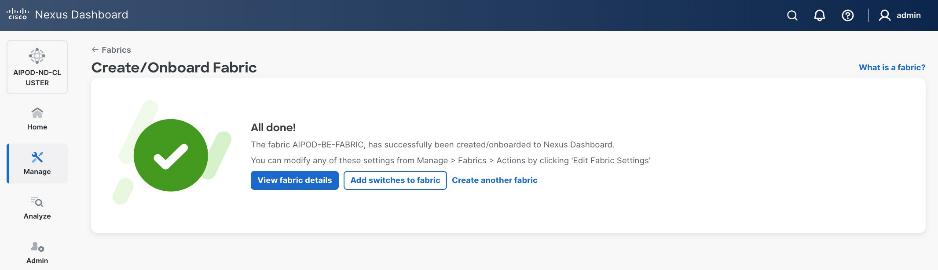

Step 10. When Fabric Creation completes, you will see the following:

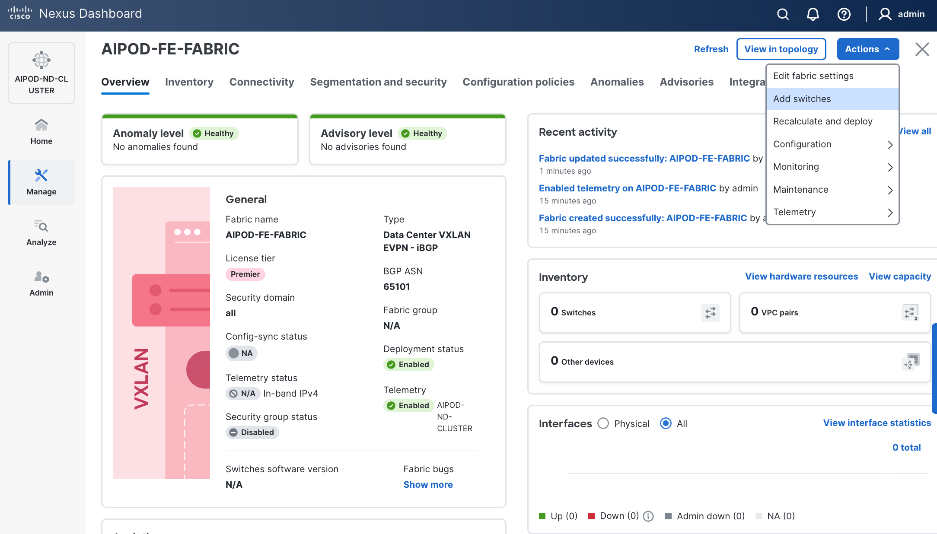

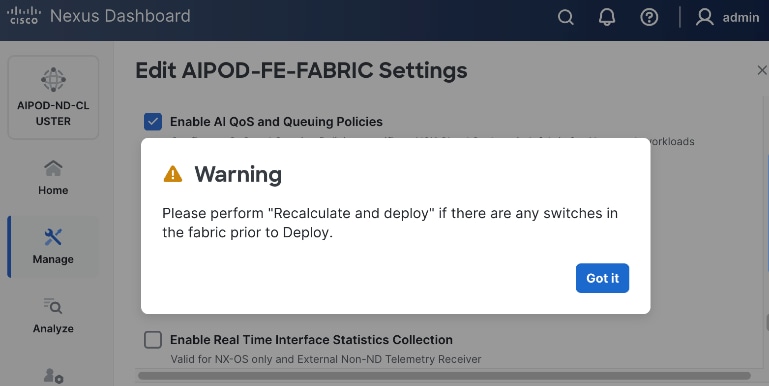

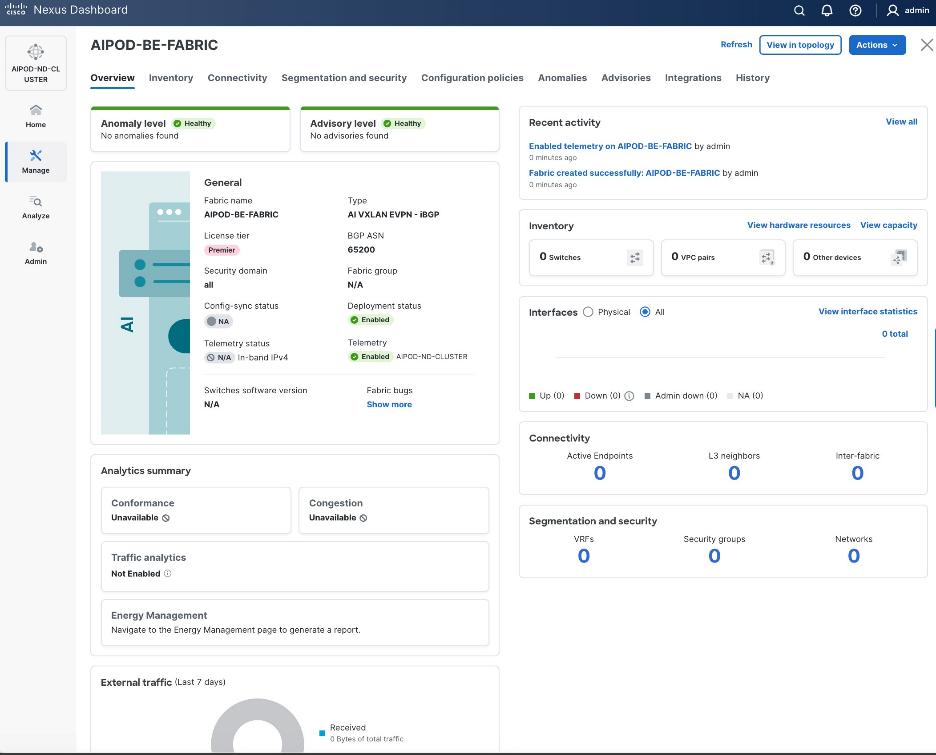

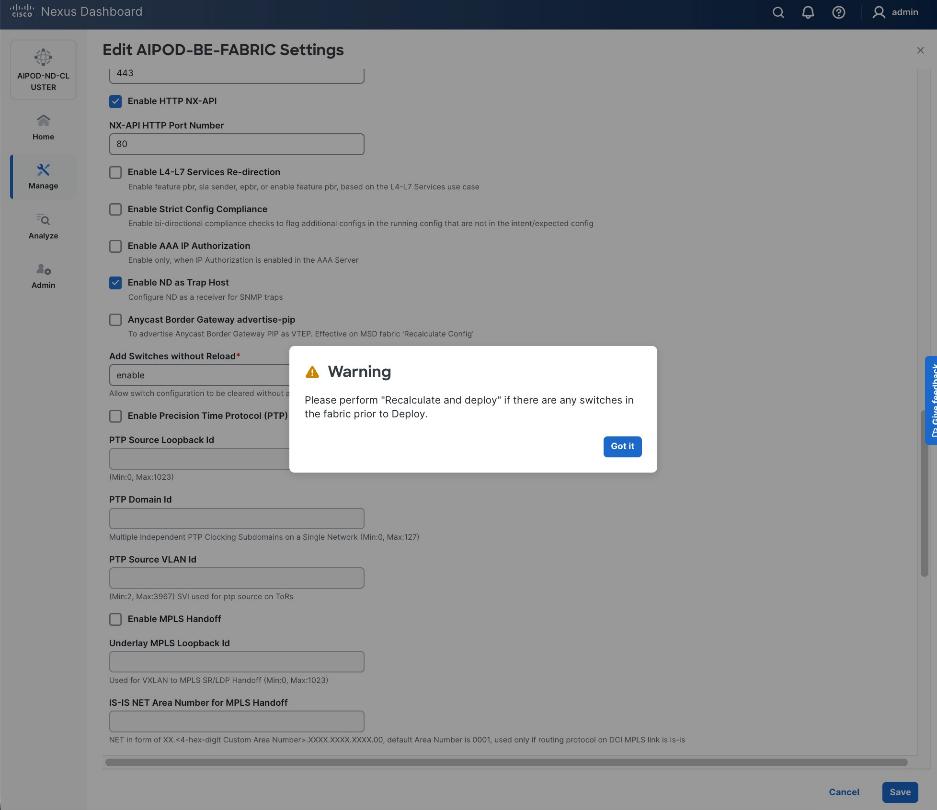

Step 11. To add switches to this new fabric without a reload, click View fabric details. Select Fabric Management > Advanced tabs and scroll down to find the field for Add switches without Reload and change setting to enable. Click Save, followed by Got it in the pop-up window.

Step 12. From the Manage > Fabrics view, click the fabric name to add switches to the fabric.

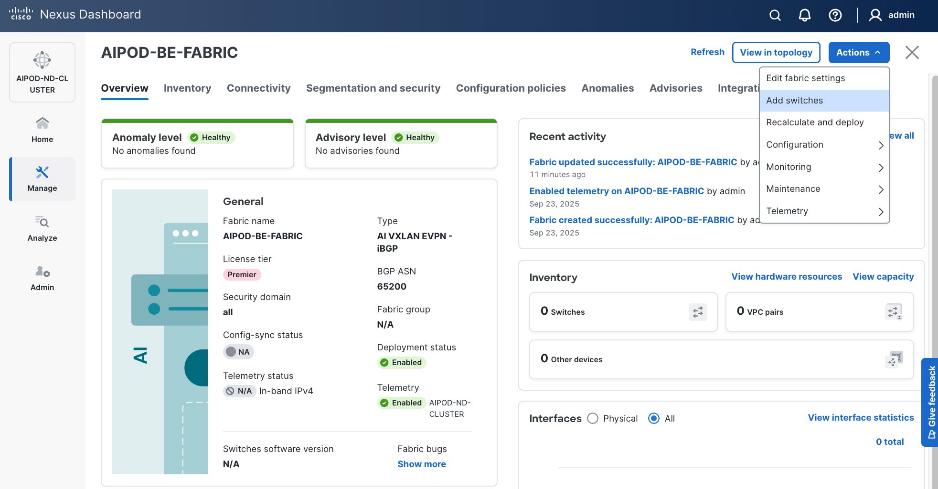

Step 13. Click Actions and select Add Switches from the drop-down list.

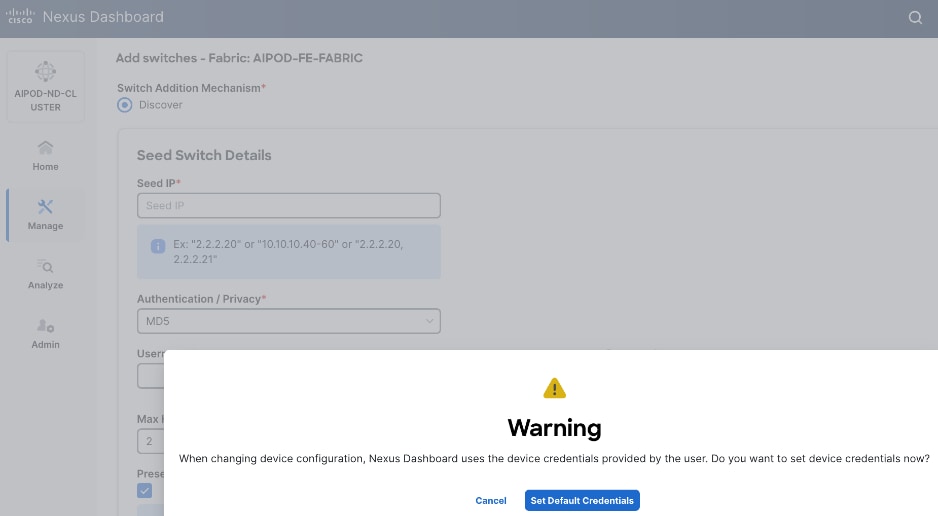

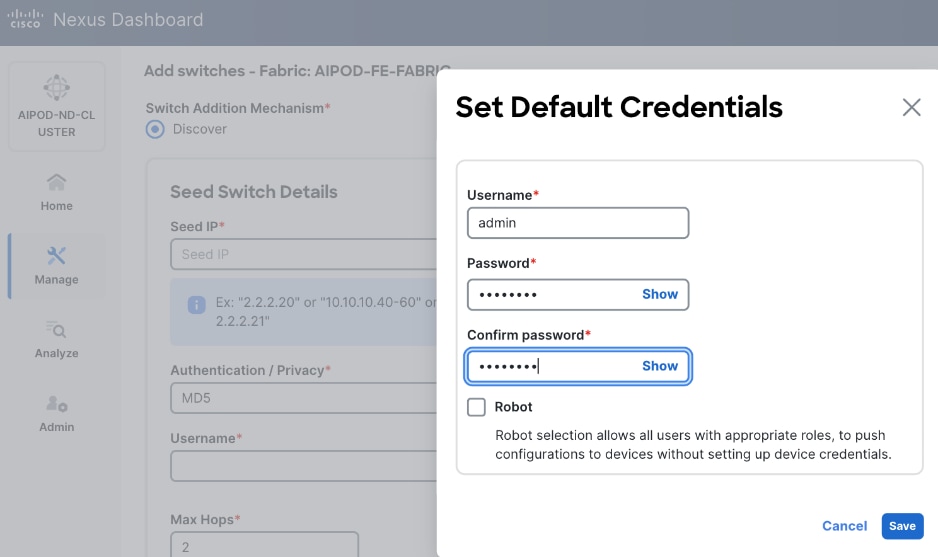

Step 14. In the pop-up window, click Set Default Credentials.

Step 15. Specify username and password. Click Save.

Step 16. Click Ok.

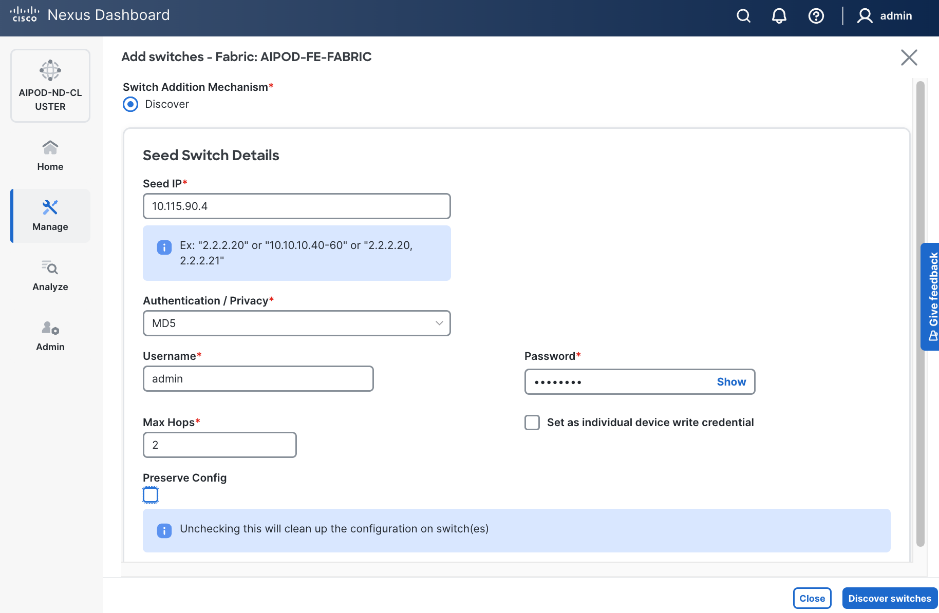

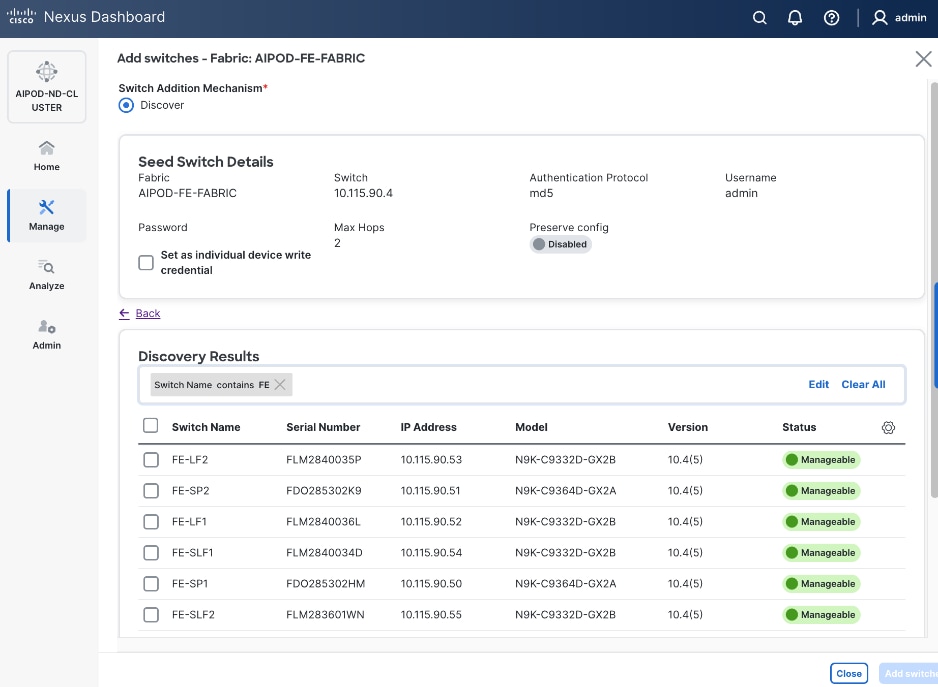

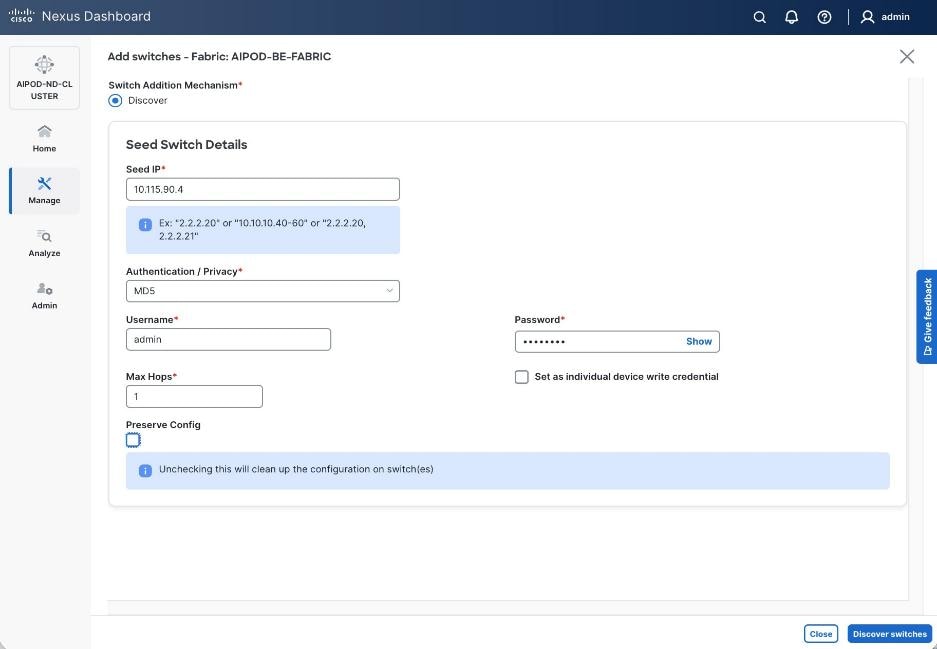

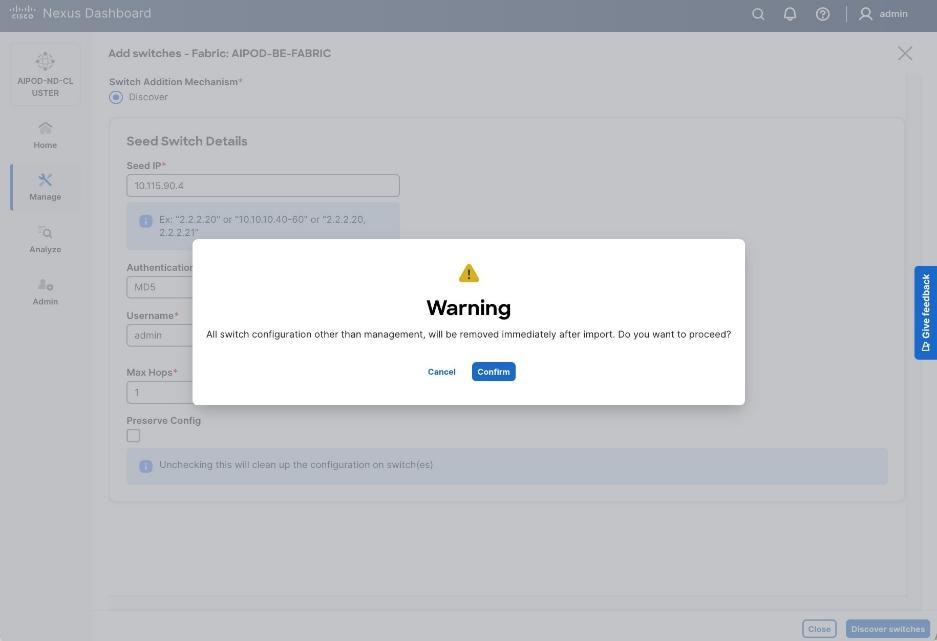

Step 17. Specify Seed IP, username and password. Adjust Max hops as needed. Click Discover Switches.

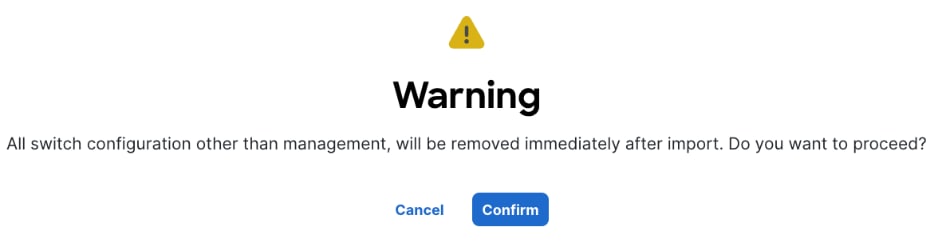

Step 18. Click Confirm in the pop-up Warning.

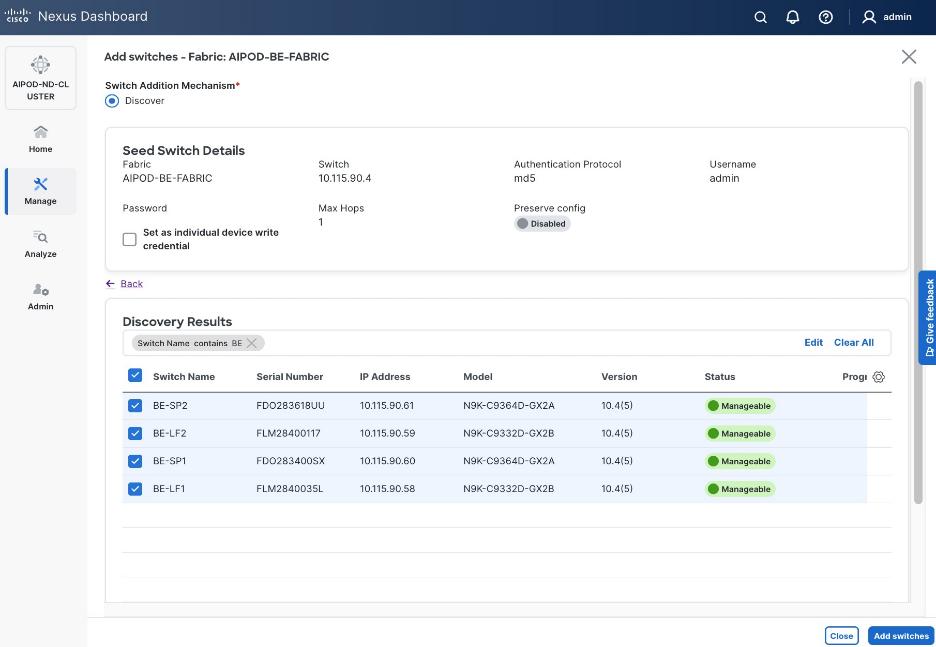

Step 19. Filter the discovered switch list as needed to view only the switches you want to add.

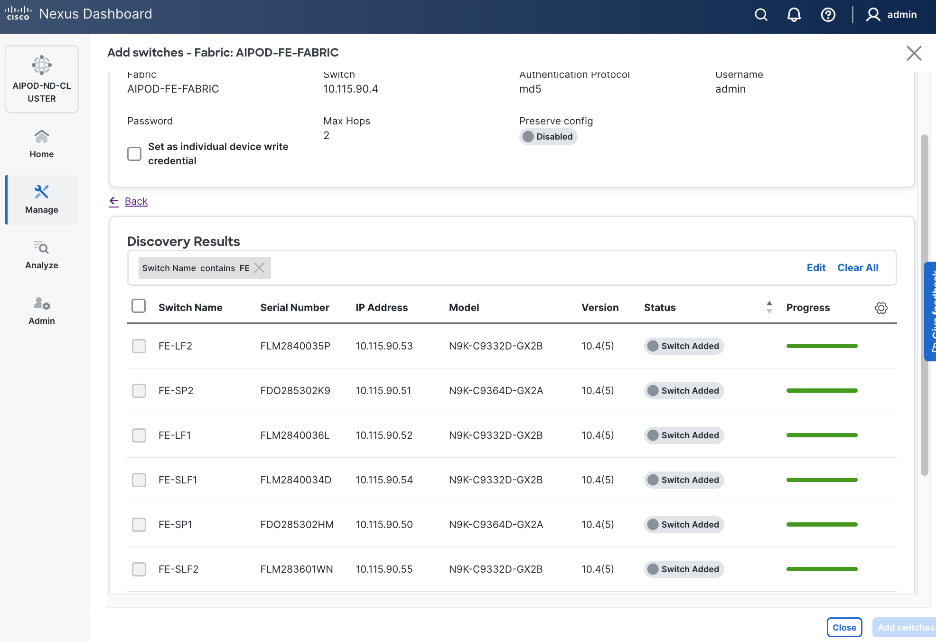

Step 20. Select all switches to be added. Click Add switches.

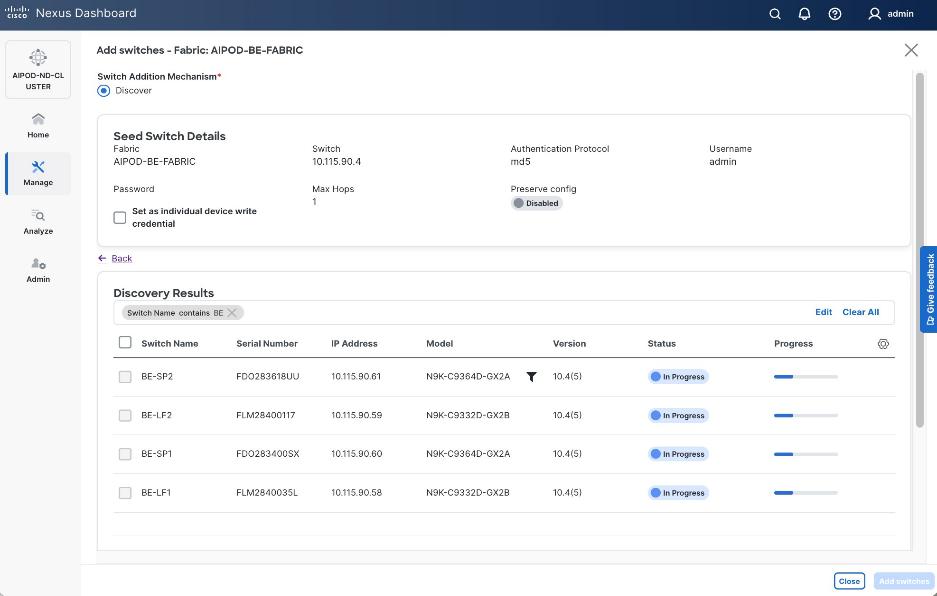

Step 21. Click Close when all switches have been added.

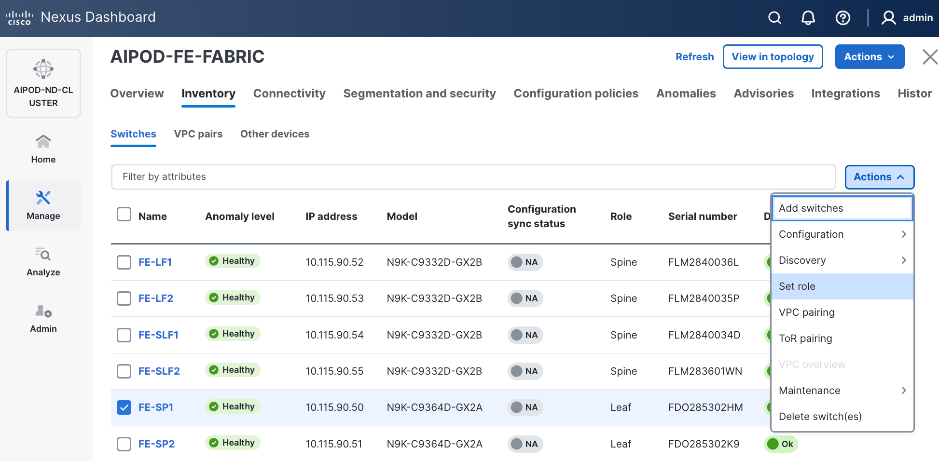

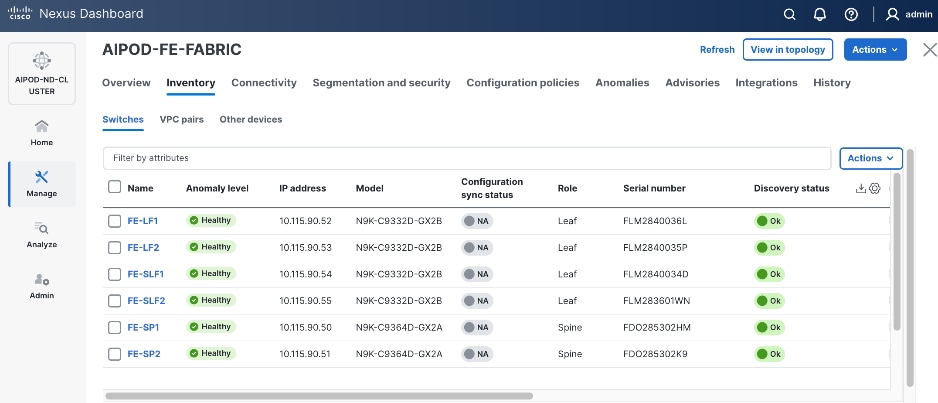

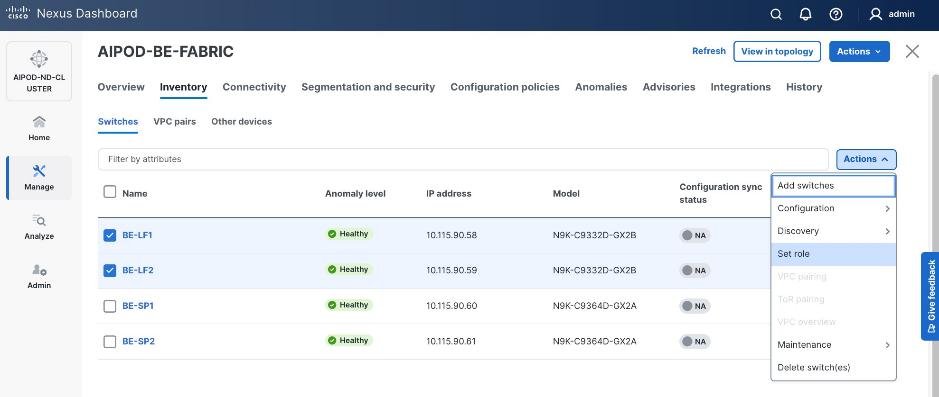

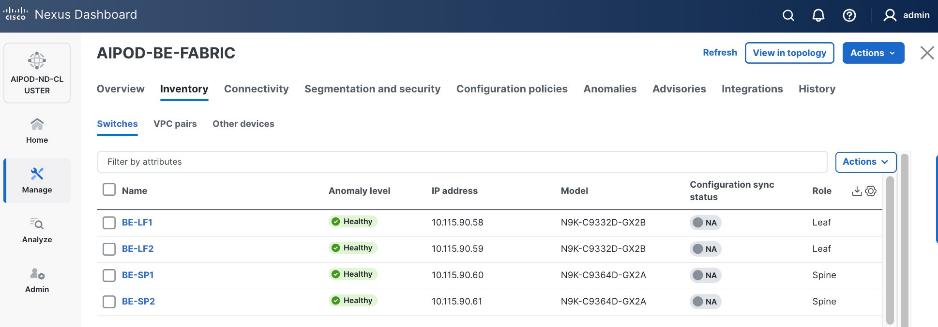

Step 22. From the Manage > Fabrics, select the fabric and click Inventory tab.

Step 23. For each switch in the list, verify Role is correct. To change the role, select the switch and then click the lower Actions button and select Set role from the drop-down list.

Step 24. In the Select Role pop-up window, select the correct role from the list and click Select.

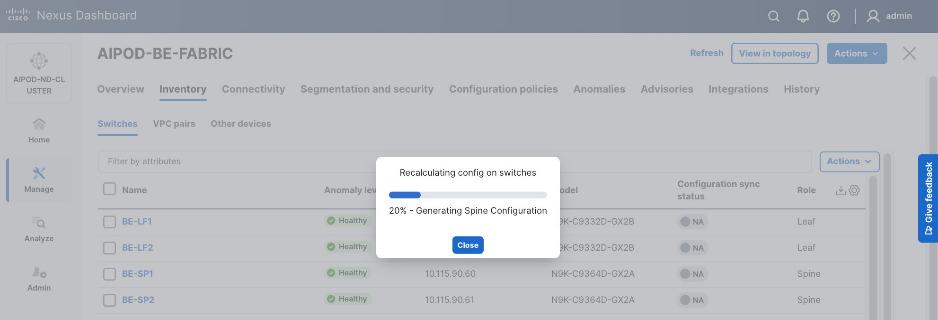

Step 25. Click Ok in the pop-up warning to perform "Recalculate and deploy" to complete the change.

Step 26. Repeat steps 1 – 25 to select and confirm the role for all switches in the fabric.

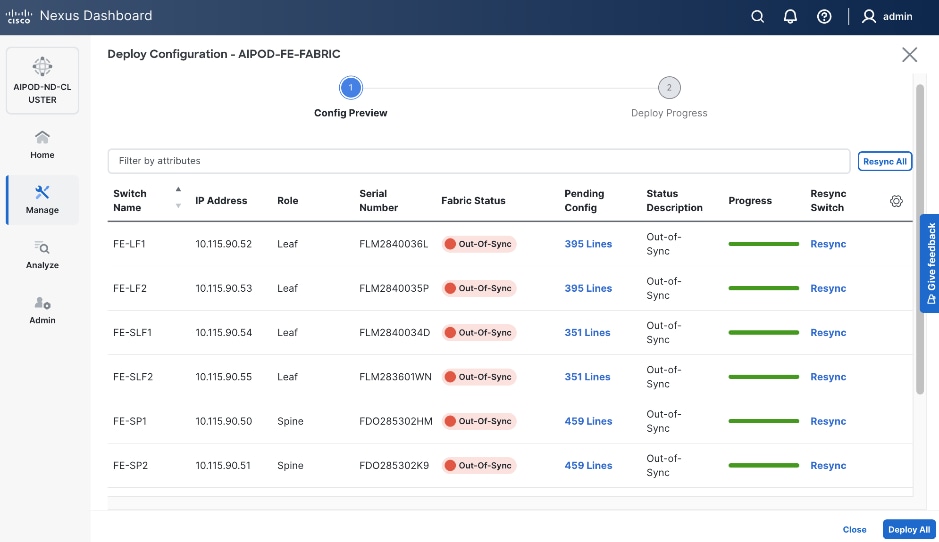

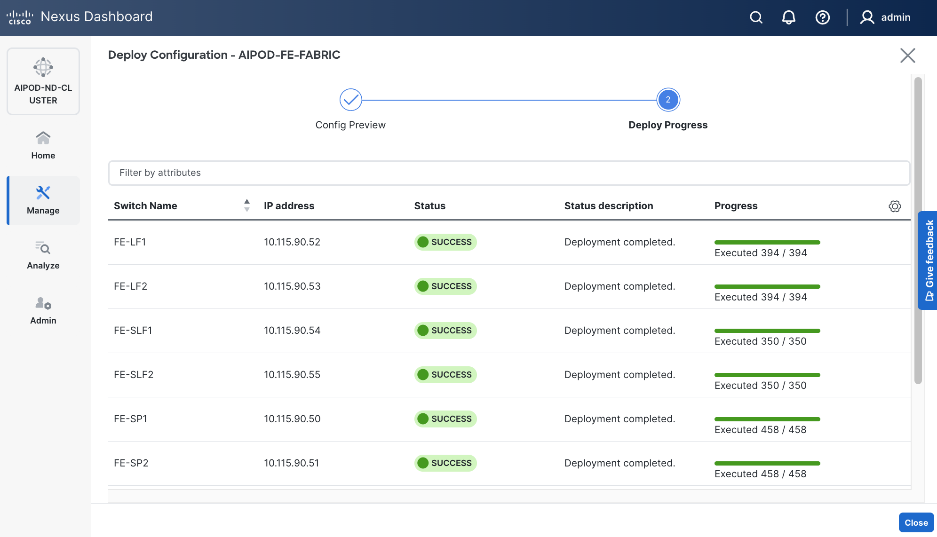

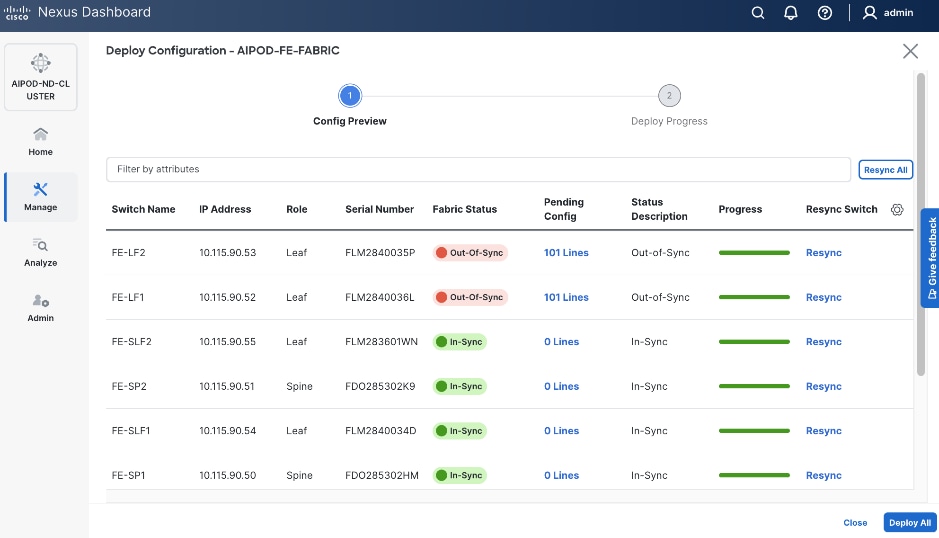

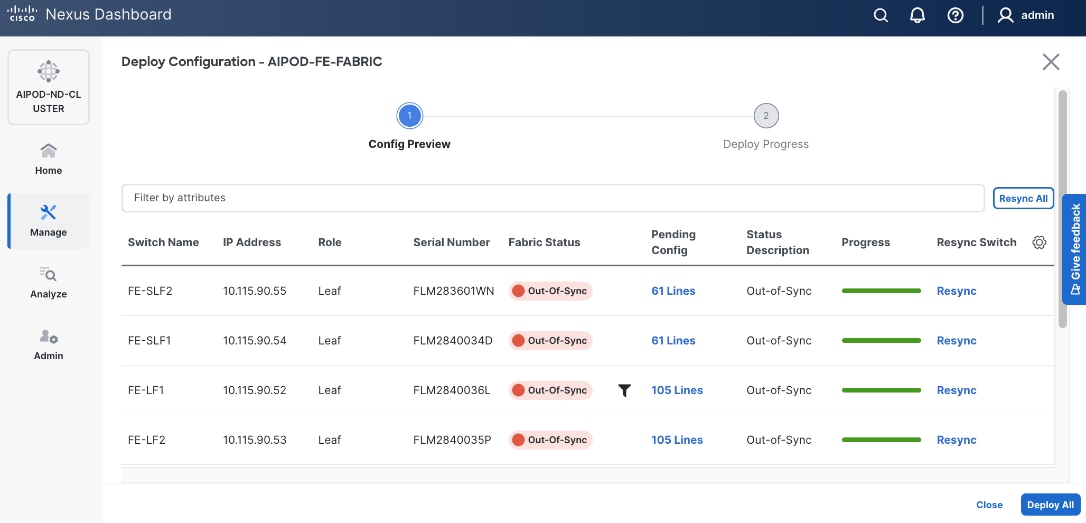

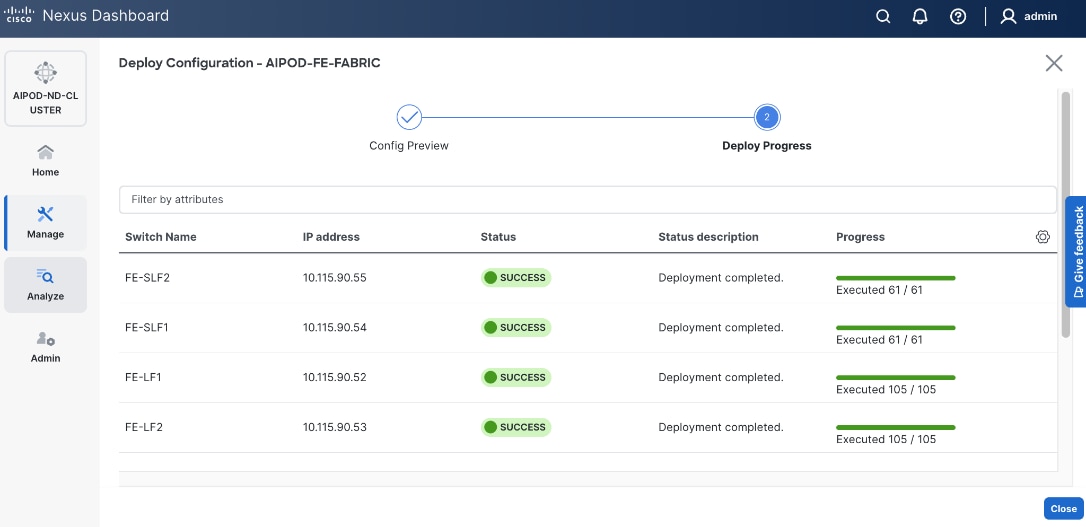

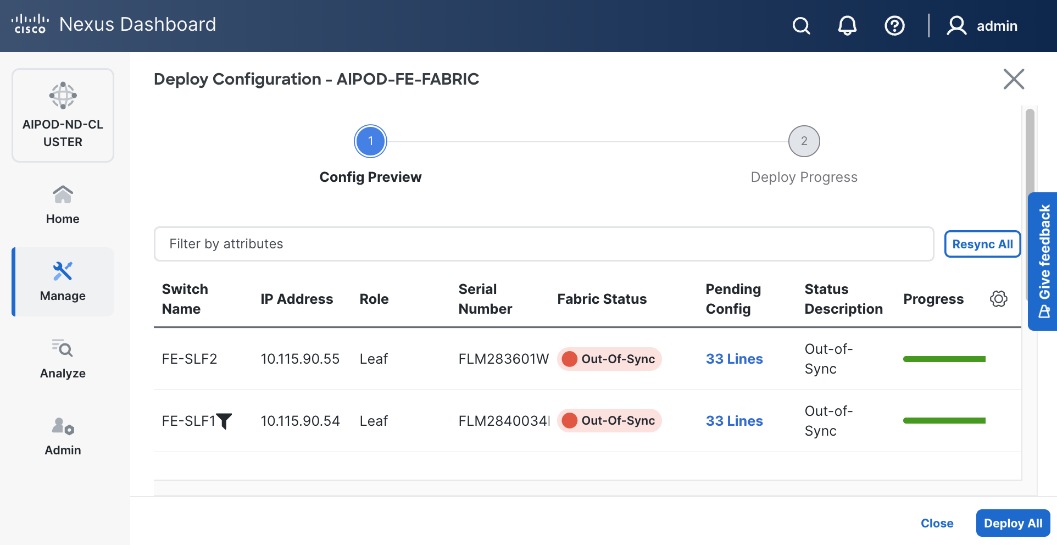

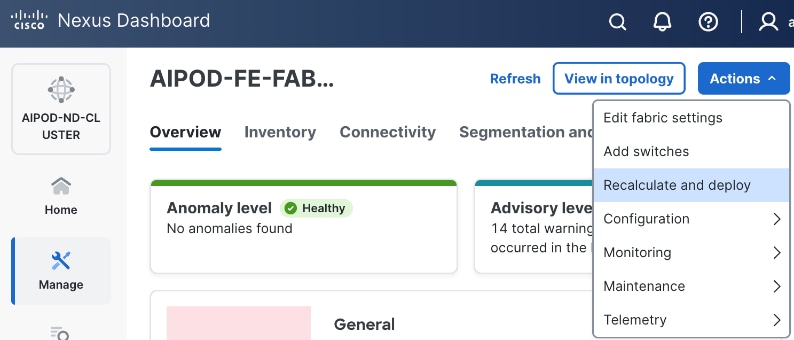

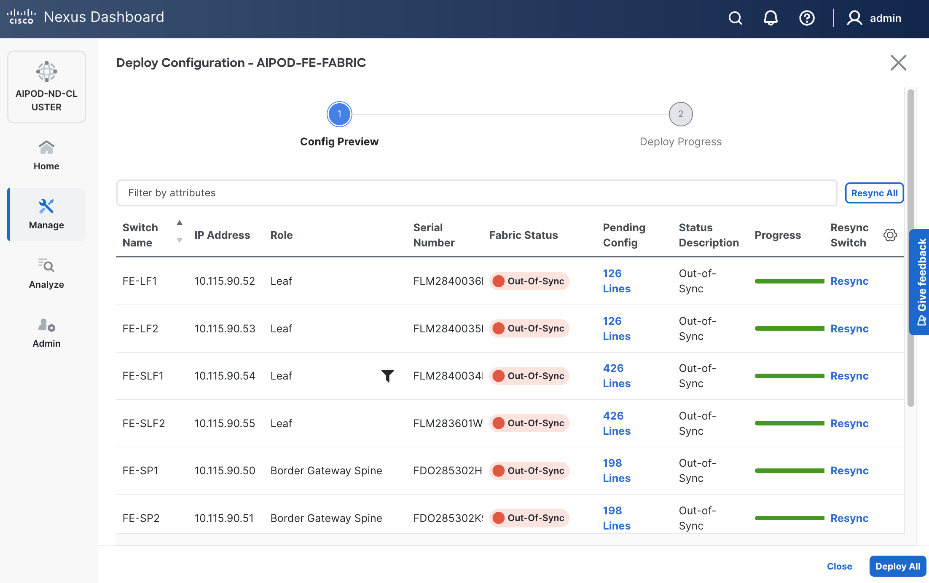

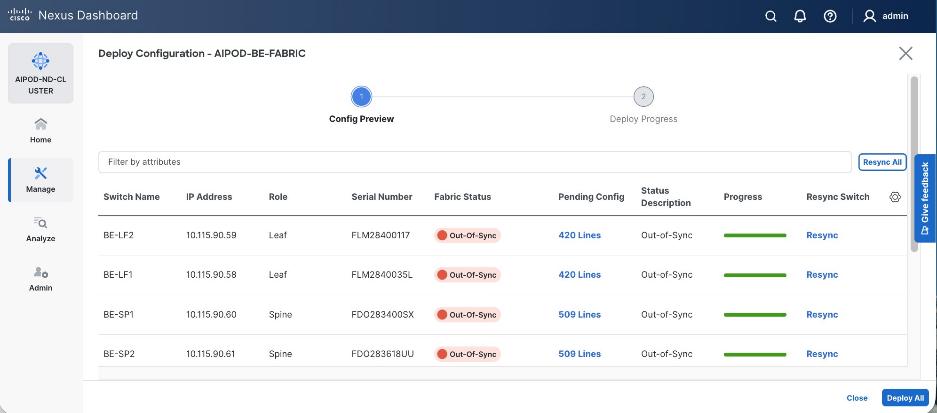

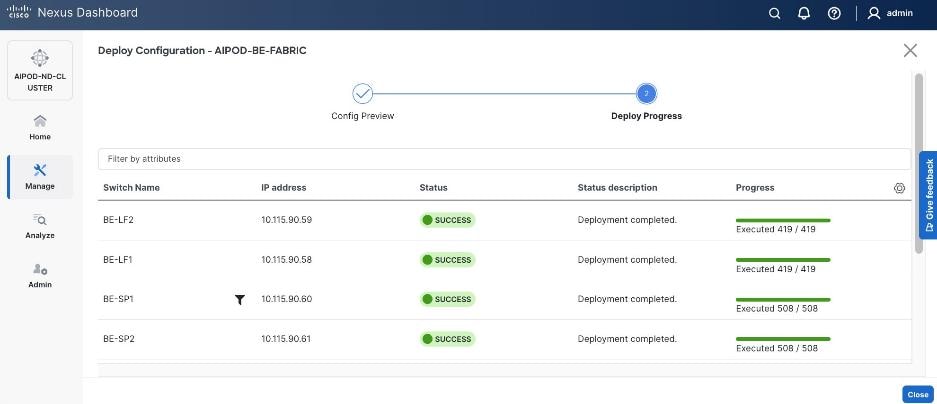

Step 27. Click the upper Actions button and select Recalculate and deploy from the drop-down list. If it says one is already in progress, wait a few minutes and repeat the steps. You should see the Fabric as Out-of-sync with some Pending Config (lines of config) change.

Step 28. Click Deploy All.

Step 29. Click Close.

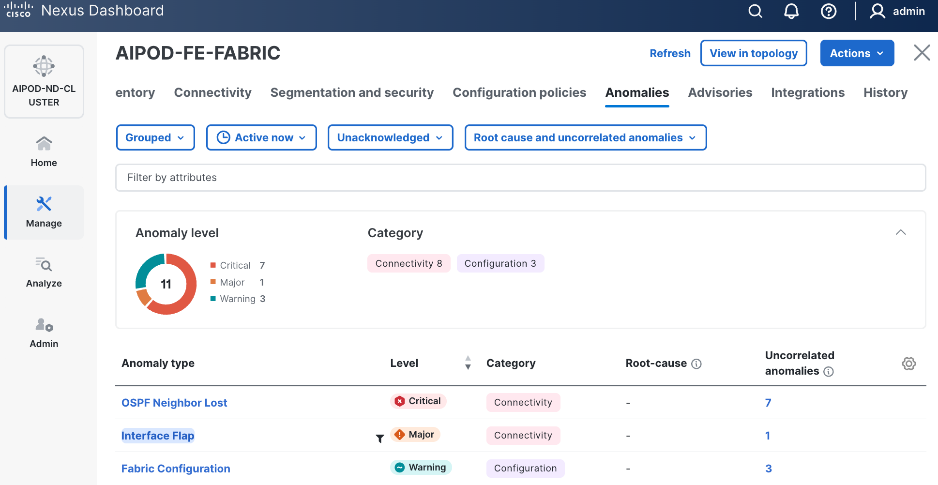

Step 30. ND will identify issues in hardware, connectivity, software etc., reflected by the Anomaly level. To view the flagged anomalies, go to Anomalies in the top menu bar. Address each anomaly to prevent issues later, either by resolving them or acknowledging them.

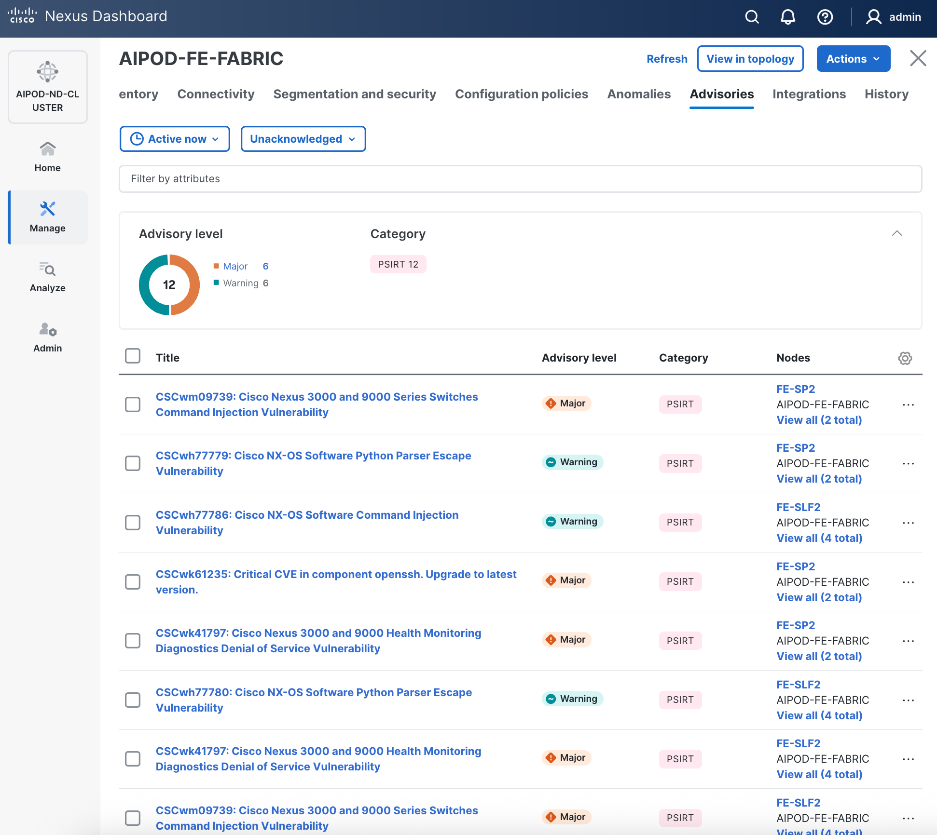

Step 31. Review the Advisories and resolve or acknowledge them.

Step 32. Evaluate and upgrade to Cisco recommended Nexus OS release.

Step 33. Now you can start attaching compute, storage and other end devices to the cluster.

Enable vPC Pairing on Compute/Management Leaf Switches in the FE Fabric

Assumptions and Prerequisites

● Compute/Management Leaf Switches discovered and added to the frontend fabric.

Setup Information

Table 10. Setup Parameters for FE Fabric: Enable vPC Pairing on Compute/Management Leaf Switches

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| vPC Pairing to Compute/Management Leaf Switches |

||

| Enable Virtual Peerlink |

Enabled |

|

| Leaf Switches |

|

|

| Leaf 1 |

FE-LF1 |

|

| Leaf 2 |

FE-LF2 |

|

Deployment Steps

To enable vPC pairing on the compute/management in the frontend fabric, follow the procedures below.

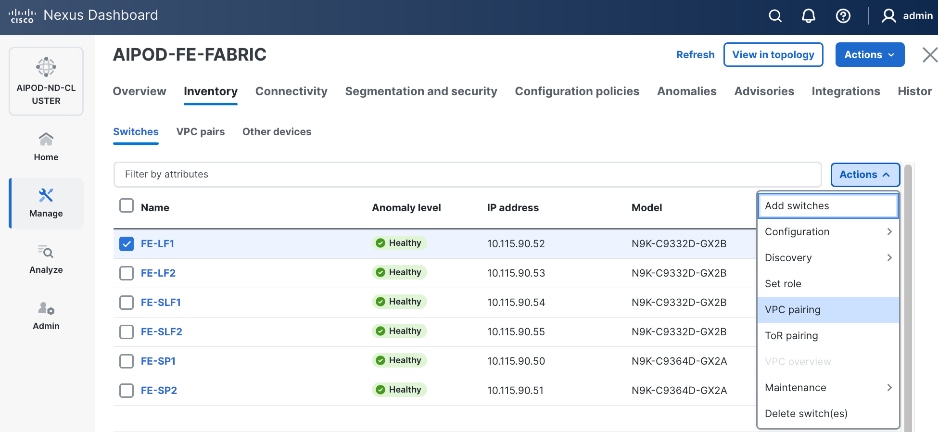

Procedure 1. Enable vPC pairing for compute/management leaf switches in the FE fabric

Step 1. From a browser, go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the frontend fabric and click Inventory tab.

Step 4. To enable VPC pairing on the leaf switches that connect to UCS compute (GPU and management) nodes, select the first leaf switch in the leaf pair.

Step 5. Click the lower Actions button and select VPC pairing from the drop-down list.

Step 6. Select the VPC peer switch for the first compute/management leaf. Enable Virtual Peerlink.

Step 7. Click Save.

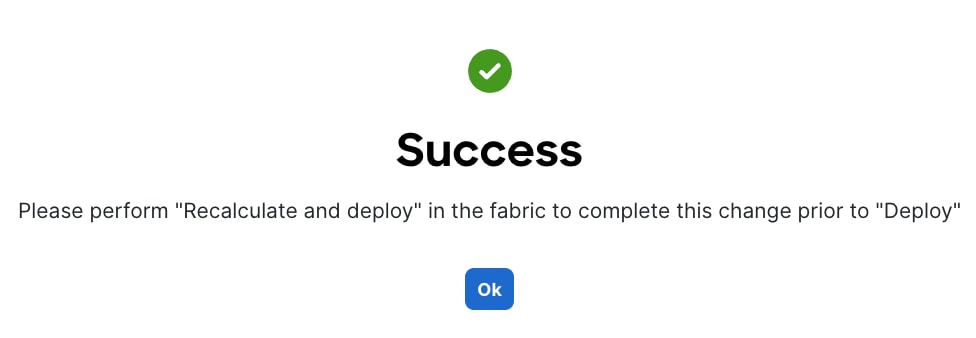

Step 8. Click Ok in the Success pop-up window.

Step 9. Select the two leaf switches in the vPC pair that are now Out-of-sync from the configuration change. Click the upper Actions button and select Recalculate and deploy from the drop-down list.

Step 10. Click Deploy All.

Step 11. When the configuration deployment completes successfully, click Close.

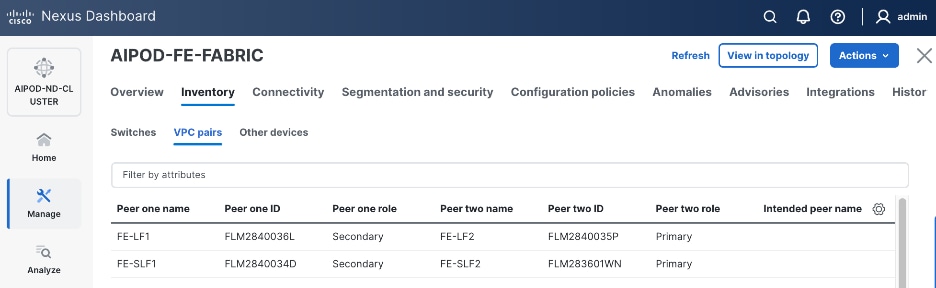

Step 12. In the Inventory tab, go to VPC pairs tab to see the newly created vPC pair.

Enable vPC Pairing on Storage Leaf Switches in the Frontend Fabric

Assumptions and Prerequisites

● Storage Leaf Switches discovered and added to the frontend fabric

Setup Information

Table 11. Setup Parameters for FE Fabric: Enable vPC Pairing on Storage Leaf Switches

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| vPC Pairing to Storage Leaf Switches |

||

| Enable Virtual Peerlink |

Enabled |

|

| Leaf Switches |

|

|

| Leaf 1 |

FE-SLF1 |

|

| Leaf 2 |

FE-SLF2 |

|

Deployment Steps

To enable vPC pairing for the storage leaf switches in the frontend fabric, follow the procedures below.

Procedure 1. Enable vPC pairing for storage leaf switches in the FE fabric

Step 1. Repeat the previous procedure to configure storage leaf switches in the frontend fabric as vPC peers.

Step 2. In the Inventory tab, go to VPC pairs tab to see the newly created vPC pairs.

Step 3. From the left navigation menu, go to Manage > Fabric and select the frontend fabric.

Step 4. Select the Topology tab. You should see the 2 Leaf switch pairs grouped in a box, indicating they are part of the same vPC pair.

Enable Layer 2 Connectivity to Management UCS X-Direct from FE fabric

To enable Layer 2 connectivity to management UCS X-Direct chassis, you will configure two vPCs, one for -A side and another for -B side. Each vPC will use multiple ports on each compute leaf switch pair to connect to -A and -B uplinks on the Cisco UCS X-Direct chassis.

Assumptions and Prerequisites

● Compute/management leaf switches deployed as a vPC pair

● Management UCS-X Direct cabled using multiple uplinks to frontend compute/management leaf switches

Setup Information

Table 12. Setup Parameters for FE Fabric: Layer 2 Connectivity to Management UCS X–Direct

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| Leaf Switches |

FE-LF1, FE-LF2 |

|

| Management UCS |

UCS X-Direct with (-A, -B) uplinks; Both uplinks are dual-homed to FE-LF1 & FE-LF2 |

With multiple servers |

| Virtual Port Channel (vPC) |

To UCS X-Direct |

Management UCS-X Direct Chassis |

| vPC/PC1 - ID |

15 |

To UCS X-Direct: Side-A |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/5, 1/7 |

FI-A: Ports 1/1-4 (PC-11) |

| vPC/PC2 – ID |

16 |

To UCS X-Direct: Side-B |

| Ports |

1/6, 1/8 |

FI-B: Ports 1/1-4 (PC-12) |

Deployment Steps

To enable Layer 2 connectivity to management Cisco UCS X-Direct chassis from the frontend fabric, follow the procedures below using the setup information provided in this section.

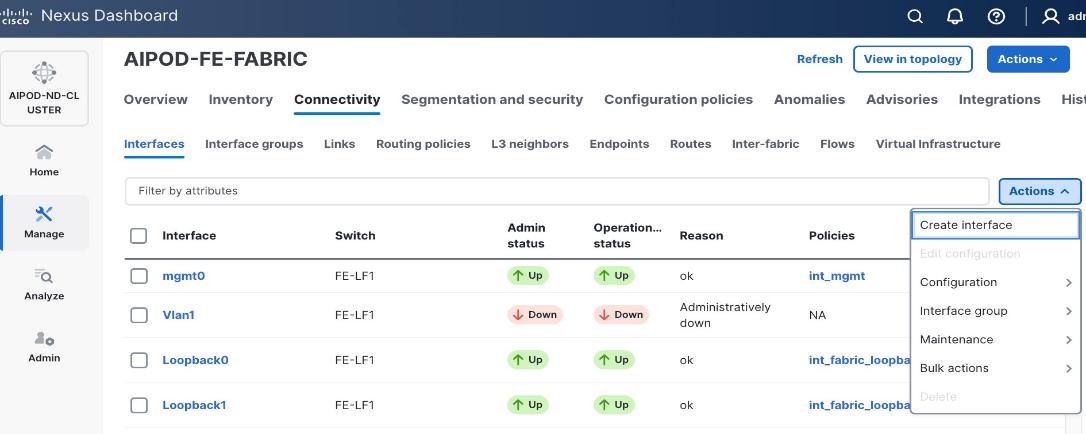

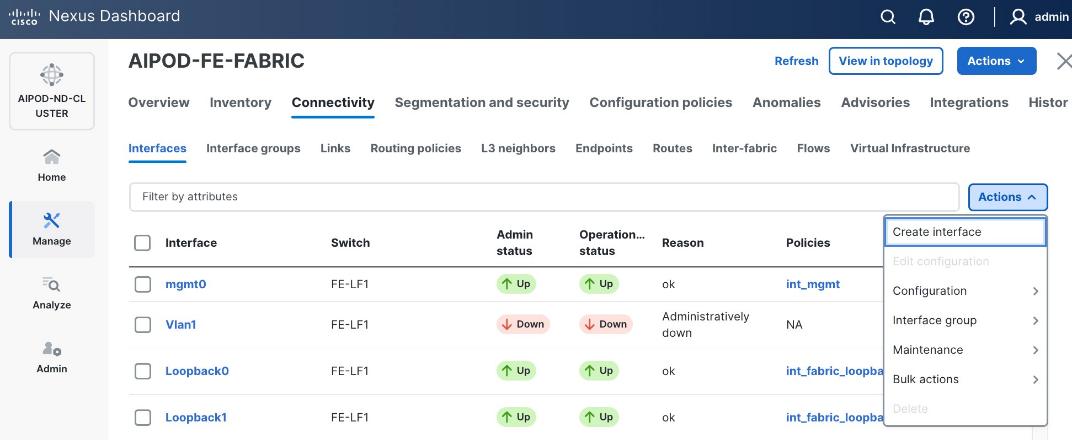

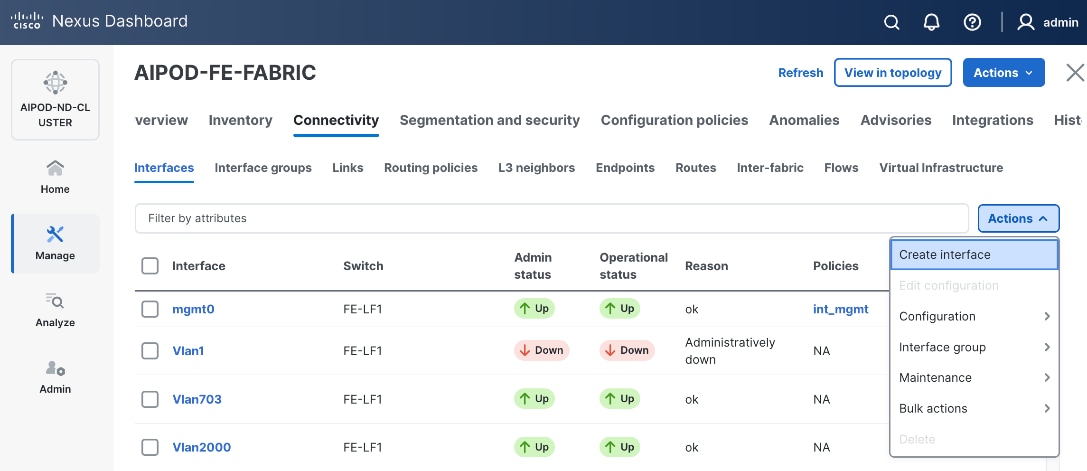

Procedure 1. Deploy first vPC to Management UCS X-Direct

Step 1. From a browser, go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the frontend fabric and go to Connectivity > Interfaces tab.

Step 4. Click the lower Actions button and select Create interface.

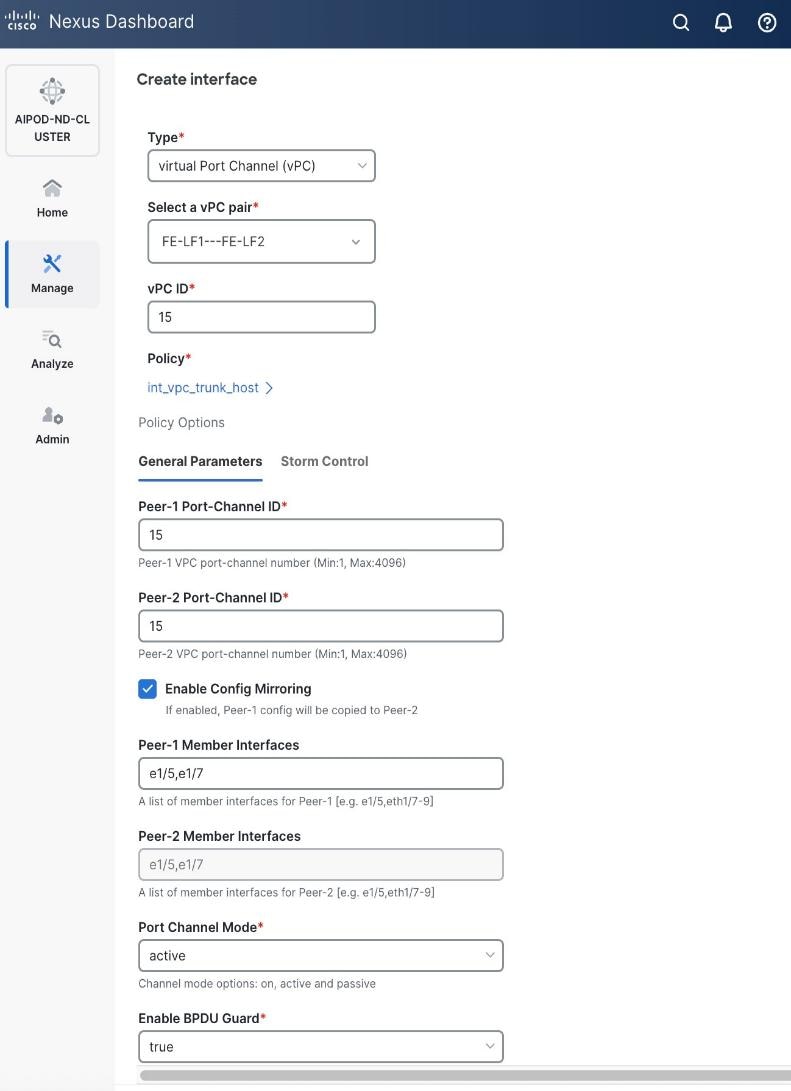

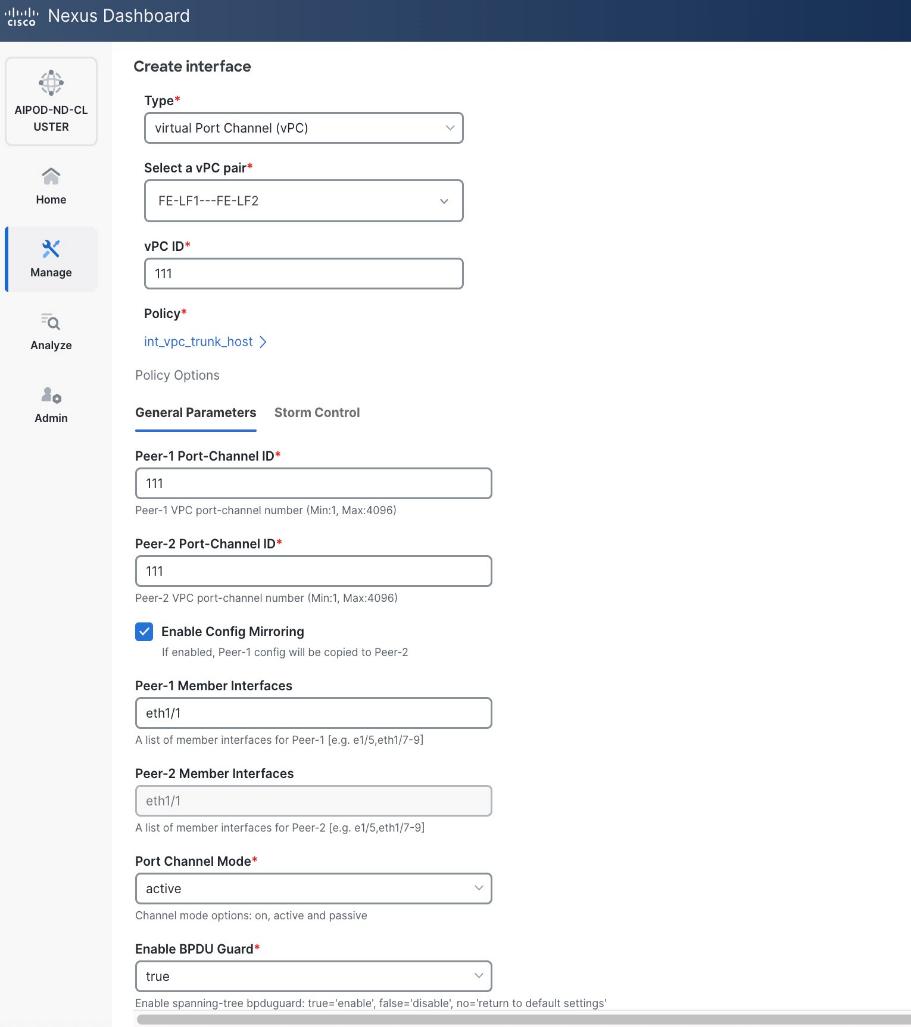

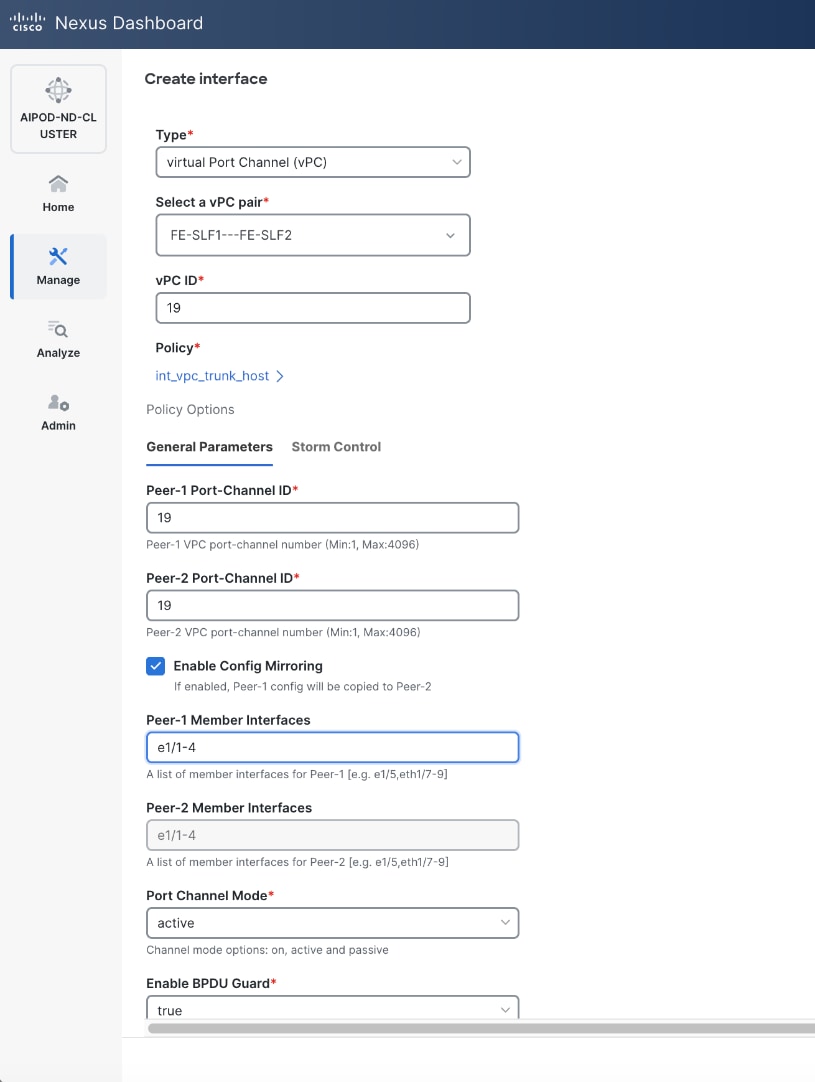

Step 5. In the Create interface window:

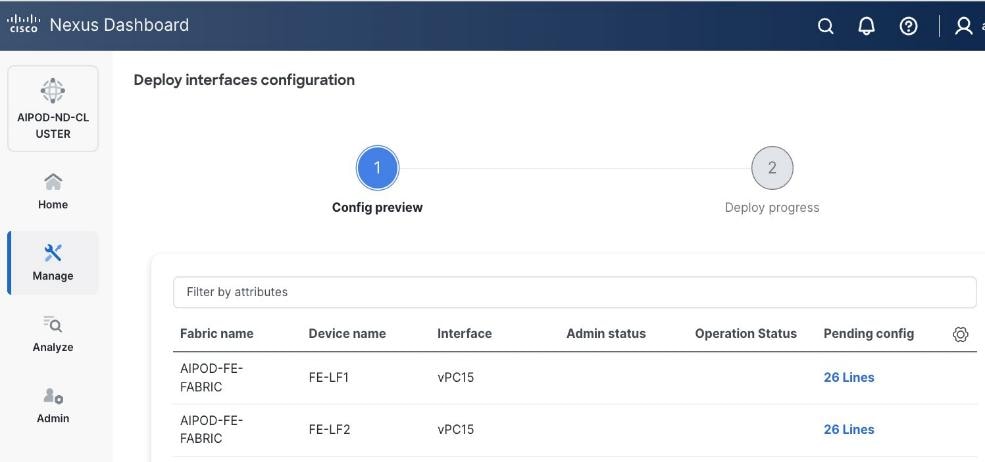

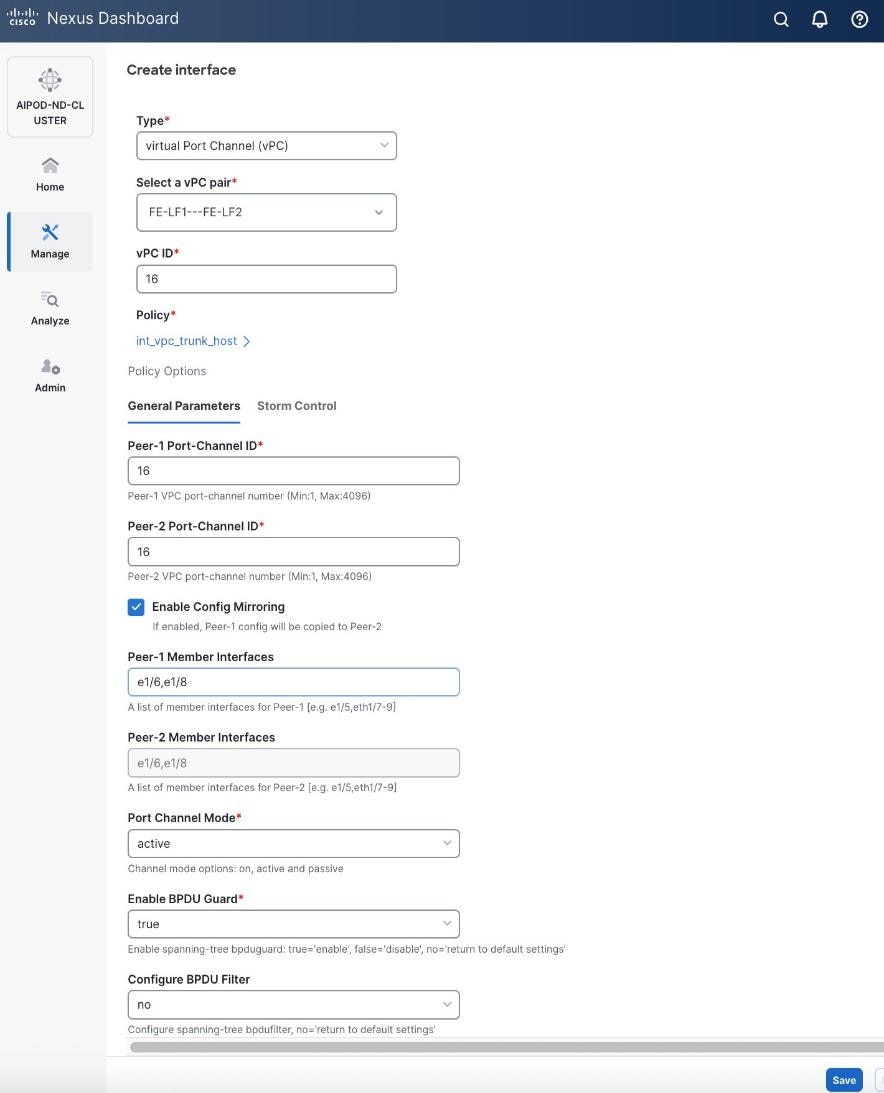

● Specify the TypeType of interface as virtual Port Channel (vPC)virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pairSelect a vPC pair, select the compute leaf switch vPC pair from the dropdown list.

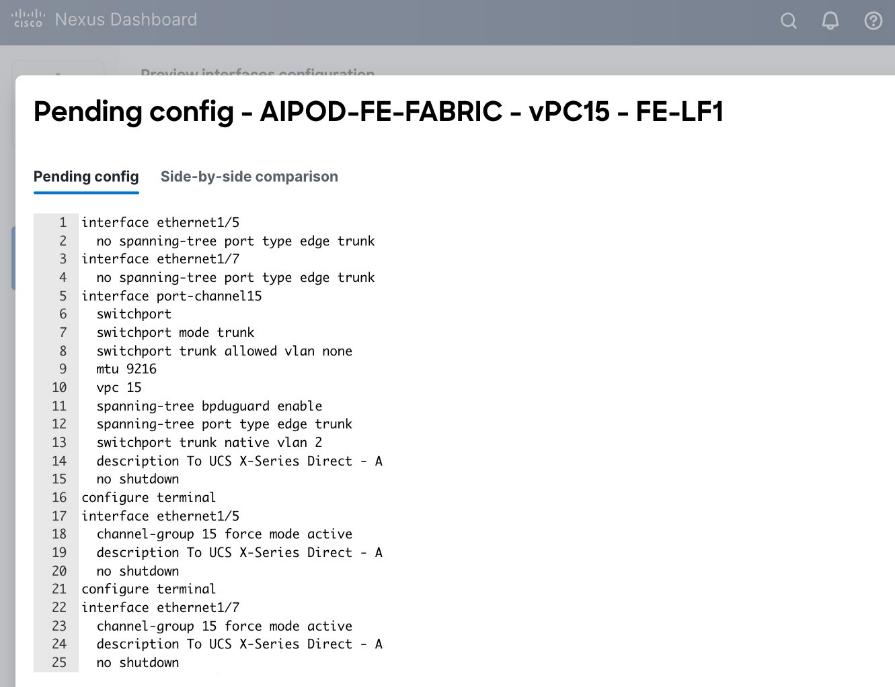

● Specify a vPC IDvPC ID for the firstfirst vPC to the UCS X-Direct (-A side-A side). Port Channel IDs from each switch to the first UCS node should match the vPC ID (see screenshot below).

● Leave the Policy as int_vpc_trunk_host.

● EnableEnable checkbox for Config MirroringConfig Mirroring to configure both vPC peer switches identically.

● Specify Peer-1 Member InterfacesPeer-1 Member Interfaces that connects to first UCS node.

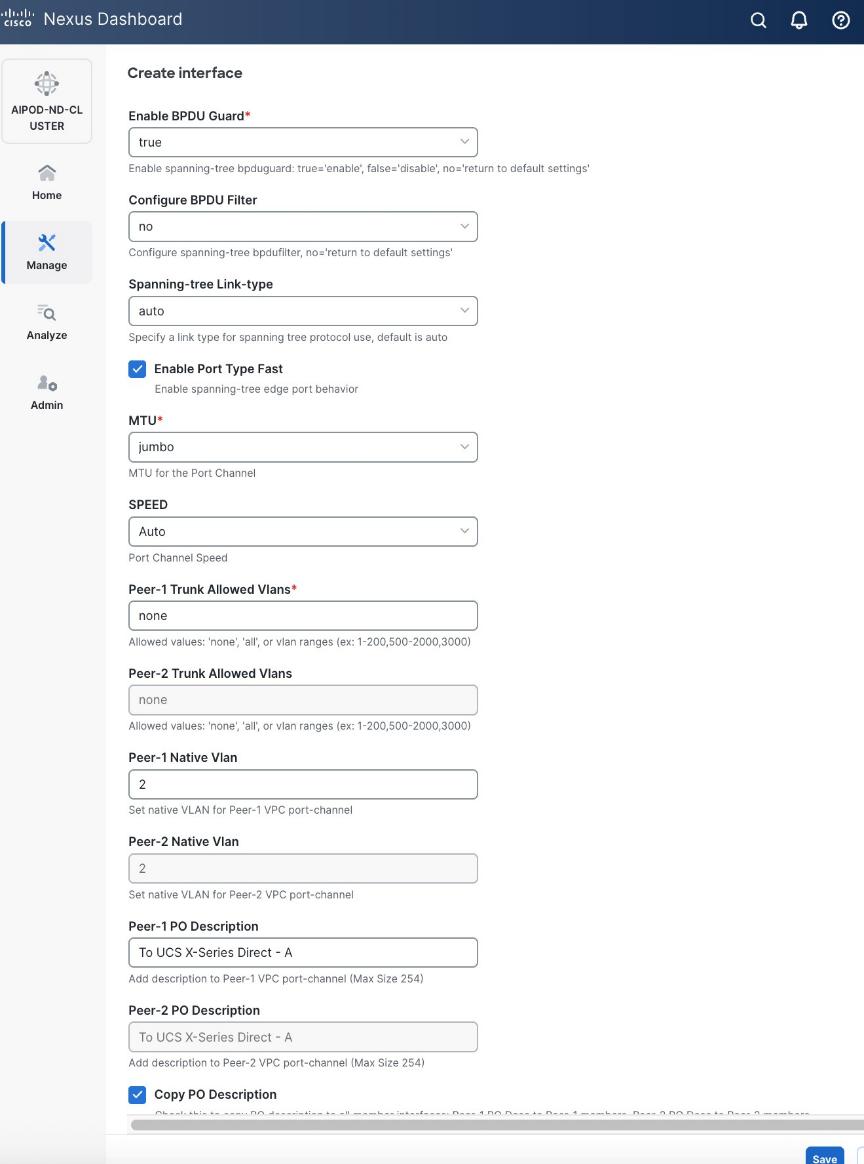

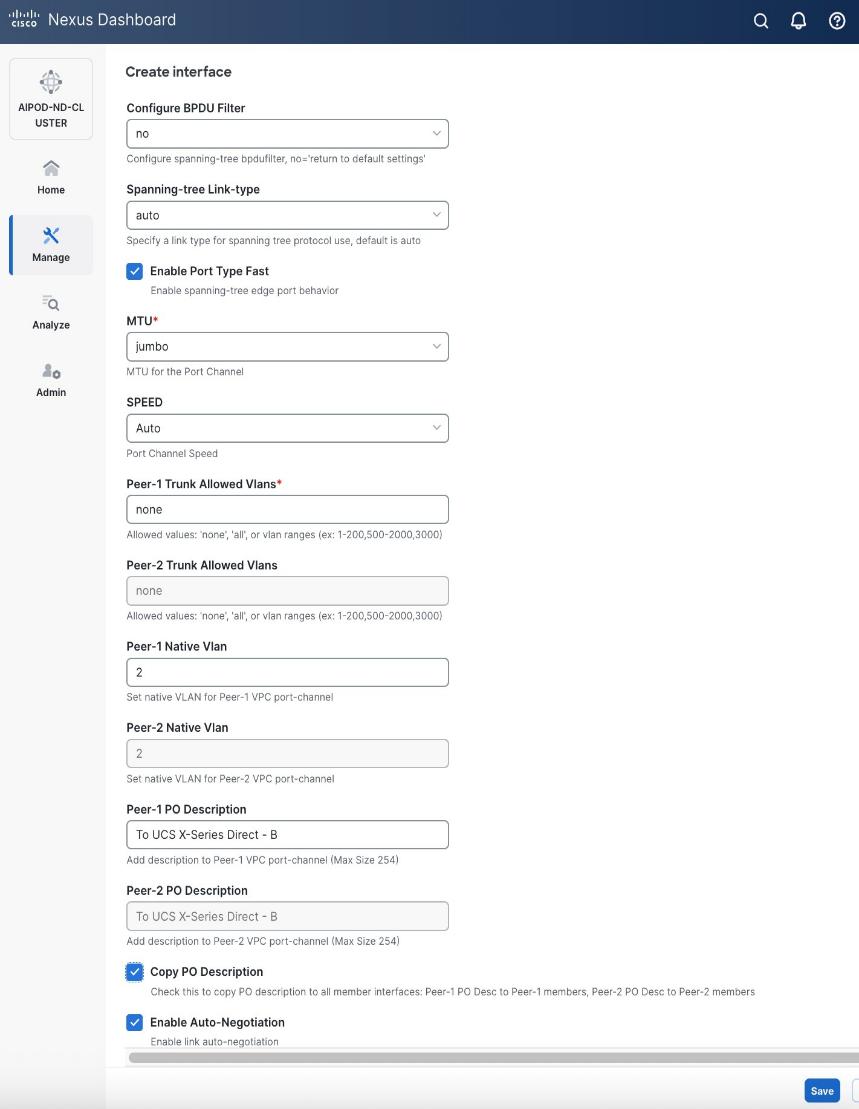

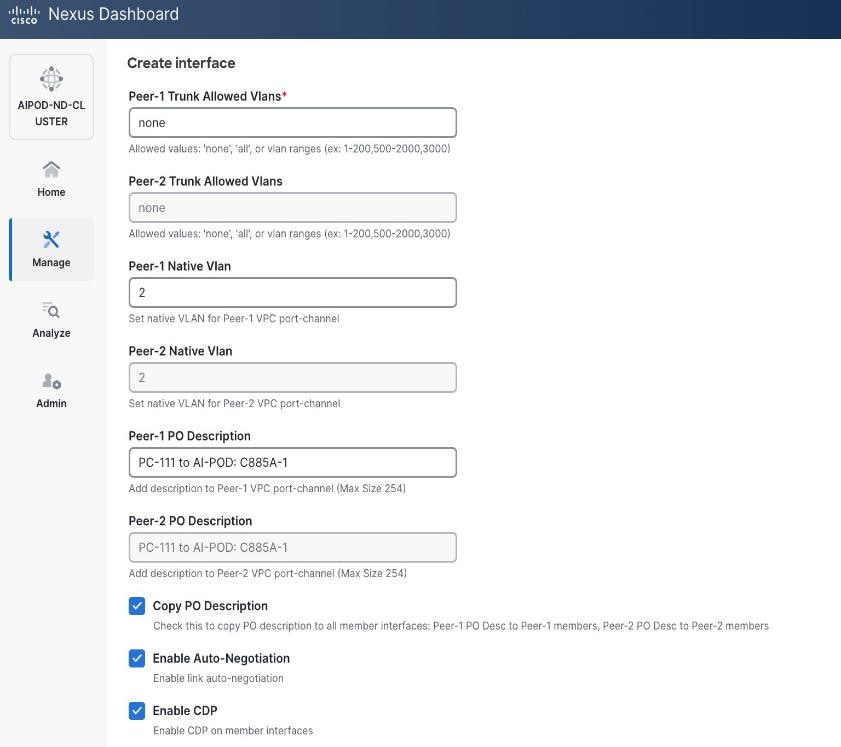

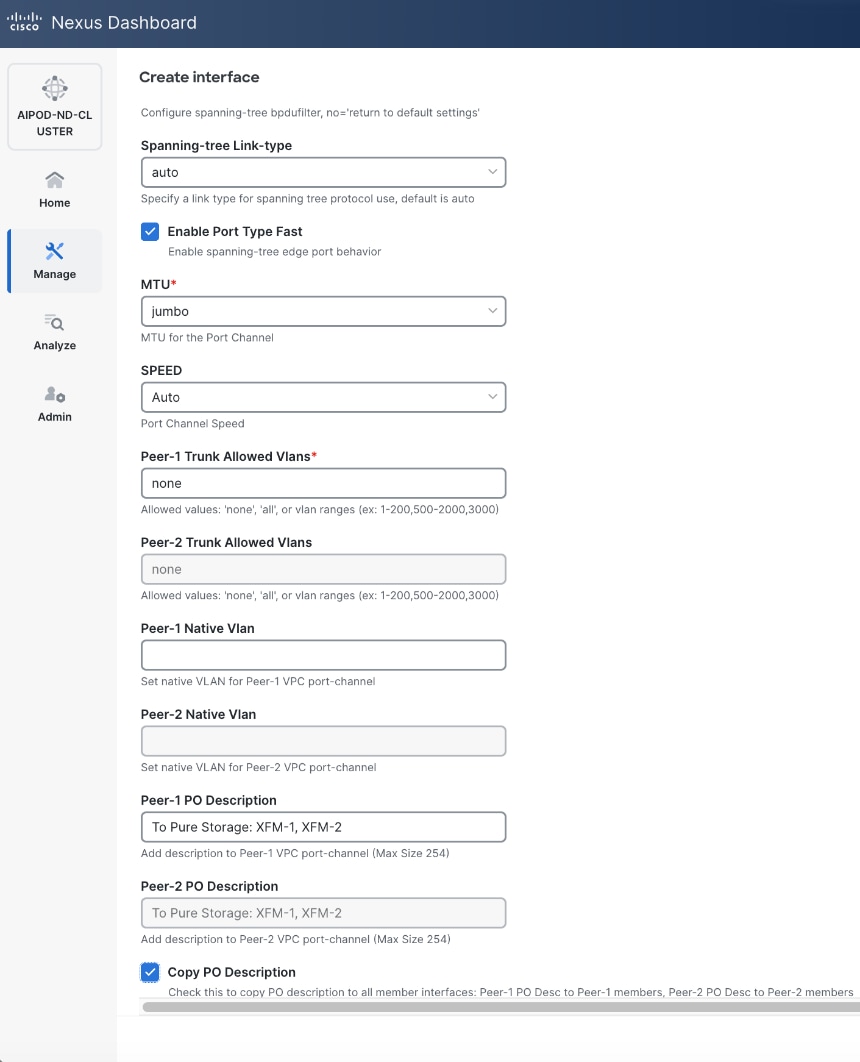

● Leave other fields as is.

● Scroll down and fill remaining fields: Native VLANNative VLAN, Peer-1 PO Description, Peer-1 PO Description, and select the checkbox for Copy PO Description Copy PO Description to copy the description to second vPC peer’s Port Channel.

Step 6. Click Save.

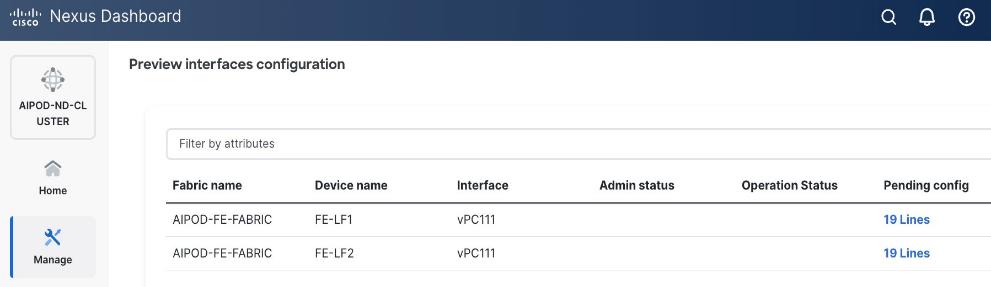

Step 7. Click Preview.

Step 8. Click Close and then click Cancel.

Step 9. Click Deploy. The Pending Config is the configuration shown in the previous step.

Step 10. Click Deploy Config.

Step 11. Verify that all the interfaces and port-channels are up on each switch in the vPC leaf pair that connects to the UCS X-Direct (-A side). It may take a few minutes for the vPC to go from Not discovered to consistent state.

Procedure 2. Deploy second vPC to Management UCS X-Direct

Step 1. Repeat the previous procedure for the second vPC to UCS X-Direct (-B side).

Step 2. Click Save.

Step 3. Click Deploy then click Deploy Config.

Step 4. Verify that all the interfaces and port-channels are up on each switch in the vPC leaf pair that connects to the UCS X-Direct (-B side). It may take a few minutes for the vPC to go from Not discovered to consistent state.

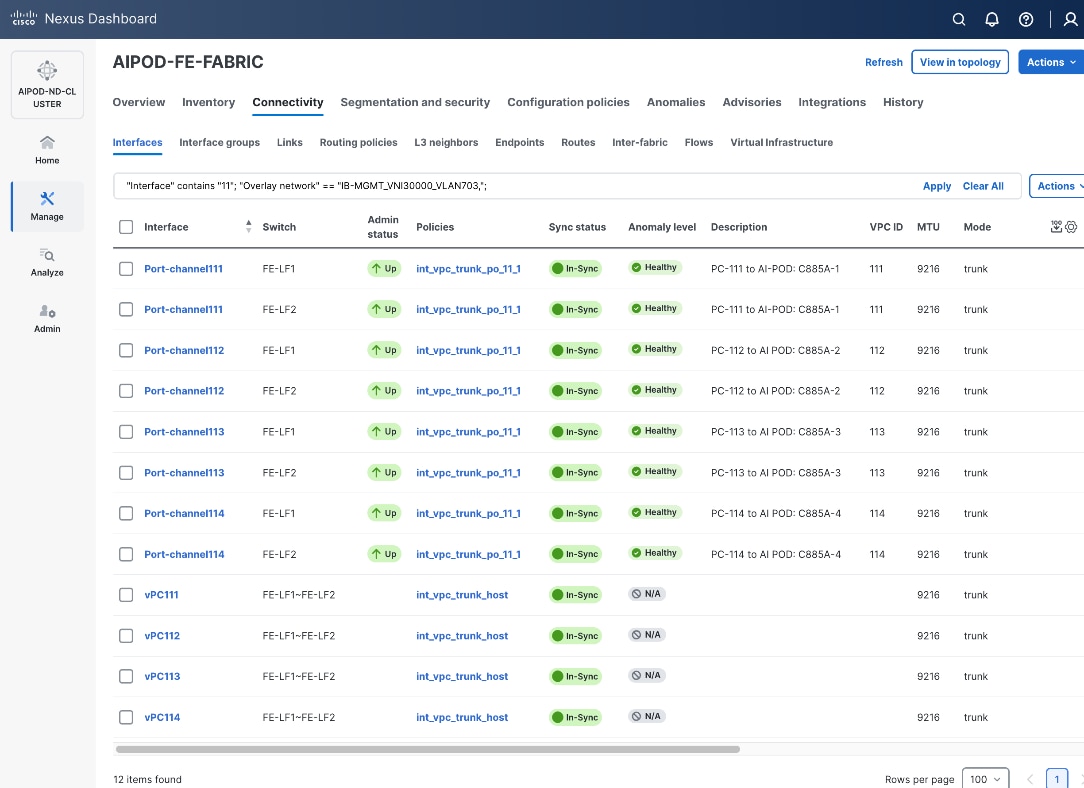

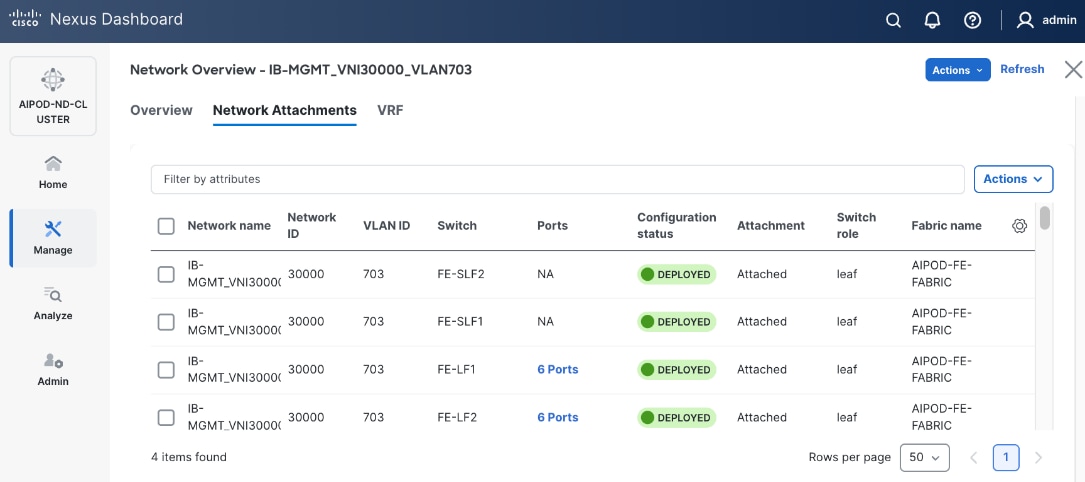

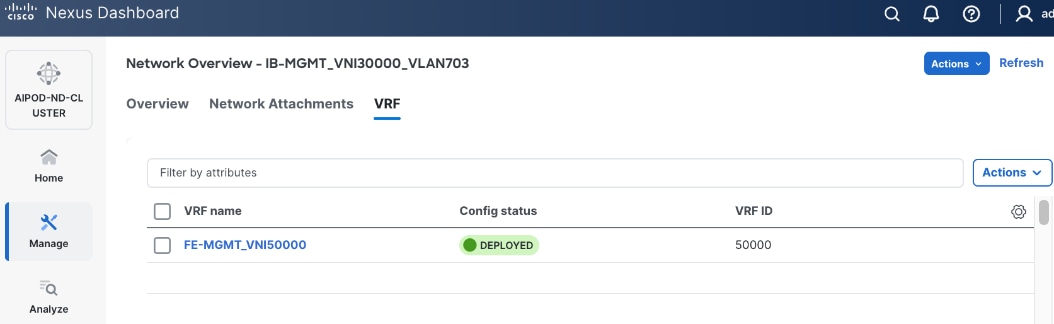

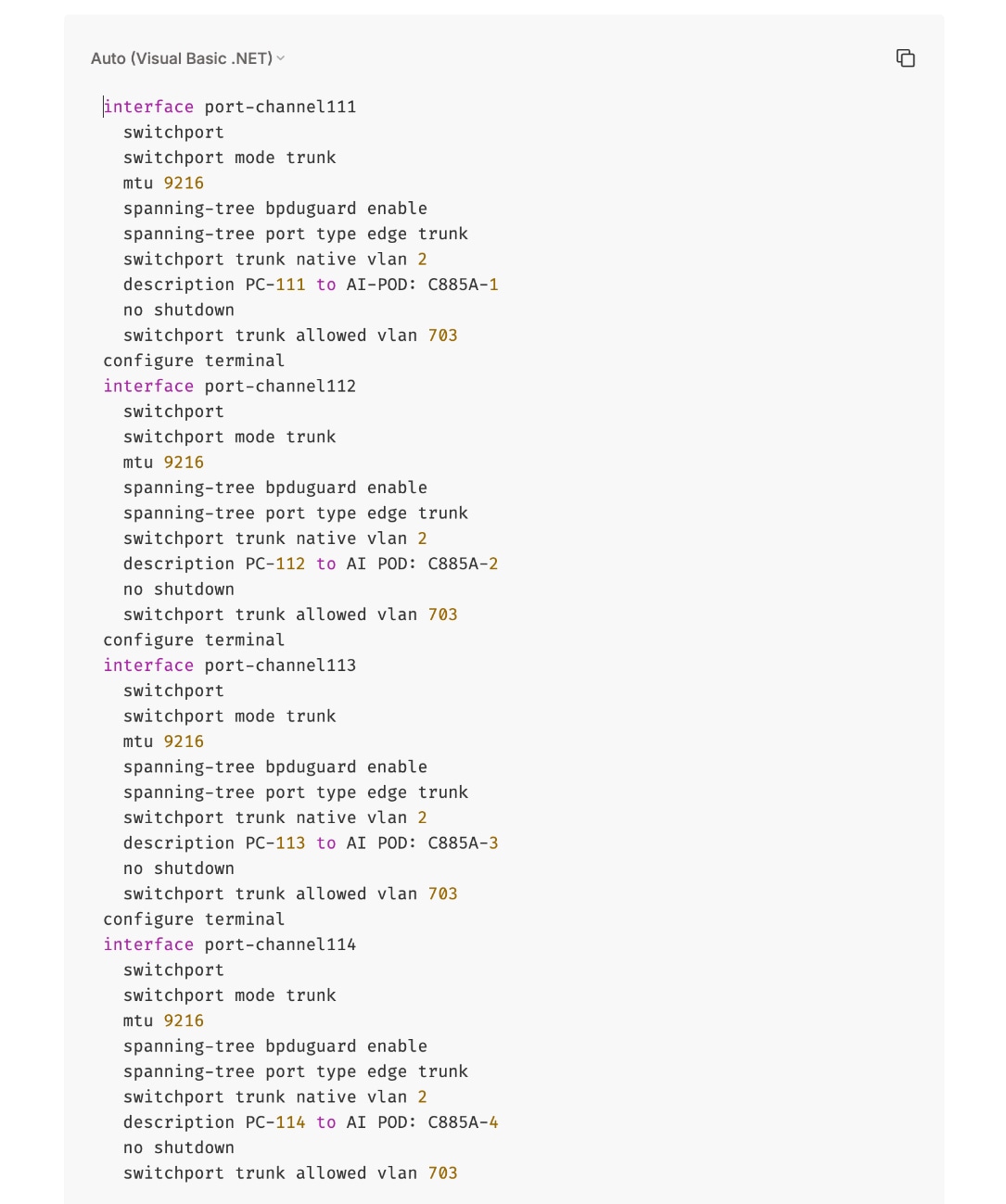

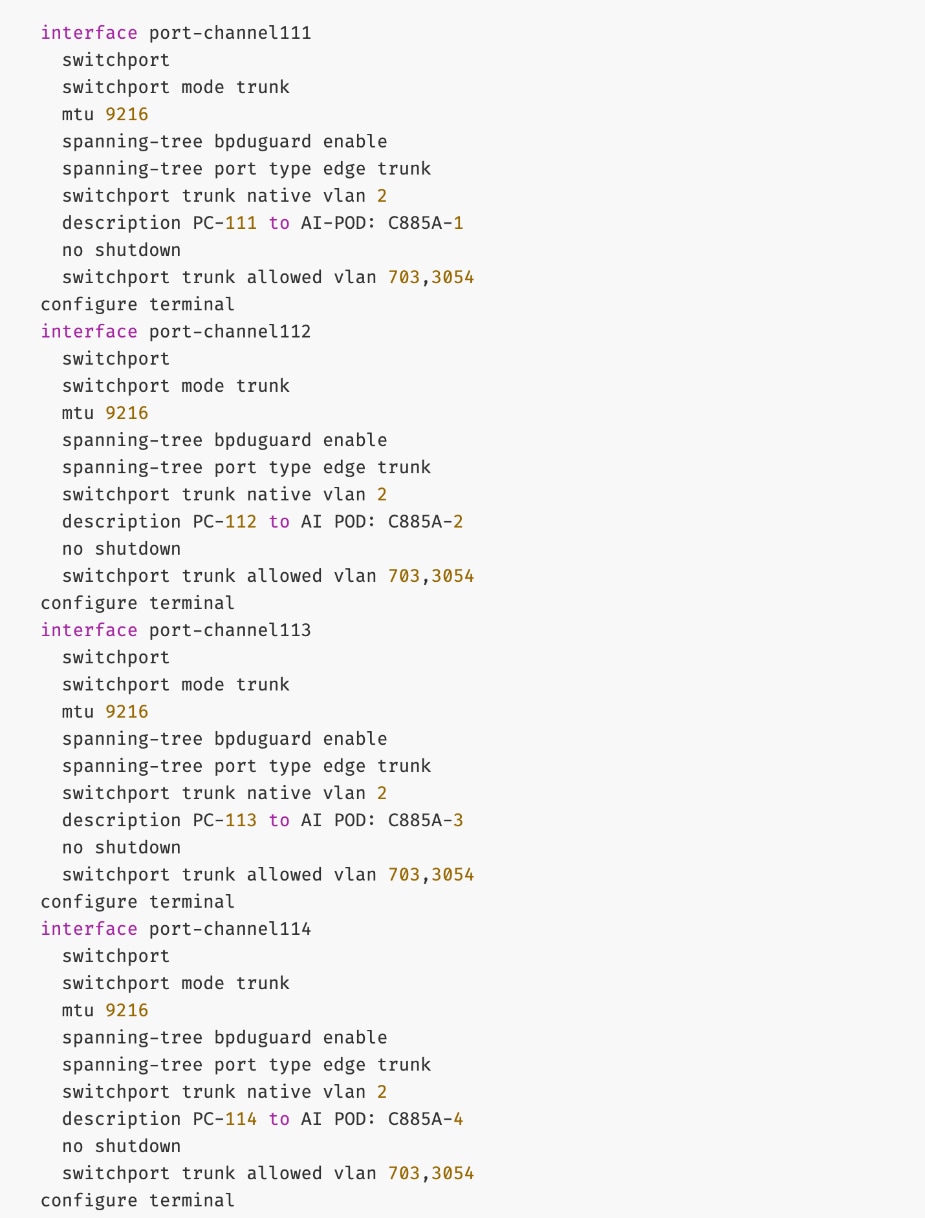

Enable Layer 2 Connectivity to UCS GPU Nodes from FE Fabric

To enable layer 2 connectivity to UCS GPU nodes, you will be configuring four vPCs, one per Cisco UCS C885A node. Each vPC will use one port on each switch in the compute leaf pair to connect to the UCS node.

Assumptions and Prerequisites

● Compute/management leaf switches deployed as a vPC pair

● Frontend NICs on UCS GPU nodes dual-homed to compute/management leaf switches

Setup Information

Table 13. Setup Parameters for FE Fabric: Layer 2 Connectivity to UCS GPU Nodes

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| Leaf Switches |

FE-LF1, FE-LF2 |

|

| UCS Nodes |

4 x UCS C885A GPU Nodes, each dual-homed to FE-LF1 & FE-LF2 |

|

| Virtual Port Channel (vPC) |

To UCS C885As |

UCS GPU Nodes |

| vPC/PC1 - ID |

111 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/1 |

On each Leaf switch |

| vPC/PC2 – ID |

112 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/2 |

On each Leaf switch |

| vPC/PC3 - ID |

113 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/3 |

On each Leaf switch |

| vPC/PC4 – ID |

114 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/4 |

On each Leaf switch |

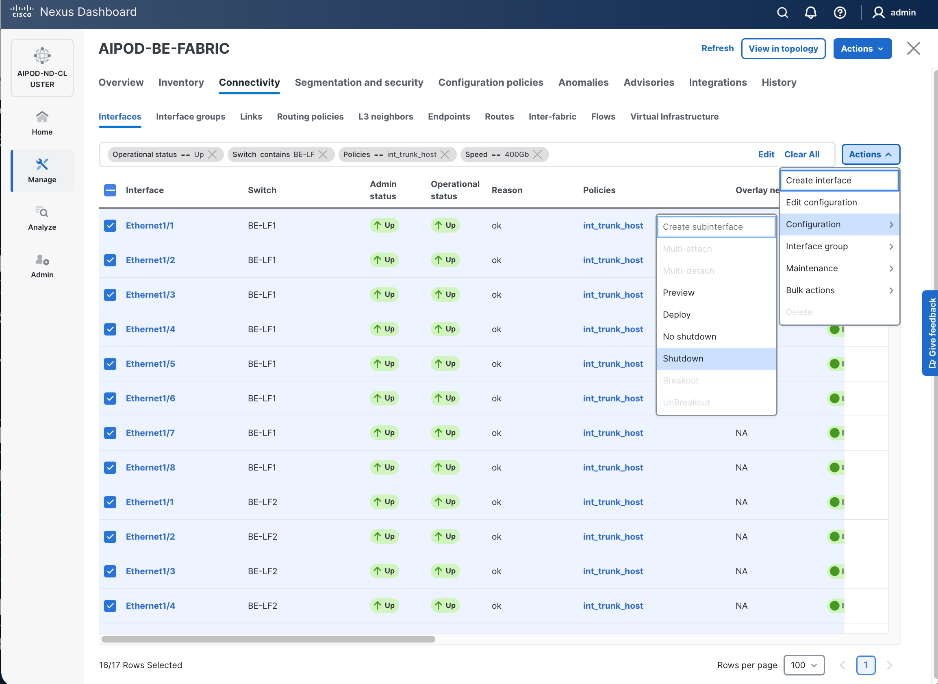

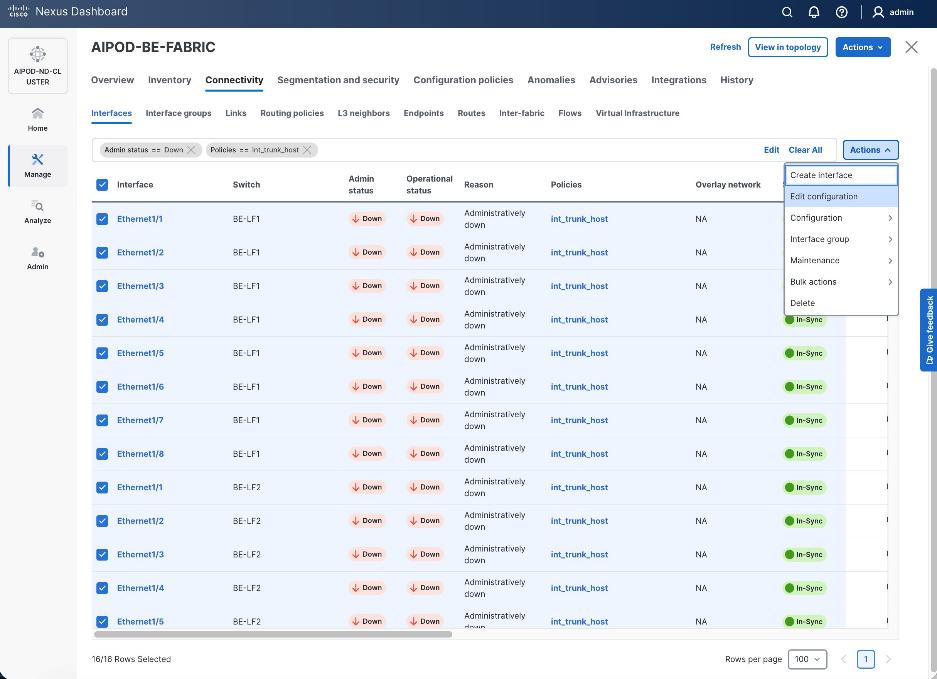

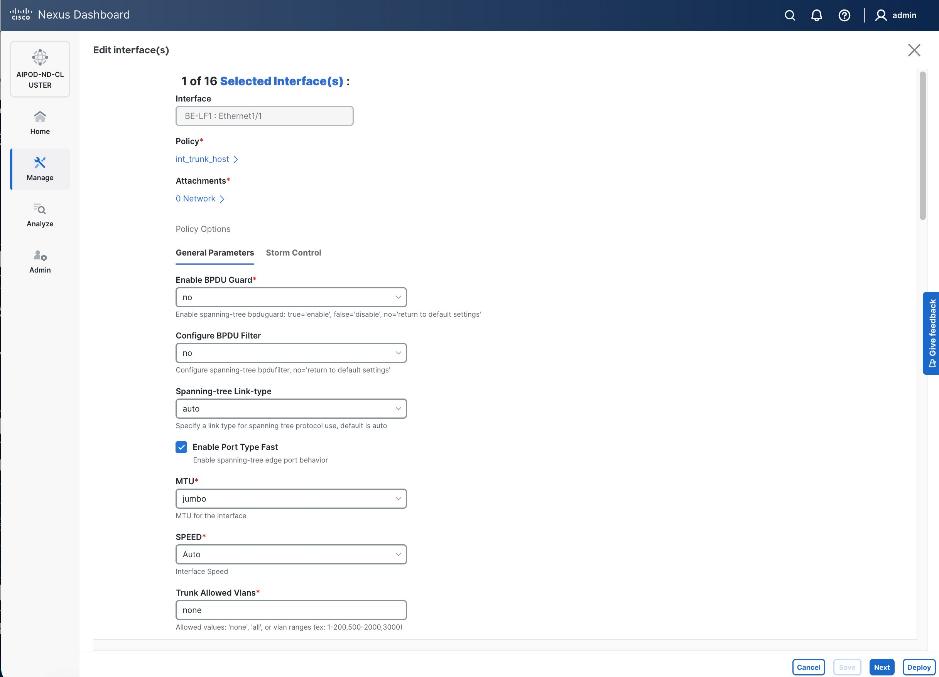

Deployment Steps

To enable Layer 2 connectivity from the frontend fabric to UCS C885A GPU nodes, follow the procedures below using the setup information provided in this section.

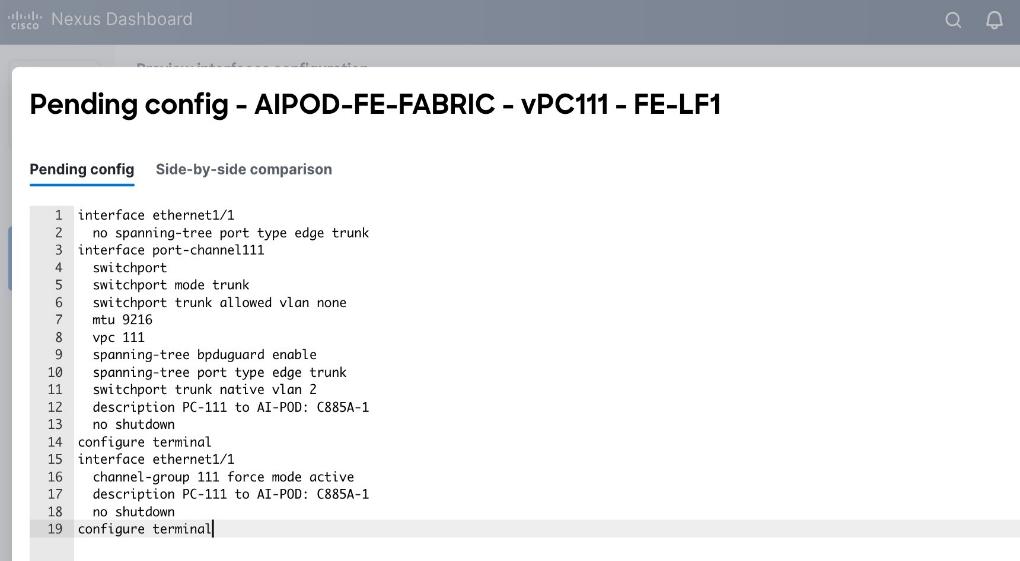

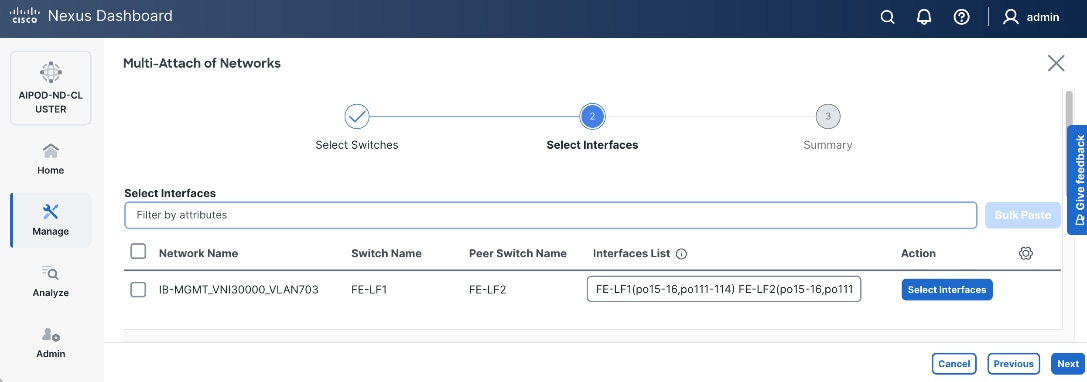

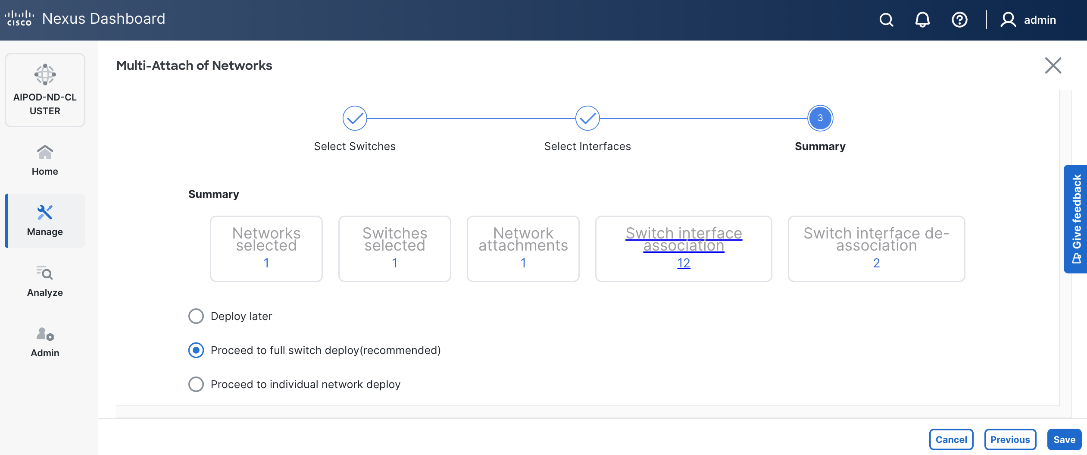

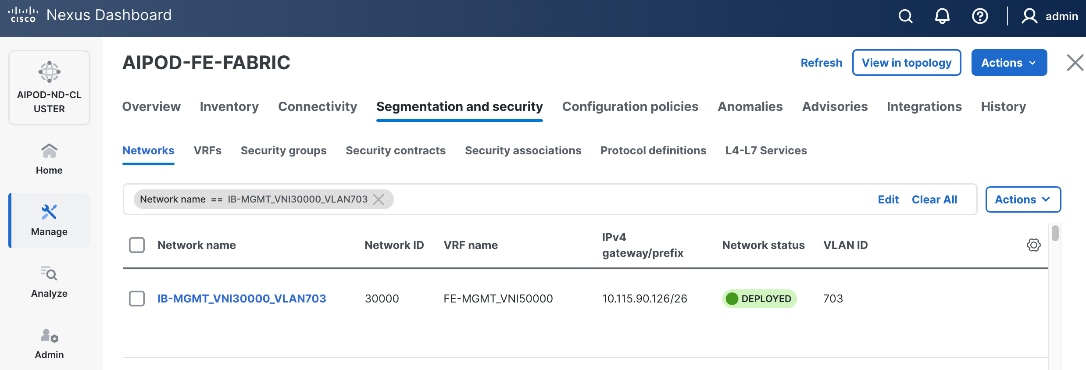

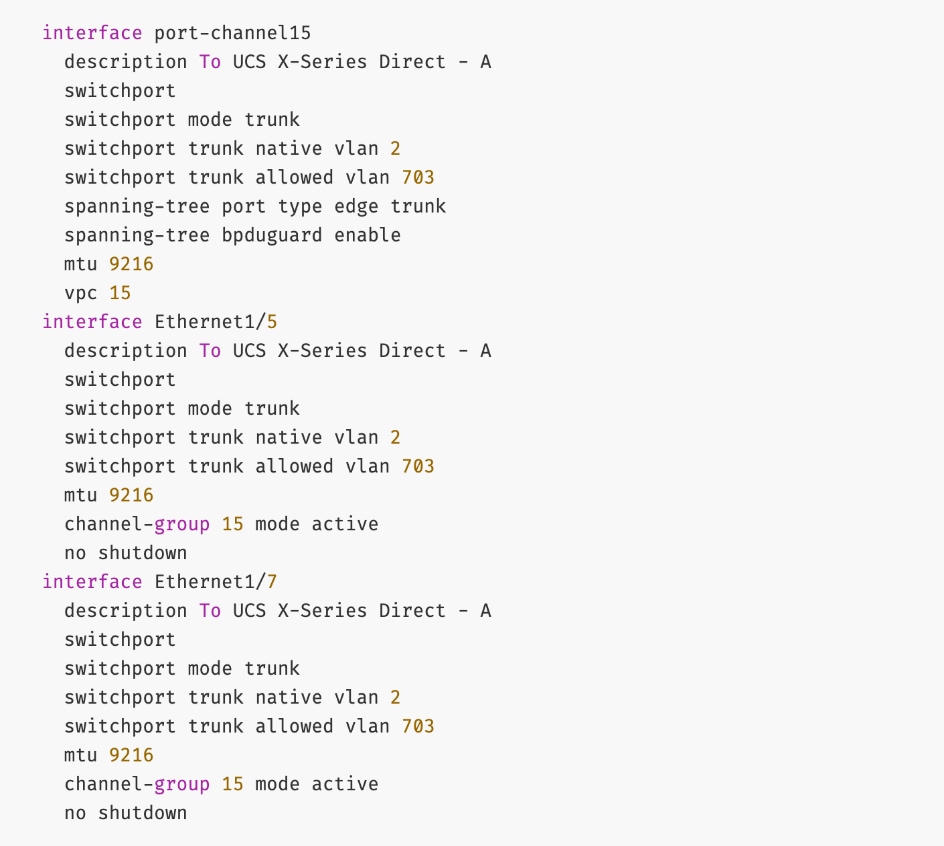

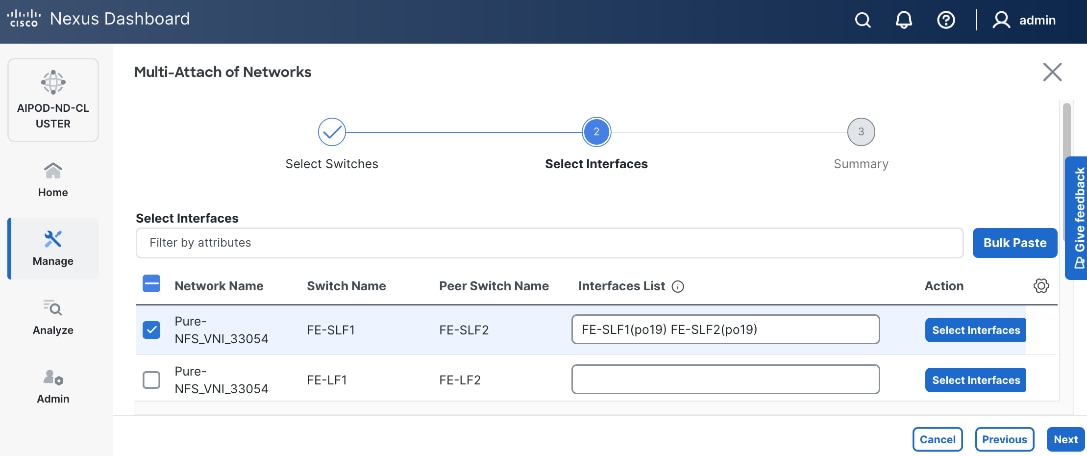

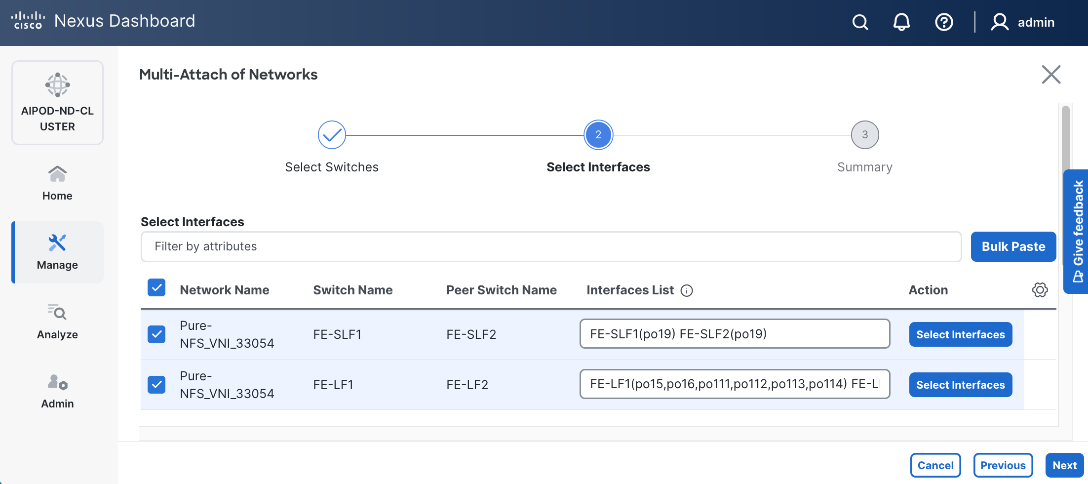

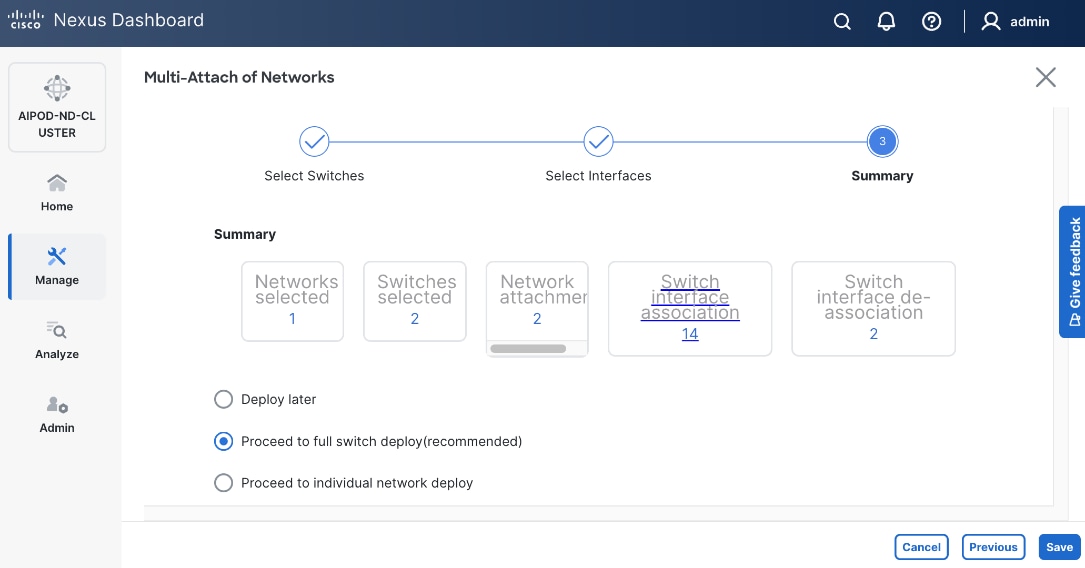

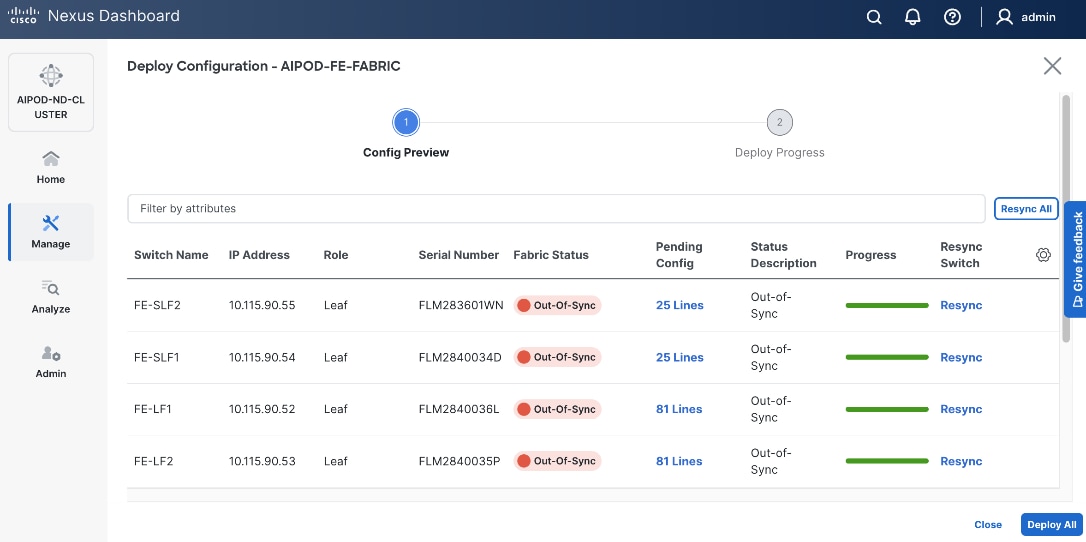

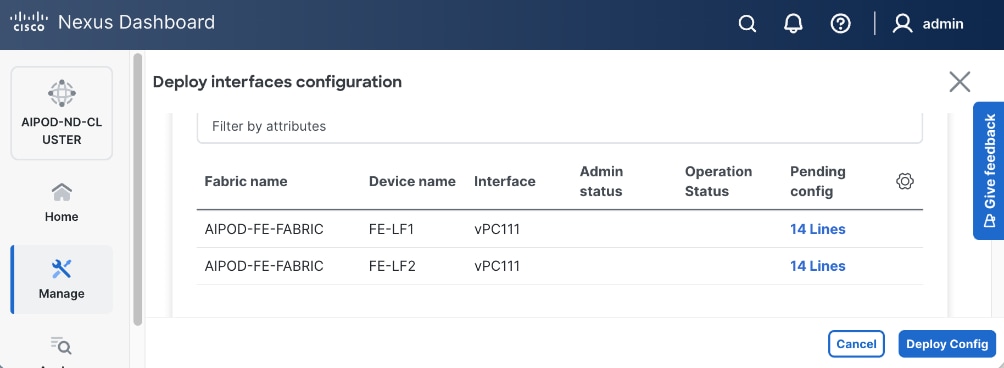

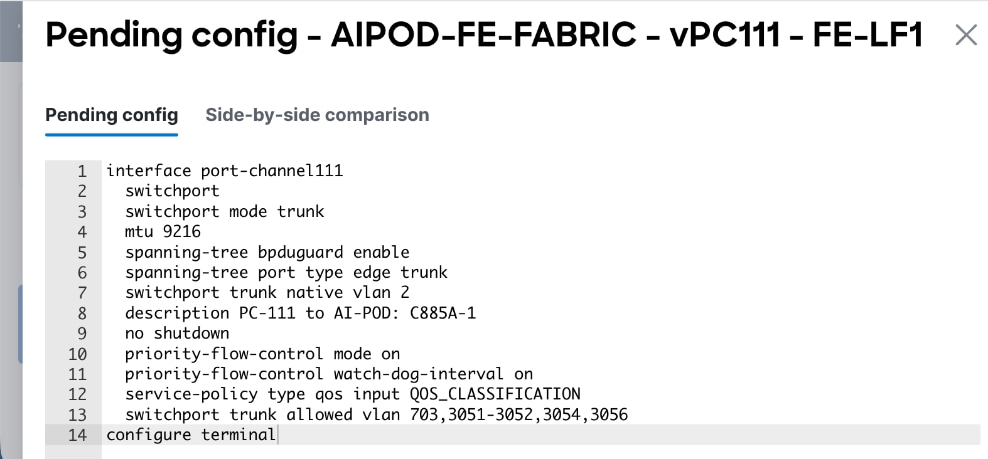

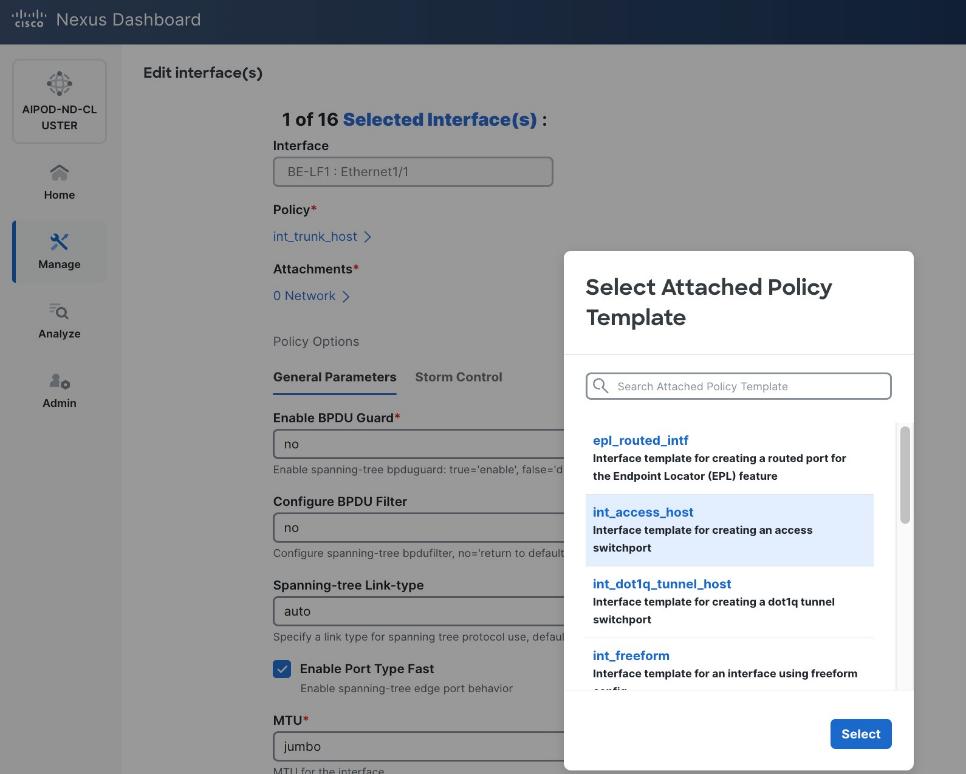

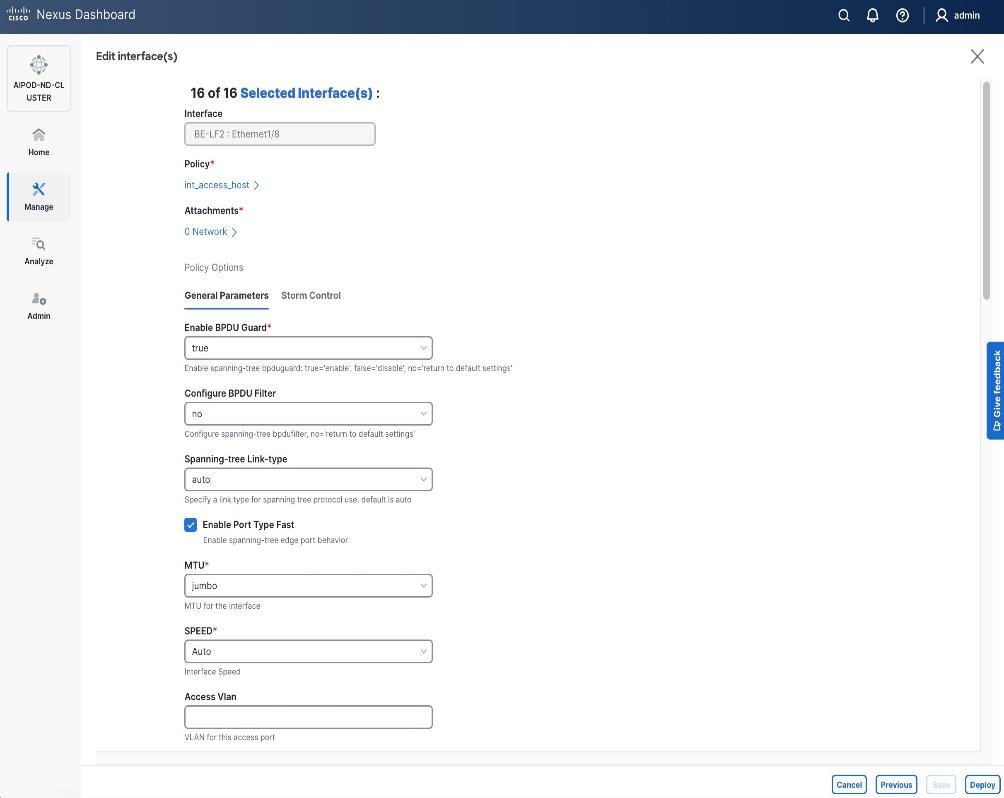

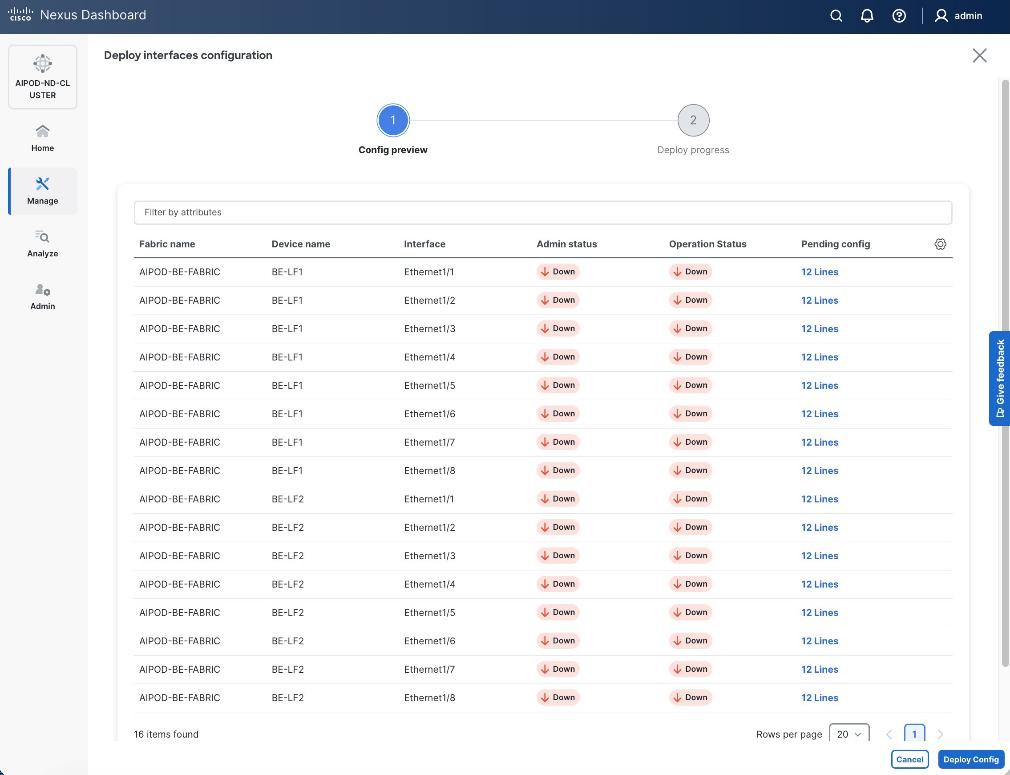

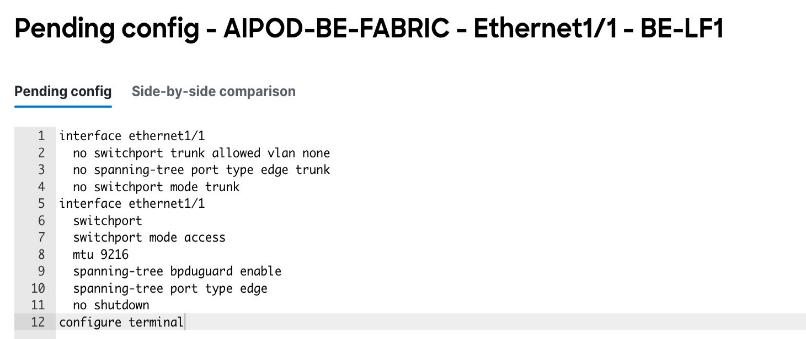

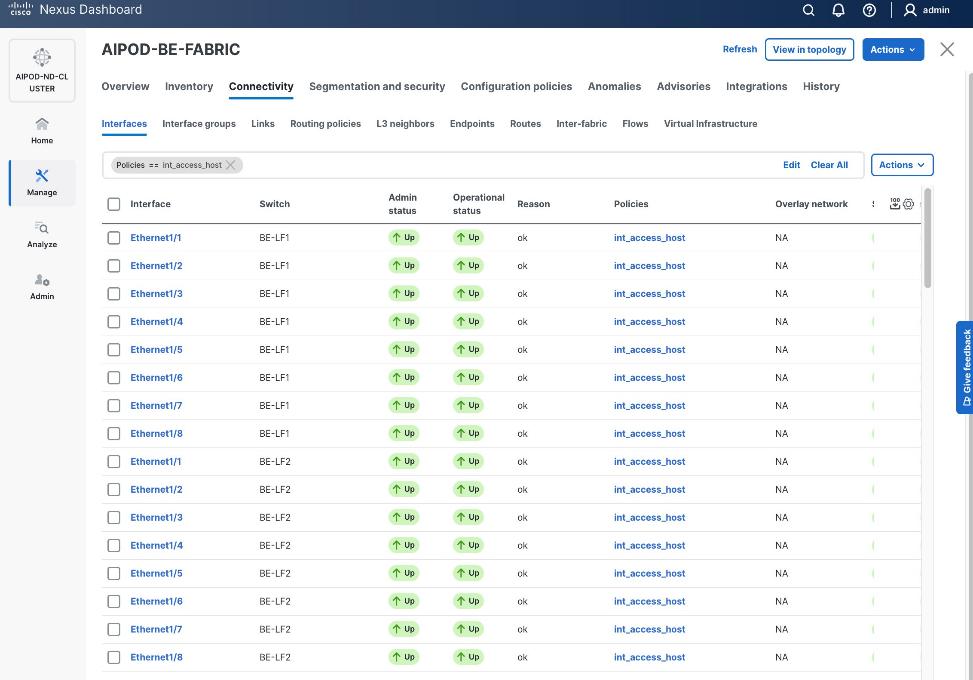

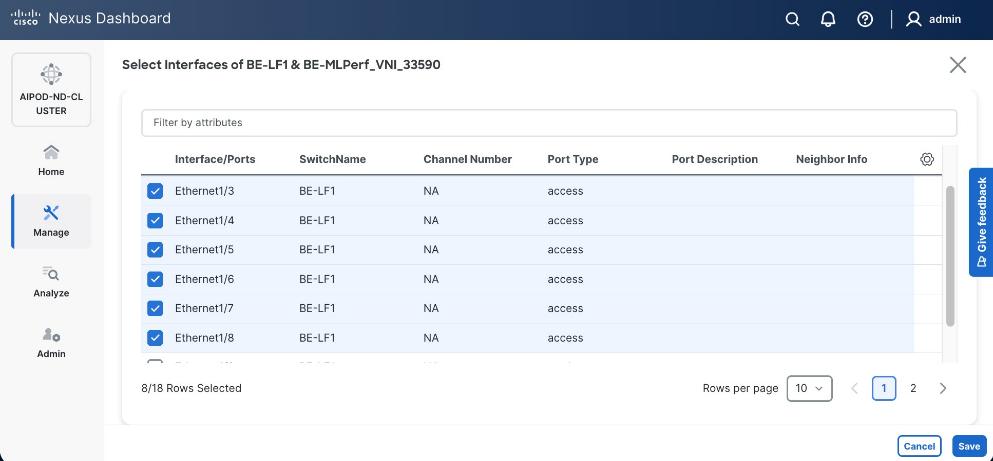

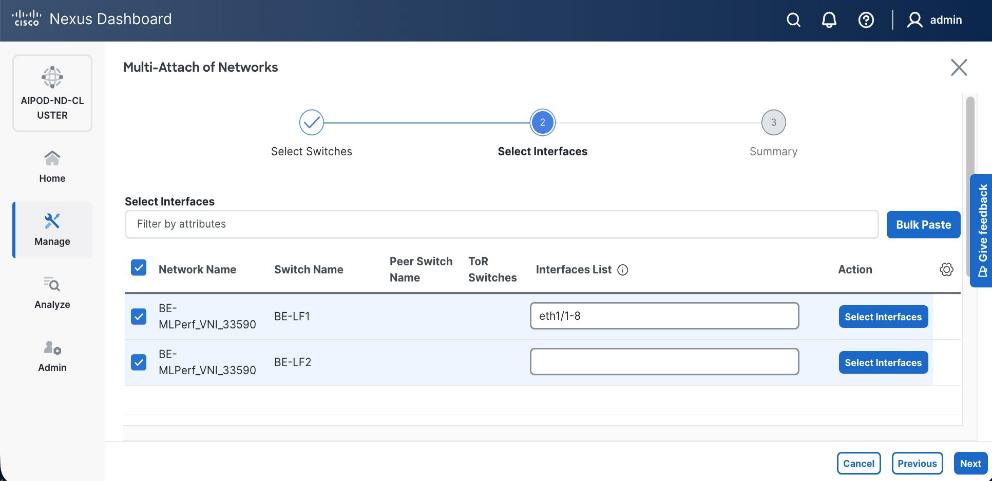

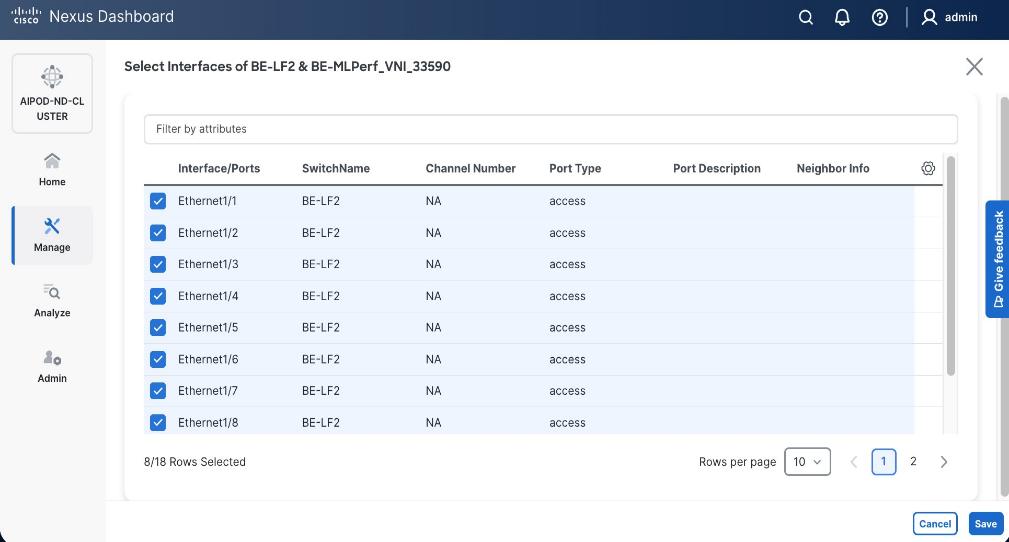

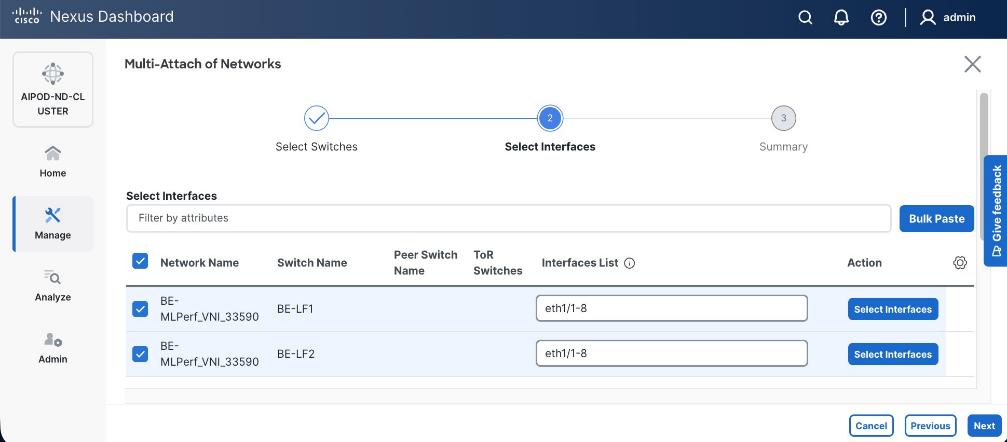

Procedure 1. Deploy first vPC to first UCS C885A GPU node

Step 1. From a browser, go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the frontend fabric and go to Connectivity > Interfaces tab.

Step 4. Click the lower Actions button and select Create interface.

Step 5. In the Create interface window:

● Specify the TypeType of interface as virtual Port Channel (vPC)virtual Port Channel (vPC) from the drop-down list.

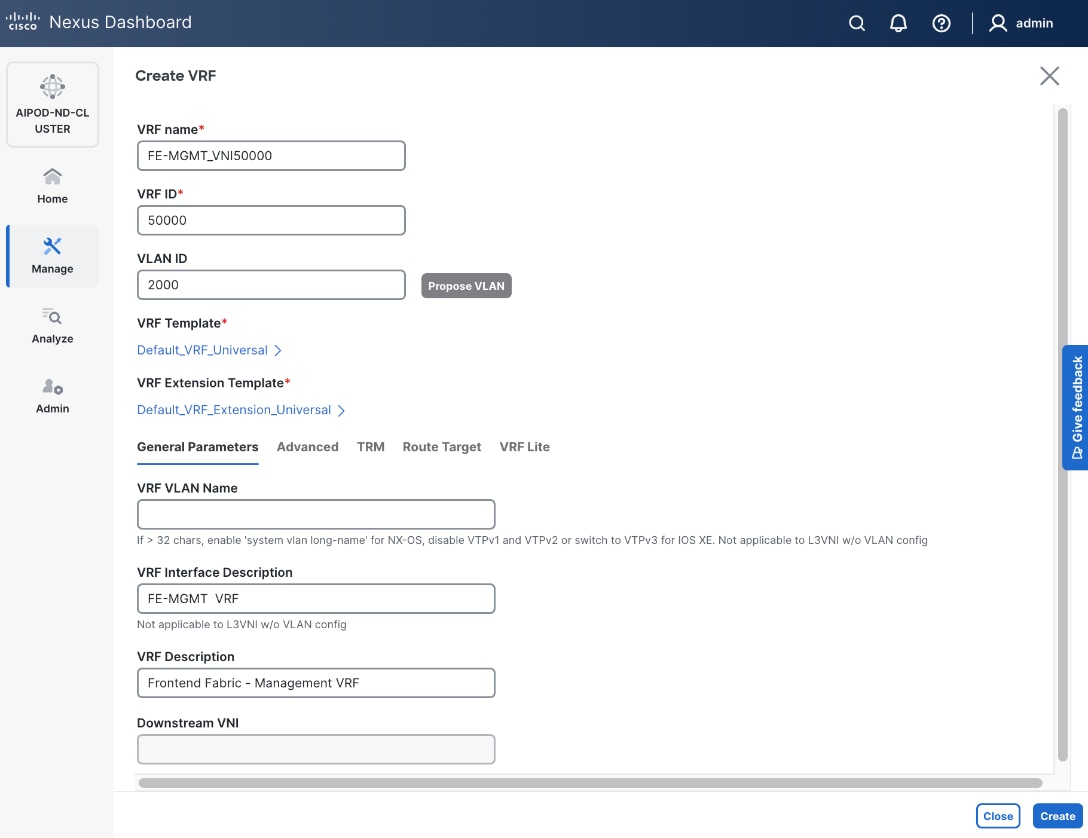

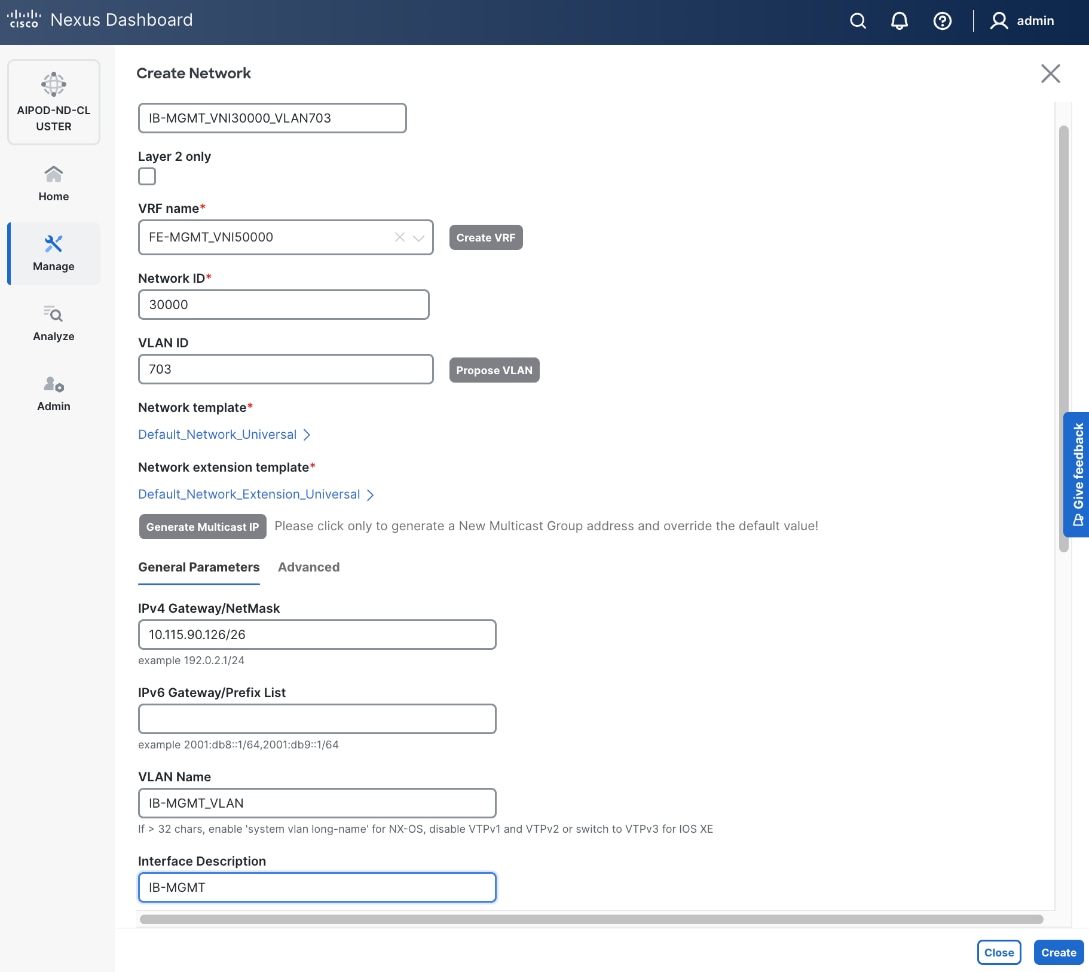

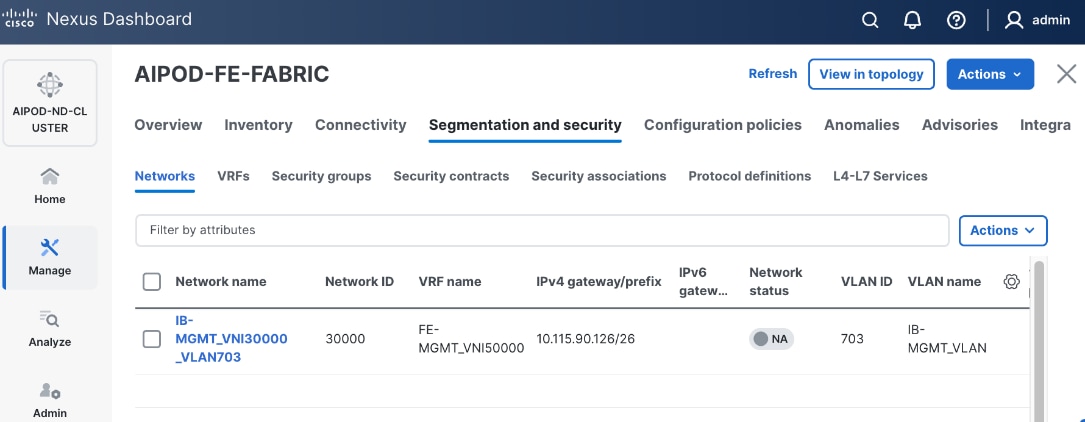

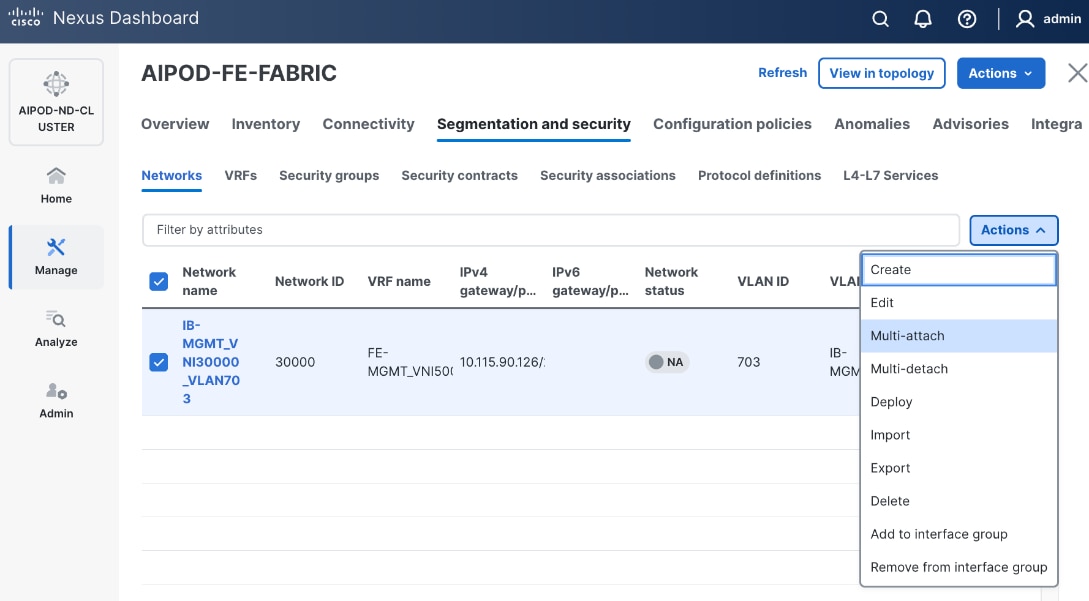

● For the Select a vPC pairSelect a vPC pair, select the compute leaf switch VPC pair from the dropdown list.