Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

Published: January 2026

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to: http://www.cisco.com/go/designzone

Executive Summary

Artificial intelligence (AI) and Machine Learning (ML) are fundamentally reshaping organizations, driving investment, innovation, and new opportunities. While many enterprises are launching AI initiatives, ensuring their success presents significant infrastructure and operation challenges.

Cisco AI PODs deliver a comprehensive architecture for building scalable, secure, and right-sized AI/ML infrastructure for enterprises. These full-stack solutions are available in easy-to-order bundles, backed by Cisco Validated Designs (CVDs) and solution-level support through Cisco TAC. By simplifying the design and deployment of AI/ML infrastructure, Cisco AI PODs accelerate AI initiatives, enabling enterprise IT and Lines-of-Business (LOB) teams to confidently deploy AI-ready infrastructure that delivers meaningful business outcomes.

AI POD architecture is designed to adapt to rapidly evolving AI technologies by leveraging the full breadth of Cisco’s Data Center portfolio and strong partnerships with industry leaders. AI PODs are built using Cisco Unified Computing System (UCS) servers with Cisco Intersight, Cisco Nexus networking with Nexus Dashboard, and Splunk Observability Cloud. The design incorporates AI infrastructure best-practices from the Cisco Nexus 9000 platform and serves as a foundational building block of the Cisco Secure AI Factory with NVIDIA. The solution incorporates recommendations from NVIDIA’s Enterprise Reference Architecture and integrates with key storage partners, including NetApp, Pure Storage, and VAST Data.

While this guide currently focuses on core infrastructure, Cisco AI PODs are designed to evolve, enabling future integration with Cisco’s advanced security solutions like Cisco AI Defense, Cisco Hypershield, and Cisco Smart Switches with DPUs to deliver enhanced, distributed protection in AI/ML deployments.

This document is the Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide, detailing the end-to-end solution architecture and design. Upcoming Deployment Guides will provide prescriptive, step-by-step instructions for building and deploying this solution in an enterprise data center. The three Deployment Guides will cover the specific design validated in Cisco IT labs, with a separate guide for each storage partner: NetApp, Pure Storage, and VAST Data.

The complete portfolio of Cisco AI POD CVDs, including this Design Guide and all upcoming Deployment Guides, is available here: Cisco Validated Design Zone for AI-Ready Infrastructure.

Solution Overview

This chapter contains the following:

● Audience

AI/ML is rapidly transforming enterprise organizations, driving a need for reliable, scalable, and secure AI ready infrastructure. This guide provides a comprehensive architecture for an end-to-end, high performance AI infrastructure for model training and fine-tuning in enterprise data centers.

The solution consists of Cisco UCS C-Series (C885A M8 and C845A M8) GPU servers connected to two network fabrics built using Cisco Nexus 9000 Series switches. The AI POD architecture is designed to meet enterprise requirements and features a flexible, modular design that supports deployments starting at 32, 64, or 128 GPU clusters. These clusters serve as foundational building blocks (or Scale Unit Types) that can then be incrementally scaled to support 256, 512, or higher GPU clusters.

This guide is for IT architects, infrastructure engineers, and AI/ML practitioners responsible for designing, building, and managing AI/ML infrastructure in enterprise data centers.

The AI POD designs described in this document are specifically tailored for resource-intensive training and fine-tuning workloads. This guide provides the architectural blueprint and key design considerations necessary to plan a successful AI POD deployment.

This CVD document describes the architecture and design of the Cisco AI POD for AI/ML Training and Fine-tuning. It details the infrastructure building blocks, network fabrics, storage options, and software stacks required to support an AI training cluster running. While the Cisco AI POD architecture can support a range of AI workloads, including inference, the primary focus of this design guide is on enterprise model training and fine-tuning.

Enterprise AI Requirements and Challenges

Enterprises launching AI initiatives and projects need infrastructure that is scalable, secure, and optimized for different AI workloads. This infrastructure must also be designed to minimize operational complexity and ease integration into existing data center environments. However, achieving this poses several challenges that can impact the success of these AI initiatives and delay time-to-value. Organizations must overcome these infrastructure, operations, and security hurdles, all while keeping pace with the rapid evolution of AI technologies.

Some of the critical requirements and challenges that organizations must address include:

● Right-Sizing Infrastructure: Different AI workloads such as training, fine-tuning, and inferencing have different infrastructure requirements. These are influenced by factors such as model size, precision, dataset size, and performance expectations including job completion times and user experience. Enterprises must right-size infrastructure and balance resources across multiple AI projects, typically on shared infrastructure, to avoid over-provisioning or performance issues from under-provisioning.

● Operational Complexity: Building and managing AI infrastructure is inherently complex, requiring new expertise across traditional domains (compute, network, storage) and new ecosystem software and tools. The ramp-up required to support GPU-dense servers, high-performance networking and specialized software and tools, significantly increases the operational burden on IT teams, making integration and efficient management of AI projects a significant challenge.

● Integration into Existing Data Centers: AI infrastructure should ideally integrate with ease into existing enterprise data centers. However, the power, cooling, and specialized networking requirements of AI training clusters make this challenging. While some challenges are unavoidable, strategic design choices can dramatically ease this integration. By leveraging familiar operational models, network design patterns, and software stacks, enterprises can minimize the learning curve and reduce the operational burden on IT teams managing both AI and traditional infrastructure.

● Scaling with Multi-Tenancy: Enterprise AI initiatives often involve multiple teams and lines of business, typically sharing the same infrastructure. This infrastructure must support the various tenant workloads without compromising on performance or reliability. Designing a multi-tenant infrastructure that delivers consistent performance and quality of service (QoS) while maintaining isolation and fairness is critical for AI adoption at scale.

● New Traffic Patterns: AI model training and fine-tuning are highly data-intensive processes that introduce new and demanding traffic patterns within enterprise data centers. This traffic can overwhelm traditional infrastructure, leading to poor AI workload and application performance, such as longer training and fine-tuning job completion times, and higher inference latency.

● Security Vulnerabilities: AI expands the attack surface and introduces new and evolving threat vectors (for example, models, frameworks, applications) that add to enterprise challenges. Protecting sensitive enterprise data and ensuring the integrity of AI models and applications is critical. Key vulnerabilities that an organization may encounter because of this expanded attack surface include:

◦ Compromised datasets used in training/fine-tuning that can skew AI model behavior.

◦ Malicious inputs designed to trick and manipulate model behavior.

◦ Vulnerabilities in underlying AI frameworks or libraries that can be exploited by attackers.

◦ Misconfigured infrastructure components that leave systems exposed to breaches.

● Operational Silos: The complexity of the AI/ML stack, often involving new hardware and software components, can create operational and management silos with fragmented visibility. Lack of end-to-end observability can hinder effective monitoring and troubleshooting, leading to increased operational complexity, delayed problem resolution, and sub-optimal performance.

AI Workloads and Infrastructure Implications

This chapter contains the following:

● Training and Fine-Tuning Workloads

AI workloads have distinct characteristics that place specific demands on the underlying infrastructure. Understanding these differences, particularly between training, fine-tuning, and inferencing, is essential for designing AI infrastructure that is right-sized and optimized for the workload. This guide focuses on training and fine-tuning, which impose the most demanding requirements on compute and networking.

Training and Fine-Tuning Workloads

Training and fine-tuning AI models are typically non-production, offline tasks focused on developing a model from scratch or customizing an existing one. The primary performance metric for these workloads is Job Completion Time (JCT).

While a shorter JCT is desirable, the decision to invest in a larger GPU cluster to reduce JCT is highly organization-specific and depends on the organization’s unique operational and business priorities. For example, one organization might justify a larger cluster to reduce a critical model’s JCT by a few hours, while another might only consider scaling if it cuts JCT by days or weeks. This is especially true for less frequently trained models or where data scientists can work on other tasks while these jobs run.

Justifying additional resources for lower JCT involves a cost-benefit analysis that goes well beyond the direct cost of GPUs. Scaling a GPU cluster significantly impacts the entire supporting infrastructure, requiring more power, cooling, network bandwidth, storage I/O, and physical rack space. These costs, coupled with increased complexity, must be weighed against the business value of faster model iteration and rollout.

To achieve optimal JCTs, the infrastructure must meet the following requirements:

GPU Cluster Size: Training foundational models, such as Large Language Models (LLMs), require massive parallel processing power from large clusters of high-performance GPUs. In contrast, fine-tuning these same models requires significantly fewer resources. GPU requirements vary greatly based on model size, dataset size, and the fine-tuning method used. Table 1 illustrates this difference, comparing the resources used to train Llama 3.1 models against the estimated resources needed for full fine-tuning on different NVIDIA GPU models.

Table 1. Estimated Resource Requirements for Training vs. Full Fine-Tuning

| AI Model |

Model Size (Parameters) |

Training (H100 GPUs) |

Full Fine-Tuning (Estimated Memory) |

Estimated GPU Resources – Full Fine-Tuning (H100 with 80GB of GPU memory) |

Estimated GPU Resources – Full Fine-Tuning (H200 with 141GB of GPU memory) |

| Llama 3.1 |

8B |

32 GPUs |

60GB |

60GB/80GB = ~1 GPU |

60GB/141GB = ~1GPU |

| Llama 3.1 |

70B |

256+ GPUs |

500GB |

500GB/80GB = ~7 GPUs |

500GB/141GB = ~4 GPUs |

| Llama 3.1 |

16,000 GPUs |

3.25TB |

3.25TB/80GB = ~40 GPUs |

3.25TB/141GB = ~24 GPUs |

Source: https://arxiv.org/pdf/2407.21783 | https://huggingface.co/blog/llama31#training-memory-requirements

Table 1 shows that the GPU requirements for fine-tuning are orders of magnitude lower than those of training. These requirements can be further reduced by using more performant GPUs (for example, H200 instead of H100). The choice of fine-tuning method, from 32-bit full fine-tuning to 4-bit Parameter Efficient Fine-Tuning (PEFT), also has a significant impact on GPU requirements, as shown in Table 2.

Table 2. Estimated Resource Requirements for Fine-Tuning a Llama 3.1 70B model using H200 SXM GPUs

| AI Workload |

Model Size |

Precision |

Estimated Memory Requirement |

Estimated GPU Resources |

| Full Fine-Tuning |

70B |

32-bit |

1200GB |

1200GB/141GB = ~9 GPUs |

| Full Fine-Tuning |

70B |

16-bit |

600GB |

600GB/141GB = ~5 GPUs |

| PEFT – LoRA/Badam/… |

70B |

16-bit |

160GB |

160GB/141GB = ~2 GPUs |

| PEFT - QLoRA |

70B |

8-bit |

80GB |

80GB/141GB = ~1 GPU |

| PEFT - QLoRA |

70B |

4-bit |

48GB |

48GB/141GB = ~1 GPU |

Source: https://github.com/hiyouga/LLaMA-Factory?tab=readme-ov-file#hardware-requirement

Note: In the provided scenarios, the actual memory requirements and GPU resources required can vary depending on factors such as dataset size, optimizations, and model architecture.

Key Takeaway: A typical enterprise fine-tuning workload for a large model requires a cluster of 32 to 64 GPUs. To support multiple similarly-sized workloads concurrently (for different projects), the GPU cluster size must scale accordingly, yet it remains significantly smaller than the thousands of GPUs required to train a foundational model from scratch.

Stringent Network Requirements: Training and fine-tuning rely on critical GPU-to-GPU communication. These workloads involve frequent synchronization and collective operations to exchange data between GPUs allocated to the workload. This requires a network fabric that provides:

● High Throughput: Supports concurrent high-bandwidth data flows between GPUs.

● Low End-to-End Latency: Minimizes data exchange delays in which directly impact JCT.

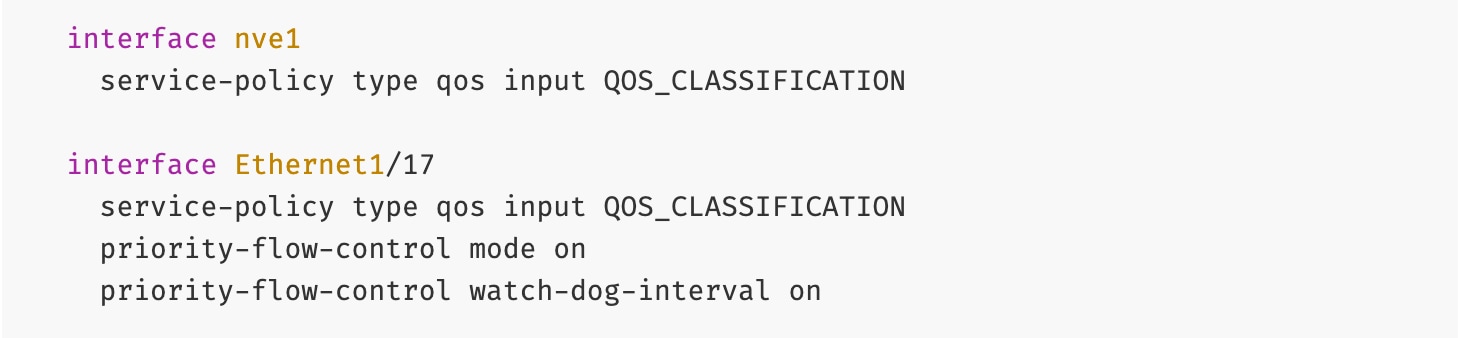

● Lossless: Packet drops can force training jobs to restart, severely impacting JCT. Technologies like Priority Flow Control (PFC) and Explicit Congestion Notification (ECN) are essential to prevent this.

● Low Jitter: Mitigates microsecond buffer overruns and transient congestion caused by synchronous, bursty GPU operations.

● Efficient Load Balancing: Training workloads often have low entropy, which can lead to inefficient link utilization with traditional Equal-Cost Multi-Path (ECMP. AI/ML networks require advanced load-balancing strategies to maximize link utilization and minimize congestion.

High Availability, Security, and Operations: While important, these aspects are generally less critical for pre-production training and fine-tuning environments when compared to production inferencing, where real-time user interaction and continuous service are paramount.

Workload Management: Efficient scheduling and orchestration of workloads and GPU resources are essential for managing complex training and fine-tuning jobs.

Unlike training, inferencing workloads process live, production traffic, often in real-time. Performance is measured by latency and throughput metrics, which are influenced the number of concurrent users, input/output token sizes, and batch sizes.

Key metrics for inferencing include:

● Time-to-First Token (TTFT): Measures the interval between a user prompt to receiving and the first output token. This is critical for perceived responsiveness, as it represents a user’s wait time before seeing the start of a response.

● End-to-End Request Latency: Measures the total time to receive a complete response. This metric represents the user's total wait time for an answer; a function of TTFT and the generation time for the remaining tokens.

● Time per Output Token (TPOT) or Inter-Token Latency: Measures the average time between subsequent tokens after the first.

● Requests per Second: Measures the volume of user requests that the system successfully completed per second.

● Tokens per Second or Throughput: Measures the total system-wide output tokens per second across all concurrent requests.

Inferencing infrastructure typically requires:

● Smaller GPU clusters: Fewer GPUs compared to training and fine-tuning. Even large models that require multiple GPUs are typically contained within a single server.

● Less stringent network requirements: Can utilize existing 100/200 GbE data center fabrics.

● High Availability, Security, and Observability: Critical for production inferencing, similar to other mission-critical applications running in enterprise data centers.

It is important to note that inferencing landscape is evolving rapidly. New agentic AI workloads, where models engage in multi-step reasoning, use tools, and interact with other AI agents or systems, will likely impose new demands on the AI infrastructure, including more complex communication patterns, higher sustained data rates, and lower latency requirements.

While inferencing is critical for enterprises, its architecture and designs are outside the scope of this guide. See Cisco Validated Design Zone for AI-Ready Infrastructure for Inferencing AI POD CVDs.

Cisco AI PODs: A Complete Solution for Enterprise AI

This chapter contains the following:

● How AI PODs Address Enterprise AI Challenges

Cisco AI PODs provide a validated, integrated architecture that meets the stringent requirements of AI workloads. This section introduces the AI POD architecture and outlines its role as a foundational infrastructure stack for enterprise AI deployments.

Cisco AI PODs are modular, full-stack infrastructure solutions designed to accelerate a wide range of AI initiatives and use cases in enterprise organizations. Cisco AI PODs support various AI workloads—including training, fine-tuning, inferencing—enabling enterprises to confidently deploy and operate infrastructure that meets diverse AI requirements.

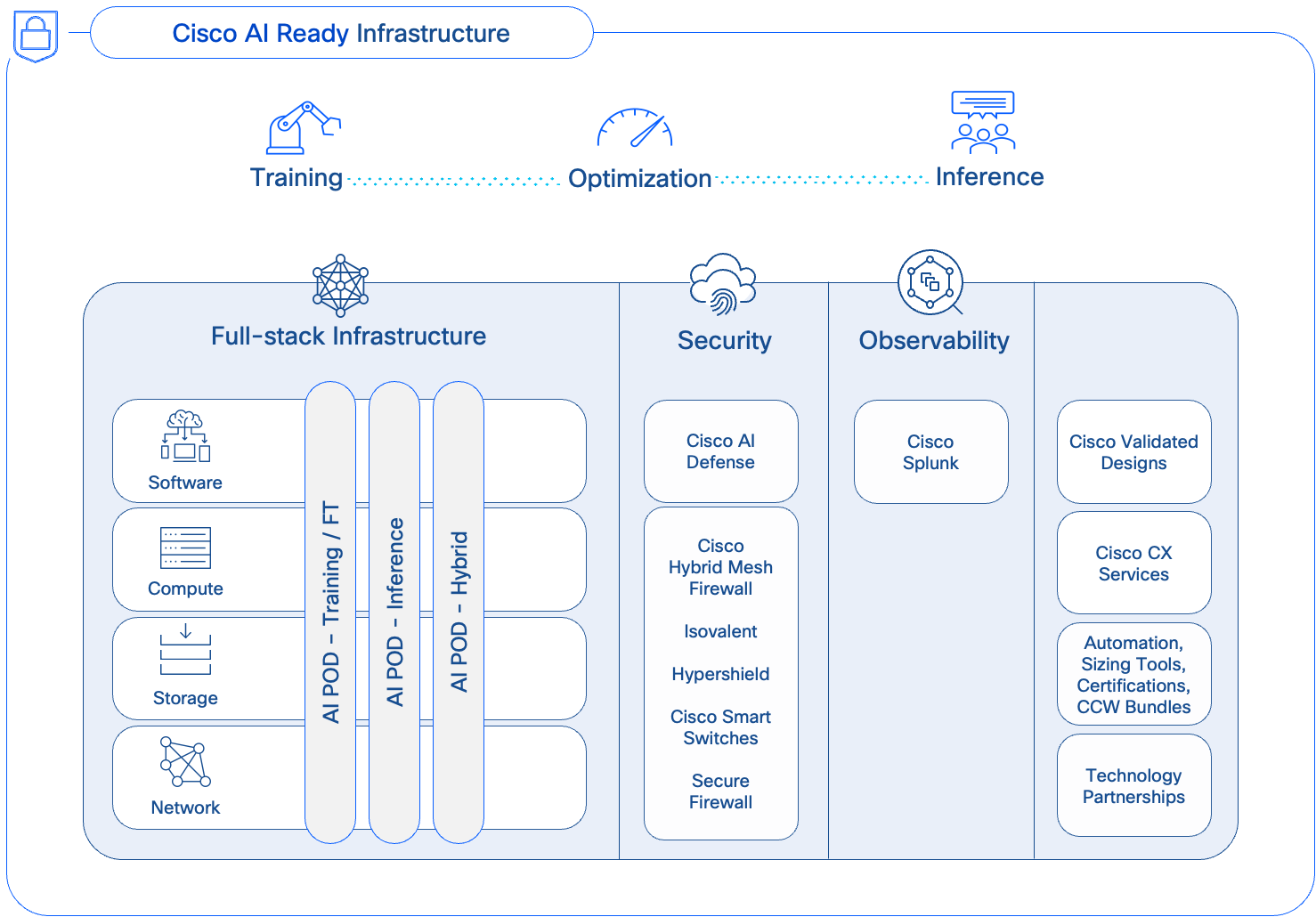

AI PODs are a core component of Cisco’s AI strategy (Figure 1) for delivering secure, AI-ready infrastructure for enterprise organizations. They can be deployed as dedicated, fit-to-purpose stacks for specific AI workloads (for example, Training) or as hybrid stacks, supporting multiple AI workloads (for example, Training and Inferencing). This gives enterprises the flexibility to support multiple AI projects and workloads across the organization.

Cisco AI PODs simplify the path to AI by providing fully integrated, easy-to-order bundles. Backed by CVDs and Cisco TAC support, AI PODs enable Enterprise IT and LOB teams to quickly deploy full-stack, AI-ready infrastructure without compromising on flexibility or choice.

How AI PODs Address Enterprise AI Challenges

Cisco AI PODs provide a comprehensive, full-stack architecture that addresses the infrastructure and operational challenges of enterprise AI. By delivering a high-performance, right-sized, and scalable solution, AI PODs adapt to evolving needs while simplifying design, deployment, and management.

● Right-sized Infrastructure for Enterprise AI Workloads: Enterprise AI applications require models spanning from classic predictive ML models to Small Language Models (SLMs) and generative LLMs with billions of parameters. A single application may also use a combination of models depending on the use case. Consequently, as AI adoption grows, organizations will need to support dozens, and eventually hundreds, of diverse models.

Since most enterprises focus on customizing pre-trained foundational models using techniques like fine-tuning and Retrieval-Augmented Generation (RAG), these tasks represent the most common AI workloads in enterprise deployments. Though resource-intensive, these workloads require significantly fewer GPUs than training from scratch, as illustrated in Tables 1 and 2. AI PODs meet this need with modular Scale Unit Types (32, 64, or 128 GPUs) to ensure efficient resource utilization without costly over-provisioning. This modular approach allows enterprises to select an optimal starting cluster size for their AI initiatives and then scale precisely and incrementally as their needs evolve.

● Optimized for AI Workloads: AI PODs feature non-blocking, lossless, high-bandwidth, and low-latency network designs engineered to handle the data-intensive traffic patterns of model training and fine-tuning. This eliminates network bottlenecks, and ensures optimal JCT.

● Multi-tenancy: To support concurrent workloads from multiple projects and LOBs, the AI POD design uses MP-BGP VXLAN EVPN to create secure, isolated environments for each tenant. VXLAN provides data plane isolation by encapsulating each tenant’s traffic into a unique virtual network (L2 or L3), while MP-BGP ensures control plane isolation through Virtual Routing and Forwarding (VRF) instances.

● Operational Ease and Consistency, at Scale: Modular building blocks and scale-out spine-leaf designs in the AI POD architecture, enable enterprises to incrementally expand their infrastructure without complex redesigns, while maintaining design and operational consistency at scale.

● Simplified Data Center Integration: AI PODs simplify integration by using familiar technologies, tools, and design patterns commonly seen in enterprise data centers. The AI POD design leverages familiar management tools like Cisco Intersight and Nexus Dashboard, along with established network designs like VXLAN EVPN fabrics and Spine-Leaf topologies. This alignment provides operational consistency, simplifies adoption, and reduces the learning curve for IT teams managing both AI and traditional infrastructure.

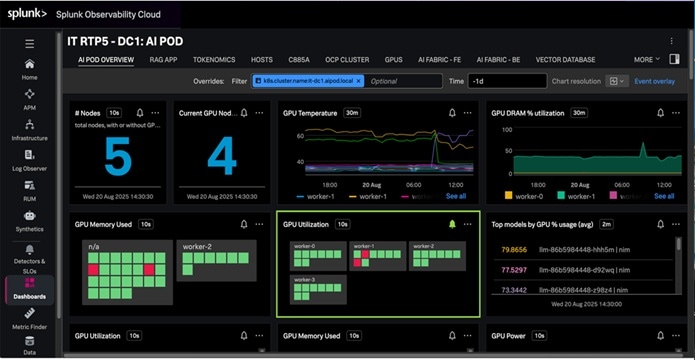

● Enhanced Observability: Powered by the Splunk Observability Cloud, AI PODs deliver end-to-end visibility across the entire AI/ML stack. This integrated observability helps to eliminate operational silos, streamline monitoring, and accelerate troubleshooting and performance optimization.

● Strengthening Security Posture (Future Integration): While the current focus is on the core compute, networking and storage infrastructure, AI PODs will evolve and integrate with advanced Cisco security solutions (for example, AI Defense, Hypershield) that protect against new threat vectors emerging in AI/ML deployments.

When planning an AI infrastructure for training and fine-tuning, a basic decision is determining the optimal size of your AI training cluster. Right-sizing this cluster requires a clear understanding of workload needs and translating these requirements into GPU resources necessary to meet target Job Completion Times.

Note: In this context, a workload refers to the specific model being trained or fine-tuned, and not the broader AI use case.

Key Factors for Sizing Your GPU Cluster

The size of your GPU cluster is determined by two main factors:

● Single Largest Workload: This is the single model (or workload) that requires the most GPU resources for one training or fine-tuning job. It sets the baseline for the minimal number of GPUs required per workload and the optimal connectivity for the GPUs assigned to this job.

● Concurrent Workload Requirements: This is the number of similar or smaller-sized workloads that need to run concurrently on the cluster. This will determine the total cluster capacity, at peak load, across all projects and tenants.

In an enterprise setting, the concurrent workloads could be from multiple projects, LOBs or even single use cases that involve several models. What matters ultimately is the total number of models (or workloads) that must be hosted on this infrastructure, as this directly impacts the cluster size and the infrastructure required to support it.

For sizing the cluster, the largest workload is important for two reasons. First, it defines the minimal GPU resources required to run the most demanding workload in your environment, which can then be used to determine the overall cluster capacity based on the number of concurrent workloads this infrastructure needs to support. Secondly, it directly influences the connectivity design for GPU-to-GPU communication. All GPUs allocated to the same workload should ideally have the most optimal, non-blocking connectivity, which has a bearing on how the GPU nodes are connected to the fabric. For more information, see the Backend Fabric Design (East-West) section.

Approaches to Sizing

Estimating the largest workload can be challenging, as users often request the latest, largest models to future-proof their projects, making it difficult for IT teams to right-size the infrastructure.

Given this, two practical approaches that enterprises could take are:

● For workloads migrating to on-prem from the cloud, use historical resource consumption as a starting point.

● For workloads without historical data, use a relatively large, current LLM as your reference workload to model resource requirements.

As previously stated, customizing an LLM is often the largest, most-demanding workload for an enterprise. By estimating the GPU resources required for this single task and then multiplying it by the expected number of concurrent workloads (across all tenants), you can calculate the total GPU resources required and begin designing the complete AI infrastructure.

Architecture

This chapter contains the following:

● Key Infrastructure Building Blocks

● Dual Fabrics: Backend and Frontend Networks

● Modular and Scalable Design: Scale Units and Scale Unit Types

The Cisco AI POD is a modular architecture designed to provide a scalable and performant infrastructure for distributed AI training and fine-tuning. This section provides a high-level overview of the end-to-end solution and the core hardware and software building blocks that make up the architecture.

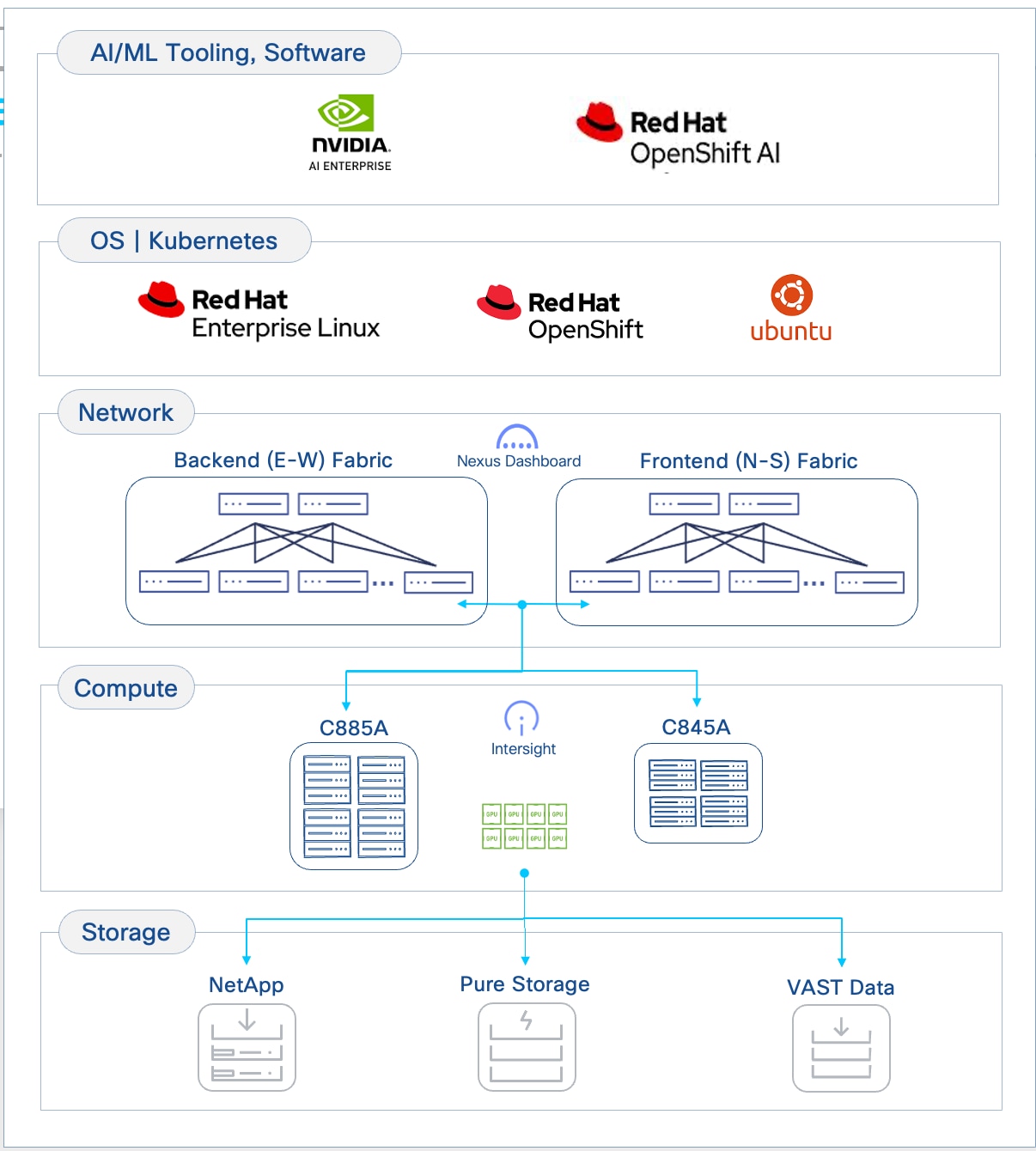

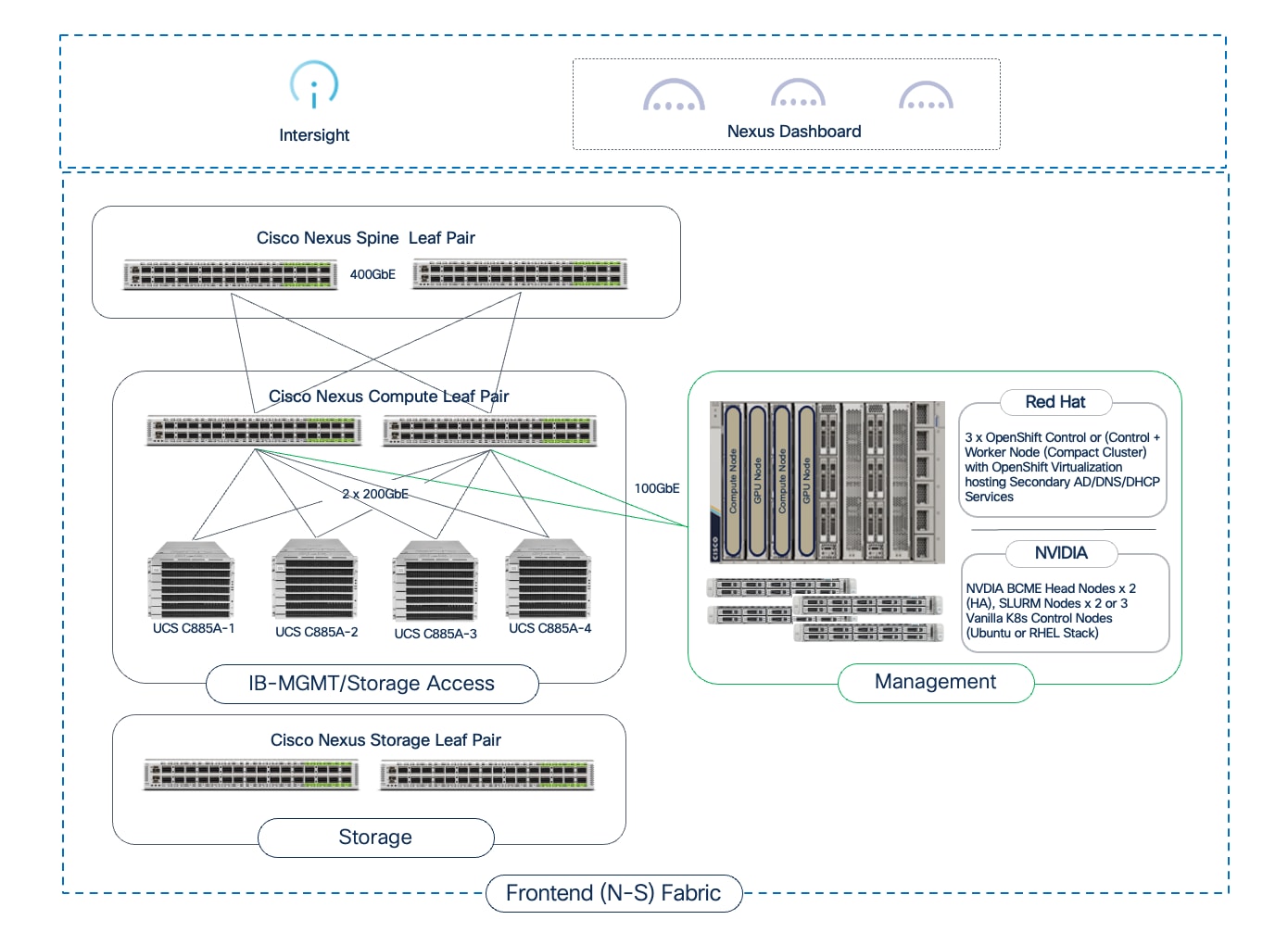

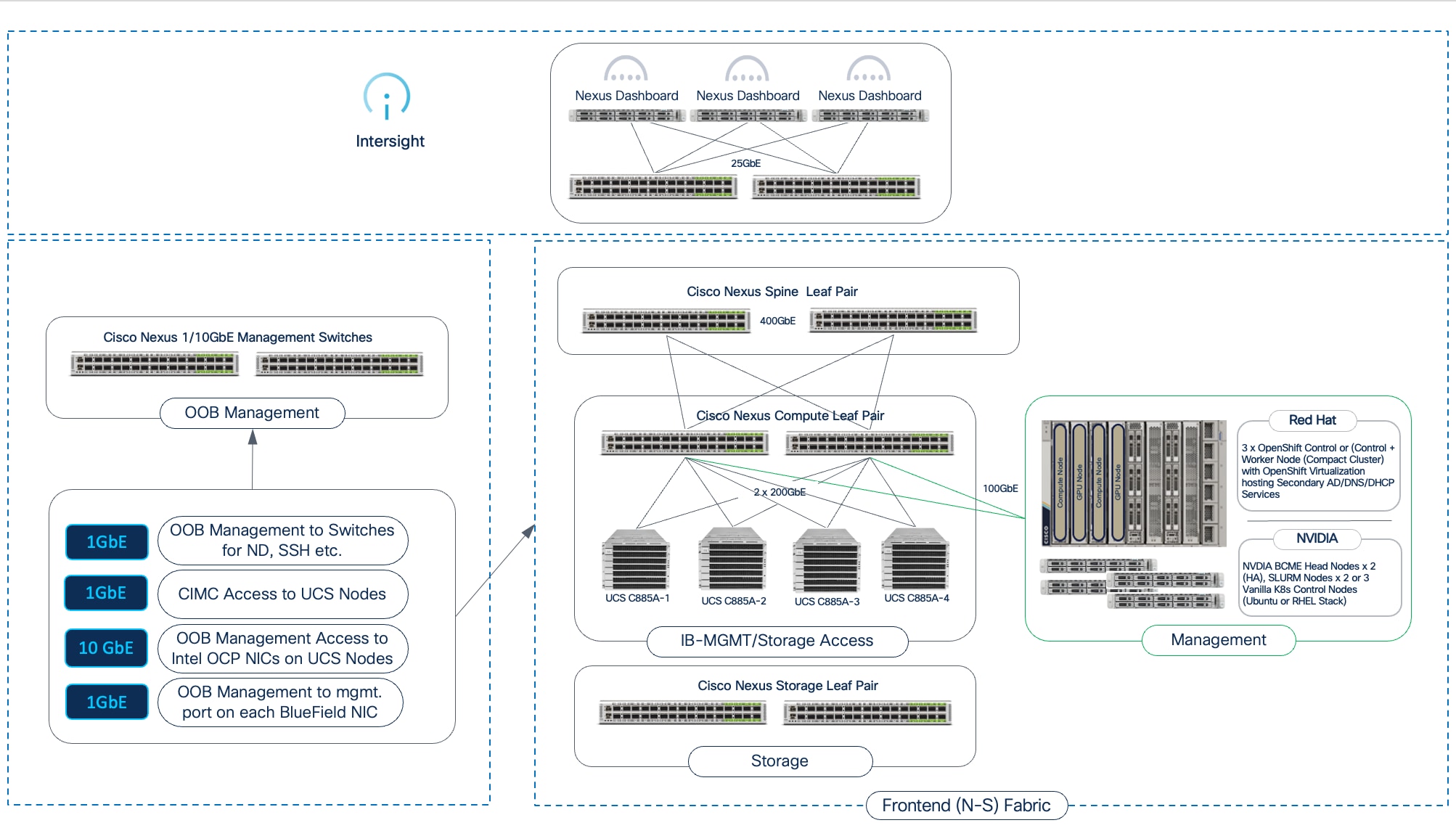

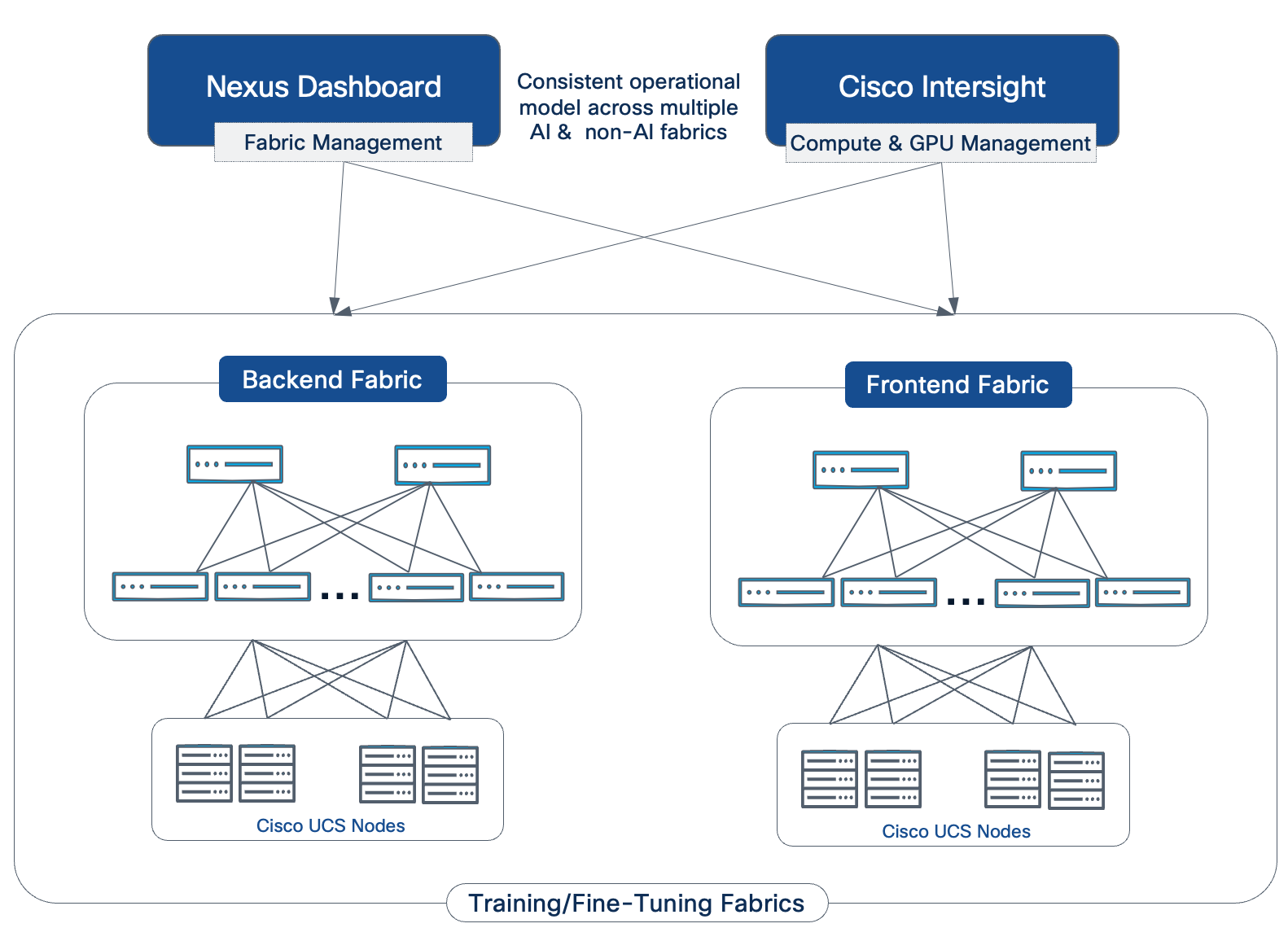

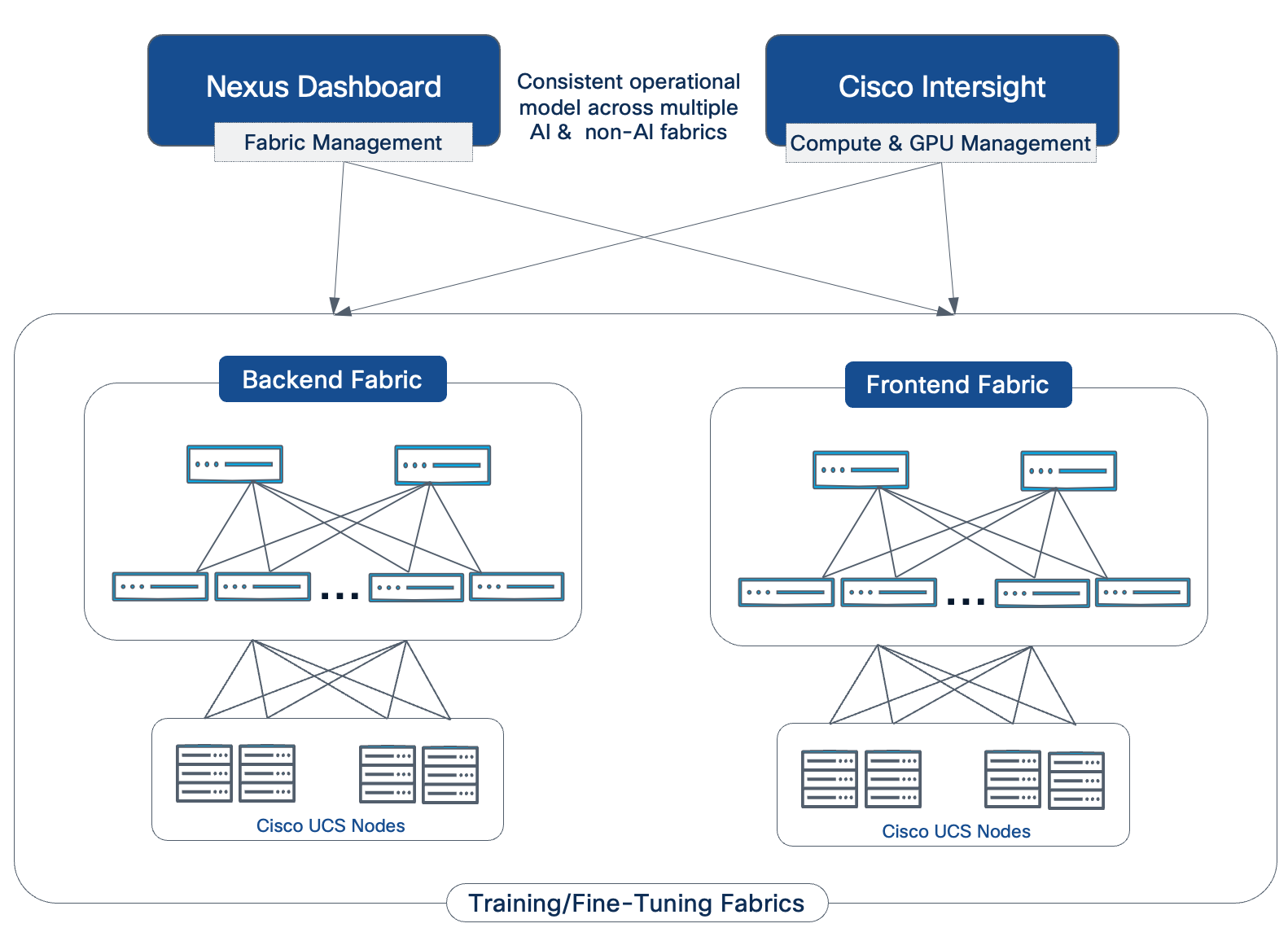

Figure 2 illustrates the high-level architecture of the AI POD.

The design takes a modular, building blocks approach, integrating compute (CPU, GPU), networking, storage, operating system (OS)/Kubernetes (K8s) platform, and AI software components. This delivers an AI-ready infrastructure that enterprises can deploy to support a range of AI initiatives and scale as needed with consistency, simplicity and operational ease.

Key Infrastructure Building Blocks

Cisco provides a comprehensive portfolio of products and solutions optimized for the full-breadth of the AI/ML lifecycle—from training to inferencing to hybrid deployments. Cisco AI PODs deliver foundational AI/ML infrastructure stacks designed to address an organization’s current and future AI needs. The AI POD solutions will continue to evolve, incorporating capabilities from Cisco’s extensive portfolio across compute, network, security, and observability to deliver a robust AI infrastructure stack for enterprise organizations.

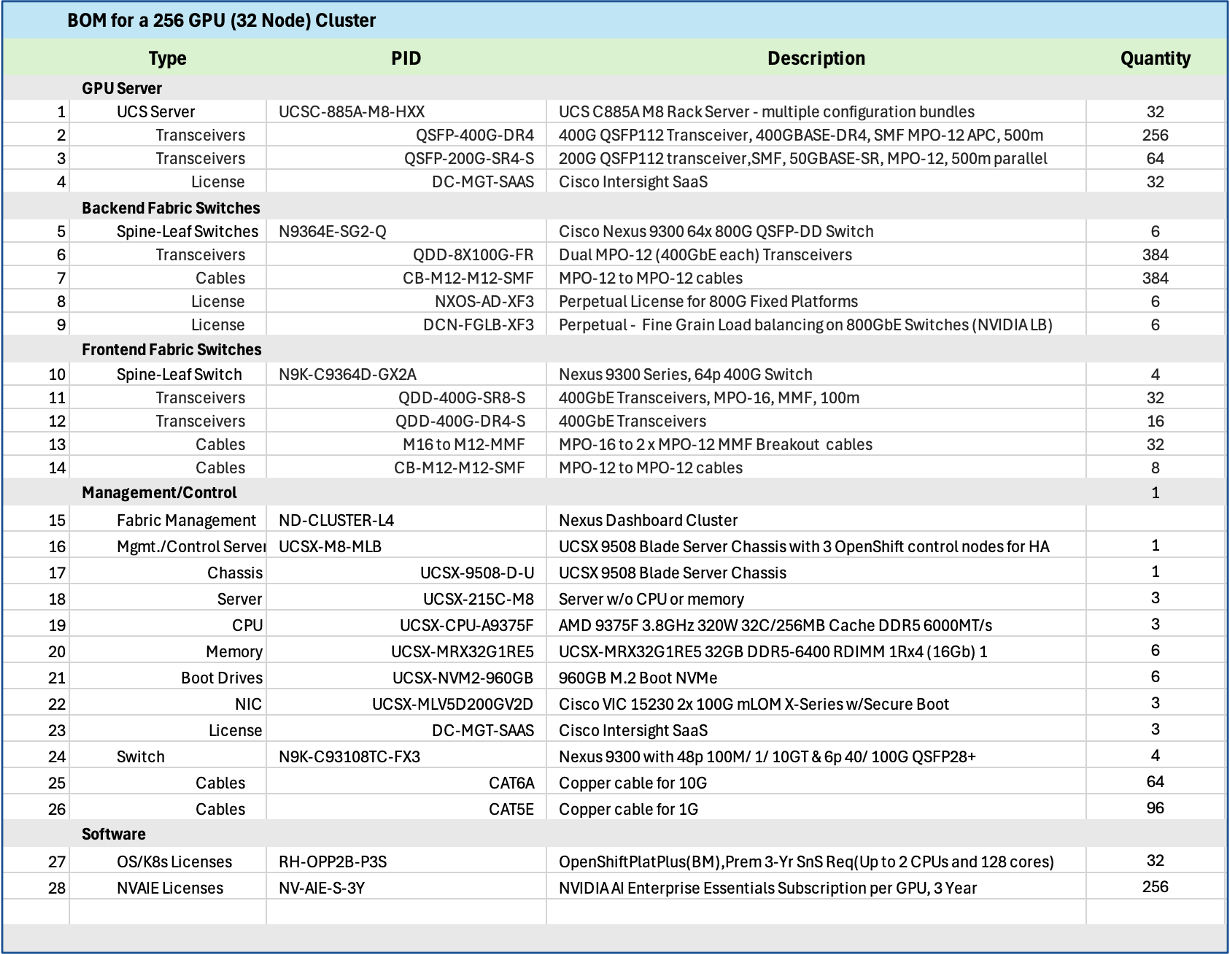

The Cisco AI POD architecture is a fully integrated, full-stack solution built from the following core components:

● Compute: Purpose-built Cisco UCS AI servers provide the GPU-dense compute power necessary for large-scale distributed training and fine-tuning.

● Network: The Cisco Nexus 9000 series provides a high-bandwidth, low-latency network fabric, managed by Cisco Nexus Dashboard, to ensure lossless communication for both backend (East-West) and frontend (North-South) traffic.

● Storage: Validated enterprise storage from industry-leading partners (such as NetApp, Pure Storage, and VAST) provides the high-performance, scalable, and reliable data access essential for data-intensive AI workloads.

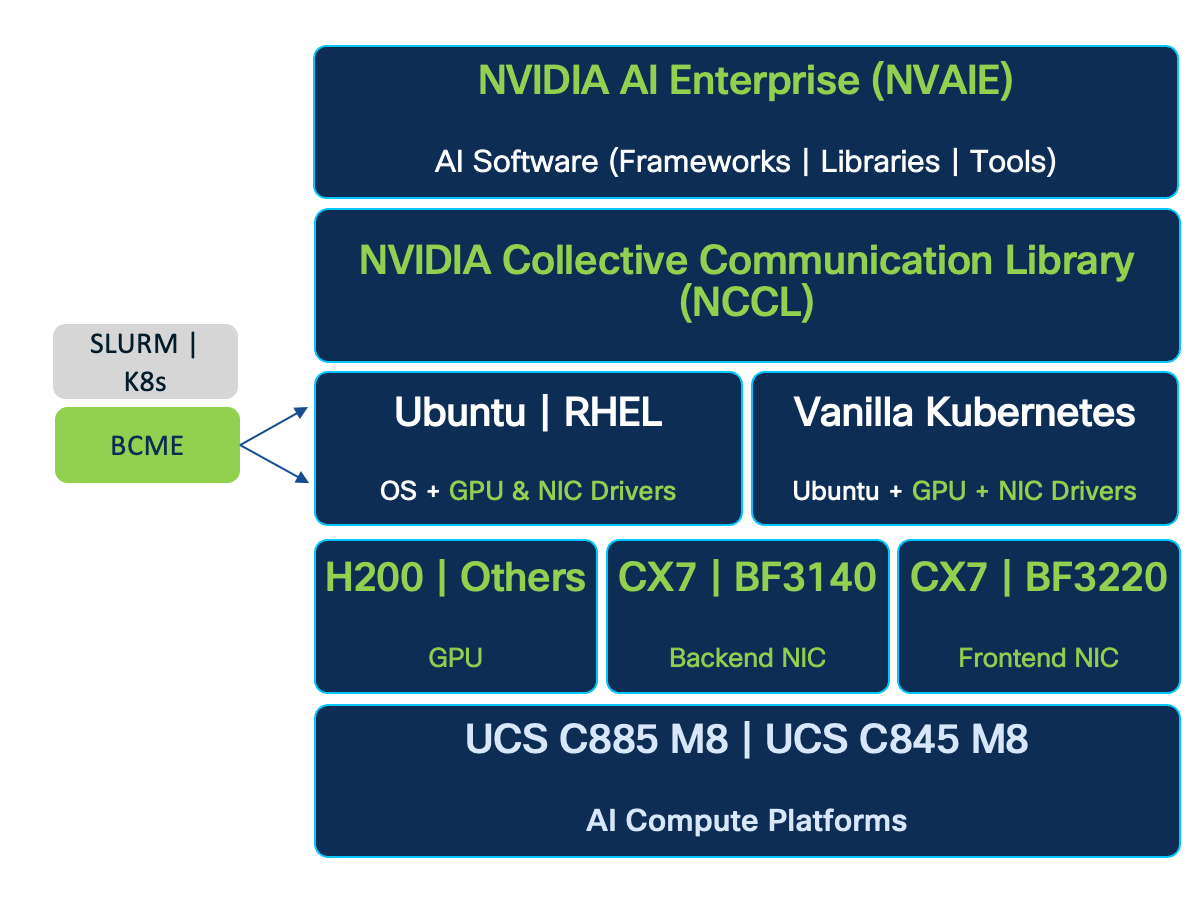

● Software Stack: A comprehensive software stack combines the operating system, workload orchestration (K8s or Simple Linux Utility for Resource Management (SLURM)), and essential GPU libraries from NVIDIA to run and manage AI workloads efficiently.

● Management: A unified management framework uses a dedicated management cluster, running on separate Cisco UCS servers, to host the control planes for the software stack. This is complemented by Cisco Nexus Dashboard and Cisco Intersight, which provide centralized management and automation for the physical network and compute infrastructure.

● Observability: End-to-end observability, powered by Splunk, delivers full-stack visibility into the health and performance of the entire AI infrastructure, from hardware to applications.

The following sections provide a detailed look at each of these building blocks.

Compute: Cisco UCS GPU Servers

The core compute platforms in this Cisco AI POD architecture are the purpose-built Cisco UCS GPU servers. These servers are designed for large-scale distributed training and fine-tuning, offering distinct options to match different deployment needs:

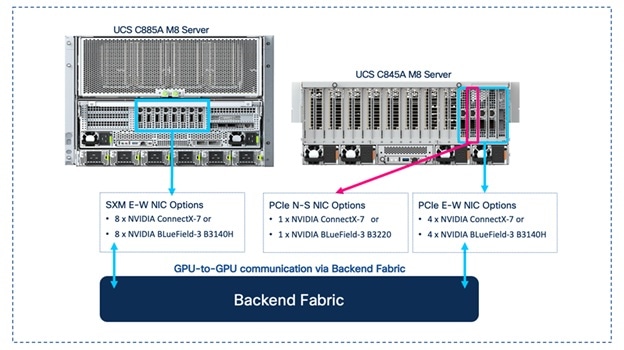

● Cisco UCS C885A M8 server is a fixed-configuration, NVIDIA SXM or AMD OAM based 8-GPU system optimized for maximum performance and density.

● Cisco UCS C845A M8 server is a flexible platform supporting 2, 4, 6, or 8 PCIe GPUs, allowing for modular scalability.

Both platforms support multiple NVIDIA and AMD GPU models with high-speed, internal GPU-to-GPU connectivity. The choice between these models will depend on the specific AI workload requirements and performance targets.

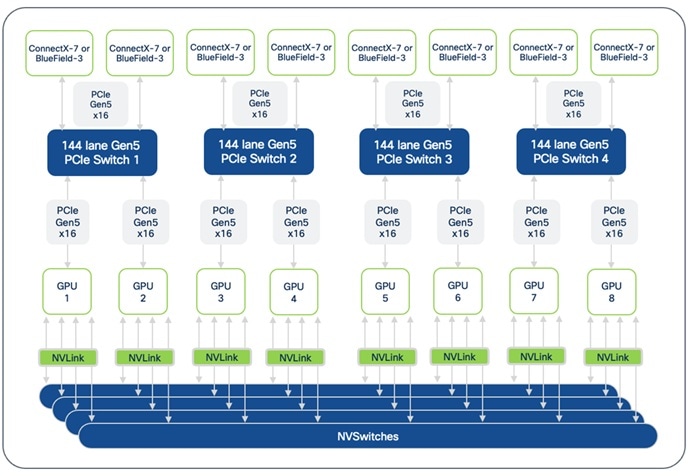

Cisco UCS C885A M8 Rack Server

AI model training and fine-tuning often involves extensive matrix multiplications and parallel computations that require high-performance GPUs with high-bandwidth connectivity, both within and across systems. The Cisco UCS C885A M8 is a high-performance, 8-RU, 8-GPU (AMD OAM or NVIDIA SXM-based) rack server designed to provide the GPU density and high-speed interconnects necessary for these workloads.

Cisco UCS C885A is based on NVIDIA’s HGX architecture, specifically the HGX 2-8-9-400 architecture using 2 CPUs, 8 NVIDIA GPUs, 9 NVIDIA NICs, and 400Gbps of bandwidth per GPU to the backend (East-West) fabric.

Note: The Cisco UCS C885A supports up to two NICs to connect to the frontend fabric.

The Cisco UCS C885A M8 features two 4th or 5th Gen AMD EPYC CPUs and up to 24 DDR5 RDIMMs (up to 6,400 MT/s) to provide the high core count and memory bandwidth needed to manage the operating system and support these AI workloads.

Dedicated, High-Speed GPU-to-GPU Interconnects

Distributed training and fine-tuning typically involves cycles of computations, followed by collective operations to synchronize states that require all or groups of GPUs to exchange data with each other, often at the same time or synchronously and using up all available bandwidth on the links. For more info on the collective operations used in a training and fine-tuning environment, see NVIDIA Collective Communications Library (NCCL) Overview and AMD’s ROCm Communication Collectives Library (RCCL) documentation.

For performant AI training and fine-tuning jobs, the dedicated high-speed GPU-to-GPU interconnects within the system will provide a low-latency, high-bandwidth fabric for efficient data and gradient synchronization across GPUs, directly impacting the speed, efficiency and scaling training jobs. The high-speed interconnects within a Cisco UCS C885A are:

● NVIDIA NVLink with NVSwitches: On a system with NVIDIA GPUs, the eight GPUs are interconnected using NVLink and NVSwitches, enabling 900 GB/s of bidirectional bandwidth between any pair of GPUs within the server.

● AMD Infinity Fabric: On a system with AMD GPUs, the eight GPUs are interconnected using AMD’s Infinity Fabric, enabling over 128 GB/s of bidirectional bandwidth using a full-mesh topology for direct GPU-to-GPU communication.

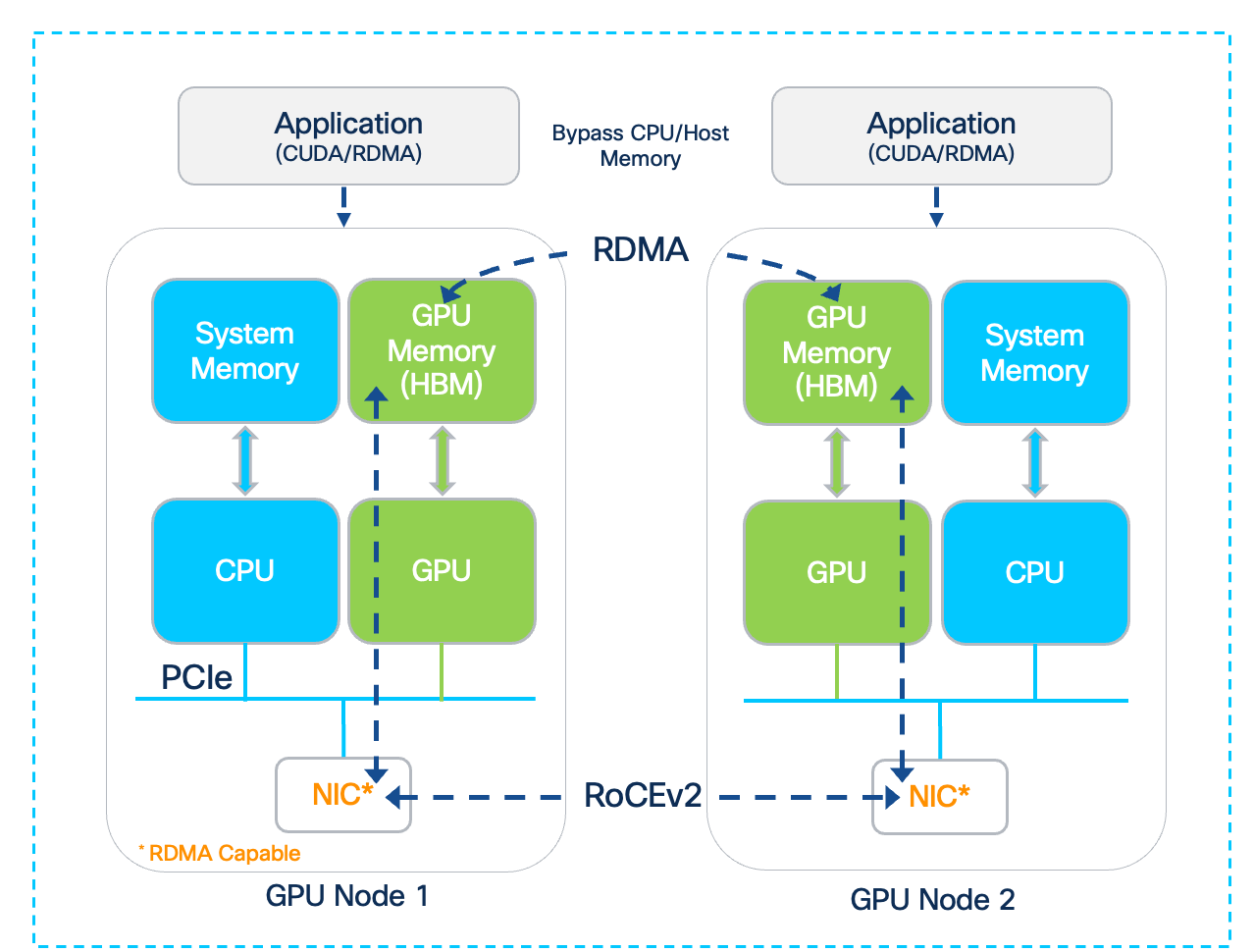

The direct GPU-to-GPU communication enabled by the dedicated interconnects bypasses the CPU and system memory for significantly faster data exchange and improved job completion times.

GPU Options

Each Cisco UCS C885A M8 server offers a fixed configuration of 8 GPUs – either NVIDIA (HGX H200) or AMD (MI300X, MI350X OAM) GPUs. These GPUs are necessary for the demanding AI workloads such as training and fine-tuning of Large Language Models (LLMs) as well as distributed inferencing with large parameter (70B+) models.

For up-to-date information on the GPU models available on the Cisco UCS C885A, see Appendix A - References.

External Network Connectivity for Scaling Training and Fine-Tuning Clusters

Unlike inferencing, AI training and fine-tuning often extends beyond a single server, requiring clusters of dense 8-GPU servers, anywhere from 4, 8, 16 nodes to as high as 64, 128 nodes in enterprise deployments. To facilitate this scaling, Cisco UCS C885A M8 servers provides the following network interface options:

● NICs for connectivity to Backend(BE) / East-West(E-W) Fabric: Each Cisco UCS C885A server is equipped with eight PCIe Gen5 x16 HHHL slots and eight dedicated (1x400G) NICs (for example, NVIDIA BlueField-3 B3140H SuperNIC or ConnectX-7 or Pensando Pollara 400) for connecting to the BE network fabric. The NICs provide high-bandwidth, low-latency GPU Direct RDMA communication between GPUs residing on different C885A M8 servers connected to the same backend fabric.

● NICs for connectivity to Frontend(FE) / North-South(N-S) Fabric: Each Cisco UCS C885A server is equipped with five PCIe Gen5 x16 FHHL slots, with four available for use as FE/N-S NICs to connect to the frontend fabric. These NICs can be 2 x NVIDIA BlueField-3 B3220 or B3240 DPUs or 4 x ConnectX-7 NICs. The FE fabric can be a dedicated fabric or an existing data center fabric and provide connectivity for AI workload management and orchestration components, access to high-speed storage access, network services, and when used for inferencing, it will also be used for inferencing requests from users and applications to models hosted on the C885A.

Note: Currently, the BlueField-3 B3240 FE NIC is only available for Cisco Hyperfabric AI deployments.

The Cisco UCS C885A M8 server use PCIe Gen5 for high-speed connectivity between GPUs and NICs, ensuring maximum data throughput.

Storage for Data-Intensive Workloads

AI training and fine-tuning are inherently data-intensive processes, requiring access to large datasets. The C885A M8 platform supports the following storage options to meet these demands:

● Local High-Speed NVMe: Each C885A M8 server can be configured with up to 16 PCIe Gen5 x4 2.5” U.2 NVMe SSDs, providing high-speed local storage. This capacity is beneficial for caching frequently accessed training data, storing intermediate results, or for checkpointing model parameters during long training runs, thereby reducing I/O latency and improving GPU utilization. Also, up to 2X PCIe3 x4 960GB M.2 NVMe drives are also included for booting the operating system.

● External Storage Integration: For access to shared datasets, the platform supports standard IP-based storage protocols such as NFS and object storage. In AI POD deployments, a dedicated storage NIC can be deployed to ensure high-bandwidth data transfer rates between the compute nodes and the external storage systems, preventing storage I/O from becoming a bottleneck to training performance. GPUDirect RDMA is also supported on the frontend NICs for high-speed, low latency connectivity between GPUs and external storage systems.

Building Block for Scale Units

The Cisco UCS C885A M8 server is a foundational compute option for AI POD's modular Scale Units that will be discussed in an upcoming section. Multiple Cisco UCS C885A servers are combined with specific models of Cisco Cloud Scale (CS) and Silicon One (S1) Nexus leaf switches to form distinct Scale Unit Types that enables customers to start with a cluster that meets their current needs (for example, 32, 64 or 128 GPU cluster), but that can scale to higher clusters (for example, 128, 256 or 512 GPUs) as their needs evolve, in a predictable and consistent manner.

Operating System Support

Cisco UCS C885A M8 server supports several Linux OS and Kubernetes, both vanilla K8s as well as Red Hat OpenShift. See the Cisco UCS C885A M8 Rack Server Spec Sheet for a current list of supported OS types and versions.

Cisco Intersight

Cisco UCS servers can be managed through Cisco Intersight, providing unified, cloud-managed lifecycle management, policy-driven configuration, and comprehensive health monitoring. Intersight management of C885 is currently limited to capabilities such as inventory display (CPU, GPU, Memory, NVMe Drives, and Network Cards), health and alerts management, KVM cross-launch, power management, and basic hardware metrics monitoring. Additional capabilities will be available in the future, including firmware management and automated OS installation.

For more information, see Appendix A - References.

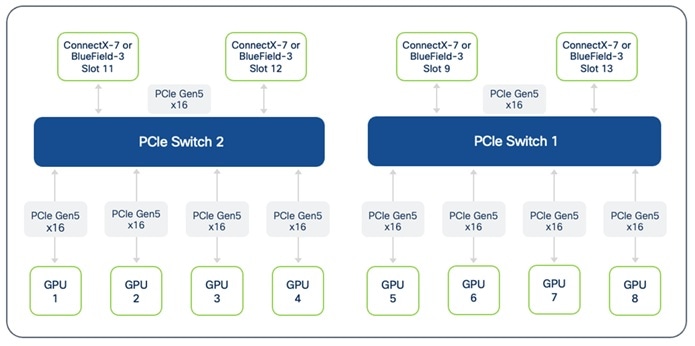

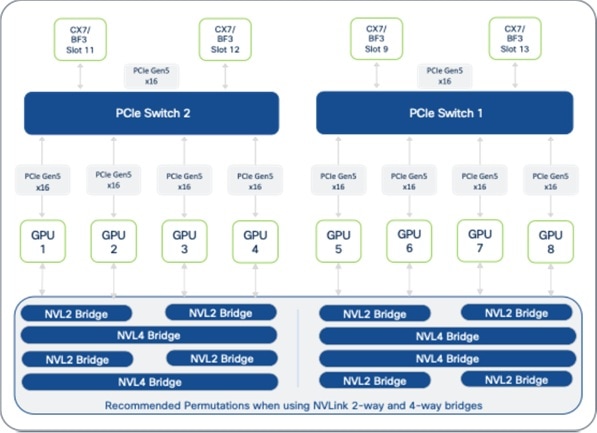

Cisco UCS C845A M8 Rack Server

The Cisco UCS C845A M8 is a flexible, 4-RU purpose-built AI platform that supports the full spectrum of AI use cases, from training and fine-tuning to distributed large model inferencing, including graphics acceleration in Virtual Desktop Infrastructure (VDI) deployments. The Cisco UCS C845A M8 is based on NVIDIA’s MGX architecture, specifically the PCIe Optimized 2-8-5-200 architecture when using 2 CPUs, 8 NVIDIA GPUs, 5 NVIDIA NICs, and 200Gbps of bandwidth per GPU to connect to backend (E-W) fabric.

Note: Cisco UCS C845A supports a maximum of 4 E-W NICs (1 NIC for every 2 GPUs) to connect the GPUs to the backend fabric and 1 N-S NIC to connect to the frontend fabric.

The Cisco UCS C845A M8 features two high-end AMD Turin (5th Gen) EPYC processors with up to 96 cores and 32 DDR5 RDIMM slots ( up to 4TB of memory). It is a versatile platform that can be scaled modularly to support 2, 4, 6 or 8 AMD or NVIDIA PCIe-based GPUs.

Dedicated, High-Speed GPU-to-GPU Interconnects

The high-speed interconnects within a Cisco UCS C845A are described below. They enable low-latency, high-bandwidth GPU-to-GPU connectivity to accelerate training and fine-tuning jobs:

● NVIDIA NVLink Bridges: On a system with NVIDIA GPUs, H200 NVL GPUs can be interconnected using 2-way NVLink (NVL2) or 4-way NVLink (NVL4) bridges. These bridges facilitate high-speed, direct GPU-to-GPU communication, ensuring efficient data and gradient synchronization. Note that NVIDIA L40S and RTX Pro 6000 GPUs do not support NVLink. RTPX Pro 6000 and L40S GPUs do not support NVLink.

● AMD Infinity Fabric Bridges: On a system with AMD MI210 GPUs, the GPUs are interconnected using 2-way or 4-way Infinity Fabric bridges, enabling direct GPU-to-GPU communication.

The direct GPU-to-GPU communication enabled by these dedicated interconnects bypasses the CPU and system memory for significantly faster data exchange and improved job completion times. It is important to adhere to recommended GPU population rules (see UCS 845A spec sheet in References section) to optimize NVLink/Infinity Fabric topology and prevent communication between GPUs on different PCIe switches from being routed through CPUs, which would introduce latency.

GPU Options

Each Cisco UCS C845A M8 server supports a flexible configuration of 2 to 8 PCIe GPUs (in even numbers, minimum 2), allowing you to choose the number of GPUs that match their specific use case and scale as workloads increase. GPUs cannot be mixed within a single server and must be procured from Cisco (requiring a unique SBIOS ID for CIMC). Table 3 lists the available GPU options on a UCS C845A M8 server, including capabilities such as NVLink and Multi-Instance GPU (MIG), and NVIDIA AI Enterprise (NVAIE) licensing requirements.

Table 3. Available PCIe GPU Options on Cisco UCS C845A M8 Server

| GPU Model |

Supported GPU Count |

Card/Slot Specs |

Memory, Power |

NVLink (NVL) / MIG Support |

NVAIE License |

| NVIDIA H100 NVL |

2, 4, 6, 8 |

FHFL, Single-Wide, 2-slot |

94GB, 400W |

NVL: Yes, 2-way MIG: Yes |

Included (5-Year License) |

| NVIDIA H200 NVL |

2, 4, 6, 8 |

FHFL, Single-Wide, 2-slot |

141GB, 600W |

Yes, 2/4-way MIG: Yes |

Included (5-Year License) |

| NVIDIA L40S |

2, 4, 6, 8 |

FHFL, Single-Wide, 2-slot |

48GB, 350W |

NVL: No MIG: No |

NOT Included |

| NVIDIA RTXP6000 |

2, 4, 6, 8 |

FHFL, Single-Wide, 2-slot |

96GB, 600W |

NVL: No MIG: Yes |

NOT Included |

| AMD MI210 |

2, 4, 6, 8 |

FHFL, Single-Wide, 2-slot |

64GB, 300W |

N/A |

N/A |

For up-to-date information on the GPU models available on the C845A, please see UCS C845A data and spec sheet links provided in the References section of this document.

External Network Connectivity for Scaling Training and Fine-Tuning Clusters

AI training and fine-tuning will typically require multi-node clusters, anywhere from 4, 8, 16 nodes to as high as 64, 128 nodes in enterprise deployments. To facilitate this scaling, Cisco UCS C845A M8 servers provide the following network interface options:

● NICs for connectivity to Backend (BE) / East-West (E-W) Fabric: Up to four PCIe Gen5 x16 FHHL slots are available for connectivity to East-West fabric (for example, NVIDIA ConnectX-7 (1x400G) or BlueField-3 B3140H SuperNIC (1x400G)). These are used for high-bandwidth, low-latency GPU Direct RDMA communication between GPUs residing on different C845A M8 servers connected to the same BE fabric.

● NICs for connectivity to Frontend (FE) / North-South (N-S) Fabric: One PCIe Gen5 x16 FHHL slot is available for connectivity to North-South fabric (for example, NVIDIA ConnectX-7 (2x200G) or BlueField-3 B3220 (2x 200G)). The FE fabric can be a dedicated fabric or an existing data center fabric and provides connectivity for AI workload management and orchestration components, access to high-speed storage, network services. When used for inferencing, inferencing requests from users and applications to models hosted on the Cisco UCS C845A will also use this NIC. The Cisco UCS C845A M8 server uses PCIe Gen5 for high-speed connectivity between GPUs and NICs, ensuring maximum data throughput.

Storage for Data-Intensive Workloads

AI training and fine-tuning are inherently data-intensive processes, requiring access to large datasets. The Cisco UCS C845A M8 platform supports the following storage options to meet these demands:

● Local High-Speed NVMe and SATA: Each Cisco UCS C845A M8 server can be configured with up to 20 E1.S NVMe SSDs for high-speed cache and data storage. These E1.S drives are available in capacities such as 1.9TB, 3.8TB, and 7.6TB. This capacity is beneficial for caching frequently accessed training data, storing intermediate results, or for checkpointing model parameters during long training runs, thereby reducing I/O latency and improving GPU utilization. Additionally, up to two M.2 SATA SSDs (240GB or 960GB) are available for booting the operating system, with an optional hardware RAID controller.

● External Storage Integration: For access to vast, shared datasets, the platform supports standard IP-based storage protocols such as NFS and object storage. In AI POD deployments, a dedicated storage NIC can be deployed to ensure consistent, high-bandwidth data transfer rates between the compute nodes and the external storage systems, preventing storage I/O from becoming a bottleneck to training performance. GPUDirect RDMA is also supported on the frontend NICs for high-speed, low latency connectivity between GPUs and external storage systems.

Building Block for Scale Units

The Cisco UCS C845A M8 server is also a foundational compute option for AI POD's modular Scale Units that will be discussed in a later section. Its modular design allows for flexible scaling of GPU count within a server and enables the creation of clusters tailored to specific AI needs. Multiple C845A servers can be combined with specific models of Cisco Nexus leaf switches to form distinct Scale Unit Types that enables customers to start with a cluster that meets their current needs, but that can scale to larger GPU clusters as their needs evolve, in a predictable and consistent manner.

Operating System Support

Cisco UCS C845A M8 server supports several Linux OS and Kubernetes. Supported OS include Ubuntu Server 22.04 LTS, 24.04 LTS, RedHat Enterprise Linux >9.4, RedHat Enterprise Linux CoreOS 4.16, and Rocky Linux 9.5. See Cisco UCS C845A M8 server spec sheet for an up-to-date list of supported OS types and versions.

Cisco Intersight

Cisco UCS servers can be managed through Cisco Intersight, providing unified, cloud-managed lifecycle management, policy-driven configuration, and comprehensive health monitoring. Intersight management of C845A includes capabilities such as server claiming, inventory display (CPU, GPU, Memory, NVMe Drives, and Network Cards), health and alerts management, KVM cross-launch, and power management. Additional capabilities will be included in the future, including real-time inventory updates, OS installation using KVM, tunneled KVM, connected TAC support, firmware management (including GPUs), advanced hardware monitoring, HCL, advisories, automated OS installation, and policy-driven management.

For more information, see Appendix A - References.

Network Fabric: Cisco Nexus 9000 Series Switches and Nexus Dashboard

In distributed training and fine-tuning, the network fabric plays a crucial role in providing high-bandwidth, low-latency communication to interconnect dense GPU servers like the UCS C885A and C845A. The Cisco Nexus 9000 series is designed to meet these demanding requirements, serving as the high-performance foundation for both the leaf and spine layers of the backend and frontend fabrics in the architecture.

The AI POD architecture leverages the following key platforms:

● Cisco Nexus 9332D-GX2B: A 1RU, 32-port 400GbE switch based on Cisco Cloud Scale technology, ideally suited for leaf role.

● Cisco Nexus 9364D-GX2A: A 2RU, 64-port 400GbE switch based on Cisco Cloud Scale technology, ideally suited for larger leaf or spine roles.

● Cisco Nexus 9364E-SG2 is a 2RU, 64-port 800GbE (or 128 x 400GbE ports) switch based on Cisco Silicon One technology. Designed for next-generation fabrics, it is available in QSFP-DD and OSFP form factors with dual-port transceivers for 400GbE connectivity, making it suitable for both leaf and spine roles.

All of these Nexus switches provide the port density, switching capacity, and advanced features necessary for AI/ML workloads, including support for RDMA over Converged Ethernet (RoCE), hardware-accelerated telemetry, and advanced load-balancing mechanisms.

Cisco Nexus 9332D-GX2B Switch

The Cisco Nexus 9332D-GX2B is a 1RU switch engineered for high-density 400GbE leaf (or spine) deployments. With 32 flexible QSFP-DD ports, it provides the critical connectivity for dense GPU servers like the Cisco UCS C885A and Cisco UCS C845A. The switch delivers 25.6 Tbps of forwarding throughput and features a 60 MB shared buffer to manage traffic bursts and prevent packet loss in latency-sensitive AI environments.

![]()

Cisco Nexus 9364D-GX2A Switch

For larger-scale deployments, the Cisco Nexus 9364D-GX2A is a 2RU switch that doubles the port density to 64 x 400GbE QSFP-DD ports, making it suitable for either a high-density leaf or a compact spine role. It provides 51.2 Tbps of throughput and a deep 120 MB shared buffer to maintain lossless performance across more expansive AI PODs.

Cisco Nexus 9364E-SG2 Switch

Based on Cisco Silicon One technology, the Cisco Nexus 9364E-SG2 is a 2RU switch designed for next-generation leaf and spine architectures. It features 64 native 800GbE ports, which can be configured to 128 ports of 400GbE using dual-port transceivers for maximum density. With 51.2 Tbps of throughput and a 256 MB on-die packet buffer, it is particularly well-suited for AI/ML, offering advanced congestion-management mechanisms and enhanced telemetry.

Common Capabilities for AI/ML Fabrics

All of these Nexus platforms provide the foundational features essential for building a high-performance AI network fabric:

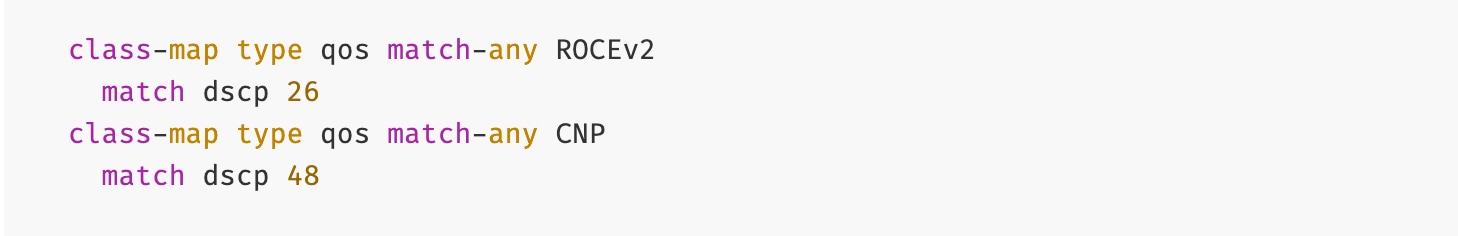

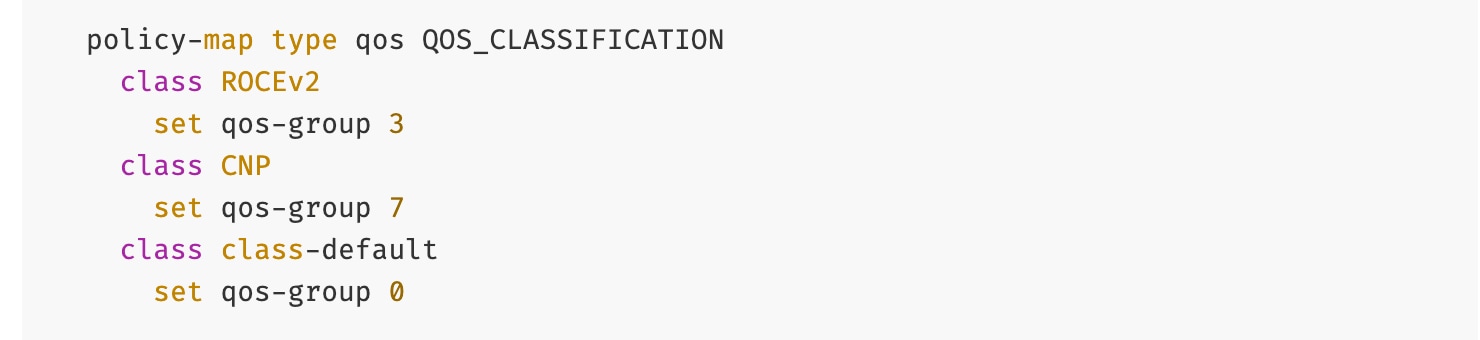

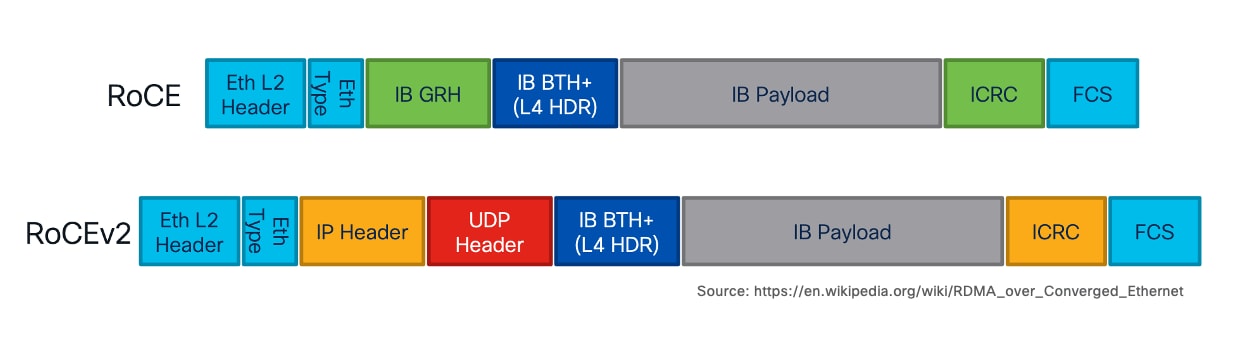

● Lossless Transport for RDMA: They fully support RDMA over Converged Ethernet (RoCEv2), providing the low latency, high-bandwidth, and congestion-management necessary for distributed training.

● Advanced Fabric Features: They support modern network architectures using VXLAN EVPN and segment routing, allowing for the creation of flexible, scalable, and automated multi-tenant network infrastructures.

● Intelligent Traffic Management: These switches are particularly well-suited for AI/ML applications, supporting intelligent traffic management with features like Dynamic Load Balancing (DLB and advanced telemetry.

● Centralized Management: The entire fabric, including all leaf and spine switches, is managed through Cisco Nexus Dashboard, which provides a single point of control for automation, monitoring, and analytics.

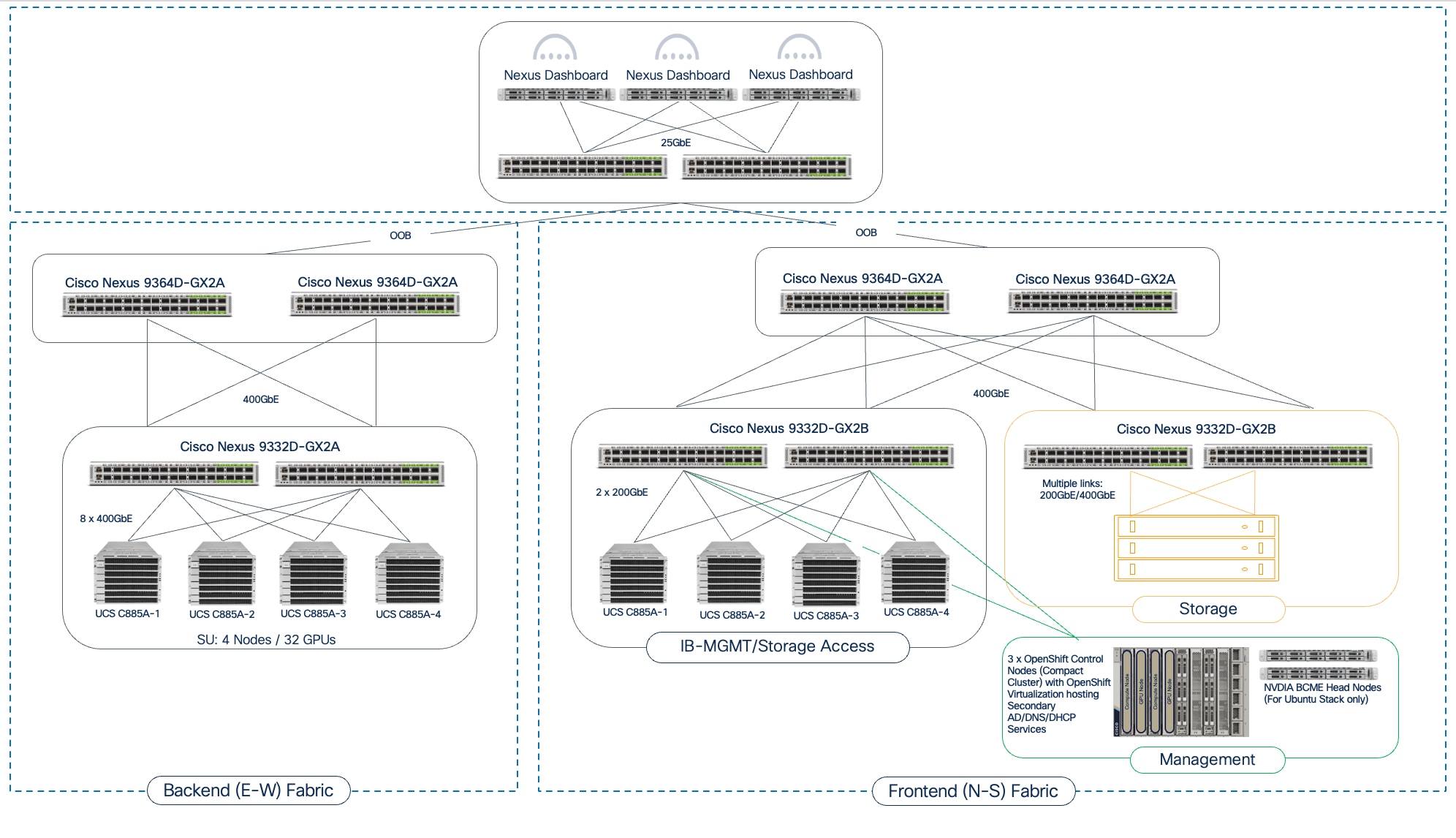

Cisco Nexus Dashboard

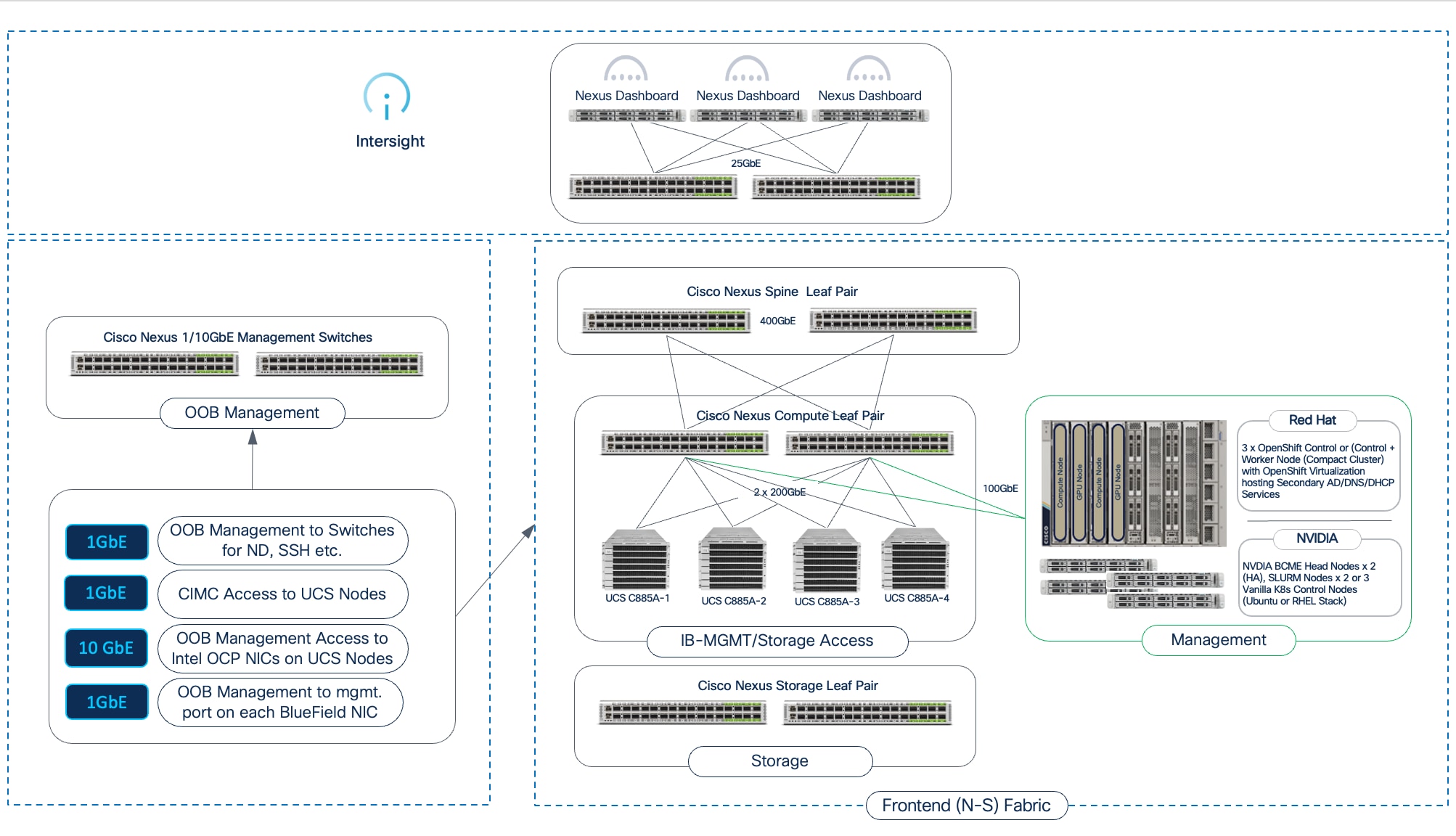

The AI POD design for training and fine-tuning incorporates two distinct network fabrics: a backend (East-West) fabric for high-speed GPU-to-GPU connectivity between UCS GPU servers and a frontend (North-South) fabric for connectivity to management, storage, services, and user/application traffic. Cisco Nexus Dashboard (ND) provides a unified platform to simplify the deployment, management, and operations of these critical fabrics in the Cisco AI POD design.

The key capabilities that Nexus Dashboard provides for an AI POD include:

● Centralized Fabric Management

The latest release of ND 4.1 also consolidates Nexus Dashboard Fabric Controller, Orchestrator, and Insights into a single unified and cohesive platform with a single menu and API endpoint. This integration simplifies lifecycle management of multiple data center fabrics (both AI and non-AI) through one interface.

In the AI POD design, the same Nexus Dashboard is used to manage both AI fabrics (backend, frontend), providing operational consistency and simplifying the overall management of this environment.

● AI/ML Fabric Deployment and Blueprints

Nexus Dashboard provides built-in blueprints for flexible deployment of VXLAN EVPN-based or IP BGP fabrics, including templates, customized for enterprise AI deployments. These are pre-defined, best-practice templates to automate and accelerate the deployment of AI fabrics.

In the AI POD design, AI/ML templates are used to deploy both fabrics in a matter of minutes.

● Deep Visibility and Day-2 Operations

Nexus Dashboard offers comprehensive monitoring capabilities, including hardware-assisted telemetry, and real-time per flow analytics. ND also offers several day-2 operational capabilities such as centralized fabric upgrades and proactive fabric management by providing aggregated views of anomalies and advisories impacting the fabric, including an analysis hub with multiple troubleshooting tools.

● Scalable, Multi-Fabric Management

The Nexus Dashboard platform itself is designed for scale and resilience, allowing it to manage environments of any size. ND can be deployed as a physical or virtual appliance cluster (1, 3, or 6 nodes), including on the latest Cisco UCS M8-based appliances or in public clouds like AWS. For massive-scale deployments, several Nexus Dashboard clusters can be federated, providing a global view and management across multiple data centers and sites from a single pane of glass.

In the AI POD design, a single ND cluster, consisting of 3 physical nodes are used to manage both the backend and frontend fabrics.

● Automation and API Capabilities

ND offers robust automation with Infrastructure-as-Code (IaC) capabilities, enabling accelerated deployments and simplified workflows. A unified API endpoint across for multiple fabrics facilitates single API automation for fabric deployment, configuration, and ongoing management.

For more information, see Appendix A - References.

Storage

AI training and fine-tuning workloads are inherently data-intensive, requiring performant, scalable and reliable storage. For Cisco AI PODs, additional storage considerations include:

● Incremental scalability to meet evolving enterprise AI needs

● Flexibility to leverage established enterprise storage systems and newer solutions purpose-built for AI workloads

● Operational consistency across AI and non-AI environments

To meet these requirements, the Cisco AI POD solution integrates and validates proven enterprise storage solutions and Software-Defined Storage (SDS) solutions from industry-leading partners. The design ensures that these solutions provide the following core capabilities:

● High-Speed Connectivity: Storage systems typically connect to the frontend fabric in an AI network and require high-speed data connectivity with the compute nodes running AI workloads. In the AI POD design, this connectivity can be done using either dedicated or shared NICs. These NICs are typically 2 x 200GbE NICs, operating at 100Gbps or 200Gbps to ensuring high-bandwidth access to storage. NVIDIA recommends 12.5Gbps or more per GPU for storage access.

● GPU Direct Storage (GDS) Support: The AI POD design leverages GDS, which enables direct data transfer between storage and GPU memory, bypassing the system CPU and memory. This can significantly reduce latency and improve throughput for data-intensive AI operations, particularly for large datasets and workloads.

● Scalability and Flexibility: Integrated storage solutions should offer flexible scalability in both capacity and performance, allowing enterprises to grow their data stores as AI projects grow without disruption.

● Optimized Data Access: The solutions should support optimized data access like NFS over RDMA-that provide large GPU clusters with efficient parallel access to storage.

To deliver the above capabilities, Cisco has partnered with three key enterprise storage partners. The storage solutions validated in the AI POD solution are:

● NetApp Storage – NetApp offers a unified data platform with robust data management capabilities, making it a reliable choice for enterprises leveraging existing investments. NetApp's All-Flash FAS (AFF) systems, powered by ONTAP software, provide high-performance, all-flash NVMe-based storage. They support NFS, NFS over RDMA, object store and are validated for GDS, ensuring low-latency, high-throughput data access directly to GPUs.

ONTAP provides enterprise-class data management features like deduplication, compression, drive encryption, snapshots, replication, and backup, crucial for protecting valuable AI datasets and models. This extends existing data management practices to AI workloads, ensuring operational consistency. NetApp FlexGroup volumes are designed to handle massive, high-performance datasets, making them ideal for AI training workloads. Unlike traditional volumes, FlexGroup automatically distributes files and metadata across multiple constituent volumes and storage nodes, enabling parallel I/O operations. NetApp's scale-out architecture allows for seamless growth in both capacity and performance, adapting to evolving AI project needs.

NetApp’s latest AFX storage system is based on a next‑generation storage architecture that evolves the ONTAP storage model into a disaggregated high-performance NAS solution. AFX system delivers all the performance benefits of parallel file systems and niche AI storage solutions, but on an enterprise-grade platform that is simple, secure, and fully integrated. Unlike solutions that require proprietary file system clients, AFX uses standard file and object protocols, including parallel NFS (pNFS) for extreme performance and AWS S3-compatible object storage for flexibility. This integrated platform means that all of your applications can use AFX without installing custom clients that introduce instability, security risks, or operational complexity.

● Pure Storage - Pure Storage offers high-performance, all-flash solutions optimized for AI/ML in the FlashBlade//S systems. A scalable, all-flash, scale-out file and object storage platform designed for high-throughput, low-latency access to unstructured data. Its modular architecture allows for incremental scaling of both performance and capacity, making it ideal for large AI datasets and parallel access. FlashBlade//S supports high-speed 400GbE network interfaces and GDS integration.

◦ Portworx by Pure Storage - Portworx unlocks the value of Kubernetes data at enterprise scale. It’s a fully integrated container data platform that Automates, Protects, and Unifies modern applications across hybrid and multi-cloud, works with any underlying storage (on-prem or cloud) and any Kubernetes distribution, and simplifies developer actions and platform data management:

- Automate: Portworx automates Kubernetes data management end-to-end, boosting efficiency and time-to-market across DevOps/MLOps. It abstracts heterogeneous on-prem/cloud storage and delivers a cloud operating model with self-service storage/database services.

- Protect: Architect app-aware resiliency from Day 1 with synchronous DR (zero-data-loss targets) and automated Day-2 operations. Encrypt at cluster or storage-class scope, enforce RBAC, and use policy-driven backups with immutability/portability to counter ransomware.

- Unify: Unify Kubernetes storage by removing per-array CSI dependencies so platforms stay fully declarative across hybrid/multi-cloud. Manage data for containers and VMs in one solution—reducing VM licensing overhead and preserving future flexibility.

● VAST Storage - VAST Data's Universal Storage platform is an SDS platform designed for exabyte-scale and all-flash performance geared for AI workloads. Its Disaggregated Shared-Everything (DASE) architecture separates compute from storage, allowing independent scaling of resources to meet dynamic AI workload demands. This architecture provides high throughput and low latency for large datasets.

VAST Data Servers (EBox) can be deployed on Cisco UCS C225 M8 servers, leveraging Cisco's compute platform. When deployed on UCS C225s, the VAST Data Servers can be managed through Cisco Intersight, providing unified visibility and operational consistency for the compute layer of the storage solution within the broader AI POD infrastructure. VAST supports high-speed RDMA access and GDS.

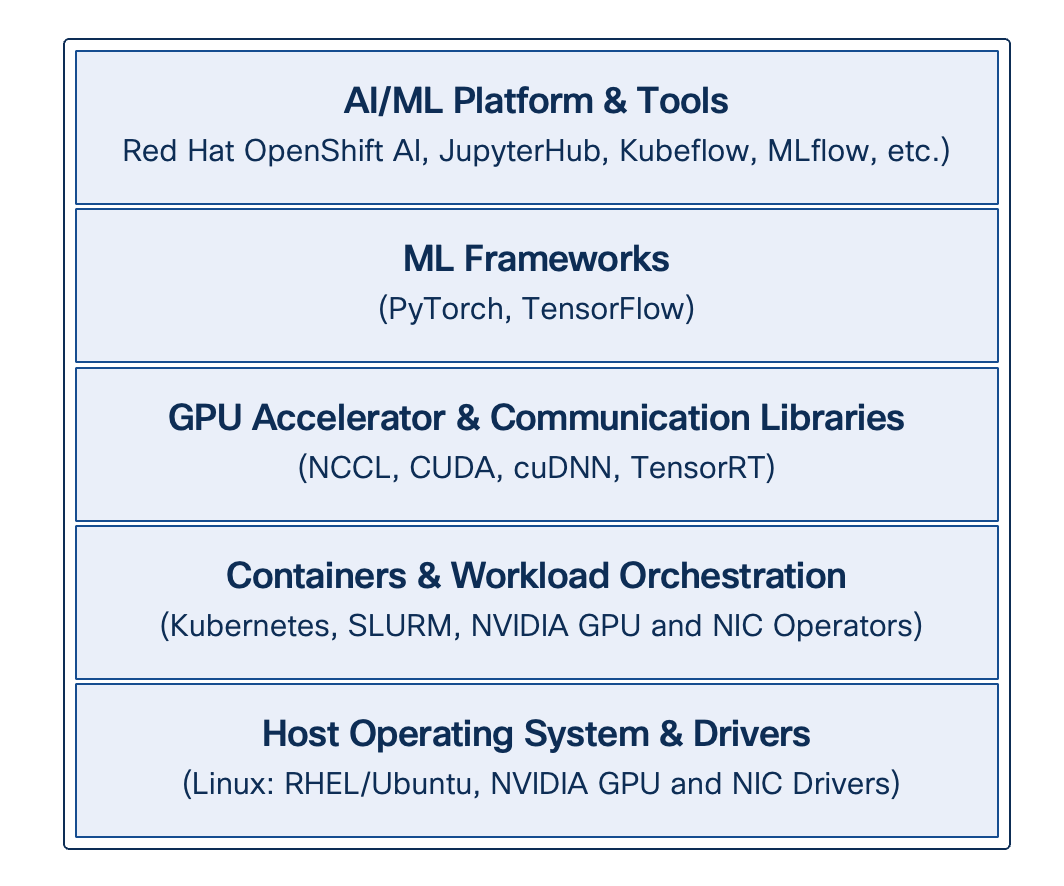

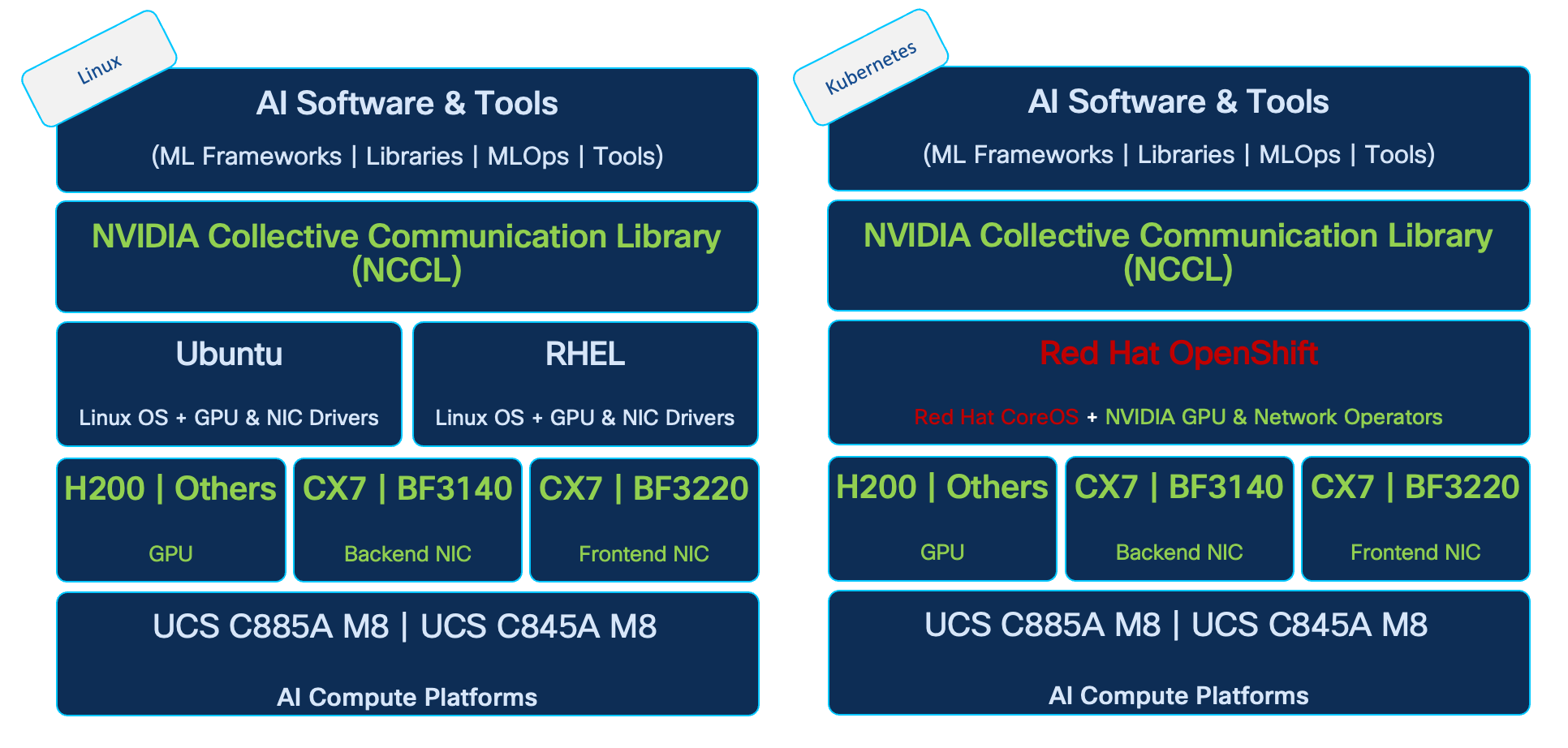

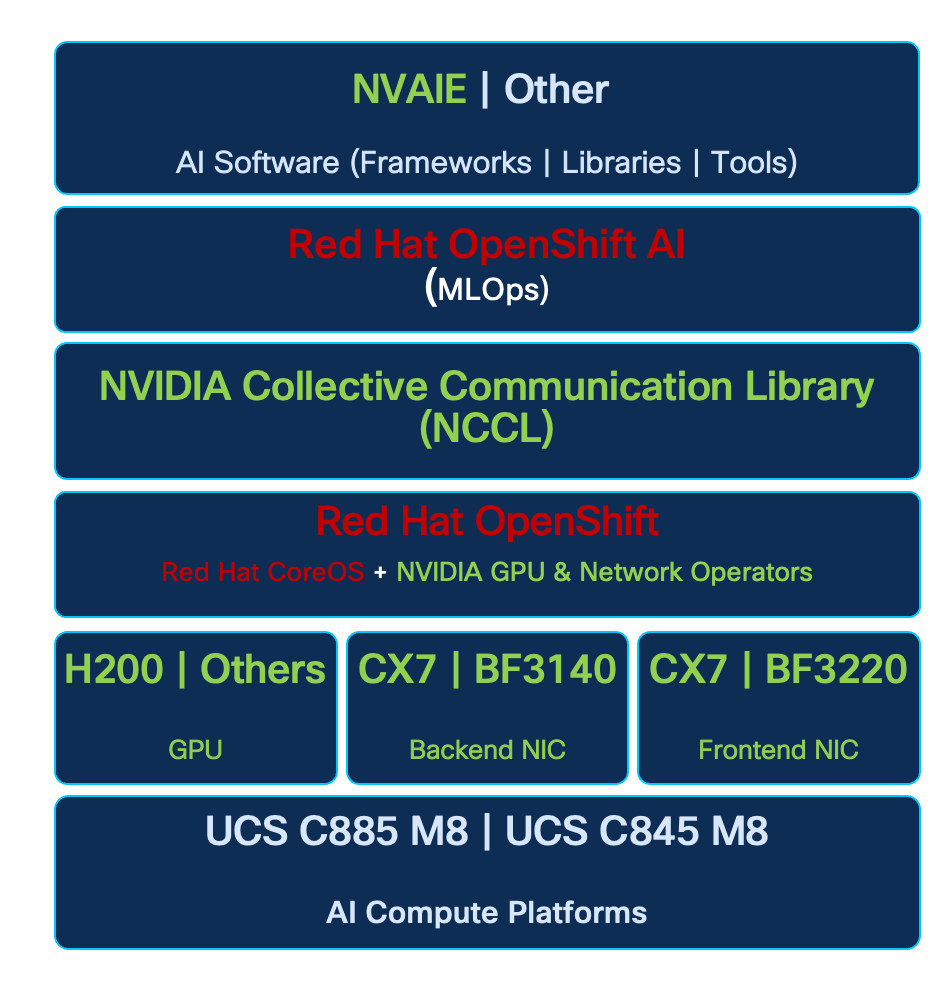

AI Software Stack

A comprehensive and integrated software stack is essential for efficiently deploying, managing, and executing AI/ML workloads on the underlying infrastructure. Cisco AI PODs support a robust software ecosystem designed for high-performance computing and AI-specific tasks:

● Operating System: Cisco AI PODs support standard Linux distributions such as Ubuntu and Red Hat Enterprise Linux as the base OS on Cisco’s GPU-dense AI platforms (For a complete list of support distributions, see Cisco UCS Hardware Compatibility List (HCL) tool). For training and fine-tuning workloads, enterprises can choose to run AI workloads natively on these Linux distributions or combine it with a workload orchestration and management layer such as K8s.

● Workload Orchestration and Management: AI PODs integrate with workload managers (for example, SLURM, or Kubernetes’ native scheduling) to provide effective scheduling and orchestration of complex training jobs. Enterprise-grade Kubernetes platforms (for example, Red Hat OpenShift), serving as the de facto orchestration layer for AI/ML applications and services, can provide operational consistency across diverse AI workload environments, from inferencing to training and fine-tuning.

● NVIDIA Collective Communication Library (NCCL): Crucial for distributed training and fine-tuning, NCCL optimizes inter-GPU communication, ensuring efficient data exchange and synchronization across the GPU cluster for collective operations.

● NVIDIA AI Enterprise (NVAIE): This suite provides the core AI software libraries, frameworks (for example, PyTorch, TensorFlow), and tools necessary for developing and running high-performance AI applications. NVAIE ensures optimized performance and compatibility with NVIDIA GPUs. AI/ML frameworks can seamlessly leverage NCCL for optimal inter-GPU connectivity in distributed training and fine-tuning.

MLOps

In Red Hat OpenShift deployments, Red Hat OpenShift AI can serve as a robust, Kubernetes-native MLOps platform purpose-built for enterprise AI/ML workloads, addressing the evolving demands of regulated industries like finance, healthcare, and public sector. By building on OpenShift's hardened infrastructure, it unifies the AI lifecycle—from secure data ingestion and collaborative development in isolated projects to automated KFP pipelines, modular model customization with Training Hub and Kubeflow Trainer, distributed Ray-based training with GPU auto-scaling, and production-grade model serving via KServe with vLLM optimizations, llm-d distribution, and RAG capabilities. New AI safety features, such as guardrails and detectors for LLM filtering, enhance governance, ensuring ethical deployment and compliance with frameworks like NIST or CIS.

Enterprises gain substantial advantages:

● Scalability and Efficiency: Resource management with Kueue queuing, hardware profiles, and telemetry minimizes waste, enabling cost-effective handling of large datasets and models in hybrid environments.

● Security and Compliance: Inherited defense-in-depth (RBAC, SCCs, auditing) plus AI-specific guardrails reduce risks, avoiding custom implementations prone to gaps.

● Multi-Tenancy and Collaboration: Project isolation and RBAC support team workflows without overlays, fostering innovation while enforcing least privilege.

● Operational Reliability: Monitoring, versioned artifacts, and auto-scaling ensure reproducibility and uptime, with support for disconnected operations.

Management

The AI POD architecture takes a unified approach to management to ensure operational consistency and simplified scaling. The solution leverages two platforms for managing and operating the key infrastructure subsystems:

● Network Fabric Management: Cisco Nexus Dashboard offers unified control and visibility for the dual networks (backend and frontend fabric) in an AI training deployment. It streamlines network provisioning, operations, and troubleshooting, ensuring consistent network performance and health. As a centralized point of control, it also provides a single API endpoint for automating the end-to-end network infrastructure. Furthermore, Nexus Dashboard can manage multiple AI POD training or inferencing fabrics and can be federated with other clusters, providing a consistent management plane across both AI and non-AI data center environments.

● Compute Infrastructure Management: Cisco Intersight provides centralized, cloud-managed lifecycle management for all Cisco UCS compute resources, including the GPU nodes and management cluster servers. This SaaS-based platform (with an on-premises appliance option) enables policy-driven configuration, health monitoring, firmware management, and automation, simplifying compute operations. Support for the Cisco UCS C8xx series within Intersight is continually evolving, and it is recommended to consult the latest documentation for current capabilities.

The two platforms together, Cisco Nexus Dashboard for the network and Cisco Intersight for the compute, provide a complete, end-to-end management framework for the AI POD architecture.

Observability

Unified, end-to-end visibility are critical for operationalizing AI at scale within the enterprise in order to simplify complex infrastructure and to identify and resolve issues faster when they occur.

Cisco Splunk Observability Cloud delivers comprehensive, full-stack observability across the entire AI/ML environment, from infrastructure (compute, network, storage) to applications and workloads. This integrated visibility helps eliminate management silos, enables proactive monitoring, facilitates rapid root cause analysis, and supports performance optimization.

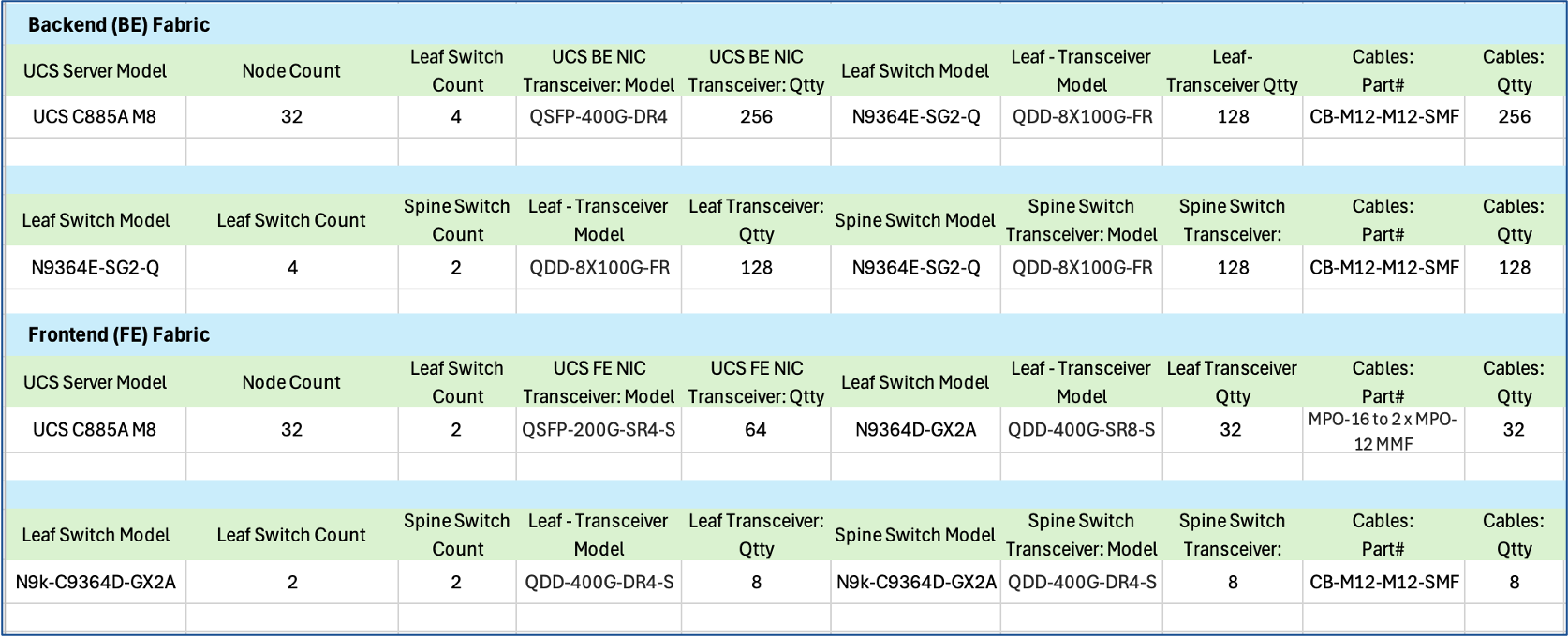

Dual Fabrics: Backend and Frontend Networks

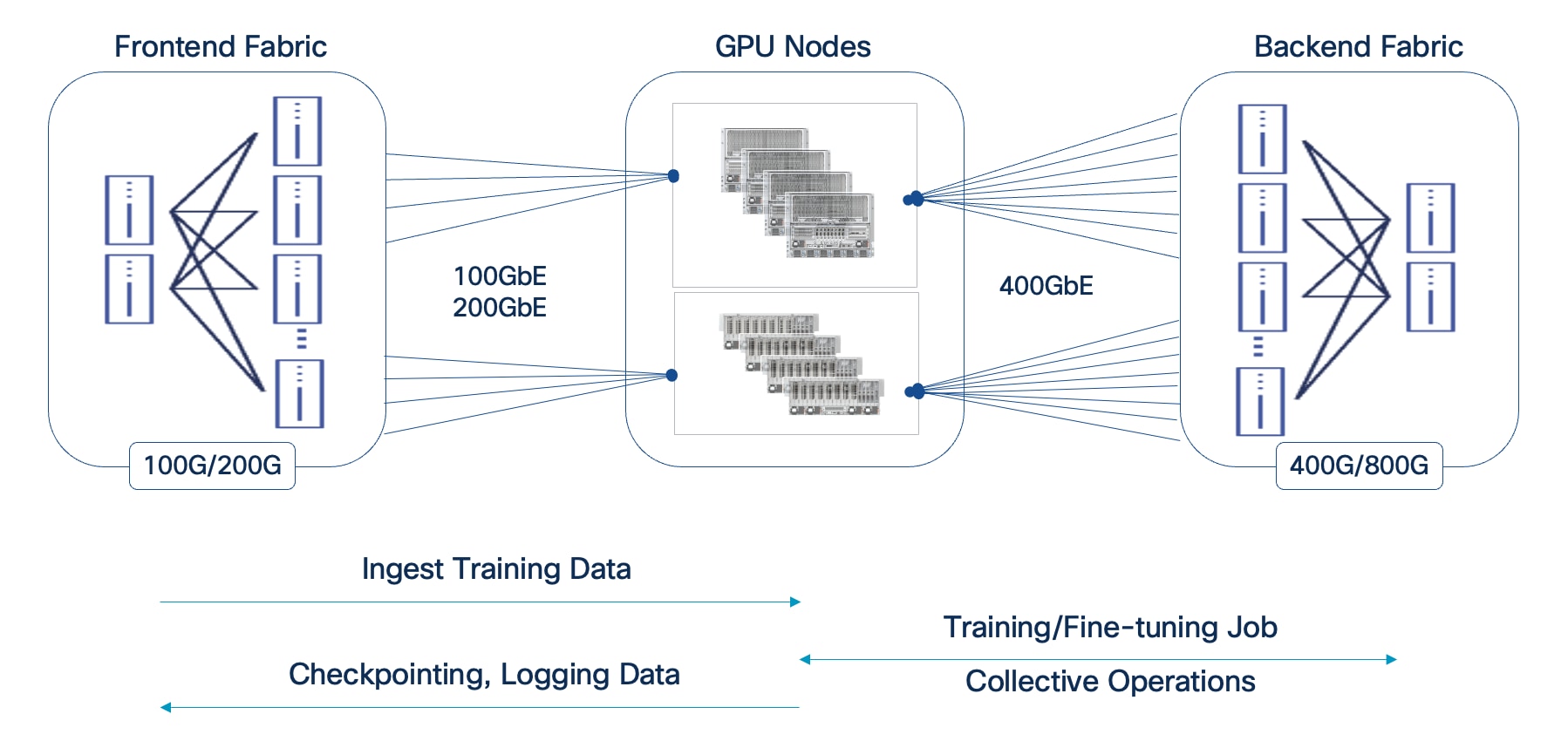

Cisco AI POD architecture is a dual-fabric design to meet the stringent performance requirements of distributed training and fine-tuning AI workloads. Distributed training and fine-tuning have fundamentally different communication patterns and performance requirements from those for management, storage access, or user/application traffic.

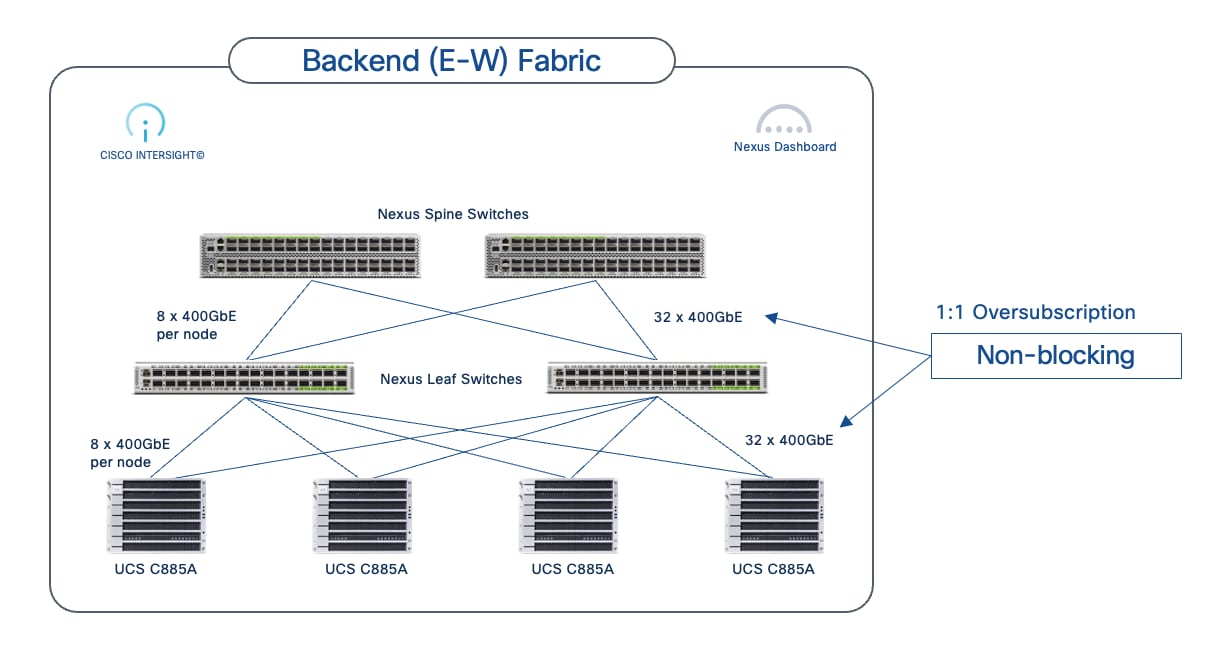

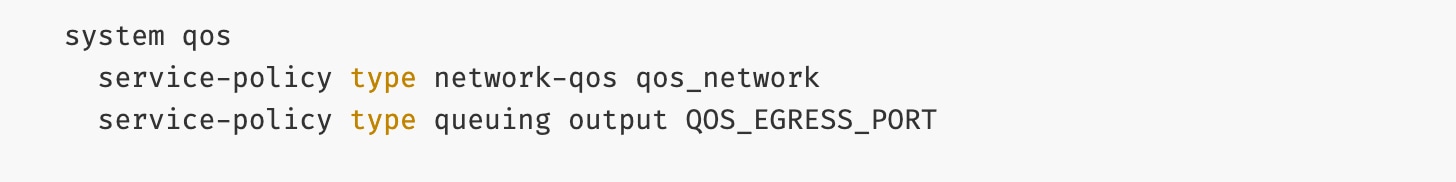

● Backend (East-West) Fabric: This fabric is a dedicated, isolated, high-performance backend fabric for low latency, high-throughput, and lossless GPU-to-GPU communication using GPU Direct RDMA (RoCEv2). This isolation ensures that collective operations and data synchronization between GPUs during training and fine-tuning are not impacted by other network traffic, directly contributing to faster Job Completion Times (JCTs).

● Frontend (North-South) Fabric: This fabric handles management traffic, high-speed storage access including GPU Direct Storage, and seamless integration with the broader enterprise data center network. It provides robust connectivity for accessing enterprise data, data center services as well as user/application traffic. The separation of BE and FE fabrics ensures consistent and reliable connectivity for all supporting services without compromising the performance of the core AI workload.

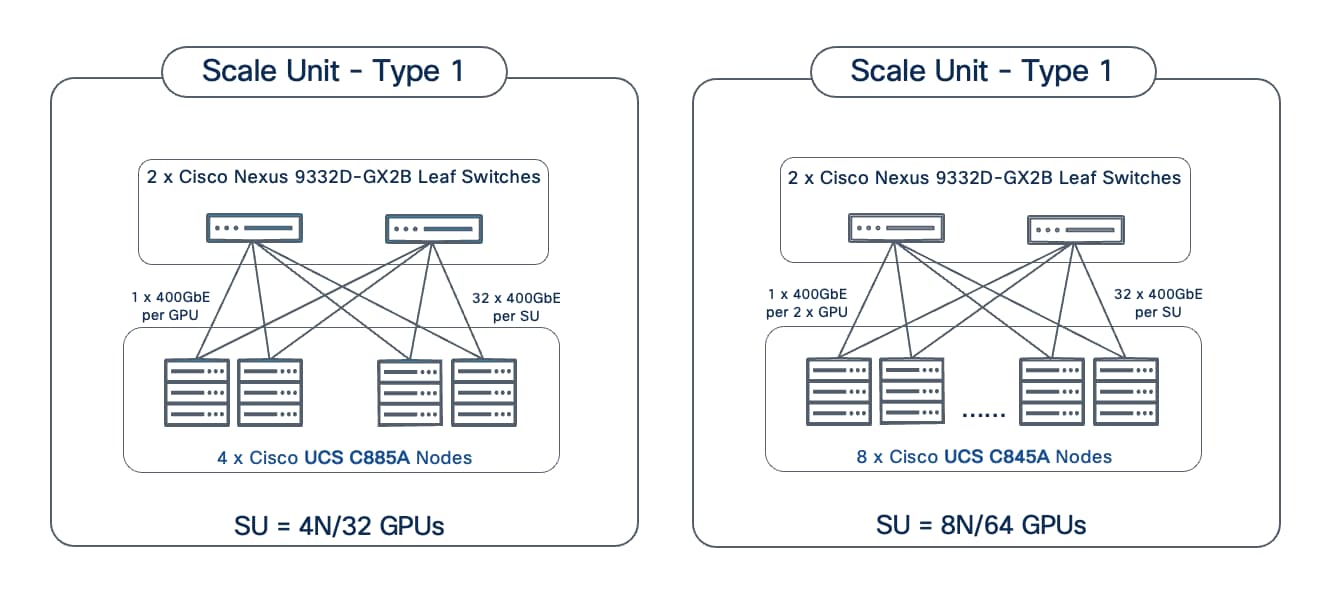

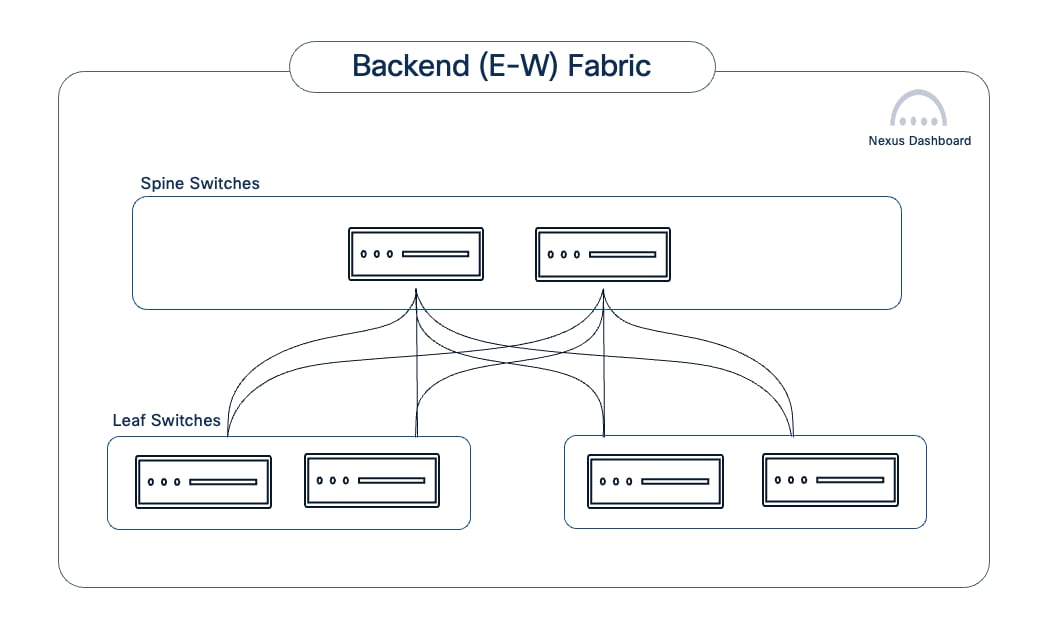

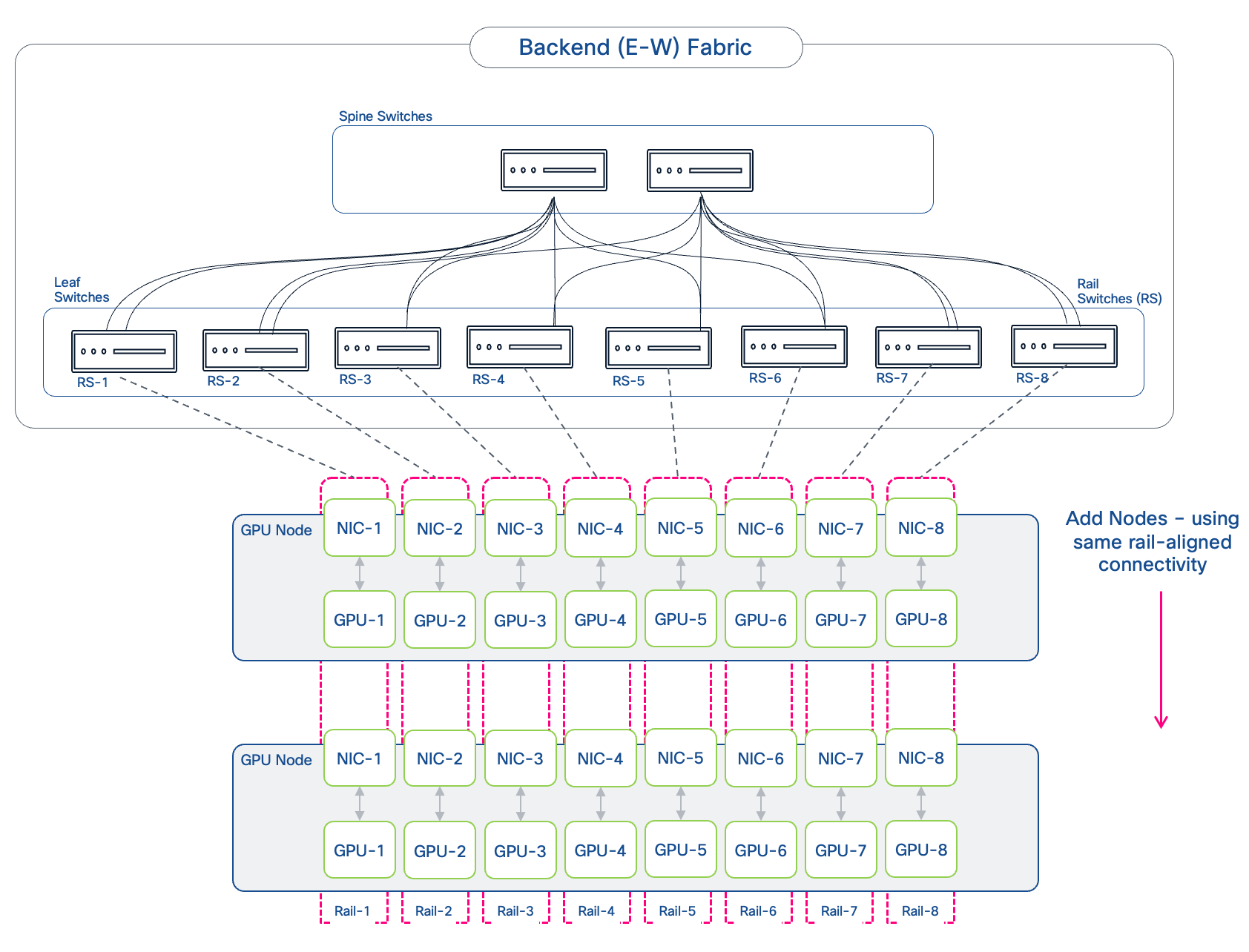

Modular and Scalable Design: Scale Units and Scale Unit Types

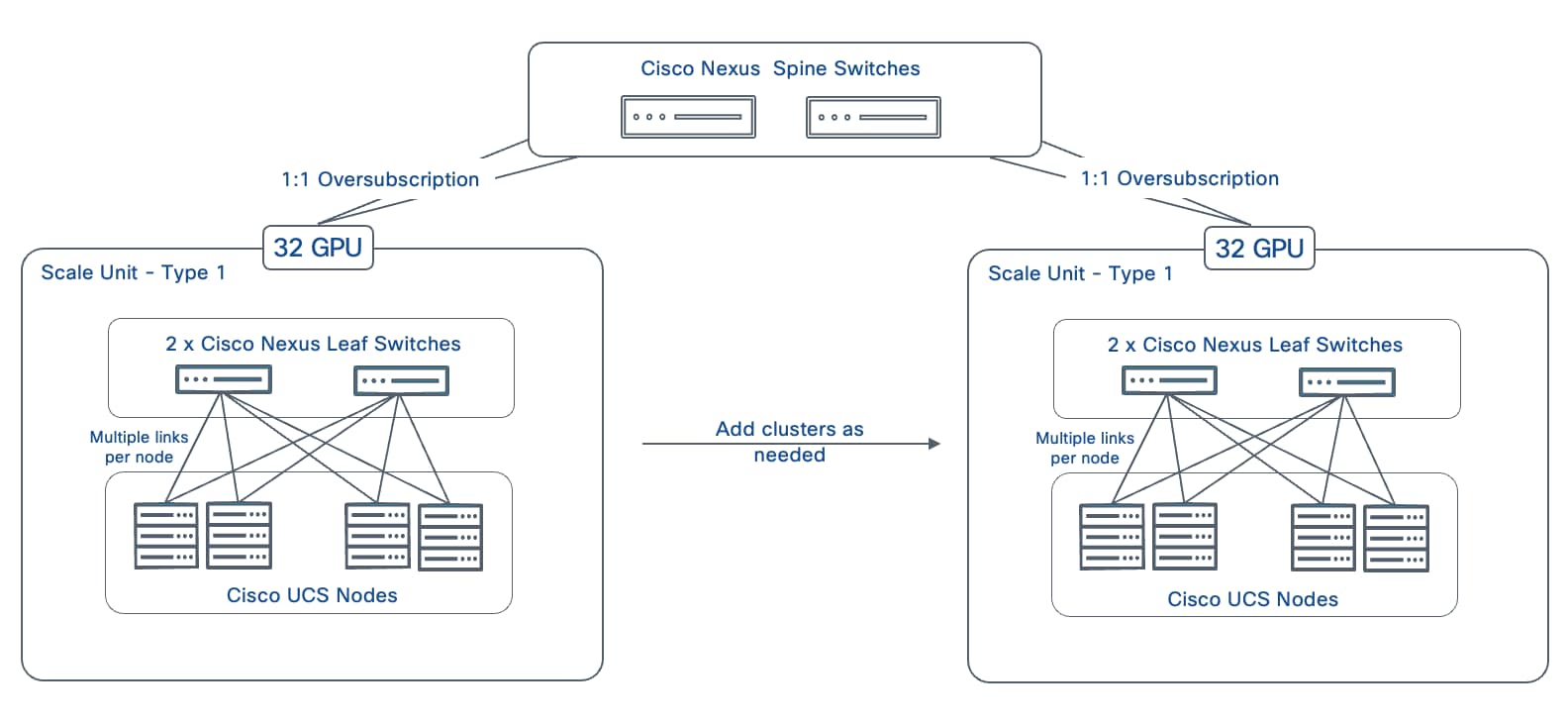

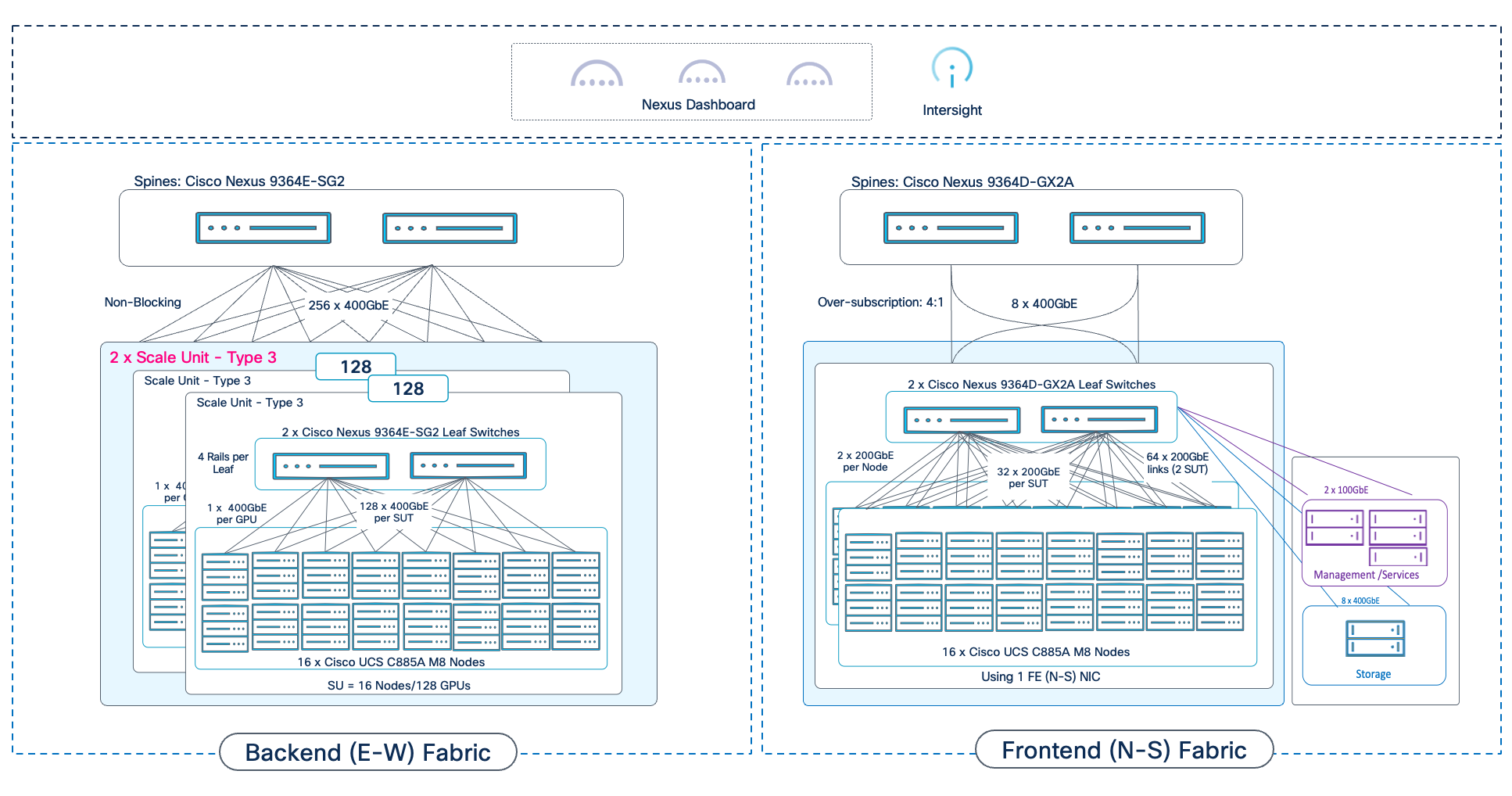

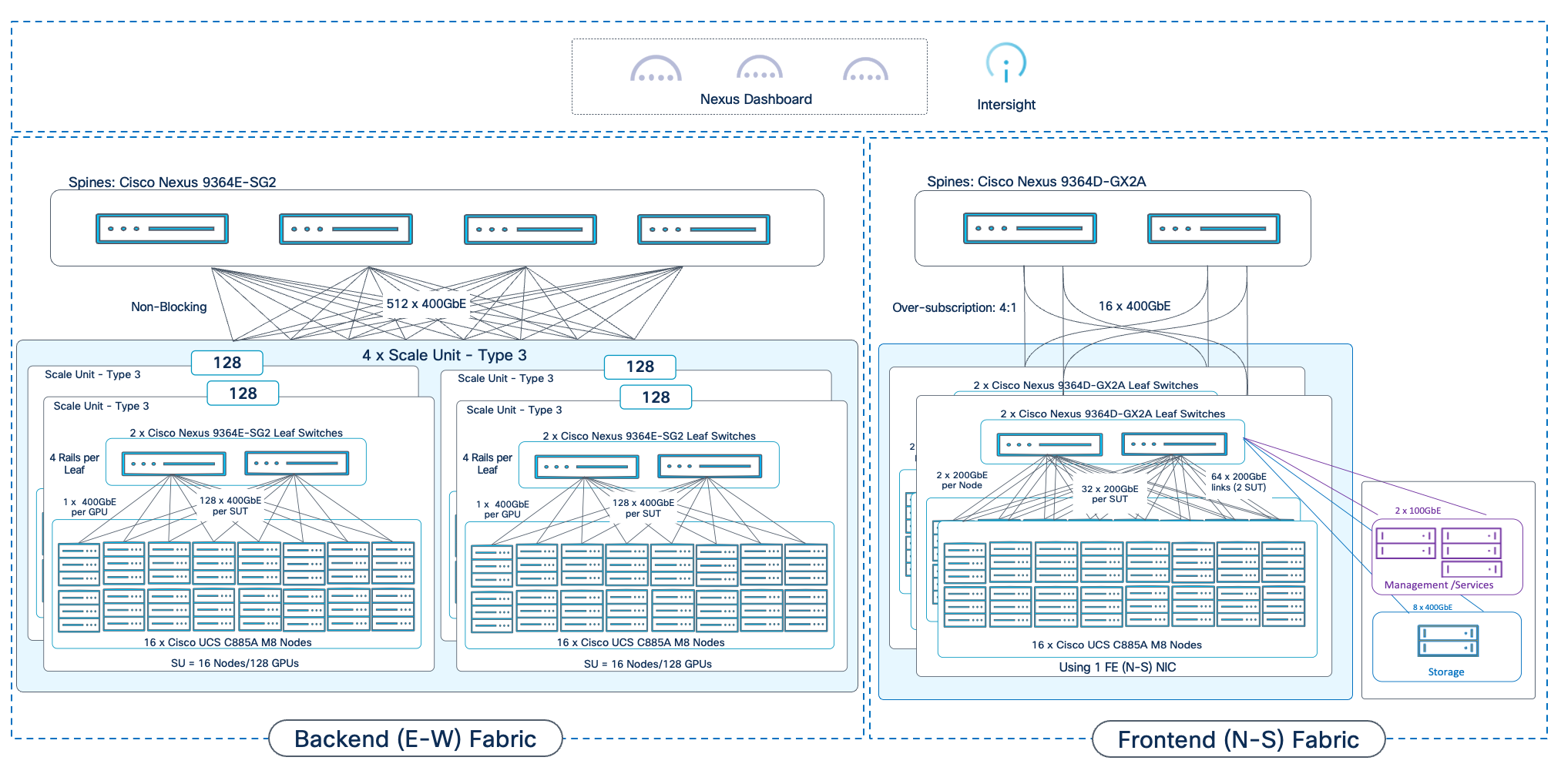

The AI POD architecture is designed to be modular with consistent scalability and operations that allow enterprises to grow their AI infrastructure in a predictable manner as needs evolve. This is achieved through the concept of Scale Units and Scale Unit Types. Enterprises can select from one of the pre-defined Scale Unit Types as their starting cluster and then expand to larger cluster sizes as their needs grow. If a customer knows their end scale target, this can be factored into the selection early.

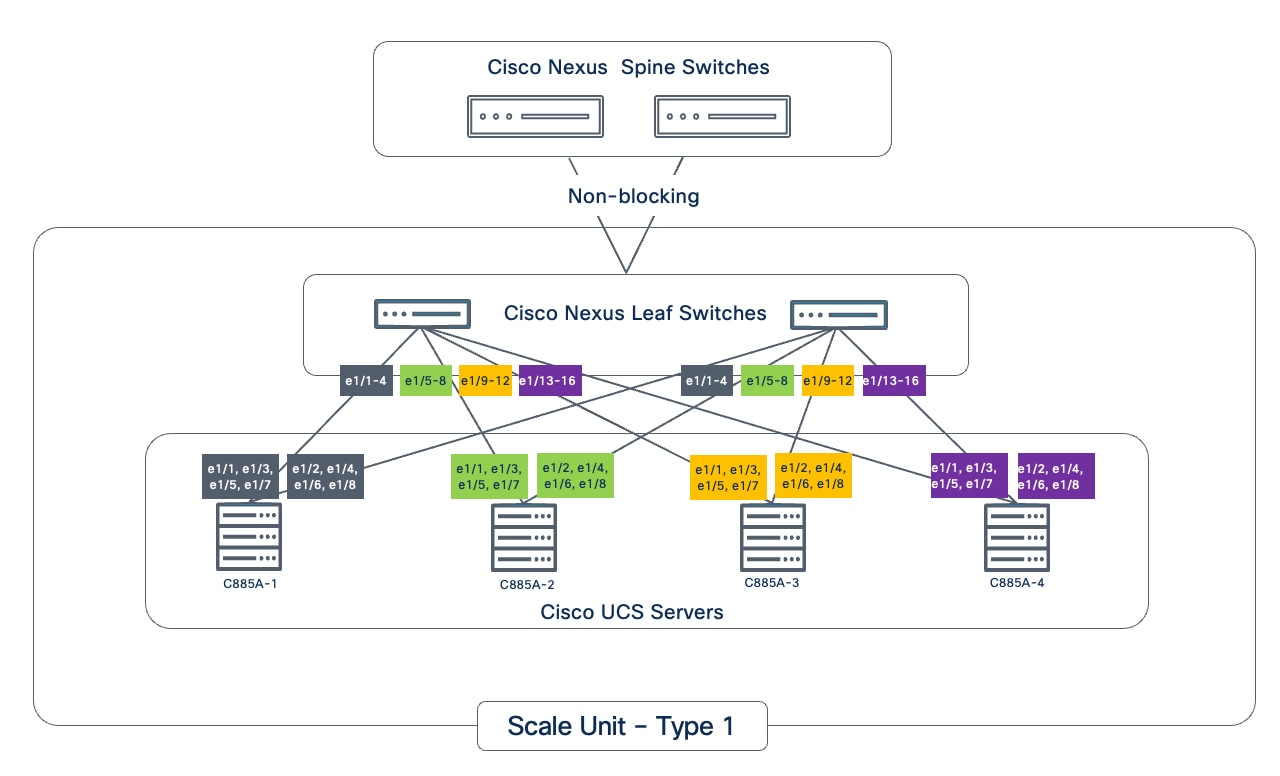

● Scale Units as Building Blocks: A Scale Unit is a foundational, repeatable building block that combines Cisco UCS compute nodes (equipped with high-performance GPUs) and Cisco Nexus leaf switches. Each Scale Unit is engineered to deliver a specific cluster size of AI compute and network capacity. Multiple Scale Unit Types are defined based on the Nexus leaf switch model used with a direct bearing on the GPU cluster size. Both Cisco Nexus Cloudscale and Silicon One (S1) switches are supported.

● Predictable Scale-Out: Larger AI POD clusters can be built out by incrementally adding and interconnecting these individual Scale Units in a robust spine-leaf network topology. This approach ensures that performance scales predictably and consistently, avoiding bottlenecks as the AI deployment expands.

● Flexibility and Right-Sizing: Scale Units come in various configurations (for example, supporting 32, 64, or 128 GPU clusters), allowing enterprises to right-size their initial AI POD deployment to match current workload demands. This modularity provides the flexibility to expand capacity precisely when needed, preventing costly over-provisioning and ensuring efficient resource utilization over time.

● Operational Consistency at Scale: Enterprises can adopt additional scale units as their needs evolve without changing their operational model. The new scale units can be added to an existing backend fabric and managed through Nexus Dashboard. The frontend fabric that supports the backend fabric is also managed through Nexus Dashboard, providing management consistency across fabrics as well.

Design

This chapter contains the following:

● Backend Fabric Design (East-West)

● Frontend Fabric Design (North-South)

● MLOps using Red Hat OpenShift AI

This chapter moves from the high-level architecture to the detailed subsystem design, providing the specific designs for the backend and frontend fabrics, compute, storage, and management layers that form the complete AI POD solution for distributed training and fine-tuning.

AI training and fine-tuning workloads, and the associated GPU clusters, typically rely on two independent networks: a backend (BE) for parallel processing and synchronization across GPU nodes, and a frontend (FE) network for ingesting training data, checkpointing, and logging (Figure 8). In hybrid deployments, the frontend network also handles inferencing traffic from users, applications, and other services. In enterprise deployments, the frontend network can be an existing data center network that meets the requirements. Unlike frontend fabrics, backend fabrics are isolated, typically with no outside network access to other parts of the enterprise or external networks.

Note: A backend fabric is typically not used or necessary in an inferencing only deployment.

In enterprise deployments, the frontend fabric could be an existing enterprise data center fabric that meets the requirements. In this scenario, the storage could be connected to the same fabric, or a dedicated AI storage fabric could also be deployed.

Backend Fabric Design (East-West)

This section details the design of the Backend (East-West) Fabric. In distributed AI model training and fine-tuning, this fabric is critical for performant GPU-GPU communication and data exchange during collective operations, directly impacting the JCT of training workloads. The GPU nodes (like Cisco UCS C885A and Cisco UCS C845A) are typically dense GPU platforms that connect to this fabric using dedicated Network Interface Cards (NICs), separate from those used for the FE fabric.

As discussed, enterprises typically do not train large foundational models (like LLMs) from scratch for their AI initiatives and use cases. Instead, they focus on customizing these pre-trained models with their organization’s data, often using fine-tuning, Retrieval-Augmented Generation (RAG), or a combination of both approaches. These customization workloads, while resource-intensive, require significantly fewer GPUs (magnitudes less) and supporting infrastructure compared to the initial training of these models. The Cisco AI POD's Backend Fabric is purpose-built and designed to meet these distinct characteristics and stringent requirements of enterprise AI workloads, as previously described.

Key Requirements

The performance of AI training and fine-tuning workloads, and therefore the backend fabric, is based on JCT. Therefore, the fabric must be designed to meet critical network requirements that ensure optimal JCTs.

Note: As previously discussed, the acceptable JCT is subjective to each organization and may depend on the specific AI initiative or use case, as well as the characteristics of the workload itself (for example, model size, dataset size).

To ensure optimal JCT, the backend fabric must provide the following:

● High Bandwidth: To handle concurrent, large data (elephant) flows between GPUs.

● Low End-to-End Latency: To minimize data exchange delays between nodes, as these directly impact JCT.

● Lossless Fabric: To avoid network interruptions or lost packets that can force training jobs to restart, significantly affecting JCT

● Low Jitter: As fast, bursty GPU operations can cause buffer overflows, leading to temporary congestion if not managed.

● Efficient Load Balancing: AI training workloads typically have low entropy, which can make traditional load balancing methods like ECMP sub-optimal. ECMP is designed for high-entropy flows common in enterprise data centers but may be less than ideal for AI workloads due to the low variability exhibited by these flows.

Note: The low entropy is a significant concern when all GPUs are assigned to a single training job. However, as the number of concurrent training workloads on the fabric increases, the entropy also increases. Monitoring the backend fabric to understand these traffic patterns will allow you to understand the needs of your environment.

Modular Design Tailored for Enterprise AI

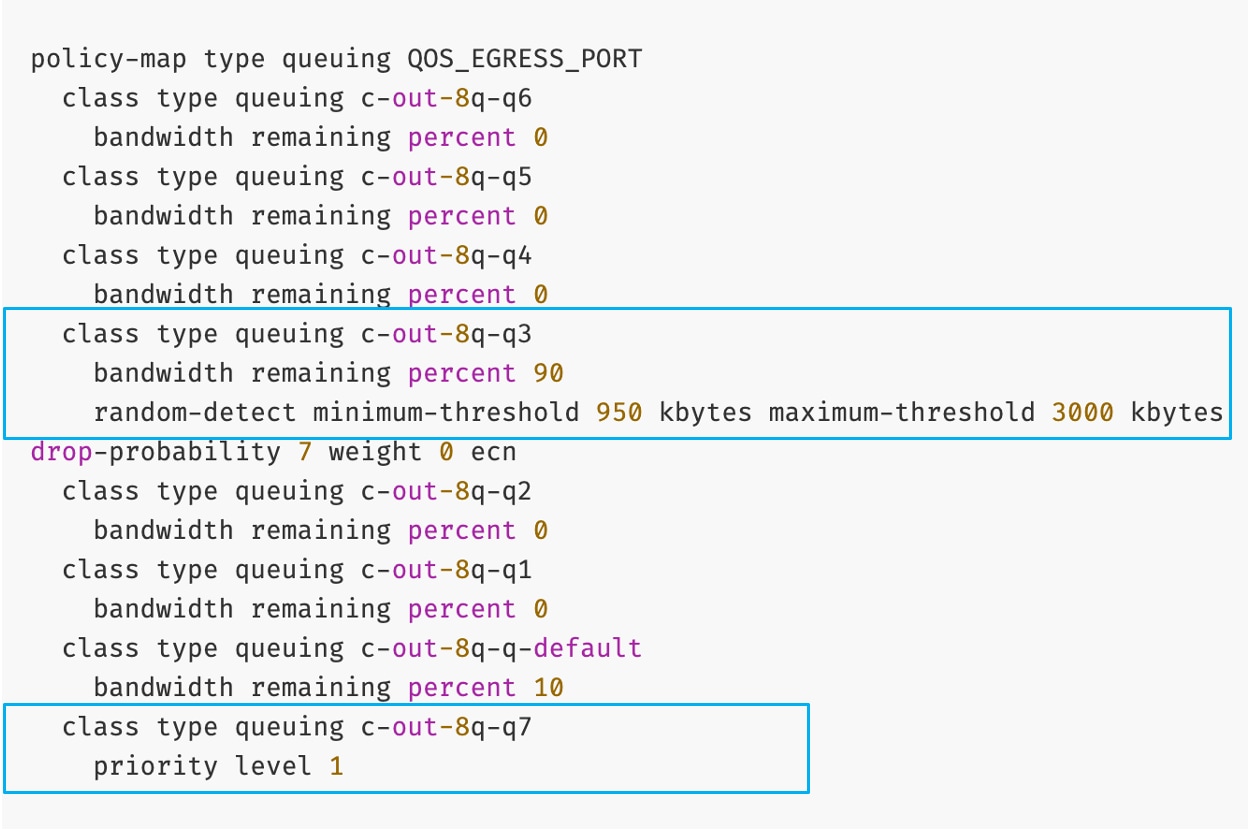

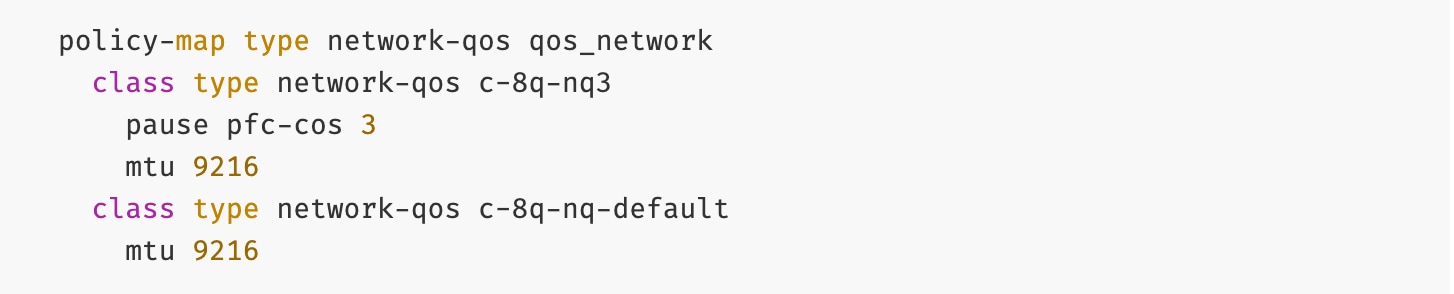

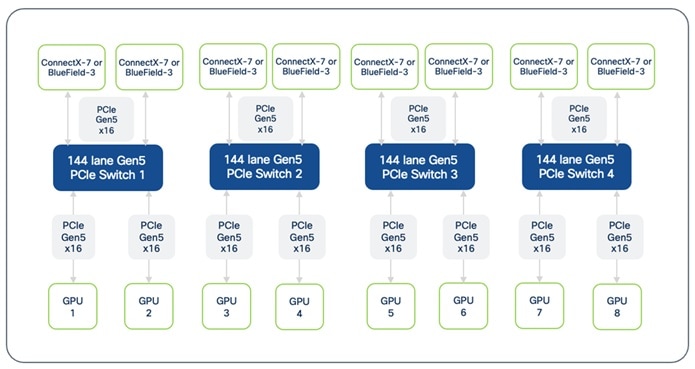

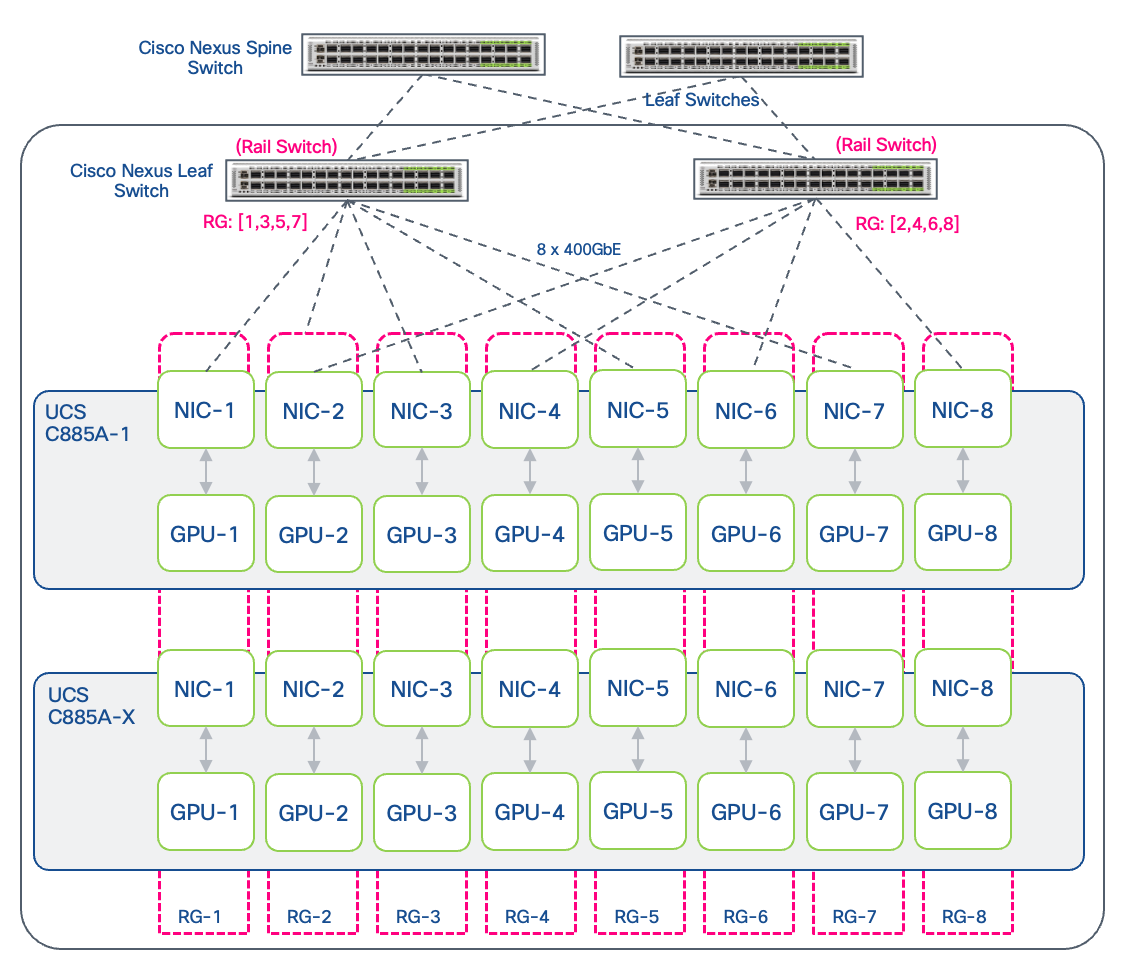

The backend fabric in AI POD uses a modular architecture built around the concept of Scale Units (SU). As outlined in NVIDIA's Enterprise Reference Architecture (ERA) for HGX-8-9-400 architectures (like Cisco UCS C885A), NVIDIA defines Scale Units as pre-defined units of four GPU compute nodes. These units provide a flexible and granular approach to scaling AI training infrastructure.

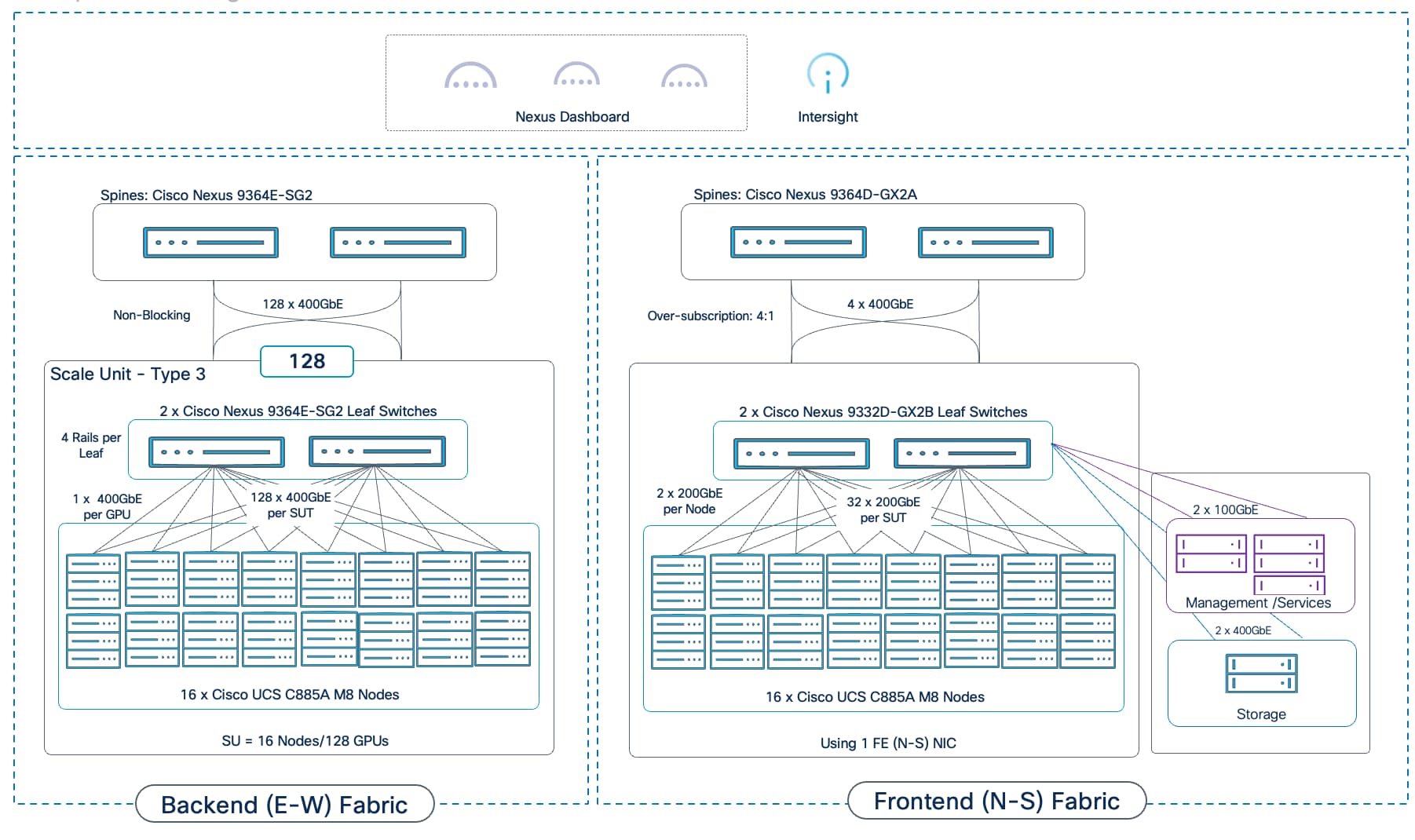

Scale Units

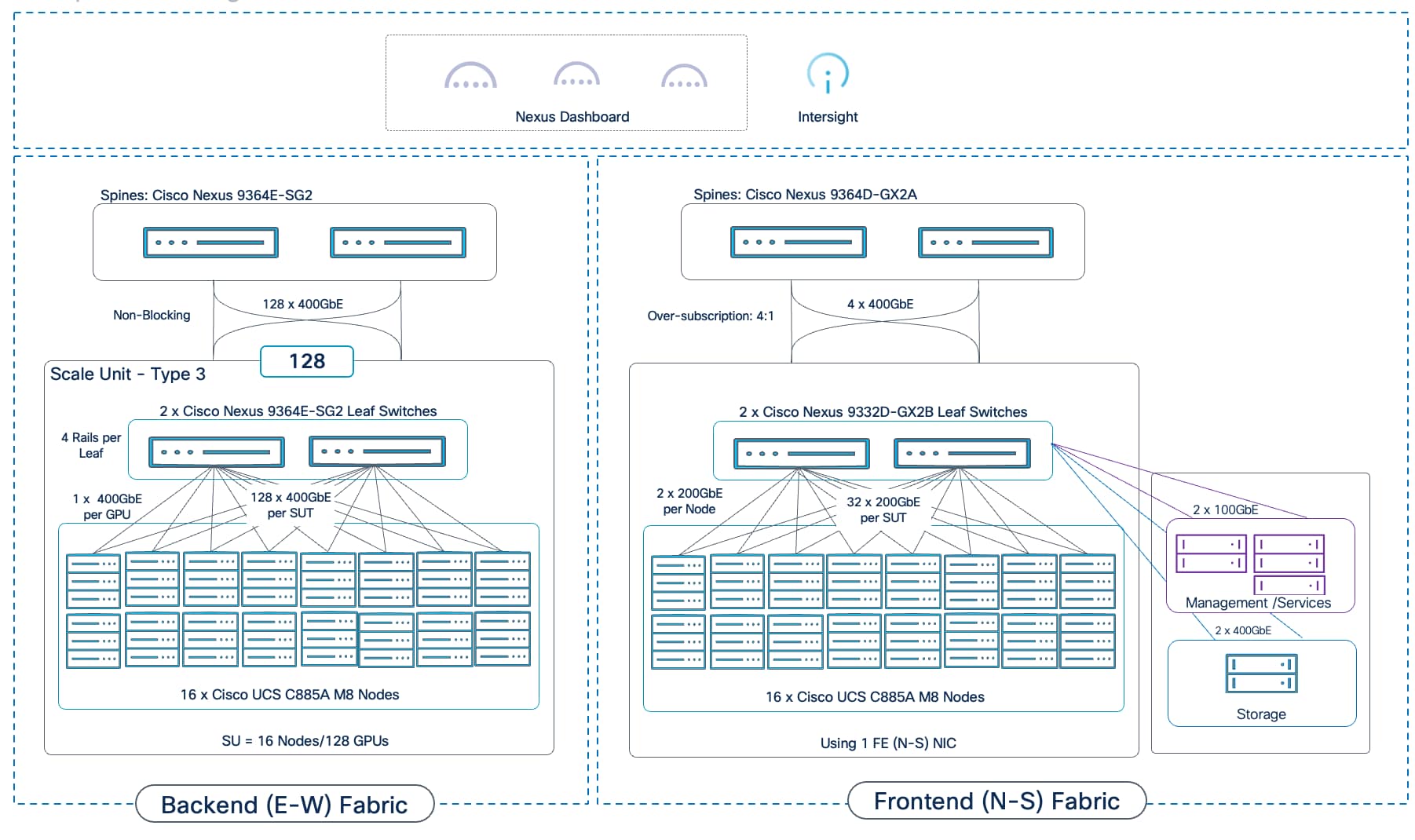

In the Cisco AI POD for training architecture, the Scale Unit definition has been expanded to include a pair of network switches and the GPU compute nodes that connect to them. The different Nexus data center switches used in the AI POD architecture are right-sized to support the GPU node scale for that SU, resulting in three distinct Scale Unit Types (see Table 4 and Table 5). These scale unit types serve as foundational building blocks in the Cisco AI POD architecture for building a backend fabric for training and fine-tuning. It is important to note that these pre-defined compute-plus-network units are designed to align with the typical GPU cluster sizes that enterprises need for fine-tuning and customization.

Unlike the thousands of GPUs required for training large LLMs from scratch, enterprise fine-tuning and customization workloads typically require smaller GPU clusters, though they often need to support multiple such workloads running concurrently. To address this, the Cisco AI PODs architecture provides multiple Scale Unit Types that enterprises can select from as a starting point. Organizations can then incrementally grow their cluster with consistent performance and design methodology. This modular approach enables enterprises to right-size their infra to meet their current workload needs, but then seamlessly expand by adding more scale units as AI projects evolve. See next section for detailed descriptions and scaling options.

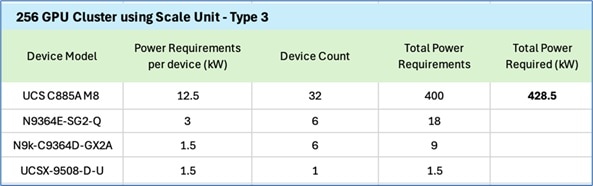

Table 4. Scale Unit Types for AI PODs with Cisco UCS C885A M8 Servers

| Scale Unit Type |

Nexus Switch Model & Count |

UCS C885A Nodes (Max) |

GPU Cluster Size (Max per SU) |

| Scale Unit – Type 1 |

2 x N9k-C9332D-GX2B |

Up to 4 Nodes |

Up to 32 GPUs |

| Scale Unit – Type 2 |

2 x N9k-C9364D-GX2A |

Up to 8 Nodes |

Up to 64 GPUs |

| Scale Unit – Type 3 |

2 x N9k-C9364E-SG2 |

Up to 16 Nodes |

Up to 128 GPUs |

Table 5. Scale Unit Types for AI PODs with Cisco UCS C845A M8 Servers

| Scale Unit Type |

Nexus Switch Leaf Pair |

UCS C845A* Nodes |

GPU Cluster Size* |

| Scale Unit – Type 1 |

2 x N9k-C9332D-GX2B |

Up to 8 Nodes |

Up to 64 GPUs |

| Scale Unit – Type 2 |

2 x N9k-C9364D-GX2A |

Up to 16 Nodes |

Up to 128 GPUs |

| Scale Unit – Type 3 |

2 x N9k-C9364E-SG2 |

Up to 32 Nodes |

Up to 256 GPUs |

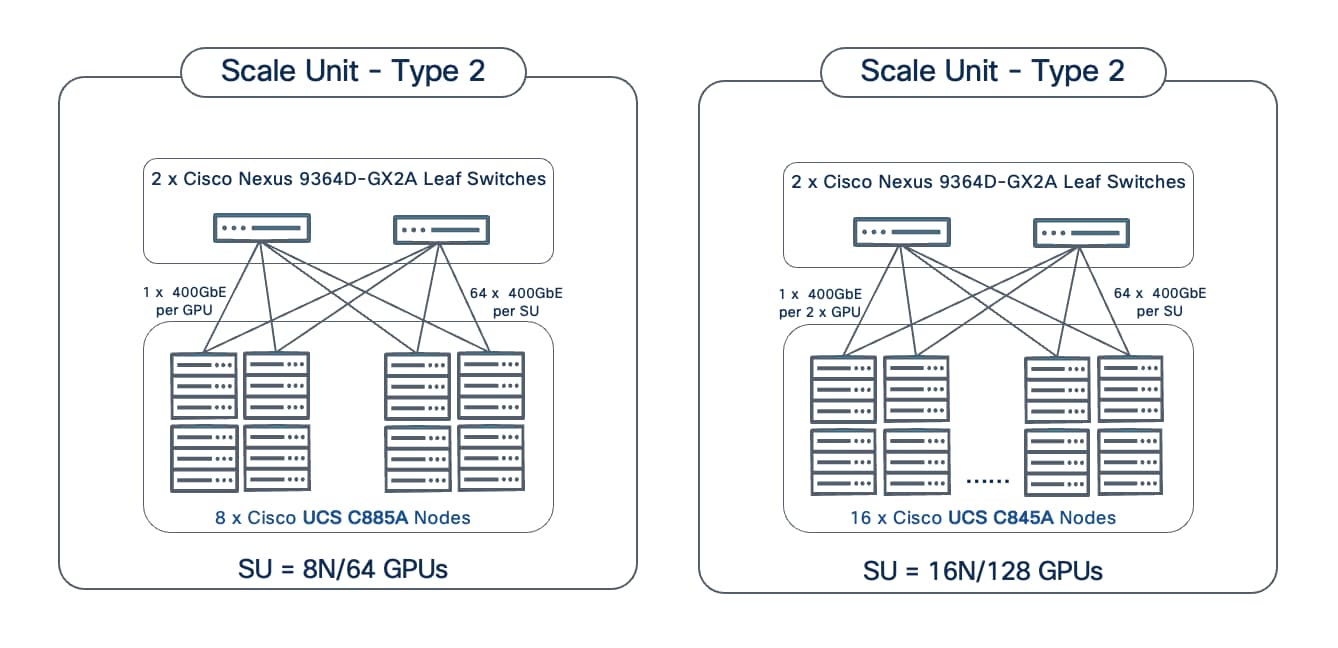

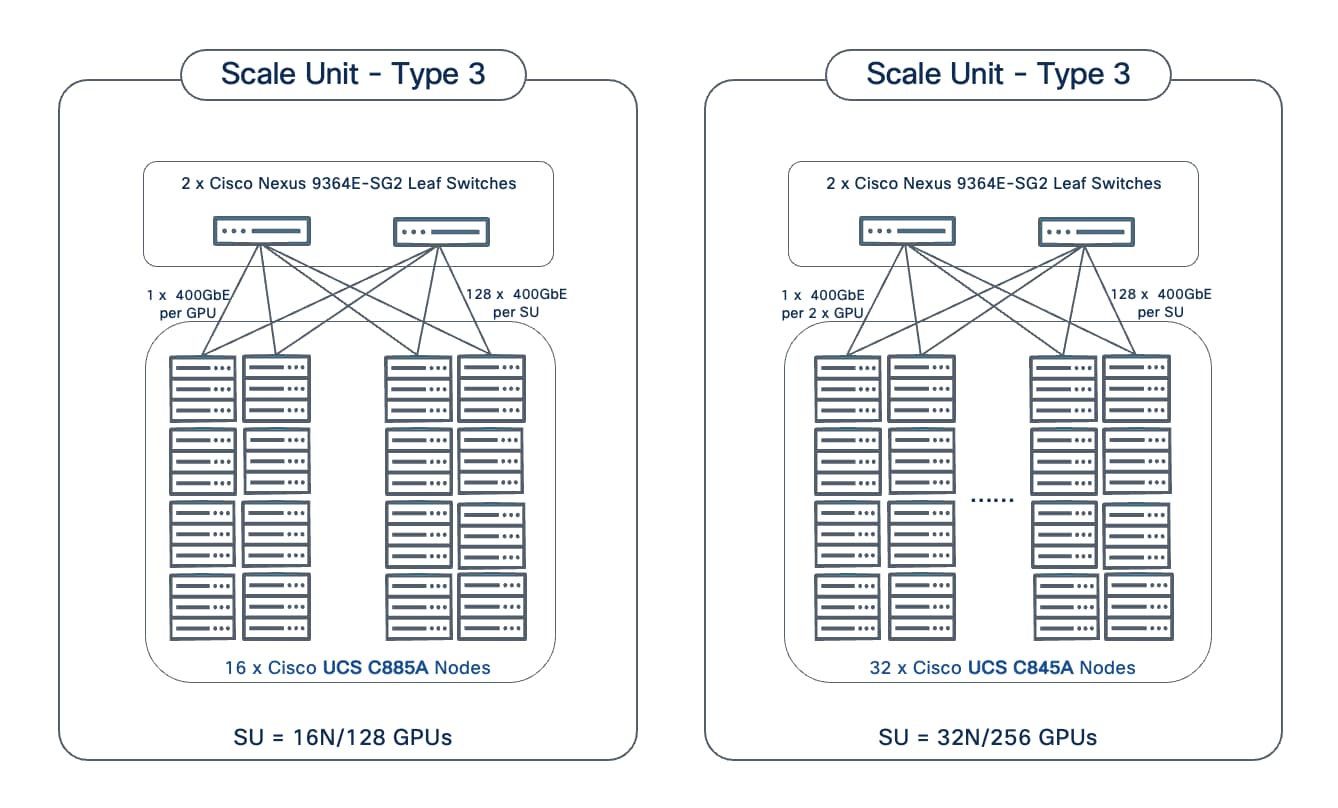

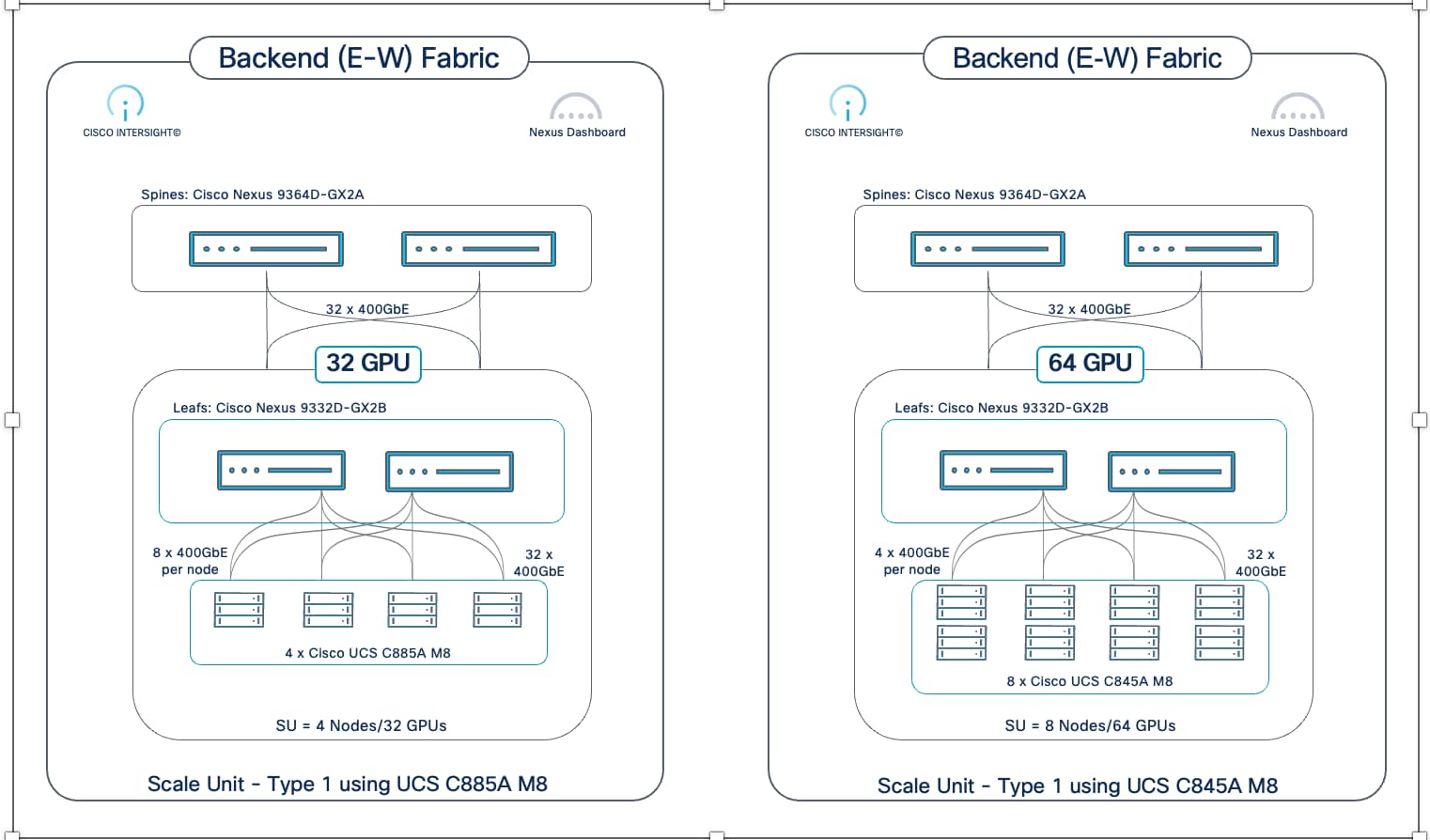

*On a Cisco UCS C845A M8 server, 2 GPUs share 1 East-West NIC for connecting to the BE fabric. This reduces the number of switch ports required per node, allowing for a higher node count and GPU density per Scale Unit Type when compared to using Cisco UCS C885A. This also assumes an 8-GPU configuration per C845A node.

Scale Unit Types: Configurations and Scaling Options

This section provides additional details on each of the scale unit types introduced in the previous section. These units serve as the foundational building blocks for the AI POD infrastructure, combining Cisco UCS compute nodes and Cisco Nexus leaf switches to deliver right-sized infrastructure of varying GPU cluster sizes for enterprise AI training and fine-tuning workloads.

Note: Though not shown, each scale unit type in the figures below connects to a pair of Spine switches (recommended). Also, each scale unit type is rail-optimized within the SU.

Scale Unit - Type 1

Scale Unit Type 1 consists of 2 Cisco Nexus 9332D-GX2B Cloudscale switches with up to:

● 4 x Cisco UCS C885A M8 servers (4 nodes, 32 GPUs), with each server connecting to leaf switches using 8 x 400GbE links.

● 8 x Cisco UCS C845 M8 servers (8 nodes, 64 GPUs), with each server connecting to leaf switches using 4 x 400GbE links.

Scale Unit - Type 2

Scale Unit Type 2 consists of 2 Cisco Nexus 9364D-GX2A Cloudscale switches with up to:

● 8 x Cisco UCS C885A M8 servers (8 nodes, 64 GPUs), with each server connecting to leaf switches using 8 x 400GbE links.

● 16 x Cisco UCS C845 M8 servers (16 nodes, 128 GPUs), with each server connecting to leaf switches using 4 x 400GbE links.

Scale Unit - Type 3

Scale Unit Type 3 consists of 2 Cisco Nexus 9364E-SG2 Silicon One switches with up to:

● 16 x Cisco UCS C885A M8 servers (16 nodes, 128 GPUs), with each server connecting to leaf switches using 8 x 400GbE links.

● 32 x Cisco UCS C845 M8 servers (32 nodes, 256 GPUs), with each server connecting to leaf switches using 4 x 400GbE links.

The individual Scale Unit Types described above represents the starting point for an AI POD infrastructure for training and fine-tuning. As AI infrastructure needs grow, enterprises can expand to larger GPU clusters by adding additional Scale Units. This may involve adding more scale units of a given type to the same Spine switch pair or may require adding additional spine switch pairs to support a greater number of Scale Units. For a comprehensive overview of how the different scale unit types can be scaled to achieve large cluster sizes, go to Scale the Backend Fabric.

Network Topology

The backend fabric provides the optimal networking for GPUs in a cluster to communicate with each other in a multi-node environment, which is critical for accelerating distributed AI/ML training and fine-tuning.

Spine-Leaf Clos Topology