Cisco AI POD with VAST Data for Training and Fine-Tuning Deployment Guide

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

In partnership with:

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to: https://www.cisco.com/go/designzone.

Executive Summary

Cisco AI PODs are modular, pre-validated infrastructure solutions designed to support the full AI lifecycle, including training, fine-tuning, and inference workloads. Built on Cisco UCS compute, Cisco Nexus networking, and industry-leading GPUs, Cisco AI PODs provide a scalable, secure, and operationally efficient foundation for enterprise AI deployments in data center and edge environments. The architecture takes a building-block approach using Scale Unit Types, enabling organizations to start with deployments of 32-, 64-, or 128-GPU clusters. These foundational building blocks can then scaled incrementally and predictably to support 256, 512, or higher GPU clusters as requirements evolve. Cisco AI PODs are validated to simplify design, deployment, and day-to-day operations while supporting a broad range of AI use cases.

The solution is based on one of several design options presented in the Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide. The implementation details enable infrastructure engineers and AI/ML practitioners to quickly build, configure, and operationalize a high-performance AI cluster.

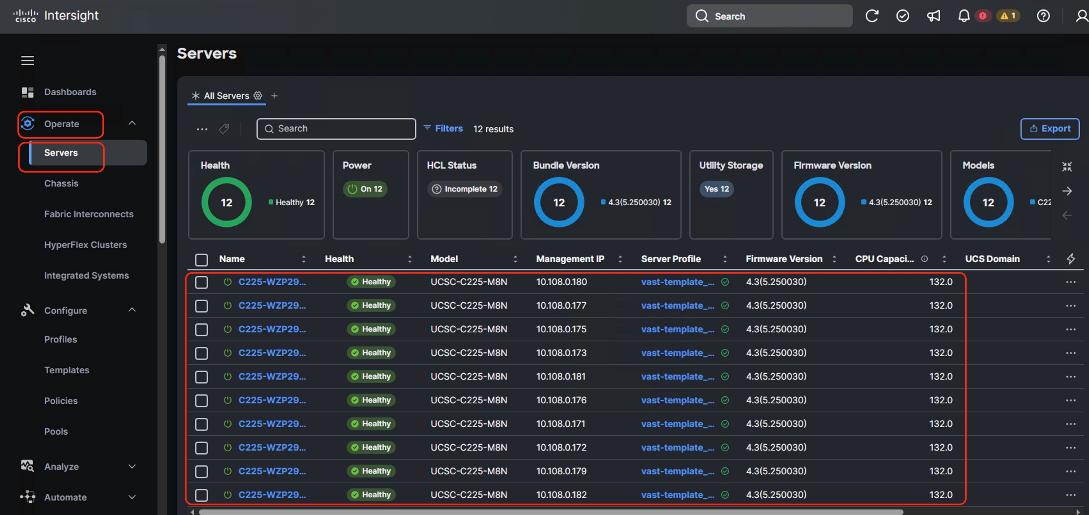

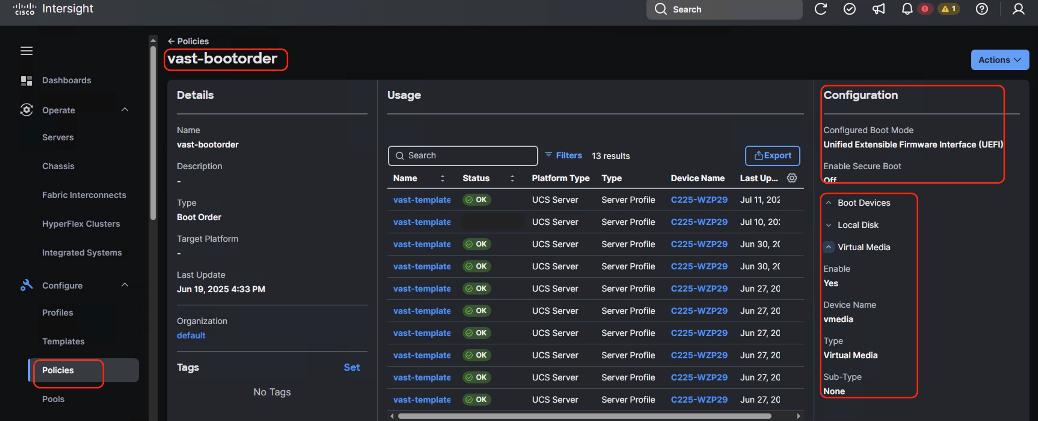

Within this architecture, Cisco E-Box, based on Cisco UCS C225 M8 servers, provides a flexible, CPU-optimized compute platform for AI infrastructure services, data processing, and supporting control-plane functions. Cisco AI PODs enable centralized lifecycle management through Cisco Intersight and Nexus Dashboard, delivering consistent provisioning, automation, and operational visibility across the AI infrastructure. This approach supports AI workloads such as large language models (LLMs), generative AI, retrieval-augmented generation (RAG), and analytics, while allowing configurations to be aligned with performance, scalability, and cost requirements.

When combined with VAST Data, Cisco AI PODs deliver a validated, high-performance storage architecture optimized for data-intensive AI workloads. VAST Data provides a single, global namespace with file and object access, enabling efficient data sharing across AI training and inference workflows without data duplication. Deployed on Cisco UCS-based platforms, the VAST Data architecture enables independent scaling of performance and capacity, delivering predictable low latency and high throughput as AI environments expand.

The integrated solution of Cisco AI PODs, Cisco Nexus Dashboard Cisco E-Box (Cisco UCS C225 M8), and VAST Data provides a cohesive AI-ready infrastructure that simplifies data access, supports efficient GPU utilization, and reduces operational complexity. Centralized management through Cisco Intersight, combined with VAST Data’s parallel data services, enables consistent operations, enterprise-grade security, and high availability. Backed by Cisco Validated Designs and partner validation, this solution helps organizations deploy and scale AI workloads with reduced risk and increased confidence.

This deployment guide, together with the AI POD Design Guide and the GitHub repo for this solution, serves as the complete AI POD Cisco Validated Design for Enterprise Training and Fine-tuning. The complete portfolio of Cisco AI POD CVDs is available here: Cisco Validated Design Zone for AI-Ready Infrastructure.

Solution Overview

This chapter contains the following:

Cisco AI PODs integrated with VAST Data offers a comprehensive, scalable infrastructure designed to accelerate AI and machine learning workloads. This solution combines Cisco UCS servers, Cisco Nexus switches, and VAST Data storage—with the advanced GPU-accelerated compute capabilities of Cisco AI PODs. Together, they provide a validated, high-performance platform optimized for AI lifecycle tasks such as training, inferencing, and deployment. Leveraging technologies like Cisco UCS X-Series modular systems, NVIDIA GPUs, and software platforms including NVIDIA Base Command Manager, this integrated environment simplifies AI infrastructure management through Cisco Intersight. The combined solution delivers high-speed networking, persistent storage, and automation to reduce complexity and enable enterprises to efficiently scale AI workloads with security and operational visibility.

The intended audience of this document includes but is not limited to IT architects, sales engineers, field consultants, professional services, IT managers, partner engineering, and customers who want to take advantage of an infrastructure built to deliver IT efficiency and enable IT innovation.

This document provides deployment guidance around setting up Cisco AI PODs with Cisco UCS C885A M8 servers along with VAST Data AI training and fine-tuning use cases. This document introduces various design elements and explains various considerations and best practices for a successful deployment.

The Cisco AI POD solution in this document is a fully integrated solution with high-density compute, high-performance networking, scale-out storage, and a robust software stack, designed for Enterprise Training and Fine-Tuning. This guide provides detailed implementation guidance for deploying a 32-GPU cluster and covers the configuration of compute, network, storage, and the software stack required to support distributed training and fine-tuning workloads. It also includes the platform level validations to ensure that the integrated subsystems are functioning as expected. The integrated solution consists of the following components:

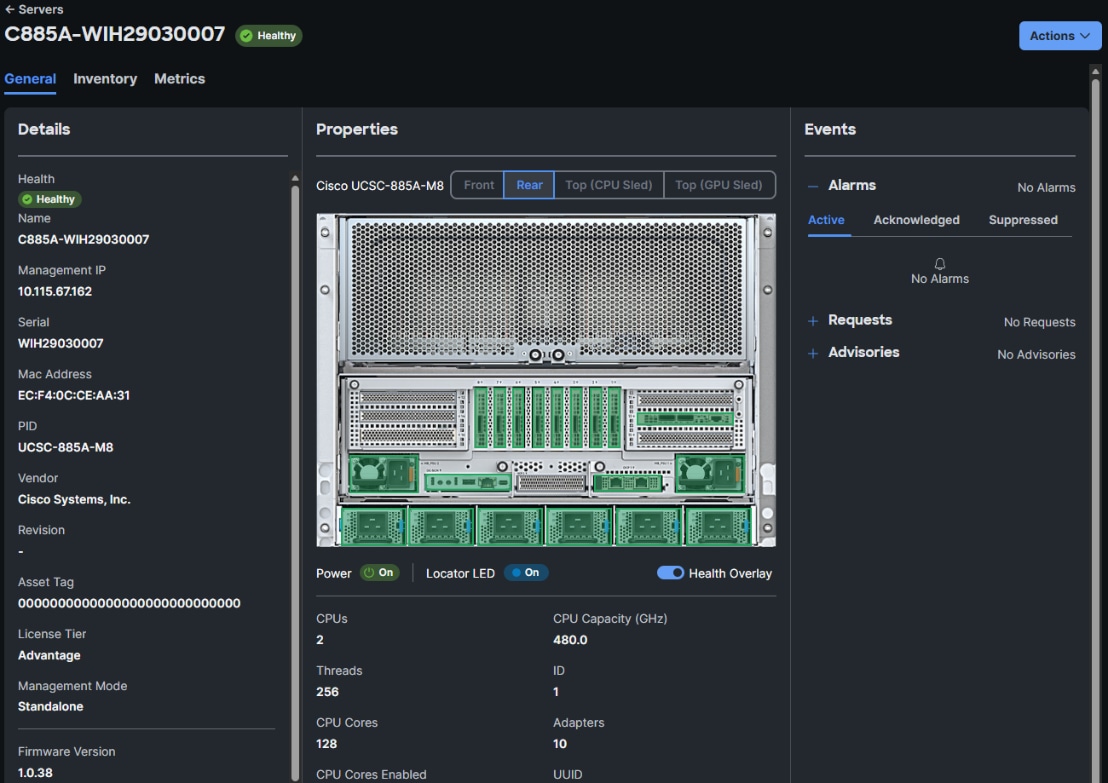

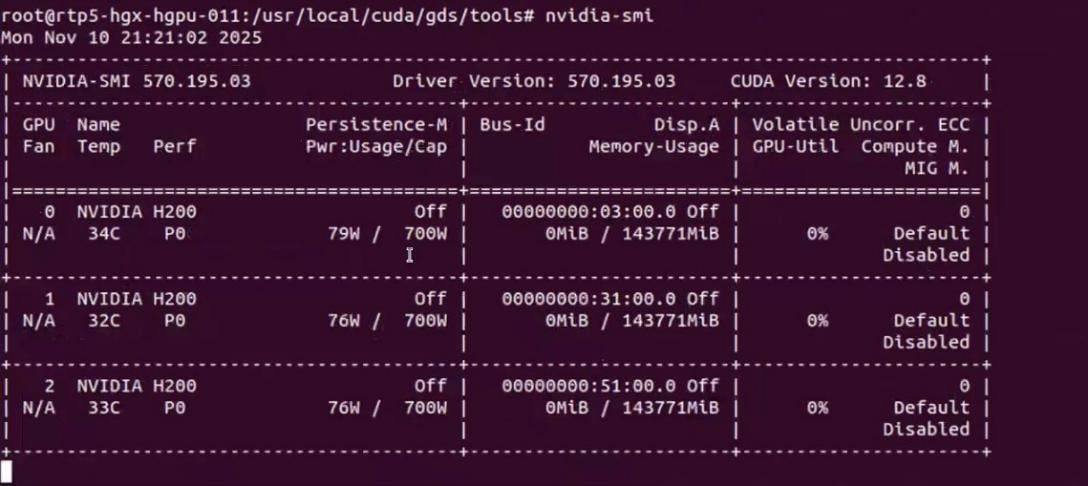

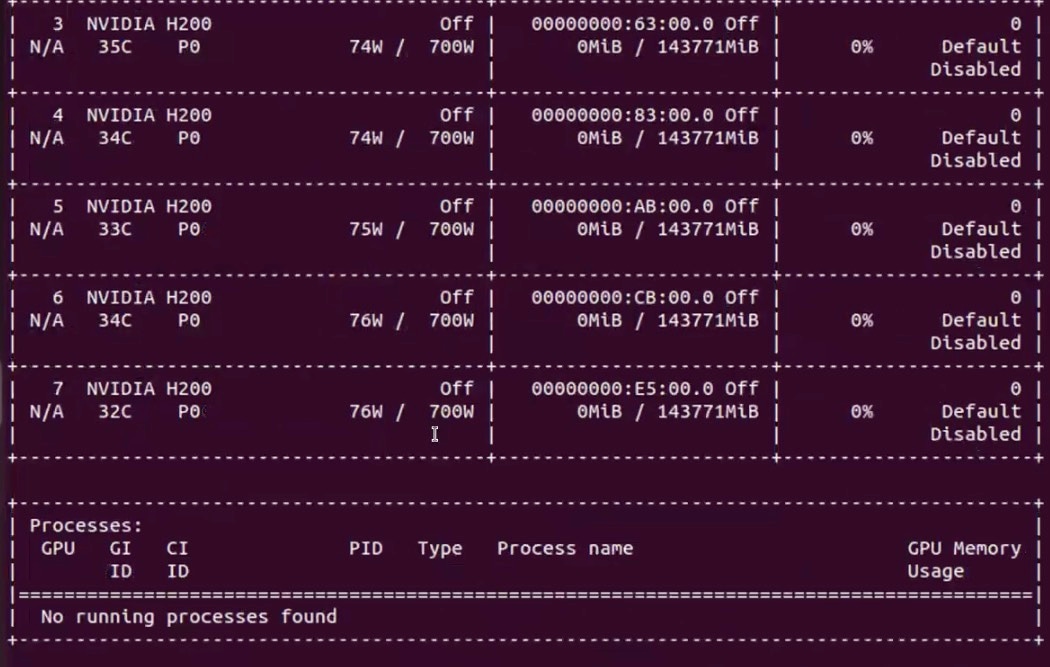

● Cisco UCS C885A M8 Servers: Four nodes, each equipped with eight NVIDIA H200 GPUs (SXM) and dual AMD EPYC processors. These servers provide the primary compute power for distributed training and fine-tuning. Within the server, GPUs are interconnected via NVIDIA NVLink, delivering 900 GB/s of bidirectional bandwidth per node.

● Cisco UCS X-Series Direct: A dedicated management cluster used to host the management services.

● Network: Dual-fabric architecture (Backend and Frontend) utilizing Cisco Nexus 9000 Series switches, managed and deployed using Cisco Nexus Dashboard.

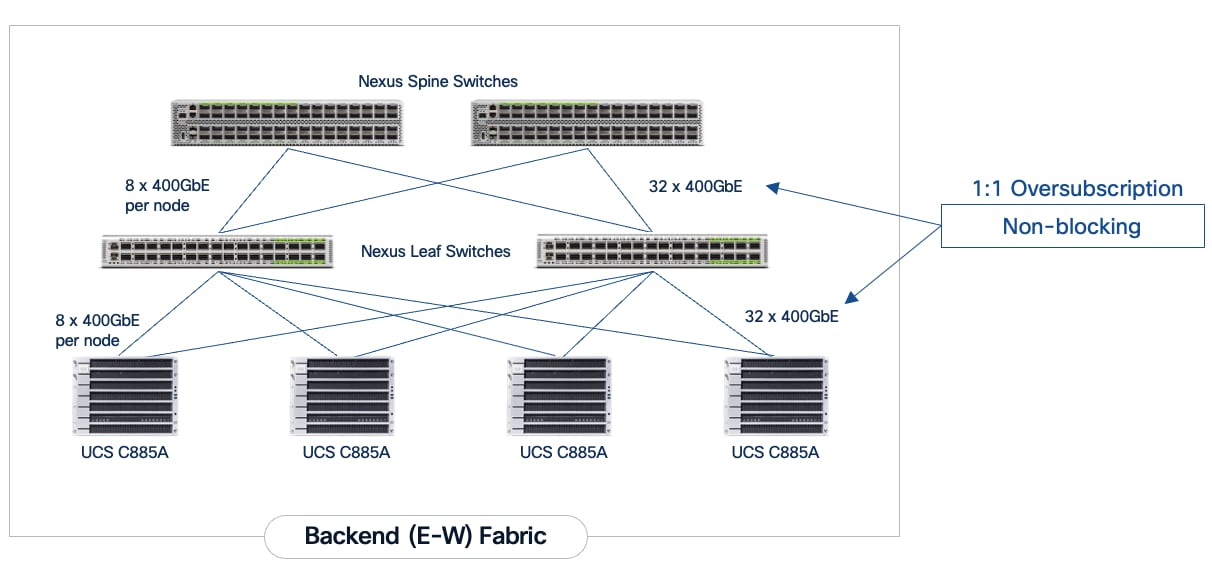

● Backend (East-West) Fabric: Four Cisco Nexus 9332D-GX2B switches connected in a two-tier spine-leaf Clos-based topology. This fabric provides a dedicated, non-blocking 400GbE environment for GPU-to-GPU communication via RoCEv2.

● Frontend (North-South) Fabric: Four Cisco Nexus 9332D-GX2B switches, two as compute + management leaf switches and two as dedicated storage leaf switches. This fabric provides connectivity for cluster management, storage I/O, and user access.

● VAST Data on Cisco EBox: VAST Data platform is deployed on Cisco EBox based on Cisco UCS C225 M8 servers, provides a CPU-dense, flexible platform well suited for AI data services, metadata processing, and infrastructure control functions, enabling efficient integration of VAST Data within Cisco AI POD architectures aligned to NVIDIA reference designs.

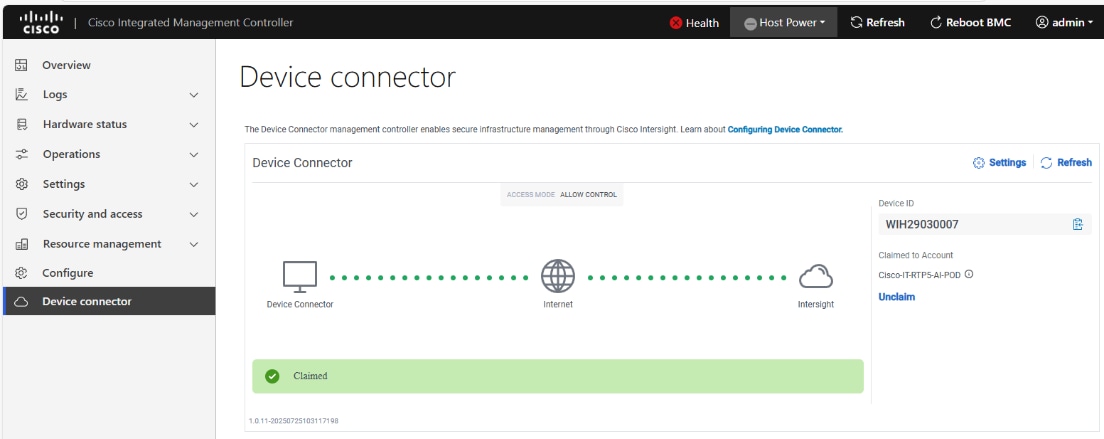

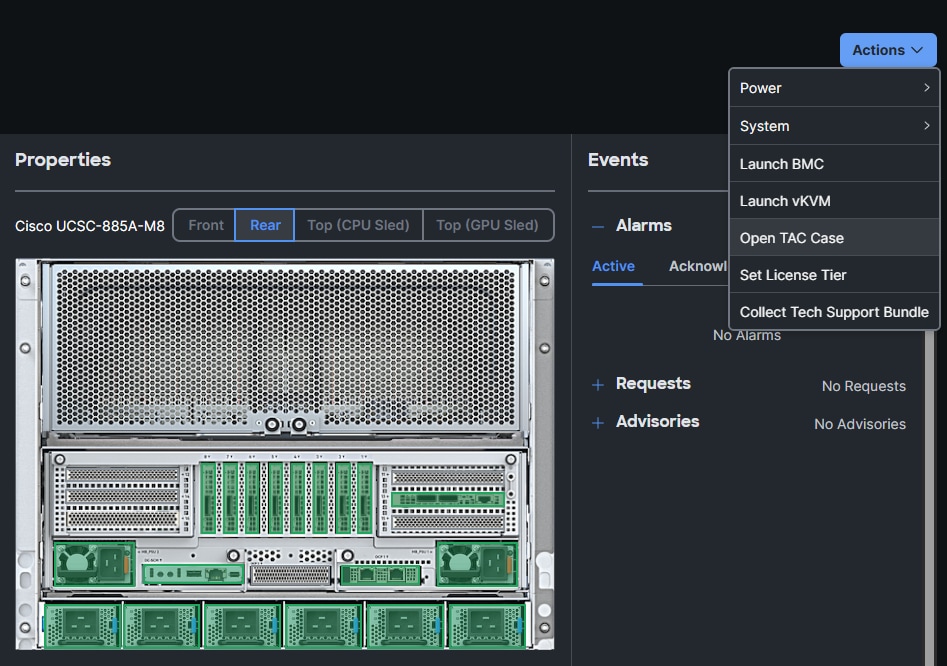

● Cisco Intersight: Provides hardware health monitoring and visibility for the Cisco UCS C885A M8 GPU nodes while managing the complete lifecycle of the Cisco UCS X-Series management cluster.

● Cisco Nexus Dashboard: Serves as the centralized automation and operations platform for both the Backend and Frontend network fabrics.

● NVIDIA AI Enterprise (NVAIE): A comprehensive suite of AI software that includes optimized drivers, CUDA libraries, and the NVIDIA Collective Communications Library (NCCL) required for performant distributed training.

Solution Design

This chapter contains the following:

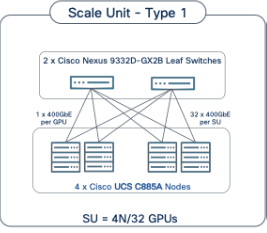

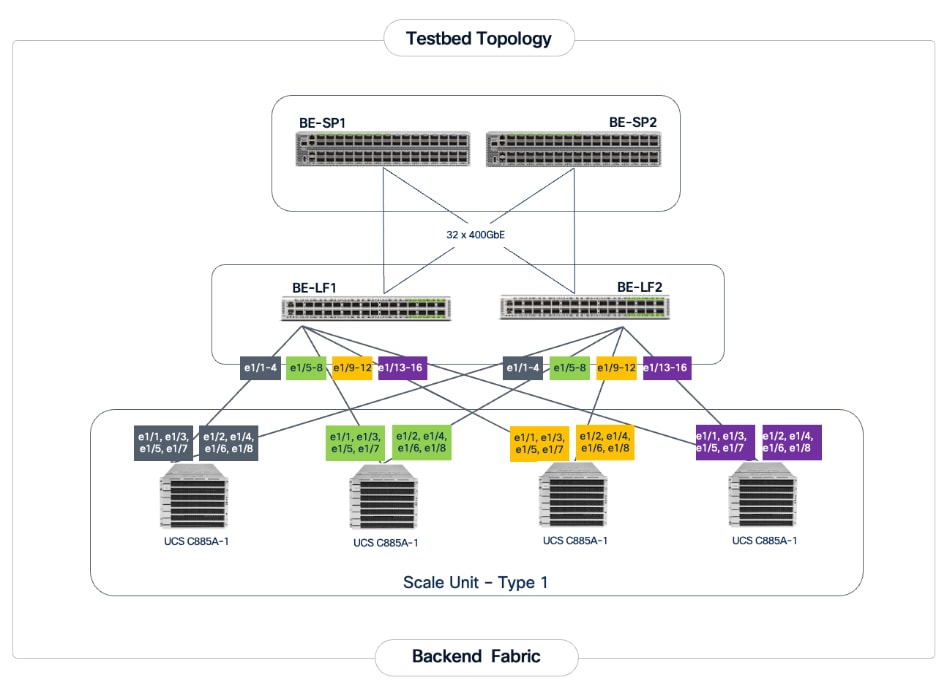

The Cisco AI POD architecture is a modular, building-block design using Scale Unit Types that can be predictably and incrementally scaled to support large GPU clusters as described in the Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide. This implementation is based on Scale Unit - Type 1 (see Figure 1), a 32-GPU cluster using Cisco UCS dense GPU servers, Cisco Nexus networking, VAST Data on Cisco EBox, integrated into a unified infrastructure stack.

Cisco AI PODs with VAST Data meets the following general design requirements:

● Resilient design across all layers of the infrastructure with no single point of failure

● Scalable design with the flexibility to add compute capacity, storage, or network bandwidth as needed

● Modular design that can be replicated to expand and grow as the needs of the business grow

● Flexible design that can support different models of various components with ease

● Simplified design with the ability to integrate and automate with external automation tools

● Cloud-enabled design which can be configured, managed, and orchestrated from the cloud using GUI or APIs

The following figure illustrates the logical infrastructure stack, validated in this solution:

For the AI POD networking and server design, please refer to the Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide. This document focuses on the VAST Data design integrated with Cisco AI PODs.

The storage system and architecture are critical components of AI training, fine-tuning, and inference environments. AI workloads require extremely high performance, linear scalability, and secure shared access to data in order to efficiently read large training datasets and write model checkpoints, logs, embeddings, and other artifacts throughout the AI lifecycle. A primary storage requirement for AI training is very high-throughput, low-latency sequential reads, as massive datasets must be rapidly streamed into GPU memory at the start of each training epoch, while also supporting highly parallel metadata operations.

The VAST Data platform is deployed on Cisco EBox leveraging Cisco UCS C-Series servers. The solution implements a disaggregated, shared-nothing architecture that separates compute (VAST CNodes) from storage capacity (VAST DNodes) both deployed on each Cisco EBox node This architecture enables independent scaling of performance and capacity while presenting a single global namespace across the entire cluster, simplifying data access for AI workloads running on Cisco AI PODs.

Each VAST Data EBox node is connected redundantly to a pair of Cisco Nexus 9332D-GX2B leaf switches using high-speed Ethernet connectivity. The existing deployment is configured with 200GbE front-end networking, including NFSv3, NFSv4.x, and NFS over RDMA (NFS-RDMA) for ultra-low-latency data access, as well as S3 object access to the same underlying data without data duplication. When equipped with NVIDIA ConnectX 7 adapters, VAST Data enables high-bandwidth, RDMA-accelerated data paths optimized for GPU-dense environments.

VAST Data’s parallel, distributed metadata architecture eliminates traditional file system bottlenecks, allowing all clients to access all storage nodes concurrently. This design enables massive parallel I/O, consistent low latency, and linear performance scaling as additional CNodes and DNodes are added. AI workloads benefit from parallel data access patterns without the constraints of controller-based storage architectures.

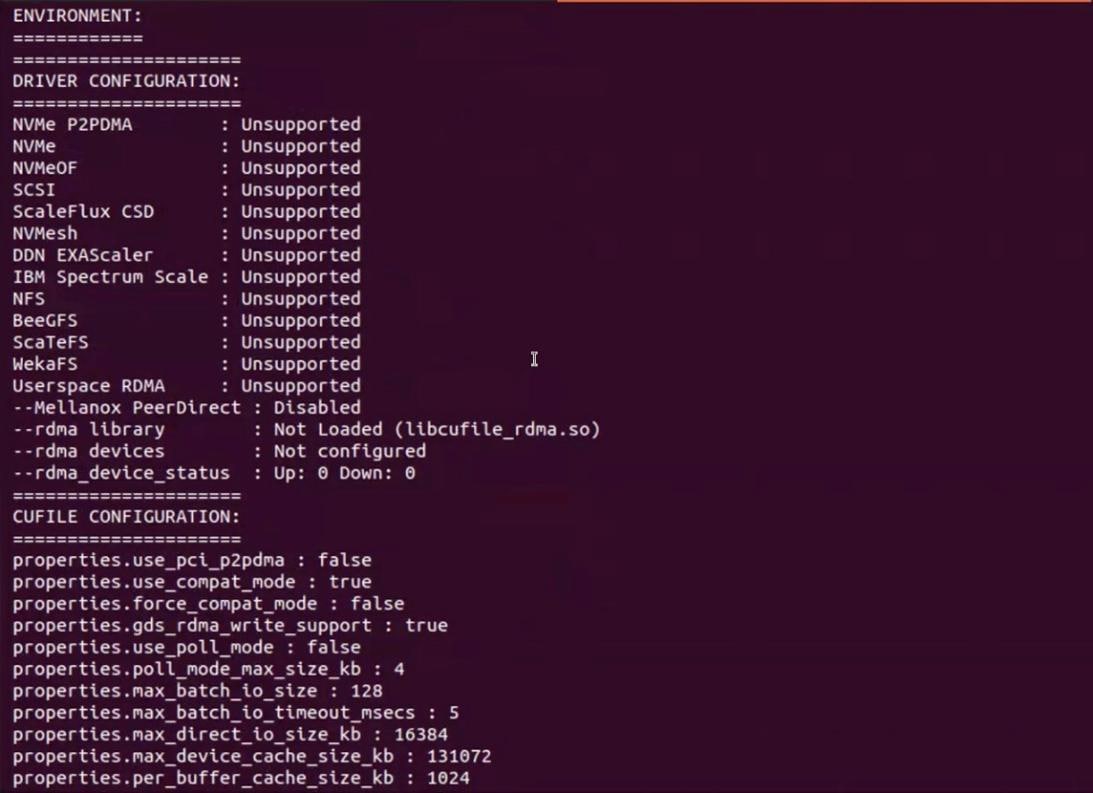

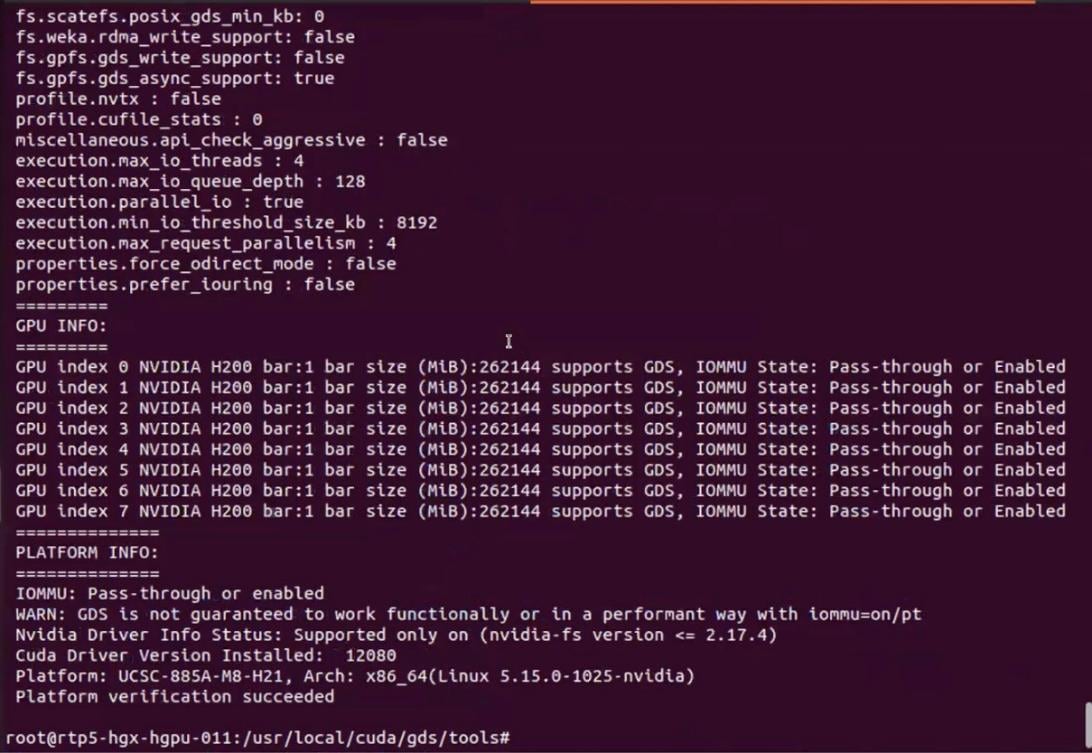

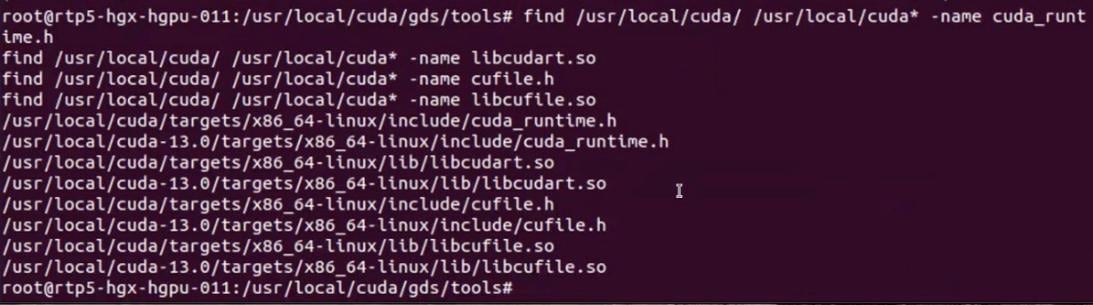

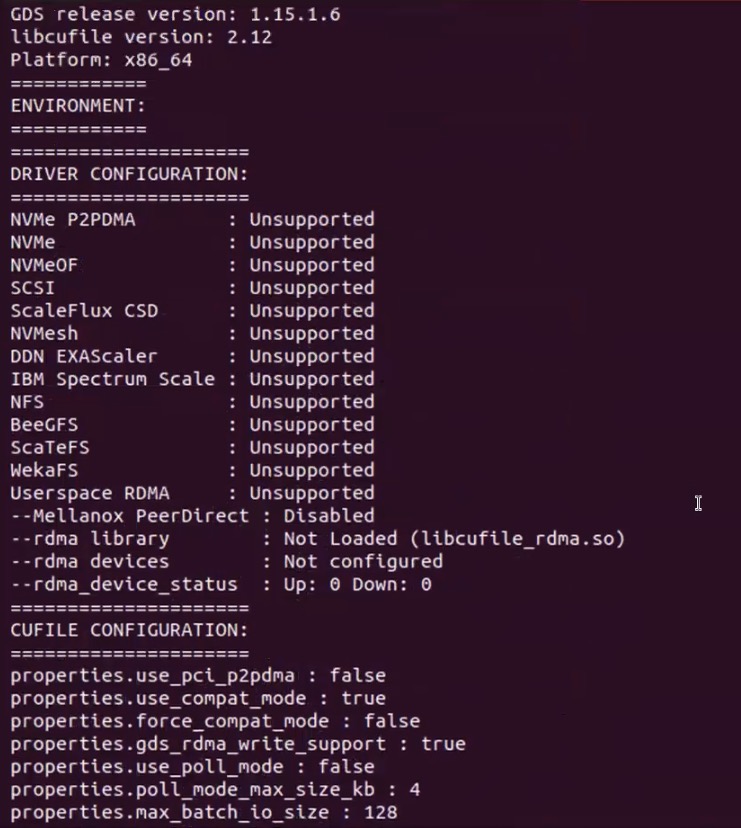

The VAST Data platform supports GPU-accelerated workloads using NVIDIA GPUDirect Storage, enabling direct data movement between VAST storage and GPU memory, bypassing CPU bottlenecks and reducing latency. This capability is particularly beneficial for large-scale AI training and fine-tuning workloads deployed on Cisco UCS C885A GPU servers, where maximizing GPU utilization is critical.

Aligned with NVIDIA Enterprise Reference Architecture (ERA) guidance, the VAST Data on Cisco UCS design enables scalable AI infrastructure by independently scaling VAST nodes alongside Cisco UCS GPU compute nodes. This architecture provides a high-performance, resilient, and operationally simple storage foundation for AI training, fine-tuning, and inference within Cisco AI POD environments.

This section provides the specific hardware and software details used in this deployment (Table 1).

| Component (PID) |

Quantity |

Notes |

| UCS GPU Cluster |

||

| Cisco UCS C885A M8 Servers |

4 Nodes |

|

| NVIDIA H200 SXM5 GPUs |

32 GPUs (total), 8 GPUs per server |

141GB of HBM3e memory each |

| NVIDIA ConnectX-7 NICs |

8 NICs per server |

1x 400GbE NIC for connecting to backend fabric |

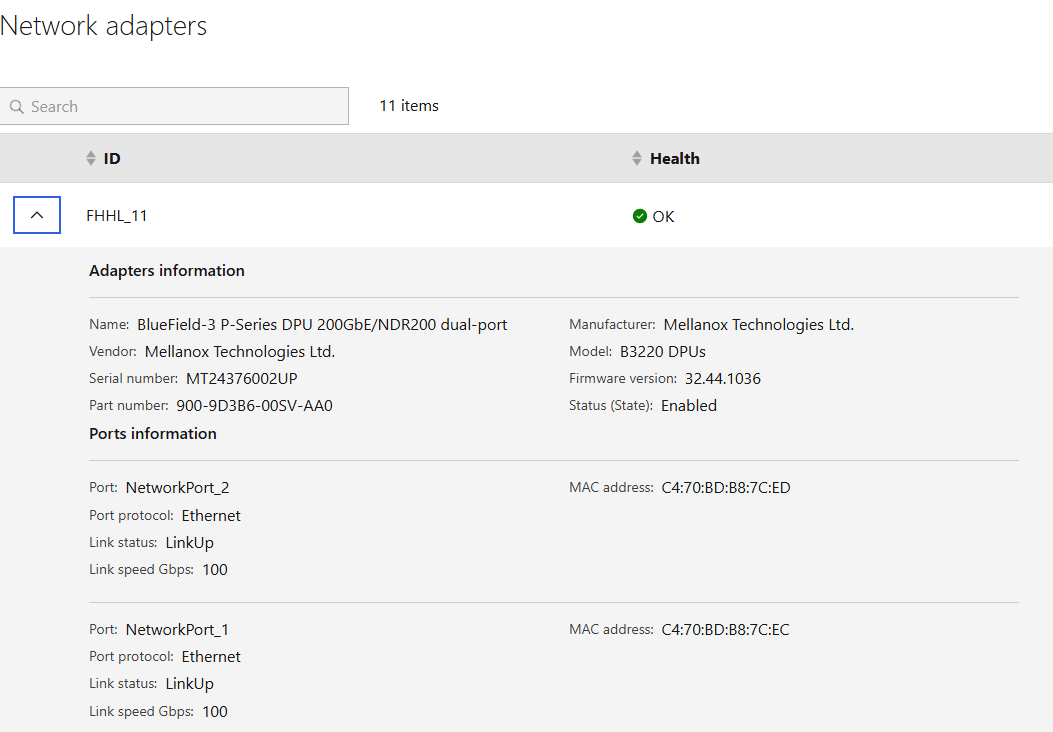

| NVIDIA BlueField-3 NICs |

1 NIC per server |

2x 200GbE NIC for connecting to frontend fabric |

| Backend Fabric |

||

| Cisco Nexus 9332D-GX2B |

2 Spine, 2 Leaf Switches |

400GbE fabric |

| Frontend Fabric |

||

| Cisco Nexus 9364D-GX2A |

2 Spine Switches |

400GbE from Spine to Leaf |

| Cisco Nexus 9332D-GX2B |

2 Compute, 2 Storage Leaf Switches |

200GbE to compute, 2x 200GbE to each VAST EBox node |

| UCS Management Cluster |

||

| Cisco UCS X-Series Direct |

|

|

| UCS X9508 Chassis (UCSX-9508) |

1 |

|

| UCS X Direct 100G (UCSX-S9108-100G) |

2 |

|

| VIC 15231 MLOM (UCSX-ML-V5D200G) |

3 (2x100G mLOM) |

To connect to frontend fabric |

| Storage |

||

| VAST Data |

12 x Cisco EBox nodes |

2x 200G from each node for VAST internal network 2x 200G from each node for VAST external network |

| Software |

||

| NVIDIA AI Enterprise (NVAIE) |

|

Licenses required |

| Cisco Nexus Dashboard |

3 |

3-node physical cluster |

| Cisco Intersight |

N/A |

SaaS platform |

| VAST Data |

N/A |

VAST Data storage and compute Licenses |

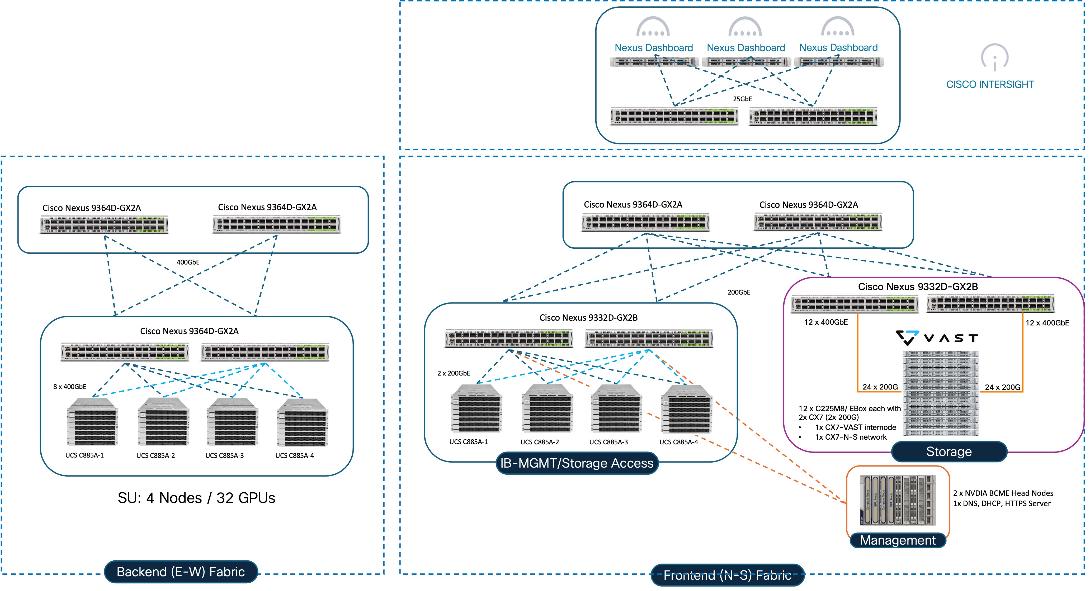

The physical topology for AI PODs with VAST Data and NVIDIA Base Command Manager is as follows:

● Cisco UCS C885A M8 servers each with 8 NVIDIA H200 GPUs

● Cisco UCS X9508 Chassis with eight Cisco UCS X210c Compute Nodes for management and supporting services

● Fifth-generation Cisco UCS X-Series Direct Fabric Interconnects 9108 to support 100GbE connectivity from various components

● High-speed Cisco NX-OS-based Nexus 9332D-GX2B and 9364D-GX2A switching design to support 100GE and 400GE connectivity

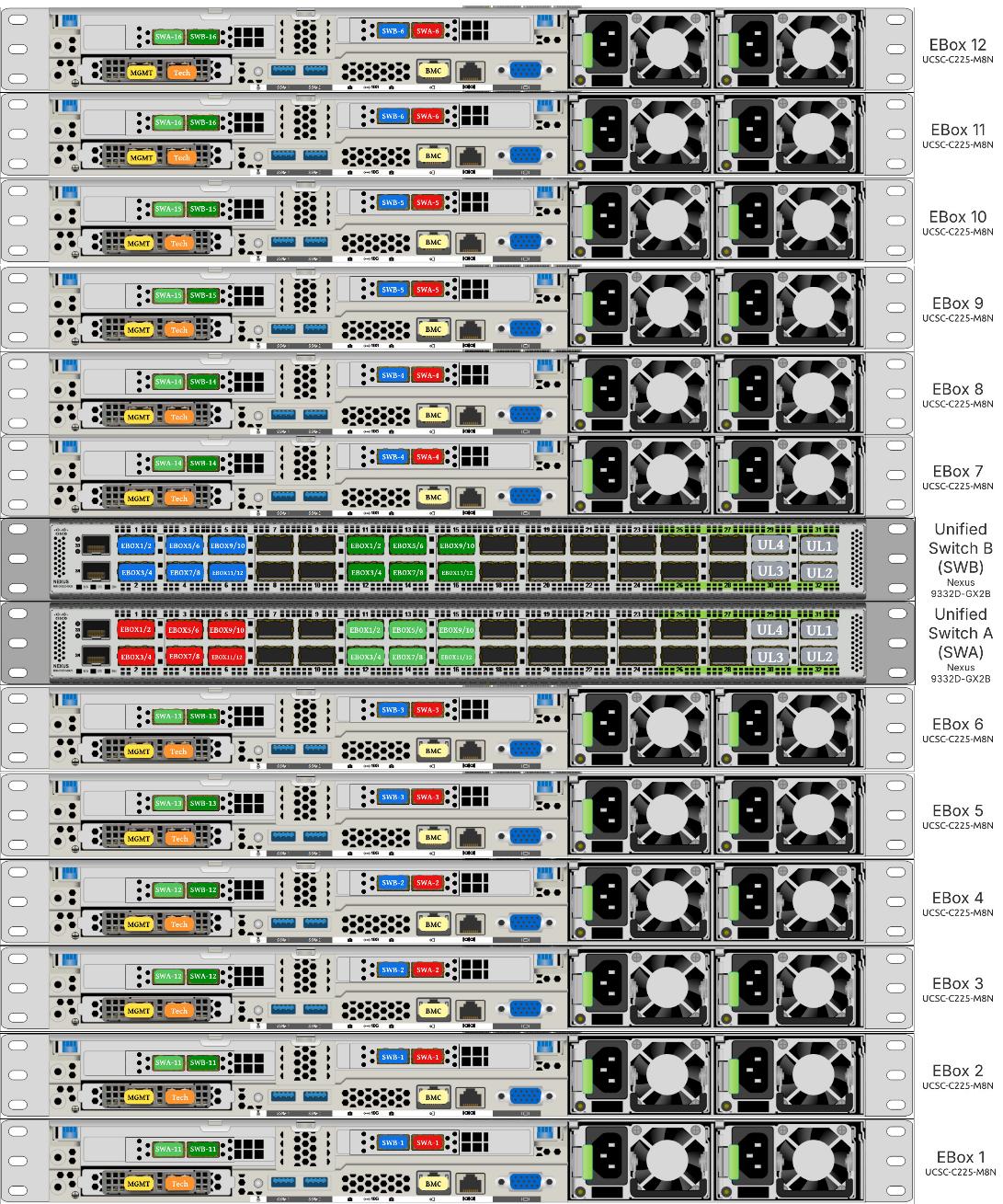

● VAST Data on Cisco UCS, comprising of 12x Cisco UCS C225 M8N nodes certified for Cisco EBox

The software components of this solution consist of:

● Cisco Intersight to deploy, maintain, monitor and support the Cisco UCS server components

● Cisco Nexus Dashboard to deploy, maintain, and support the Cisco Nexus Switching Fabrics

● NVIDIA Base Command Manager to orchestrate training workloads on Ubuntu

● VAST Data on Cisco EBox

AI PODs with VAST Data Topology

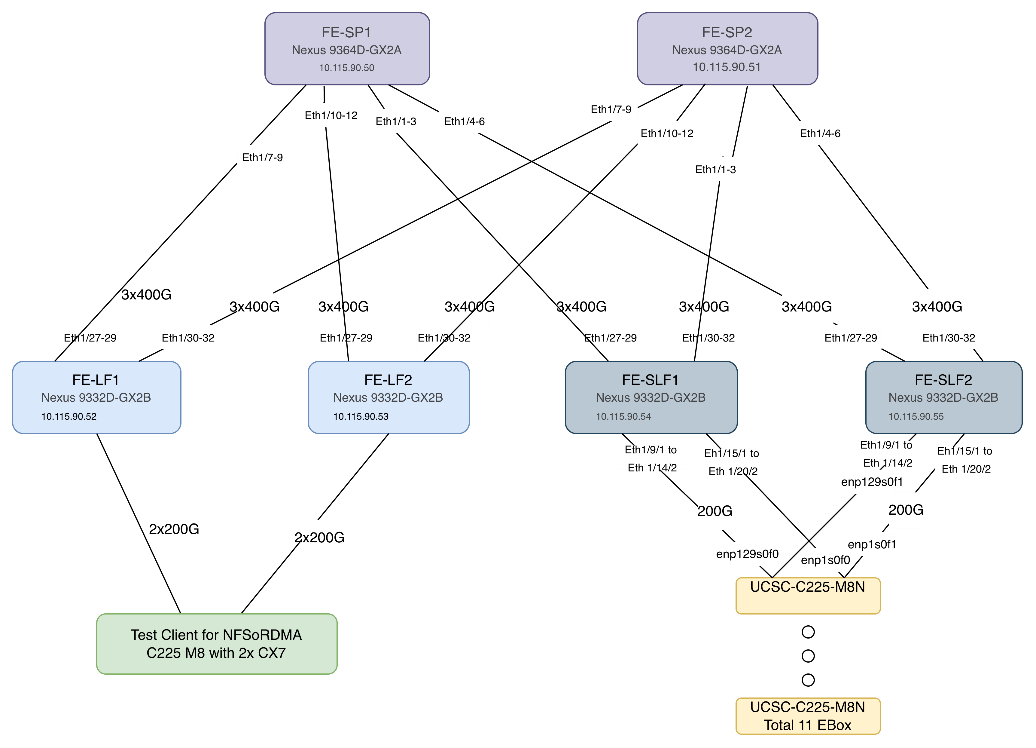

Figure 2 shows various hardware components and the network connections for AI PODs with VAST Data.

The key functional building blocks of this design are:

● Backend (East-West) Fabric is a dedicated, non-blocking 400GbE fabric optimized for inter-node GPU-to-GPU communication. The fabric is built using a minimum of 2 leaf switches and 2 spine switches. It can be scaled to support a max. cluster size of 128 GPUs by adding leaf pairs and larger clusters by adding spine pairs.

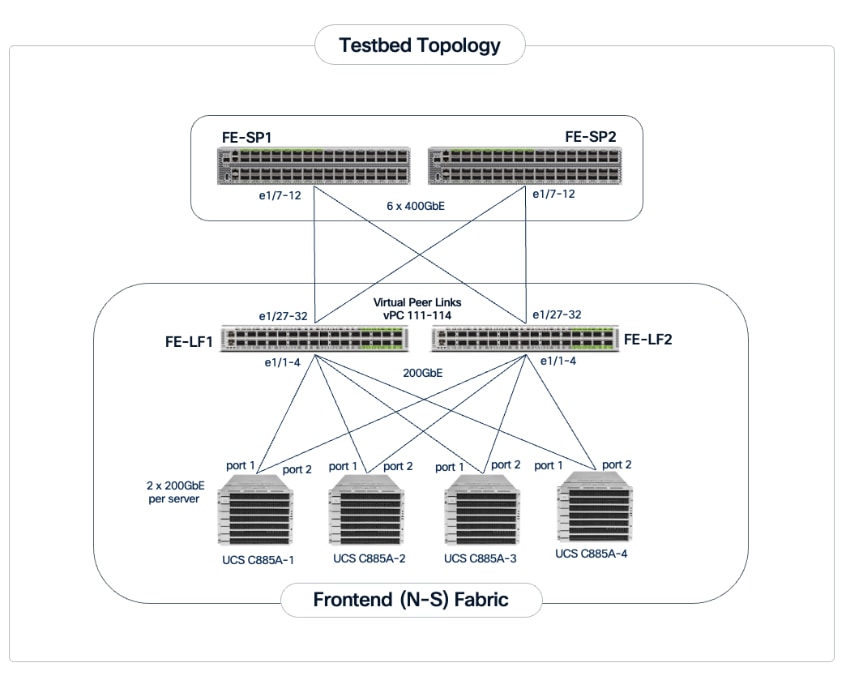

● Frontend (North-South) Fabric is a 400GbE-capable spine-leaf fabric providing connectivity for management, user access, and storage. This fabric uses 2 spine switches and 4 leaf switches as listed below. This fabric can also be scaled as needed by adding or upgrading links or adding switch pairs.

◦ Compute/Management Leaf Pair: Provides 200GbE connectivity for the Cisco UCS C885A nodes and Cisco UCS X-Series Direct management clusters.

◦ Dedicated Storage Leaf Pair: Provides 400GbE connectivity to VAST Data on Cisco EBox , isolating storage I/O from other frontend traffic.

● Scale Unit - Type 1: This building block consists of four Cisco UCS C885A M8 servers connected to two backend leaf switches, forming a 32-GPU cluster. The Cisco UCS C885A M8 servers connect to the backend and frontend fabrics using E-W NICs (8 per server) and N-S NICs (1 per server), respectively. This design can scale by adding more scale units of the same or different types. Additional N-S NICs can also be added as needed — for example, to provide dedicated, high-speed access to storage.

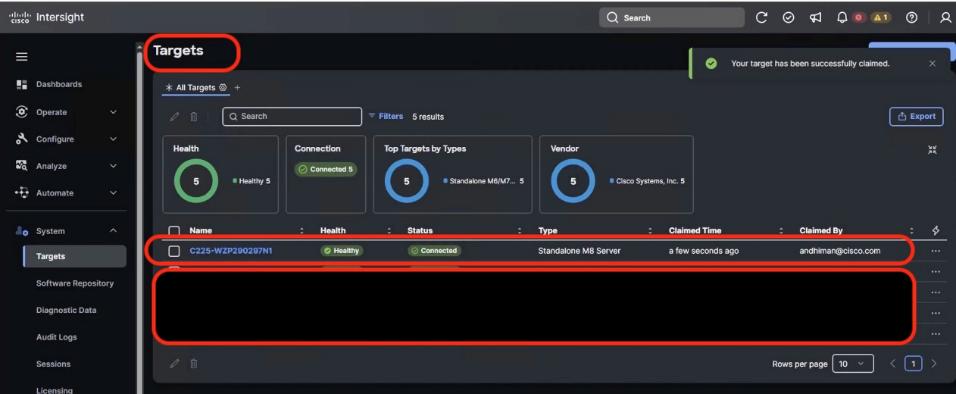

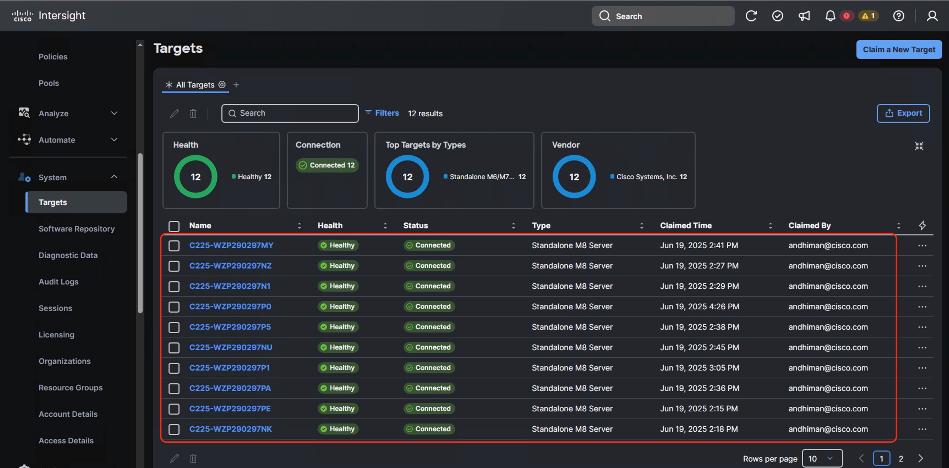

● VAST Data on Cisco EBox leveraging Cisco UCS C225 M8 servers is configured in Intersight Standalone mode with a minimum of twelve (12) nodes for VAST cluster.

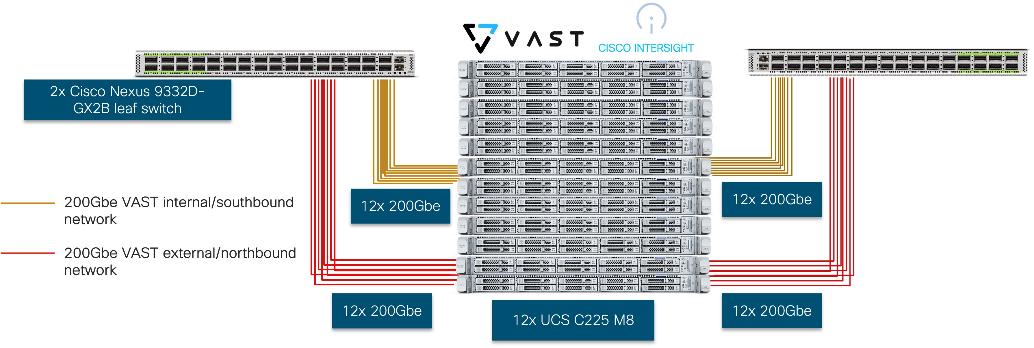

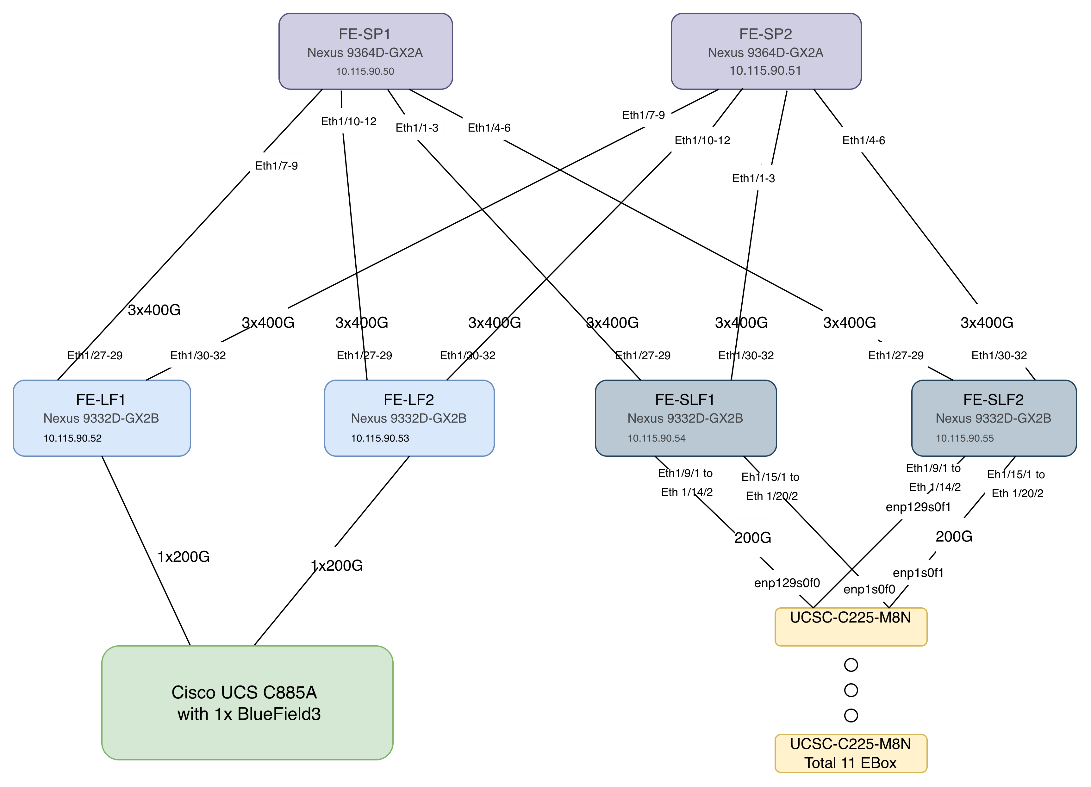

Figure 3 details a high-level deployment of VAST on Cisco UCS C225 M8 (EBox) nodes.

The deployment includes:

● 12 x Cisco UCS C225 M8 servers (EBox) certified for VAST

● 2 x Cisco Nexus 9332D-GX2B or Nexus 9364D-GX2A (leaf switches)

● 2 optics

◦ Optics (passive cables)

- 24x QDD-2Q200-CU3M (400G QSFP56-DD to 2x200G QSFP56 Copper Breakout Cable, 3m)

- 4x QDD-400-CU3M (400G Passive Cable, 3m)

◦ Optics and Fiber

- On 400G switch side, 24x QDD-400G-DR4-S (400G QSFP-DD Transceiver, MPO-16 APC, 100m OM4 MMF),

- On CX7 side, 48x QSFP-200G-SR4-S (200GBASE SR4 QSFP56 Transceiver, MPO, 100m over OM4 MMF)

- MPO breakout cable (Breakout MMF patchcord: MPO-16 to 2X MPO-12)

Note: For certified optics see: https://tmgmatrix.cisco.com.

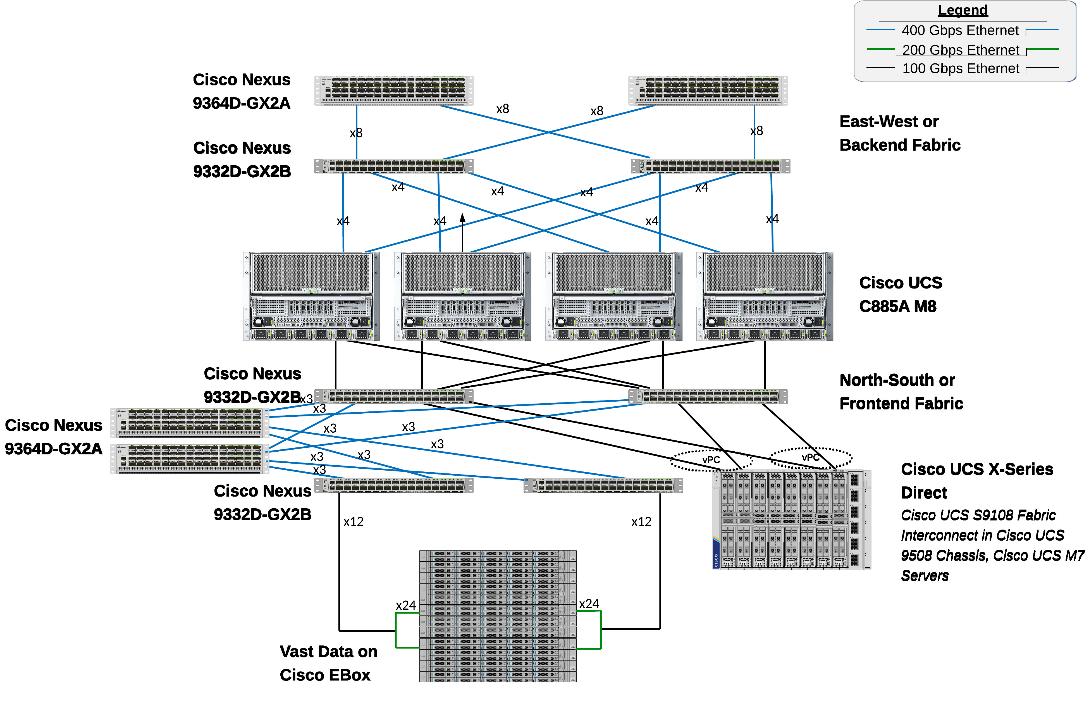

The physical connectivity of the AI POD is designed to maximize throughput and minimize latency. For the 32-GPU cluster, a 2-way rail-optimized topology is implemented. This section details the Connectivity Design and port mapping used in this validated design.

Backend (East-West) Connectivity

The backend fabric is engineered for non-blocking connectivity between GPU servers in the cluster. This is achieved by ensuring that the number of uplinks from leaf-to-spine are equal in number and bandwidth to the number of downlinks from leaf-to-UCS server. As shown in Figure 4, the total number of 400GbE host-facing ports on the leaf switches (32 ports across 4 nodes) is matched by an equal number of 400GbE uplinks to the spine layer, ensuring that GPU synchronization traffic never encounters oversubscription bottlenecks.

Each Cisco UCS C885A node is connected to the two leaf switches in the fabric using a 2-way rail-optimized topology. To achieve this, the 8 x 400GbE connections from each server are distributed across the two leaf switches in the Scale Unit – Type 1. This ensures that GPUs of the same rank, across all nodes in the Scale Unit, connect to the same physical leaf switch, minimizing the network hops required for critical collective operations.

Table 2. Backend Fabric Connectivity

| From |

GPU NICs |

To |

Port Speed |

Connectivity |

| UCS C885A (1-4) |

NICs 1, 3, 5, 7 |

Leaf Switch 1 |

400GbE |

Access VLAN |

| UCS C885A (1-4) |

NICs 2, 4, 6, 8 |

Leaf Switch 2 |

400GbE |

Access VLAN |

| Leaf Switch 1 |

16 x Uplinks – evenly distributed across Spines |

Spine Switch 1-2 |

400GbE |

Routed |

| Leaf Switch 2 |

16 x Uplinks – evenly distributed across Spines |

Spine Switch 1-2 |

400GbE |

Routed |

Each Cisco UCS C885A server was equipped with 8 x NVIDIA ConnectX-7 (1 x 400GbE) NICs, one per GPU for connecting to the backend fabric. NVIDIA BlueField-3 NICs can also be used as E-W NICs.

Figure 5 illustrates the backend topology used to validate this solution.

Frontend (North-South) Connectivity

The frontend fabric provides the data path for cluster management, storage, services and external access. Each Cisco UCS C885A server connects to the compute/leaf switches in the frontend fabric using 2 x 200GbE links. The uplinks to the frontend fabric are configured as a LACP bond for high availability. The management and storage access traffic are deployed in different VLANs and trunked on this bonded interface.

The Cisco UCS X-Series management cluster also connect to the same compute leaf switches that the Cisco UCS C885A servers connect to. These leaf switches also provide access to Cisco Intersight and Nexus Dashboard for managing this environment.

VAST Data on Cisco EBox, in this design connects to dedicated frontend storage leaf switches using multiple high bandwidth 400GbE links to support concurrent NFS and S3 traffic.

Table 3. Frontend Fabric Connectivity

| From |

To |

Connectivity |

Traffic Type |

| UCS C885A Nodes |

Compute Leaf Pair |

2-Port LACP Bond |

VLAN Trunk (Management & Storage) |

| UCS X-Series Direct |

Compute Leaf Pair |

Multi-Port LACP Port-Channel |

VLAN Trunk (Management/Control) Plane) |

| VAST Data |

Storage Leaf Pair |

VAST unified connectivity (VAST internal network and VAST Client Network on same laf pair switches) |

VLAN Trunk (NFS & S3) |

The detailed connectivity from UCS C885A nodes to the compute/management leaf pair is shown in Figure 6.

Connectivity for VAST Data on Cisco EBox

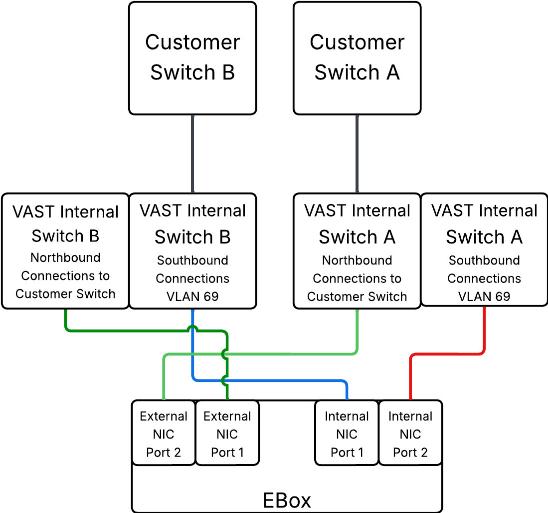

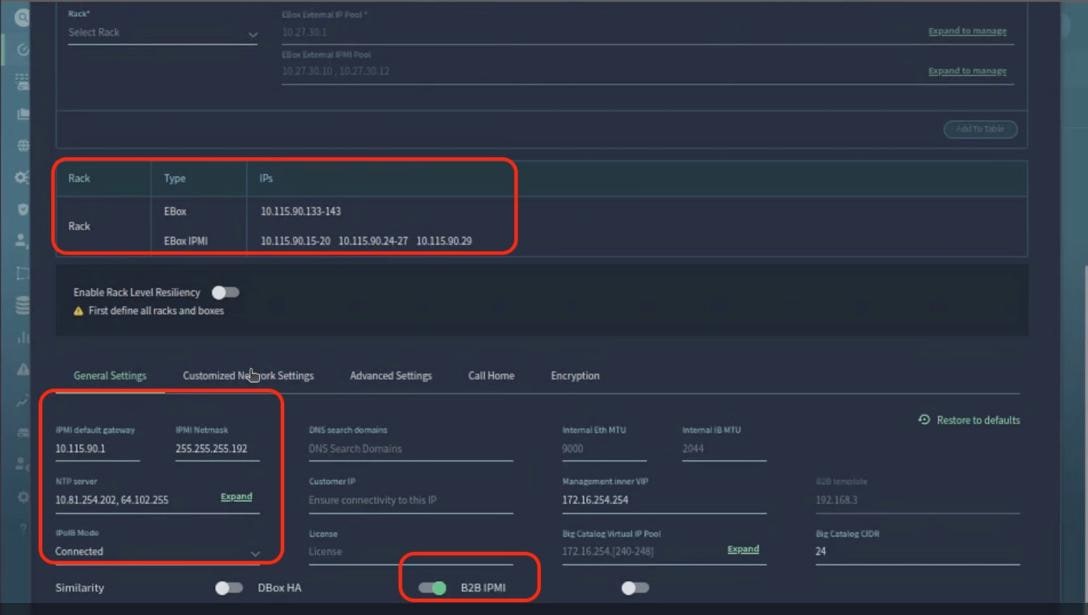

In the deployment both the internal/southbound network ports (from network adapter in PCI Slot 3) and the external/customer/northbound network ports (from network adapter in PCI slot 1) are connected to the same pair of 400G Cisco Nexus switches.

Figure 7 details the connectivity of Cisco UCS C225 M8 server (EBox) with a single pair of Cisco Nexus 9332D-GX2B switches.

The following are the labelling instructions for Figure 8:

● SWA is defined as switch A.

● SWB is defined as switch B.

● Ports marked in blue and red are used for VAST internal network.

● Ports marked in light and dark green are used for connectivity to customer network or external network.

● Number of uplinks or connections to spine switches is dependent on the number of EBox connected to the switch pair. If you have 12 EBox nodes, you need 4x 400G uplinks from each switch.

● In this deployment, a 400G to 2x 200G breakout cable (QDD-2Q200-CU3M) was used allowing connections to 400G ports on switch side and 200G ports on CX7 adapter for each EBox. For example:

◦ EBox1 and EBox2 both marked with SWA-11 connects to Port 11 of Switch A

◦ EBox1 and EBox2 both marked with SWB-11 connects to Port 11 of Switch B

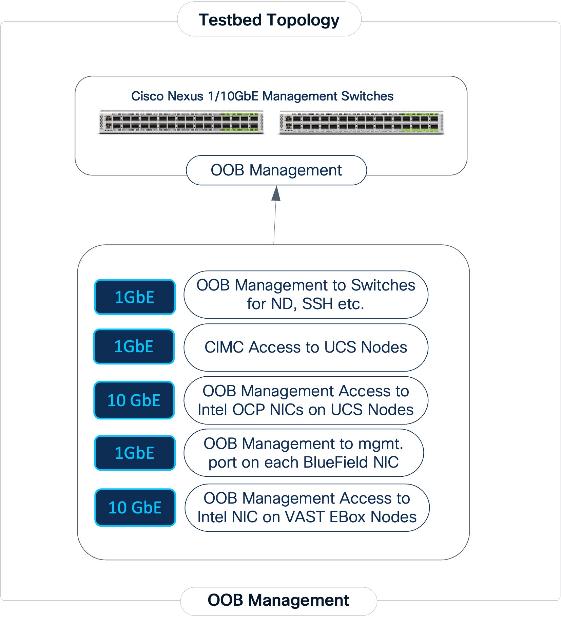

Out-of-Band Management Connectivity

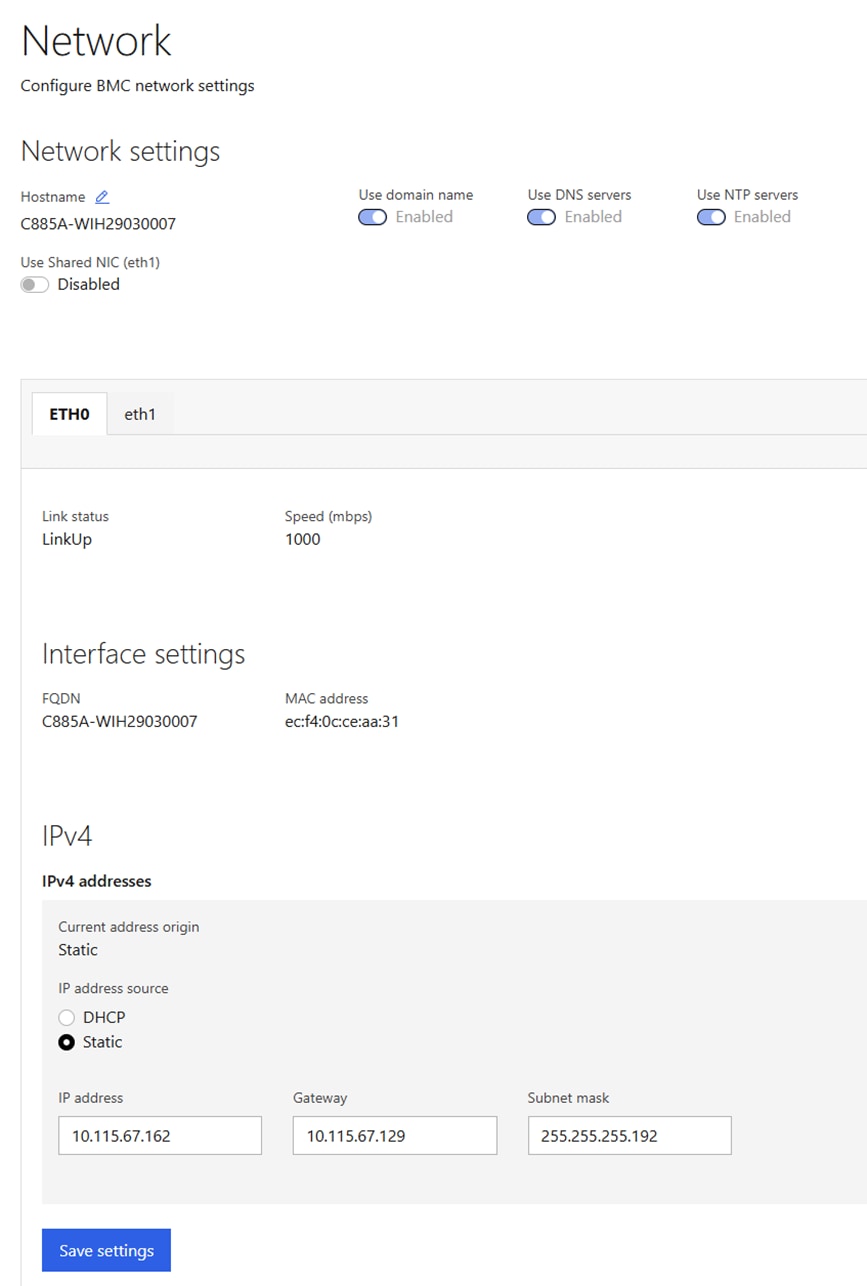

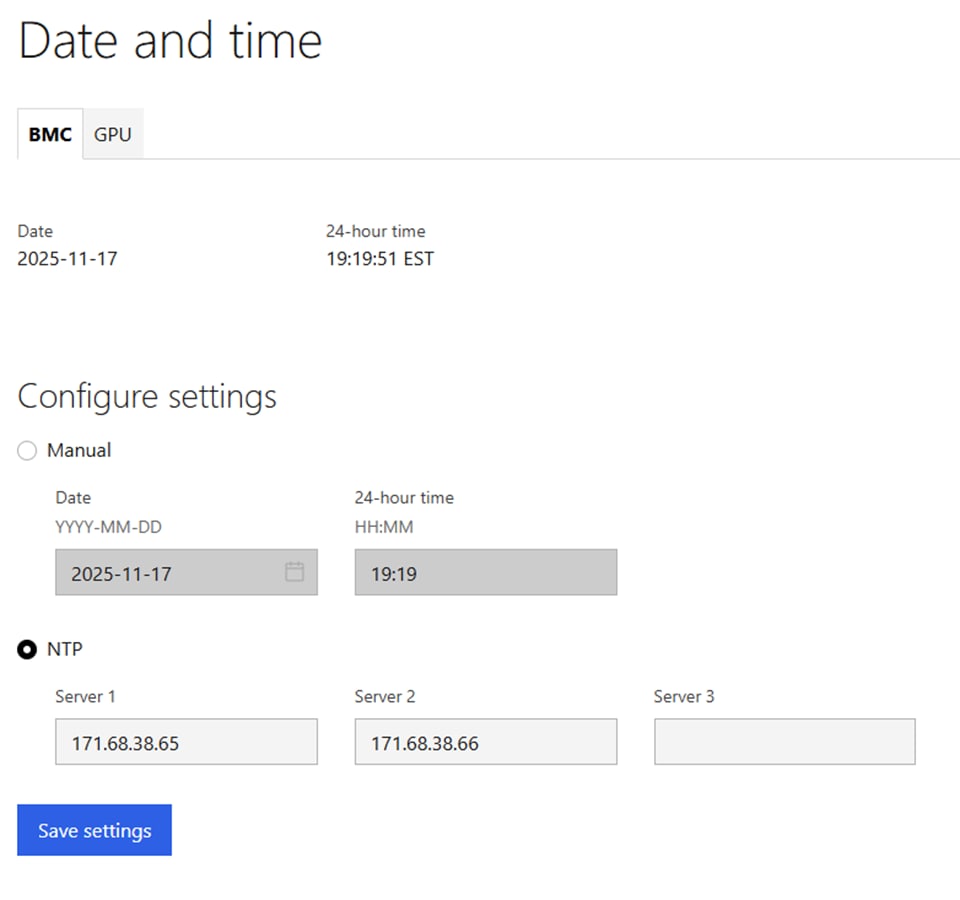

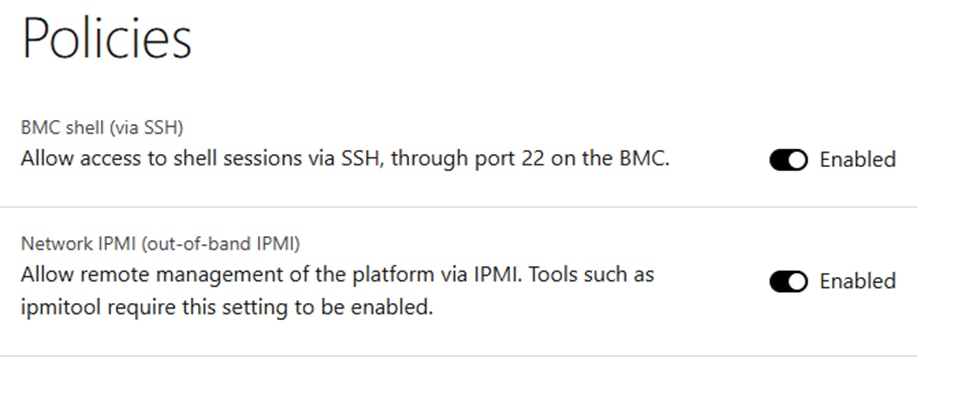

All switches and servers in the topology are connected to dedicated Out-of-Band (OOB) management network and for initial provisioning via CIMC and Redfish and as a backup path to access the devices.

The VAST EBox nodes management ports (MGMT/enp65s0f0) are also connected to same Out-of-Band (OOB) management network.

This section provides the specific design details for the compute, network, and storage sub-systems for solution validated in Cisco labs.

Backend (East-West) Fabric

The backend fabric is a lossless, low-latency, high-throughput ethernet fabric, designed to support the stringent performance requirements of GPU-to-GPU RDMA communication. This fabric is exclusively for inter-node RDMA over Ethernet (RoCEv2) GPU communication. As stated earlier, this fabric is deployed as a two-tier spine-leaf Clos topology using a MP-BGP VXLAN EVPN architecture, providing a multi-tenant environment with flexible support for both scalable Layer 2 and Layer 3 overlays across an IP underlay. In this design, a layer 2 overlay where all 32 GPUs reside in a single logical broadcast domain, simplifying the communication patterns required by AI frameworks.

Table 4. UCS GPU Node Connectivity to Backend Fabric

| From |

E-W NIC |

Connectivity |

To |

Logical Connectivity |

| UCS C885A Nodes (1-4) |

1/1, 1/3, 1/5, 1/7 (Access Ports) |

Rail Optimized (2-way) |

Backend Leaf 1 |

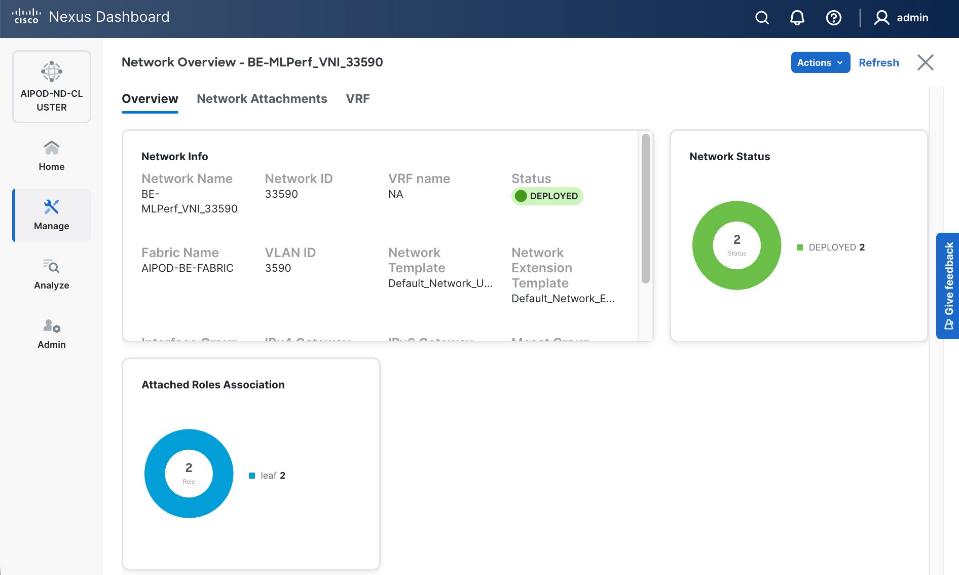

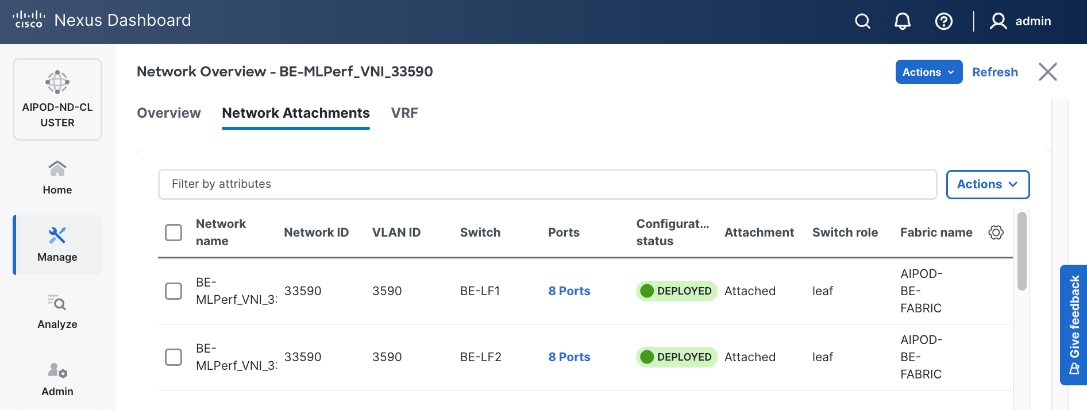

Access VLAN (3590) mapped to L2 VNI (33590) |

| UCS C885A Nodes (1-4) |

1/2, 1/4, 1/6, 1/8 (Access Ports) |

Rail Optimized (2-way) |

Backend Leaf 2 |

Access VLAN (3590) mapped to L2 VNI (33590) |

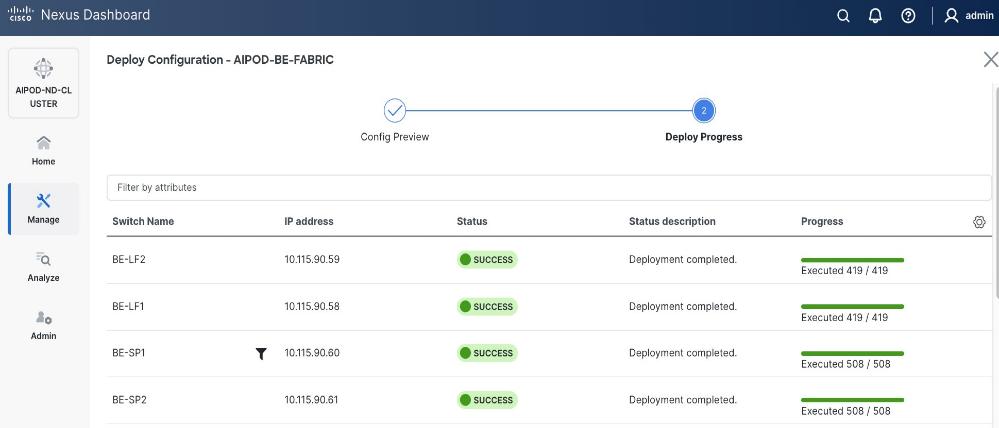

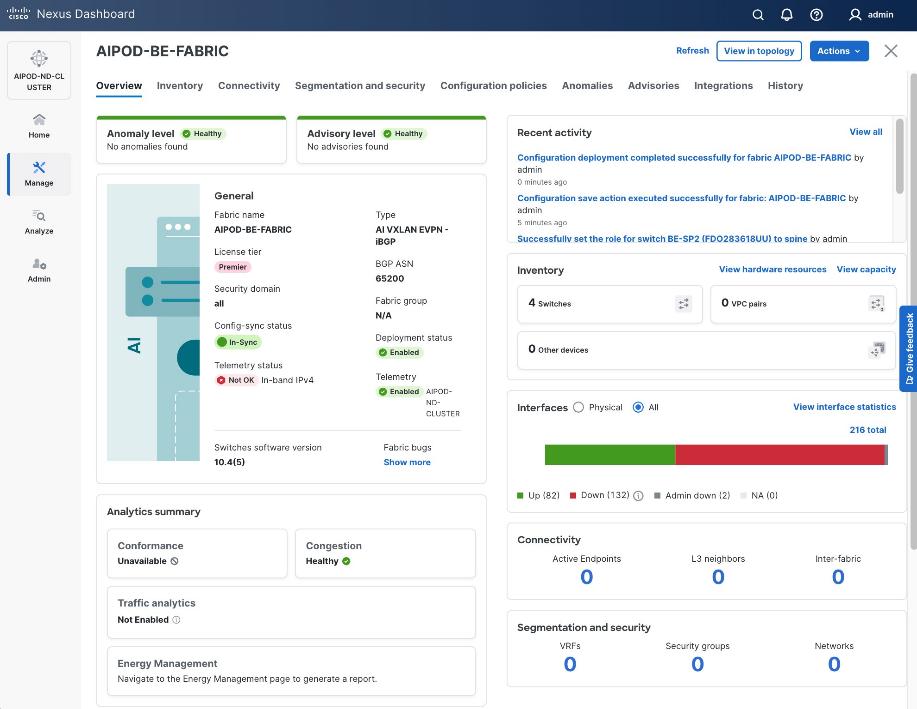

The fabric is deployed using pre-built fabric templates available in Nexus Dashboard, implementing a prescriptive, best-practice design for the backend fabric as shown in Figure 10. Though the templates implement a specific configuration, organizations still have the flexibility of customizing some or all aspects of the template as needed.

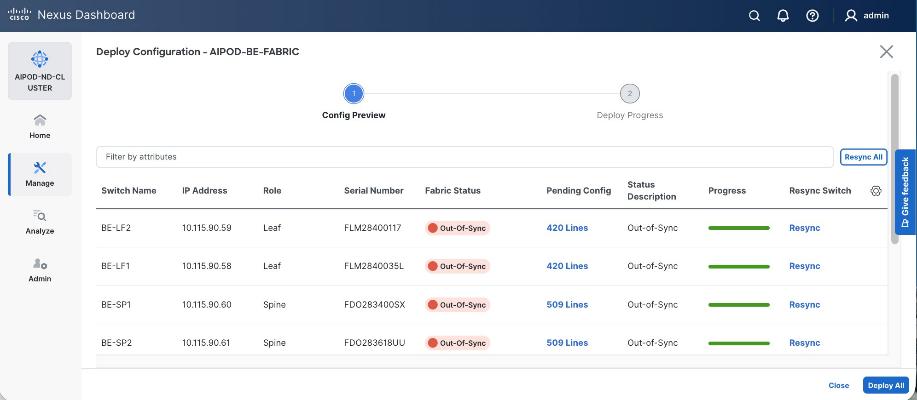

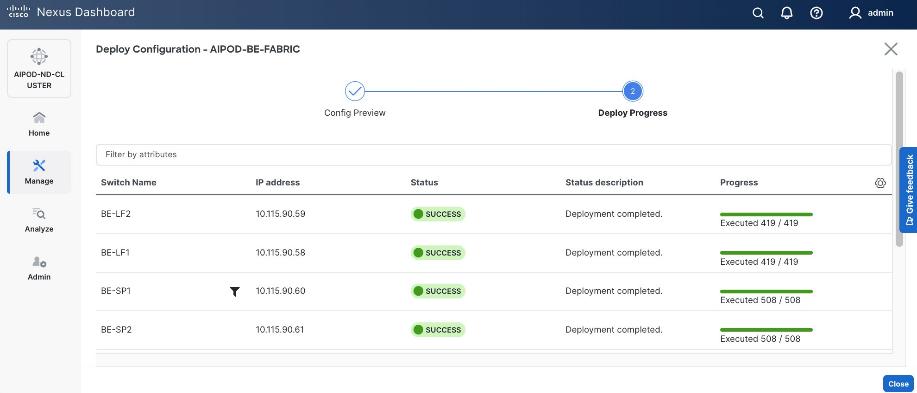

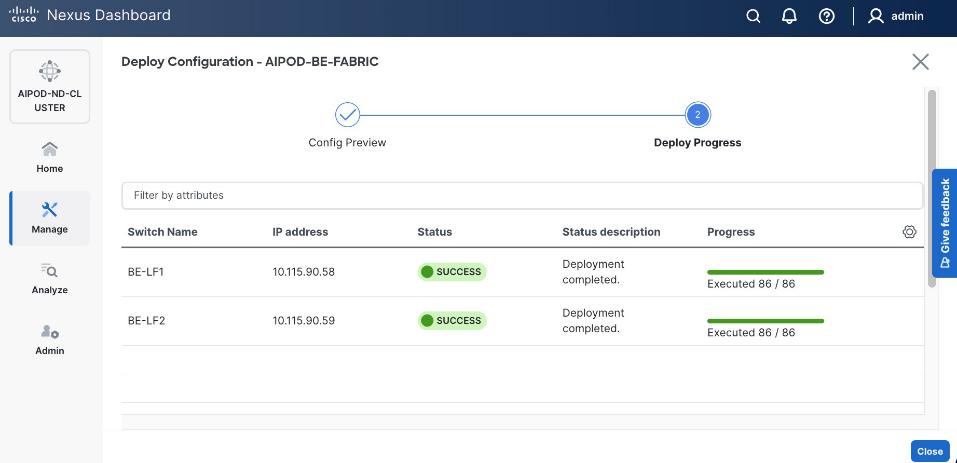

When the connectivity is in place, these templates enable the fabric to be provisioned and deployed quickly. The 2-spine, 2-leaf backend fabric in this design with over 400 lines of configuration (see Figure 11) was deployed in minutes.

The design uses QoS features outlined below to create a lossless environment for RoCEv2 traffic, preventing packet drops during bursty synchronization events. The QoS policy is implemented (default) by the deployed AI/ML template.

● Traffic Classification: A dedicated class-map (COS 3) is used to identify RoCEv2 synchronization traffic.

● Priority Flow Control (PFC): PFC is enabled on COS 3 to provide hop-by-hop flow control. This ensures that in the event of congestion, the switch can signal the upstream device to pause transmission, preventing packet drops.

● Explicit Congestion Notification (ECN): ECN is configured with specific WRED (Weighted Random Early Detection) thresholds. This allows the Nexus switches to mark packets when buffers begin to fill, signaling the GPU endpoints to throttle their transmission rate before PFC is triggered, maintaining a smooth data flow.

● MTU: A global MTU of 9000 (Jumbo Frames) is applied across all links in the fabric to ensure large AI data packets are processed efficiently without fragmentation.

In this implementation, the default QoS policy in the deployed template was modified to support this design. The key changes are:

● MTU for PFC3 traffic changed from X to Y.

● QoS Bandwidth Allocation changed to allocate more bandwidth for RDMA traffic since this backend fabric is dedicated to this type of traffic. The only other traffic in this network is a small amount of control and management traffic.

The Cisco UCS GPU nodes are added to the Red Hat OpenShift cluster as worker nodes. The networking connectivity to the backend fabric is deployed and provisioned using Kubernetes NMState Operator and NVIDIA’s Network Operator. The GPU Direct RDMA and overall deployment of GPU is implemented using NVIDIA’s GPU Operator . All operators are available from Red Hat’s Operator Hub, directly accessible from OpenShift cluster console.

The Layer 2 (overlay) connectivity between the 4 Cisco UCS C88A M8 nodes across the backend fabric requires the following changes on the Cisco UCS nodes.

Frontend (North-South) Fabric

The frontend fabric provides Cisco UCS GPU nodes with connectivity to management, services, storage, and to other networks within and external to the enterprise. In a hybrid deployment, inferencing traffic from users and application also use this fabric.

Similar to the backend fabric, the frontend is also deployed in a two-tier spine-leaf Clos-based topology, using a MP-BGP VXLAN EVPN architecture. Both Layer 2 and Layer 3 overlays are used to logically segment the different types of traffic on this network.

Quality of Service (QoS), including PFC and ECN, was deployed to ensure that NFS RDMA traffic to VAST Storage was prioritized across the frontend fabric when there is congestion.

Table 5 lists VLANs configured for setting up the environment along with their usage.

| VLAN ID |

Name |

Usage |

IP Subnet used in this deployment |

| 2* |

Native-VLAN |

Use VLAN 2 as native VLAN instead of default VLAN (1) |

|

| 550* |

OOB-MGMT-VLAN |

Out-of-band management VLAN to connect management ports for various devices |

10.115.90.0/26; GW: 10.115.90.1 |

| 703 |

Ubuntu-BareMetal-MGMT |

Routable VLAN used for Ubuntu management |

10.115.90.64/26; GW: 10.115.90.126 |

| 3051 |

NFS |

Used for Ubuntu storage |

192.168.51.0/24 |

| 10 |

VAST-Discovery_VLAN10 |

Used for discovery of nodes during VAST cluster install |

|

| 3056 |

VAST-Client_VLAN_3056 |

VAST external storage network |

192.168.56.24 |

| 69 |

VAST-Storage_VLAN_69 |

VAST internal network |

|

Table 6 lists the VMs or bare metal servers necessary for deployment as outlined in this document.

| Virtual Machine Description |

VLAN |

IP Address |

Comments |

| AD1 |

703 |

10.115.90.123 |

Hosted on pre-existing management infrastructure |

| AD2 |

703 |

10.115.90.124 |

Hosted on pre-existing management infrastructure |

| NVIDIA Base Command Head Node |

703 |

10.115.90.115 |

Hosted on pre-existing management infrastructure |

Table 7 lists the software revisions for various components of the solution.

| Layer |

Device |

Image Bundle |

Comments |

| Compute |

Cisco UCS C885A M8 Firmware Package |

1.1(0.250025) |

Upgrades all server components |

| Cisco UCS X210c M6 |

5.3(5.250021) |

||

| Cisco UCS Fabric Interconnect 9108 100G |

4.3(5.240162) |

||

| Network |

Cisco Nexus Dashboard |

4.1.1g |

|

| Cisco Nexus 9332D-GX2B NX-OS |

10.4(5) |

||

| Cisco Nexus 9364D-GX2A NX-OS |

10.4(5) |

||

| Storage |

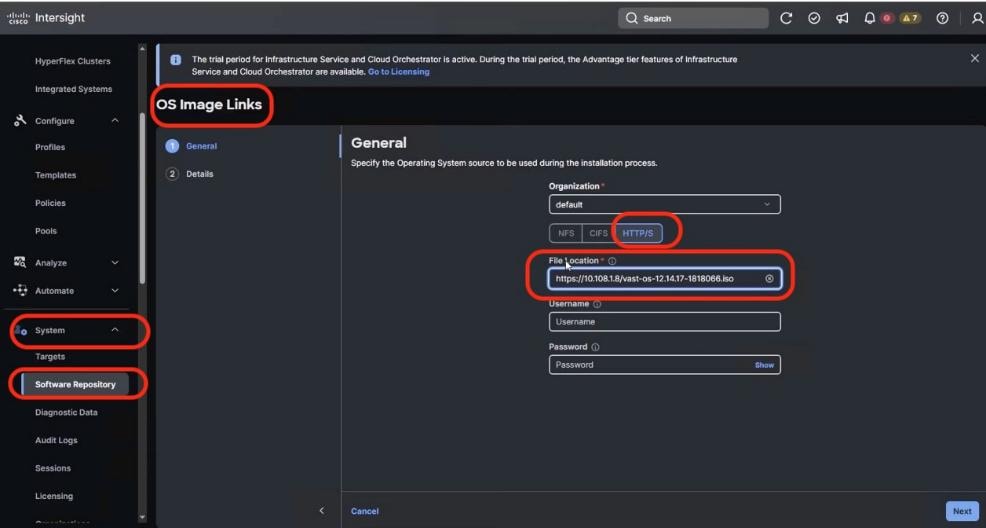

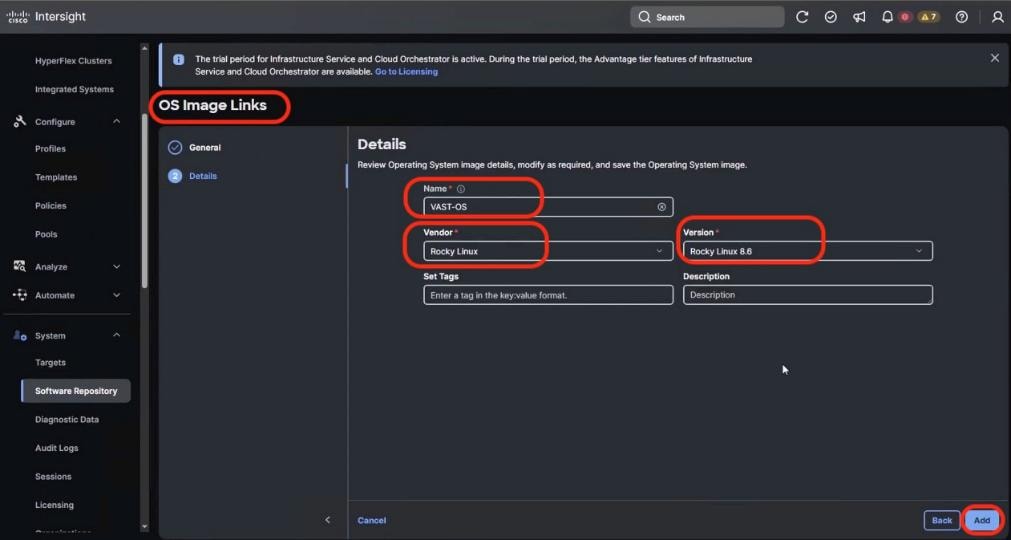

VAST OS |

vast-os-12.14.27-1879753 |

|

| VAST VMS |

release-5.3.3-hf5-2058254 |

||

| Software |

NVIDIA H200 GPU Driver - Ubuntu |

570.133.20 |

|

| NVIDIA H200 GPU CUDA Version-Ubuntu |

12.8 |

Network Switch Configuration

This chapter contains the following:

Cisco Nexus Frontend Fabric Setup

Cisco Nexus Backend Fabric Setup

In this lab configuration, Cisco Nexus Dashboard is used to create and configure the Backend and Frontend Fabrics. Nexus Dashboard is available in both physical and virtual form factors. In this lab configuration, three Nexus Dashboard physical nodes were installed into a cluster. See Nexus Dashboard Capacity Planning and Cisco Nexus Dashboard Data Sheet to determine the form factor and cluster size for your deployment, then install Nexus Dashboard

Cisco Nexus Frontend Fabric Setup

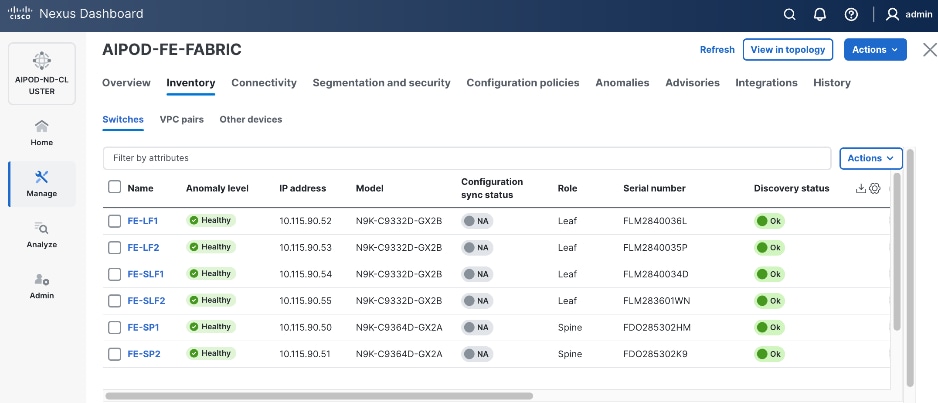

In this setup, the Nexus Frontend Fabric consisted of 2 spine and 4 leaf switches. This fabric was cabled as listed in Table 3. The fabric switch details are listed in Table 8.

Table 8. Frontend Fabric Switch Details

| Switch |

Role |

OOB IP |

Firmware |

Model |

| FE-LF1 |

Leaf |

10.115.90.52 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-LF2 |

Leaf |

10.115.90.53 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SLF1 |

Storage Leaf |

10.115.90.54 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SLF2 |

Storage Leaf |

10.115.90.55 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SP1 |

Spine |

10.115.90.50 |

10.4(5) |

Cisco Nexus 9364D-GX2A |

| FE-SP2 |

Spine |

10.115.90.51 |

10.4(5) |

Cisco Nexus 9364D-GX2A |

Physical Connectivity

Note: Follow the physical connectivity guidelines for AIDPODs with VAST Data as explained in Connectivity Design section.

Initial Configuration of Switches

The following procedures describe this basic configuration of the Cisco Nexus frontend fabric switches for use in the existing environment. This procedure assumes the use of Cisco Nexus 9000 10.4(5), the Cisco suggested Nexus switch release at the time of this validation.

Procedure 1. Set up initial configuration from a serial console

Step 1. Set up the initial configuration for each backend fabric switch as listed in Table 8.

Step 2. Configure the switch.

Note: On initial boot, the NX-OS setup automatically starts and attempts to enter Power on Auto Provisioning.

Abort Power On Auto Provisioning [yes - continue with normal setup, skip - bypass password and basic configuration, no - continue with Power On Auto Provisioning] (yes/skip/no)[no]: yes

Disabling POAP.......Disabling POAP

poap: Rolling back, please wait... (This may take 5-15 minutes)

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name: <nexus-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address: <nexus-out_of_band_mgmt0-ip>

Mgmt0 IPv4 netmask: <nexus-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway: <nexus-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [2048]: Enter

Configure the ntp server? (yes/no) [n]: Enter

Configure default interface layer (L3/L2) [L2]: Enter

Configure default switchport interface state (shut/noshut) [noshut]: Enter

Enter basic FC configurations (yes/no) [n]: n

Configure CoPP system profile (strict/moderate/lenient/dense) [strict]: Enter

Would you like to edit the configuration? (yes/no) [n]: Enter

Step 3. Review the configuration summary before enabling the configuration:

Use this configuration and save it? (yes/no) [y]: Enter

Step 4. Repeat this procedure for all switches listed in Table 8.

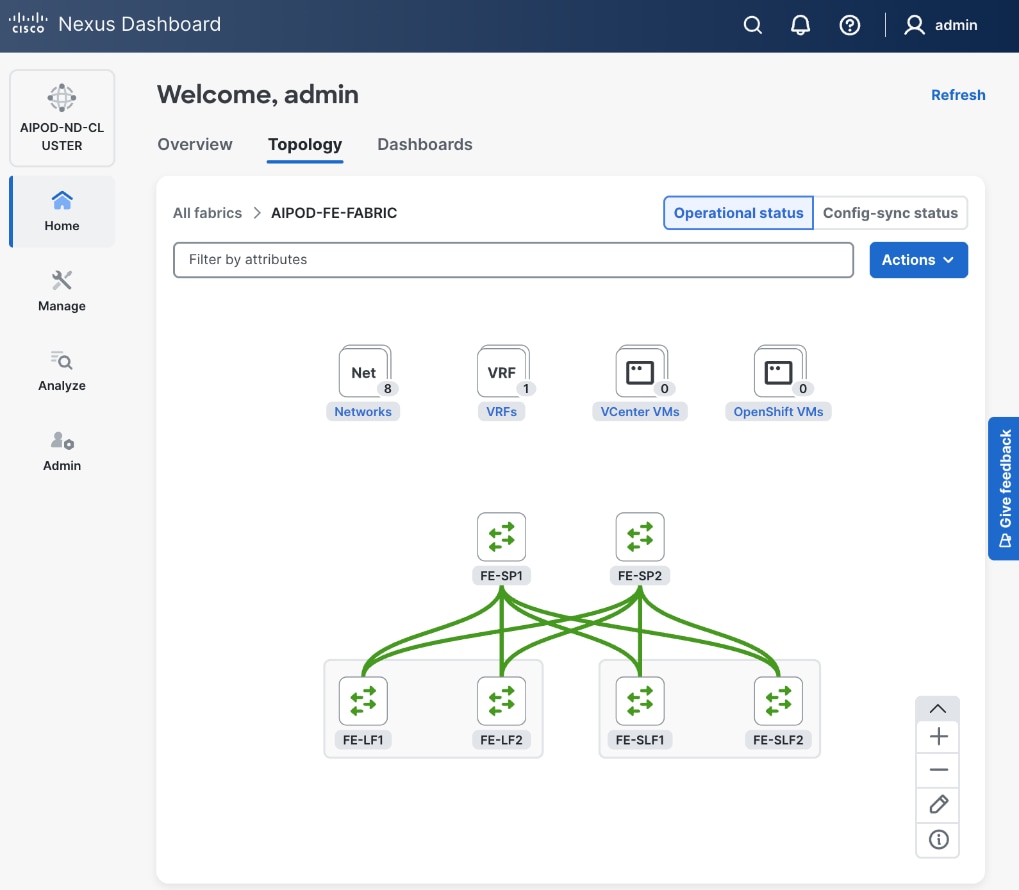

Deploy Frontend Fabric Using Nexus Dashboard

The procedures outlined in this section will use Cisco Nexus Dashboard (ND), specifically the fabric templates provided by ND, to deploy the frontend (FE) fabric in the AI POD solution. The frontend fabric is a 2-tier, 3-stage spine-leaf Clos topology, built using Cisco Nexus 9000 series data center switches. Once the fabric is deployed, ND will be used to provision connectivity between various infrastructure components connected to the frontend fabric. The Cisco UCS GPU servers in the AI POD training cluster will use the frontend (N-S) NIC to connect to the FE fabric.

The procedures in this section will:

● Deploy a VXLAN EVPN fabric on the frontend leaf and spine switches, connected in a 2-tier spine-leaf topology.

● Enable Virtual Port Channel (vPC) peering on compute/management leaf pairs and storage leaf pairs in the frontend fabric.

● Provision connectivity to UCS servers that will be used to host the control plane and workload management components for the AI workloads running on UCS GPU servers.

● Provisioning external connectivity from the frontend fabric to other enterprise internal and external networks. This includes connectivity to Cisco Intersight, and other SaaS services used in the AI POD solution.

● Provision any connectivity required to bring up the storage system.

● Enable connectivity between UCS management and storage, as well as from UCS GPU nodes to storage.

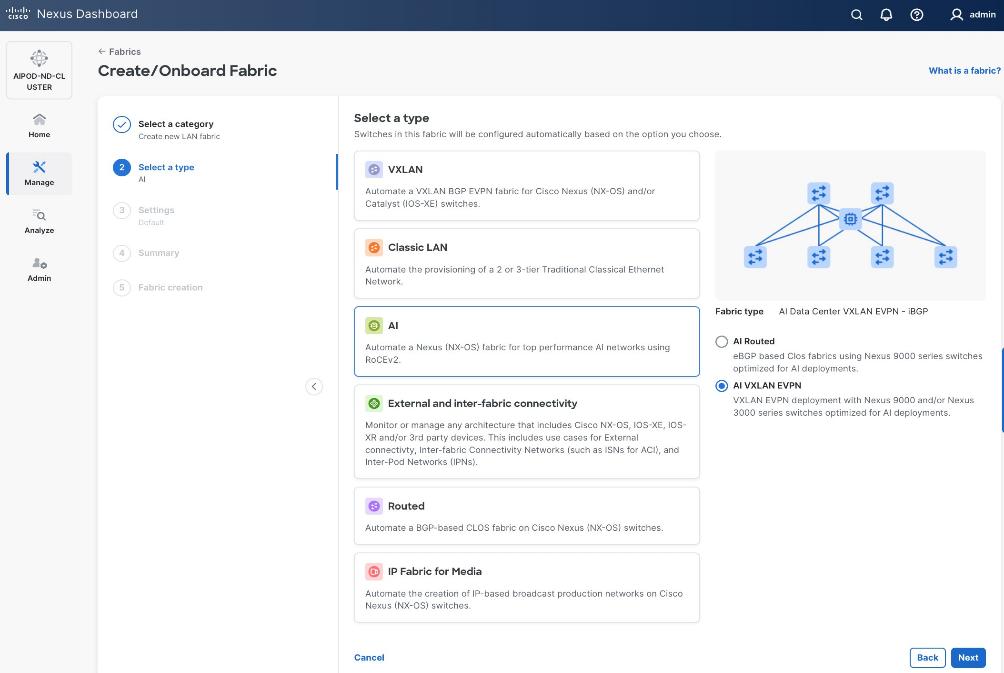

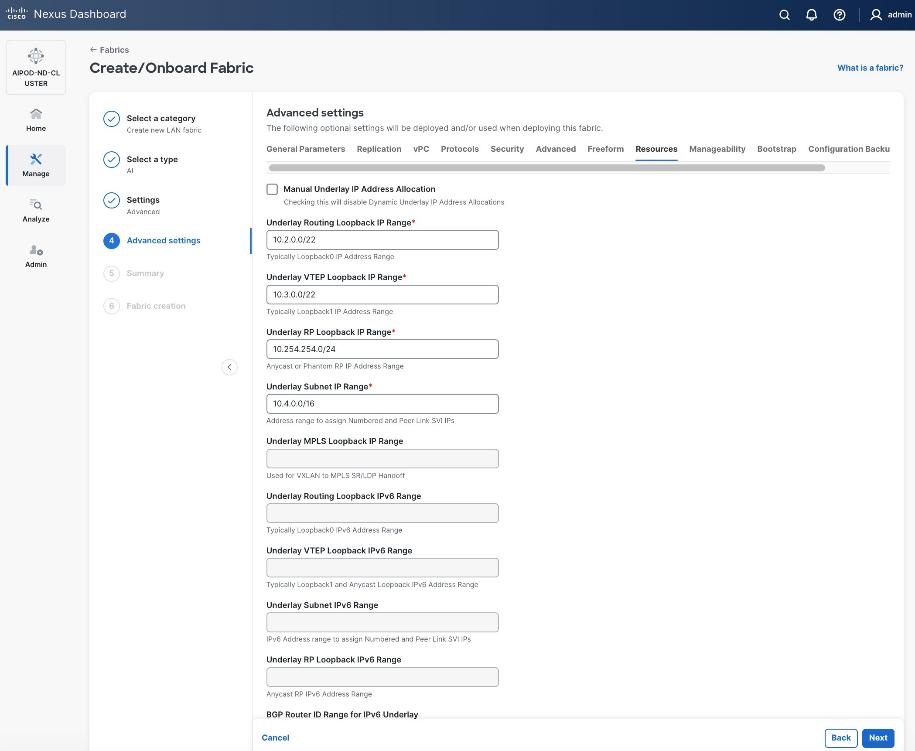

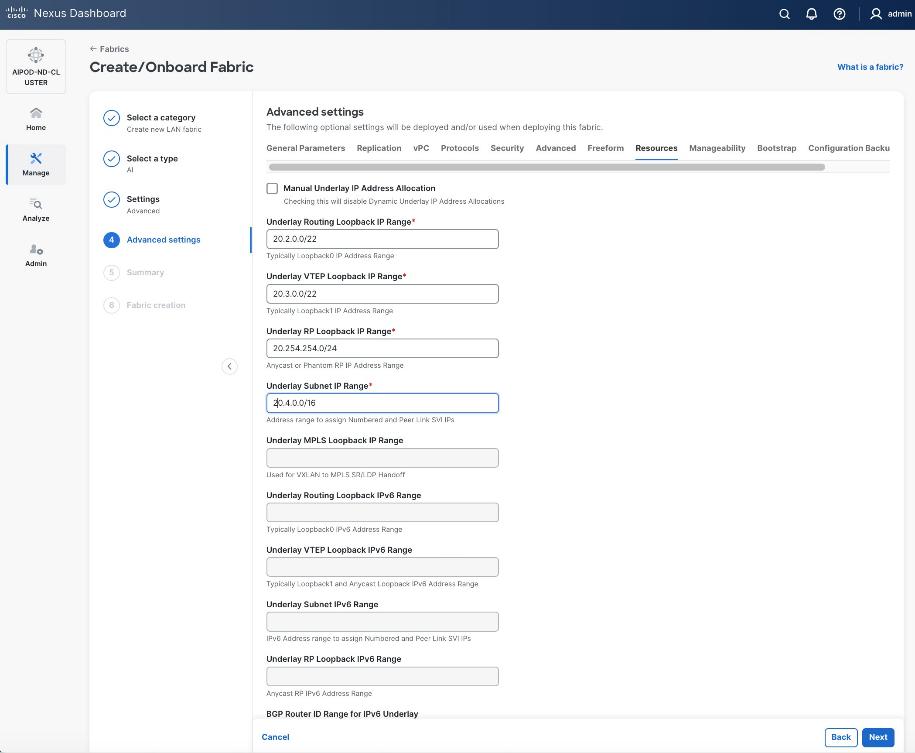

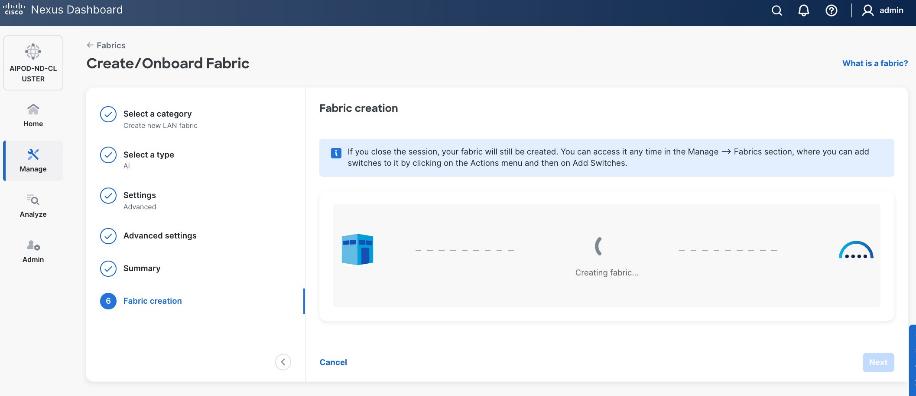

Procedure 1. Deploy VXLAN EVPN fabric on the two-tier spine and leaf switches

Step 1. From a web browser go to the management IP of any node in the Nexus Dashboard cluster. Log in using the admin account.

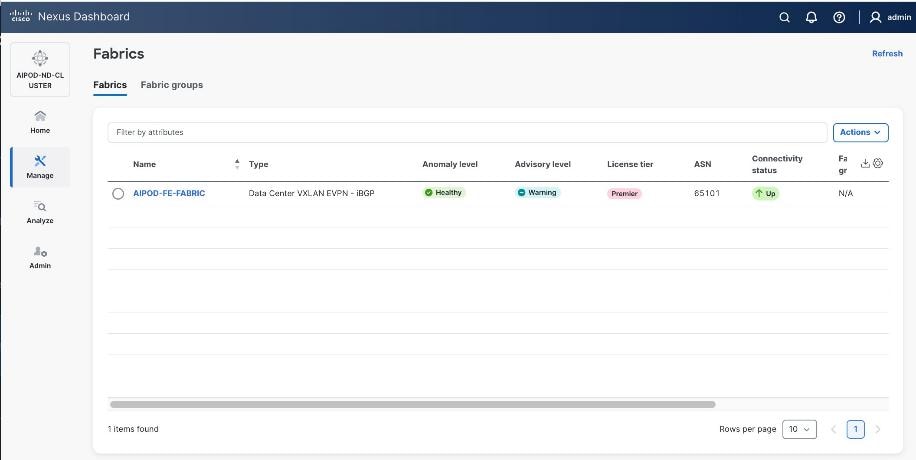

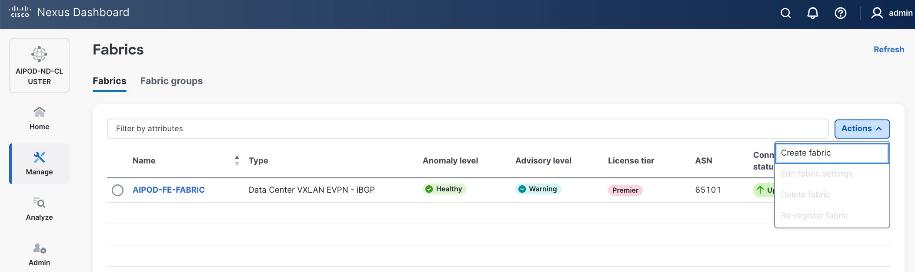

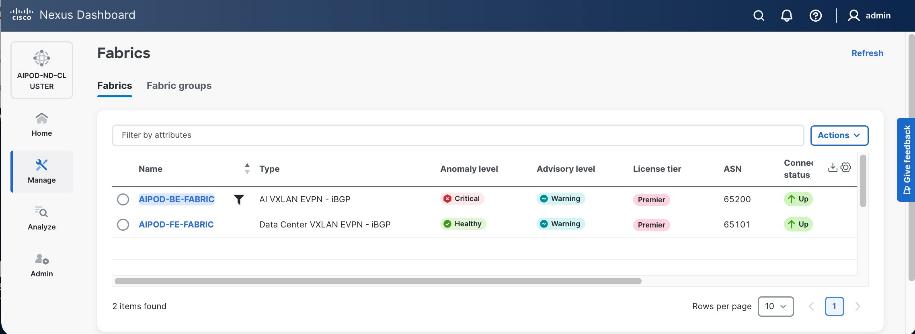

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Click Actions and select Create Fabric from the drop-down list.

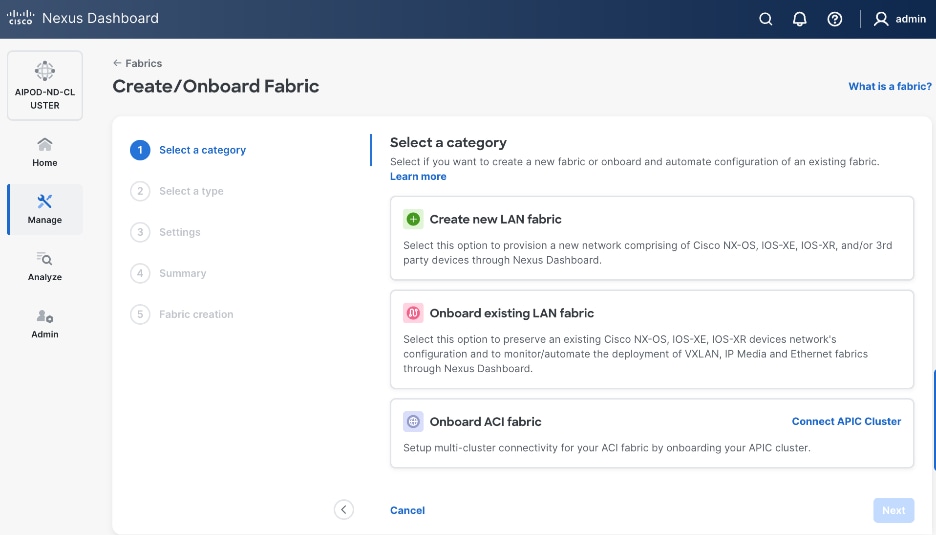

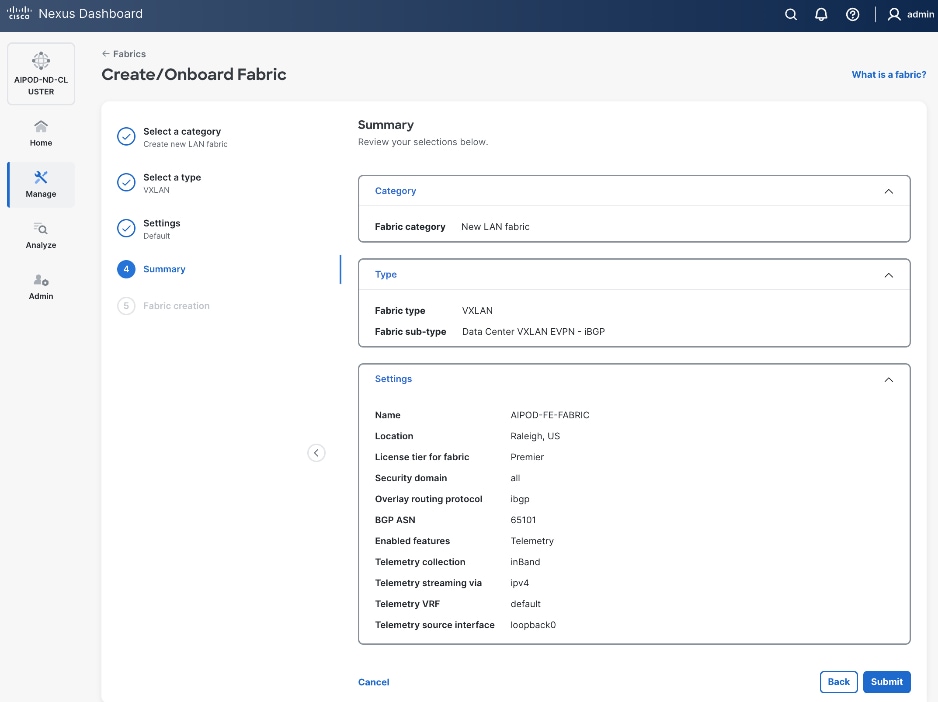

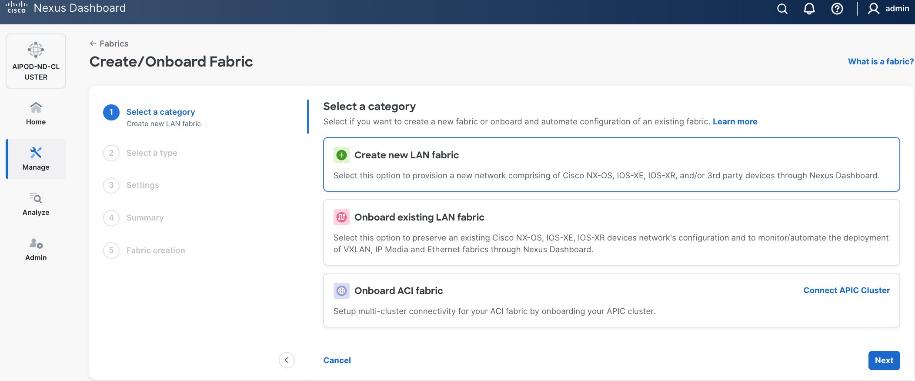

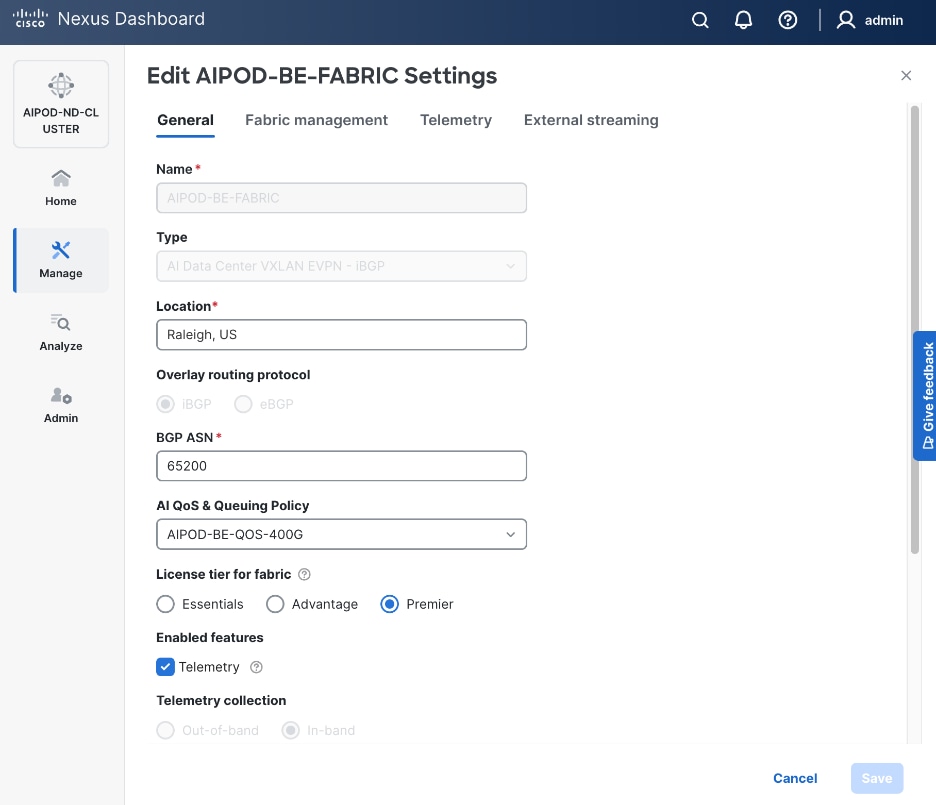

Step 4. Select Create new LAN fabric. Click Next.

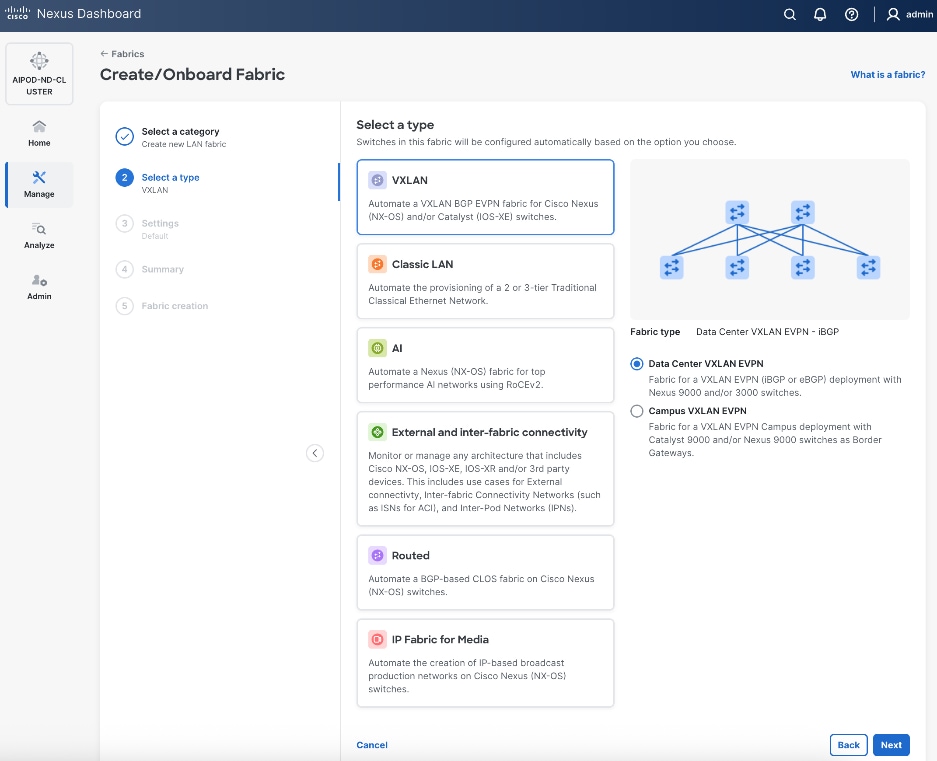

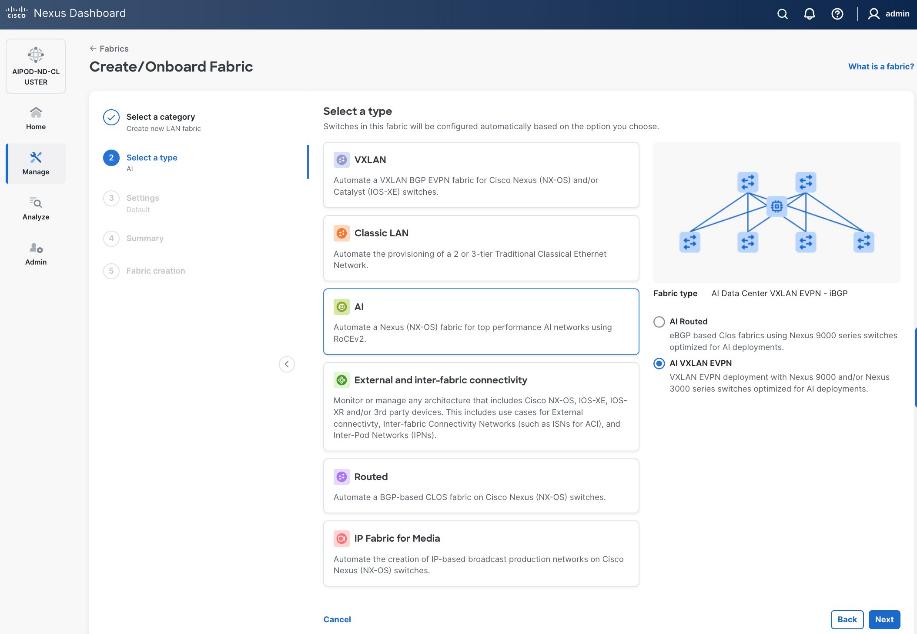

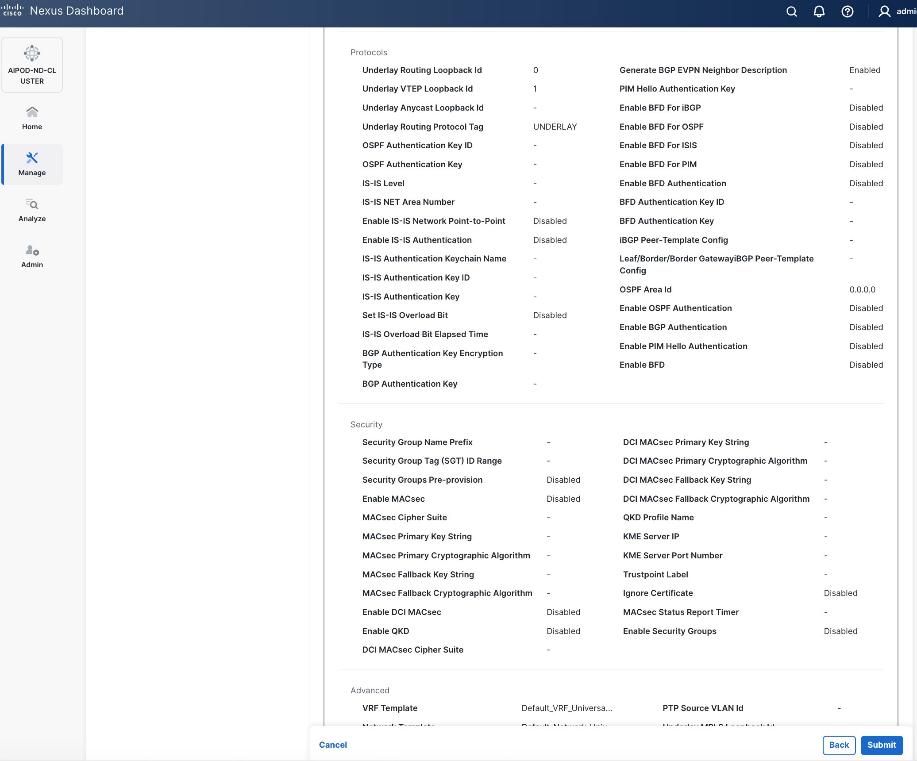

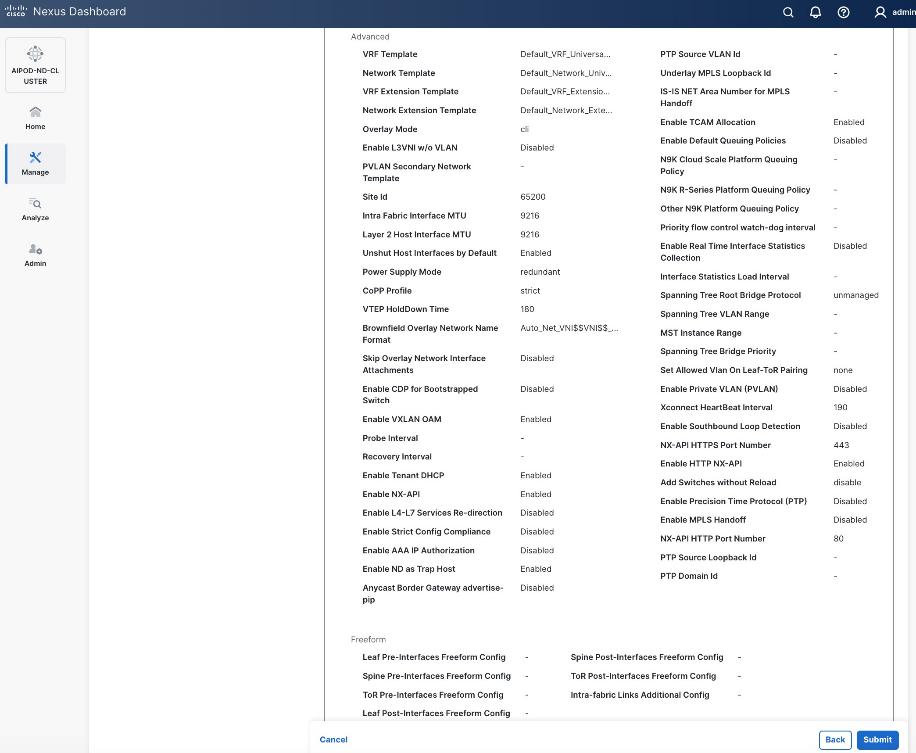

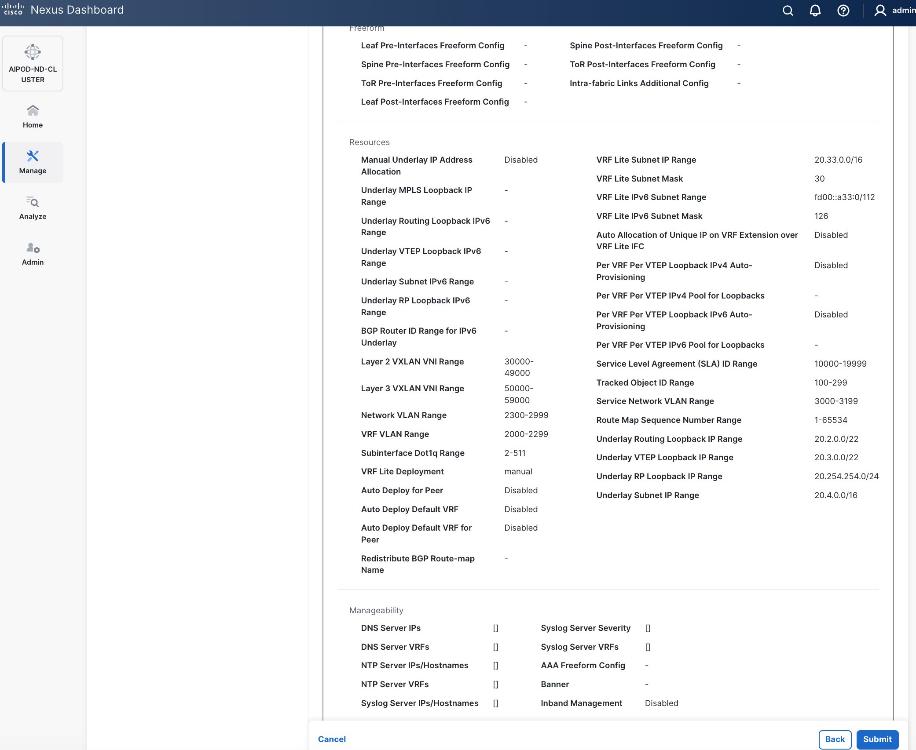

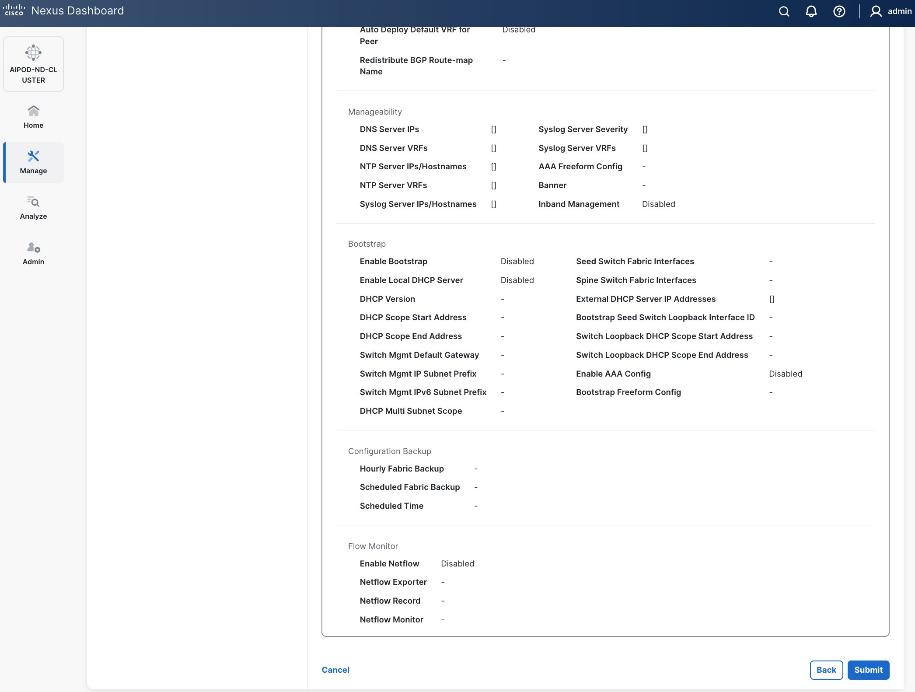

Step 5. Select VXLAN and radio button for Data Center VXLAN EVPN for the fabric type. Click Next.

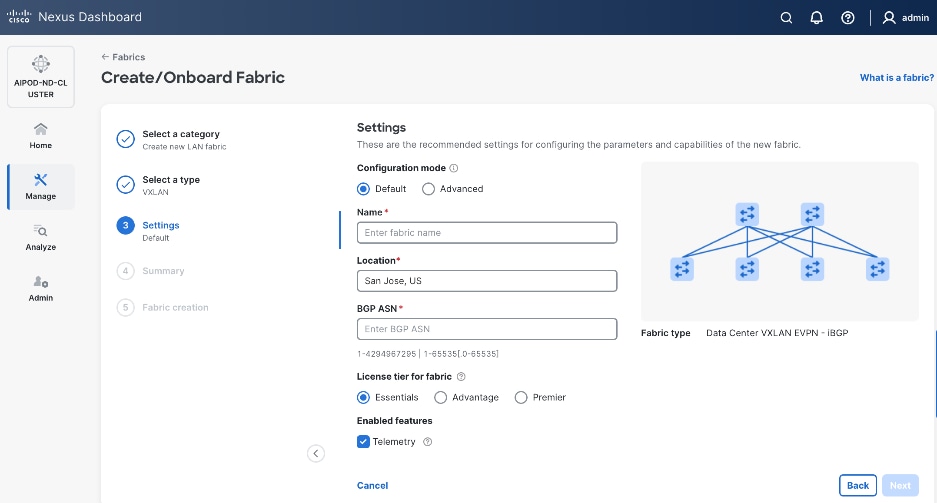

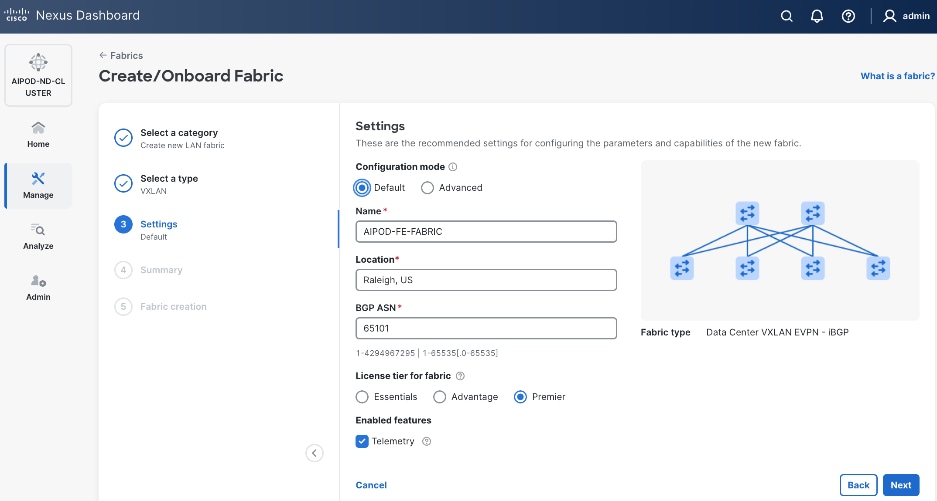

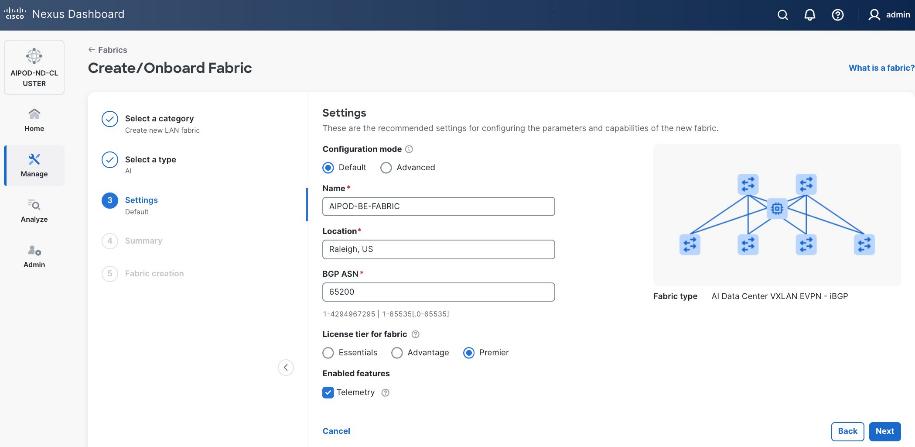

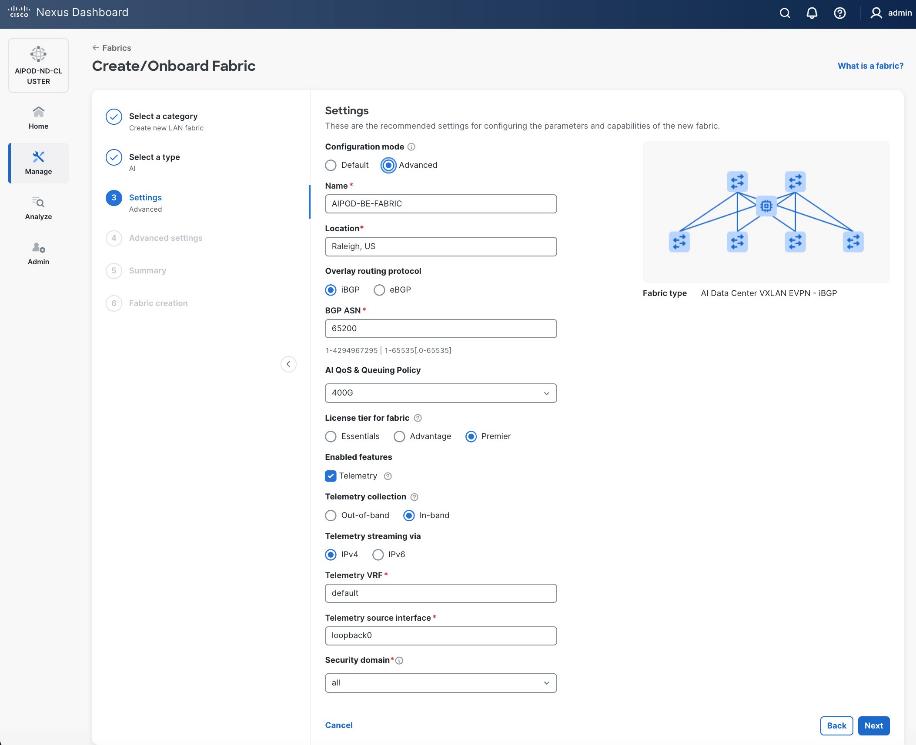

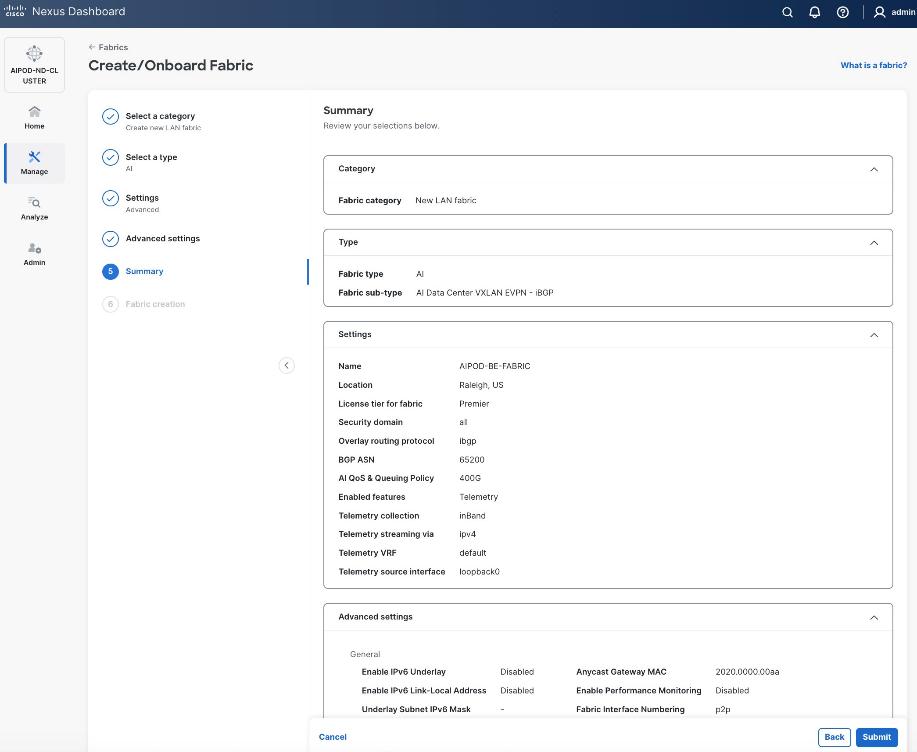

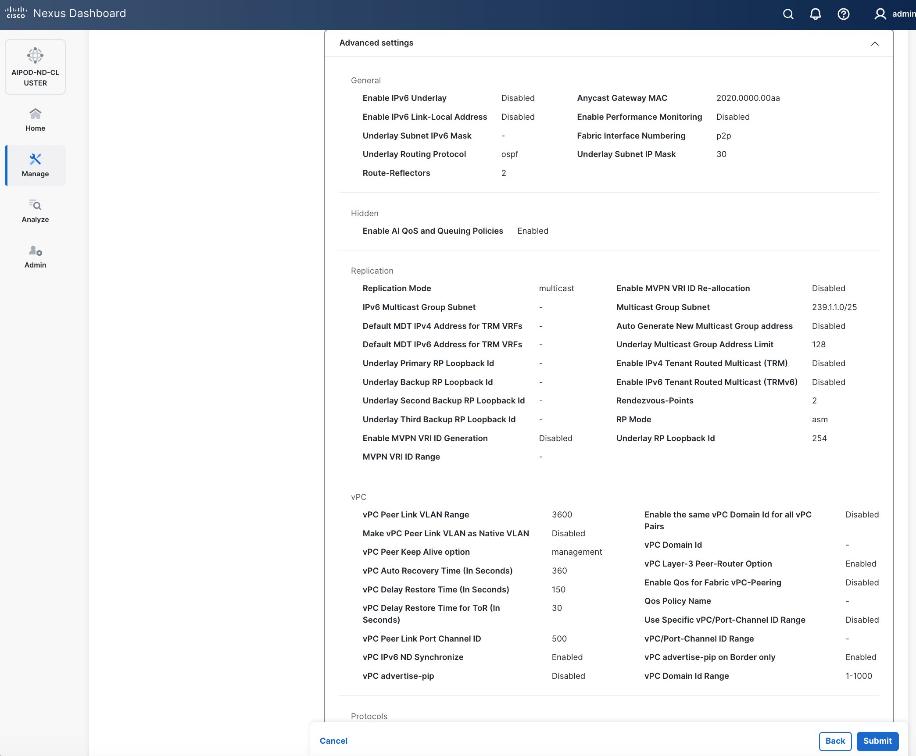

Step 6. For Configuration Mode, keep the Default option. Specify Name, Location, and BGP ASN for fabric. Also select the Licensing tier for fabric from the options available. Premier is required for advanced network analytics and day 2 operations. Click the ? icon to see the features available in each tier.

Step 7. Click Next.

Step 8. In the Summary view, verify the settings and click Submit.

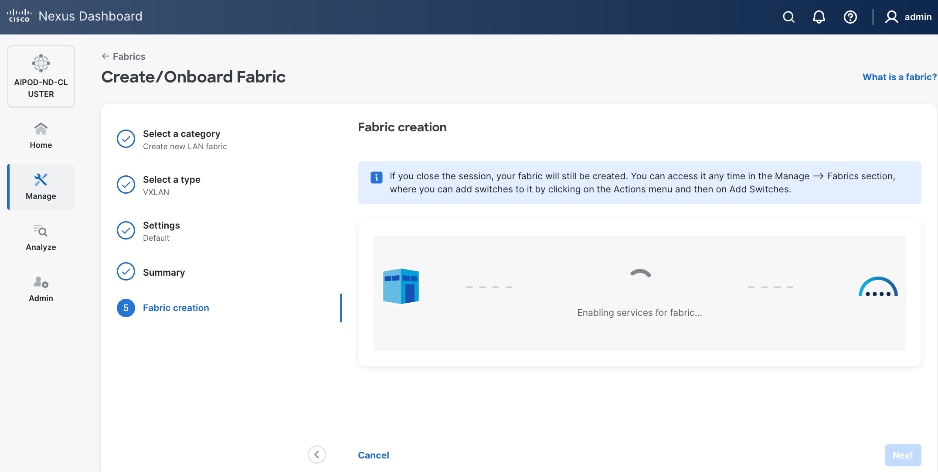

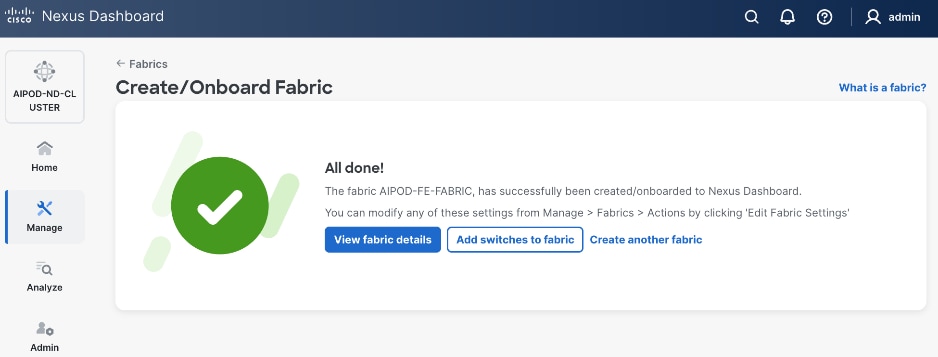

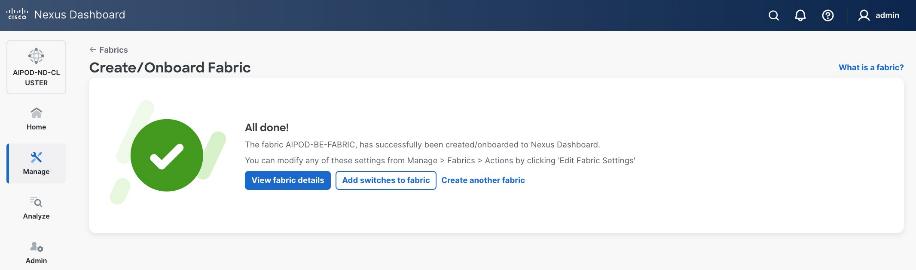

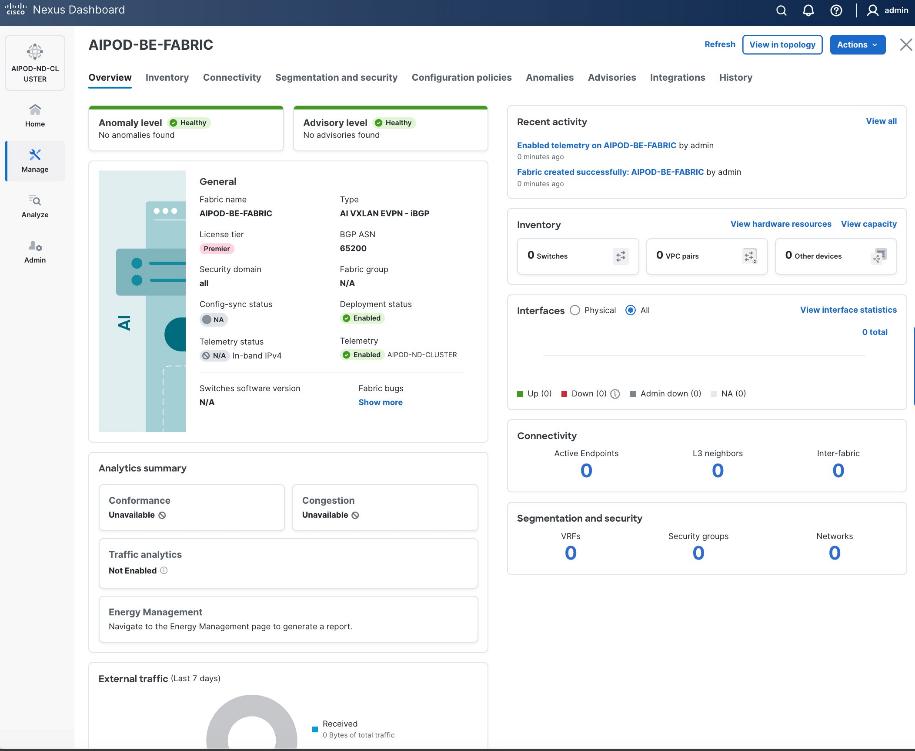

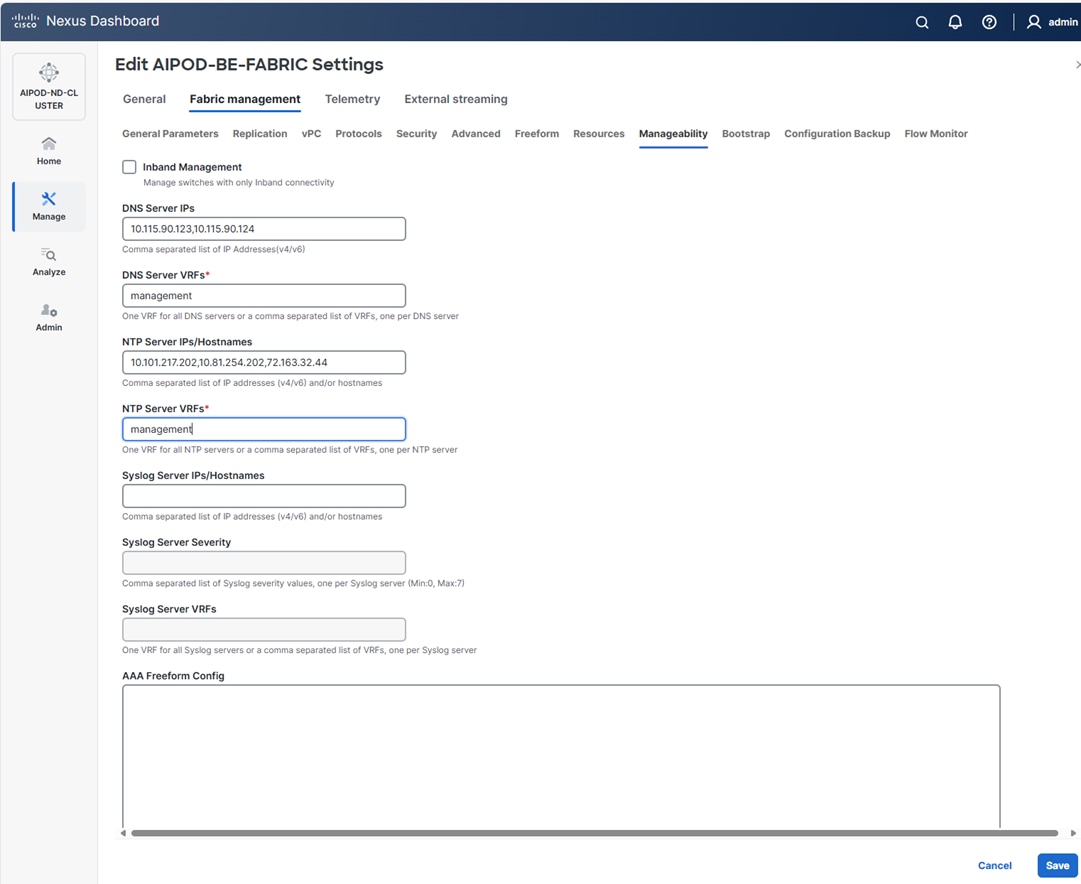

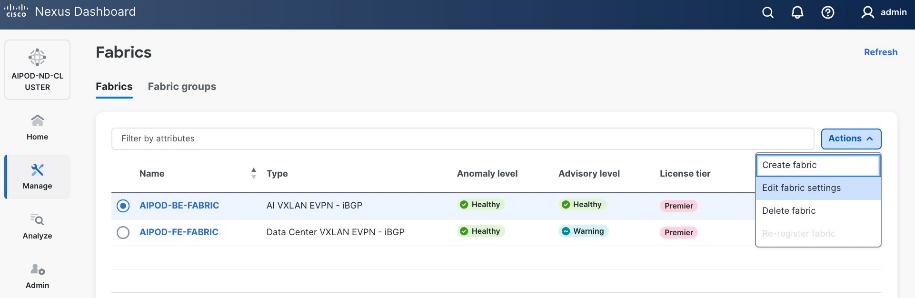

When Fabric Creation completes, you should see the following:

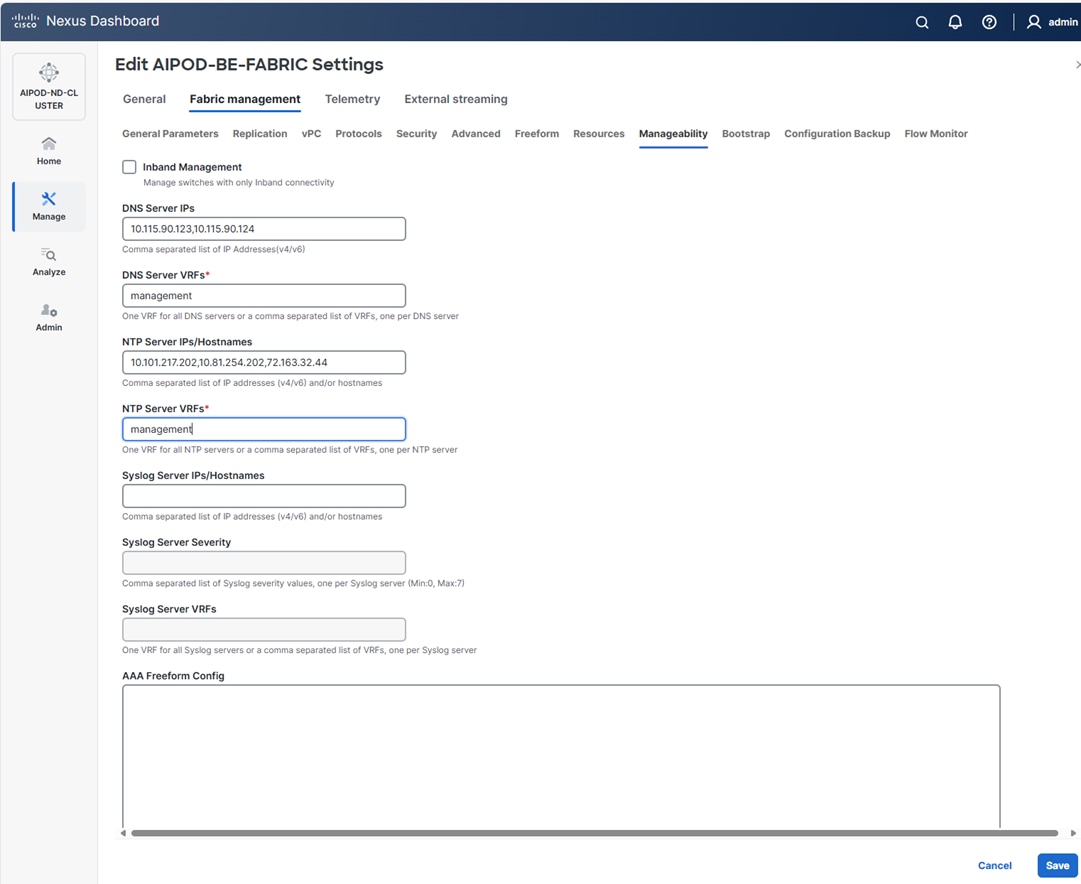

Step 9. Select Manage > Fabrics and then select the FE fabric. From the Actions drop-down list, select Edit fabric settings. Select the Fabric management tab and the Manageability tab. Add the NTP Server IPs and the NTP Server VRF (management) and click Save.

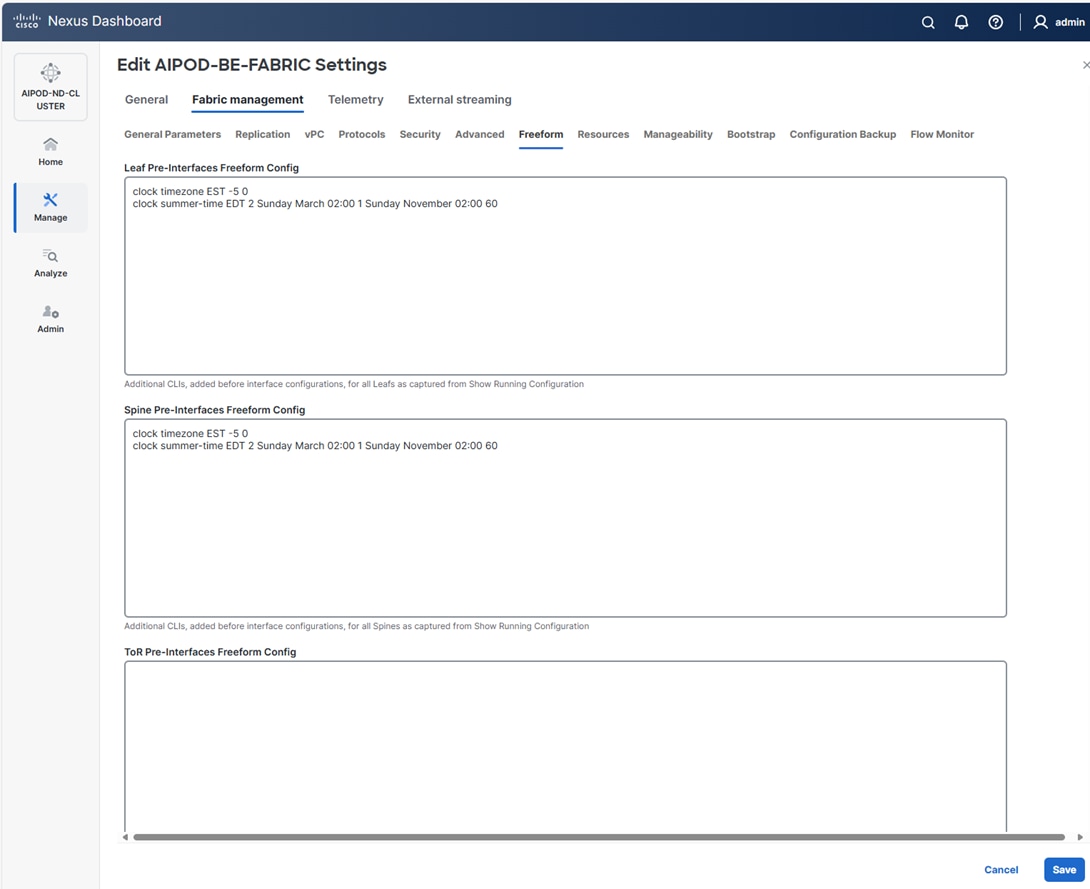

Note: The above screenshot and the following screenshot show the BE fabric but are the same for the FE fabric.

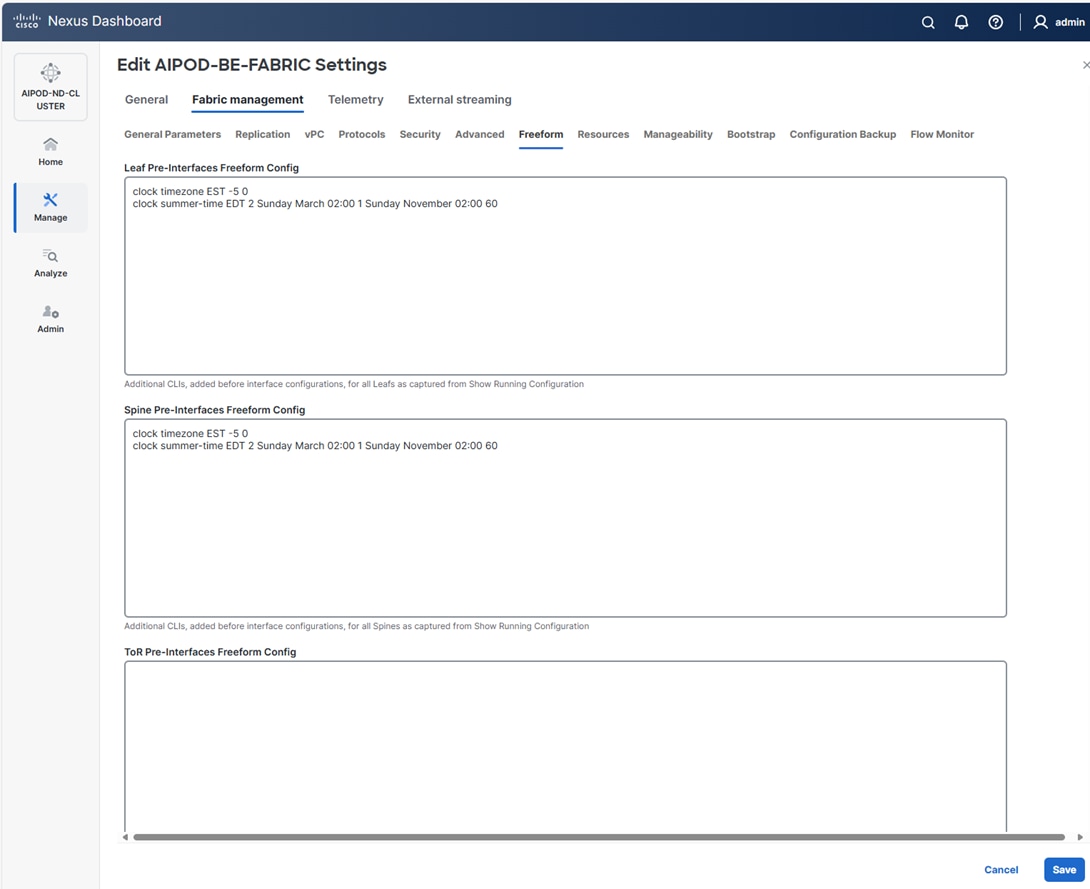

Step 10. Select the Freeform tab and optionally enter the info shown in the screenshot modified for your timezone. Click Save.

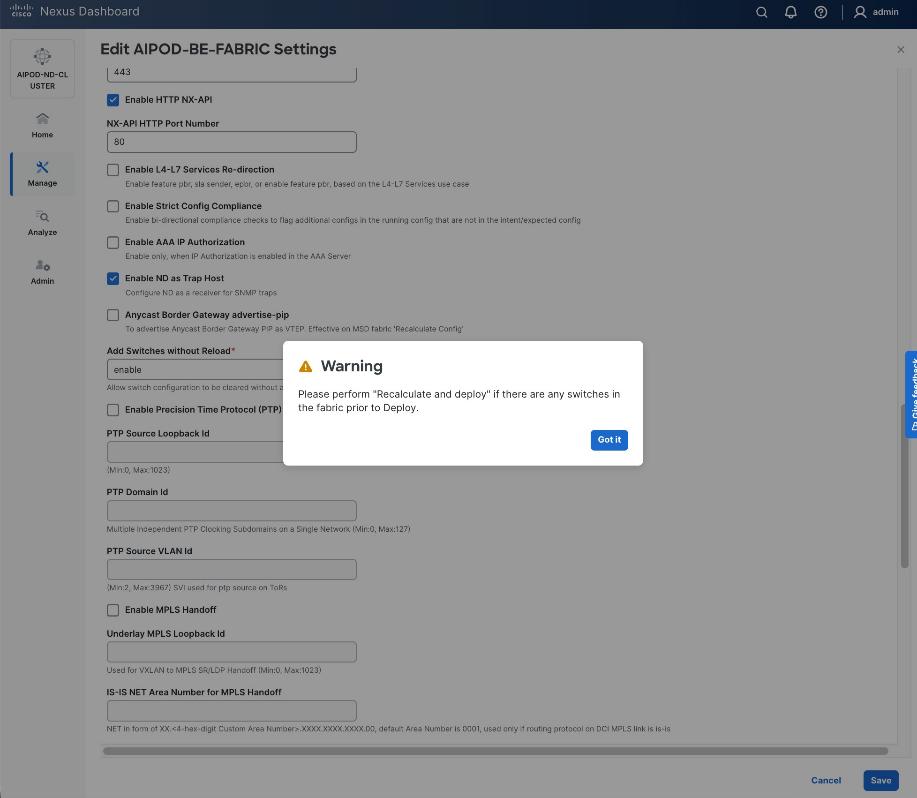

Step 11. If you want to add switches without a reload, click View fabric details. Select Fabric Management > Advanced tabs and scroll down to find the field for Add switches without Reload and change setting to enable. Click Save, followed by Got it in the pop-up window.

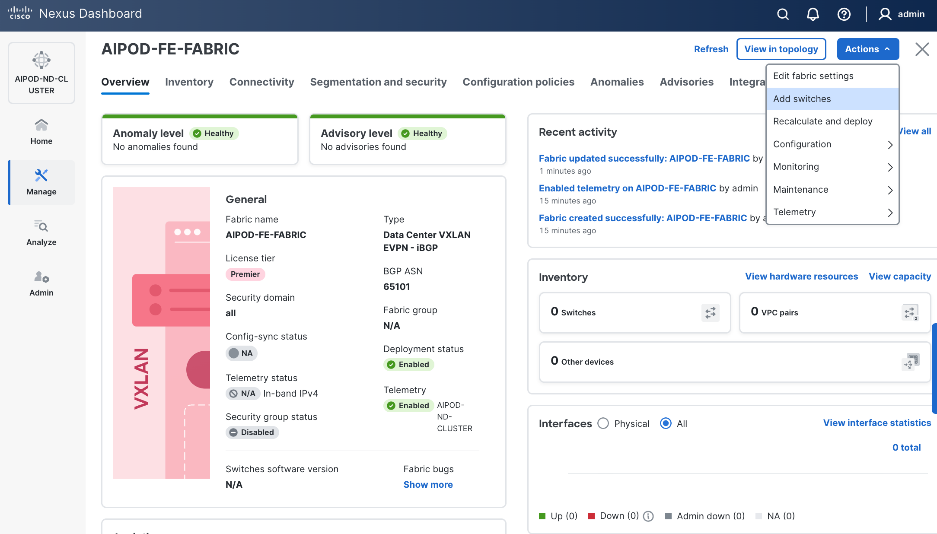

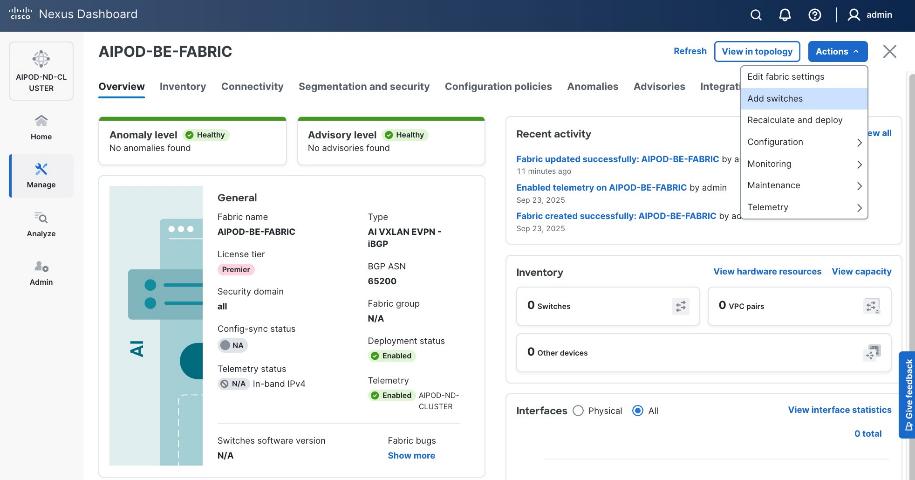

Step 12. From the Manage > Fabrics view, click the fabric name to add switches to the fabric.

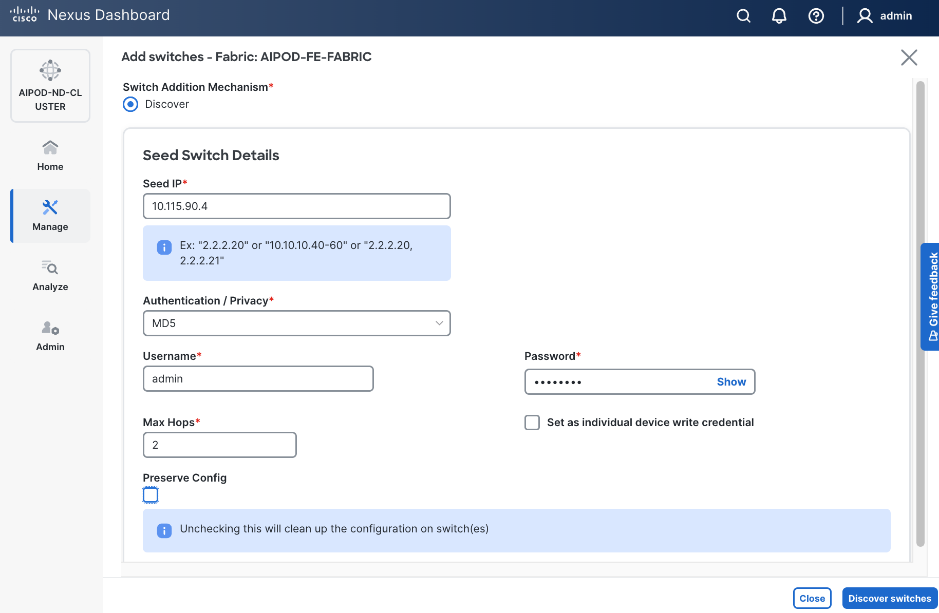

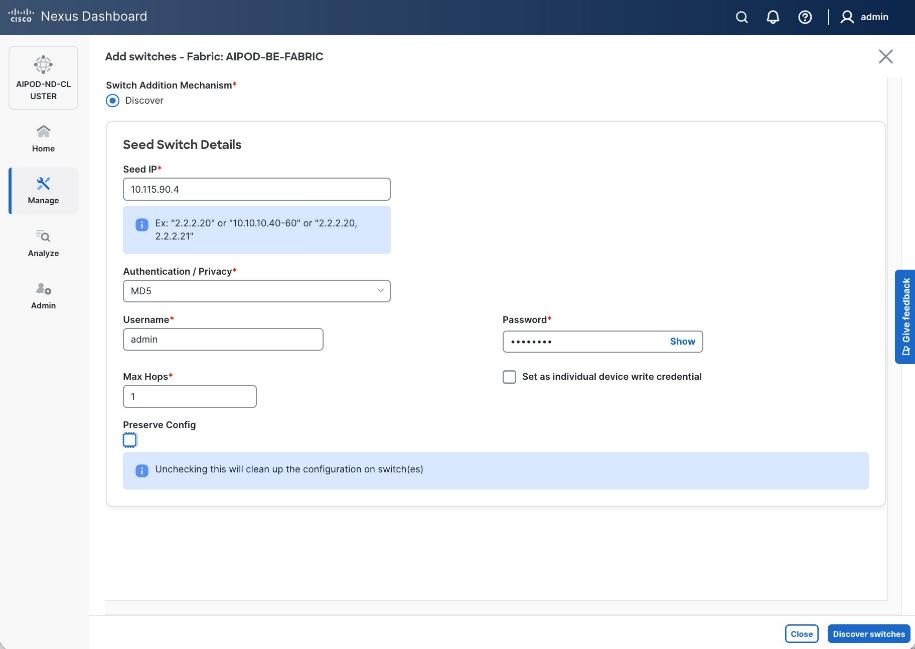

Step 13. Click Actions and select Add Switches from the drop-down list.

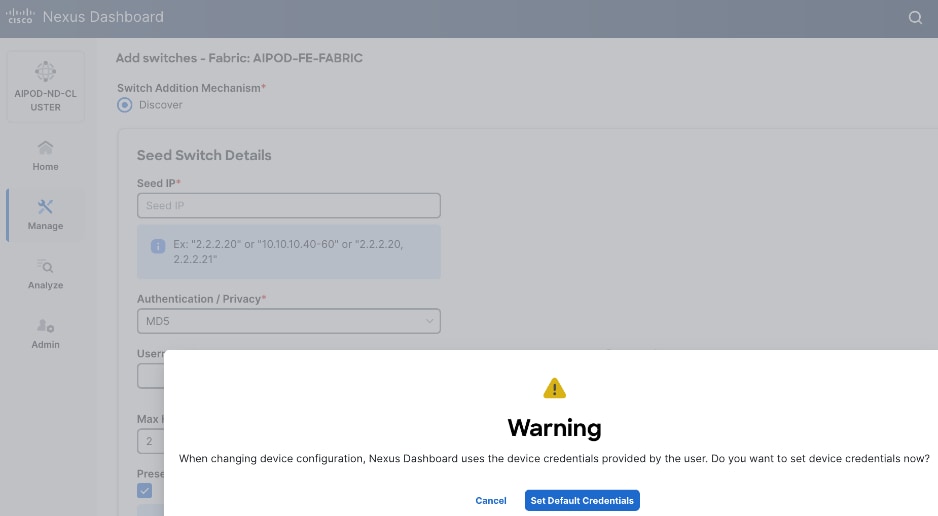

Step 14. In the pop-up window, click Set Default Credentials.

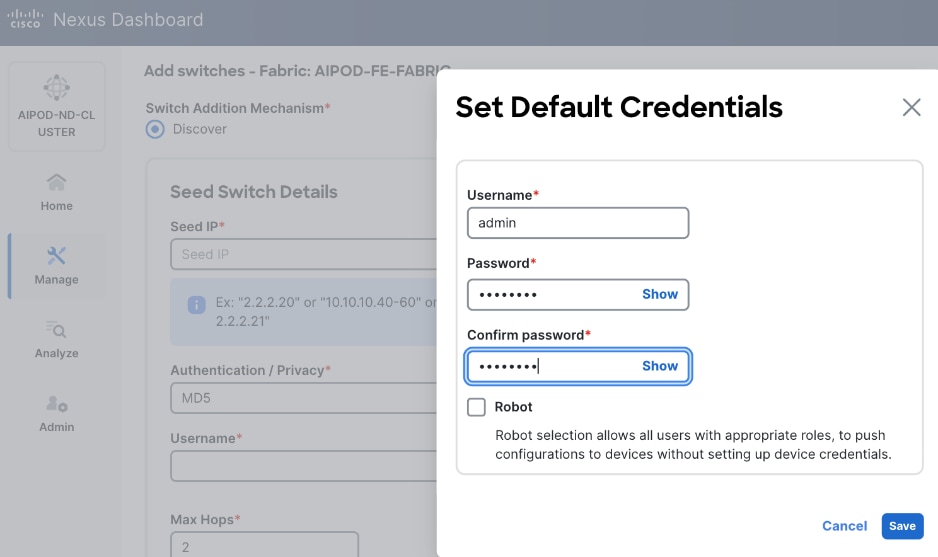

Step 15. Specify Username and Password. Click Save.

Step 16. Click Ok.

Step 17. Specify Seed IP, Username and Password. Adjust Max hops as needed. Click Discover Switches.

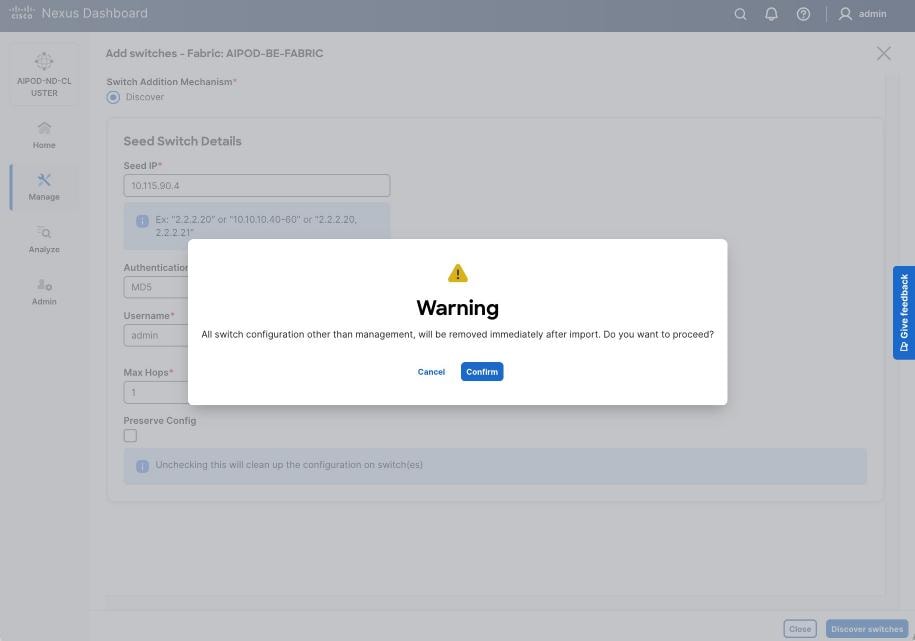

Step 18. Click Confirm in the pop-up Warning.

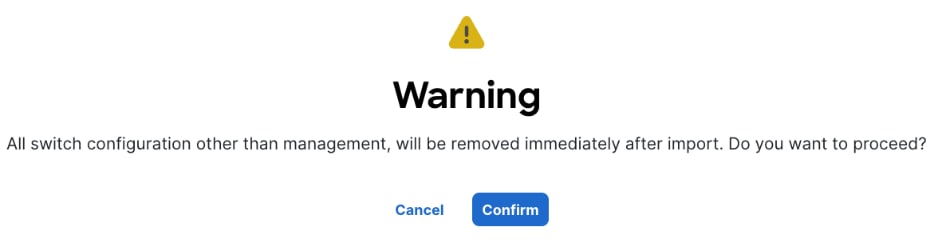

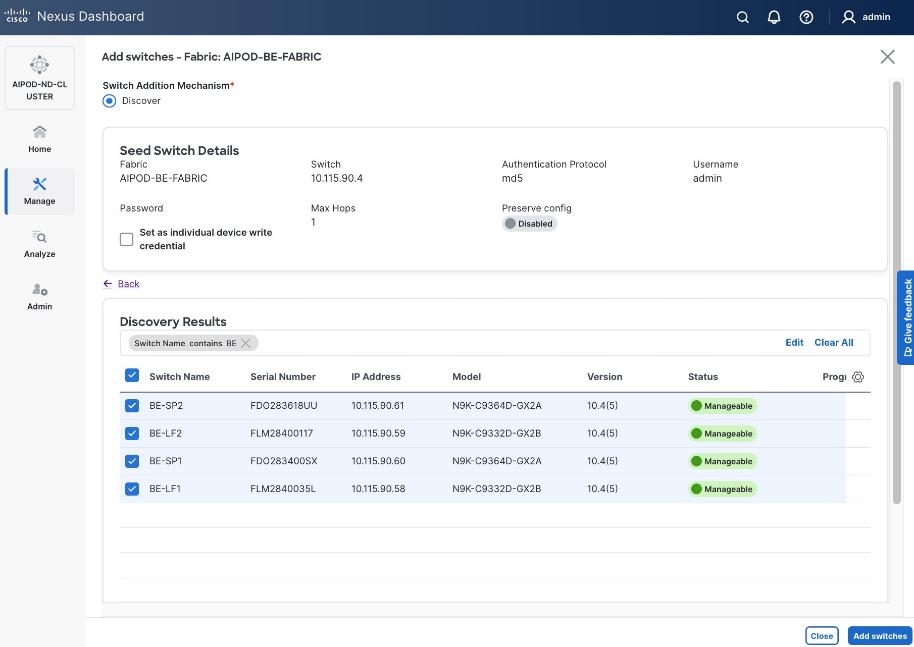

Step 19. Filter the discovered switch list as needed to view just the switches you want to add.

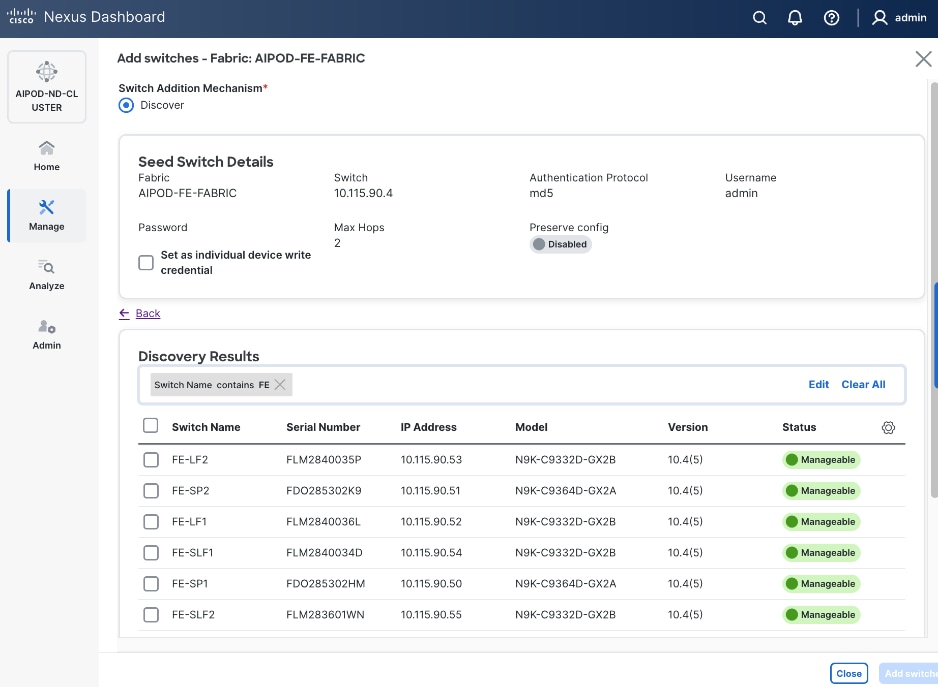

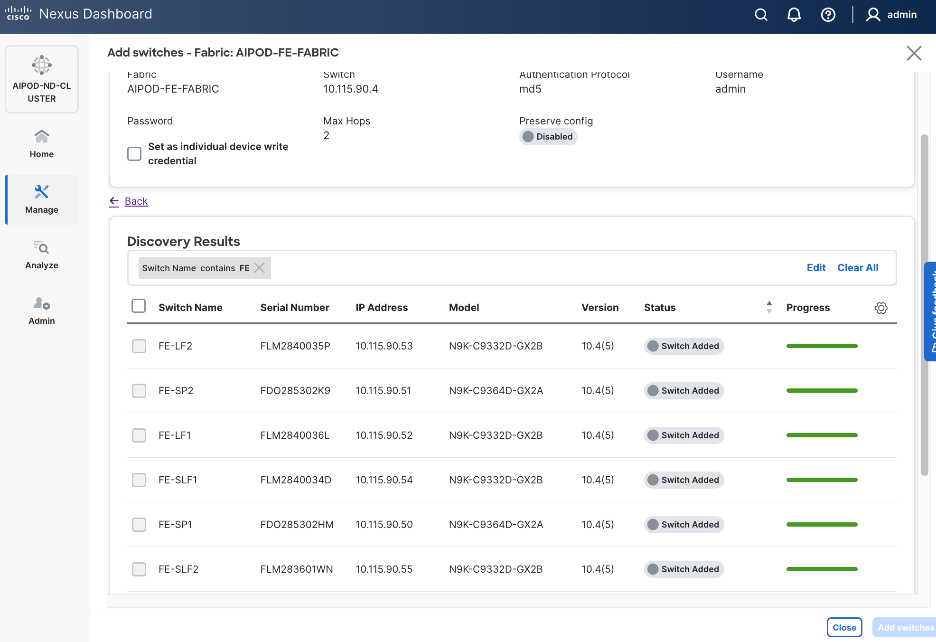

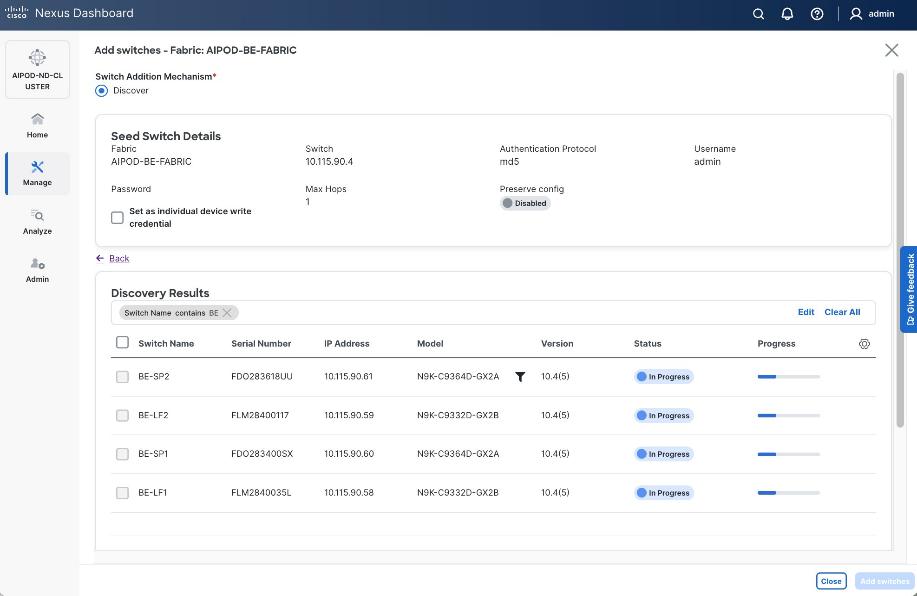

Step 20. Select all switches to be added. Click Add switches.

Step 21. Click Close when all switches have been added.

Step 22. From the Manage > Fabrics, select the fabric and click the Inventory tab.

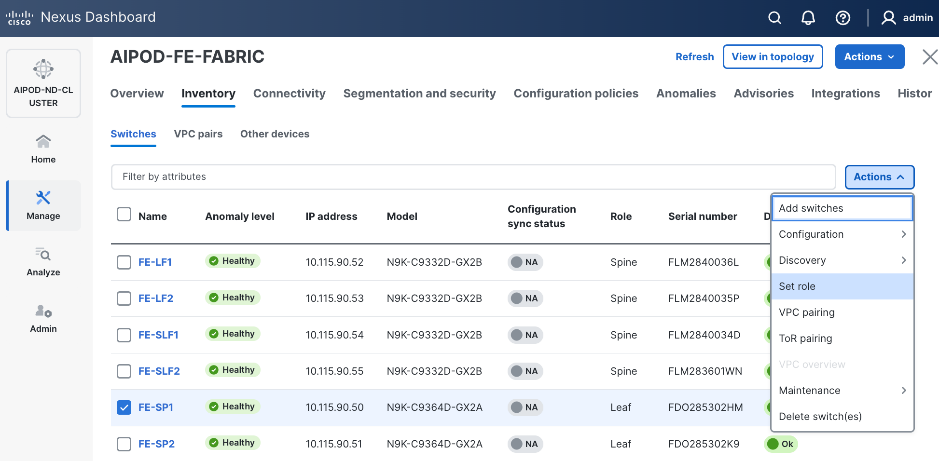

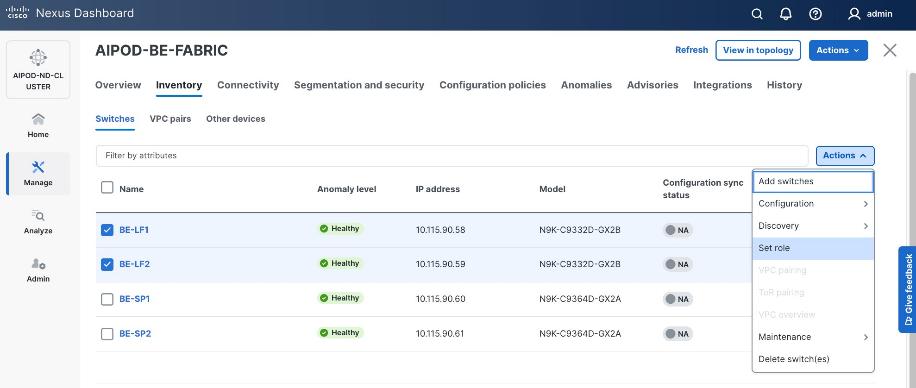

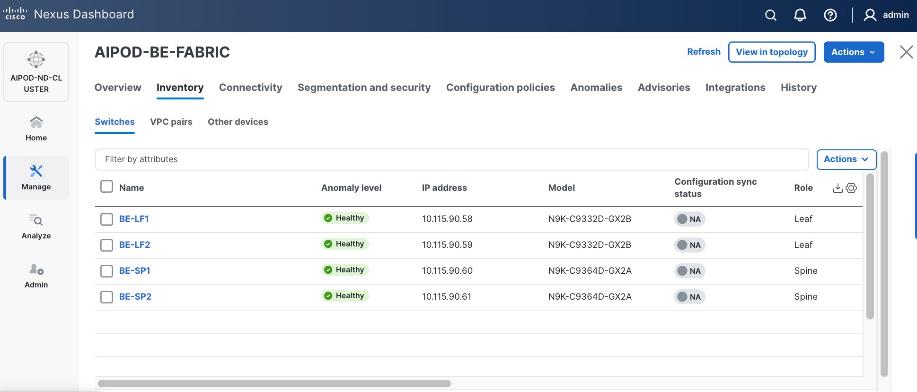

Step 23. For each switch in the list, verify Role is correct. To change the role, select the switch and then click the lower Actions button and select Set role from the drop-down list.

Step 24. In the Select Role pop-up window, select the correct role from the list and click Select.

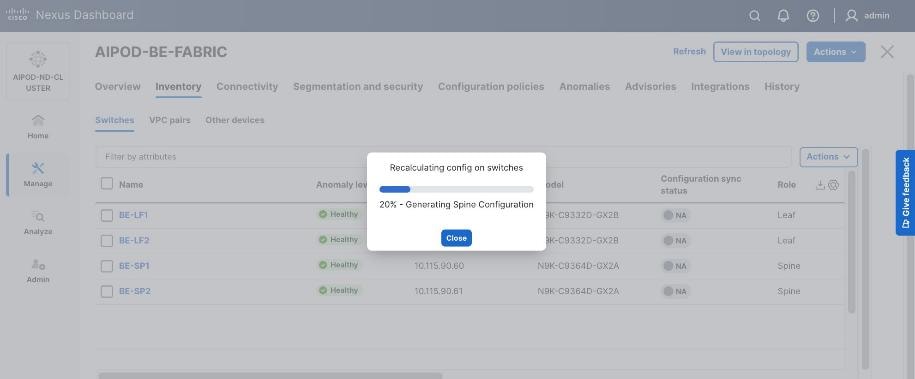

Step 25. Click OK in the pop-up warning to perform Recalculate and deploy to complete the change.

Step 26. Repeat steps 1 – 25 to select and confirm the role for all switches in the fabric.

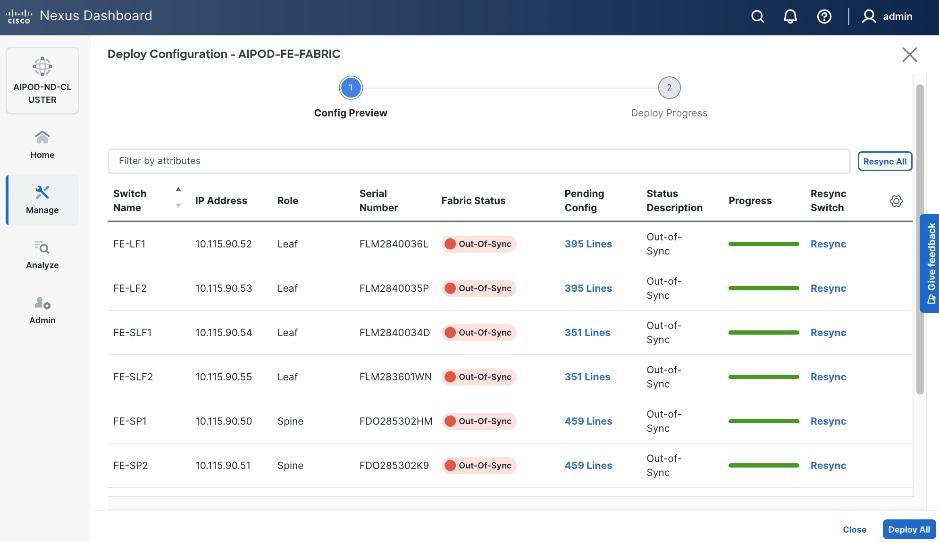

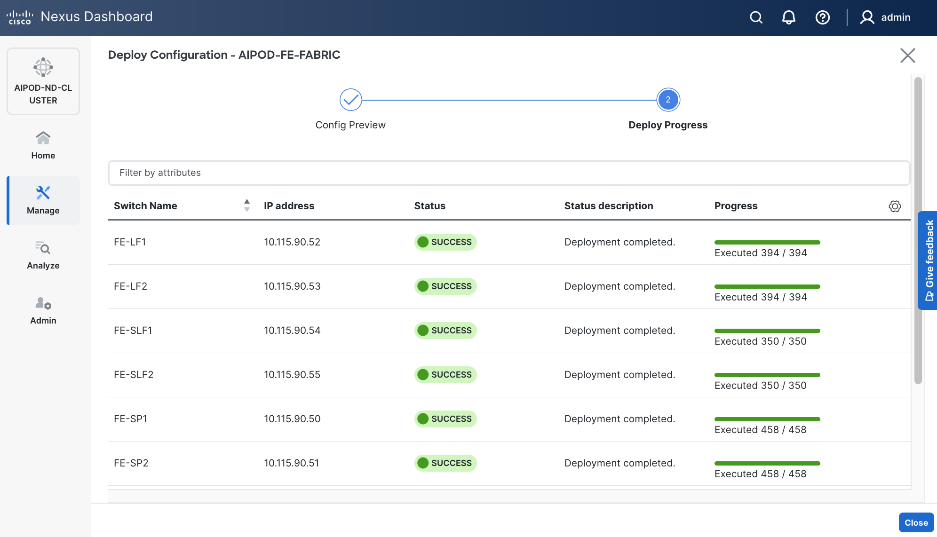

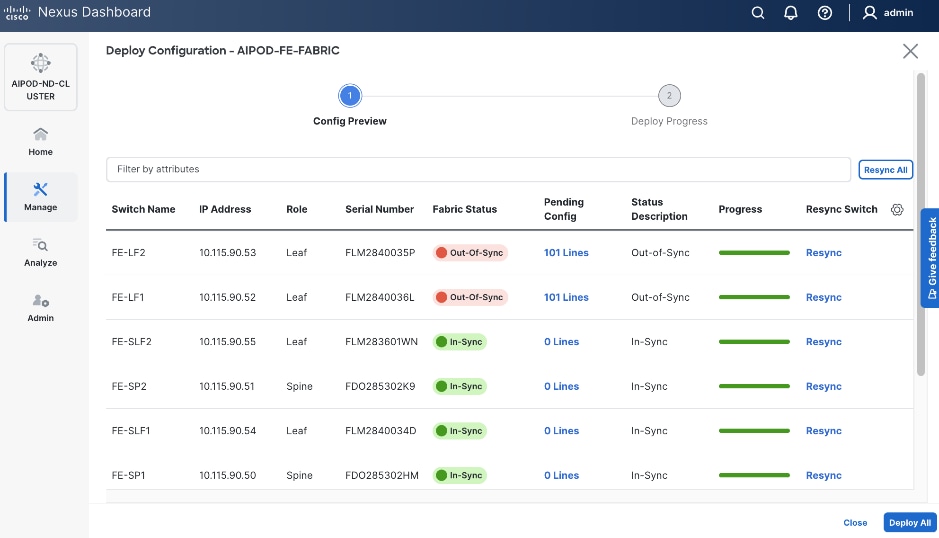

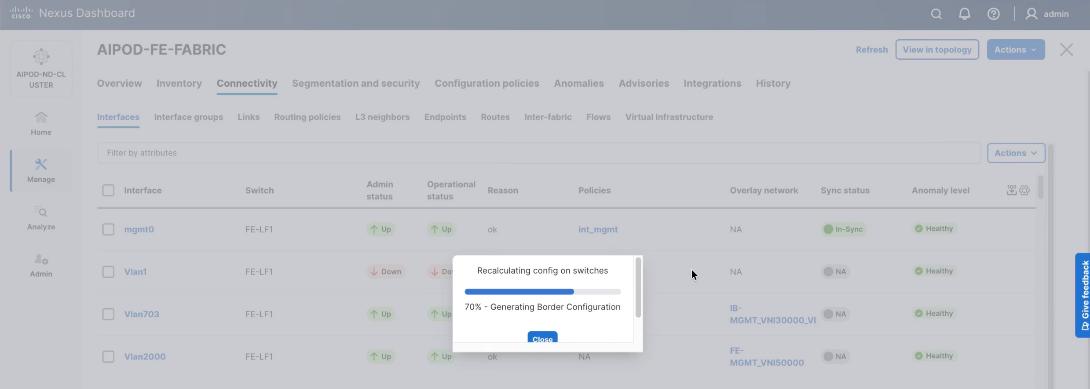

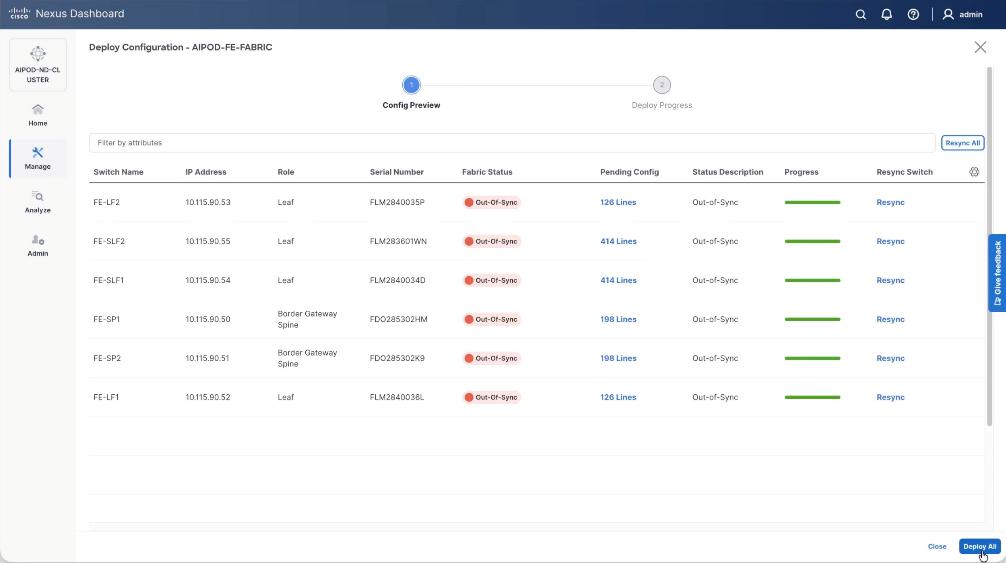

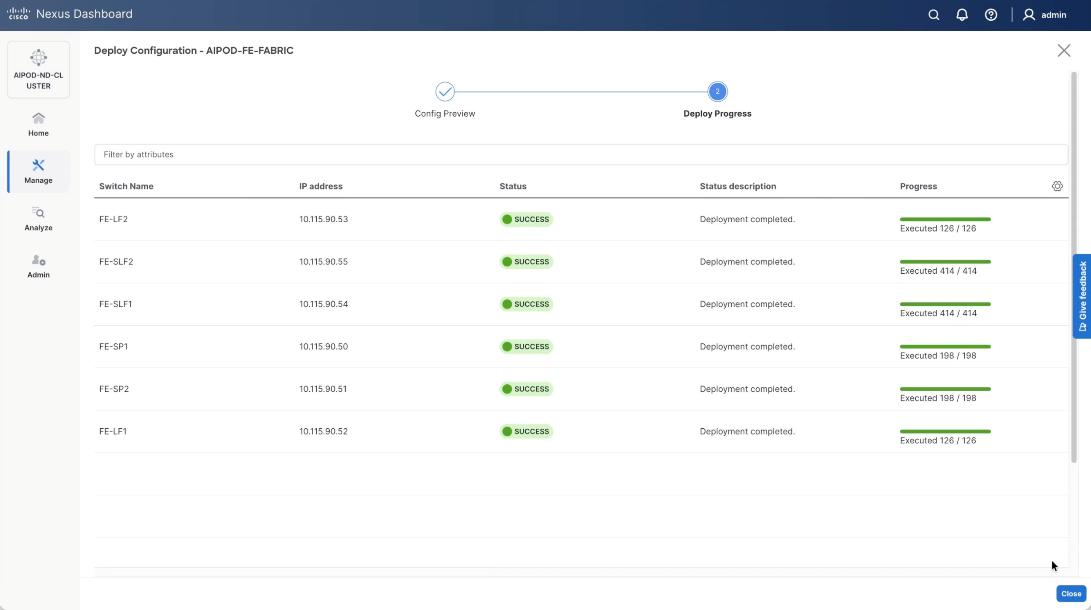

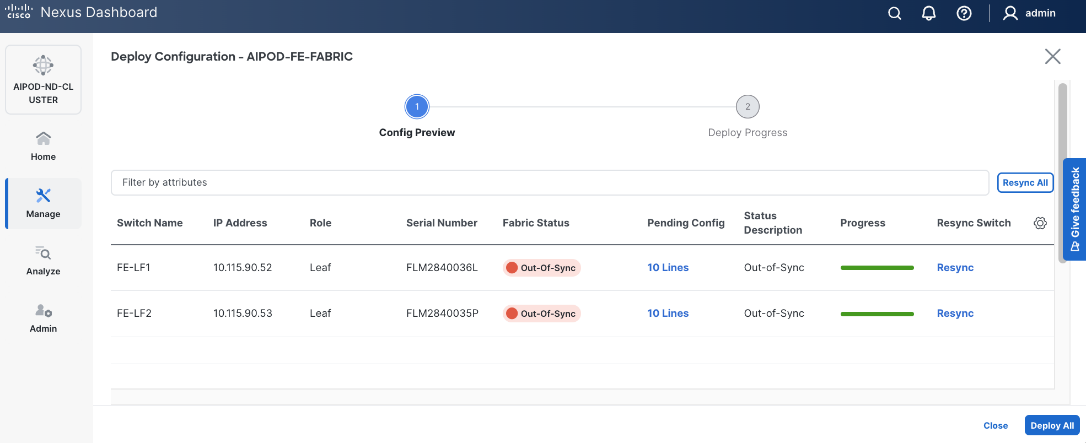

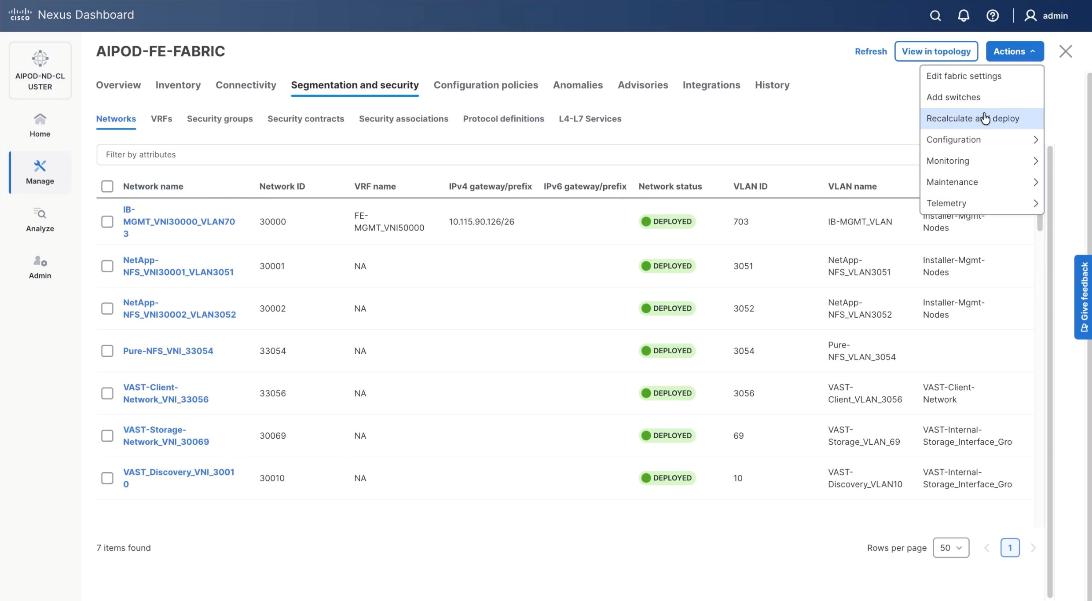

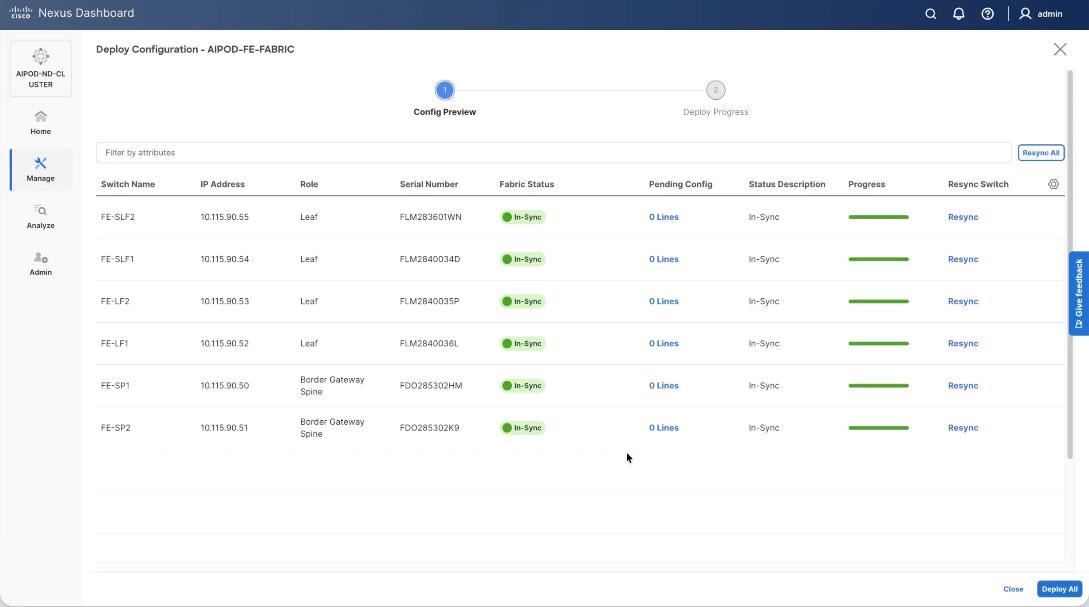

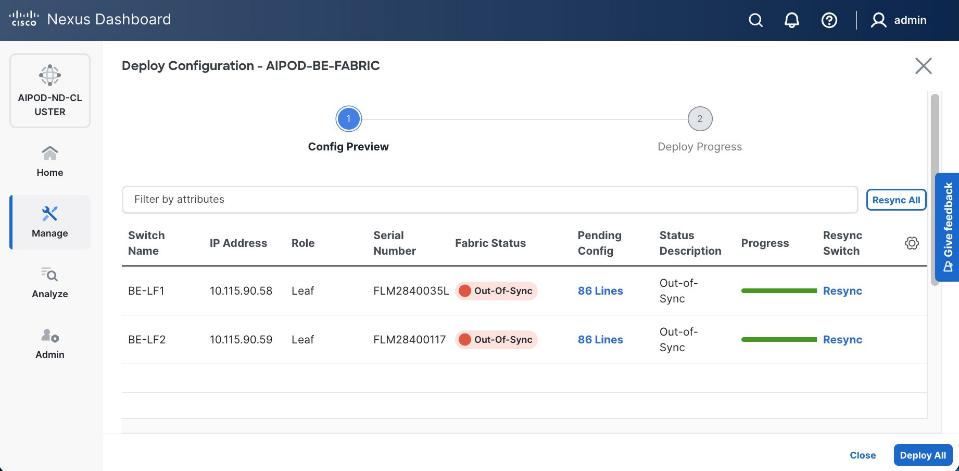

Step 27. Click the upper Actions button and select Recalculate and deploy from the drop-down list. If it says one is already in progress, wait a few minutes and repeat the steps. You should see the Fabric as Out-of-sync with some Pending Config (lines of config) change.

Step 28. Click Deploy All.

Step 29. Click Close.

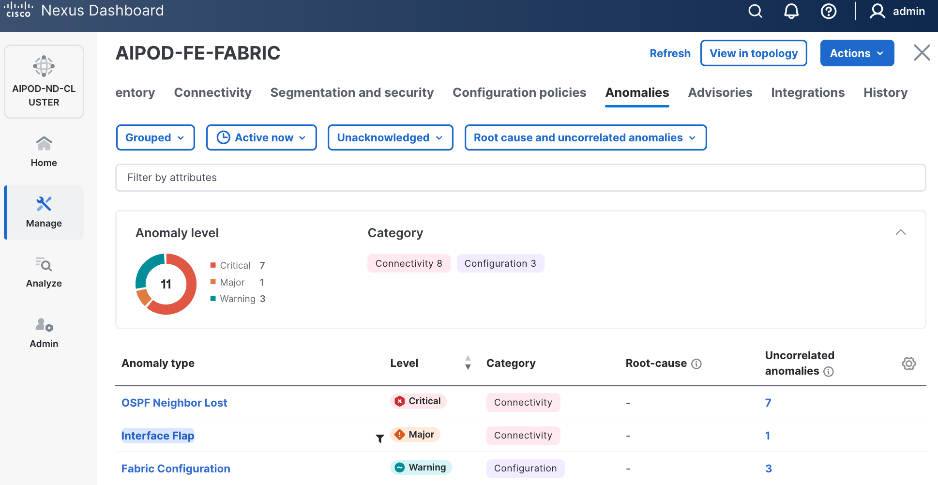

Step 30. ND will identify issues in hardware, connectivity, software and so on, reflected by the Anomaly level. To view the flagged anomalies, go to Anomalies. Address each anomaly to prevent issues later, either by resolving them or acknowledging them.

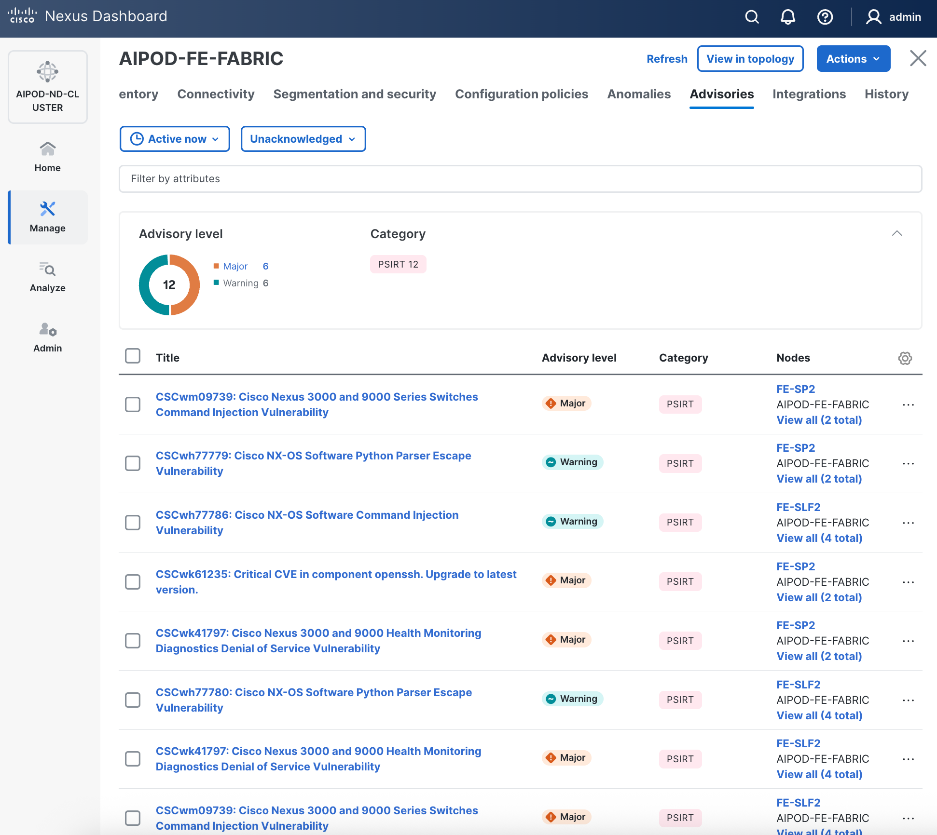

Step 31. Review the Advisories and resolve or acknowledge them.

Step 32. Evaluate and upgrade to Cisco recommended Nexus OS release.

Step 33. Start attaching compute, storage, and other end devices to the cluster.

Enable vPC Pairing on Compute/Management Leaf Switches in the FE Fabric

To enable vPC pairing on the compute/management in the FE fabric, follow the procedures below.

Procedure 1. Enable vPC pairing for compute/management leaf switches in the FE fabric

Step 1. From a web browser go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

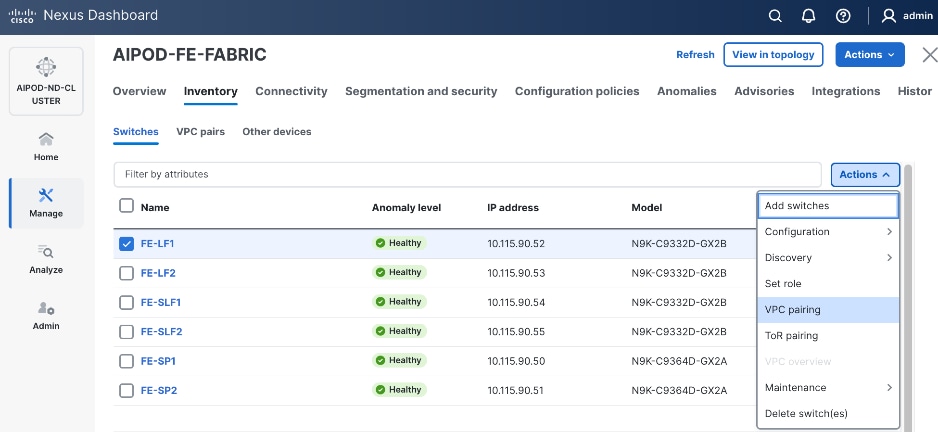

Step 3. Select the FE fabric and click the Inventory tab.

Step 4. To enable VPC pairing on the leaf switches that connect to UCS compute (GPU and management) nodes, select the first leaf switch in the leaf pair.

Step 5. Click the lower Actions button and select VPC pairing from the drop-down list.

Step 6. Select the VPC peer switch for the first compute/management leaf. Enable Virtual Peerlink.

Step 7. Click Save.

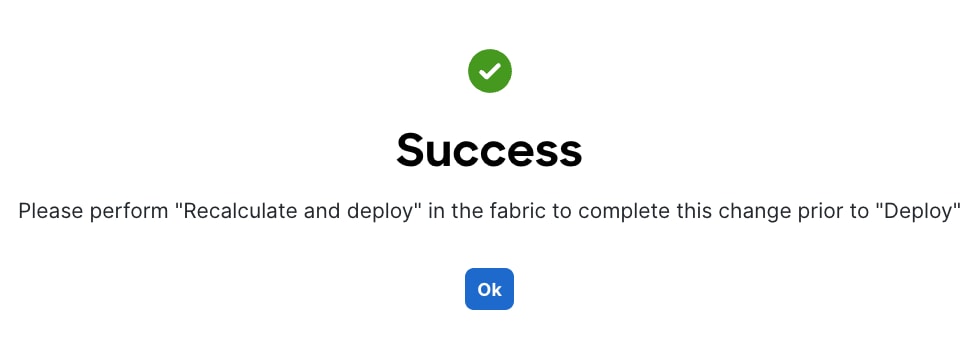

Step 8. Click OK in the Success pop-up window.

Step 9. Select the two leaf switches in the vPC pair that are now Out-of-sync from the configuration change. Click the Actions button and select Recalculate and deploy from the drop-down list.

Step 10. Click Deploy All.

Step 11. When the configuration deployment completes successfully, click Close.

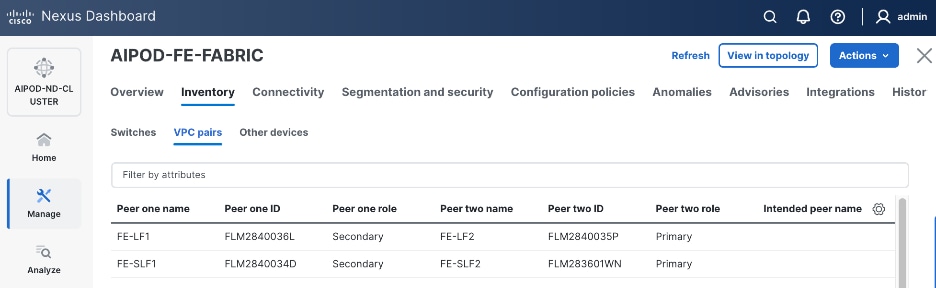

Step 12. From the Inventory tab, go to VPC pairs tab to see the newly created vPC pair.

Enable vPC Pairing on Storage Leaf Switches in the Frontend Fabric

To enable vPC pairing for the storage leaf switches in the FE fabric, follow the procedures below.

Procedure 1. Enable vPC pairing for storage leaf switches in the FE fabric

Step 1. Repeat the steps in the previous procedure to configure storage leaf switches in the FE fabric as vPC peers.

Step 2. From the Inventory tab, go to VPC pairs tab to see the newly created vPC pairs.

Step 3. From the navigation menu, go to Manage > Fabric and select the FE fabric and then the Topology tab, you should now see the 2 Leaf switch pairs grouped in a box, indicating they are vPC peers.

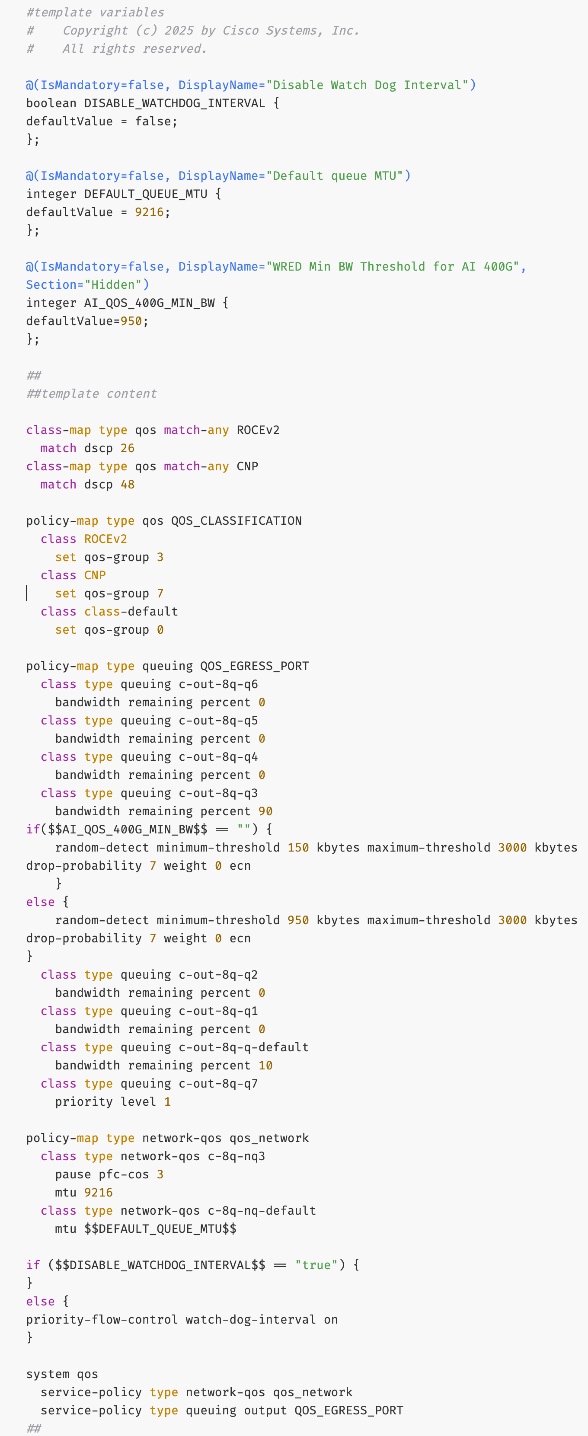

Modify QoS Policy on FE fabric (VAST Data)

Assumptions and Prerequisites

Assumes that you have selected the AI Fabric template with default QoS policy enabled. This section describes how to modify this default policy for the software version used in this CVD.

Setup Information

Table 9. Setup Information for BE Fabric QoS

| Parameter Type |

Parameter Name | Value |

Parameter Type/Other Info |

| QoS Policy Template |

|

|

| Default/Original Policy Template Name |

400G | AI_Fabric_QOS_400G |

|

| New Policy Template Name |

VAST_UNIFIED_QOS_200G |

|

| PFC MTU |

9216 |

Default for this release: 4200 |

| MTU for c-8q-nq3 |

9216 |

Default for this release: 4200 |

Deployment Steps

To change the QoS policy deployed in the frontend fabric, follow the procedures below, using the setup information listed in Table 9.

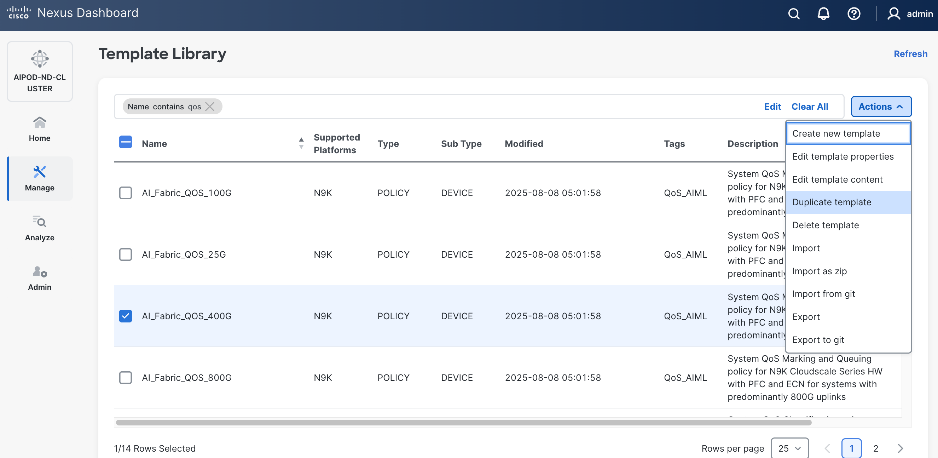

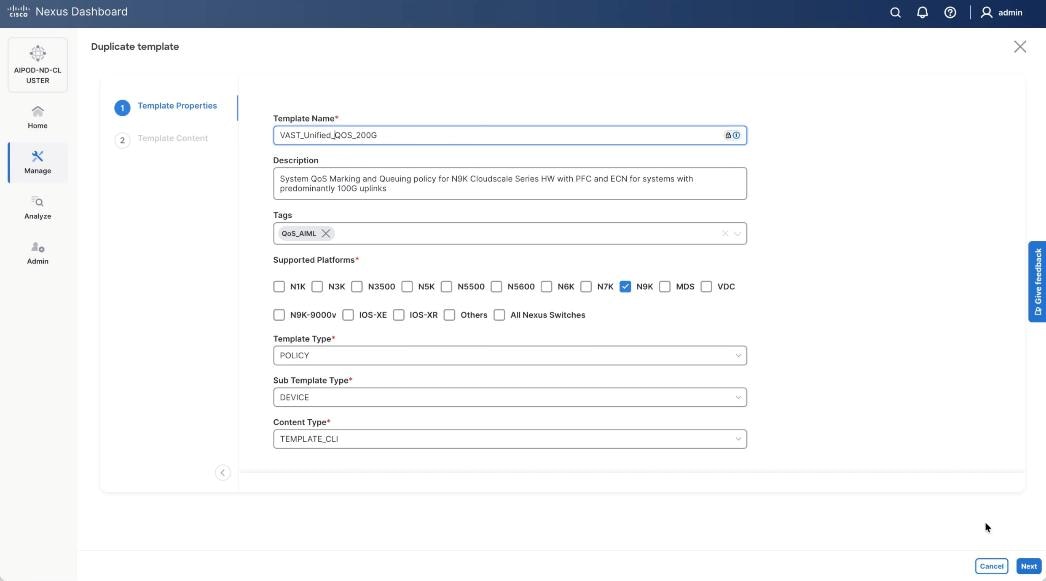

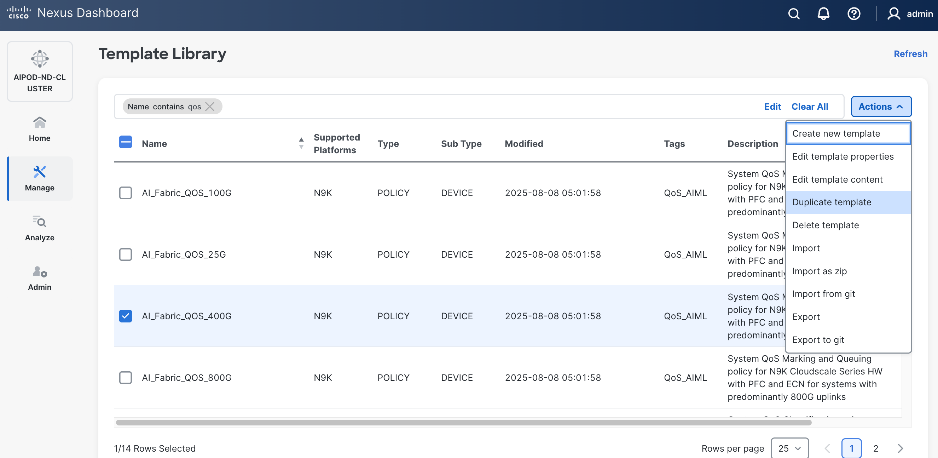

Procedure 1. Create new template from default QoS policy template

Step 1. From a web browser go to Cisco Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. Go to Manage > Template Library.

Step 3. Filter on QOS in top search bar.

Step 4. Select the default QoS policy that was applied when the BE fabric was deployed using the default AI fabric template.

Step 5. Click Actions.

Step 6. Select Duplicate template from the drop-down list.

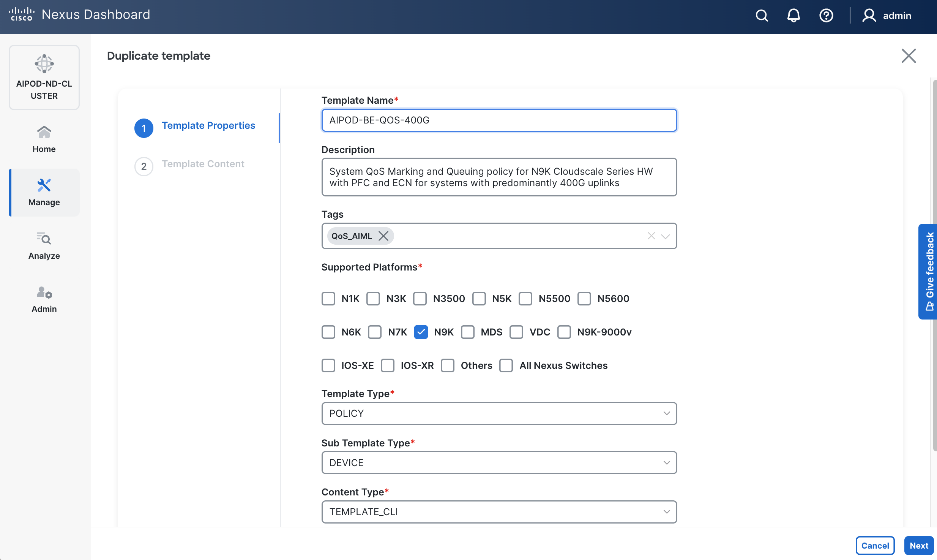

Step 7. In the Template Properties section, specify a new name for the QoS policy template.

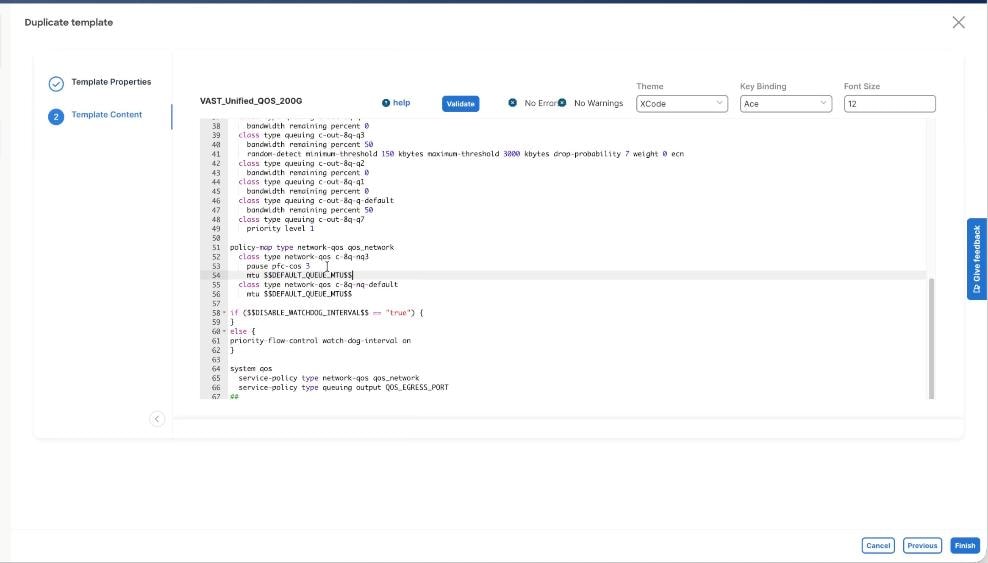

Step 8. In the Template Content section, modify the network-qos class c-8q-nq3 to $DEFAULT_QUEUE_MTU which is 9216. Click Finish.

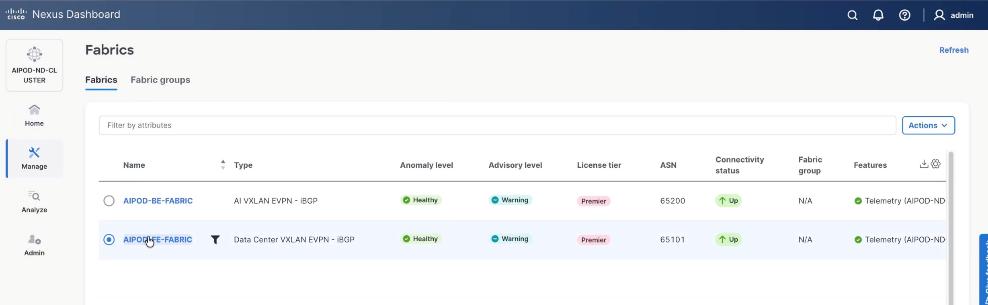

Step 9. Go to Manage > Fabrics. Select the FE fabric from the list and click the FE fabric name.

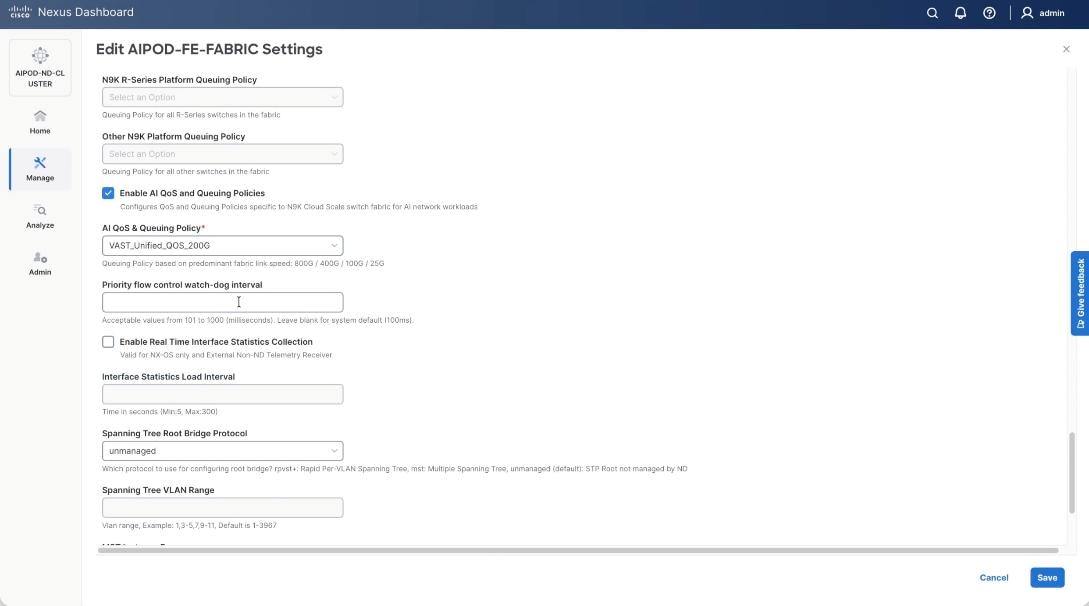

Step 10. Go to Actions > Edit Fabric Settings from the drop-down list. Select the Fabric Management tab and click the Advanced tab. Scroll through the Edit AIPOD-FE-Fabric Settings and check the Enable AI QOS and Queuing Policies option. From the AI QOS and Queuing Policies drop-down list, select the VAST_Unified_QOS_200G policy created in the previous steps. Click Save.

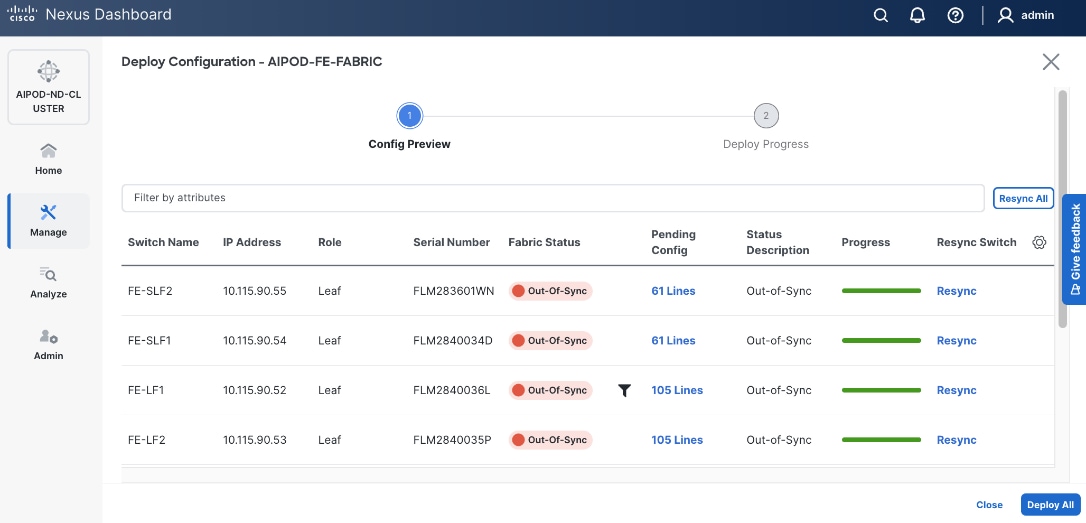

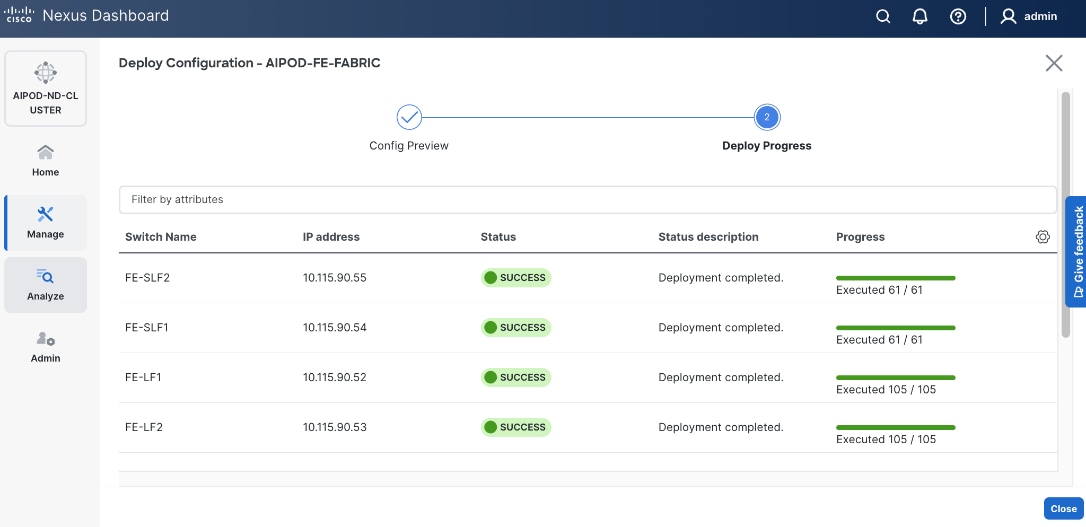

Step 11. From the AIPOD-FE-Fabric, click the Actions tab and select Recalculate and Deploy.

Step 12. Click Deploy All.

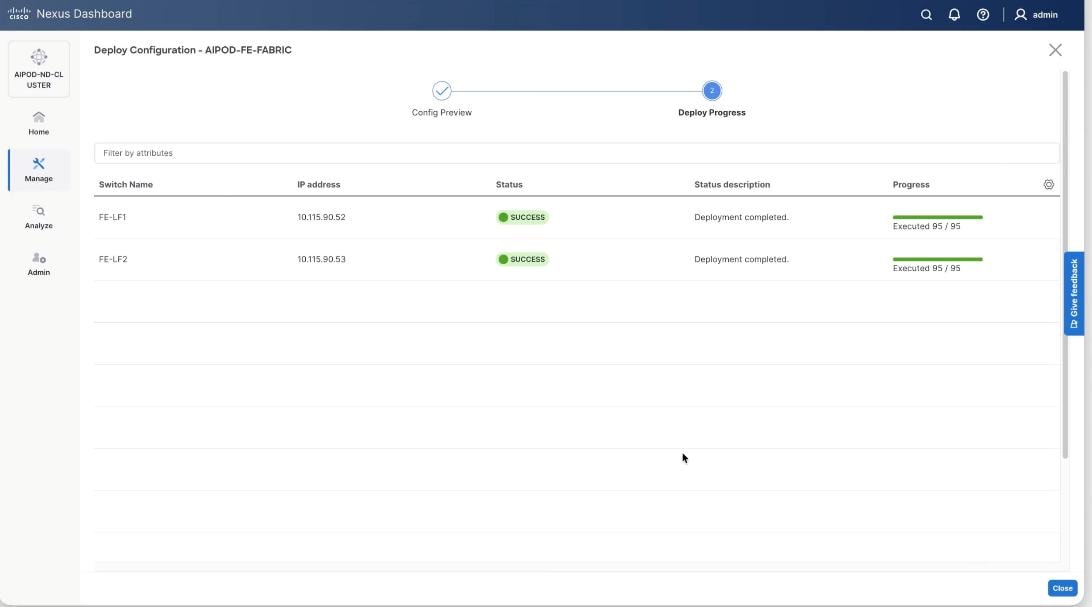

Step 13. Confirm the successful deployment to FE Fabric.

Enable Layer 2 Connectivity to Management UCS X-Direct from FE fabric

Table 10. Setup Parameters for FE Fabric: Layer 2 Connectivity to Management UCS X–Direct

| Leaf Switches |

FE-LF1, FE-LF2 |

|

| Management UCS |

UCS X-Direct with (-A, -B) uplinks; Both uplinks are dual-homed to FE-LF1 & FE-LF2 |

With multiple servers |

| Virtual Port Channel (vPC) |

To UCS X-Direct |

Management UCS-X Direct Chassis |

| vPC/PC1 - ID |

15 |

To UCS X-Direct: Side-A |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/5, 1/7 |

FI-A: Ports 1/1-4 (PC-11) |

| vPC/PC2 – ID |

16 |

To UCS X-Direct: Side-B |

| Ports |

1/6, 1/8 |

FI-B: Ports 1/1-4 (PC-12) |

To enable Layer 2 connectivity to the management of the Cisco UCS X-Series Direct chassis from the FE fabric, follow the procedures below. You will be configuring two vPCs to the management UCS X-Series Direct, one for -A side and another for -B side. Each vPC will use multiple ports on each compute leaf switch pair to connect to -A and -B uplinks on UCS X-Series Direct chassis.

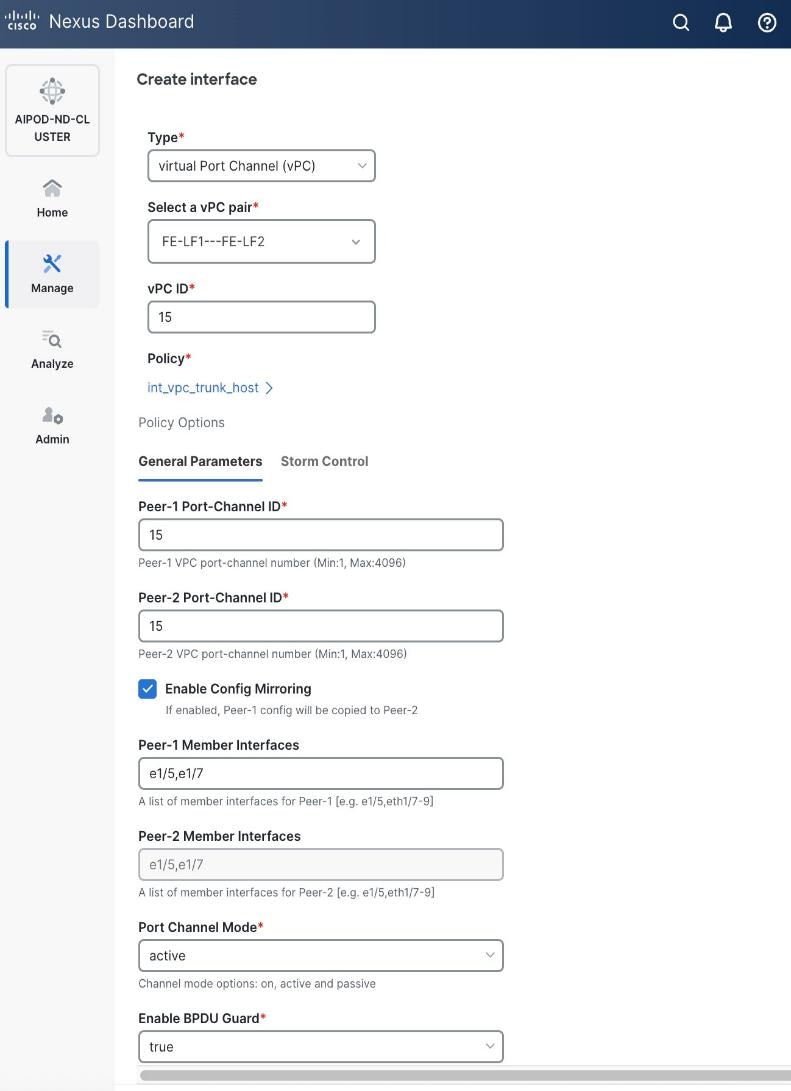

Procedure 1. Deploy first vPC to Management UCS X-Series Direct

Step 1. From a web browser go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

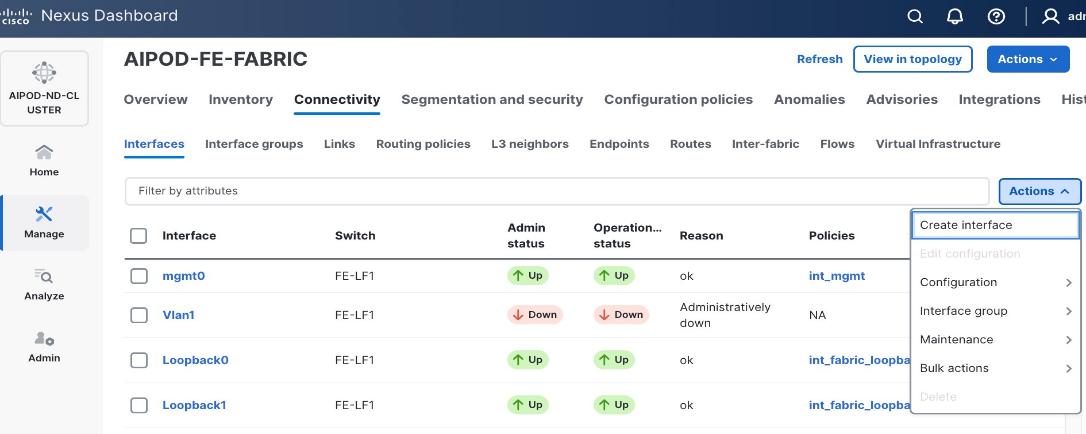

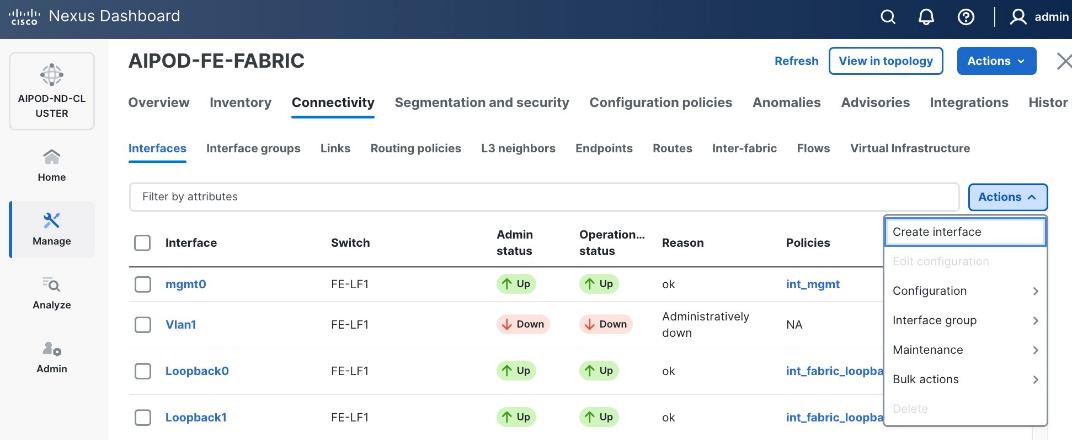

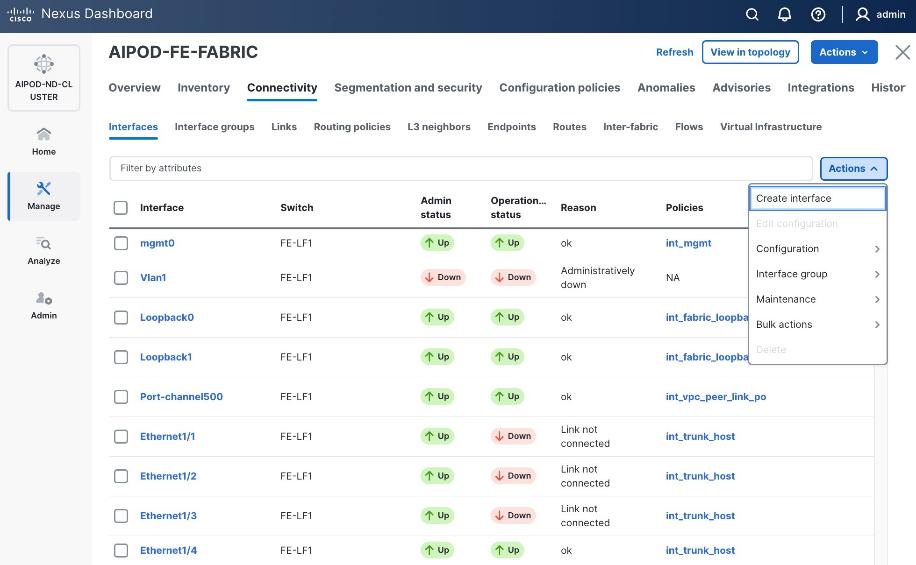

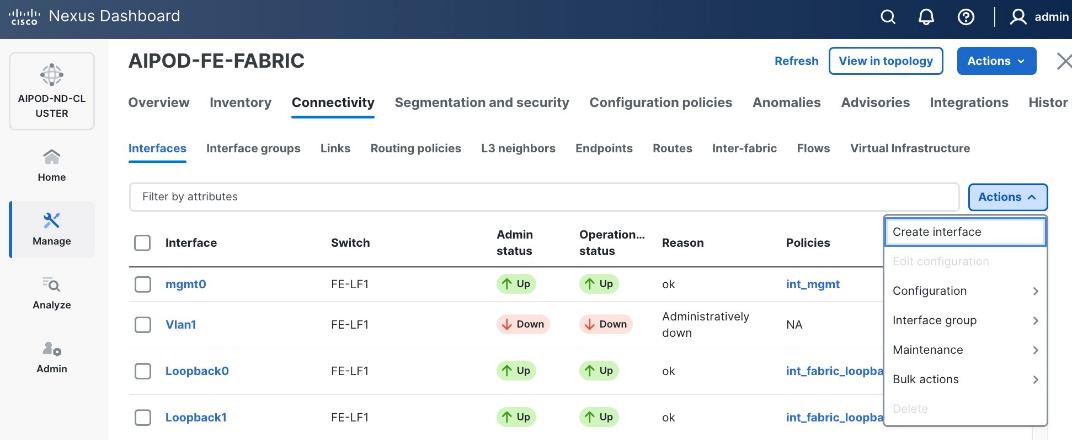

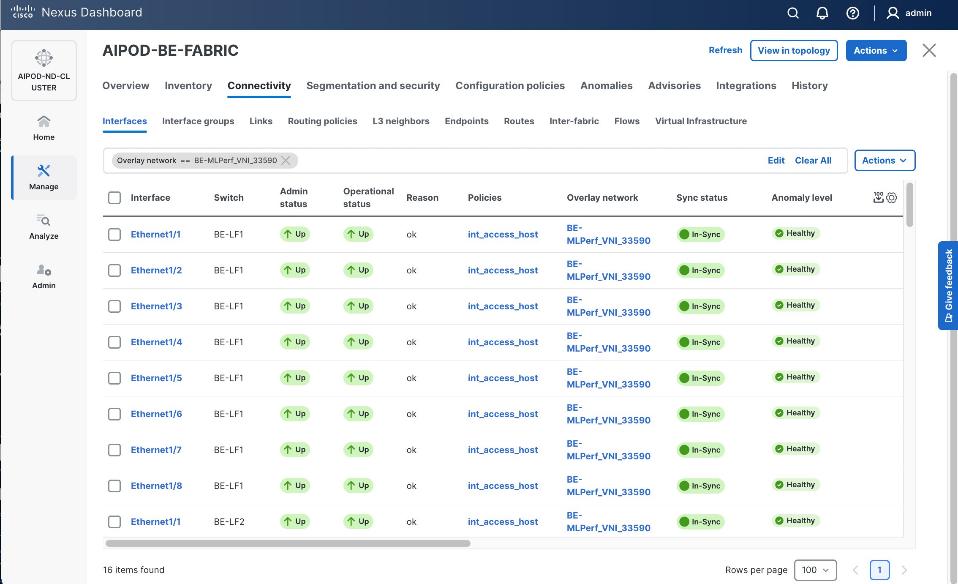

Step 3. Select the FE fabric and go to Connectivity > Interfaces tab.

Step 4. Click the lower Actions button and select Create interface.

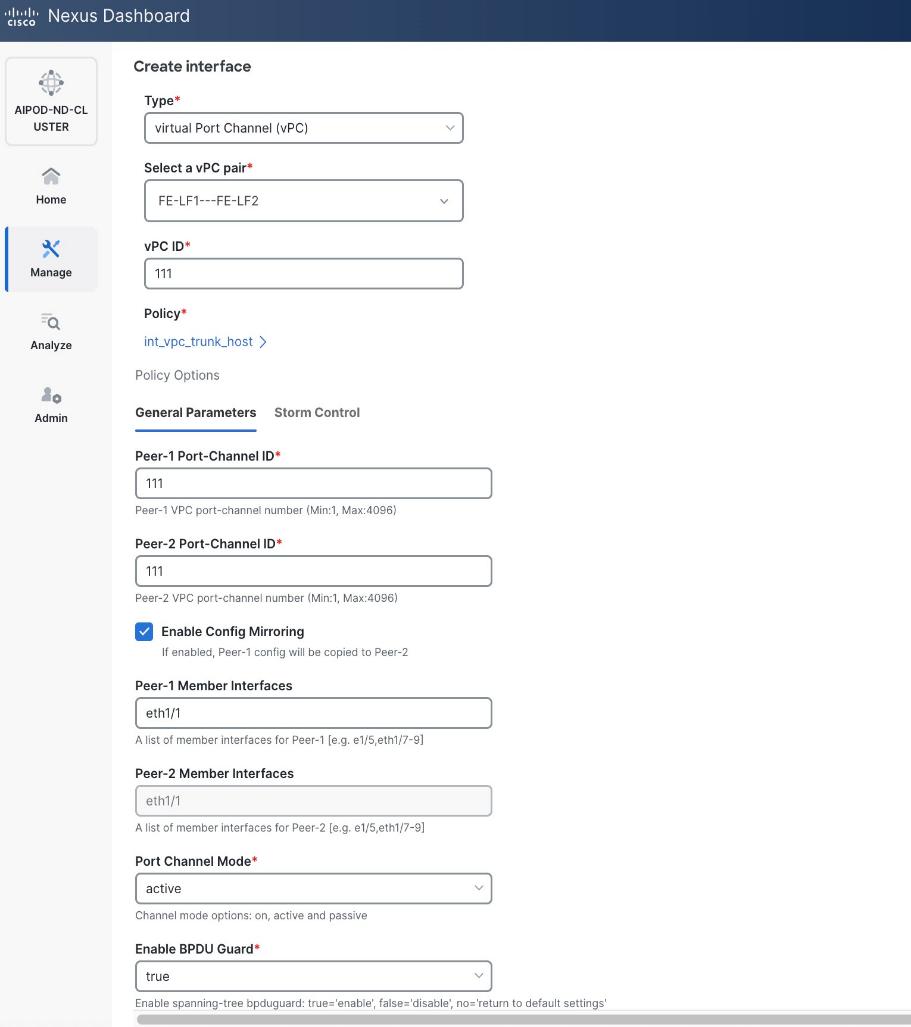

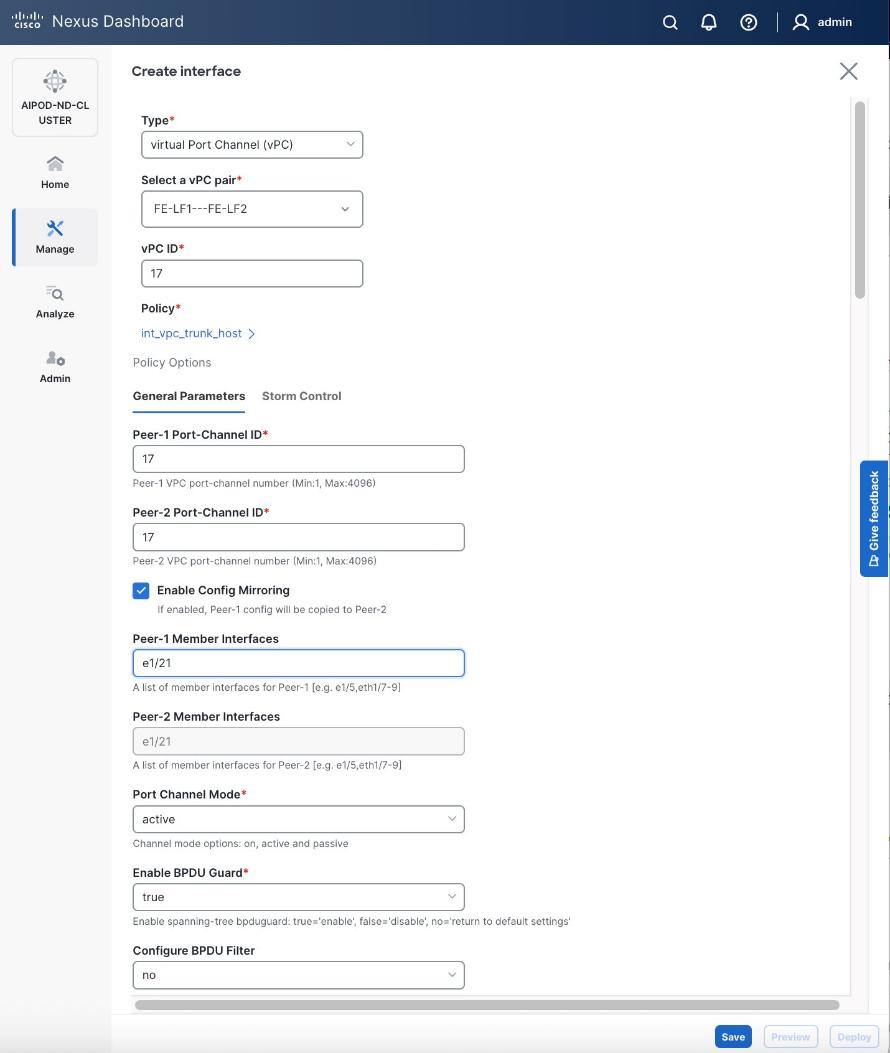

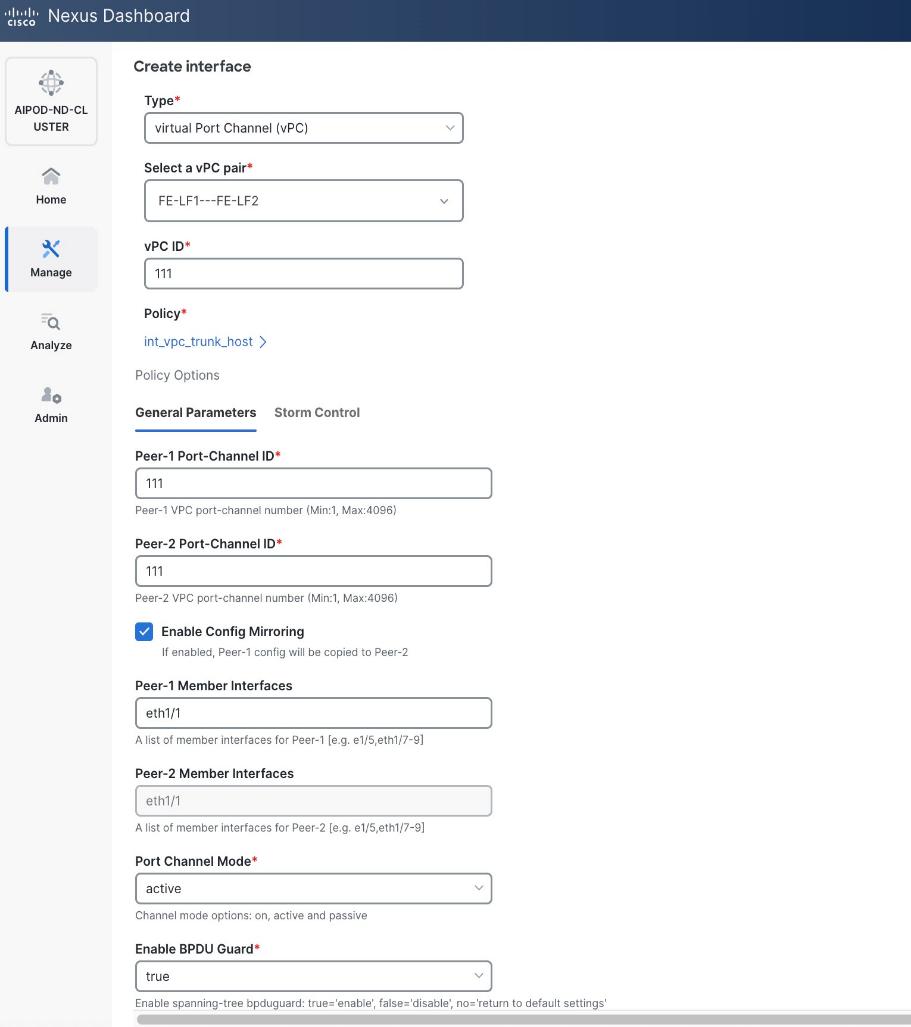

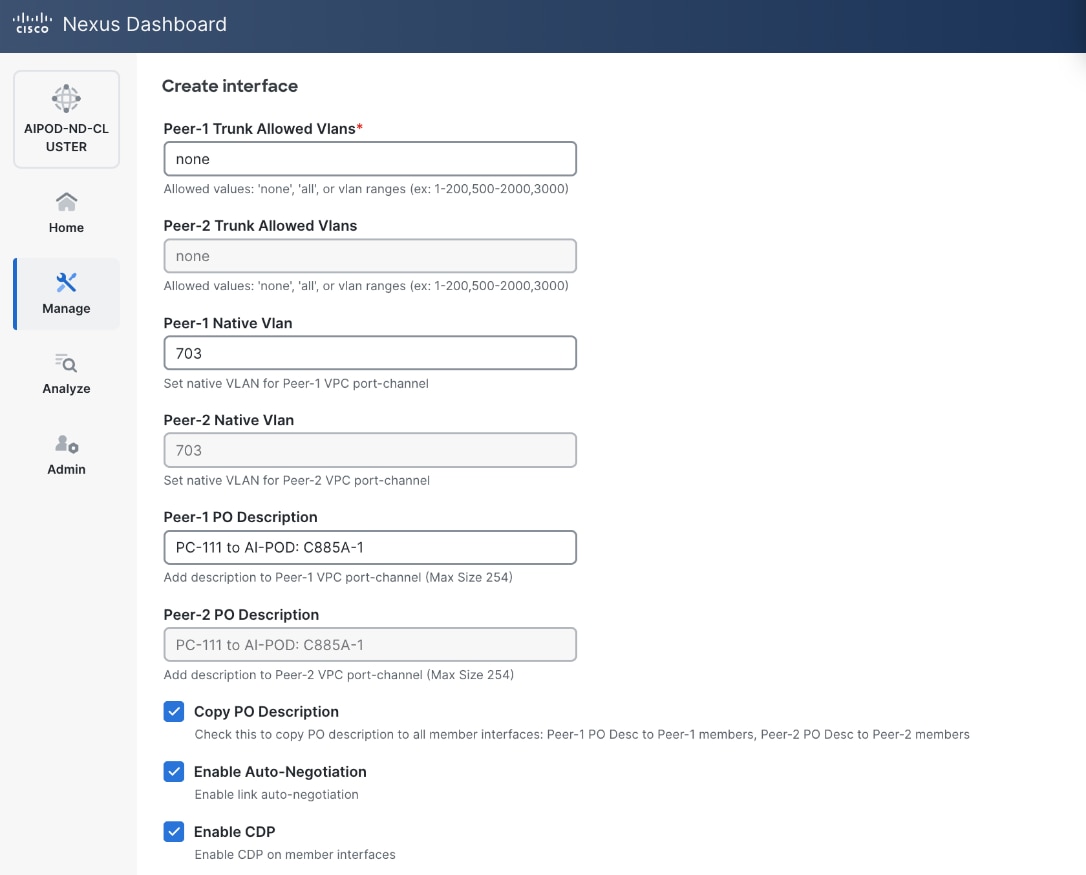

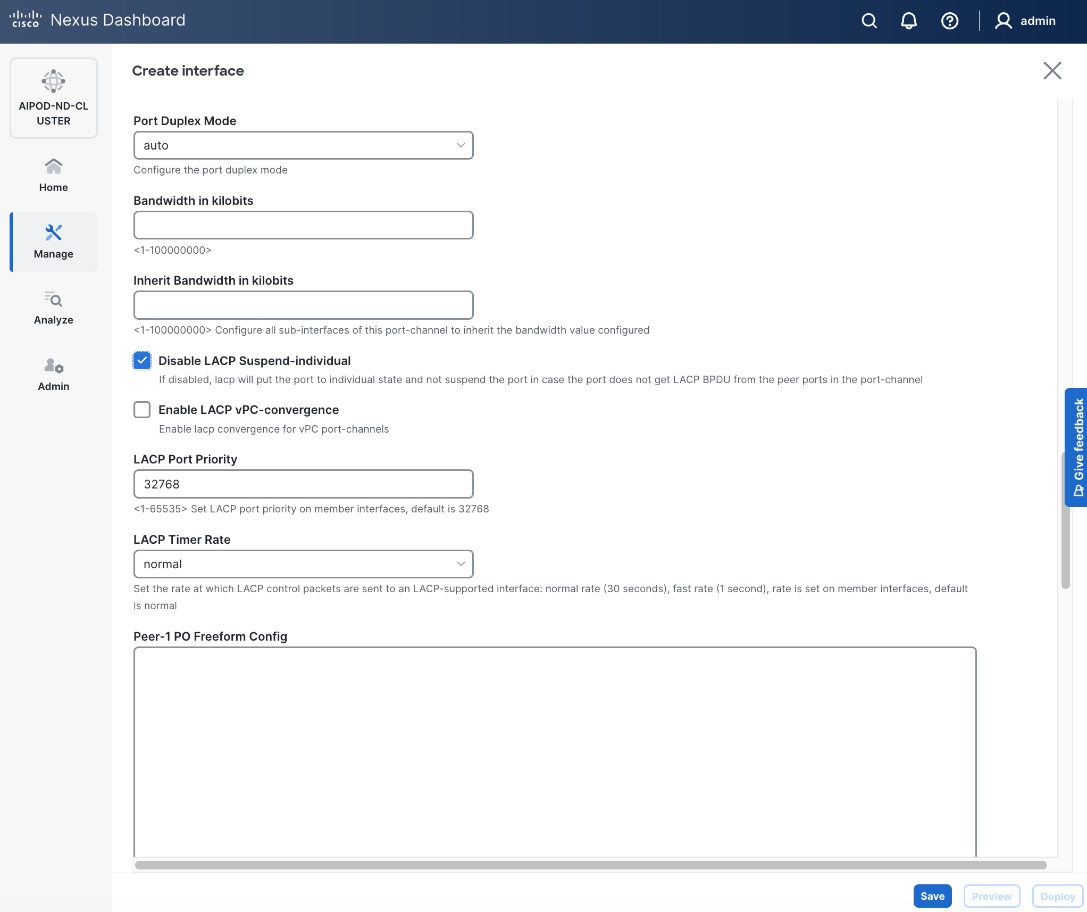

Step 5. In the Create interface window:

● Specify the Type of interface as virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pair, select the compute leaf switch vPC pair from the drop-down list.

● Specify a vPC ID for the first vPC to the UCS X-Direct (-A side). Port Channel IDs from each switch to the first UCS node should match the vPC ID (see screenshot below).

● Leave the Policy as int_vpc_trunk_host.

● Enable checkbox for Config Mirroring to configure both vPC peer switches identically.

● Specify Peer-1 Member Interfaces that connects to first UCS node.

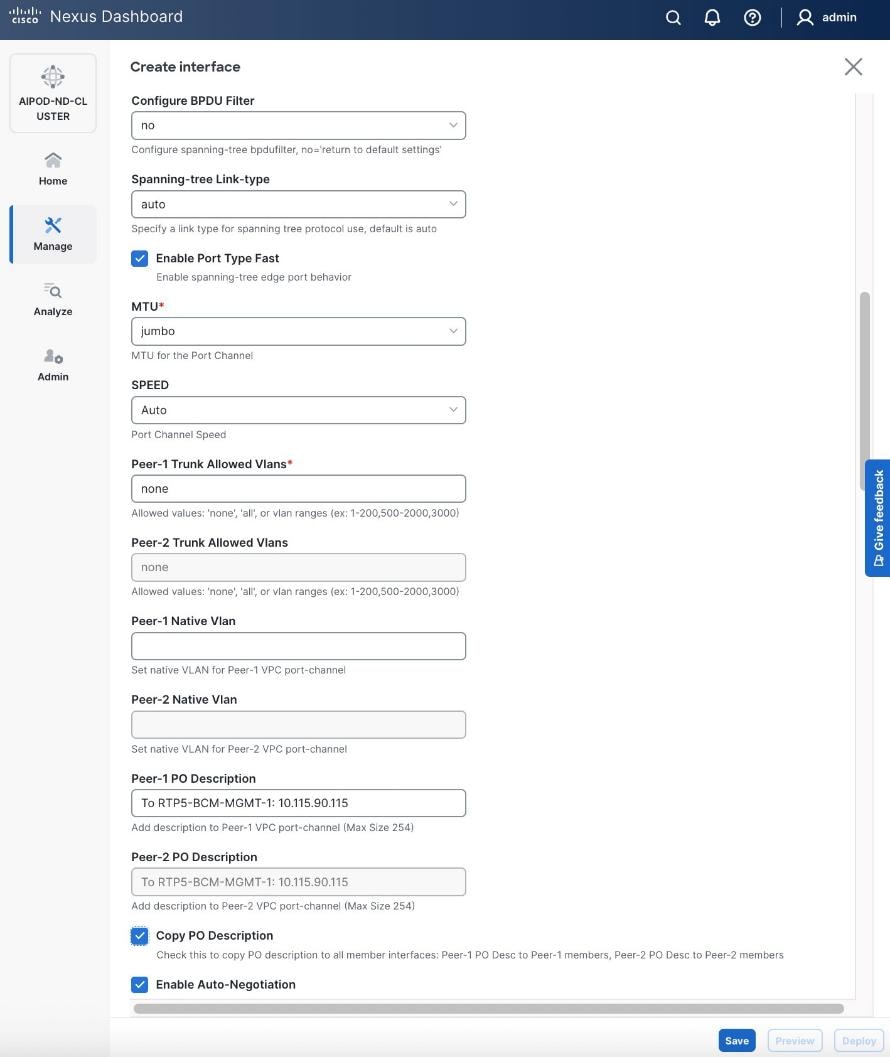

● Leave other fields as-is.

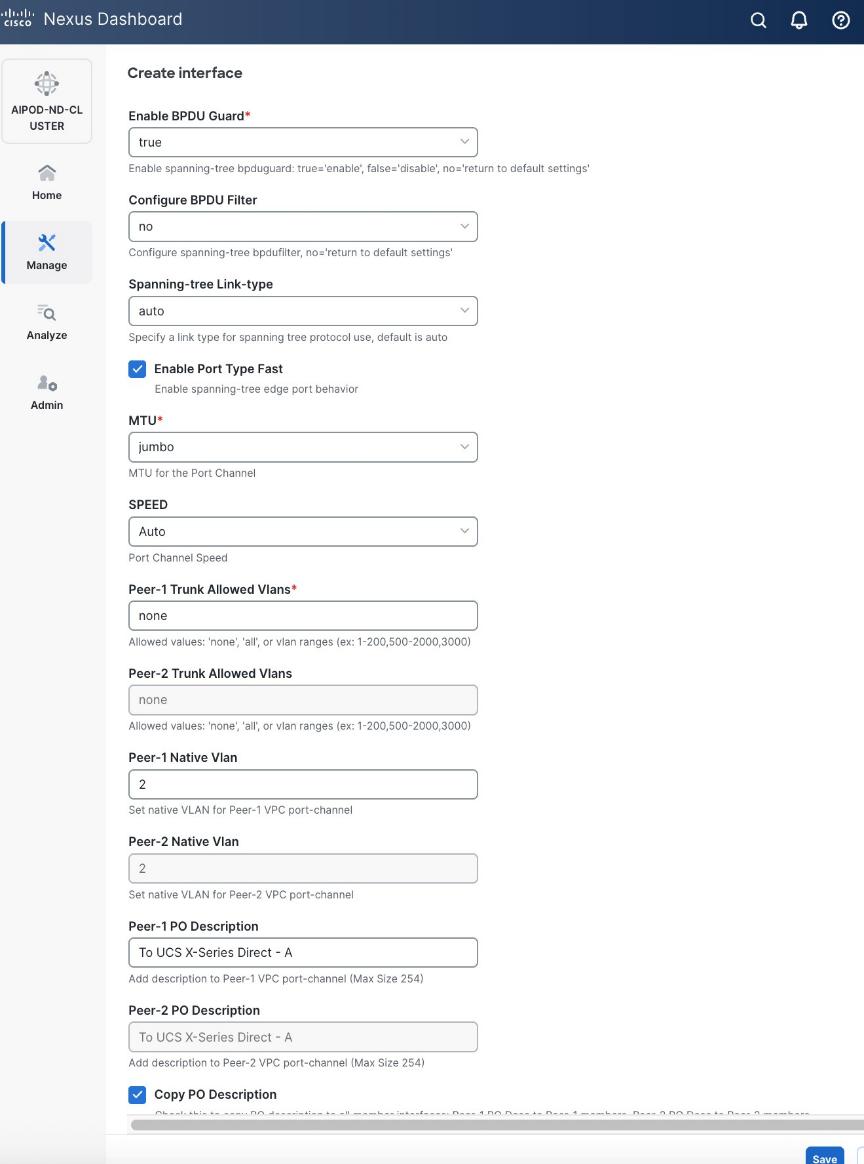

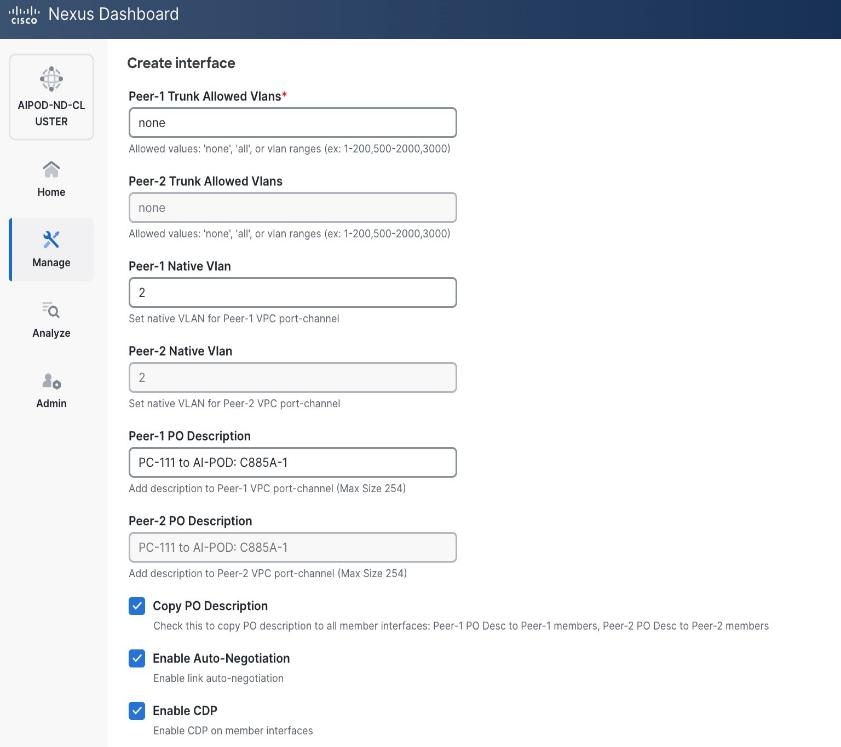

● Scroll down and fill remaining fields: Native VLAN, Peer-1 PO Description, and select the checkbox for Copy PO Description to copy the description to second vPC peer’s Port Channel.

Step 6. Click Save.

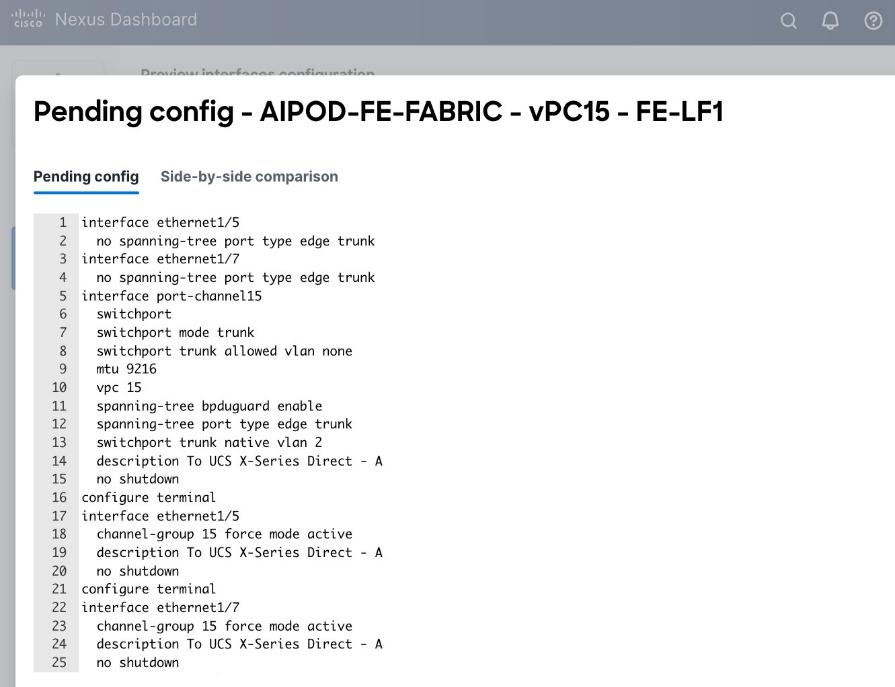

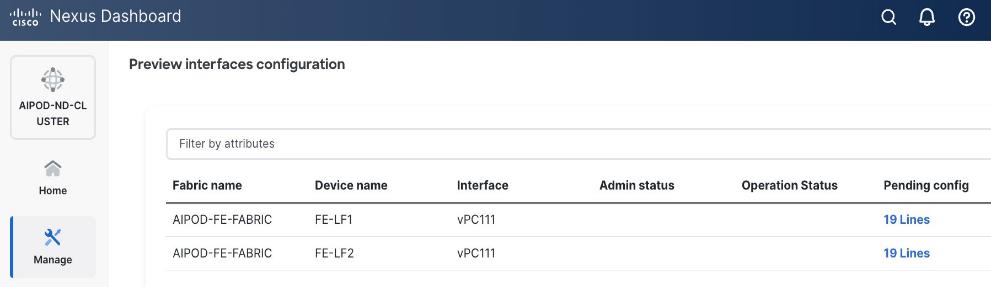

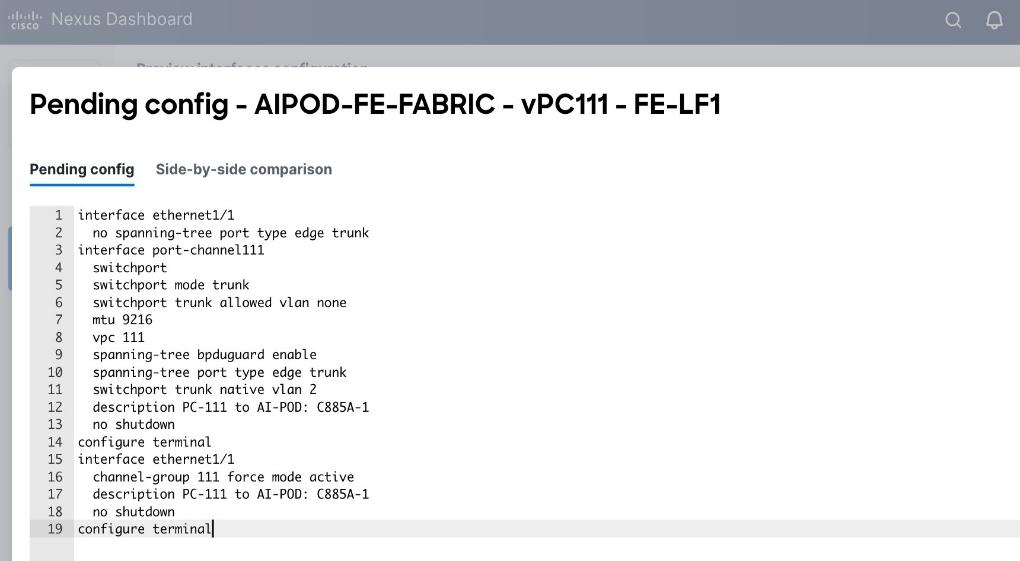

Step 7. Click Preview.

Step 8. Click Close, then click Cancel.

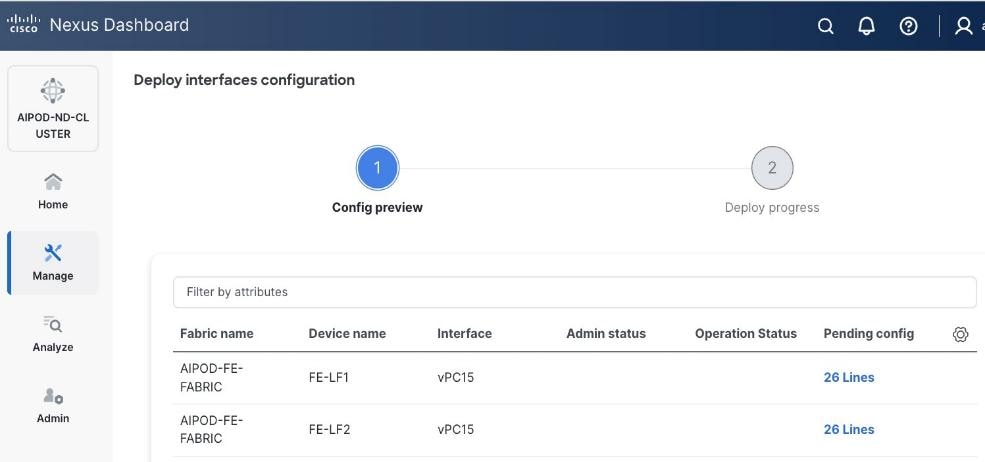

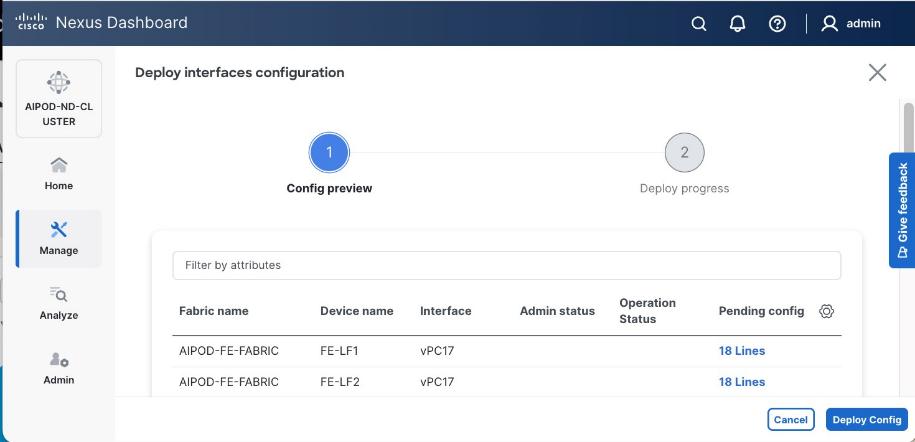

Step 9. Click Deploy. The Pending Config is the configuration shown in the previous step.

Step 10. Click Deploy Config.

Step 11. Verify that all the interfaces and port-channels are up on each switch in the vPC leaf pair that connects to the UCS X-Series Direct (-A side). It may take a few minutes for the vPC to go from Not discovered to consistent state.

Step 12. Repeat this procedure for the second vPC to UCS X-Series Direct (-B side).

Enable Layer 2 Connectivity to UCS GPU Nodes from FE Fabric

To enable layer 2 connectivity to UCS GPU nodes, you will be configuring four vPCs, one per Cisco UCS C885A node. Each vPC will use one port on each switch in the compute leaf pair to connect to the UCS node.

Note: The VAST NFS client, which builds on the standard Linux kernel NFS driver, has the option of local ports, which specifies multiple client-side IPs for outgoing traffic (NFSv3 only). If you use this options, vPC on GPU nodes is not required.

Table 11. Setup Parameters for FE Fabric: Layer 2 Connectivity to UCS GPU Nodes

| Leaf Switches |

FE-LF1, FE-LF2 |

|

| UCS Nodes |

4 x UCS C885A GPU Nodes, each dual-homed to FE-LF1 & FE-LF2 |

|

| Virtual Port Channel (vPC) |

To UCS C885As |

UCS GPU Nodes |

| vPC/PC1 - ID |

111 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/1 |

On each Leaf switch |

| vPC/PC2 – ID |

112 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/2 |

On each Leaf switch |

| vPC/PC3 - ID |

113 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/3 |

On each Leaf switch |

| vPC/PC4 – ID |

114 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/4 |

On each Leaf switch |

To enable Layer 2 connectivity to UCS C885A GPU nodes from the FE fabric, follow the procedures below.

Procedure 1. Deploy first vPC to first UCS C885A GPU node

Step 1. From a web browser go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Connectivity > Interfaces tab.

Step 4. Click the lower of Actions button and select Create interface.

Step 5. In the Create interface window:

● Specify the Type of interface as virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pair, select the compute leaf switch VPC pair from the drop-down list.

● Specify a vPC ID for the vPC to the first UCS GPU node. Peer-1 and Peer-2 Port-Channel ID should match that of the vPC ID.

● Leave the Policy as int_vpc_trunk_host.

● Enable checkbox for Config Mirroring.

● Specify Peer-1 Member Interfaces that connects to first UCS node.

● Specify Peer-1 Native VLAN.

● Specify Peer-1 PO Description.

● Select the checkbox for Copy PO Description to copy PO description to all member interfaces.

Step 6. Additional configuration changes can be made later as needed. Click Save.

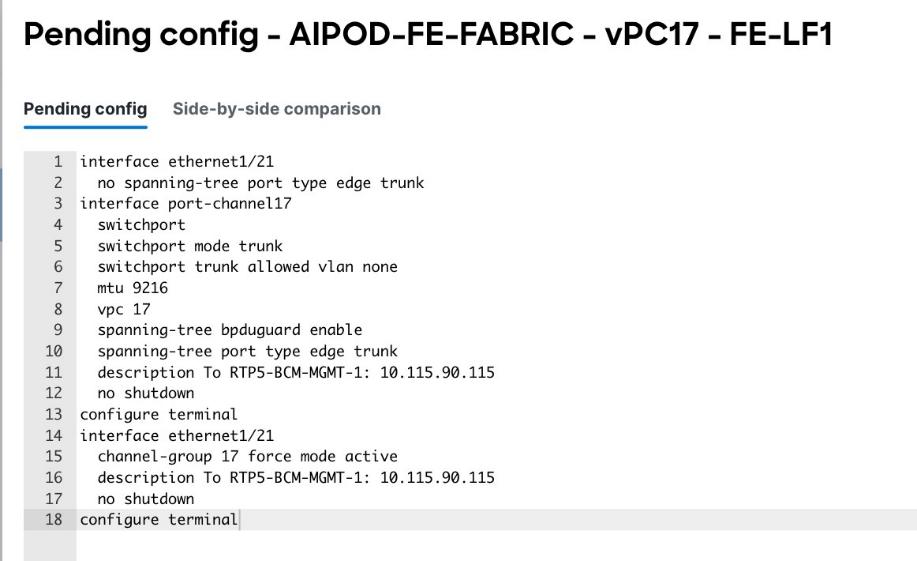

Step 7. Click Preview to view the Pending config changes.

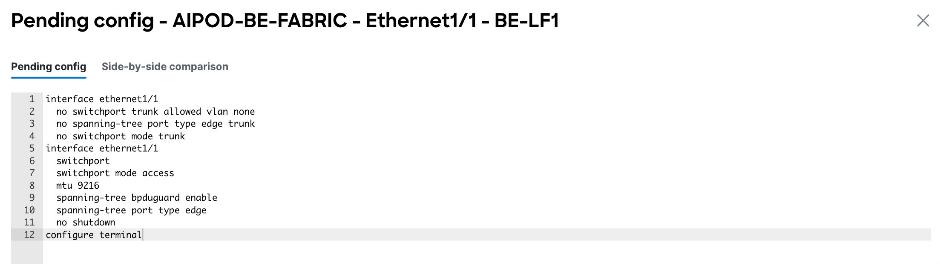

Step 8. Click Pending Config for each switch to see the configuration.

Step 9. Click the X in the top right corner and select Deploy and Deploy config to deploy the Pending config changes.

Step 10. Click Close when deployment completes successfully.

Step 11. Verify that all the interfaces and port-channel is up on each switch in the leaf switch pair that connects to the UCS node. It may take a few minutes for the vPC to go from Not discovered to consistent state.

Step 12. Repeat this procedure to provision layer 2 connectivity from the compute/management leaf switches to the remaining 3 UCS nodes in the cluster.

(Ubuntu) Enable Layer 2 Connectivity to NVIDIA BCM Nodes

If running Ubuntu on the Cisco UCS C885A M8 nodes under NVIDIA BCM, to enable Layer 2 connectivity to the BCM (UCS) node(s) from the FE fabric, you will be configuring two vPCs from the same BCM node: one to compute/management leaf pair and another storage leaf pair.

Table 12. Setup Parameters for FE Fabric: Layer 2 Connectivity to NVIDIA BCME Nodes

| Virtual Port Channel (vPC) |

To BCME Node |

Management/Control/Workload Management Node |

| vPC/PC1 - ID |

17 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/21 |

|

| vPC/PC1 - ID |

18 |

|

| vPC Pair |

FE-SLF1, FE-SLF2 |

|

| Ports |

1/24 |

|

To enable Layer 2 connectivity to the BCM (UCS) node(s) from the FE fabric, follow the procedures below.

Procedure 1. Deploy first vPC to BCM node from compute leaf switch pair

Step 1. From a web browser go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Connectivity > Interfaces tab.

Step 4. Click the lower Actions button and select Create interface.

Step 5. In the Create interface window:

● Specify the Type of interface as virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pair, select the leaf switch pair from the drop-down list.

● Specify a vPC ID for the first vPC to the BCME node. Port Channel IDs from each switch to the first UCS node should match the vPC ID (see screenshot below).

● Leave the Policy as int_vpc_trunk_host.

● Enable checkbox for Config Mirroring to configure both vPC peer switches identically.

● Specify Peer-1 Member Interfaces that connects to the BCME node.

Step 6. Scroll down and fill remaining fields: Native VLAN (optional), Peer-1 PO Description, Copy PO Description.

Step 7. Click Save.

Step 8. Click Preview.

Step 9. Click Close, the click Cancel.

Step 10. Click Deploy. The Pending Config is the configuration shown in the previous step.

Step 11. Click Deploy Config.

Step 12. Verify that all the interfaces and port-channels are up on each switch in the vPC leaf pair that connects to the BCME node. It may take a few minutes for the vPC to go from Not discovered to consistent state.

Step 13. Repeat this procedure for the second vPC from storage leaf pair to BCME node.

(Ubuntu) Enable Layer 2 Connectivity to UCS GPU Nodes from FE Fabric

If running Ubuntu on the Cisco UCS C885A M8 nodes under NVIDIA BCM, to enable Layer 2 connectivity to UCS C885A GPU nodes from the FE fabric, you will be configuring four vPCs, one per Cisco UCS C885A node. Each vPC will use one port on each switch in the compute leaf pair to connect to the UCS node.

Table 13. Setup Parameters for FE Fabric: Layer 2 Connectivity to UCS GPU Nodes

| Leaf Switches |

FE-LF1, FE-LF2 |

|

| UCS Nodes |

4 x UCS C885A GPU Nodes |

Each node is dual-homed to FE-LF1 & FE-LF2 |

| Virtual Port Channel (vPC) |

To UCS C885As |

UCS GPU Nodes |

| vPC/PC1 - ID |

111 |

To UCS C885A-1 |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/1 |

On each Leaf switch |

| vPC/PC2 – ID |

112 |

To UCS C885A-2 |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/2 |

On each Leaf switch |

| vPC/PC3 - ID |

113 |

To UCS C885A-3 |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/3 |

On each Leaf switch |

| vPC/PC4 – ID |

114 |

To UCS C885A-4 |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/4 |

On each Leaf switch |

To enable Layer 2 connectivity to UCS C885A GPU nodes from the FE fabric, follow the procedures below. You will be configuring four vPCs, one per Cisco UCS C885A node. Each vPC will use one port on each switch in the compute leaf pair to connect to the UCS node.

Procedure 1. Deploy first vPC to first UCS C885A GPU node

Step 1. From a web browser go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Connectivity > Interfaces tab.

Step 4. Click the lower Actions button and select Create interface.

Step 5. In the Create interface window:

● Specify the Type of interface as virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pair, select the compute leaf switch VPC pair from the drop-down list.

● Specify a vPC ID for the vPC to the first UCS GPU node. Peer-1 and Peer-2 Port-Channel ID should match that of the vPC ID.

● Leave the Policy as int_vpc_trunk_host.

● Enable checkbox for Config Mirroring.

● Specify Peer-1 Member Interfaces that connects to first UCS node.

● Specify Peer-1 Native VLAN.

● Specify Peer-1 PO Description.

● Enable checkbox for Copy PO Description to copy PO description to all member interfaces.

● Select the checkbox for Disable LACP Suspend-individual .

● Leave everything else as is. Additional configuration changes can be made later as needed.

Step 6. Click Save.

Step 7. Click Preview.

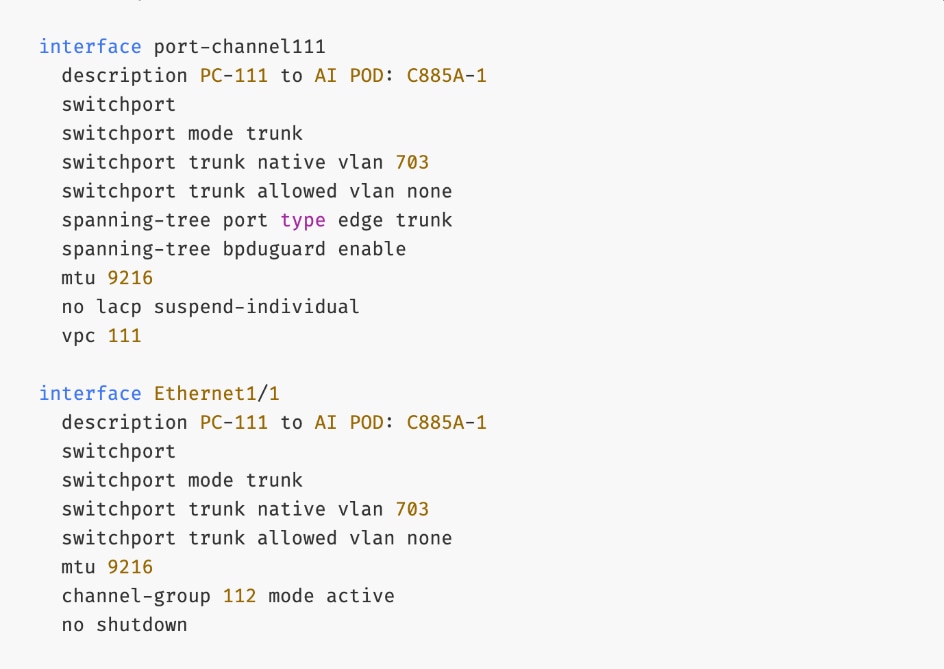

Step 8. To view the Pending config changes, click the Pending Config column for each switch (X lines) to see the configuration. The configuration is provided as a reference from one leaf switch.

Step 9. Click the X in the top right corner and select Deploy and Deploy config to deploy the Pending config changes.

Step 10. Click Close when deployment completes successfully.

Step 11. Verify that all the interfaces and port-channel is up on each switch in the leaf switch pair that connects to the UCS node. It may take a few minutes for the vPC to go from Not discovered to consistent state.

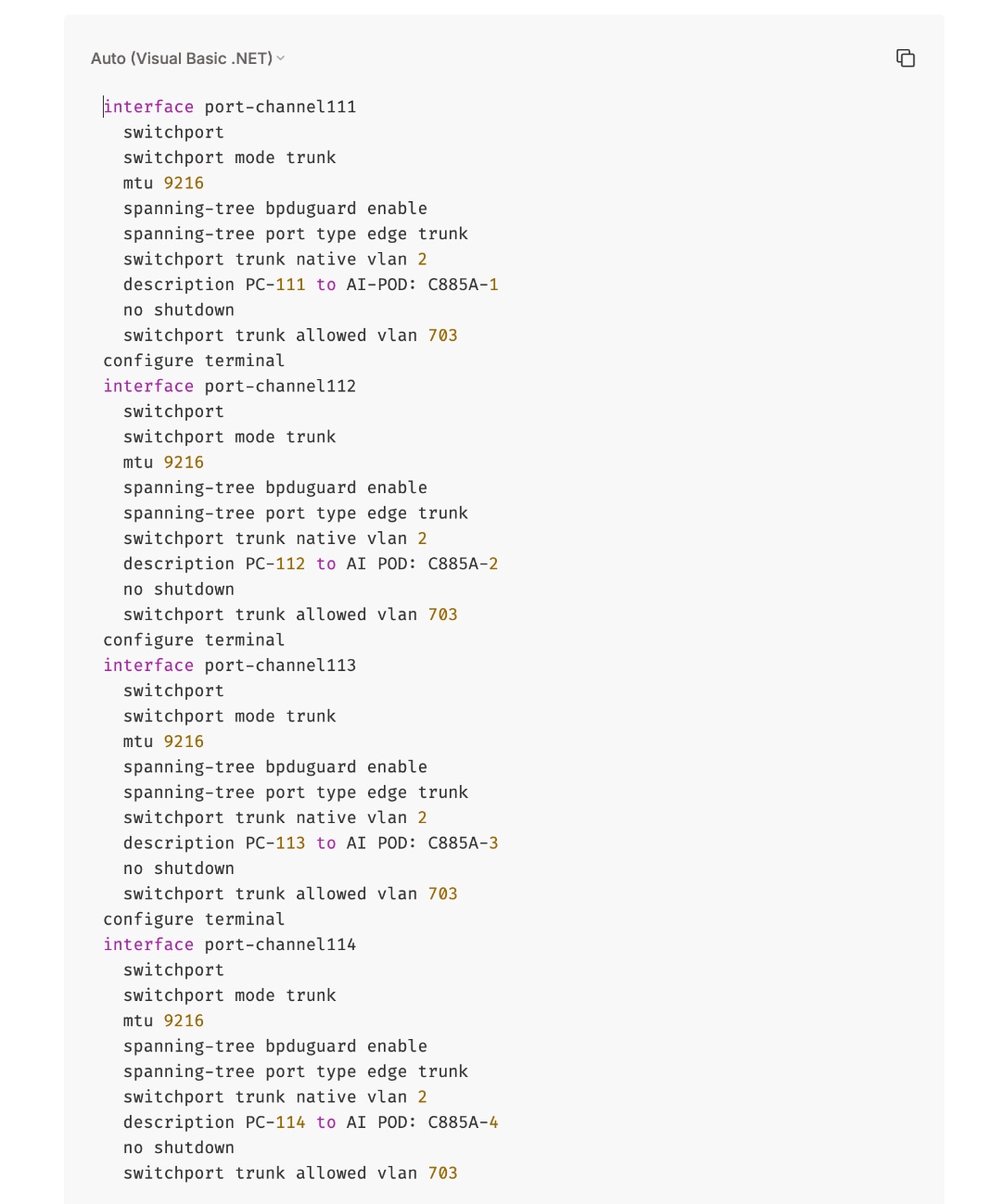

The deployed configuration on one leaf switch is provided as a reference below:

Step 12. Repeat this procedure to provision layer 2 connectivity from the compute/management leaf switches to the remaining 3 UCS nodes in the cluster.

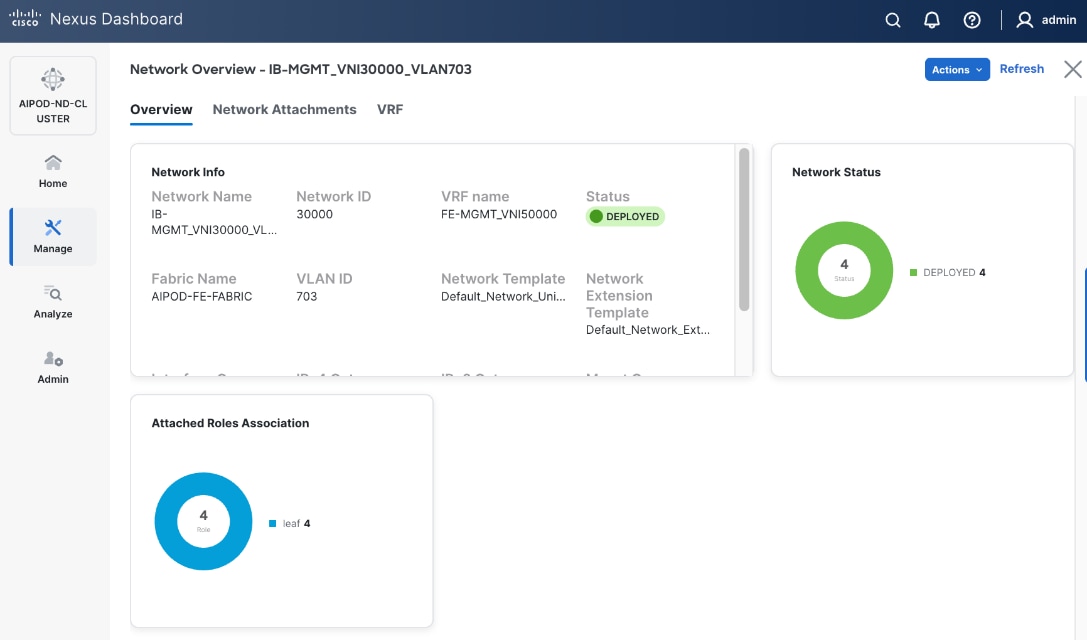

Enable In-Band Management Connectivity to UCS GPU and Management Nodes

The In-band management (IB-MGMT) network in the FE fabric will provide the following connectivity:

● Connectivity from control, management and services nodes to the UCS GPU nodes where the AI workload is running.

● Connectivity to other networks (networks outside this FE fabric to other networks within the enterprise or external to the enterprise).

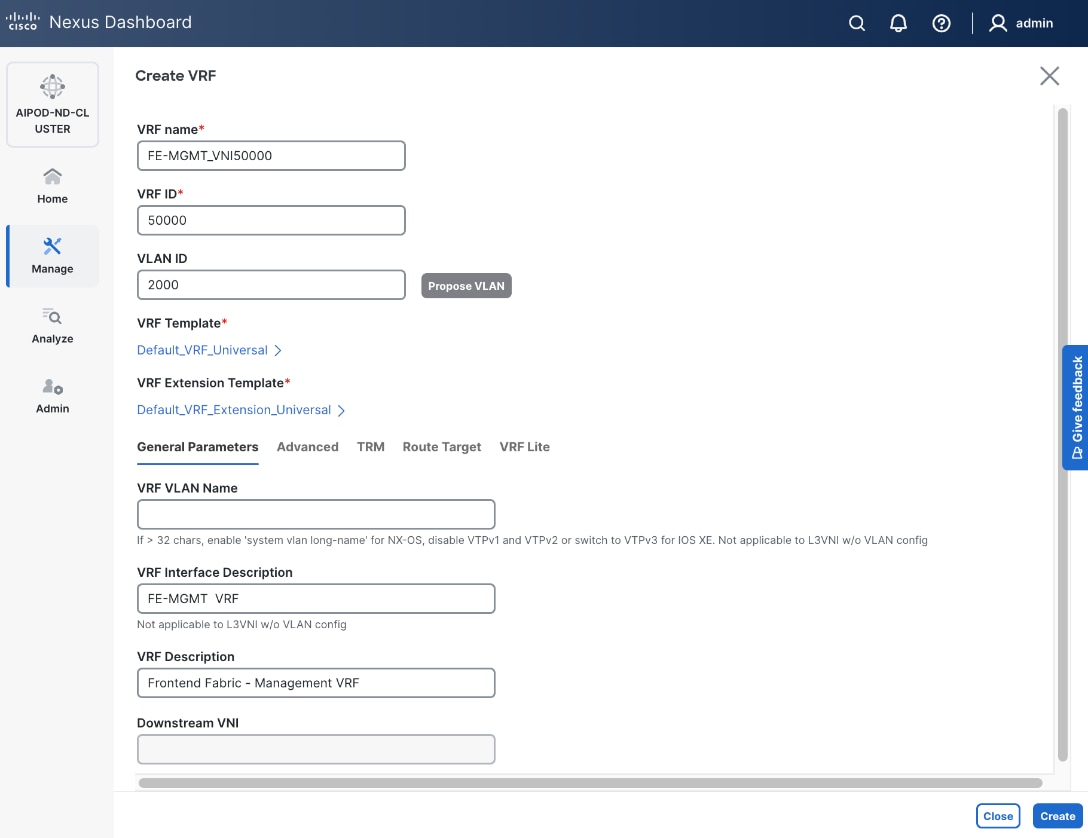

Table 14. Setup Parameters for FE Fabric: In-Band Management Connectivity to UCS Management and GPU Nodes

| IB-MGMT Network |

|

|

| Name |

IB-MGMT_VN30000_VLAN703 |

|

| Layer 2 Only |

No |

|

| IB-MGMT VRF |

|

|

| VRF Name |

FE-MGMT_VN50000 |

|

| VRF ID |

50000 |

(System Proposed) |

| VLAN ID |

2000 |

(System Proposed) |

| VRF Interface Description |

FE-MGMT VRF |

|

| VRF Description |

Frontend Fabric – Management VRF |

|

| IB-MGMT Network Contd. |

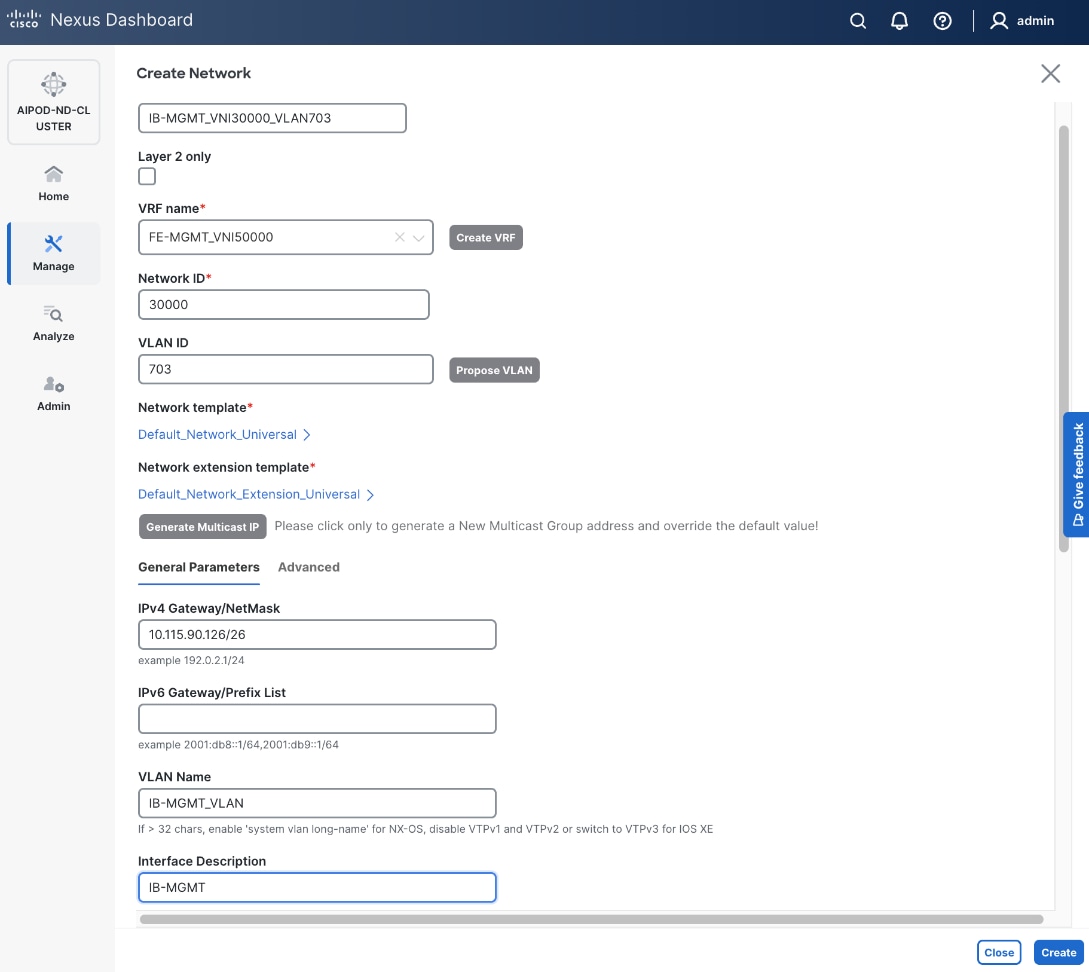

|

|

| Network ID |

30000 |

|

| VLAN ID |

703 |

|

| IPv4 Gateway/Netmask |

10.115.90.126/26 |

|

| VLAN Name |

IB-MGMT_VLAN |

|

| Interface Description |

IB-MGMT |

|

| UCS C885A GPU Nodes |

|

|

| vPC Leaf Switch Pair |

FE-LF1, FE-LF2 |

vPC Leaf Switch Pair |

| UCS C885-A Node-1 Interface |

Port-Channel 111 |

|

| UCS C885-A Node-2 Interface |

Port-Channel 112 |

|

| UCS C885-A Node-3 Interface |

Port-Channel 113 |

|

| UCS C885-A Node-4 Interface |

Port-Channel 114 |

|

| Management UCS X-Direct Chassis |

|

|

| vPC Leaf Switch Pair |

FE-LF1, FE-LF2 |

|

| UCS X-Direct (-A Uplinks) |

Port-Channel 15 |

|

| UCS X-Direct (-B Uplinks) |

Port-Channel 16 |

|

To deploy the in-band management network and enable connectivity to the UCS GPU nodes, follow the procedures below.

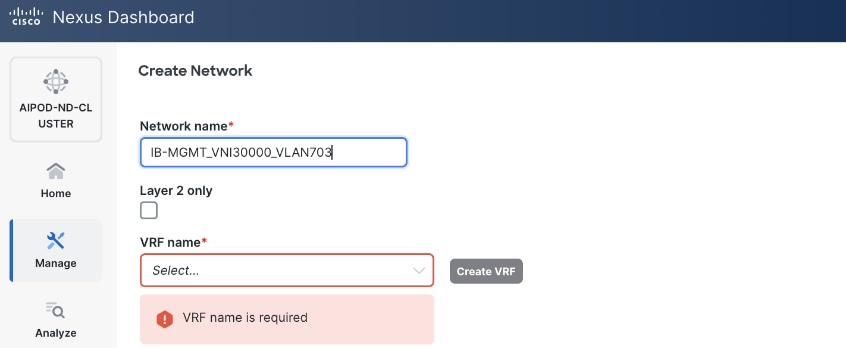

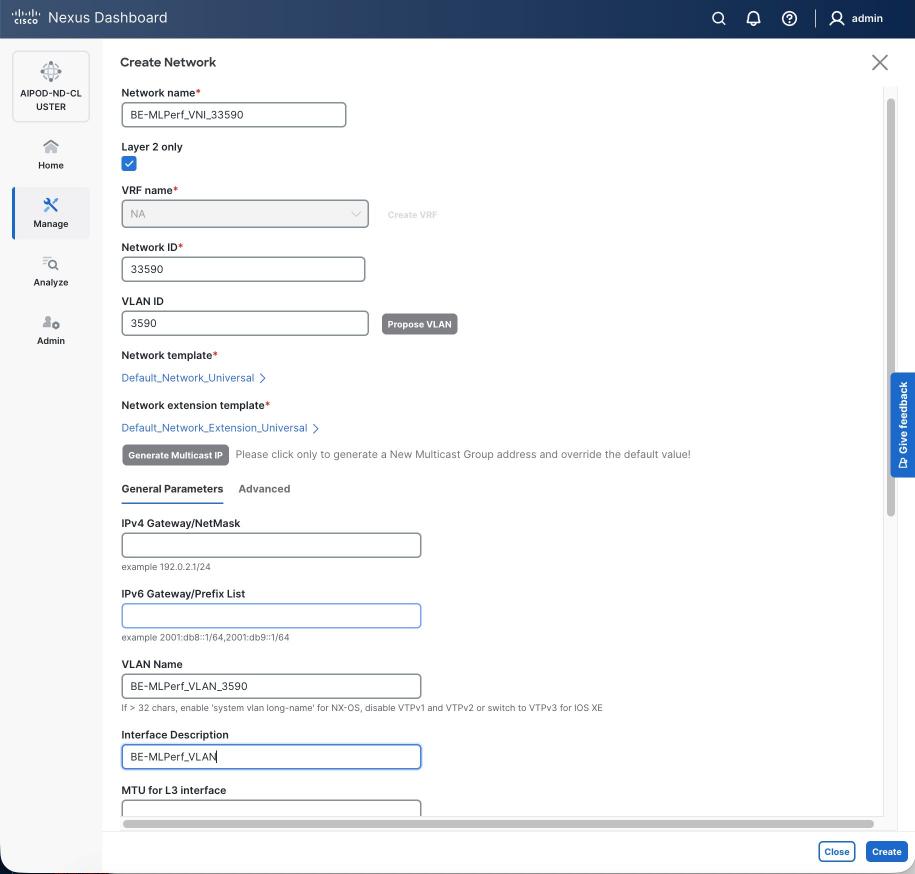

Procedure 1. Deploy In-Band Management Connectivity for UCS GPU Nodes

Step 1. From a web browser go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the navigation menu, go to Manage > Fabrics.

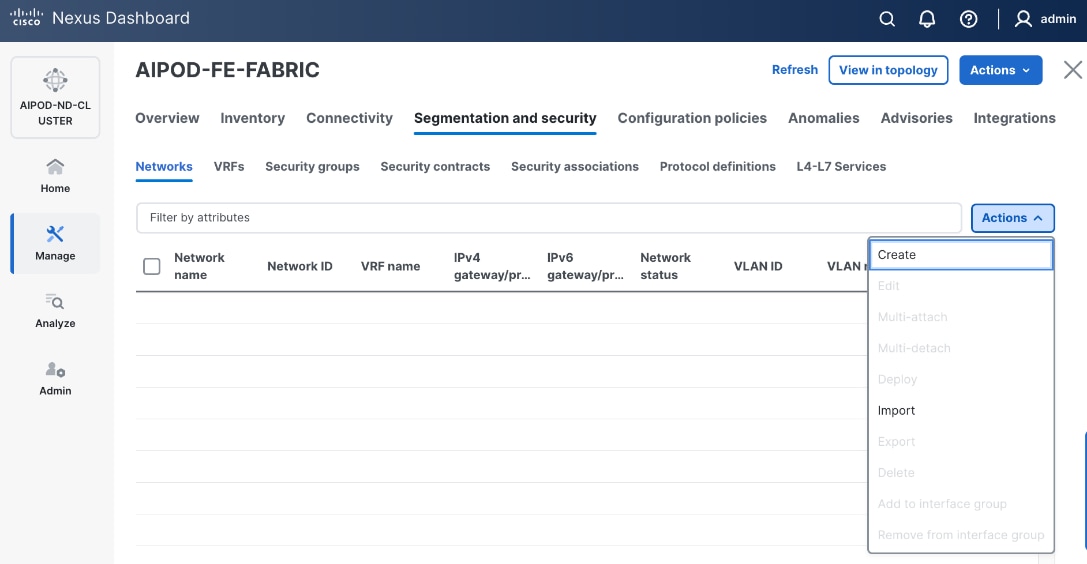

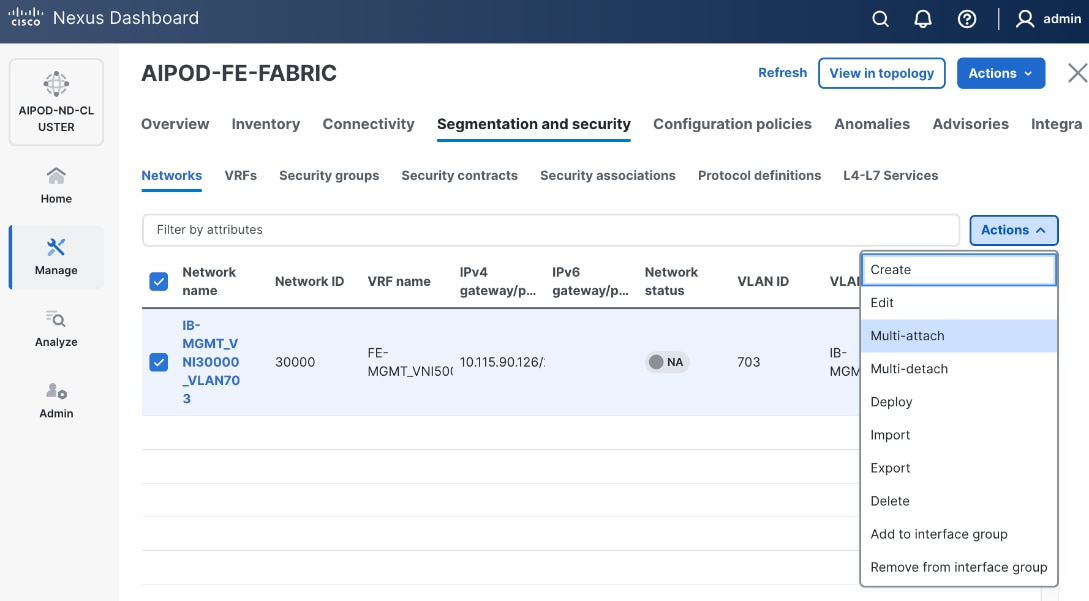

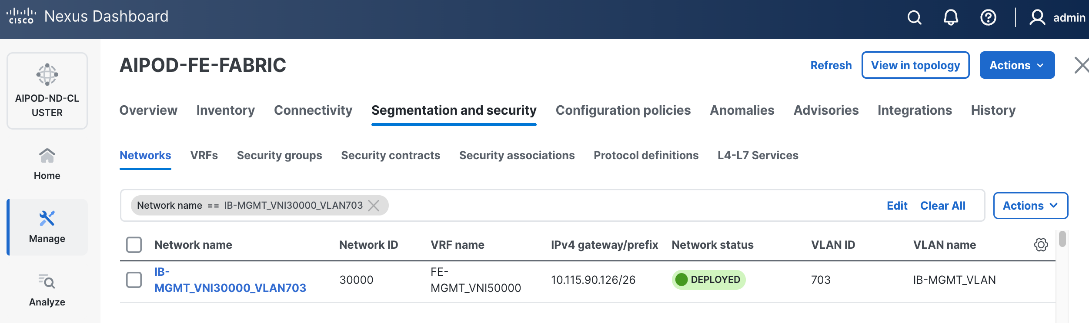

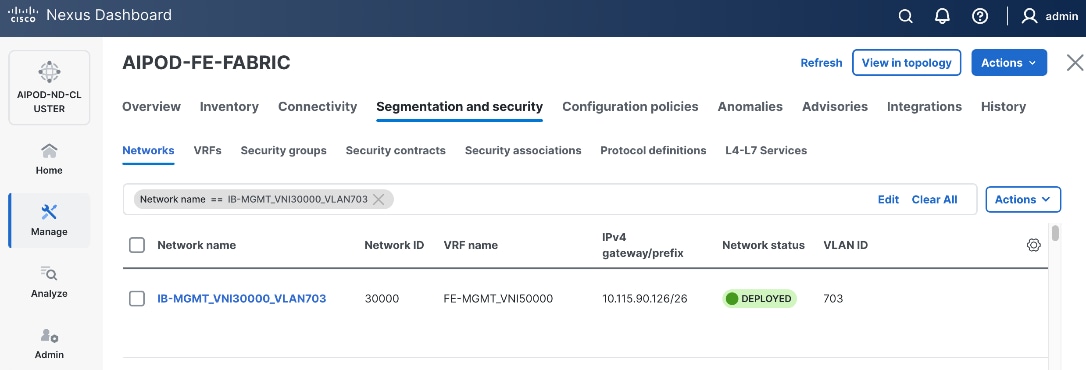

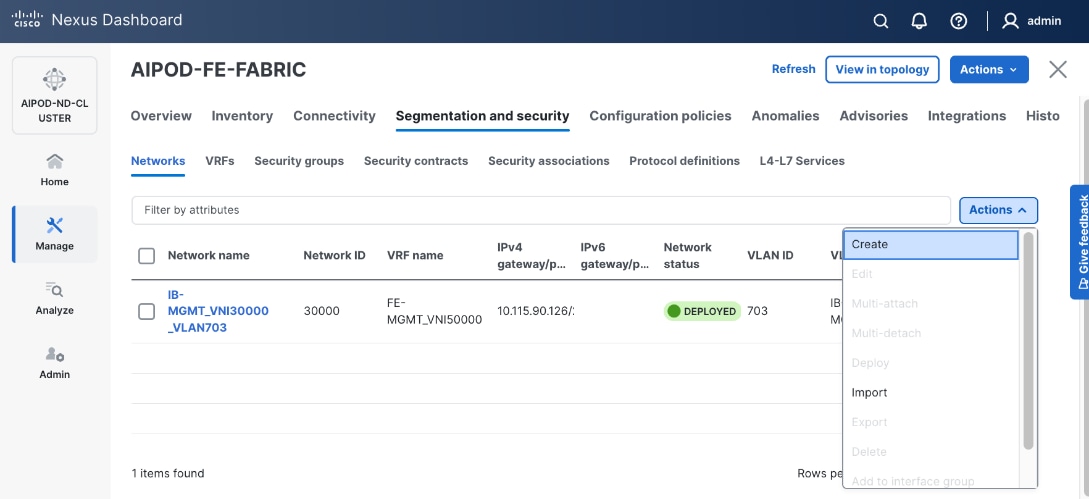

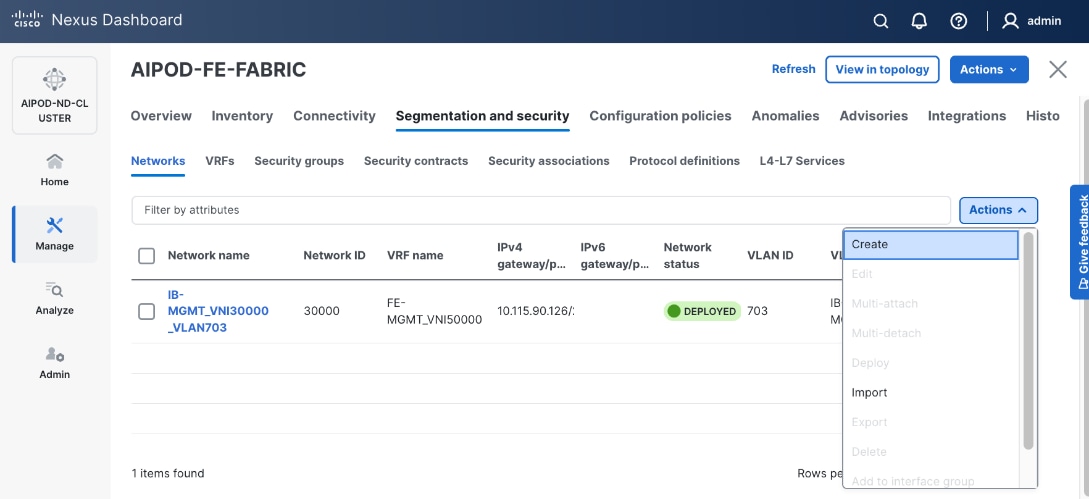

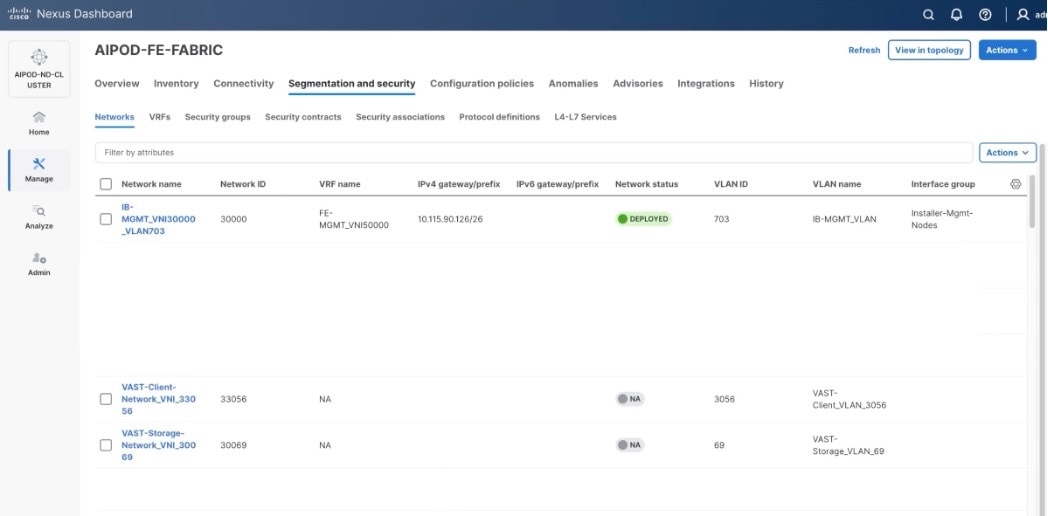

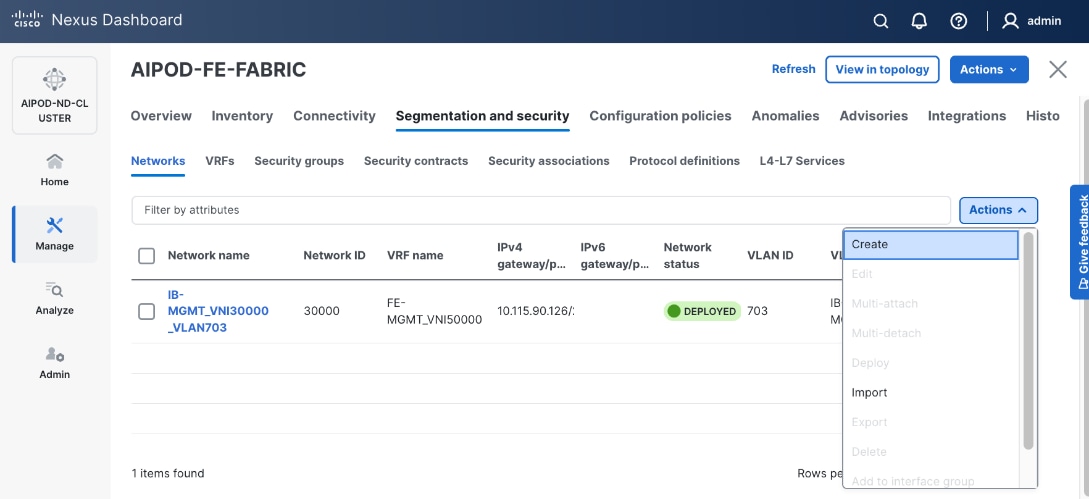

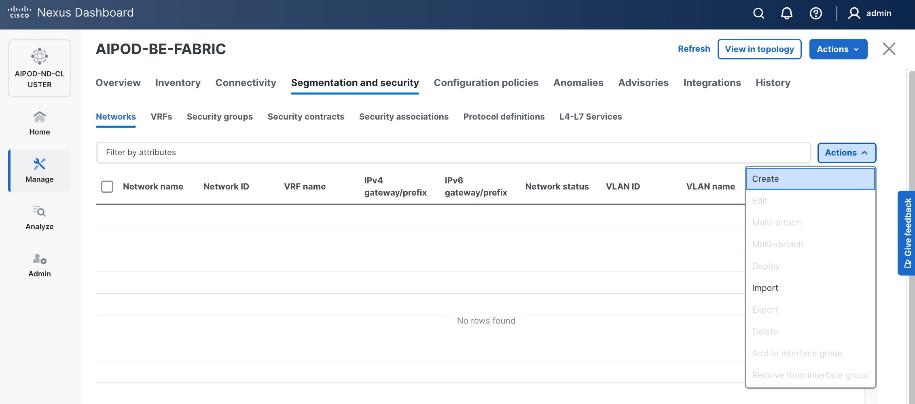

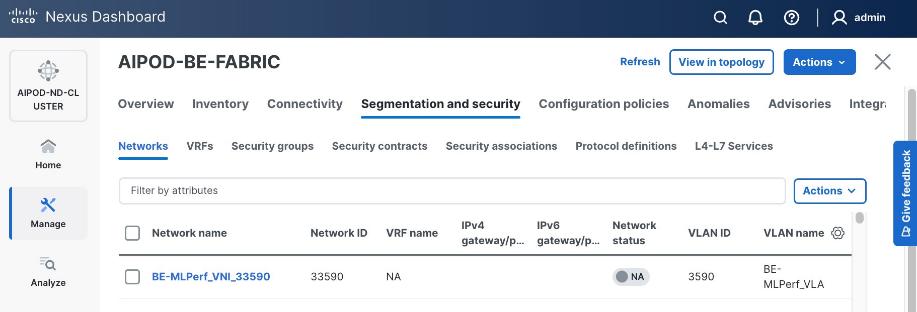

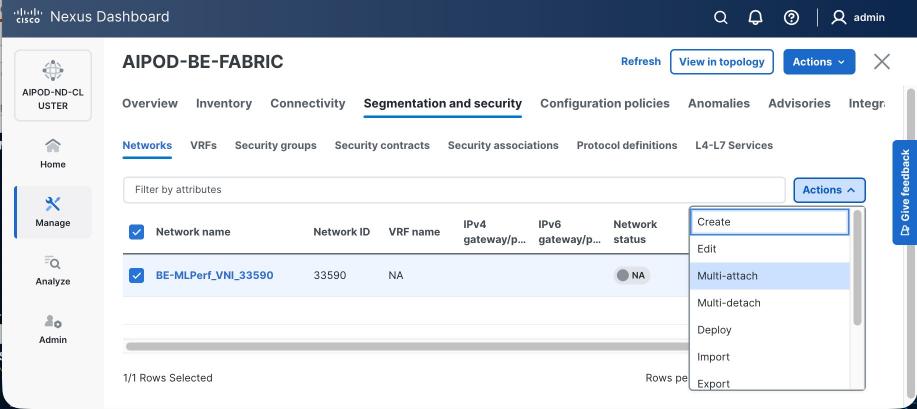

Step 3. Select the FE fabric and go to Segmentation and Security > Networks tab.

Step 4. Click the lower Actions button and select Create from the list.

Step 5. In the Create Network window, specify the following:

● Network name for the IB-MGMT network.

● Leave unchecked the Layer 2 only checkbox as IB-MGMT is a layer 3 overlay network.

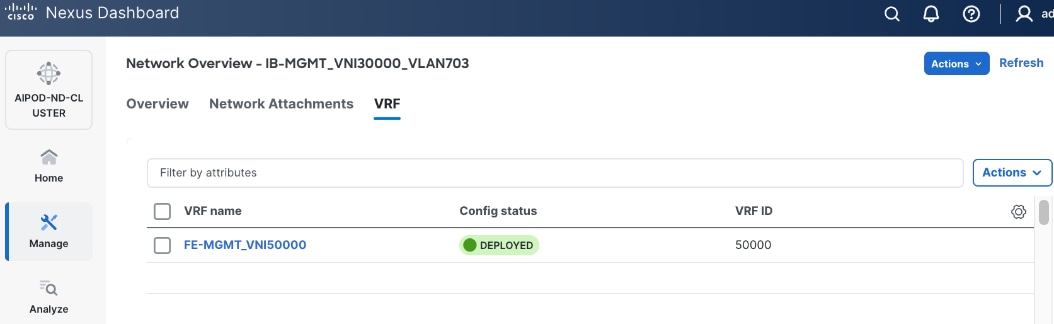

● VRF name. If a VRF hasn't been created already, you have an option from this window to also create a VRF.

● To create a new VRF, click on Create VRF. In the Create VRF window, specify VRF ID (or use default), VLAN ID (or click the Propose VLAN button to let system define a VLAN), and optionally other parameters as shown in the following screenshot.

Step 6. Click Create to create the VRF and return to the Create Network window.

Step 7. In the Create Network window, specify the following:

● Network ID or use default.

● VLAN ID or click Propose VLAN to let system define a VLAN.

● In the General Parameters tab, specify IP Gateway/Netmask, VLAN Name and Interface Description.

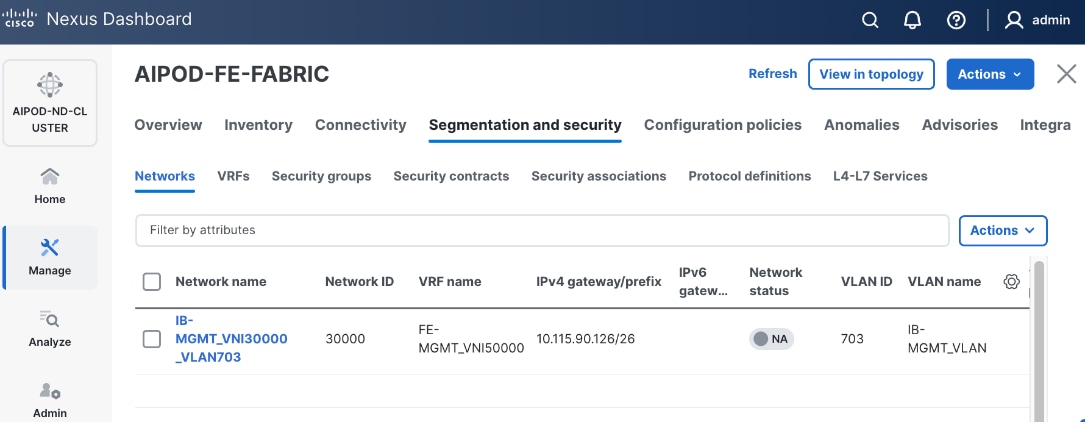

Step 8. Click Create to create the Network.

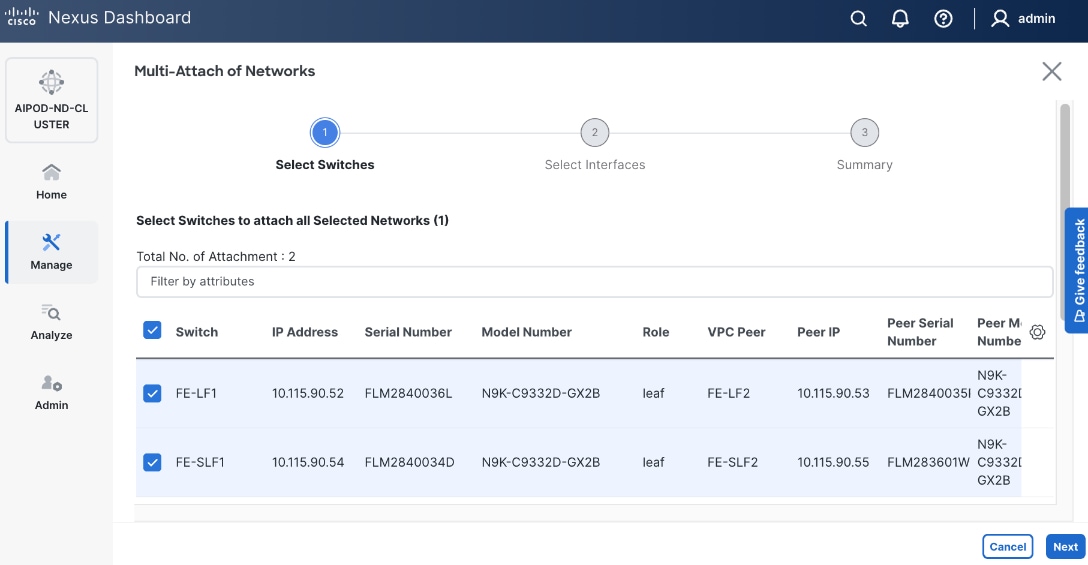

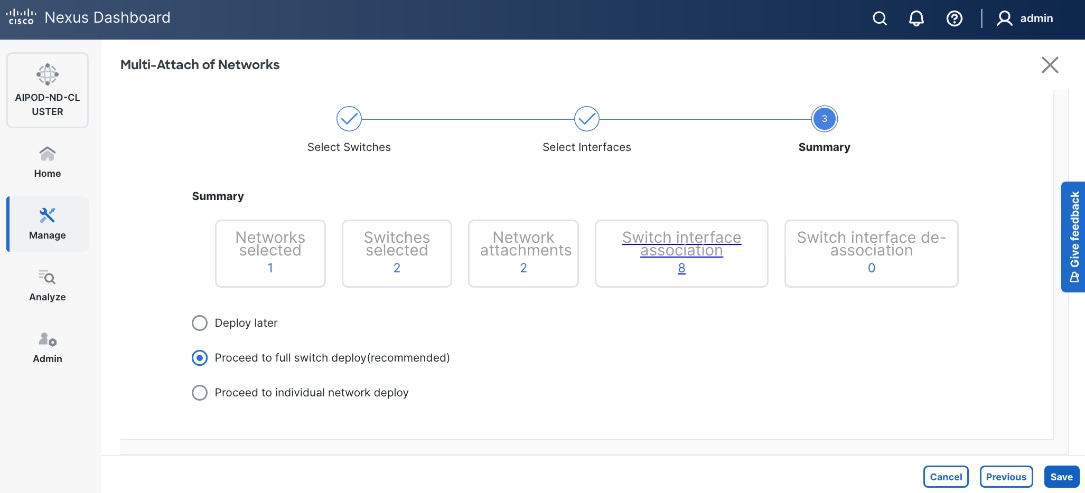

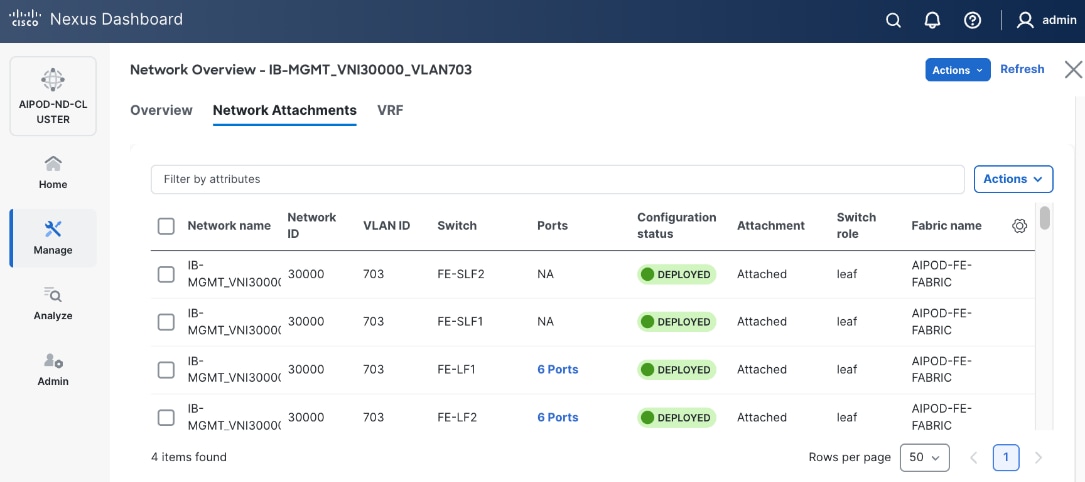

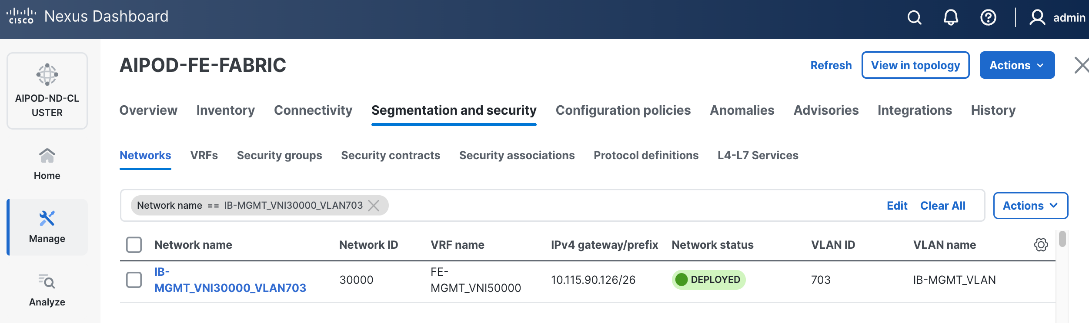

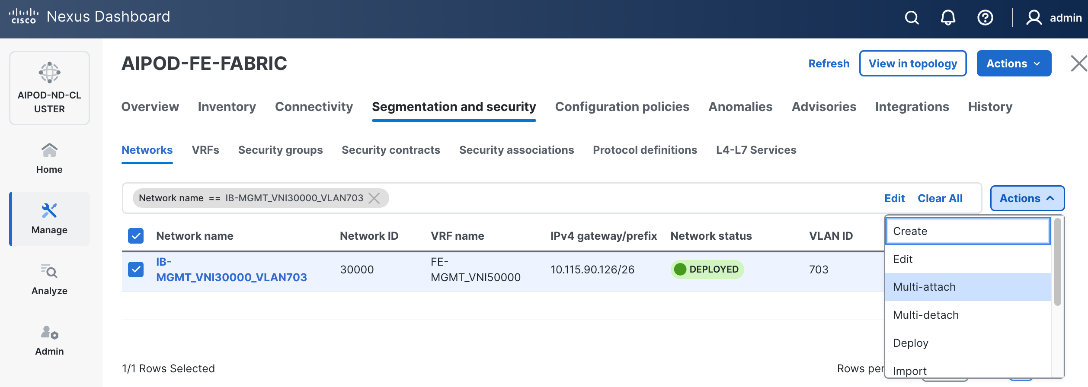

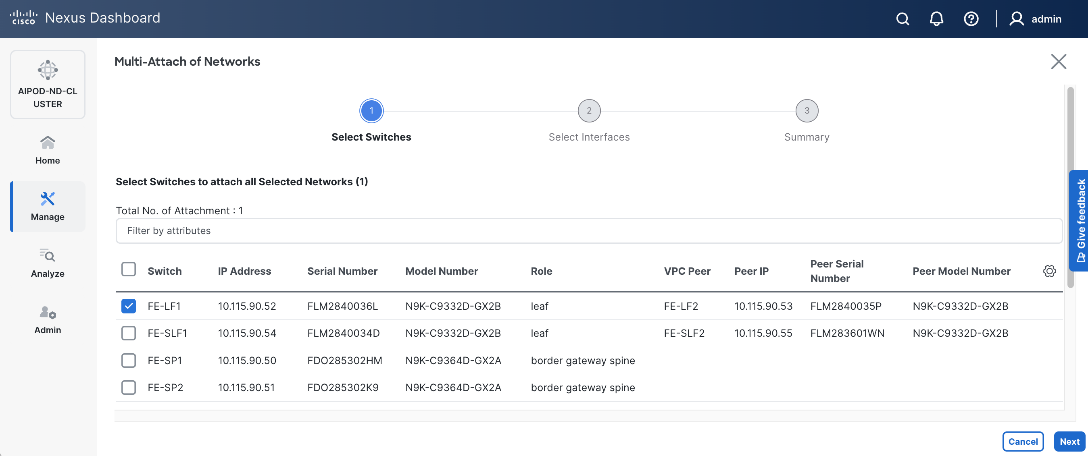

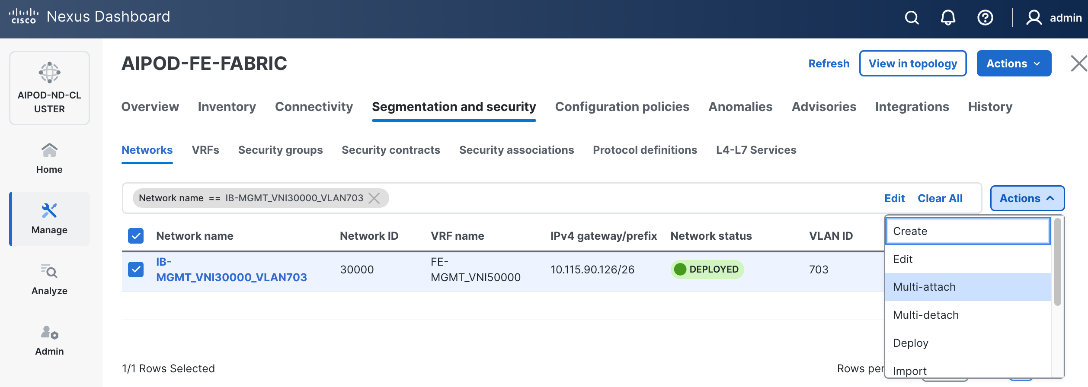

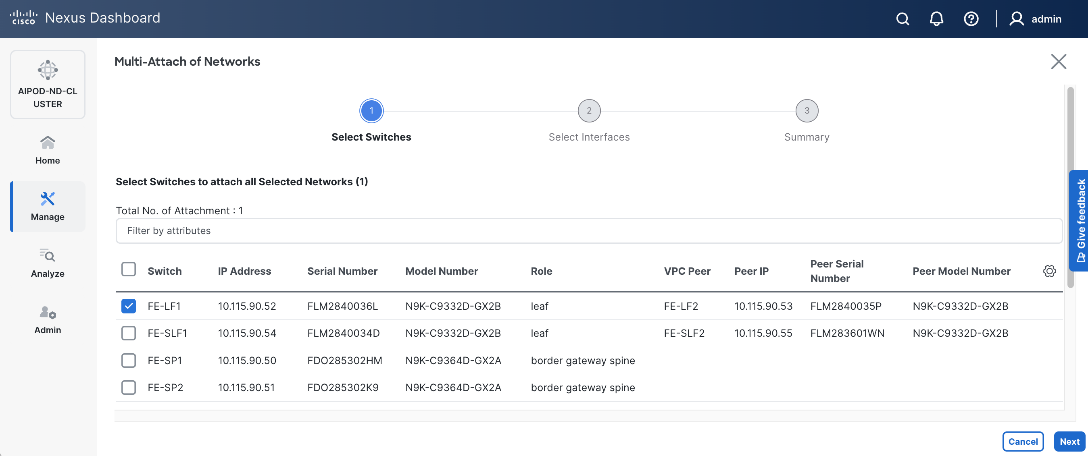

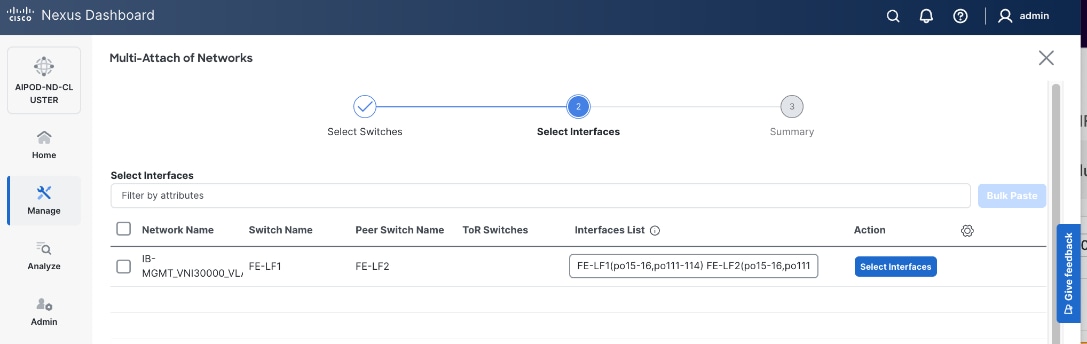

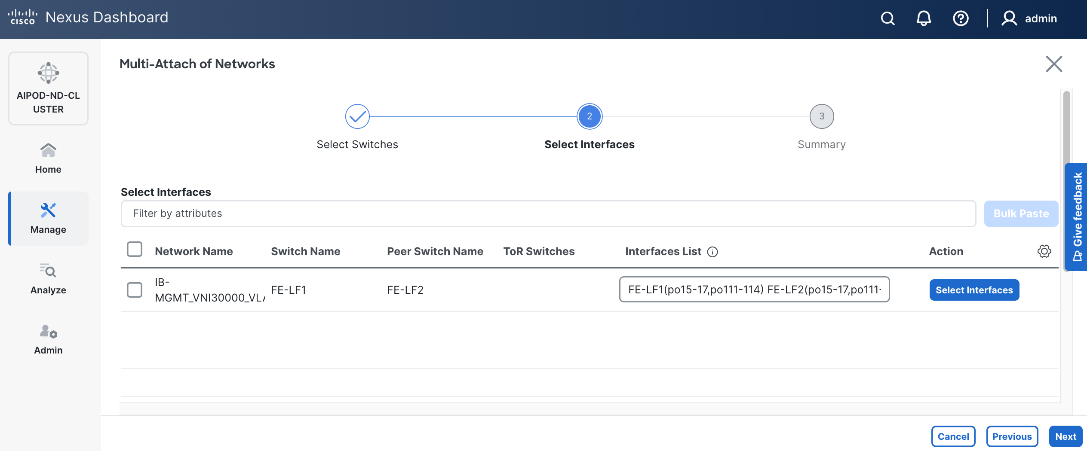

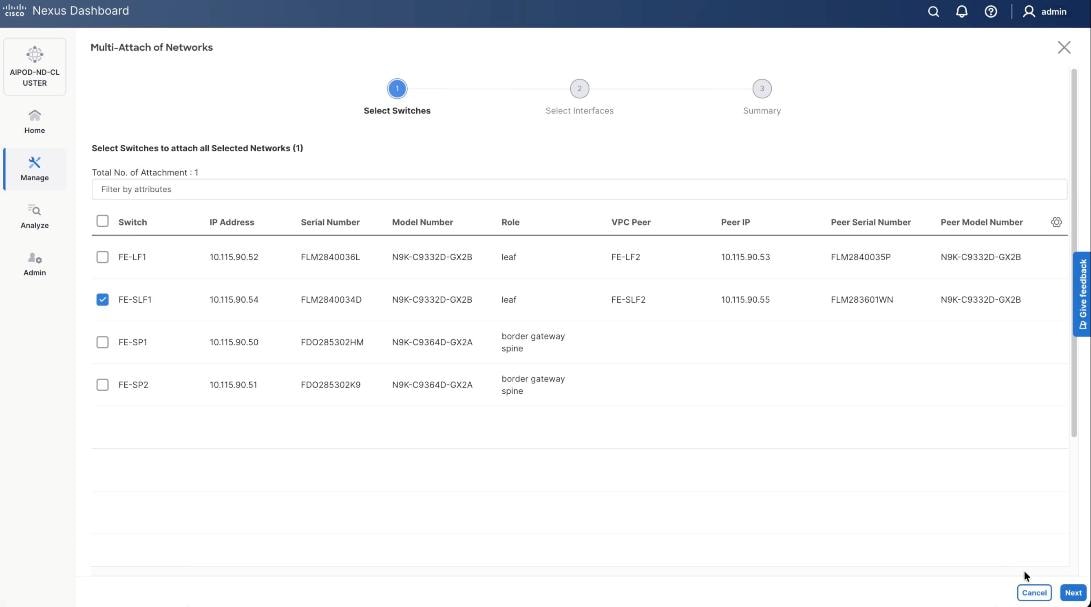

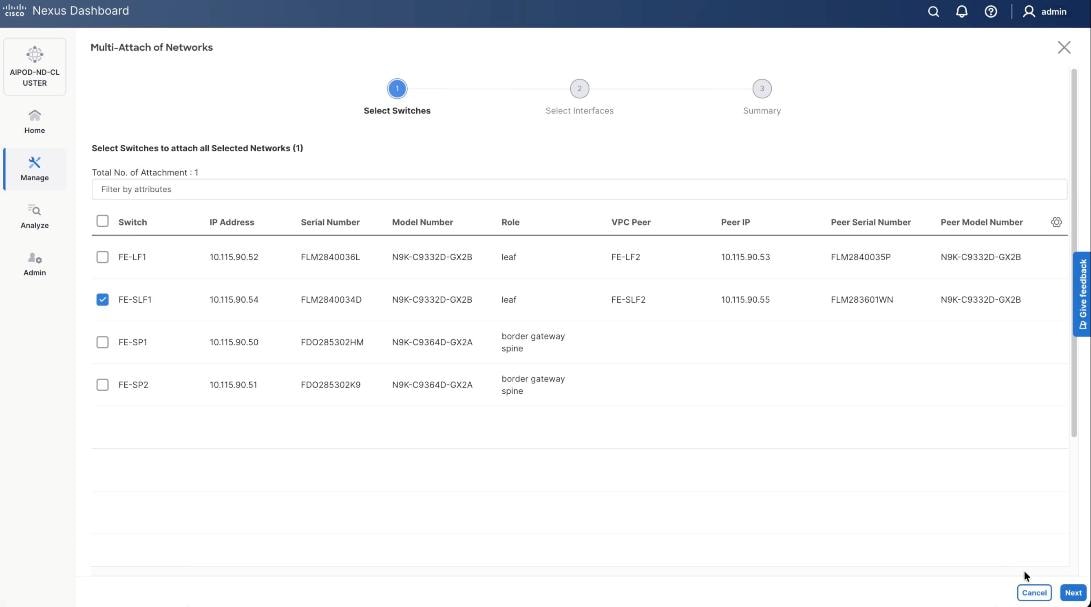

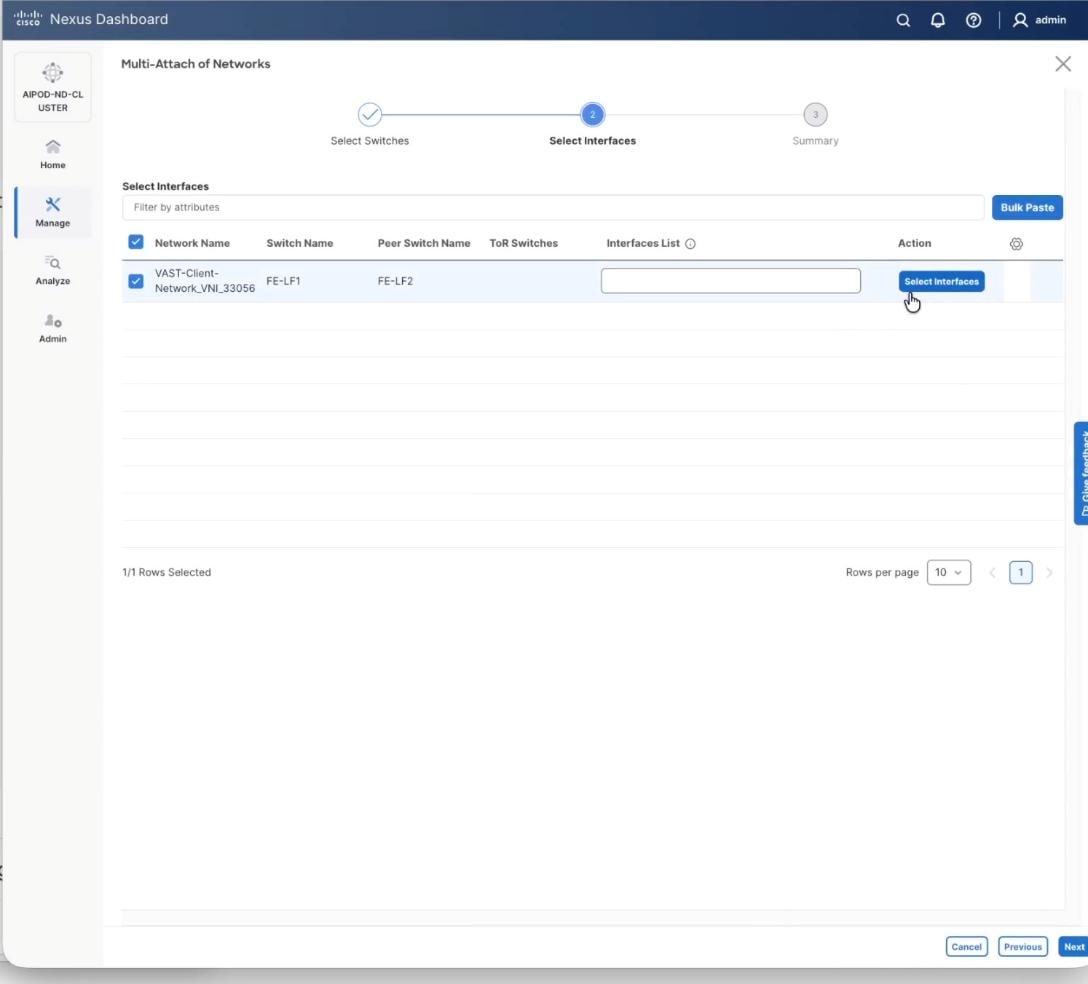

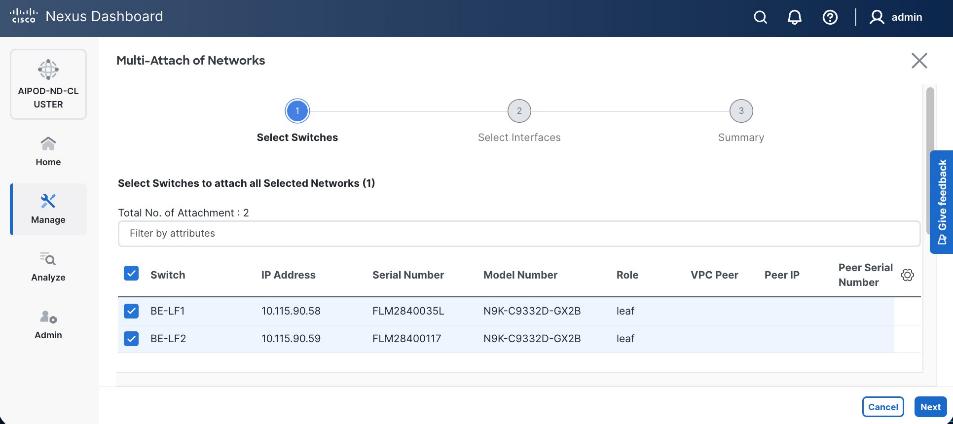

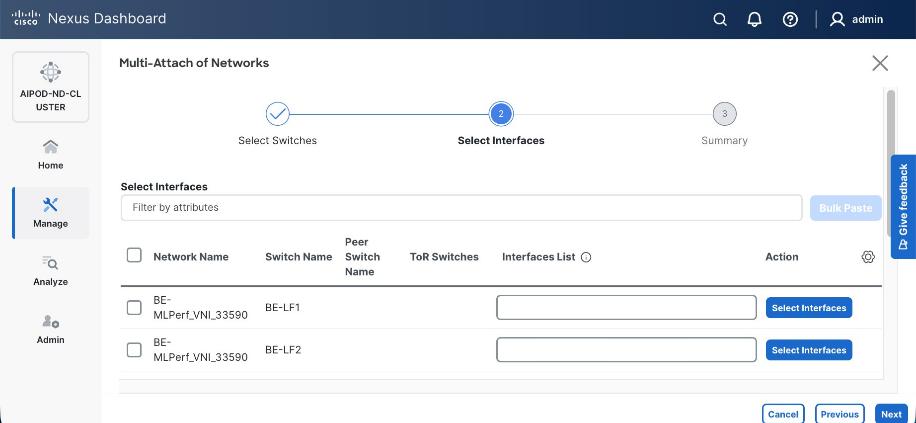

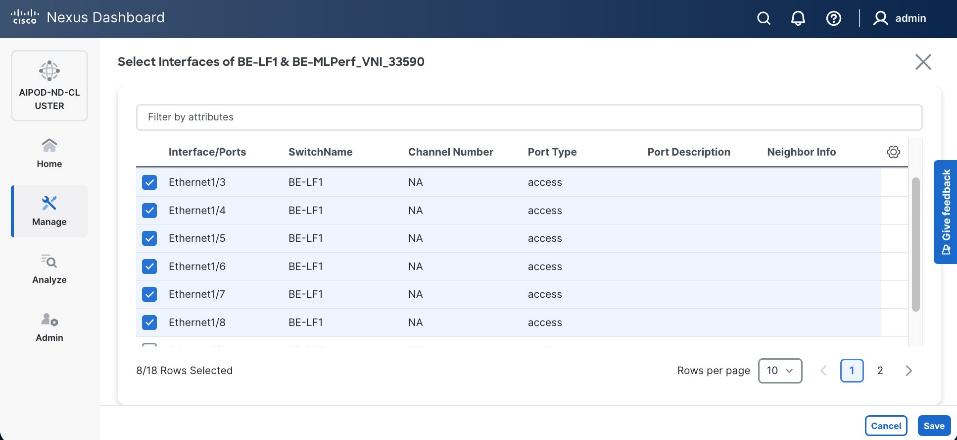

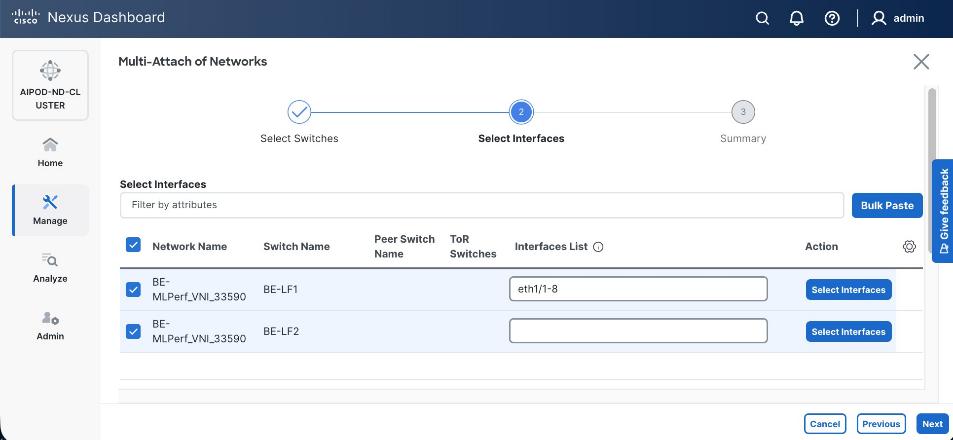

Step 9. Select newly created network and deploy it on both leaf pairs. Click the lower Actions button and select Multi-attach from the list.

Step 10. Select the Leaf switch pairs. Enabling this network on storage leaf pairs as shown below may not be necessary in all deployments.

Step 11. Click Next.

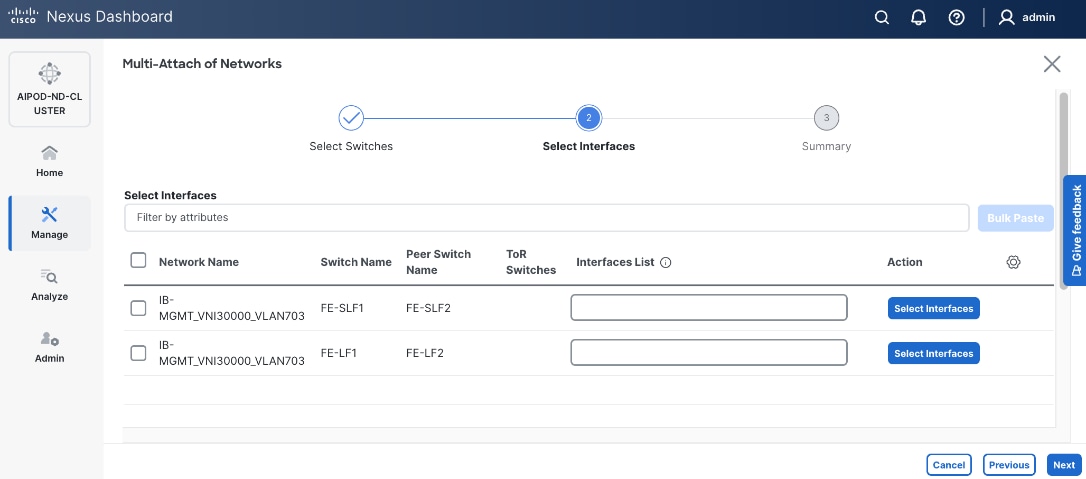

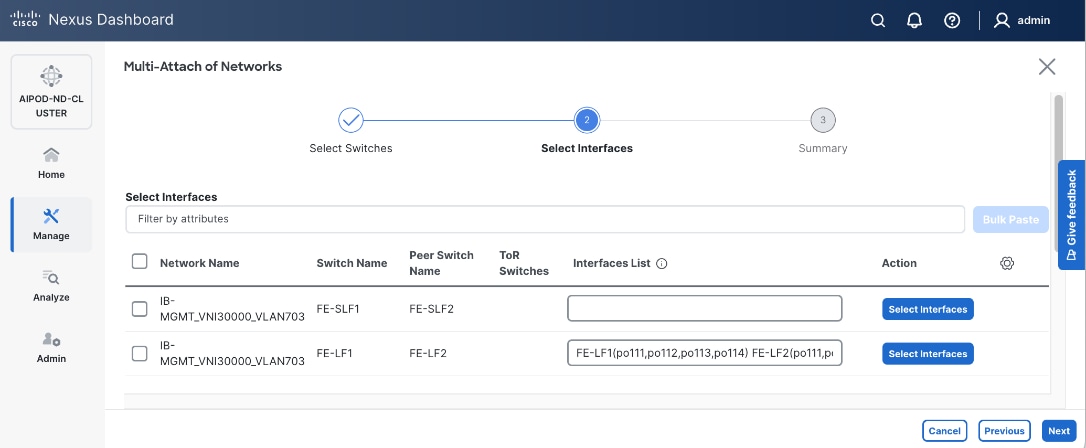

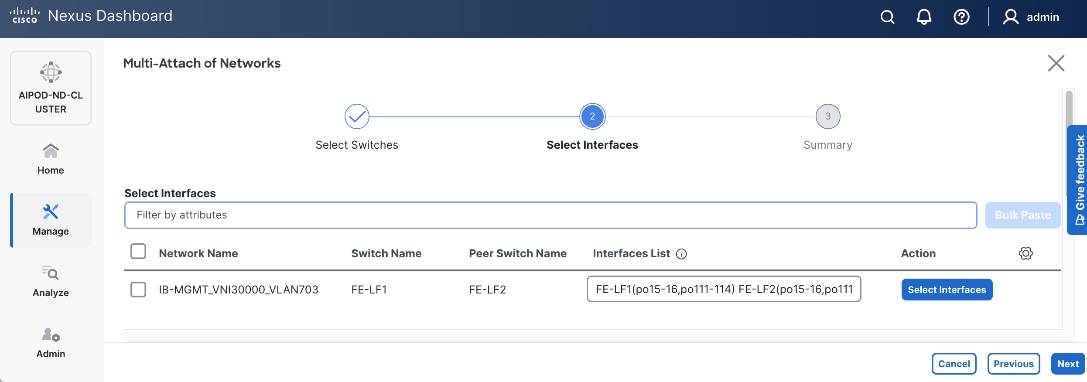

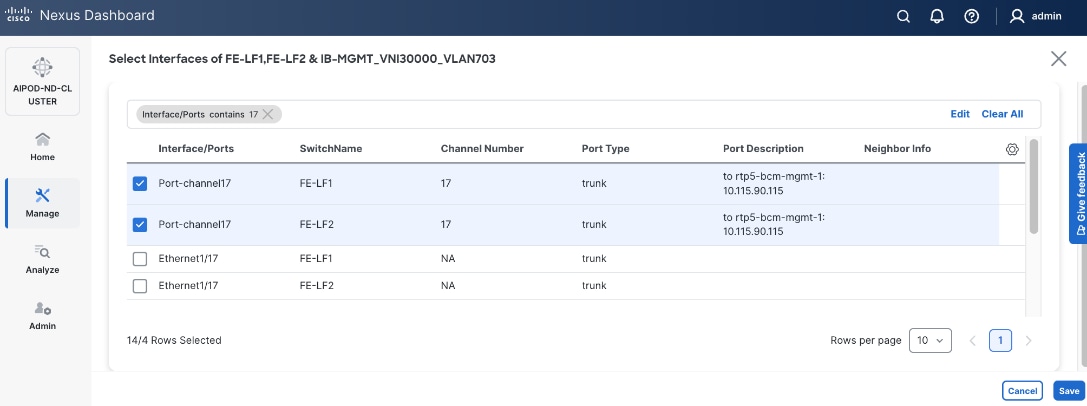

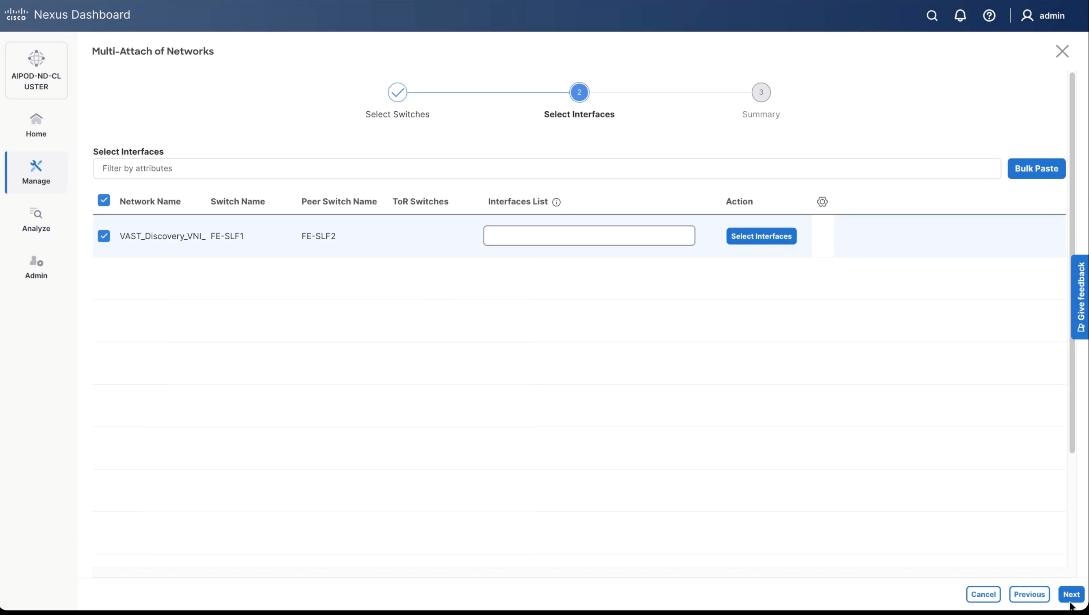

Step 12. Select each switch pair in the list and click Select interfaces to deploy this network as a trunked VLAN (VLAN 703) on the selected interfaces. Select the interfaces on the compute leaf switches that connect to the UCS GPU nodes. Additional interfaces can be added later as needed.

Step 13. Click Next.

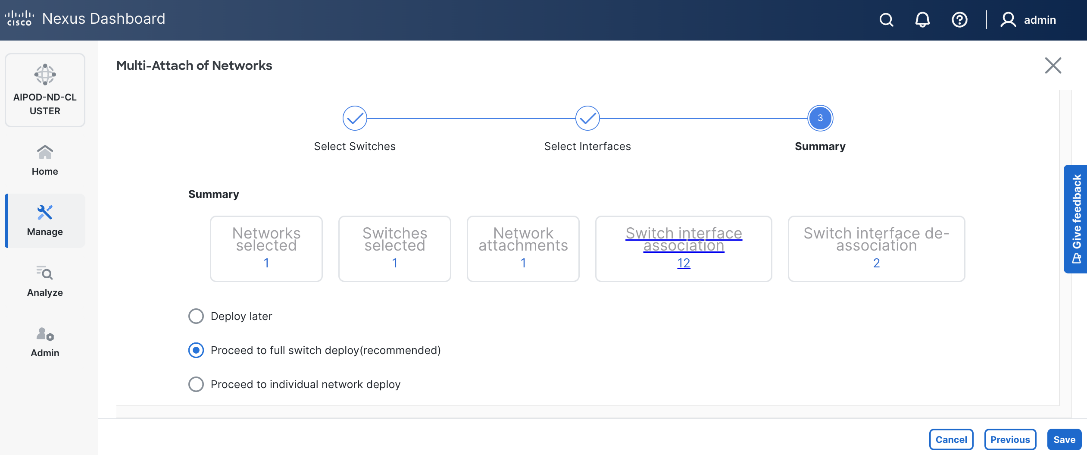

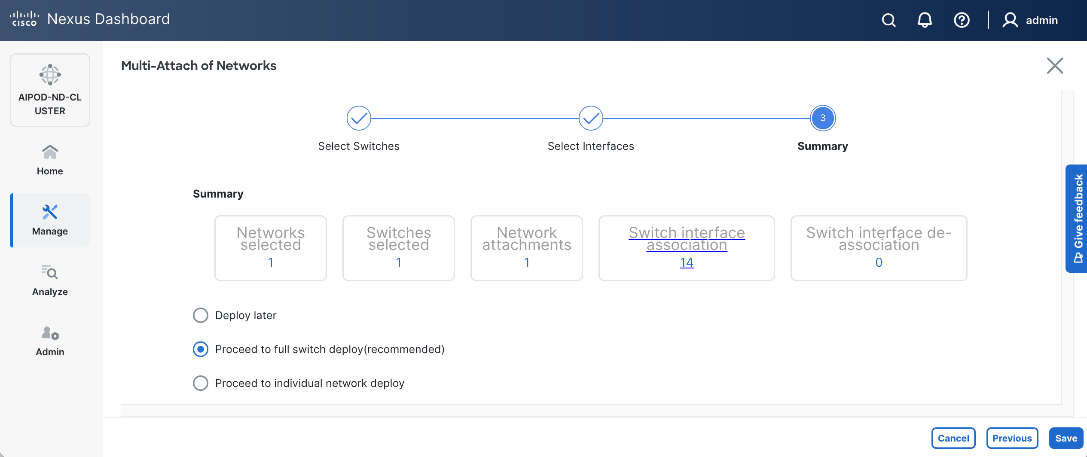

Step 14. Click Save.

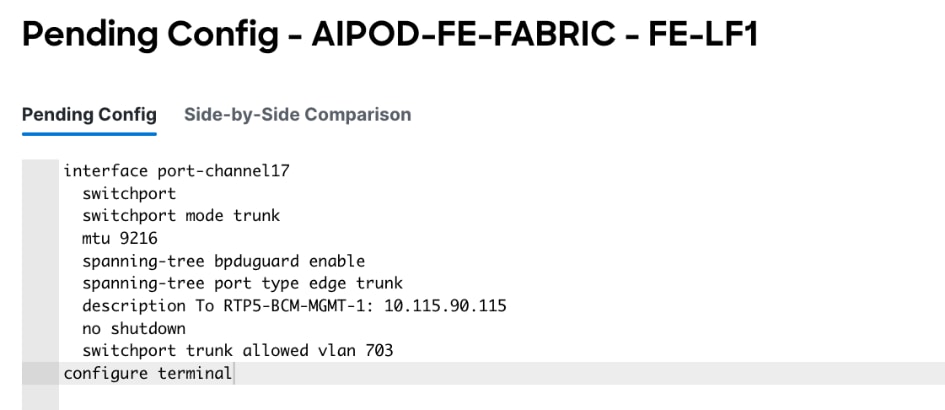

Step 15. Click Pending Config to see the configuration being deployed. The pending configuration on one leaf switch is provided as a reference at the end.

Step 16. Click Deploy All.

Step 17. Click Close.

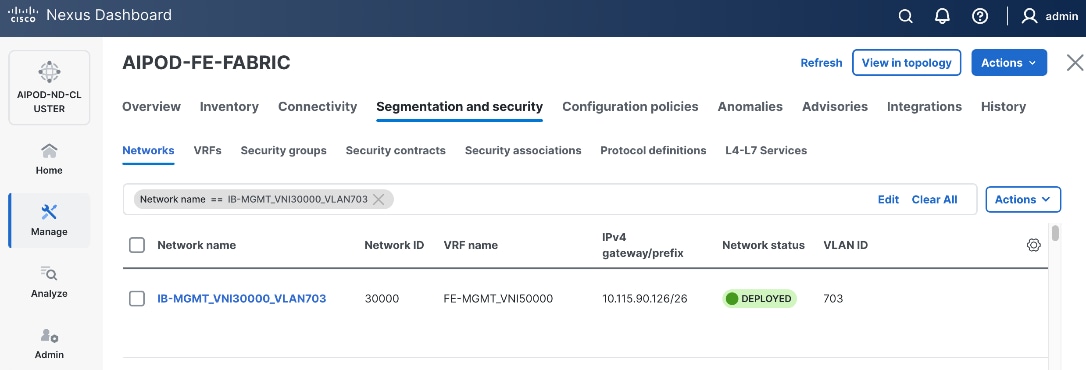

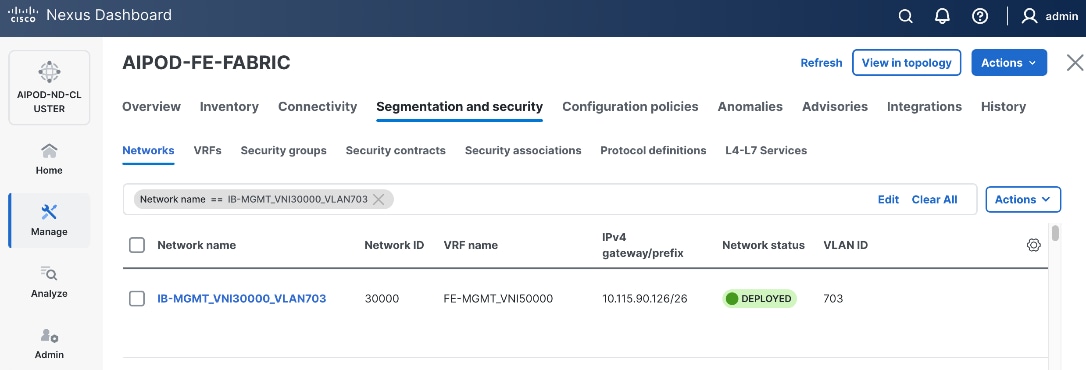

Step 18. Click the Network name to verify that the network was successfully deployed on the relevant switches and interfaces.

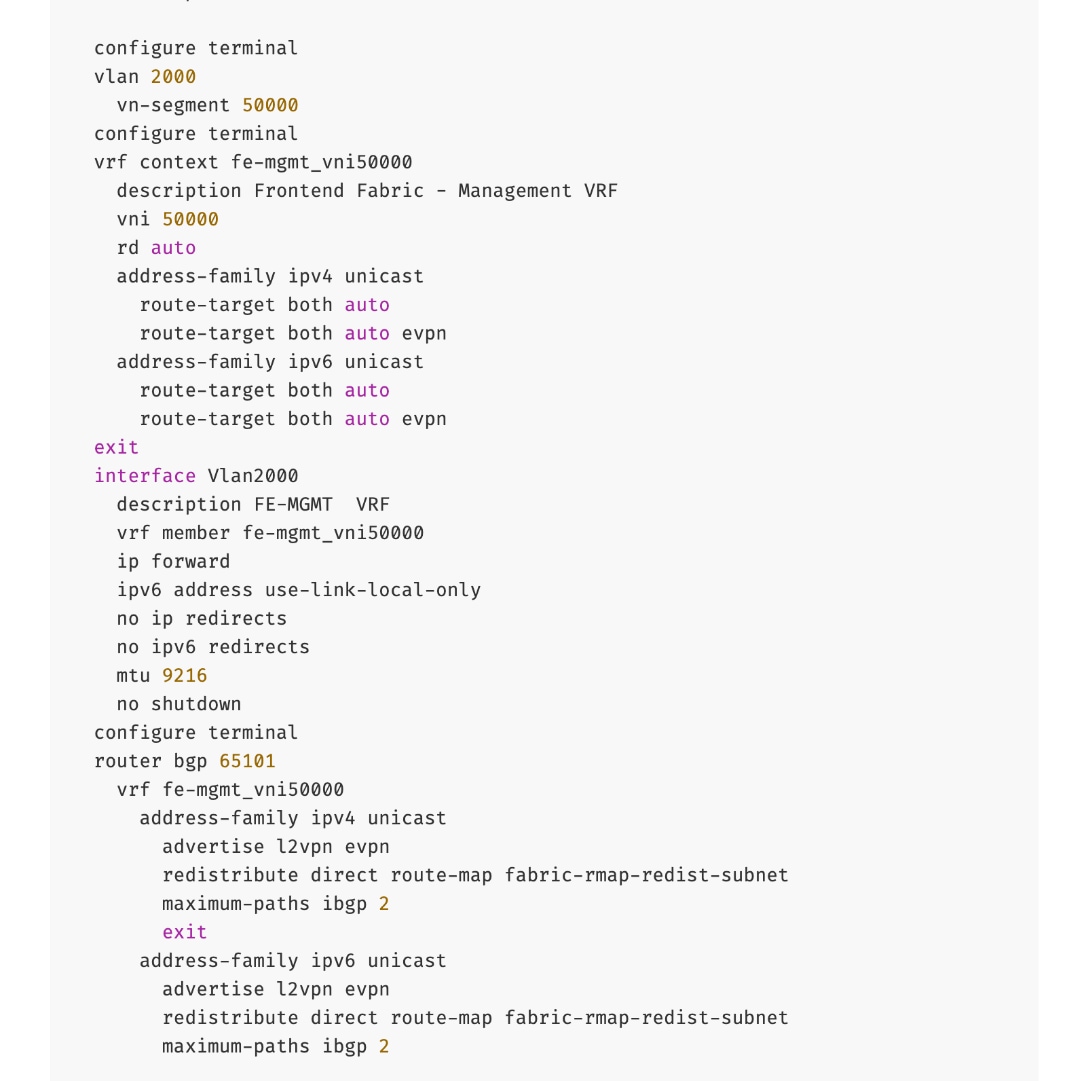

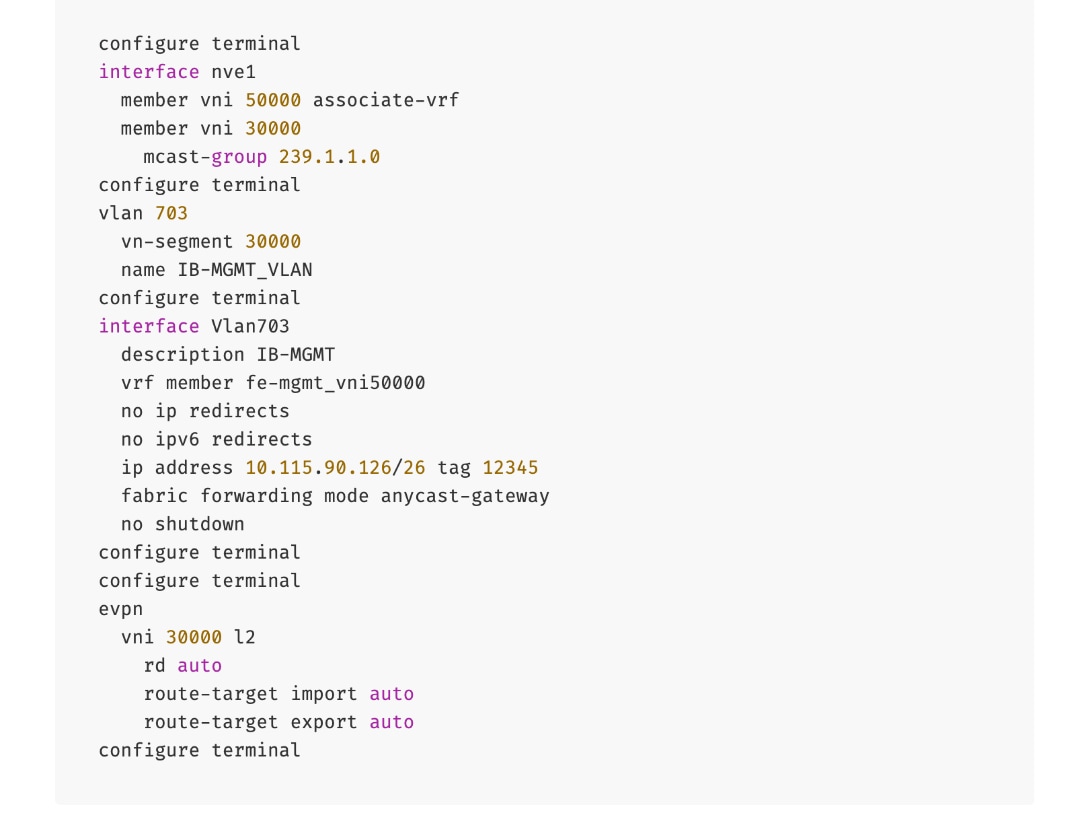

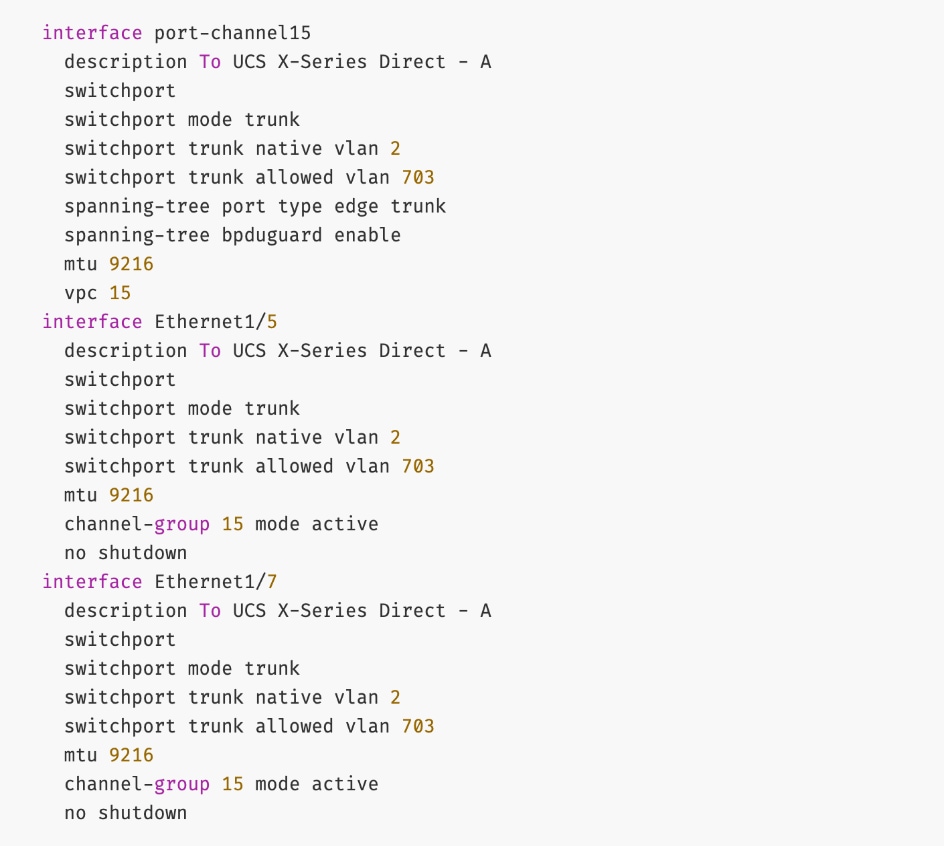

The configuration deployed on one compute leaf switch is provided below:

To deploy in-band management connectivity to Management UCS X-Series Direct on the compute leaf switches in the FE fabric, follow the procedures below.

Procedure 2. Deploy in-band management connectivity for management UCS X-Direct chassis

Step 1. From a web browser go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Segmentation and Security > Networks tab.

Step 4. Select the previously deployed in-band management network from the list.

Step 5. Click the lower Actions button and select Multi-attach from the list.

Step 6. Select the leaf switch pair from the list which the UCS X-Series Direct system connects.

Step 7. Click Next.

Step 8. Click Select Interfaces to the right of the leaf switch pair to add the interfaces that connect to management UCS X-Series Direct.

Step 9. Click Next.

Step 10. Click Save.

Step 11. Click Deploy All.

Step 12. Click Close.

Step 13. Click the Network name to verify that the network was successfully deployed on the relevant switches and interfaces.

The configuration deployed on one compute leaf switch is provided below as a reference:

(Ubuntu) Enable In-Band Management Connectivity to BCM Node(s)

To deploy in-band management connectivity to BCM node connected to compute leaf switches in the FE fabric, you will be deploying this network on the compute Leaf switch pair that connects to the BCM node.

Table 15. Setup Parameters for FE Fabric: In-Band Management Connectivity to BCME Nodes

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| IB-MGMT Network |

|

|

| Name |

IB-MGMT_VN30000_VLAN703 |

|

| IB-MGMT VRF |

|

|

| VRF Name |

FE-MGMT_VN50000 |

|

| Management BCME Node |

|

|

| vPC Leaf Switch Pair |

FE-LF1, FE-LF2 |

|

| BCM Interface |

Port-Channel 17 |

|

To deploy in-band management connectivity to BCM node connected to compute leaf switches in the FE fabric, follow the procedures below.

Procedure 1. Enable in-band management connectivity to BCM node

Step 1. From a web browser go to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Segmentation and Security > Networks tab.

Step 4. Select the previously deployed in-band management network from the list.

Step 5. Click the lower Actions button and select Multi-attach from the list.

Step 6. Select the leaf switch pair from the list that the BCME node connects.

Step 7. Click Next.

Step 8. Click Select Interfaces to the right of the network name to add the interfaces that connect to the BCM node.

Step 9. Click Save.

Step 10. Click Next.

Step 11. Click Save.

The configuration deployed on one compute leaf switch is provided below as a reference:

Step 12. Click Deploy All.

Step 13. Click Close.

Step 14. Click the Network name to verify that the network was successfully deployed on the relevant switches and interfaces.

Enable Layer 2 Connectivity on FE Fabric for VAST Data on Cisco EBox

This section details configuring the Layer 2 connectivity from the FE fabric to VAST storage.

Table 16. Setup Parameters for FE Fabric: VAST Internal Storage Network and VAST External Network

| Parameter Type |

Parameter Name | Value |

| Name |

VAST-Storage-Network_VNI_30069 |

| Layer 2 Only |

Enable checkbox |

| Network ID |

30069 |

| VLAN ID |

69 |

| VLAN Name |

VAST-Storage_VLAN_69 |

| Interface Description |

VAST-Client-Network_VNI_30069, vast internal network traffic |

| Name |

VAST-Discovery_VNI_30010 |

| Layer 2 Only |

Enable checkbox |

| Network ID |

30010 |

| VLAN ID |

10 |

| VLAN Name |

VAST-Storage_VLAN_10 |

| Interface Description |

VAST-Discovery_VNI_30010, VAST cluster node discovery VLAN, native VLAN on vast storage port |

| VAST Client network |

VAST-Client-Network_VNI_33056 |

| Layer 2 Only |

Enable checkbox |

| Network ID |

33056 |

| VLAN ID |

3056 |

| VLAN Name |

VAST-Client_VLAN_3056 |

| Interface Description |

VAST-Storage-Network_VNI_33056 |

Table 17. FE Fabric ports for Layer 2 Connectivity to Cisco EBox nodes

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| Leaf Switches |

FE-SLF1, FE-SLF2 |

|

| VAST Storage |

|

To Storage Leaf Switches |

| FE-SLF1 |

|

|

| VAST internal Storage network Ports |

1/9 to 1/14 |

Each 400GbE port is configured as 2x 200GbE breakout port |

| VAST External network Ports |

1/15 to 1/20 |

Each 400GbE port is configured as 2x 200GbE breakout port |

| FE-SLF2 |

|

|

| VAST internal storage Network Ports |

1/9 to 1/14 |

Each 400GbE port is configured as 2x 200GbE breakout port |

| VAST External network Ports |

1/15 to 1/20 |

Each 400GbE port is configured as 2x 200GbE breakout port |

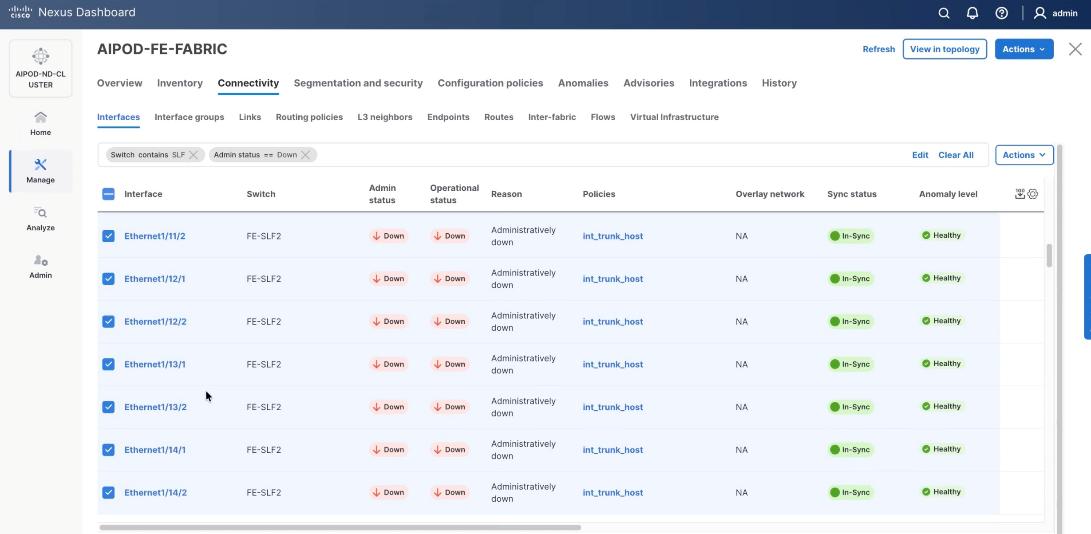

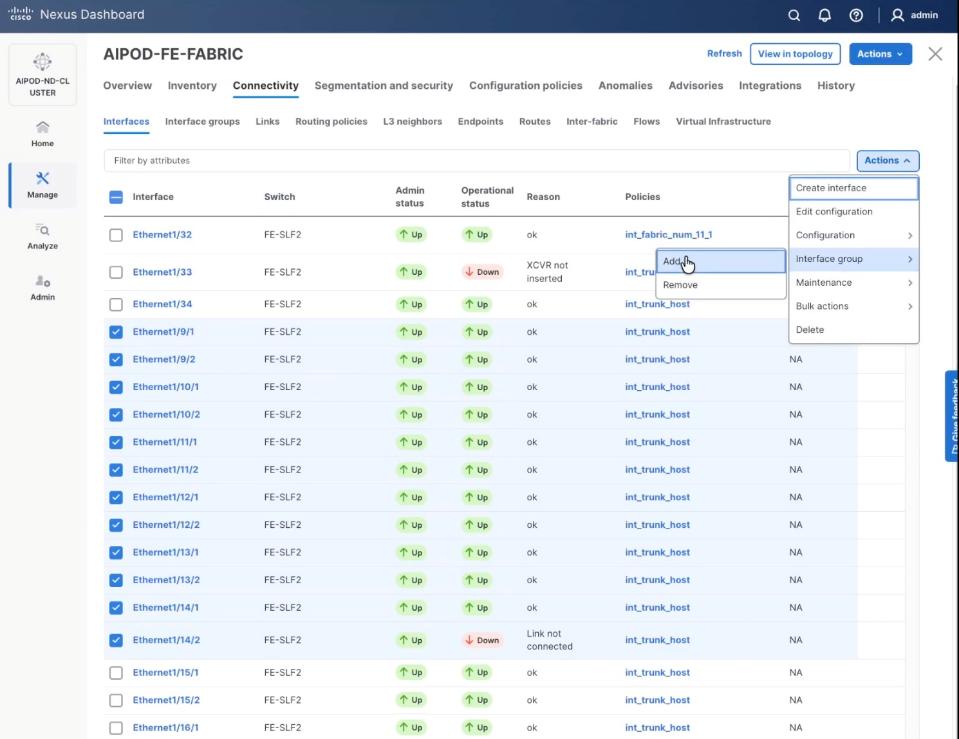

To enable Layer 2 connectivity from the FE fabric to VAST EBox nodes, follow the procedures below.

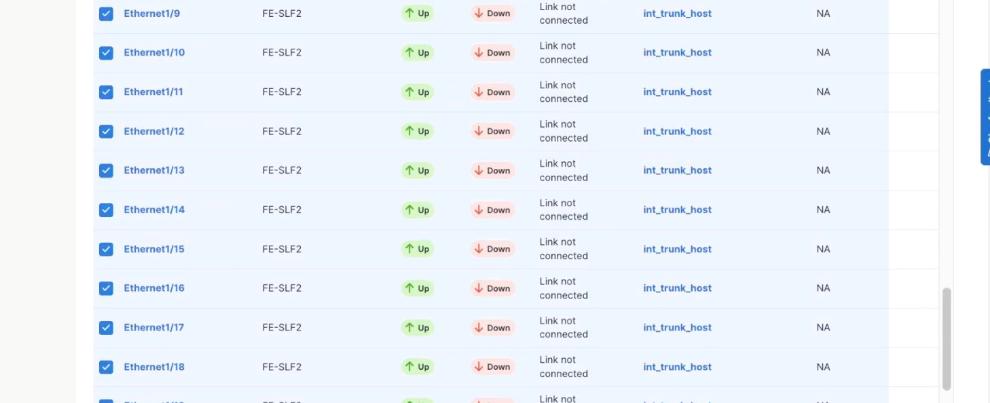

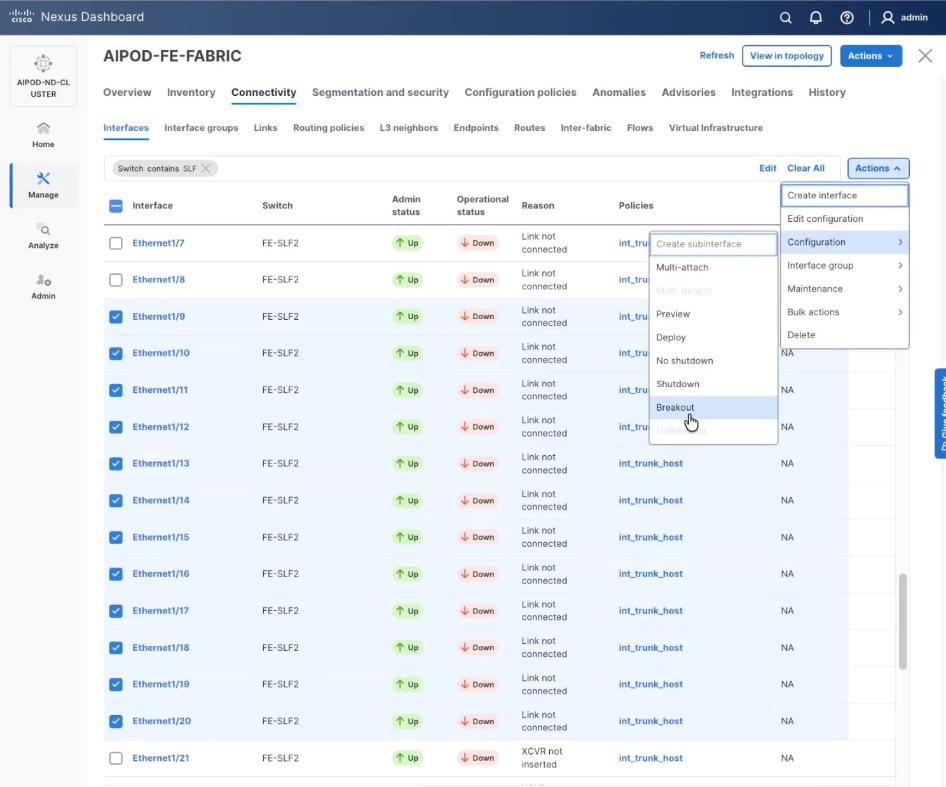

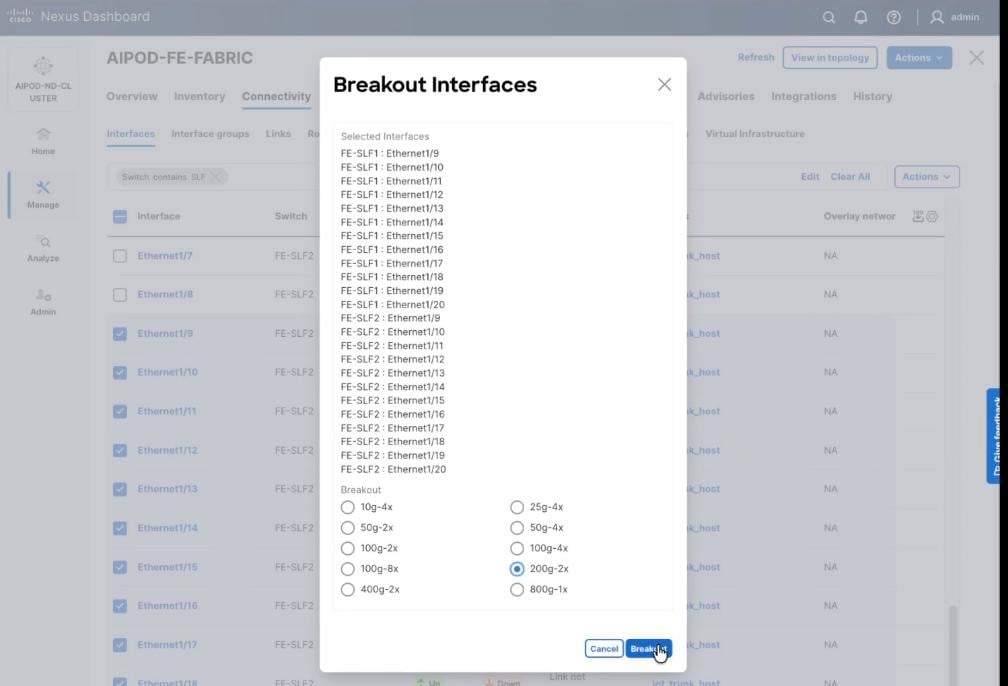

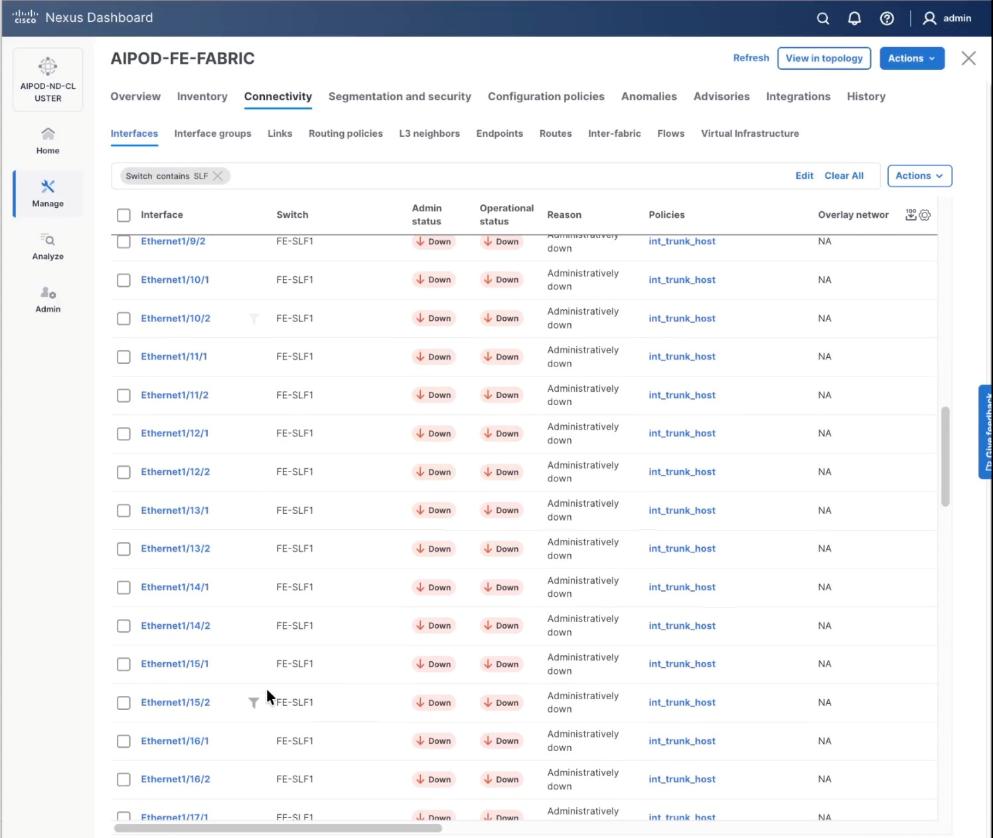

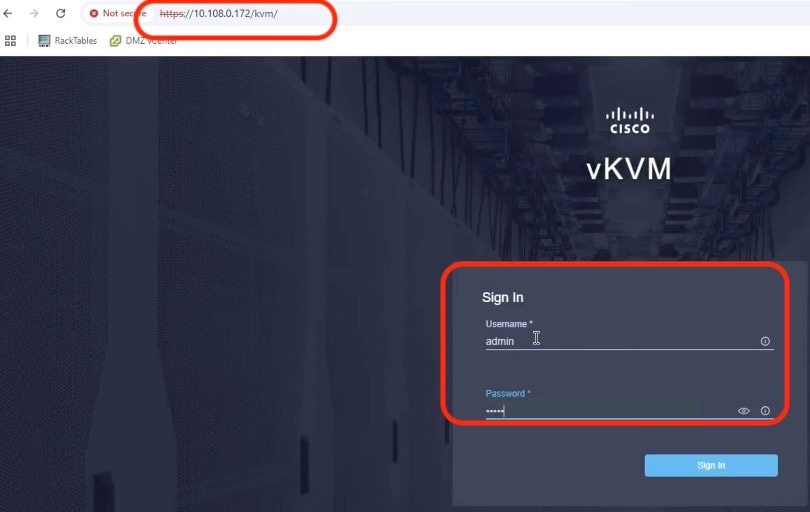

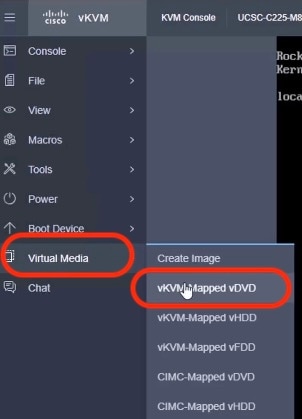

Procedure 1. Configure breakout ports on Storage leaf switches

Step 1. From a web browser go to the Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Connectivity > Interfaces tab.

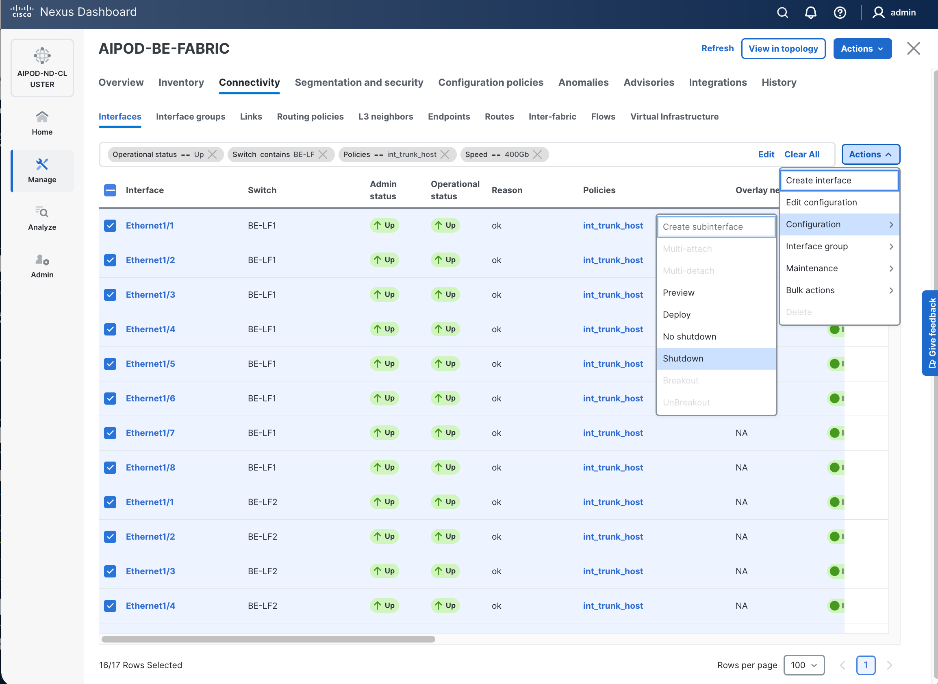

Step 4. Select Filter on the storage leaf switches (SLF) and select the VAST internal storage ports that connect to VAST EBox nodes. From the Actions drop-down list, select Interface Group > Add.

Step 5. Click Action and select Configuration > Breakout.

Step 6. Select 200g-2x and click Breakout.

Step 7. Verify the 200G breakout ports are configured successfully.

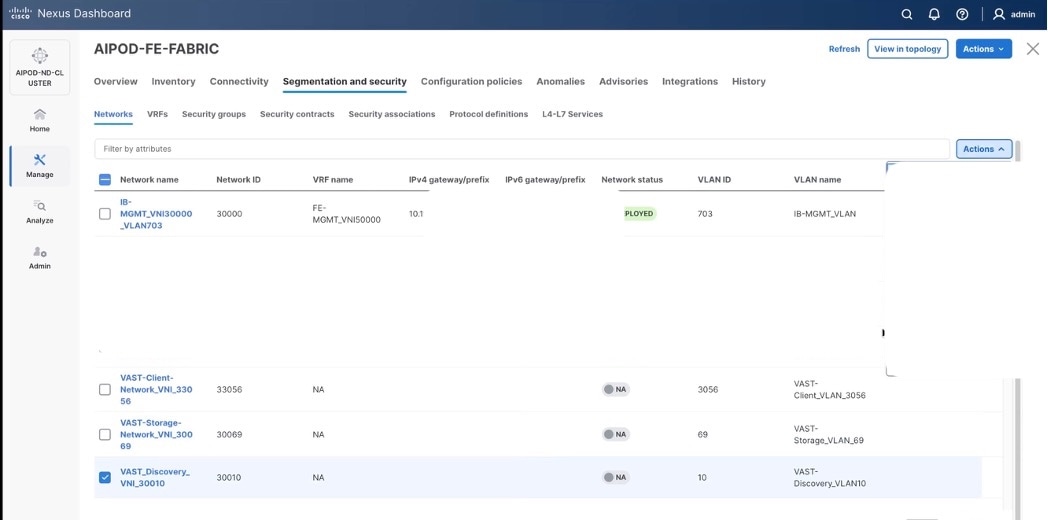

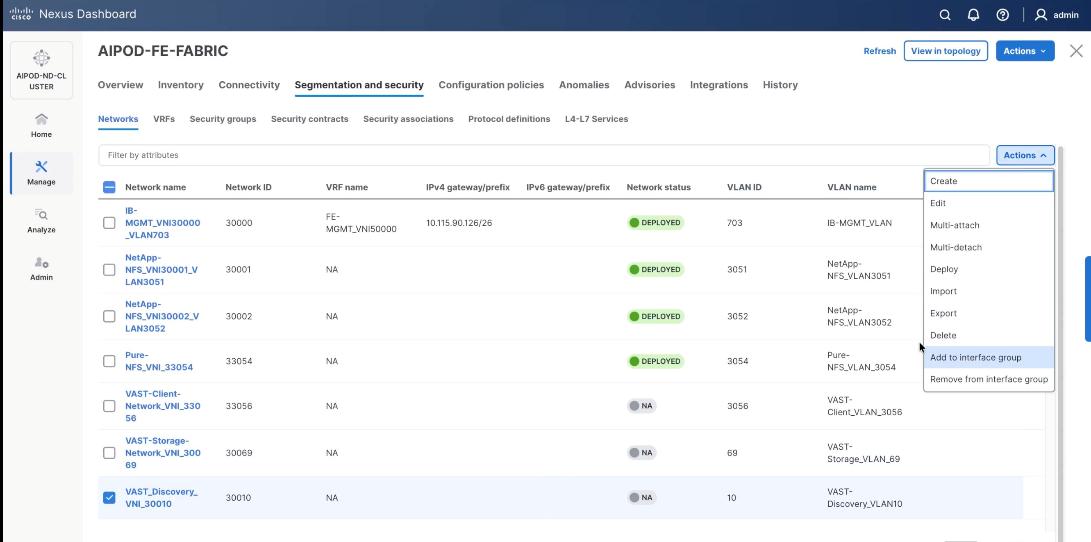

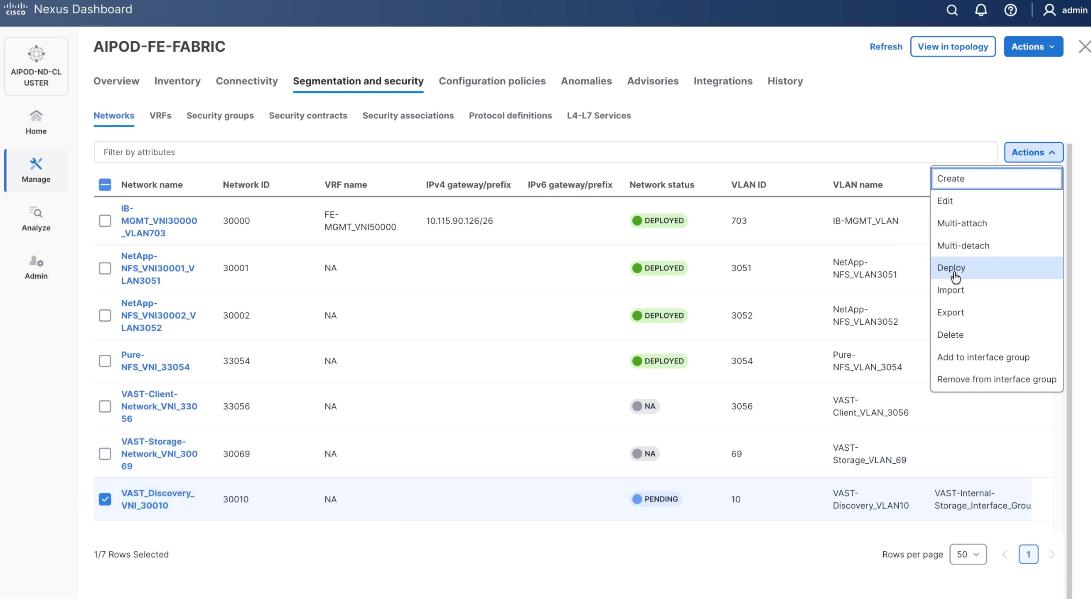

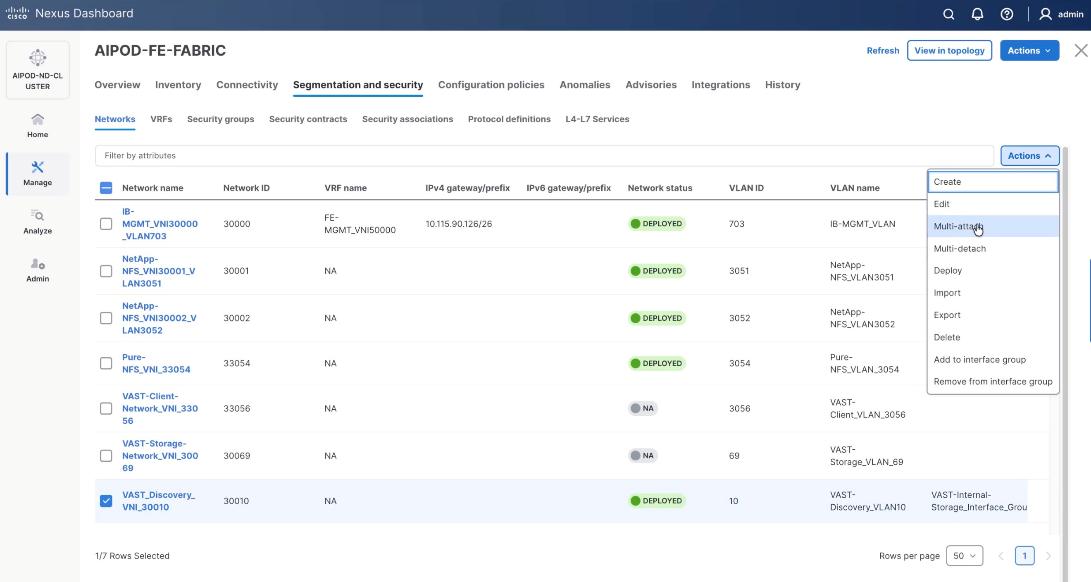

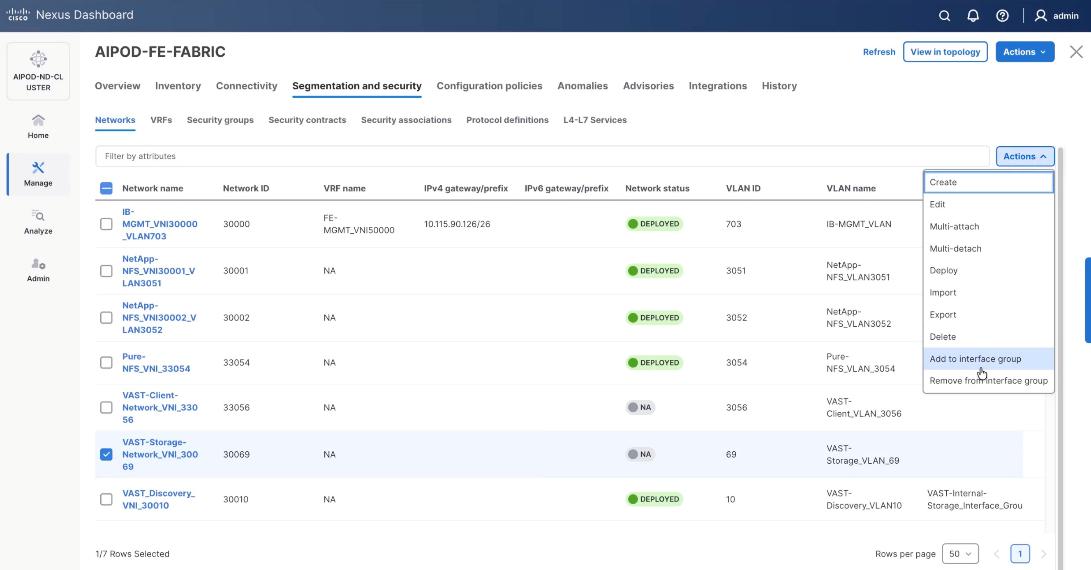

Procedure 2. Create Networks for VAST Data

The section details the creation of network deployed for VAST Data. The three networks created are:

● VAST-Client-Network_VNI_30069

● VAST-Discovery_VNI_30010

● VAST-Client-Network_VNI_33056

Step 1. From a web browser go to the Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

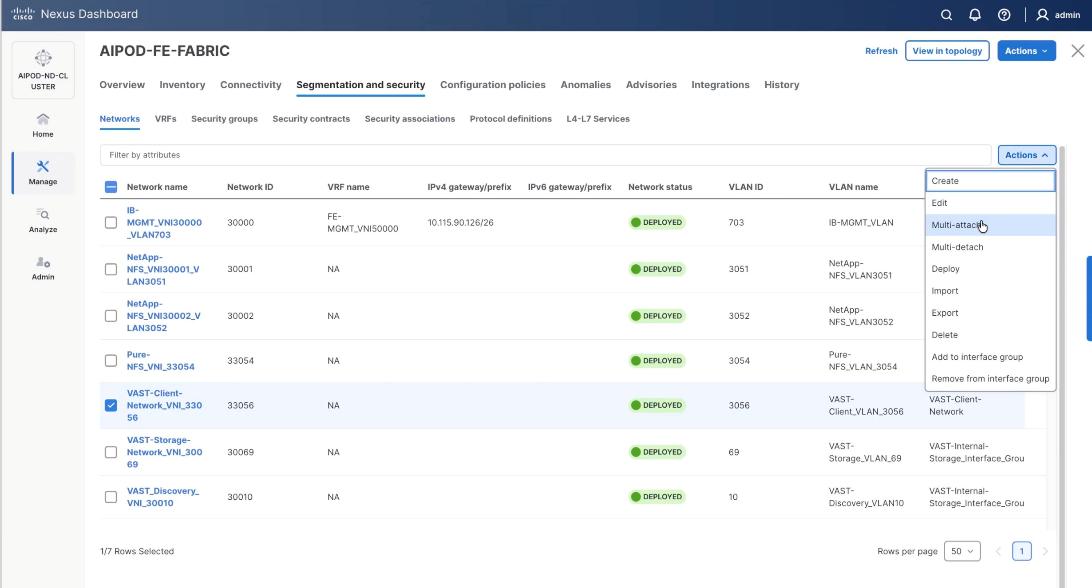

Step 2. From the navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Segmentation and Security > Networks tab.

Step 4. Click the lower Actions button and select Create from the menu.

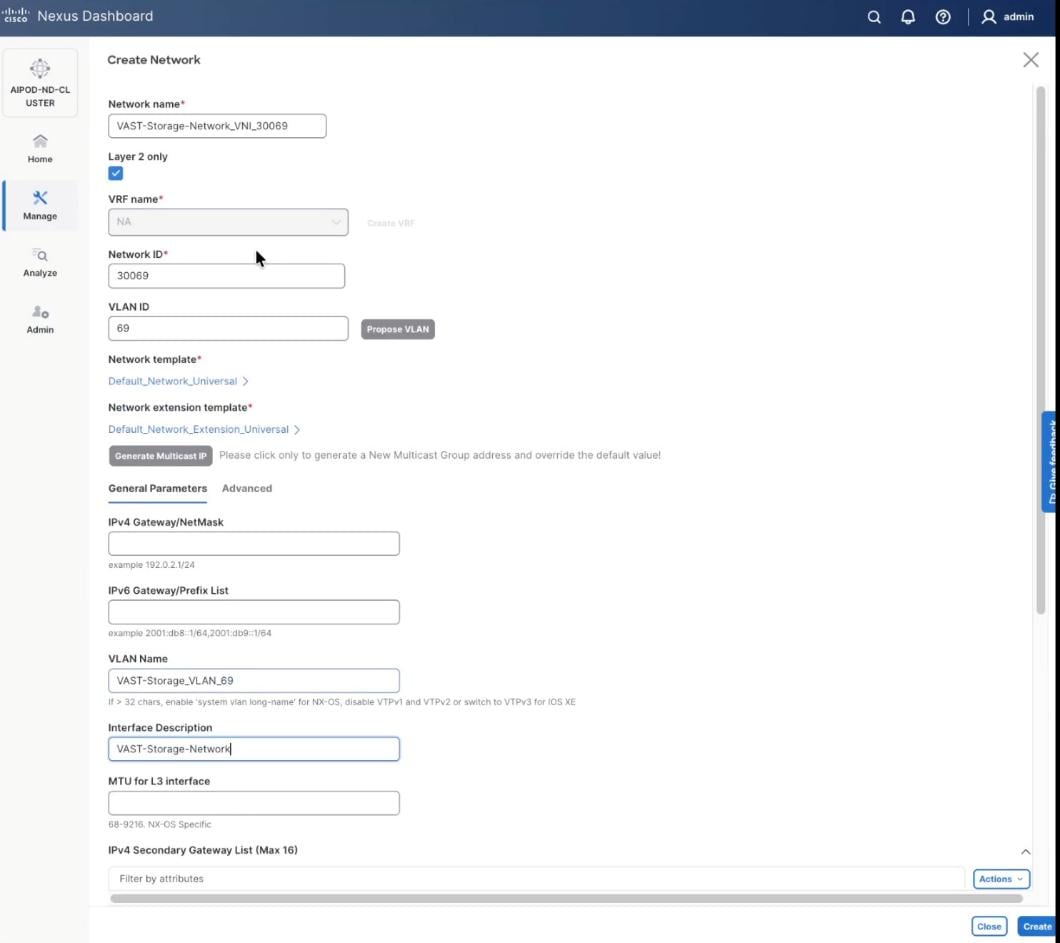

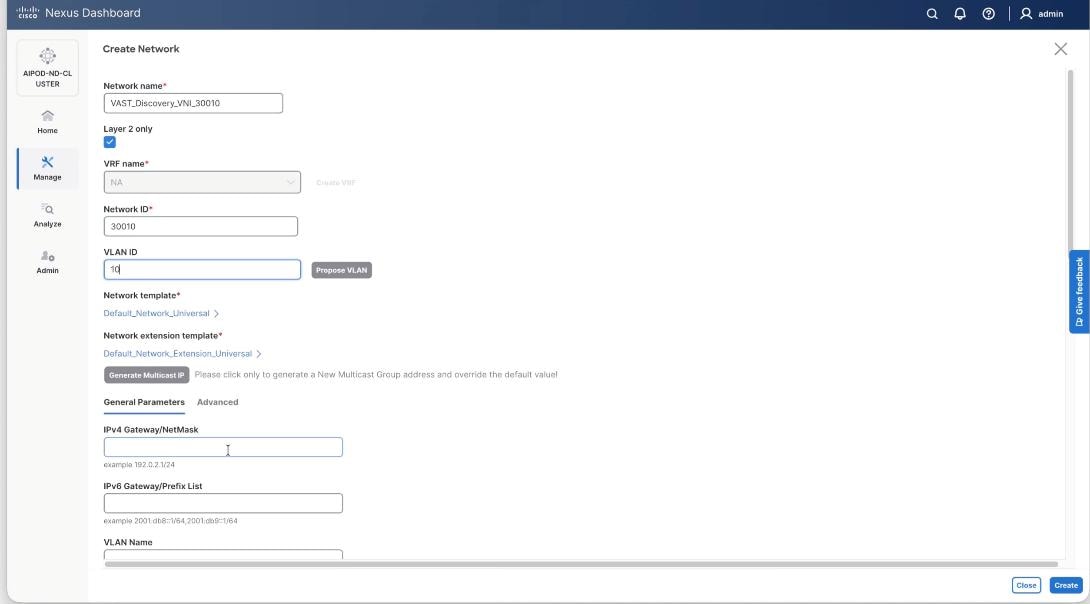

Step 5. In the Create Network window, specify the following:

● Network name: VAST-Storage-Network_VNI_30069

● Enable checkbox for Layer 2 only.

● Network ID or use 30069.

● VLAN ID 69.

● In the General Parameters tab, specify VLAN Name and Interface Description.

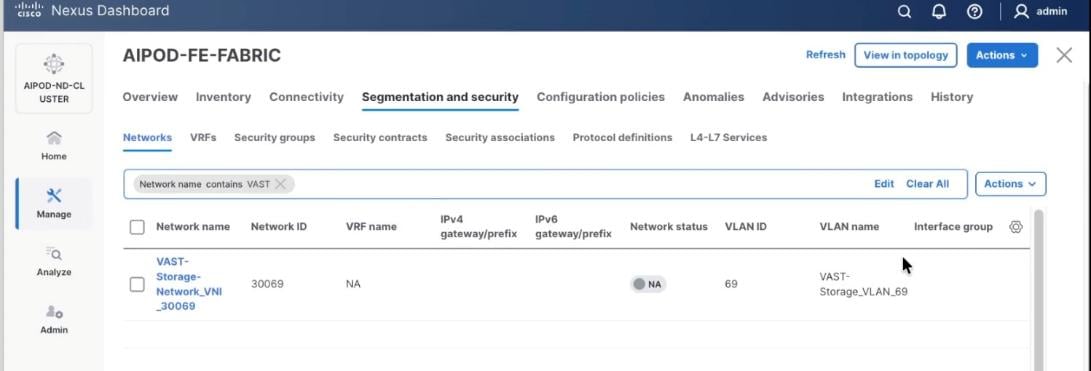

Step 6. Verify created network.

Step 7. Repeat steps 1 – 6 to create VAST Discovery network. From a web browser go to the Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 8. From the navigation menu, go to Manage > Fabrics.

Step 9. Select the FE fabric and go to Segmentation and Security > Networks tab.

Step 10. Click the lower Actions button and select Create from the menu.

Step 11. In the Create Network window, specify the following:

● Network name: VAST-Discovery_VNI_30010

● Enable checkbox for Layer 2 only.

● Network ID or use 30010.

● VLAN ID 10.

● In the General Parameters tab, specify VLAN Name and Interface Description.

Step 12. Confirm creation of the network.

Step 13. Repeat steps 1 – 12 to create VAST Client network. Click the lower Actions button and select Create from the menu.

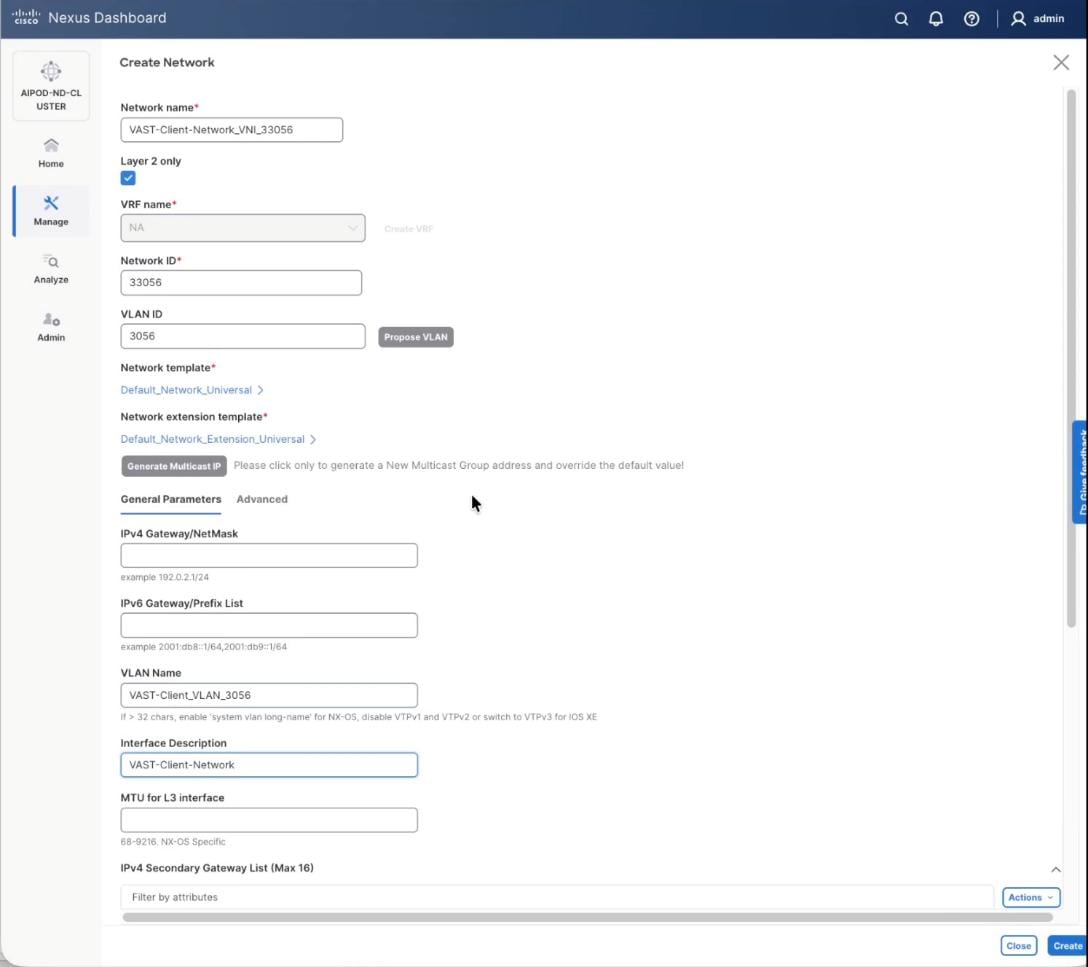

Step 14. In the Create Network window, specify the following:

● Network name: VAST-Client-Network_VNI_33056

● Enable checkbox for Layer 2 only

● Network ID or use 33056

● VLAN ID 3056

● In the General Parameters tab, specify VLAN Name and Interface Description

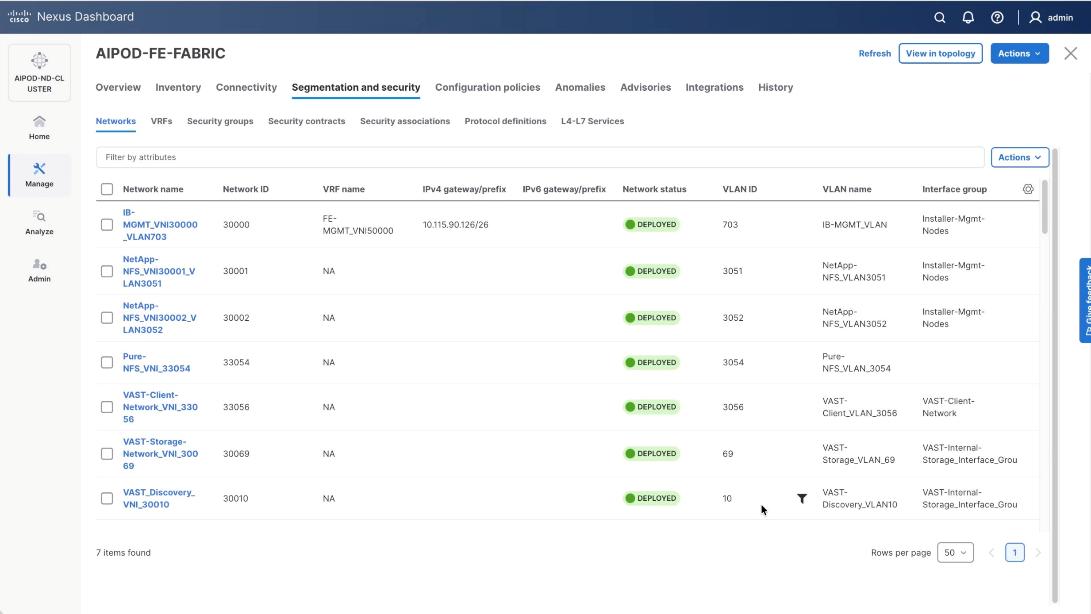

Step 15. Confirm creation of all three networks deployed in this solution.

Procedure 3. Deploy VAST Internal storage network

This procedure details the following:

● Configure VAST native Discovery VLAN for ports 1/9/1 to port 1/14/2 on each leaf switch (FE-SLF1 and FE-SLF2).

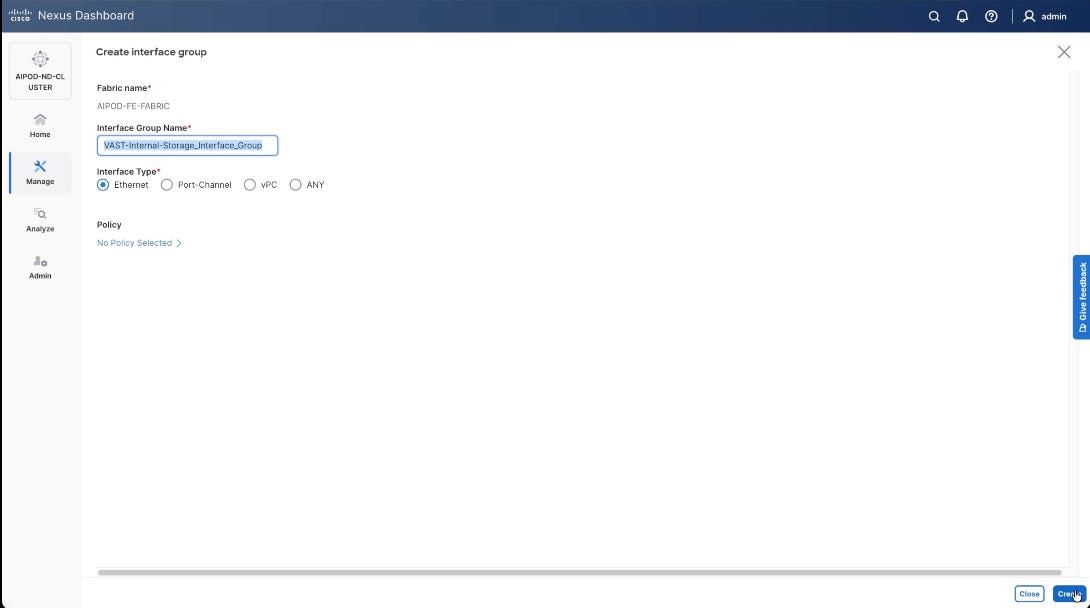

● Add ports to Interface groups: VAST-Internal-Storage_Interface_Group

● Attach VAST-Discovery_VNI_30010, add network to interface group and Deploy network on the storage leaf switches of the FE Fabric (FE-SLF1 and FE-SLF2)

● Attach VAST-Storage-Network_VNI_30069, add network to interface group and Deploy network on the storage leaf switches of the FE Fabric (FE-SLF1 and FE-SLF2)

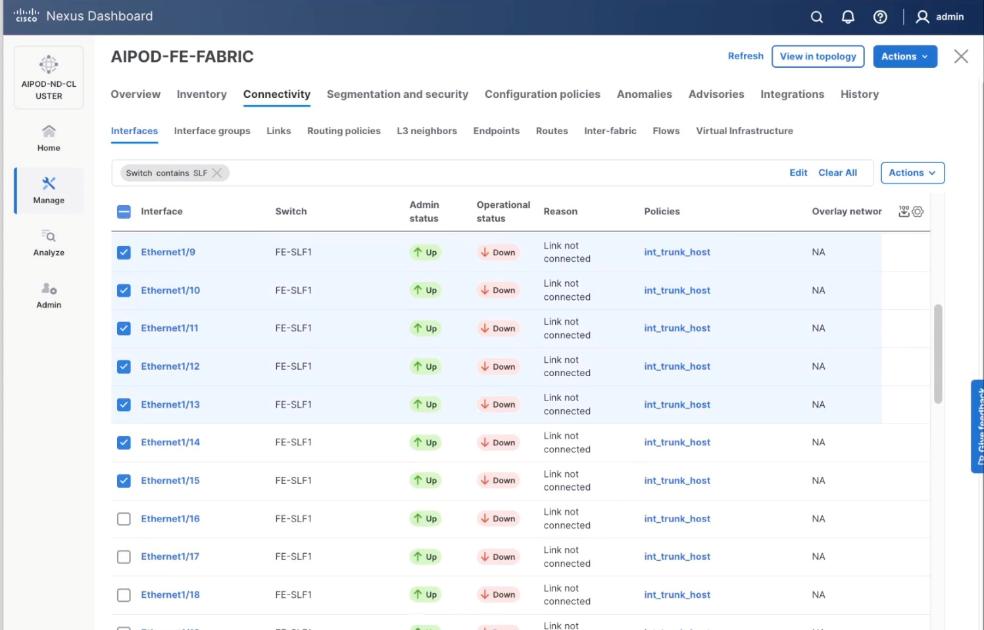

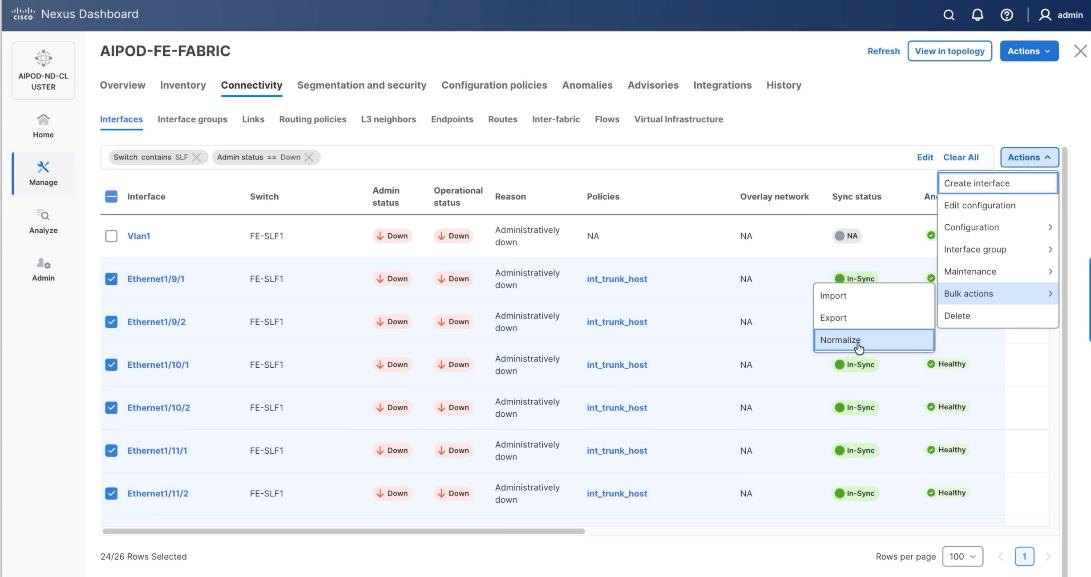

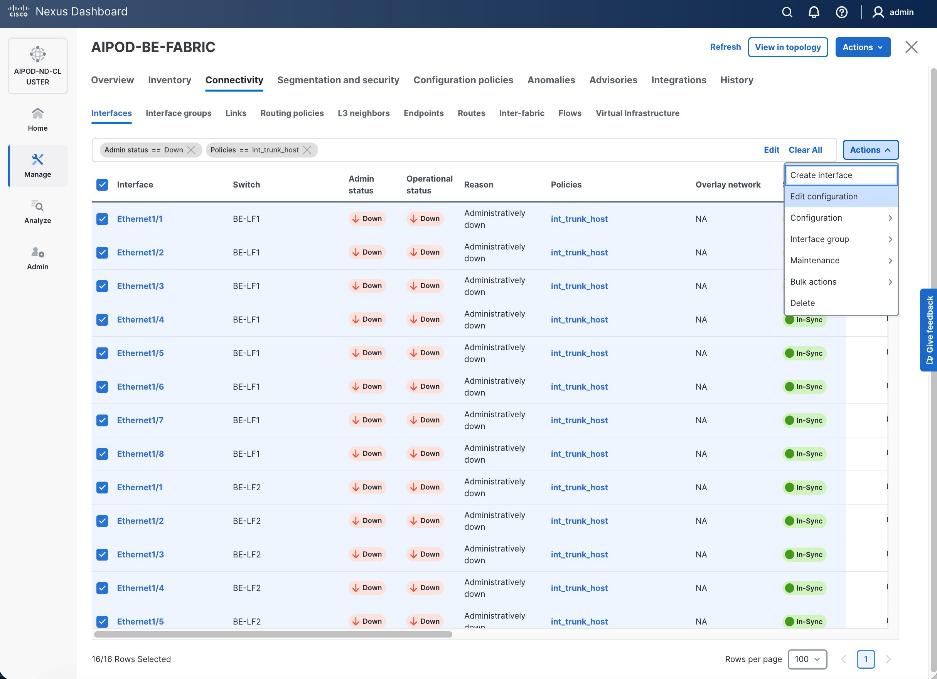

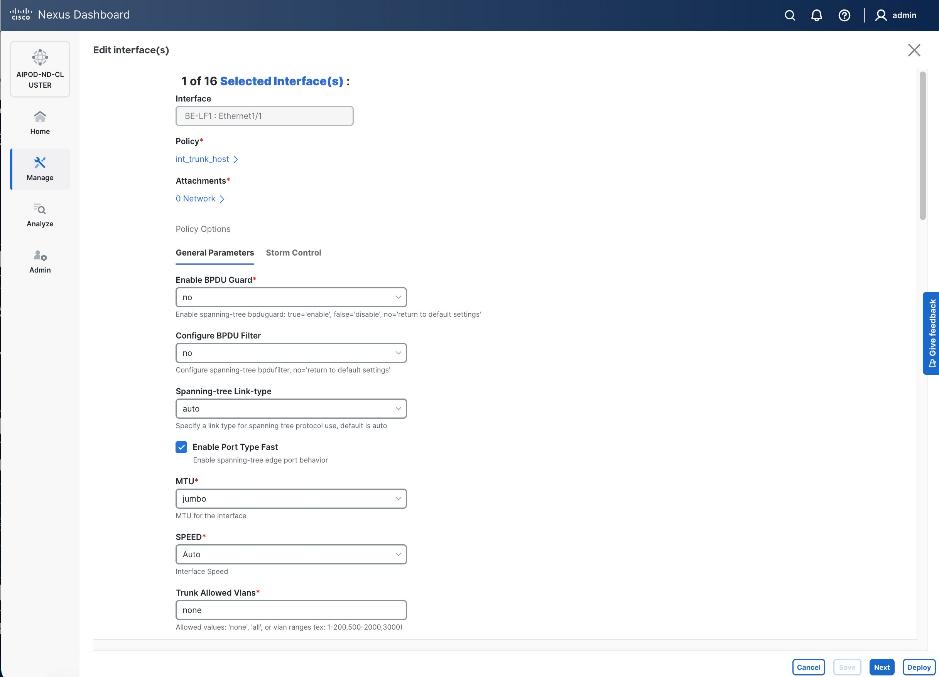

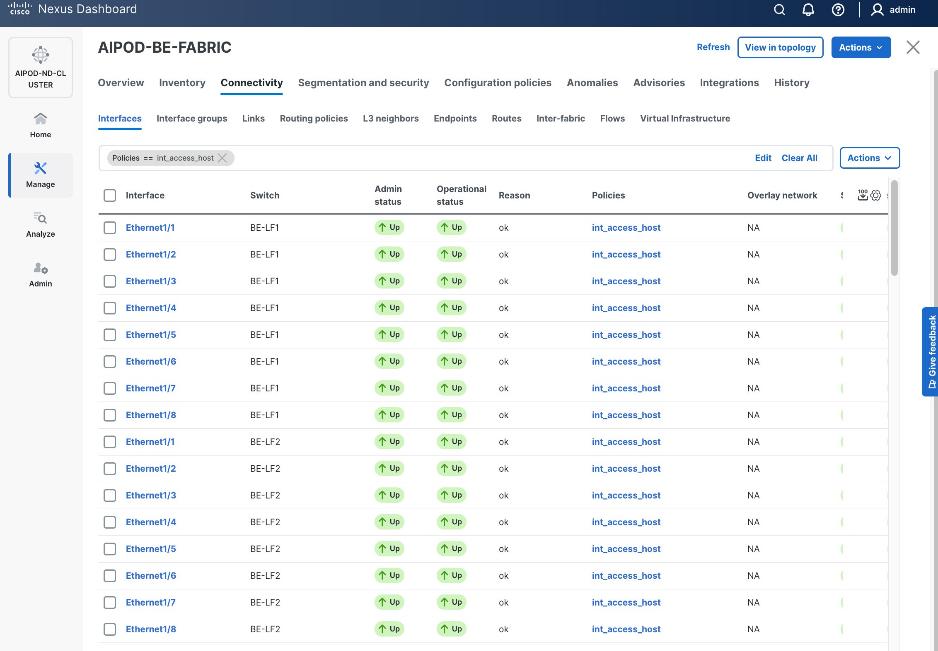

Step 1. Go to the Connectivity tab and select the ports 1/9/1 to 1/14/2 (VAST internal storage ports) . You can filter using storage leaf name (SLF) to narrow down the selection.

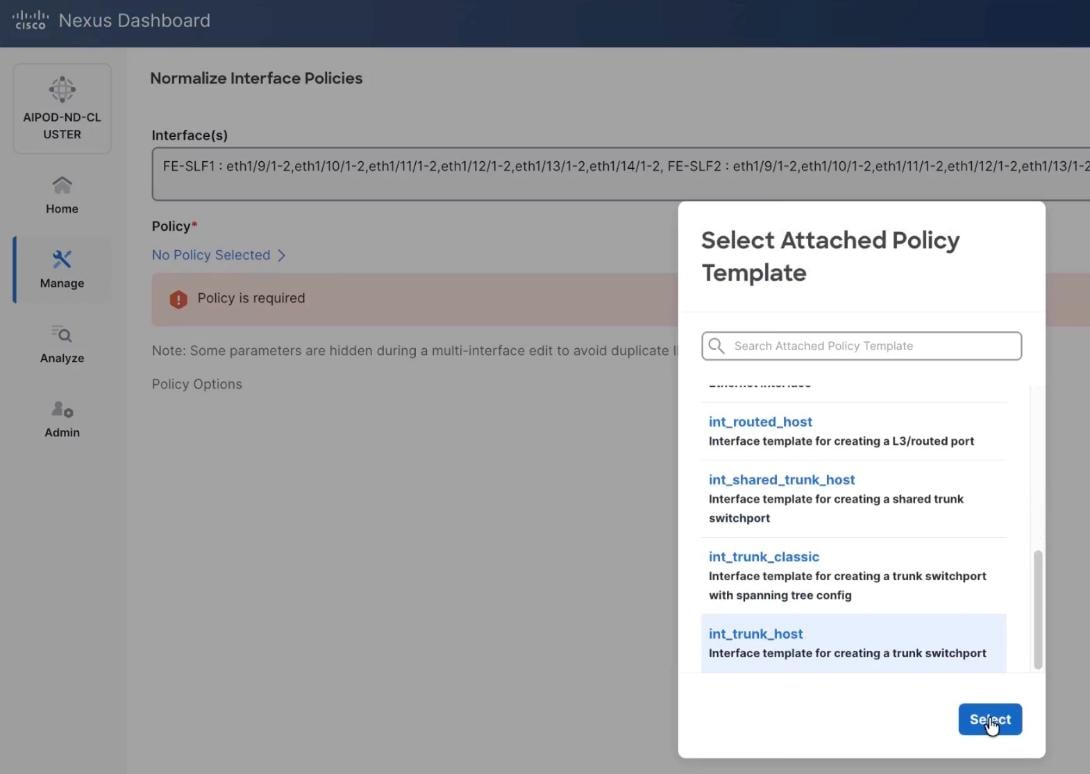

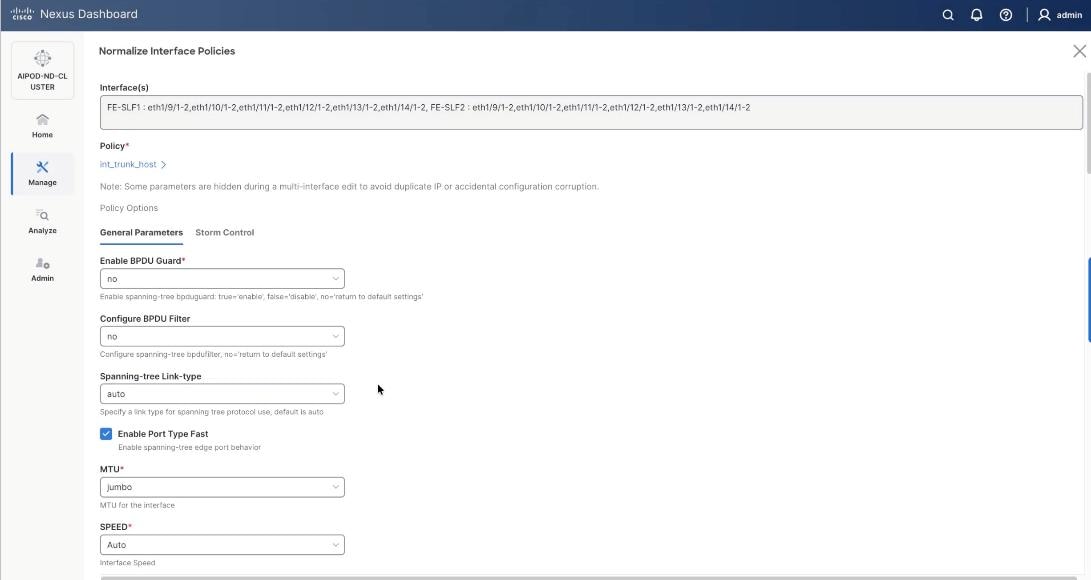

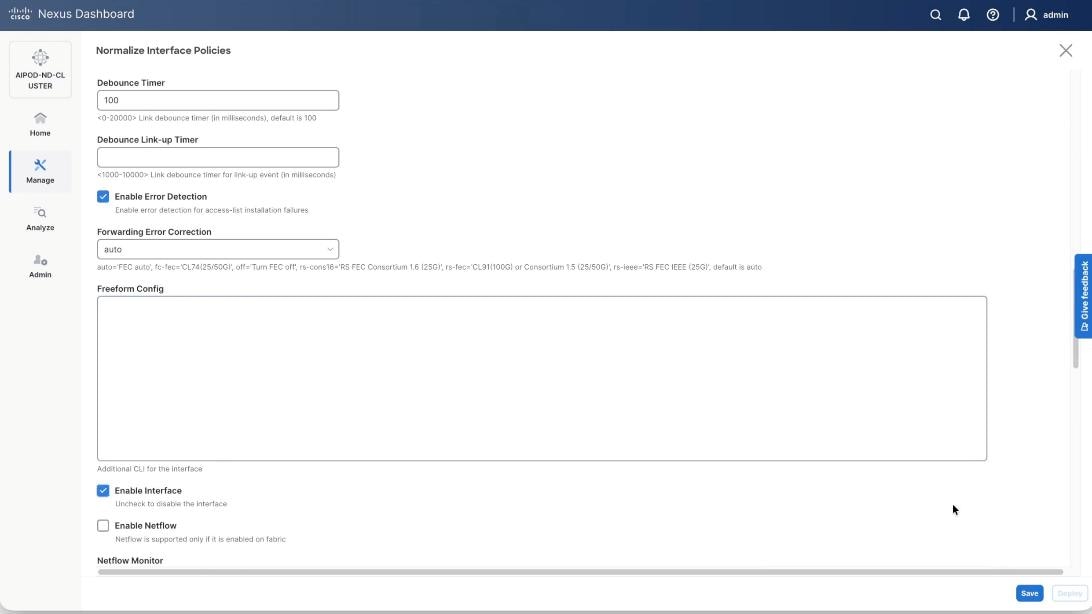

Step 2. When all VAST storage ports are selected, click Actions > Bulk Actions > Normalize.

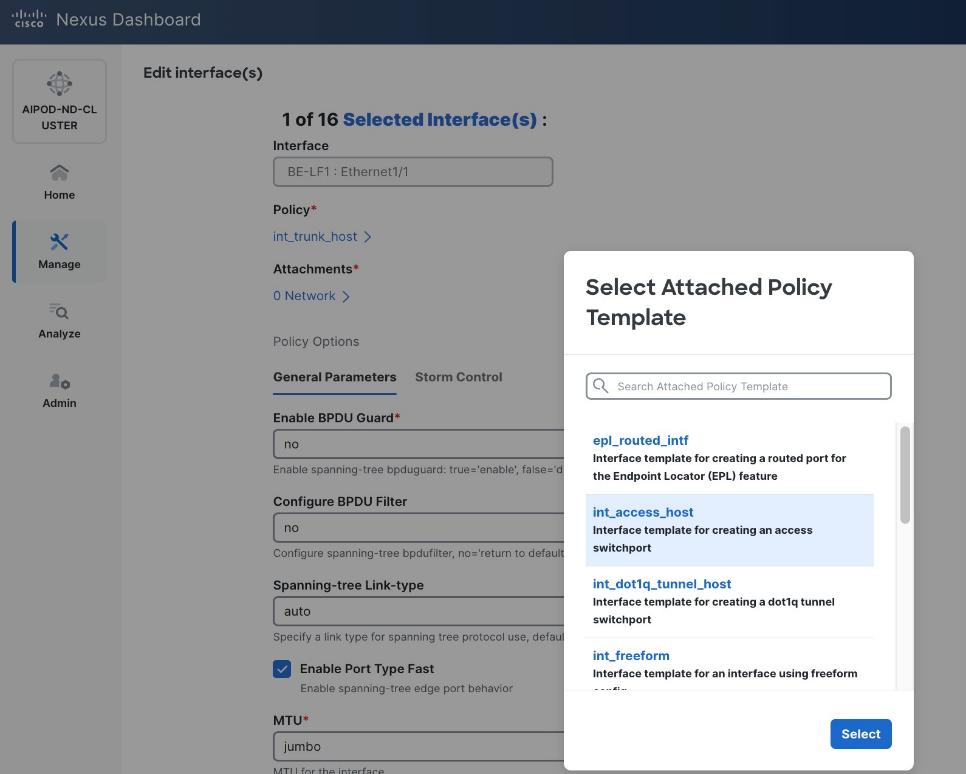

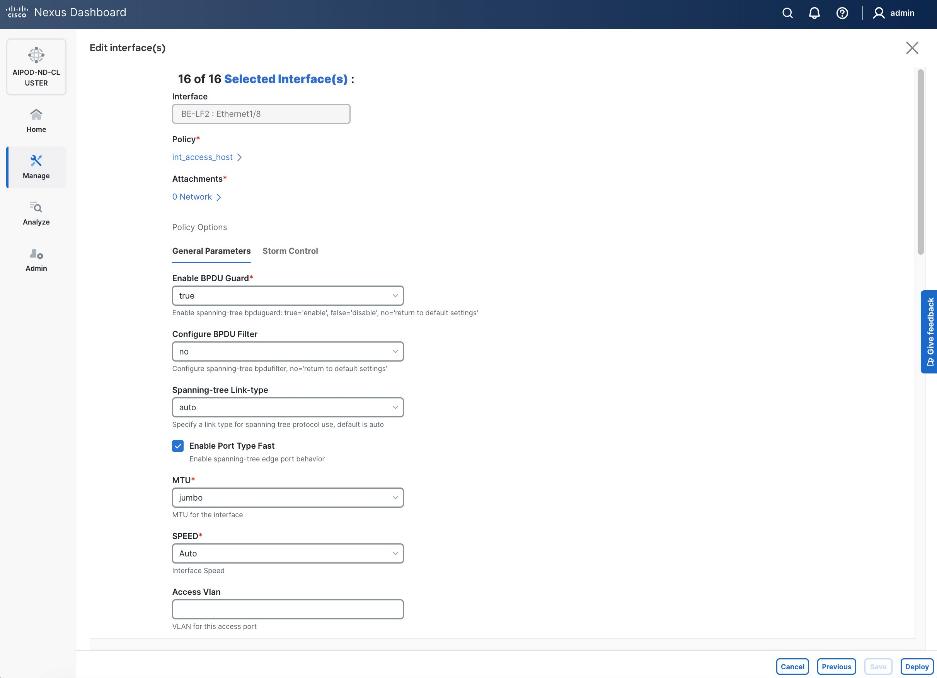

Step 3. Select trunk policy int_trunk_host. Select interface group as VAST-Storage-Network and click Save.

Step 4. On the Normalize interfaces Policy screen, scroll down to native vlan and enter native VLAN as 10 (VAST node discovery VLAN). Ensure Enable interface is checked. Click Save.

Step 5. Verify the changes to the ports with native VLAN as 10 and QoS Policies.

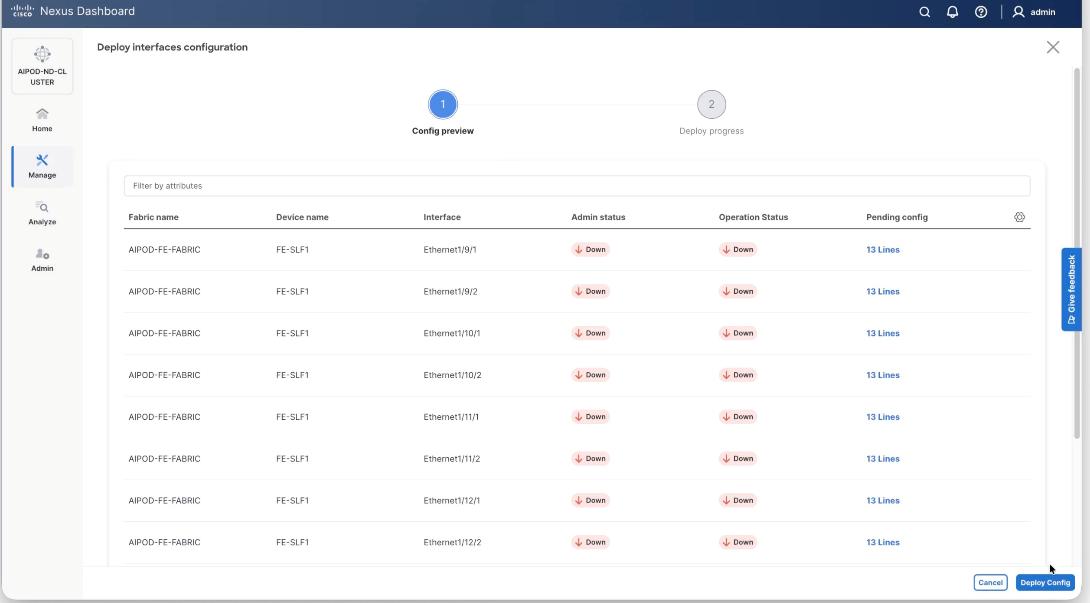

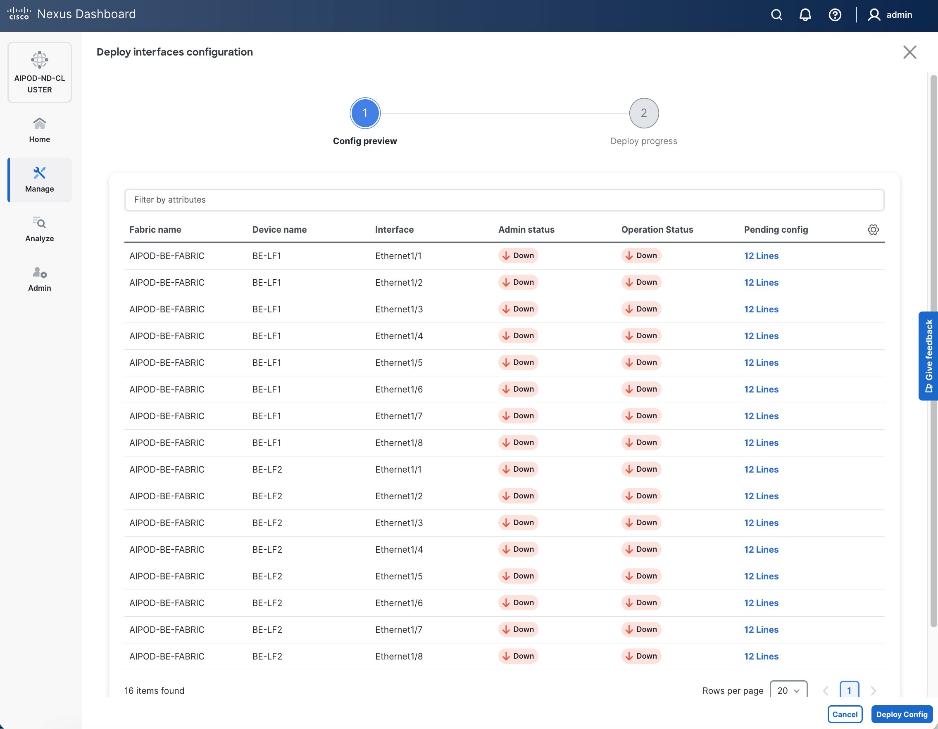

Step 6. Click Deploy Config.

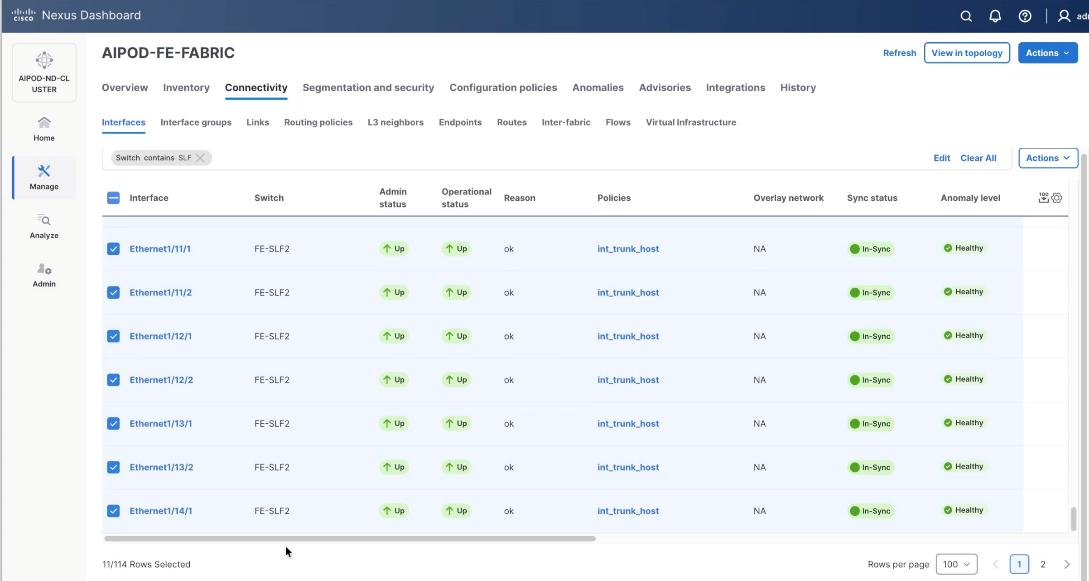

Step 7. Ensure the storage port interfaces are up.

Step 8. From the navigation menu, go to Manage > Fabrics.

Step 9. Select the FE fabric and go to Connectivity > Interfaces tab.

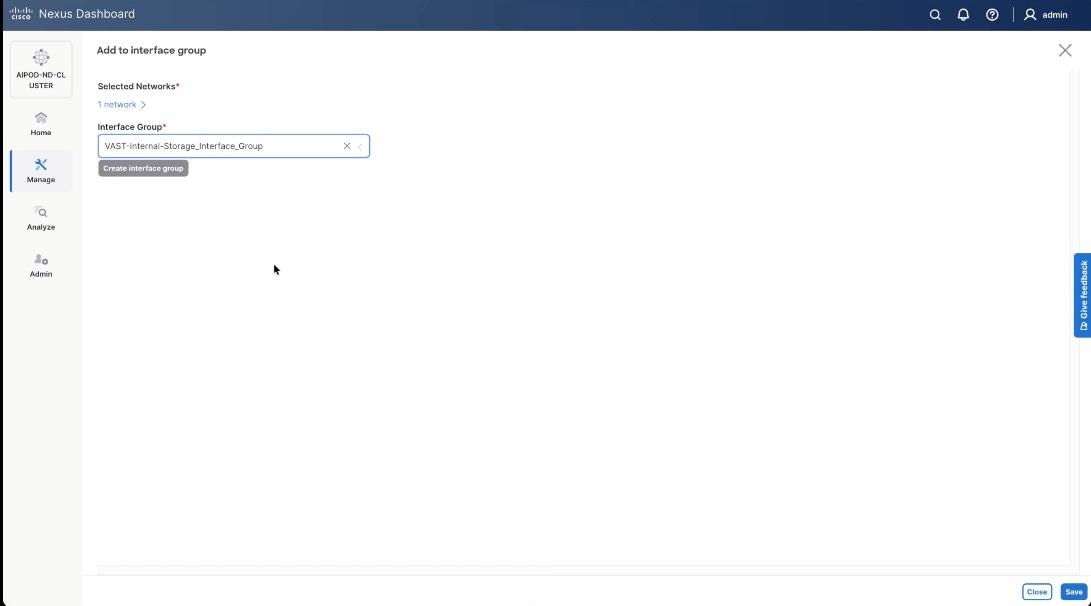

Step 10. Select ports 1/9/1 to 1/14/2 on both FE-SLF1 and FE-SLF2. From the Action drop-down list, select interface group > Add.

Step 11. Click create Interface group and name the interface group as VAST-Internal-Storage_Interface_Group, select interface type as ethernet. Click Create and then click Save.

Step 12. From the navigation menu, go to Manage > Fabrics.

Step 13. Select the FE fabric and go to Segmentation and Security > Networks tab.

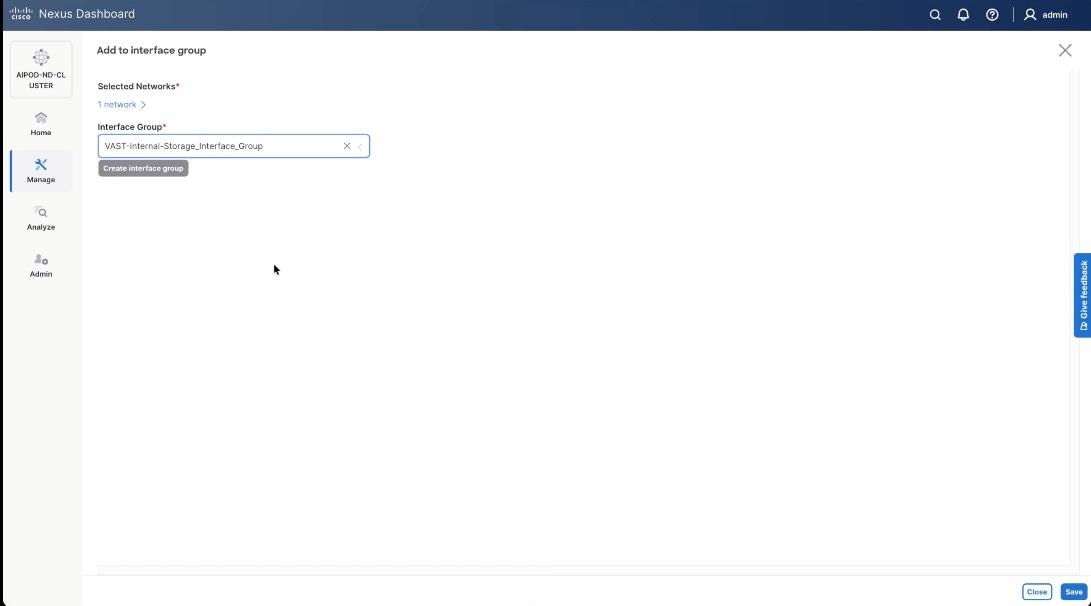

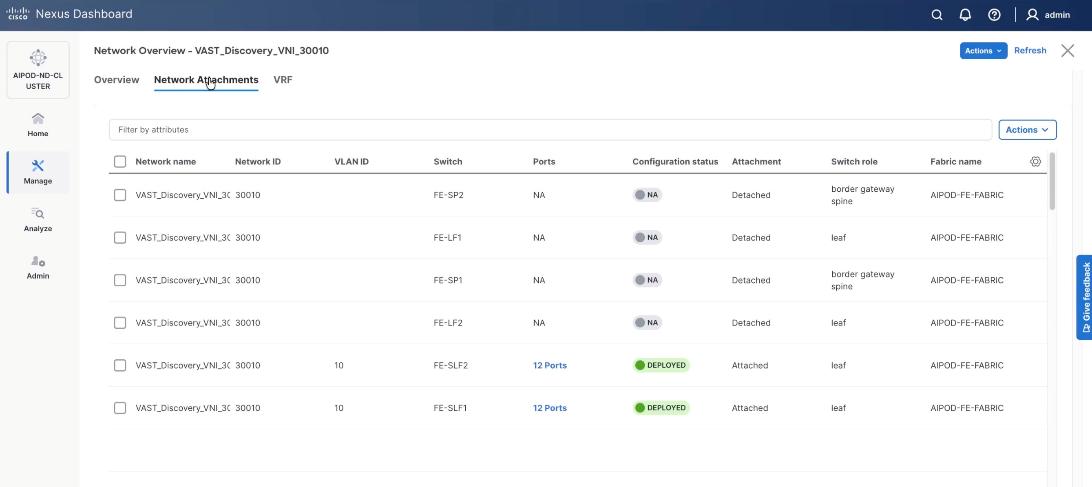

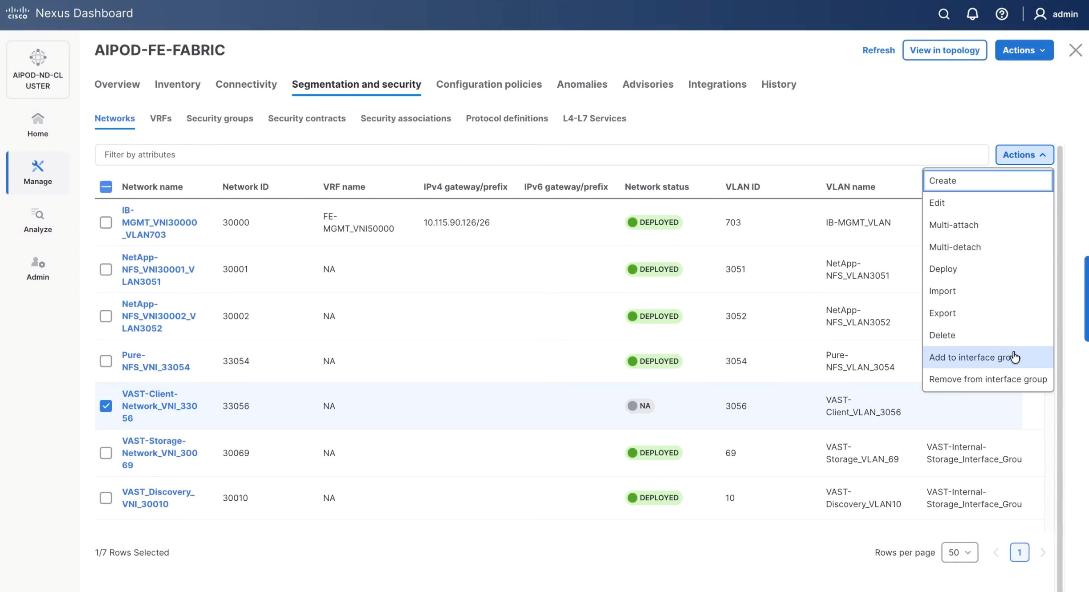

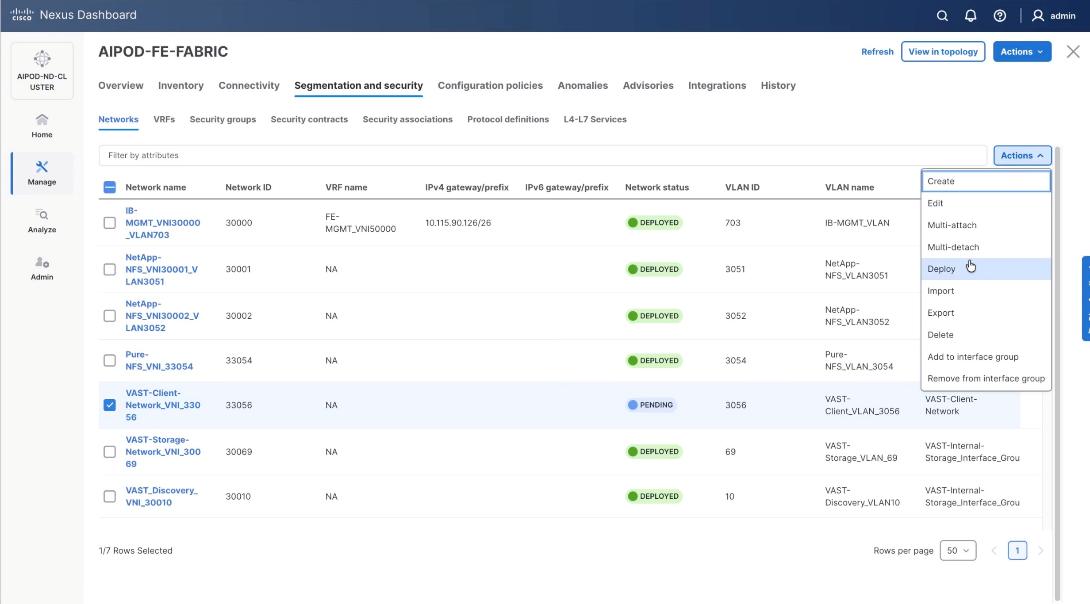

Step 14. Select VAST discovery network, VAST-Discovery_VNI_30010, click the lower Actions button and select Add to interface group from the list.

Step 15. Select the Interface group VAST-Internal-Storage_Interface_Group and click Save.

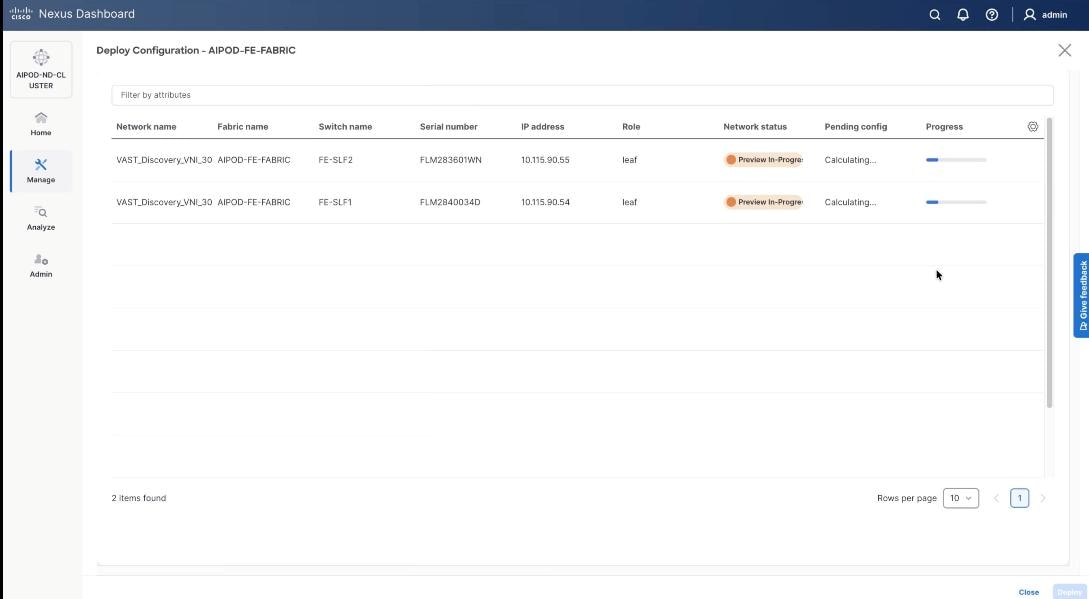

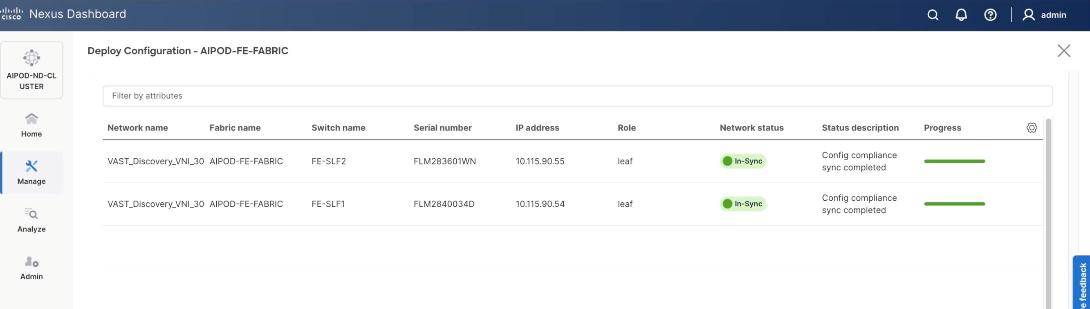

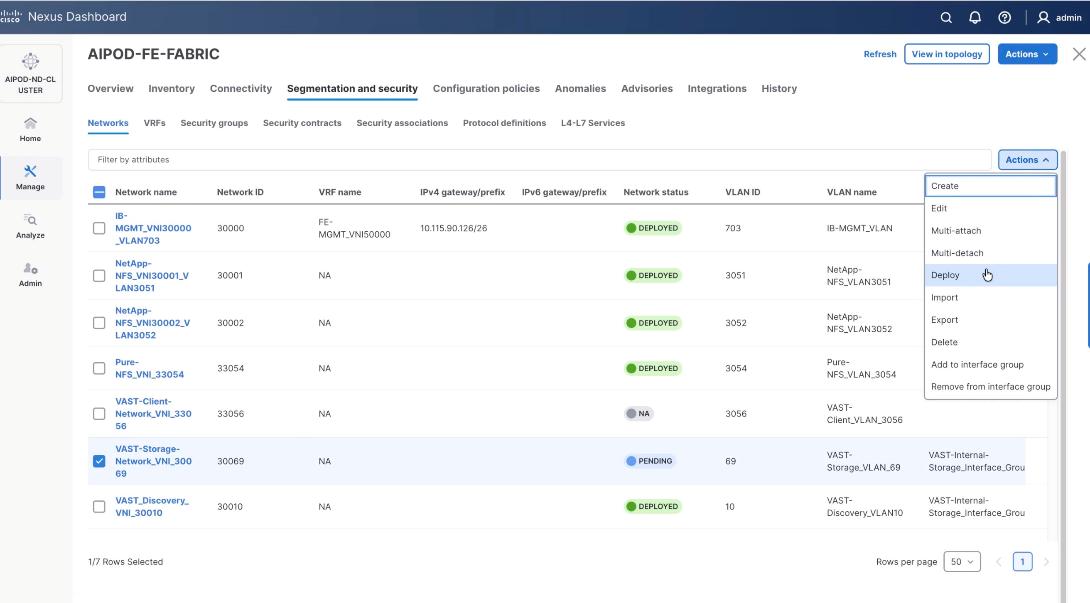

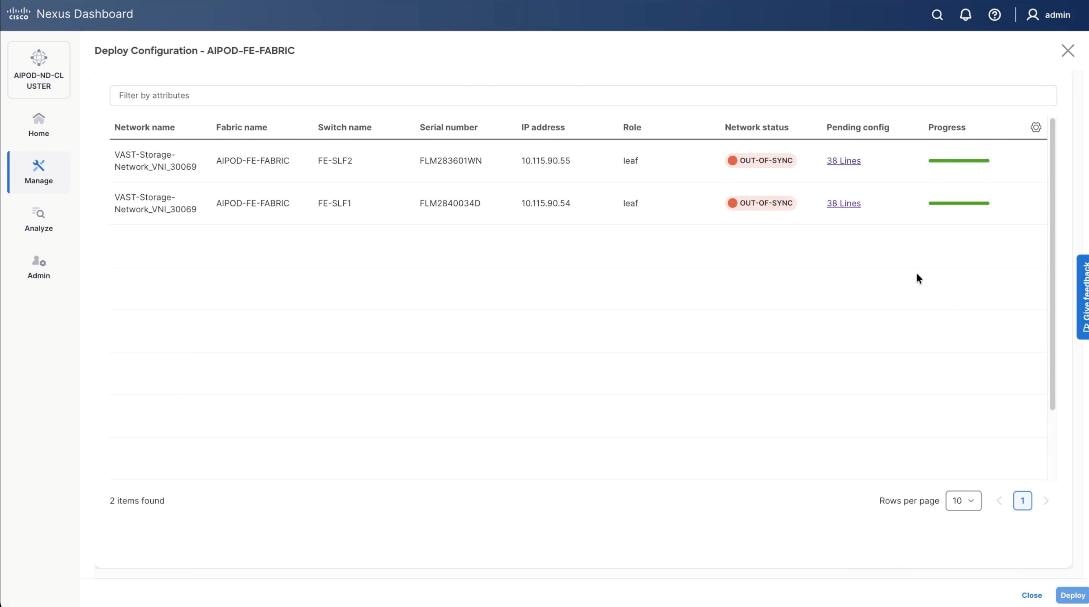

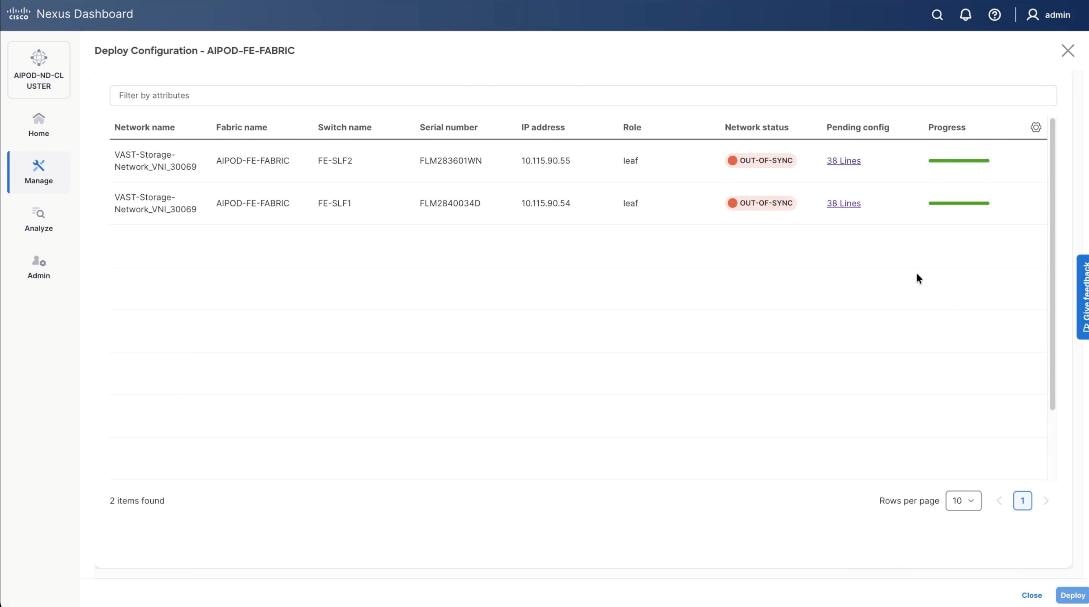

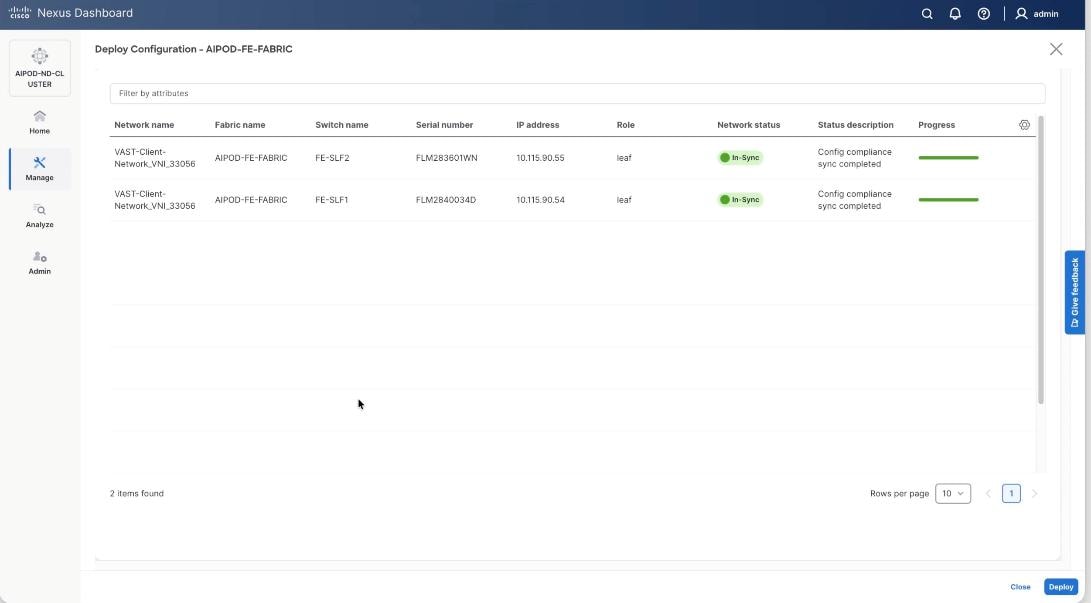

Step 16. Select VAST Discovery Network, click the Actions button and select the click Deploy.

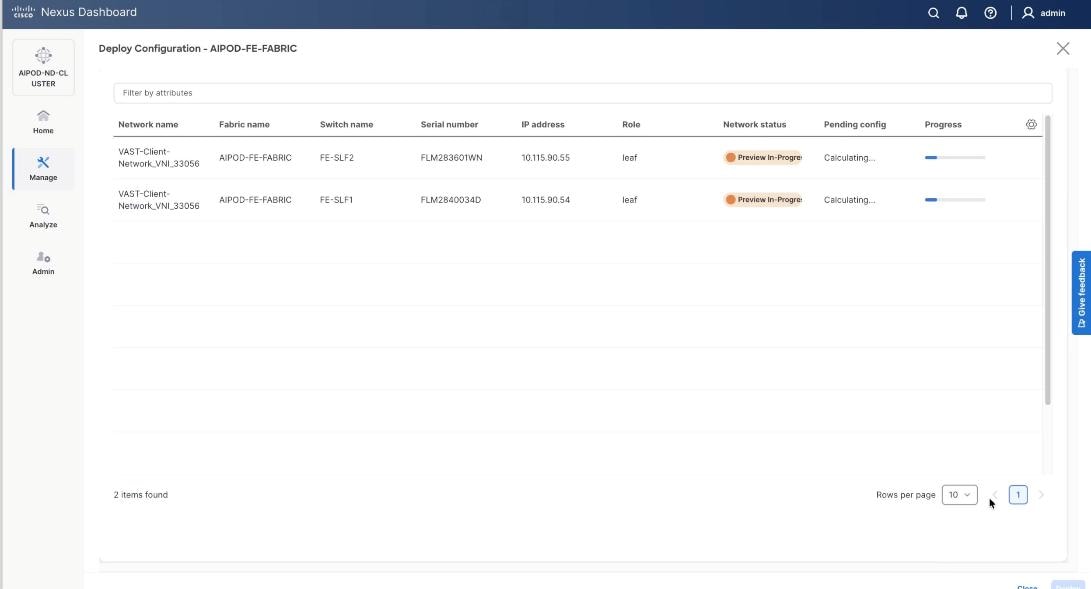

Step 17. From the Deploy Configuration screen, click Deploy.

Step 18. Verify successful deployment and click Close.

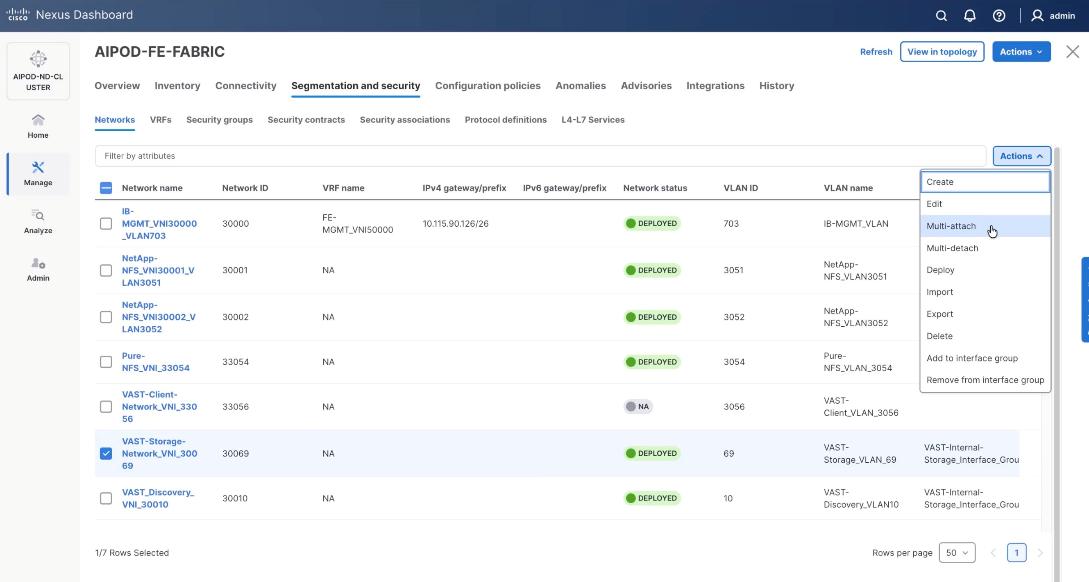

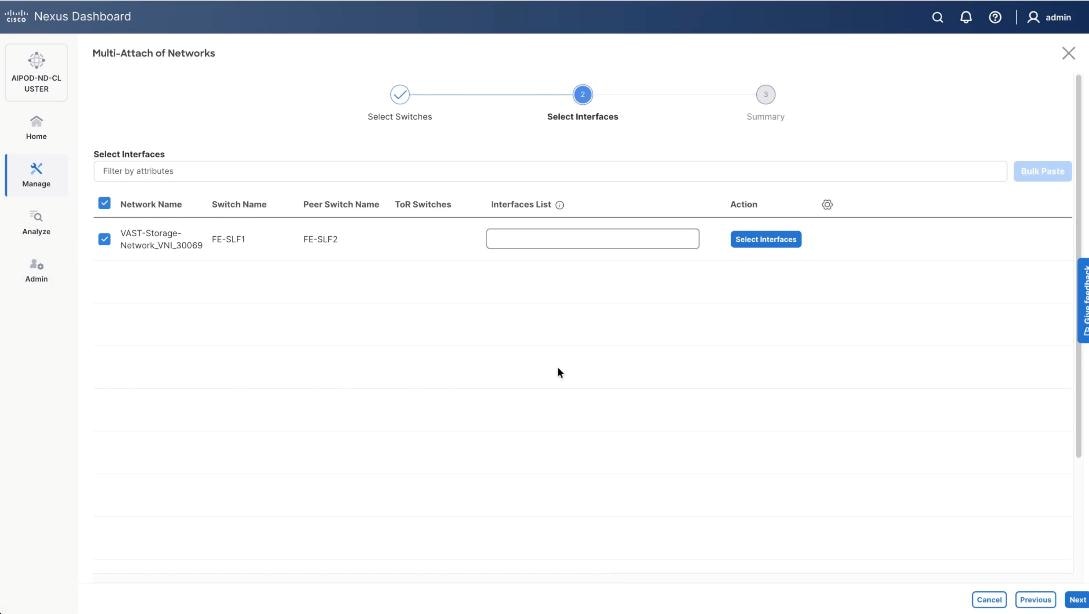

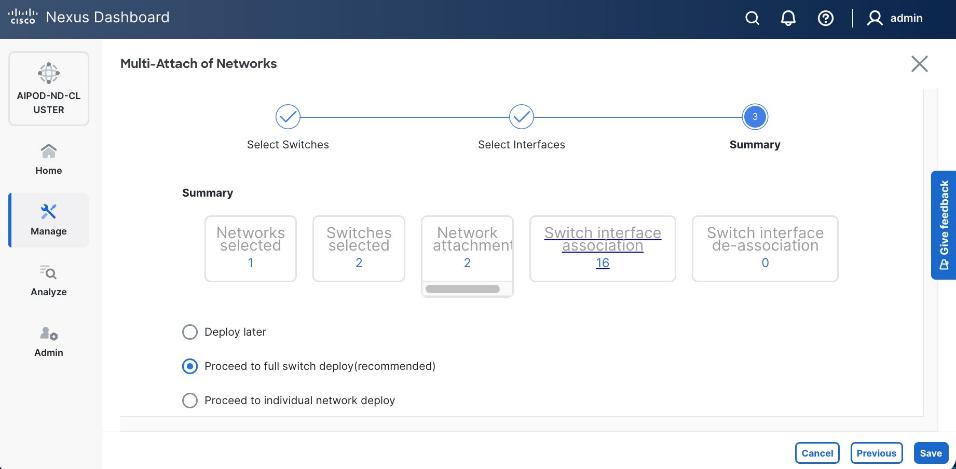

Step 19. Select the VAST discovery network, click the lower Actions button and select Multi-attach from the list.

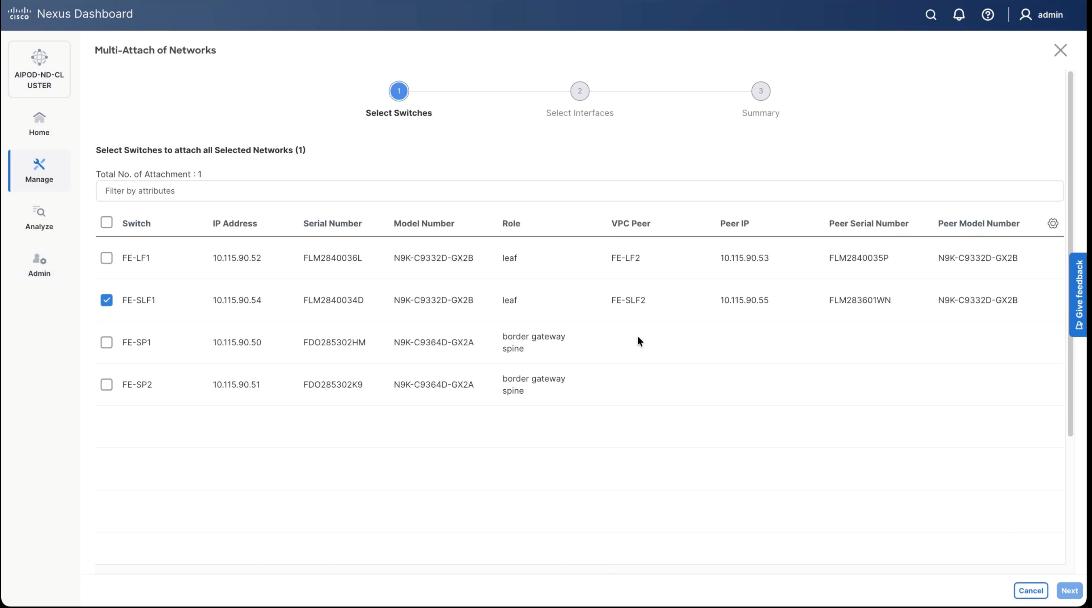

Step 20. Select the FE_SLF1 and click Next.

Step 21. Select VAST Discovery VNI and click Next.

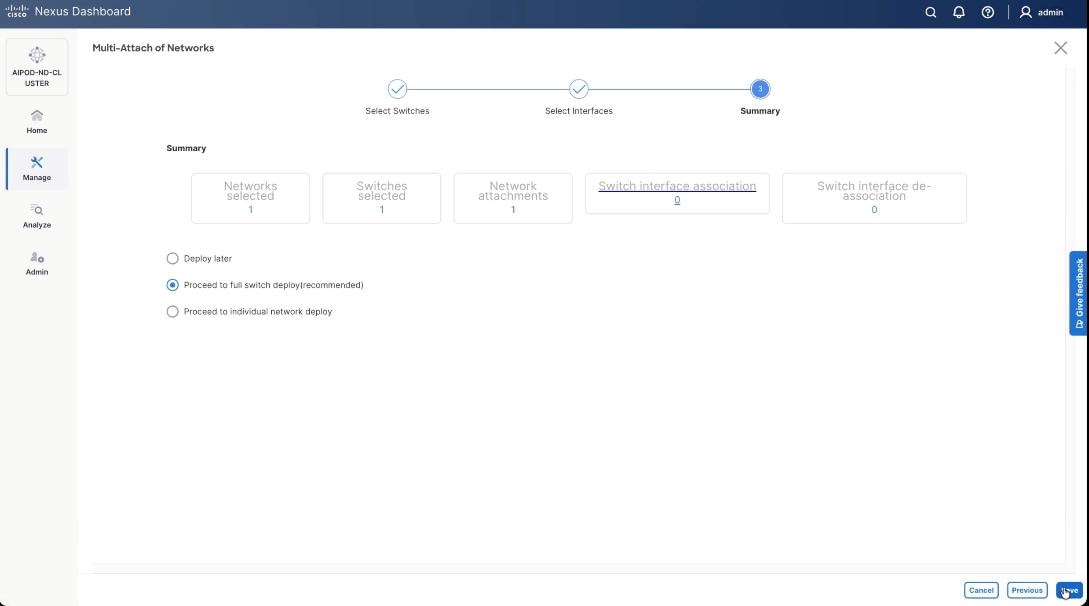

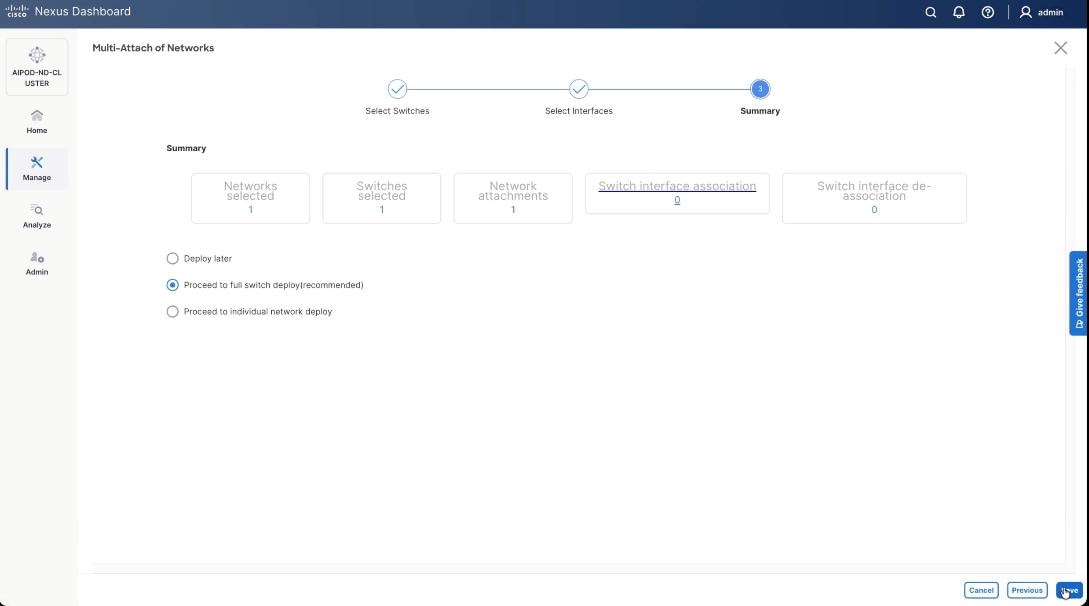

Step 22. Keep the default recommended option of Proceed to Full Switch Deployment and click Save.

Step 23. Click Deploy All.

Step 24. Verify the port attachments to both Storage leaf.

Step 25. Select VAST internal storage network, VAST-Storage-Network_VNI_30069, click the lower Actions button and select Add to interface group from the list.

Step 26. Select the Interface group, VAST-Internal-Storage_Interface_Group and click Save.

Step 27. Select VAST Storage Network, click the Actions button and select Deploy.

Step 28. From the Deploy Configuration screen, click Deploy.

Step 29. Verify the successful deployment and click Close.

Step 30. Select the VAST storage network, click the lower Actions and select Multi-attach from the list.

Step 31. Select FE_SLF1 and click Next.

Step 32. Select VAST internal Storage VNI and click Next.

Step 33. Keep the default recommended option of Proceed to Full Switch Deployment and click Save.

Step 34. Click Deploy All.

Step 35. Verify successful the deployment of network VAST-Discovery_VNI_30010 and click Close.

Procedure 4. Deploy VAST External Client Network

This procedure details the following:

● Add ports and network to Interface groups: VAST-Client-Network

● Attach VAST-Client-Network_VNI_33056, add network to interface group and Deploy network on the front end Fabric (FE-Fabric)

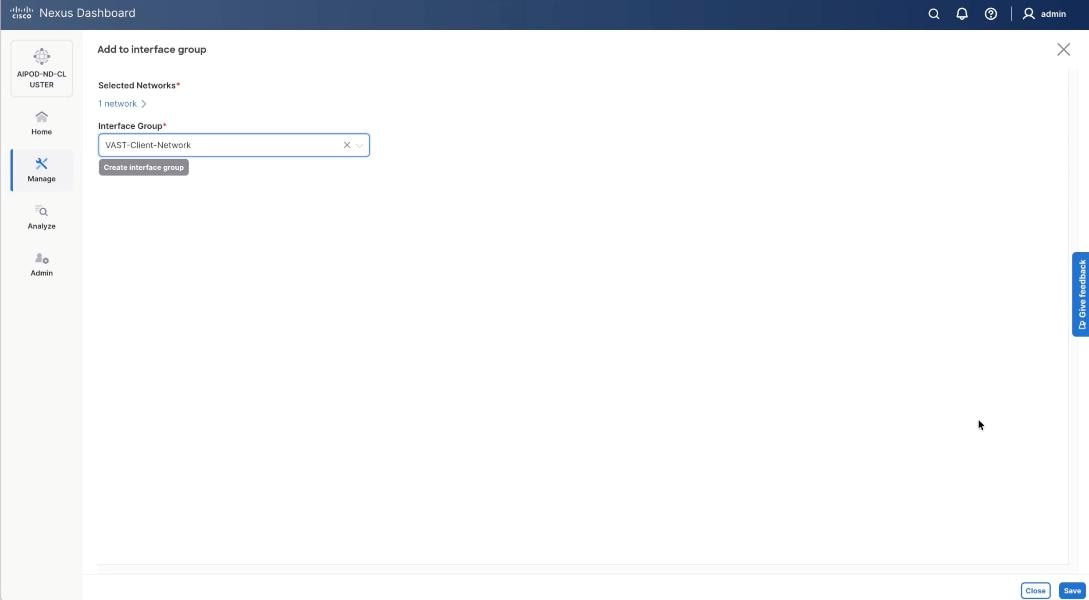

Step 1. Select the VAST discovery network VAST_Client-Network_VNI_33056, click the lower Actions button and select Add to interface group from the list.

Step 2. Select the Interface group VAST-Client-Network and click Save. If it doesn’t exist create the interface group.

Step 3. Select VAST Client Network, click the Actions button and select Deploy.

Step 4. From the Deploy Configuration screen, click Deploy.

Step 5. Verify a successful deployment and click Close.

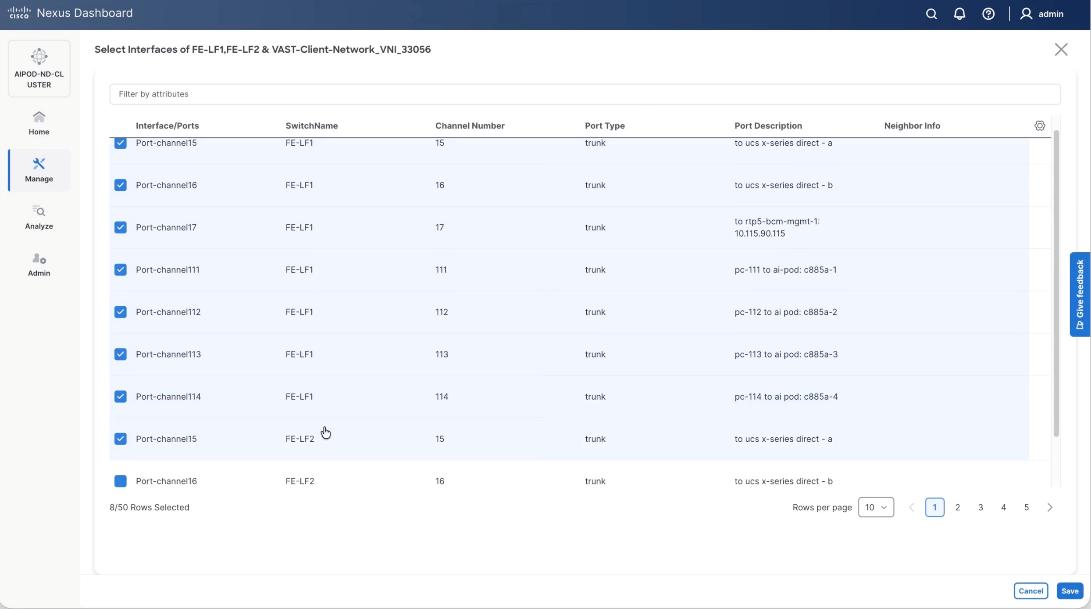

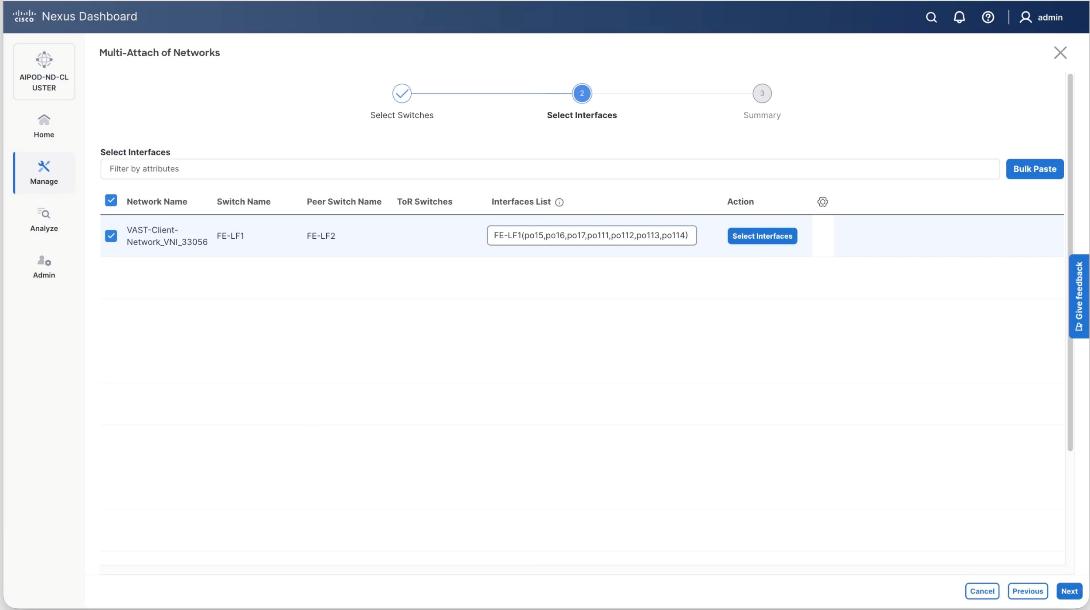

Step 6. Select the VAST Client network VAST-Client-Network_VNI_33056, click the lower Actions button and select Multi-attach from the list.

Step 7. Select the FE_SLF1 and click Next.

Step 8. Add the VAST client network to the port channel interfaces for the GPU nodes connected to the FE-LF1 and FE-LF2 client leaf switches. In the existing deployment theses are port channel 111, port channel 112, port channel 113 and port channel 114. Also add the BCM nodes and X-Series management nodes to allow access to VAST client network.

Step 9. Select the VAST-Client-Network and click Select interfaces.

Step 10. Select the port channel interfaces for 4x C885 GPU nodes on FE-LF1 and FE-LF2 . Also add the BCM nodes and X-Series management nodes to allow access to VAST client network. Click Save.

Step 11. Verify the port channel and click Next.

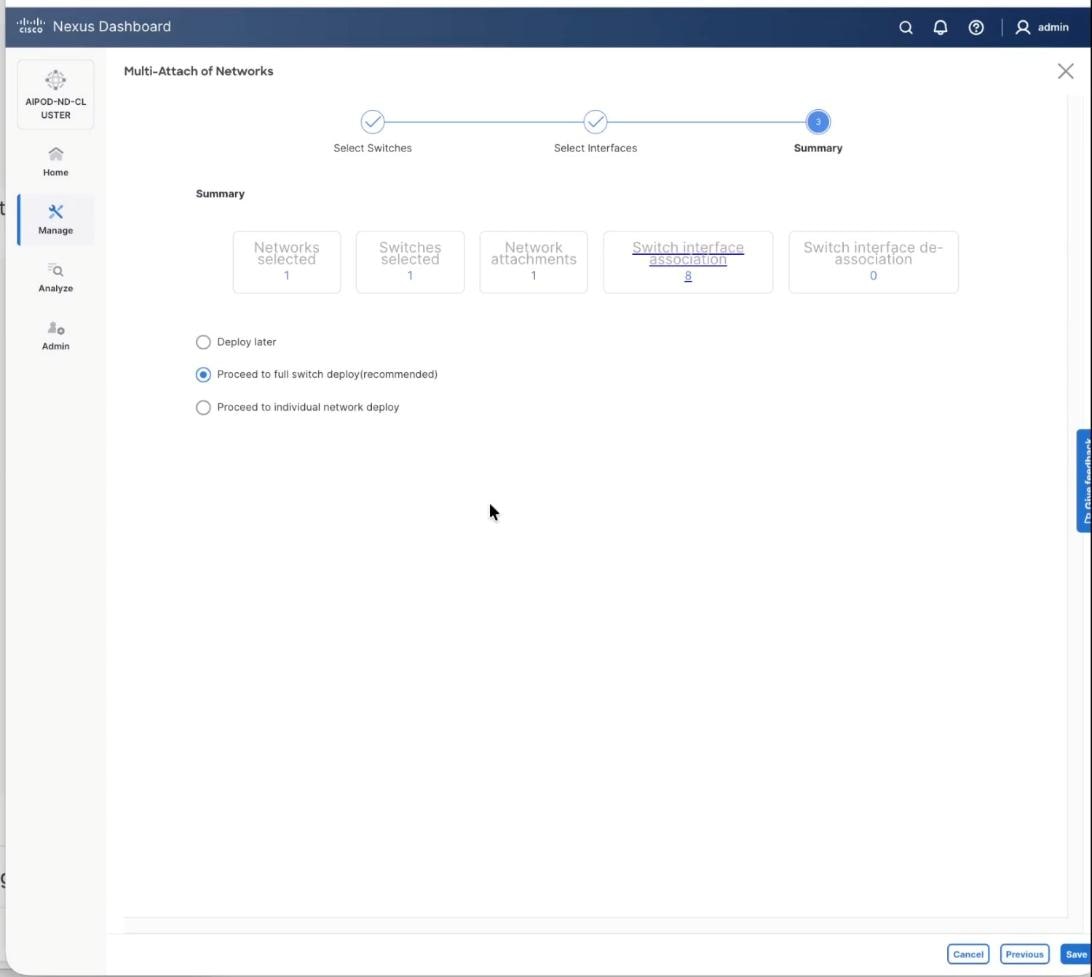

Step 12. Click Save.

Step 13. The configuration to deploy is shown below. Click Pending Config to see the configuration being deployed. The pending configuration on one leaf switch is provided as a reference at the end. Click Deploy All.

Step 14. Click Close.

Step 15. Verify a successful deployment.

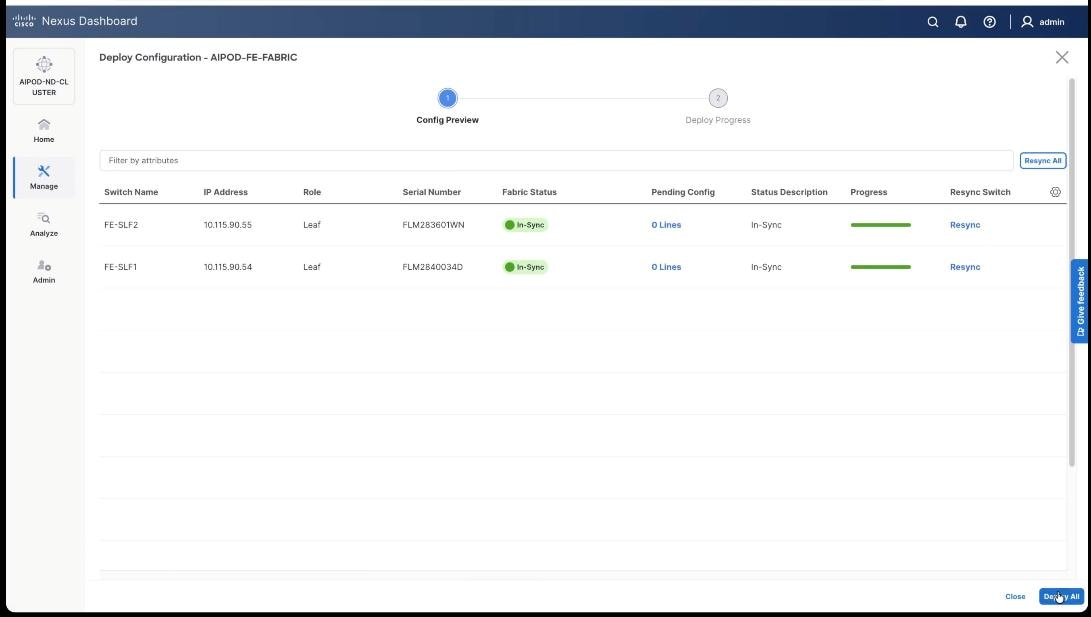

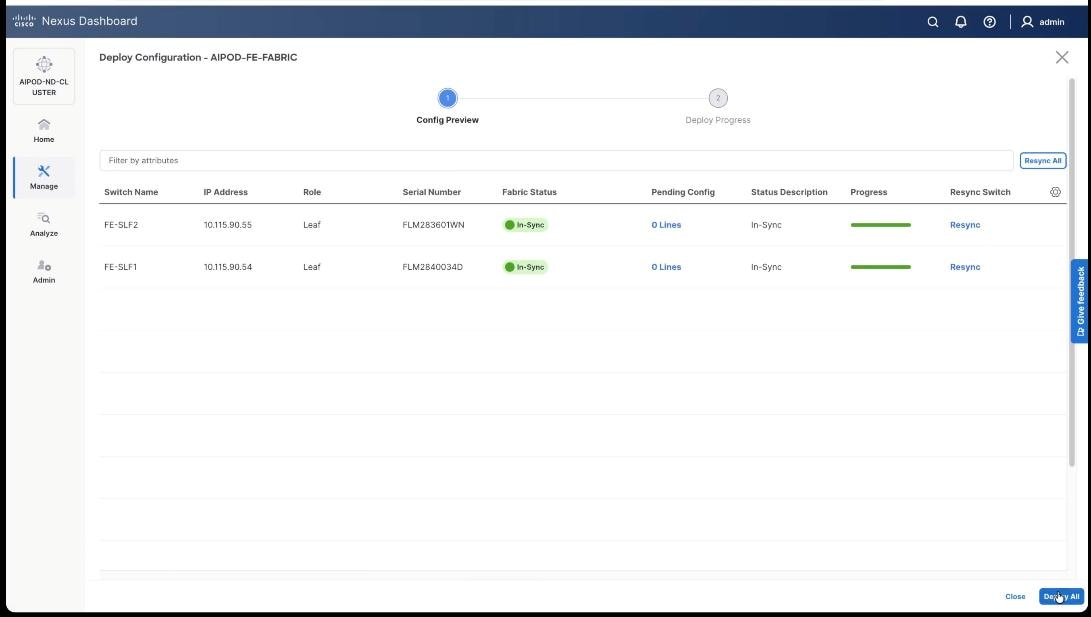

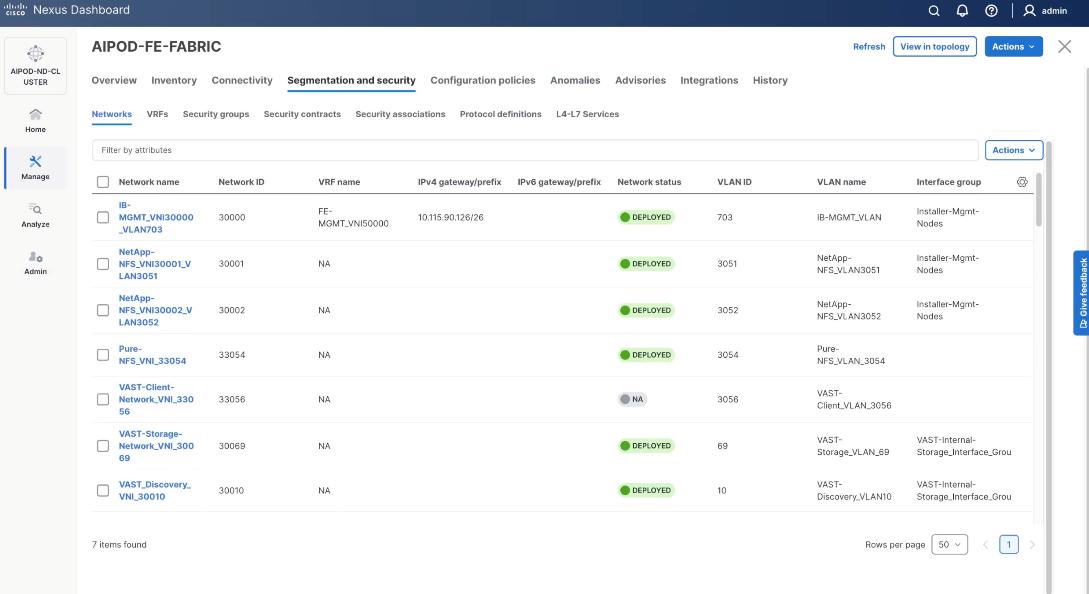

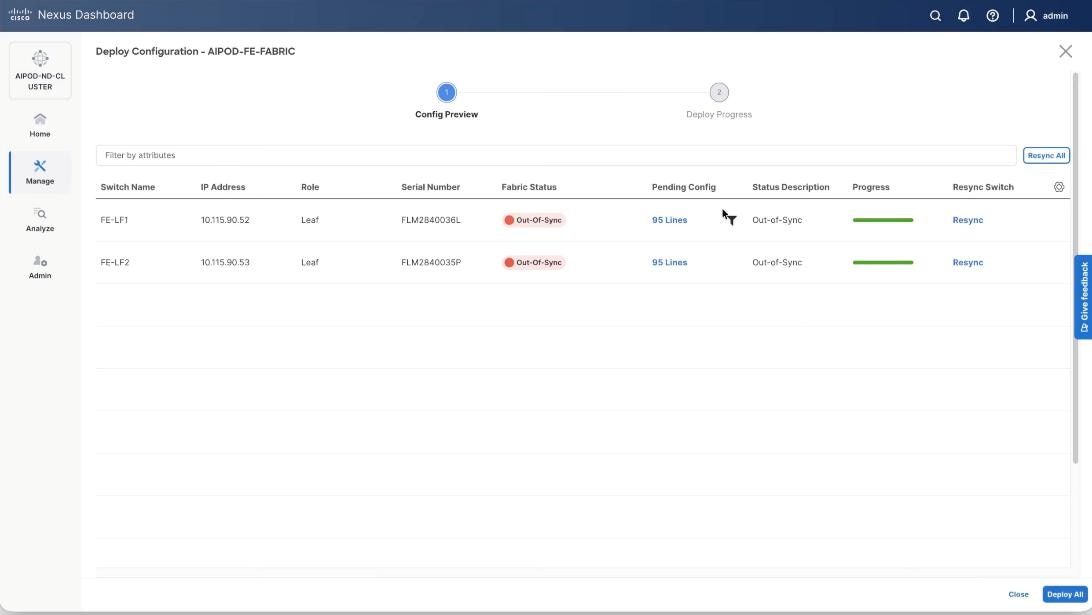

Step 16. Verify a successful deployment and propagation of configurations from Nexus Dashboard controller to nexus switches. Select the FE fabric and go to Segmentation and Security > Networks. Click Action and click Recalculate and Deploy.

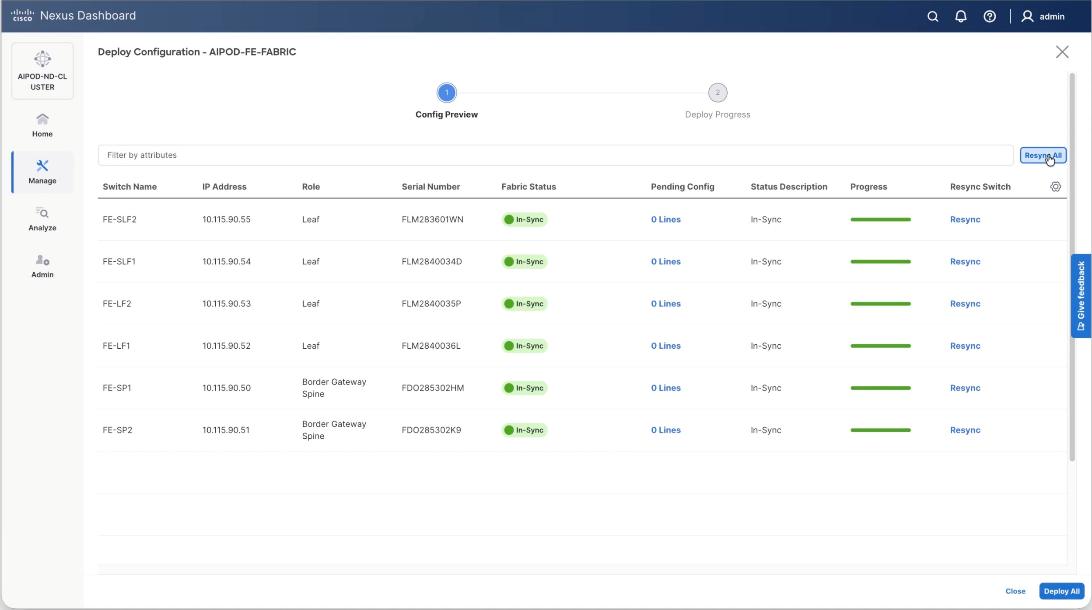

Step 17. Verify in-sync status of FE Fabric.

Step 18. Click Resync All to confirm synchronization of FE Fabric.

Cisco Nexus Backend Fabric Setup

In this setup, the Nexus Backend Fabric consisted of 2 spine and 2 leaf switches. This fabric was cabled according to Table 4. The fabric switch details are listed in Table 18.

Table 18. Backend Fabric Switch Details

| Switch |

Role |

OOB IP |

Firmware |

Model |

| BE-LF1 |

Leaf |

10.115.90.58 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| BE-LF2 |

Leaf |

10.115.90.59 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| BE-SP1 |

Spine |

10.115.90.60 |

10.4(5) |

Cisco Nexus 9364D-GX2A |

| BE-SP2 |

Spine |

10.115.90.61 |

10.4(5) |

Cisco Nexus 9364D-GX2A |

Physical Connectivity

Note: Follow the physical connectivity guidelines in the Connectivity Design section.

Initial Configuration of Switches

The following procedures describe this basic configuration of the Cisco Nexus backend fabric switches for use in the solution. This procedure assumes the use of Cisco Nexus 9000 10.4(5), the Cisco suggested Nexus switch release at the time of this validation.

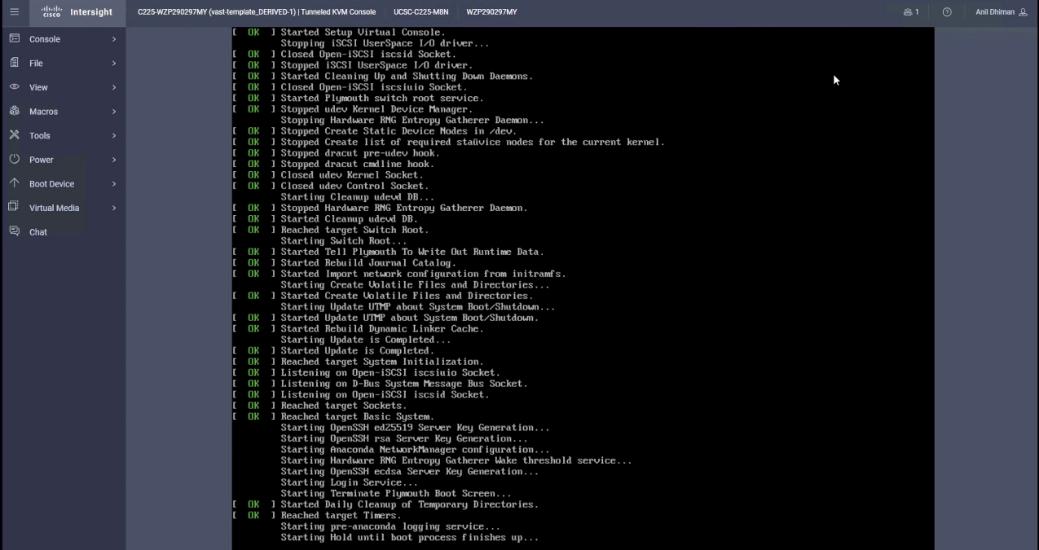

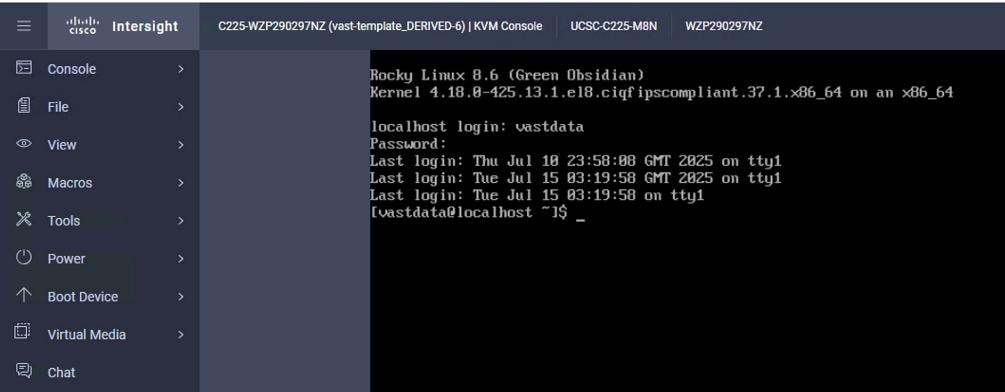

Procedure 1. Set Up Initial Configuration from a serial console

Step 1. Set up the initial configuration for each backend fabric switch as listed in Table 18.

Step 2. Configure the switch.

Note: On initial boot, the NX-OS setup automatically starts and attempts to enter Power on Auto Provisioning.

Abort Power On Auto Provisioning [yes - continue with normal setup, skip - bypass password and basic configuration, no - continue with Power On Auto Provisioning] (yes/skip/no)[no]: yes

Disabling POAP.......Disabling POAP

poap: Rolling back, please wait... (This may take 5-15 minutes)

---- System Admin Account Setup ----

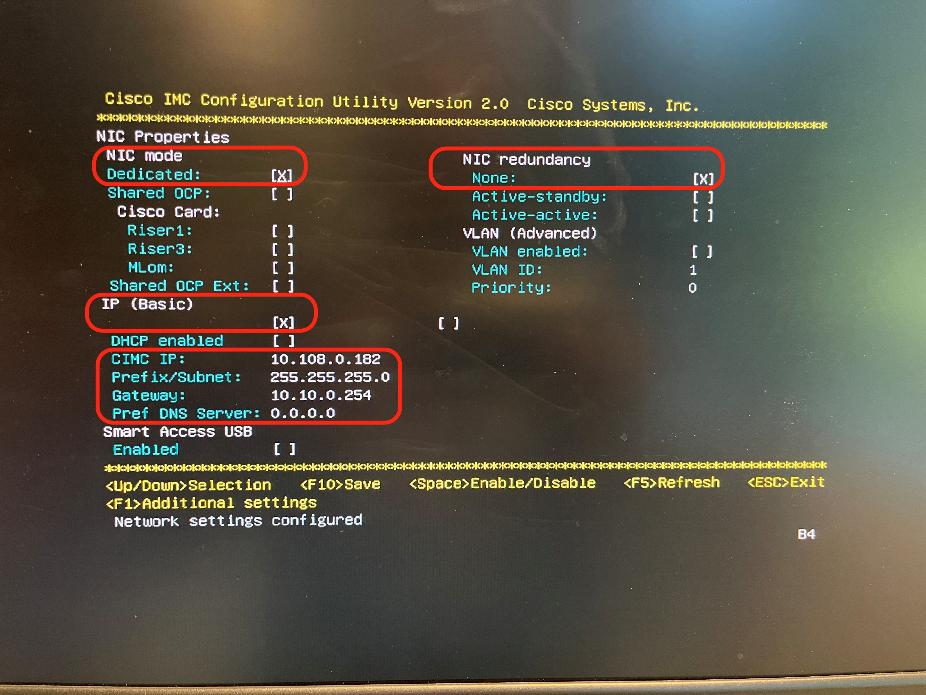

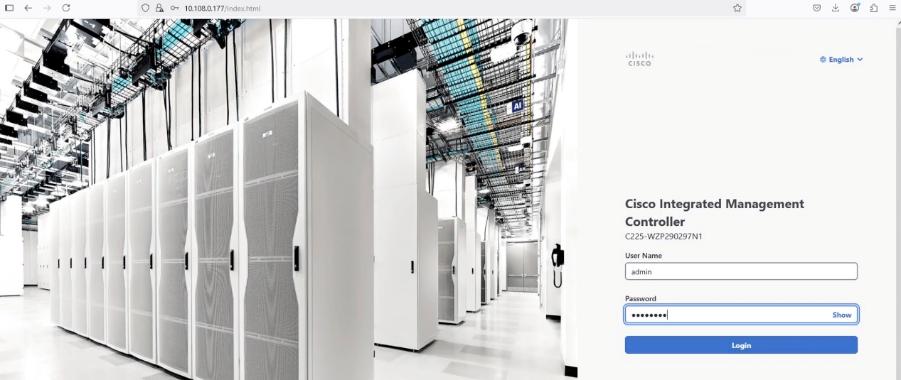

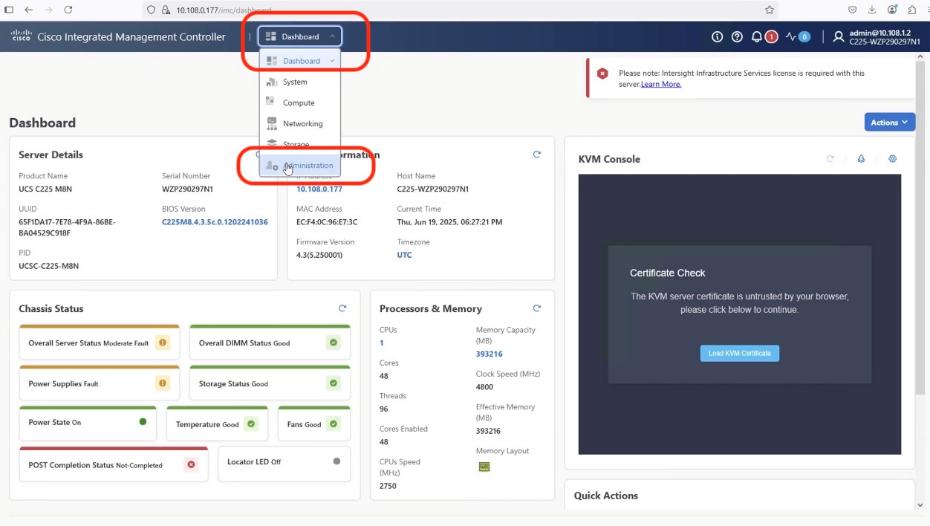

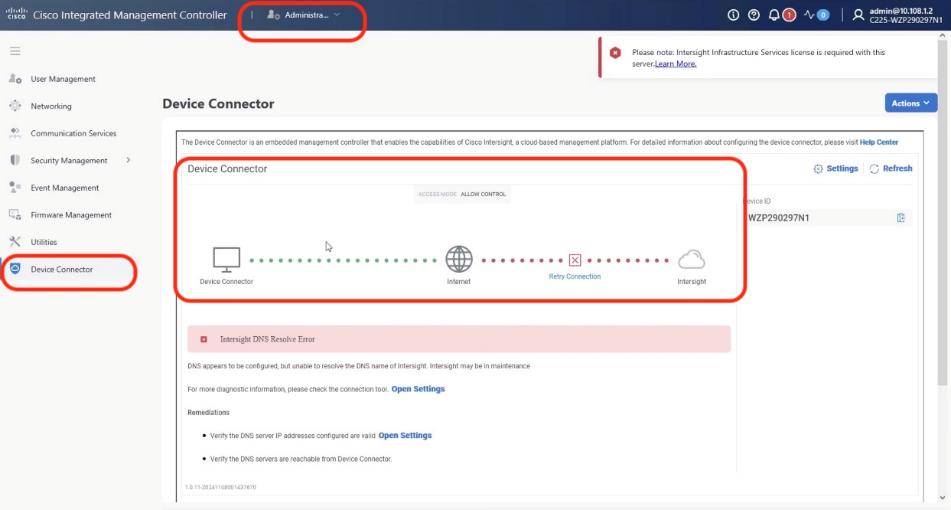

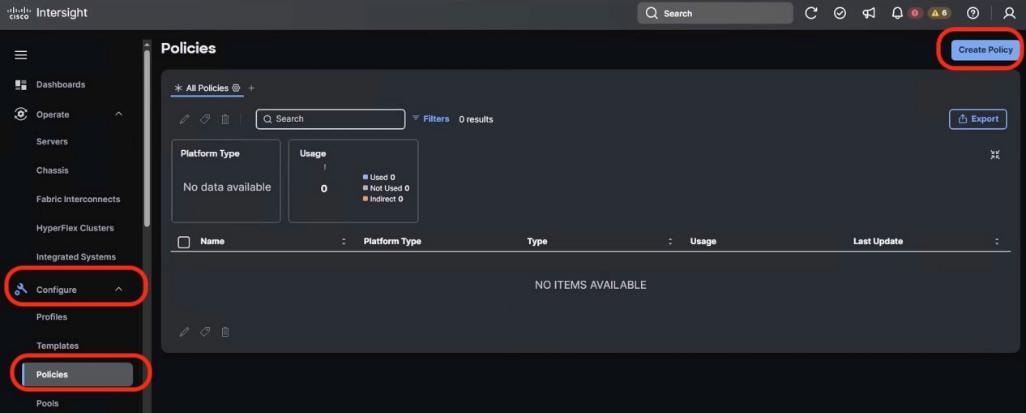

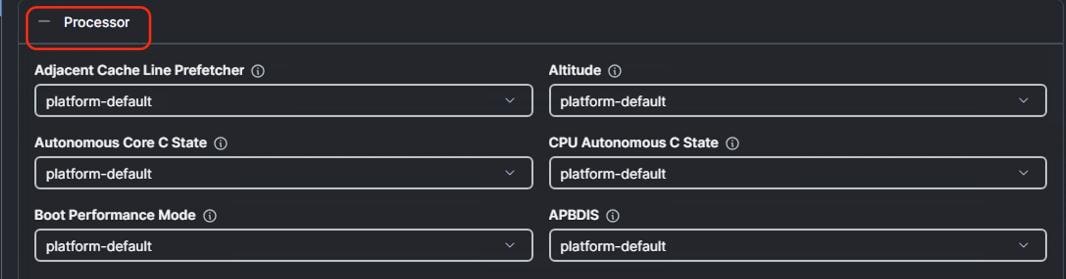

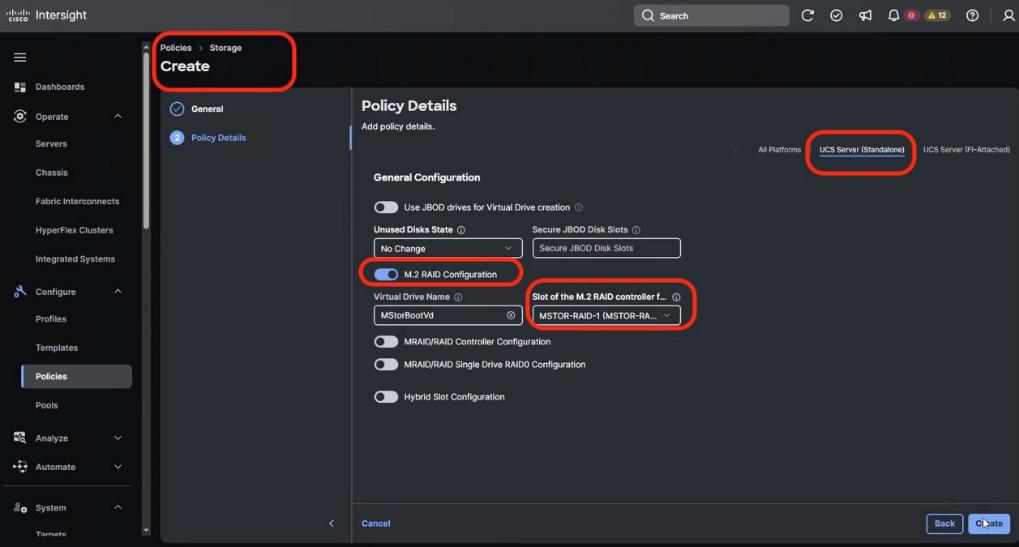

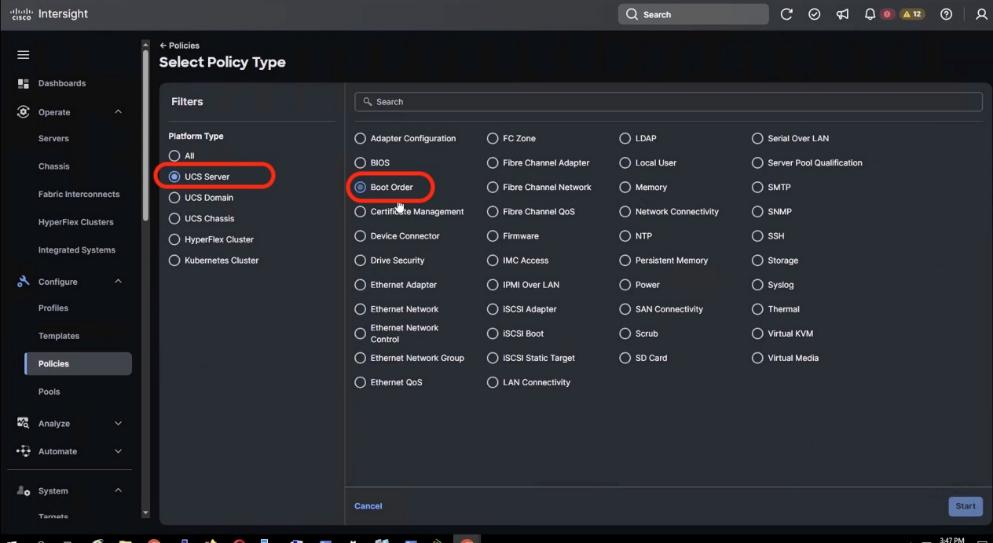

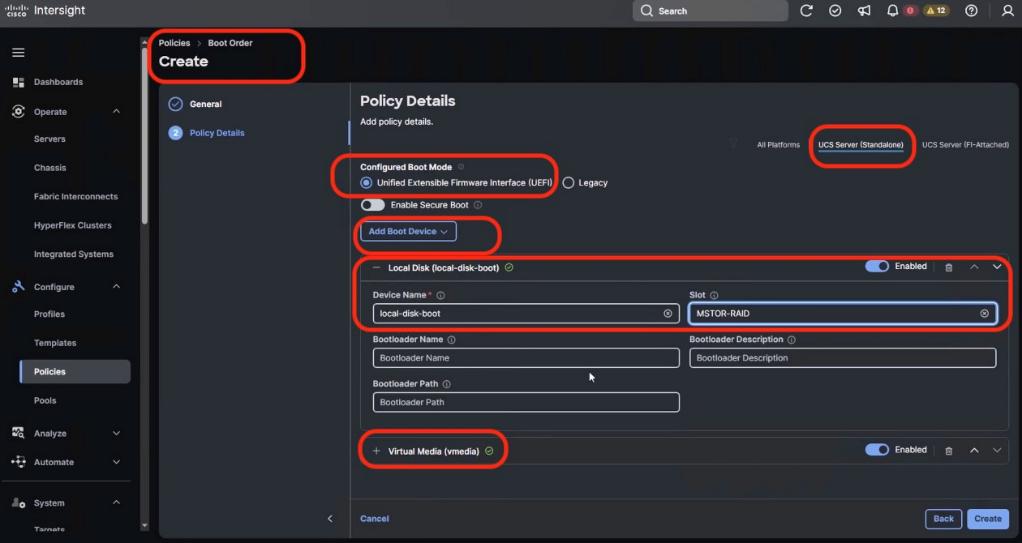

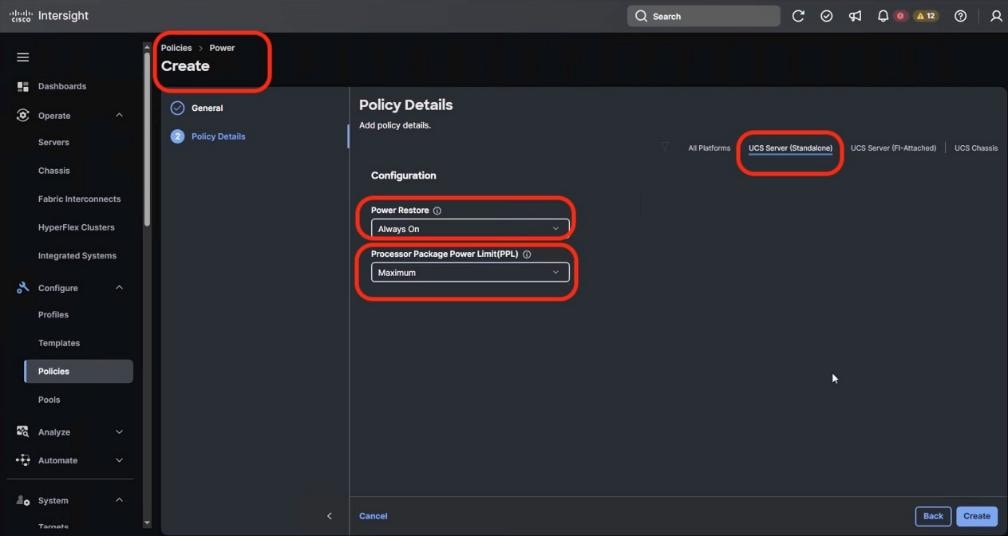

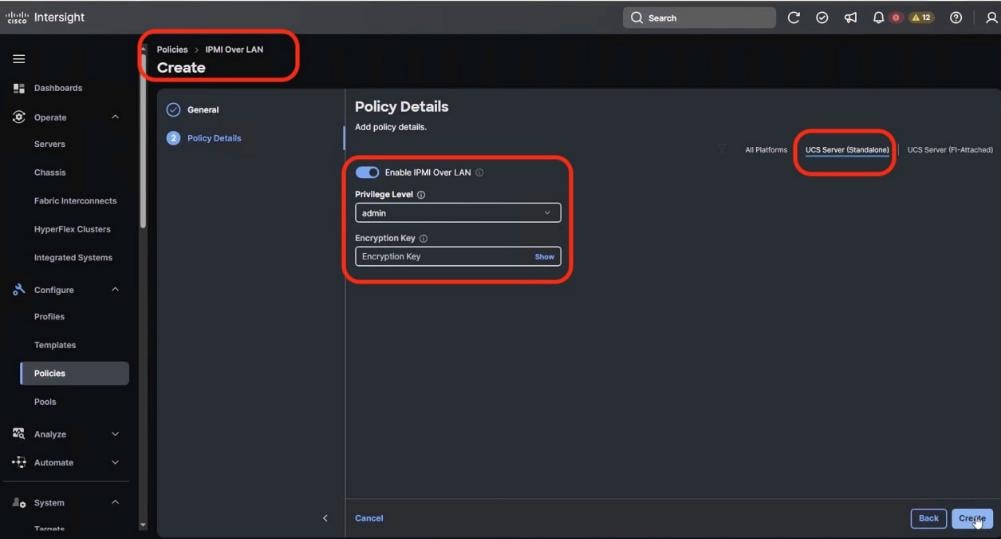

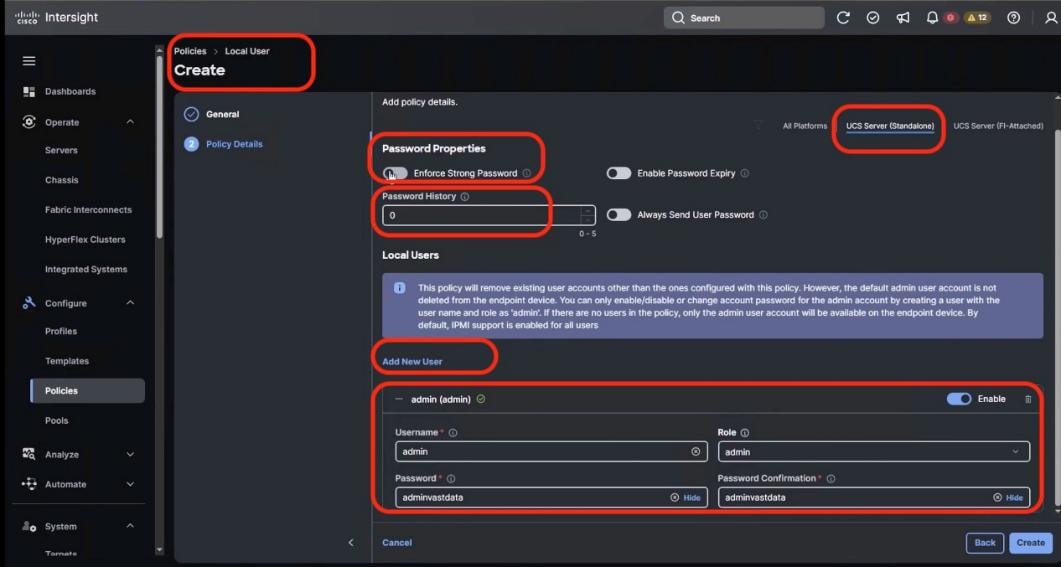

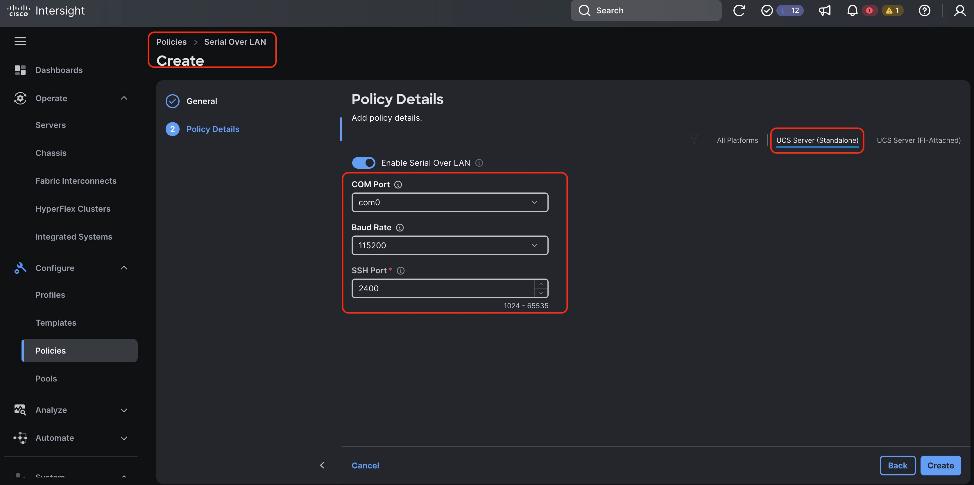

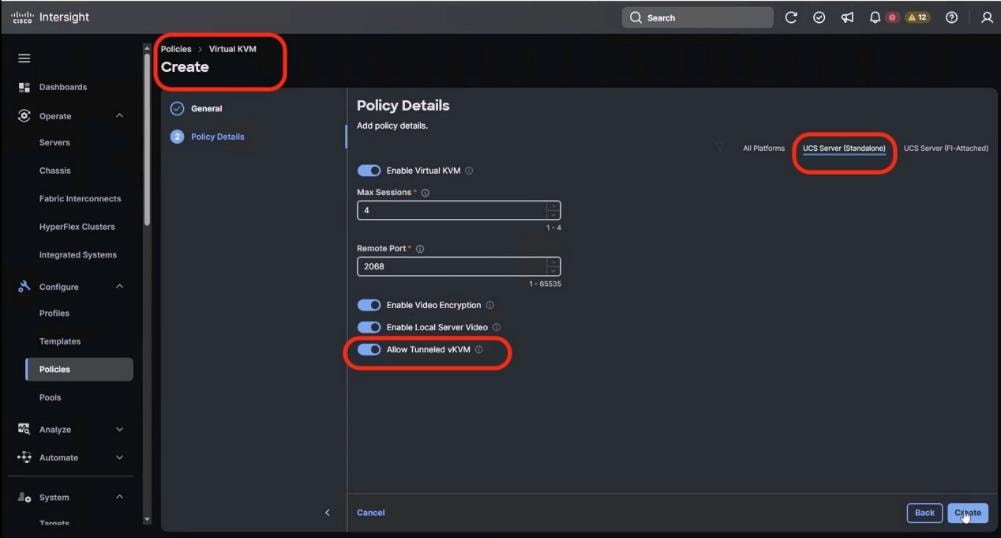

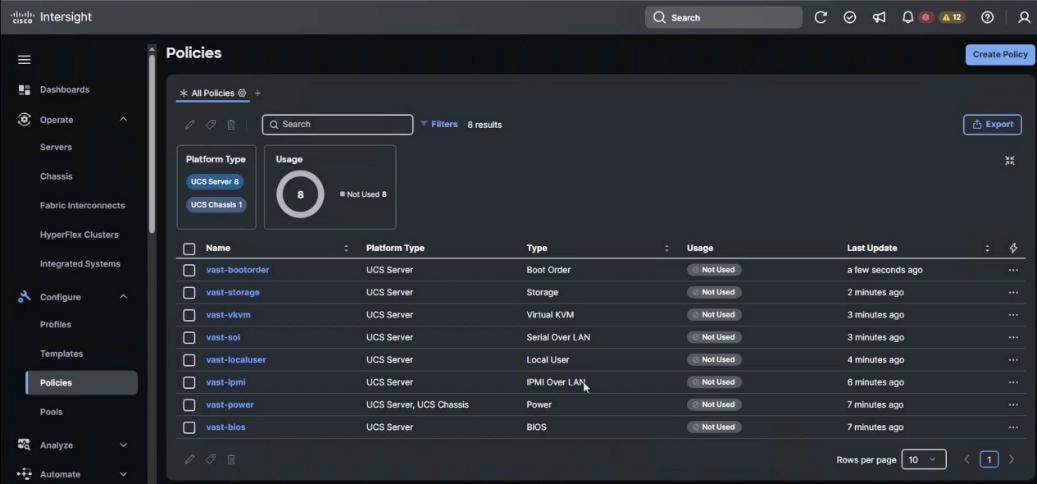

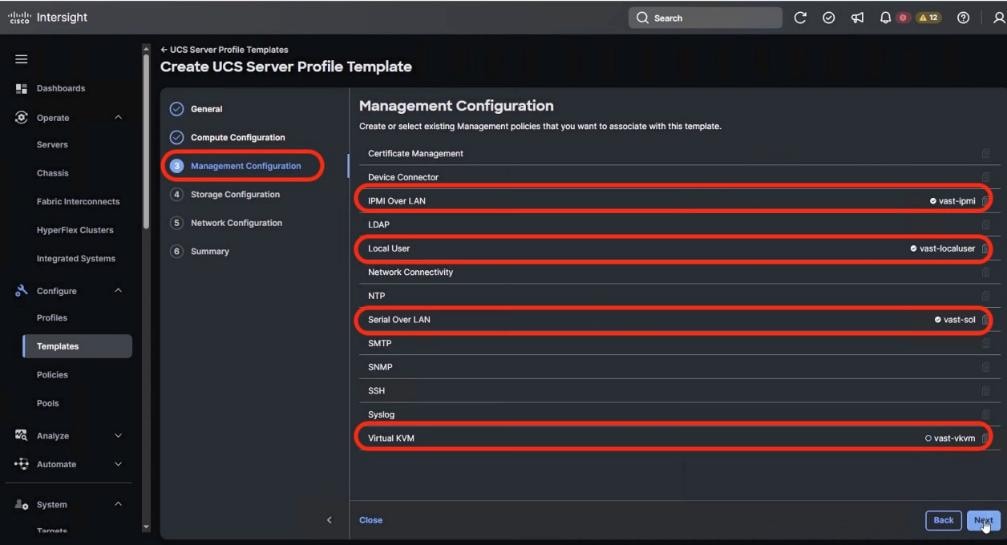

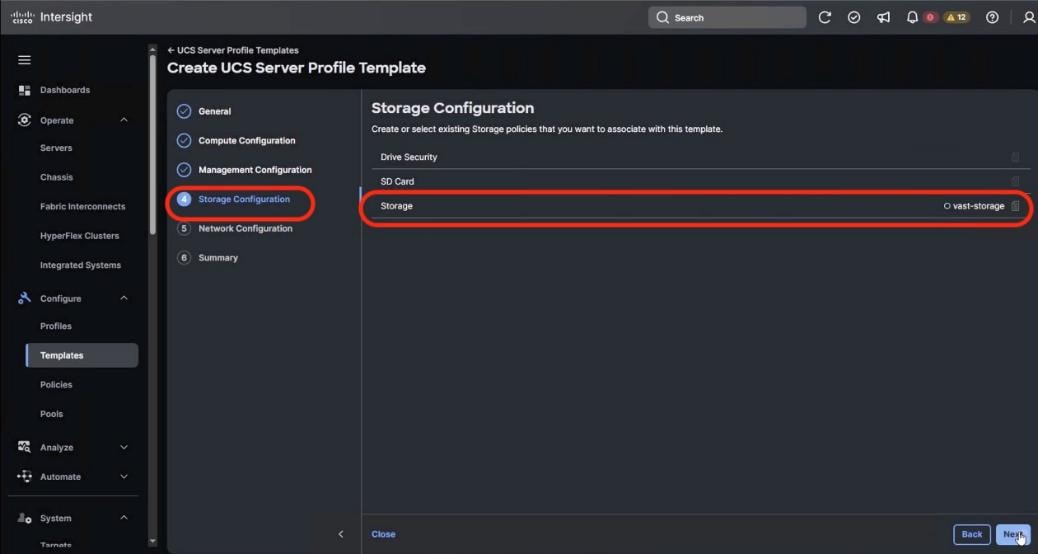

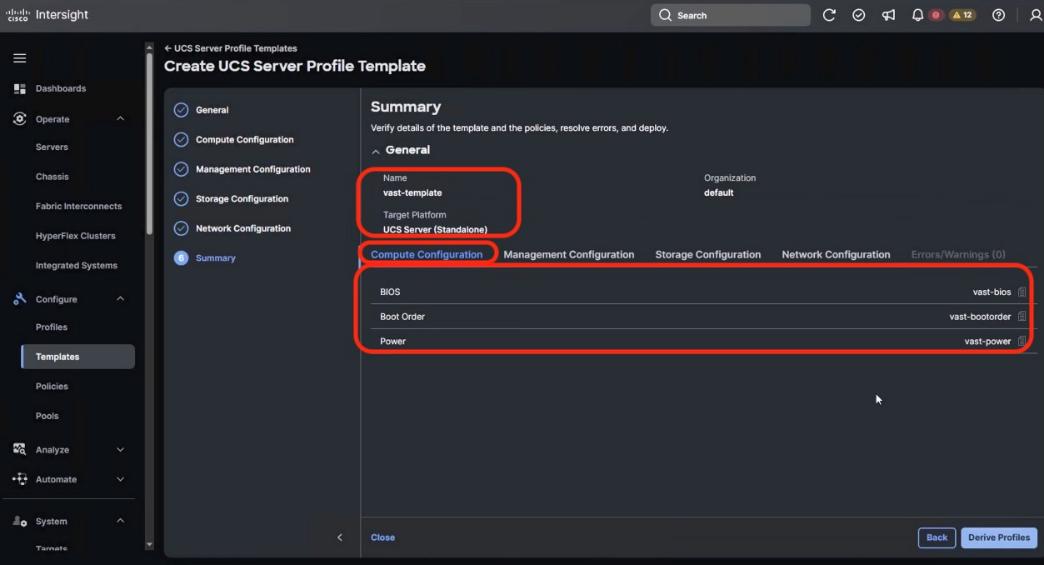

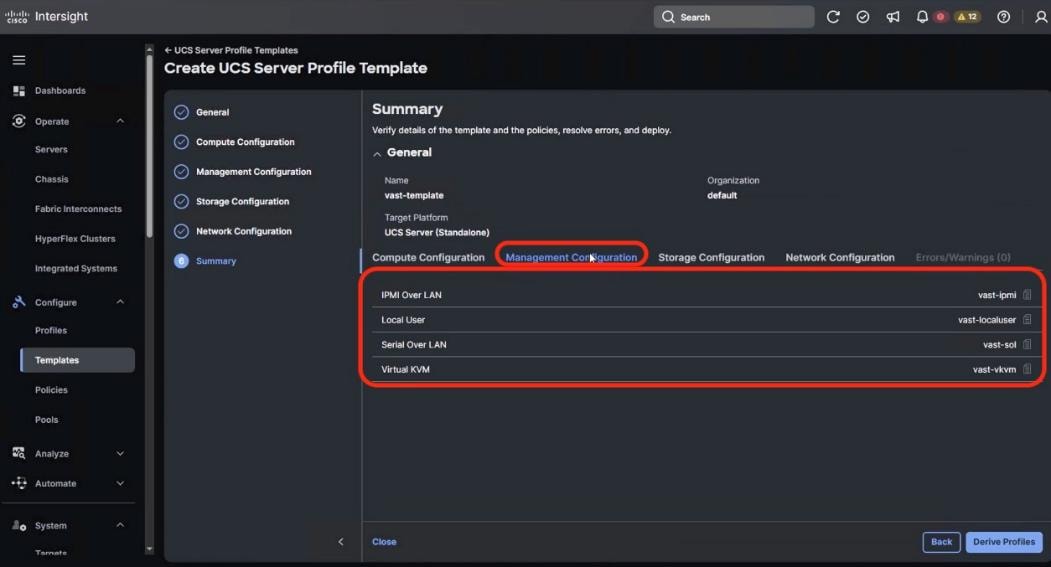

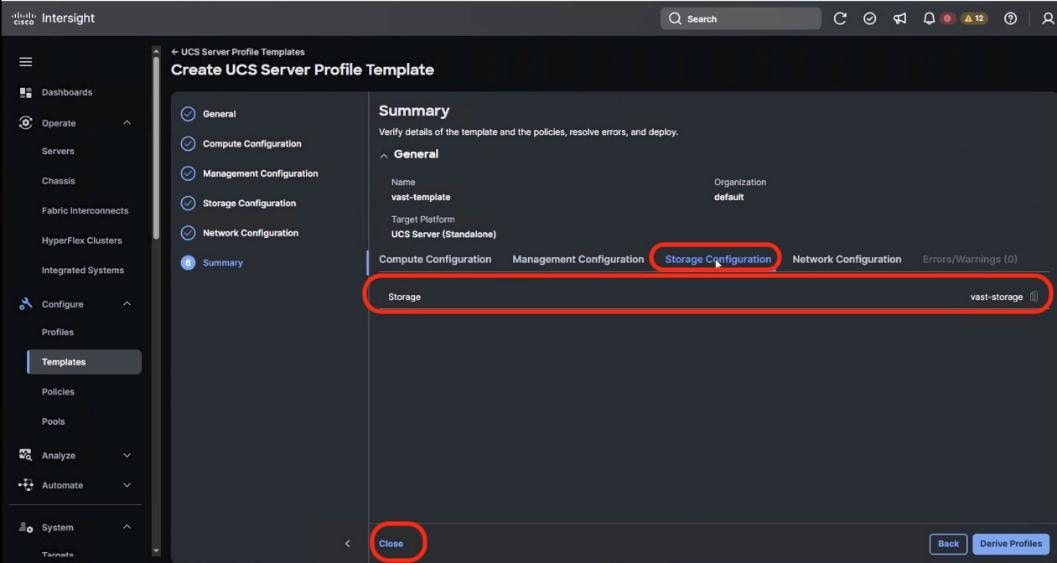

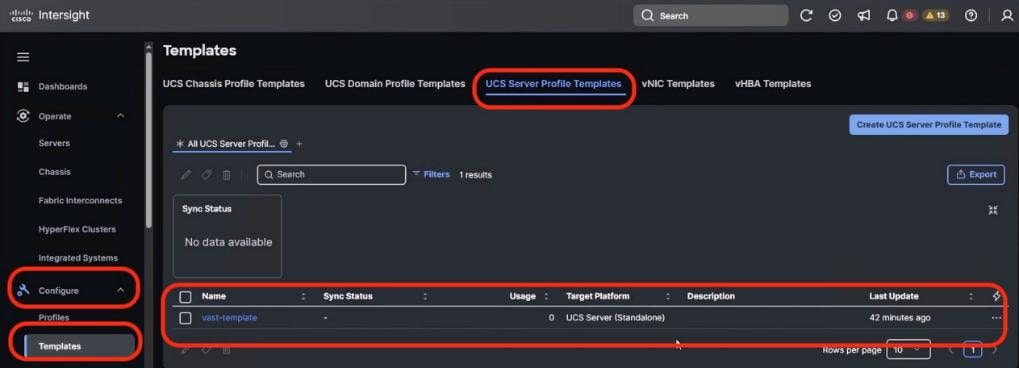

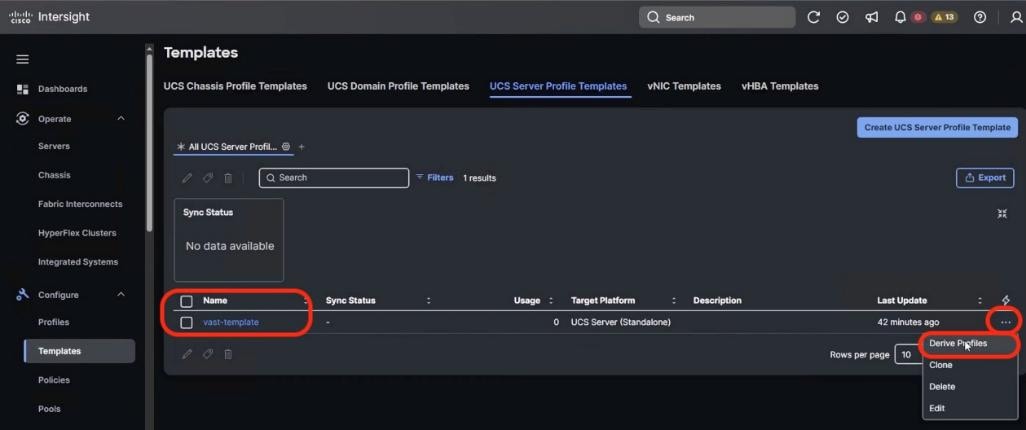

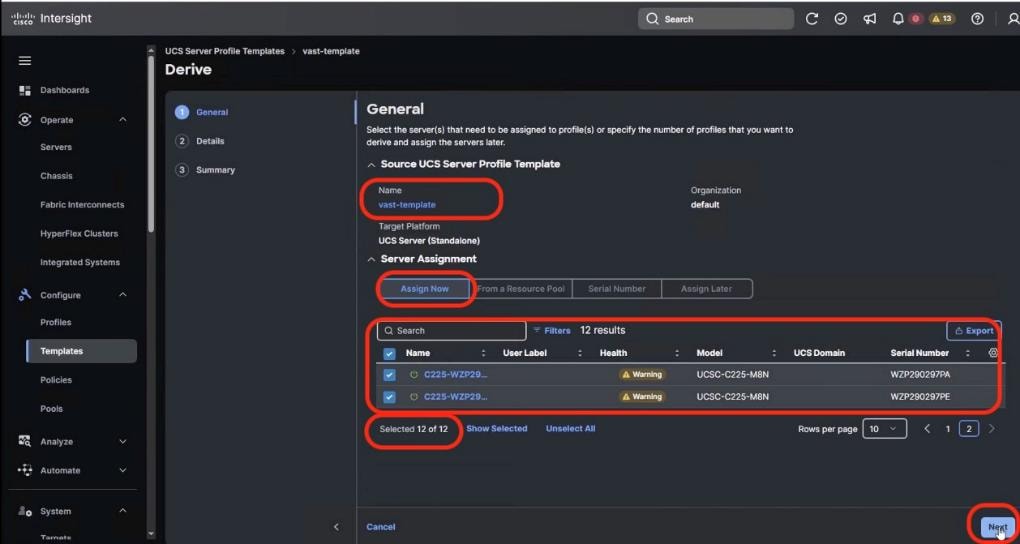

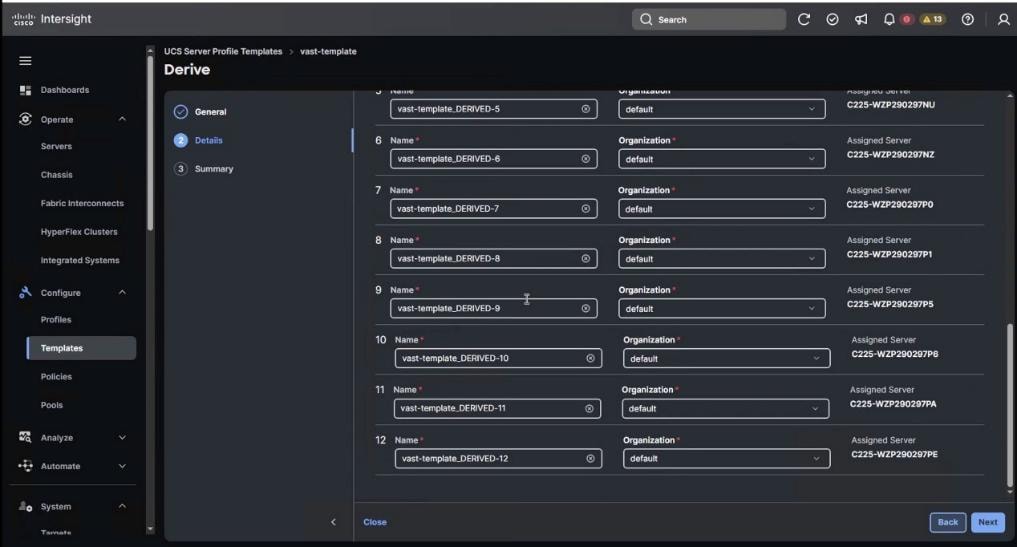

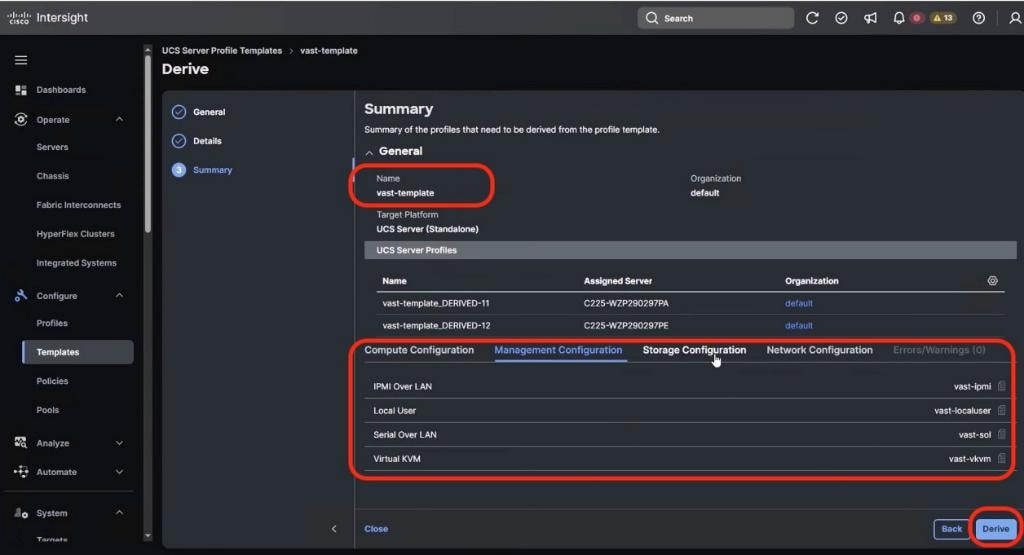

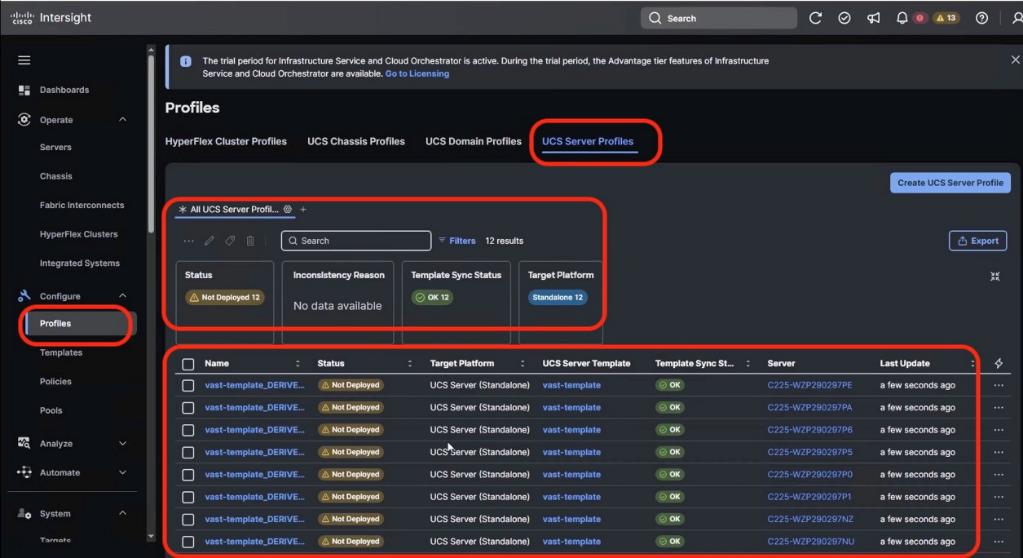

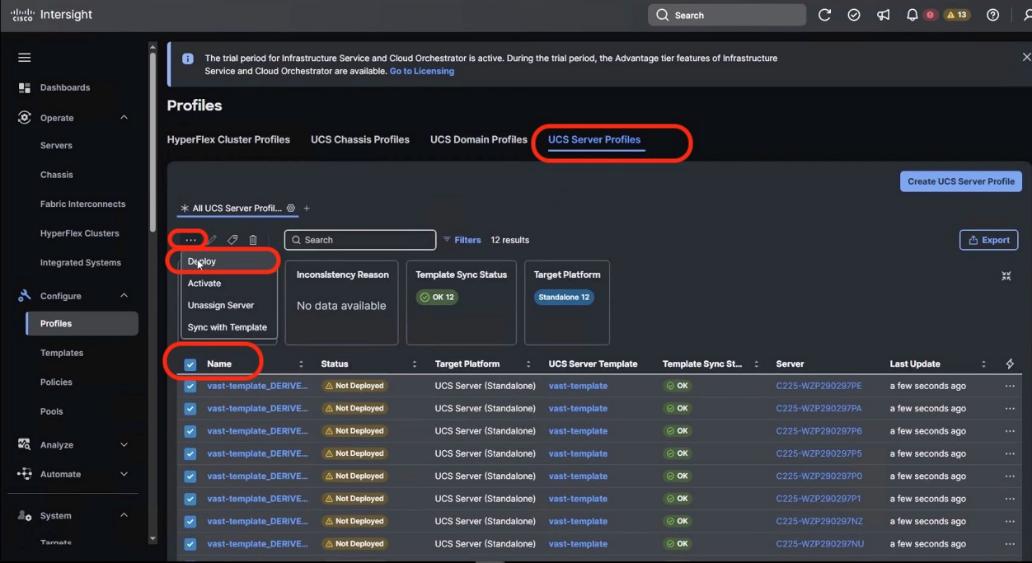

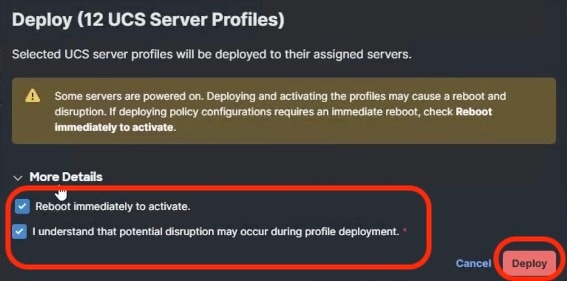

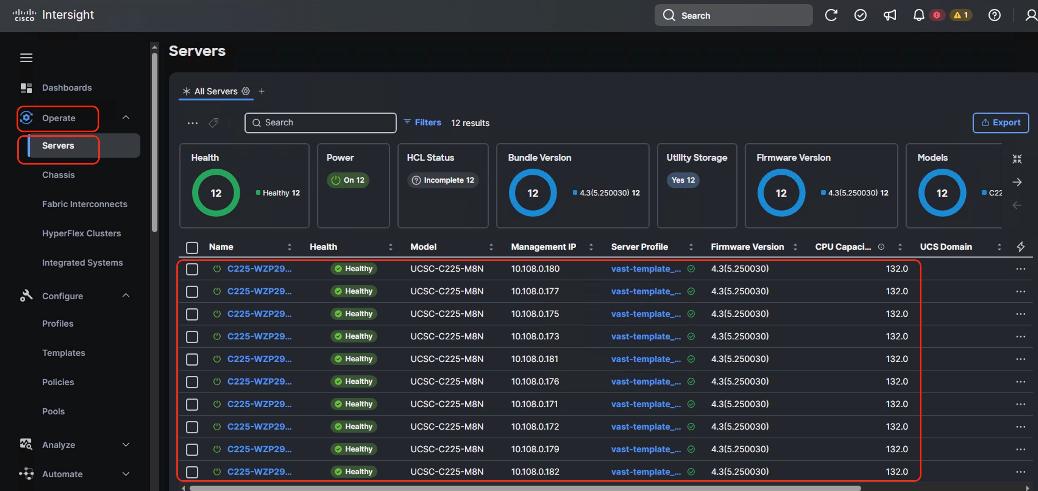

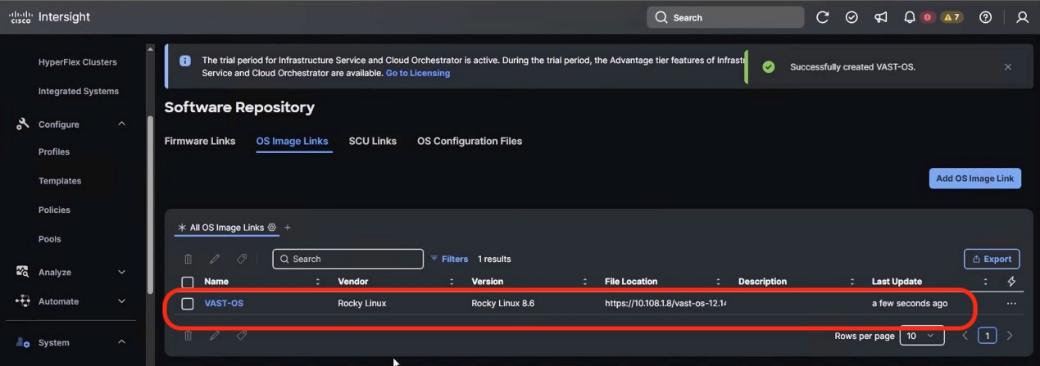

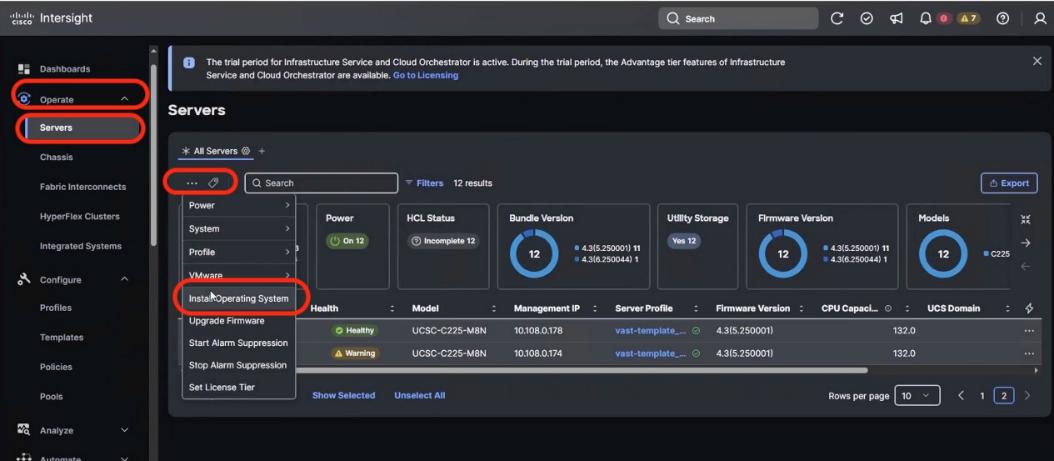

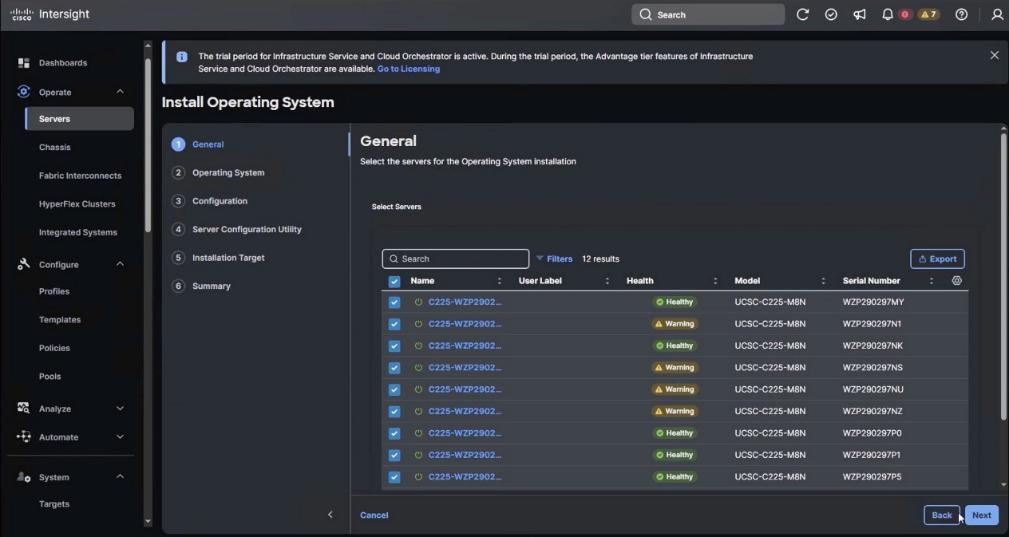

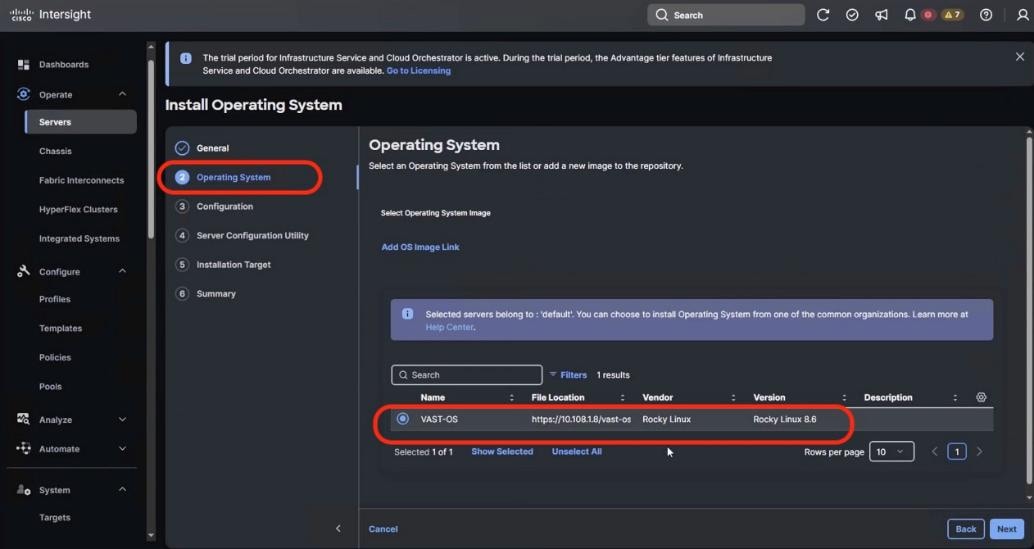

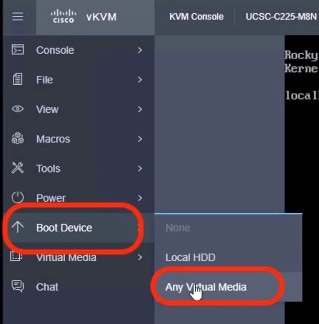

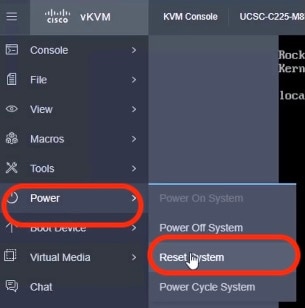

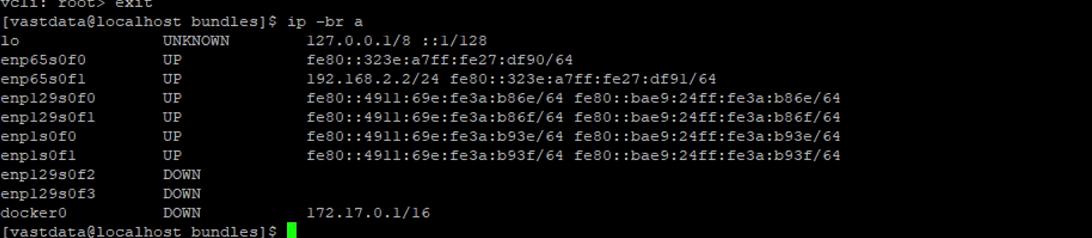

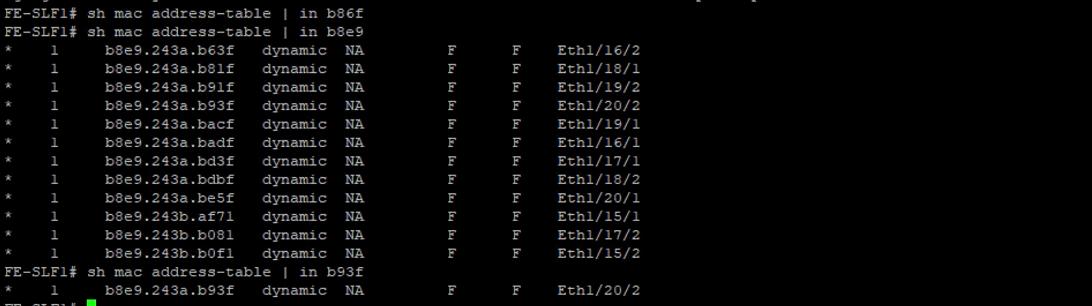

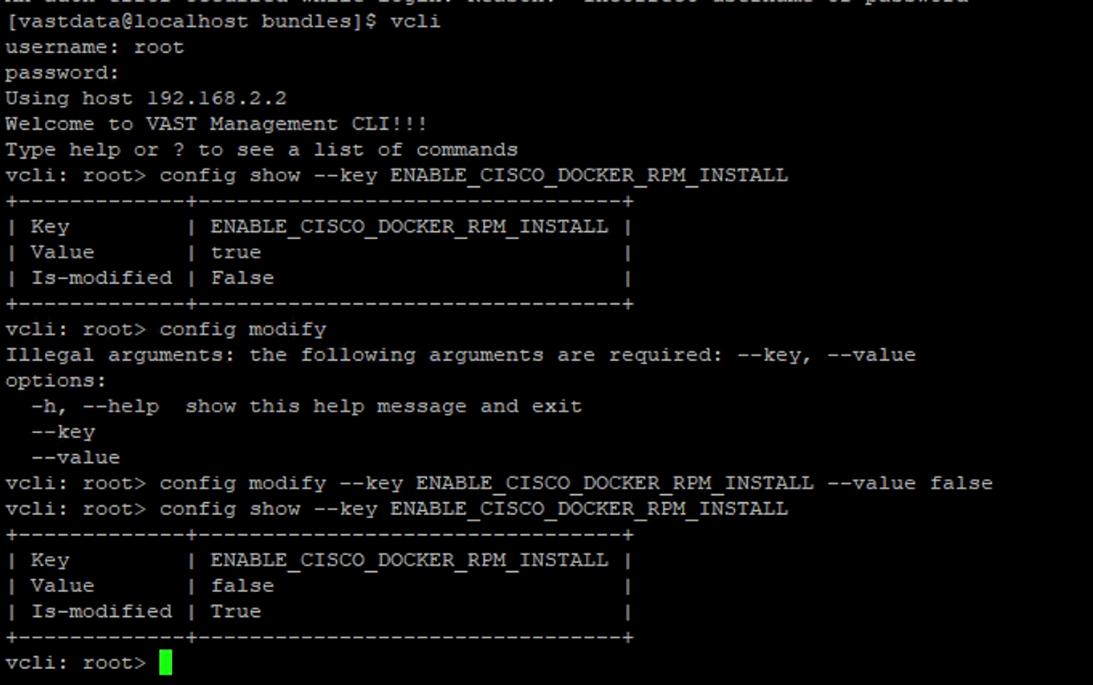

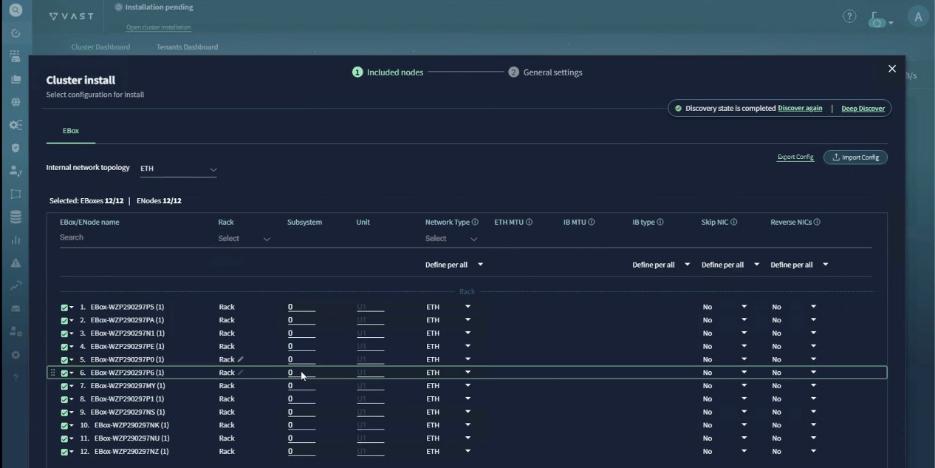

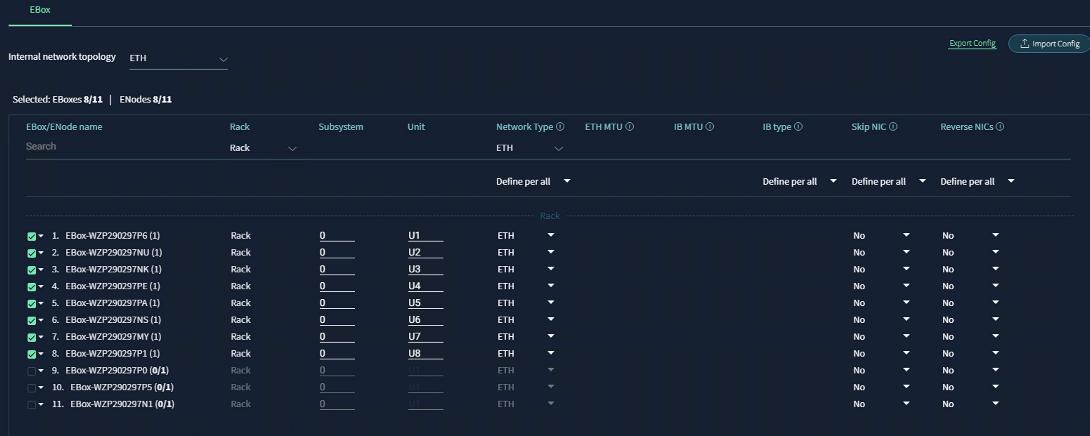

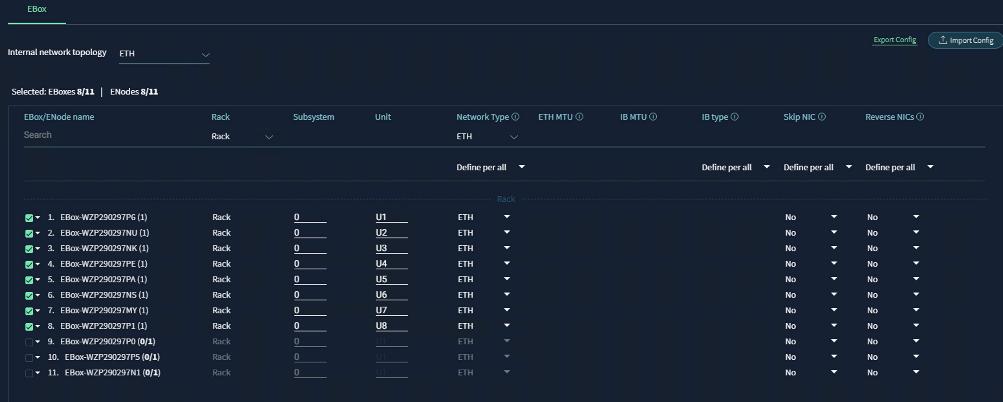

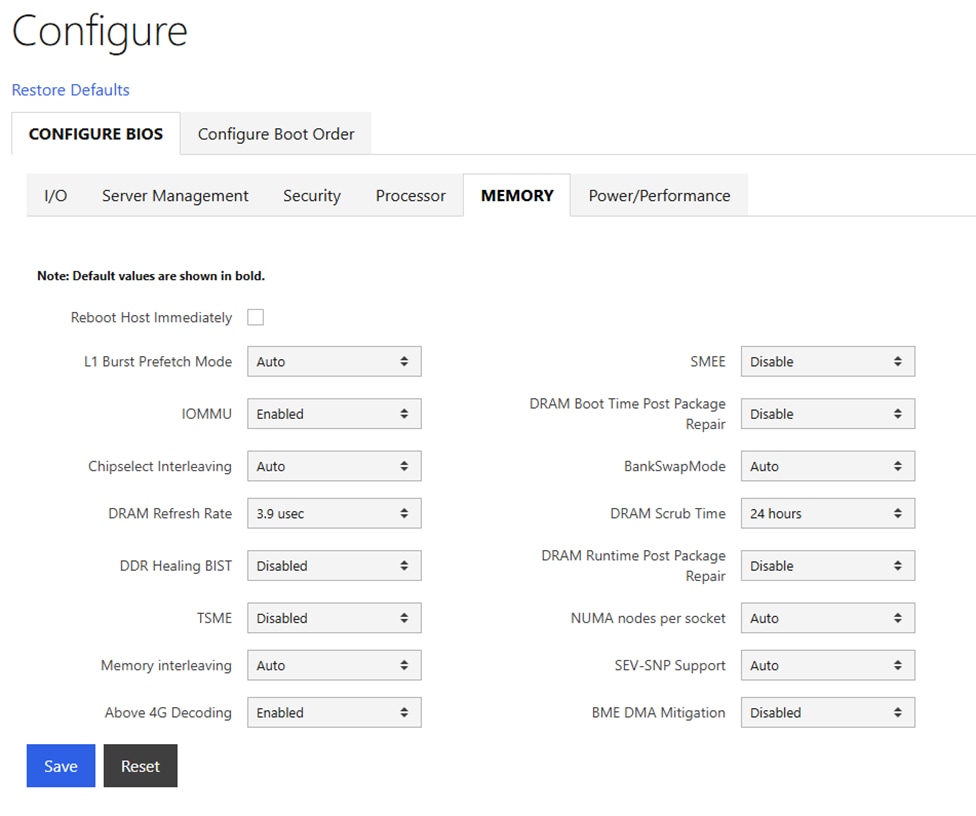

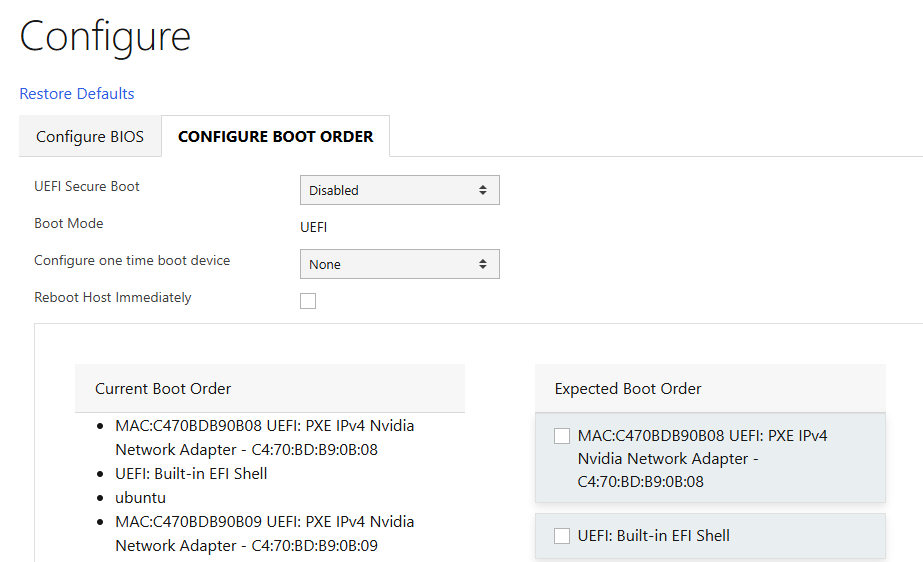

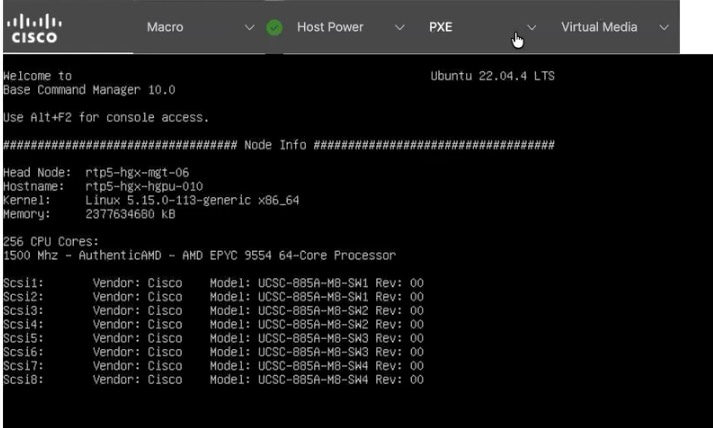

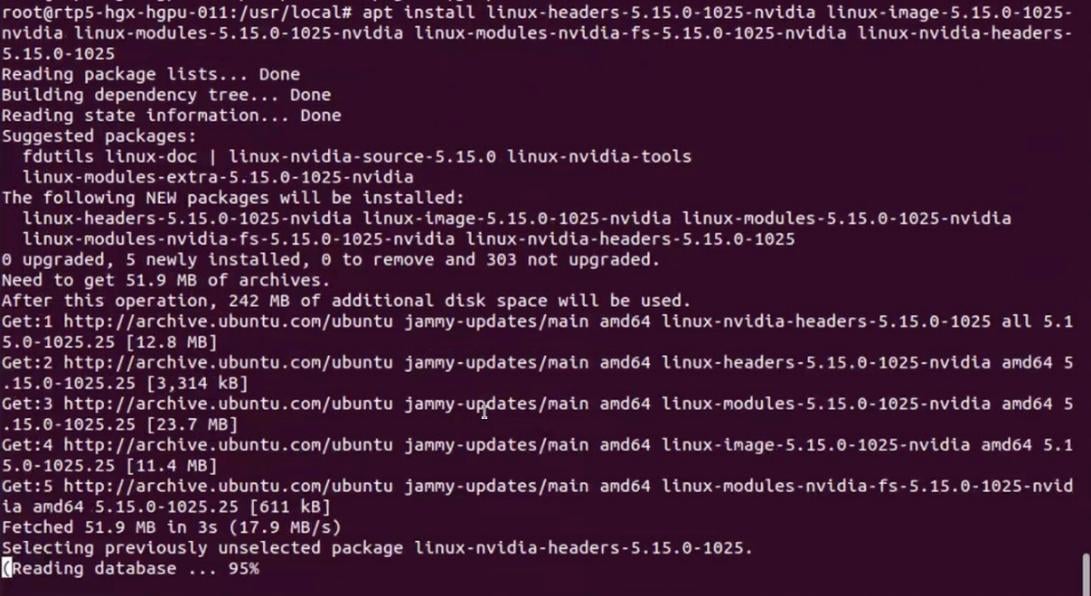

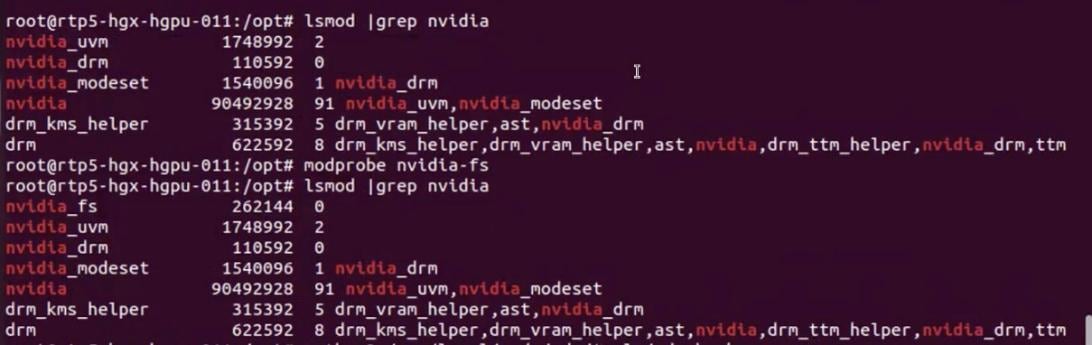

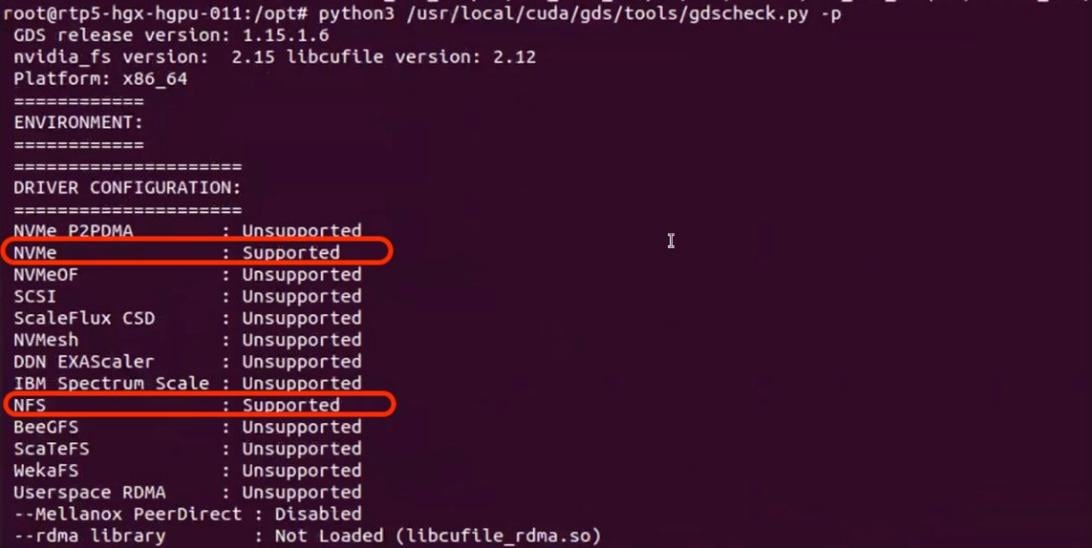

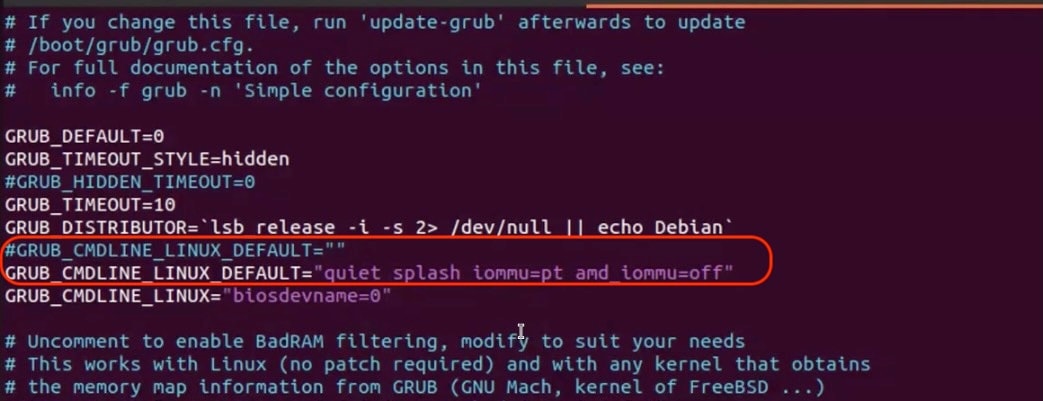

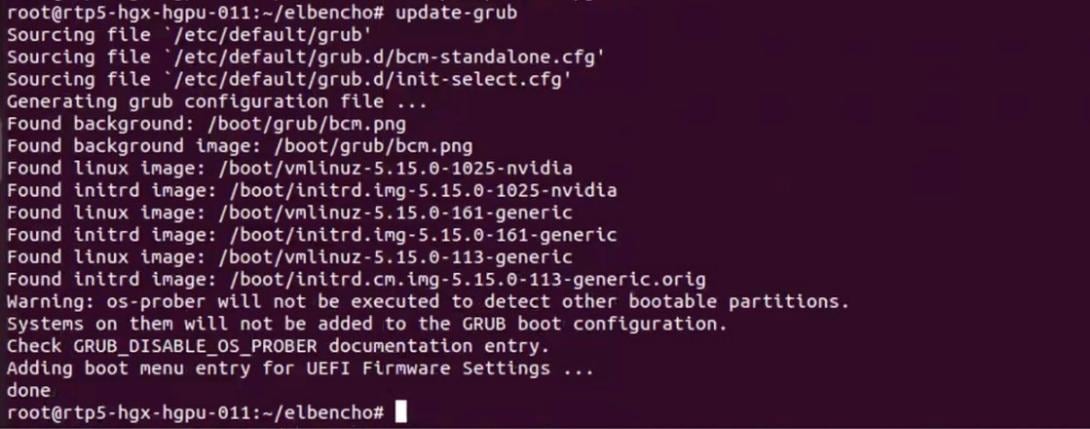

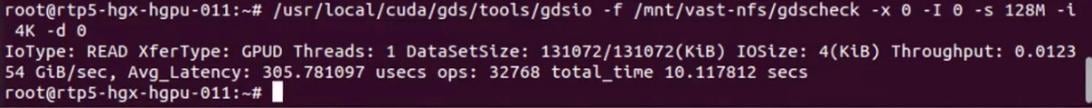

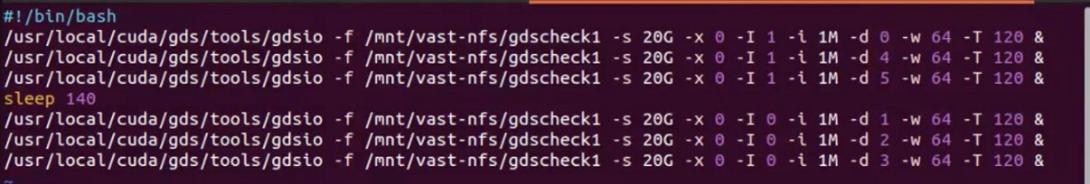

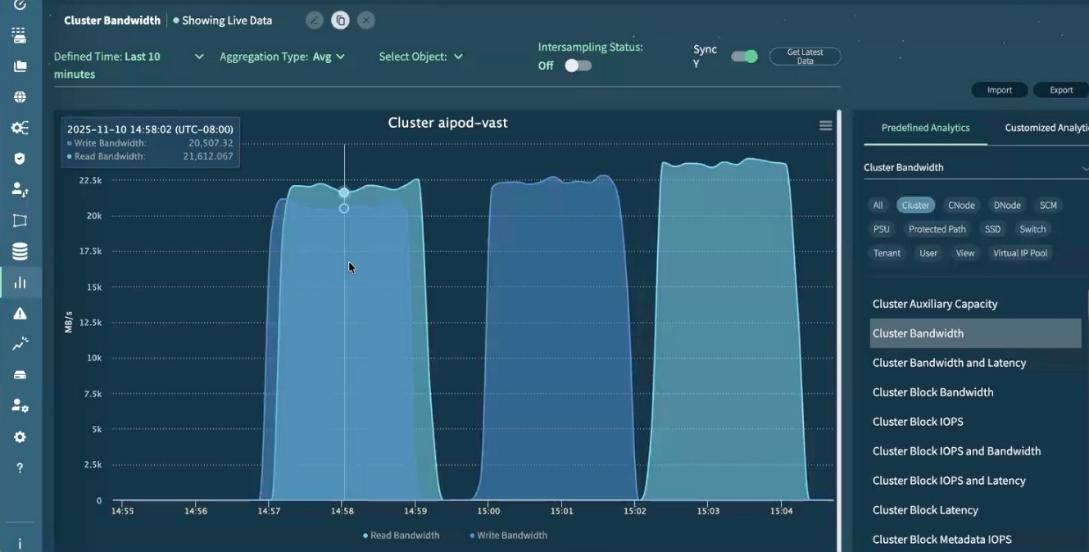

Do you want to enforce secure password standard (yes/no) [y]: Enter