Support for Mongo and CDL

Feature History

|

Feature Name |

Release Information |

Description |

|---|---|---|

|

Subscriber migration to multiple SPR DB replica sets |

2025.02.0 |

This feature migrates data from CPS 7.5 to CPC. distributing records across multiple SPR databases. It extracts data from CPS 7.5, restores it to SPR1 in CPC, and assigns hash values for subscriber distribution across SPR1, SPR2, and SPR3. |

|

Mongo 7.0 Support for Subscriber Profile Repository (SPR) |

2025.01.0 |

cnAAA provides support for storing Subscriber Profile Repository (SPR) information in Mongo 7.0. The Subscriber information can be created, updated, or deleted through SOAP unified API calls. Mongo 7.0 is deployed as a replica set with replica set members deployed on local and remote sites. Mongo DB ensures that the data is replicated across sites, ensuring geo redundancy and availability in case of failure on any of the sites. |

Overview

cnAAA provides support for storing Subscriber Profile Repository (SPR) information in Mongo 7.0. The subscriber information can be created, updated, or deleted through SOAP unified API calls.

Mongo 7.0 is deployed as a replica set with replica set members deployed on local and remote sites. Mongo DB ensures that the data is replicated across sites, ensuring geo redundancy and availability in case of failure on any of the sites.

Configure of the SPR MongoDB replica set

Install the SPR MongoDB replica set within the cnAAA Kubernetes cluster namespace. Configure the replica member hosts to have network reachability to the cnAAA Engine Pods, using node IPs of master nodes as replica member host IP addresses.

SPR DB replica set configurations

-

MongoDB replica set members utilize the host network to facilitate network connectivity across different sites.

-

Ensure that host network reachability is maintained across mated pair sites to prevent issues with database initialization and replication.

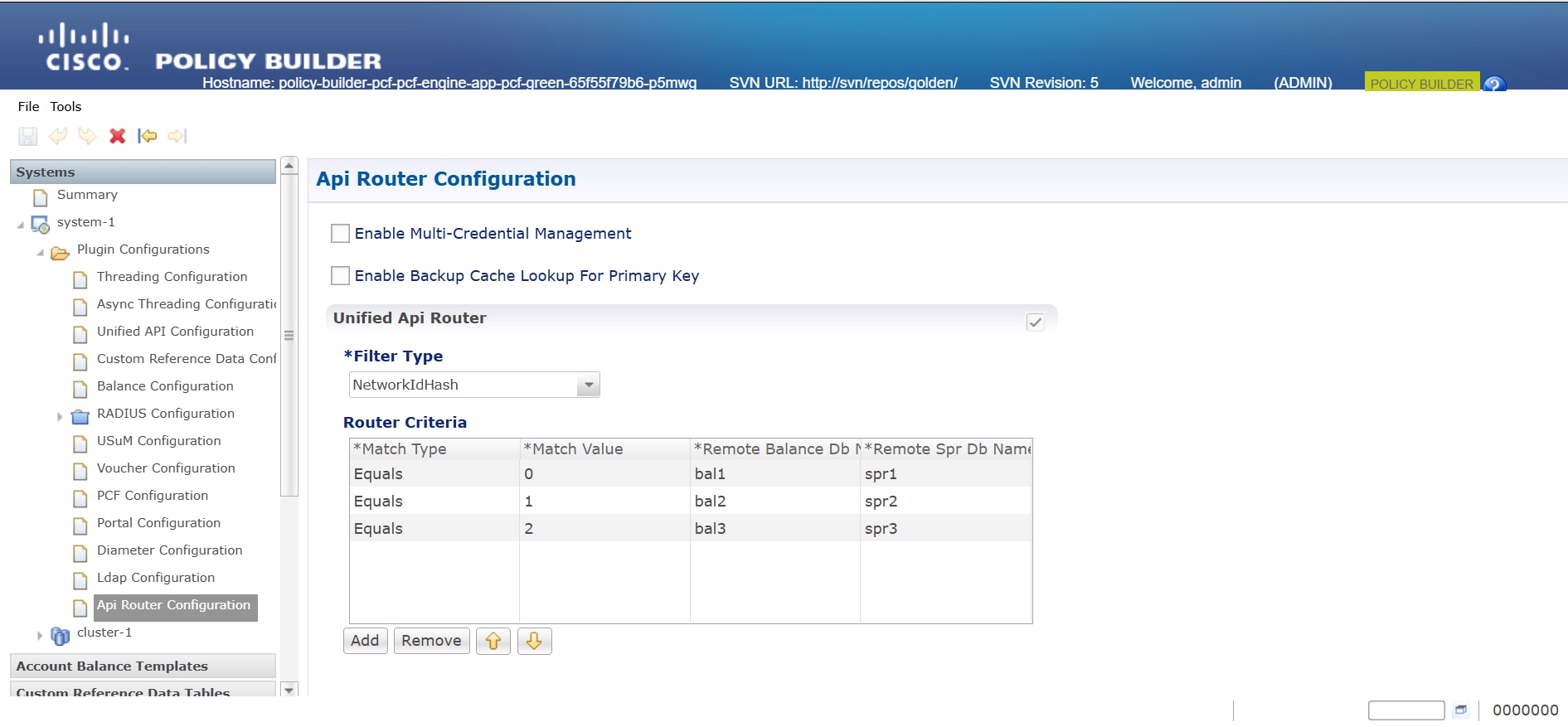

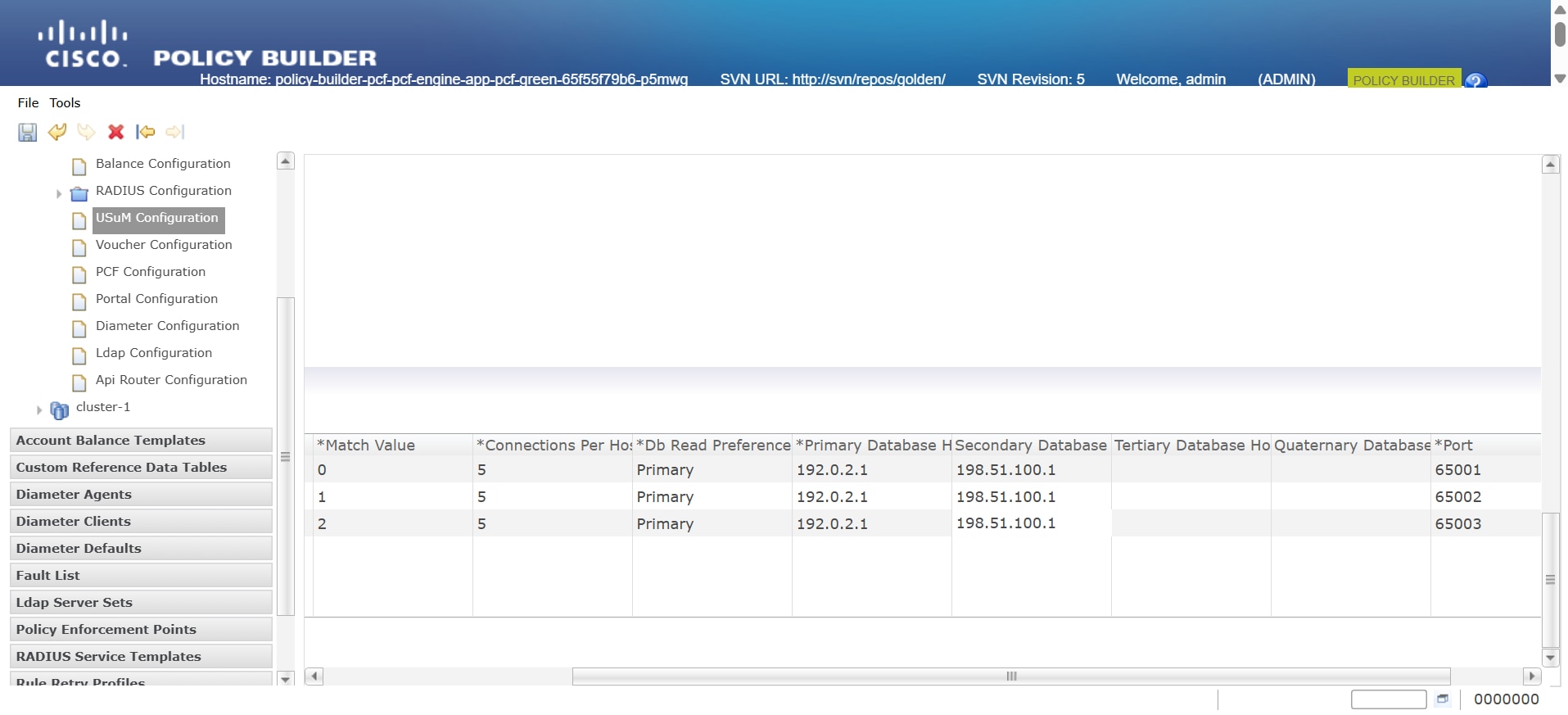

Configure Policy Builder and remote database with USuM configuration

To set up the Policy Builder and configure the remote Subscriber Profile Repository (SPR) database using the USuM configuration tab, follow these steps:

Configure Policy Builder

Set up the Policy Builder with primary and secondary replica members, including their port numbers, under Plugin Configurations > USuM Configuration.

Configure Remote Database with USuM Configuration

Procedure

|

Step 1 |

Access Plugin Configurations: In the left pane of the Policy Builder page, click on the Plugin Configurations option. |

|

Step 2 |

Select USuM Configuration: Choose the USuM Configuration tab. |

|

Step 3 |

Enter Database Values: Input the mandatory database values required for configuration. |

Configure the MongoDB tuning

To optimize MongoDB performance for cnAAA, configure the these database tuning parameters with required values.

db

global-settings

db-tunings

oplog-size-mb 3072

wired-tiger-cache-size-gb 3

slowms 500

concurrent-transactions-read 2

concurrent-transactions-write 3Notes:

oplog-size-mb - 3072 indicates 3GB space allocated for oplog.

wired-tiger-cache-size-gb - Defines the maximum size of the internal cache that WiredTiger uses for all data.

slowms - The slow operation time threshold, in milliseconds.

concurrent-transactions-read - Specify the maximum number of concurrent read transactions (read tickets) allowed into the storage engine.

concurrent-transactions-write - Specify the maximum number of concurrent write transactions (write tickets) allowed into the storage engine.

Note |

Mongo authentication is currently not supported in this release. |

Configure Mongo Replica Sets

Prerequisite: Before configuring the replica set, create the labels for the nodes.

To Configure Mongo replica set configurations using cnAAA, follow these steps:

-

Provision Mongo Replica Sets:Set up Mongo replica sets by configuring their settings and assigning database labels on Kubernetes nodes to ensure replica set members spawn on the appropriate master nodes.

-

Sample Configuration:This configuration sets up a database replica with an arbiter and two members, specifying their roles, priorities, and network settings.

db scdb replica-name sdb-subscriber1 port 65001 interface vlan2400 resource cpu limit 6000 resource memory limit 112000 replica-set-label key smi.cisco.com/node-type replica-set-label value oam member-configuration member sdb-rs1-arbiter host 10.192.1.24 arbiter true site local exit member-configuration member sdb-rs1-s1-m1 host 10.192.1.22 arbiter false priority 102 site local exit member-configuration member sdb-rs1-s1-m2 host 10.192.1.23 arbiter false priority 101 site local exit exit

For more information on replica sets, see Mobile Policy Common Commands in Ultra Cloud Core Policy and Charging, Release 2025.01.0- CLI Reference Guide.

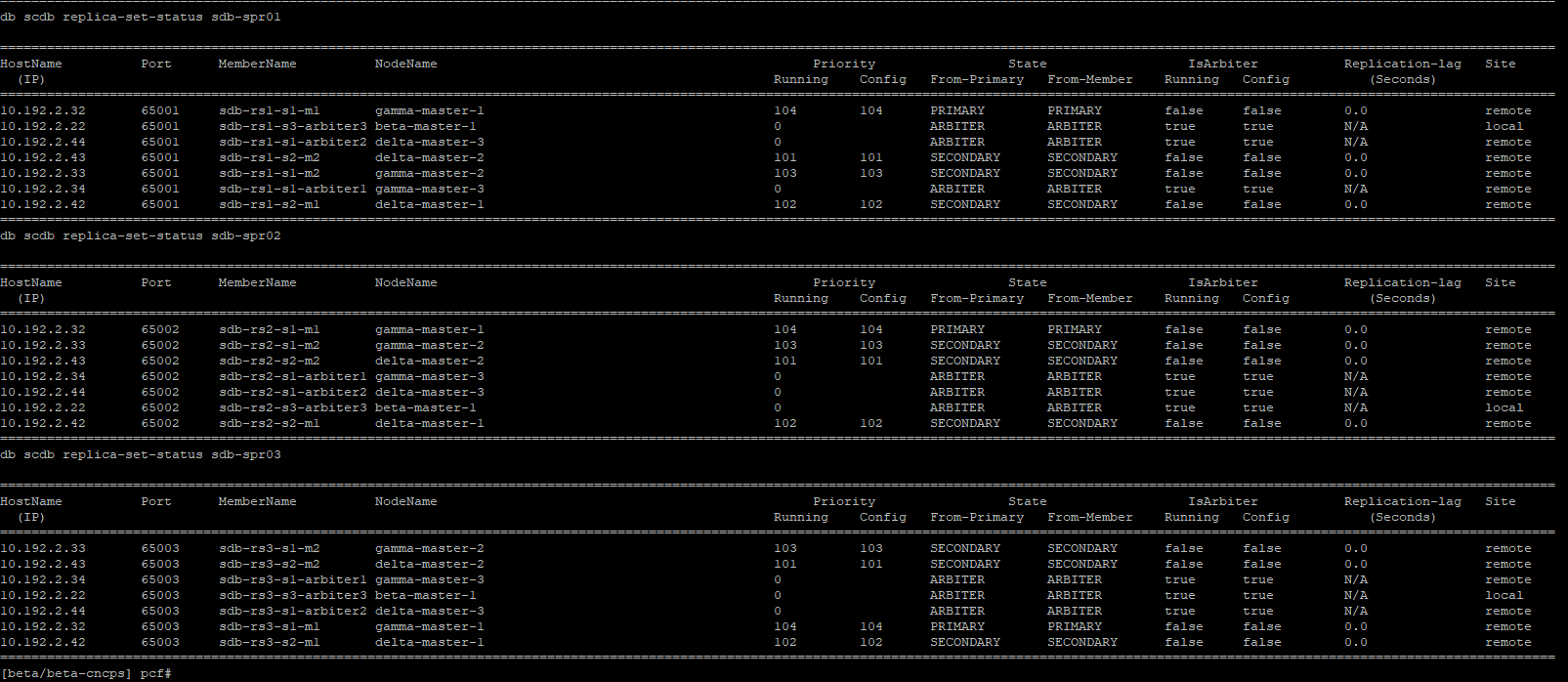

Verification

show db scdb replica-set-status-

Verify Replica Set Status: Ensure the replica set initializes correctly, with each member assigned the correct role (primary, secondary, arbiter).

cloud-user@alpha-master-1:~$ kubectl get pods -A|grep db-scdb

<namespace> db-scdb-sdb-subscriber1-0 1/1 Running 0 7d5h

<namespace> db-scdb-sdb-subscriber1-1 1/1 Running 0 7d5h

<namespace> db-scdb-sdb-subscriber1-2 1/1 Running 0 7d5h

cloud-user@alpha-master-1:~$

Feedback

Feedback