These use cases show the configuration for logging of proxy accounting retry messages for OCS and PMZ servers.

No Log for Successful First Attempt: The system does not generate a log entry when an accounting message successfully reaches the primary OCS or PMZ server on

the first attempt.

radius server-group grp1

servers WB_12100

primary 10.1.43.142

secondary 10.1.43.140

nas-ip 11.11.118.69

timeout-seconds 5

server-type online

retries 3

exit

servers PassiveMZ-12998

primary 10.1.45.171

secondary 10.1.45.170

nas-ip 11.11.118.69

timeout-seconds 5

server-type offline

retries 3

exit

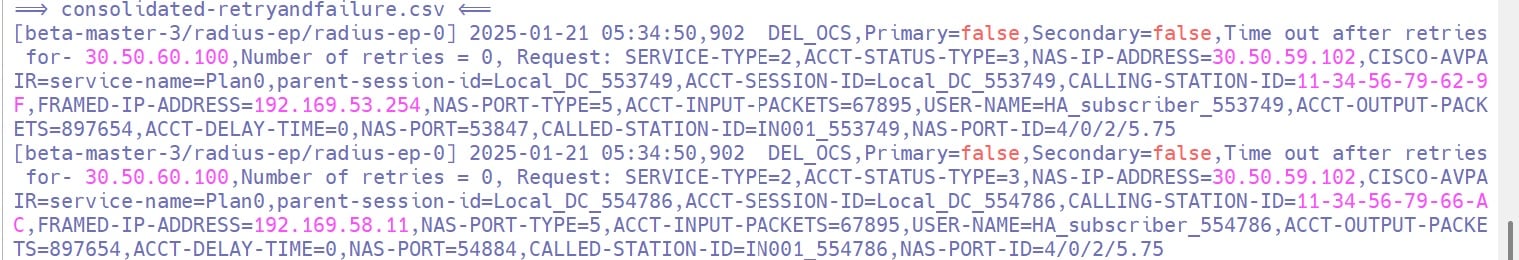

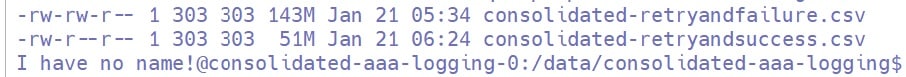

Log for Failure After All Retries: The system generates a log entry in ocs_consolidated-retryandfailure.csv, pmz_consolidated-retryandfailure.csv when an accounting message fails to reach both primary and secondary OCS or PMZ servers after all retries.

radius server-group grp1

servers WB_12100

primary 10.1.43.142

secondary 10.1.43.140

nas-ip 11.11.118.69

timeout-seconds 5

server-type online

retries 3

exit

servers PassiveMZ-12998

primary 10.1.45.171

secondary 10.1.45.170

nas-ip 11.11.118.69

timeout-seconds 5

server-type offline

retries 3

exit

This is a sample log entry for OCS server in Log for Failure After All Retries scenario.

2025-06-16 18:13:25,940 WB_12100,Primary=false,Secondary=false,Time out after retries for- 10.1.43.140,

Number of retries = 3, Request: FRAMED-IP-ADDRESS=192.168.3.10,CALLING-STATION-ID=0005.9A3C.2A02,NAS-PORT-TYPE=5,

USER-PASSWORD=xxxxxxxxx,USER-NAME=0005.9A3C.2A02,ACCT-STATUS-TYPE=1,NAS-IP-ADDRESS=11.11.118.69,

NAS-PORT=0,CISCO-AVPAIR=parent-session-id=00011,service-name=A0F0050M050M000005MQ,portbundle=enable,

ACCT-SESSION-ID=child_00011,NAS-PORT-ID=0/0/0/701

This is a sample log entry for PMZ server in Log for Failure After All Retries scenario.

2025-06-16 18:13:15,960 PassiveMZ-12998,

Primary=false,Secondary=false,Time out after retries for- 10.1.45.170,Number of retries = 3,

Request: FRAMED-IP-ADDRESS=192.168.3.10,CALLING-STATION-ID=0005.9A3C.2A02,

NAS-PORT-TYPE=5,USER-PASSWORD=xxxxxxxxx,USER-NAME=0005.9A3C.2A02,ACCT-STATUS-TYPE=1,

NAS-IP-ADDRESS=11.11.118.69,NAS-PORT=0,CISCO-AVPAIR=parent-session-id=00011,

service-name=A0F0050M050M000005MQ,portbundle=enable,

ACCT-SESSION-ID=child_00011,NAS-PORT-ID=0/0/0/701

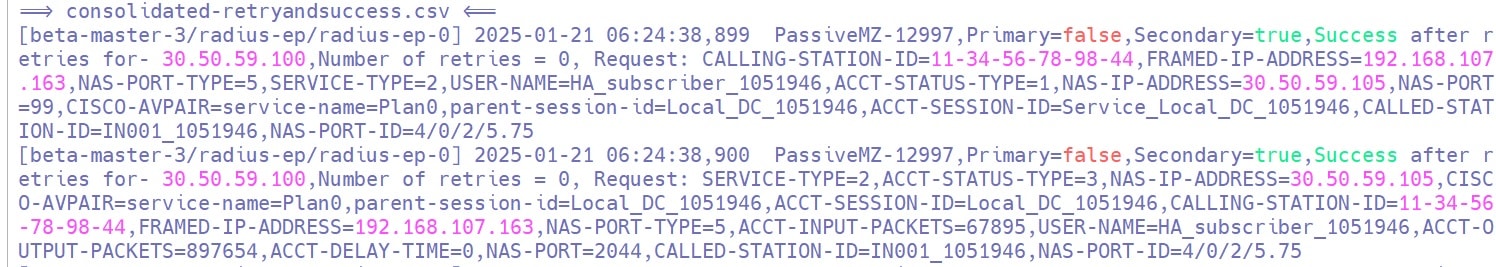

Log for Success on Secondary After Primary Fails: The system generates a log entry in ocs_consolidated-retryandsuccess.csv, pmz_consolidated-retryandsuccess.csv when an accounting message fails to reach the primary, but reaches the secondary OCS or PMZ server after all retries.

radius server-group grp1

servers WB_12100

primary 10.1.43.142

secondary 10.1.43.140

nas-ip 11.11.118.69

timeout-seconds 5

server-type online

retries 3

exit

servers PassiveMZ-12998

primary 10.1.45.171

secondary 10.1.45.170

nas-ip 11.11.118.69

timeout-seconds 5

server-type offline

retries 3

exit

This is a sample log entry for OCS server in Log for Success on Secondary After Primary Fails scenario.

2025-06-17 09:01:36,934 WB_12100,Primary=false,

Secondary=true,Success after retries for- 10.1.43.140,

Number of retries = 0, Request: FRAMED-IP-ADDRESS=192.168.3.10,

CALLING-STATION-ID=0005.9A3C.1A01,NAS-PORT-TYPE=5,USER-PASSWORD=xxxxxxxxx,

USER-NAME=0005.9A3C.1A01,ACCT-STATUS-TYPE=1,NAS-IP-ADDRESS=11.11.118.69,

NAS-PORT=0,CISCO-AVPAIR=parent-session-id=00011,service-name=A0F0050M050M000005MQ,

portbundle=enable,

ACCT-SESSION-ID=child_00011,NAS-PORT-ID=0/0/0/701

This is a sample log entry for PMZ server in Log for Success on Secondary After Primary Fails scenario.

2025-06-17 09:01:38,013 PassiveMZ-12998,Primary=false,

Secondary=true,Success after retries for- 10.1.45.170,Number of retries = 0,

Request: FRAMED-IP-ADDRESS=192.168.3.10,CALLING-STATION-ID=0005.9A3C.1A01,

NAS-PORT-TYPE=5,USER-PASSWORD=xxxxxxxxx,USER-NAME=0005.9A3C.1A01,

ACCT-STATUS-TYPE=1,NAS-IP-ADDRESS=11.11.118.69,NAS-PORT=0,CISCO-AVPAIR=parent-session-id=00011,

service-name=A0F0050M050M000005MQ,portbundle=enable,

ACCT-SESSION-ID=child_00011,NAS-PORT-ID=0/0/0/701

Log for Success After Retries to Primary: The system generates a log entry in ocs_consolidated-retryandsuccess.csv, pmz_consolidated-retryandsuccess.csv when an accounting message is delivered to the primary OCS or PMZ server after one or more retries.

radius server-group grp1

servers WB_12100

primary 10.1.43.142

secondary 10.1.43.140

nas-ip 11.11.118.69

timeout-seconds 5

server-type online

retries 3

exit

servers PassiveMZ-12998

primary 10.1.45.171

secondary 10.1.45.170

nas-ip 11.11.118.69

timeout-seconds 5

server-type offline

retries 3

exit

This is a sample log entry for OCS server in Log for Success After Retries to Primary scenario.

2025-06-13 18:52:25,632 WB_12100,Primary=true,Secondary=false,

Success not at first time - for- 10.1.43.142,Number of retries = 2,

Request: FRAMED-IP-ADDRESS=192.168.3.10,CALLING-STATION-ID=0005.9A3C.4A04,

NAS-PORT-TYPE=5,USER-PASSWORD=xxxxxxxxx,USER-NAME=0005.9A3C.4A04,

ACCT-STATUS-TYPE=1,NAS-IP-ADDRESS= 11.11.118.69,NAS-PORT=0,CISCO-AVPAIR=parent-session-id=00011,

service-name=A0F0050M050M000005MQ,portbundle=enable,

ACCT-SESSION-ID=child_00011,NAS-PORT-ID=0/0/0/701

This is a sample log entry for PMZ server in Log for Success After Retries to Primary scenario.

2025-06-13 19:06:08,883 PassiveMZ-12998,Primary=true,

Secondary=false,Success not at first time - for- 10.1.45.171,

Number of retries = 2, Request: FRAMED-IP-ADDRESS=192.168.3.10,

CALLING-STATION-ID=0005.9A3C.4A04,NAS-PORT-TYPE=5,USER-PASSWORD=xxxxxxxxx,

USER-NAME=0005.9A3C.4A04,ACCT-STATUS-TYPE=1,NAS-IP-ADDRESS= 11.11.118.69,

NAS-PORT=0,CISCO-AVPAIR=parent-session-id=00011,service-name=A0F0050M050M000005MQ,portbundle=enable,

ACCT-SESSION-ID=child_00011,NAS-PORT-ID=0/0/0/701

Log for Full Queue: The system generates a log entry when the proxy queue is full and cannot process additional messages.

radius server-group grp1

servers WB_12100

primary 10.1.43.142

secondary 10.1.43.140

nas-ip 11.11.118.69

timeout-seconds 5

thread-pool-size 300

server-type online

max-proxy-queue-size 50000

retries 3

exit

servers PassiveMZ-12998

primary 10.1.45.171

secondary 10.1.45.170

nas-ip 11.11.118.69

timeout-seconds 5

thread-pool-size 300

server-type offline

max-proxy-queue-size 50000

retries 3

exit

This is a sample log entry for OCS server in Log for Full Queue scenario.

2025-07-05 14:59:10,109 WB_12100,Primary=false,

Secondary=false,Queue Full for - 10.1.43.142,Number of retries = 0,

Request: FRAMED-IP-ADDRESS=192.168.3.10,CALLING-STATION-ID=0005.9A3C.5A05,

NAS-PORT-TYPE=5,USER-PASSWORD=xxxxxxxxx,USER-NAME=0005.9A3C.5A05,ACCT-STATUS-TYPE=3,

NAS-IP-ADDRESS=11.11.118.69,NAS-PORT=0,CISCO-AVPAIR=parent-session-id=00011,

service-name=A0F0050M050M000005MQ,portbundle=enable,ACCT-SESSION-ID=child_00011,

NAS-PORT-ID=0/0/0/701

This is a sample log entry for PMZ server in Log for Full Queue scenario.

2025-07-05 14:59:10,108 PassiveMZ-12998,Primary=false,

Secondary=false,Queue Full for - 10.1.45.171,Number of retries = 0,

Request: FRAMED-IP-ADDRESS=192.168.3.10,CALLING-STATION-ID=0005.9A3C.5A05,

NAS-PORT-TYPE=5,USER-PASSWORD=xxxxxxxxx,USER-NAME=0005.9A3C.5A05,

ACCT-STATUS-TYPE=3,NAS-IP-ADDRESS=11.11.118.69,NAS-PORT=0,CISCO-AVPAIR=parent-session-id=00011,

service-name=A0F0050M050M000005MQ,portbundle=enable,

ACCT-SESSION-ID=child_00011,NAS-PORT-ID=0/0/0/701

This is a sample log file for Grafana GUI User Login Details:

Grafana user logging details are captured in Grafana pod which will be present under CEE namespace

$ kubectl get pods -A|grep graf

cee grafana-bc8df4c5d-zxhb6 2/2 Running 3 (16d ago) 28d

cee grafana-dashboard-metrics-9d666f59-m67zg 1/1 Running 0 28d

pcf grafana-dashboard-cdl-pcf-764ccc76f9-l29jd 1/1 Running 0 16d

pcf grafana-dashboard-etcd-pcf-77664d6867-27fvb 1/1 Running 0 16d

pcf grafana-dashboard-pcf-748fb659bd-9sfqj 1/1 Running 0 16d

Luser@rid8043277-aellendu-1-master1:~$

NOTE: The above Grafana pods logs user logging details.

Sample Logs:

-->If Grafana ingress is opened in browser:

logger=context userId=0 orgId=0 uname= t=2025-10-24T11:10:29.611429757Z level=info msg="Request Completed" method=GET path=/ status=302 remote_addr=10.1.14.204 time_ms=0 duration=479.628µs size=29 referer= handler=/ status_source=server

-->If logged in with admin credentials

logger=context userId=2 orgId=1 uname=admin t=2025-10-24T11:13:54.213746926Z level=info msg="Request Completed" method=GET path=/api/live/ws status=-1 remote_addr=11.11.76.195 time_ms=20 duration=20.484741ms size=0 referer= handler=/api/live/ws status_source=server

-->If admin user logout is performed

logger=http.server t=2025-10-24T11:15:39.54041156Z level=info msg="Successful Logout" userID=2

logger=context userId=2 orgId=1 uname=admin t=2025-10-24T11:15:39.540592971Z level=info msg="Request Completed" method=GET path=/logout status=302 remote_addr=11.11.76.195 time_ms=53 duration=53.590989ms size=29 referer="https://grafana.10.86.70.223.nip.io/?orgId=1" handler=/logout status_source=server

Feedback

Feedback