Overview of Integrating Secure Workload with ACI

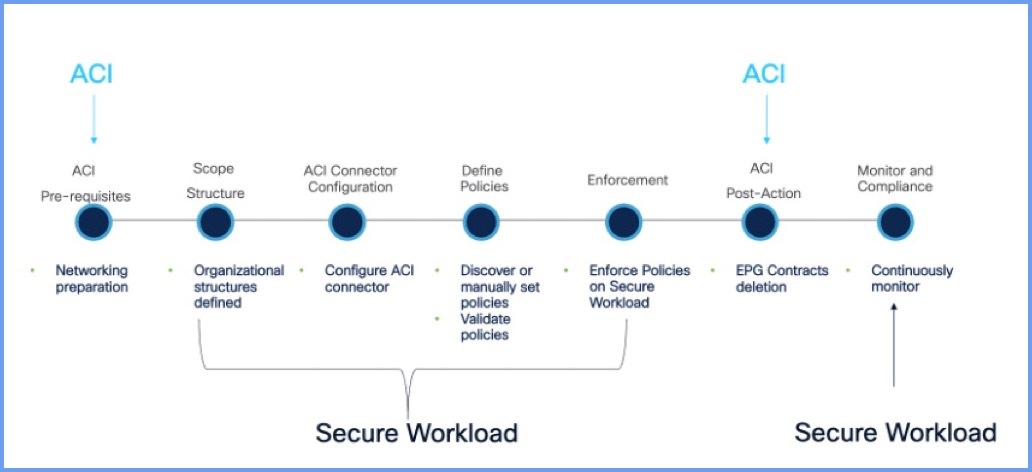

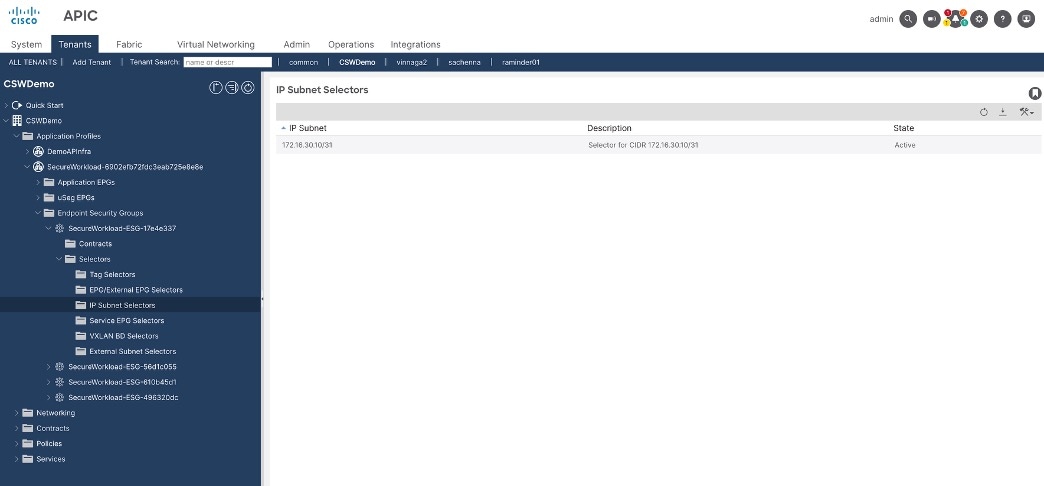

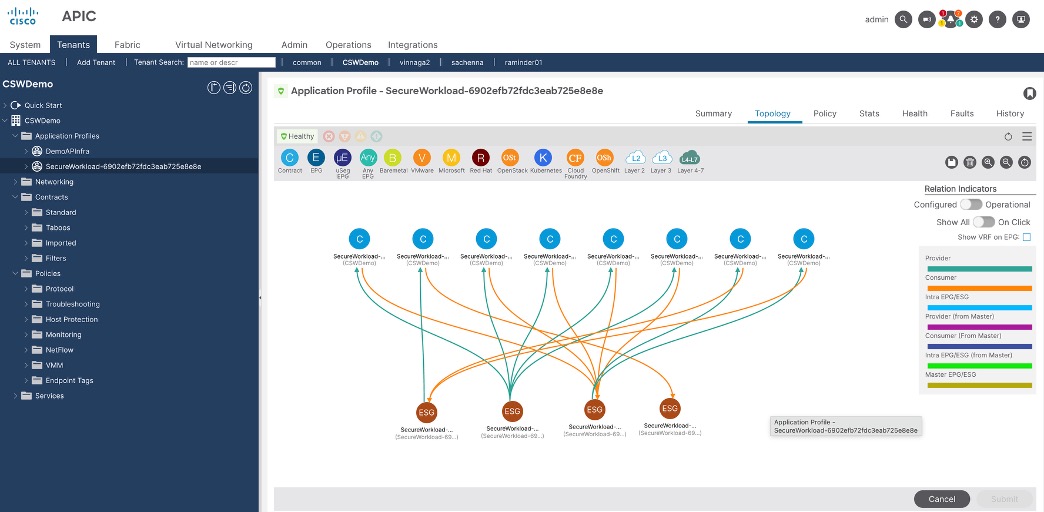

Secure Workload integrates with Application Centric Infrastructure (ACI) to enhance workload visibility, policy enforcement, and segmentation within ACI environments. The integration enables dynamic policy optimization, telemetry collection, and enforcement of segmentation policies directly on ACI fabrics.

This integration offers the following features and benefits:

Realize better security—Using ACI fabric, you can easily move from network centric segmentation to granular application centric segmentation to protect critical business resources.

Monitor ACI fabric health—Gain visibility into switch memory (TCAM) health.

Protect existing infrastructure—Leverage existing ACI implementation on location and extend these capabilities to the broader Cisco Hybrid Mesh Firewall ecosystem.

Apply segmentation controls—Leverage AI/ML to discover, validate, analyze, and enforce segmentation policies without disrupting business operations.

Save time-managing policies—Automating the policy lifecycle saves you time by eliminating the need for manual deployment of segmentation policies and significantly reducing any operational overhead.

By integrating Secure Workload with ACI, users can achieve unified segmentation, enhanced visibility, and dynamic policy enforcement across their data center environments.

Feedback

Feedback