FlexPod with Cisco AI POD: Infrastructure for AI Training and Fine-Tuning Deployment Guide

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

![]()

In partnership with:

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to: http://www.cisco.com/go/designzone.

Cisco AI PODs are modular, pre-validated infrastructure solutions designed to accelerate the entire AI lifecycle, including training, fine-tuning, and high-throughput inferencing. They leverage Cisco UCS compute, Cisco Nexus networking, advanced GPUs, and integrated software such as NVIDIA AI Enterprise and RedHat OpenShift to deliver scalable, secure, and efficient AI infrastructure suitable for both data center and edge deployments. These PODs simplify AI adoption by providing centralized management through Cisco Intersight and Nexus Dashboard, enabling rapid deployment, automation, and operational visibility. Supporting diverse AI workloads like large language models, generative AI, and real-time analytics, Cisco AI PODs offer flexible configurations tailored to various business needs and cost models. Backed by Cisco Validated Designs and partner storage options, they ensure reliability, performance, and seamless integration within existing IT environments, helping organizations innovate and scale AI with confidence and reduced complexity.

Combining Cisco AI PODs with FlexPod Datacenter creates a powerful, scalable, and validated infrastructure solution optimized for AI and machine learning workloads. FlexPod Datacenter provides a converged architecture integrating Cisco UCS servers, Cisco Nexus switches, and NetApp storage, designed for high availability and flexibility. When integrated with Cisco AI PODs, which include advanced GPU capabilities and AI-optimized compute resources, the combined solution supports accelerated AI lifecycle processes such as training, inferencing, and model deployment. This integration leverages Cisco UCS servers, NVIDIA GPUs, and software platforms like NVIDIA Base Command Manager and Red Hat OpenShift to deliver a unified environment that simplifies AI infrastructure management through Cisco Intersight. The solution offers high-speed networking, persistent storage, and automation playbooks to reduce deployment time and operational complexity, enabling enterprises to scale AI workloads efficiently while maintaining security and operational visibility. This combined approach supports diverse AI use cases, including generative AI and MLOps, with validated designs that minimize risk and maximize performance in enterprise data centers.

For information about the FlexPod design and deployment details, including the configuration of various elements of design and associated best practices, refer to Cisco Validated Designs for FlexPod, here: https://www.cisco.com/c/en/us/solutions/design-zone/data-center-design-guides/flexpod-design-guides.html.

Solution Overview

This chapter contains the following:

● Purpose of this documentPurpose of this document

● New in this releaseNew in this release

The intended audience of this document includes but is not limited to IT architects, sales engineers, field consultants, professional services, IT managers, partner engineering, and customers who want to take advantage of an infrastructure built to deliver IT efficiency and enable IT innovation.

This document provides deployment guidance around setting up Cisco AI PODs with Cisco UCS C885A M8 servers along with FlexPod Datacenter for AI training and fine-tuning use cases. This configuration is built as a tenant on top of FlexPod Base and assumes FlexPod Base has already been configured. This document introduces various design elements and explains various considerations and best practices for successful deployment.

The following design elements distinguish this version of FlexPod from previous models:

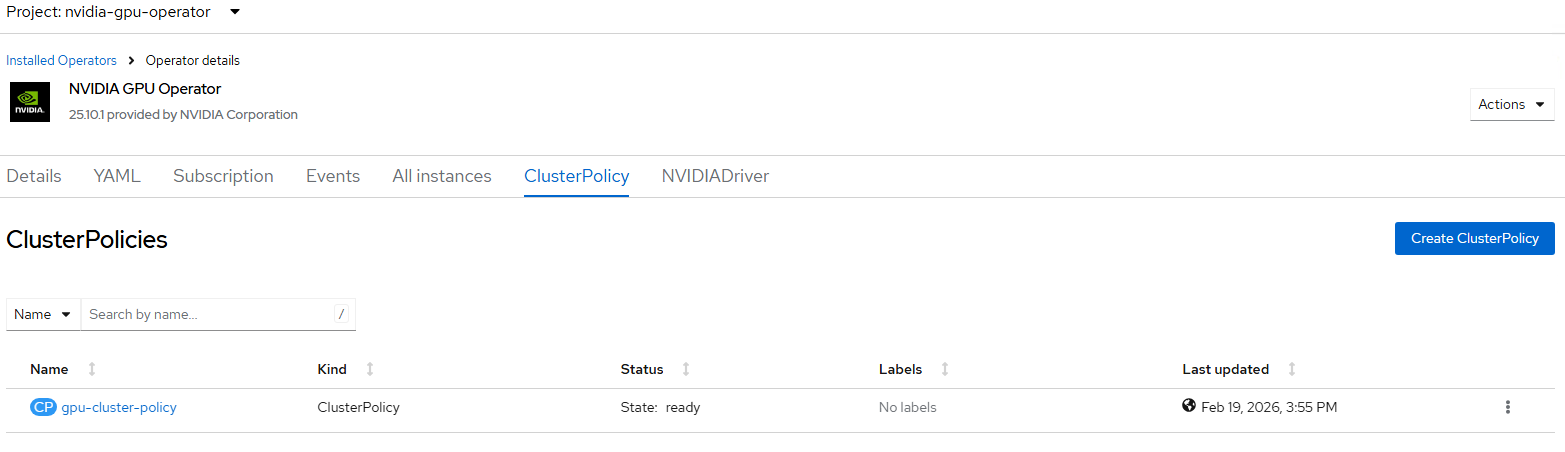

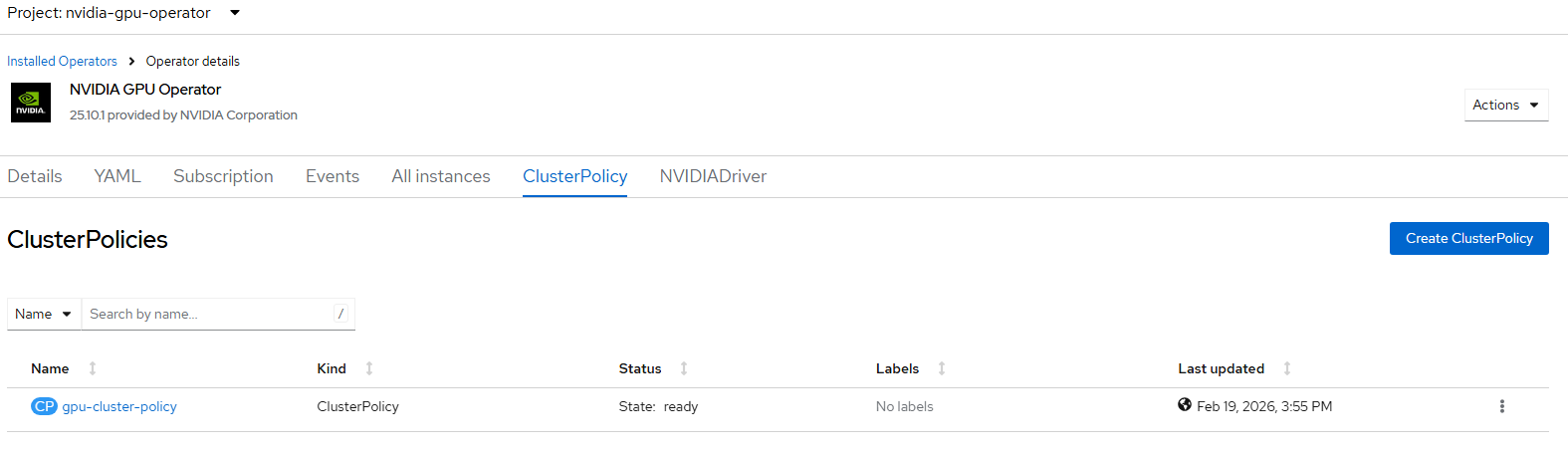

Configuration of AI PODs with NetApp Storage first with NVIDIA Base Command Manager and running sample training workloads. ● Configuration of AI PODs with NetApp Storage first with NVIDIA Base Command Manager and running sample training workloads.

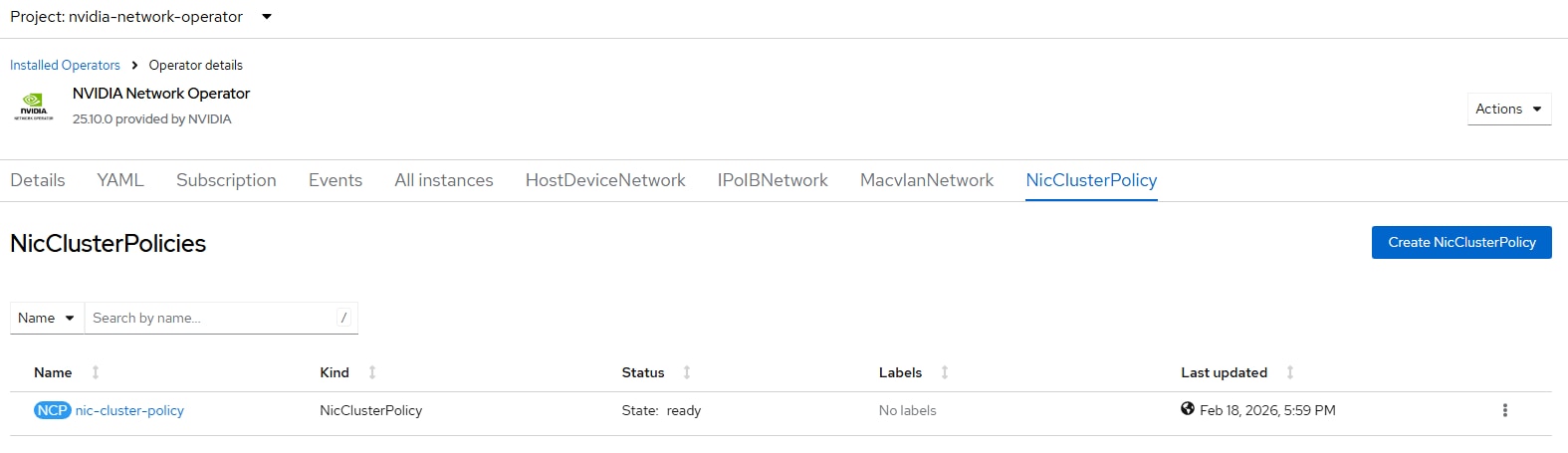

● Adding the Cisco UCS C885A M8 nodes to an existing OpenShift cluster and setting up the East-West or backend networking.

Deployment Hardware and Software

This chapter contains the following:

● Design RequirementsDesign Requirements

● NetApp ONTAP DesignNetApp ONTAP Design

● Physical TopologyPhysical Topology

● Software RevisionsSoftware Revisions

● FlexPod CablingFlexPod Cabling

The FlexPod Datacenter with Cisco UCS and Cisco Intersight meets the following general design requirements:

● Resilient design across all layers of the infrastructure with no single point of failure

● Scalable design with the flexibility to add compute capacity, storage, or network bandwidth as needed

● Modular design that can be replicated to expand and grow as the needs of the business grow

● Flexible design that can support different models of various components with ease

● Simplified design with the ability to integrate and automate with external automation tools

● Cloud-enabled design which can be configured, managed, and orchestrated from the cloud using GUI or APIs

To deliver a solution which meets all these design requirements, various solution components are connected and configured as explained in the following sections.

For the AI POD networking and server design, please refer to the Cisco AI POD for Enterprise Training and Fine-Tuning Design Guide - Cisco. Only the NetApp ONTAP Design is listed in this document.

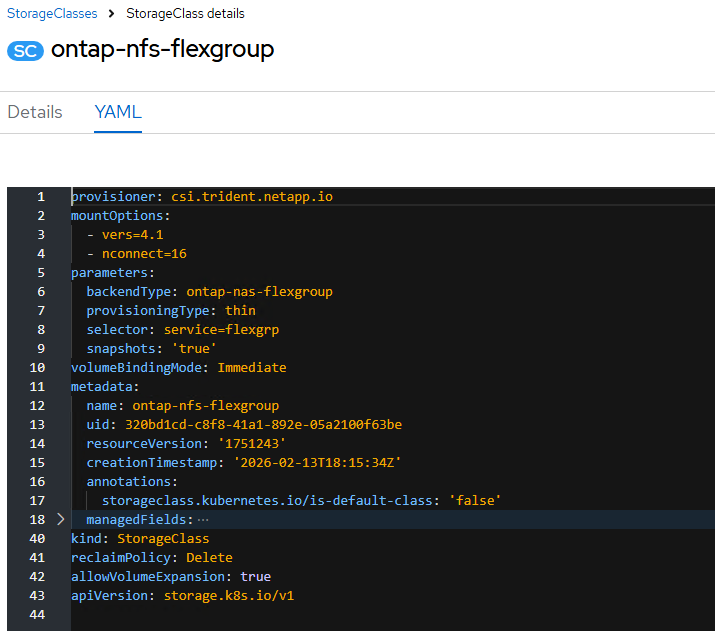

The storage system and design is a critical component of the AI training and fine-tuning infrastructure. AI workloads require high-performance, scalability, and secure access to storage to read large training datasets and to write model checkpoints, logging, and other artifacts during the training process. A key storage requirement is for very high-throughput sequential reads, as massive datasets may need to be loaded into GPU memory at the beginning of each training epoch.

The NetApp AFF A90 storage system is a 4RU chassis containing 2 controllers that operate as high availability partners (HA Pair) for each other, with up to 48 2.5-inch form-factor solid state disks (SSD). Each controller is connected to a separate pair of Cisco Nexus 9332D-GX2B leaf switches (frontend fabric) using two 100GE connectivity providing general NFS v3 and v4 services, and as well as S3 access to shared filesystems if desired. To enable high performance and scalability, the storage controllers form a storage cluster that enables the entire performance and capacity of the cluster nodes to be combined into a single namespace called a FlexGroup with data distributed across the disks of each node in the cluster.

The storage cluster also supports NFS v4.1 with Parallel NFS (pNFS) that enables clients to establish connections directly to every controller in the cluster. Additionally, session trunking combines the performance from multiple physical interfaces into a single session, enabling even single-threaded workloads to access more network bandwidth than is possible with traditional ethernet bonding. Combining all these features with RDMA enables the AFF A90 storage system to deliver low latency and high throughput that scales linearly for workloads leveraging NVIDIA GPUDirect Storage. The NVIDIA Enterprise Reference Architecture (ERA) provides the guidance to do the scaling of AFF A90 storage nodes along with Cisco C series GPU nodes

The AI PODs with FlexPod Datacenter with Red Hat OpenShift on Bare Metal infrastructure configuration is shown here. The AI PODs with FlexPod Datacenter with NVIDIA Base Command Manager

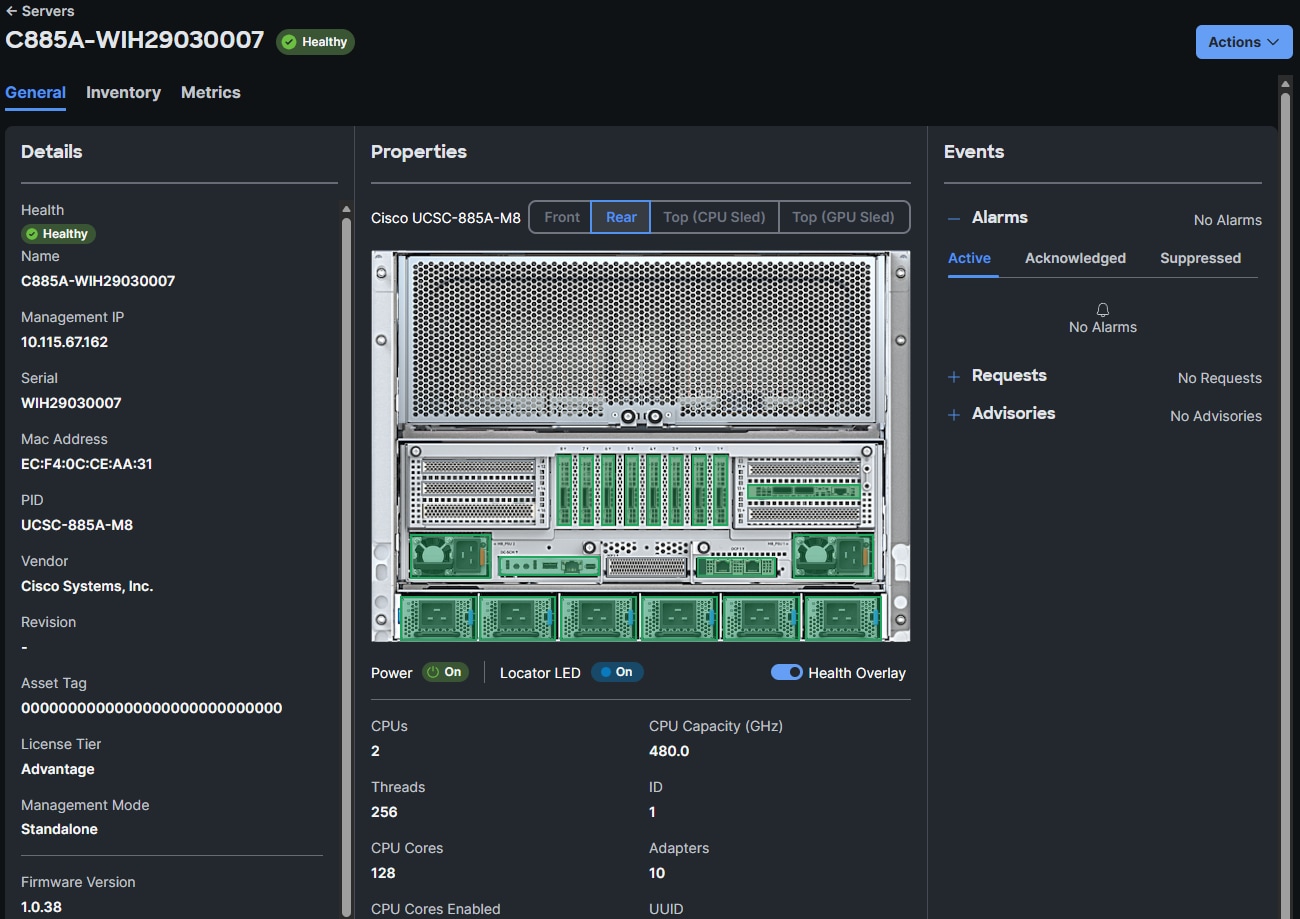

● Cisco UCS C885A M8 servers each with 8 NVIDIA H200 GPUs

● Cisco UCS X9508 Chassis with eight Cisco UCS X210c Compute Nodes for OpenShift cluster nodes and supporting services

● Cisco UCS X-Series Direct Fabric Interconnects 9108 to support 100GbE connectivity from various components

● High-speed Cisco NX-OS-based Nexus 9332D-GX2B and 9364D-GX2A switching design to support 100GE and 400GE connectivity

● NetApp AFF A90 storage controllers 100/200G Ethernet

The software components of this solution consist of:

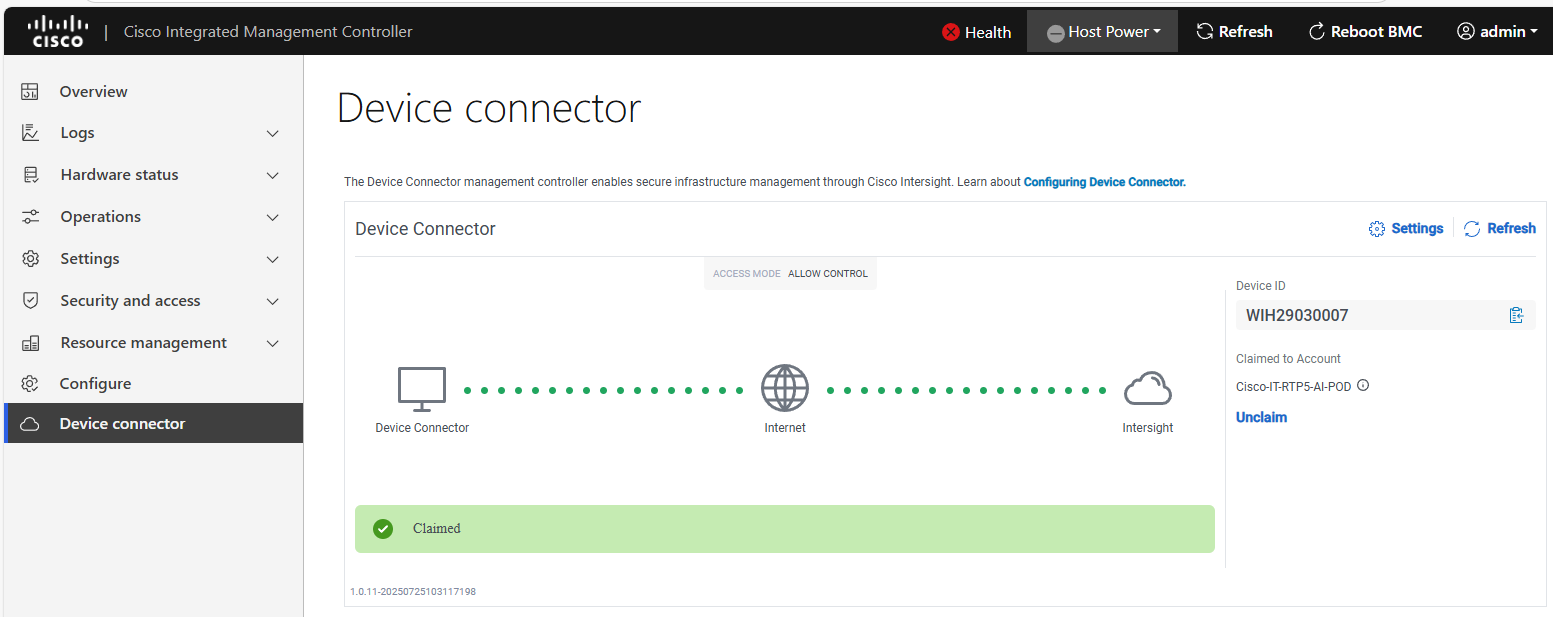

● Cisco Intersight to deploy, maintain, monitor and support the Cisco UCS server components

● Cisco Nexus Dashboard to deploy, maintain, and support the Cisco Nexus Switching Fabrics

● NVIDIA Base Command Manager to orchestrate training workloads on Ubuntu

● Red Hat OpenShift which provides a platform for both containers and VMs

FlexPod Datacenter with AI PODs Topology

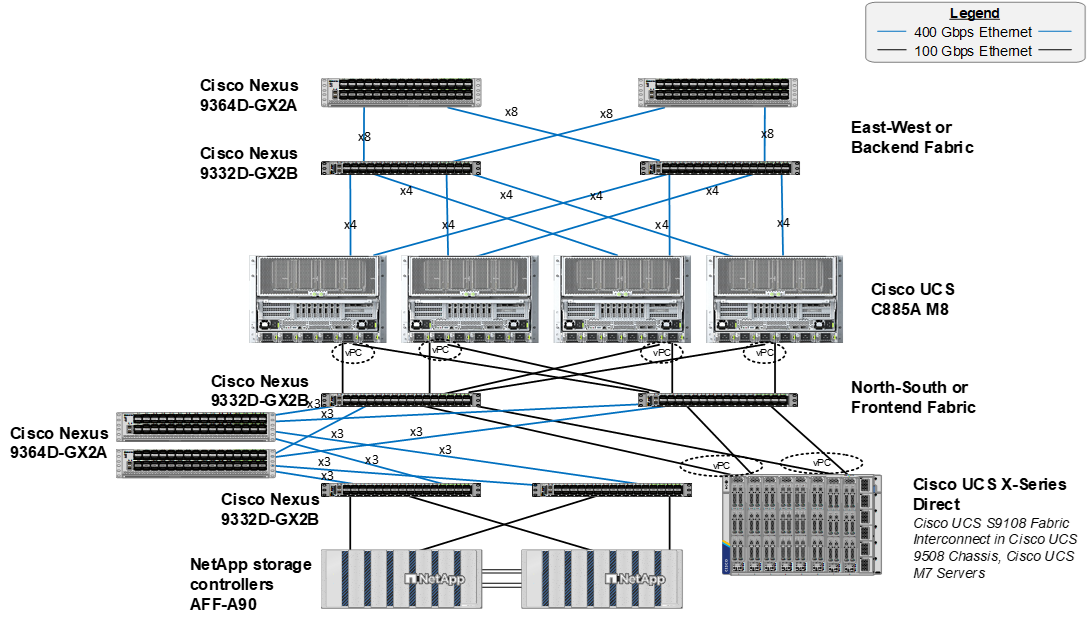

Figure 1 shows various hardware components and the network connections for this IP-based FlexPod design.

The reference hardware configuration includes:

● A scalable East-West or Backend fully non-blocking Spine Leaf fabric consisting of Cisco Nexus 9332D-GX2B leafs and Nexus 9364D-GX2A spines.

● 4 or more Cisco UCS C885A M8 servers, each with 8 NVIDIA H200 SXM GPUs.

● A scalable North-South or Frontend Spine Leaf fabric consisting of Cisco Nexus 9332D-GX2B leafs and Nexus 9364D-GX2A spines. This fabric has dedicated storage and compute leaf pairs.

● Two Cisco UCS Fabric Interconnects 9108 100G (FIs) in the chassis provide the chassis connectivity. At least two 100 Gigabit Ethernet ports from each FI, configured as a Port-Channel, are connected to each Nexus 9332D-GX2B switch in the North-South or Frontend Spine Leaf fabric. 25 Gigabit Ethernet connectivity is also supported as well as other versions of the Cisco UCS FI that would be used with Intelligent Fabric Modules (IFMs) in the chassis.

● One Cisco UCS X9508 Chassis contains up to 8 Cisco UCS X210c servers for OpenShift cluster nodes and supporting services nodes for services such as DNS/DHCP or configuration VMs.

● One NetApp AFF A90 HA pair connects to the Cisco Nexus 9332D-GX2B Switches using two 100 GE ports from each controller configured as individual links. 200GE connectivity is also supported when NVIDIA ConnectX-7 cards in the NetApp storage controllers are used.

Note: Only identically configured Cisco UCS C885A M8 servers should be added to an OpenShift cluster. Adding servers with different port configurations, such as Cisco UCS C-Series servers with only one ConnectX-7 will cause issues with Nic Cluster Policy in the NVIDIA Network Operator.

Table 1 lists VLANs configured for setting up the FlexPod environment along with their usage.

| VLAN ID |

Name |

Usage |

IP Subnet used in this deployment |

| 2* |

Native-VLAN |

Use VLAN 2 as native VLAN instead of default VLAN (1) |

|

| 550* |

OOB-MGMT-VLAN |

Out-of-band management VLAN to connect management ports for various devices |

10.115.90.0/26; GW: 10.115.90.1 |

| 703 |

OCP/Ubuntu-BareMetal-MGMT |

Routable VLAN used for Ubuntu management and OpenShift cluster and node management |

10.115.90.64/26; GW: 10.115.90.126 |

| 3051 |

NFS |

Used for Ubuntu storage and OpenShift NFS RWX Persistent Storage |

192.168.51.0/24 |

Note: *VLAN configured in FlexPod Base. In setting up FlexPod Base, the Nexus switch portion will not be set up.

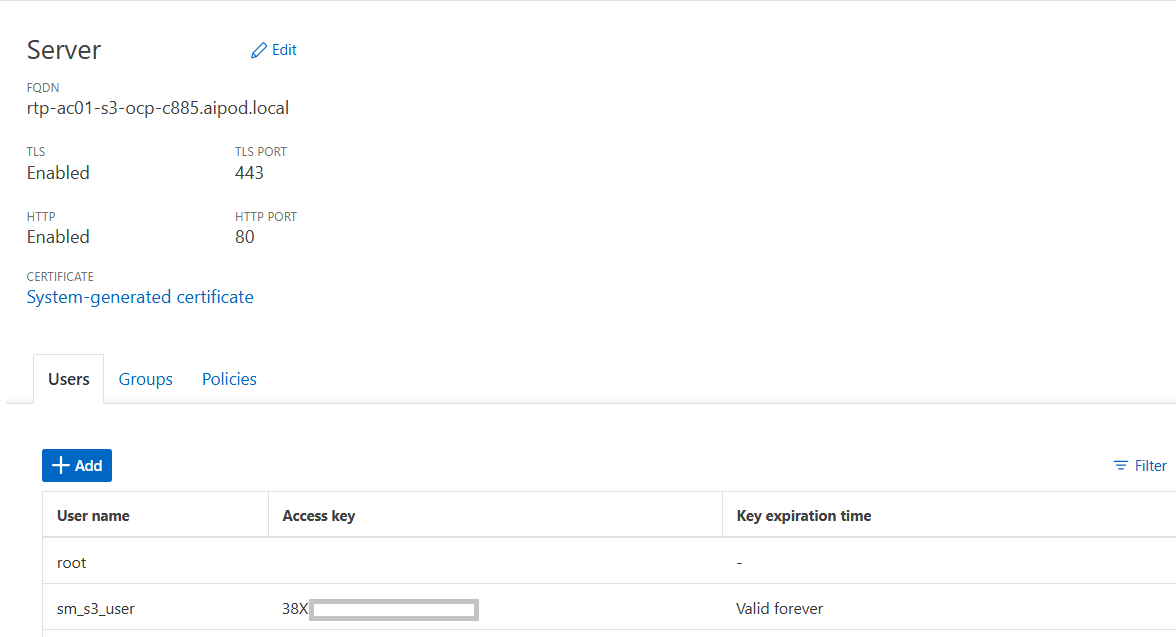

Note: S3 object storage was also used in this environment. It was determined that OpenShift uses the management network to reach S3 storage. A separate VLAN, subnet and vNIC was not defined for S3.

Table 2 lists the VMs or bare metal servers necessary for deployment as outlined in this document.

| Virtual Machine Description |

VLAN |

IP Address |

Comments |

| AD1 |

703 |

10.115.90.123 |

Hosted on pre-existing management infrastructure within the FlexPod |

| AD2 |

703 |

10.115.90.124 |

Hosted on pre-existing management infrastructure within the FlexPod |

| OCP Installer |

703 |

10.115.90.65 |

Hosted on pre-existing management infrastructure within the FlexPod |

| NVIDIA Base Command Head Node |

703 |

10.115.90.115 |

Hosted on pre-existing management infrastructure within the FlexPod |

Table 3 lists the software revisions for various components of the solution.

| Layer |

Device |

Image Bundle |

Comments |

| Compute |

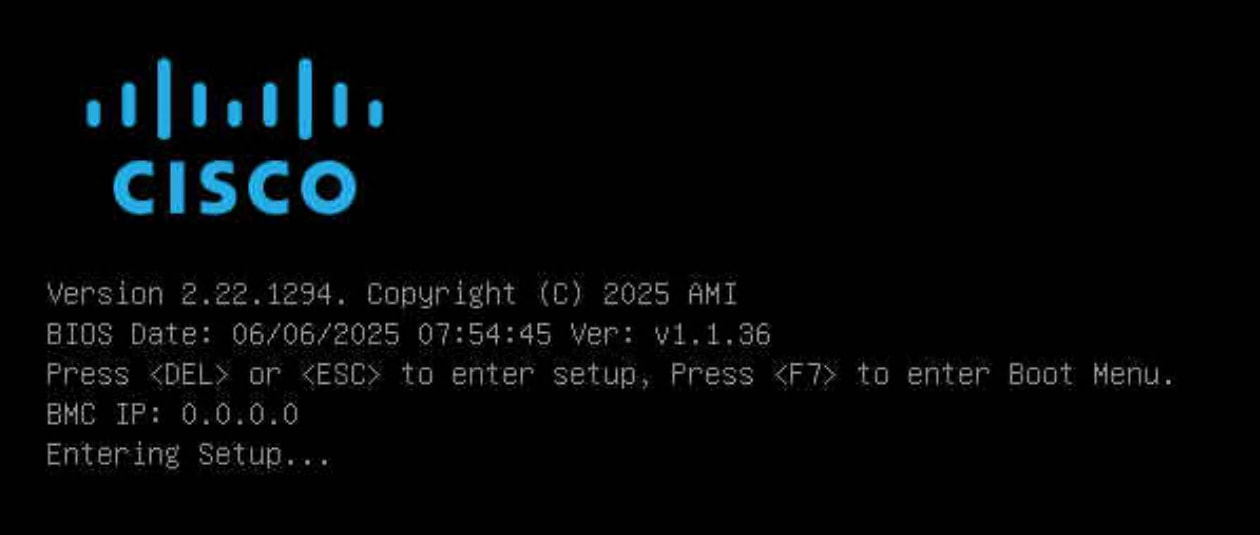

Cisco C885A M8 Firmware Package |

1.1(0.250025) |

Upgrades all server components |

|

|

Cisco UCS X210c M6 |

5.3(5.250021) |

Used for OpenShift Control Plane / Worker Nodes |

|

|

Cisco UCS Fabric Interconnect 9108 100G |

4.3(5.240162) |

|

| Network |

Cisco Nexus Dashboard |

4.1.1g |

|

| Cisco Nexus 9332D-GX2B NX-OS |

10.4(5) |

|

|

| Cisco Nexus 9364D-GX2A NX-OS |

10.4(5) |

|

|

| Storage |

NetApp AFF A90 |

ONTAP 9.15.1P7 |

Although ONTAP 9.15.1 was used for this validation |

| Software |

Red Hat OpenShift |

4.16 |

|

| NetApp Trident |

25.6.2 |

|

|

| NVIDIA H200 GPU Driver - Ubuntu |

570.133.20 |

|

|

|

|

NVIDIA H200 GPU CUDA Version - Ubuntu |

12.8 |

|

Table 4. Cisco Nexus Backend Fabric Cable Connections

| Device |

Port |

Speed |

Device |

Port |

Comment |

| BE-LF1 |

mgmt0 |

1G |

management switch |

||

| BE-LF1 |

Eth1/1 |

400G |

C885A-1 |

CX-7 1 |

|

| BE-LF1 |

Eth1/2 |

400G |

C885A-1 |

CX-7 3 |

|

| BE-LF1 |

Eth1/3 |

400G |

C885A-1 |

CX-7 5 |

|

| BE-LF1 |

Eth1/4 |

400G |

C885A-1 |

CX-7 7 |

|

| BE-LF1 |

Eth1/5 |

400G |

C885A-2 |

CX-7 1 |

|

| BE-LF1 |

Eth1/6 |

400G |

C885A-2 |

CX-7 3 |

|

| BE-LF1 |

Eth1/7 |

400G |

C885A-2 |

CX-7 5 |

|

| BE-LF1 |

Eth1/8 |

400G |

C885A-2 |

CX-7 7 |

|

| BE-LF1 |

Eth1/9 |

400G |

C885A-3 |

CX-7 1 |

|

| BE-LF1 |

Eth1/10 |

400G |

C885A-3 |

CX-7 3 |

|

| BE-LF1 |

Eth1/11 |

400G |

C885A-3 |

CX-7 5 |

|

| BE-LF1 |

Eth1/12 |

400G |

C885A-3 |

CX-7 7 |

|

| BE-LF1 |

Eth1/13 |

400G |

C885A-4 |

CX-7 1 |

|

| BE-LF1 |

Eth1/14 |

400G |

C885A-4 |

CX-7 3 |

|

| BE-LF1 |

Eth1/15 |

400G |

C885A-4 |

CX-7 5 |

|

| BE-LF1 |

Eth1/16 |

400G |

C885A-4 |

CX-7 7 |

|

| BE-LF1 |

Eth1/17 |

400G |

BE-SP1 |

Eth1/1 |

|

| BE-LF1 |

Eth1/18 |

400G |

BE-SP1 |

Eth1/2 |

|

| BE-LF1 |

Eth1/19 |

400G |

BE-SP1 |

Eth1/3 |

|

| BE-LF1 |

Eth1/20 |

400G |

BE-SP1 |

Eth1/4 |

|

| BE-LF1 |

Eth1/21 |

400G |

BE-SP1 |

Eth1/5 |

|

| BE-LF1 |

Eth1/22 |

400G |

BE-SP1 |

Eth1/6 |

|

| BE-LF1 |

Eth1/23 |

400G |

BE-SP1 |

Eth1/7 |

|

| BE-LF1 |

Eth1/24 |

400G |

BE-SP1 |

Eth1/8 |

|

| BE-LF1 |

Eth1/25 |

400G |

BE-SP2 |

Eth1/1 |

|

| BE-LF1 |

Eth1/26 |

400G |

BE-SP2 |

Eth1/2 |

|

| BE-LF1 |

Eth1/27 |

400G |

BE-SP2 |

Eth1/3 |

|

| BE-LF1 |

Eth1/28 |

400G |

BE-SP2 |

Eth1/4 |

|

| BE-LF1 |

Eth1/29 |

400G |

BE-SP2 |

Eth1/5 |

|

| BE-LF1 |

Eth1/30 |

400G |

BE-SP2 |

Eth1/6 |

|

| BE-LF1 |

Eth1/31 |

400G |

BE-SP2 |

Eth1/7 |

|

| BE-LF1 |

Eth1/32 |

400G |

BE-SP2 |

Eth1/8 |

|

| BE-LF2 |

mgmt0 |

1G |

management switch |

||

| BE-LF2 |

Eth1/1 |

400G |

C885A-1 |

CX-7 2 |

|

| BE-LF2 |

Eth1/2 |

400G |

C885A-1 |

CX-7 2 |

|

| BE-LF2 |

Eth1/3 |

400G |

C885A-1 |

CX-7 6 |

|

| BE-LF2 |

Eth1/4 |

400G |

C885A-1 |

CX-7 8 |

|

| BE-LF2 |

Eth1/5 |

400G |

C885A-2 |

CX-7 2 |

|

| BE-LF2 |

Eth1/6 |

400G |

C885A-2 |

CX-7 2 |

|

| BE-LF2 |

Eth1/7 |

400G |

C885A-2 |

CX-7 6 |

|

| BE-LF2 |

Eth1/8 |

400G |

C885A-2 |

CX-7 8 |

|

| BE-LF2 |

Eth1/9 |

400G |

C885A-3 |

CX-7 2 |

|

| BE-LF2 |

Eth1/10 |

400G |

C885A-3 |

CX-7 2 |

|

| BE-LF2 |

Eth1/11 |

400G |

C885A-3 |

CX-7 6 |

|

| BE-LF2 |

Eth1/12 |

400G |

C885A-3 |

CX-7 8 |

|

| BE-LF2 |

Eth1/13 |

400G |

C885A-4 |

CX-7 2 |

|

| BE-LF2 |

Eth1/14 |

400G |

C885A-4 |

CX-7 2 |

|

| BE-LF2 |

Eth1/15 |

400G |

C885A-4 |

CX-7 6 |

|

| BE-LF2 |

Eth1/16 |

400G |

C885A-4 |

CX-7 8 |

|

| BE-LF2 |

Eth1/17 |

400G |

BE-SP1 |

Eth1/9 |

|

| BE-LF2 |

Eth1/18 |

400G |

BE-SP1 |

Eth1/10 |

|

| BE-LF2 |

Eth1/19 |

400G |

BE-SP1 |

Eth1/11 |

|

| BE-LF2 |

Eth1/20 |

400G |

BE-SP1 |

Eth1/12 |

|

| BE-LF2 |

Eth1/21 |

400G |

BE-SP1 |

Eth1/13 |

|

| BE-LF2 |

Eth1/22 |

400G |

BE-SP1 |

Eth1/14 |

|

| BE-LF2 |

Eth1/23 |

400G |

BE-SP1 |

Eth1/15 |

|

| BE-LF2 |

Eth1/24 |

400G |

BE-SP1 |

Eth1/16 |

|

| BE-LF2 |

Eth1/25 |

400G |

BE-SP2 |

Eth1/9 |

|

| BE-LF2 |

Eth1/26 |

400G |

BE-SP2 |

Eth1/10 |

|

| BE-LF2 |

Eth1/27 |

400G |

BE-SP2 |

Eth1/11 |

|

| BE-LF2 |

Eth1/28 |

400G |

BE-SP2 |

Eth1/12 |

|

| BE-LF2 |

Eth1/29 |

400G |

BE-SP2 |

Eth1/13 |

|

| BE-LF2 |

Eth1/30 |

400G |

BE-SP2 |

Eth1/14 |

|

| BE-LF2 |

Eth1/31 |

400G |

BE-SP2 |

Eth1/15 |

|

| BE-LF2 |

Eth1/32 |

400G |

BE-SP2 |

Eth1/16 |

|

| BE-SP1 |

mgmt0 |

1G |

management switch |

||

| BE-SP1 |

Eth1/1 |

400G |

BE-LF1 |

Eth1/17 |

|

| BE-SP1 |

Eth1/2 |

400G |

BE-LF1 |

Eth1/18 |

|

| BE-SP1 |

Eth1/3 |

400G |

BE-LF1 |

Eth1/19 |

|

| BE-SP1 |

Eth1/4 |

400G |

BE-LF1 |

Eth1/20 |

|

| BE-SP1 |

Eth1/5 |

400G |

BE-LF1 |

Eth1/21 |

|

| BE-SP1 |

Eth1/6 |

400G |

BE-LF1 |

Eth1/22 |

|

| BE-SP1 |

Eth1/7 |

400G |

BE-LF1 |

Eth1/23 |

|

| BE-SP1 |

Eth1/8 |

400G |

BE-LF1 |

Eth1/24 |

|

| BE-SP1 |

Eth1/9 |

400G |

BE-LF2 |

Eth1/17 |

|

| BE-SP1 |

Eth1/10 |

400G |

BE-LF2 |

Eth1/18 |

|

| BE-SP1 |

Eth1/11 |

400G |

BE-LF2 |

Eth1/19 |

|

| BE-SP1 |

Eth1/12 |

400G |

BE-LF2 |

Eth1/20 |

|

| BE-SP1 |

Eth1/13 |

400G |

BE-LF2 |

Eth1/21 |

|

| BE-SP1 |

Eth1/14 |

400G |

BE-LF2 |

Eth1/22 |

|

| BE-SP1 |

Eth1/15 |

400G |

BE-LF2 |

Eth1/23 |

|

| BE-SP1 |

Eth1/16 |

400G |

BE-LF2 |

Eth1/24 |

|

| BE-SP2 |

mgmt0 |

1G |

management switch |

||

| BE-SP2 |

Eth1/1 |

400G |

BE-LF1 |

Eth1/25 |

|

| BE-SP2 |

Eth1/2 |

400G |

BE-LF1 |

Eth1/26 |

|

| BE-SP2 |

Eth1/3 |

400G |

BE-LF1 |

Eth1/27 |

|

| BE-SP2 |

Eth1/4 |

400G |

BE-LF1 |

Eth1/28 |

|

| BE-SP2 |

Eth1/5 |

400G |

BE-LF1 |

Eth1/29 |

|

| BE-SP2 |

Eth1/6 |

400G |

BE-LF1 |

Eth1/30 |

|

| BE-SP2 |

Eth1/7 |

400G |

BE-LF1 |

Eth1/31 |

|

| BE-SP2 |

Eth1/8 |

400G |

BE-LF1 |

Eth1/32 |

|

| BE-SP2 |

Eth1/9 |

400G |

BE-LF2 |

Eth1/25 |

|

| BE-SP2 |

Eth1/10 |

400G |

BE-LF2 |

Eth1/26 |

|

| BE-SP2 |

Eth1/11 |

400G |

BE-LF2 |

Eth1/27 |

|

| BE-SP2 |

Eth1/12 |

400G |

BE-LF2 |

Eth1/28 |

|

| BE-SP2 |

Eth1/13 |

400G |

BE-LF2 |

Eth1/29 |

|

| BE-SP2 |

Eth1/14 |

400G |

BE-LF2 |

Eth1/30 |

|

| BE-SP2 |

Eth1/15 |

400G |

BE-LF2 |

Eth1/31 |

|

| BE-SP2 |

Eth1/16 |

400G |

BE-LF2 |

Eth1/32 |

Table 5. Cisco Nexus Frontend Fabric Cable Connections

| Device |

Port |

Speed |

Device |

Port |

Comment |

| FE-LF1 |

mgmt0 |

1G |

management switch |

||

| FE-LF1 |

Eth1/1 |

200G |

C885A-1 |

BF 1 |

|

| FE-LF1 |

Eth1/2 |

200G |

C885A-2 |

BF 1 |

|

| FE-LF1 |

Eth1/3 |

200G |

C885A-3 |

BF 1 |

|

| FE-LF1 |

Eth1/4 |

200G |

C885A-4 |

BF 1 |

|

| FE-LF1 |

Eth1/5 |

100G |

S9108-A |

Eth1/5 |

UCS X-Series Direct |

| FE-LF1 |

Eth1/6 |

100G |

S9108-B |

Eth1/5 |

UCS X-Series Direct |

| FE-LF1 |

Eth1/7 |

100G |

S9108-A |

Eth1/6 |

UCS X-Series Direct |

| FE-LF1 |

Eth1/8 |

100G |

S9108-B |

Eth1/6 |

UCS X-Series Direct |

| FE-LF1 |

Eth1/20/1 |

100G |

C225M6-1 |

VIC 1 |

|

| FE-LF1 |

Eth1/20/2 |

100G |

C225M6-2 |

VIC 1 |

|

| FE-LF1 |

Eth1/20/3 |

100G |

C225M6-3 |

VIC 1 |

|

| FE-LF1 |

Eth1/20/4 |

100G |

C225M6-4 |

VIC 1 |

|

| FE-LF1 |

Eth1/21 |

100G |

RTP5-BCM-MGMT |

VIC 1 |

BCM Head Node |

| FE-LF1 |

Eth1/27 |

400G |

FE-SP1 |

Eth1/7 |

|

| FE-LF1 |

Eth1/28 |

400G |

FE-SP1 |

Eth1/8 |

|

| FE-LF1 |

Eth1/29 |

400G |

FE-SP1 |

Eth1/9 |

|

| FE-LF1 |

Eth1/30 |

400G |

FE-SP2 |

Eth1/7 |

|

| FE-LF1 |

Eth1/31 |

400G |

FE-SP2 |

Eth1/8 |

|

| FE-LF1 |

Eth1/32 |

400G |

FE-SP2 |

Eth1/9 |

|

| FE-LF2 |

mgmt0 |

1G |

management switch |

||

| FE-LF2 |

Eth1/1 |

200G |

C885A-1 |

BF 2 |

|

| FE-LF2 |

Eth1/2 |

200G |

C885A-2 |

BF 2 |

|

| FE-LF2 |

Eth1/3 |

200G |

C885A-3 |

BF 2 |

|

| FE-LF2 |

Eth1/4 |

200G |

C885A-4 |

BF 2 |

|

| FE-LF2 |

Eth1/5 |

100G |

S9108-A |

Eth1/7 |

UCS X-Series Direct |

| FE-LF2 |

Eth1/6 |

100G |

S9108-B |

Eth1/7 |

UCS X-Series Direct |

| FE-LF2 |

Eth1/7 |

100G |

S9108-A |

Eth1/8 |

UCS X-Series Direct |

| FE-LF2 |

Eth1/8 |

100G |

S9108-B |

Eth1/8 |

UCS X-Series Direct |

| FE-LF2 |

Eth1/20/1 |

100G |

C225M6-1 |

VIC 2 |

|

| FE-LF2 |

Eth1/20/2 |

100G |

C225M6-2 |

VIC 2 |

|

| FE-LF2 |

Eth1/20/3 |

100G |

C225M6-3 |

VIC 2 |

|

| FE-LF2 |

Eth1/20/4 |

100G |

C225M6-4 |

VIC 2 |

|

| FE-LF2 |

Eth1/21 |

100G |

RTP5-BCM-MGMT |

VIC 2 |

BCM Head Node |

| FE-LF2 |

Eth1/27 |

400G |

FE-SP1 |

Eth1/10 |

|

| FE-LF2 |

Eth1/28 |

400G |

FE-SP1 |

Eth1/11 |

|

| FE-LF2 |

Eth1/29 |

400G |

FE-SP1 |

Eth1/12 |

|

| FE-LF2 |

Eth1/30 |

400G |

FE-SP2 |

Eth1/10 |

|

| FE-LF2 |

Eth1/31 |

400G |

FE-SP2 |

Eth1/11 |

|

| FE-LF2 |

Eth1/32 |

400G |

FE-SP2 |

Eth1/12 |

|

| FE-SLF1 |

mgmt0 |

1G |

management switch |

||

| FE-SLF1 |

Eth1/24 |

100G |

RTP5-BCM-MGMT |

PCIe3 1 |

|

| FE-SLF1 |

Eth1/25 |

100G |

NetApp-01 |

e2b |

|

| FE-SLF1 |

Eth1/26 |

100G |

NetApp-02 |

e2b |

|

| FE-SLF1 |

Eth1/27 |

400G |

FE-SP1 |

Eth1/1 |

|

| FE-SLF1 |

Eth1/28 |

400G |

FE-SP1 |

Eth1/2 |

|

| FE-SLF1 |

Eth1/29 |

400G |

FE-SP1 |

Eth1/3 |

|

| FE-SLF1 |

Eth1/30 |

400G |

FE-SP2 |

Eth1/1 |

|

| FE-SLF1 |

Eth1/31 |

400G |

FE-SP2 |

Eth1/2 |

|

| FE-SLF1 |

Eth1/32 |

400G |

FE-SP2 |

Eth1/3 |

|

| FE-SLF1 |

mgmt0 |

1G |

management switch |

||

| FE-SLF1 |

Eth1/24 |

100G |

RTP5-BCM-MGMT |

PCIe3 2 |

|

| FE-SLF1 |

Eth1/25 |

100G |

NetApp-01 |

e3a |

|

| FE-SLF1 |

Eth1/26 |

100G |

NetApp-02 |

e3a |

|

| FE-SLF1 |

Eth1/27 |

400G |

FE-SP1 |

Eth1/4 |

|

| FE-SLF1 |

Eth1/28 |

400G |

FE-SP1 |

Eth1/5 |

|

| FE-SLF1 |

Eth1/29 |

400G |

FE-SP1 |

Eth1/6 |

|

| FE-SLF1 |

Eth1/30 |

400G |

FE-SP2 |

Eth1/4 |

|

| FE-SLF1 |

Eth1/31 |

400G |

FE-SP2 |

Eth1/5 |

|

| FE-SLF1 |

Eth1/32 |

400G |

FE-SP2 |

Eth1/6 |

|

| FE-SP1 |

mgmt0 |

1G |

management switch |

||

| FE-SP1 |

Eth1/1 |

400G |

FE-SLF1 |

Eth1/27 |

|

| FE-SP1 |

Eth1/2 |

400G |

FE-SLF1 |

Eth1/28 |

|

| FE-SP1 |

Eth1/3 |

400G |

FE-SLF1 |

Eth1/29 |

|

| FE-SP1 |

Eth1/4 |

400G |

FE-SLF2 |

Eth1/27 |

|

| FE-SP1 |

Eth1/5 |

400G |

FE-SLF2 |

Eth1/28 |

|

| FE-SP1 |

Eth1/6 |

400G |

FE-SLF2 |

Eth1/29 |

|

| FE-SP1 |

Eth1/7 |

400G |

FE-LF1 |

Eth1/27 |

|

| FE-SP1 |

Eth1/8 |

400G |

FE-LF1 |

Eth1/28 |

|

| FE-SP1 |

Eth1/9 |

400G |

FE-LF1 |

Eth1/29 |

|

| FE-SP1 |

Eth1/10 |

400G |

FE-LF2 |

Eth1/27 |

|

| FE-SP1 |

Eth1/11 |

400G |

FE-LF2 |

Eth1/28 |

|

| FE-SP1 |

Eth1/12 |

400G |

FE-LF2 |

Eth1/29 |

|

| FE-SP1 |

Eth1/63.4 |

100G |

Uplink Router |

||

| FE-SP1 |

Eth1/64.4 |

100G |

Uplink Router |

||

| FE-SP2 |

mgmt0 |

1G |

management switch |

||

| FE-SP2 |

Eth1/1 |

400G |

FE-SLF1 |

Eth1/30 |

|

| FE-SP2 |

Eth1/2 |

400G |

FE-SLF1 |

Eth1/31 |

|

| FE-SP2 |

Eth1/3 |

400G |

FE-SLF1 |

Eth1/32 |

|

| FE-SP2 |

Eth1/4 |

400G |

FE-SLF2 |

Eth1/30 |

|

| FE-SP2 |

Eth1/5 |

400G |

FE-SLF2 |

Eth1/31 |

|

| FE-SP2 |

Eth1/6 |

400G |

FE-SLF2 |

Eth1/32 |

|

| FE-SP2 |

Eth1/7 |

400G |

FE-LF1 |

Eth1/30 |

|

| FE-SP2 |

Eth1/8 |

400G |

FE-LF1 |

Eth1/31 |

|

| FE-SP2 |

Eth1/9 |

400G |

FE-LF1 |

Eth1/32 |

|

| FE-SP2 |

Eth1/10 |

400G |

FE-LF2 |

Eth1/30 |

|

| FE-SP2 |

Eth1/11 |

400G |

FE-LF2 |

Eth1/31 |

|

| FE-SP2 |

Eth1/12 |

400G |

FE-LF2 |

Eth1/32 |

|

| FE-SP2 |

Eth1/63.4 |

100G |

Uplink Router |

||

| FE-SP2 |

Eth1/64.4 |

100G |

Uplink Router |

| Device |

Port |

Speed |

Device |

Port |

Comment |

| Head Node |

Management |

1G |

management switch |

CIMC |

|

| Head Node |

VIC0 |

100G |

FE-LF1 |

Eth1/21 |

|

| Head Node |

VIC1 |

100G |

FE-LF2 |

Eth1/21 |

|

| C885A-1 |

Management |

1G |

management switch |

CIMC |

|

| C885A-1 |

BF 0 |

200G |

FE-LF1 |

Eth1/1 |

N-S |

| C885A-1 |

BF 1 |

200G |

FE-LF2 |

Eth1/1 |

N-S |

| C885A-1 |

CX-7 1 |

400G |

BE-LF1 |

Eth1/1 |

E-W |

| C885A-1 |

CX-7 2 |

400G |

BE-LF2 |

Eth1/1 |

E-W |

| C885A-1 |

CX-7 3 |

400G |

BE-LF1 |

Eth1/2 |

E-W |

| C885A-1 |

CX-7 4 |

400G |

BE-LF2 |

Eth1/2 |

E-W |

| C885A-1 |

CX-7 5 |

400G |

BE-LF1 |

Eth1/3 |

E-W |

| C885A-1 |

CX-7 6 |

400G |

BE-LF2 |

Eth1/3 |

E-W |

| C885A-1 |

CX-7 7 |

400G |

BE-LF1 |

Eth1/4 |

E-W |

| C885A-1 |

CX-7 8 |

400G |

BE-LF2 |

Eth1/4 |

E-W |

| C885A-2 |

Management |

1G |

management switch |

CIMC |

|

| C885A-2 |

BF 0 |

200G |

FE-LF1 |

Eth1/2 |

N-S |

| C885A-2 |

BF 1 |

200G |

FE-LF2 |

Eth1/2 |

N-S |

| C885A-2 |

CX-7 1 |

400G |

BE-LF1 |

Eth1/5 |

E-W |

| C885A-2 |

CX-7 2 |

400G |

BE-LF2 |

Eth1/5 |

E-W |

| C885A-2 |

CX-7 3 |

400G |

BE-LF1 |

Eth1/6 |

E-W |

| C885A-2 |

CX-7 4 |

400G |

BE-LF2 |

Eth1/6 |

E-W |

| C885A-2 |

CX-7 5 |

400G |

BE-LF1 |

Eth1/7 |

E-W |

| C885A-2 |

CX-7 6 |

400G |

BE-LF2 |

Eth1/7 |

E-W |

| C885A-2 |

CX-7 7 |

400G |

BE-LF1 |

Eth1/8 |

E-W |

| C885A-2 |

CX-7 8 |

400G |

BE-LF2 |

Eth1/8 |

E-W |

| C885A-3 |

Management |

1G |

management switch |

CIMC |

|

| C885A-3 |

BF 0 |

200G |

FE-LF1 |

Eth1/3 |

N-S |

| C885A-3 |

BF 1 |

200G |

FE-LF2 |

Eth1/3 |

N-S |

| C885A-3 |

CX-7 1 |

400G |

BE-LF1 |

Eth1/9 |

E-W |

| C885A-3 |

CX-7 2 |

400G |

BE-LF2 |

Eth1/9 |

E-W |

| C885A-3 |

CX-7 3 |

400G |

BE-LF1 |

Eth1/10 |

E-W |

| C885A-3 |

CX-7 4 |

400G |

BE-LF2 |

Eth1/10 |

E-W |

| C885A-3 |

CX-7 5 |

400G |

BE-LF1 |

Eth1/11 |

E-W |

| C885A-3 |

CX-7 6 |

400G |

BE-LF2 |

Eth1/11 |

E-W |

| C885A-3 |

CX-7 7 |

400G |

BE-LF1 |

Eth1/12 |

E-W |

| C885A-3 |

CX-7 8 |

400G |

BE-LF2 |

Eth1/12 |

E-W |

| C885A-4 |

Management |

1G |

management switch |

CIMC |

|

| C885A-4 |

BF 0 |

200G |

FE-LF1 |

Eth1/4 |

N-S |

| C885A-4 |

BF 1 |

200G |

FE-LF2 |

Eth1/4 |

N-S |

| C885A-4 |

CX-7 1 |

400G |

BE-LF1 |

Eth1/13 |

E-W |

| C885A-4 |

CX-7 2 |

400G |

BE-LF2 |

Eth1/13 |

E-W |

| C885A-4 |

CX-7 3 |

400G |

BE-LF1 |

Eth1/14 |

E-W |

| C885A-4 |

CX-7 4 |

400G |

BE-LF2 |

Eth1/14 |

E-W |

| C885A-4 |

CX-7 5 |

400G |

BE-LF1 |

Eth1/15 |

E-W |

| C885A-4 |

CX-7 6 |

400G |

BE-LF2 |

Eth1/15 |

E-W |

| C885A-4 |

CX-7 7 |

400G |

BE-LF1 |

Eth1/16 |

E-W |

| C885A-4 |

CX-7 8 |

400G |

BE-LF2 |

Eth1/16 |

E-W |

Network Switch Configuration

This chapter contains the following:

● Cisco Nexus Dashboard SetupCisco Nexus Dashboard Setup

● Nexus Frontend Fabric SetupNexus Frontend Fabric Setup

● Nexus Backend Fabric SetupNexus Backend Fabric Setup

In this lab configuration, Cisco Nexus Dashboard is used to create and configure the Backend and Frontend Fabrics. Nexus Dashboard is available in both physical and virtual form factors. In this lab configuration, three Nexus Dashboard physical nodes were installed into a cluster. Please see Nexus Dashboard Capacity Planning and Cisco Nexus Dashboard Data Sheet to determine the form factor and cluster size for your deployment. Then install Nexus Dashboard

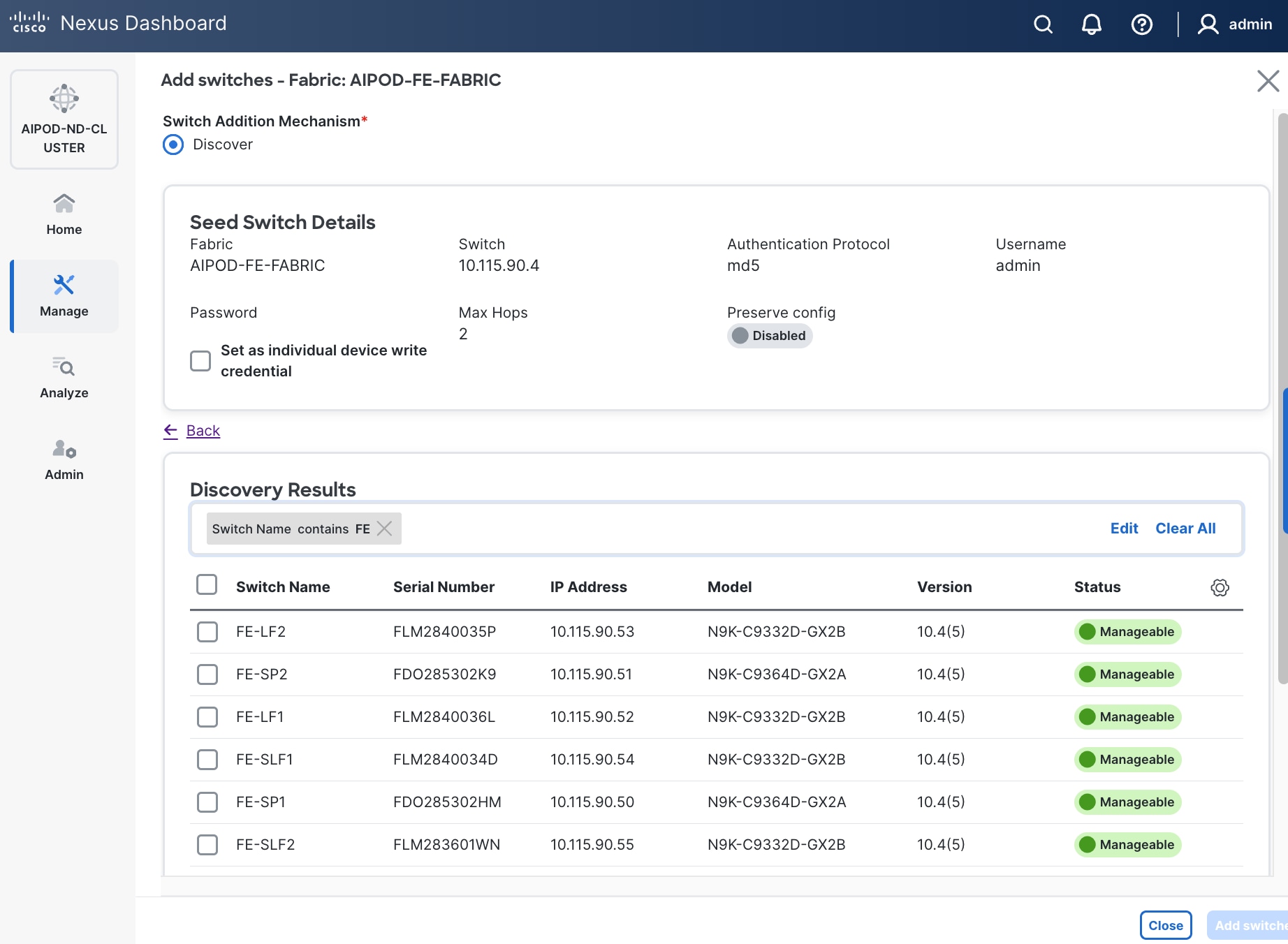

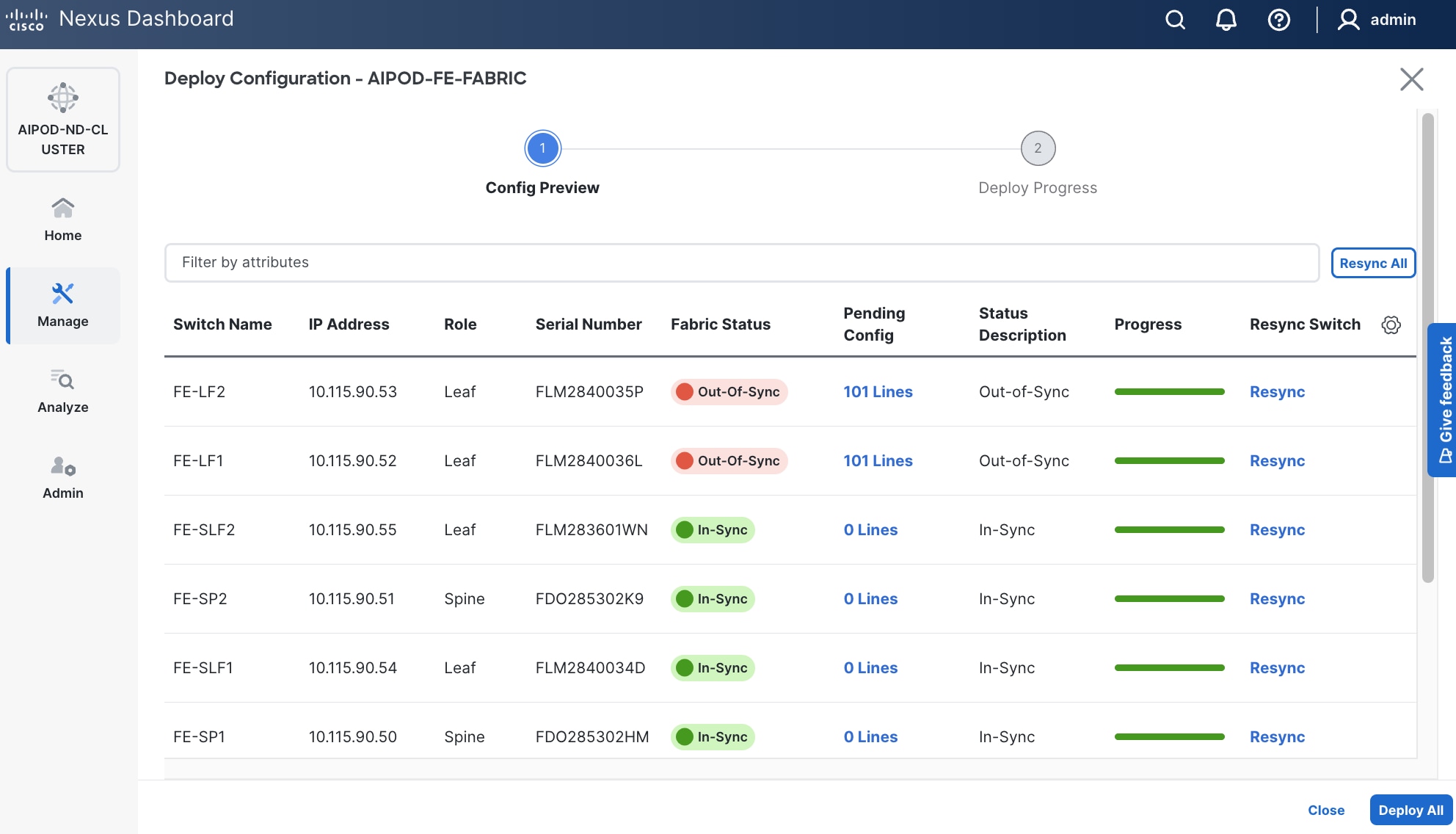

In this setup, the Nexus Frontend Fabric consisted of 2 spine and 4 leaf switches. This fabric was cabled according to Table 4. The fabric switch details are listed in Table 7.

Table 7. Frontend Fabric Switch Details

| Switch |

Role |

OOB IP |

Firmware |

Model |

| FE-LF1 |

Leaf |

10.115.90.52 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-LF2 |

Leaf |

10.115.90.53 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SLF1 |

Storage Leaf |

10.115.90.54 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SLF2 |

Storage Leaf |

10.115.90.55 |

10.4(5) |

Cisco Nexus 9332D-GX2B |

| FE-SP1 |

Spine |

10.115.90.50 |

10.4(5) |

Cisco Nexus 9364D-GX2A |

| FE-SP2 |

Spine |

10.115.90.51 |

10.4(5) |

Cisco Nexus 9364D-GX2A |

Physical Connectivity

Follow the physical connectivity guidelines for FlexPod as explained in section FlexPod Cabling.

Initial Configuration of Switches

The following procedures describe this basic configuration of the Cisco Nexus frontend fabric switches for use in the FlexPod environment. This procedure assumes the use of Cisco Nexus 9000 10.4(5), the Cisco suggested Nexus switch release at the time of this validation.

Procedure 1. Set Up Initial Configuration from a serial console

Set up the initial configuration for each backend fabric switch from Table 7 above.

Step 1. Configure the switch.

Note: On initial boot, the NX-OS setup automatically starts and attempts to enter Power on Auto Provisioning.

Abort Power On Auto Provisioning [yes - continue with normal setup, skip - bypass password and basic configuration, no - continue with Power On Auto Provisioning] (yes/skip/no)[no]: yes

Disabling POAP.......Disabling POAP

poap: Rolling back, please wait... (This may take 5-15 minutes)

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name: <nexus-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address: <nexus-out_of_band_mgmt0-ip>

Mgmt0 IPv4 netmask: <nexus-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway: <nexus-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [2048]: Enter

Configure the ntp server? (yes/no) [n]: Enter

Configure default interface layer (L3/L2) [L2]: Enter

Configure default switchport interface state (shut/noshut) [noshut]: Enter

Enter basic FC configurations (yes/no) [n]: n

Configure CoPP system profile (strict/moderate/lenient/dense) [strict]: Enter

Would you like to edit the configuration? (yes/no) [n]: Enter

Step 2. Review the configuration summary before enabling the configuration.

Use this configuration and save it? (yes/no) [y]: Enter

Step 3. Repeat this configuration for all switches in Table 7.

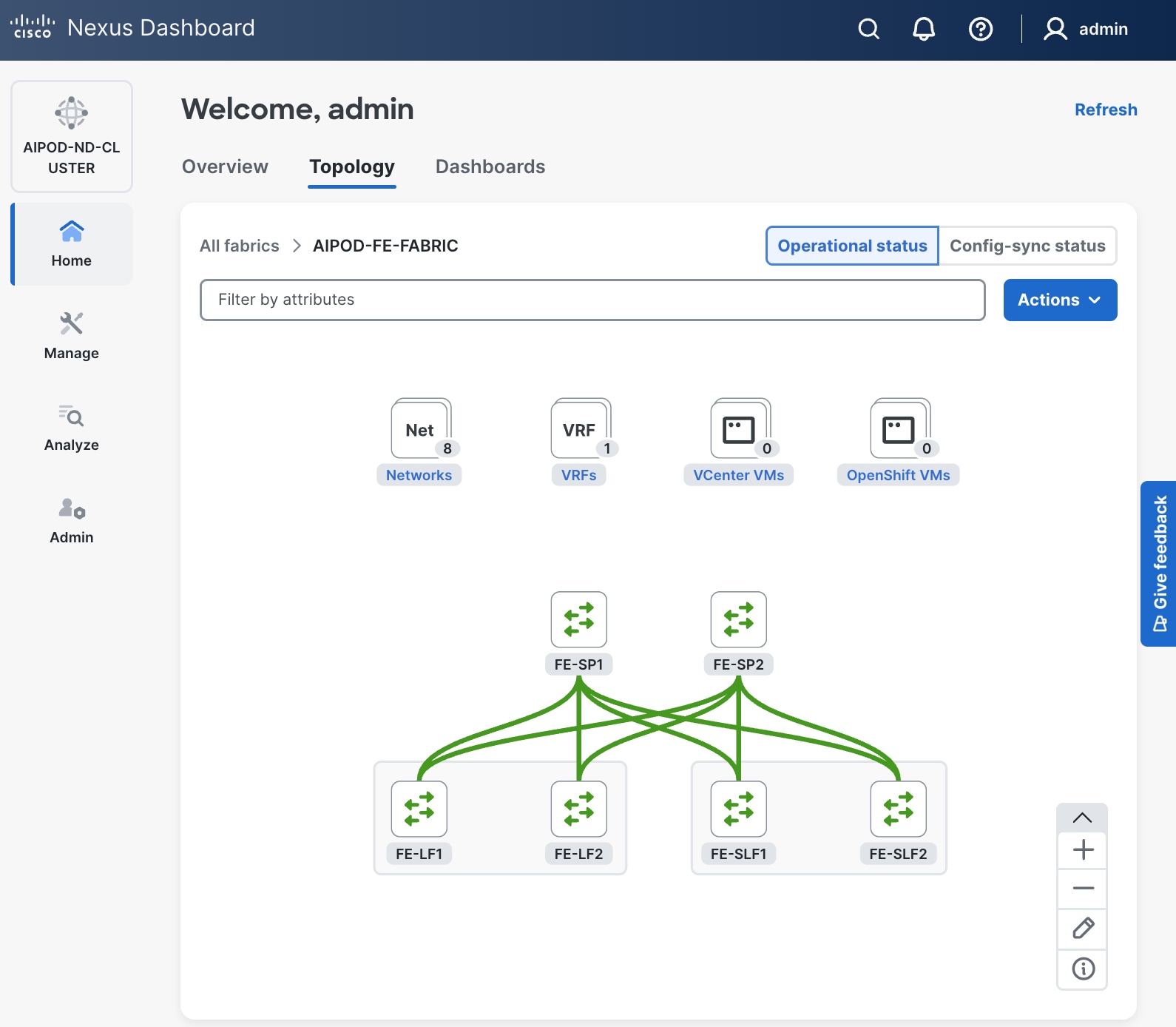

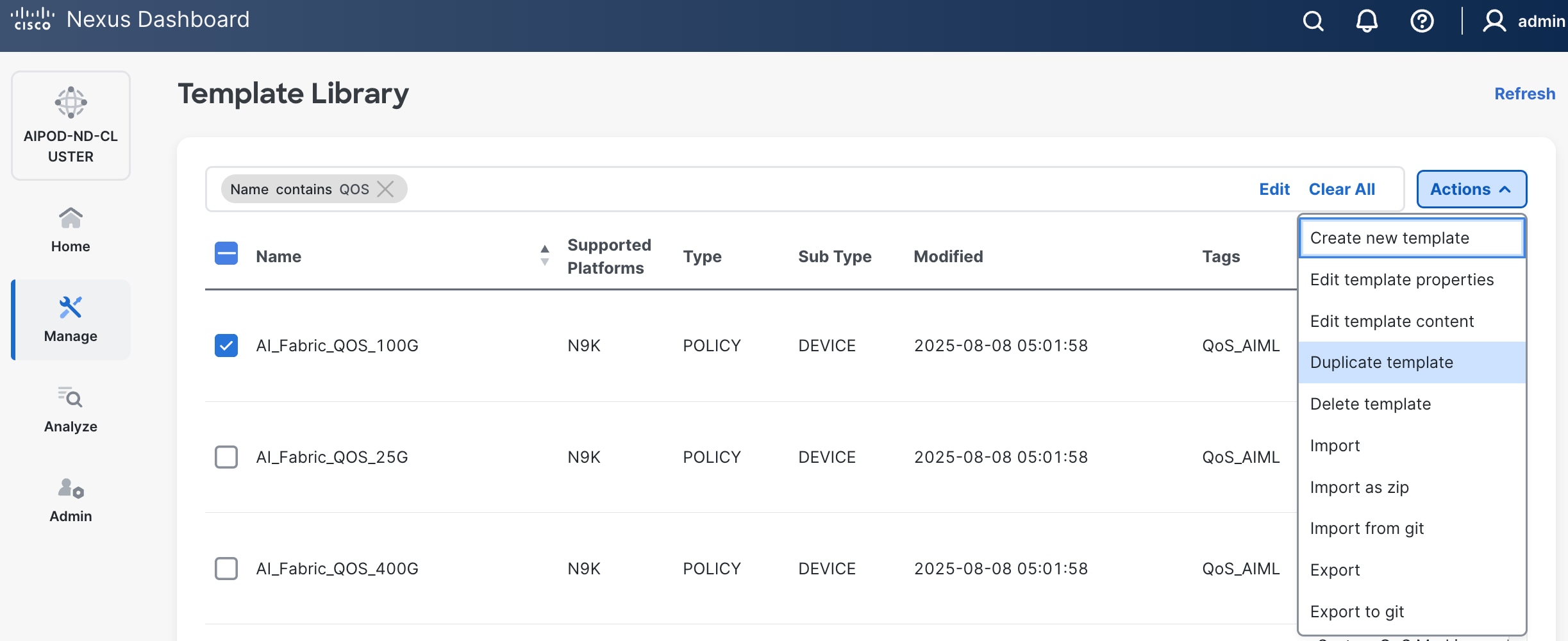

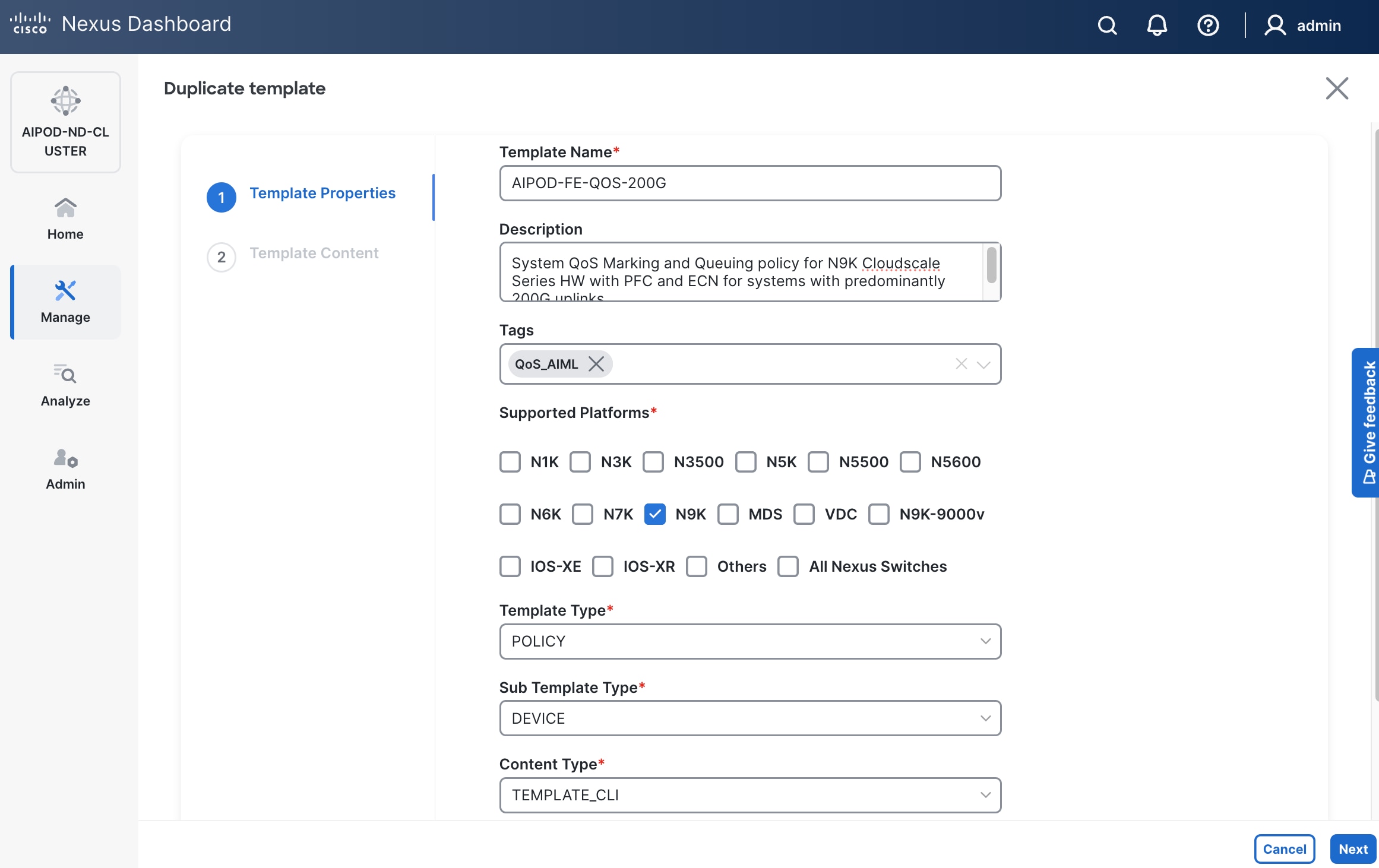

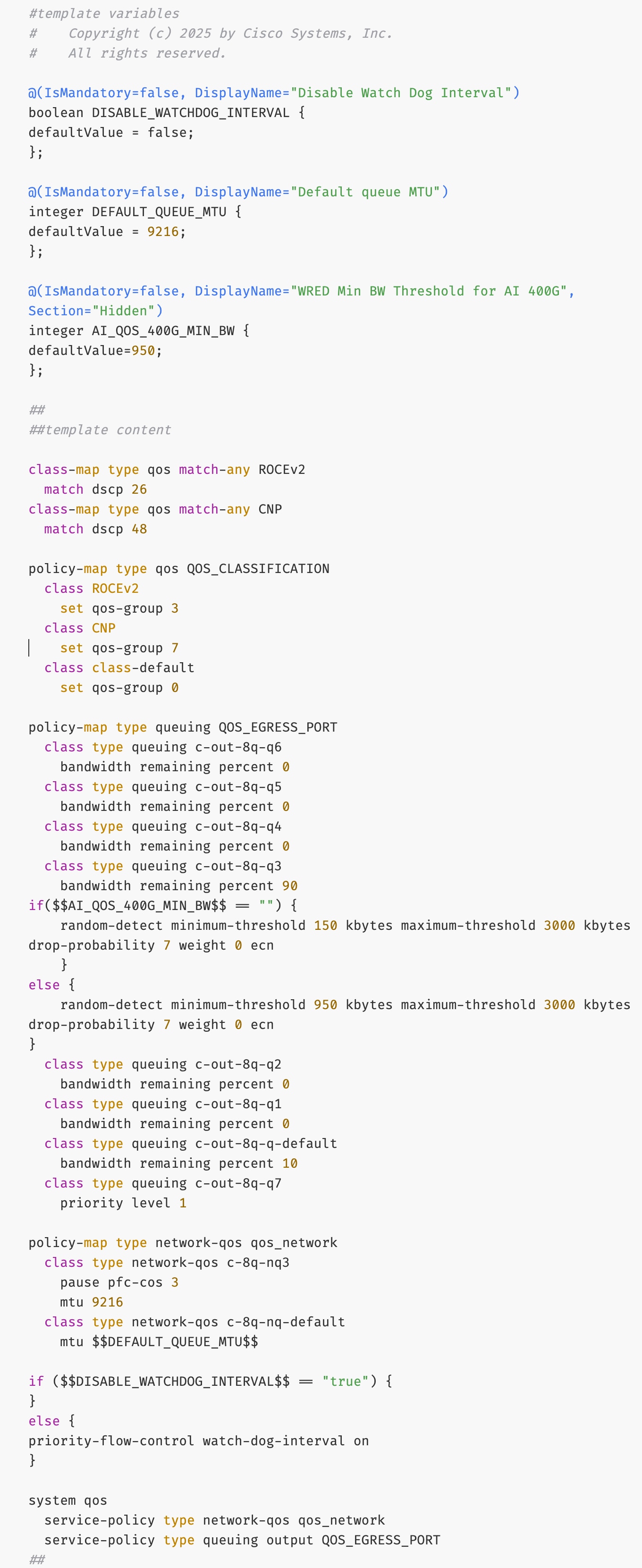

Deploy Frontend Fabric Using Nexus Dashboard

The procedures outlined in this section will use Cisco Nexus Dashboard (ND), specifically the fabric templates provided by ND, to deploy the frontend (FE) fabric in the AI POD solution. The frontend fabric is a 2-tier, 3-stage spine-leaf Clos topology, built using Cisco Nexus 9000 series data center switches. Once the fabric is deployed, ND will be used to provision connectivity between various infrastructure components connected to the frontend fabric. The Cisco UCS GPU servers in the AI POD training cluster will use the frontend (N-S) NIC to connect to the FE fabric.

The procedures in this section will:

● Deploy a VXLAN EVPN fabric on the frontend leaf and spine switches, connected in a 2-tier spine-leaf topology.

● Enable Virtual Port Channel (vPC) peering on compute/management leaf pairs and storage leaf pairs in the frontend fabric.

● Provision connectivity to UCS servers that will be used to host the control plane and workload management components for the AI workloads running on UCS GPU servers.

● Provisioning external connectivity from the frontend fabric to other enterprise internal and external networks. This includes connectivity to Cisco Intersight, Red Hat Hybrid Cloud Console and other SaaS services used in the AI POD solution.

● Provision any connectivity required to bring up the storage system.

● Enable connectivity between UCS management and storage, as well as from UCS GPU nodes to storage.

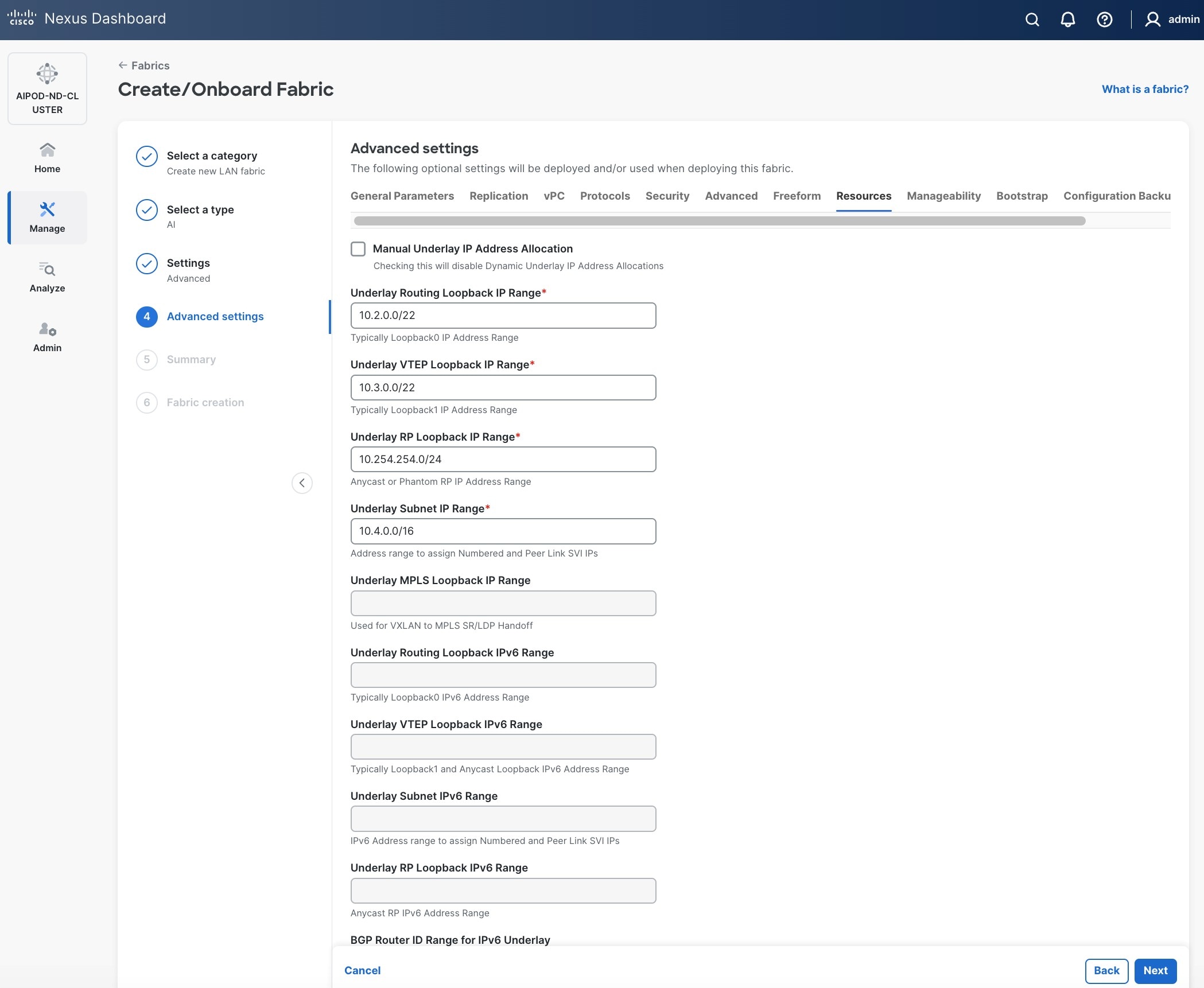

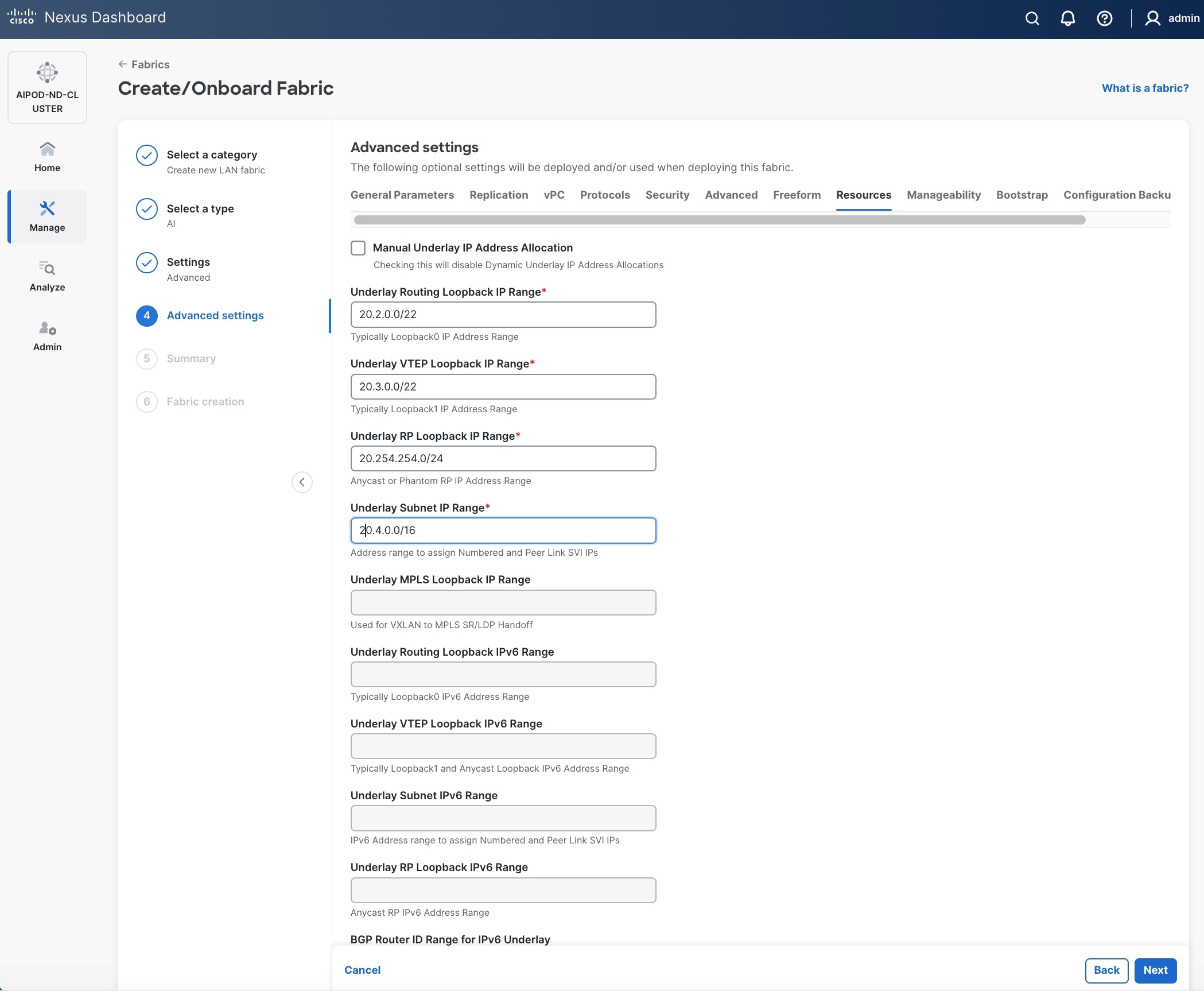

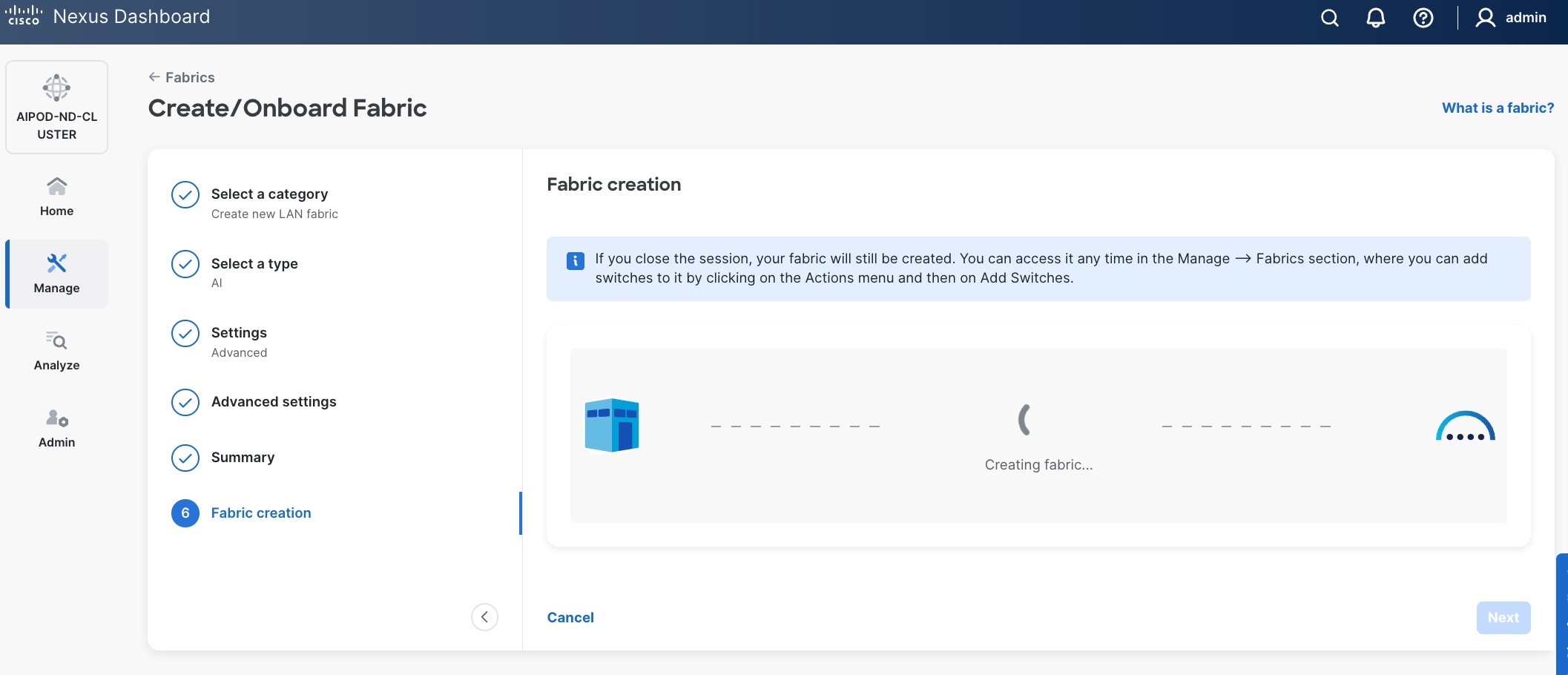

Procedure 1. Deploy VXLAN EVPN fabric on the two-tier spine and leaf switches

Step 1. Use a web browser to navigate to the management IP of any node in the Nexus Dashboard cluster. Log in using the admin account.

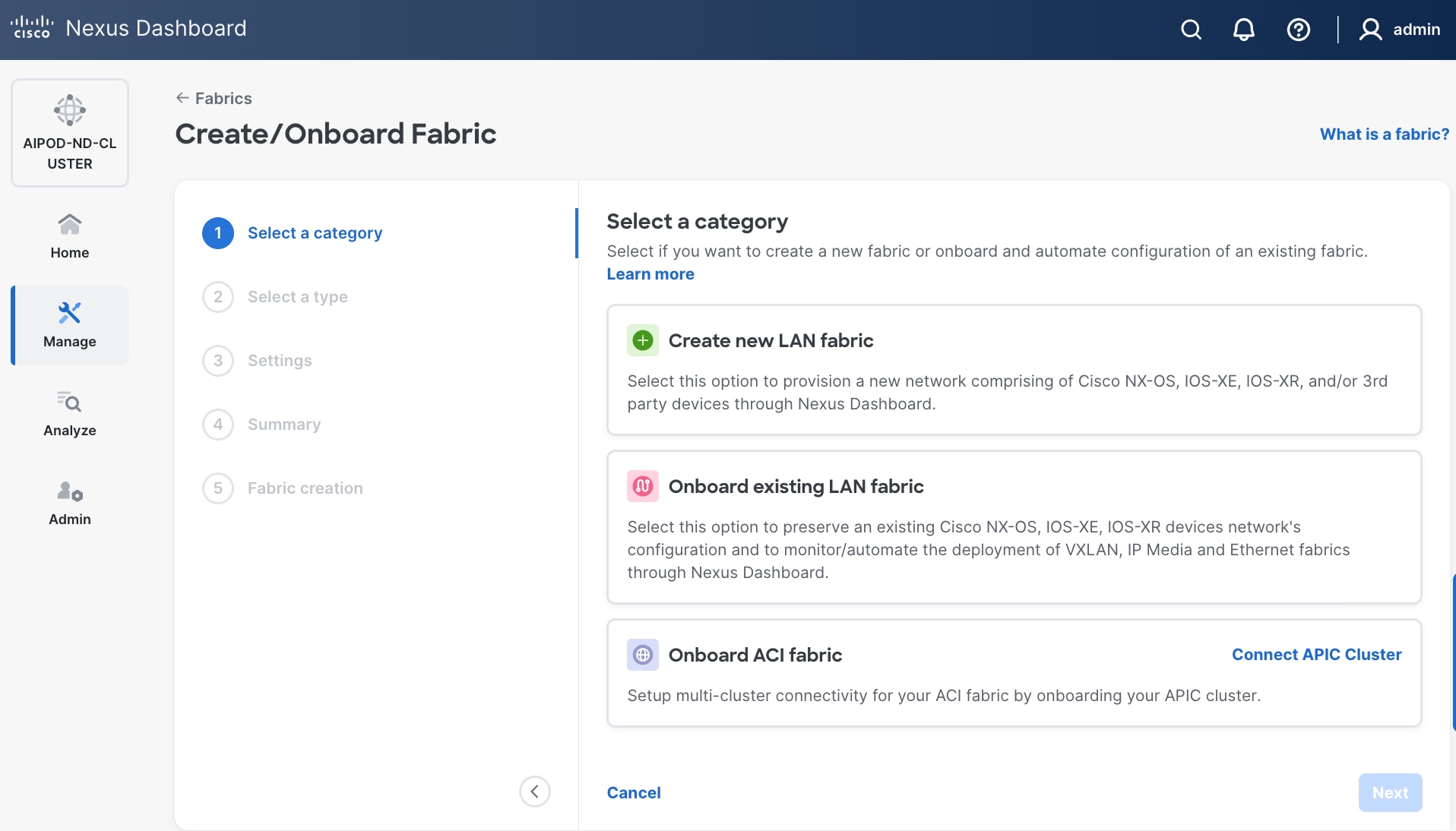

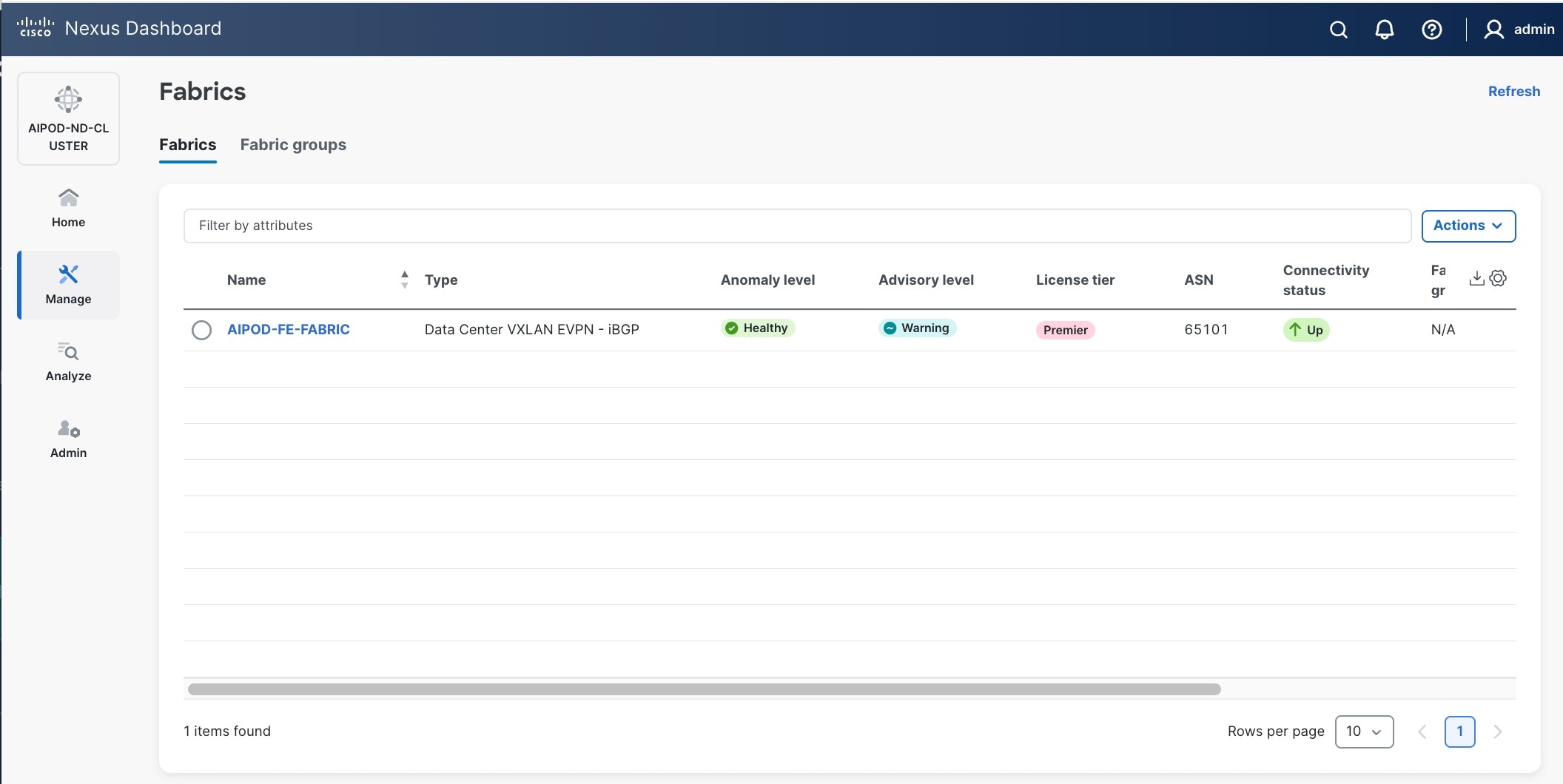

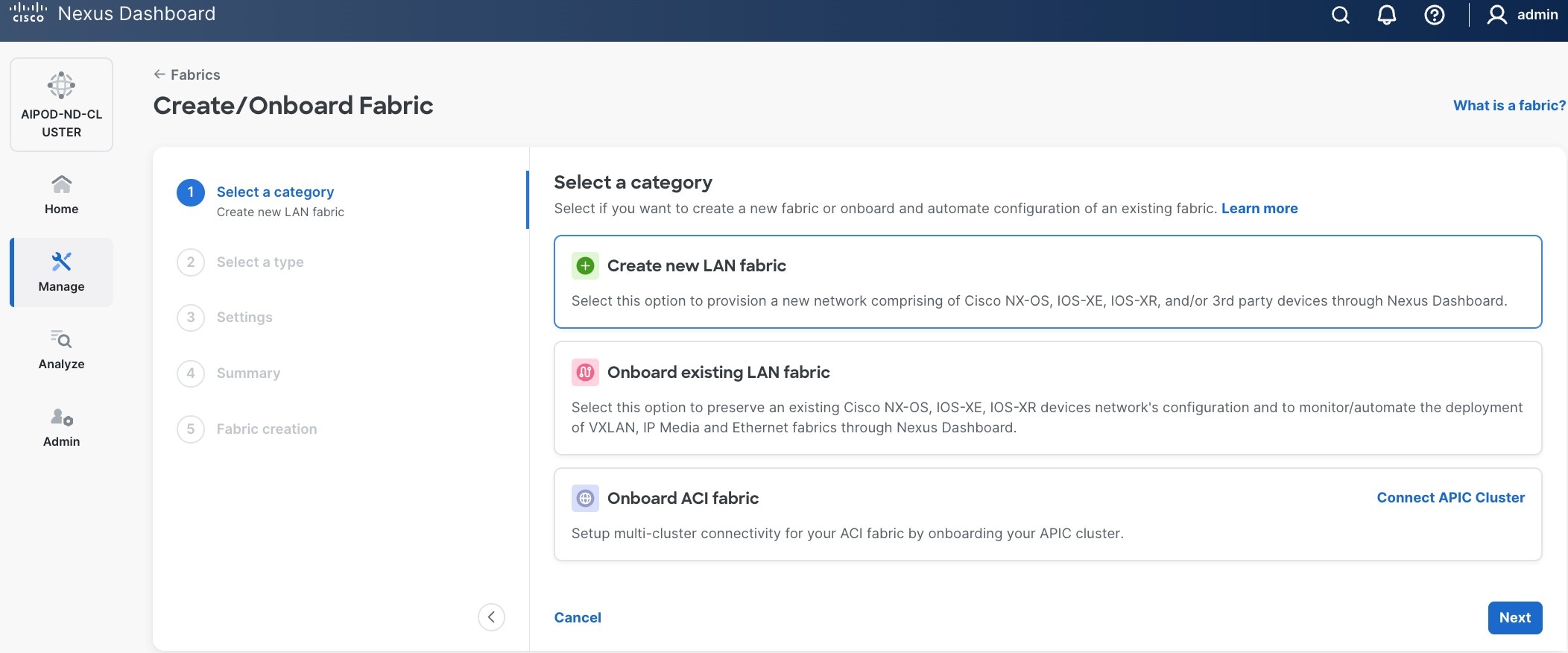

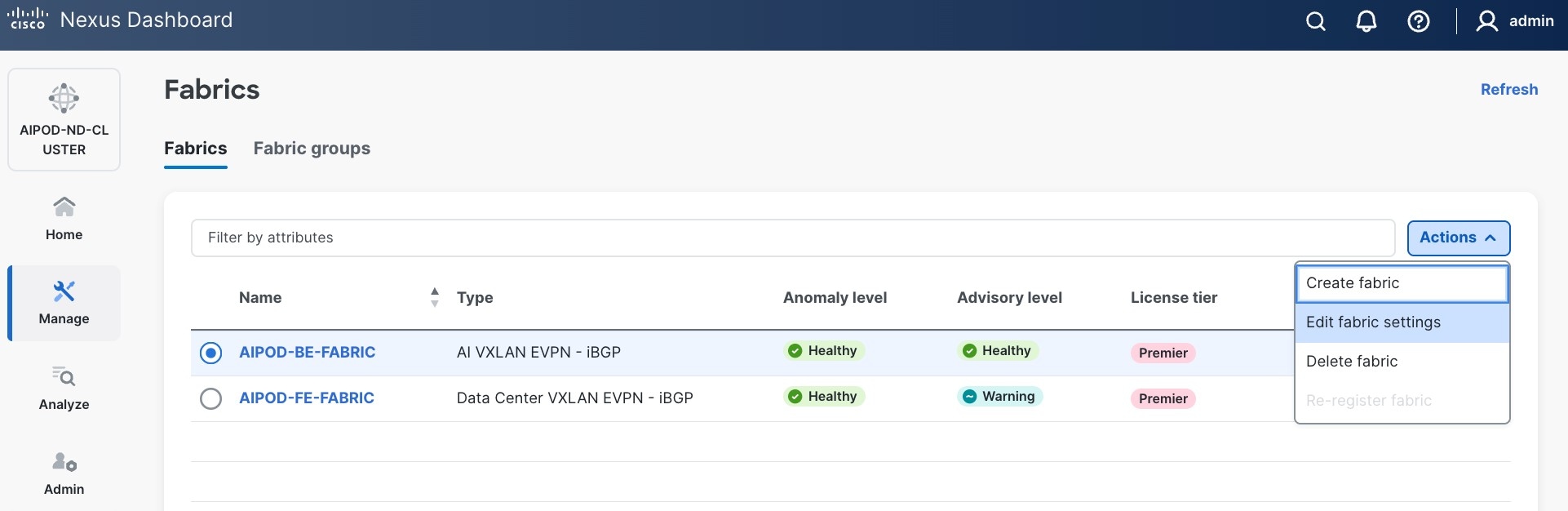

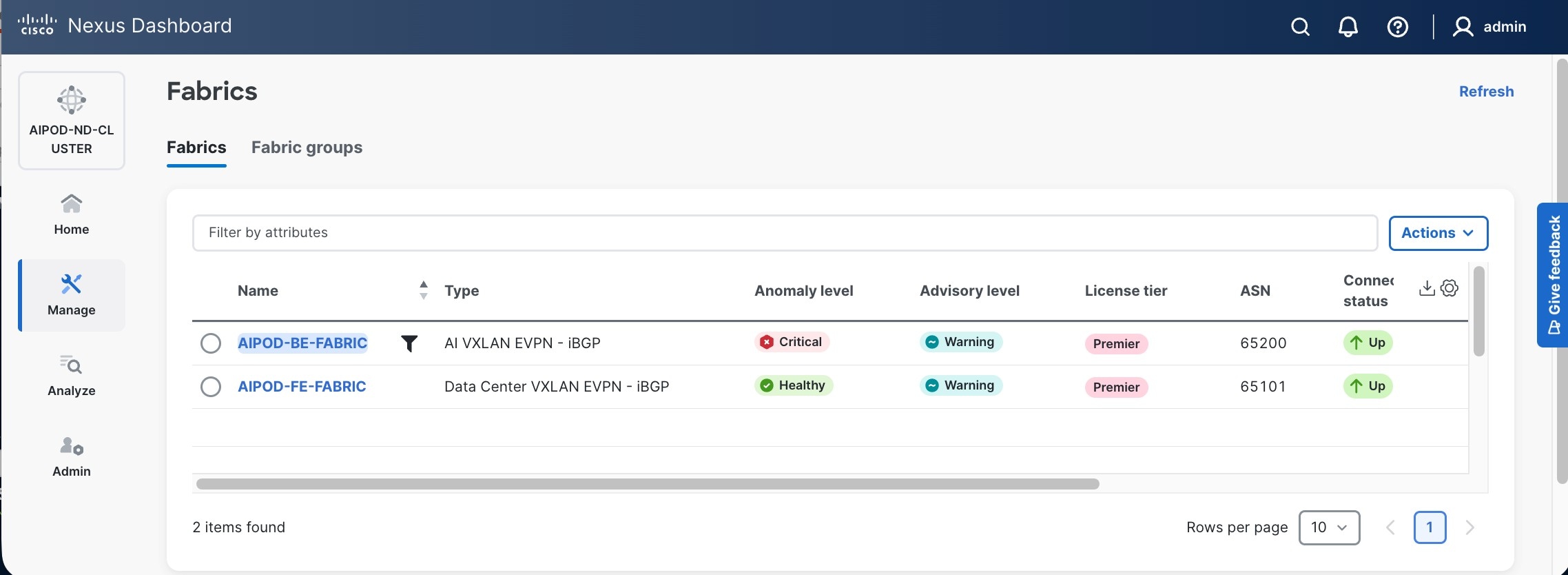

Step 2. From the left navigation menu, go to Manage > Fabrics.

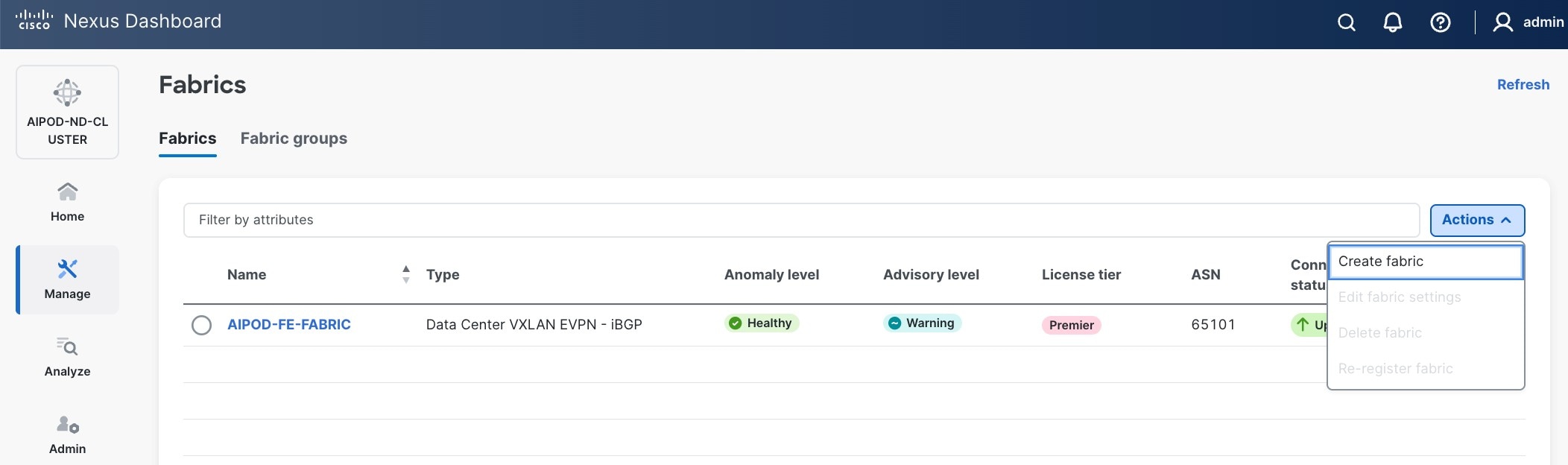

Step 3. Click Actions and select Create Fabric from the drop-down list.

Step 4. Select Create a new LAN fabric box. Click Next.

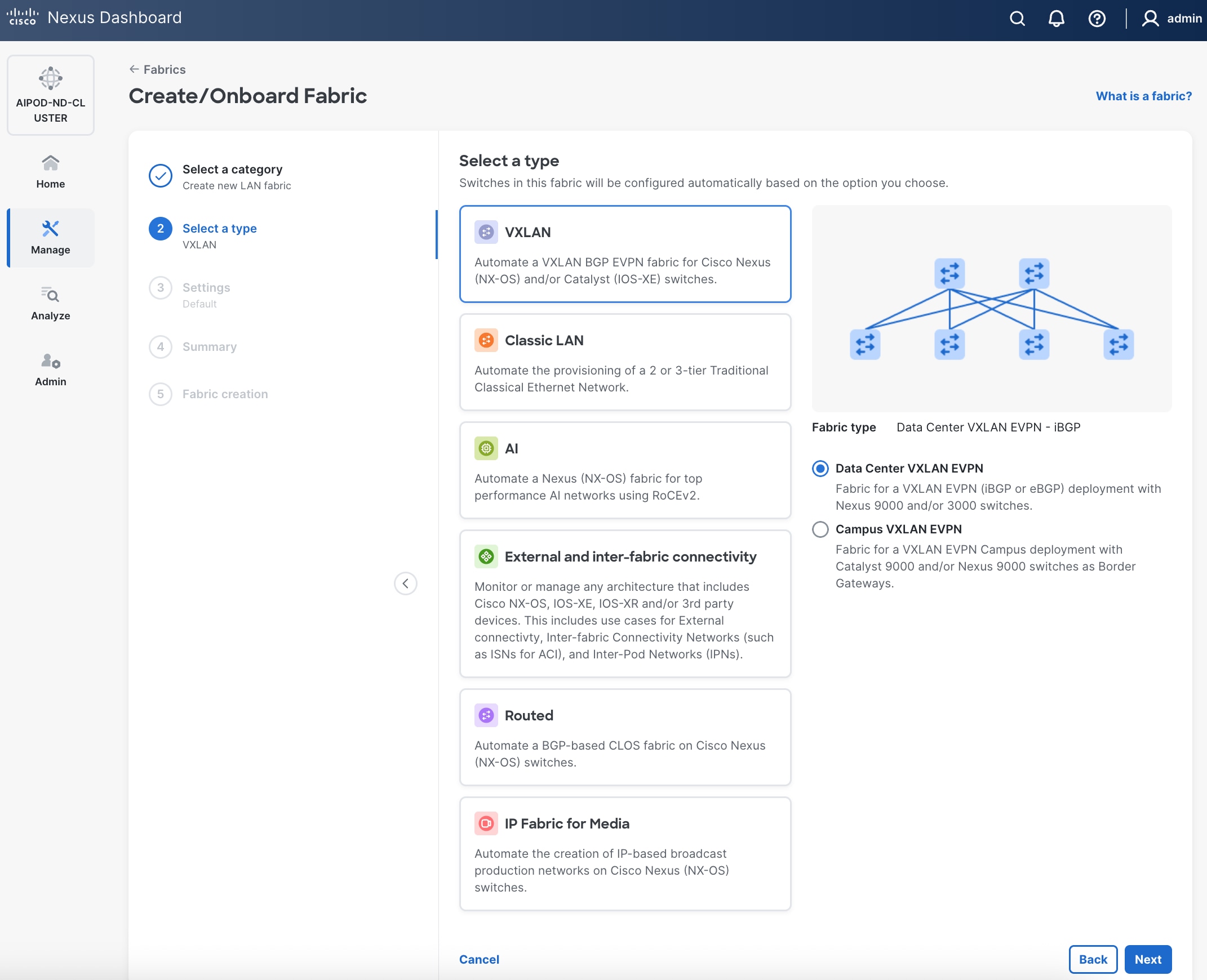

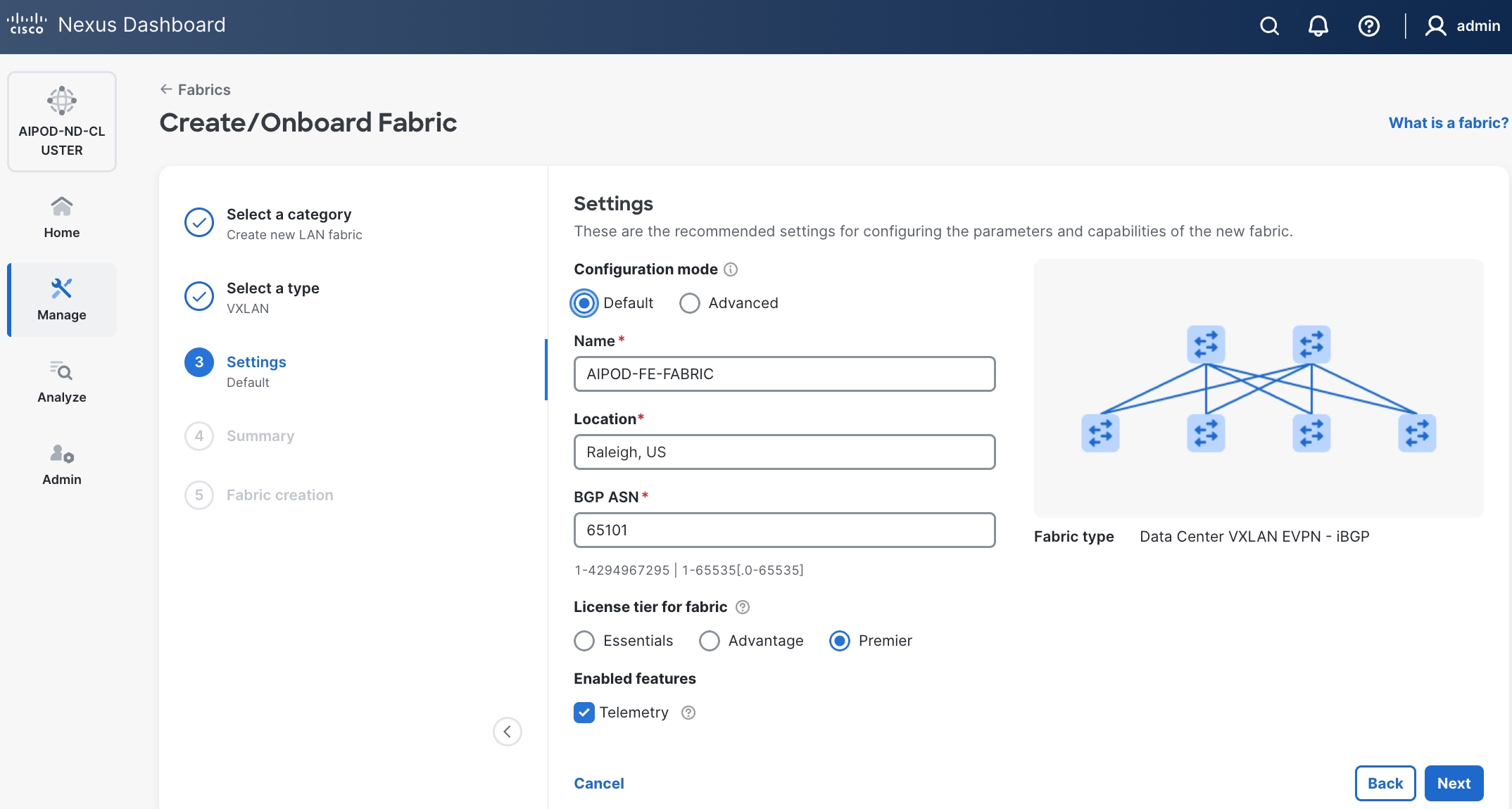

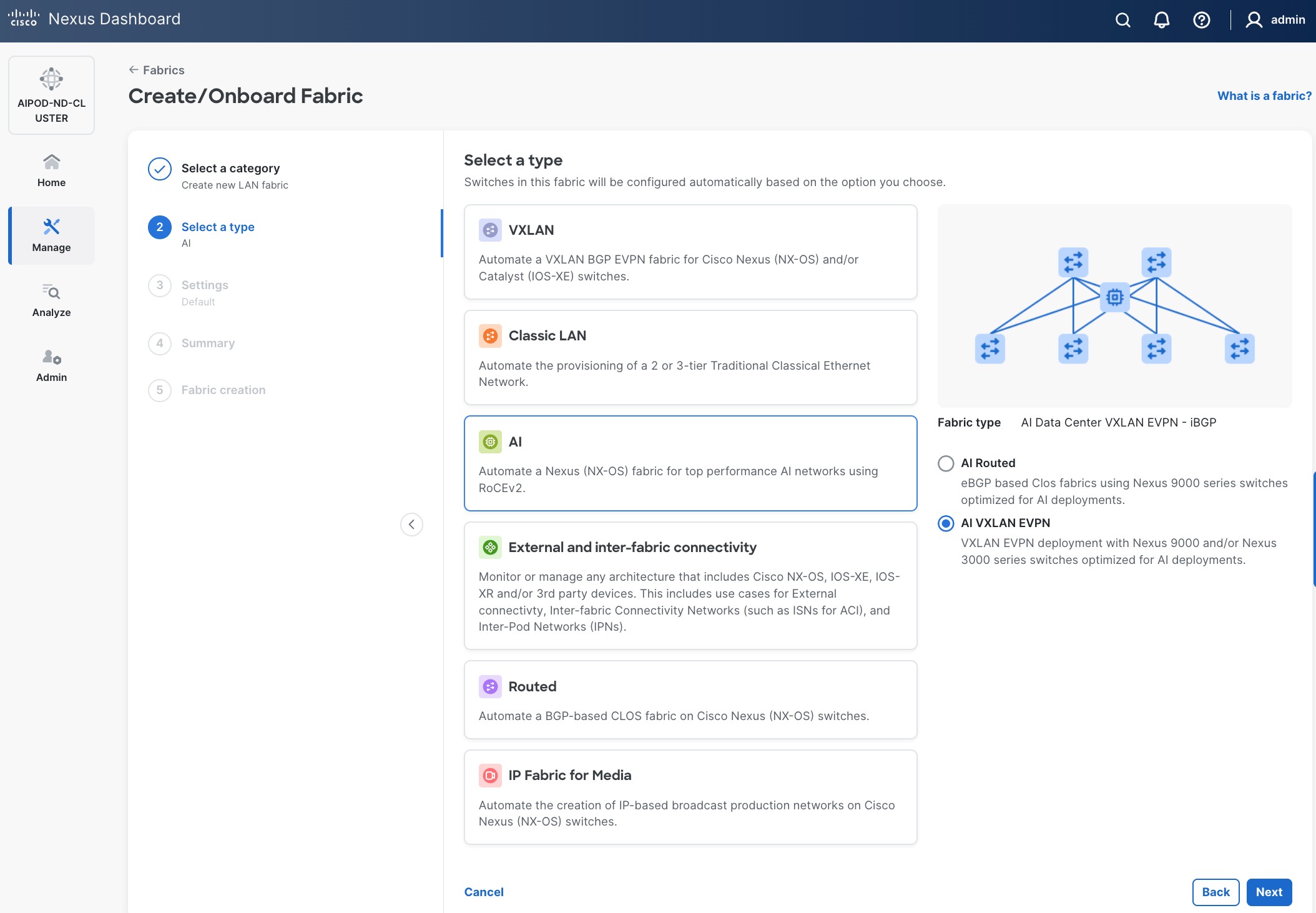

Step 5. Select VXLAN and radio button for Data Center VXLAN EVPN for the fabric type. Click Next.

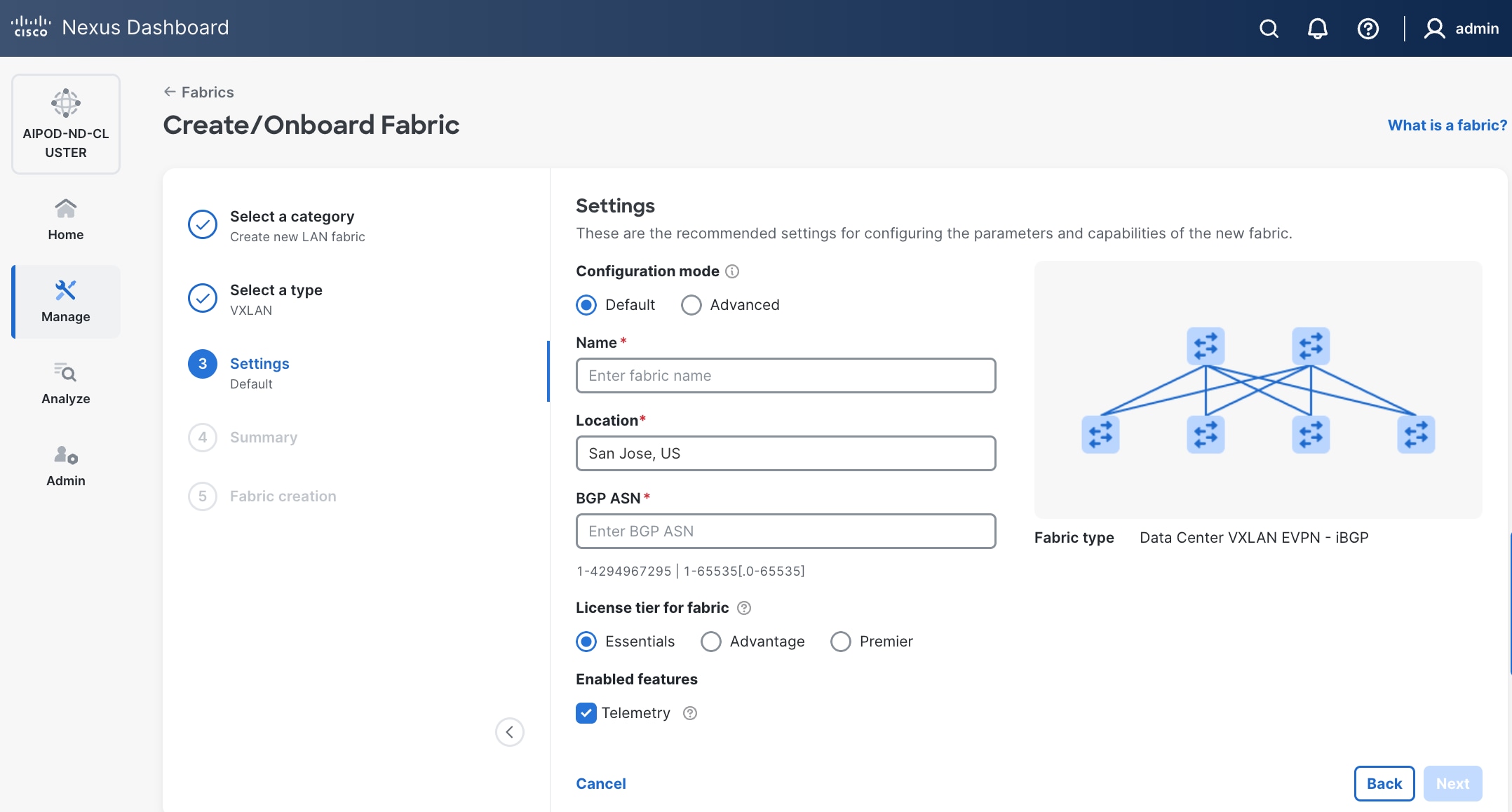

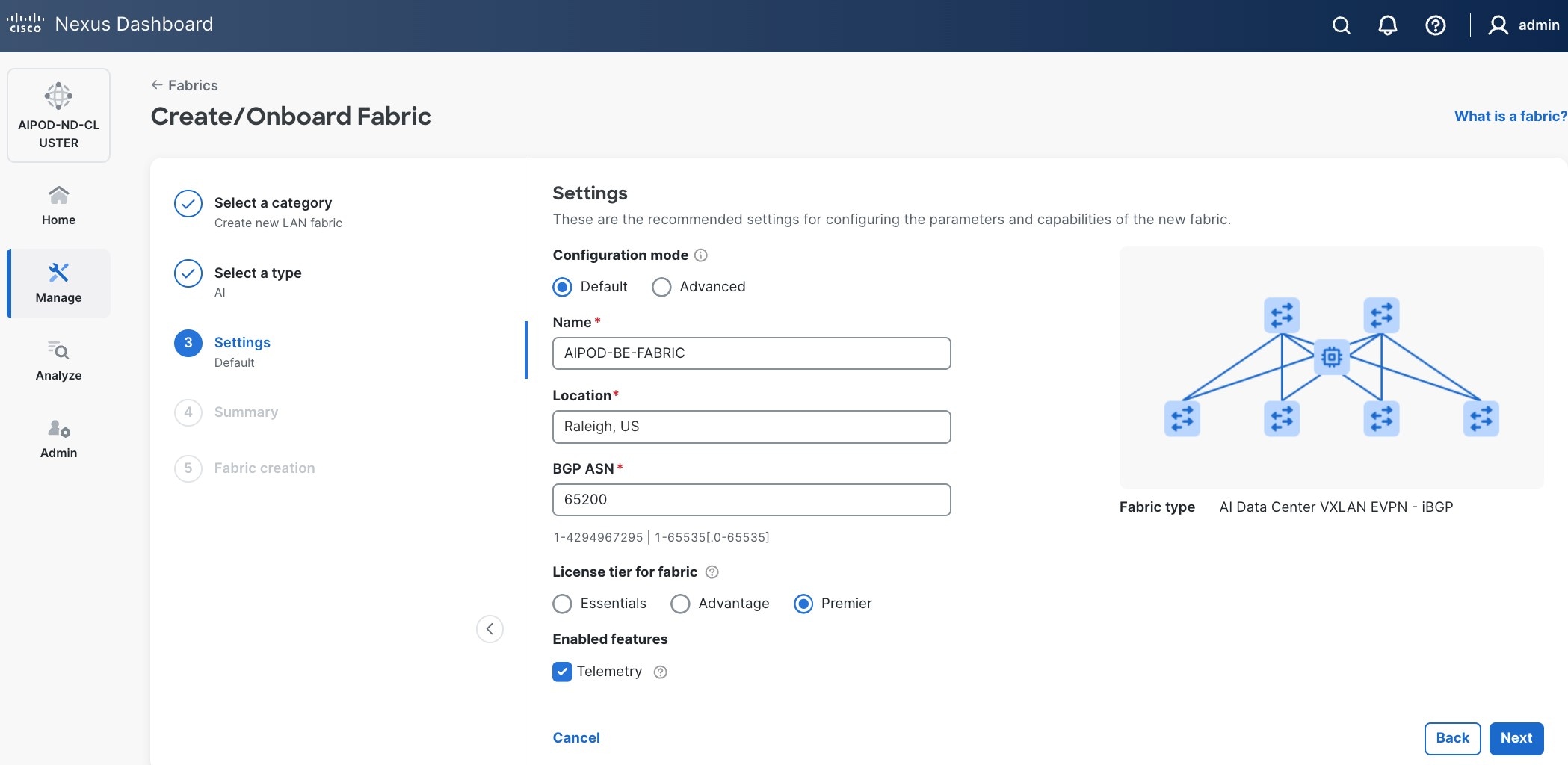

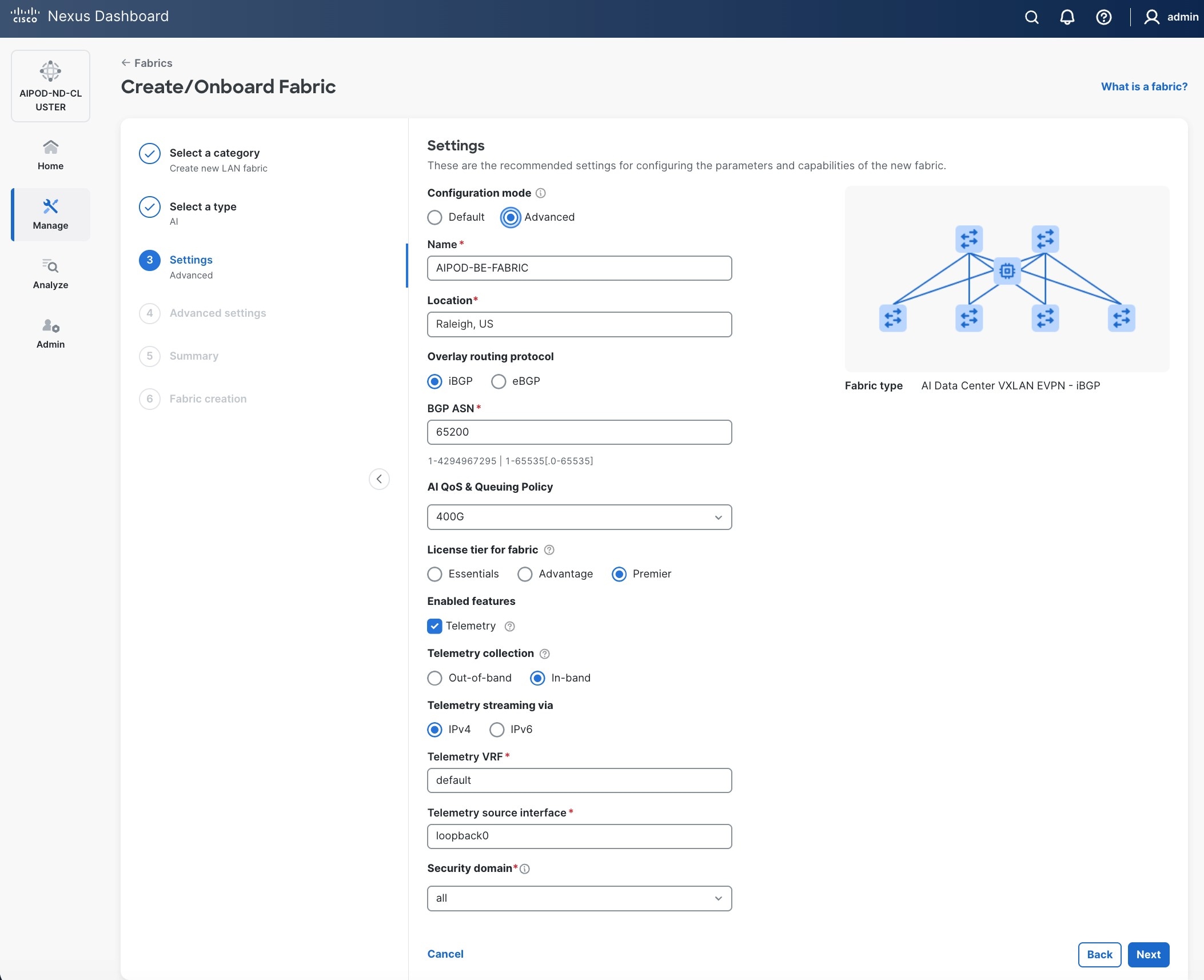

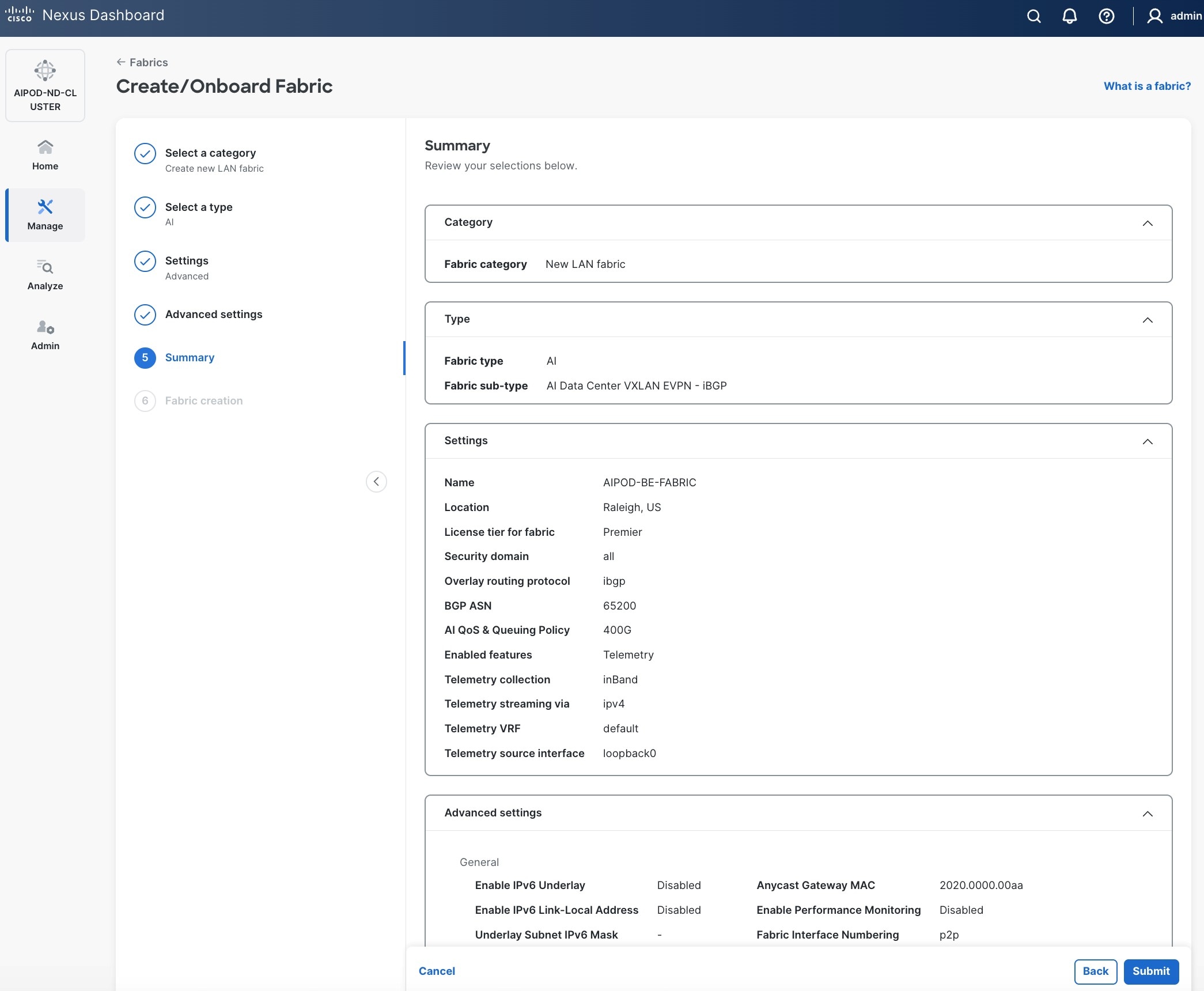

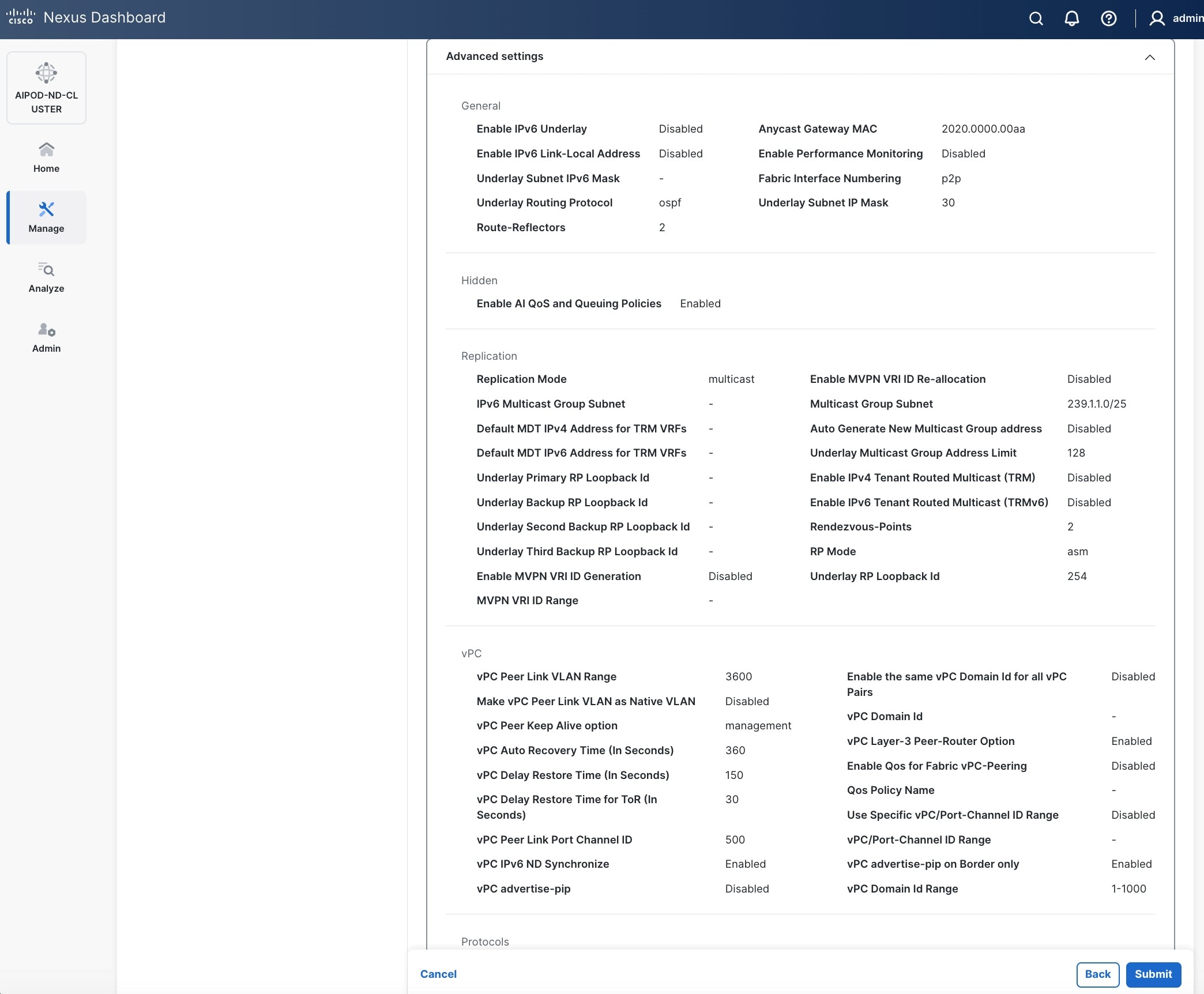

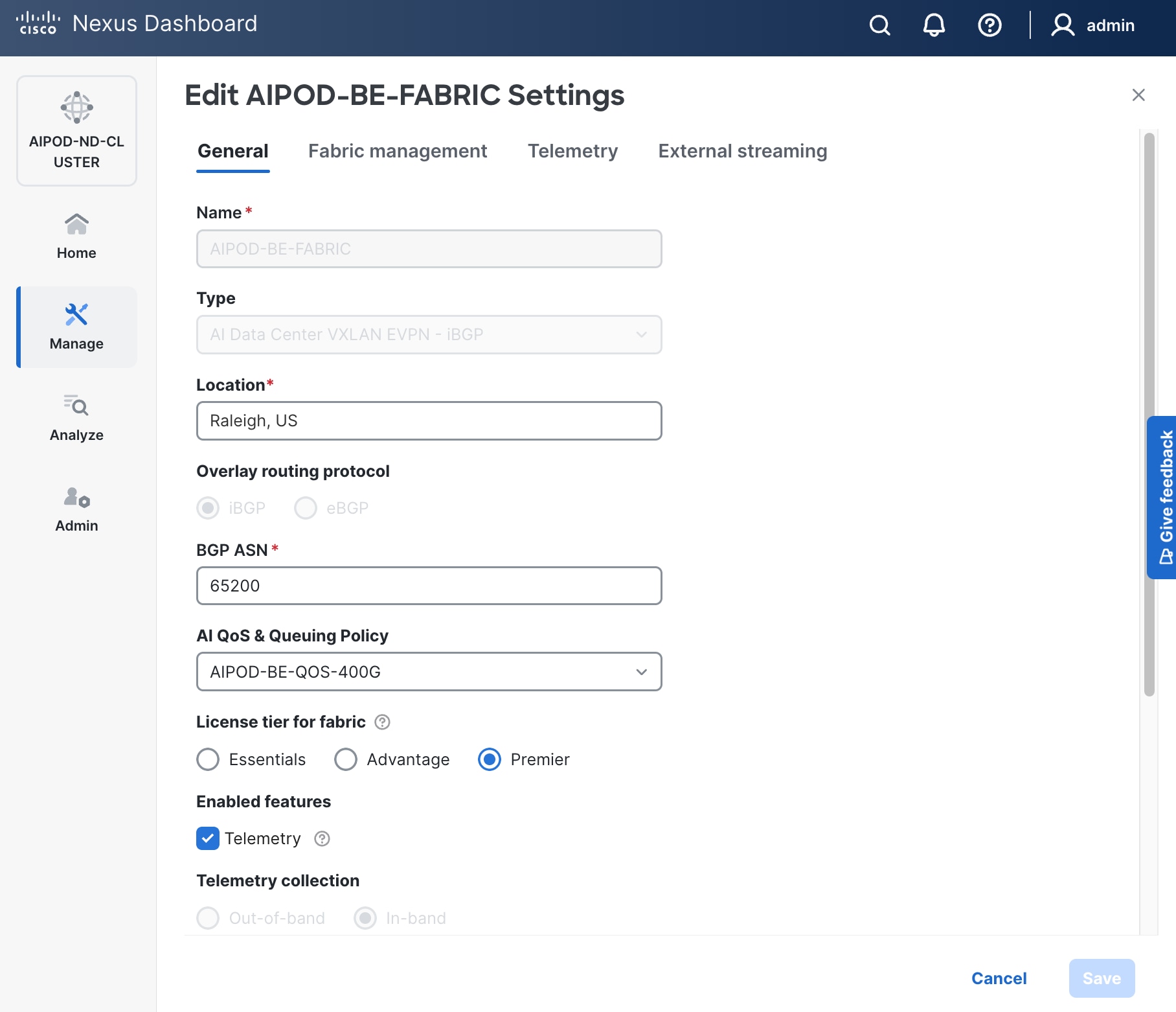

Step 6. For Configuration mode, keep the Default option. Specify Name, Location, and BGP ASN for fabric. Also select the Licensing tier for fabric from the options available. Premier is required for advanced network analytics and day 2 operations. Click the ? icon to see the features available in each tier.

Step 7. Click Next.

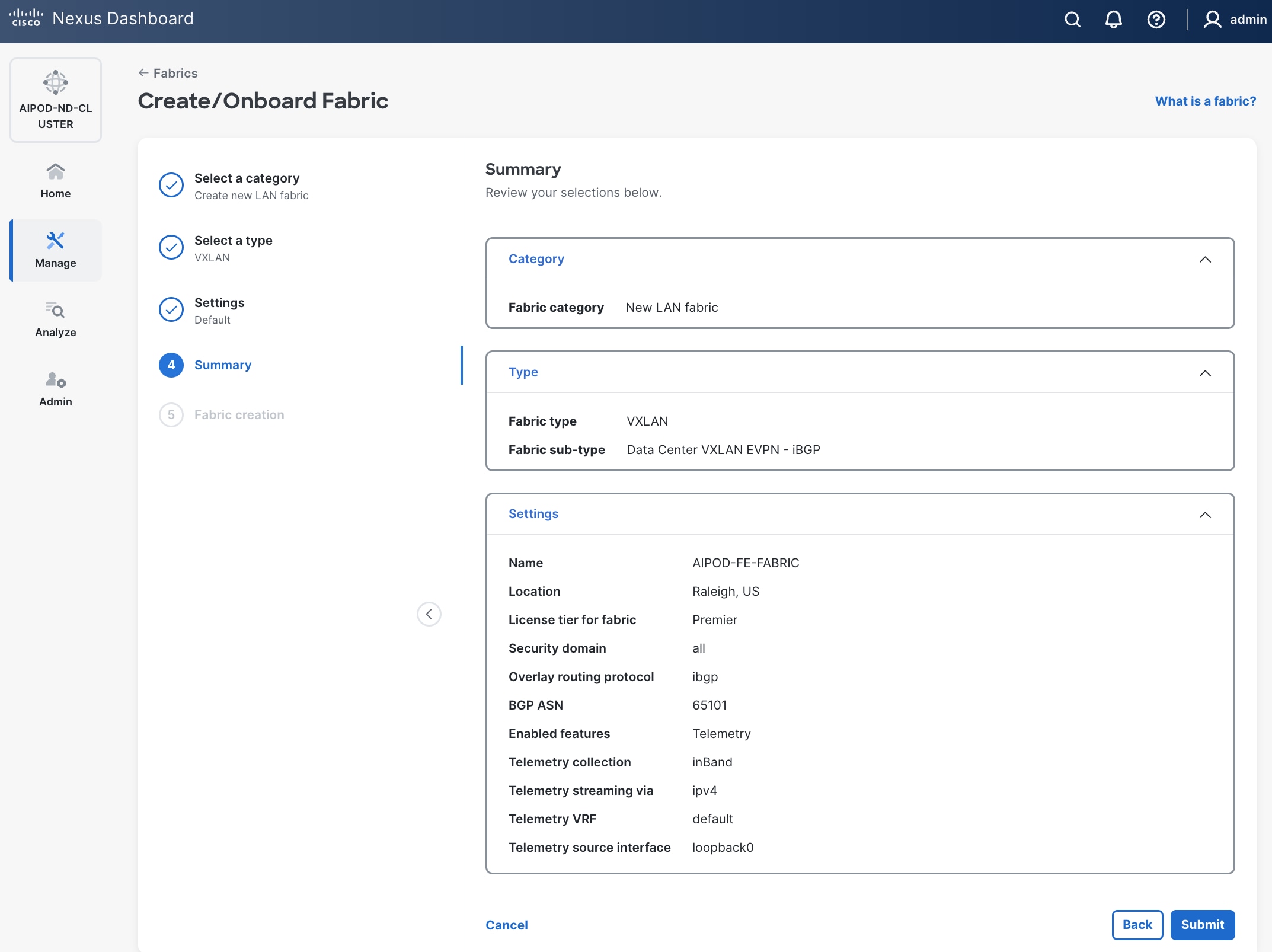

Step 8. In the Summary view, verify the settings and click Submit.

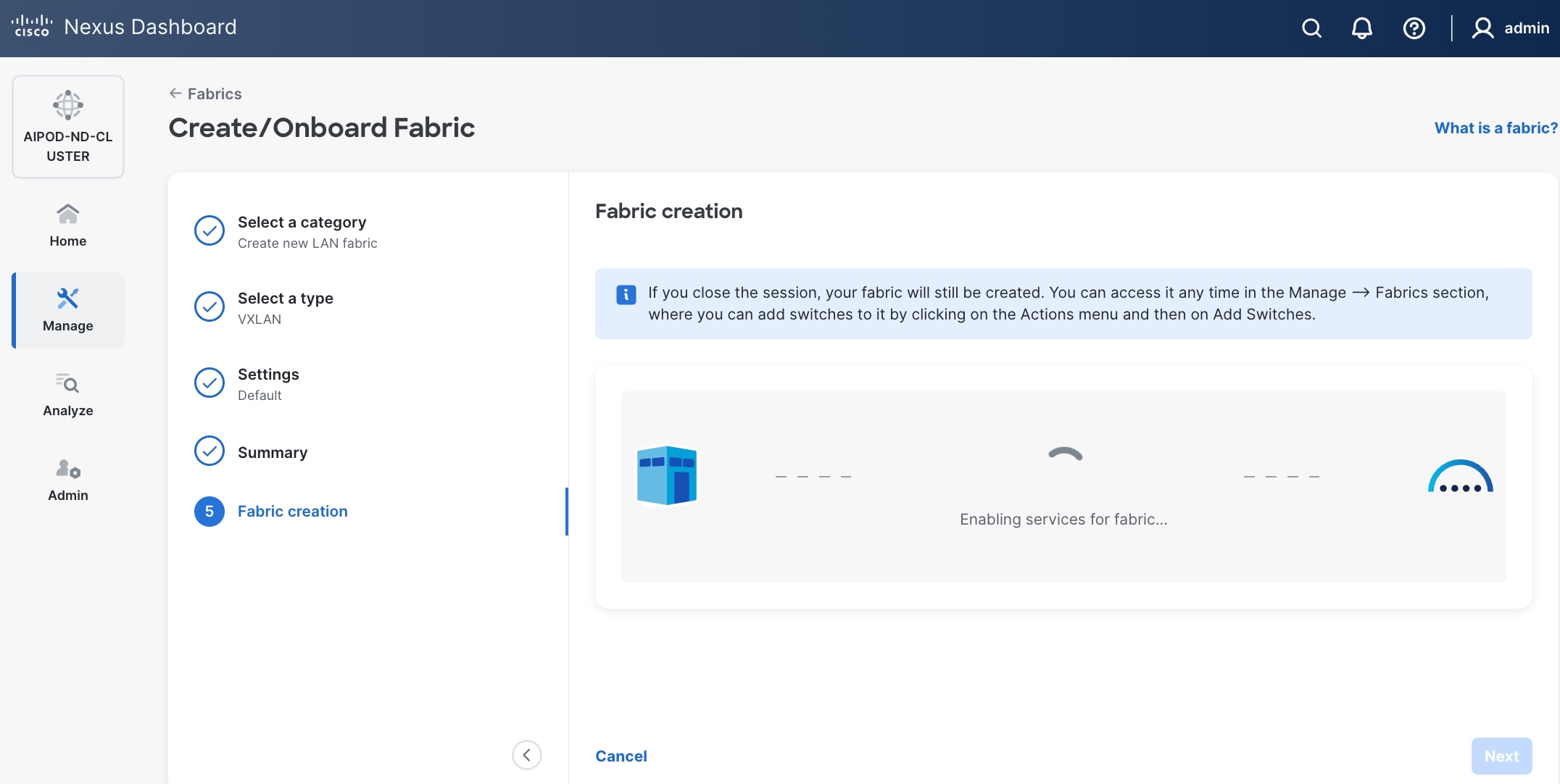

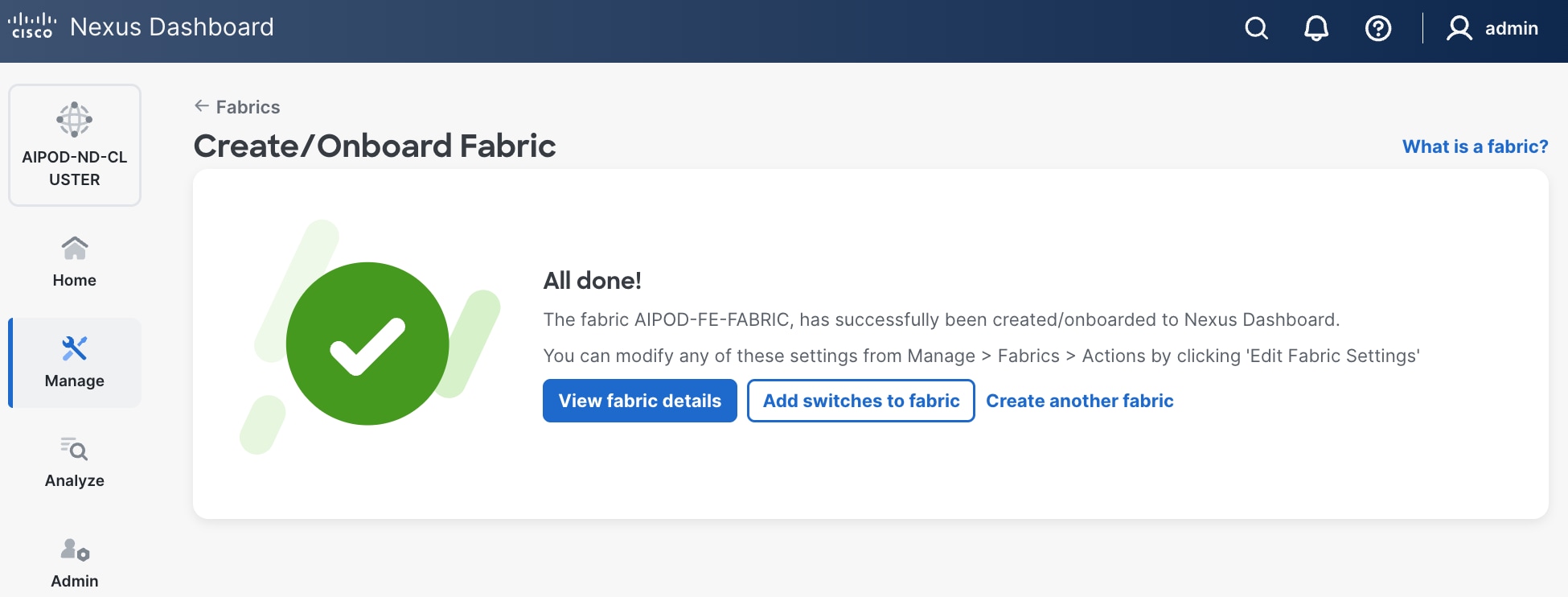

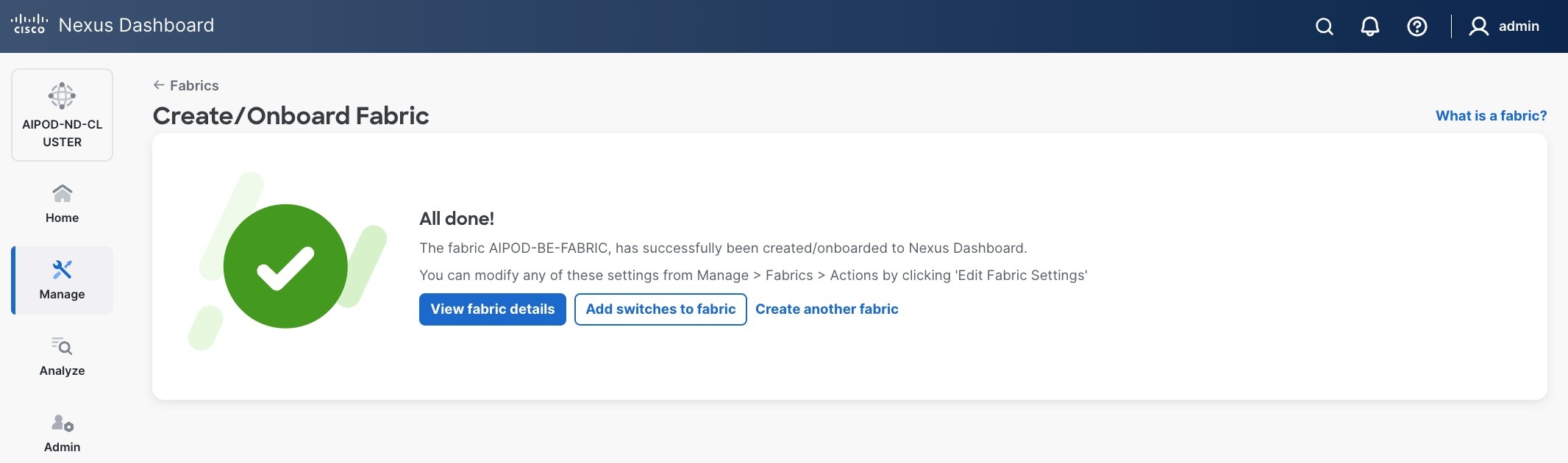

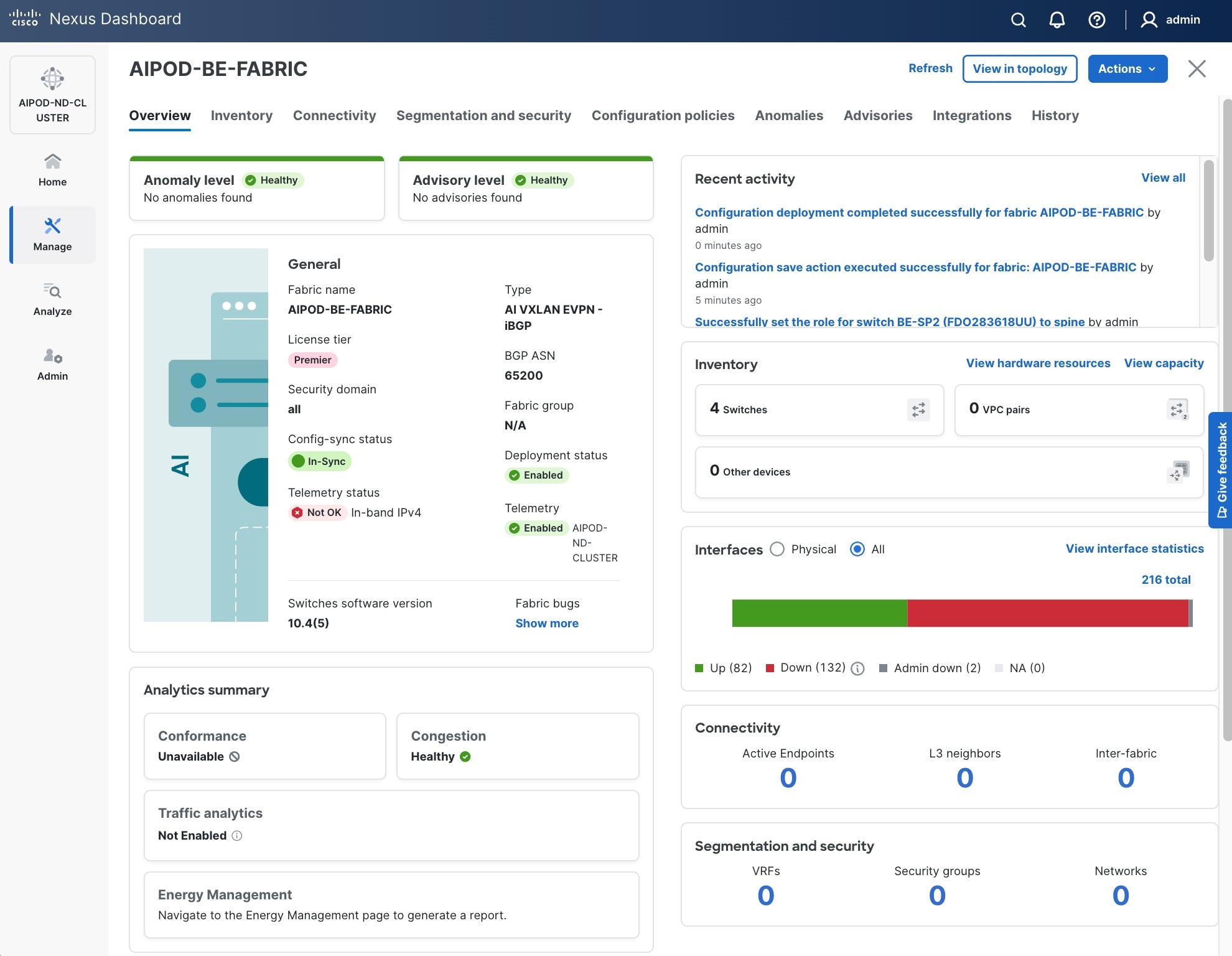

Step 9. When Fabric Creation completes, you should see the following:

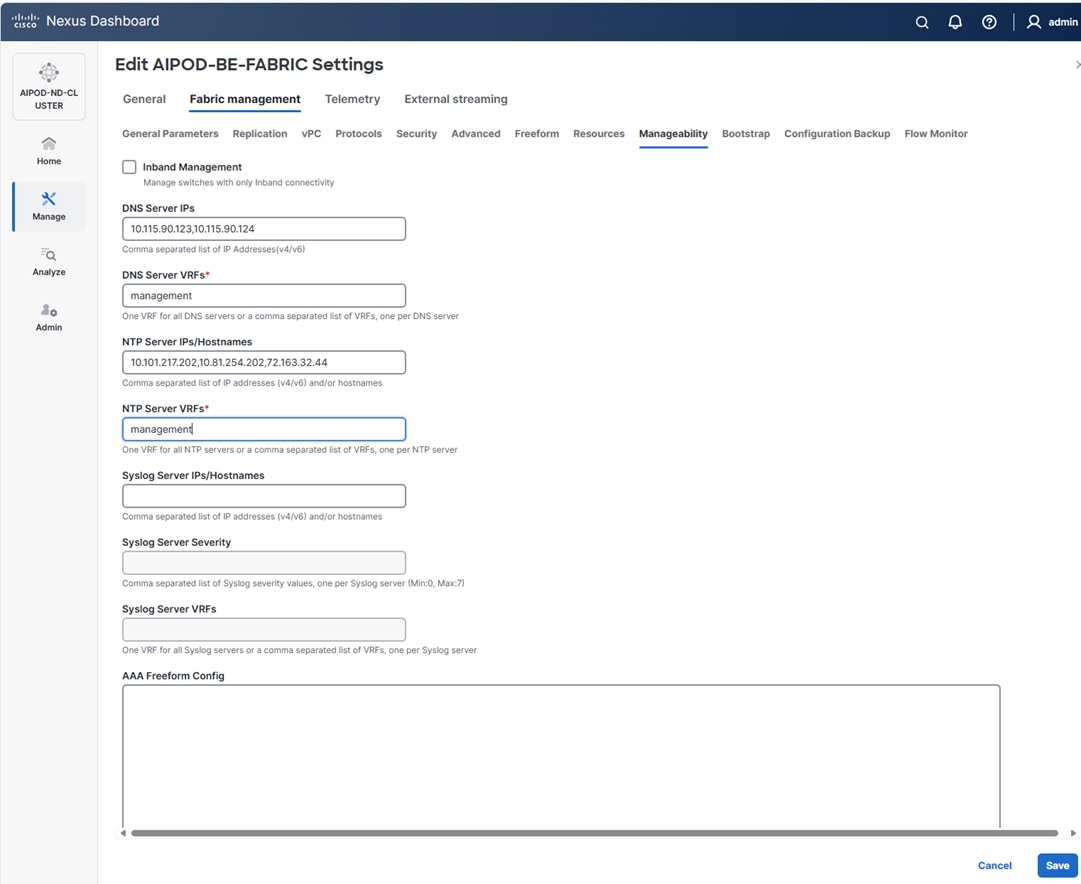

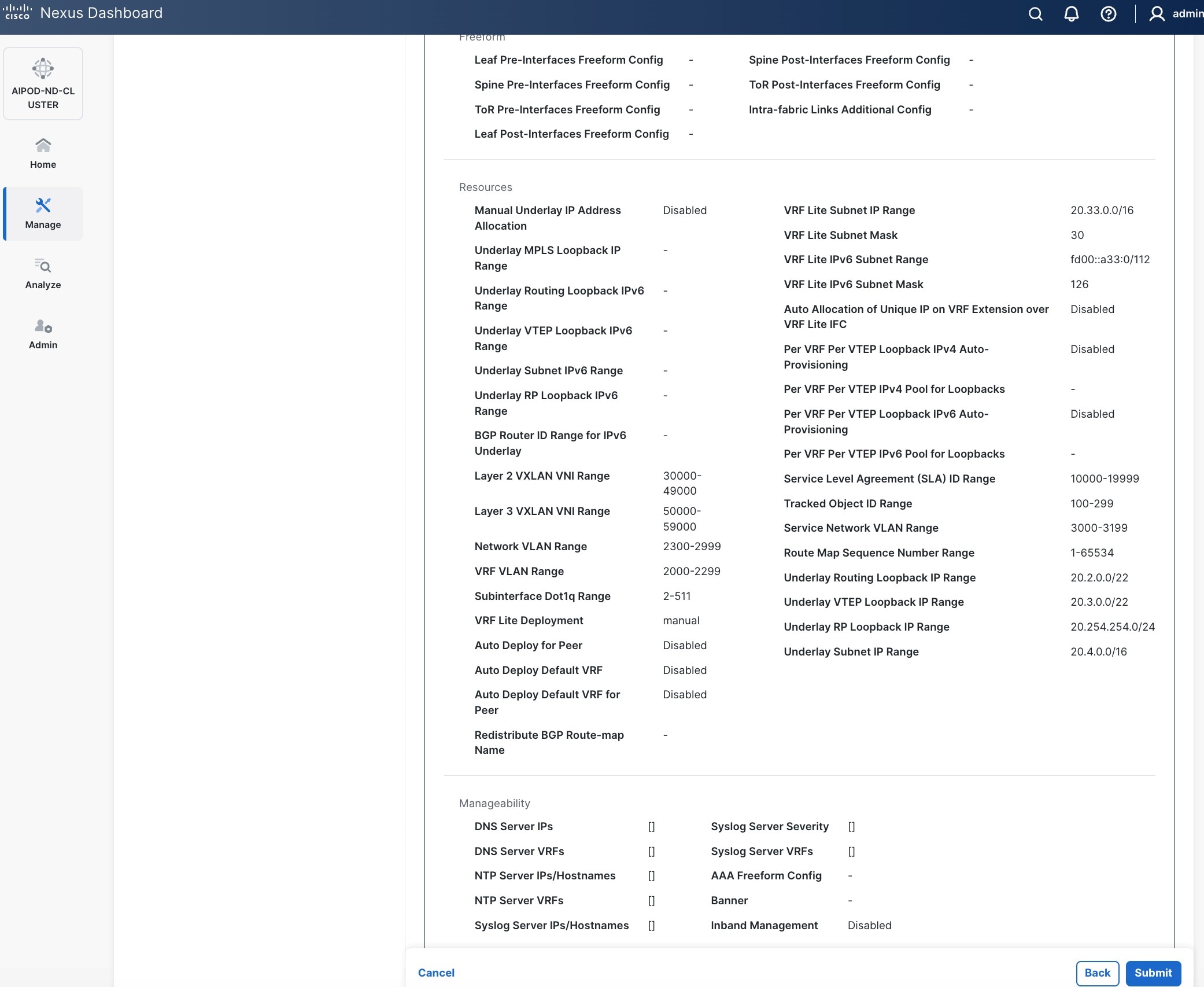

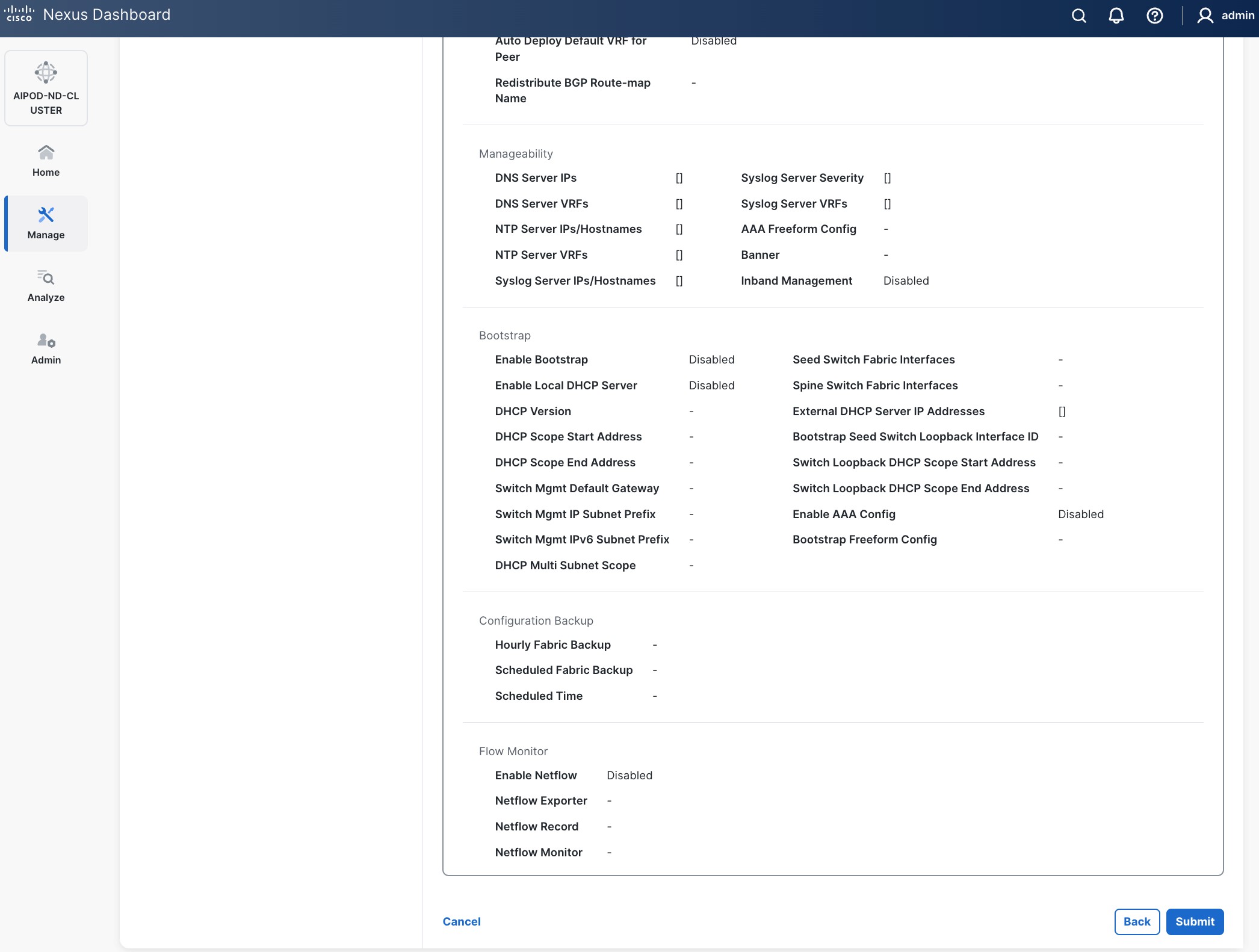

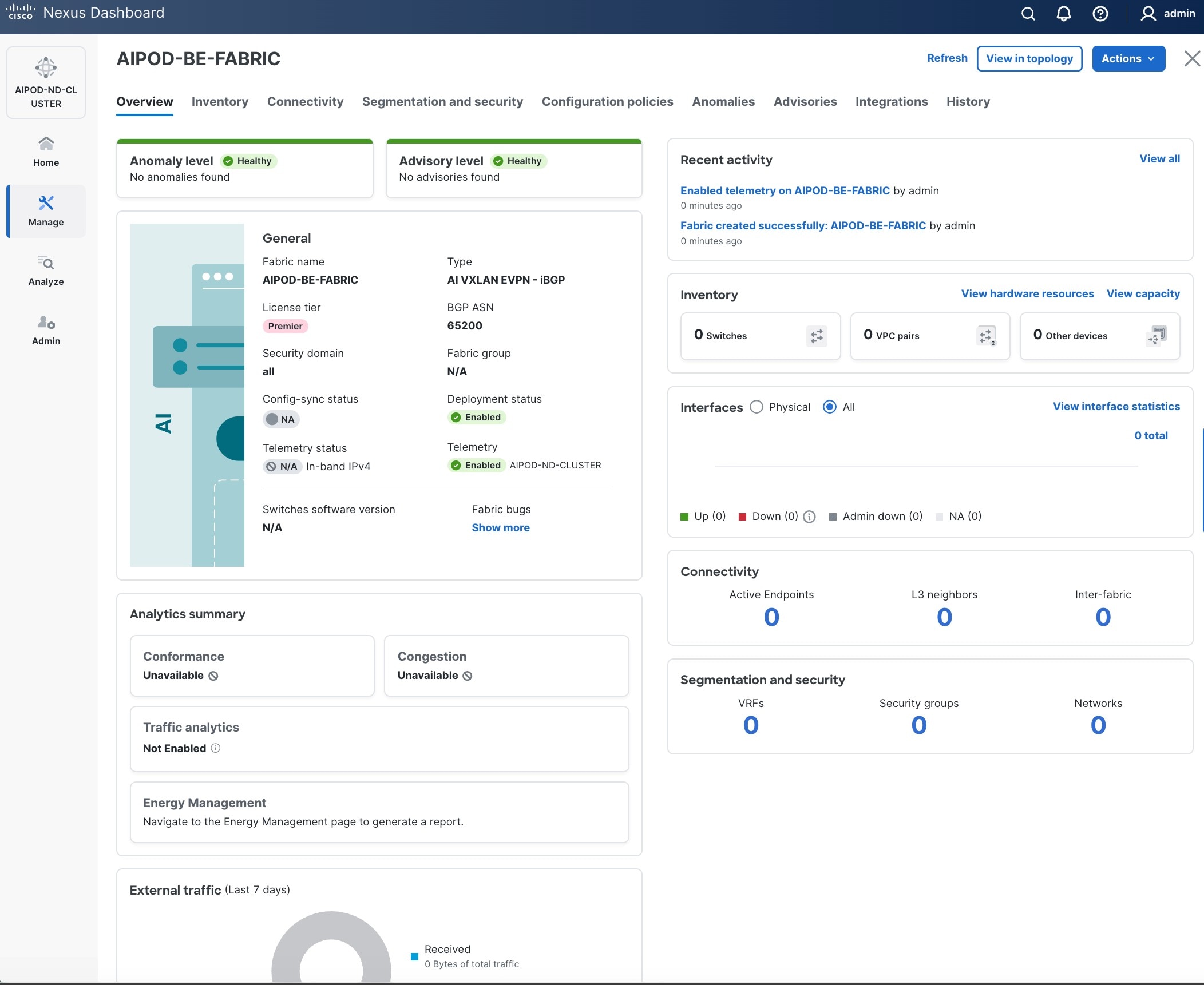

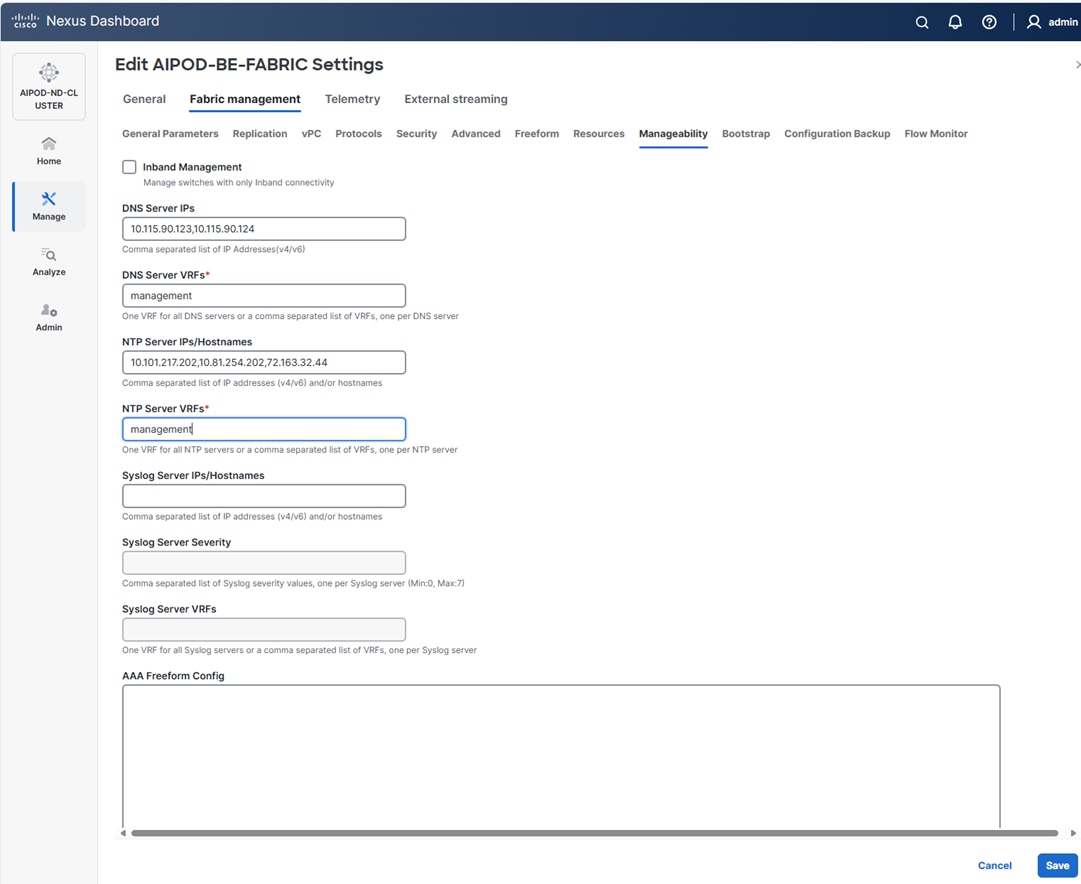

Step 10. Select Manage > Fabrics on the left and then select the FE fabric. From the Actions drop-down list, select Edit fabric settings. Select the Fabric management tab and the Manageability tab underneath. Add the NTP Server IPs and the NTP Server VRF (management) and click Save.

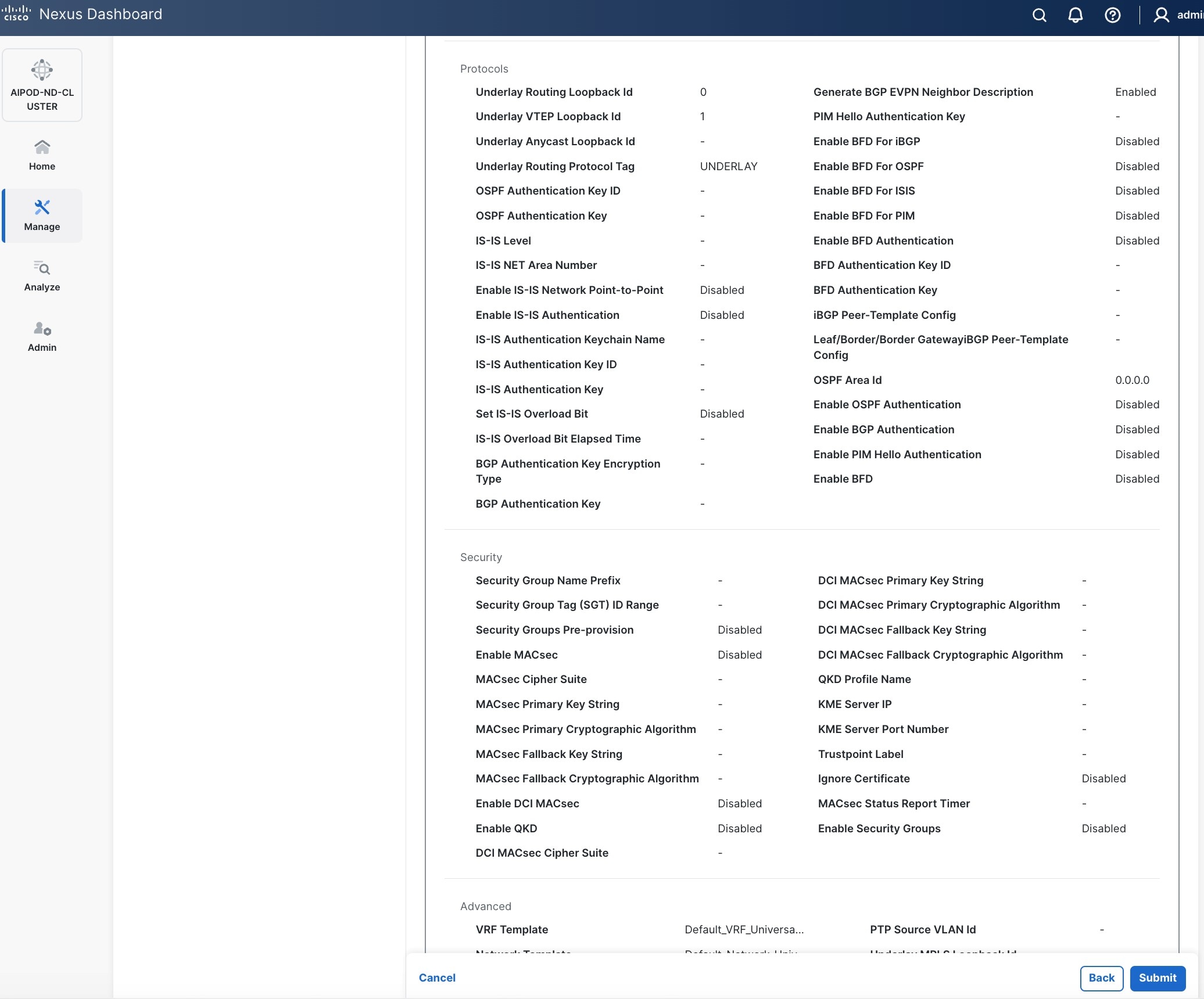

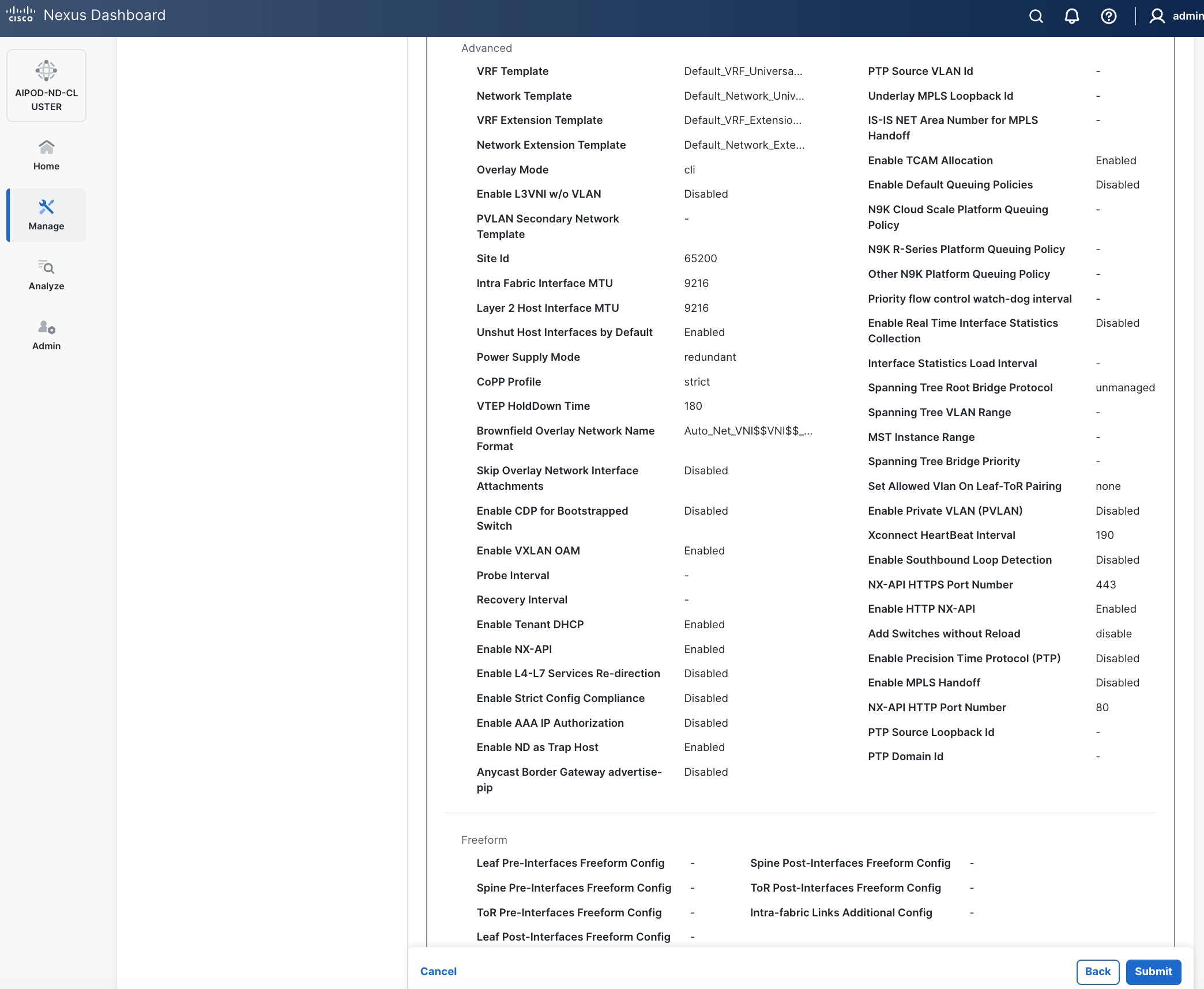

Note: This screenshot and the following screenshot show BE fabric but are the same for the FE fabric.

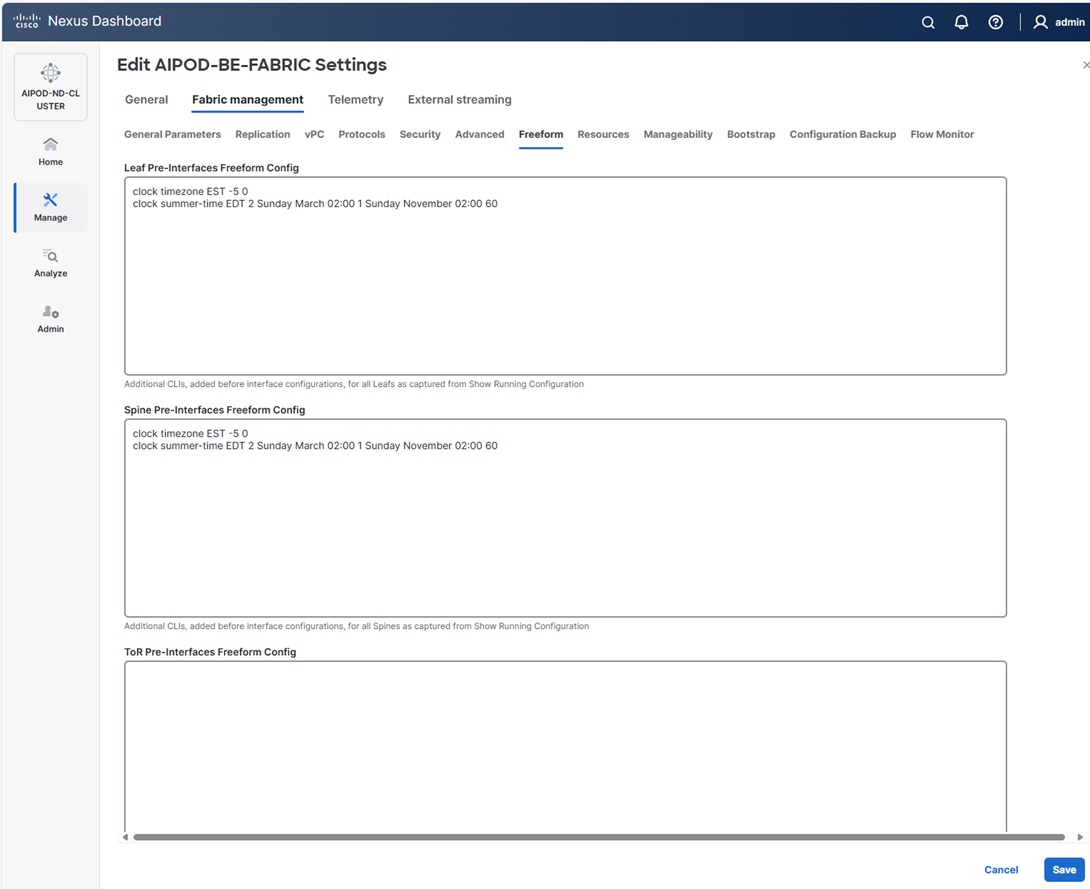

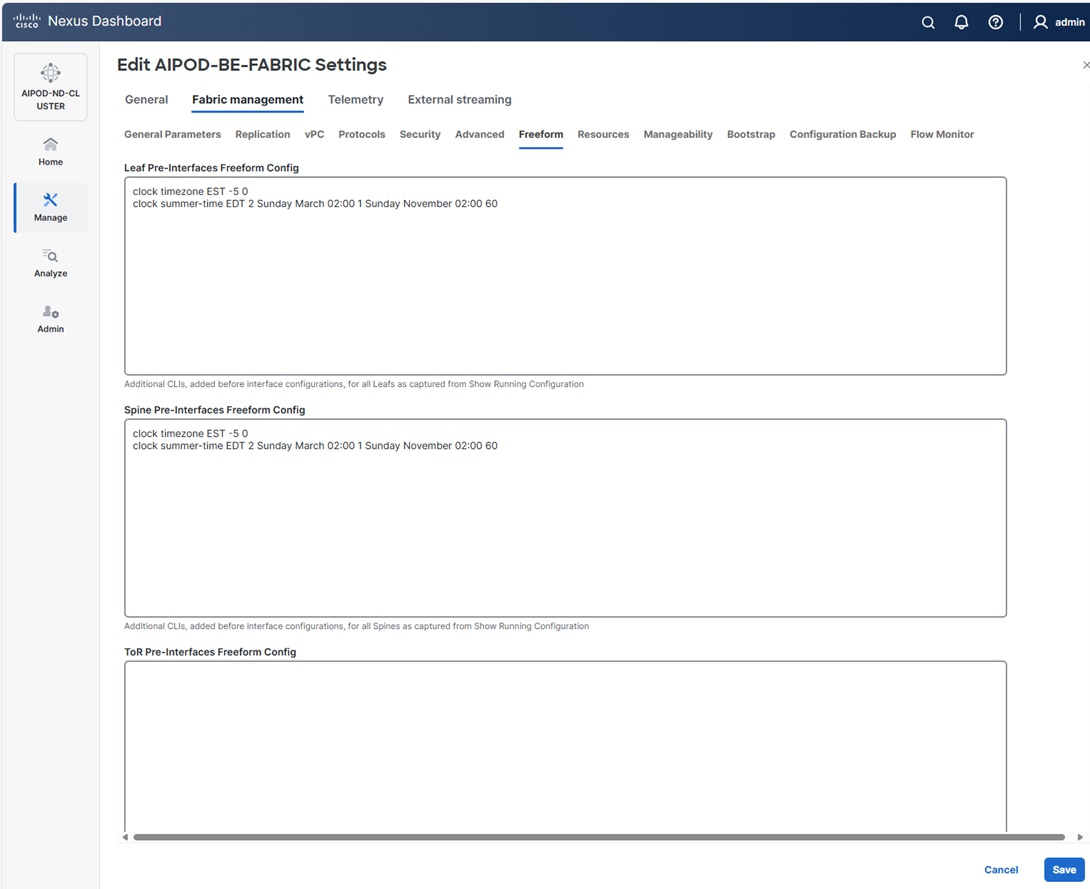

Step 11. Select the Freeform tab and optionally enter the info shown in the screenshot modified for your timezone. Click Save.

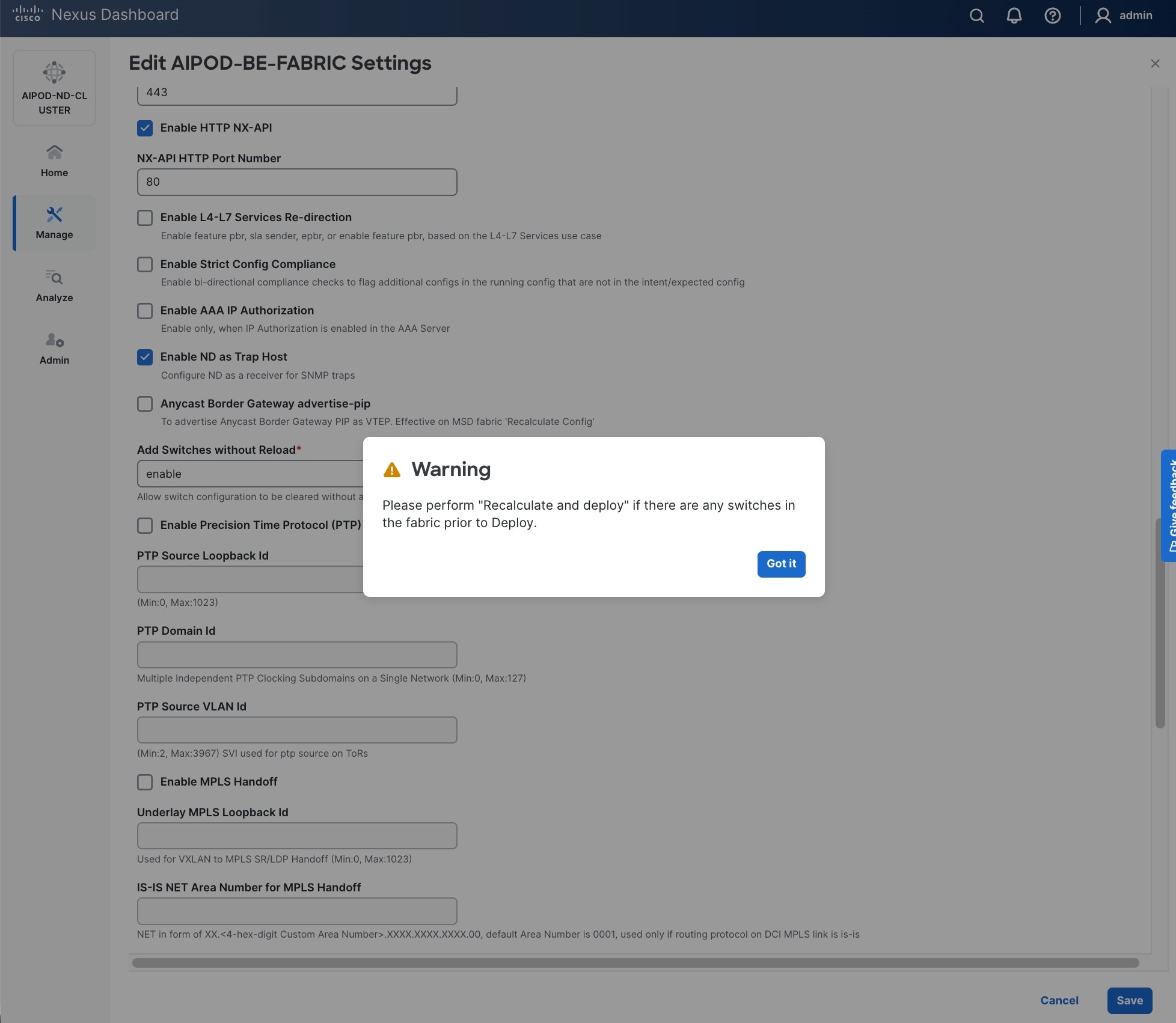

Step 12. If you want to add switches without a reload, click View fabric details. Select Fabric Management > Advanced tabs and scroll down to find the field for Add switches without Reload and change setting to enable. Click Save, followed by Got it in the pop-up window.

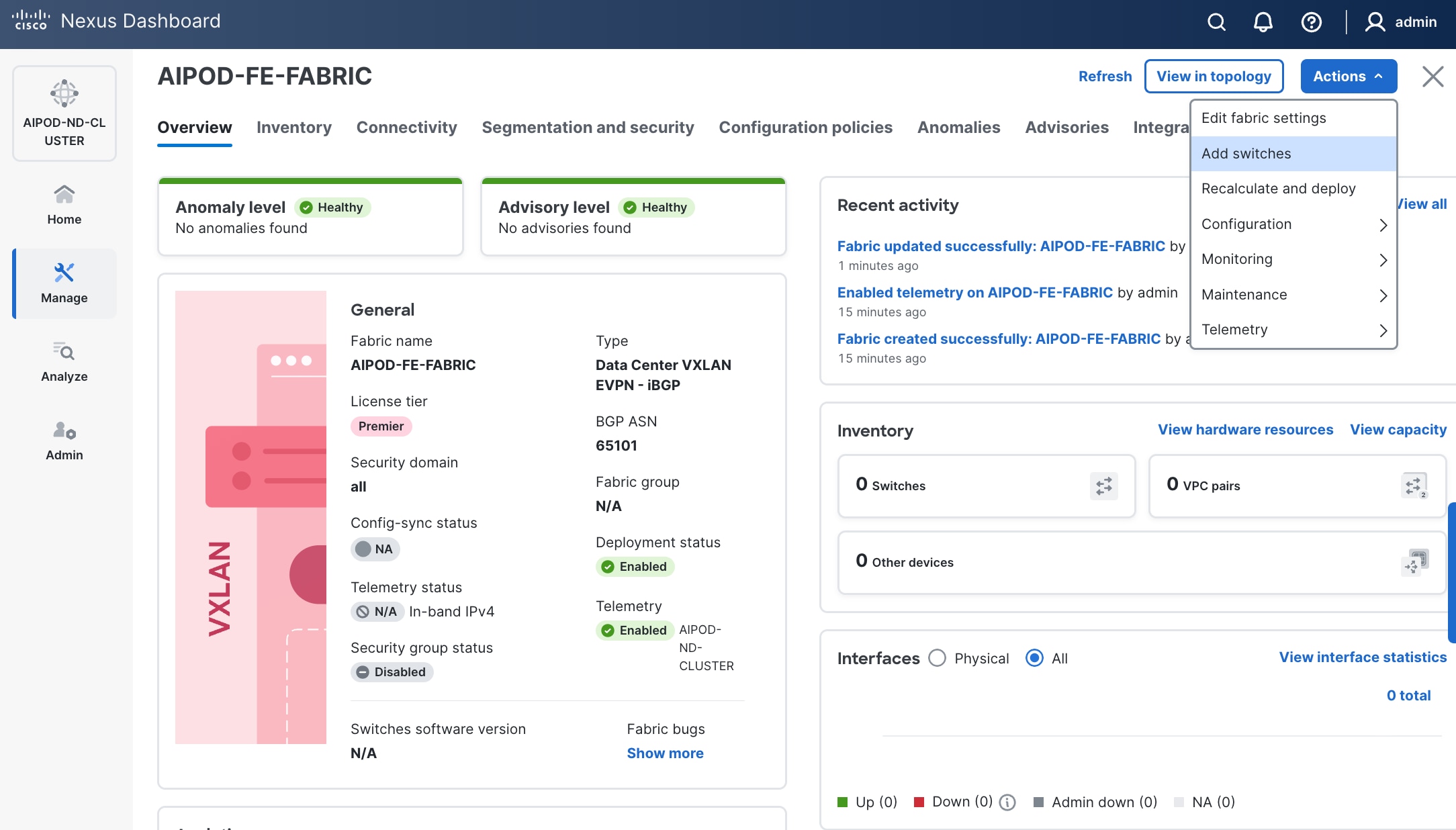

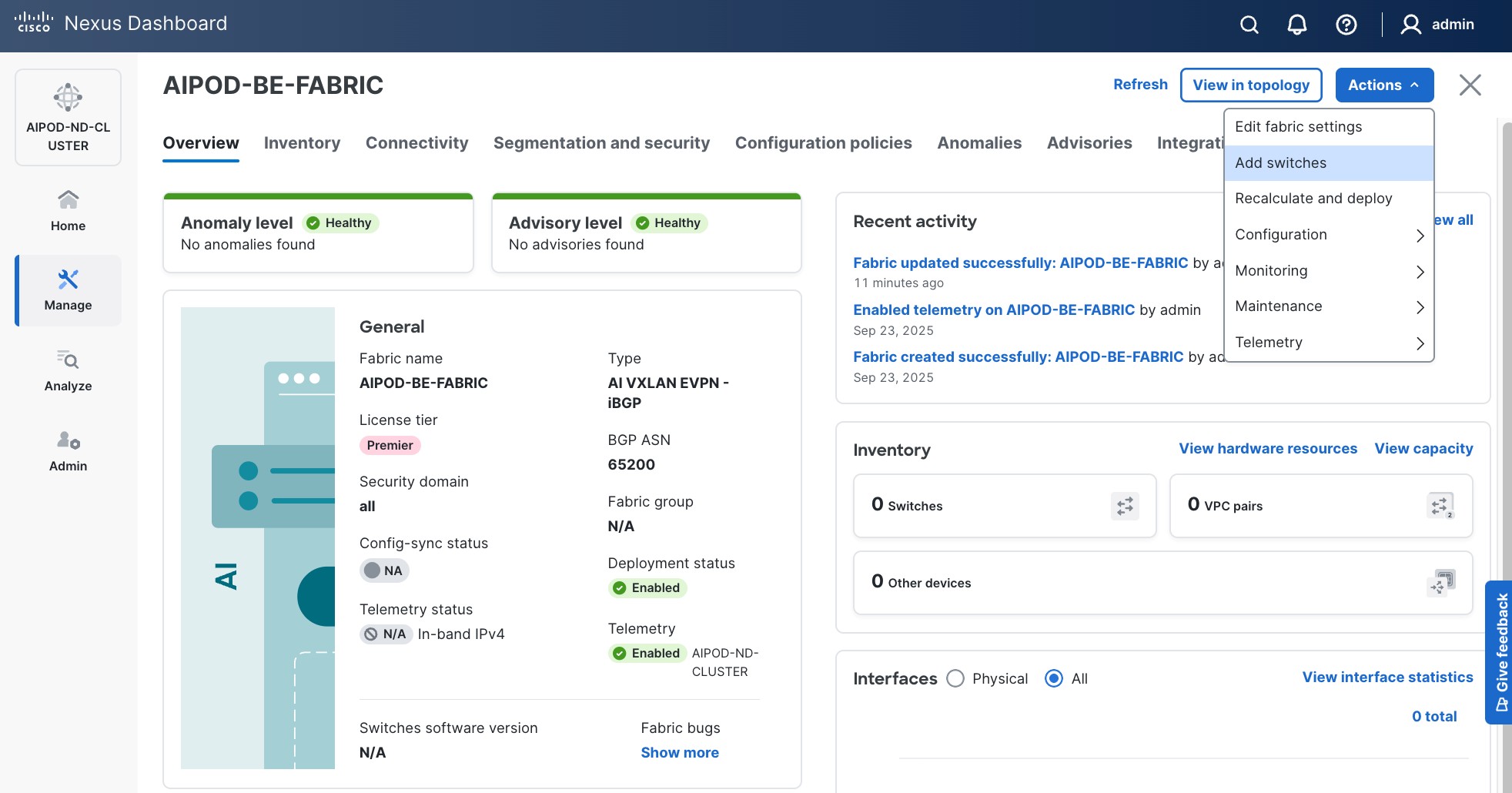

Step 13. From the Manage > Fabrics view, click the fabric name to add switches to the fabric.

Step 14. Click Actions and select Add Switches from the drop-down list.

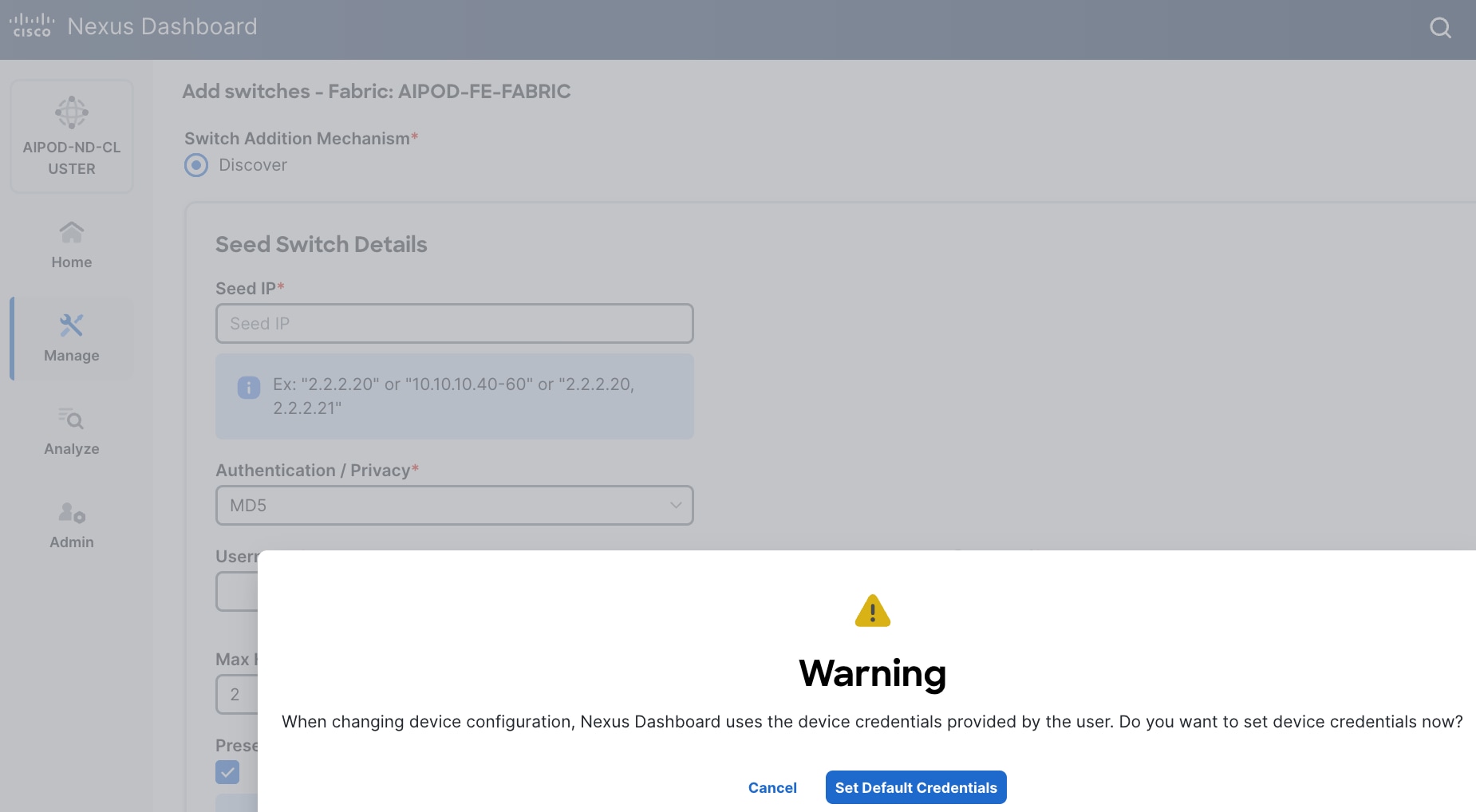

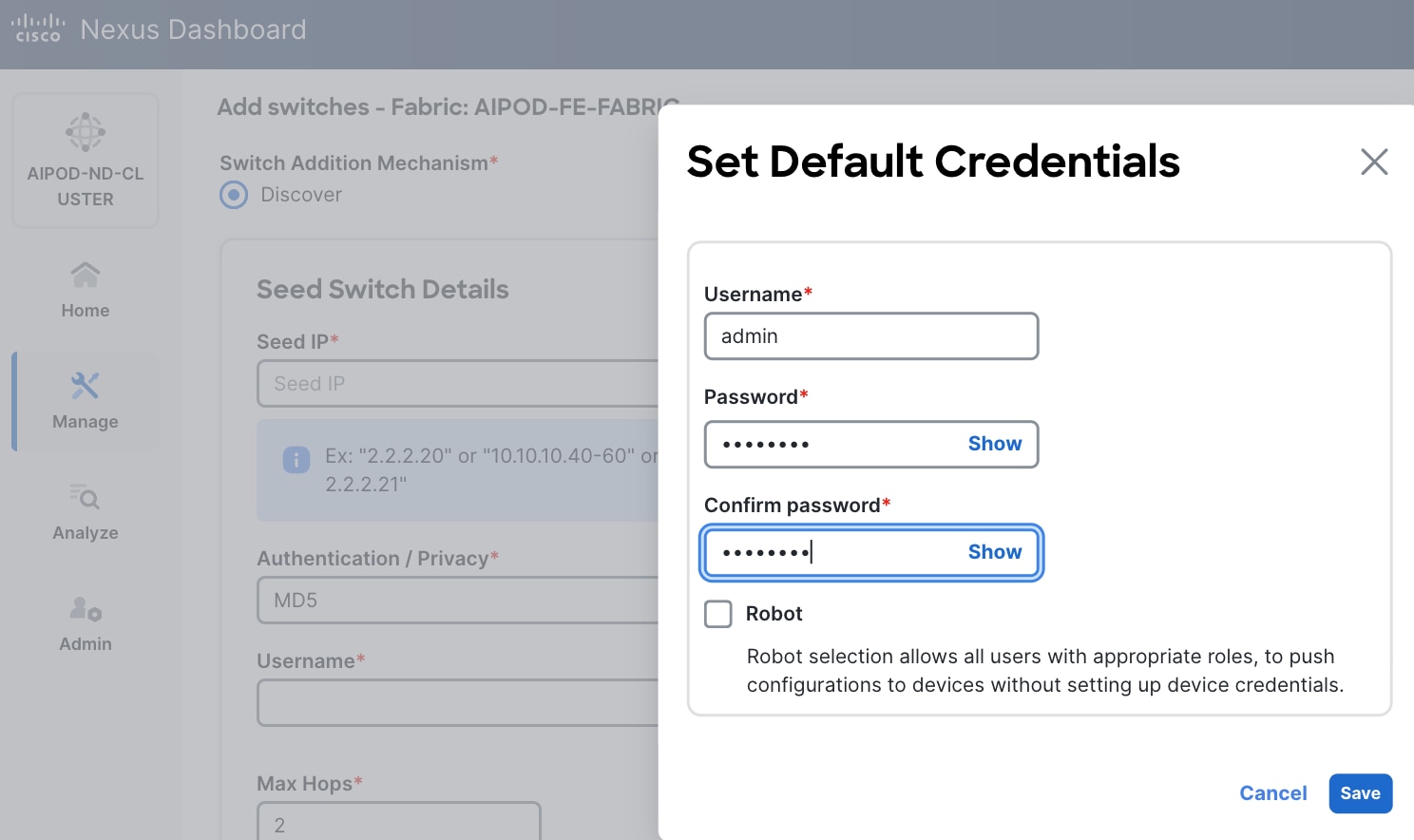

Step 15. In the pop-up window, click Set Default Credentials.

Step 16. Specify username and password. Click Save.

Step 17. Click OK.

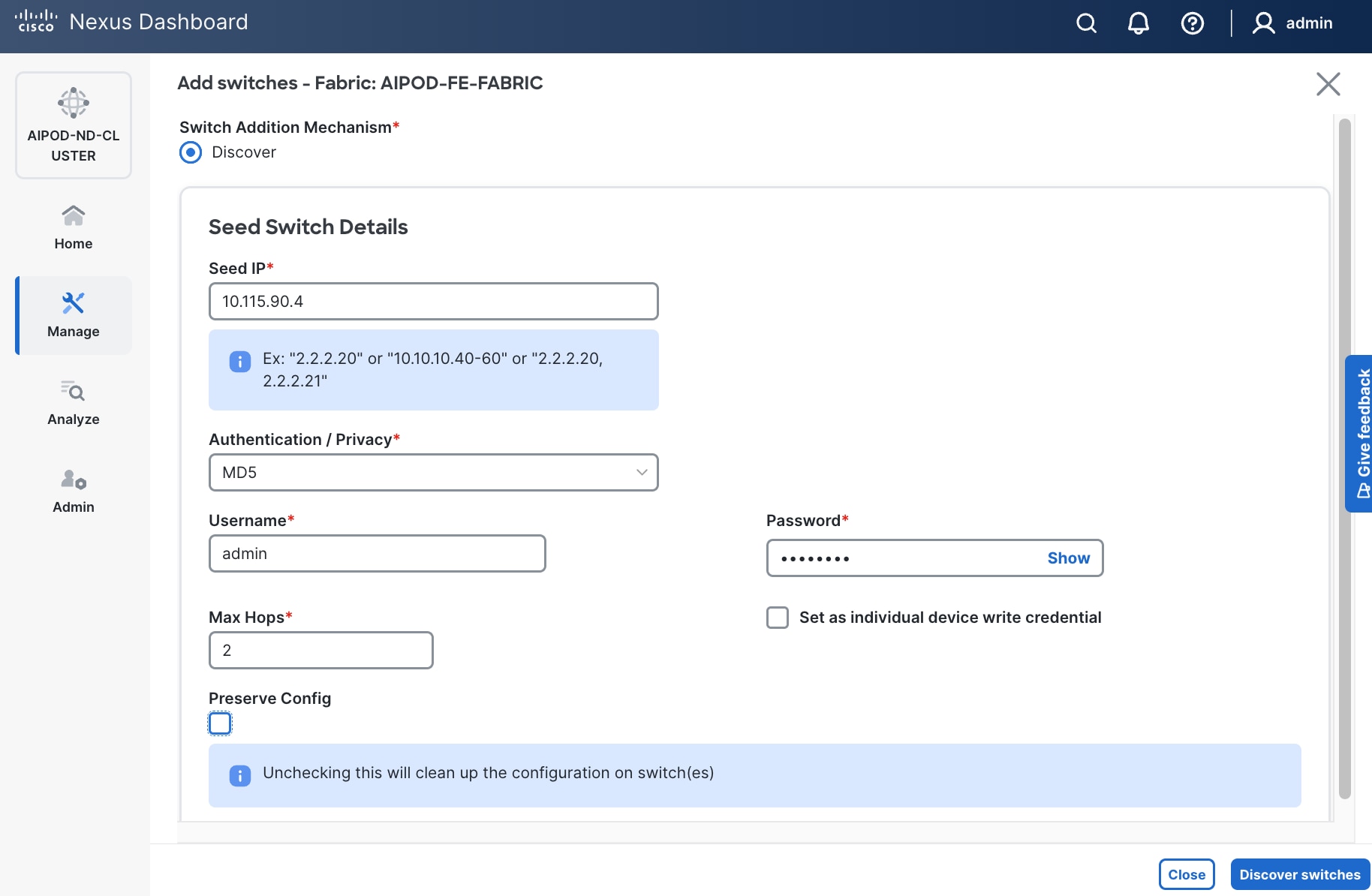

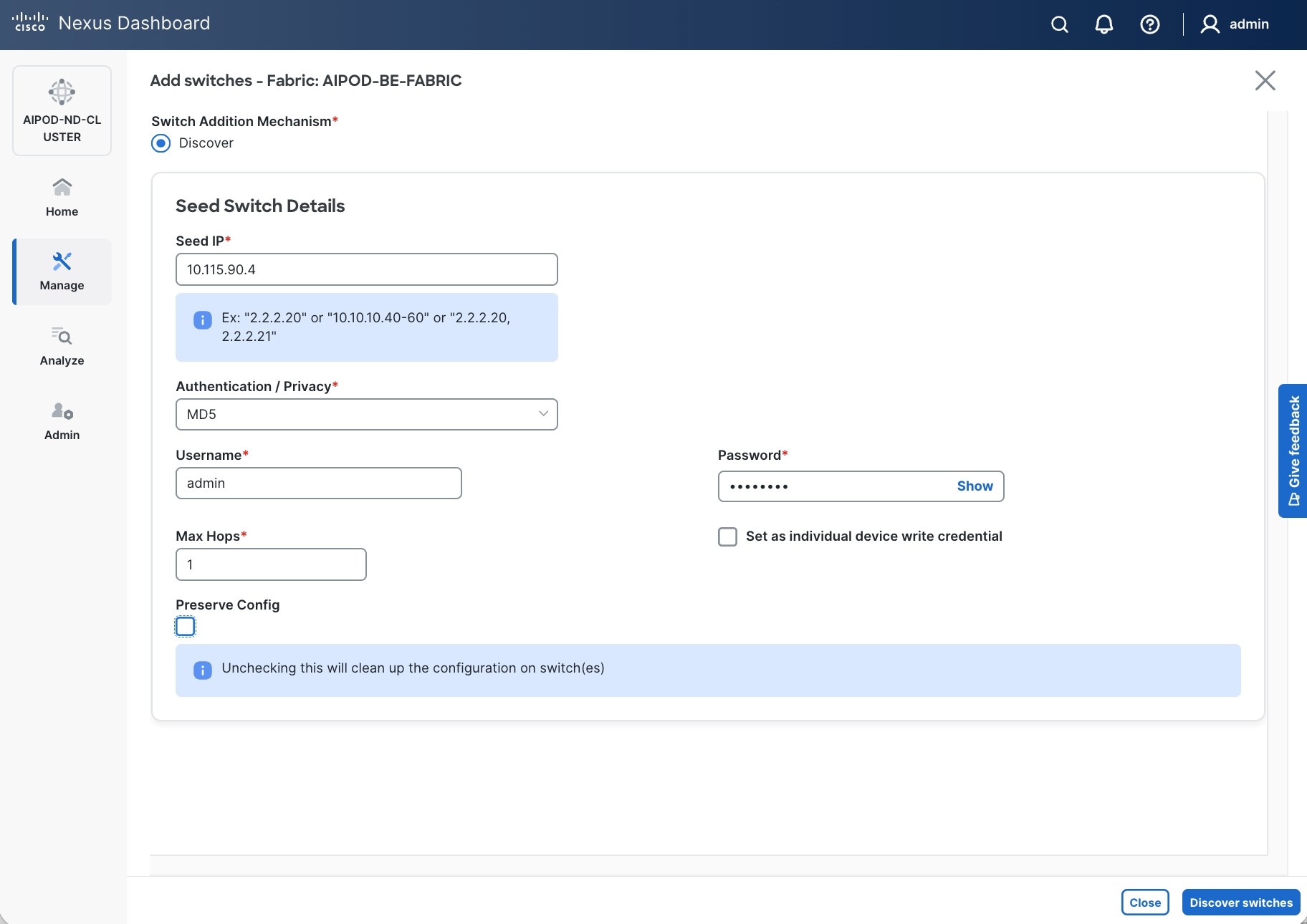

Step 18. Specify Seed IP, username and password. Adjust Max hops as needed. Click Discover Switches.

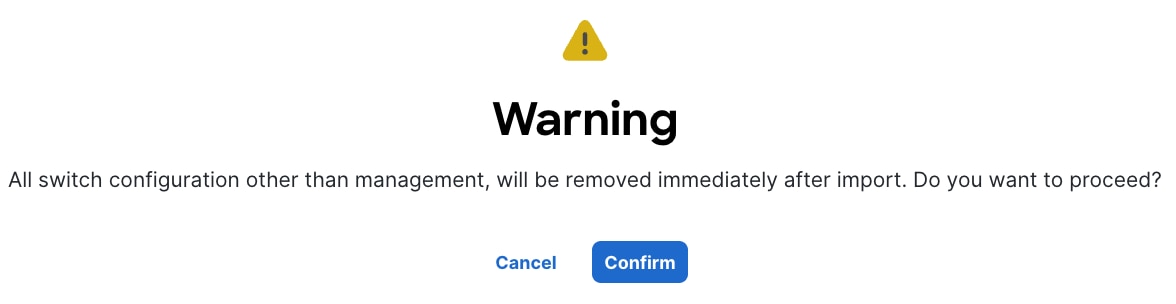

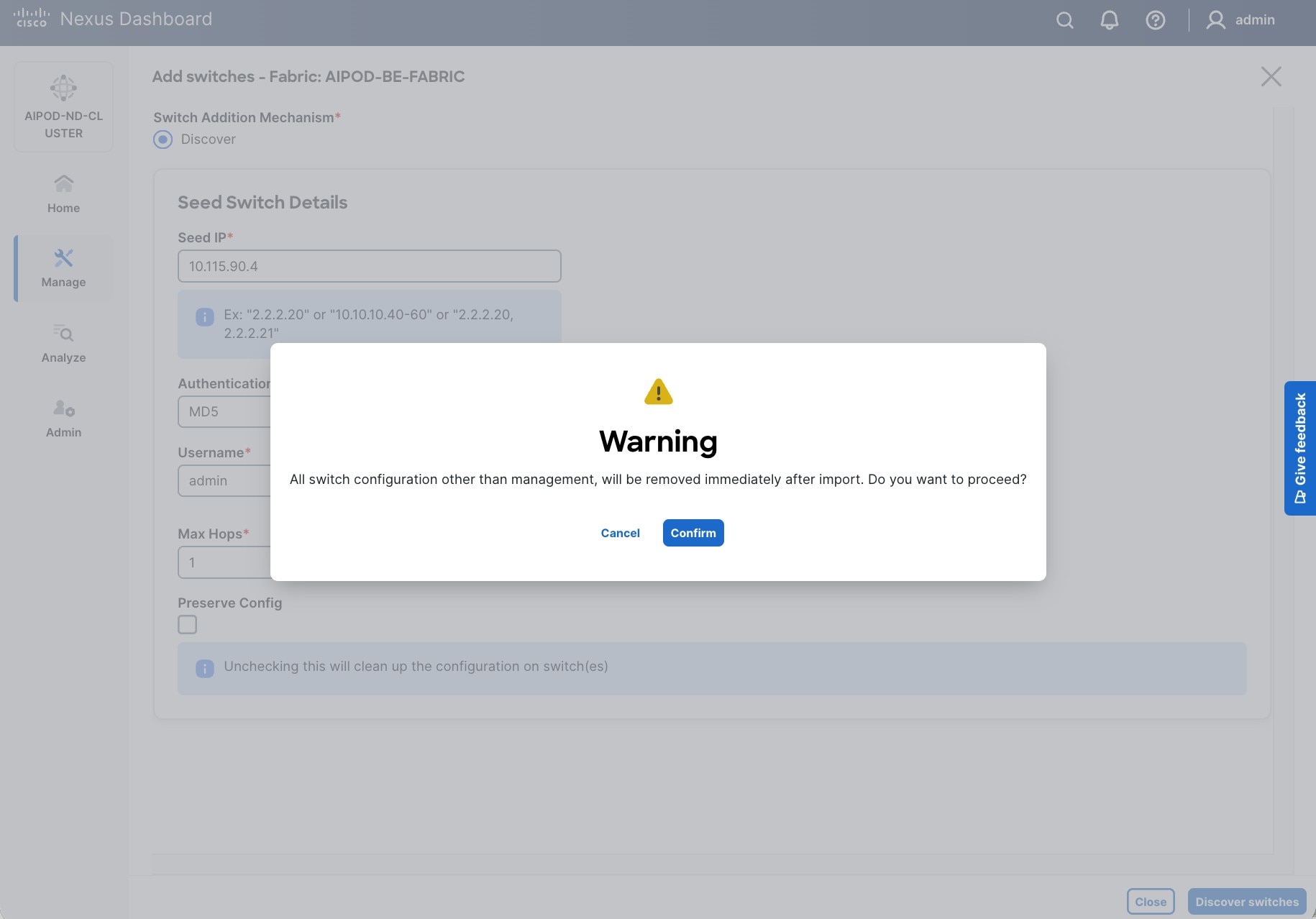

Step 19. Click Confirm in the pop-up Warning.

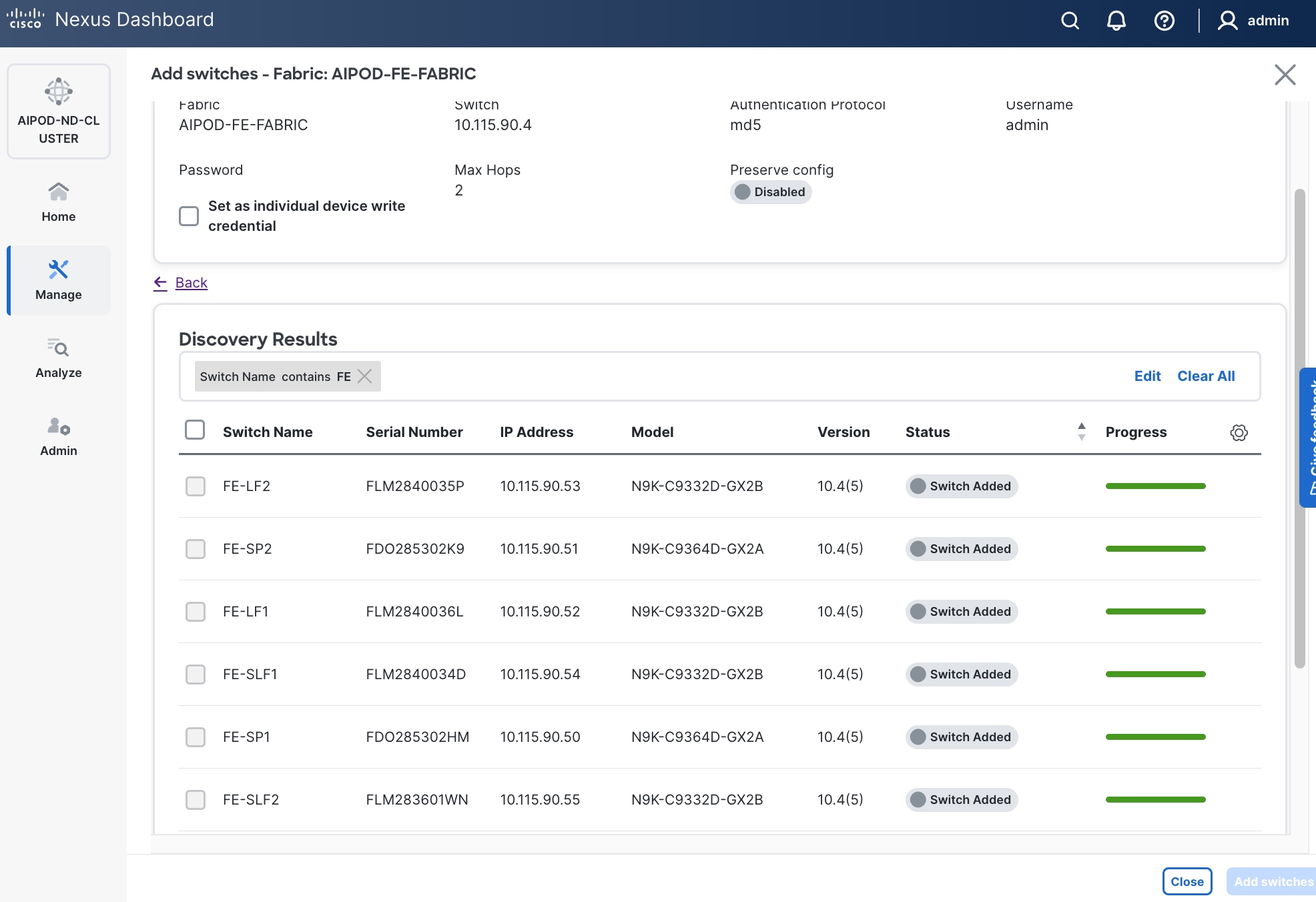

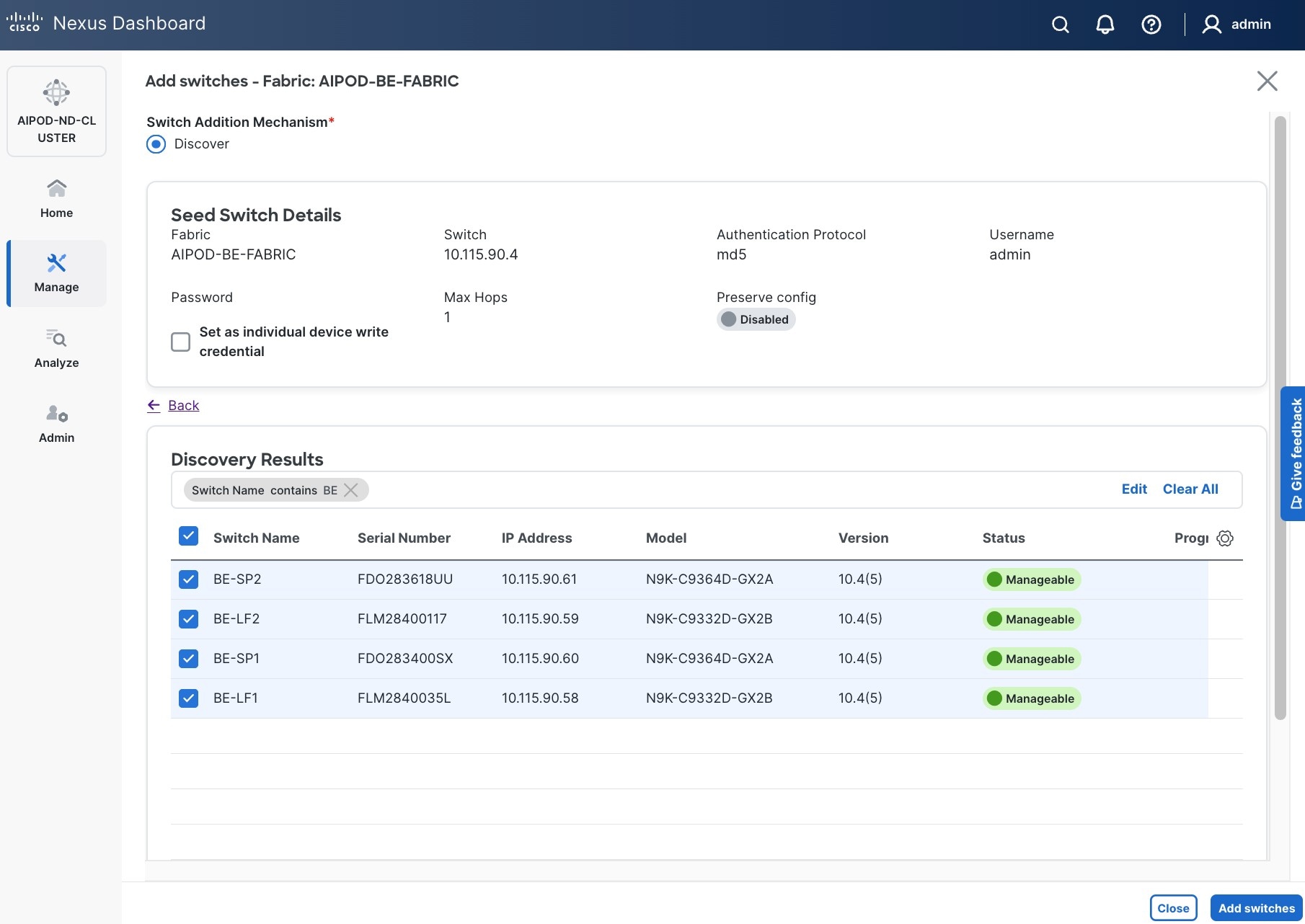

Step 20. Filter the discovered switch list as needed to view just the switches you want to add.

Step 21. Select all switches to be added. Click Add switches.

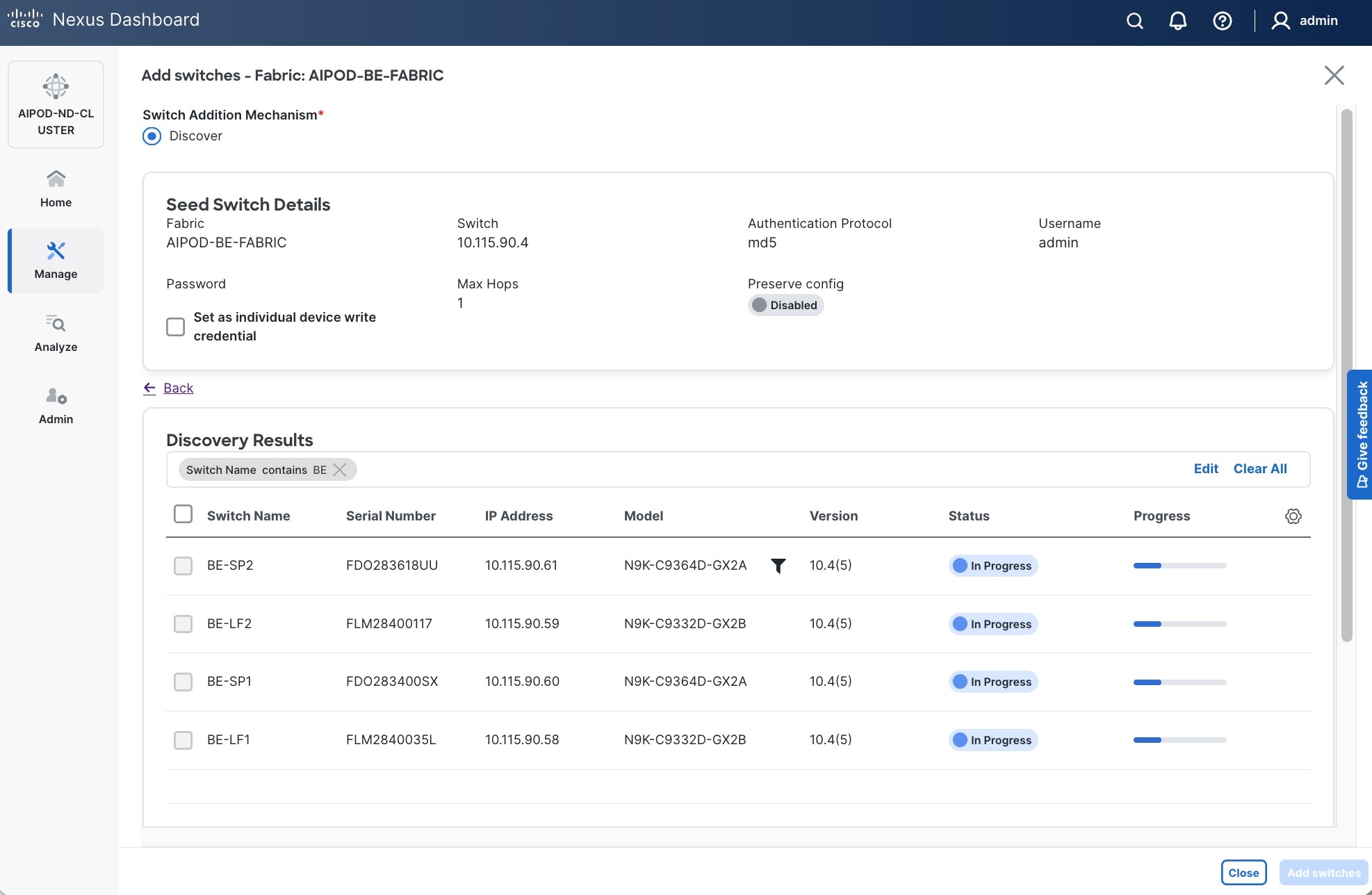

Step 22. Click Close when all switches have been added.

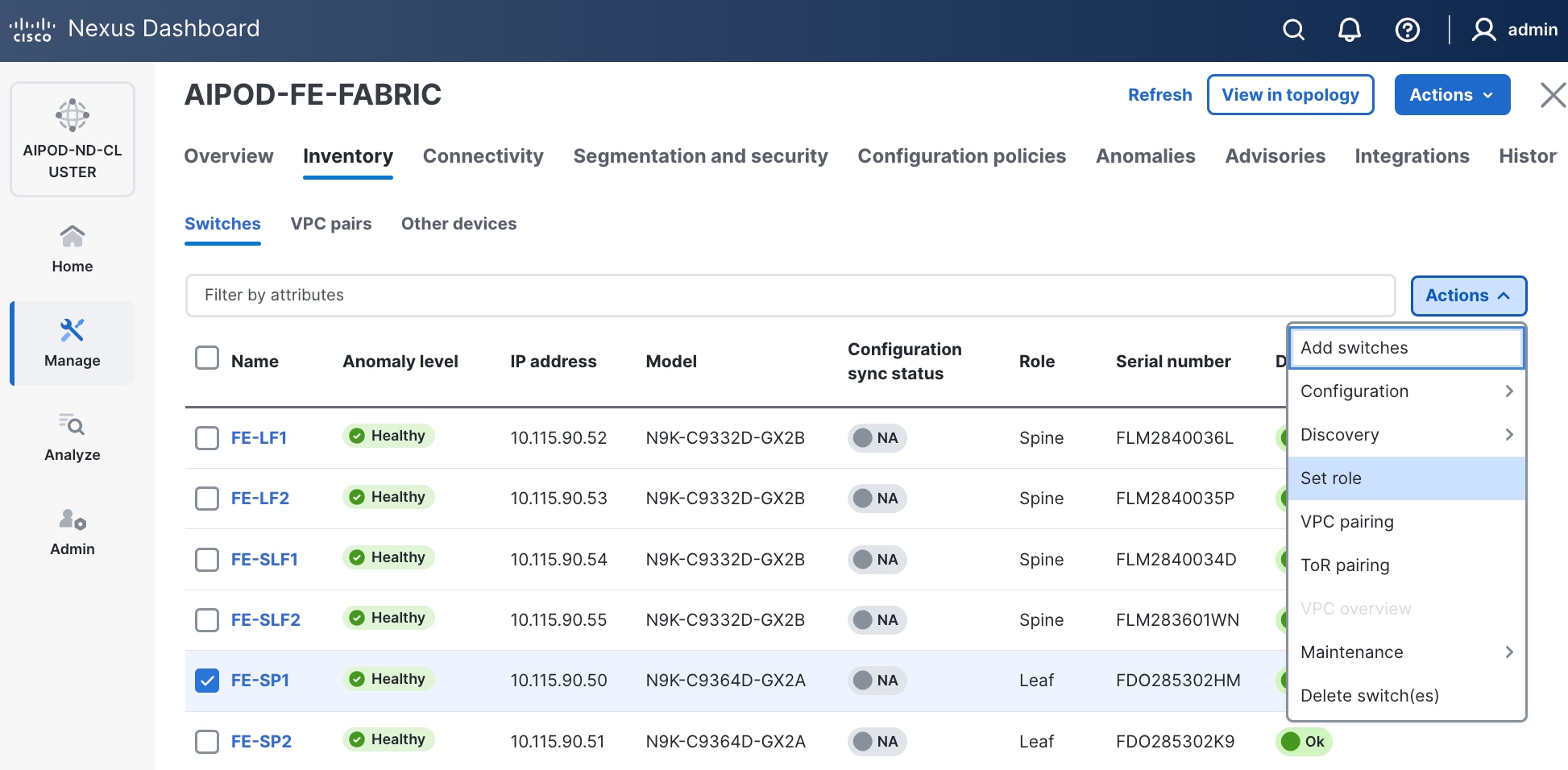

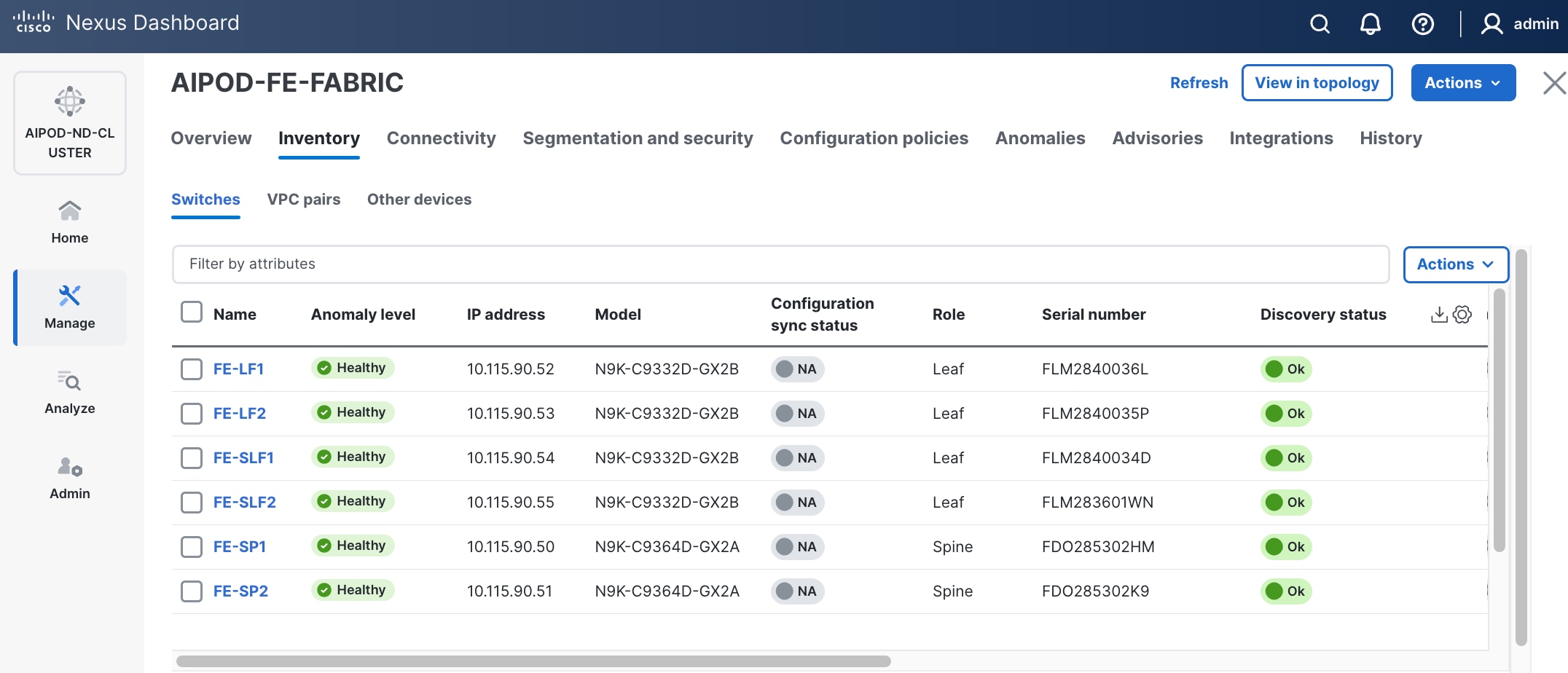

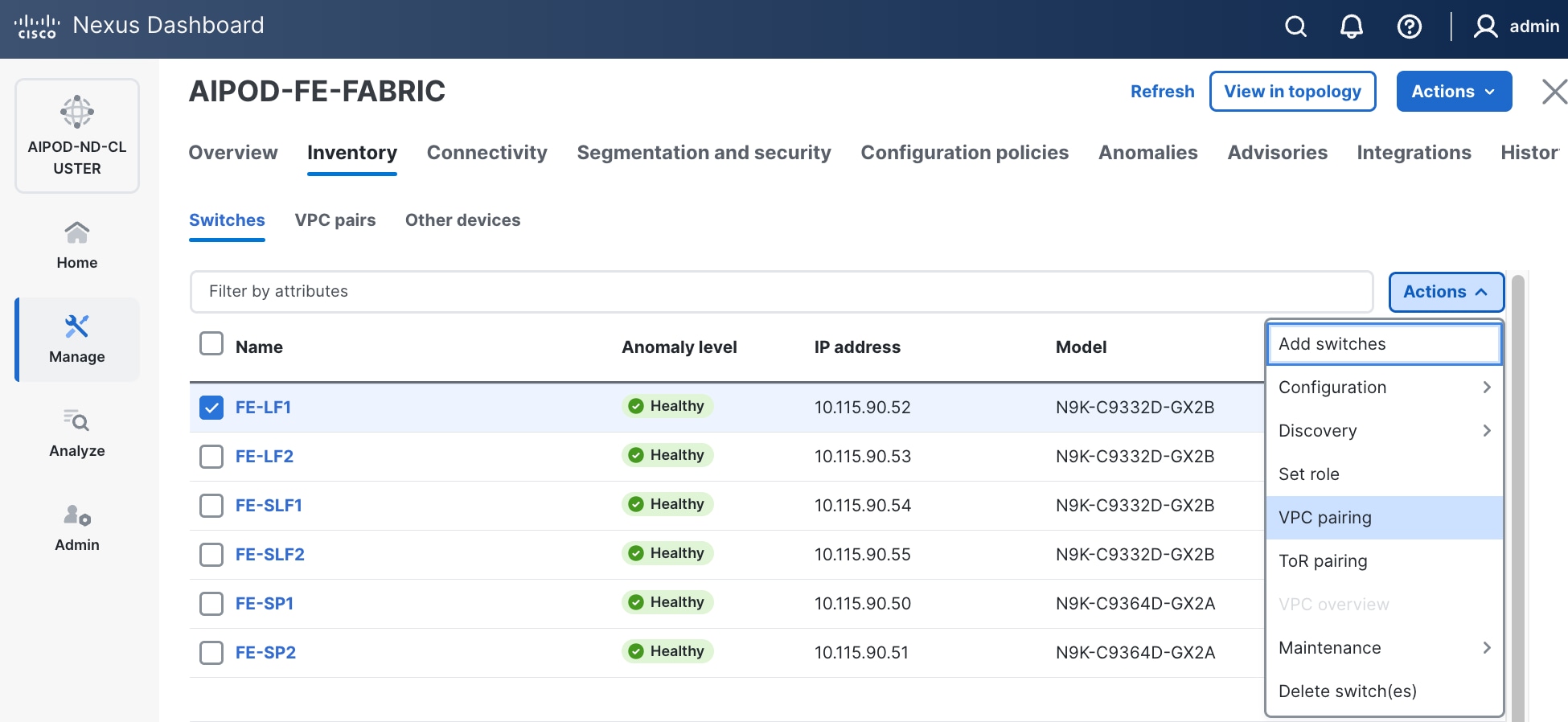

Step 23. From the Manage > Fabrics, select the fabric and click Inventory tab.

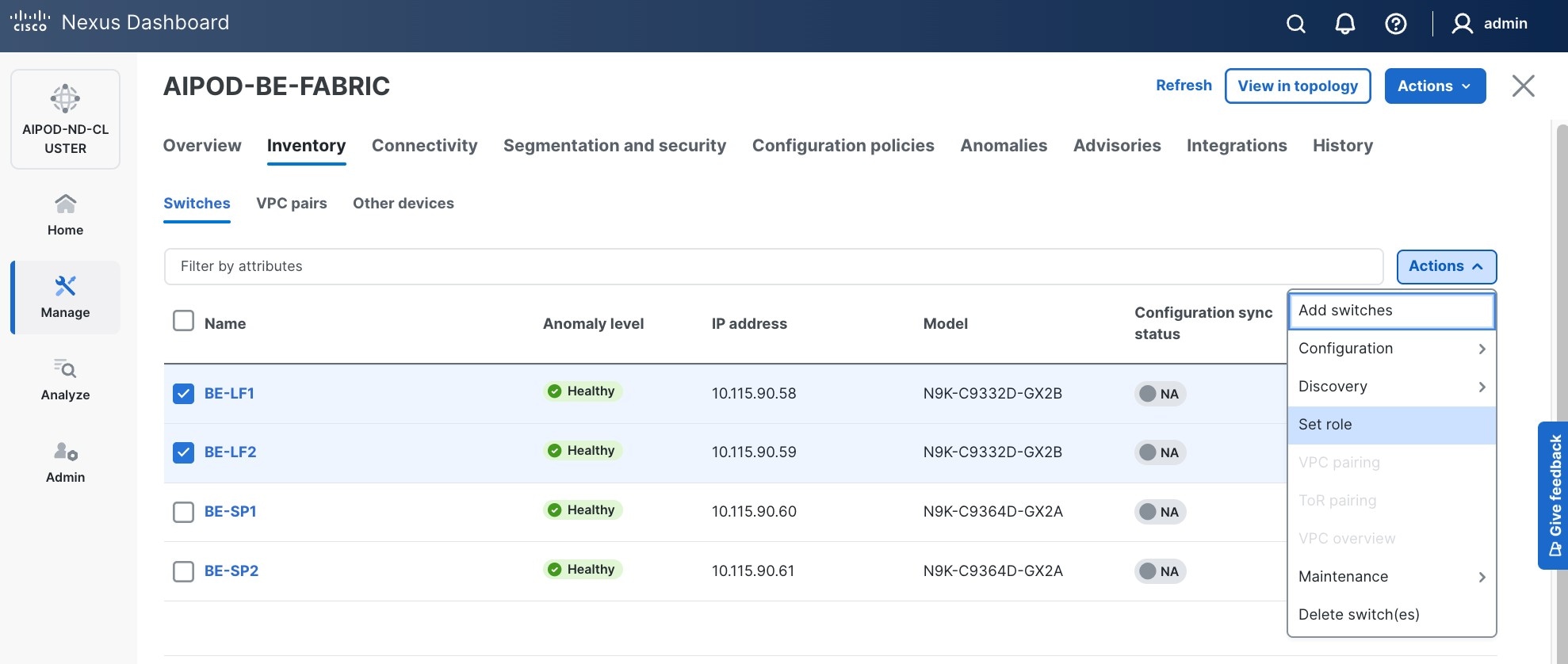

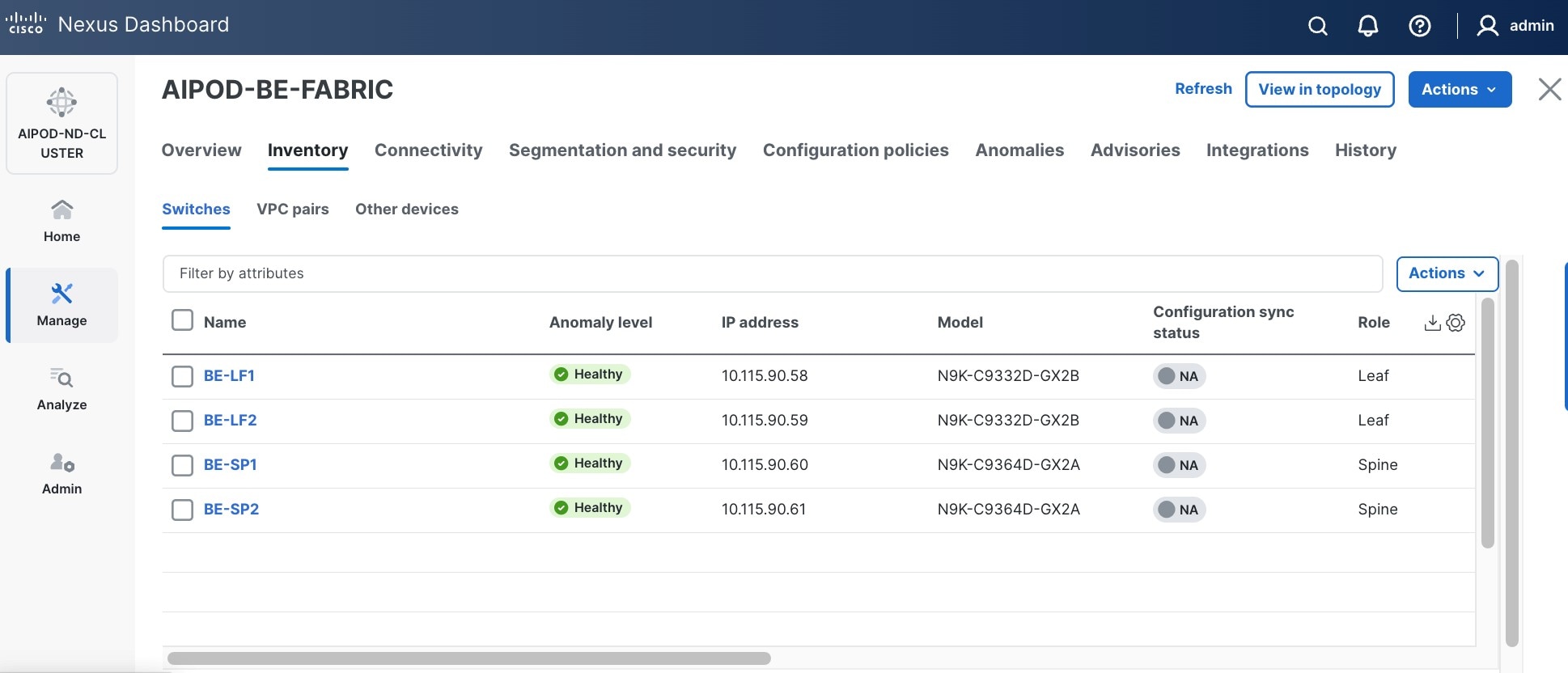

Step 24. For each switch in the list, verify Role is correct. To change the role, select the switch and then click the lower of the two Actions buttons and select Set role from the drop-down list.

Step 25. In the Select Role pop-up window, select the correct role from the list and click Select.

Step 26. Click OK in the pop-up warning to perform "Recalculate and deploy" to complete the change.

Step 27. Repeat these steps to select and confirm the role for all switches in the fabric.

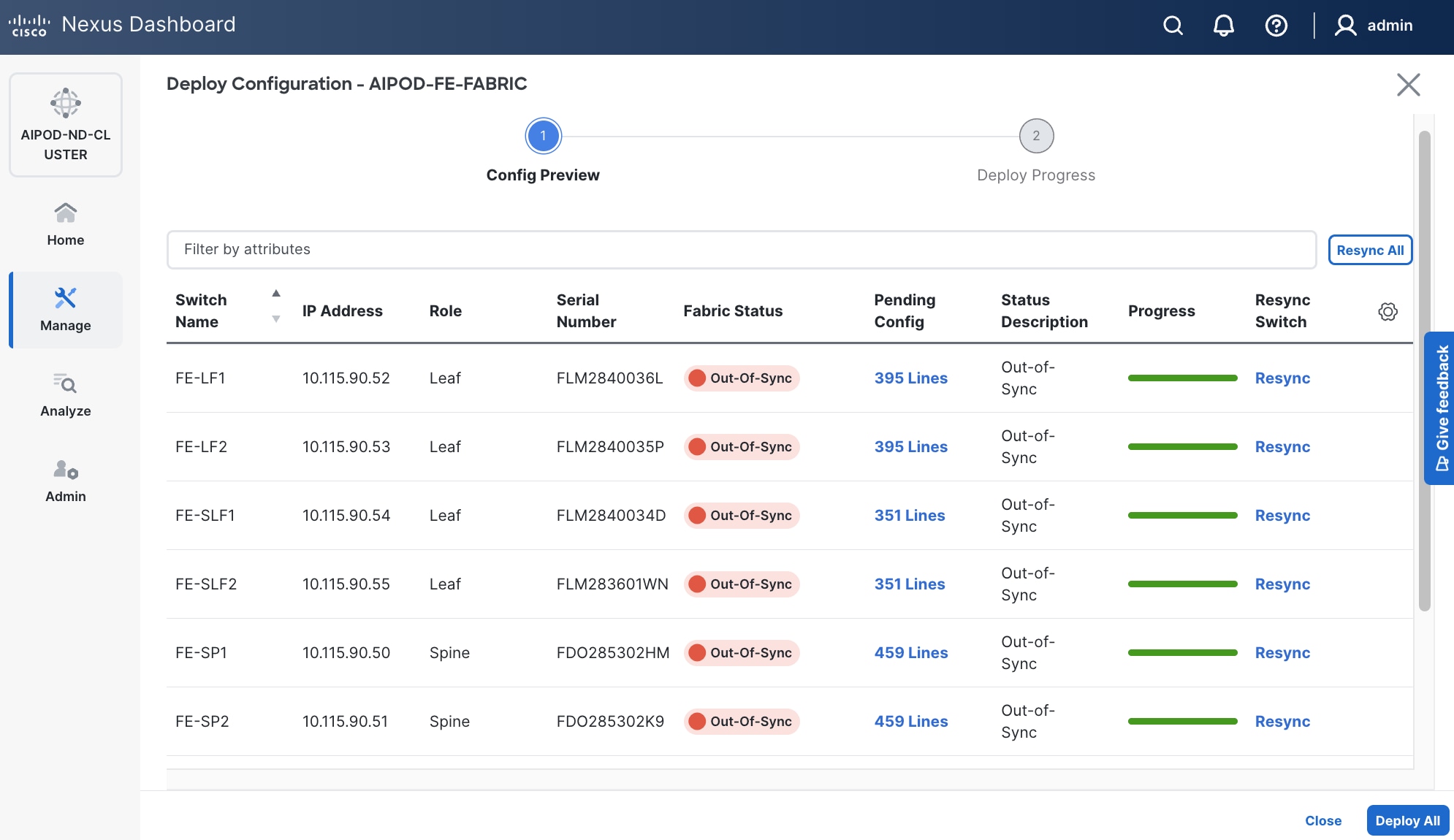

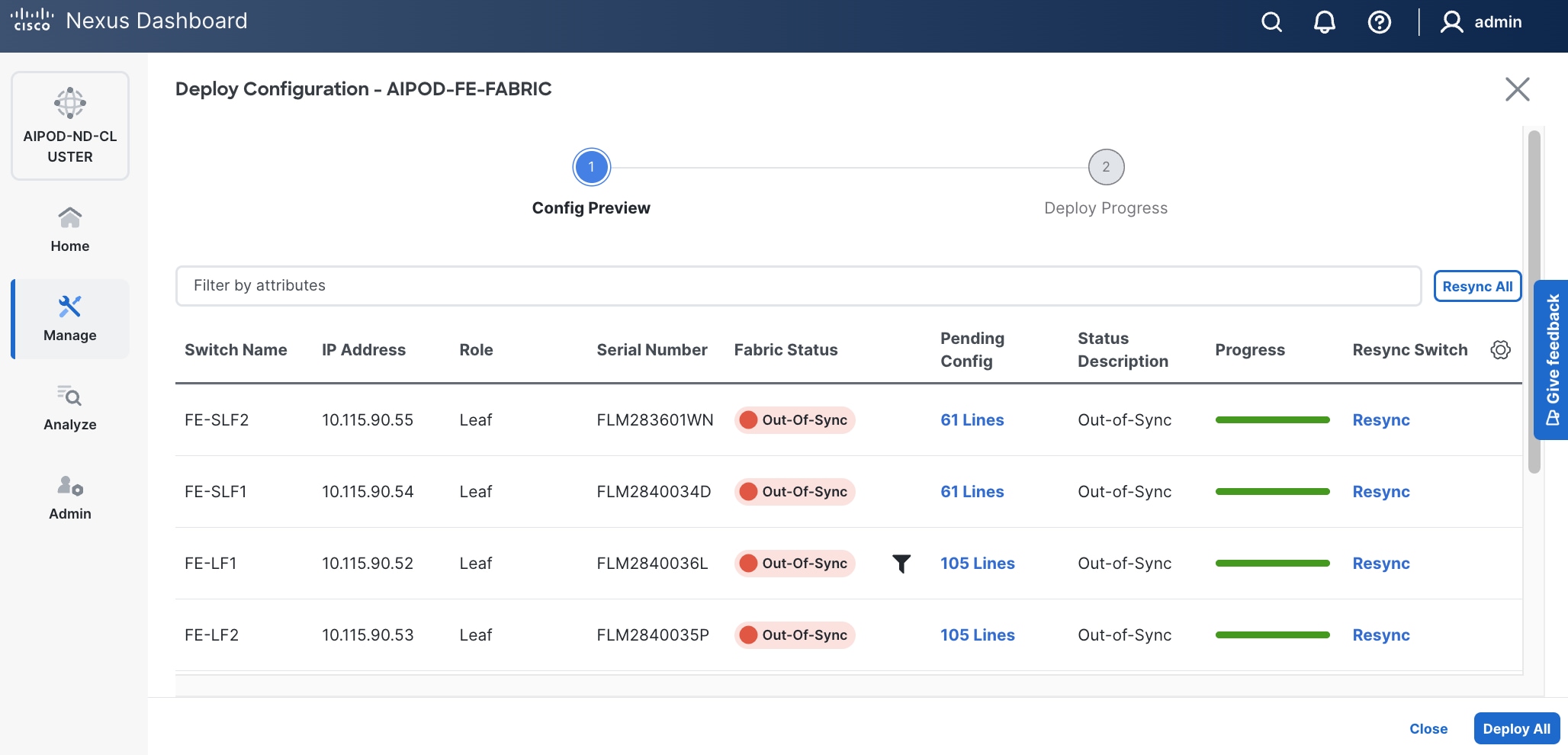

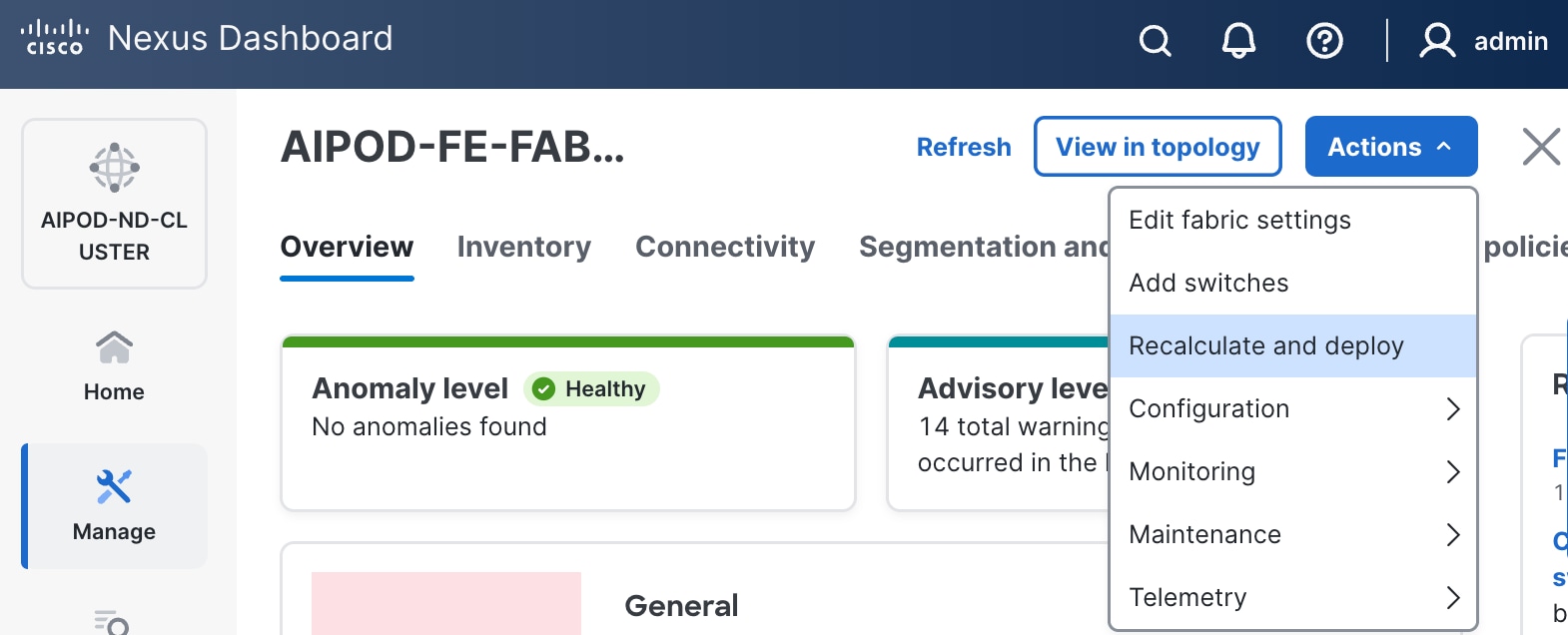

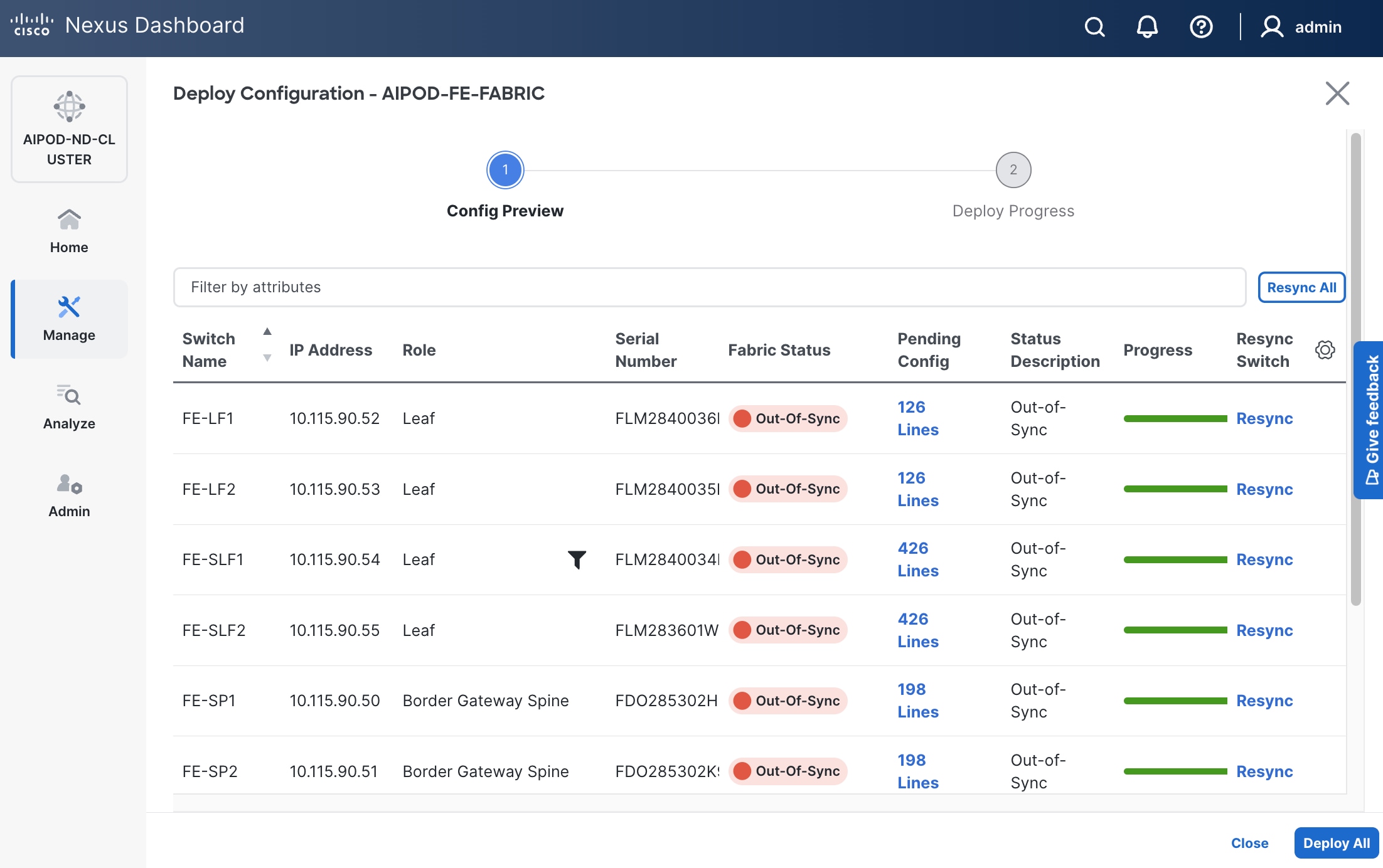

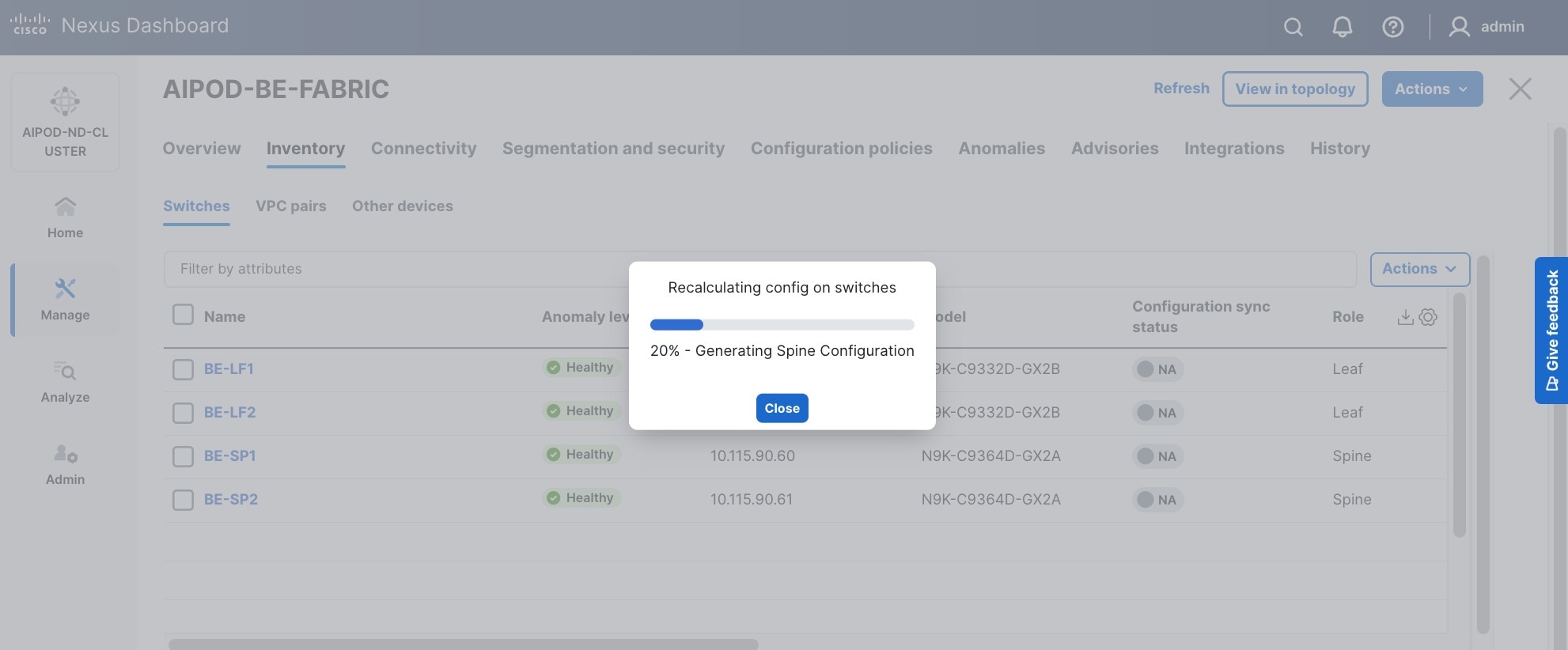

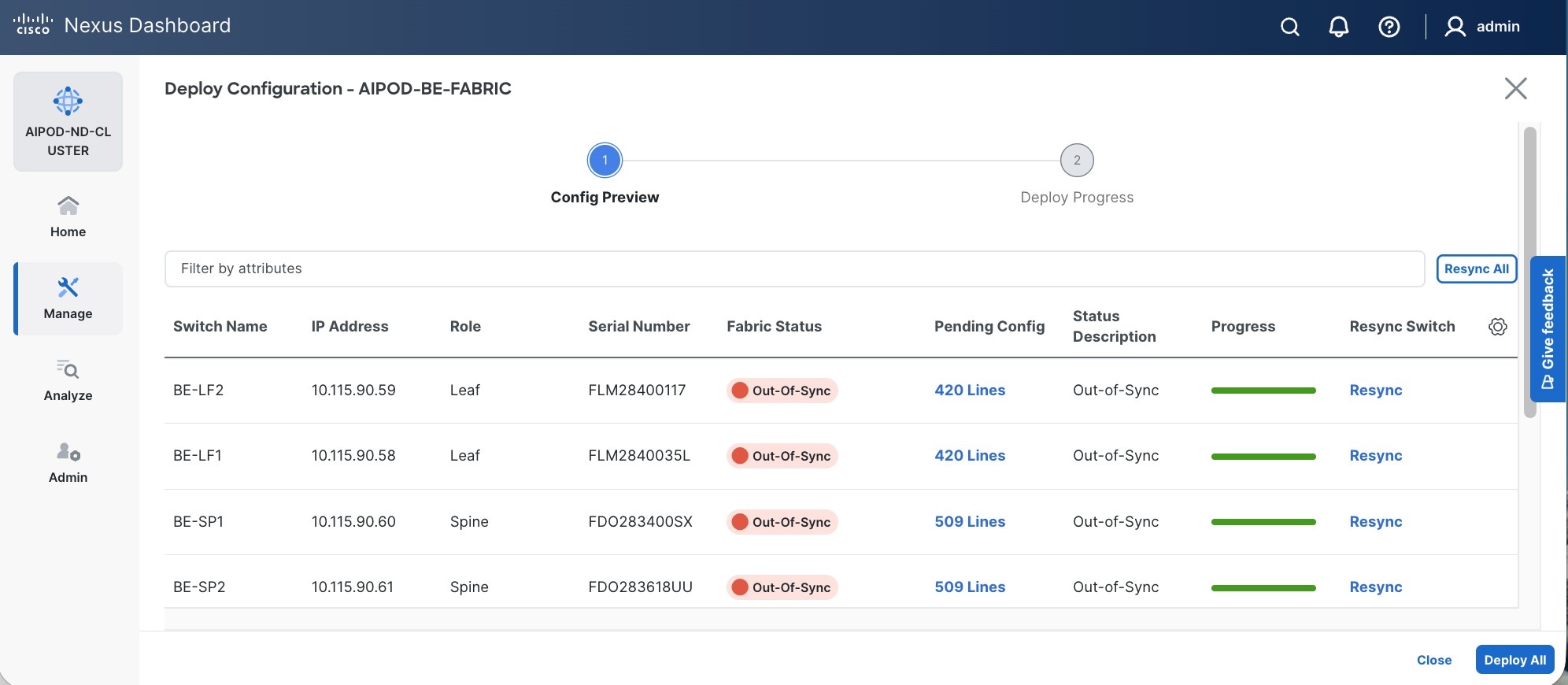

Step 28. Click the higher of the two Actions buttons and select Recalculate and deploy from the drop-down list. If it says one is already in progress, wait a few minutes and repeat the steps. You should see the Fabric as Out-of-sync with some Pending Config (lines of config) change.

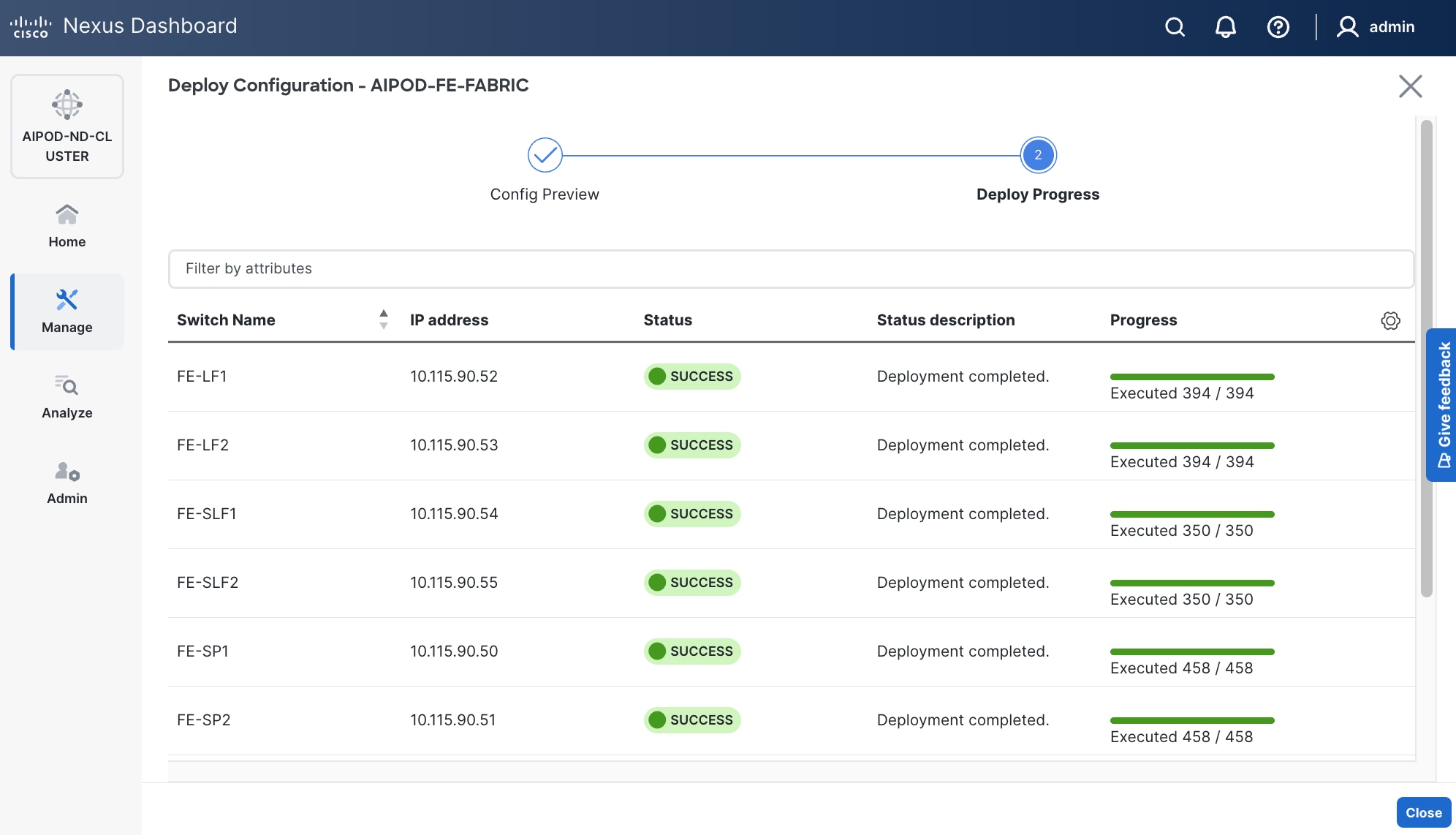

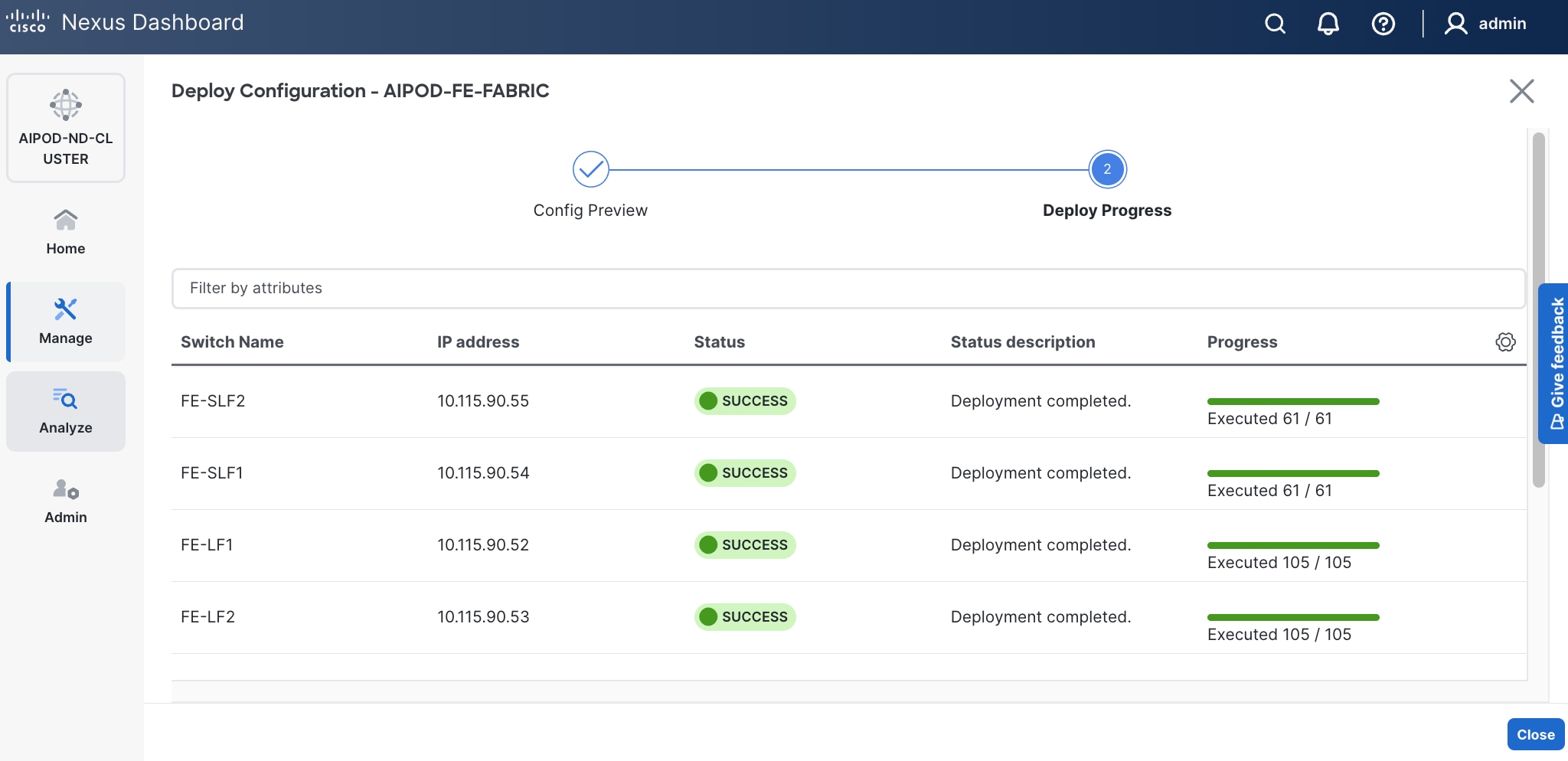

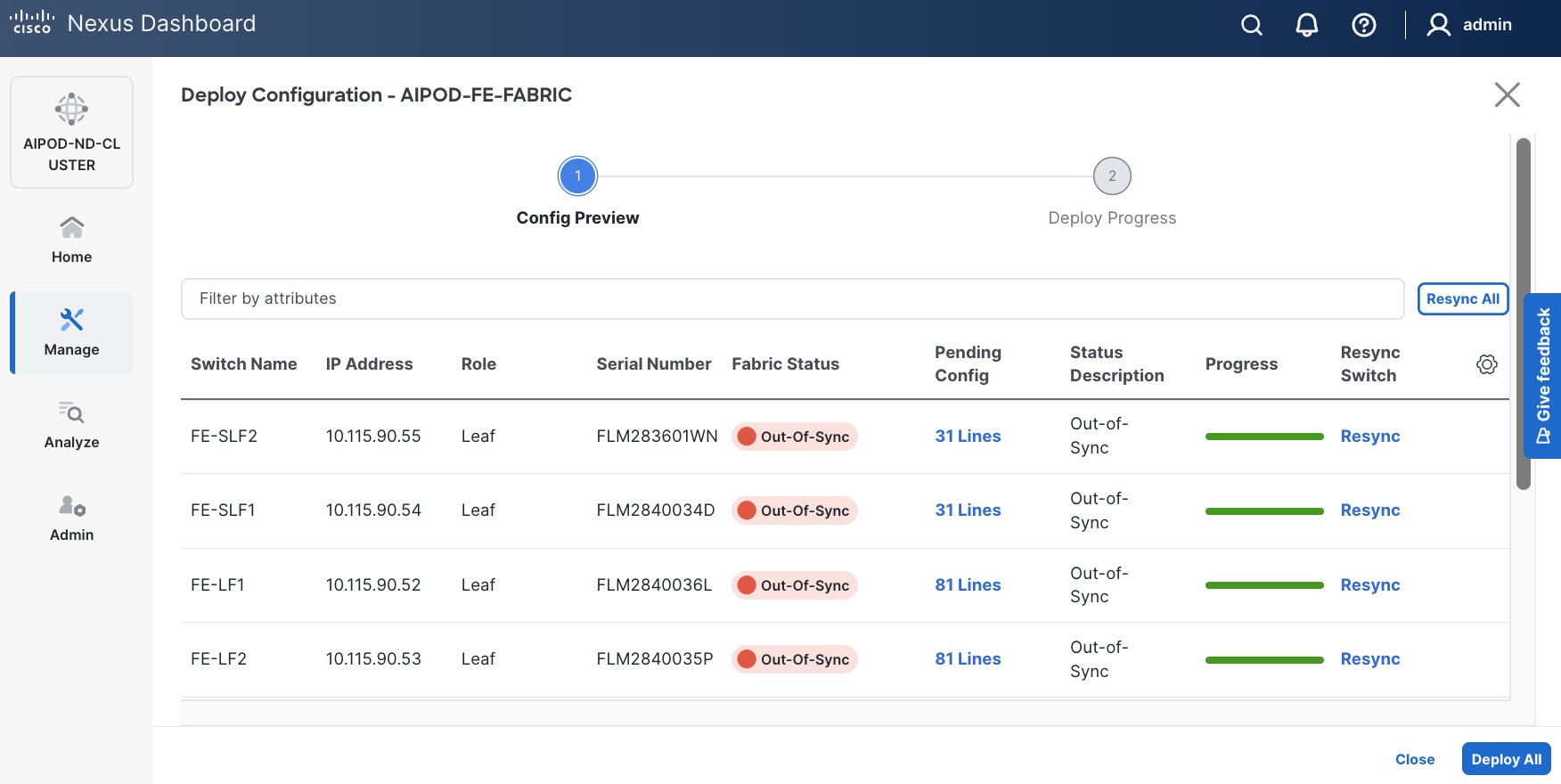

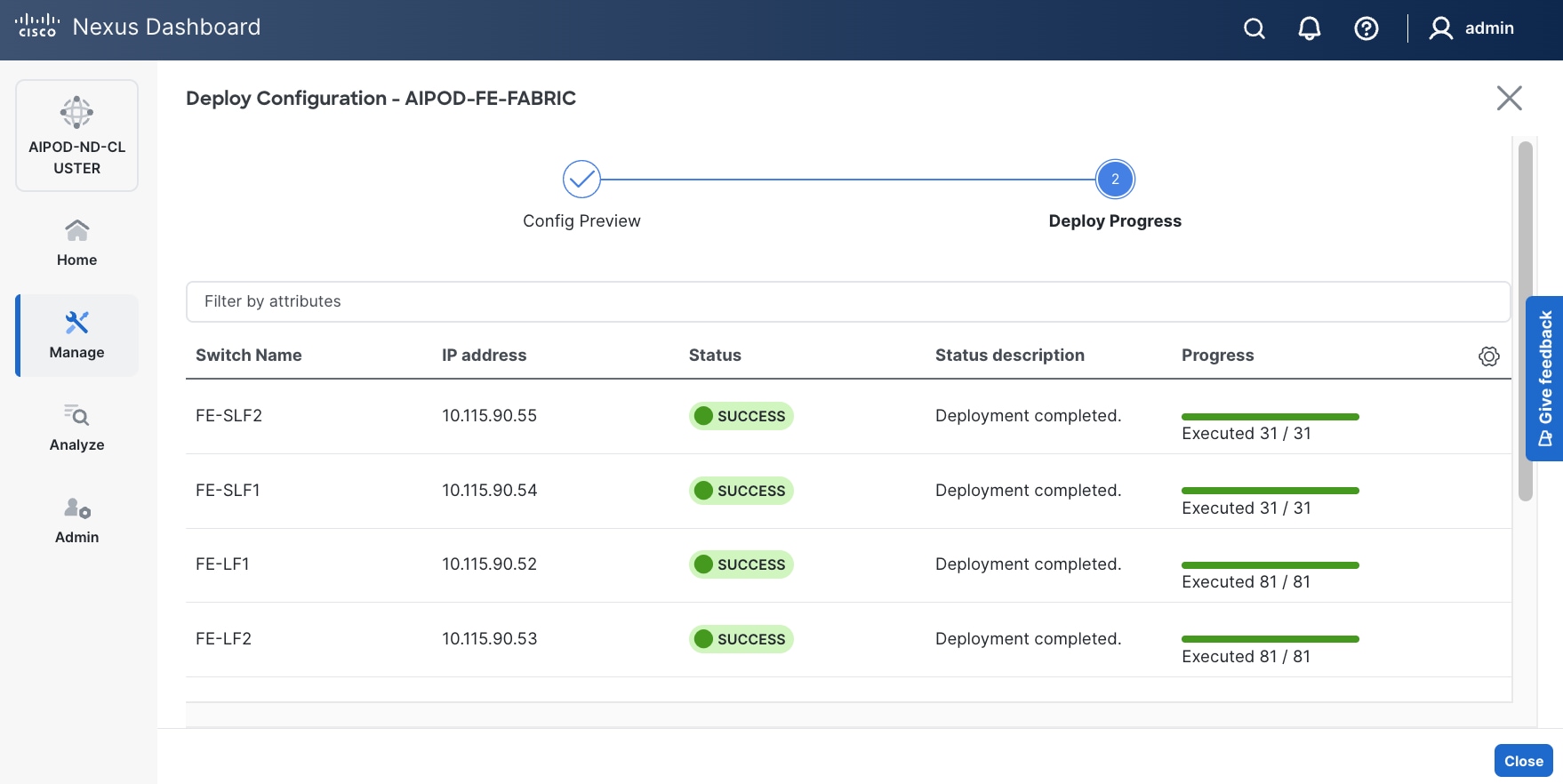

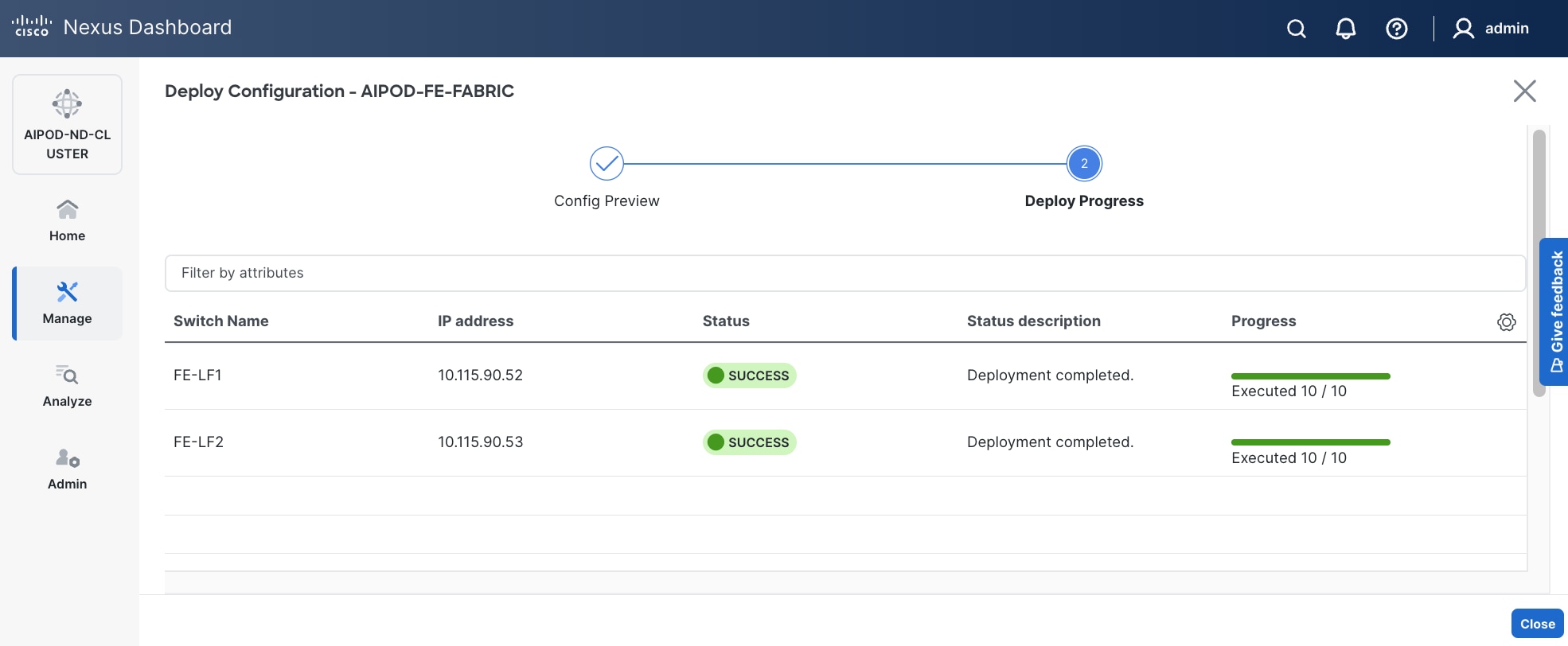

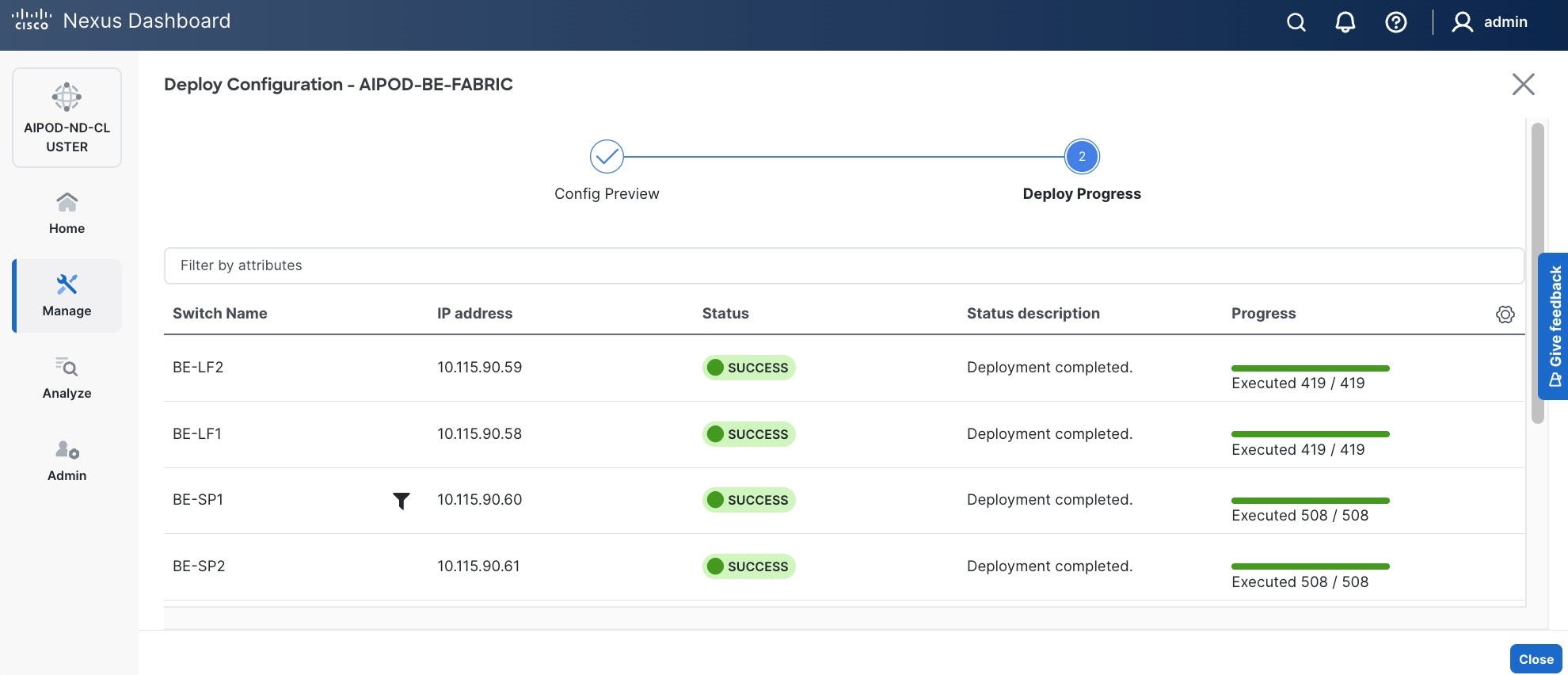

Step 29. Click Deploy All.

Step 30. Click Close.

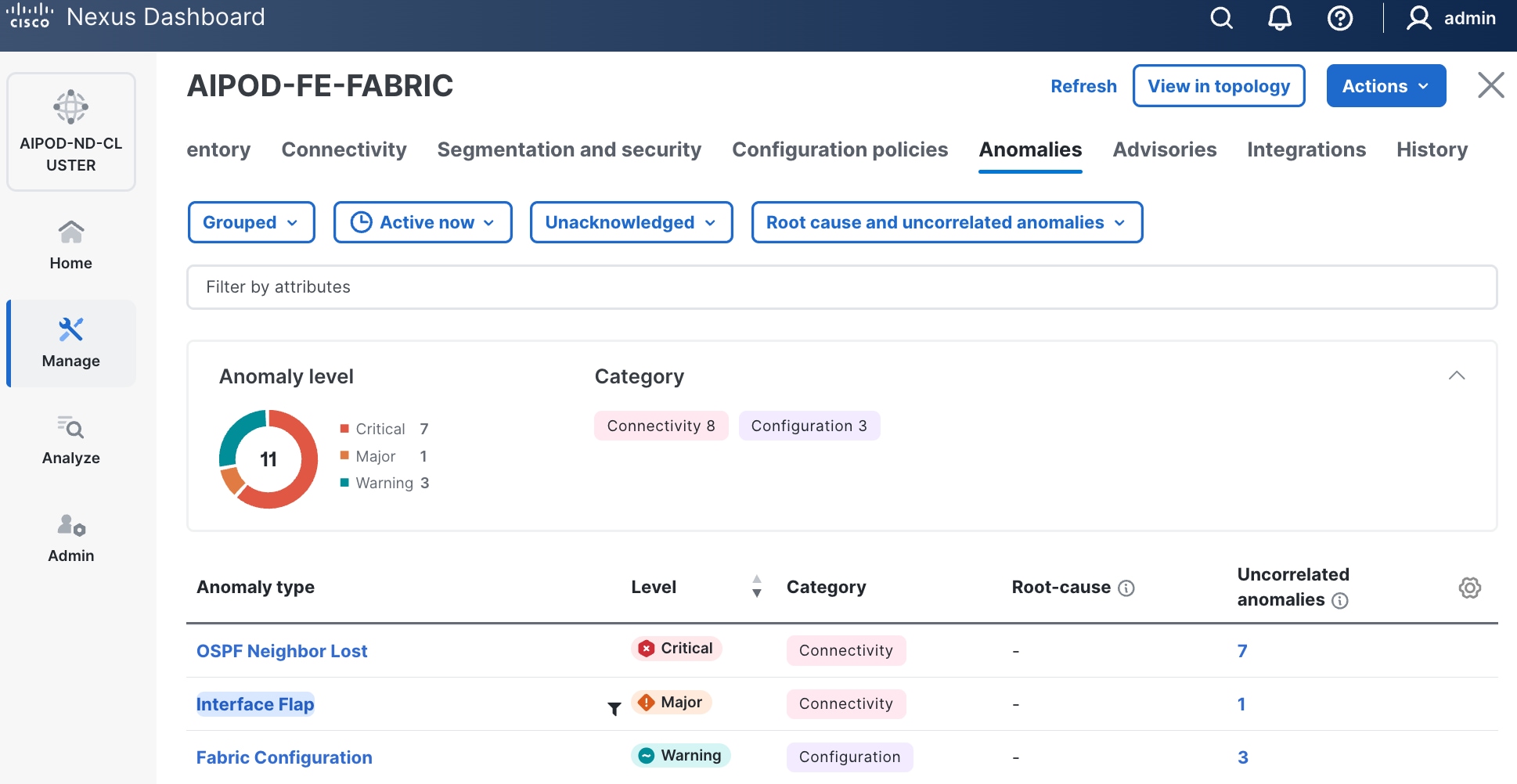

Step 31. ND will identify issues in hardware, connectivity, software and so on, reflected by the Anomaly level. To view the flagged anomalies, navigate to Anomalies in the top menu bar. Address each anomaly to prevent issues later, either by resolving them or acknowledging them.

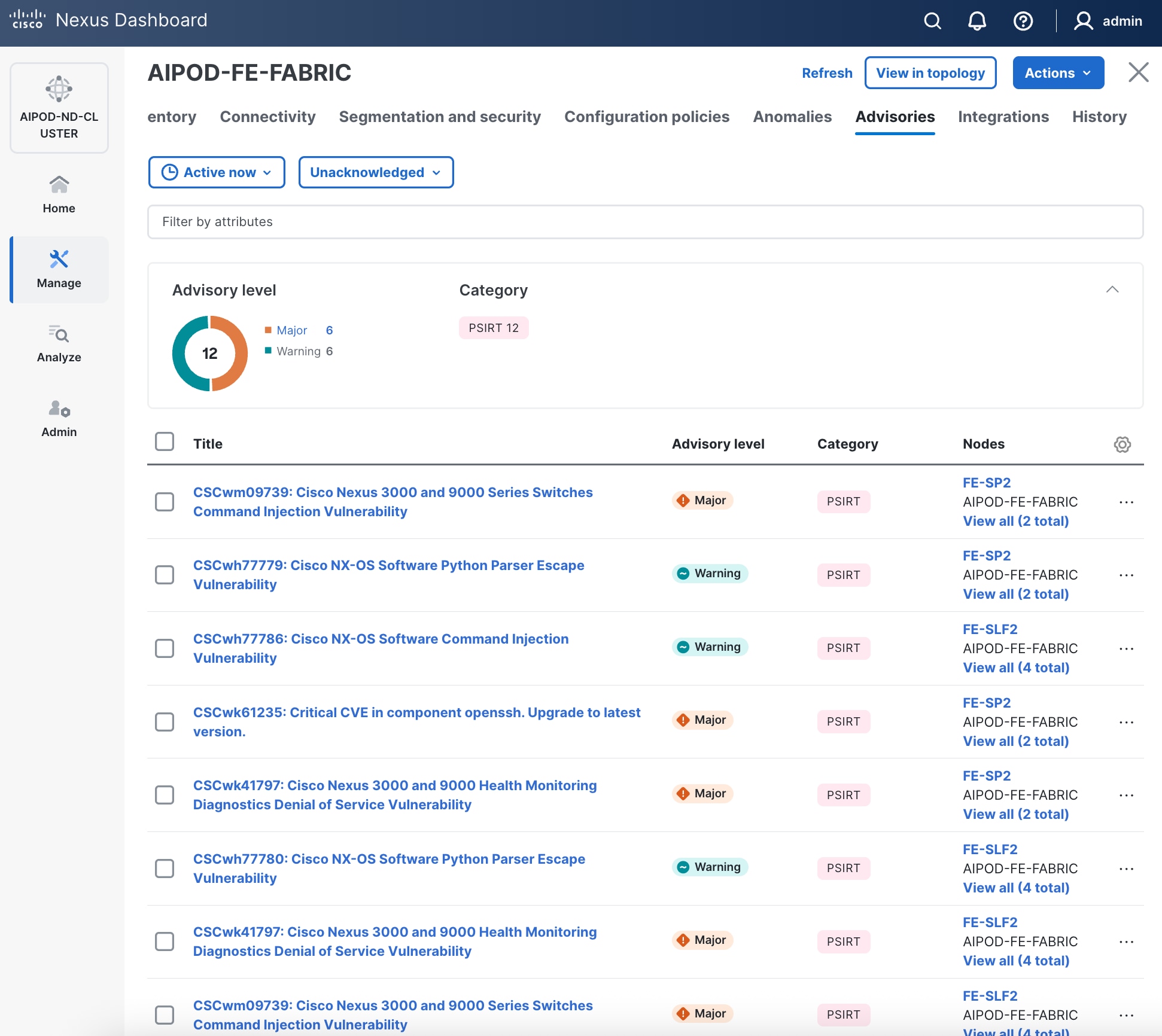

Step 32. Review the Advisories and resolve or acknowledge them.

Step 33. Evaluate and upgrade to Cisco recommended Nexus OS release.

Step 34. Now start attaching compute, storage and other end devices to the cluster.

Enable vPC Pairing on Compute/Management Leaf Switches in the FE Fabric

To enable vPC pairing on the compute/management in the FE fabric, follow the procedures below.

Procedure 1. Enable vPC pairing for compute/management leaf switches in the FE fabric

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and click Inventory tab.

Step 4. To enable VPC pairing on the leaf switches that connect to UCS compute (GPU and management) nodes, select the first leaf switch in the leaf pair.

Step 5. Click the lower of the two Actions buttons and select VPC pairing from the drop-down list.

Step 6. Select the VPC peer switch for the first compute/management leaf. Enable Virtual Peerlink.

Step 7. Click Save.

Step 8. Click OK in the Success pop-up window.

Step 9. Select the two leaf switches in the vPC pair that are now Out-of-sync from the configuration change. Click the higher of the two Actions buttons and select Recalculate and deploy from the drop-down list.

Step 10. Click Deploy All.

Step 11. When the configuration deployment completes successfully, click Close.

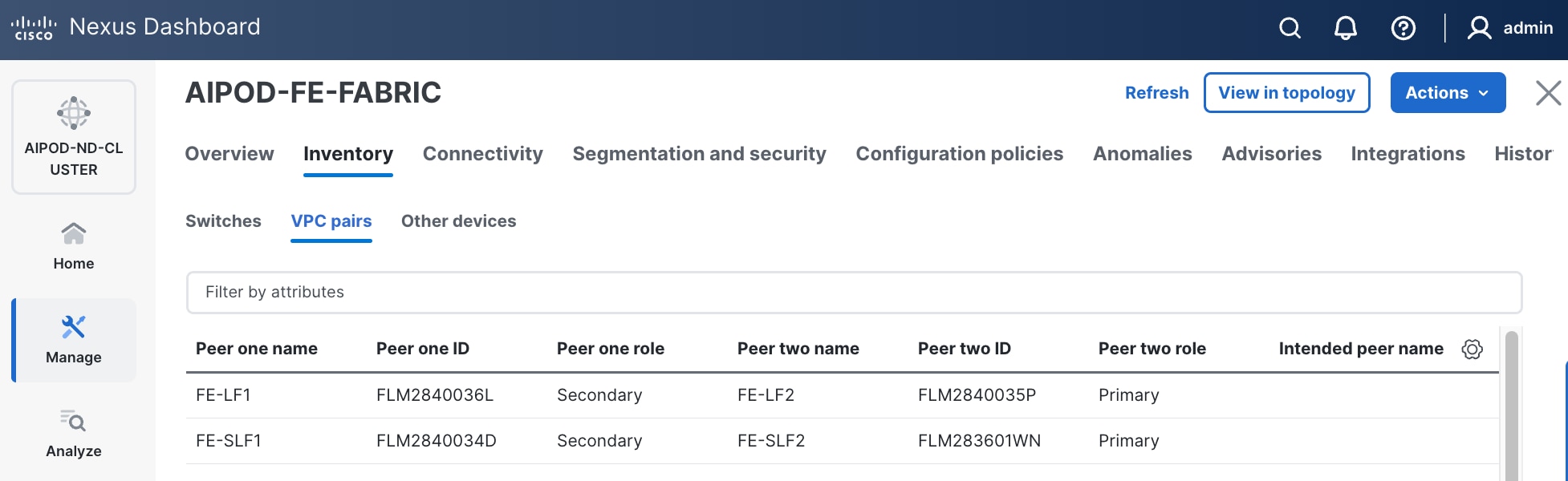

Step 12. In the Inventory tab, navigate to VPC pairs tab to see the newly created vPC pair.

Enable vPC Pairing on Storage Leaf Switches in the Frontend Fabric

To enable vPC pairing for the storage leaf switches in the FE fabric, follow the procedures below.

Procedure 1. Enable vPC pairing for storage leaf switches in the FE fabric

Step 1. Repeat the previous procedure to configure storage leaf switches in the FE fabric as vPC peers.

Step 2. In the Inventory tab, navigate to VPC pairs tab to see the newly created vPC pairs.

Step 3. From the left navigation menu, if you now go to Manage > Fabric and select the FE fabric and then the Topology tab, you should now see the 2 Leaf switch pairs grouped in a box, indicating they are vPC peers.

Enable Layer 2 Connectivity to Management UCS X-Direct from FE fabric

Table 8. Setup Parameters for FE Fabric: Layer 2 Connectivity to Management UCS X–Direct

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| Leaf Switches |

FE-LF1, FE-LF2 |

|

| Management UCS |

UCS X-Direct with (-A, -B) uplinks; Both uplinks are dual-homed to FE-LF1 & FE-LF2 |

With multiple servers |

| Virtual Port Channel (vPC) |

To UCS X-Direct |

Management UCS-X Direct Chassis |

| vPC/PC1 - ID |

15 |

To UCS X-Direct: Side-A |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/5, 1/7 |

FI-A: Ports 1/1-4 (PC-11) |

| vPC/PC2 – ID |

16 |

To UCS X-Direct: Side-B |

| Ports |

1/6, 1/8 |

FI-B: Ports 1/1-4 (PC-12) |

To enable Layer 2 connectivity to management Cisco UCS X-Series Direct chassis from the FE fabric, follow the procedures below. You will be configuring two vPCs to the management Cisco UCS X-Series Direct, one for -A side and another for -B side. Each vPC will use multiple ports on each compute leaf switch pair to connect to -A and -B uplinks on Cisco UCS X-Series Direct chassis.

Procedure 1. Deploy first vPC to Management UCS X-Series Direct

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

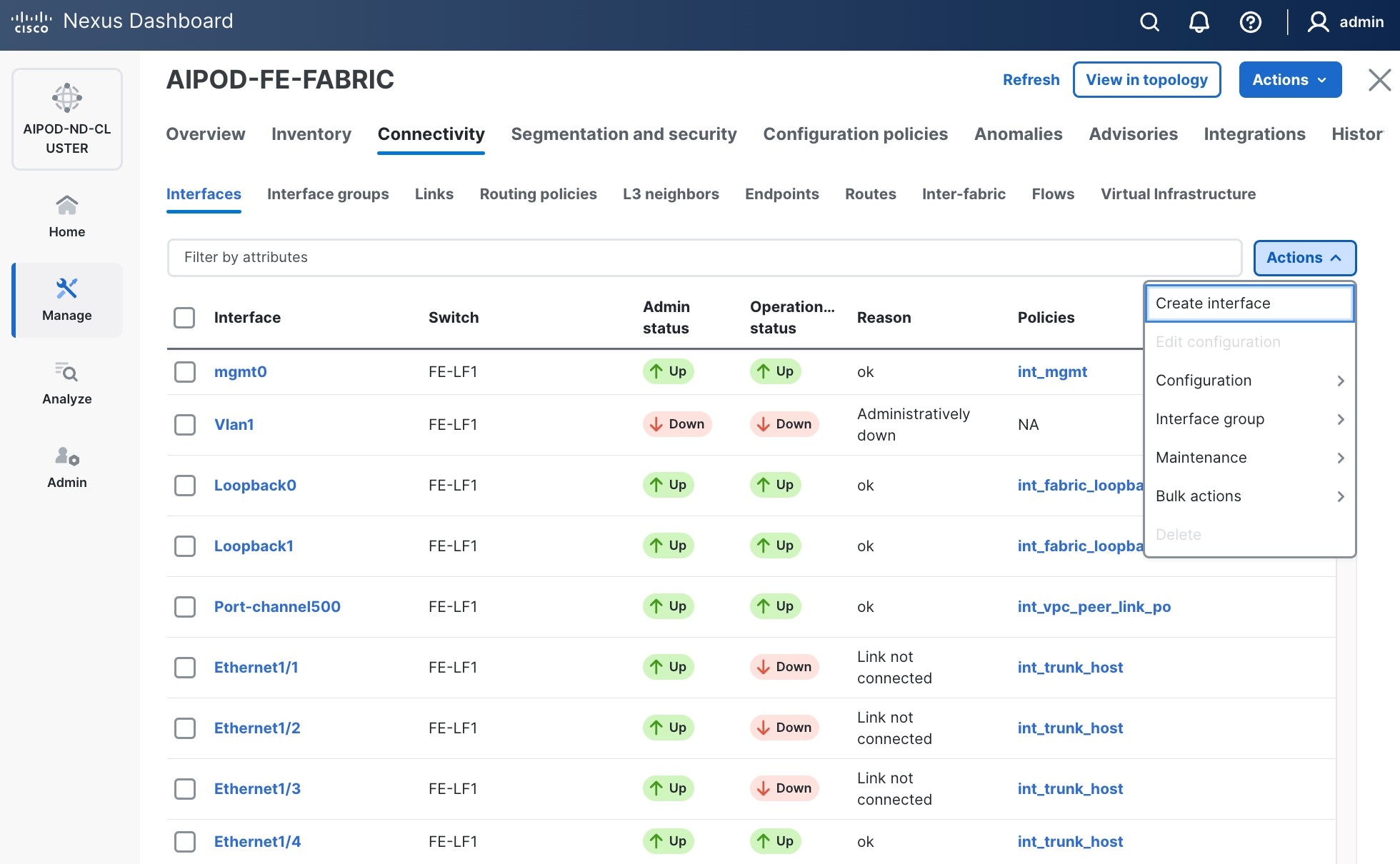

Step 2. From the left navigation menu, go to Manage > Fabrics.

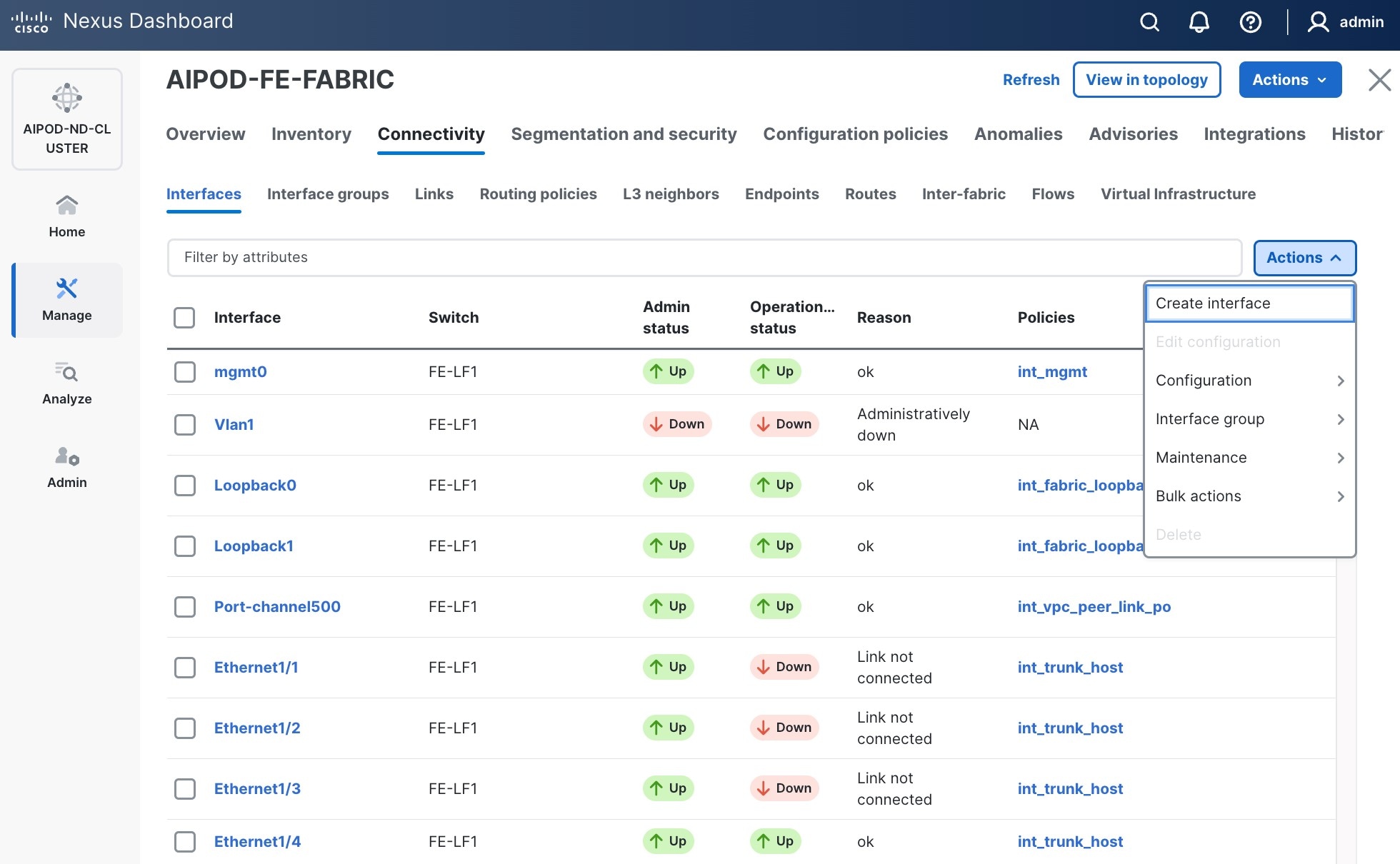

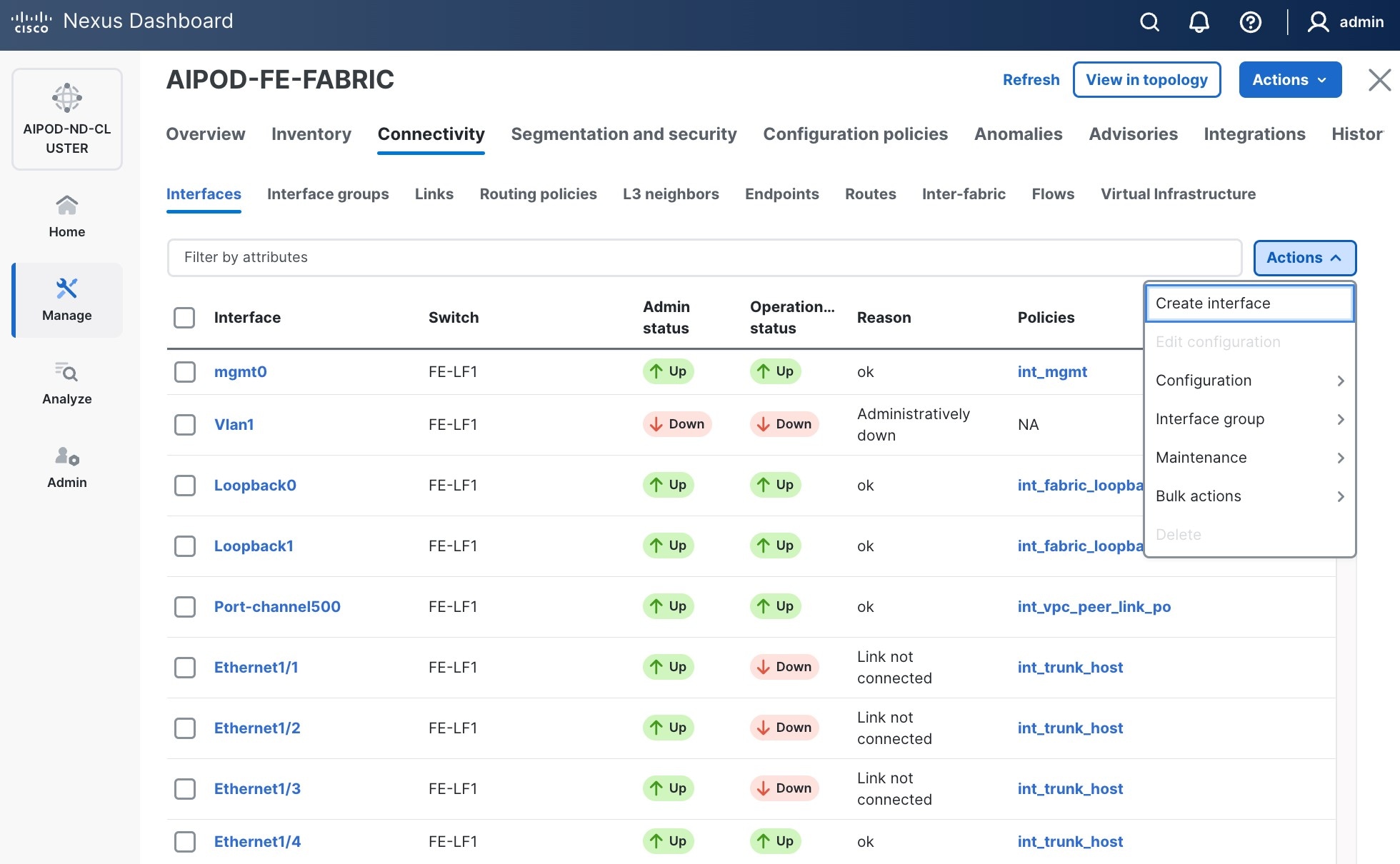

Step 3. Select the FE fabric and navigate to Connectivity > Interfaces tab.

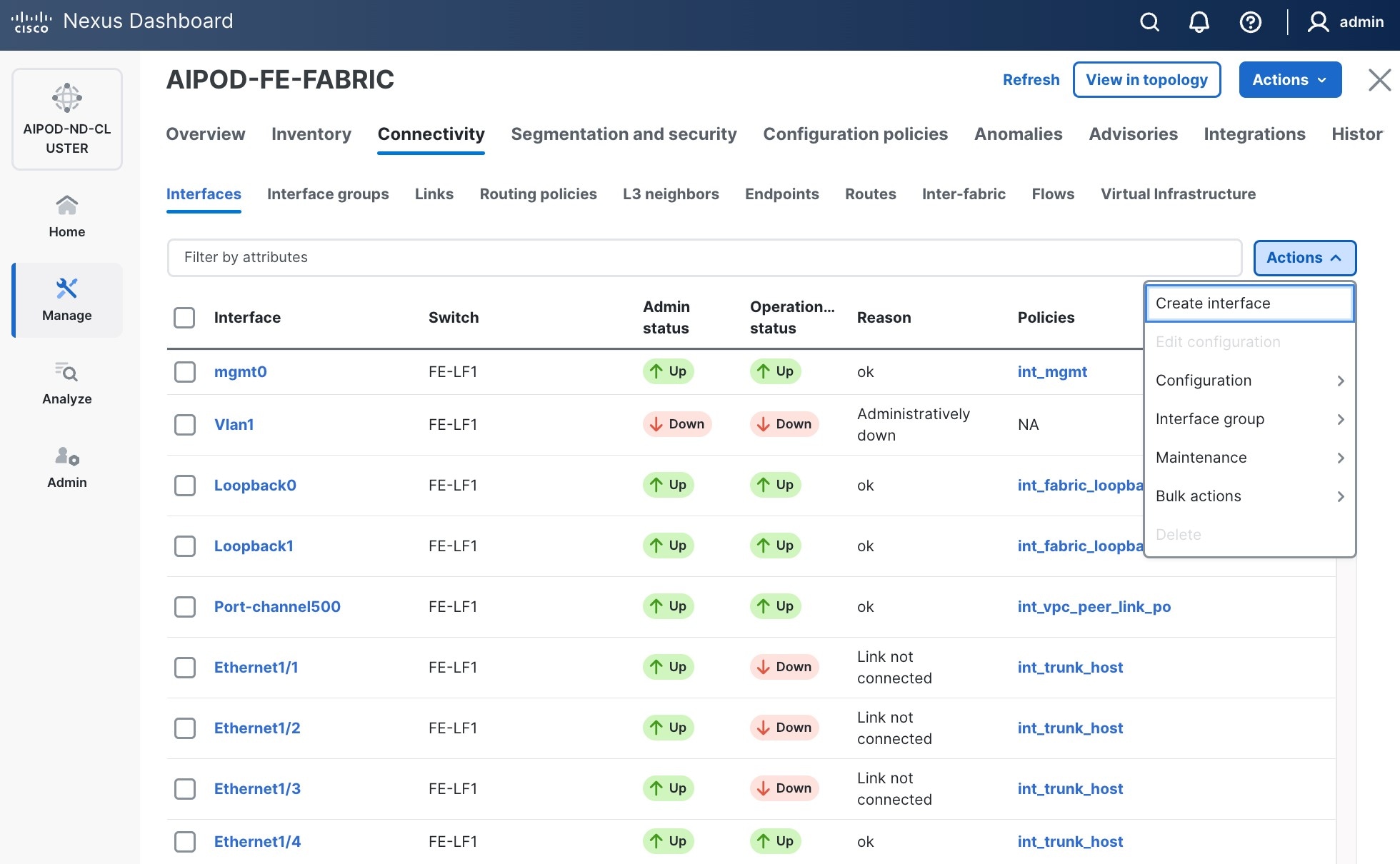

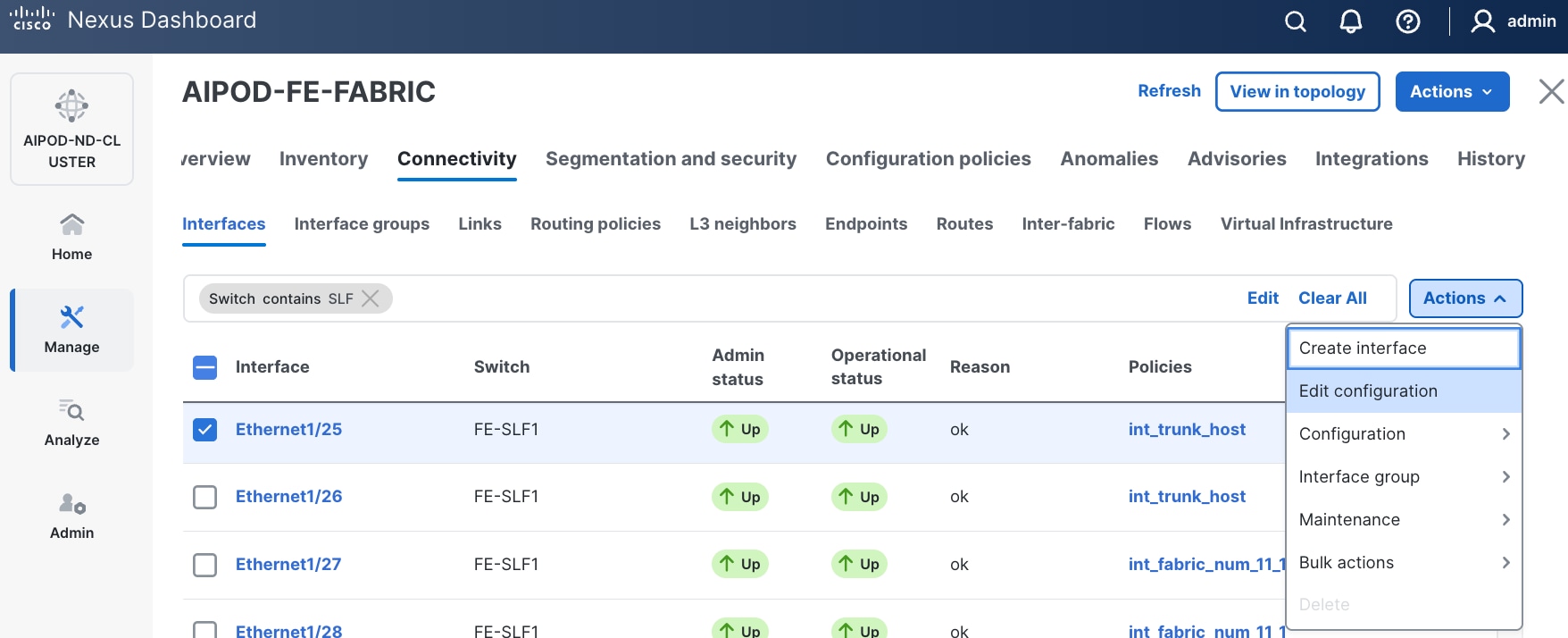

Step 4. Click the lower of the two Actions buttons and select Create interface.

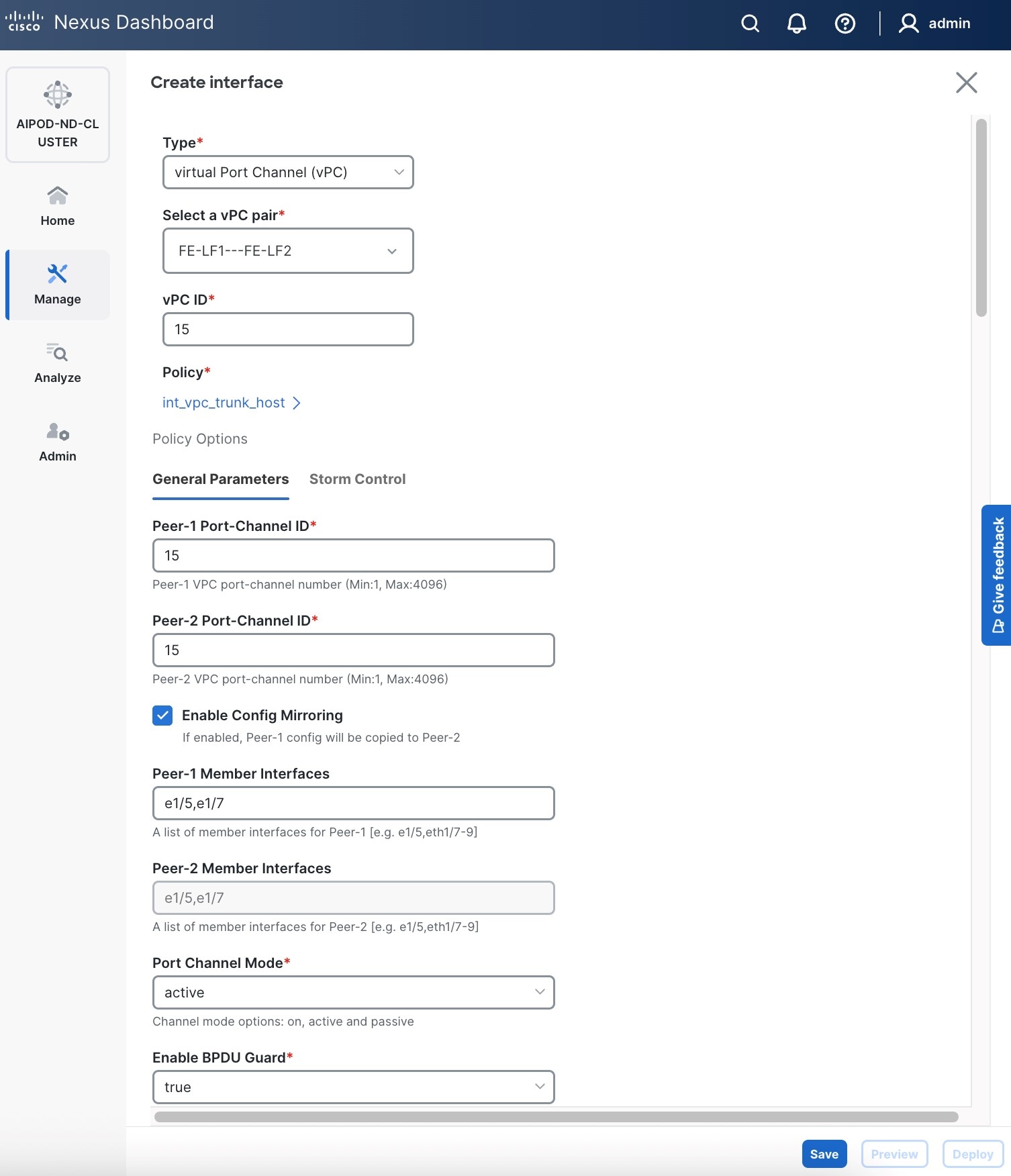

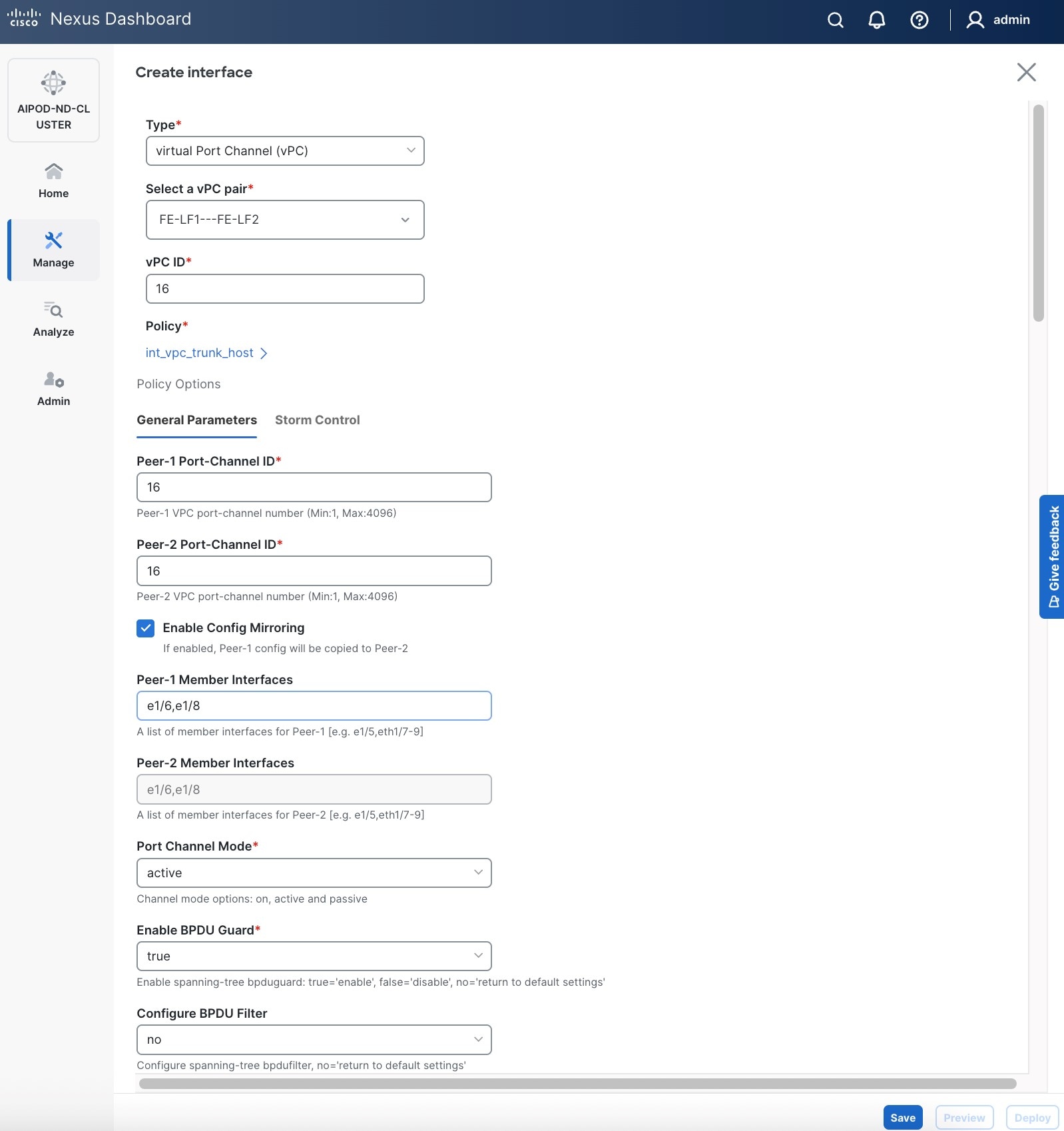

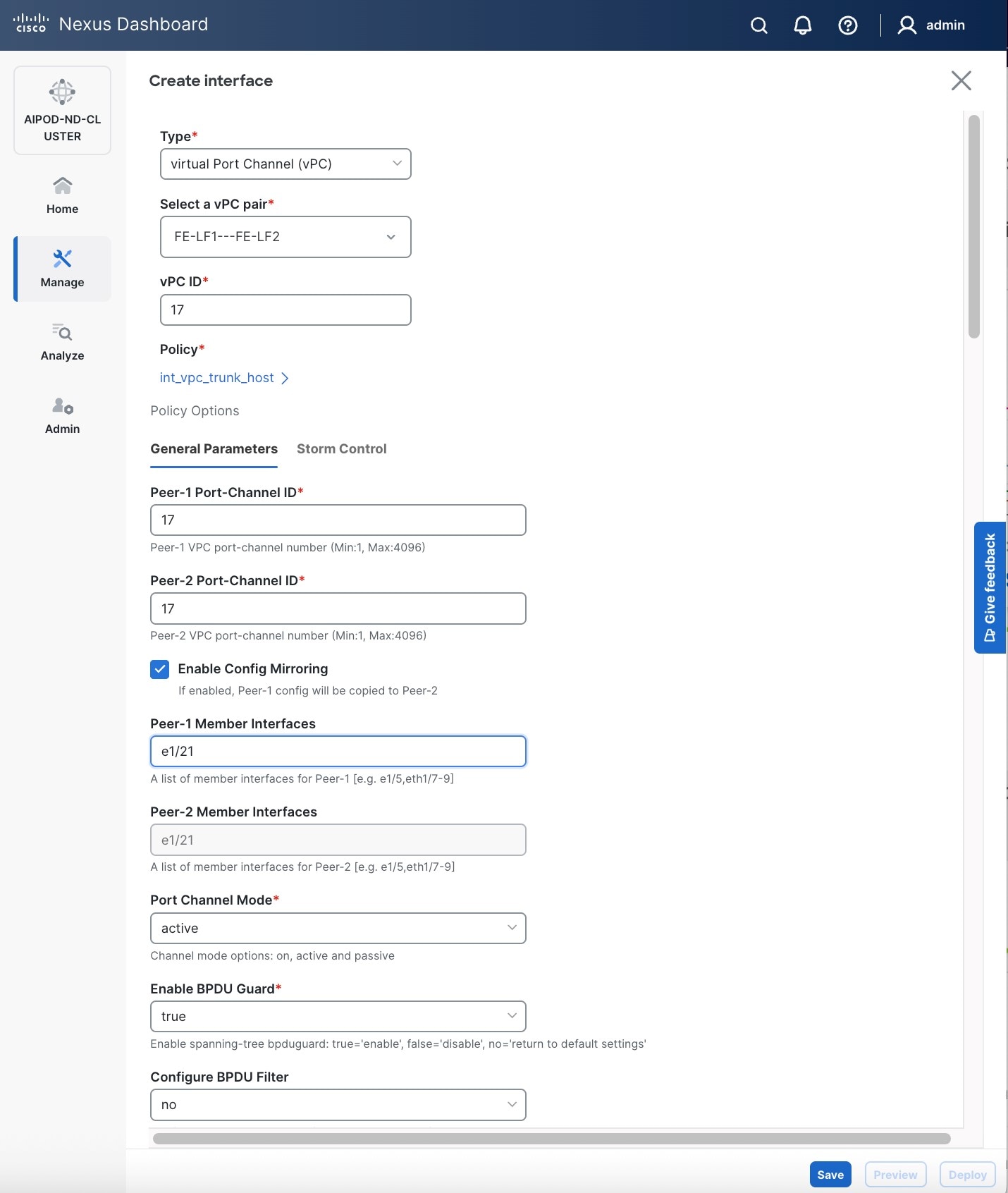

Step 5. In the Create interface window:

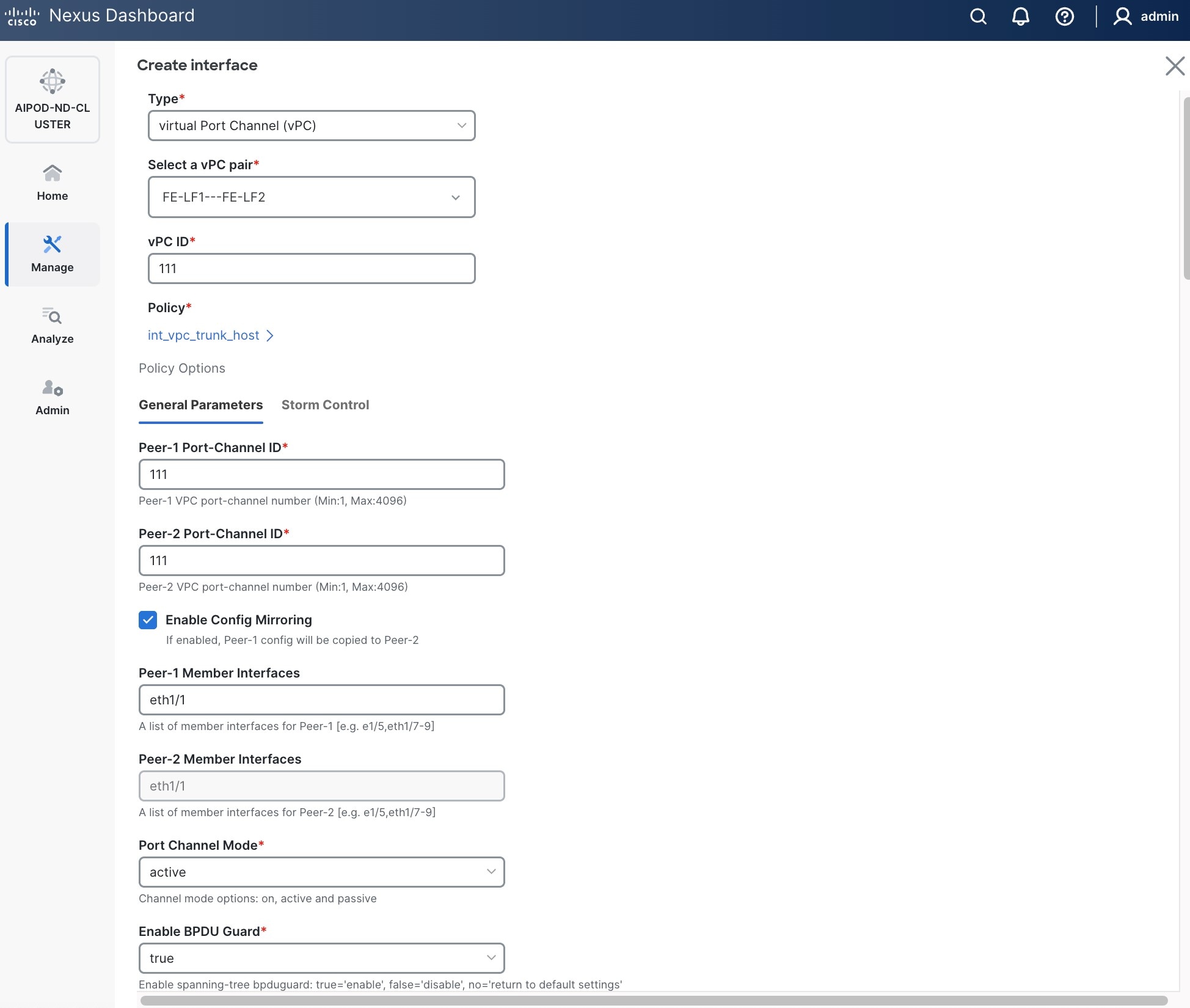

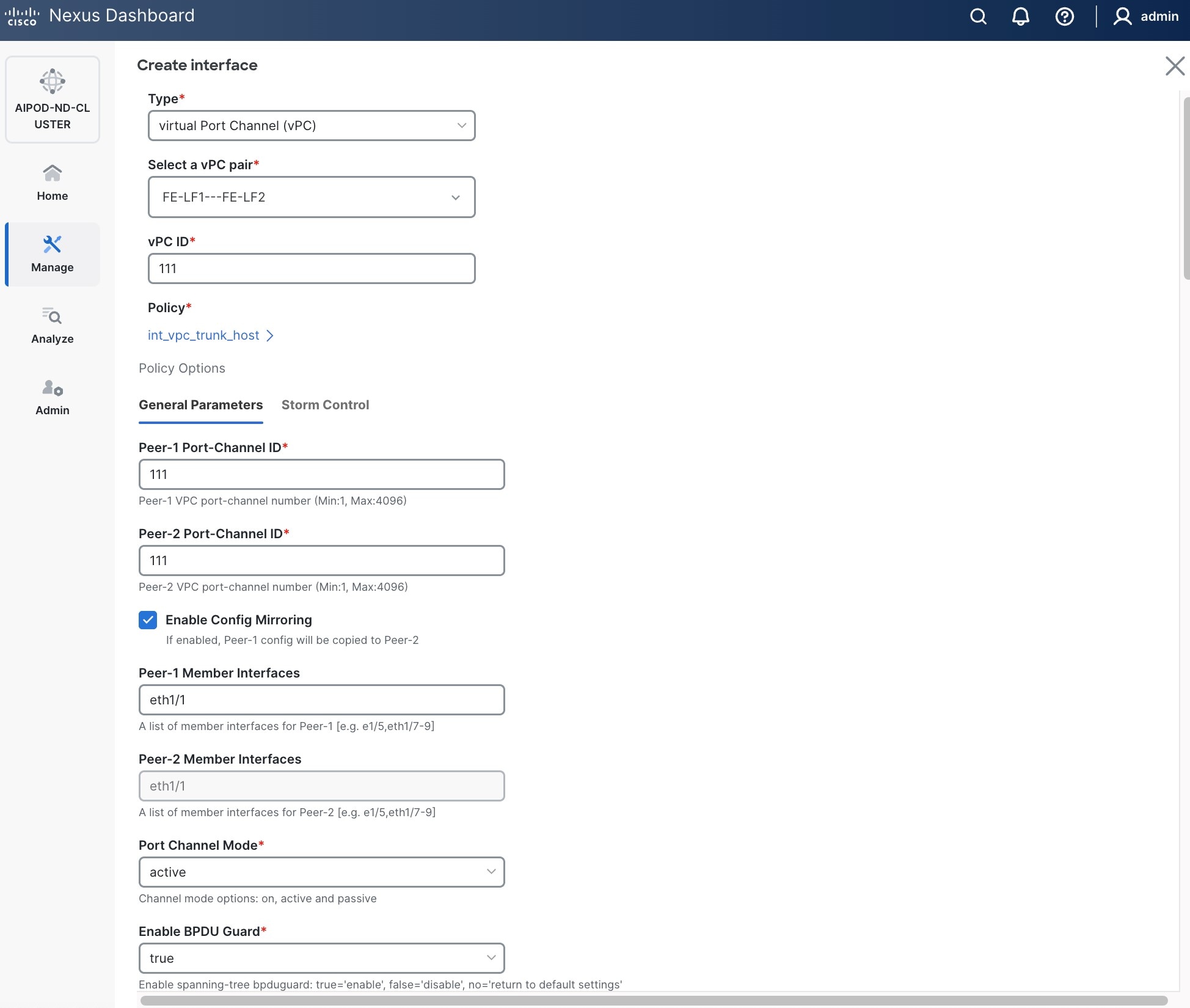

● Specify the TypeType of interface as virtual Port Channel (vPC)virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pairSelect a vPC pair, select the compute leaf switch vPC pair from the drop-down list.

● Specify a vPC IDvPC ID for the firstfirst vPC to the UCS X-Direct (-A side-A side). Port Channel IDs from each switch to the first UCS node should match the vPC ID (see screenshot below).

● Leave the Policy as int_vpc_trunk_host.

● EnableEnable checkbox for Config MirroringConfig Mirroring to configure both vPC peer switches identically.

● Specify Peer-1 Member InterfacesPeer-1 Member Interfaces that connects to first UCS node.

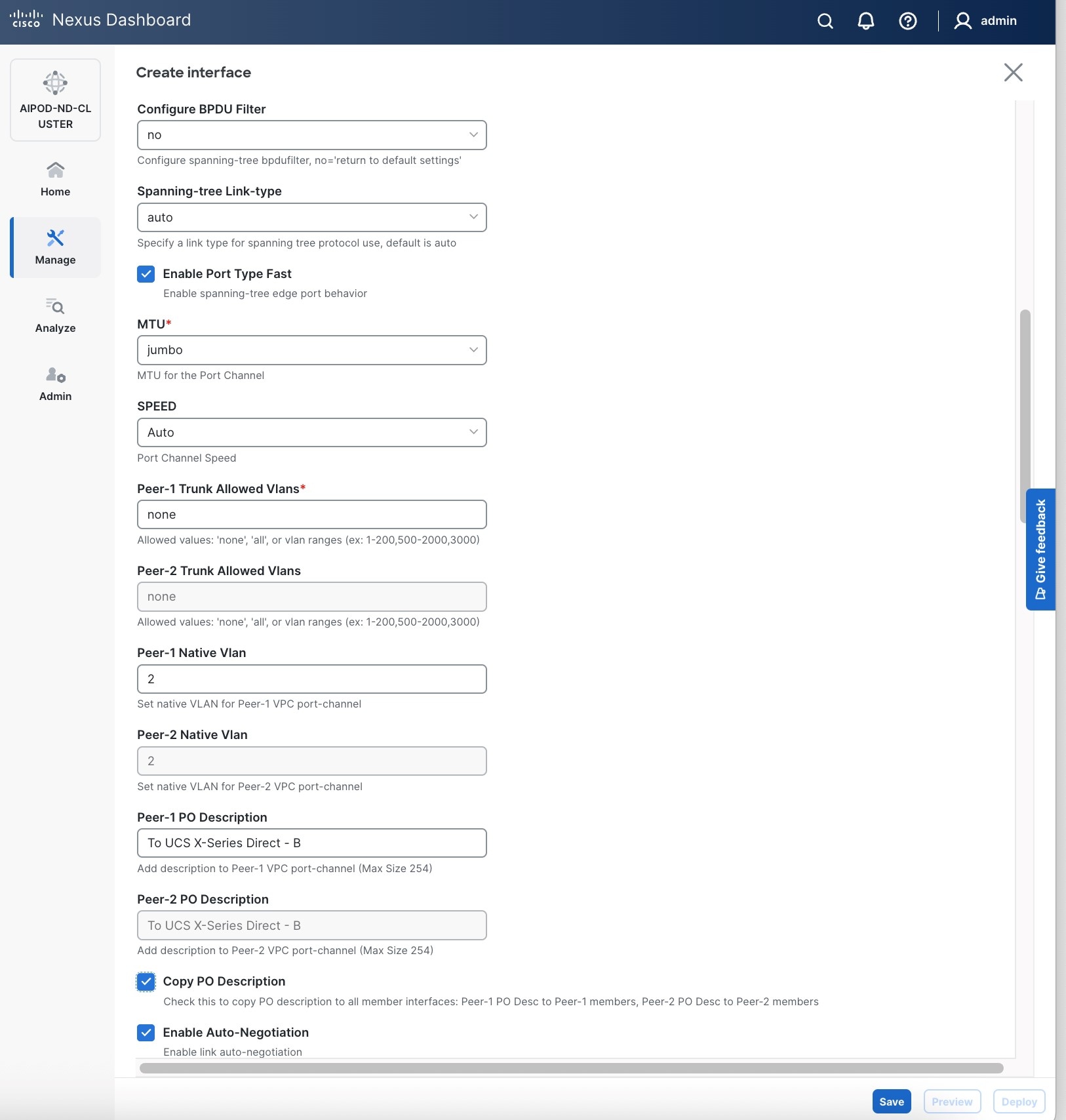

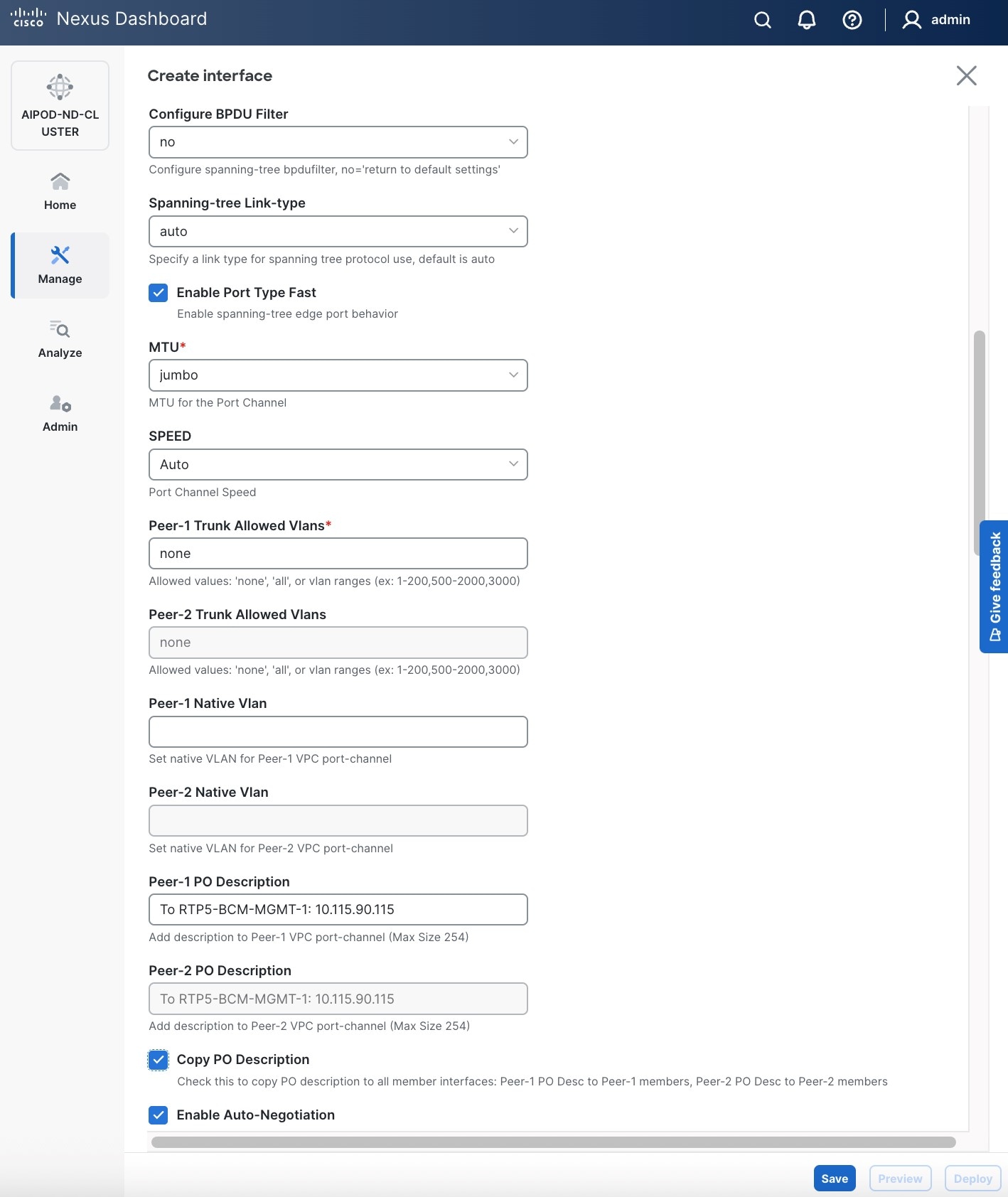

● Leave other fields as is.

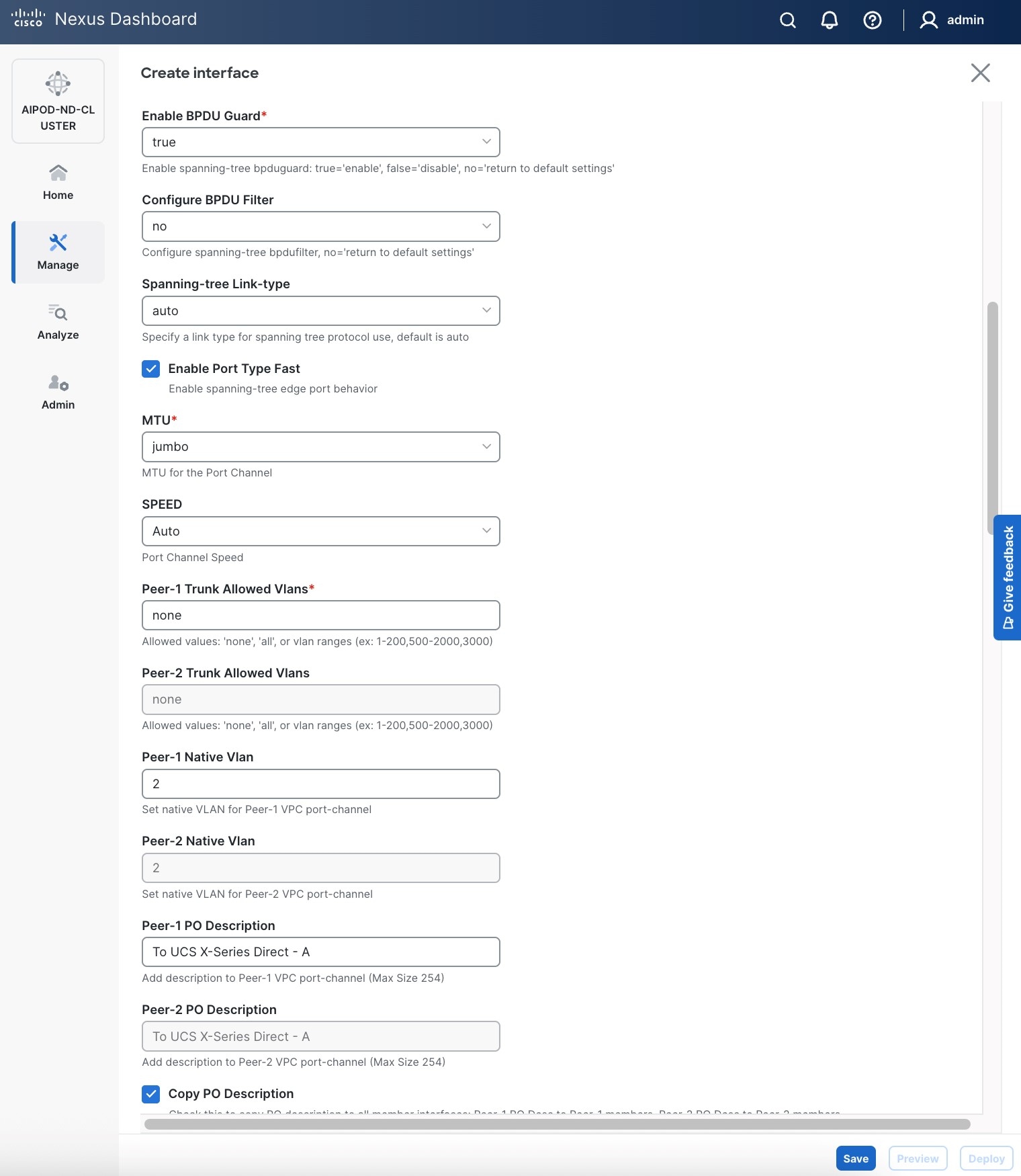

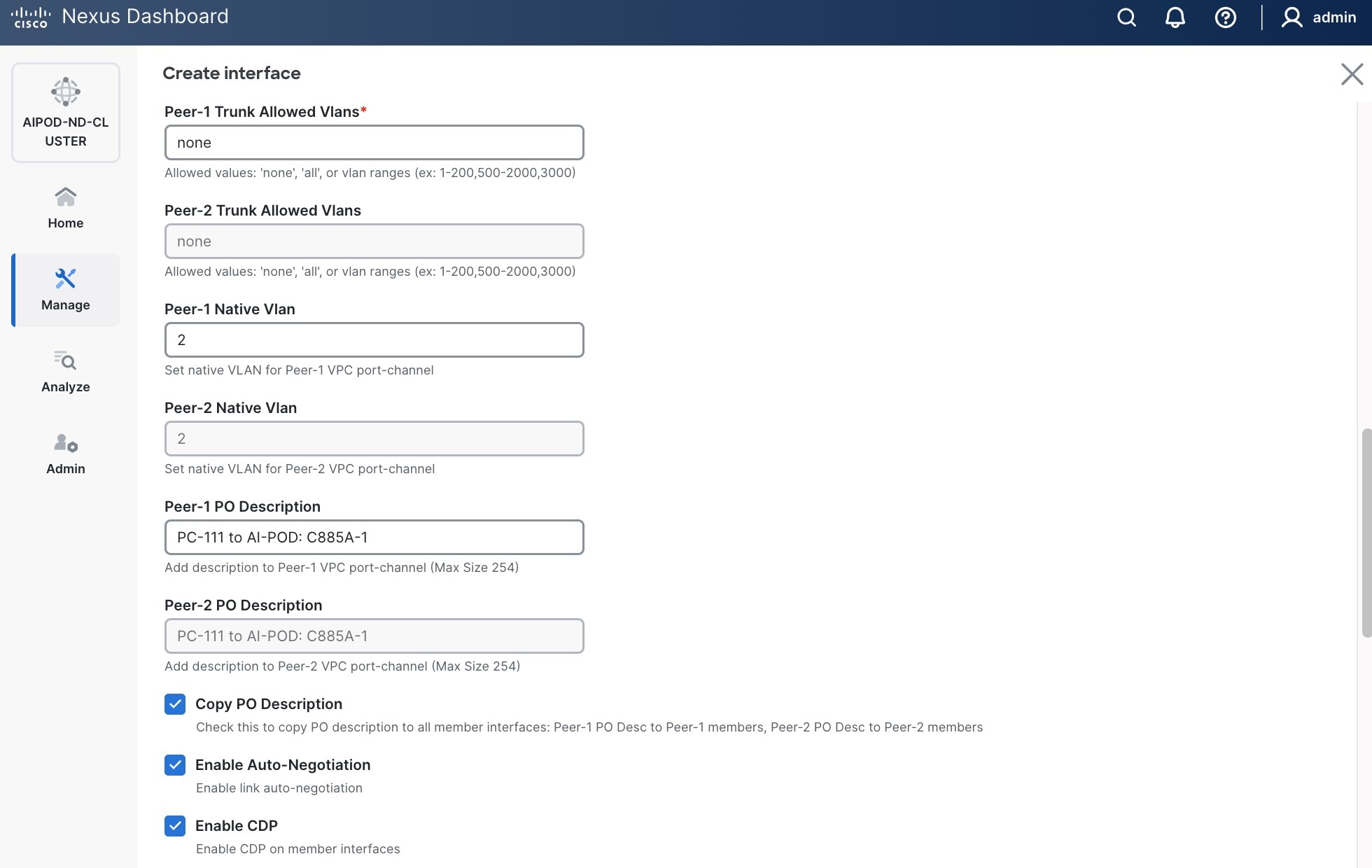

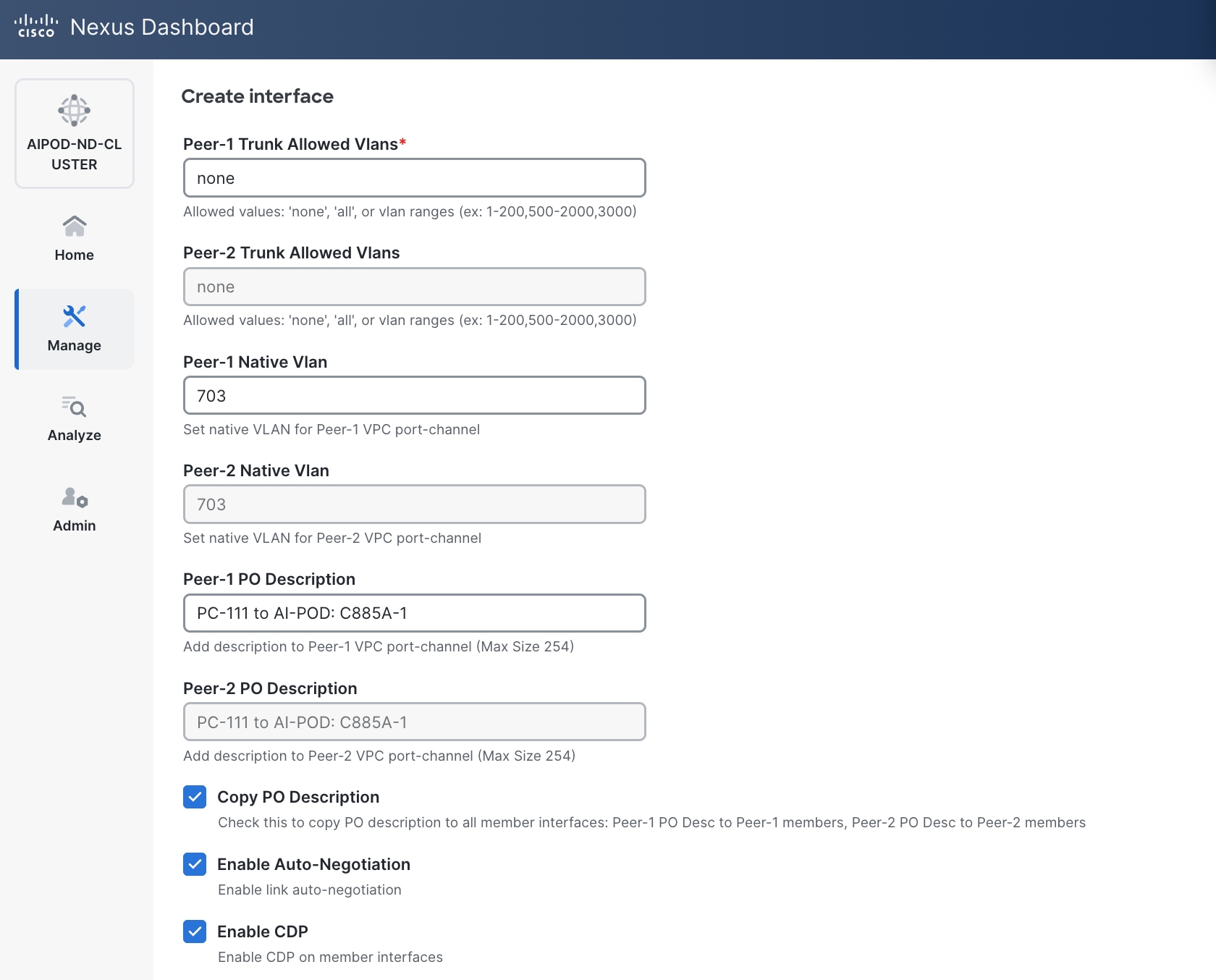

● Scroll down and fill remaining fields: Native VLANNative VLAN, Peer-1 PO Description, Peer-1 PO Description, and select the checkbox for Copy PO Description Copy PO Description to copy the description to second vPC peer’s Port Channel.

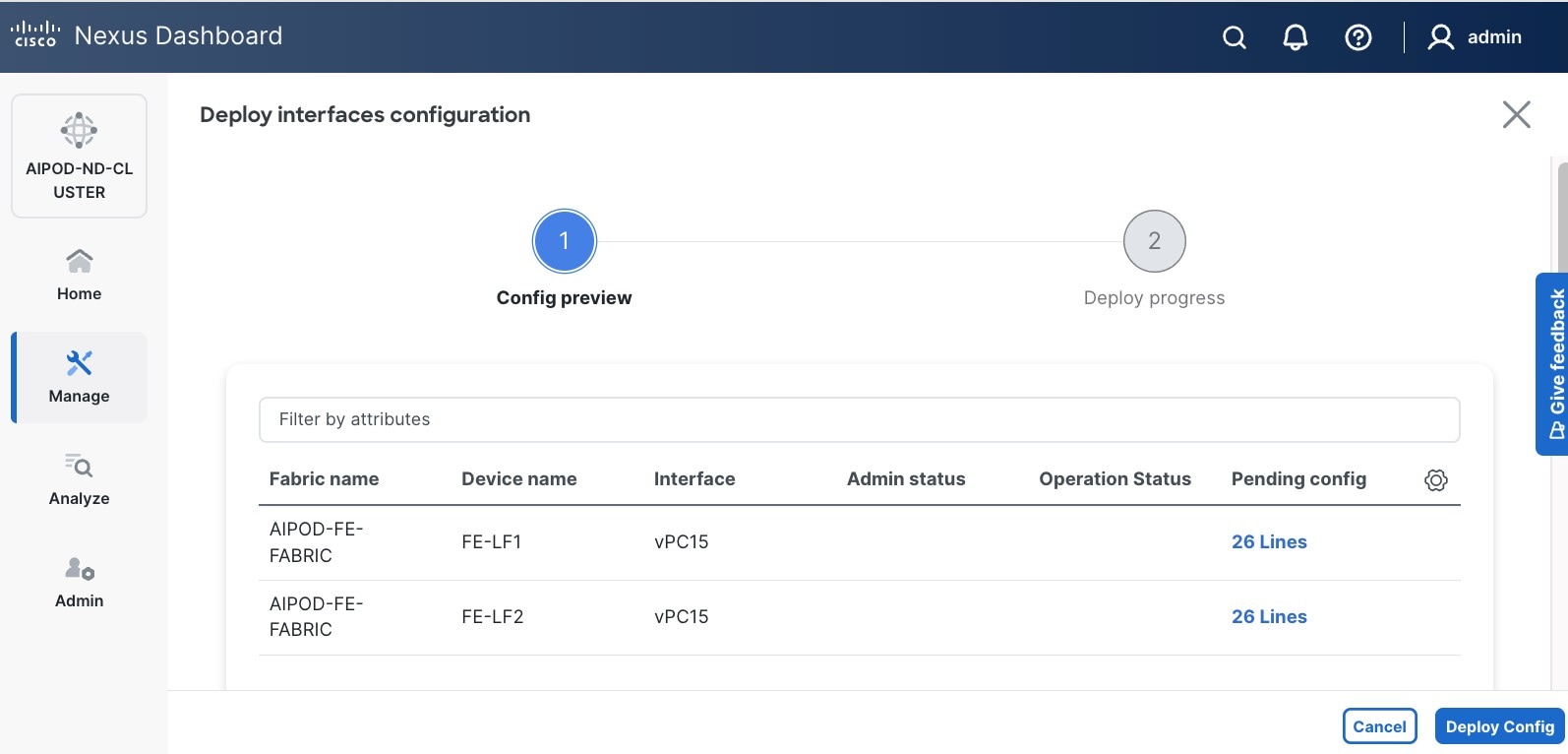

Step 6. Click Save.

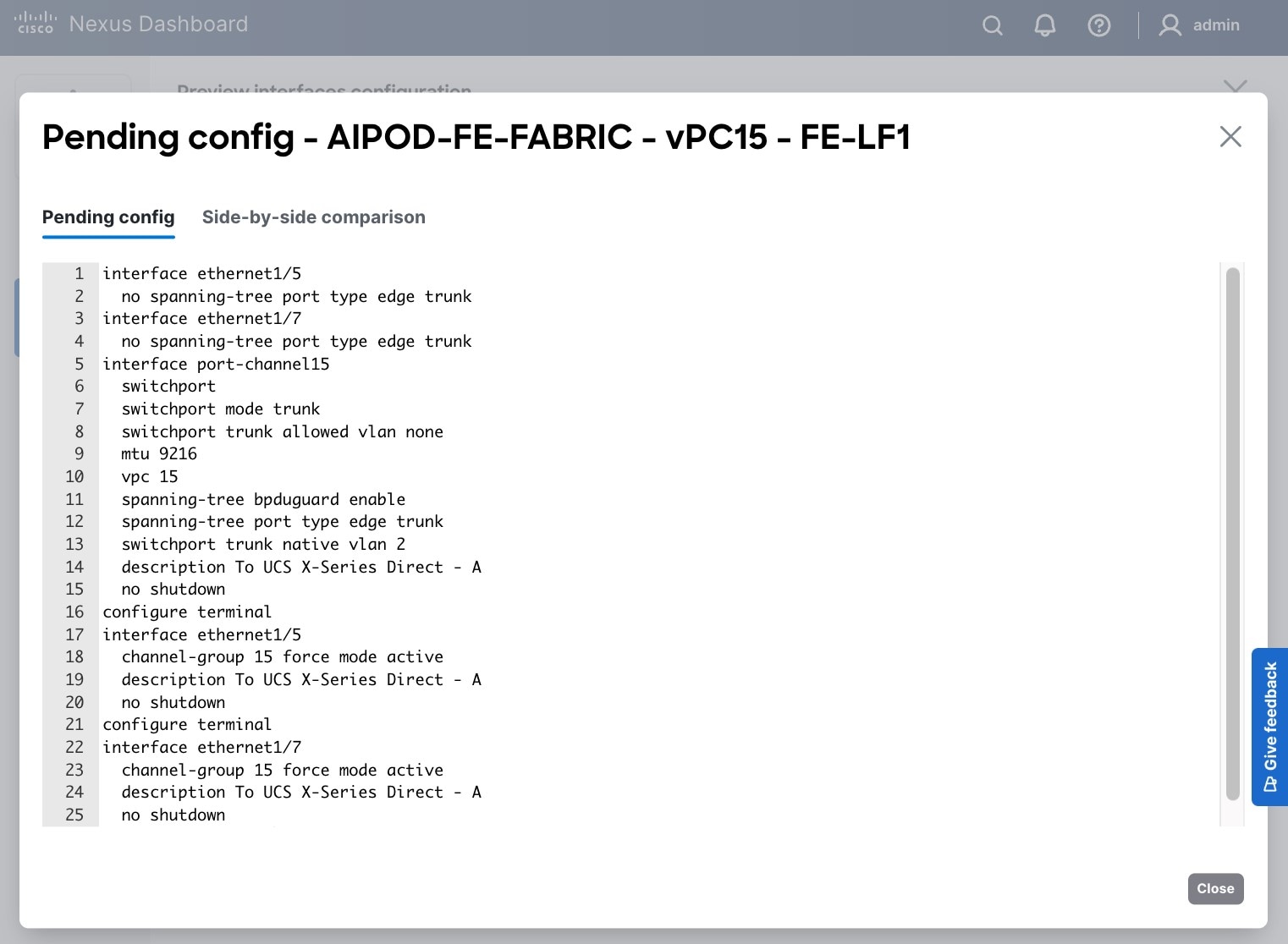

Step 7. Click Preview.

Step 8. Click Close, then click Cancel.

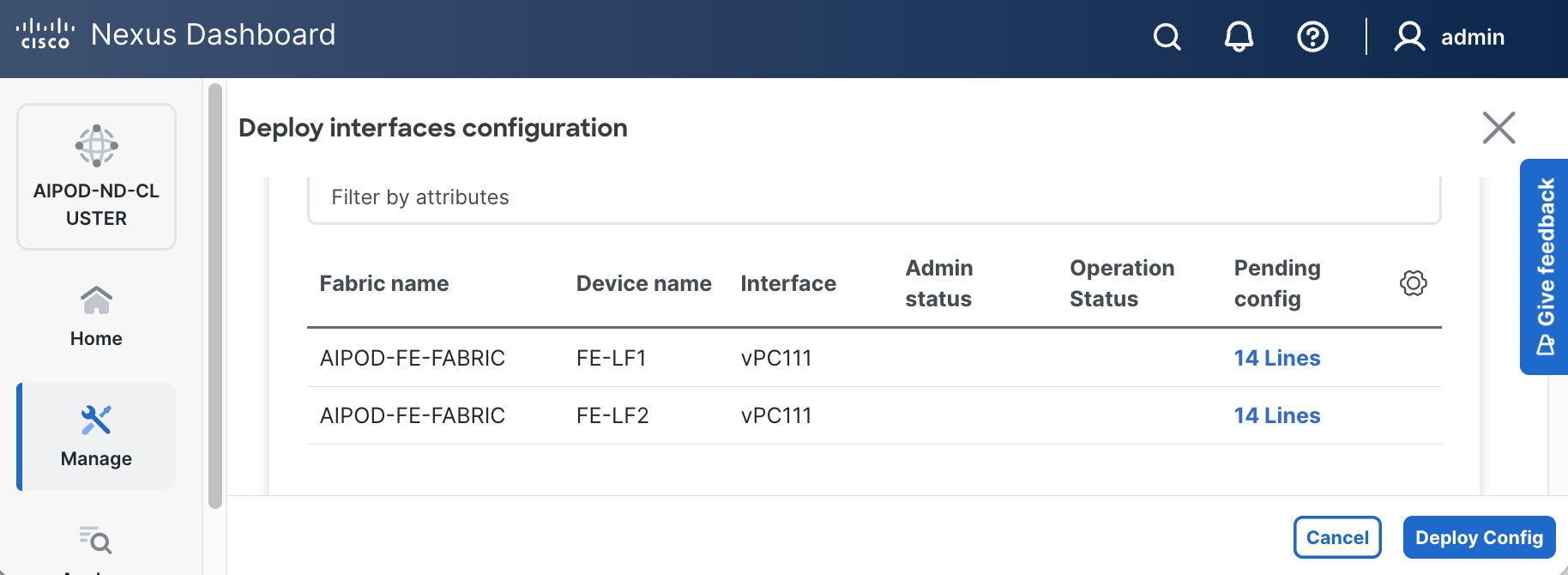

Step 9. Click Deploy. The Pending Config is the configuration shown in a previous step.

Step 10. Click Deploy Config.

Step 11. Verify that all the interfaces and port-channels are up on each switch in the vPC leaf pair that connects to the UCS X-Series Direct (-A side). It may take a few minutes for the vPC to go from Not discovered to consistent state.

Procedure 2. Deploy second vPC to Management UCS X-Direct

Step 1. Repeat the previous procedure for the second vPC to UCS X-Series Direct (-B side).

Step 2. Click Save.

Step 3. Click Deploy, followed by Deploy Config.

Step 4. Verify that all the interfaces and port-channels are up on each switch in the vPC leaf pair that connects to the UCS X-Series Direct (-B side). It may take a few minutes for the vPC to go from Not discovered to consistent state.

Enable Layer 2 Connectivity to UCS GPU Nodes from FE Fabric

To enable layer 2 connectivity to UCS GPU nodes, you will be configuring four vPCs, one per UCS C885A node. Each vPC will use one port on each switch in the compute leaf pair to connect to the UCS node.

Table 9. Setup Parameters for FE Fabric: Layer 2 Connectivity to UCS GPU Nodes

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| Leaf Switches |

FE-LF1, FE-LF2 |

|

| UCS Nodes |

4 x UCS C885A GPU Nodes, each dual-homed to FE-LF1 & FE-LF2 |

|

| Virtual Port Channel (vPC) |

To UCS C885As |

UCS GPU Nodes |

| vPC/PC1 - ID |

111 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/1 |

On each Leaf switch |

| vPC/PC2 – ID |

112 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/2 |

On each Leaf switch |

| vPC/PC3 - ID |

113 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/3 |

On each Leaf switch |

| vPC/PC4 – ID |

114 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/4 |

On each Leaf switch |

To enable Layer 2 connectivity to UCS C885A GPU nodes from the FE fabric, follow the procedures below.

Procedure 1. Deploy first vPC to first UCS C885A GPU node

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and navigate to Connectivity > Interfaces tab.

Step 4. Click the lower of the two Actions buttons and select Create interface.

Step 5. In the Create interface window:

● Specify the TypeType of interface as virtual Port Channel (vPC)virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pairSelect a vPC pair, select the compute leaf switch VPC pair from the drop-down list.

● Specify a vPC IDvPC ID for the vPC to the first first UCS GPU node. Peer-1 and Peer-2 Port-Channel ID should match that of the vPC ID.

● Leave the Policy as int_vpc_trunk_host.

● Enable checkbox for Config Mirroring.

● Specify Peer-1 Member InterfacesPeer-1 Member Interfaces that connects to first UCS node.

● Specify Peer-1 Native Vlan.

● Specify Peer-1 PO Description.

● EnableEnable the checkbox for Copy PO DescriptionCopy PO Description to copy PO description to all member interfaces.

Step 6. Additional configuration changes can be made later as needed. Click Save.

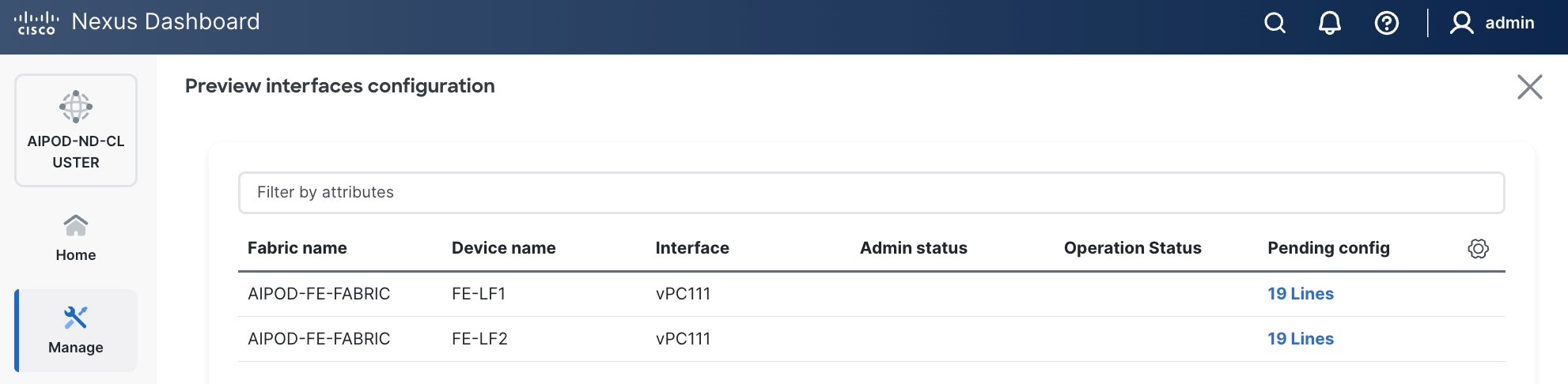

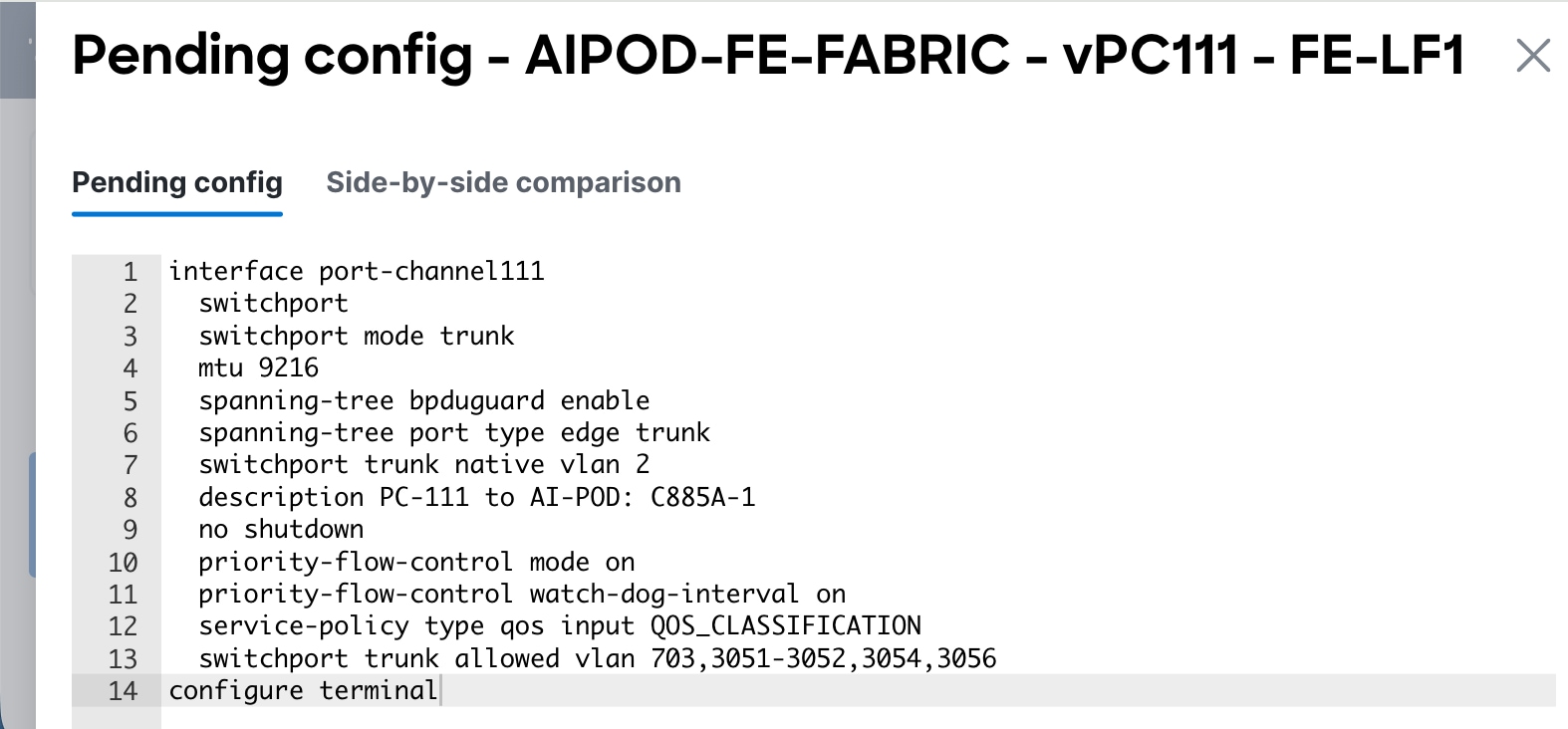

Step 7. Click Preview to view the Pending config changes.

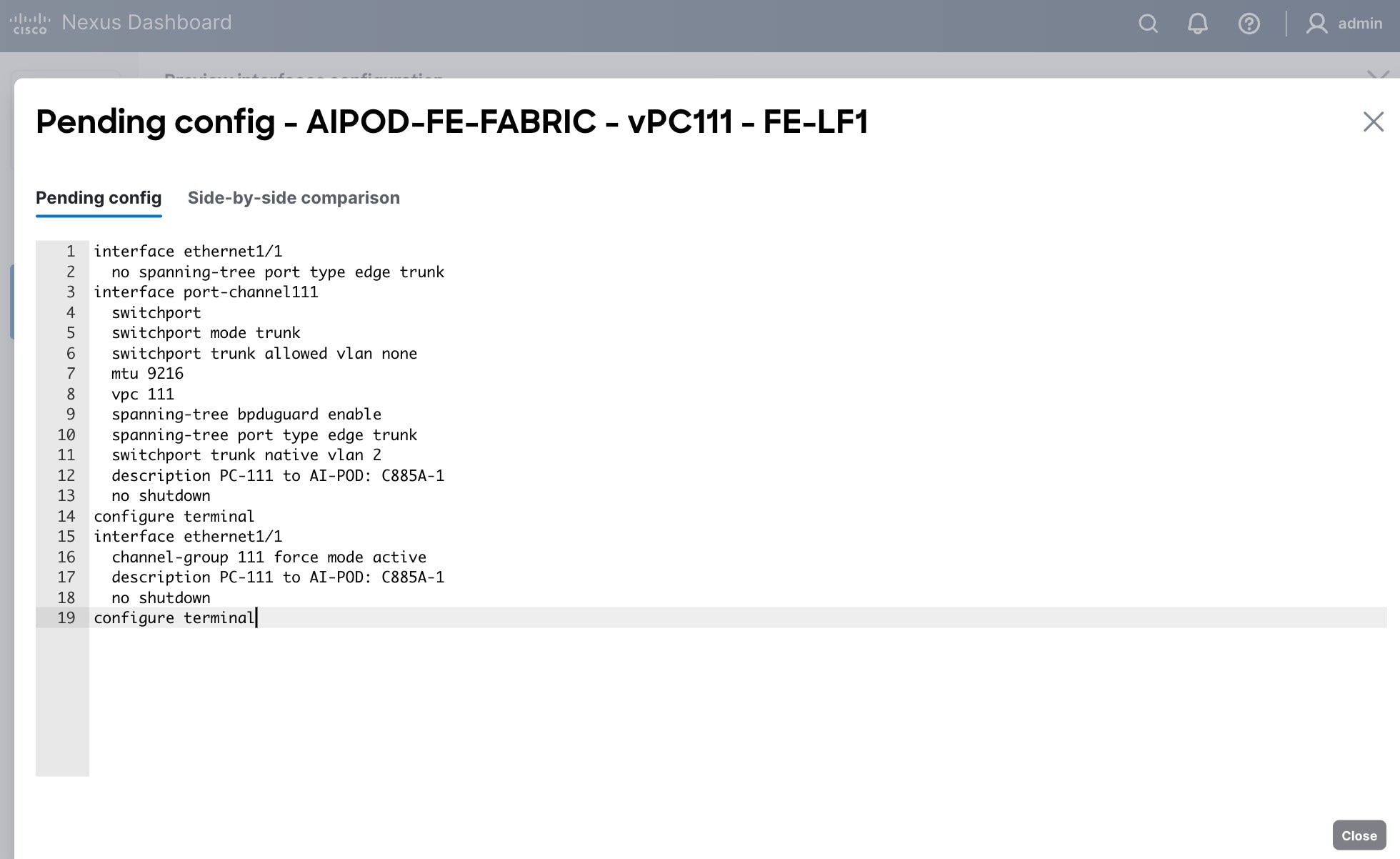

Step 8. Click the Pending Config for each switch to see the configuration.

Step 9. Click the X in the top right corner and select Deploy and Deploy config to deploy the Pending config changes.

Step 10. Click Close when deployment completes successfully.

Step 11. Verify that all the interfaces and port-channel is up on each switch in the leaf switch pair that connects to the UCS node. It may take a few minutes for the vPC to go from Not discovered to consistent state.

Procedure 2. Deploy vPCs to remaining UCS C885A GPU nodes

Step 1. Repeat the previous procedure to provision layer 2 connectivity from the compute/management leaf switches to the remaining 3 UCS nodes in the cluster.

Step 2. Verify that all the interfaces and port-channel is up on each switch in the leaf switch pair that connects to the UCS nodes. It may take a few minutes for the vPC to go from Not discovered to consistent state.

(Ubuntu) Enable Layer 2 Connectivity to NVIDIA BCM Nodes

If running Ubuntu on the Cisco UCS C885A M8 nodes under NVIDIA BCM, to enable Layer 2 connectivity to the BCM (UCS) node(s) from the FE fabric, you will be configuring two vPCs from the same BCM node: one to compute/management leaf pair and another storage leaf pair.

Table 10. Setup Parameters for FE Fabric: Layer 2 Connectivity to NVIDIA BCME Nodes

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| Virtual Port Channel (vPC) |

To BCME Node |

Management/Control/Workload Management Node |

| vPC/PC1 - ID |

17 |

|

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/21 |

|

| vPC/PC1 - ID |

18 |

|

| vPC Pair |

FE-SLF1, FE-SLF2 |

|

| Ports |

1/24 |

|

To enable Layer 2 connectivity to the BCM (UCS) node(s) from the FE fabric, follow the procedures below.

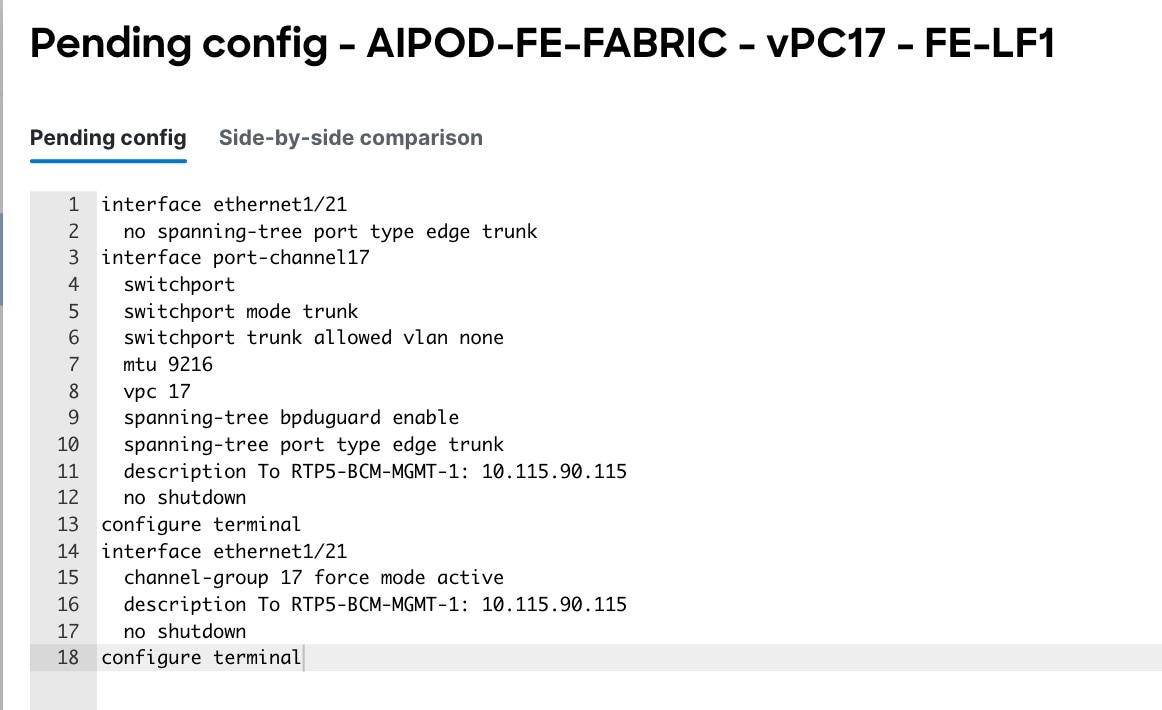

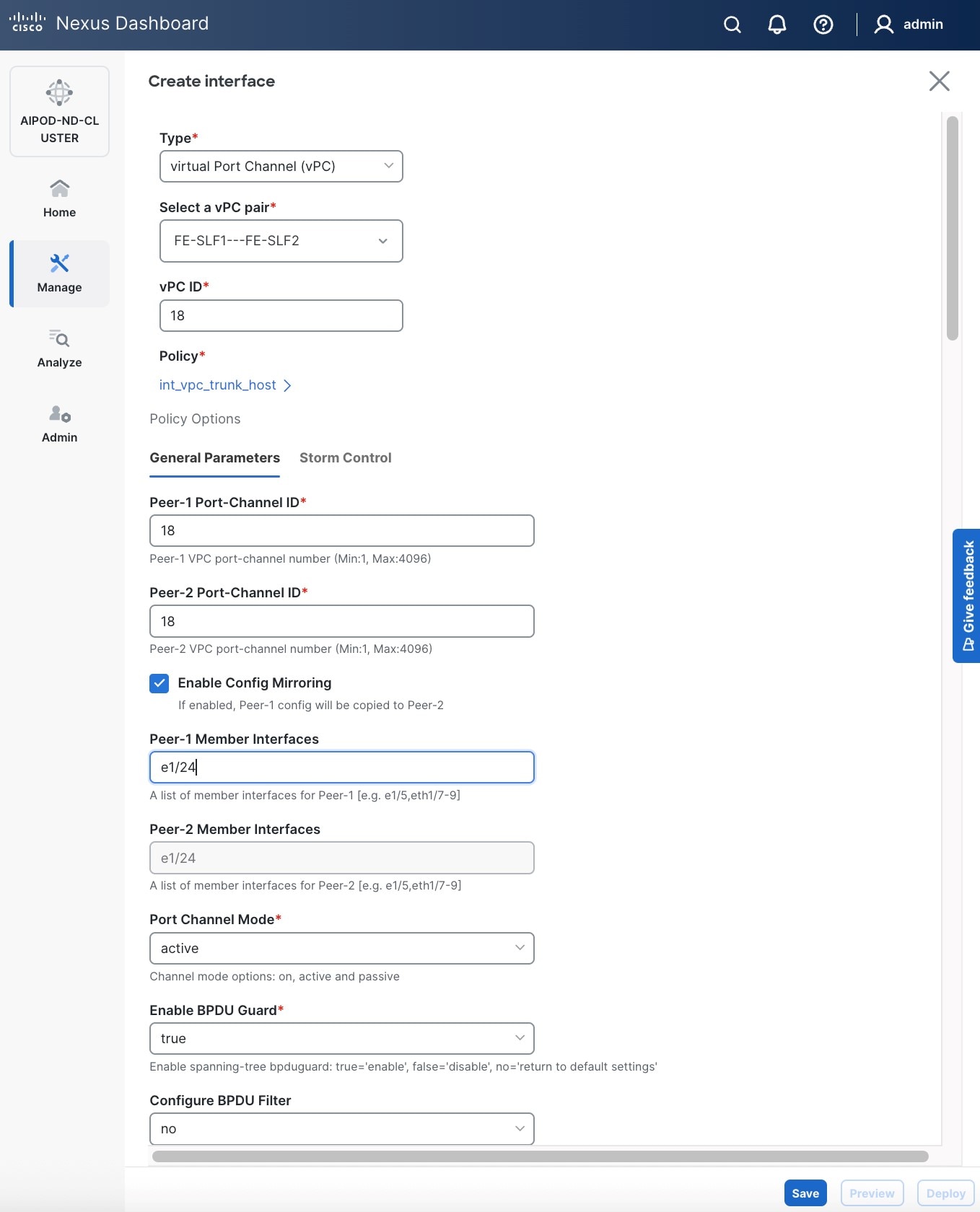

Procedure 1. Deploy first vPC to BCM node from compute leaf switch pair

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and navigate to Connectivity > Interfaces tab.

Step 4. Click the lower of the two Actions buttons and select Create interface.

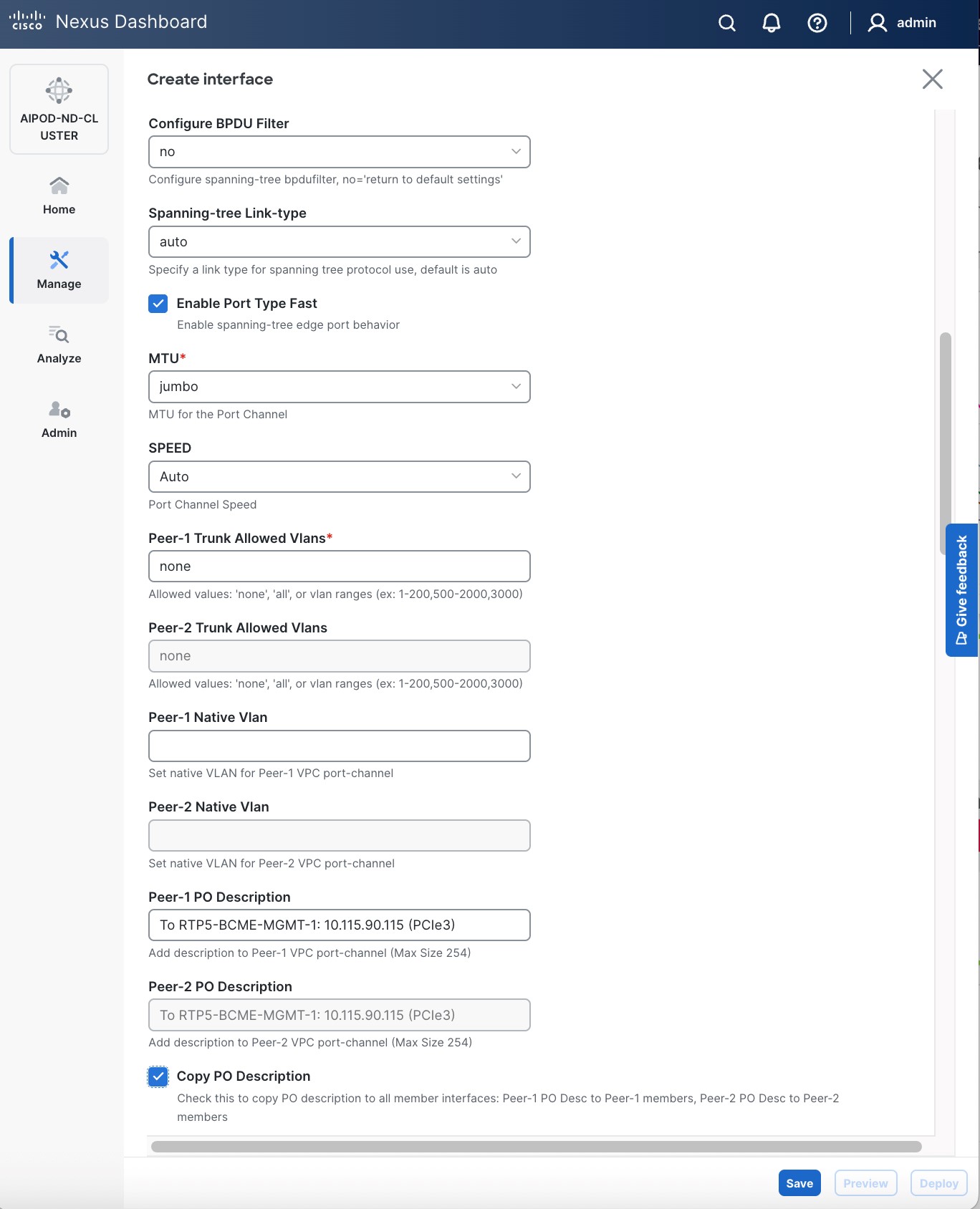

Step 5. In the Create interface window:

● Specify the TypeType of interface as virtual Port Channel (vPC)virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pairSelect a vPC pair, select the leaf switch pair from the drop-down list.

● Specify a vPC IDvPC ID for the firstfirst vPC to the BCME nodeBCME node. Port Channel IDs from each switch to the first UCS node should match the vPC ID (see screenshot below).

● Leave the Policy as int_vpc_trunk_host.

● EnableEnable checkbox for Config MirroringConfig Mirroring to configure both vPC peer switches identically.

● Specify Peer-1 Member Interfaces Peer-1 Member Interfaces that connects to the BCME node.

● Scroll down and fill remaining fields: Native VLAN (optional), Peer-1 PO Description, Copy PO Description.

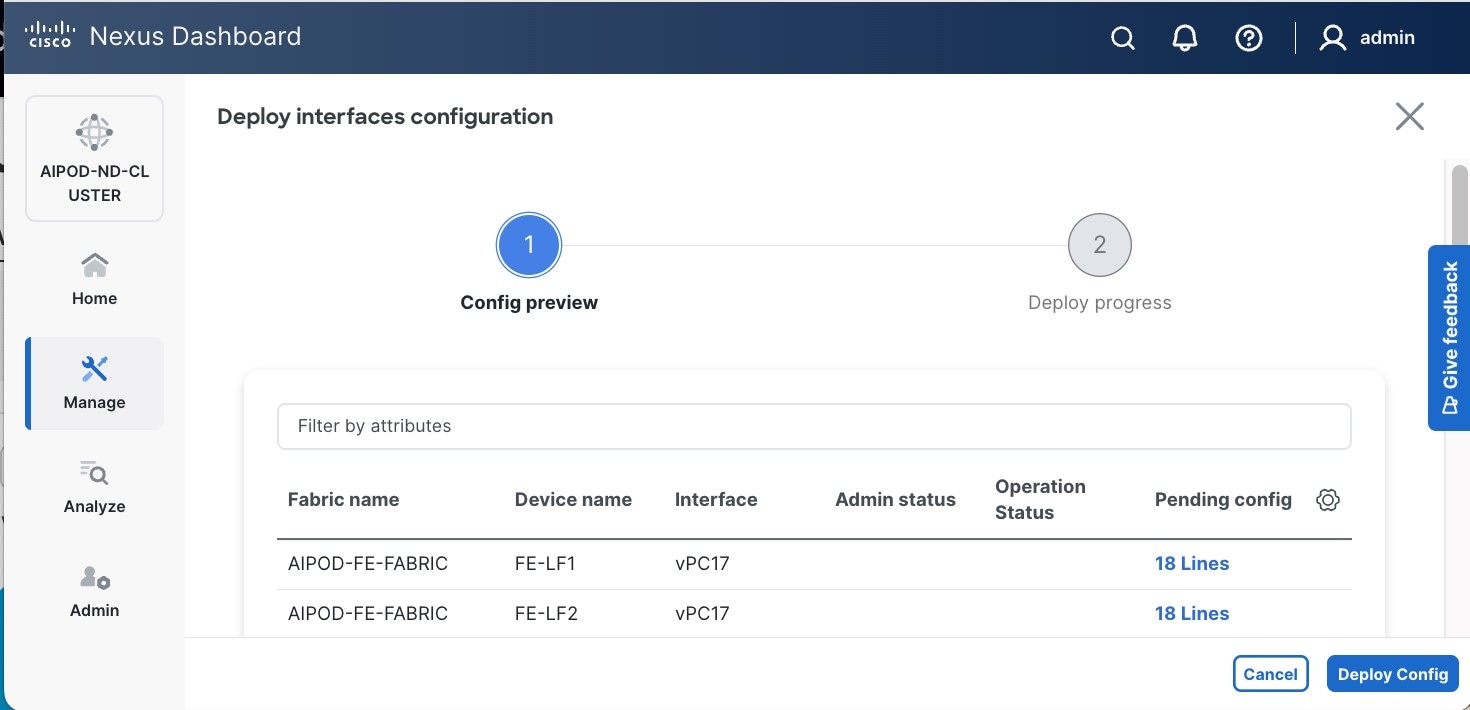

Step 6. Click Save.

Step 7. Click Preview.

Step 8. Click Close, then click Cancel.

Step 9. Click Deploy. The Pending Config is the configuration shown in a previous step.

Step 10. Click Deploy Config.

Step 11. Verify that all the interfaces and port-channels are up on each switch in the vPC leaf pair that connects to the BCME node. It may take a few minutes for the vPC to go from Not discovered to consistent state.

Procedure 2. Deploy second vPC to BCM node from storage leaf switch pair

Step 1. Repeat the previous procedure for the second vPC from storage leaf pair to BCME node.

Step 2. Click Save.

Step 3. Click Deploy, then click Deploy Config.

Step 4. Verify that all the interfaces and port-channels are up on each switch in the vPC leaf pair that connects to the BCM node. It may take a few minutes for the vPC to go from Not discovered to consistent state.

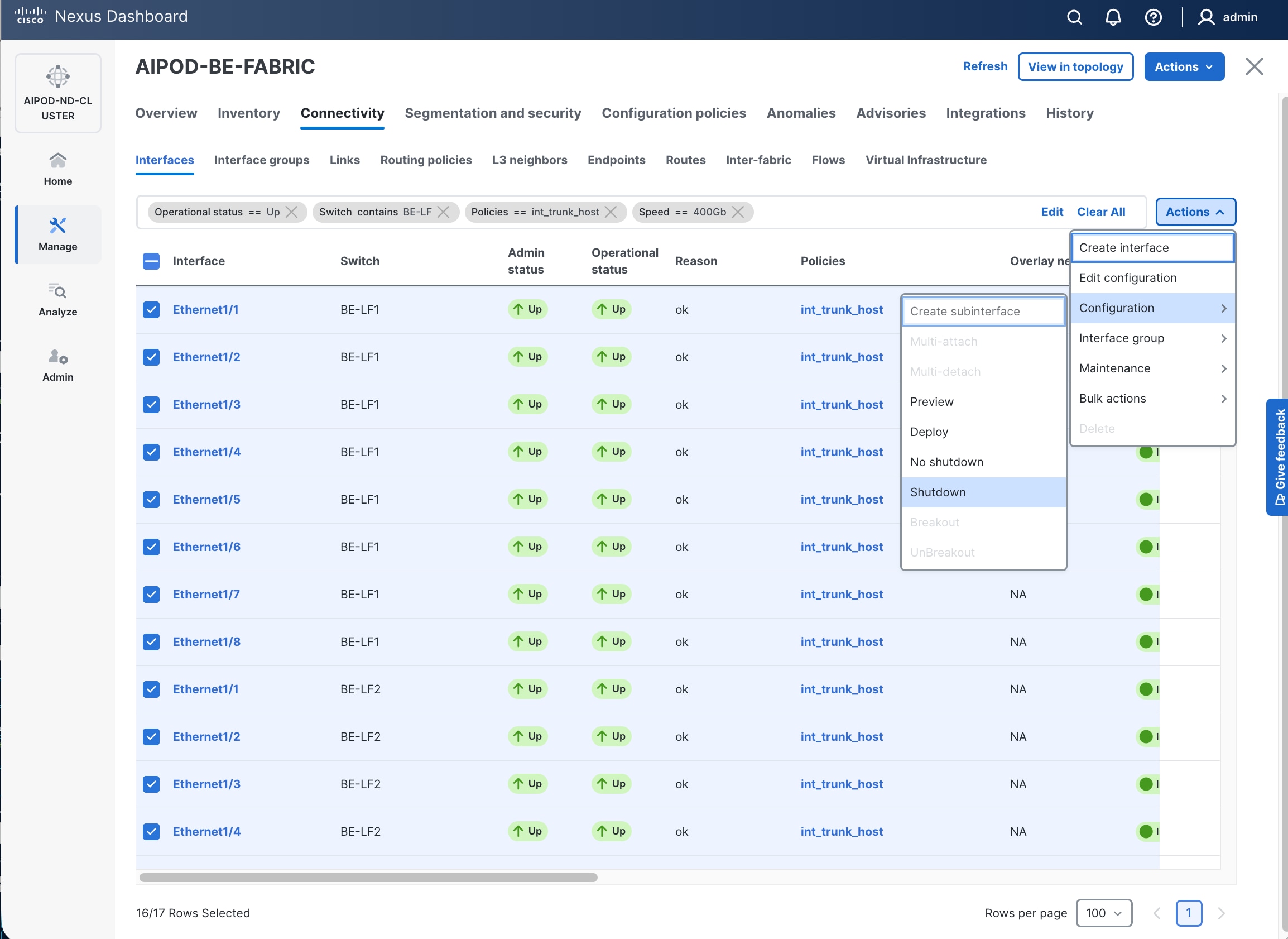

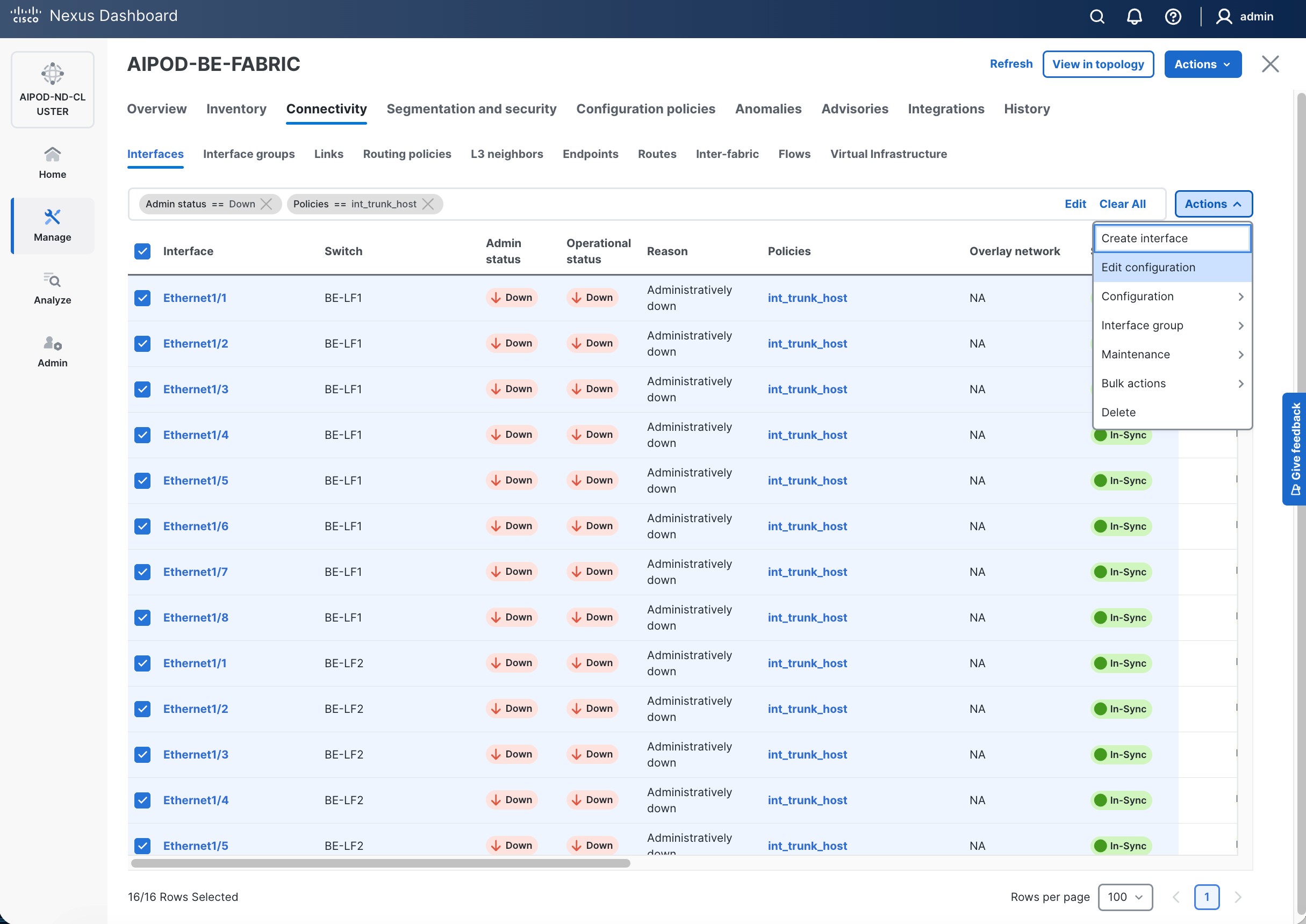

(Ubuntu) Enable Layer 2 Connectivity to UCS GPU Nodes from FE Fabric

If running Ubuntu on the Cisco UCS C885A M8 nodes under NVIDIA BCM, to enable Layer 2 connectivity to UCS C885A GPU nodes from the FE fabric, you will be configuring four vPCs, one per UCS C885A node. Each vPC will use one port on each switch in the compute leaf pair to connect to the UCS node.

If running OpenShift on the Cisco UCS C885A M8 nodes, follow this procedure but set the native VLAN to 2 below instead of 703.

Table 11. Setup Parameters for FE Fabric: Layer 2 Connectivity to UCS GPU Nodes

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| Leaf Switches |

FE-LF1, FE-LF2 |

|

| UCS Nodes |

4 x UCS C885A GPU Nodes |

Each node is dual-homed to FE-LF1 & FE-LF2 |

| Virtual Port Channel (vPC) |

To UCS C885As |

UCS GPU Nodes |

| vPC/PC1 - ID |

111 |

To UCS C885A-1 |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/1 |

On each Leaf switch |

| vPC/PC2 – ID |

112 |

To UCS C885A-2 |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/2 |

On each Leaf switch |

| vPC/PC3 - ID |

113 |

To UCS C885A-3 |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/3 |

On each Leaf switch |

| vPC/PC4 – ID |

114 |

To UCS C885A-4 |

| vPC Pair |

FE-LF1, FE-LF2 |

|

| Ports |

1/4 |

On each Leaf switch |

To enable Layer 2 connectivity to UCS C885A GPU nodes from the FE fabric, follow the procedures below. You will be configuring four vPCs, one per UCS C885A node. Each vPC will use one port on each switch in the compute leaf pair to connect to the UCS node.

Procedure 1. Deploy first vPC to first UCS C885A GPU node

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and navigate to Connectivity > Interfaces tab.

Step 4. Click the lower of the two Actions buttons and select Create interface.

Step 5. In the Create interface window:

● Specify the TypeType of interface as virtual Port Channel (vPC)virtual Port Channel (vPC) from the drop-down list.

● For the Select a vPC pairSelect a vPC pair, select the compute leaf switch VPC pair from the dropdown list.

● Specify a vPC IDvPC ID for the vPC to the first first UCS GPU node. Peer-1 and Peer-2 Port-Channel ID should match that of the vPC ID.

● Leave the Policy as int_vpc_trunk_host.

● Enable checkbox for Config Mirroring.

● Specify Peer-1 Member InterfacesPeer-1 Member Interfaces that connects to first UCS node.

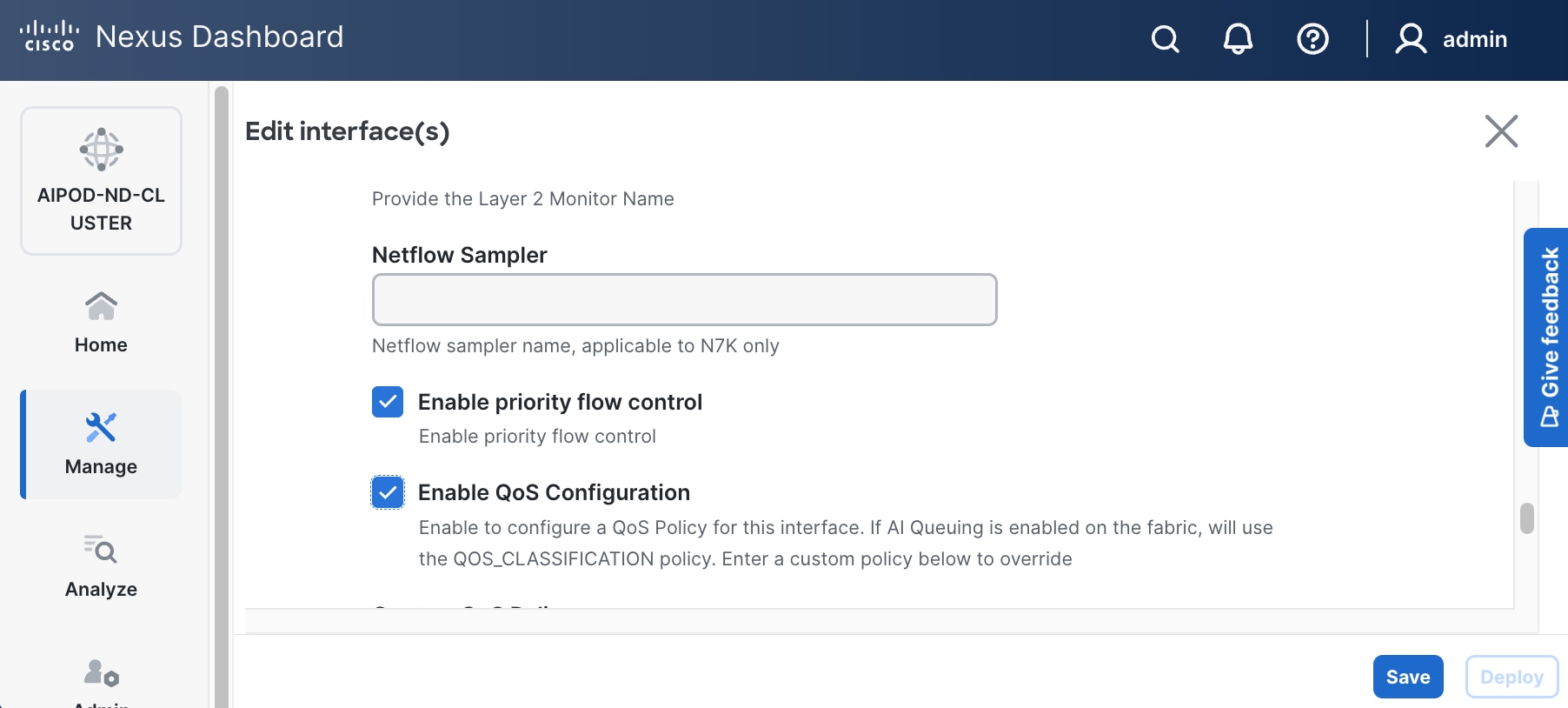

● Specify Peer-1 Native Vlan. Peer-1 Native Vlan. – If running OpenShift on the C885A nodes, set the Peer-1 Native VLAN to 2

● Specify Peer-1 PO Description.

● EnableEnable checkbox for Copy PO DescriptionCopy PO Description to copy PO description to all member interfaces.

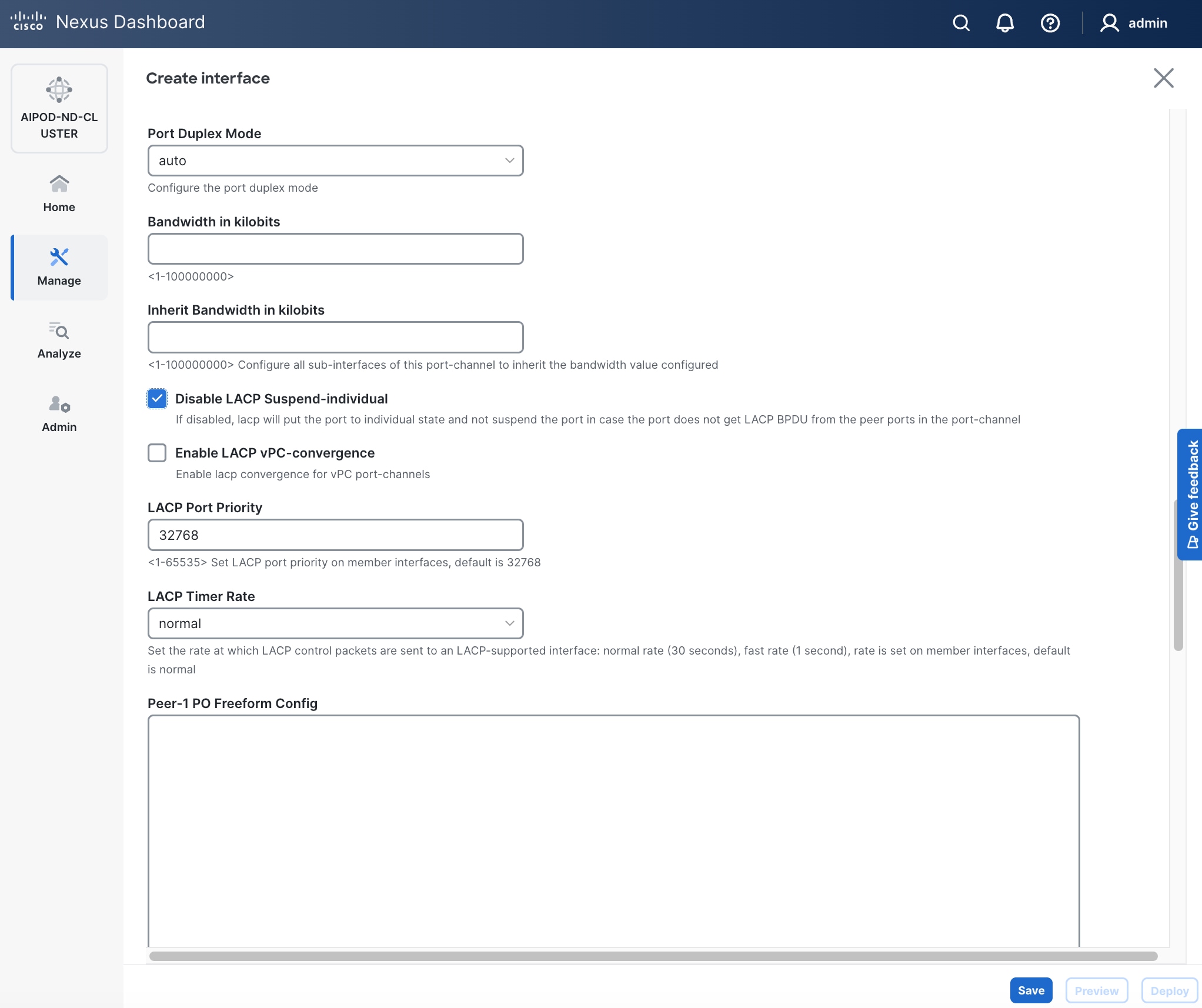

● Enable checkbox for Disable LACP Suspend-individual Disable LACP Suspend-individual – If running OpenShift on the C885A nodes, do not select this checkbox.

● Leave everything else as is. Additional configuration changes can be made later as needed.

Step 6. Click Save.

Step 7. Click Preview.

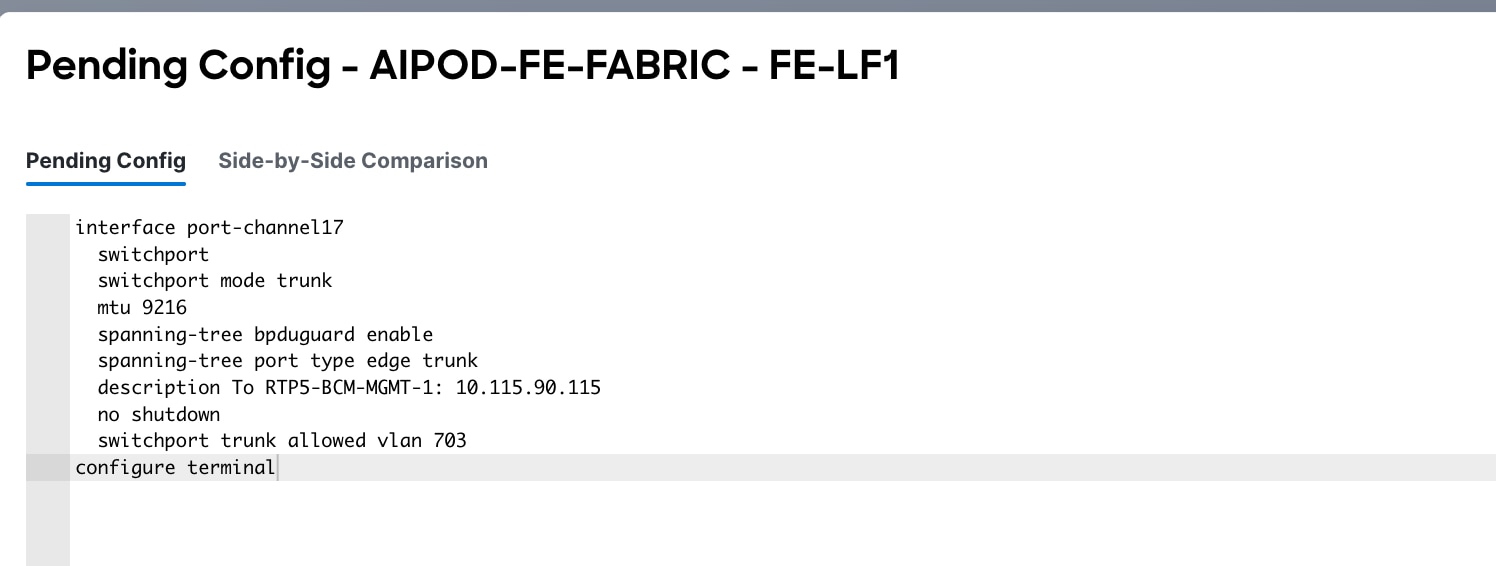

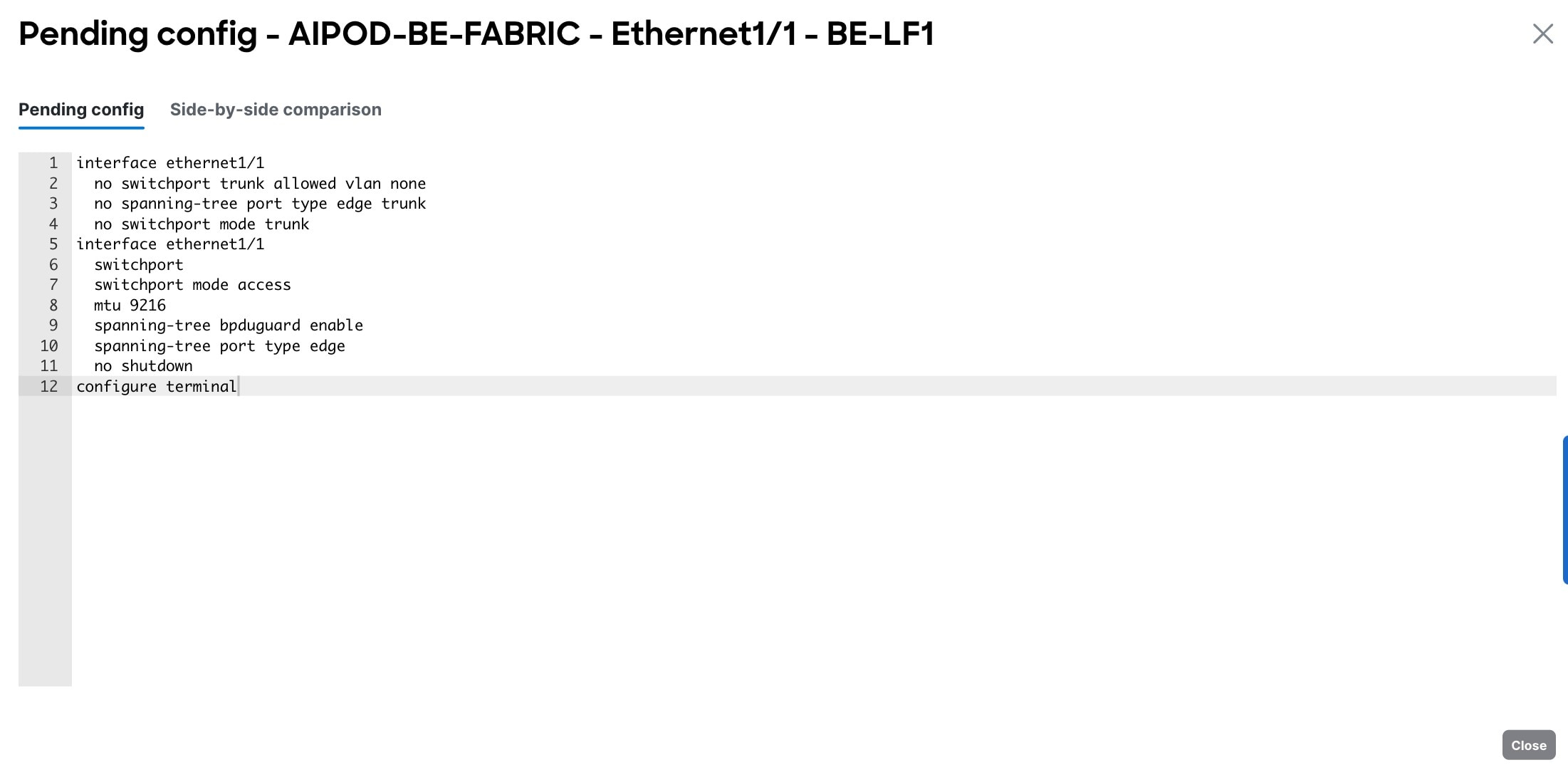

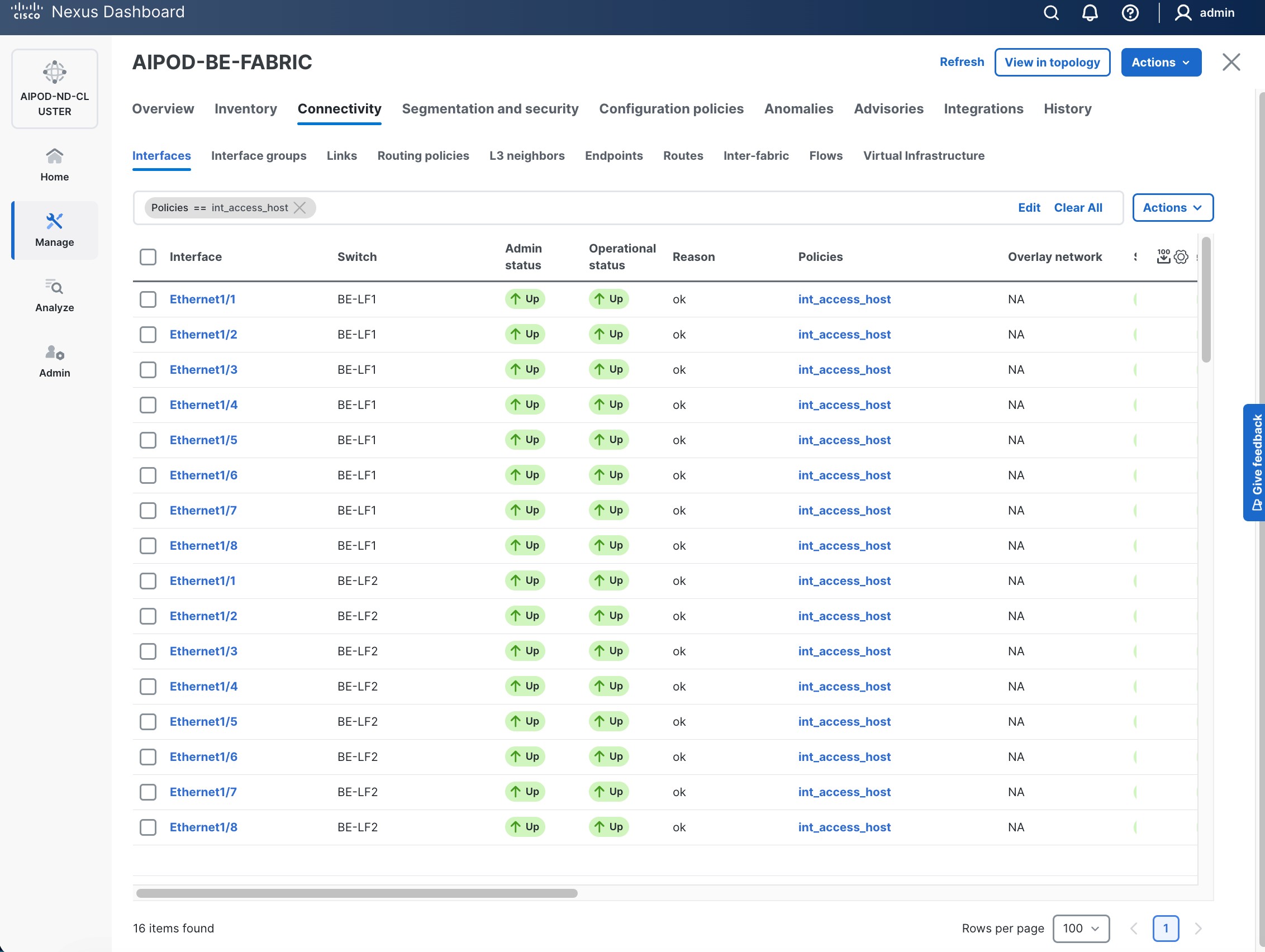

Step 8. To view the Pending config changes, click the Pending Config column for each switch (X lines) to see the configuration. The configuration is provided as a reference from one leaf switch.

Step 9. Click the X in the top right corner and select Deploy and Deploy config to deploy the Pending config changes.

Step 10. Click Close when deployment completes successfully.

Step 11. Verify that all the interfaces and port-channel is up on each switch in the leaf switch pair that connects to the UCS node. It may take a few minutes for the vPC to go from Not discovered to consistent state.

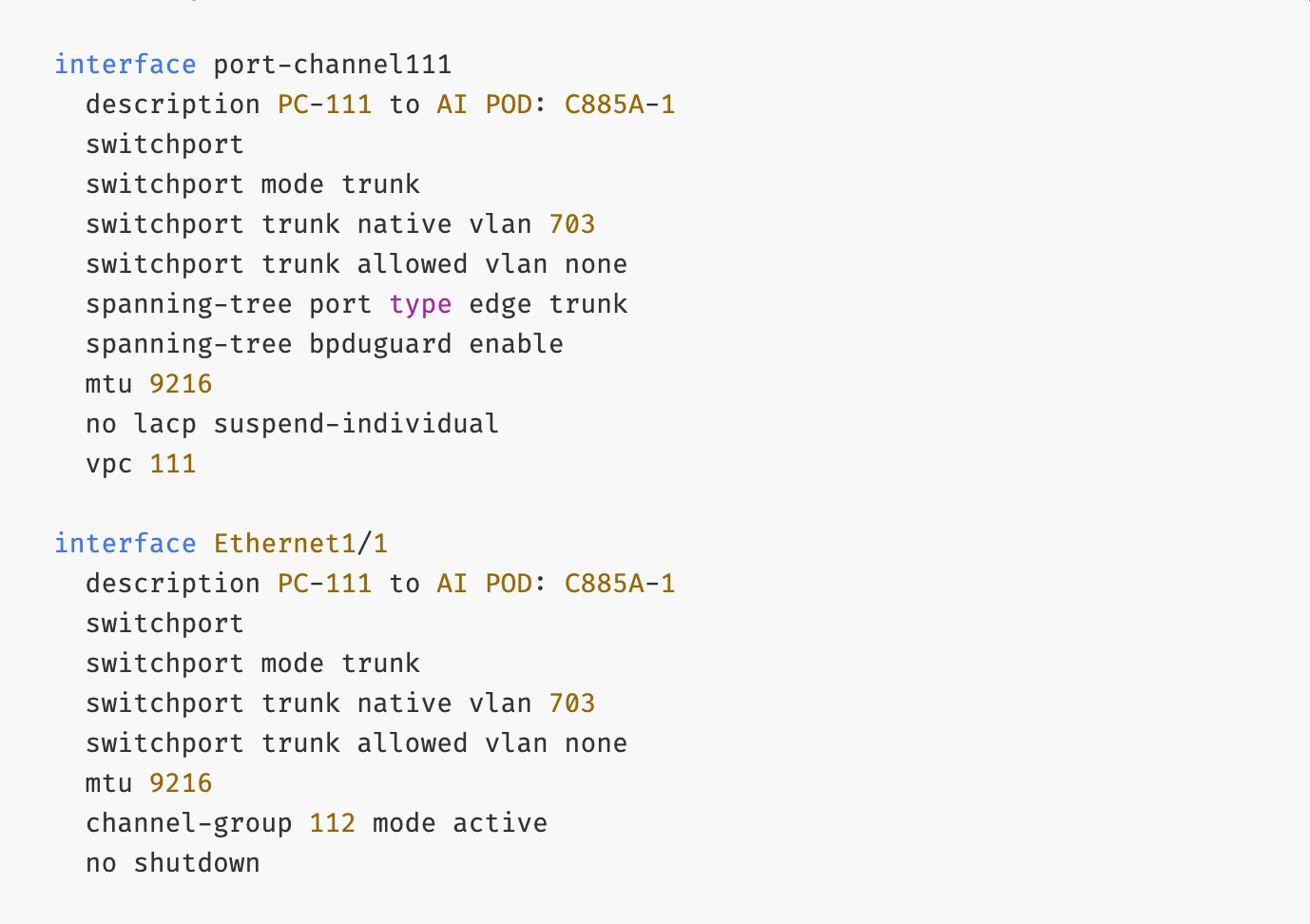

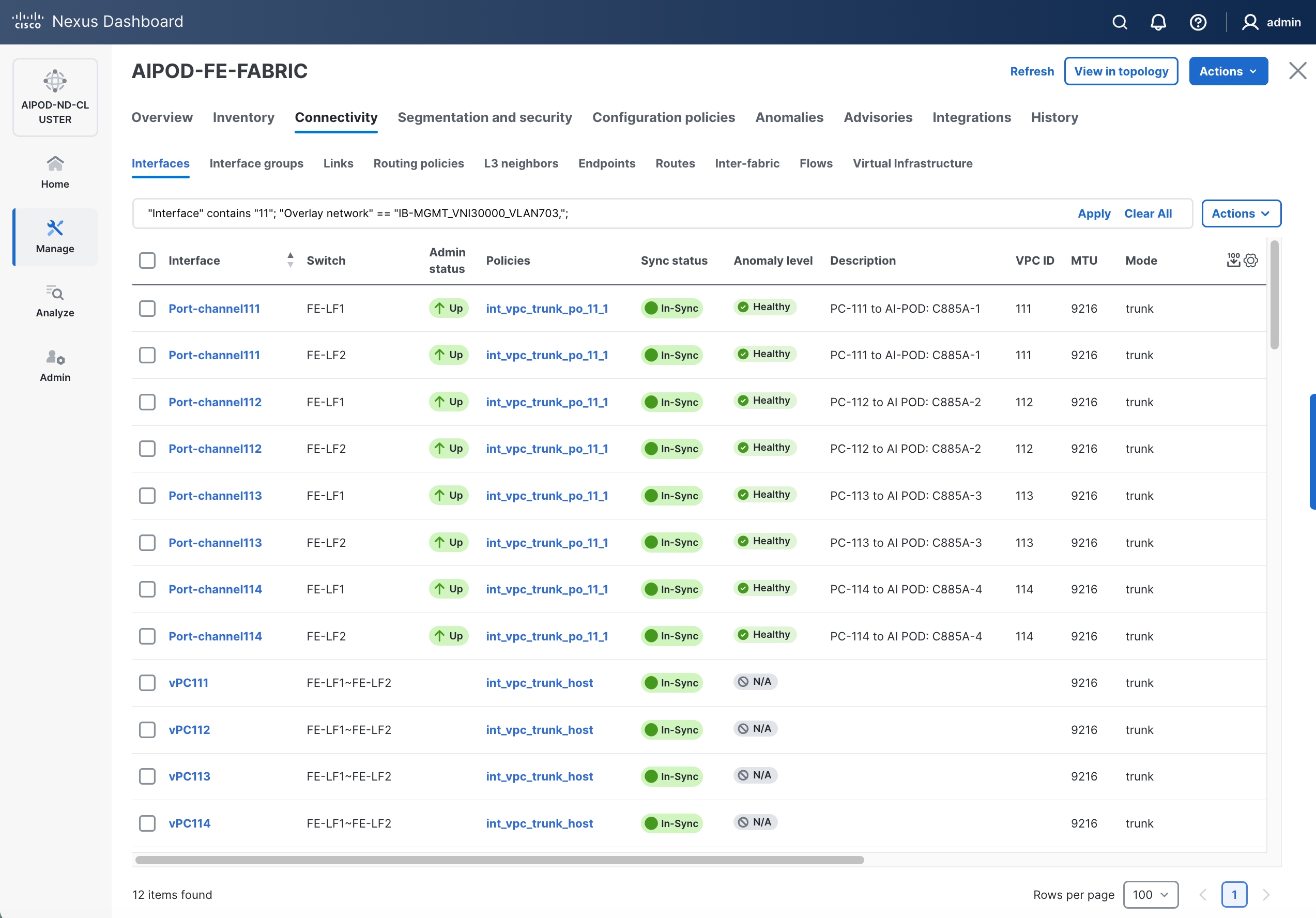

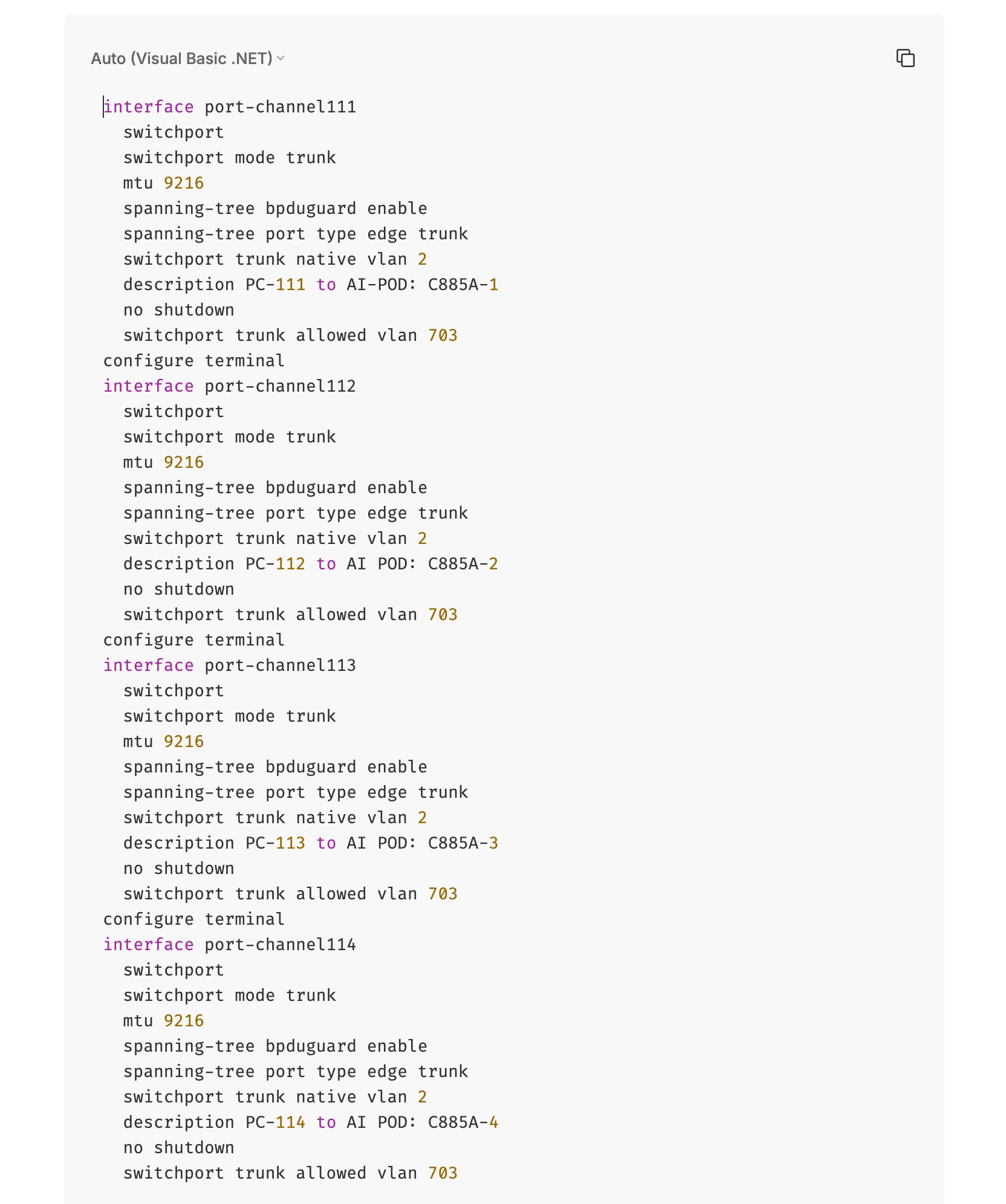

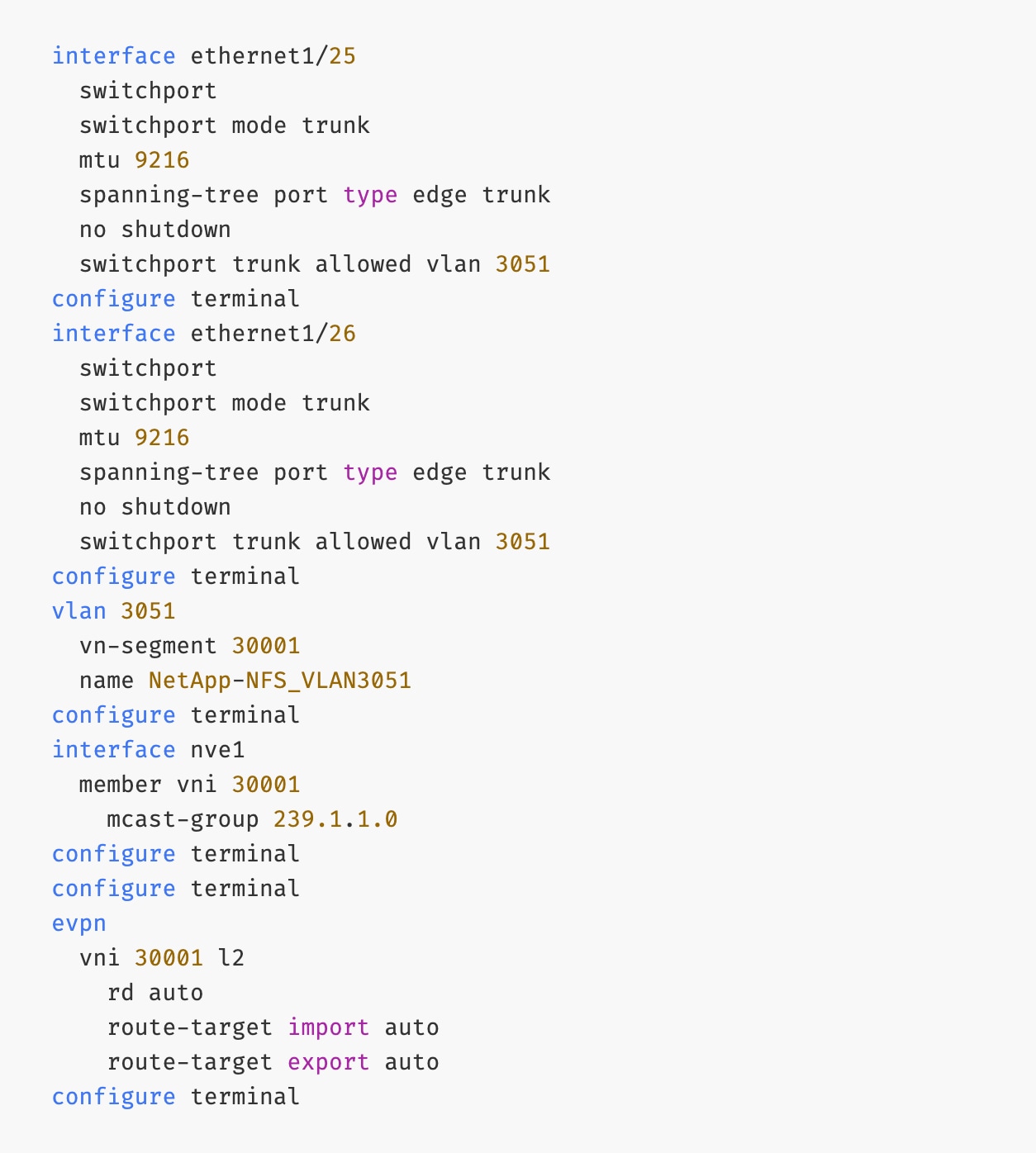

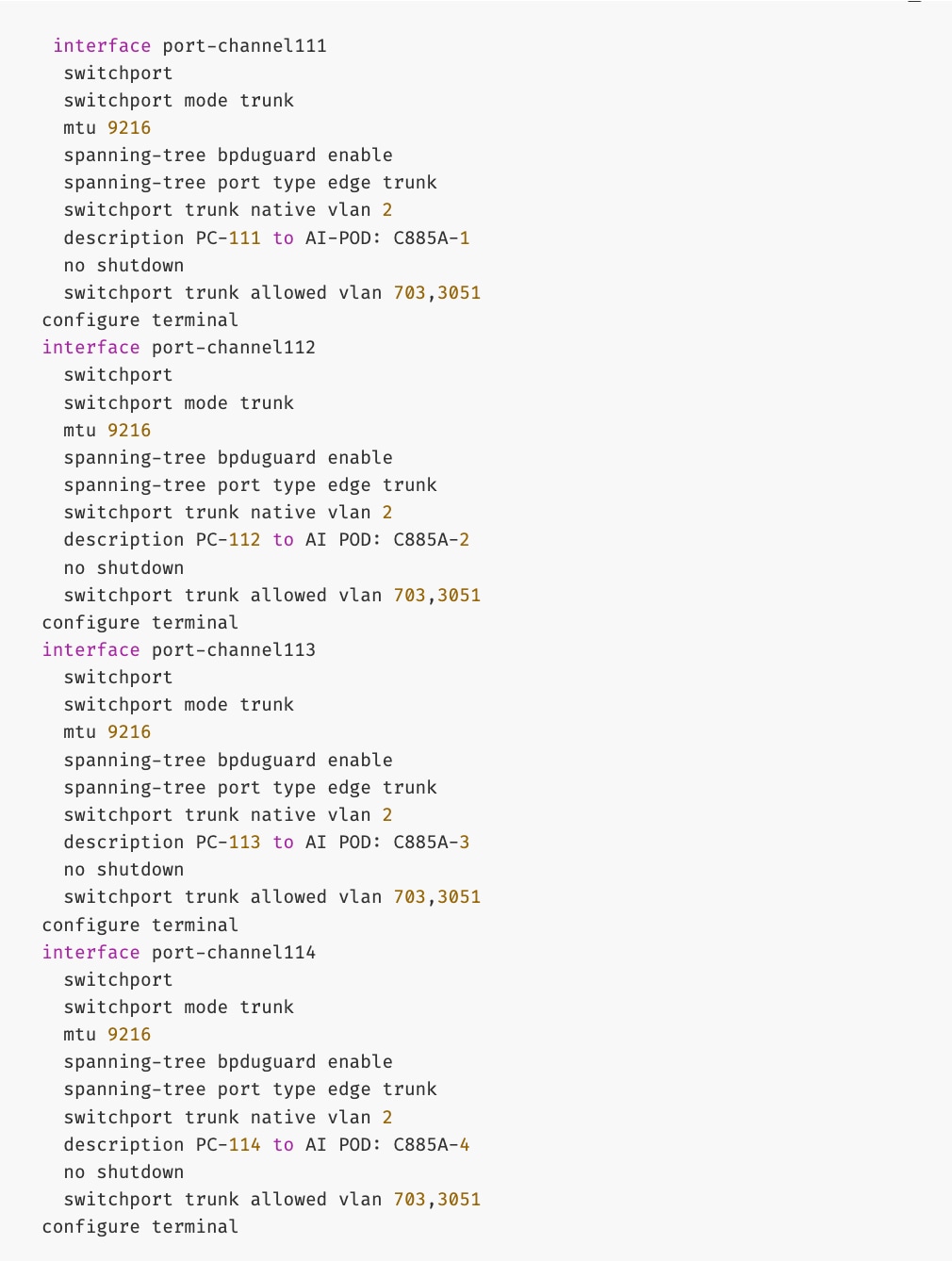

The deployed configuration on one leaf switch is provided as a reference below:

Procedure 2. Deploy vPCs to remaining UCS C885A GPU nodes

Step 1. Repeat the previous procedure to provision layer 2 connectivity from the compute/management leaf switches to the remaining 3 UCS nodes in the cluster.

Step 2. Verify that all the interfaces and port-channel is up on each switch in the leaf switch pair that connects to the UCS nodes. It may take a few minutes for the vPC to go from Not discovered to consistent state.

Enable In-Band Management Connectivity to UCS GPU and Management Nodes

The In-band management (IB-MGMT) network in the FE fabric will provide the following connectivity:

● Connectivity from control, management and services nodes to the UCS GPU nodes where the AI workload is running

● Connectivity to other networks (networks outside this FE fabric to other networks within the enterprise or external to the enterprise)

In a Red Hat OpenShift environment, this network will also serve as the Cluster IP pod network for the OpenShift cluster running on UCS management (Kubernetes Control) nodes and UCS GPU (Kubernetes Worker) nodes.

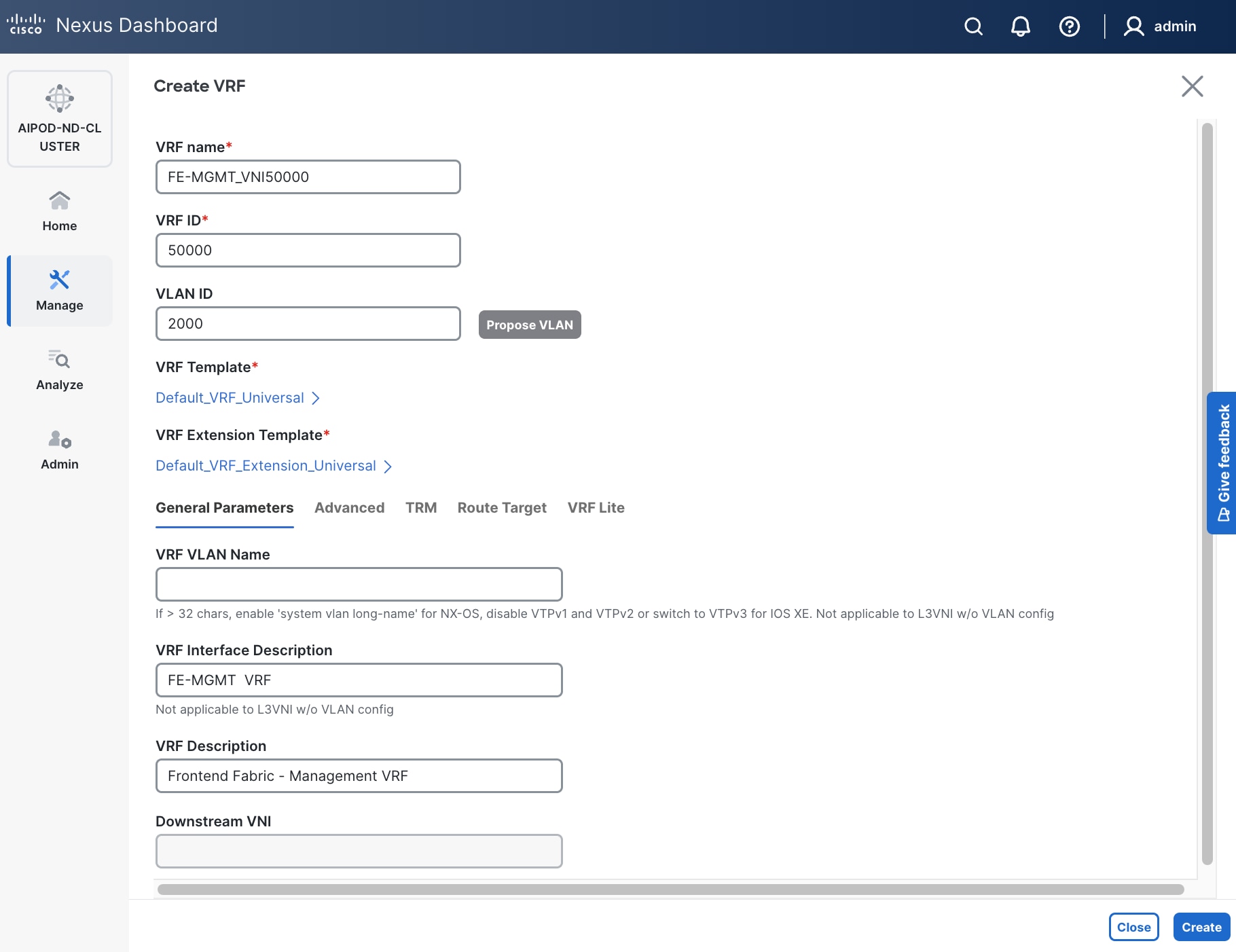

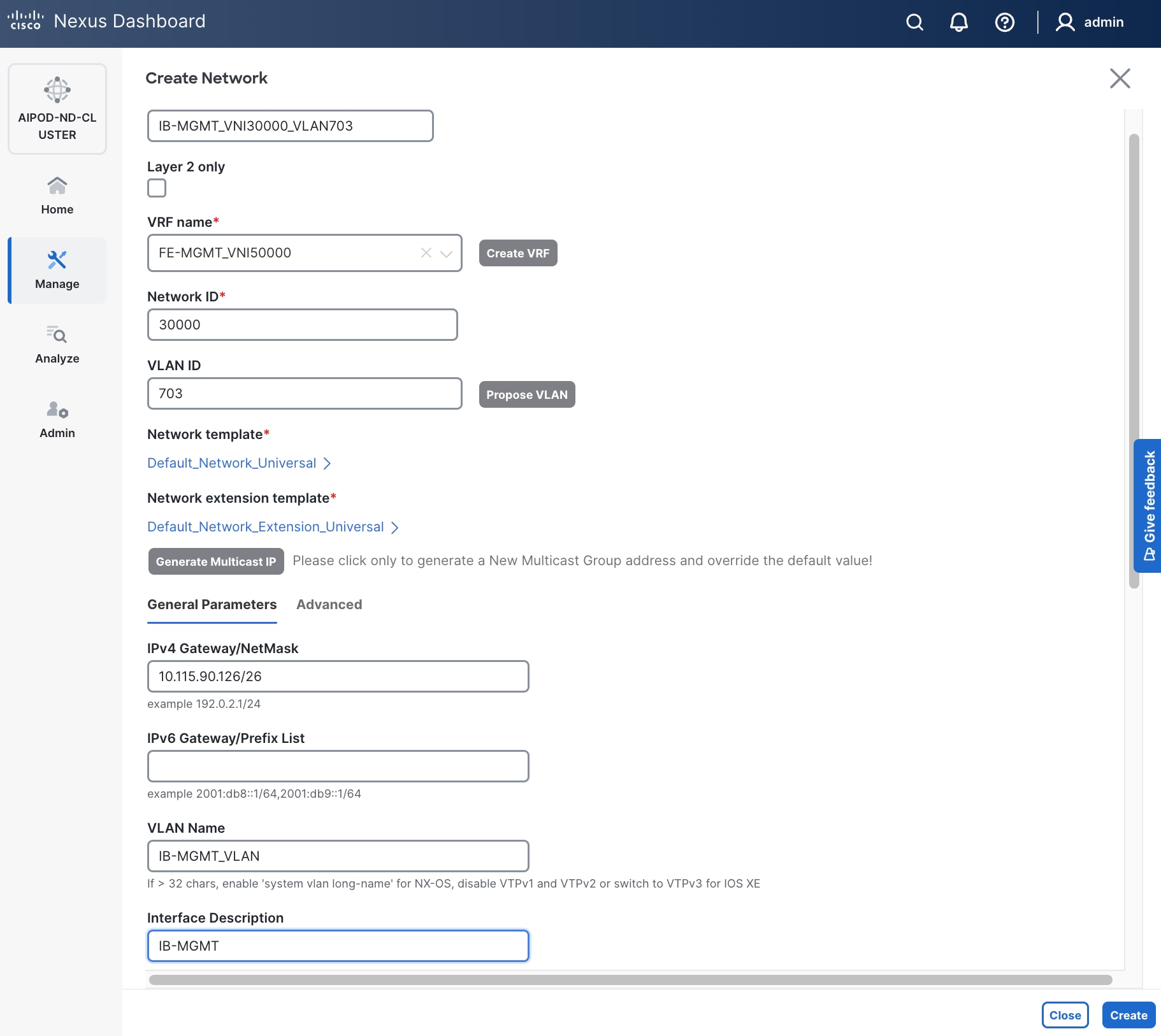

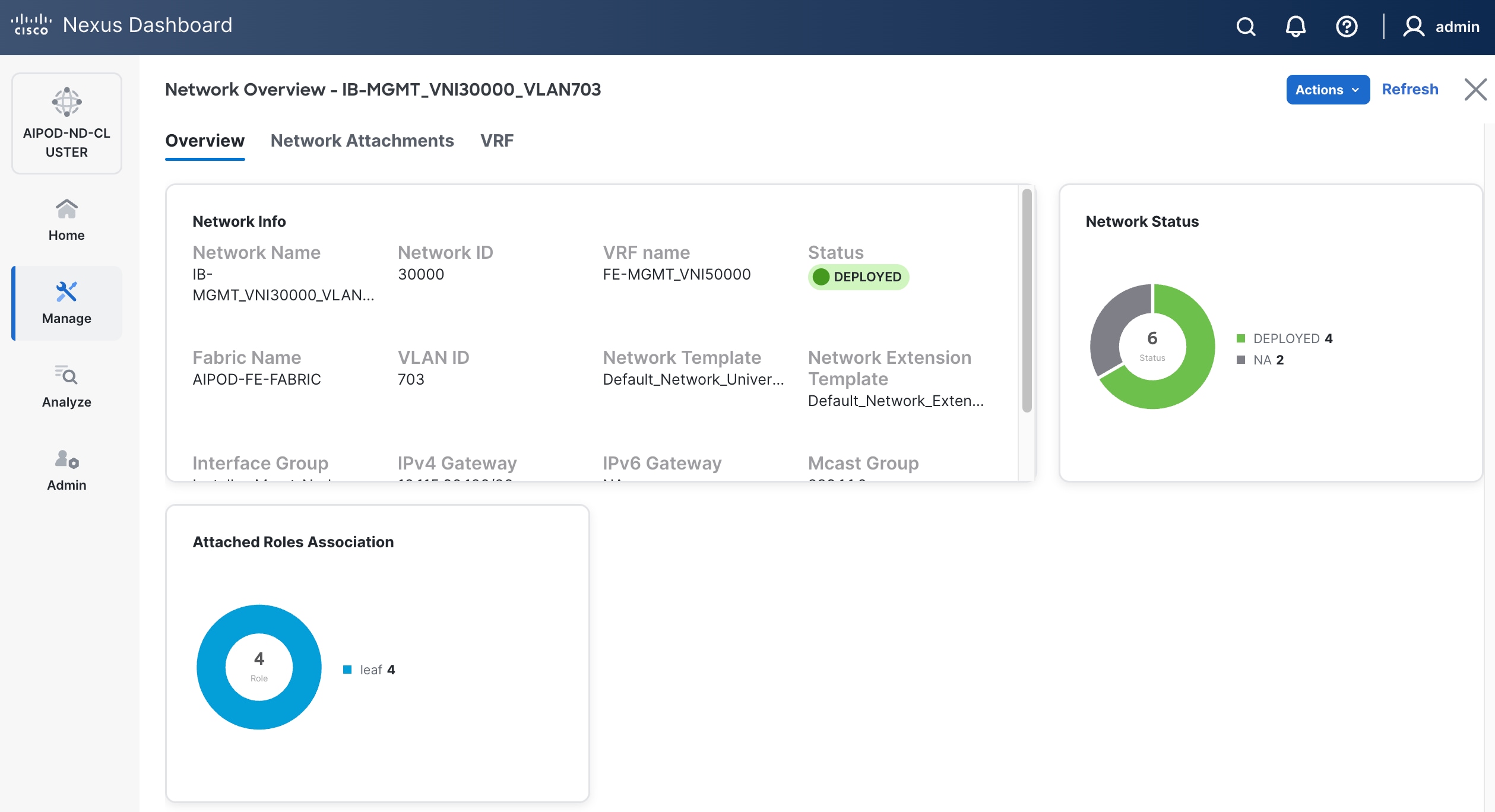

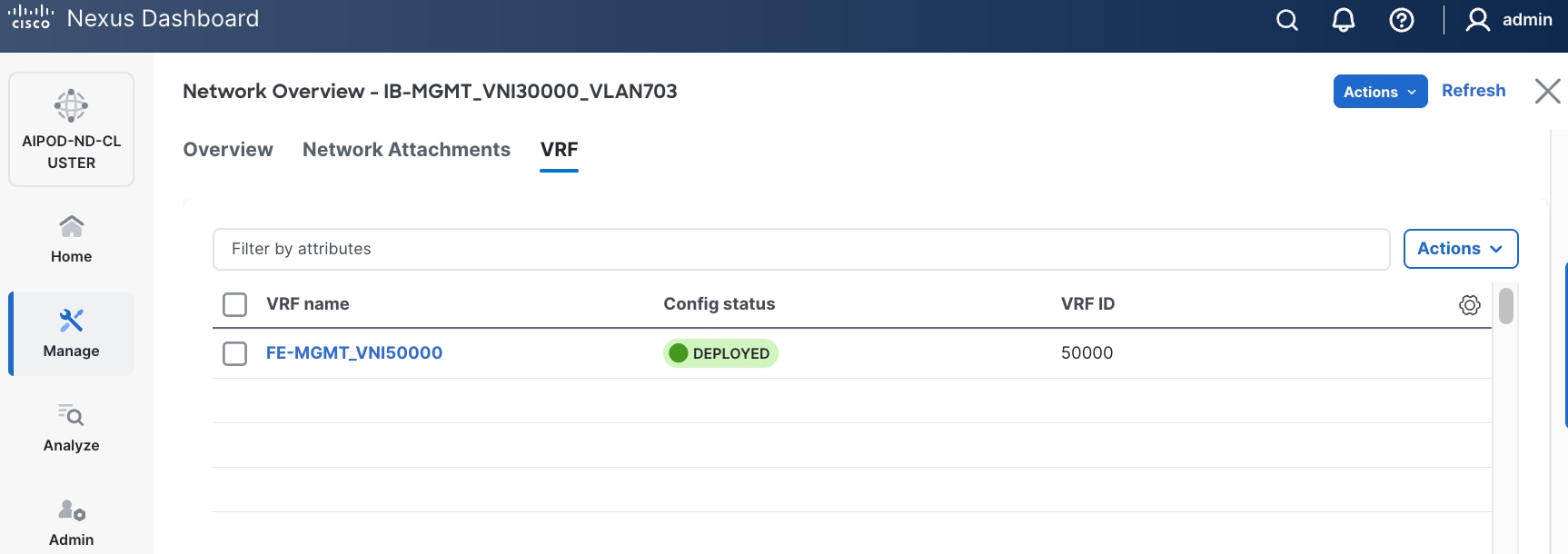

Table 12. Setup Parameters for FE Fabric: In-Band Management Connectivity to UCS Management and GPU Nodes

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| IB-MGMT Network |

|

|

| Name |

IB-MGMT_VN30000_VLAN703 |

|

| Layer 2 Only |

No |

|

| IB-MGMT VRF |

|

|

| VRF Name |

FE-MGMT_VN50000 |

|

| VRF ID |

50000 |

(System Proposed) |

| VLAN ID |

2000 |

(System Proposed) |

| VRF Interface Description |

FE-MGMT VRF |

|

| VRF Description |

Frontend Fabric – Management VRF |

|

| IB-MGMT Network Contd. |

|

|

| Network ID |

30000 |

|

| VLAN ID |

703 |

|

| IPv4 Gateway/Netmask |

10.115.90.126/26 |

|

| VLAN Name |

IB-MGMT_VLAN |

|

| Interface Description |

IB-MGMT |

|

| UCS C885A GPU Nodes |

|

|

| vPC Leaf Switch Pair |

FE-LF1, FE-LF2 |

vPC Leaf Switch Pair |

| UCS C885-A Node-1 Interface |

Port-Channel 111 |

|

| UCS C885-A Node-2 Interface |

Port-Channel 112 |

|

| UCS C885-A Node-3 Interface |

Port-Channel 113 |

|

| UCS C885-A Node-4 Interface |

Port-Channel 114 |

|

| Management UCS X-Direct Chassis |

|

|

| vPC Leaf Switch Pair |

FE-LF1, FE-LF2 |

|

| UCS X-Direct (-A Uplinks) |

Port-Channel 15 |

|

| UCS X-Direct (-B Uplinks) |

Port-Channel 16 |

|

To deploy the in-band management network and enable connectivity to the UCS GPU nodes, follow the procedures below.

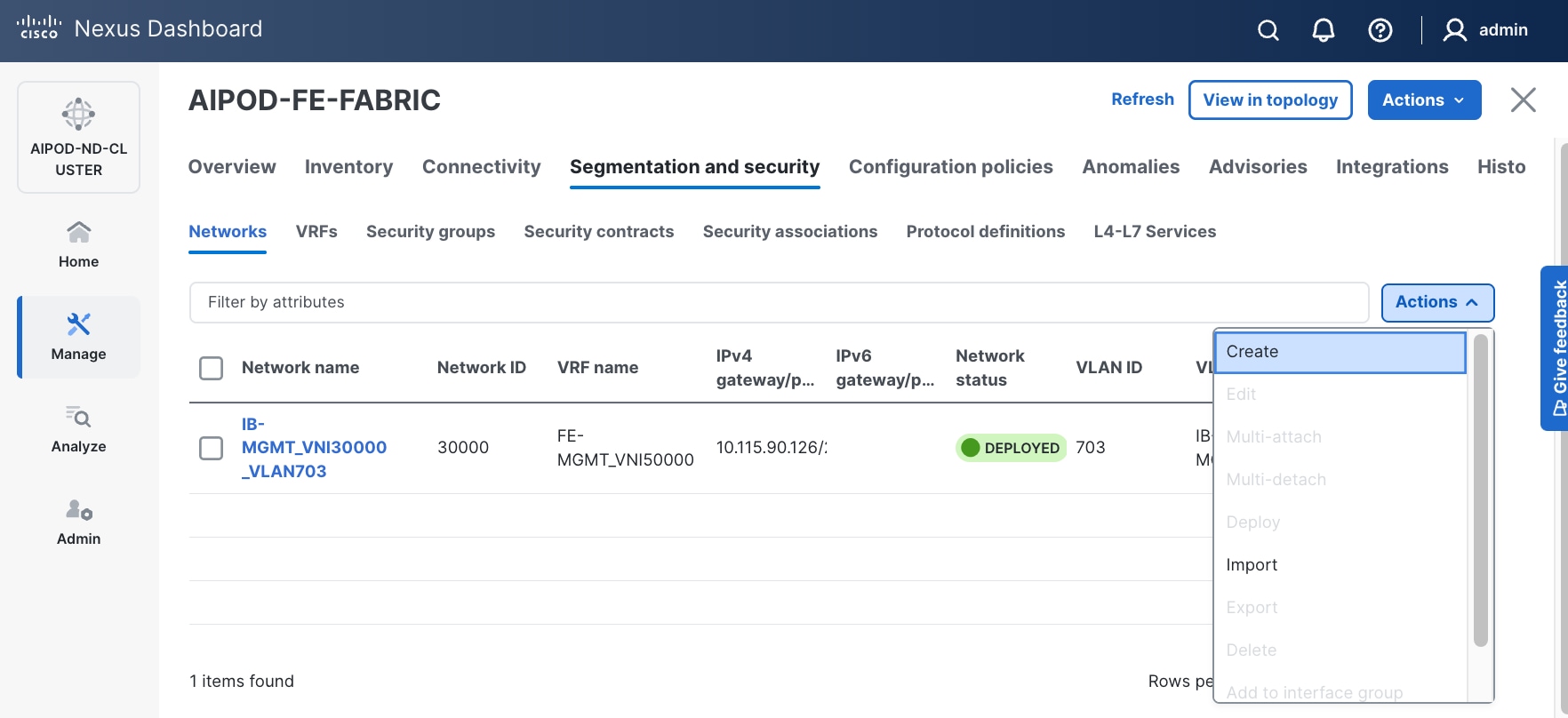

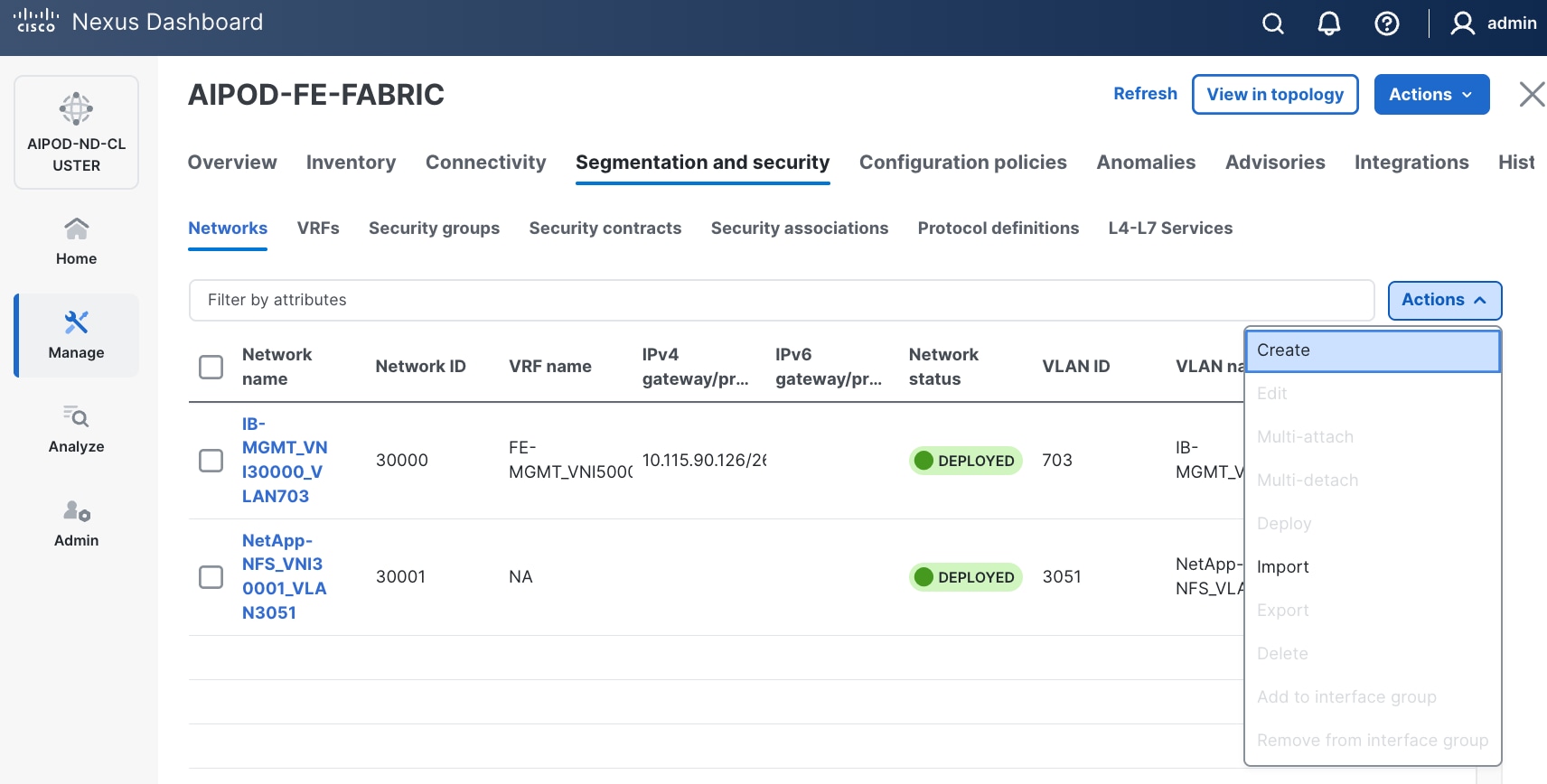

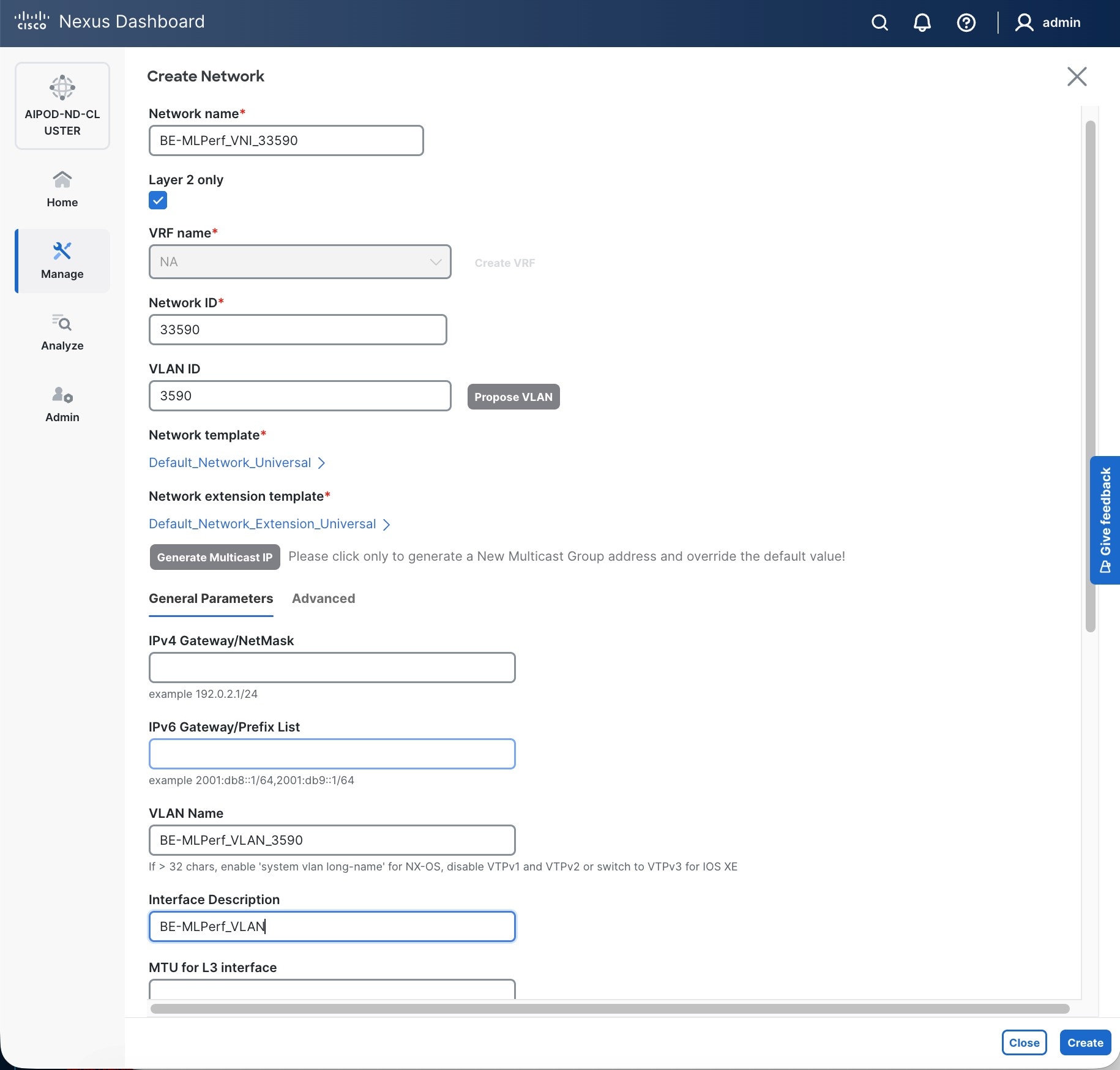

Procedure 1. Deploy In-Band Management Connectivity for UCS GPU Nodes

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

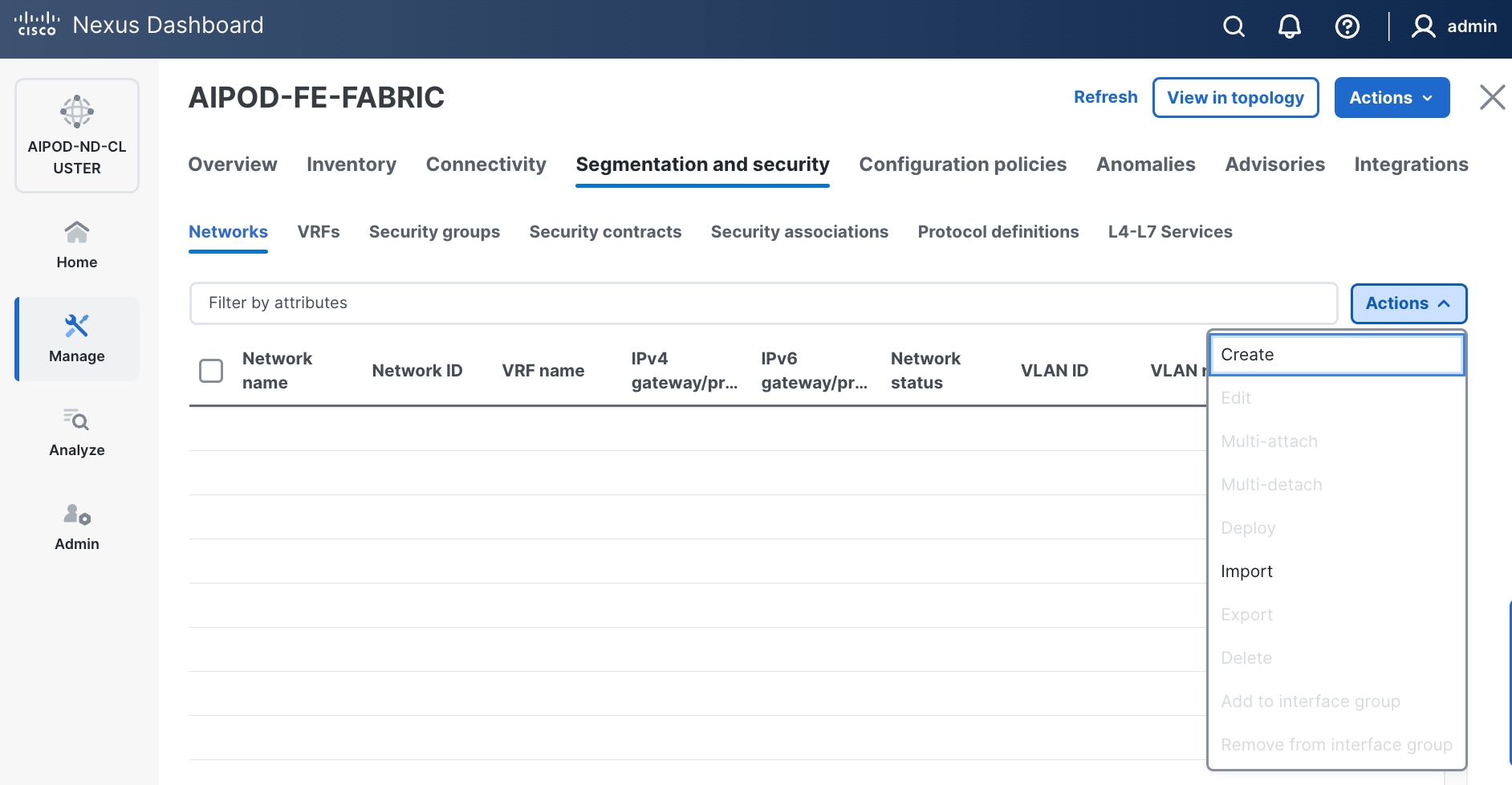

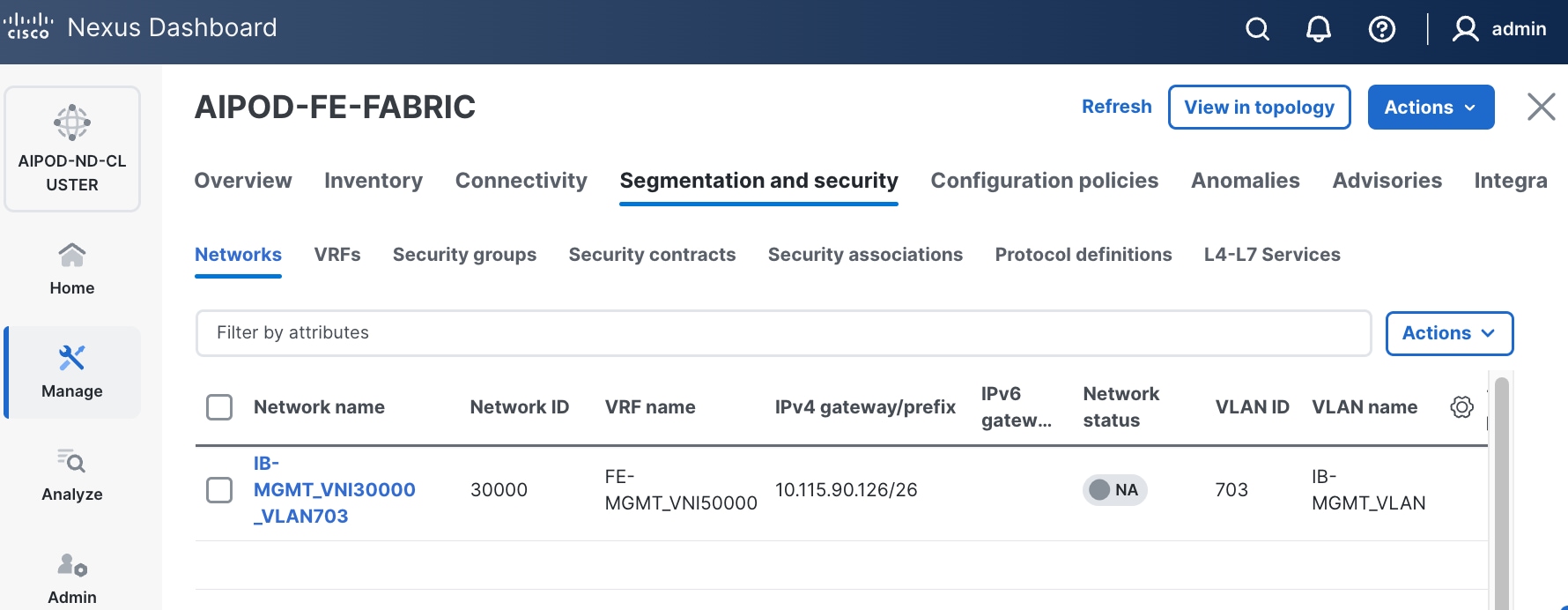

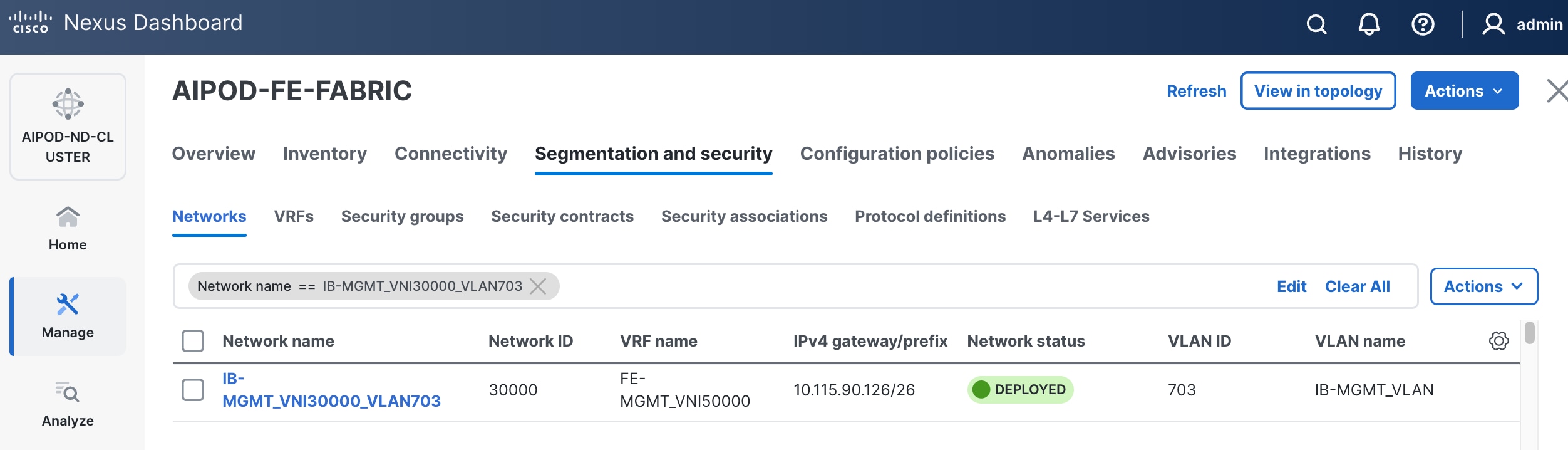

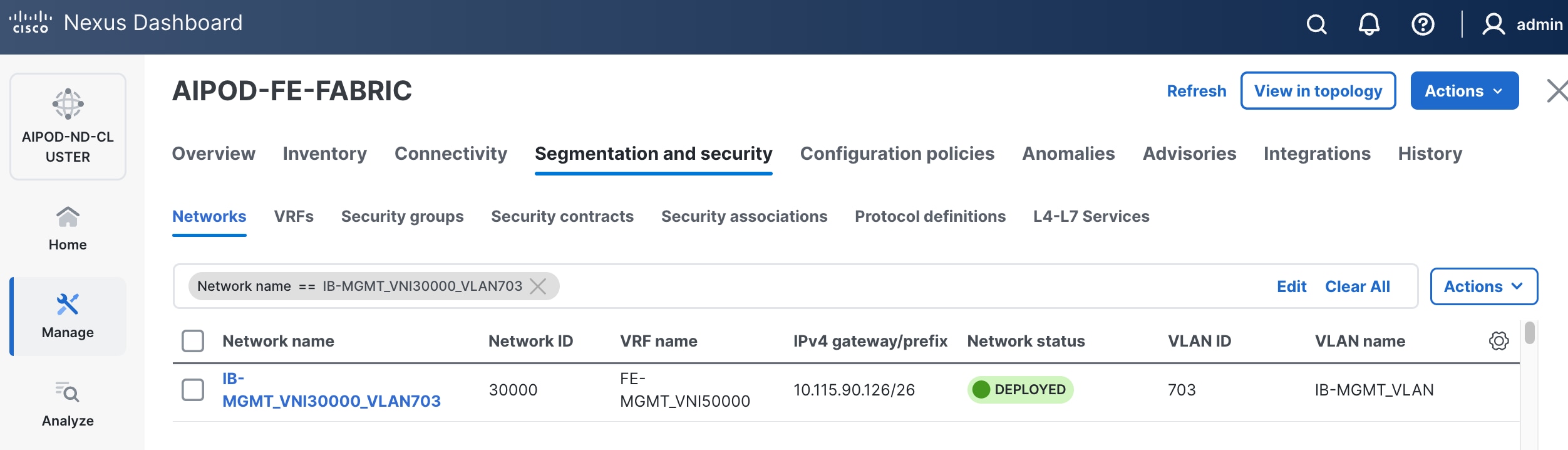

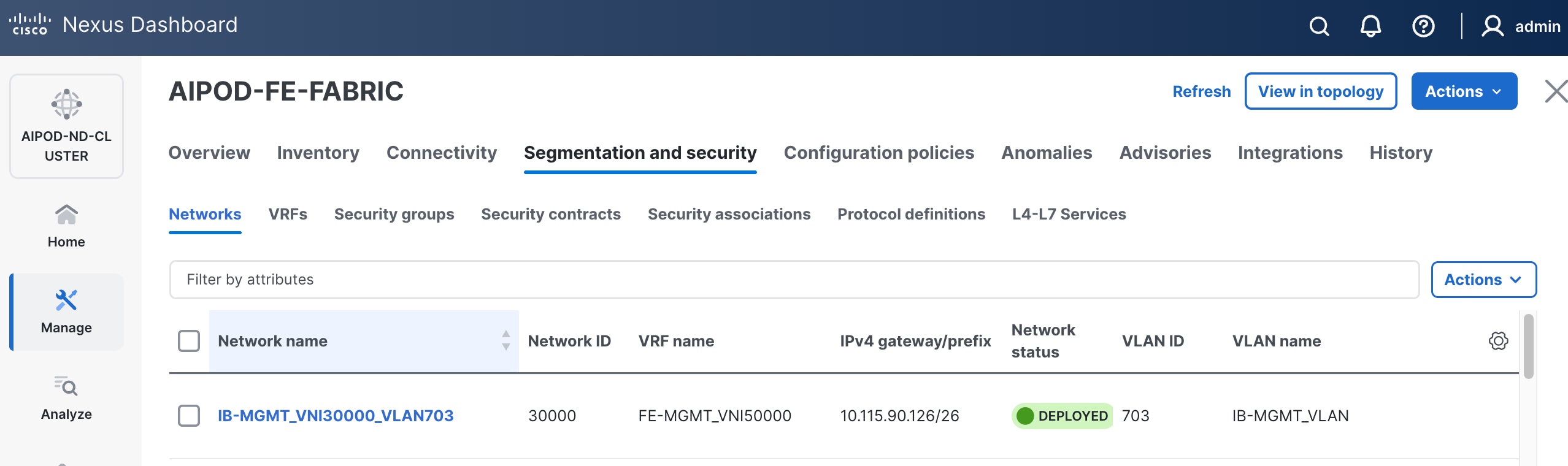

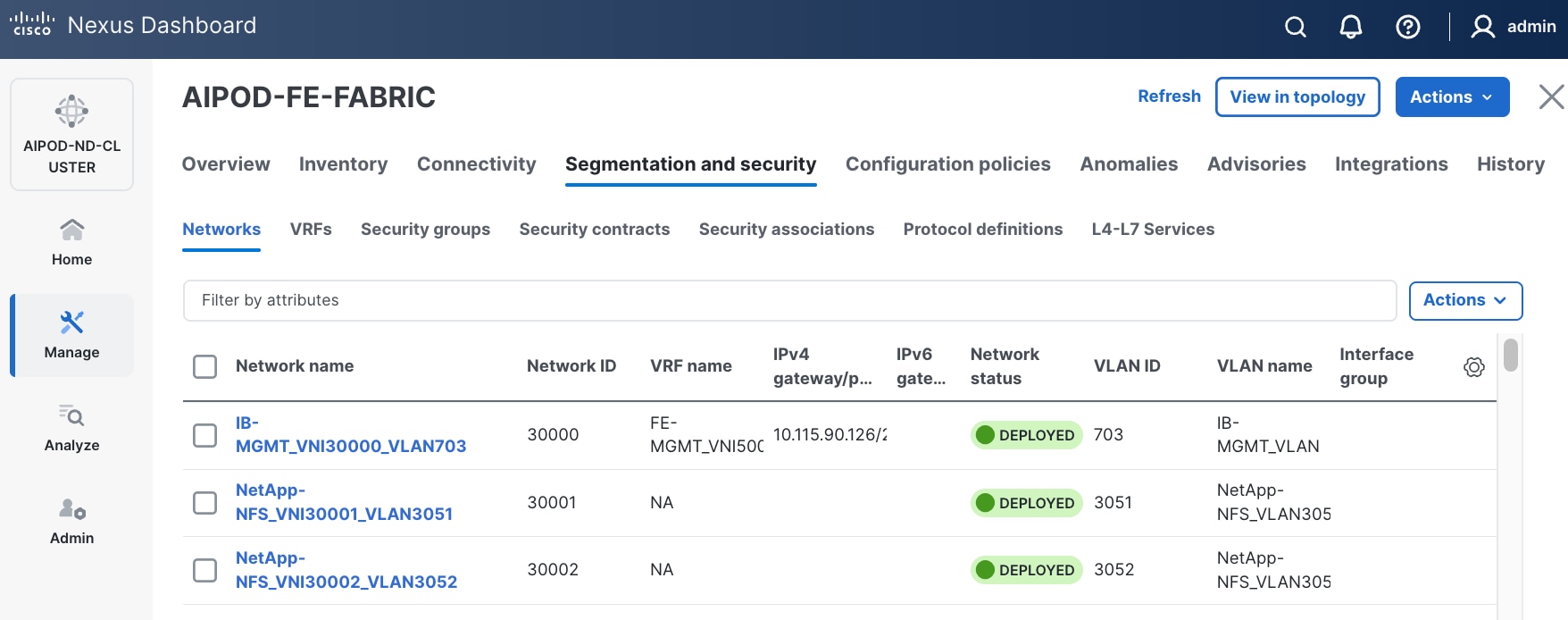

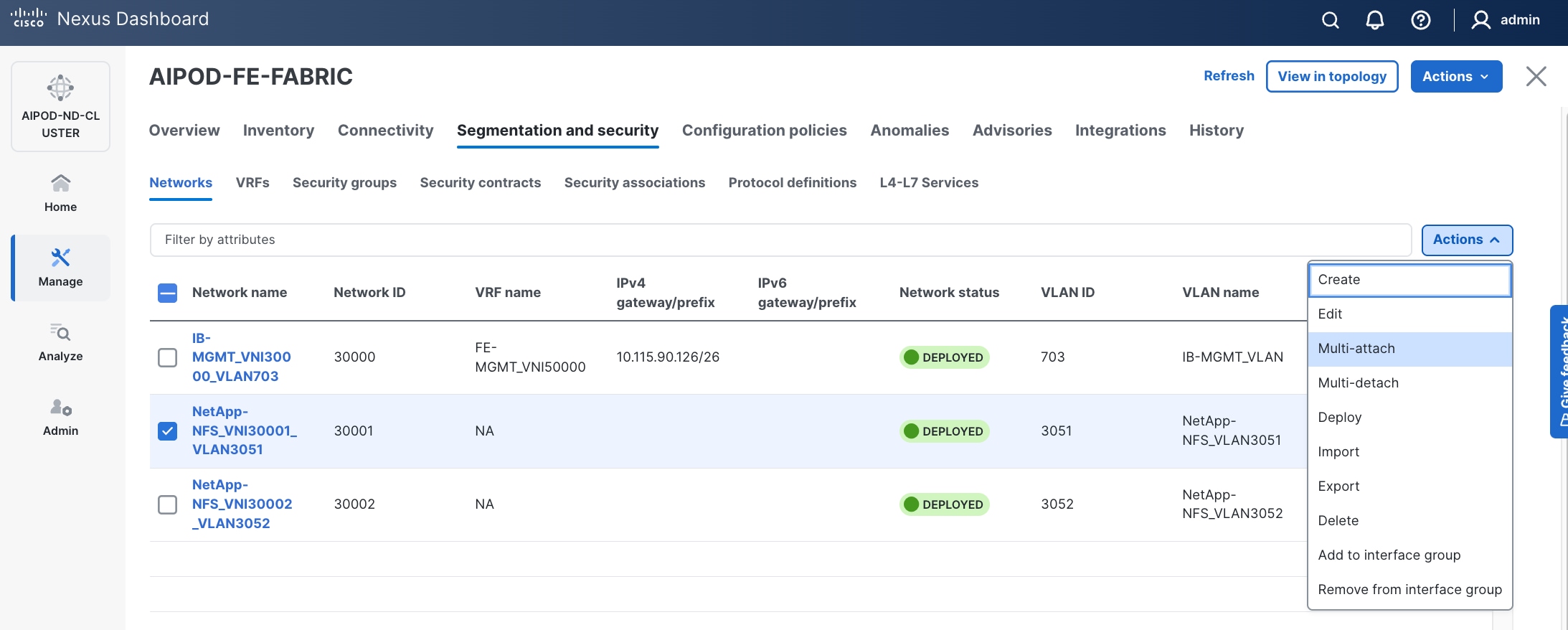

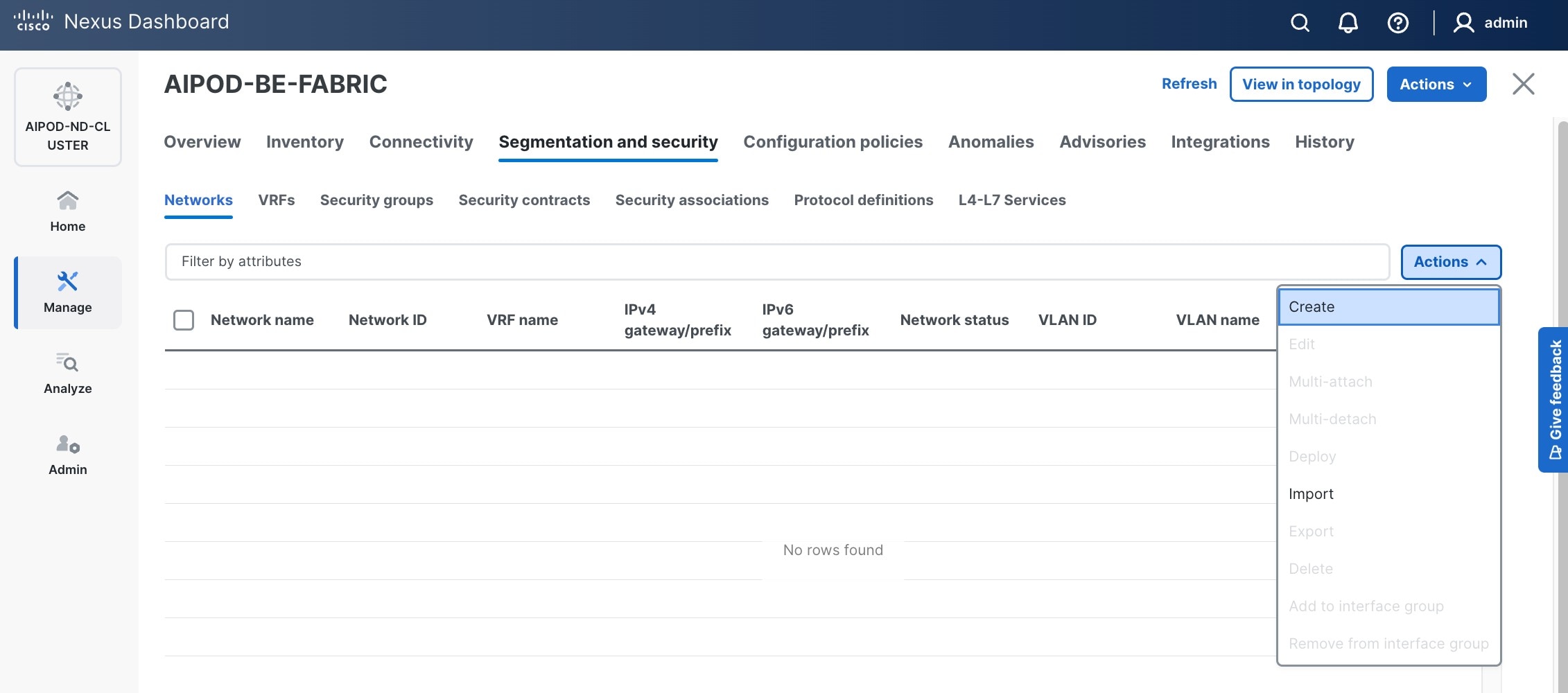

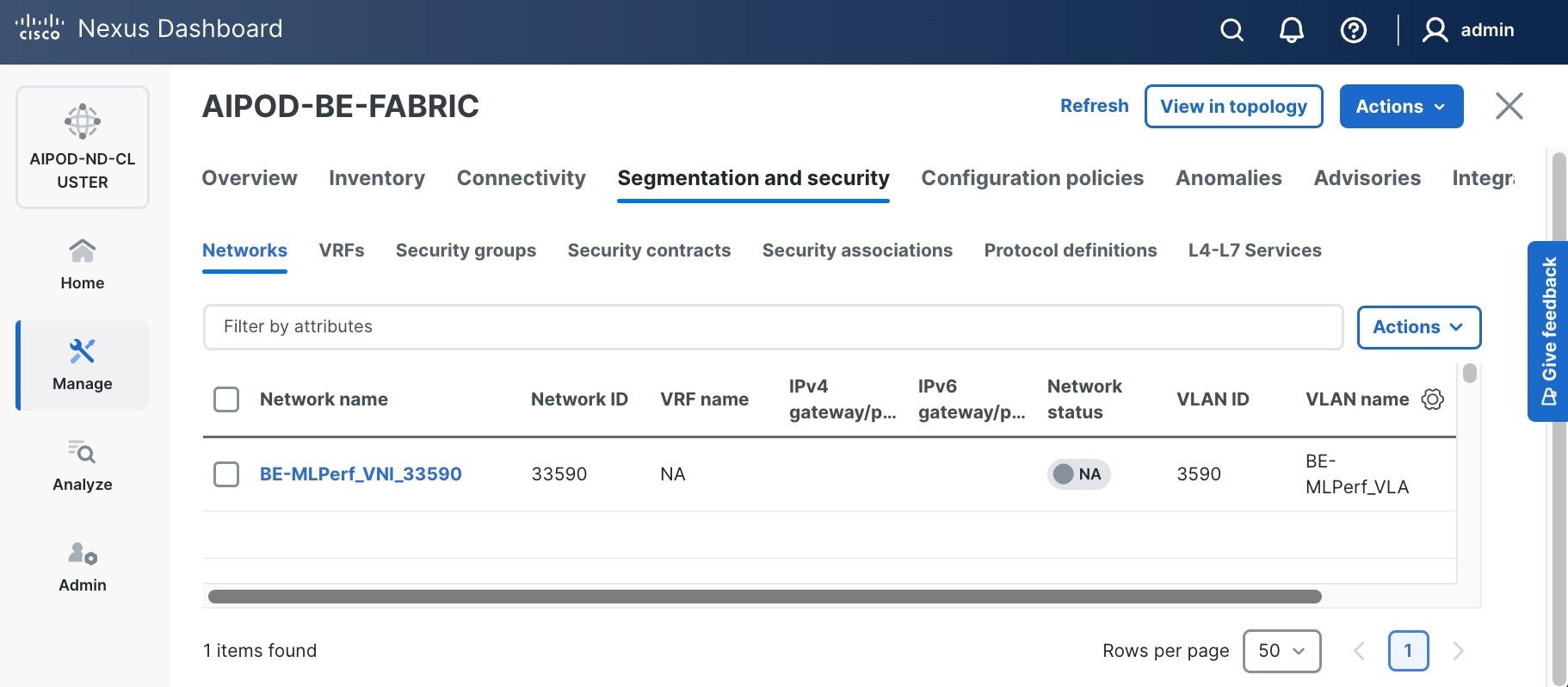

Step 3. Select the FE fabric and navigate to Segmentation and Security > Networks tab.

Step 4. Click the lower of the two Actions buttons and select Create from the list.

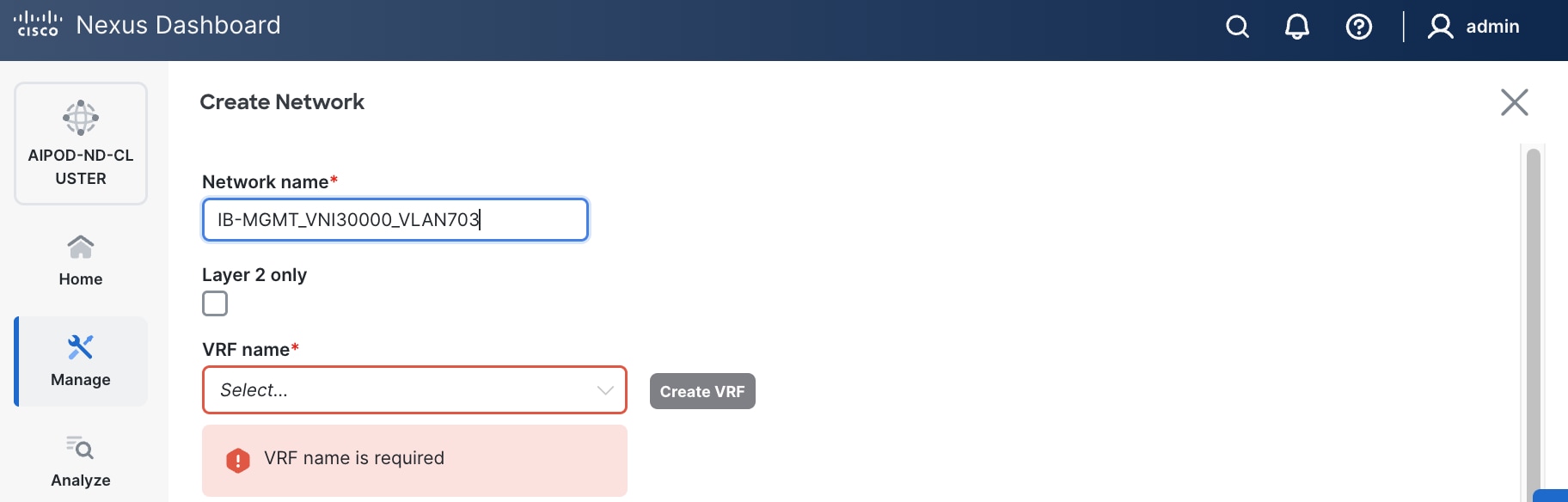

Step 5. In the Create Network window, specify the following:

● Network nameNetwork name for the IB-MGMT network.

● Leave unchecked the Layer 2 only Layer 2 only checkbox as IB-MGMT is a layer 3 overlay network.

● VRF nameVRF name. If a VRF hasn't been created already, you have an option from this window to also create a VRF.

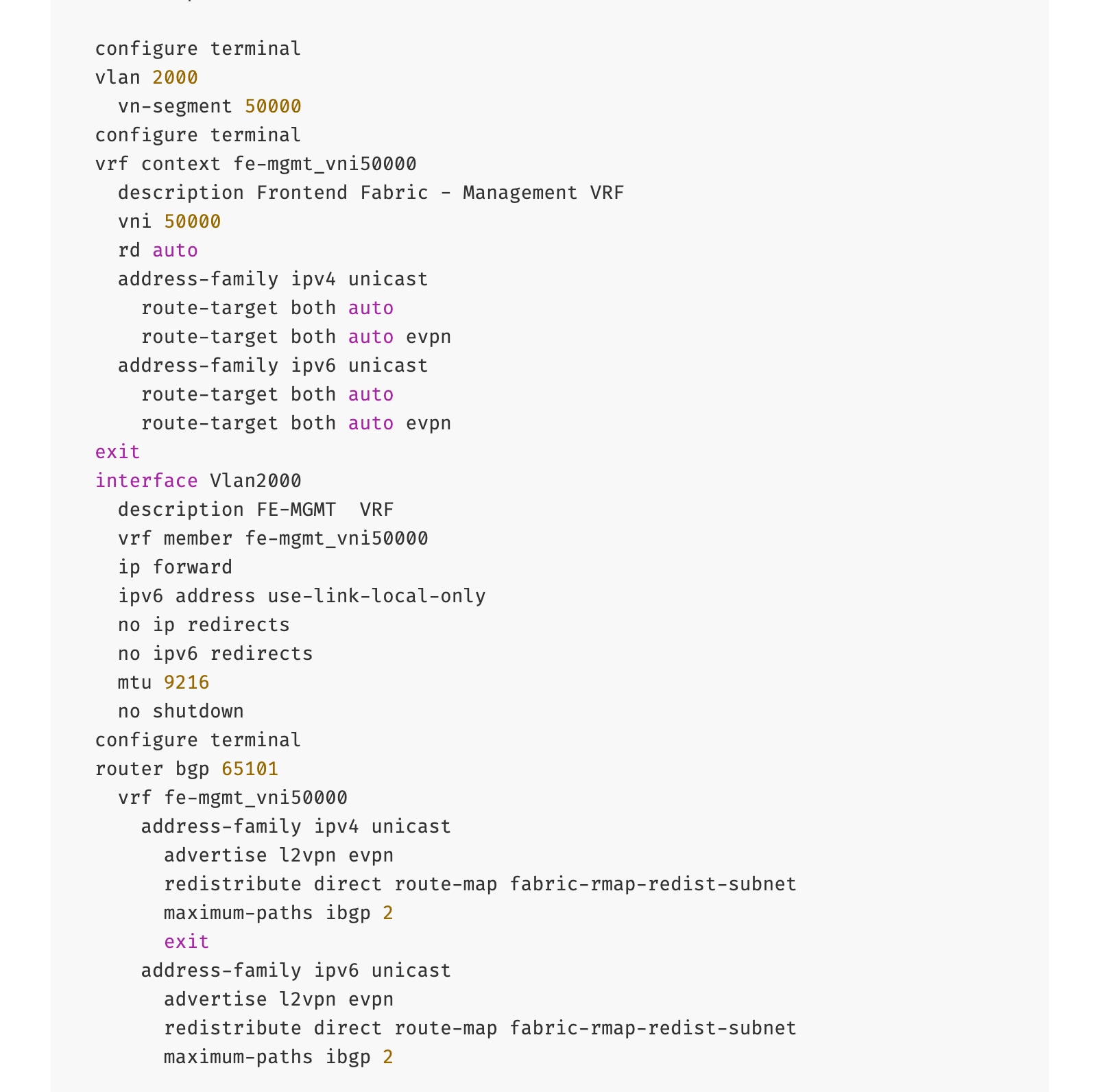

● To create a new VRF, click Create Create VRF. In the Create VRF Create VRF window, specify VRF ID (VRF ID (or use default), VLAN ID (VLAN ID (or click Propose VLAN Propose VLAN to let system define a VLAN), and optionally other parameters as shown in the screenshot.

Step 6. Click Create to create the VRF and return to the Create Network window.

Step 7. In the Create Network window, specify the following:

● Network IDNetwork ID or use default.

● VLAN ID VLAN ID or click Propose VLAN Propose VLAN button to let system define a VLAN.

● In the General Parameters tab, specify IP Gateway/Netmask, VLAN Name and Interface Description.

Step 8. Click Create to create the Network.

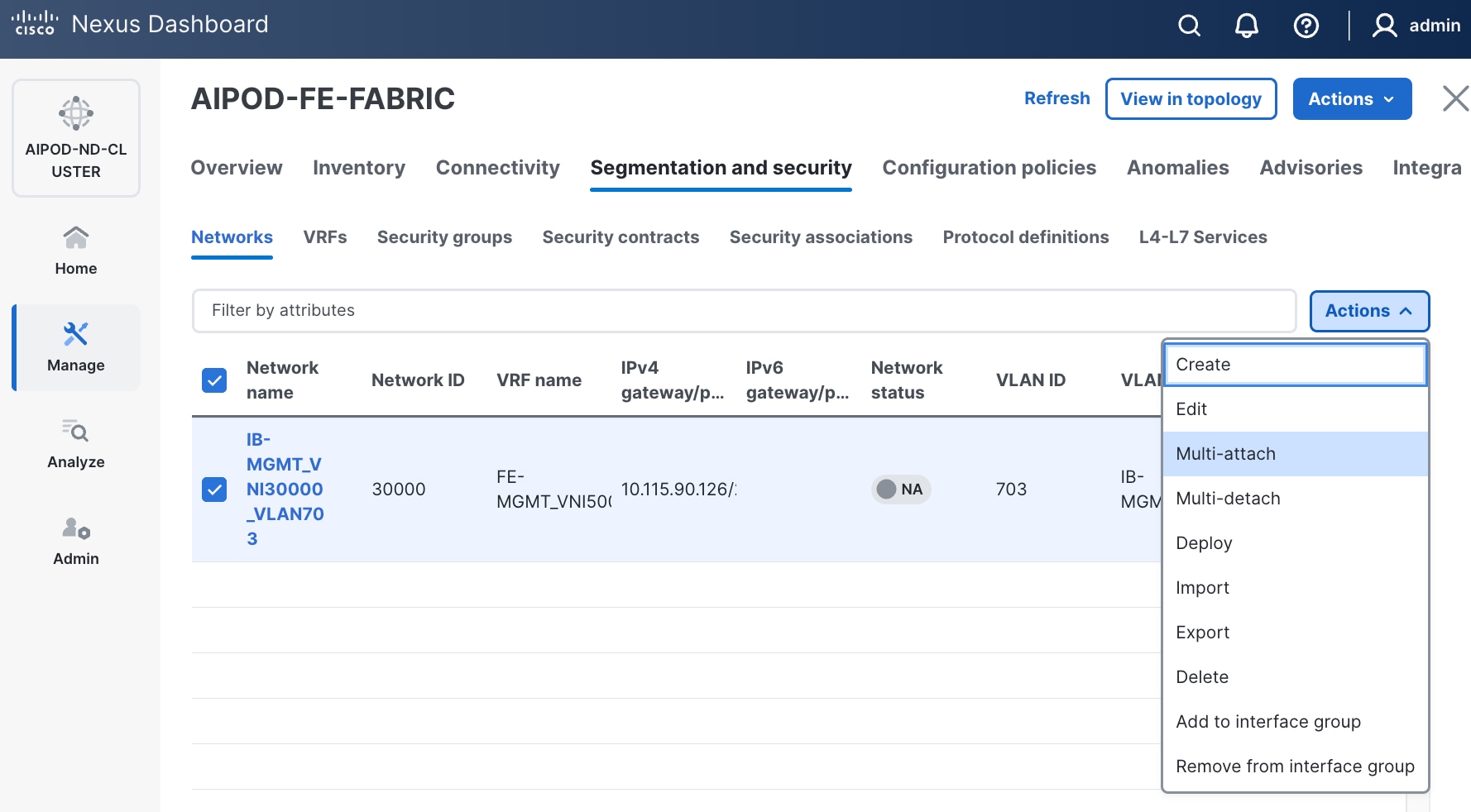

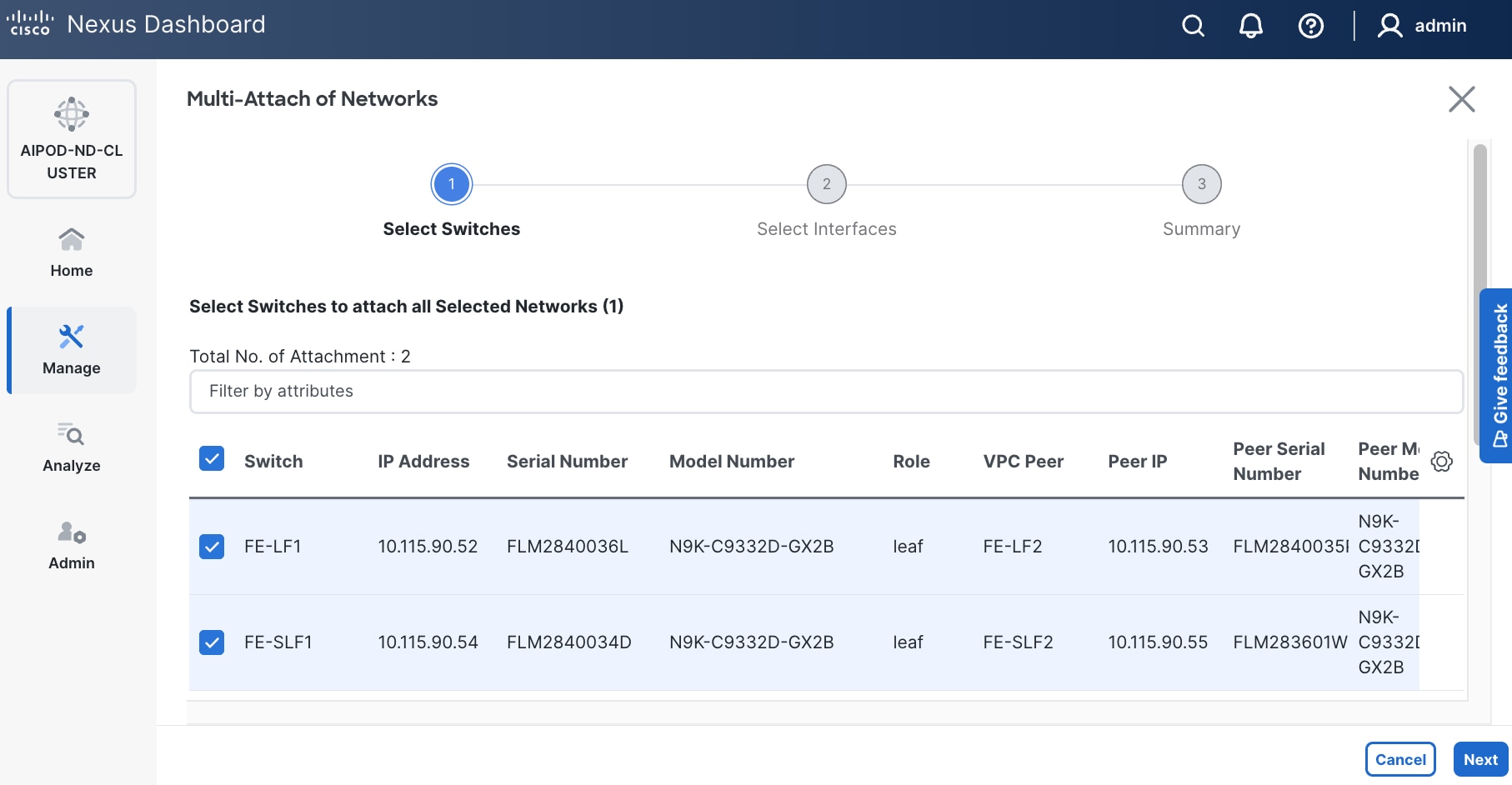

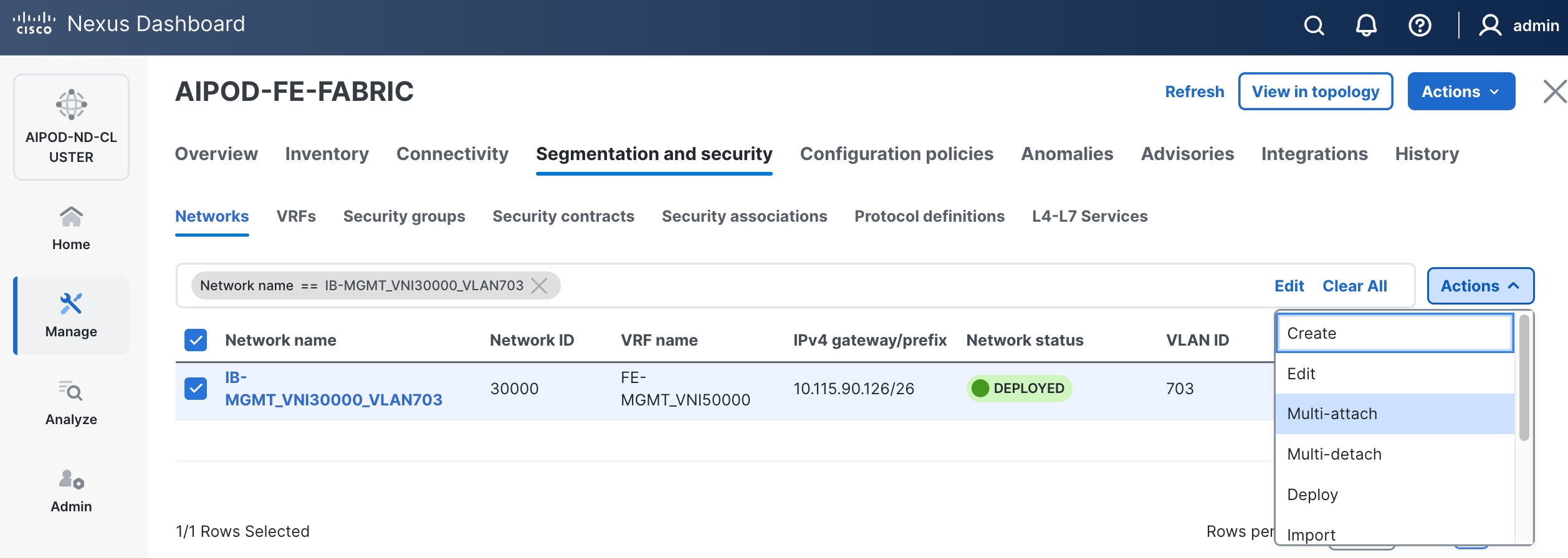

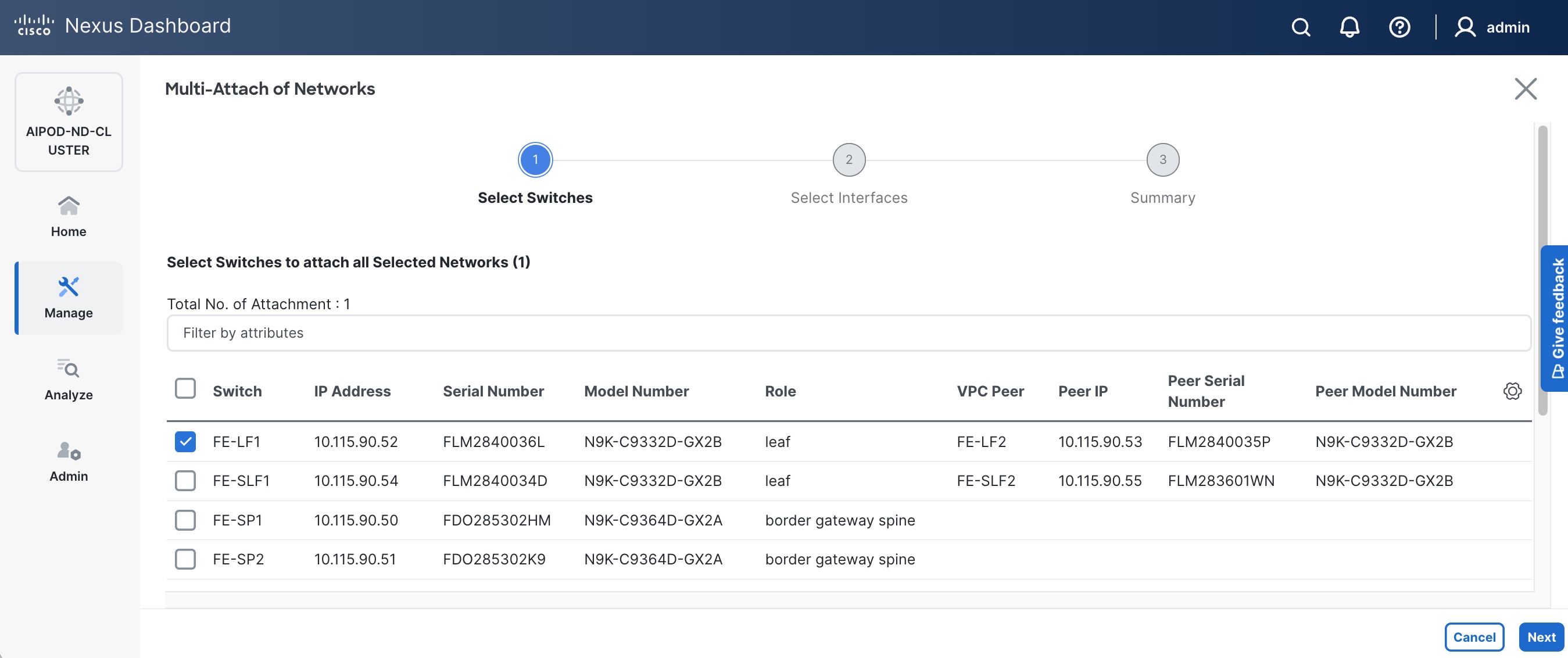

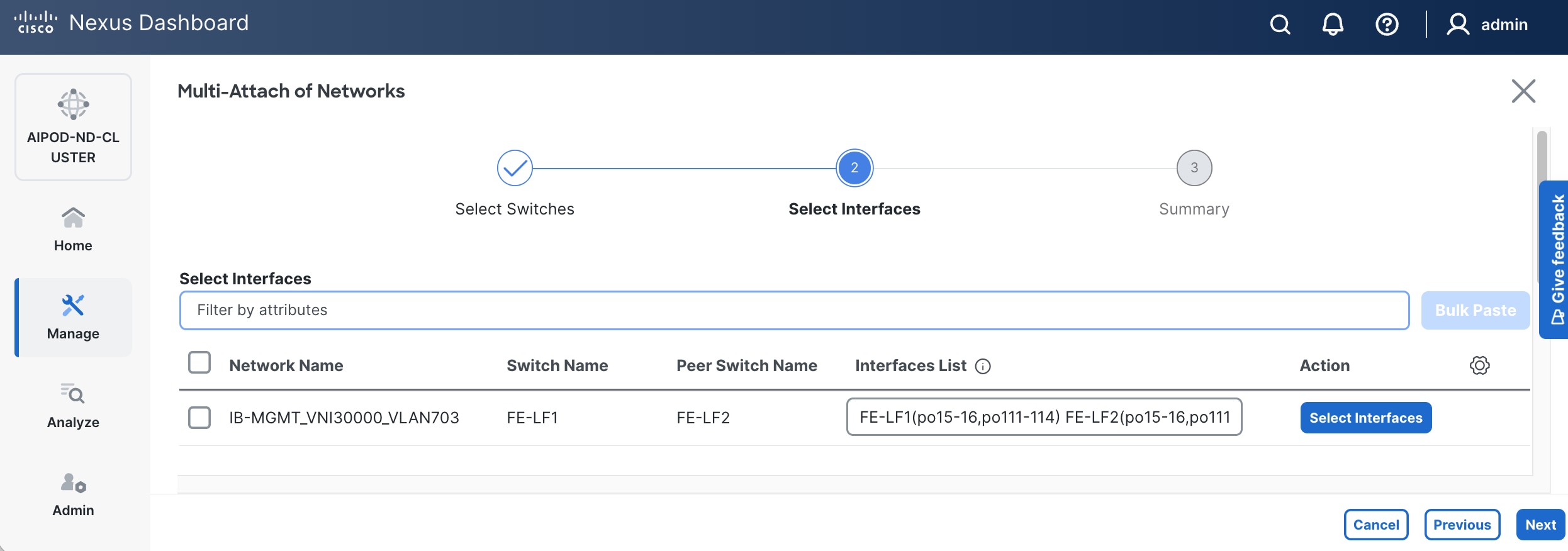

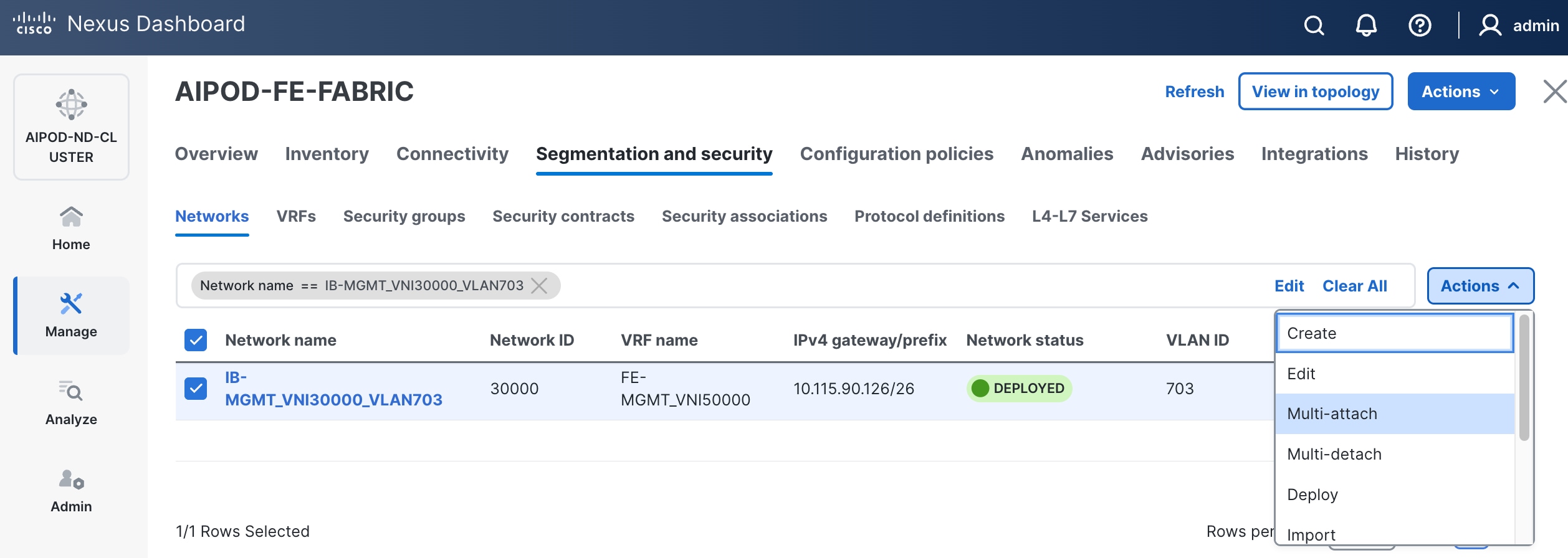

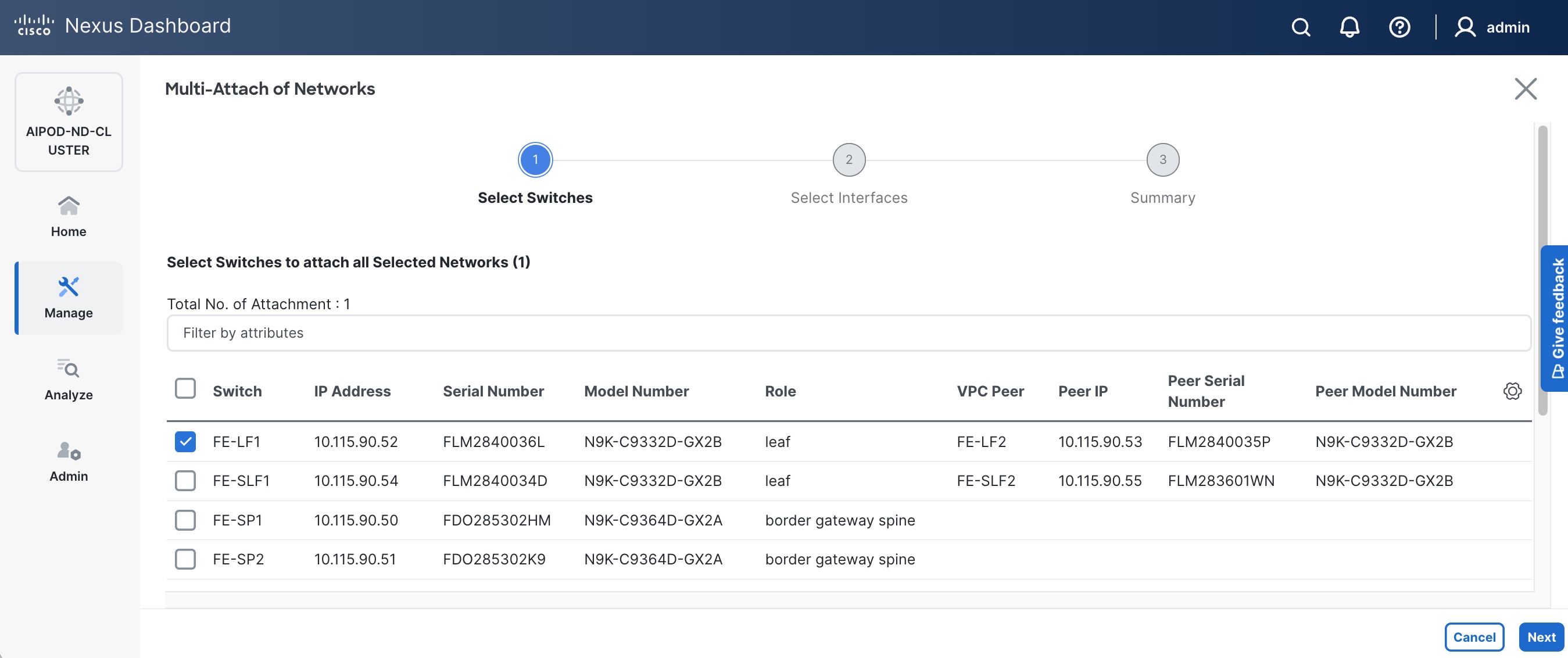

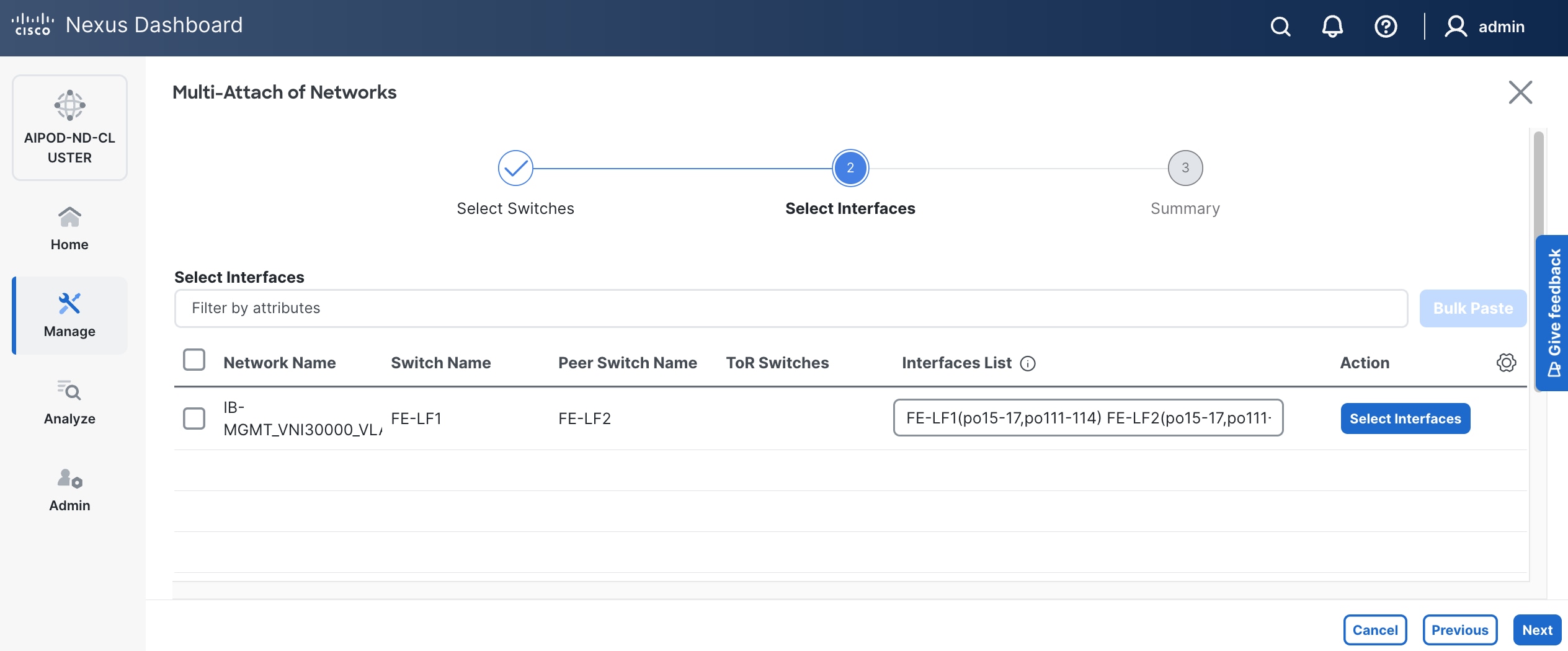

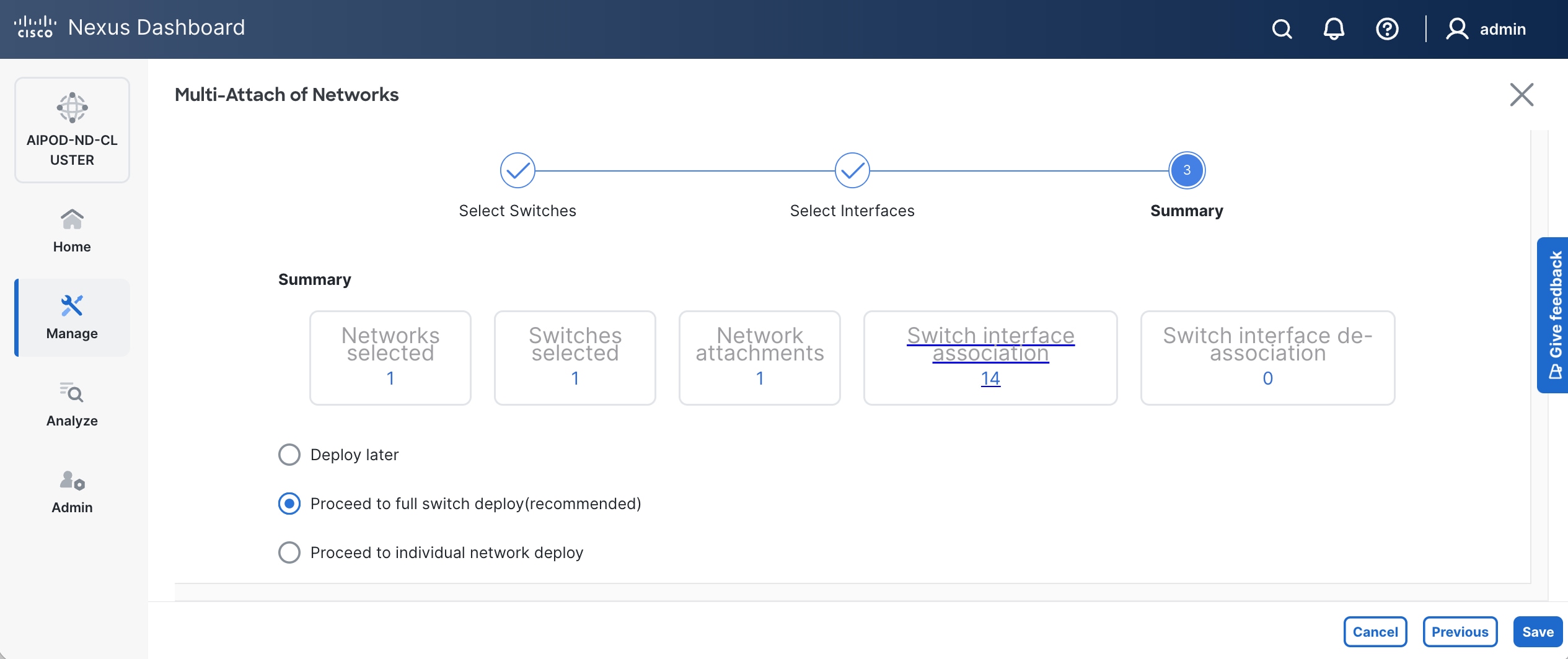

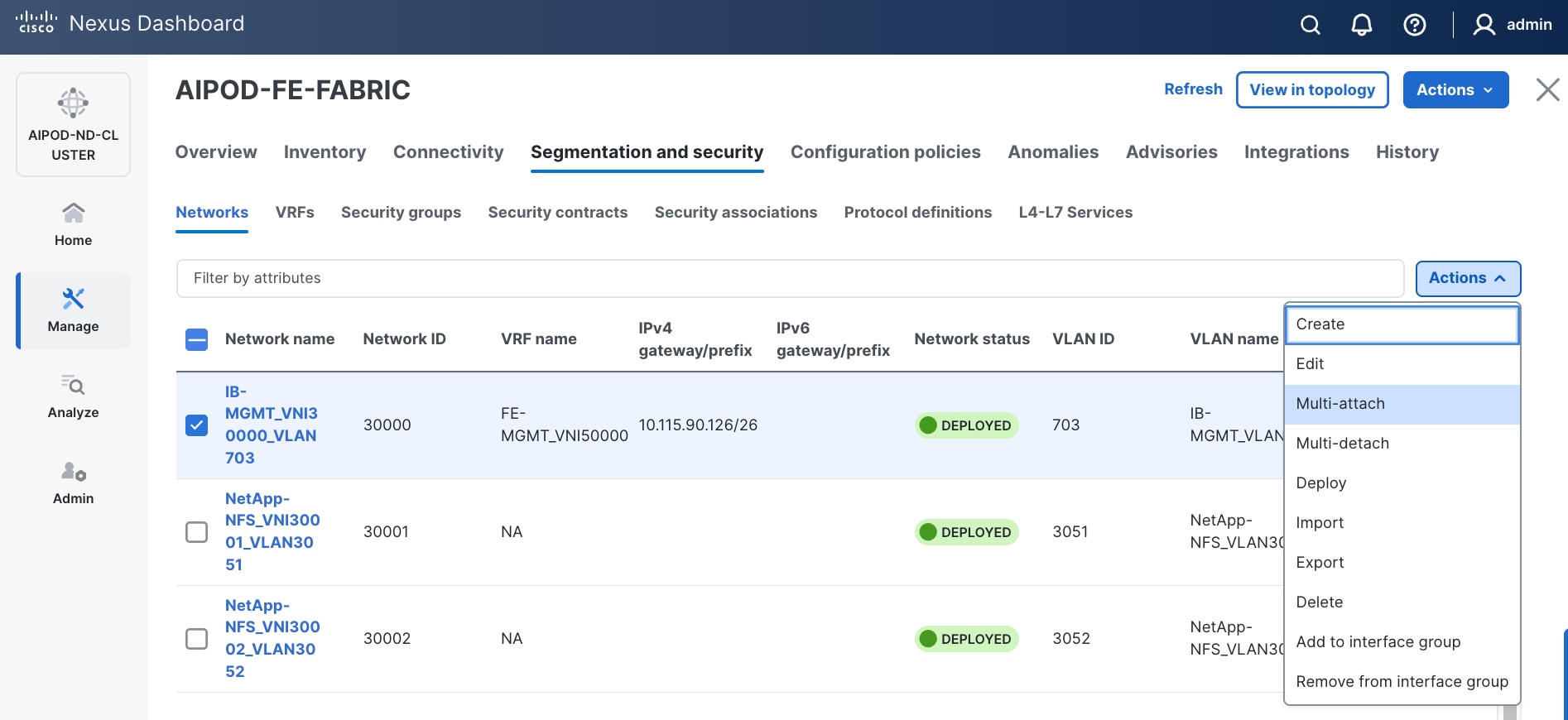

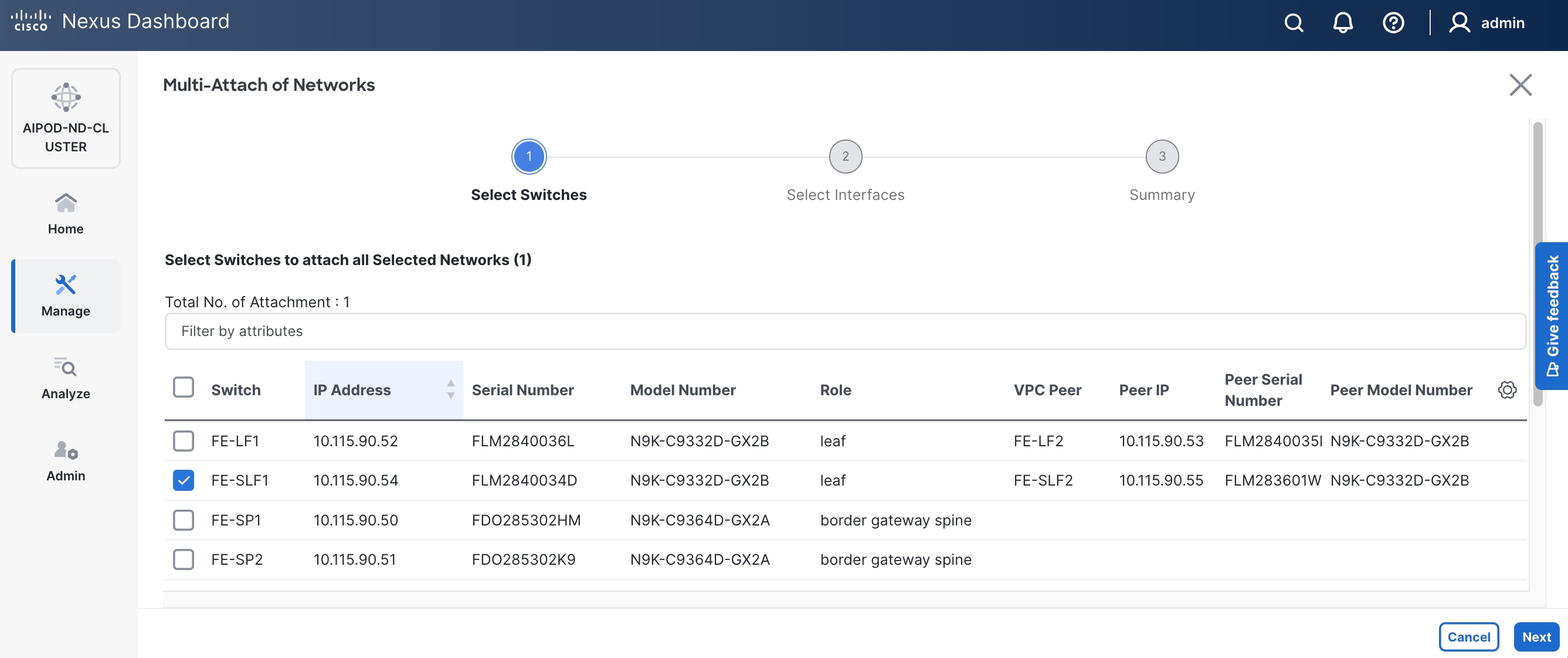

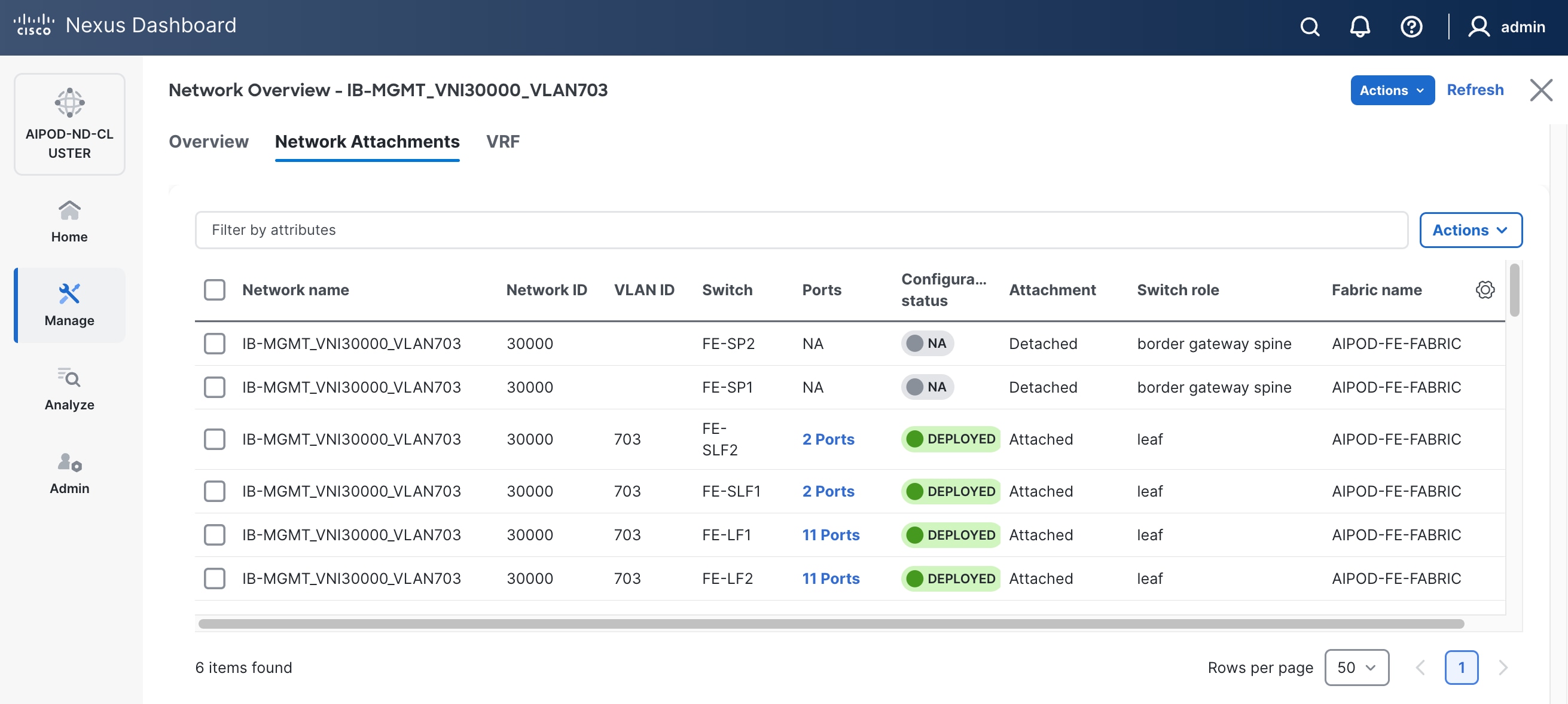

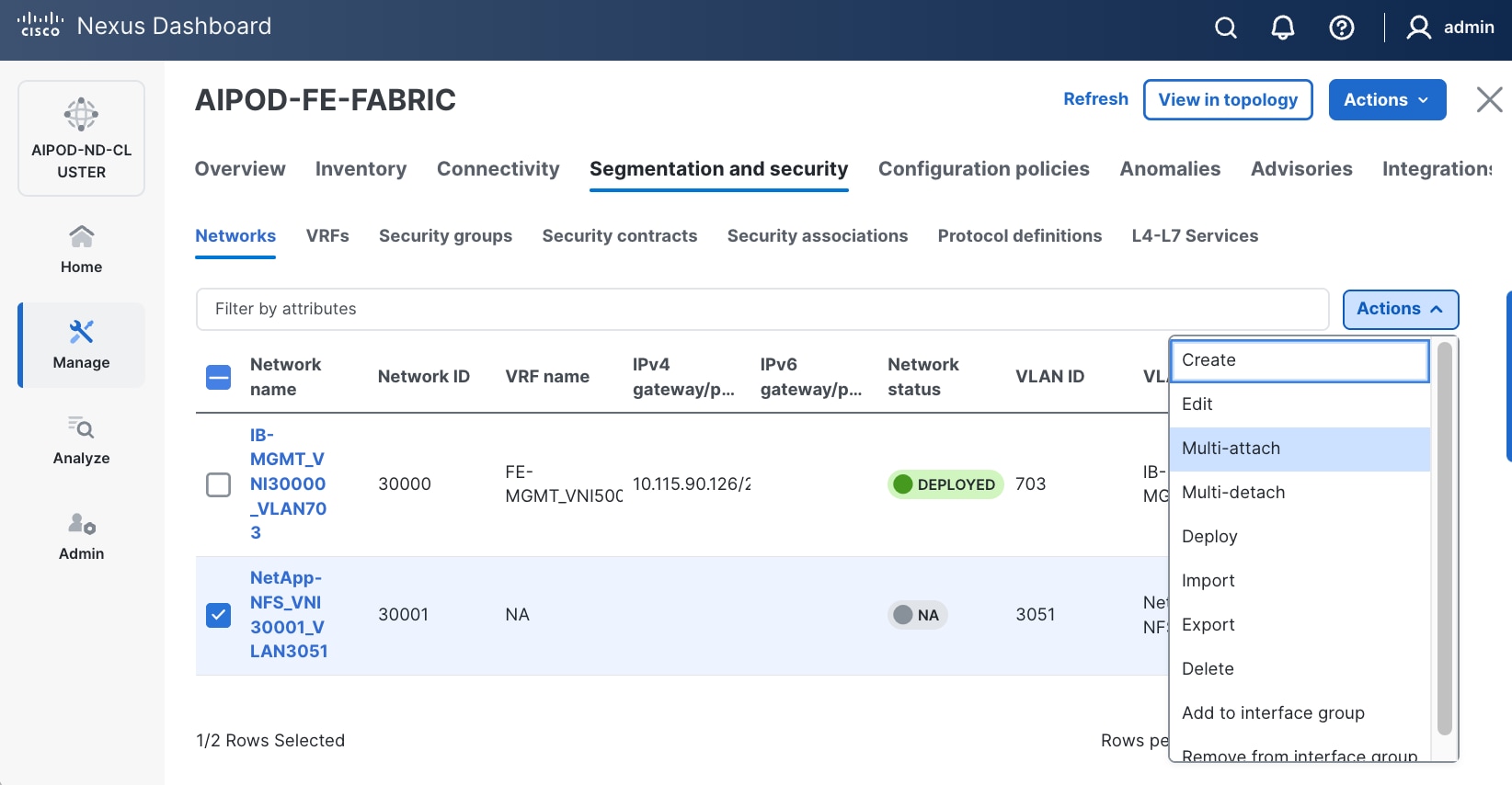

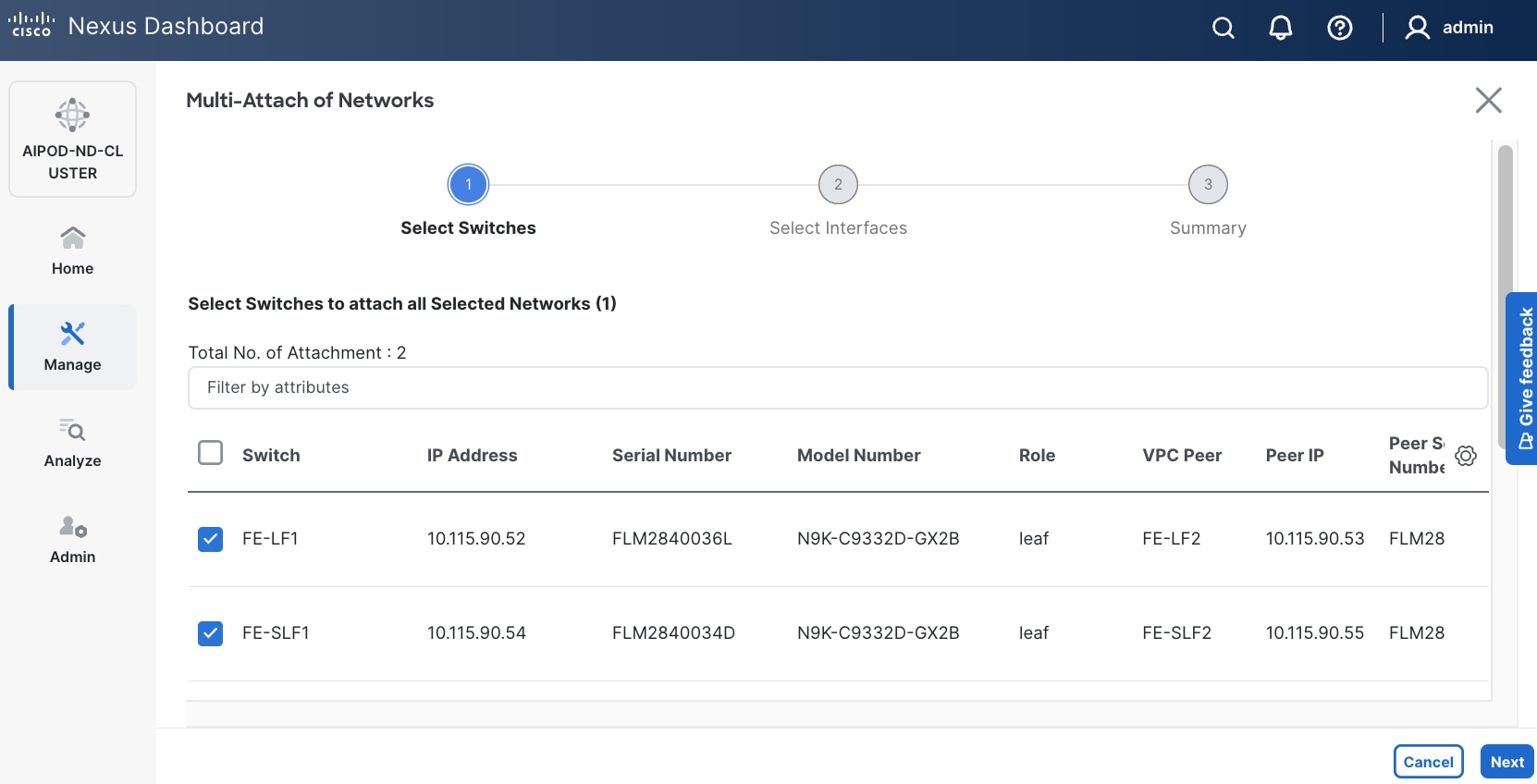

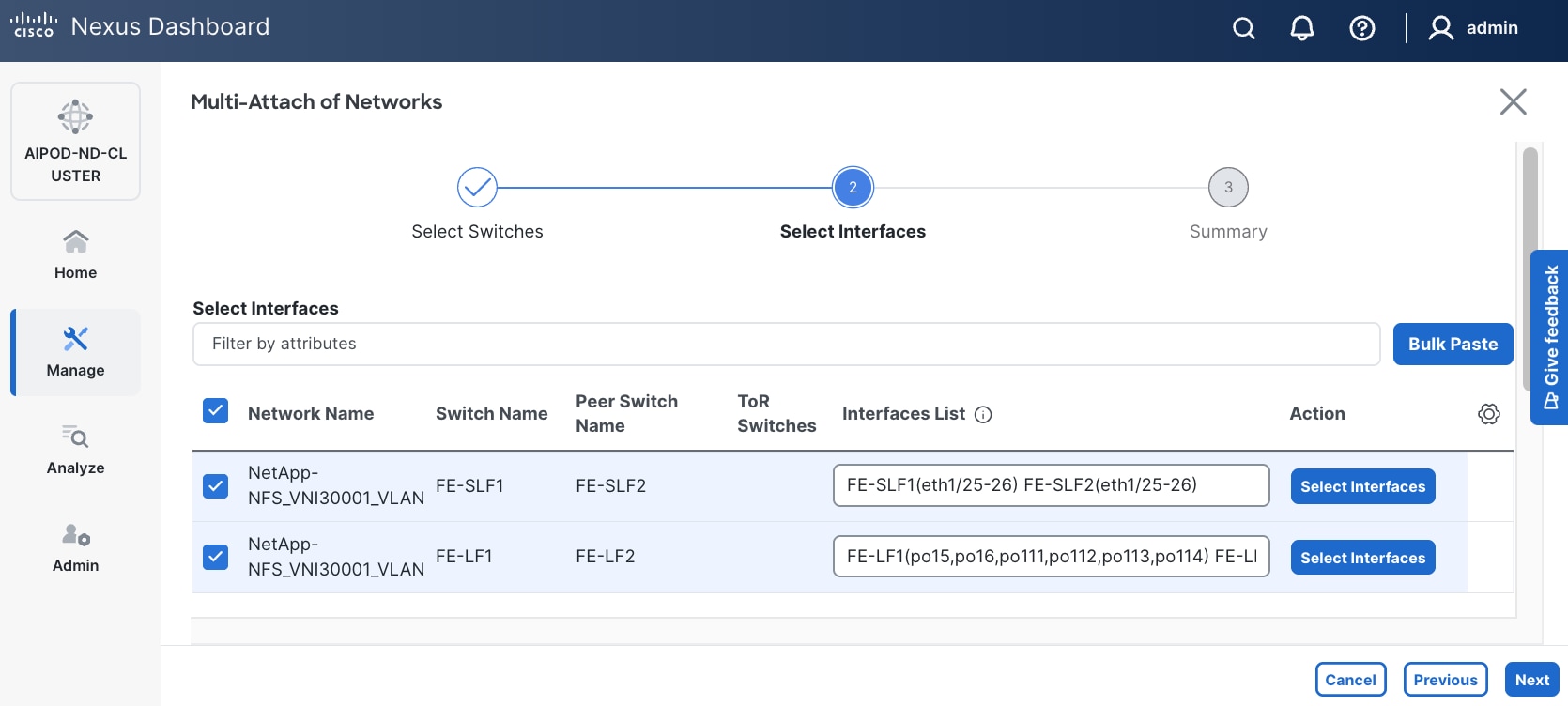

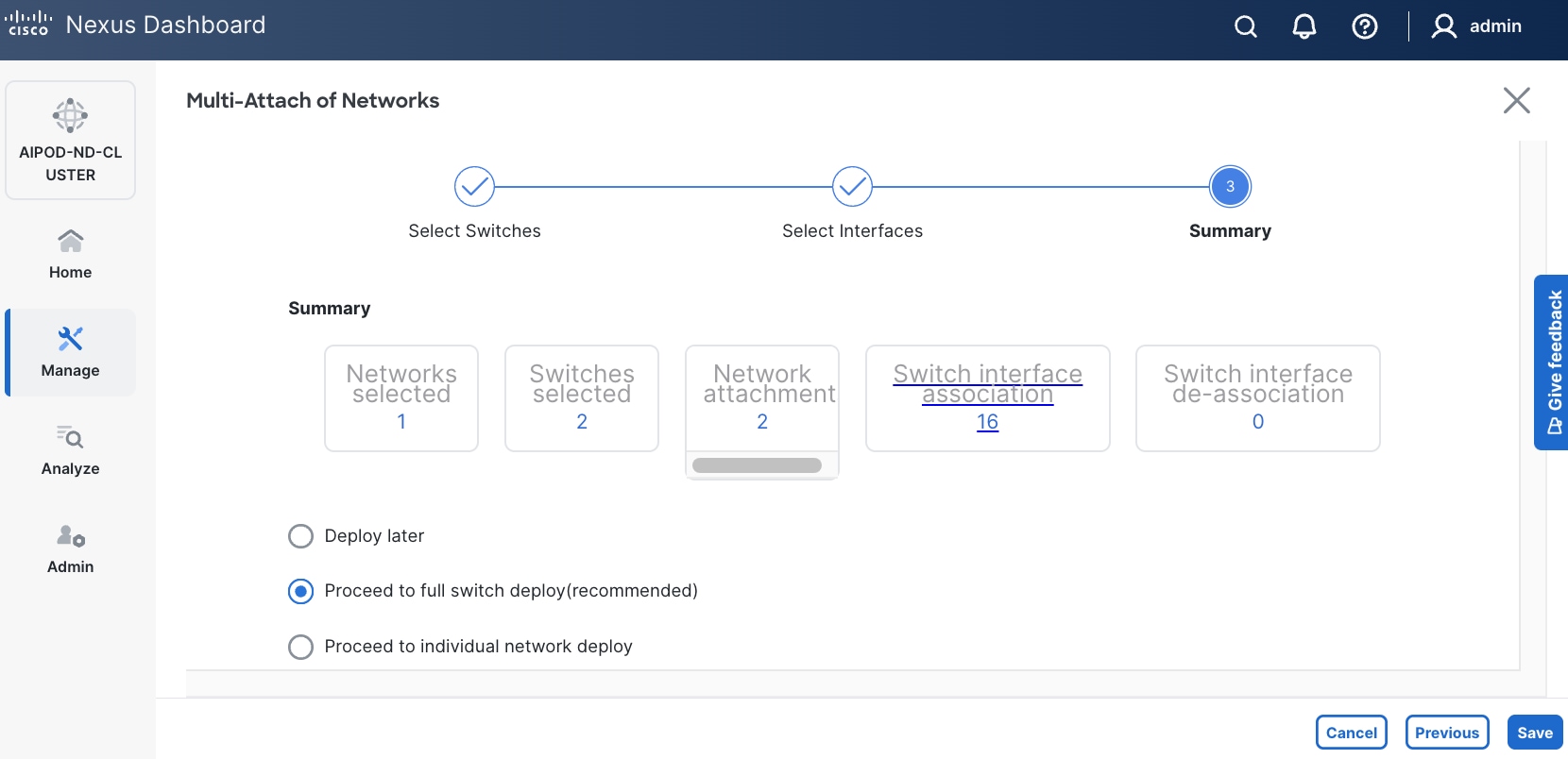

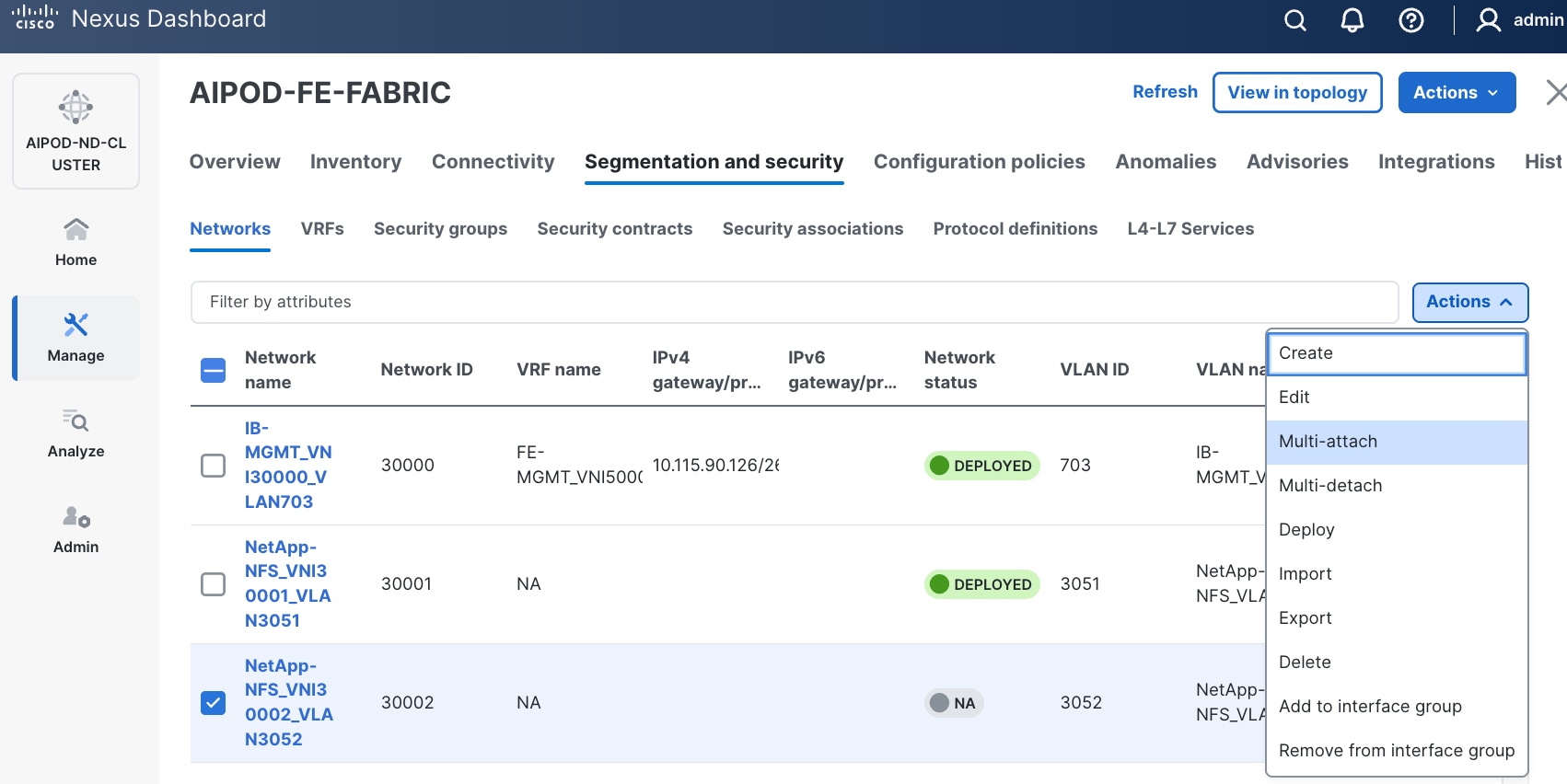

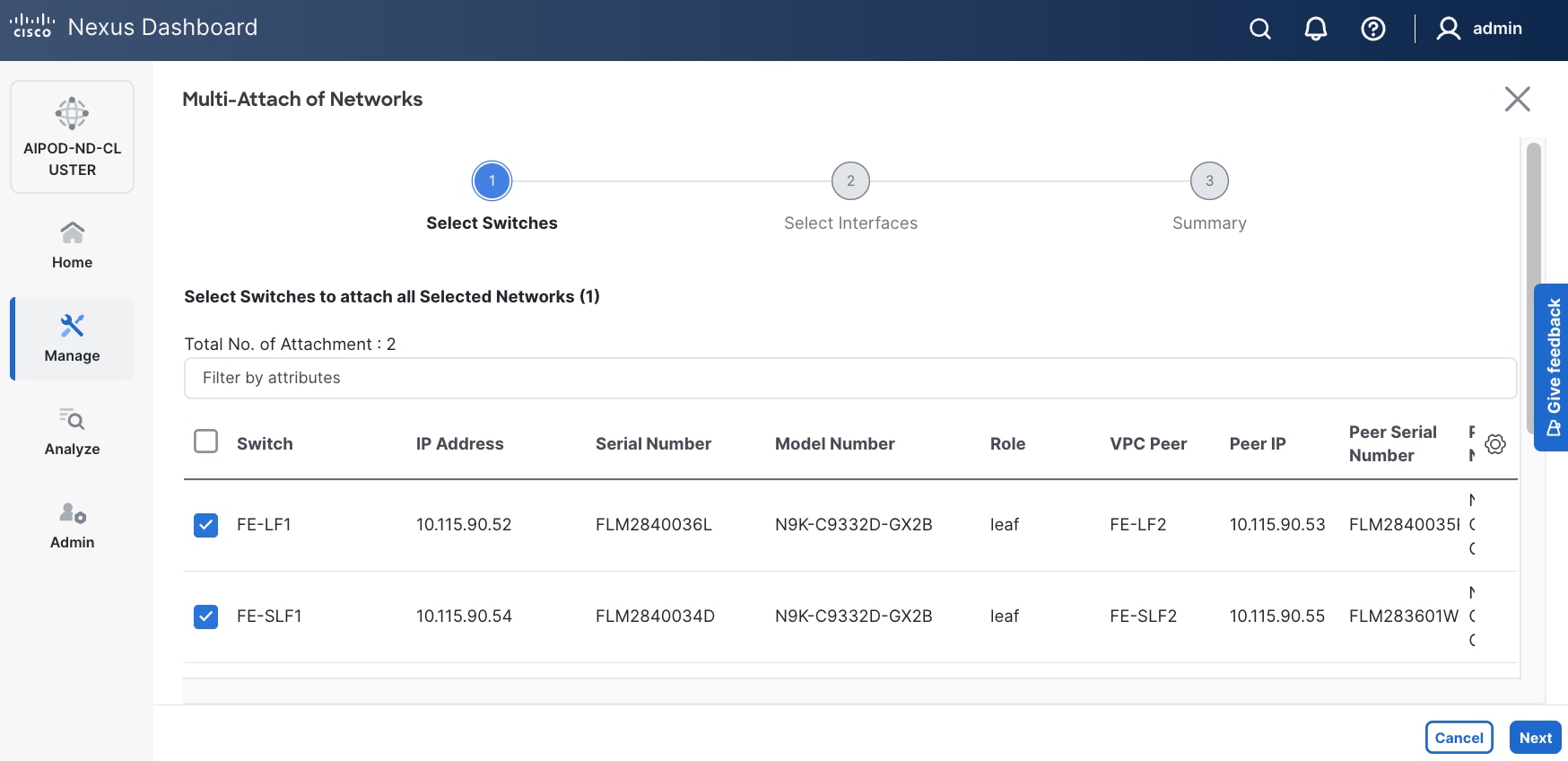

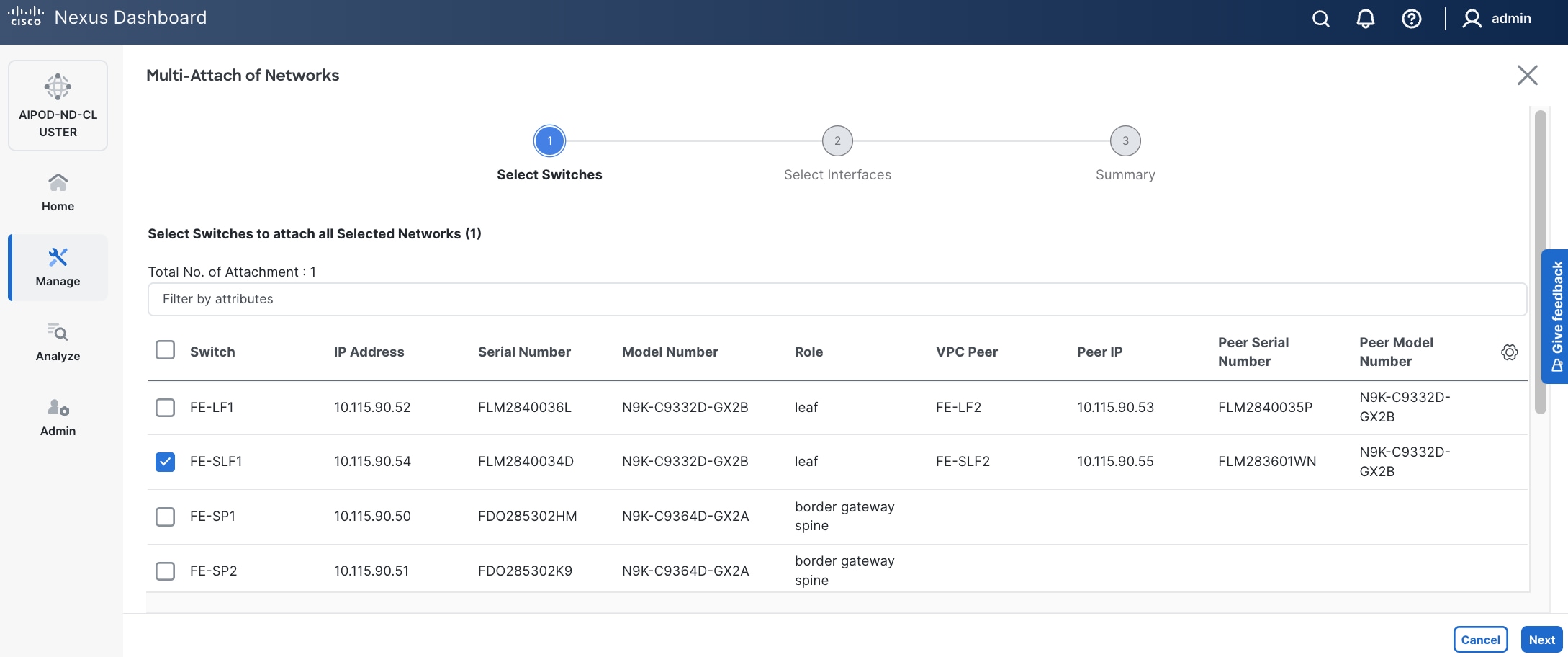

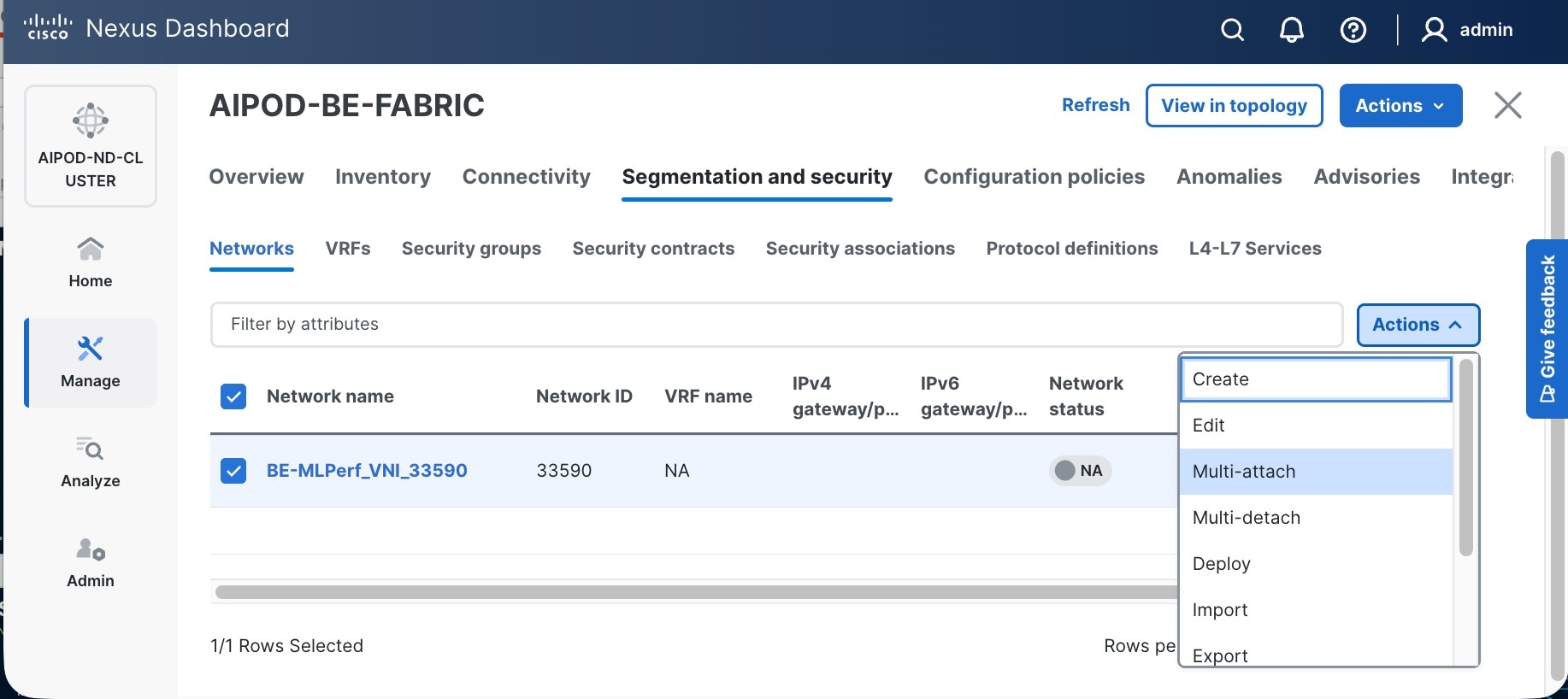

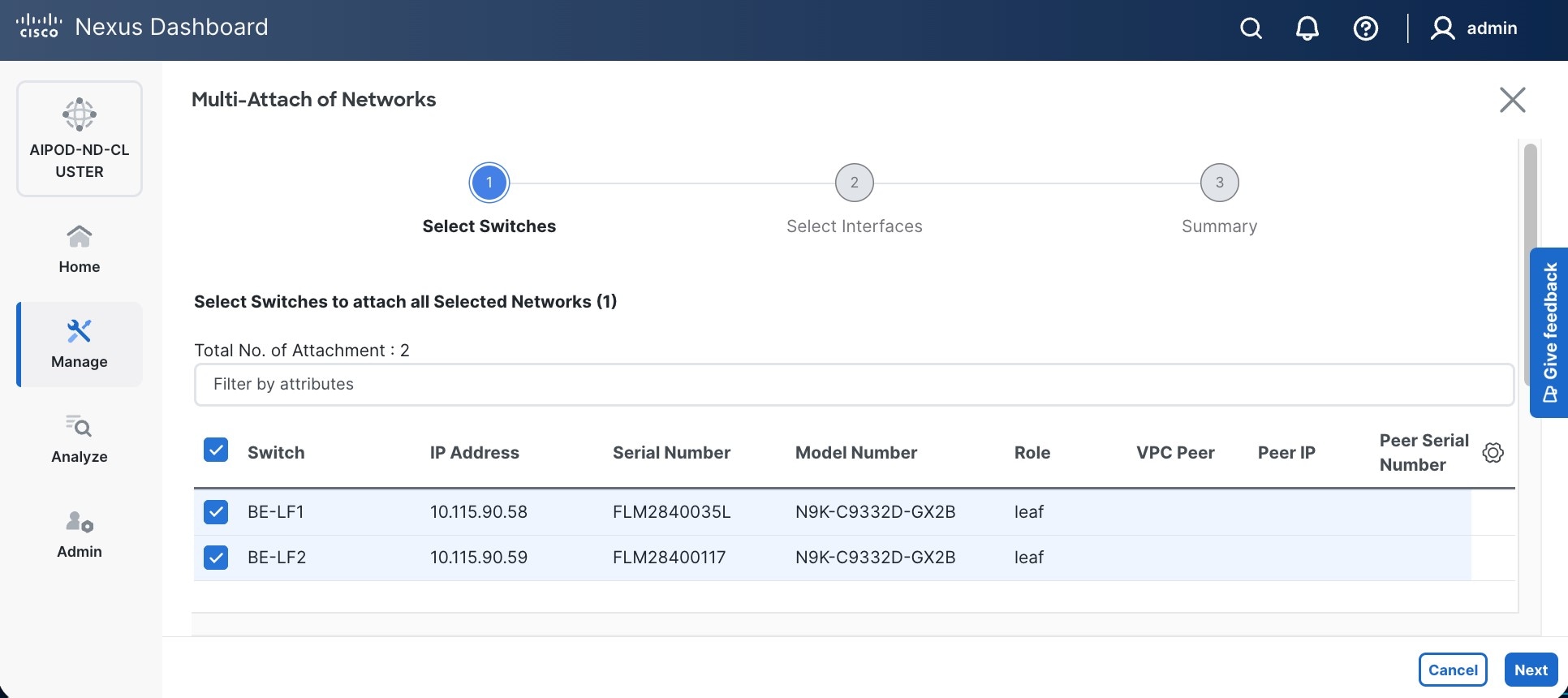

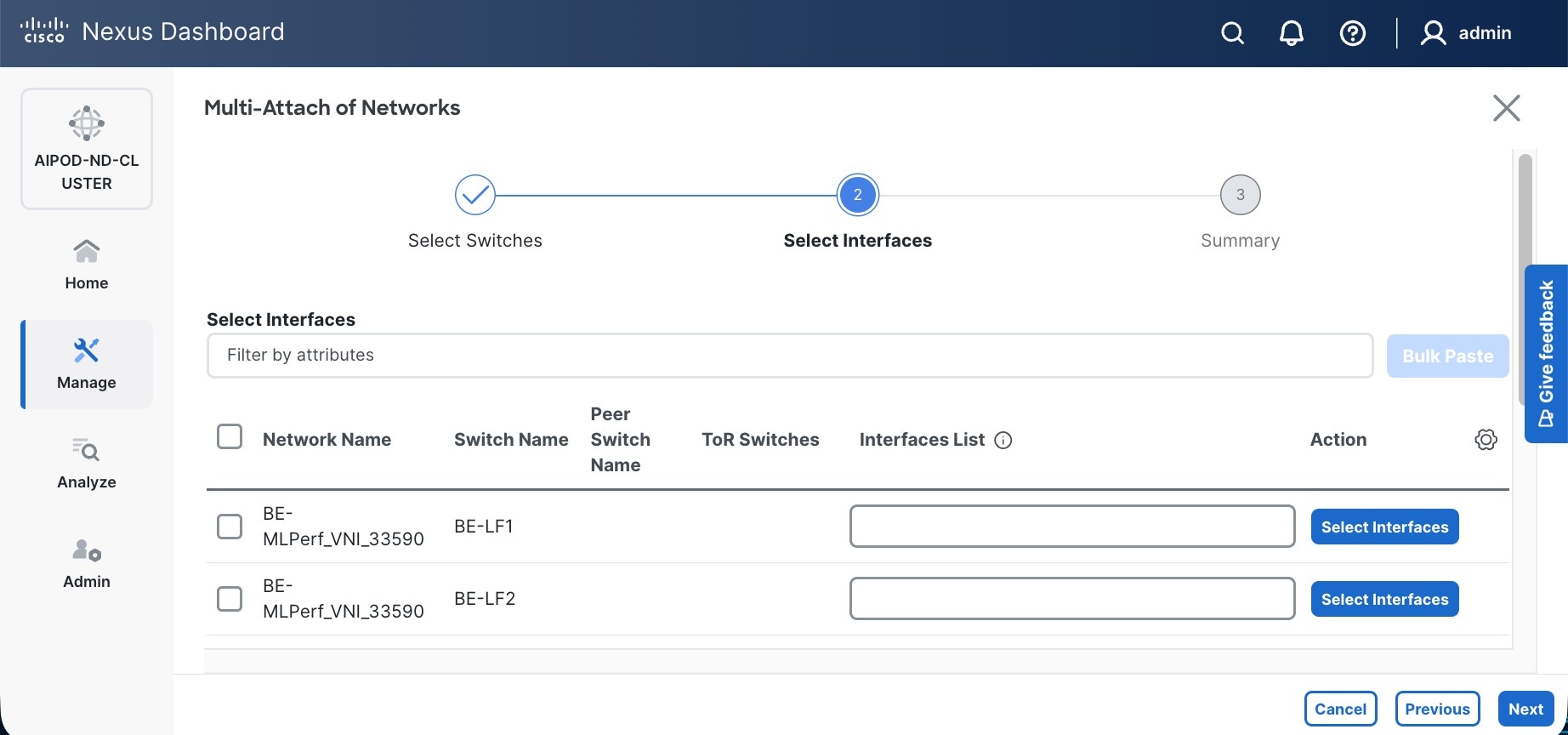

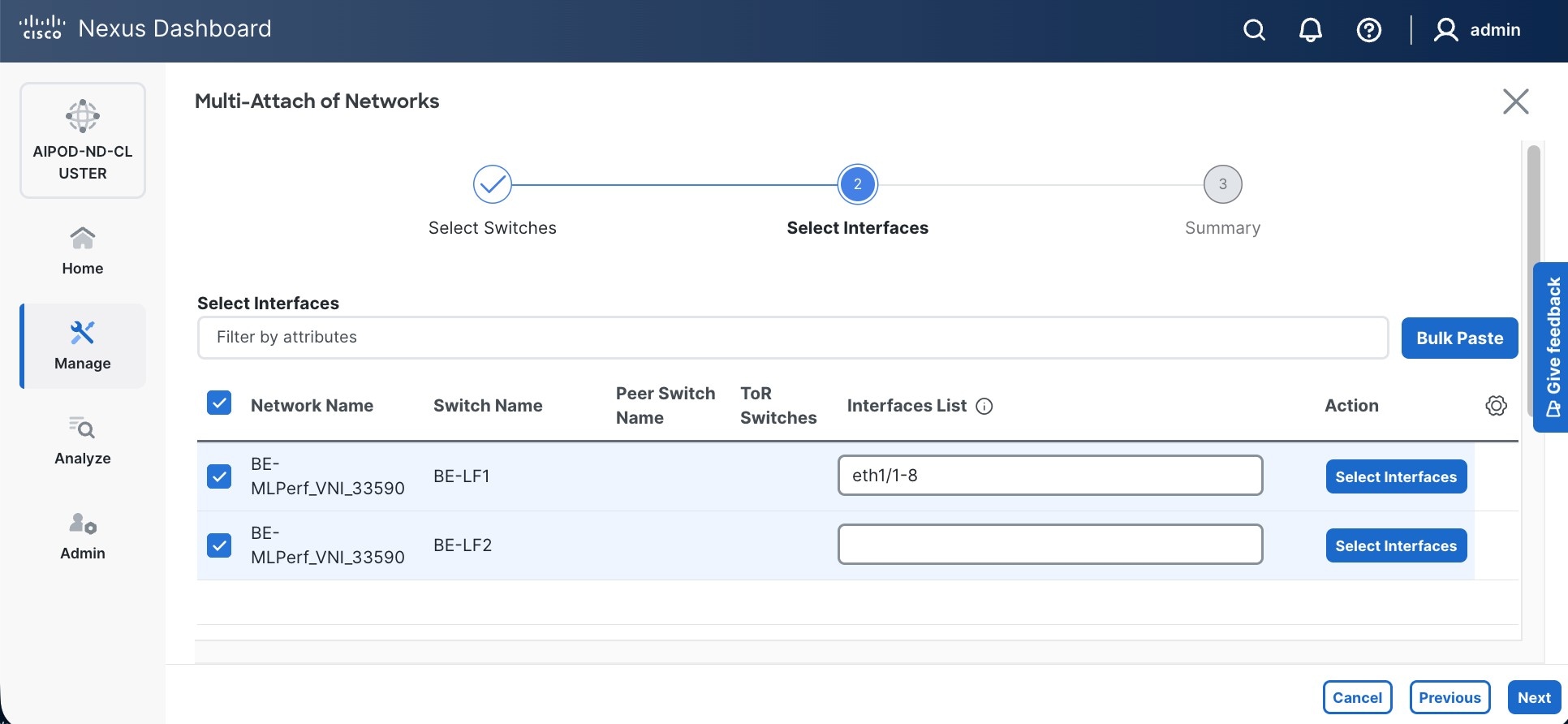

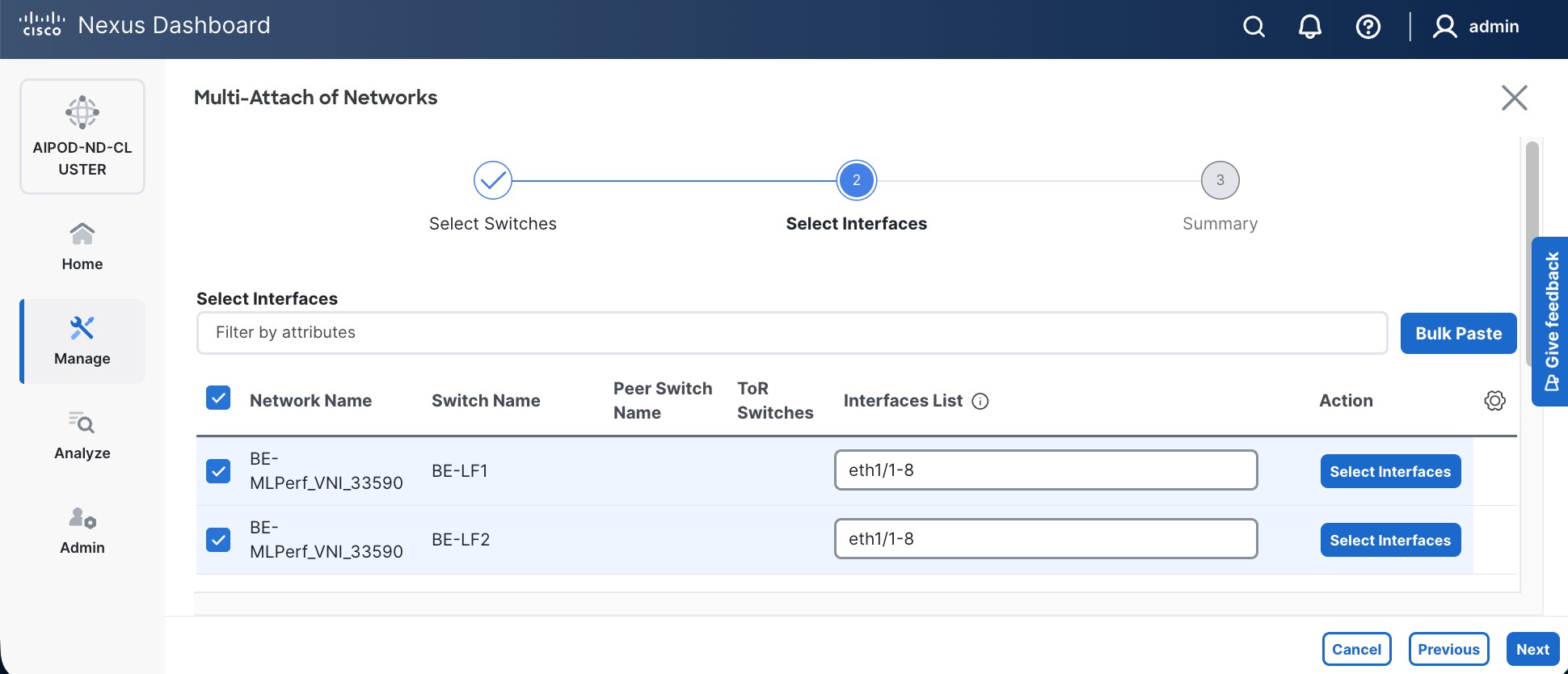

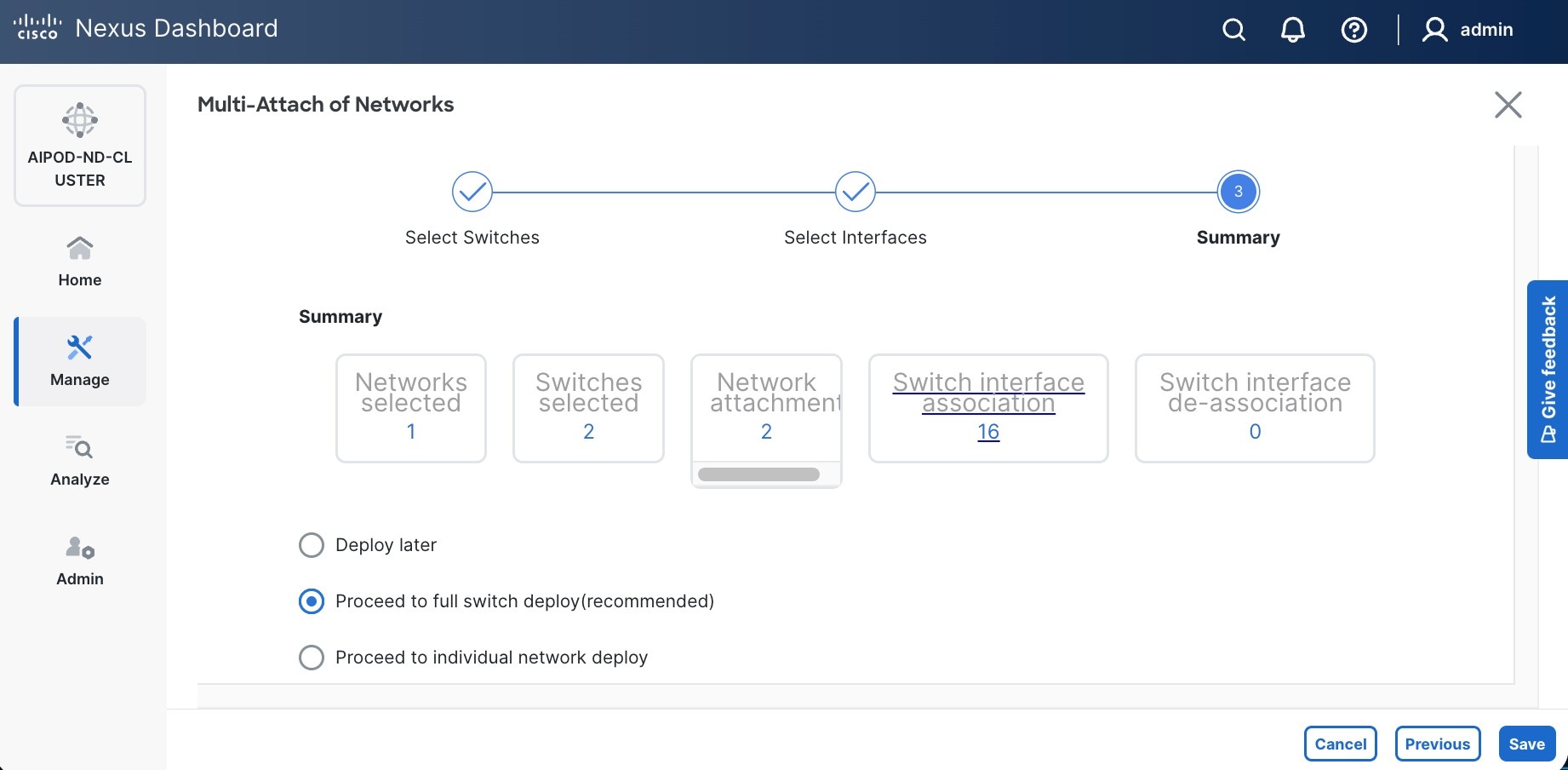

Step 9. Select newly created network and deploy it on both leaf pairs. Click the lower of the two Actions button and select Multi-attach from the list.

Step 10. Select the Leaf switch pairs. Enabling this network on storage leaf pairs, as shown below, may not be necessary in all deployments.

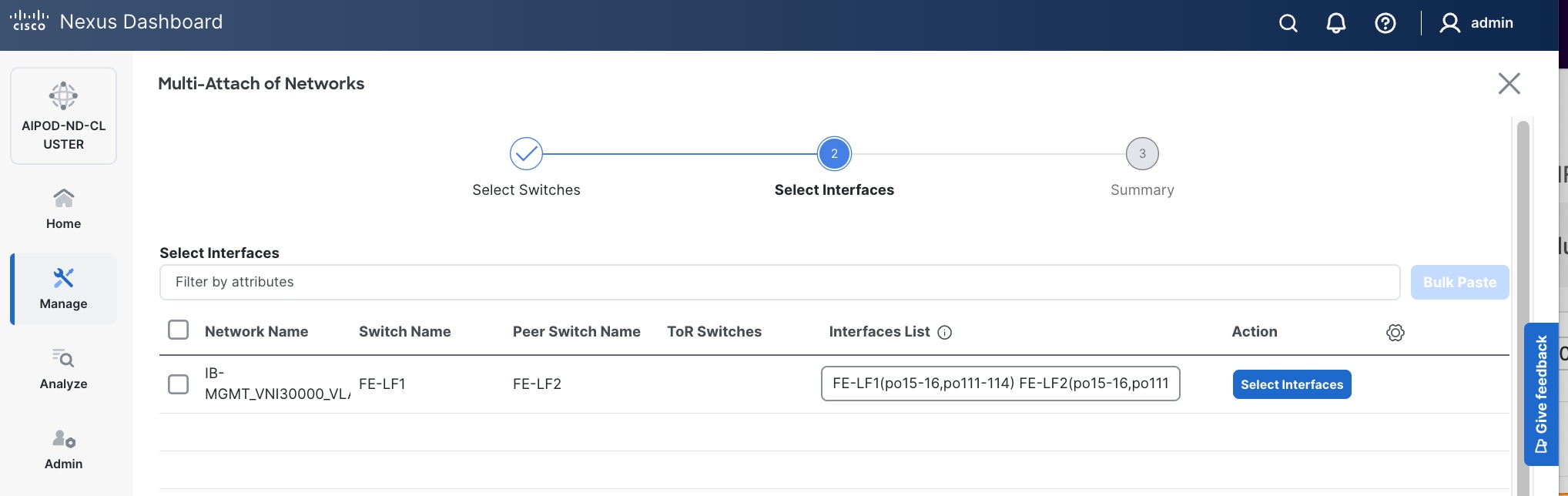

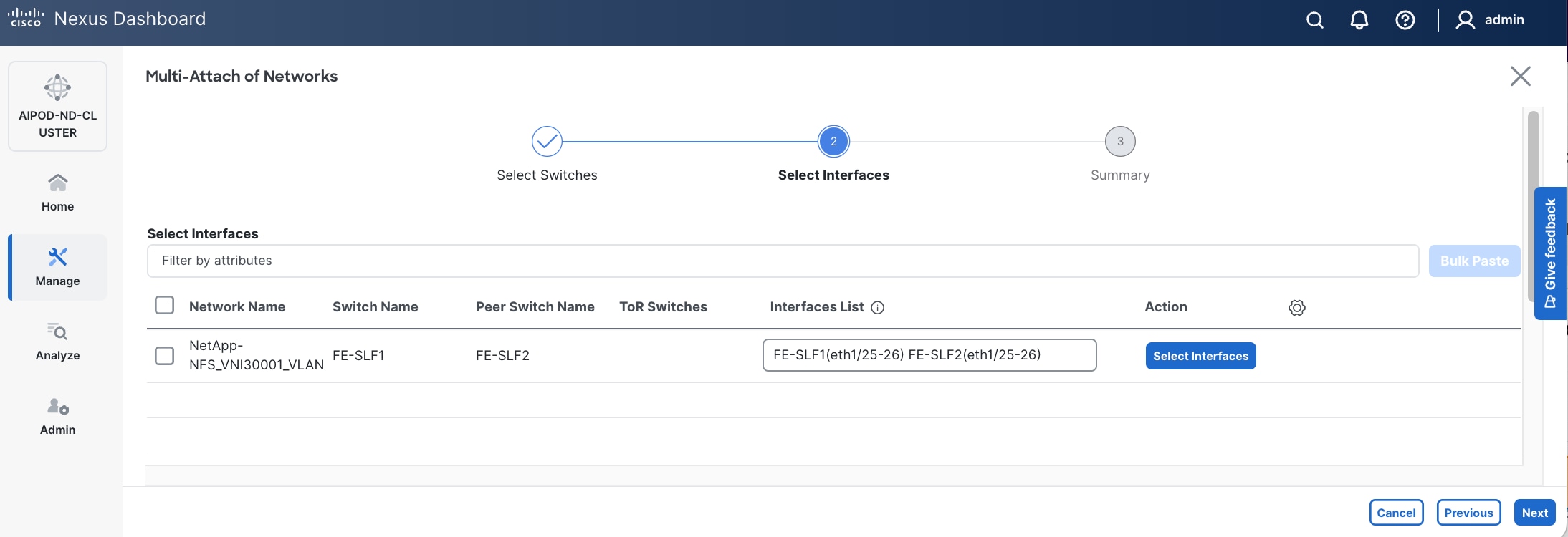

Step 11. Click Next.

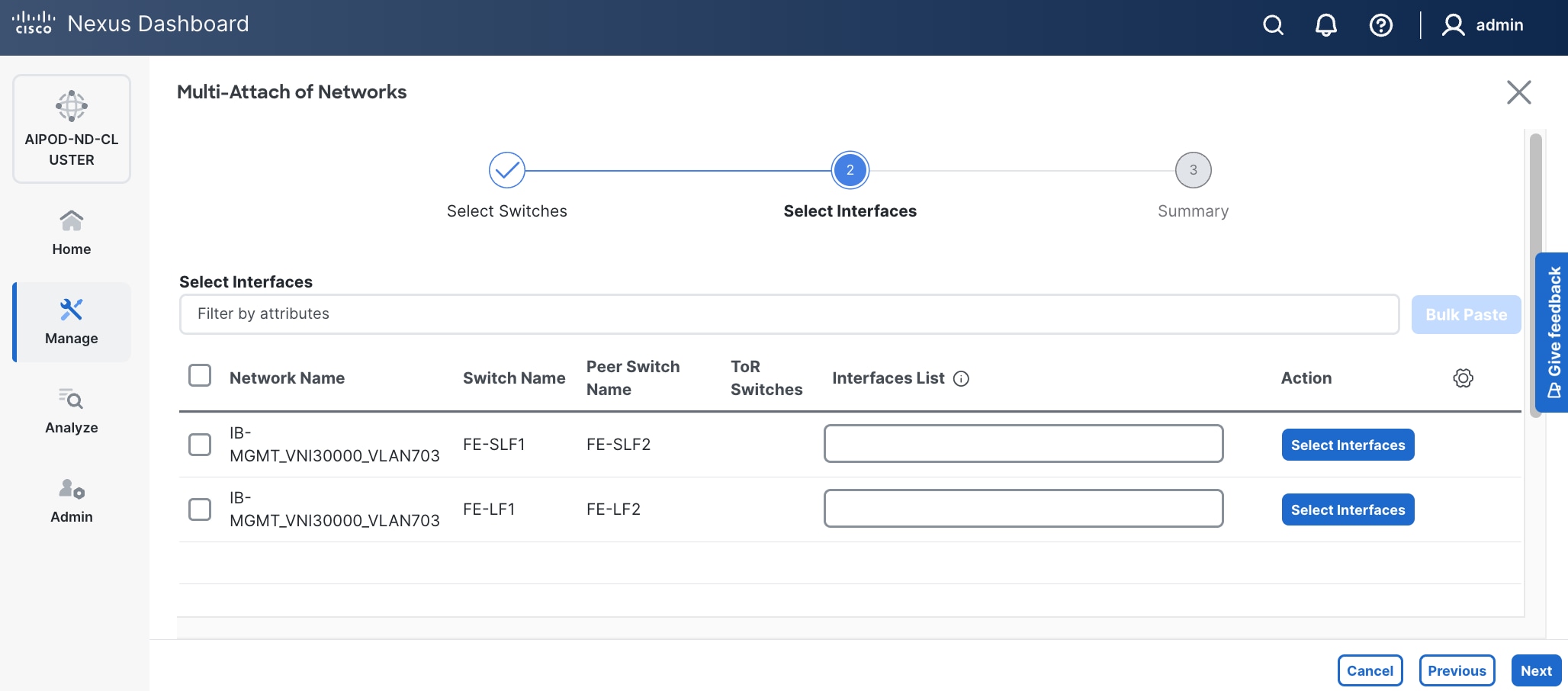

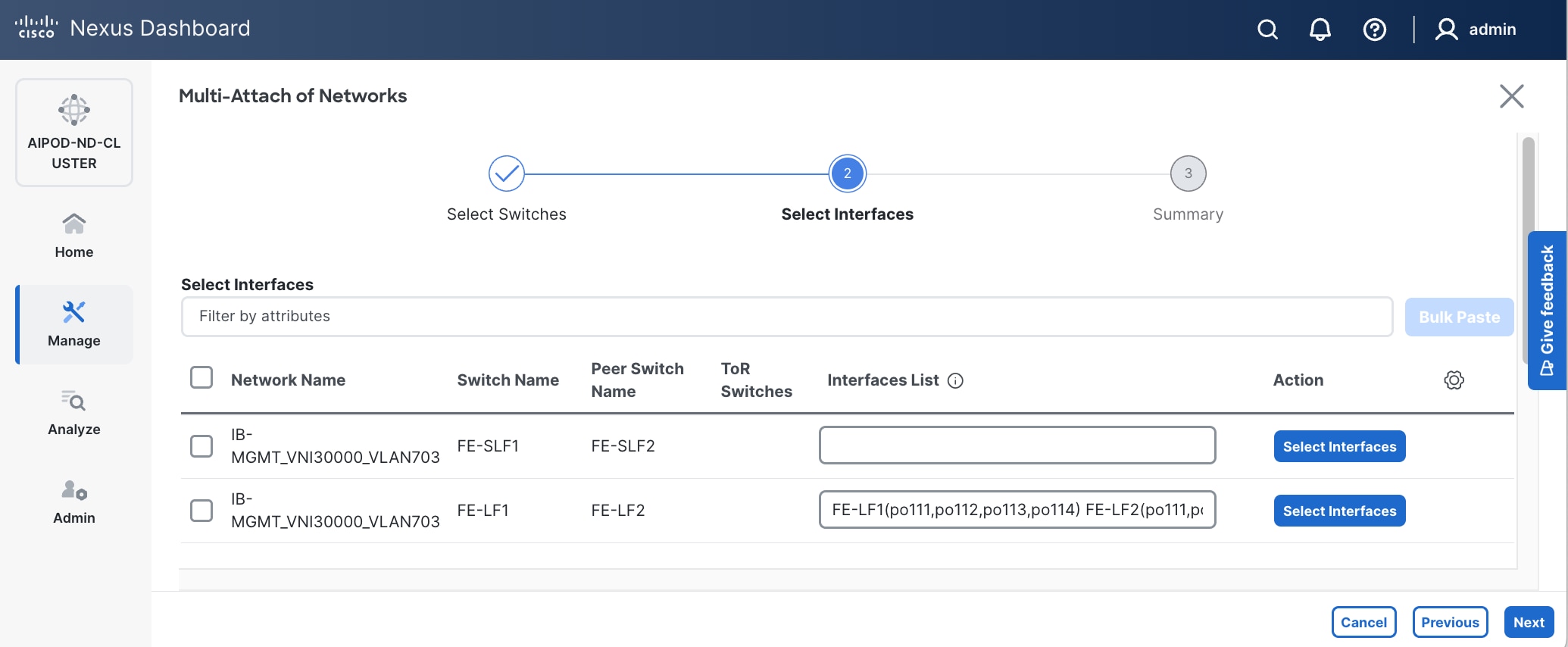

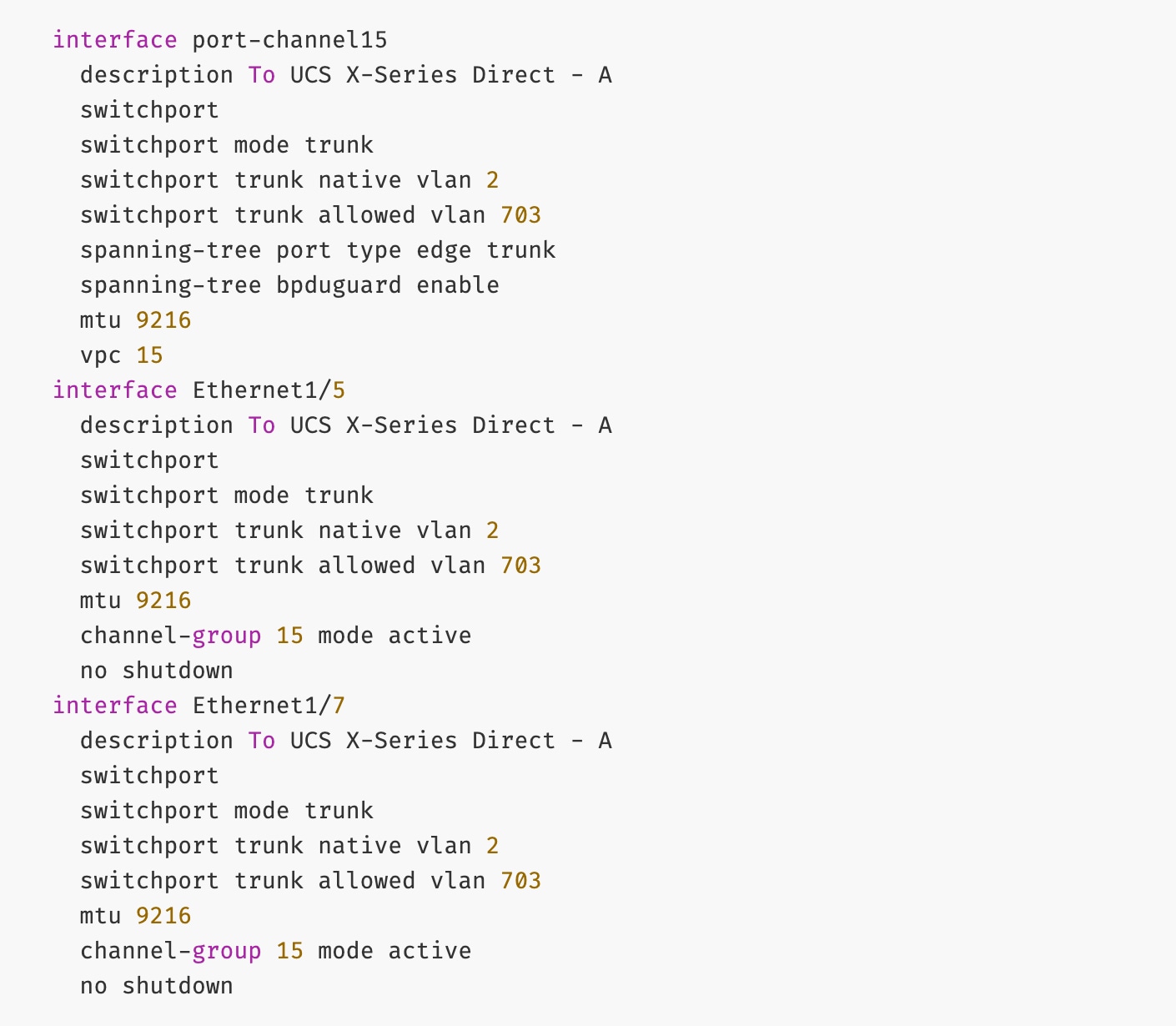

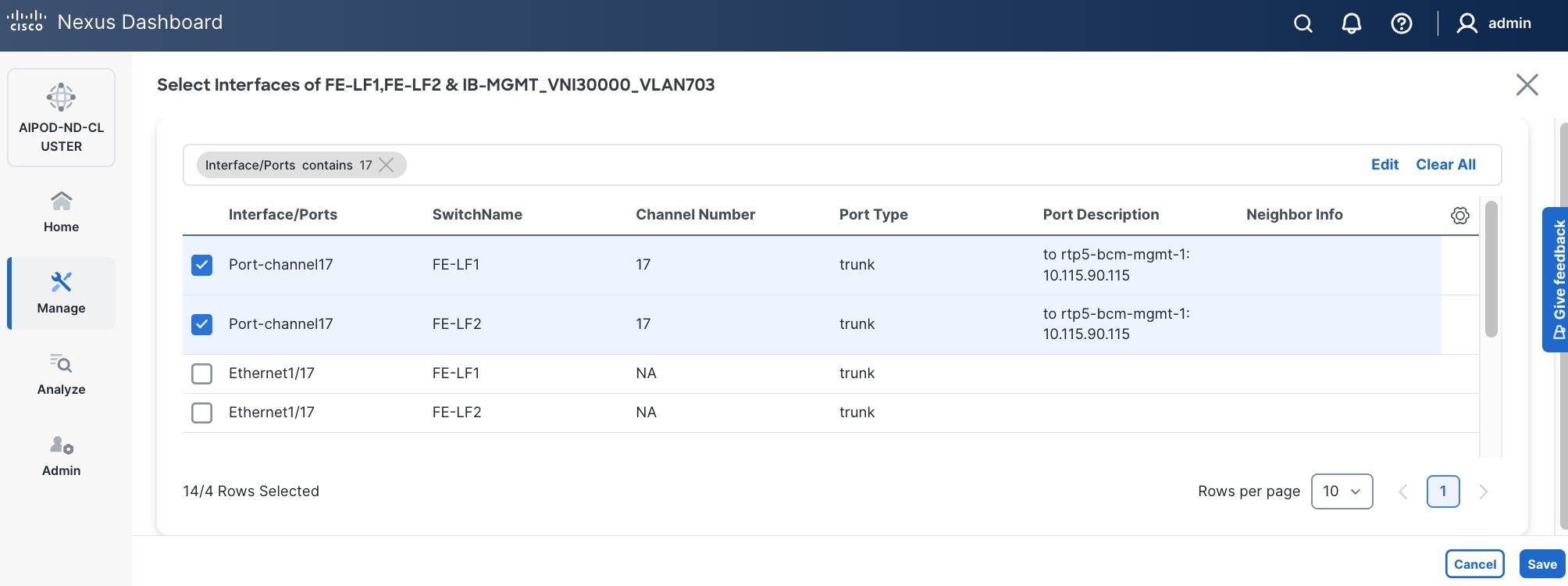

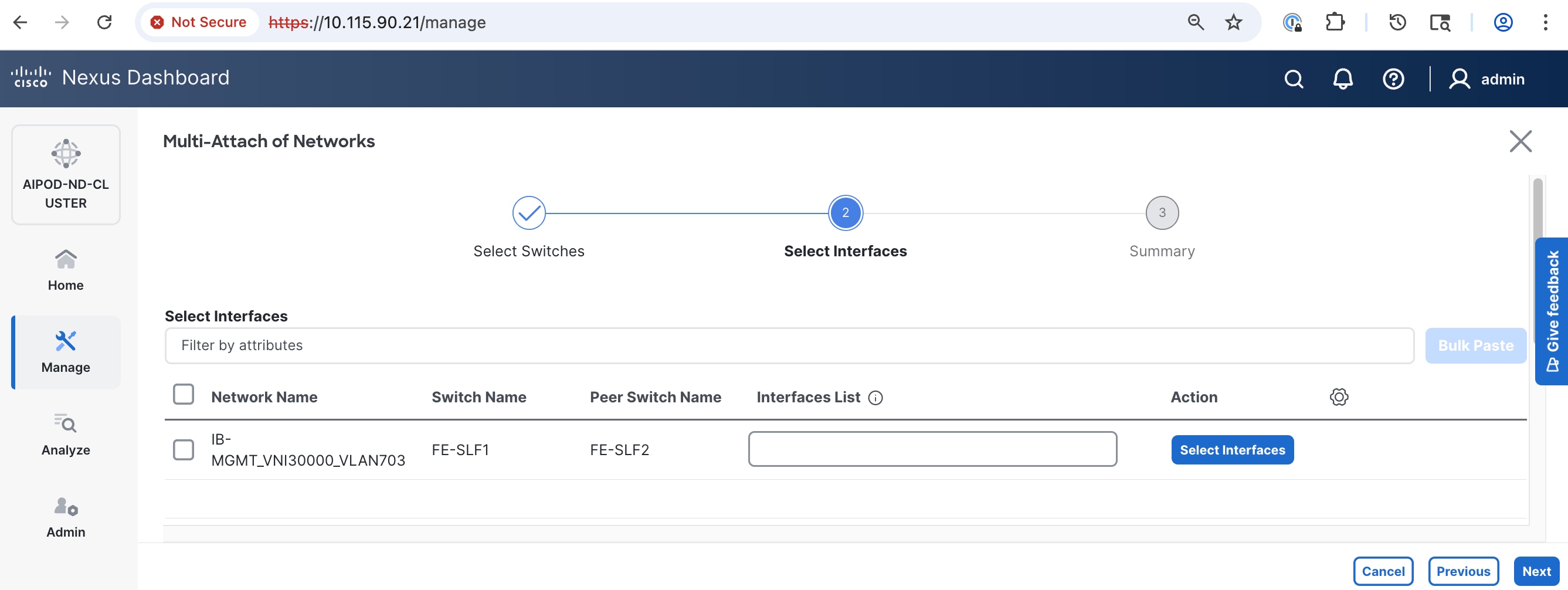

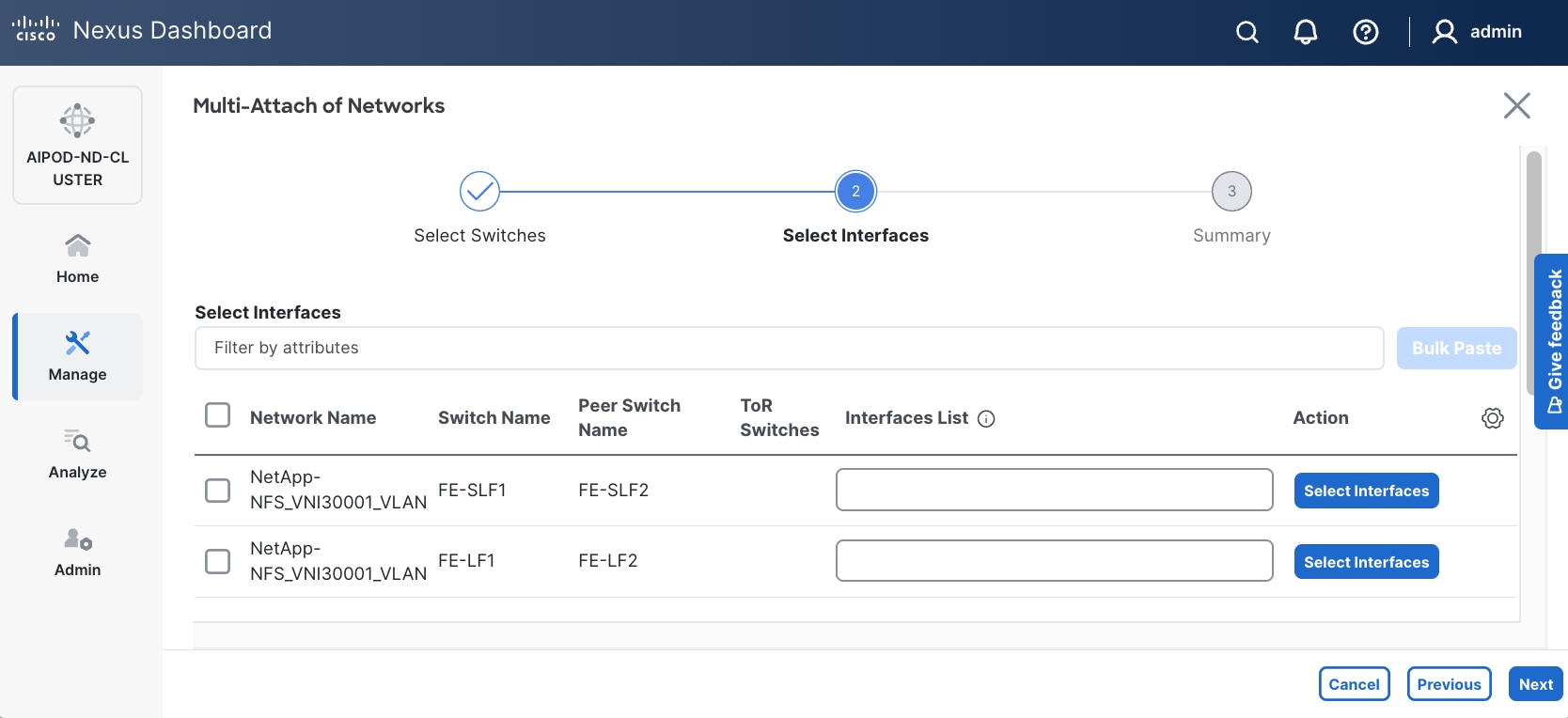

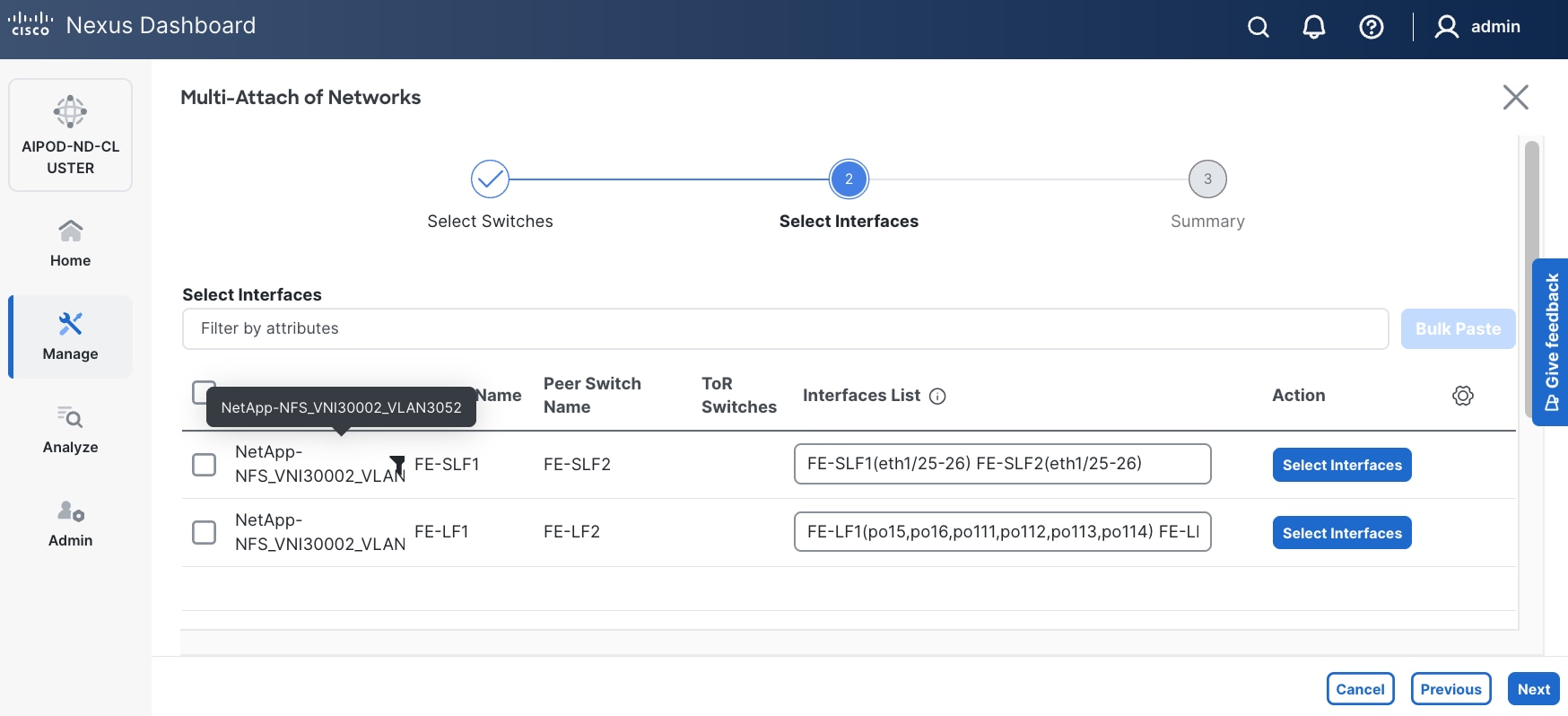

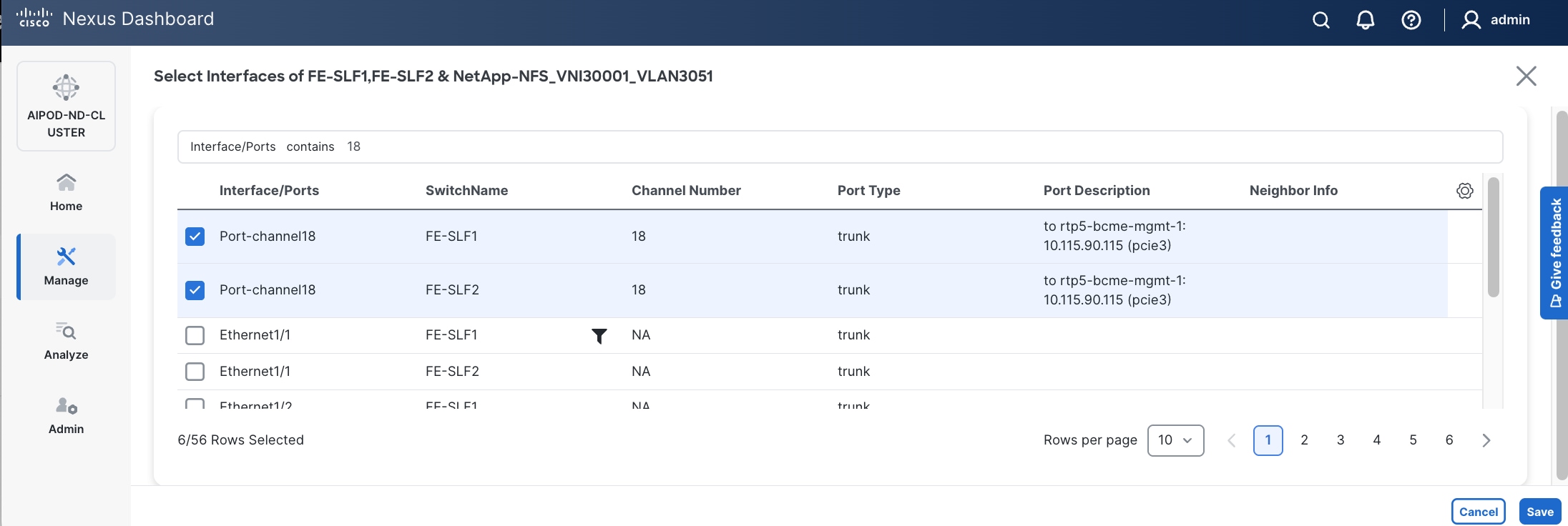

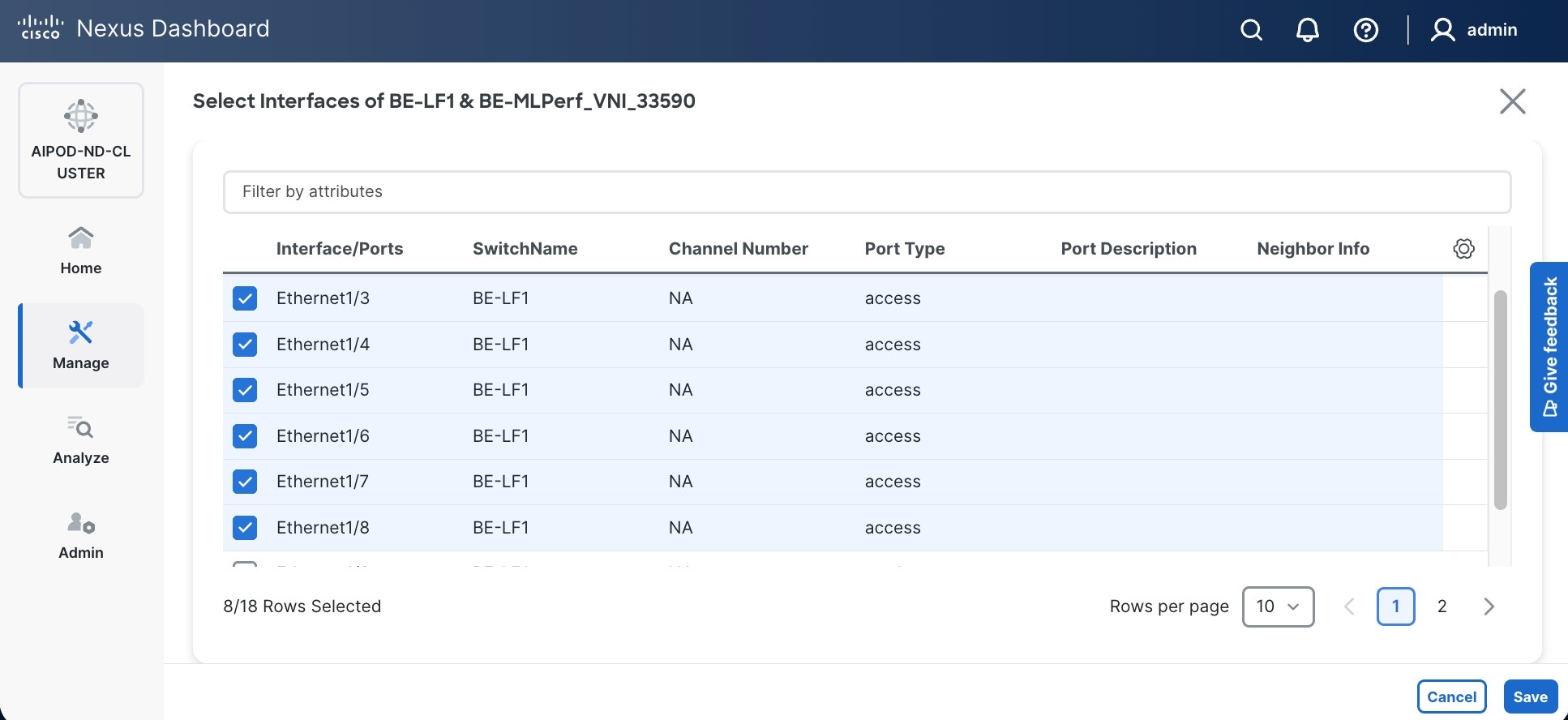

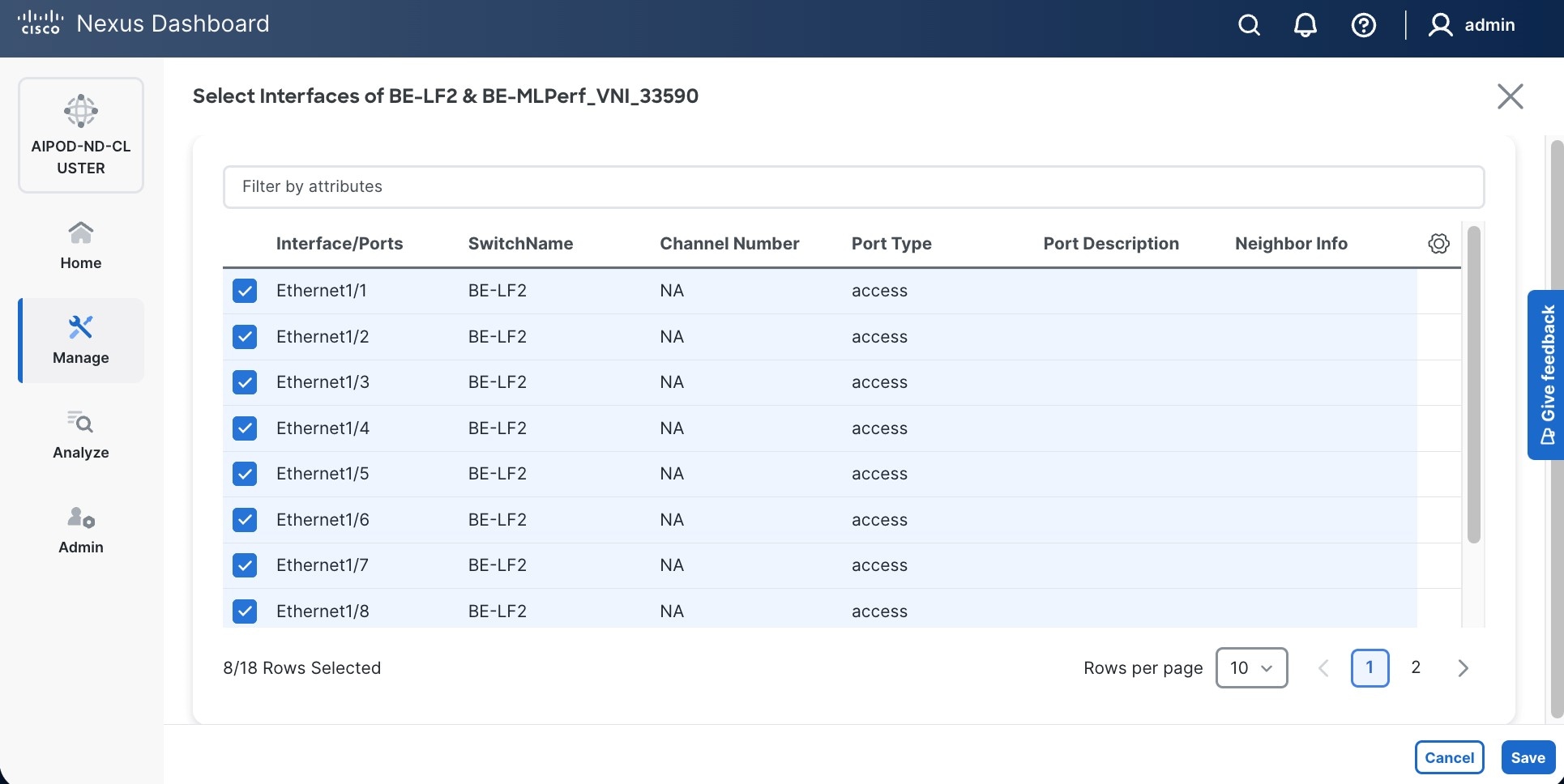

Step 12. Select each switch pair in the list and click Select interfaces on the right to deploy this network as a trunked VLAN (VLAN 703) on the selected interfaces. Select the interfaces on the compute leaf switches that connect to the UCS GPU nodes. Additional interfaces can be added later as needed.

Step 13. Click Next.

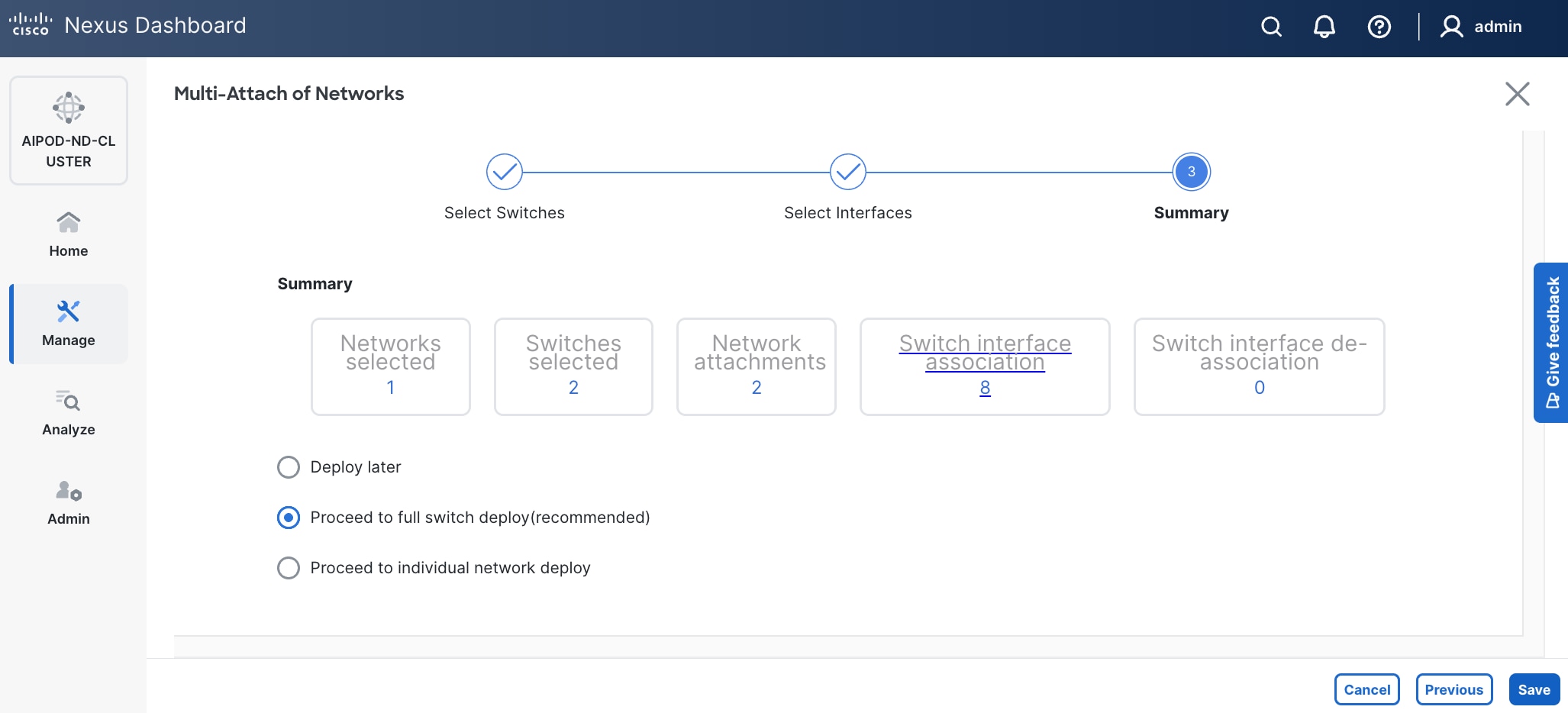

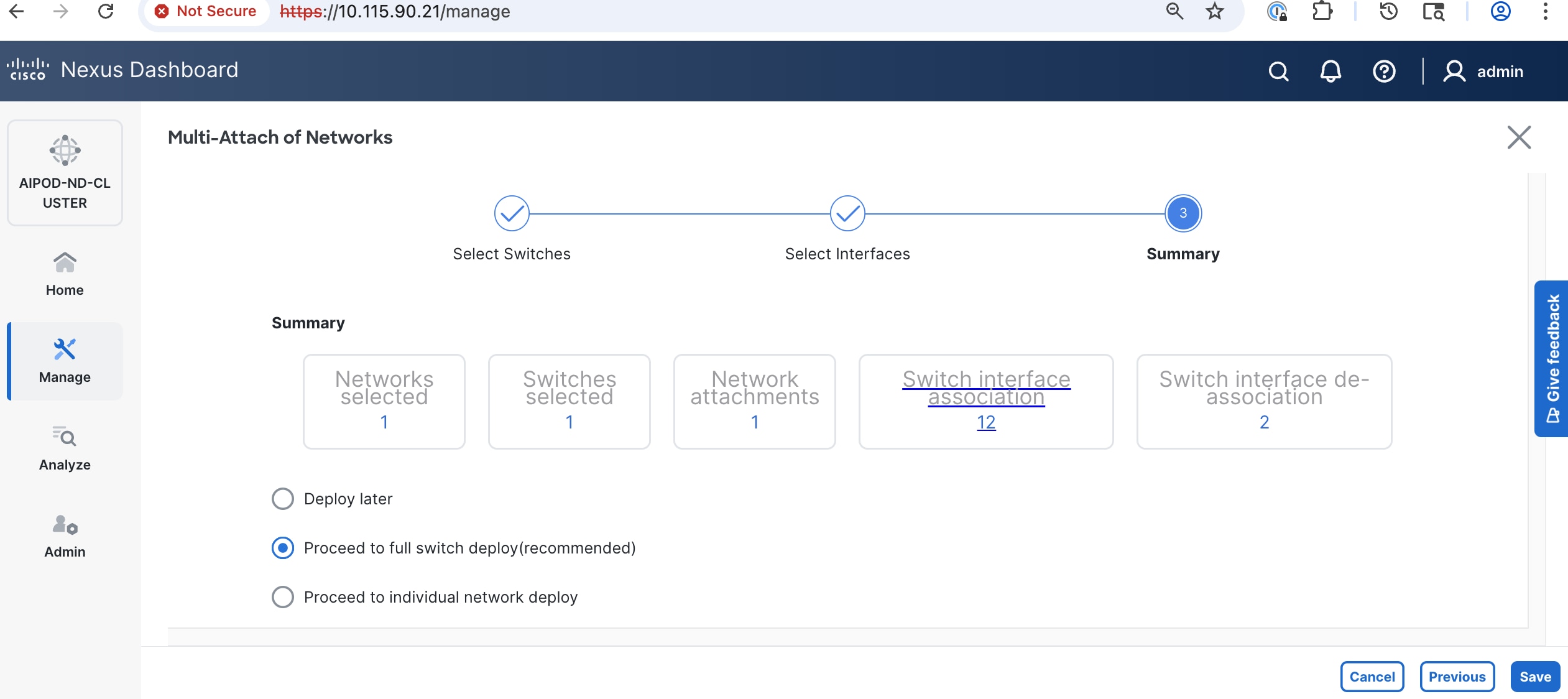

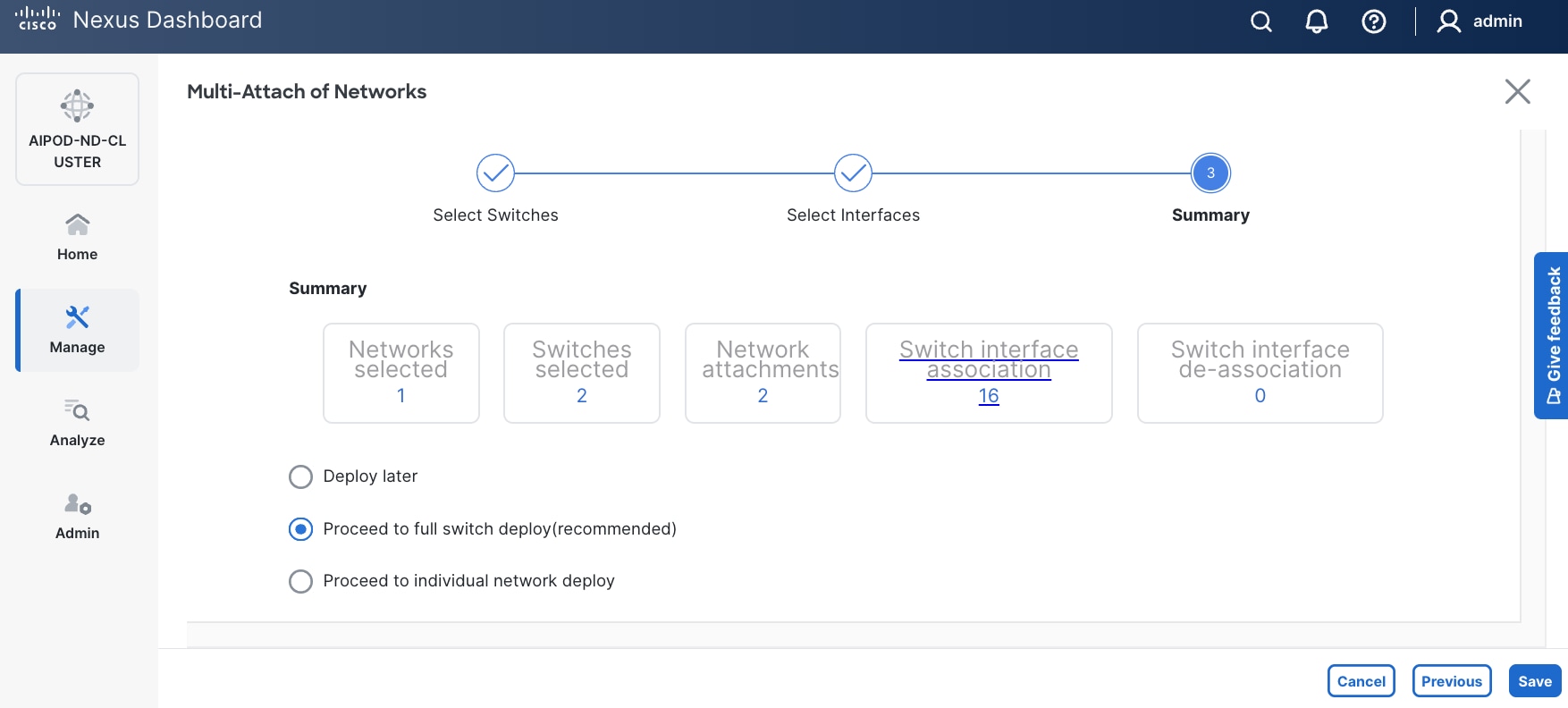

Step 14. Click Save.

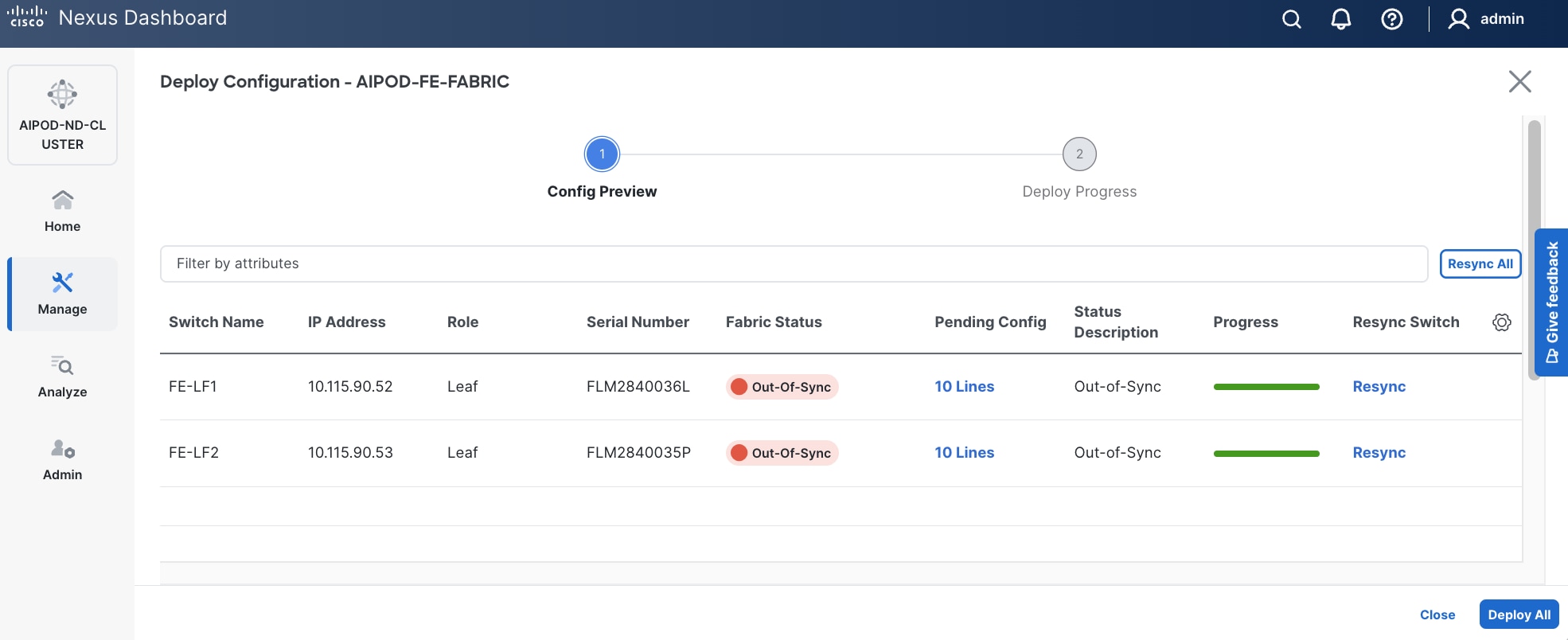

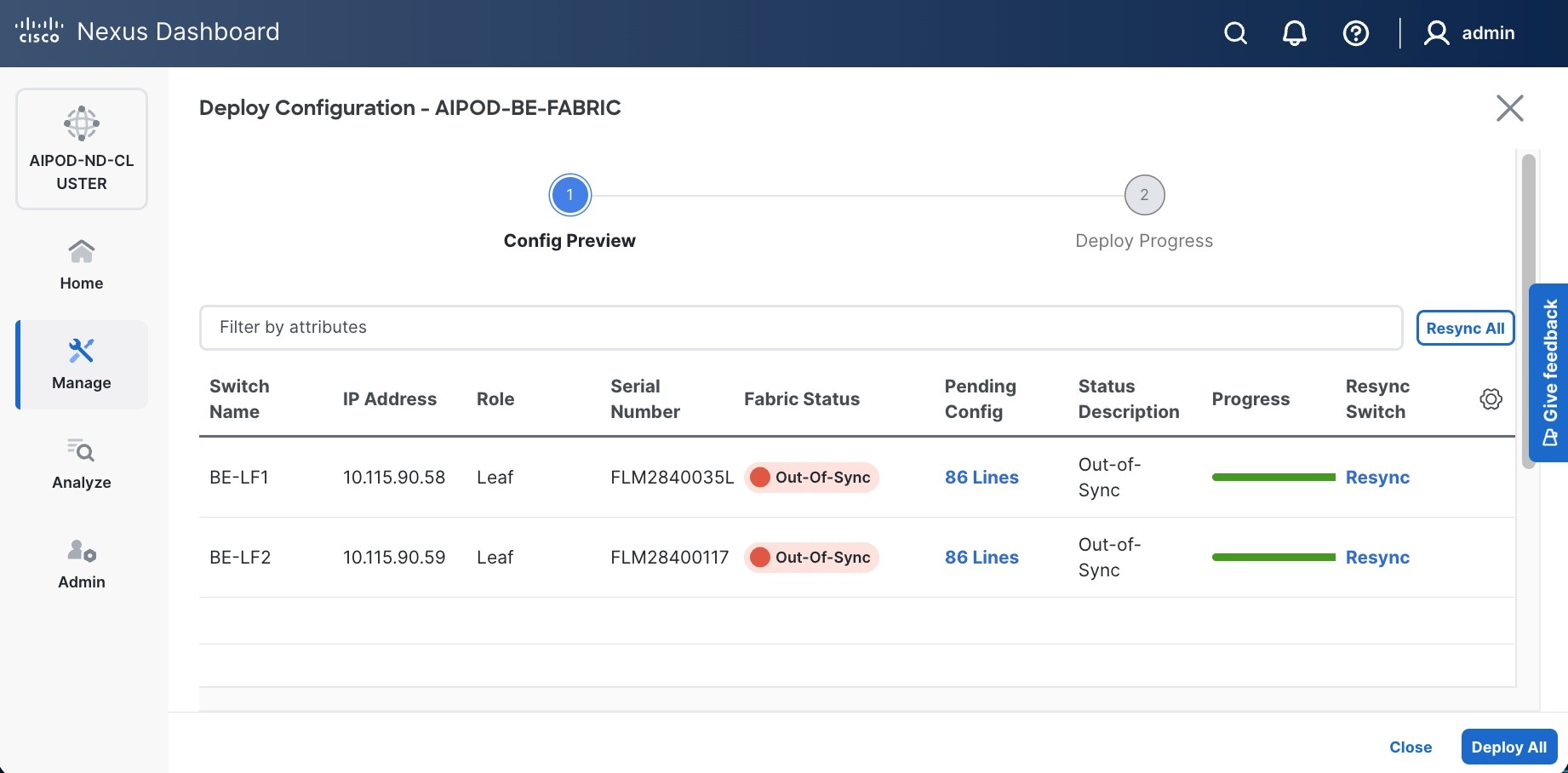

Step 15. Click Pending Config to see the configuration being deployed. The pending configuration on one leaf switch is provided as a reference at the end.

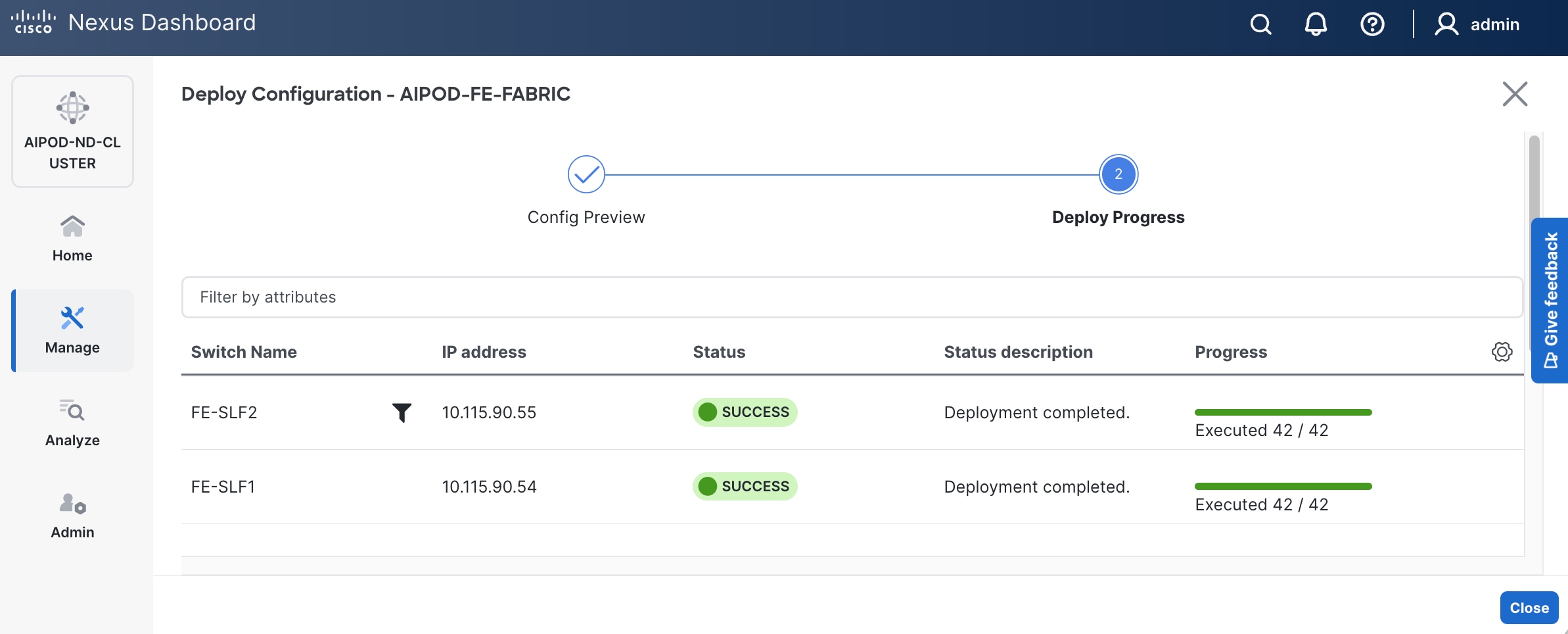

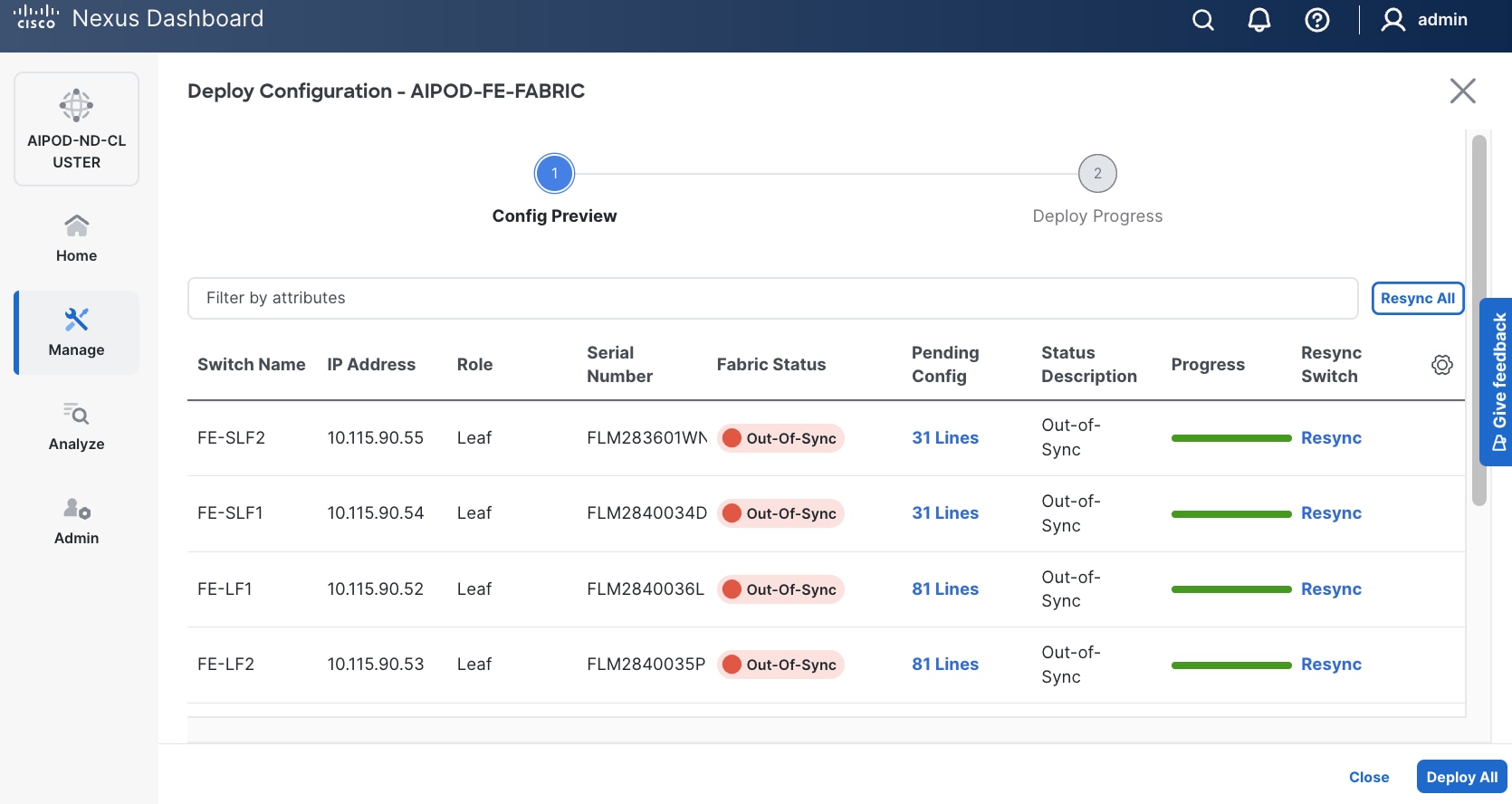

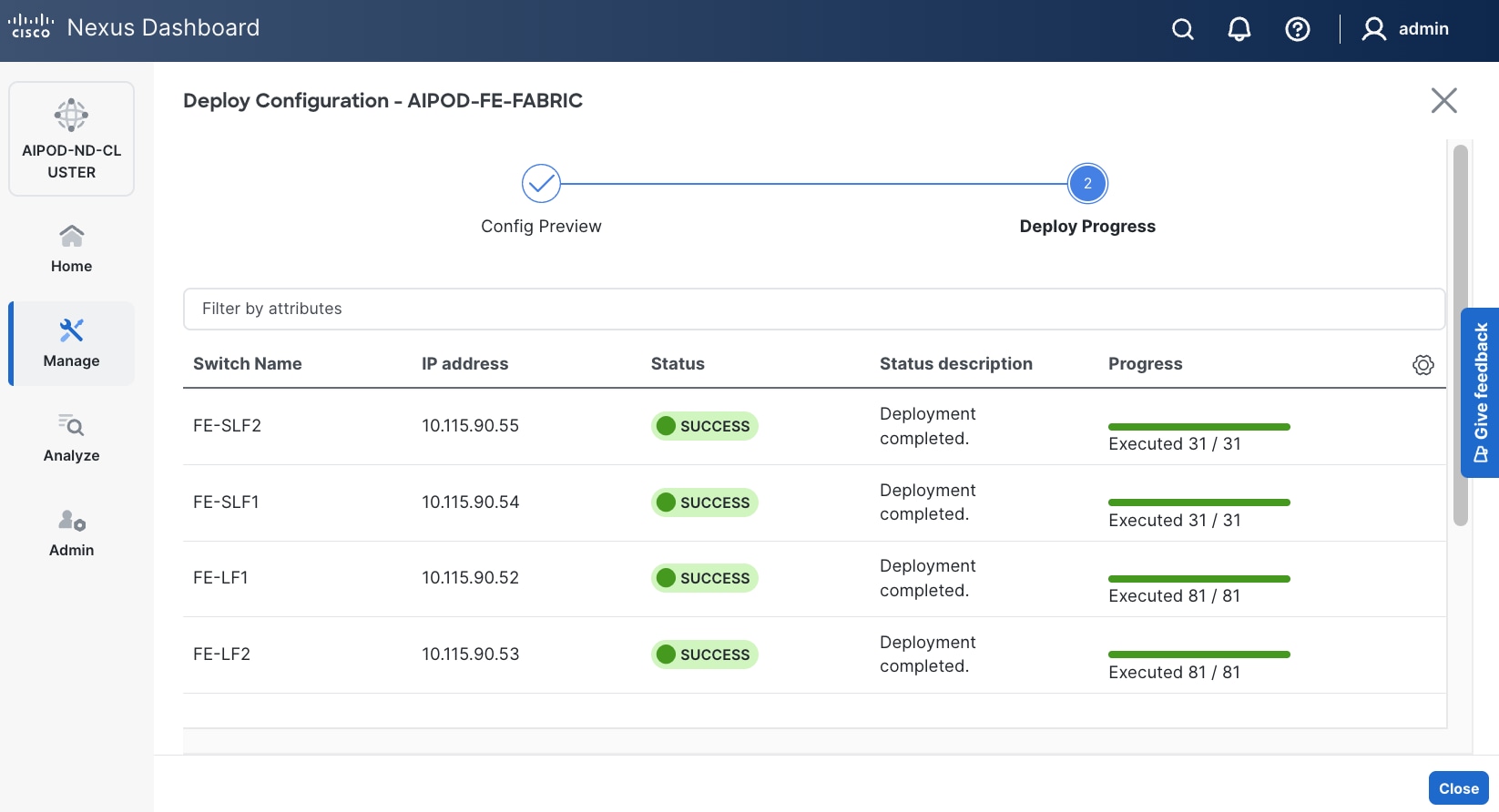

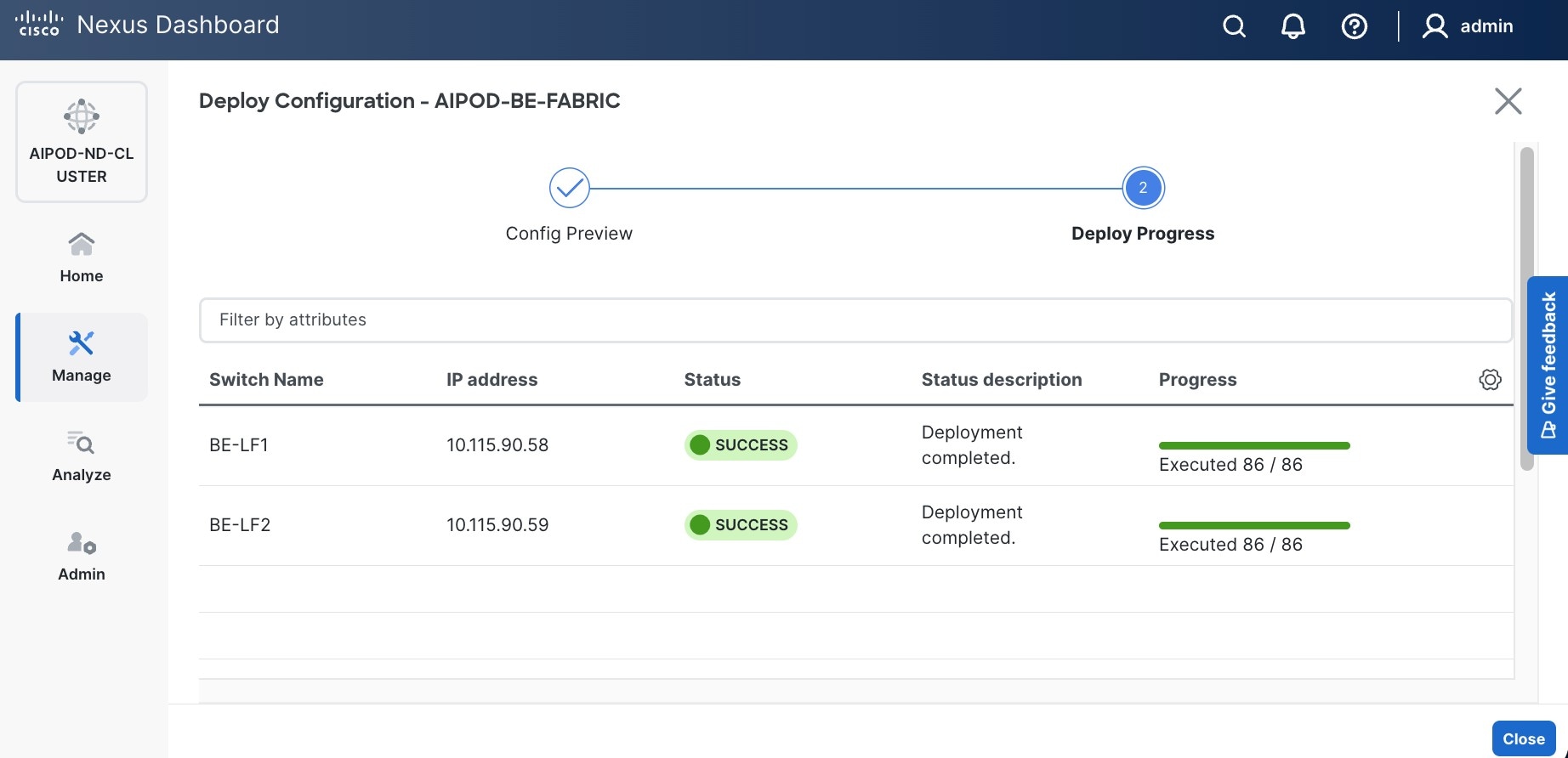

Step 16. Click Deploy All.

Step 17. Click Close.

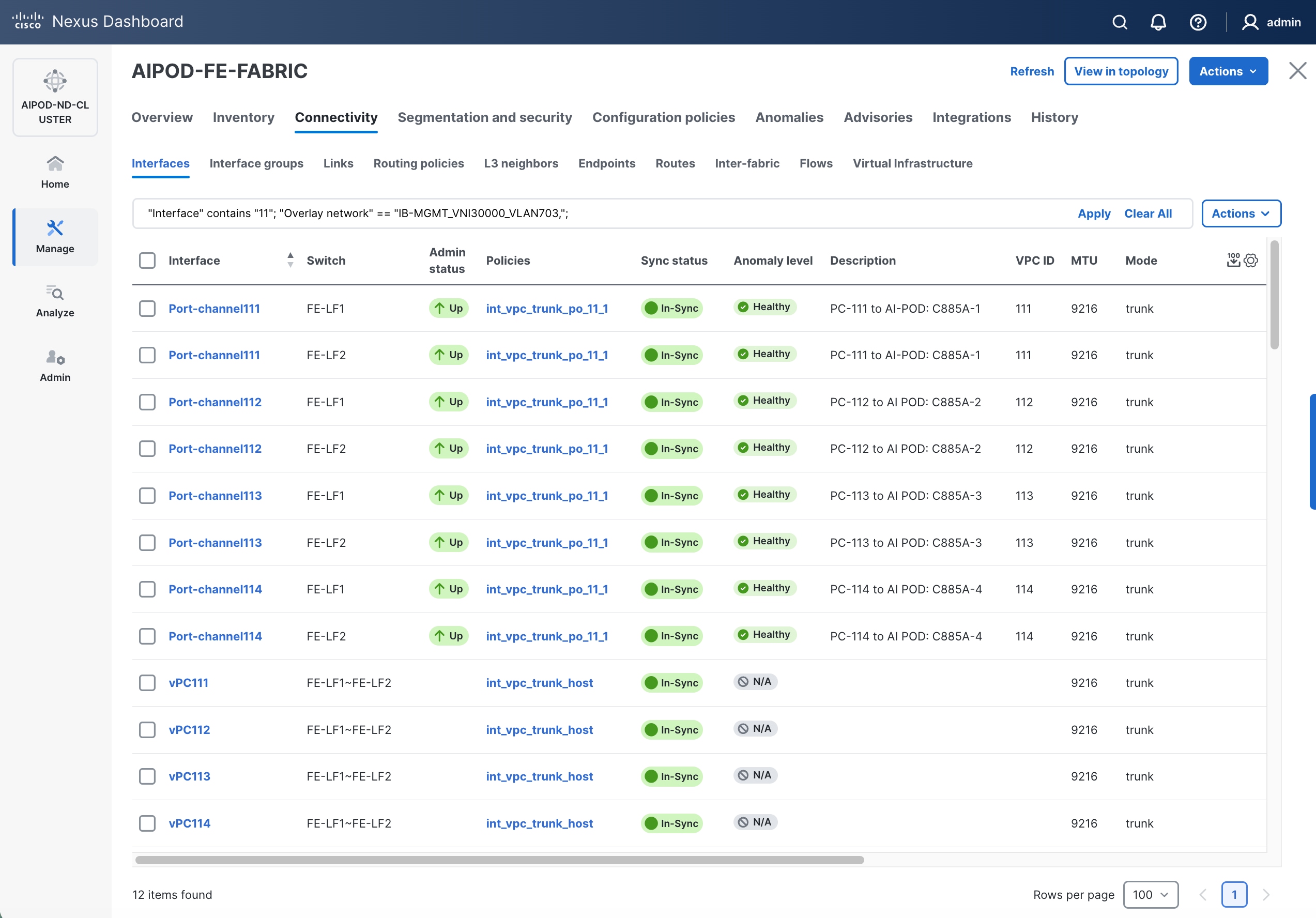

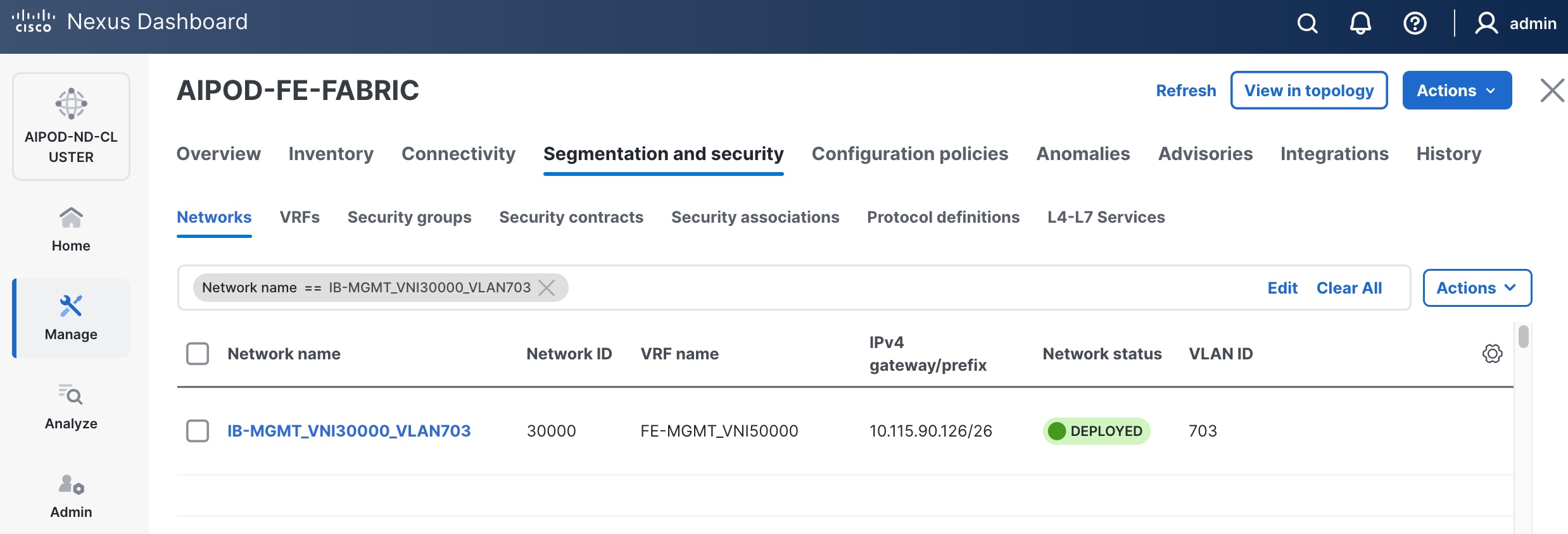

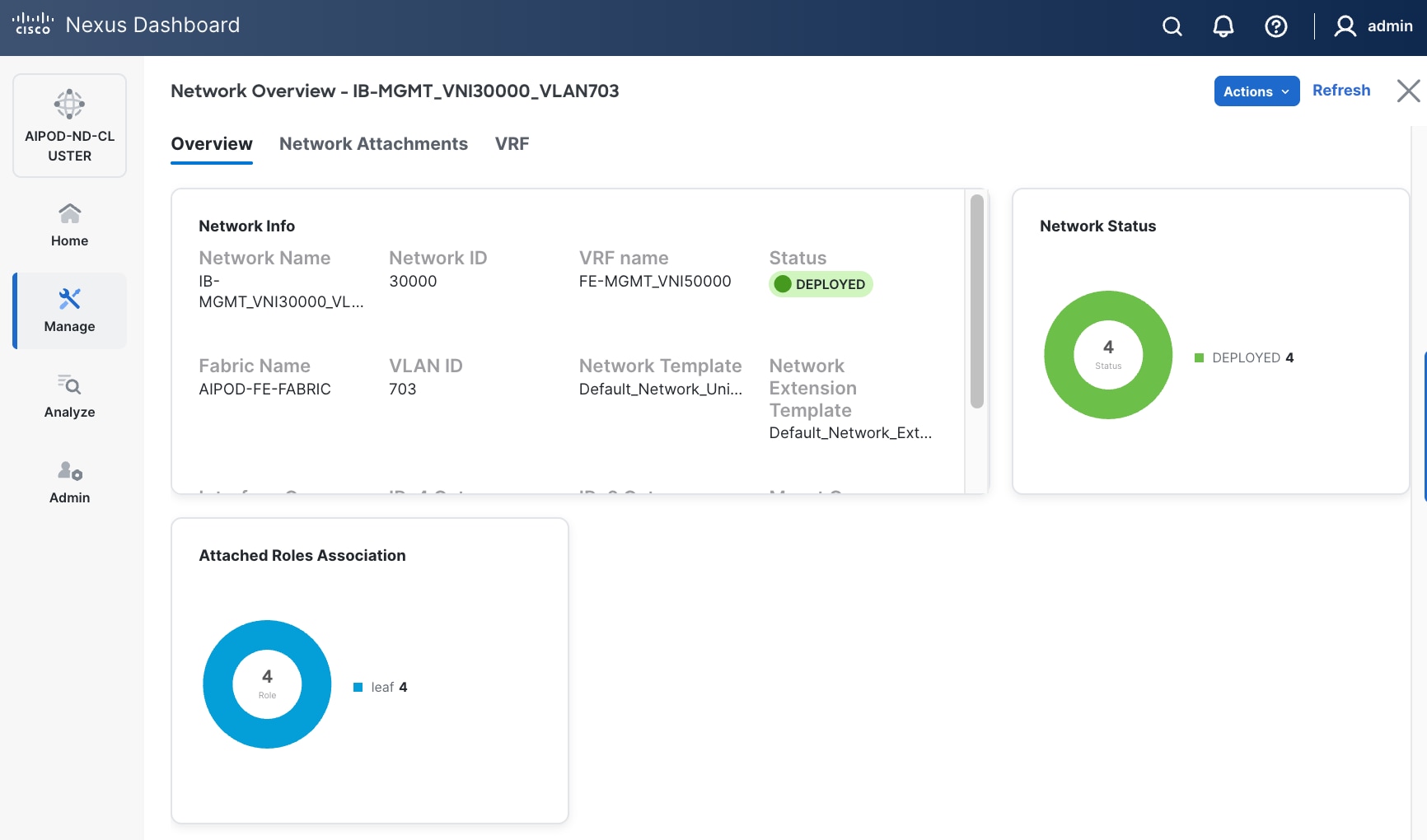

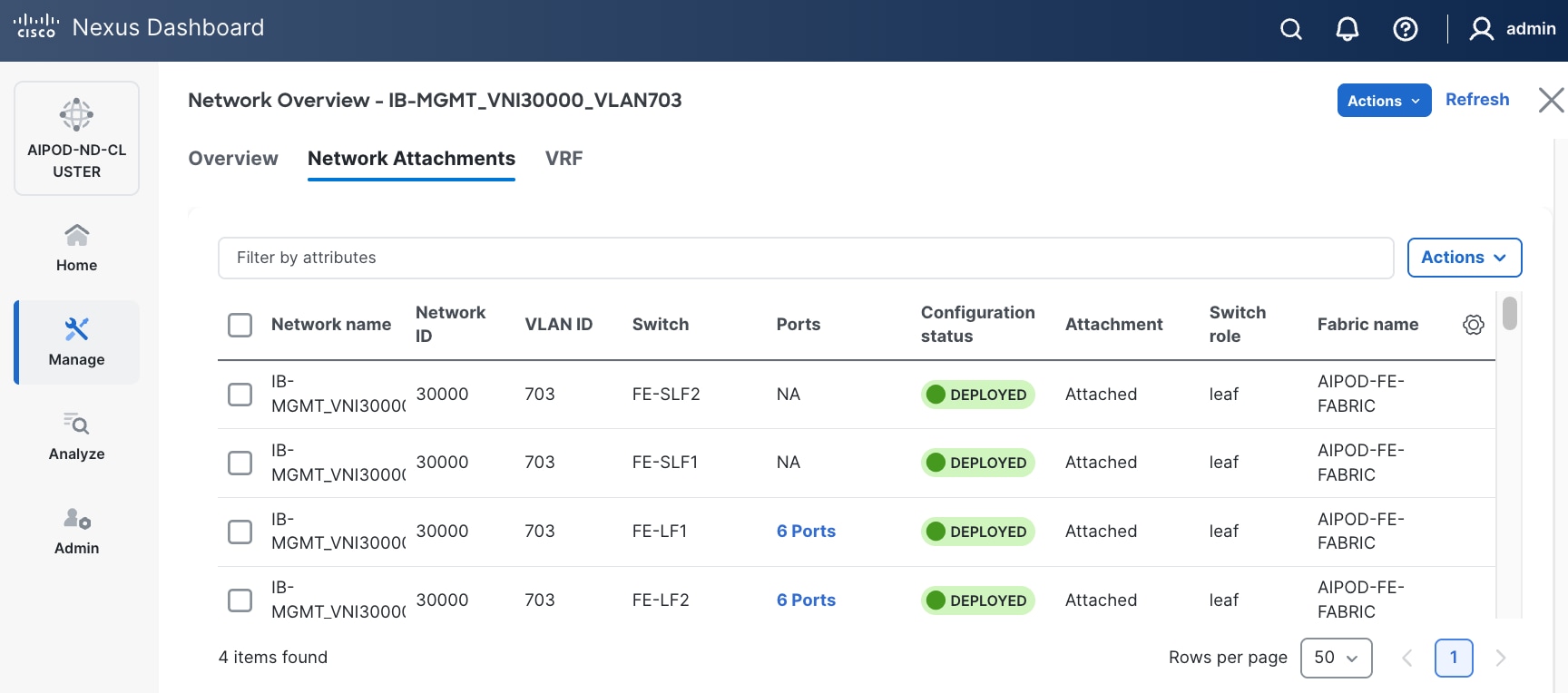

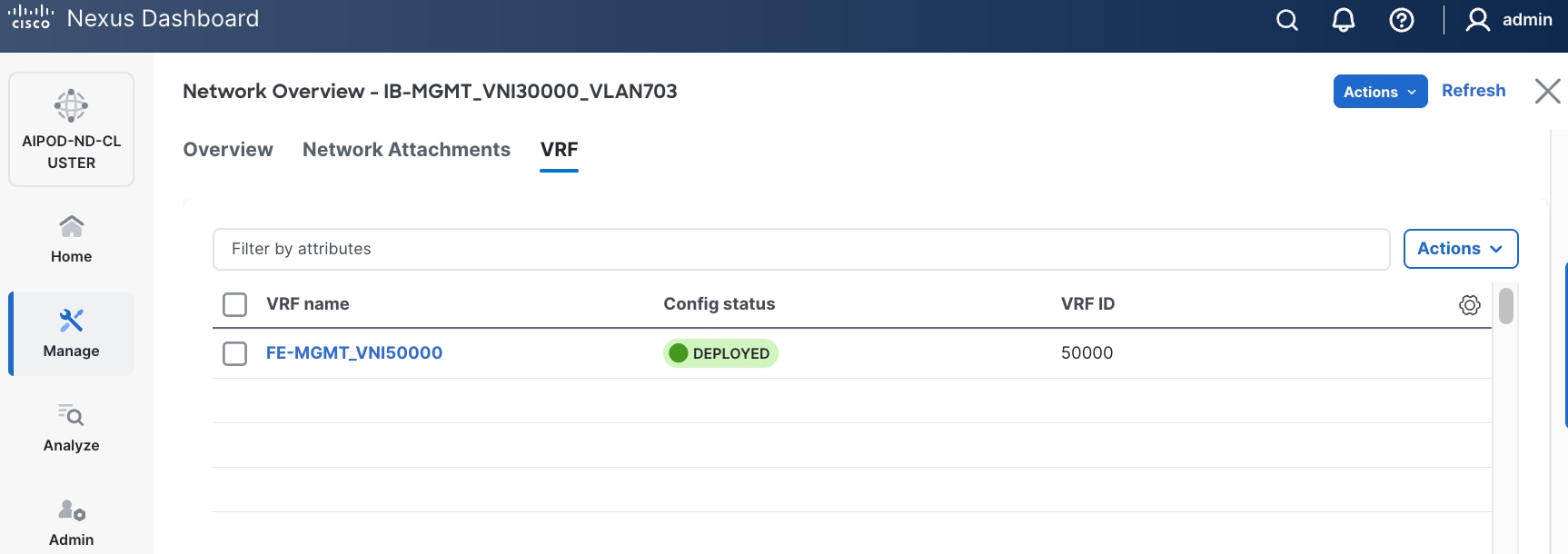

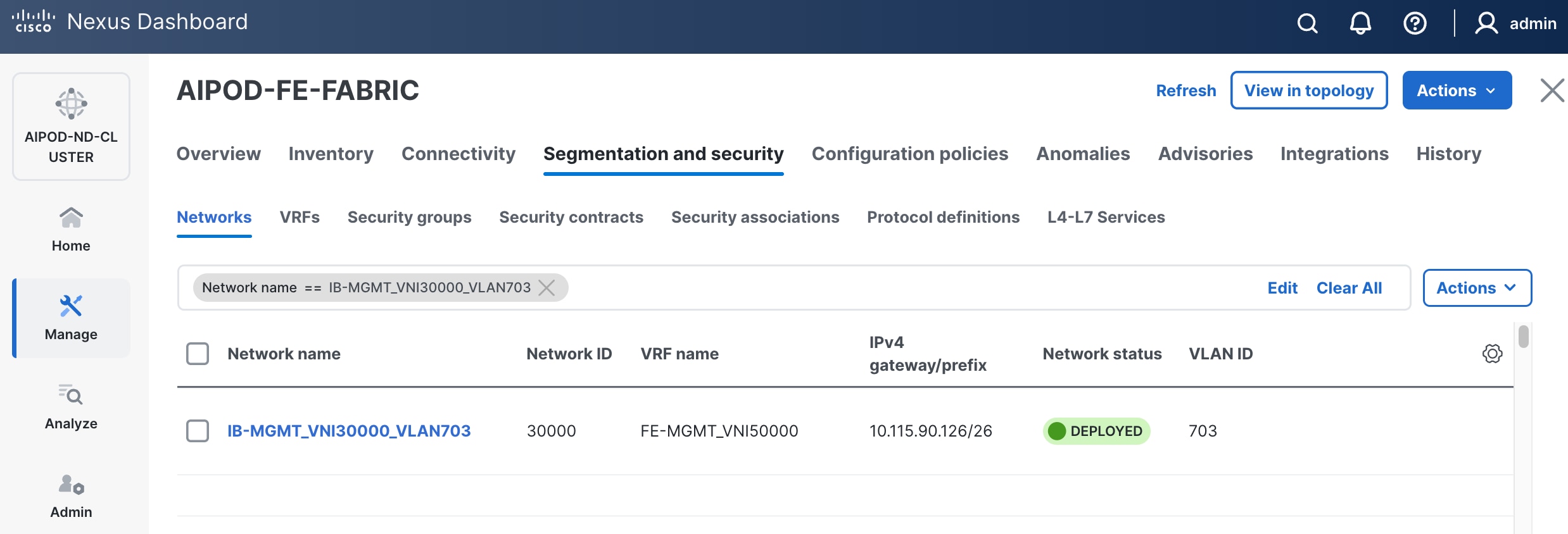

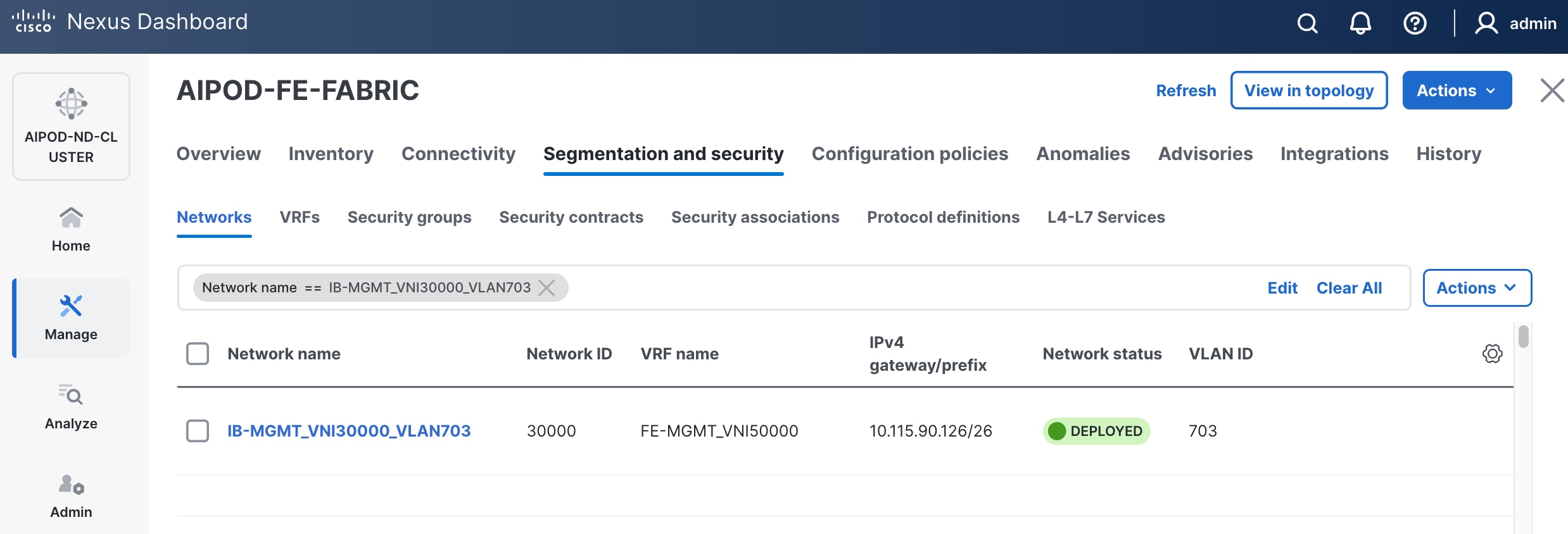

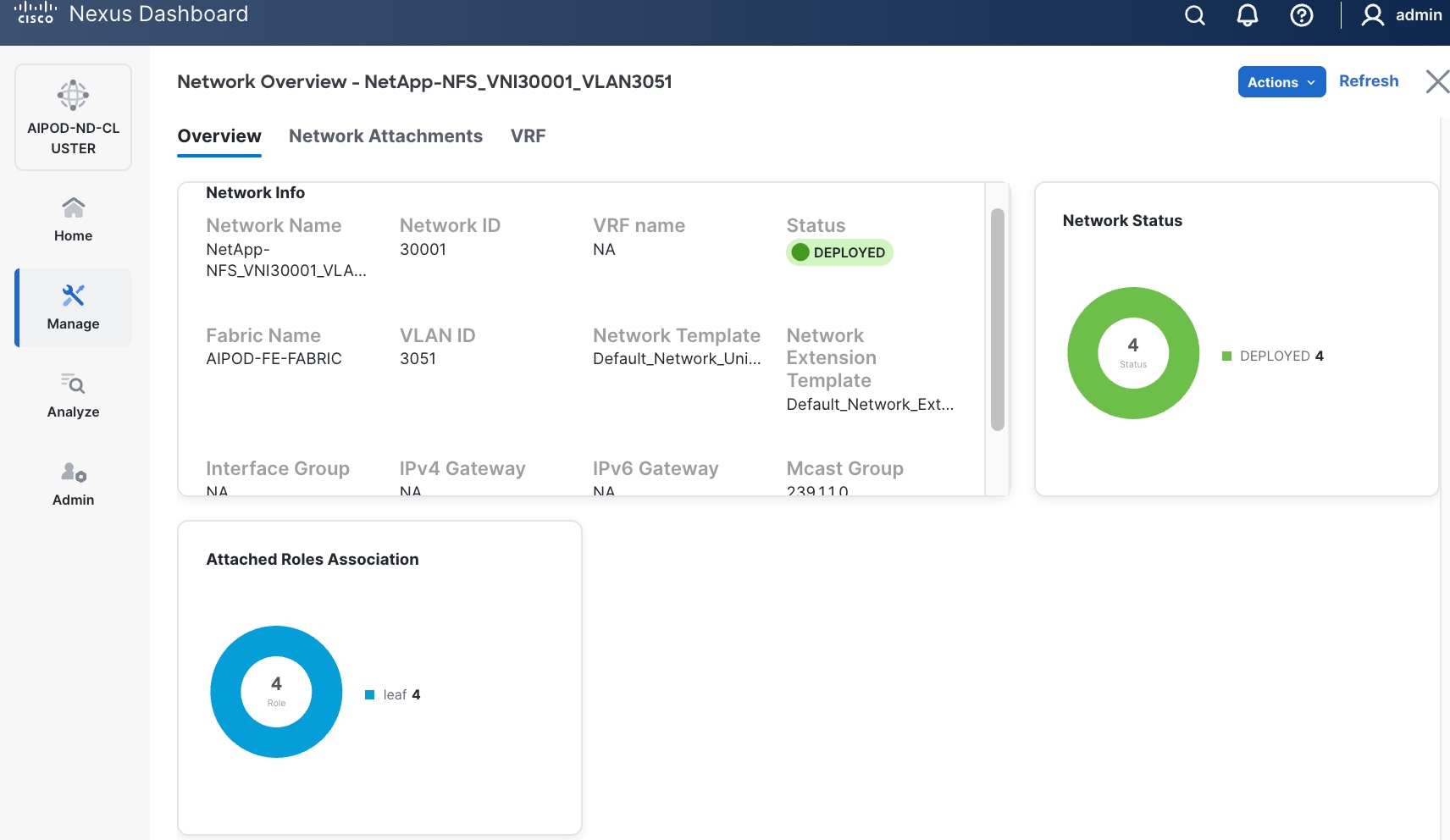

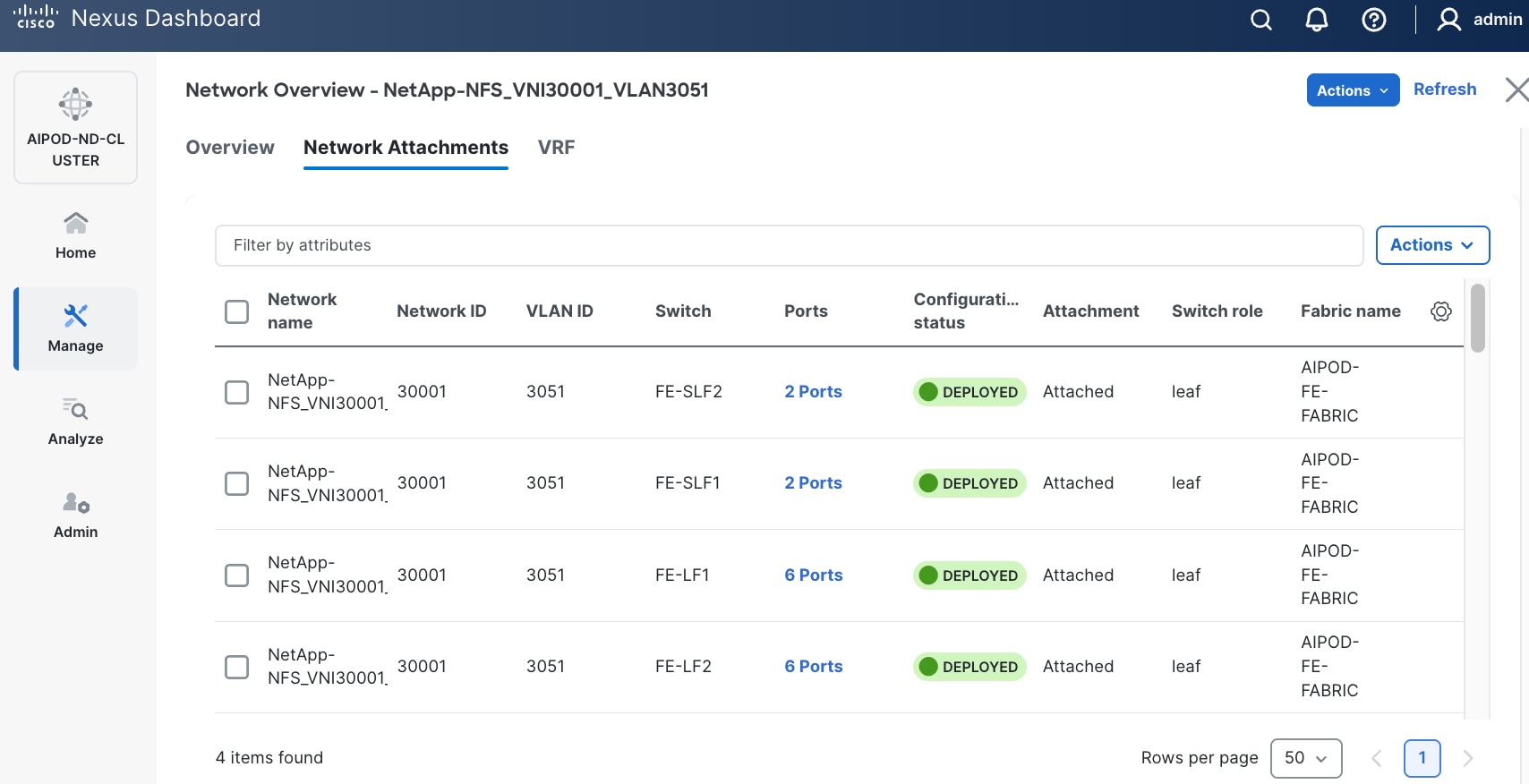

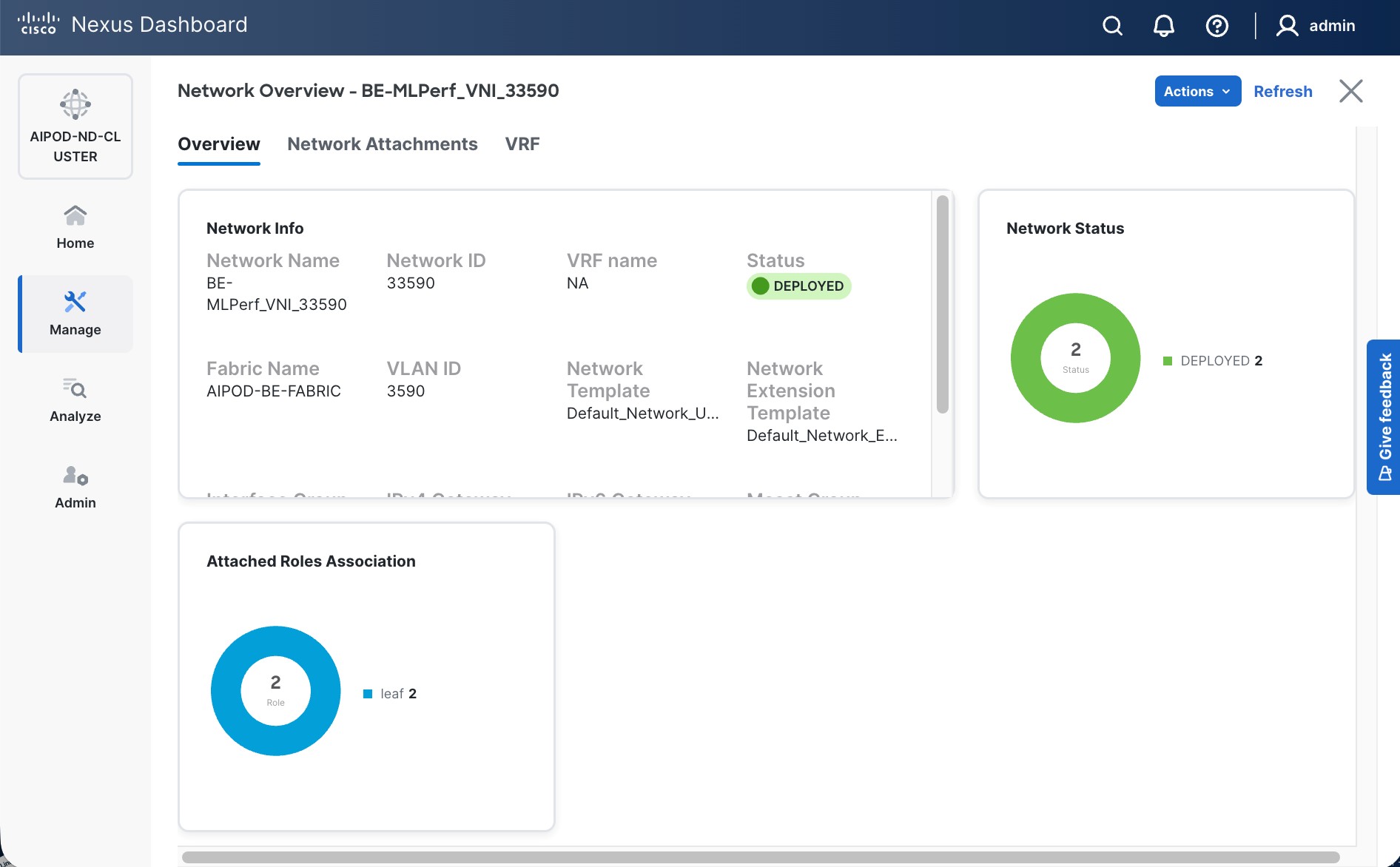

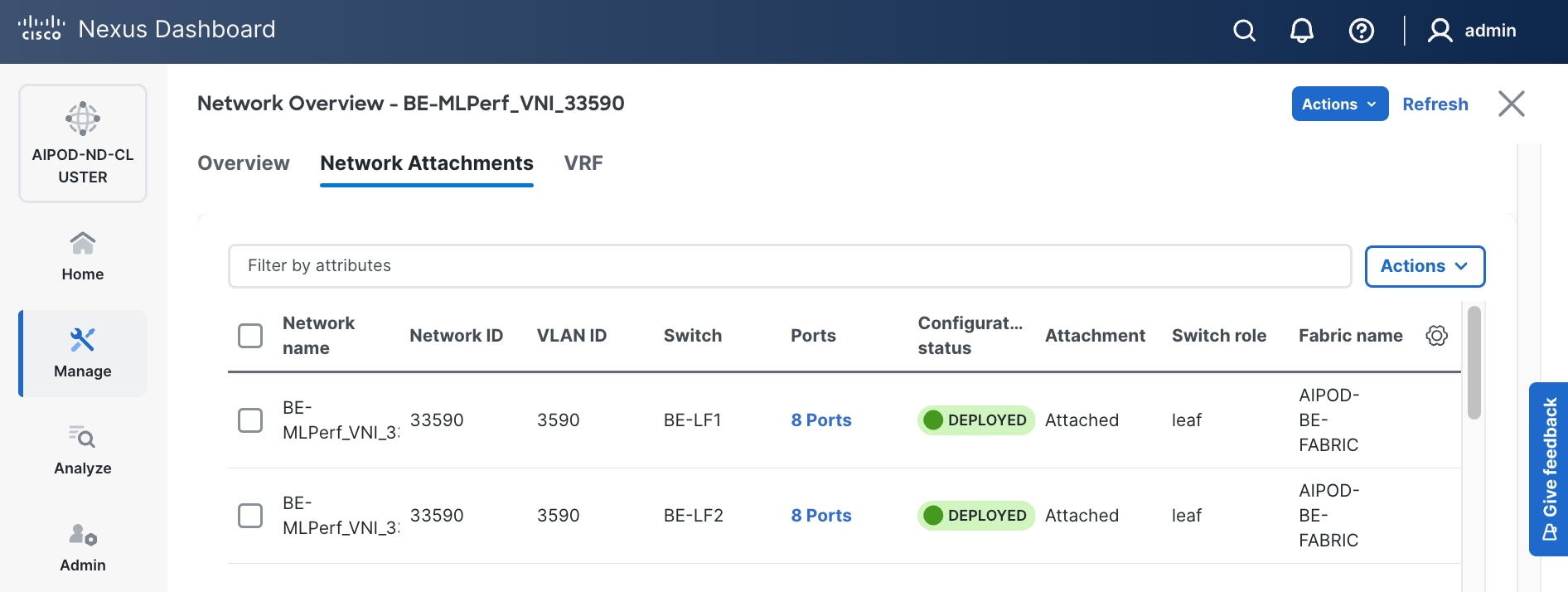

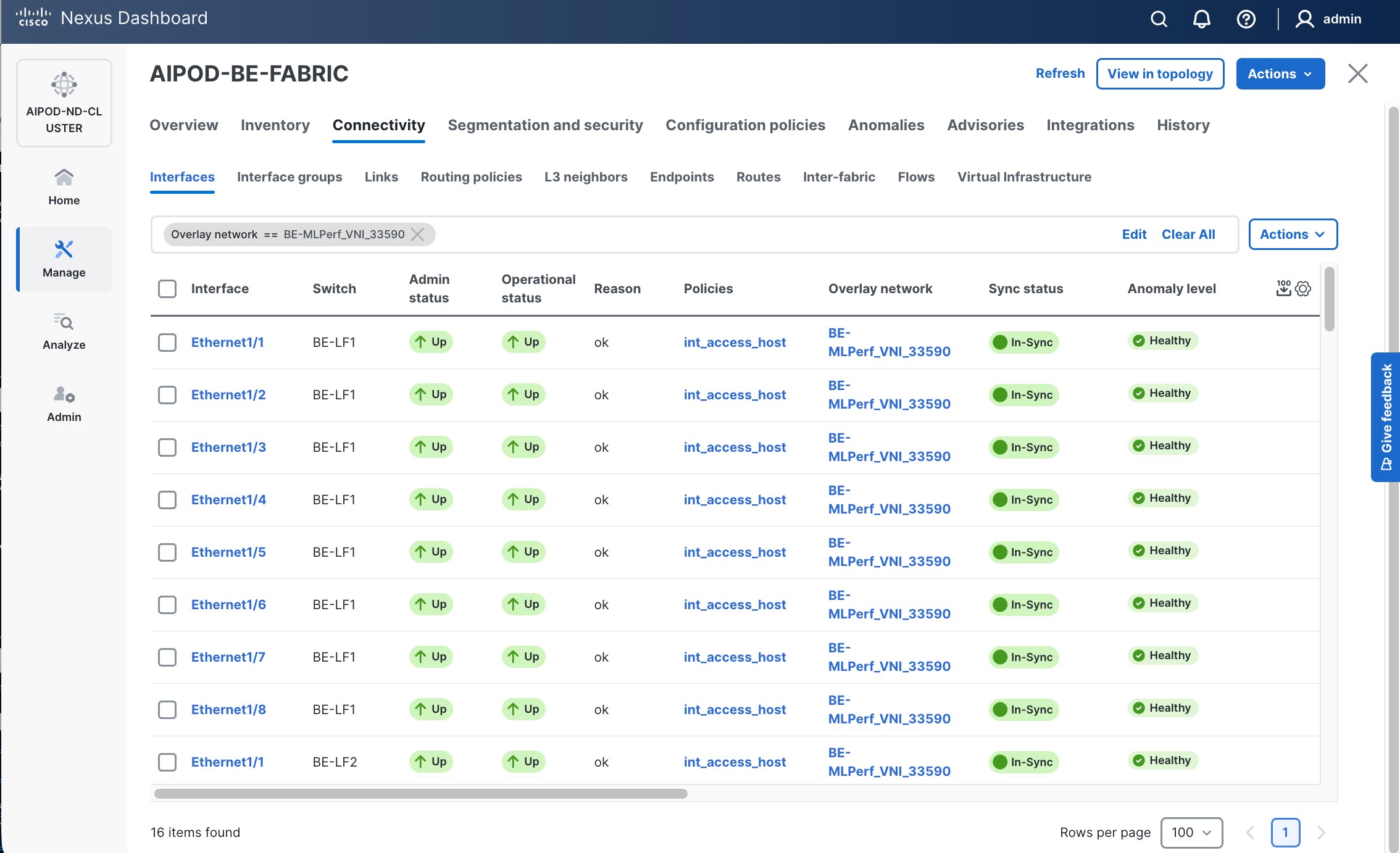

Step 18. Click the Network name to verify that the network was successfully deployed on the relevant switches and interfaces.

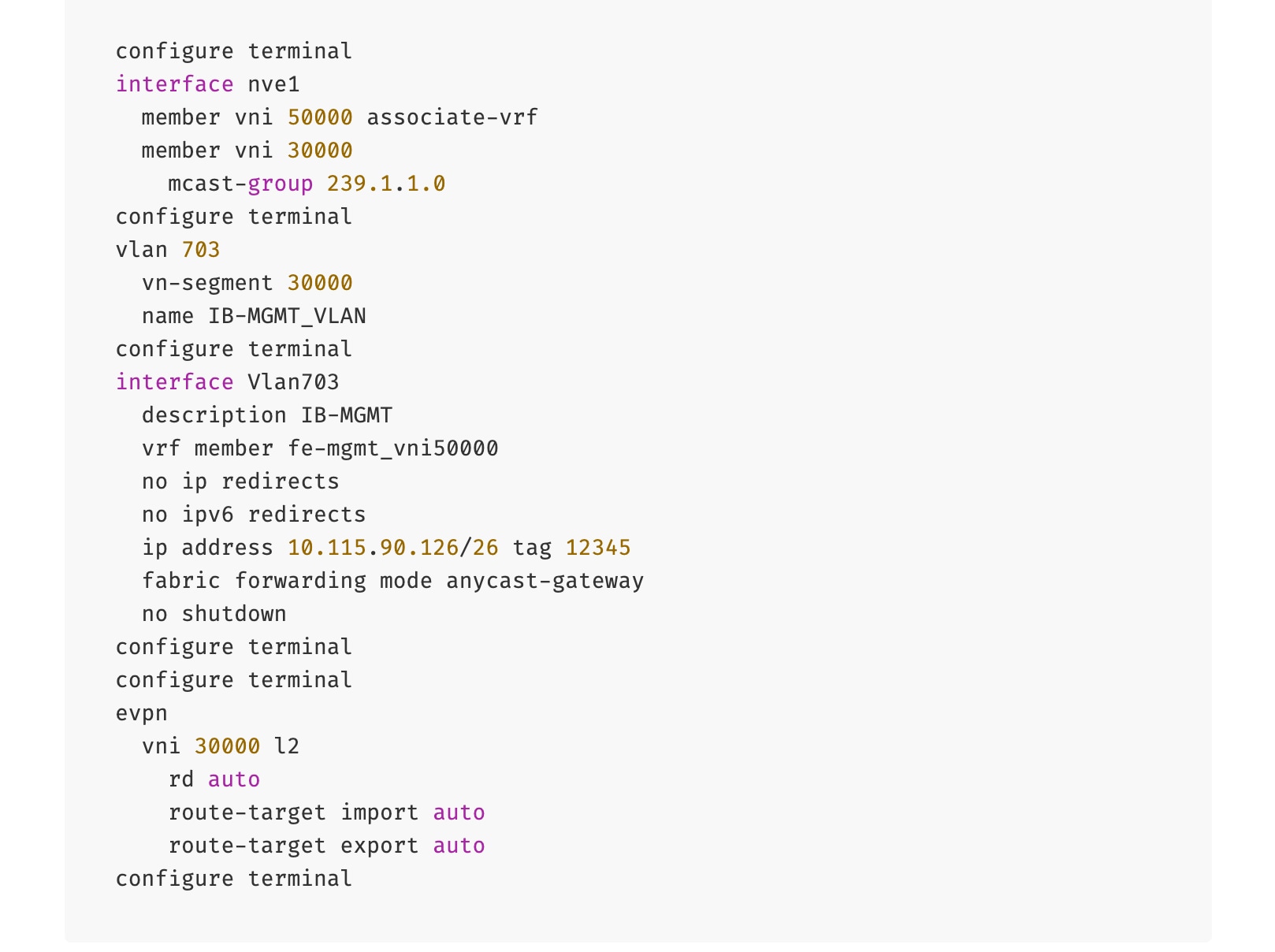

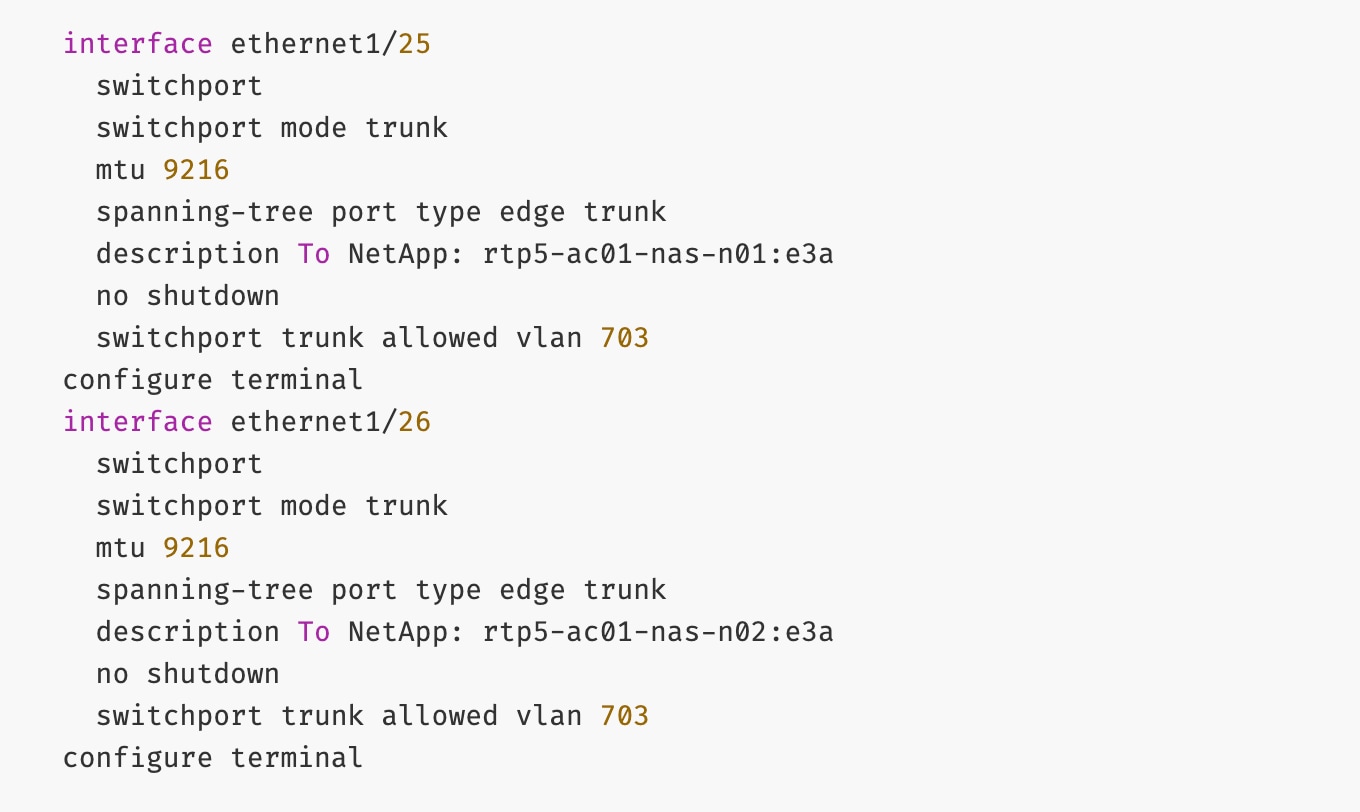

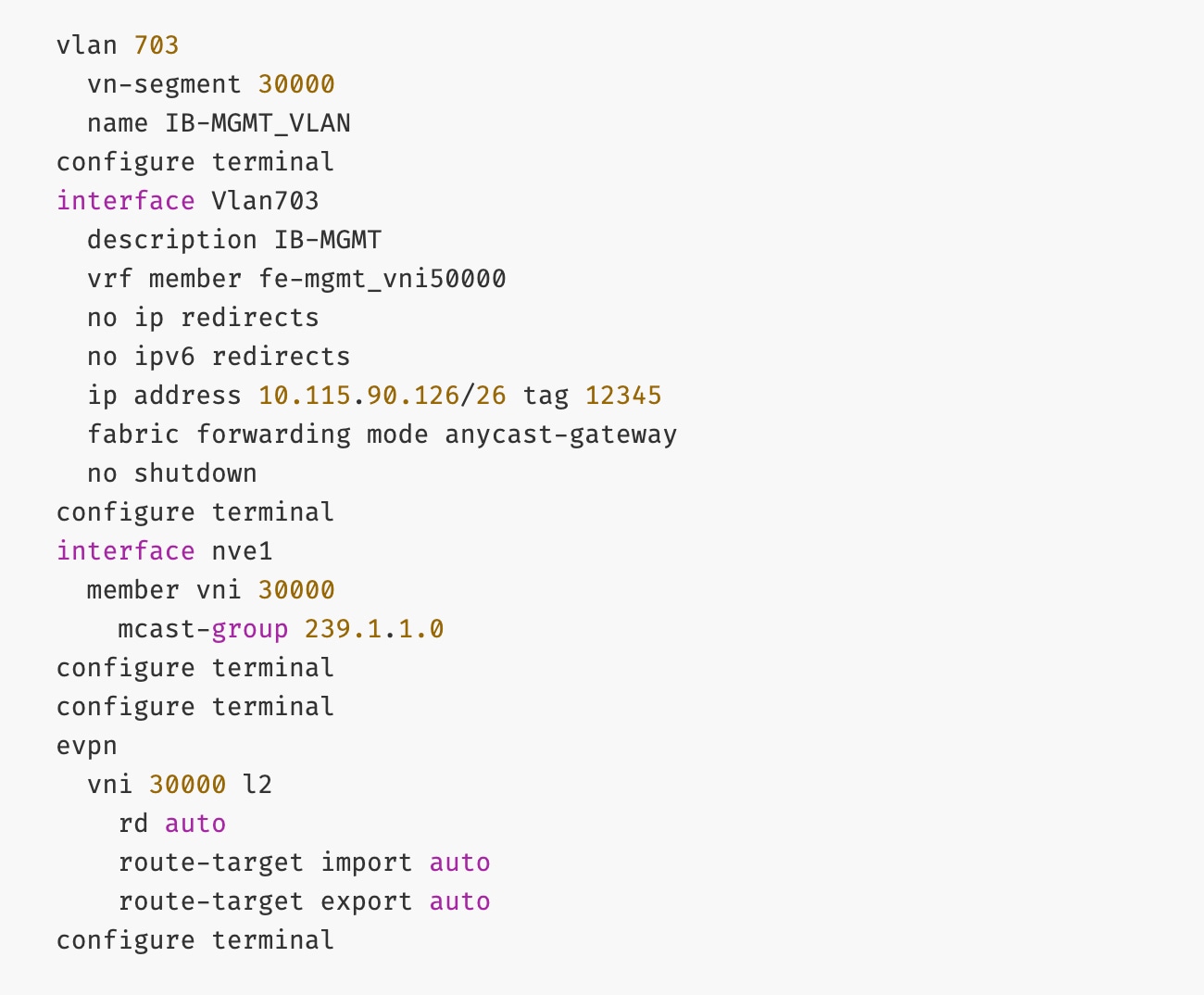

The configuration deployed on one compute leaf switch is provided below as a reference:

To deploy in-band management connectivity to Management UCS X-Series Direct on the compute leaf switches in the FE fabric, follow the procedures below.

Procedure 2. Deploy in-band management connectivity for management UCS X-Direct chassis

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and navigate to Segmentation and Security > Networks tab.

Step 4. Select the previously deployed in-band management network from the list.

Step 5. Click the lower of the two Actions buttons and select Multi-attach from the list.

Step 6. Select the leaf switch pair from the list that the UCS X-Series Direct system connects to.

Step 7. Click Next.

Step 8. Click Select Interfaces to the right of the leaf switch pair to add the interfaces that connect to management UCS X-Series Direct.

Step 9. Click Next.

Step 10. Click Save.

Step 11. Click Deploy All.

Step 12. Click Close.

Step 13. Click the Network name to verify that the network was successfully deployed on the relevant switches and interfaces.

The configuration deployed on one compute leaf switch is provided below as a reference:

(Ubuntu) Enable In-Band Management Connectivity to BCM Node(s)

To deploy in-band management connectivity to BCM node connected to compute leaf switches in the FE fabric, you will be deploying this network on the compute Leaf switch pair that connects to the BCM node.

Table 13. Setup Parameters for FE Fabric: In-Band Management Connectivity to BCME Nodes

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| IB-MGMT Network |

|

|

| Name |

IB-MGMT_VN30000_VLAN703 |

|

| IB-MGMT VRF |

|

|

| VRF Name |

FE-MGMT_VN50000 |

|

| Management BCME Node |

|

|

| vPC Leaf Switch Pair |

FE-LF1, FE-LF2 |

|

| BCM Interface |

Port-Channel 17 |

|

To deploy in-band management connectivity to BCM node connected to compute leaf switches in the FE fabric, complete the procedures below.

Procedure 1. Enable in-band management connectivity to BCM node

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Segmentation and Security > Networks tab.

Step 4. Select the previously deployed in-band management network from the list.

Step 5. Click the lower of the two Actions buttons and select Multi-attach from the list.

Step 6. Select the leaf switch pair from the list that the BCME node connects.

Step 7. Click Next.

Step 8. Click Select Interfaces to the right of the network name to add the interfaces that connect to the BCM node.

Step 9. Click Save.

Step 10. Click Next.

Step 11. Click Save.

The configuration deployed on one compute leaf switch is provided below as a reference:

Step 12. Click Deploy All.

Step 13. Click Close.

Step 14. Click the Network name to verify that the network was successfully deployed on the relevant switches and interfaces.

Enable Layer 2 Connectivity to NetApp Storage

To enable Layer 2 connectivity from the FE fabric to NetApp storage, you will be configuring four ports, two on each Leaf switch. Each port is configured as a trunk port using the default native VLAN.

Table 14. Setup Parameters for FE Fabric: Layer 2 Connectivity to NetApp Storage

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| Leaf Switches |

FE-SLF1, FE-SLF2 |

|

| NetApp Storage |

|

To Storage Leaf Switches |

| FE-SLF1 |

|

|

| Ports |

1/25 |

|

| Ports |

1/26 |

|

| FE-SLF2 |

|

|

| Ports |

1/25 |

|

| Ports |

1/26 |

|

To enable Layer 2 connectivity from the FE fabric to NetApp storage, follow the procedures below.

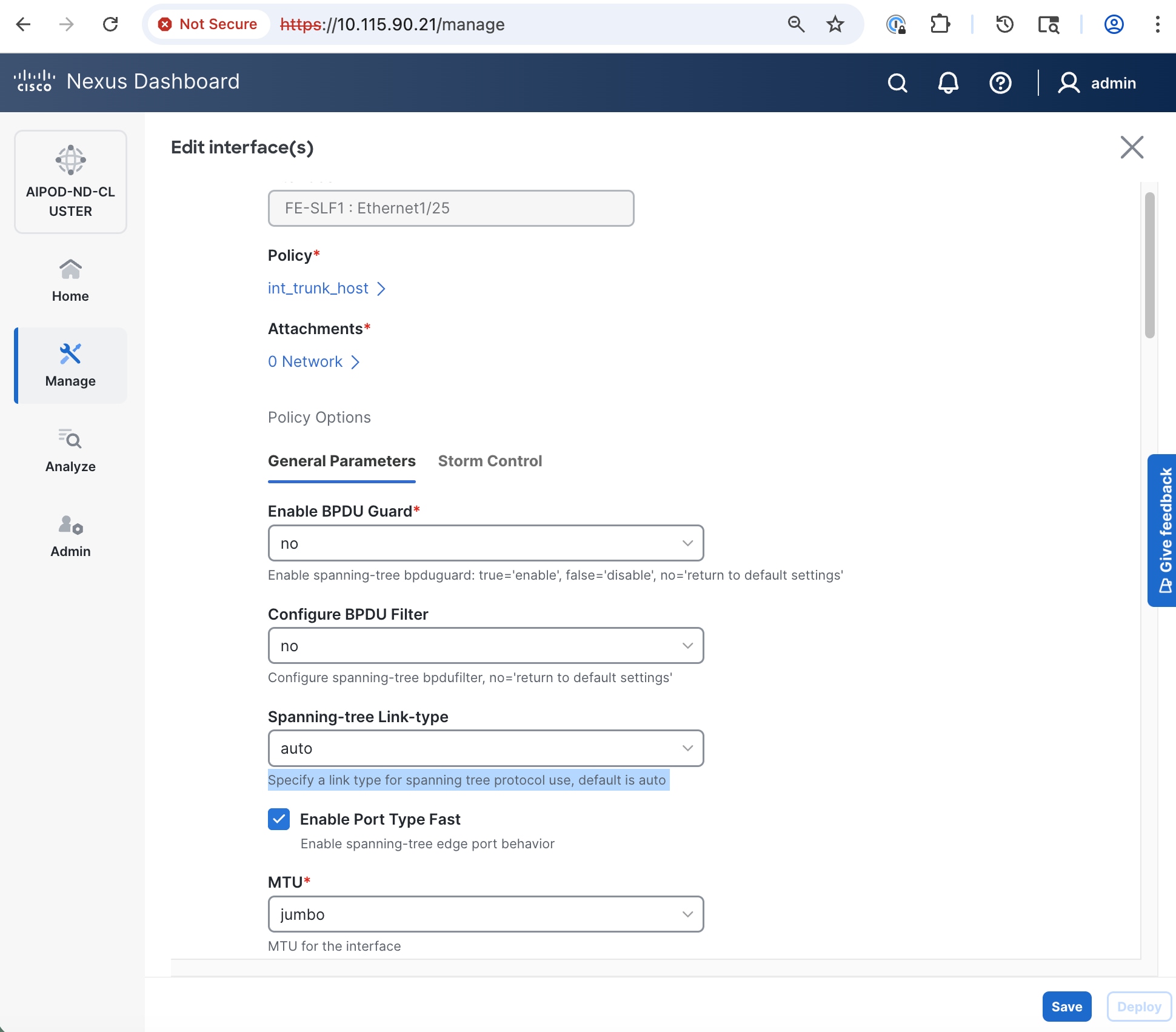

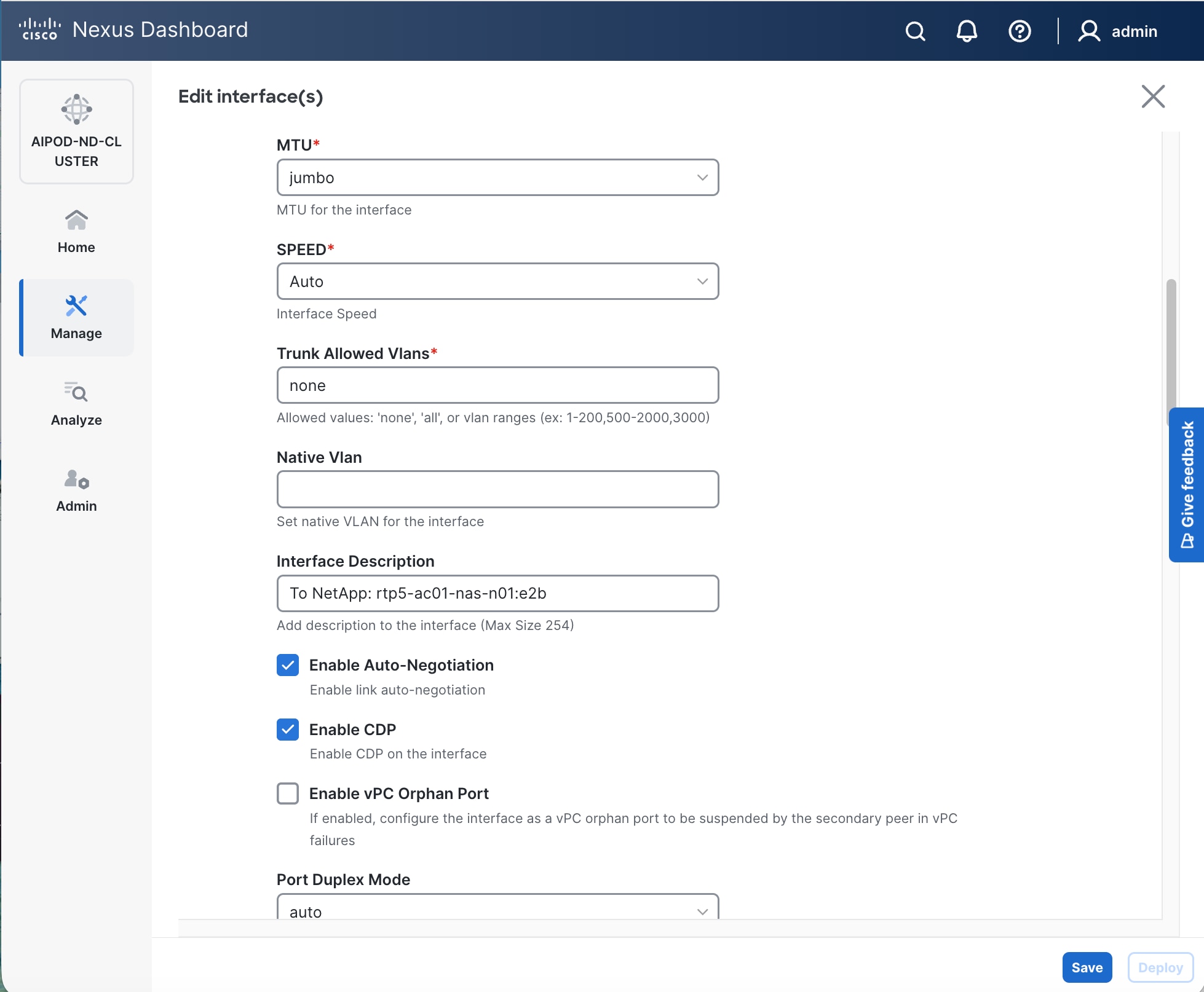

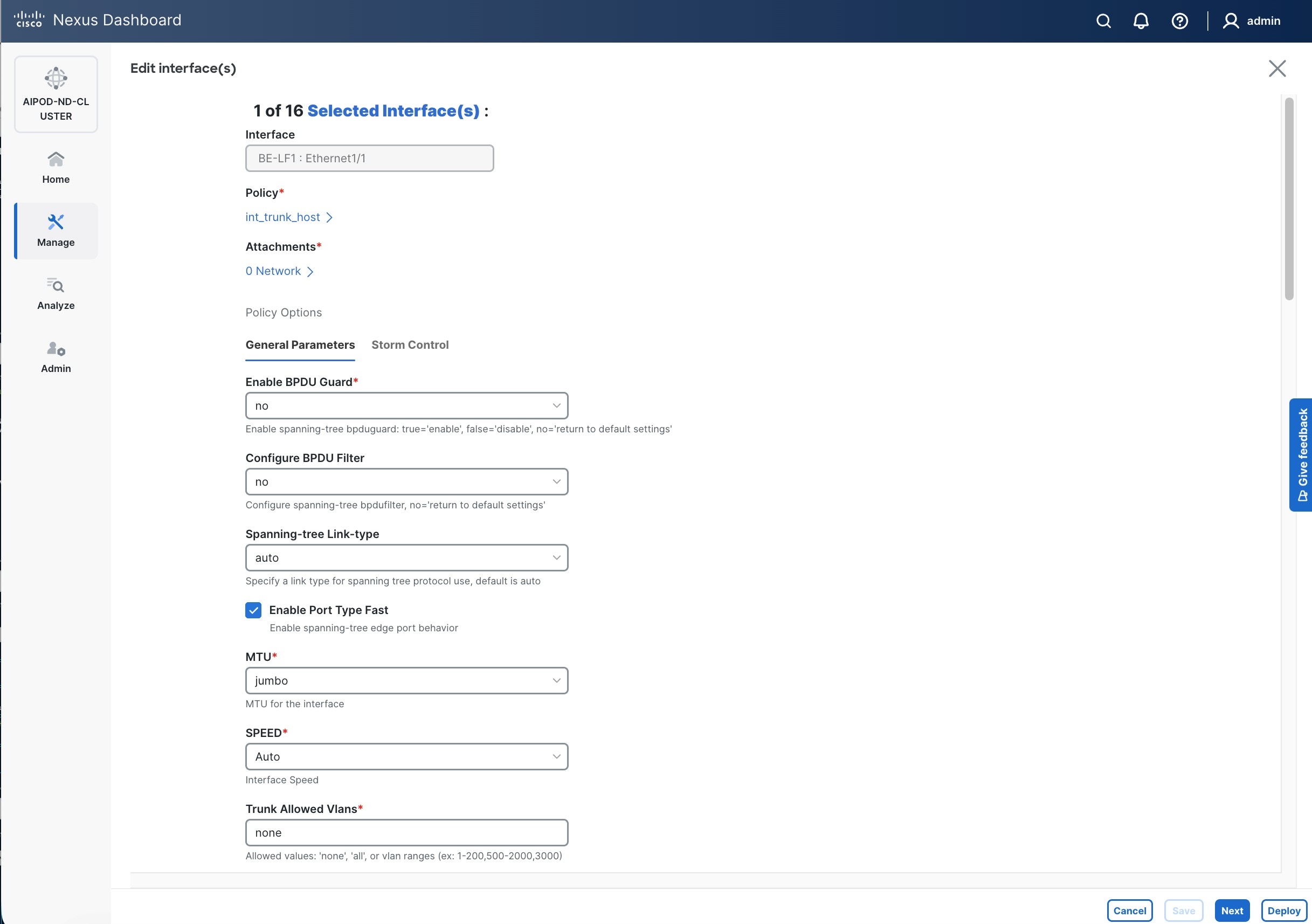

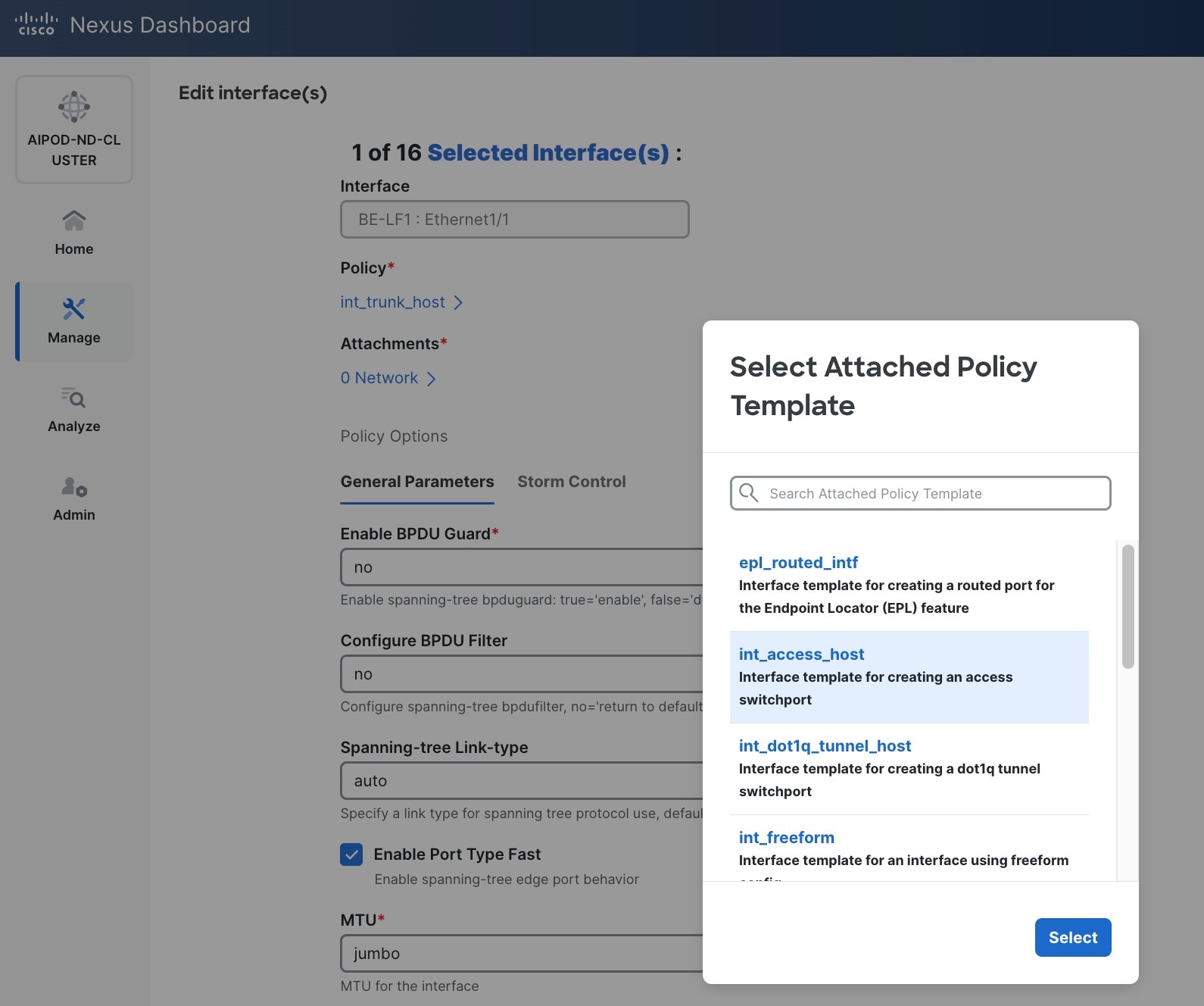

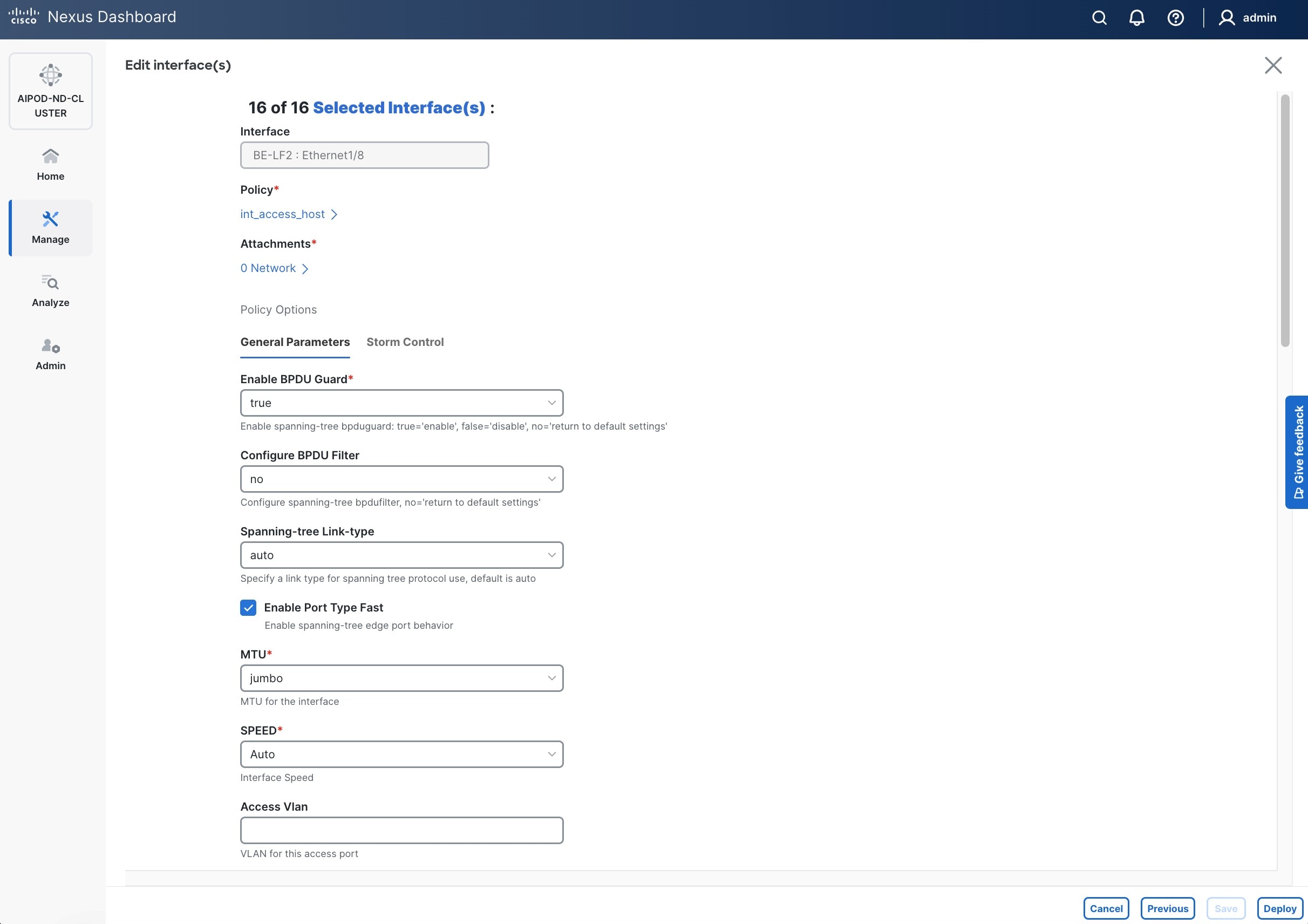

Procedure 2. Enable Layer 2 connectivity on the first port to NetApp storage

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

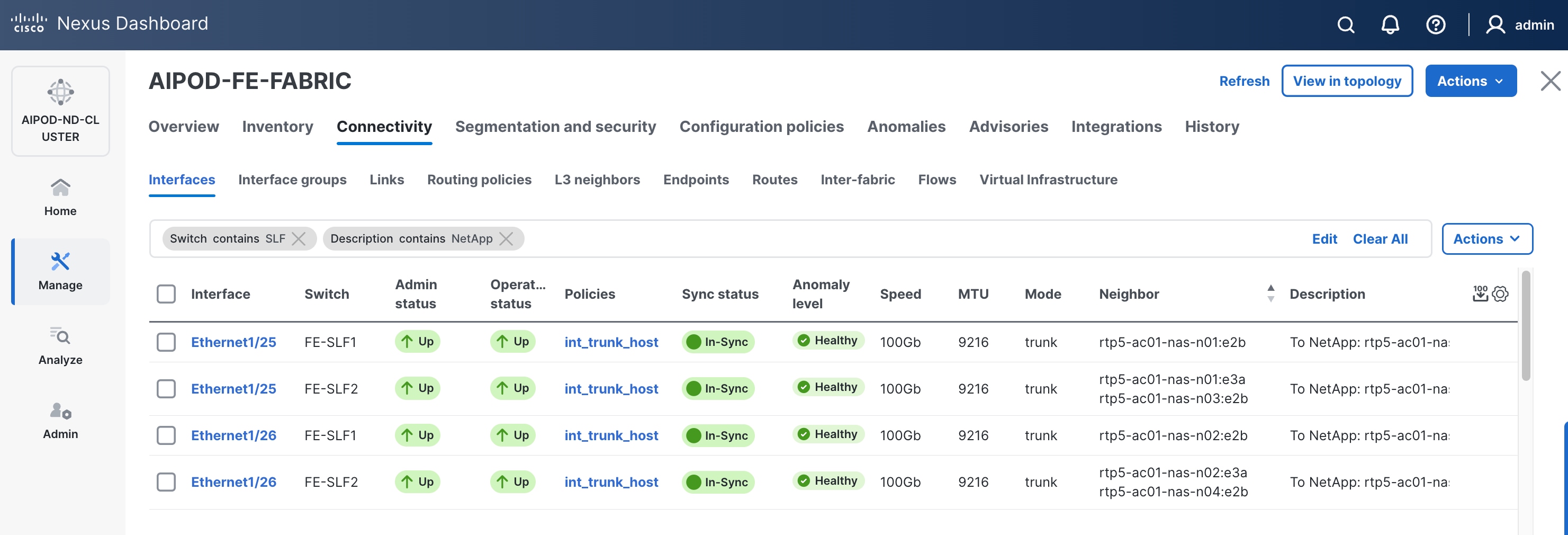

Step 3. Select the FE fabric and go to Connectivity > Interfaces tab.

Step 4. Filter on the storage leaf switches and the ports that connect to NetApp storage.

Step 5. Select the first interface to configure from the list.

Step 6. Click the lower of the two Actions buttons and select the Edit configuration from the drop-down list.

Step 7. In the Edit interface window, configure Interface Description and leave everything else as is.

Step 8. Click Save.

Step 9. Click X to exit the window (changes will be deployed later).

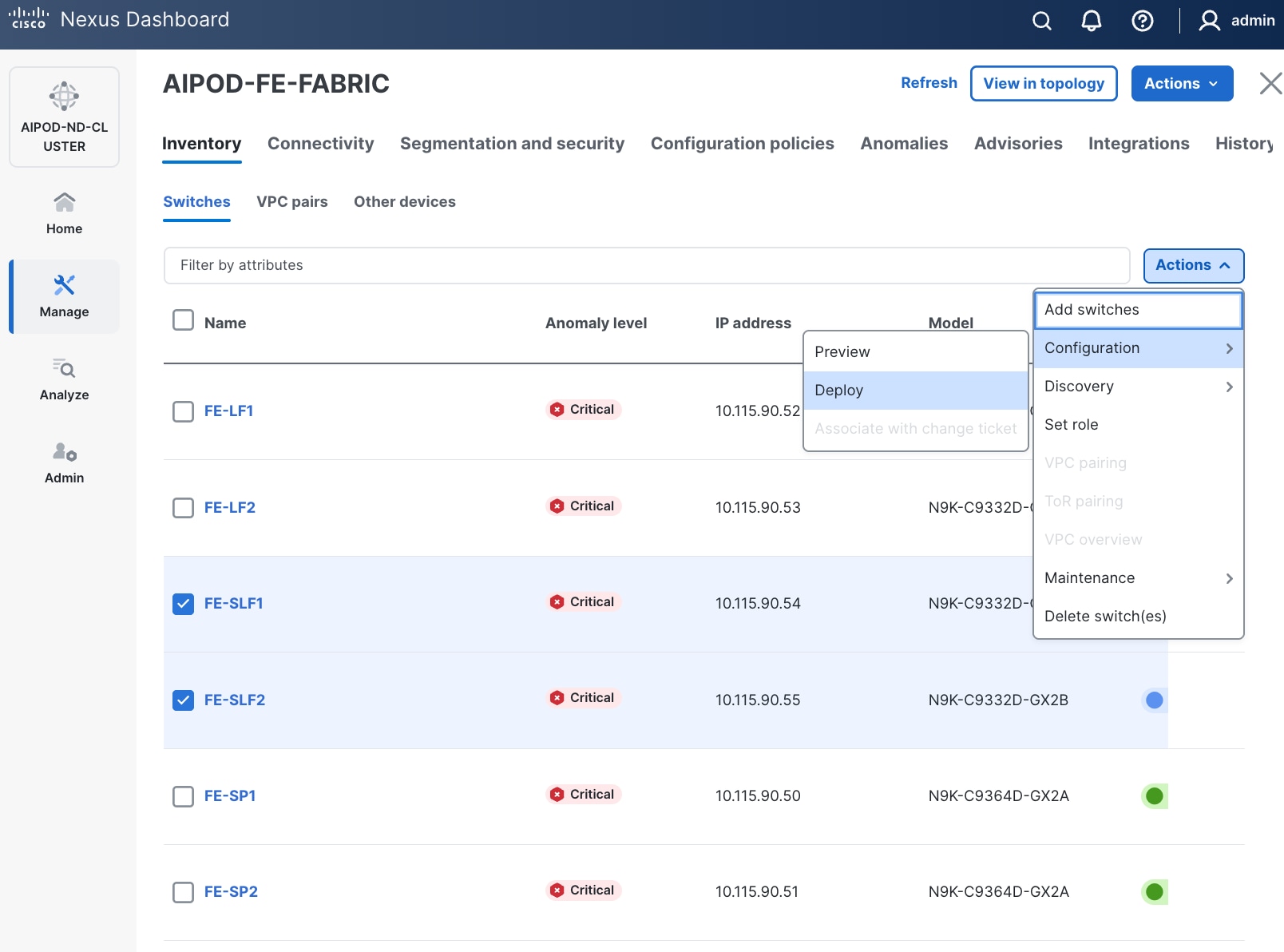

Step 10. Repeat for remaining ports on both storage leaf switches that connect to NetApp storage.

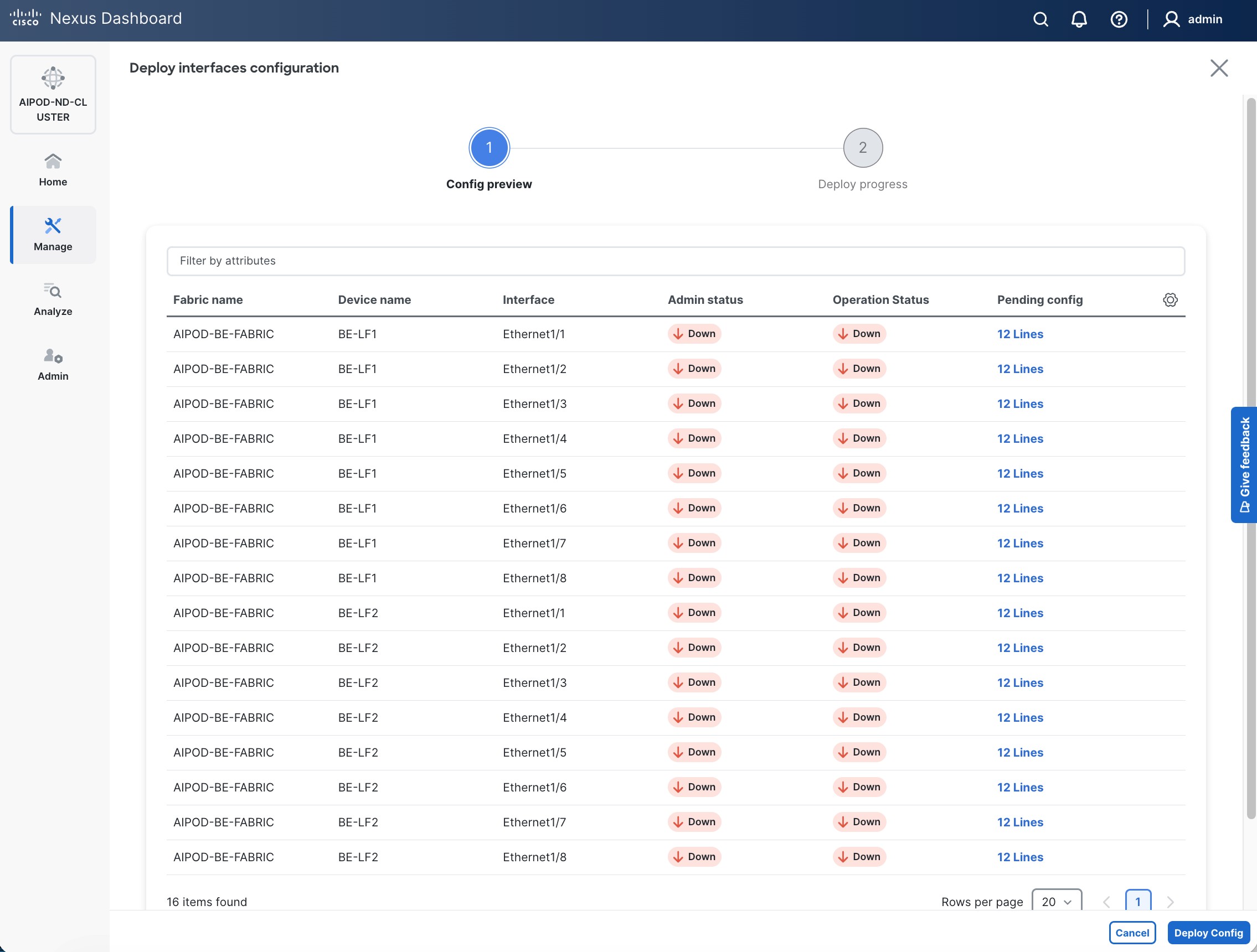

Step 11. Navigate to Manage > Inventory. Select the two storage Leaf switches and click the lower of the two Actions button.

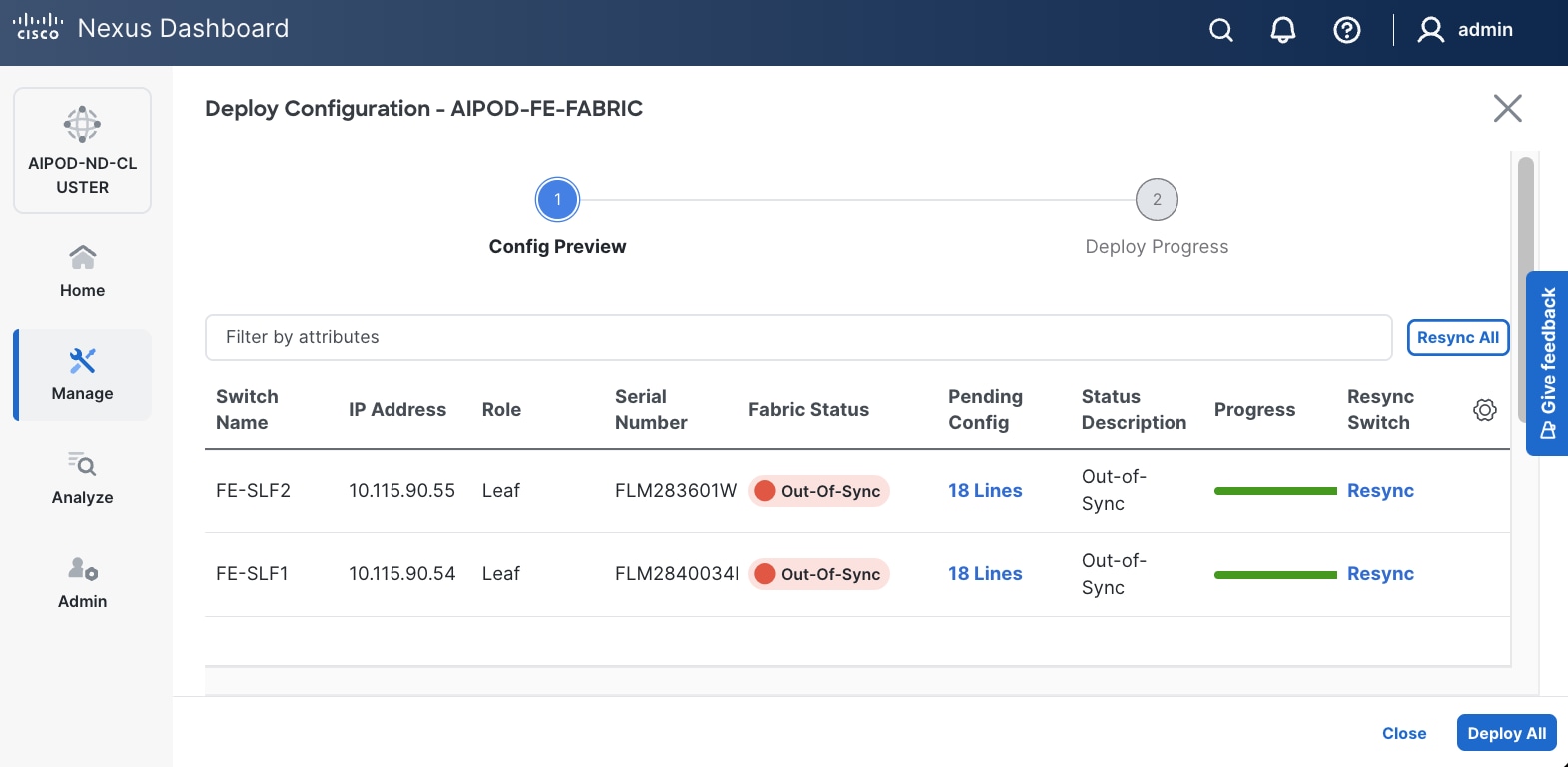

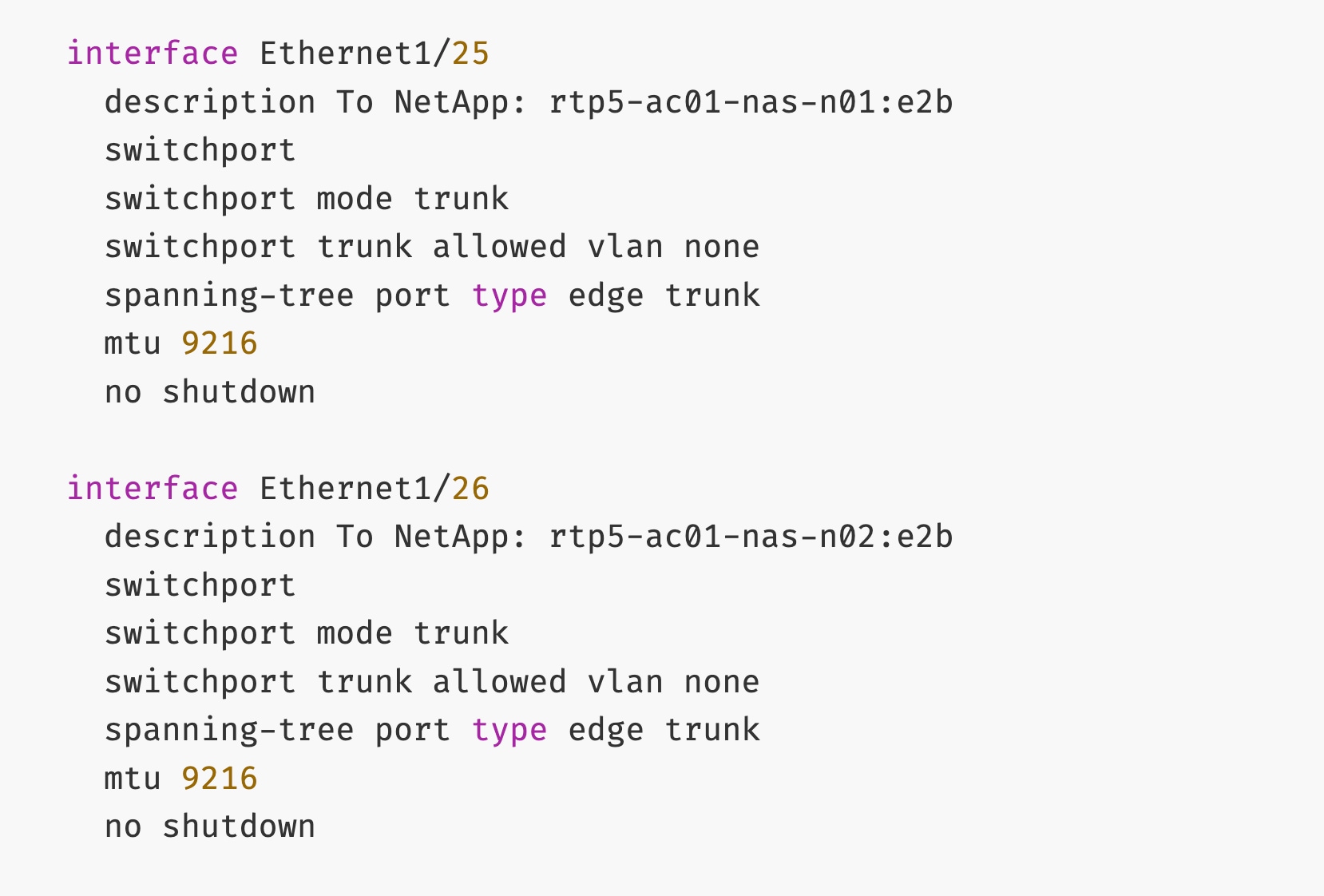

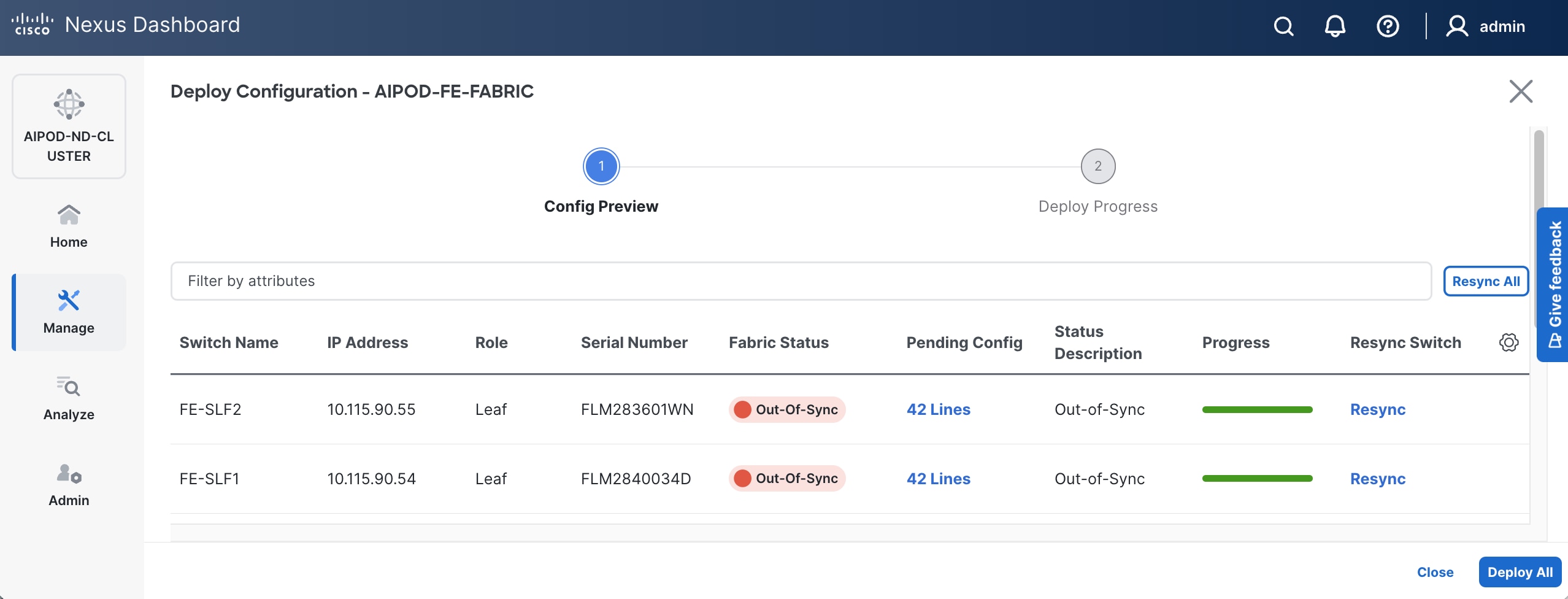

Step 12. Select Configuration > Deploy from the drop-down list.

Step 13. Click Pending Config for each switch to see the configuration that will be deployed on each switch. The deployed configuration from one leaf switch is provided as reference below.

Step 14. Click Deploy All.

Step 15. Click Close.

Enable In-Band Management Connectivity to NetApp Storage

Table 15. Setup Parameters for FE Fabric: In-Band Management Connectivity to NetApp Storage

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| IB-MGMT Network |

|

|

| Name |

IB-MGMT_VN30000_VLAN703 |

|

| NetApp Storage |

|

On Storage Leaf Switches |

| Storage Leaf Switch |

FE-SLF1 |

|

| Port |

e1/25 |

|

| Port |

e1/26 |

|

| Storage Leaf Switch |

FE-SLF2 |

|

| Port |

e1/25 |

|

| Port |

e1/26 |

|

Procedure 1. Deploy In-Band Management Connectivity for NetApp storage

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Segmentation and Security > Networks tab.

Step 4. Select the previously deployed in-band management network from the list.

Step 5. Click the lower of the two Actions buttons and select Multi-attach from the drop-down list.

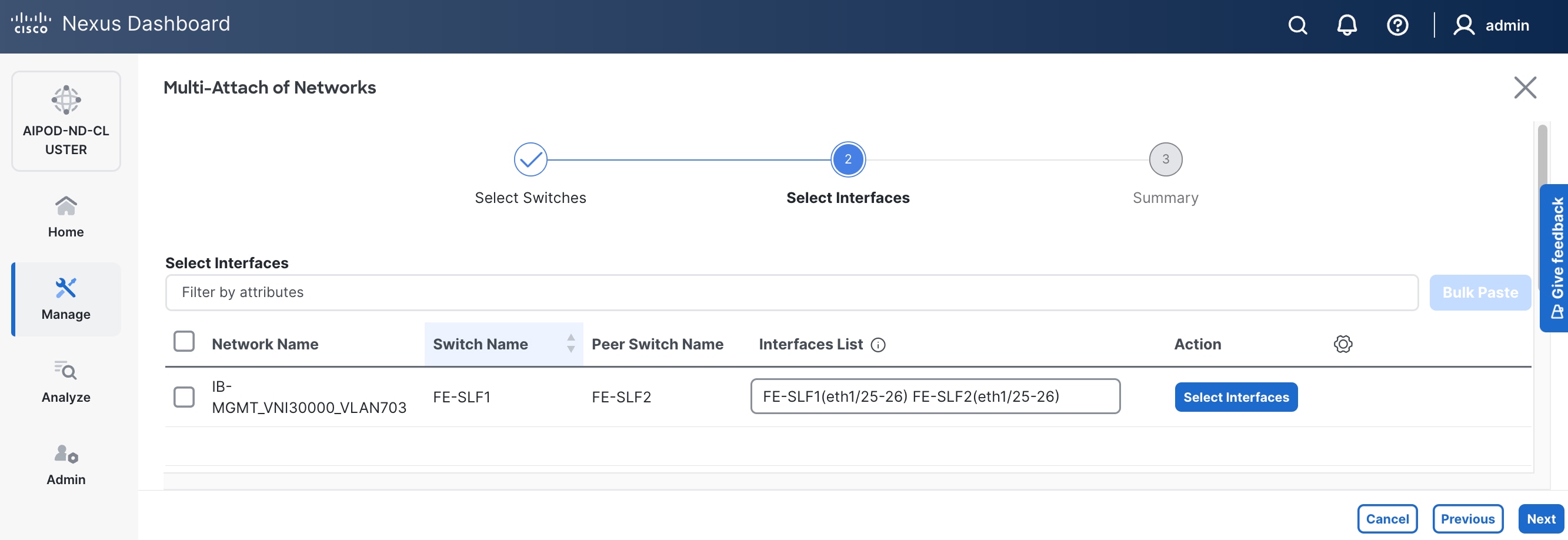

Step 6. Deploy the network on the storage leaf switch pair.

Step 7. Click Next.

Step 8. Select the network and click Select interfaces on the right.

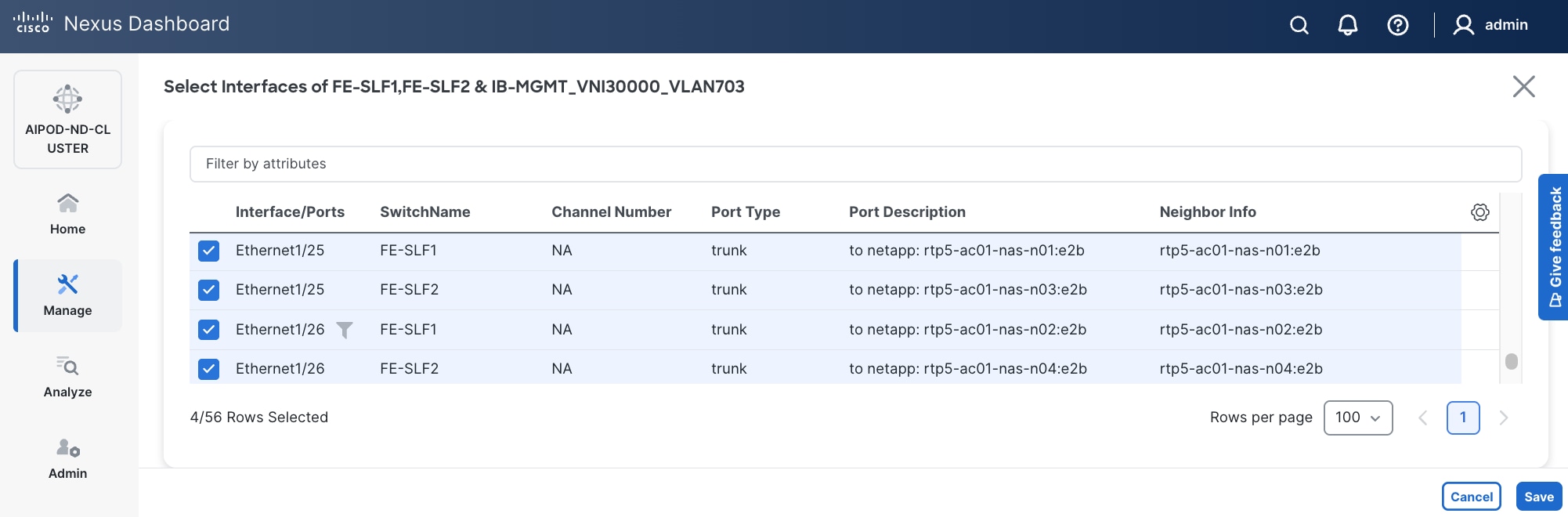

Step 9. Select the interfaces from the list.

Step 10. Click Save.

Step 11. Click Next.

Step 12. Click Save.

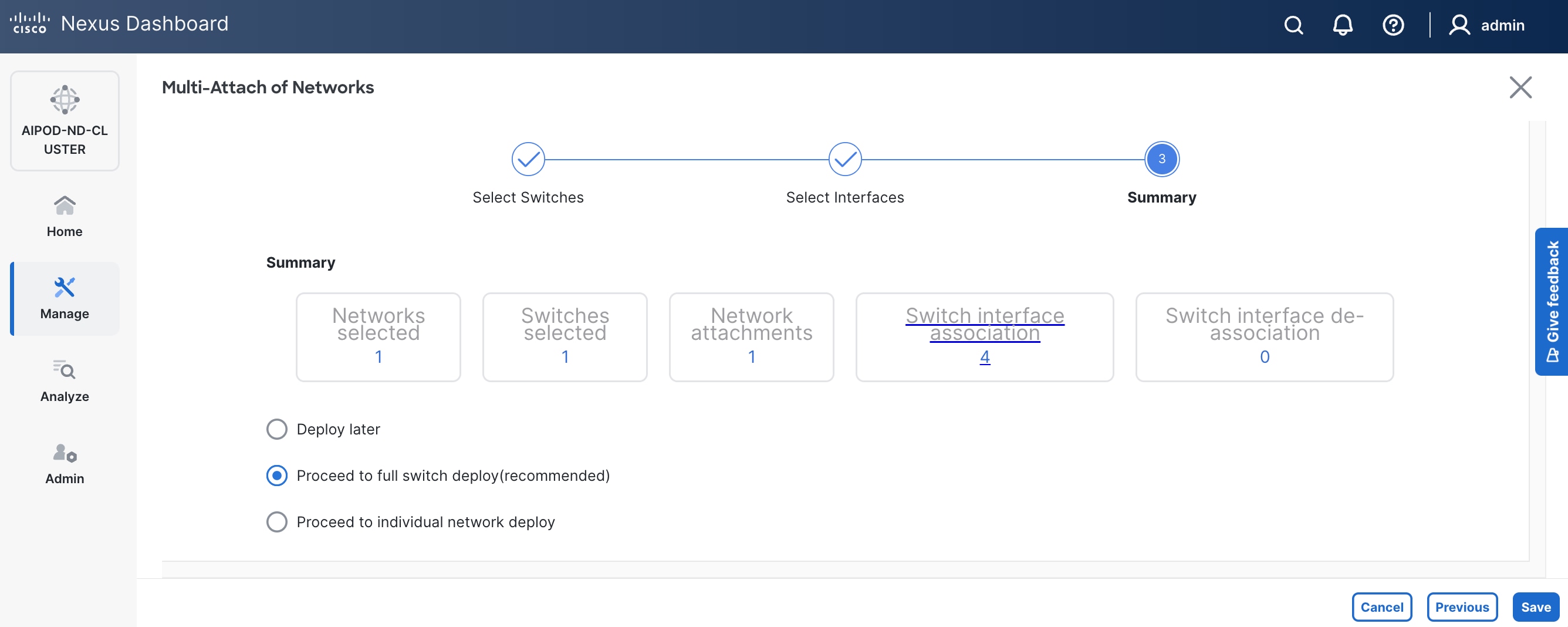

Step 13. Click Pending Config to see the configuration being deployed. The pending configuration on one leaf switch is provided as a reference at the end.

Step 14. Click Deploy All.

Step 15. Click Close.

Step 16. Click the Network name to verify that the network was successfully deployed on the relevant switches and interfaces.

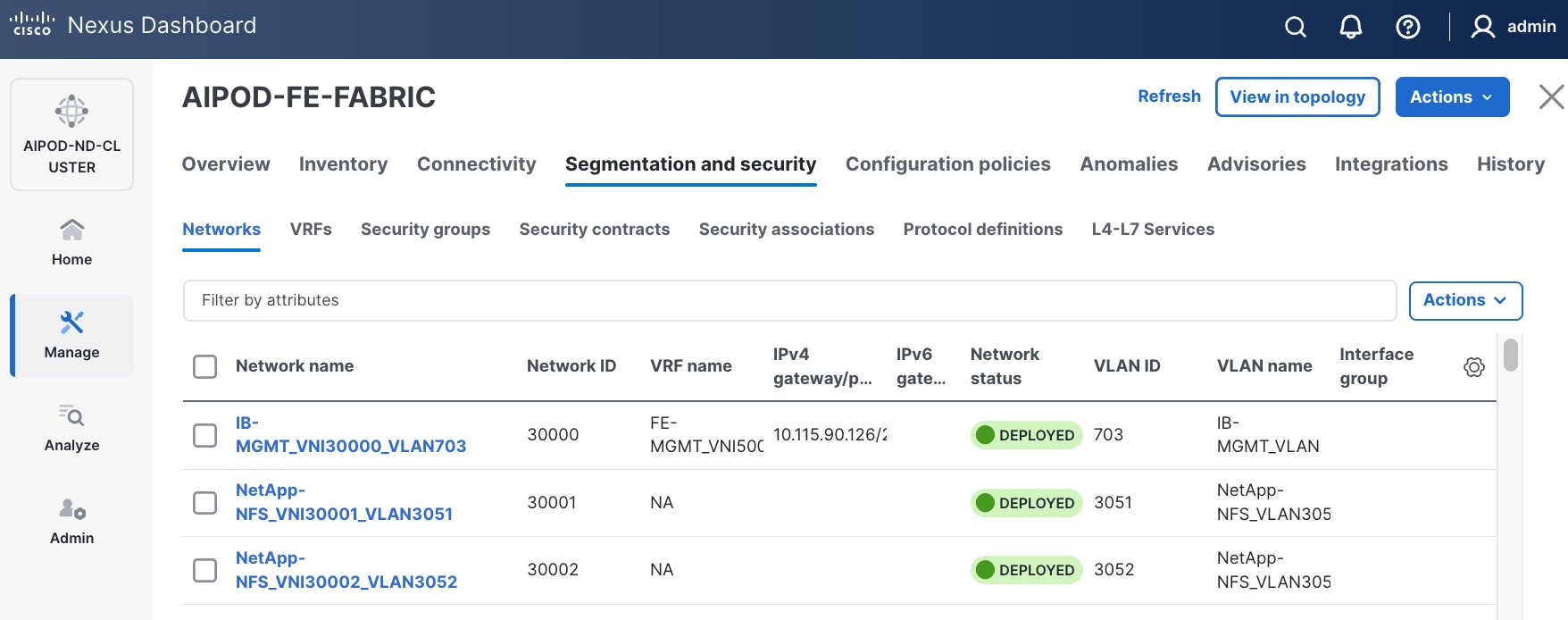

Step 17. Verify the status and that the network is deployed.

The configuration deployed on one storage leaf switch is provided below as a reference:

Enable NFS Storage Data Access to NetApp Storage

Table 16. Setup Parameters for FE Fabric: NFS Storage Data Access to NetApp Storage

| Parameter Type |

Parameter Name | Value |

Parameter Type |

| NFS Storage Data Network(s) |

|

|

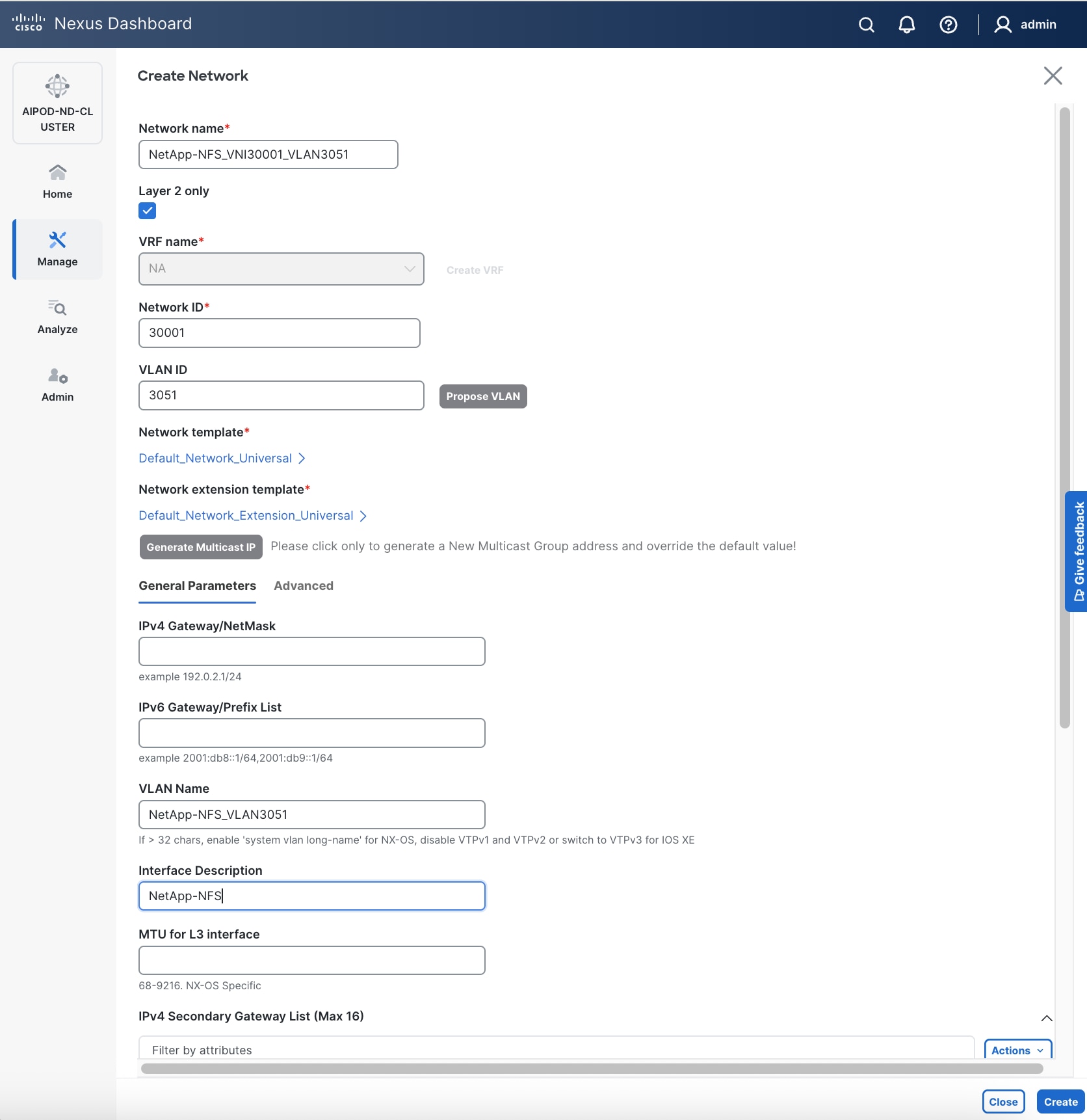

| Name |

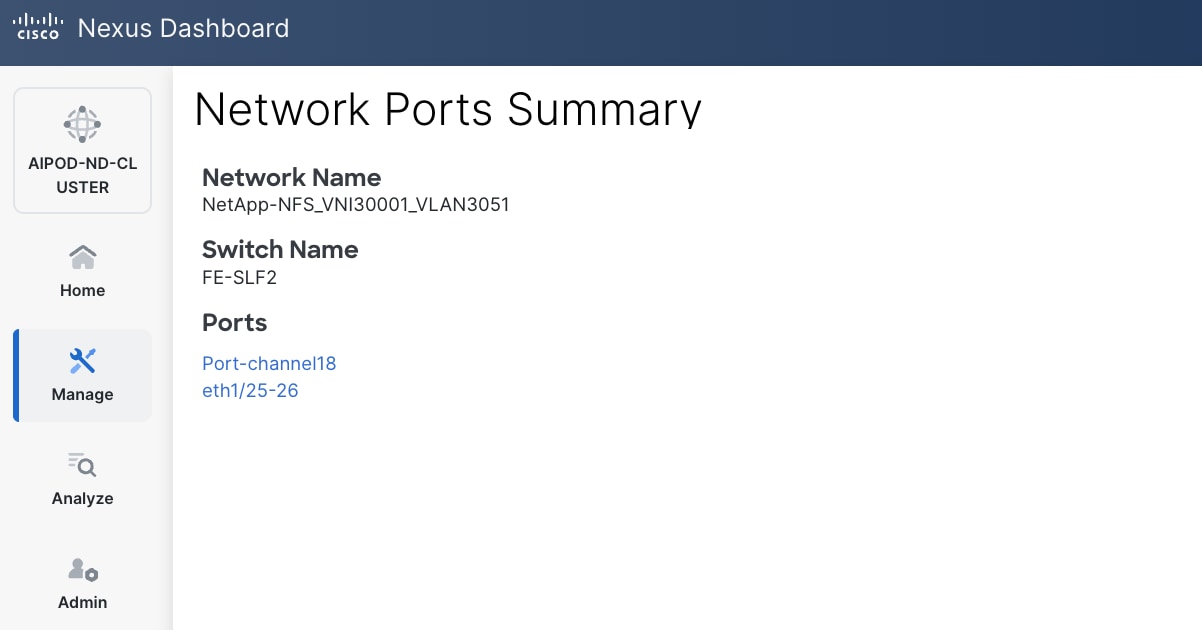

NetApp-NFS_VN30001_VLAN3051 |

|

| Layer 2 Only |

Enable checkbox |

|

| Network ID |

30001 |

|

| VLAN ID |

3051 |

|

| VLAN Name |

NetApp-NFS_VLAN3051 |

|

| Interface Description |

NetApp-NFS |

|

| Name |

NetApp-NFS_VN30002_VLAN3052 |

|

| Layer 2 Only |

Enable checkbox |

|

| Network ID |

30002 |

|

| VLAN ID |

3052 |

|

| VLAN Name |

NetApp-NFS_VLAN3052 |

|

| Interface Description |

NetApp-NFS |

|

| NetApp Storage |

|

|

| vPC Leaf Switch Pair |

FE-SLF1, FE-SLF2 |

|

| Port |

e1/25 - 26 |

|

| Leaf Switch Pair |

FE-LF1, FE-LF2 |

|

| vPC |

15,16, 111-114 |

To Management UCS-X Direct, UCS C885A GPU Nodes |

| Port Channel |

15,16, 111-114 |

Members: e1/1-4 |

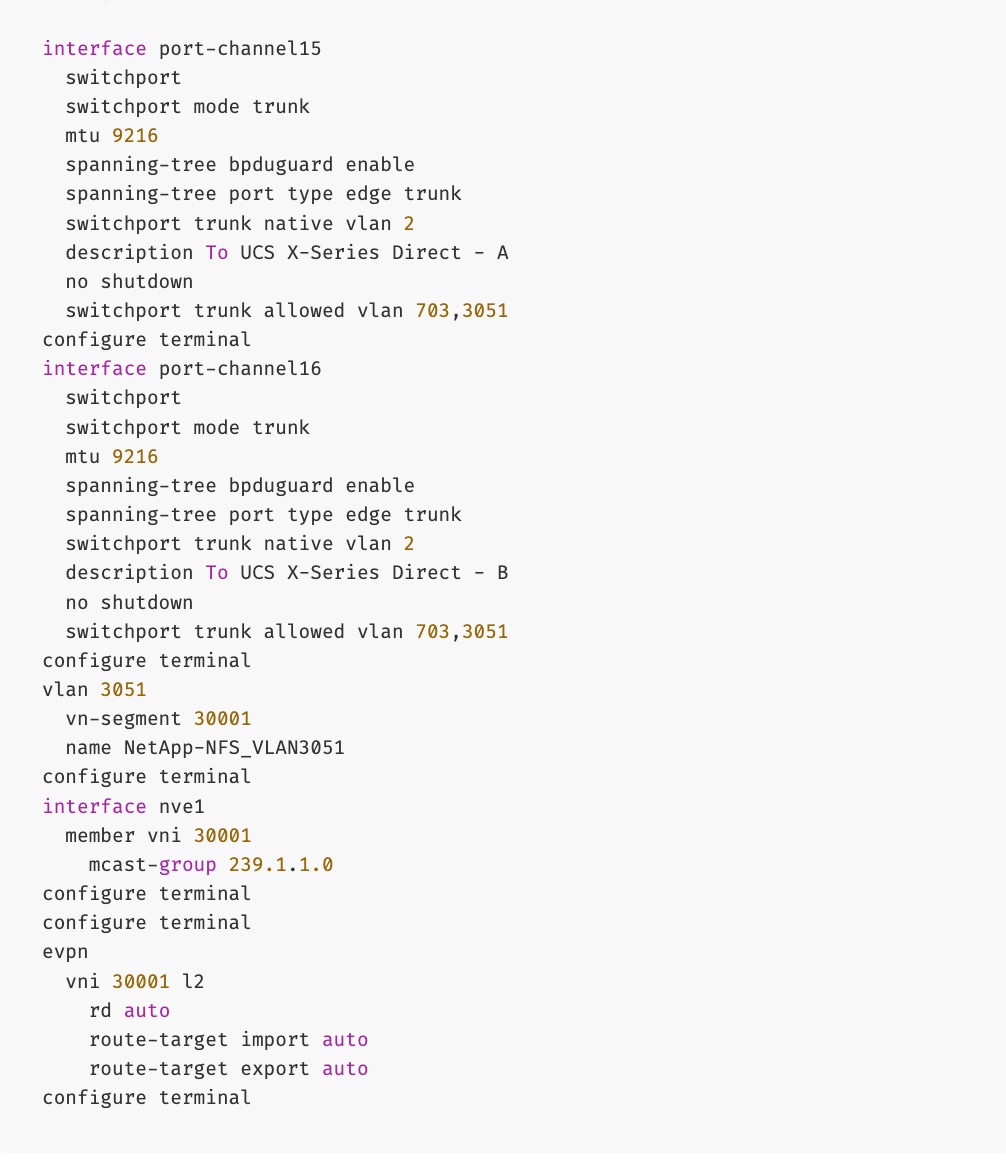

To enable NFS storage data access to NetApp storage, follow the procedures below.

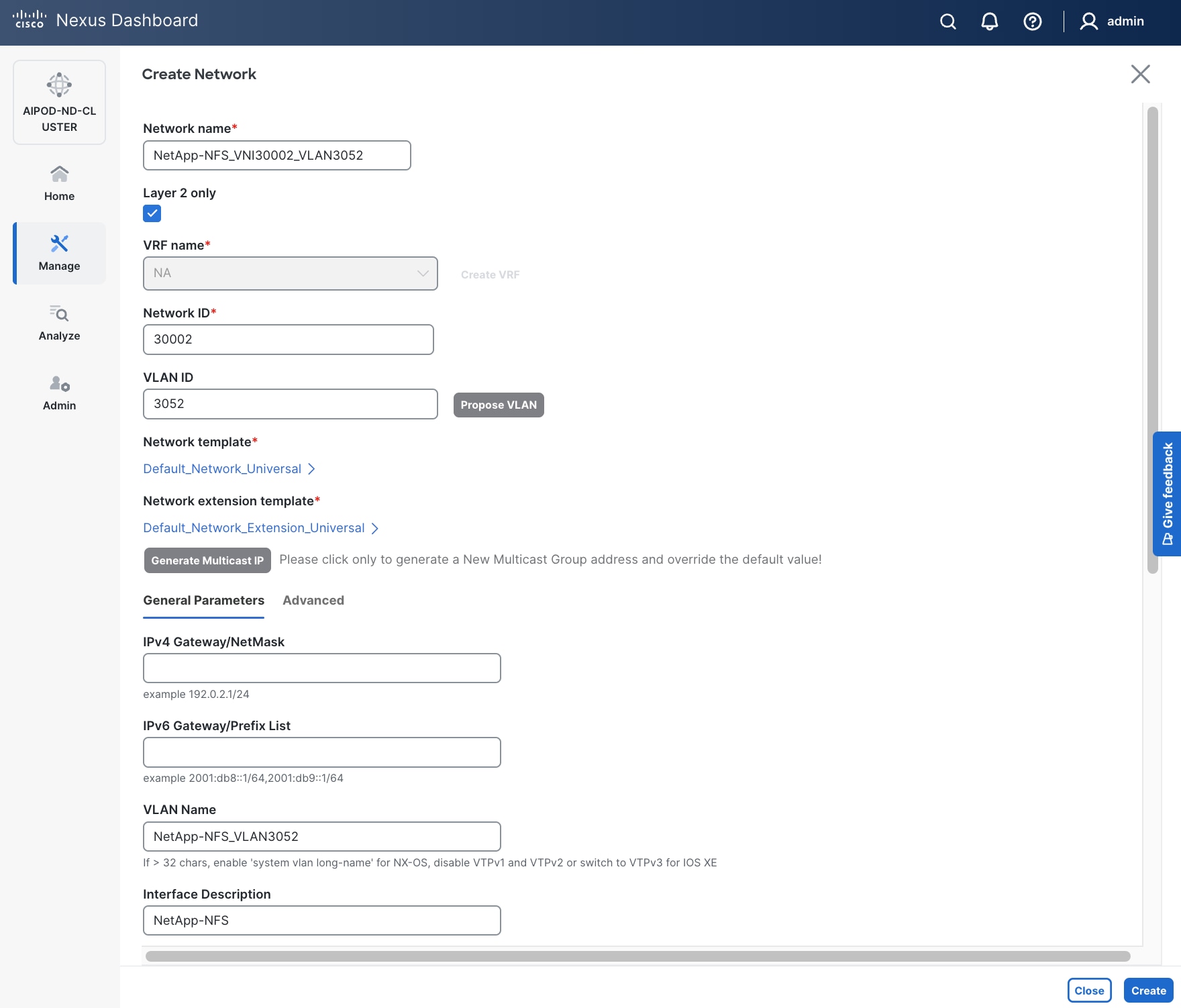

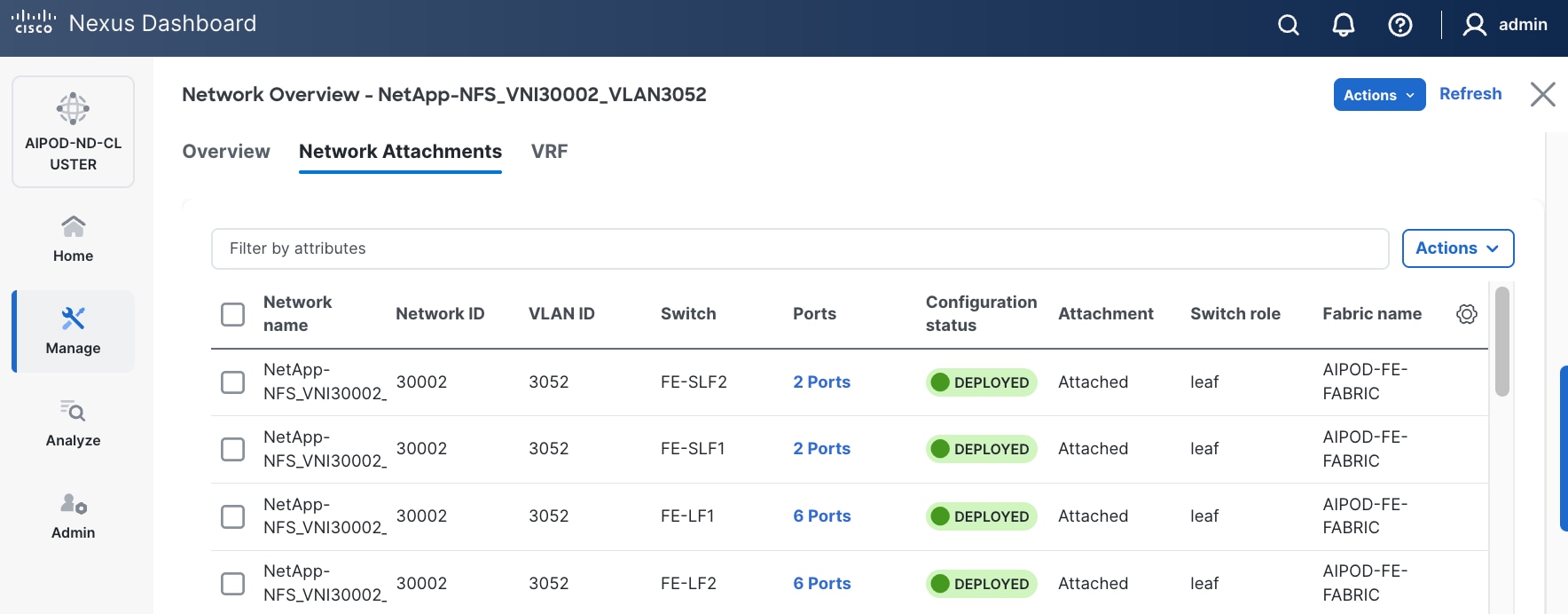

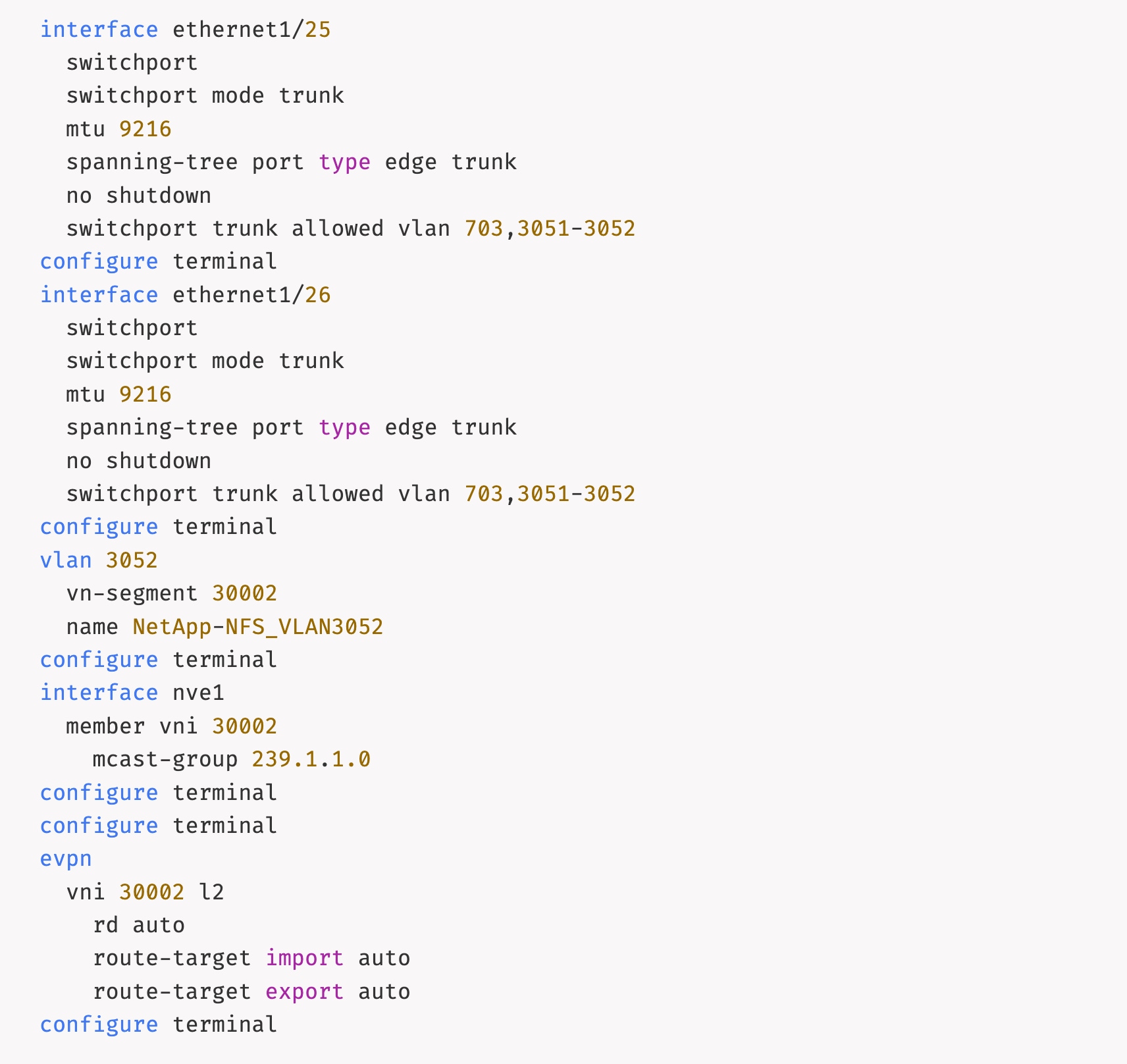

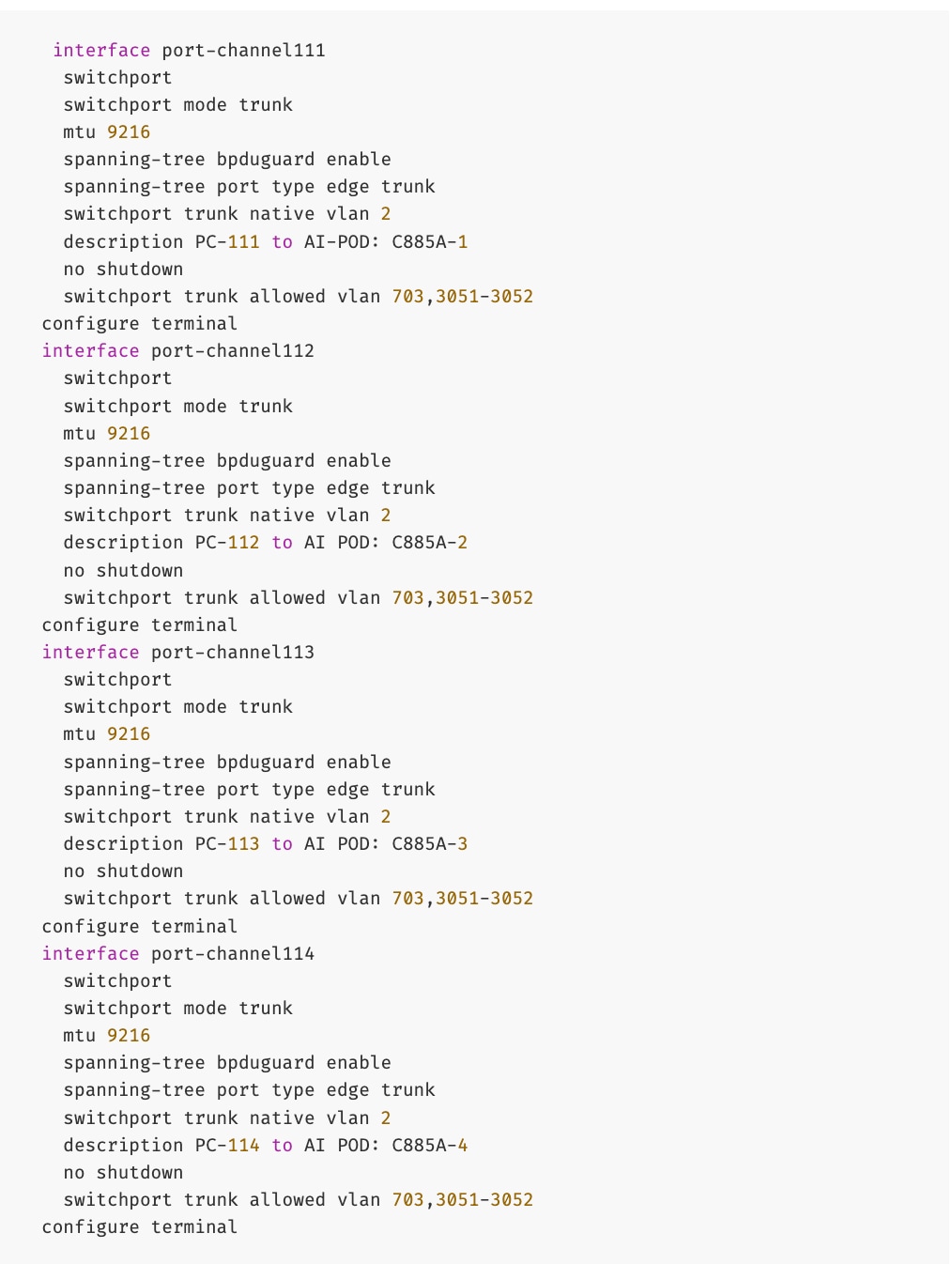

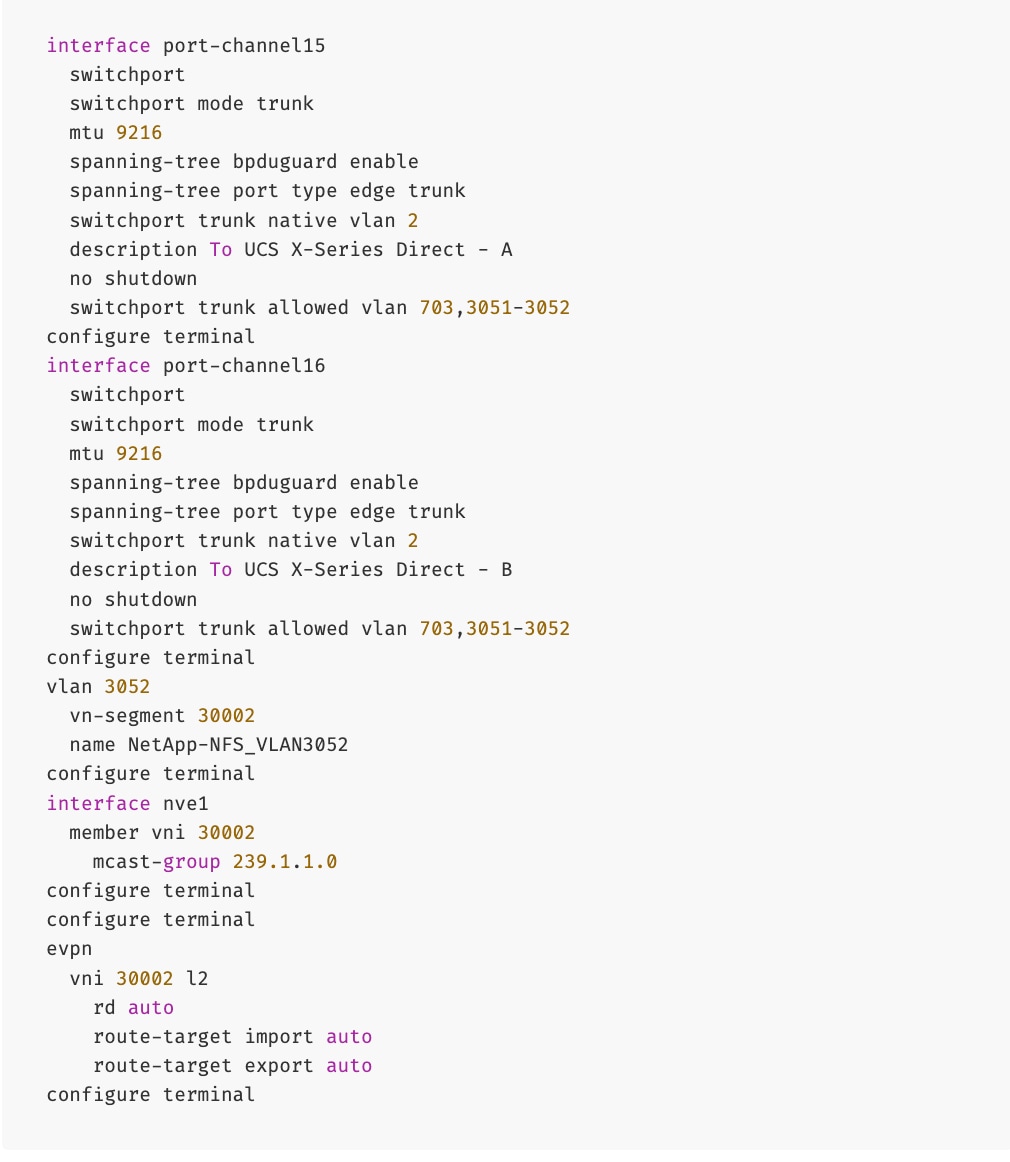

Procedure 1. Enable NFS Storage Data Access to NetApp Storage using the first NFS VLAN

Step 1. Use a web browser to navigate to Nexus Dashboard. Use the management IP of any node in the ND cluster. Log in using admin account.

Step 2. From the left navigation menu, go to Manage > Fabrics.

Step 3. Select the FE fabric and go to Segmentation and Security > Networks tab.

Step 4. Click the lower of the two Actions buttons and select Create from the menu.

Step 5. In the Create Network window, specify the following:

● Network name

● Enable checkbox for Layer 2 only Layer 2 only.

● Network IDNetwork ID or use default.

● VLAN ID VLAN ID or click Propose VLAN Propose VLAN to let system define a VLAN.

● In the General Parameters tab, specify VLAN Name and Interface Description.

Step 6. Click Create to create the NFS Storage Data Network.

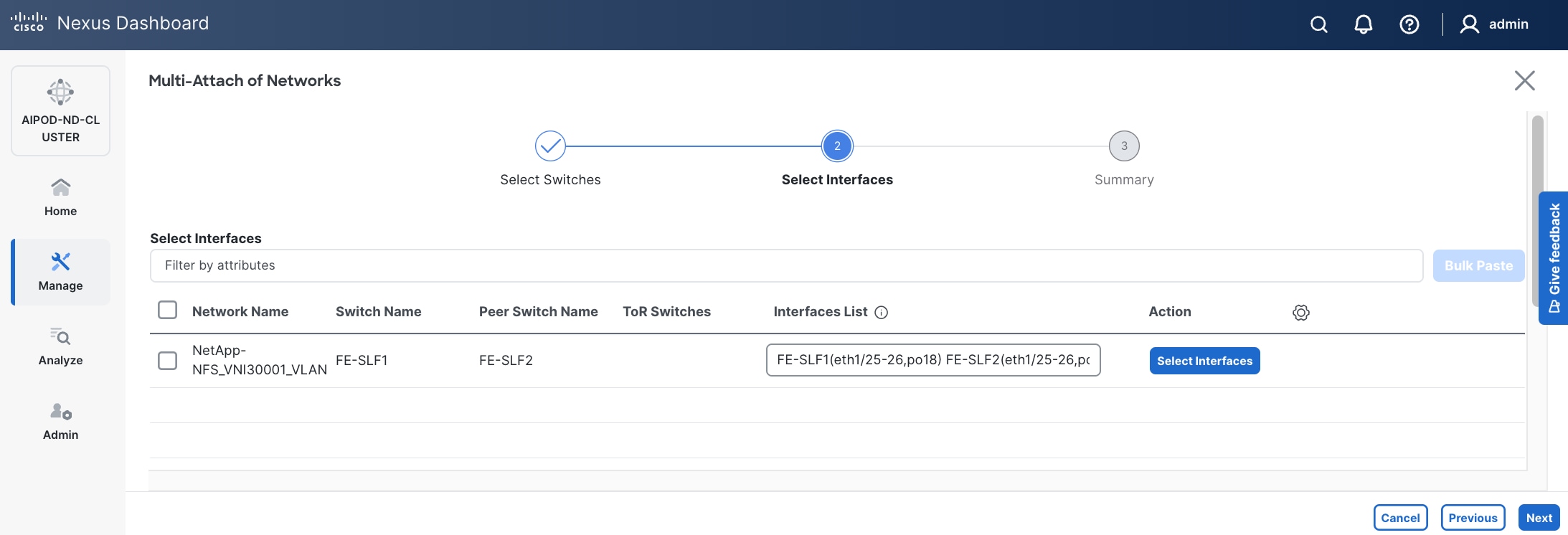

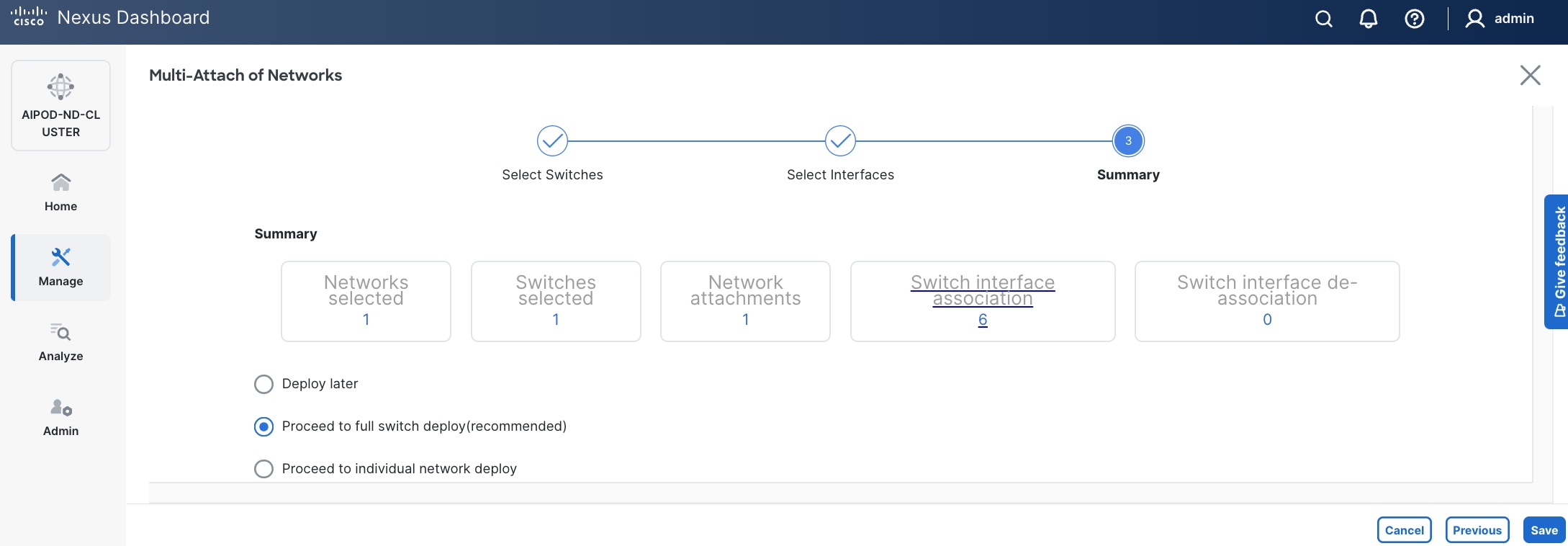

Step 7. Select newly created network. Click the lower of the two Actions button and select Multi-attach from the list.

Step 8. Select the compute and storage Leaf switch pairs.

Step 9. Click Next.

Step 10. Select each Network Name in the list and click Select interfaces on the right to deploy this network as a trunked VLAN on the selected interfaces. This should include the ports on the compute and storage leaf pair that connect to UCS nodes and NetApp storage, respectively. Additional interfaces can be added later as needed.

Step 11. Click Next.

Step 12. Click Save.

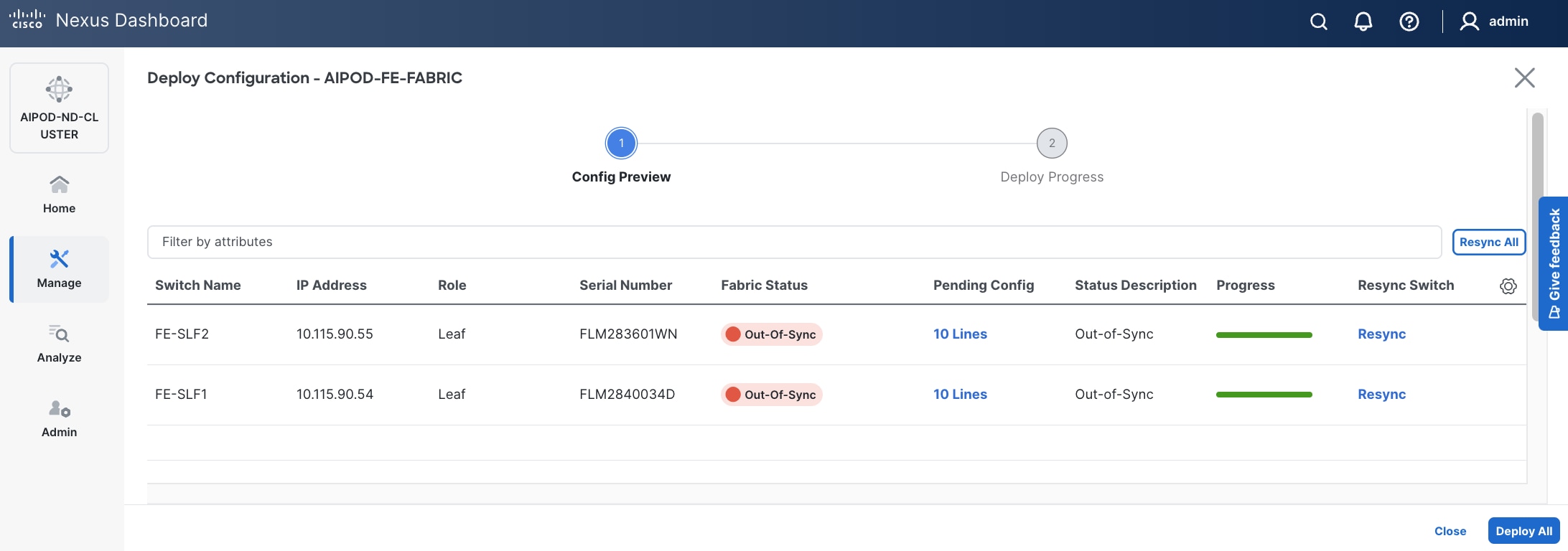

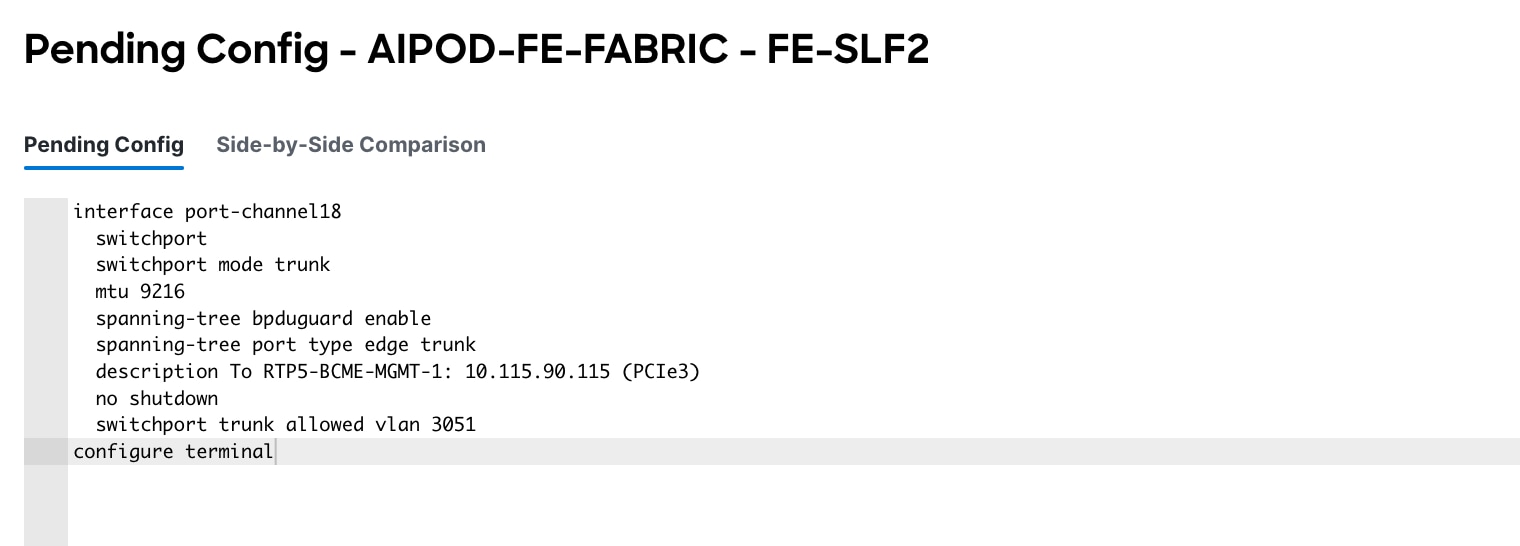

Step 13. Click Pending Config to see the configuration being deployed. The pending configuration on one leaf switch is provided as a reference at the end.

Step 14. Click Deploy All.

Step 15. Click Close.

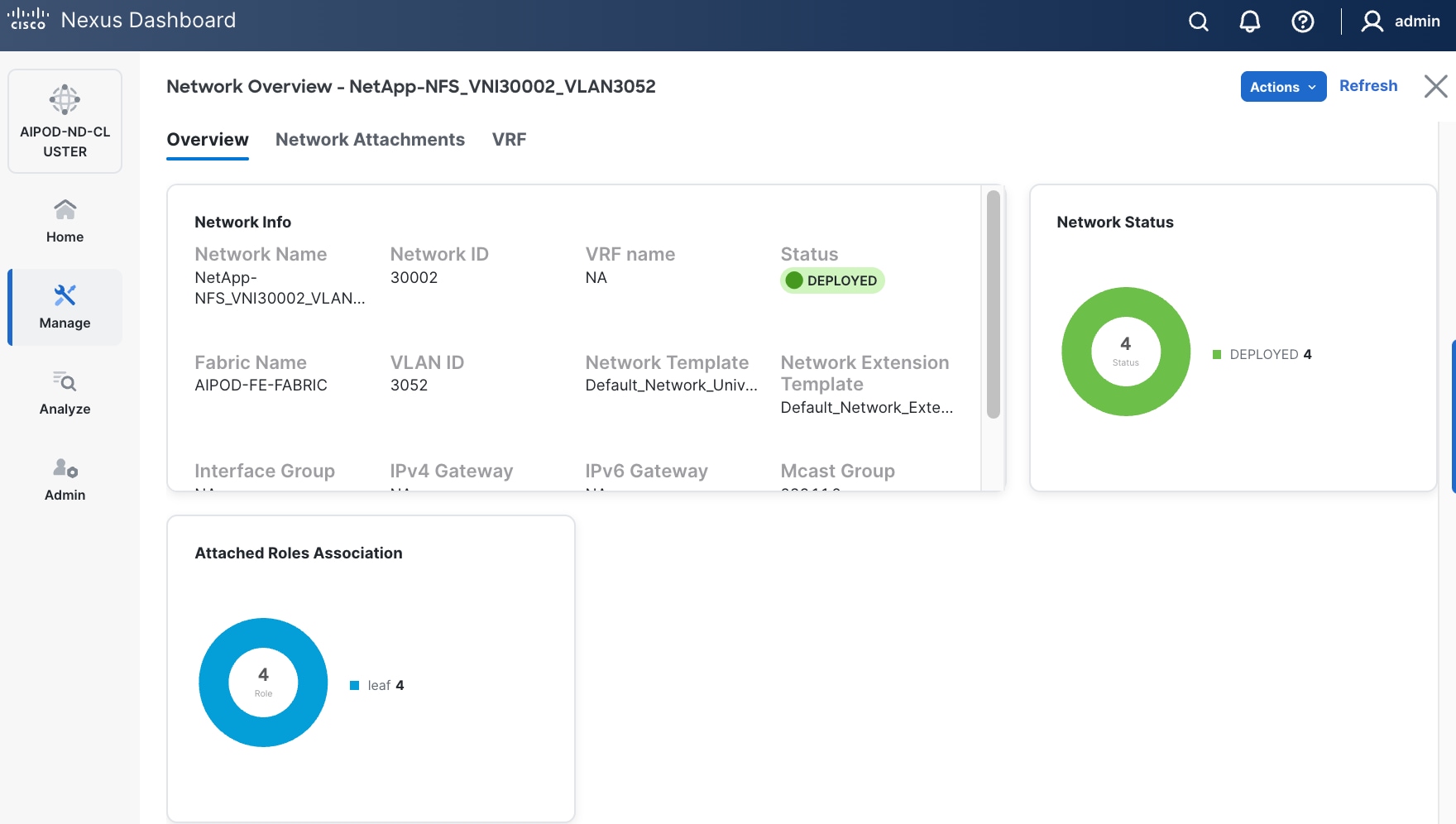

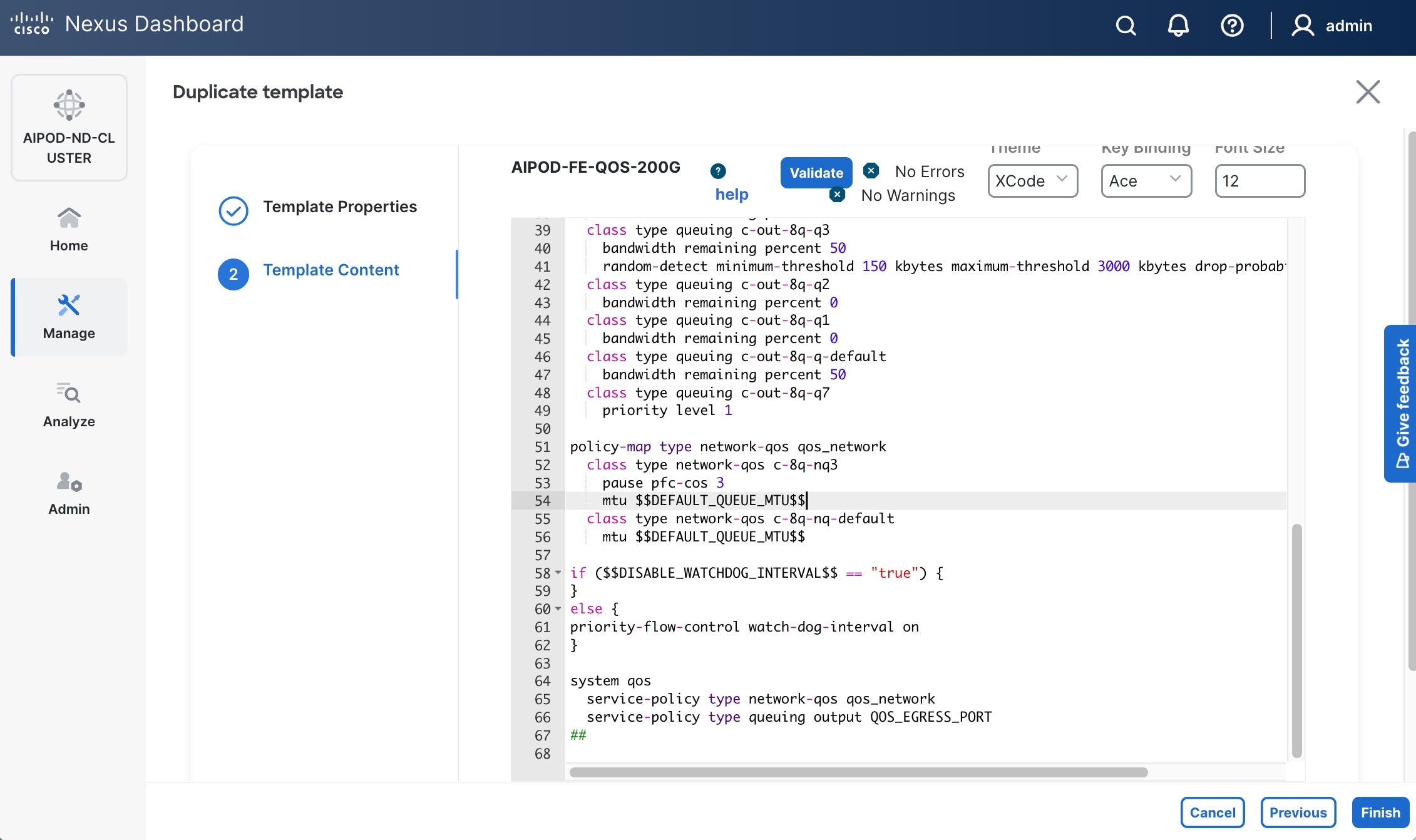

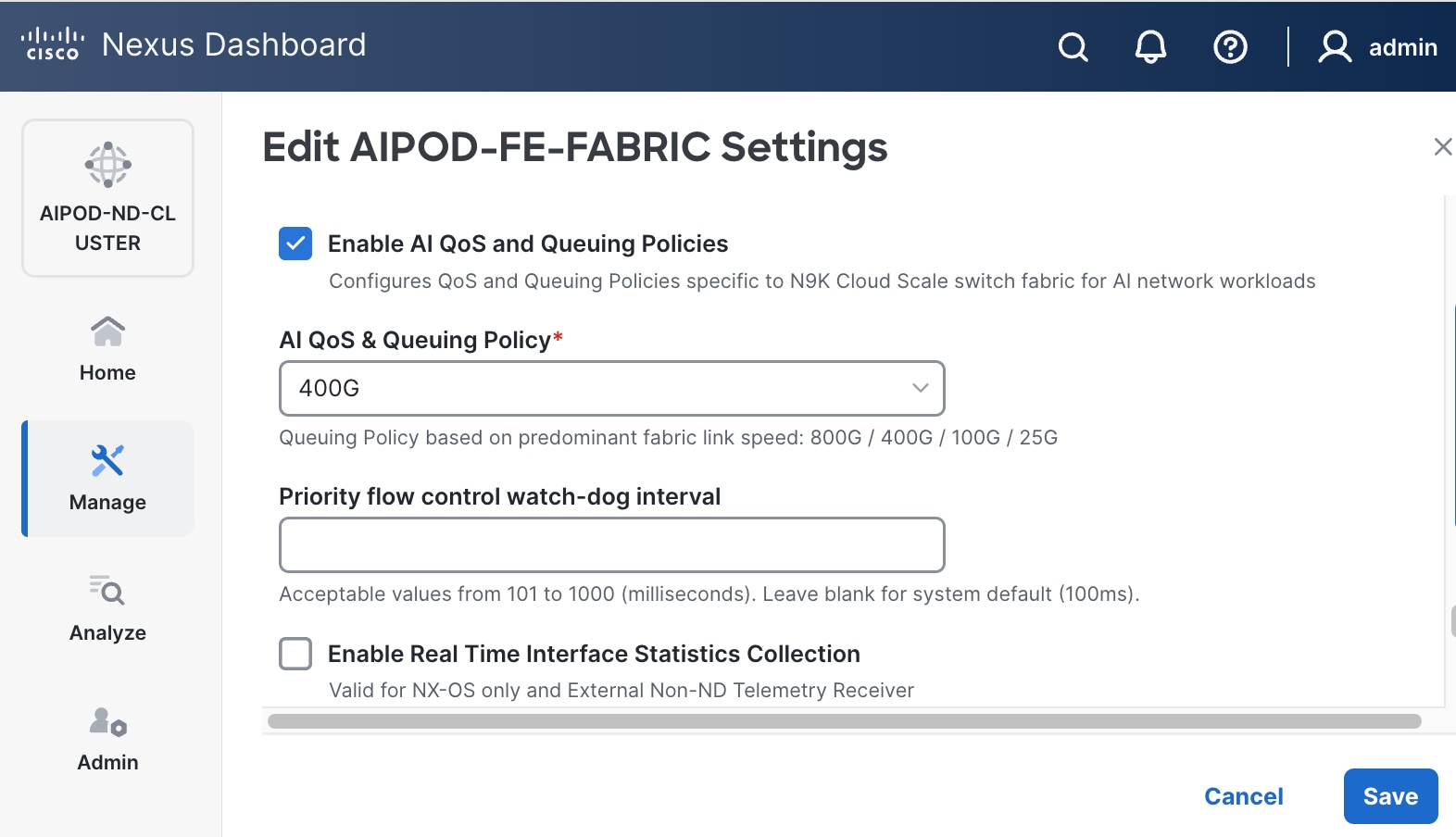

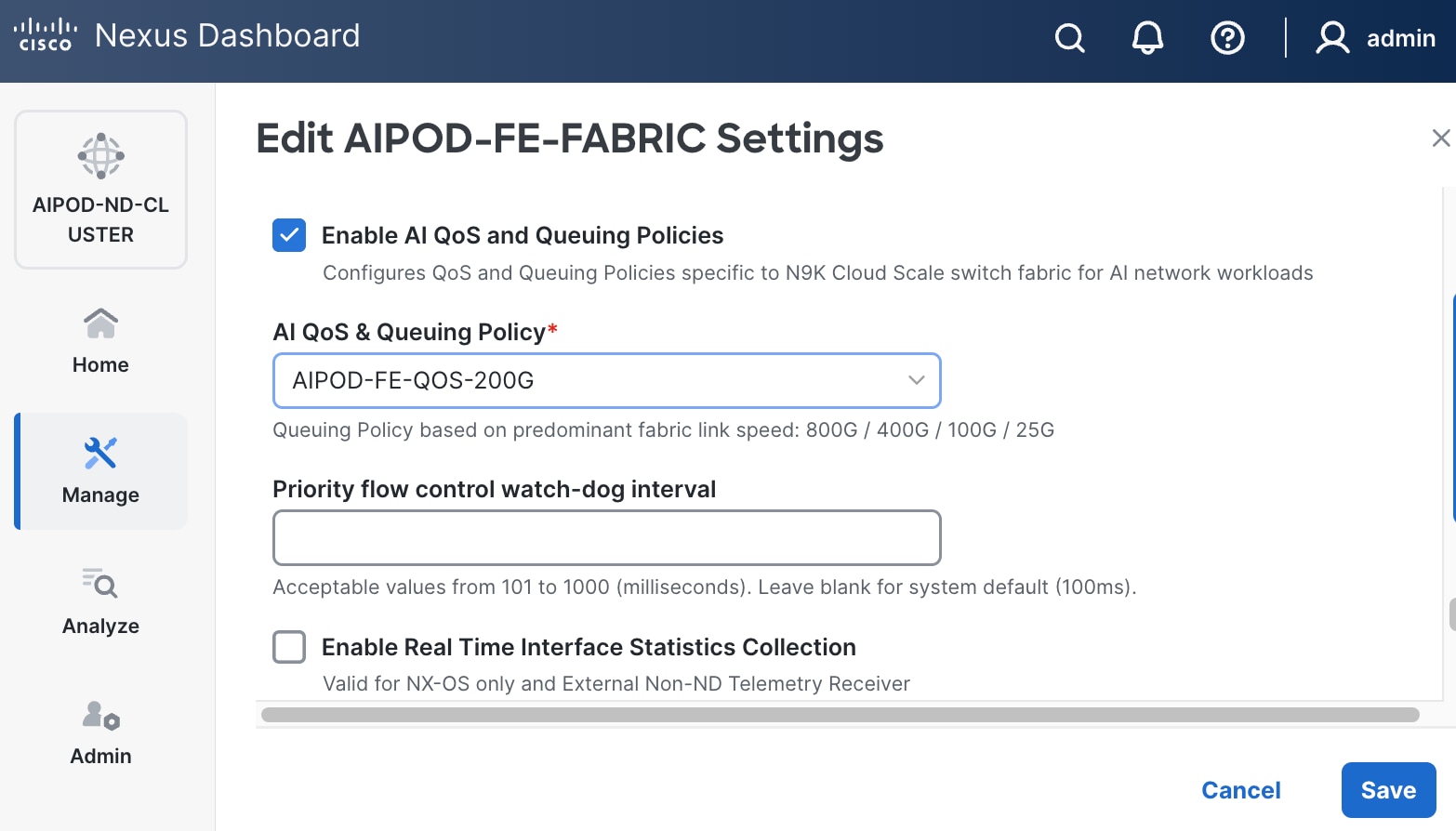

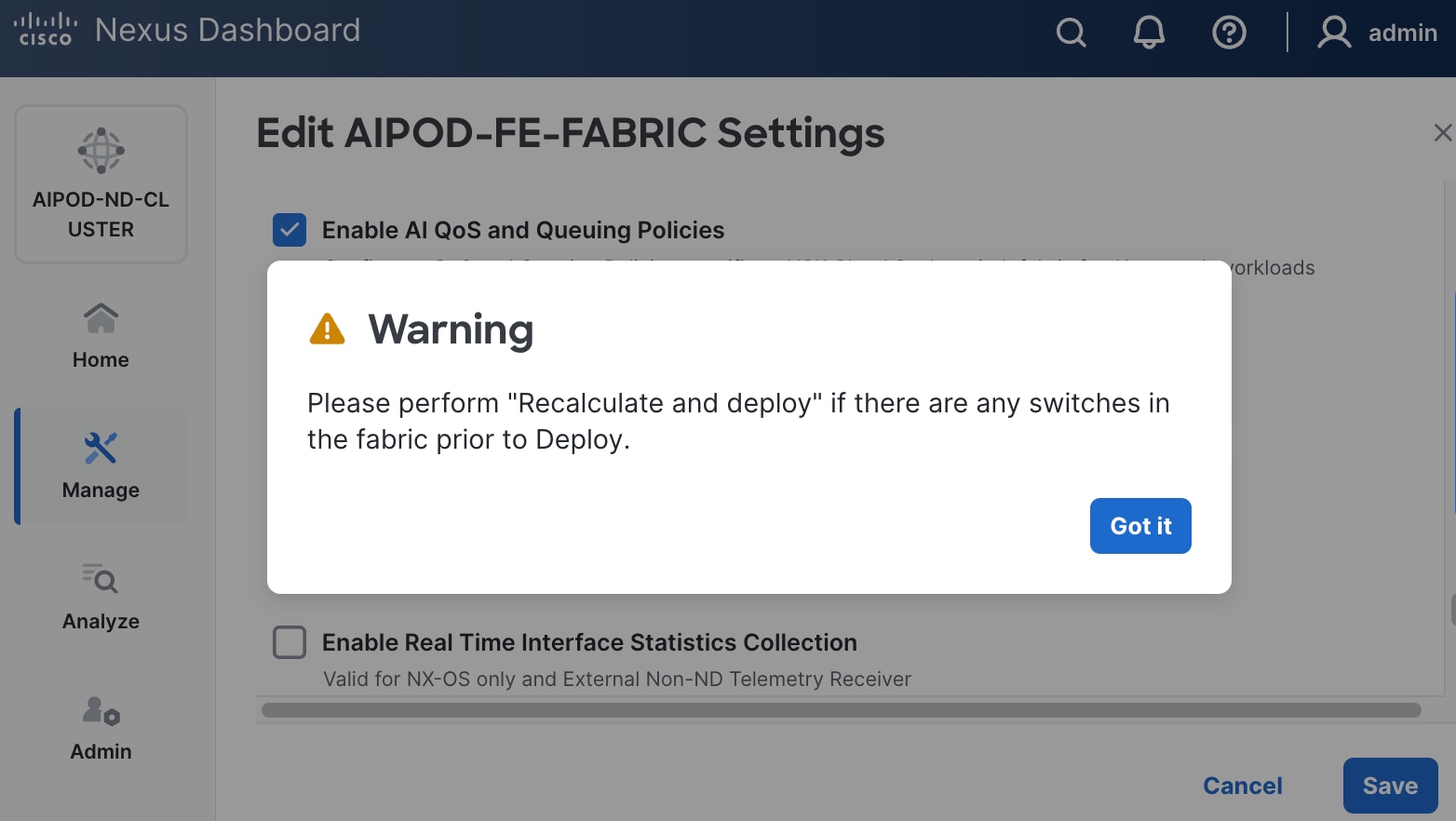

Step 16. Click the Network name to verify that the network was successfully deployed on the relevant switches and interfaces.