Overview

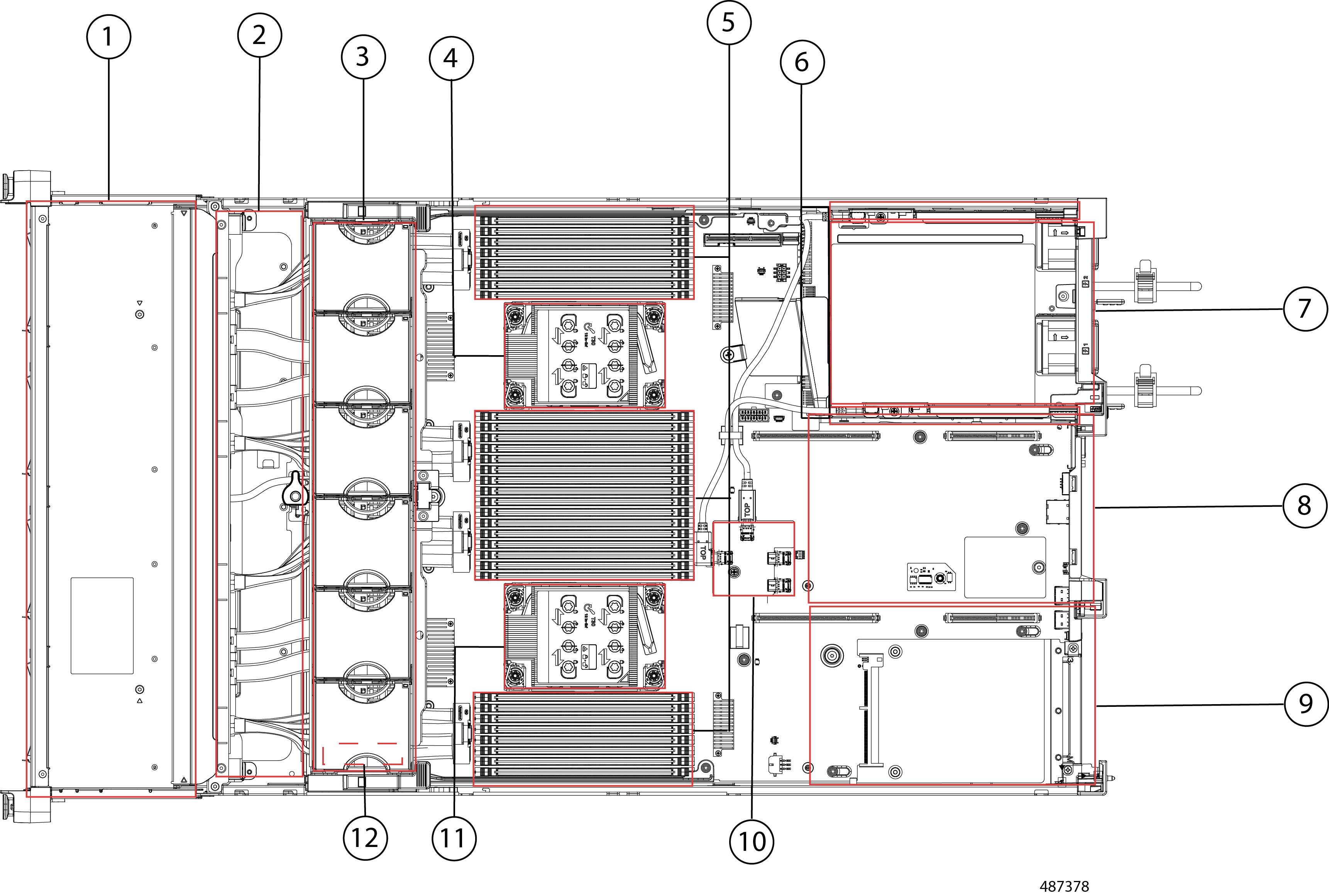

The Cisco UCS C240 M8 is a 2U rack server chassis that can operate in both standalone environments and as part of the Cisco Unified Computing System (Cisco UCS).

Each Cisco UCS C240 M8 has two CPU sockets that can support the Intel® Xeon® 6 Scalable Processors, in either one or two CPU configurations. These processors feature 86 cores per CPU, 350W TDP per socket, 4xUPI 2.0 at up to 24 GT/s, 8 distinct channels of DDR5 DIMMs, and support a maximum of 88 PCIe version 5.0 lanes.

Additionally, the server supports the following features with one CPU or two identical CPUs:

-

32 DDR5 DIMMs (RDIMM) are supported on a dual-CPU server, and 16 DDR5 DIMMS (RDIMM) in a single-CPU server:

-

Up to 6400 MT/s for 1 DPC

-

Up to 5200 MT/s for 2DPC

-

Up to 8000 MT/S MR DIMMs

-

16 DIMMs are supported per CPU for a total system memory of 8 TB (up to 256 GB DDR5 DIMMs).

-

-

DDR5 DIMM capacities vary based on the CPU type for the compute node. For more information see the Cisco UCS Intel M8 Memory Guide..

-

Intel Xeon 6 Scalable Processors support 16, 32, 48, 64, 96, 128, and 256 GB DDR5 DIMMs per CPU socket.

The servers have different supported configurations, which differ based on the number and type of storage drives installed.

-

The servers can support small form factor (SFF), EDSFF (E3.S), and large form-factor (LFF) drives, most of which are front-loading into the server's drive cage, but one model of server supports mid-mount LFF drives.

-

Support for M.2 SSDs:

-

The server supports up to 2x M.2 SATA drives.

-

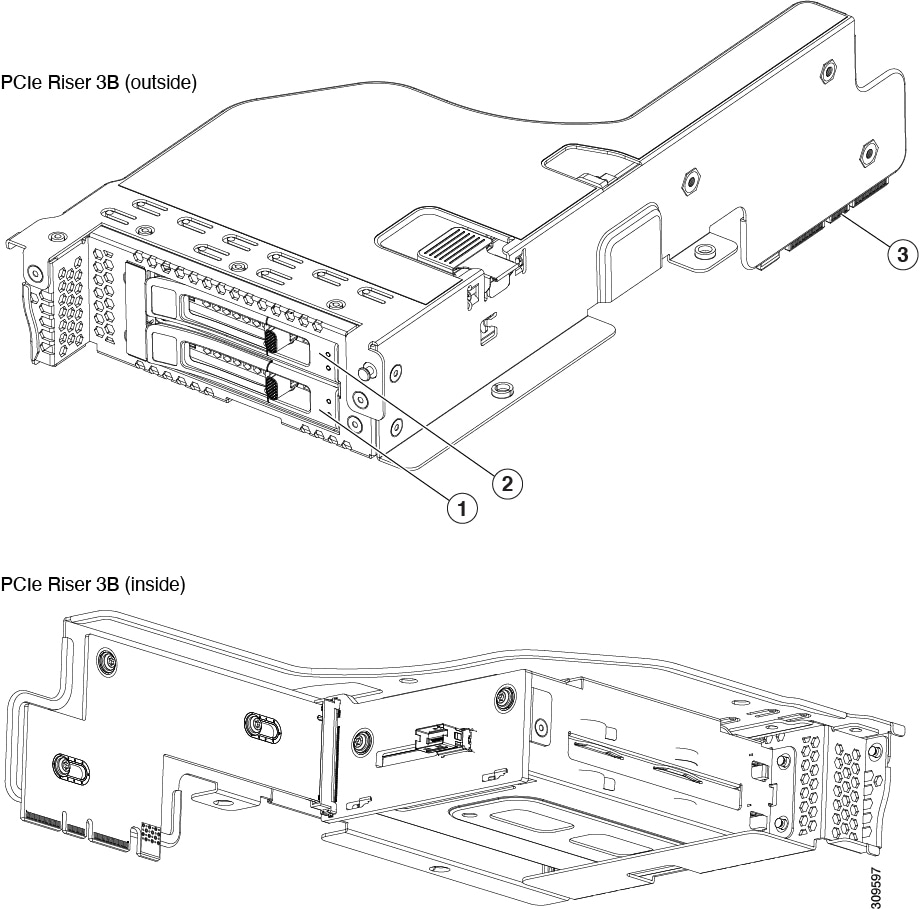

For boot RAID M.2 support: One M.2 Boot-Optimized RAID controller which can be internal or rear accessible. Rear M.2 RAID Controllers can be installed in either the mLOM slot or near Riser 3.

-

-

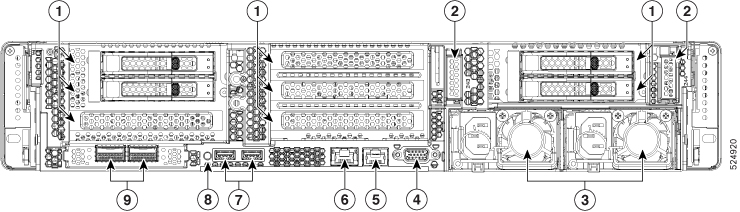

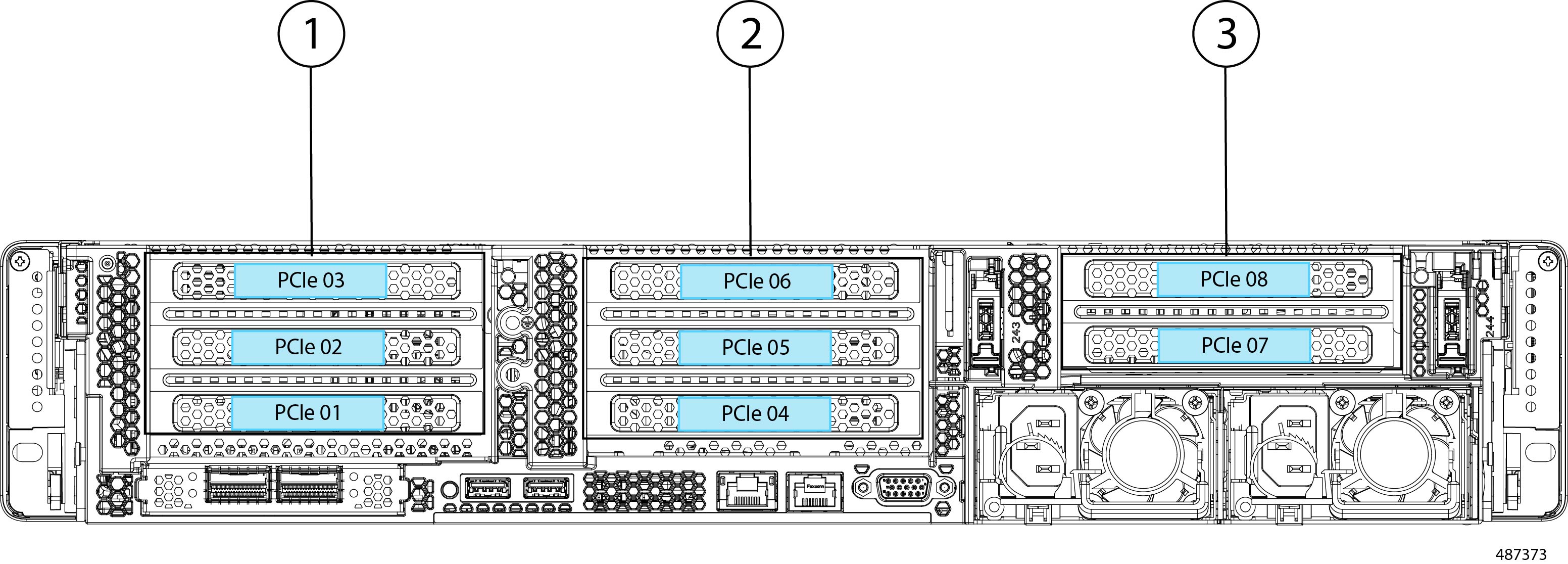

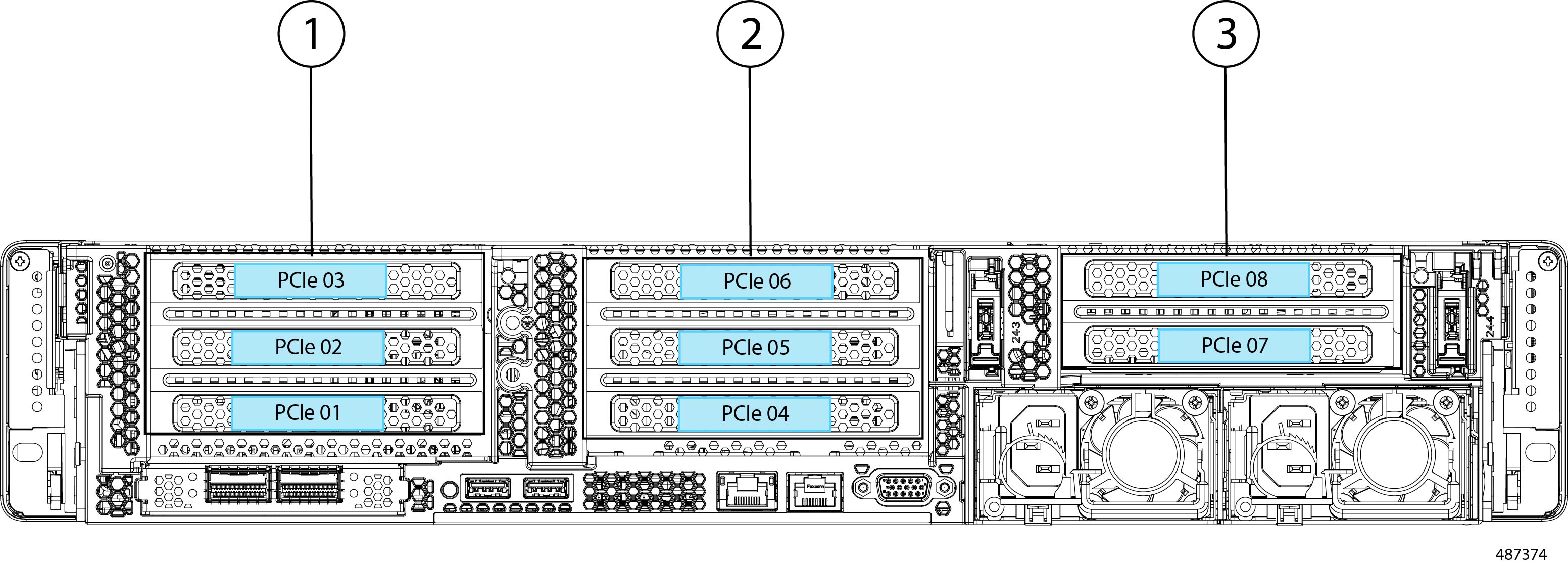

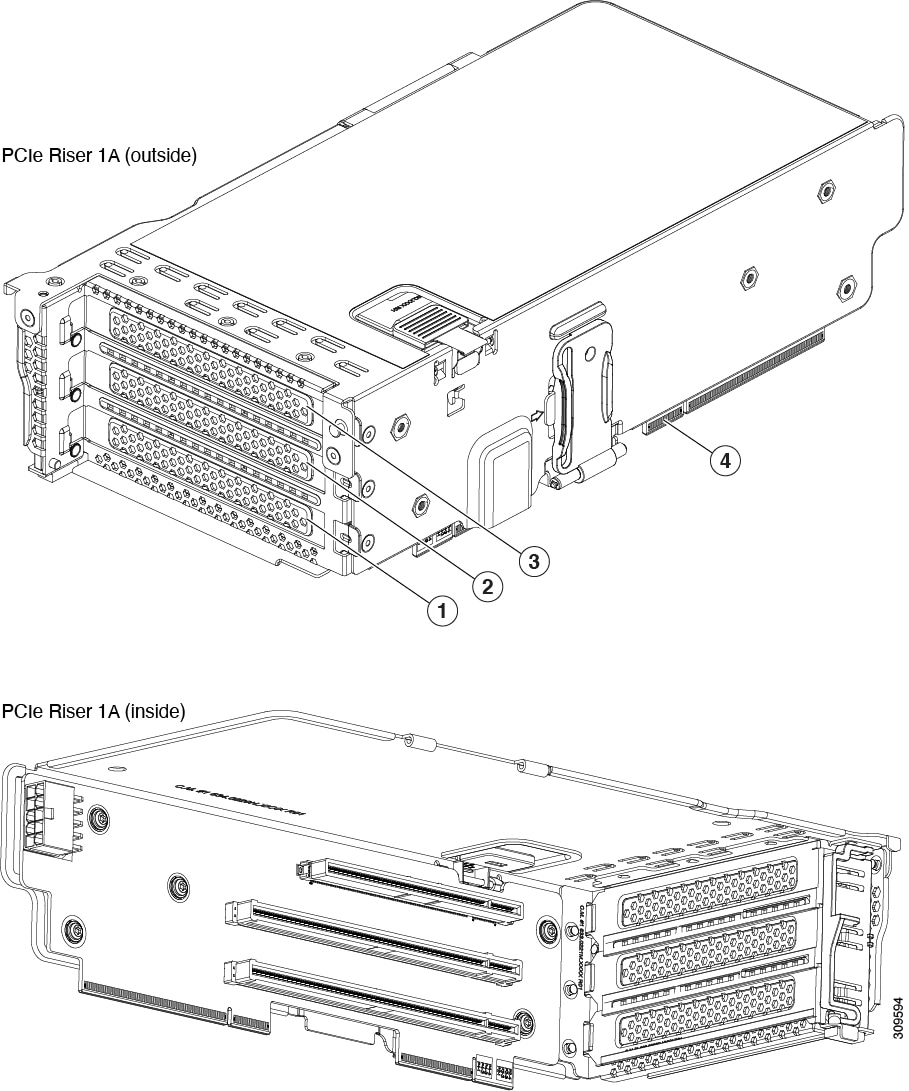

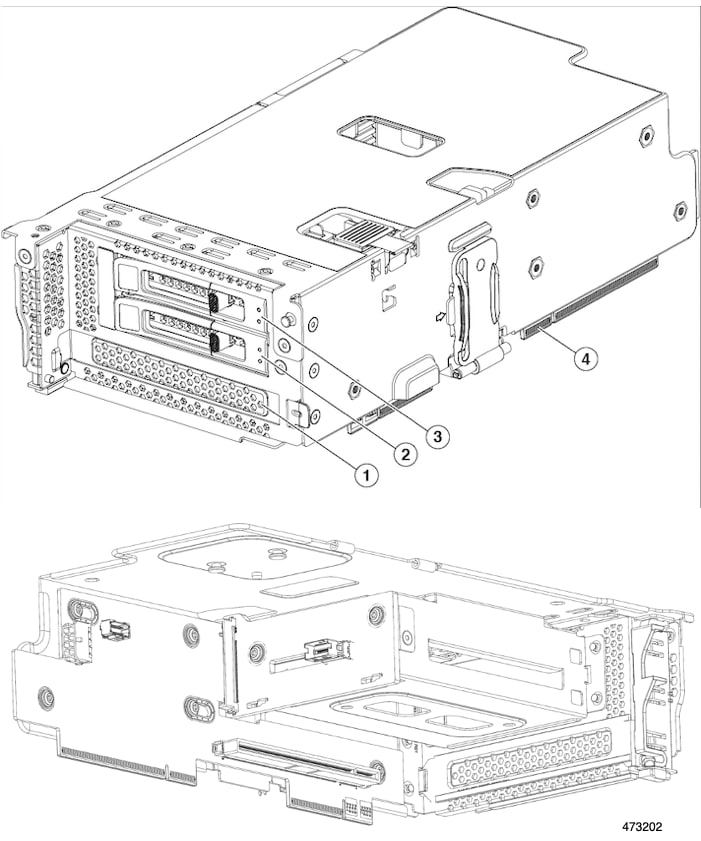

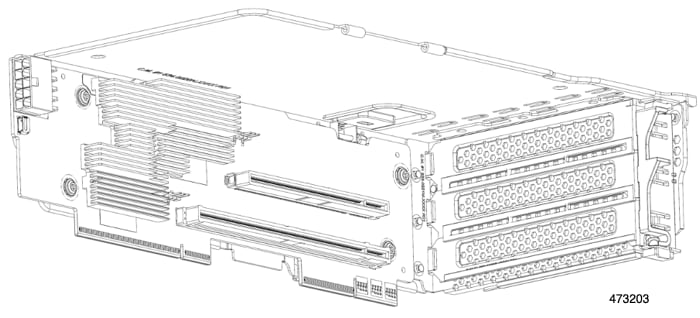

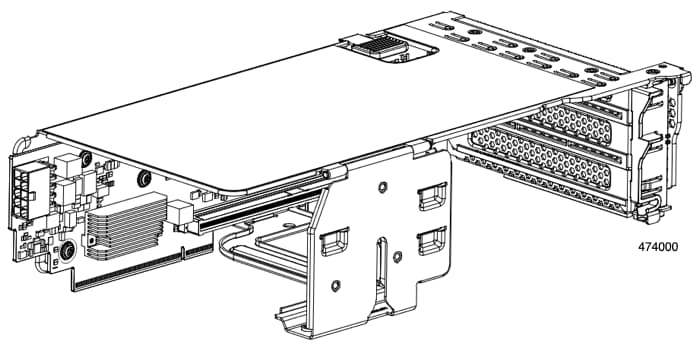

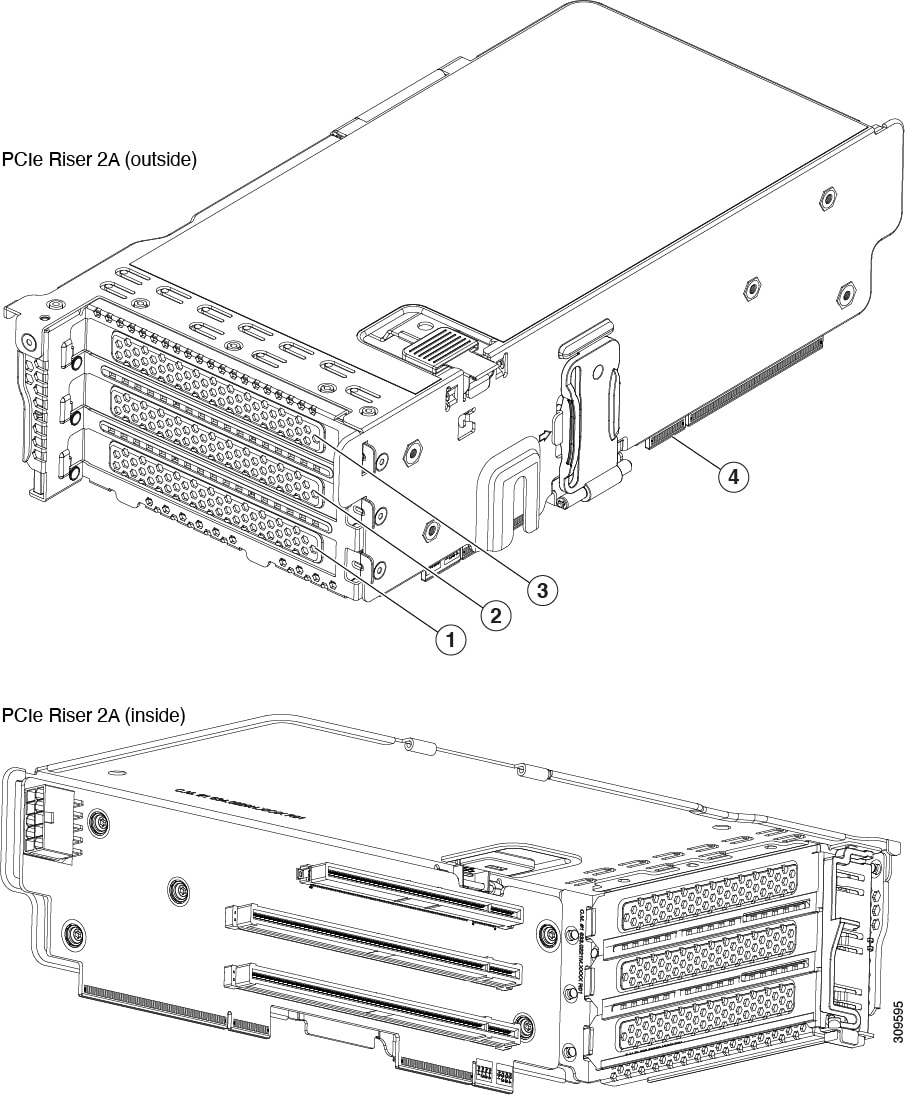

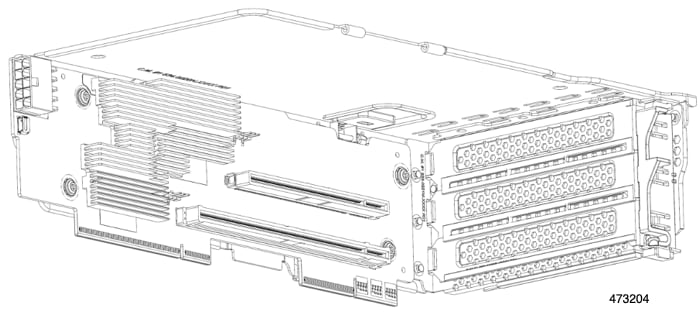

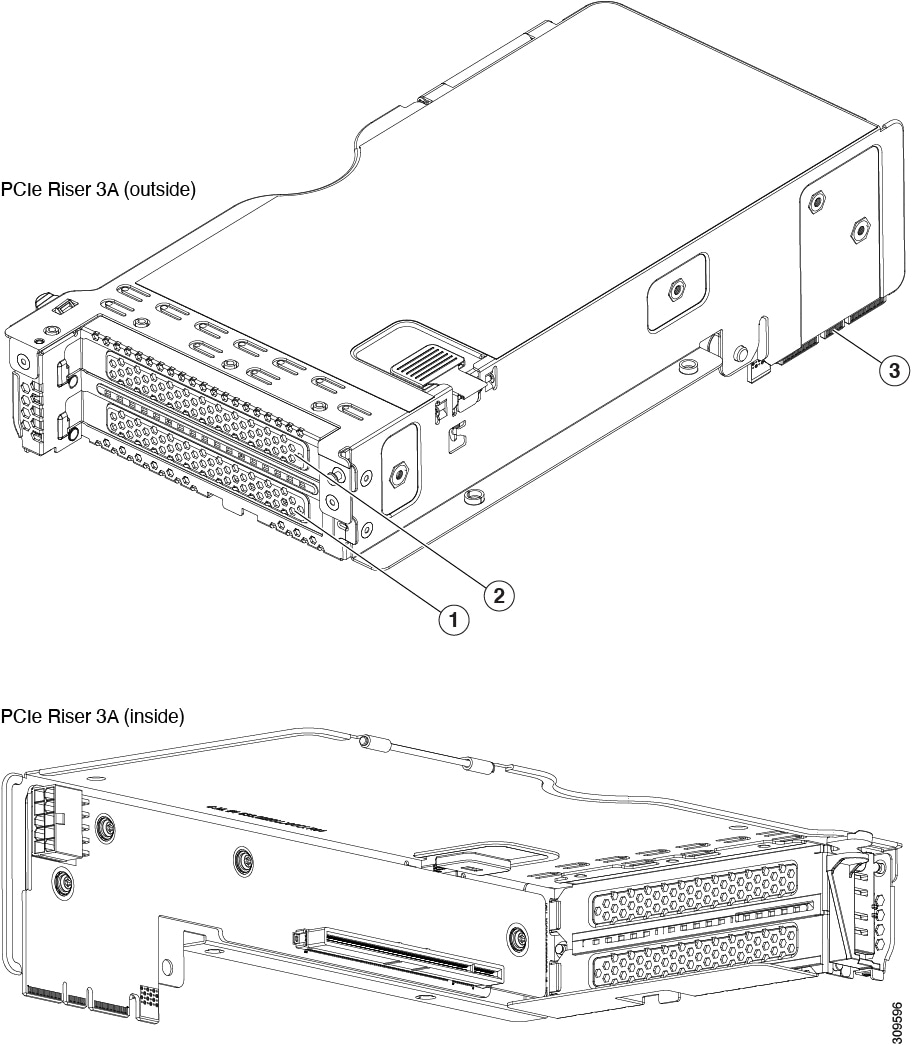

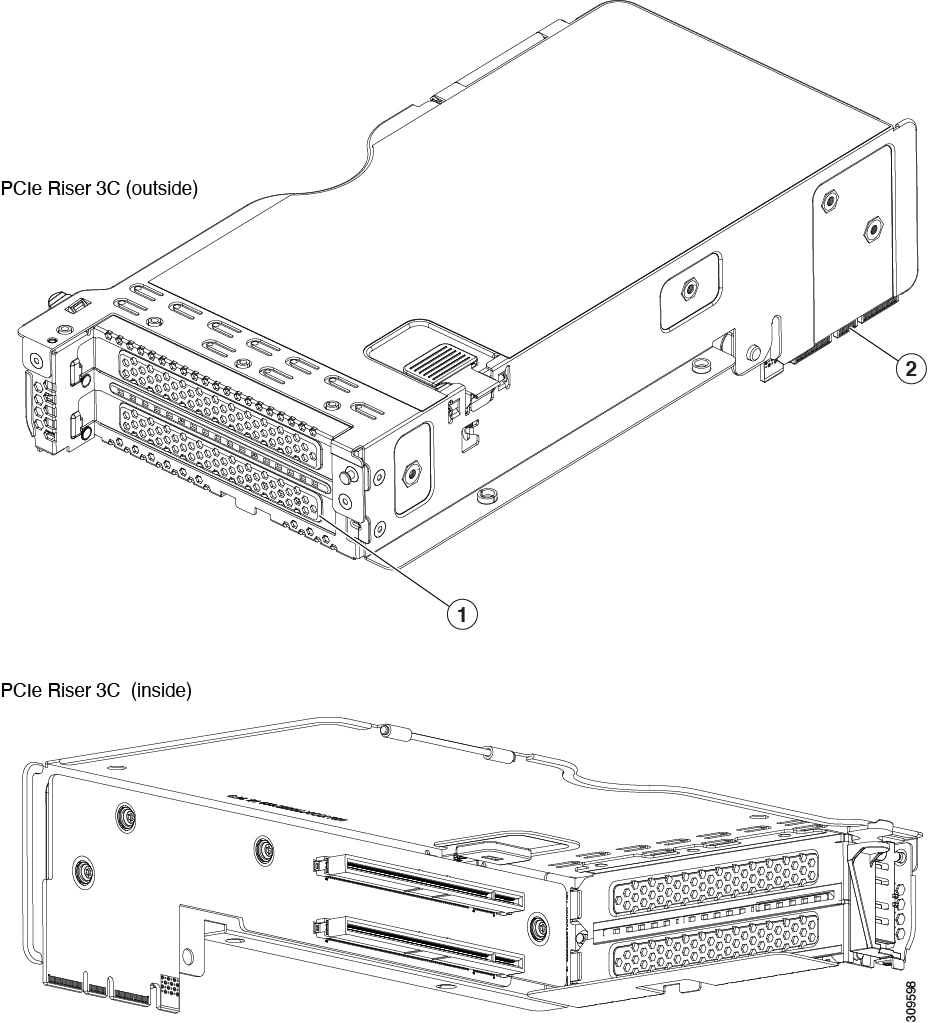

Rear PCIe risers support the following PCIe options:

-

Up to 7 PCIe Gen 5 slots (3 slots x16, plus 4 slots x8)

-

Up to 5 PCIe Gen 5 x16 slots

-

-

Optionally, GPUs can be installed in the rear PCIe risers:

-

Up to 3 double-wide GPUs.

-

Up to 8 single-wide GPUs

-

-

Internal slot for a 24 G Tri-Mode RAID controller with SuperCap for write-cache backup, or for up to two 24G treim-Mode HBA controllers.

-

One mLOM/VIC card provides 10/25/40/50/100/200 Gbps.

-

Two power supplies (PSUs) that support N+1 power configuration and cold redundancy.

-

Six modular, hot swappable fans.

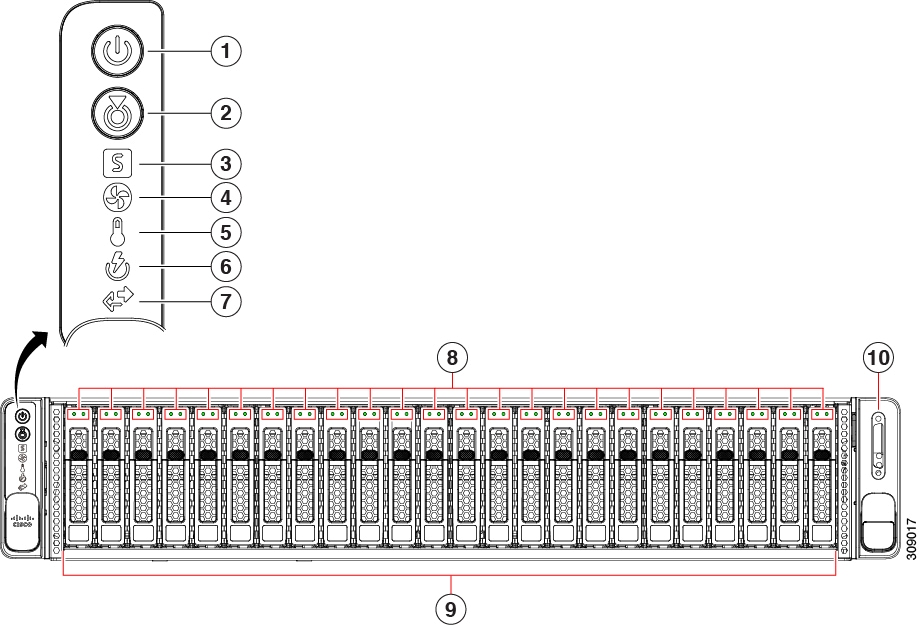

Server Configurations, UCSC-C240-M8SX

The Cisco UCS C240 M8SX server offers a hybrid backplane that supports the following:

-

Front-loading drive bays 1 through 24 support 2.5-inch SAS/SATA/U.3 NVMe drives.

-

U.3 drives are supported in all 24 slots when using in conjunction with the tri-mode RAID controller

-

Slots 1 through 4 and 21 through 24 can support direct attach NVMe SSDs (either U.2 or U.3)

-

Optionally, the rear-loading drive bays support for 2.5-inch SAS/SATA or NVMe drives.

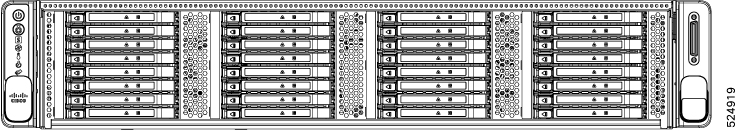

Server Configurations, UCSC-C240-M8E3S

The 32 NVMe configuration (UCSC-C240-M8E3S) can be ordered as an E3.S NVMe-only server. This server has an NVMe backplane that supports the following:

-

Front-loading drive bays 1 through 32 support 2.5-inch EDSFF NVMe drives

-

Front-loading drives support a flexible EDSFF drive config as either 32 E3.S 1TB drives or 16 E3.S 2TB drives.

-

Optionally, the rear-loading drive bays support up to four E3.S 1TB or 2TB drives.

Note |

NVMe drives are supported only on a dual CPU server and are not RAID controlled. |

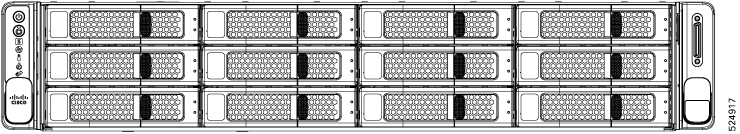

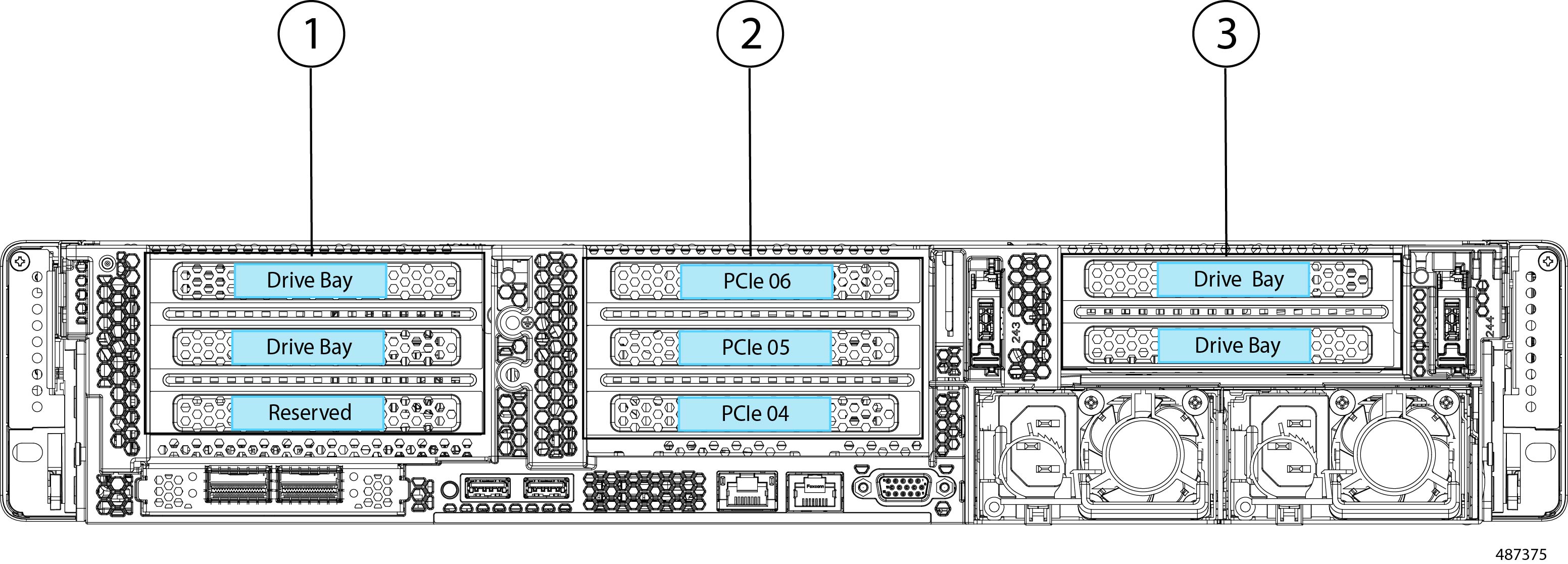

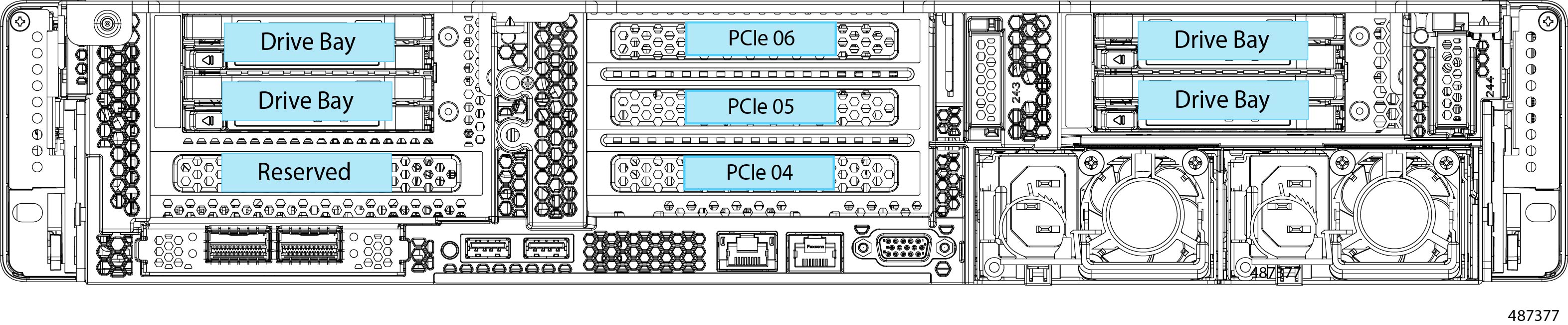

Server Configurations, UCSC-X240 M8L

The server is orderable with the following configuration for large form factor (LFF) drives.

-

Cisco UCS C240 M8 LFF 16 (UCSC-C240-M8L)—Large form-factor (LFF) drives, with a 24-drive backplane.

-

Front-loading drive bays 1—12 support 3.5-inch SAS3/SAS4 drives (HDD)

-

The midplane drive cage supports up to four 3.5-inch SAS-only drives (HDD)

-

Optionally, rear-loading drive bays support either two or four SFF SAS3/SAS4 or ES.3 NVMe drives. With the rear drives installed, this configuration is the storage-centric server config.

-

Feedback

Feedback