Cisco and Hitachi Adaptive Solutions for Converged Infrastructure as a Stretched Data Center

Available Languages

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure as a Stretched Data Center

Design and Implementation Guide for Cisco and Hitachi Adaptive Solutions with Cisco ACI Multi-Pod and Hitachi Global-Active Device

Last Updated: December 20, 2019

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2019 Cisco Systems, Inc. All rights reserved.

Table of Contents

Cisco and Hitachi Adaptive Solutions for CI Overview

Cisco ACI Multi-Pod Design with ACI 4.2

Cisco Workload Optimization Manager

APIC Controller Considerations

Hitachi Global-Active Device (GAD)

Cisco UCS Domain Configuration

Deployment Hardware and Software

DC1 Physical Cabling for the Cisco UCS 6454 with the VSP 5100

DC2 Physical Cabling for the Cisco UCS 6454 with the VSP G370

Cisco ACI Multi-Pod Deployment

Deploy Inter-Pod Network (IPN)

Setup ACI Fabric for Multi-Pod

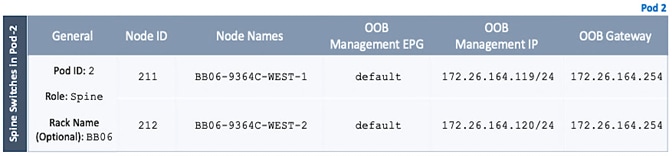

Setup Pod-2 Spine Switches, Leaf Switches, and APIC(s)

Physical Connectivity - Inter-Pod Network

Setup ACI Fabric for Multi-Pod – Using Configuration Wizard

Configure Inter-Pod Connectivity

Add Physical Pod – Second Pod or Site (Pod-2)

Configure DHCP Relay on IPN Devices

Configure OSPF Interface Profile for Spines in Pod-2

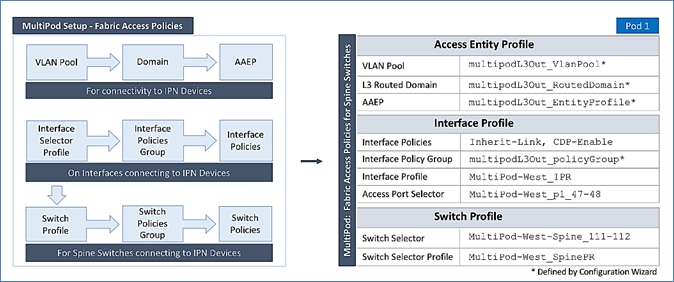

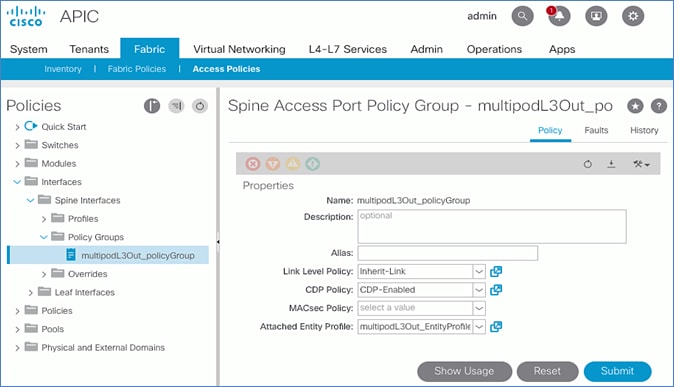

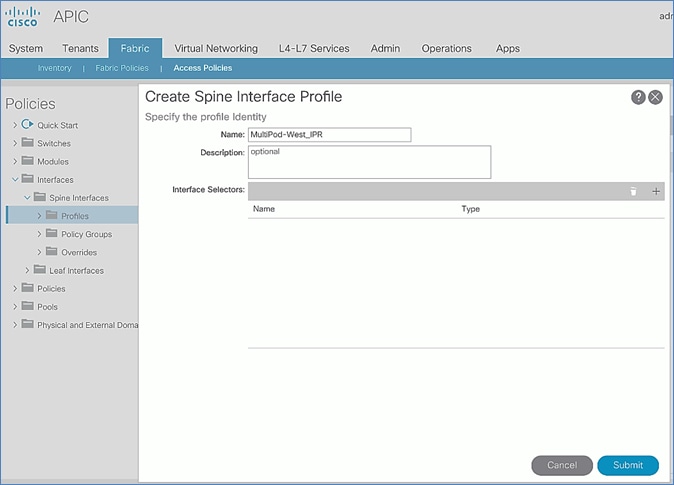

Setup Fabric Access Policies for Spine Switches in Pod-1

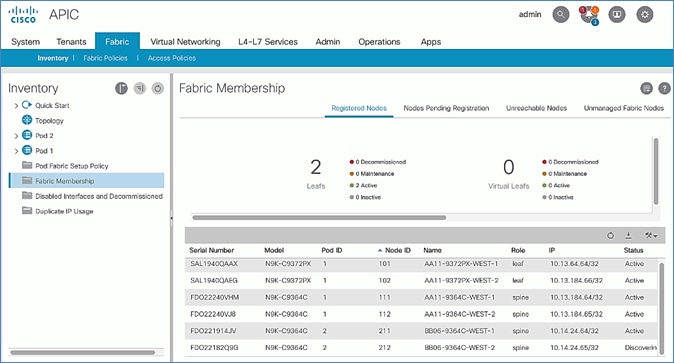

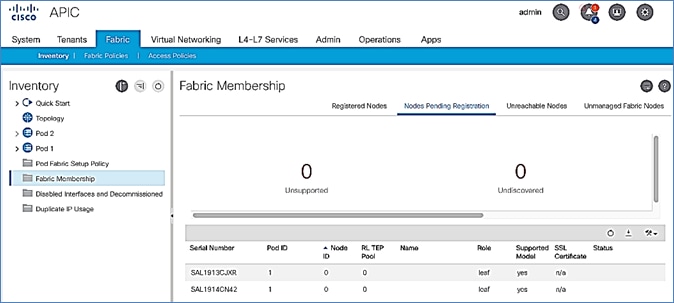

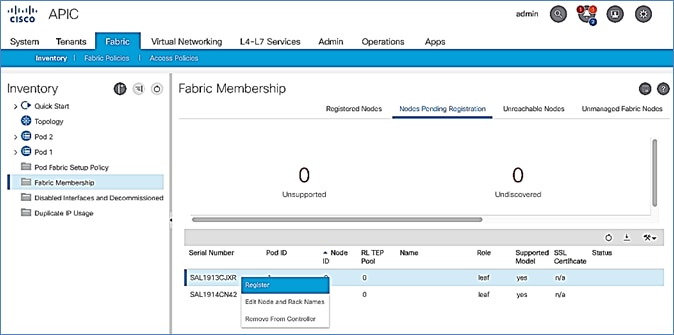

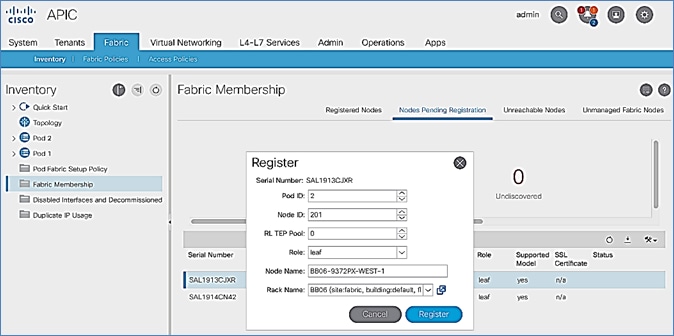

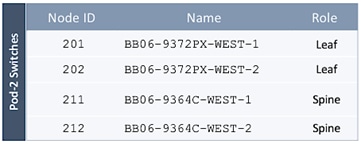

Deploy Spine and Leaf Switches in Pod-2

Configure NTP for Pod-2 using Out-of-Band Management

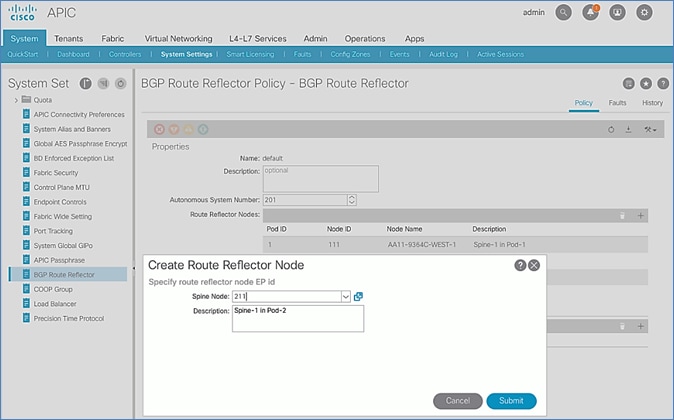

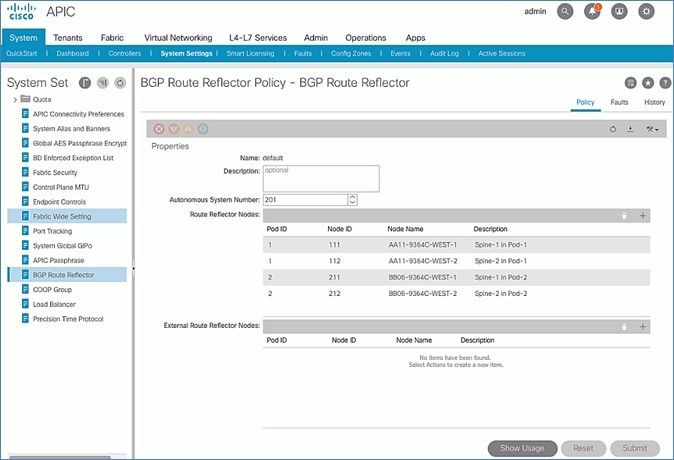

Update BGP Route Reflector Policy for Pod-2

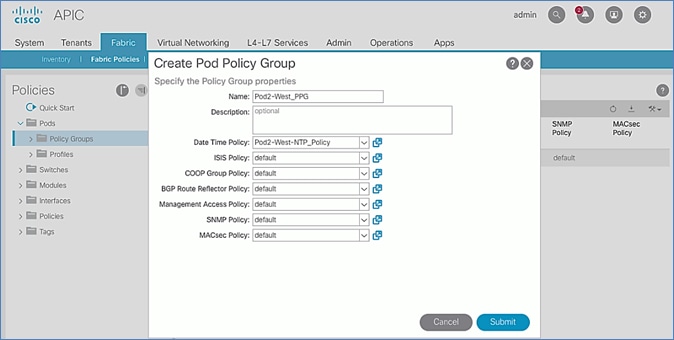

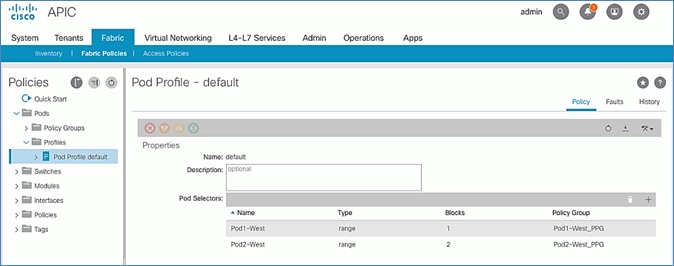

Update Pod Profile to Apply Pod Policies

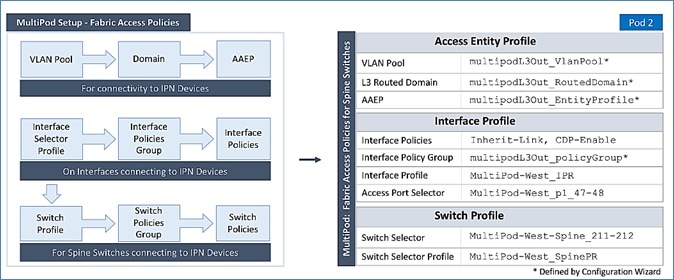

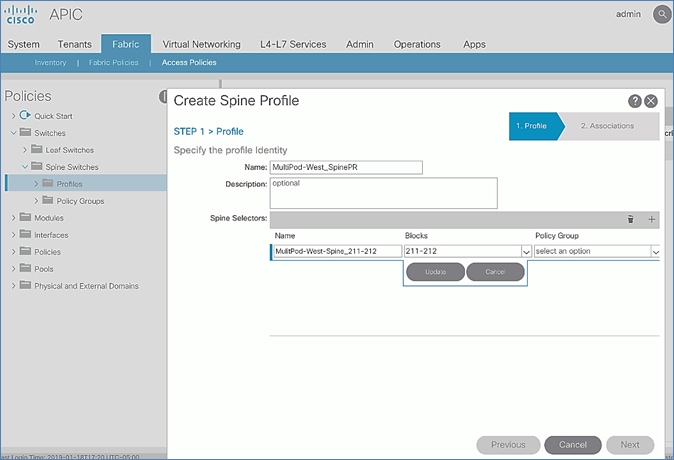

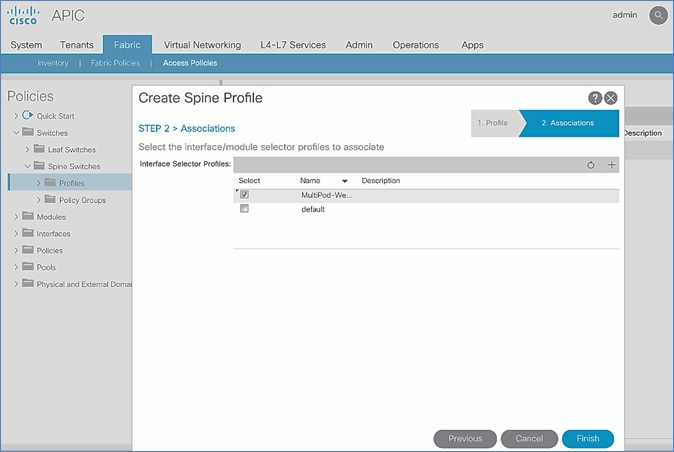

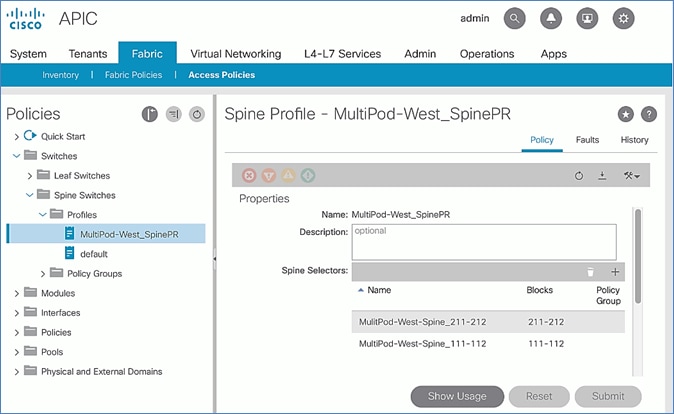

Setup Fabric Access Policies for Spine Switches in Pod-2

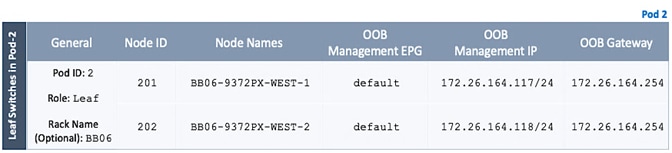

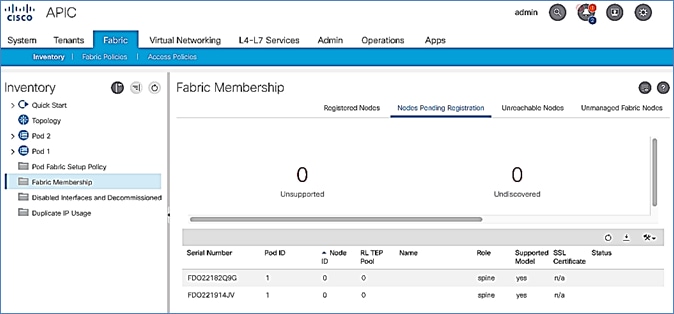

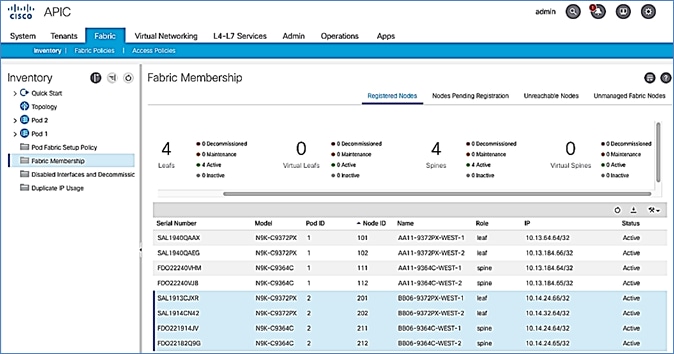

Verify Pod-2 Switches are Part of the ACI Fabric

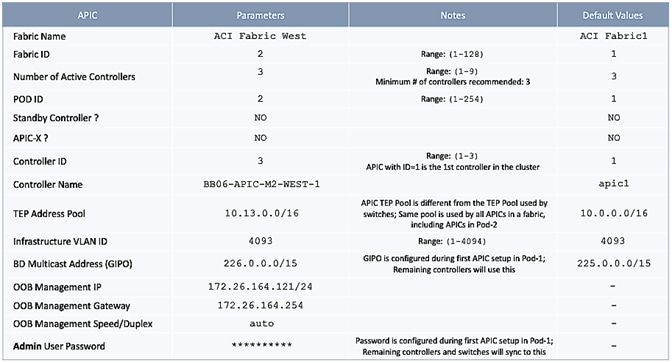

Verify Pod-2 APIC is Part of the APIC Cluster

Add Pod-2 APIC as DHCP Relay Destination

Verify ACI Multi-Pod Fabric Setup

Verify OSPF Status on Spine Switches

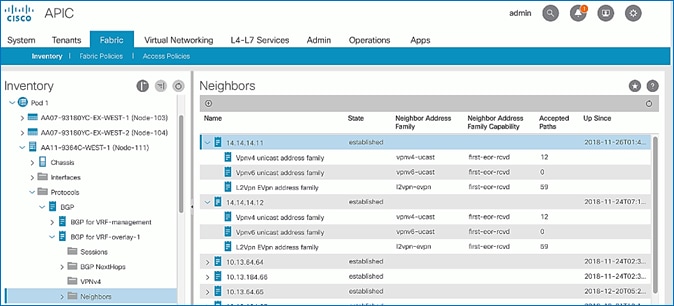

Verify MP-BGP EVPN Status on Spine Switches

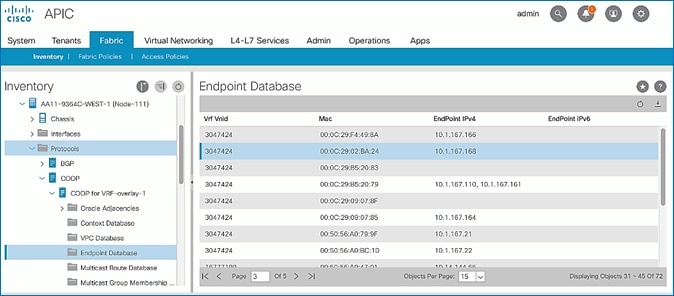

Verify COOP Status on Spine Switches

Deploy Shared Layer 3 Connectivity to Outside – Pod-2

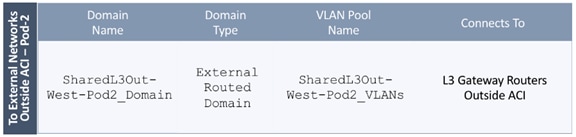

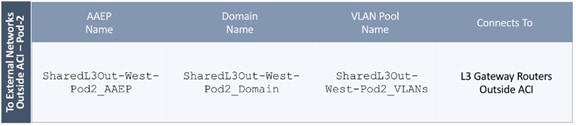

Create VLAN Pool for External Routed Domain

Configure Domain Type for External Routed Domain

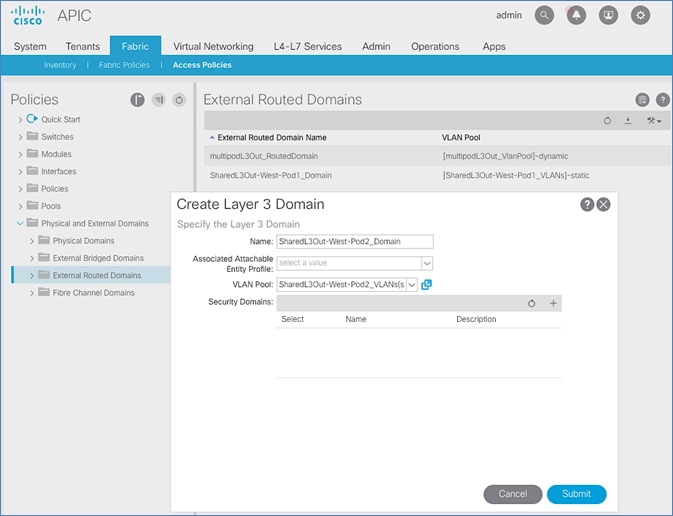

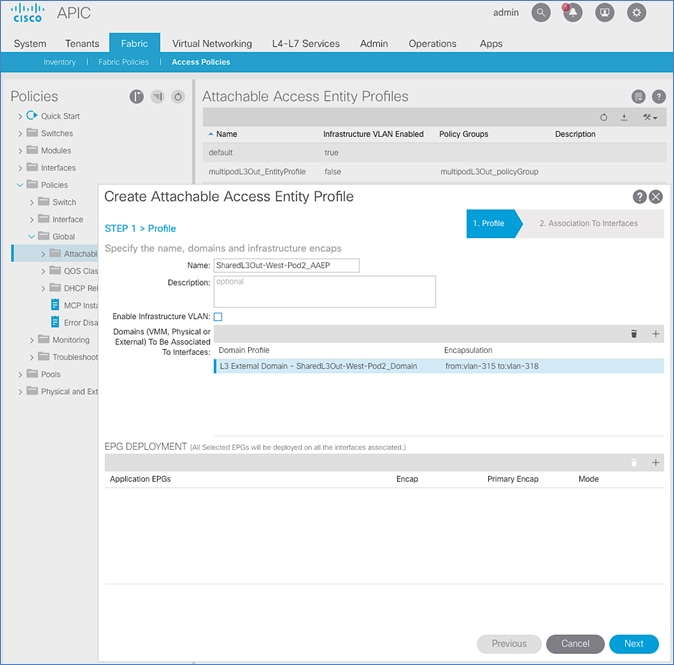

Create Attachable Access Entity Profile for External Routed Domain

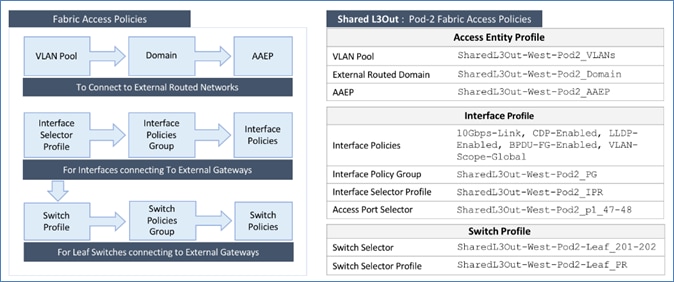

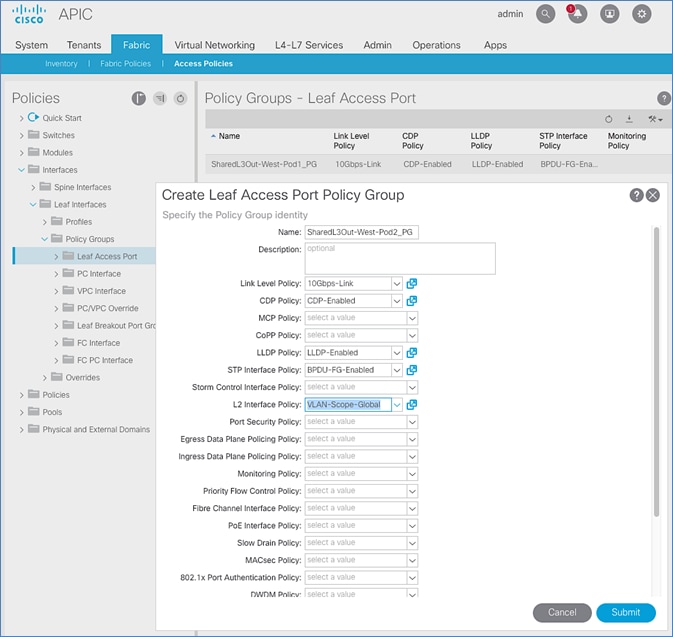

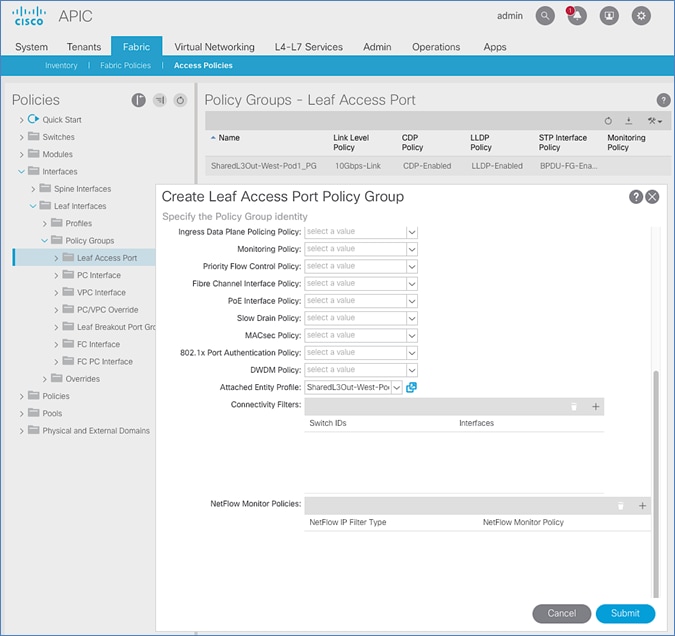

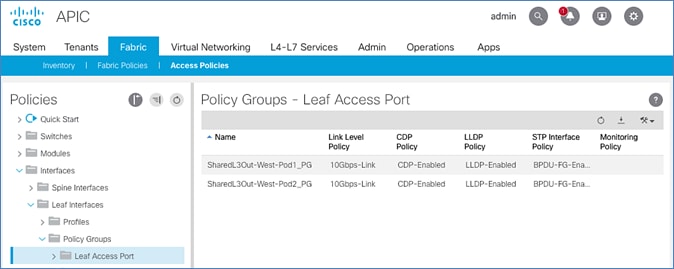

Configure Interfaces to External Routed Domain

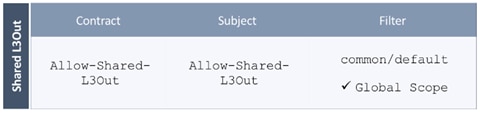

Configure Tenant Networking for Shared L3Out

Configure External Routed Networks under Tenant Common

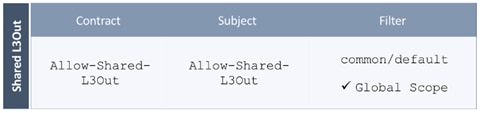

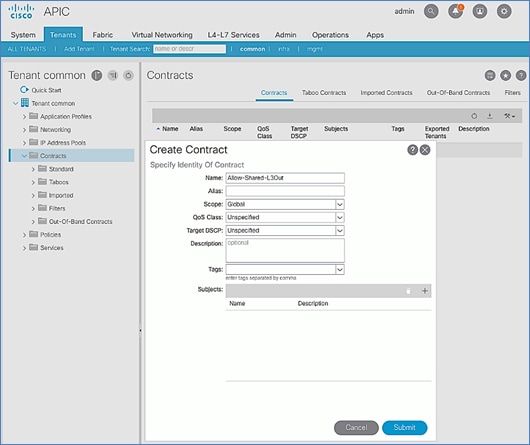

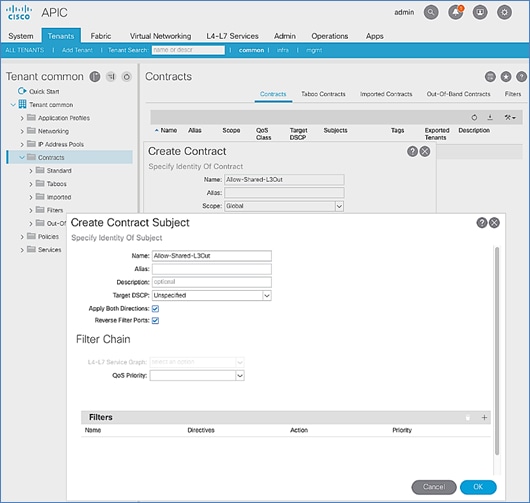

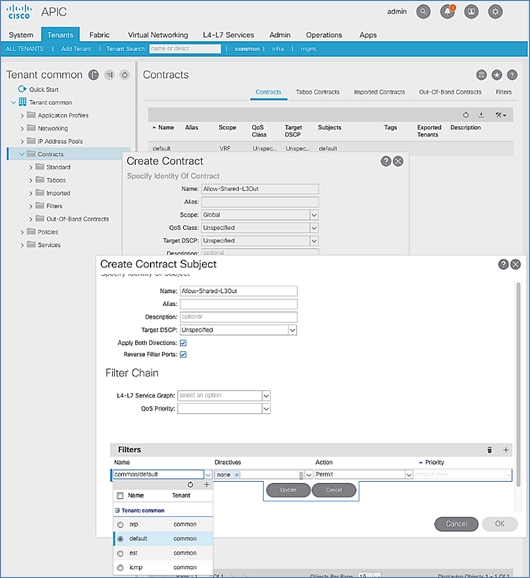

Create Contracts for External Routed Networks from Tenant (common)

Provide Contracts for External Routed Networks from Tenant (common)

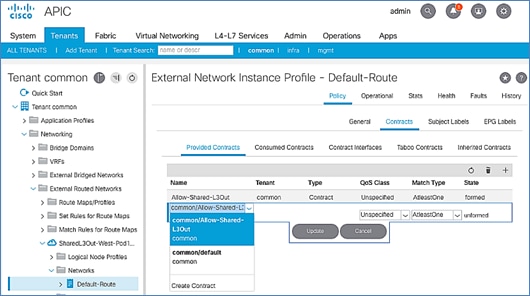

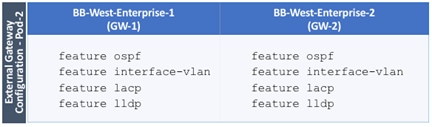

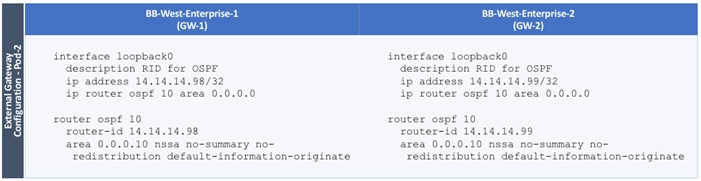

Configure External Gateways in the Outside Network

Physical VSP Connections at Data Centers 1, 2 and 3

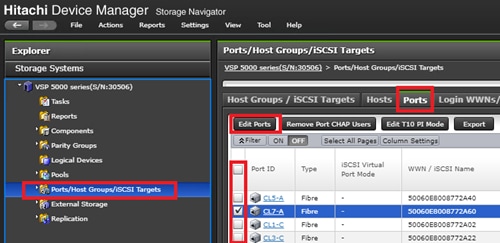

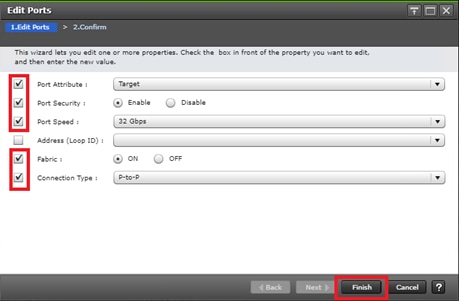

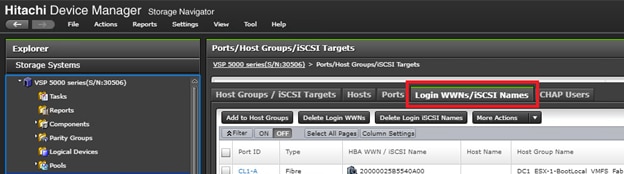

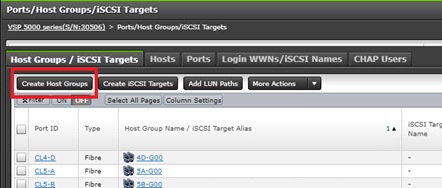

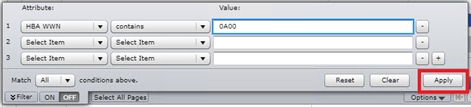

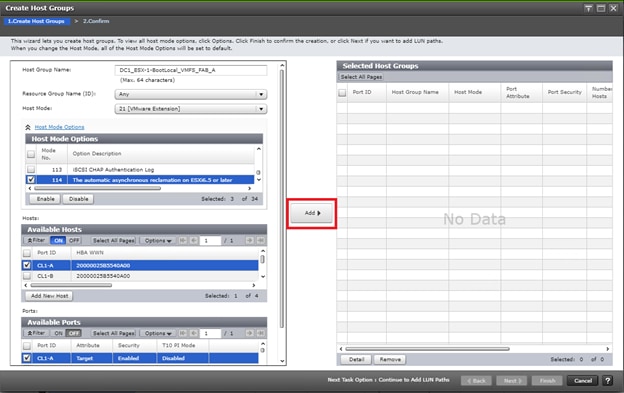

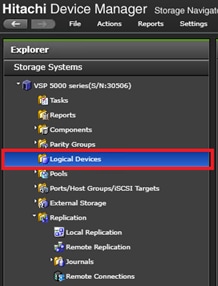

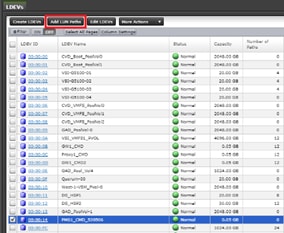

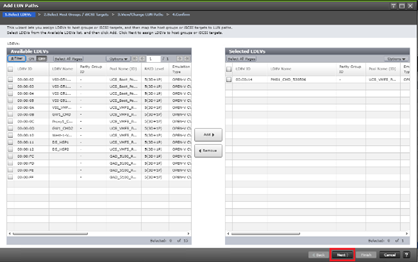

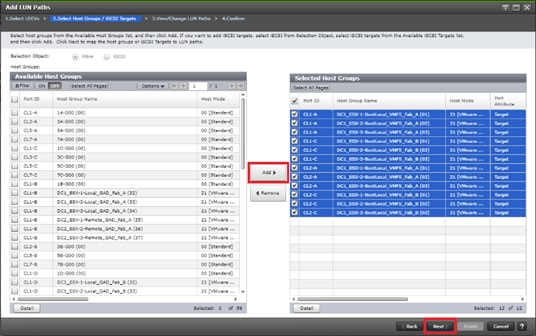

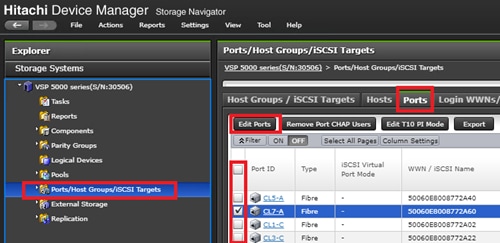

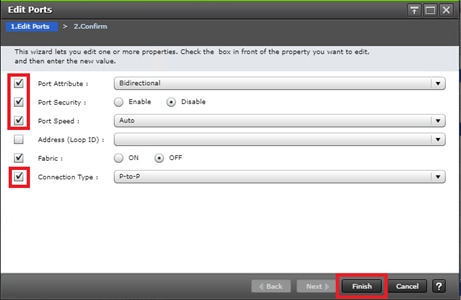

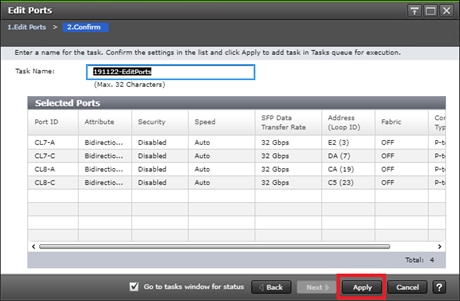

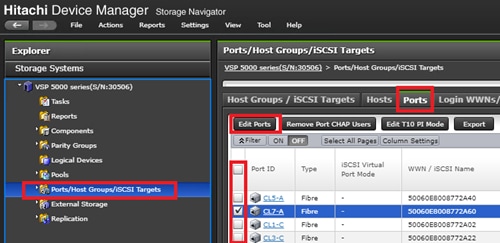

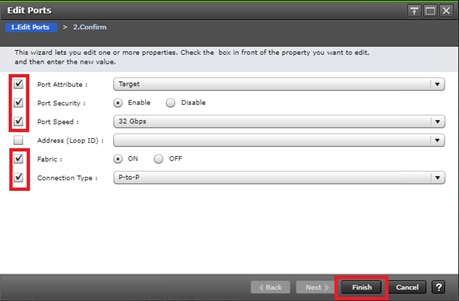

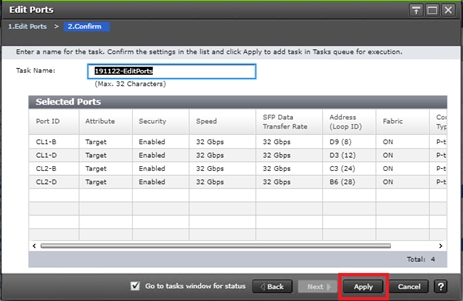

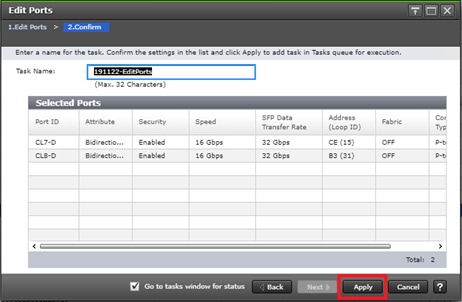

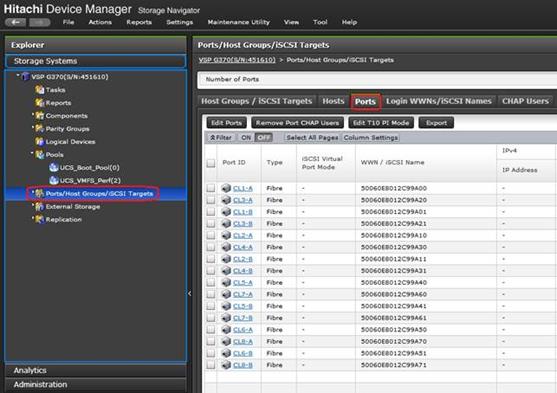

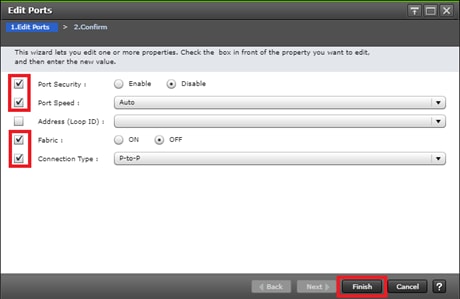

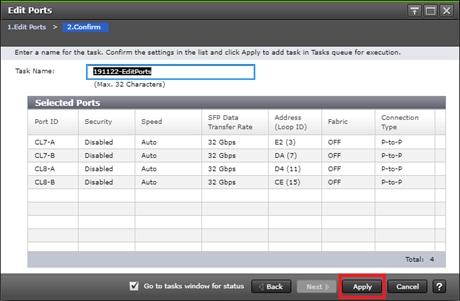

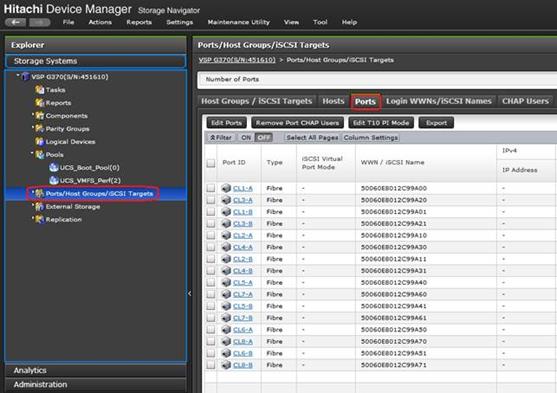

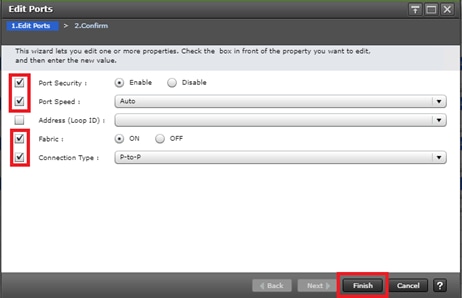

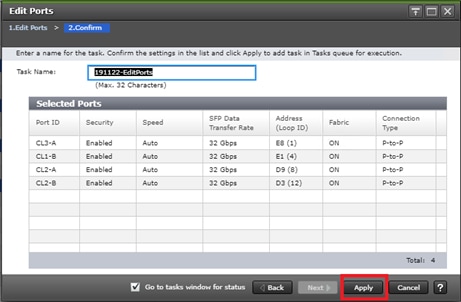

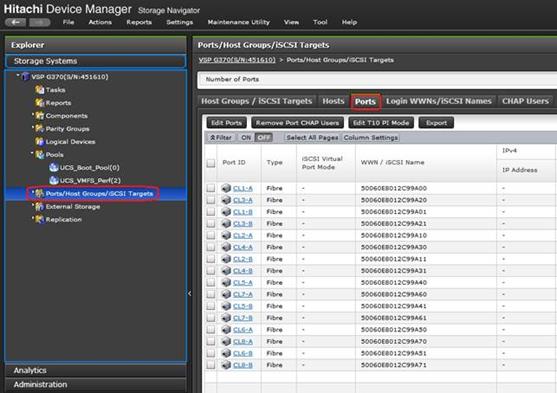

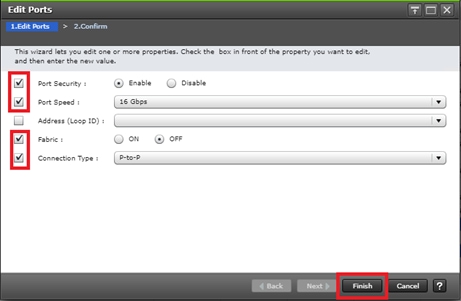

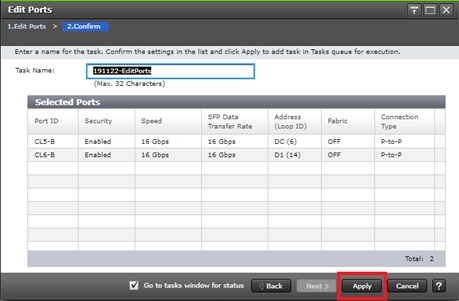

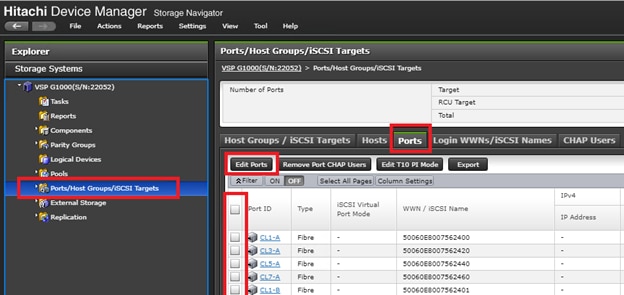

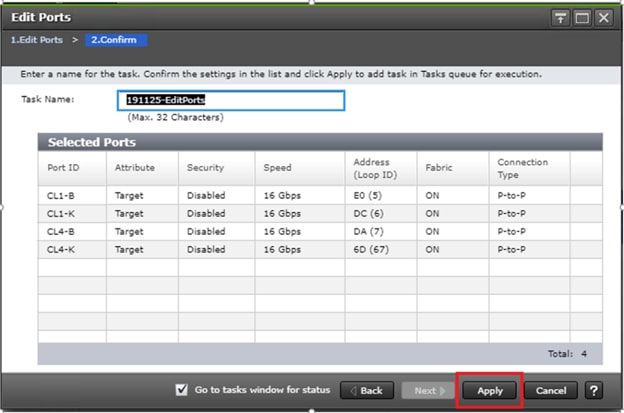

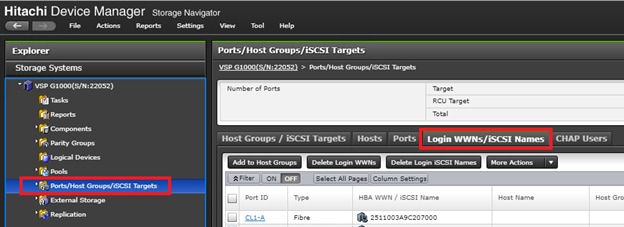

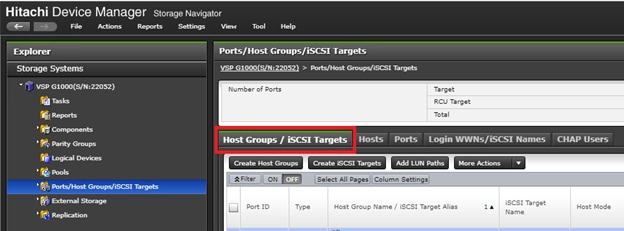

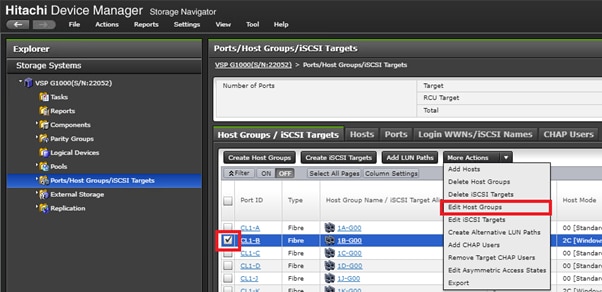

Configure Fibre Channel Ports at DC1 for Local Boot LUNs and VMFS Storage

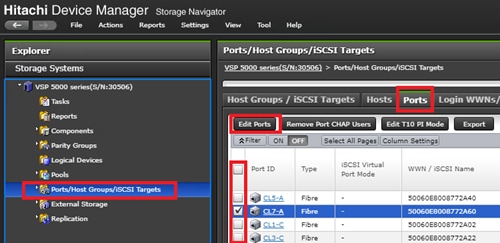

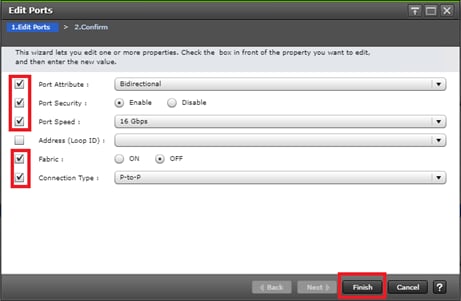

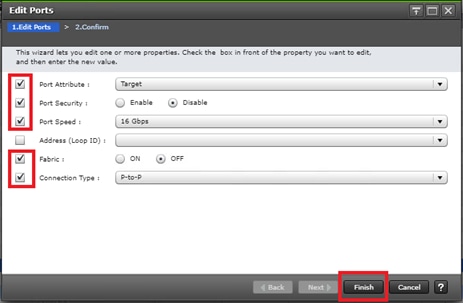

Configure Fibre Channel Ports at DC2 for Local Boot LUNs and VMFS Storage

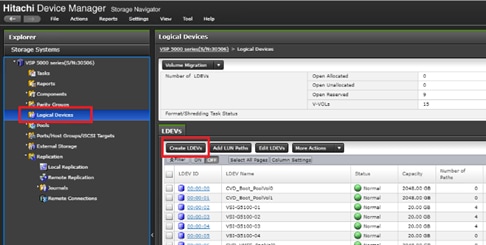

Deploy Hitachi GAD with RAIDCOM

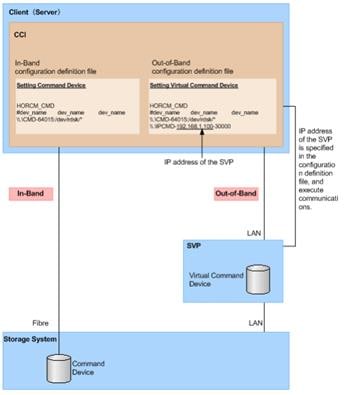

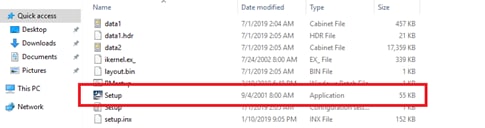

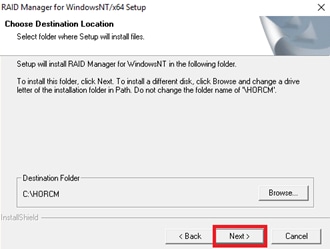

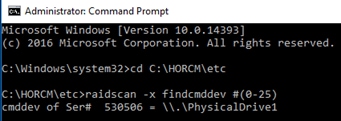

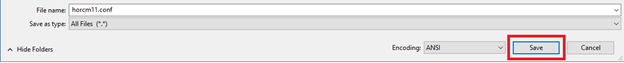

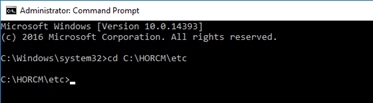

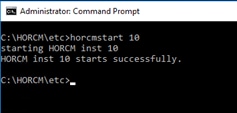

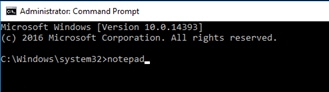

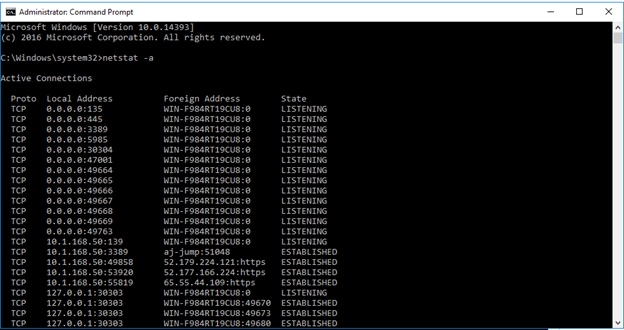

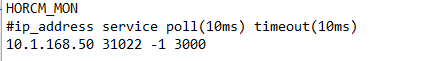

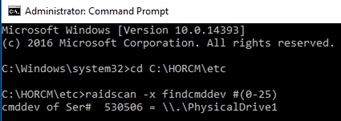

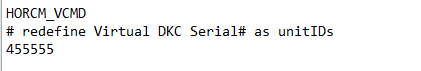

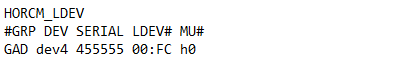

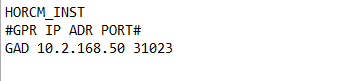

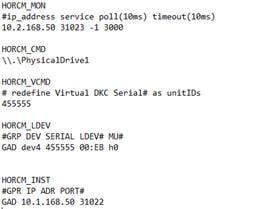

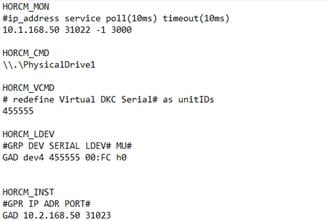

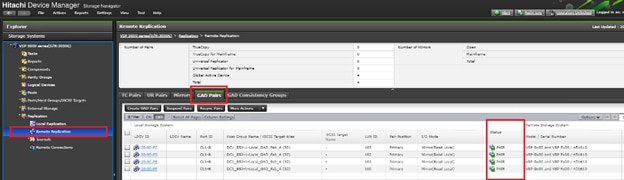

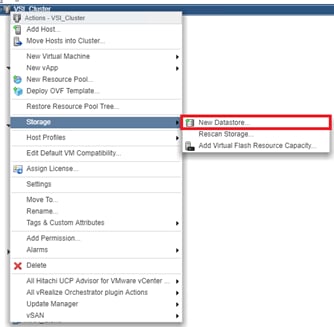

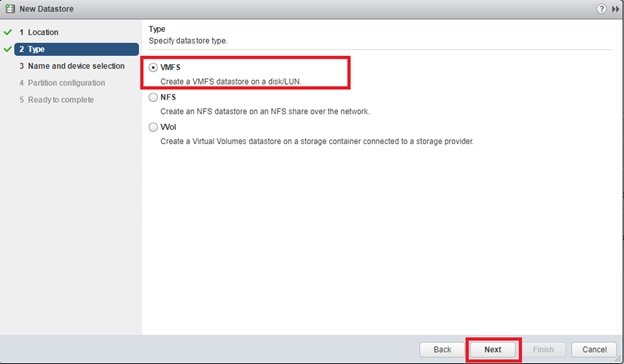

Create a GAD Pair with HORCM Files

Cisco Validated Designs (CVDs) are systems and solutions that are designed, tested, and documented to facilitate and accelerate customer deployments. CVDs incorporate a wide range of technologies, products and best-practices into a portfolio of solutions that address the business needs of our customers.

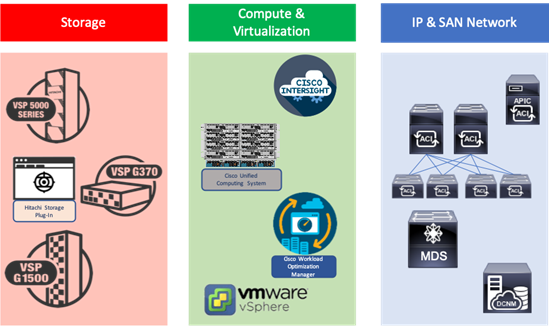

Cisco and Hitachi are working together to deliver a converged infrastructure solution that helps enterprise businesses meet the challenges of today and position themselves for the future. This CVD expands the previously released Cisco and Hitachi Adaptive Solutions with Cisco ACI CVD which is a Virtual Server Infrastructure (VSI) incorporating the Cisco Application Centric Infrastructure (ACI). This reimplementation creates a stretched data center utilizing the Cisco ACI Multi-Pod design for a seamless network between locations, Hitachi Global-Active Device (GAD) for active/active storage across these same locations and features the new Hitachi VSP 5100.

This document explains the design and implementation of the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure as a Virtual Server Infrastructure (VSI) using Cisco ACI and Hitachi GAD to create a private cloud extended across multiple locations. The recommended solution architecture is built on the Cisco Unified Computing System (Cisco UCS) using the unified software release to support the Cisco UCS hardware platforms for the Cisco UCS B-Series Blade Server, Cisco UCS 6400 or 6300 Fabric Interconnects, Cisco Nexus 9000 Series switches, Cisco MDS 9000 Multilayer switches, and Hitachi Virtual Storage Platform (VSP) 5000 system along with other enterprise VSP systems.

Introduction

Modernizing your data center can be overwhelming, and it’s vital to select a trusted technology partner with proven expertise. With Cisco and Hitachi as partners, companies can build for the future by enhancing systems of record, supporting systems of innovation, and growing their business. Organizations need an agile solution, free from operational inefficiencies, to deliver continuous data availability, meet SLAs, and prioritize innovation.

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure as a Stretched Data Center is a best practice data center architecture extended between locations as a seamless environment for the underlying network, storage hypervisor-based compute infrastructure. This architecture expands the Cisco and Hitachi Adaptive Solutions with Cisco ACI utilizing the Cisco ACI Multi-Pod design for a uniform network and incorporates Hitachi GAD for storage resiliency between locations and complies with the VMware vSphere Metro Storage Cluster (vMSC) specifications.

This design also features the introduction of the Hitachi VSP 5100 for both NVMe and SAS flash storage. The Hitachi VSP connects through the Cisco MDS Multilayer Switch to Cisco UCS and is enabled within the ACI network using the Cisco Nexus family of switches.

The reference architecture covers specifics of products utilized within the Cisco validation lab, but the solution is considered relevant for equivalent supported components listed within Cisco and Hitachi Vantara’s published compatibility matrixes. Supported adjustments from the example validated build must be evaluated with care as their implementation instructions may differ.

Audience

The audience for this document includes, but is not limited to; sales engineers, field consultants, professional services, IT managers, partner engineers, and customers who want to modernize their infrastructure to meet SLAs and their business needs at any scale.

Purpose of this Document

This document discusses the design and implementation of the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure as a Stretched Data Center. This solution features a validated reference architecture composed of:

· Cisco UCS Compute

· Cisco Nexus Switches with ACI implementing the Cisco ACI Multi-Pod design

· Cisco MDS Multilayer Fabric Switches

· Hitachi Virtual Storage Platform

· Hitachi GAD

The design and technology decisions that went into this solution directly extend the previously published Cisco and Hitachi Adaptive Solutions for Converged Infrastructure with Cisco ACI Design Guide and the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure with Cisco ACI Deployment Guide.

Solution Summary

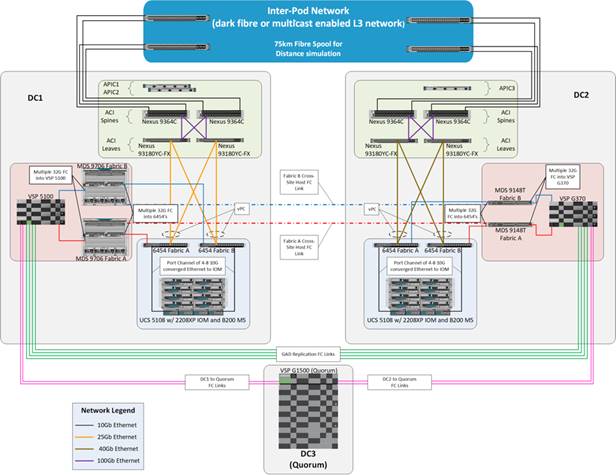

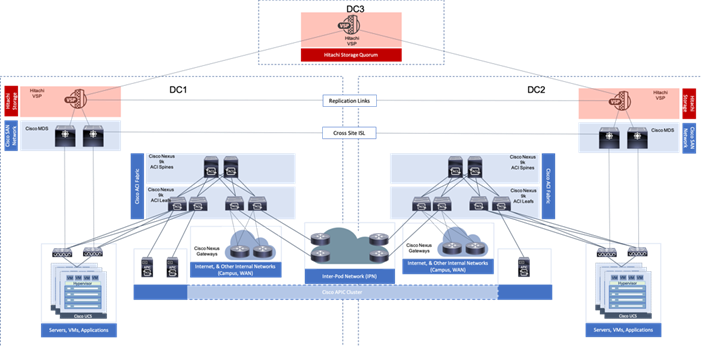

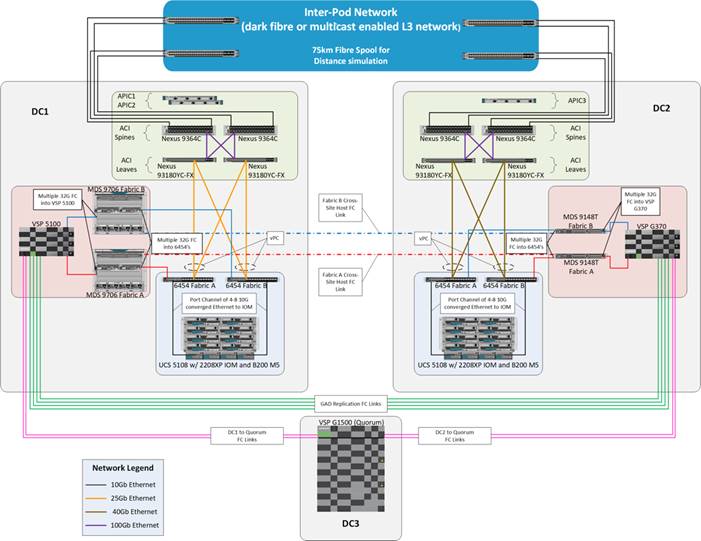

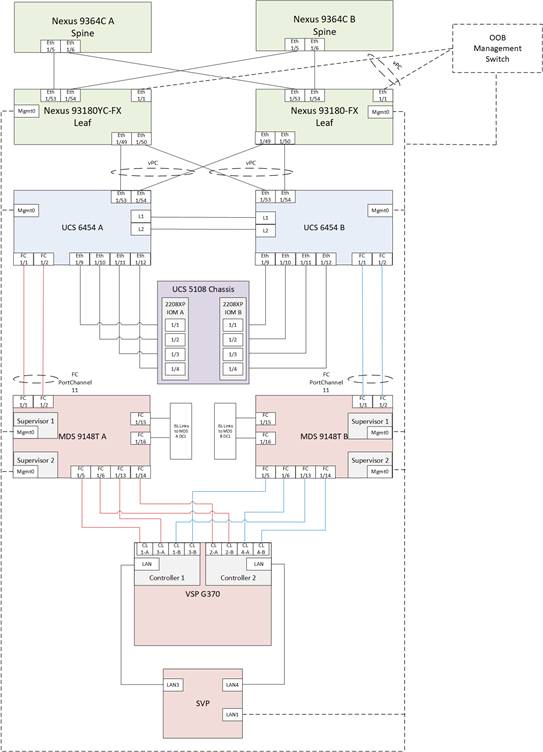

The Cisco and Hitachi Adaptive Solutions for CI as a Stretched Data Center is a validated reference architecture incorporating Cisco and Hitachi products to produce a resilient uniform data center infrastructure spanning multiple locations, keeping with the VMware restraints of the vMSC specification. In this validation, the IP network was displaced with a series of 75KM fibre spools and the SAN network was connected with shorter multimode fibre, relying on the independent validation of long-distance FC connections supported by both Cisco and Hitachi. The topology of this lab environment is shown in Figure 1.

Table 1 lists the hardware deployed in this reference architecture.

Table 1 Reference Architecture Hardware

| DC1 |

DC2 |

| Hitachi VSP 5100 |

Hitachi VSP G370 |

| Cisco MDS 9706 Multilayer Director Switches |

Cisco MDS 9148T Multilayer Fabric Switches |

| Cisco UCS B200 M5 Blade Servers |

Cisco UCS B200 M5 Blade Servers |

| Cisco UCS 6454 Fabric Interconnects |

Cisco UCS 6454 Fabric Interconnects |

| Cisco ACI Application Policy Infrastructure Controllers |

Cisco ACI Application Policy Infrastructure Controller |

| Cisco Nexus 93180YC-FX Switches (Leaf) |

Cisco Nexus 93180YC-FX Switches (Leaf) |

| Cisco Nexus 9364C Switches (Spine) |

Cisco Nexus 9364C Switches (Spine) |

| Cisco Nexus 93180YC-EX Switches (NX-OS Standalone Mode Data Center Interconnect/ Existing Network connectivity as implemented for lab simulation) |

Cisco Nexus 93180YC-EX Switches (NX-OS Standalone Mode Data Center Interconnect/ Existing Network connectivity as implemented for lab simulation) |

Cisco and Hitachi Adaptive Solutions for CI Overview

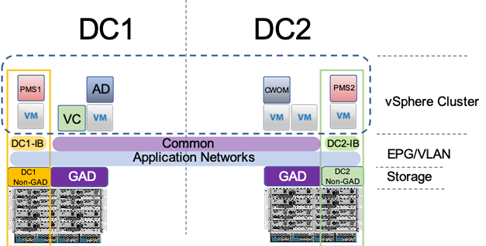

This Adaptive Solutions for CI release extends the previous Adaptive Solutions for CI with Cisco ACI CVD, adding new products from both Cisco and Hitachi to create the uniform stretched data center solution. Figure 2 illustrate the summary of the technologies used in this validated design. A full list of products and product references can be found in the Appendix: Solution References section.

Figure 2 Components in Adaptive Solutions for CI as a Stretched Data Center

What’s New in this Release?

Cisco ACI Multi-Pod Design with ACI 4.2

Cisco ACI is a holistic architecture that introduces hardware and software innovations built upon the Cisco Nexus 9000® Series product line. Cisco ACI provides a centralized policy-driven application deployment architecture that is managed through the Cisco Application Policy Infrastructure Controller (APIC). The Cisco ACI Multi-Pod design allows this architecture to extend the ACI Fabric in a uniform manner across multiple locations, defined as Pods, without the spine-leaf mesh requirements across pods implemented with the Stretched design of the ACI Fabric.

A thorough explanation of the ACI Multi-Pod design can be found in the ACI Multi-Pod White Paper.

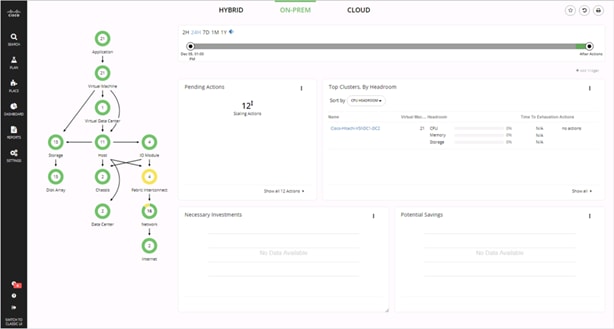

Cisco Workload Optimization Manager

The Cisco Workload Optimization Manager (CWOM) is software that continuously analyzes workload consumption, costs, and compliance constraints and automatically allocates resources in real-time, on-premises and in the cloud. As an optional component to the Adaptive Solutions infrastructure, it brings planning and efficiency to resource use and expansion needs. The CWOM can do the following:

· Deliver better application response time

· Increase utilization by 20% or more

· Reduce user generated tickets by 20% or more

· Show a ROI within 90 days or less

To learn more about CWOM, go to: https://www.cisco.com/c/en/us/products/servers-unified-computing/workload-optimization-manager/index.html.

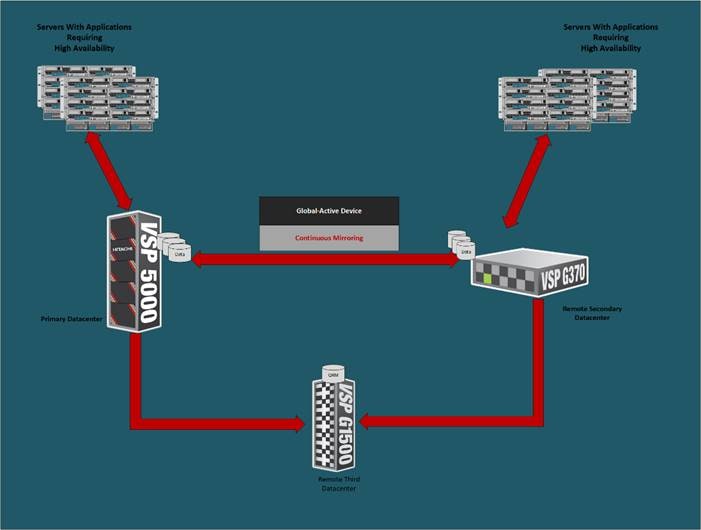

Hitachi GAD Technology

GAD enables you to create and maintain synchronous, remote copies of data volumes. A Virtual Storage Machine (VSM) is configured in the primary and secondary storage systems using the actual information of the primary storage system, and the GAD primary and secondary volumes are assigned the same virtual LDEV (Logical Device) number in the VSM. This enables the host to see the pair volumes as a single volume on a single storage system, and both volumes receive the same data from the host. A quorum disk, which can be in a third and external storage system or in an iSCSI-attached host server, is used to monitor the GAD pair volumes. The quorum disk acts as a heartbeat for the GAD pair, with both storage systems accessing the quorum disk to check on each other. A communication failure between systems results in a series of checks with the quorum disk to identify the problem so the system can receive host updates.

Figure 3 Global Active Device between Primary and Secondary Data Centers

VMware Native Multi-Pathing (NMP) on the host runs in the Active/Active configuration. While this configuration works well at campus distances, at metro distances Asymmetric Logical Unit Access (ALUA) is required to support optimized/nonoptimized paths and ensure that the shortest path is used. If the host cannot access the primary volume (P-VOL) or secondary volume (S-VOL), host I/O is redirected by the alternate path software to the appropriate volume without any impact to the host applications.

Hitachi Virtual Storage Platform 5000 Series

The Hitachi Virtual Storage Platform 5000 series is comprised of the following:

· Hitachi VSP 5000 Series – The Foundation for a Modern Storage Infrastructure. A completely new, enterprise-class, flash array and the next evolution of the Hitachi VSP series. Its innovative, scale-out design is optimized for NVMe (non-volatile memory express) and SCM (Storage class memory).

· SVOS RF - Legendary Hitachi Resilience and Performance, Optimized for NVMe. A new version of SVOS RF (v.9.x) which has been optimized for the VSP 5000 series scale-out, NVMe design. It incorporates AI and ML to reduce cost with intelligent tiering that automates data placement to assure that your data always resides on the most optimized tier.

To ensure the best possible customer experiences, Hitachi Virtual Storage Platform (VSP) 5000 series reliably delivers more data faster than ever for open systems and mainframe applications. The enterprise-level VSP 5000 series starts as small as 3.8TB and grows to 69PB of raw capacity. With scalability to handle up to 21 million IOPS, multiple application workloads can be consolidated for cost savings. All models in the VSP 5000 series are backed by the industry’s most comprehensive 100 percent data availability guarantee to ensure that your operations are always up and running. Hitachi Remote Ops monitoring system and Hitachi Ops Center Analyzer enable industry-leading uptime.

Challenges to digital business initiatives include sprawling storage resources that operate in silos, slow response times that frustrate you, inadequate security and lengthy deployment processes for new IT services. VSP 5000 series was designed to meet these challenges head-on.

NVMe Done Right

The all-flash VSP 5000 series is the best choice for blazing flash performance with response times as low as 70 microseconds.

Cost-Saving Capacity Efficiency

Adaptive data reduction (ADR), provided by Hitachi Storage Virtualization Operating System RF (SVOS RF), enables organizations to improve storage utilization, reduce storage footprint and control costs. ADR offers selectable compression and deduplication for all media, which can be enabled at a volume level, making the system tunable. With guaranteed total efficiency rates of up to 7:1, you save on not only capacity purchases but also floor-space consumption, utility charges and support costs.

Optimize Operations with Artificial Intelligence

All VSP systems are packaged with Hitachi Ops Center Analytics. Our AI-powered solution constantly analyzes telemetry to optimize application performance and prevent extended outages. It works with Hitachi Ops Center Automator to maintain best practices and quality of service (QoS). Manual administrative tasks are streamlined and implemented with fewer errors, speeding addition of new applications and expansion of existing applications.

Simple, Easy-to-Use Management

The VSP family can be set up quickly and managed with ease using Hitachi Ops Center Administrator and its intuitive graphical user interface. RESTAPIs allow integration with existing toolsets and automation templates to further consolidate management tasks. Reduce complexity of steps needed to deploy, monitor and reconfigure storage resources to centralize administrative operations.

Protect Your Data from Unauthorized Access

The VSP 5000 models are hardened to prevent any leaks of physical data and unauthorized system access. Additional measures are available to ensure quick recovery from ransomware attacks.

Resiliency You Can Rely On

Leveraging hot-swappable components, nondisruptive upgrades and outstanding data protection, the VSP 5000 series offers complete system redundancy and is backed by our 100% data availability guarantee. Quadruple redundancy protects against failures even during routine maintenance.

Virtualized Storage Management and Monitoring

Consolidate existing silos and simplify IT by virtualizing all storage under a single pane. External storage systems benefit from the data services that the VSP 5000 series delivers, including data reduction, metro clustering and Automation.

Modernized Consumption Models

In addition to standard capital expenditure (capex) and leasing models, we offer pay-per-use utility pricing and cloud-based consumption services to reduce upfront acquisition costs and better align what you pay with what you use.

Enjoy Peace of Mind

Comprehensive business continuity can be assured with active-active clustering via GAD. Enabling robust business-continuity solutions across multiple data centers, GAD offers zero downtime: Performance stays high, even when data protection is running.

Requirements

Each site needs to be deployed following the steps detailed in the Deployment Guide for Cisco and Hitachi Adaptive Solutions for Converged Infrastructure with Cisco ACI.

· The Primary site (DC1 in our reference), will conform as closely to the Deployment Guide as is appropriate to the customer environment.

· The Multi-Pod configuration section below to configure the IPN and the Spines/Leaf Switches in the Secondary site (DC2 in our reference).

· When the ACI fabric is setup/extended to the second site, DC2 can be completed with some minor changes that will be noted in this document.

These sites need to be connected by fibre or a multicast enabled L3 network for IP traffic, supported long reach fibre channel connections, and conform to the following requirements:

· Less than 10ms RTT for communication for IP and fibre channel traffic.

· GAD compliant VSP devices at each location.

· A third independent location hosting a VSP resource to act as a mediator.

· Processor compatibility between Cisco UCS servers across the differing sites to be able to support vMotion across sites (optionally configure vSphere EVC if there is a disparity).

Physical Topology

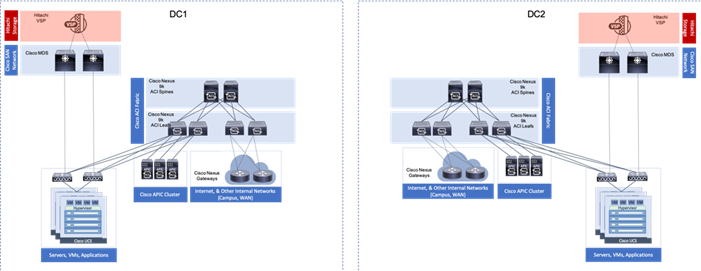

The physical topology is based on the design detailed in the Adaptive Solutions for Converged Infrastructure with Cisco ACI Design Guide, as shown in Figure 4.

Figure 4 Adaptive Solutions for Converged Infrastructure with Cisco ACI

This data center architecture is powerful and resilient, and can be implemented in multiple locations but would be limited in base connectivity as to what might be available through a connecting WAN as shown in Figure 5.

Figure 5 Adaptive Solutions at Multiple Locations

Provisioning in this manner may work for locations with greatly differing functions, and little need for collaboration. Industry trends toward collaboration speak of a much different need, with groups commonly dispersed across locations and a need to maintain functionality in the event of an entire location facing a disruption.

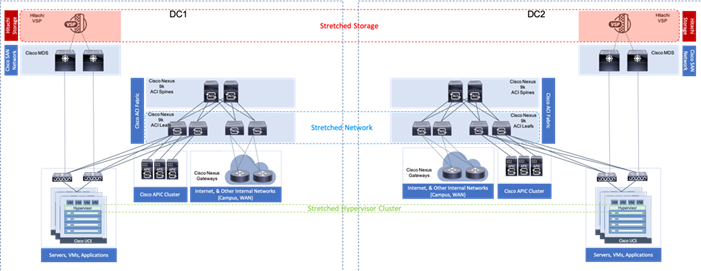

These needs for greater collaboration and resiliency across locations can be met by building a uniform experience between locations stretching the storage, network, and compute resources between data center resources as shown in Figure 6.

Figure 6 A Uniform Stretched Data Center

With the Adaptive Solutions Stretched Data Center architecture, the technologies of Hitachi GAD and Cisco ACI Multi-Pod are incorporated to expand the original architecture into a uniform and resilient infrastructure of stretched storage, network and compute, extended across potentially geographically displaced locations as shown in Figure 7.

Figure 7 Adaptive Solutions Stretched Data Center with Cisco Multi-Pod and Hitachi GAD

This reference architecture has been validated in Cisco labs with the assistance of Hitachi Vantara. Components and configurations shown should not always be considered to be prescriptive to the solution as the customer will have options for hardware and software not specified in this document. However, the interoperability guidelines from both companies should be referred to when departing from the validated design.

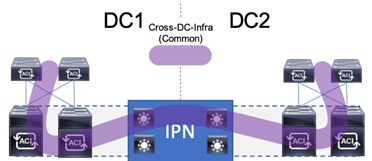

Network Design

The ACI design in this architecture leverages Multi-Pod by implementing an Inter-Pod Network (IPN) to enable traffic across the leaves and spines of separated pods without requiring a direct mesh topology. The IPN is not part of the ACI fabric, but it connects to each pod through one or more spine switches within each pod. The design specifics of the pods within each DC of the stretched architecture can be found in the previously mentioned Adaptive Solutions for Converged Infrastructure with Cisco ACI Design Guide.

ACI Multi-Pod Design

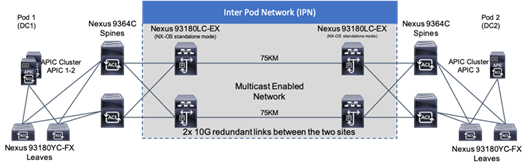

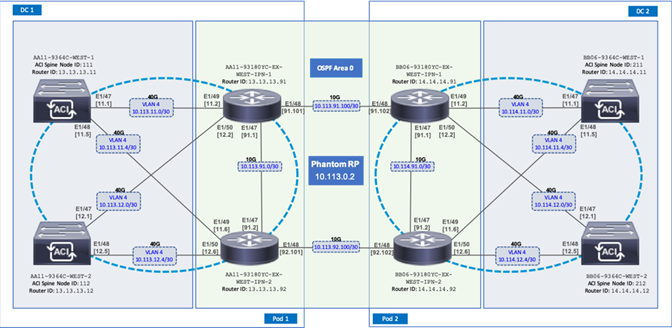

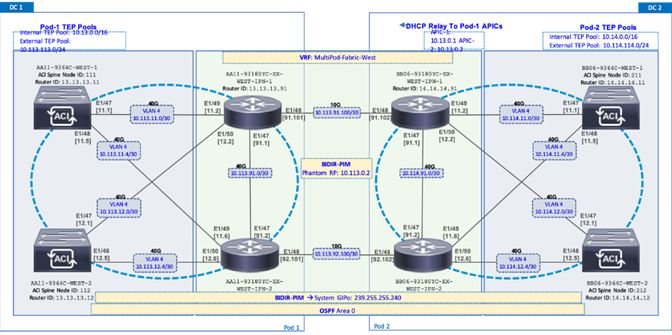

The ACI Multi-Pod design used in this CVD is shown in Figure 8.

Figure 8 Adaptive Solutions ACI Multi-Pod Design

IPN Configuration

The IPN consists of two Nexus switches in each data center connected using a 10Gbps 75 km long fiber. The dual link design provides high availability in case of a link failure. Each spine is connected to each of the Nexus using a 10Gbps connection for a fully redundant setup.

Each Nexus is configured for the following features to support the Multi-Pod network:

PIM Bidir Configuration

In addition to unicast communication, Layer 2 multi-destination flows belonging to bridge domains that are extended across Pods must also be supported. This type of traffic is usually referred to as Broadcast, Unknown Unicast and Multicast (BUM) traffic and it is exchanged by leveraging VXLAN data plane encapsulation between leaf nodes. Inside a Pod (or ACI fabric), BUM traffic is encapsulated into a VXLAN multicast frame and it is always transmitted to all the local leaf nodes. In order to flood the BUM traffic across Pods, the same multicast used inside the Pod is also extended through the IPN network. PIM bidir enables this functionality on the IPN devices.

OSPF Configuration

OSPF is enabled on Spine switches and IPN devices to exchange routing information between the Spine switches and IPN devices.

DHCP Relay Configuration

In order to support auto-provisioning of configuration for all the ACI devices across multiple Pods, the IPN devices connected to the spines must be able to relay DHCP requests generated from ACI devices in remote Pods toward the APIC node(s) active in the first Pod.

Interface VLAN Encapsulation

The IPN device interfaces connecting to the ACI Spines are configured as sun-interfaces with VLAN encapsulation value set to 4.

MTU Configuration

The IPN devices are configured for maximum supported MTU value of 9216 to handle the VxLAN overhead.

TEP Pools and Interfaces

In Cisco ACI Multi-Pod setup, unique Tunnel Endpoint (TEP) Pools are defined on each site. In this CVD, these pools are 10.11.0.0/16 and 10.12.0.0/16 for the two data centers.

External TEP

The pod connection profile uses a VXLAN TEP (VTEP) address called the External TEP (ETEP) as the anycast shared address across all spine switches in a pod. This IP address should not be part of the TEP pool assigned to each pod and is therefore selected outside the two networks listed above. The IP addresses used in the two data centers are 10.241.249.1 and 10.242.249.1.

APIC Controller Considerations

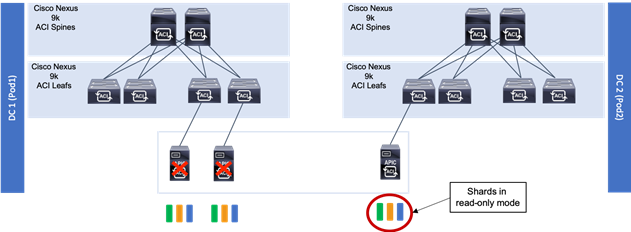

The ACI Multi-Pod fabric brings interesting considerations for the deployment of the APIC controller cluster managing the solution. To increase the scalability and resiliency of the design, APIC supports data sharding for data stored in the APIC. The basic idea behind sharding is that the data repository is split into several database units, known as ‘shards’ and the shard is then replicated three times, with each copy assigned to a specific APIC appliance. In a three node APIC cluster, one replica of each shard exists on every node. In this scenario, if two of the three nodes become unavailable, the shards in third node become read-only because of lack of quorum and stay in read-only mode until the other nodes become accessible again.

Figure 9 APIC Nodes and Data Sharding

In Figure 9, the three APIC nodes are distributed across the two data centers. In case of a split-brain scenario where two data centers cannot communicate to each other over the IPN, this implies that the shards on the APIC nodes in Pod1 would remain in full ‘read-write’ mode, allowing a user connected there to make configuration changes however the shards in Pod2 will move to a ‘read-only’ mode. Once the connectivity issues are resolved and the two Pods regain full connectivity, the APIC cluster would come back together and any change made to the shards in majority mode would be applied also to the rejoining APIC nodes.

To mitigate this scenario, customers can deploy a 3 nodes APIC cluster with two nodes in Pod1 and one node in Pod2 and then add a fourth backup APIC node in Pod2 to handle the full site failure scenario. The backup APIC server however should only be brought into action if a long-term connectivity outage or data center maintenance is expected. For typical short-term outages, three node cluster should suffice in most scenarios.

For more information about APIC cluster and sizing recommendations, consult the Multi-Pod design white paper: https://www.cisco.com/c/en/us/solutions/collateral/data-center-virtualization/application-centric-infrastructure/white-paper-c11-737855.html

Management Network Considerations

The management networks used for virtualization between the two sites will be configured in what can be summarized as either a Brown Field scenario (integrating with existing infrastructure), or Green Field scenario (new placement originating from the ACI fabric).

Figure 10 Brown Field Management Source External to the ACI Fabric

The Brown Field situation was configured in the previous single DC ACI design. The management network, referred to as an In Band Management (DC[1,2]-IB within ESX/UCS and DC[1,2]-Internal-Infra within ACI) in the CVD, came in from an external switch and routing for that network was managed by a router upstream of that switch. Continuation of a Brown Field model can occur as shown in Figure 10, but some routing will need to be worked out external to the fabric if this implementation persists as traffic will need to traverse cross site.

Figure 11 Green Field Management from the ACI Fabric

A single unified Cross Data Center Management EPG illustrated in Figure 12 (referenced as Common in the deployment), provides the default connectivity needed for vCenter and other management VMs to exist within either site. As an option, site specific management that is configured within, or ported into the ACI fabric can valid. If site specific management is created or ported into the ACI fabric, it will need have one of the management EPGs extended as was mentioned previously.

These options are not prescriptive and should be selected as appropriate to the deployed environment. In the validated architecture, a mixture of these two scenarios was implemented as shown in Figure 12.

Figure 12 Cross DC Mgmt along with DC Specific Management

The above configuration sets up DC specific EPGs for physical and virtual infrastructure that wouldn’t be appropriate to exist outside of the specific DC, while maintaining the L3 gateway for these EPGs to set the availability to these resources through contracts and filters specified by the ACI fabric.

The Cross DC management network and the DC Internal Infra appropriate to the DC will be extended into the respective UCS domain for the location, and the hypervisors hosted within the UCS domain.

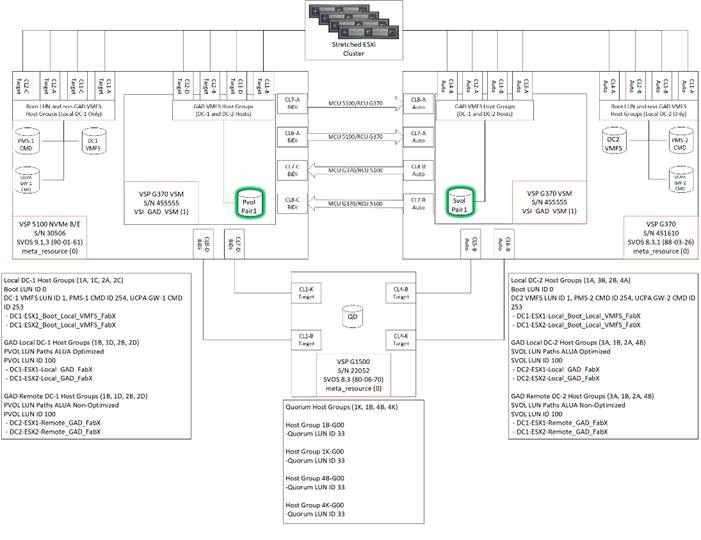

Storage Design

The solution explained in this CVD presents a VMware Metro Storage Cluster (vMSC) – compatible two-site stretched cluster utilizing Hitachi VSP storage, Cisco compute, and Cisco MDS Multilayer Fabric Switches. This architecture will allow synchronous active-active remote copies between your primary VSP G5100 at DC1 and VSP G370 at DC2. VSP G1500 is used as the 3rd quorum system at data center 3 to verify data consistency between data centers in respect to data in flight. GAD and vMSC allows storage high availability across both sites for enhanced protection of mission critical VM’s and applications running on the cluster. With this design, in the event of a path, replication link, primary system, secondary system, or wide area network (WAN) failure, the data being replicated with GAD will stay online and consistent, preventing down time. Utilizing VMware vSphere vCenter 6.7 U2 native multipathing (NMP), ALUA settings are honored from the Hitachi VSP’s for seamless failover to remove/standby hosts in the event of an outage. Figure 13 illustrates a fully built-out environment with Cisco VSI infrastructure utilizing Hitachi VSP 5000 with GAD technology.

Figure 13 Storage Configuration Between Systems Performing GAD Replication

Hitachi Global-Active Device (GAD)

As part of Hitachi SVOS, VSM technology ensures two physical systems are logically presented as one system. GAD feature implements cross-mirrored storage volumes between two Virtual Storage Platform systems accepting read/write I/Os on both sides that are continuously updated. If a disk controller failure occurs at one site, the controller at the other site automatically takes over and accepts read/write I/Os. It enables production workloads on both systems, while maintaining full data consistency and protection. The GAD feature assures that an active and up to date storage volume is available to a production application despite the loss of a virtualized controller, system or site.

During an outage, GAD by design will block I/O to the respective site where the failure occurred by pushing status PSUE (Pair Split under Error) to the volume and redirects all I/O to the alternate site automatically by honoring ALUA settings with the use of NPM.

For more information about GAD, go to:

https://knowledge.hitachivantara.com/Documents/Management_Software/SVOS/9.1.x/Global-Active_Device

During an outage where pairs are suspended to either a PSUE or PSUS (Pair Split under Suspend) state, you must correct the cause of the outage and resync the active-active volume set so the same data is consistent at the primary site as well as the secondary site. For information on disaster recovery of GAD go to:

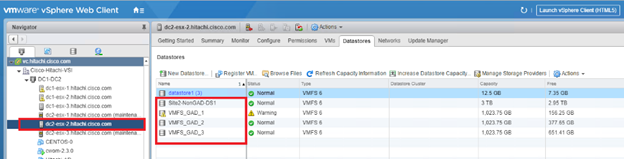

Optionally, you have the choice between using data center specific storage or highly available GAD datasets, within this deployment you will be able to use other Hitachi management products to allocate a VMFS datastore to your virtual environment.

VMware vSphere Metro Storage Cluster (vMSC)

A vMSC environment on Hitachi Virtual Storage Platform G and F series storage systems provides an ideal solution for maximizing availability and uptime. It clusters physical data centers within metro distances. This Metro Storage Cluster solution from Hitachi Vantara consists of storage systems presenting replicated storage as a single LUN from different geographically distributed sites. This design enables high availability of services by allowing virtual machine migration between sites with no downtime. A combination of software and hardware products from Hitachi Vantara provides the following key functions to a vSphere infrastructure:

· Host multipathing

· Internal and externalized storage provisioning

· Synchronous storage replication across metro cluster distances

· Transparent storage failover

· Host access via uniform (recommended) or non-uniform topology

These functions work together with VMware vSphere vMotion, VMware vSphere High Availability, and VMware vSphere Distributed Resource Scheduler to build this reference architecture for vMSC. The advanced functions found in Hitachi Virtual Storage Platform G and F series storage systems do the following:

· Fulfill the requirements of a virtual infrastructure

· Lessen the need for additional hardware that may be required in traditional Metro Storage Cluster solutions

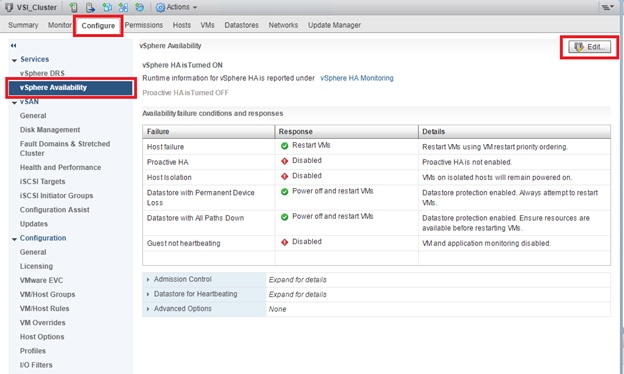

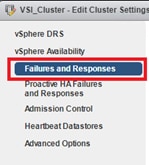

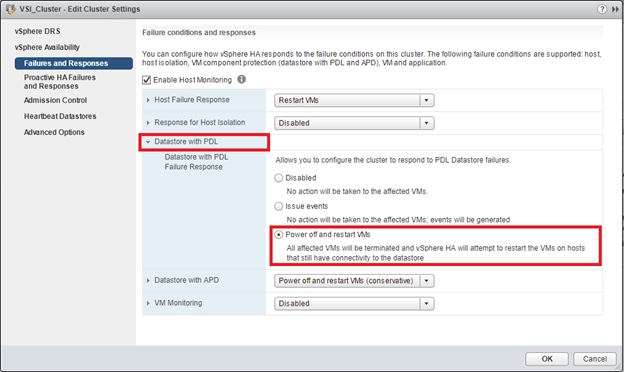

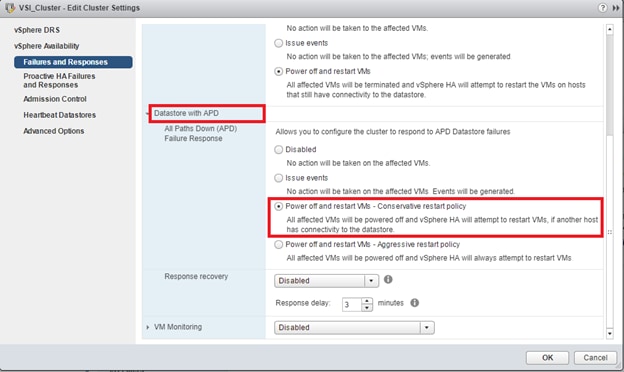

vMSC supports Stretched Storage, leveraging GAD which provides a single stretched datastore across the data center. Within this design VM Component Protection (VMCP) is used to protect virtual machines from storage related events, such as Permanent Device Loss (PDL) and All Paths Down (APD). This paper shows the pre-designed, pre-configured, and pre-validated solution as a reference architecture that is comprised of both a VMware vSphere stack and Stretched Storage stack, leveraging vMSC and GAD on Cisco UCS VSI infrastructure.

VMware Support

Hitachi VSP F series and G series are aligned with the VMware software-defined storage vision, providing the following support:

· vMSC: Using Hitachi GAD, you can create and maintain synchronous, remote copies of data volumes. A VSM is configured in the primary and secondary storage systems using the actual information of the primary storage system, and the GAD primary and secondary volumes are assigned the same virtual LDEV number in the VSM. This enables the host to see the pair volumes as a single volume on a single storage system, and both volumes receive the same data from the host. Configuring GAD as the backend storage for a vMSC provides an ideal solution for maximizing availability and uptime by clustering physical data centers that reside within metro distances of each other.

· Hitachi Storage Provider for VMware vCenter: Hitachi Storage Provider works with VMware vSphere API for Storage Awareness (VASA) to provide access to Hitachi VSP F series and G series. Storage Provider enables policy-based storage management using VMware Storage Policy-based Management (SPBM) and VMware Virtual Volumes (VVols). Storage Provider provides a simplified method for VMware admins and storage admins to deliver effective storage that meets advanced VM requirements.

· vSphere Storage APIs - Array Integration (VAAI): VAAI enables multiple storage functions (primitives) within vSphere to be offloaded to certified storage hardware. This reduces ESXi processor utilization by allowing certified storage hardware to perform these functions on the storage systems themselves. In many cases, VAAI accelerates these functions allowing them to complete in less time as compared to performing the functions within software on the ESXi host.

· Hitachi Storage Replication Adapter (SRA): Hitachi Storage Replication Adapter (SRA) for VMware Site Recovery Manager provides a disaster recovery (DR) solution that works with both your storage environment and your VMware environment. Arrays at both sites are "paired" during Site Recovery Manager configuration, and VMware administrators use the Site Recovery Manager application to manage the configuration and definition of the DR plan.

· vStorage API for Multipathing (VAMP): Hitachi VSP F series and G series support VAMP to provide enhanced control of I/O path selection and failover.

· vStorage API for Data Protection (VADP): Hitachi VSP F series and G series support VADP to enable backup applications to perform file-level or VM-level backup of running virtual machines.

Multipathing

VMware Native Multi-Pathing (NMP) is the multipathing software that integrates with GAD to provide load balancing, path optimization, path failover, and path failback capabilities for vSphere hosts. NMP will load-balance I/O between all available preferred paths (Active) from P-VOL and keep all paths to S-VOL as active non-optimized paths. NMP rules for the Hitachi VSP are now enabled by default within vSphere, this allows for reduction in time to production when deploying new vSphere ESXi clusters connected to Hitachi Storage. The rules will handle devices configured with ALUA to be used for GAD. In this guide, VMware’s Native Multi-Pathing is used.

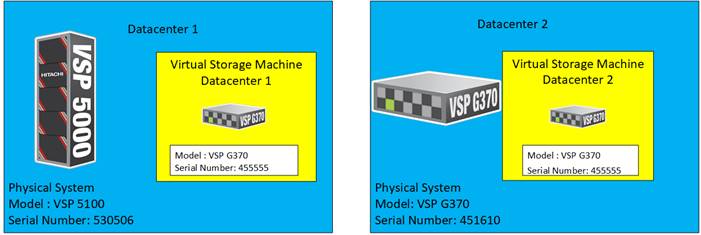

Virtual Storage Machine (VSM)

The VSM is the unit that is used to manage virtualized storage system resources. You create a VSM within a physical storage system to enable the host server to recognize two storage systems as one virtual storage system.

When GAD is used to provide nondisruptive host access to volumes that reside on different storage systems, a VSM for the storage system that contains the primary volumes (P-VOLs) of the GAD pairs is created in the secondary storage system. For GAD, the primary storage system is the virtualized storage system.

Figure 14 illustrates the relationship between a (physical) storage system and VSM’s.

Figure 14 VSM Emulation Between Systems Performing GAD Replication

In this design, a common VSM model of VSP G370 was emulated. To learn more about VSM emulation types, go to:

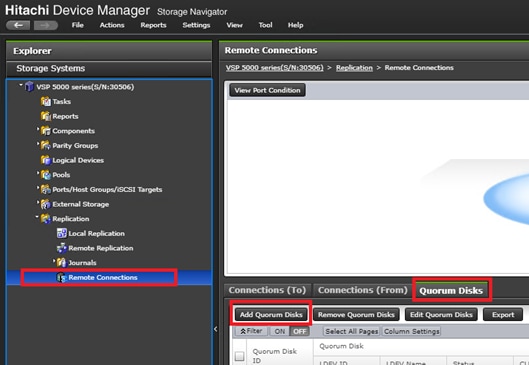

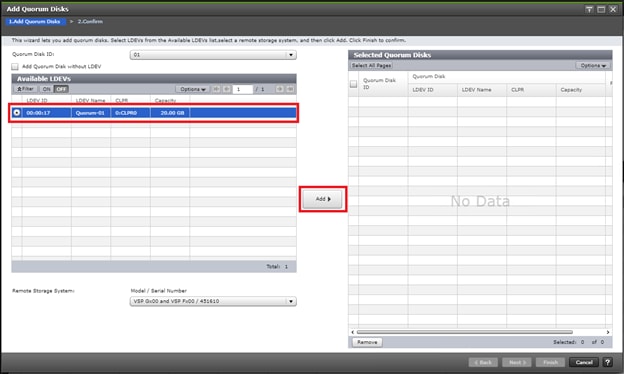

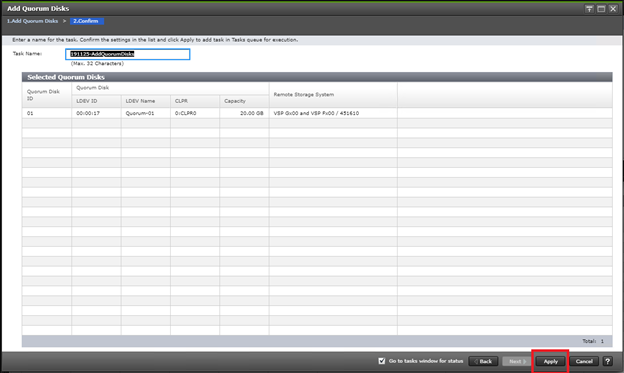

Quorum

A quorum entity that is external to either system is normally in a separate location is used to determine the operational control when certain failure occurs to avoid split-brain scenarios. In a vMSC using VSP platform, there are various options for providing quorum services including a separate Storage system (including any supported 3rd party storage that can be attached to VSP G/F platform) or presenting an iSCSI disk from physical/virtual machine from 3rd site or cloud. In the case of this design guide, it is recommended to keep the quorum system at a third site. This is utilized in disaster situations where the primary or secondary data center is offline and the quorum is used to maintain data consistency between VSPs in GAD replication, and to verify fail over.

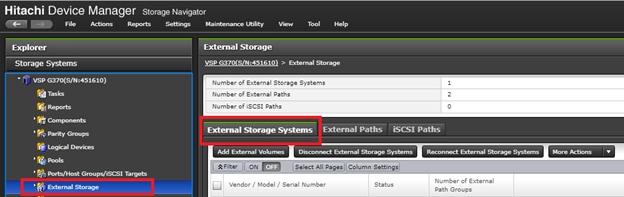

Example: External storage system: A 20GB LUN is created on an external storage array such as VSP G1500 or other supported external 3rd party storage array for use as a quorum disk. This LUN is presented to the Site 1 VSP and Site 2 VSP as externalized storage by virtue of VSP G/F platform SVOS virtualization device capability. The quorum disk stores continually updated information about data consistency in Hitachi GAD P-VOLs and S-VOLs for use during site failover operations. GAD uses the information in the event of a failure, to direct host operations to the other volume within the pair.

Quorum Links

In this design, two External Paths are configured between DC1 VSP 5100 storage and VSP G1500 quorum storage, and two more External paths between site 2 VSP G370 storage and VSP G1500 quorum storage.

Replication Links Main Control Unit and Remote Control Unit (MCU/RCU)

A storage replication link consists of bidirectional ports on the DC1 storage system connected to a remote-control unit bidirectional port that is defined on the DC2 storage system. It represents a bidirectional remote copy connection from the primary data volume (P-VOL) on the DC1 storage system to the secondary data volume (S-VOL) on the DC2 storage system. Within this design 2 sets of MCU/RCU paths are defined for storage replication.

Cisco MDS

The SAN of the Adaptive Solutions Stretched Data Center uses pairs of MDS switches at each location. These switches operate as parallel networks within each location and are connected across the data centers to their location counterparts with Inter-switch Links (ISL) as shown in Figure 15.

Figure 15 Adaptive Solutions SAN Connecting the Two Data Centers

Symmetry is not required between the differing MDS pairs across the two locations, with DC1 using a pair of director class MDS 9700 series switches, and DC2 utilizing a pair of 9148Ts. Also, these switches across the extended SAN can be easily managed for both Zones and Devices Aliases through an optional DCNM implementation supporting both sites.

The zoning utilized within the MDS will enable access to boot LUNs, GAD VMFS datastores, as well as non-GAD VMFS datastores. The GAD resources from the opposing DC will be presented within the zoning to enable VMFS continuity in the event of an availability incident in the DC that is somehow specific to the VSP.

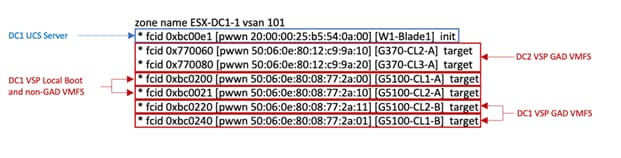

Figure 16 MDS A zoning for an example DC1 host

Created zonesets are shared across the DCs through the ISL connected SAN fabrics. Zones created for a host initiator in one DC will have its zone present across the ISL in the opposing DC to expose the VSP GAD targets from both sides.

Compute Design

Cisco UCS Domain Configuration

The Cisco UCS compute for both environments were configured in an identical manner, relying on the respective DC internal infra networks for the Cisco UCS FI management interfaces.

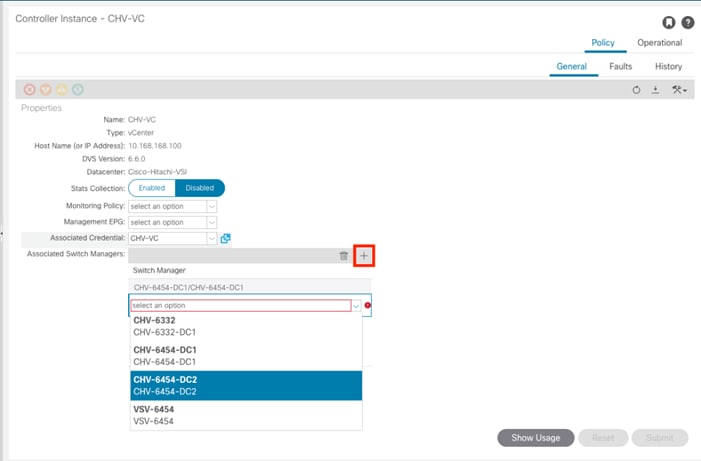

The ACI-vmm-[#] networks are configured to each UCS domain as needed through the ACI UCSM Integration. Both UCS domains are set as Switch Managers for the ACI Virtual Machine Manager (VMM) configured for the vCenter spanning both data centers. When an EPG is associated to the VMM, the VLAN allocated to it within the VMM is configured on the Cisco UCS FI uplinks, and appropriate vNIC templates associated with the hypervisor uplinks connected to the VMM managed vDS.

Consistency of the UCS Service Profiles between the two UCS domains was handled manually during the deployment, but could have been more efficiently synchronized by utilizing Cisco UCS Central (not explained in this validation) to create Global Service Profiles.

Cisco Management Components

Additional benefits are brought to the solution with the optional inclusion of CWOM and Cisco Intersight.

· Registering the Cisco UCS Fabric Interconnects and the vCenter to CWOM gives scaling visibility to components, tracking resources that are constrained or under used within the infrastructure.

· Registering the UCS Fabric Interconnect to Intersight gives extensive visibility into the UCS domain health, firmware revision compliance, and expedited support with Cisco TAC.

Virtualization Design

The virtualization design includes topics already mentioned in the previous storage and compute design sections, with additional specifics explained in the published Adaptive Solutions for Converged Infrastructure with Cisco ACI Design Guide.

Figure 17 vSphere Cluster in the Adaptive Solutions Stretched Data Center

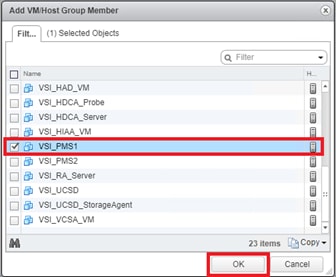

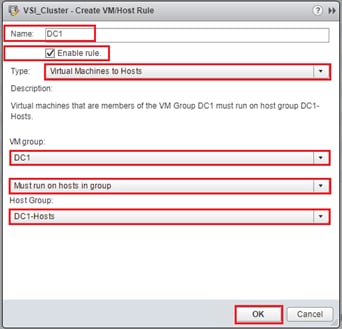

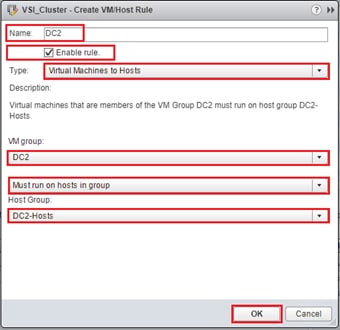

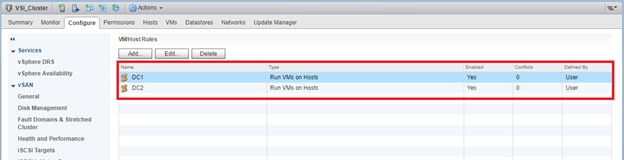

The deployment example shown in Figure 17 is shown with a single vSphere DRS cluster spanning both data center locations. Within this cluster there are three categories of VMs:

· VMs that should stay in DC1

· VMs that should stay in DC2

· VMs that can reside in either DC

VMs that will be specific to a DC are deployed to the Non-GAD storage associated with that DC, while all cross DC VMs will be deployed to GAD storage. The network port-groups will similarly need to be mapped to VMs appropriate for either mobility between locations, or an affinity to a specific DC. With these factors accounted for, VM Groups and Host Group pairings are created to lock VMs to the ESXi hosts of a particular DC if they should not traverse between DC locations either through DRS or an HA event.

Both the vCenter instance and the Active Director server participated in this cluster during the validation, residing on GAD storage and associated with the Common network to be available in either DC during the event of a site down scenario during testing. vSphere HA rules are negotiated by the hypervisor hosts during an event, so the availability of vCenter is not required for VM powerups to occur. This example is not intended to be a requirement of the deployment, but exists as an example of the resiliency that the solution can provide.

Architecture

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure is a validated reference architecture targeting Virtual Server Infrastructure (VSI) implementations. The architecture was originally built for a single data center around the Cisco Unified Computing System (Cisco UCS) and the Hitachi Virtual Storage Platform (VSP) connected together by Cisco MDS Multilayer SAN Switches, and with the Cisco Application Centric Infrastructure using Cisco Nexus Switches. This single data center design is stretched across two potentially geographically displaced locations using the technologies of the Cisco Multi-Pod Design and Hitachi GAD, which will both be explained in these deployment instructions.

Deployment

The Adaptive Solutions Stretched Data Center utilizes the deployment guide of the published Adaptive Solutions for Converged Infrastructure with ACI for each of the DC placements shown in the architecture.

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure with Cisco ACI Deployment Guide

You must complete the first DC setup using the steps and specifications found in the deployment guide and it’s required before beginning the Multi-Pod Deployment. This deployment guide will be referred to going forward as the DC1 Deployment Guide,

These deployments will differ slightly from the DC1 Deployment Guide, and components changed will be noted as follows:

Table 2 Validated Hardware and Software

| Component |

DC Location |

Software Version/Firmware Version |

|

| Network |

Cisco Nexus 93180YC-FX (leaf) |

Both |

14.2(1j) [14.1(2g) in initial release] |

| Cisco Nexus 9364C (spine) |

Both |

14.2(1j) [14.1(2g) in initial release] |

|

| Cisco APIC M2 |

Both |

4.2(1j) [4.1(2g) in initial release] |

|

| Cisco ExternalSwitch |

Shared |

1.1 [1.0 in initial release] |

|

| Compute |

Cisco UCS Fabric Interconnect 6454 |

Both |

4.0(4d) [4.0(4b) in initial release] |

| Cisco UCS 2208XP IOM |

Both |

4.0(4d) [4.0(4b) in initial release] |

|

| Cisco UCS B200 M5 |

Both |

4.0(4d) [4.0(4b) in initial release] |

|

| VMware vSphere |

Both |

6.7 U2 VMware_ESXi_6.7.0_13006603_Custom_Cisco_6.7.2.1.iso |

|

| ESXi 6.7 U2 nenic |

Both |

1.0.29.0 |

|

| ESXi 6.7 U2 nfnic |

Both |

4.0.0.40 [4.0.0.38 in initial release] |

|

| VMware vCenter Server Appliance |

Shared |

6.7 U2 |

|

| VM Virtual Hardware Version |

Both |

13 |

|

| Storage |

Hitachi VSP 5100 |

DC1 |

90-01-61 (SVOS 9.1.3) |

| Hitachi VSP G370 |

DC2 |

88-03-23 (SVOS 8.3.1) |

|

| Hitachi Storage Plugin for vCenter |

Shared |

4.1.0 [3.10.0 in initial release] |

|

| Hitachi Command Control Interface (CCI) |

|

01-52-03/01 |

|

| Cisco MDS 9706 |

DC1 |

8.3(2) [8.3(1) in initial release] |

|

| Cisco MDS 9148T |

DC2 |

8.3(2) |

|

| Cisco DCNM |

DC1 |

11.2(1) |

|

The VLANs used in each environment will be nearly identical, with the exception of the insertion of a DC2 specific VLAN for infrastructure placed in DC2 as opposed to DC1.

Table 3 VLANs used in the Deployment

| VLAN Name |

VLAN Purpose |

ID Used in Validating this Document |

Note |

| DC1-IB |

DC1 VLAN for Internal Infrastructure (UCSM/VSP) |

119 |

Referred to as Internal-Infra in the initial release, but will be utilized for physical and virtual infrastructure specific to DC1 |

| DC2-IB |

DC2 VLAN for Internal Infrastructure (UCSM/VSP) |

219 |

|

| Common |

VLAN for Shared Infrastructure (AD/DNS) |

319 |

|

| Host-Mgmt |

VLAN for Hypervisor Hosts (ESXi) |

419 |

|

| vMotion |

VLAN for vSphere vMotion traffic |

519 |

|

| Native |

VLAN to which untagged frames are assigned |

2 |

|

| App-vDS-[1-100] |

VLAN for Application VM Interfaces residing in vDS based port groups |

1100-1199 |

|

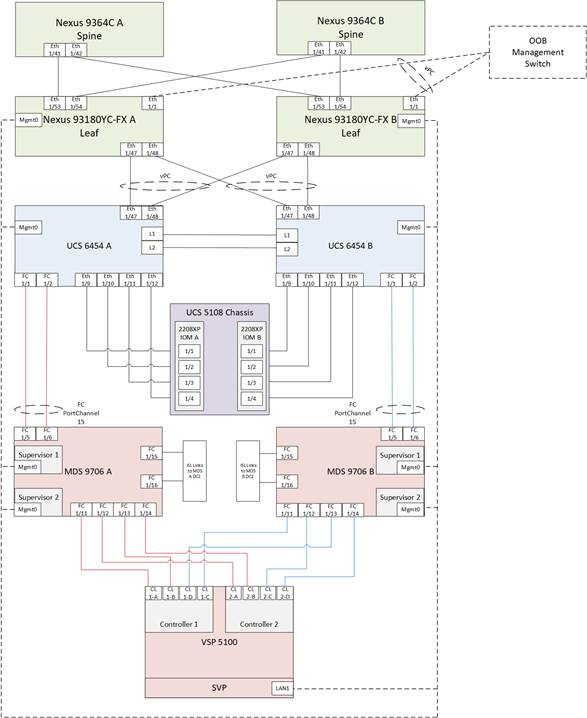

DC1 Physical Cabling for the Cisco UCS 6454 with the VSP 5100

Figure 18 Cabling Configuration used in the DC1 Design Featuring the Cisco UCS 6454 and the VSP 5100

Table 4 Cisco Nexus 93180YC-FX A Cabling Information for DC1

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 93180YC-FX A |

Eth1/1 |

GbE |

GbE |

Any |

| Eth1/47 |

25GbE |

Cisco UCS 6454 FI A |

Eth 1/47 |

|

| Eth1/48 |

25GbE |

Cisco UCS 6454 FI B |

Eth 1/47 |

|

| Eth1/53 |

40GbE or 100GbE |

Cisco 9364C A (Spine) |

Eth 1/41 |

|

| Eth1/54 |

40GbE or 100GbE |

Cisco 9364C B (Spine) |

Eth 1/41 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 5 Cisco Nexus 93180YC-FX B Cabling Information for DC1

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 93180YC-FX B |

Eth1/1 |

GbE |

GbE |

Any |

| Eth1/47 |

25GbE |

Cisco UCS 6454 FI A |

Eth 1/48 |

|

| Eth1/48 |

25GbE |

Cisco UCS 6454 FI B |

Eth 1/48 |

|

| Eth1/53 |

40GbE or 100GbE |

Cisco 9364C A (Spine) |

Eth 1/42 |

|

| Eth1/54 |

40GbE or 100GbE |

Cisco 9364C B (Spine) |

Eth 1/42 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 6 Cisco UCS 6454 FI A Cabling Information for DC1

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS 6454 FI A |

FC 1/1 |

32Gb FC |

MDS 9706 A |

FC1/5 |

| FC 1/2 |

32Gb FC |

MDS 9706 A |

FC1/6 |

|

| Eth1/9 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/1 |

|

| Eth1/10 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/2 |

|

| Eth1/11 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/3 |

|

| Eth1/12 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/4 |

|

| Eth1/47 |

25GbE |

Cisco Nexus 93180YC-FX A |

Eth1/47 |

|

| Eth1/48 |

25GbE |

Cisco Nexus 93180YC-FX B |

Eth1/47 |

|

| L1 |

GbE |

Cisco UCS 6454 FI B |

L1 |

|

| L2 |

GbE |

Cisco UCS 6454 FI B |

L2 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 7 Cisco UCS 6454 FI B Cabling Information for DC1

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS 6454 FI B |

FC 1/1 |

32Gb FC |

MDS 9706 A |

FC1/5 |

| FC 1/2 |

32Gb FC |

MDS 9706 A |

FC1/6 |

|

| Eth1/9 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/1 |

|

| Eth1/10 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/2 |

|

| Eth1/11 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/3 |

|

| Eth1/12 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/4 |

|

| Eth1/47 |

25GbE |

Cisco Nexus 93180YC-FX A |

Eth1/48 |

|

| Eth1/48 |

25GbE |

Cisco Nexus 93180YC-FX B |

Eth1/48 |

|

| L1 |

GbE |

Cisco UCS 6454 FI B |

L1 |

|

| L2 |

GbE |

Cisco UCS 6454 FI B |

L2 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 8 Cisco MDS 9706 A Cabling Information for DC1

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco MDS 9706 A |

FC 1/5 |

32Gb FC |

Cisco UCS 6454 FI A |

FC1/1 |

| FC 1/6 |

32Gb FC |

Cisco UCS 6454 FI A |

FC1/2 |

|

| FC 1/11 |

32Gb FC |

VSP 5100 Controller 1 |

CL1-A |

|

| FC 1/12 |

32Gb FC |

VSP 5100 Controller 2 |

CL2-A |

|

| FC 1/13 |

32Gb FC |

VSP 5100 Controller 1 |

CL1-B |

|

| FC 1/14 |

32Gb FC |

VSP 5100 Controller 2 |

CL2-B |

|

| FC 1/15 |

32Gb FC |

MDS 9148 T A |

FC 1/15 |

|

| FC 1/16 |

32Gb FC |

MDS 9148 T A |

FC 1/16 |

|

| Sup1 MGMT0 |

GbE |

GbE management switch |

Any |

|

| Sup2 MGMT0 |

GbE |

GbE management switch |

Any |

Table 9 Cisco MDS 9706 B Cabling Information for DC1

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco MDS 9706 B |

FC 1/5 |

32Gb FC |

Cisco UCS 6454 FI B |

FC1/1 |

| FC 1/6 |

32Gb FC |

Cisco UCS 6454 FI B |

FC1/2 |

|

| FC 1/11 |

32Gb FC |

VSP 5100 Controller 1 |

CL1-C |

|

| FC 1/12 |

32Gb FC |

VSP 5100 Controller 2 |

CL2-C |

|

| FC 1/13 |

32Gb FC |

VSP 5100 Controller 1 |

CL1-D |

|

| FC 1/14 |

32Gb FC |

VSP 5100 Controller 2 |

CL2-D |

|

| FC 1/15 |

32Gb FC |

MDS 9148 T B |

FC 1/15 |

|

| FC 1/16 |

32Gb FC |

MDS 9148 T B |

FC 1/16 |

|

| Sup1 MGMT0 |

GbE |

GbE management switch |

Any |

|

| Sup2 MGMT0 |

GbE |

GbE management switch |

Any |

DC2 Physical Cabling for the Cisco UCS 6454 with the VSP G370

Figure 19 Cabling Configuration used in the DC2 Design Featuring the Cisco UCS 6454 and the VSP G370

Table 10 Cisco Nexus 93180YC-FX A Cabling Information for DC2

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 93180YC-FX A |

Eth1/1 |

GbE |

GbE |

Any |

| Eth1/49 |

40GbE |

Cisco UCS 6454 FI A |

Eth 1/53 |

|

| Eth1/50 |

40GbE |

Cisco UCS 6454 FI B |

Eth 1/53 |

|

| Eth1/53 |

40GbE or 100GbE |

Cisco 9364C A (Spine) |

Eth 1/5 |

|

| Eth1/54 |

40GbE or 100GbE |

Cisco 9364C B (Spine) |

Eth 1/5 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 11 Cisco Nexus 93180YC-FX B Cabling Information for DC2

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 93180YC-FX B |

Eth1/1 |

GbE |

GbE |

Any |

| Eth1/49 |

40GbE |

Cisco UCS 6454 FI A |

Eth 1/54 |

|

| Eth1/50 |

40GbE |

Cisco UCS 6454 FI B |

Eth 1/54 |

|

| Eth1/53 |

40GbE or 100GbE |

Cisco 9364C A (Spine) |

Eth 1/6 |

|

| Eth1/54 |

40GbE or 100GbE |

Cisco 9364C B (Spine) |

Eth 1/6 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

![]() The connections for the 93180YC-FX switches to the Cisco UCS 6454 are using 40G ports in these cabling diagrams instead of the more readily available 25G ports from each platform. This is not a requirement, but intends to show that there is some potential capacity to use the higher bandwidth ports in this topology. Prior to use in this manner, the 40G 93180YC-FX ports will need to be converted to Downlink ports from the APIC.

The connections for the 93180YC-FX switches to the Cisco UCS 6454 are using 40G ports in these cabling diagrams instead of the more readily available 25G ports from each platform. This is not a requirement, but intends to show that there is some potential capacity to use the higher bandwidth ports in this topology. Prior to use in this manner, the 40G 93180YC-FX ports will need to be converted to Downlink ports from the APIC.

Table 12 Cisco UCS 6454 FI A Cabling Information for DC2

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS 6454 FI A |

FC 1/1 |

32Gb FC |

MDS 9148T A |

FC1/1 |

| FC 1/2 |

32Gb FC |

MDS 9148T A |

FC1/2 |

|

| Eth1/9 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/1 |

|

| Eth1/10 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/2 |

|

| Eth1/11 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/3 |

|

| Eth1/12 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/4 |

|

| Eth1/53 |

25GbE |

Cisco Nexus 93180YC-FX A |

Eth1/49 |

|

| Eth1/54 |

25GbE |

Cisco Nexus 93180YC-FX B |

Eth1/49 |

|

| L1 |

GbE |

Cisco UCS 6454 FI B |

L1 |

|

| L2 |

GbE |

Cisco UCS 6454 FI B |

L2 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 13 Cisco UCS 6454 FI B Cabling Information for Cisco UCS 6454 to VSP G370

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS 6454 FI B |

FC 1/1 |

32Gb FC |

MDS 9148T B |

FC1/1 |

| FC 1/2 |

32Gb FC |

MDS 9148T B |

FC1/2 |

|

| Eth1/9 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/1 |

|

| Eth1/10 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/2 |

|

| Eth1/11 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/3 |

|

| Eth1/12 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/4 |

|

| Eth1/53 |

25GbE |

Cisco Nexus 93180YC-FX A |

Eth1/50 |

|

| Eth1/54 |

25GbE |

Cisco Nexus 93180YC-FX B |

Eth1/50 |

|

| L1 |

GbE |

Cisco UCS 6454 FI B |

L1 |

|

| L2 |

GbE |

Cisco UCS 6454 FI B |

L2 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 14 Cisco MDS 9148 T A Cabling Information for Cisco UCS 6454 to VSP G370

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco MDS 9148 T A |

FC 1/1 |

32Gb FC |

Cisco UCS 6454 FI A |

FC1/1 |

| FC 1/2 |

32Gb FC |

Cisco UCS 6454 FI A |

FC1/2 |

|

| FC 1/5 |

32Gb FC |

VSP G370 Controller 1 |

CL1-A |

|

| FC 1/6 |

32Gb FC |

VSP G370 Controller 2 |

CL2-B |

|

| FC 1/13 |

32Gb FC |

VSP G370 Controller 1 |

CL3-A |

|

| FC 1/14 |

32Gb FC |

VSP G370 Controller 2 |

CL2-A |

|

| FC 1/15 |

32Gb FC |

MDS 9706 A |

FC 1/15 |

|

| FC 1/16 |

32Gb FC |

MDS 9706 A |

FC 1/16 |

|

| Sup1 MGMT0 |

GbE |

GbE management switch |

Any |

|

| Sup2 MGMT0 |

GbE |

GbE management switch |

Any |

Table 15 Cisco MDS 9148 T B Cabling Information for Cisco UCS 6454 to VSP G370

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco MDS 9148 T B |

FC 1/1 |

32Gb FC |

Cisco UCS 6454 FI B |

FC1/1 |

| FC 1/2 |

32Gb FC |

Cisco UCS 6454 FI B |

FC1/2 |

|

| FC 1/5 |

32Gb FC |

VSP G370 Controller 1 |

CL3-B |

|

| FC 1/6 |

32Gb FC |

VSP G370 Controller 2 |

CL4-A |

|

| FC 1/13 |

32Gb FC |

VSP G370 Controller 1 |

CL1-B |

|

| FC 1/14 |

32Gb FC |

VSP G370 Controller 2 |

CL4-B |

|

| FC 1/15 |

32Gb FC |

MDS 9706 B |

FC 1/15 |

Cisco ACI Multi-Pod Deployment

The Adaptive Solutions Stretched Data Center leverages a Cisco Multi-Pod ACI fabric design to extend the ACI fabric and the vSphere cluster across two data centers to provide business continuity in the event of a disaster. The ACI Pods can be in the same data center location or in different geographical sites. This design assumes the two Pods are in two different geographical locations that was validated in the Cisco labs using a 75km fiber spool to interconnect the data centers.

This section provides detailed procedures for setting up the Cisco ACI Multi-Pod Fabric. An Inter-Pod network is first deployed to provide connectivity between data centers, followed by the ACI fabric in the second DC location.

The procedures outlined in this section are specific to deploying a Cisco ACI Multi-Pod fabric.

Prerequisites

Before ACI Multi-Pod can be deployed, the first ACI fabric (or Pod-1) should be up and running with Spine switches, Leaf switches and APICs.

Topology

Figure 20 shows the connectivity between Pods through the IPN and the connectivity from each Pod to the IPN. The connectivity between IPN devices uses 10GbE but the Spine switches in each Pod connect to the IPN devices using 40GbE links. Multiple nodes and links are used from each Pod to IPN and between IPNs to provide a redundant paths between Pods in the event of failures.

Figure 20 ACI Multi-Pod Fabric

Deployment Overview

A high-level overview of the steps involved in deploying an ACI Multi-Pod fabric is summarized below.

Physical Connectivity

The following are the steps involved to set up the physical connectivity:

· Complete the physical connectivity within the Inter-Pod Network (IPN) to provide connectivity between the sites.

· Deploy Spine switches, Leaf switches and APIC(s) in the second ACI Pod. For discovery and auto-provisioning of the fabric in a new Pod, a Spine switch must have at least one link up to a Leaf switch.

· Complete the physical connectivity to connect Spine switches to the IPN in each Pod. It is not necessary to connect all Spines in a Pod to the IPN. For redundancy, two Spines in each Pod should be connected to the IPN. The connected Spine switches will be seen as equal cost paths to the Pod TEP addresses so connecting more Spine switches to the IPN also increases the number of Equal-Cost Multi-Paths (ECMP) routes to Pod networks. This leads to a greater distribution of traffic load.

Deploy Inter-Pod Network (IPN)

The following are the steps involved to deploy the inter-pod network:

· (Optional) Configure a VRF for ACI Multi-Pod traffic on all IPN devices and put the relevant interfaces in the VRF. This isolates the ACI Multi-Pod traffic and protects the ACI underlay network that is now exposed through the IPN. The IPN can be thought of as an extension of the ACI underlay infrastructure in each Pod. The underlay is necessary for establishing VXLAN tunnels between leaf switches and spine switches in each Pod. VXLAN tunnels enable seamless forwarding of Layer 2 and Layer 3 data plane traffic between Pods. The VXLAN overlay is essential for ensuring that the interconnected Pods function as a single ACI fabric.

· Configure Layer 2 encapsulation, Layer 2 protocols (LLDP, CDP), MTU (Jumbo) and IP addressing on relevant interfaces of the IPN devices that provide connectivity within the IPN, and between the IPN and Spines in each Pod. The Spine switches will tag all traffic towards the IPN using VLAN 4. Therefore, IPN devices must be configured for trunking using VLAN 4 on the interfaces connecting to the Spine. Enabling LLDP (preferred) or CDP on IPN interfaces is recommended for determining which ports connect to which devices. Encapsulating traffic in VXLAN adds 50 Bytes of overhead so in order to transport Jumbo (9000 Bytes) frames from endpoints across the IPN, the MTU on the IPN interfaces should be set to at least 50 Bytes higher to avoid fragmentation. MTU used in validation is 9216B as it is commonly used as a default jumbo MTU on many Cisco platforms, including Cisco UCS.

· Enable routing within the IPN and on the connections to Spines to advertise TEP pools between Pods. Each Pod uses a unique TEP pool that must be advertised to the other Pod in order to establish VXLAN Tunnels from one Pod to the other. The Spines in each Pod that connect to the IPN also use Proxy TEP addressing that are also advertised to the other Pods. The proxy TEP addressing enables each Spine to advertise equal cost routes for the Pod subnets to the IPN routers. IPN will use the ECMP to the Spines to distribute traffic to the Pod subnets. Loopback interfaces are used on IPN nodes are used as the router-id for the routing protocol. Currently, OSPFv2 is the only routing protocol supported. Note that underlay infrastructure in an ACI Pod uses ISIS and not OSPF. If the IPN is an extensive L3 network that is already using another routing protocol, it is not necessary to use OSPF everywhere in the IPN – it is only necessary between the Spine switches and IPN devices.

· Enable IP Multicast routing using Bidirectional PIM (BIDIR-PIM) to forward Broadcast, Unknown Unicast and Multicast (BUM) traffic between Pods. This is necessary when endpoints in the same Bridge Domain are distributed across both Pods, to enable seamless East-West (DC1 to DC2) communication between endpoints for multi-destination or non-unicast traffic. BUM traffic is encapsulated in a VXLAN multicast frame to transport it within or between Pods. In an ACI fabric, a multicast traffic within each Bridge Domain is sent to a unique IP multicast group address. The multicast address for the bridge domain is assigned when the bridge domain is first defined in ACI. The address is allocated from a pool of multicast addresses, known as Global IP Outside (GIPo) in ACI. To forward BUM traffic between Pods, the IPN needs to support IP multicast, specifically BIDIR-PIM. In ACI Multi-Pod, when a Bridge Domain is activated within a Pod, an IGMP Join is forwarded to the IPN to receive BUM traffic from remote endpoints in the same Pod. The multicast address pool used for BUM traffic for bridge domains that span the IPN can be the same as the infrastructure GIPo range used within a Pod or different pool can be allocated for this. BIDIR-PIM requires a Rendezvous Point (RP) to be defined. For RP resiliency, a phantom RP can be used. For distributing the RP load,

· Configure DHCP Relay on IPN devices to enable auto-configuration of Spines and APICs in Pod-2 from Pod-1.

Setup ACI Fabric for Multi-Pod

The following are the steps involved to set up the ACI fabric for Multi-Pod:

· Configure IP connectivity to connect Spine Interfaces to IPN devices in Pod-1.

· Configure Routing Protocols (OSPF, BGP) on the Spine Switches. OSPF will provide IP reachability between Pods, specifically between TEP address pools in each Pod. ACI Fabric will redistribute routes from IS-IS used within each Pod to OSPF and vice-versa. This effectively extends the underlay network (VRF overlay-1 in ACI Fabric) to the IPN. BGP will be used to advertise learned MAC and IP addresses of endpoints and their locations. The endpoint information is maintained on separate Counsel of Oracle Protocol (COOP) database on Spine switches on each Pod. Endpoints learned on each local Pod is advertised across the BGP-EVPN peering between Pods. The peering is directly between Spine switches in the Pods. When multiple Pods are connected across the IPN, BGP route-reflectors can be deployed in the IPN rather than direct peering between Pods.

· Configure External TEP Addresses for Spine switches to use for Spine-to-Spine connections across the IPN.

· Add a second Pod to the ACI fabric.

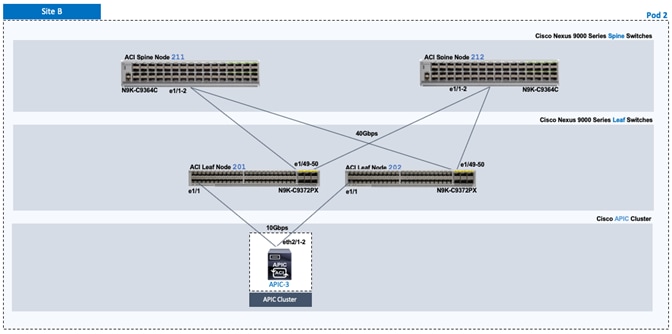

Setup Pod-2 Spine Switches, Leaf Switches, and APIC(s)

The following are the steps involved to set up the Pod-2 spine switches, leaf switches, and APIC(s):

· Configure ACI Fabric access policies to enable connectivity from Pod-1 Spines switches to the IPN.

· Configure newly discovered Spine and Leaf switches in Pod-2 from the first Pod.

· Configure ACI Fabric Access Policies to enable connectivity from Pod-2 Spines switches to the IPN.

· Deploy APIC(s) in Pod-2 to the APIC cluster that manages the fabric.

For additional information about ACI Multi-Pod, see the References section of this document and the ACI product documentation.

Deployment Guidelines

The following are the deployment guidelines:

· IPN must support an MTU of 50 Bytes higher than the MTU used by the endpoints in the deployment. In this design, the ACI Multi-Pod Fabric uses, by default, an MTU of 9000 Bytes or Jumbo frames for vMotion traffic. It is also possible for other (for example, Management, Applications) traffic to use Jumbo frames. The minimum MTU the IPN must support is therefore, 9050 Bytes but 9216 Bytes is recommended, in order to keep it consistent with default MTU on other Cisco platforms such as Cisco UCS.

· ACI Multi-Pod Fabric uses a VLAN ID of 4 for connectivity between Spine Switches and IPN devices in each Pod. This is system defined and cannot be changed – the IPN devices connecting to the Spines must therefore be configured to use VLAN 4.

· IPN device must support a BIDIR-PIM range of at least /15. First generation Nexus 9000 series switches cannot be used as IPN devices as the ASICS used on these support a max BIDIR-PIM range of /24.

· For auto-discovery and auto-configuration of newly added Spine switches to work, at least one Leaf switch must be online and connected to the Spine switch in the remote Pod. The Spine switch should be able to see the Leaf switch via LLDP.

· A Multi-Pod ACI fabric deployment requires the 239.255.255.240 (System GIPo) to be configured as a BIDIR-PIM range on the IPN devices. This configuration is not required when using the Infra GIPo as System GIPo feature. The APIC and switches must be running releases that support this feature.

· Spine switches from each Pod cannot be directly connected to each other – they must go through at least one IPN router/switch.

· It is not necessary to connect all Spines switches in a Pod to the IPN. If possible, connect at least two Spine switches from each Pod to the IPN to provide node redundancy in the event of a Spine switch failure. Traffic is distributed across all the spine switches that are connected to the IPN so more spine switches can be connected to distribute the load even further.

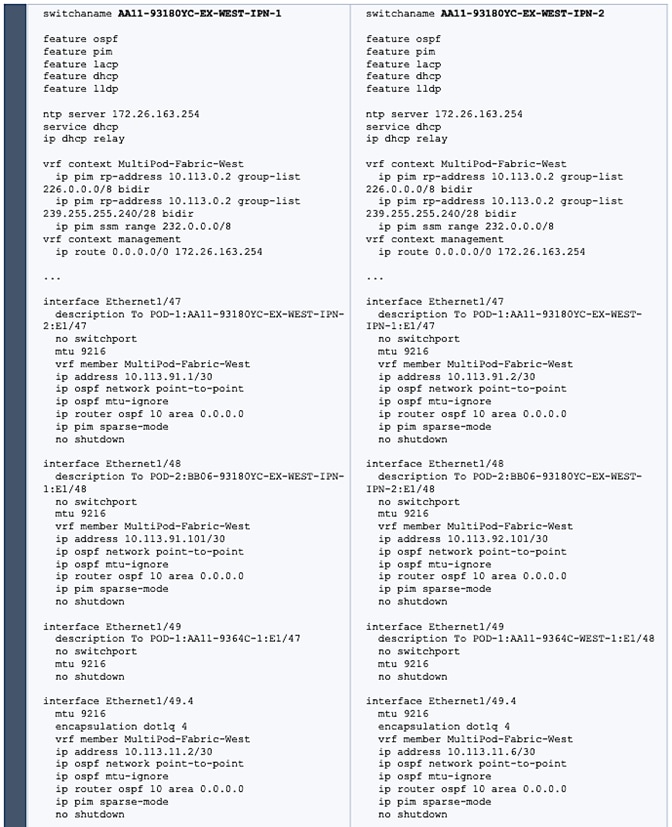

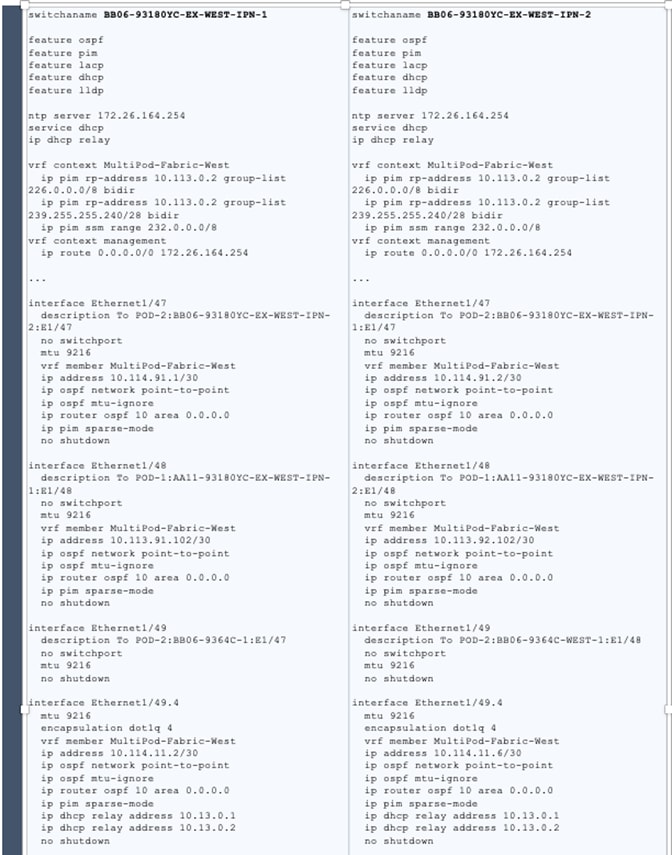

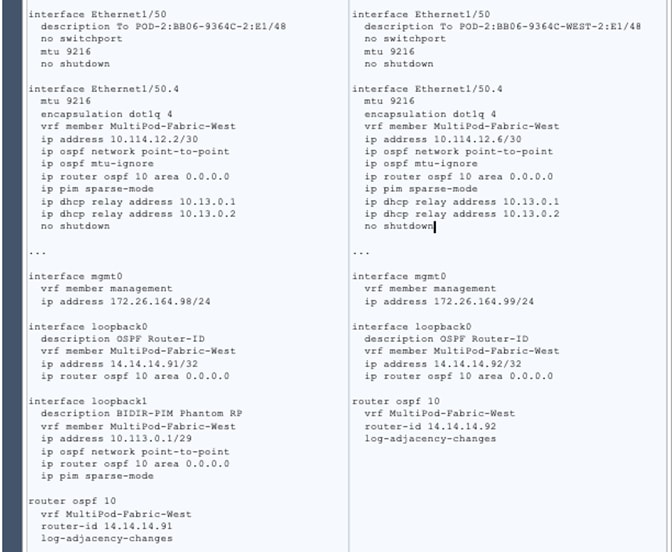

Deploy Inter-Pod Network

This section provides the configuration for deploying Inter-Pod switches that provide Pod-to-Pod connectivity. The IPN is not managed by the APIC. IPN can be thought of as an extension of the ACI underlay network. IPN devices must be enabled for L3 forwarding with VRF Lite (recommended), OSPF, DHCP Relay and BIDIR-PIM. LACP is also required when link bundling is deployed. LLDP is optional but recommended to verify connectivity to peers and ports used for the connection.

Deployment Overview

The high-level steps involved in the setting up the Inter-Pod Network is as follows:

· Complete the physical connectivity to connect devices in the IPN, to IPN devices in remote Pod and to Spine switches in local Pod

· Identify the information required to setup the IPN

· Configure IPN Devices in Pod-1 (DC1)

· Configure IPN Devices in Pod-2 (DC2)

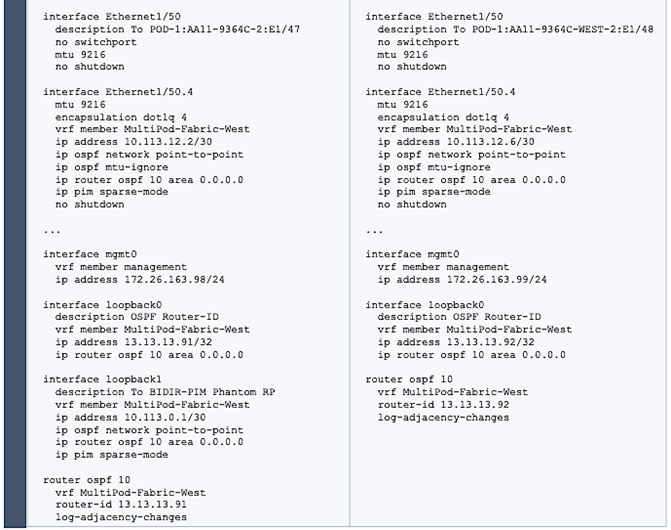

Physical Connectivity - Inter-Pod Network

Figure 21 illustrates the IPN connectivity between IPN devices and to Spine switches in each Pod. The connectivity between IPN devices uses 10GbE and 40GbE to Spine switches.

Figure 21 Inter-Pod Network Connectivity

Table 16 Configure IPN Devices in Pod-1 and Pod-2

Setup ACI Fabric for Multi-Pod – Using Configuration Wizard

In APIC Release 4.0(1) and higher, ACI Multi-Pod can be deployed using a configuration wizard that configures the fabric for Multi-Pod.

Prerequisites

The Inter-Pod network should be setup prior to configuring the ACI fabric for Multi-Pod.

Deployment Overview

Deploying ACI Multi-Pod using the APIC Configuration Wizard consists of the following high-level activities:

· Configure Interpod Connectivity - For connecting the first Pod or site to IPN and setting up Multi-Pod

· Add Physical Pod - For adding a second Pod or site in the Multi-Pod setup

The Configure Interpod Connectivity portion of the wizard is for setting up the first Pod or site (Pod-1) for the following:

· IP Connectivity from Spines in Pod-1 to the Inter-Pod network. This includes configuring the Spine interfaces that connect to the IPN for IP connectivity. The APIC on the back-end will take the minimal information provided to the wizard, to configure the necessary fabric access policies for connecting devices to the ACI fabric. This includes configuration of interface and switch-level, policies and profiles on the Spines connecting to the IPN.

· Routing Protocols to enable IP Routing on the Spines in Pod-1 towards the IPN. This includes OSPF-based underlay network for exchanging routes between the Pods and MP-BGP based overlay network for exchanging endpoint location information using MP-BGP EVPN.

· External TEP addressing for Pod-1 to communicate with other Pods or sites. This includes specifying a routable External TEP Pool for the first Pod or site.

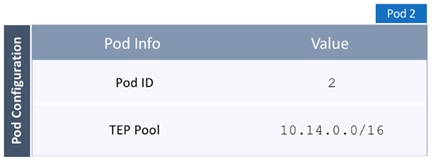

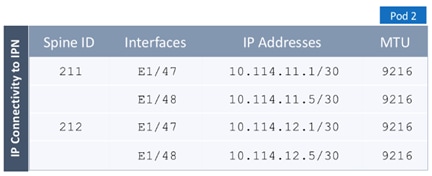

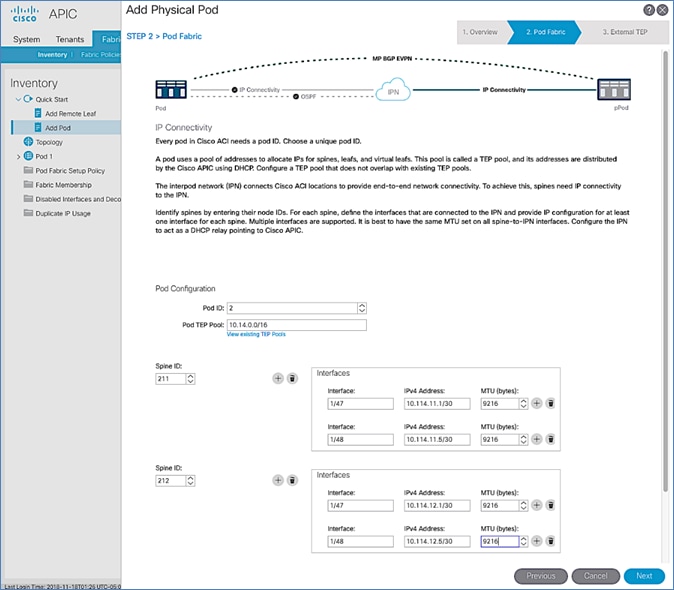

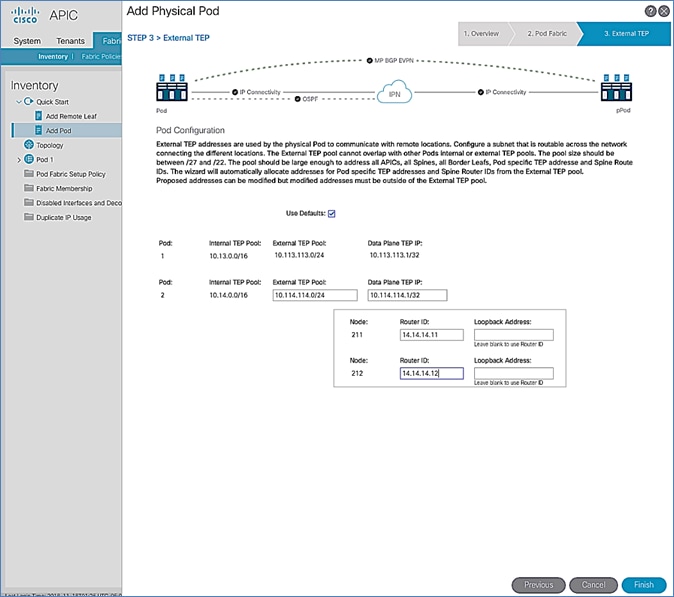

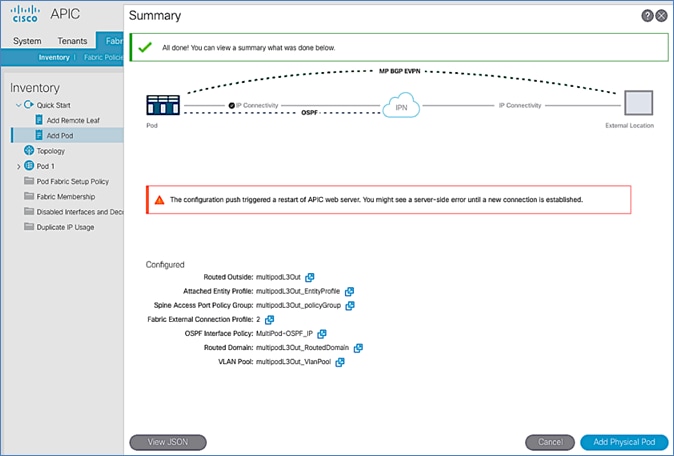

The Add Physical Pod portion of the wizard is for adding the second Pod or site (Pod-2) and consists of the following:

· Pod Fabric information for creating a second Pod. This includes specifying a unique Pod ID and TEP Pool for the new Pod. It also includes parameters for configuring IP connectivity from Spines in Pod-2 to the Inter-Pod network, similar to the information used in Pod-1 for connecting the Spines in Pod-1 to IPN.

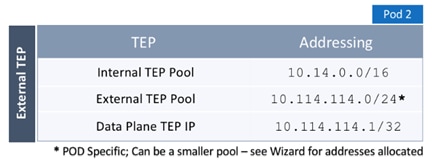

· External TEP addressing for Pod-2 to communicate with other Pods or sites. This includes specifying a routable External TEP Pool for the second Pod or site.

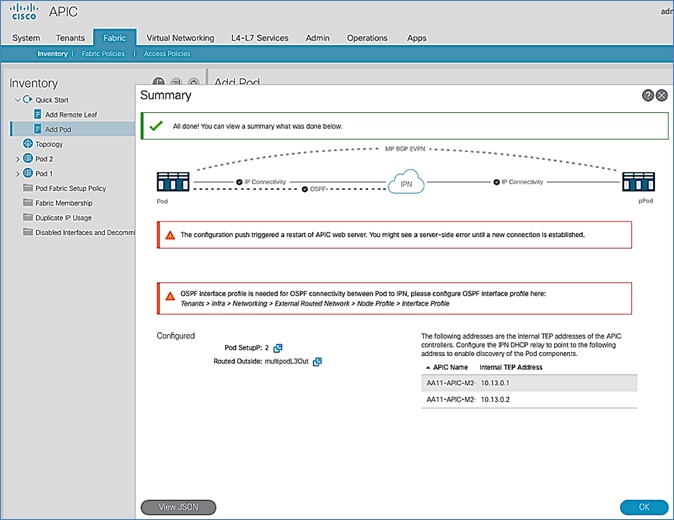

· Configure DHCP Relay on the IPN devices to point to the APIC TEP IP Addresses.

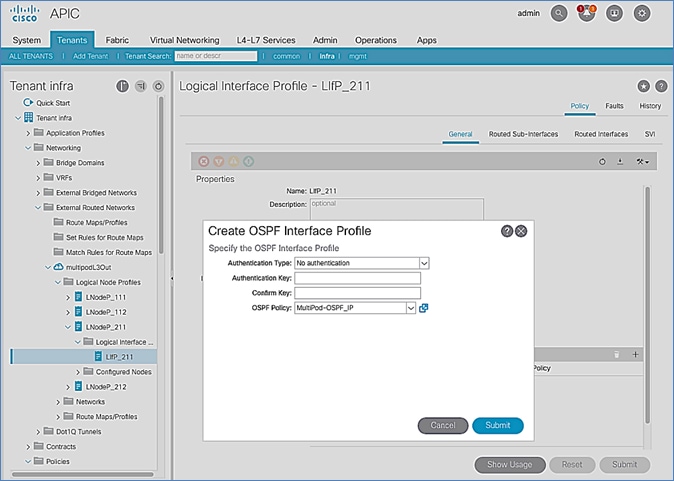

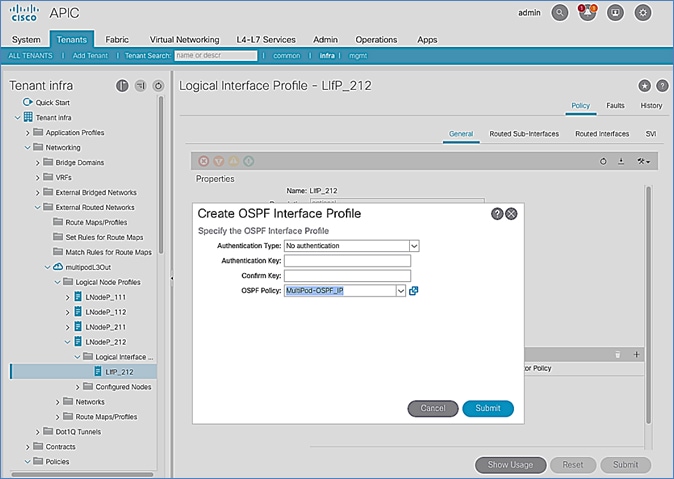

· Configure OSPF interface policies for Pod-2 Spine switches that connect to the IPN

The setup information and deployment steps for configuring Interpod connectivity and adding a Physical Pod using the Wizard are covered in the next sections.

Configure Inter-Pod Connectivity

Follow the procedures in this section to configure Inter-Pod connectivity to connect the Spine switches in Pod-1 to IPN and set up ACI Fabric for Multi-Pod.

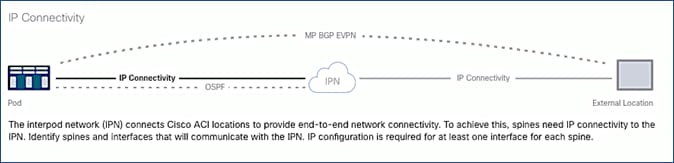

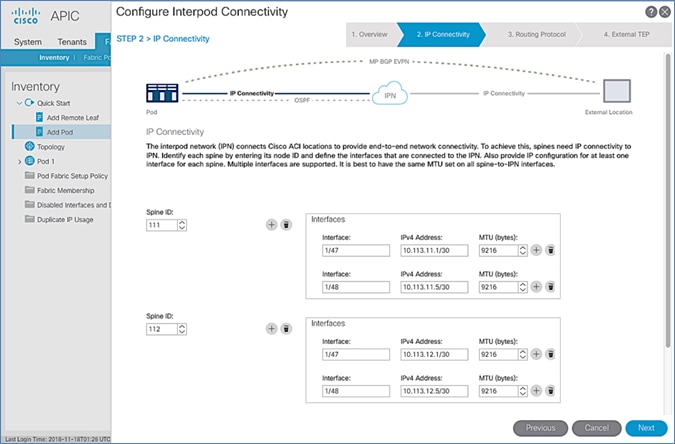

IP Connectivity

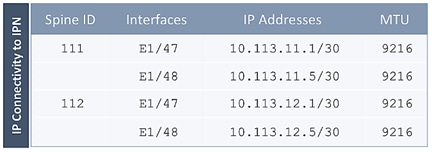

IP Connectivity section of the wizard provides the physical interface and IP configuration on the Spines switches in Pod-1 that connect to IPN devices. The parameters used in this CVD for this portion of the configuration is provided in Table 17 .

Table 17 IP Connectivity Information for Pod-1

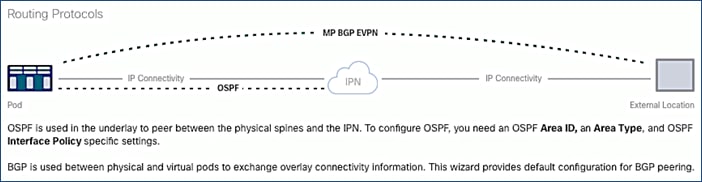

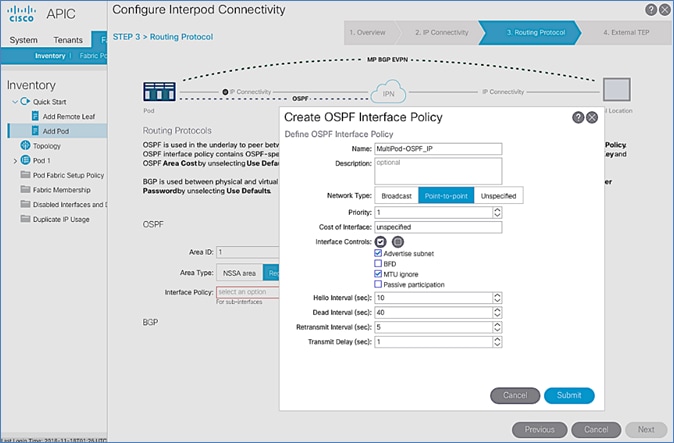

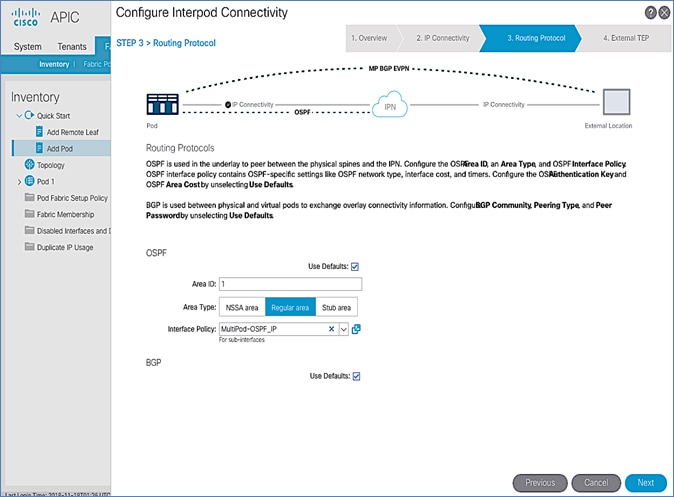

Routing Protocols

Routing Protocols section of the wizard provides the routing protocol (OSPF, BGP) configuration on the Spine switches in Pod-1 that connect to IPN to enable the OSPF based underlay network and MP-BGP based overlay. The parameters used in this CVD for this portion of the configuration is provided in Table 18 .

Table 18 Routing Protocols Information for Pod-1

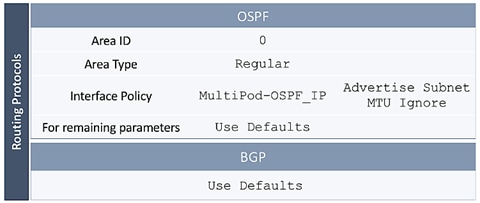

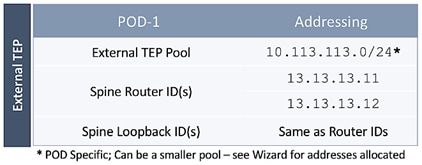

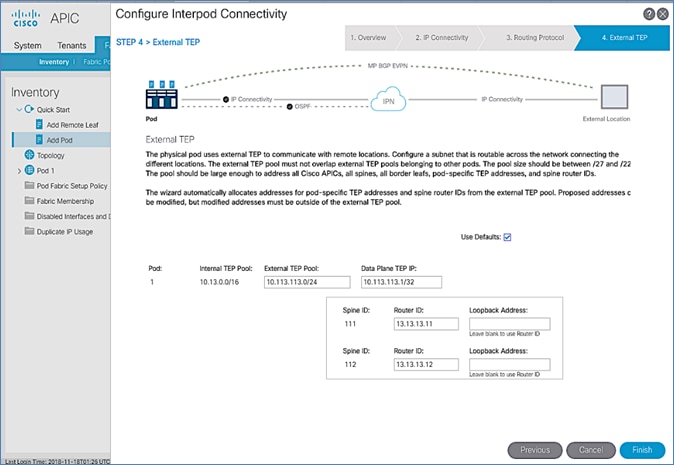

External TEP

External TEP section of the wizard provides the addressing configuration on the Spine switches to enabled Pod-to-Pod connectivity across the Inter-Pod network. The parameters used in this CVD for this portion of the configuration is provided in the Table 19 .

Table 19 External TEP Information for Pod-1

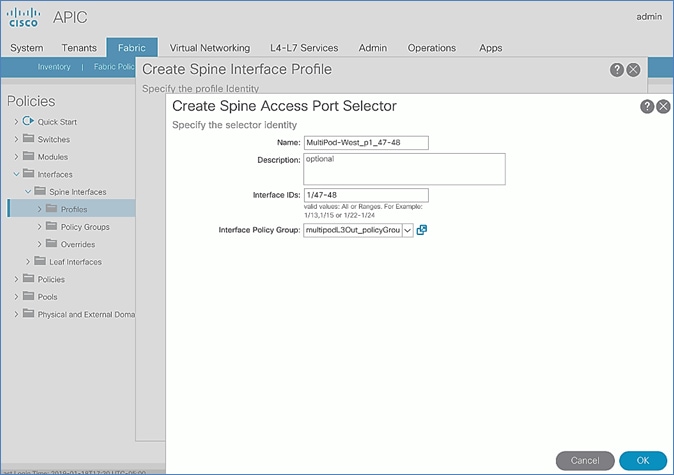

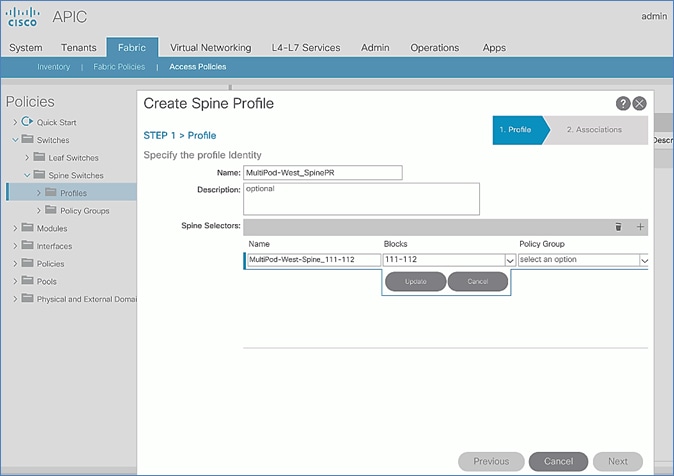

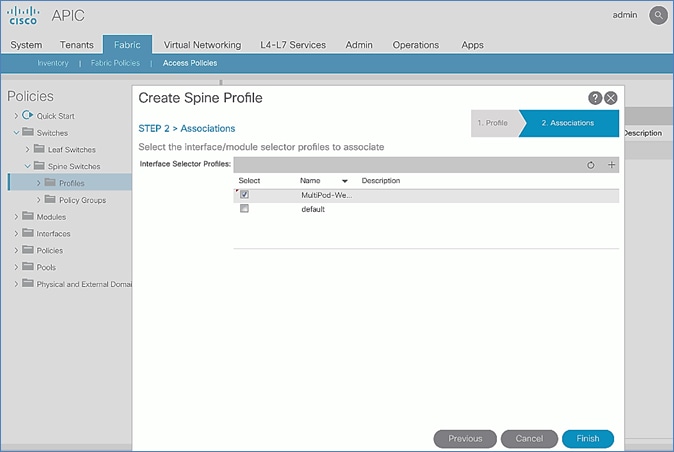

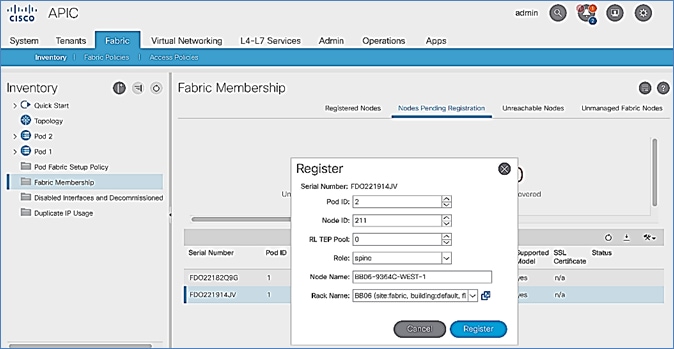

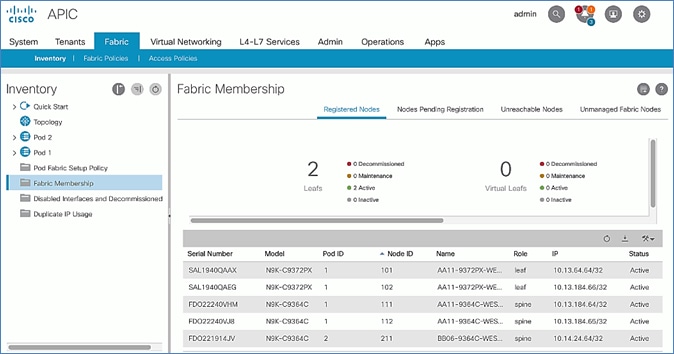

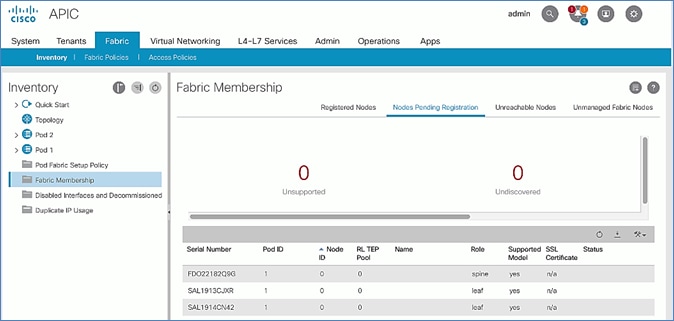

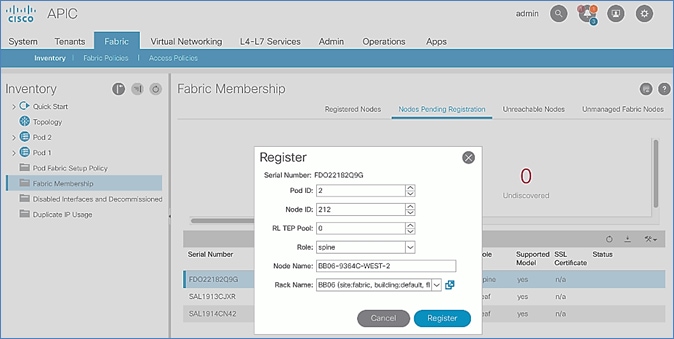

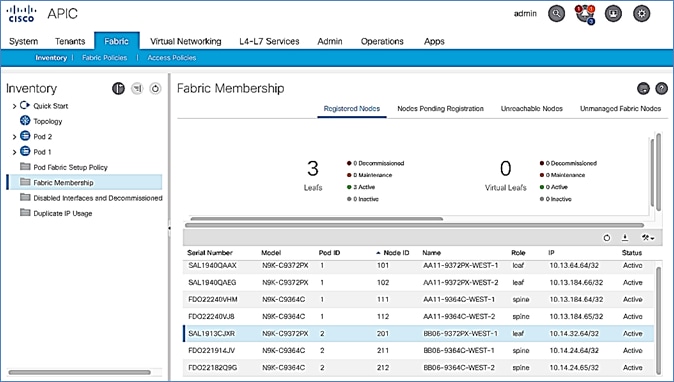

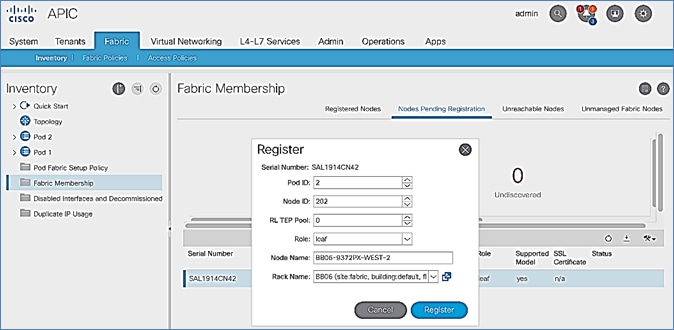

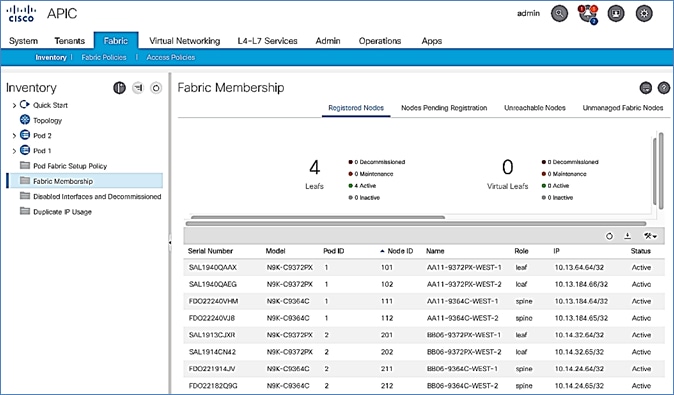

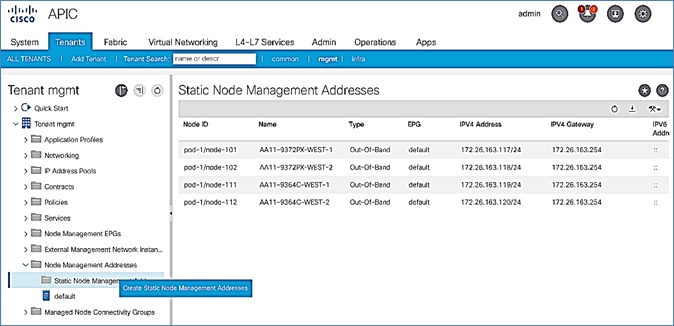

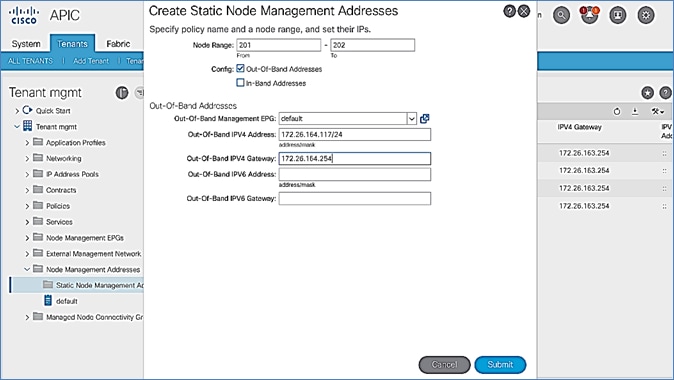

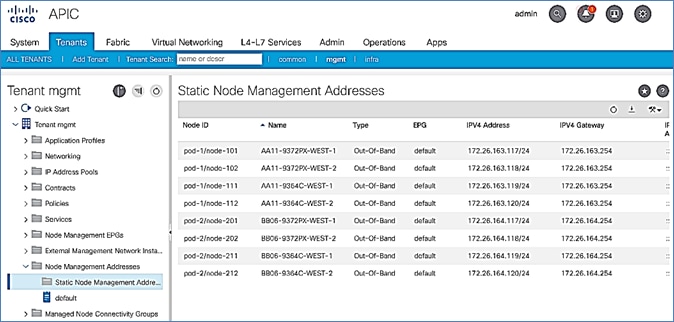

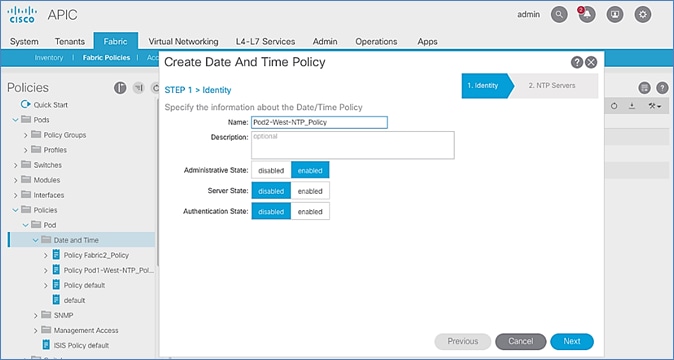

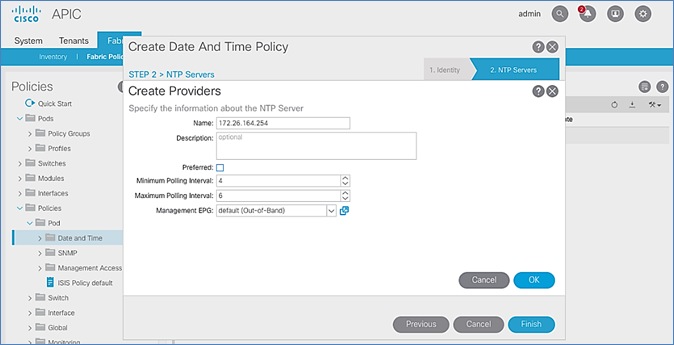

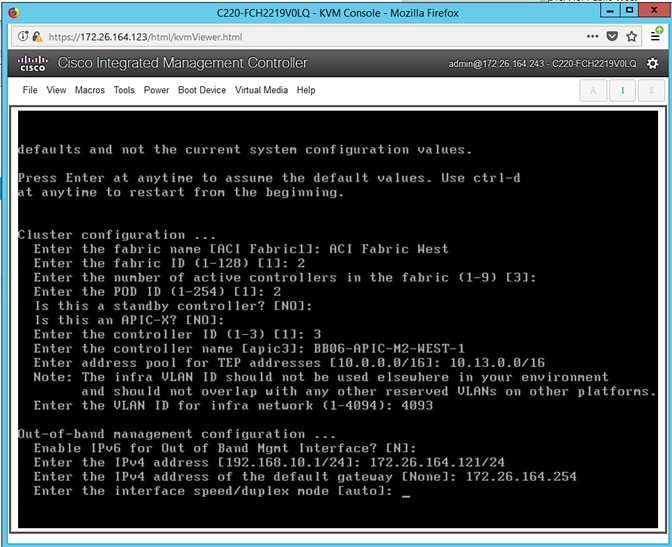

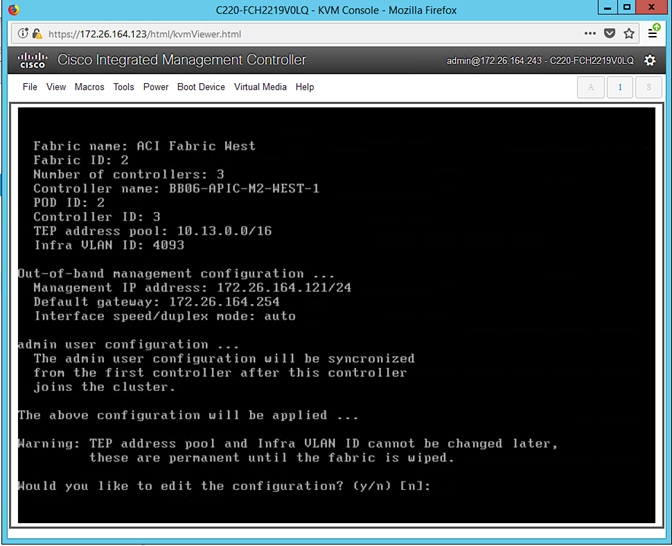

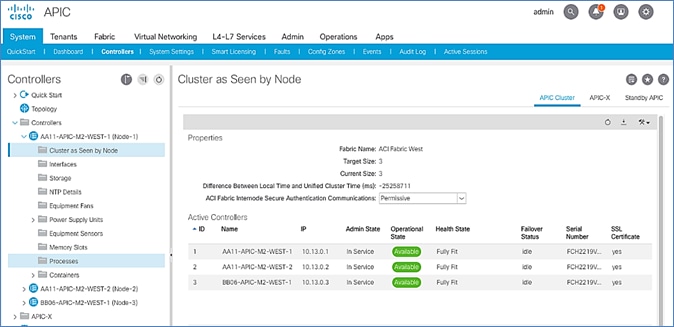

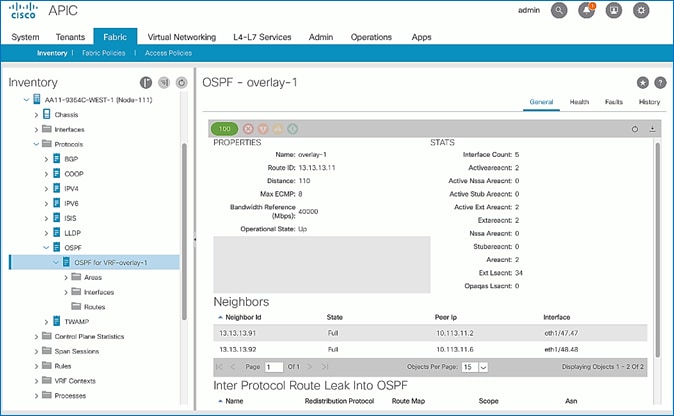

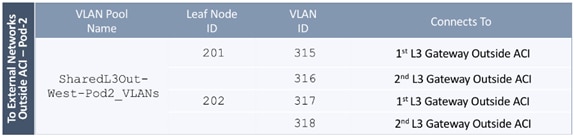

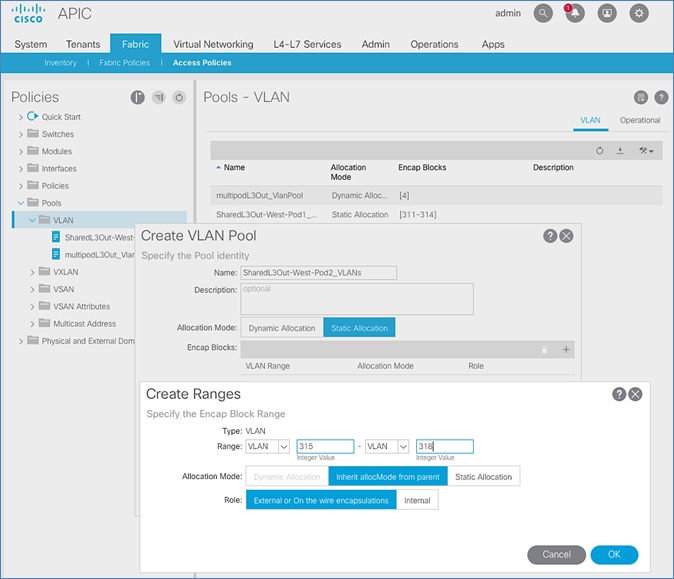

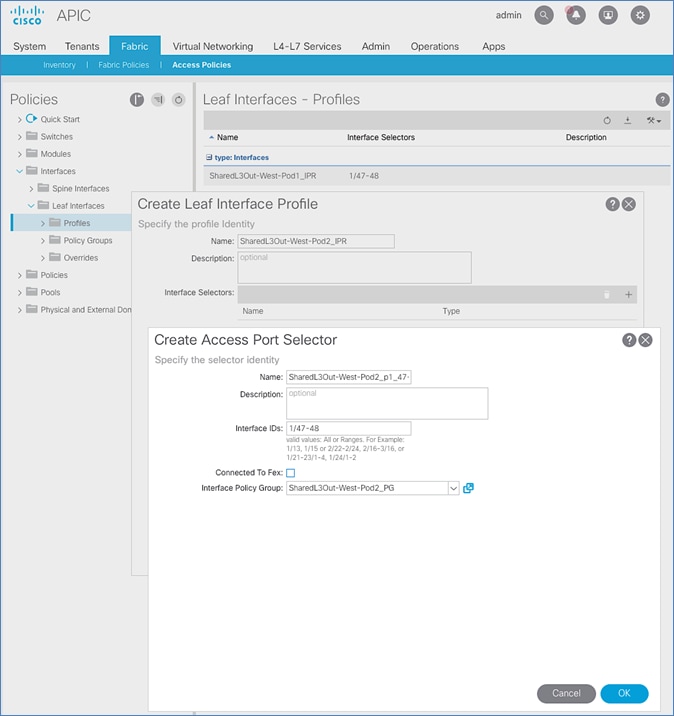

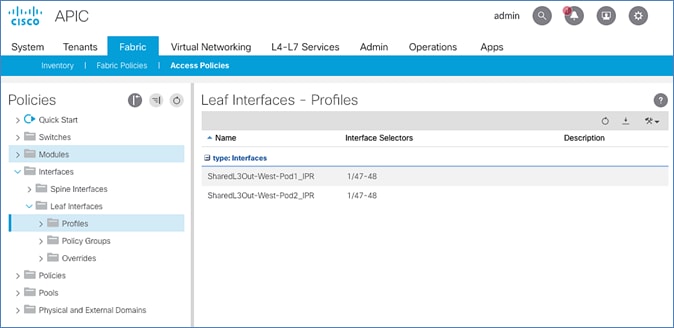

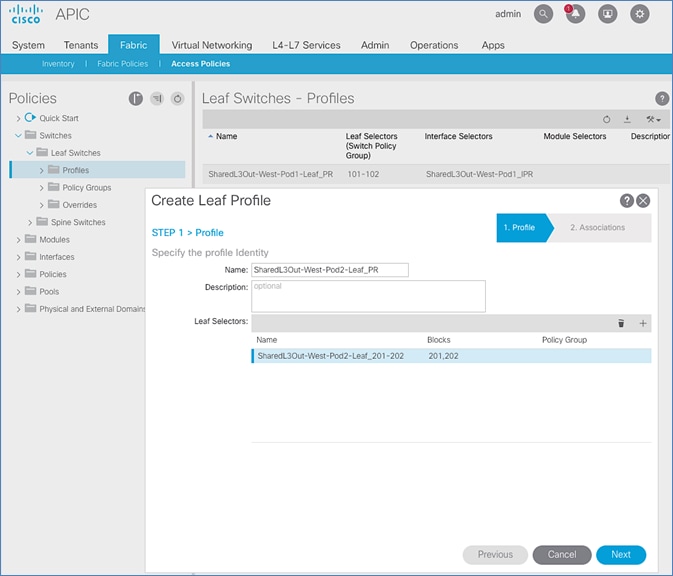

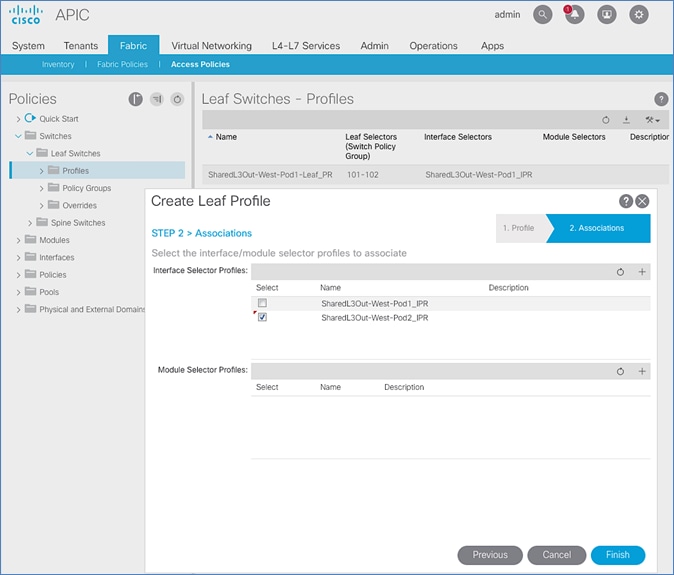

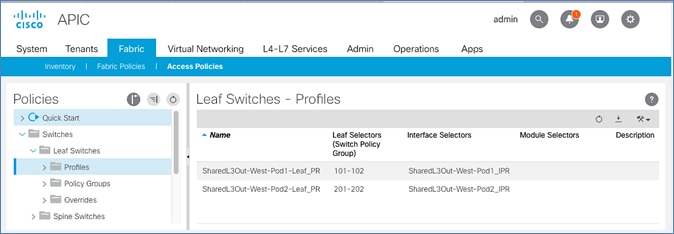

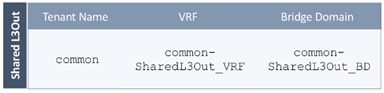

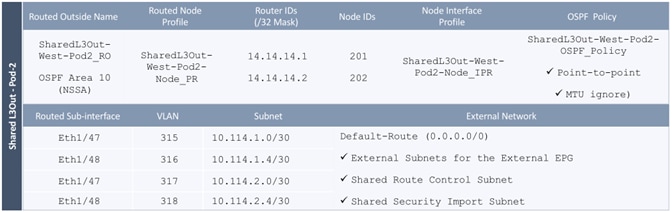

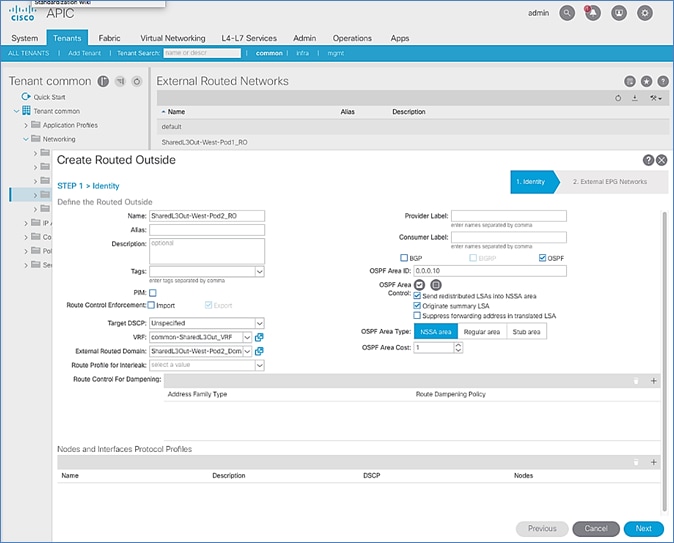

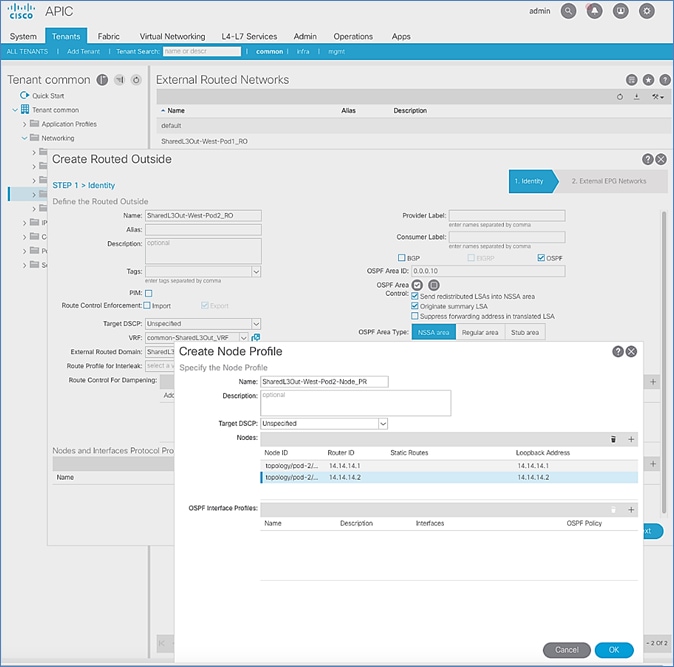

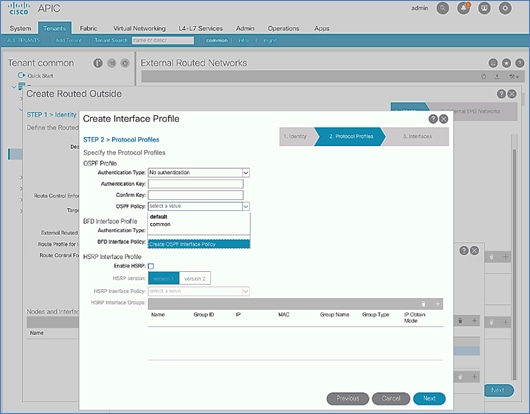

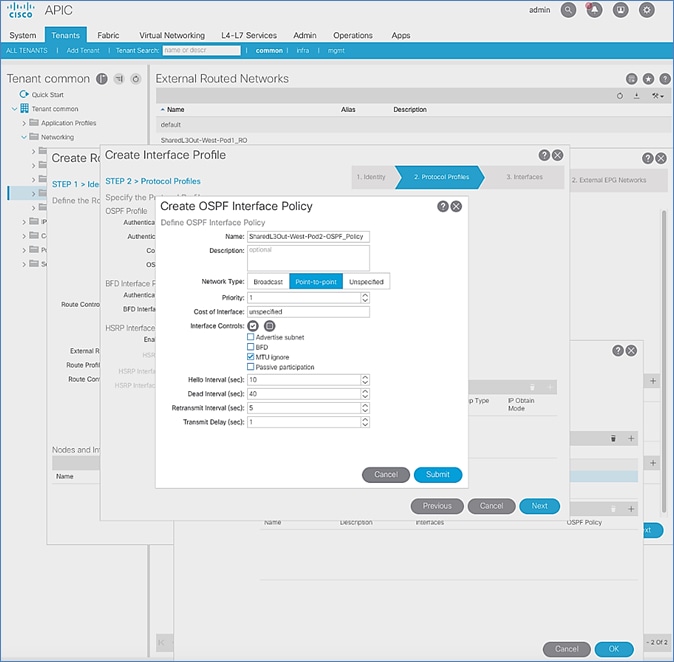

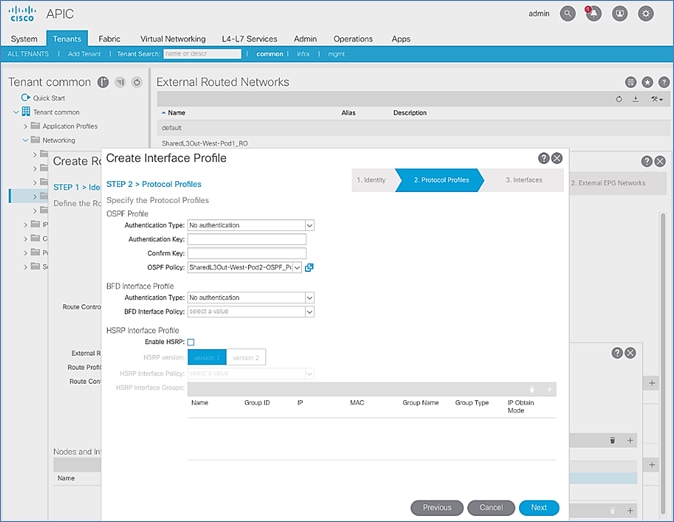

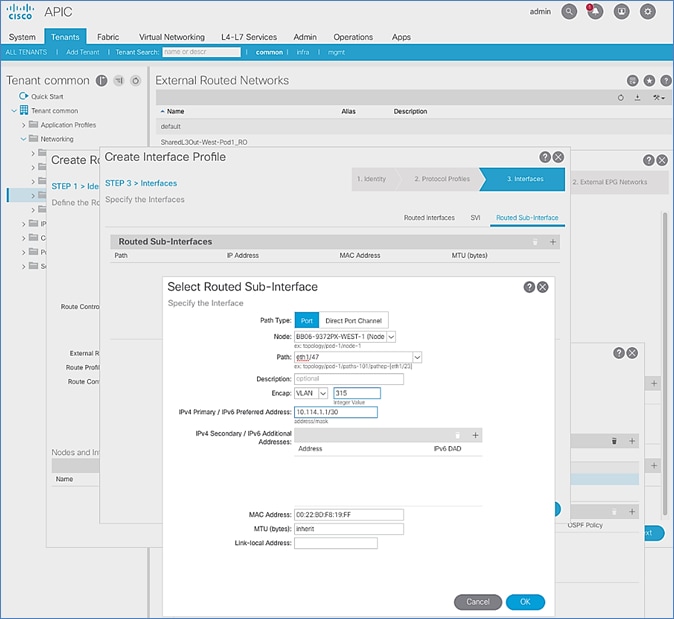

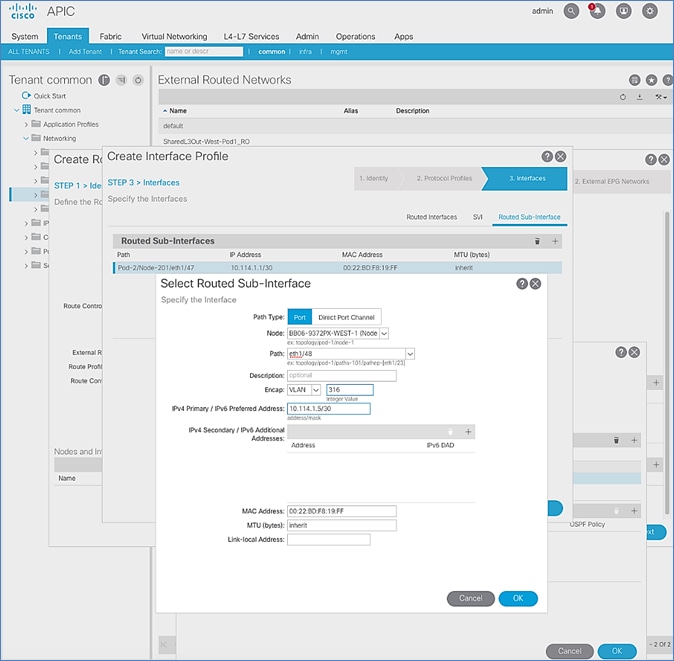

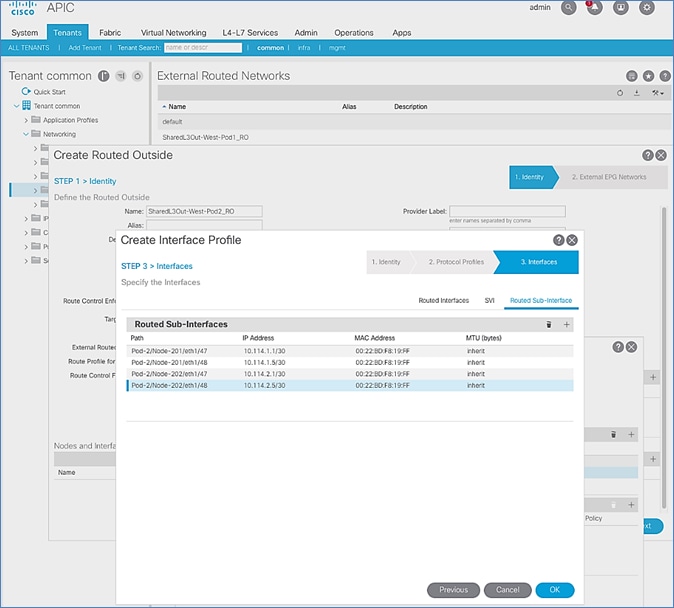

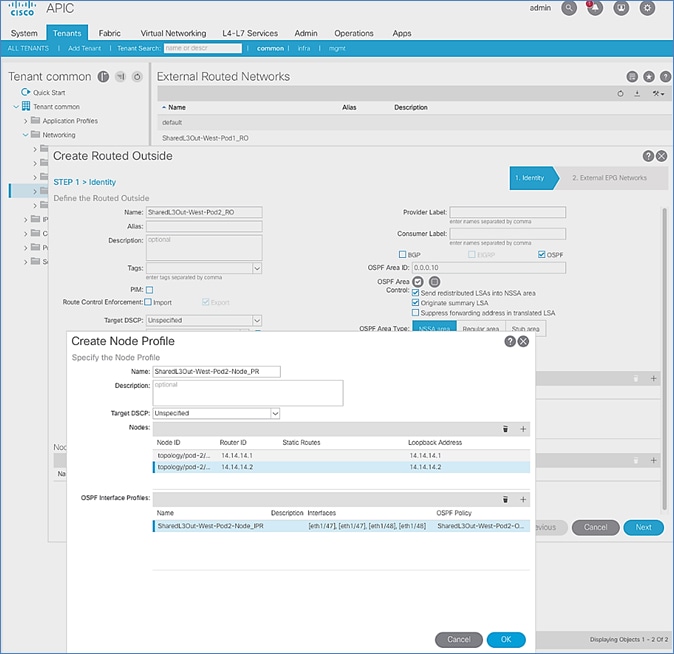

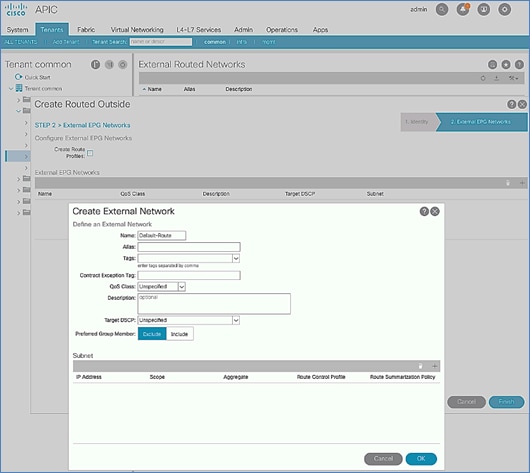

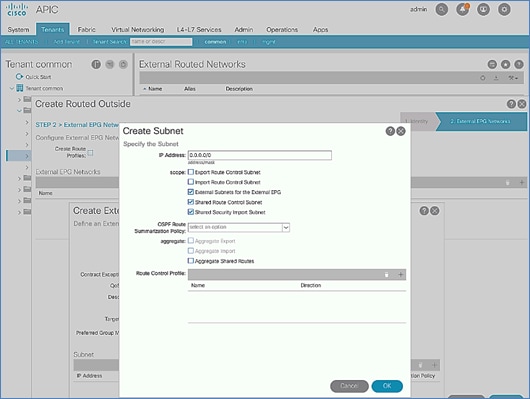

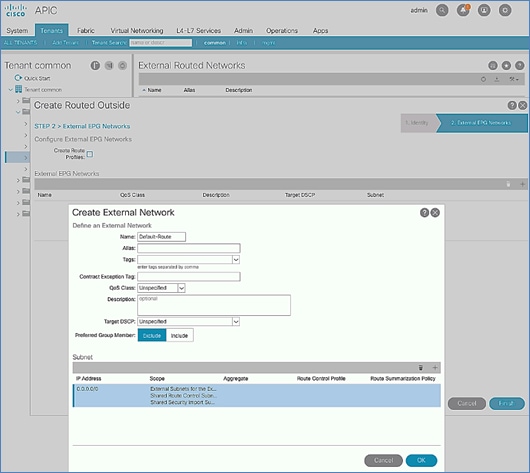

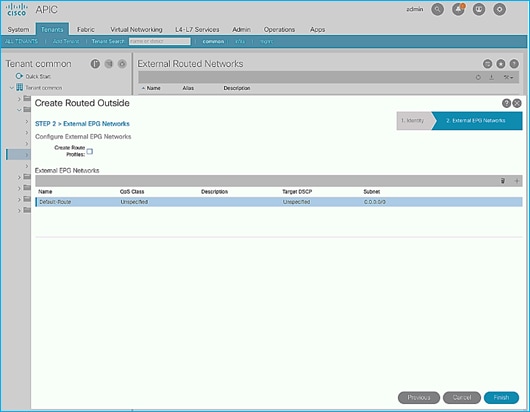

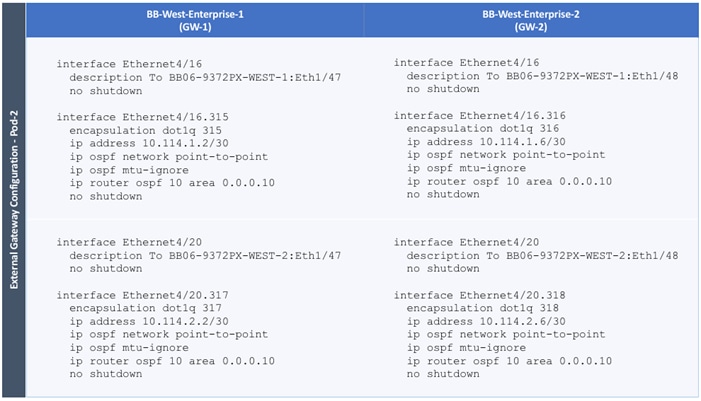

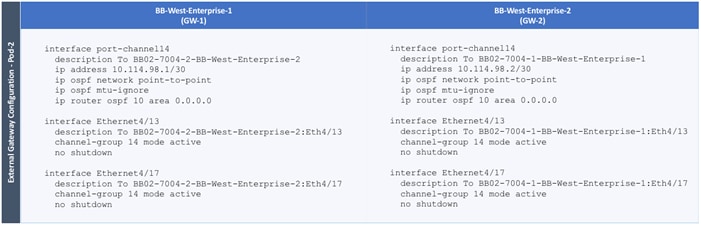

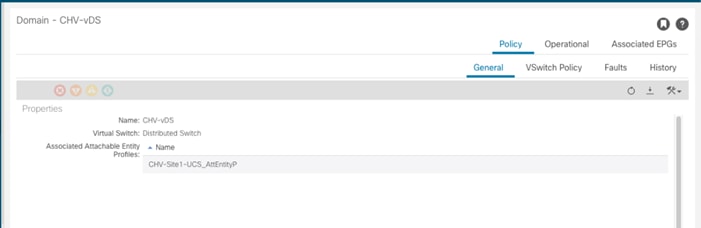

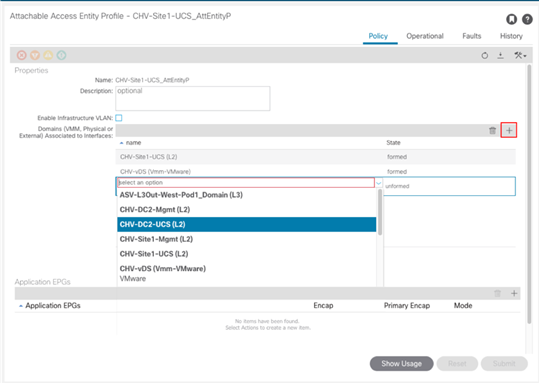

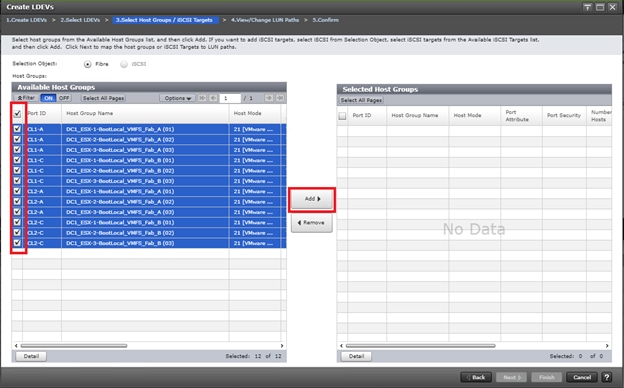

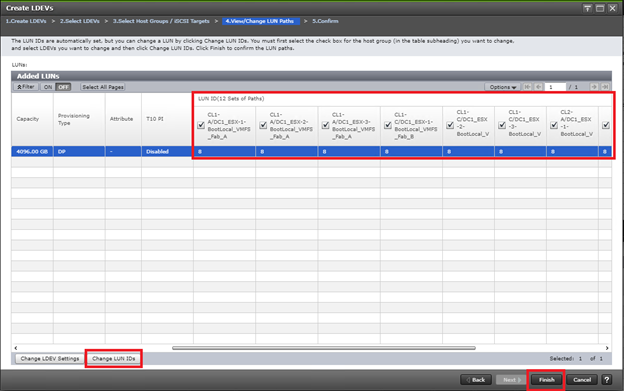

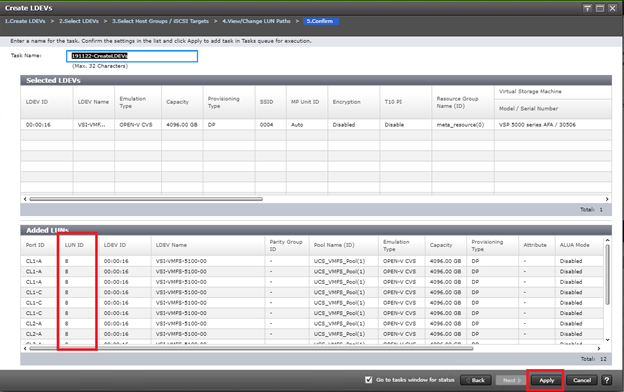

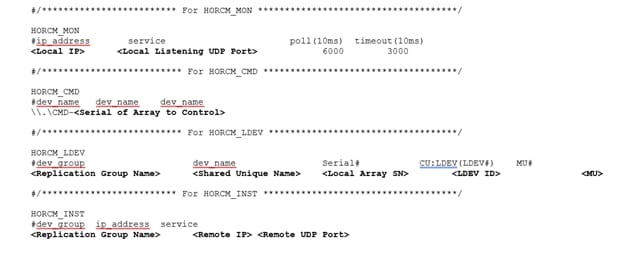

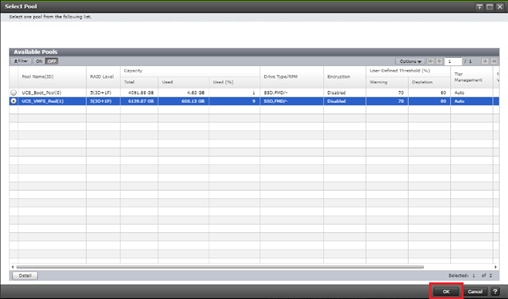

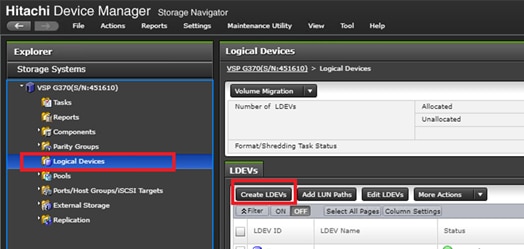

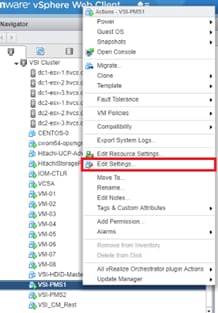

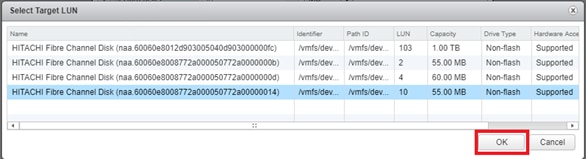

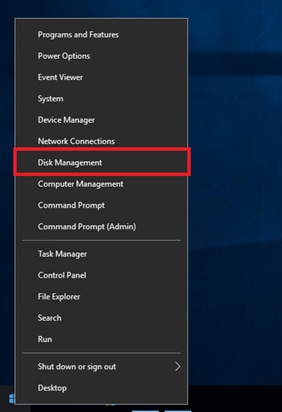

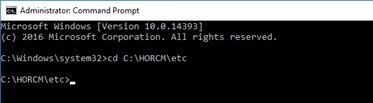

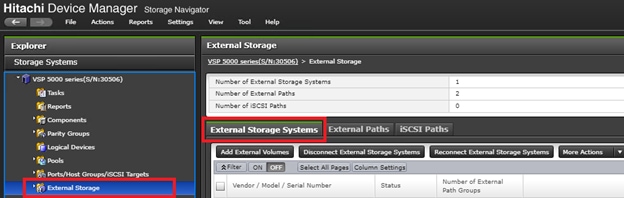

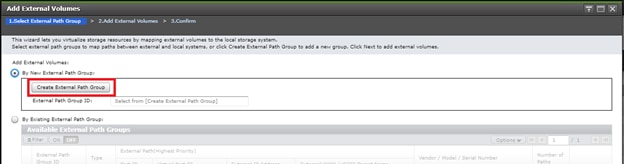

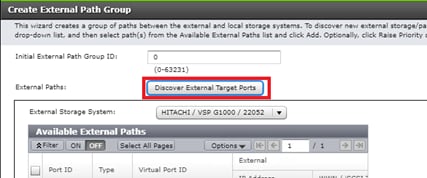

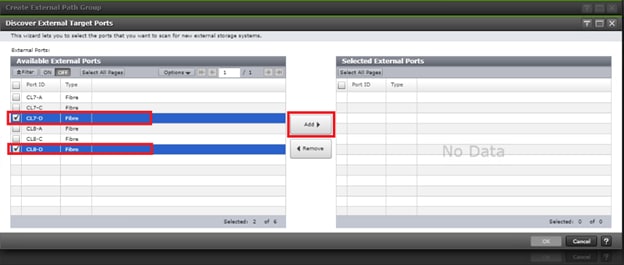

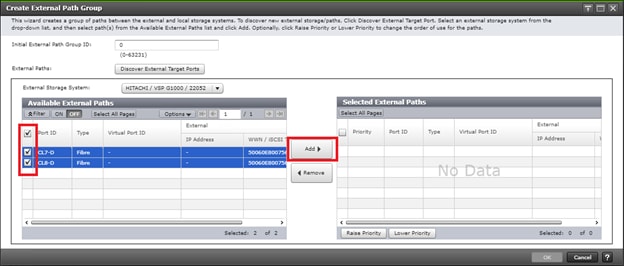

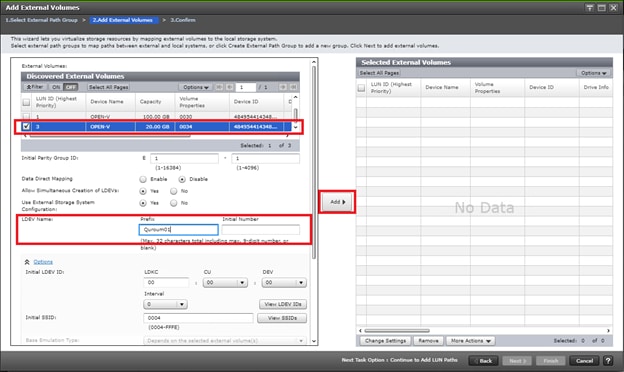

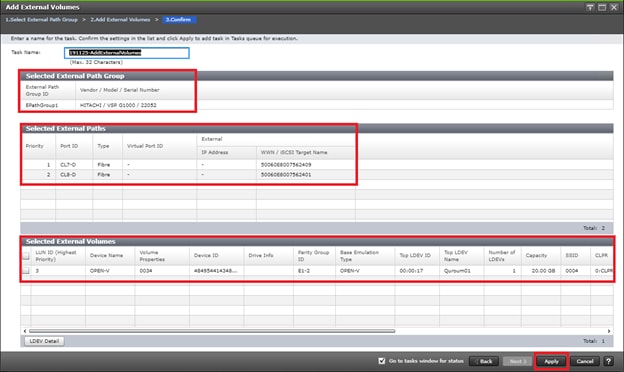

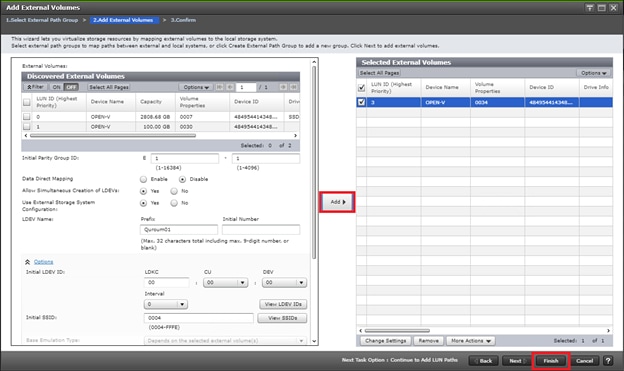

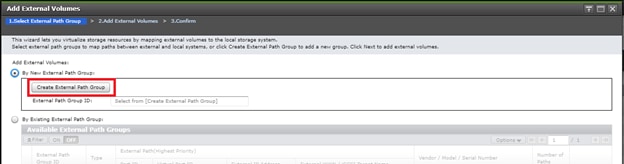

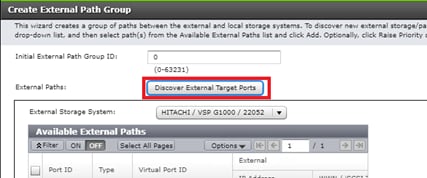

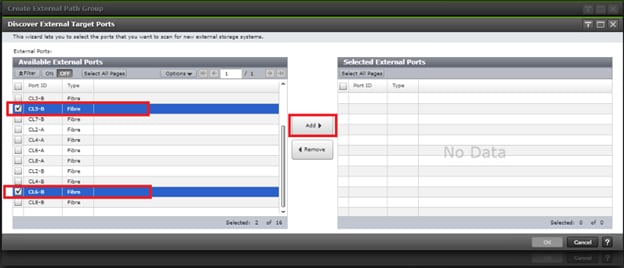

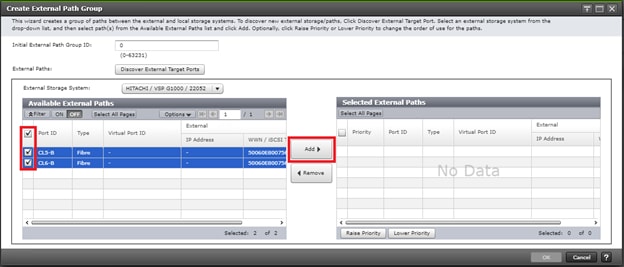

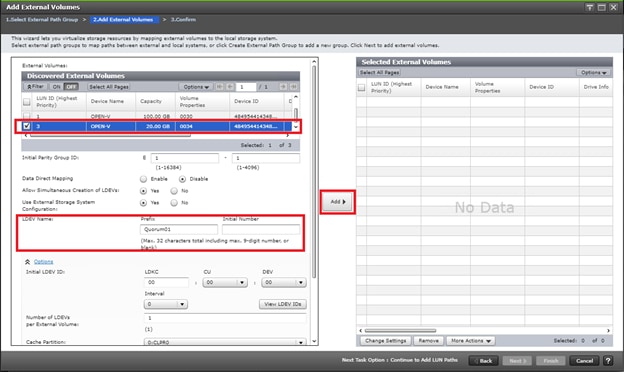

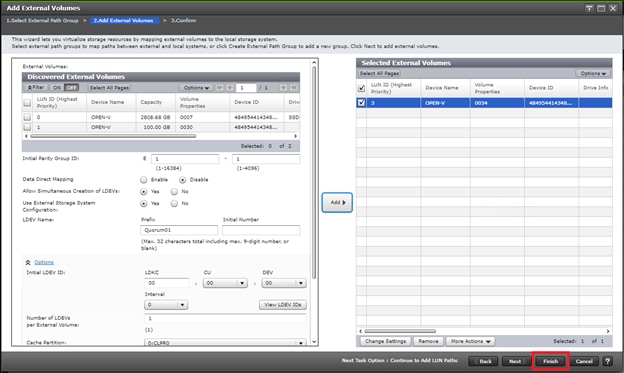

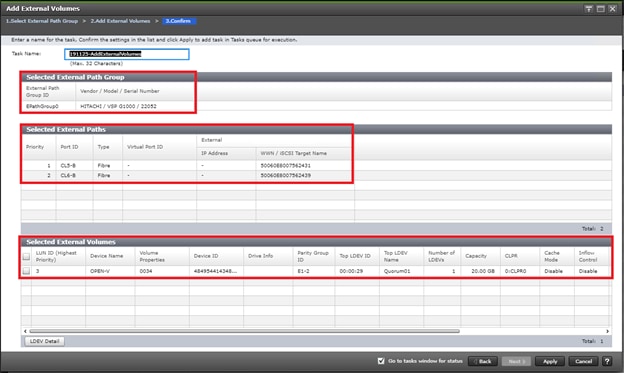

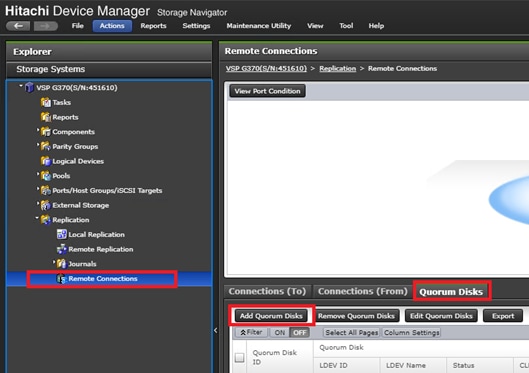

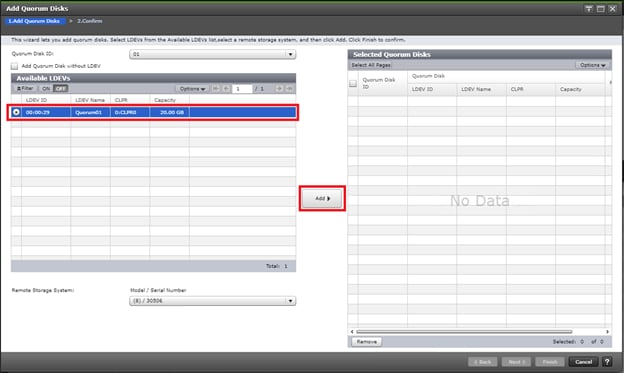

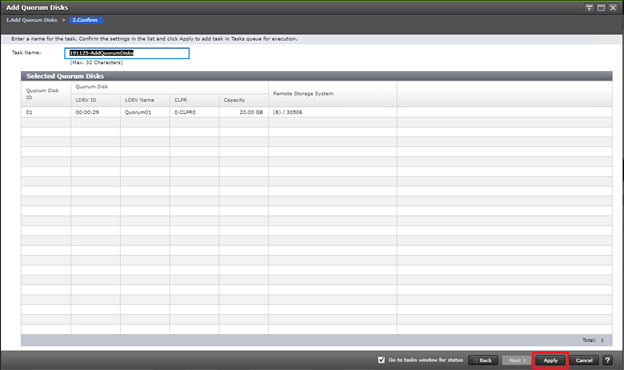

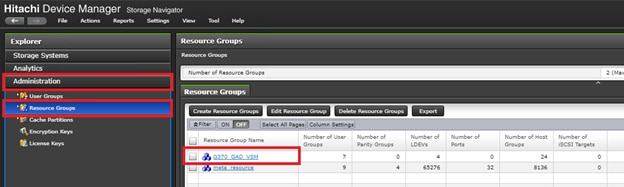

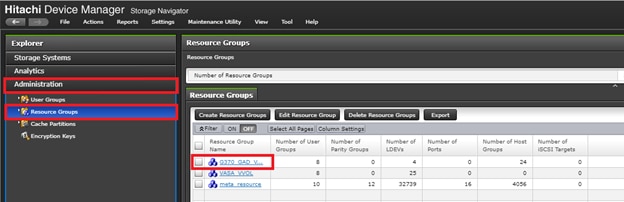

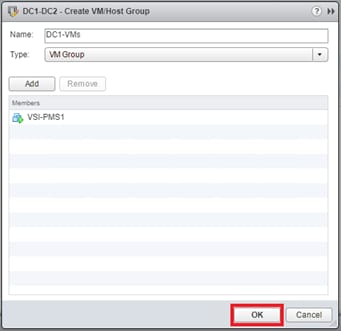

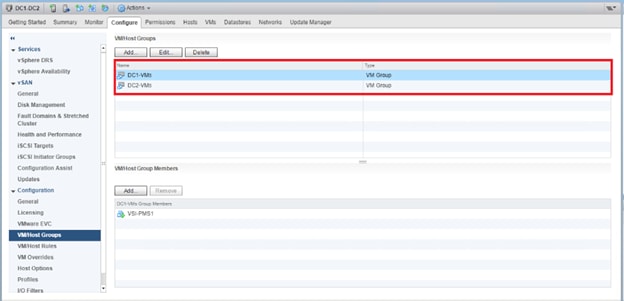

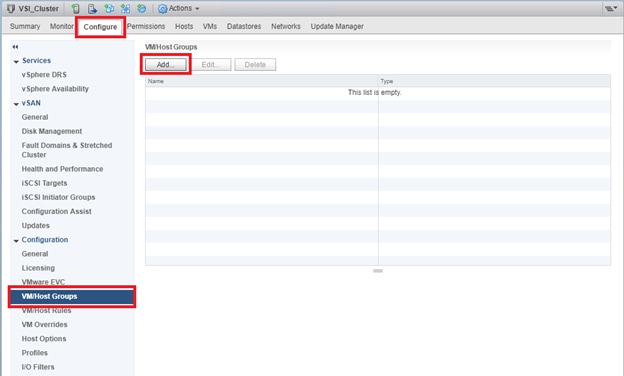

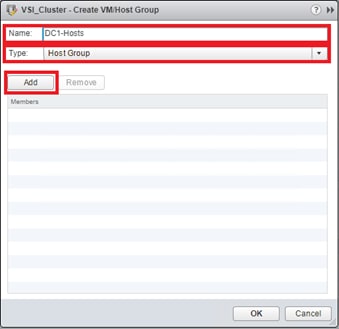

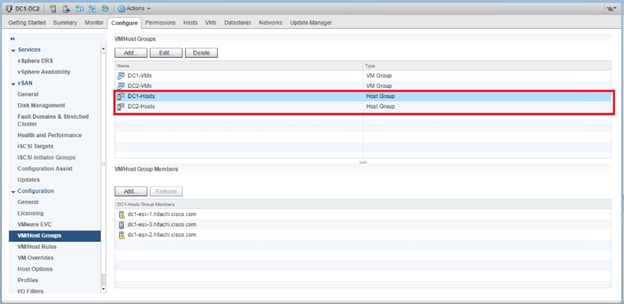

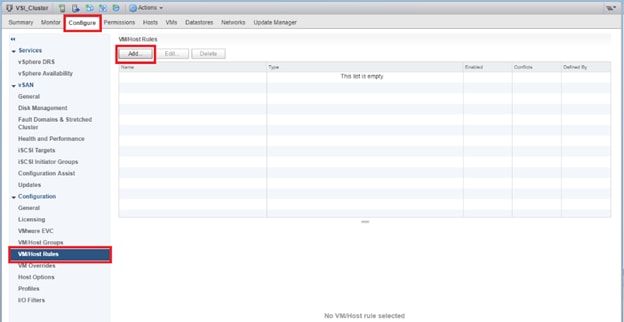

Run Configuration Wizard for IPN Connectivity