Cisco and Hitachi Adaptive Solutions for Converged Infrastructure with Cisco ACI

Available Languages

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure with Cisco ACI

Deployment Guide for Cisco and Hitachi Adaptive Solutions with ACI 4.1 and VMware vSphere 6.7 for Single Site Deployment

Last Updated: September 17, 2019

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2019 Cisco Systems, Inc. All rights reserved.

Table of Contents

Deployment Hardware and Software

Hardware and Software Versions

Cisco Application Policy Infrastructure Controller (APIC) Verification

Initial ACI Fabric Setup Verification

Set Up Out-of-Band Management IP Addresses for New Leaf Switches

Verify Time Zone and NTP Server

Verify Fabric Wide Enforce Subnet Check for IP and MAC Learning

Create LLDP Interface Policies

Create BPDU Filter/Guard Policies

Create Virtual Port Channels (vPCs)

vPC – Cisco UCS Fabric Interconnects

Deploy Shared Layer 3 Connectivity to Outside Networks – Pod-1

Create VLAN Pool for External Routed Domain

Configure Domain Type for External Routed Domain

Create AAEP for External Routed Domain

Configure Interfaces to External Routed Domain

Configure Tenant Networking for Shared L3Out

Configure External Routed Networks under Tenant Common

Create Contracts for External Routed Networks from Tenant (common)

Provide Contracts for External Routed Networks from Tenant (common)

Configure External Gateways in the Outside Network

Create Application Profile for Infrastructure

Create Application Profile for Host Connectivity

Initial MDS Configuration Dialogue

Cisco MDS Switch Configuration

Create Port Descriptions - Fabric B

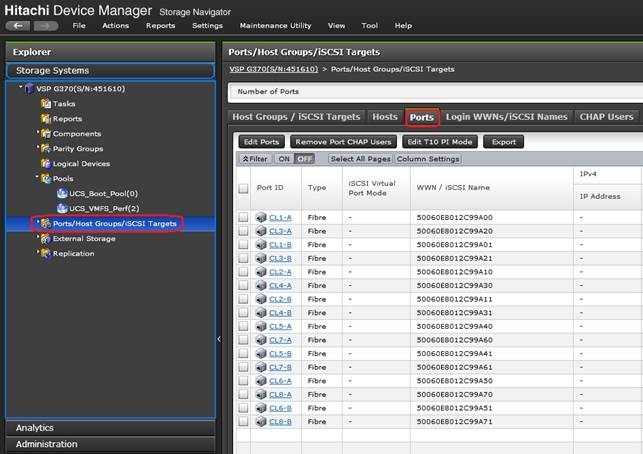

Configure Fibre Channel Ports on Hitachi Virtual Storage Platform

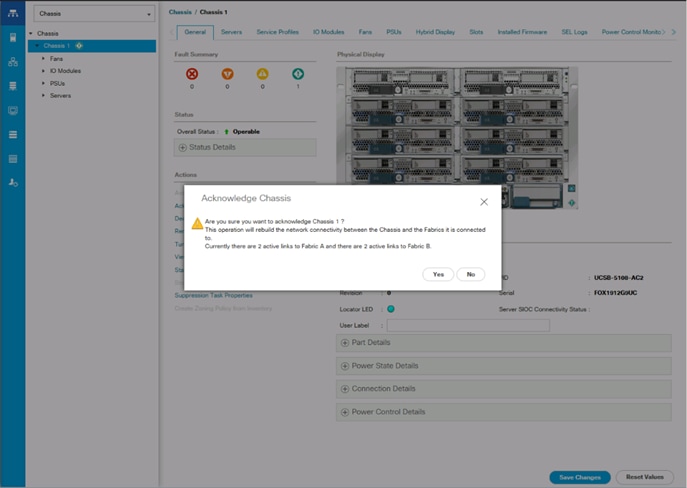

Cisco UCS Compute Configuration

Upgrade Cisco UCS Manager Software to Version 4.0(4b)

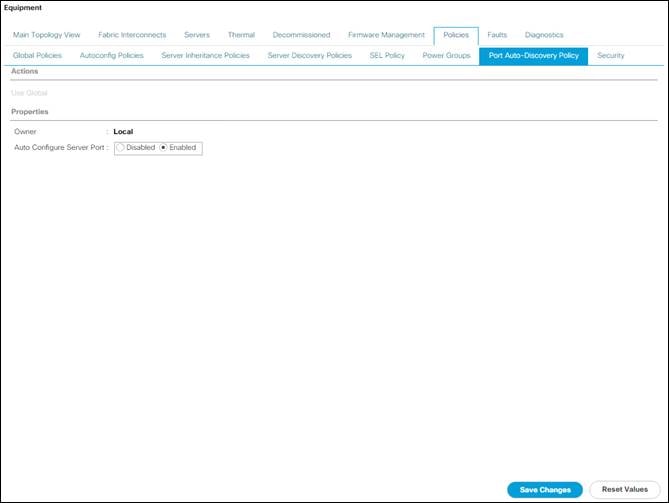

Enable Port Auto-Discovery Policy

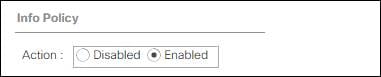

Enable Info Policy for Neighbor Discovery

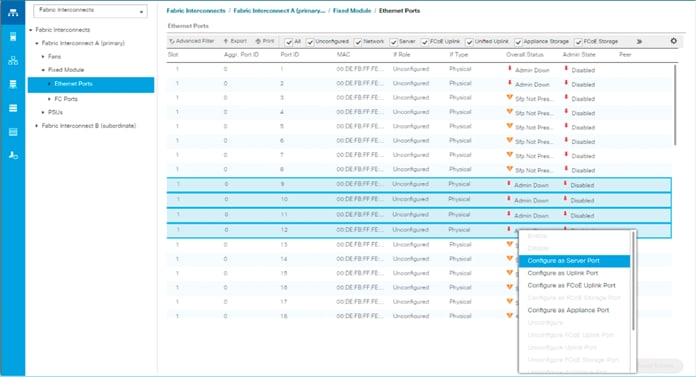

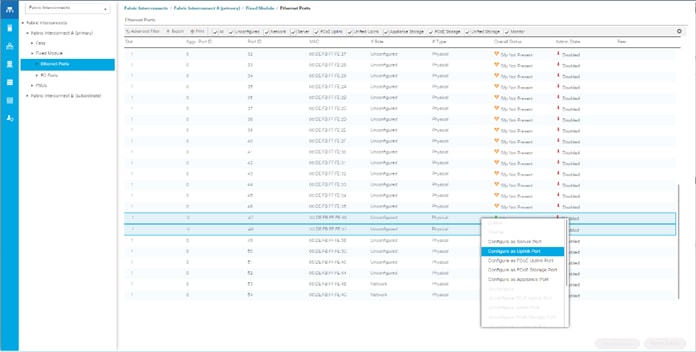

Enable Server and Uplink Ports

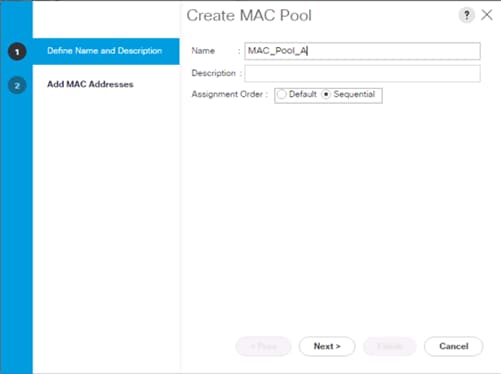

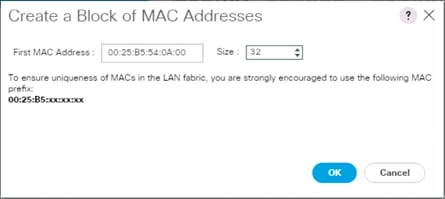

Configure Cisco UCS LAN Connectivity

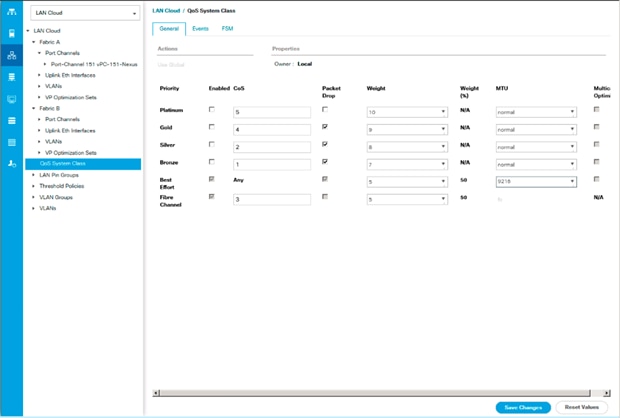

Set Jumbo Frames in Cisco UCS Fabric

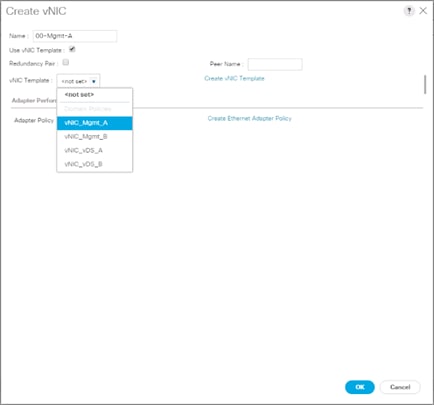

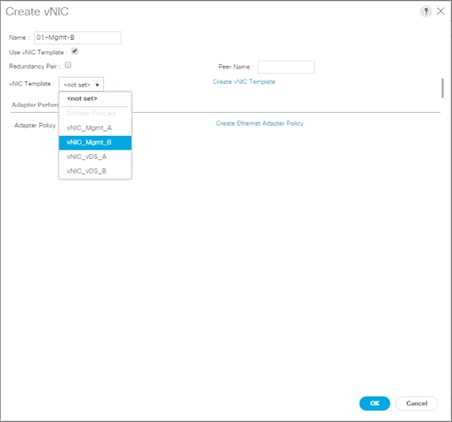

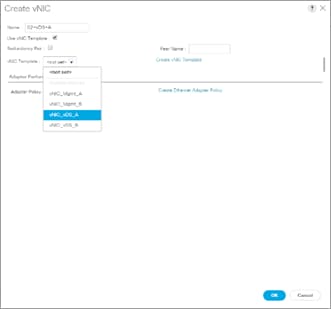

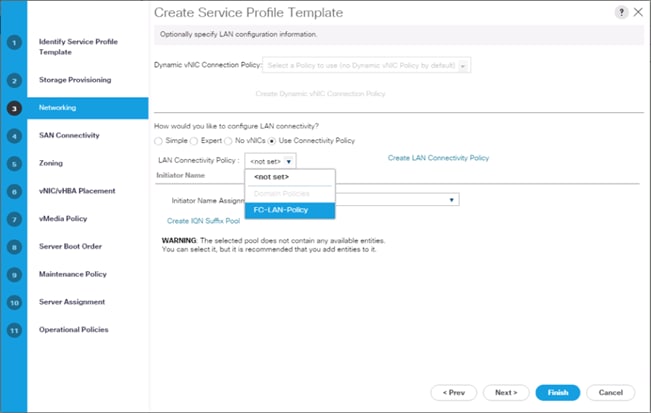

Create LAN Connectivity Policy

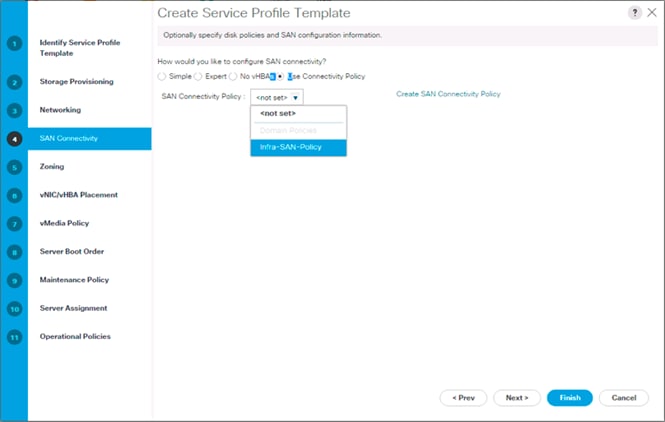

Create SAN Connectivity Policy

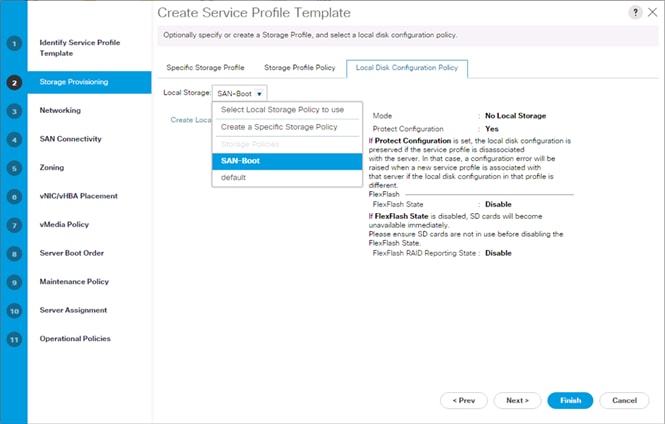

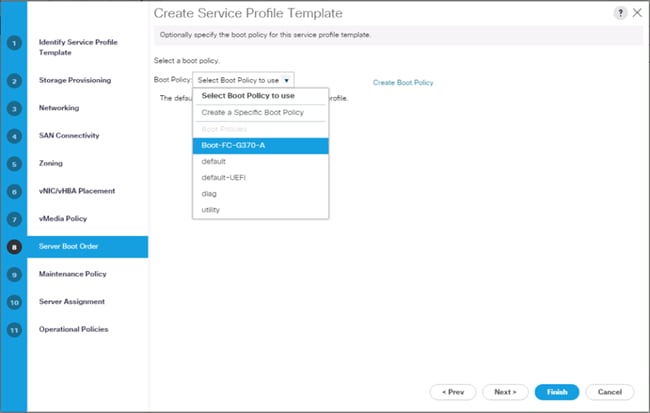

Create Service Profile Template

Create vMedia Service Profile Template

Collect UCS Host vHBA Information for Zoning

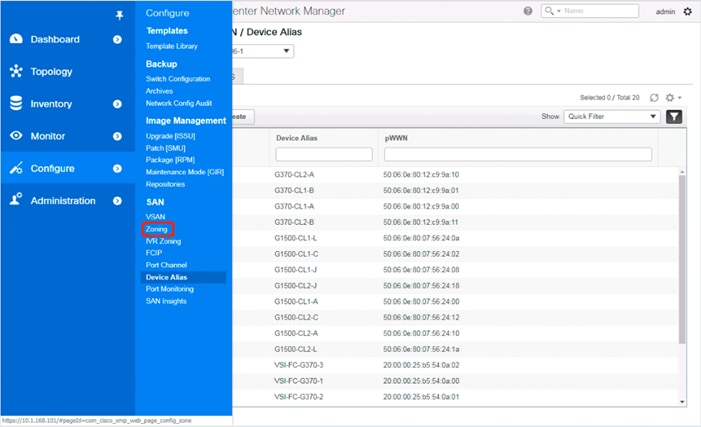

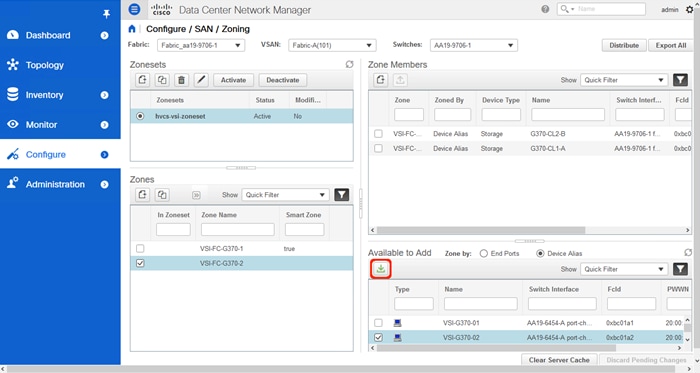

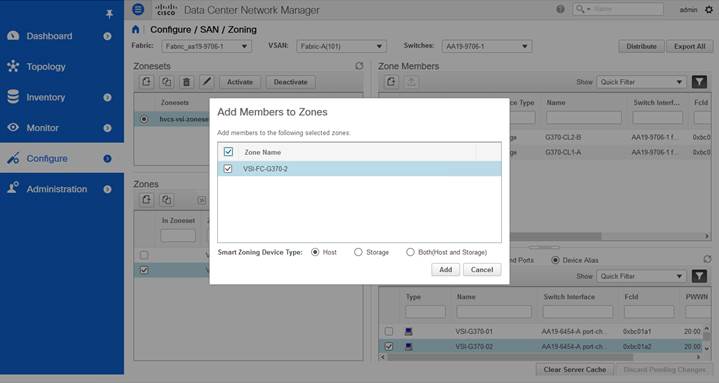

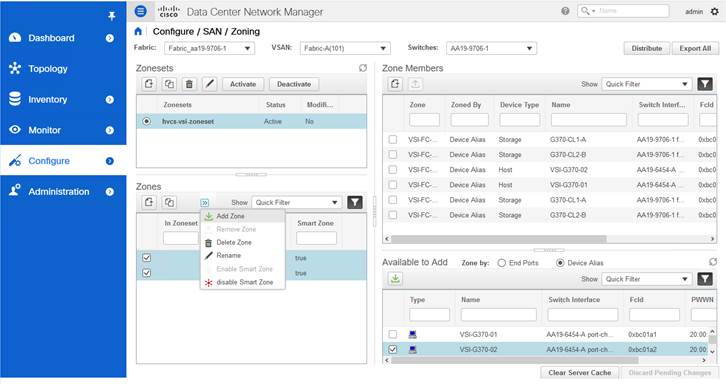

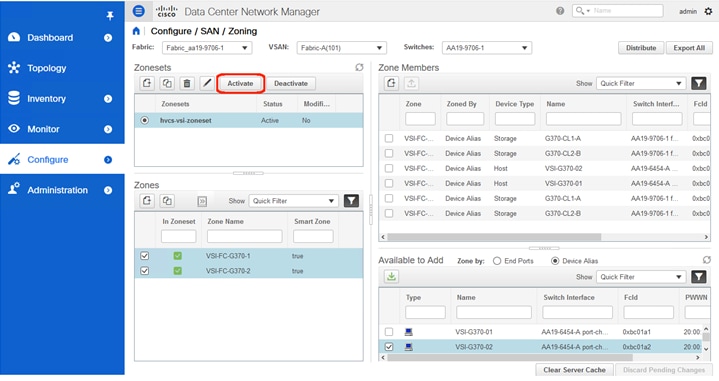

DCNM Switch Registration and Zoning(Optional)

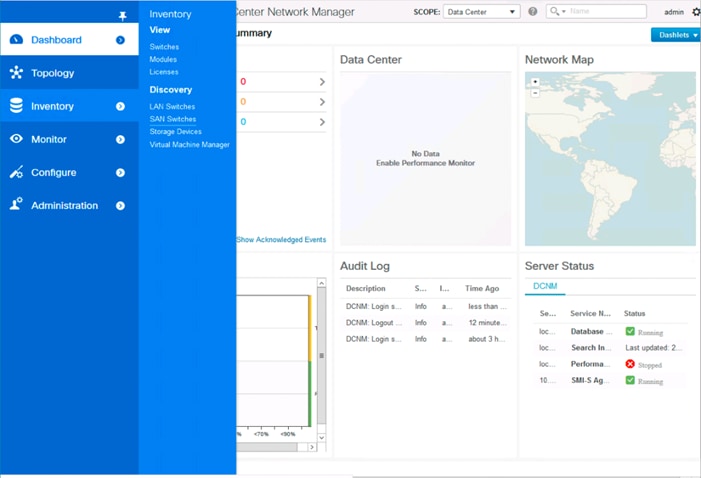

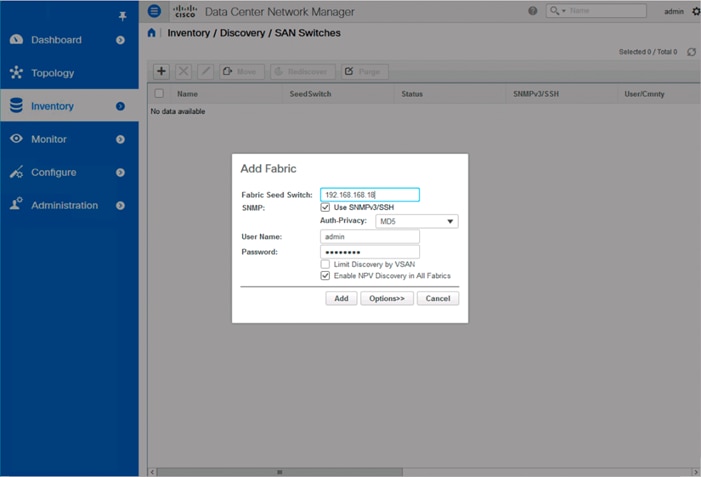

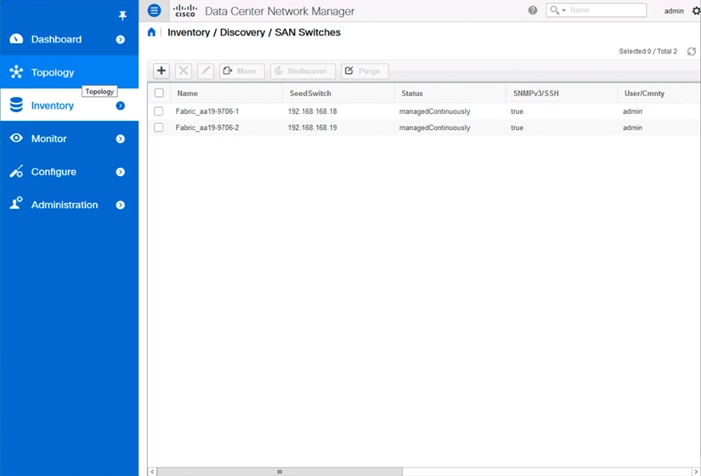

Connect to DCNM and Registering Switches

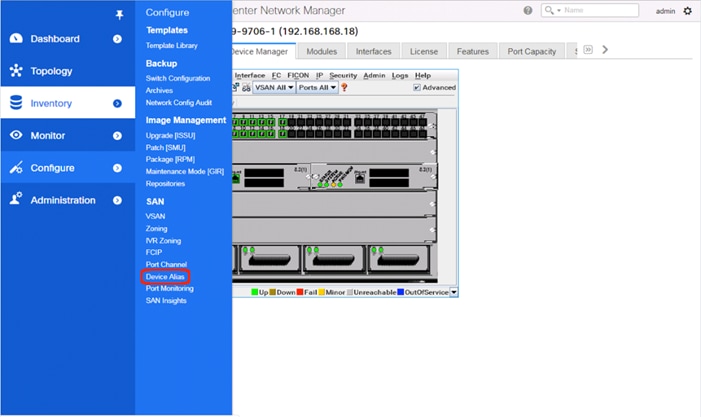

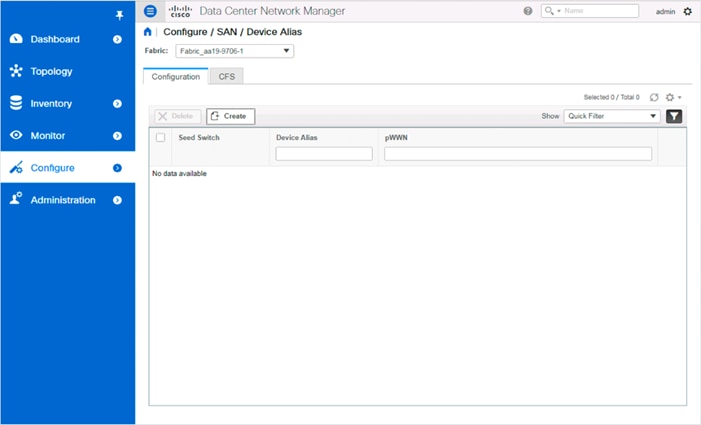

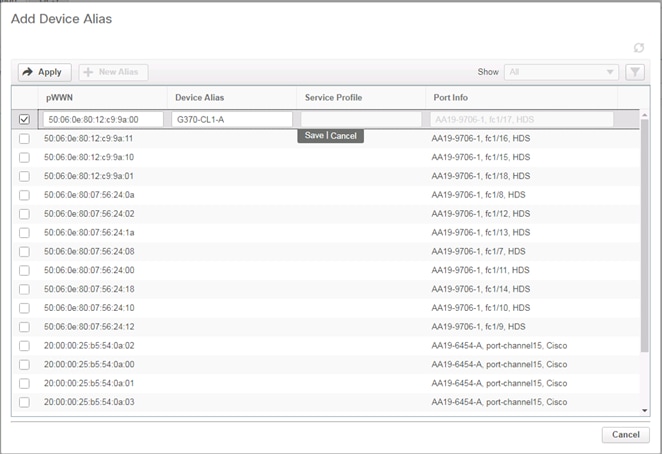

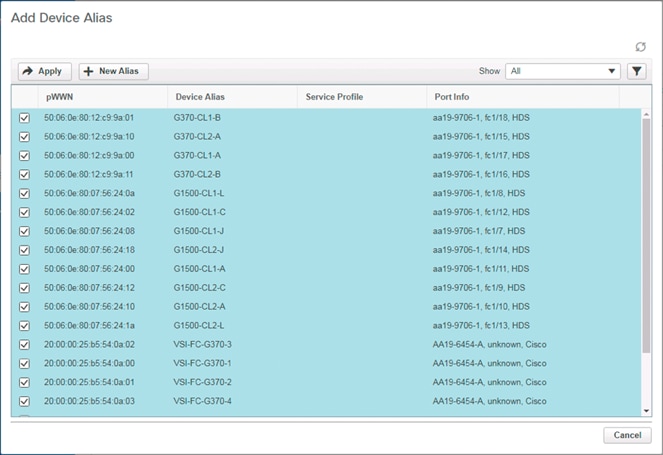

Configuring Device Aliases for the VSP and ESXi hosts

Configure Host Connectivity and Presentation for Storage on Hitachi Virtual Storage Platform

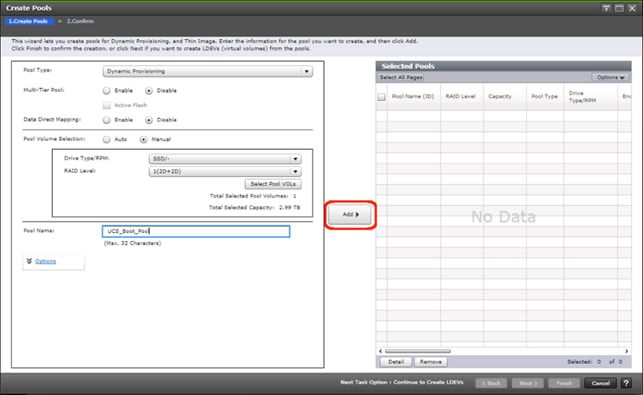

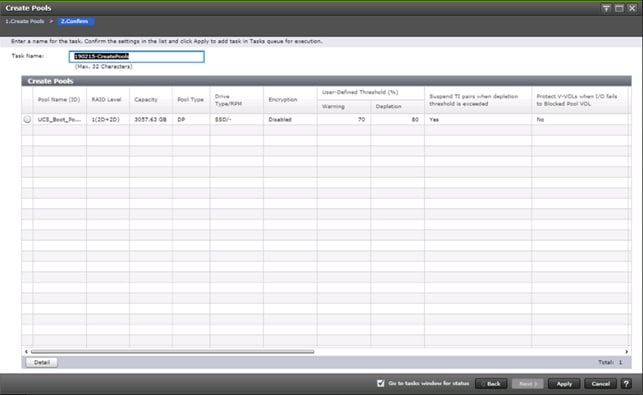

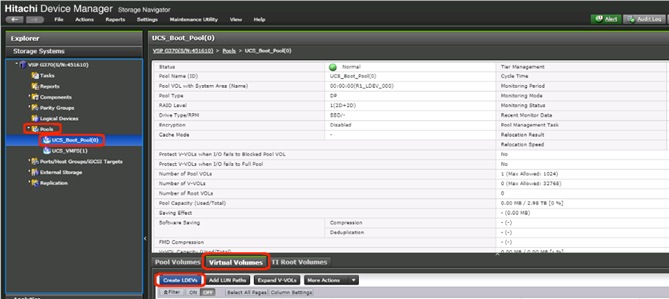

Create a Hitachi Dynamic Provisioning Pool for UCS Server Boot LDEVs

Create a Hitachi Dynamic Provisioning Pool for UCS Server VMFS Volume LDEVs

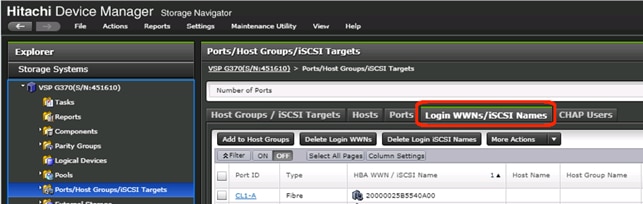

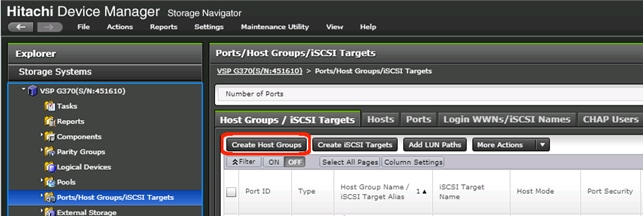

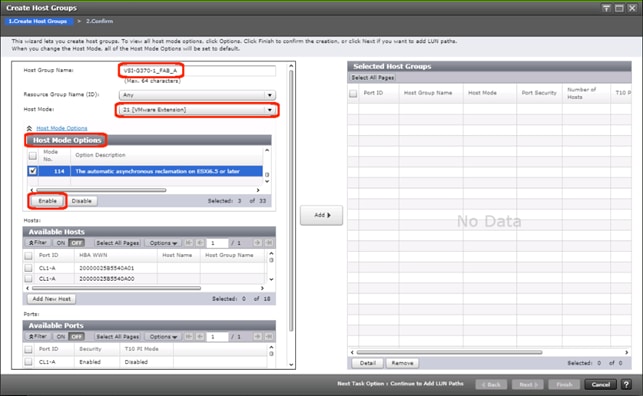

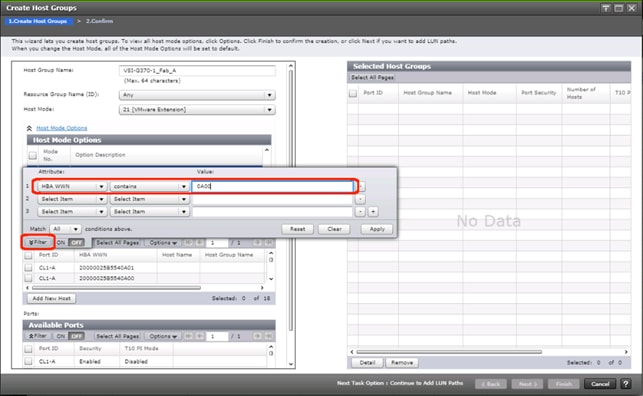

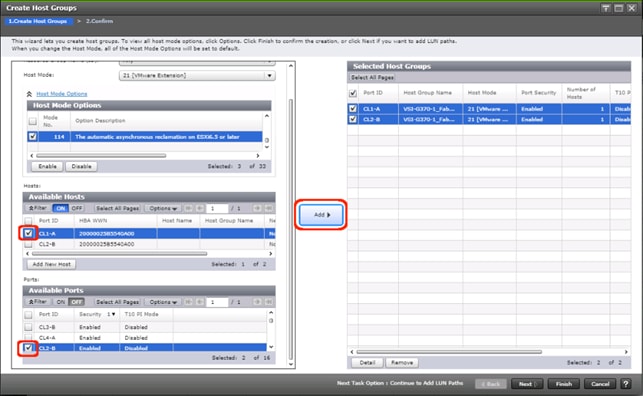

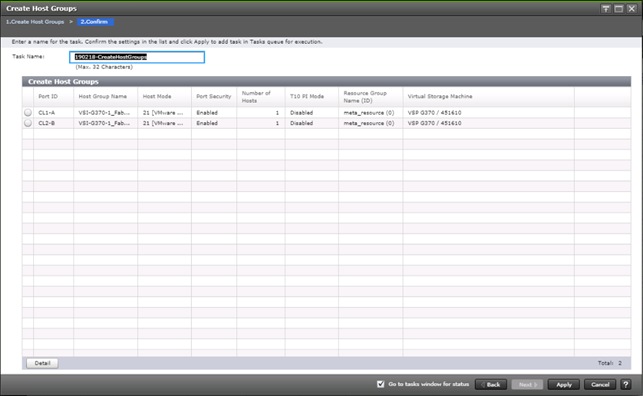

Create Host Groups for Cisco UCS Server vHBAs on Each Fabric

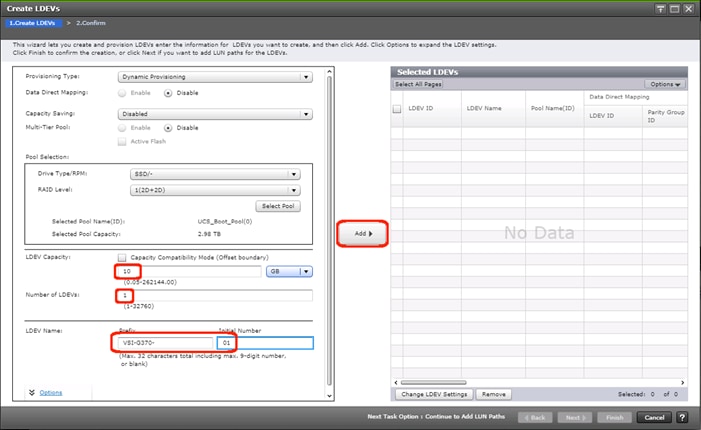

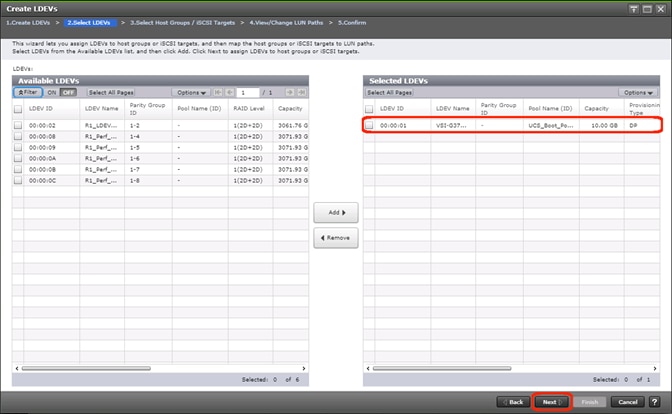

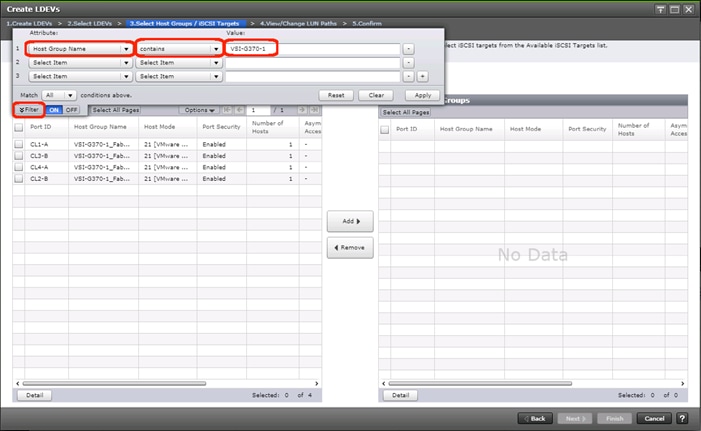

Create Boot LDEVs for Each UCS Service Profile and Add LDEV Paths

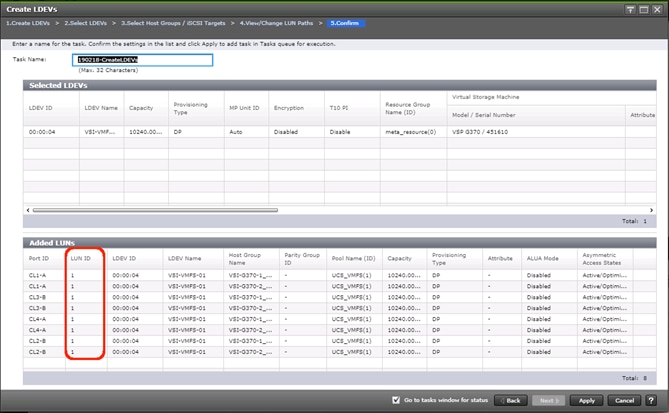

Create Shared VMFS LDEVs and Add LDEV Paths

Download Cisco Custom Image for ESXi 6.7 U2

Log into Cisco UCS 6454 Fabric Interconnect

Set Up VMware ESXi Installation

Set Up Management Networking for ESXi Hosts

Log into VMware ESXi Hosts by Using VMware Host Client

Set Up VMkernel Ports and Virtual Switch

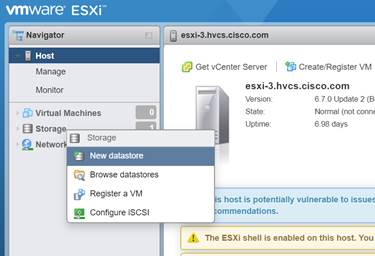

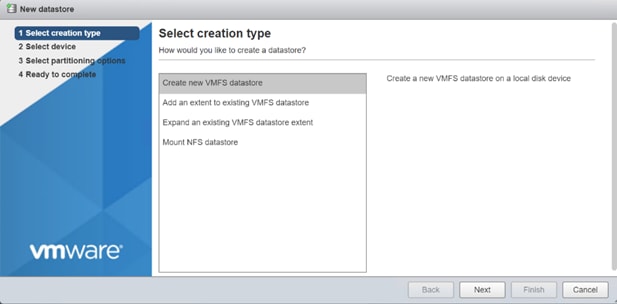

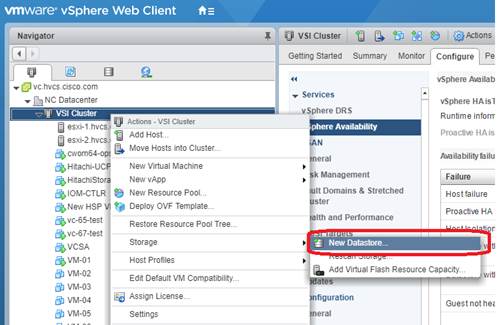

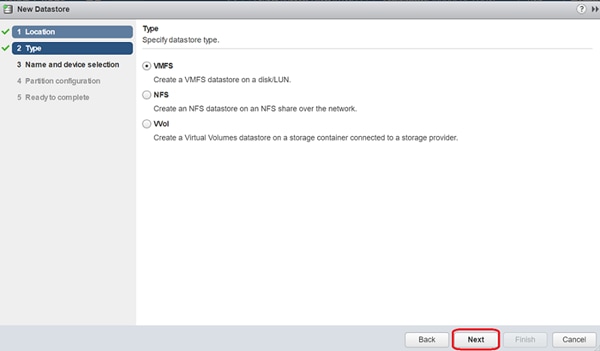

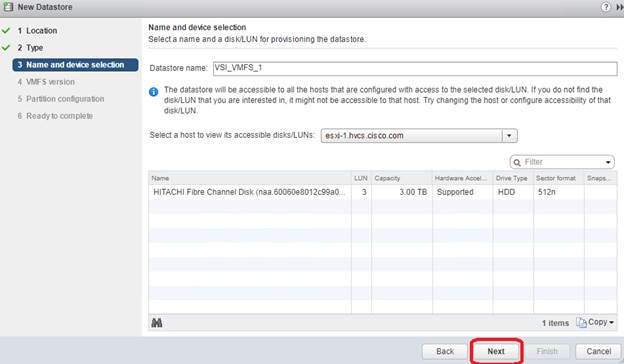

Add Provisioned Datastore to Configured Hosts

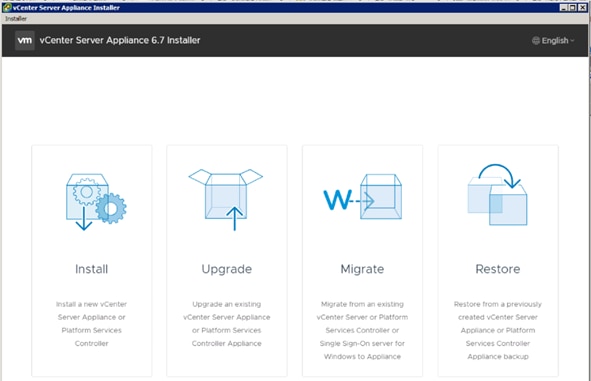

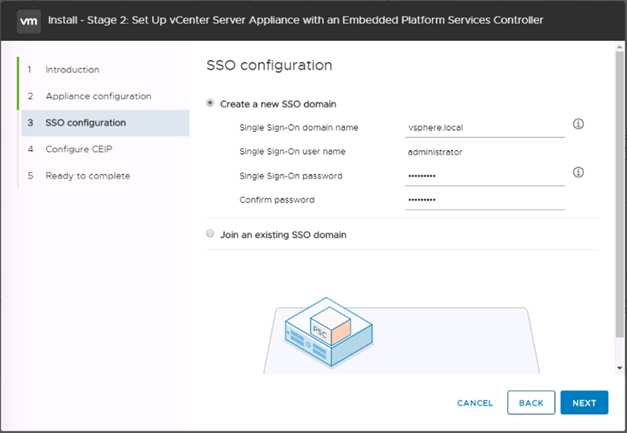

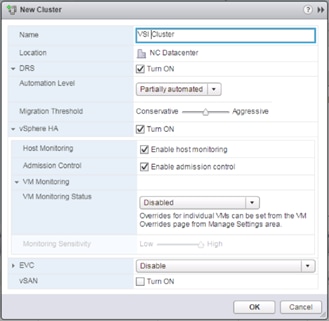

Build the VMware vCenter Server Appliance (optional)

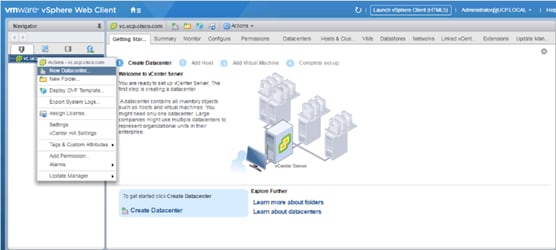

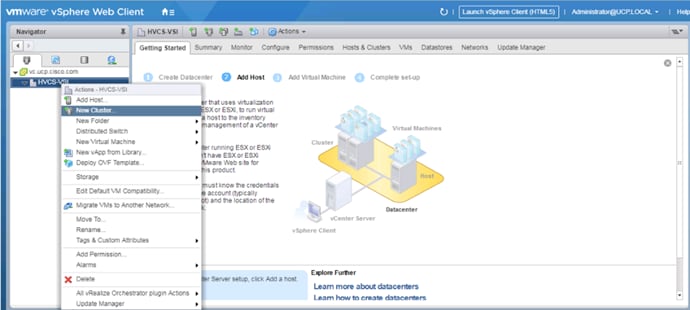

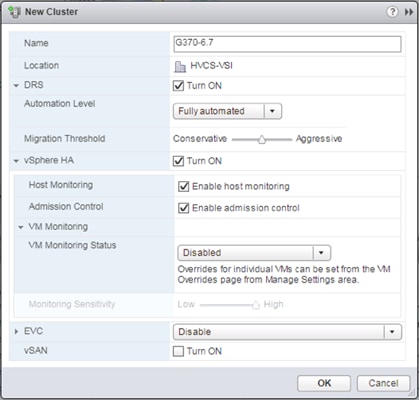

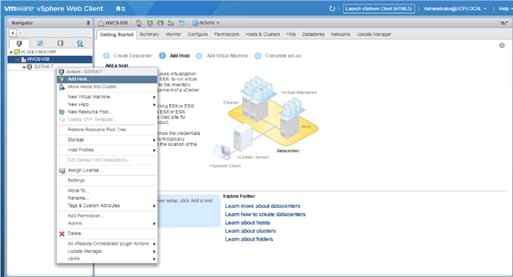

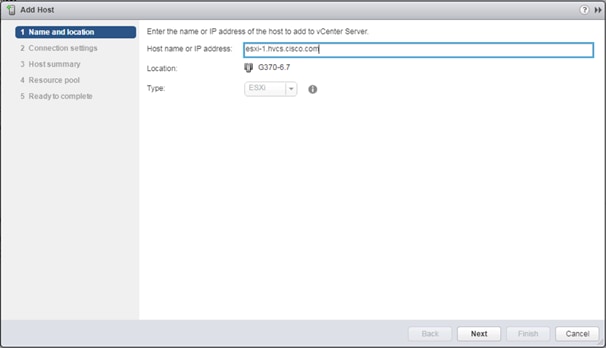

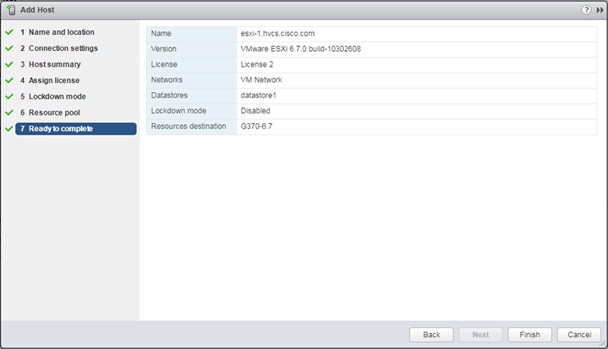

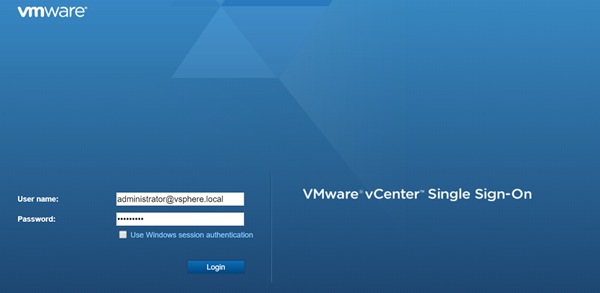

Add the VMware ESXi Hosts Using the VMware vSphere Web Client

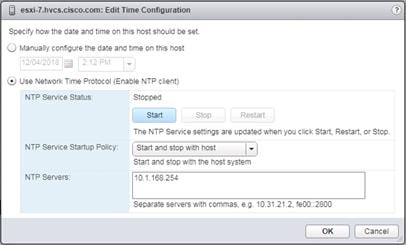

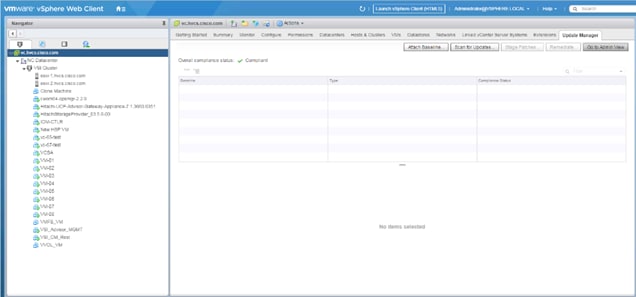

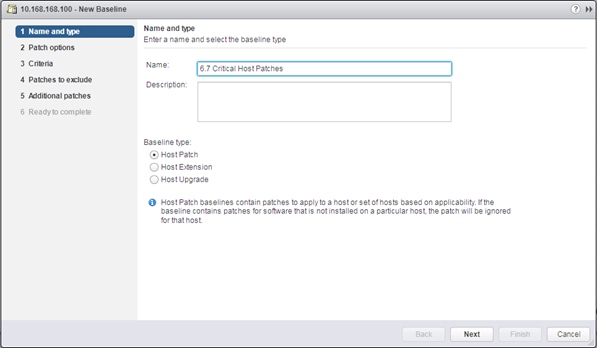

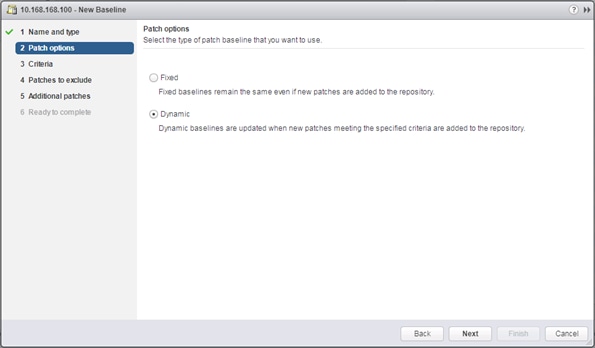

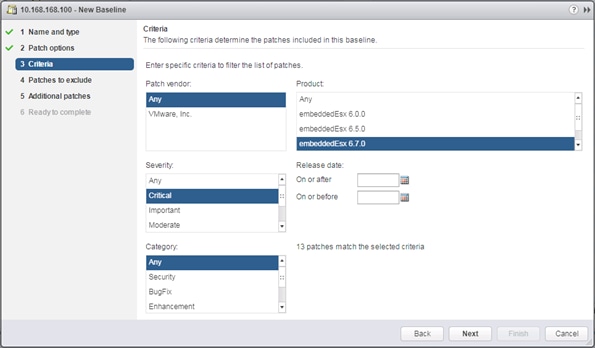

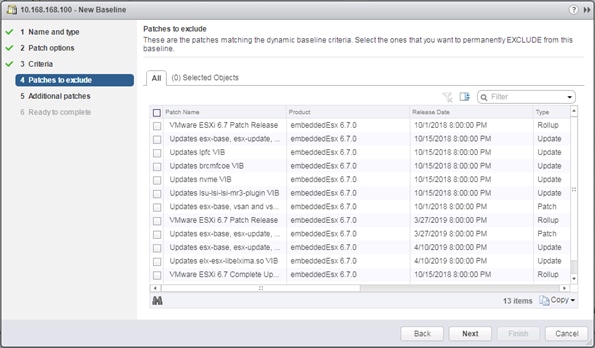

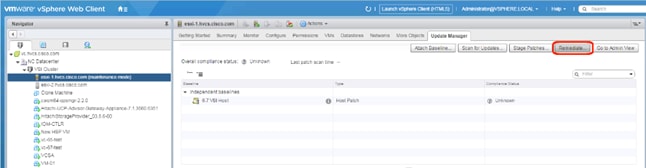

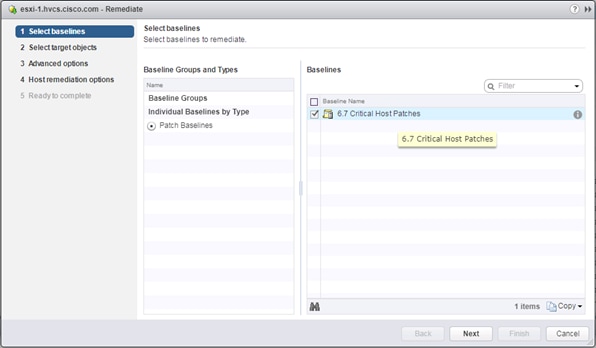

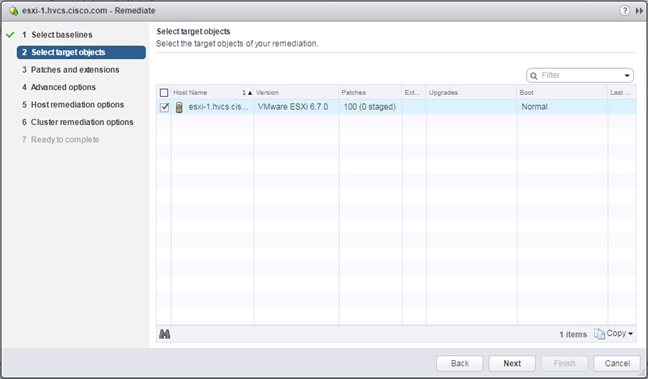

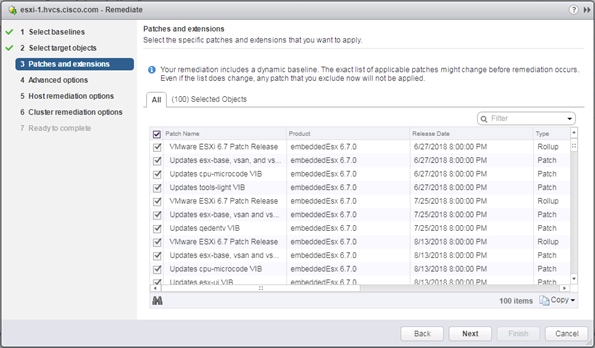

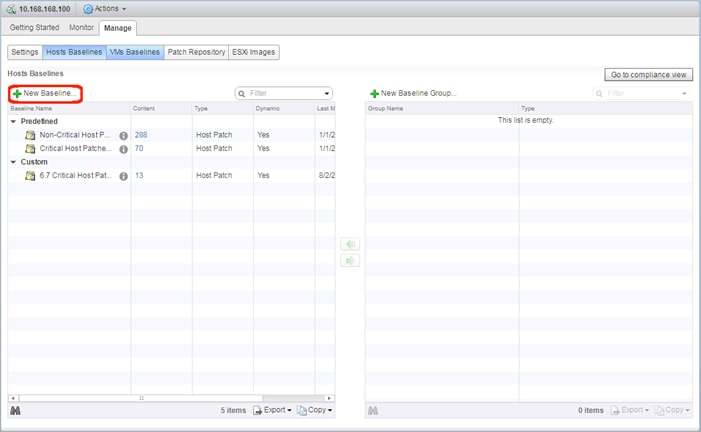

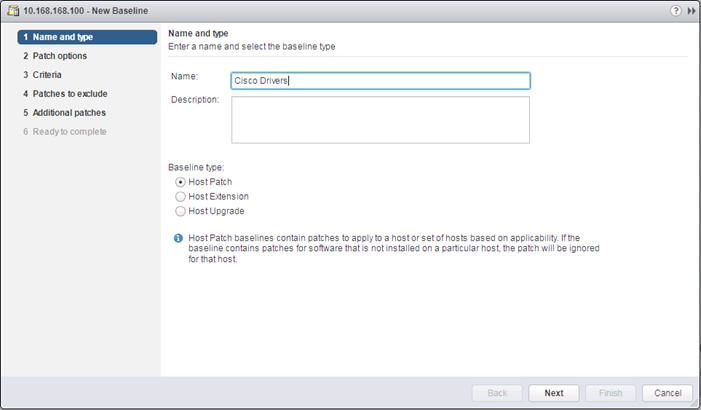

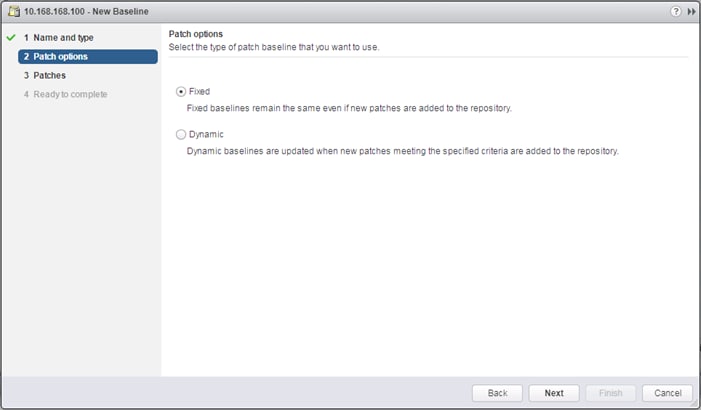

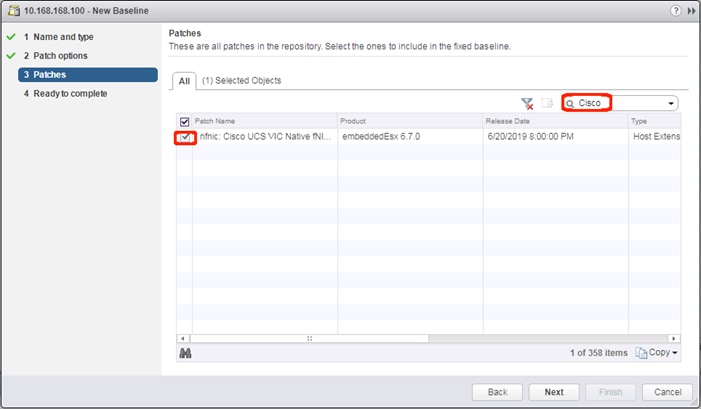

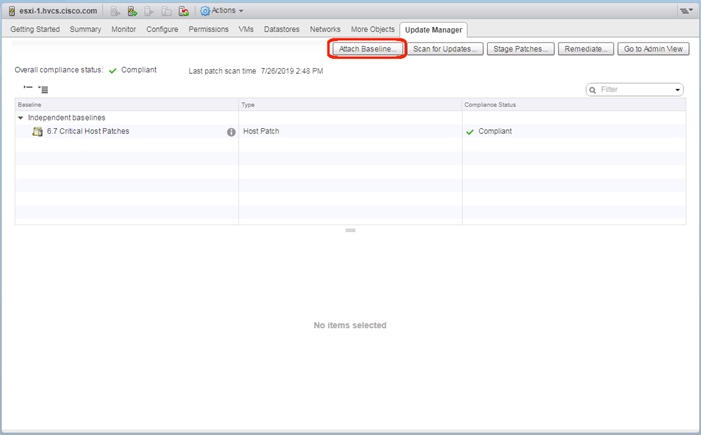

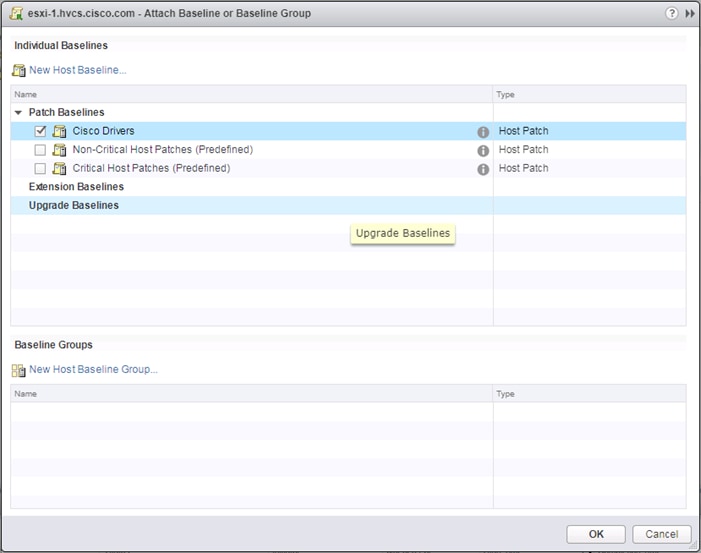

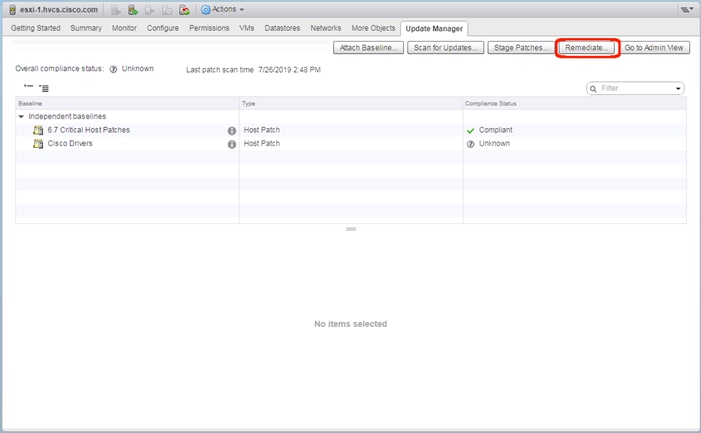

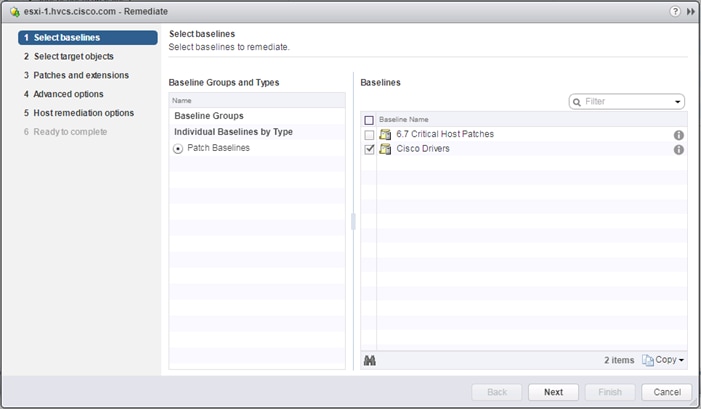

Create and Apply Patch Baselines with VUM

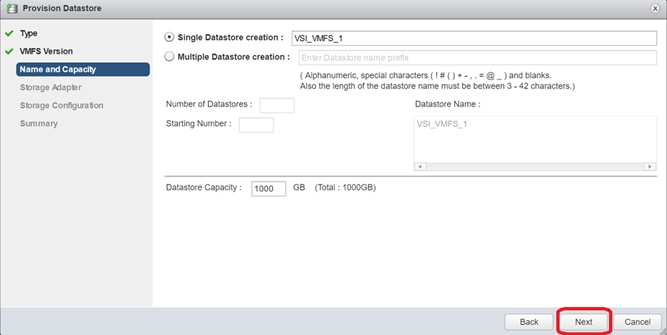

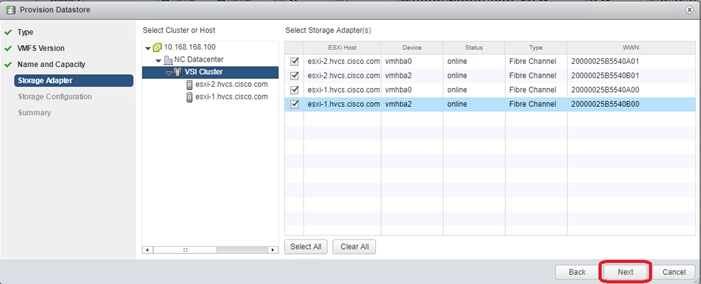

Allocate VMFS Datastore using Hitachi Storage Plug-in for VMware vCenter

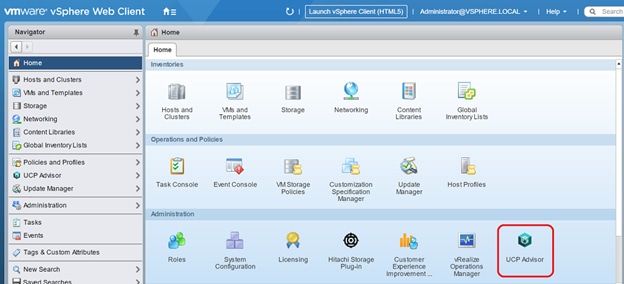

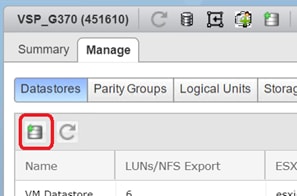

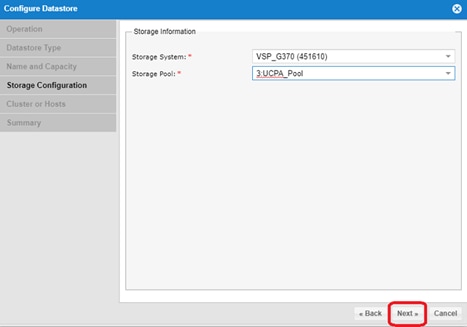

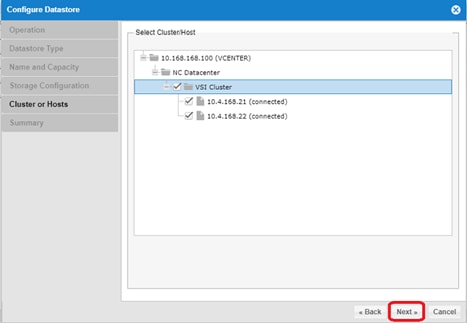

Allocate VMFS datastore using Hitachi Unified Compute Platform Advisor

Allocate VMFS Datastore using Hitachi Storage Provider for VMware vCenter (LDEV Storage Type)

Remediation of L1 Terminal Fault – VMM (L1TF) Security Vulnerability (Optional)

ACI Integration with Cisco UCS and vSphere

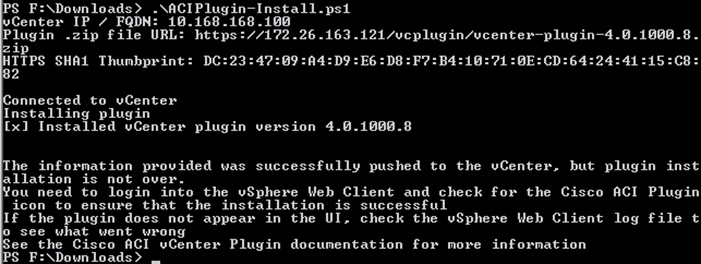

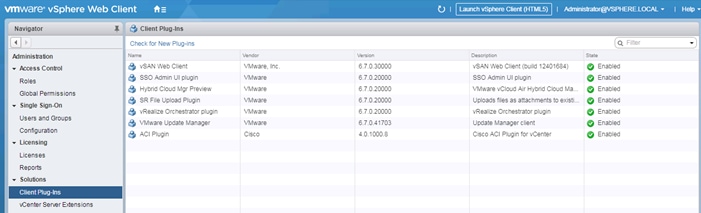

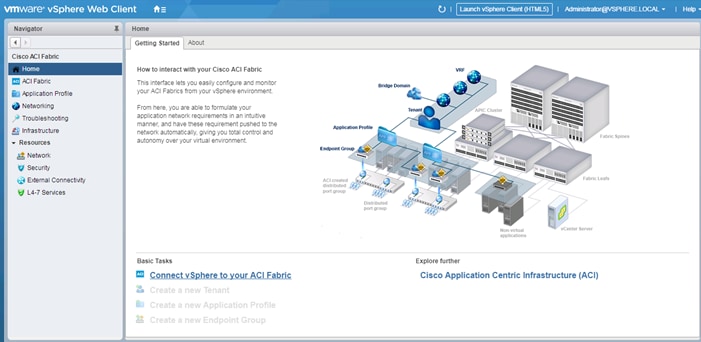

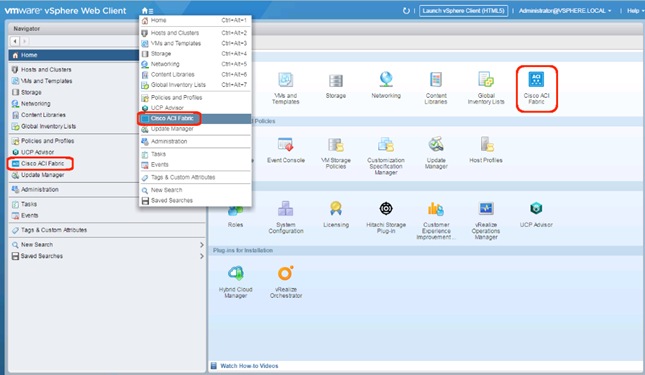

Cisco ACI vCenter Plug-in Installation

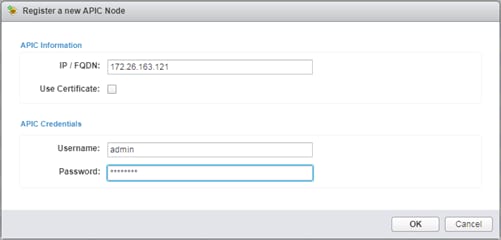

Create Virtual Machine Manager (VMM) Domain in APIC

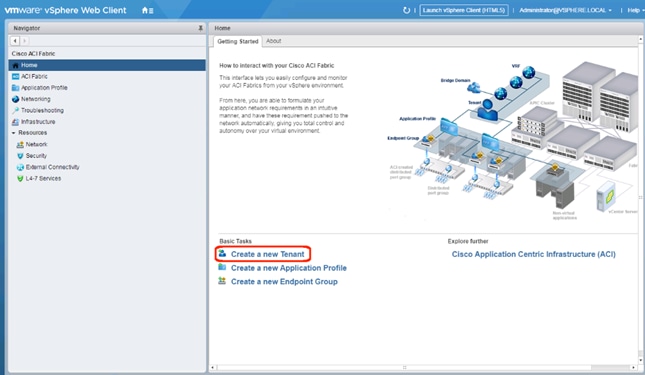

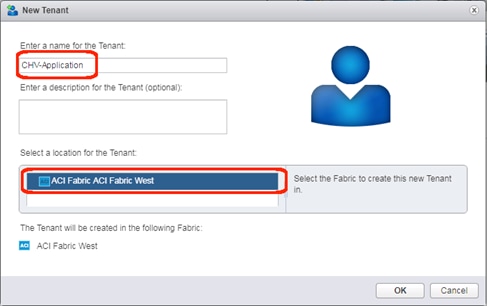

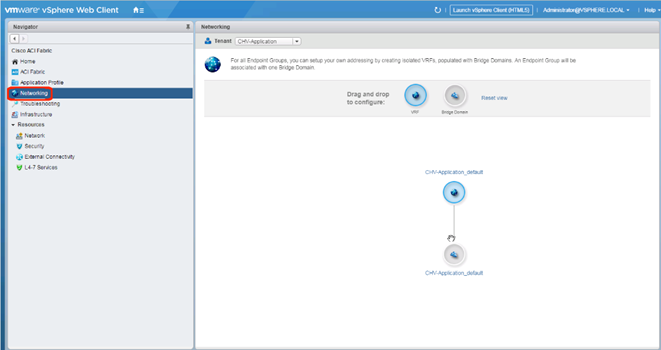

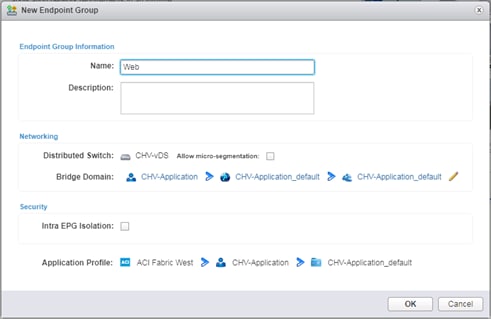

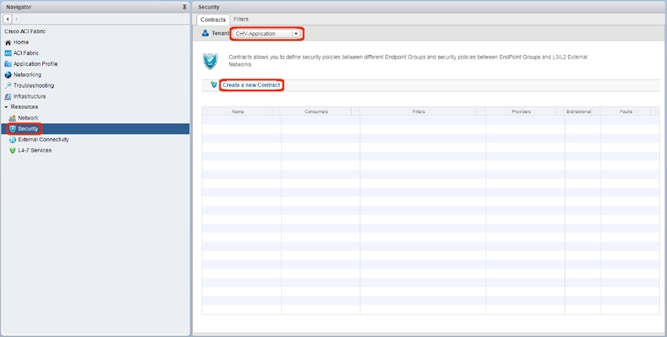

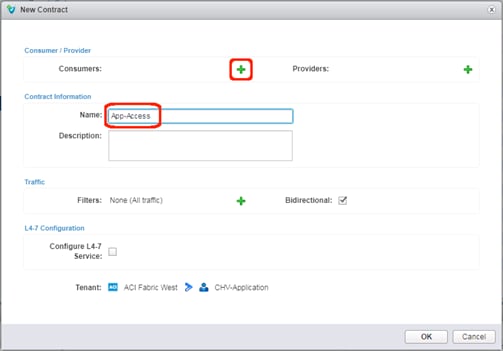

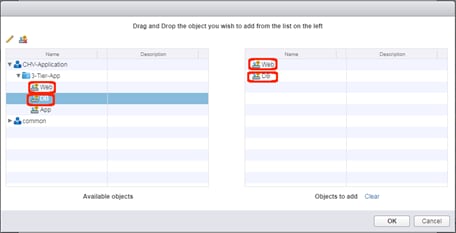

Create an Application Tenant with the Cisco ACI vCenter Plugin

Appendix: MDS Device Alias and Zoning through CLI

Appendix: MDS Example startup-configuration File

Appendix – Cisco Workload Optimization Manager

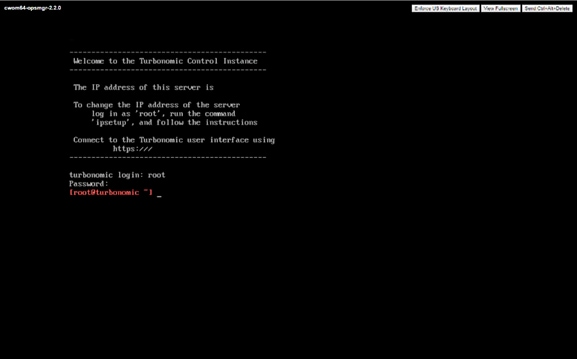

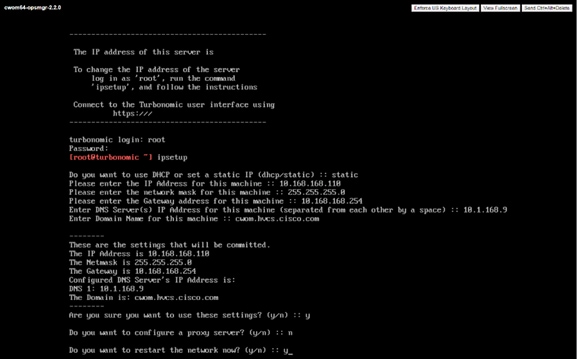

Cisco Workload Optimization Manager Setup

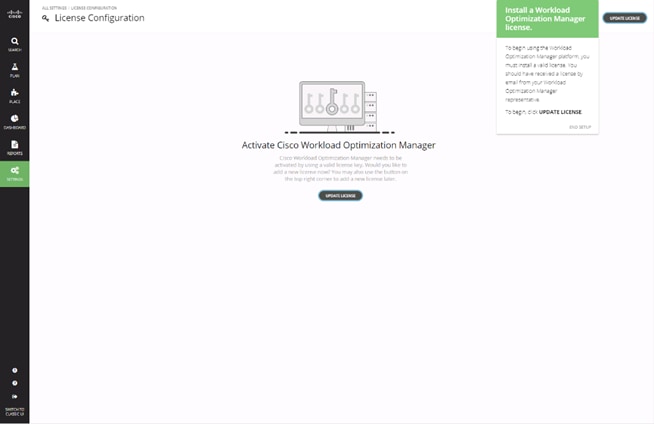

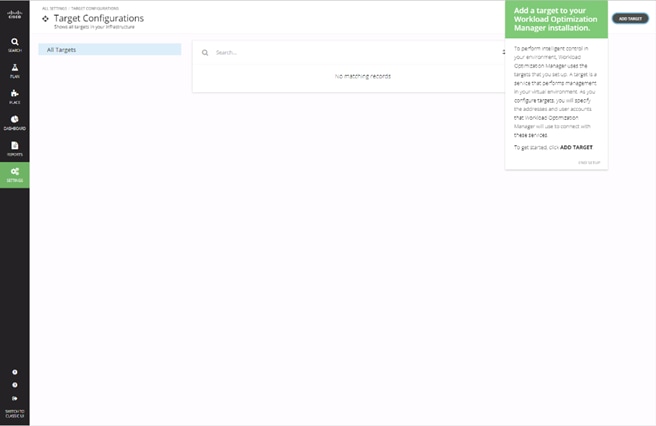

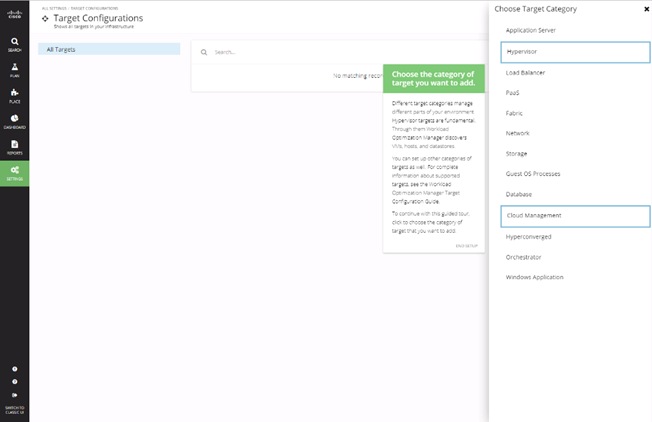

Install Workload Optimization Manager

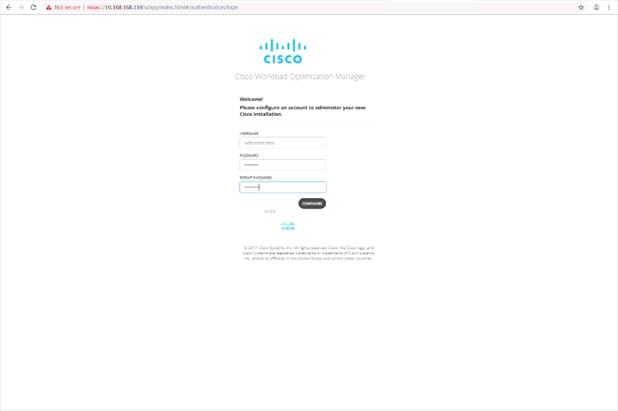

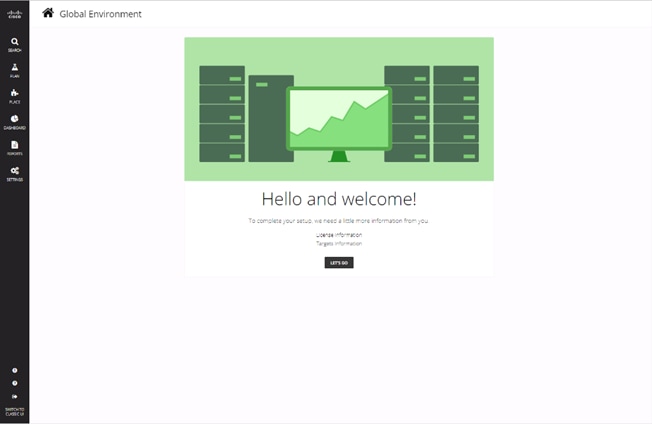

Initial Cisco Workload Optimization Manager Setup

License Installation and First Time Login

Cisco Validated Designs (CVDs) consist of systems and solutions that are designed, tested, and documented to facilitate and improve customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of our customers.

Cisco and Hitachi are working together to deliver a converged infrastructure solution that helps enterprise businesses meet the challenges of today and position themselves for the future. This CVD utilizes many of the same components as the initial Cisco and Hitachi Adaptive Solutions architecture but has been reimplemented to take advantage of the Cisco Application Centric Infrastructure (ACI).

Cisco ACI is a holistic architecture that introduces hardware and software innovations built upon the Cisco Nexus 9000® Series product line. Cisco ACI provides a centralized policy-driven application deployment architecture that is managed through the Cisco Application Policy Infrastructure Controller (APIC). Cisco ACI delivers software flexibility with the scalability of hardware performance.

This document steps through the deployment of the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure as a Virtual Server Infrastructure (VSI) using Cisco ACI. This architecture is described in the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure with ACI Design Guide. The recommended solution architecture is built on Cisco Unified Computing System (Cisco UCS) using the unified software release to support the Cisco UCS hardware platforms for the Cisco UCS B-Series Blade Server, Cisco UCS 6400 or 6300 Fabric Interconnects, Cisco Nexus 9000 Series switches, Cisco MDS 9000 Multilayer switches, and Hitachi Virtual Storage Platform (VSP).

Introduction

Modernizing your data center can be overwhelming, and it’s vital to select a trusted technology partner with proven expertise. With Cisco and Hitachi as partners, companies can build for the future by enhancing systems of record, supporting systems of innovation, and growing their business. Organizations need an agile solution, free from operational inefficiencies, to deliver continuous data availability, meet SLAs, and prioritize innovation.

Hitachi and Cisco Adaptive Solutions for Converged Infrastructure as a Virtual Server Infrastructure (VSI) is a best practice datacenter architecture built on the collaboration of Hitachi Vantara and Cisco to meet the needs of enterprise customers utilizing virtual server workloads. This architecture has been expanded to include the Cisco Application Centric Infrastructure (ACI) as an overarching SDN solution. Under the ACI umbrella, the Hitachi Virtual Storage Platform (VSP) connects through the Cisco MDS Multilayer Switch to the Cisco Unified Computing System (Cisco UCS) and is enabled within the network using the same Cisco Nexus family of switches.

The reference architecture covers specifics of products utilized within the Cisco validation lab, but the solution is considered relevant for equivalent supported components listed within Cisco and Hitachi Vantara’s published compatibility matrixes. Supported adjustments from the example validated build must be evaluated with care as their implementation instructions may differ.

Audience

The audience for this document includes, but is not limited to; sales engineers, field consultants, professional services, IT managers, partner engineers, and customers who want to modernize their infrastructure to meet SLAs and their business needs at any scale.

Purpose of this Document

This document provides a step by step configuration and implementation guide for the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure solution configured with Cisco ACI 4.1. This solution features a validated reference architecture composed of:

· Cisco UCS Compute

· Cisco Nexus Switches with ACI

· Cisco MDS Multilayer Fabric Switches

· Hitachi Virtual Storage Platform

The design and technology decisions that went into this solution can be found in the accompanying Cisco and Hitachi Adaptive Solutions with Cisco ACI Design Guide.

What’s New in this Release?

The following design uses many of the concepts and best practices of the initial release, but in this release the primary differentiators are:

· Support for Cisco ACI 4.1

· Support for the Intel Cascade Lake Processors within Cisco UCS B200 M5 servers

· Support for Hitachi UCP Advisor

Architecture

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure is a validated reference architecture targeting Virtual Server Infrastructure (VSI) implementations. The architecture is built around the Cisco Unified Computing System (Cisco UCS) and the Hitachi Virtual Storage Platform (VSP) connected together by Cisco MDS Multilayer SAN Switches, and in this release, designed with the Cisco Application Centric Infrastructure using Cisco Nexus Switches.

These components come together to form a powerful and scalable design, built on the best practices of both companies to create an ideal environment for virtualized systems.

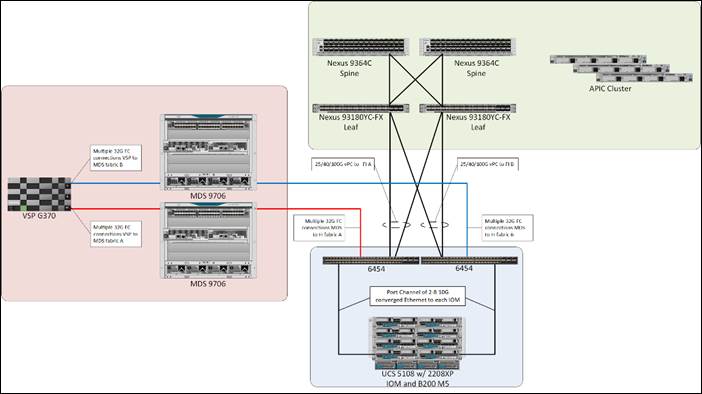

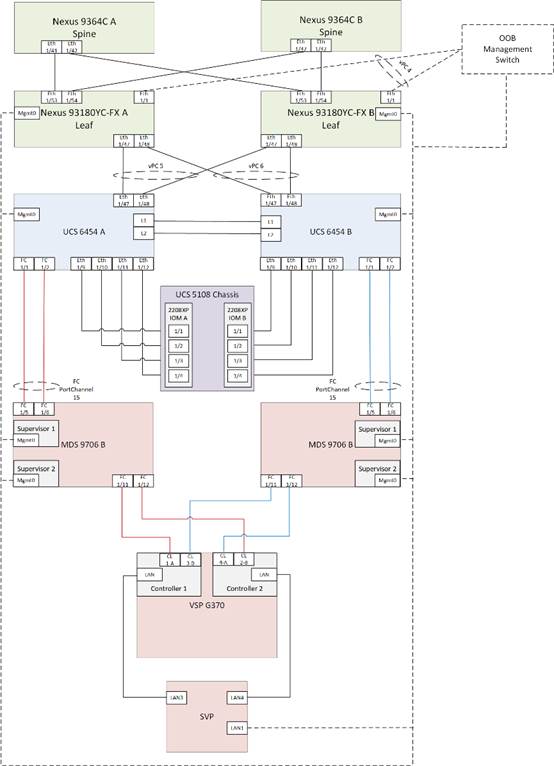

The solution is built and validated for a topology featuring the Cisco UCS Fabric Interconnect as well as the Hitachi VSP Storage System, using the MDS and the Nexus switching infrastructure which is implemented with Cisco ACI. The topology, shown in Figure 1:

· Cisco Nexus 93180YC-FX – 100Gb capable ACI leaves, giving LAN connectivity to the UCS compute resources.

· Cisco Nexus 9364C ACI spines, delivering the backbone of the upstream network.

· Cisco Application Policy Infrastructure Controllers, management servers for the ACI SDN implementation, giving API, CLI, and GUI options as an interface.

· Cisco UCS 6454 Fabric Interconnect – Unified management of UCS compute, and the compute’s access to storage and networks.

· Cisco UCS B200 M5 – High powered, versatile blade server, with IntelÒ Cascade Lake processors.

· Cisco MDS 9706 – 32Gbps Fibre Channel connectivity within the architecture, as well as interfacing to resources present in an existing data center.

· Hitachi VSP G370 – Mid-range, high performance storage system with optional all-flash configuration

The Cisco UCS B200 M5 servers in this topology are hosted within the same Cisco UCS 5108 Chassis but connect into the fabric interconnects from the chassis using Cisco UCS 2208XP IOMs. The 2208XP IOM supports up to 8 10G connections going into the Cisco UCS 6454 FIs, delivering a high bandwidth solution for either remote office or the corporate datacenter.

The Cisco UCS B200 M5 servers in this topology are hosted within the same Cisco UCS 5108 Chassis but connect into the fabric interconnects from the chassis using Cisco UCS 2208XP IOMs. The 2208XP IOM supports up to 8 10G connections going into the Cisco UCS 6454 FIs, delivering a high bandwidth solution for either remote office or the corporate datacenter.

Management components for both architectures additionally include:

· Cisco UCS Manager – Management delivered through the Fabric Interconnect, providing stateless compute, and policy driven implementation of the servers managed by it.

· Cisco Intersight (optional) – Comprehensive unified visibility across UCS domains, along with proactive alerts and enablement of expedited Cisco TAC communications.

· Cisco Data Center Network Manager (optional) – Multi-layer network configuration and monitoring.

· Cisco Workload Optimization Manager (optional) – Resource optimization to deliver capex savings.

· Hitachi Storage Navigator – Management of Storage Virtualization Operating System (SVOS) on the VSP storage platform.

· Hitachi UCP Advisor (optional) – Comprehensive visibility and provisioning of VSP storage through vCenter.

The validation lab covered the above topology, as well as management components listed within a vSphere 6.7 U2 based hypervisor environment. vSphere 6.5 was not validated but is supported within the Cisco-Hitachi Interoperability partnership.

Hardware and Software Versions

Table 1 lists the validated hardware and software versions used for this solution. Configuration specifics are given in this deployment guide for the devices and versions listed in the following tables. Component and software version substitution from what is listed is considered acceptable within this reference architecture, but substitution will need to comply with the hardware and software compatibility matrices from both Cisco and Hitachi.

Cisco UCS Hardware Compatibility Matrix:

https://ucshcltool.cloudapps.cisco.com/public/

Cisco Nexus and MDS Interoperability Matrix:

Recommended Cisco APIC and Cisco Nexus 9000 Series ACI-Mode Switches Releases:

Cisco ACI Virtualization Compatibility Matrix:

Hitachi Vantara Interoperability:

https://support.hitachivantara.com/en_us/interoperability.html

In addition, any substituted hardware or software may have different configurations from what is detailed in this guide and will require a thorough evaluation of the substituted product reference documents.

Table 1 Validated Hardware and Software

| Component |

Software Version/Firmware Version |

|

| Network |

Cisco Nexus 93180YC-FX (leaf) |

14.1(2g) |

| Cisco Nexus 9364C (spine) |

14.1(2g) |

|

| Cisco APIC M2 |

4.1(2g) |

|

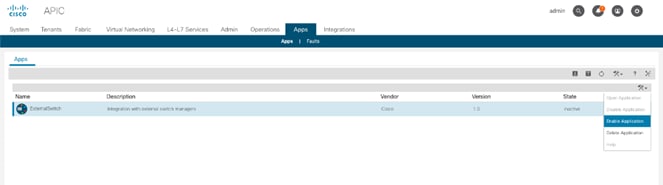

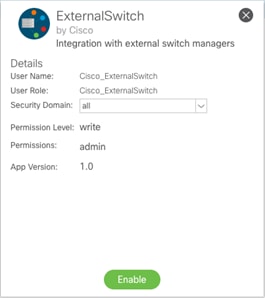

| Cisco ExternalSwitch |

1.0 |

|

| Cisco ACI Plugin |

4.1.2000.7 |

|

| Compute |

Cisco UCS Fabric Interconnect 6454 |

4.0(4b) |

| Cisco UCS 2208XP IOM |

4.0(4b) |

|

| Cisco UCS B200 M5 |

4.0(4b) |

|

| VMware vSphere |

6.7 U2 VMware_ESXi_6.7.0_13006603_Custom_Cisco_6.7.2.1.iso |

|

| ESXi 6.7 U2 nenic |

1.0.29.0 |

|

| ESXi 6.7 U2 nfnic |

4.0.0.38 |

|

| VMware vCenter Server Appliance |

6.7 U2 |

|

| VM Virtual Hardware Version |

13 |

|

| Storage |

Hitachi VSP G370 |

88-03-23 (SVOS 8.3.1) |

| Hitachi UCP Advisor |

3.0 |

|

| Hitachi Storage Plugin for vCenter |

3.10.0 |

|

| Hitachi Storage Provider for VMware vCenter (VASA) |

3.5.6 |

|

| Cisco MDS 9706 |

8.3(1) |

|

| Cisco DCNM |

11.2(1) |

|

Configuration Guidelines

This document provides details for configuring a fully redundant, highly available configuration for the Cisco and Hitachi Converged Infrastructure. References are made to which component is being configured with each step, either “-1” or “-2”. For example, AA19-9706-1 and AA19-9706-2 are used to identify the two MDS switches that are provisioned with this document, with AA19-9706-1 and 2 used to represent a command invoked on both Nexus switches. The Cisco UCS fabric interconnects are similarly configured. Additionally, this document details the steps for provisioning multiple Cisco UCS hosts, and these examples are identified as: VM-Host-Infra-01, VM-Host-Infra-02 to represent hosts deployed to each of the fabric interconnects in this document. Finally, to indicate that you should include information pertinent to your environment in a given step, <text> appears as part of the command structure.

See the following example of a configuration step for both Nexus switches:

AA19-9706-1&2 (config)# ntp server <<var_oob_ntp>>

This document is intended to enable you to fully configure the customer environment. In this process, various steps require you to insert customer-specific naming conventions, IP addresses, and VLAN schemes, as well as to record appropriate MAC addresses. The tables provided can be copied or printed for use as a reference to align the appropriate customer deployed values for configuration specifics used within the guide.

Table 2 lists the VLANs necessary for deployment as outlined in this guide.

Table 2 VLANs Used in the Deployment

| VLAN Name |

VLAN Purpose |

ID Used in Validating this Document |

Customer Deployed Value |

| Internal-Infra |

VLAN for Internal Infrastructure (UCSM/VSP) |

119 |

|

| Common |

VLAN for Shared Infrastructure (AD/DNS) |

319 |

|

| Host-Mgmt |

VLAN for Hypervisor Hosts (ESXi) |

419 |

|

| vMotion |

VLAN for vSphere vMotion traffic |

519 |

|

| Native |

VLAN to which untagged frames are assigned |

2 |

|

| App-vDS-[1-100] |

VLAN for Application VM Interfaces residing in vDS based port groups |

1100-1199 |

|

Table 3 lists additional configuration variables are used throughout the document as pointers to where a customer provided name, or reference for relevant existing information will be used.

Table 3 Variables for Information Used in the Design

| Variable |

Variable Description |

Customer Deployed Value |

| <<var_nexus_A_hostname>> |

Nexus switch A hostname (Example: AA20-93180-1) |

|

| <<var_nexus_A_mgmt_ip>> |

Out-of-band management IP for Nexus switch A (Example: 172.16.163.108) |

|

| <<var_nexus_B_hostname>> |

Nexus switch B hostname (Example: AA20-93180-2) |

|

| <<var_nexus_B_mgmt_ip>> |

Out-of-band management IP for Nexus switch B (Example: 172.26.163.109) |

|

| <<var_oob_mgmt_mask>> |

Out-of-band management network netmask (Example: 255.255.255.0) |

|

| <<var_oob_gateway>> |

Out-of-band management network gateway (Example: 172.26.163.254) |

|

| <<var_oob_ntp>> |

Out-of-band management network NTP server (Example: 172.26.163.254) |

|

| <<var_password>> |

Administrative password (Example: N0taP4ss) |

|

| <<var_dns_domain_name>> |

DNS domain name (Example: ucp.cisco.com) |

|

| <<var_nameserver_ip>> |

DNS server IP(s) (Example: 10.1.168.9) |

|

| <<var_timezone>> |

Time zone (Example: America/New_York) |

|

| <<var_ib_mgmt_vlan_id>> |

In-band (Site Infra) management network VLAN ID (Example: 119) |

|

| <<var_ib_mgmt_vlan_netmask_length>> |

Length of Site-Infra-VLAN Netmask (Example: /24) |

|

| <<var_ib_gateway_ip>> |

Site Infra management network VLAN ID (Example: 10.1.168.254) |

|

| <<var_vmotion_vlan_id>> |

vMotion management network VLAN ID (Example: 519) |

|

| <<var_vmotion_vlan_netmask_length>> |

Length of vMotion-VLAN Netmask (Example: /24) |

|

| <<var_vsan_a_id>> |

VSAN used for the A Fabric between the VSP /FI (Example: 101) |

|

| <<var_vsan_b_id>> |

VSAN used for the A Fabric between the VSP /FI (Example: 102) |

|

| <<vsp_hostname>> <<vsp-g370>> |

Hitachi VSP storage system name (Example g370-[Serial Number]) |

|

| <<var_ucs_clustername>> <<var_ucs_6454_clustername>> |

Cisco UCS Manager cluster host name (Example: AA19-6454) |

|

| <<var_ucsa_mgmt_ip>> |

Cisco UCS fabric interconnect (FI) A out-of-band management IP address (Example: 10.1.168.16) |

|

| <<var_ucs_mgmt_vip>> |

Cisco UCS fabric interconnect (FI) Cluster out-of-band management IP address (Example: 10.1.168.15) |

|

| <<var_ucsb_mgmt_ip>> |

Cisco UCS FI B out-of-band management IP address (Example: 10.1.168.17) |

|

| <<var_vm_host_infra_01_ip>> |

VMware ESXi host 01 in-band management IP (Example: 10.4.168.21) |

|

| <<var_vm_host_infra_02_ip>> |

VMware ESXi host 02 in-band management IP (Example: 10.4.168.22) |

|

| <<var_vm_host_infra_vmotion_01_ip>> |

VMware ESXi host 01 vMotion IP (Example: 192.168.100.21) |

|

| <<var_vm_host_infra_vmotion_02_ip>> |

VMware ESXi host 02 vMotion IP (Example: 192.168.100.22) |

|

| <<var_vmotion_subnet_mask>> |

vMotion subnet mask (Example: 255.255.255.0) |

|

| <<var_vcenter_server_ip>> |

IP address of the vCenter Server (Example: 10.168.168.100) |

|

Physical Cabling

This section explains the cabling examples used for the validated topology in the environment. To make connectivity clear in this example, the tables include both the local and remote port locations.

Physical Cabling for the UCS 6454 with the VSP G370

Figure 2 shows the cabling configuration used in the design featuring the Cisco UCS 6454 FI with the Hitachi VSP G370.

Figure 2 Cisco UCS 6454 and VSP G370 with ACI Cabling Diagram

The following tables list the specific port connections with the cables used in the deployment of the Cisco UCS 6454 and the VSP G370 are provided below.

Table 4 Cisco Nexus 93180YC-FX A (Leaf) Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 93180YC-FX A

|

Eth1/1 |

GbE |

GbE management switch |

any |

| Eth1/47 |

25GbE |

Cisco UCS 6454 FI A |

Eth1/47 |

|

| Eth1/48 |

25GbE |

Cisco UCS 6454 FI B |

Eth 1/47 |

|

| Eth1/53 |

40GbE or 100GbE |

Cisco 9364C A (Spine) |

Eth 1/41 |

|

| Eth1/54 |

40GbE or 100GbE |

Cisco 9364C B (Spine) |

Eth 1/41 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 5 Cisco Nexus 93180YC-FX B (Leaf) Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 93180YC-FX B

|

Eth1/1 |

GbE |

GbE management switch |

any |

| Eth1/47 |

25GbE |

Cisco UCS 6454 FI A |

Eth1/48 |

|

| Eth1/48 |

25GbE |

Cisco UCS 6454 FI B |

Eth 1/48 |

|

| Eth1/53 |

40GbE or 100GbE |

Cisco 9364C A (Spine) |

Eth 1/42 |

|

| Eth1/54 |

40GbE or 100GbE |

Cisco 9364C B (Spine) |

Eth 1/42 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

Table 6 Cisco UCS 6454 A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS 6454 FI A |

FC 1/1 |

32Gb FC |

MDS 9706 A |

FC 1/5 |

| FC 1/2 |

32Gb FC |

MDS 9706 A |

FC 1/6 |

|

| Eth1/9 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/1 |

|

| Eth1/10 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/2 |

|

| Eth1/11 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/3 |

|

| Eth1/12 |

10GbE |

Cisco UCS Chassis 2208XP FEX A |

IOM 1/4 |

|

| Eth1/47 |

25GbE |

Cisco Nexus 93180YC-FX A |

Eth1/47 |

|

| Eth1/48 |

25GbE |

Cisco Nexus 93180YC-FX B |

Eth1/47 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

|

| L1 |

GbE |

Cisco UCS 6454 FI B |

L1 |

![]() Ports 1-8 on the Cisco UCS 6454 are unified ports that can be configured as Ethernet or as Fibre Channel ports. Server ports should be initially deployed started with 1/9 to give flexibility for FC port needs, and ports 49-54 are not configurable for server ports. Also, ports 45-48 are the only configurable ports for 1Gbps connections that may be needed to a network switch.

Ports 1-8 on the Cisco UCS 6454 are unified ports that can be configured as Ethernet or as Fibre Channel ports. Server ports should be initially deployed started with 1/9 to give flexibility for FC port needs, and ports 49-54 are not configurable for server ports. Also, ports 45-48 are the only configurable ports for 1Gbps connections that may be needed to a network switch.

Table 7 Cisco UCS 6454 B Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS 6454 FI B

|

FC 1/1 |

32Gb FC |

MDS 9706 B |

FC 1/5 |

| FC 1/2 |

32Gb FC |

MDS 9706 B |

FC 1/6 |

|

| Eth1/9 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/1 |

|

| Eth1/10 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/2 |

|

| Eth1/11 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/3 |

|

| Eth1/12 |

10GbE |

Cisco UCS Chassis 2208XP FEX B |

IOM 1/4 |

|

| Eth1/47 |

25GbE |

Cisco Nexus 93180YC-FX A |

Eth1/48 |

|

| Eth1/48 |

25GbE |

Cisco Nexus 93180YC-FX B |

Eth1/48 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

|

| L1 |

GbE |

Cisco UCS 6454 FI A |

L1 |

|

| L2 |

GbE |

Cisco UCS 6454 FI A |

L2 |

Table 8 Cisco MDS 9706 A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco MDS 9706 A

|

FC 1/5 |

32Gb FC |

Cisco UCS 6454 FI A |

FC 1/1 |

| FC 1/6 |

32Gb FC |

Cisco UCS 6454 FI A |

FC 1/2 |

|

| FC 1/11 |

32Gb FC |

VSP G370 Controller 1 |

CL 1-A |

|

| FC 1/12 |

32Gb FC |

VSP G370 Controller 2 |

CL 2-B |

|

| Sup1 MGMT0 |

GbE |

GbE management switch |

Any |

|

| Sup2 MGMT0 |

GbE |

GbE management switch |

Any |

Table 9 Cisco MDS 9706 B Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco MDS 9706 B

|

FC 1/5 |

32Gb FC |

Cisco UCS 6454 FI B |

FC 1/1 |

| FC 1/6 |

32Gb FC |

Cisco UCS 6454 FI B |

FC 1/2 |

|

| FC 1/11 |

32Gb FC |

VSP G370 Controller 1 |

CL 3-B |

|

| FC 1/12 |

32Gb FC |

VSP G370 Controller 2 |

CL 4-A |

|

| Sup1 MGMT0 |

GbE |

GbE management switch |

Any |

|

| Sup2 MGMT0 |

GbE |

GbE management switch |

Any |

Table 10 Hitachi VSP G370 Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Hitachi VSP G370

|

CL 1-A |

32Gb FC |

MDS 9706 A |

FC 1/11 |

| CL 2-B |

32Gb FC |

MDS 9706 A |

FC 1/12 |

|

| CL 3-B |

32Gb FC |

MDS 9706 B |

FC 1/11 |

|

| CL 4-A |

32Gb FC |

MDS 9706 B |

FC 1/12 |

|

| Cont1 LAN |

GbE |

SVP |

LAN3 |

|

| Cont2 LAN |

GbE |

SVP |

LAN4 |

![]() SVP will be configured by a Hitachi Vantara support engineer at the time of initial configuration and is out of scope of the primary deployment.

SVP will be configured by a Hitachi Vantara support engineer at the time of initial configuration and is out of scope of the primary deployment.

This section provides a detailed procedure for configuring the Cisco ACI fabric for use in the environment and is written where the components are added to an existing Cisco ACI fabric as several new ACI tenants. Required fabric setup is verified, but previous configuration of the ACI fabric is assumed.

In ACI, both spine and leaf switches are configured using the APIC, individual configuration of the switches is not required. The Cisco APIC discovers the ACI infrastructure switches using LLDP and acts as the central control and management point for the entire configuration.

Physical Connectivity

Physical cabling should be completed by following the diagram and table references found in the Physical Cabling section.

Cisco Application Policy Infrastructure Controller (APIC) Verification

To verify the setup of the Cisco APIC, follow these steps:

![]() Cisco recommends a cluster of at least 3 APICs controlling an ACI Fabric.

Cisco recommends a cluster of at least 3 APICs controlling an ACI Fabric.

1. Log into the APIC GUI using a web browser, by browsing to the out of band IP address configured for APIC. Login with the admin user id and password.

2. Take the appropriate action to close any warning or information screens.

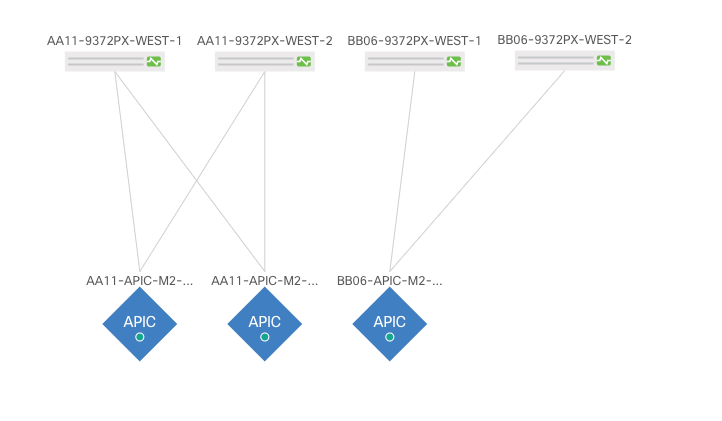

3. From the APIC home page, select the System tab followed by Controllers.

4. Select the Controllers folder. Verify that at least 3 APICs are available and have redundant connections to the fabric.

Cisco ACI Fabric Discovery

This section details the steps for adding the two Nexus 93180YC-FX leaf switches to the Fabric. This procedure is assuming that dedicated leaves are being added to an established ACI fabric. If the two Nexus 93180YC-FX leaves have already been added to the fabric, continue to the next section. These switches are automatically discovered in the ACI Fabric and are manually assigned node IDs. To add Nexus 93180YC-FX leaf switches to the ACI fabric, follow these steps:

1. At the top in the APIC home page, select the Fabric tab and make sure Inventory under Fabric is selected.

2. In the left pane, select and expand Fabric Membership.

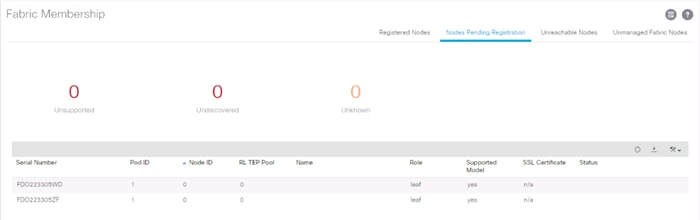

3. The two 93180YC-FX Leaf Switches will be listed on the Fabric Membership page within the Nodes Pending Registration tab as Node ID 0 as shown:

![]() For auto-discovery to occur by the APIC, the leaves will need to be running an ACI mode switch software release. For instructions on migrating from NX-OS, please refer to: https://www.cisco.com/c/en/us/td/docs/switches/datacenter/aci/apic/sw/kb/b_KB_Converting_N9KSwitch_NXOSStandaloneMode_to_ACIMode.html

For auto-discovery to occur by the APIC, the leaves will need to be running an ACI mode switch software release. For instructions on migrating from NX-OS, please refer to: https://www.cisco.com/c/en/us/td/docs/switches/datacenter/aci/apic/sw/kb/b_KB_Converting_N9KSwitch_NXOSStandaloneMode_to_ACIMode.html

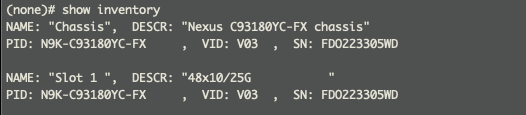

4. Connect to the two Nexus 93180YC-FX leaf switches using serial consoles and login in as admin with no password (press enter). Use show inventory to get the leaf’s serial number.

5. Match the serial numbers from the leaf listing to determine the A and B switches under Fabric Membership.

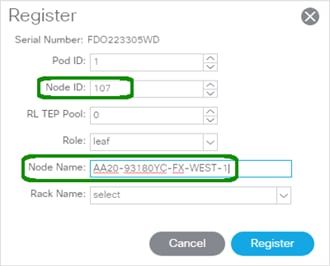

6. In the APIC GUI, within Nodes Pending Registration under Fabric Membership, right click the A leaf in the list and select Register.

7. Enter a Node ID and a Node Name for the Leaf switch and click Register.

8. Repeat steps 4-7 for the B leaf in the list.

![]() During discovery, there may be some messages appearing about the leaves being inactive, these messages can be ignored.

During discovery, there may be some messages appearing about the leaves being inactive, these messages can be ignored.

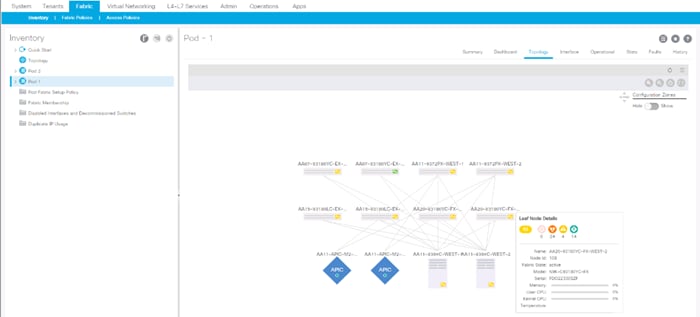

9. Click the Pod the leaves are associated with and select the Topology tab for the Pod. The discovered ACI Fabric topology will appear. It may take a few minutes for the new Nexus 93180YC-FX switches to appear and you will need to click Refresh for the complete topology to appear. You may also need to move the switches around to get the arrangement that you desire.

![]() The topology shown in the screenshot above is the topology of the validation lab fabric containing 8 leaf switches, 2 spine switches, and 2 APICs. The environment used is implementing an ACI Multi-Pod (not explained in this document), which places the third APIC in a remotely connected ACI Pod. Cisco recommends a cluster of at least 3 APICs in a production environment.

The topology shown in the screenshot above is the topology of the validation lab fabric containing 8 leaf switches, 2 spine switches, and 2 APICs. The environment used is implementing an ACI Multi-Pod (not explained in this document), which places the third APIC in a remotely connected ACI Pod. Cisco recommends a cluster of at least 3 APICs in a production environment.

Initial ACI Fabric Setup Verification

This section details the steps for the initial setup of the Cisco ACI Fabric, where the software release is validated, out of band management IPs are assigned to the new leaves, NTP setup is verified, and the fabric BGP route reflectors are verified.

Software Upgrade

To upgrade the software, follow these steps:

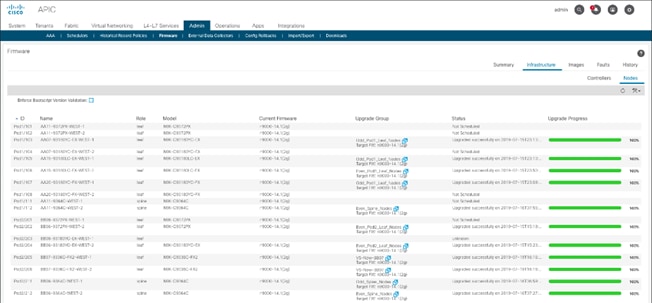

1. In the APIC GUI, select Admin -> Firmware.

2. This document was validated with ACI software release 4.1(2g). Select the Infrastructure tab within Firmware, and the Nodes sub-tab under Infrastructure. All switches should show the same firmware release and the release version should be at minimum n9000-14.1(2g). The switch software version should also correlate with the APIC version.

3. Click Admin > Firmware > Controller Firmware. If all APICs are not at the same release at a minimum of 4.1(2g), follow the Cisco APIC Management, Installation, Upgrade, and Downgrade Guide to upgrade both the APICs and switches if the APICs are not at a minimum release of 4.1(2g) and the switches are not at n9000-14.1(2g).

Set Up Out-of-Band Management IP Addresses for New Leaf Switches

To set up out-of-band management IP addresses, follow these steps:

1. To add Out-of-Band management interfaces for all the switches in the ACI Fabric, select Tenants -> mgmt.

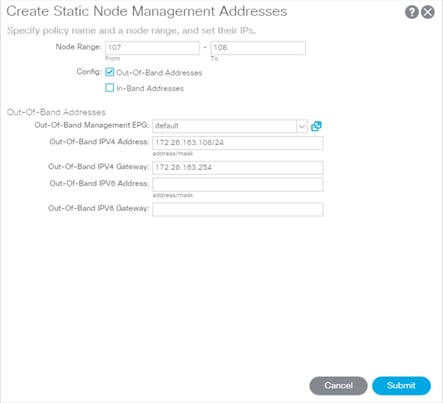

2. Expand Tenant mgmt on the left. Right-click Node Management Addresses and select Create Static Node Management Addresses.

3. Enter the node number range for the new leaf switches (107-108 in this example).

4. Select the checkbox for Out-of-Band Addresses.

5. Select default for Out-of-Band Management EPG.

6. Considering that the IPs will be applied in a consecutive range of two IPs, enter a starting IP address and netmask in the Out-of-Band IPV4 Address field.

7. Enter the Out-of-Band management gateway address in the Gateway field.

8. Click Submit, then click YES.

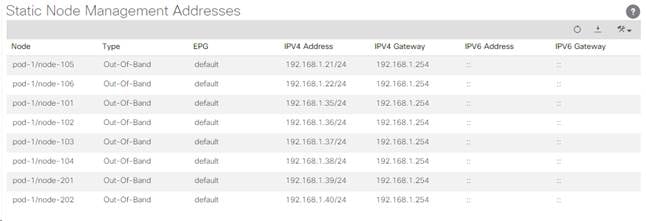

9. On the left, expand Node Management Addresses and select Static Node Management Addresses. Verify the mapping of IPs to switching nodes.

10. Direct out-of-band access to the switches is now available using SSH.

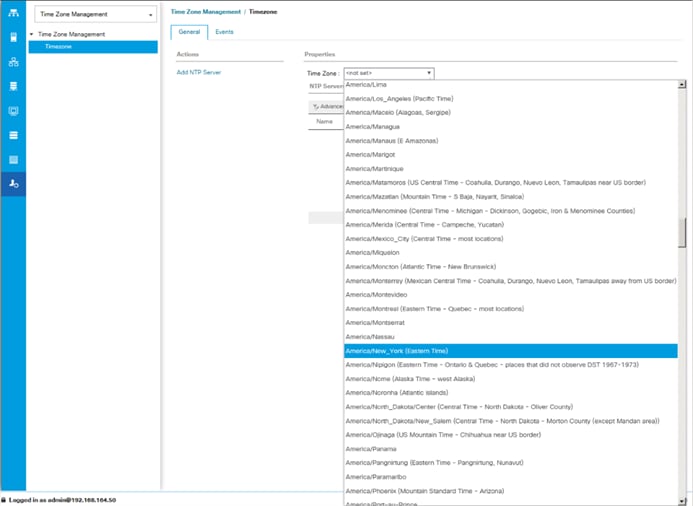

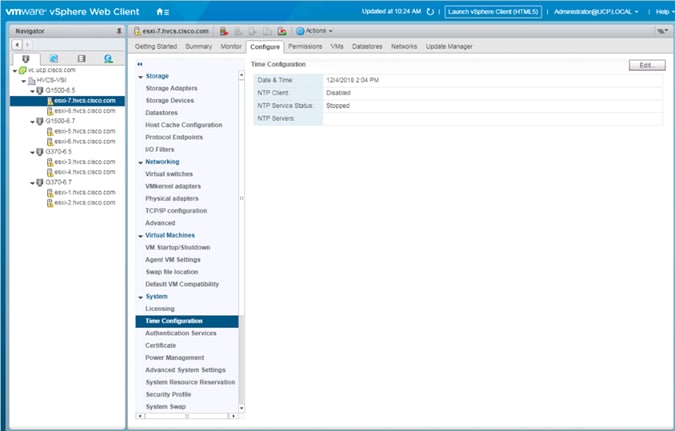

Verify Time Zone and NTP Server

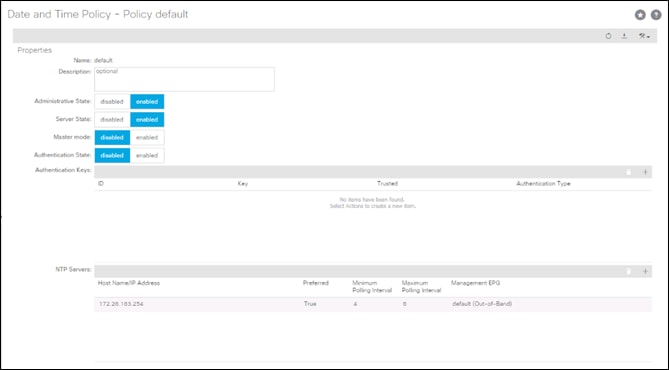

This procedure will allow customers to verify setup of an NTP server for synchronizing the fabric time. To verify the time zone and NTP server set up, follow these steps:

1. To verify NTP setup in the fabric, select and expand Fabric -> Fabric Policies -> Policies -> Pod -> Date and Time.

2. Select default. In the Datetime Format - default pane, verify the correct Time Zone is selected and that Offset State is enabled. Adjust as necessary and click Submit and Submit Changes.

3. On the left, select Policy default. Verify that at least one NTP Server is listed.

4. If desired, select enabled for Server State to enable the ACI fabric switches as NTP servers. Click Submit.

5. If necessary, on the right use the + sign to add NTP servers accessible on the out of band management subnet. Enter an IP address accessible on the out of band management subnet and select the default (Out-of-Band) Management EPG. Click Submit to add the NTP server. Repeat this process to add all NTP servers.

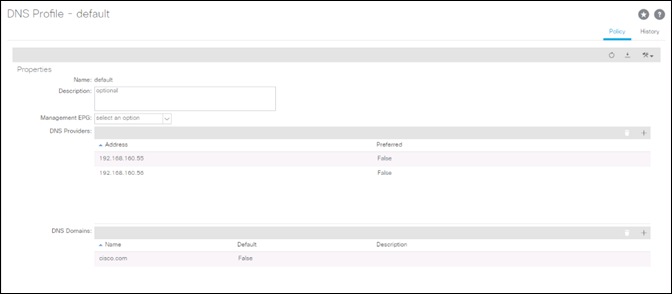

Verify Domain Name Servers

To verify optional DNS in the ACI fabric, follow these steps:

1. Select and expand Fabric > Fabric Policies > Policies > Global > DNS Profiles > default.

2. Verify the DNS Providers and DNS Domains.

3. If necessary, in the Management EPG drop-down, select the default (Out-of-Band) Management EPG. Use the + signs to the right of DNS Providers and DNS Domains to add DNS servers and the DNS domain name. Note that the DNS servers should be reachable from the out of band management subnet. Click SUBMIT to complete the DNS configuration.

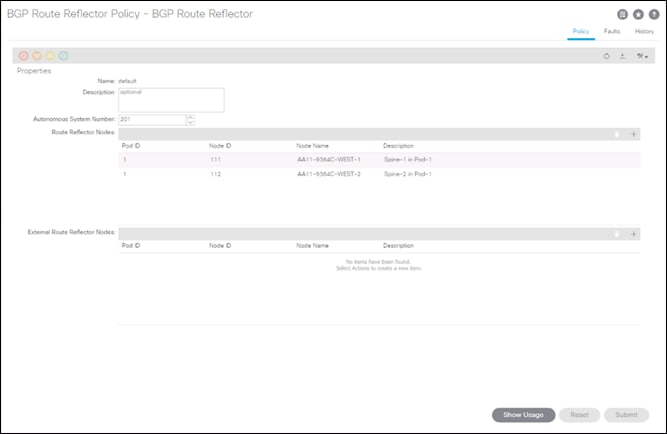

Verify BGP Route Reflectors

In this ACI deployment, both of the spine switches are set up as BGP route-reflectors to distribute external routes throughout the fabric. To verify the BGP Route Reflector, follow these steps:

1. Select and expand System -> System Settings -> BGP Route Reflector.

2. Verify that a unique Autonomous System Number has been selected for this ACI fabric. If necessary, use the + sign on the right to add the two spines to the list of Route Reflector Nodes. Click Submit to complete configuring the BGP Route Reflector.

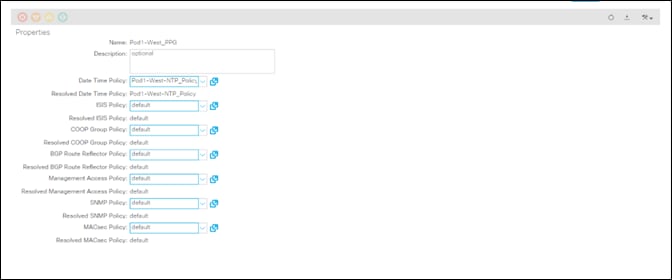

3. To verify the BGP Route Reflector has been enabled, select and expand Fabric -> Fabric Policies -> Pods -> Policy Groups. Under Policy Groups make sure a policy group has been created and select it. The BGP Route Reflector Policy field should show “default.”

4. If a Policy Group has not been created, on the left, right-click Policy Groups under Pod Policies and select Create Pod Policy Group. In the Create Pod Policy Group window, provide an appropriate Policy Group name. Select the default BGP Route Reflector Policy. Click Submit to complete creating the Policy Group.

5. On the left expand Pods -> Profiles and select Pod Profile default.

6. Verify that the created Policy Group or the Fabric Policy Group identified above is selected. If the Fabric Policy Group is not selected, view the drop-down list to select it and click Submit.

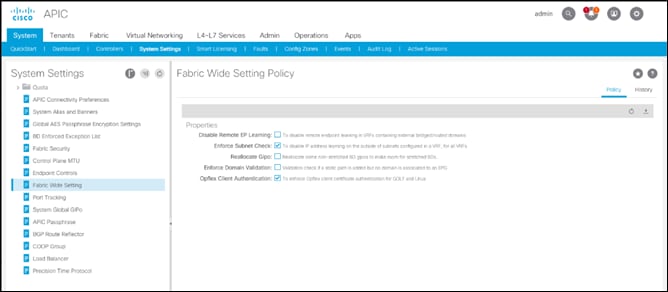

Verify Fabric Wide Enforce Subnet Check for IP and MAC Learning

In this ACI deployment, Enforce Subnet Check for IP and MAC Learning should be enabled. To verify this setting, follow these steps:

1. Select and expand System -> System Settings -> Fabric Wide Setting.

2. Ensure that Enforce Subnet Check is selected.

3. Select OpFlex Client Authentication.

![]() Required if you’re configuring Cisco AVE.

Required if you’re configuring Cisco AVE.

4. Click Submit.

Fabric Access Policy Setup

This section details the steps to create various access policies creating parameters for CDP, LLDP, LACP, etc. These policies are used during vPC and VMM domain creation. In an existing fabric, these policies may already exist.

The following policies will be setup during the Fabric Access Policy Setup:

| Access Interface Policies |

Purpose |

Policy Name |

| Link Level Policies |

Sets link to 40Gbps |

40Gbps-Link |

| Sets link to 25Gbps |

25Gbps-Link |

|

| Sets link to 10Gbps |

10Gbps-Link |

|

| Sets link to 1Gbps |

1Gbps-Link |

|

| CDP Interface Policies |

Enables CDP |

CDP-Enabled |

| Disables CDP |

CDP-Disabled |

|

| LLDP Interface Policies |

Enables LLDP |

LLDP-Enabled |

| Disables LLDP |

LLDP-Disabled |

|

| Port Channel Policies |

Sets LACP Mode |

LACP-Active |

| Sets MAC Pinning |

MAC-Pinning |

|

| Layer 2 Interface Policies |

Specifies VLAN Scope as Port Local |

VLAN-Scope-Local |

| Specifies VLAN Scope as Global |

VLAN-Scope-Global |

|

| Firewall Policies |

Disables Firewall |

Firewall-Disabled |

| Spanning Tree Policies |

Enables BPDU Filter and Guard |

BPDU-FG-Enabled |

| Disables BPDU Filter and Guard |

BPDU-FG-Disabled |

The existing policies can be used if configured the same way as listed. To define fabric access policies, follow these steps:

1. Log into the APIC AGUI.

2. In the APIC UI, select and expand Fabric -> Access Policies -> Policies -> Interface.

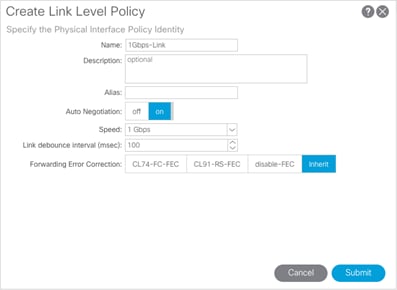

Create Link Level Policies

This procedure will create link level policies for setting up the 1Gbps, 10Gbps, and 40Gbps link speeds. To create the link level policies, follow these steps:

1. In the left pane, right-click Link Level and select Create Link Level Policy.

2. Name the policy as 1Gbps-Link and select the 1Gbps Speed.

3. Click Submit to complete creating the policy.

4. In the left pane, right-click Link Level and select Create Link Level Policy.

5. Name the policy 10Gbps-Link and select the 10Gbps Speed.

6. Click Submit to complete creating the policy.

7. In the left pane, right-click Link Level and select Create Link Level Policy.

8. Name the policy 25Gbps-Link and select the 25Gbps Speed.

9. Click Submit to complete creating the policy.

10. In the left pane, right-click Link Level and select Create Link Level Policy.

11. Name the policy 40Gbps-Link and select the 40Gbps Speed.

12. Click Submit to complete creating the policy.

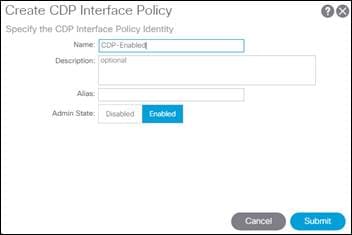

Create CDP Policy

This procedure creates policies to enable or disable CDP on a link. To create a CDP policy, follow these steps:

1. In the left pane, right-click CDP interface and select Create CDP Interface Policy.

2. Name the policy as CDP-Enabled and enable the Admin State.

3. Click Submit to complete creating the policy.

4. In the left pane, right-click the CDP Interface and select Create CDP Interface Policy.

5. Name the policy CDP-Disabled and disable the Admin State.

6. Click Submit to complete creating the policy.

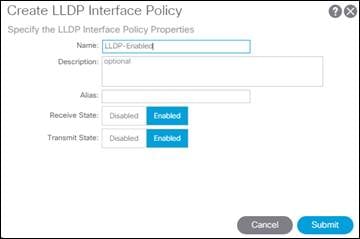

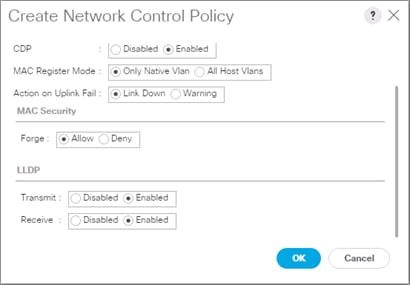

Create LLDP Interface Policies

This procedure will create policies to enable or disable LLDP on a link. To create an LLDP Interface policy, follow these steps:

1. In the left pane, right-click LLDP lnterface and select Create LLDP Interface Policy.

2. Name the policy as LLDP-Enabled and enable both Transmit State and Receive State.

3. Click Submit to complete creating the policy.

4. In the left, right-click the LLDP lnterface and select Create LLDP Interface Policy.

5. Name the policy as LLDP-Disabled and disable both the Transmit State and Receive State.

6. Click Submit to complete creating the policy.

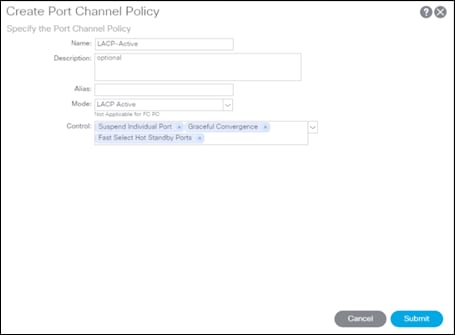

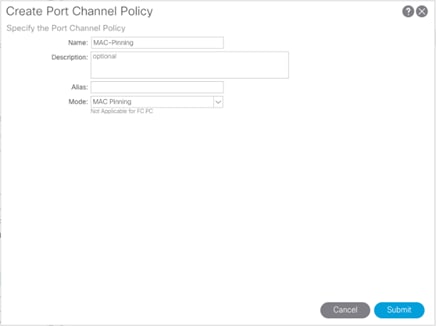

Create Port Channel Policy

This procedure will create policies to set LACP active mode configuration and the MAC-Pinning mode configuration. To create the Port Channel policy, follow these steps:

1. In the left pane, right-click Port Channel and select Create Port Channel Policy.

2. Name the policy as LACP-Active and select LACP Active for the Mode. Do not change any of the other values.

3. Click Submit to complete creating the policy.

4. In the left pane, right-click Port Channel and select Create Port Channel Policy.

5. Name the policy as MAC-Pinning and select MAC Pinning for the Mode. Do not change any of the other values.

6. Click Submit to complete creating the policy.

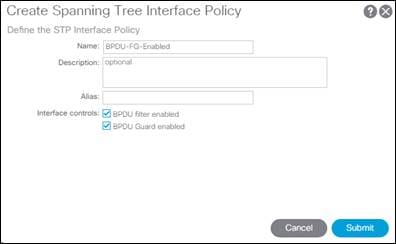

Create BPDU Filter/Guard Policies

This procedure will create policies to enable or disable BPDU filter and guard. To create a BPDU filter/Guard policy, follow these steps:

1. In the left pane, right-click Spanning Tree Interface and select Create Spanning Tree Interface Policy.

2. Name the policy as BPDU-FG-Enabled and select both the BPDU filter and BPDU Guard Interface Controls.

3. Click Submit to complete creating the policy.

4. In the left pane, right-click Spanning Tree Interface and select Create Spanning Tree Interface Policy.

5. Name the policy as BPDU-FG-Disabled and make sure both the BPDU filter and BPDU Guard Interface Controls are cleared.

6. Click Submit to complete creating the policy.

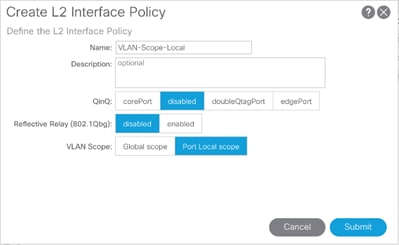

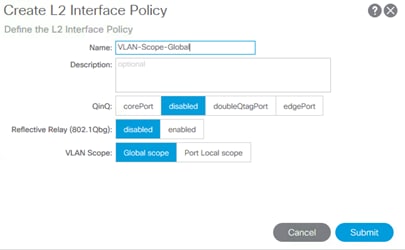

Create VLAN Scope Policy

To create policies to enable port local scope for all the VLANs, follow these steps:

1. In the left pane, right-click the L2 Interface and select Create L2 Interface Policy.

2. Name the policy as VLAN-Scope-Local and make sure Port Local scope is selected for VLAN Scope. Do not change any of the other values.

3. Click Submit to complete creating the policy.

4. Repeat steps 1-3 to create a VLAN-Scope-Global Policy and make sure Global scope is selected for VLAN Scope. Do not change any of the other values.

Create Firewall Policy

To create policies to disable a firewall, follow these steps:

1. In the left pane, right-click Firewall and select Create Firewall Policy.

2. Name the policy Firewall-Disabled and select Disabled for Mode. Do not change any of the other values.

3. Click Submit to complete creating the policy.

Create Virtual Port Channels (vPCs)

This section details the steps to setup vPCs for connectivity to the In-Band Management Network and Cisco UCS.

vPC - Management Switch

To setup a vPC for connectivity to the existing In-Band Management Network, follow these steps:

![]() This deployment guide covers the configuration for a single, pre-existing Cisco Nexus management switch. You can adjust the management configuration depending on your connectivity setup. The In-Band Management Network provides connectivity of Management Virtual Machines and Hosts in the ACI fabric to existing services on the In-Band Management network outside of the ACI fabric. Layer 3 connectivity outside of the ACI Fabric is assumed between the In-Band and Out-of-Band Management networks. This setup creates management networks that are physically isolated from tenant networks. In this validation, a 1GE vPC from two 1GE capable leaf switches in the fabric is connected to a port-channel on a Nexus 5K switch outside the fabric. Multiple upstream management switches are supported, but only one is used in this example.

This deployment guide covers the configuration for a single, pre-existing Cisco Nexus management switch. You can adjust the management configuration depending on your connectivity setup. The In-Band Management Network provides connectivity of Management Virtual Machines and Hosts in the ACI fabric to existing services on the In-Band Management network outside of the ACI fabric. Layer 3 connectivity outside of the ACI Fabric is assumed between the In-Band and Out-of-Band Management networks. This setup creates management networks that are physically isolated from tenant networks. In this validation, a 1GE vPC from two 1GE capable leaf switches in the fabric is connected to a port-channel on a Nexus 5K switch outside the fabric. Multiple upstream management switches are supported, but only one is used in this example.

Table 11 VLAN for Incoming IB-MGMT

| Name |

VLAN |

| Site1-IB-MGMT |

<119> |

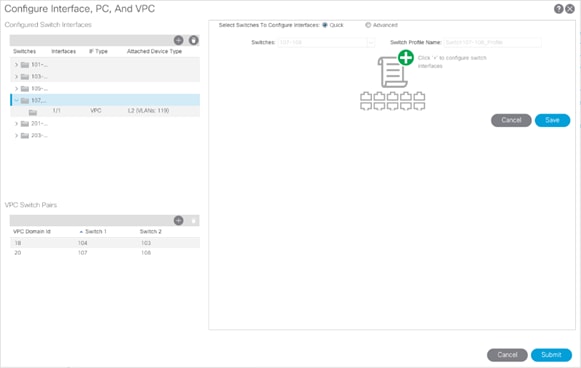

4. In the APIC GUI, at the top select Fabric -> Access Policies -> Quick Start.

5. In the right pane select Configure an interface, PC and VPC.

6. In the configuration window, configure a VPC domain between the leaf switches by clicking “+” under VPC Switch Pairs. If a VPC Domain already exists between the two switches being used for this vPC, skip to step 7.

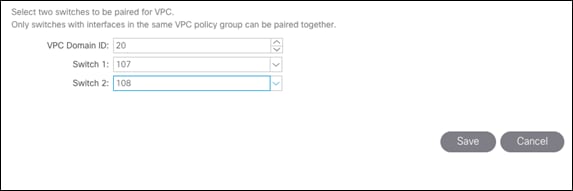

7. Enter a VPC Domain ID (20 in this example).

8. From the drop-down list, select Switch A and Switch B IDs to select the two leaf switches.

9. Click Save.

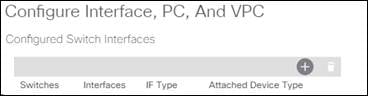

10. If a profile for the two leaf switches being used does not already exist under Configured Switch Interfaces, click the “+” under Configured Switch Interfaces. If the profile does exist, select it and proceed to step 10.

11. From the Switches drop-down list on the right, select both the leaf switches being used for this vPC.

12. Leave the system generated Switch Profile Name in place.

13. Click the big green “+” on the right to configure switch interfaces.

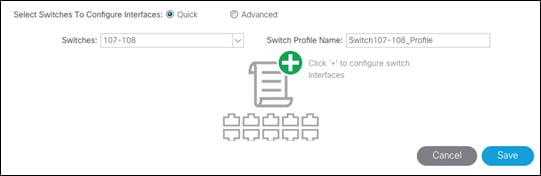

14. Configure various fields as shown in the figure below. In this screen shot, port 1/1 on both leaf switches is connected to a Nexus switch using 1Gbps links.

15. Click Save.

16. Click Save again to finish the configuring switch interfaces.

17. Click Submit.

![]() To validate the configuration, log into the Nexus switch (IP listed under Fabric -> Inventory -> [pod] -> [leaf] -> Management Interfaces) and verify the port-channel is up (show port-channel summary).

To validate the configuration, log into the Nexus switch (IP listed under Fabric -> Inventory -> [pod] -> [leaf] -> Management Interfaces) and verify the port-channel is up (show port-channel summary).

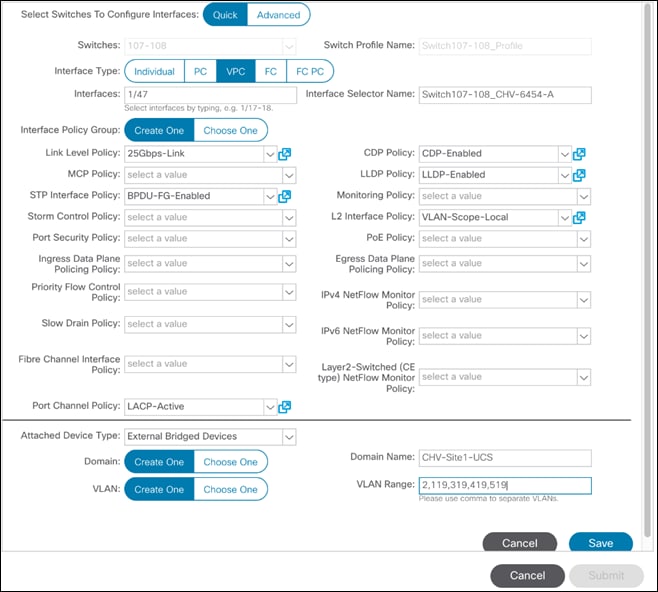

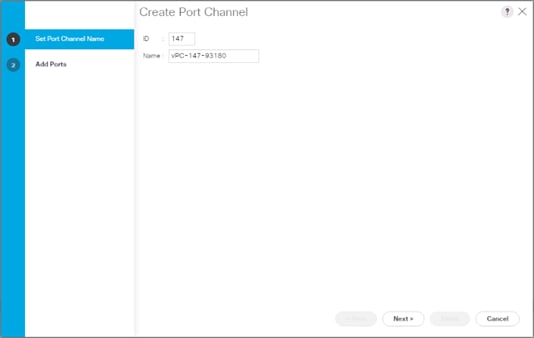

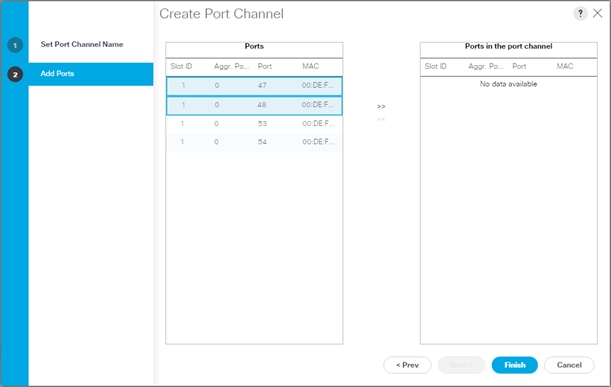

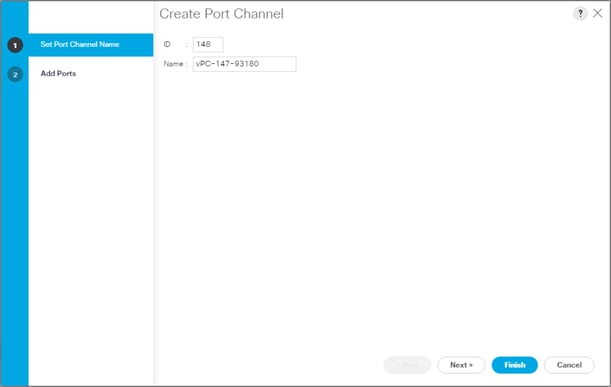

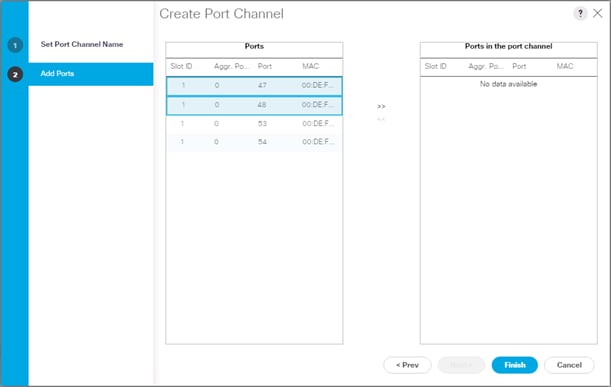

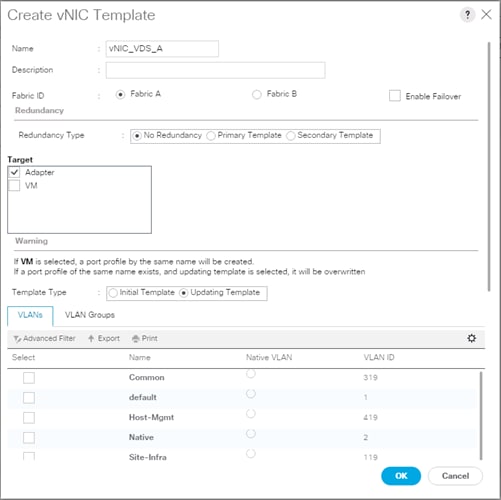

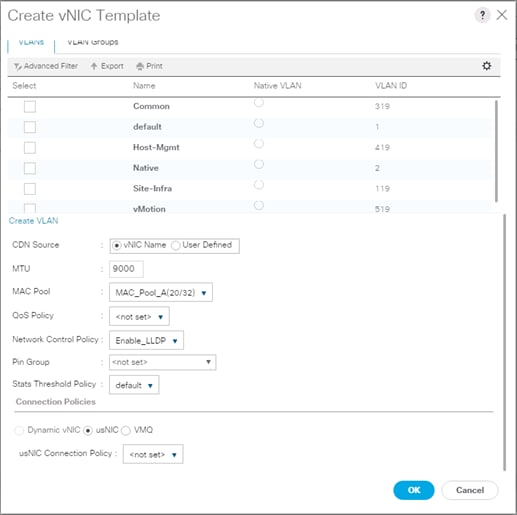

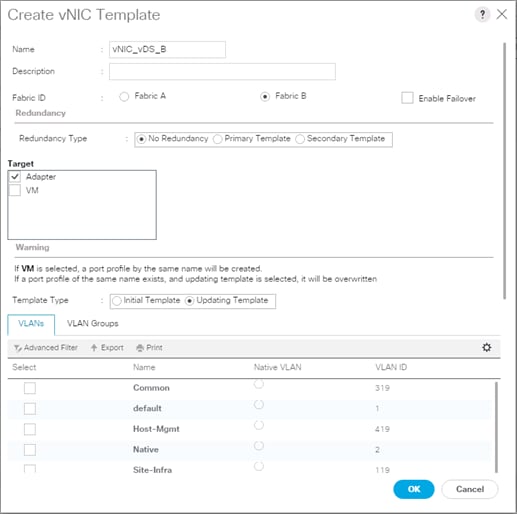

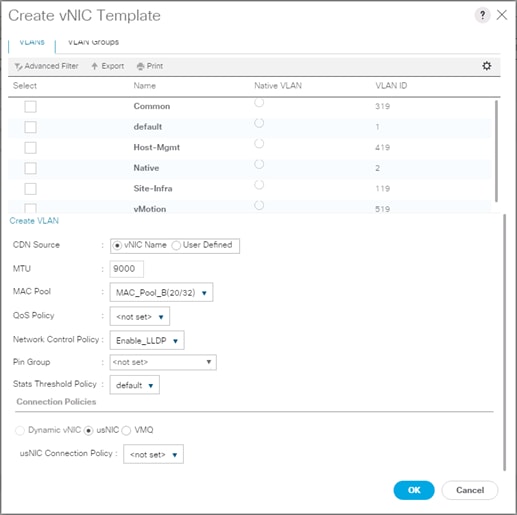

vPC – Cisco UCS Fabric Interconnects

To setup vPCs for connectivity to the Cisco UCS Fabric Interconnects, follow these steps:

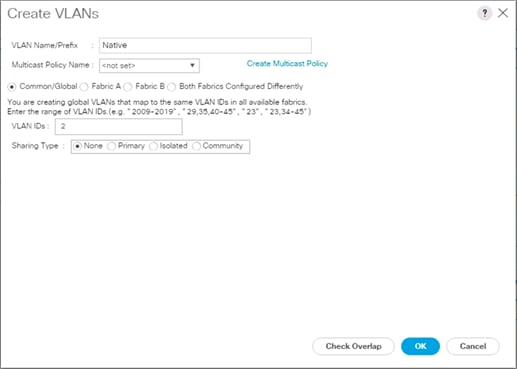

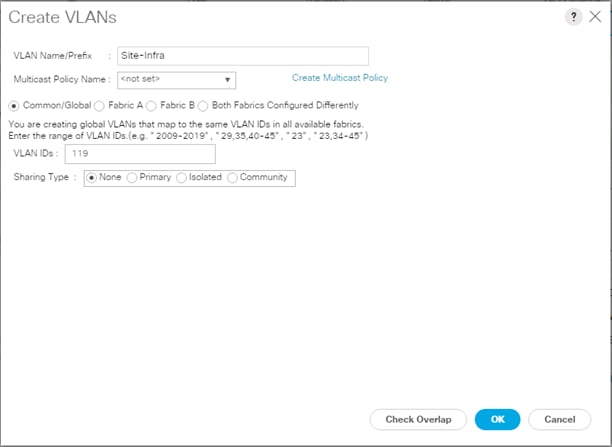

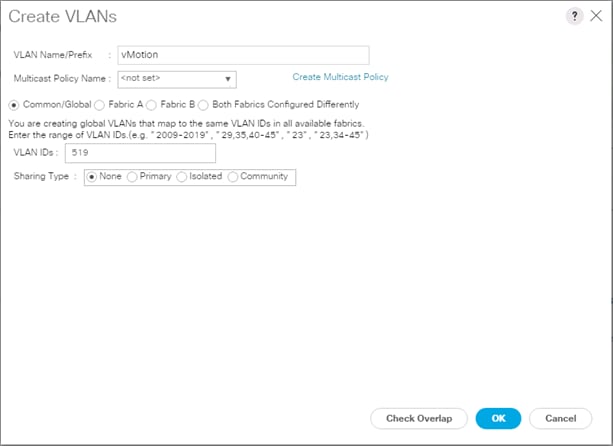

Figure 3 VLANs Configured for Cisco UCS

Table 12 VLANs for Cisco UCS Hosts

| Name |

VLAN |

| Native |

<2> |

| Site1-Infra |

<119> |

| Common |

<319> |

| Internal-Infra |

<419> |

| vMotion |

<519> |

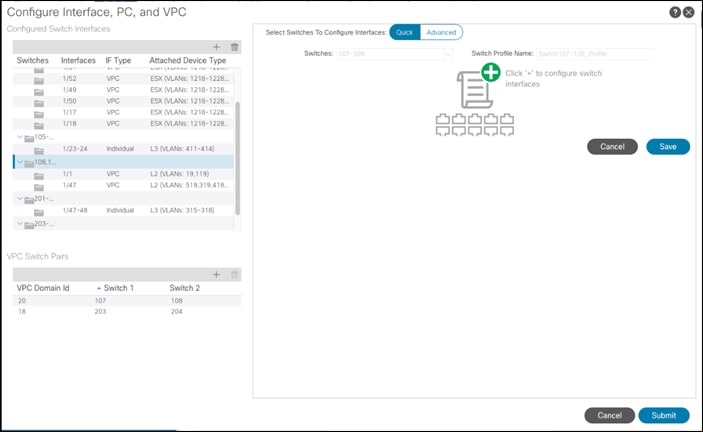

1. In the APIC GUI, select Fabric -> Access Policies -> Quick Start.

2. In the right pane, select Configure and interface, PC and VPC.

3. In the configuration window, select the Configured Switch Interfaces line for the two 93180YC-FX switches.

4. Click ![]() to add switch interfaces.

to add switch interfaces.

5. Configure various fields as shown in the figure below. In this screenshot, port 1/47 on both leaf switches is connected to UCS Fabric Interconnect A using 25Gbps links.

6. Click Save.

7. Click Save again to finish the configuring switch interfaces.

8. Click Submit.

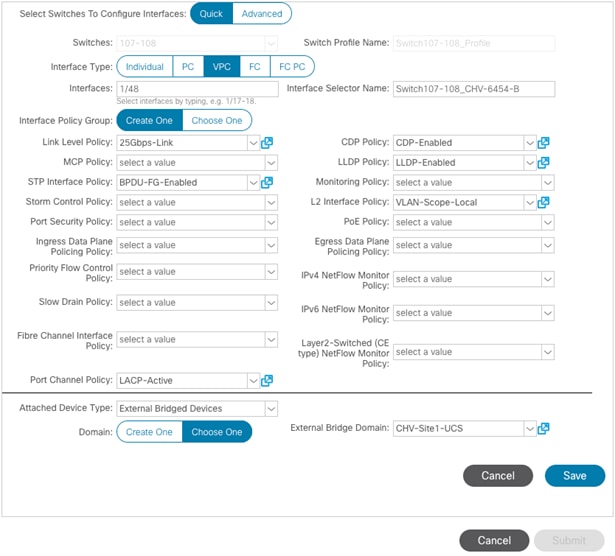

9. From the right pane, select Configure and interface, PC and VPC.

10. Select the switches configured in the last step under Configured Switch Interfaces.

11. Click ![]() on the right to add switch interfaces.

on the right to add switch interfaces.

12. Configure various fields as shown in the screenshot. In this screenshot, port 1/48 on both leaf switches is connected to the Cisco UCS Fabric Interconnect B using 25Gbps links. Instead of creating a new domain, the External Bridged Device created in the last step (CH-Site1-UCS) is attached to the FI-B as shown below.

13. Click Save.

14. Click Save again to finish the configuring switch interfaces.

15. Click Submit.

16. Optional: Repeat this procedure to configure any additional UCS domains. For a uniform configuration, the External Bridge Domain (UCS) will be utilized for all the Fabric Interconnects.

Deploy Shared Layer 3 Connectivity to Outside Networks – Pod-1

Follow the procedures outlined in this section to establish Layer 3 connectivity or a Shared L3Out from the ACI fabric to networks outside the ACI fabric.

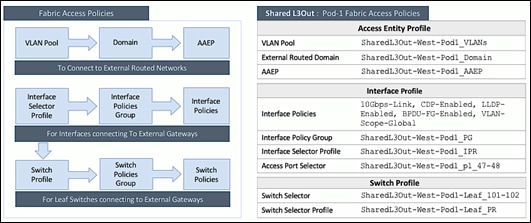

Deployment Overview

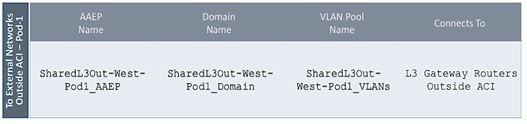

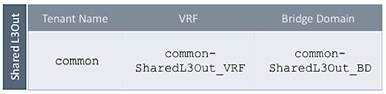

In this design, the Shared L3Out connection is established in the system-defined common Tenant so that it can be used by all tenants in the ACI fabric. Tenants must not use overlapping addresses when connecting to the outside networks using the same shared L3Out connection. The connection uses four 10GbE interfaces between border leaf switches deployed earlier and pair of Nexus 7000 switches. The Nexus 7000 routers serve as the external gateway to the networks outside the fabric. OSPF is utilized as the routing protocol to exchange routes between the two networks. Some highlights of this connectivity are:

· Pair of Nexus 7000 routers are connected to a pair of Nexus ACI leaf switches – using a total of 4 links.

· VLANs are used for connectivity across the 4 links – using a total of 4 VLANs. VLANs are configured on separate sub-interfaces.

· Fabric Access Policies are configured on ACI Leaf switches to connect to the External Routed domain (via Nexus 7000s) using VLAN pool (vlans: 311-314).

· A dedicated VRF common-SharedL3Out_VRF is configured in Tenant common for external connectivity.

· The shared Layer 3 Out created in common Tenant “provides” an external connectivity contract that can be “consumed” from any tenant.

· The Nexus 7000s are configured to originate and send a default route to the Nexus 9000 leaf switches using OSPF.

· ACI leaf switches advertise tenant subnets back to Nexus 7000 switches.

· In ACI 4.0, ACI leaf switches can also advertise host-routes if it is enabled.

Create VLAN Pool for External Routed Domain

In this section, a VLAN pool is created to enable connectivity to the external networks, outside the ACI fabric. The VLANs in the pool are for the four links that connect ACI Border Leaf switches to the Nexus Gateway routers in the non-ACI portion of the customer’s network.

Setup Information

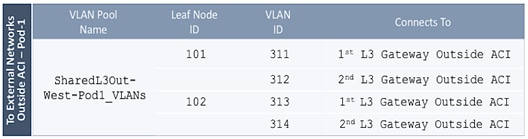

Table 13 VLAN Pool for Shared L3Out in Pod-1

![]() Configuration references are included for Pod1 to align with an eventual Multi-Pod buildout involving two pods.

Configuration references are included for Pod1 to align with an eventual Multi-Pod buildout involving two pods.

Deployment Steps

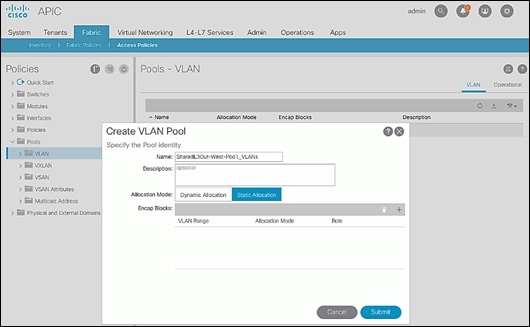

To configure a VLAN pool to connect to external gateway routers outside the ACI fabric, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Fabric > Access Policies.

3. From the left navigation pane, expand and select Pools > VLAN.

4. Right-click and select Create VLAN Pool.

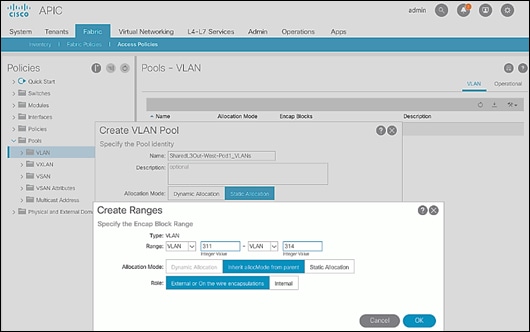

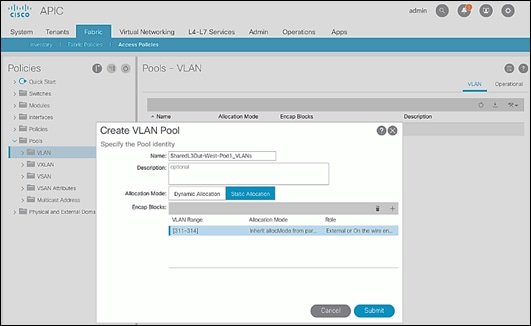

5. In the Create VLAN Pool pop-up window, specify a Name and for Allocation Mode, select Static Allocation.

6. For Encap Blocks, use the [+] button on the right to add VLANs to the VLAN Pool. In the Create Ranges pop-up window, configure the VLANs that need to be configured from the Border Leaf switches to the external gateways outside the ACI fabric. Leave the remaining parameters as is.

7. Click OK. Use the same VLAN ranges on the external gateway routers to connect to the ACI Fabric.

8. Click Submit to complete.

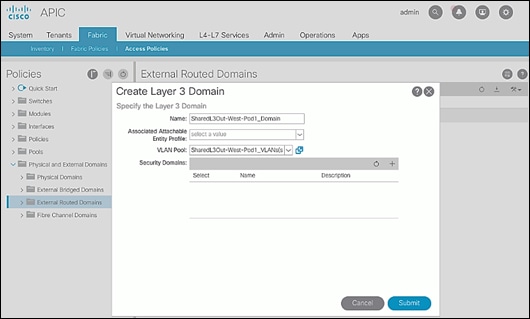

Configure Domain Type for External Routed Domain

To configure the domain type for the external domain, follow the procedures outlined in this section.

Deployment Steps

To specify the domain type for connecting to external gateway routers outside the ACI fabric, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Fabric > Access Policies.

3. From the left navigation pane, expand and select Physical and External Domains > External Routed Domains.

4. Right-click External Routed Domains and select Create Layer 3 Domain.

5. In the Create Layer 3 Domain pop-up window, specify a Name for the domain. For the VLAN Pool, select the previously created VLAN Pool from the drop-down list.

6. Click Submit to complete.

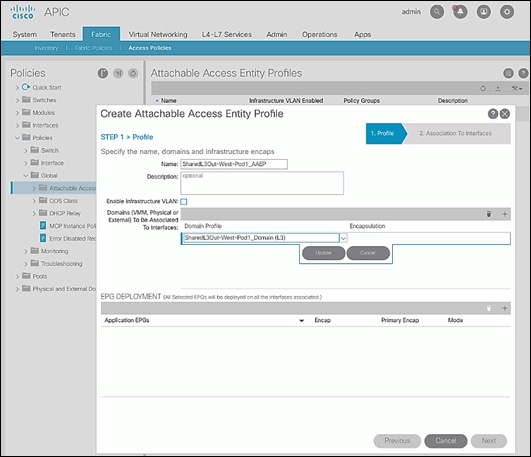

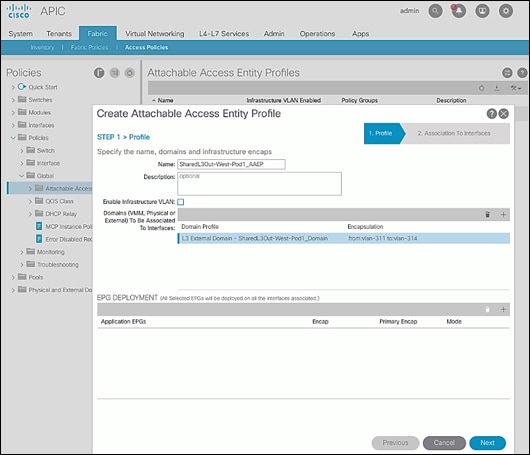

Create AAEP for External Routed Domain

To configure Attachable Access Entity Profile (AAEP) for external domain, follow the procedures outlined in this section.

Setup Information

Table 14 Attachable Access Entity Profile (AAEP) for Shared L3Out in Pod-1

Deployment Steps

To create an Attachable Access Entity Profile (AAEP) to connect to external gateway routers outside the ACI fabric, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Fabric > Access Policies.

3. From the left navigation pane, expand and select Policies > Global > Attachable Access Entity Profiles.

4. Right-click and select Create Attachable Access Entity Profile.

5. In the Create Attachable Access Entity Profile pop-up window, specify a Name.

6. For the Domains, click the [+] on the right-side of the window and select the previously created domain from the drop-down list below Domain Profile.

7. Click Update.

8. You should now see the selected domain and the associated VLAN Pool as shown below.

9. Click Next. This profile is not associated with any interfaces at this time – they can be associated once the interfaces are configured in the upcoming section.

10. Click Finish to complete.

Configure Interfaces to External Routed Domain

To configure interfaces to the external routed domain, follow the procedures outlined in this section.

Setup Information

· Border Leaf switches (Node ID: 101,102) in Pod-1 connect to External Gateways (Nexus 7000 series switches) using 10Gbps links, on ports 1/47 and 1/48.

Figure 4 Fabric Access Policies for Shared L3Out in Pod-1

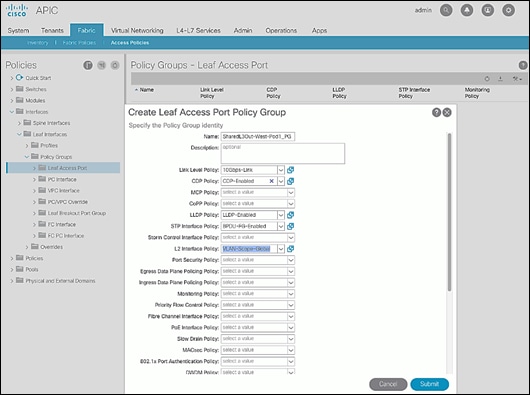

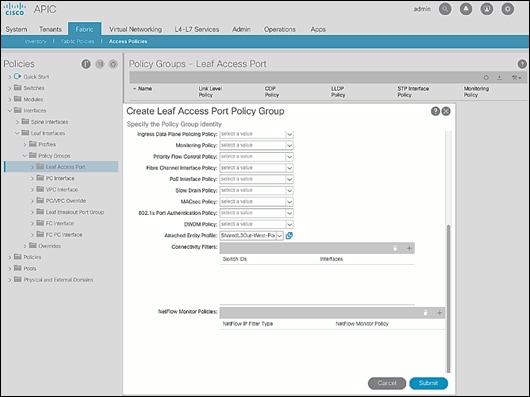

Create Interface Policy Group for Interfaces to External Routed Domain

To create an interface policy group to connect to external gateway routers outside the ACI fabric, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Fabric > Access Policies.

3. From the left navigation pane, expand and select Interfaces > Leaf Interfaces > Policy Groups > Leaf Access Port.

4. Right-click and select Create Leaf Access Port Policy Group.

5. In the Create Leaf Access Port Policy Group pop-up window, specify a Name and select the applicable interface policies from the drop-down list for each field.

6. For the Attached Entity Profile, select the previously created AAEP to external routed domain.

7. Click Submit to complete.

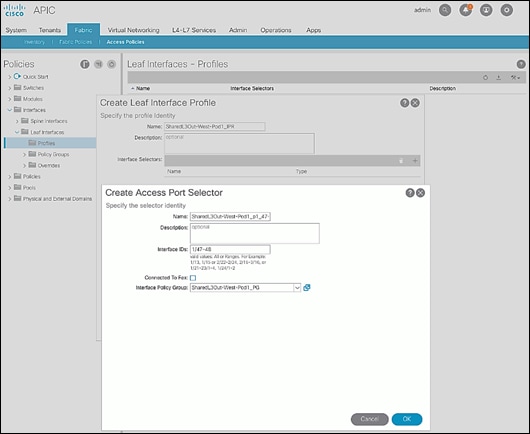

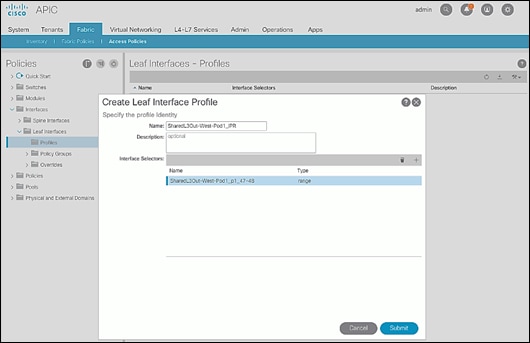

Create Interface Profile for Interfaces to External Routed Domain

To create an interface profile to connect to external gateway routers outside the ACI fabric, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Fabric > Access Policies.

3. From the left navigation menu, expand and select Interfaces > Leaf Interfaces > Profiles.

4. Right-click and select Create Leaf Interface Profile.

5. In the Create Leaf Interface Profile pop-up window, specify a Name. For Interface Selectors, click the [+] to select access ports to apply interface policies to. In this case, the interfaces are access ports that connect Border Leaf switches to gateways outside ACI.

6. In the Create Access Port Selector pop-up window, specify a selector Name. For the Interface IDs, specify the access ports connecting to the two external gateways. For the Interface Policy Group, select the previously created Policy Group from the drop-down list.

7. Click OK to close the Create Access Port Selector pop-up window.

8. Click Submit to complete.

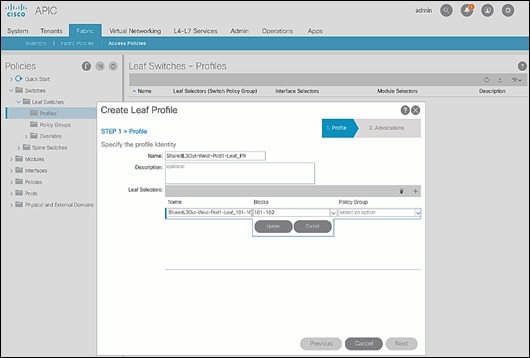

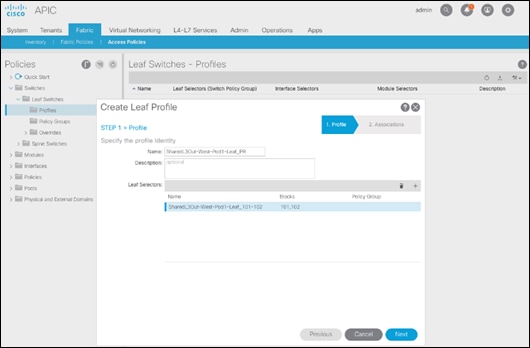

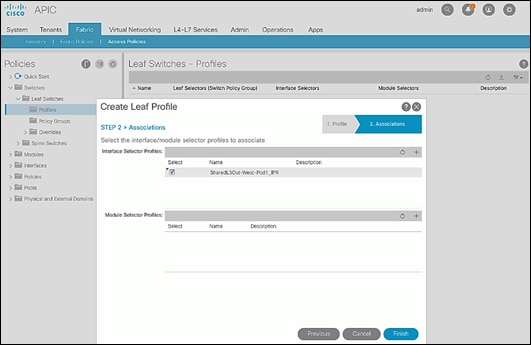

Create Leaf Switch Profile to External Routed Domain

To create leaf switch profile to connect to external gateway routers outside the ACI fabric, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Fabric > Access Policies.

3. From the left navigation menu, expand and select Switches > Leaf Switches > Profiles.

4. Right-click and select Create Leaf Profile.

5. In the Create Leaf Profile pop-up window, specify a profile Name. For Leaf Selectors, click the [+] to select the Leaf switches to apply the policies to. In this case, the Leaf switches are the Border Leaf switches that connect to the gateways outside ACI.

6. Specify a Leaf Selector Name. For the Interface IDs, specify the access ports connecting to the two external gateways. For Blocks, select the Node IDs of the Border Leaf switches from the drop-down list.

7. Click Update.

8. Click Next.

9. In the Associations window, select the previously created Interface Selector Profiles from the list.

10. Click Finish to complete.

Configure Tenant Networking for Shared L3Out

To configure tenant networking to connect to networks outside the ACI fabric, follow the procedures outlined in this section.

Setup Information

Figure 5 Tenant Networking for Shared L3Out

Deployment Steps

To configure tenant networking for the Shared L3Out for connectivity outside the ACI fabric, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Tenants > common.

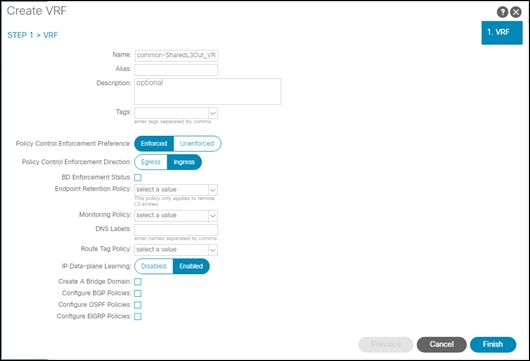

3. From the left navigation pane, select and expand Tenant common > Networking > VRFs.

4. Right-click and select Create VRF.

5. In the Create VRF pop-up window, STEP 1 > VRF, specify a Name (for example, common-SharedL3Out_VRF).

6. Deselect the check box for Create a Bridge Domain.

7. Click Finish.

Configure External Routed Networks under Tenant Common

To configure external routed networks under Tenant Common, follow the procedures outlined in this section.

Setup Information

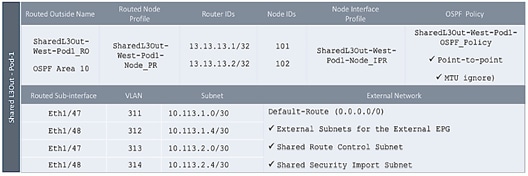

Table 15 Routed Outside – Pod-1

Deployment Steps

To configure the external routed networks under Tenant common, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Tenants > common.

3. In the left navigation pane, select and expand Tenant common > Networking > External Routed Networks.

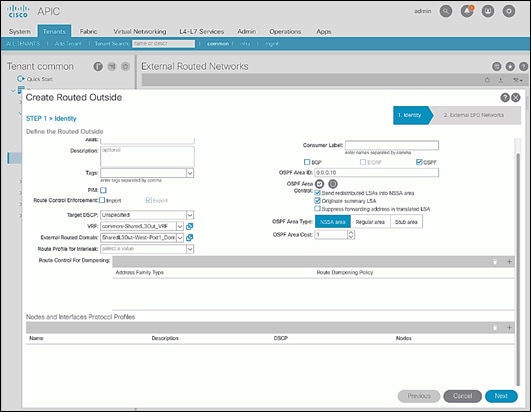

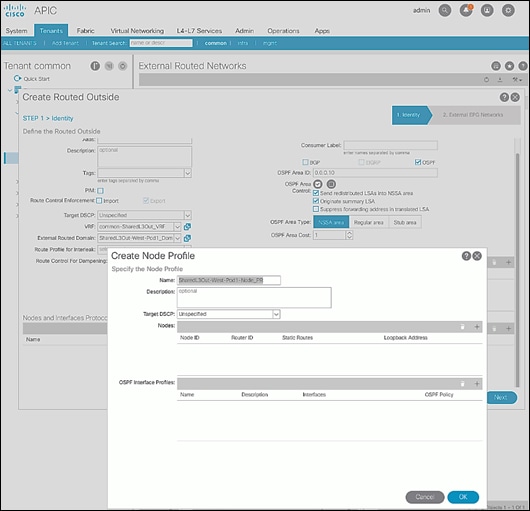

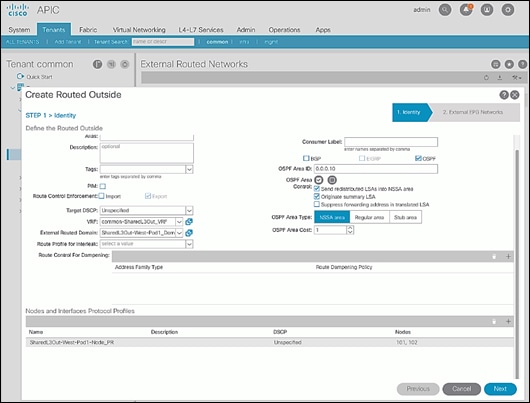

4. Right-click and select Create Routed Outside.

5. In the Create Routed Outside pop-up window, specify a Name.

6. Select the check box next to OSPF.

7. For the OSPF Area ID, enter 0.0.0.10 (should match the external gateway configuration).

8. For the VRF, select the previously created VRF from the drop-down list.

9. For the External Routed Domain, select the previously created domain from the drop-down list.

10. For Nodes and Interfaces Protocol Profiles, click [+] to add a Node Profile.

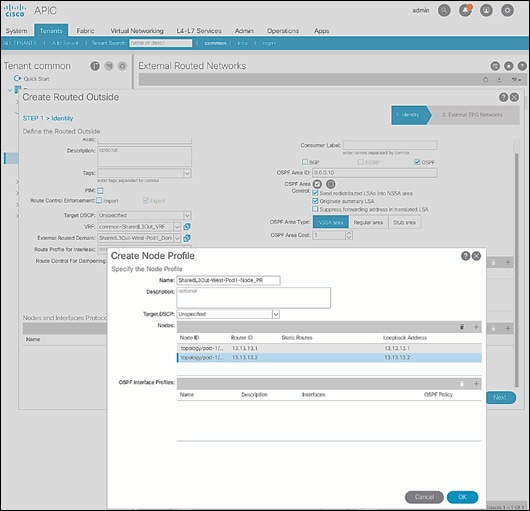

11. In the Create Node Profile pop-up window, specify a profile Name.

12. For Nodes, click [+] to add a Node.

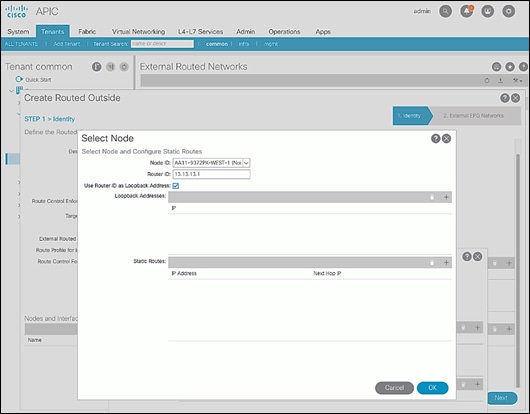

13. In the Select Node pop-up window, for the Node ID, select first Border Leaf switch from the drop-down list. For the Router ID, specify the router ID for the first Border Leaf Switch (for example, 13.13.13.1).

14. Click OK to complete selecting the Node.

15. Repeat steps 1-14 to add the second Border Leaf to the list of Nodes.

16. For OSPF Interface Profiles, click [+] to add a profile.

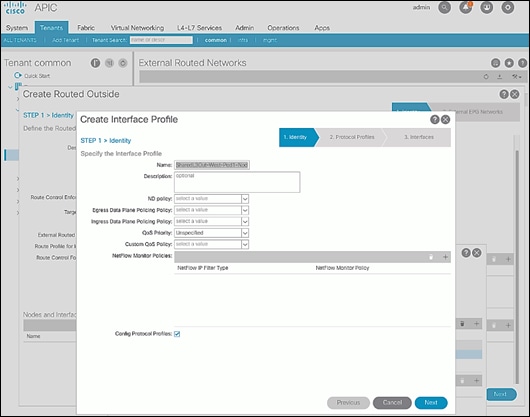

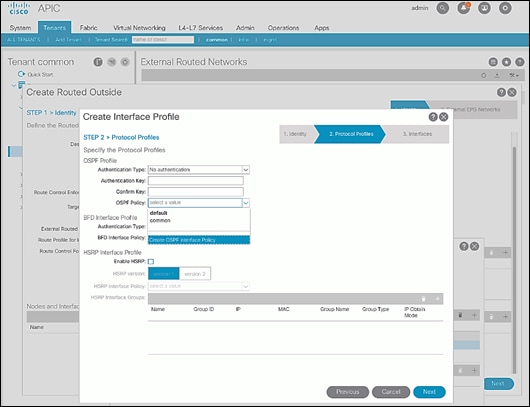

17. In the Create Interface Profile pop-up window, for Step 1 > Identity, specify a Name.

18. Click Next.

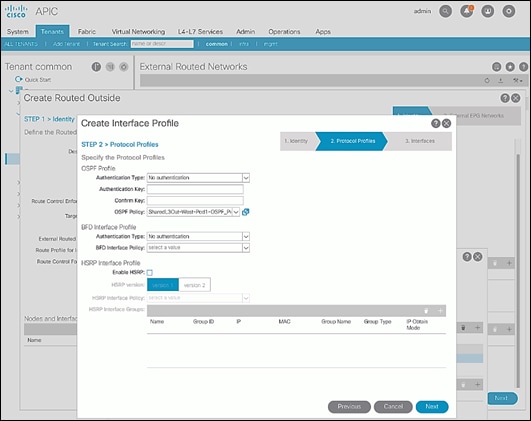

19. In Step 2 > Protocol Profiles, for the OSPF Policy, use the drop-down list to select Create OSPF Interface Policy.

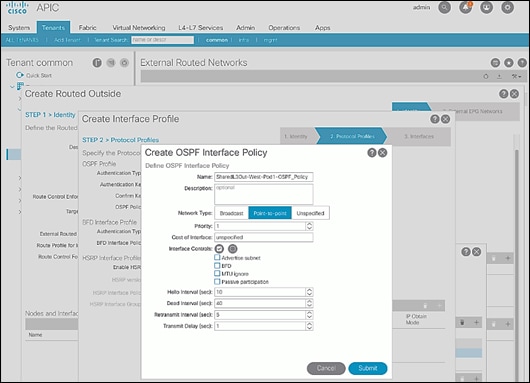

20. In the Create OSPF Interface Policy pop-up window, specify a Name. For Network Type, select Point-to-Point. For Interface Controls, select the checkbox for MTU ignore.

21. Click Submit to complete creating the OSPF policy.

22. In the Create Interface Profile pop-up window, click Next.

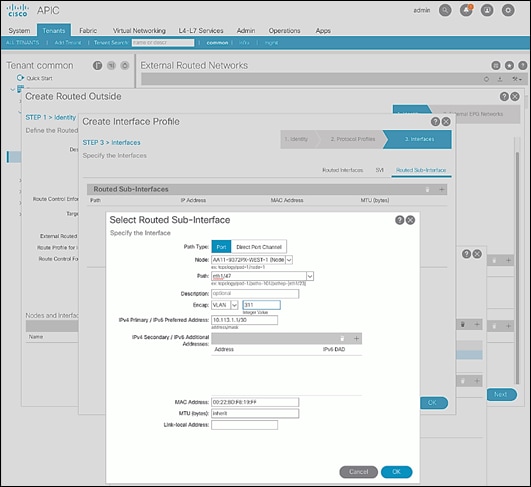

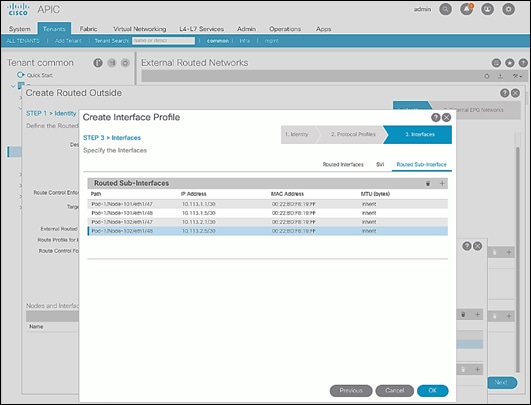

23. For STEP 3 > Interfaces, select the tab for Routed Sub-Interface. Click [+] on the right side of the window to add a routed sub-interface.

24. In the Select Routed Sub-Interface pop-up window, for Node, select the first Border Leaf. For Path, select the interface (for example, 1/47) on the first Border Leaf that connects to the first external gateway. For Encap, specify the VLAN (for example, 311). For IPv4 Primary / IPv6 Preferred Address, specify the address (for example, 10.113.1.1/30).

25. Click OK to complete configuring the first routed sub-interface.

26. Repeat steps 1-25 to create the next sub-interface that connects the first Leaf to the second Gateway.

27. Repeat steps 1-25 to create the sub-interfaces on the second Leaf that connects to the two gateways.

28. Click OK to complete creating the Interface Profile.

29. In the Create Routed Outside pop-up window, click Next.

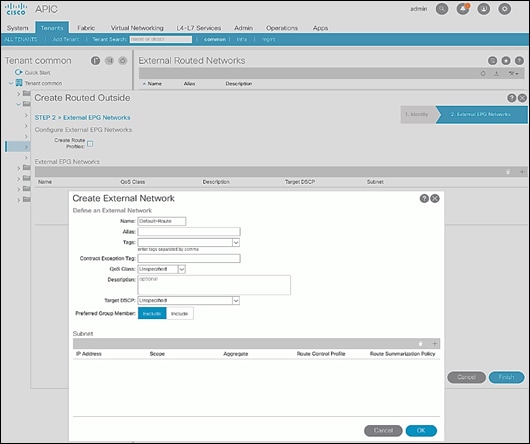

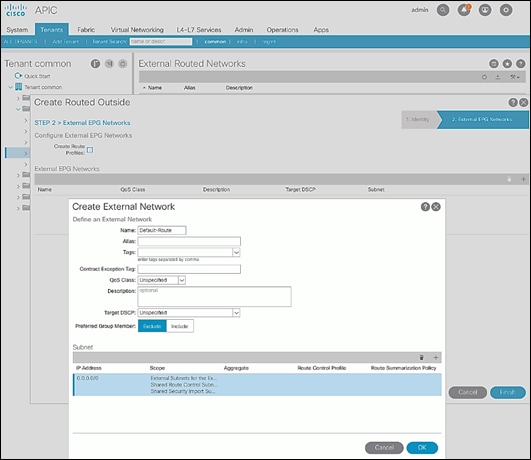

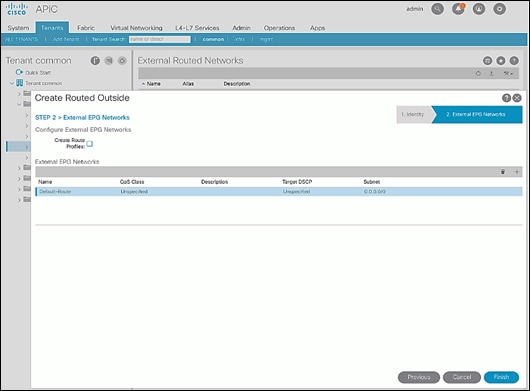

30. In STEP 2 > External EPG Networks, for External EPG Networks, click [+] to add an external network.

31. In the Created External Network pop-up window, specify a Name (for example, Default-Route).

32. For Subnet, click [+] to add a Subnet.

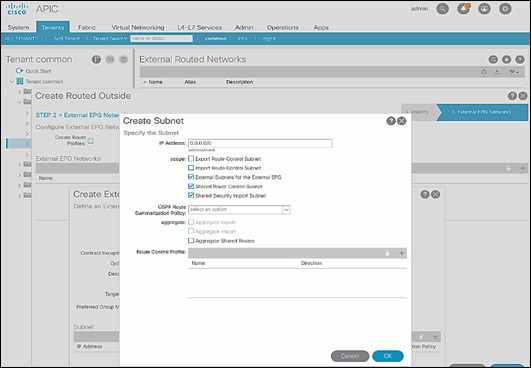

33. In the Create Subnet pop-up window, for the IP Address, enter a route (for example, 0.0.0.0/0). Select the checkboxes for External Subnets for the External EPG, Shared Route Control Subnet, and Shared Security Import Subnet.

34. Click OK to complete creating the subnet.

35. Click OK again to complete creating the external network.

36. Click Finish to complete creating the Routed Outside.

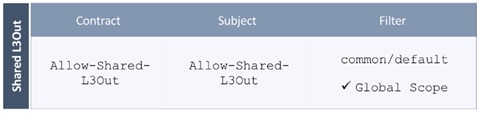

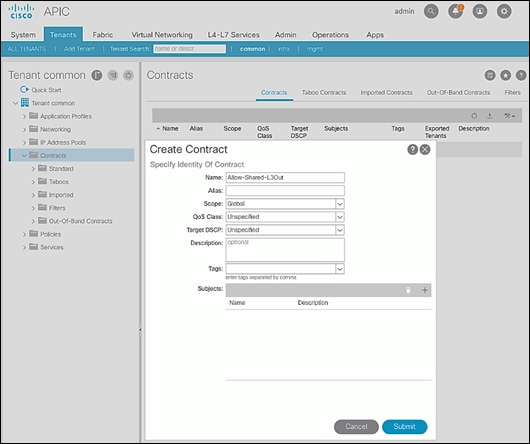

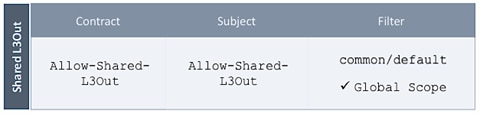

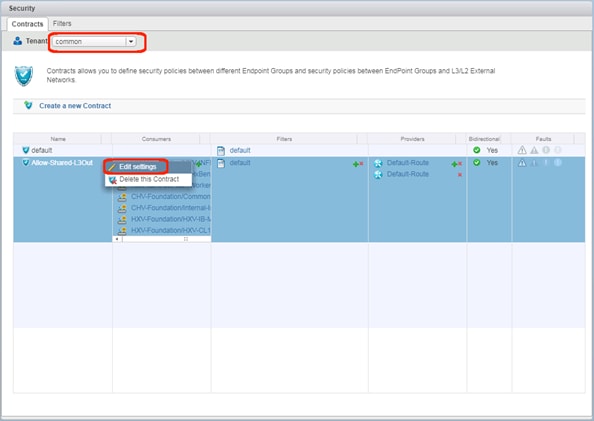

Create Contracts for External Routed Networks from Tenant (common)

To create contracts to access external routed networks, follow the procedures outlined in this section.

Setup Information

Table 16 Contract Created

Deployment Steps

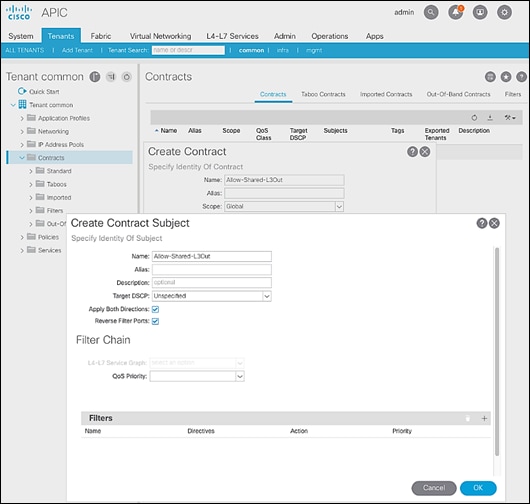

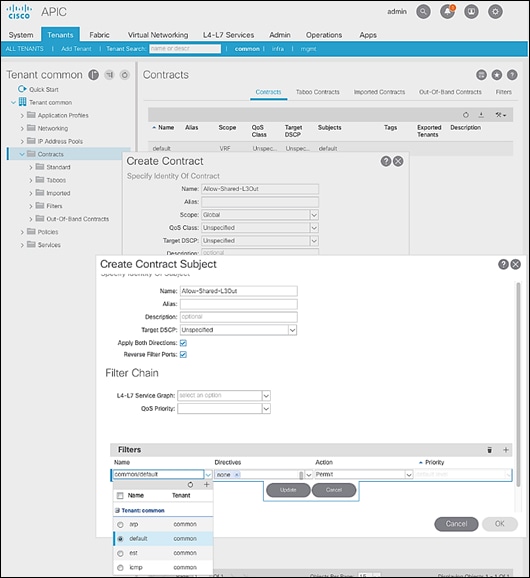

To create contracts for external routed networks from Tenant common, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Tenants > common.

3. In the left navigation pane, select and expand Tenant common > Contracts.

4. Right-click Contracts and select Create Contract.

5. In the Create Contract pop-up window, specify a Name.

6. For Scope, select Global from the drop-down list to allow the contract to be consumed by all tenants.

7. For Subjects, click [+] on the right side to add a contract subject.

8. In the Create Contract Subject pop-up window, specify a Name.

9. For Filters, click [+] on the right side to add a filter.

10. In the Filters section of the window, for Name, select default (common) from the drop-down list to create a default filter for Tenant common.

11. Click Update.

12. Click OK to complete creating the contract subject.

13. Click Submit to complete creating the contract.

Provide Contracts for External Routed Networks from Tenant (common)

To provide contracts to access external routed networks, follow the procedures outlined in this section.

Setup Information

Table 17 External Routed Network Contracts

Deployment Steps

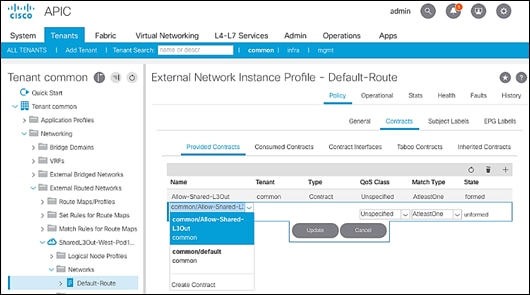

To provide contracts for external routed networks from Tenant common, follow these steps:

1. Use a browser to navigate to the APIC GUI. Log in using the admin account.

2. From the top navigation menu, select Tenants > common.

3. In the left navigation pane, select and expand Tenant common > Networking > External Routed Networks.

4. Select and expand the recently created External Routed Network for SharedL3out or Routed Outside network (for example, SharedL3Out-West-Pod1_RO).

5. Select and expand Networks.

6. Select the recently created route (for example, Default-Route).

7. In the right window pane, select the tab for Policy and then Contracts.

8. Under the Provided Contracts tab, click [+] on the right to add a Provided Contract.

9. For Name, select the previously created contract (for example, common/Allow-Shared-L3Out) from the drop-down list.

10. Click Update.

11. Other Tenants can now ‘consume’ the Allow-Shared-L3Out contract to route traffic outside the ACI fabric. This deployment example shows a default filter to allow all traffic. More restrictive contracts can be created for a more restrictive access to destinations outside the fabric.

Configure External Gateways in the Outside Network

This section provides a sample configuration from the external Layer 3 Gateways routers that connect to the fabric. The gateways are in the external network and peer using OSPF to two ACI border leaf switches in the fabric. Nexus 7000 routers are used as External gateway routers in this design, but other Cisco models can also be used.

![]() The gateway configuration shown below shows only the relevant portion of the configuration; it is not the complete configuration.

The gateway configuration shown below shows only the relevant portion of the configuration; it is not the complete configuration.

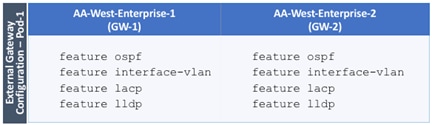

Enable Protocols

The protocols used between the ACI border leaf switches and external gateways have to be explicitly enabled on Nexus platforms used as external gateways in this design. The configuration to enable these protocols are provided below.

Table 18 Protocols Enabled

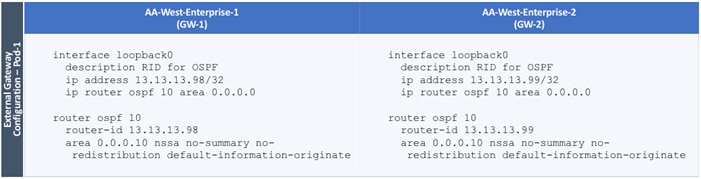

Configure OSPF

OSPF is used between the external gateways and ACI border leaf switches to exchange routing between the two domains. The global configuration for OSPF is provided below. Loopback is used as the router IDs for OSPF. Note that interfaces between ACI border leaf switches will be in OSPF Area 10.

Table 19 External Gateways for Pod-2 – Protocols

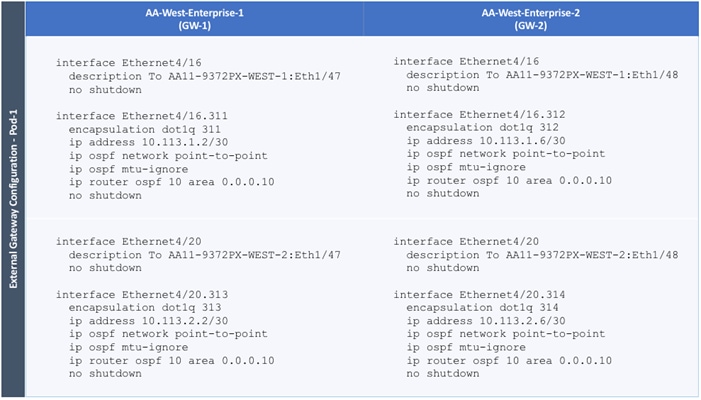

Configure Interfaces

The interface level configuration for connectivity between external gateways and ACI border leaf switches in Pod-1 is provided below. Note that interfaces to ACI are in OSPF Area 10 while the loopbacks and port-channels between the gateways are in OSPF Area 0.

Table 20 Interface Configuration – To ACI Border Leaf Switches

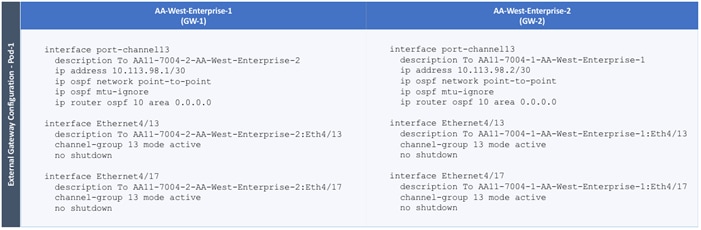

The configuration on the port-channel with 2x10GbE links that provide direct connectivity between the external gateways is provided below.

Table 21 Interface Configuration – Between External Gateways

Deploy CHV-Foundation Tenant

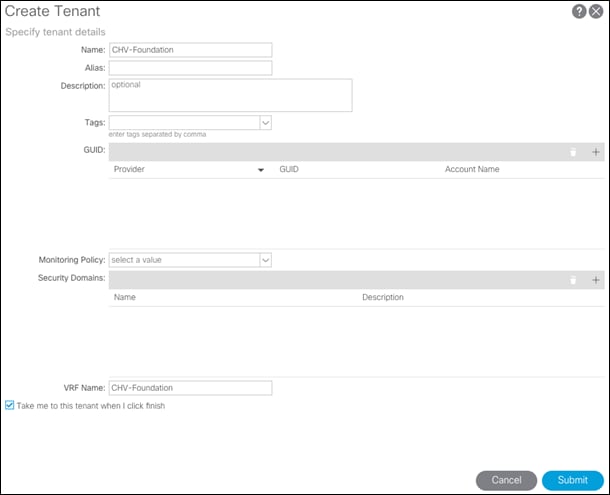

This section details the steps for creating the CHV-Foundation Tenant in the ACI Fabric. This tenant will host infrastructure connectivity for Internal Infrastructure (VMware ESXi on UCS nodes, Hitachi VSP) as well as Shared Infrastructure (AD/DNS). To deploy the CHV-Foundation Tenant, follow these steps:

1. In the APIC GUI, select Tenants -> Add Tenant.

2. Name the Tenant CHV-Foundation.

3. For the VRF Name, enter CHV-Foundation. Keep the check box “Take me to this tenant when I click finish” checked.

4. Click Submit to finish creating the Tenant.

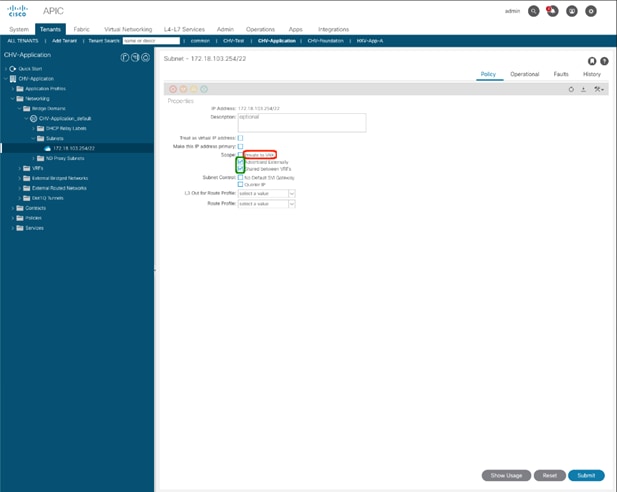

Create Bridge Domains

The following Bridge Domains and EPGs will be created to be associated with the EPGs:

| Bridge Domain |

EPG |

VLAN |

Subnet(gw/mask) |

| BD-CHV-Foundation-Internal |

Site-Infra |

119 |

10.1.168.254/24 |

| BD-CHV-Common |

Common |

319 |

10.168.168.254/24 |

| BD-ESXi |

Host-Mgmt |

419 |

10.4.168.254/24 |

| BD-vMotion |

vMotion |

519 |

|

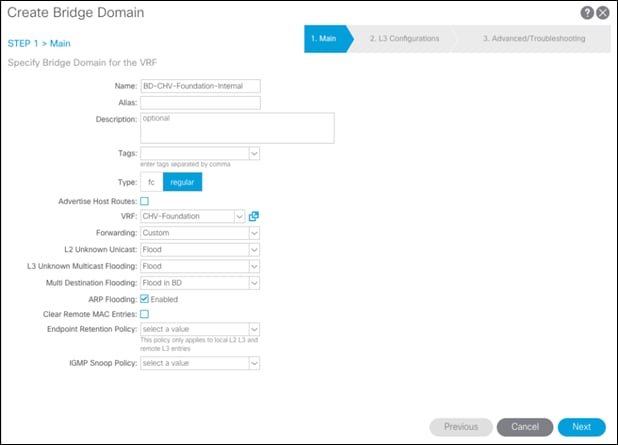

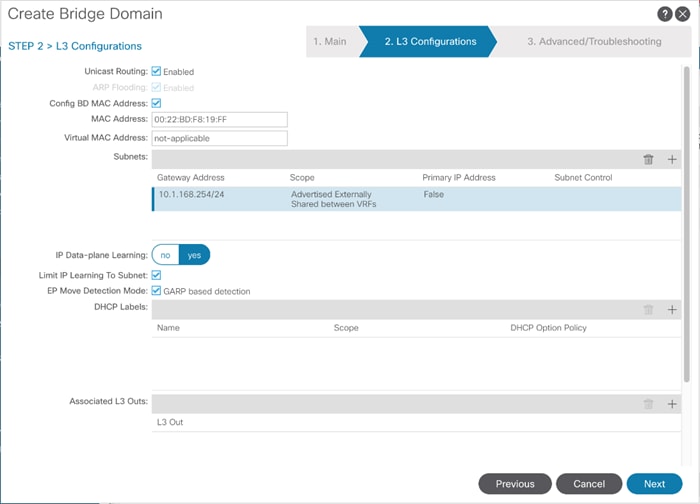

To create a Bridge Domain, follow these steps:

1. In the left pane, expand Tenant CHV-Foundation and Networking.

2. Right-click Bridge Domains and select Create Bridge Domain.

3. Name the Bridge Domain BD-CHV-Foundation-Internal.

4. Select CHV-Foundation from the VRF drop-down list.

5. Select Custom under Forwarding and enable Flood for L2 Unknown Unicast.

6. Click Next.

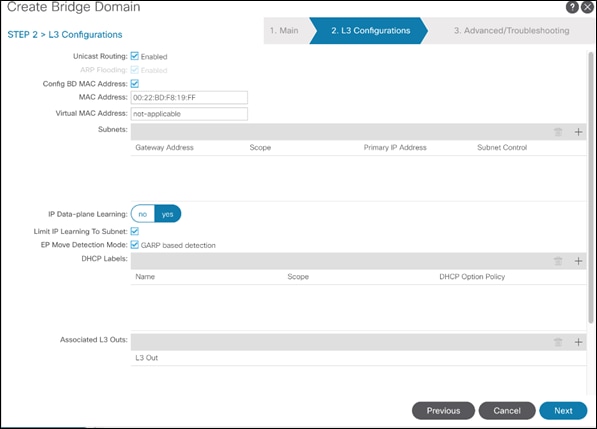

7. Under L3 Configurations, make sure Limit IP Learning to Subnet is selected and select EP Move Detection Mode – GARP based detection.

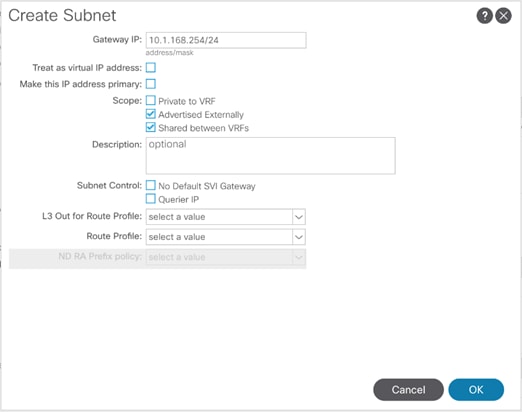

8. Select the + option to the far right of Subnets.

9. Provide the appropriate Gateway IP and mask for the subnet.

10. Select the Scope options for Advertised Externally and Shared between VRFs.

11. Click OK.

12. Select Next.

13. No changes are needed for Advanced/Troubleshooting. Click Finish to finish creating the Bridge Domain.

14. Repeat these steps for the [BD-CHV-Common, BD-ESXi, and BD-vMotion] bridge domain creations, leaving out the Subnet creation for the BD-vMotion bridge domain.

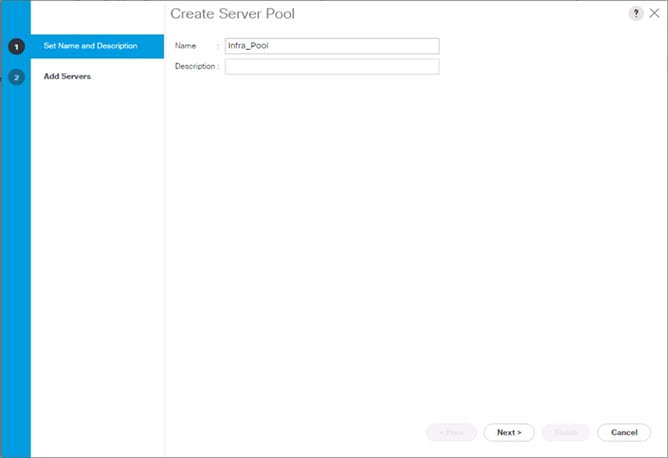

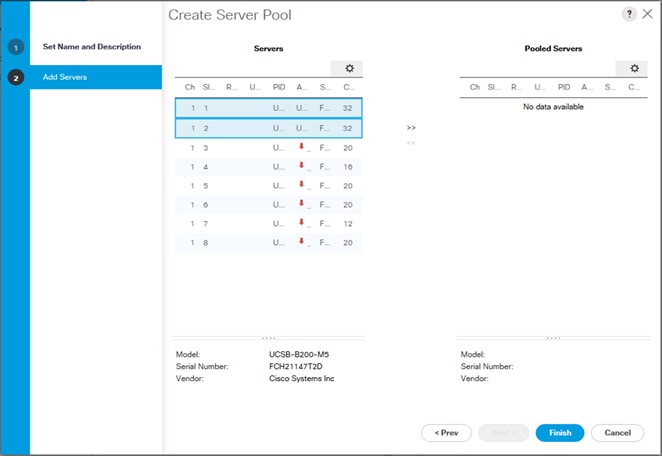

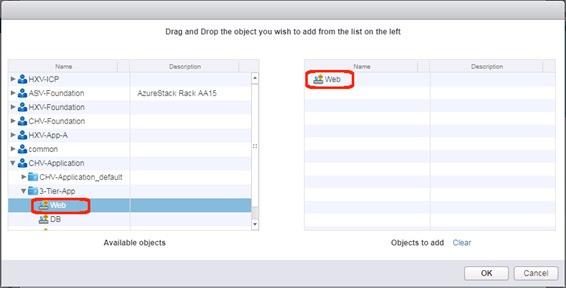

Create Application Profile for Infrastructure

Infrastructure will reside within the Foundation tenant to provide shared services like AD/DNS but will also contain internal the infrastructure backend like UCSM, vCenter, and Hitachi VSP. To create an application profile for Infra, follow these steps:

1. In the left pane, expand tenant CHV-Foundation, right-click Application Profiles and select Create Application Profile.

2. Name the Application Profile Infra and click Submit to complete adding the Application Profile.

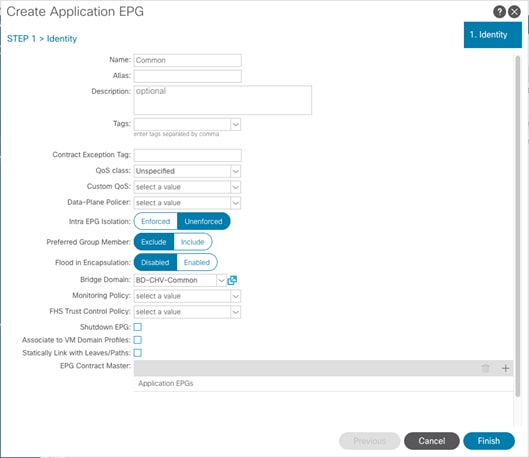

Create EPG for Shared Infra Access

This EPG will be common resources used by the infrastructure as well as the applications within the tenant, such as vCenter and AD/DNS.

To create the EPG for Shared-Infra access, follow these steps:

1. In the left pane, expand the Application Profiles and right-click the Infra Application Profile and select Create Application EPG.

2. Name the EPG Common.

3. From the Bridge Domain drop-down list, select Bridge Domain BD-CHV-Common.

4. Click Finish to complete creating the EPG.

5. In the left menu, expand the newly created EPG, right-click Domains and select Add L2 External Domain Association.

6. Select the CHV-Site1-UCS L2 External Domain Profile.

7. Click Submit.

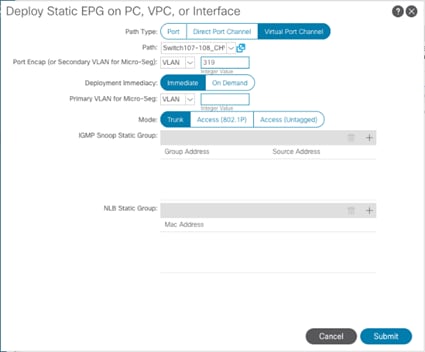

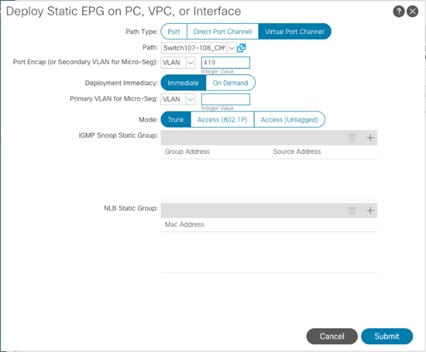

8. In the left menu, right-click Static Ports and select Deploy Static EPG on PC, VPC, or Interface.

9. Select the Virtual Port Channel Path Type, then for Path select the vPC for the first UCS Fabric Interconnect.

10. For Port Encap leave VLAN selected and fill in the UCS Common VLAN ID <319>.

11. Set the Deployment Immediacy to Immediate and click Submit.

12. Repeat steps 9-11 to add the Static Port mapping for the second UCS Fabric Interconnect vPC.

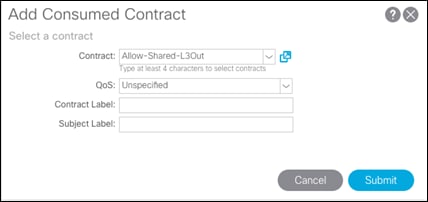

13. In the left navigation pane for the Common EPG, right click Contracts, and select add Consumed Contract.

14. In the Add Consumed Contract pop-up window, select the Allow-Shared-L3Out contract from the drop-down list.

15. Click Submit.

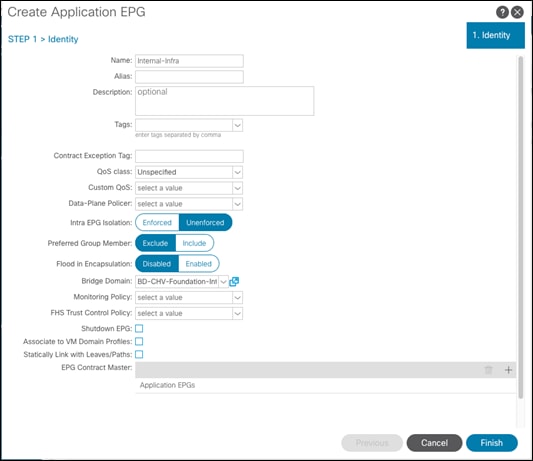

Create EPG for Internal Infra Access

This EPG is an example of backend infrastructure that doesn’t need to be exposed to the application tenant, containing components such as Cisco UCSM and the Hitachi VSP.

To create the EPG for Internal-Infra access, follow these steps:

1. In the left pane, expand the Application Profiles and right-click the Infra Application Profile and select Create Application EPG.

2. Name the EPG Internal-Infra.

3. From the Bridge Domain drop-down list, select Bridge Domain BD-CHV-Foundation-Internal.

4. Click Finish to complete creating the EPG.

5. In the left menu, expand the newly created EPG, right-click Domains and select Add L2 External Domain Association.

6. Select the CHV-Site1-UCS L2 External Domain Profile.

7. Click Submit.

8. Repeat steps 5-7 for the CHV-Site1-Mgmt L2 external domain.

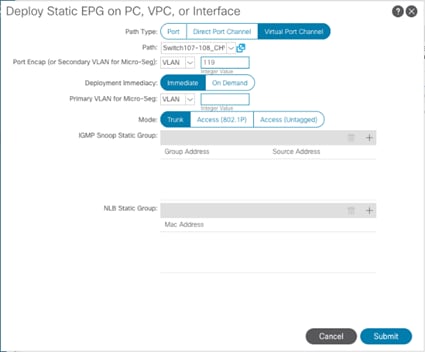

9. In the left menu, right-click Static Ports and select Deploy Static EPG on PC, VPC, or Interface.

10. Select the Virtual Port Channel Path Type, then for Path select the vPC for the first UCS Fabric Interconnect.

11. For Port Encap leave VLAN selected and fill in the UCS Site-Infra VLAN ID <119>.

12. Set the Deployment Immediacy to Immediate and click Submit.

13. Repeat steps 9-12 to add the Static Port mapping for the second UCS Fabric Interconnect, and the upstream management vPC.

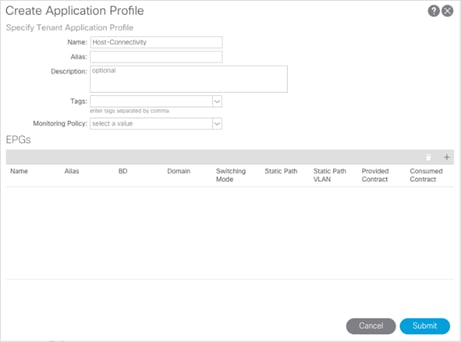

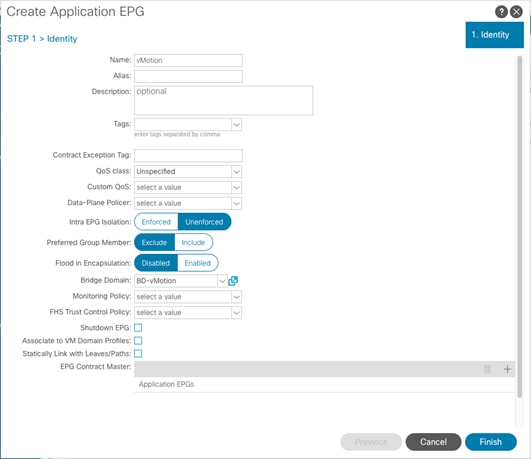

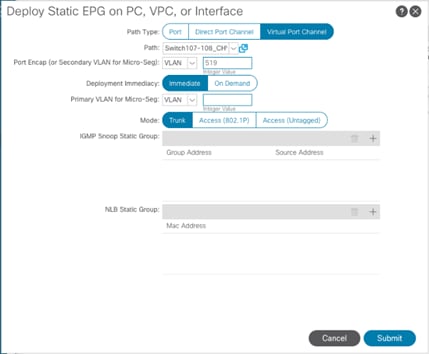

Create Application Profile for Host Connectivity

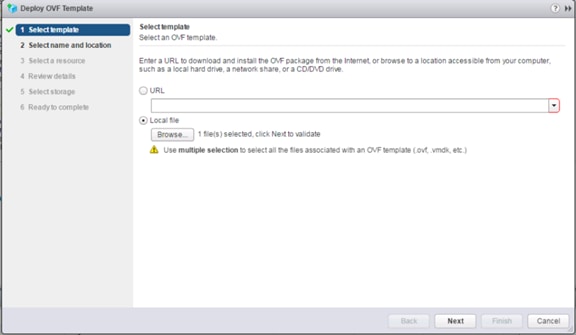

The Foundation tenant will also contain EPGs for hypervisor specific traffic that will be grouped into their own Application Profile. These EPGs are for the ESXi management VMkernel which will be connected via a contract to the vCenter, and a vMotion EPG which will hold the non-routed vMotion traffic between the ESXi hosts.

To create an application profile for Host-Connectivity, follow these steps:

1. In the left pane, expand tenant CHV-Foundation, right-click Application Profiles and select Create Application Profile.

2. Name the Application Profile Host-Connectivity and click Submit to complete adding the Application Profile.

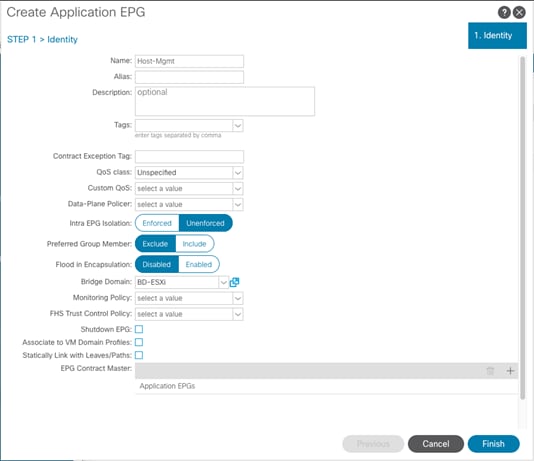

Create EPG for Host Management

This EPG will be for the management communication between ESXi hosts and vCenter.

To create the EPG for Host-Mgmt access, follow these steps:

1. In the left pane, expand the Application Profiles and right-click the Infra Application Profile and select Create Application EPG.

2. Name the EPG Host-Mgmt.

3. From the Bridge Domain drop-down list, select Bridge Domain BD-ESXi.

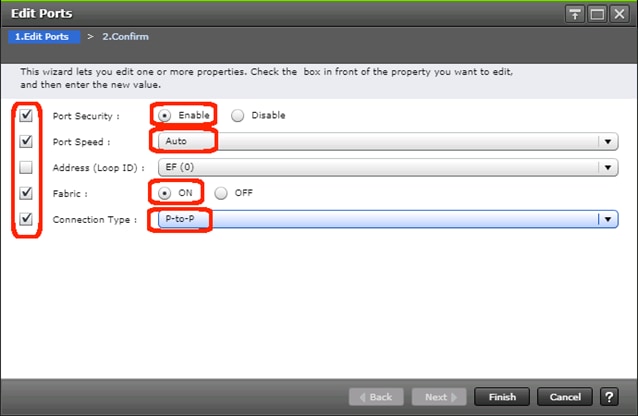

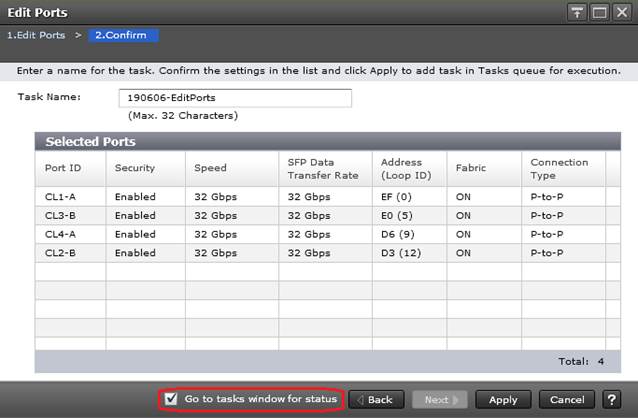

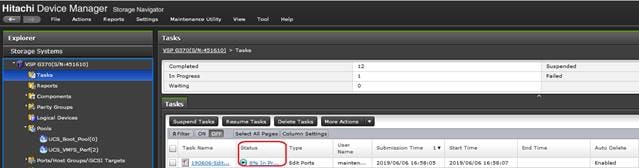

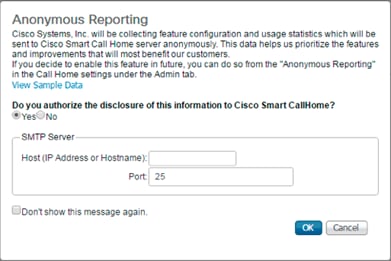

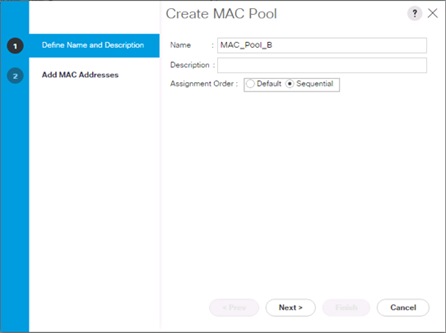

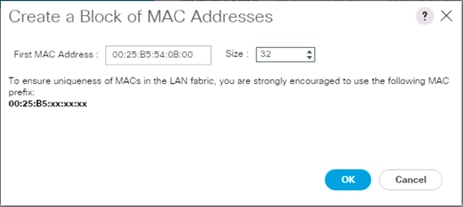

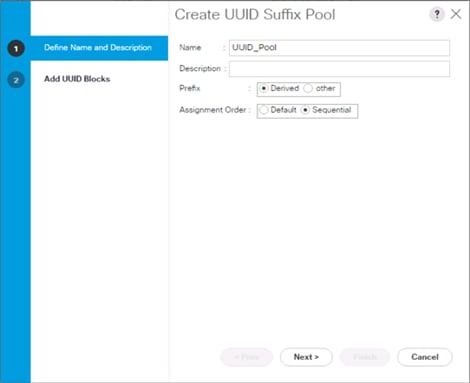

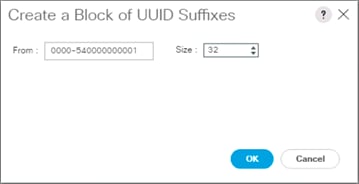

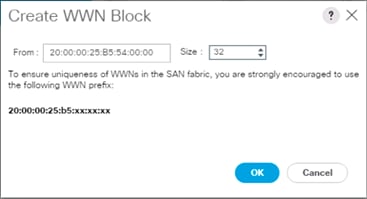

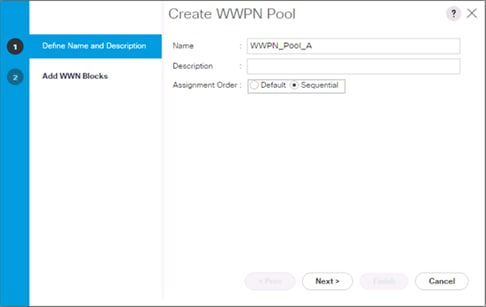

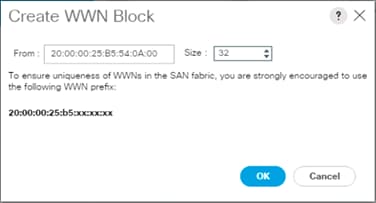

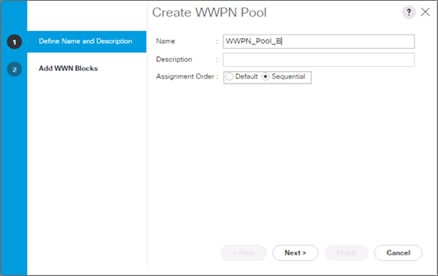

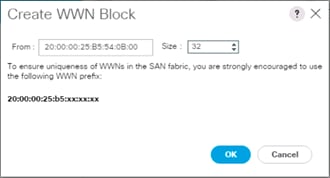

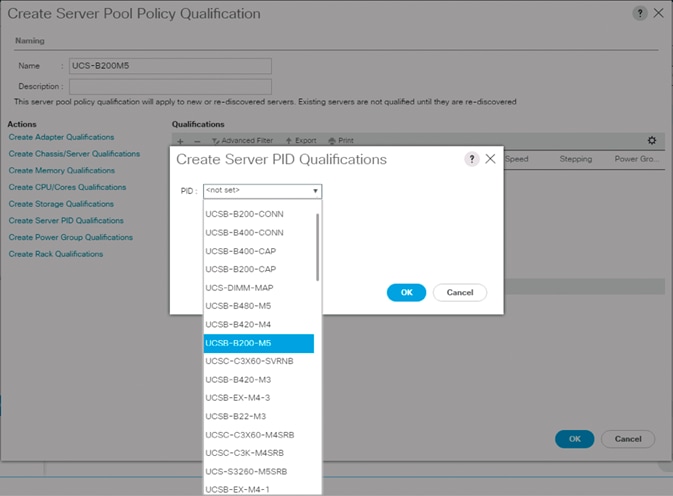

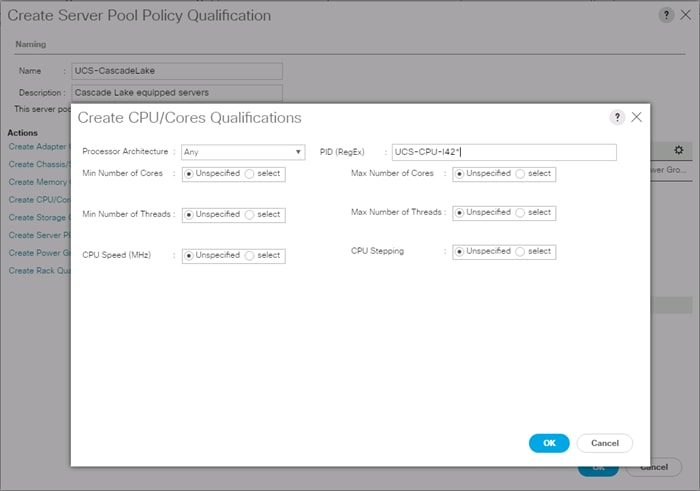

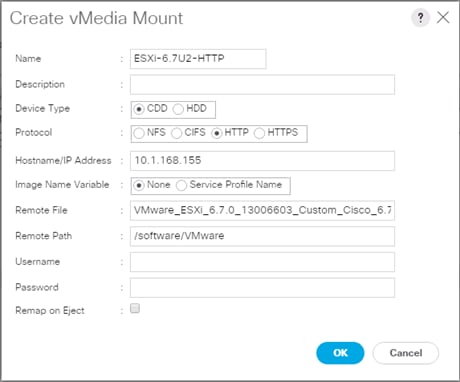

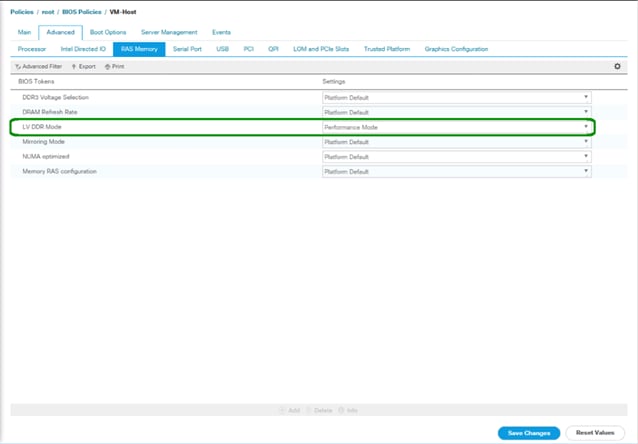

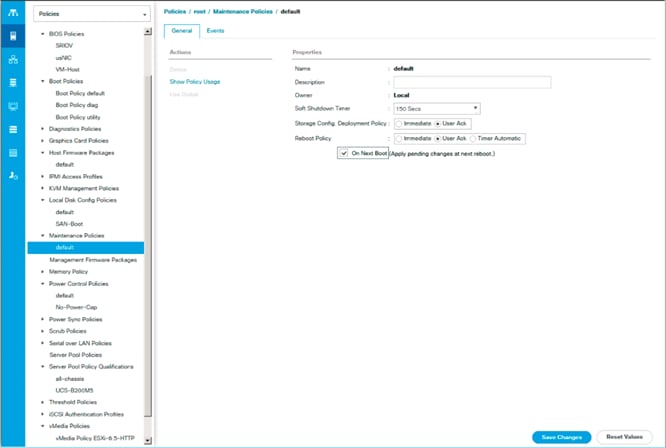

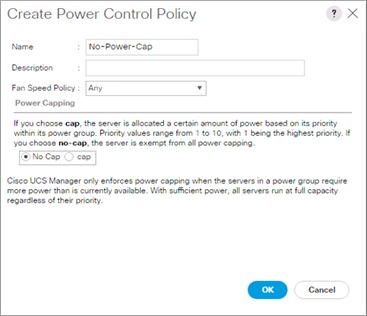

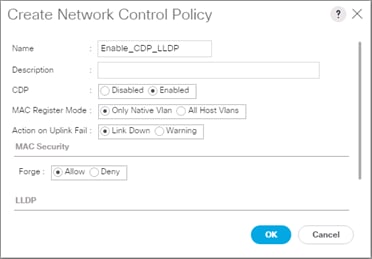

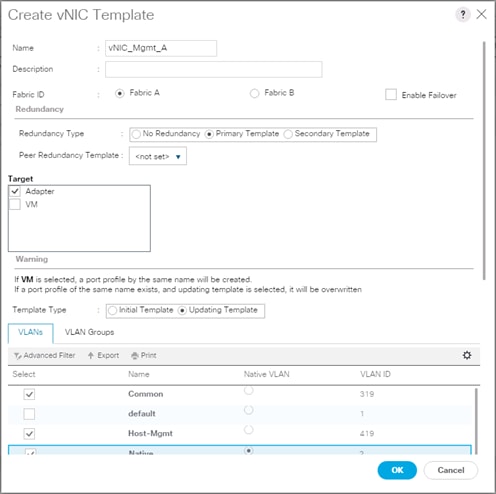

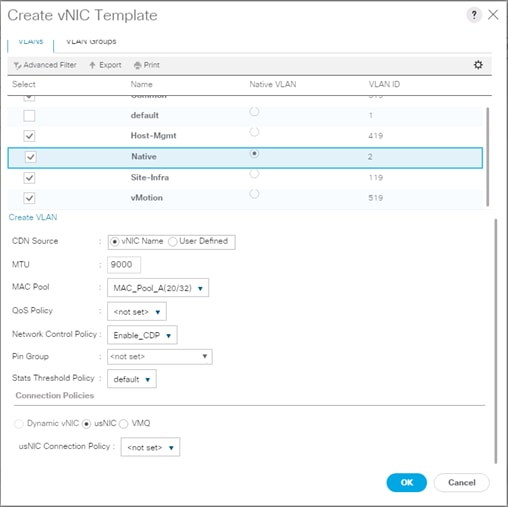

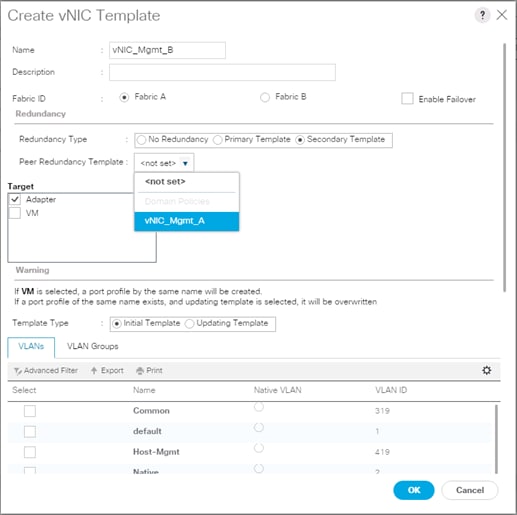

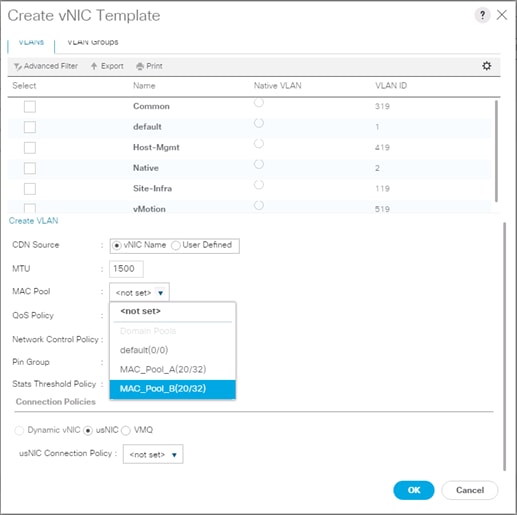

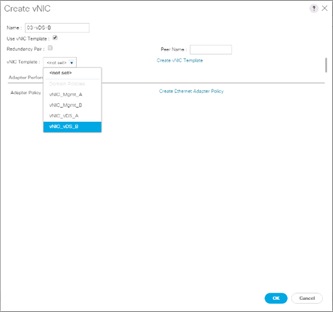

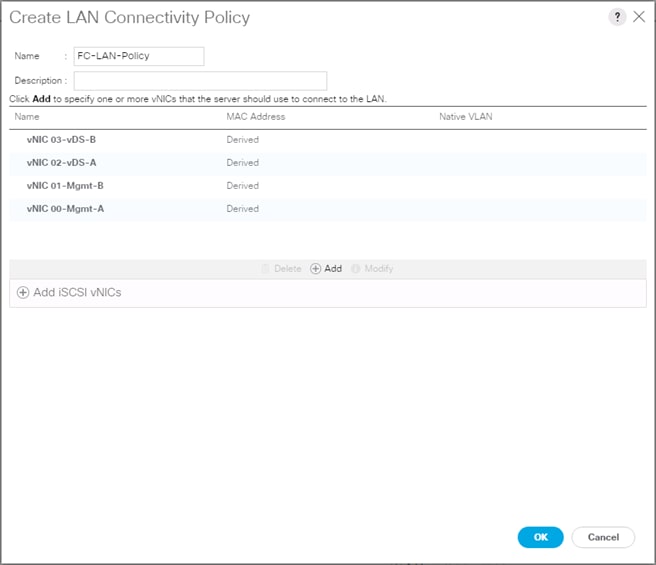

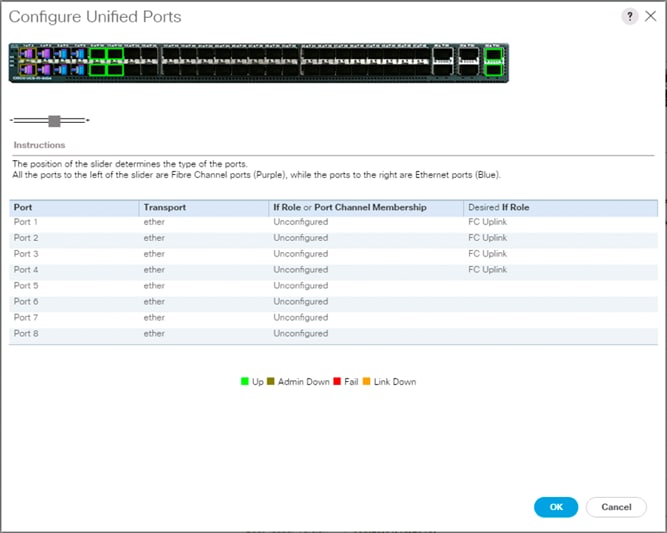

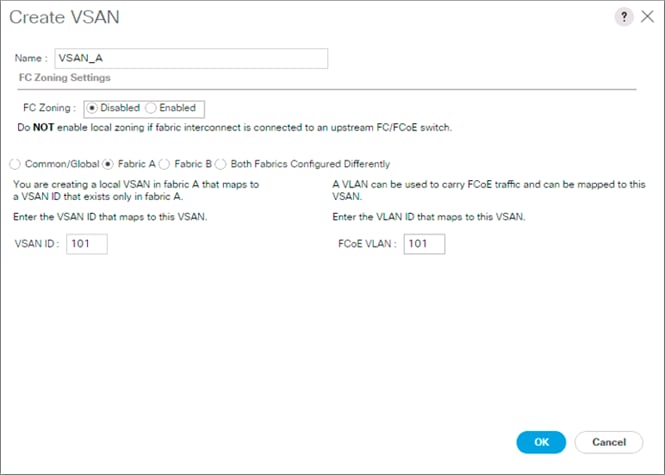

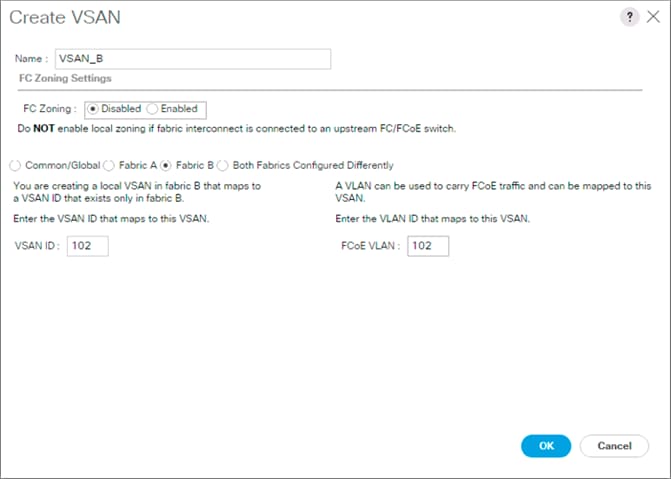

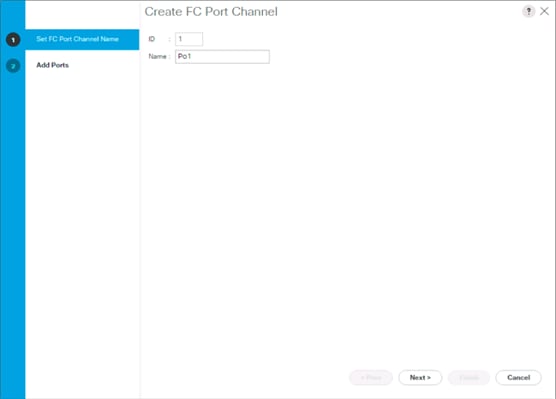

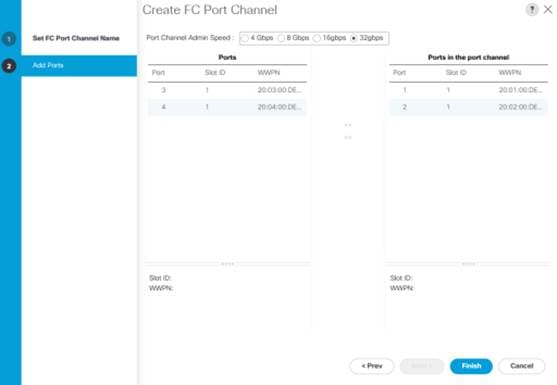

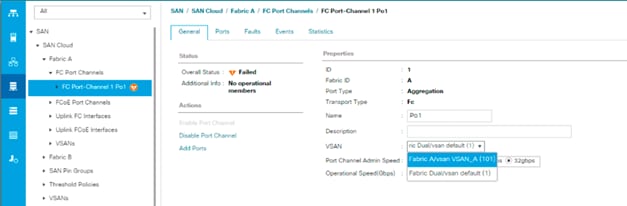

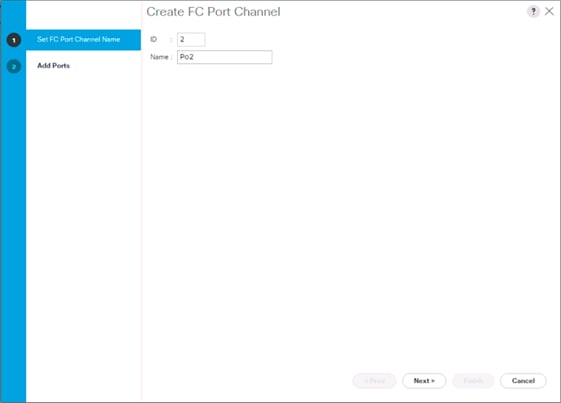

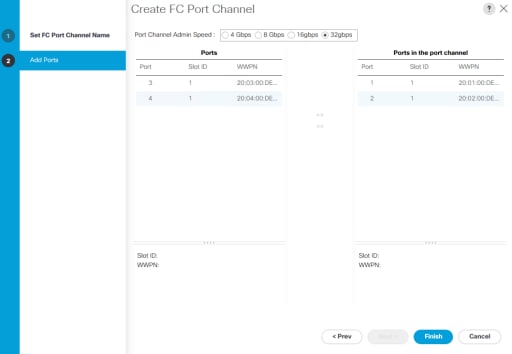

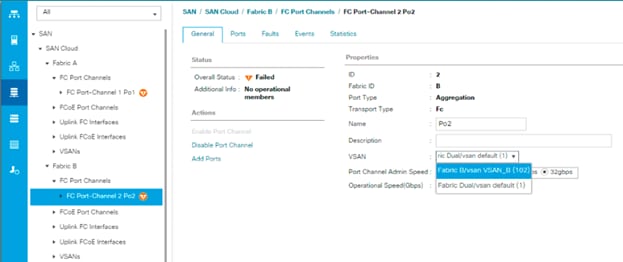

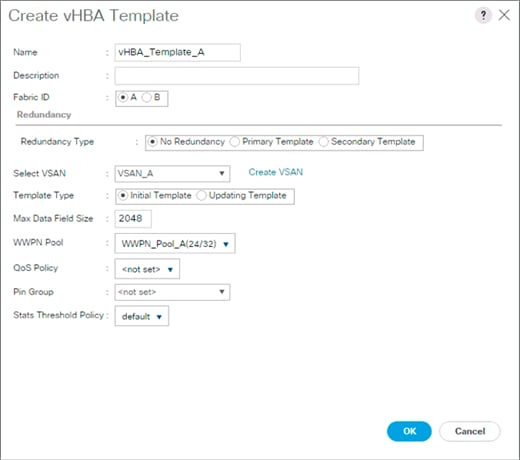

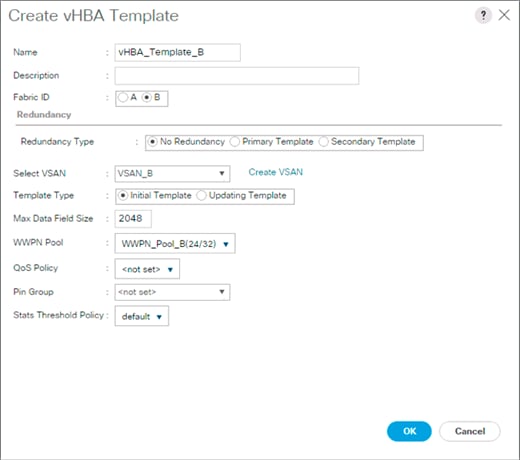

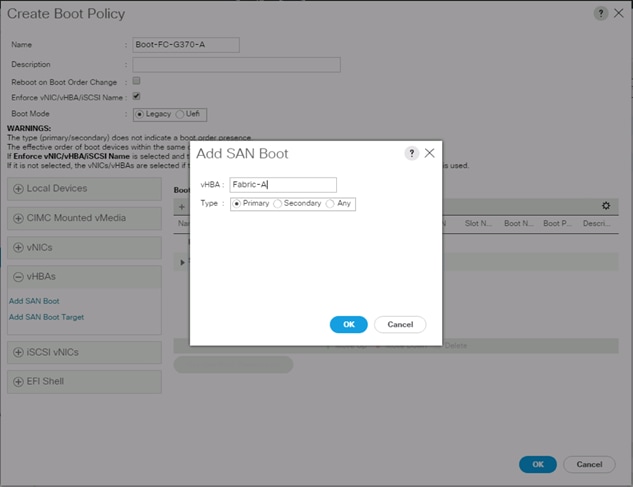

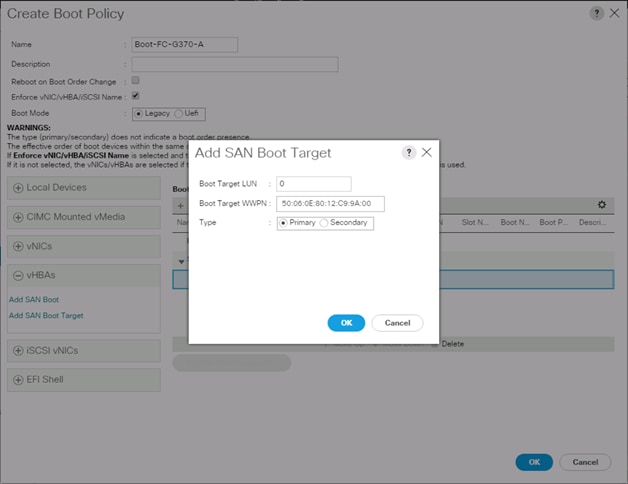

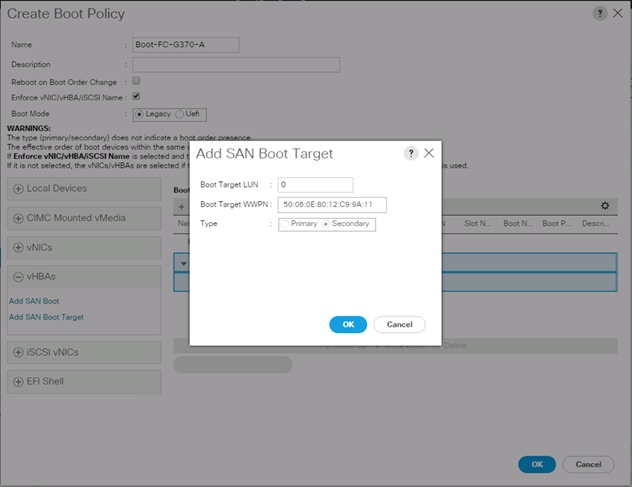

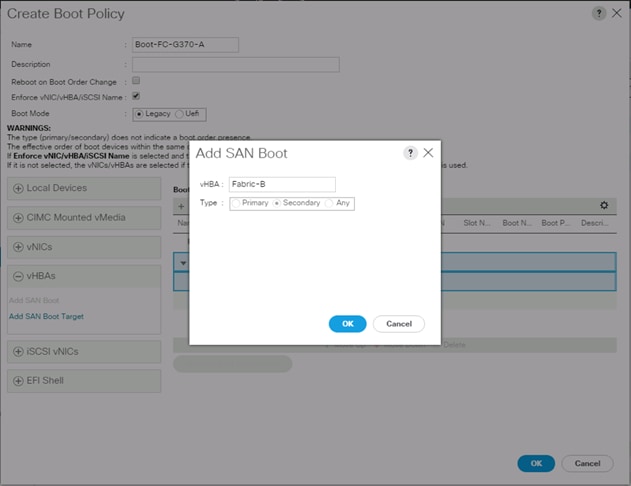

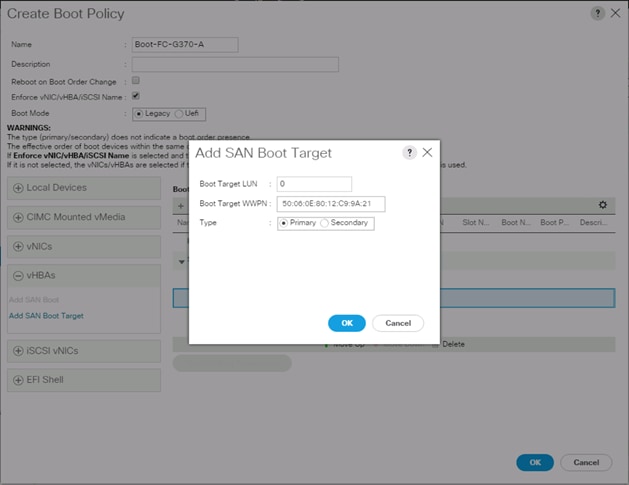

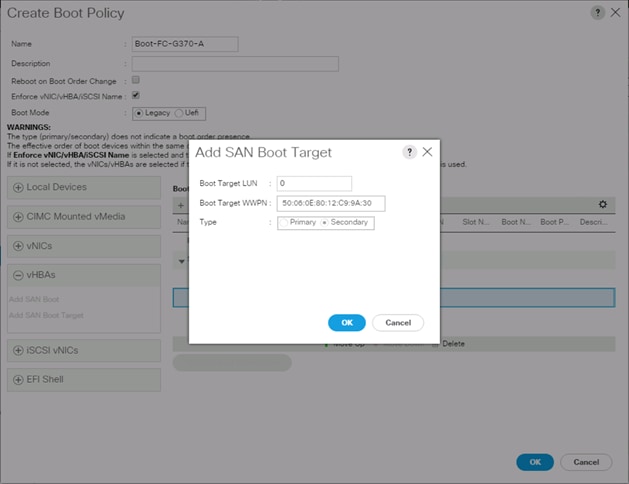

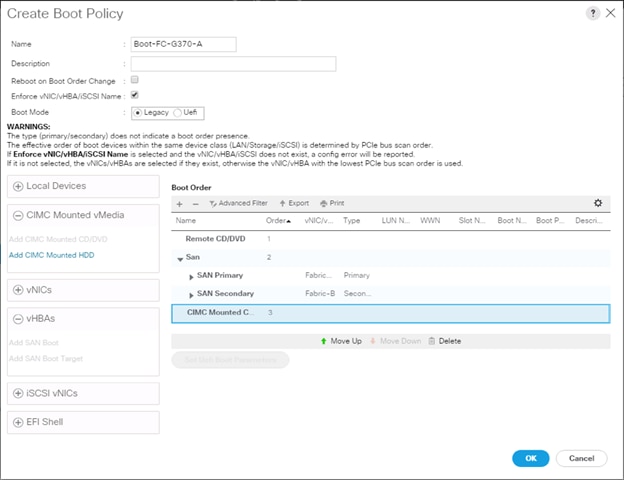

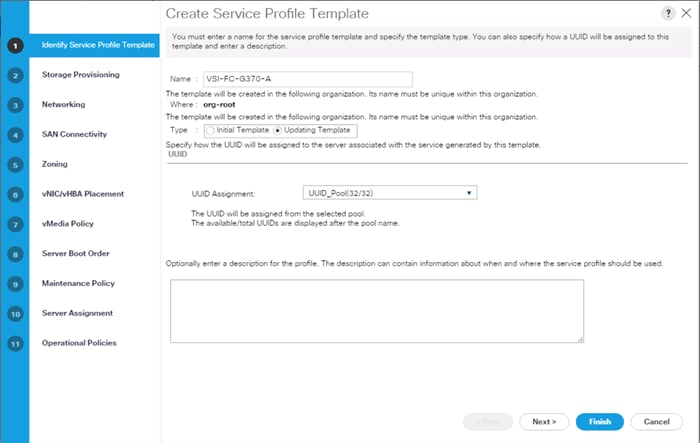

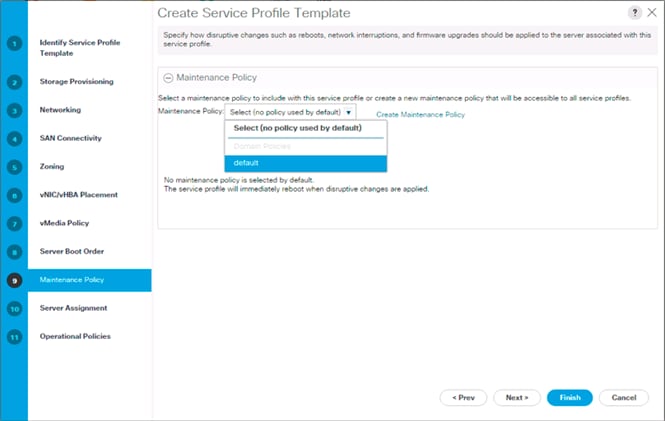

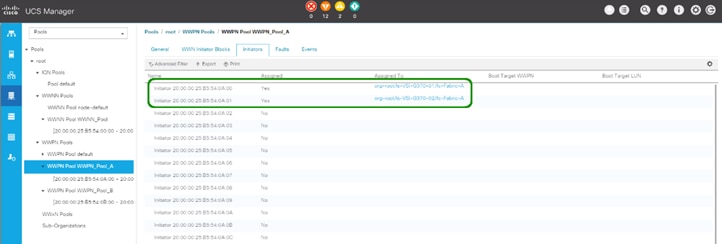

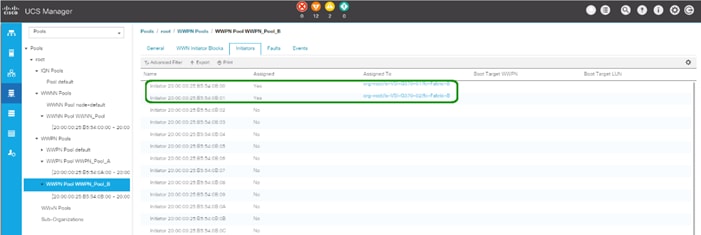

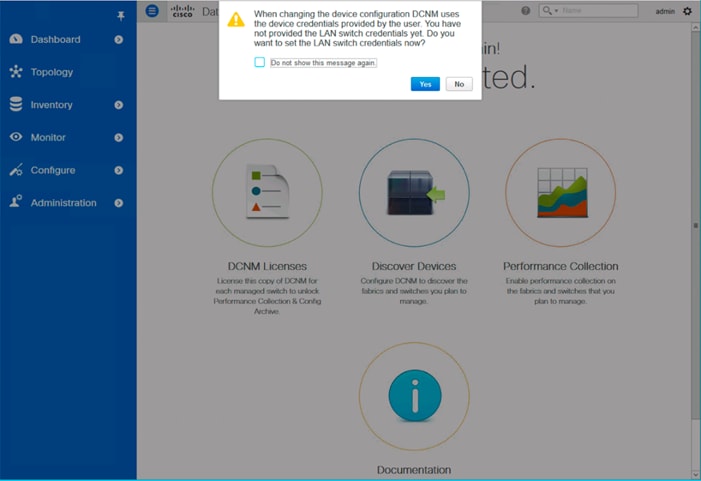

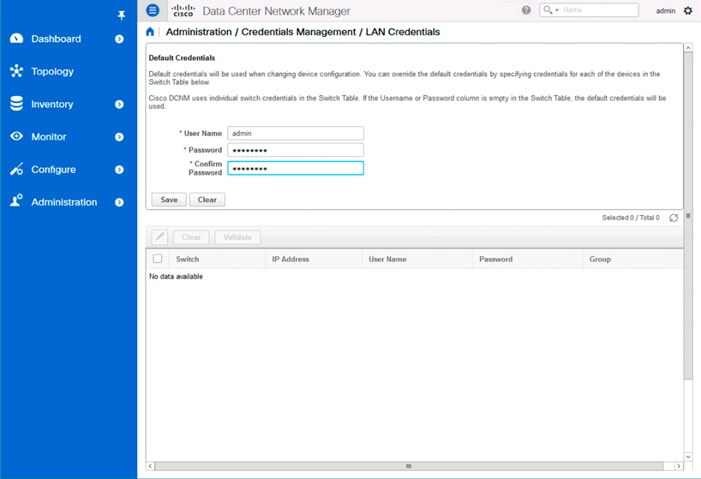

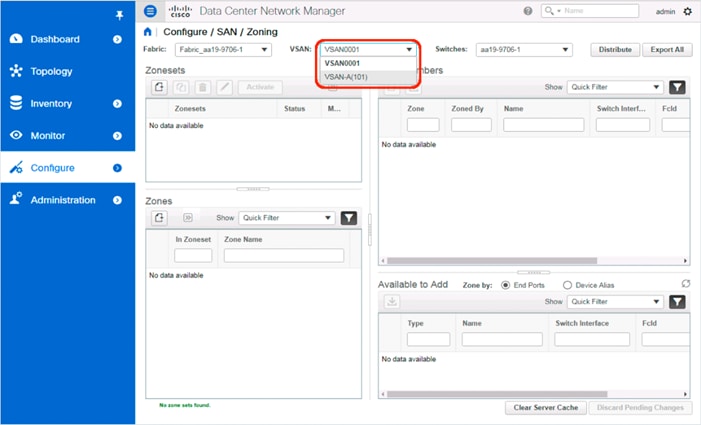

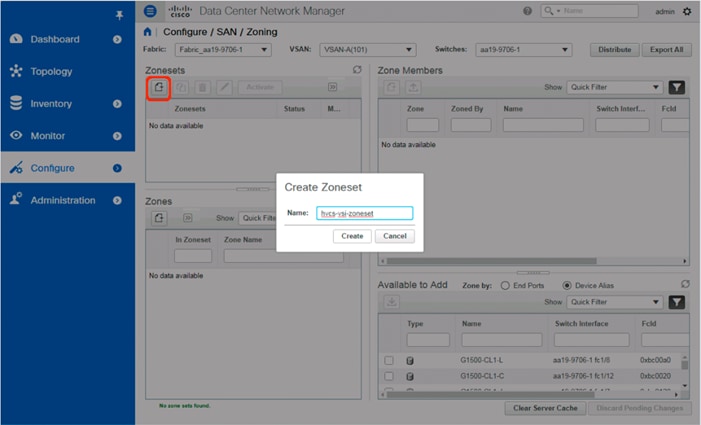

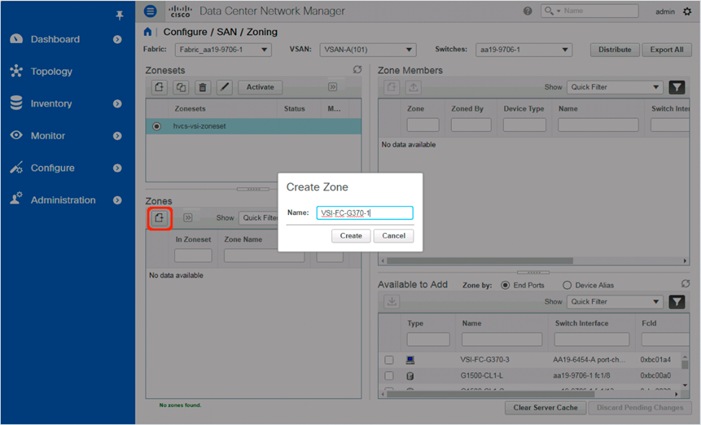

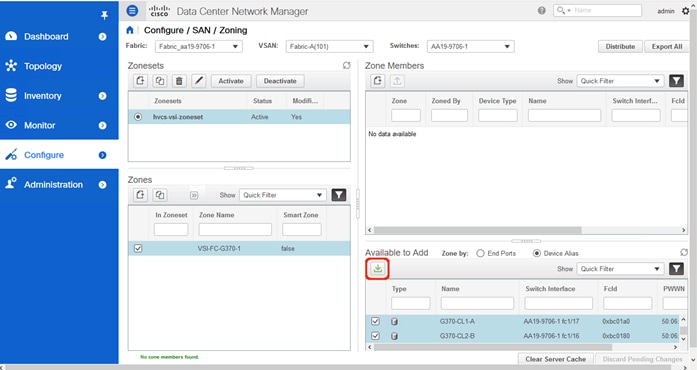

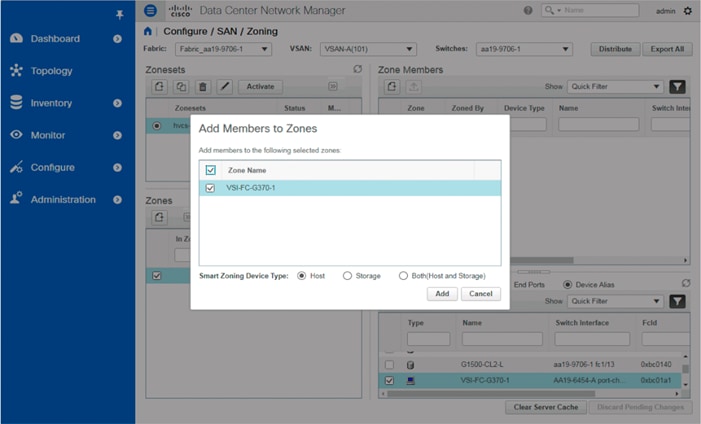

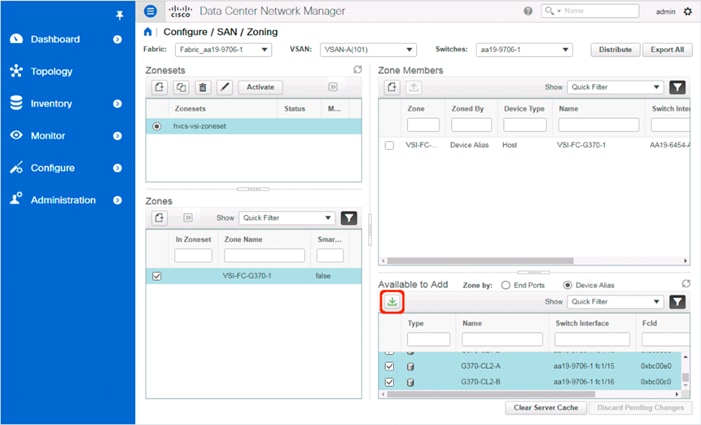

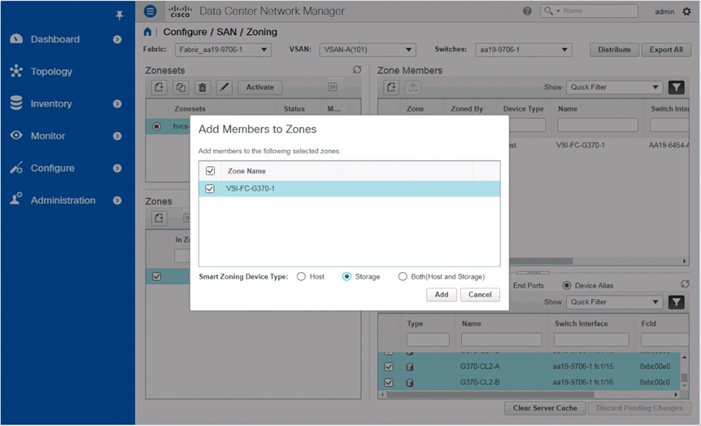

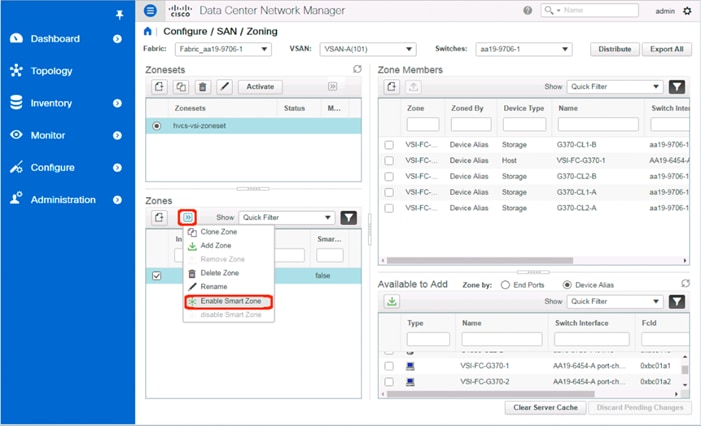

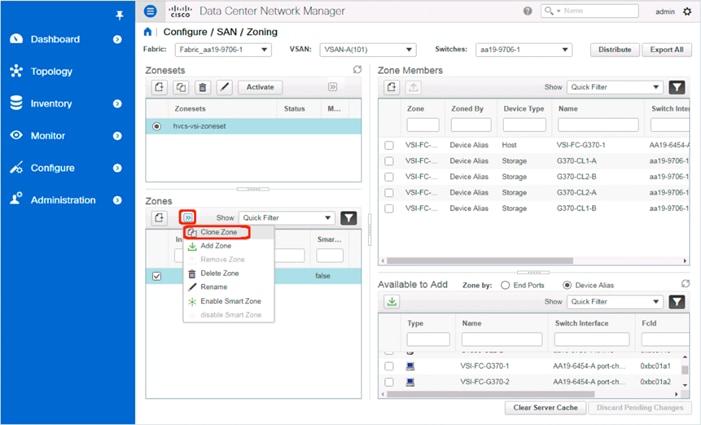

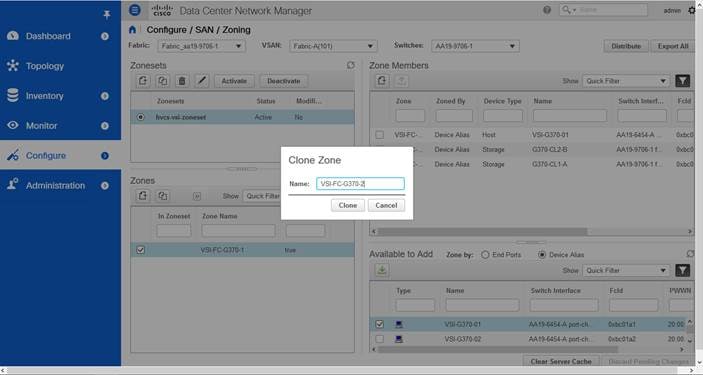

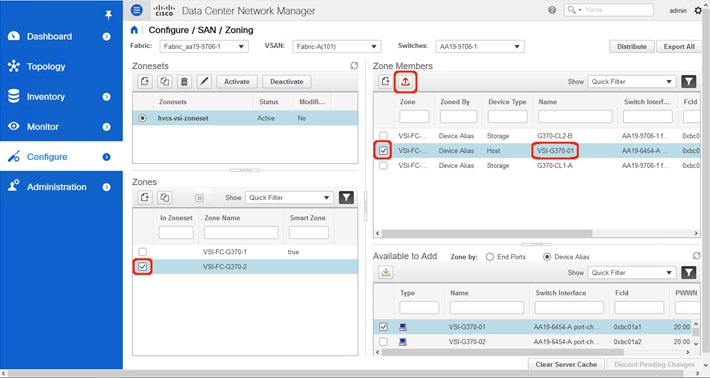

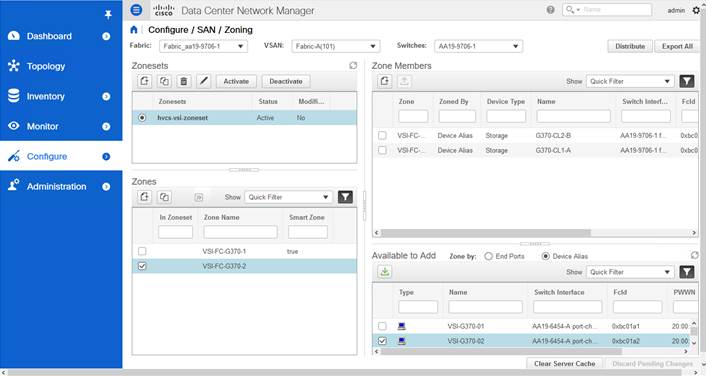

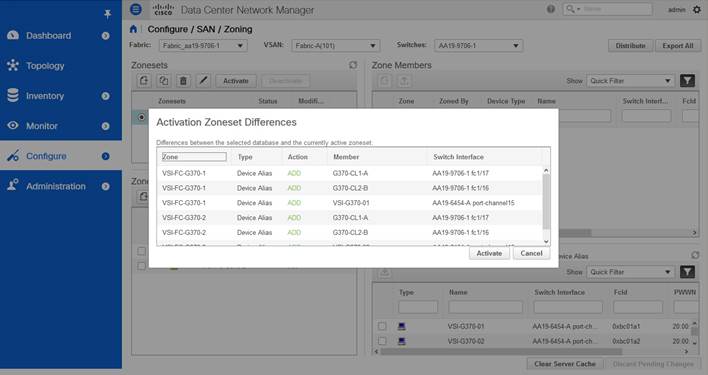

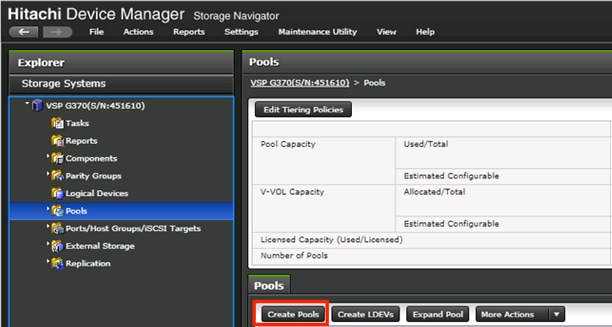

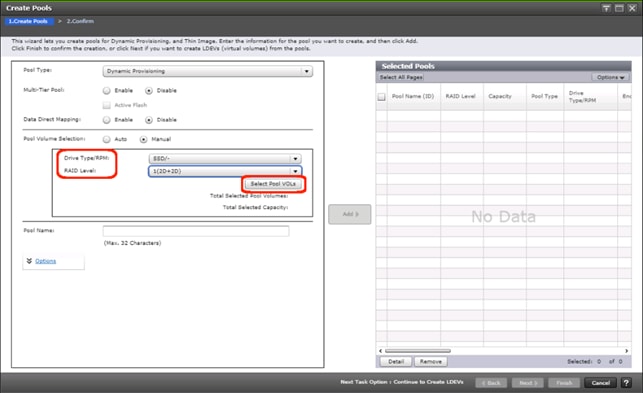

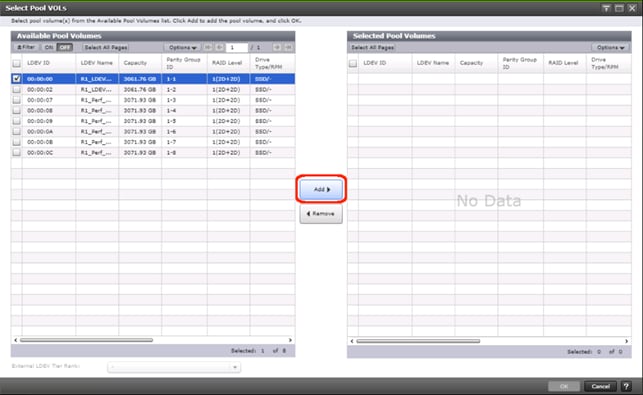

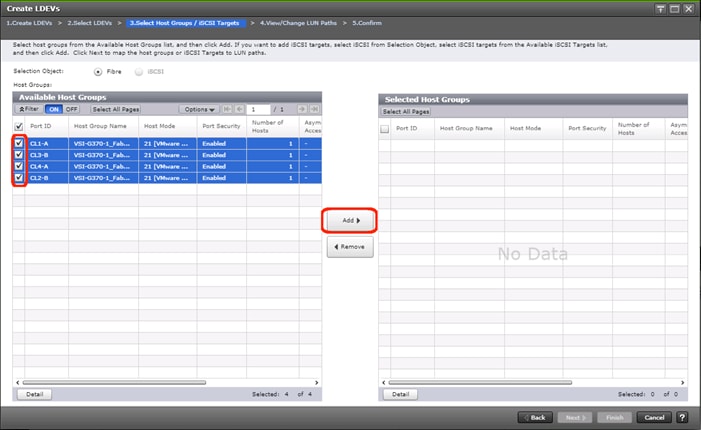

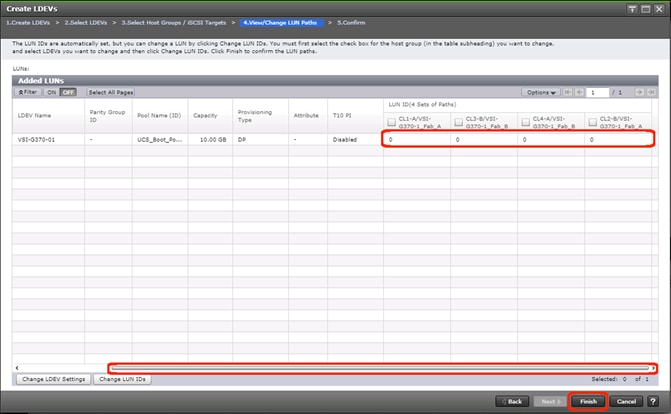

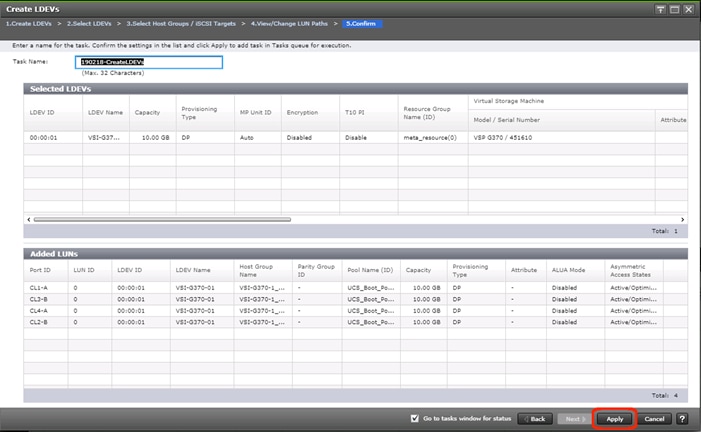

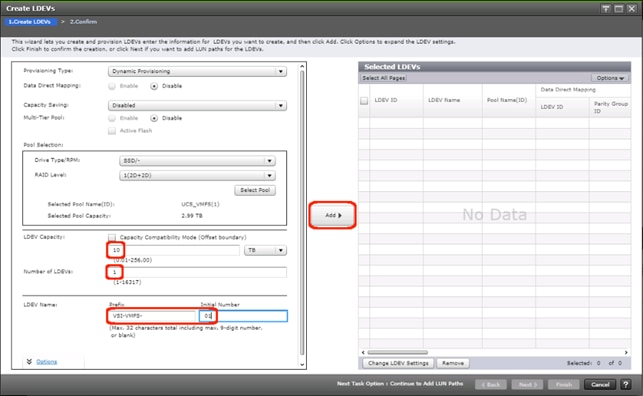

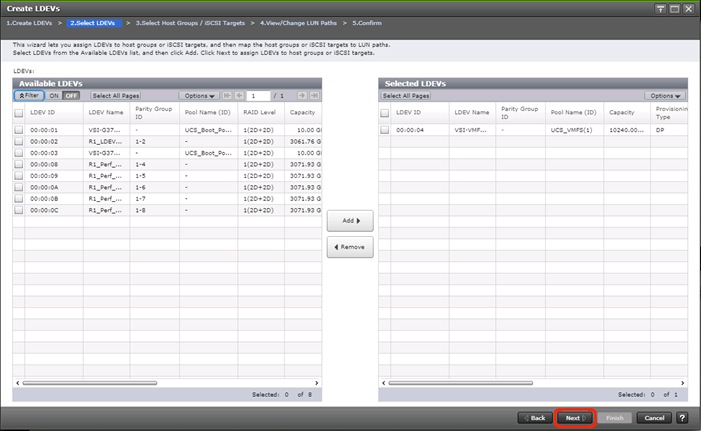

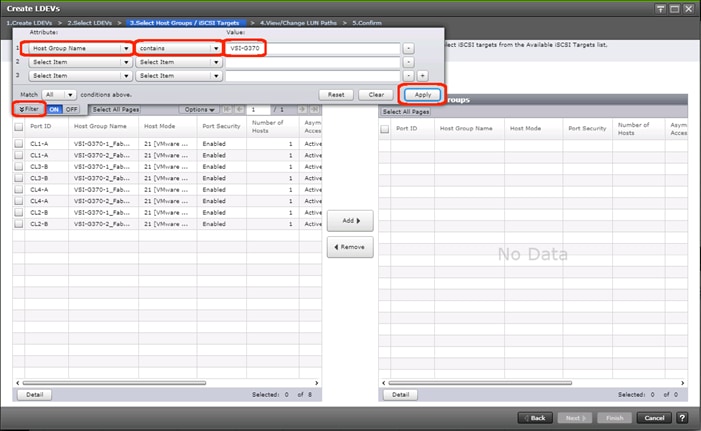

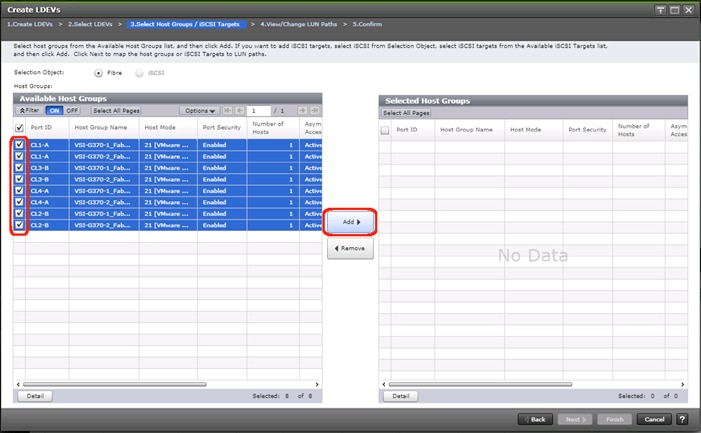

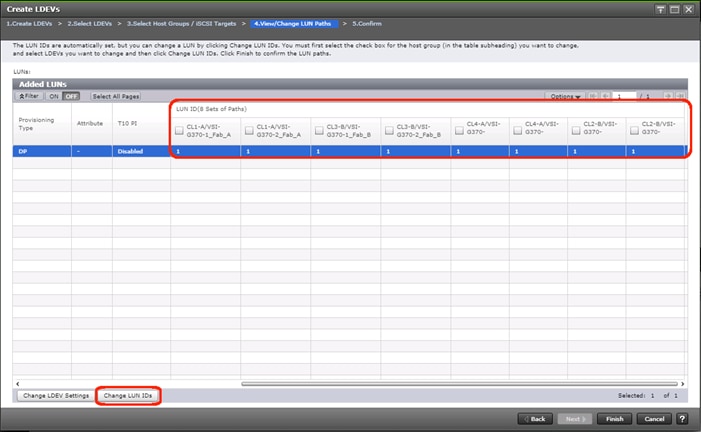

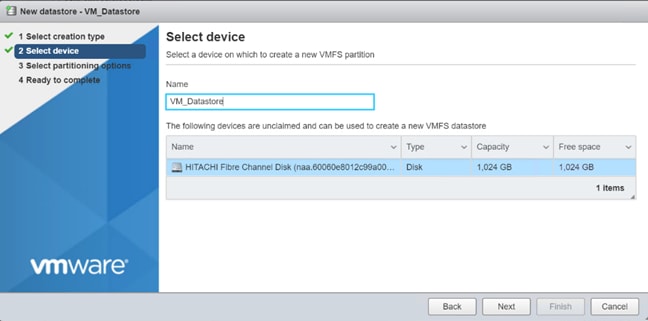

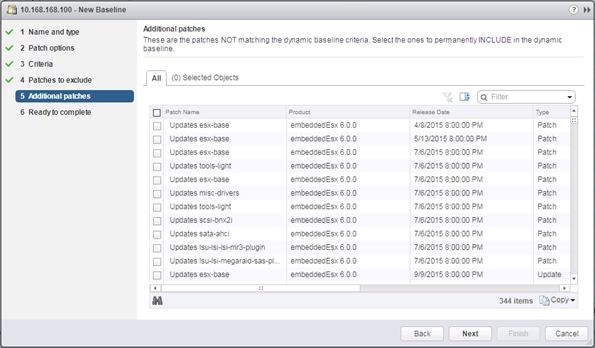

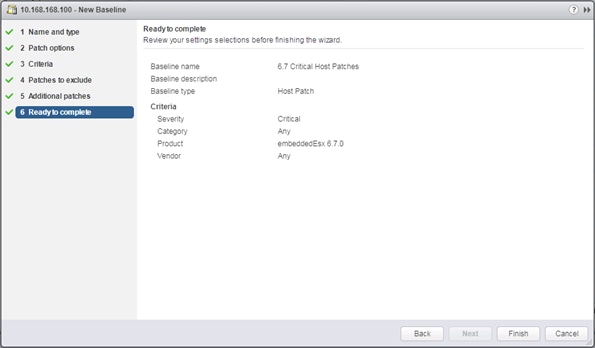

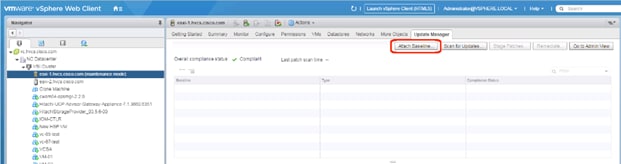

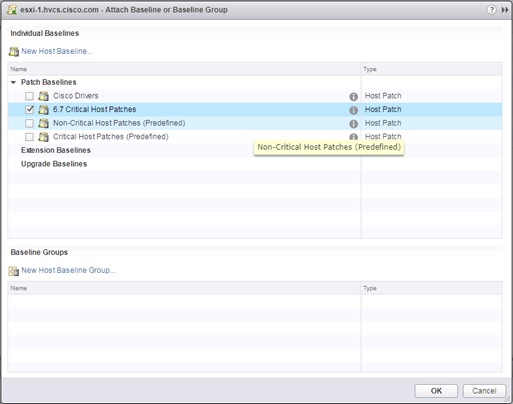

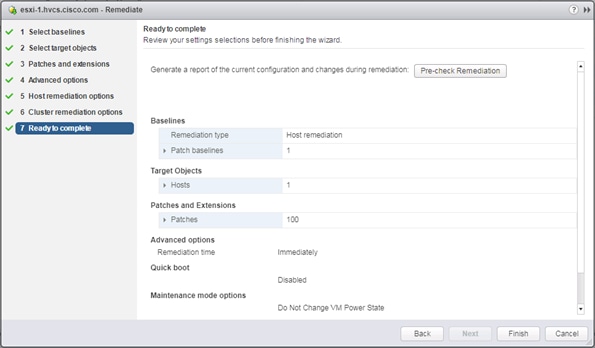

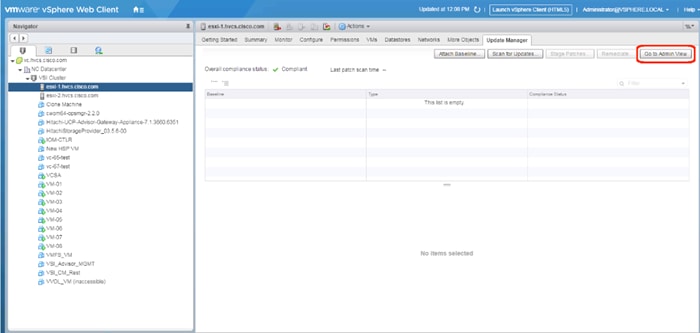

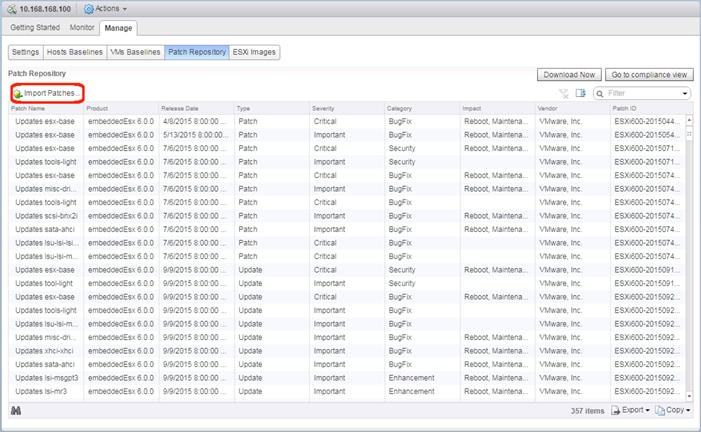

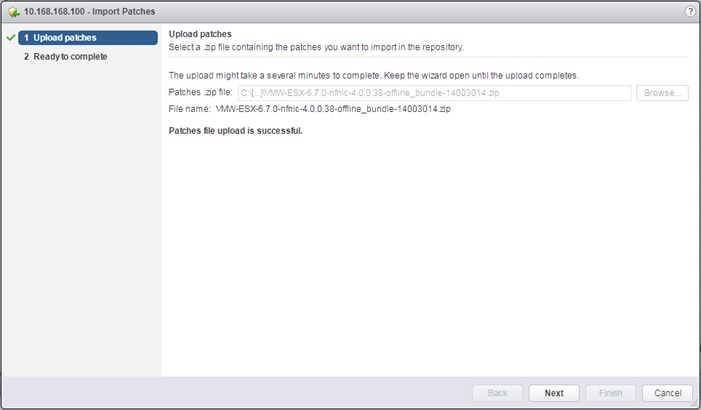

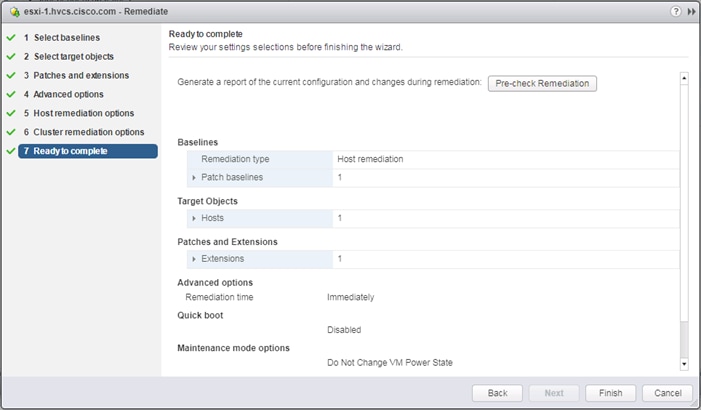

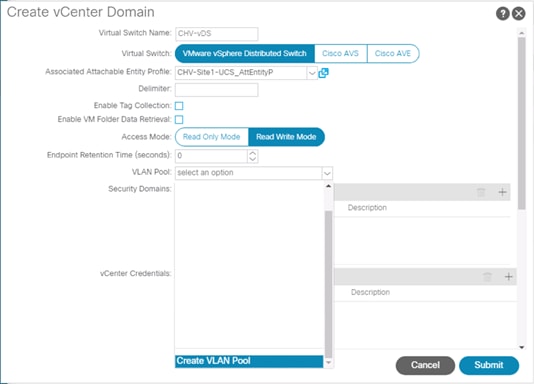

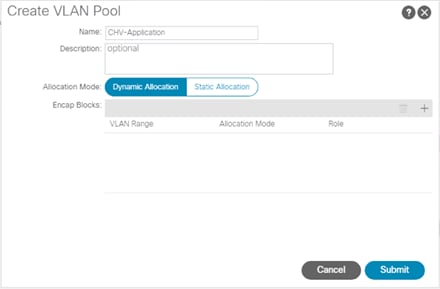

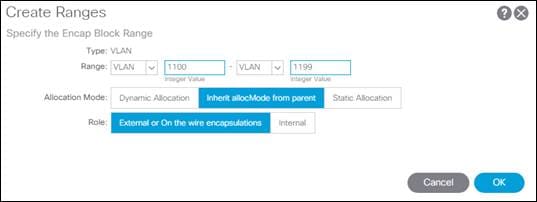

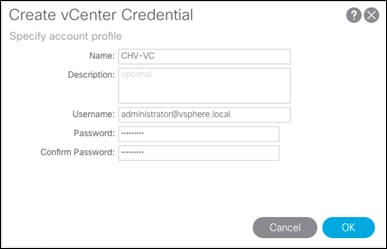

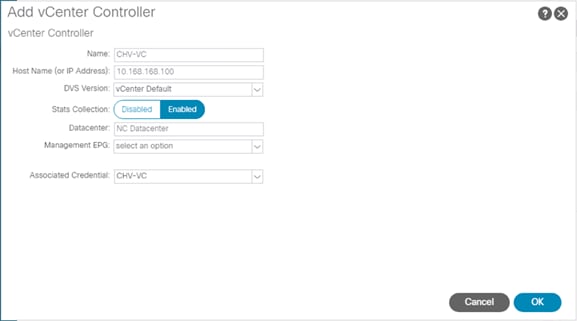

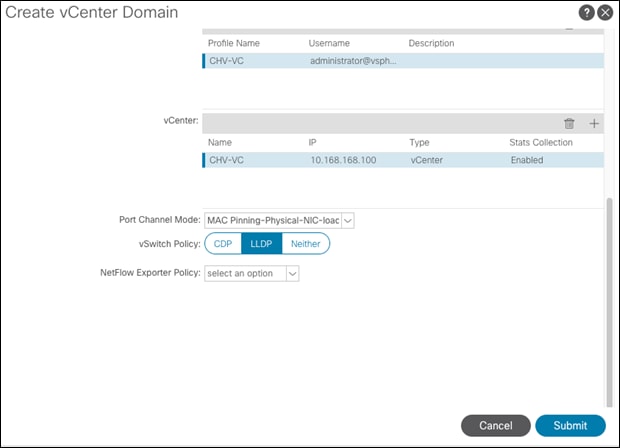

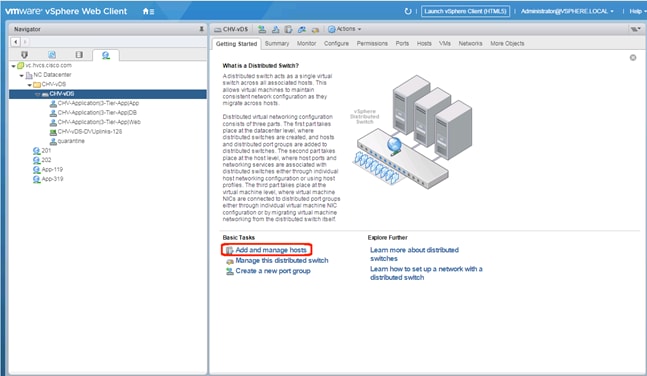

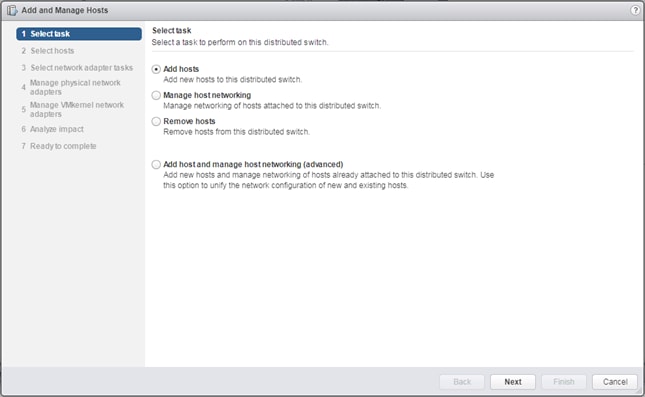

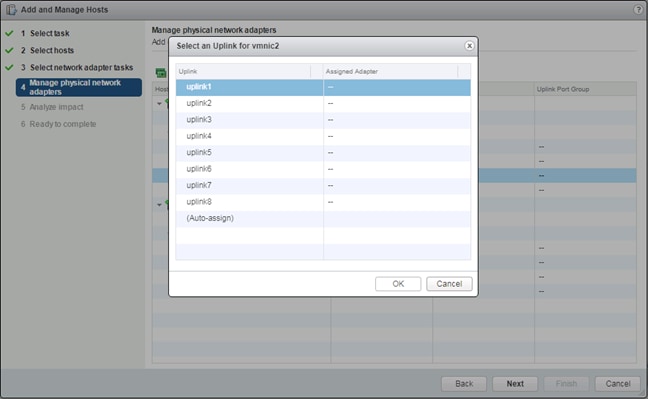

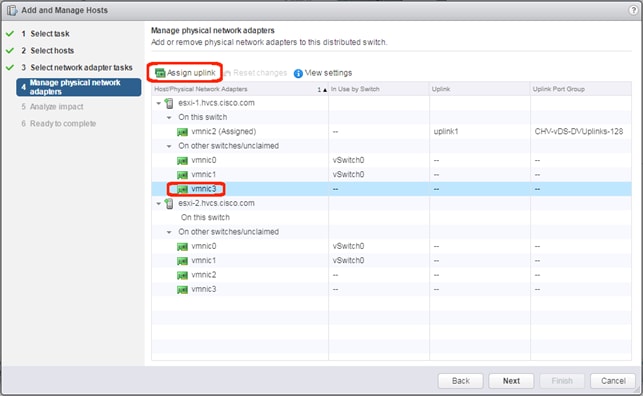

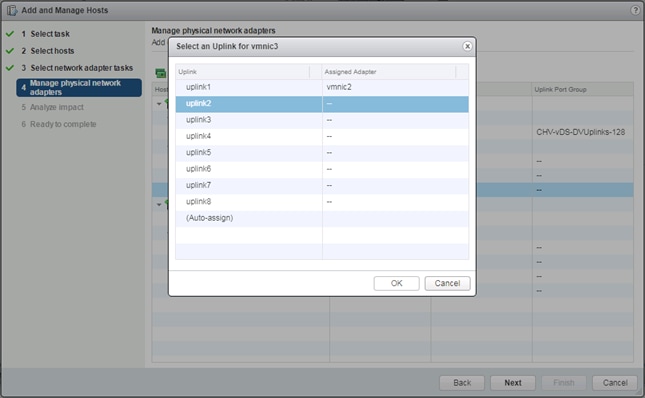

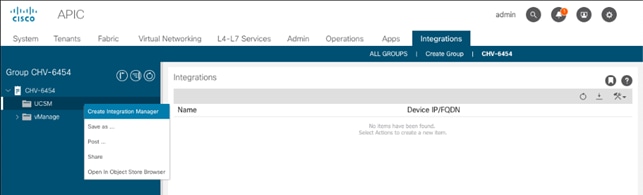

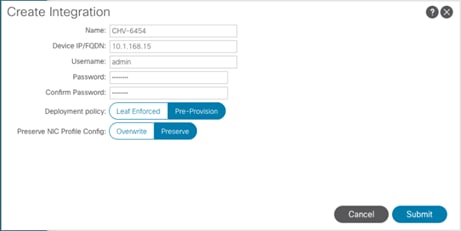

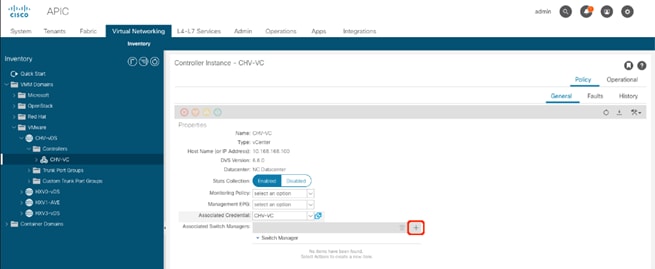

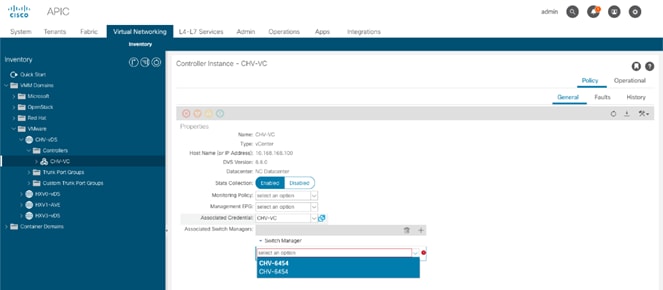

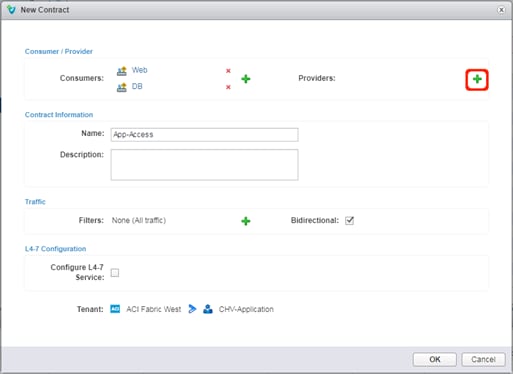

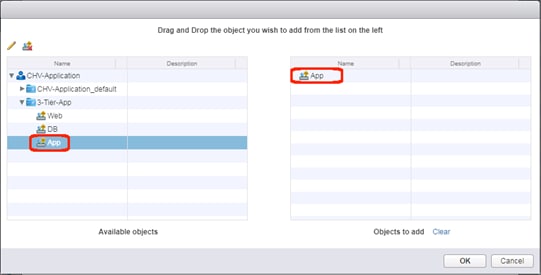

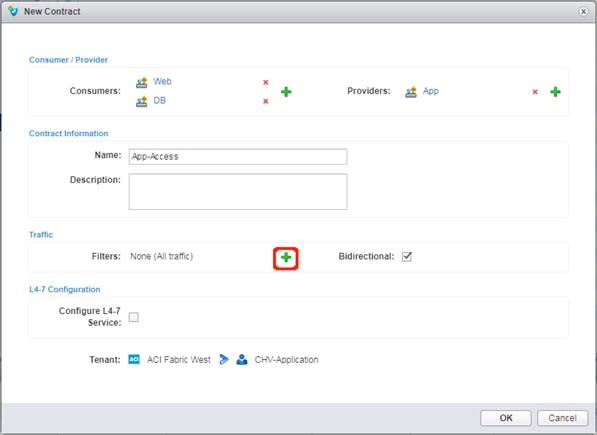

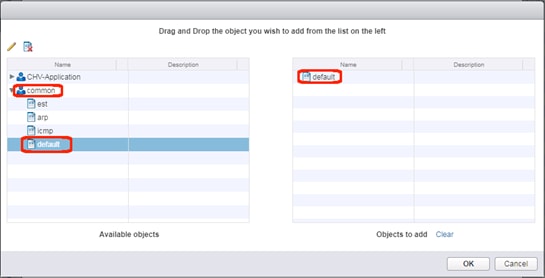

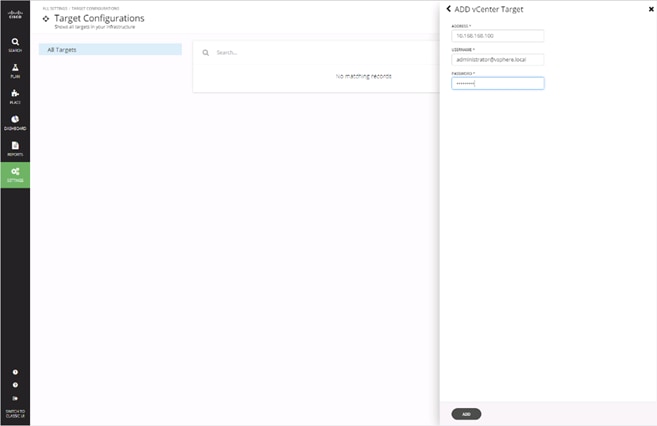

4. Click Finish to complete creating the EPG.