Renewable Energy: Offshore Wind Farm 1.3 Design Guide

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

Introduction to Offshore Wind Farm

Cisco Solution for Renewable Energy Offshore Wind Farms

Offshore Wind Farm Cisco Validated Design

Scope of Wind Farm Release 1.3

New Capabilities in Offshore Wind Farm Release 1.3

Offshore Wind Farm Places in the Network

Wind Farm Actors in the Network

Solution Design Considerations

TAN Non-HA Design Considerations

TAN High Availability Design with REP

Resilient Ethernet Protocol Ring

Offshore Substation Network and Building Blocks

OSS DMZ and Third-Party Network

Network VLANs and Routing Design

BGP EVPN VXLAN Network Design for Turbine Network

Turbine network Underlay Design

Turbine network VXLAN Overlay Design

Cisco Wi-Fi Architecture for Off-Shore Windfarm

Use case for Service Operations Vessel Wireless Backhaul within a Wind Farm

Hardware Redundancy and High-Availability

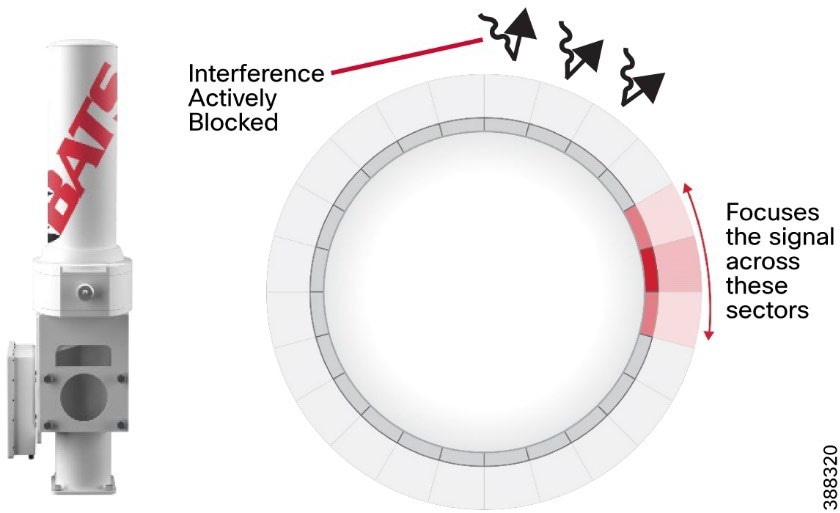

BATS FAST 5.8 Intelligent Antenna System

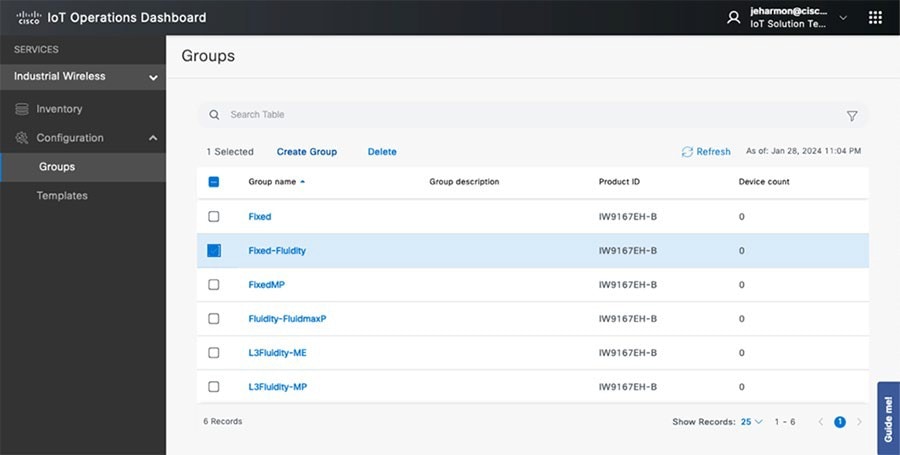

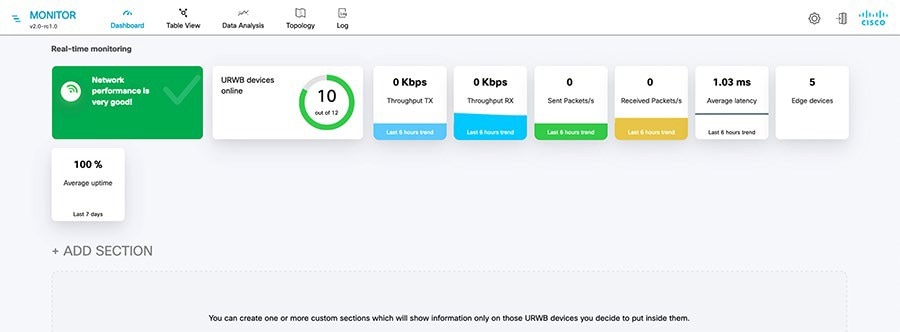

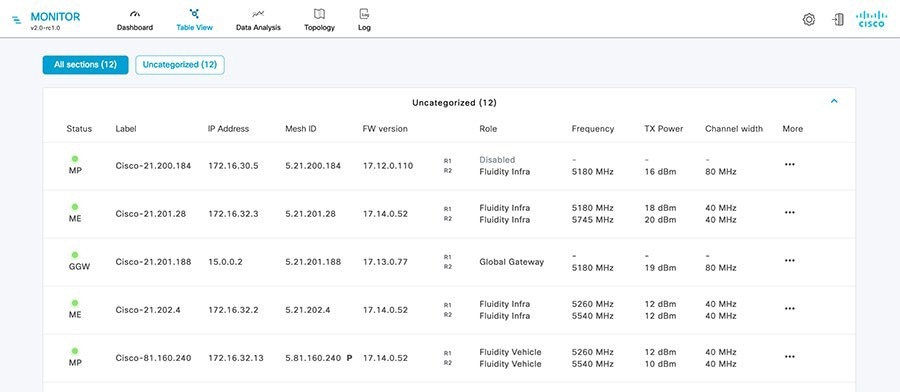

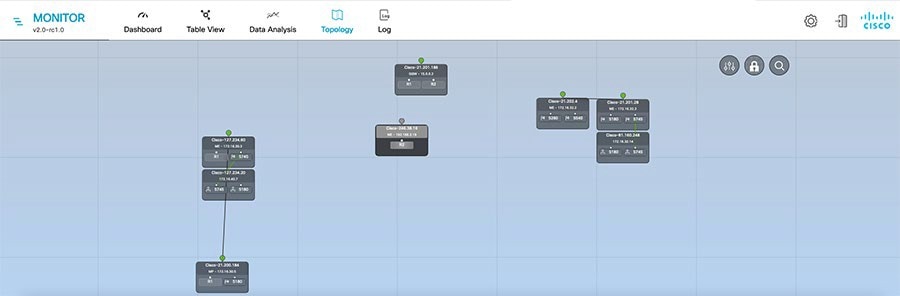

IW Monitor: Centralized Management of URWB Infrastructure

URWB: Terminology and Miscellaneous Configurations

URWB Mobility Architecture: Layer 2 Fluidity

Fluidity Advanced Handoff Tuning for SOV Radios

URWB Fluidity Advanced: Large Network Optimization

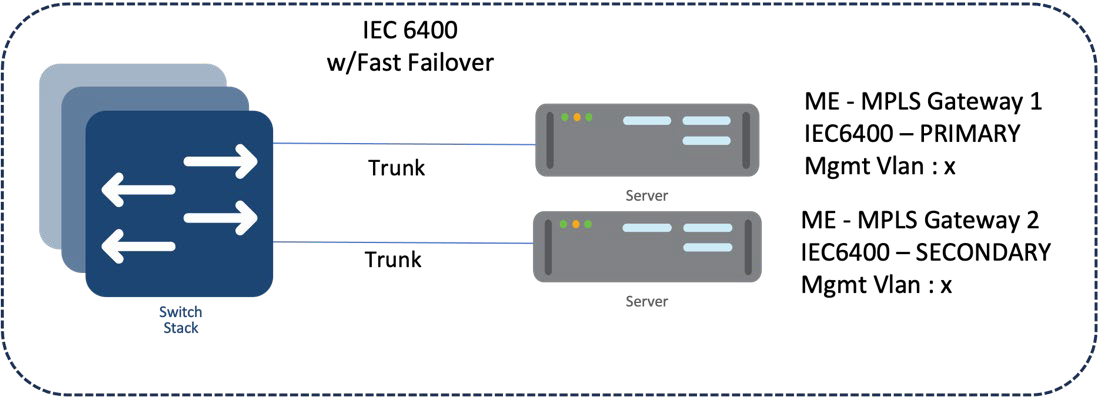

IW9167E/IEC6400 Mesh End Redundancy and High Availability

URWB Access Layer: Fast Convergence on Failure

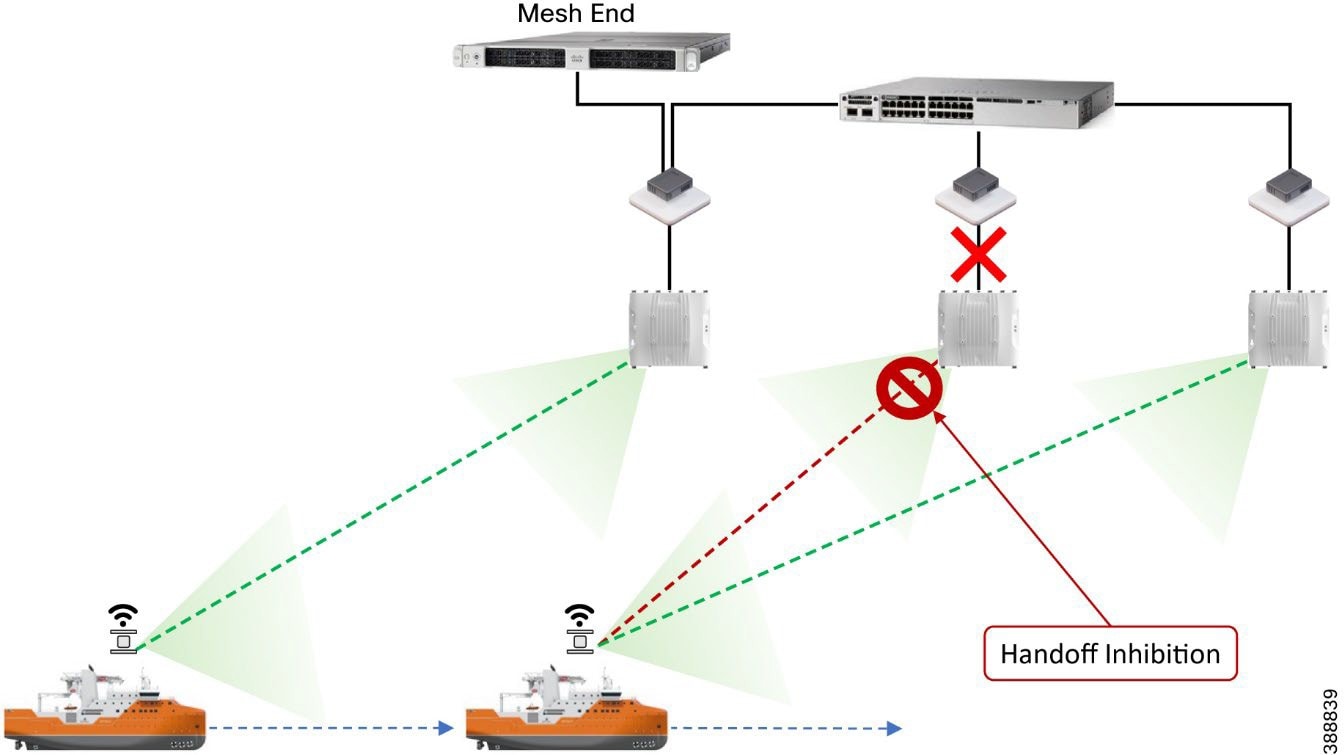

Link Backhaul Check: Handoff Inhibition

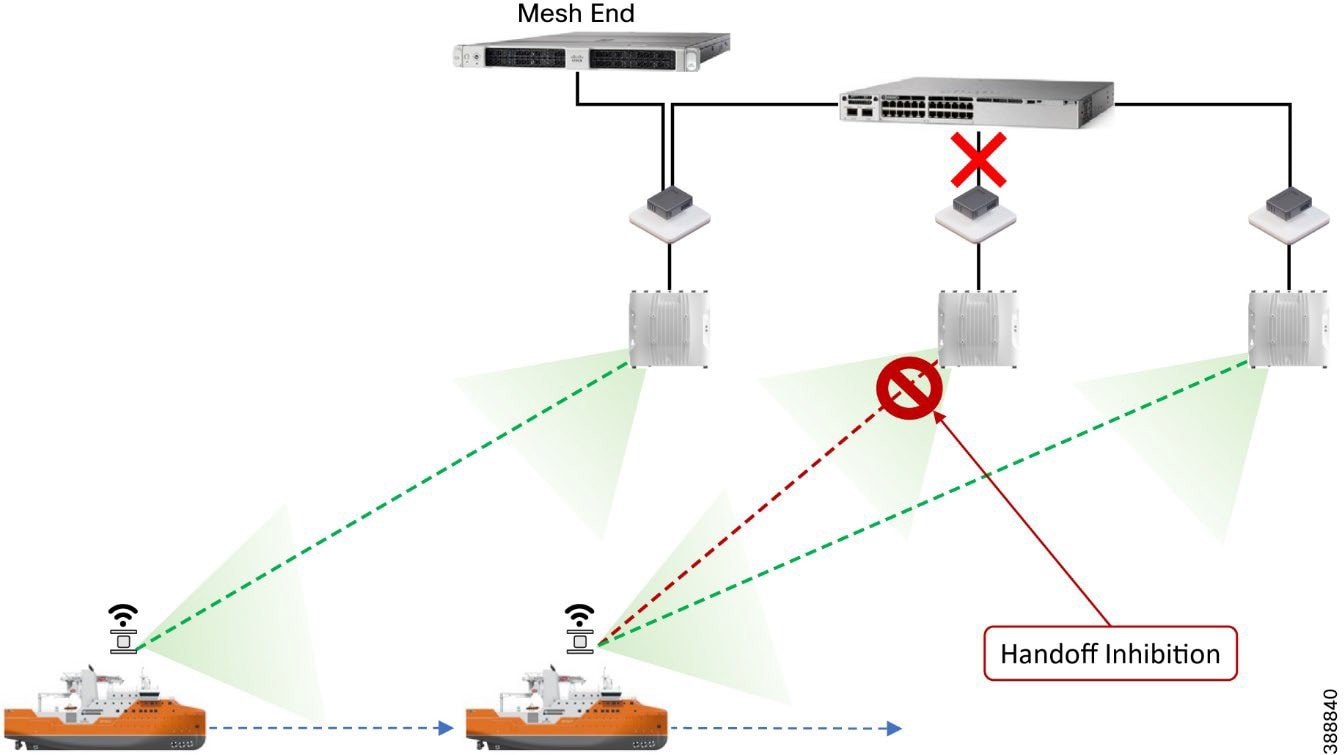

Mesh-End Backhaul Check: Handoff Inhibition

Onboard Radio Redundancy: Failover and Recovery

SCADA Applications and Protocols

Open Platform Communications Unified Architecture

Turbine Operator Network Design

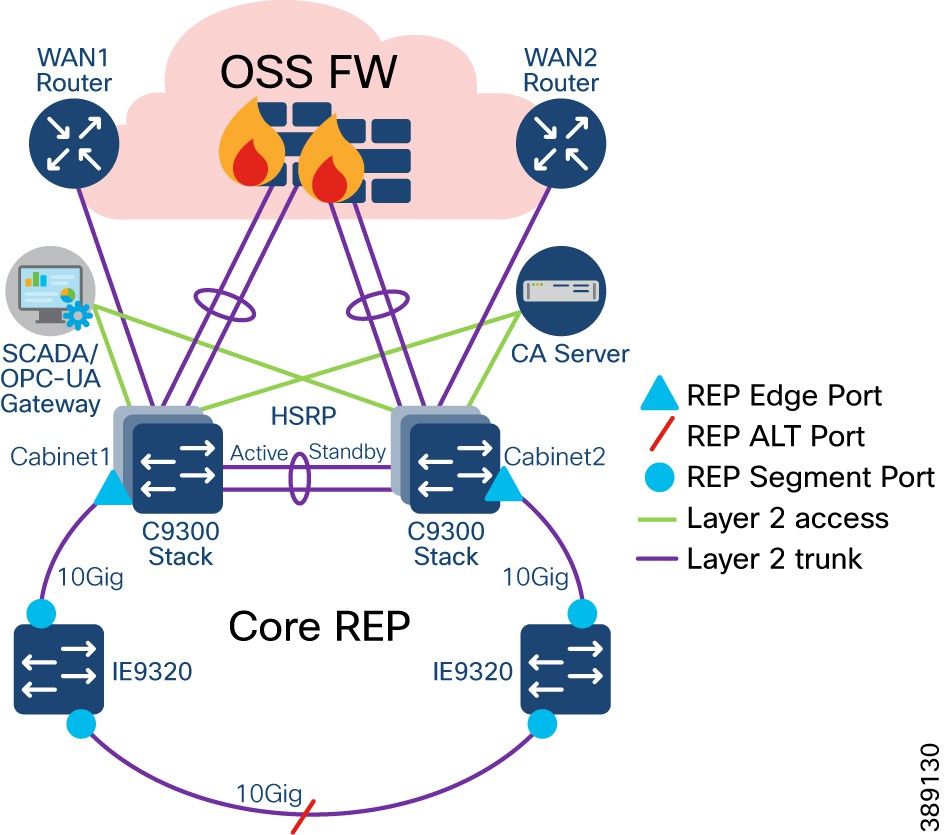

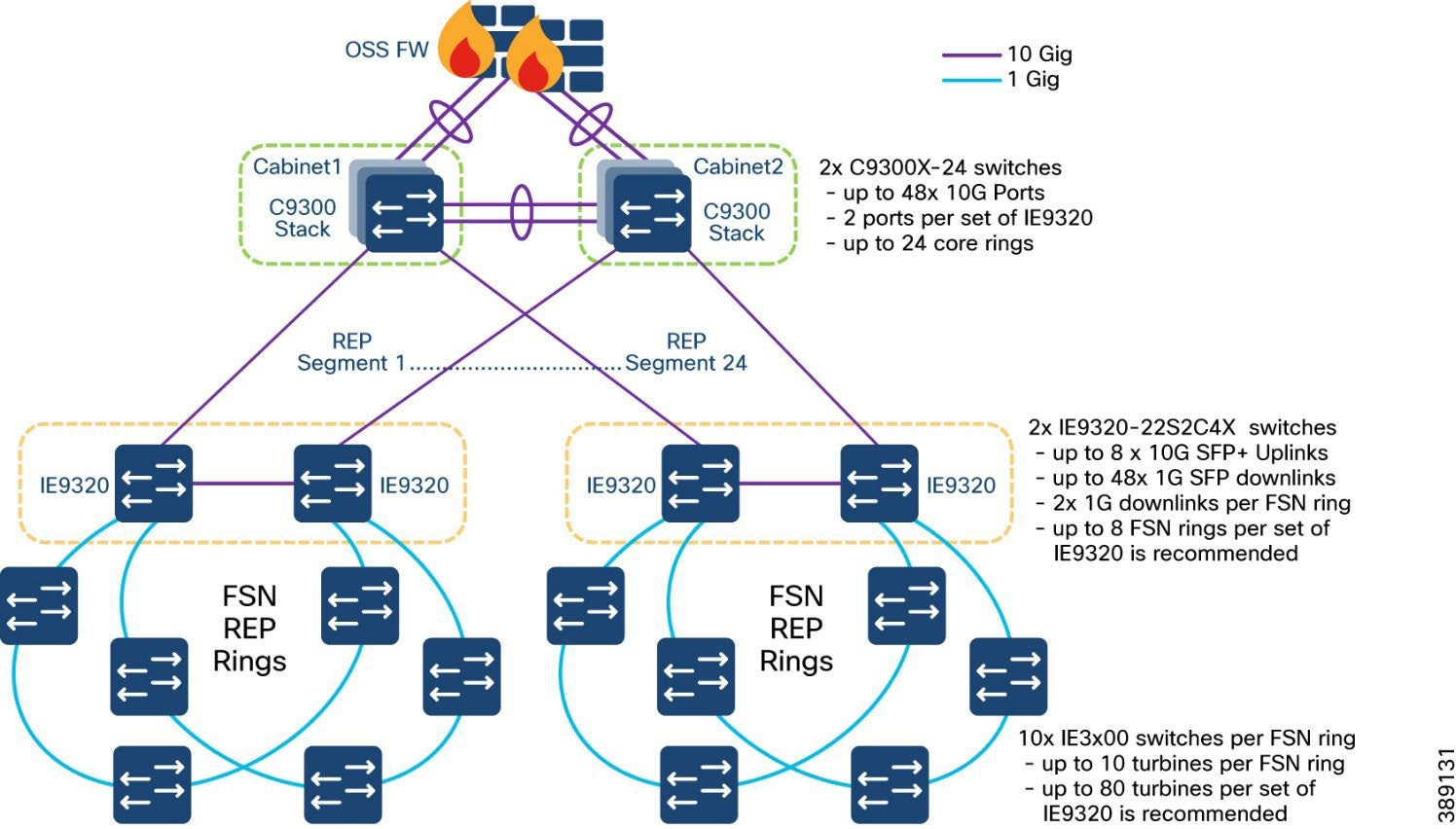

OSS (third-party) Turbine Operator Core Network Ring Design

OSS (third-party) Turbine Operator Aggregation Network Design

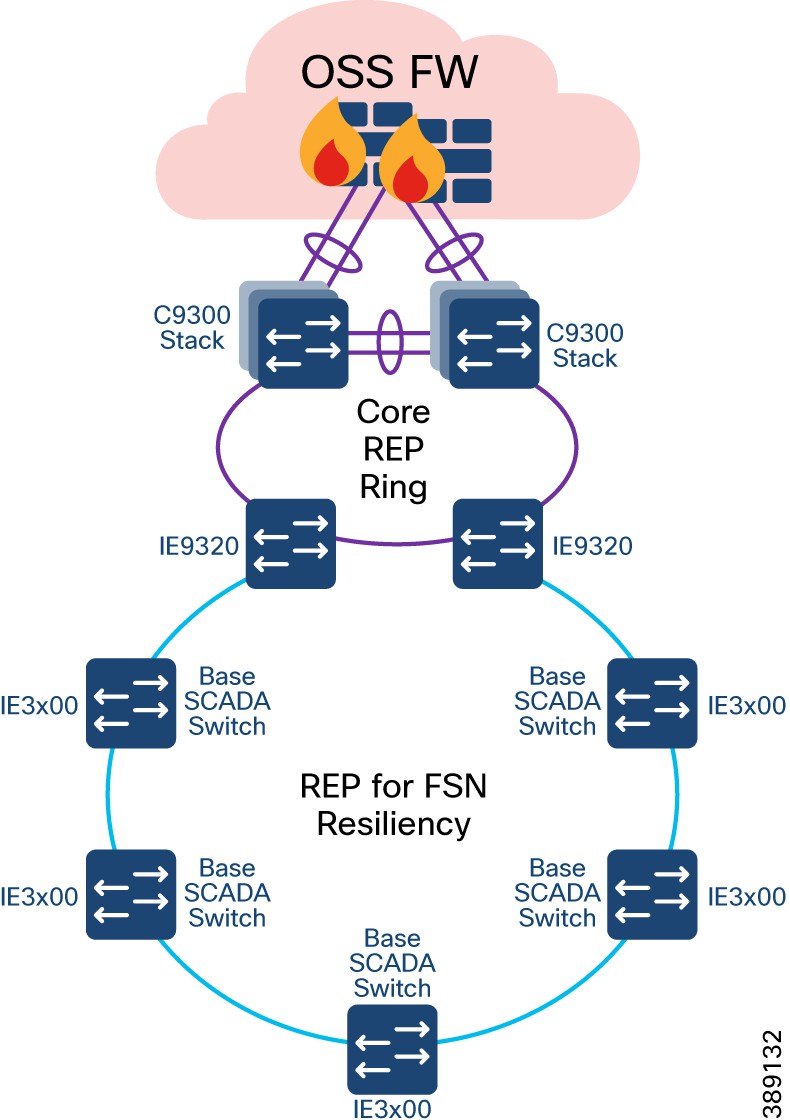

Farm Area SCADA Network (FSN) Design

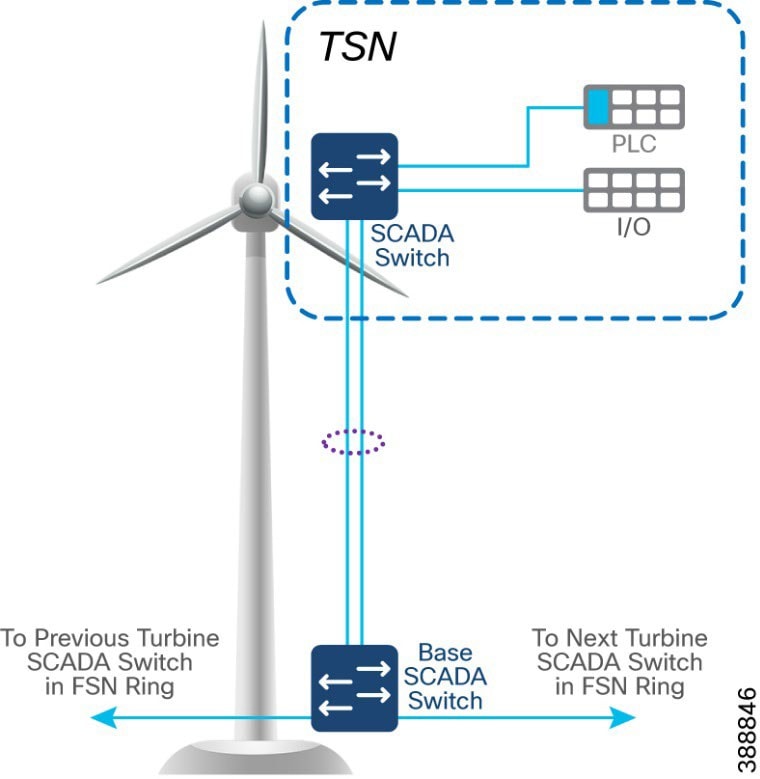

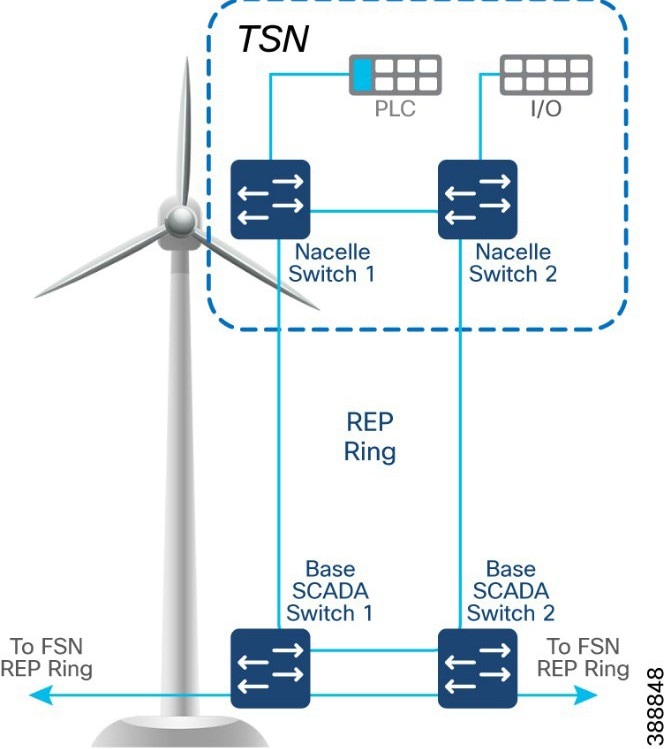

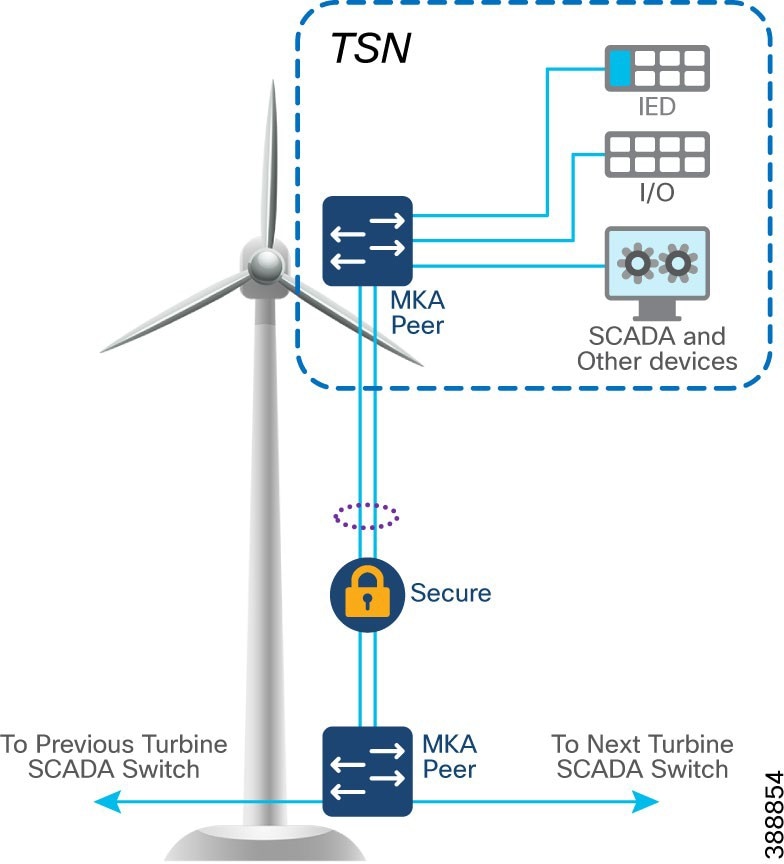

Turbine SCADA Network (TSN) Design

TSN Non-HA Design Considerations

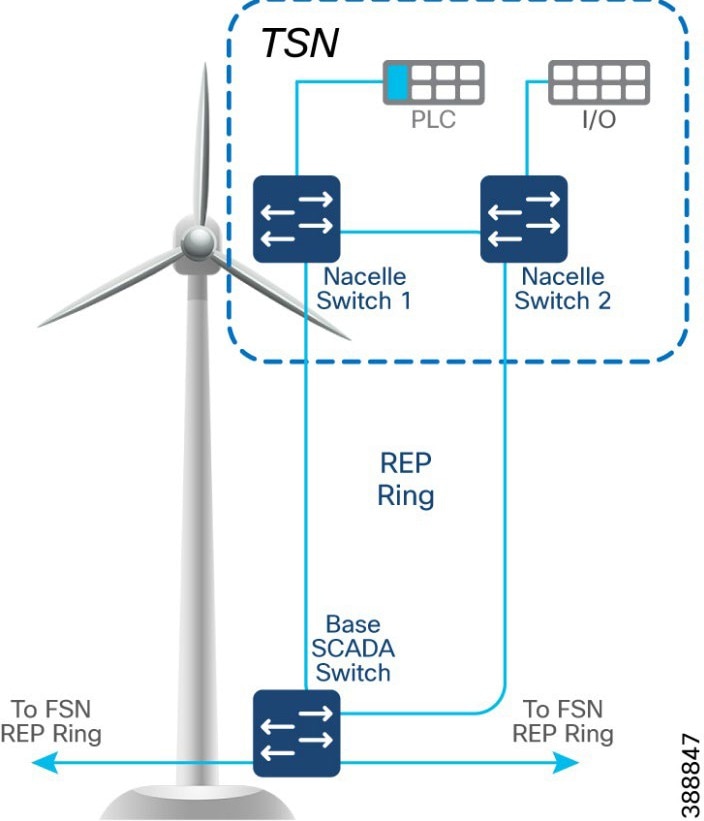

TSN High Availability Design with REP

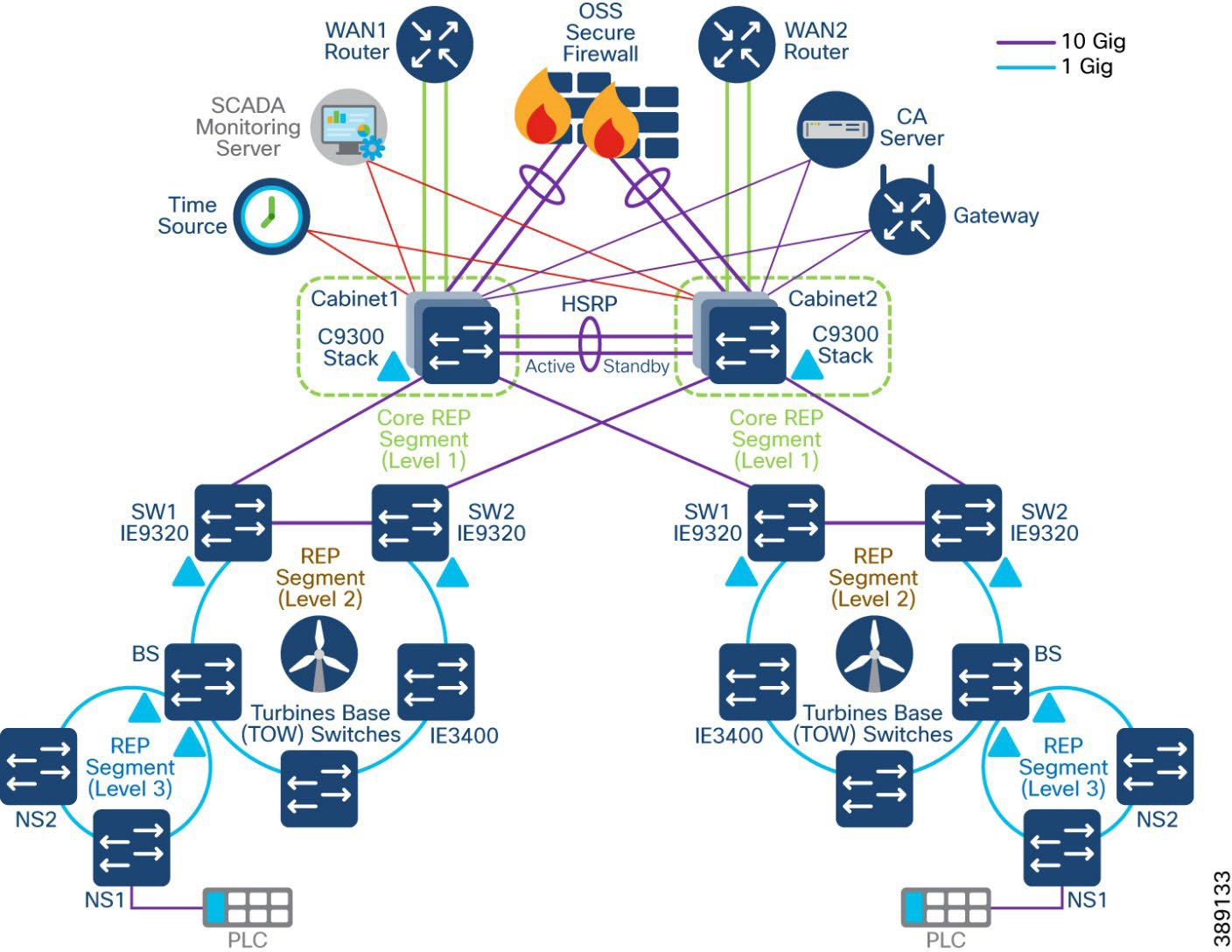

Multi-level advanced REP rings design across Cabinets

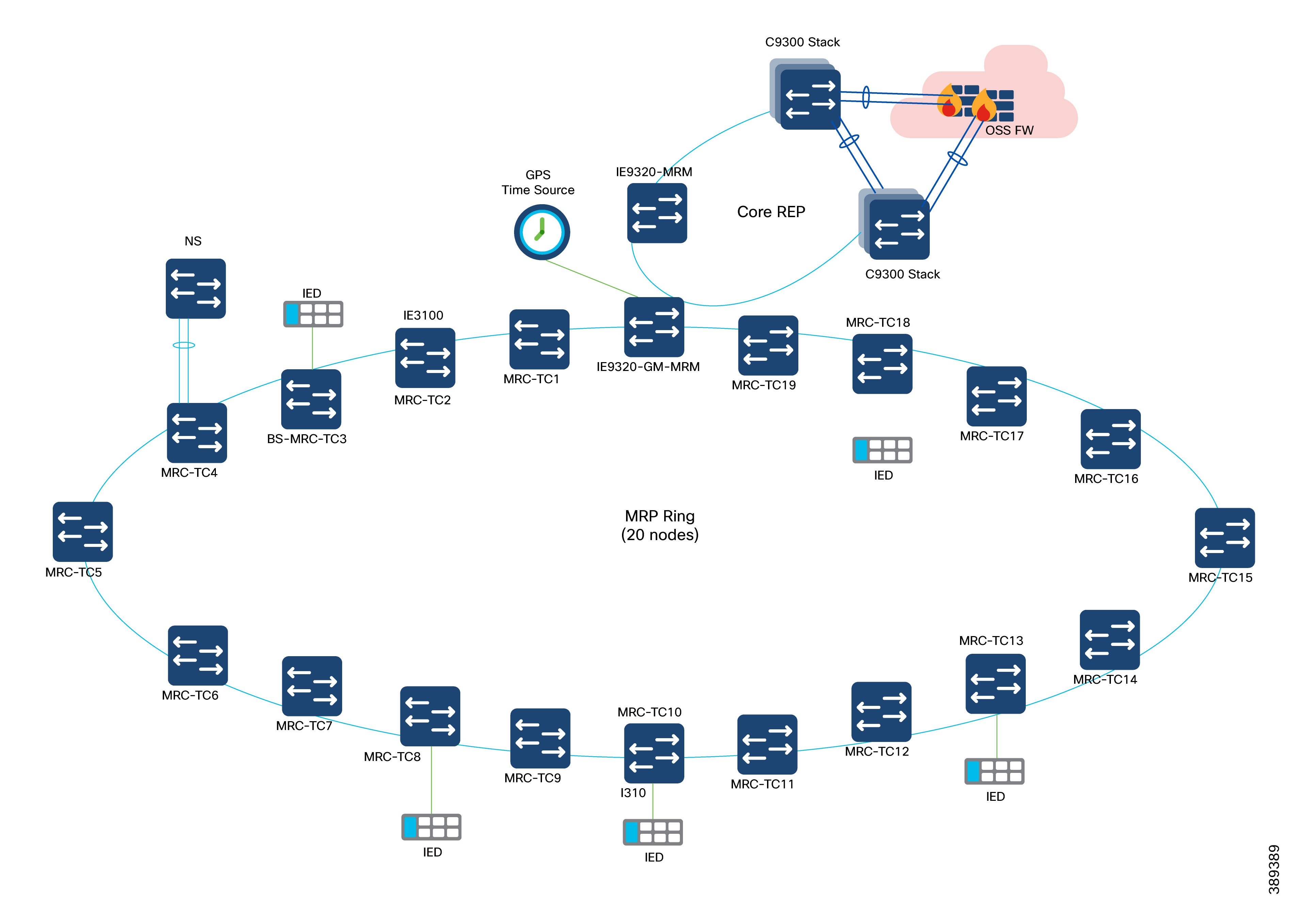

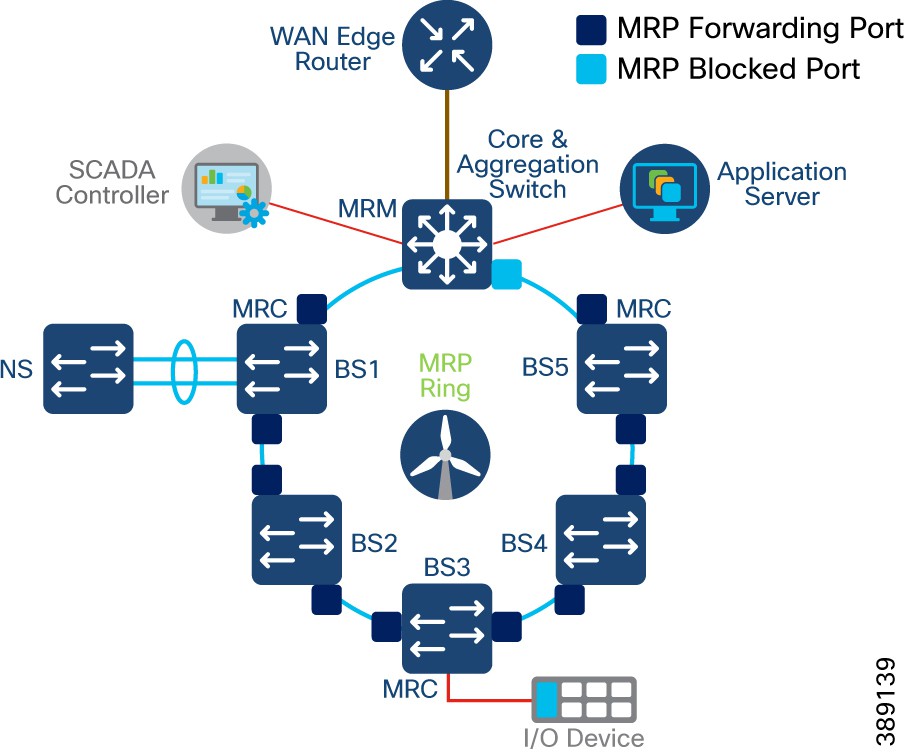

Media Redundancy Protocol (MRP) Ring design for FSN

MRP Ring Design Considerations

Turbine SCADA network timing synchronization

PTP over MRP Design Considerations

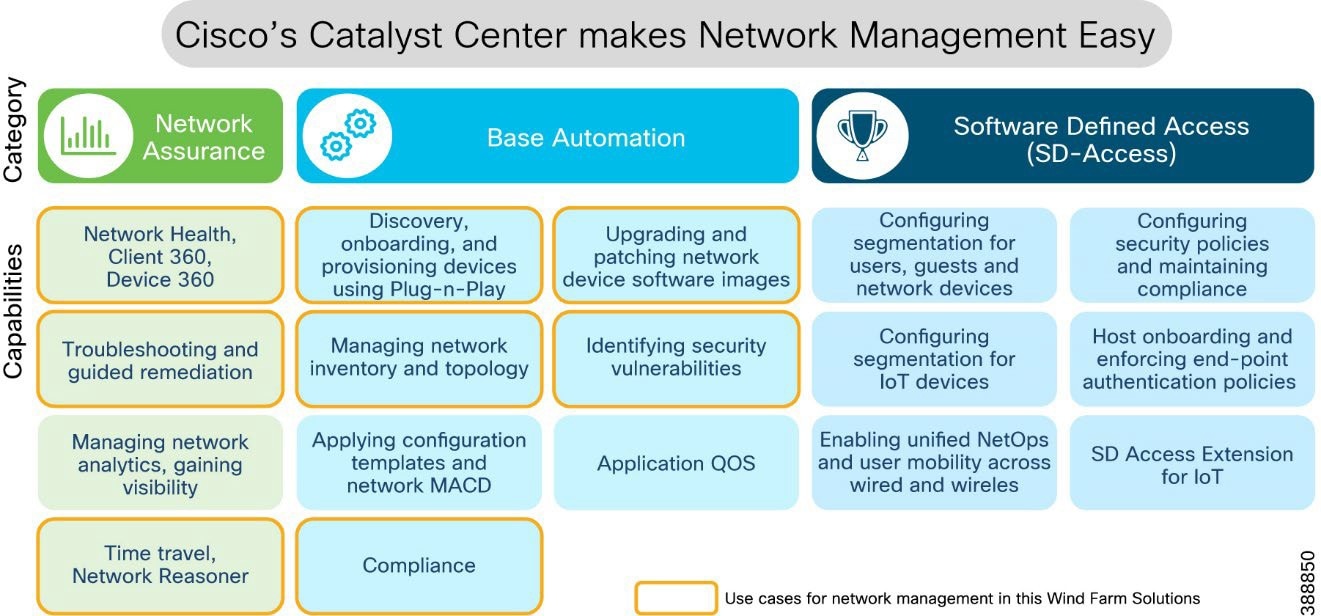

Network Management and Automation

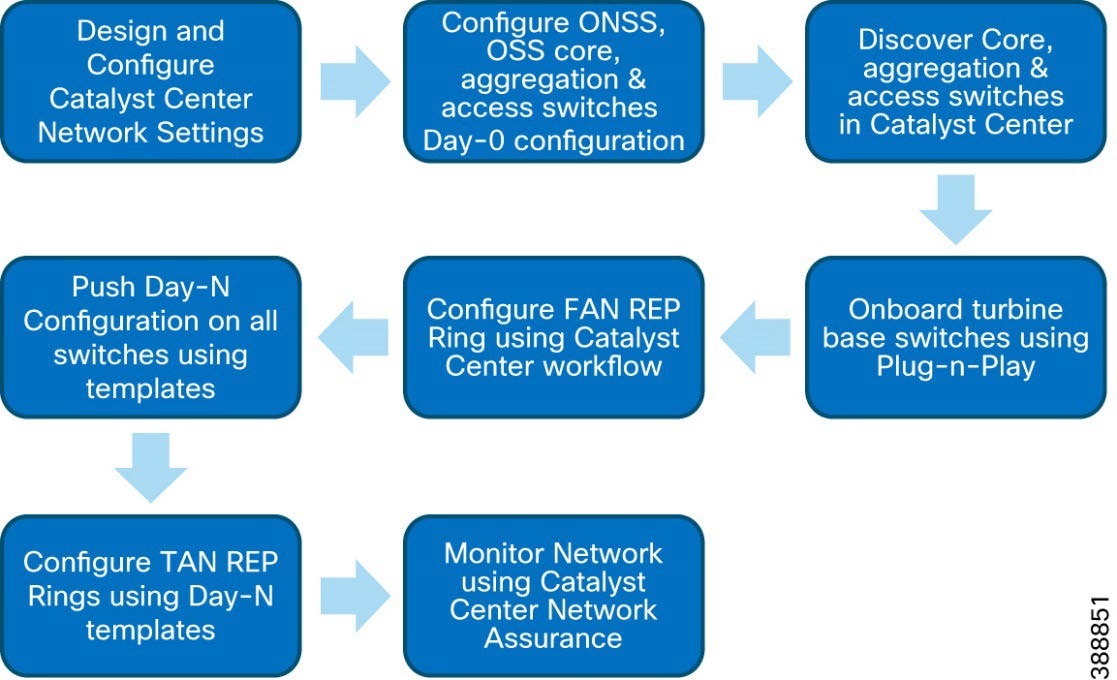

Device Discovery and Onboarding

Device Plug-n-Play Onboarding Using Catalyst Center

FAN REP Ring Provisioning using Catalyst Center REP Automation Workflow

REP Ring Design Considerations, Limitations, and Restrictions

Day N Operations and Templates

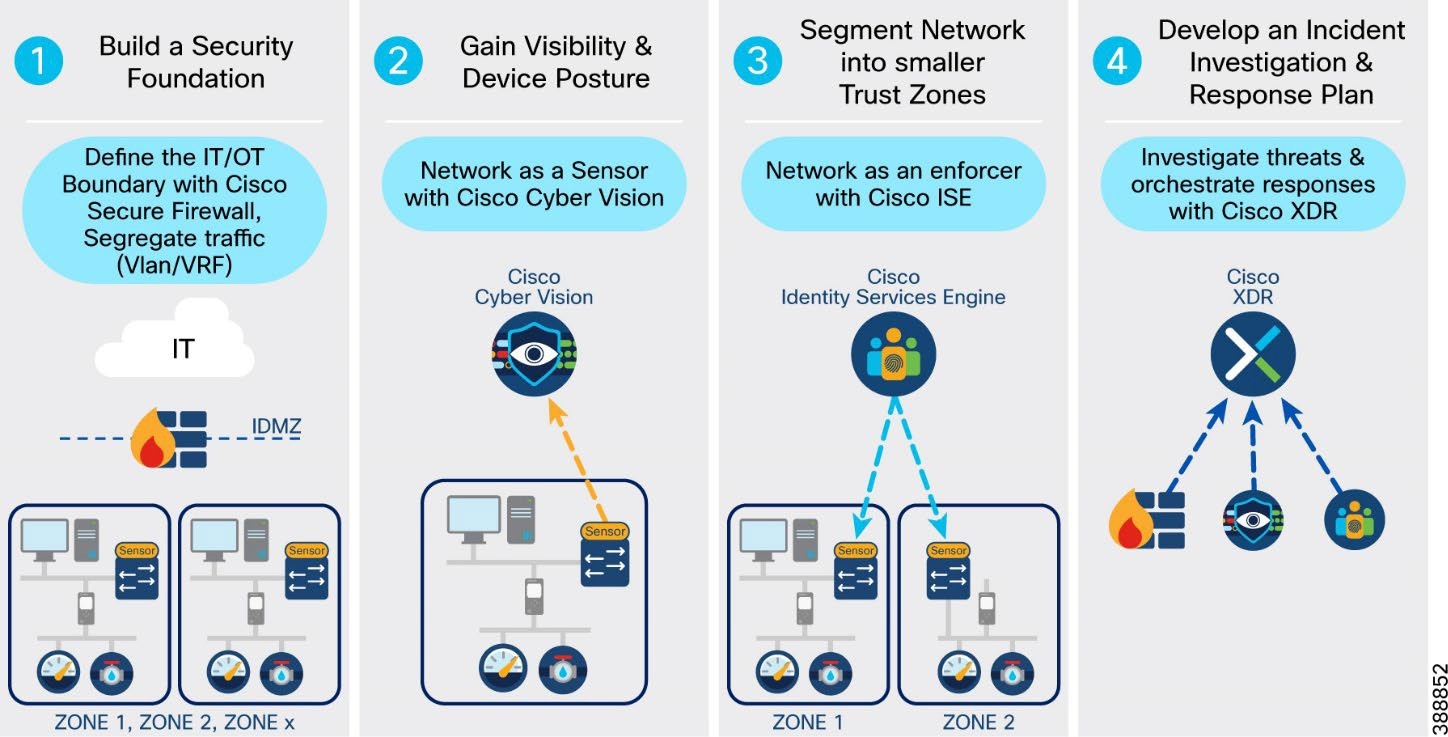

Security Design Considerations

Security Approach and Philosophy

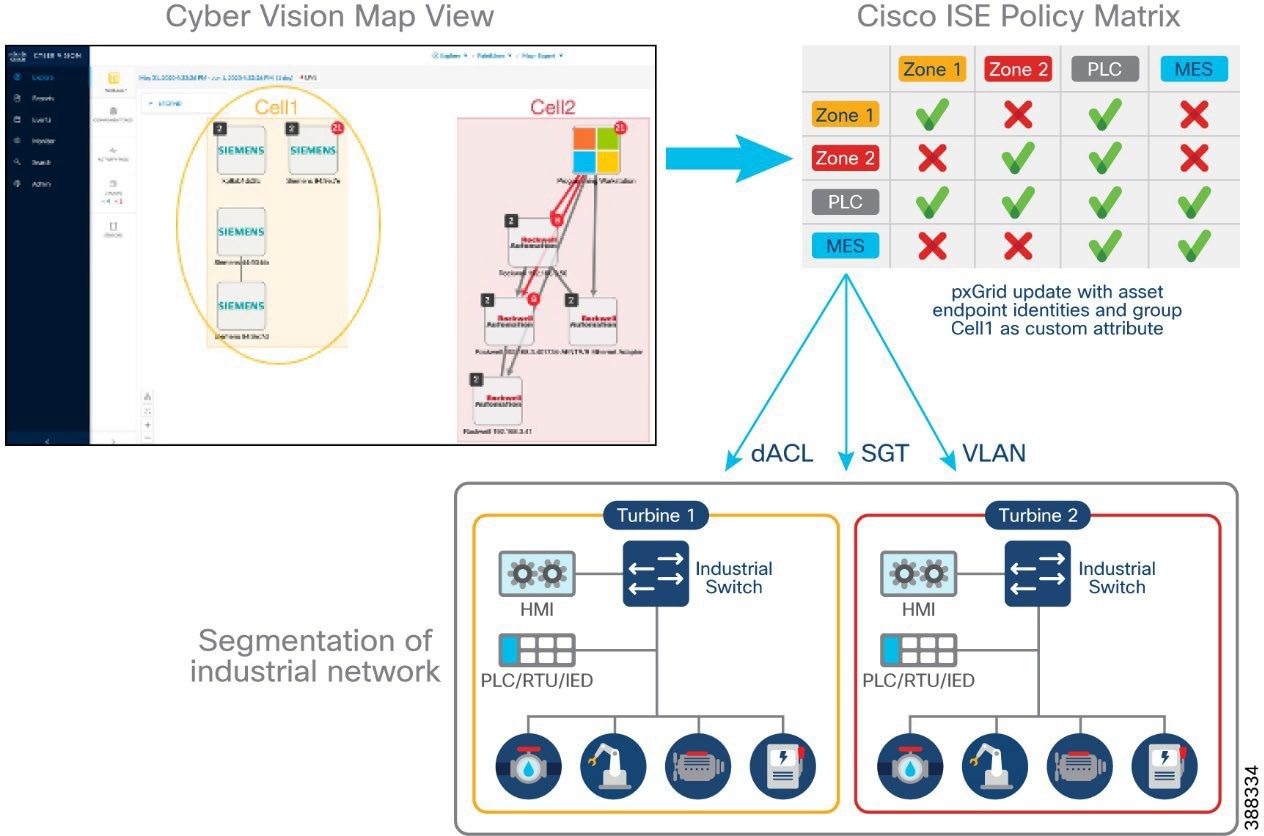

Wind Farm Network Security Use Cases and Features

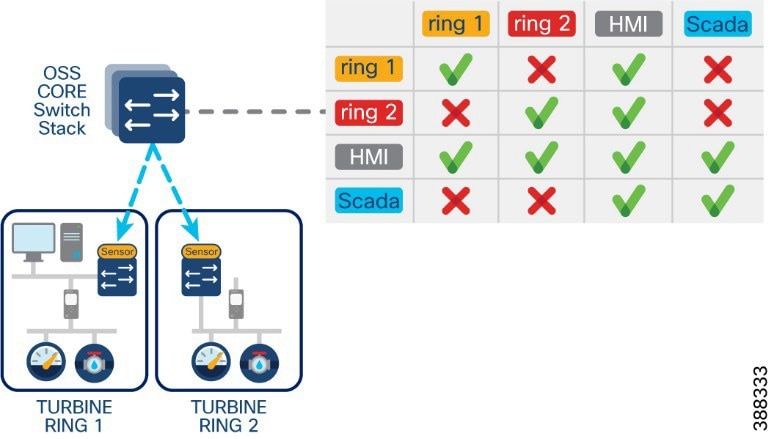

Advantages of Network Segmentation

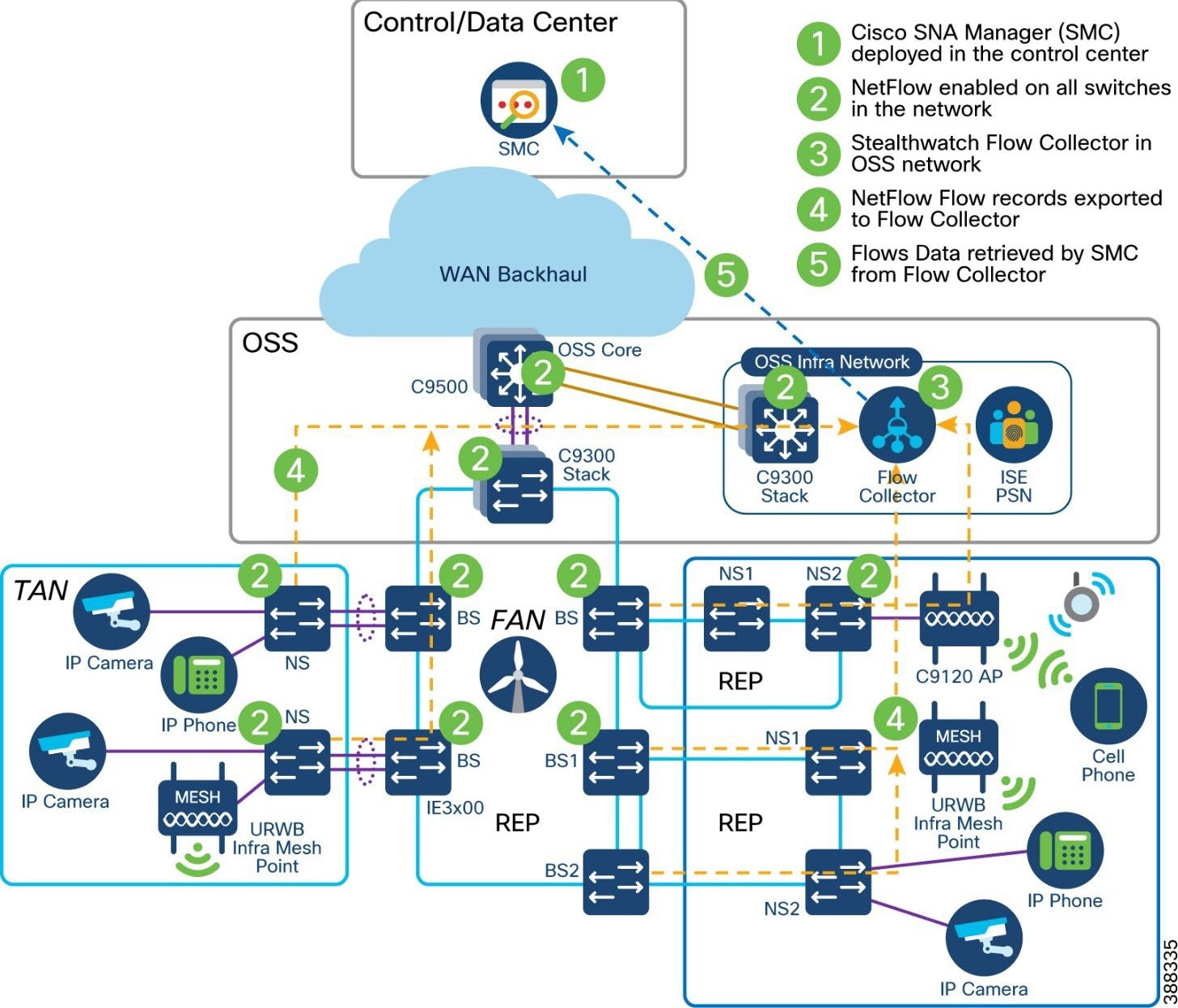

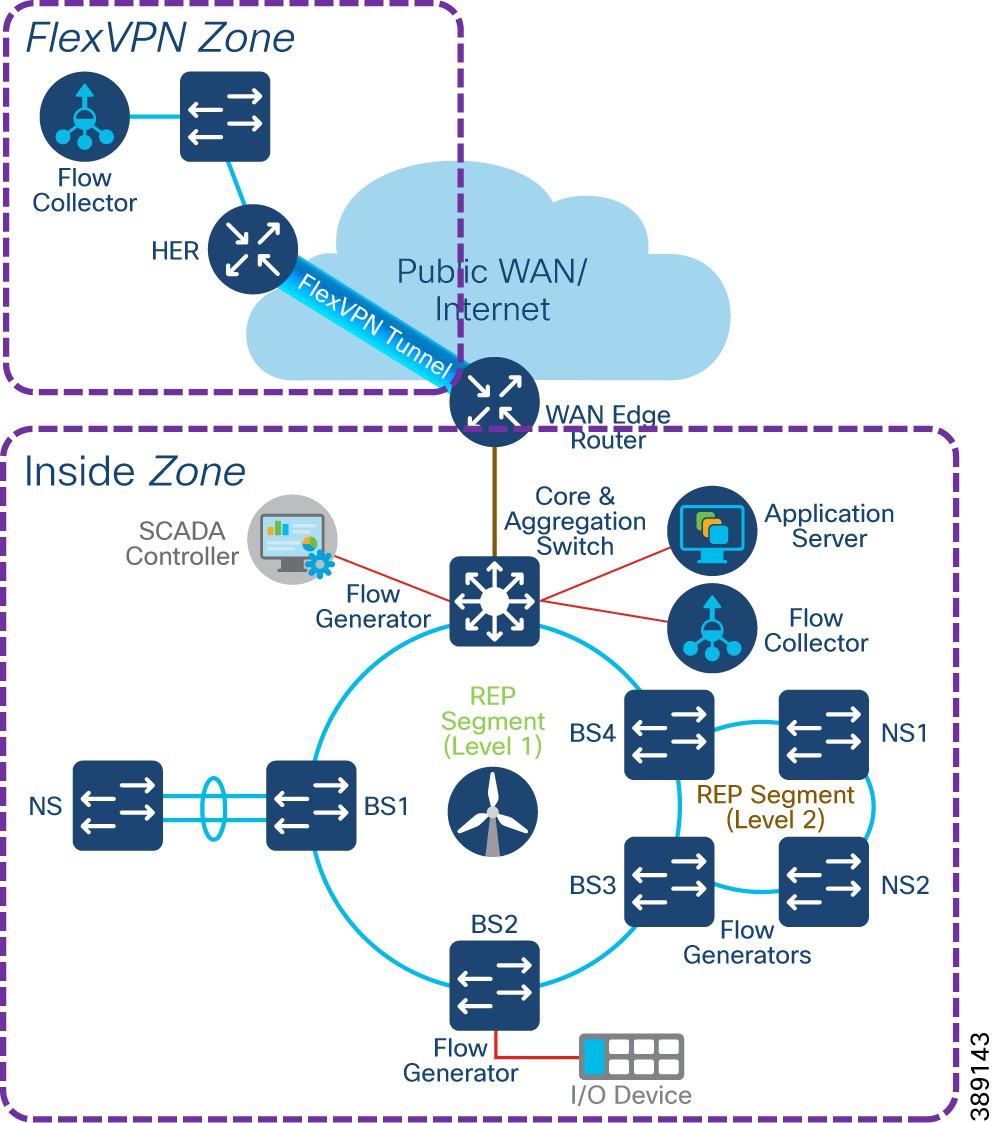

Cisco Secure Network Analytics (Stealthwatch)

Flexible NetFlow Data Collection

Cisco Secure Network Analytics for Windfarm Network Security

Cisco Secure Network Analytics for Abnormal Traffic Detection

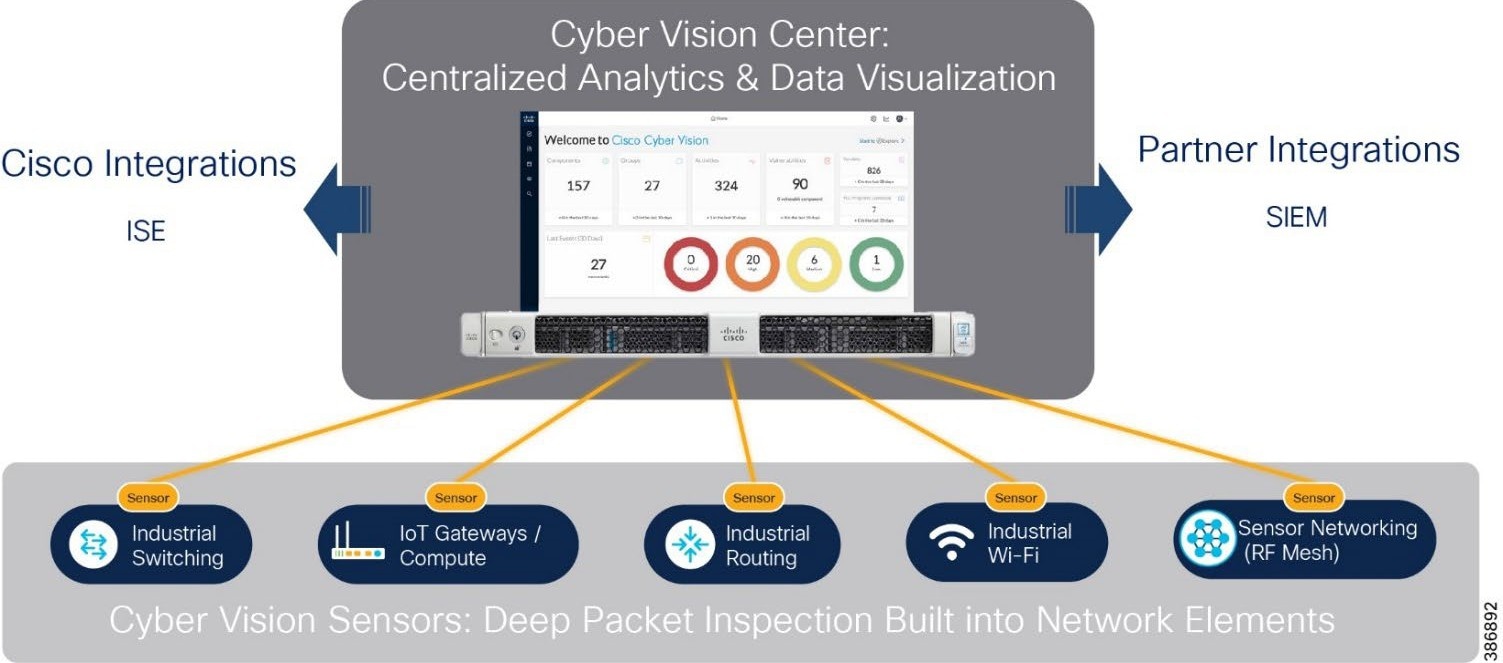

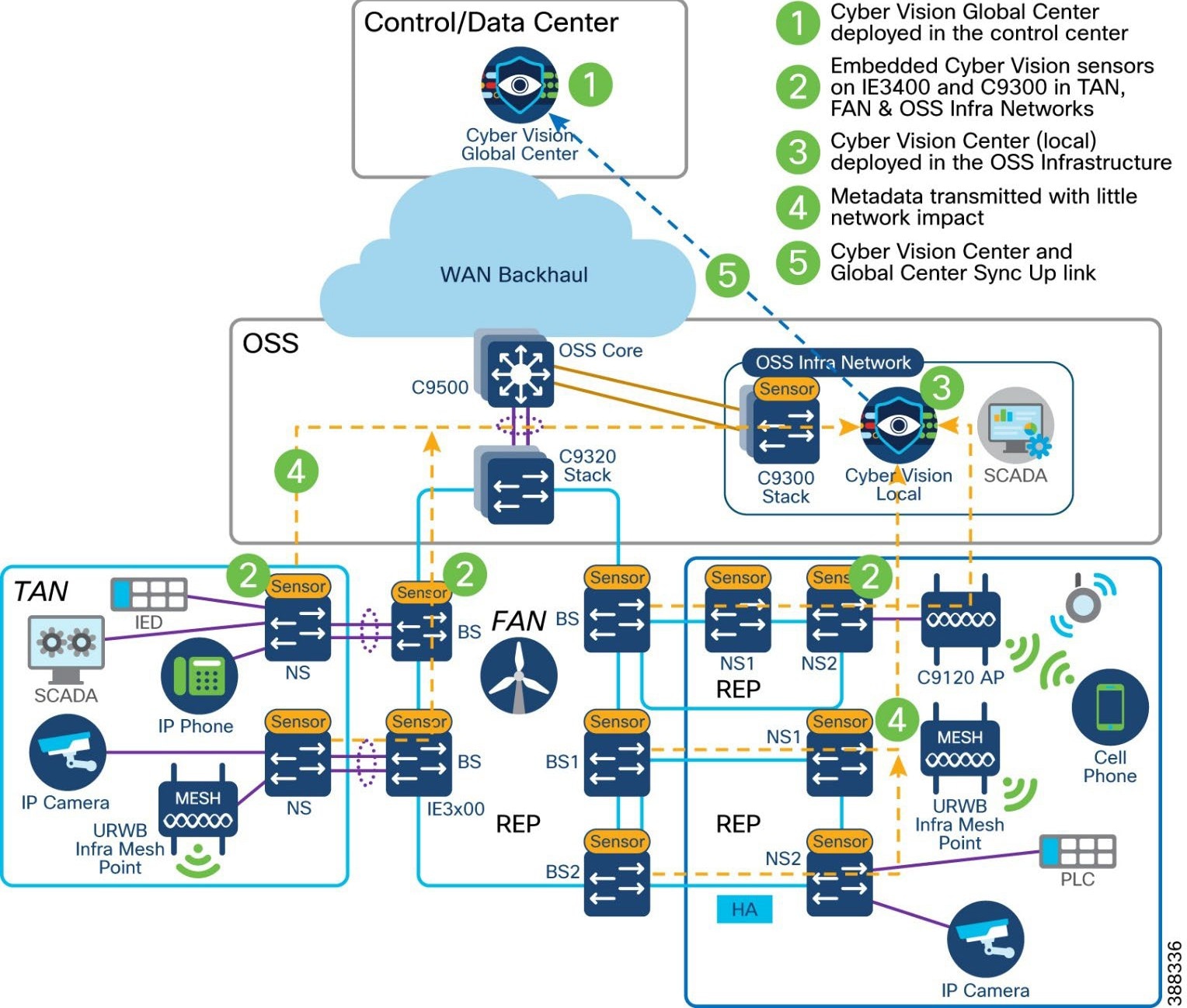

Operational Technology Flow and Device Visibility using Cisco Cyber Vision

Cyber Vision Design Considerations

Wind Farm Cyber Vision Network Sensors

Cisco Cyber Vision’s SEA Architecture and Core Components

Cisco Cyber Vision’s SEA in Wind Farm Network Design

Design Requirements & Considerations

Turbine Operator Network Security Design

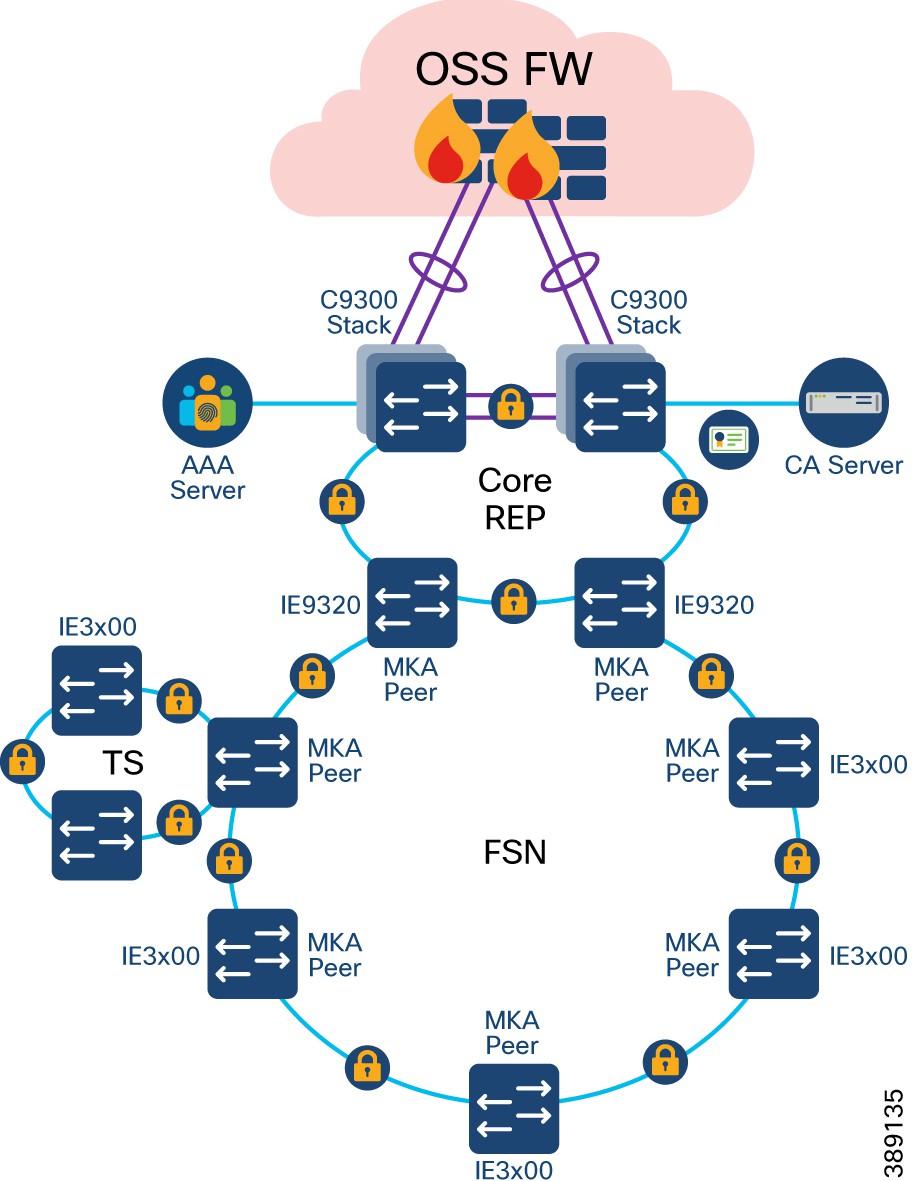

MACsec Encryption in Turbine Operator Network

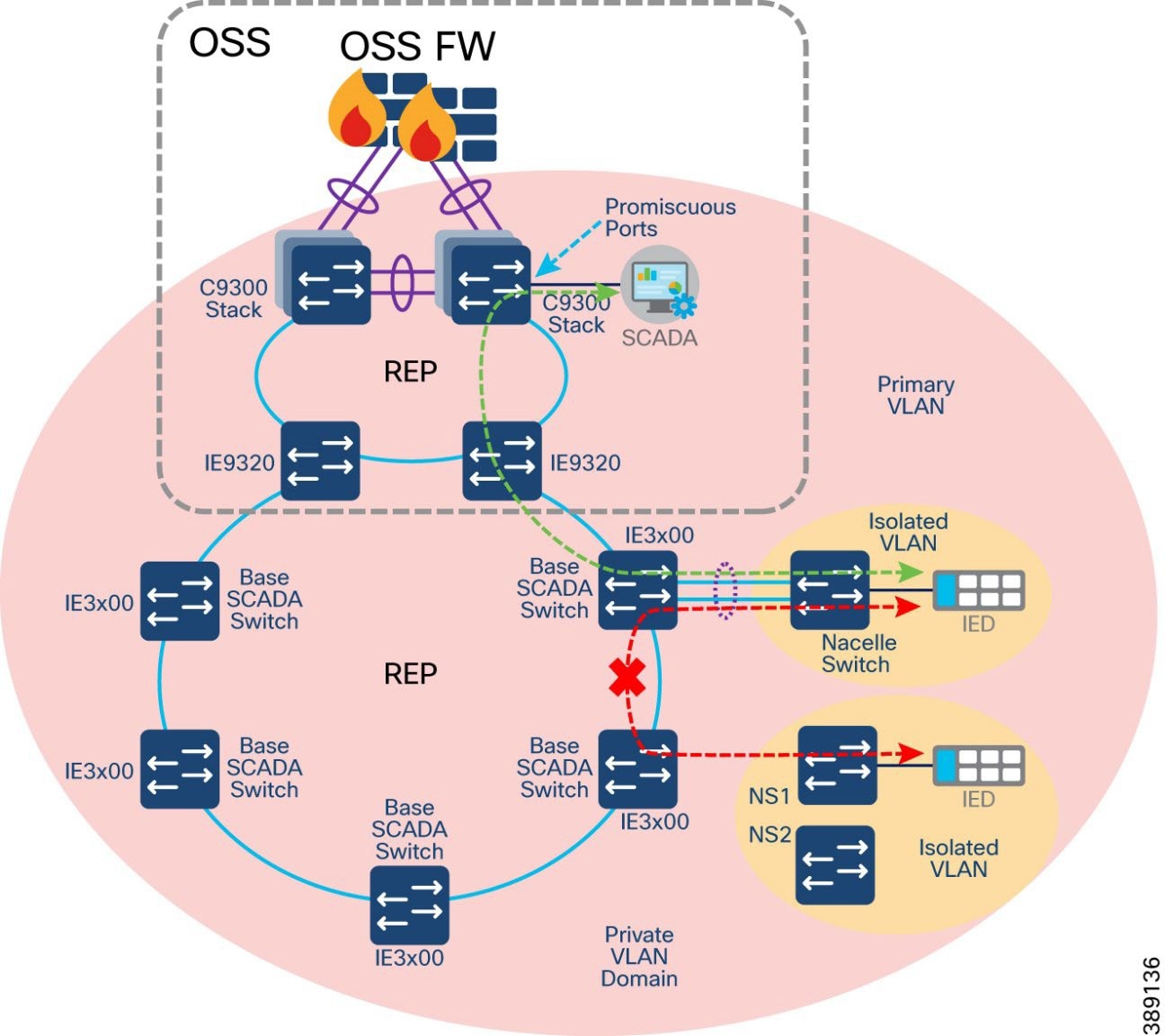

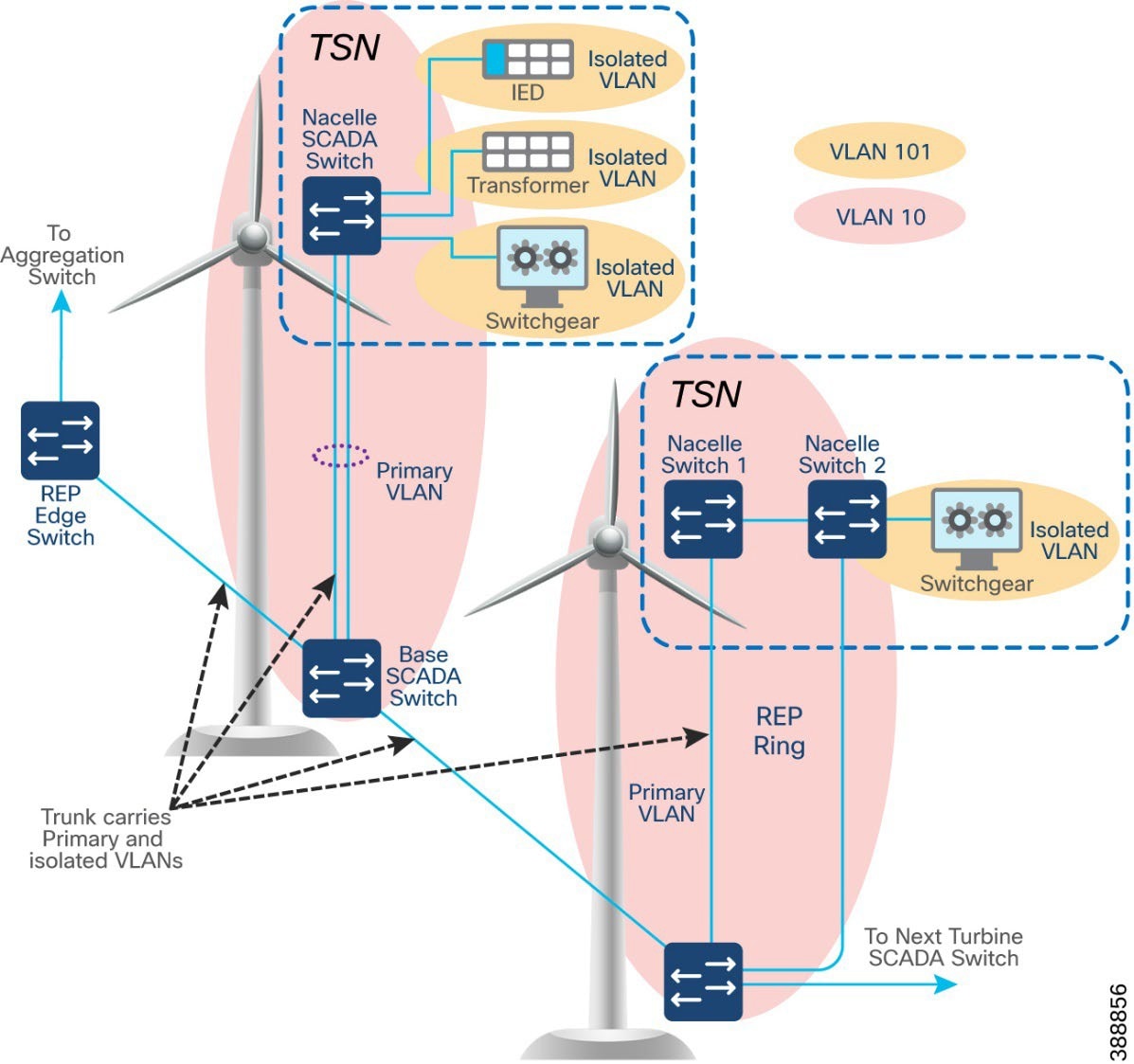

Network micro-segmentation using Private VLAN

NERC CIP Compliance Features and Guidance

CIP-005-7 - Electronic Security Perimeter(s)

CIP-007-6 – System Security Management

CIP-008-6 – Incident Reporting and Response Planning

CIP-010-4 – Configuration Change Management and Vulnerability Assessments

CIP-011-3 – Information Protection

CIP-013-2 – Supply Chain Risk Management

Network Scale and High Availability Summary

Wind Farm Operator Network High Availability Summary

FAN Aggregation High Availability

OSS and ONSS Core High Availability

Cisco Catalyst Center Redundancy

Cisco SD-WAN Validator SD-WAN Validator Orchestrator

Cisco SD-WAN SD-WAN Controller

Cisco SD-WAN Manager SD-WAN Manager Clustering

Turbine operator Network High Availability Summary

SCADA Core Network High Availability

Turbine Operator Compact Onshore Substation

Compact Onshore Substation Use Cases

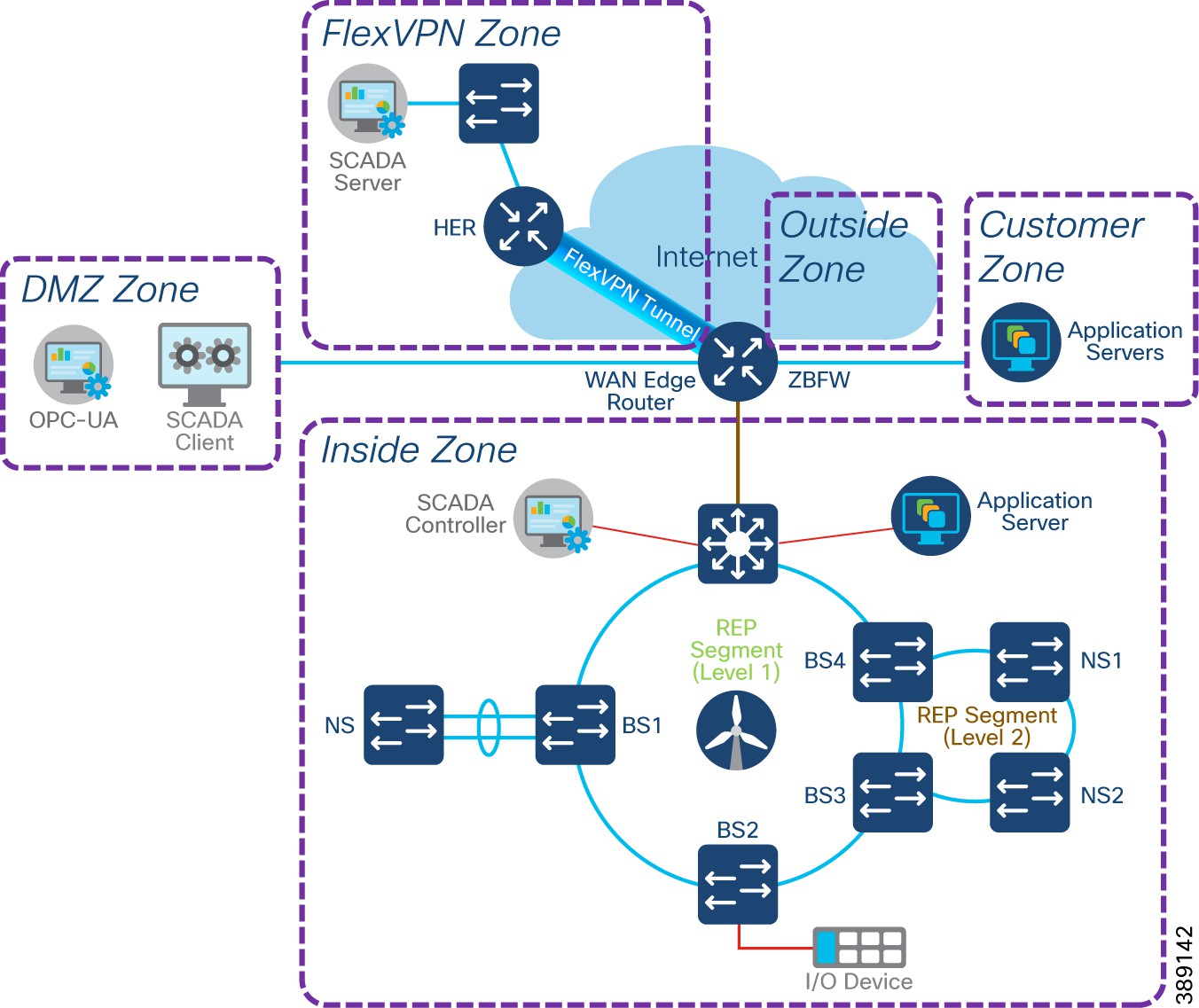

Compact Onshore Substation Network Architecture

Core Network and Routing design considerations

WAN Edge with FlexVPN network design

Cisco IR1101 as WAN Edge Router

Cisco Catalyst 8300 Series Edge Platform as HER

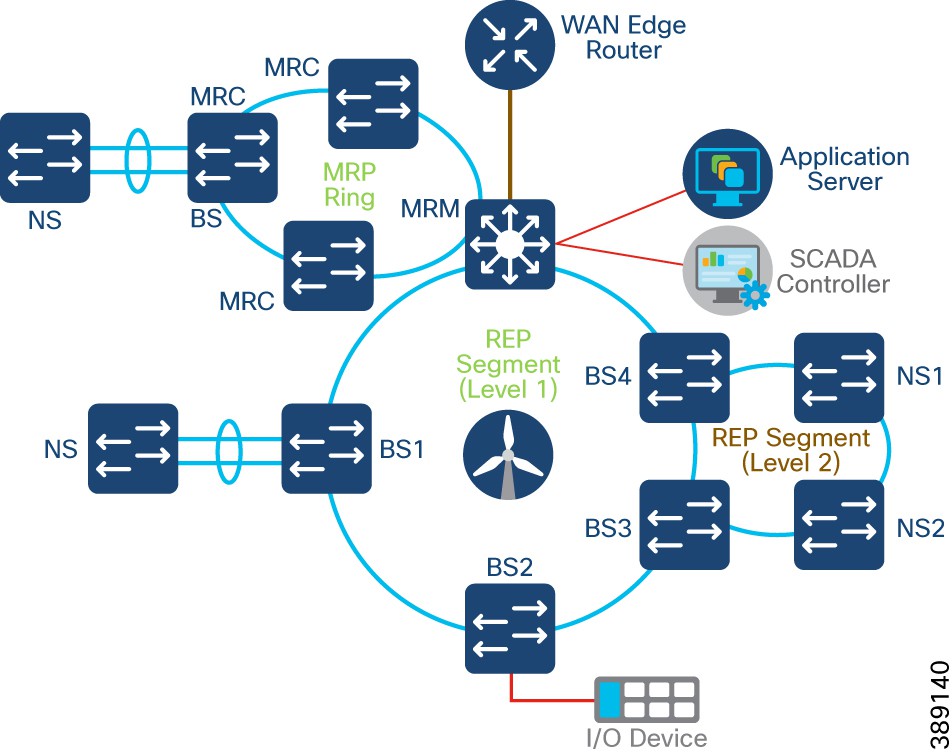

Farm Area SCADA Network (FSN) REP Ring Design

FSN with MRP and REP Rings Design

FSN with MRP and REP Rings Design

Turbine SCADA Network (TSN) Design with REP

Compact Substation Network Security

Compact Substation Network Segmentation using Private VLAN

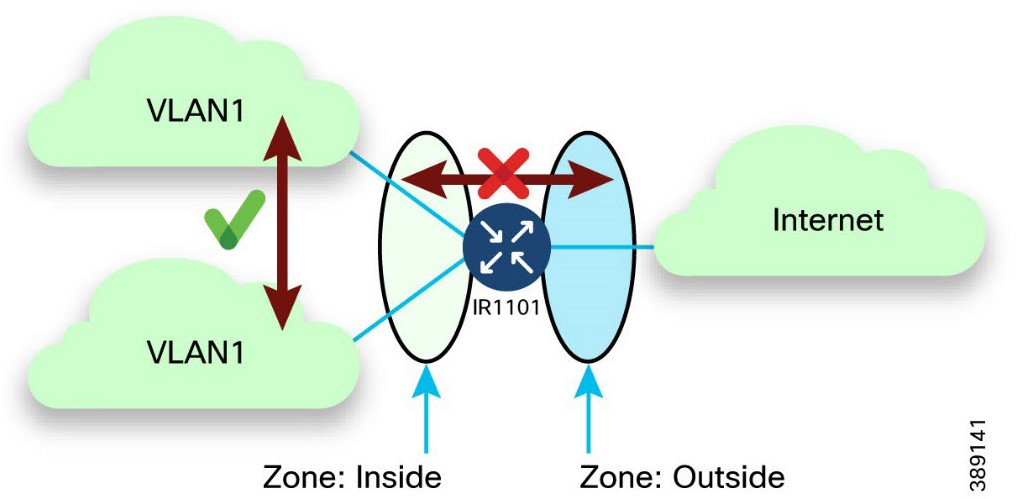

Zone Based Firewall (ZBFW) Design

Network Visibility using NetFlow

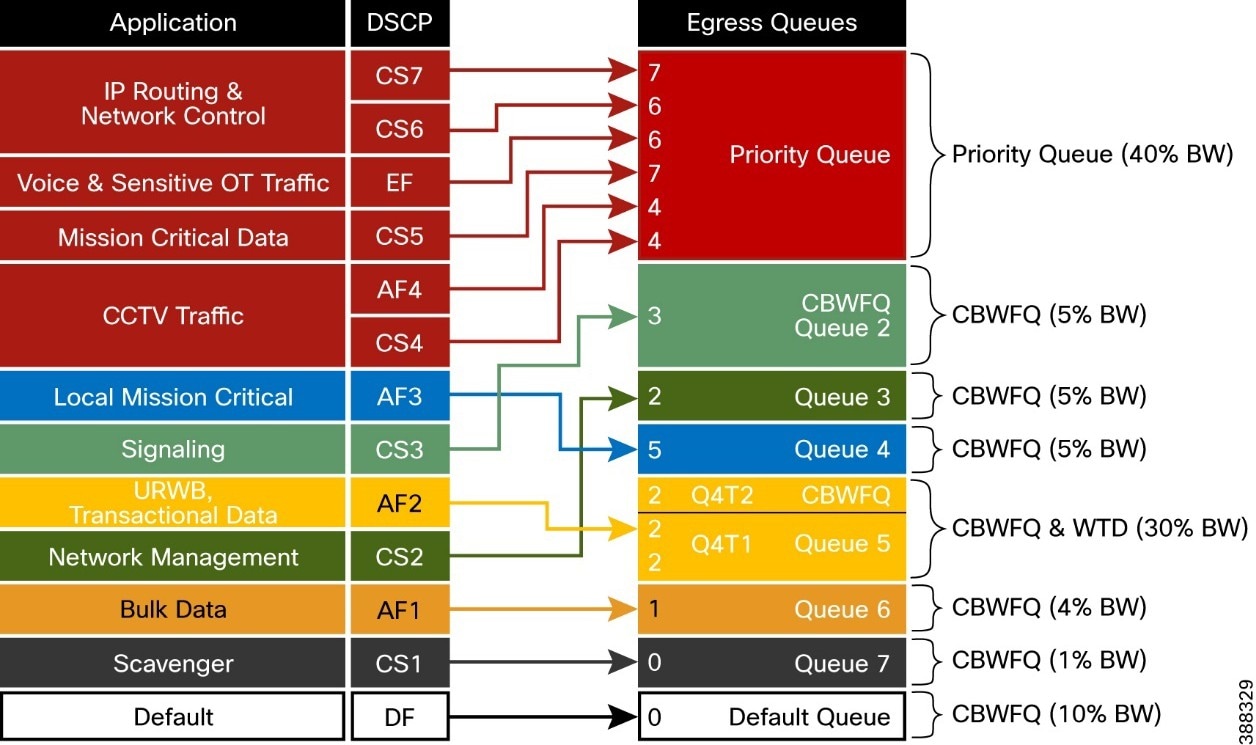

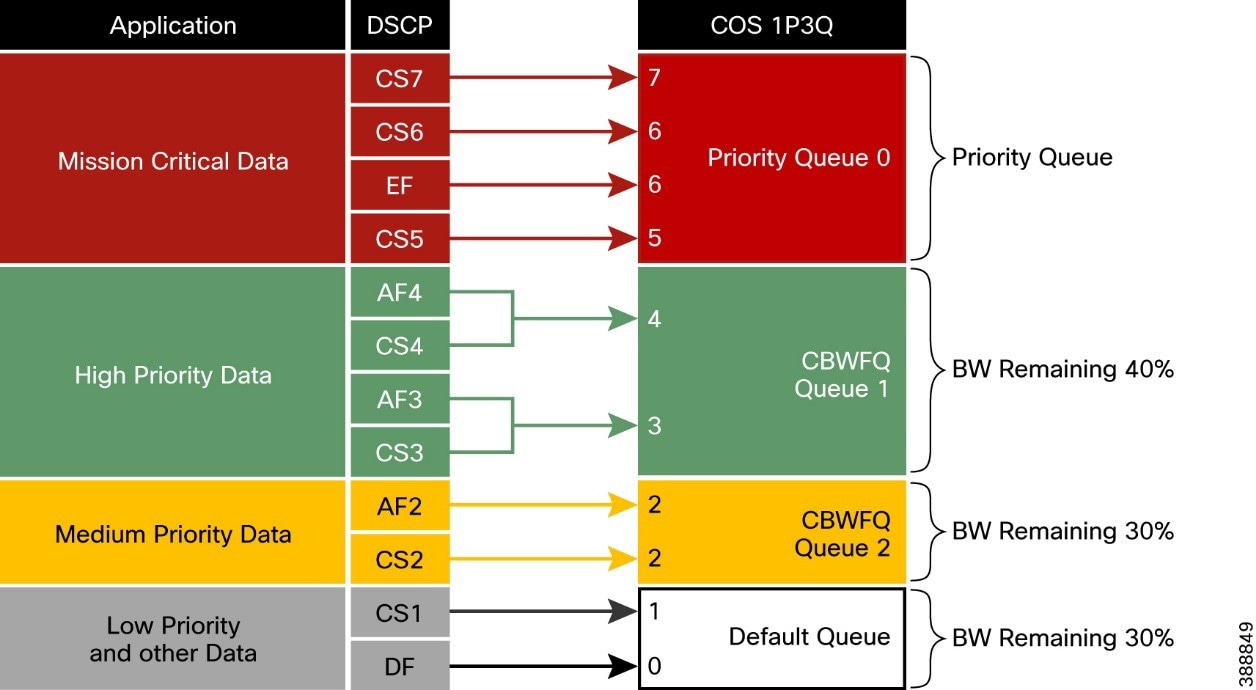

Compact Substation Quality of Service

Design Considerations on Core Switch

Introduction to Offshore Wind Farm

Most countries are investing in renewable energy generation to accelerate the move toward carbon neutrality. The following technologies are growing steadily and being deployed at scale:

● Onshore and offshore wind

● Onshore solar farms

● Onshore battery storage

Other renewable technologies also are being researched and developed, such as wave, tidal, and energy storage technologies. We will start to see innovative renewable energy deployments in the future.

Some countries are leading the push to integrate renewable energy into the grid. China and the UK are examples of countries that are leading the way with large deployments of wind farms, both onshore and offshore. European countries in general are setting big targets for offshore wind farms. And the United States is predicted to become a major offshore wind energy producer in the coming decade. Cisco can help with renewable energy technologies, in onshore and offshore wind farms, onshore solar farms, and onshore battery storage facilities. This document focusses on the complexities that offshore wind farms are facing and the solutions that Cisco offers.

Deploying and operating renewable energy technologies can be challenging: they need to operate in harsh and remote locations, a secure and reliable network is required, and that network needs to work flawlessly with the various OT and IT technologies that form the solution.

As digital technology is increasingly required to operate remote distributed energy resource locations, networking and communication equipment must be installed with close attention paid to ease of operations, management, and security. Cisco validated designs are simple, scalable, and flexible. They focus on operational processes that are field-friendly and don’t require a technical wizard. Our centralized network device management (Cisco Catalyst Center) and strong networking asset operation capabilities eliminate the need for manual asset tracking and the inconsistencies in field deployments from one site to another. Integration with operations ensures that field technicians can easily deploy and manage devices without the need for IT support, while IT and OT teams have full visibility and control of the deployed equipment.

Additionally, Cisco provides a wide range of connectivity options, ranging from fiber to cellular or high-speed wireless where hardwired connections are not available.

Cisco has launched a complete validated design for offshore wind farms. This design focusses on an end-to-end architecture for the asset operator’s network, including both onshore and offshore locations.

This document refers to the following stakeholders:

● Wind farm operator: The asset operator responsible for the daily operations and administration of a wind farm as a power-producing entity. Many operators are also involved in the development, ownership, and construction of the wind farm. Operators sell power that is produced to public utility companies, typically with long-term fixed price contracts in place.

● Wind farm owner (asset owner): Typically, a consortium of parties such as public utilities or oil and gas companies and financing companies. There are also dedicated renewable energy companies and others who invest in this area. Many wind farm owners also are operators. Many such companies are dedicated renewable companies or renewable energy branches of traditional utilities or oil and gas companies.

● Turbine supplier: Wind turbine suppliers design, test, and manufacture wind turbine equipment, including wind turbine generators (WTGs), and ancillary systems such as supervisory control and data acquisition (SCADA) and power automation. These suppliers also provide ongoing support and maintenance services (O&M) for many wind farm operators. It is typical for the monitoring and maintenance of the wind turbine network to be outsourced to these same suppliers.

● Offshore transmission owner (OFTO): Offshore wind farms are connected to the onshore grid by an export cable system. Regulatory requirements in many countries prohibit power generators from owning transmission assets. Therefore, the export cable often is owned and operated by another third-party, the OFTO. Developers, who often also are the wind farm owners or operators, divest the export cable system to a third-party through a regulated auction. In some regions, the requirement to divest the transmission assets (export cable systems) is not required.

● Grid utility: A traditional power grid operator that provides the connection point for exported power from a wind farm can be either a transmission system operator (TSO), a distribution network operator (DNO), or a distribution system operator (DSO). Public utilities that are also wind farm owners separate the grid and renewable businesses usually due to strict regulatory requirements.

Cisco Solution for Renewable Energy Offshore Wind Farms

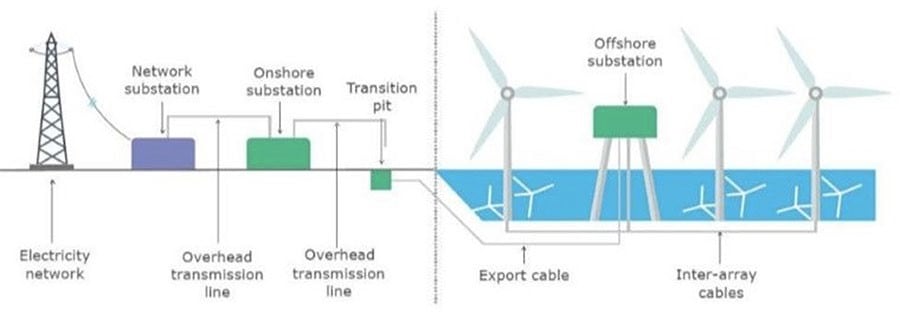

Offshore wind farms are large infrastructure deployments with multiple locations. They include the following:

● Onshore substation

● Offshore substation (platform offshore)

● Offshore wind turbines (ranging from 50 to 300 turbines)

● Onshore operations and maintenance offices

● Offshore service operations vessels (SOV), which provide worker accommodations, offices, and workshops while offshore

Reliable and secure connectivity is key for providing monitoring and control of these offshore and therefore remote assets. Without a reliable and secure communications infrastructure, monitoring and control would be challenging.

From the offshore wind farm operator’s viewpoint, the network needs to be easy to deploy, monitor, upgrade, and troubleshoot.

The network design also needs to be standardized to enable easy specification and procurement at the early stages of a project. Avoiding both bespoke work and delivering different architectures for each project should enable a speedier project delivery phase.

A standardized solution is required that provides the flexibility to meet these needs while facilitating a clear path forward as complexity and scale evolve (for example, larger wind farms, increased number of devices and applications, and increased reliability).

Offshore Wind Farm Cisco Validated Design

Cisco has developed a complete Cisco Validated Design (CVD) for offshore wind farm projects. It provides a blueprint solution for all phases of a project, from specification and procurement to deployment.

The CVD includes networking infrastructure from offshore wind turbine through offshore platforms to the onshore WAN interface point and connectivity to the wind farm operator’s control center.

The CVD is modular to allow for varying wind farm sizes, so it can be adapted for any number of turbines. It provides resilient architectures to allow for fault conditions, and includes cyber security built in from the start.

This CVD offers the following key benefits:

● Flexible deployment options: Support for simple to advanced solutions that cover various deployment options (scalable for small to large wind farms). A modular design that can adjust to the various sizes of wind farms that are deployed. Providing a flexible platform for the deployment of future services and applications.

● Rugged and reliable network equipment: Network equipment designed for harsh offshore environments where required. The ability for network equipment to operate in space-constrained locations and tough environmental conditions.

● Simplified provisioning: Automation and simple onboarding, monitoring, and management of remote networking assets with centralized monitoring and management of multiple wind farm networks.

● Simplified operations: Increased operational visibility, minimized outages, and faster remote issue resolution. Compliance of network device configurations (changes from a known baseline are flagged) and firmware and powerful analytics to provide deep visibility of the network assets.

● Multi-level security: End-to-end robust security capabilities to protect the infrastructure and associated services, monitor traffic flows, and provide control points for interfacing to third-party networks and equipment. Vulnerability information for discovered assets and asset reporting to aid regulatory compliance (for example, NIS 2 and NERC CIP).

The validated design is built on the following functional blocks:

● Wind farm operator data center

● Wind farm wide area network (WAN)

● Onshore DMZ

● Onshore substation

● Offshore DMZ

● Offshore substation

● Turbine Operator network

● Power control and metering (PCM) network

● Turbine plant IT network (for example, enterprise and plant services)

● Service SOVs

● Operations and maintenance buildings (O&M)

● Turbine Operator Compact Onshore Substation

The validated design allows customers or partners to select the parts are applicable to a particular project or deployment or use the complete end-to-end architectures.

Scope of Wind Farm Release 1.3

This Design Guide provides network architecture and design guidance for the planning and subsequent implementation of a Cisco Renewable Energy Wind Farm solution. In addition to this Design Guide, Cisco Wind Farm Solution Implementation Guide provides more specific implementation and configuration guidance with sample configurations.

This Release 1.3 supersedes and replaces the Cisco Offshore Wind Farm Release 1.2 Design Guide.

New Capabilities in Offshore Wind Farm Release 1.3

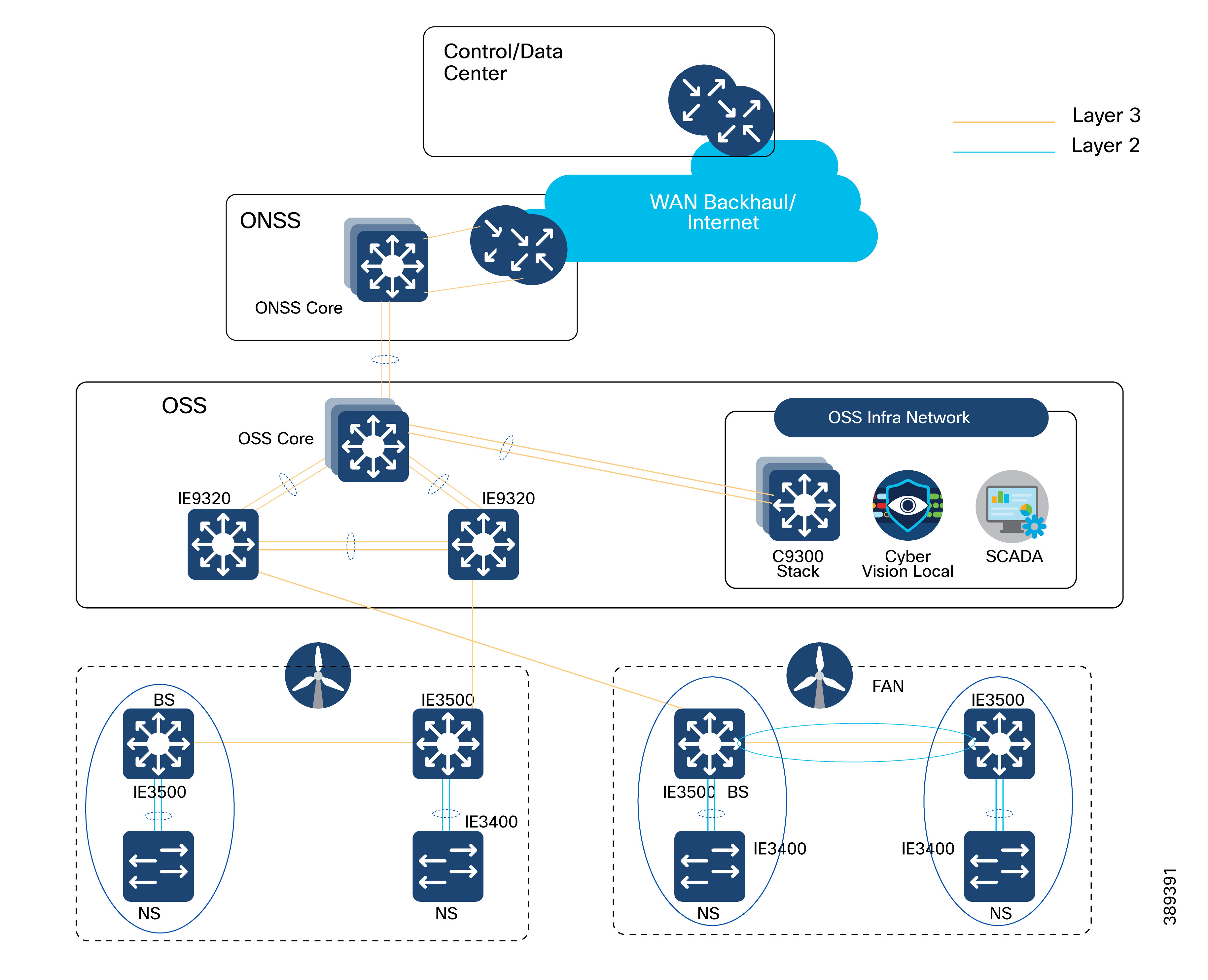

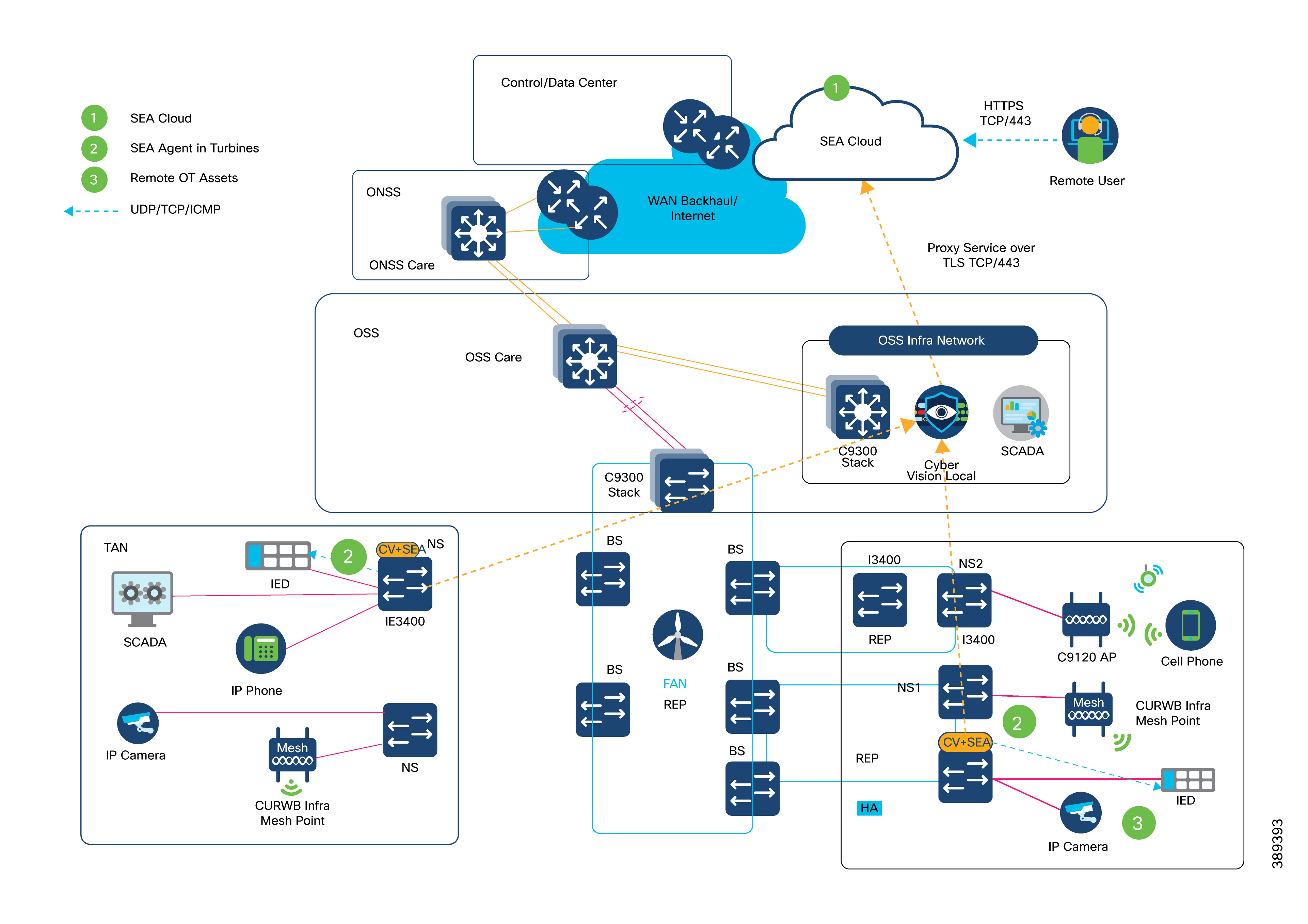

The turbine operator in an offshore wind farm is responsible for the daily operations and administration of turbines in a wind farm as a power-producing entity. Many operators are also involved in the development, ownership, and construction of the wind farm. Operators sell power that is produced to public utility companies, typically with long-term fixed price contracts in place. Turbine operators like Siemens, Vestas etc., monitor and maintain the wind turbine network with the help of turbine suppliers.

● Turbine Operator Network design in Offshore Substation (OSS) which includes Farm Area SCADA network (FSN), Turbine SCADA Network (TSN) with highly available and resilient network architecture across different OSS geo-locations.

● Turbine Operator network design with:

◦ Media Redundancy Protocol (MRP) ring of FSN up to 20 nodes along with core and FSN REP rings on IE9320 switch

◦ Timing synchronization in turbine SCADA network using Precision Time Protocol (PTP) over MRP ring

● Wind Farm Asset Operator Network architecture with:

◦ BGP EVPN VXLAN fabric L2 overlay between turbine base and OSS core switches with OSPF based L3 underlay (Optional)

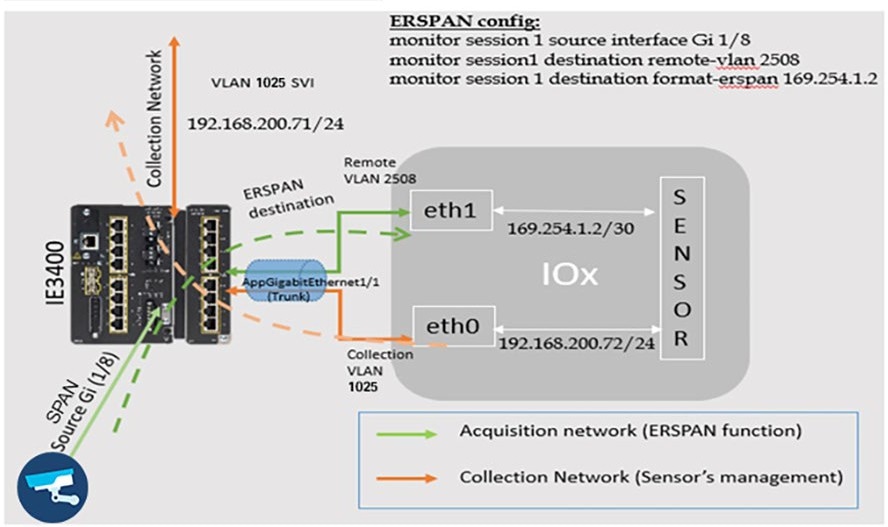

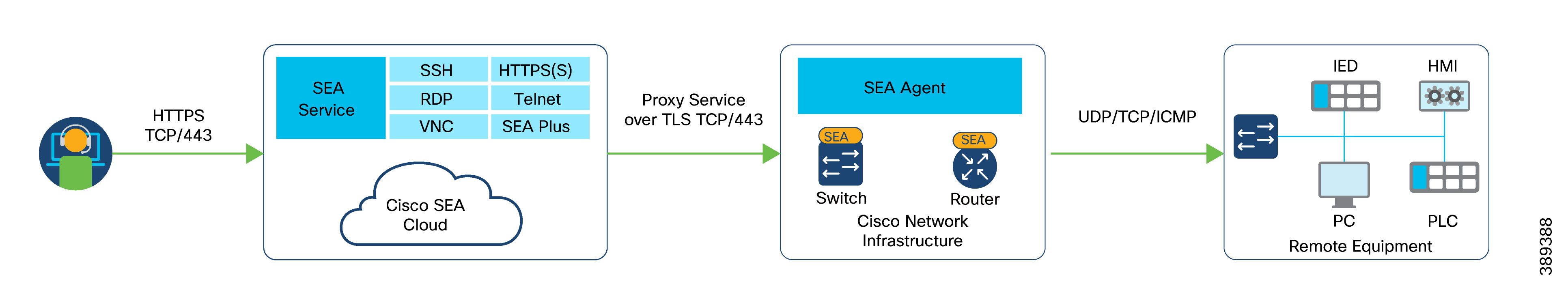

◦ Secure Remote Access of OT assets connected to wind turbines, using combined Cyber Vision (CV) and Secure Equipment Access (SEA) IOx application on turbine switches

The following architectural blocks are out of the scope of this CVD:

● Backhaul design for WAN handoff routers

● Typical service provider or customer MPLS network or other fixed connectivity options (microwave, fiber, and so on).

● Data center network design

Offshore Wind Farm Places in the Network

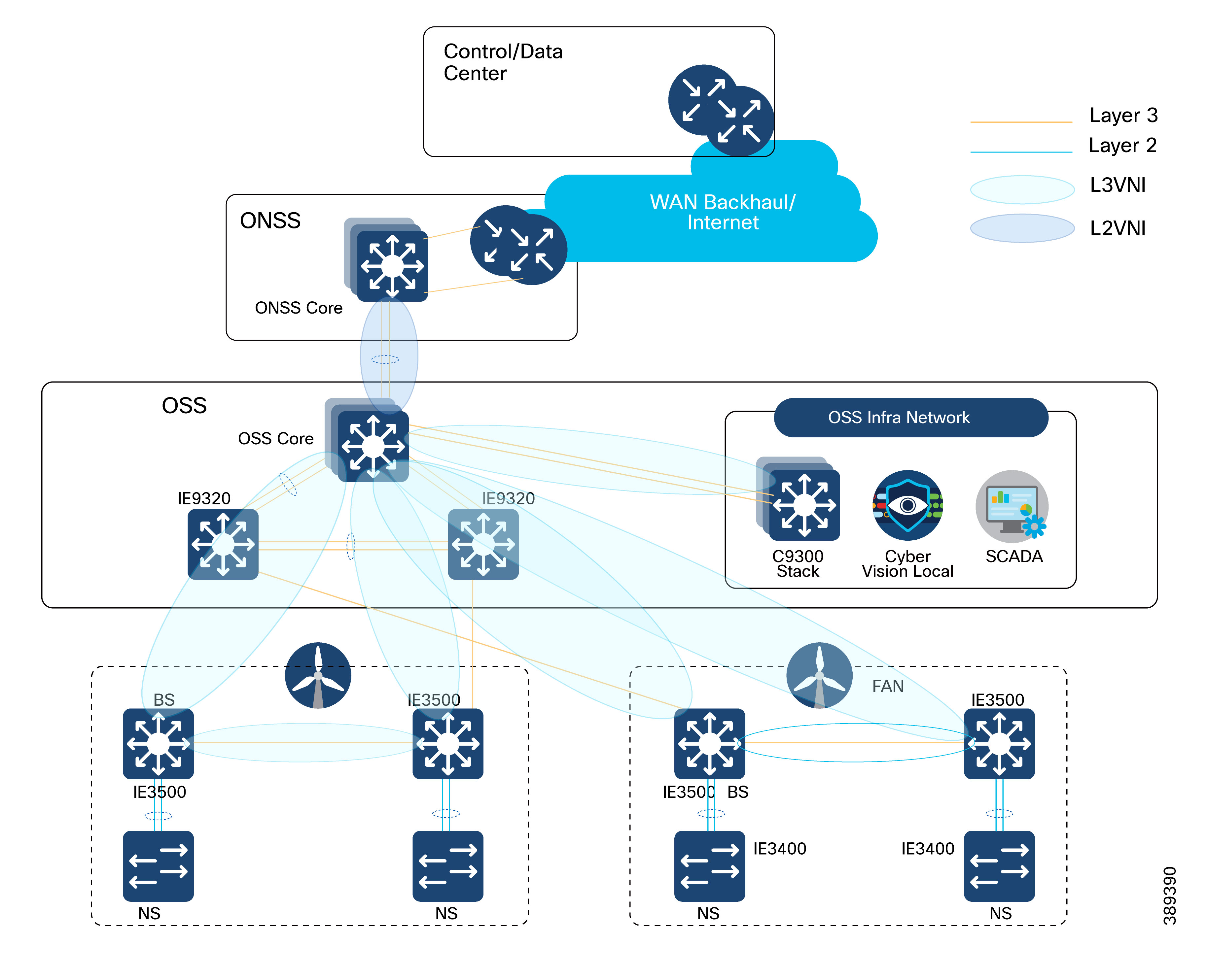

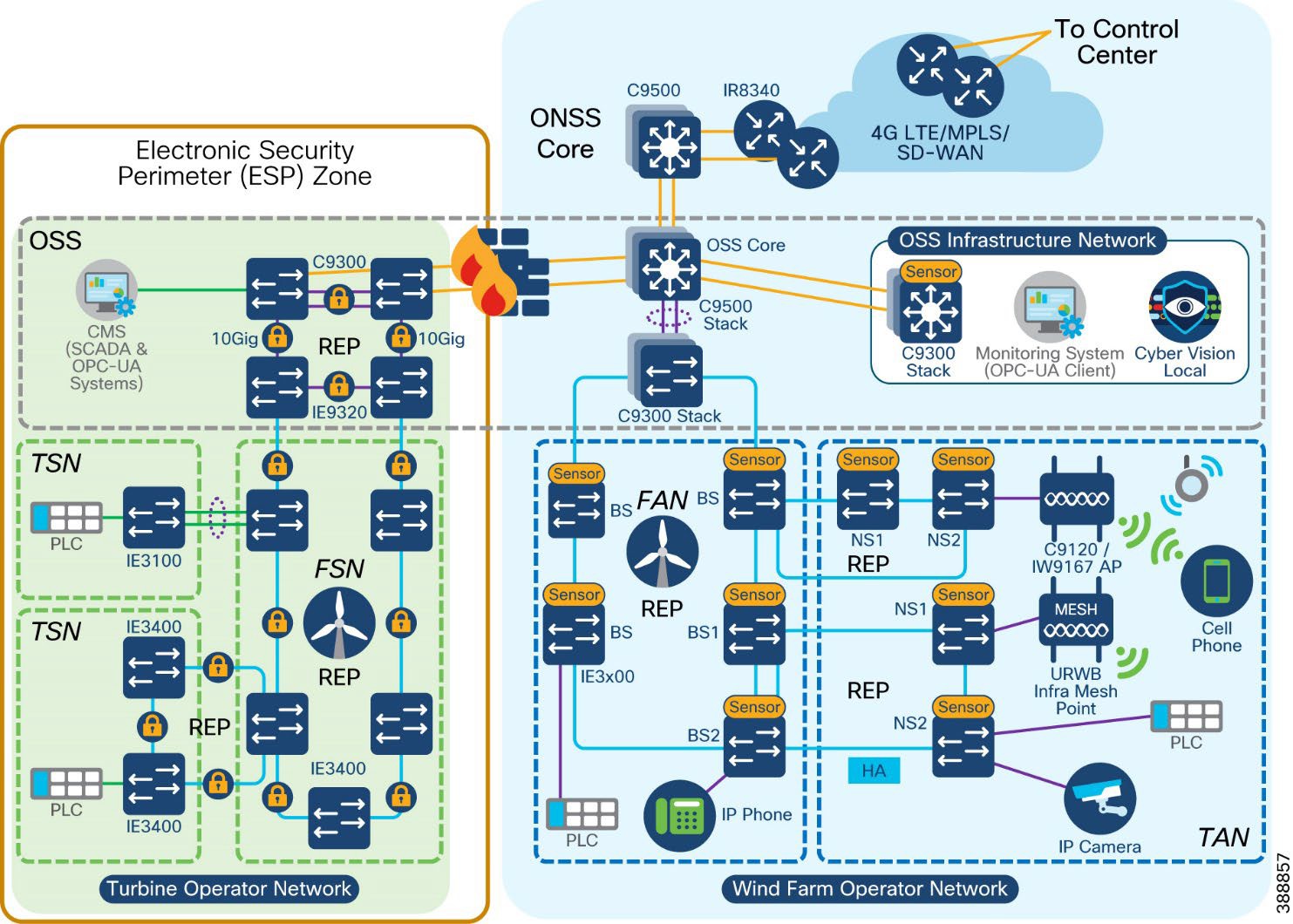

Figure 1 shows the places that an offshore wind farm has in a network.

|

● Wind turbine generator (WTG)

● Offshore substation (OSS)

● Onshore substation (ONSS)

● Service operations vessel (SOV)

● Crew transfer vessel (CTV)

Wind Farm solution architecture network building blocks are defined based on the places in the offshore wind farms.

The communications options that are available at a given site greatly influence the outcomes and capabilities for any use case. The availability of dependable lower latency, high bandwidth connectivity (such as fiber, LTE/5G cellular, and Wi-Fi) allows for more advanced network and data service options, while sites with bandwidth constrains may be limited to simpler use cases such as remote management and monitoring. Table 1 lists the key use cases in an offshore wind farm.

Table 1. Wind farm use cases

| Use Case |

Type of Services |

Description |

| WTG SCADA |

● Turbine telemetry

● Fire detection

● Turbine ancillary systems

● Weather systems

|

● Telemetry data collection associated with turbine systems and components.

● Detection of smoke and fire within the turbine.

● Telemetry data collection associated with ancillary systems (for example, elevator, navigation lights).

● Data from weather-related systems such as radar for offshore farms, wind speed anemometers.

|

| Process and control systems (ONSS, OSS, other miscellaneous systems) |

● Heating and ventilation systems

● Public announcement and general alarm (PAGA) systems

● Backup generators

● Fire detection systems

|

● Heating, ventilation, and air conditioning (HVAC) systems.

● Audio systems for announcements and alarms.

● Generators for emergency power.

● Fire detection systems.

|

| Marine-related Systems |

● Tetra, VHF, UHF Radio

● Automatic identification system (AIS)

● Radar systems

|

● Ship and worker radio systems.

● Shipping identification system.

● Radar for SOV management.

|

| Enterprise services |

● IP telephony

● Corporate network access

● Guest network access

|

● Enterprise voice communications for workers.

● Fixed and mobile handsets (Wi-Fi).

● General network access for enterprise services such as email, file sharing, video, and web.

● Basic internet access for subcontractors.

|

| Physical security |

● Closed circuit television (CCTV)

● Access control

|

● Physical security monitoring of turbine assets and areas around turbines for safety and security.

● Intrusion detection and entry into areas such as O&M offices and turbine towers.

|

| Miscellaneous systems |

● Bat and bird monitors

● Radar

● Lightning detection systems

● Lidar (turbine monitoring)

|

● Detection of protected wildlife.

● Additional radar equipment as specified by certain bodies (military, Coast Guard, and so on).

● Detection of lightning strikes.

● Monitoring of turbine performance and blade dynamics.

|

| SOV connectivity |

● IP telephony

● Wireless network access (Wi-Fi)

|

● Enterprise. Voice communication for workers.

● Fixed and mobile handsets.

● Wi-Fi access points within SOVs to provide enterprise network access for staff and provide IP telephone coverage.

|

| Environmental sensors |

● Heat and humidity

● Door open and close

● Machine temperature

|

● Turbine nacelle or tower and external measurements.

● Turbine tower, external ancillary cabinets.

● Machine casing or transformer case temperature.

|

| Location-based services |

Personnel location and man down (for lone worker) |

● Wi-Fi access points providing Bluetooth capability for short-range personnel devices for location and man down worker safety.

|

| Tubine Operator Network Services |

Turbine operational telemetry data collection |

● Turbine monitor and operational data collection using SCADA systems.

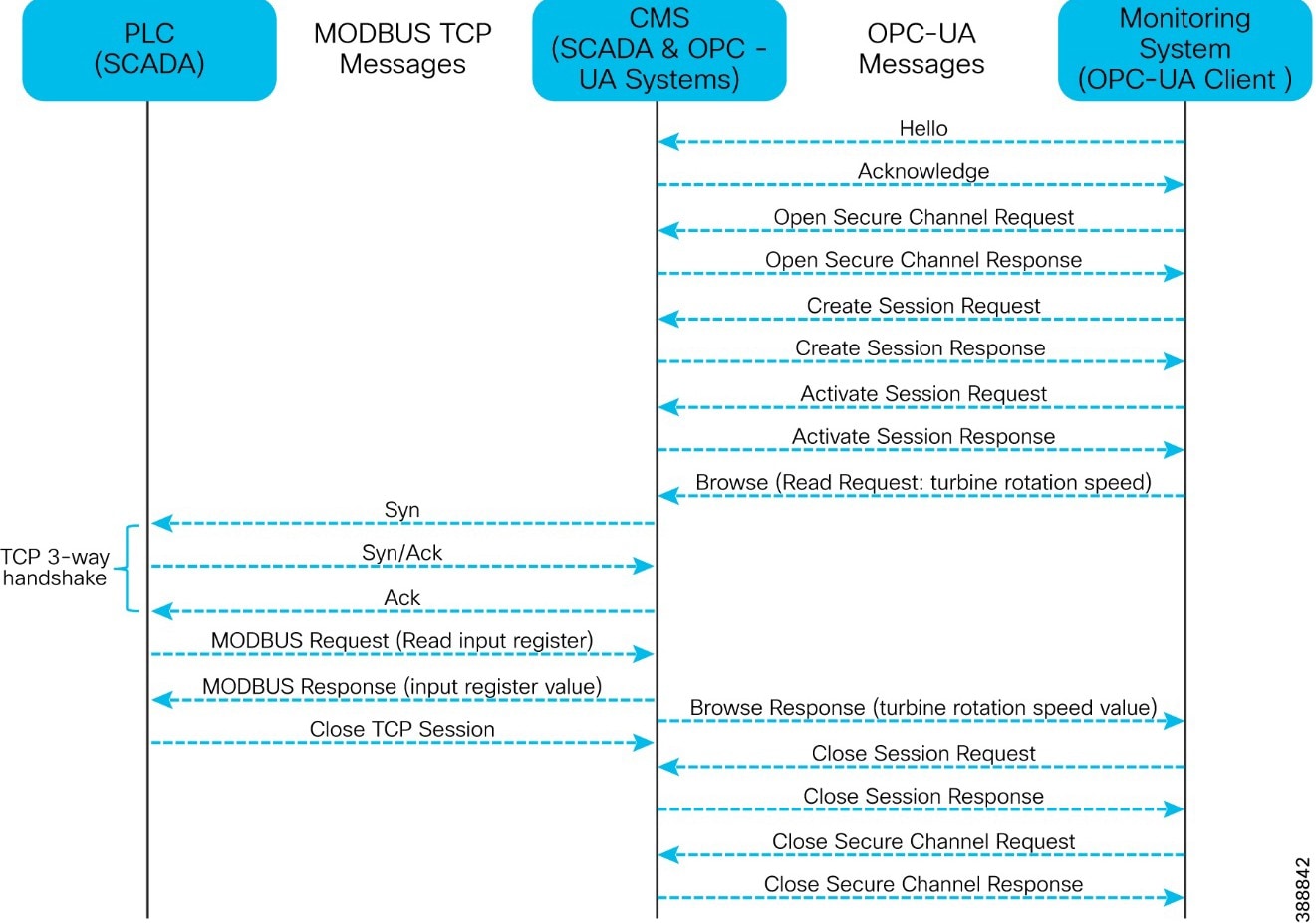

● OPC-UA gateway/server which translates turbine telemetry data (For example, SCADA MODBUS) into OPC-UA protocol messages.

Provide turbine telemetry data as OPC-UA protocol messages to asset operator on demand. |

Wind Farm Actors in the Network

Various wind farm use cases and places need different endpoints or actors in the network. The following actors are needed in a wind farm network to deliver the features for the use cases that the previous section describes. These actors usually are key to operating a renewable energy site, providing both monitoring and control capabilities.

● CCTV cameras: Physical safety and security IP cameras

● IP phones: Voice over IP (VoIP) telephony devices

● Programmable logic controller (PLC) devices and input and output (I/O) controllers: Power systems protection and control

● Intelligent electronic devices (IED): Power systems protection and control

● Wi-Fi access points: Provide corporate IT Wi-Fi access

● Cisco Ultra Reliable Wireless Backhaul (URWB) access points: Provide wireless backhaul for SOV connectivity

● Wind turbine monitoring and control: SCADA and monitoring systems

● Fire detection and alarming devices or sensors

● HVAC systems

● Environmental and weather systems: Sensors that are associated with monitoring weather and environmental conditions

● Lightning detection: Sensors that are associated with lightning detection

● Marine systems (radar, radio)

Each actor in an offshore wind farm requires network communication with other actors or application servers in the offshore substation (OSS) and control or operations center based on use case requirements. Table 2 lists the traffic types and flow in the offshore wind farms places in the network.

Table 2. Network Segments and Design Use Cases

| Traffic Type |

Traffic Flows in the Network |

| Video traffic (CCTV cameras) |

● Turbine nacelle to control center or OSS: CCTV camera in turbine nacelle switch streaming live video to video server in the control center or OSS infrastructure

● Turbine base switch to control center: CCTV camera in base switch streaming live video to a video server in the Control Center or OSS Infrastructure

|

| SCADA data for monitoring and control (PLCs and I/O devices that are external to the turbine supplier’s dedicated SCADA network))i.e., wind farm operator SCADA |

● Traffic within turbine nacelle: PLC and I/O devices communication using SCADA protocols (for examples, DNP3, MODBUS, or T104)

● Turbine base switch to nacelle: PLC in turbine base switch to an I/O device in nacelle using SCADA protocols (for example: DNP3, MODBUS, or T104)

● Traffic within turbine base switch: Communication between PLC and I/O devices in a turbine base switch

● Turbine base switch to base: Communication between PLC in a turbine base switch and I/O device in another turbine base switch

|

| IP telephony voice traffic (IP phones) |

● Maintenance and operations personnel voice communication between turbine base switch or nacelle and OSS

● Maintenance and operations personnel voice communication between turbine base switch networks

● Maintenance and operations personnel voice communication between an SOV and turbine base switch or OSS networks

|

| Wi-Fi traffic (Wi-Fi APs) |

● Turbine nacelle to OSS infrastructure or control center): Workforce AP in nacelle to WLC in the OSS network

● Turbine base switch to OSS infrastructure or control center

● Workforce AP in a turbine base switch to WLC in the OSS network

● Workforce corporate network access from offshore wind farm

● Guest internet wireless access from offshore and onshore substation.

|

| Offshore and onshore power automation and control SCADA traffic (IEDs, switchgear, other substation OT devices if any) |

● Turbine nacelle switch to OSS infrastructure

● Turbine base switch to OSS infrastructure

● Management traffic between IEDs and SCADA systems in the OSS and IEDs and SCADA devices connected turbine nacelle or turbine switches.

|

| Cisco Ultra Reliable Wireless Backhaul (URWB) traffic |

● SOV wireless connectivity using a URWB network from turbine nacelle or turbine switch or OSS network

|

| Turbine Operator network traffic (dedicated turbine Operator SCADA network provided by a turbine manufacturer) |

● SCADA OPC-UA protocol traffic between OSS DMZ and OSS infrastructure network

● SCADA OT Protocols (DNP3/MODBUS IP) to OPC-UA protocol messages translation (OPC-UA gateway) between IED, Switchgear and OPC-UA server in OSS

|

| SD-WAN and network management traffic (OMP, SSH, SNMP and network control protocols, and so on) |

● Management traffic from ONSS and OSS via the WAN to the control center

● Management traffic related to Cisco SD-WAN and Catalyst Center network management platform

|

| Auxiliary systems traffic (HVAC, fire, lighting detection, environmental sensors) |

Turbine nacelle and switch to OSS infrastructure or control center |

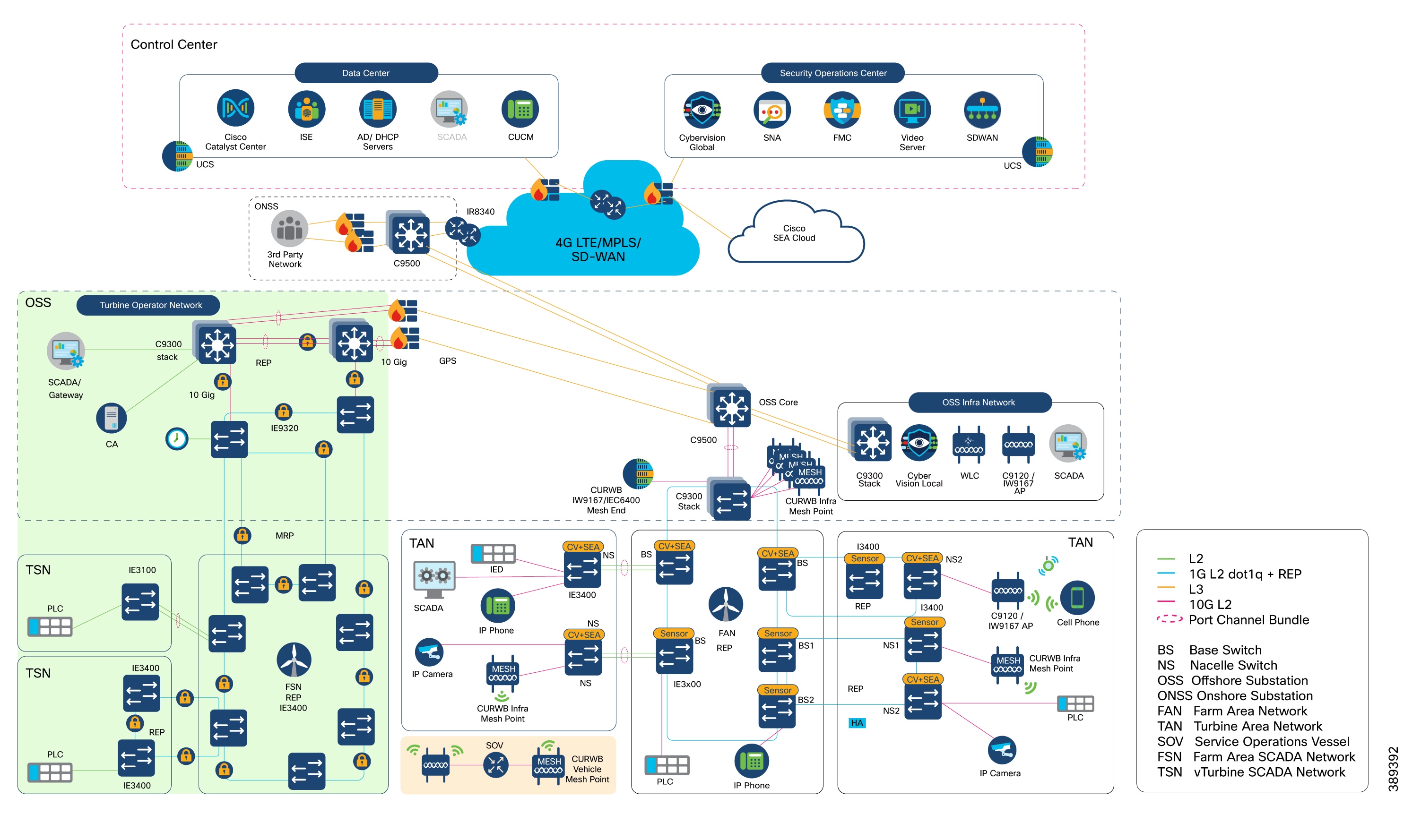

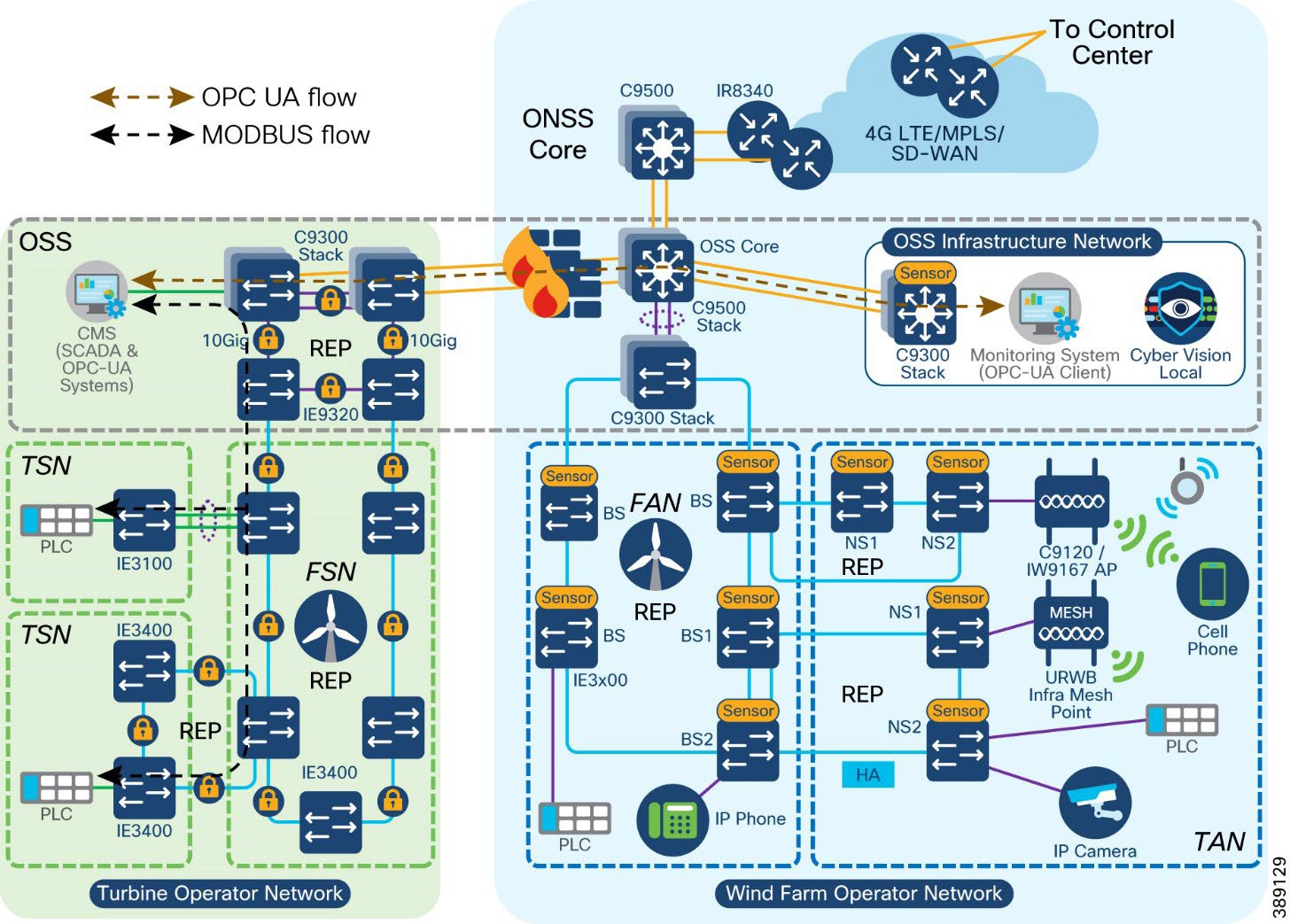

Offshore wind farm solution architecture is built on the following functional blocks:

● Wind farm operator control center (includes data center and security operations center): Hosts wind farm IT and OT data center applications and servers such as Cisco Catalyst Center, Cisco ISE, AD server, DHCP server, Cisco Cyber Vision Center (CVC), and more.

● Wind farm wide area network (WAN): A backhaul network for interconnecting a wind farm onshore substation with a control center. It can be a privately owned MPLS network, a service provider LTE network, or a Cisco SD-WAN managed network.

● Onshore substation (ONSS): A remote site in a wind farm that interconnects an offshore substation with a control center via a WAN.

● Offshore Substation (OSS): Consists of offshore core network and infrastructure applications to provide network connectivity and application access to wind turbine bases and nacelle switches and their IT and OT endpoints.

● Farm area network (FAN): An aggregation network that connects multiple wind turbines base switches and to their aggregation switches.

● Turbine area network (TAN): A switched layer 2 network typically formed by one or more nacelle switches in a wind turbine.

● SOV: Wind farm network operation and maintenance vehicle that moves around offshore wind farms and connects to TAN and OSS or ONSS networks for service operation personnel network communication.

● Third-party networks or turbine operator networks: Turbine vendor’s SCADA control network that runs separately from a wind farm operator’s network. This CVD also covers the third-party turbine operator network architecture which includes:

◦ Farm Area SCADA Network (FSN) - A dedicated network that links multiple turbines to the offshore substation. Commonly a fiber ring that aggregates on the offshore substation.

◦ Turbine SCADA Network (TSN) - A dedicated network within the turbine itself (tower and nacelle), providing connectivity for the turbine SCADA devices.

Each of these building blocks and design considerations are discussed in detail in Chapter 4: Solution Design Considerations.

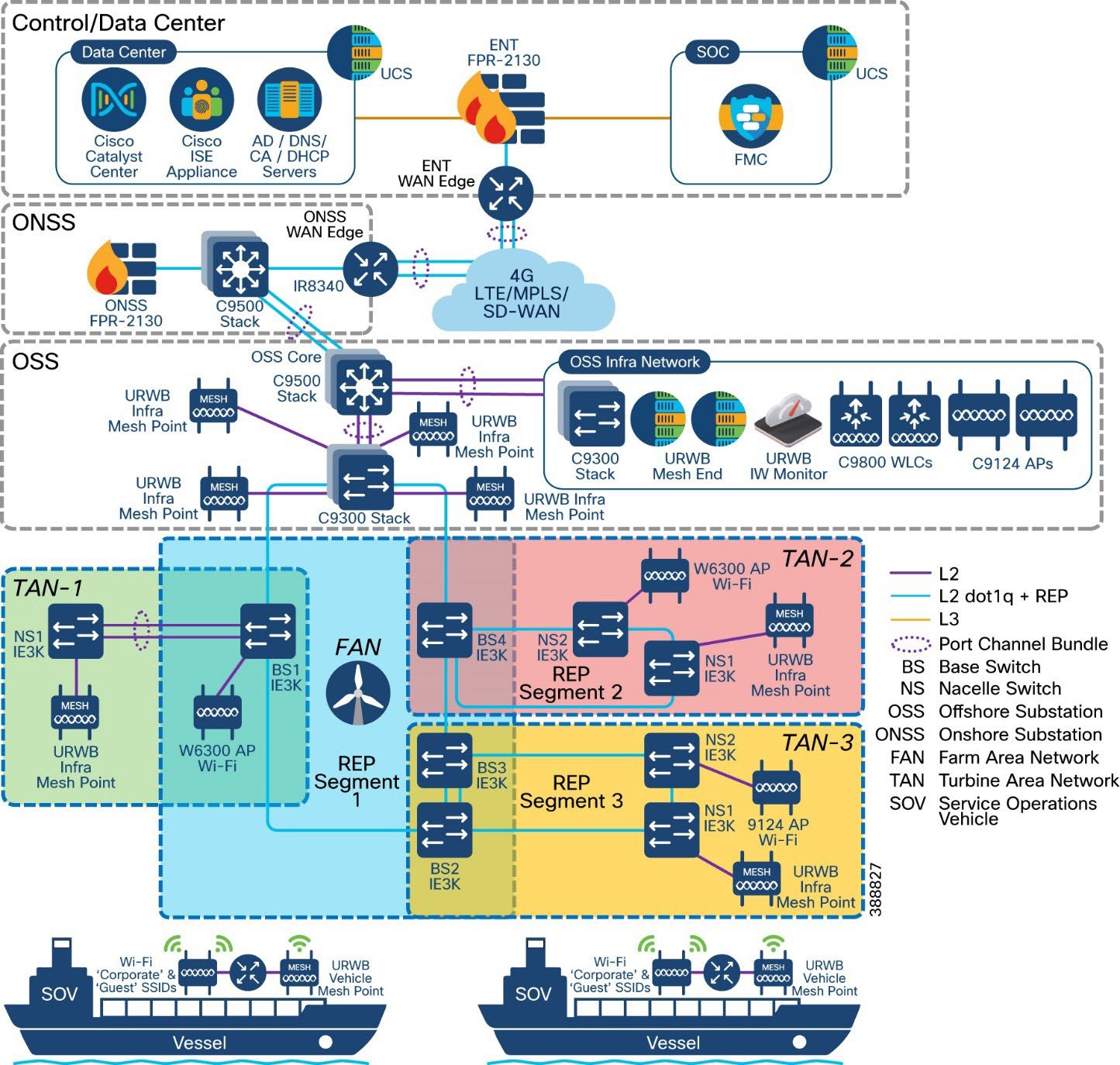

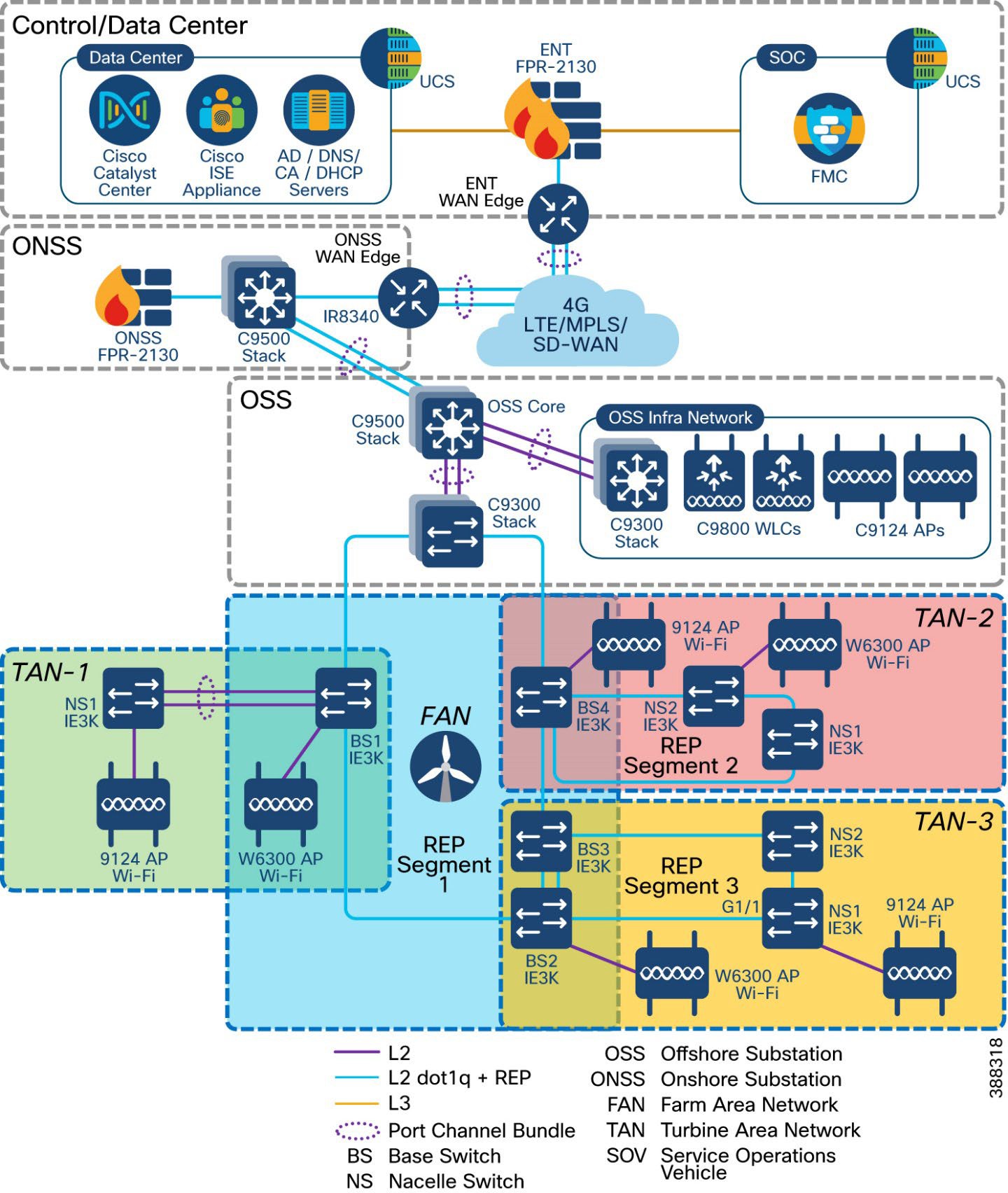

Figure 2 shows the end-to-end solution network architecture of a wind farm.

In Figure 2, section highlighted in green is the Turbine Operator Network and new capabilities in an offshore wind Farm, as discussed in the Scope of Wind Farm Release 1.3 CVD.

This section describes the components of a wind farm network. Several device models can be used at each layer of the network. The device models that are suitable for each role in the network and the corresponding CVD software versions are described in Solution Hardware and Software Compatibility.

You can choose a device model to suit specific deployment requirements such as network size, cabling and power options, and access requirements. Table 3 describes device models that are used for components in the architecture for a wind farm solution.

Table 3. Components and Device Models in Wind Farm Architecture

| Component Role |

Component |

Description |

| Turbine nacelle switch, no HA |

Cisco Catalyst Industrial Ethernet (IE) 3400 Series Switch |

1G fiber ring with port channel connectivity to base switch. |

| Turbine nacelle switch, with HA |

Cisco Catalyst Industrial Ethernet (IE) 3400 Series Switch |

1G fiber ring for nacelle switch redundancy. |

| Turbine base switch |

Cisco Catalyst Industrial Ethernet (IE) 3400 Series Switch and/or Cisco Catalyst Industrial Ethernet (IE) 3100 Series Switch |

1G fiber ring for base switches in FAN. Up to 20 switches can be in the ring. 8, 9, or 10 switches in the ring are common in a deployment. |

| Farm area aggregation |

Cisco Catalyst 9300 Series switch Stack |

REP ring aggregation switch. Stack for HA. |

| OSS and ONSS core switch, with HA |

Cisco Catalyst 9500 Series switches with Stackwise Virtual (SVL) |

Offshore IT network core. Deployed with SVL for HA. |

| OSS IT network access switch |

Cisco Catalyst 9300 Series switch stack |

Consists of two switches for HA to provide access connectivity to OSS network infrastructure devices. |

| OSS firewall |

Cisco Secure Firewall 2100 or 4100 Series |

OSS network firewall. |

| ONSS WAN router |

Cisco Catalyst IR8300 Rugged Series Router or Cisco Catalyst 8000 Series Edge Platform |

Onshore substation WAN router |

| OT network sensor |

Combined Cisco Cyber Vision (CV) and Secure Equipment Access (SEA) network sensor on IE3400 Series Switches |

CV network sensors on all IE switches in the ring and FAN. |

| OT security dashboard |

Cisco Cyber Vision Center global and local virtual appliances |

CVC deployed globally and locally in control center and OSS network infrastructures, respectively. |

| Secure Remote Access |

Cisco Secure Equipment Access Cloud |

IoT Operations Dashboard (IoD) hosted in Cisco Cloud for configuring remote access sessions and settings. |

| Wireless LAN controller |

Cisco Catalyst 9800 Wireless Controller (WLC) |

Catalyst Wi-Fi network controller in OSS network infrastructure. |

| SCADA application server |

SCADA application server |

SCADA application server in OSS network infrastructure. |

| URWB gateway |

URWB IW9167E or IEC6400 Edge Compute Appliance |

URWB wireless network mesh end |

| Network management |

Cisco Catalyst Center |

Wind farm network management application in control center and DC. |

| Authentication, authorization, and accounting (AAA) |

Cisco ISE |

AAA and network policy administration. |

| IT and OT security management |

Cisco Secure Network Analytics (Stealthwatch) Manager and Flow Collector Virtual Edition |

Network flow analytics and security dashboard in control center. |

| Physical safety video server |

Cisco or third-party video server for IP cameras |

Cisco or third-party video server for IP cameras in control center |

| 4G-LTE or 5G connectivity for SOV |

Cisco Industrial Router 1101 with 4G-LTE or 5G SIM |

4G-LTE or 5G connectivity for SOV when in range of cellular connectivity close to shore. |

| OSS, FAN, or TAN wireless backhaul |

URWB IW9167E |

URWB infrastructure AP on OSS, FAN, or TAN for SOV wireless backhaul |

| OSS vessel wireless backhaul |

URWB IW9167E |

OSS vessel mesh point for connectivity into OSS or TAN. |

| Wi-Fi network access point |

C9120 AP or IW9167I |

Catalyst Wi-Fi network AP in TAN and OSS. |

| Hardened Wi-Fi access point |

Cisco IW6300 |

Catalyst Wi-Fi network AP in TAN and OSS. |

| Turbine operator SCADA network core switch |

Cisco Catalyst 9300 Series switches |

Consists of two switches in a stack with HSRP for Core network HA. Provides access connectivity to OSS turbine operator SCADA network infrastructure devices. |

| Farm area SCADA Network (FSN) rings aggregation switch |

Cisco Catalyst Industrial Ethernet 9300 Rugged Series switches |

Turbine operator Farm area SCADA network aggregation switch. Also provides 10G uplink core network resiliency using REP and PTP Grandmaster and Boundary Clock (GMC-BC). |

| Farm area SCADA Network (FSN) switch |

Cisco Catalyst Industrial Ethernet (IE) 3400 Series Switch or Cisco Catalyst Industrial Ethernet (IE) 3100 Series Switch |

1G fiber ring for SCADA network base switches in FSN. Up to 20 switches can be in the REP ring or in an MRP ring. |

| Turbine SCADA Network (TSN) switch |

Cisco Catalyst Industrial Ethernet (IE) 3400 Series Switch or Cisco Catalyst Industrial Ethernet (IE) 3100 Series Switch |

Turbine SCADA network nacelle switches in in a HA topology (ring or port channel). |

| Compact ONSS WAN Edge router |

Cisco Catalyst IR1101 Rugged Series Router |

Compact Onshore substation WAN edge router for data center connectivity. |

| Compact ONSS WAN Headend Router |

Cisco Catalyst 8500 Series Edge platform |

Compact Onshore substation data center headend router for FlexVPN tunnels. |

| Compact ONSS Core Switch |

Cisco Catalyst Industrial Ethernet (IE) 3500 Series Switch |

Compact Onshore substation core layer 3 and turbine rings aggregation switch. |

Table 4. Components and Device Models in Wind Farm Architecture

| Component Role |

Hardware Model |

Version |

| Turbine nacelle switch, no HA |

IE3400-8P2S, IE3400-8T2S, IE3100-8T4S |

26.1.1 |

| Turbine nacelle switch, with HA |

IE3400-8P2S, IE3400-8T2S, IE3100-8T4S |

26.1.1 |

| Turbine base switch |

IE3400-8P2S, IE3400-8T2S, IE3100-8T4S |

26.1.1 |

| Farm area aggregation |

C9300-24UX |

26.1.1 |

| OSS core switch, with HA |

C9500-16X |

26.1.1 |

| OSS IT network access switch |

C9300-24UX |

26.1.1 |

| ONSS core switch |

C9300-24UX |

26.1.1 |

| OSS and ONSS DMZ firewall |

Cisco Secure Firewall 2140 |

7.10.1 |

| Firewall management application |

Cisco Secure Firewall Management Center Virtual Appliance |

7.0.1 |

| ONSS WAN edge router |

Cisco Catalyst IR8340 Rugged Series Router |

26.1.1 |

| Network management application |

Cisco Catalyst Center Appliance DN2-HW-APL |

2.3.6.0 |

| Unified Computing System (UCS) |

UCS-C240-M5S |

3.1.3c |

| AAA server |

Cisco ISE Virtual Appliance |

3.2 |

| CV network sensors |

IoX Sensor App |

5.04.0 |

| OT security dashboard |

Cisco Cyber Vision Center global and local virtual appliance |

5.04.0 |

| Wireless LAN controller |

C9800-L-C-K9 |

26.1.1 |

| Cisco IW6300 ruggedized AP for Wi-Fi access |

IW6300-AP |

26.1.1 |

| Cisco AP for Wi-Fi access |

AIR-AP9120 or IW9167 |

26.1.1 |

| URWB mesh point |

URWB IW9167E |

26.1.1 |

| URWB mesh gateway |

URWB IW9167E or IEC6400 |

26.1.1 |

| URWB IW-Monitor |

URWB IW-Monitor VM |

v2.0 |

| IT and OT security management |

Cisco Secure Network Analytics (Stealthwatch) Manager and Flow Collector Virtual Edition |

7.4.1 |

| Control center headend router |

ASR-1002-HX |

17.3.4a |

| WAN management |

Cisco SD-WAN Manager, SD-WAN Validator virtual appliances |

20.8.1 |

Table 5. Turbine Operator Network Cisco Hardware and Software versions Validated in this CVD

| Component Role |

Hardware Model |

Version |

| Turbine Operator network core switch |

C9300-24UX |

26.1.1 |

| Farm area SCADA network rings aggregation switch |

IE-9320-22S2C4X |

26.1.1 |

| Turbine SCADA switch, no HA |

IE3400-8P2S IE3400-8T2S IE3100-8T4S |

26.1.1 |

| Turbine SCADA switch, with HA |

IE3400-8P2S IE3400-8T2S IE3100-8T4S |

26.1.1 |

| Turbine base switch in FSN |

IE3400-8P2S, IE3400-8T2S IE3100-8T4S |

26.1.1 |

| Compact Onshore Substation WAN edge router |

IR1101-K9 |

26.1.1 |

| Compact Onshore Substation Headend router |

C8500-12X |

17.15.1a |

| Compact Onshore Substation Core Switch |

IE3500-8P3S |

26.1.1 |

Table 6. Third-party Hardware and Software Versions Validated in this CVD

| Component Role |

Hardware Model |

Version |

| Turbine physical security (CCTV) camera |

AXIS P3717-PLE |

10.3.0 |

| Video server for CCTV camera |

Axis Device Manager (ADM) |

5.9.42 |

| CA, AD, DHCP, and DNS servers in control center |

Microsoft Windows 2016 Server |

Windows 2016 Server Edition |

Solution Design Considerations

This chapter includes the following topics:

● Turbine Base Switch Network Design

● Offshore Substation Network and Building Blocks

● Network VLANs and Routing Design

● SCADA Applications and Protocols

● Turbine Operator Network Design

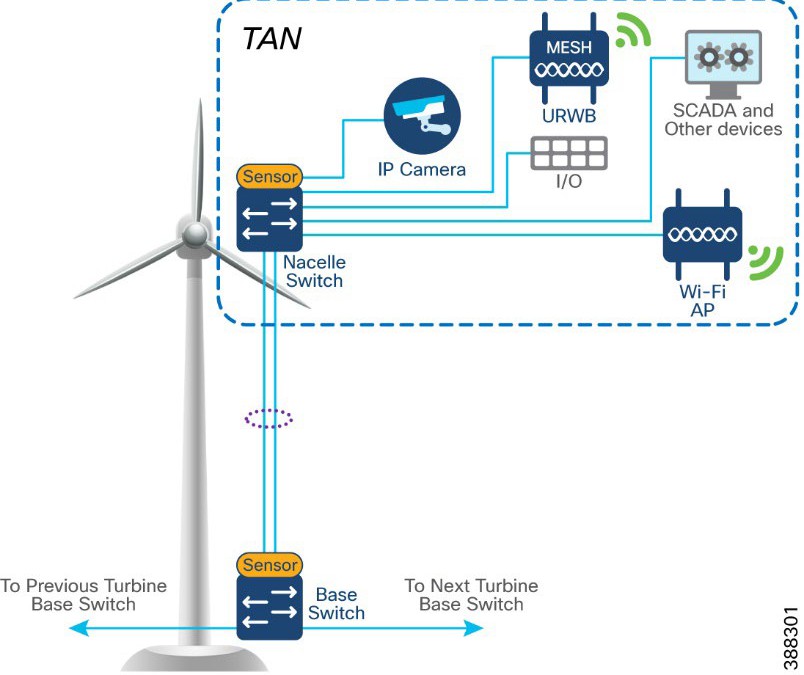

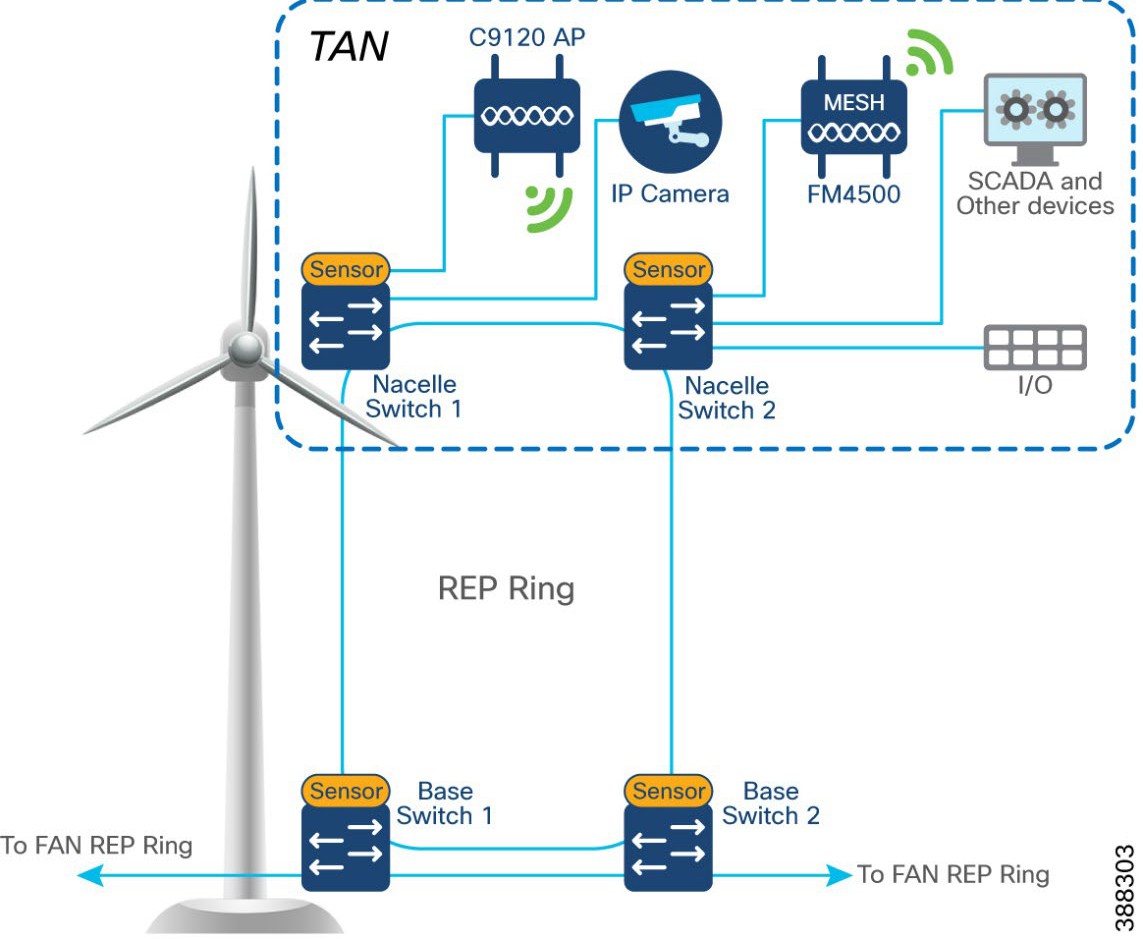

In offshore wind farms, each wind turbine has a Cisco IE3400 switch deployed at the turbine nacelle to provide offshore substation (OSS) network connectivity to various endpoints in the turbine. These endpoints include SCADA devices, PLC, I/O devices, CCTV cameras, and so on. The IE switch deployed in the turbine nacelle is also called a nacelle switch (NS). The NS with its OT and IT endpoints forms a turbine area network (TAN) in the wind farm solution architecture, as shown in Figure 3.

|

Table 7 lists the actors and traffic types in a TAN.

Table 7. TAN Actors and Traffic Types

| Actors |

Traffic Type |

| CCTV camera |

TAN to control center. CCTV camera in nacelle switch streaming live video to video server in OSS infrastructure or control center. |

| PLC and IO |

Traffic within the TAN. OT Traffic between PLC and I/O in nacelle. TAN to base. PLC in base of turbine to I/O in nacelle. |

| Wi-Fi access point |

TAN to OSS infrastructure and CC. Workforce AP in Nacelle to WLC in OSS network. Provides corporate network access and guest internet access. |

| SCADA |

TAN to OSS infrastructure. management traffic between SCADA endpoints in OSS. |

| URWB |

Offshore vessel wireless connectivity using URWB network from the TAN. |

TAN Non-HA Design Considerations

● Single Cisco IE3400 switch deployed in each turbine nacelle, as shown in Figure 4 for the TAN non-HA design option turbine nacelle Ethernet switch.

● Layer 2 Star Topology (non-HA) of nacelle switches connecting to turbine base switch (shown in Figure 4).

● An LACP port-channel with two member links to a base switch provides link-level redundancy to TAN.

● Multiple VLANs for segmenting TAN devices are configured in the NS. Examples include CCTV camera VLAN, OT VLAN for SCADA endpoints, Wi-Fi AP management VLAN, management VLAN (FTP/SSH), URWB VLAN for vessel connectivity, voice VLAN for VOIP phones, and marine systems VLAN.

● First hop security protocols with device authentication using MAB or Dot1x are configured for securing TAN endpoints.

● Layer 3 gateway for all VLANs in the NS is configured in OSS Core switch (C9500 switch)

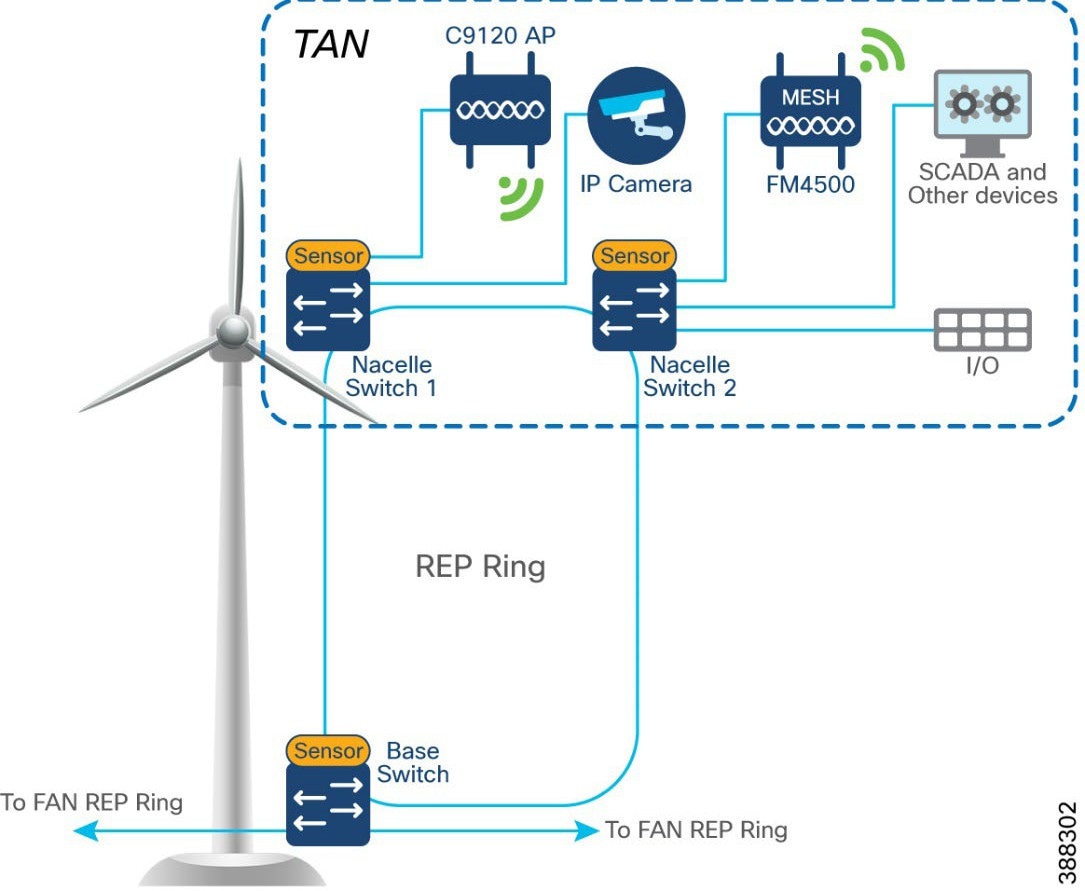

TAN High Availability Design with REP

An IE3400 nacelle switch in the TAN provides a single point of failure for TAN endpoints. To provide a highly available TAN, two nacelle switches are deployed for TAN endpoints network connectivity. In addition, a redundancy protocol is configured.

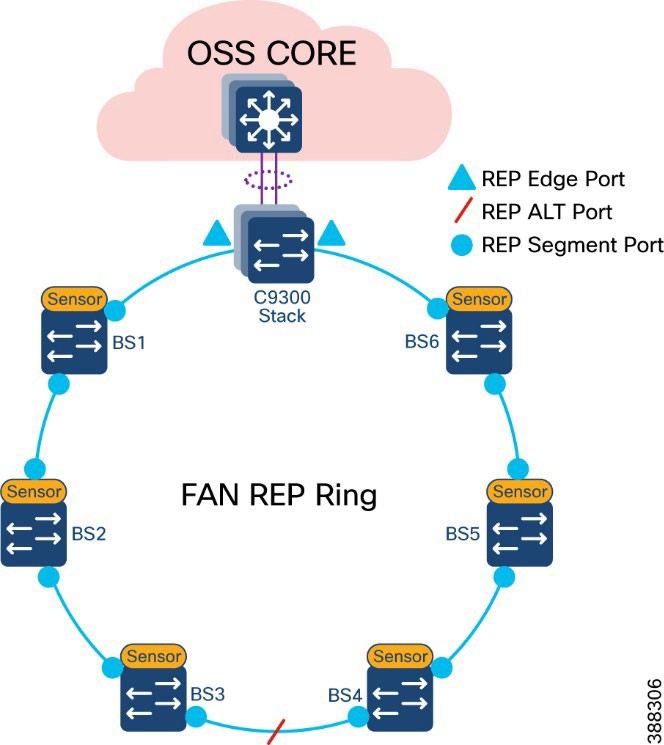

Resilient Ethernet Protocol Ring

Resilient Ethernet Protocol (REP) is a Cisco proprietary protocol that provides an alternative to Spanning Tree Protocol (STP) for controlling network loops, handling link failures, and improving convergence time. REP controls a group of ports that are connected in a segment, ensures that the segment does not create bridging loops, and responds to link failures within the segment. REP provides a basis for constructing complex networks and supports VLAN load balancing. It is the preferred resiliency protocol for IoT applications.

A REP segment is a chain of ports that are connected to each other and configured with a segment ID. Each segment consists of standard (non-edge) segment ports and two user-configured edge ports. The preferred alternate port selected by REP is blocked during normal operation of the ring. If a REP segment fails, the preferred alternate port is automatically enabled by REP, which provides an alternate path for the failed segment. When the failed REP segment recovers, the recovered segment is made the preferred alternate port and blocked by REP. In this way, recovery happens with minimal convergence time.

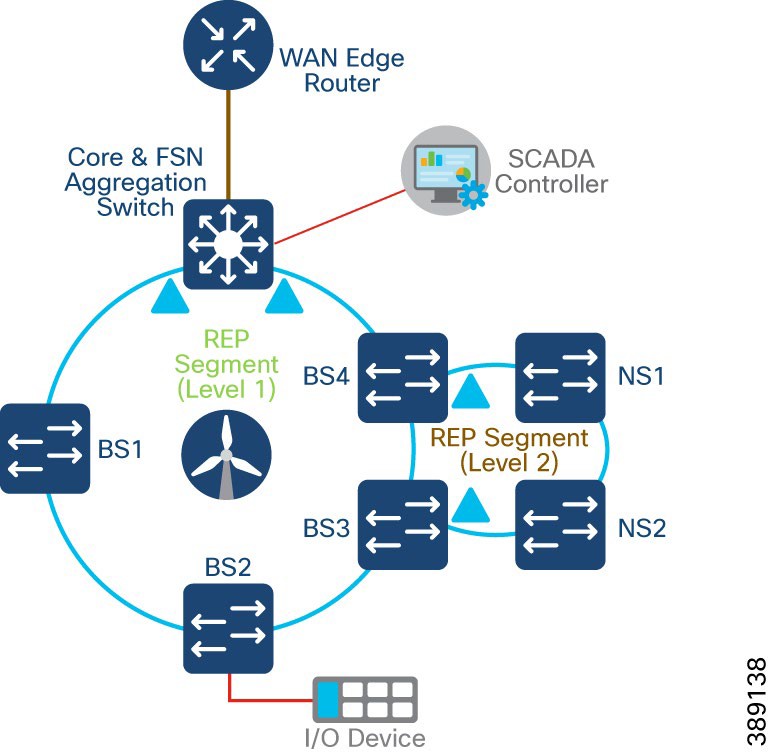

Two uplink ports from two nacelle switches deployed for HA in TAN are connected to a turbine base switch.

There are two options for TAN high availability design. In the first option, shown in Figure 4, a closed ring topology of two nacelle switches connects to a single turbine base switch. This arrangement forms a subtended REP ring to the FAN main ring.

|

In the second option, shown in Figure 5, the uplinks form two nacelle switches are connected to two different base switches in a TAN, which provides redundancy for the turbine base switch network and the TAN. In this option:

● An open ring topology of two nacelle switches connects to two turbine base switches.

● A subtended RIP ring of FAN main REP ring of base switches is formed.

|

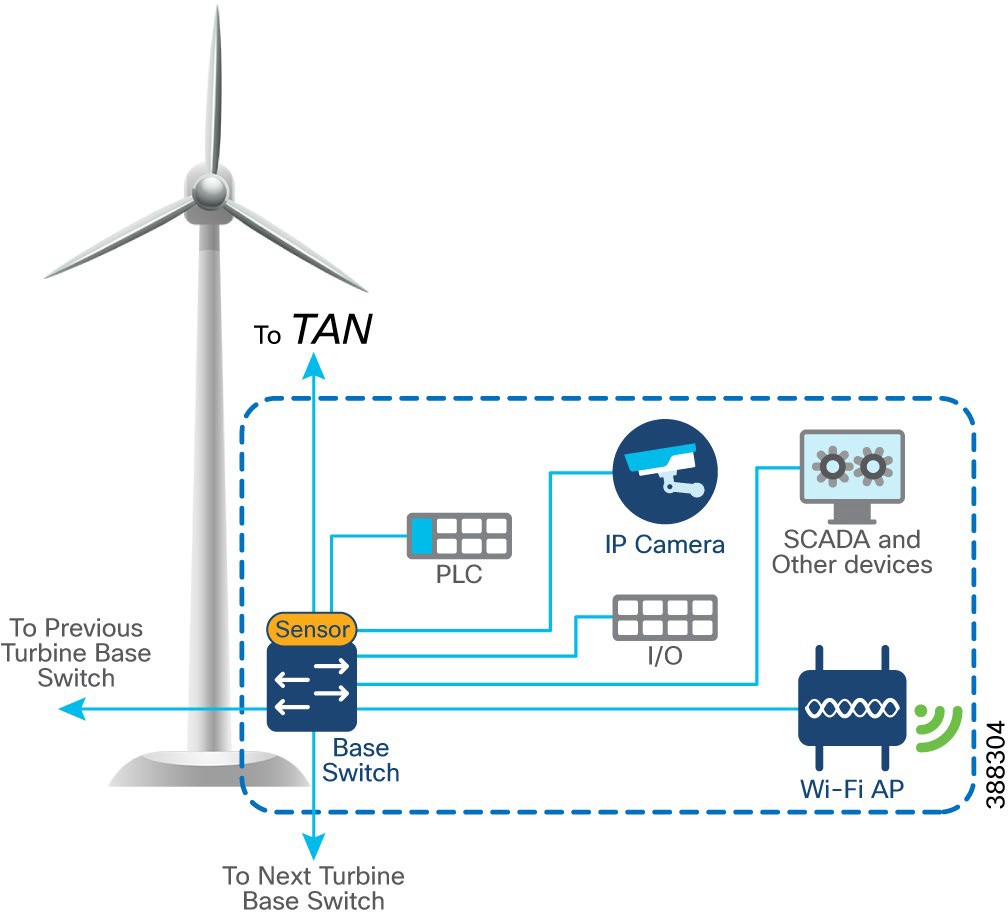

In offshore wind farms, each wind turbine has an IE3400 switch that is deployed at the turbine base to provide OSS network connectivity to various endpoints in the turbine base. These endpoints include SCADA devices, PLC, I/O devices, CCTV cameras. the TAN, and so on. The IE switch that is deployed in the turbine base is also called the base switch (BS). The BS, with its OT and IT endpoints, forms a Turbine Base Network (TBN) in the wind farm solution architecture, as shown in Figure 6.

|

Table 8 lists the actors and traffic type in a turbine base switch network.

Table 8. Turbine Base Network Actors and Traffic Types

| Actors |

Traffic Type |

| CCTV camera |

Base to control center. CCTV camera in base switch streaming live video to video server in OSS infrastructure or control center. |

| PLC and I/O controller |

Traffic within base. OT traffic between PLC and I/O in base. Base to base. PLC in base of turbine to I/O in base. |

| Wi-Fi access point |

Base to OSS infrastructure or control center. Workforce AP in base switch communicating with the WLC in the OSS infrastructure. Provides connectivity to the OSS and DC network based on needs and provides guest internet access. |

| SCADA |

Base to OSS Infrastructure. Management traffic between SCADA endpoints in OSS. |

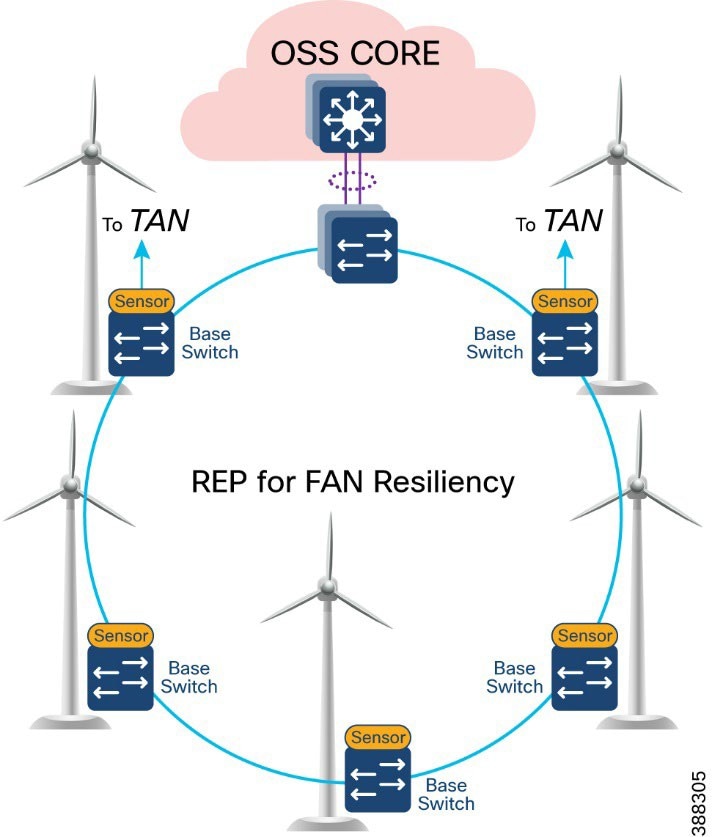

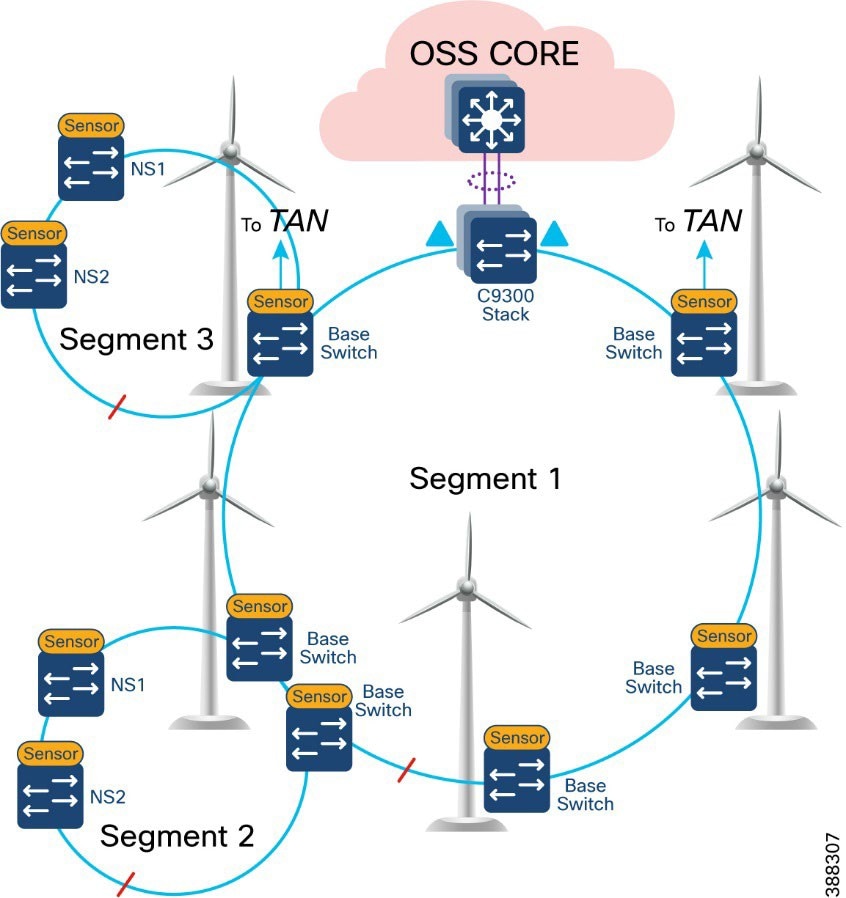

In offshore wind farms, the base switch from each wind turbine is connected in a ring topology using a 1G fiber cable with Catalyst 9300 stack switches to form a farm area network (FAN) ring. A REP is configured in the FAN ring to provide FAN resiliency for faster network convergence if a REP segment fails.

Figure 7 shows a FAN ring aggregating to a pair of Cisco Catalyst 9300 switches in a stack configuration. A Catalyst 9300 stack aggregates all FAN rings in an offshore wind farm.

|

● Cisco Industrial Ethernet 3400 Switches as turbine base ethernet switches.

● A layer 2 closed ring of turbine base switches connected via 1G fiber forms a FAN.

● FAN base switches aggregate subtended REP ring for HA traffic from the TAN with HA.

● A FAN ring consists of a maximum of 18 base switches. A Catalyst 9300 stack aggregates up to 10 FAN rings, depending on the Catalyst9300 model and port density.

● REP protocol is used for base switches and FAN resiliency; REP edge ports are configured on a Catalyst 9300 stack in OSS aggregation.

● Multiple VLANs are configured for network segmentation of TAN and FAN devices. Examples include CCTV camera VLAN, OT VLAN for SCADA endpoints, management VLAN (FTP and SSH), URWB VLAN for vessel connectivity, Wi-Fi AP management VLAN, voice VLAN for VoIP Phones, and marine systems VLAN.

A closed REP ring of FAN forms a main REP segment to forward all VLAN traffic in an offshore wind farm network. Primary and secondary REP edge ports are configured on an OSS aggregation switch stack (Catalyst9300) and an alternate port is configured in the middle of the ring. See Figure 8.

A FAN REP ring can be provisioned by using the Cisco Catalyst Center REP workflow, which automates the REP configuration from daisy-chained IE switches. For more detailed information about FAN REP ring and subtended REP ring provisioning using Cisco Catalyst Center, see Chapter 5: Network Management and Automation.

|

A TAN REP ring aggregating to a turbine base switch or a pair of base switches, as discussed in TAN High Availability Design with REP, creates a subtended REP ring or ring of REP rings in an offshore wind farm network. A closed or open REP ring configured with REP Topology Change Notification (TCN) within a REP segment notifies REP neighbors of any topology changes. At the edge, REP can propagate the TCN to other REP segments.

Figure 9 shows the FAN main REP ring and subtended REP rings design in the wind farm solution architecture.

|

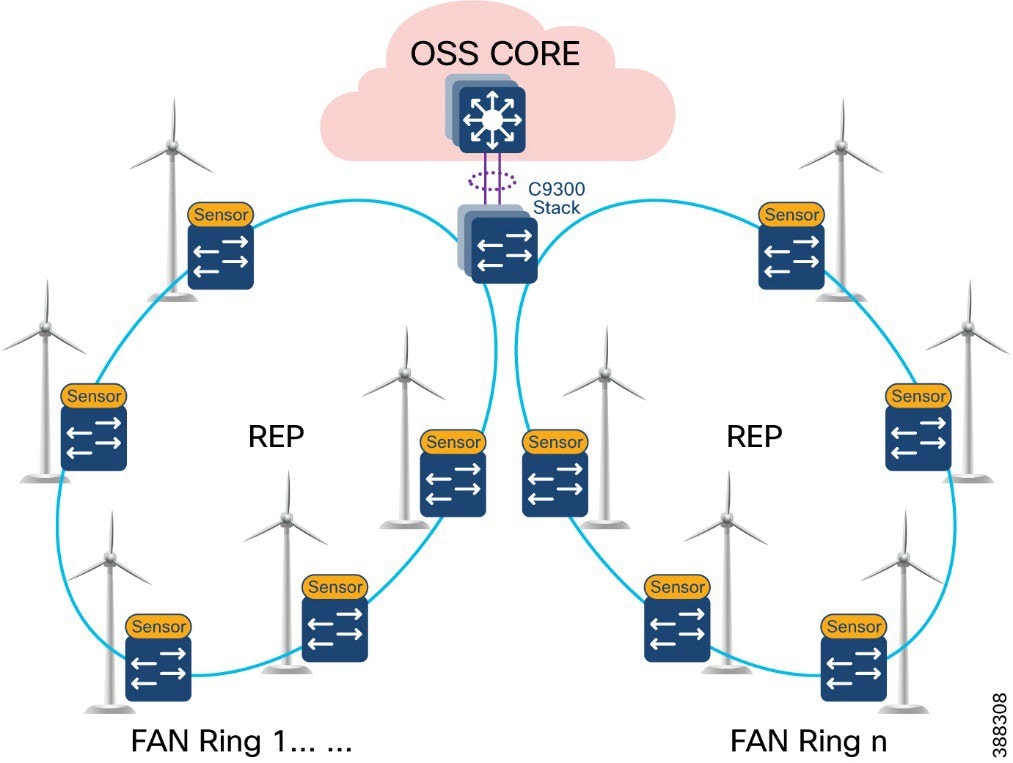

An offshore wind farm can have more than 200 turbines, and each turbine can have more than 2 base switches that connect to the OSS network. We recommend that a deployment have a FAN ring size of more than 20 IE switches. Multiple such FAN rings should be aggregated to access offshore substation (OSS) and onshore substation (ONSS) IT networks.

The FAN aggregation infrastructure is composed of Cisco Catalyst 9300 Series switches, typically with two of these switches in a physical stack, that are capable of providing 10G uplinks to OSS and ONSS networks. A stack of two Catalyst 9300 switches physically located in an OSS network connects turbine base switches in a ring via fiber cables (turbine string cables) and aggregates layer 2 traffic from each ring to upper layers of the wind farm network infrastructure, for or example, to an OSS core switch.

Figure 10 shows the FAN aggregation design using a stack of Catalyst 9300 Series switches to aggregate FAN rings and their traffic from and to offshore wind turbines.

|

We recommend that between one and nine FAN rings be aggregated to a stack of Catalyst 9300 switches for optimal network performance. If there are more than 200 turbines or base switches in a wind farm, another stack of two Catalyst 9300 switches can be added to the FAN aggregation network in the offshore substation OSS.

Offshore Substation Network and Building Blocks

This section discusses the design for a wind farm OSS network. The OSS network has following building blocks:

● OSS core network: Provides network layer 3 routing across offshore and onshore substations

● OSS DMZ and third-party or other networks: Provides secure remote access for corporate employees and third-party vendors to OSS assets

● OSS infrastructure network: Hosts OSS infrastructure services and application servers

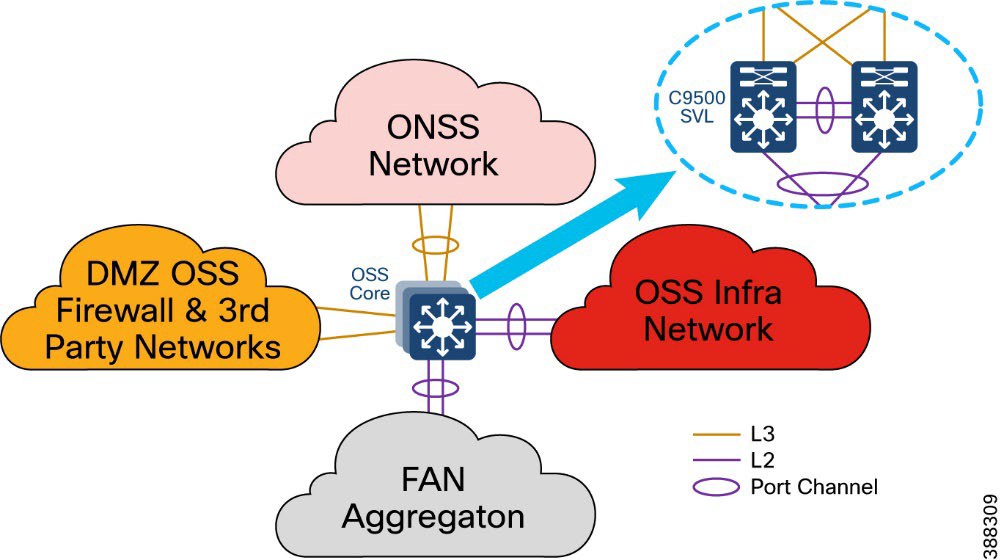

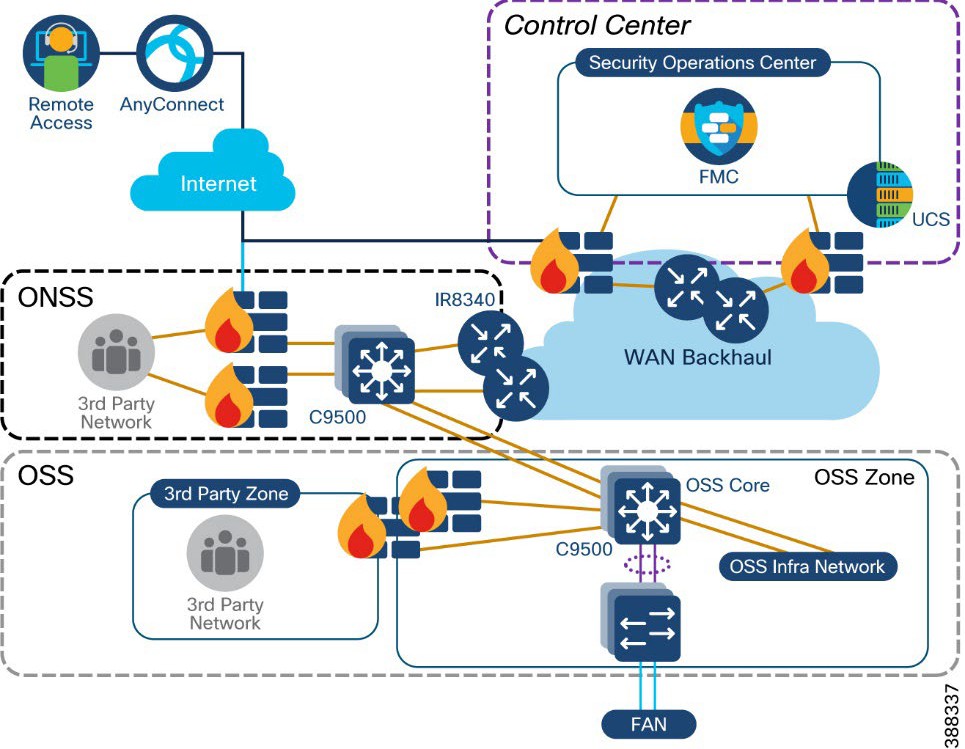

An offshore substation core network is composed of a pair of suitably sized layer 3 devices that provide resilient core networking and routing capabilities. Multilayer switches may be used as core switches, even though they are intended for routing. In the wind farm solution architecture, Cisco Catalyst 9500 Stackwise Virtual (SVL) switches are used as OSS core network switches.

The OSS core connects to multiple components, and this connection should be resilient, providing higher bandwidth (10Gbps) layer 3 links. The OSS core network connects the following building blocks in an OSS network and provides connectivity through fiber uplinks to the onshore substation network (ONSS), as shown in Figure 11.

● ONSS network: Connects to ONSS core switches.

● OSS infrastructure network: Provides layer 2 access switch connectivity to infrastructure applications such as CVC, SCADA servers, WLC, and so on.

● FAN aggregation: Aggregates FAN rings in a wind farm

● OSS DMZ and firewall: Connects to third-party networks (for example, turbine vendor SCADA networks such as GE, VESTAS, and others, and substation automation networks export cable HVDC and AC systems).

|

In Figure 11, a pair of Cisco Catalyst 9500 switches in Stackwise Virtual (SVL) configuration provides core network high availability across OSS networks with layer 3 links to the OSS DMZ firewall and ONSS core. These layer 3 links can be configured as Equal-Cost Multi Pathing (ECMP) routing links or links bundled in a layer 3 port channel.

The C9500 SVL switch connects to the OSS infrastructure and FAN aggregation switches using layer 2 port channels with each port channel bundling two 10 Gb ethernet interfaces.

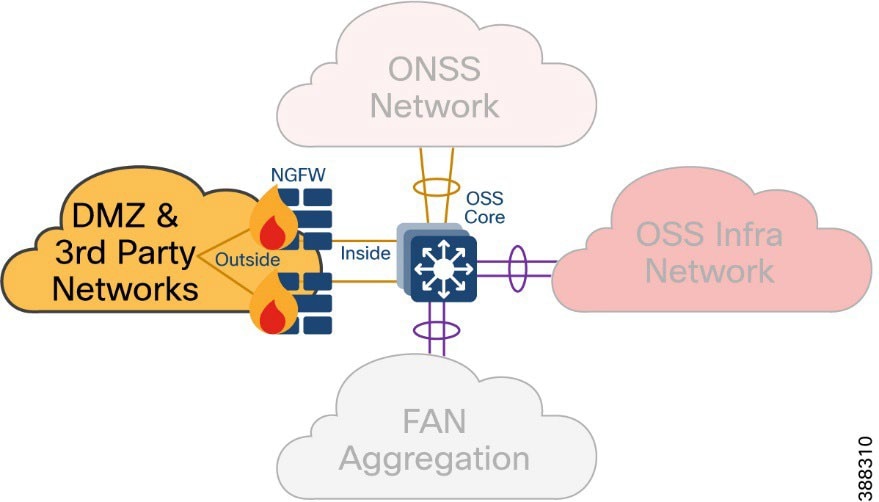

OSS DMZ and Third-Party Network

A DMZ in a wind farm OSS network provides a layer of security for the internal network by terminating externally connected services at the DMZ and allowing only permitted services to reach the internal network nodes.

Any network service that runs as a server that communicates with an external network or the Internet is a candidate for placement in the DMZ. Alternatively, these servers can be placed in a data center and be reachable only from the external network after being quarantined at the DMZ.

Cisco Next-Generation Firewall (NGFW) is deployed with outside interface connectivity to third-party turbine vendor networks and inside interface connectivity to the OSS core network, as shown in Figure 12. A Cisco Secure Firewall Management Center in the control center centrally manages all Cisco Secure Firewall instances in the OSS and ONSS networks.

|

The OSS DMZ is composed of a resilient pair of Cisco NGFW Secure Firewall 2100 or 4100 Series appliances. The Cisco Secure Firewall in OSS DMZ provides OSS network security protection from outside vendor networks.

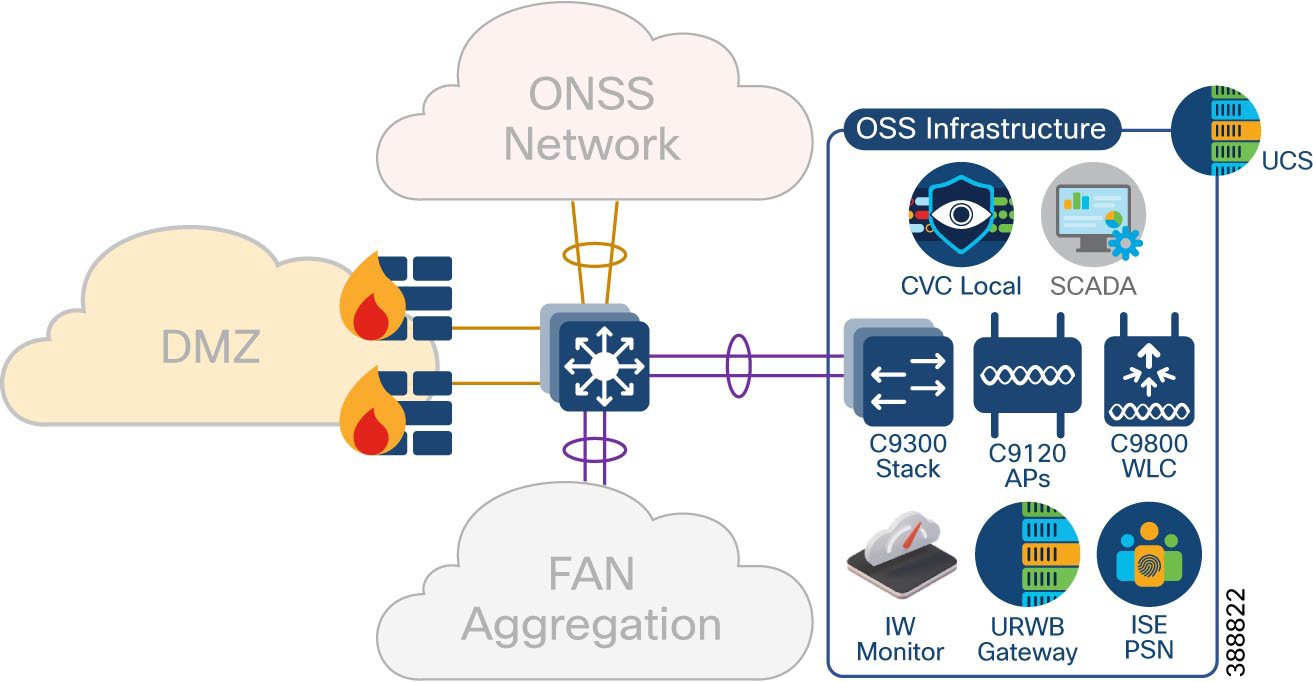

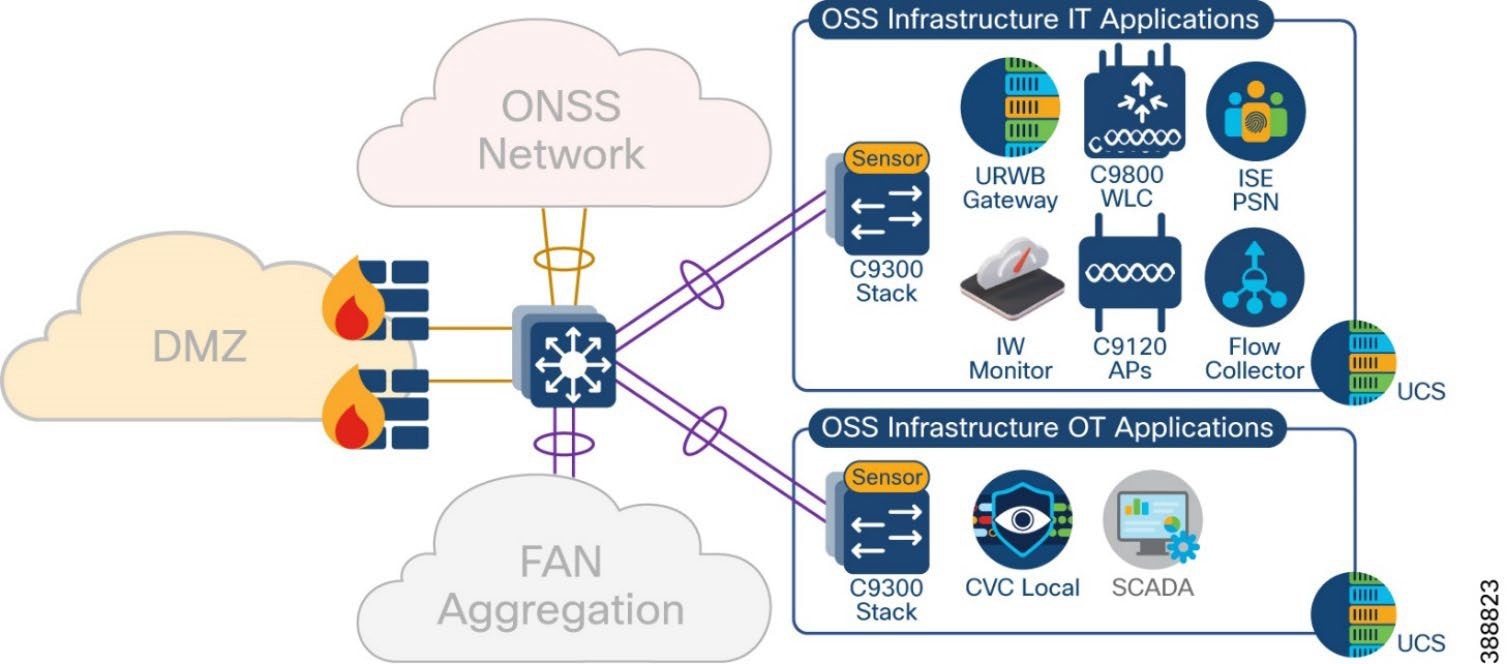

This section covers various infrastructure components and application servers in a wind farm network. The OSS infrastructure is composed of a set of resources that are accessible by devices or endpoints across the FAN and TAN. The OSS infrastructure is deployed with a pair of Cisco Catalyst 9300 Series switches in a stack to extend access to various applications and servers, as shown in Figure 13 as Option 1.

You also can deploy the OSS infrastructure with a separate access switch stack and UCS server for wind farm OSS IT and OT applications respectively, as shown in Figure 13 as Option 2. The OSS infrastructure includes of:

● One or more Cisco Unified Computing System (UCS) servers for hosting virtual machines for the applications

● On Cisco Cyber Vision Center (CVC) Local

● One or more ISE PSNs*

● Two URWB gateways (IW9167E or IEC6400)

● Two Catalyst 9800 WLCs

● Four IW9167E devices with 90-degree horn antennas

● IW6300 or C9120 APs for Wi-Fi access

● One URWB IW monitor

● SCADA server application

Figure 13 shows the wind farm OSS infrastructure network and its components.

|

*ISE PSN may optionally be deployed at the OSS infrastructure network for the distributed deployment of ISE with PAN at the control center. You also may choose to decentralize PSNs if there is a latency concern.

Figure 14 shows the wind Farm OSS Infrastructure network option for IT and OT applications separated into two access switch stacks and UCS servers.

|

In a wind farm network, an onshore substation (ONSS) is a renewable energy site that is normally in remote areas where communication network is not readily available.

Generally, offshore substations connect to ONSSs in rural locations where access to backhaul technologies is limited. While offshore to onshore connectivity is served by fiber optic cable, the backhaul from the onshore location is more challenging and often relies on service provider network availability for services such as fiber, MPLS, metro Ethernet, and so on.

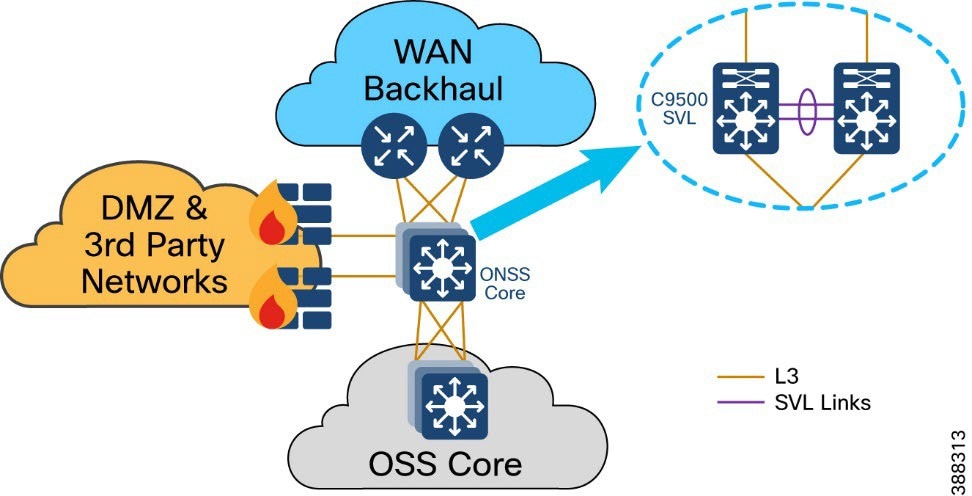

In the wind farm solution architecture, Cisco Catalyst 9500 Stackwise Virtual (SVL) switches are used as ONSS core network switches. The ONSS core connects to multiple components. The connections should be resilient and provide higher bandwidth (10Gbps) and layer 3 links for scalable L3 routing.

Figure 15 shows the building blocks in an ONSS network that the ONSS core network connects to.

|

OSS network building blocks include:

● OSS network: Connects to an OSS core switch via 10 Gb fiber links

● ONSS DMZ and firewall: Connects to third-party networks (for example, turbine vendor SCADA network, power control and metering network, export cable HVAC and DC system)

● WAN backhaul: Connects wind farm data center and control center to the ONSS via service provider MPLS, 4G LTE, and so on.

The ONSS DMZ is similar to the OSS DMZ that is discussed in OSS DMZ and Third-Party Network. WAN backhaul and control center are discussed in detail in the following section.

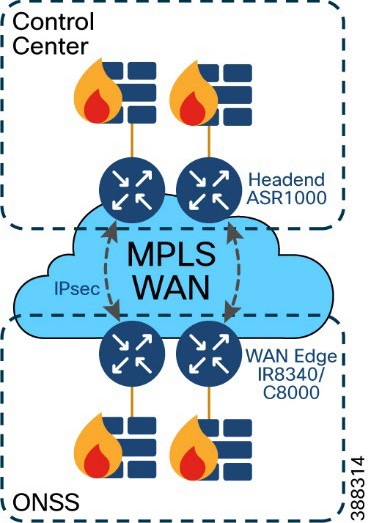

This section discusses wind farm WAN backhaul connectivity in an onshore substation. The wind farm WAN often is a dedicated WAN infrastructure that connects the transmission service operator (TSO) control center with various substations and other field networks and assets. Wind farm WAN connections can include a variety of technologies, such as cellular LTE or 5G options for public backhaul, fiber ports to connect wind farm operator or utility owned private networks, leased lines or MPLS PE connectivity options, and legacy multilink PPP backhaul aggregating multiple T1/E1 circuits.

ONSS WAN router can provide inline firewall (zone-based firewall) functionality, or a dedicated firewall can be placed beyond the substation router to protect wind farm assets. This approach results in a unique design in which a DMZ is required at the substation edge. All communications into and out of the substations network must pass through the DMZ firewall. The zone traffic egressing the substation edge should be encrypted using IPsec and put into separate logical networks using Layer 3 virtual private network (L3VPN) technology, as shown in Figure 16.

A WAN tier aggregates the wind farm operator’s control center and onshore and offshore substations. A Cisco IR8340 or C8000 Series Router deployed as an ONSS WAN edge router serves as an interface between the onshore substation and the control center.

|

WAN circuits and backhaul failure options are efficiently designed, provisioned, and managed using Cisco SD-WAN.

For more information, see Cisco SD-WAN Design Guide.

The wind farm WAN backhaul design is similar to the Cisco Substation Automation Solution WAN backhaul design. For more information about WAN backhaul design, see Substation Automation Design Guide – The New Digital Substation.

A wind farm asset operator’s control center hosts multiple IT and OT applications with other network infrastructure servers. All communications to the control center are secured by using a pair of firewalls in HA deployment and a pair of Cisco ASR1000 series routers acting as headend or hub routers. Cisco ASR1000 Series routers terminate all IPSec tunnels from remote substations WAN edge routers.

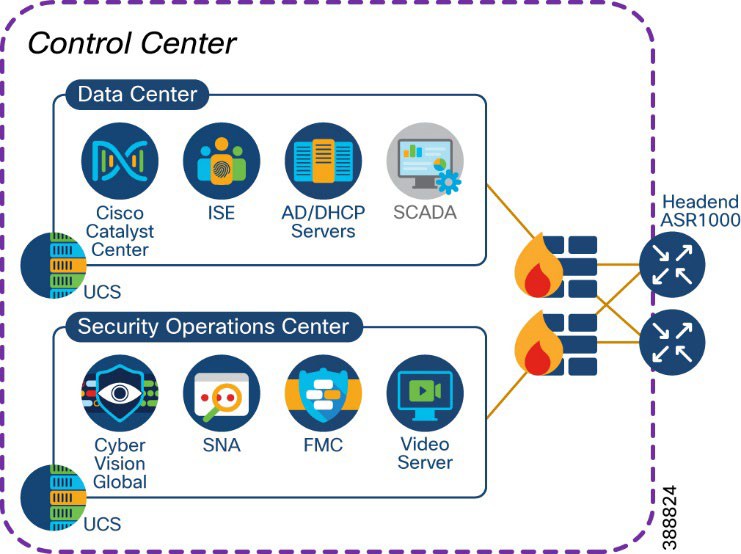

Figure 17 shows a wind farm control center with its IT and OT applications and servers.

|

The control center network consists of:

● One or more Cisco ASR1000 Series routers for WAN headend

● One or more 2100 or 4100 Series firewalls

● One or more Cisco Unified Computing System (UCS) servers for hosting virtual machines for applications

● One Cisco Catalyst Center for network management

● One or more Cisco ISE policy administration node (PAN)*

● One centralized Active Directory

● One centralized DHCP server

● One network time protocol (NTP) server

● One SCADA server application for wind farm turbine control

● One video server for CCTV

● One Cyber Vision Global

● One Cisco Centralized Secure Firewall Management Center

● One Cisco Secure Networks Analytics Manager (SMC)

* PSN may optionally be deployed at the OSS Infrastructure network for the distributed deployment of ISE with the

PAN located at the control center. You may also choose to decentralize the PSN whenever there is a concern about latency.

Network VLANs and Routing Design

This section covers the different VLANs in a wind farm network and virtual routing and forwarding (VRF) for layer 3 routing between OSS and ONSS core networks. The wind farm network is segmented by using VLANs for various endpoints and applications traffic. There is a dedicated VLAN and VRF for each service, endpoint, or application traffic in the network. Table 9 summarizes the design guidance for creating multiple VRFs and VLANs in the network.

Table 9. VLANs and VRFs in the Wind Farm Network Design

| VRF |

VLAN Description |

| Management VRF (VRF for network management traffic) |

Network device management VLAN(s) Wi-Fi and URWB aps management VLAN(s) FAN and TAN REP ring administrative VLAN(s) Cyber Vision (CV) collection network VLAN(s) |

| Video and voice VRF (VRF for CCTV cameras and IP telephony voice traffic) |

VLANs for CCTV Cameras in FAN and TAN IP telephony devices voice VLAN |

| Wi-Fi access VRF |

Employee and contractor Wi-Fi access VLAN Guest Wi-Fi access VLAN |

| URWB traffic VRF |

URWB traffic VLAN |

| Operational technology (OT) (VRF for all renewables and OT traffic in the network) |

VLANs for SCADA traffic such as weather systems, HVAC, fire detection, lightning detection, and other systems such as wildlife monitoring VLANs for automation systems such as I/O controllers, PLCs, and so on |

| Internet VRF (VRF for device Internet access from the wind farm network) |

DMZ VLANs for Internet traffic routing in OSS and ONSS networks |

| Global routing table (GRT) |

VLANs local to OSS network (not to be routed) VLANs local to ONSS network (not to be routed) |

A VRF creates a separate routing and forwarding table in the network for IP routing, which is used instead of a default global routing table (GRT). A VRF provides high-level network segmentation across multiple services or traffic in the network. Each VLAN layer 3 interface (SVI) is created and assigned to a VRF for layer 3 routing in the OSS and ONSS core switches.

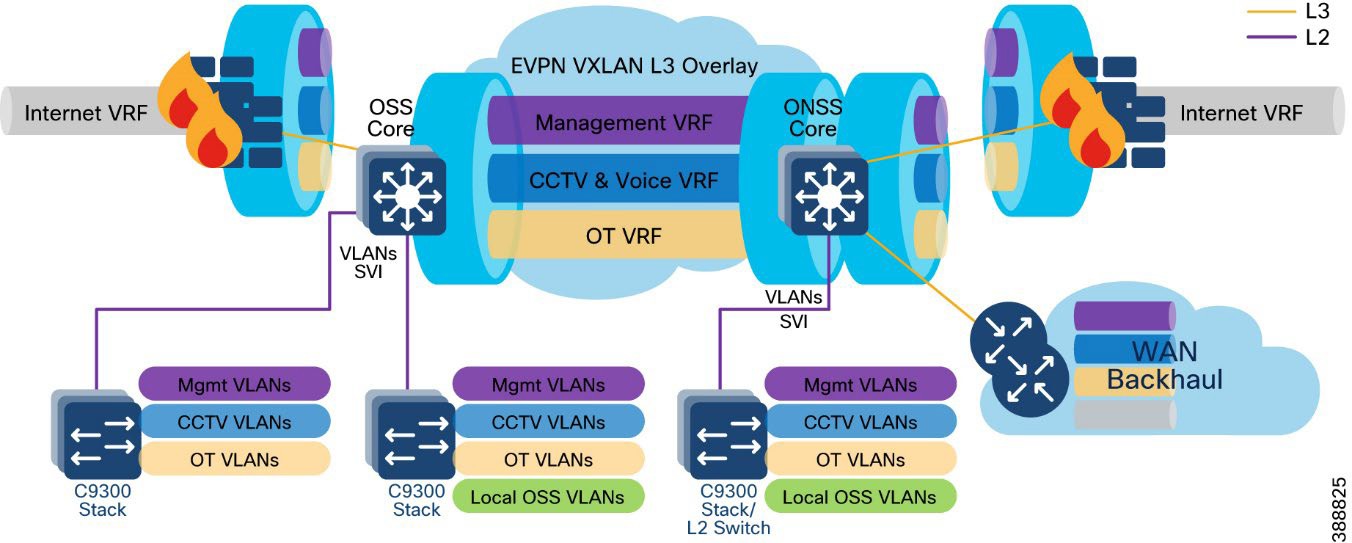

The VRF-lite feature is used along with Ethernet VPN - Virtual Extensible LAN (EVPN-VXLAN) design to provide a unified overlay network solution for layer 3 routing between OSS and ONSS core Catalyst 9500 Stackwise Virtual (SVL) switches per VRF. This provides a more scalable solution for traffic segregation (per VRF) while using a single control plane routing protocol to exchange VRF prefixes (BGP).

Figure 18 illustrates the layer 3 IP routing design in the wind farm architecture.

|

For more information, see Information about VRF-lite in IP Routing Configuration Guide, Cisco IOS XE Dublin 17.10.x (Catalyst 9500 Switches).

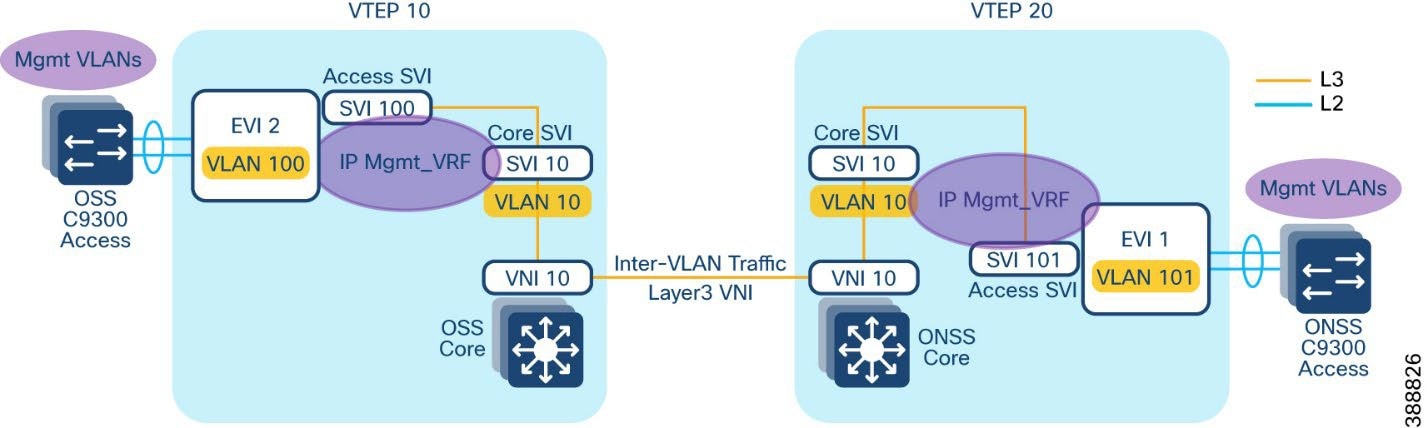

Ethernet VPN (EVPN) is a control plane for VXLAN that is used to reduce the flooding in the network and address scalability challenges in a VXLAN network due to flood and learn mechanism. To address this issue, a control plane is used to manage the MAC address learning and Virtual Tunneling End Point (VTEP) discovery. In BGP EVPN VXLAN deployments, Ethernet Virtual Private Network (EVPN) is used as the control plane. EVPN control plane provides the capability to exchange both MAC address and IP address information. EVPN uses Multi-Protocol Border Gateway Protocol (MP-BGP) as the routing protocol to distribute reachability information pertaining to the VXLAN overlay network, including endpoint MAC addresses, endpoint IP addresses, and subnet reachability information.Refer to the following URL for more details on BGP EVPN VXLAN design.

An EVPN VXLAN Layer 3 overlay network allows host devices in different Layer 2 networks to send Layer 3 or routed traffic to each other. The network forwards the routed traffic using a Layer 3 virtual network instance (VNI) and an IP VRF. In the Wind Farm Asset operator’s offshore and onshore substations (OSS & ONSS) core networks are configured in a two VTEP topology without a spine switch, as shown in Figure 19.

In this design, a layer 3 overlay VXLAN network between OSS and ONSS core switches is configured per VRF (with BGP routing) to route inter-subnet traffic between these core switches using layer 3 Virtual Network Identifier (L3VNI). A Layer 3 VNI and a VTEP is configured per VRF in the wind farm network. The following Figure 19 shows the movement of traffic in an EVPN VXLAN Layer 3 overlay network using a Layer 3 VNI for a VRF in the wind farm network.

|

Refer to the following URL for more information about EVPN VXLAN Layer 3 overlay network.

BGP EVPN VXLAN Network Design for Turbine Network

Alternative to Layer 2 switching network design for the wind farm operator’s turbine network, Layer 3 based BGP EVPN VXLAN network provides following benefits.

● Eliminates Layer 2 domain with REP in FAN ring

● Provides deterministic Layer-3 transport

● Enables a scalable fabric based L2 extension (overlay) between turbines and OSS core

● Provides VRF-based network segmentation

● Ensures fast convergence (sub-second target)

● Provides operational clarity for field troubleshooting

This section describes BGP EVPN VXLAN based network design option for wind farm operator’s turbine network which can be preferred over a layer 2 turbine network design depending on the wind turbine deployments and ease of operations required.

Turbine network Underlay Design

An underlay network is the physical network over which the virtual overlay network is established. Once the overlay network is defined along with the data-plane encapsulation, a method of transport is required to move the data across the physical network underneath. This method of transport is typically an underlay transport network, or simply the underlay.

In BGP EVPN VXLAN, the underlay Layer 3 network transports the VXLAN-encapsulated packets between the source and destination VTEPs and provides reachability between them. The VXLAN overlay and the underlying IP network between the VTEPs are independent of each other.

The underlay network for BGP EVPN VXLAN is a routed inter-switch links between turbine base and OSS aggregation switches. This provides a deterministic L3 fault domains in the FAN ring (fast reconvergence, scalable routing), while keeping the nacelle/base access simple and rugged.

Figure 20 illustrates the underlay physical network design for the turbine network.

|

As shown in Figure 20, following are the underlay network design considerations for the turbine network, to deploy a BGP EVPN VXLAN overlay network across OSS and turbine switches.

Turbine Base Switches (IE3500) as L3-connected leaf sites: Each turbine base switch participates in the L3 Farm Area Network (FAN) ring toward the aggregation layer.

It is recommended to connect two turbine base switches per FAN ring

Ring Aggregation Switches (IE9320) as L3 transit in a redundant pair:

1. Deploy IE9320s as a pair with an L3 Port-Channel between them (east-west redundancy)

2. Uplink that IE9320 pair to OSS Core (C9500 SVL) using L3 Port-Channels for underlay redundancy

OSPF as underlay routing protocol to provide network reachability between OSS core, OSS aggregation (IE9320) and turbine base switches with consistent MTU across OSPF domain. It is recommended to configure a physical MTU size of 9198 bytes in all switches participating in the BGP EVPN VXLAN overlay fabric.

Turbine Nacelle Switches (IE3400) stay L2-attached to the base: Use Layer-2 Port-Channel from IE3400 (nacelle) to IE3500 (base) for access resiliency while keeping the FAN ring purely L3.

Turbine network VXLAN Overlay Design

An overlay network is a virtual network that is built over an existing Layer 2 or Layer 3 network by forming a static or dynamic tunnel that runs on top of the physical network infrastructure. The existing Layer 3 network is what forms the underlay and is covered in the previous section, “Turbine network underlay design”.

When a data packet is sent through an overlay, the original packet or frame is packaged or encapsulated at a source edge device with an outer header and dispatched toward an appropriate destination edge device. The intermediate network devices forward the packet based on the outer header but are not aware of the data in the original packet. At the destination edge device, the packet is decapsulated by stripping off the overlay header and then forwarded based on the actual data within.

In the context of BGP EVPN VXLAN, VXLAN is used as the overlay technology to encapsulate the data packets and tunnel the traffic over a Layer 3 network. VXLAN creates a Layer 2 overlay network by using a MAC-in-UDP encapsulation. A VXLAN header is added to the original Layer 2 frame, and it is then placed within a UDP-IP packet. A VXLAN overlay network is also called as a VXLAN segment. Only host devices and virtual machines within the same VXLAN segment can communicate with each other.

● Each VXLAN segment is identified through a 24-bit segment ID, termed the VXLAN network identifier (VNI). This ensures that up to 16 million VXLAN segments can be present within the same administrative domain.

● Every VXLAN segment has tunnel edge devices known as Virtual Tunnel End points (VTEPs). These devices sit at the edge of the VXLAN network and are responsible for creating instances of VXLAN tunnels, and for performing VXLAN encapsulation and decapsulation.

● A VTEP has a switch interface on the local LAN segment to support local endpoint communication through bridging, and an IP interface to interact with the transport IP network.

● The IP interface has a unique IP address that identifies the VTEP on the transport IP network. The VTEP uses this IP address to encapsulate Ethernet frames and transmits the encapsulated packets to the transport network through the IP interface

● A VTEP device also discovers the remote VTEPs for its VXLAN segments and learns remote MAC address-to-VTEP mappings through its IP interface.

Figure 21 illustrates BGP EVPN VXLAN Overlay design for the turbine network.

|

An EVPN VXLAN Layer 2 overlay network allows host devices in the same subnet to send bridged or Layer 2 traffic to each other. The network forwards the bridged traffic using a Layer 2 virtual network instance (VNI).

As shown in Figure 21, following are the VXLAN overlay design considerations for the turbine network. The topology shows an EVPN VXLAN network with two VTEPs (OSS core switch as VTEP 1 and IE3500 turbine base switch as VTEP 2) and no spine switches. Ingress replication is performed between the VTEPs to forward BUM traffic in the network.

● OSS core switch C9500 SVL acts a leaf VTEP; turbine base switches (IE3500) in a two turbine routed ring acts as leaf VTEPs. In a two-VTEP topology, a spine switch is not mandatory.

● VXLAN overlay extends Layer 2 from turbine base switches (IE3500) to OSS core (C9500 SVL) with IE9320 OSS aggregation switches as transit switches in the overlay network.

● It is recommended to configure L2VNIs for VLANs in a turbine base switch to OSS core and VLANs in OSS infra network to in OSS core with a separate L2VPN in BGP per VRF.

● L3VNI is configured for routing inter VLAN traffic between OSS and ONSS core switches, as discussed in the section, “Network VLANs and Routing Design”.

Refer to the following URL, for more details on EVPN VXLAN Layer 2 overlay network.

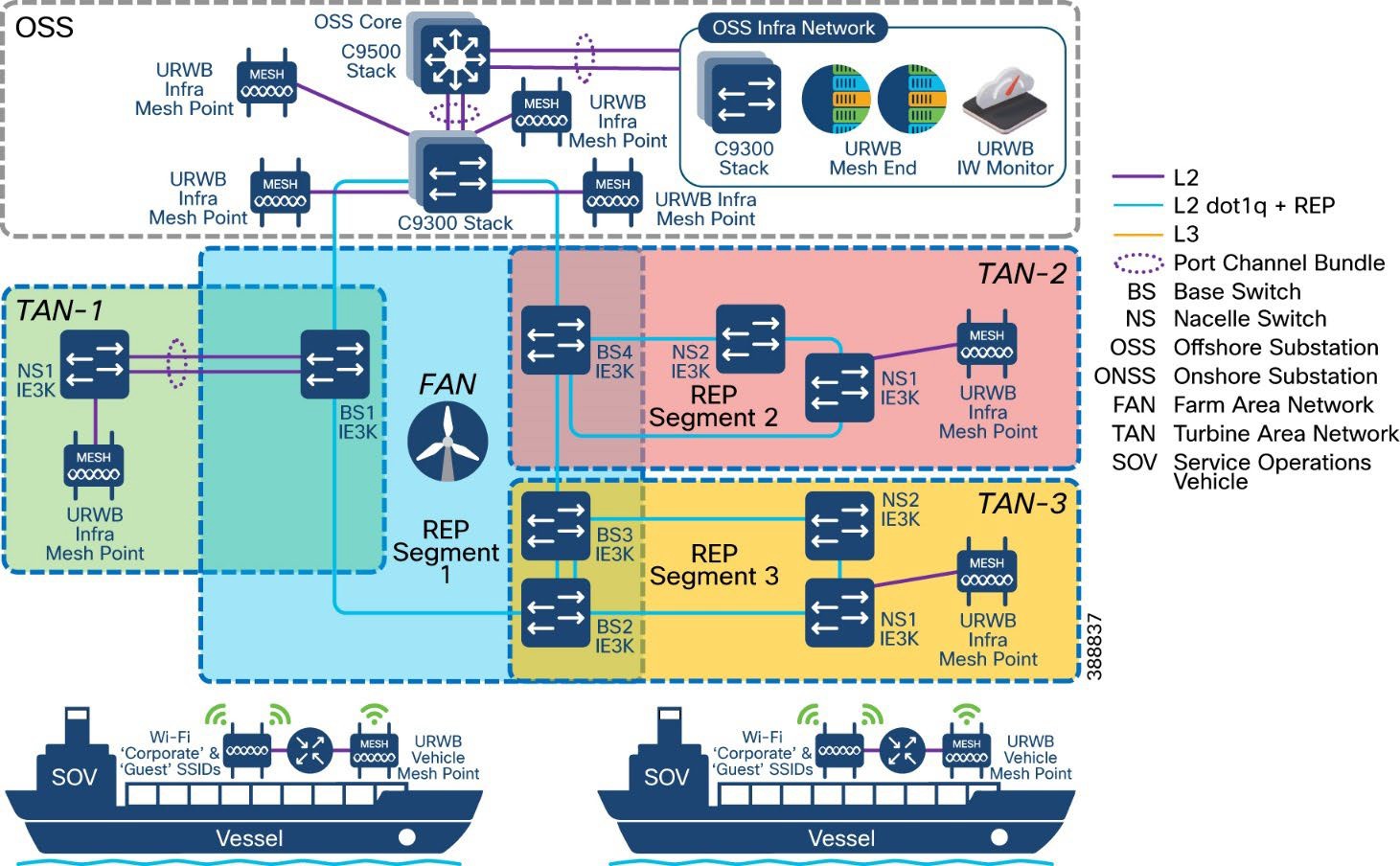

Figure 22 shows the offshore wind farm wireless architecture. This architecture includes URWB on the OSS and TAN for SOV connectivity and enterprise Wi-Fi on the OSS, TAN, FAN, and vessel. The following sections provide details about the URWB architecture.

|

This section provides an overview of the Cisco Wi-Fi deployment at an offshore windfarm for employee, contractor, and guest access on the OSS, FAN, and TAN. The wireless deployment leverages Cisco’s next-generation wireless controller, the Cisco Catalyst 9800 WLC deployed within the OSS infra network and is managed centrally via the Cisco Catalyst Center at the control center. Micro segmentation is provided by Cisco ISE and TrustSec.

Cisco Wi-Fi Architecture for Off-Shore Windfarm

Figure 23 shows the Wi-Fi architecture for an offshore wind farm deployment, which enables Wi-Fi access for employees, contractors, and guests on the OSS network, FAN, and TAN.

|

The Cisco Catalyst Center is located onshore within the control center. It has connectivity to the Cisco Catalyst 9800 WLC over a WAN connection. The Catalyst Center is used to centrally manage and configure the WLCs and APs. It can be used to view the health metrics of the wired and wireless networks within the offshore windfarm network.

The Catalyst Center also is used to configure the TrustSec matrix that Is used for segmentation of user traffic. A Microsoft Windows servers is located within the control center and provides the following functionality:

● Employees user identity store (group, username, password)

● Contractor user identity store (group, username, password)

● Certificate authority (CA)

● DNS server

● DHCP server (DHCP scopes for employee and guest wireless access)

The ISE server is collocated in the control center. The ISE server acts as the central identity and policy management server used for wireless IEEE 802.1X authentication and authorization. It assigns security group tags (SGTs) to clients. These tags are used for micro-segmentation. The ISE server is integrated with the Cisco Catalyst Center and the Catalyst 9800 WLC.

ISE also hosts the wireless guest portal for guest wireless access.

The appropriate firewall ports need to be opened on the enterprise firewall at the boundary of the control center for Catalyst Center to WLC communications (configuration and telemetry), ISE to WLC communication (IEEE 802.1X, TrustSec), and WLC to AD connectivity (DHCP, DNS ports).

The Cisco Catalyst 9800 WLCs are deployed as a redundant SSO high-availability pair with the OSS infra network connected to the Cisco Catalyst 9300 switches. The Wi-Fi deployment is managed using Catalyst Center Catalyst Center and Cisco ISE for IEEE802.1X wireless and guest access. A good practice is to use different WLC interfaces for wireless management (access port), wireless client traffic (trunk port) and guest user traffic (access port).

Cisco IW6300s (ruggedized APs 9124s (enterprise APs) and the IW9167E/I) are deployed in local mode on the OSS, FAN BS, or TAN NS to provide wireless access where needed. The Catalyst IW 9167 provides more capacity in terms of throughput at the PHY level offering speeds up to 5Gbps on the ethernet port and up to 10Gbps on the SFP port with a max data rate of 7.96 Gbps. In addition to offering dual operation modes (Wi-Fi & URWB) for flexibility the 9167 can operate within the 6ghz frequency with its tri radio design. The wireless traffic is carried over the CAPWAP tunnel from the APs to the WLC and dropped off in the appropriate client VLAN on the Catalyst 9300 switch within the OSS infra network.

A dedicated VLAN and subnet needs to be assigned for wireless AP management. One or more VLANs and subnets need to be assigned for wireless client traffic. A dedicated VLAN and subnet needs to be assigned for guest wireless traffic. The AP management subnet needs to be trunked to whichever switch has APs connected to it. It also needs to exist on the switches to which the WLCs are connected. The AP switch port can be configured as an access port in the AP management VLAN with spanning-tree portfast enabled.

The SVIs for the VLANs and subnets need to exist on the Cisco 9500 stack.

Different user groups need to be created for employee users and contractors within Microsoft Active Directory (AD) or another LDAP server of your choice. Employee and contractor users need to be created in Microsoft AD or LDAP server and assigned to the appropriate groups. Unique scalable group tags (SGTs) need to be assigned for the employee, contractor, and guest user groups within ISE.

The employee SGT usually is configured to provide full access to all the required enterprise services so that it can accomplish its job functions. The contractor SGT usually provides limited access only to the services that it needs to access for its function, or only internet access if that is all it requires. The guest SGT is provided only with internet access. These policies can be defined and configured on the Cisco Catalyst Center and pushed to the ISE. The ISE then pushes these policies to the WLC.

When a wireless client is connected and is authenticated by ISE, the IP-SGT binding is generated on the controller.

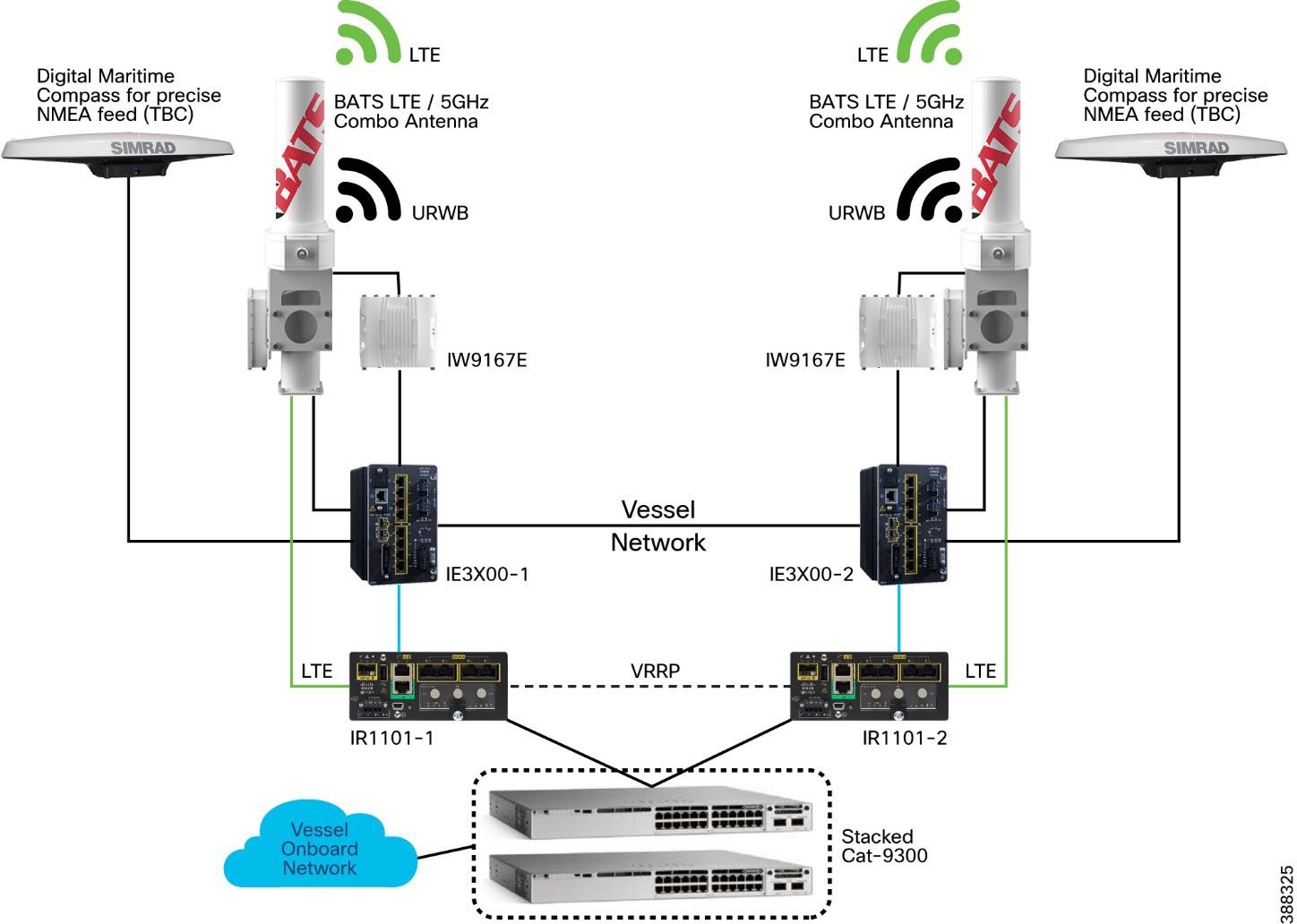

Use case for Service Operations Vessel Wireless Backhaul within a Wind Farm

There is a need for a reliable, high-bandwidth wireless backhaul solution that connects to the large Service Operations Vessels (SOVs) and smaller crew transfer vessels (CTVs), both of which move staff around an offshore wind farm estate. During periods near shore, a vessel should use public cellular connectivity.

This section provides an overview of URWB technology, the wireless network components needed to build out the wind farm solution, and the high-level and low-level architecture to support connectivity SOVs and CTVs to the OSS network.

The following high-level requirements are met by this CVD:

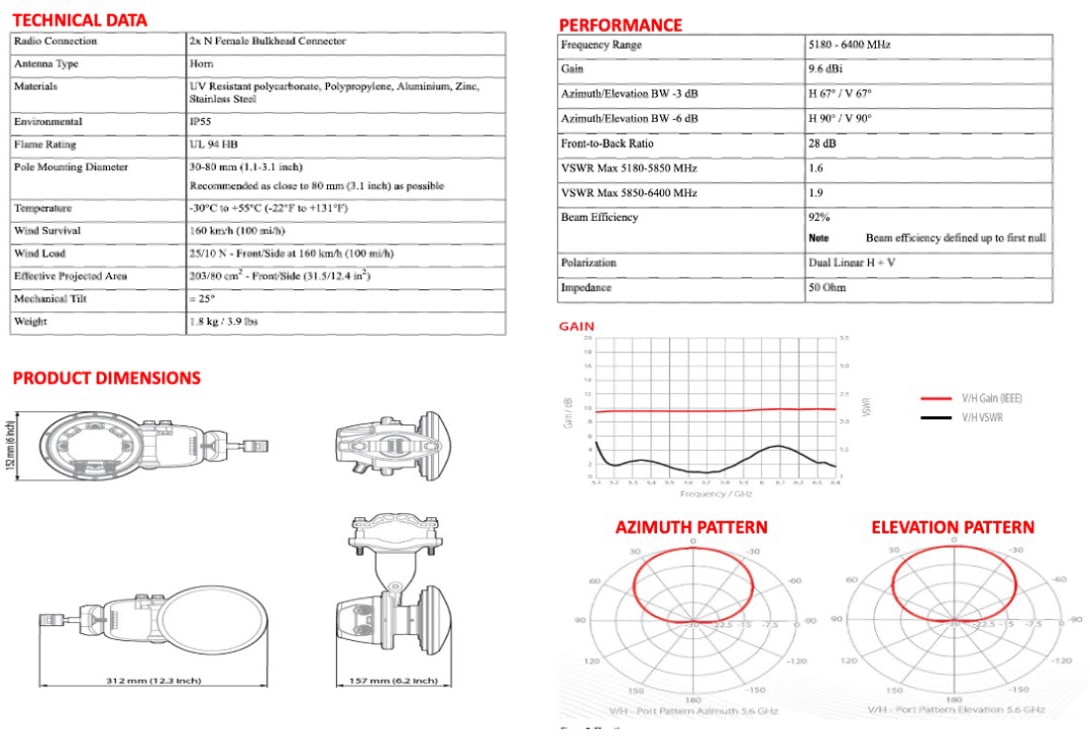

● Reliable wireless backhaul connectivity to vessels that are within a 10 km radius of an OSS platform using URWB radios.

● The head end or wayside is on the OSS.

● Vessels can switch to cellular connectivity when in range of onshore cellular networks.

● Vessels have specialist antennas with appropriate radios and modems.

● Antennas on vessels automatically adjust their direction to optimize the radio signals for best performance by using a GPS feed to dynamically change the beam direction.

● Antennas on vessels are combination antennas that support 5 GHz URWB wireless and public LTE.

● Target throughput: 30 to 50 Mbps for vessels that are within a 10 km radius of an OSS.

● Support for connectivity to a public LTE network when a vessel is going to or from a harbor, extending to a few miles offshore.

● IP telephony extended to a vessel, with Cisco Survivable Remote Site Telephony (SRST) preferred onboard for periods when no OSS connectivity is available.

● Corporate and guest user networks to be extended to the SOV using fixed and Wi-Fi connections.

The Catalyst IW9167 Series addresses the growing need to provide reliable wireless connectivity for mission-critical applications as organizations automate processes and operations. It comes with three 4x4 radios in a heavy-duty design that is IP67 rated and packed with advanced features. Cisco URWB provides up to 99.995% stability with <10 ms latency, 0 packet loss and seamless roaming. For a detailed overview of Cisco’s URWB please view the IW9167 datasheet.

The following key technologies underlay the foundation for the URWB solution:

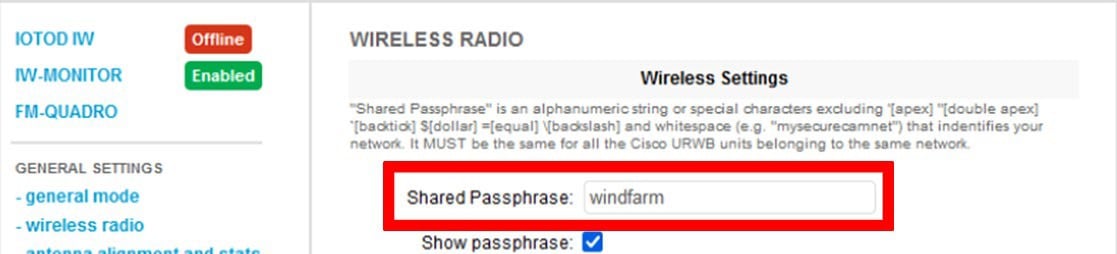

● Prodigy 2.0: MPLS-based transmission protocol built to overcome the limits of standard wireless protocols.

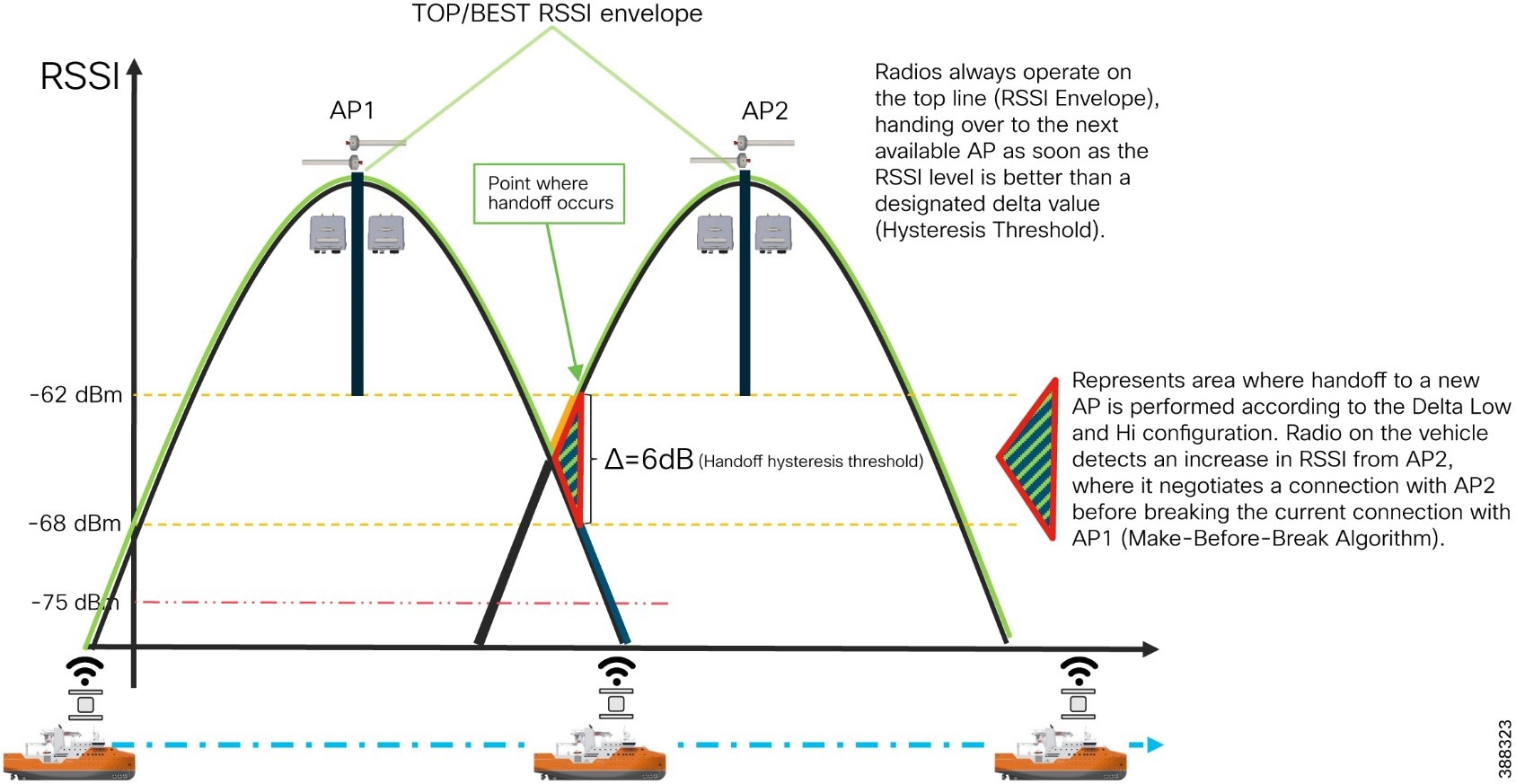

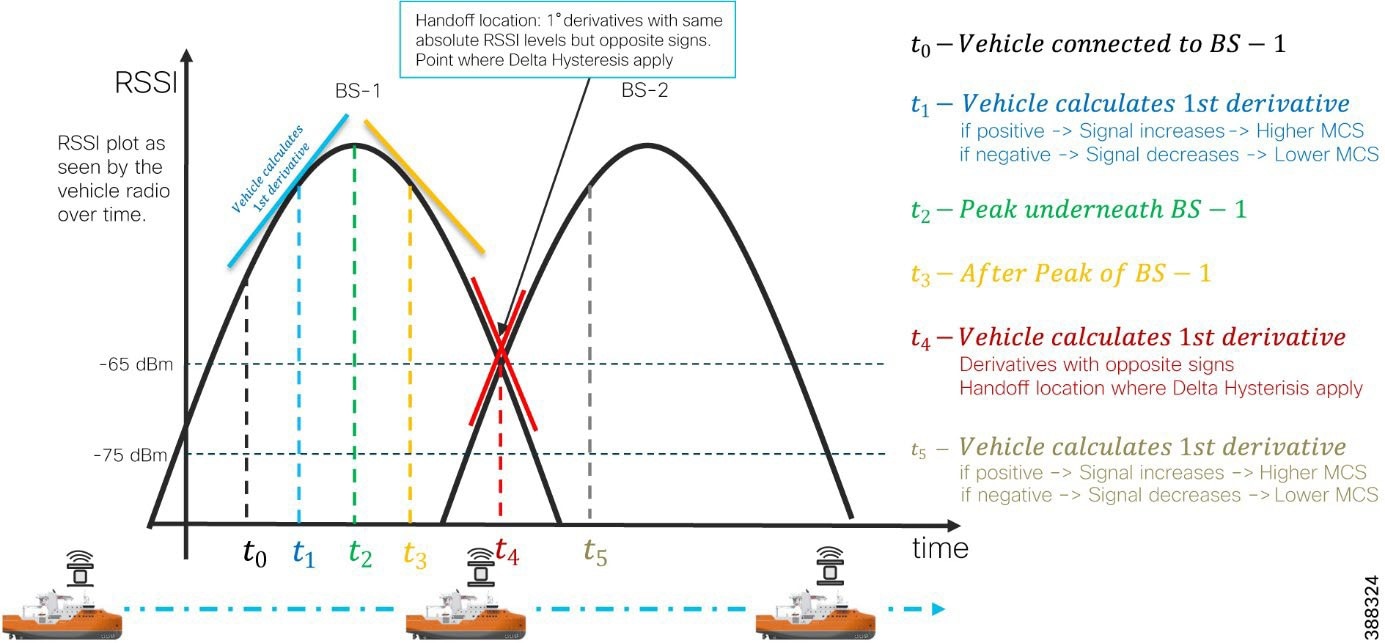

● Fluidity: Proprietary fast-roaming algorithm for vehicle-to-wayside communication with a 0 ms roam delay and no roam loss for speeds up to 200 Mph (360 kph).

● Fast-failover high-availability mechanism that provides hardware redundancy and carrier-grade availability.

URWB uses the proprietary wireless-based MPLS transmission protocol Prodigy to discover and create label-switched paths (LSPs) between mesh-point radios and mesh end(s). Prodigy helps make the wireless mesh networks resilient. It also helps making fixed and mobility networks resilient. MPLS provides an end-to-end packet delivery service operating between layer 2 and layer 3 of the OSI network stack. It relies on label identifiers, rather than on the network destination address as in traditional IP routing, to determine the sequence of nodes to be traversed to reach the end of the path.

Fluidity enables a vehicle that is moving between multiple infrastructure APs to maintain end-to-end connectivity with seamless handoffs between APs. Vehicle radios negotiate with the infrastructure APs and form a new wireless connection to a more favorable infrastructure AP with better signal quality before breaking or losing their currently active wireless connections.

Hardware Redundancy and High-Availability