Scenario

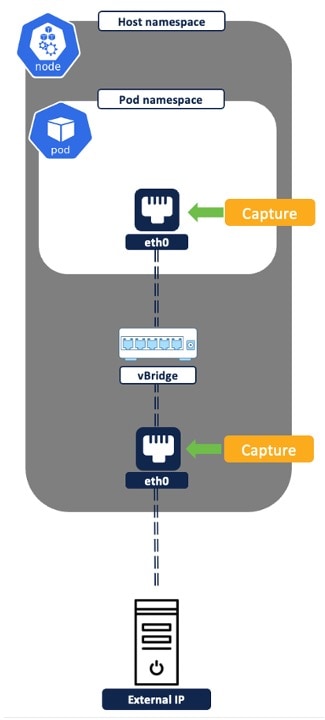

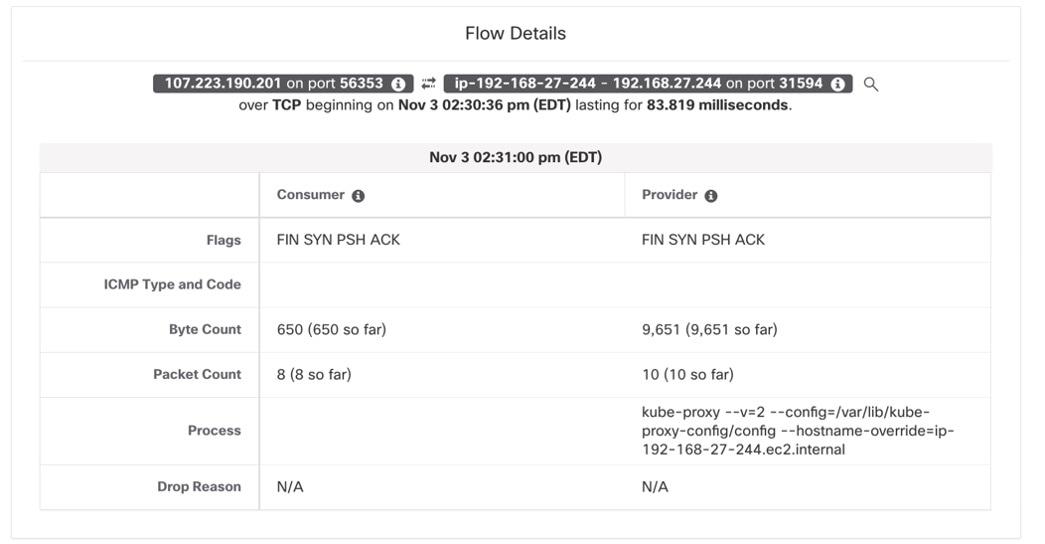

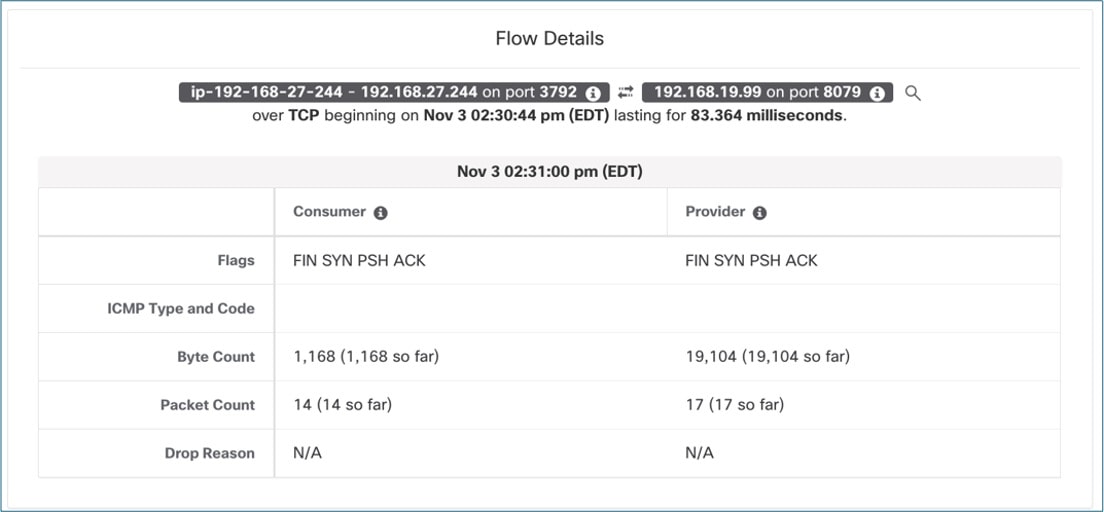

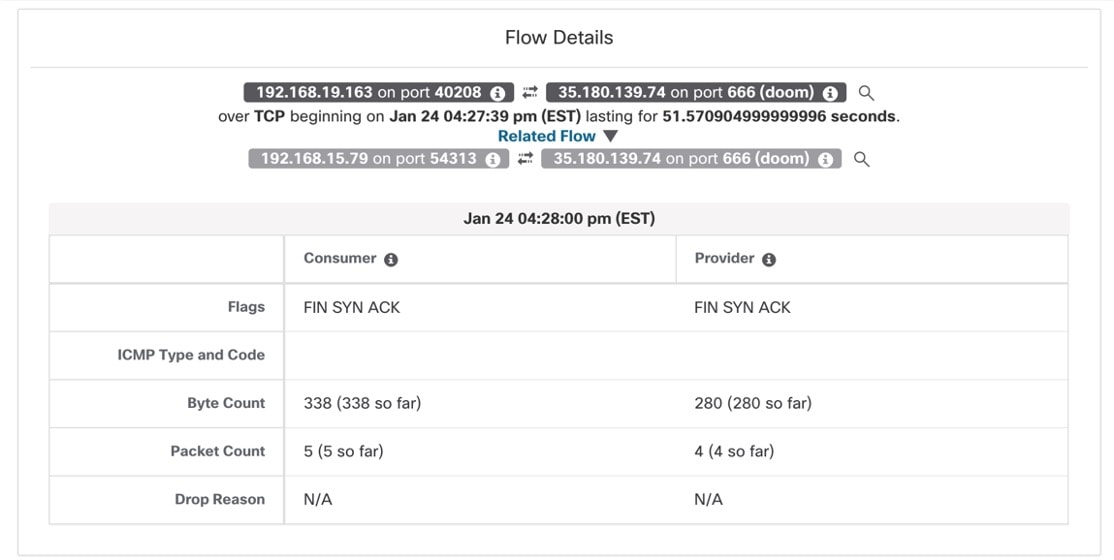

A Network Security Engineer in an Enterprise Financial Organization faces challenges in ensuring the security of Kubernetes or OpenShift clusters. These challenges include enforcing network policies, implementing traffic segmentation, mitigating potential vulnerabilities, addressing complex network architectures, and continuous monitoring for emerging security threats within and between clusters.

Is this use case for you?

The target audience for this use case includes DevOps engineers, security analysts, and cloud and network security engineers. Their responsibilities include continuously monitoring and securing containerized environments, and using container security tools to detect and respond to potential threats and vulnerabilities.

Feedback

Feedback