Scenario

Modern enterprise applications run in hybrid multicloud environments that include bare-metal servers, virtual machines, and containerized workloads orchestrated by Kubernetes. This complexity introduces challenges in securing applications and data without affecting operational agility. Cisco Secure Workload addresses this challenge by bringing security closer to applications through microsegmentation and zero-trust principles. It uses advanced machine learning and behavioral analysis to tailor security policies based on workload behavior. This enables organizations to:

-

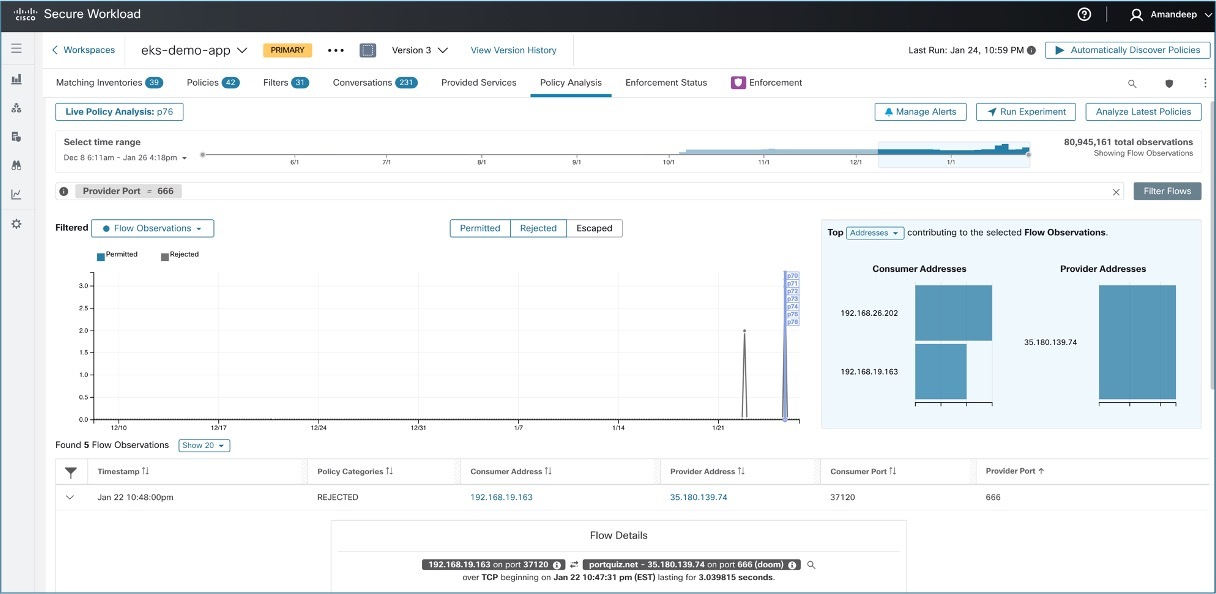

Allow only business-required traffic through microsegmentation policies.

-

Detect anomalies and potential threats via behavioral baselining.

-

Identify vulnerabilities in software packages installed on workloads.

-

Quarantine compromised workloads to prevent lateral movement.

-

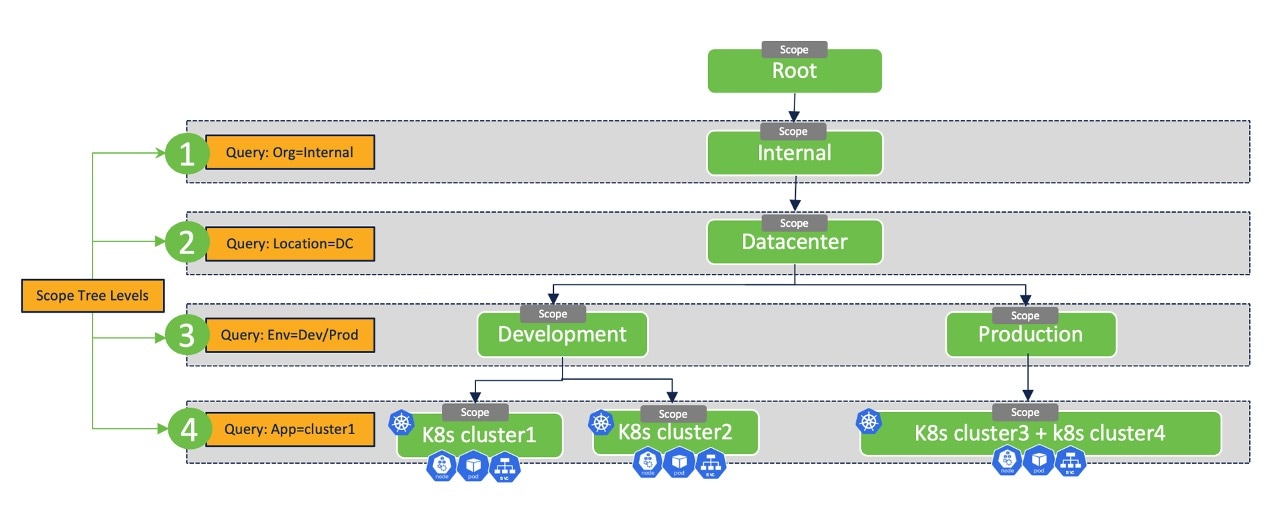

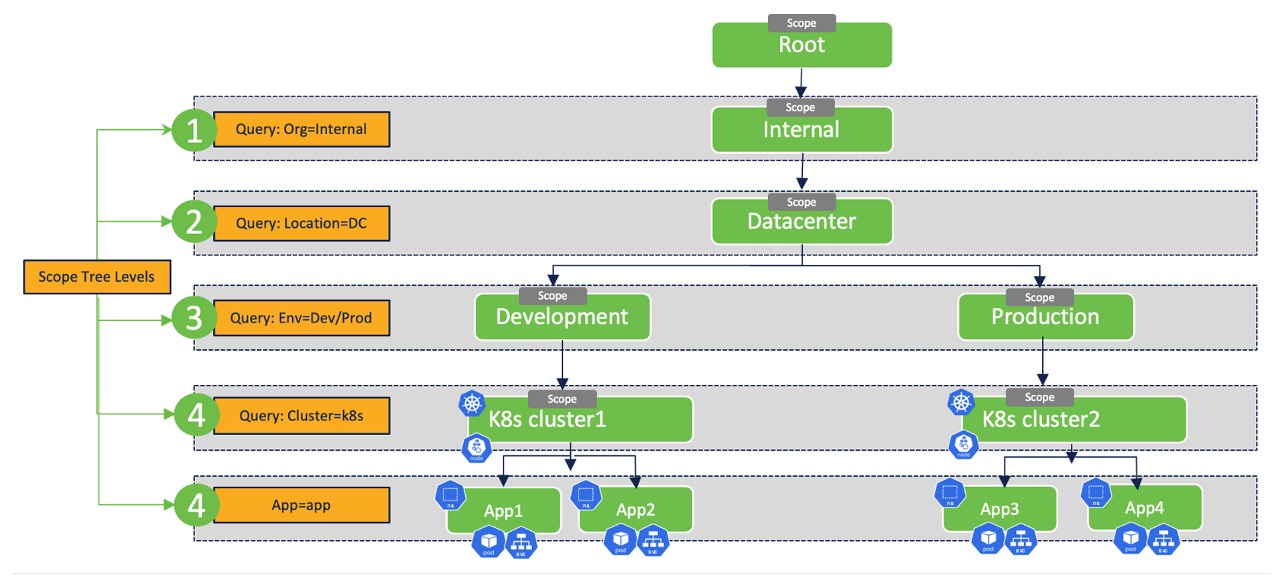

Enforce zero-trust security and microsegmentation in Kubernetes environments.

Is this use case for you?

The target audience for this scenario includes Security Architects, Network Administrators or Cloud Architects responsible for managing and configuring network infrastructure. They may be involved in defining and implementing network policies to segment and control communication among different components within the Kubernetes cluster.

Feedback

Feedback