Prerequisites for geo-redundancy

Before you enable GR between sites, complete these requirements:

-

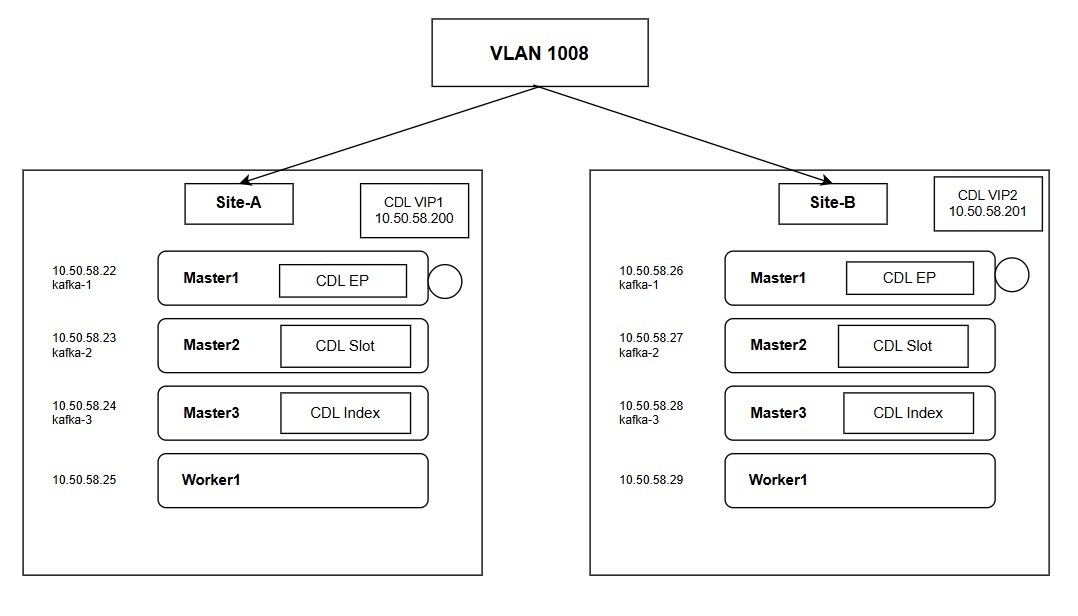

Network Configuration: Configure the replication network VLAN on all master nodes at both sites.

-

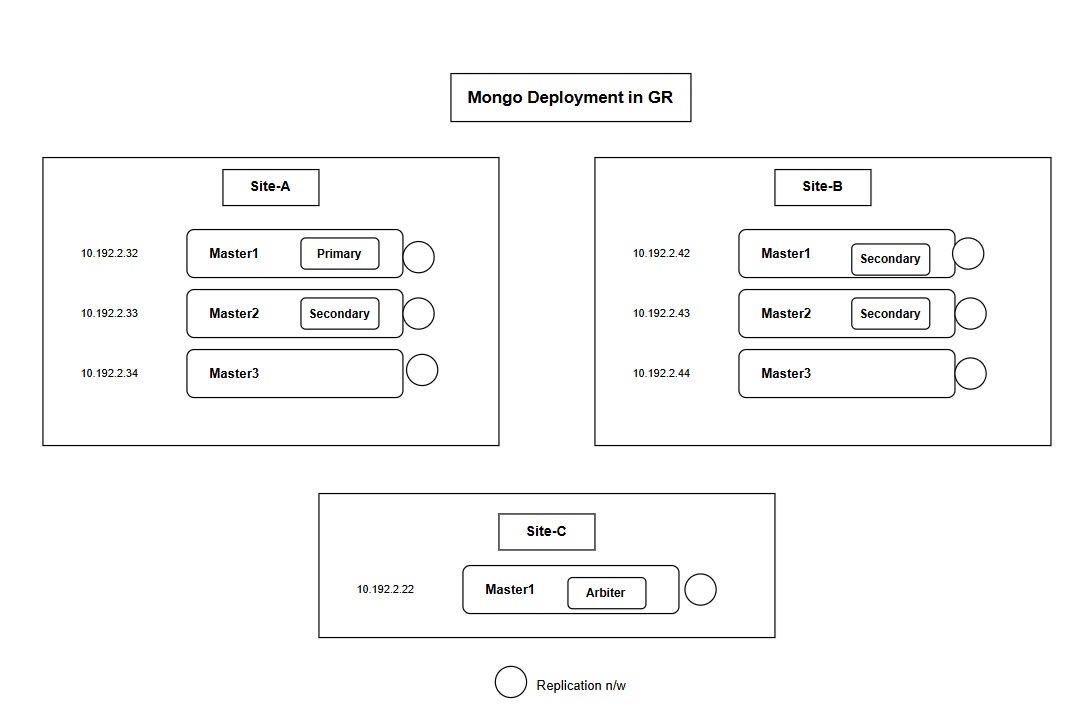

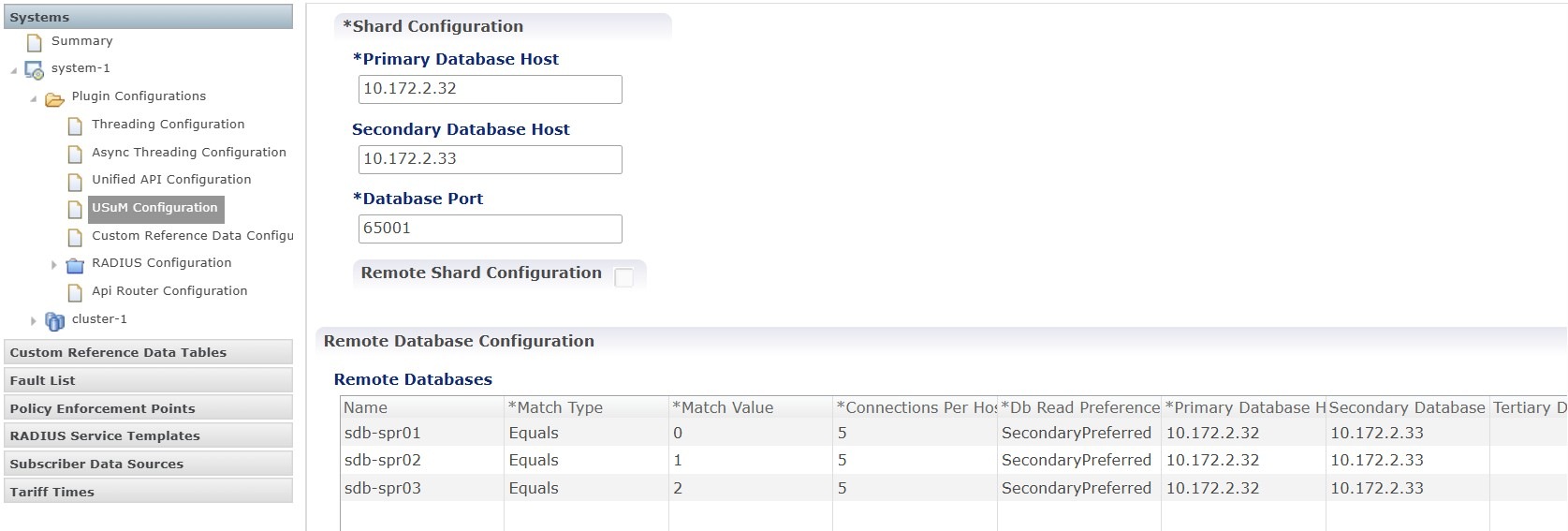

IP Allocation: Allocate IP addresses based on the number of replica sets configured for both the MongoDB and CDL data-store endpoints.

-

Timing: Complete all network configurations and IP allocations before you enable GR to prevent replication issues.

Note |

The number of MongoDB replica sets for each datastore endpoint determines the total number of IP addresses required for the deployment. |

IP address allocation for datastore endpoints

Deploy one MongoDB replica set with two local members and one CDL endpoint with three Kafka replicas. This configuration requires six IP addresses for the site.

Note |

Configure three Kafka replicas on a single IP address and assign a unique port number to each replica. This approach reduces the total IP addresses required. |

This table describes the IP address requirements for a single site in an active-active geo-redundant deployment.

| Data store endpoint | Number of IPs required | Purpose | Configuration reference |

| CDL endpoint VIP | 1 | Enables the remote site (Site-B) to access the local site (Site-A) CDL datastore for data synchronization. | cdl datastore session endpoint external-ip 10.50.58.200 |

| CDL Kafka replicas | 3 | Used for CDL replication. | cdl kafka external-ip 10.50.58.22 10091 |

| MongoDB members | 2 | Required for data members on each site. This assumes 2 MongoDB members per site and an arbiter placed at a third site. | member-configuration member sdb-rs1-s1-m1 host 10.192.2.32 |

Feedback

Feedback