Adaptive Solutions with Cisco UCS X215c M8 and Hitachi VSP One Block E2E 100G VMware

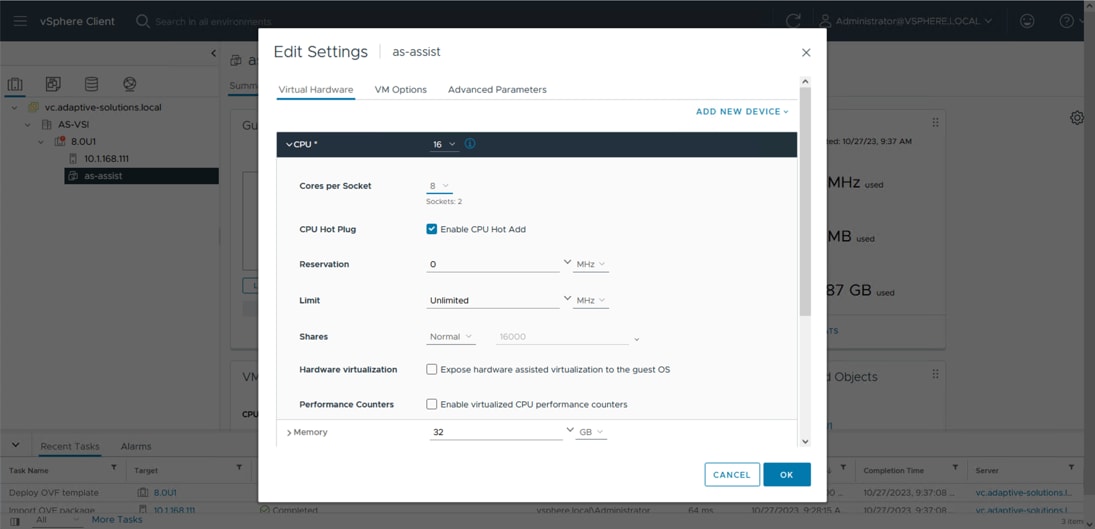

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

In partnership with:

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to: http://www.cisco.com/go/designzone.

Executive Summary

Cisco Validated Designs consist of systems and solutions that are designed, tested, and documented to facilitate and improve customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of our customers.

Cisco and Hitachi have joined forces to provide a converged infrastructure solution designed to address the current challenges faced by enterprise businesses and to position them for future success. Drawing upon their extensive industry knowledge and innovative technology, this collaborative Cisco CVD presents a robust, flexible, and agile foundation for today's businesses. Moreover, the partnership between Cisco and Hitachi goes beyond a singular solution, allowing businesses to leverage their ambitious roadmap of progressive technologies, including advanced analytics, IoT, cloud, and edge capabilities. By partnering with Cisco and Hitachi, organizations can confidently embark on their modernization journey and position themselves to capitalize on emerging business opportunities facilitated by groundbreaking technology.

This document explains the deployment of the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure as a Virtual Server Infrastructure (VSI), as it was described in the Adaptive Solutions with Cisco UCS X215c M8 and Hitachi VSP One Block E2E 100G VMware Design Guide. The recommended solution architecture is built on Cisco Unified Computing System (Cisco UCS) using the unified software release to support the Cisco UCS hardware platforms for Cisco UCS X-Series servers, Cisco UCS X-Series Direct Fabric Interconnects, Cisco Nexus 9000 Series switches, and the Hitachi Virtual Storage Platform (VSP) One Block 24 using high-performance NVMe over TCP-delivered storage. This architecture is implemented on VMware vSphere 8.0U3 to support the leading virtual server platform for enterprise customers.

Additional Cisco Validated Designs created in a partnership between Cisco and Hitachi can be found here: https://cisco.com/go/as-cvds

Solution Overview

This chapter contains the following:

● Audience

Modernizing your data center can be overwhelming, and it’s vital to select a trusted technology partner with proven expertise. With Cisco and Hitachi as partners, companies can build for the future by enhancing systems of record, supporting systems of innovation, and growing their business. Organizations need an agile solution, free from operational inefficiencies, to deliver continuous data availability, meet SLAs, and prioritize innovation.

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure as a Virtual Server Infrastructure (VSI) is a best-practice datacenter architecture built on the collaboration of Hitachi Vantara and Cisco to meet the needs of enterprise customers running virtual server workloads. This architecture is composed of the Hitachi VSP One Block series connected through the Cisco Nexus 9300 switches, supporting the NVMe over TCP (NVMe/TCP) storage protocol, to Cisco Unified Computing System X-Series Servers managed through Cisco Intersight.

These deployment instructions are based on the buildout of the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure validated reference architecture, which describes the specifics of the products used within the Cisco validation lab, but the solution is considered relevant for equivalent supported components listed within Cisco and Hitachi Vantara’s published compatibility matrices. Supported adjustments from the example validated build must be evaluated with care, as their implementation instructions may differ.

The intended audience of this document includes, but is not limited to, IT architects, sales engineers, field consultants, professional services, IT managers, partner engineering, and customers who want to take advantage of an infrastructure built to deliver IT efficiency and enable IT innovation.

This document provides a step-by-step configuration and implementation guide for the Cisco and Hitachi Adaptive Solutions for the Converged Infrastructure solution. This solution features a validated reference architecture composed of:

● Cisco UCS Compute

● Cisco Nexus Switches

● Hitachi Virtual Storage Platform

For the design decisions and technology discussion of the solution, see the Adaptive Solutions with Cisco UCS X215c M8 and Hitachi VSP One Block E2E 100G VMware Design Guide

The following design elements distinguish this version of the Adaptive Solutions Virtual Server Infrastructure from previous models:

● Cisco UCS X215c M8 servers with 5th Gen AMD EPYCTM processors, supporting up to 96 cores per processor and up to 6TB of DDR5-6400 DIMMs

● 100 Gbps Ethernet end-to-end

● Integration of the Cisco UCS X-Series Direct 9108-100G Fabric Interconnect into Adaptive Solutions

● VMware vSphere 8.0 Update 3g

● NVMe/TCP connectivity to the Hitachi VSP One storage array providing VMFS datastores

● M.2 boot LUNs

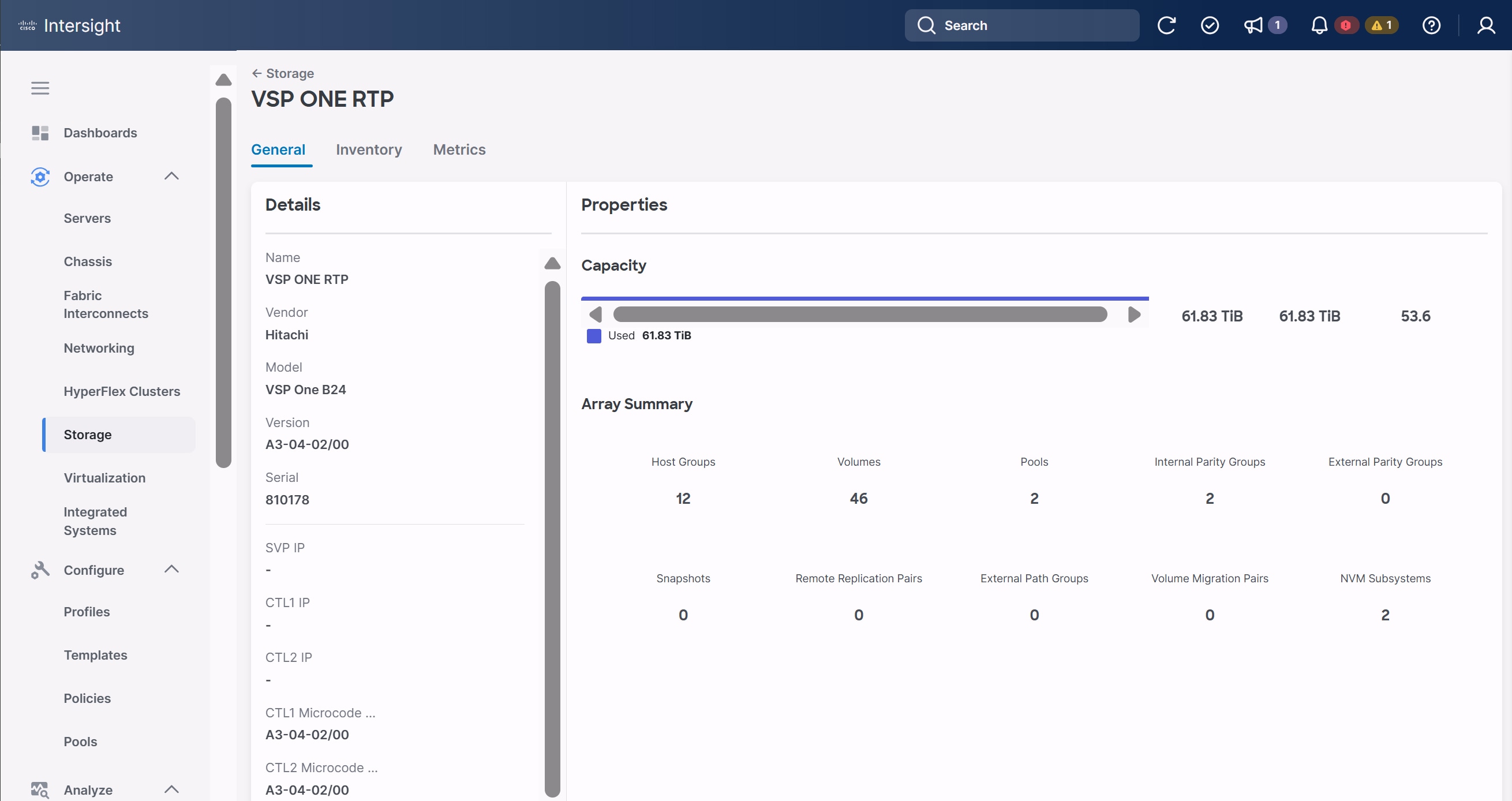

● Hitachi Virtual Storage Platform (VSP) One Block 24

● Hitachi Vantara VSP One Block Storage Modules for Red Hat Ansible

Deployment Hardware and Software

This chapter contains the following:

The Adaptive Solutions Virtual Server Infrastructure consists of a high-performance network built using the following hardware components:

● Cisco UCS X9508 Chassis with Cisco UCS X-Series Direct Fabric Interconnect, supporting (8/16/32 Gbps) Fibre Channel connectivity, 10/25/40/100 Gigabit Ethernet, and up to eight Cisco UCS X215c M8 Compute Nodes with 4th or 5th Generation AMD EPYCTM CPUs.

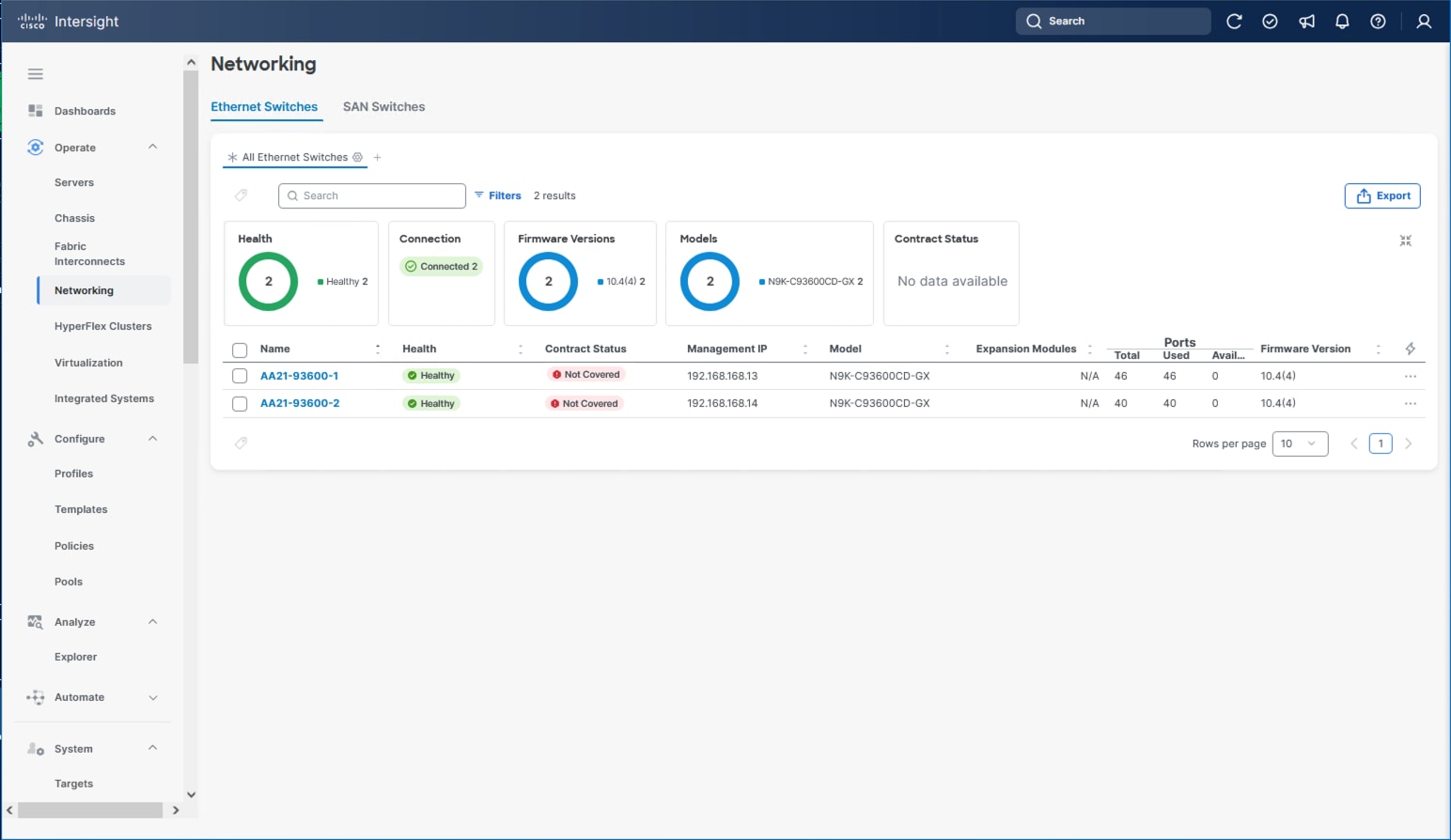

● High-speed Cisco NX-OS-based Nexus 93600CD-GX switching design supporting up to 400G.

● Hitachi VSP One Block with 100G NVMe/TCP connectivity.

The software components of the solution consist of:

● Cisco Intersight SaaS platform to deploy, maintain, and support the Adaptive Solutions infrastructure.

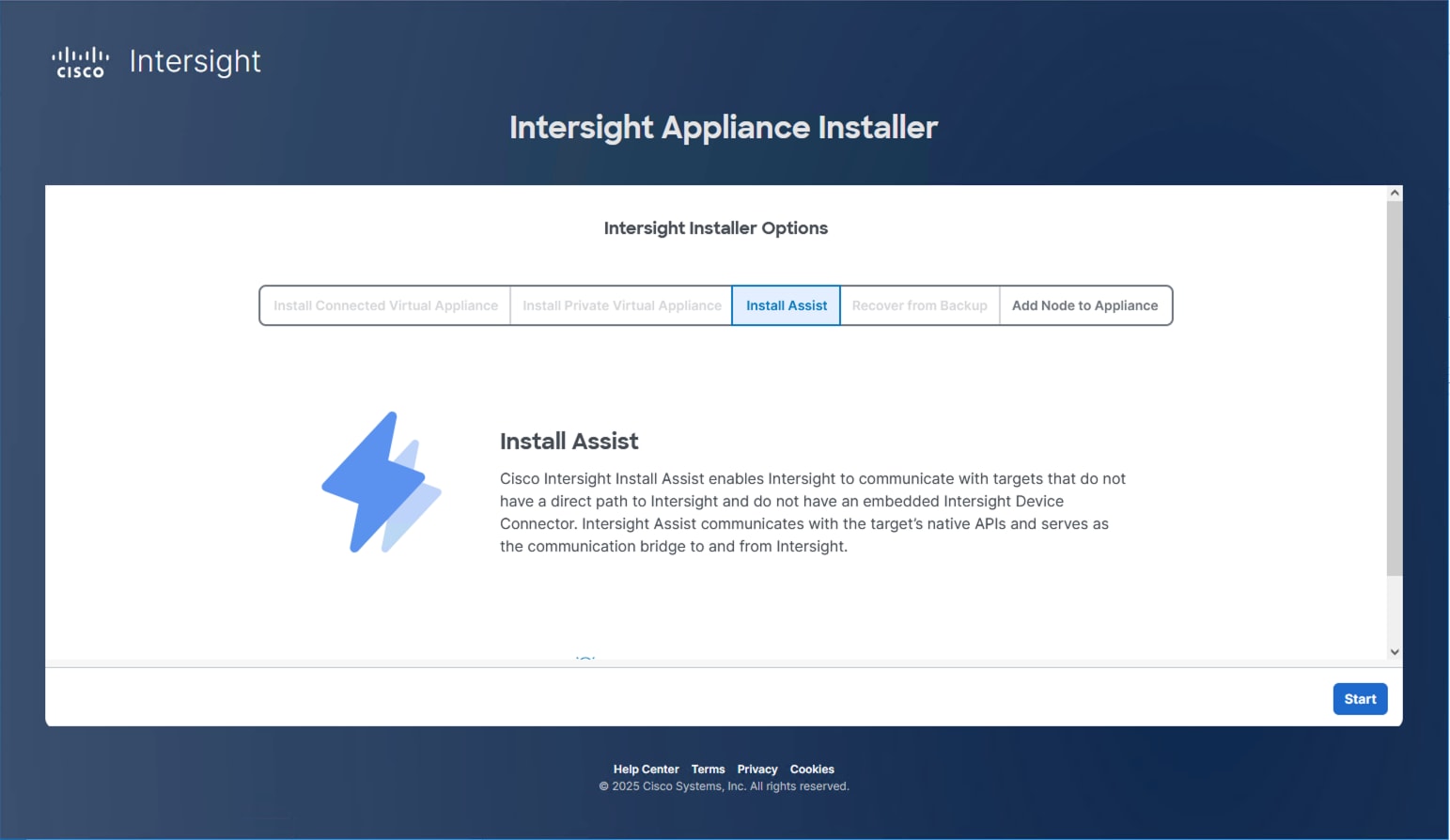

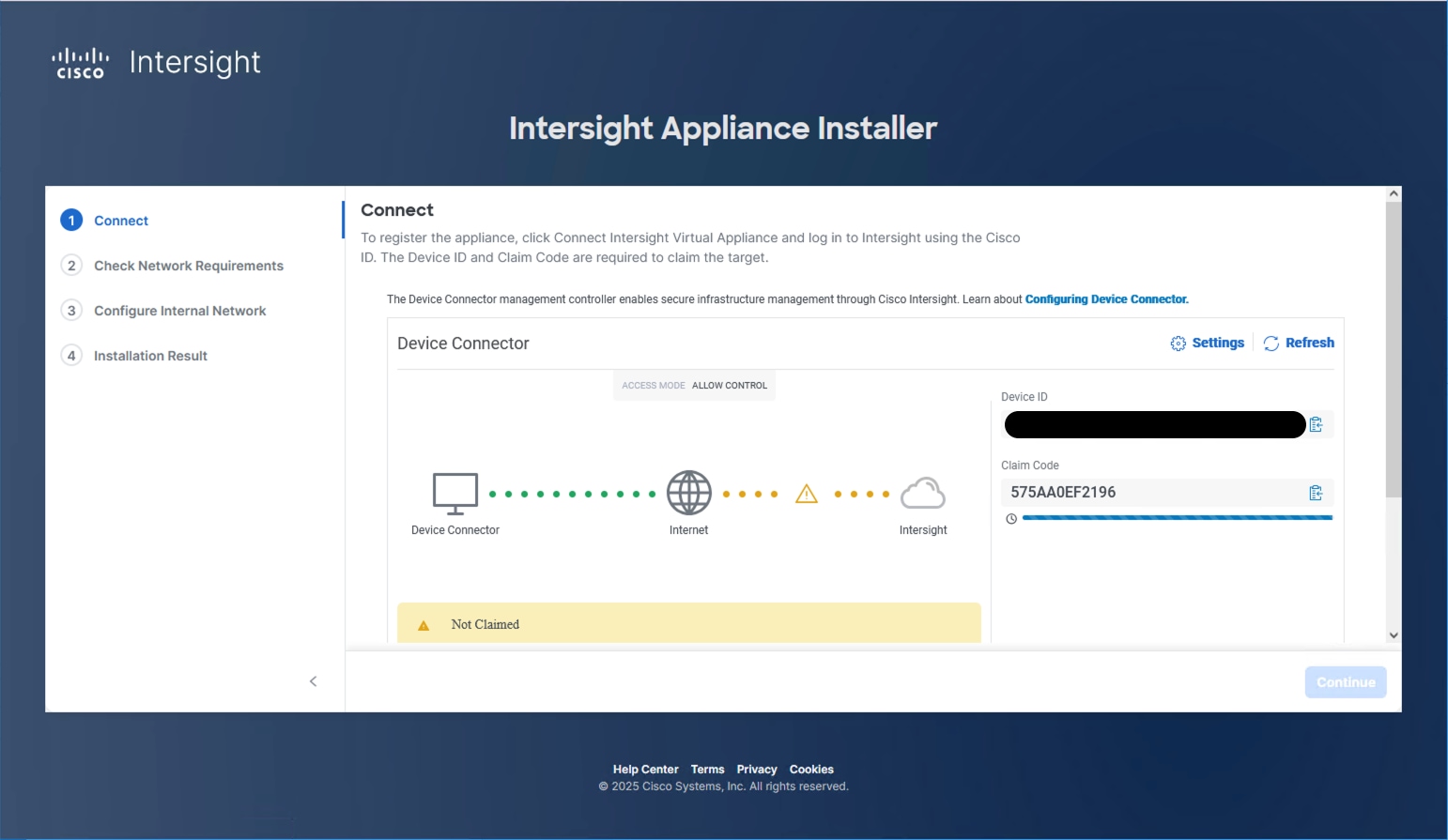

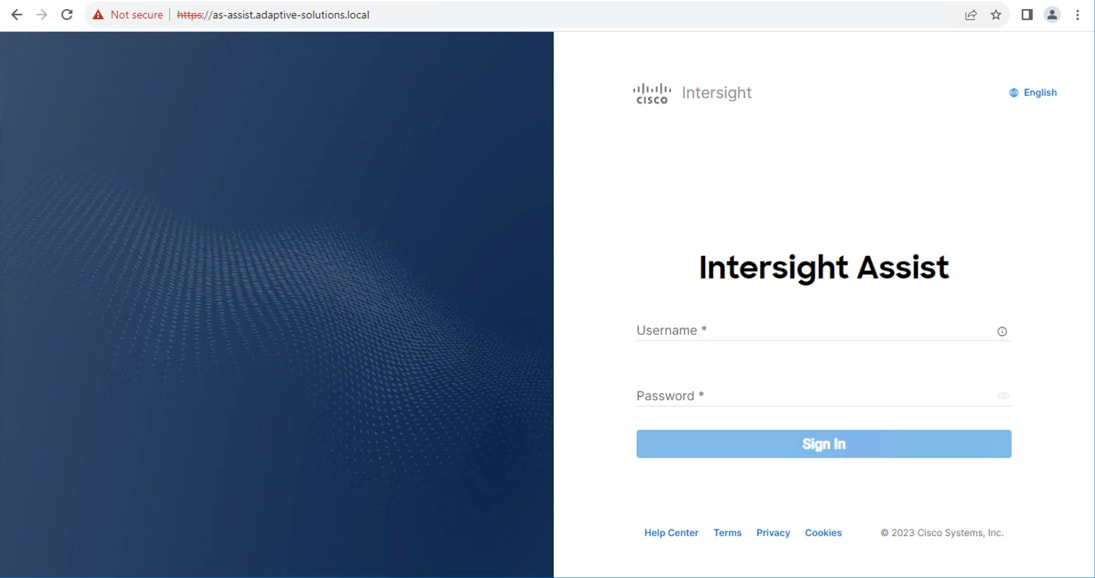

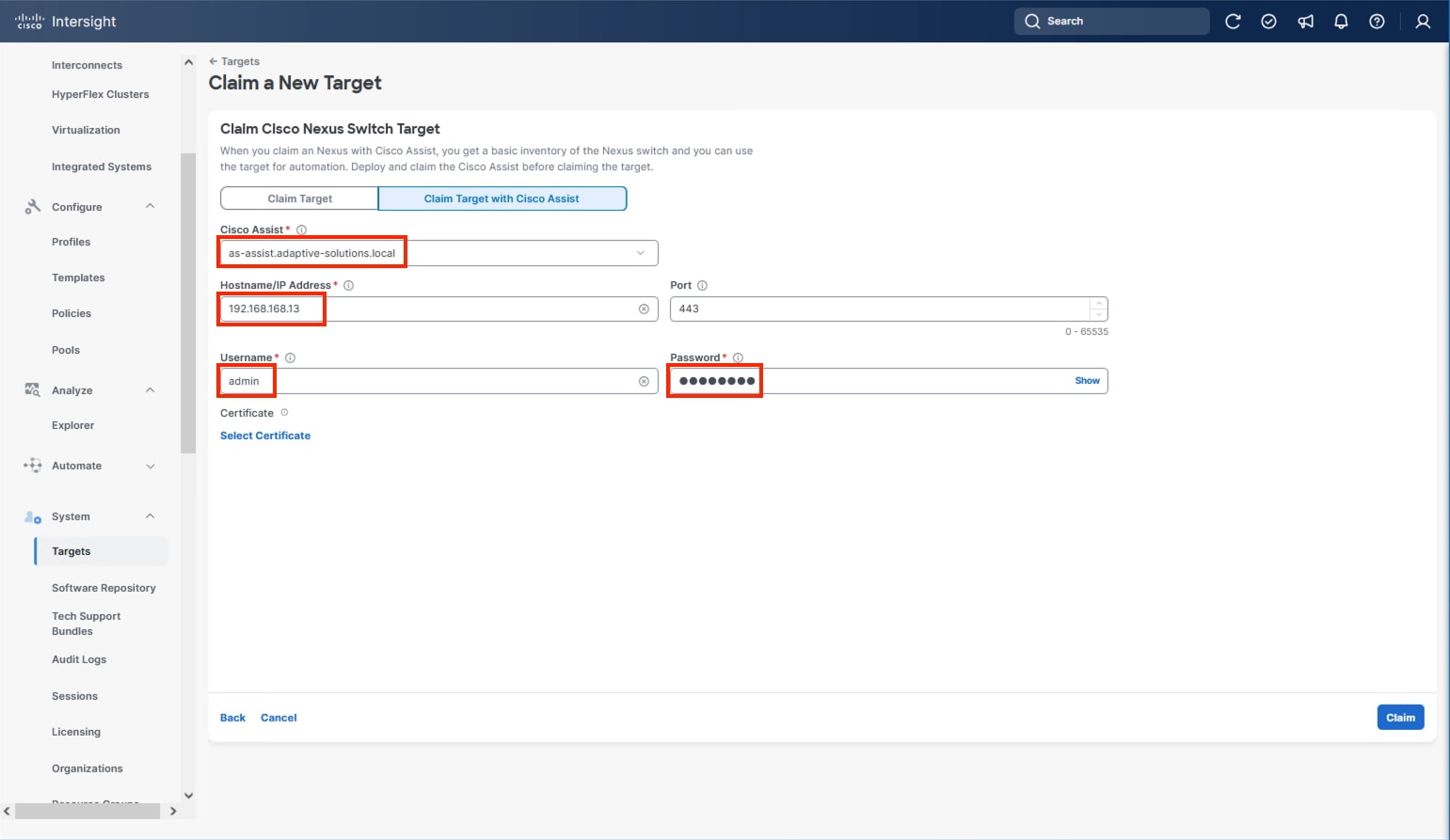

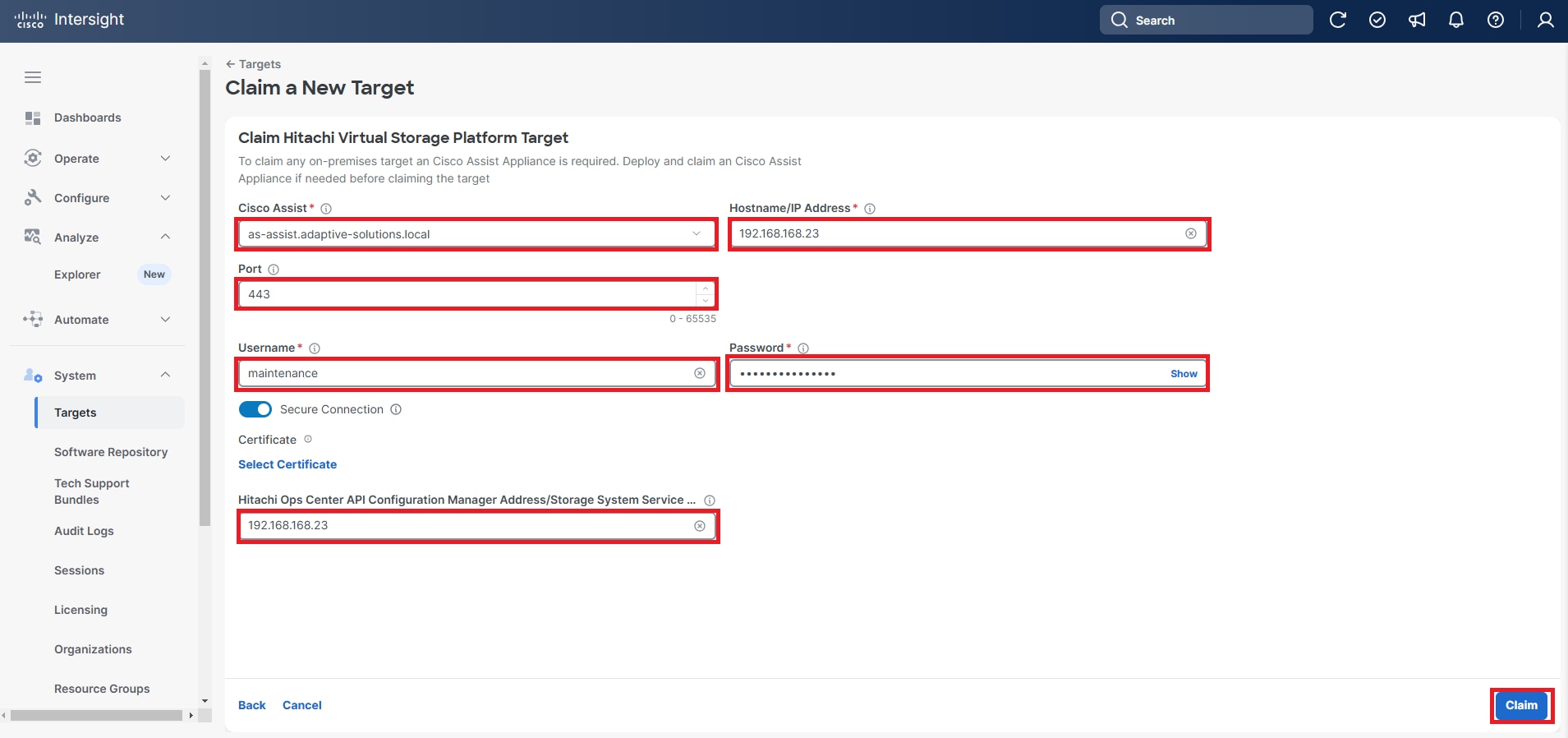

● Cisco Intersight Assist Virtual Appliance to connect the Hitachi VSP One Block, VMware vCenter, and Cisco Nexus switches with Cisco Intersight.

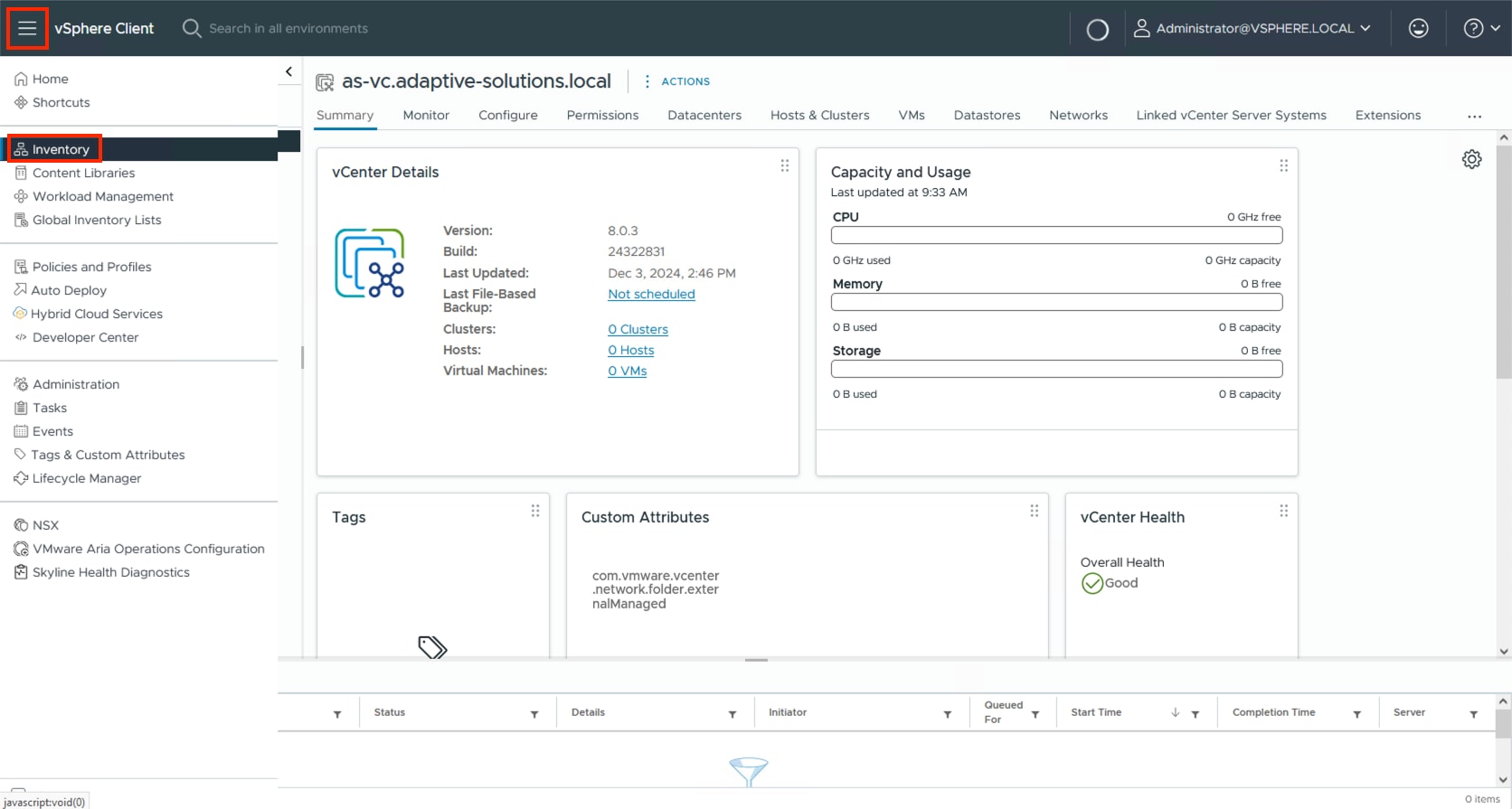

● VMware vCenter to set up and manage the virtual infrastructure, as well as Cisco Intersight integration.

● Hitachi VSP One Block Administrator.

● Hitachi Vantara’s Command Control Interface (CCI) Raidcom software, which serves as the primary method to configure the IP storage protocols of the VSP One Block.

● Hitachi Vantara VSP One Block Storage Modules for Red Hat Ansible.

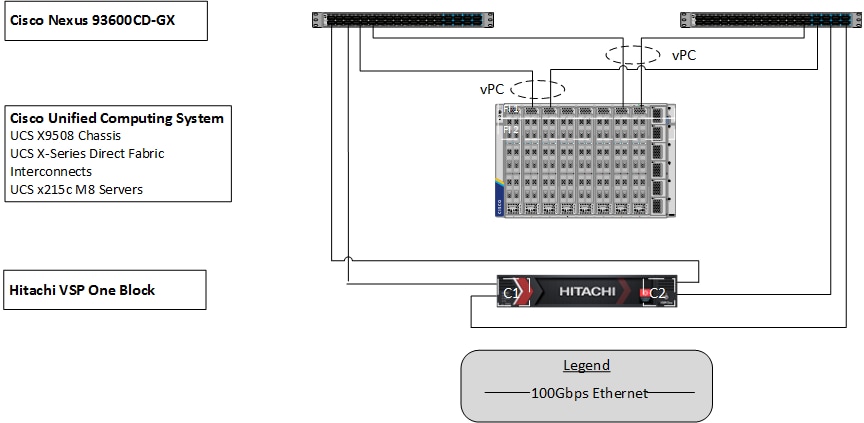

Figure 1 shows the validated hardware components and connections used in the Adaptive Solutions Virtual Server Infrastructure design.

The reference hardware configuration includes:

● Two Cisco Nexus 93600CD-GX switches in Cisco NX-OS mode, providing the switching fabric.

● Two Cisco UCS X-Series Direct Fabric Interconnects (FIs), providing chassis connectivity. One 100 Gigabit Ethernet port from each FI, configured as a Port-Channel, is connected to each 93600CD-GX.

● Hitachi VSP One Block controllers, each connecting with one 100 Gbps NVMe/TCP port to each Cisco Nexus 93600CD-GX switch, delivering traffic to the IP SAN network.

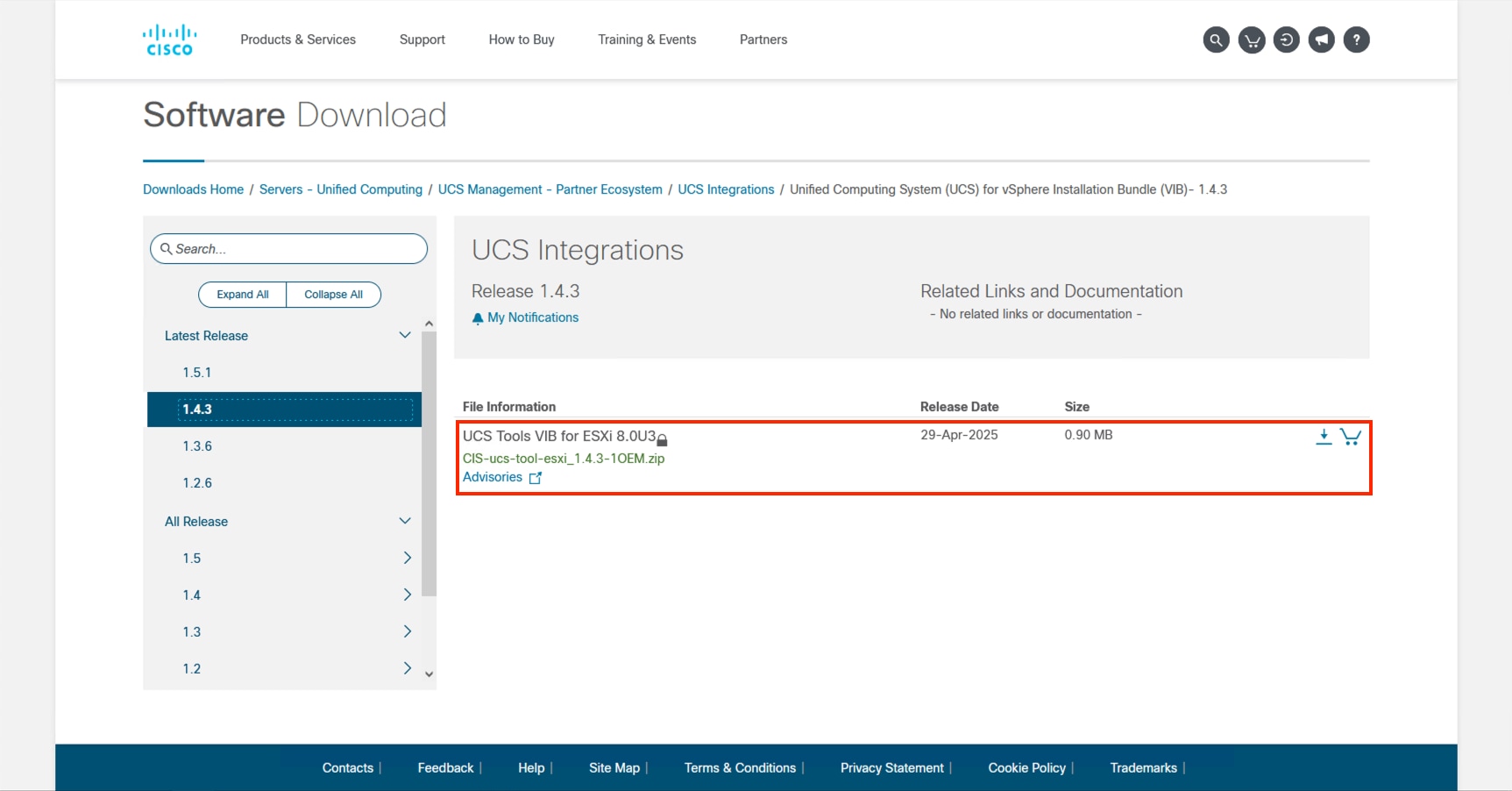

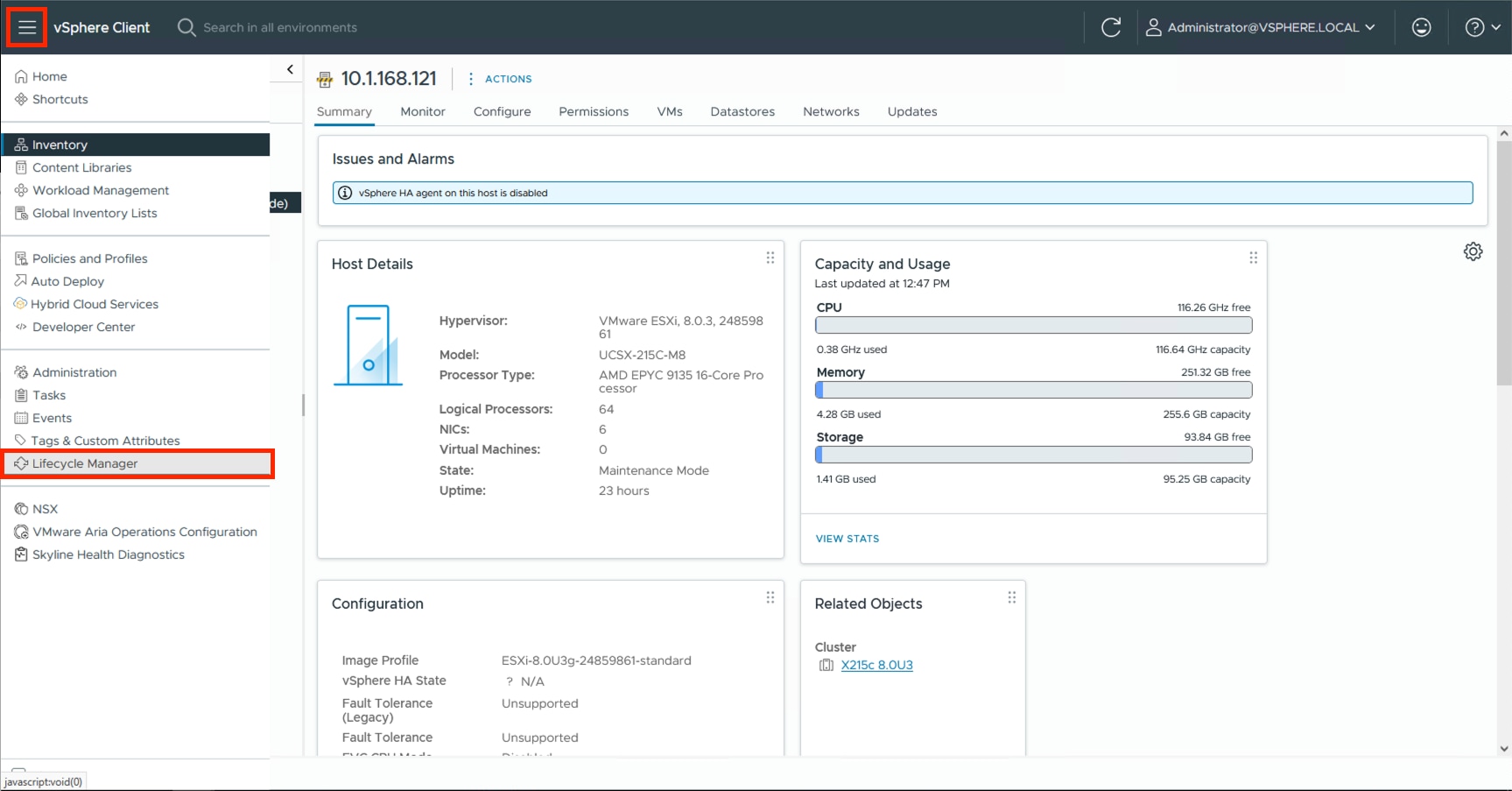

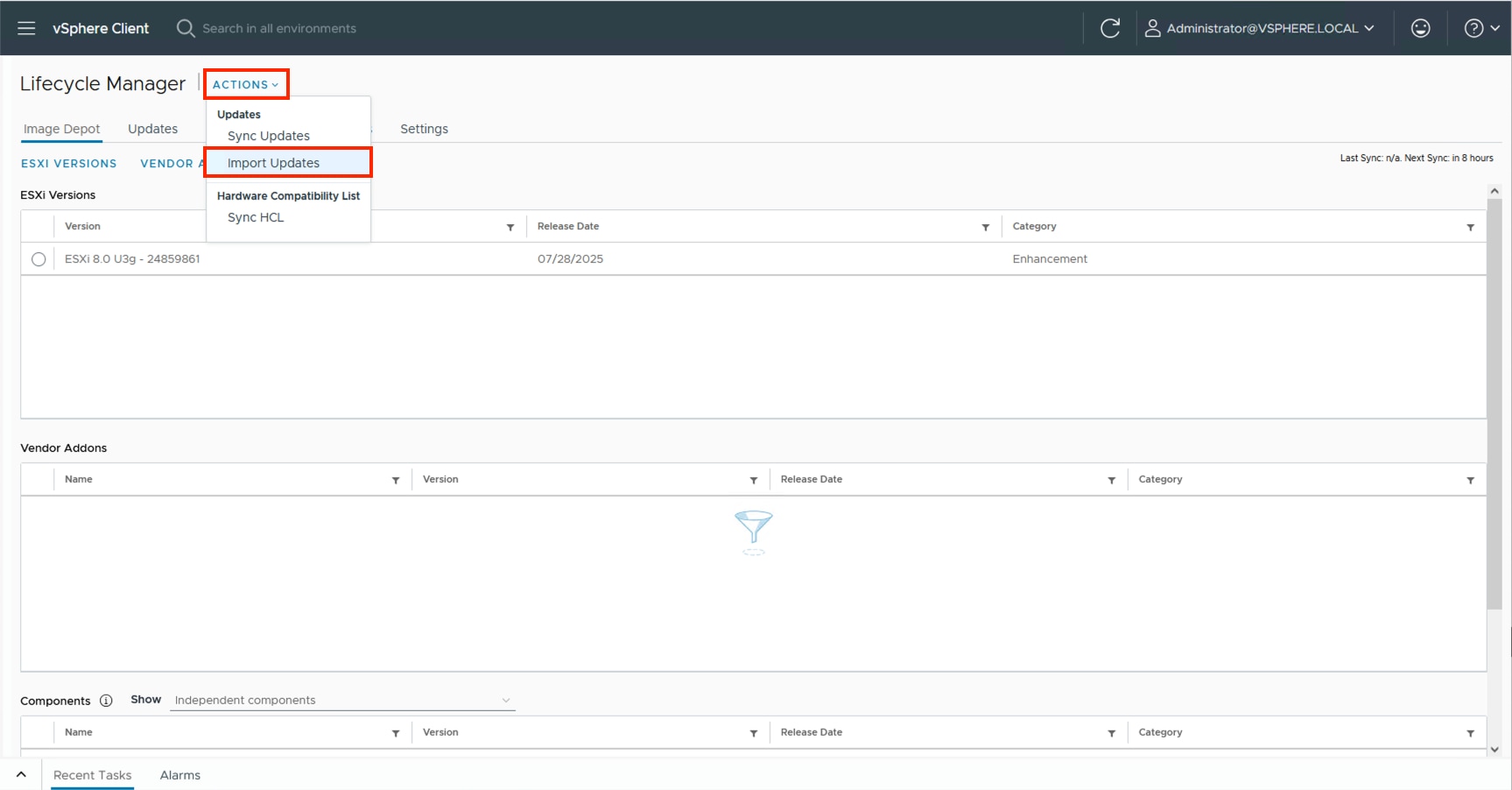

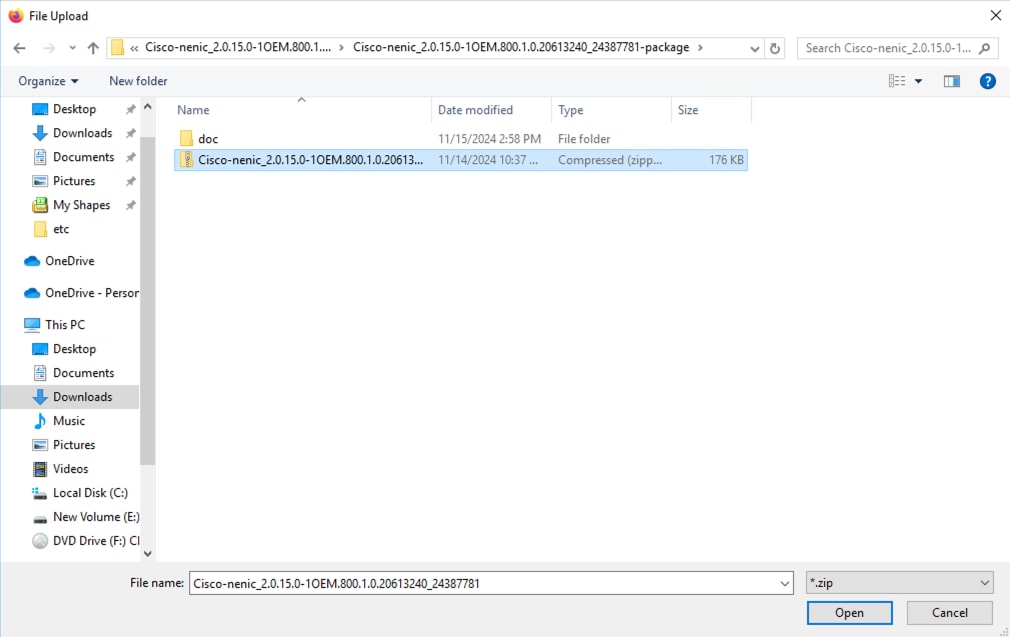

Table 1 lists the software revisions for various components of the solution.

| Layer |

Device |

Image |

Comments |

| Network |

Cisco Nexus 93600CD-GX NX-OS |

10.4(5)M |

|

| Compute |

Cisco UCS X-Direct 9108-100G Fabric Interconnect |

4.3(5.250033) |

|

| Cisco UCS X215c M8 |

5.3(0.250001) |

|

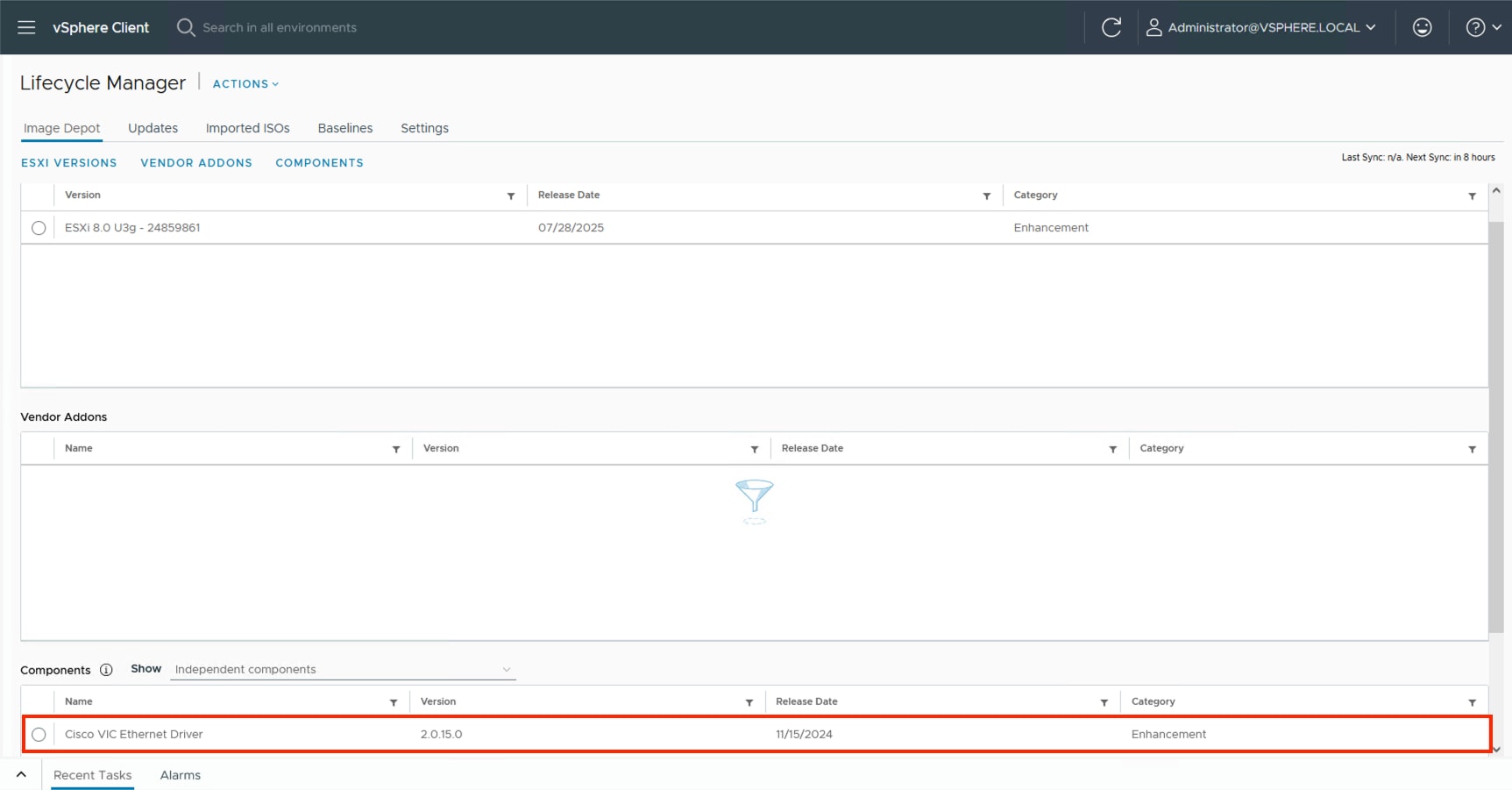

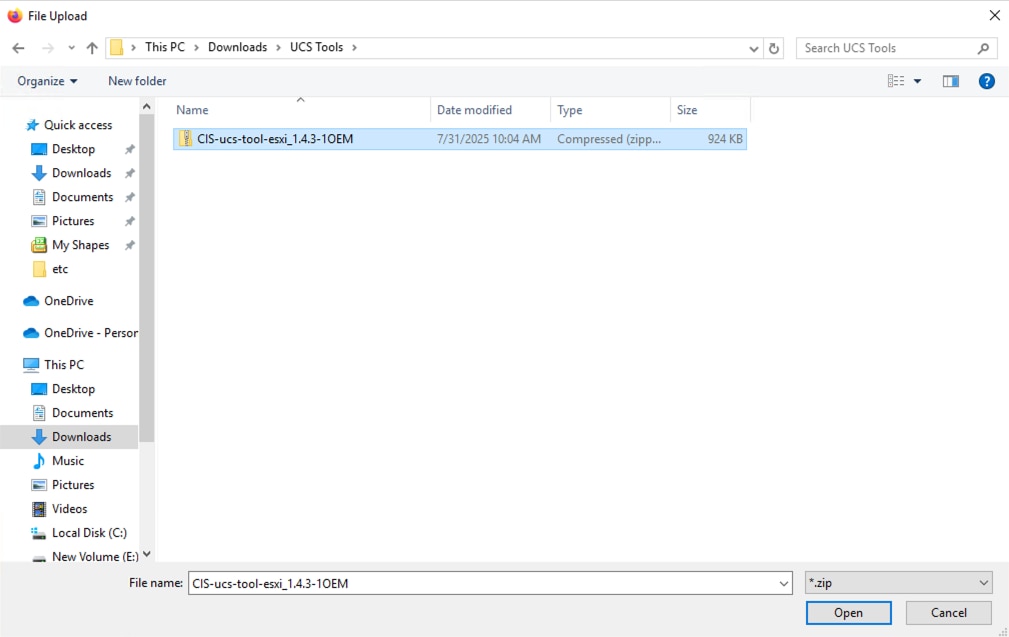

|

| Cisco UCS Tools |

1.4.3 |

|

|

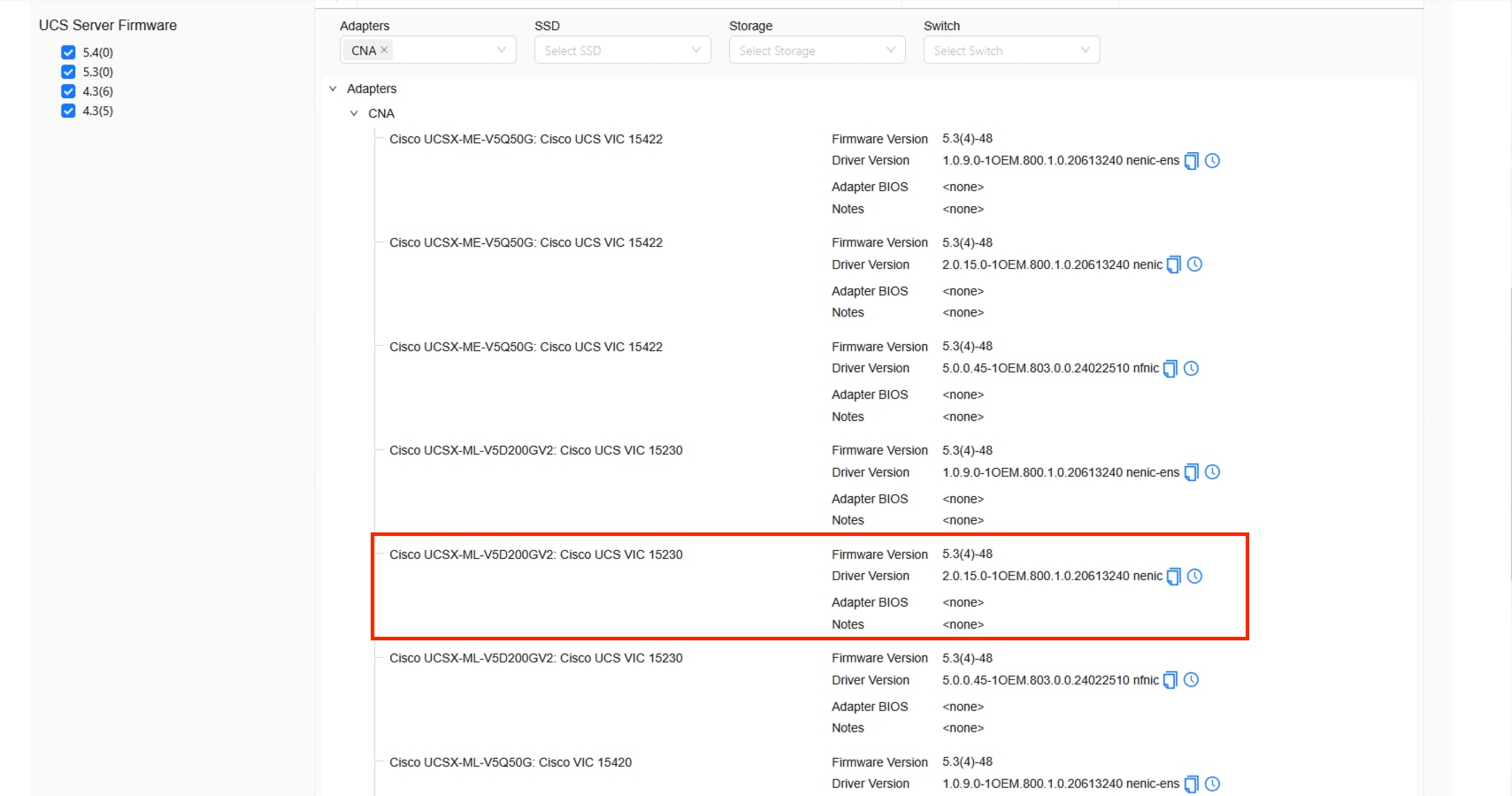

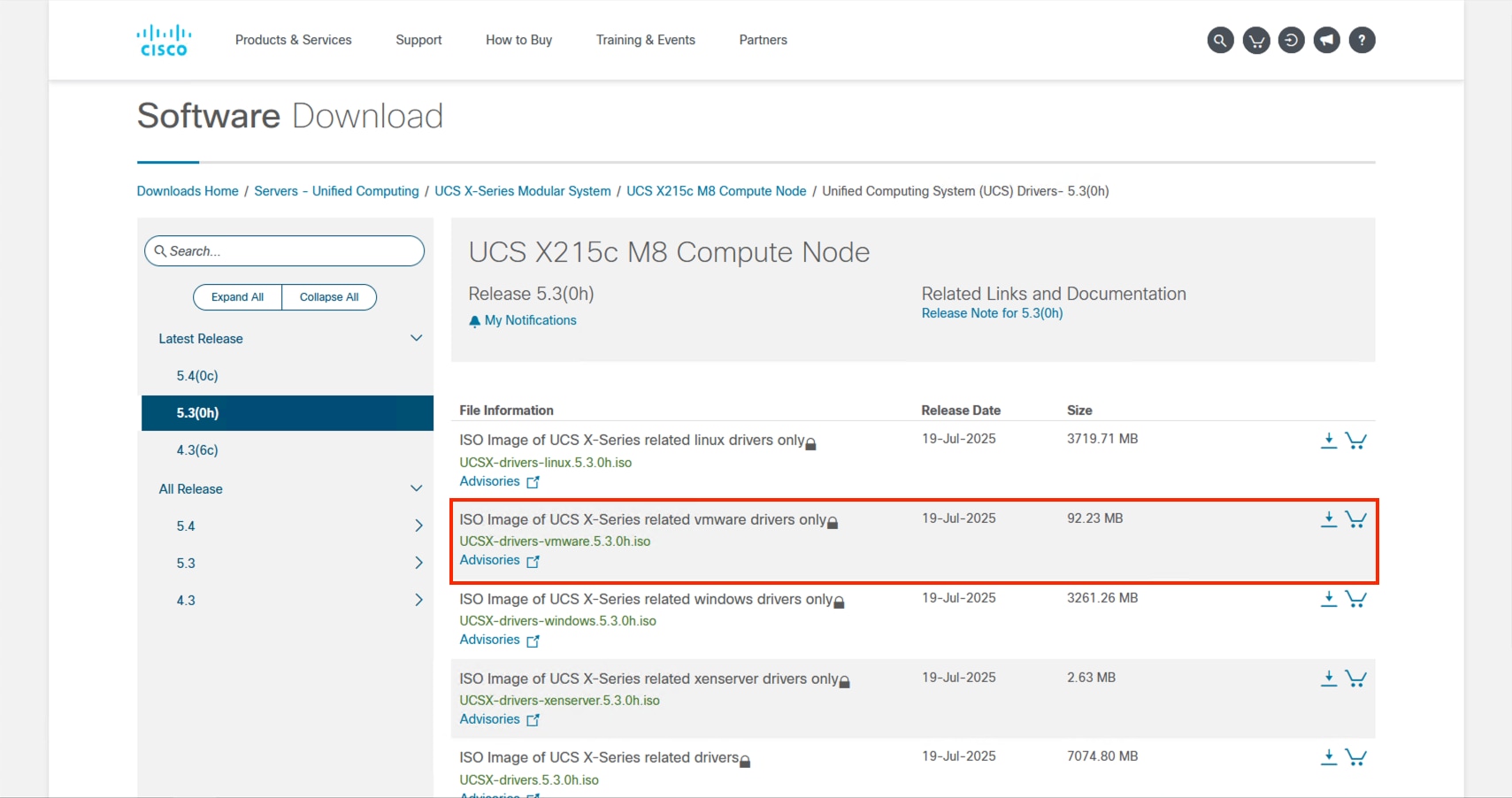

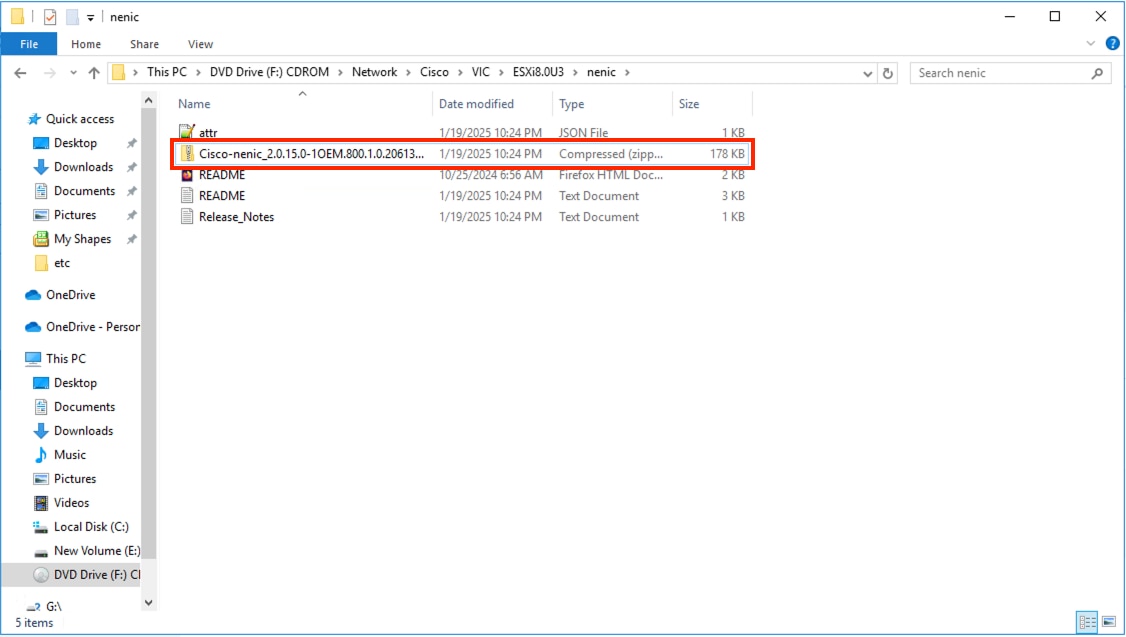

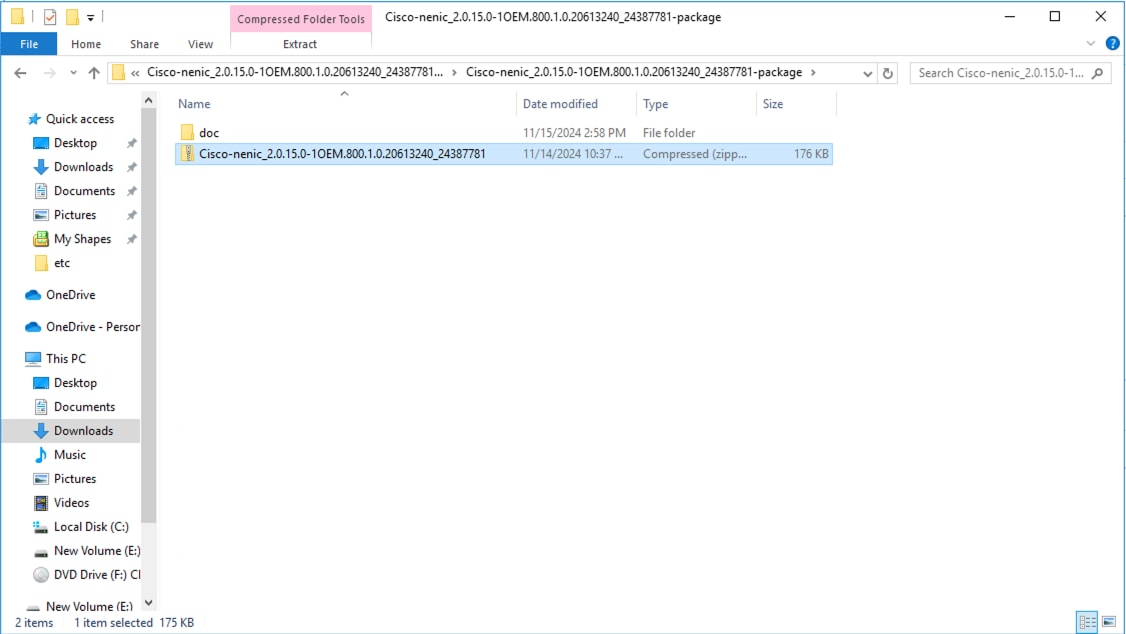

| VMware ESXi nenic Ethernet Driver |

2.0.15.0 |

|

|

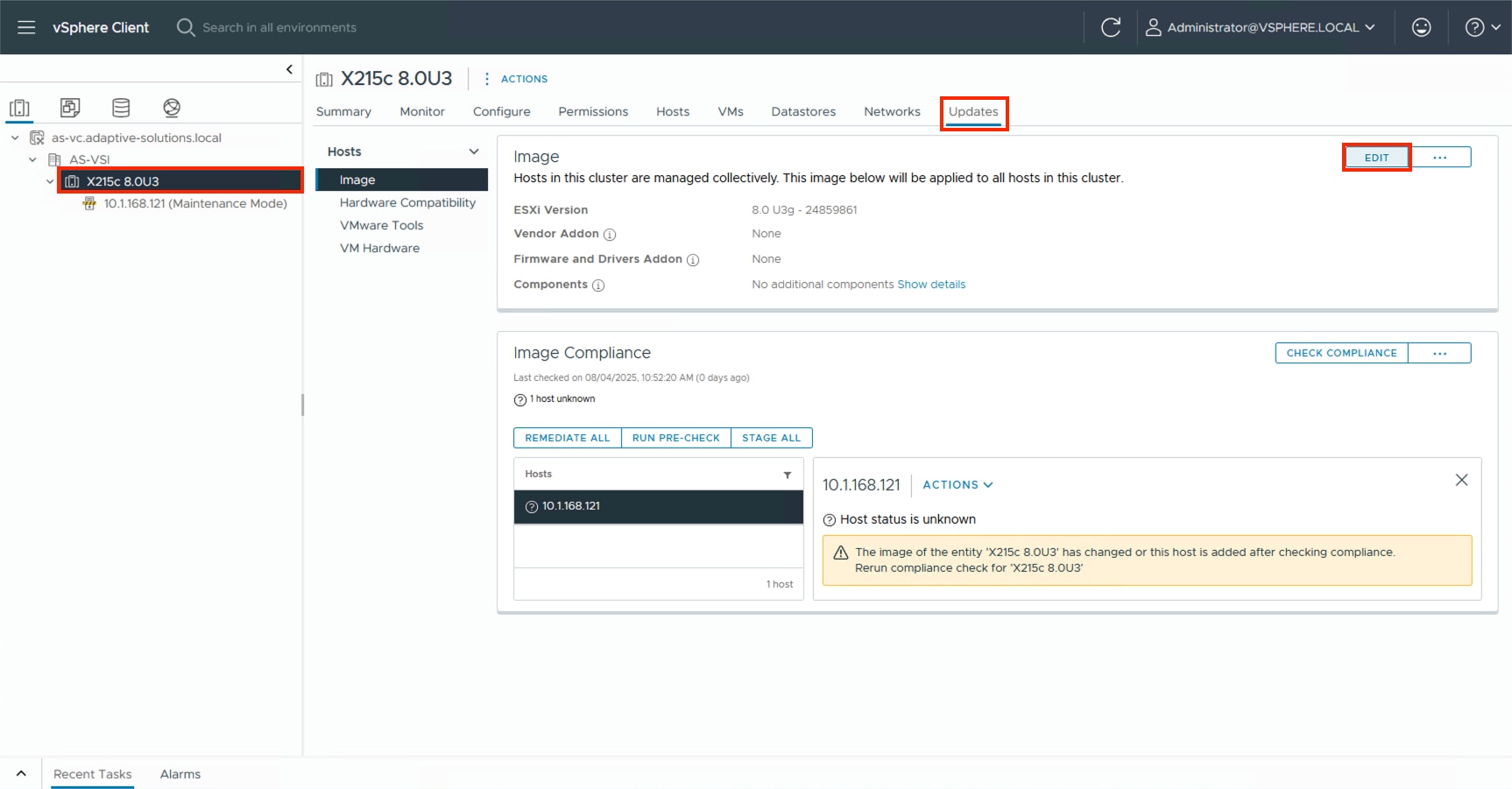

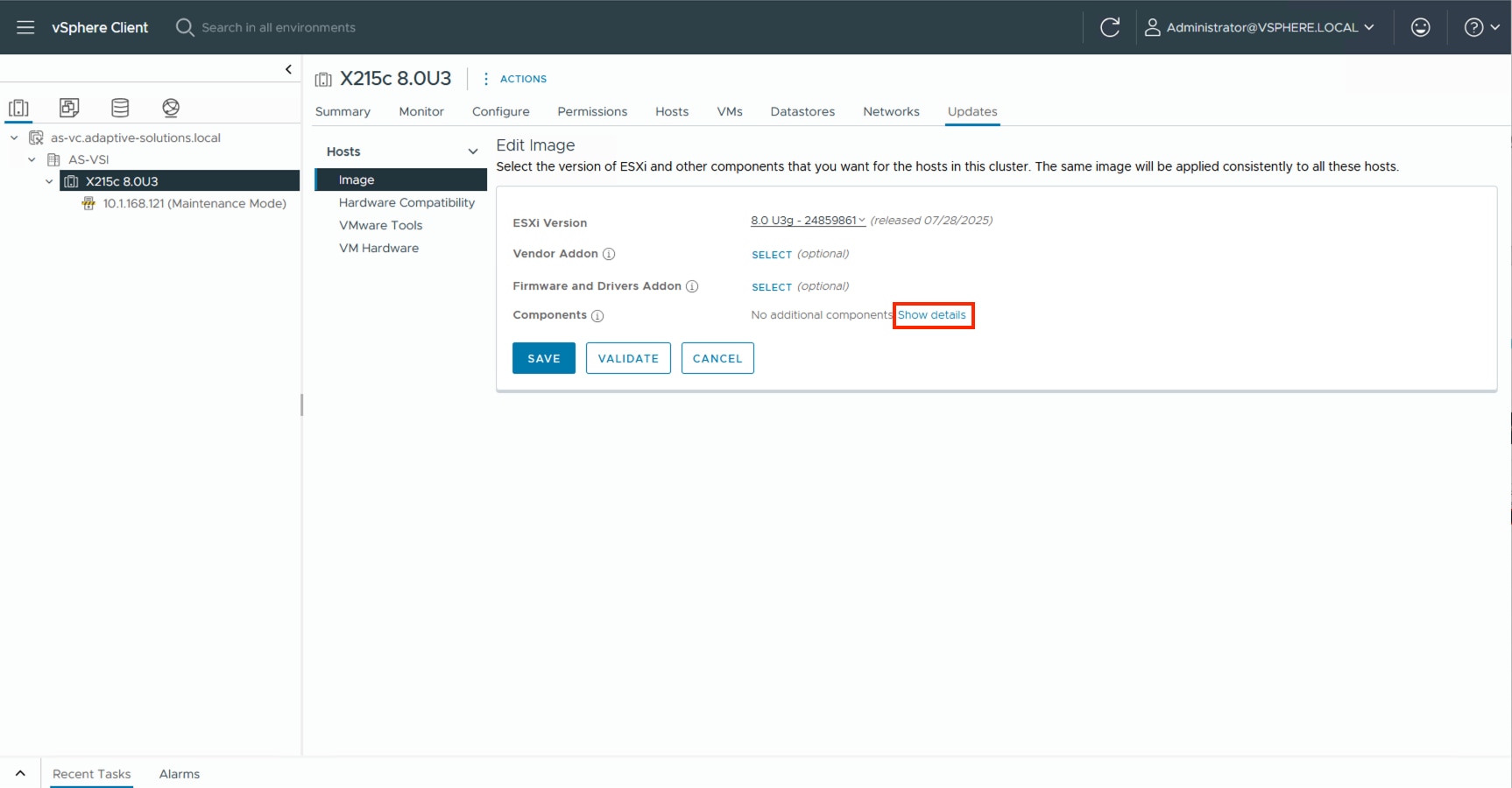

| VMware ESXi |

8.0 Update 3g |

Build 24859861 |

|

| VMware vCenter Appliance |

8.0 Update 3e |

Build 24674346 |

|

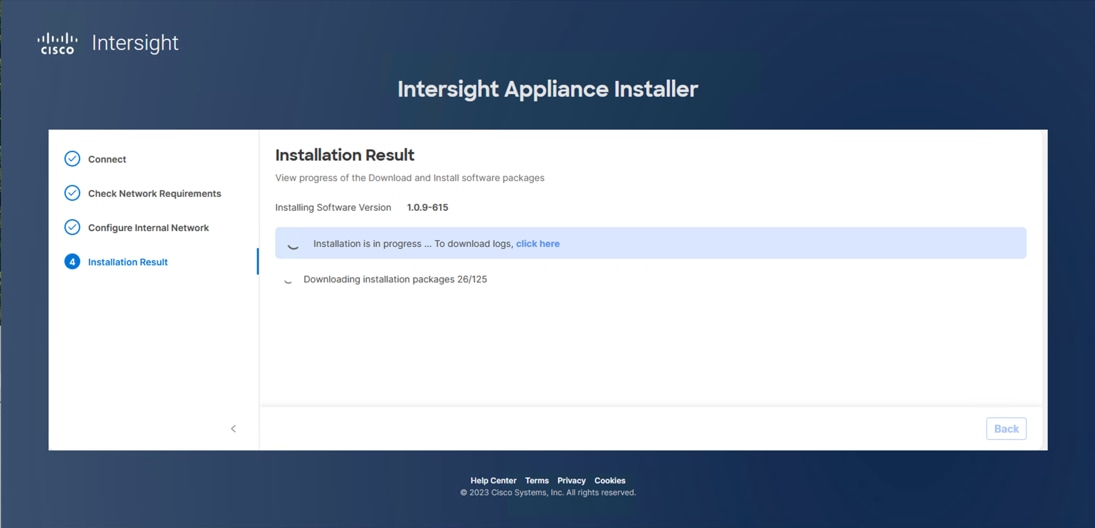

| Cisco Intersight Assist Appliance |

1.1.2-0 |

1.1.2-0 initially installed; will be automatically upgraded as new releases become available. |

|

| Storage |

Hitachi VSP One Block |

SVOS 10.4.0 A3-04-02-40/00 |

|

| Hitachi Vantara VSP One Block Storage Modules for Red Hat Ansible |

VSP One Block Storage Modules 3.5 Red Hat Enterprise Linux 8.10 Ansible-Core 2.16.3 |

|

Table 2 lists the VLANs configured in the environment and details their usage.

| VLAN ID |

Name |

Usage |

IP Subnet used in this deployment |

| 2 |

Native-VLAN |

Use VLAN 2 as the native VLAN instead of the default VLAN (1). |

|

| 19 |

OOB-MGMT-VLAN |

Out-of-band management VLAN to connect management ports for various devices. |

192.168.168.0/24; GW: 192.168.168.254** |

| 41 |

NVMe-TCP-A |

Fabric A NVMe over TCP traffic |

10.0.41.0/24* |

| 42 |

NVMe-TCP-B |

Fabric B NVMe over TCP traffic |

10.0.42.0/24* |

| 119 |

IB-MGMT-VLAN |

In-band management VLAN used for all in-band management connectivity, for example, ESXi hosts, VM management, and others. |

10.1.168.0/24; GW: 10.1.168.254 |

| 1000 |

vMotion |

VMware vMotion traffic |

10.0.0.0/24* |

| 1100 |

VM-Traffic |

VM data traffic sourced from FI-A and FI-B |

10.1.100.0/24; GW: 10.1.100.254 |

| 1101 |

VM-Traffic-A |

VM data traffic sourced from FI-A |

10.1.101.0/24; GW: 10.1.101.254 |

| 1102 |

VM-Traffic-B |

VM data traffic sourced from FI-B |

10.1.101.0/24; GW: 10.1.101.254 |

* IP gateway is not required for these subnets as no routing is used.

** OB-MGMT-VLAN is not carried on the FI uplinks and will not be part of the VLAN Policy.

Table 3 lists the infrastructure VMs necessary for the VSI environment hosted on pre-existing management infrastructure.

| Virtual Machine Description |

VLAN |

IP Address |

| Cisco Intersight Assist |

119 |

10.1.168.99 |

| vCenter Server |

119 |

10.1.168.150 |

| Active Directory |

119 |

10.1.168.101 |

The information in this section is provided as a reference for cabling the physical equipment in the environment. This includes a diagram and tables for each layer of infrastructure, detailing the local and remote port locations.

Note: If you modify the validated architecture, see the Cisco Hardware Compatibility Matrix and the Hitachi Product Compatibility Guide for guidance.

This document assumes that the out-of-band management ports are plugged into an existing management infrastructure at the deployment site. These interfaces will be used in various configuration steps.

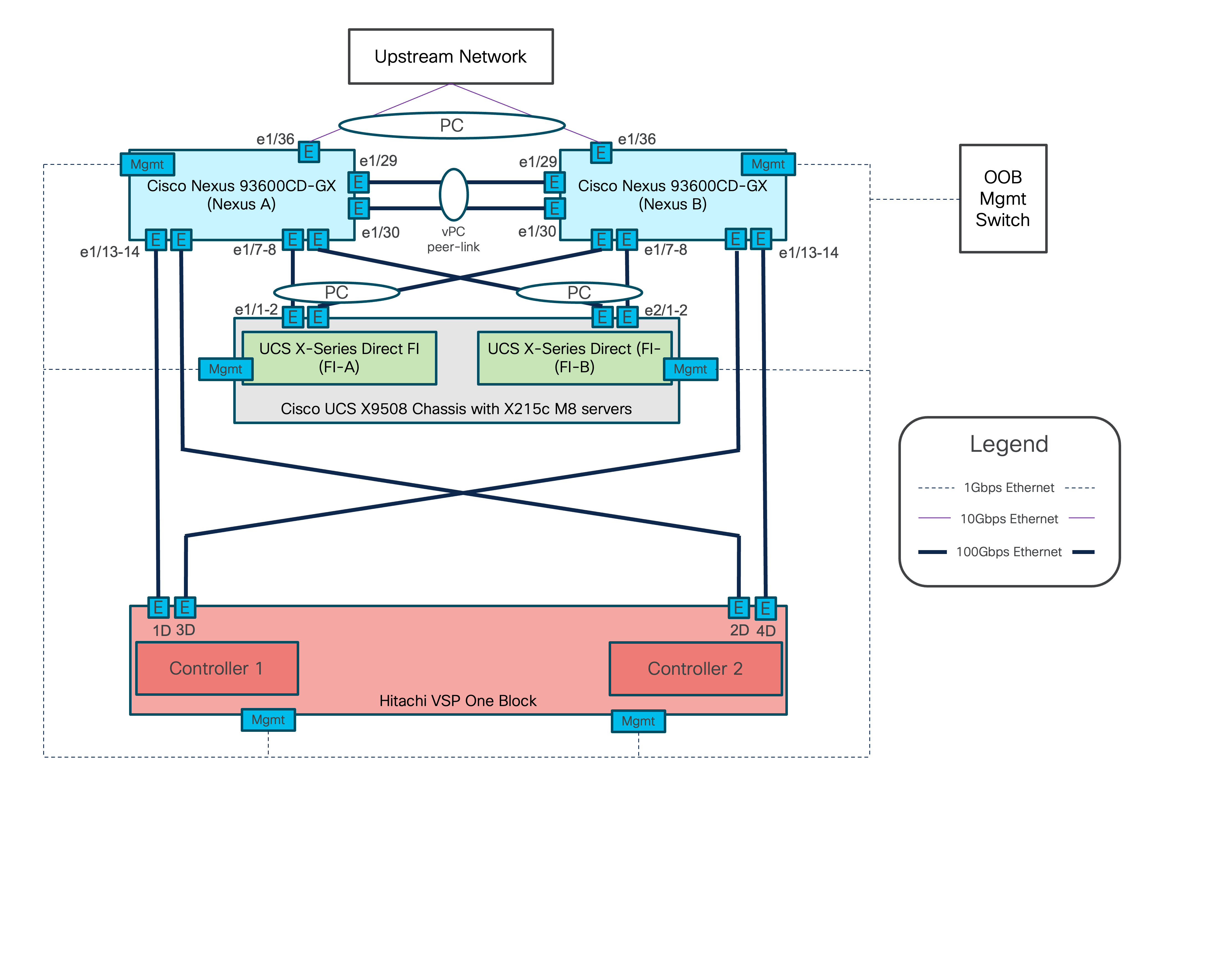

Figure 2 details the cable connections used in the validation lab for the Adaptive Solutions VSI topology based on the Cisco UCS X-Series Direct 9108-100G Fabric Interconnect and the Hitachi VSP One. 100G links connect the Cisco UCS Fabric Interconnects as port-channels to the Cisco Nexus 93600CD-GX switch pair’s vPCs, with 100G connections from the Nexus switches to the VSP connecting as unbundled ports. Upstream of the Nexus switches, 400G uplink connections are possible for this model, but are not present in this design.

Additional 1Gb management connections are required for an out-of-band network switch that sits apart from the Adaptive Solutions infrastructure. Each Cisco UCS fabric interconnect and Cisco Nexus switch is connected to the out-of-band network switch, and the VSP has two controllers. Each VSP controller has a connection to the out-of-band network switch. Layer 3 network connectivity is required between the Out-of-Band (OOB) and In-Band (IB) management subnets.

Tables 4 through 9 list the specifics of the connections for each component.

Table 4. Cisco Nexus 93600CD-GX A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 93600CD-GX A |

1/7 |

QSFP-100G-AOC2M |

Cisco UCS X-Series Direct FI A |

1/7 |

| 1/8 |

QSFP-100G-AOC2M |

Cisco UCS X-Series Direct FI B |

1/7 |

|

| 1/13 |

QSFP-100G-SR4 |

Hitachi VSP One Block |

CL1-D |

|

| 1/14 |

QSFP-100G-SR4 |

Hitachi VSP One Block |

CL2-D |

|

| 1/29 |

QSFP-100G-AOC1M |

Cisco Nexus 93600CD-GX B |

1/29 |

|

| 1/30 |

QSFP-100G-AOC1M |

Cisco Nexus 93600CD-GX B |

1/30 |

|

| 1/36 |

10Gbase-SR |

Upstream Network |

|

|

| Mgmt |

Cat 5 |

Management Switch |

|

Table 5. Cisco Nexus 93600CD-GX B Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 93600CD-GX B

|

1/7 |

QSFP-100G-AOC2M |

Cisco UCS X-Series Direct FI A |

1/8 |

| 1/8 |

QSFP-100G-AOC2M |

Cisco UCS X-Series Direct FI B |

1/8 |

|

| 1/13 |

QSFP-100G-SR4 |

Hitachi VSP One Block |

CL3-D |

|

| 1/14 |

QSFP-100G-SR4 |

Hitachi VSP One Block |

CL4-D |

|

| 1/29 |

QSFP-100G-AOC1M |

Cisco Nexus 93600CD-GX A |

1/29 |

|

| 1/30 |

QSFP-100G-AOC1M |

Cisco Nexus 93600CD-GX A |

1/30 |

|

| 1/36 |

10Gbase-SR |

Upstream Network |

|

|

| Mgmt |

Cat 5 |

Management Switch |

|

Table 6. Cisco UCS X-Series Direct Fabric Interconnect A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS X-Series Direct FI A

|

1/7 |

QSFP-100G-AOC2M |

Cisco Nexus 93600CD-GX A |

1/7 |

| 1/8 |

QSFP-100G-AOC2M |

Cisco Nexus 93600CD-GX B |

1/7 |

|

| Mgmt |

Cat 5 |

Management Switch |

|

Table 7. Cisco UCS X-Series Direct Fabric Interconnect B Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS X-Series Direct FI B

|

1/1 |

QSFP-100G-AOC2M |

Cisco Nexus 93600CD-GX A |

1/8 |

| 1/2 |

QSFP-100G-AOC2M |

Cisco Nexus 93600CD-GX B |

1/8 |

|

| Mgmt |

Cat 5 |

Management Switch |

|

Table 8. Hitachi VSP One Block Controller 1

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Hitachi VSP One Block Controller 1

|

1D |

FTLF8564D1BCW |

Cisco Nexus 93600CD-GX A |

1/13 |

| 3D |

FTLF8564D1BCW |

Cisco Nexus 93600CD-GX B |

1/13 |

|

| Mgmt |

Cat 5 |

Management Switch |

|

Table 9. Hitachi VSP One Block Controller 2

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Hitachi VSP One Block Controller 2

|

4D |

FTLF8564D1BCW |

Cisco Nexus 93600CD-GX A |

1/14 |

| 2D |

FTLF8564D1BCW |

Cisco Nexus 93600CD-GX B |

1/14 |

|

| Mgmt |

Cat 5 |

Management Switch |

|

The cables and transceivers used in the validated environment are examples of supported connections and are not prescriptive to the solution. They demonstrate a set of valid options for this design. For additional supported transceivers and cable types, see the specific product specification sheets and the Cisco Optics-to-Device Compatibility Matrix here: https://tmgmatrix.cisco.com/.

Cisco Nexus LAN Switch Configuration

This chapter contains the following:

● Cisco Nexus Switch Configuration

This chapter provides detailed procedures for configuring the Cisco Nexus 93600CD-GX switches for use in the LAN switching of the Adaptive Solutions Virtual Server Infrastructure.

Follow the physical connectivity guidelines for infrastructure cabling as explained in the Adaptive Solutions Cabling section.

The following procedures describe this basic configuration of the Cisco Nexus switches for use in the Adaptive Solutions VSI. These procedures assume the use of Cisco Nexus 9000 10.4(5)M, the Cisco suggested Nexus switch release at the time of this validation. If not at version 10.4(5)M, the switches can be upgraded after initial configuration by following the Cisco Nexus 9000 Series NX-OS Software Upgrade and Downgrade Guide, Release 10.4(x).

Procedure 1. Set up Initial Configuration

To set up the initial configuration for the Cisco Nexus A switch on <nexus-A-hostname>, follow these steps from a serial console:

Step 1. Configure the switch:

Note: On initial boot, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

Abort Power On Auto Provisioning [yes - continue with normal setup, skip - bypass password and basic configuration, no - continue with Power On Auto Provisioning] (yes/skip/no)[no]: yes

Disabling POAP.......Disabling POAP

poap: Rolling back, please wait... (This may take 5-15 minutes)

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name: <nexus-A-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address: <nexus-A-out_of_band_mgmt0-ip>

Mgmt0 IPv4 netmask: <nexus-A-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway: <nexus-A-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [1024]: Enter

Configure the ntp server? (yes/no) [n]: Enter

Configure default interface layer (L3/L2) [L2]: Enter

Configure default switchport interface state (shut/noshut) [noshut]: shut

Enter basic FC configurations (yes/no) [n]: n

Configure CoPP system profile (strict/moderate/lenient/dense) [strict]: Enter

Would you like to edit the configuration? (yes/no) [n]: Enter

Step 2. Review the configuration summary before enabling the configuration:

Use this configuration and save it? (yes/no) [y]: Enter

Step 3. To set up the initial configuration of the Cisco Nexus B switch, repeat steps 1 and 2 with the appropriate host and IP address information.

Cisco Nexus Switch Configuration

To manually configure the Nexus switches, follow these steps:

Procedure 1. Enable Nexus Features

Cisco Nexus A and Cisco Nexus B. Perform these steps on both switches.

Step 1. Log in as admin using ssh.

Step 2. Run the following commands to enable Nexus features:

config t

feature nxapi

feature hsrp

feature udld

feature interface-vlan

feature lacp

feature vpc

feature lldp

Procedure 2. Set Global Configurations

Cisco Nexus A and Cisco Nexus B

Note: Perform these steps on both switches.

Step 1. Run the following commands to set global configurations:

spanning-tree port type network default

spanning-tree port type edge bpduguard default

spanning-tree port type edge bpdufilter default

port-channel load-balance src-dst l4port

ip name-server <dns-server-1> <dns-server-2>

ip domain-name <dns-domain-name>

ip domain-lookup

ntp server <global-ntp-server-ip> use-vrf management

ntp master 3

clock timezone <timezone> <hour-offset> <minute-offset>

For example: clock timezone EST -5 0:

clock summer-time <timezone> <start-week> <start-day> <start-month> <start-time> <end-week> <end-day> <end-month> <end-time> <offset-minutes>

For example: clock summer-time EDT 2 Sunday March 02:00 1 Sunday November 02:00 60:

copy run start

ip route 0.0.0.0/0 <oob-mgmt-vlan-gateway>

Note: For more information on configuring the timezone and daylight savings time or summer time, see Cisco Nexus 9000 Series NX-OS Fundamentals Configuration Guide, Release 10.4(x).

Procedure 3. Create VLANs

Cisco Nexus A and Cisco Nexus B

Note: Perform these steps on both switches.

Step 1. From the global configuration mode, run the following commands:

vlan <native-vlan-id for example, 2>

name native-vlan

vlan <nvme-tcp-a-vlan-id for example, 41> #Fab A Only

name nvme-tcp-a

vlan <nvme-tcp-b-vlan-id for example, 42> #Fab B Only

name nvme-tcp-b

vlan <ib-mgmt-vlan-id for example, 119>

name ib-mgmt

vlan <vmotion-vlan-id for example, 1000>

name vmotion

vlan <vm-traffic-vlan-id for example, 1100>

name vm-traffic

vlan <vm-traffic-a-vlan-id for example, 1101>

name vm-traffic-a

vlan <vm-traffic-b-vlan-id for example, 1102>

name vm-traffic-b

Procedure 4. Add NTP Distribution Interface in IB-MGMT Subnet (Optional)

This procedure will configure each IB-MGMT SVI to be available for redistribution of the NTP service to optionally configured application networks that might not be set up to reach an upstream NTP source.

Cisco Nexus A

Step 1. From the global configuration mode, run the following commands:

interface Vlan<ib-mgmt-vlan-id>

ip address <switch-a-ntp-ip>/<ib-mgmt-vlan-netmask-length>

no shutdown

exit

ntp peer <nexus-B-mgmt0-ip> use-vrf management

Cisco Nexus B

Step 1. From the global configuration mode, run the following commands:

interface Vlan<ib-mgmt-vlan-id>

ip address <switch-b-ntp-ip>/<ib-mgmt-vlan-netmask-length>

no shutdown

exit

ntp peer <nexus-A-mgmt0-ip> use-vrf management

Procedure 5. Create Application Network Interfaces (Optional)

This procedure creates Switched Virtual Interfaces (SVI) and Hot Standby Router Protocol (HSRP) configurations for each of these SVIs. The HSRP relationship allows an active/standby relationship between the two Nexus switches for these interfaces. The IB-Mgmt network is implemented for routing upstream of the Nexus switches, and these application networks could similarly be handled.

Cisco Nexus A

Step 1. Run the following commands:

int vlan 1100

no shutdown

ip address <<var_nexus_A_App-1100>>/24

hsrp 100

preempt

ip <<var_nexus_App-1100_vip>>

Note: When HSRP priority is not set, it defaults to 100. Alternating SVIs within a switch are set to a number higher than 105 to set those SVIs to default to be the standby router for that network. Be careful when the VLAN SVI for one switch is set without a priority (defaulting to 100), the partner switch is set to a priority with a value other than 100.

Cisco Nexus B

Step 1. Run the following commands:

int vlan 1100

no shutdown

ip address <<var_nexus_B_App-1100>>/24

hsrp 100

preempt

priority 105

ip <<var_nexus_App-1100_vip>>

Procedure 6. Create Port Channels

Cisco Nexus A

Note: For fibre optic connections to Cisco UCS systems (AOC or SFP-based), entering udld enable will result in a message stating that this command is not applicable to fiber ports. This message is expected. This command will enable UDLD on twinax connections.

Step 1. From the global configuration mode, run the following commands:

interface Po10

description vPC peer-link

!

interface Eth1/29

description <nexus-b-hostname>:Eth1/29

!

interface Eth1/30

description <nexus-b-hostname>:Eth1/30

!

interface Eth1/29-30

channel-group 10 mode active

no shutdown

!

! UCS Connectivity

!

interface Po17

description <ucs-domainname>-a

!

interface Eth1/7

udld enable

description <ucs-domainname>-a:Eth1/7

channel-group 17 mode active

no shutdown

!

interface Po18

description <ucs-domainname>-b

!

interface Eth1/8

udld enable

description <ucs-domainname>-b:Eth1/7

channel-group 18 mode active

no shutdown

!

interface Eth1/13

description <vsp>-CL1-D 100G

switchport access vlan <nvme-tcp-a-vlan>

mtu 9216

no shutdown

!

interface Eth1/14

description <vsp>-CL2-D 100G

switchport access vlan <nvme-tcp-a-vlan>

mtu 9216

no shutdown

!

! Uplink Switch Connectivity

!

interface Po136

description MGMT-Uplink

!

interface Eth1/36

description <mgmt-uplink-switch-a-hostname>:<port>

channel-group 136 mode active

no shutdown

exit

copy run start

Cisco Nexus B

Note: For fibre optic connections to Cisco UCS systems (AOC or SFP-based), entering “udld enable” will result in a message stating that this command is not applicable to fiber ports. This message is expected. This command will enable UDLD on twinax copper connections.

Step 1. From the global configuration mode, run the following commands:

interface Po10

description vPC peer-link

!

interface Eth1/29

description <nexus-a-hostname>:Eth1/29

!

interface Eth1/30

description <nexus-a-hostname>:Eth1/30

!

interface Eth1/29-30

channel-group 10 mode active

no shutdown

!

! UCS Connectivity

!

interface Po17

description <ucs-domainname>-a

!

interface Eth1/7

udld enable

description <ucs-domainname>-a:Eth1/8

channel-group 17 mode active

no shutdown

!

interface Po18

description <ucs-domainname>-b

!

interface Eth1/8

udld enable

description <ucs-domainname>-b:Eth1/8

channel-group 18 mode active

no shutdown

!

interface Eth1/13

description <vsp>-CL3-D 100G

switchport access vlan <nvme-tcp-b-vlan>

mtu 9216

no shutdown

!

interface Eth1/14

description <vsp>-CL4-D 100G

switch port access vlan <nvme-tcp-b-vlan>

mtu 9216

no shutdown

!

! Uplink Switch Connectivity

!

interface Po136

description MGMT-Uplink

!

interface Eth1/36

description <mgmt-uplink-switch-a-hostname>:<port>

channel-group 136 mode active

no shutdown

exit

copy run start

Procedure 7. Configure Port Channel Parameters

Cisco Nexus A and Cisco Nexus B. Perform these steps on both switches.

Step 1. From the global configuration mode, run the following commands to set up the VPC Peer-Link port-channel:

interface Po10

switchport mode trunk

switchport trunk native vlan <native-vlan-id>

switchport trunk allowed vlan <nvme-tcp-a-vlan>,<nvme-tcp-b-vlan>,<ib-mgmt-vlan >,<vmotion-vlan>,<vm-traffic-vlan>,<vm-traffic-a-vlan>,<vm-traffic-b-vlan>

spanning-tree port type network

speed 100000

duplex full

Step 2. From the global configuration mode, run the following commands to set up port-channels for UCS FI X-Direct connectivity:

interface Po17

switchport mode trunk

switchport trunk native vlan <native-vlan-id>

switchport trunk allowed vlan <nvme-tcp-a-vlan>,<ib-mgmt-vlan>,<vmotion-vlan>,<vm-traffic-vlan>,<vm-traffic-a-vlan>

spanning-tree port type edge trunk

mtu 9216

!

interface Po18

switchport mode trunk

switchport trunk native vlan <native-vlan-id>

switchport trunk allowed vlan <nvme-tcp-b-vlan>,<ib-mgmt-vlan>,<vmotion-vlan>,<vm-traffic-vlan>,<vm-traffic-b-vlan>

spanning-tree port type edge trunk

mtu 9216

Step 3. From the global configuration mode, run the following commands to setup port-channels for connectivity to existing management switch(es):

interface Po136

switchport mode trunk

switchport trunk native vlan <native-vlan-id>

switchport trunk allowed vlan <ib-mgmt-vlan-id>

spanning-tree port type network

mtu 9216

!

exit

copy run start

Procedure 8. Configure Virtual Port Channels

Cisco Nexus A

Step 1. From the global configuration mode, run the following commands:

vpc domain <nexus-vpc-domain-id for example, 10>

role priority 10

peer-keepalive destination <nexus-B-mgmt0-ip> source <nexus-A-mgmt0-ip> vrf management

peer-switch

peer-gateway

auto-recovery

delay restore 150

ip arp synchronize

!

interface Po10

vpc peer-link

!

interface Po17

vpc 17

!

interface Po18

vpc 18

!

interface Po136

vpc 136

!

exit

copy run start

Cisco Nexus B

Step 1. From the global configuration mode, run the following commands:

vpc domain <nexus-vpc-domain-id for example, 10>

role priority 20

peer-keepalive destination <nexus-A-mgmt0-ip> source <nexus-B-mgmt0-ip> vrf management

peer-switch

peer-gateway

auto-recovery

delay restore 150

ip arp synchronize

!

interface Po10

vpc peer-link

!

interface Po17

vpc 11

!

interface Po18

vpc 12

!

interface Po136

vpc 136

!

exit

copy run start

Procedure 9. Verify Configuration

The Nexus configuration is now complete and can be verified with a number of commands including:

● show port-channel summary

● show vpc brief

● show interface brief

● show cdp neighbors

Cisco Intersight Managed Mode Configuration

This chapter contains the following:

● Configure Server Profile Template

● Cisco UCS IMM Setup Completion

● Tunneled KVM Setting within System

The Cisco Intersight platform is a management solution delivered as a service with embedded analytics for Cisco and third-party IT infrastructures. The Cisco Intersight managed mode (also referred to as Cisco IMM) is a new architecture that manages Cisco Unified Computing System (Cisco UCS) fabric interconnect–attached systems through a Redfish-based standard model. Cisco Intersight managed mode standardizes both policy and operation management for the Cisco UCS X215c M8 compute nodes used in this deployment guide.

Note: Cisco UCS C-Series M7 and M8 servers, connected and managed through Cisco UCS FIs, are also supported by IMM. For a complete list of supported platforms, go to: https://www.cisco.com/c/en/us/td/docs/unified_computing/Intersight/b_Intersight_Managed_Mode_Configuration_Guide/b_intersight_managed_mode_guide_chapter_01010.html

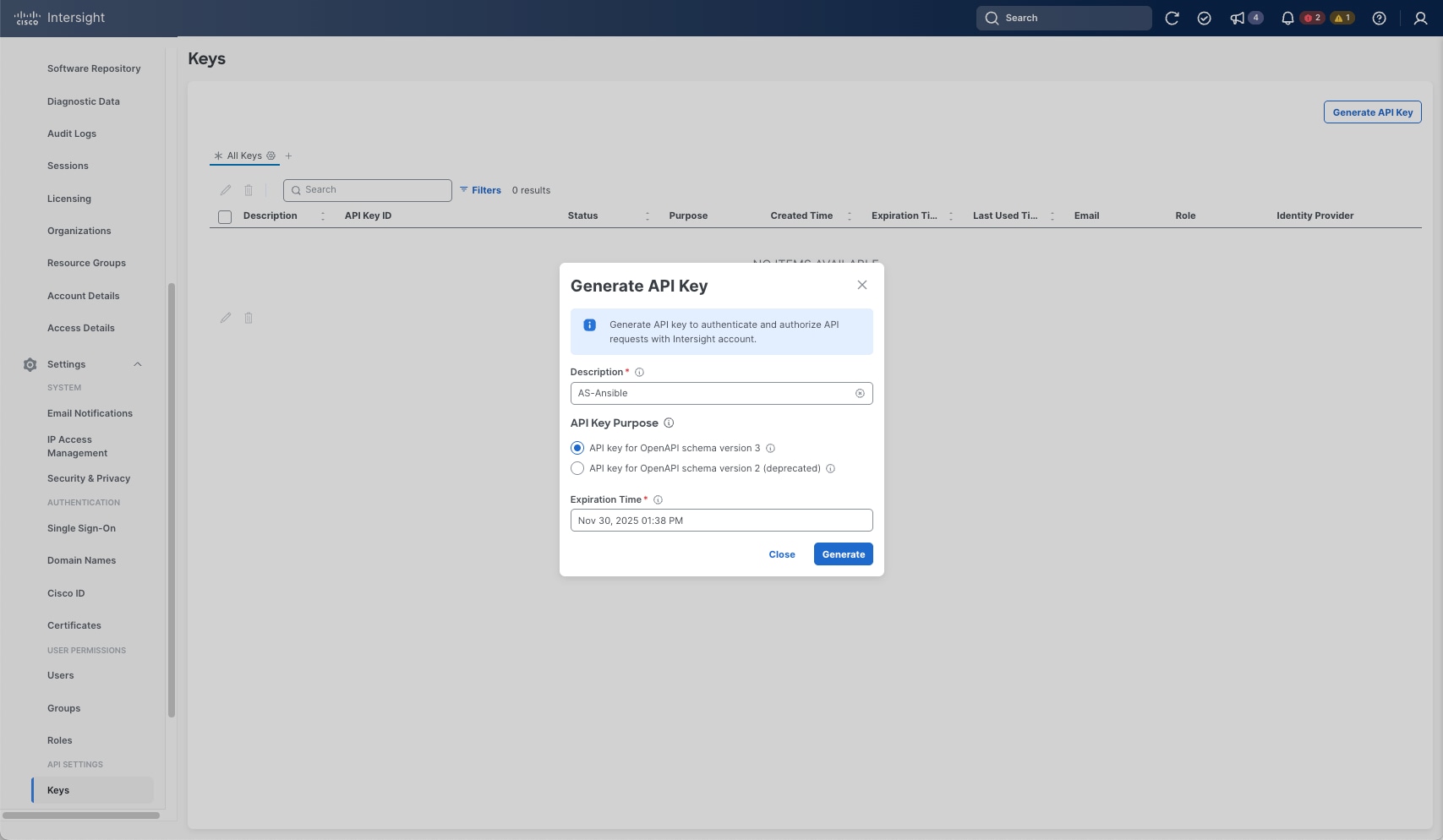

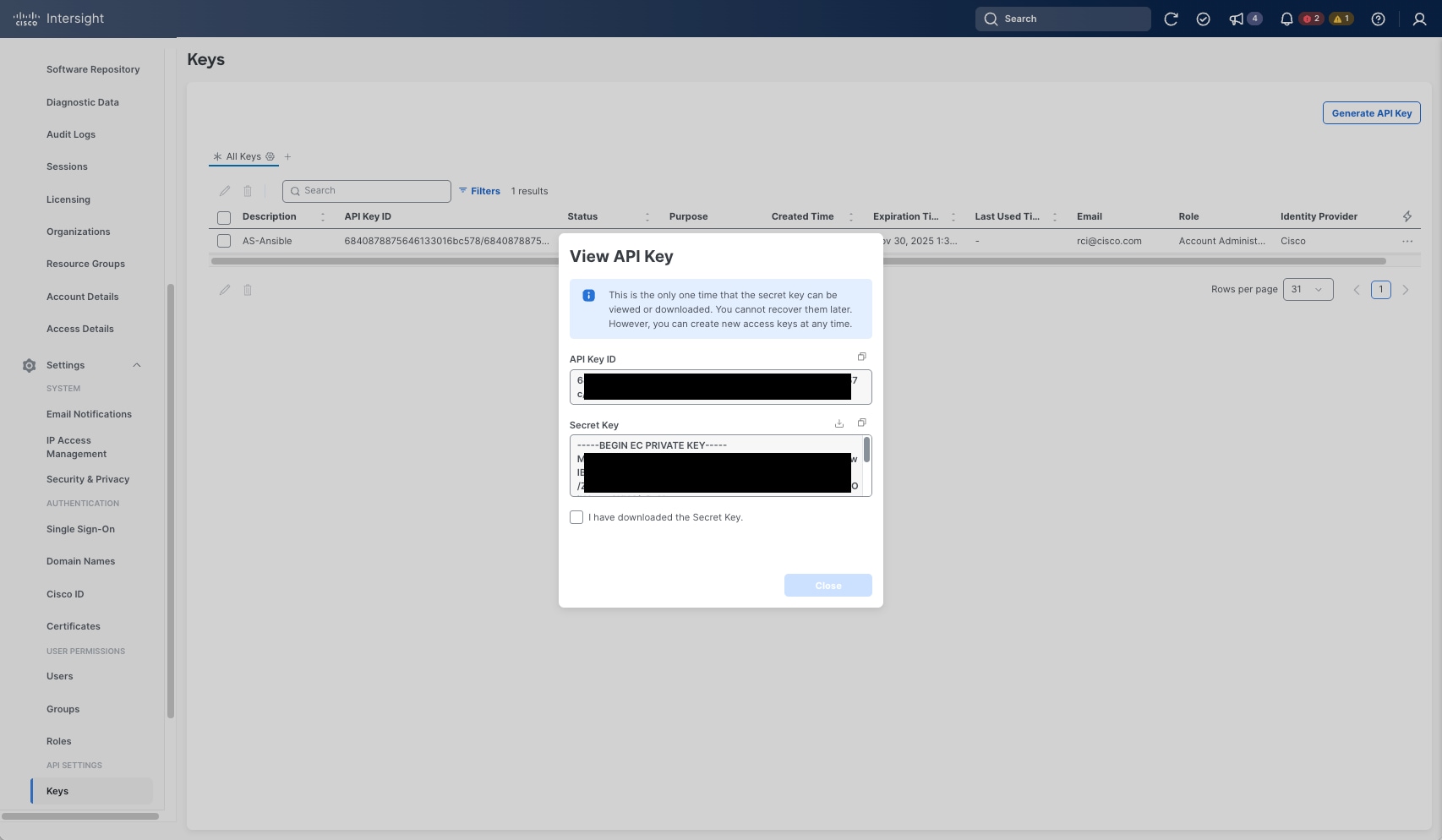

Procedure 1. Set Up Cisco Intersight Account

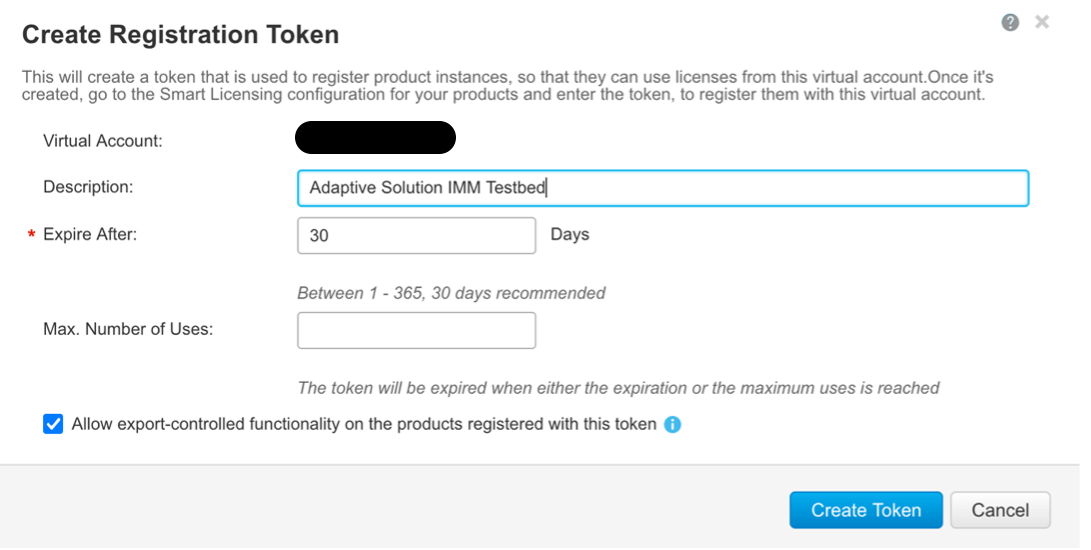

When setting up a new Cisco Intersight account (as explained in this document), the account must be enabled for Cisco Smart Software Licensing.

Note: Skip this procedure if starting out with a trial, or if a token has already been generated.

Step 1. Log into the Cisco Smart Licensing portal: https://software.cisco.com/software/smart-licensing/alerts.

Step 2. Verify that the correct virtual account is selected.

Step 3. Go to Inventory > General and generate a new token for product registration.

Step 4. Copy this newly created token.

Procedure 2. Setting Up Cisco Intersight Licensing

Step 1. Go to https://intersight.com and click Create an account if not using an existing account.

Step 2. Select the appropriate region for the account. Click Next.

Step 3. Read and accept the license agreement. Click Next.

Step 4. Provide an Account Name. Click Create.

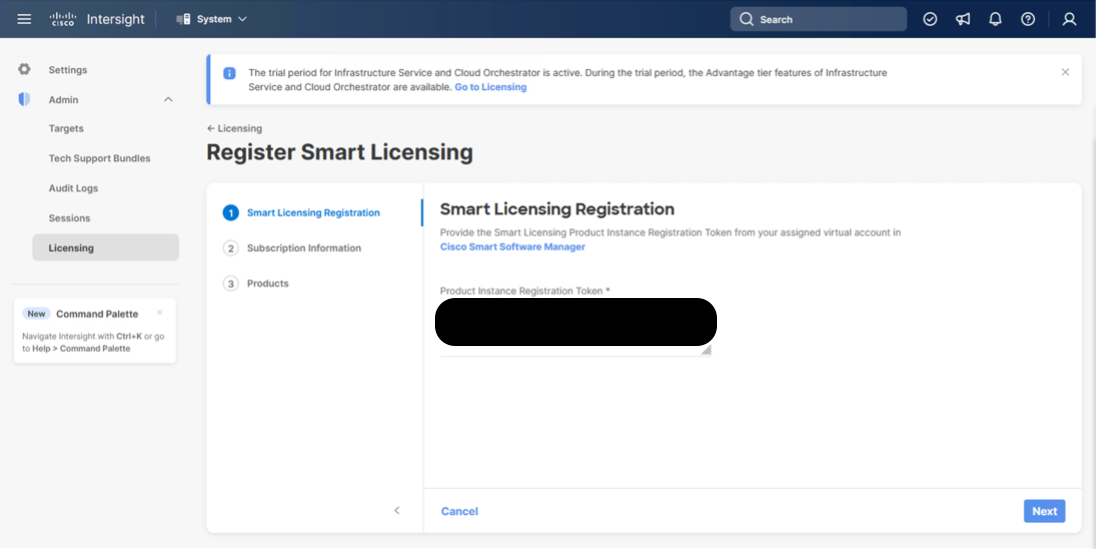

Step 5. Select to either Register Smart Licensing if that has been established or Start Trial.

Step 6. If registering, the Register Smart Licensing will take you to System > Admin > Licensing.

Step 7. Provide the copied token from the Cisco Smart Licensing Portal. Click Next.

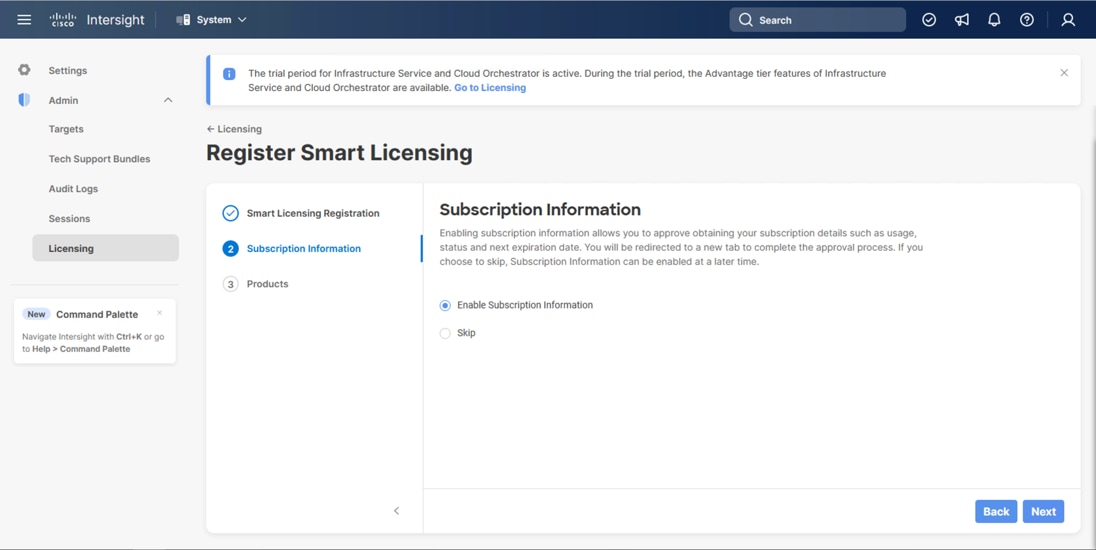

Step 8. Select Enable or Skip subscription information and click Next.

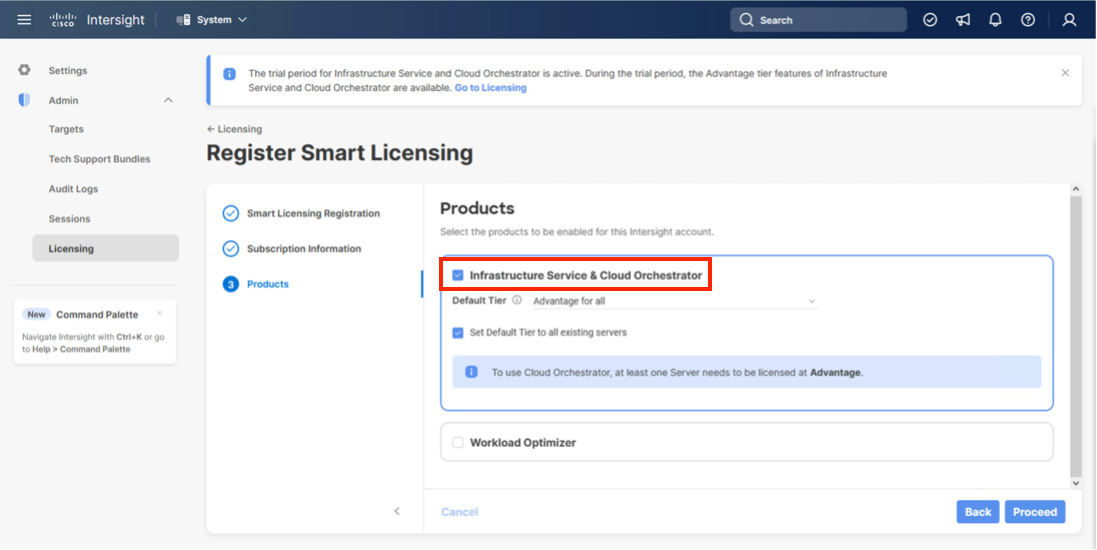

Step 9. Select the Infrastructure Service & Cloud Orchestrator option, adjust the default tier for licensing if needed, and if this should be used for existing servers, click Proceed. Click Confirm when asked to verify options.

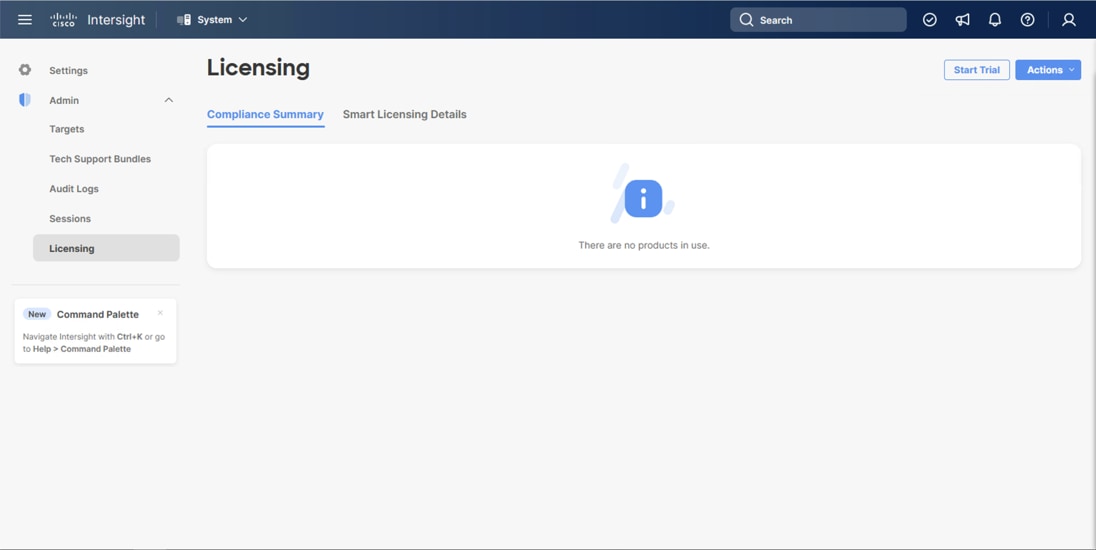

On successfully syncing the Smart Licensing, the following page displays:

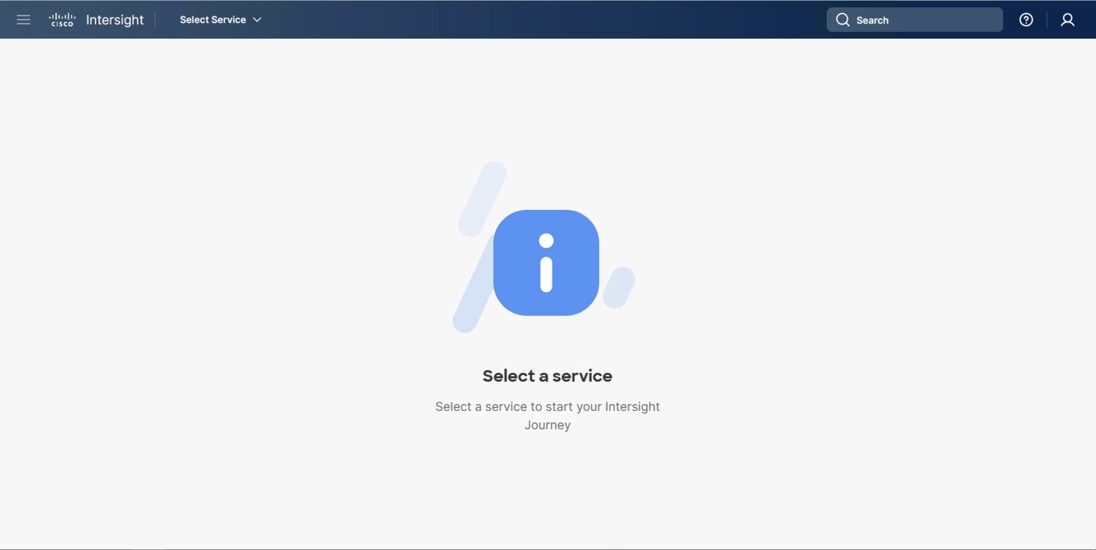

On successfully creating the Intersight account with trial licensing, the following page displays:

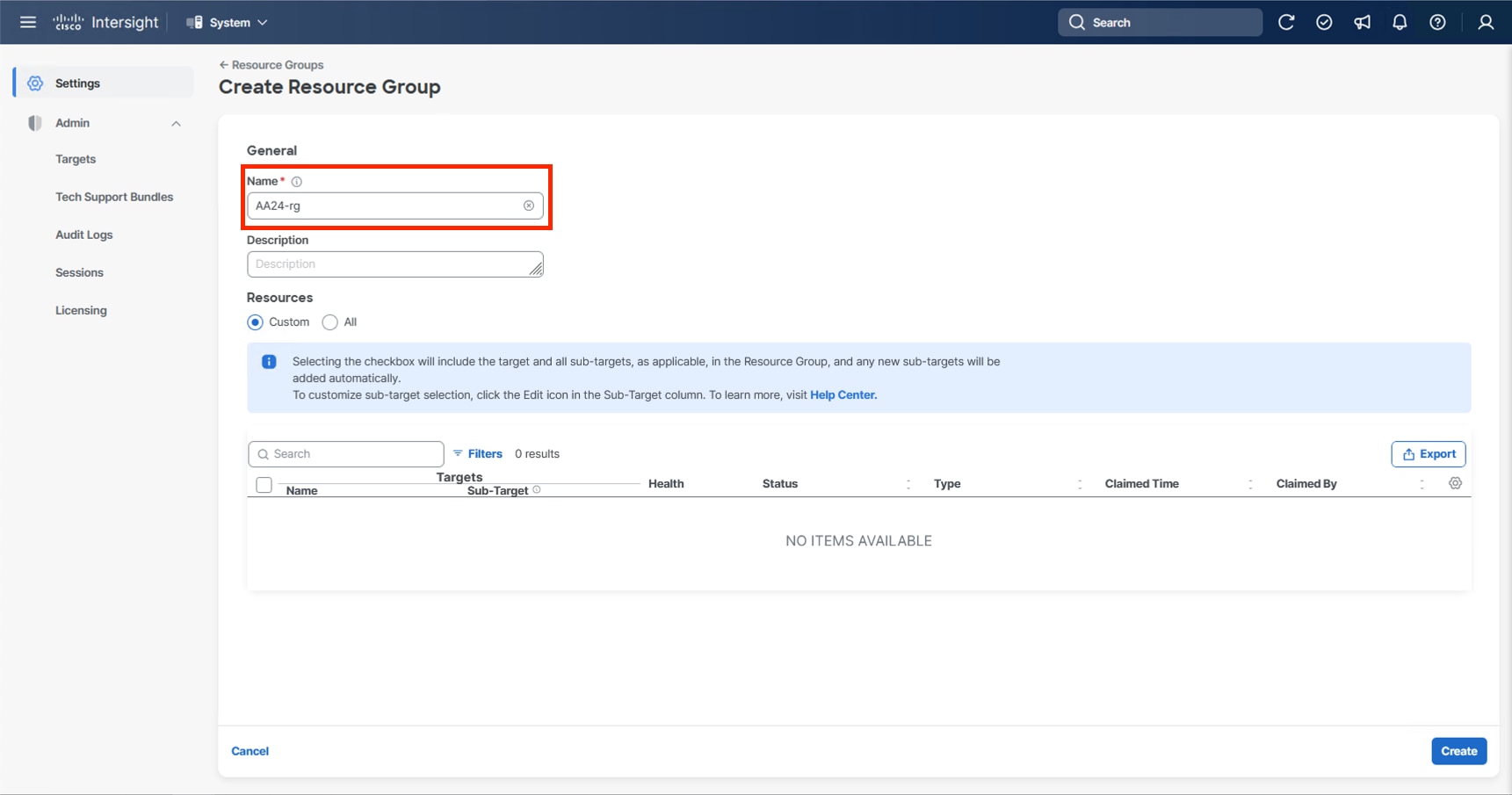

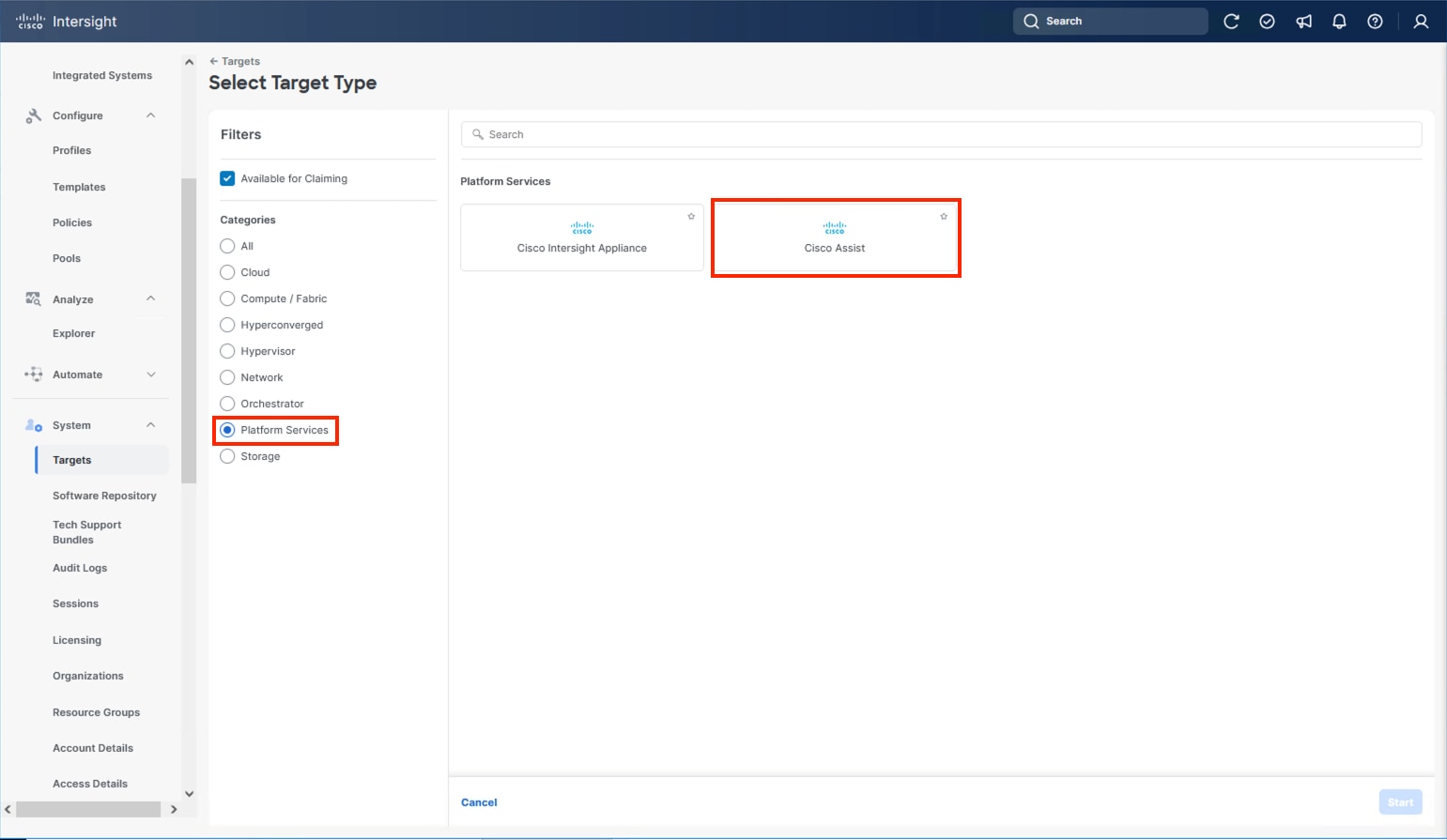

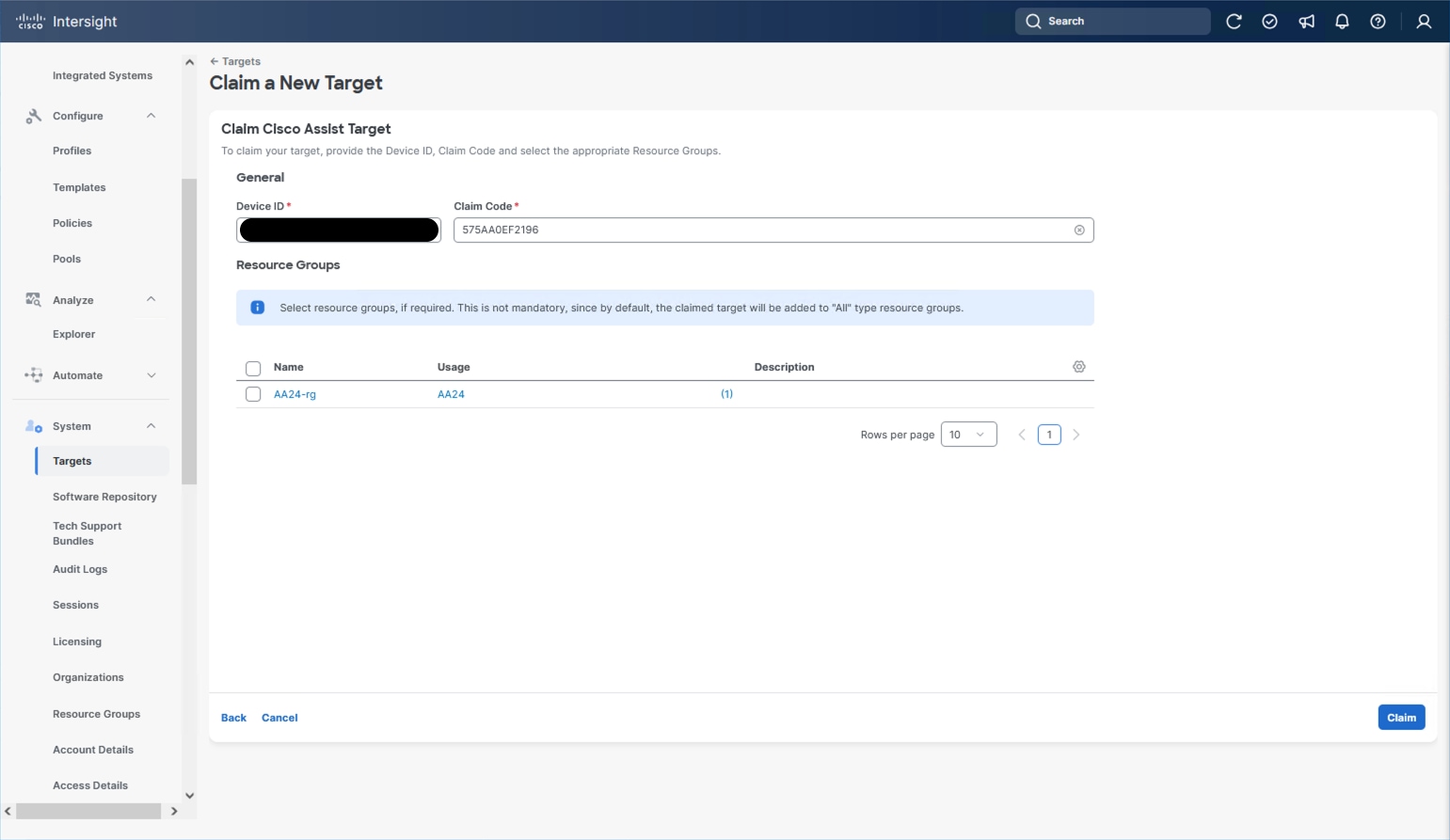

Procedure 3. Configure Cisco Intersight Resource Group

In this procedure, a Cisco Intersight resource group is created where resources such as targets will be logically grouped. In this deployment, a single resource group is created to host all the resources, but you can choose to create multiple resource groups for granular control of the resources.

Step 1. Log in to Cisco Intersight.

Step 2. At the top, select System. On the left, click Settings (the gear icon).

Step 3. Click Resource Groups in the middle panel.

Step 4. Click + Create Resource Group in the top-right corner.

Step 5. Provide a name for the Resource Group (for example, AA24-rg).

Step 6. Click Create.

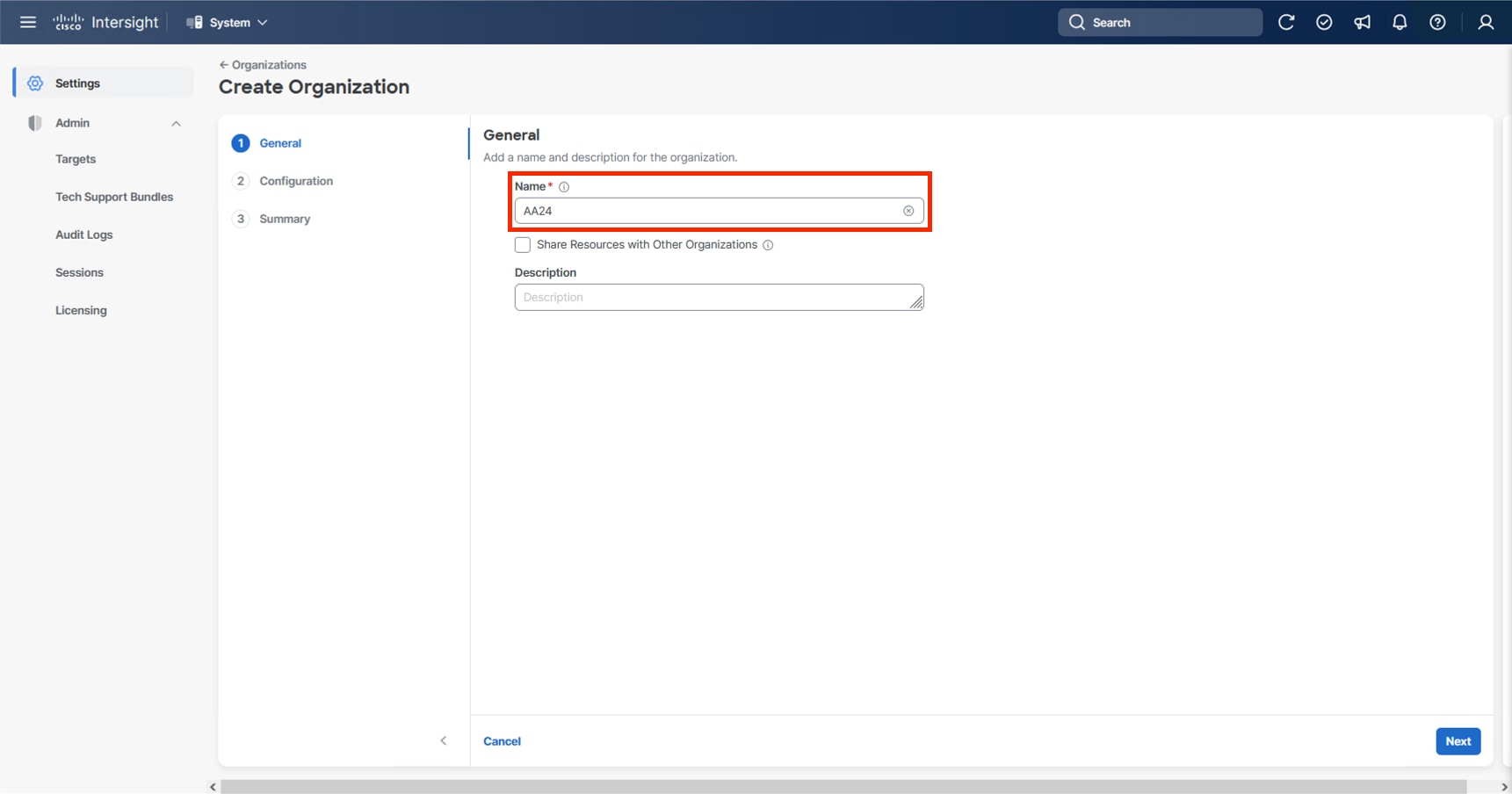

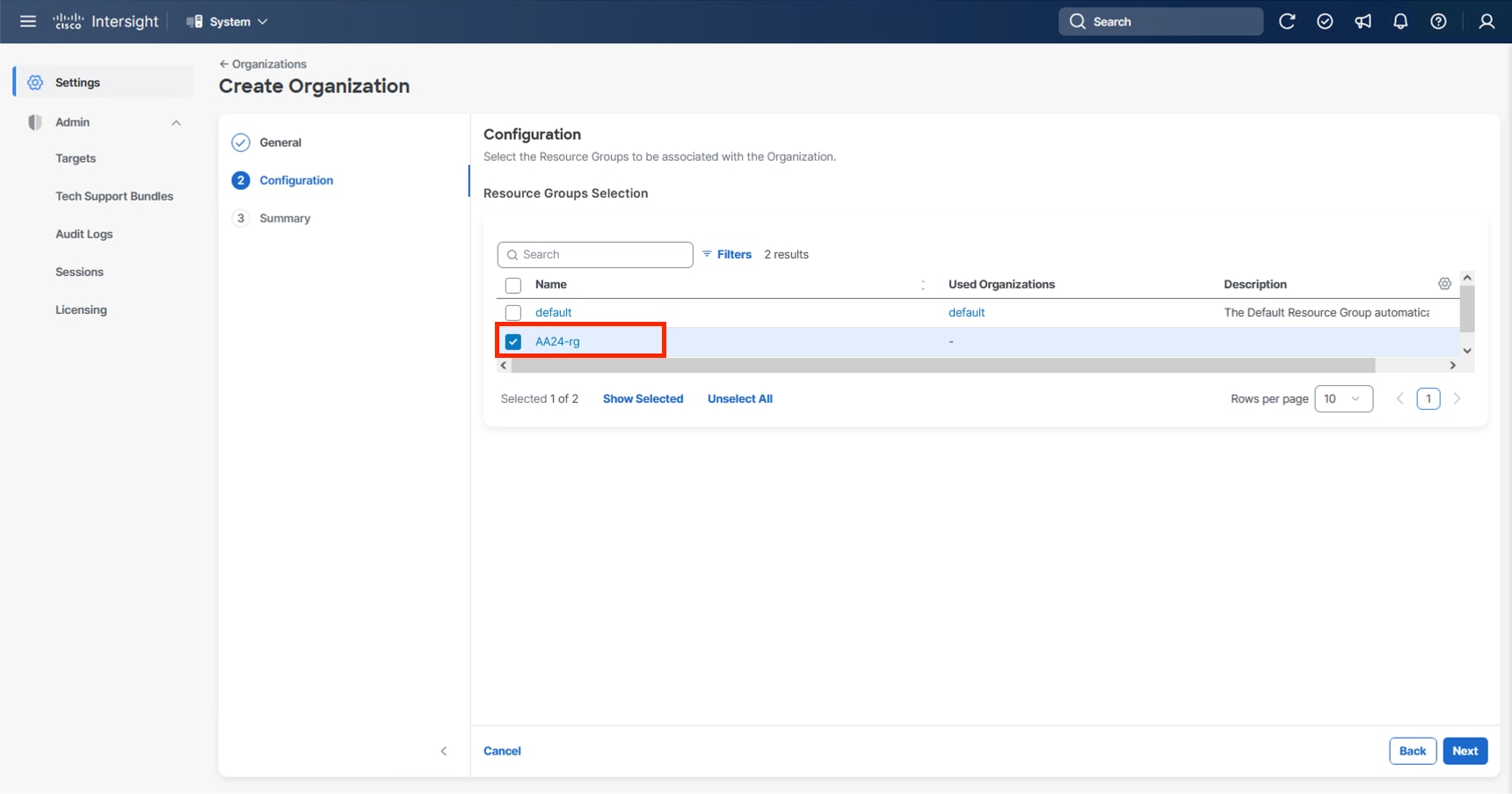

Procedure 4. Configure Cisco Intersight Organization

This procedure creates an Intersight organization where all Cisco Intersight managed mode configurations including policies are defined. To create a new organization, follow these steps:

Step 1. Log in to the Cisco Intersight portal.

Step 2. At the top, select System. On the left, click Settings (the gear icon).

Step 3. Click Organizations in the middle panel.

Step 4. Click + Create Organization in the top-right corner.

Step 5. Provide a name for the organization (for example, AA24) and click Next.

Step 6. Select the Resource Group created in the last step (for example, AA24-rg) and click Next.

Step 7. Review the Summary and click Create.

The UCS domain contained within the Fabric Interconnects will be added directly to the account as targets. The other infrastructure components will be onboarded as targets through the Intersight Assist Appliance after it has been deployed, explained later in section Management Configuration.

Procedure 1. Configure the Cisco UCS X-Direct Fabric Interconnects

The Cisco UCS X-Direct fabric interconnects support UCSM mode but will default to IMM mode with the currently shipping code. This guide will cover IMM deployment and configuration, UCSM configuration can be implemented following previous CVD examples, or referencing the current Cisco documentation covering UCSM configuration.

Note: Configuring X-Direct is started through the serial console, this connection will be 115200 baud instead of 9600 baud used by many other Cisco devices.

To start the configuration, follow these steps:

Step 1. Configure Fabric Interconnect A (FI-A) by connecting to the FI-A console. On the Basic System Configuration Dialog screen, set the management mode to Intersight:

Cisco UCS Fabric Interconnect A

Enter the configuration method. (console/gui) ? console

The Fabric interconnect will be configured in the intersight managed mode. Choose (y/n) to proceed: y

Enforce strong password? (y/n) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Enter the switch fabric (A/B) []: A

Enter the system name: <ucs-cluster-name>

Physical Switch Mgmt0 IP address : <ucsa-mgmt-ip>

Physical Switch Mgmt0 IPv4 netmask : <ucs-mgmt-mask>

IPv4 address of the default gateway : <ucs-mgmt-gateway>

DNS IP address : <dns-server-1-ip>

Configure the default domain name? (yes/no) [n]: n <optional>

Following configurations will be applied:

Management Mode=intersight

Switch Fabric=A

System Name=<ucs-cluster-name>

Enforced Strong Password=yes

Physical Switch Mgmt0 IP Address=<ucsa-mgmt-ip>

Physical Switch Mgmt0 IP Netmask=<ucs-mgmt-mask>

Default Gateway=<ucs-mgmt-gateway>

DNS Server=<dns-server-1-ip>

Apply and save the configuration (select 'no' if you want to re-enter)? (yes/no): yes

Step 2. After applying the settings, make sure you can ping the fabric interconnect management IP address. When Fabric Interconnect A is correctly set up and is available, Fabric Interconnect B will automatically discover Fabric Interconnect A during its setup process as shown in the next step.

Step 3. Configure Fabric Interconnect B (FI-B). For the configuration method, choose console. Fabric Interconnect B will detect the presence of Fabric Interconnect A and will prompt you to enter the admin password for Fabric Interconnect A. Provide the management IP address for Fabric Interconnect B and apply the configuration:

Cisco UCS Fabric Interconnect B

Enter the configuration method. (console/gui) ? console

Installer has detected the presence of a peer Fabric interconnect. This Fabric interconnect will be added to the cluster. Continue (y/n) ? y

Enter the admin password of the peer Fabric interconnect: <password>

Connecting to peer Fabric interconnect... done

Retrieving config from peer Fabric interconnect... done

Peer Fabric interconnect management mode : intersight

Peer Fabric interconnect Mgmt0 IPv4 Address: <ucsa-mgmt-ip>

Peer Fabric interconnect Mgmt0 IPv4 Netmask: <ucs-mgmt-mask>

Peer FI is IPv4 Cluster enabled. Please Provide Local Fabric Interconnect Mgmt0 IPv4 Address

Physical Switch Mgmt0 IP address : <ucsb-mgmt-ip>

Apply and save the configuration (select 'no' if you want to re-enter)? (yes/no): yes

Applying configuration. Please wait.

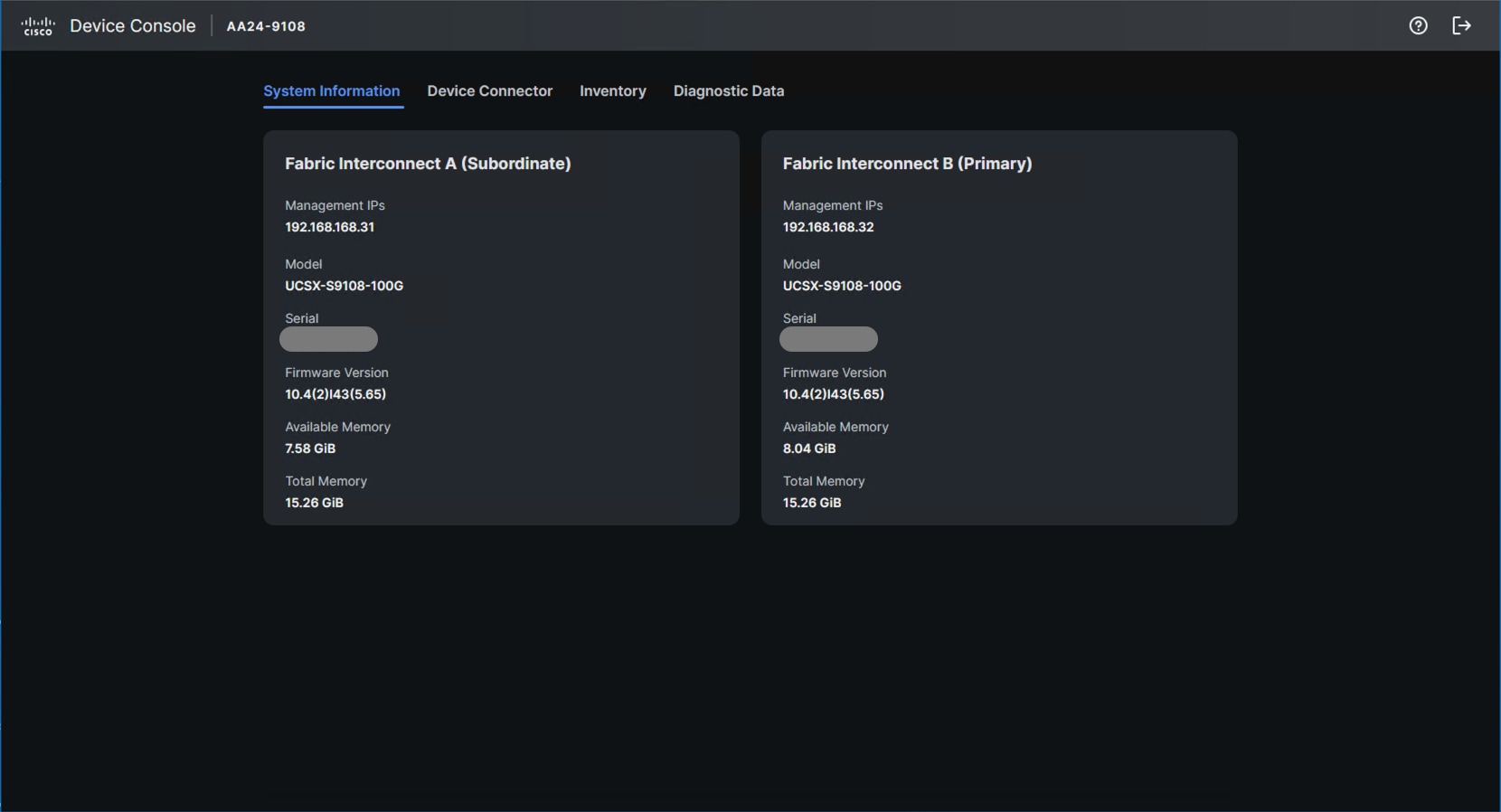

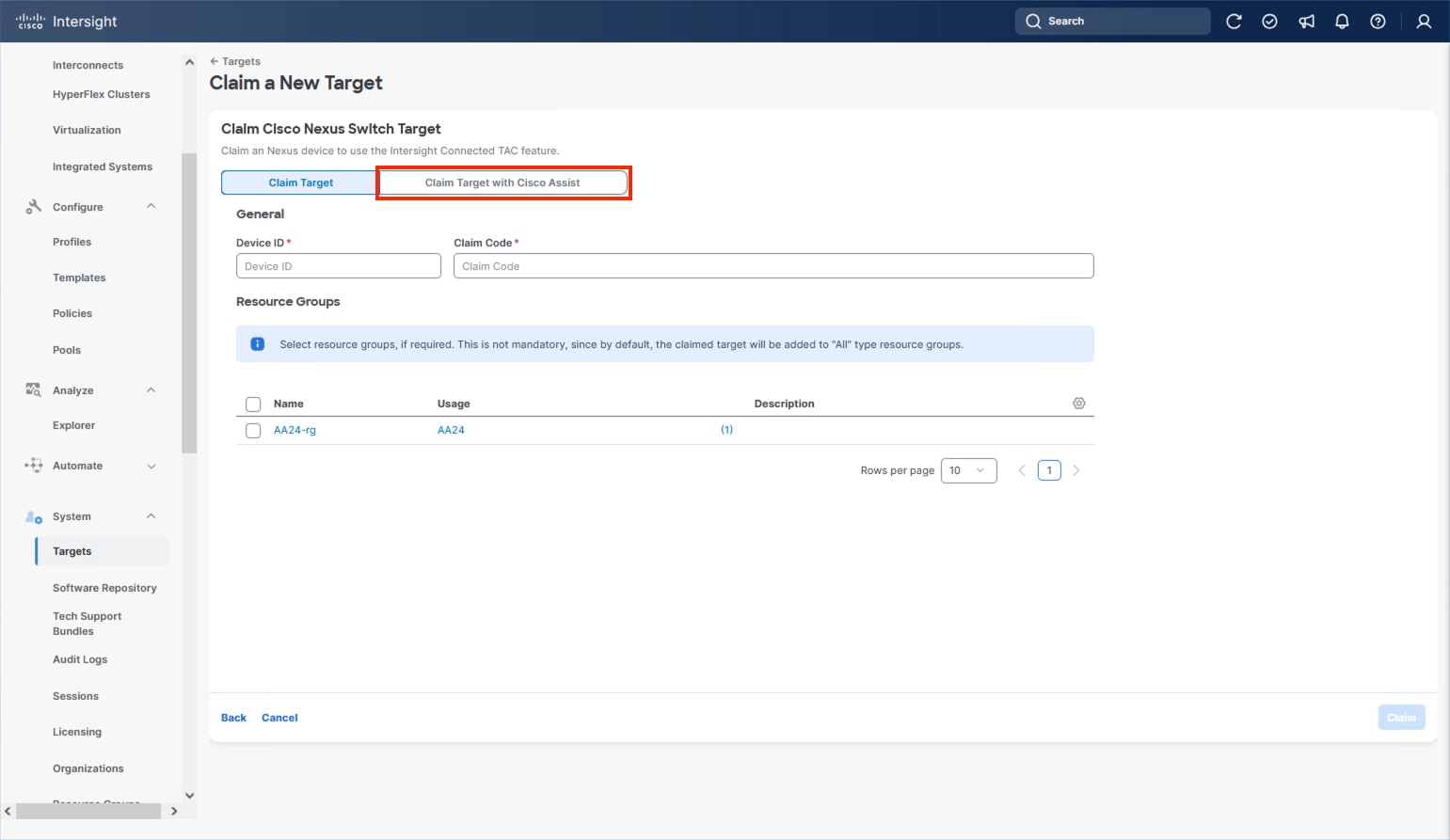

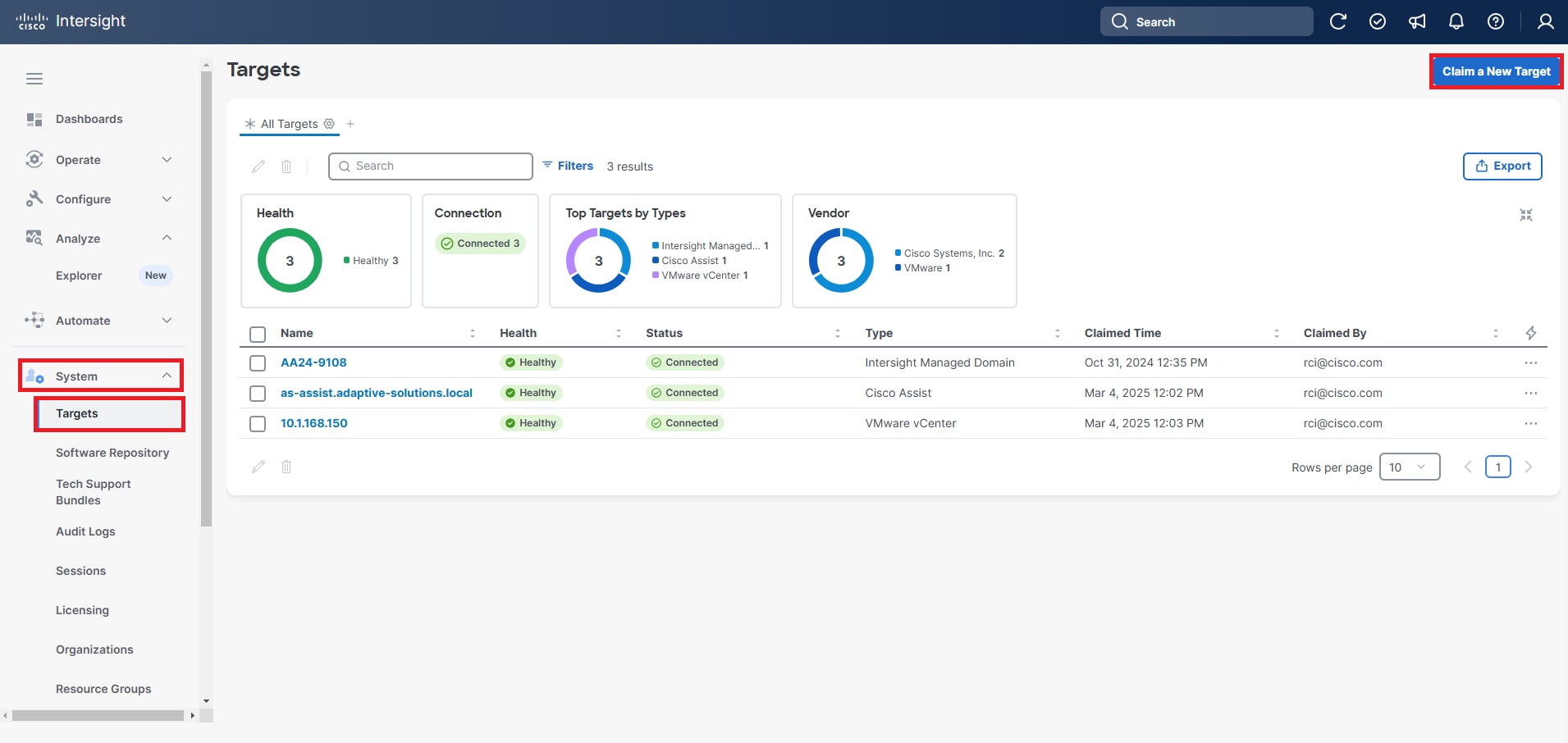

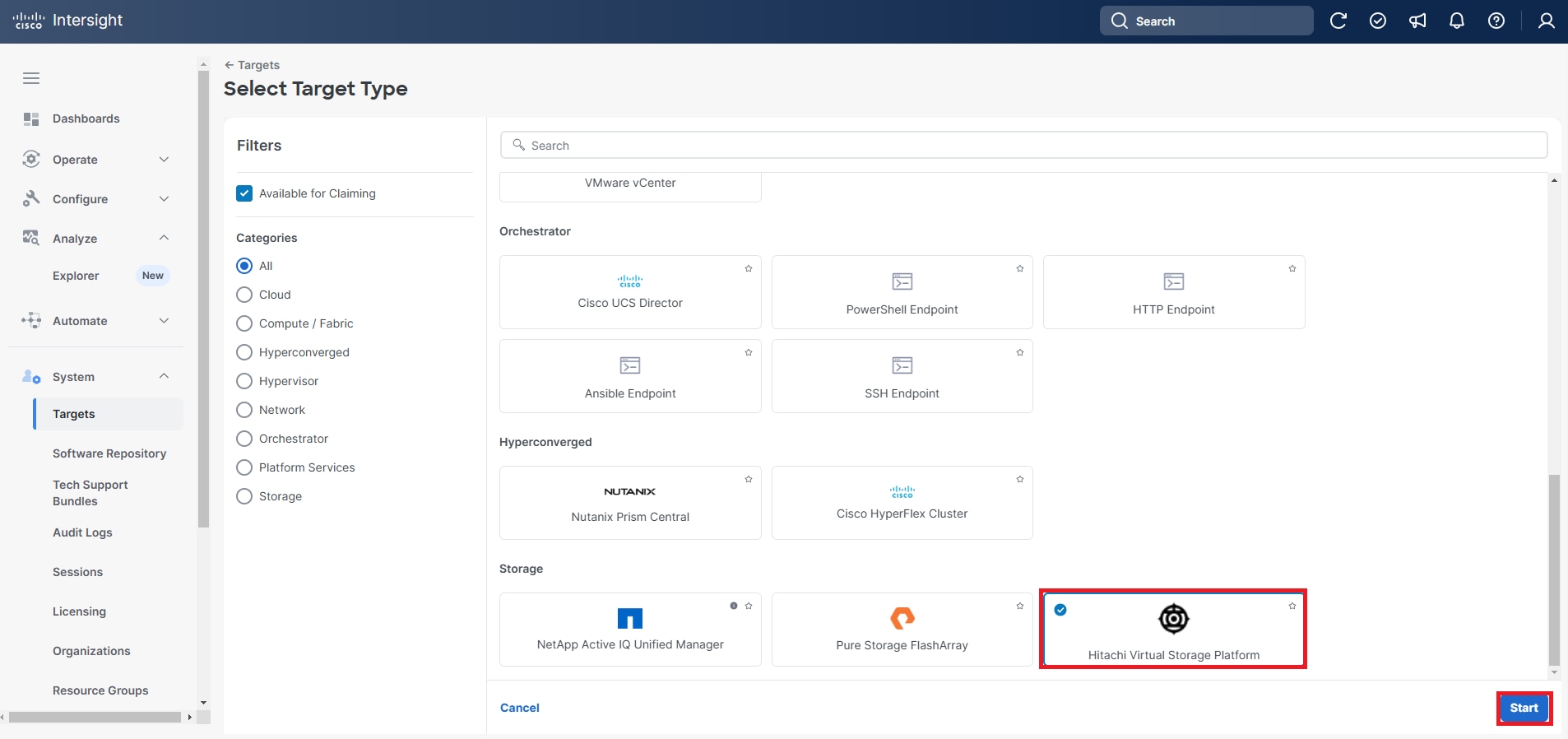

Procedure 2. Claim Cisco UCS Fabric Interconnects in Cisco Intersight

Note: With the initial Basic System Configuration Dialog previously completed for the fabric interconnects, log into the Fabric Interconnect A Device Console using a web browser to capture the Cisco Intersight connectivity information.

Step 1. Use the management IP address of Fabric Interconnect A to access the device from a web browser and the previously configured admin password to log into the device.

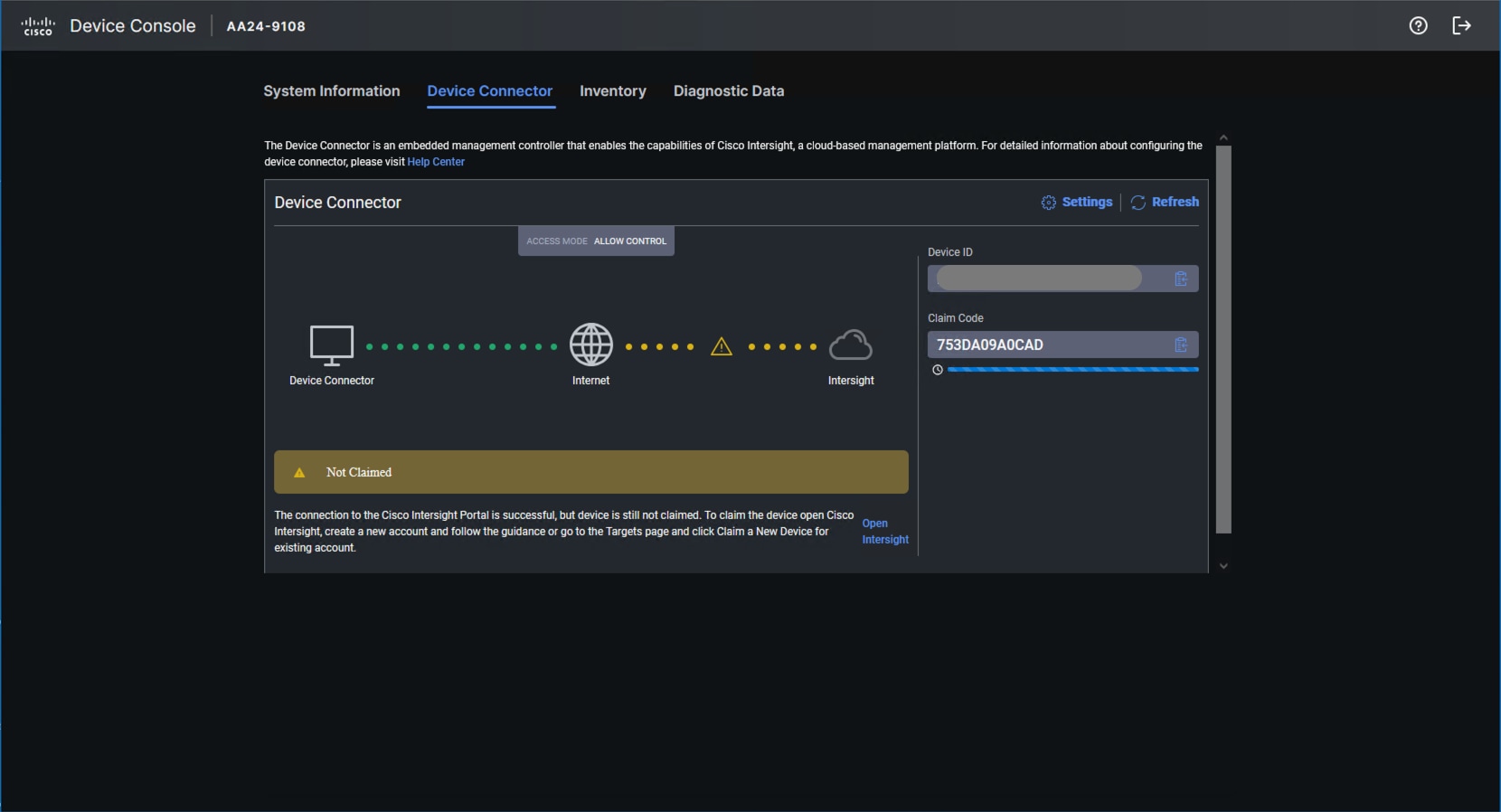

Step 2. Under DEVICE CONNECTOR, the current device status will show Not claimed. Note or copy the Device ID and Claim Code information for claiming the device in Cisco Intersight.

Step 3. Log in to Cisco Intersight.

Step 4. At the top, select System. On the left, click Administration > Targets.

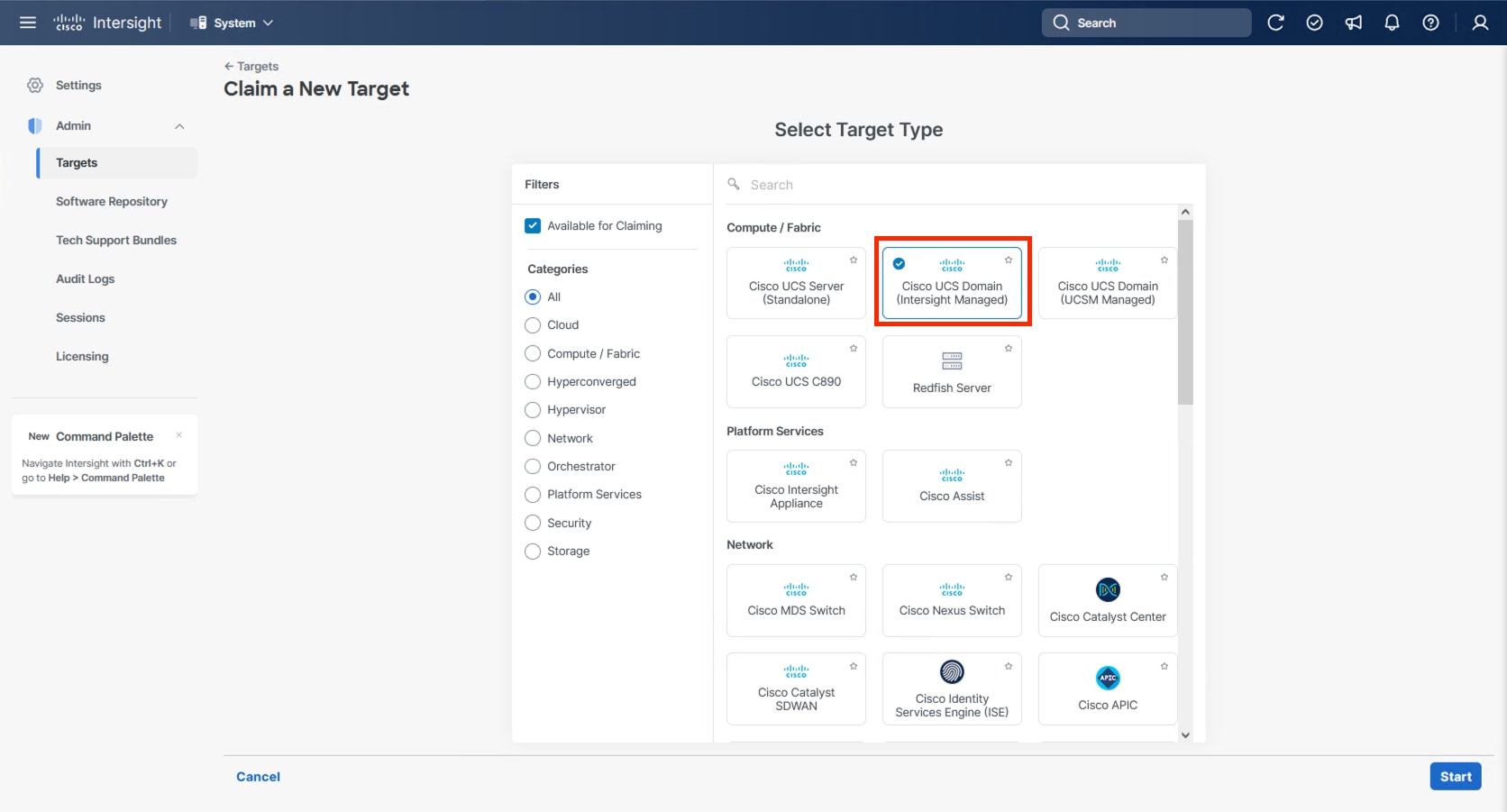

Step 5. Click Claim a New Target.

Step 6. Select Cisco UCS Domain (Intersight Managed) and click Start.

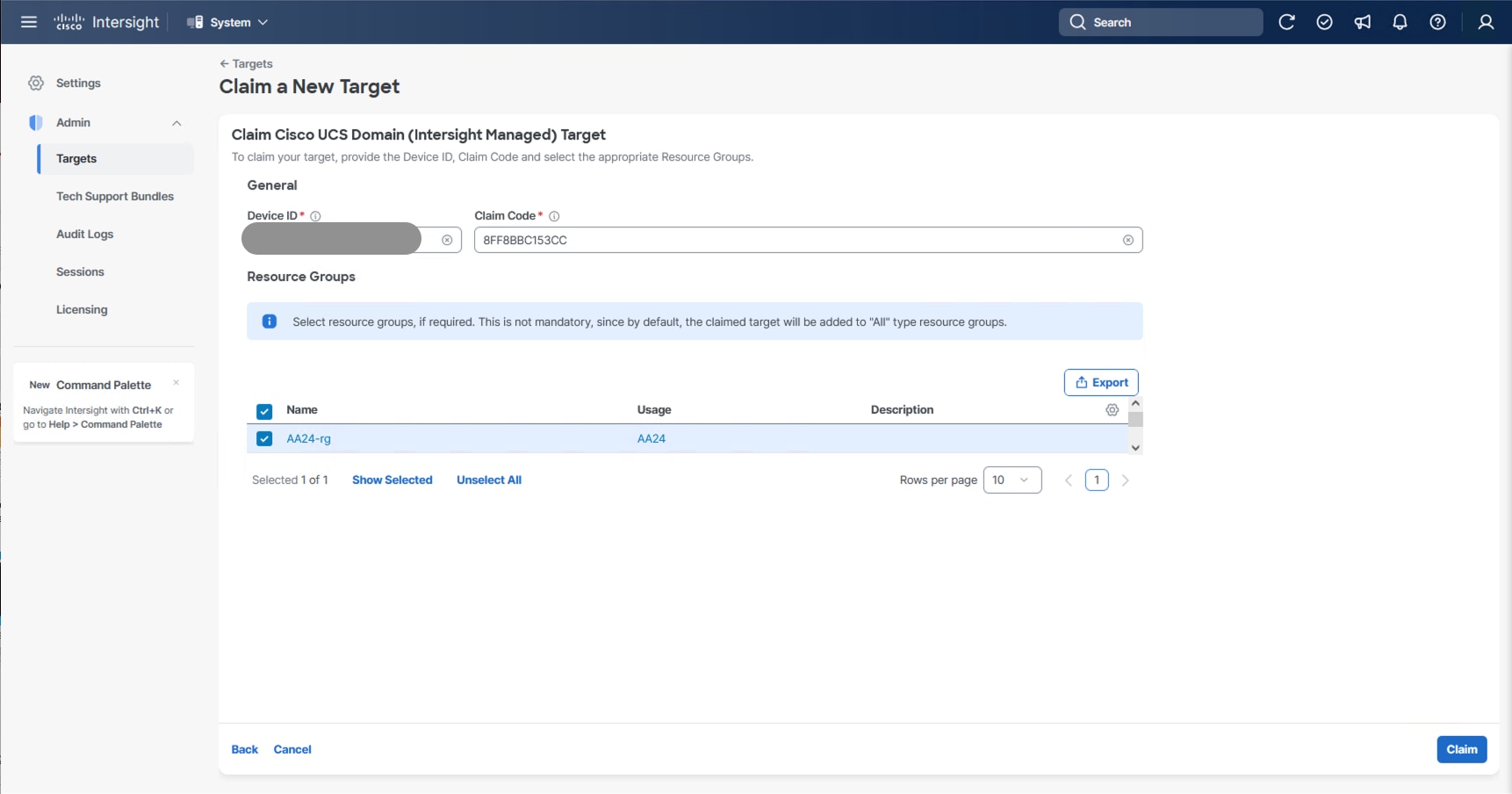

Step 7. Copy and paste the Device ID and Claim Code from the Cisco UCS FI in Step 2 to Intersight.

Step 8. Select the previously created resource group and click Claim.

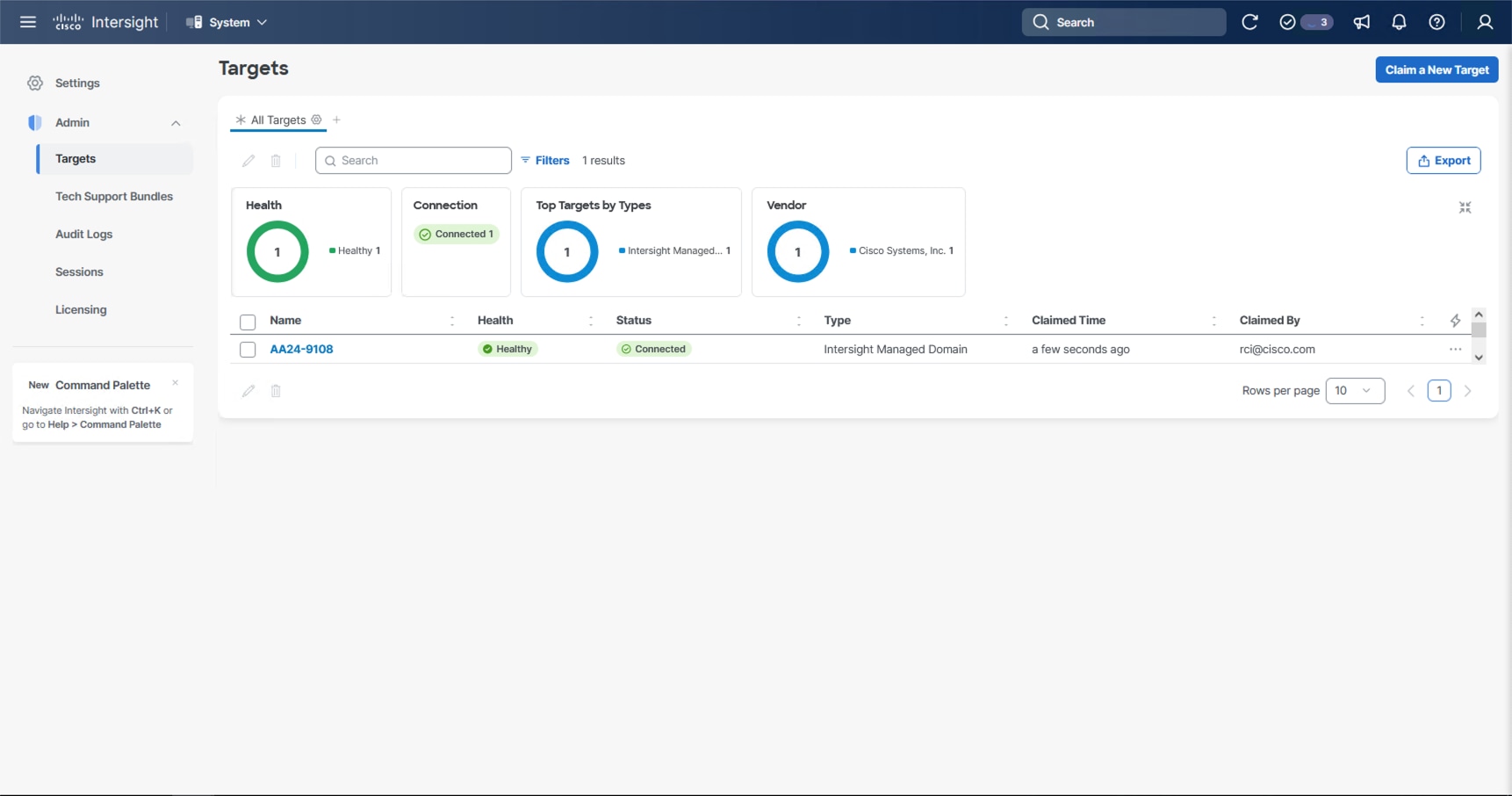

With a successful device claim, Cisco UCS FI appears as a target in Cisco Intersight:

Step 9. Log into the web GUI of the Cisco UCS fabric interconnect and click the browser Refresh button.

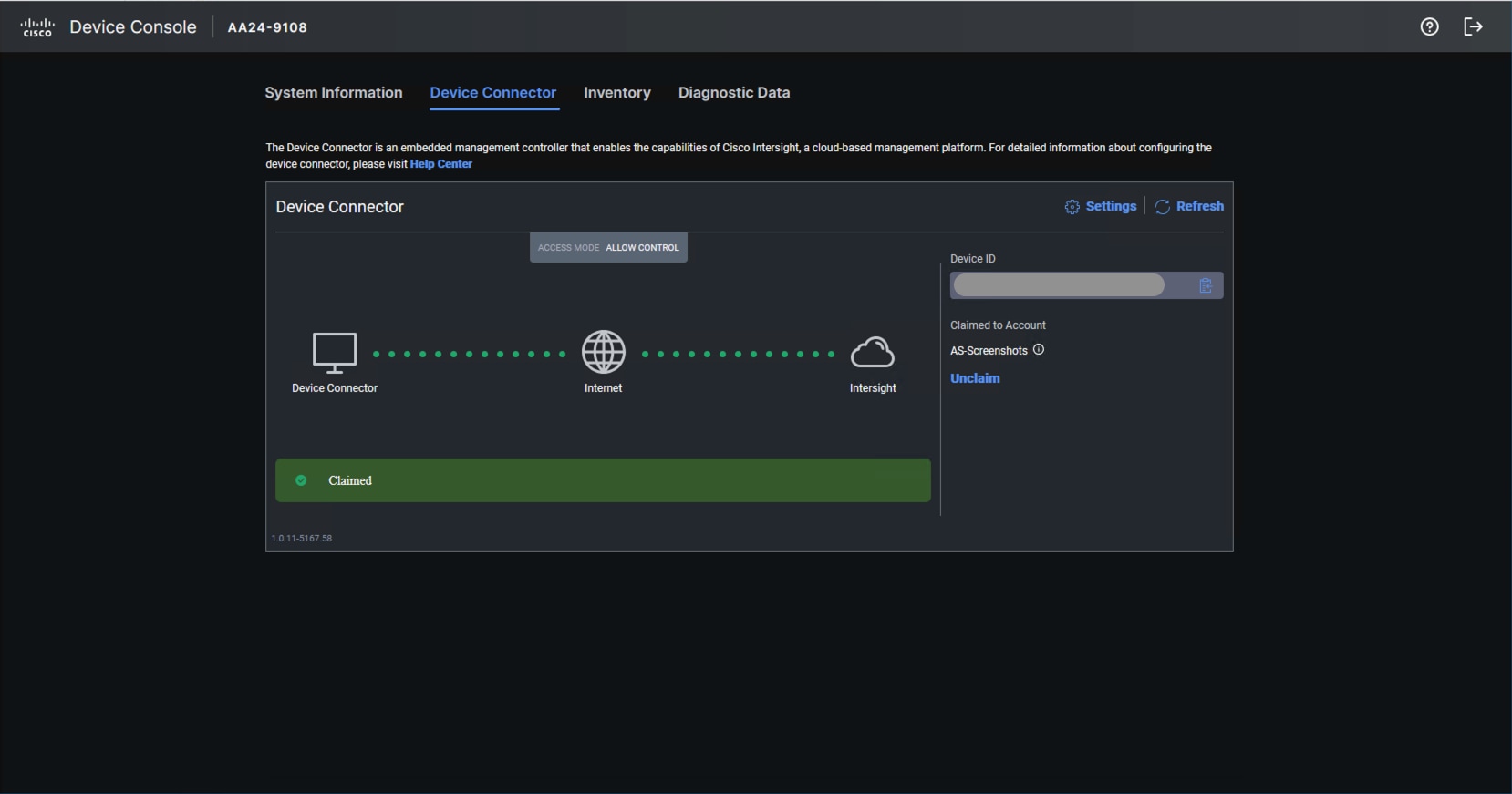

The fabric interconnect status is now set to Claimed:

Procedure 3. Upgrade Fabric Interconnect Firmware using Cisco Intersight

Step 1. Log into the Cisco Intersight portal.

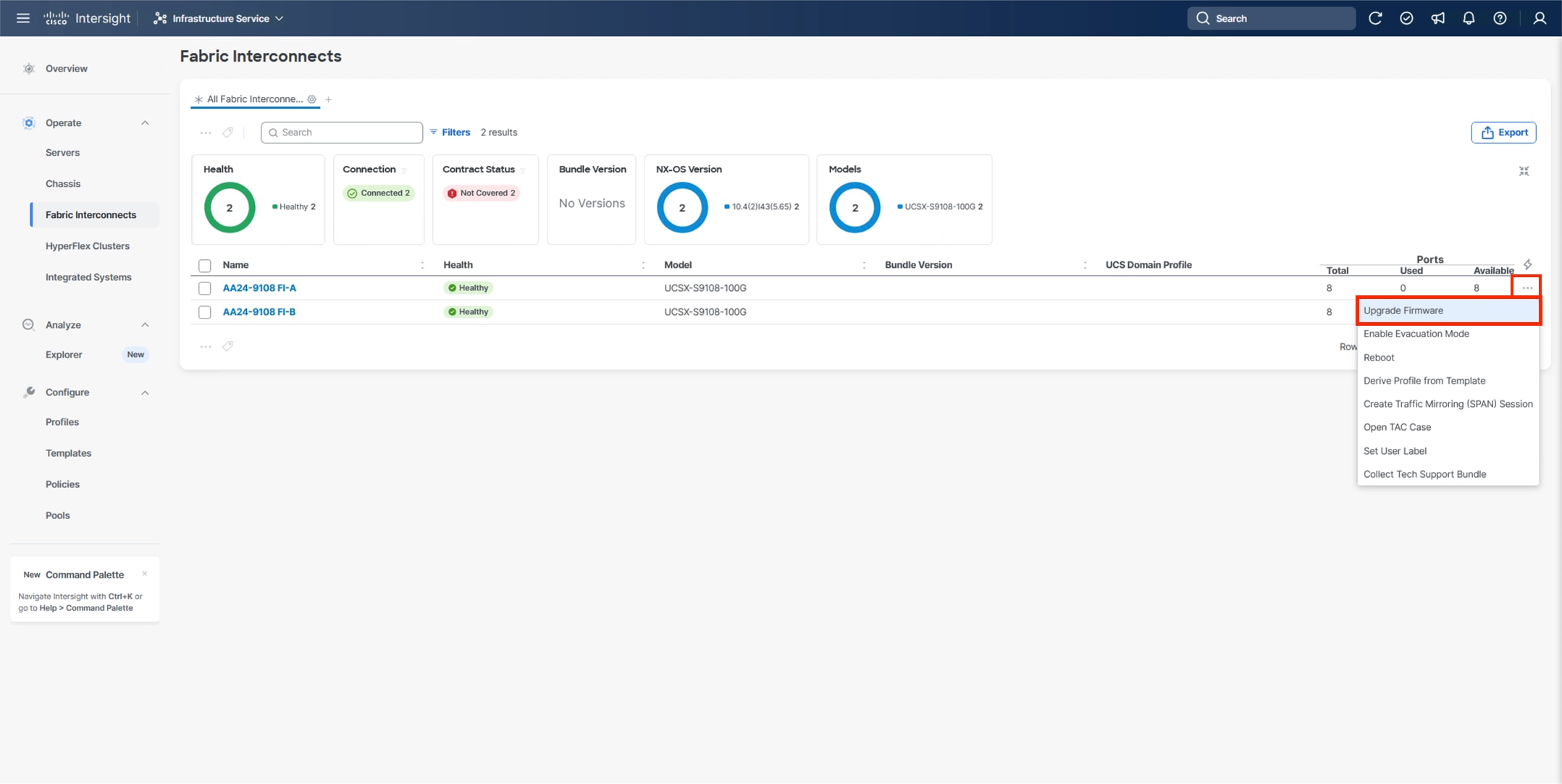

Step 2. At the top, from the drop-down list select Infrastructure Service and then select Fabric Interconnects under Operate on the left.

Step 3. Click the ellipses “…” at the end of the row for either of the Fabric Interconnects and select Upgrade Firmware.

Step 4. Click Start.

Step 5. Verify the Fabric Interconnect information and click Next.

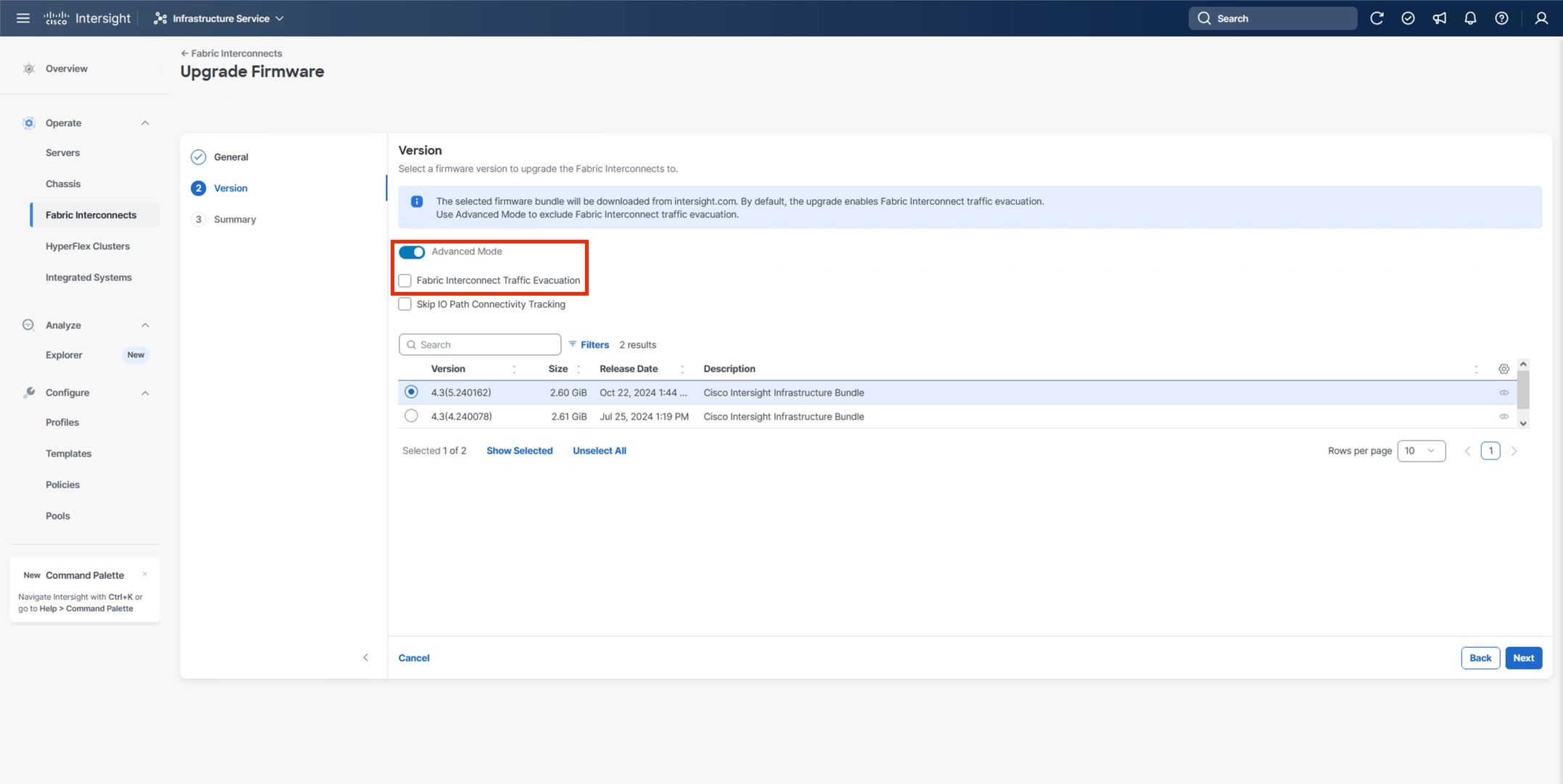

Step 6. Enable Advanced Mode using the toggle switch and uncheck Fabric Interconnect Traffic Evacuation.

Step 7. Select 4.3(5) or other currently appropriate release from the list and click Next.

Note: 4.3(5) is the minimum required release version for supporting X215c M8 Compute Nodes.

Step 8. Verify the information and click Upgrade to start the upgrade process.

Step 9. Watch the Request panel of the main Intersight screen since the system will prompt for user permission before upgrading each FI. Click the Circle with Arrow and follow the prompts on the screen to grant permission.

Step 10. Wait for both the FIs to successfully upgrade.

A Cisco UCS domain profile configures a fabric interconnect pair through reusable policies, allows configuration of the ports and port channels, and configures the VLANs and VSANs in the network. It defines the characteristics of and configured ports on fabric interconnects. The domain-related policies can be attached to the profile either at the time of creation or later. One Cisco UCS domain profile can be assigned to one fabric interconnect domain.

Procedure 1. Configure a Cisco UCS Domain Profile

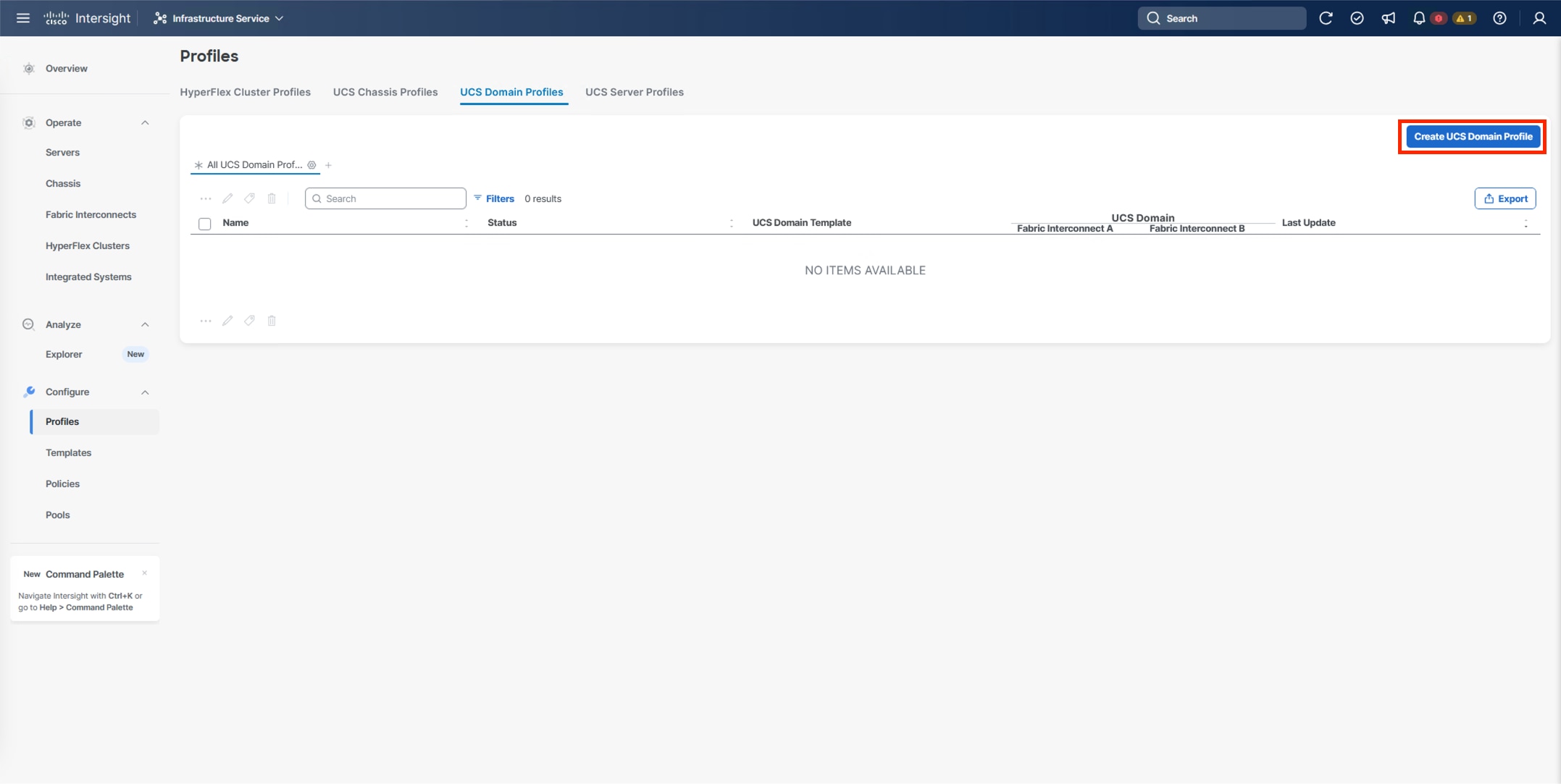

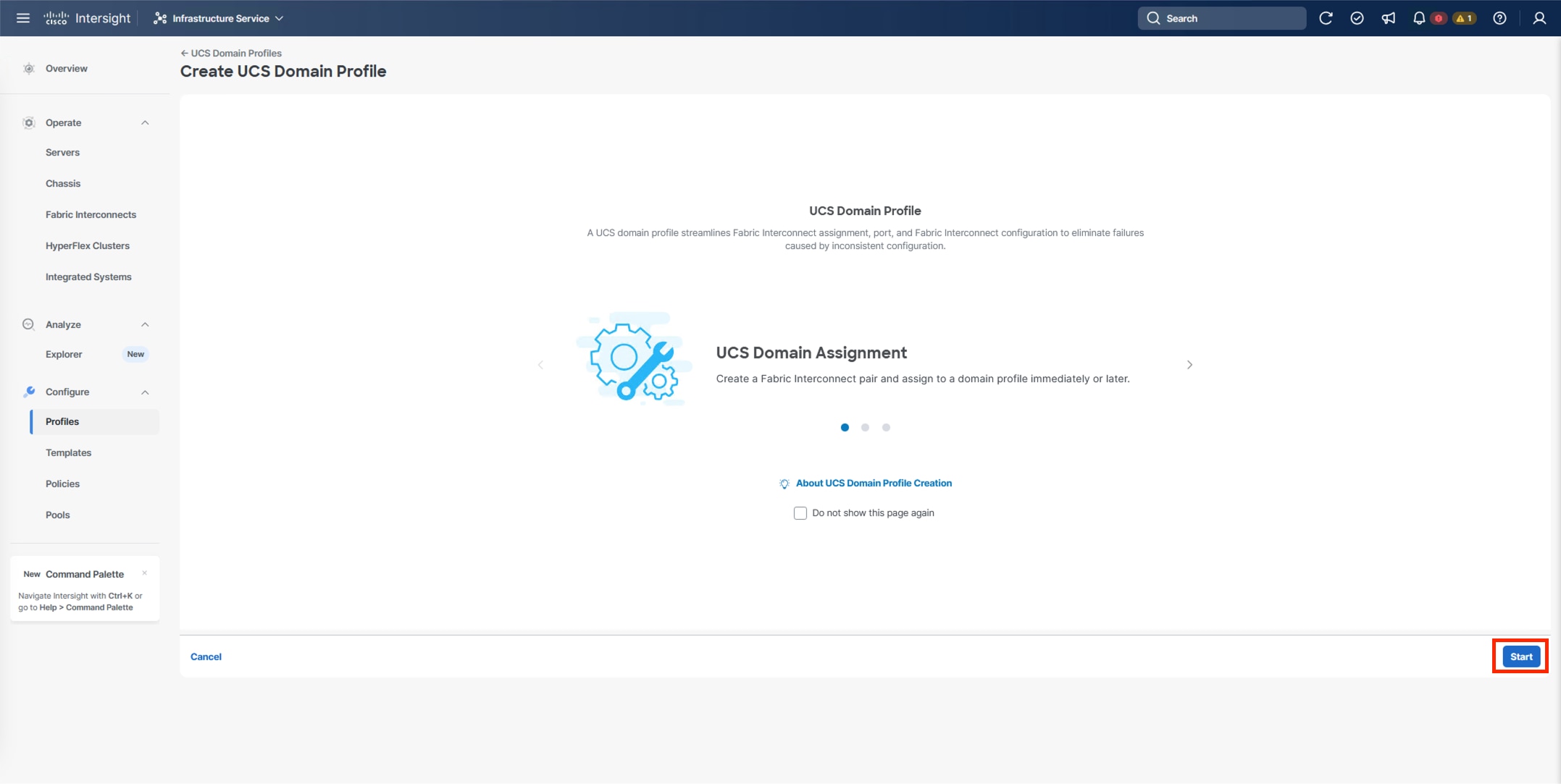

Step 1. Log into the Cisco Intersight portal.

Step 2. At the top, from the drop-down list, select Infrastructure Service and under Configure, select Profiles.

Step 3. In the main window, select UCS Domain Profiles and click Create UCS Domain Profile.

Step 4. From the Create UCS Domain Profile screen, click Start.

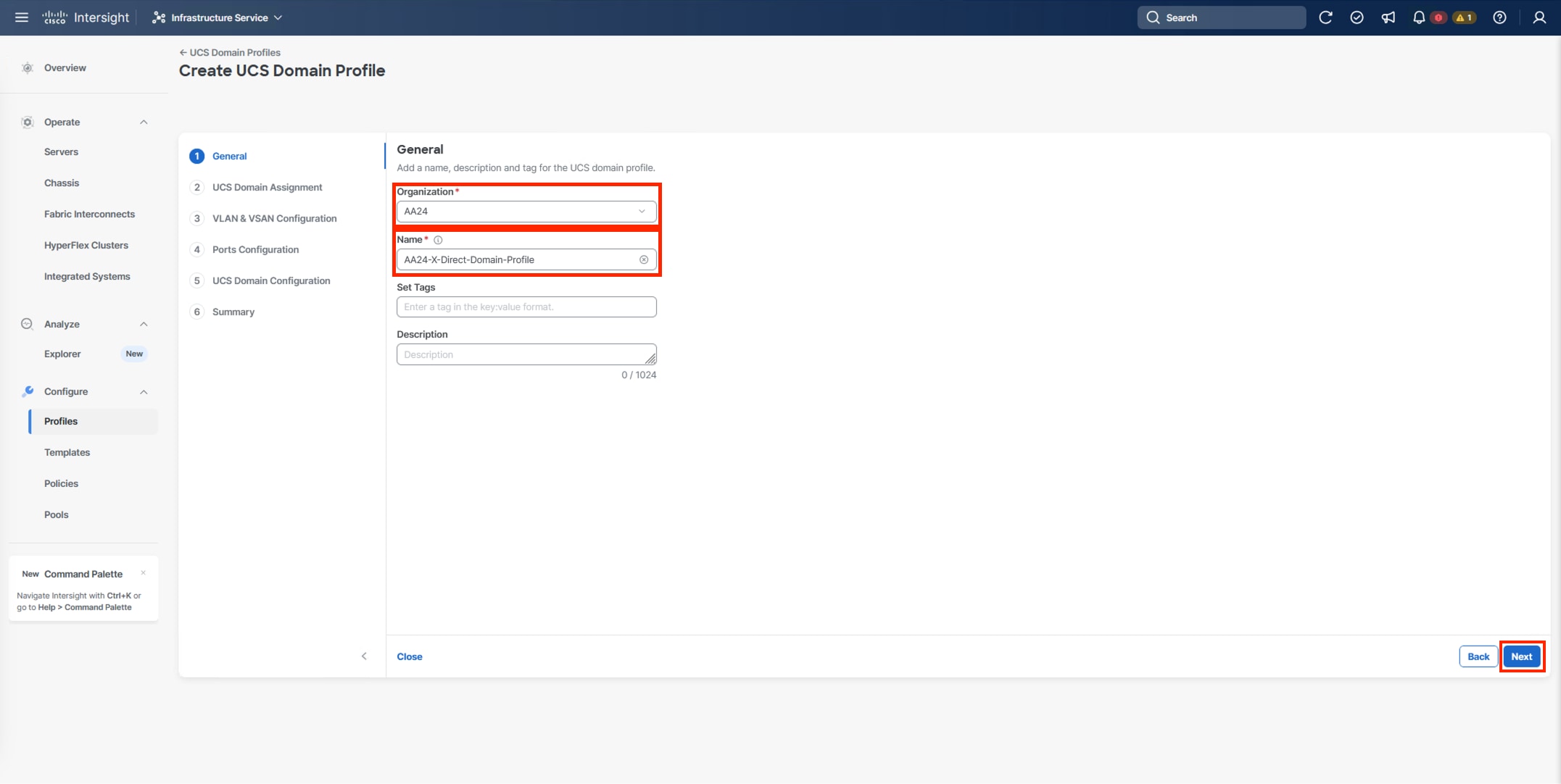

Procedure 2. UCS Domain Profile General Configuration

Step 1. Select the organization from the drop-down list (for example, AA24).

Step 2. Provide a name for the domain profile (for example, AA24-X-Direct-Domain-Profile).

Step 3. Provide an optional Description.

Step 4. Click Next.

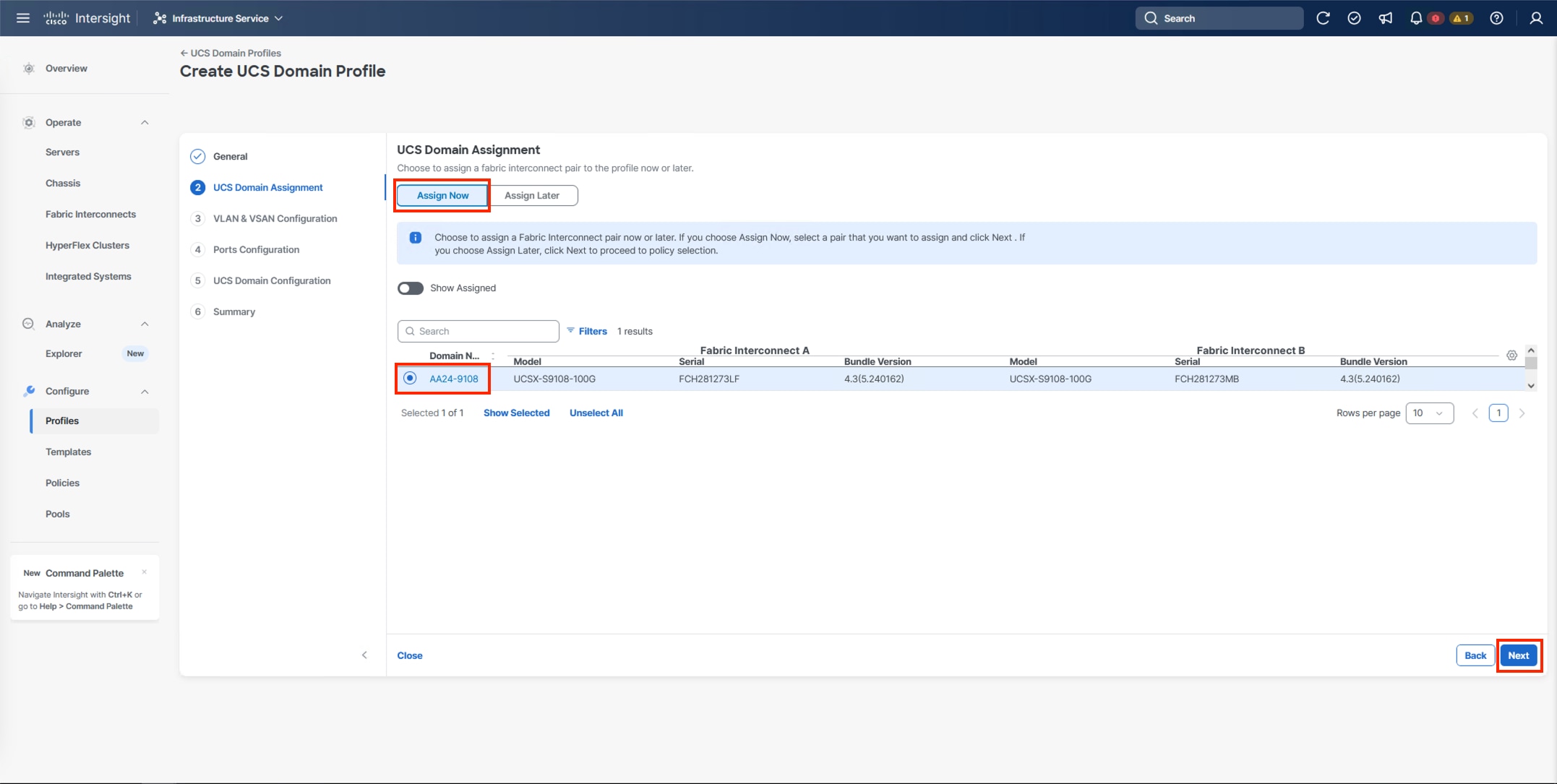

Procedure 3. UCS Domain Assignment

Step 1. Assign the Cisco UCS domain to this new domain profile by clicking Assign Now and select the previously added Cisco UCS domain (for example, AA24-9108).

Step 2. Click Next.

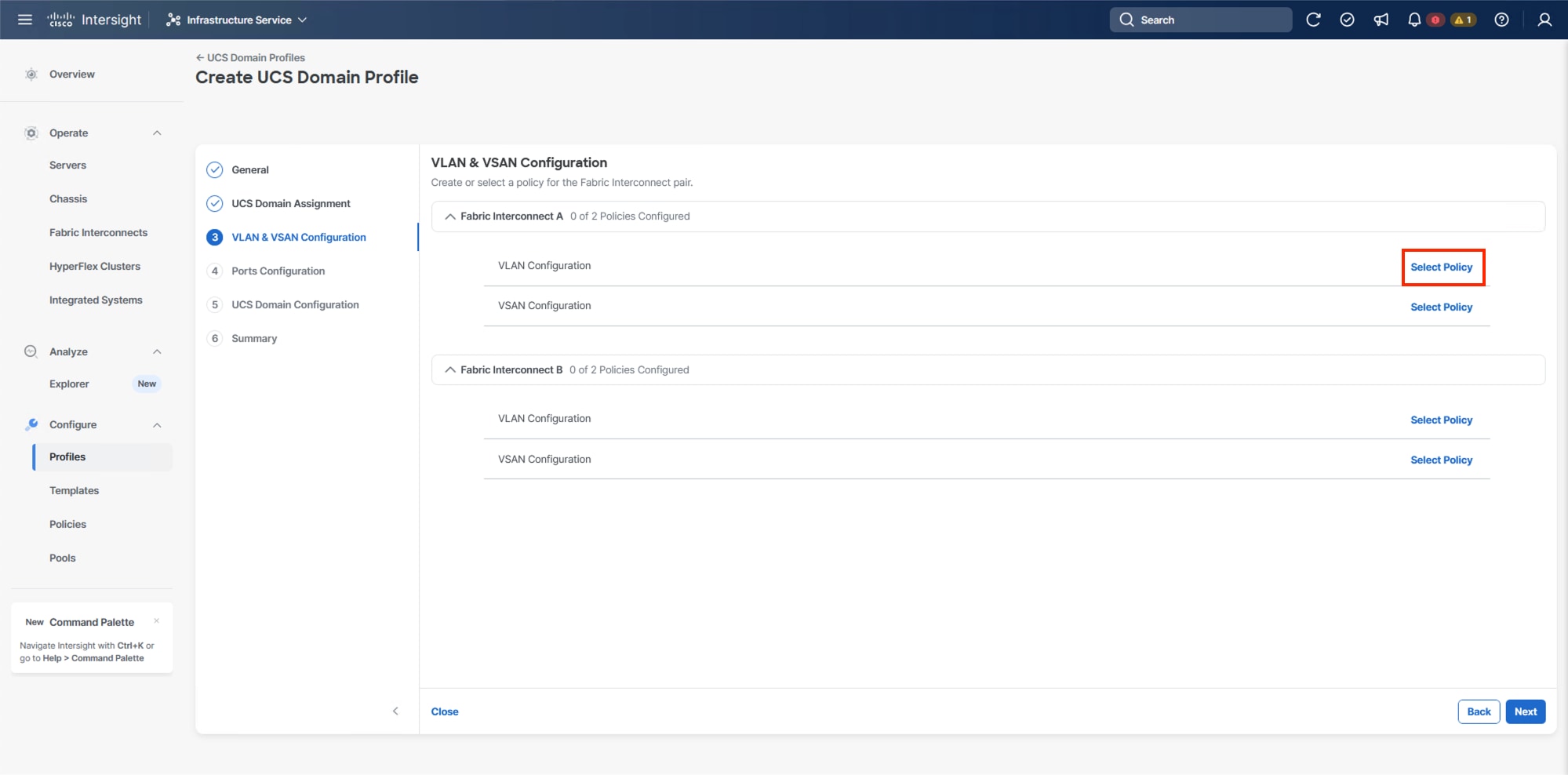

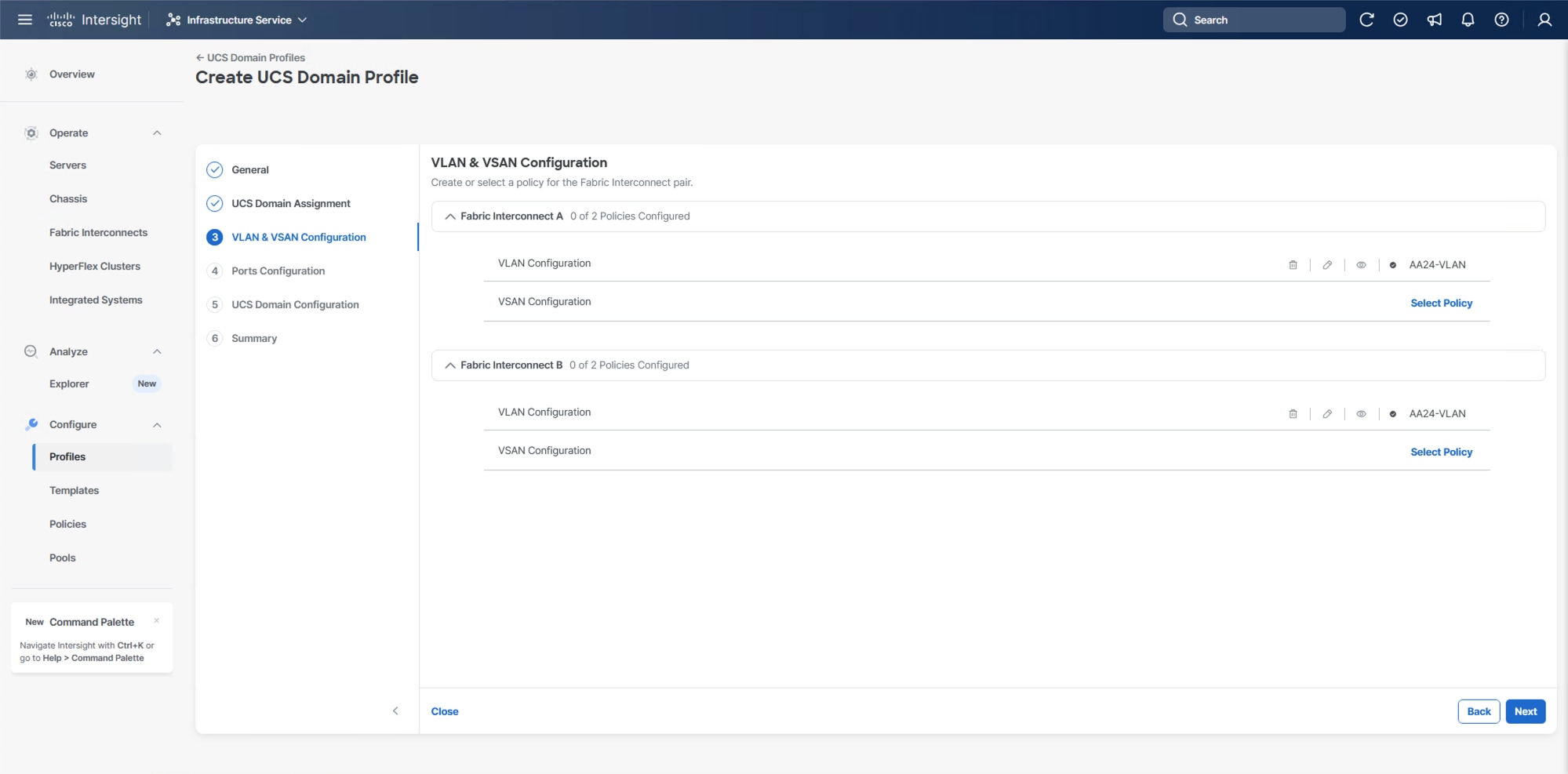

Procedure 4. VLAN and VSAN Configuration

In this procedure, a single VLAN policy is created for both fabric interconnects and two individual VSAN policies are created because the VSAN IDs are unique for each fabric interconnect that are applied to the UCS Domain.

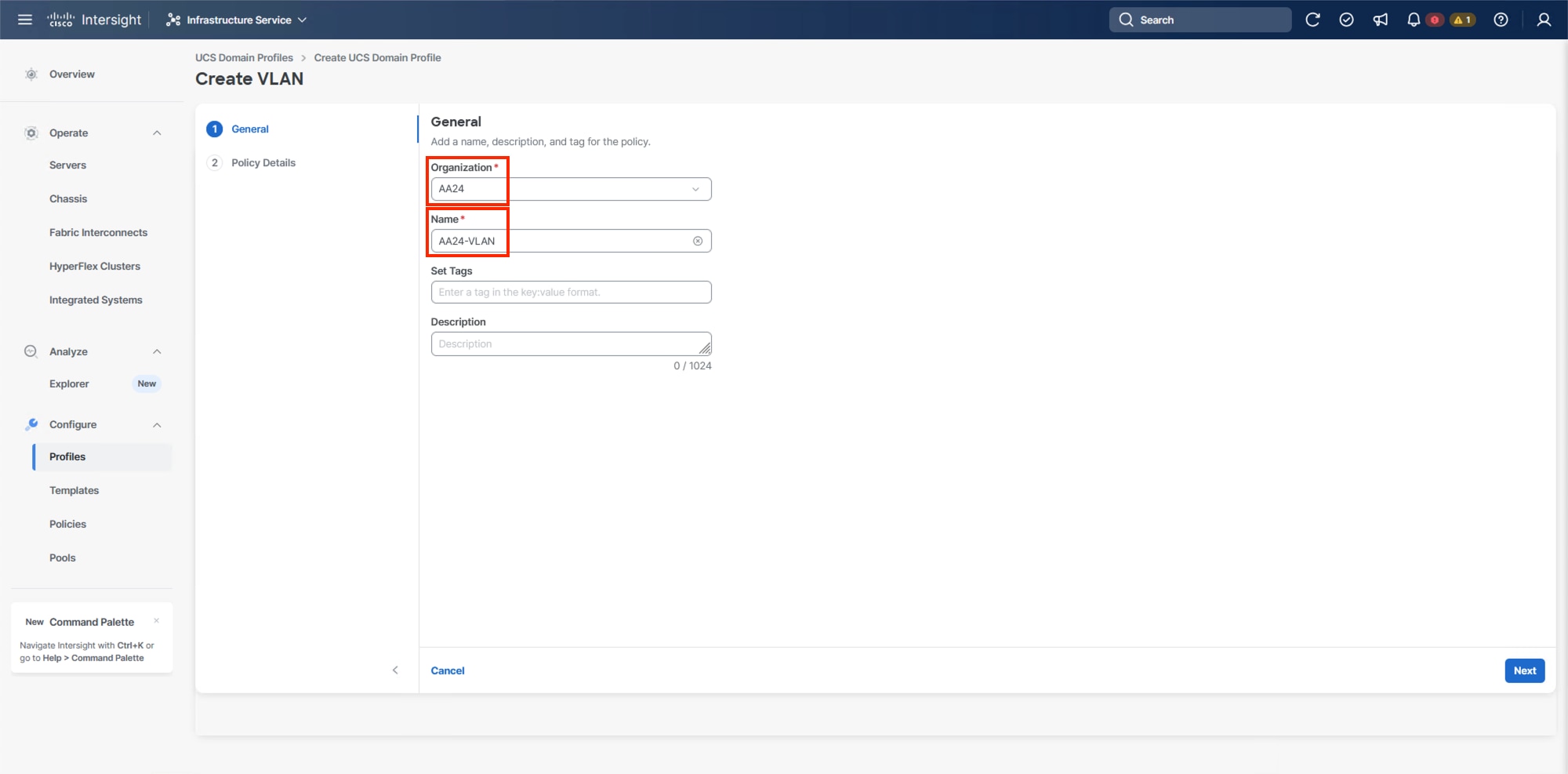

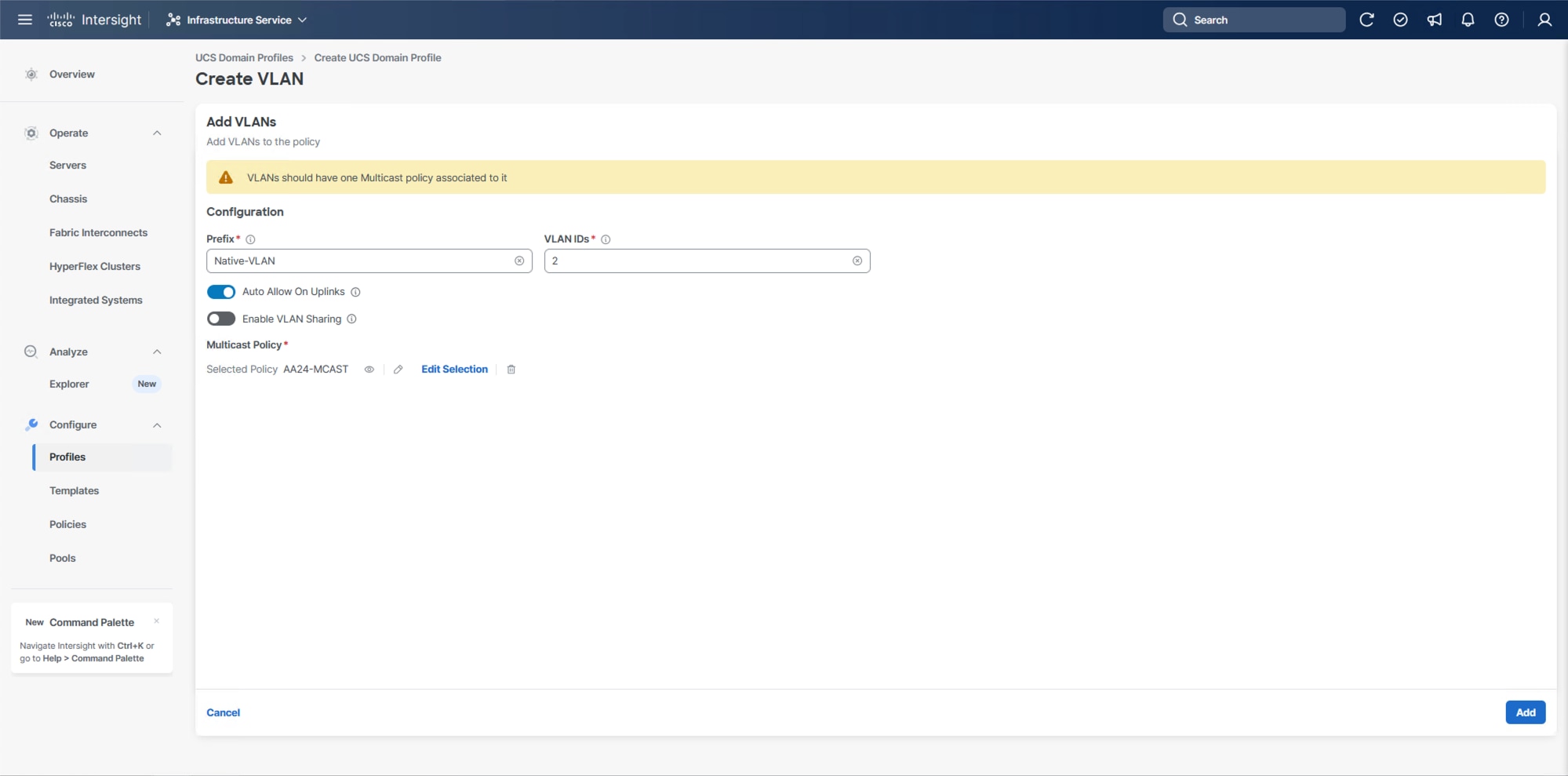

VLAN Configuration

Step 1. Click Select Policy next to VLAN Configuration under Fabric Interconnect A.

Step 2. In the pane on the right, click Create New.

Step 3. Verify the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-VLAN).

Step 4. Click Next.

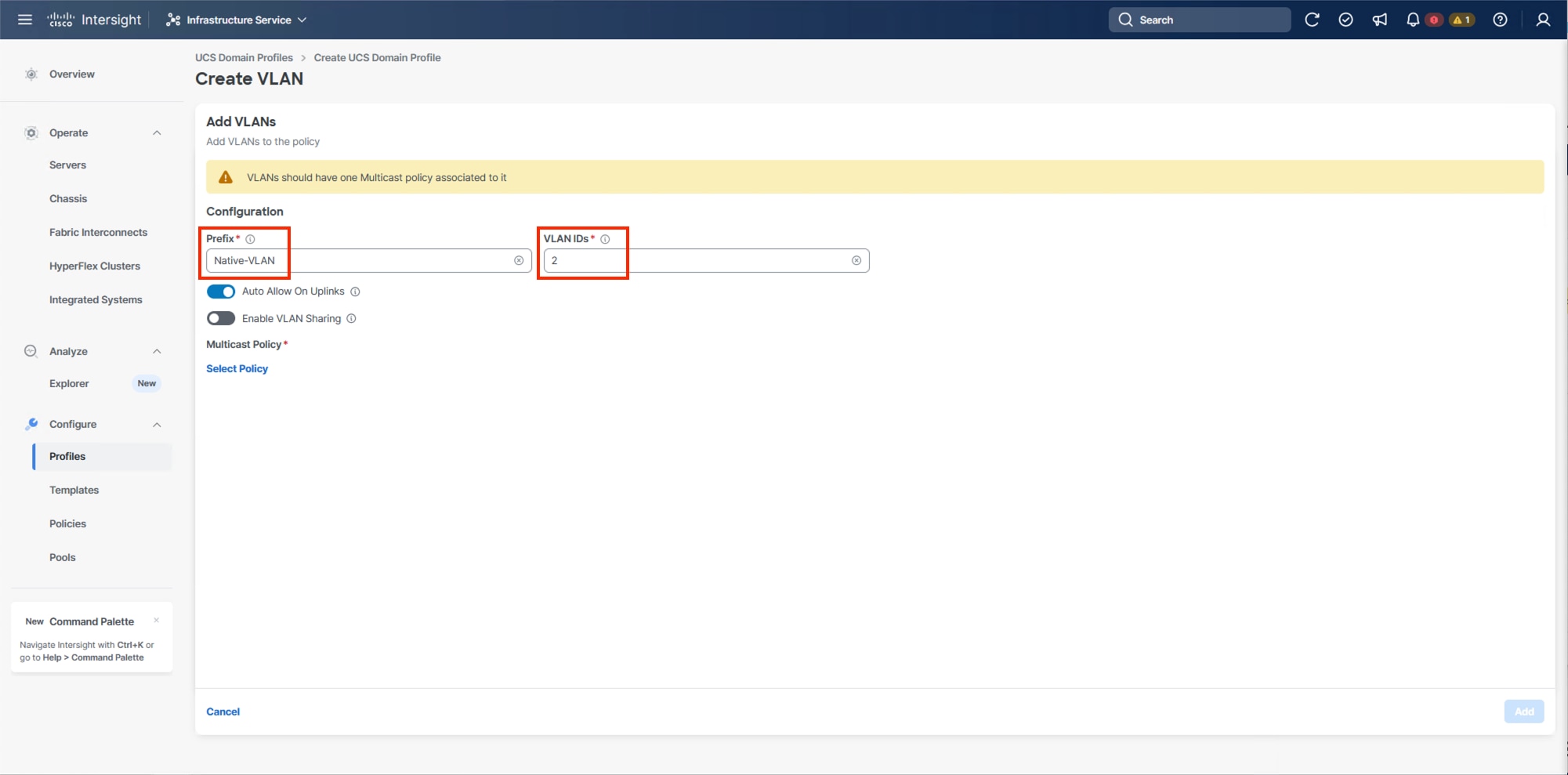

Step 5. Click Add VLANs.

Step 6. Provide a name and VLAN ID for the native VLAN.

Step 7. Make sure Auto Allow On Uplinks is enabled.

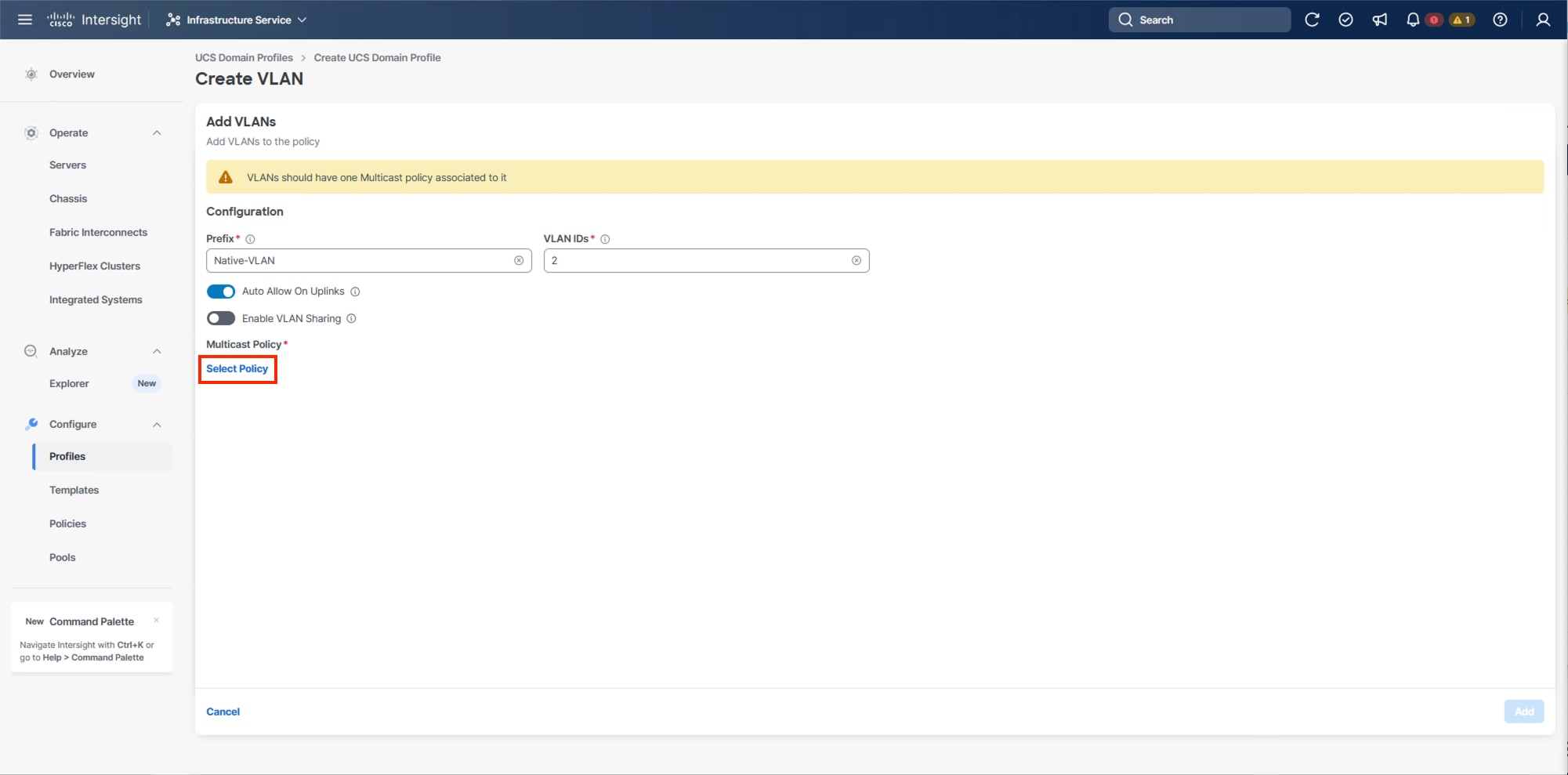

Step 8. To create the required Multicast policy, click Select Policy under Multicast Policy*.

Step 9. In the window on the right, click Create New to create a new Multicast Policy.

Step 10. Provide a Name for the Multicast Policy (for example, AA24-MCAST).

Step 11. Provide optional Description and click Next.

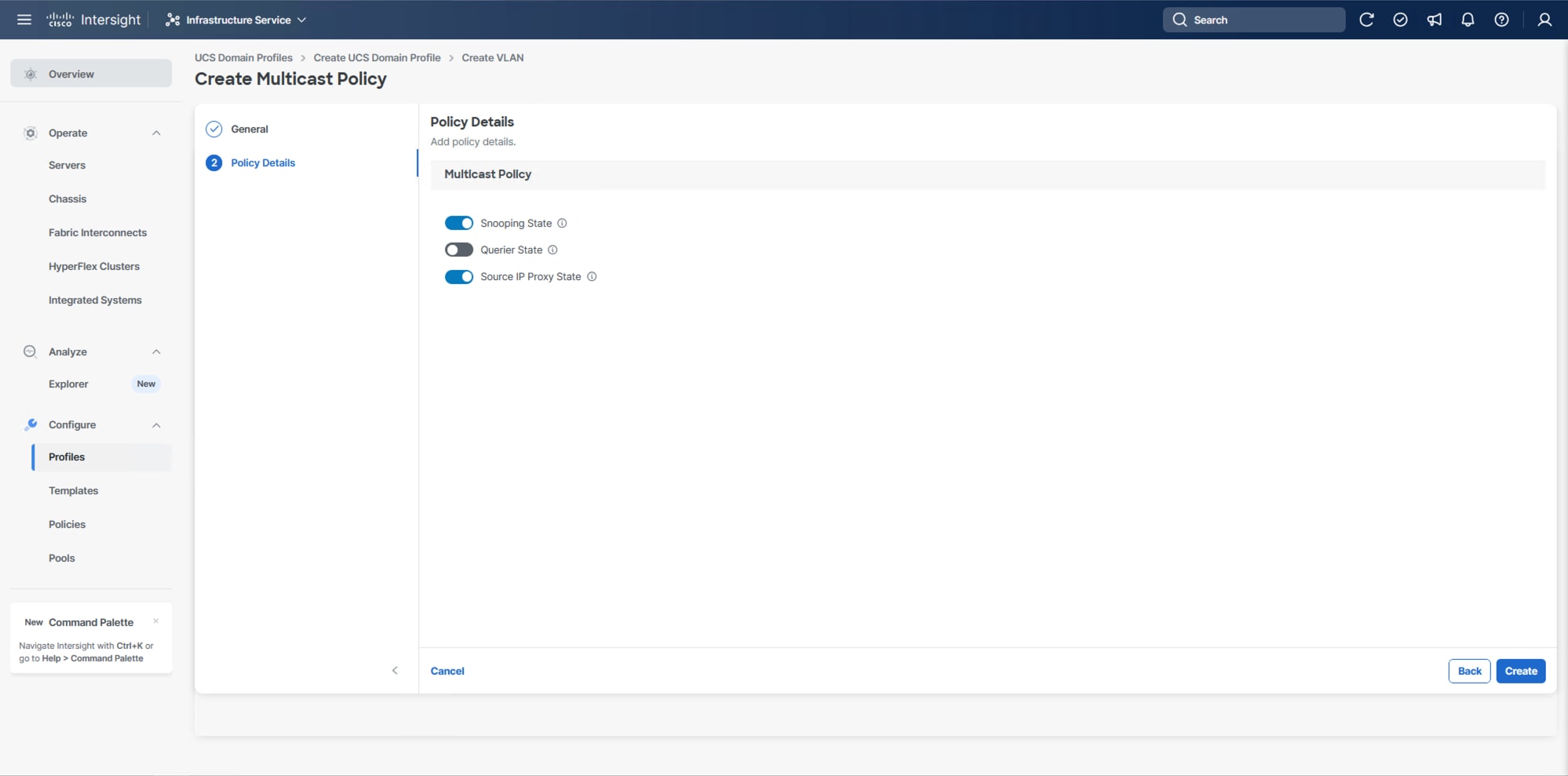

Step 12. Leave the default settings selected and click Create.

Step 13. Click Add to add the VLAN.

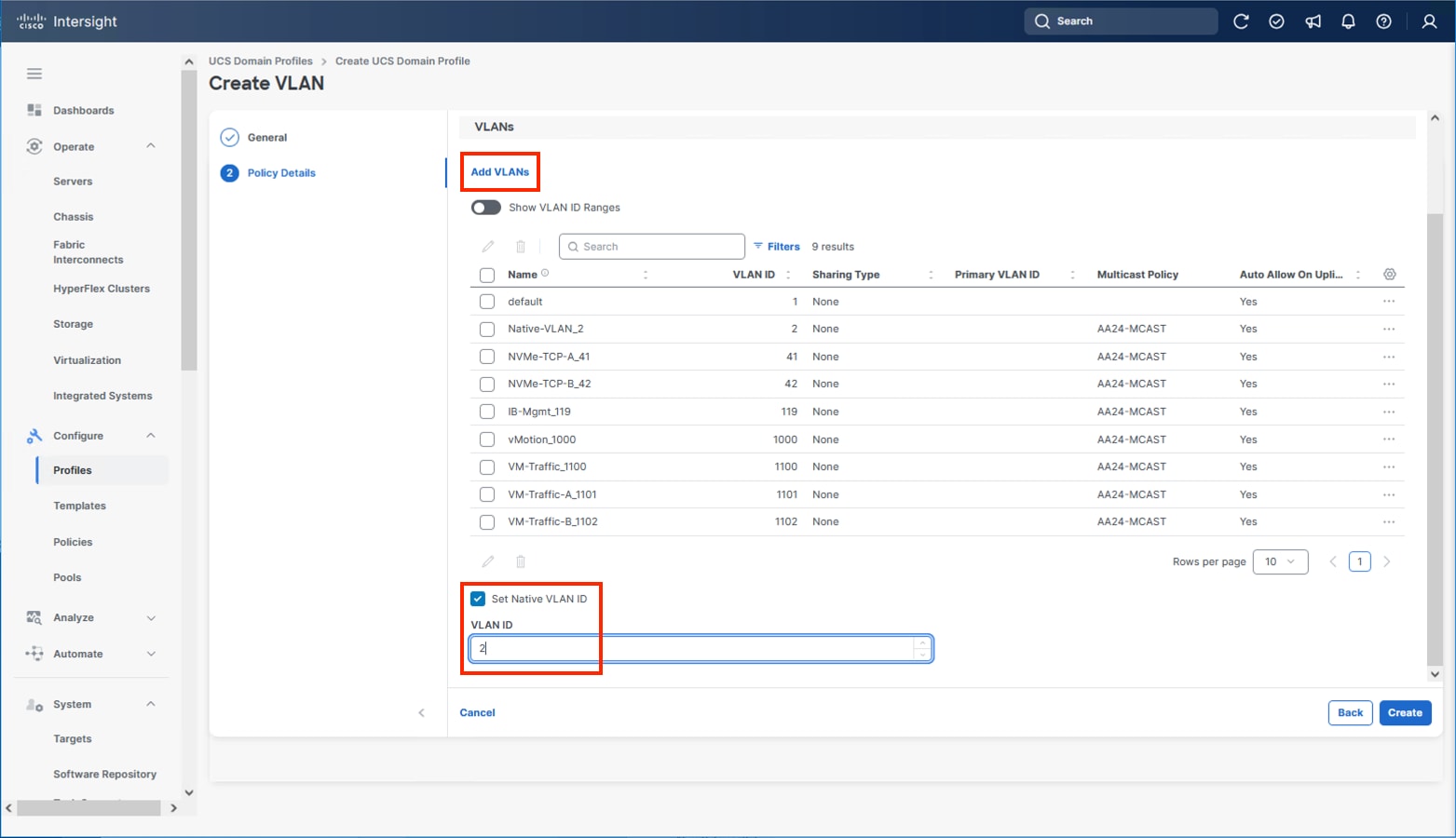

Step 14. Add the remaining VLANs listed in Table 2. Click Add VLANs and enter the VLANs one by one. Reuse the previously created multicast policy for all the VLANs.

Step 15. Select Set Native VLAN ID and enter the VLAN number (for example, 2) under VLAN ID.

Step 16. Click Create to finish creating the VLAN policy and associated VLANs.

Step 17. Click Select Policy next to VLAN Configuration for Fabric Interconnect B and select the same VLAN policy.

VSAN Configuration

Note: The VSAN Configuration will not be used in this X-Direct solution. VSP One based design using IP storage, FC and FC-NVMe based implementations should reference the previous VSI CVD Deployment Guide.

Step 1. Confirm the VLAN Policy is applied to both fabric interconnects.

Step 2. Click Next.

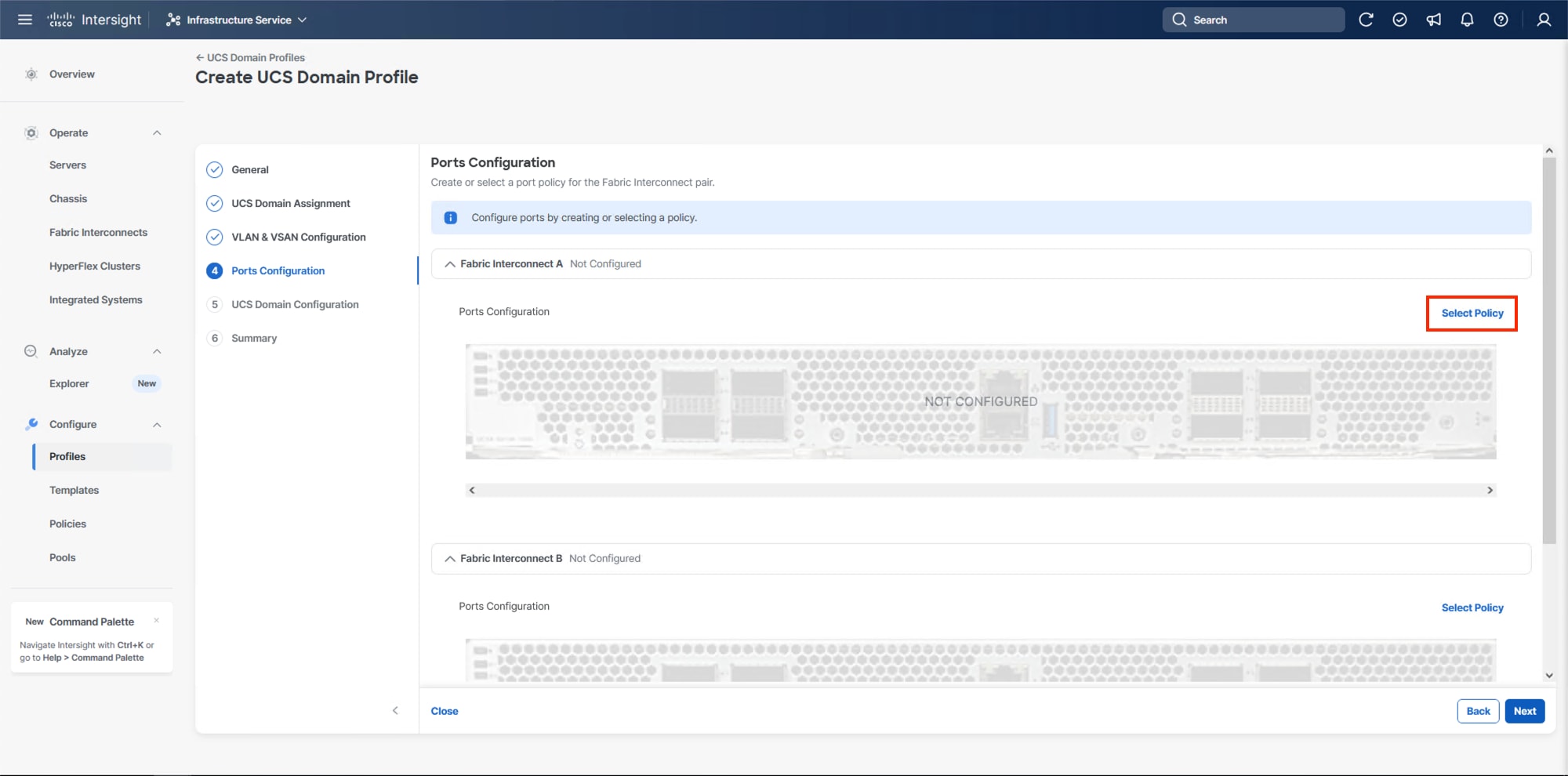

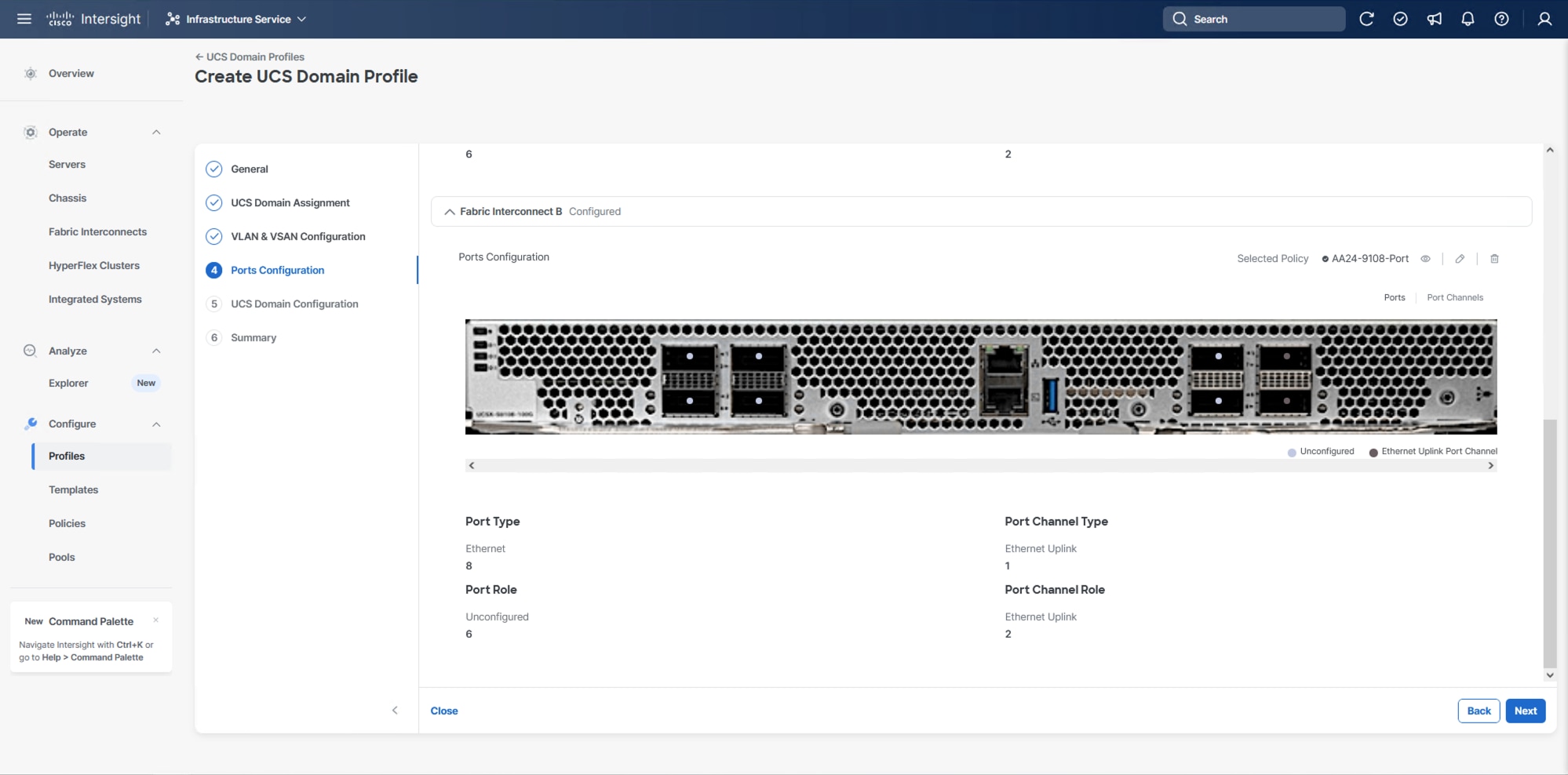

Procedure 5. Ports Configuration

This procedure creates the Ports Configuration policies for Fabric Interconnect A and uses the same Port Configuration policy for Fabric Interconnect B. If there are variances in port configuration and between the two fabric interconnects, separate policies can be created.

Step 1. Click Select Policy for Fabric Interconnect A.

Step 2. Click Create New in the pane on the right to define a new port configuration policy.

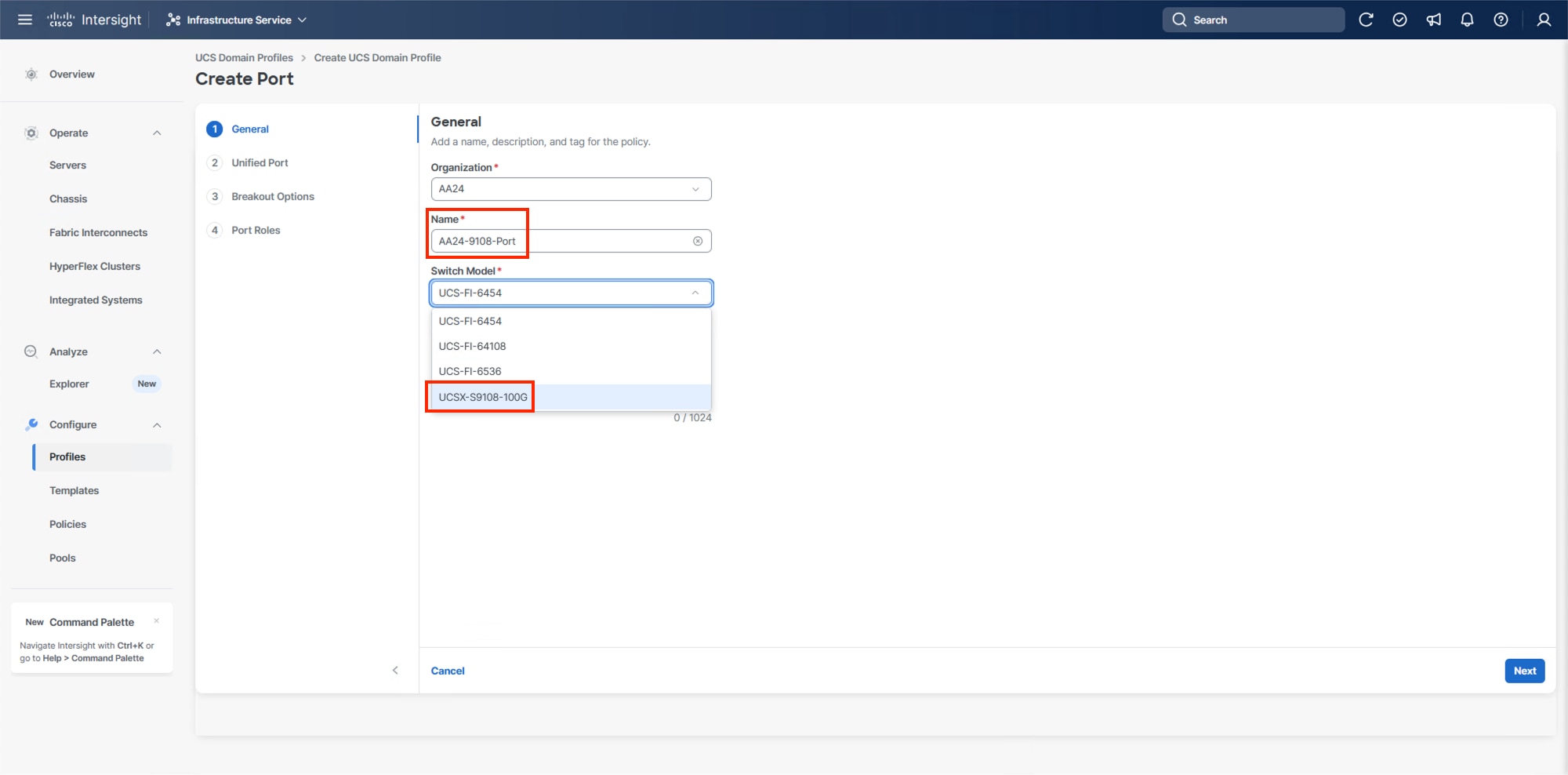

Step 3. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-9108-Port). Select the UCSX-S9108-100G Switch Model.

Step 4. Click Next.

Step 5. Ignore the Unified Port configuration. Click Next.

Step 6. There are no breakout ports configured in the validated architecture, ignore the Breakout Options unless making adjustments required by your placement. Click Next.

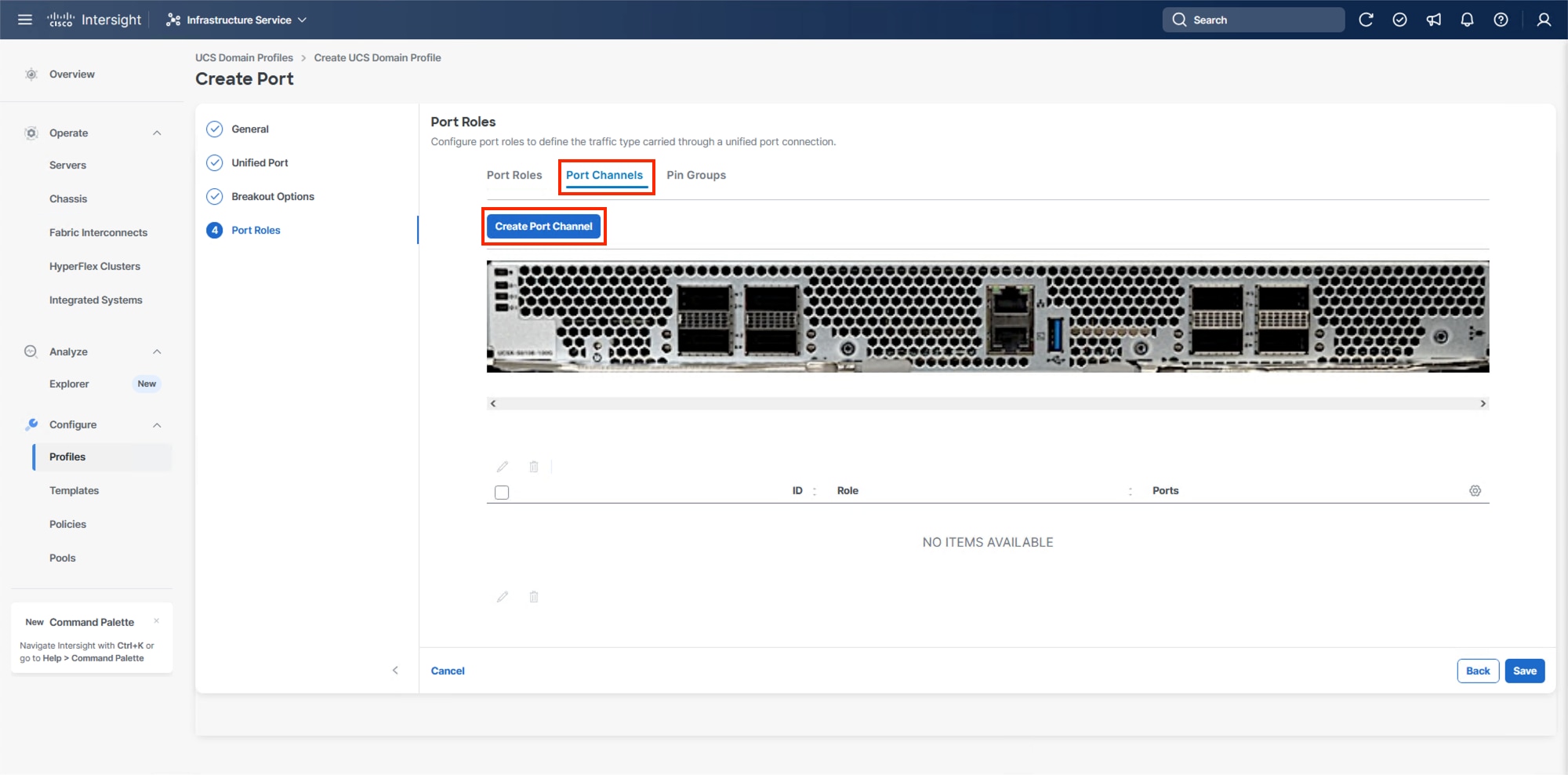

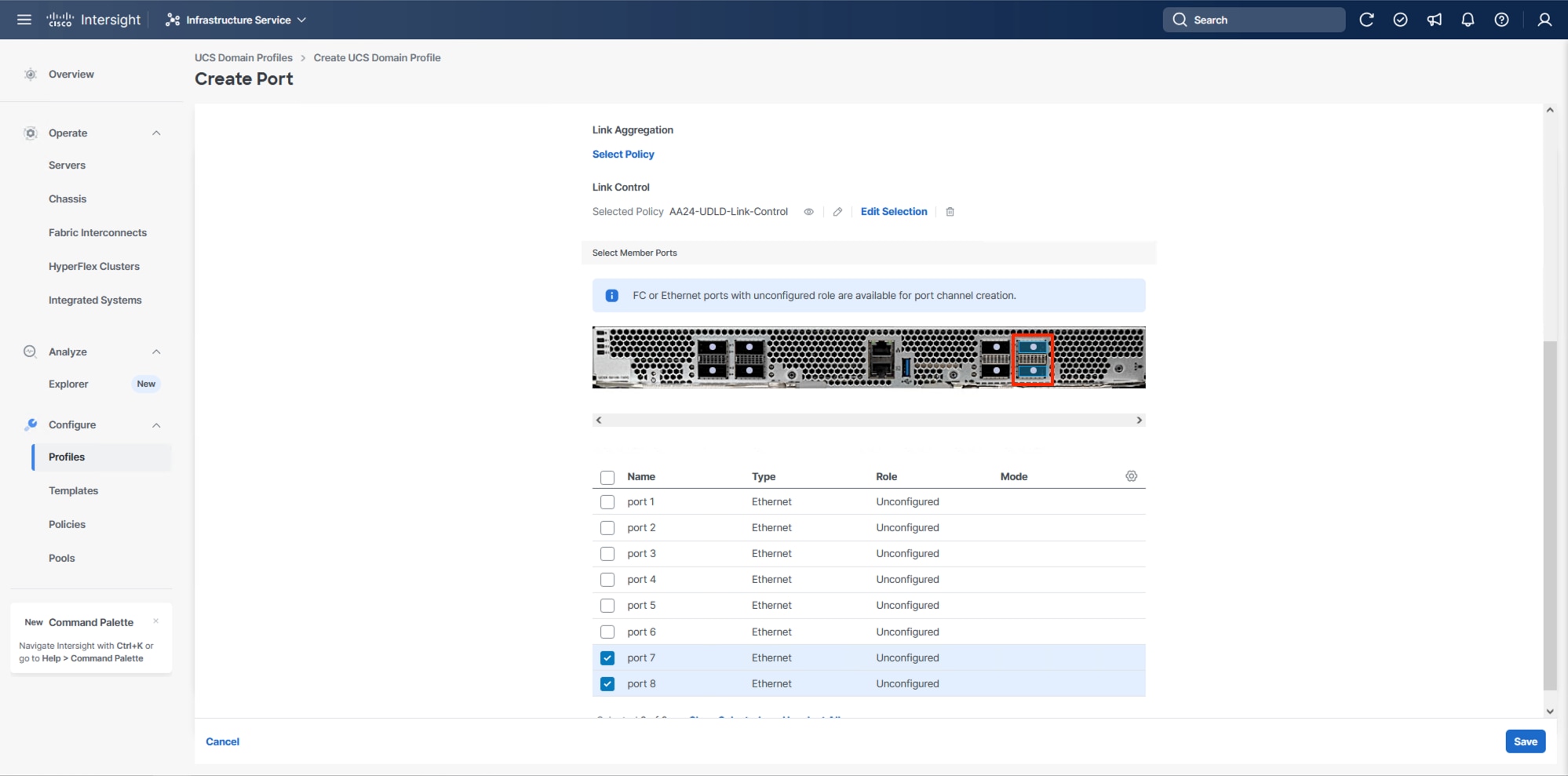

Step 7. In Port Roles, click the Port Channels tab in the center and click Create Port Channel.

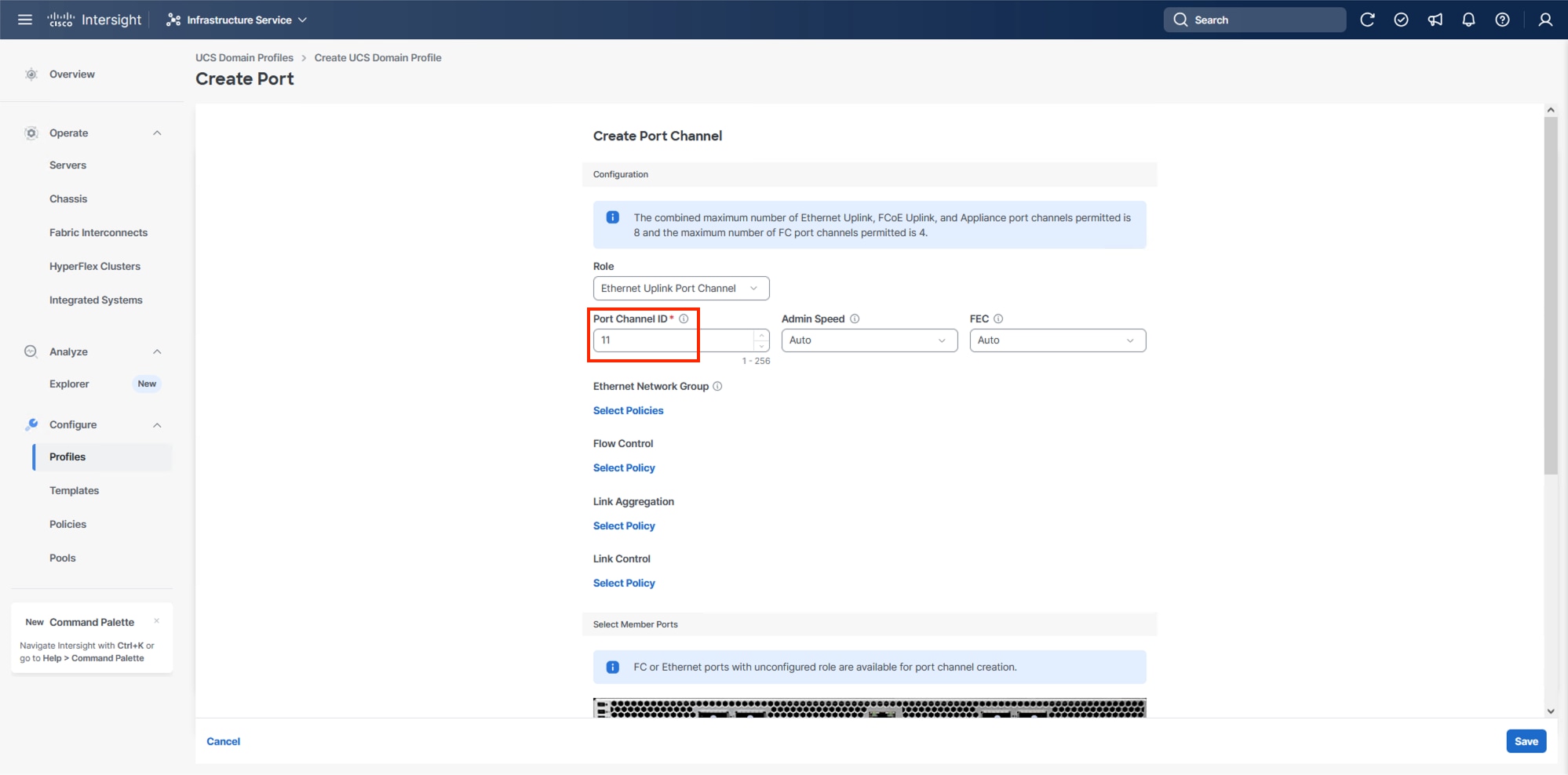

Step 8. To create the network uplinks to the Nexus 93600CD-GX switches, leave the Role as Ethernet Uplink Port selected.

Step 9. Specify a Port Channel ID (example 11). Specify an Admin Speed if the upstream ports require it, otherwise leave it as Auto, and leave Forward Error Correction (FEC) as Auto.

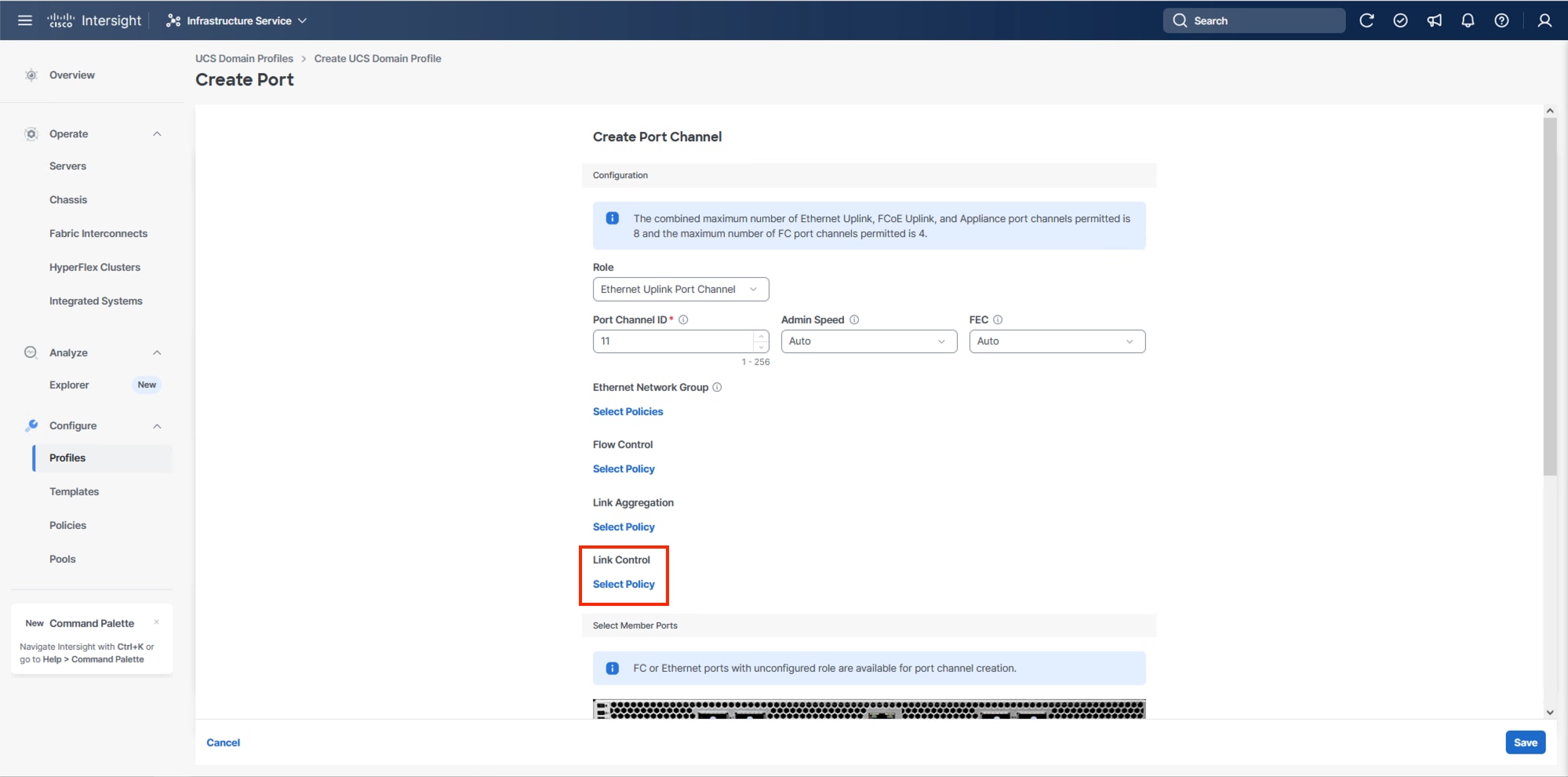

Note: Ethernet Network Group, Flow Control, and Link Aggregation policies for defining a disjoint Layer-2 domain or fine tune port-channel parameters can be configured here, but these policies were not used in this deployment and system default values were utilized.

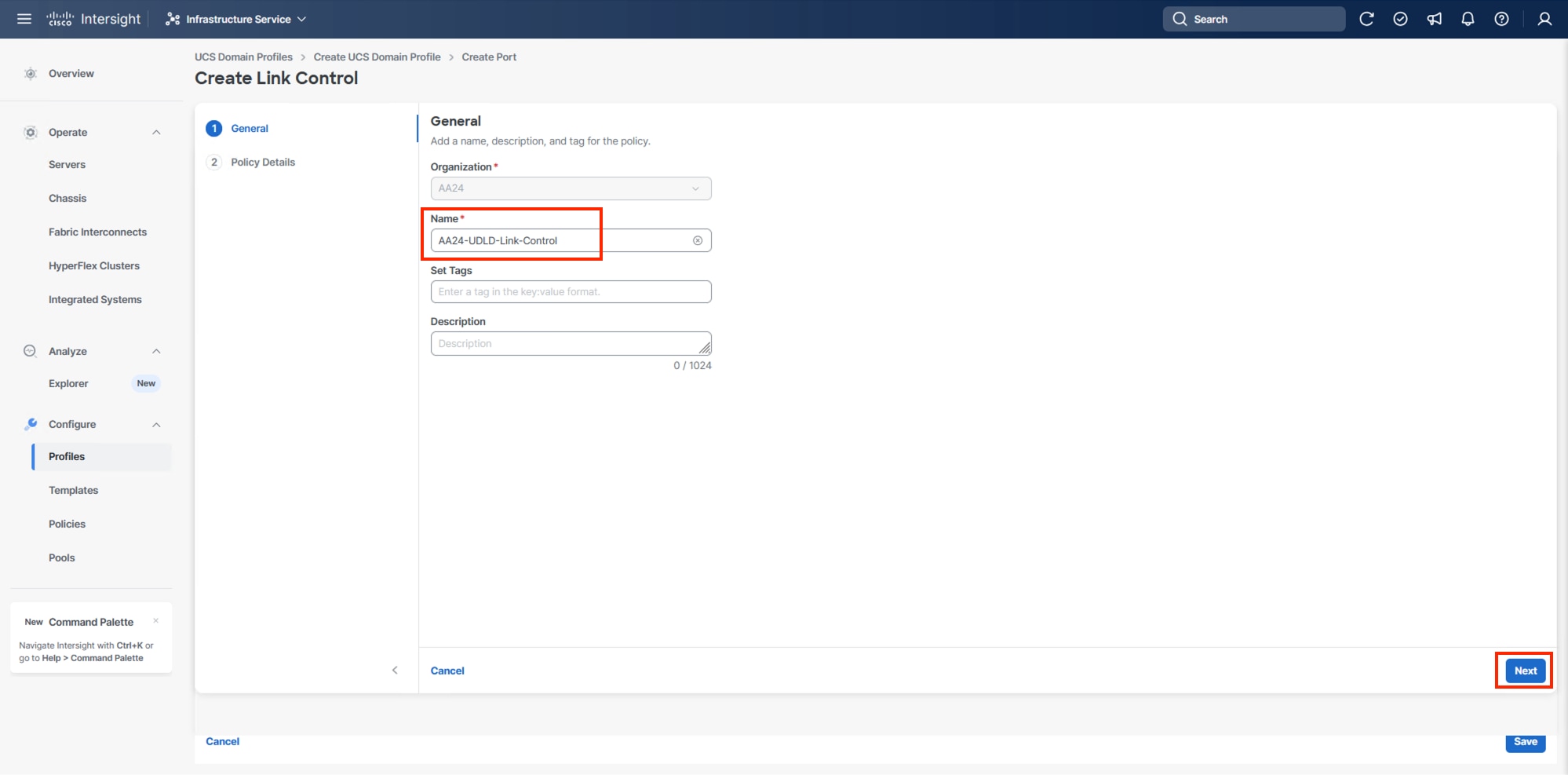

Step 10. Scroll down to Link Control and if Select Member Ports is not visible within the Create Port Channel dialogue, click Select Policy under Link Control and then select Create New.

Step 11. Provide a name for the policy (example, AA24-UDLD-Link-Control) and click Next.

Step 12. Leave the default values selected and click Create.

Step 13. Scroll down to select the ports connected to the upstream Nexus switches (example, port 7 and 8).

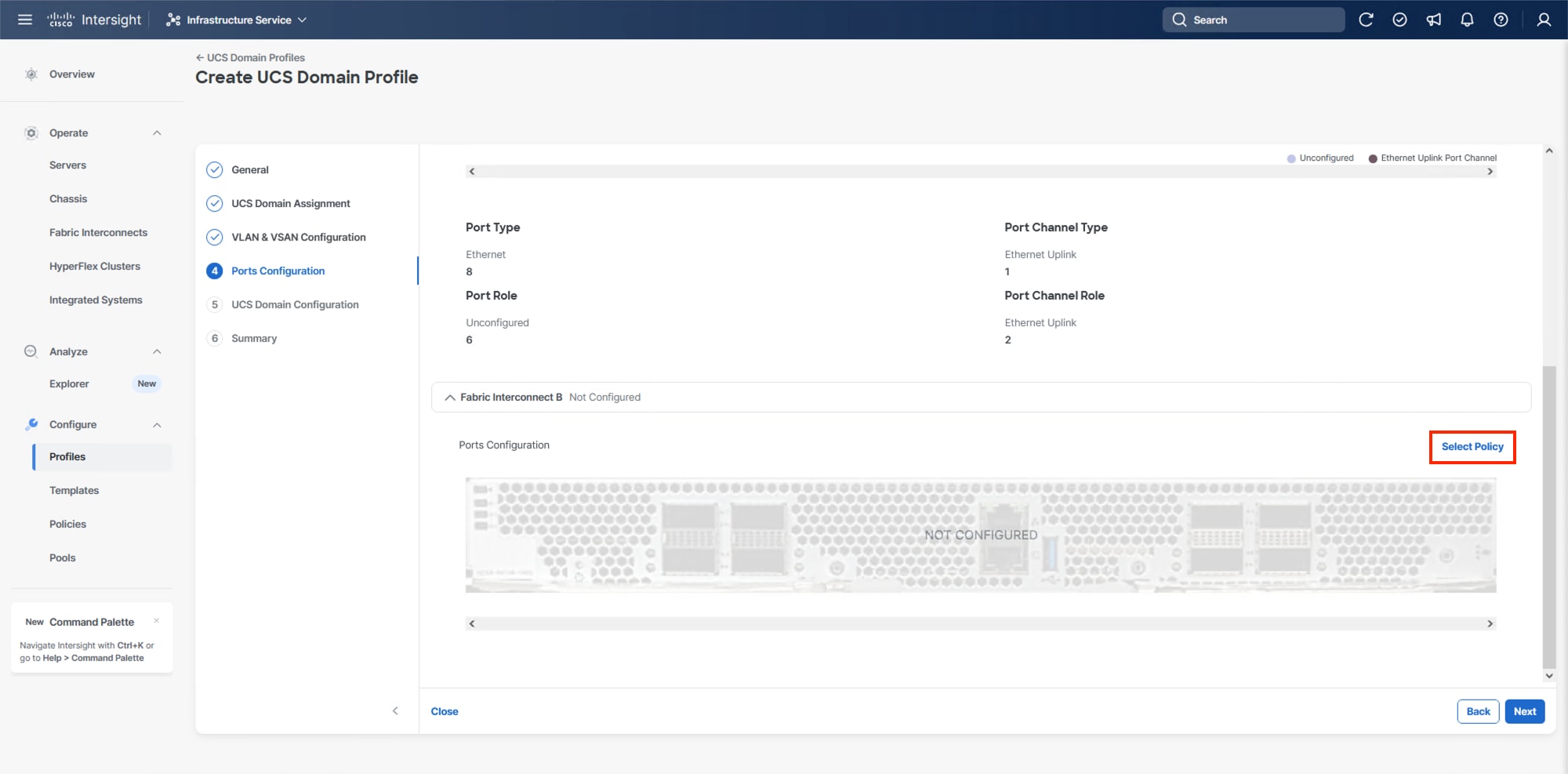

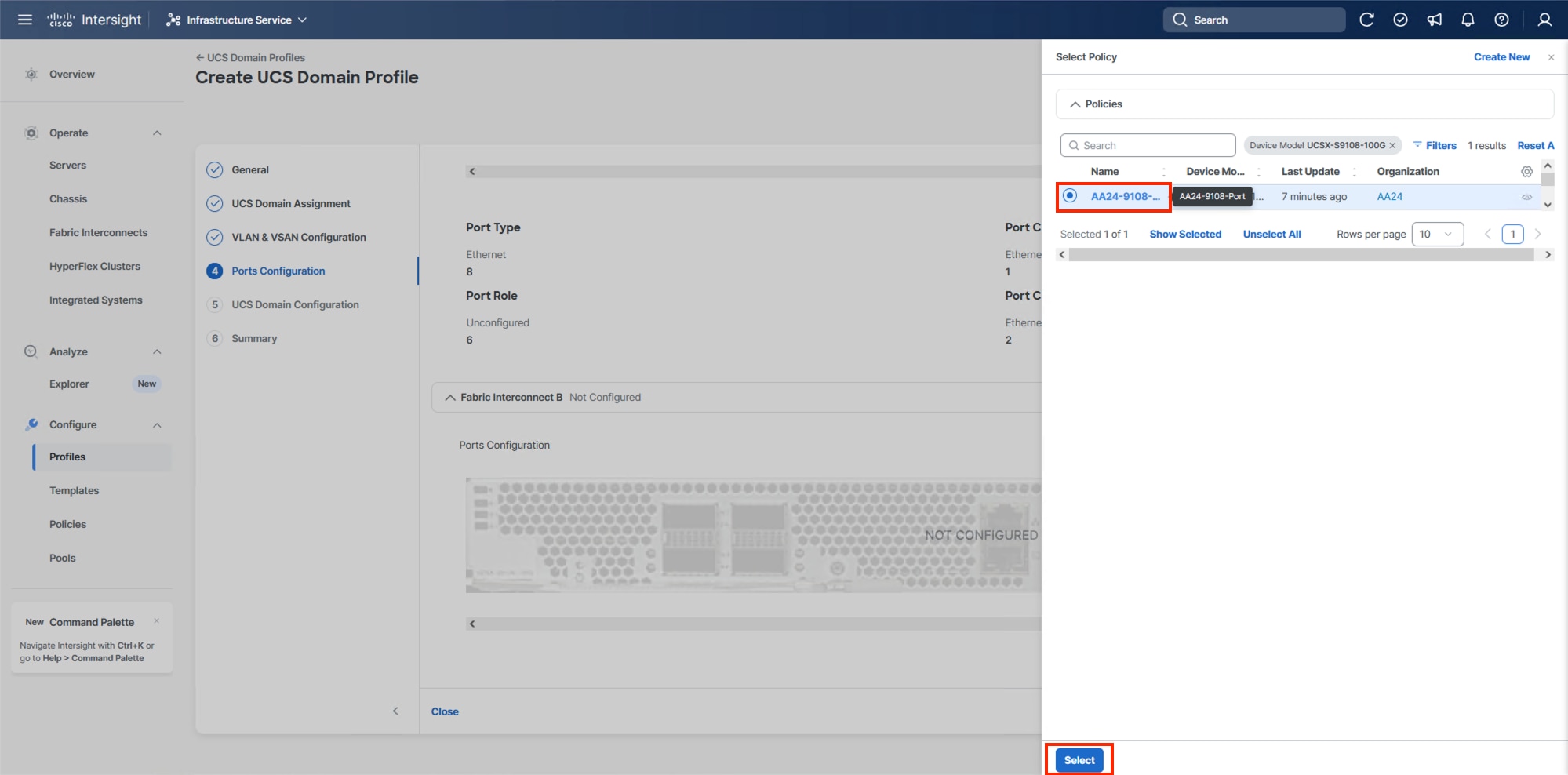

Step 14. Click Save.

Step 15. Scroll down to Fabric Interconnect B and click Select Policy.

Step 16. On the right-side panel, select the previously created Port Policy and click Select.

Note: Without a need for FC port-channels needed in this design, the same Port Policy can be used on both fabrics.

Step 17. Click Save.

Step 18. Click Next.

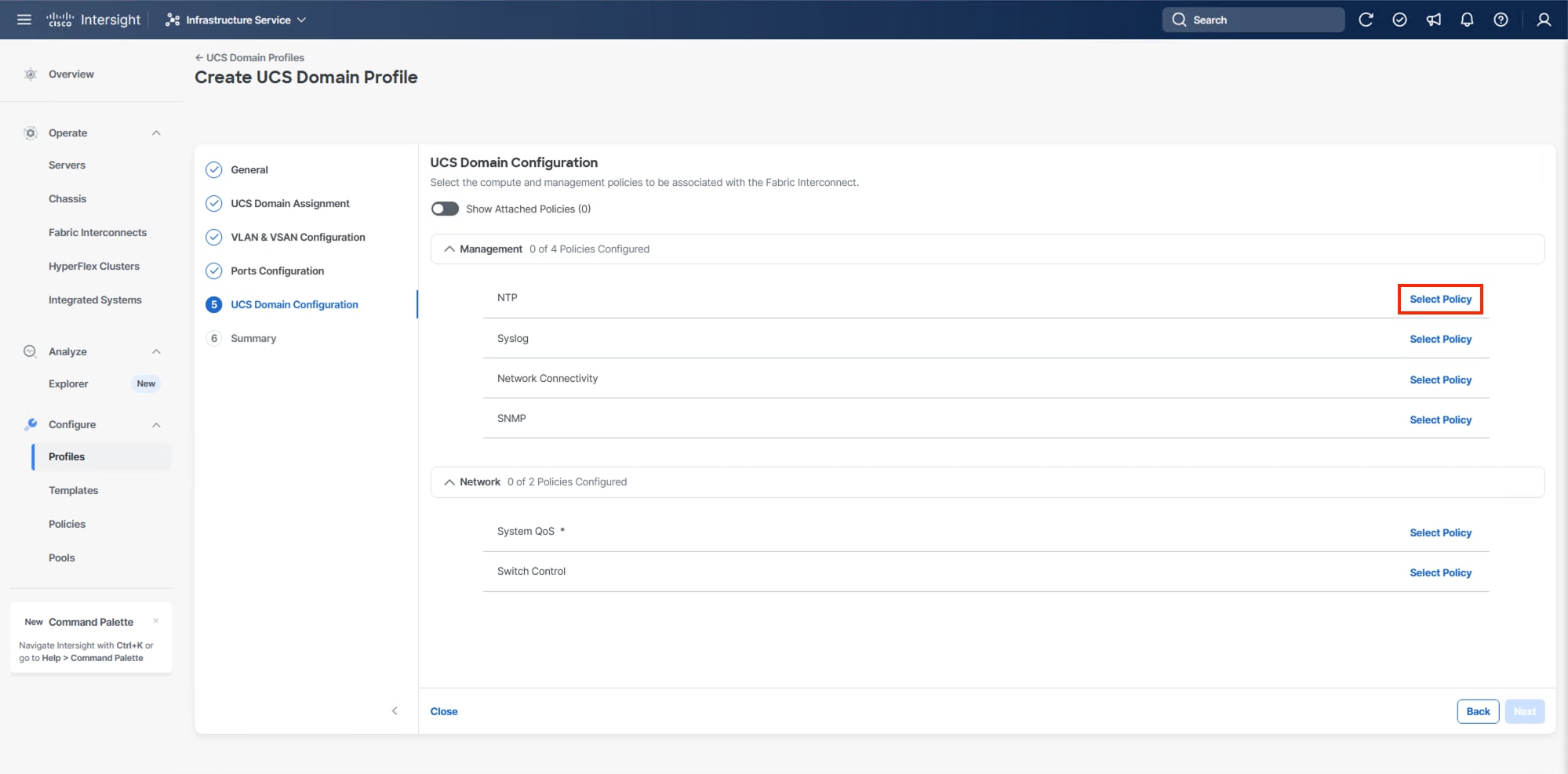

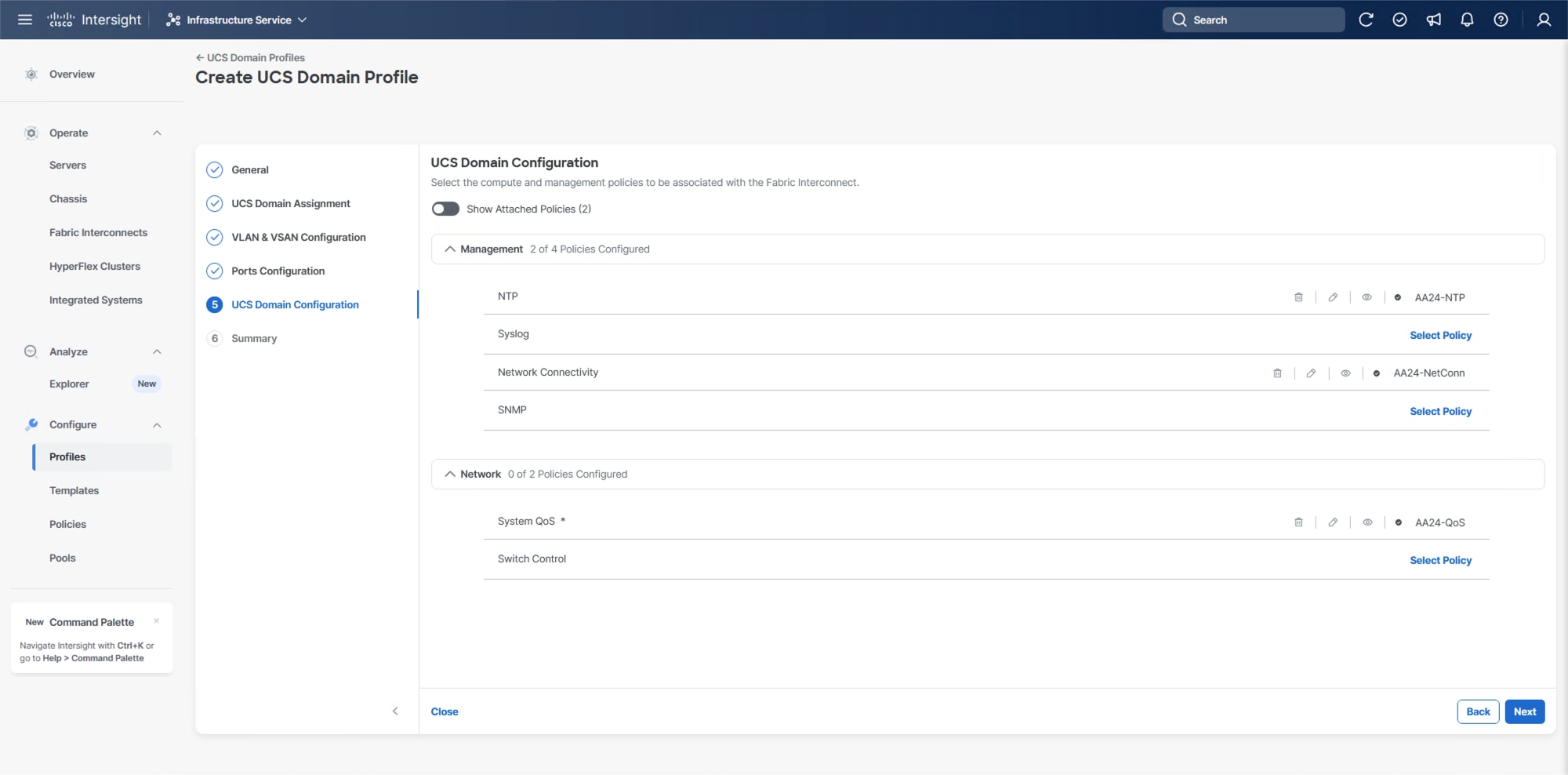

Configure UCS Domain

Under UCS domain configuration, additional policies can be configured to set up NTP, Syslog, DNS settings, SNMP, QoS and the UCS operating mode (end host or switch mode). For this deployment, four policies (NTP, Network Connectivity, SNMP, and System QoS) will be configured.

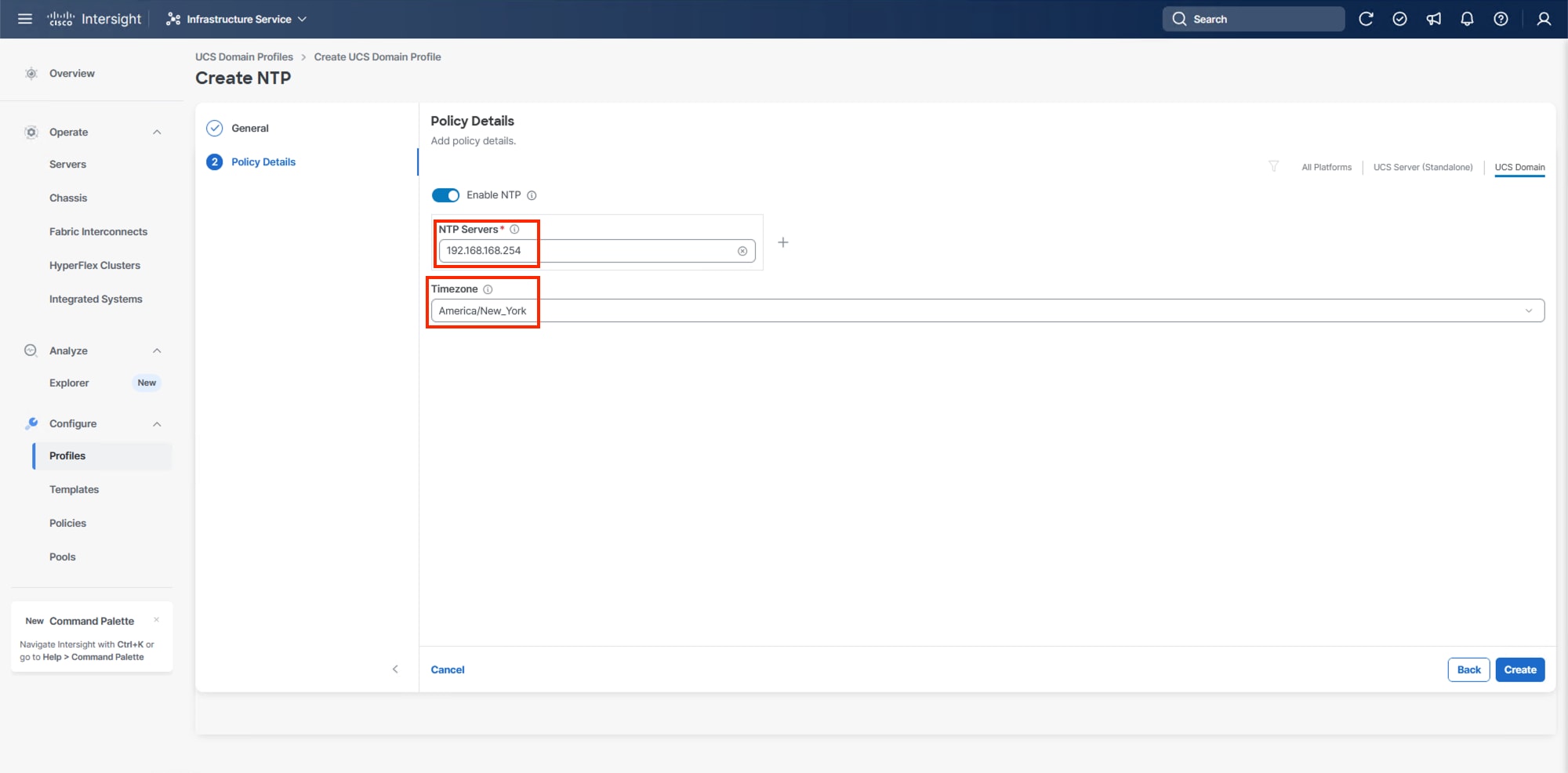

Procedure 1. Configure NTP Policy

Step 1. Click Select Policy next to NTP and in the pane on the right, click Create New.

Step 2. Verify the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-NTP).

Step 3. Click Next.

Step 4. Enable NTP should be selected, provide the first NTP server IP address, and select the time zone from the drop-down list.

Step 5. (Optional) Add a second NTP server by clicking + next to the first NTP server IP address.

Step 6. Click Create.

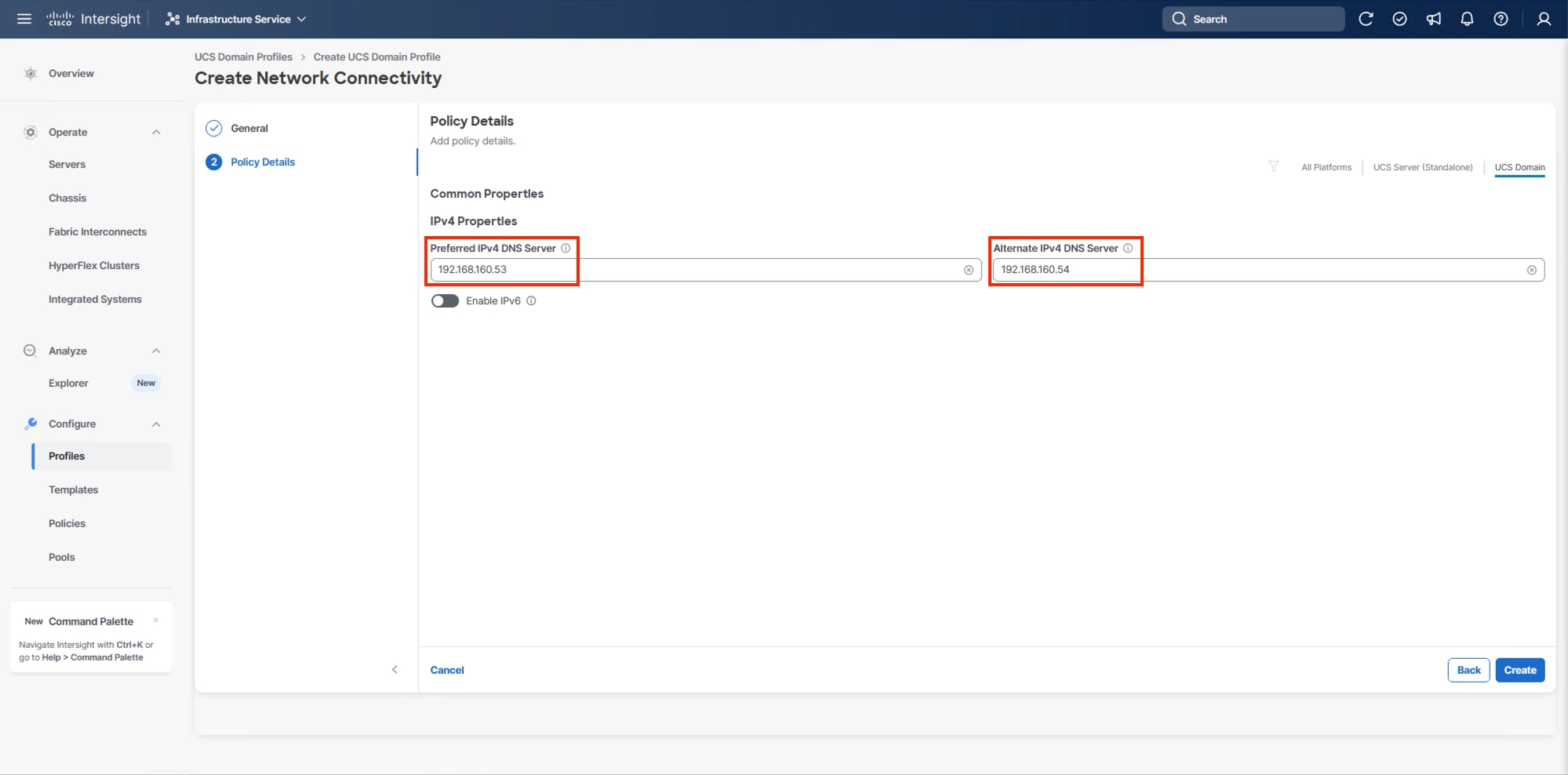

Procedure 2. Configure Network Connectivity Policy

Step 1. Click Select Policy next to Network Connectivity and then, in the pane on the right, click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-NetConn).

Step 3. Click Next.

Step 4. Provide the appropriate DNS server IP addresses for the Cisco UCS domain.

Step 5. Click Create.

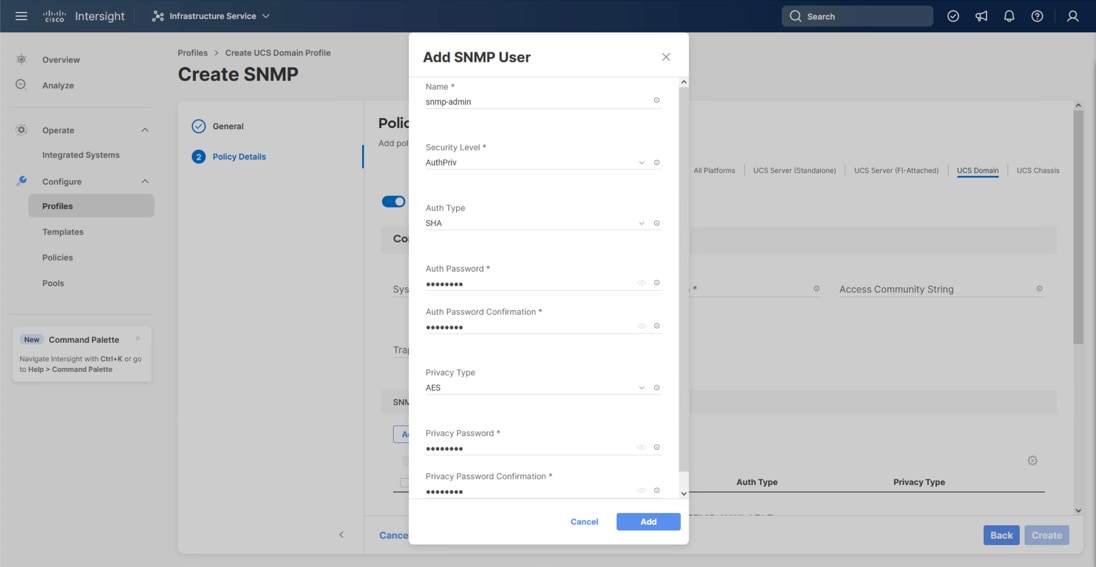

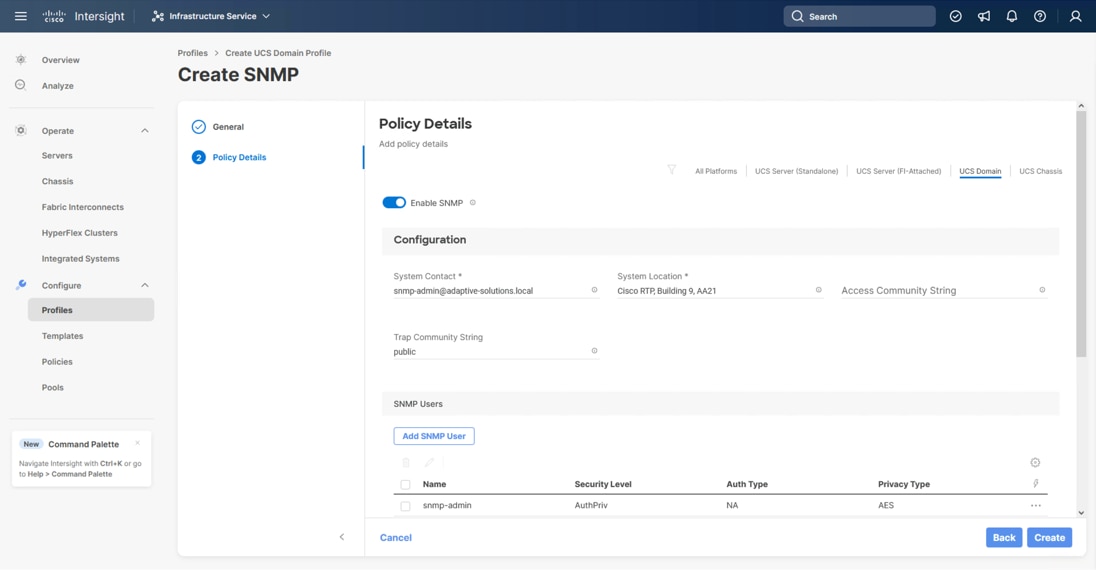

Procedure 3. Configure SNMP Policy (Optional)

Step 1. Click Select Policy next to SNMP and then, in the pane on the right, click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-SNMP).

Step 3. Click Next.

Step 4. Provide a System Contact email address, a System Location, and optional Community Strings.

Step 5. Under SNMP Users, click Add SNMP User.

Step 6. Optionally, add an SNMP Trap Destination (for example, the NDFC IP Address). If the SNMP Trap Destination is V2, you must add a Trap Community String.

Step 7. Click Create.

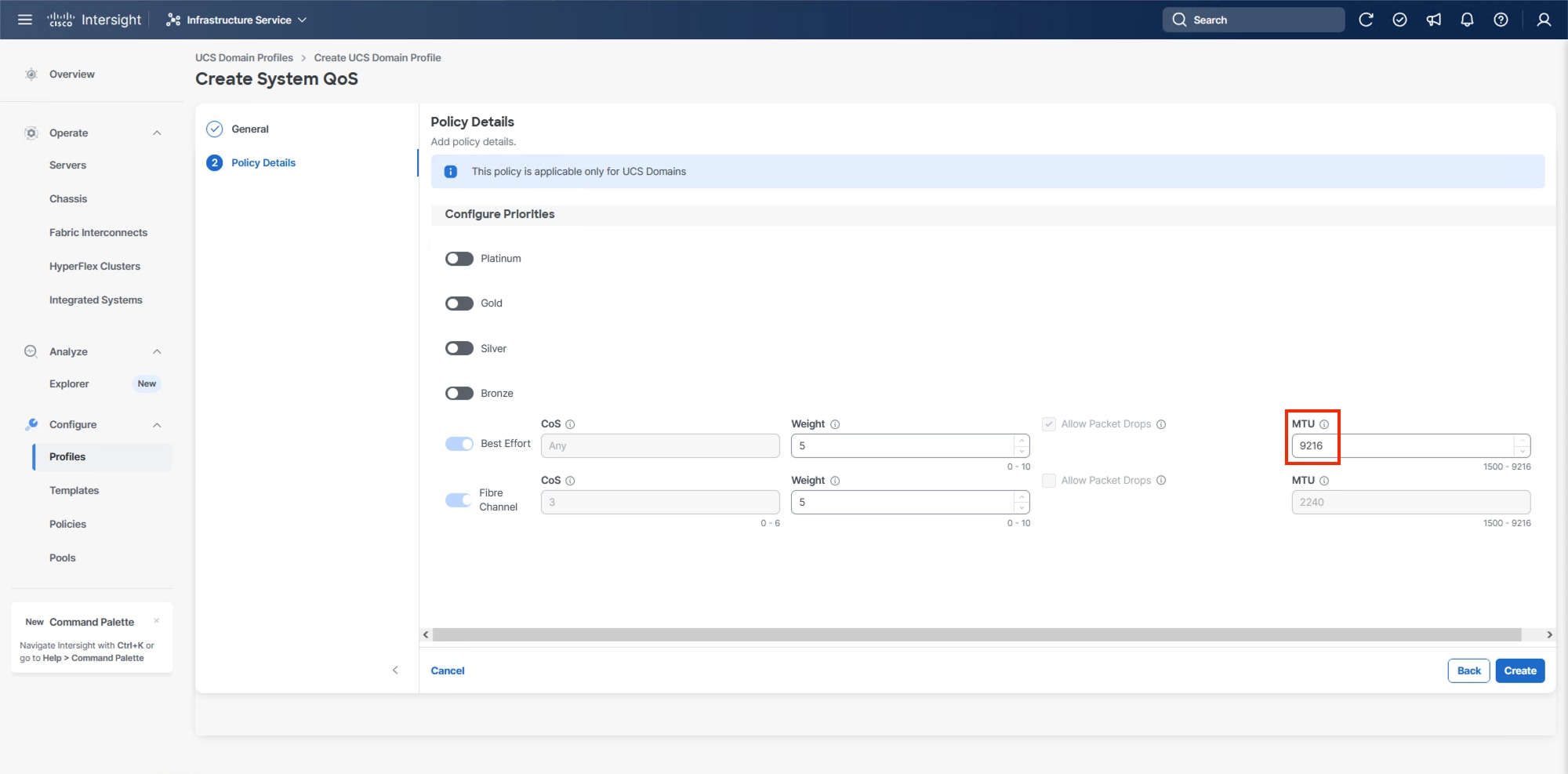

Procedure 4. Configure System QoS Policy

The System QoS policy will be adjusted to expand the capacity of the Ethernet uplinks to support jumbo frames. All Ethernet traffic is set within a common class of Best Effort in this design, which will have the MTU adjusted. Different strategies can be implemented for QoS giving weighted priorities, but any such effort would need to take care to match settings implemented upstream of the fabric interconnects.

Step 1. Click Select Policy next to System QoS* and in the pane on the right, click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-QoS).

Step 3. Click Next.

Step 4. Change the MTU for Best Effort class to 9216.

Step 5. Click Create.

Step 6. Click Next.

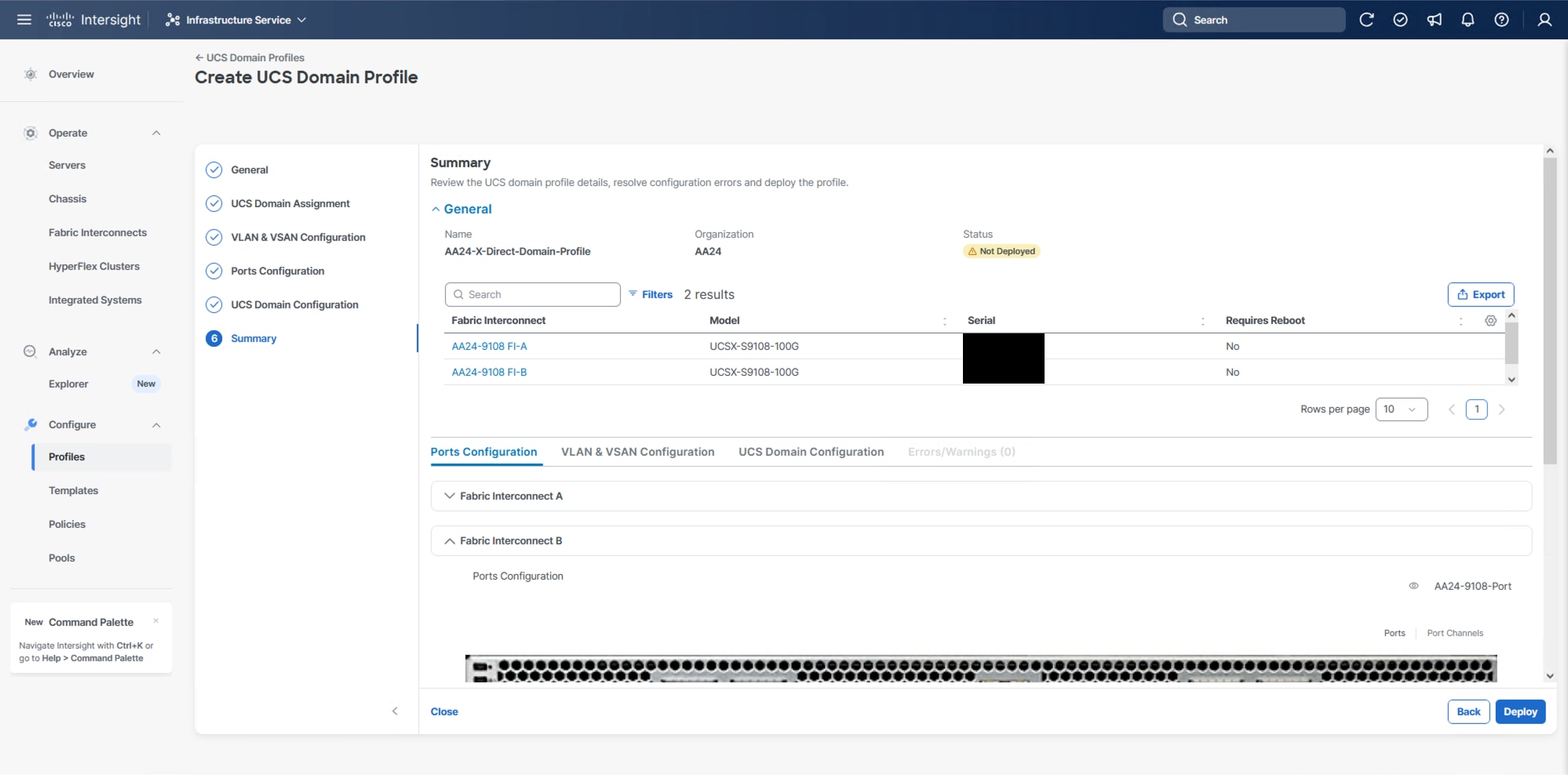

Procedure 5. Deploy the UCS Domain Profile

Step 1. Verify all settings, including the fabric interconnect settings, by expanding the settings and making sure that the configuration is correct.

Step 2. Click Deploy.

Step 3. Acknowledge any warnings and click Deploy again.

Note: The system will take some time to validate and configure the settings on the fabric interconnects. Log into the console servers to see when the Cisco UCS fabric interconnects have finished configuration and are successfully rebooted.

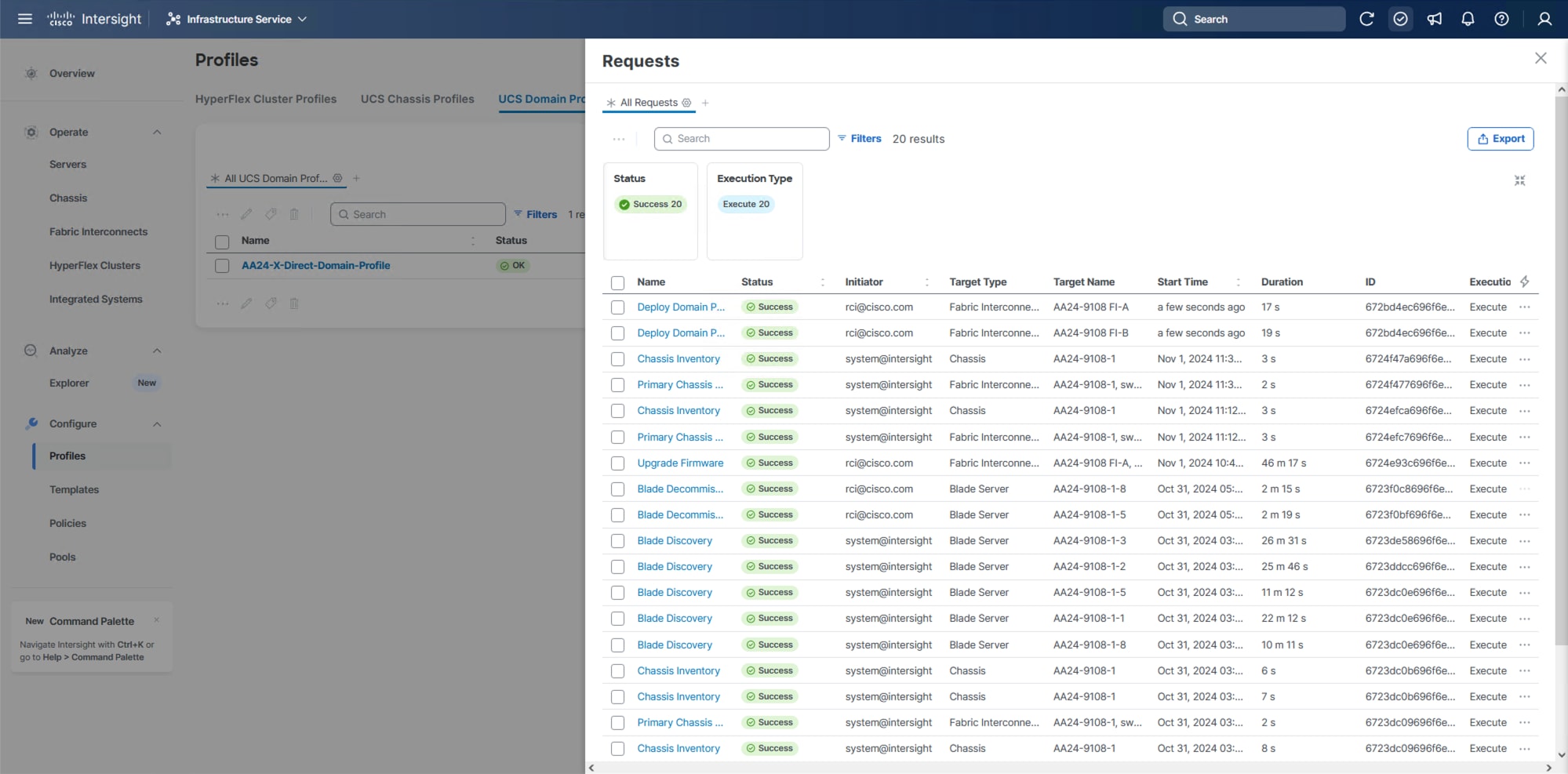

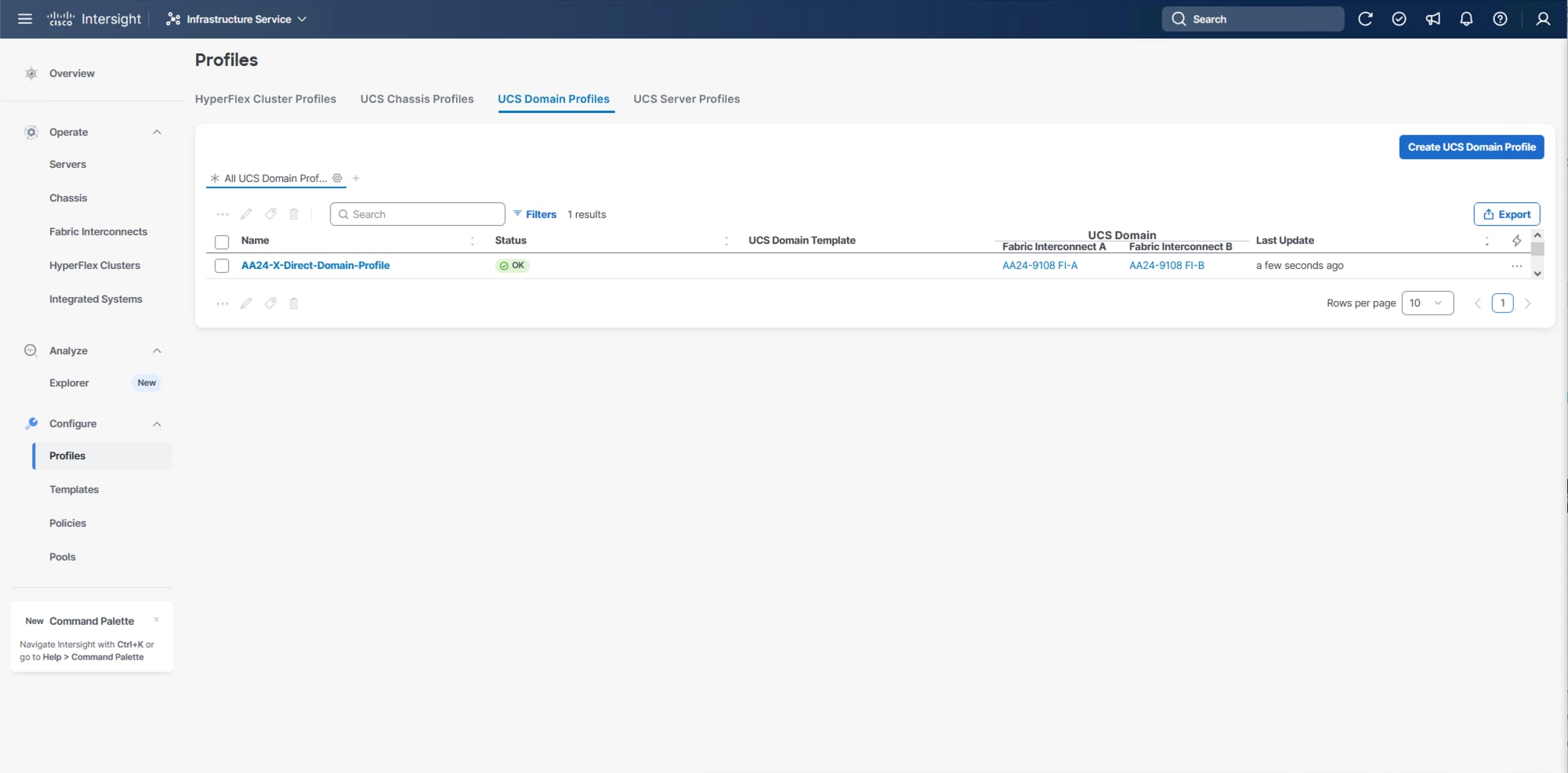

Procedure 6. Verify Cisco UCS Domain Profile Deployment

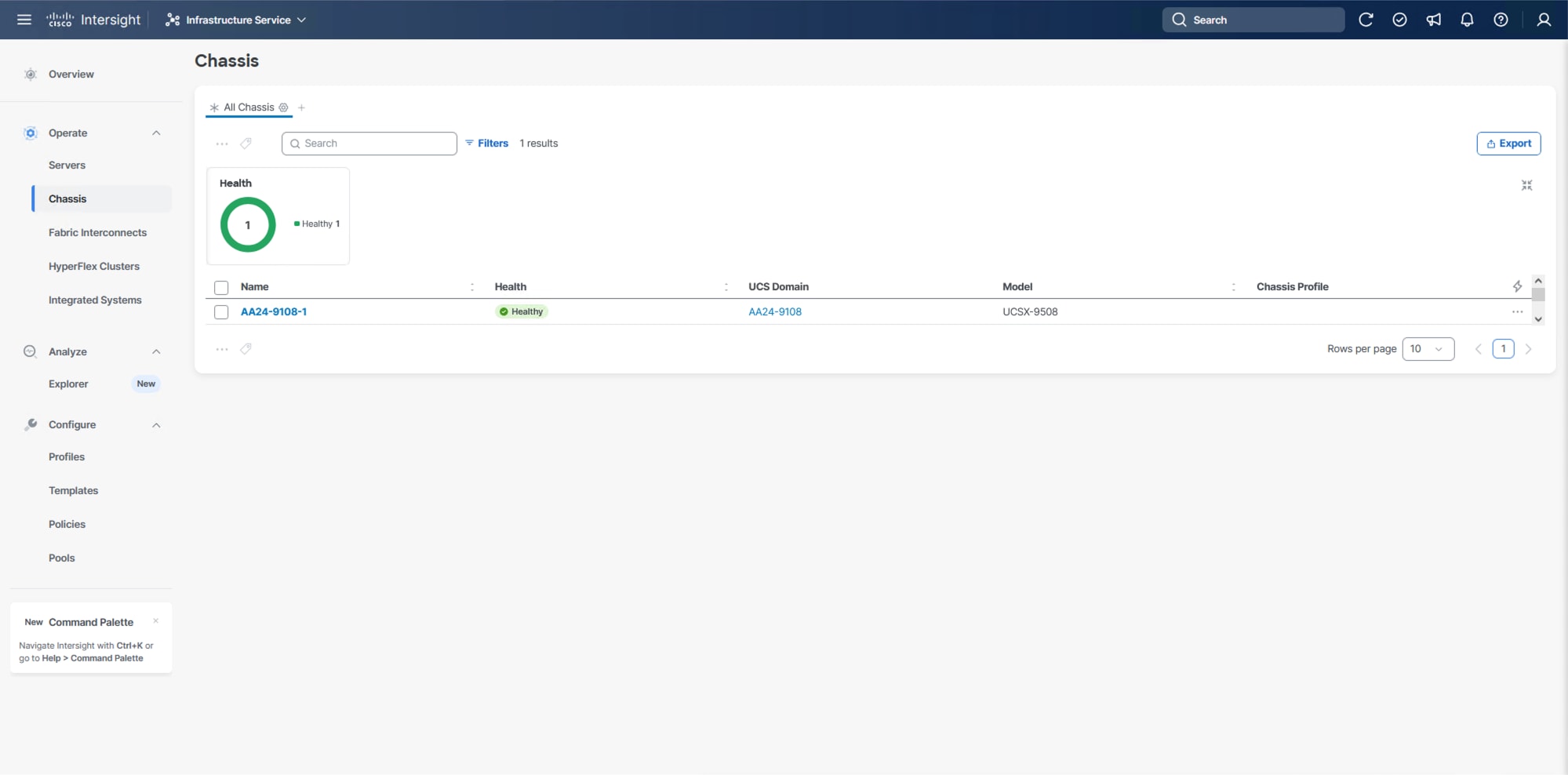

When the Cisco UCS domain profile has been successfully deployed, the Cisco UCS chassis and the blades should be successfully discovered.

Note: It takes some time to discover the blades for the first time. Watch the number of outstanding requests in Cisco Intersight.

Step 1. Log into Cisco Intersight. Go to Infrastructure Service > Configure > Profiles > UCS Domain Profiles, verify that the domain profile has been successfully deployed.

Step 2. Verify that the chassis has been discovered and is visible from Infrastructure Service > Operate > Chassis.

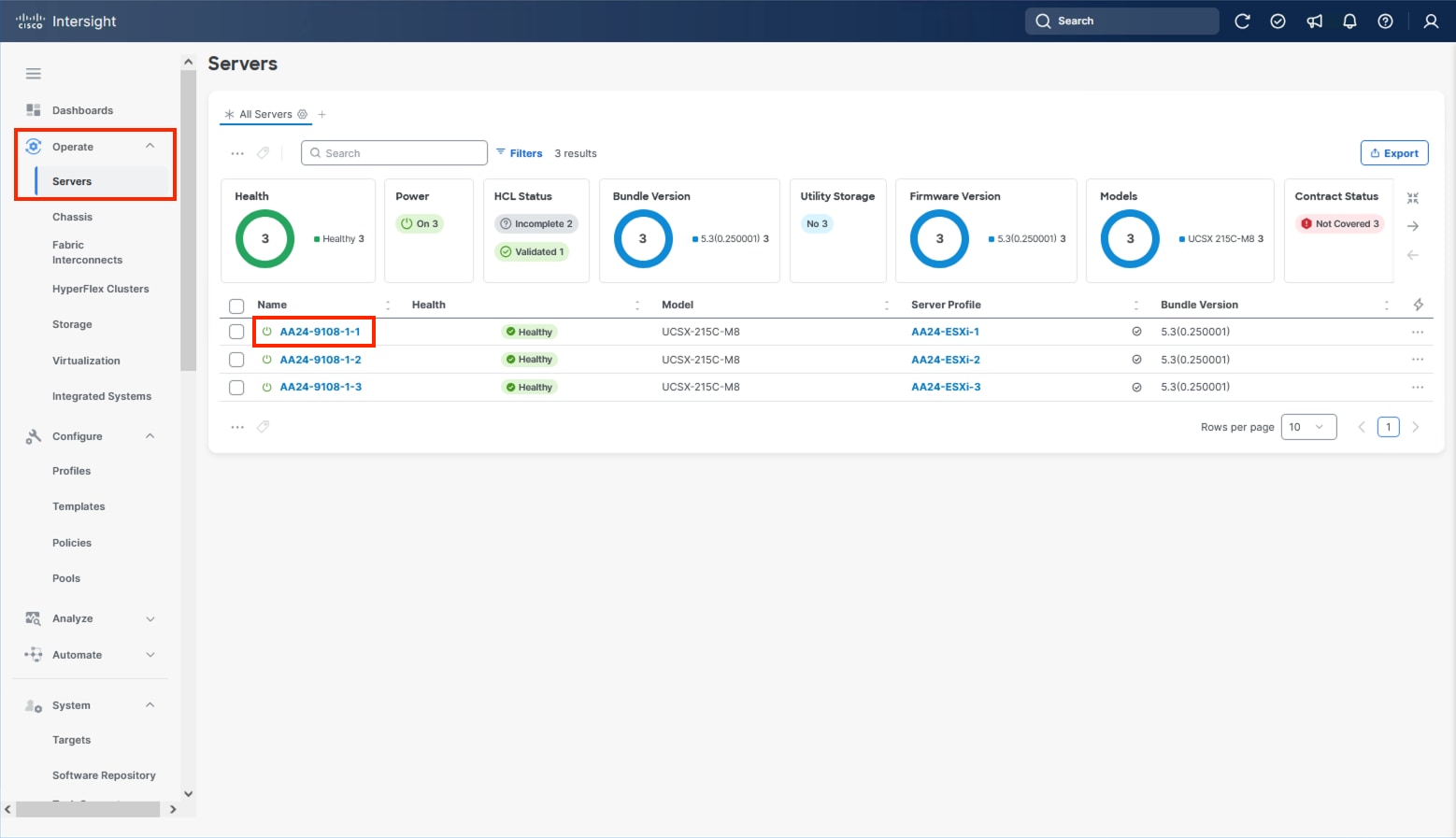

Step 3. Verify that the servers have been successfully discovered and are visible from Infrastructure Service > Operate > Servers.

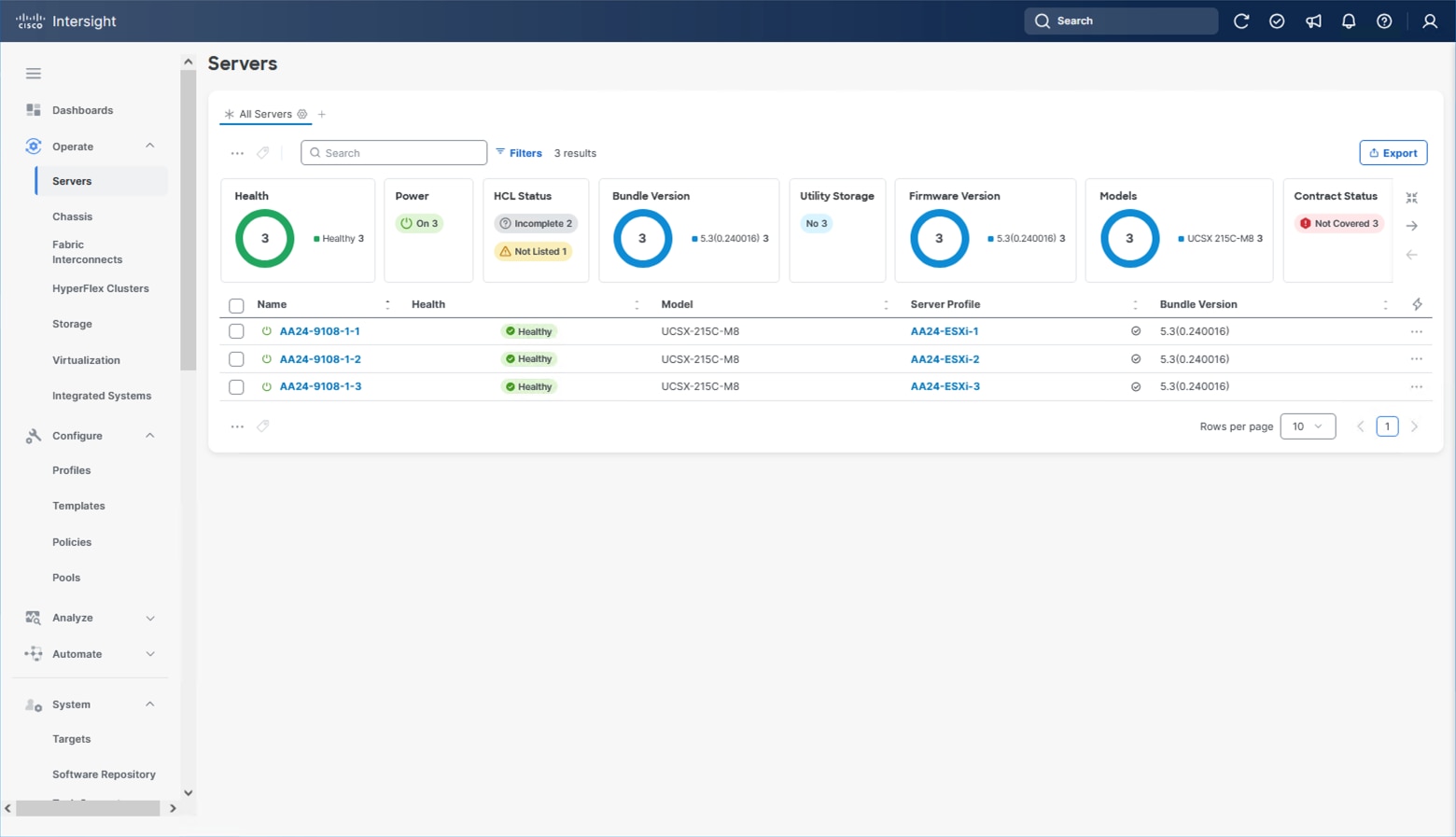

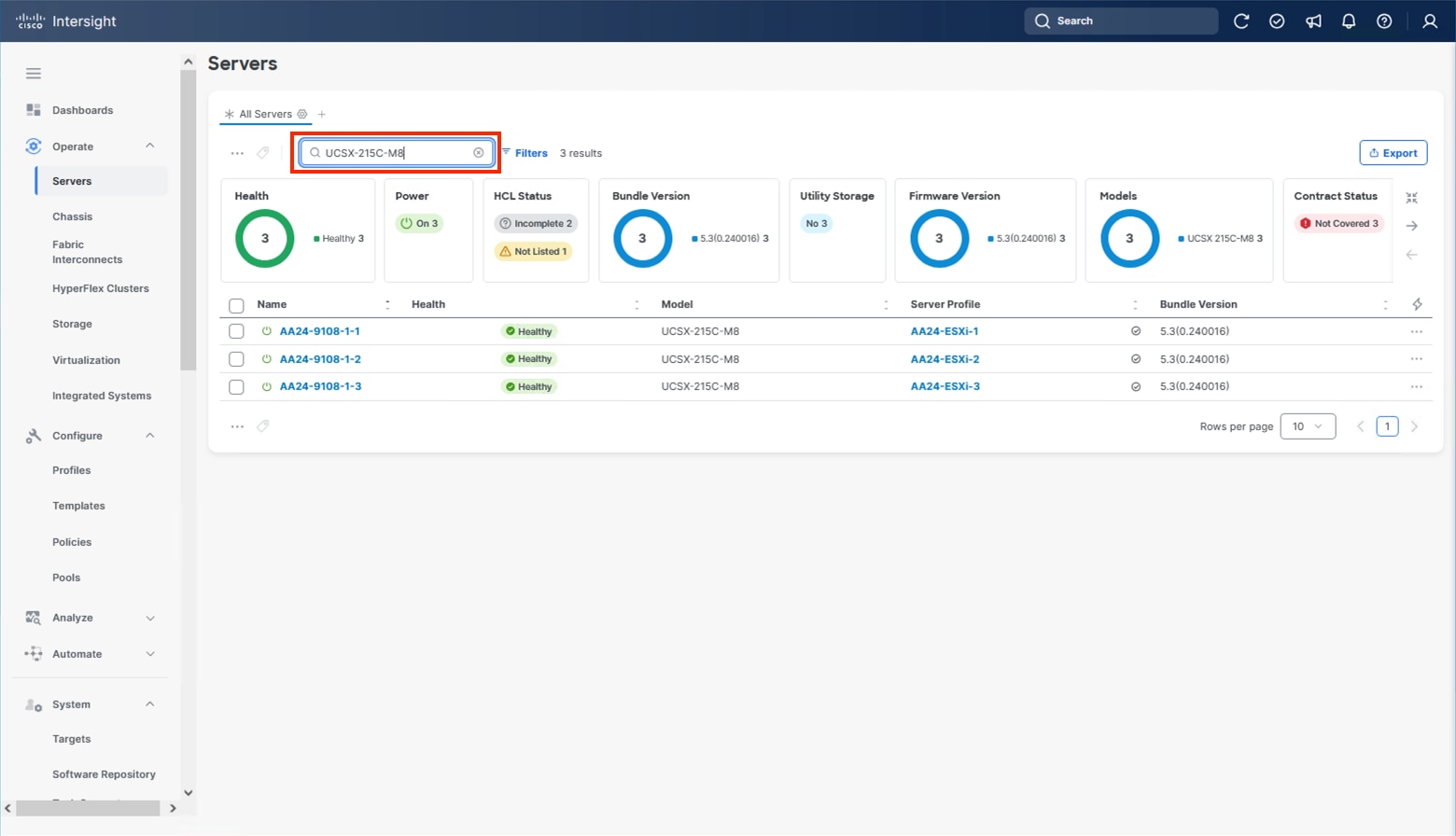

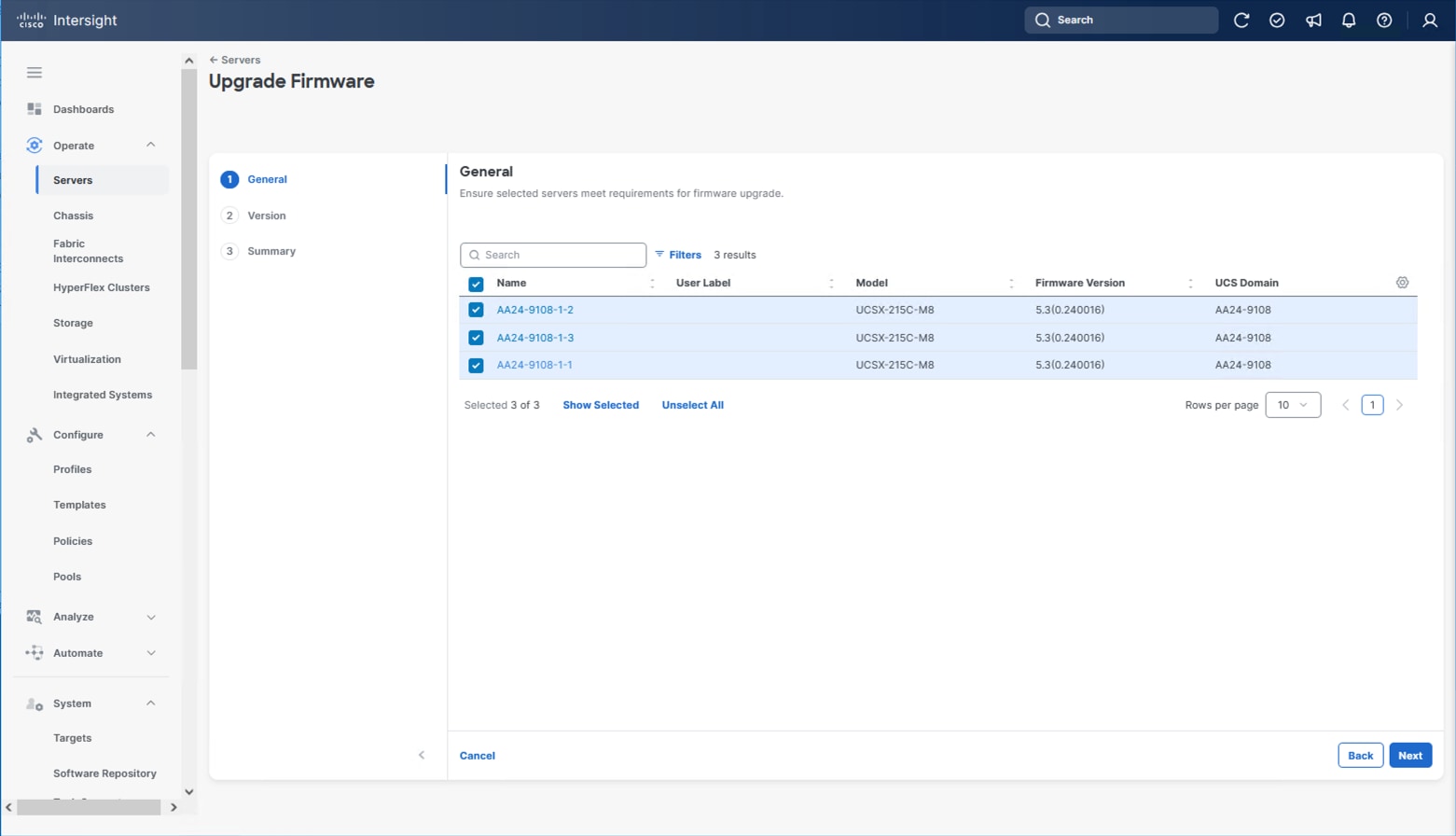

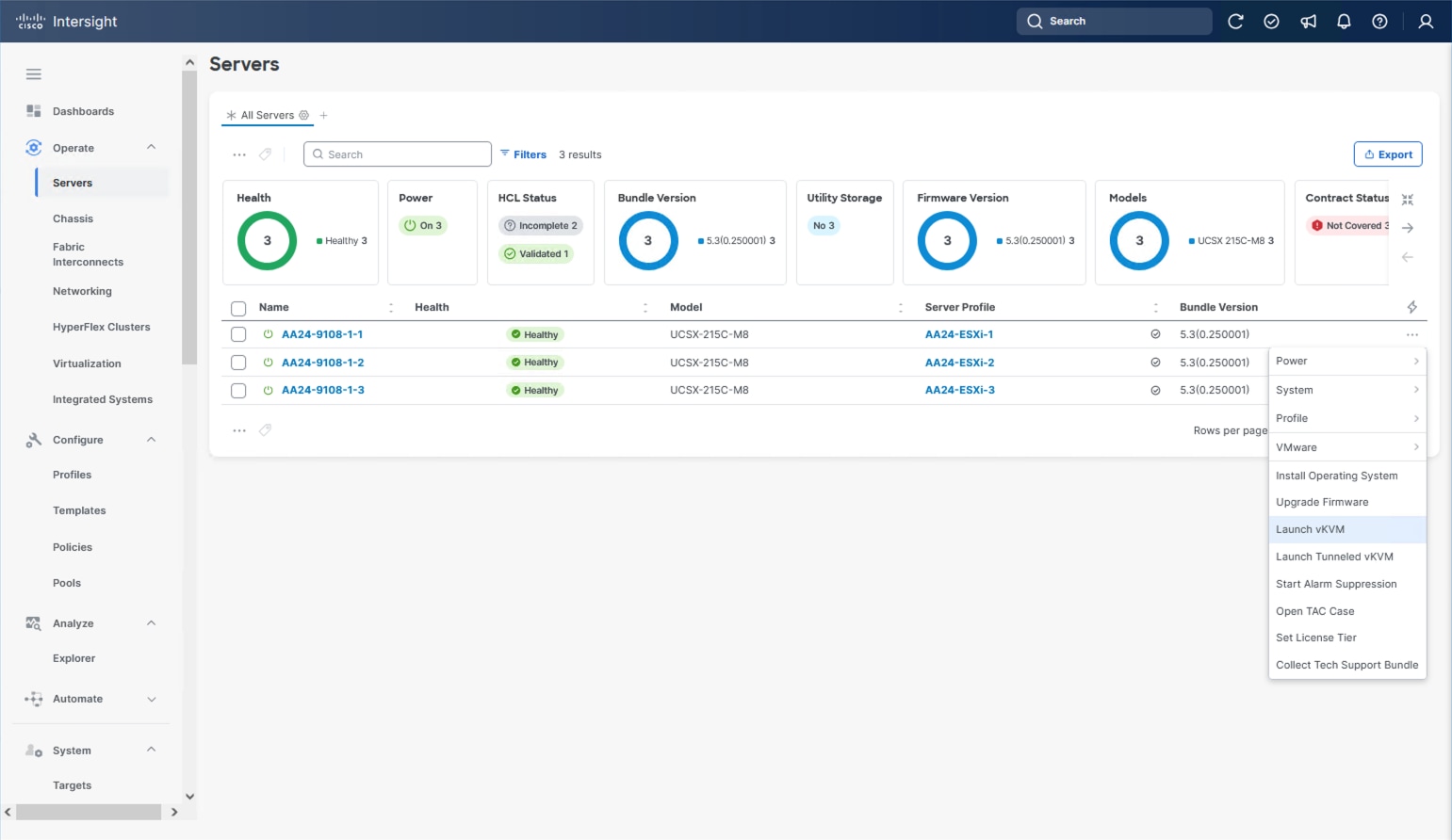

Procedure 7. Update Server Firmware

Step 1. With the servers recognized, the servers can be upgraded from the most recent Servers view from Infrastructure Service > Operate > Servers.

Step 2. Optionally, specify the desired model to work with from the list (example UCSX-215C-M8 if more types were present) and enter it within the filter box.

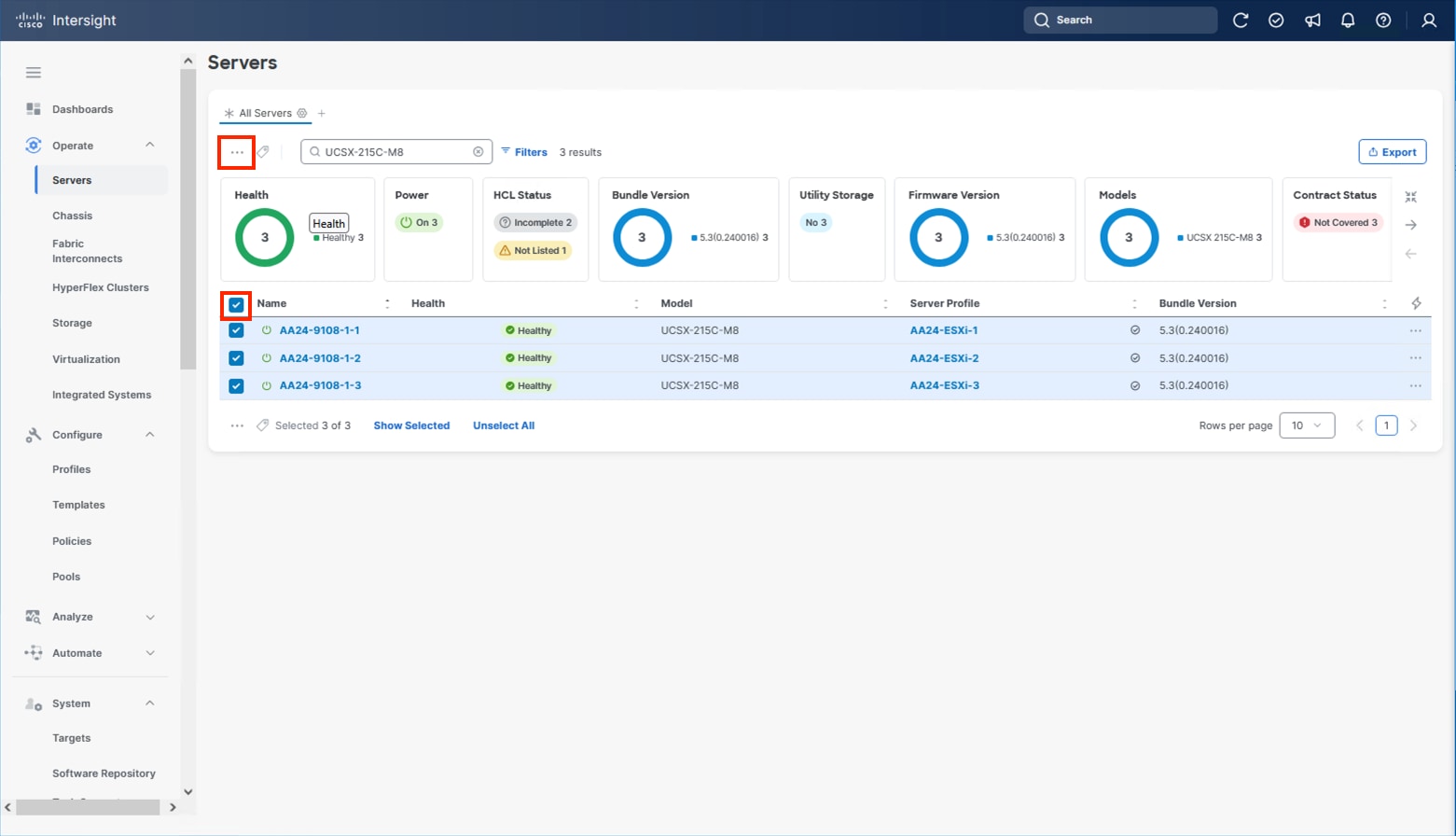

Step 3. Select all servers from the resulting list by clicking the left side box of the column header or manually select a specific set from the results.

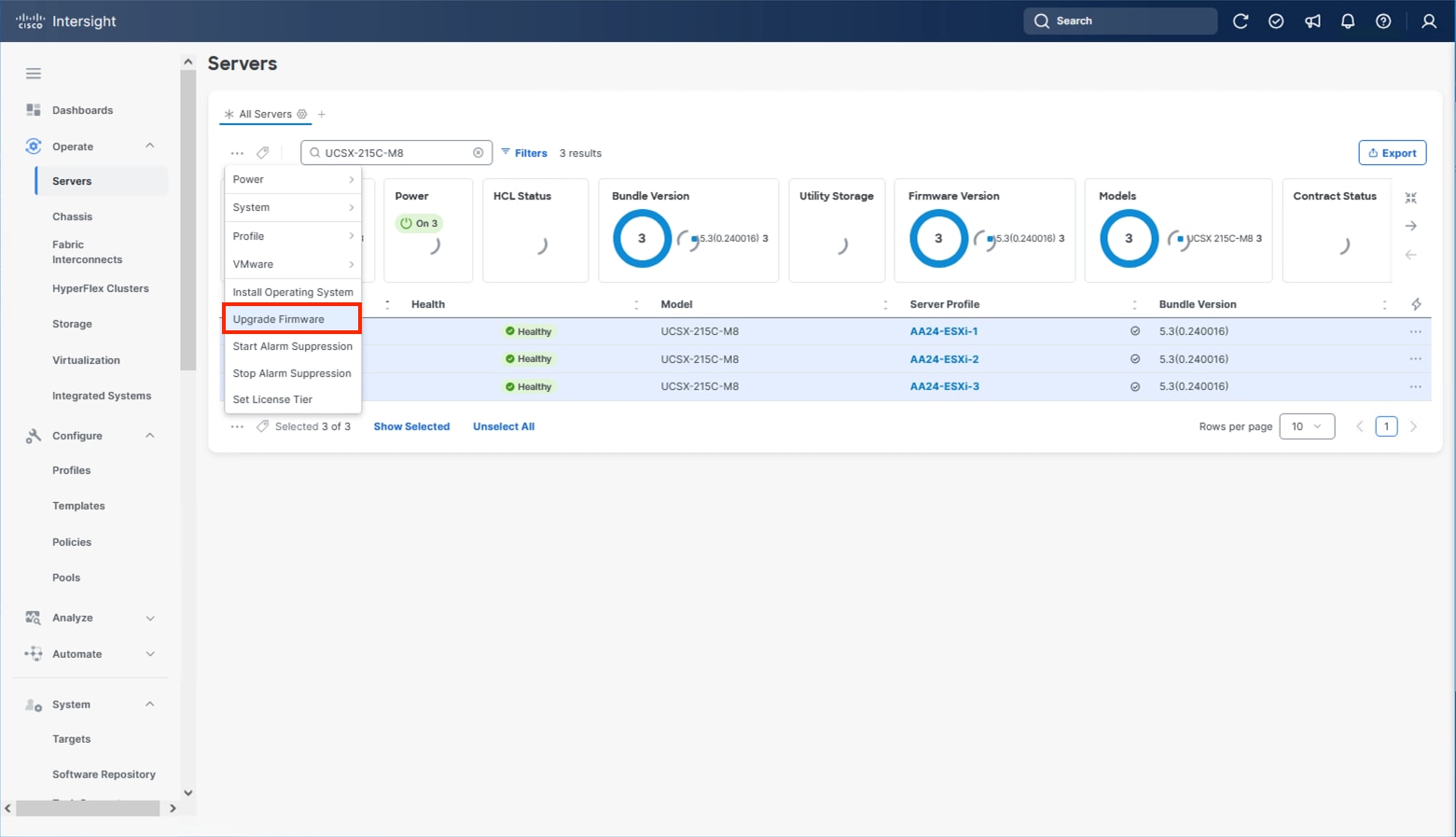

Step 4. Click the ellipsis (…) near the top left for the drop-down list.

Step 5. Select Upgrade Firmware.

Step 6. Click Start and click Next after confirming that the servers to be upgraded have been selected.

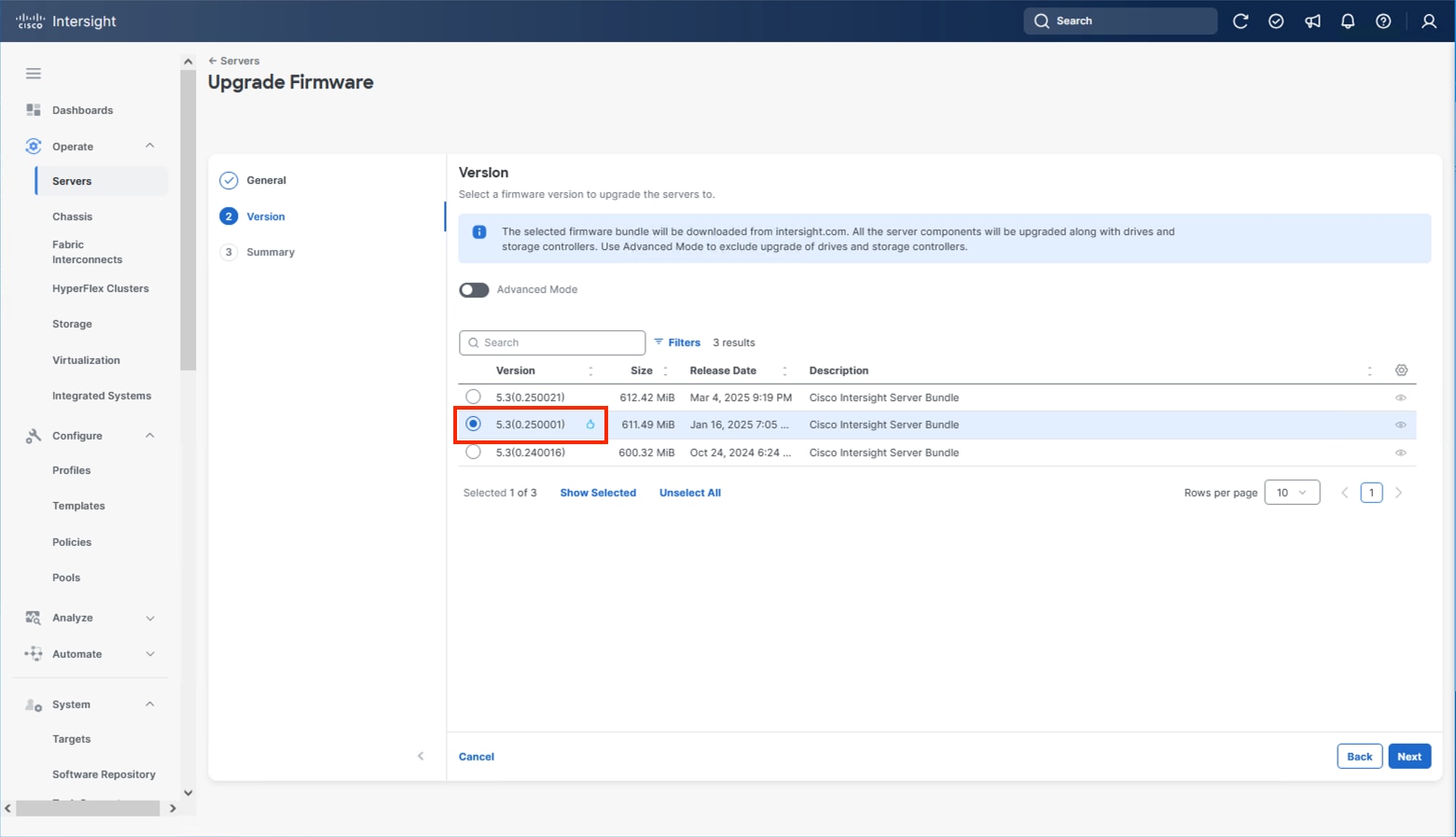

Step 7. Select the version to upgrade the servers to and click Next.

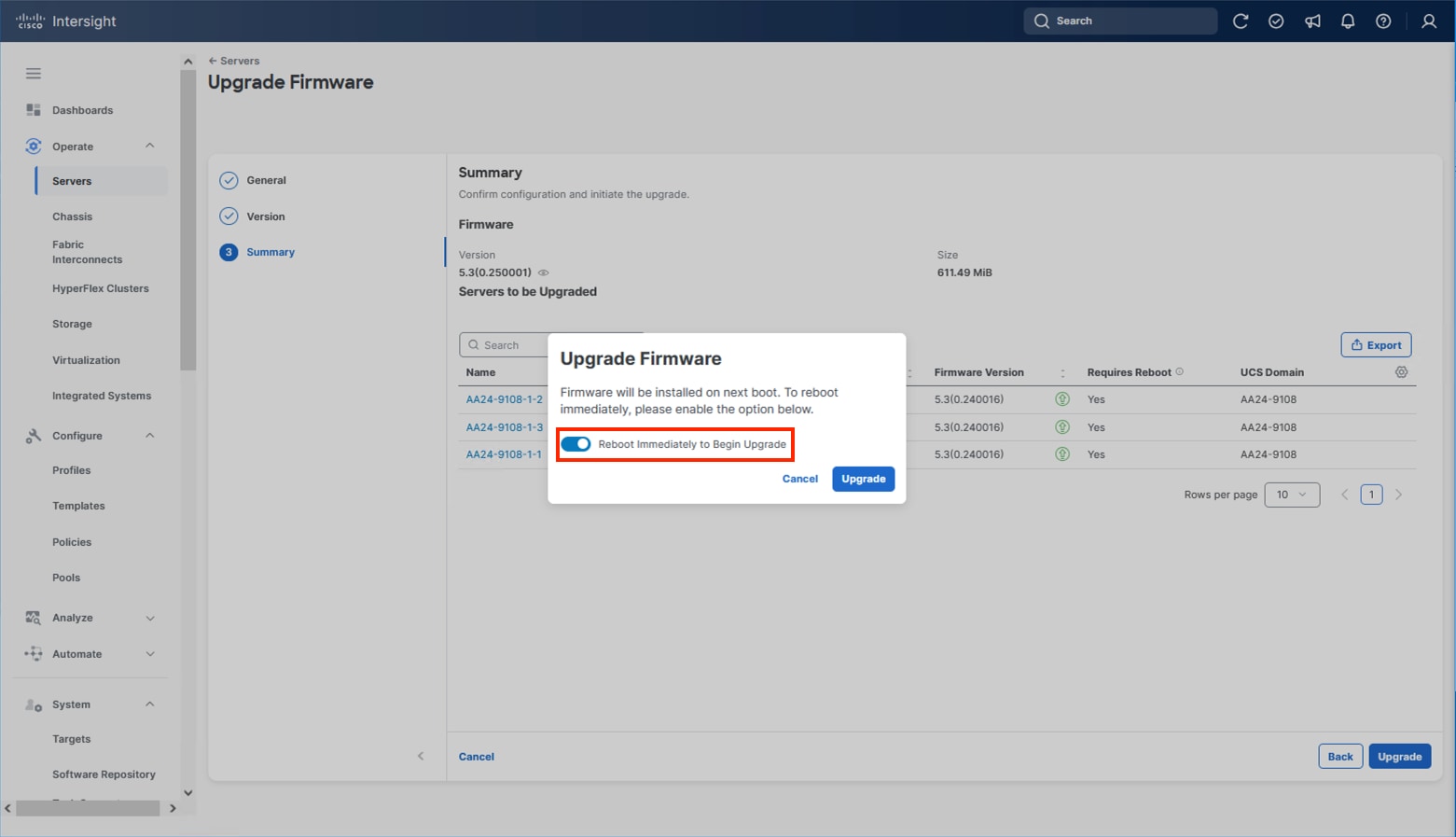

Step 8. Click Upgrade and select the toggle to Reboot Immediately to Begin Upgrade and click Upgrade again.

Firmware upgrade times will vary, but 30-45 minutes is a safe estimate for completion.

Configure Cisco UCS Chassis Profile (Optional)

The Cisco UCS Chassis profile in Cisco Intersight allows you to configure various parameters for the chassis, including:

● IMC Access Policy: IP configuration for the in-band chassis connectivity. This setting is independent of Server IP connectivity and only applies to communication to and from the chassis.

● SNMP Policy, and SNMP trap settings.

● Power Policy to enable power management and power supply redundancy mode.

● Thermal Policy to control the speed of fans.

A chassis policy can be assigned to any number of chassis profiles to provide a configuration baseline for a chassis. In this deployment, no chassis profile was created or attached to the chassis, but you can configure policies to configure SNMP or Power parameters and attach them to the chassis.

Configure Server Profile Template

In the Cisco Intersight platform, a server profile enables resource management by simplifying policy alignment and server configuration. The server profiles are derived from a server profile template. A server profile template and its associated policies can be created using the server profile template wizard. After creating the server profile template, you can derive multiple consistent server profiles from the template.

The server profile templates captured in this deployment guide support AMD based Cisco UCS X215c M8 compute nodes with 5th Generation VICs. AMD based Cisco UCS M8 C-Series profile templates can be nearly identical to configurations used in this document but can differ in aspects such as power policies. Intel based X-Series or B-Series servers will have some differences in BIOS policies so will generally use differing templates.

The configuration of server profiles for this IP storage-based architecture will show connectivity for NVMe/TCP storage utilizing a local M.2 boot option.

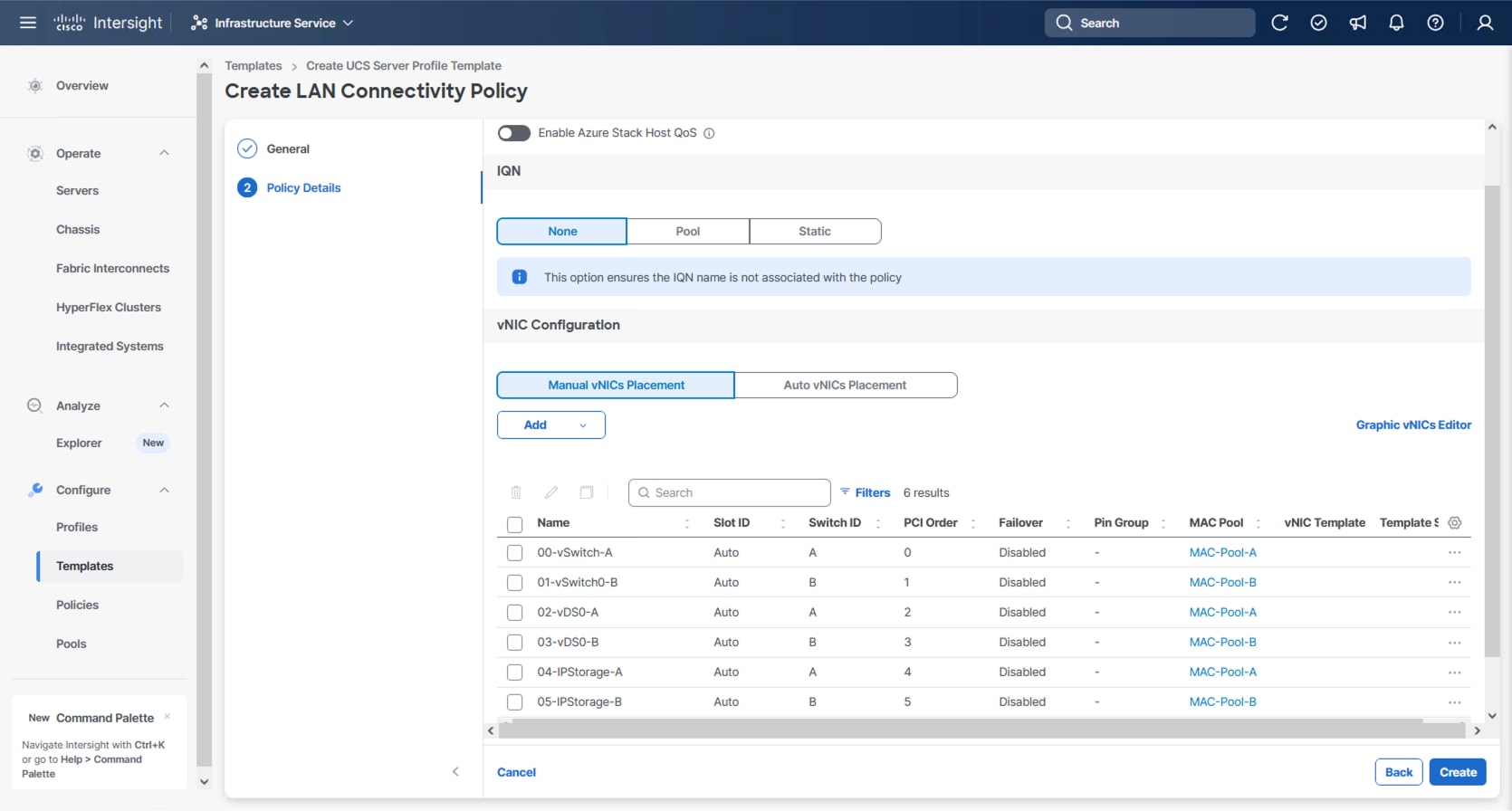

vNIC Placement for Server Profile Template

This section explains the vNIC layout used in this deployment.

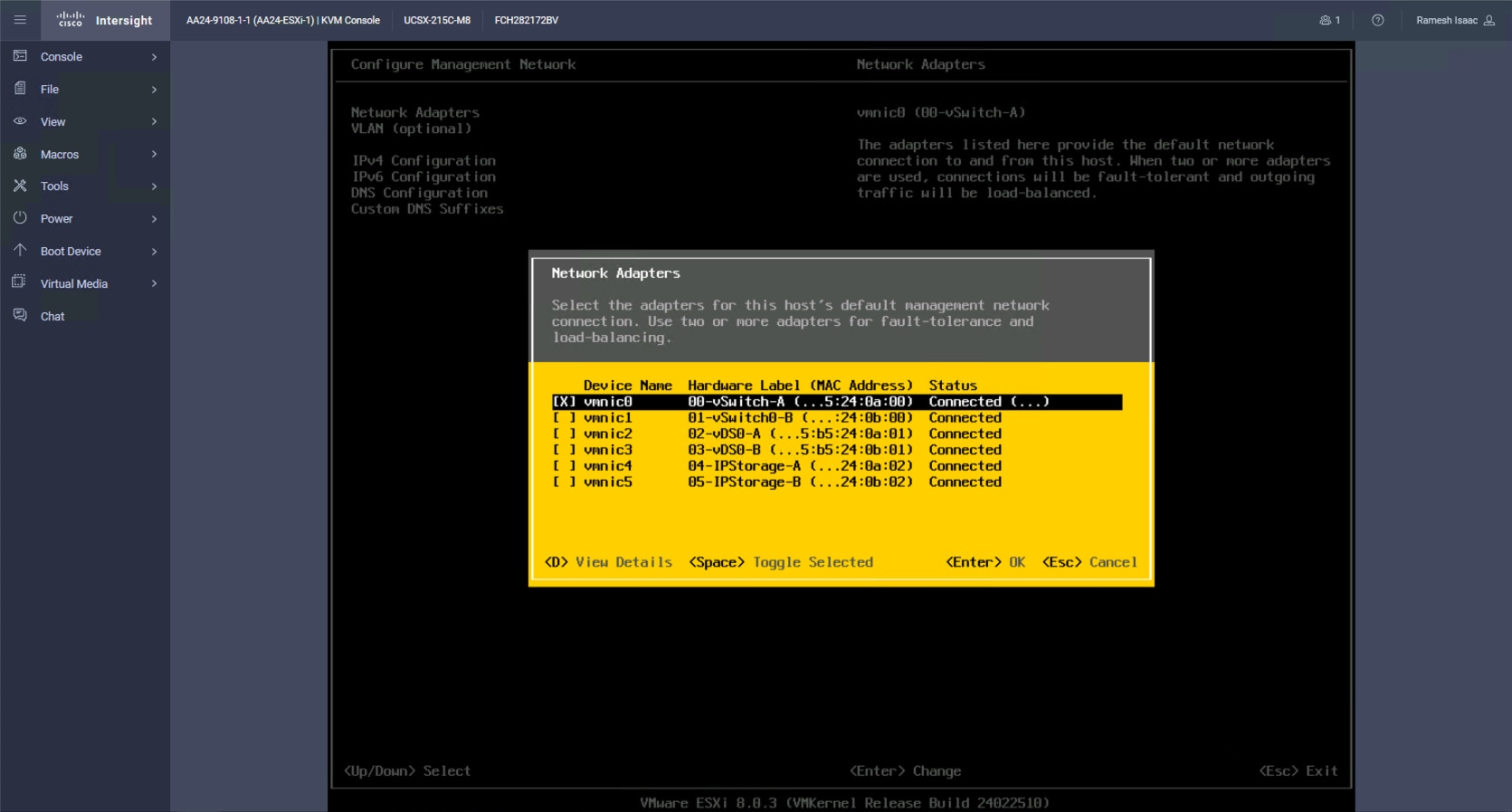

Six vNICs are configured to support management, application traffic, and IP Storage traffic supporting NVMe/TCP. The vNICs are split up into pairs with the management traffic connecting to a standard vSwitch supporting the management VMkernel, the application traffic is carried on two vNICs connecting into a vSphere Distributed Switch (VDS) to carry vMotion and application traffic, and the IP storage traffic are carried on separate vNICs reflecting the A vs B traffic connecting NVMe/TCP as needed for both vSphere datastores. These devices are manually placed as listed in Table 10.

| vNIC/vHBA Name |

Slot |

Switch ID |

PCI Order |

| 00-vSwitch0-A |

MLOM |

A |

0 |

| 01-vSwitch0-B |

MLOM |

B |

1 |

| 02-VDS0-A |

MLOM |

A |

2 |

| 03-VDS0-B |

MLOM |

B |

3 |

| 04-IPStorage-A |

MLOM |

A |

4 |

| 05-IPStorage-B |

MLOM |

B |

5 |

Procedure 1. Create Server Profile Template

Step 1. Log into Cisco Intersight.

Step 2. Go to Infrastructure Service > Configure > Templates and in the main window click Create UCS Server Profile Template.

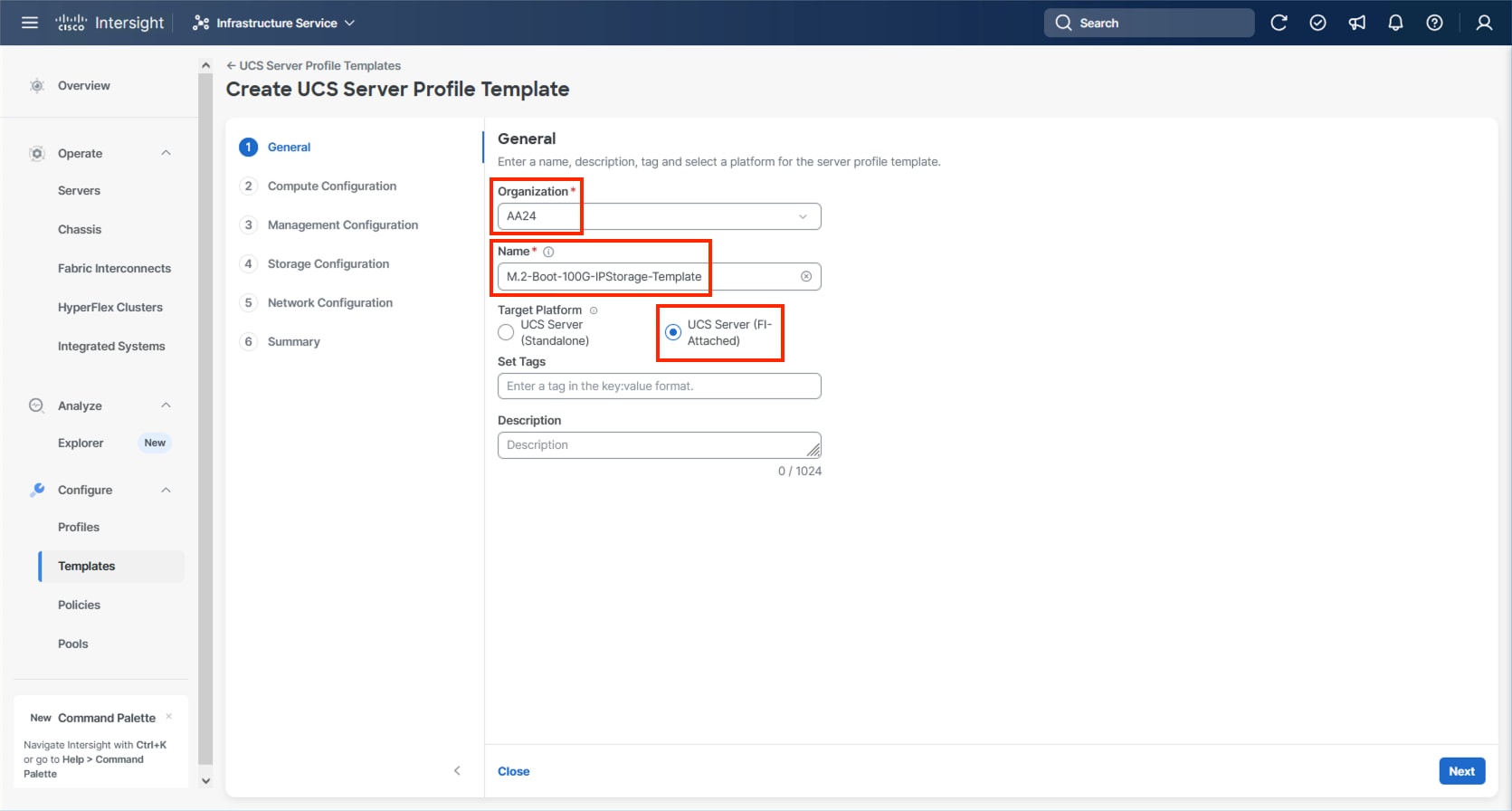

Procedure 2. General Configuration

Step 1. Select the organization from the drop-down list (for example, AA24).

Step 2. Provide a name for the server profile template. (for example, M.2-Boot-100G-IPStorage-Template)

Step 3. Select UCS Server (FI-Attached).

Step 4. Provide a description (optional).

Step 5. Click Next.

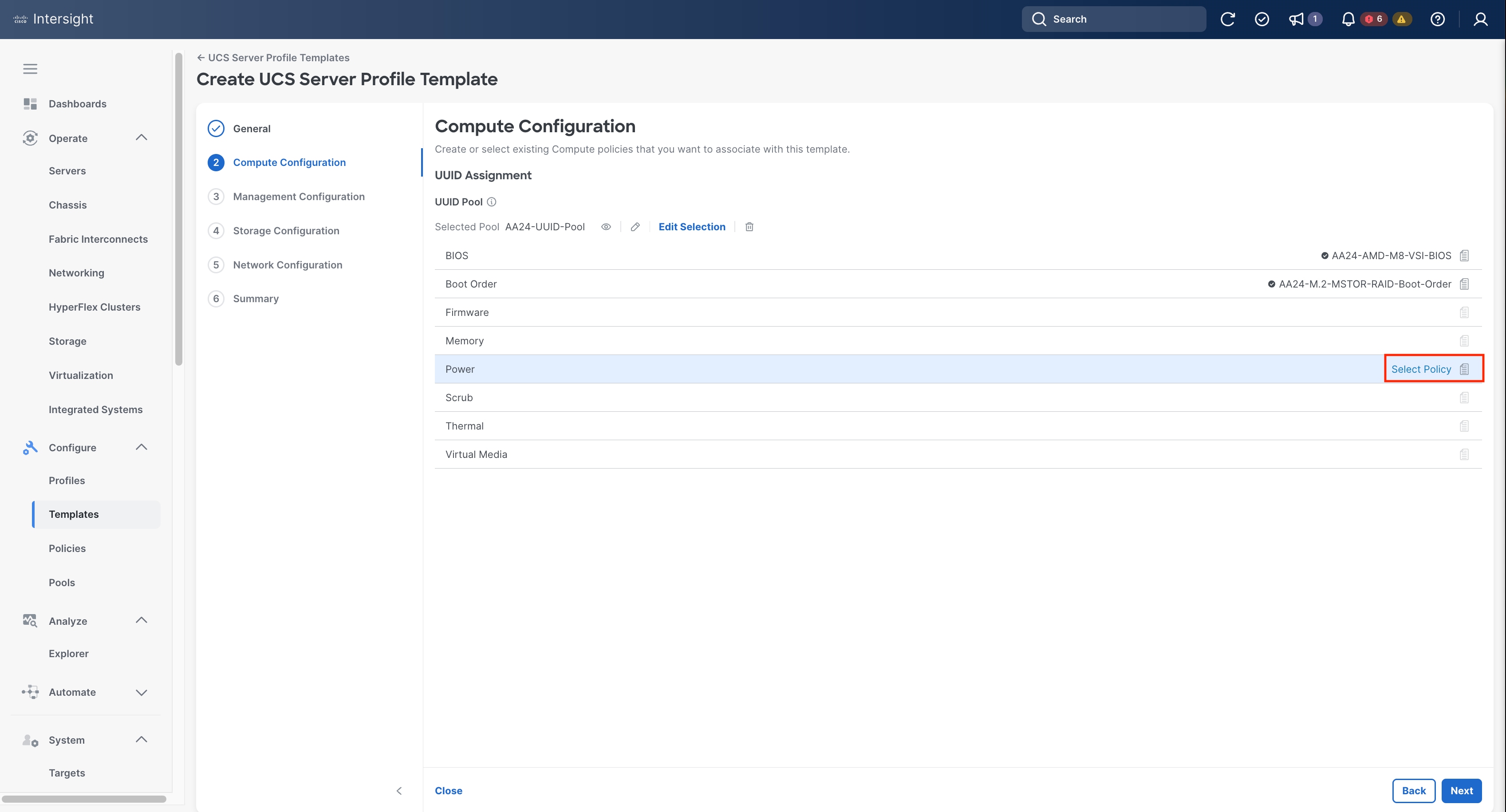

Compute Configuration

The following subcomponents of pools and policies will be addressed in the Compute Configuration:

● A UUID Pool will be created to be used for the identities of Server Profiles derived from the Server Profile Template

● A BIOS Policy to set the available settings for the underlying hardware of the UCS Compute Nodes

● A Boot Order Policy to set the boot order of the Compute Nodes

● A Virtual Media Policy to enable virtual media accessibility to the KVM

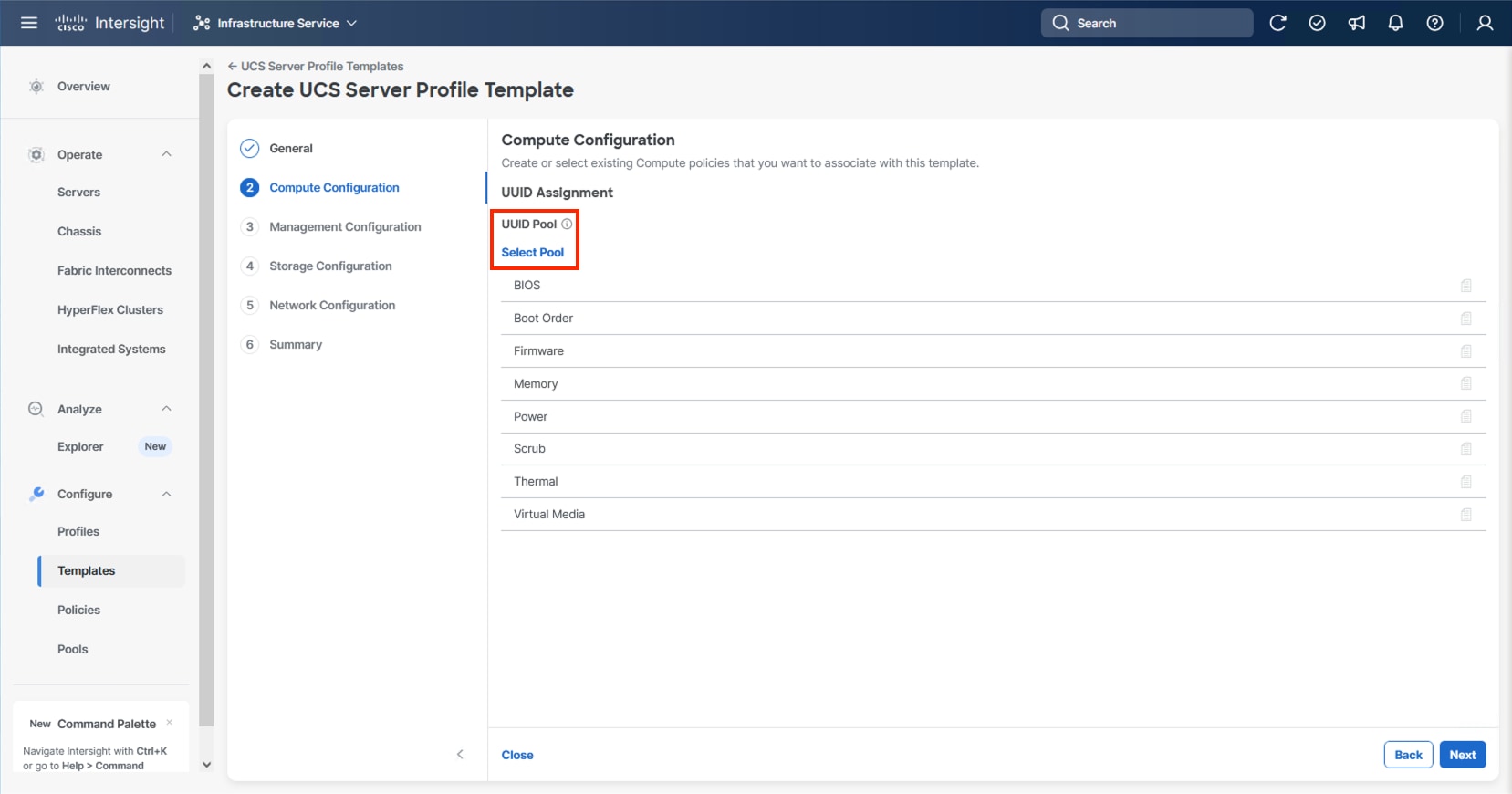

Procedure 1. Configure UUID Pool

Step 1. Click Select Pool under UUID Pool and then in the pane on the right, click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the UUID Pool (for example, AA24-UUID-Pool).

Step 3. Provide a Description (optional) and click Next.

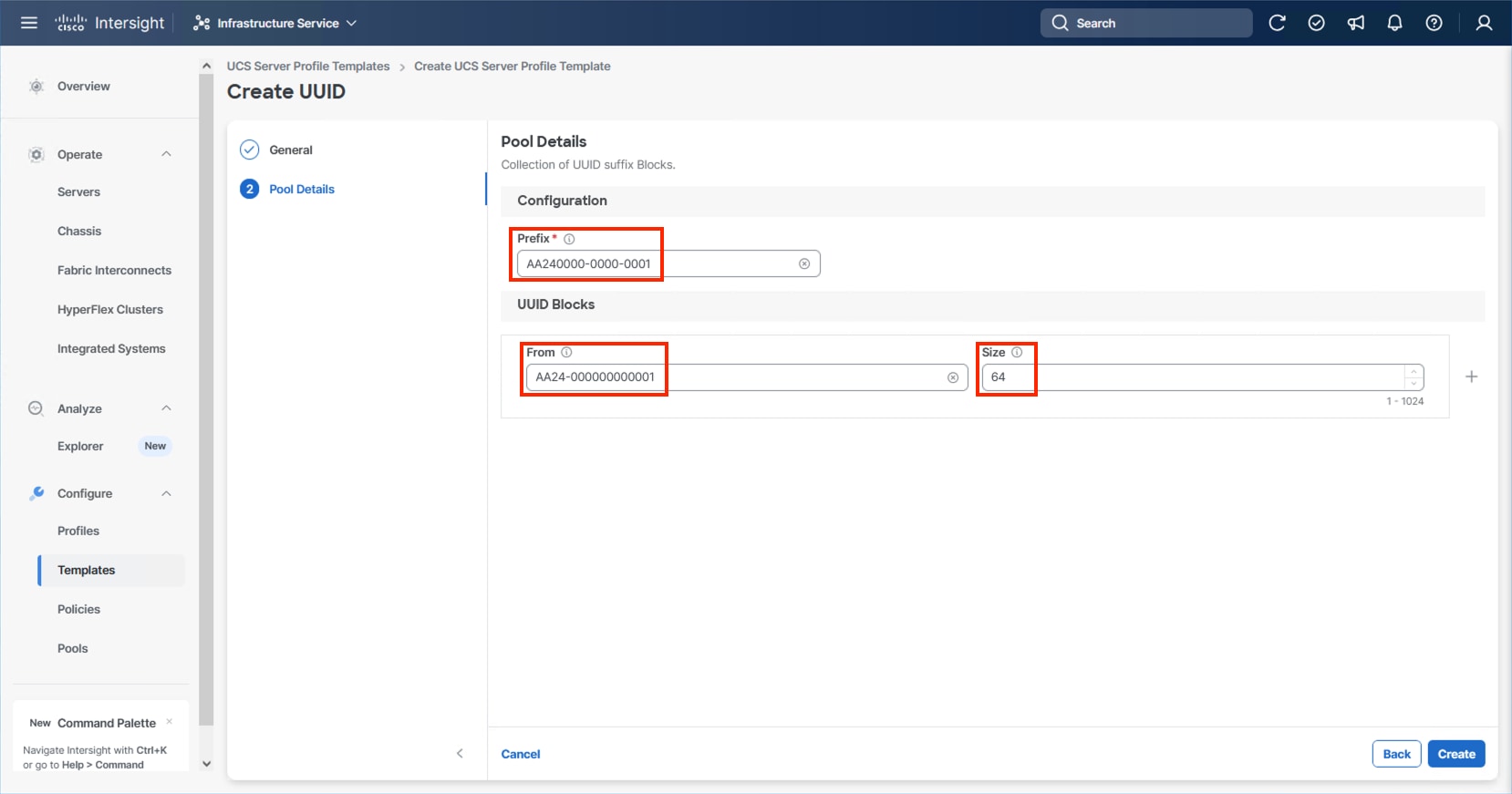

Step 4. Provide a hexadecimal UUID Prefix (for example, a prefix of AA240000-0000-0001 was used).

Step 5. Add a UUID block specifying a From starting value (example AA24-000000000001) and a Size.

Step 6. Click Create.

Procedure 2. Configure BIOS Policy

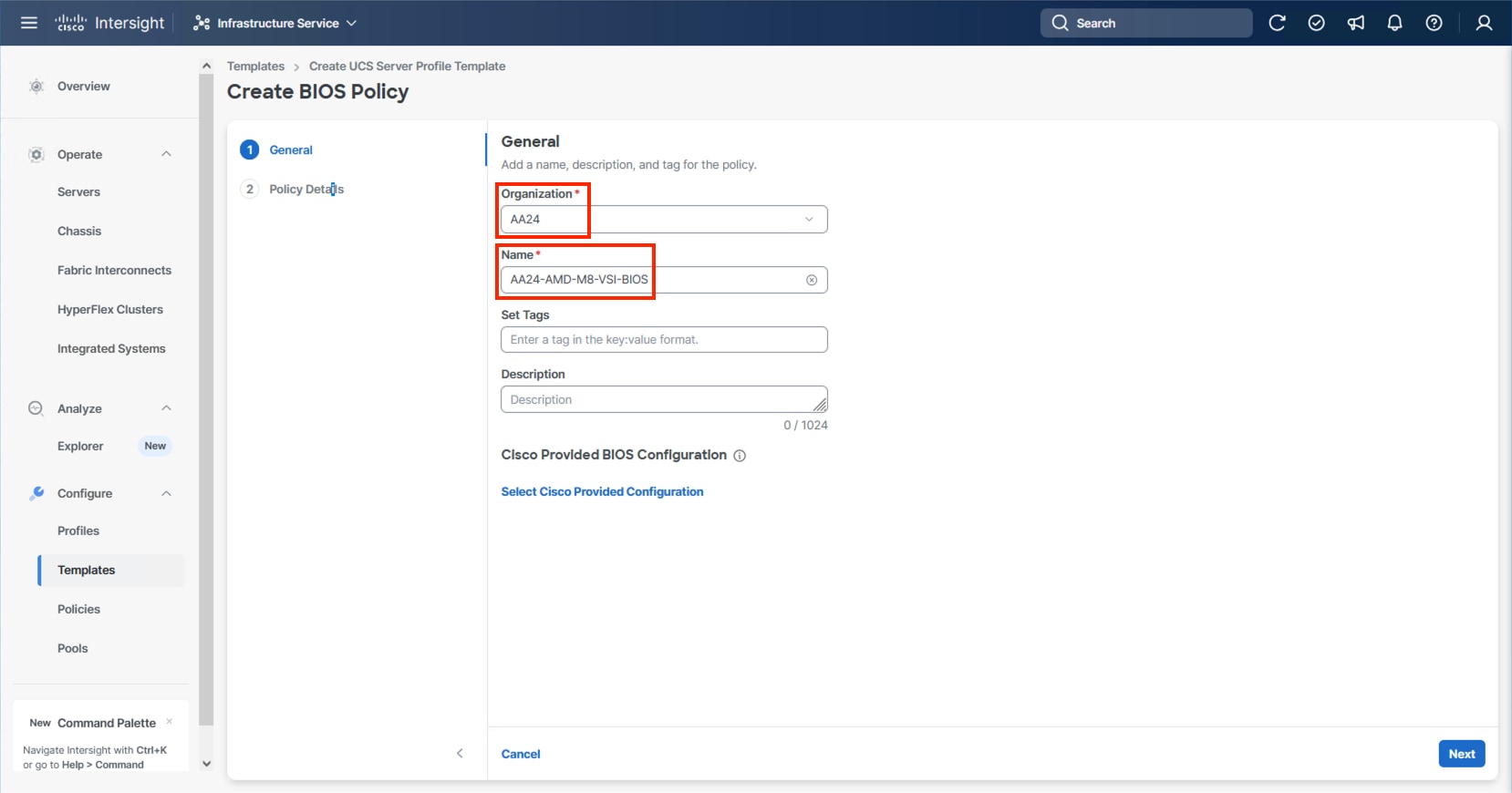

Step 1. Click Select Policy next to BIOS and in the pane on the right, click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-AMD-M8-VSI-BIOS).

Note: At the time of this publication, there is not a Cisco Provided BIOS Configuration option for the AMD M8 servers within Intersight, so a manual configuration will be used.

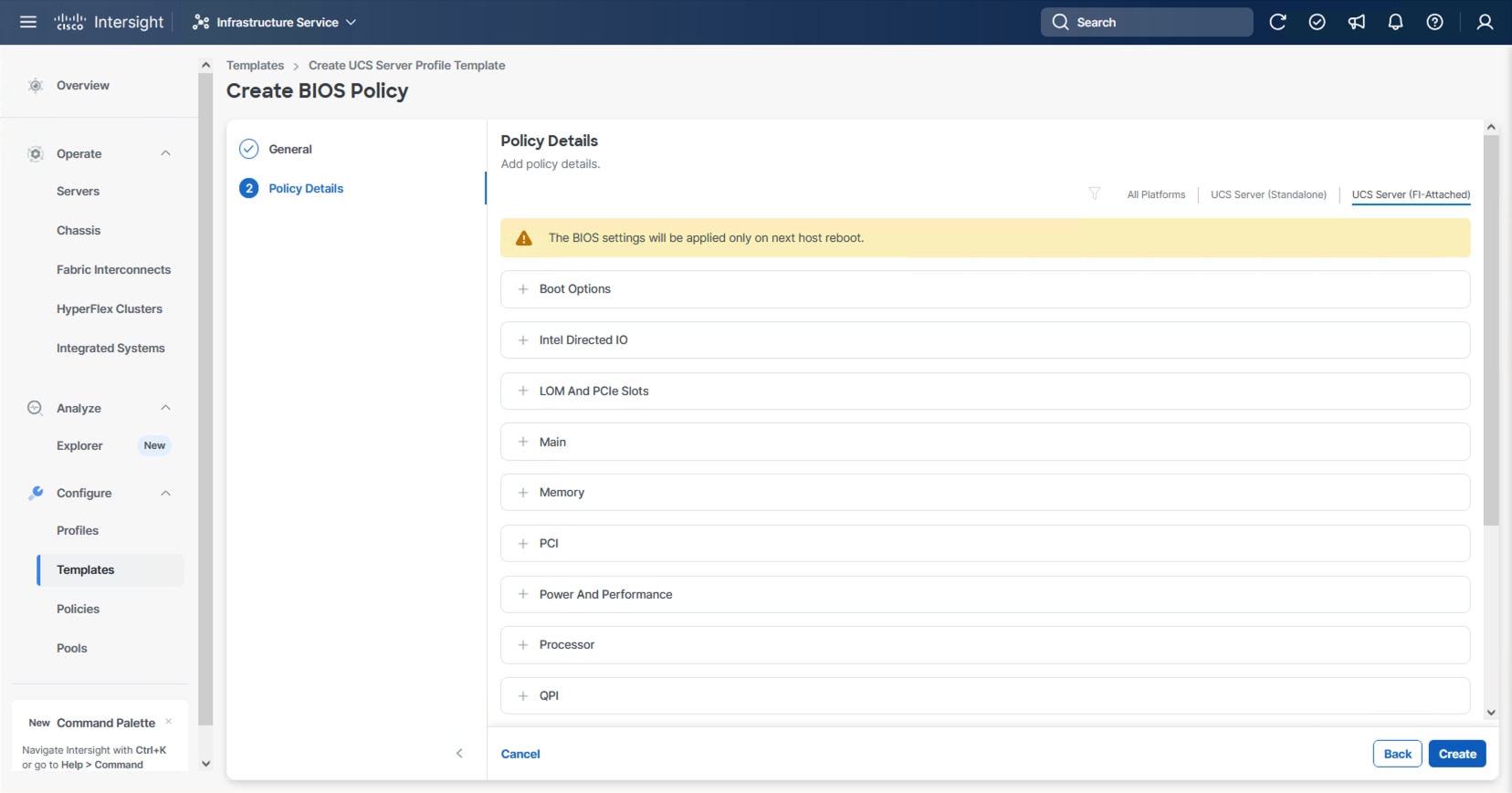

Step 3. Click Next.

Step 4. From the Policy Details screen, select the appropriate values for the BIOS settings. In this deployment, the BIOS values were selected based on recommendations in the Performance Tuning for Cisco UCS M8 Platforms with AMD EPYC 4th Gen and 5th Gen Processors guide for Cisco UCS M8 BIOS published for the Cisco UCS C245 M8 servers. The platform defaults will primarily align with recommendations for virtual workloads, so adjust the parameter shown below and leave all other parameters set to platform-default.

Step 5. Go to Power and Performance > CPPC: Enabled.

Step 6. Click Create.

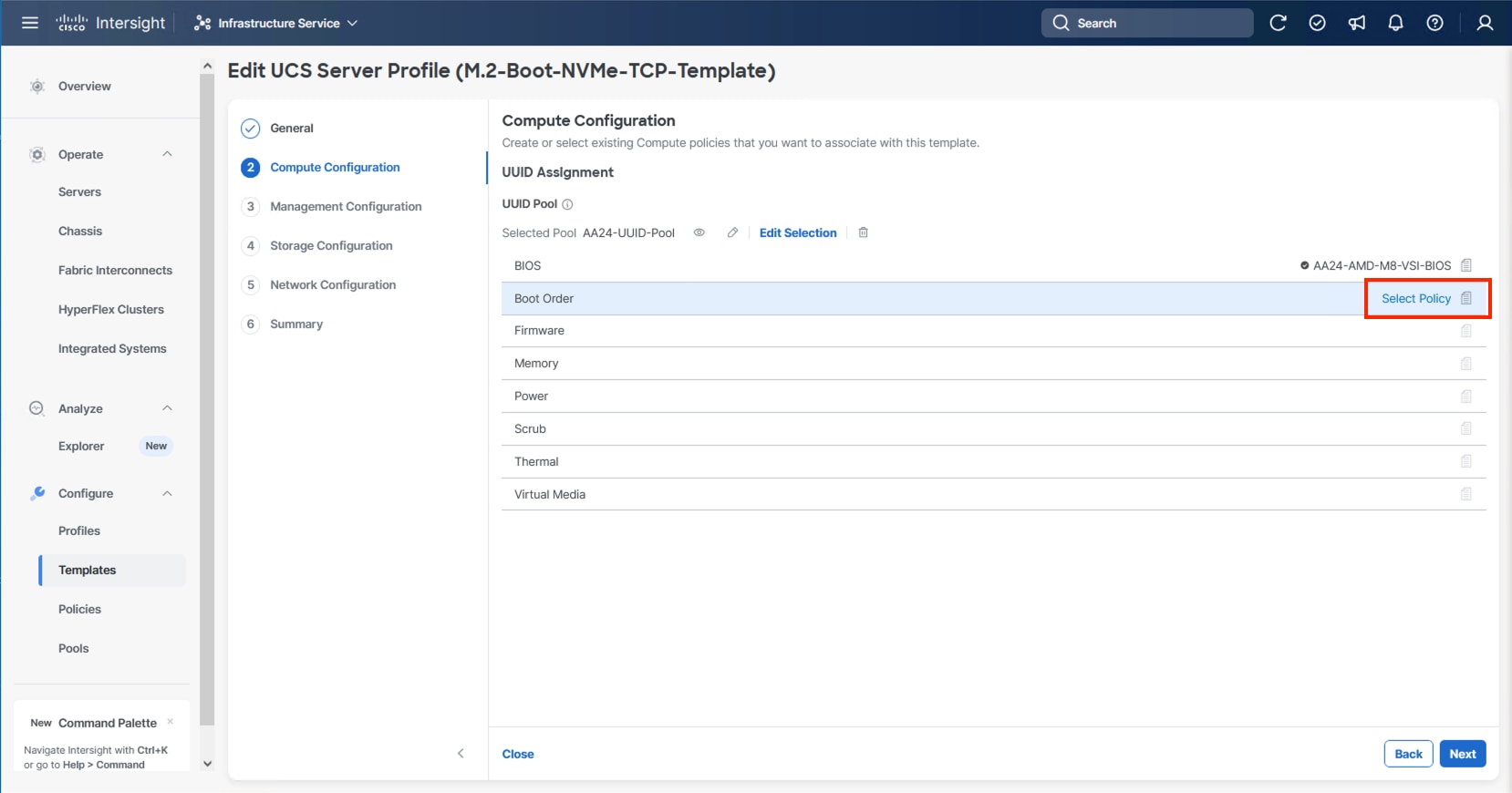

Procedure 3. Configure Boot Order Policy

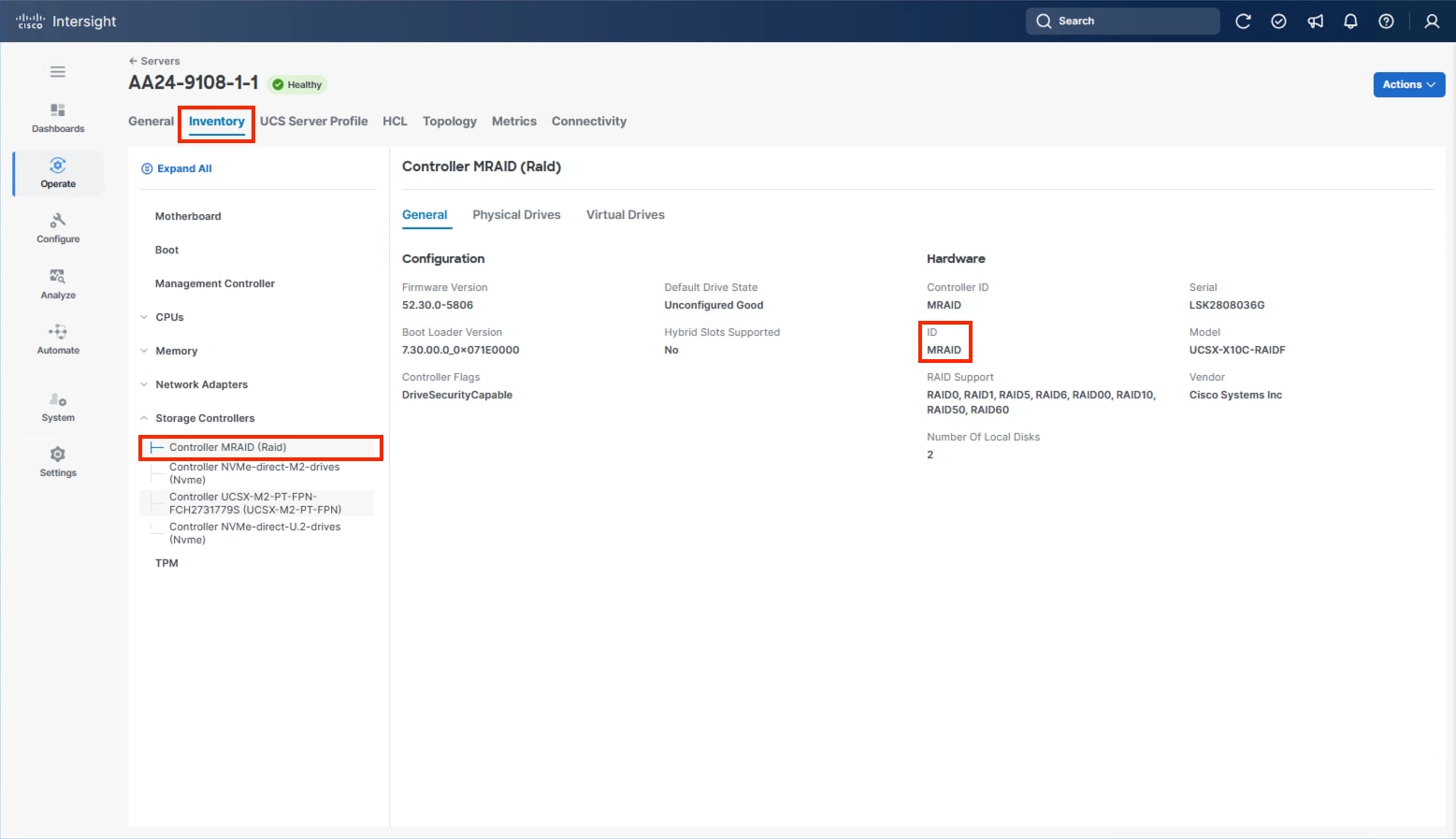

The M.2 boot option, as a local boot option, is recommended within this design. Identification of the storage controller ID will be needed for this configuration.

Step 1. Without closing your configuration dialogue, open a new tab or window by right-clicking Servers on the left side from the Operate section of your Intersight browser and select the link.

Step 2. From the new tab or window, go to Operate > Servers and click one of your servers.

Step 3. From the server view, go to Inventory > Storage Controllers and select the RAID controller and confirm the ID name associated with it.

Step 4. Go to the Server Profile Template browser window.

Step 5. Click Select Policy next to Boot Order and in the right pane click Create New.

Step 6. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-M.2-MSTOR-Boot-Order).

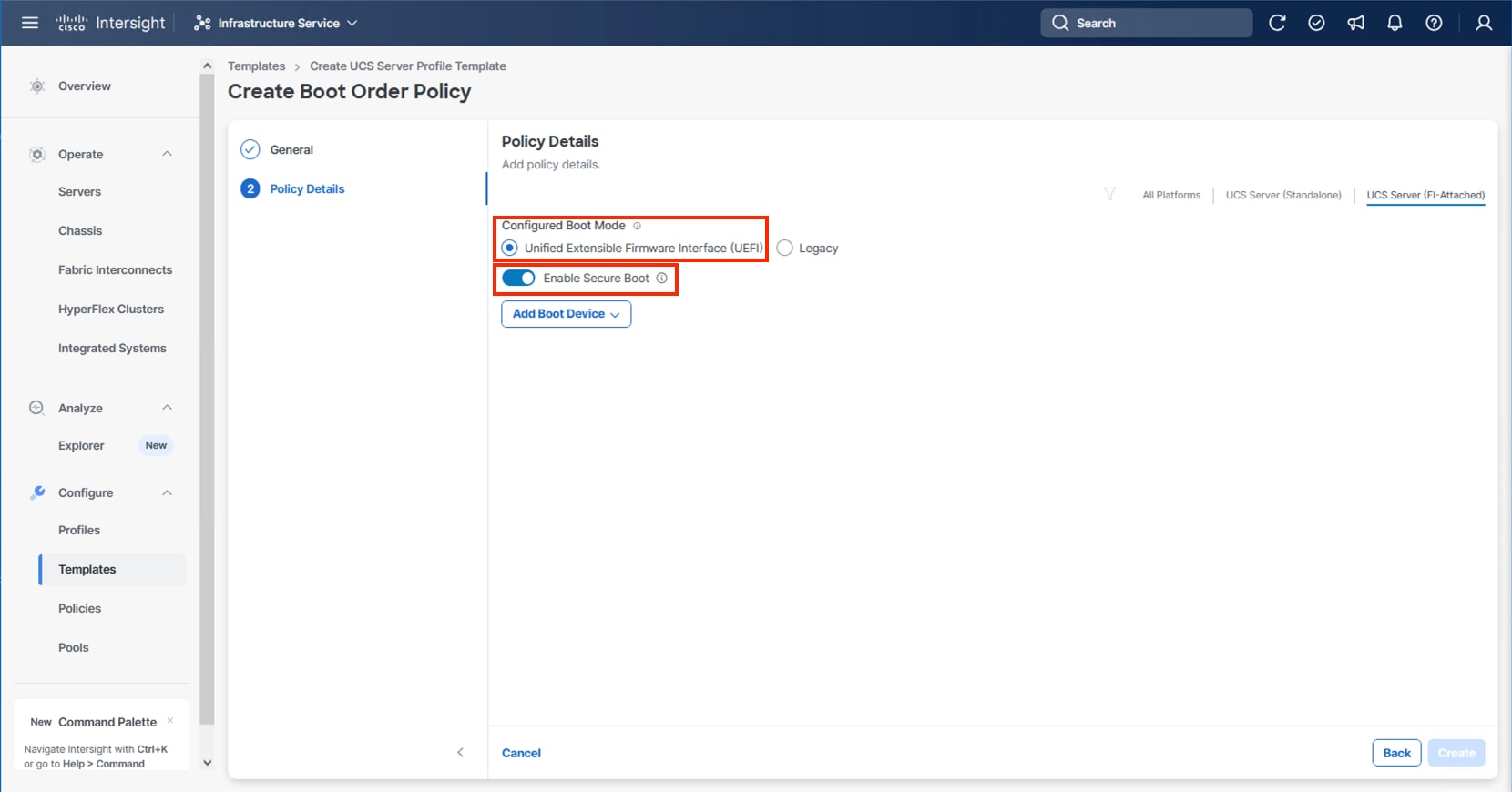

Step 7. Click Next.

Step 8. For Configured Boot Mode, select Unified Extensible Firmware Interface (UEFI).

Step 9. Turn on Enable Secure Boot.

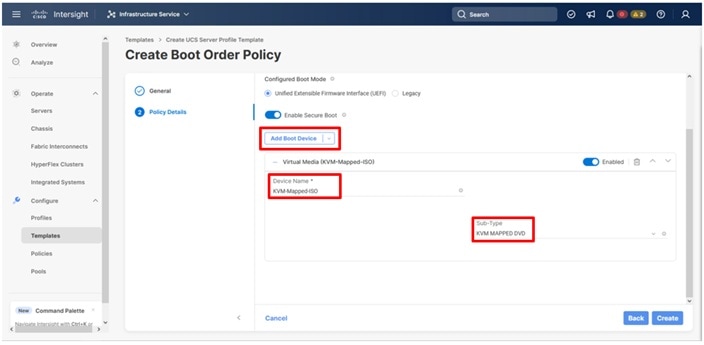

Step 10. From the Add Boot Device drop-down list, select Virtual Media.

Step 11. Provide a Device Name (for example, KVM-Mapped-ISO) and for the Sub-Type, select KVM Mapped DVD.

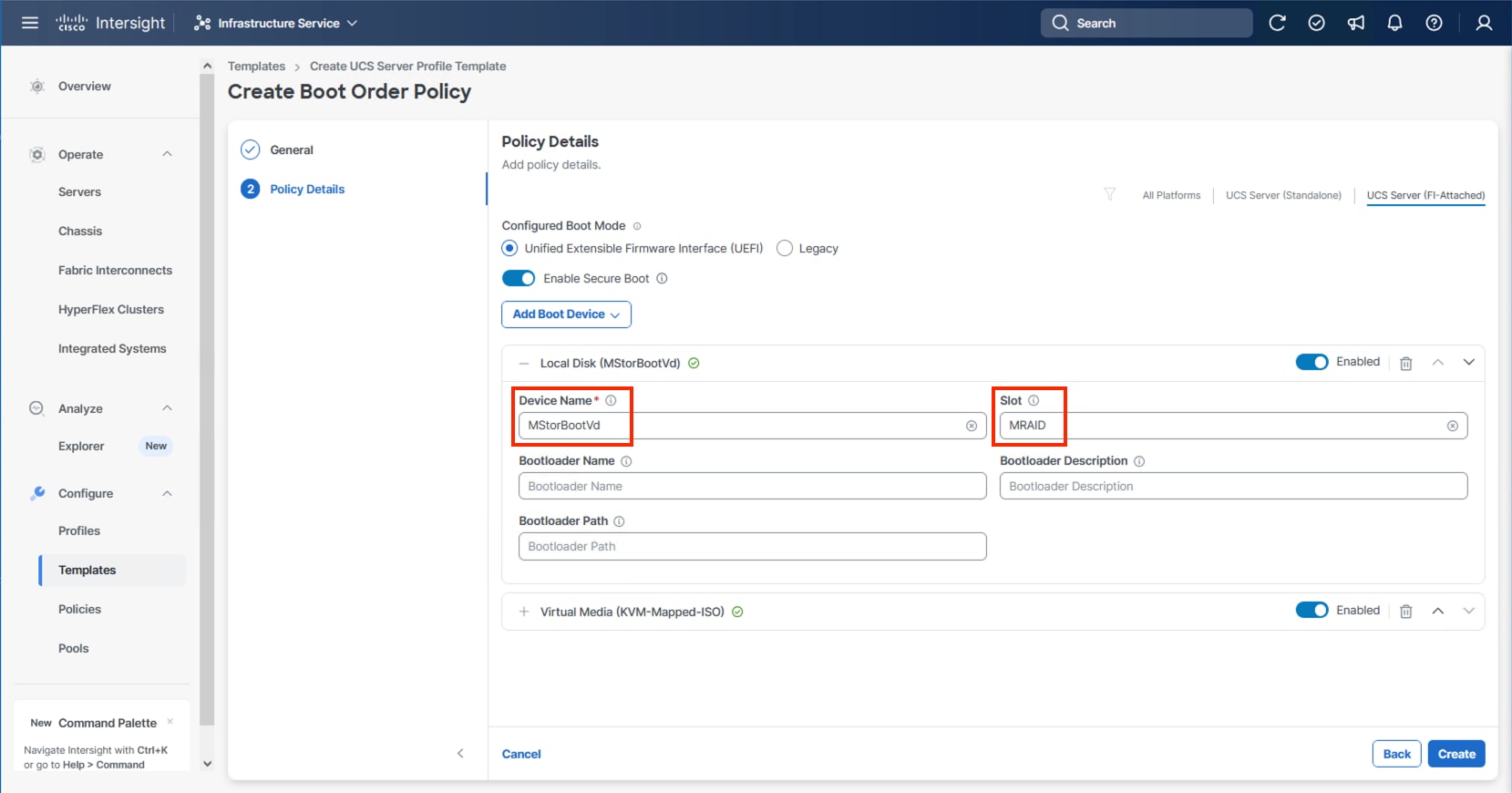

Step 12. From the Add Boot Device drop-down list, select Local Disk.

Step 13. Enter MStorBootVd (this will be used later for the Storage Policy created) for the Device Name and enter the ID found from the server Storage Controller in Step 3 for the Slot.

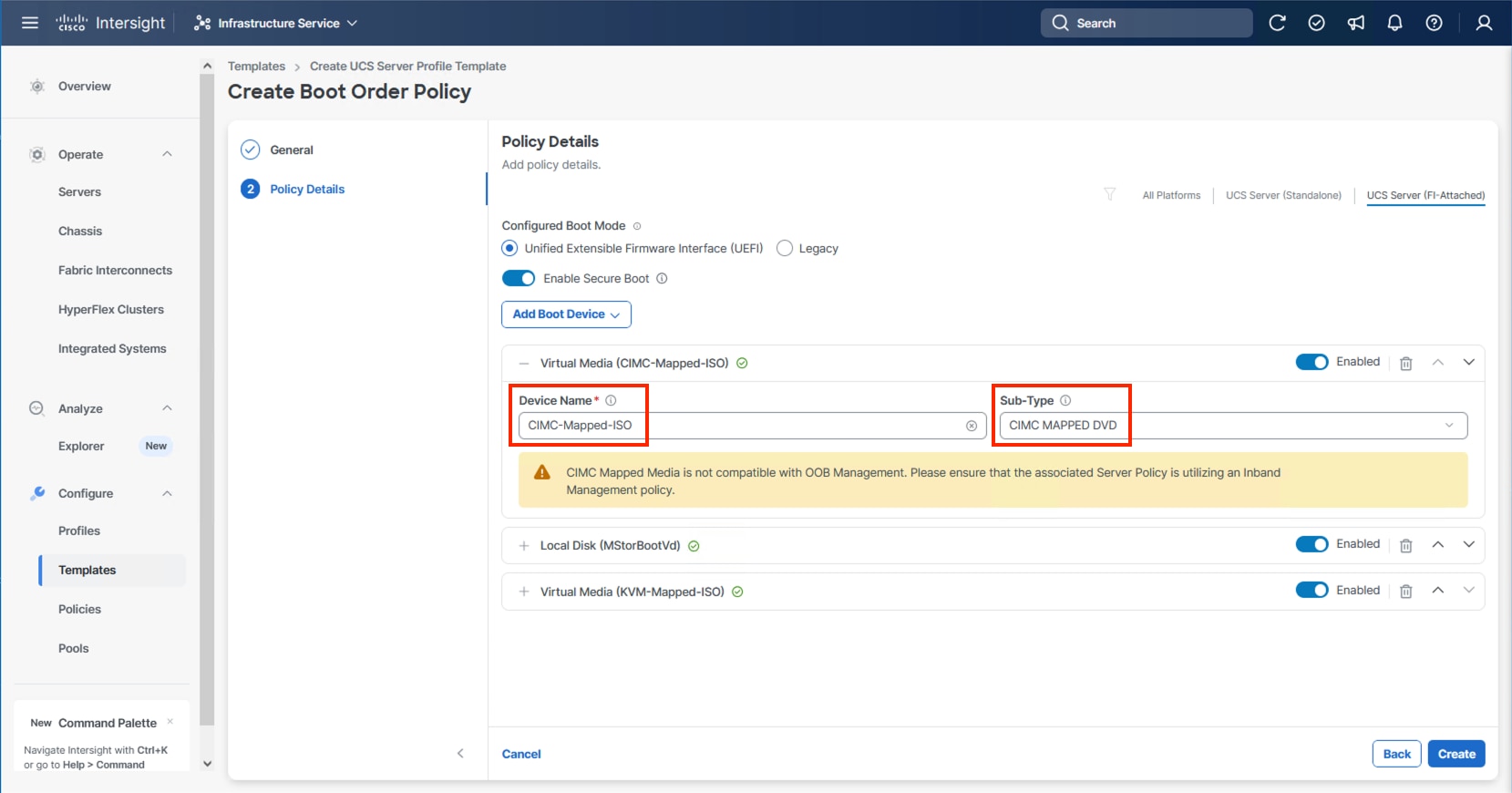

Step 14. (Optional) From the Add Boot Device drop-down list and select Virtual Media again to add a CIMC boot option providing an option to boot from ISO defined within the vMedia policy.

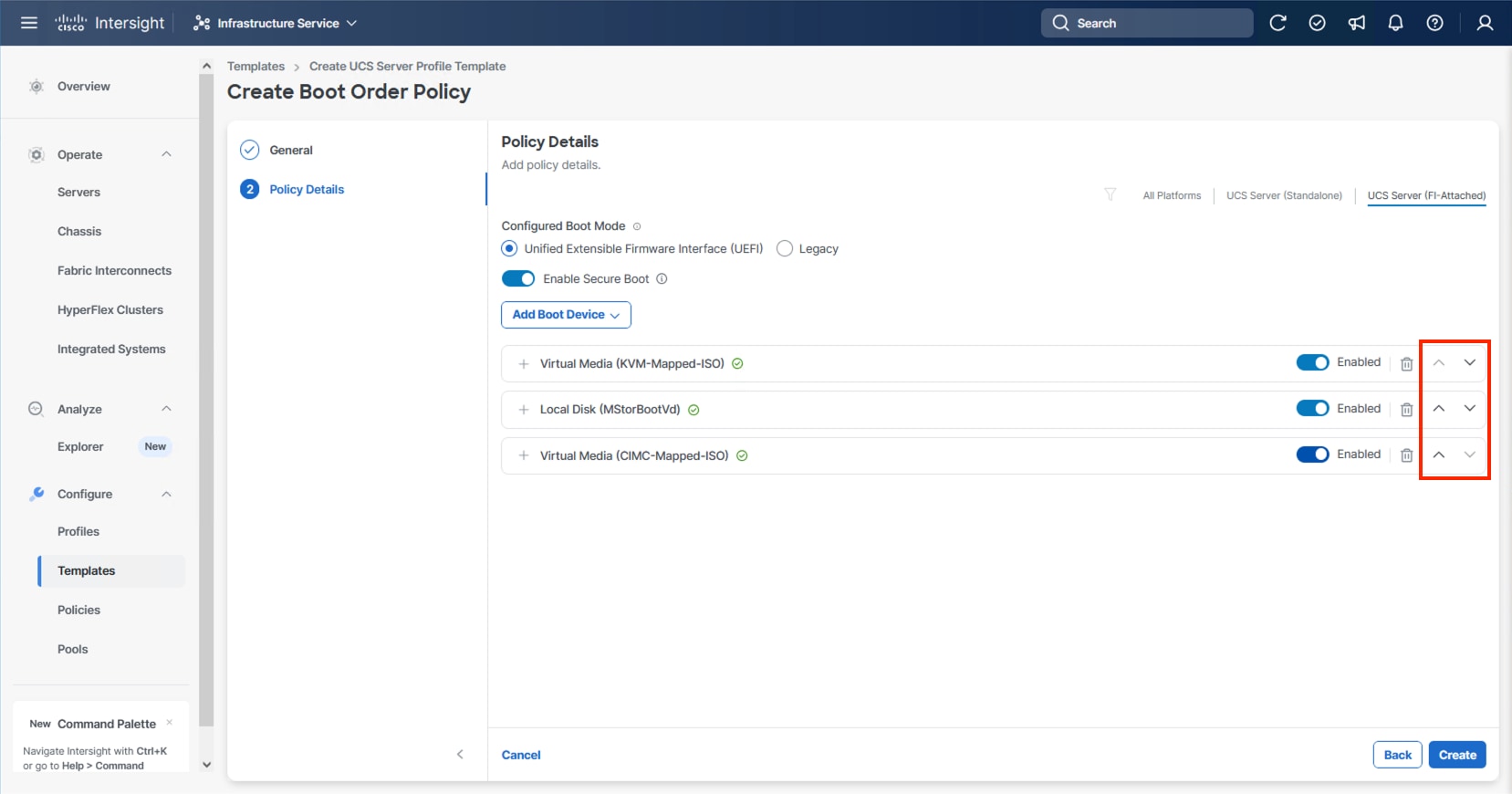

Step 15. Use the up/down arrows of each defined boot device to create the order that will be checked at boot time and click Create.

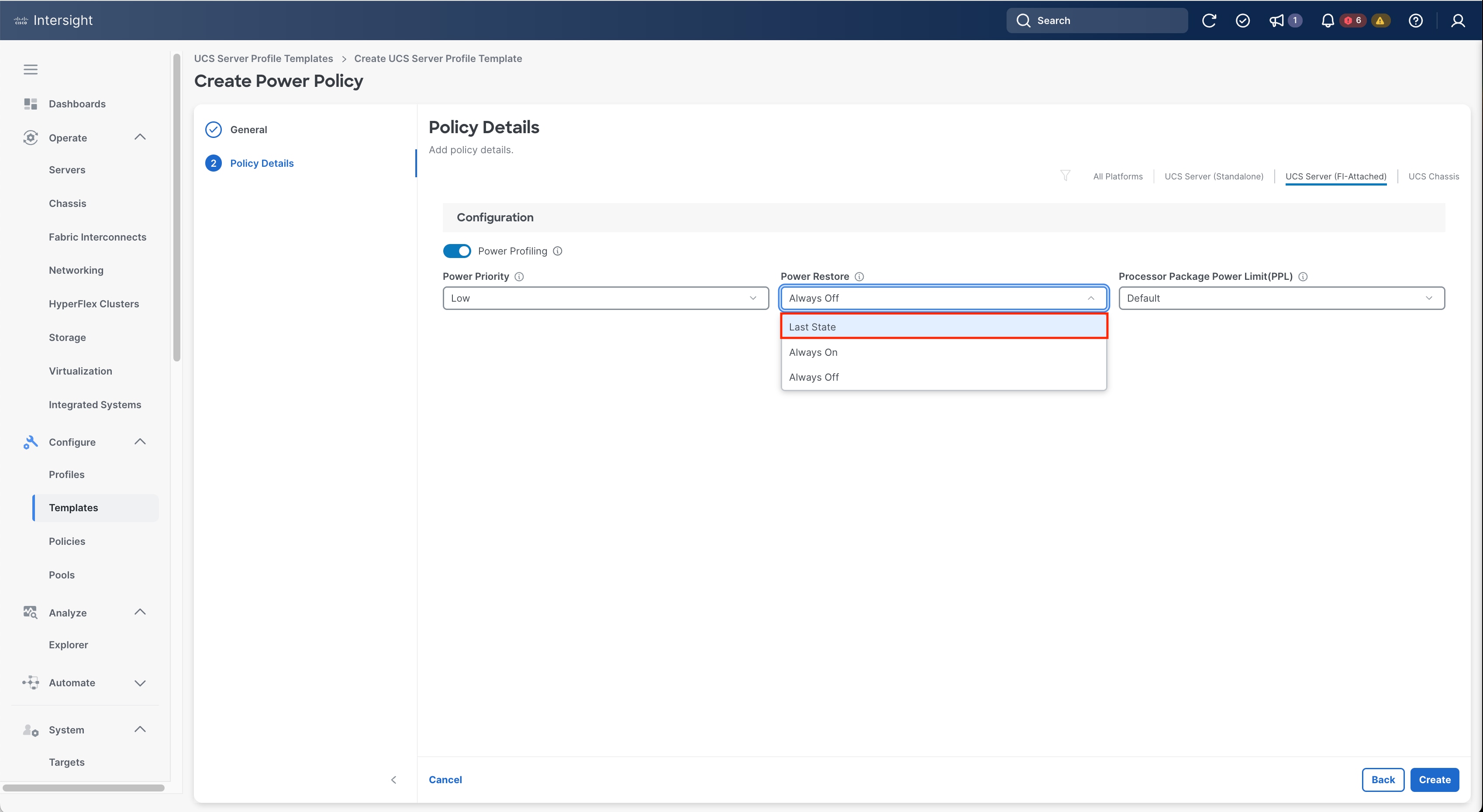

Procedure 4. Configure Power Policy

The Power Policy will be used to set the state when bringing up the nodes in the event of a loss of power.

Step 1. Click Select Policy next to Power and click Create Policy.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-NodePower).

Step 3. From the Power Restore drop-down list, select Last State.

Step 4. Click Create.

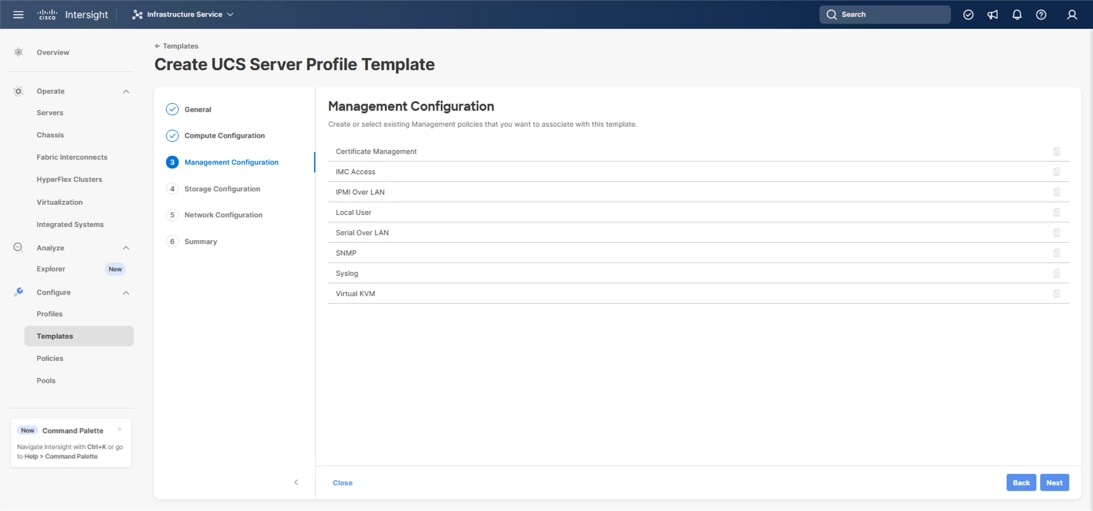

The following policies will be added to the management configuration:

● IMC Access to define the pool of IP addresses for compute node KVM access

● IPMI Over LAN to allow Intersight to manage IPMI messages

● Virtual KVM to allow the Tunneled KVM

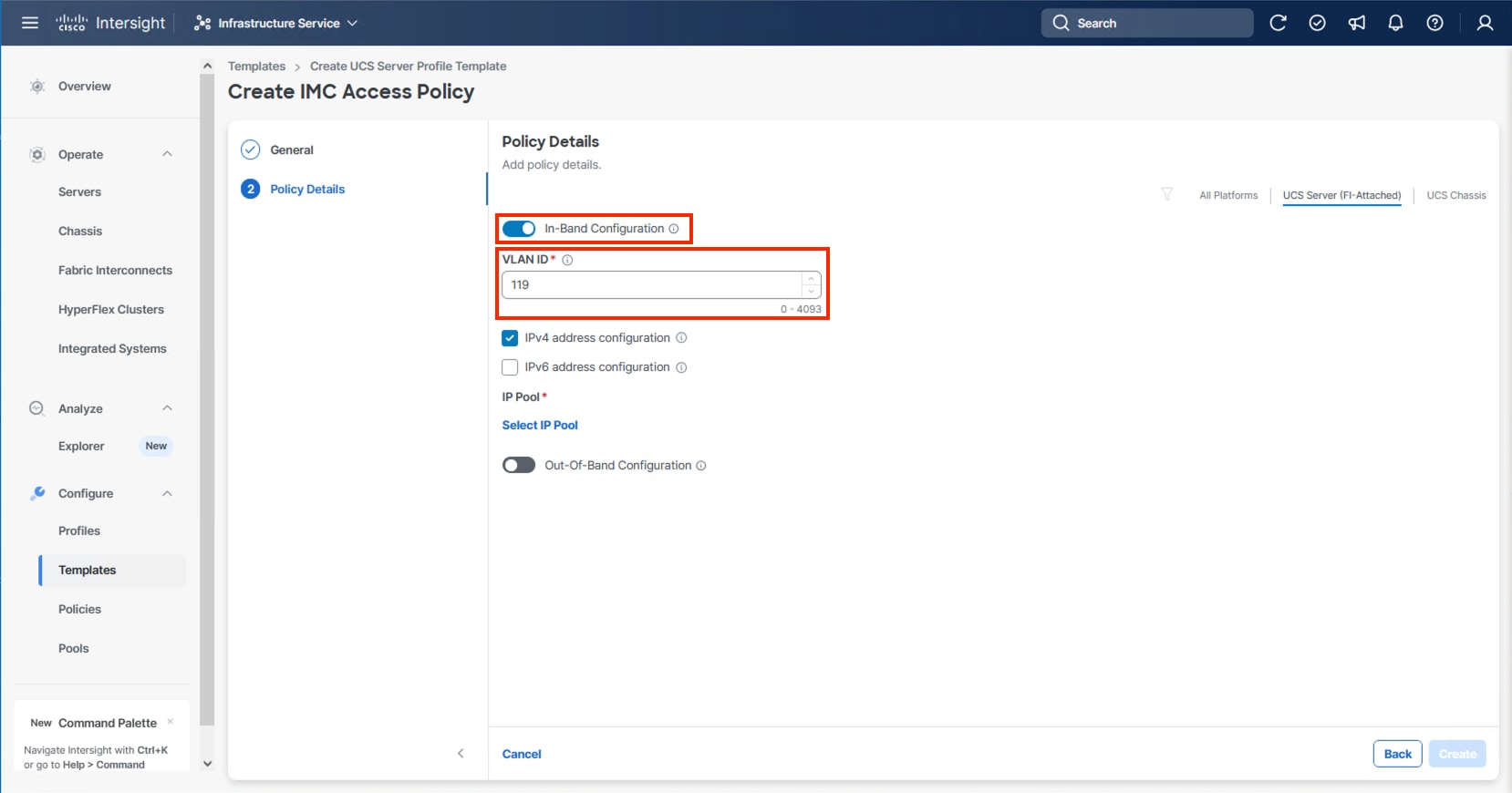

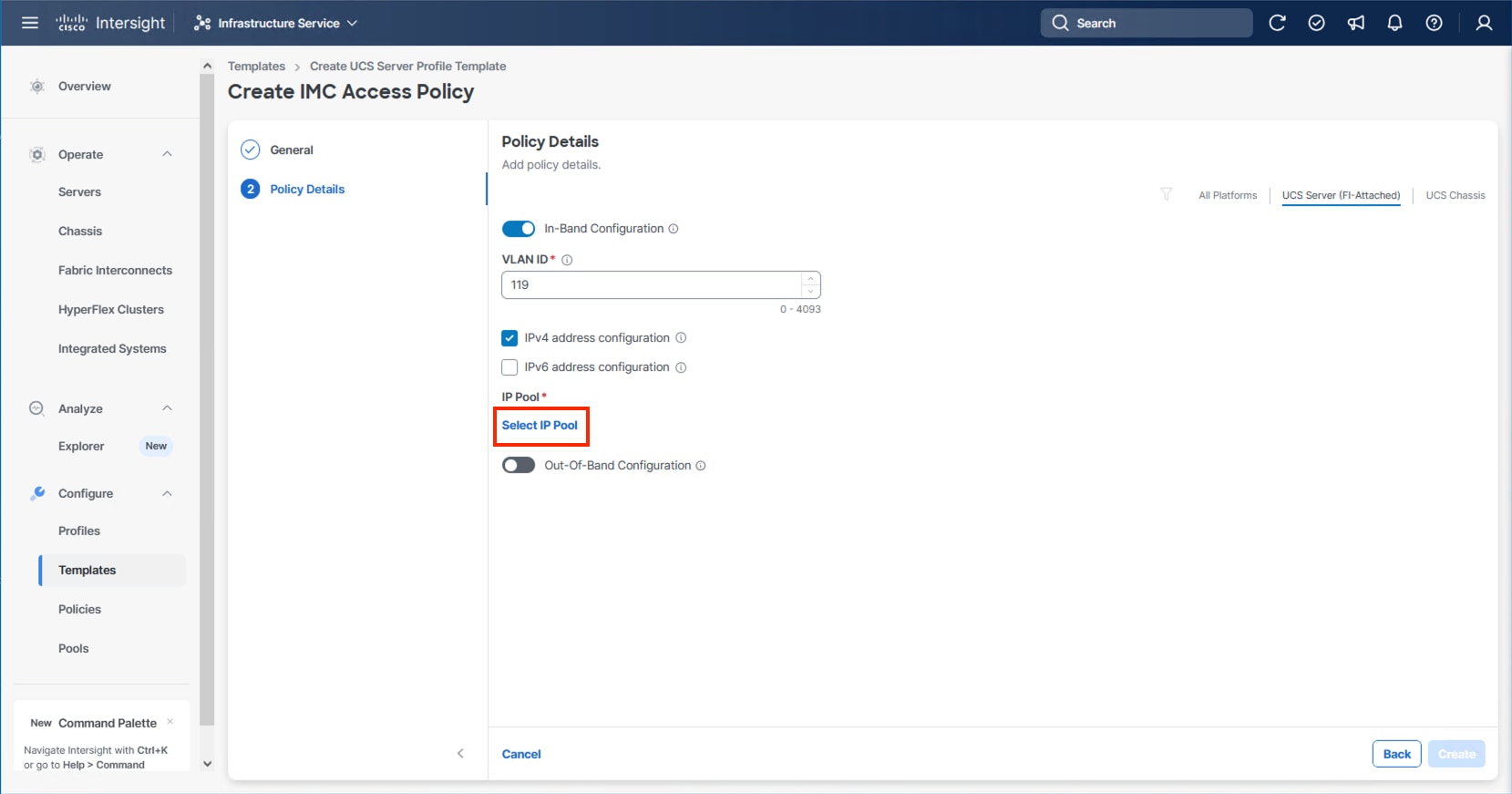

Procedure 1. Configure Cisco IMC Access Policy

Step 1. Click Select Policy next to IMC Access and click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-IMC-Access-Policy).

Step 3. Click Next.

Step 4. Click UCS Server (FI-Attached) if not selected.

Step 5. Leave the toggle to enable In-Band Configuration and specify the VLAN ID to use for the IB-Mgmt or other network to use for the IMC connectivity.

Note: Deselecting In-Band Configuration will bring up the option to enable Out-Of-Band Configuration within the IMC Access Policy. An Out-Of-Band Configuration can be used for IMC and KVM connectivity out of the management network of the fabric interconnect. If Out-Of-Band is used, there will not be a VLAN specification, and CIMC vMedia will not be possible.

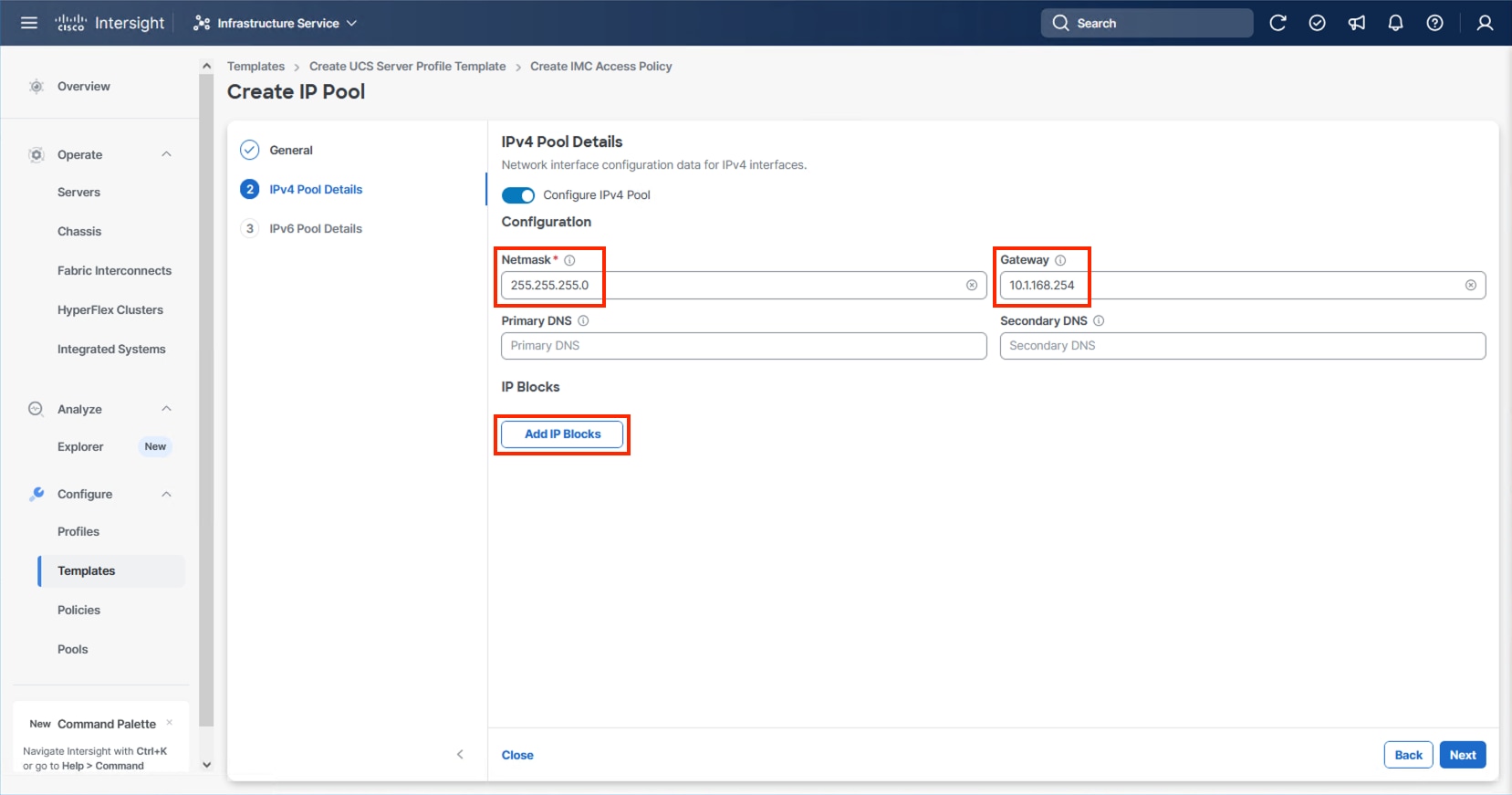

Step 6. Click Select IP Pool and click Create New.

Step 7. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-IB-Mgmt-Pool). Click Next.

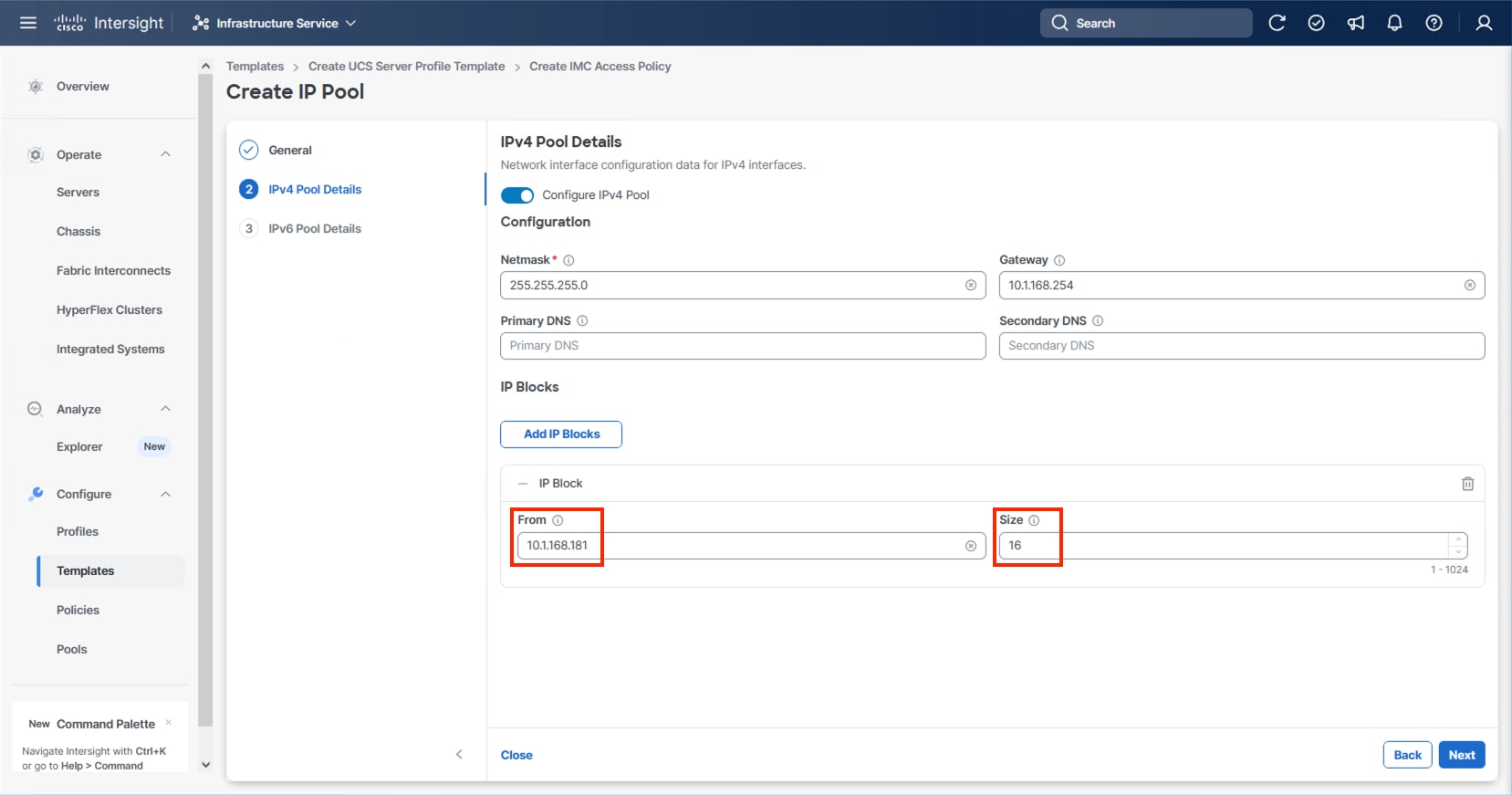

Step 8. Enter the appropriate Netmask and Gateway for the IPv4 Pool and click Add IP Blocks.

Step 9. Specify a starting IP for the block in From and a Size for the block. Click Add IP Blocks if you want to create multiple blocks within the In-Band network.

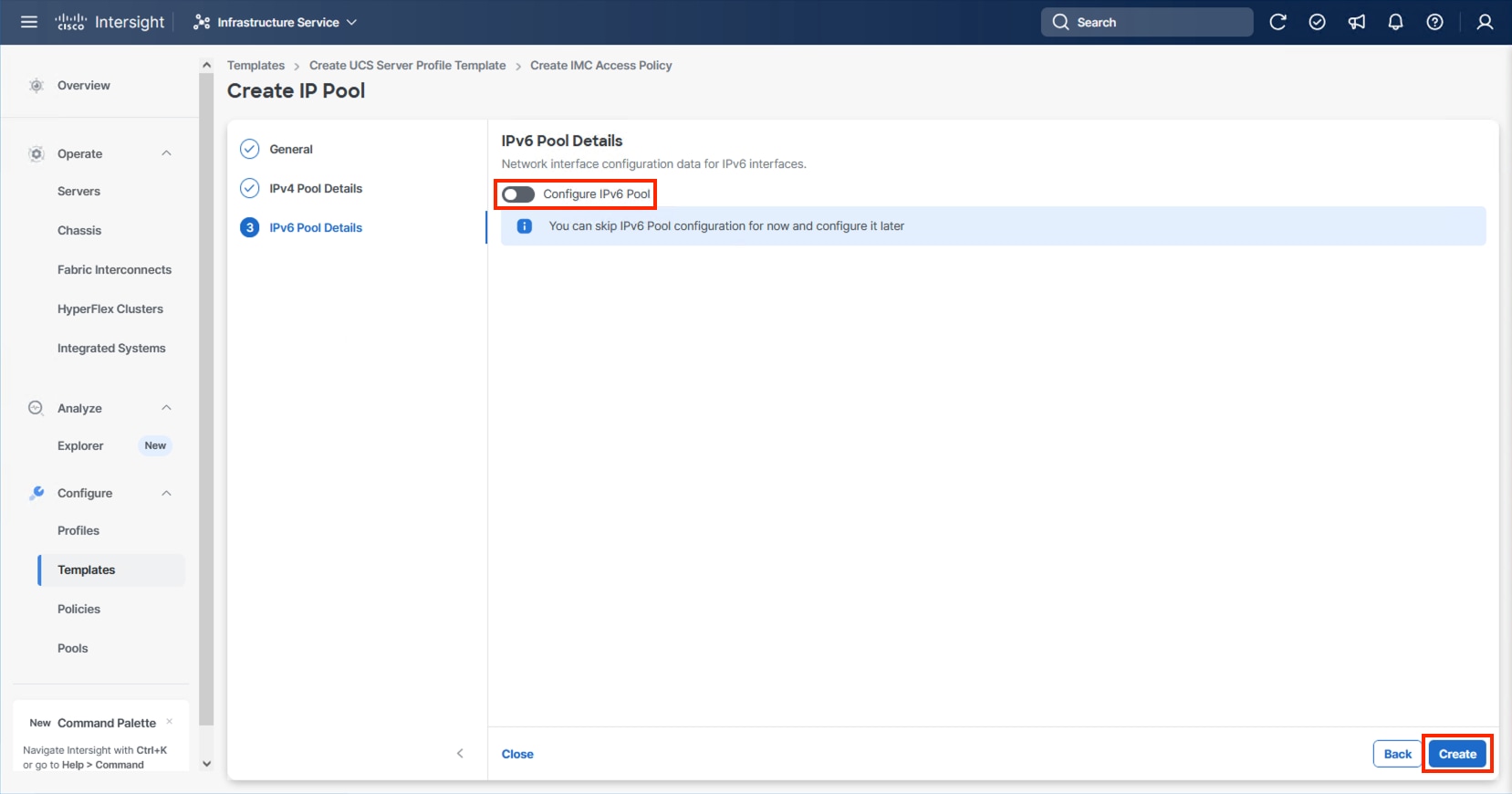

Step 10. Click Next and deselect the Configure IPv6 Pool option unless appropriate and click Create.

Step 11. Click Create to finish configuring the IMC access policy.

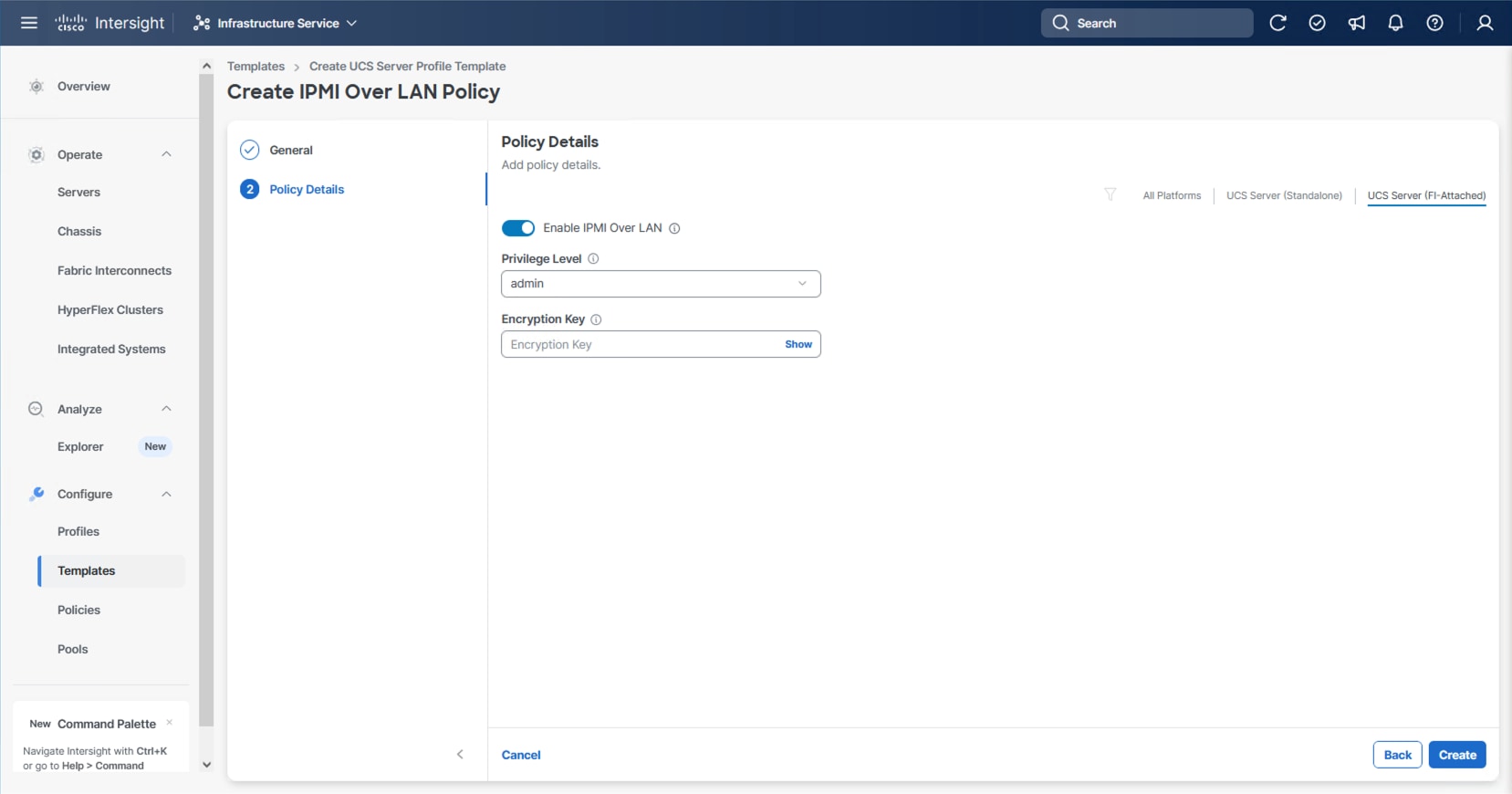

Procedure 2. Configure IPMI Over LAN Policy

Step 1. Click Select Policy next to IPMI Over LAN and click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-Enable-IPMIoLAN-Policy).

Step 3. Click Next.

Step 4. Leave the default settings in place for this policy.

Step 5. Click Create.

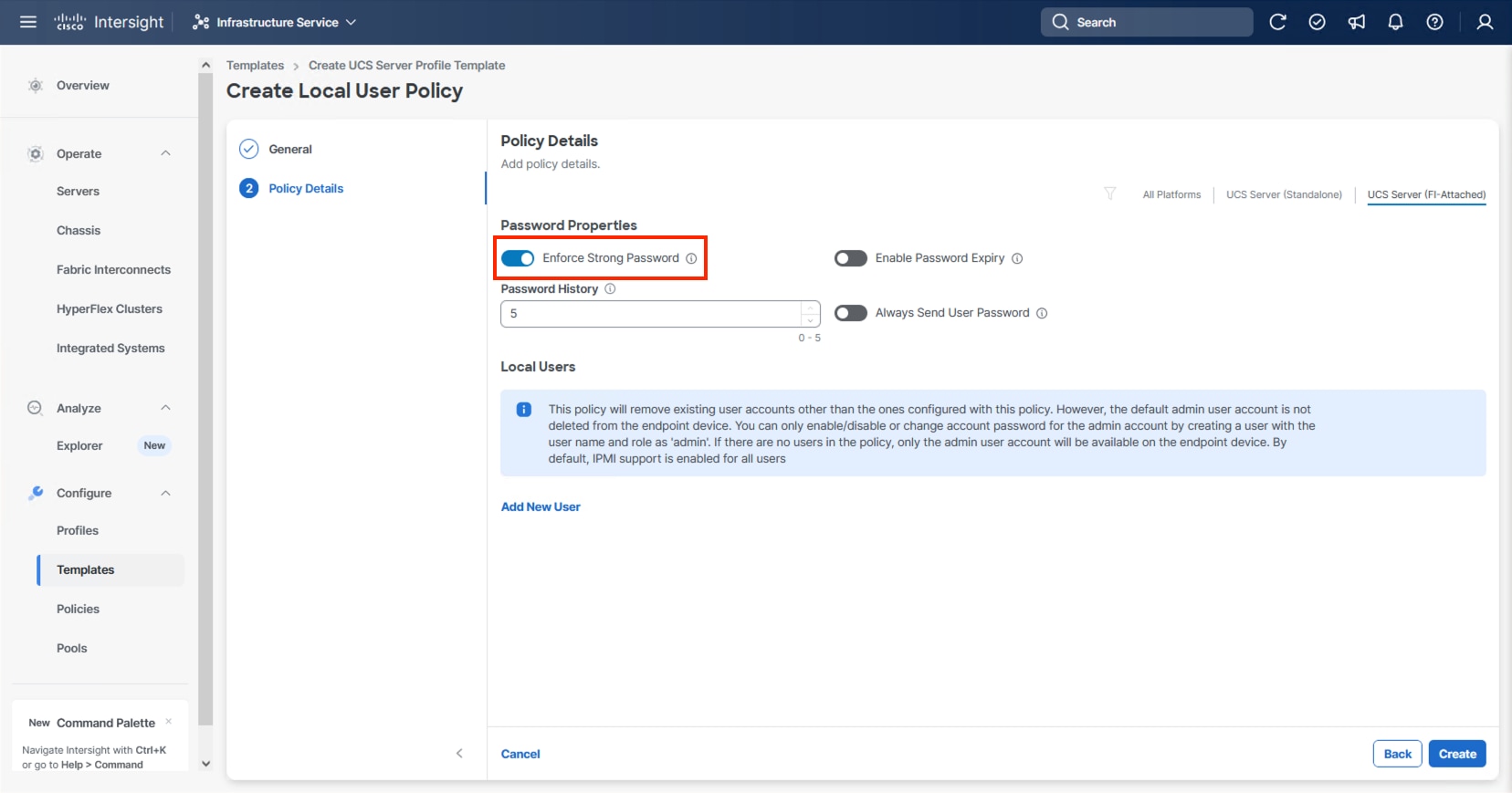

Procedure 3. Configure Local User Policy

Step 1. Click Select Policy next to Local User and click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-LocalUser).

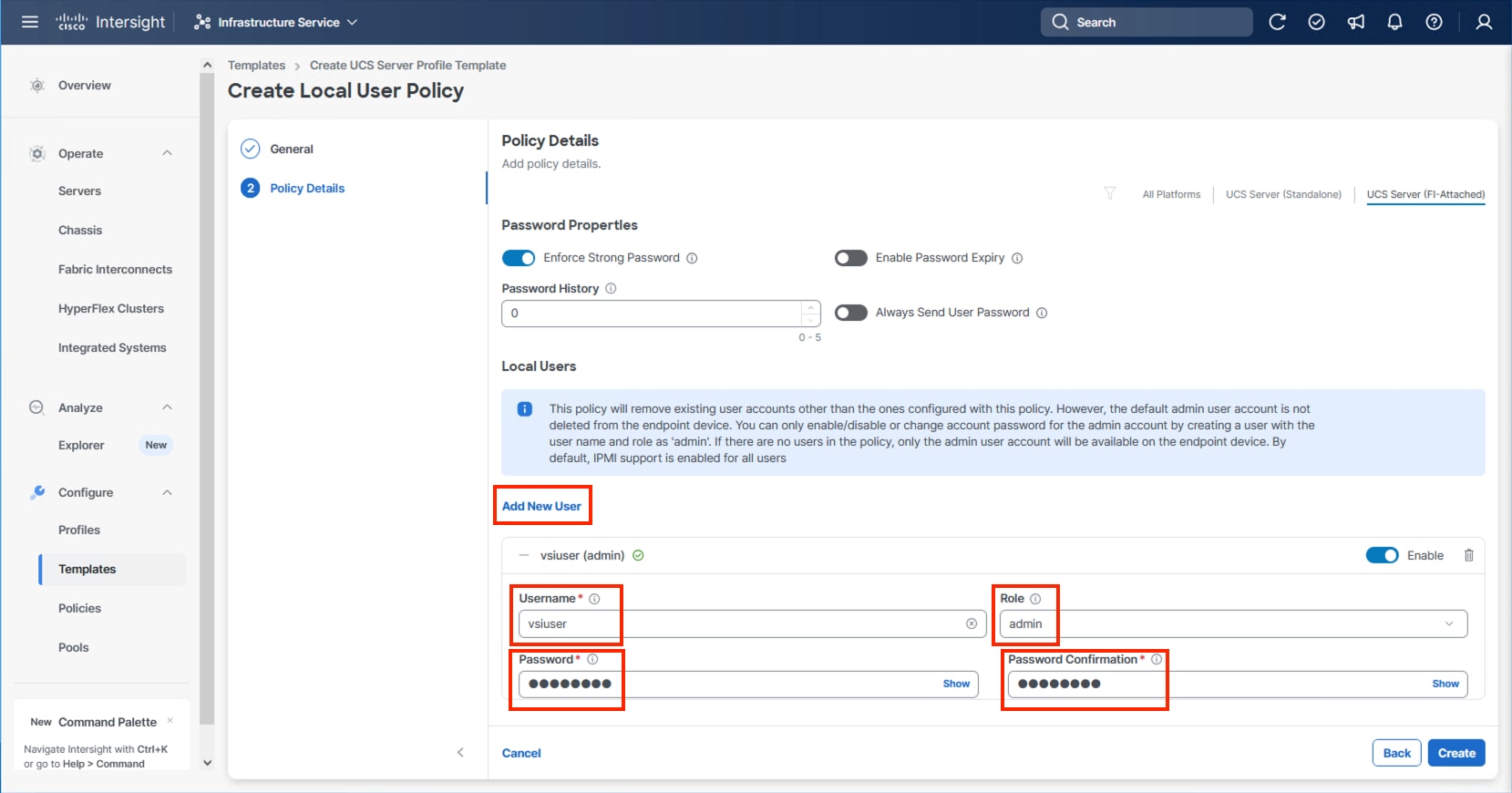

Step 3. Verify that Enforce Strong Password is selected.

Step 4. Click Add New User and then click + next to the New User.

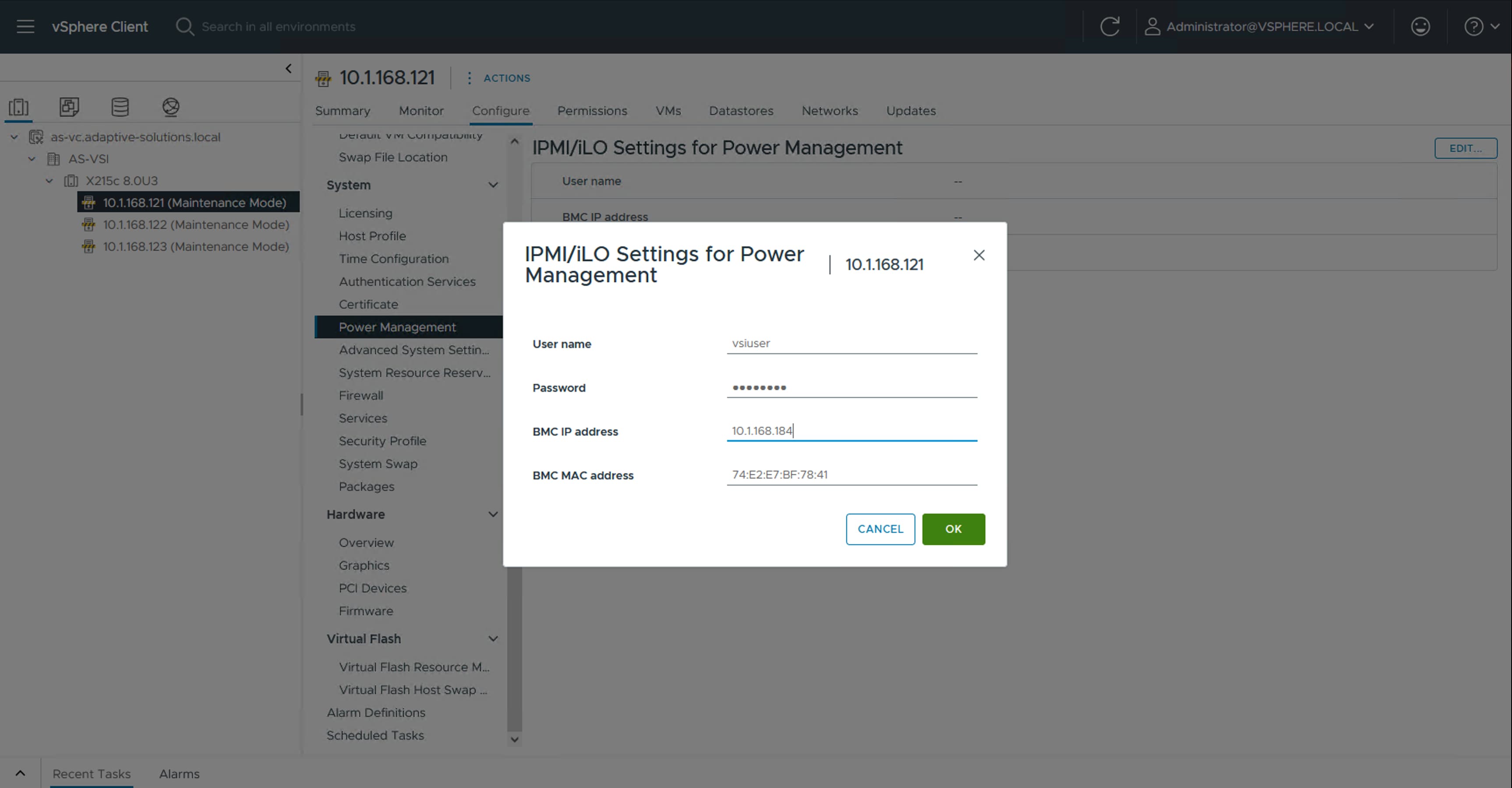

Step 5. Provide a username (for example, vsiuser), choose a role (for example, admin), and provide a password.

Note: The username and password combination defined here will be used as an alternate to log in to KVMs and can be used for IPMI.

Step 6. Click Create to finish configuring the user.

Step 7. Click Create to finish configuring the Local User Policy.

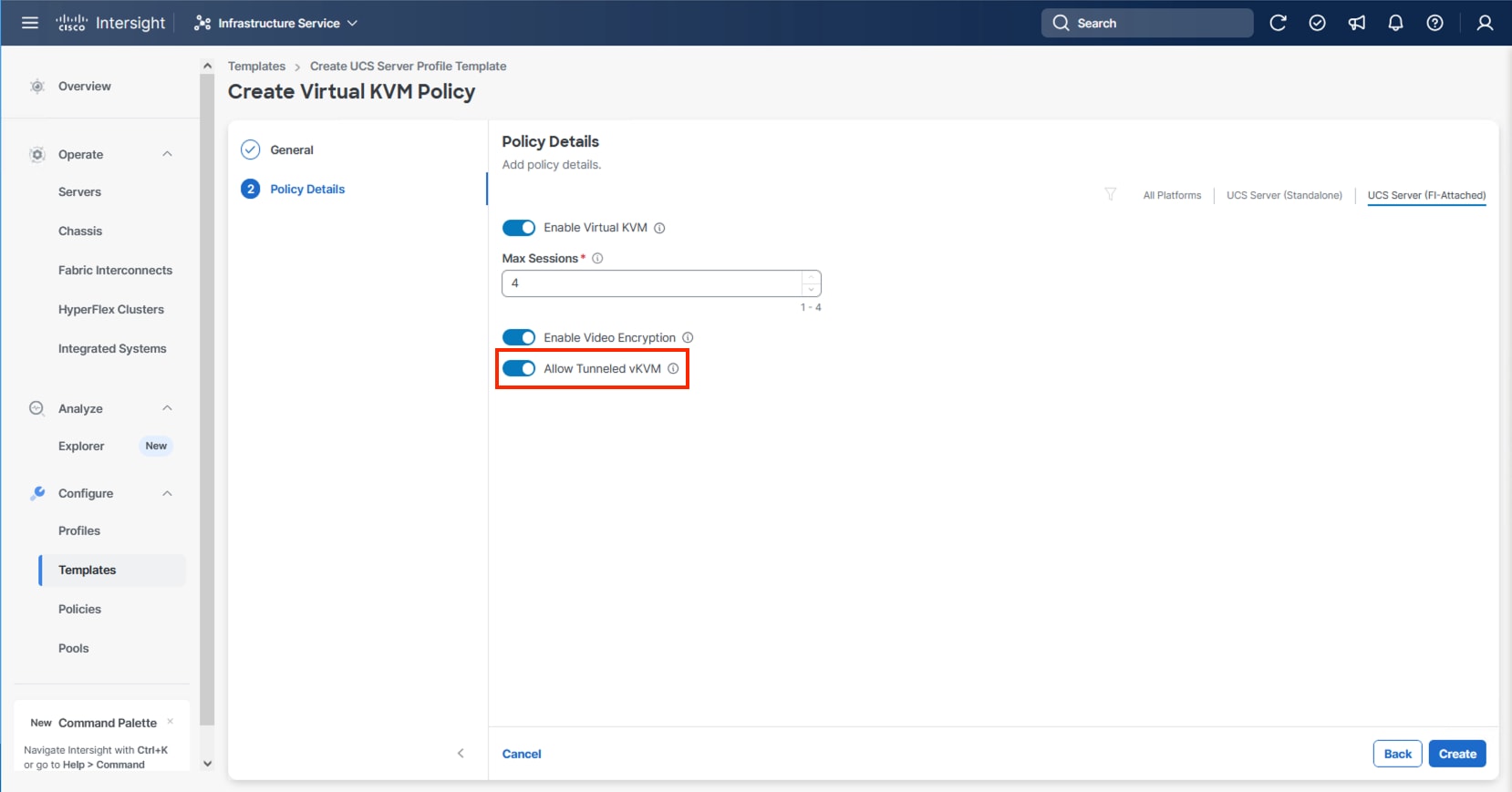

Procedure 4. Configure Virtual KVM Policy

Step 1. Click Select Policy next to Virtual KVM and click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-KVM-Policy).

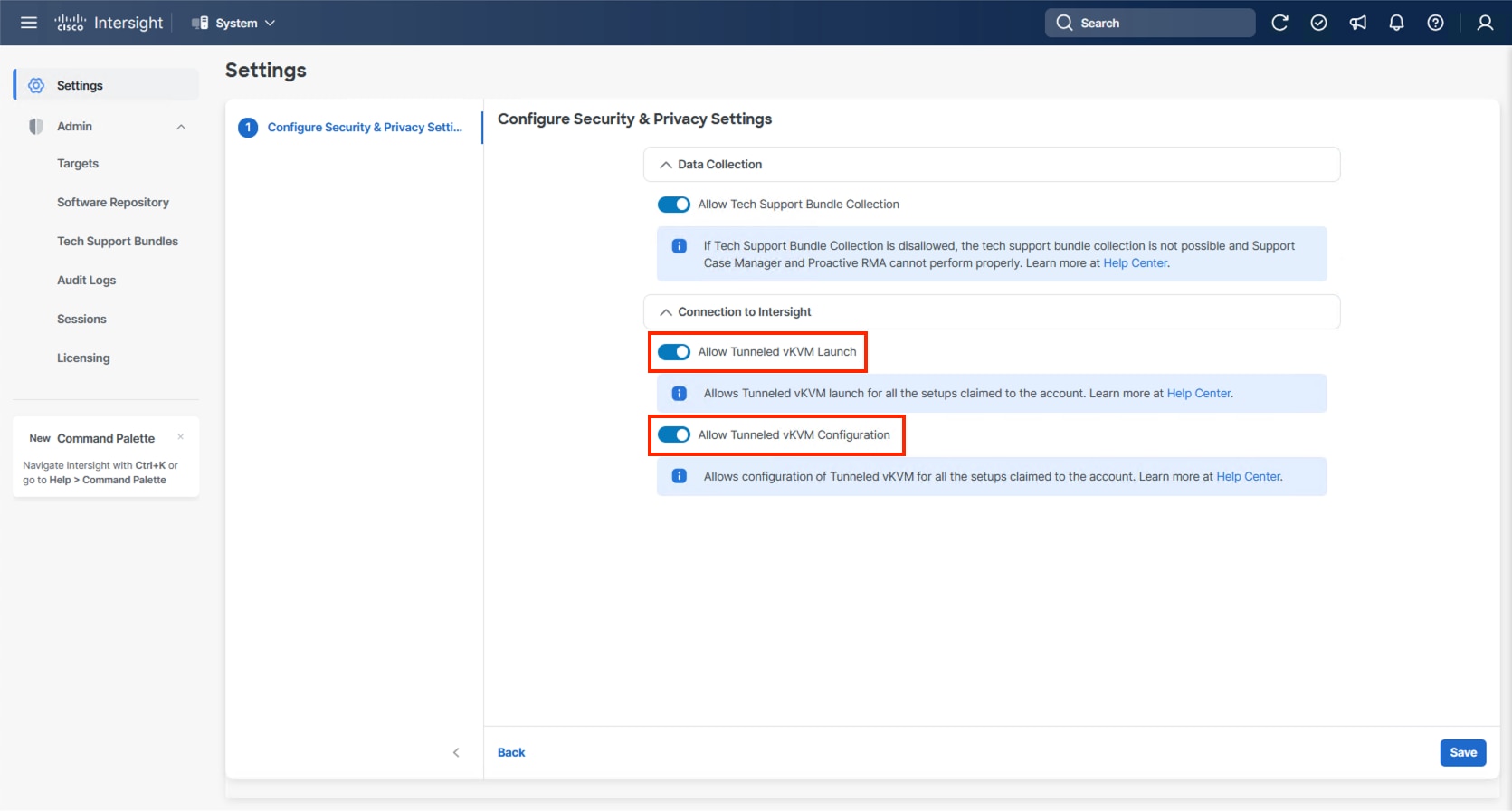

Step 3. Turn on Allow Tunneled vKVM.

Step 4. Click Create.

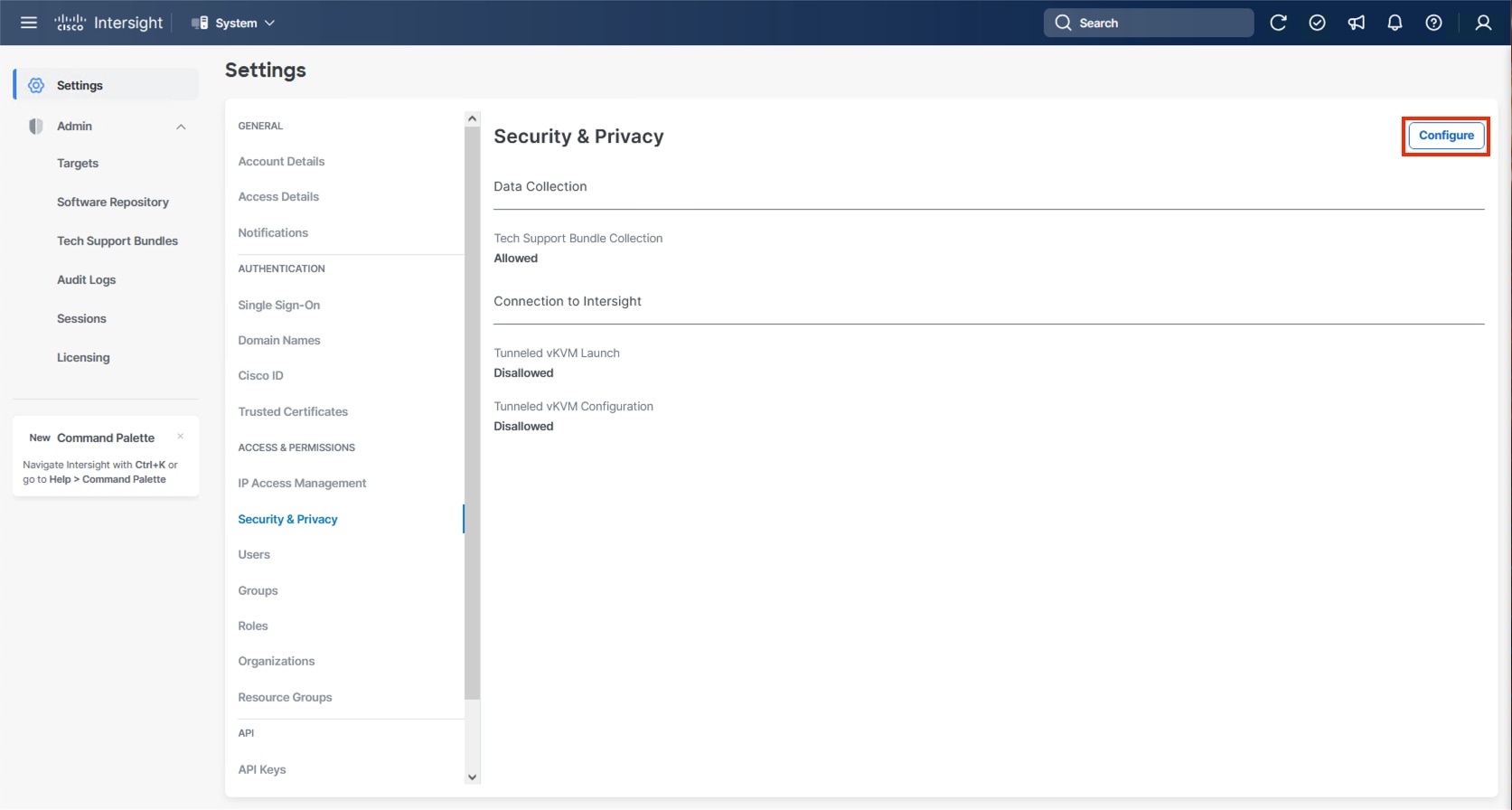

Note: To fully enable Tunneled KVM, after the Server Profile Template has been created, go to System > Settings > Security and Privacy and click Configure. Turn on Allow Tunneled vKVM Launch and Allow Tunneled vKVM Configuration.

Step 5. Click Next to continue to Storage Configuration.

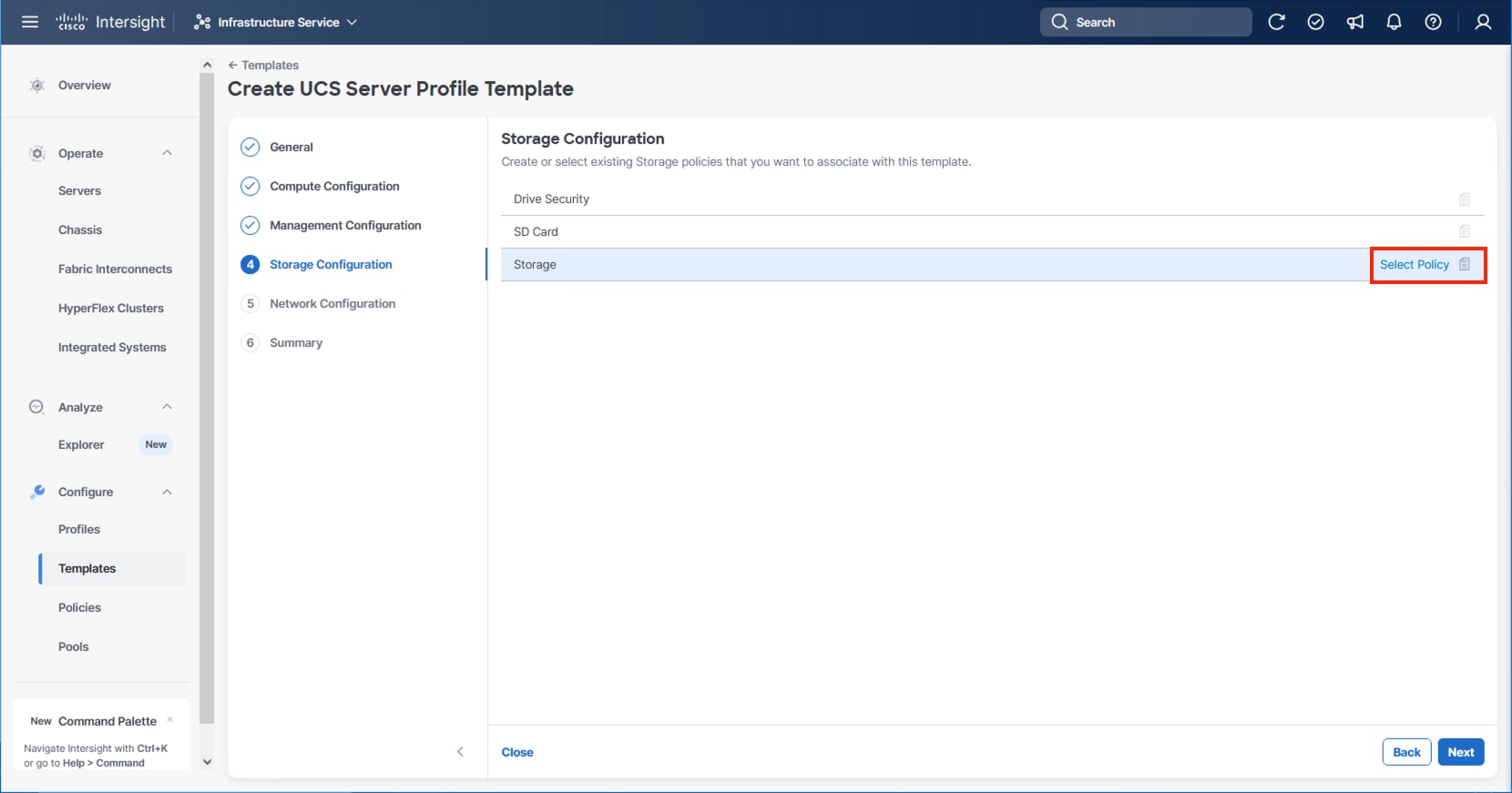

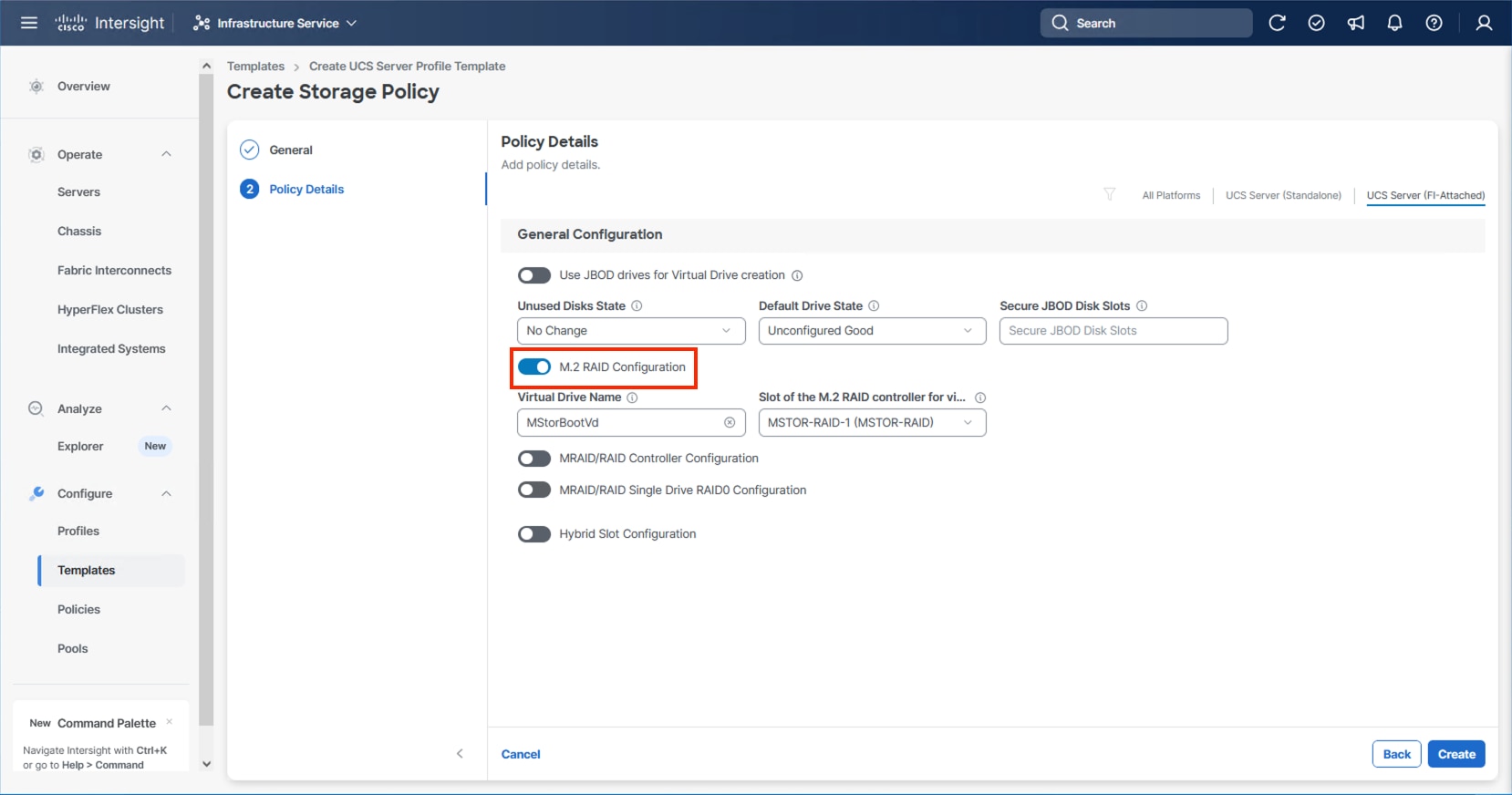

Storage Configuration

The Storage Configuration section of the Server Profile Template is required for configuring internal storage in the UCS servers using M.2 boot.

Procedure 1. Create Storage Configuration

Step 1. Click Select Policy and click Create New.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-Storage-Policy).

Step 3. Click Next.

Step 4. Enable M.2 RAID Configuration.

Step 5. Leave the default Virtual Drive Name and leave Slot of the M.2 RAID controller selected as MSTOR-RAID-1. Click Create.

Step 6. Click Next on the Storage Configuration screen.

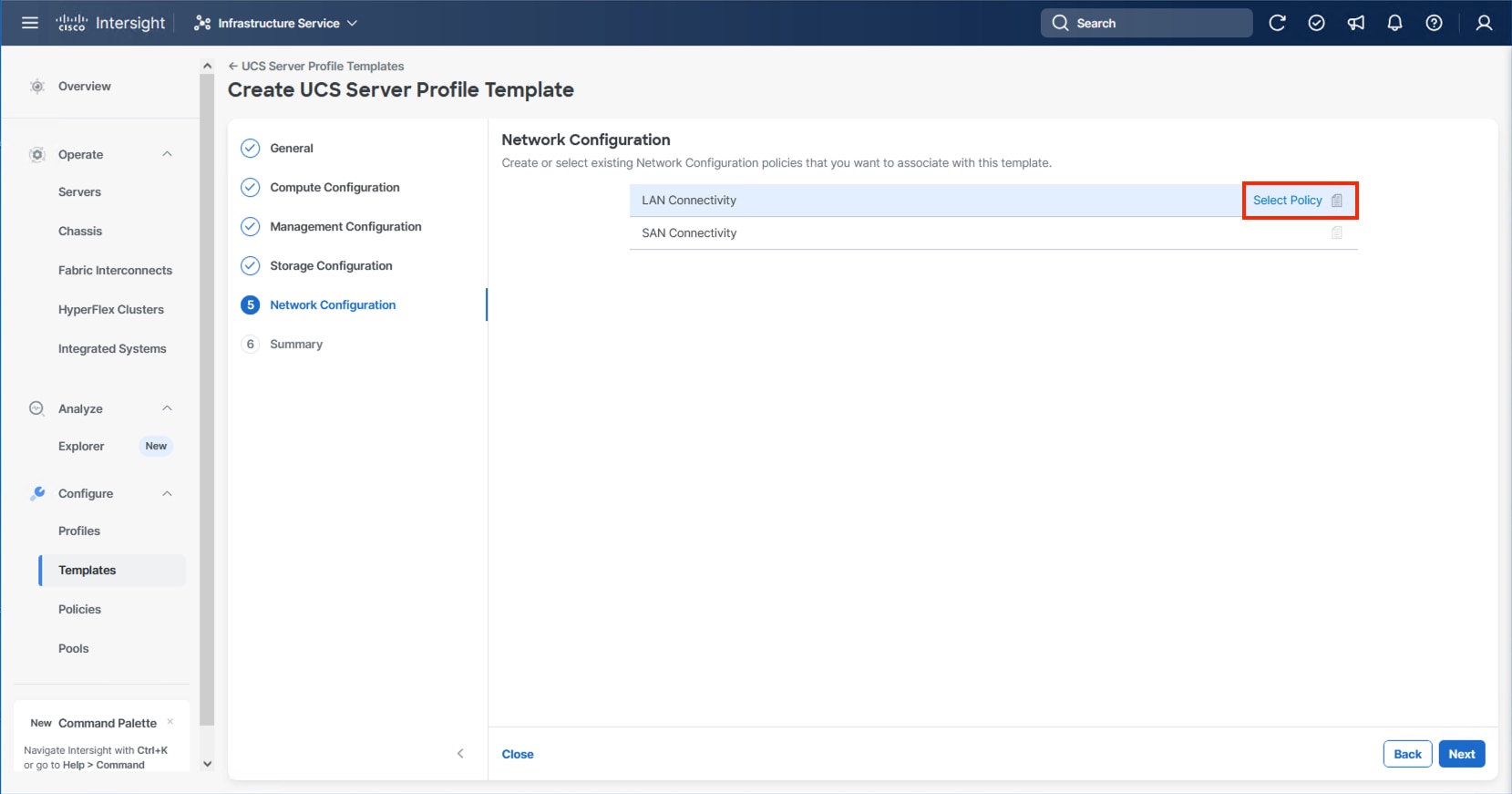

This section details how to create the LAN Connectivity policy used by the derived Server Profiles.

LAN Connectivity

Procedure 1. Create Network Configuration - LAN Connectivity Policy

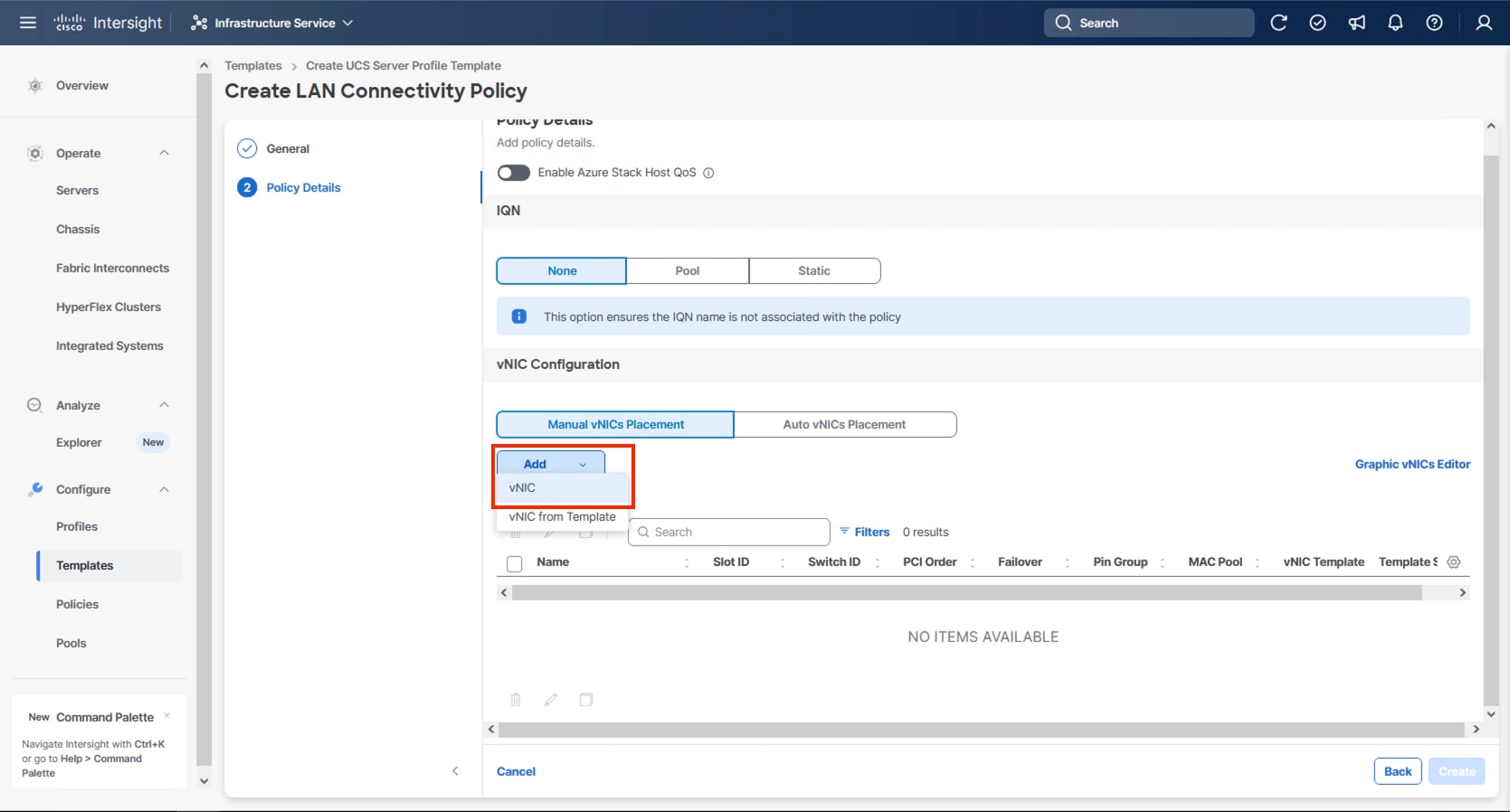

The LAN connectivity policy defines the connections and network communication resources between the server and the LAN. This policy uses pools to assign MAC addresses to servers and to identify the vNICs that the servers use to communicate with the network.

For consistent vNIC placement, manual vNIC placement is used. The six vNICs configured are listed in Table 11.

Table 11. vNICs defined in LAN Connectivity

| vNIC/vHBA Name |

Slot ID |

Switch ID |

PCI Order |

VLANs |

| 00-vSwitch0-A |

MLOM |

A |

0 |

IB-Mgmt |

| 01-vSwitch0-B |

MLOM |

B |

1 |

IB-Mgmt |

| 02-vDS0-A |

MLOM |

A |

2 |

IB-Mgmt, VM Traffic, VM Traffic-A, VM Traffic-B, vMotion |

| 03-vDS0-B |

MLOM |

B |

3 |

IB-Mgmt, VM Traffic, VM Traffic-A, VM Traffic-B, vMotion |

| 04-IPStorage-A |

MLOM |

A |

4 |

NVMe-TCP-A |

| 05-IPStorage-B |

MLOM |

B |

5 |

NVMe-TCP-B |

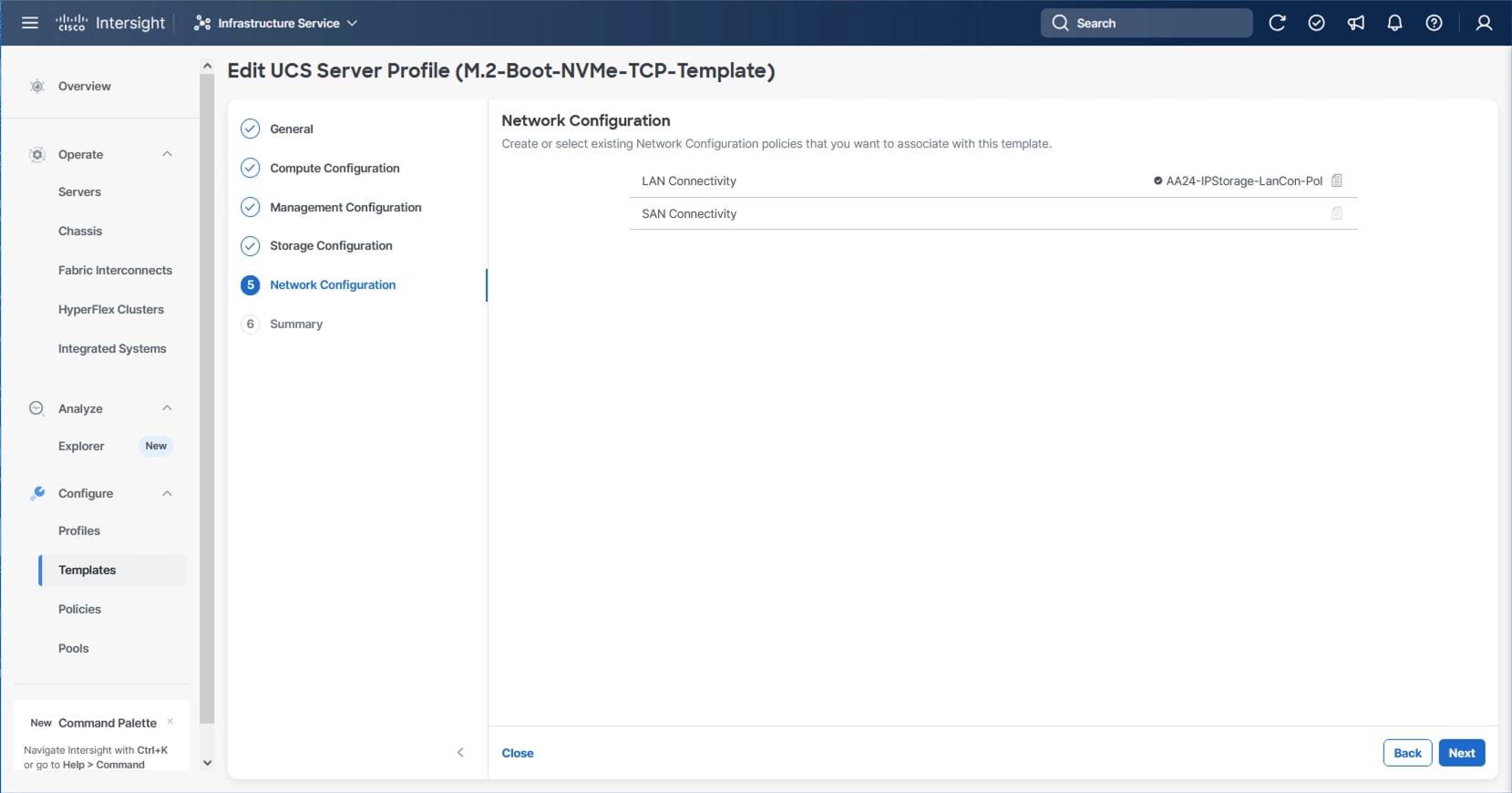

Step 1. Click Select Policy next to LAN Connectivity and click Create New from the column that appears to the right.

Step 2. Verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a name for the policy (for example, AA24-IPStorage-LanCon). Click Next.

Step 3. In the vNIC Configuration section, from the Add drop-down list, select vNIC.

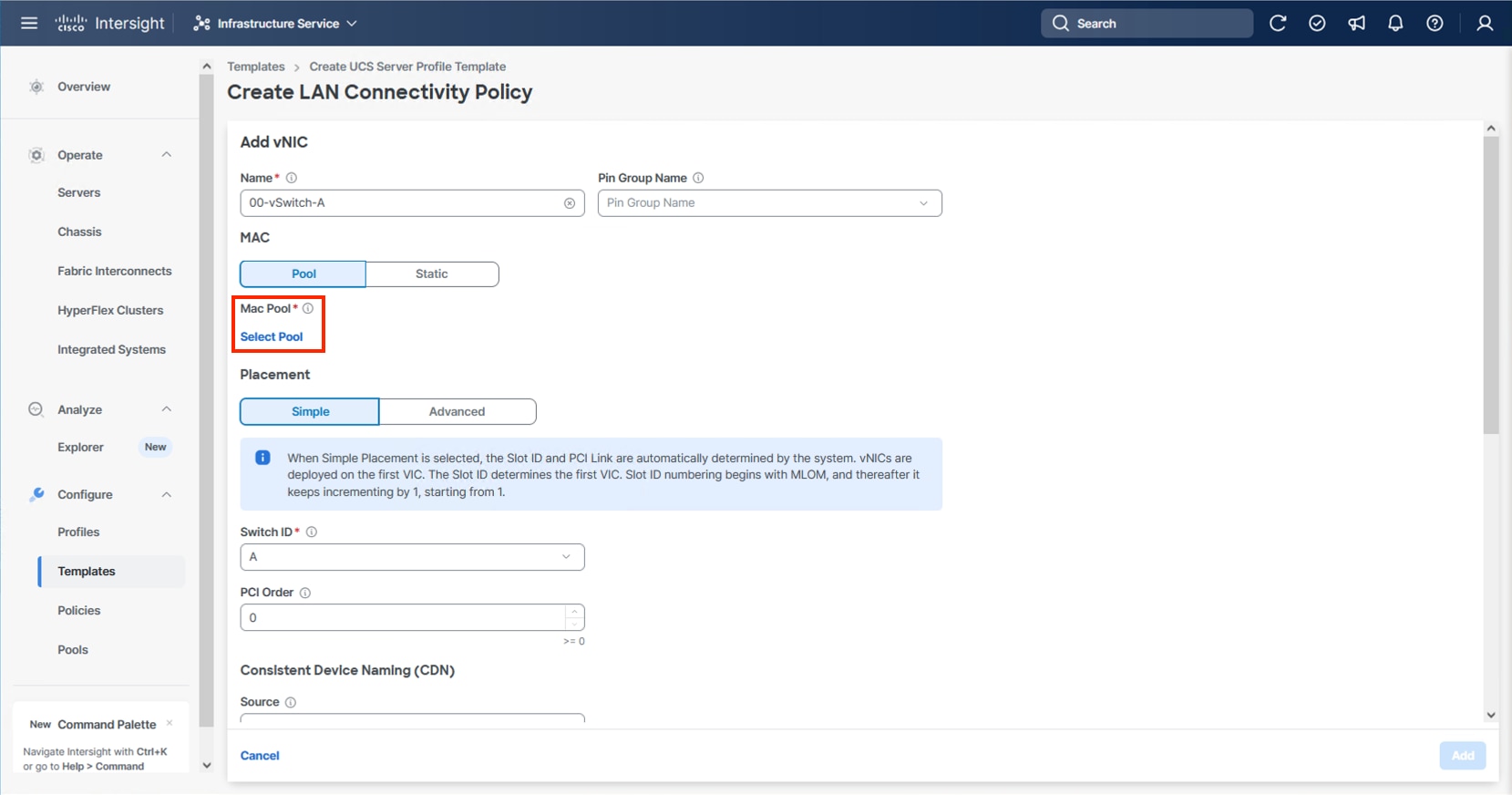

Step 4. Specify the appropriate first vNIC name from Table 11, click Select Pool and click Create New in the pane that appears to the right.

Note: When creating the first vNIC, the MAC address pool has not been defined yet therefore a new MAC address pool will need to be created. Two separate MAC address pools are configured for each fabric. MAC-Pool-A will be reused for all Fabric-A vNICs, and MAC-Pool-B will be reused for all Fabric-B vNICs.

| Pool Name |

Starting MAC Address |

Size |

vNICs |

| MAC-Pool-A |

00:25:B5:24:0A:00 |

256 |

00-vSwitch0-A, 02-VDS0-A, 04-IPStorage-A |

| MAC-Pool-B |

00:25:B5:24:0B:00 |

256 |

01-vSwitch0-B, 03-VDS0-B, 05-IPStorage-B |

Note: Each server requires 3 MAC addresses from each pool. Adjust the size of the pool according to your requirements.

Step 5. Select the MAC Pool appropriate fabric or click Create New if one has not been created.

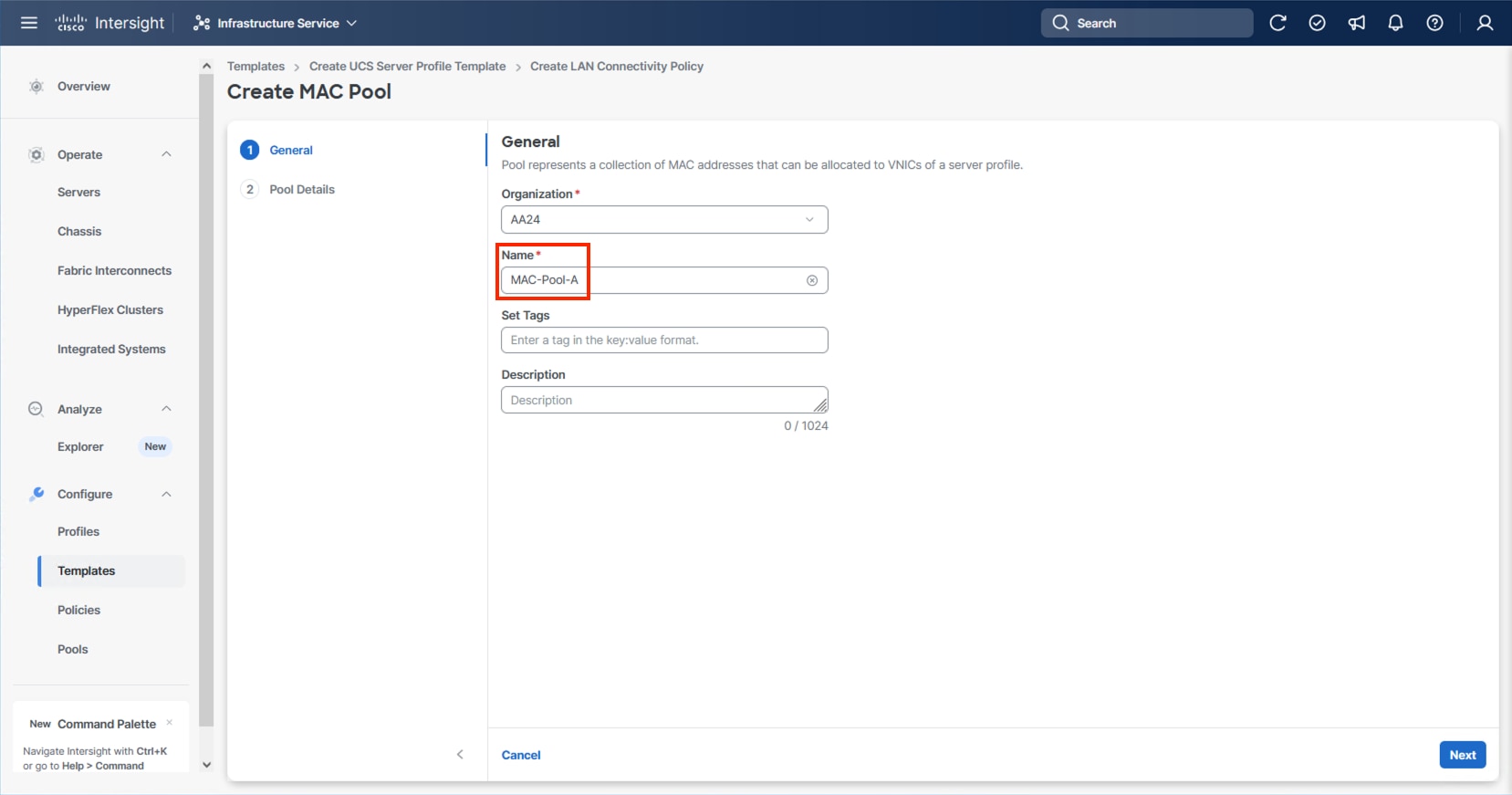

Step 6. If creating a new pool, verify that the correct organization is selected from the drop-down list (for example, AA24) and provide a Name for the pool from Table 12 depending on the vNIC being created (for example, MAC-Pool-A for Fabric A).

Step 7. Click Next.

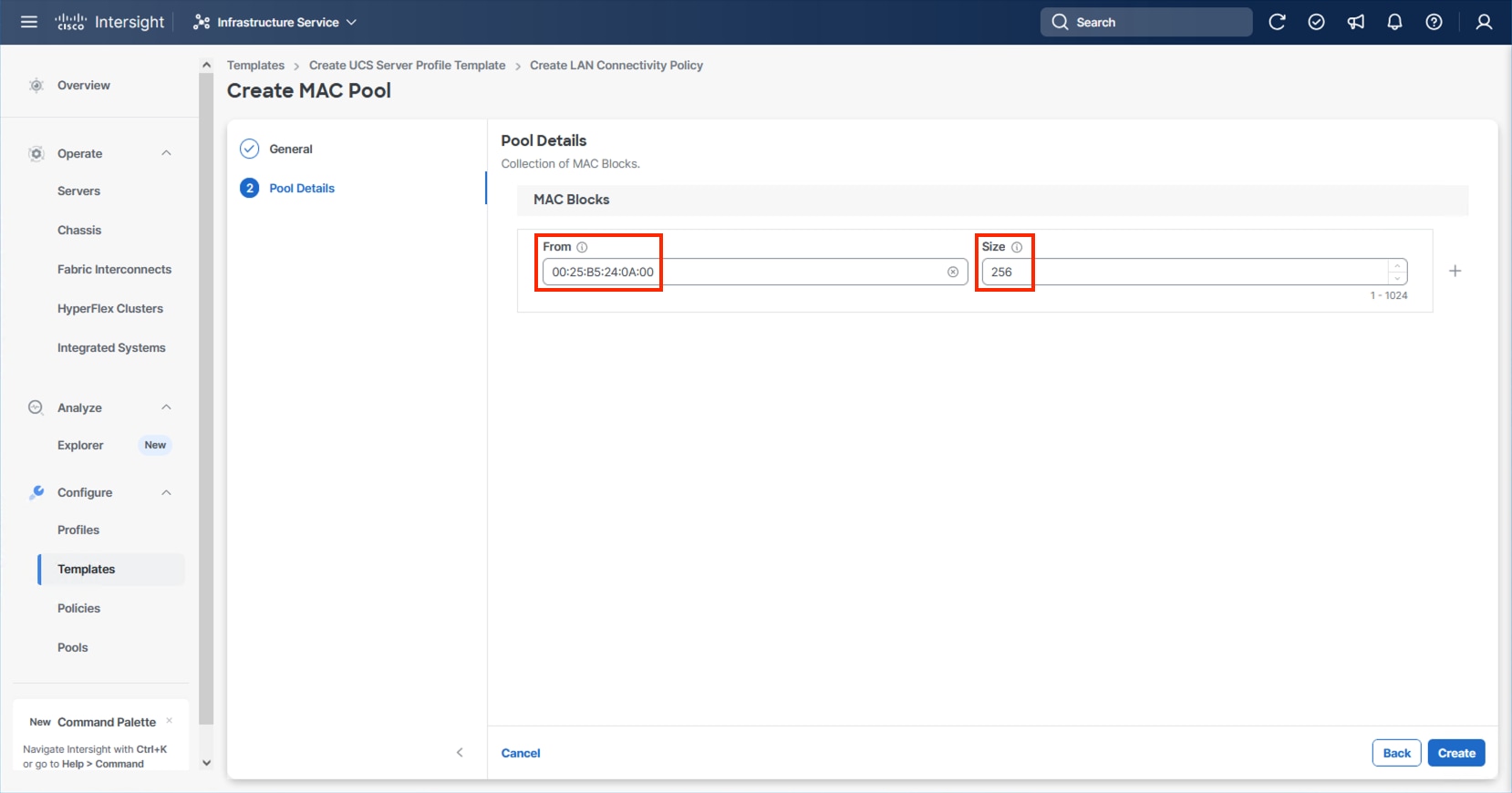

Step 8. Provide the starting MAC address from Table 12 (for example, 00:25:B5:24:0A:00)

Step 9. Provide the size of the MAC address pool from Table 12 (for example, 256).

Step 10. Click Create to finish creating the MAC address pool.

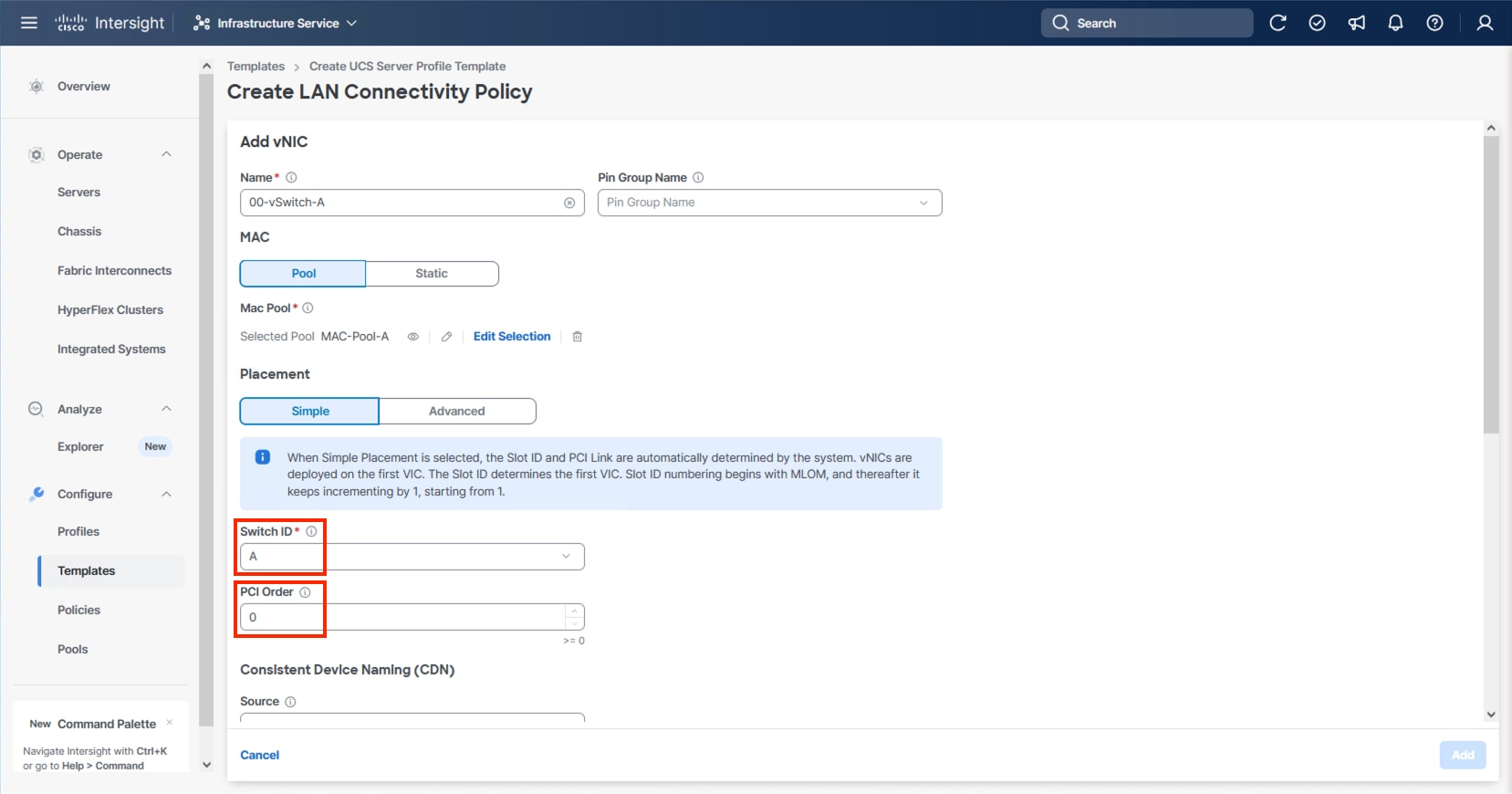

Step 11. Confirm the Switch ID and PCI Order values are correct for the vNIC being configured per Table 11.

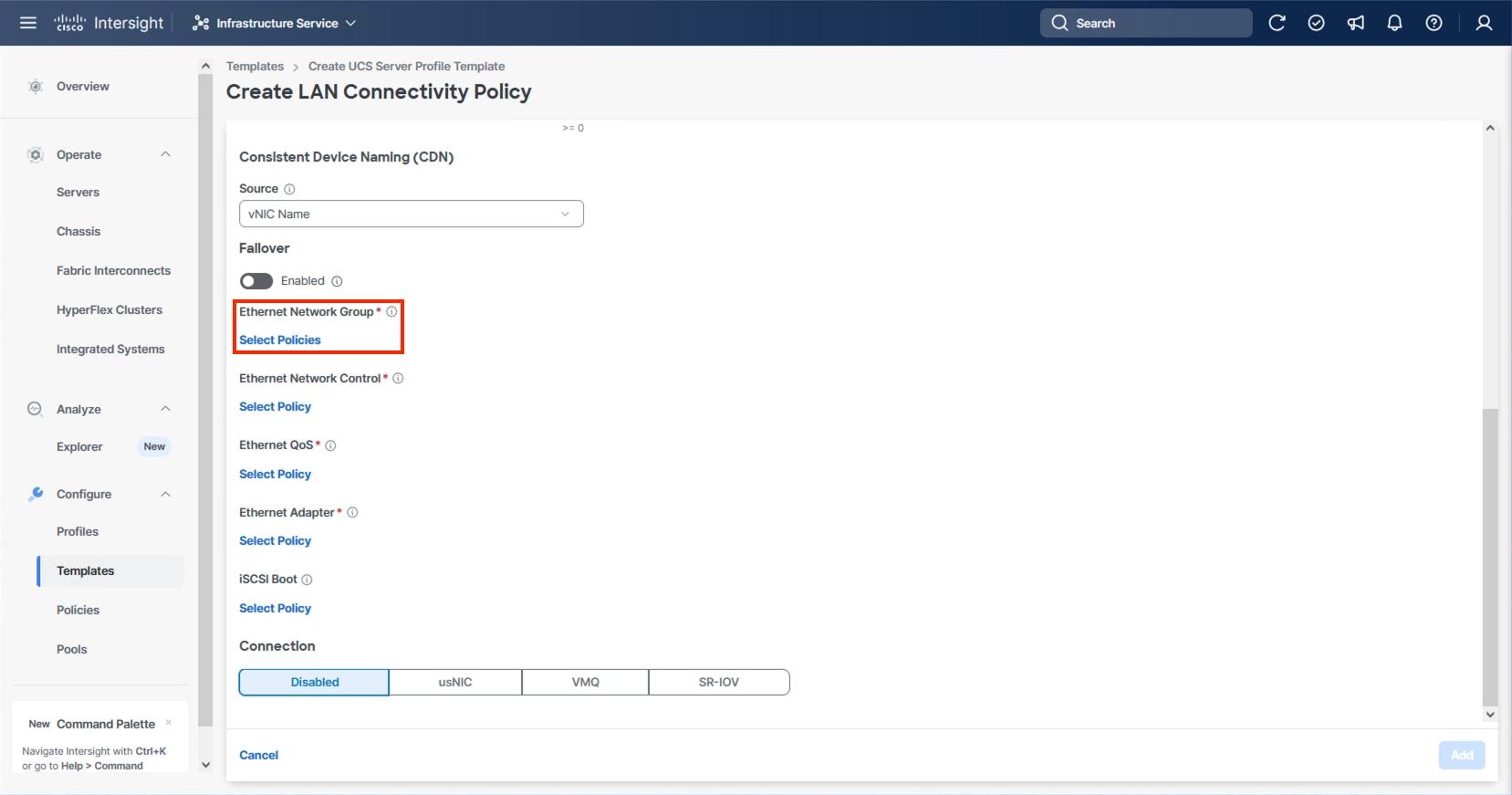

Step 12. Click Select Policy for Ethernet Network Group.

Note: Four Ethernet Network Group policies will be created, one for vSwitch0 vNICs, one for vDS0 vNICs, and one each for the A vs B IPStorage vNICs, detailing the Native VLAN and VLANs that will be carried within the vNICs.

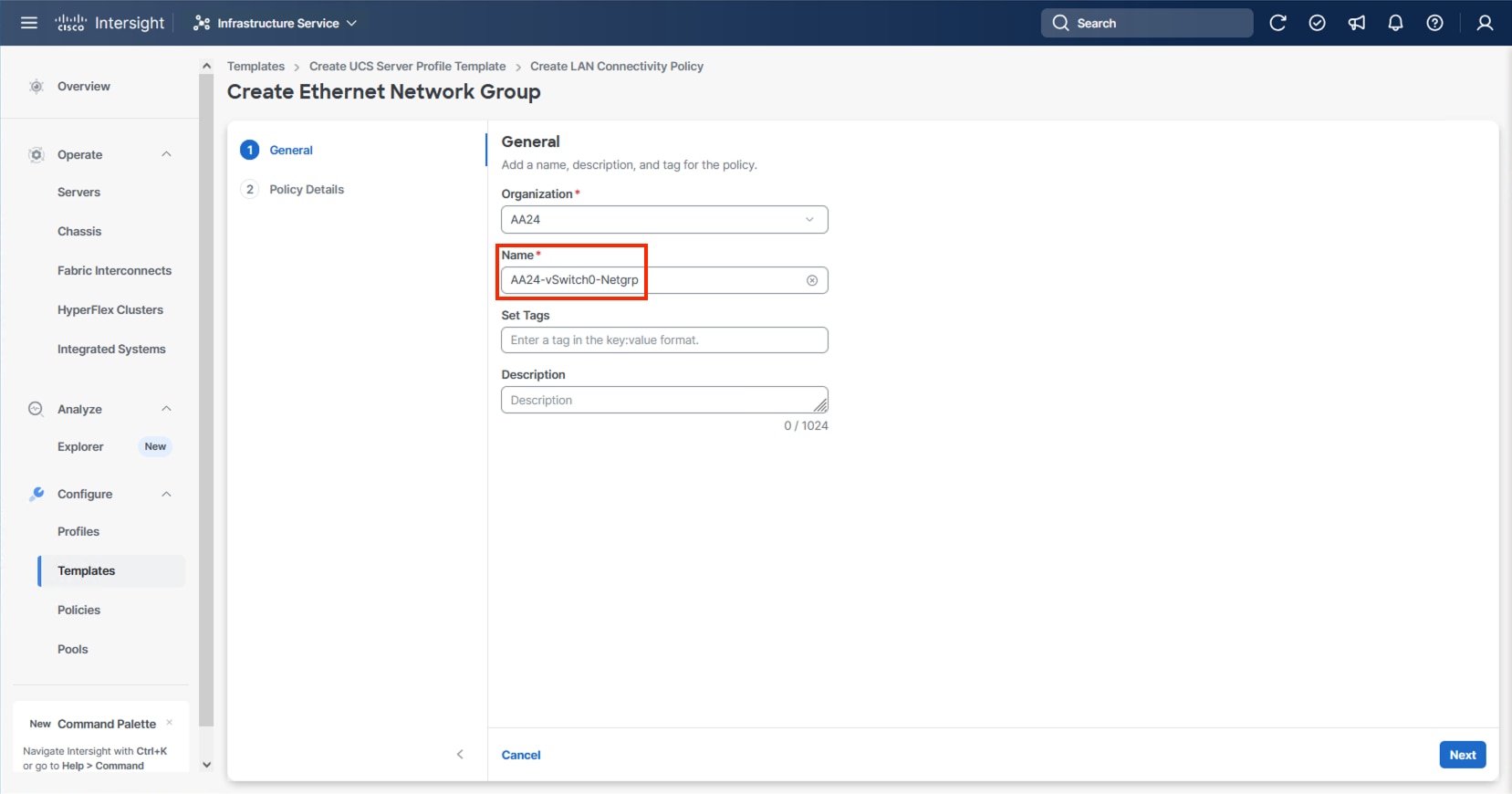

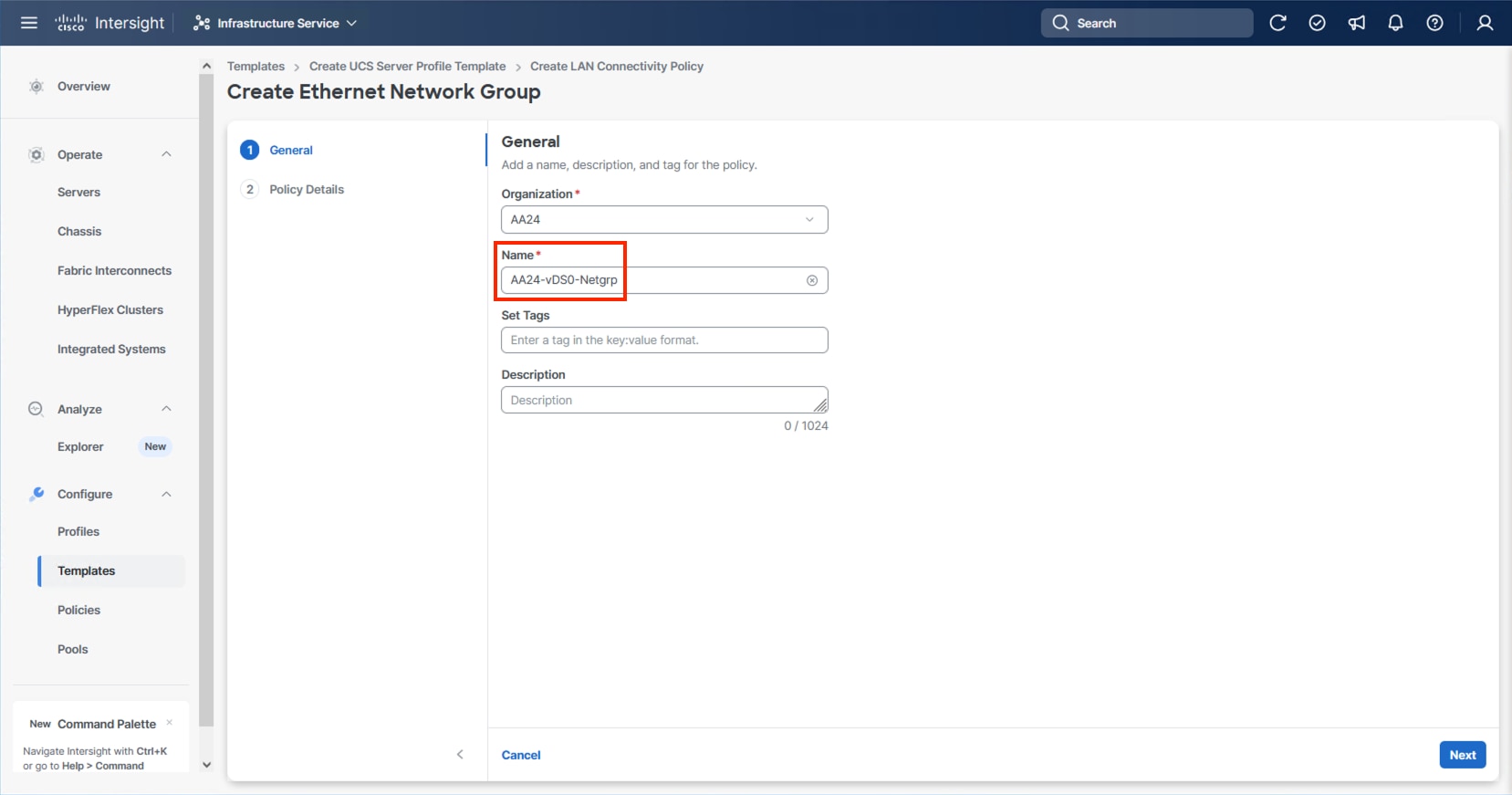

Step 13. When creating the vSwitch0 vNICs and the vSwitch0 Ethernet Network Group Policy is not created, select Create New. Select the appropriate policy if it is created and skip the next six steps.

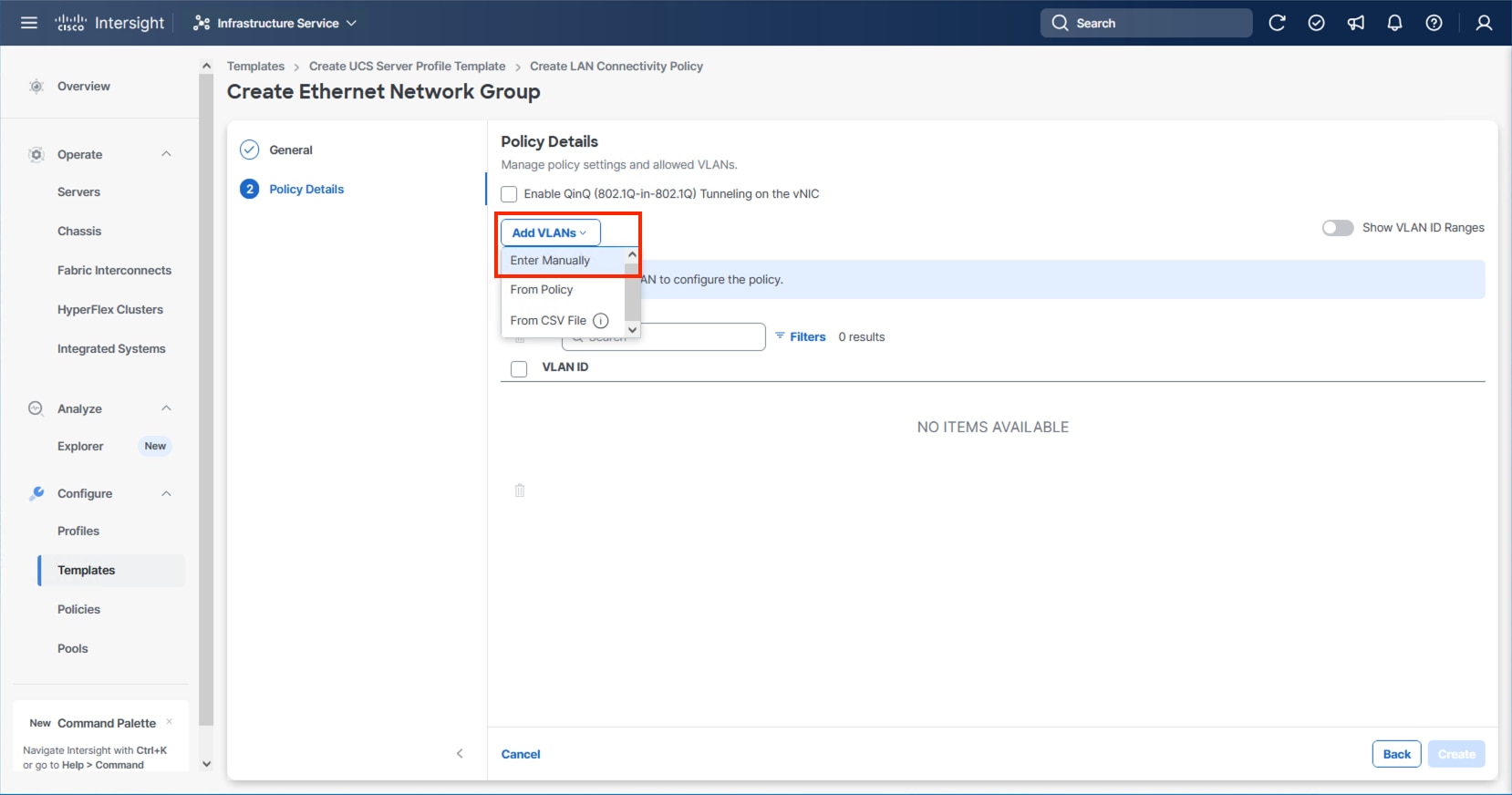

Step 14. Provide a Name for the policy and click Next.

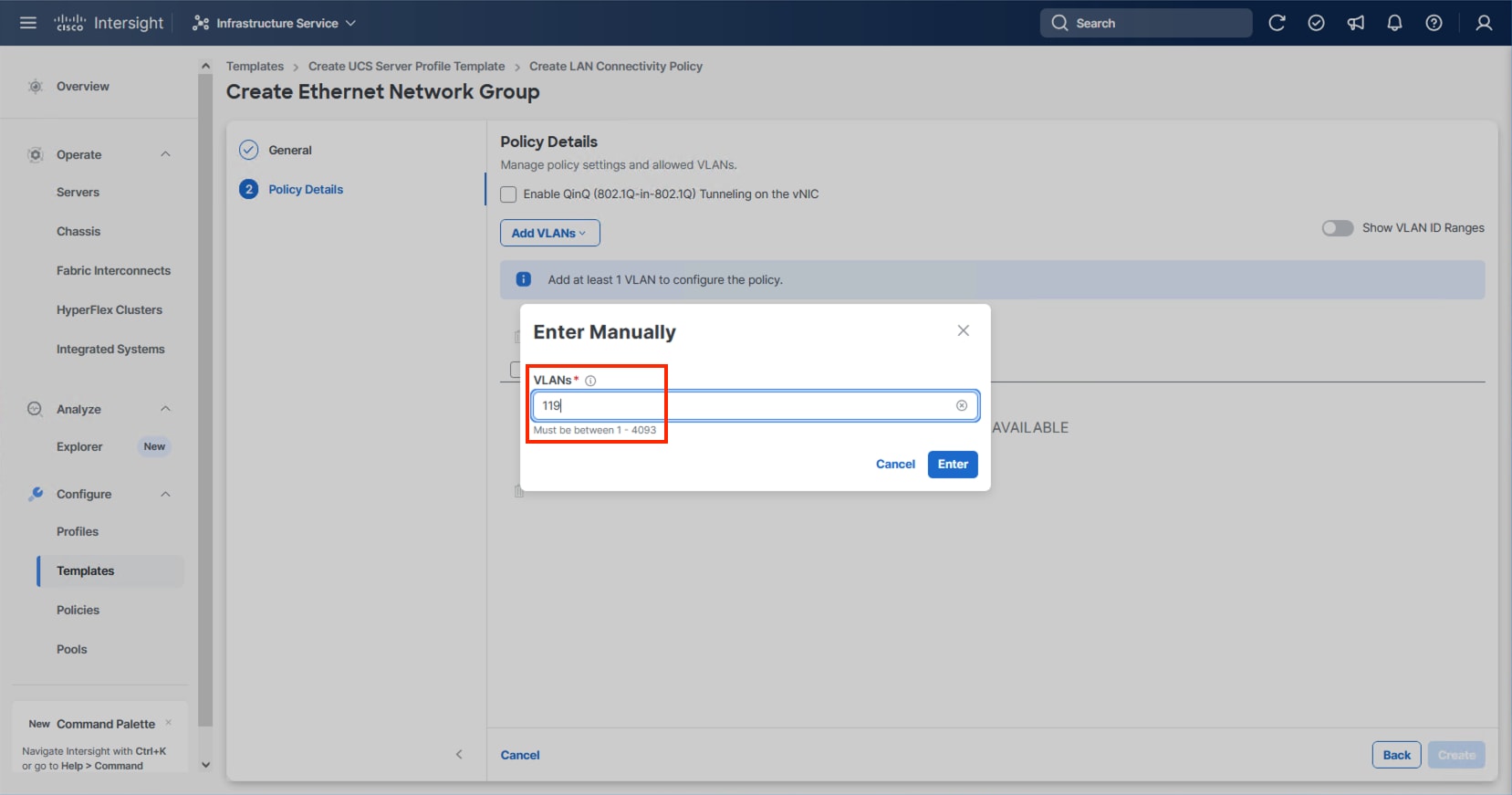

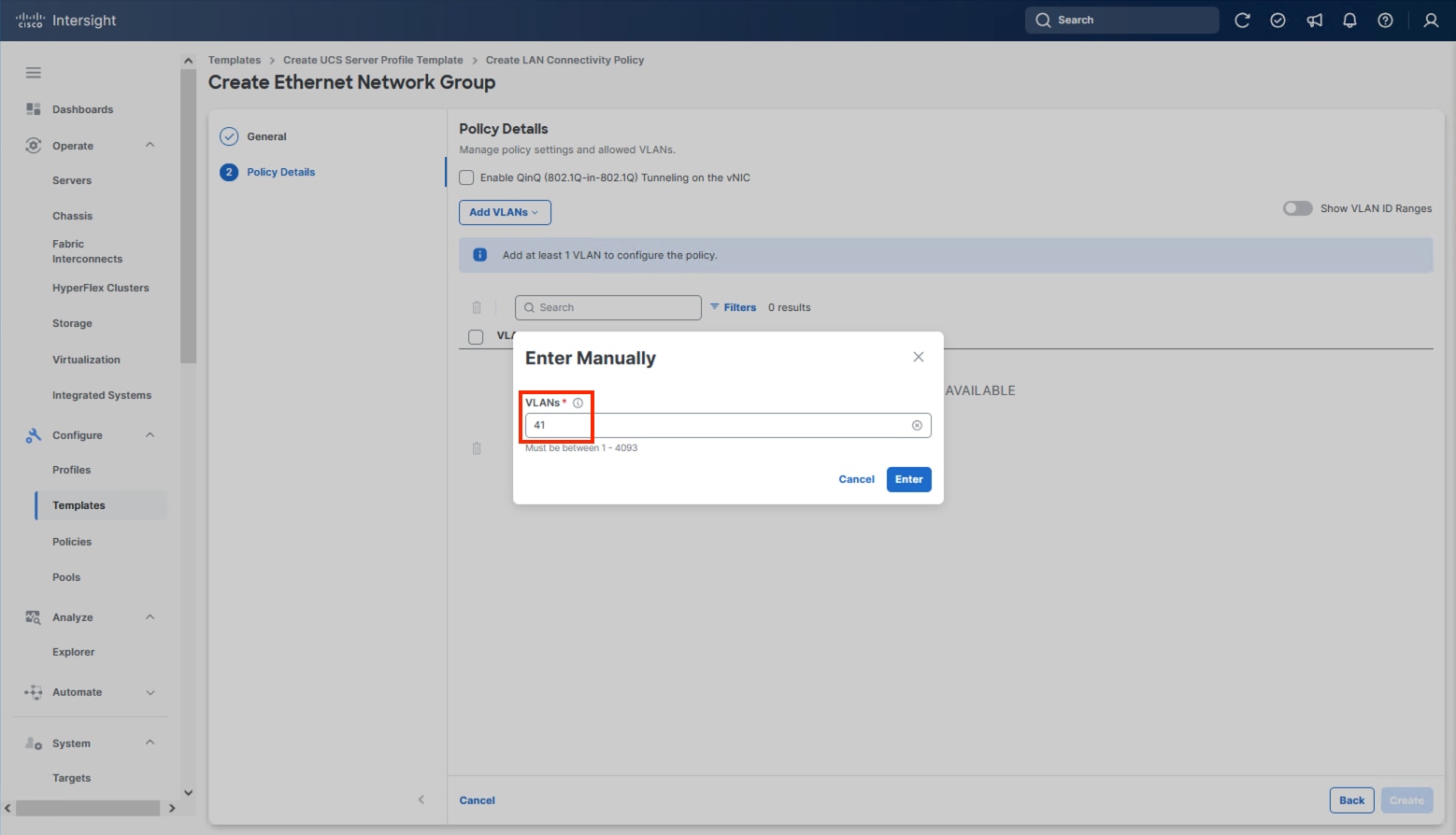

Step 15. Click Add VLANs and select Enter Manually.

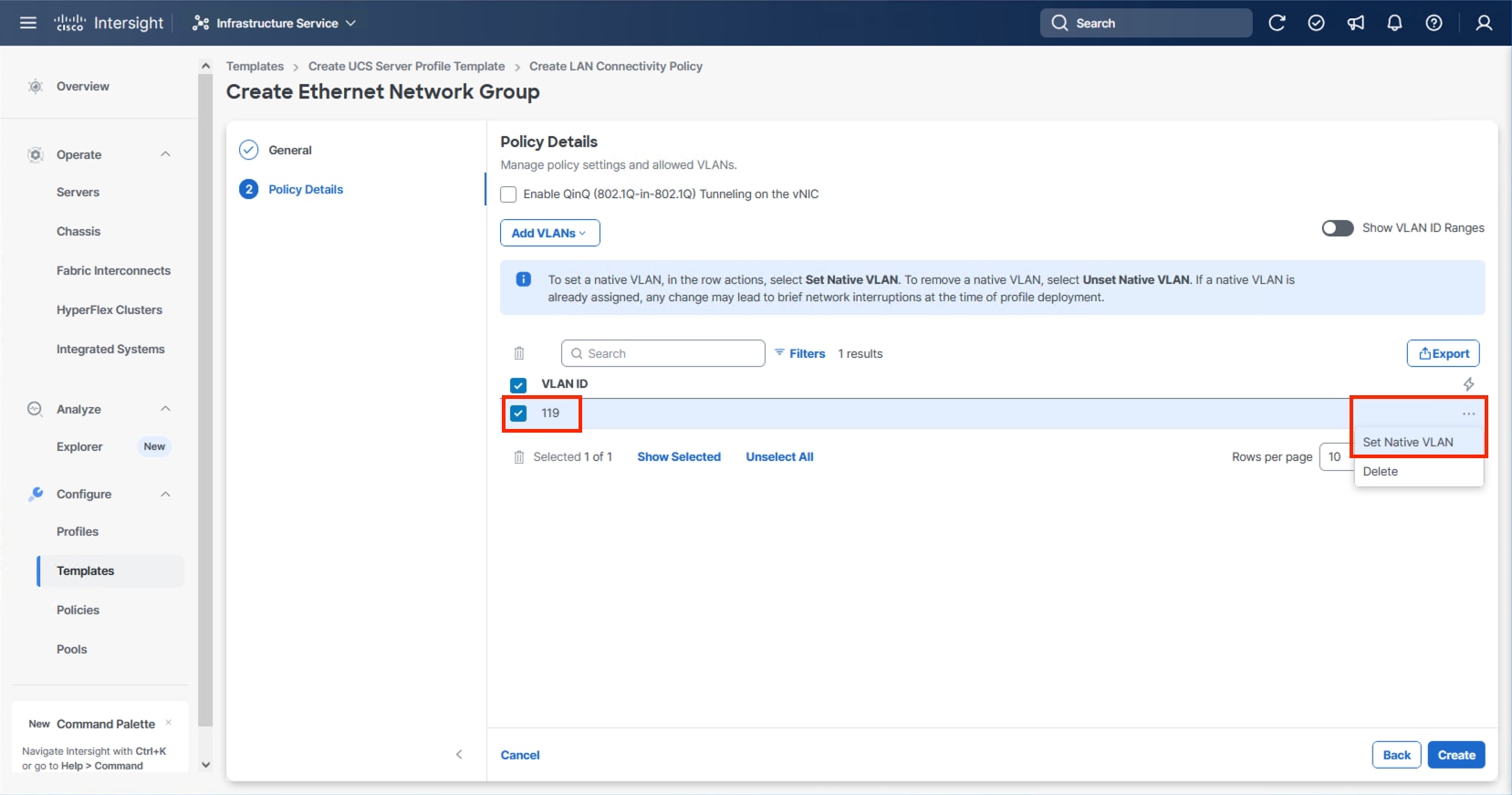

Step 16. Specify the IB-Mgmt VLAN to be used for the ESXi management network and click Enter.

Step 17. Select the entered IB-Mgmt VLAN and click the ellipsis on the right and select Set Native VLAN.

Step 18. Click Create.

Step 19. Select the newly created Ethernet Network Group policy and click Select.

Step 20. When creating the vDS0 vNICs and the vDS0 Ethernet Network Group Policy is not created, click Select Policy under the Ethernet Network Group and select Create New from the right-side pane. Select the appropriate policy if it is created and skip the next five steps.

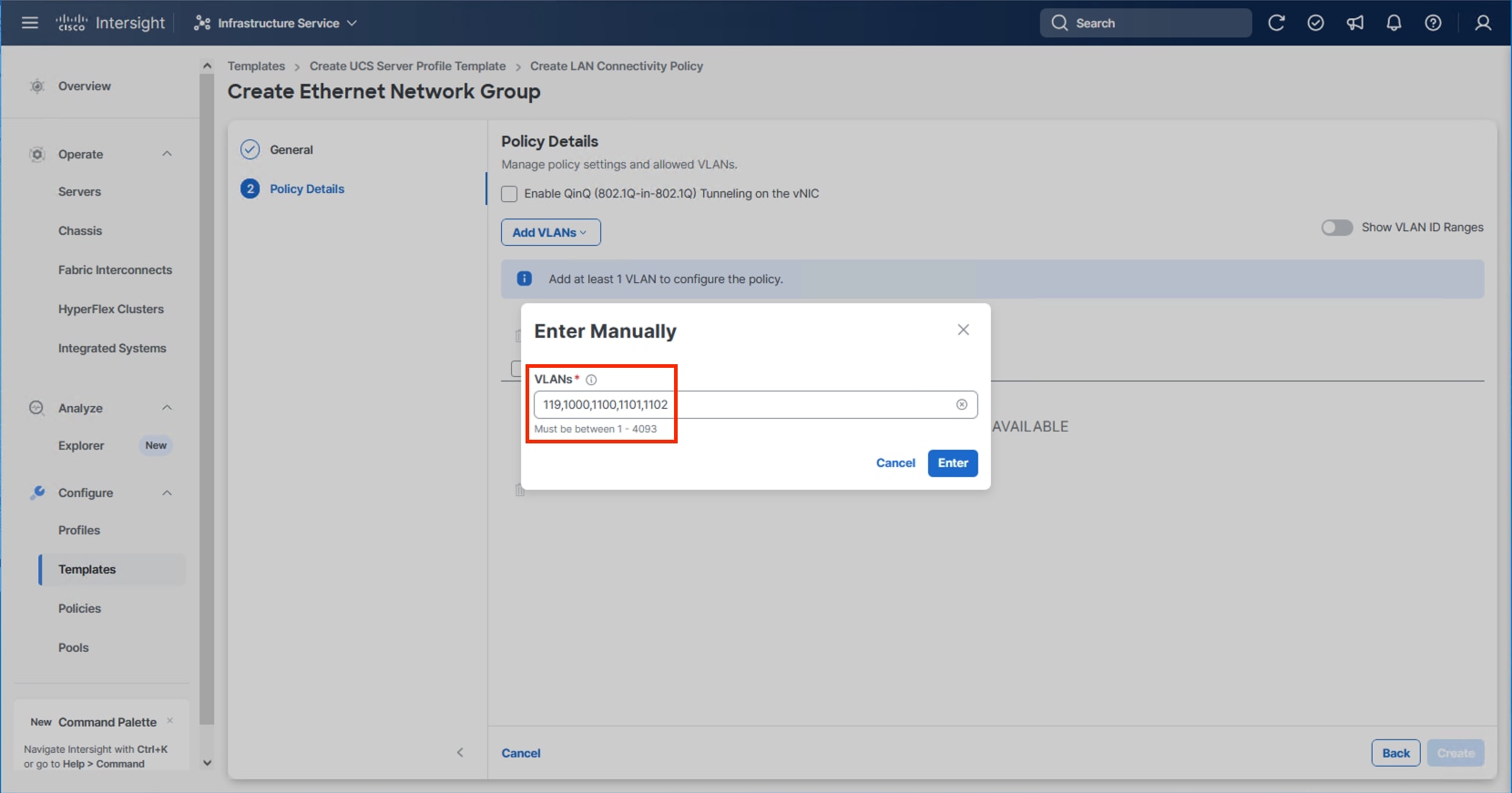

Step 21. Provide a Name for the policy and click Next.

Step 22. Click Add VLANs and select Enter Manually.

Step 23. Specify the vDS0 appropriate VLANs from Table 11 and click Enter.

Step 24. Click Create.

Step 25. Select the newly created Ethernet Network Group policy and click Select.

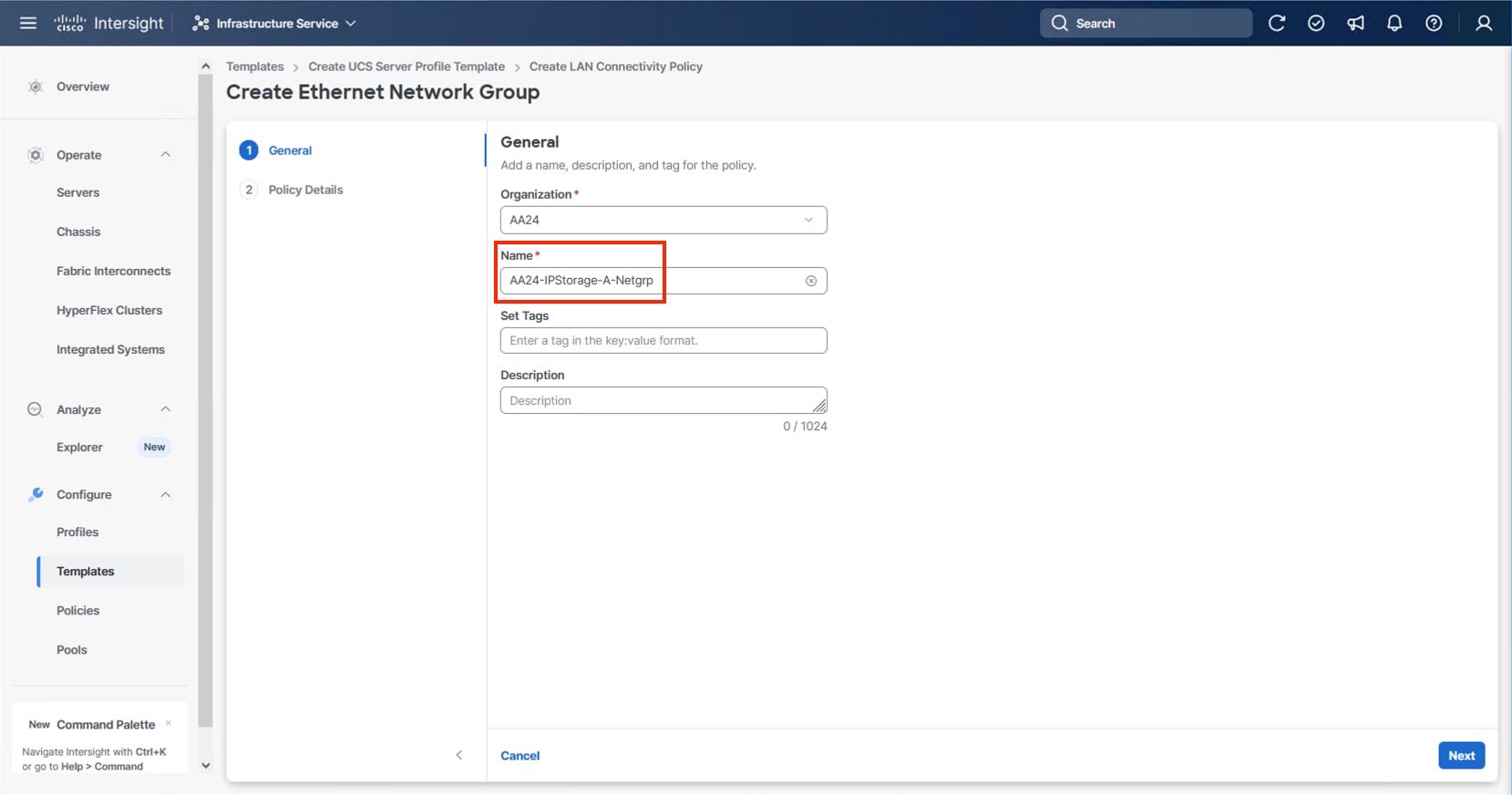

Note: When creating the IPStorage vNICs a distinct Ethernet Network Group Policy is created for each A vs B vNIC. For creating the A fabric side IPStorage vNIC Network Group Policy select Create New. When creating the B fabric side IPStorage vNIC Network Group Policy, follow these steps using the equivalent B side name and values.

Step 26. Provide a Name for the policy and click Next.

Step 27. Click Add VLANs and select Enter Manually.

Step 28. Specify the IPStorage-A appropriate VLANs from Table 11 and click Enter.

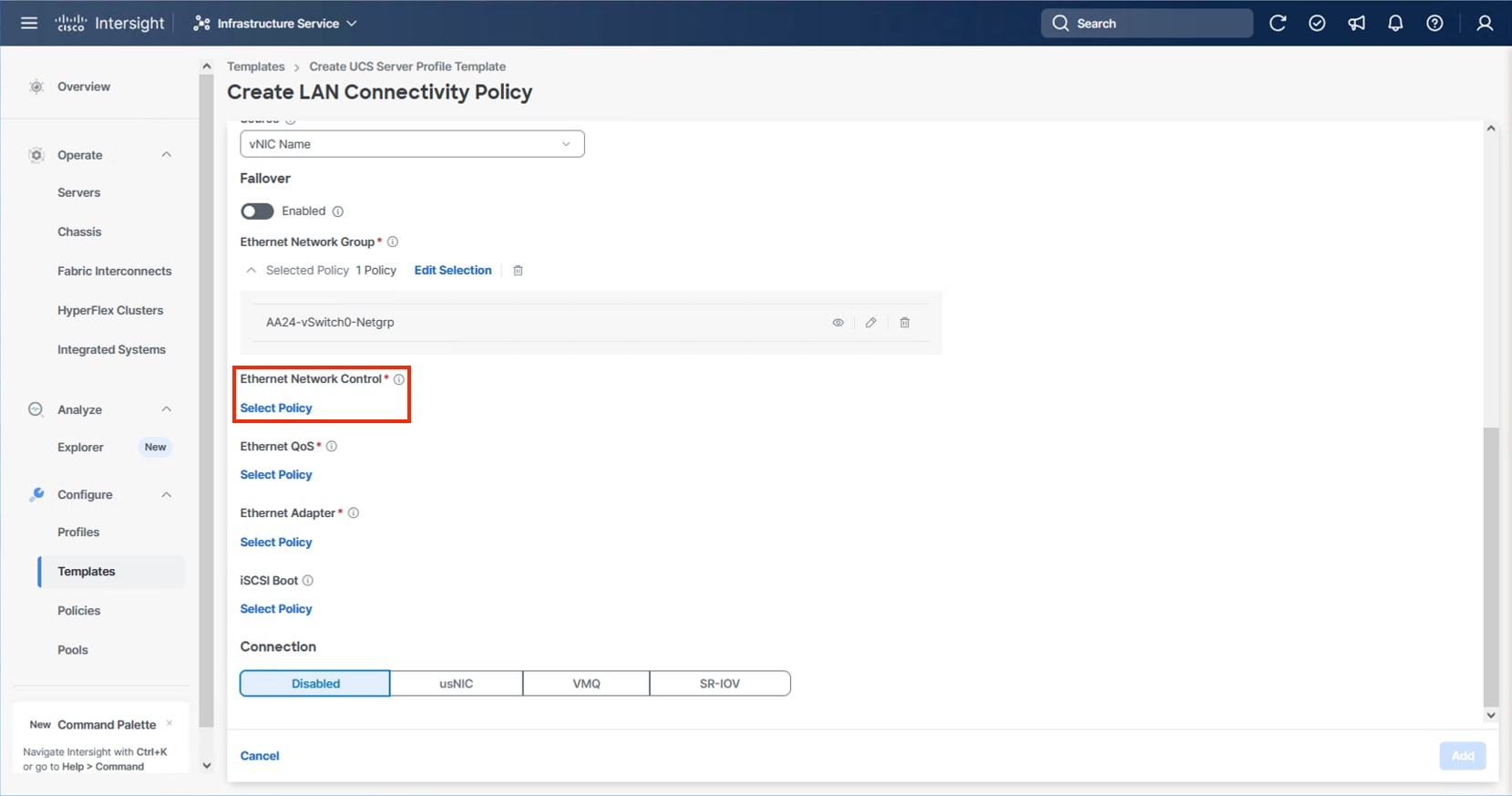

Step 29. Click Create.

Step 30. Select the newly created Ethernet Network Group policy and click Select.

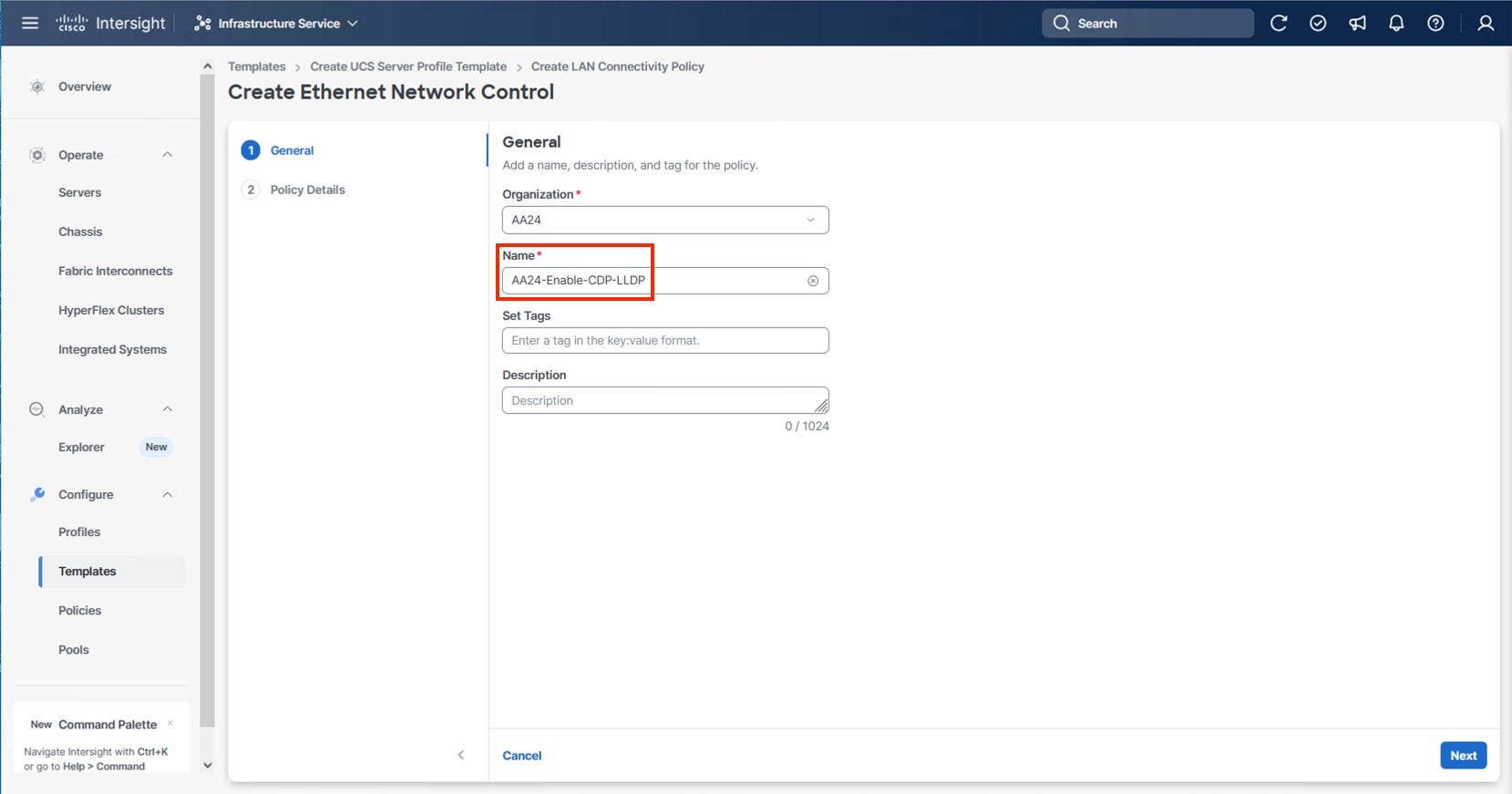

Step 31. For any type of vNIC, click Select Policy under Ethernet Network Control.

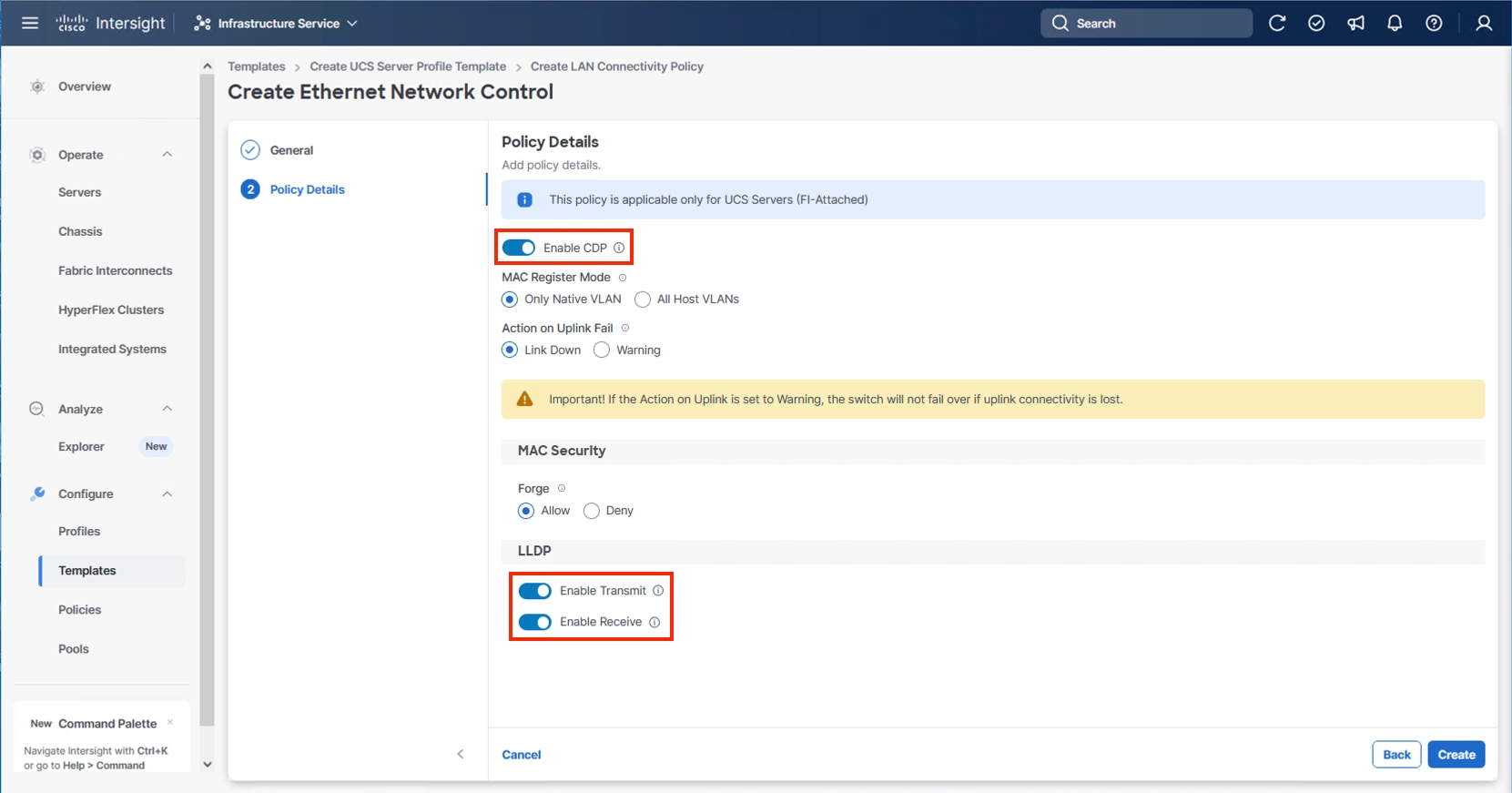

Step 32. Click Create New or select the Ethernet Network Control policy if it has already been created and skip the next two steps.

Step 33. Provide a Name for the policy and click Next.

Step 34. Select Enable CDP, Enable Transmit, and Enable Receive toggles under LLDP and click Create.

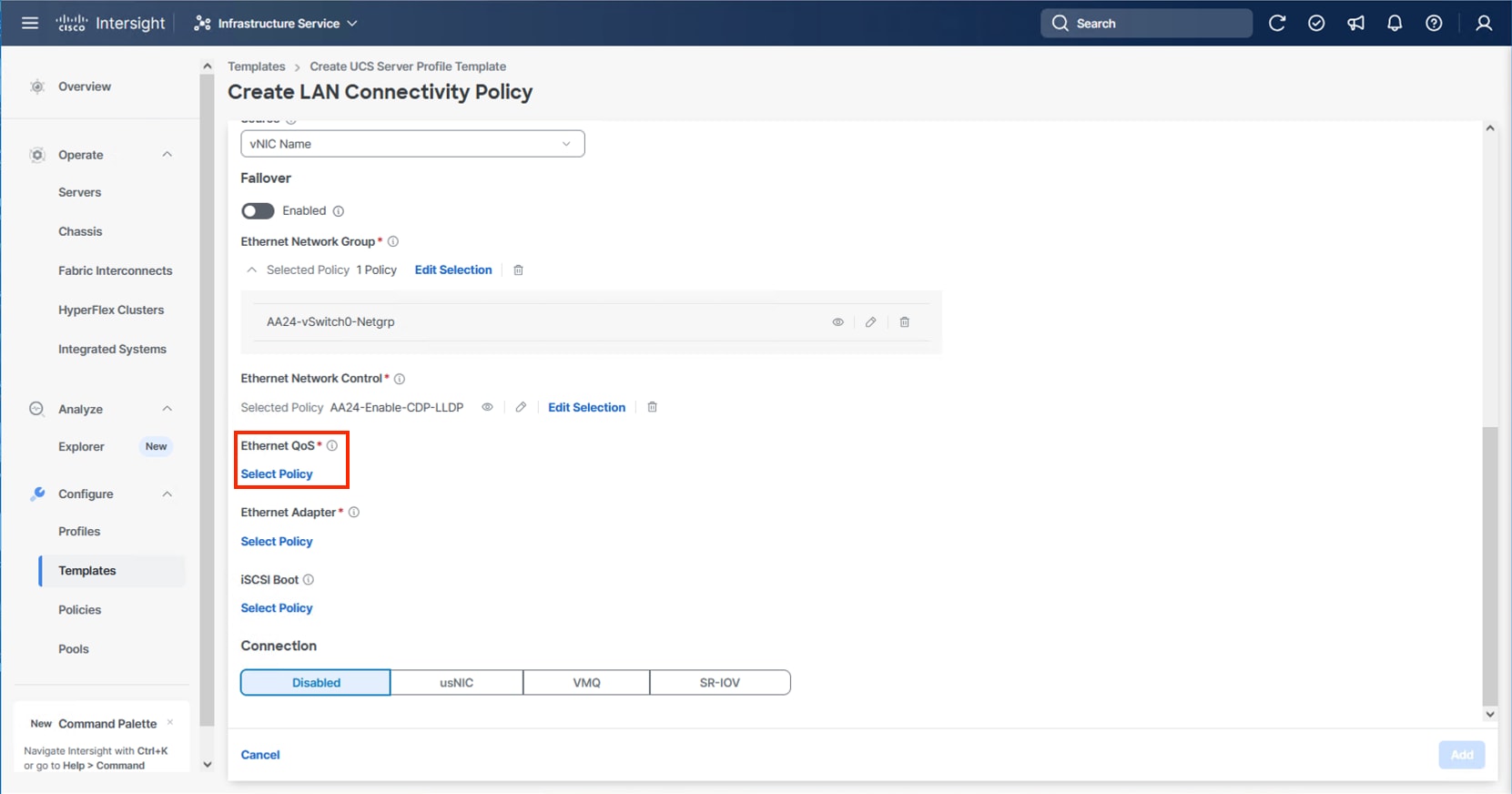

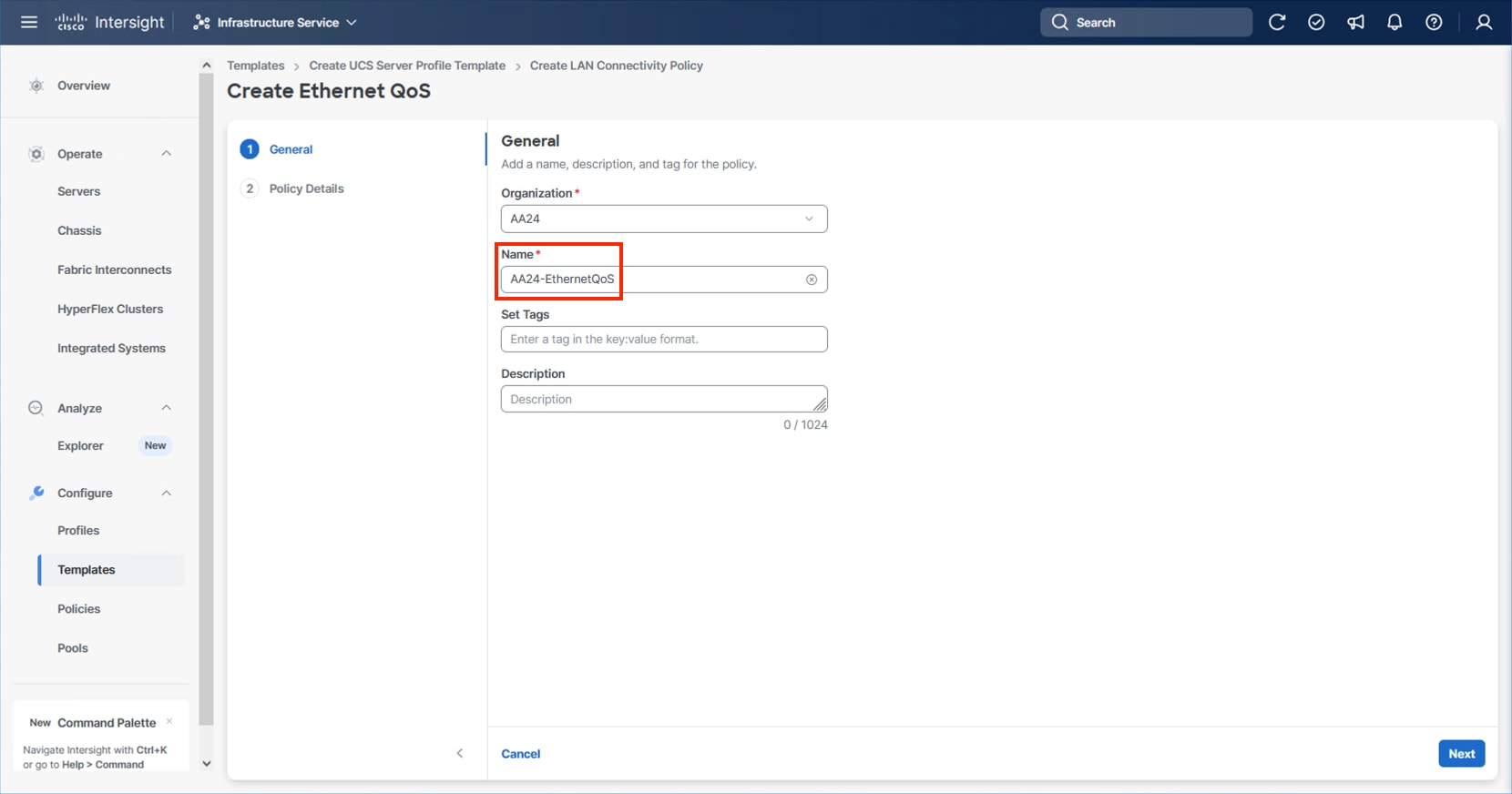

Step 35. For any type of vNIC, click Select Policy under Ethernet QoS.

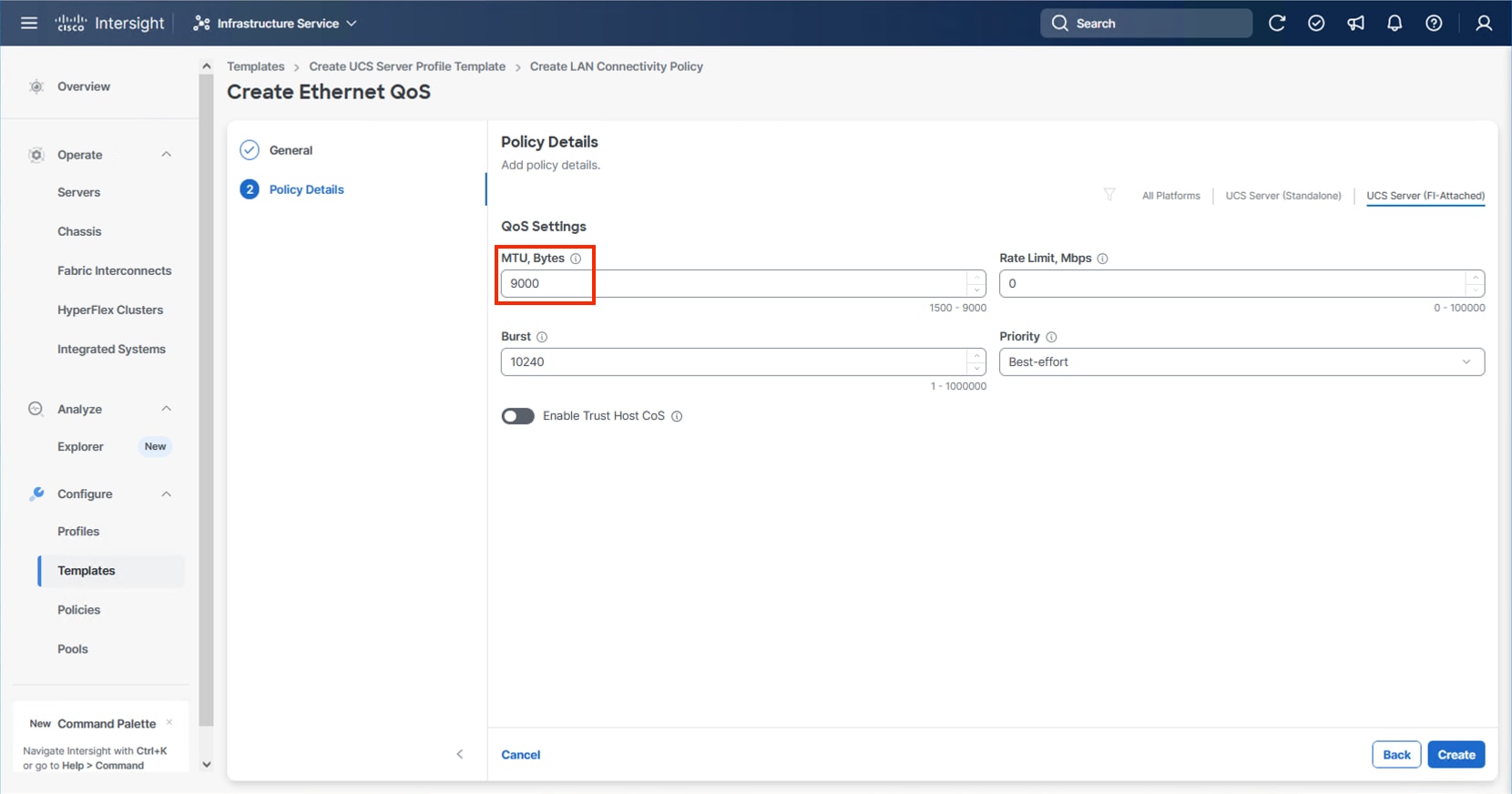

Step 36. Click Create New or select the Ethernet QoS policy if it has already been created and skip the next two steps.

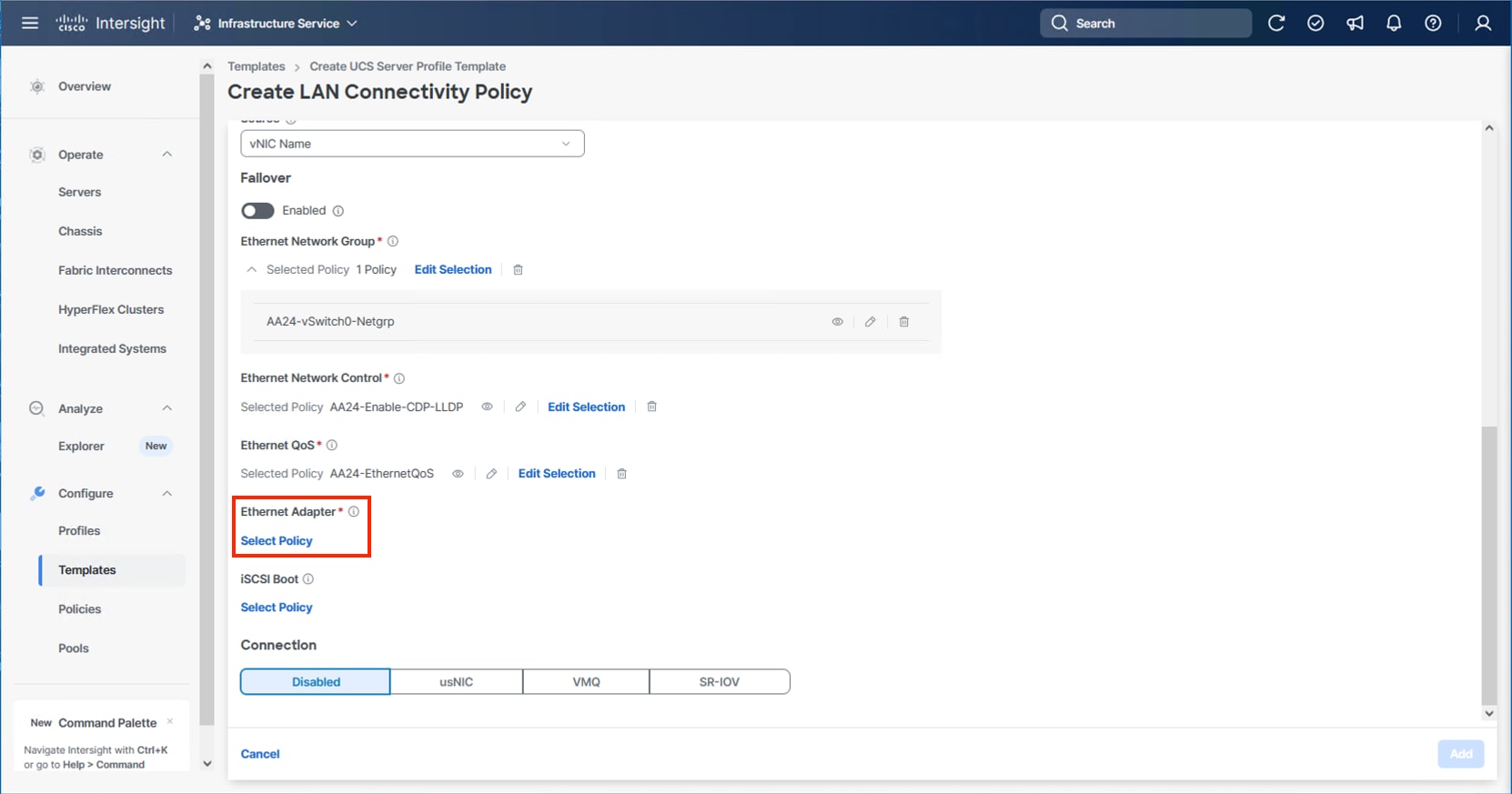

Step 37. Provide a Name for the policy and click Next.

Step 38. Change the MTU, Bytes settings to 9000 and click Create.

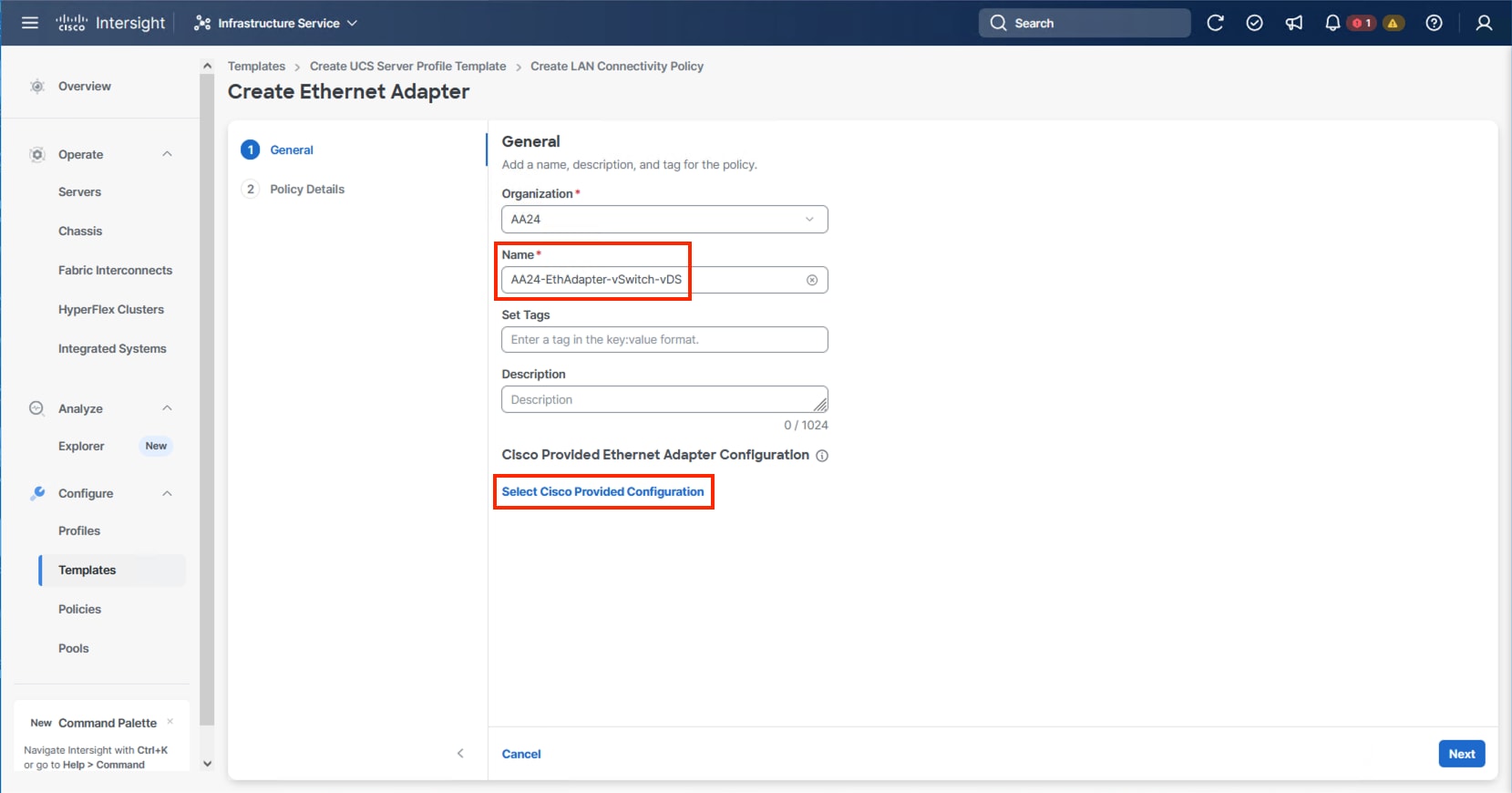

Step 39. For any type of vNIC, click Select Policy under Ethernet Adapter.

Step 40. Click Create New or select the Ethernet Adapter policy if it has already been created and skip the next three steps. Different policies will be created for the vSwitch0 vNICs and the vDS0 vNICs versus the IPStorage vNICs.

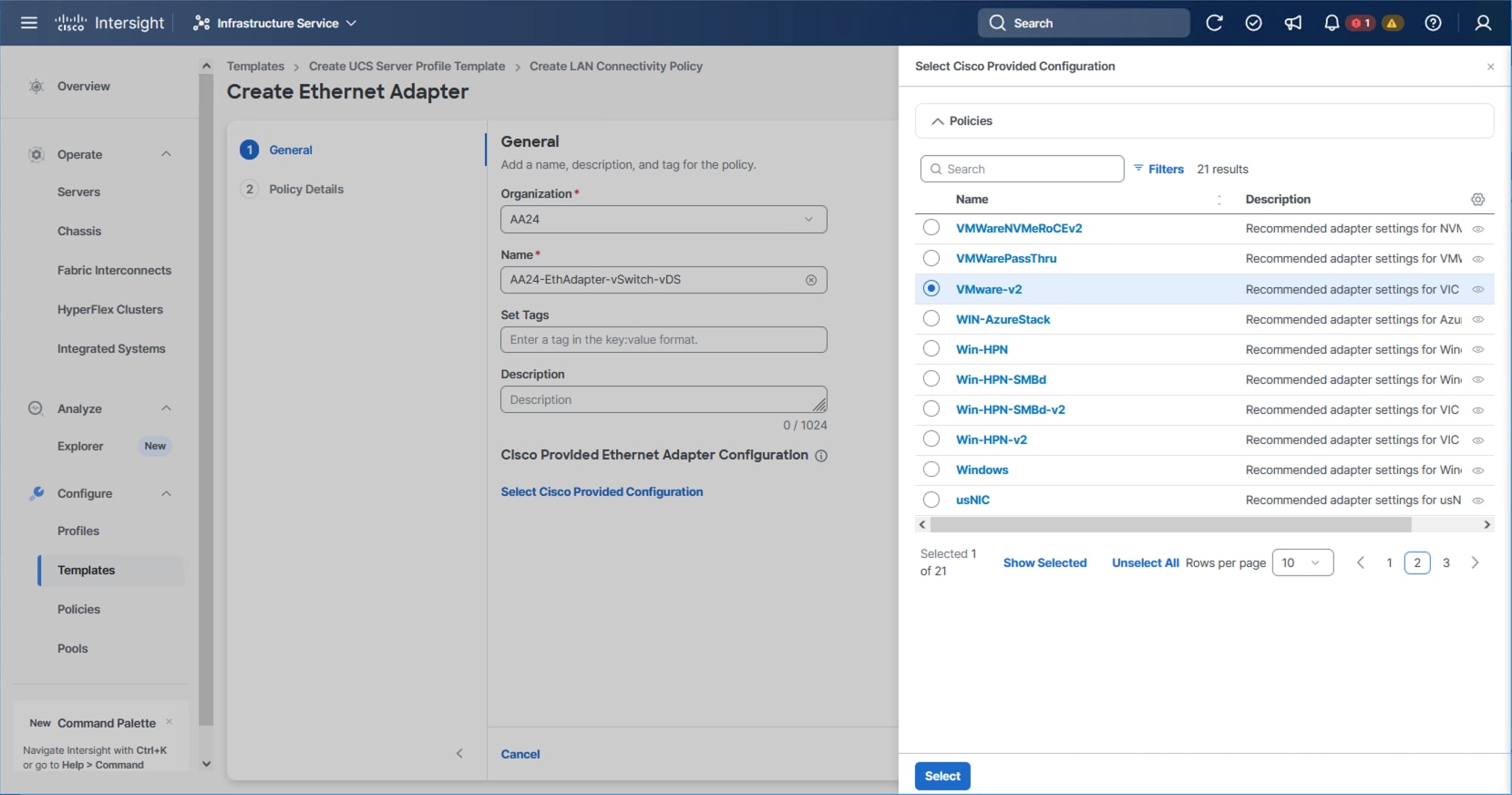

Step 41. To create the vSwitch0 and vDS0 policy, specify a Name for the policy and click Select Cisco Provided Configuration.

Step 42. Select VMware-v2, click Select and then click Next.

Step 43. Leave all options set to their default settings and click Create.

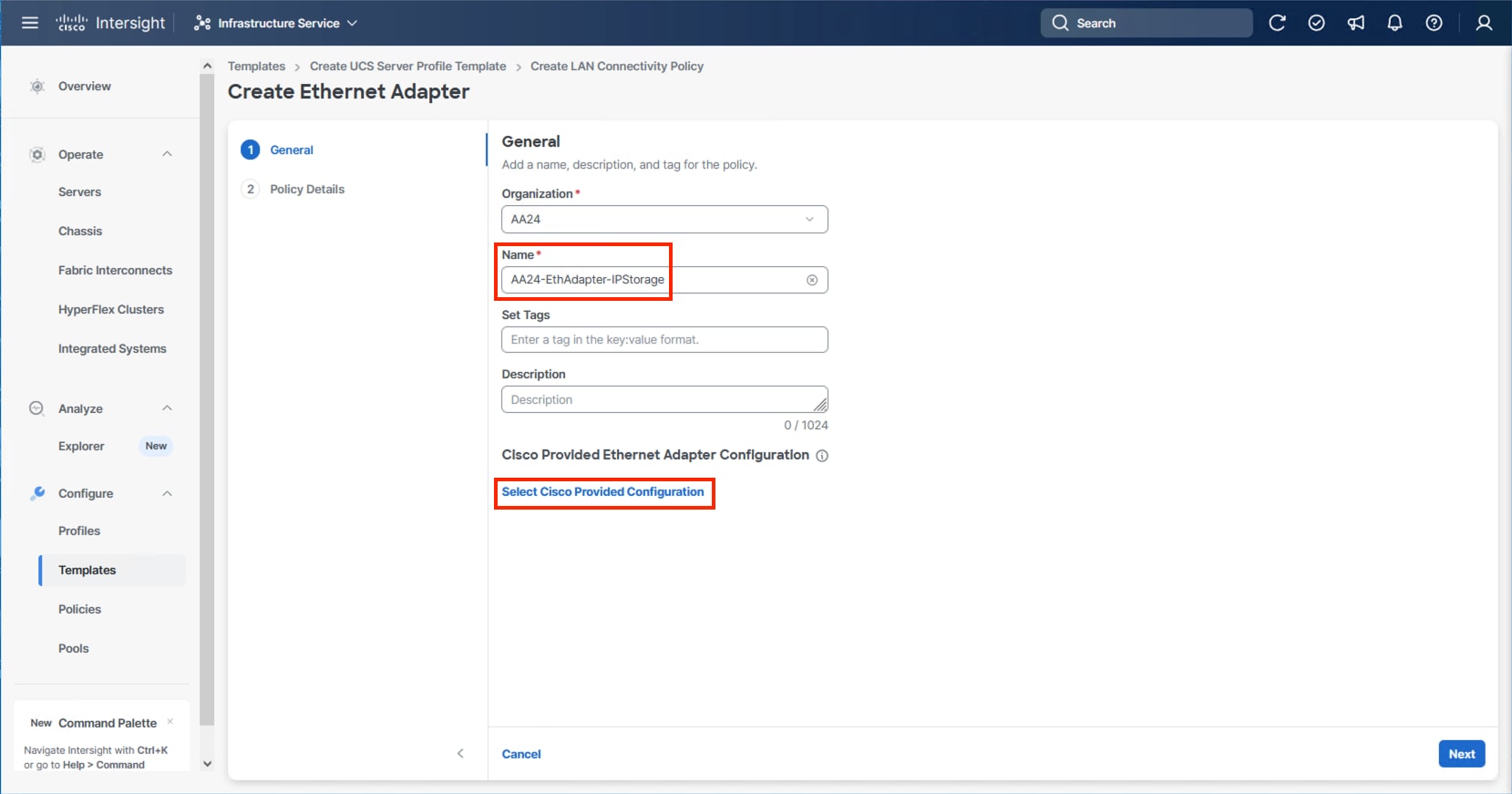

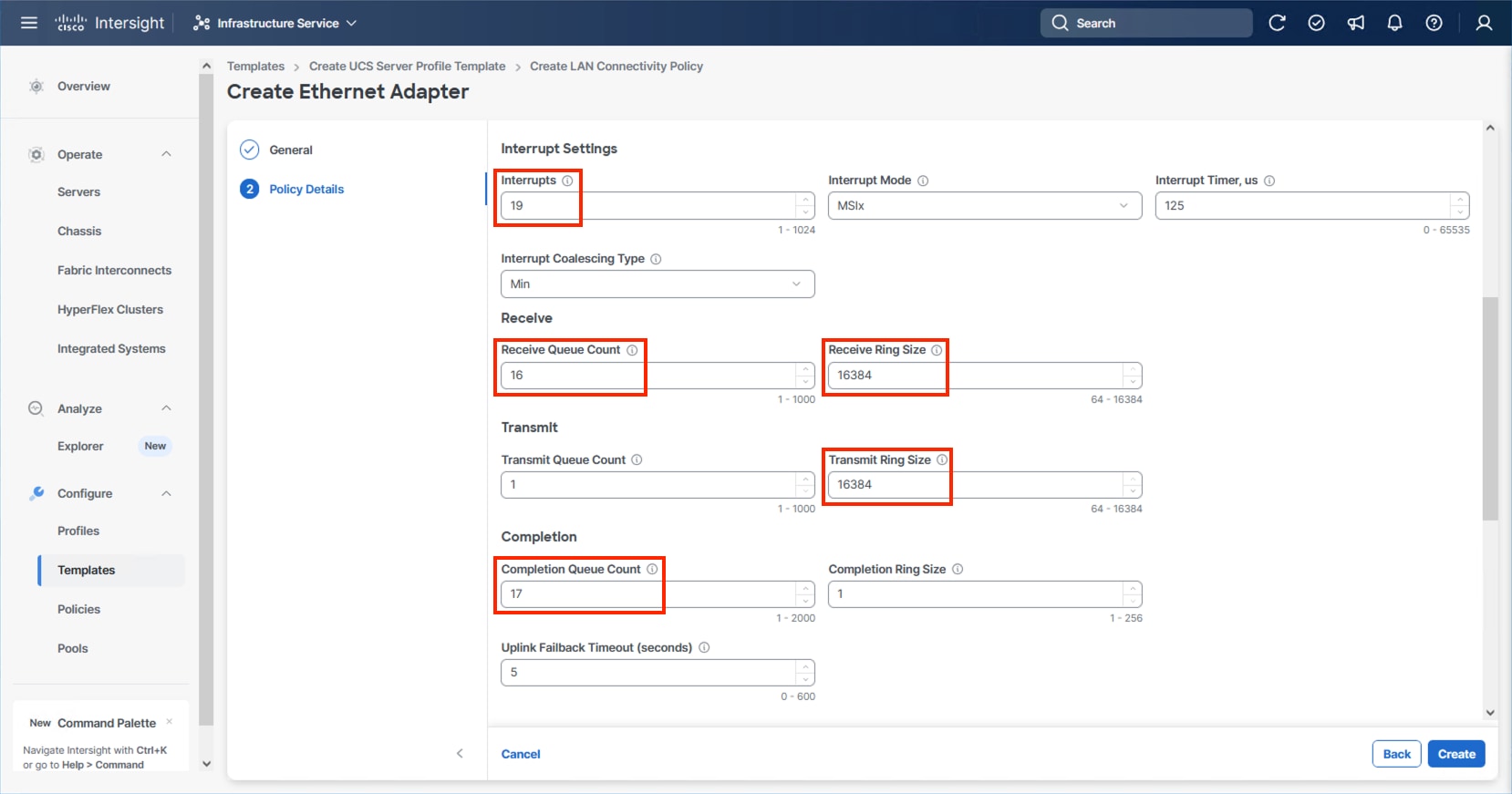

Step 44. To create the IPStorage Ethernet Adapter policy, specify a Name for the higher traffic settings used in the policy and click Select Cisco Provided Configuration.

Step 45. Select VMWare-v2 and click Next.

Step 46. Increase Interrupts to 19, the Receive Queue Count to 16, the Receive Ring Size to 16384, the Transmit Ring Size to 16384, and the Completion Queue Count to 17. Click Create.

Step 47. Click Create.

Step 48. Verify the vNIC selections and click Add.

Step 49. Repeat steps 3-48 for each additional vNIC, creating the appropriate pools and policies as required.

Step 50. Click Create to finish the LAN Connectivity Policy.

Step 51. In the IP Storage based topology, a SAN Connectivity Policy will not be needed. Click Next to continue to the Summary.

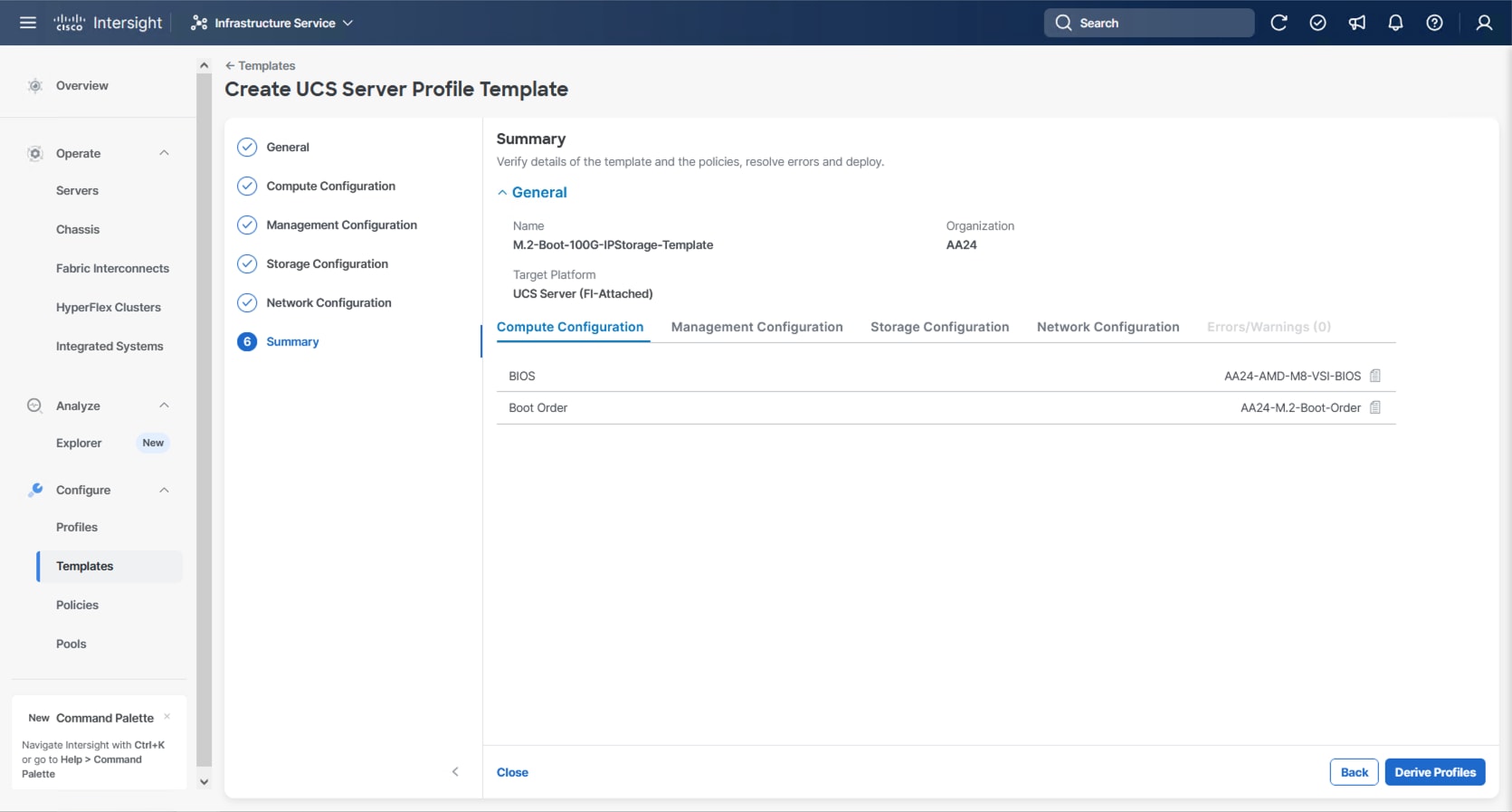

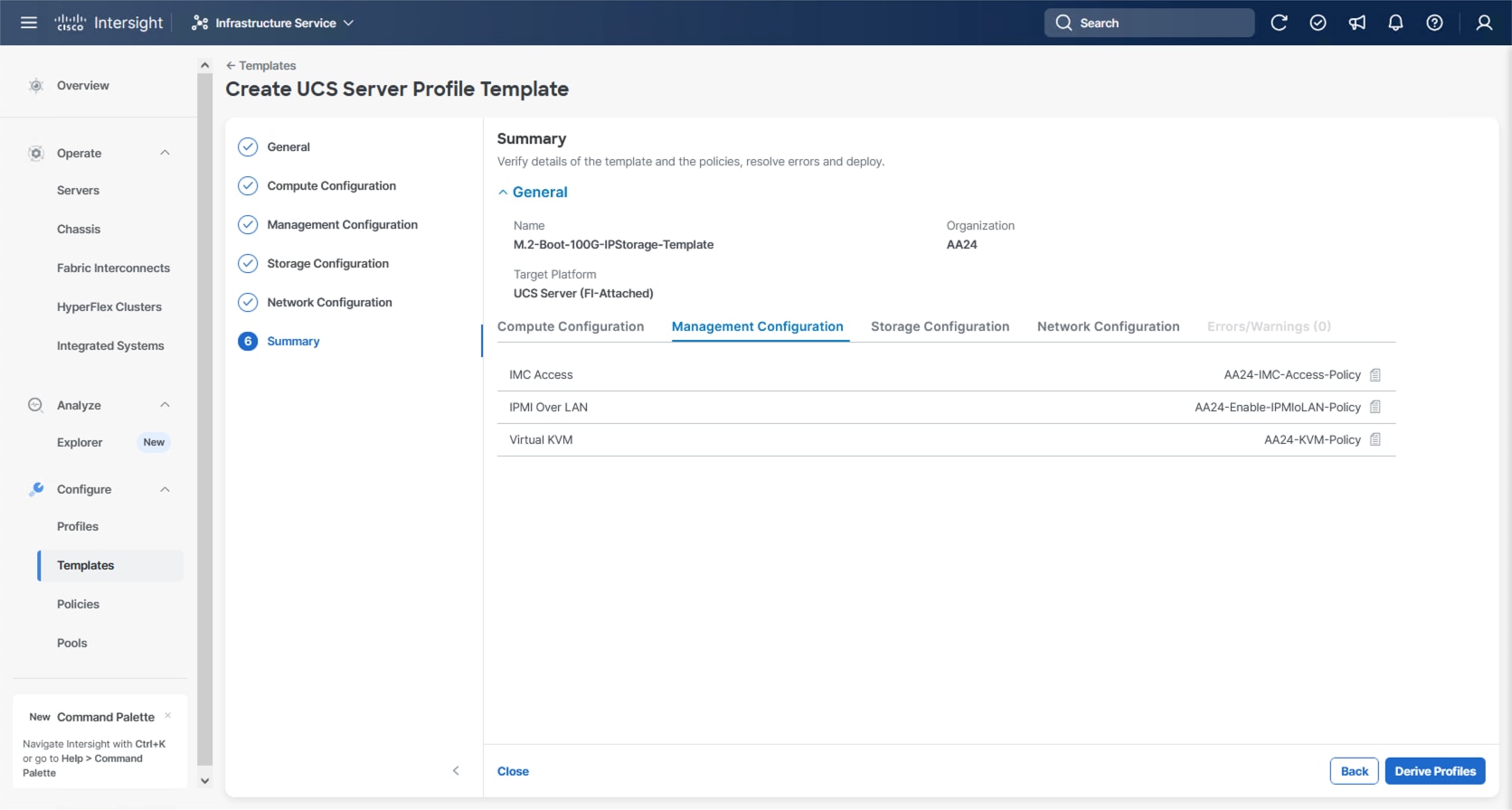

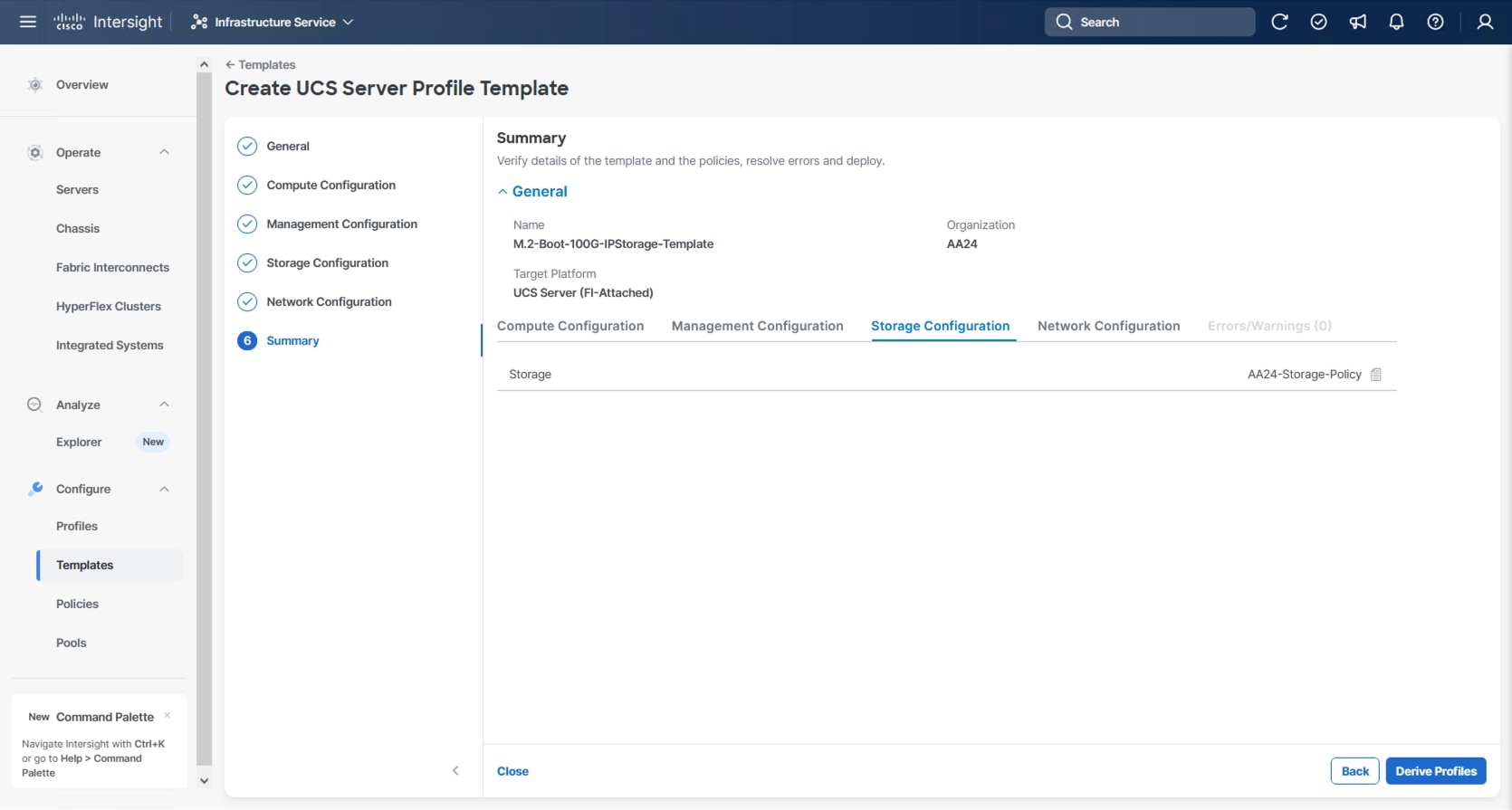

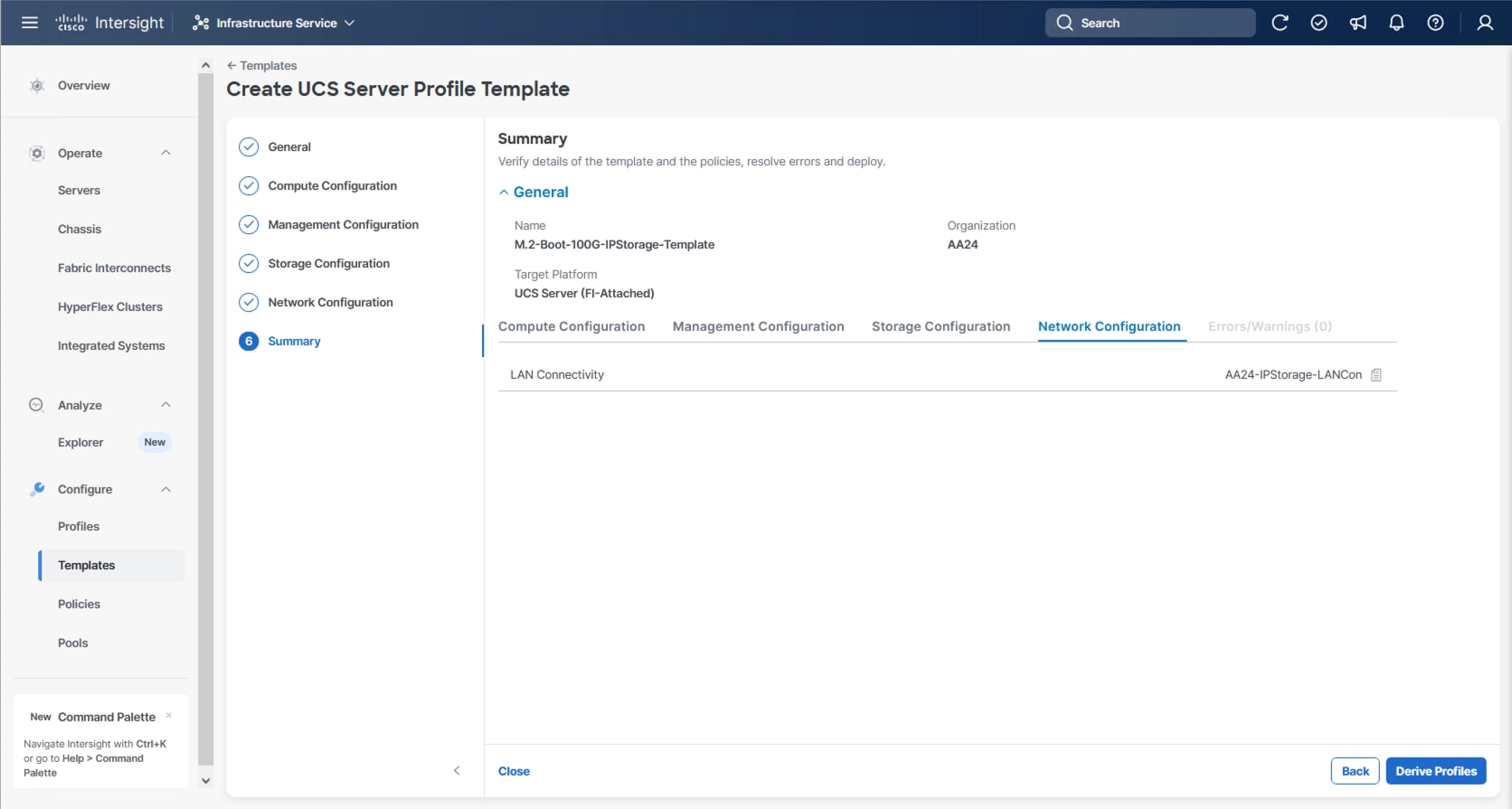

Procedure 2. Review Summary

Step 1. In the Summary screen, verify that the intended policies are mapped to the appropriate settings.

Cisco UCS IMM Setup Completion

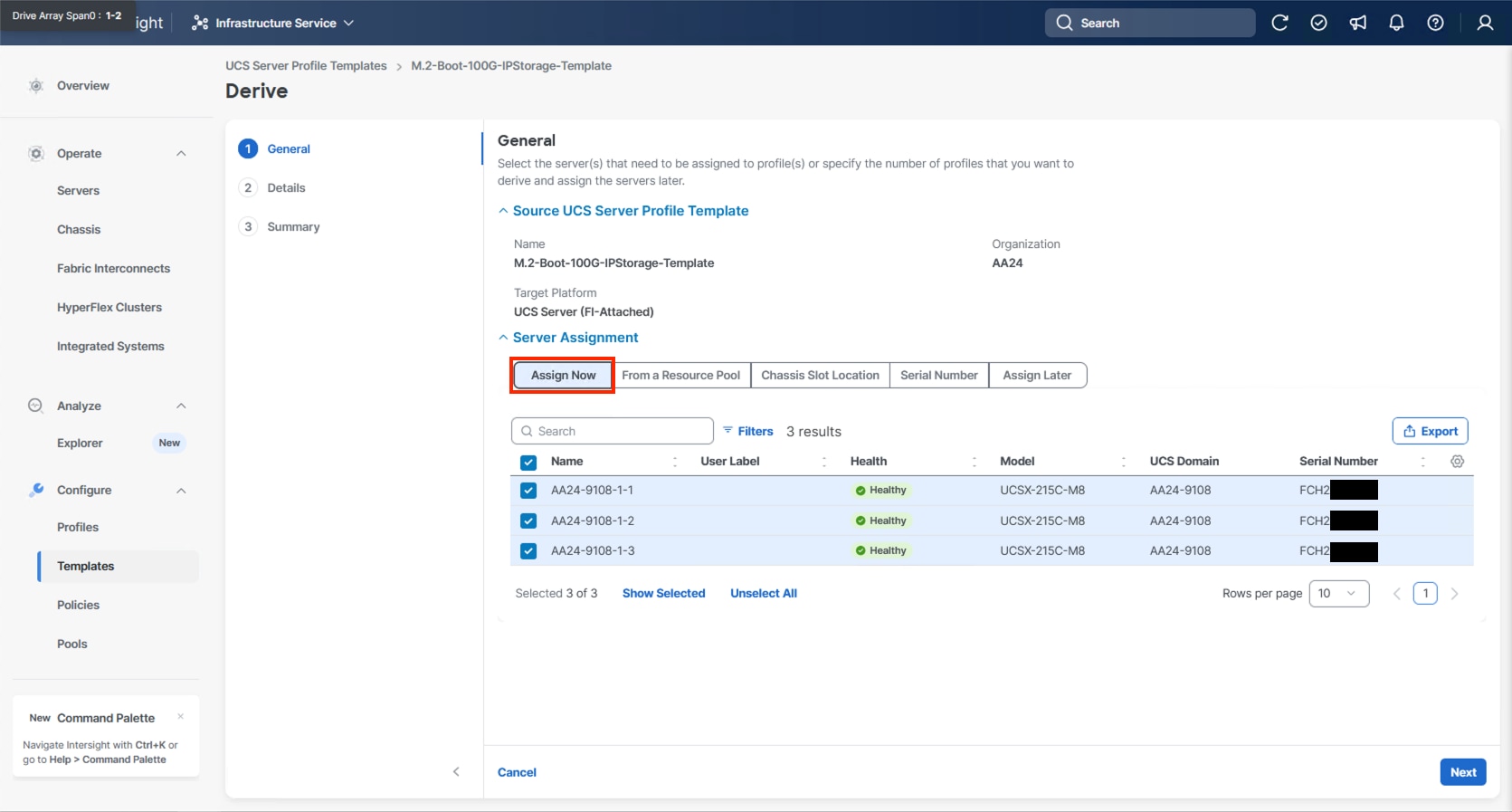

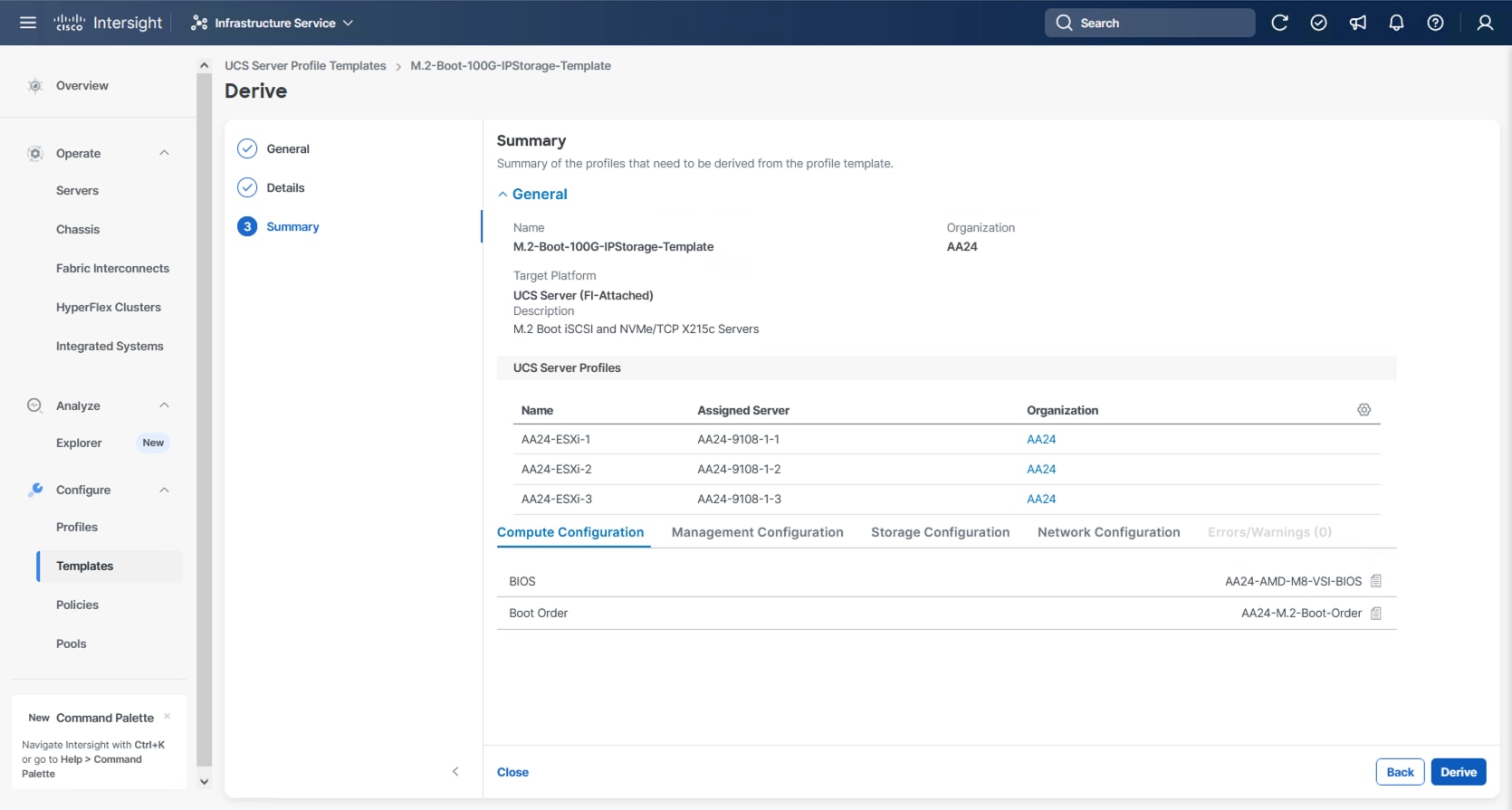

Procedure 1. Derive Server Profiles

Step 1. From the Server profile template Summary screen, click Derive Profiles.

Note: This action can also be performed later by navigating to Templates, clicking “…” next to the template name and selecting Derive Profiles.

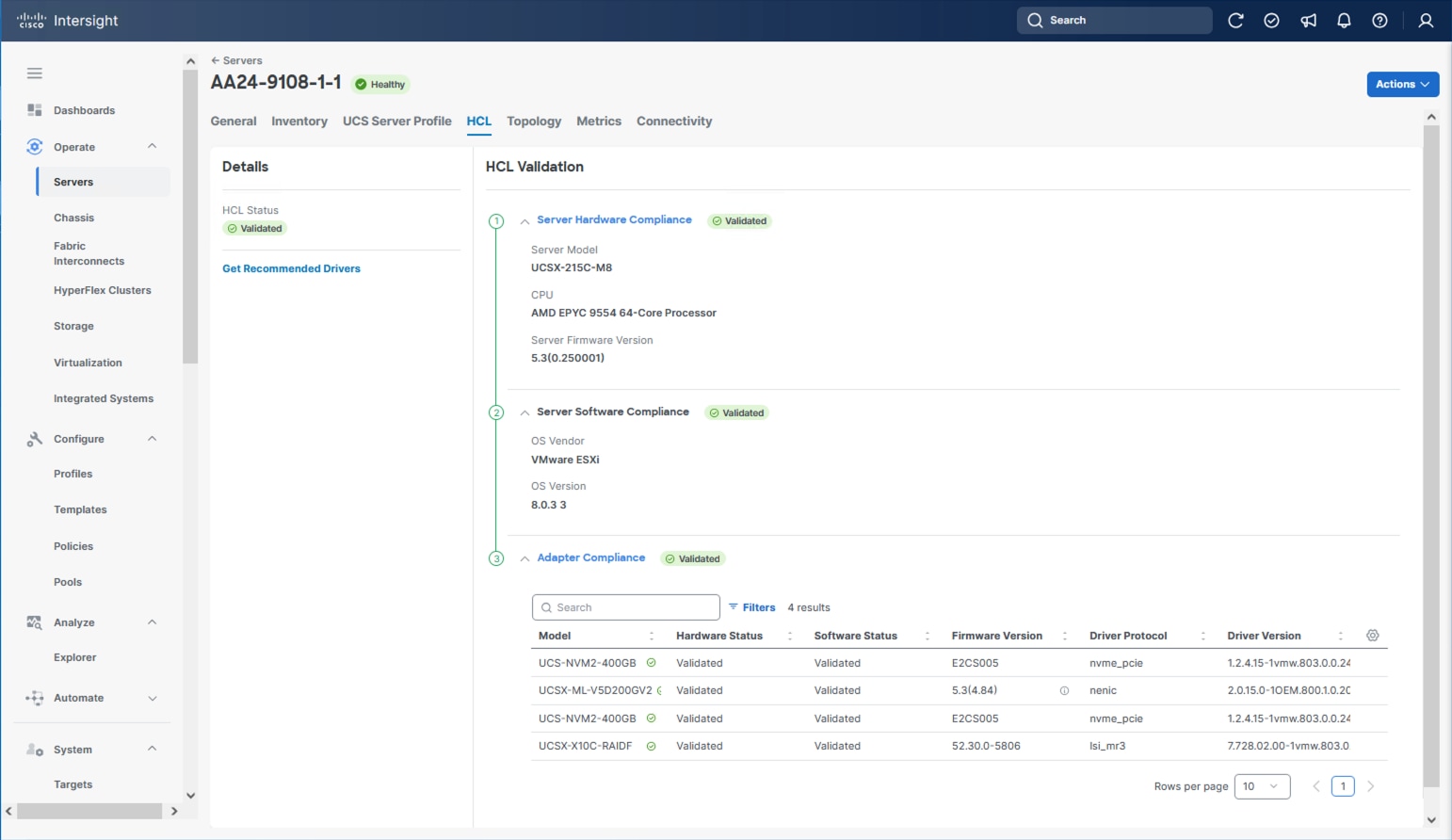

Step 2. Under Server Assignment, select Assign Now and select Cisco UCS X215c M8 server(s). You can select one or more servers depending on the number of profiles to be deployed. Optionally, provide a Model filter to exclude additional connected servers as required.

Step 3. Click Next.

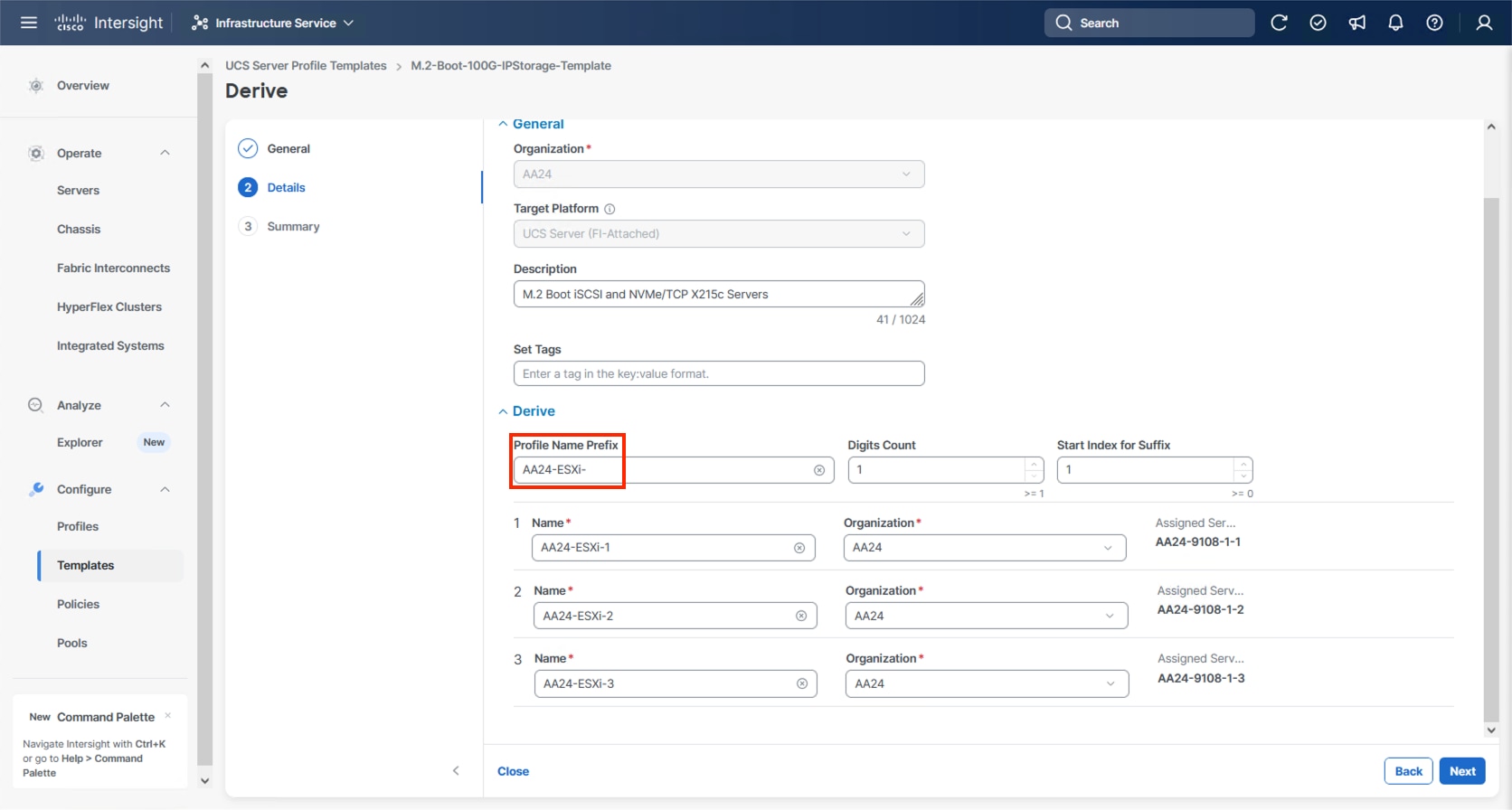

Note: Cisco Intersight will fill in the default information for the number of servers selected (3 in this case).

Step 4. Adjust the fields as needed, providing an appropriate Profile Name Prefix.

Note: It is recommended to use the server hostname for the Server Profile name.

Step 5. Click Next.

Step 6. Verify the information and click Derive to create the Server Profile(s).

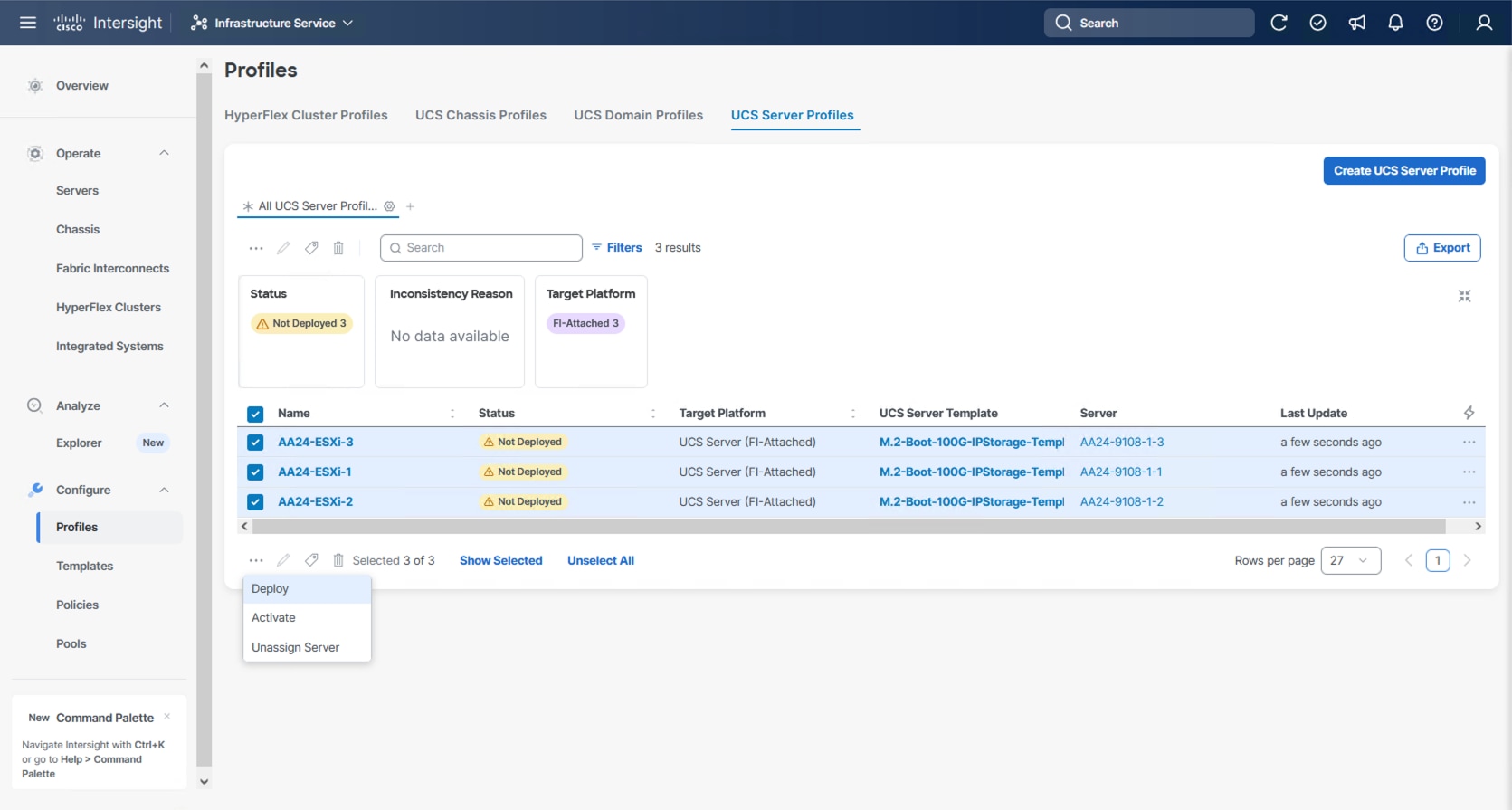

Step 7. Go to Infrastructure Service > Configure > Profiles > UCS Server Profiles list, select the profile(s) just created and click the … at the top of the column and select Deploy. Select Reboot Immediately to Activate and click Deploy.

Step 8. Cisco Intersight will start deploying the server profile(s) and will take some time to apply all the policies. Go to the Requests tab to see the progress.

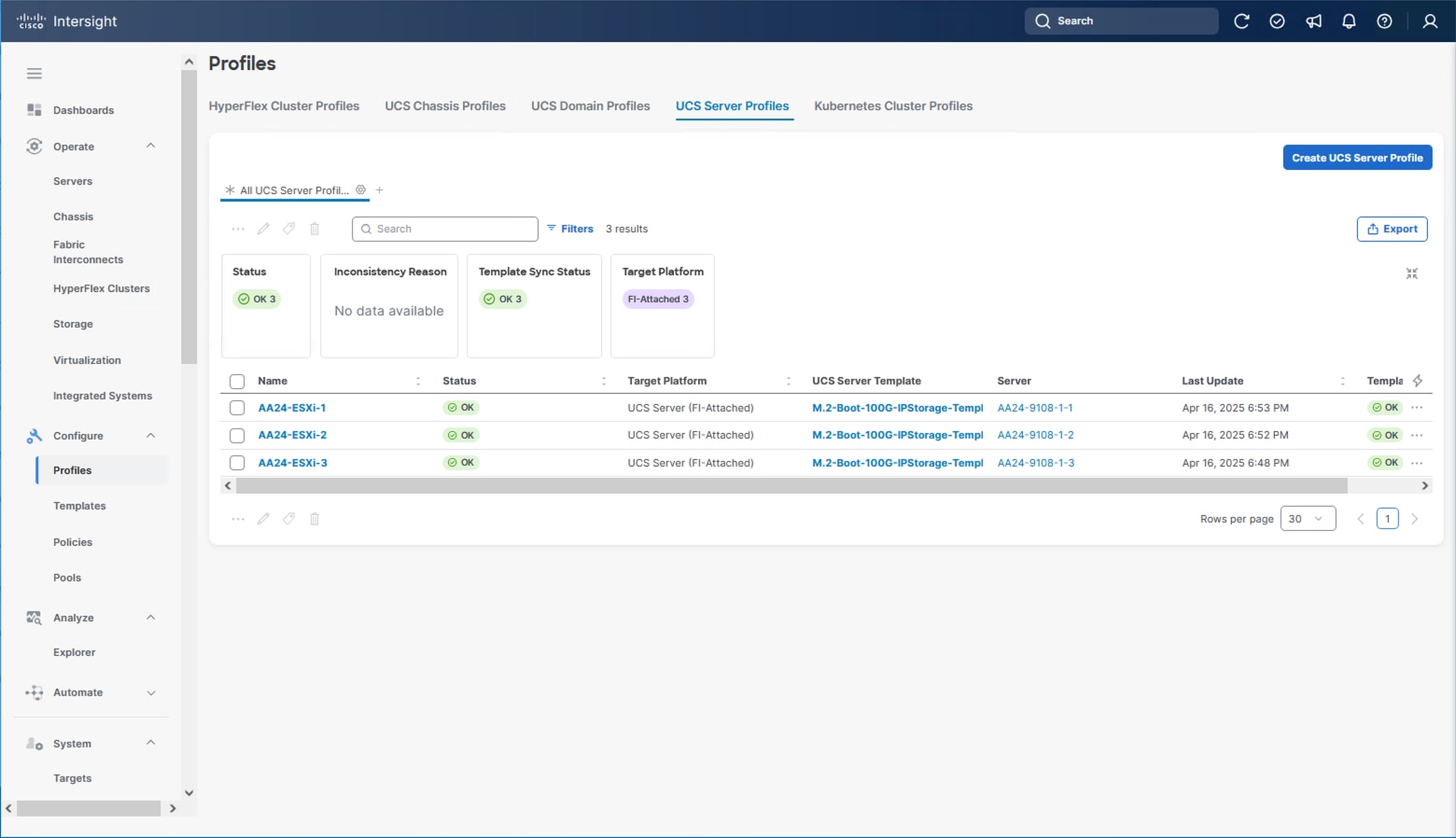

When the Server Profile(s) are deployed successfully, they will appear under the Server Profiles with the status of OK.

Tunneled KVM Setting within System

The additional settings within the System section of Intersight were described in the Create Server Profile Template process to fully enable Tunneled KVM.

Note: If this step has not been performed, complete the following procedure.

Procedure 1. Tunneled KVM Setting

Step 1. Go to System > Settings > Security and Privacy and click Configure.

Step 2. Turn on Allow Tunneled vKVM Launch and Allow Tunneled vKVM Configuration.

Step 3. Click Save to apply the changes.

Hitachi VSP One Block Storage Configuration

This chapter contains the following sections:

● NVMe/TCP Ports on Hitachi VSP One Block

● Hitachi Dynamic Provisioning Pool for LDEVs for UCS Servers

● NVM Subsystem, LDEV, and Host Access

Hitachi VSP One Block NVMe/TCP ports must be configured according to best practices. To access VSP One Block Administrator, you must use the built-in service IP. This service IP can be obtained by contacting your authorized Hitachi Vantara representative.

NVMe/TCP Ports on Hitachi VSP One Block

This section provides the procedure to configure NVMe/TCP ports on Hitachi VSP One Block.

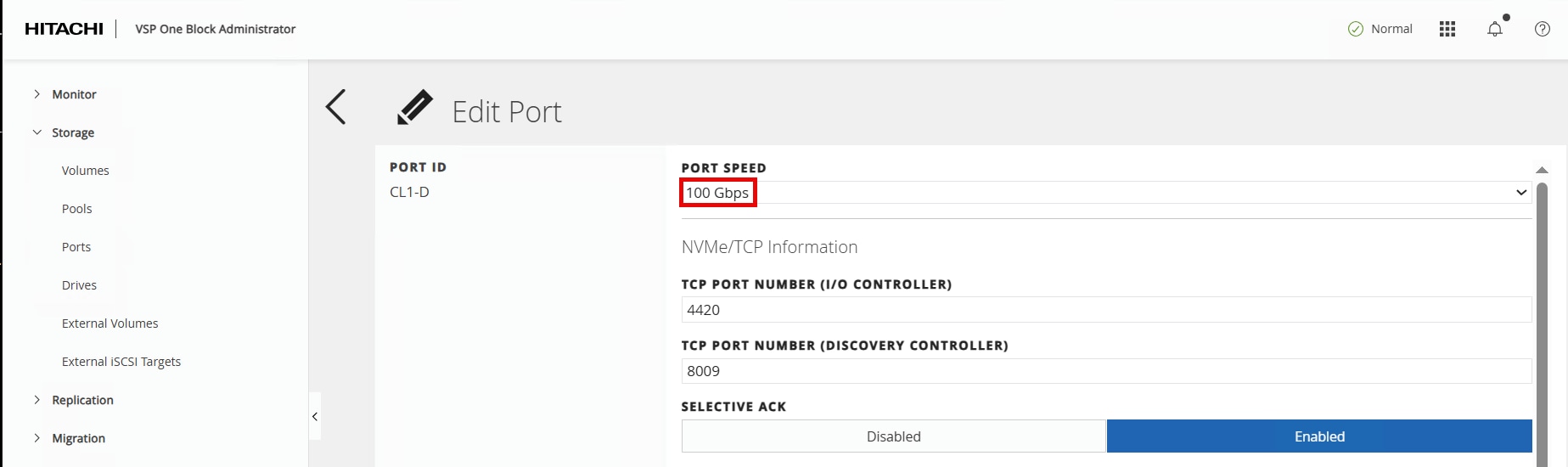

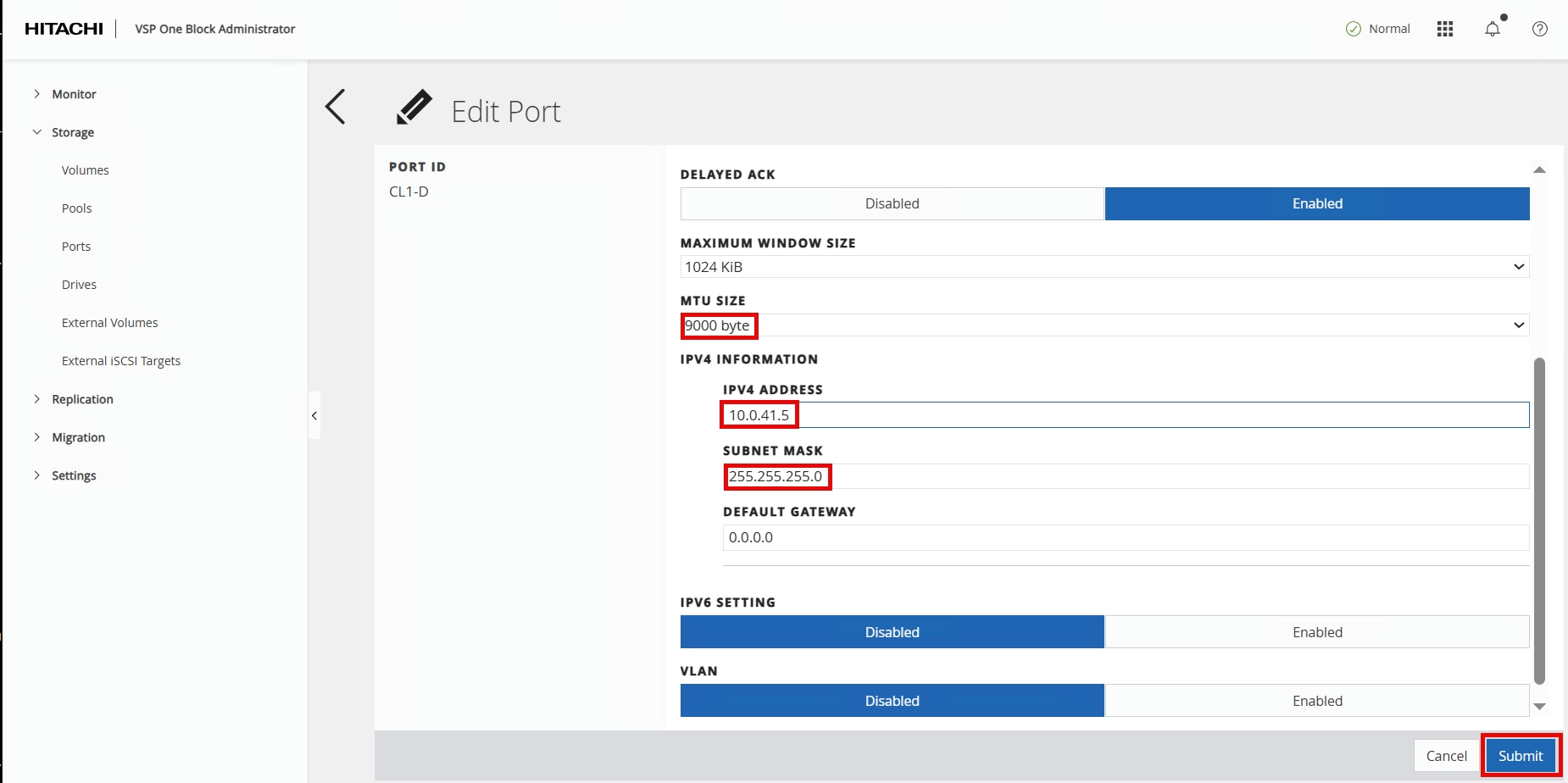

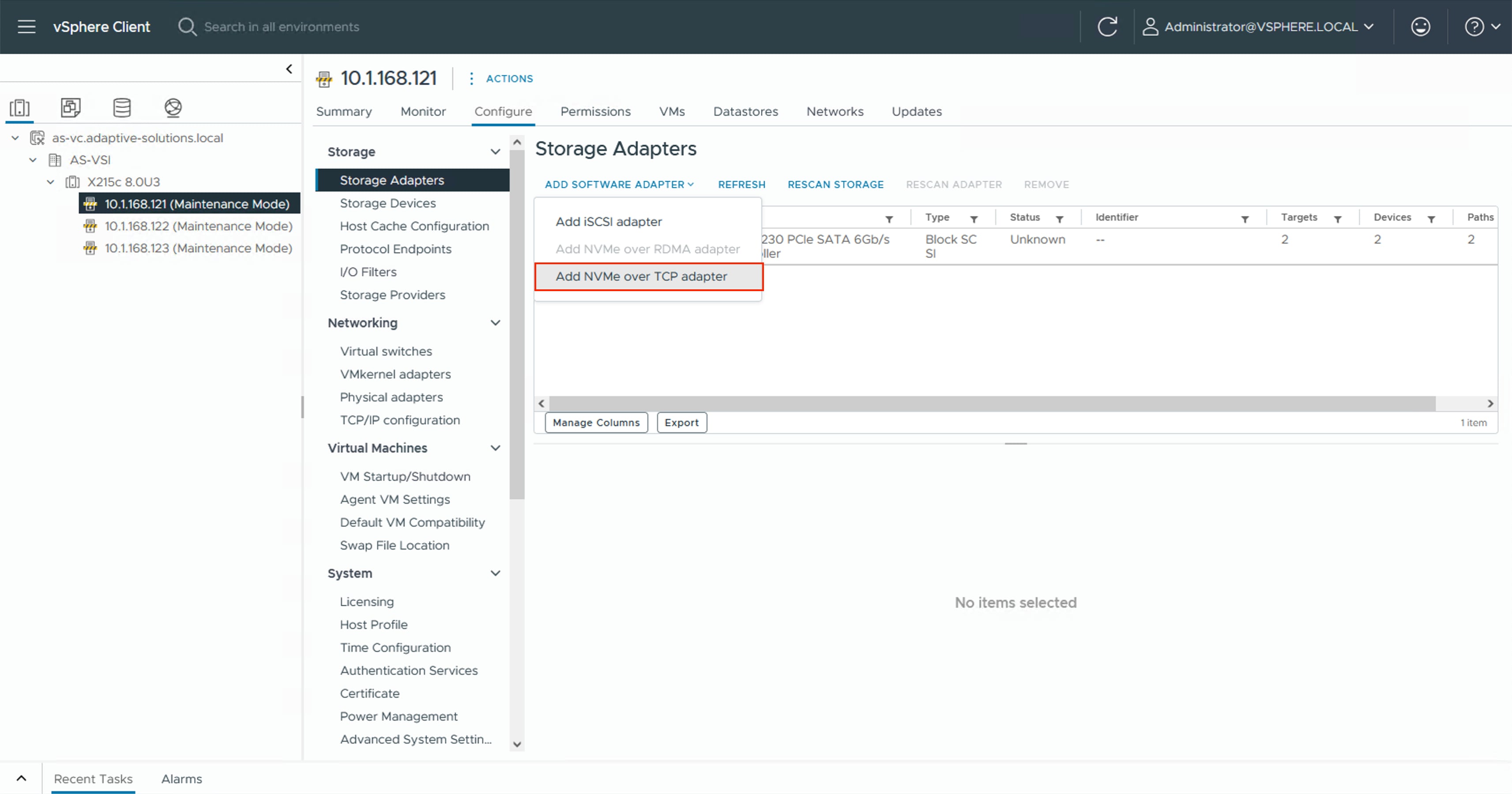

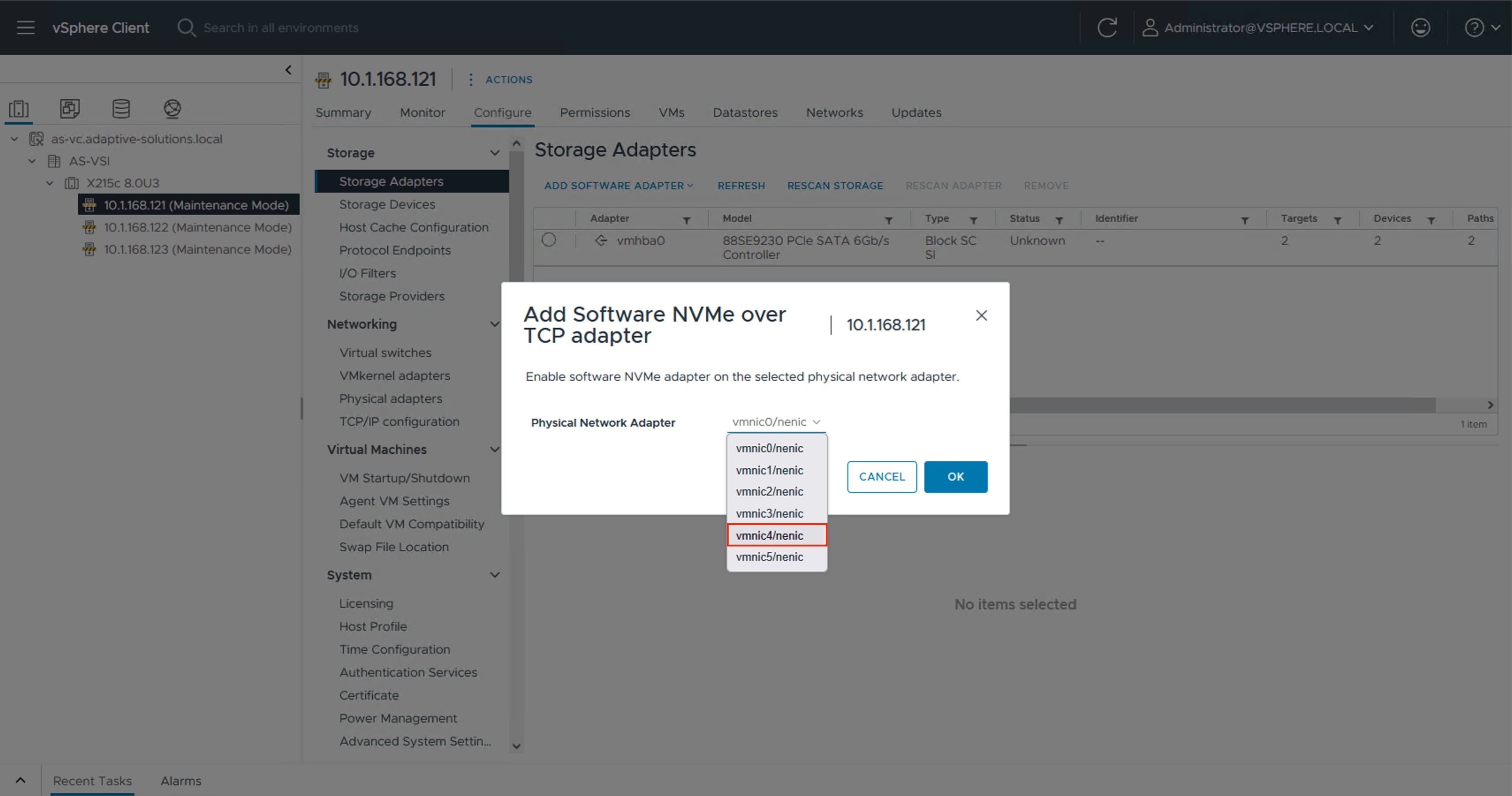

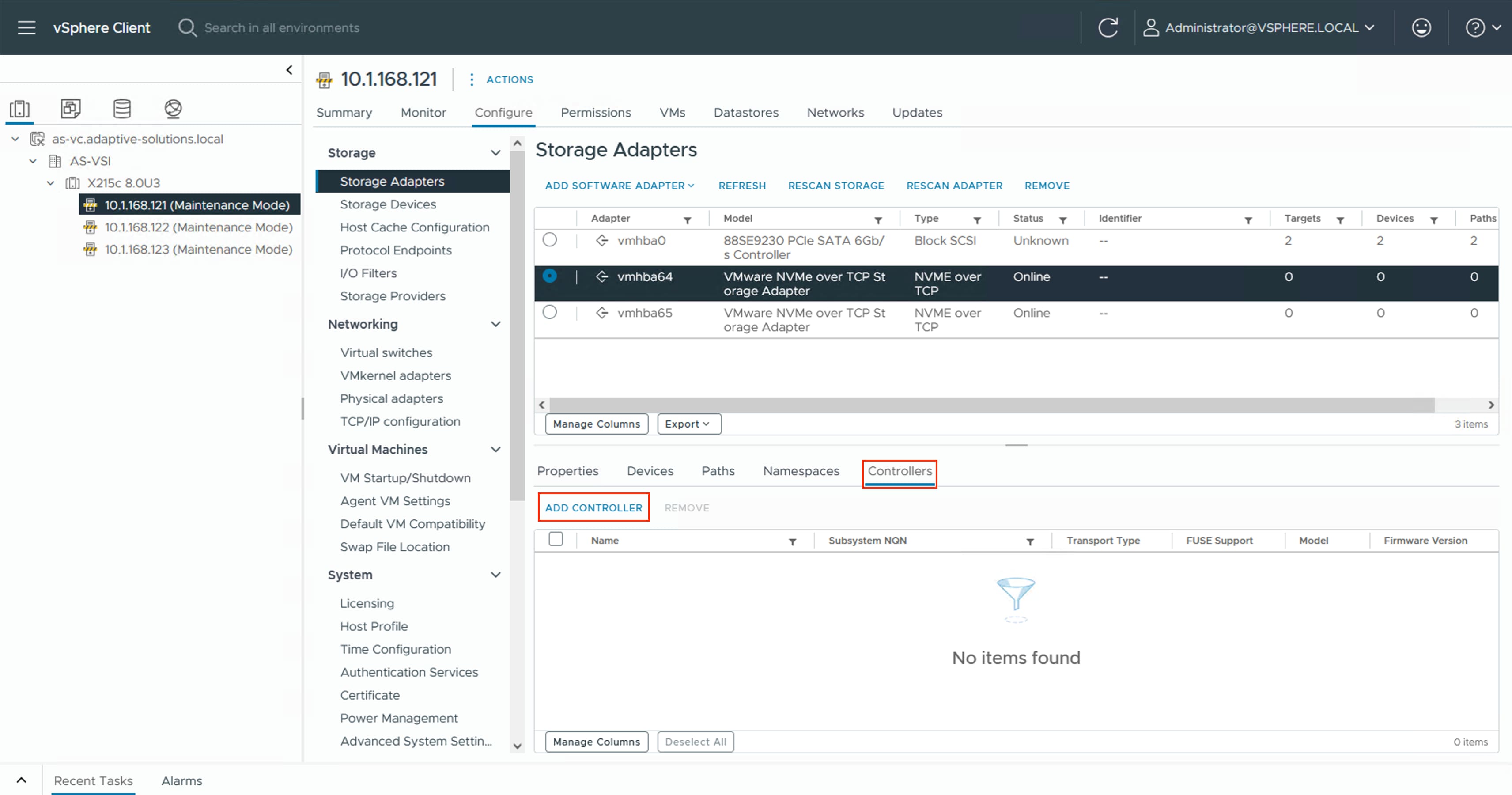

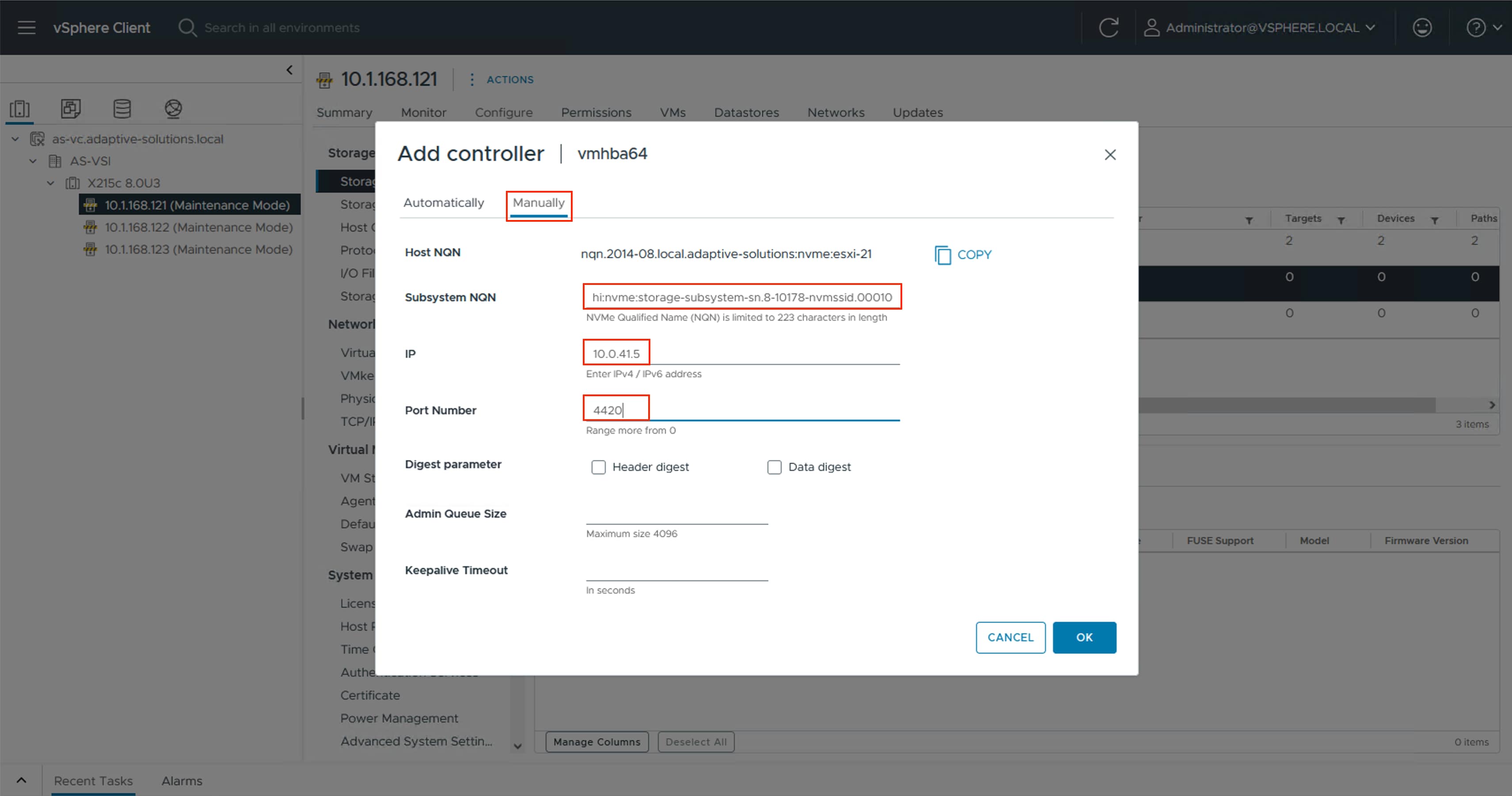

Procedure 1. Configure NVMe/TCP Ports on Hitachi VSP One Block

Step 1. Log into VSP One Block Administrator by navigating to the service IP in a web browser. Enter the required credentials and then click Log In.

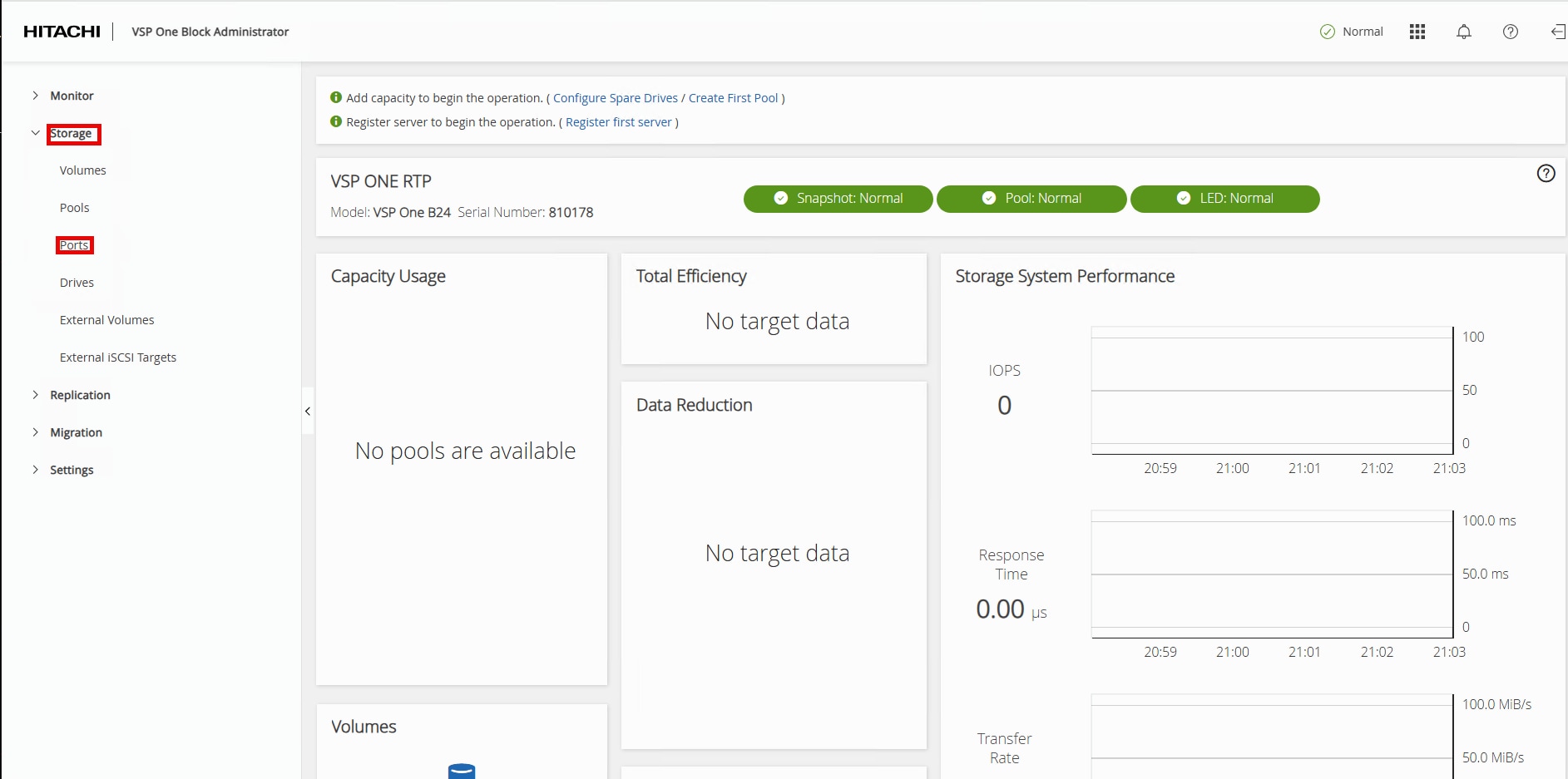

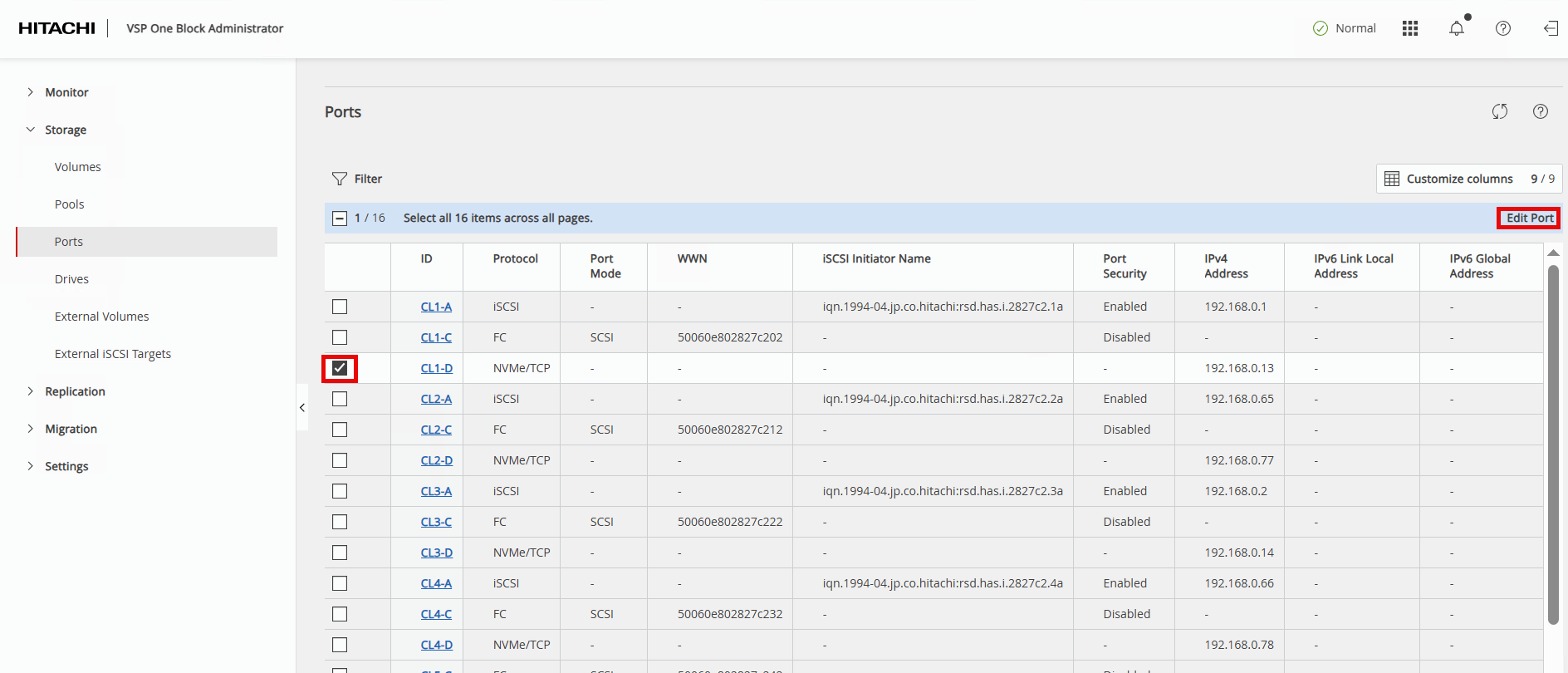

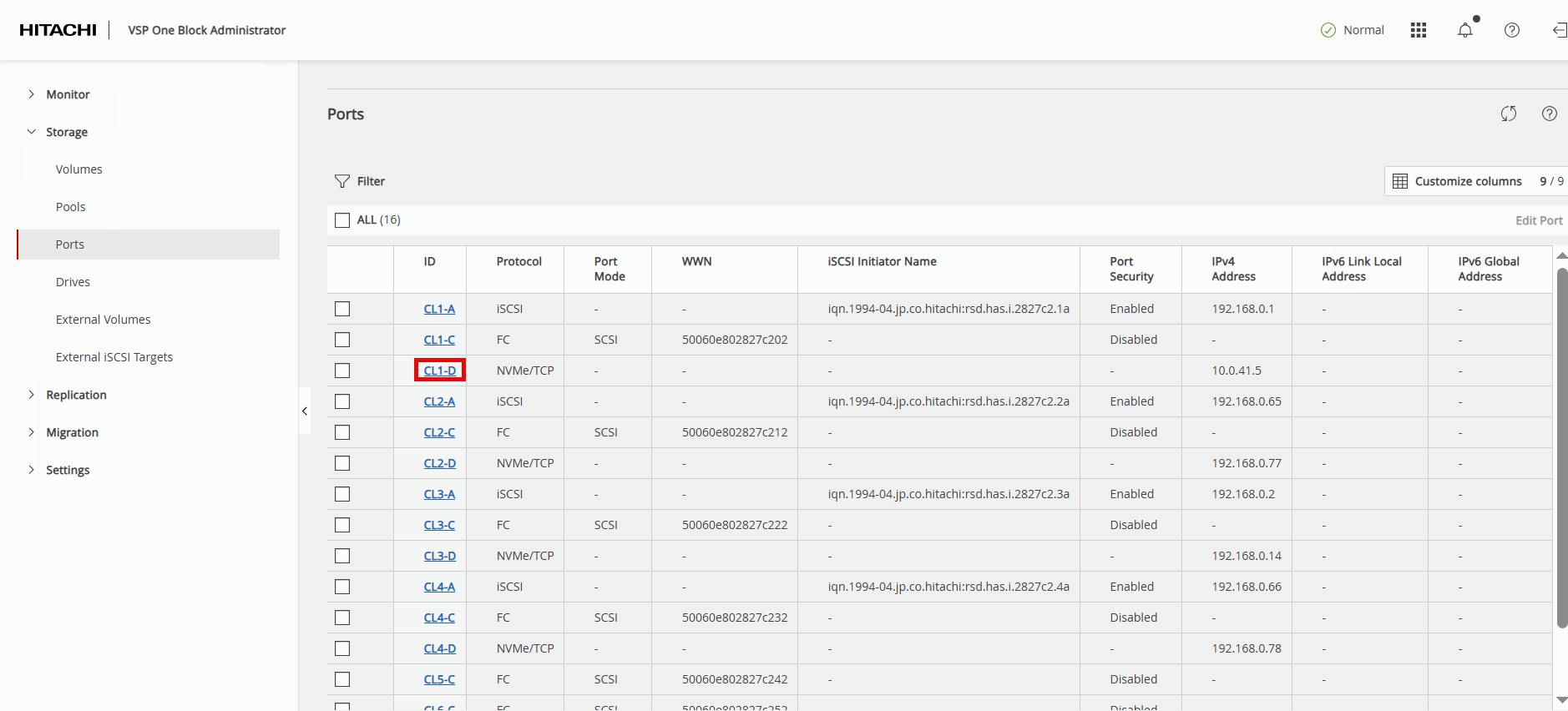

Step 2. In the left navigation pane, expand Storage and click Ports to view the installed storage ports on the storage system.

Step 3. To modify NVMe/TCP ports, select the first NVMe/TCP ID value and click Edit Port in the Actions pane.

Step 4. In the Edit NVMe/TCP Port dialog box, update the following fields and click Submit.

| Field |

Value |

| PORT SPEED |

keep 100Gbps |

| TCP PORT NUMBER (I/O Controller) |

keep 4420 |

| TCP PORT NUMBER (Discovery Controller) |

keep 8009 |

| SELECTIVE ACK |

keep Enabled |

| DELAYED ACK |

keep Enabled |

| MAXIMUM WINDOW SIZE |

keep 1024 KiB |

| MTU SIZE |

change to 9000 bytes |

| IPV4 ADDRESS |

update based on customer’s network requirements In this validation: Nexus A (CL1-D/CL2-D): Traffic uses VLAN 41 with subsequent IP 10.0.41.x Nexus B (CL3-D/CL4-D): Traffic uses VLAN 42 with subsequent IP 10.0.42.x |

| SUBNET MASK |

update based on customer’s network requirements |

| DEFAULT GATEWAY |

update based on customer’s network requirements |

| IPV6 SETTINGS |

keep Disabled |

| VLAN |

keep Disabled |

Edit Port dialogue continued between screenshots:

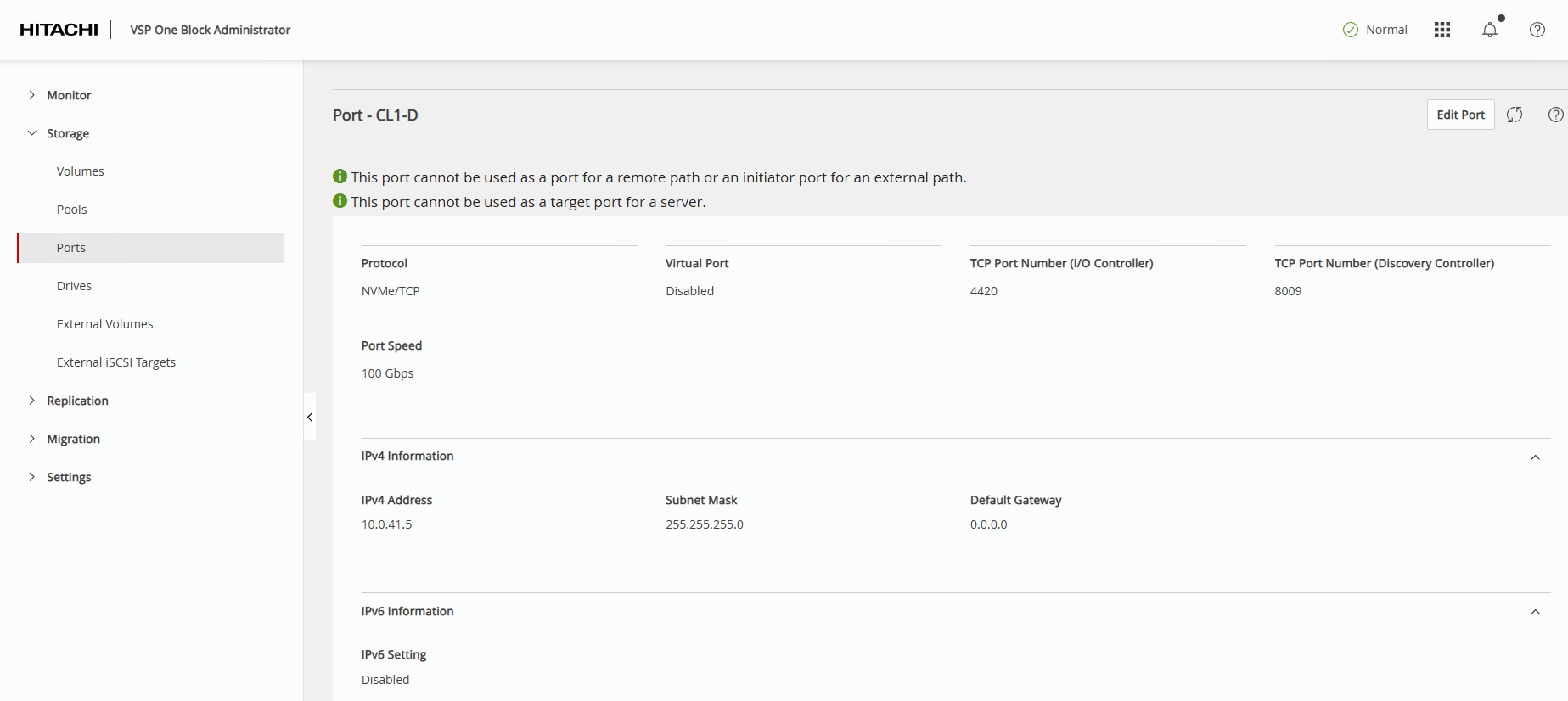

Step 5. The VSP One Block Administrator window will return to the Ports page. Select the port that was just updated to verify the new parameters.

The Ports – CL1-D page will display the updated parameters:

Step 6. Repeat Steps 1 – 6 for all remaining NVMe/TCP ports on the VSP One Block.

Hitachi Dynamic Provisioning Pool for LDEVs for UCS Servers

This section provides the procedure to create a Hitachi Dynamic Provisioning pool for LDEVs for UCS servers.

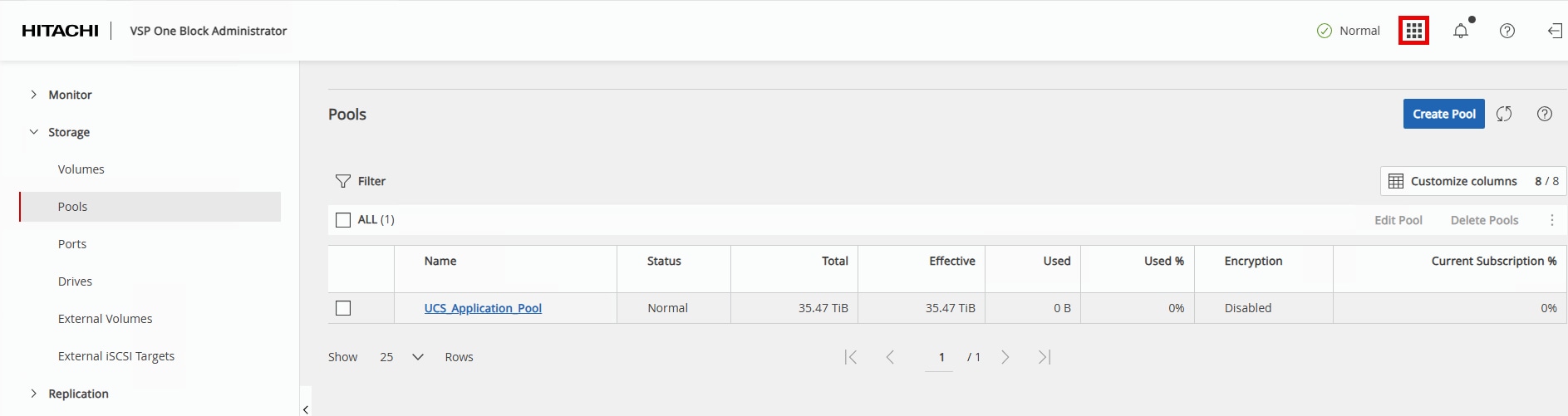

Procedure 1. Create a Hitachi Dynamic Provisioning Pool for LDEVs for UCS Servers

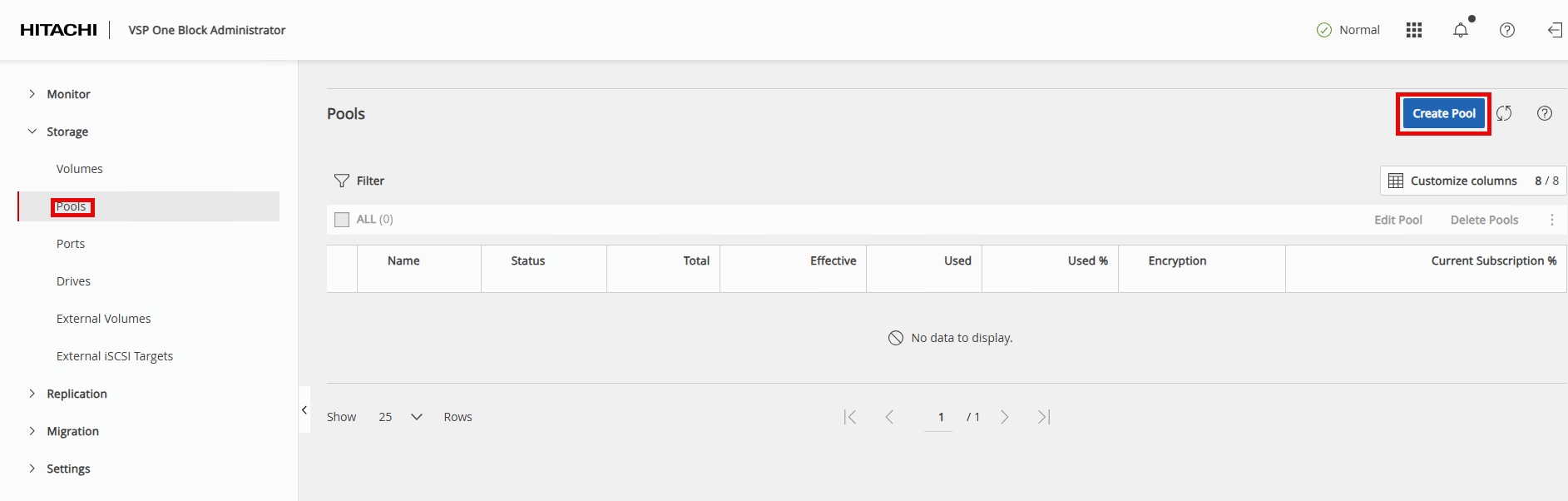

Step 1. In the VSP One Block Administrator window, highlight Pools then click Create Pool.

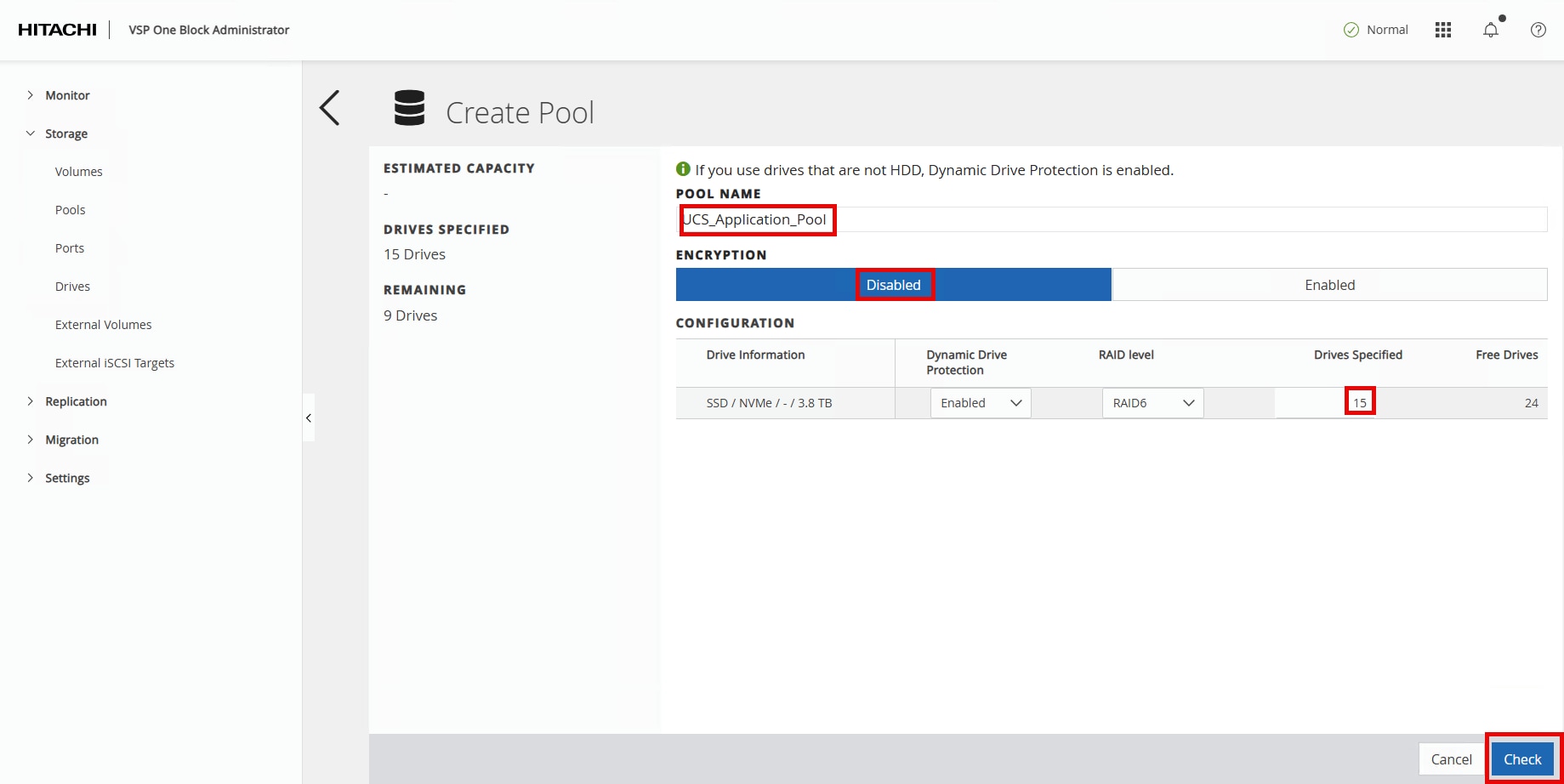

Step 2. Enter the following details in the Create Pool window:

a. POOL NAME: UCS_Application_Pool

b. Encryption: Select Disabled.

c. Drives Specified: Enter 15.

Step 3. Click Check.

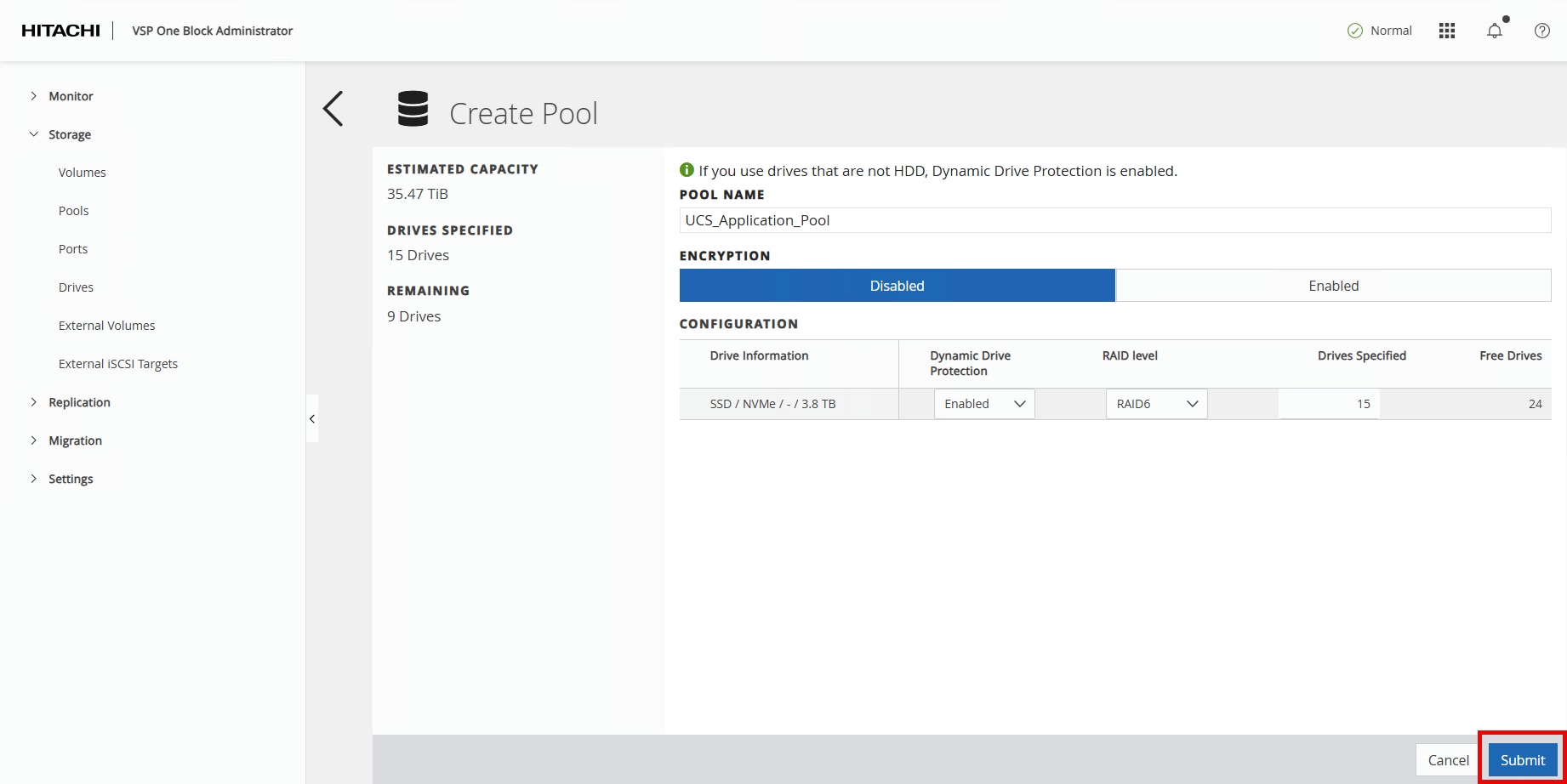

Step 4. Click Submit.

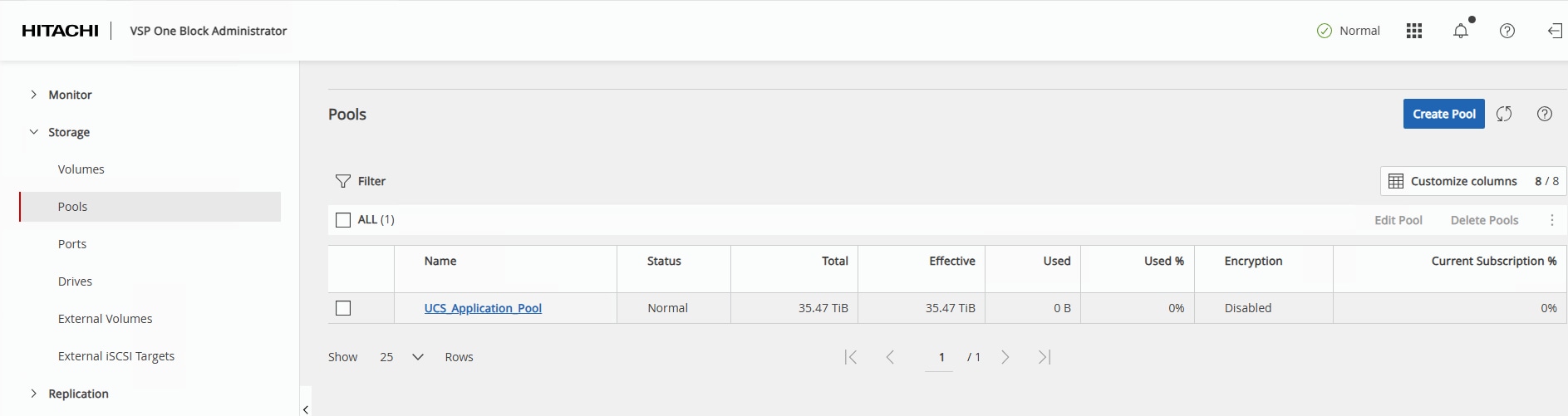

In a few minutes, the new pool creation will be completed.

NVM Subsystem, LDEV, and Host Access

This section provides the procedure to create the NVM Subsystem, LDEV, and enable host access.

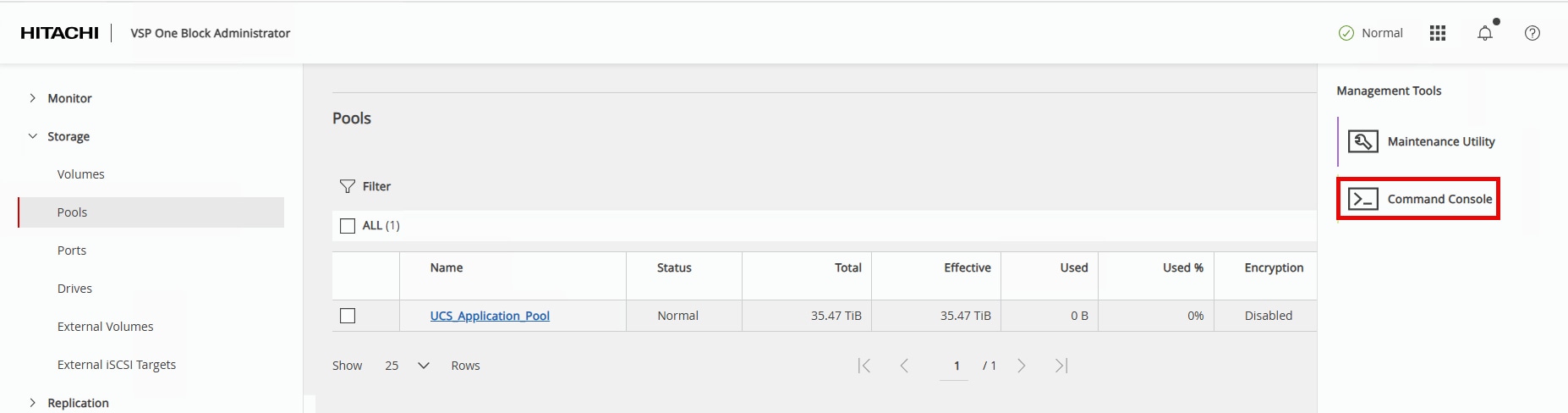

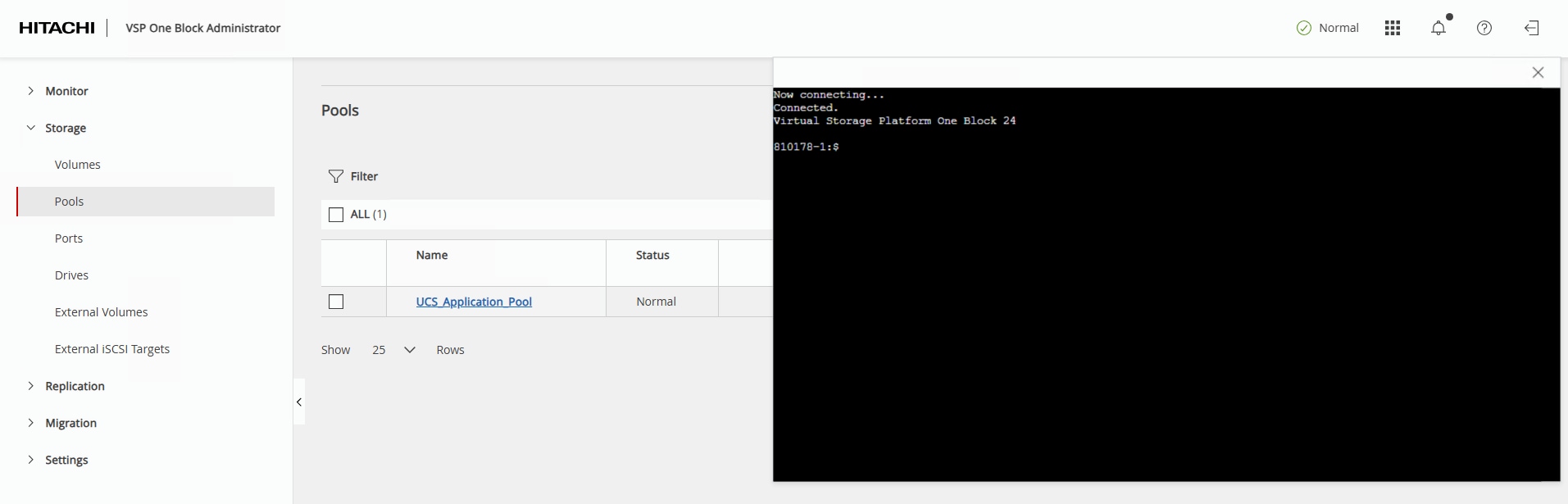

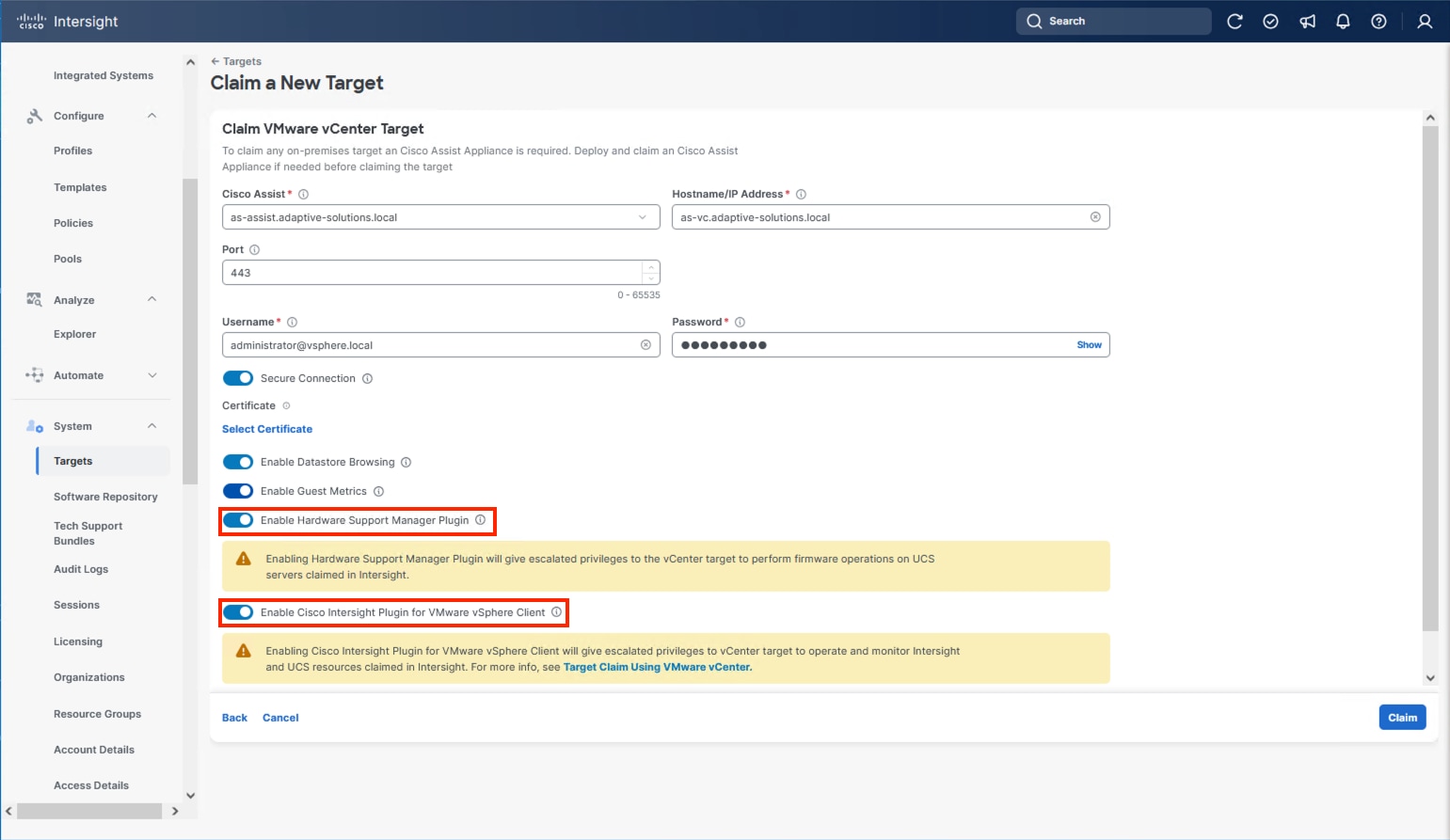

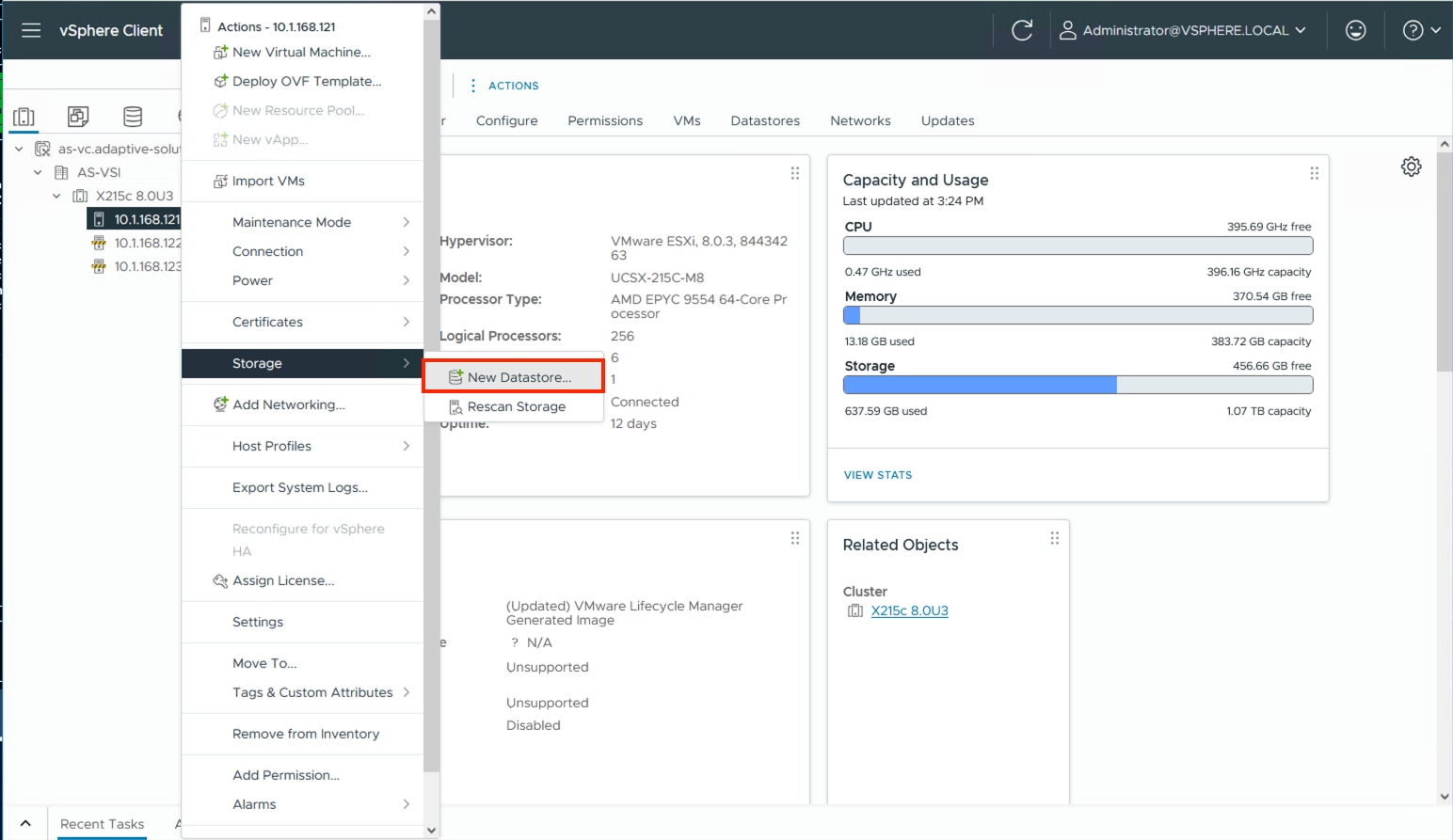

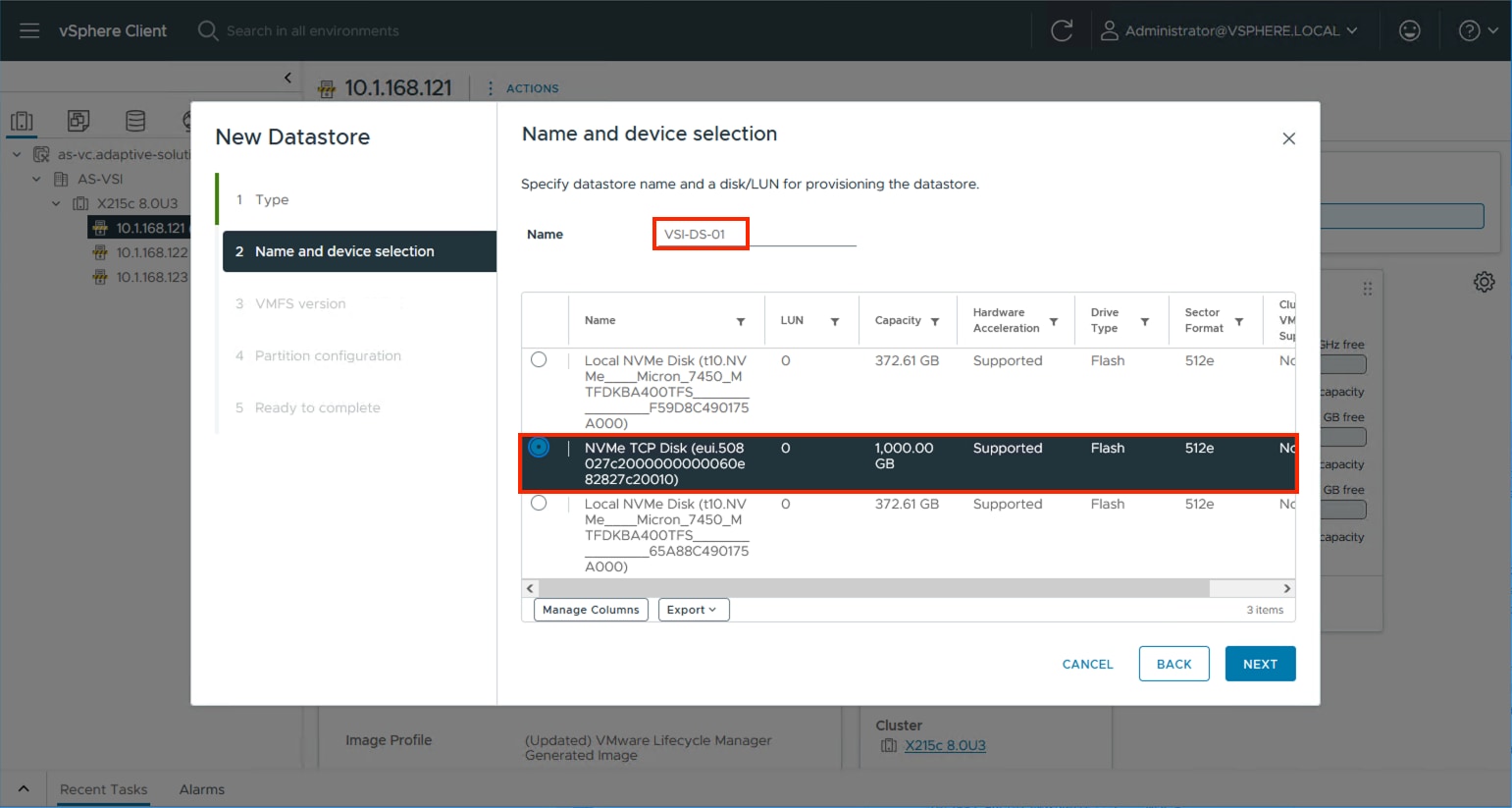

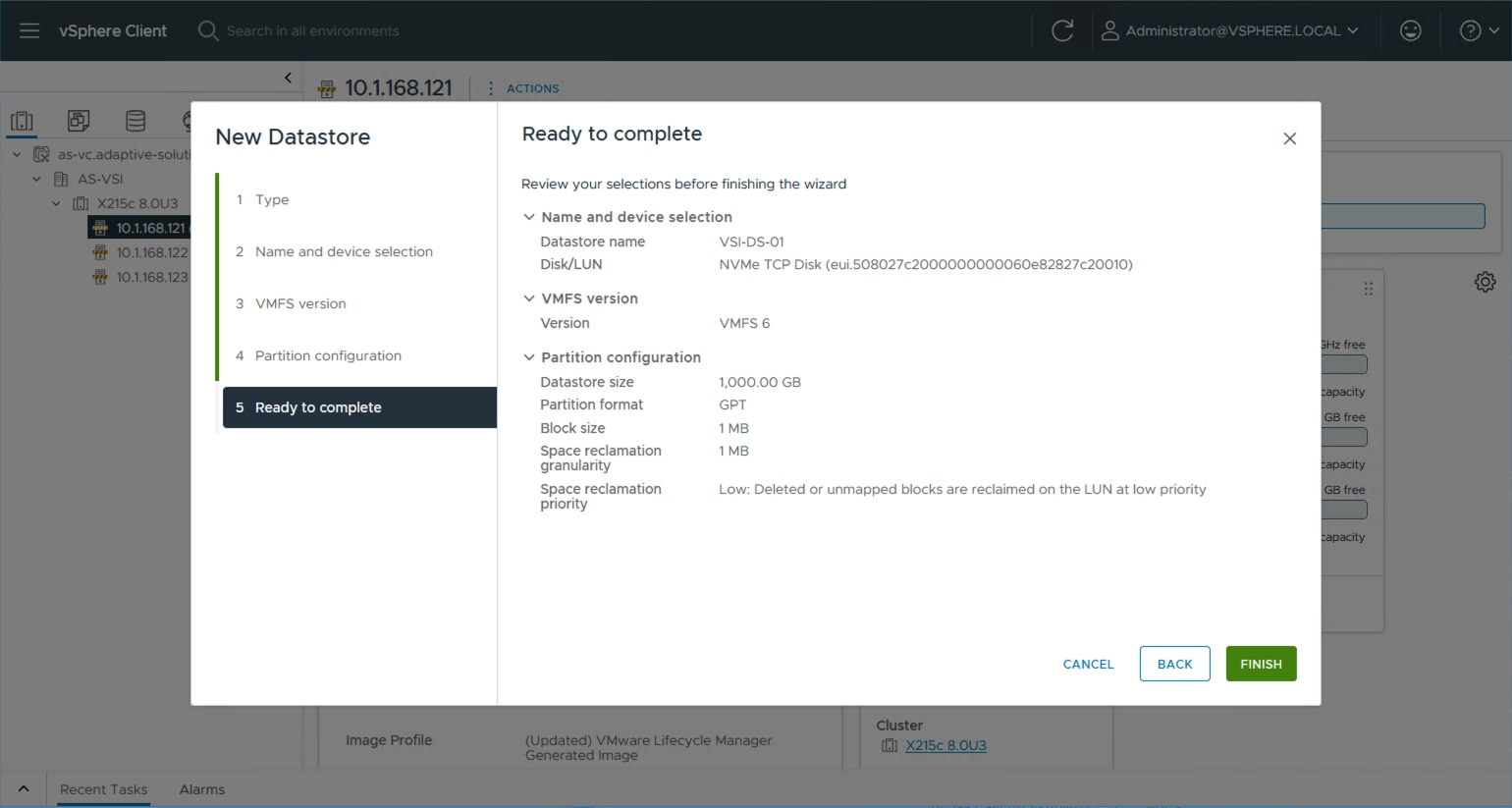

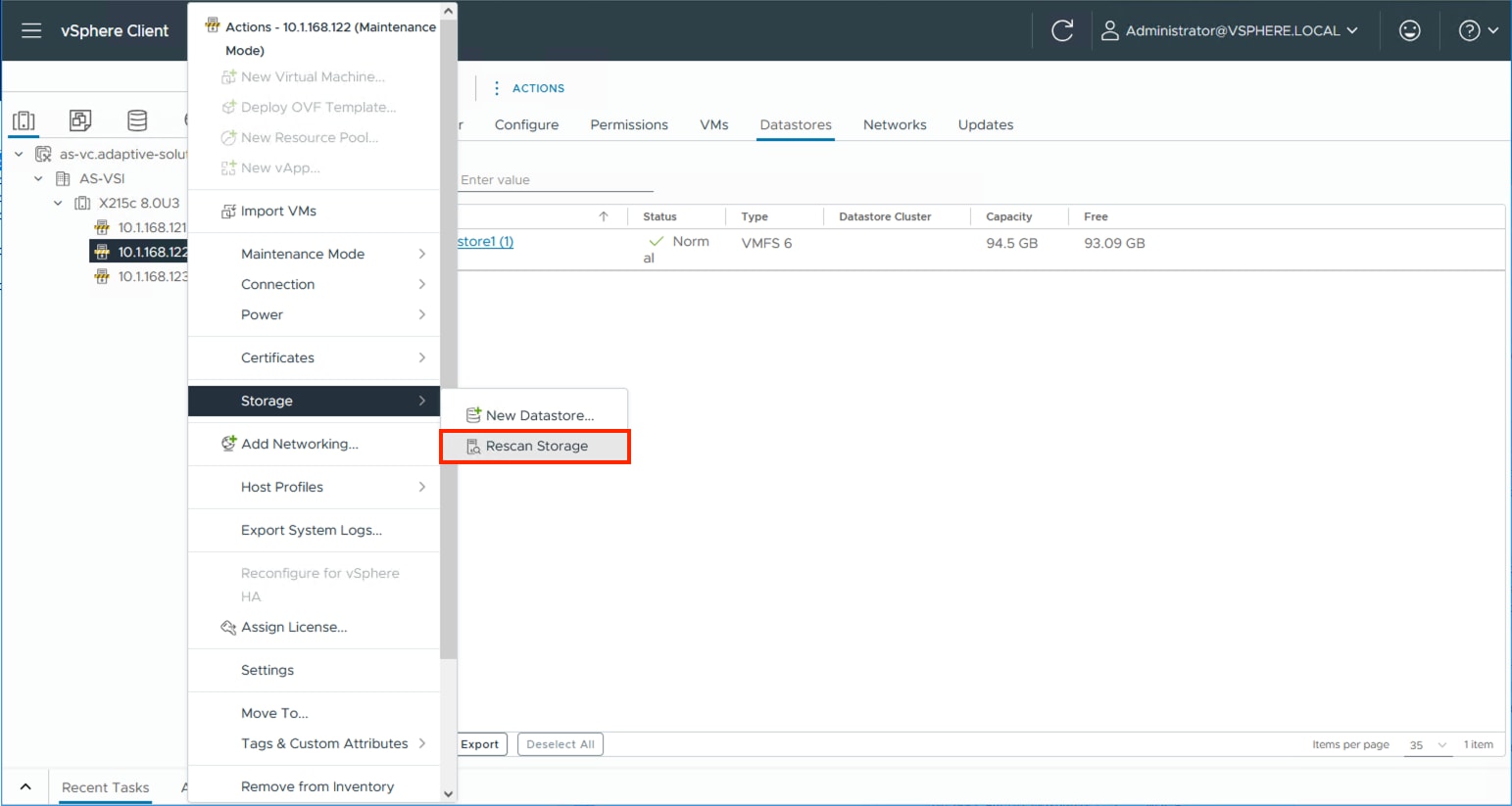

Procedure 1. Create the NVM Subsystem and LDEV and enable host access