Application hosting features

Application hosting features are IOS-XR infrastructure capabilities that

-

allow third-party applications to run directly on NCS 1004 devices,

-

enable extension of device functionality to complement IOS-XR features, and

-

provide native support for containerized applications through Docker.

The Docker daemon is packaged with IOS-XR software on the base Linux OS. This provides native support for running applications inside docker containers on IOS-XR. Docker is the preferred way to run TPAs on IOS-XR.

App Manager

The App Manager is the infra on IOS-XR tasked with the responsibility of managing the life cycle of all container apps (third part and Cisco internal) and process scripts. App Manager runs natively on the host as an IOS-XR process. App Manager leverages the functionalities of docker, systemd and RPM for managing the lifecycle of third-party applications.

Caution: Do not use MPLS packets on Linux interfaces in Docker containers

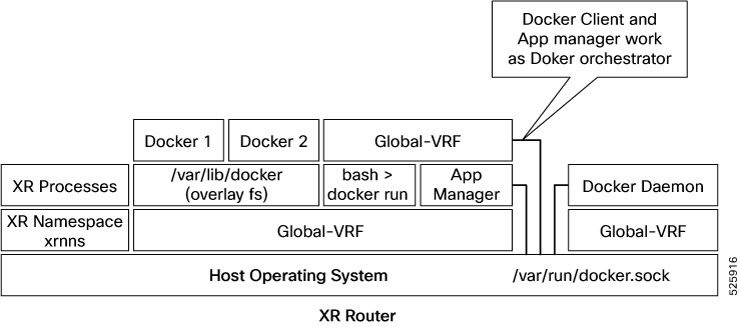

Docker container application hosting architectures

A Docker container application hosting architecture is a platform solution that

-

utilizes Docker containers to deploy and manage applications,

-

enables the App Manager to interact with containers through the Docker client and daemon, and

-

facilitates application isolation and resource management across network devices.

Cisco IOS XR employs Docker to enable application hosting through a structured architecture. The App Manager internally uses the Docker client, which communicates with Trusted Platform Applications (TPAs) such as Docker 1 and Docker 2 by sending Docker commands.

The Docker client transmits commands to the Docker daemon, which then executes them. The Docker daemon uses the docker.sock Unix socket to communicate with the Docker containers.

When the docker run command is executed, a Docker container is created and started from a Docker image. Docker containers can operate within

the global-vrf namespace, providing networking isolation.

Docker relies on overlayfs, located under the /var/lib/docker directory, for managing container directories and files

For detailed instructions on how to host an application in Docker containers on Cisco IOS XR, see: Hosting an Application in Docker Containers.

Recommendation: Follow hosting guidelines for Docker containers

Use the host paths listed below when specifying the --mount and --volume options with docker run:

-

"/var/run/netns"

-

"/var/lib/docker"

-

"/misc/disk1"

-

"/disk0"

-

"/misc/config/grpc"

-

"/etc"

-

"/dev/net/tun"

-

"/var/xr/config/grpc"

-

"/opt/owner"

TP application resource configurations

A TP application resource configuration is a system resource management feature that

-

limits the allocation of CPU, RAM, and disk space to third party applications (TPAs),

-

enforces these limits through the IOS XR application manager (appmgr), and

-

ensures platform security and operational integrity by monitoring application traffic and supporting application signing.

IOS XR is equipped with inherent safeguards to prevent third party applications from interfering with its role as a Network OS.

-

Although IOS XR doesn't impose a limit on the number of TPAs that can run concurrently, it does impose constraints on the resources allocated to the Docker daemon, based on the following parameters:

-

CPU: By default, ¼ of the CPU per core available in the platform.

You can hard limit the default CPU usage in the range between 25-75% of the total system CPU using the appmgr resources containers limit cpu value command. This configuration restricts the TPAs from using more CPU than the set hard limit value irrespective of the CPU usage by other XR processes.

This example provides the CPU hard limit configuration.

RP/0/RSP0/CPU0:ios(config)#appmgr resources containers limit cpu ? <25-75> In Percentage RP/0/RSP0/CPU0:ios(config)#appmgr resources containers limit cpu 25 -

RAM: By default, 1 GB of memory is available.

You can hard limit the default memory usage in the range between 1-25% of the overall system memory using the appmgr resources containers limit memory value command. This configuration restricts the TPAs from using more memory than the set hard limit value.

This example provides the memory hard limit configuration.

RP/0/RSP0/CPU0:ios(config)#appmgr resources containers limit memory ? <1-25> In Percentage RP/0/RSP0/CPU0:ios(config)#appmgr resources containers limit memory 20 -

Disk space is restricted by the partition size, which varies by platform and can be checked by executing "run df -h" and examining the size of the /misc/app_host or /var/lib/docker mounts.

-

-

All traffic to and from the application is monitored by the XR control protection, LPTS.

-

Signed Applications are supported on IOS XR. Users have the option to sign their own applications by onboarding an Owner Certificate (OC) through Ownership Voucher-based workflows as described in RFC 8366. Once an Owner Certificate is onboarded, users can sign applications with GPG keys based on the Owner Certificate, which can then be authenticated during the application installation process on the router.

This table shows the various functions performed by appmgr.

|

Package Manager |

Lifecyle Manager |

Monitoring and Debugging |

|---|---|---|

|

|

|

TP application bring-up methods

A TP application bring-up is a deployment process that

-

allows TP container applications to be launched and initialized for operational readiness,

-

provides multiple configuration approaches to match different deployment needs, and

-

ensures flexibility in integration with system models and container management tools.

he four recommended methods for TP application bring-up are:

- App Config: Use the application configuration files to define startup parameters.

- UM Model: Employ the Unified Management model for standardized operational controls.

- Native Yang Model: Leverage native YANG data models for direct integration and configuration.

- gNOI Containerz: Use gNOI Containerz for streamlined container orchestration and lifecycle management.

Configure a TPA using application configuration

Follow these steps to configure the docker run time options.

Procedure

|

Step 1 |

Configure the docker run time option. Use --pids-limit to limit the number of process IDs using appmgr. Example:This example shows the configuration of the docker run time option --pids-limit to limit the number of process IDs using appmgr. The number of process IDs is limited to 90. |

|

Step 2 |

Verify the docker run time option configuration. Use the show running-config appmgr command to verify the run time option. Example:This example shows how to verify the docker run time option configuration. |

Configure TPAs with the UM model

Follow these steps to configure the docker run time options.

Procedure

|

Configure the docker run time option. Use --pids-limit to limit the number of process IDs using Netconf. Example:This example shows the configuration of the docker run time option --pids-limit to limit the number of process IDs using Netconf. The number of process IDs for the specified TPA is limited as configured. For example, with |

Native model deployment for TPAs

This configuration uses the docker runtime option --pids-limit to restrict the number of process IDs for an application called alpine_app. This ensures resource control and operational stability in environments with multiple TPAs.

<rpc xmlns="urn:ietf:params:xml:ns:netconf:base:1.0" message-id="101">

<edit-config>

<target>

<candidate/>

</target>

<config>

<appmgr xmlns=http://cisco.com/ns/yang/Cisco-IOS-XR-appmgr-cfg>

<applications>

<application>

<application-name>alpine_app</application-name>

<activate>

<type>docker</type>

<source-name>alpine</source-name>

<docker-run-cmd>/bin/sh</docker-run-cmd>

<docker-run-opts>-it --pids-limit=90</docker-run-opts>

</activate>

</application>

</applications>

</appmgr>

</config>

</edit-config>

Key benefits of using the native deployment model:

- Enables direct integration for TPAs.

- Provides better resource management through advanced container options.

- Improves performance and operational reliability for supported applications.

gNOI Containerz for TPA onboarding and lifecycle management

-

A streamlined workflow for onboarding TPAs onto the device.

-

Standardized methods for managing the full lifecycle of containers, including deployment, start/stop, and removal.

-

Simplified and consistent administration of application containers on the device.

The Containerz - gNOI Container Service on NCS 1004 device is a workflow to onboard and manage third-party applications using gNOI RPCs.

For more information, see gNOI Containerz.Docker run options

A Docker run option is a container configuration parameter that

- controls resource allocation such as CPU, memory, and networking,

- enables fine-tuning of security, health checks, and process behavior, and

- allows customization during container launch for precise operation.

Docker run options are used in IOS-XR environments through the Application Manager (AppMgr). These options can be configured

during the launch of a docker containerized application using the appmgr activate command. AppMgr oversees these containers and ensures runtime options can override default configurations for aspects like

CPU, security, and health checks. Configuration is flexible: you can use CLI or Netconf, but all runtime options must be added

under docker-run-opts as needed.

|

Docker run option |

Description |

|---|---|

|

--cpus |

Number of CPUs |

|

--cpuset-cpus |

CPUs in which to allow execution (0-3, 0,1) |

|

--cap-drop |

Drop Linux capabilities |

|

--user, -u |

Sets the username or UID |

|

--group-add |

Add additional groups to run |

|

--health-cmd |

Run to check health |

|

--health-interval |

Time between running the check |

|

--health-retries |

Consecutive failures needed to report unhealthy |

|

--health-start-period |

Start period for the container to initialize before starting health-retries countdown |

|

--health-timeout |

Maximum time to allow one check to run |

|

--no-healthcheck |

Disable any container-specified HEALTHCHECK |

|

--add-host |

Add a custom host-to-IP mapping (host:ip) |

|

--dns |

Set custom DNS servers |

|

--dns-opt |

Set DNS options |

|

--dns-search |

Set custom DNS search domains |

|

--domainname |

Container NIS domain name |

|

--oom-score-adj |

Tune host's OOM preferences (-1000 to 1000) |

|

--shm-size |

Option to set the size of /dev/shm |

|

--init |

Run an init inside the container that forwards signals and reaps processes |

|

--label, -l |

Set meta data on a container |

|

--label-file |

Read in a line delimited file of labels |

|

--pids-limit |

Tune container pids limit (set -1 for unlimited) |

|

--work-dir |

Working directory inside the container |

|

--ulimit |

Ulimit options |

|

--read-only |

Mount the container's root filesystem as read only |

|

--volumes-from |

Mount volumes from the specified container(s) |

|

--stop-signal |

Signal to stop the container |

|

--stop-timeout |

Timeout (in seconds) to stop a container |

|

--cap-addNET_RAW |

Enable NET_RAW capabilities |

|

--publish |

Publish a container's port(s) to the host |

|

--entrypoint |

Overwrite the default ENTRYPOINT of the image |

|

--expose |

Expose a port or a range of ports |

|

--link |

Add link to another container |

|

--env |

Set environment variables |

|

--env-file |

Read in a file of environment variables |

|

--network |

Connect a container to a network |

|

--hostname |

Container host name |

|

--interactive |

Keep STDIN open even if not attached |

|

--tty |

Allocate a pseudo-TTY |

|

--publish-all |

Publish all exposed ports to random ports |

|

--volume |

Bind mount a volume |

|

--mount |

Attach a filesystem mount to the container |

|

--restart |

Restart policy to apply when a container exits |

|

--cap-add |

Add Linux capabilities |

|

--log-driver |

Logging driver for the container |

|

--log-opt |

Log driver options |

|

--detach |

Run container in background and print container ID |

|

--memory |

Memory limit |

|

--memory-reservation |

Memory soft limit |

|

--cpu-shares |

CPU shares (relative weight) |

|

--sysctl |

Sysctl options |

Feedback

Feedback