Installation requirements

This topic lists the requirements that you must review before you install Cisco Optical Network Controller.

VMware platform requirements

The VMware platform requirements are:

-

VMware vCenter Server version 7.0 or later.

-

VMware vSphere server and client version 7.0 or later.

-

ESXi host version 7.0 or later on the servers that host the virtual machines.

Attention |

Upgrade to VMware vCenter Server 8.0 U2 if you are using VMware vCenter Server 8.0.2 or VMware vCenter Server 8.0.1. |

Cisco Optical Network Controller is deployed on rack or blade servers within vSphere.

Note |

For more details on VMware vSphere, see VMware vSphere. |

Supporting service requirements

The supporting service requirements are:

-

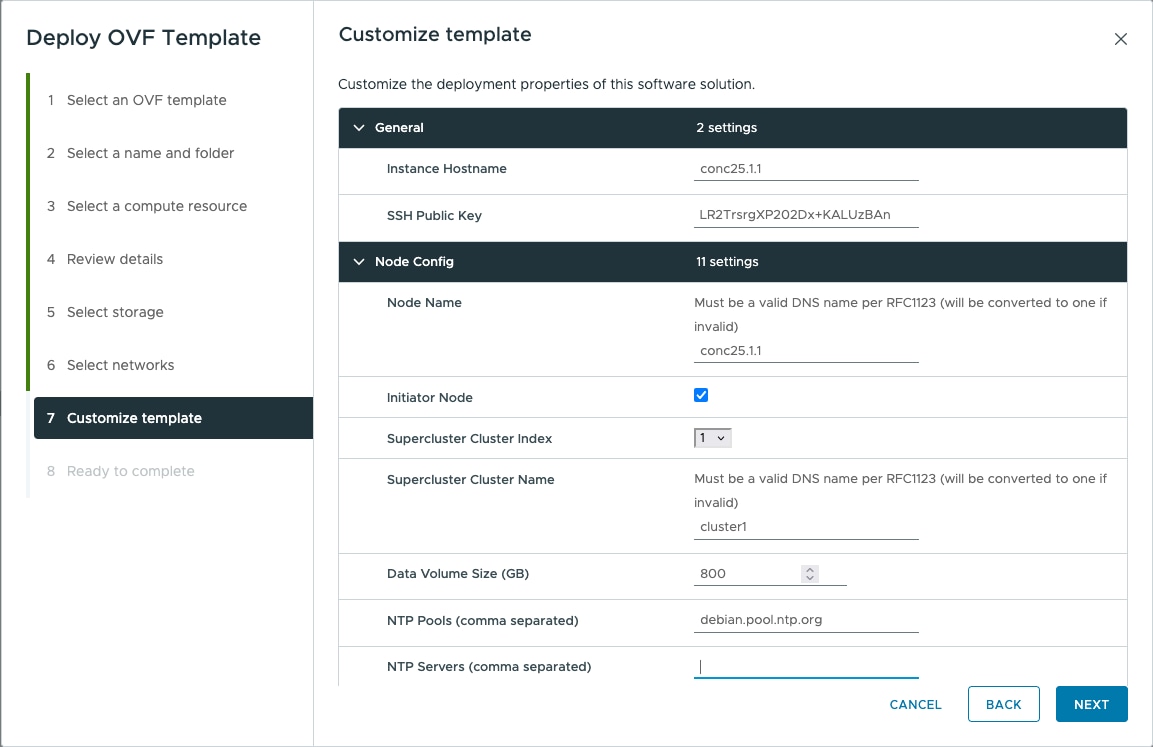

DNS server: The DNS server can be an internal DNS server if the Cisco Optical Network Controller instance is not exposed to the internet.

-

NTP server or NTP pool: Use the same NTP source on Cisco Optical Network Controller, the client PC or VM, and the ESXi host.

Network requirements

The network requirements are:

-

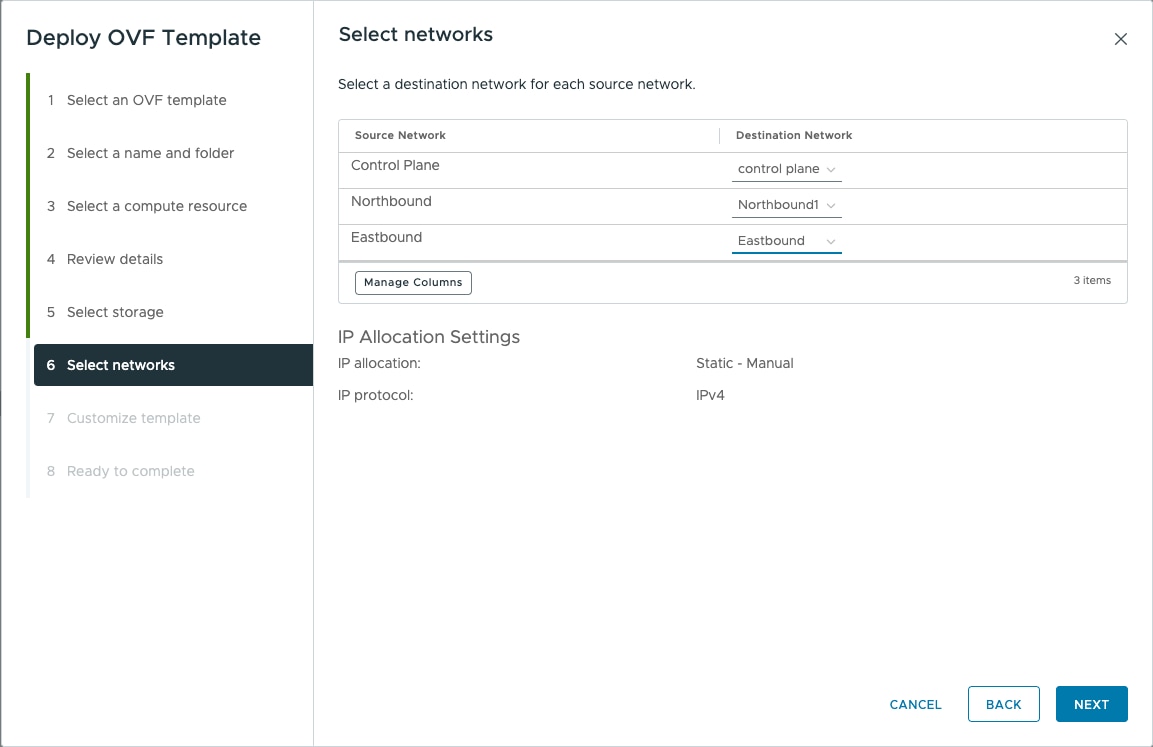

Before installation, three networks must be created.

-

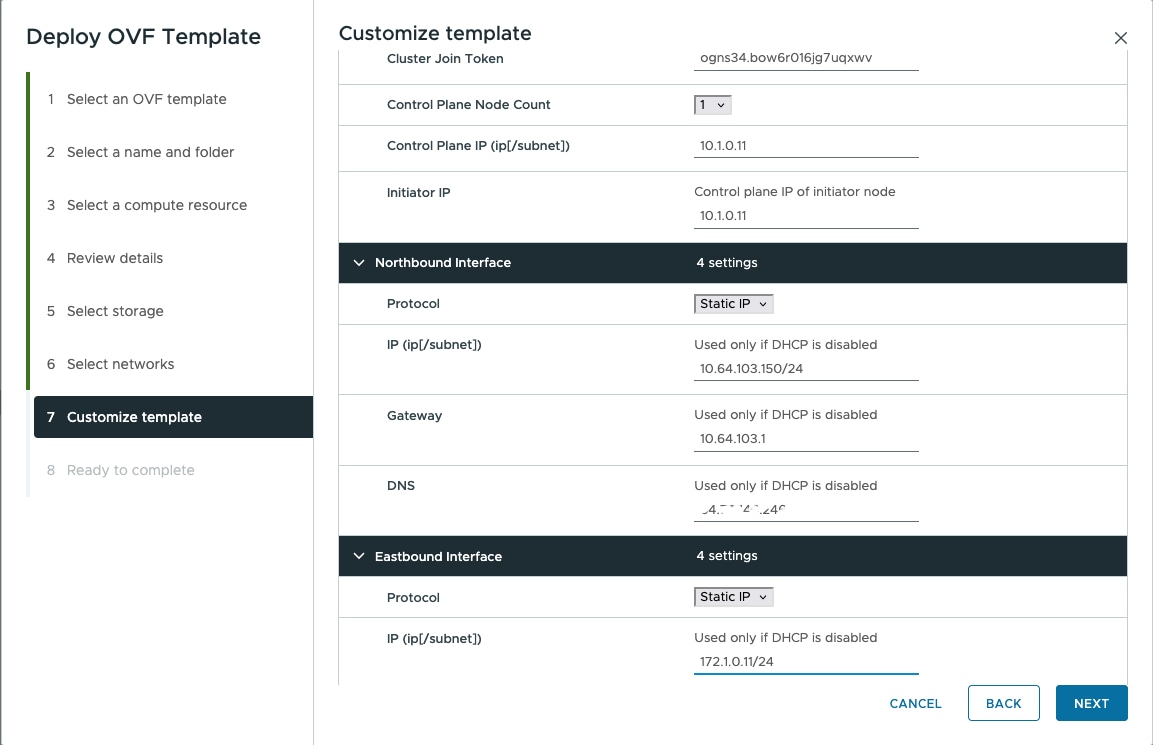

Control Plane Network: Used for internal communication between deployed virtual machines within a cluster. In a standalone deployment, this network can be a private network. In a high availability cluster, create this network between the servers where each HA node is deployed.

-

VM Network or Northbound Network: Used for communication between users and the cluster. This network carries public traffic and hosts the UI.

-

Eastbound Network: Used for internal communication between deployed virtual machines within a cluster. In a standalone deployment, this network can be a private network.

Preparation task

ESXi hosts use a self-signed certificate by default. Before deployment, ensure that browser certificate warnings are accepted and the required VMware networks are prepared.

For the procedure, see Prepare VMware networking and ESXi access.

Hardware requirements and sizing notes

This table lists the minimum hardware requirements for the base profile with daily retention only.

|

Sizing |

CPU |

Memory |

Solid State Drive (SSD) |

|---|---|---|---|

|

Extra Small (XS) |

16 vCPU |

64 GB |

1 TB |

|

Small (S) |

32 vCPU |

128 GB |

2.5 TB |

|

Medium (M) |

48 vCPU |

256 GB |

6 TB |

This table lists the minimum hardware requirements for the extended profile with weekly and monthly retention.

|

Sizing |

CPU |

Memory |

Solid State Drive (SSD) |

|---|---|---|---|

|

Extra Small (XS) |

16 vCPU |

64 GB |

1.5 TB |

|

Small (S) |

32 vCPU |

128 GB |

5 TB |

|

Medium (M) |

48 vCPU |

256 GB |

12 TB |

Use SSDs that meet the disk write latency requirement of 100 ms or lower.

Attention |

Cisco Optical Network Controller supports only SSDs for storage. |

The hardware sizing notes are:

-

Configure vCPU and memory according to the VM profile before you power on the VM in vCenter. XS uses 16 vCPU and 64 GB. S uses 32 vCPU and 128 GB.

-

The supported vCPU-to-physical-core ratio is 2:1 if hyperthreading is enabled and supported by the hardware. Otherwise, the ratio is 1:1.

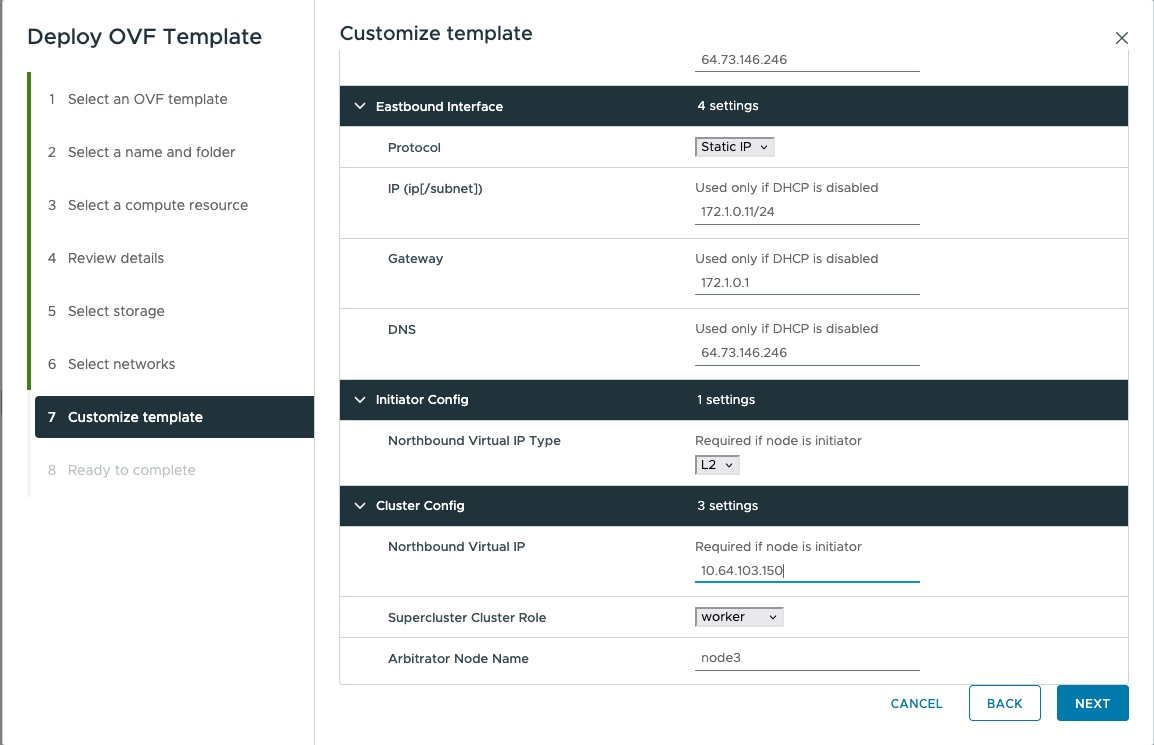

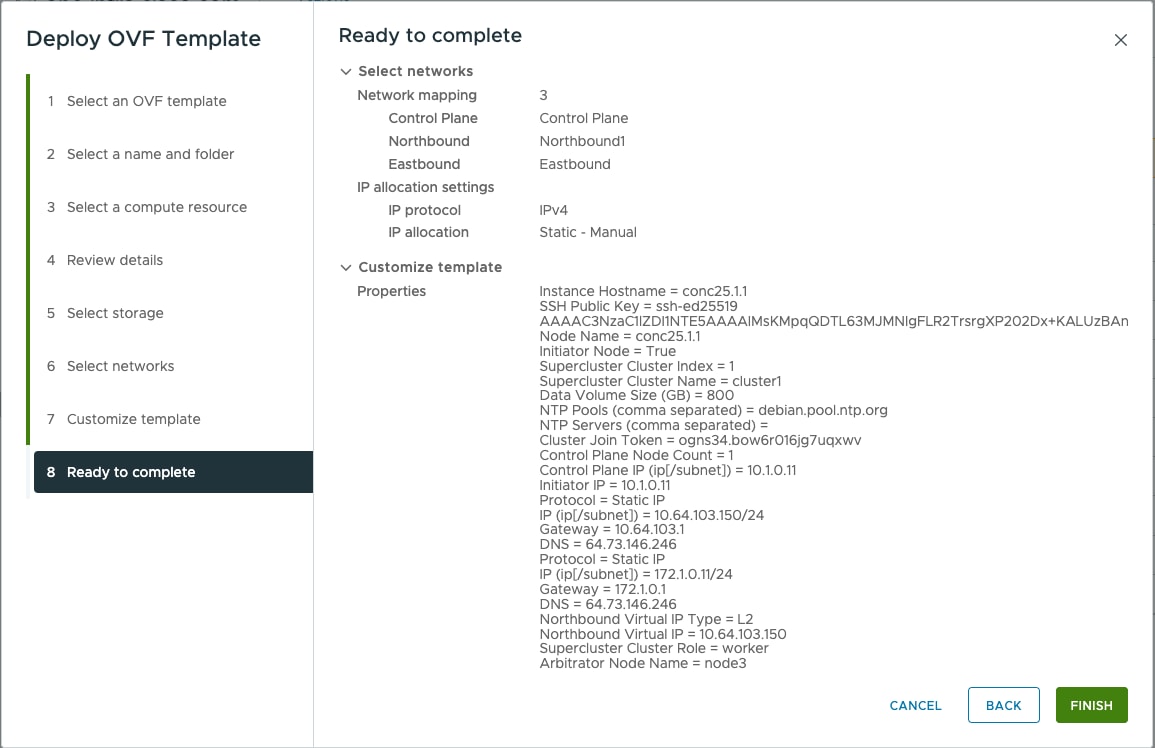

Deployment-specific requirements

This table lists the deployment-specific requirements.

|

Deployment type |

Requirements |

|---|---|

|

Standalone (SA) |

Control Plane Network: Can be a private network. Requires one IP address. Gateway required. DNS server required. Northbound Network (VM Network): Must be a public network. Requires one public IP address. Gateway required. DNS server required. Eastbound Network: Can be a private network. Requires one private IP address. Gateway required. DNS server required. |

|

Highly Available (HA) |

Control Plane: Three IP addresses for the individual nodes. This can be a private network. VM Network: Four IP addresses. This must be a public network, with three IP addresses for node management and one virtual IP for northbound communication and UI access. |

Note |

For a high availability deployment, nodes on different ESXi hosts should have a minimum link bandwidth of 10G between them to ensure efficient data communication and synchronization. |

Communications matrix

This table lists the default port assignments.

|

Traffic type |

Port |

Description |

|---|---|---|

|

Inbound |

TCP 22 |

SSH remote management |

|

TCP 8443 |

HTTPS for UI access |

|

|

Outbound |

TCP 22 |

NETCONF to routers |

|

TCP 389 |

LDAP if using Active Directory |

|

|

TCP 636 |

LDAPS if using Active Directory |

|

|

Customer specific |

HTTP for access to an SDN controller |

|

|

User specific |

HTTPS for access to an SDN controller |

|

|

TCP 3082, 3083, 2361, 6251 |

TL1 to optical devices |

|

|

Eastbound |

TCP 10443 |

Supercluster join requests |

|

UDP 8472 |

VxLAN |

Feedback

Feedback