Cisco Crosswork Hierarchical Controller 11.0 Installation Guide

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

This document is an installation guide for Cisco Crosswork Hierarchical Controller.

The document explains:

● Cisco Crosswork Hierarchical Controller Prerequisites

● Install Cisco Crosswork Hierarchical Controller Platform

● Upgrade Cisco Crosswork Hierarchical Controller Platform

● Install Cisco Network Services Orchestrator Crosswork Hierarchical Controller Function Pack

Cisco Crosswork Hierarchical Controller Prerequisites

Cisco Crosswork Hierarchical Controller supports Standalone (SA) or supercluster deployment models.

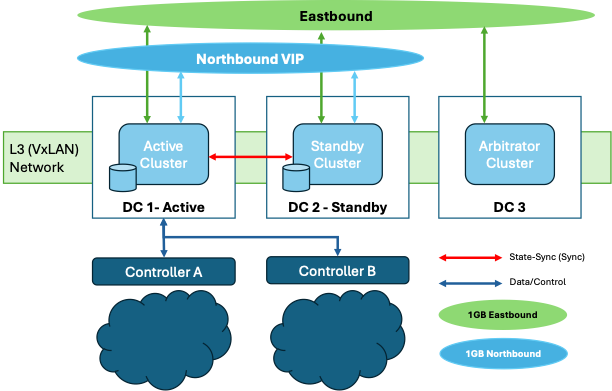

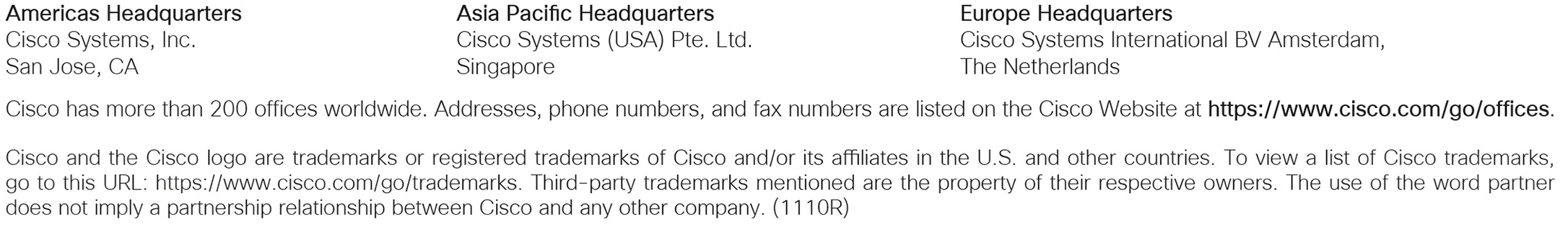

For geo-redundancy, a supercluster may be deployed in a 1+1+1 scenario, which includes:

● active single-node (worker) cluster

● standby single-node (worker) cluster

● single witness (arbitrator) node

Cisco Crosswork Hierarchical Controller Supercluster Architecture

Cisco Crosswork Hierarchical Controller is released with a VMWare OVA file distribution. OVA is a disk image deployed using vCenter on any ESXi host. This OVA packages together several components including a file descriptor (OVF) and virtual disk files containing a basic operating system and the Cisco Crosswork Hierarchical Controller installation files.

OVA can be deployed using vCenter on ESXi hosts supporting Standalone (SA) or supercluster deployment models.

● VMware vCenter Server 7.0 Update 3

● VMware ESXi 7.0 Update 3

Note: The system was tested with VMware ESXi version 7.0 Update 3. The system is expected to function as expected with other VMware ESXi 7.0 sub-versions as well. If you are using a sub-version other than VMware ESXi 7.0 Update 3 and you encounter any issues, contact your Cisco support representative.

Cisco Crosswork Hierarchical Controller is released with a QCOW2 file.

The QCOW2 file is a disk image used with QEMU and KVM on Linux systems. It functions as a virtual hard drive for a VM, and it contains a basic operating system and the Cisco Crosswork Hierarchical Controller installation files.

This can be deployed on KVM hosts and supports Standalone (SA) or supercluster deployment models.

● RHEL 9.4 with VM management utilities:

◦ virt-manager version 4.1.0

◦ virsh version 10.0.0

● QCOW2 file for VM install. This is the disk of the VM and must have a unique name.

Primary or Standalone Nodes-Non-Scale

This specification is for primary or standalone instances of Crosswork Hierarchical Controller. This applies to deployments with up to 1000 devices.

| Requirement |

|

| vCPU |

10 Cores |

| Memory |

96 GB |

| Multiple ESXi hosts (Control Plane) |

Minimum 1 Gbps required between hosts. |

| Storage |

500 GB SSD |

| HW Reservation |

100% for vCPU and memory |

| NICs |

2 for standalone 3 for supercluster |

Witness Node - Non-Scale/Scale

This specification defines the requirements for the Crosswork Hierarchical Controller witness (or arbitrator) instance, a component that is essential for both scale and non-scale deployment.

| Hardware |

Requirement |

| vCPU |

4 Cores |

| Memory |

32 GB |

| Storage |

200 GB SSD |

| HW Reservation |

100% for vCPU and memory |

| NICs |

3 for supercluster |

Primary or Standalone Nodes - Scale

This spec is for primary or standalone instances of Crosswork Hierarchical Controller.

Note: For additional scale requirements, contact Cisco Support team.

| Hardware |

Requirement |

| vCPU |

20 Cores |

| Memory |

128 GB |

| Multiple ESXi hosts (Control Plane) |

Minimum 1 Gbps required between hosts; up to 10 Gbps in scaled environments. |

| Storage |

2 TB SSD Note: This is without considering RAID configurations. |

| HW Reservation |

100% for vCPU and memory |

| NICs |

2 for standalone 3 for supercluster |

Latency All Nodes

| Latency |

Requirement |

| Geo-redundancy (1+1+1) |

<100 milliseconds between clusters (1+1+1) for the Eastbound Network |

Client

The client machine requirements are:

● Windows PC or MAC

● GPU

● Web browser with GPU hardware acceleration support

● Recommended

◦ Screen resolution 1920x1080

◦ Google Chrome Web browser version 75 or later is recommended

Note: GPU is mandatory to properly get all the benefits of the network 3D map.

The table that follows lists the default port assignments. The ports can be customized as necessary to meet your network requirements.

| User |

Role |

Description |

| Inbound |

TCP 22 |

SSH remote management |

| TCP 8443 |

HTTPS for UI access |

|

| Outbound |

TCP 22 |

NETCONF to routers |

| TCP 389 |

LDAP if using Active Directory |

|

| TCP 636 |

LDAPS if using Active Directory |

|

| Customer Specific |

HTTP for access to an SDN controller |

|

| Customer Specific |

HTTPS for access to an SDN controller |

|

| TCP 3082, 3083, 2361, 6251 |

TL1 to optical devices |

|

| Eastbound |

TCP 10443 |

Supercluster join request |

| UDP 8472 |

VxLAN |

|

| syslog |

Customer specific |

TCP/UDP |

| Control Plane Ports (Internal network between cluster nodes, not exposed)

|

TCP 443 |

Kubernetes |

| TCP 6443 |

Kubernetes |

|

| TCP 10250 |

Kubernetes |

|

| TCP 2379 |

etcd |

|

| TCP 2380 |

etcd |

|

| UDP 8472 |

VxLAN |

|

| ICMP |

Ping between nodes (optional) |

The storage volume required for Crosswork Hierarchical Controller production depends on the amount of storage needed for performance counters and for daily DB backups.

The performance monitoring storage is calculated based on the number of client ports and the amount of time the counters are stored. The ballpark figure is 700 MB for 1000 ports.

The detailed formula to calculate the storage is:

<uncompressed data>=<number of ports>*<samples per day>*<number of days>*60

Storage = (<uncompressed data>*0.1)+<daily backup size>*<number of days>*<number of months>

Taking the following assumptions into account:

● Samples – samples per day

● Sample size per port – 60 bytes

● Days – number of days the PM data is stored

● Compression ratio – data is compressed in DB, at a ratio of ~10%

● Daily backup – ~60 MB per day

● Number of backup days – 14 days

● Number of backup months – default is 12 months

Crosswork Hierarchical Controller certified scaling.

| Component |

Maximum Certified |

| Total number of devices |

10,000 |

| Total number of L2 links |

39,127 |

| Total number of L3 links |

48,522 |

| Total physical interfaces |

230,014 |

| Total logical interfaces |

320,061 |

| Total LAG interfaces |

169,898 |

| Total L2 VPN services |

51,038 |

| Total L3 VPN services |

49,931 |

Note: The scale figures presented above were observed during integration with Cisco Hierarchical Controller (HCO) version 11.0 and Crosswork Network Controller (CNC) version 7.1.0. These results were based on use cases using the Crosswork Hierarchical Controller Network Inventory and SHQL applications. Functionality of Crosswork Hierarchical Controller applications may be limited in large-scale environments. Support for these applications or additional controllers is provided on a best-effort basis and may vary depending on deployment size and use case.

The following list contains the pre-requisites of Cisco Crosswork Hierarchical Controller installation.

Before installing Cisco Crosswork Hierarchical Controller:

● For ESXi, install the ESXi host on servers with vSphere to support creating VMs.

● For KVM, install RHEL 9.4 with the required VM management utilities (virt-manager and virsh).

● Create three networks and assign each to a separate zone:

◦ The northbound network is used for communication between the client and a cluster.

◦ The eastbound network is used for communication between the nodes that form the supercluster.

◦ The control plane network helps in the communication between the deployed VMs within a cluster.

Note: Although the control plane network is not in use for the standalone or supercluster 1+1+1 configuration, it should still be created.

To create the networks:

1. For ESXi, from the vSphere client, select the Datacenter where you want to add the ESXi host.

2. For KVM:

● Ensure that bare-metal servers come with enabled CPU for virtualization, amd-v, vt-x, and so on.

● Install RHEL 9.4, ensuring that there are multiple hosts to deploy multiple VMs in disparate locations.

● After the installation, ensure that the KVM module is recognized by the OS, for example, lsmod and kvm should be loaded

● Set the RHEL subscription to RHEL repos to be able to download supported packages.

● Install the user-space management utilities:

◦ virt-manager

◦ virsh

◦ virt-install

◦ virt-viewer

◦ libvirt

◦ virt-top

◦ libguestfs-tools

● Proceed to load the NXF-based QCOW2 images and run them on the KVM hypervisor. See Install Crosswork Hierarchical Controller using KVM.

3. After adding the host, create the networks before deploying the standalone or supercluster:

◦ Standalone: Three IPs (v4). Assign a VIP for the northbound interface (for standalone, the VIP and northbound IP are the same), another real IP for the control plane, and a dummy IP for the eastbound interface.

◦ Supercluster: Seven IPs (v4). Assign three IPs each for the northbound and eastbound networks for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node, and a VIP. The VIP is the IP that exposes the active single-node cluster to the user.

Cisco Crosswork Hierarchical Controller Supercluster Requirements

BGP Installation Requirements

Supercluster:

● BGP is used for traffic routing to the virtual IP from the various locations.

● Configure the BGP password (

SSH to Crosswork Hierarchical Controller requires a public and private SSH key to be generated upfront before OVA deployment:

● Public SSH key is passed as a parameter during OVA deployment

● Private key is required to execute the remote SSH login

● SSH key must use the ed25519 encryption algorithm

To generate the keys:

4. Execute the ssh-keygen command:

# ssh-keygen -t ed25519 -f <PATH>/<keyname>

This generates the public and private keys:

◦

◦

5. Remove the comment from the public key before using it during the OVA or QCOW2 deployment.

Install Crosswork Hierarchical Controller using ESXi

Install Standalone Crosswork Hierarchical Controller using ESXi

When you deploy the OVA template it installs the Crosswork Hierarchical Controller platform and the various Crosswork Hierarchical Controller applications.

Note: It is suggested that you keep track of all settings in a spreadsheet before proceeding.

To install Crosswork Hierarchical Controller:

1. Right-click on the ESXi host in the vCenter vSphere Client screen, and then click Deploy OVF Template.

2. On the Select an OVF template page, specify the location of the source OVA template:

◦ URL: A URL to an OVA template located online.

◦ Local file: A location with the OVA template.

3. Click Next.

4. On the Select a name and folder page, specify a unique name for the VM Instance. The Virtual machine name must be a valid DNS name:

◦ contain no more than 253 characters

◦ contain only lowercase alphanumeric characters, '-' or '.'

◦ start with an alphanumeric character

◦ end with an alphanumeric character

5. From the list of options select the location of the VM to be used.

6. Click Next.

7. On the Select a compute resource page, select the destination compute resource on which you want to deploy the VM.

Note: While selecting the compute resource the compatibility check proceeds until it completes successfully.

8. Click Next.

9. On the Review details page, verify the template details.

10. Click Next.

11. On the Select storage page, set the Select virtual disk format based on SSD.

12. Leave the VM Storage Policy set to Datastore Default.

13. Select the storage.

14. Click Next.

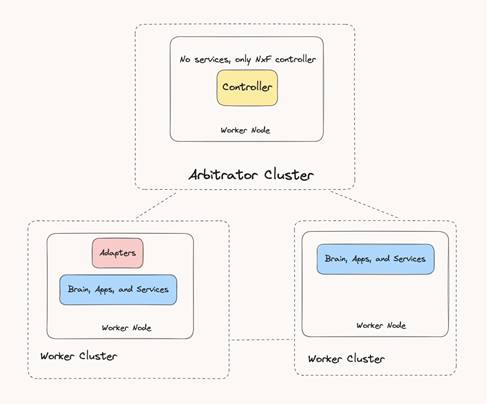

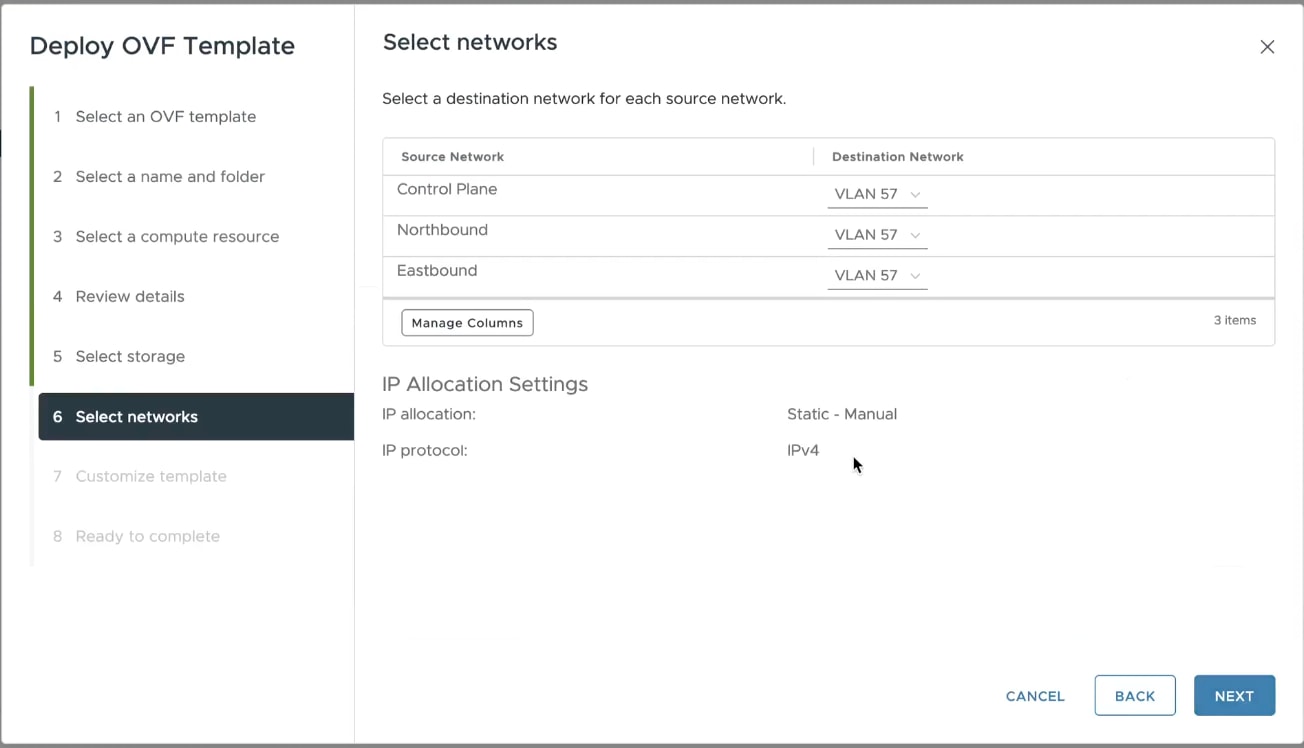

15. In the Select networks page, set the destination networks:

◦ Control Plane: The control plane network.

◦ Northbound: The VM network used for the VIP address for RESTCONF or UI access.

◦ Eastbound: The VM network used for communication between the nodes that form the supercluster.

16. Click Next.

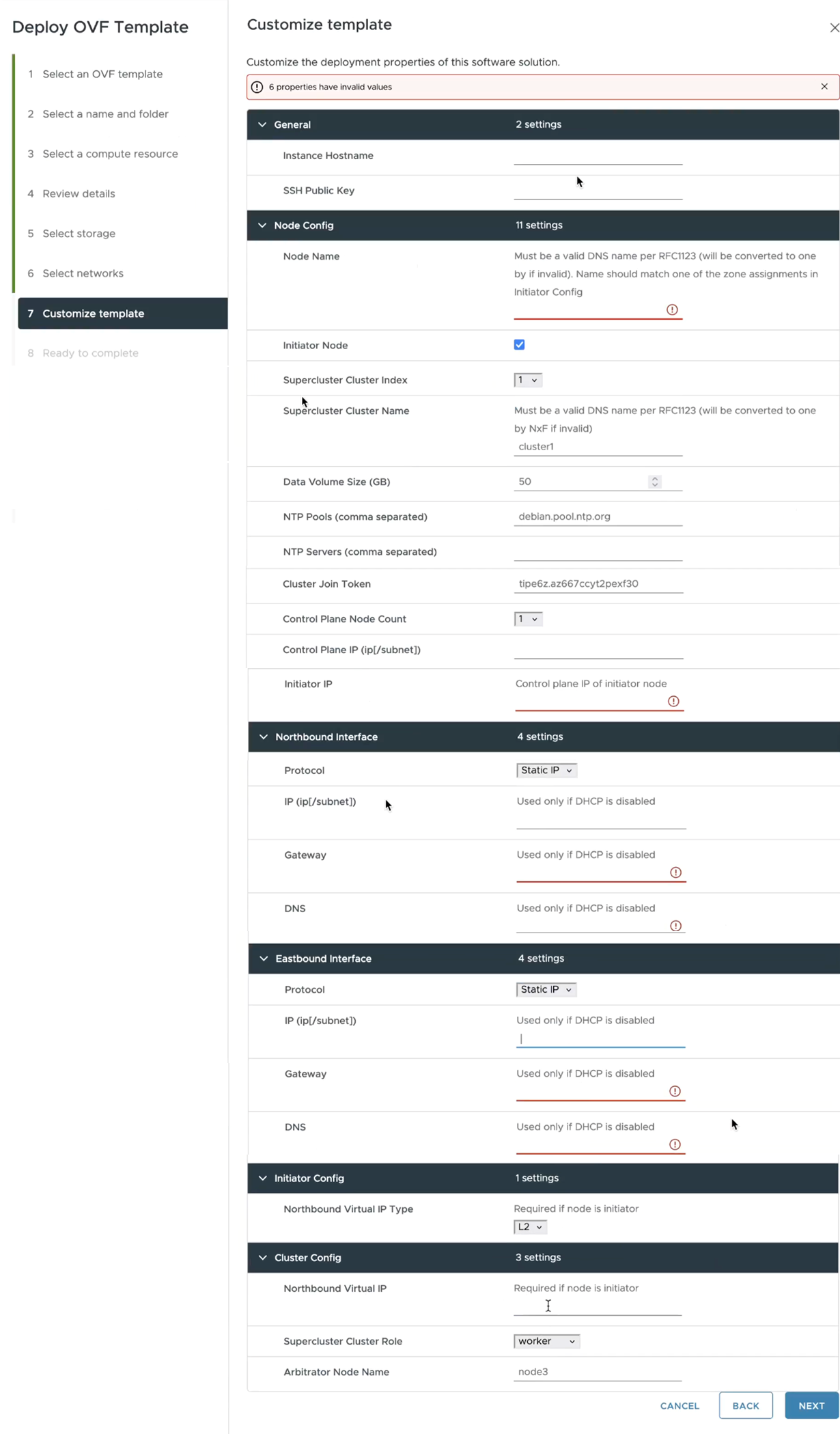

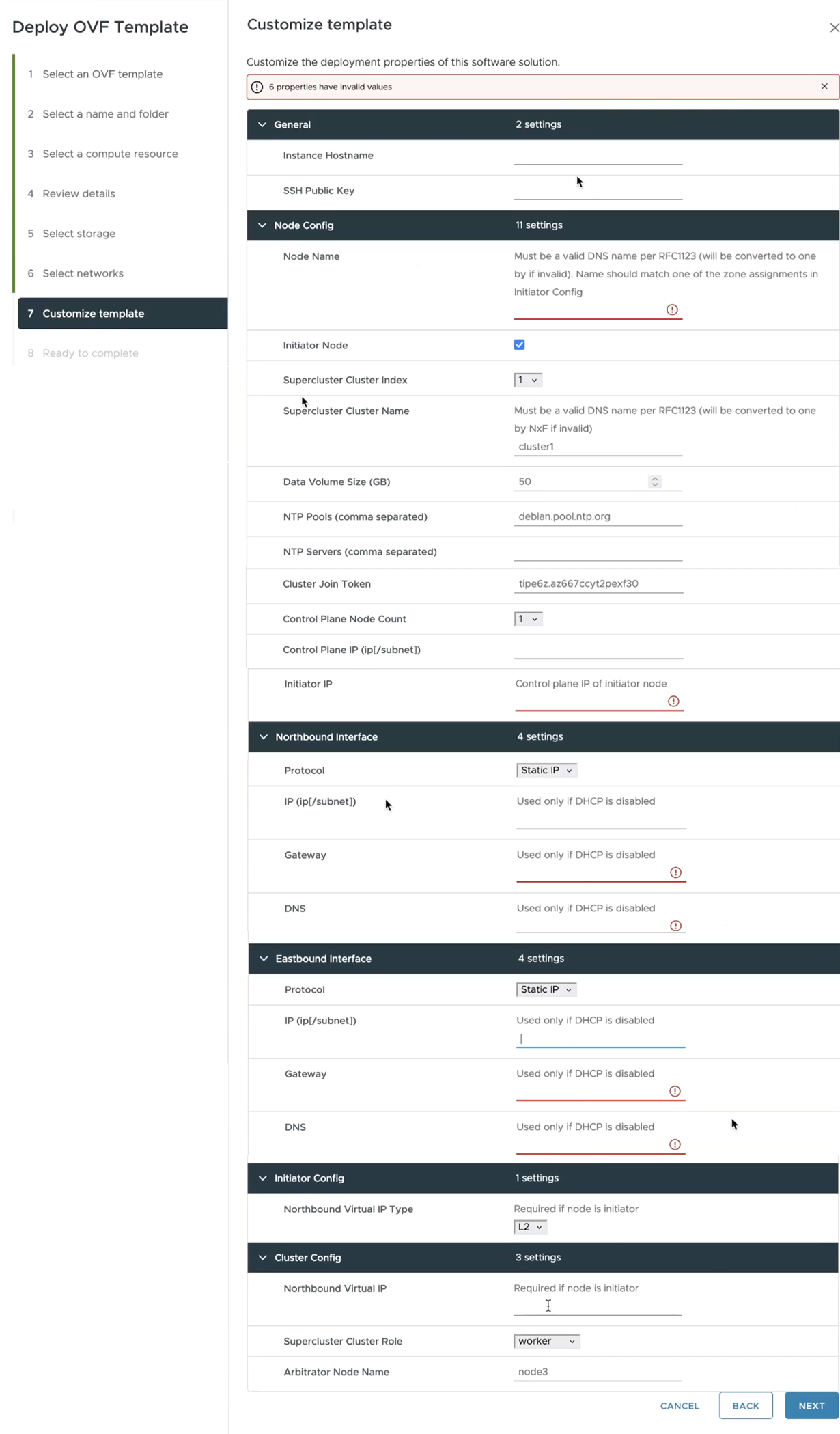

17. In the Customize template page, set the values as follows:

| Key |

Value |

| General |

|

| Instance Hostname |

The instance hostname. This is the same as used in 2. Select a name and folder page and must be a valid DNS name. |

| SSH Public Key |

The ssh public key generated by the customer’s admin. For example: ssh-keygen -t ed25519 -f ~/.ssh/... |

| Node Config |

|

| Node Name |

The standalone node name. This must be a valid DNS name: · contain no more than 253 characters · contain only lowercase alphanumeric characters, '-' or '.' · start with an alphanumeric character · end with an alphanumeric character This must exist in the zone config, that is, the name must match one of the zone assignments in the Initiator Config. |

| Initiator Node |

This is checked by default. Leave as is. The standalone node will be the initiator. |

| Supercluster Cluster Index |

The supercluster cluster index. Leave as is. |

| Supercluster Cluster Name |

The supercluster cluster name. Must be a valid DNS name per RFC1123. Leave as is. |

| Data Volume Size (GB) |

The data storage limit set for the host. See Requirements. Must be at least 500. |

| NTP Pools (comma separated) |

(Optional) A comma-separated list of the NTP pools. |

| NTP Servers (comma separated) |

(Optional) A comma-separated list of the NTP servers. |

| Cluster Join Token |

This is filled in automatically. Leave as is. |

| Control Plane Node Count |

Select 1. |

| Control Plane IP (ip[/subnet]) |

The private IP for the node. For standalone, this must be a local valid IP in the customer’s hypervisor. |

| Initiator IP |

For standalone, use the same IP set as the control plane IP. |

| Northbound Interface |

|

| Protocol |

Select Static IP or DHCP from the menu. |

| IP(ip[/subnet]) - if not using DHCP |

The public IP and subnet mask (in CIDR notation, that is, X.X.X.X/nn) for the instance northbound network if not using DHCP. Note: The subnet mask is mandatory. |

| Gateway - if not using DHCP |

The gateway IP for the instance northbound network if not using DHCP. |

| DNS |

The DNS server IP. |

| Eastbound Interface |

|

| Protocol |

Select Static IP or DHCP from the menu. |

| IP(ip[/subnet]) - if not using DHCP |

The public IP and subnet mask (in CIDR notation, that is, X.X.X.X/nn) for the instance eastbound network if not using DHCP. For standalone this can be the same as the control plane IP. Note: The subnet mask is mandatory. |

| Gateway - if not using DHCP |

The gateway IP for the instance eastbound network if not using DHCP. |

| DNS |

The DNS server IP. |

| Initiator Config |

Complete the entry for the standalone node |

| Northbound Virtual IP Type |

The northbound IP type. Set this to L2. Required as the standalone node is the initiator. |

| Cluster Config |

Complete the entry for the standalone node |

| Northbound Virtual IP |

The IP of the standalone instance used for RESTCONF or UI access. Required as the standalone node is the initiator. This is the same as the IP(ip[/subnet]) - if not using DHCP. |

| Supercluster Cluster Role |

Leave as is (worker). |

| Arbitrator Node Name |

Leave as is. Can use the default if the Node Name value is different. |

18. Click Next.

19. In the Ready to complete page, check the selections.

20. Copy and save the properties as a backup.

21. Click Finish.

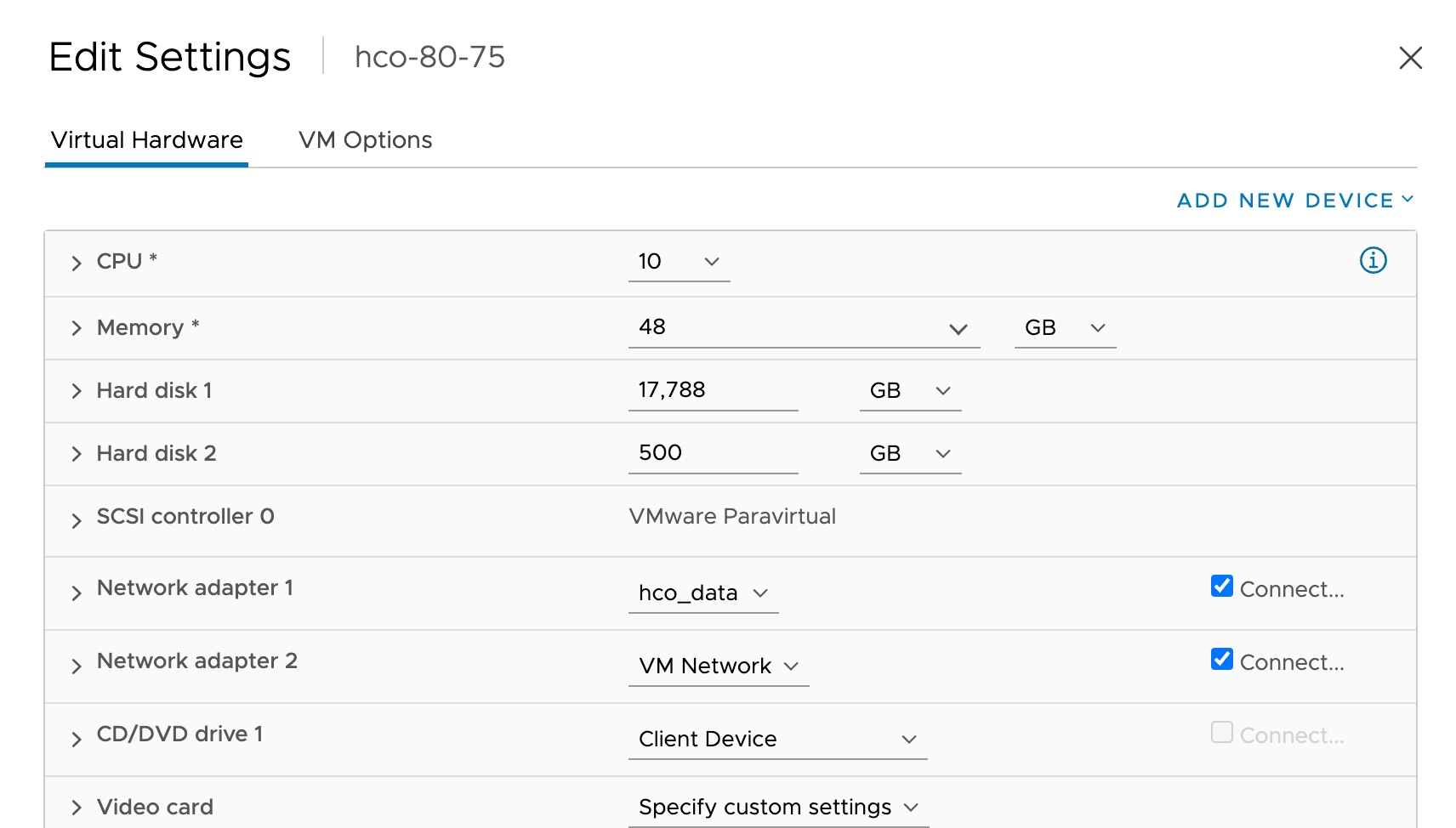

22. Right-click on the VM in the vSphere Client screen and select Edit Settings.

23. For CPU, select 10 and for Memory select 96.

24. Edit the CPU Resources and set the Reservation to 100%.

25. Edit the Memory Resources and set the Reservation to 100%.

26. Click OK.

27. Power on the VM. It may take a few minutes to get SSH access.

28. Try connecting to the VM. For this, use the private key associated with the public key used earlier during customizing public key options. Login to the VM:

# ssh -i <private-key_file> nxf@<hco_management_ip>

◦ If you are prompted for a password, there is probably a problem with the key.

◦ If the command timeouts, check the IP setting.

29. Run the following command to check the system status:

sedo system status

30. Change the default password and access string of the admin user:

sedo security user set --access role/admin -–password admin

Note: You will be prompted to provide a new password.

31. You can use sedo to configure the local users. For more information, see the Crosswork Hierarchical Controller Administration Guide.

32. Browse to the Crosswork Hierarchical Controller application using the Northbound Virtual IP and port 8443:

https://[Northbound Virtual IP]:8443/

Install Supercluster 1+1+1 Crosswork Hierarchical Controller using ESXi

This procedure describes deploying a geo-redundancy supercluster in a 1+1+1 scenario, which includes:

● active single-node cluster

● standby single-node cluster

● single witness (arbitrator) node

Prerequisites: BGP is used for traffic routing to the virtual IP from the various locations.

Note: Clusters cannot be added/removed or change roles after the clusters join the supercluster.

Important: All clusters in the supercluster must be up and running. Also, the supercluster must be active to perform any of these tasks:

● Backup

● Restore

● Service Pack Installation

● Upgrades

To install Crosswork Hierarchical Controller:

1. Right-click on the ESXi host in the vSphere Client screen, and then click Deploy OVF Template.

2. On the Select an OVF template page, specify the location of the source OVA template:

◦ URL: A URL to an OVA template located online.

◦ Local file: A location with the OVA template.

3. Click Next.

4. On the Select a name and folder page, specify a unique name for the VM Instance. The Virtual machine name must be a valid DNS name:

◦ contain no more than 253 characters

◦ contain only lowercase alphanumeric characters, '-' or '.'

◦ start with an alphanumeric character

◦ end with an alphanumeric character

5. From the list of options select the location of the VM to be used.

6. Click Next.

7. On the Select a compute resource page, select the destination compute resource on which you want to deploy the VM.

Note: While selecting the compute resource the compatibility check proceeds until it completes successfully.

8. Click Next.

9. On the Review details page, verify the template details.

10. Click Next.

11. On the Select storage page, set the Select virtual disk format based on SSD.

12. Leave the VM Storage Policy set to Datastore Default.

13. Select the storage.

14. Click Next.

15. In the Select networks page, set the destination networks:

◦ Control Plane: The control plane network. This may be the same for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node. This is not in use for the 1+1+1 configuration.

◦ Northbound: The VM network used for the VIP address for RESTCONF or UI access. This is different for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node.

◦ Eastbound: The VM network used for communication between the nodes that form the supercluster. This is different for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node.

16. Click Next.

17. In the Customize template page, set the values as follows:

| Key |

Value |

| General |

|

| Instance Hostname |

The instance hostname. This is the same as used in 2. Select a name and folder page and must be a valid DNS name. |

| SSH Public Key |

The ssh public key generated by the customer’s admin. For example: ssh-keygen -t ed25519 -f ~/.ssh/... |

| Node Config |

|

| Node Name |

The node name. This must be a valid DNS name: · contain no more than 253 characters · contain only lowercase alphanumeric characters, '-' or '.' · start with an alphanumeric character · end with an alphanumeric character This must exist in the zone config, that is, the name must match one of the zone assignments in the Initiator Config. |

| Initiator Node |

This is checked by default. · Leave checked. |

| Supercluster Cluster Index |

The supercluster cluster index. Set to 1 (active cluster), 2 (standby cluster), or 3 (arbitrator). |

| Supercluster Cluster Name |

The cluster name. Must be a valid DNS name per RFC1123. This is different for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node. |

| Data Volume Size (GB) |

The data storage limit set for the host. See Requirements. Must be at least 500. |

| NTP Pools (comma separated) |

(Optional) A comma-separated list of the NTP pools. |

| NTP Servers (comma separated) |

(Optional) A comma-separated list of the NTP servers. |

| Cluster Join Token |

This is filled in automatically. Leave as is. |

| Control Plane Node Count |

Select 1 for all nodes (for supercluster 1+1+1) |

| Control Plane IP (ip[/subnet]) |

The private IP for the node. Not in use externally for supercluster 1+1+1. Can be a dummy IP. |

| Initiator IP |

The same IP as the control plane IP. |

| Northbound Interface |

|

| Protocol |

Select Static IP or DHCP from the menu. |

| IP(ip[/subnet]) - if not using DHCP |

The public IP and subnet mask (in CIDR notation, that is, X.X.X.X/nn) for the instance northbound network if not using DHCP. |

| Gateway - if not using DHCP |

The gateway IP for the instance northbound network if not using DHCP. |

| DNS |

The DNS server IP. |

| Eastbound Interface |

|

| Protocol |

Select Static IP or DHCP from the menu. |

| IP(ip[/subnet]) - if not using DHCP |

The public IP and subnet mask (in CIDR notation, that is, X.X.X.X/nn) for the instance eastbound network if not using DHCP. For standalone this can be the same as the control plane IP. Note: The subnet mask is mandatory. |

| Gateway - if not using DHCP |

The gateway IP for the instance eastbound network if not using DHCP. |

| DNS |

The DNS server IP. |

| Initiator Config |

|

| Northbound Virtual IP Type |

The northbound IP type. Set this to L3. |

| Cluster Config |

|

| Northbound Virtual IP |

The IP used for RESTCONF or UI access. This is the same for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node. |

| Supercluster Cluster Role |

Set to worker (active cluster), worker (standby cluster), or arbitrator (arbitrator). |

| Arbitrator Node Name |

Leave the default value. Can use the default if the Node Name value is different. Not in use for the 1+1+1 configuration. |

18. Click Next.

19. In the Ready to complete page, check the selections.

20. Copy and save the properties as a backup.

21. Click Finish.

22. Right-click on the VM in the vSphere Client screen and select Edit Settings.

23. For CPU, select 10 and for Memory select 96.

24. Edit the CPU Resources and set the Reservation to 100%.

25. Edit the Memory Resources and set the Reservation to 100%.

26. Click OK.

27. Power on the VM. It may take a few minutes to get SSH access.

28. Try connecting to the VM. For this, use the private key associated with the public key used earlier during customizing public key options. Login to the VM:

# ssh -i <private-key_file> nxf@<hco_management_ip>

◦ If you are prompted for a password, there is probably a problem with the key.

◦ If the command timeouts, check the IP setting.

29. Run the following commands to get the supercluster routing up and running:

Region 1:

sedo ha bgp init <region1_node_name> <region1_northbound_ip> <BGP_AS_1>

sedo ha bgp router add <region1_node_name> <region1_northbound_gateway> <BGP_AS> <BGP_session_password>

Region 2:

sedo ha bgp init <region2_node_name> <region2_northbound_ip> <BGP_AS_2>

sedo ha bgp router add <region2_node_name> <region2_northbound_gateway> <BGP_AS> <BGP_session_password>

In both regions:

sedo ha bgp router list <region{n}_node_name>

30. Run the following command on each node and record the CLUSTER_ID:

sudo sedo supercluster status

31. On the Region 1 node, run the following command to generate a sudo command to run on the Region 2 node:

Region 1 (local) > Region 2 (remote):

sudo sedo supercluster wait-for -b <region1_node_eastboundIP>:10443 <region2_node_CLUSTER_ID>

32. On the Region 2 node, run the generated command.

33. On the Region 1 node, run the following command to generate a sudo command to run on the Region 3 node:

Region 1 (local) > Region 3 (remote):

sudo sedo supercluster wait-for -b <region1_node_eastboundIP>:10443 <region3_node_CLUSTER_ID>

34. On the Region 3 node, run the generated command.

35. On the Region2 node, run the following command to generate a sudo command to run on the Region 3 node:

Region 2 (local) > Region 3 (remote):

sudo sedo supercluster wait-for -b <region2_node_eastboundIP>:10443 <region3_node_CLUSTER_ID>

36. On the Region 3 node, run the generated command.

37. Run the following command on each node to view the peers:

sudo sedo supercluster status

38. Run the following command to check the supercluster connectivity:

sudo sedo supercluster connectivity

39. In region 1, run the following command to start the supercluster (this may take a few minutes):

sudo sedo supercluster start

40. Once connectivity check succeeds, run the following command to check the supercluster status (started):

sudo sedo supercluster status

41. Run:

sedo ha bgp show

42. Change the default password and access string of the admin user:

sedo security user set --access role/admin -–password admin

Note: You will be prompted to provide a new password.

43. You can use sedo to configure the local users. For more information, see the Crosswork Hierarchical Controller Administration Guide.

44. Browse to the Crosswork Hierarchical Controller application using the Northbound Virtual IP and port 8443:

https://[Northbound Virtual IP]:8443/

Install Crosswork Hierarchical Controller using KVM

Prepare KVM Config Files for Standalone

meta-data Standalone

The SSH public key.

Sample

********************************

meta-data sample:

********************************

instance-id: <instance_id>

local-hostname: <host_name>

public-keys:

- <public_key>

| Key |

Value |

| instance-id |

The instance ID. |

| local-hostname |

The instance host name, |

| public-keys |

The ssh public key generated by the customer’s admin. |

network-config Standalone

The network configuration file including the DNS configuration, the northbound, eastbound, and control plane networks, and the BGP router details.

Note: Although the control plane network is not in use for the standalone or supercluster 1+1+1 configuration, it should still be created.

Sample

********************************

network-config sample:

********************************

version: 2

ethernets:

enp1s0:

dhcp4: <false>/<true>

addresses:

- <control_plane_ip/subnet_mask>

enp2s0:

dhcp4: <false>/<true>

addresses:

- <northbound_ip>

gateway4: <gateway_ip>

nameservers:

addresses:

- <dns_server_ip>

enp3s0:

dhcp4: <false>/<true>

addresses:

- <eastbound_ip/subnet_mask>

| Key |

Value |

| version |

The config file version. |

| ethernets |

|

| enp1s0 |

The control plane network IP (and subnet mask) and whether DHCP is enabled. enp1s0: dhcp4: <false>/<true> addresses: - <control_plane_ip/subnet_mask> |

| enp2s0 |

The northbound network IP (and subnet mask) and whether DHCP is enabled, and the BGP router. enp2s0: dhcp4: <false>/<true> addresses: - <northbound_ip/subnet_mask> gateway4: <gateway_ip> nameservers: addresses: - <dns_server_ip> |

| enp3s0 |

The eastbound network IP (and subnet mask) and whether DHCP is enabled. Enp3s0: dhcp4: <false>/<true> addresses: - <eastbound_ip/subnet_mask> |

user-data Standalone

The optional NTP configuration, number of control planes, cluster configuration parameters, and the local users.

Sample

********************************

user-data sample:

********************************

#cloud-config

#NxF User Data

fs_setup:

- label: data

device: /dev/vdb

filesystem: ext4

mounts:

- ["/dev/vdb", "/data"]

ntp:

enabled: <true>

ntp_client: chrony

servers:

- <ntp_server>

nxf:

minControlPlaneCount: 1

clusterIndex: 1

clusterName: <cluster_name>

node:

name: <node_name>

initiator: <control_plane_ip> # Initiator control plane IP

joinToken: <join_token>

controlPlaneInterface: enp1s0

eastboundInterface: enp3s0

vip:

northbound:

interface: enp2s0

initiator:

vip:

northbound:

ip: <vip>

type: L2

kafka:

enabled: true

joinToken: <join_token>

arbitratorNode: <arbitrator_node>

clusterRole: worker

security:

localUsers:

- username: admin

displayName: NxF Admin

description: NextFusion Default Administrator

locked: true

mustChangePassword: false

expiresInDays: 0

access:

- permission/admin

| Key |

Value |

| ntp |

The NTP configuration. A list of the NTP servers. If not created, uses a default NTP server. ntp: enabled: true ntp_client: chrony servers: - <ntp_server> |

| fs_setup |

fs_setup: - label: data device: /dev/vdb filesystem: ext4 |

| mounts |

mounts: - ["/dev/vdb", "/data"] |

| nxf |

The configuration for standalone: nxf: |

| minControlPlaneCount |

The control plane count. For standalone, this is 1. minControlPlaneCount: 1 |

| clusterIndex |

The cluster index. For standalone, this is 1. Set to 1 (active cluster), 2 (standby cluster), or 3 (arbitrator). clusterIndex: <cluster_index> |

| clusterName |

The cluster name. Must be a valid DNS name per RFC1123. clusterName: <cluster_name> |

| node |

The standalone node name. This must be a valid DNS name: · contain no more than 253 characters · contain only lowercase alphanumeric characters, '-' or '.' · start with an alphanumeric character · end with an alphanumeric character The initiator IP. The standalone node is the initiator. The join token random string. The control plane, eastbound, and northbound interfaces (specified in network-config). node: name: <node_name> initiator: <control_plane_ip> joinToken: <join_token> controlPlaneInterface: enp1s0 eastboundInterface: enp3s0 vip: northbound: interface: enp2s0 |

| vip |

The VIP address. For standalone, the VIP and northbound IP are the same. vip: northbound: ip: <vip> type: L2 kafka: enabled: true |

| kafka |

The kafka configuration. kafka: enabled: true |

| joinToken |

The join token random string, |

| arbitratorNode |

The arbitrator node name. Not used for standalone but must be specified as a name different to the standalone node name. |

| clusterRole |

Must be set to worker for standalone. |

| security |

The security configuration including the local users. For more information, see the Crosswork Hierarchical Controller Administration Guide. security: localUsers: - username: admin displayName: NxF Admin description: NextFusion Default Administrator locked: true mustChangePassword: false expiresInDays: 0 access: - permission/admin |

Install Standalone Crosswork Hierarchical Controller using KVM

When you deploy the QCOW2 file it installs the Crosswork Hierarchical Controller platform and the various Crosswork Hierarchical Controller applications.

To install Crosswork Hierarchical Controller:

1. Complete the pre-requisites. See KVM Requirements.

2. Ensure that you have the required config files:

◦ network-config: The network configuration file including the DNS configuration, the northbound, eastbound, and control plane networks, and the BGP router details.

◦ user-data: The optional NTP configuration, number of control planes, cluster configuration parameters, and the local users.

◦ meta-data: The SSH public key.

3. Copy the three config files to a folder, for example, <PATH_TO_DEPLOY_DIR>/vmConfig/cloud-config/

4. Generate the ISO file using the above configuration files:

◦ mkisofs -o "

5. Install the node using the following command, specifying the following values:

● <HOST_NAME>: The Virtual Machine name.

● <CPUs>: The number of VM CPUs.

● <MEMORY>: The amount of VM memory.

● <PATH_TO_DEPLOY_DIR>: The deployment directory.

●

Important! This is the disk of the VM and each VM must have a unique file name.

● <SIZE>: The size of the VM disk in Gbytes.

● <Control_Plane_VLAN_ID>: The control plane network used for communication between local cluster nodes. Not intended for standalone use but must be configured.

● <Northbound_VLAN_ID>: The VM network used for the VIP address for RESTCONF or UI access.

● <Eastbound_VLAN_ID>: The VM network used for communication between the nodes that form the supercluster. Not intended for standalone use but must be configured.

virt-install \

--name <HOST_NAME> \

--vcpus <CPUs> \

--memory <MEMORY> \

--disk path=<PATH_TO_DEPLOY_DIR>/<QCOW2_FILE_NAME>.qcow2,

format=qcow2 \

--disk path=<PATH_TO_DEPLOY_DIR>/<QCOW2_FILE_NAME>-

data.qcow2,size=<SIZE>,device=disk,bus=virtio,format=qcow2 \

--disk path=<PATH_TO_DEPLOY_DIR>/vmConfig/cidata.iso,device=cdrom \

--osinfo debian12 \

--network bridge= <Control_Plane_VLAN_ID>,model=virtio \

--network bridge=<Northbound_VLAN_ID>,model=virtio \

--network bridge=<Eastbound_VLAN_ID>,model=virtio \

--boot loader=/usr/share/edk2/ovmf/OVMF_CODE.fd,loader.readonly=yes,

loader_secure=no,nvram.template=/usr/share/edk2/ovmf/OVMF_VARS.fd,hd,uefi\

--serial pty \

--console pty,target_type=serial \

--noautoconsole

6. Wait for the VM boot completion. It may take a few minutes to get SSH access.

7. Try connecting to the VM. For this, use the private key associated with the public key used earlier during customizing public key options. Login to the VM:

# ssh -i <private-key_file> nxf@<hco_management_ip>

◦ If you are prompted for a password, there is probably a problem with the key.

◦ If the command timeouts, check the IP setting.

8. Run the following command to check the system status:

sedo system status

9. Change the default password and access string of the admin user:

sedo security user set --access role/admin -–password admin

Note: You will be prompted to provide a new password.

10. You can use sedo to configure the local users. For more information, see the Crosswork Hierarchical Controller Administration Guide.

11. Browse to the Crosswork Hierarchical Controller application using the Northbound Virtual IP and port 8443:

https://[Northbound Virtual IP]:8443/

Prepare KVM Config Files for Supercluster

meta-data Supercluster

The SSH public key.

Sample

********************************

meta-data sample:

********************************

instance-id: <instance_id>

local-hostname: <host_name>

public-keys:

- <public_key>

| Key |

Value |

| local-hostname |

The instance host name, |

| public-keys |

The ssh public key generated by the customer’s admin. |

| public-keys |

The ssh public key generated by the customer’s admin. |

network-config Supercluster

The network configuration file including the DNS configuration, the northbound, eastbound, and control plane networks, and the BGP router details.

Note: Although the control plane network is not in use for the standalone or supercluster 1+1+1 configuration, it should still be created.

Sample

********************************

network-config sample:

********************************

version: 2

ethernets:

enp1s0:

dhcp4: <false>/<true>

addresses:

- <control_plane_ip/subnet_mask>

enp2s0:

dhcp4: <false>/<true>

addresses:

- <northbound_ip/subnet_mask>

gateway4: <gateway_ip>

nameservers:

addresses:

- <dns_server_ip>

enp3s0:

dhcp4: <false>/<true>

addresses:

- <eastbound_ip/subnet_mask>

| Key |

Value |

| version |

The config file version. |

| ethernets |

|

| enp1s0 |

The control plane network IP and whether DHCP is enabled. enp1s0: dhcp4: <false>/<true> addresses: - <control_plane_ip/subnet_mask> |

| enp2s0 |

The northbound network IP and whether DHCP is enabled, and the BGP router. enp2s0: dhcp4: <false>/<true> addresses: - <northbound_ip/subnet_mask> gateway4: <gateway_ip> nameservers: addresses: - <dns_server_ip> |

| enp3s0 |

The eastbound network IP and whether DHCP is enabled. Enp3s0: dhcp4: <false>/<true> addresses: - <eastbound_ip/subnet_mask> |

user-data Supercluster

The optional NTP configuration, number of control planes, cluster configuration parameters, and the local users.

Sample

********************************

user-data sample:

********************************

#cloud-config

#NxF User Data

fs_setup:

- label: data

device: /dev/vdb

filesystem: ext4

mounts:

- ["/dev/vdb", "/data"]

# NTP Block

# Not mandatory, If not present default ntp server will be used

ntp:

enabled: true

ntp_client: chrony

servers:

- <ntp_server>

nxf:

minControlPlaneCount: 1 # Set to 1 SC (1-1-1

clusterIndex: 1 # Cluster Index in SC deployments, set to 1/2/3

clusterName: <cluster_name>

node:

name: <node_name>

initiator: <control_plane_ip> # Initiator control plane IP

joinToken: <join_token>

controlPlaneInterface: enp1s0

eastboundInterface: enp3s0

vip:

northbound:

interface: enp2s0

# Initiator Block

initiator:

vip:

northbound:

ip: <vip> # VIP Address

type: L3

kafka:

enabled: true

joinToken: <join_token>

arbitratorNode: my-node3 # Local cluster arbitrator node name

clusterRole: <cluster_role> # worker/ arbitrator

security:

localUsers:

- username: admin

displayName: NxF Admin

description: NextFusion Default Administrator

locked: true

mustChangePassword: false

expiresInDays: 0

access:

- permission/admin

| Key |

Value |

| ntp |

The NTP configuration. A list of the NTP servers. If not created, uses a default NTP server. ntp: enabled: true ntp_client: chrony servers: - <ntp_server> |

| fs_setup |

fs_setup: - label: data device: /dev/vdb filesystem: ext4 |

| mounts |

mounts: - ["/dev/vdb", "/data"] |

| nxf |

The configuration for supercluster: nxf: |

| minControlPlaneCount |

The control plane count. For supercluster, this is 1. minControlPlaneCount: 1 |

| clusterIndex |

The supercluster cluster index. Set to 1 (active cluster), 2 (standby cluster), or 3 (arbitrator). clusterIndex: <cluster_index> |

| clusterName |

The cluster name. Must be a valid DNS name per RFC1123. This is different for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node. clusterName: <cluster_name> |

| node |

The node name. This must be a valid DNS name: · contain no more than 253 characters · contain only lowercase alphanumeric characters, '-' or '.' · start with an alphanumeric character · end with an alphanumeric character The Initiator control plane IP, join token, and control plane, eastbound, and northbound interfaces (specified in network-config). node: name: <node_name> initiator: <control_plane_ip> # Initiator control plane IP joinToken: <join_token> controlPlaneInterface: enp1s0 eastboundInterface: enp3s0 vip: northbound: interface: enp2s0 |

| initiator |

The initiator configuration. For all three nodes in the supercluster. initiator: |

| vip |

The IP used for RESTCONF or UI access. This is the same for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node. vip: northbound: ip: <vip> type: L3 kafka: enabled: true |

| kafka |

The kafka configuration. kafka: enabled: true |

| joinToken |

The join token random string. joinToken: <join_token> |

| arbitratorNode |

Can use the default if the Node Name value is different. Not in use for the 1+1+1 configuration. arbitratorNode: my-node3 # Local cluster arbitrator node name |

| clusterRole |

The cluster role, either, worker or arbitrator. Set to worker (active cluster), worker (standby cluster), or arbitrator (arbitrator). clusterRole: <cluster_role> # worker or arbitrator |

| security |

The security configuration including the local users. For more information, see the Crosswork Hierarchical Controller Administration Guide. security: localUsers: - username: admin displayName: NxF Admin description: NextFusion Default Administrator locked: true mustChangePassword: false expiresInDays: 0 access: - permission/admin |

Install Supercluster Crosswork Hierarchical Controller using KVM

This procedure describes deploying a geo-redundancy supercluster in a 1+1+1 scenario, which includes:

● active single-node worker cluster

● standby single-node worker cluster

● single witness (arbitrator) node

Prerequisites: BGP is used for traffic routing to the virtual IP from the various locations.

Note: Clusters cannot be added/removed or change roles after the clusters join the supercluster.

When you deploy the QCOW2 file it installs the Crosswork Hierarchical Controller platform and the various Crosswork Hierarchical Controller applications.

Important: All clusters in the supercluster must be up and running. Also, the supercluster must be active to perform any of these tasks:

● Backup

● Restore

● Service Pack Installation

● Upgrades

To install Crosswork Hierarchical Controller:

1. Follow this procedure for three VMs (two workers and one arbitrator).

2. Complete the pre-requisites. See KVM Requirements.

3. Ensure that you have the required config files:

◦ network-config: The network configuration file including the DNS configuration, the northbound, eastbound, and control plane networks, and the BGP router details.

◦ user-data: The optional NTP configuration, number of control planes, cluster configuration parameters, and the local users.

◦ meta-data: The SSH public key.

4. Copy the three config files to a folder, for example, <PATH_TO_DEPLOY_DIR>/vmConfig/cloud-config/

5. Generate the ISO file using the above configuration files:

◦ mkisofs -o "

6. Install the node using the following command, specifying the following values:

● <HOST_NAME>: The Virtual Machine name.

● <CPUs>: The number of VM CPUs.

● <MEMORY>: The amount of VM memory.

● <PATH_TO_DEPLOY_DIR>: The deployment directory.

●

Important! This is the disk of the VM and each VM must have a unique file name.

● <SIZE>: The size of the VM disk in Gbytes.

● <Control_Plane_VLAN_ID>: The control plane network used for communication between local cluster nodes. Not intended for supercluster use but must be configured. This may be the same for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node.

● <Northbound_VLAN_ID>: The VM network used for the VIP address for RESTCONF or UI access. This is different for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node.

● <Eastbound_VLAN_ID>: The VM network used for communication between the nodes that form the supercluster. This is different for the active single-node cluster, standby single-node cluster, and single witness (arbitrator) node.

virt-install \

--name <HOST_NAME> \

--vcpus <CPUs> \

--memory <MEMORY> \

--disk path=<PATH_TO_DEPLOY_DIR>/<QCOW2_FILE_NAME>.qcow2,

format=qcow2 \

--disk path=<PATH_TO_DEPLOY_DIR>/<QCOW2_FILE_NAME>-

data.qcow2,size=<SIZE>,device=disk,bus=virtio,format=qcow2 \

--disk path=<PATH_TO_DEPLOY_DIR>/vmConfig/cidata.iso,device=cdrom \

--osinfo debian12 \

--network bridge= <Control_Plane_VLAN_ID>,model=virtio \

--network bridge=<Northbound_VLAN_ID>,model=virtio \

--network bridge=<Eastbound_VLAN_ID>,model=virtio \

--boot loader=/usr/share/edk2/ovmf/OVMF_CODE.fd,loader.readonly=yes,

loader_secure=no,nvram.template=/usr/share/edk2/ovmf/OVMF_VARS.fd,hd,uefi\

--serial pty \

--console pty,target_type=serial \

--noautoconsole

7. Wait for the VM boot completion. It may take a few minutes to get SSH access.

8. Try connecting to the VM. For this, use the private key associated with the public key used earlier during customizing public key options. Login to the VM:

# ssh -i <private-key_file> nxf@<hco_management_ip>

◦ If you are prompted for a password, there is probably a problem with the key.

◦ If the command timeouts, check the IP setting.

9. Run the following commands to get the supercluster routing up and running:

Region 1:

sedo ha bgp init <region1_node_name> <region1_northbound_ip> <BGP_AS_1>

sedo ha bgp router add <region1_node_name> <region1_northbound_gateway> <BGP_AS> <BGP_session_password>

Region 2:

sedo ha bgp init <region2_node_name> <region2_northbound_ip> <BGP_AS_2>

sedo ha bgp router add <region2_node_name> <region2_northbound_gateway> <BGP_AS> <BGP_session_password>

In both regions:

sedo ha bgp router list <region{n}_node_name>

10. Run the following command on each node and record the CLUSTER_ID:

sudo sedo supercluster status

11. On the Region 1 node, run the following command to generate a sudo command to run on the Region 2 node:

Region 1 (local) > Region 2 (remote):

sudo sedo supercluster wait-for -b <region1_node_eastboundIP>:10443 <region2_node_CLUSTER_ID>

12. On the Region 2 node, run the generated command.

13. On the Region 1 node, run the following command to generate a sudo command to run on the Region 3 node:

Region 1 (local) > Region 3 (remote):

sudo sedo supercluster wait-for -b <region1_node_eastboundIP>:10443 <region3_node_CLUSTER_ID>

14. On the Region 3 node, run the generated command.

15. On the Region2 node, run the following command to generate a sudo command to run on the Region 3 node:

Region 2 (local) > Region 3 (remote):

sudo sedo supercluster wait-for -b <region2_node_eastboundIP>:10443 <region3_node_CLUSTER_ID>

16. On the Region 3 node, run the generated command.

17. Run the following command on each node to view the peers:

sudo sedo supercluster status

18. Run the following command to check the supercluster connectivity:

sudo sedo supercluster connectivity

19. In region 1, run the following command to start the supercluster (this may take a few minutes):

sudo sedo supercluster start

20. Once connectivity check succeeds, run the following command to check the supercluster status (started):

sudo sedo supercluster status

21. Run:

sedo ha bgp show

22. Change the default password and access string of the admin user:

sedo security user set --access role/admin -–password admin

Note: You will be prompted to provide a new password.

23. You can use sedo to configure the local users. For more information, see the Crosswork Hierarchical Controller Administration Guide.

24. Browse to the Crosswork Hierarchical Controller application using the Northbound Virtual IP and port 8443:

https://[Northbound Virtual IP]:8443/

View Installed Crosswork Hierarchical Controller Applications

To view the installed Crosswork Hierarchical Controller applications:

1. After the installation is complete, ssh to the server.

2. Run the following command to see which applications are installed:

sedo hco apps list

The output displays the installed applications with their name and version.

Add Network Adapters and Discover Network Devices

For instructions on how to add network adapters and discover network devices, refer to the Cisco Crosswork Hierarchical Controller Administration Guide.

Upgrade and Convert Standalone Instance to Supercluster

You can upgrade a Cisco Crosswork Hierarchical Controller version 10.1 standalone instance and then use it as an instance in the creation of a Cisco Crosswork Hierarchical Controller version 11 supercluster. This requires an eastbound interface for the supercluster.

This requires you to upgrade the instance, create & set an eastbound interface, and then configure the supercluster.

Prerequisites

● Cisco Crosswork Hierarchical Controller version 10.1 standalone instance to be upgraded

● Two Cisco Crosswork Hierarchical Controller version 11 instances

● Cisco Crosswork Hierarchical Controller version 11 system pack

Upgrade the Crosswork Hierarchical Controller version 10.1 instance to Crosswork Hierarchical Controller version (as described in the Upgrading Standalone). This is described in summary below.

Upgrade

1. Copy the system pack to the instance /data folder.

2. Upload the system pack:

sudo sedo system upgrade upload /data/<system-pack-name>

3. List the available upgrades:

sudo sedo system upgrade list

4. Apply the upgrade:

sudo sedo system upgrade apply

5. Wait for a minute and then reboot to complete:

sudo reboot

6. After the machine comes up, check that the upgrade is completed and all the system components are up and running:

sedo system status

sedo version

Create & Add an Eastbound Interface

This procedure describes how to add an eastbound interface to an instance in OpenStack. The instructions vary according to your hypervisor.

To add the eastbound interface:

1. In hypervisor/using hypervisor UIs identify the existing eastbound network that is shared with the 2

two other instances originally created with the Crosswork Hierarchical Controller v11 image.

2. Add the eastbound interface to the instance (using the hypervisor).

3. Create a network file for the eastbound interface on the instance itself.

For example, list existing interfaces files:

ls -l /etc/systemd/network/

4. Create an interface network file that matches the new interface added to the instance:

sudo vi /etc/systemd/network/10-cloud-init-enp9s0.network

5. Add the following sections to the file created above. The content of the file needs to be “aligned” with the interface that was associated to the VM by the hypervisor.

[Link]

MTUBytes=1450

[Match]

MACAddress=[ MAC address allocated by hypervisor]

Name=enp9s0

[Network]

DHCP=ipv4

6. Modify the new file’s permissions:

sudo chown systemd-network:systemd-network /etc/systemd/network/10-cloud-init-enp9s0.network

sudo chmod 644 /etc/systemd/network/10-cloud-init-enp9s0.network

7. Restart the VM network service for the changes to take effect:

sudo systemctl restart systemd-networkd

8. Set the eastbound interface (the one that was added in the previous section):

sudo sedo system set-eastbound INTERFACE_NAME

Update Northbound Interface VIP

This optional procedure describes how to update the northbound interface VIP.

To update the northbound interface VIP:

· Run the following commands to update the VIP:

kubectl -n nxf-system set env statefulset/controller VIP_TYPE=L3

kubectl -n nxf-system set env statefulset/controller VIP_ADDRESS=<new-vip-address>

sudo kubectl --kubeconfig /etc/kubernetes/admin.conf -n nxf-system scale deployment/ingress-proxy --replicas 0

kubectl -n nxf-system patch deployment/ingress-proxy --type='json' -p='[{"op": "replace", "path": "/spec/template/spec/containers/0/ports/0/hostIP", "value": "<new-vip-address>"}]'

sudo kubectl --kubeconfig /etc/kubernetes/admin.conf -n nxf-system scale deployment/ingress-proxy --replicas $(kubectl get node --no-headers -l nxf-cluster=participant | wc -l)

sudo ip address del <old-vip-address>/32 dev <northbound-interface>

Use Standalone Instance to initiate a Supercluster

1. Continue with the supercluster configuration procedure below.

2. Run the following commands to get the supercluster routing up and running:

Region 1:

sedo ha bgp init <region1_node_name> <region1_northbound_ip> <BGP_AS_1>

sedo ha bgp router add <region1_node_name> <region1_northbound_gateway> <BGP_AS> <BGP_session_password>

Region 2:

sedo ha bgp init <region2_node_name> <region2_northbound_ip> <BGP_AS_2>

sedo ha bgp router add <region2_node_name> <region2_northbound_gateway> <BGP_AS> <BGP_session_password>

In both regions:

sedo ha bgp router list <region{n}_node_name>

3. Run the following command on each node and record the CLUSTER_ID:

sudo sedo supercluster status

4. On the Region 1 node, run the following command to generate a sudo command to run on the Region 2 node:

Region 1 (local) > Region 2 (remote):

sudo sedo supercluster wait-for -b <region1_node_eastboundIP>:10443 <region2_node_CLUSTER_ID>

5. On the Region 2 node, run the generated command.

6. On the Region 1 node, run the following command to generate a sudo command to run on the Region 3 node:

Region 1 (local) > Region 3 (remote):

sudo sedo supercluster wait-for -b <region1_node_eastboundIP>:10443 <region3_node_CLUSTER_ID>

7. On the Region 3 node, run the generated command.

8. On the Region2 node, run the following command to generate a sudo command to run on the Region 3 node:

Region 2 (local) > Region 3 (remote):

sudo sedo supercluster wait-for -b <region2_node_eastboundIP>:10443 <region3_node_CLUSTER_ID>

9. On the Region 3 node, run the generated command.

10. Run the following command on each node to view the peers:

sudo sedo supercluster status

11. Run the following command to check the supercluster connectivity:

sudo sedo supercluster connectivity

12. In region 1, run the following command to start the supercluster (this may take a few minutes):

sudo sedo supercluster start

13. Once connectivity check succeeds, run the following command to check the supercluster status (started):

sudo sedo supercluster status

14. Run:

sedo ha bgp show

15. Change the default password and access string of the admin user:

sedo security user set --access role/admin -–password admin

Note: You will be prompted to provide a new password.

16. You can use sedo to configure the local users. For more information, see the Crosswork Hierarchical Controller Administration Guide.

17. Browse to the Crosswork Hierarchical Controller application using the Northbound Virtual IP and port 8443:

https://[Northbound Virtual IP]:8443/

Upgrade Cisco Crosswork Hierarchical Controller

This topic describes how to upgrade Crosswork Hierarchical Controller.

Note: To upgrade from Crosswork Hierarchical Controller version 10 to version 11 is a multi-step process:

1. Upgrade from version 10.0 to version 10.1. For more information, see the Crosswork Hierarchical Controller Release Notes version 10.1.

2. Upgrade from version 10.1 to version 11.

1. Download Crosswork Hierarchical Controller version 11 from cisco.com.

2. Download the adapter service packs. These will be required after the upgrade, and before you re-enable the adapters. The installation command MUST use the adapter names that are in use prior to upgrading, so record the names that appear in Device Manager.

3. Check that the system status is Running:

sedo system status

4. Check that the Crosswork Hierarchical Controller version is 10.1:

sedo hco version

5. Check the NSO version:

sedo nso version

This procedure describes how to upgrade standalone Crosswork Hierarchical Controller:

1. Disable the adapters

2. Backup Crosswork Hierarchical Controller Standalone

3. Replace NSO Packages

4. Upgrade Crosswork Hierarchical Controller Standalone

5. Upgrade the adapters

Disable Adapters

Note: During the upgrade, if the Cisco CNC adapter is configured with the same destination name, old CDG adapter collection jobs are automatically removed from the Cisco CNC controller. If a different destination name is used, the old collection jobs must be manually deleted from the Cisco CNC controller.

Important: Ensure that you disable all the adapters, especially the CDG adapters, before upgrading. If you do not, the default DYNAMIC_APP_PORT=65001 will not be available after upgrade for the CNC adapters, and this will require additional configuration to use a different port.

1. Disable all the adapters. For each adapter:

a. In the applications bar in Crosswork Hierarchical Controller, select Device Manager > Adapters.

b. Select the required adapter in the Adapters list on the left.

c. Select the General tab.

d. Deselect the Enabled checkbox.

e. Click Save.

2. Disable each of the adapter services:

sedo service disable <adapter_service_name>

3. Check that the adapter services are disabled:

sedo system status

Backup Crosswork Hierarchical Controller Standalone

Important: Run this procedure after disabling the adapters.

1. Make a full backup of the system:

sedo backup create full

2. Check the backup list:

sedo backup list

3. Download the backup file with a password:

sedo backup download -p <password> <backup file name>

Replace NSO Packages

1. Create an NSO backup before upgrading the NSO packages:

sedo nso backup create

2. Delete the old packages in the /nso/run/packages directory of the NSO Manager pod:

nso-pod$ cd /nso/run/packages

nso-pod$ rm -r *

nso-pod$ exit

3. Download new packages and place them in the /nso/run/packages directory:

kubectl cp [nso-package].tar.gz hco/nso-manager-srv-0:/usr/app

kubectl exec -it nso-manager-srv-0 -n hco -- /bin/bash

nso-pod$ cp /usr/app/[nso-package].tar.gz /nso/run/packages/

nso-pod$ cd /nso/run/packages/

nso-pod$ tar -zvxf [nso-package].tar.gz

nso-pod$ cp [nso-package]/packages/*.tar.gz /nso/run/packages/

{noformat}

Upgrade Crosswork Hierarchical Controller Standalone

1. Copy the system pack provided to the instance /data folder.

2. Upload the system pack:

sudo sedo system upgrade upload /data/<system-pack-name>

3. List the available upgrades:

sudo sedo system upgrade list

4. Apply the upgrade:

sudo sedo system upgrade apply

5. Wait one minute and then reboot to complete:

sudo reboot

6. Check:

sedo version

sedo hco version

sedo nso version

sedo config list-keys

Upgrade Adapters

1. Download the adapter service packs.

2. Install the adapter service packs. The installation command MUST use the name that was in use prior to upgrading (if this is not the default adapter name, that is, if the DYNAMIC_APP_GUID param was used in the original installation to modify the name, install the new service pack with DYNAMIC_APP_GUID=[adapter name as it was displayed in Device Manager on v10].

3. Wait until the adapter pods are re-created using the newly installed service pack, and then validate that the adapter pods are restarted:

sedo system status command

4. Re-enable the adapters in Device Manager.

This procedure describes how to upgrade a Crosswork Hierarchical Controller supercluster from version 10.1 to version 11.

A supercluster in a 1+1+1 scenario includes:

● active single-node cluster

● standby single-node cluster

● single witness (arbitrator) node

Note: If Cluster1 is down and the standby node acts as the active node, do not deploy the new adapter. The deployment will fail with an error: cluster 1 is not reachable. Ensure that the Cluster1 is operational and reachable before you initiate the deployment process.

This procedure describes how to upgrade:

1. Disable the adapters on the Active node

2. Backup the Active node

3. Upgrade NSO on both the Active and Standby nodes

4. Upgrade the nodes separately in the following order:

a. Active

b. Standby

c. Arbitrator

5. Upgrade the adapters on the Active node

Disable Adapters on Active Node

Disable all the adapters on the active node only.

Important: Ensure that you disable all the adapters, especially the CDG adapters, before upgrading. If you do not, the default DYNAMIC_APP_PORT=65001 will not be available after upgrade for the CNC adapters, and this will require additional configuration to use a different port.

1. Disable all the adapters. For each adapter:

a. In the applications bar in Crosswork Hierarchical Controller, select Device Manager > Adapters.

b. Select the required adapter in the Adapters list on the left.

c. Select the General tab.

d. Deselect the Enabled checkbox.

e. Click Save.

2. Disable each of the adapter services:

sedo service disable <adapter_service_name>

3. Check that the adapter services are disabled:

sedo system status

Backup Active Node

Backup the active node only.

1. Make a full backup of the system:

sedo backup create full

2. Check the backup list:

sedo backup list

3. Download the backup file with a password:

sedo backup download -p <password> <backup file name>

Replace NSO Packages

Replace the NSO packages on both the Active and Standby nodes:

1. Create an NSO backup before upgrading the NSO packages:

sedo nso backup create

2. Delete the old packages in the /nso/run/packages directory of the NSO Manager pod:

nso-pod$ cd /nso/run/packages

nso-pod$ rm -r *

nso-pod$ exit

3. Download new packages and place them in the /nso/run/packages directory:

kubectl cp [nso-package].tar.gz hco/nso-manager-srv-0:/usr/app

kubectl exec -it nso-manager-srv-0 -n hco -- /bin/bash

nso-pod$ cp /usr/app/[nso-package].tar.gz /nso/run/packages/

nso-pod$ cd /nso/run/packages/

nso-pod$ tar -zvxf [nso-package].tar.gz

nso-pod$ cp [nso-package]/packages/*.tar.gz /nso/run/packages/

{noformat}

Upgrade the Nodes

Upgrade the nodes in parallel:

1. Active

2. Standby

3. Arbitrator

1. Copy the system pack to all instances /data folder.

2. Upload the system pack on all nodes:

sudo sedo system upgrade upload /data<system-pack-name>

3. List the available upgrades on all nodes:

sudo sedo system upgrade list

4. Apply the upgrade on all nodes:

sudo sedo system upgrade apply

Note: Wait for apply to be completed on all nodes before proceeding to the next step.

5. Wait for a minute and then reboot to complete (all nodes):

sudo reboot

6. Check:

sedo version

sedo hco version

sedo nso version

sedo config list-keys

Upgrade Adapters on Active Node

Upgrade and enable the adapters on the active node only.

Note: During the upgrade, if the Cisco CNC adapter is configured with the same destination name, old CDG adapter collection jobs are automatically removed from the Cisco CNC controller. If a different destination name is used, the old collection jobs must be manually deleted from the Cisco CNC controller.

1. Download the adapter service packs.

2. Install the adapter service packs. The installation command MUST use the name that was in use prior to upgrading (if this is not the default adapter name, that is, if the DYNAMIC_APP_GUID param was used in the original installation to modify the name, install the new service pack with DYNAMIC_APP_GUID=[adapter name as it was displayed in Device Manager on v10].

3. Wait until the adapter pods are re-created using the newly installed service pack, and then validate that the adapter pods are restarted:

sedo system status command

4. Re-enable the adapters in Device Manager.

Install a Cisco Network Services Orchestrator Crosswork Hierarchical Controller Function Pack

NSO Engine Embedded Inside Crosswork Hierarchical Controller

NSO runs as an Crosswork Hierarchical Controller micro-service, alongside the Crosswork Hierarchical Controller applications and adapters.

This exposes the NSO NBI from Crosswork Hierarchical Controller and the NSO UI as an Crosswork Hierarchical Controller application (which will mostly be used for configuration of Function Packs/NEDs).

Note: Crosswork Hierarchical Controller HA and embedded NSO integrate seamlessly. The NSO database exists on both the Crosswork Hierarchical Controller Active and Standby nodes, and the database is synchronized continuously. If the Crosswork Hierarchical Controller Active node fails, and the Standby node takes over and becomes the Active node, NSO is updated automatically and switches nodes too.

Network Services Orchestrator (NSO)

The Crosswork Hierarchical Controller Function Pack integrates Cisco NSO with a controller to deploy services on the controller. This integration is with either a Nokia Service Provider (NSP) controller or a Cisco Crosswork Network Controller (CNC). The NEDs are installed as part of the Function Pack installation.

For full details on installing and using the Network Services Orchestrator (NSO) Crosswork Hierarchical Controller Function Pack, see the:

● Cisco NSO Crosswork Hierarchical Controller - Function Pack Installation Guide

● Cisco NSO Crosswork Hierarchical Controller - Function Pack User Guide.

For details on Function Pack versions, contact your Cisco Representative.

For full details on installing and using the Cisco NSO Routed Optical Networking Core Function Pack, see the:

● Cisco NSO Routed Optical Networking Core Function Pack Installation Guide

● Cisco NSO Routed Optical Networking Core Function Pack User Guide

● Cisco RON Solution Guide

Install NSO Function Pack in Crosswork Hierarchical Controller Embedded Instance

The embedded NSO instance is a fully functional standalone container installation of NSO. The installation procedure is the same as the standard installation with one difference: the file system of NSO is not readily available on the host server.

To load the new function pack, the administrator must copy the function pack files onto the NSO pod, and then log into the pod shell and place the files in the correct directories. Once the files are on the NSO pod, follow the instructions in the Function Pack Installation Guide.

To install NSO Function Pack in Crosswork Hierarchical Controller Embedded Instance:

1. Connect to the Crosswork Hierarchical Controller host server via SSH.

2. Download the NSO function pack.

3. Copy the NSO function pack into the NSO pod:

kubectl cp [function-pack-file] <zone-a/zone-b>/nso-manager-srv-0:/usr/app

4. Log into the pod shell:

sedo shell <zone-a/zone-b>/nso-manager-srv

cd /usr/app/nso-temp

5. Continue with function pack extraction and installation as specified in the Function Pack Installation Guide.

6. To provision the VPN using eNSO:

a. Configure devices authentication groups:

set devices authgroups group CWAuth default-map

set devices authgroups group CWAuth default-map remote-name admin

set devices authgroups group CWAuth default-map remote-password xxxxx

b. Add the CNC as a device in eNSO:

set devices device Rao-CW address cwgeo-mgmt.cisco.com

set devices device Tier4-CW port 30603

set devices device Tier4-CW authgroup CWAuth

set devices device Tier4-CW device-type generic ned-id cisco-cnc_rc-gen-1.0

set devices device Tier4-CW ned-settings cisco-cnc_rc connection ssl accept-any true

c. Add the controller:

set hco-nm-settings default-hco-controller CWAuth

Considerations for a High Availability (HA) Deployment

HA in NSO needs to be disabled for installing and updating function packs.

1. On both the active and standby nodes, in the NSO CLI execute:

admin@ncs> request high-availability disable

2. On both the active and standby nodes, install the function pack.

3. Restart the NSO pods to reactivate HA protection:

sudo kubectl --kubeconfig /etc/kubernetes/admin.conf -n zone-a scale statefulset nso-manager-srv --replicas=0

sudo kubectl --kubeconfig /etc/kubernetes/admin.conf -n zone-b scale statefulset nso-manager-srv --replicas=0

sudo kubectl --kubeconfig /etc/kubernetes/admin.conf -n zone-a scale statefulset nso-manager-srv --replicas=1

sudo kubectl --kubeconfig /etc/kubernetes/admin.conf -n zone-b scale statefulset nso-manager-srv --replicas=1

Example of How to Install the RON Function Pack

This describes an example of how to install a RON function pack on the NSO pod. This example refers to a version previously used. For the complete and most updated procedures, you must refer to the related Function Pack Installation Guide.

1. Copy the function pack file into the pod:

kubectl cp nso-6.1-ron-2.1.1.tar.gz zone-a/nso-manager-srv-0:/usr/app

2. Move into the NSO pod:

sedo shell zone-a/nso-manager-srv

cd /usr/app/nso-temp

3. Untar the function pack tar.gz file:

tar xvzf nso-6.1-ron-2.1.1.tar.gz

cd nso-6.1-ron-2.1.1/

4. Copy the function pack packages to the rundir:

cp ron/core-fp-packages/*.tar.gz $NCS_RUN_DIR/packages/

5. Initiate NSO CLI command from the specified path for loading packages:

cd $NCS_RUN_DIR/packages/

ncs_cli -u admin

6. Load the packages:

request packages reload

7. Verify that the function pack has successfully loaded:

show packages package package-version | select build-info ncs version | select build-info file | select build-info package sha1 | select oper-status error-info | select oper-status up | tab

8. Set SSH algorithms public-key:

configure

set devices global-settings ssh-algorithms public-key [ ssh-ed25519 ecdsa-sha2-nistp256 ecdsa-sha2-nistp384 ecdsa-sha2-nistp521 rsa-sha2-512 rsa-sha2-256 ssh-rsa ]

commit

9. Initiate NSO CLI command from the specified path to load merge XMLs:

cd /nso/run/packages/nso-6.1-ron-2.1.1/ron/bootstrap-data

ncs_cli -u admin

10. Load bootstrap data according to the function pack installation guide:

configure

unhide debug

unhide ron

load merge commit-queue-settings.xml

commit

...

<repeat for all files in installation guide>

...

load merge RON-status-codes.xml

commit

The device-type and ned-id depend on the actual device you want to connect, as well as the NED version installed on NSO. Update the commands below accordingly.

To add a device:

1. Add credentials:

set devices authgroups group <credential_name> default-map remote-name <username> remote-password <password>

commit

2. Add device:

set devices device <device_name> address <IP> authgroup <device_authgroup_name> device-type cli ned-id <cisco-iosxr-cli-7.49>

set devices device <device_name> state admin-state unlocked

commit

request devices device <device_name> ssh fetch-host-key

request devices device <device_name> connect

request devices device <device_name> sync-from

Feedback

Feedback