Cisco and Hitachi Adaptive Solutions for SAP HANA TDI

Available Languages

Cisco and Hitachi Adaptive Solutions for SAP HANA TDI

Deployment Guide for Cisco and Hitachi Converged Infrastructure with Cisco UCS Blade Servers, Cisco Nexus 9336C-FX2 Switches, Cisco MDS 9706 Fabric Switches, and Hitachi VSP G370 Storage Systems with SUSE Linux Enterprise Server for SAP Applications 12 SP4 and Red Hat Enterprise Linux 7.5

Published: April 2, 2019

Updated: February 7, 2020

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2020 Cisco Systems, Inc. All rights reserved.

Table of Contents

Deployment Hardware and Software

Hardware and Software Versions

Cisco Nexus 9000 Series Switch Network Configuration

Cisco Nexus 9000 Initial Configuration

Enable Appropriate Cisco Nexus 9000 Series Switches Features and Settings

Create VLANs for SAP HANA Traffic

Configure Virtual Port-Channel Domain

Configure Network Interfaces for the VPC Peer Links

Configure vPCs with Cisco UCS Fabric Interconnect

Configuration of Hitachi Storage

Create Dynamic Provisioning Pools

Provision the LUNS (Virtual Volumes)

Create Virtual Volumes for the Operating System LUNS and Map Ports

Create Virtual Volumes for HANA Shared File System and Map Ports

Create Virtual Volumes for Log LUNs and Map Ports

Create Virtual Volumes for Data LUNs and Map Ports

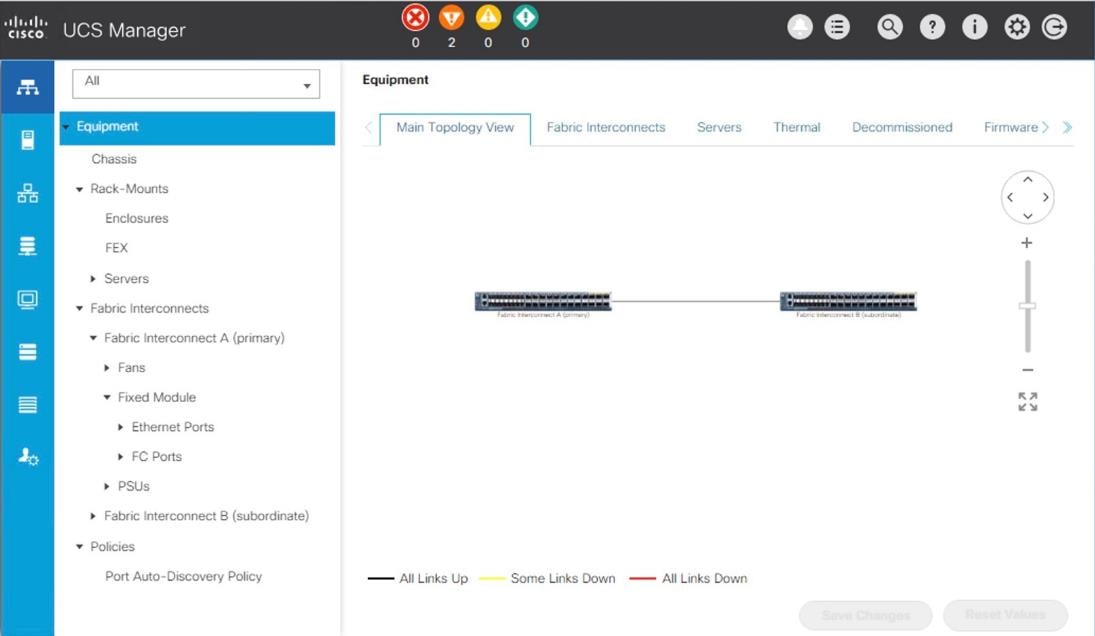

Cisco UCS Configuration Overview

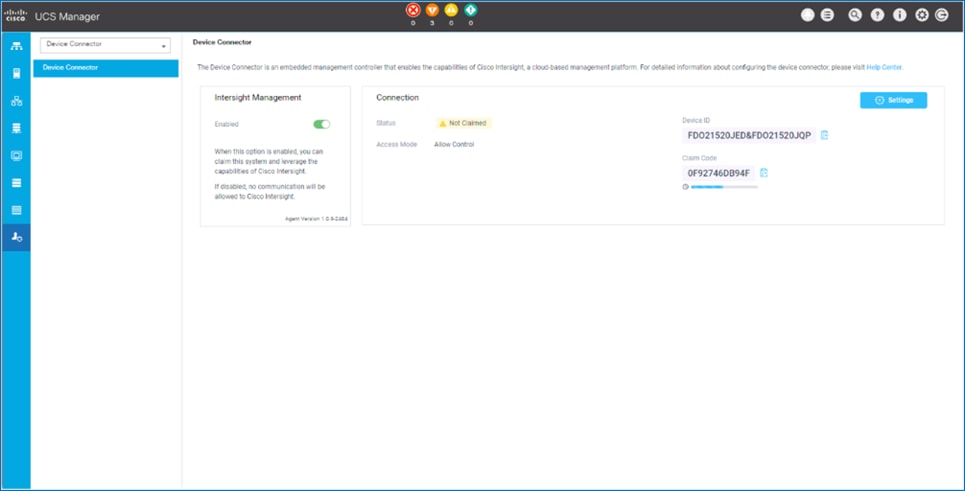

Upgrade Cisco UCS Manager Software to Version 4.0(1c)

Initial Setup of Cisco UCS 6332-16UP Fabric Interconnects

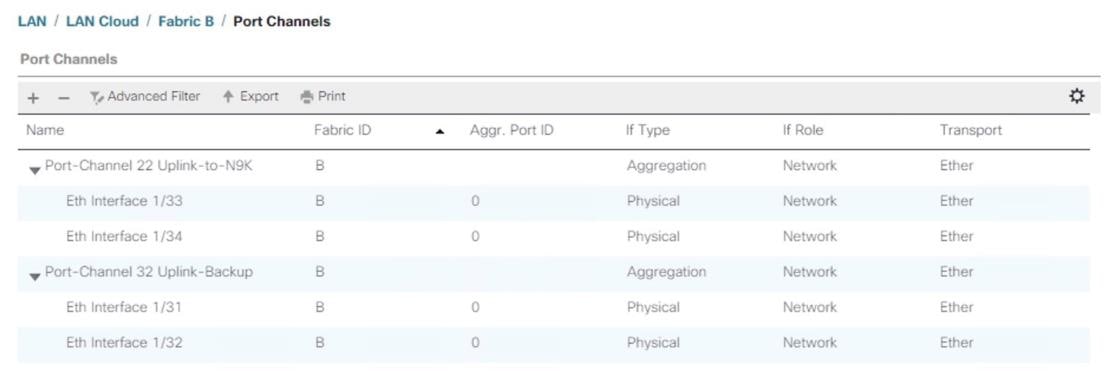

Cisco UCS 6332-16UP Fabric Interconnect B

Configure Ethernet Uplink Ports

Add Block of IP Addresses for KVM Access

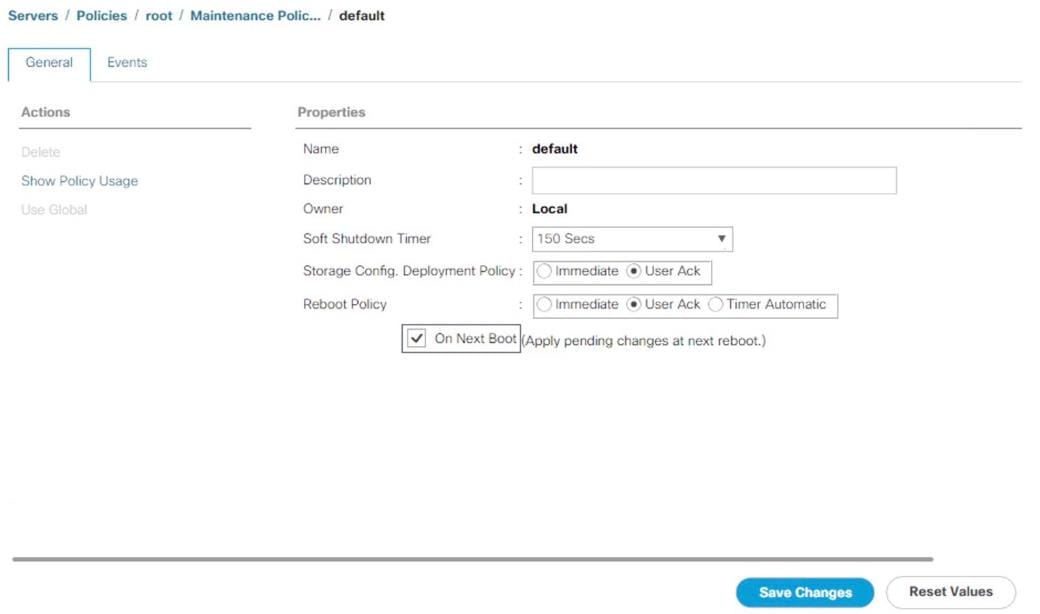

Update Default Maintenance Policy

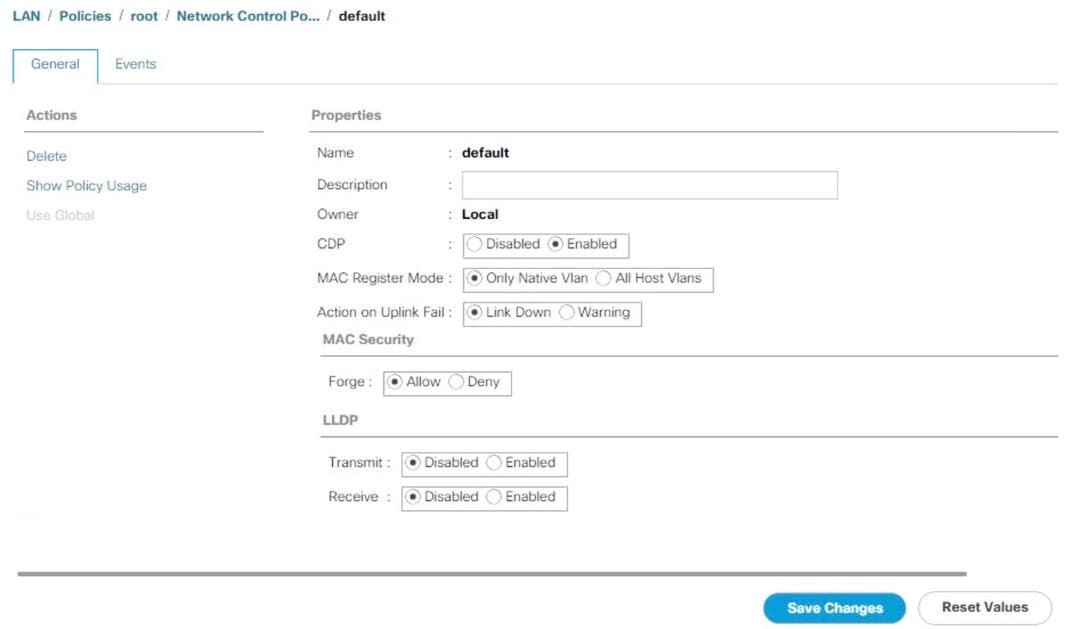

Configure Cisco UCS LAN Connectivity

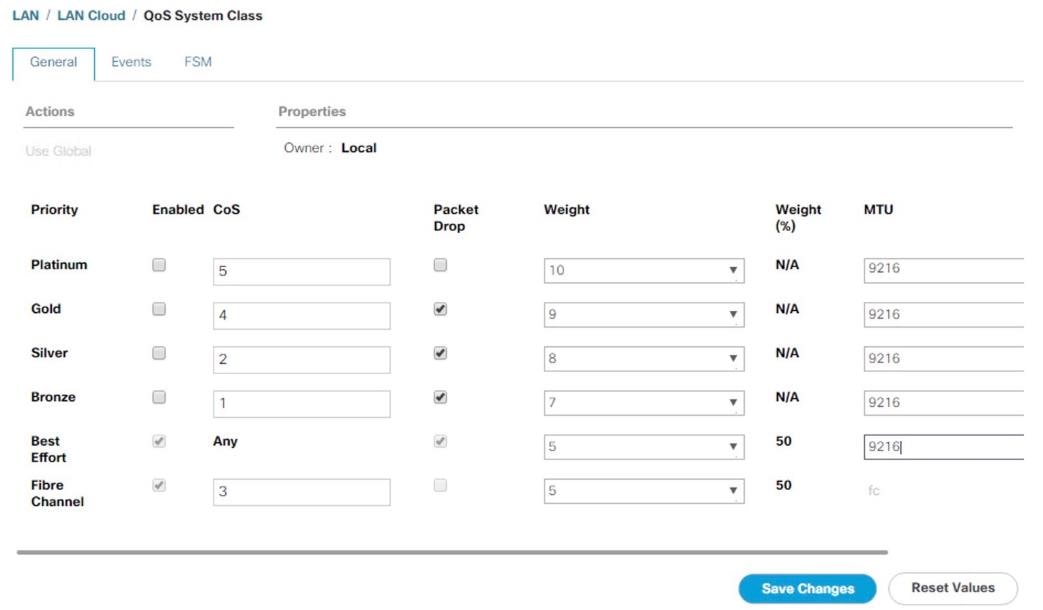

Set Jumbo Frames in Cisco UCS Fabric

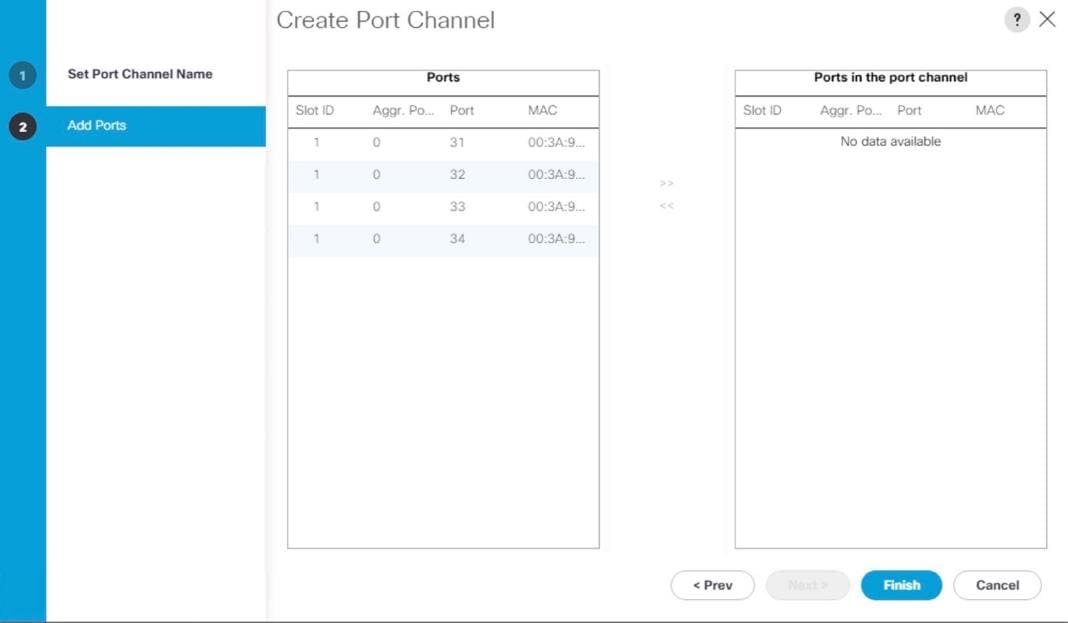

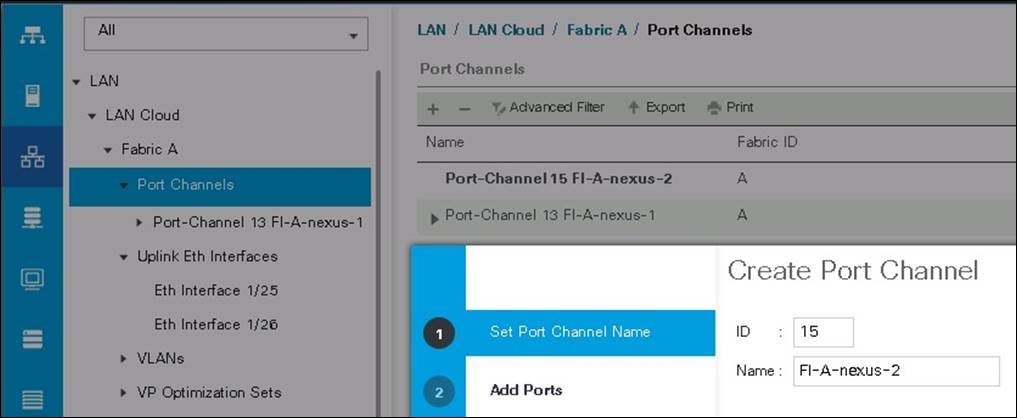

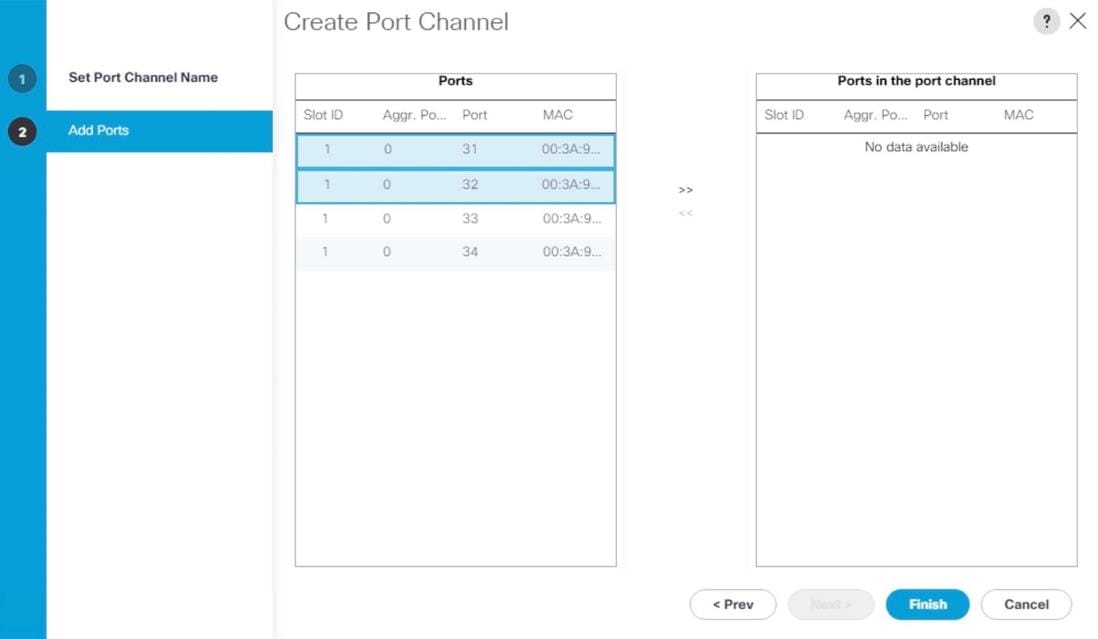

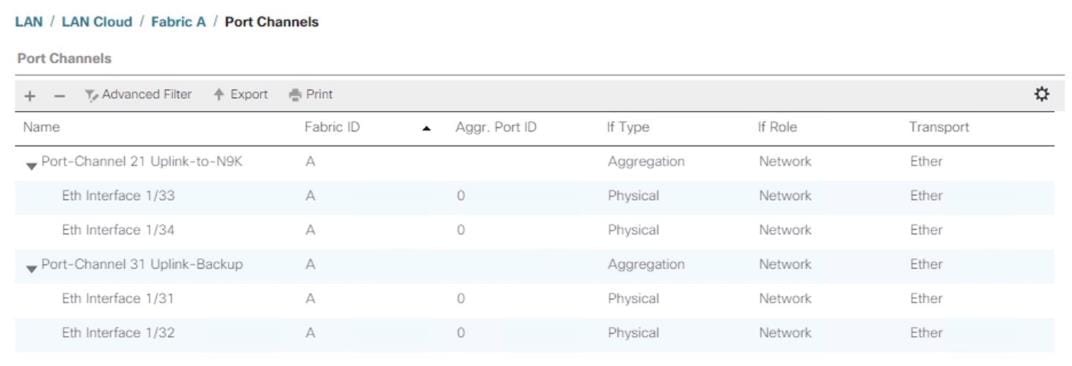

Create LAN Uplink Port Channels

Configure Cisco UCS SAN Configurations

Create Boot Policy for SAN Boot

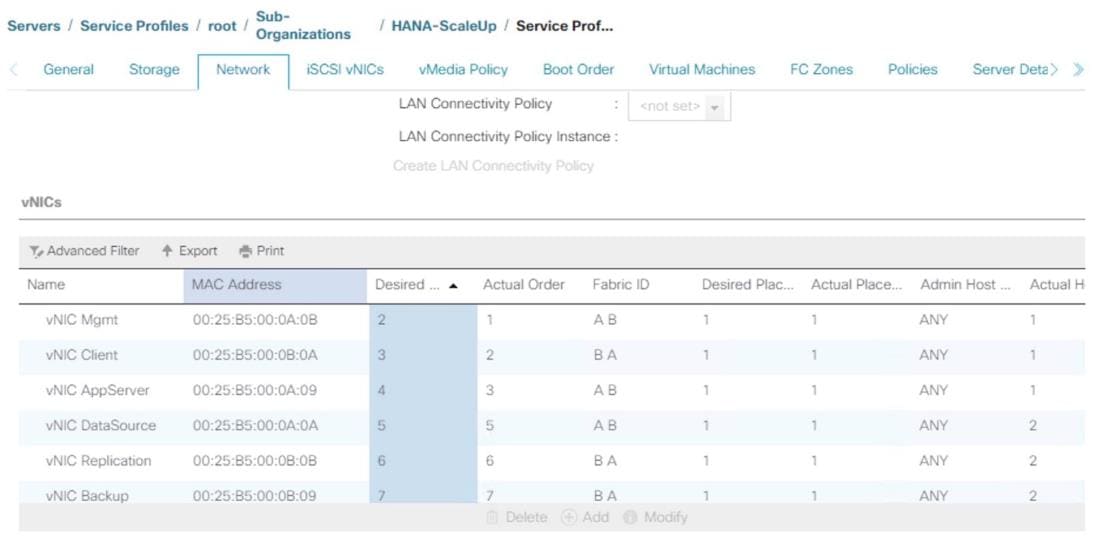

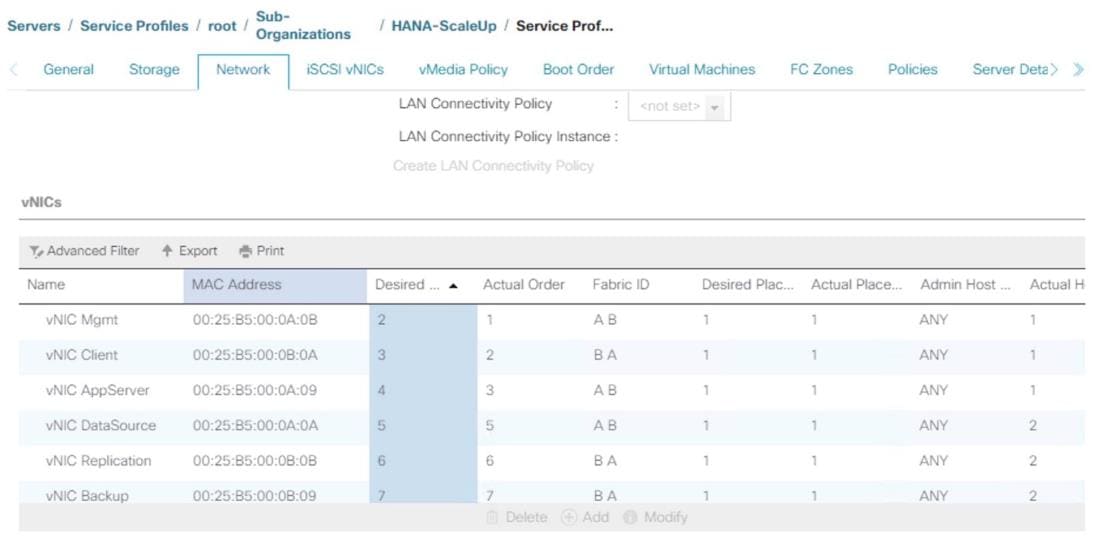

Create Service Profile Templates for SAP HANA Scale Up Servers

Create Service Profile from the Template

Configure Cisco MDS 9706 Switches

Cisco MDS Initial Configuration Dialogue

Cisco MDS Switch Configuration

Configure Fibre Channel Ports and Port Channels

Create and Configure Fiber Channel Zoning

Associate Service Profile to Cisco UCS Server

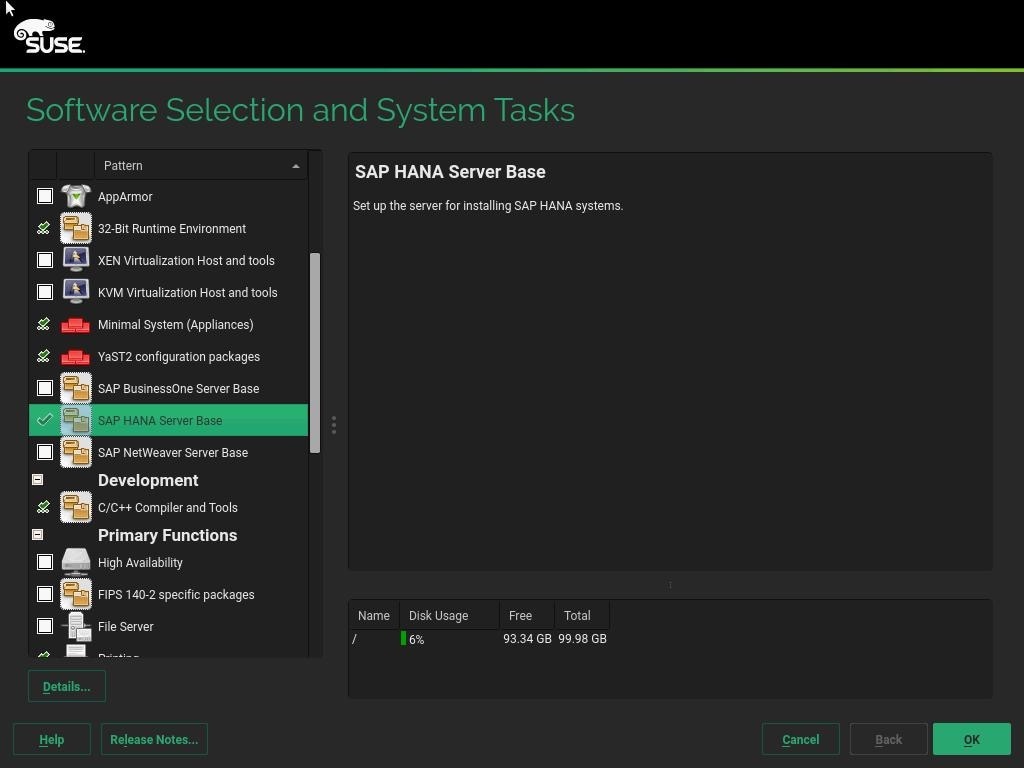

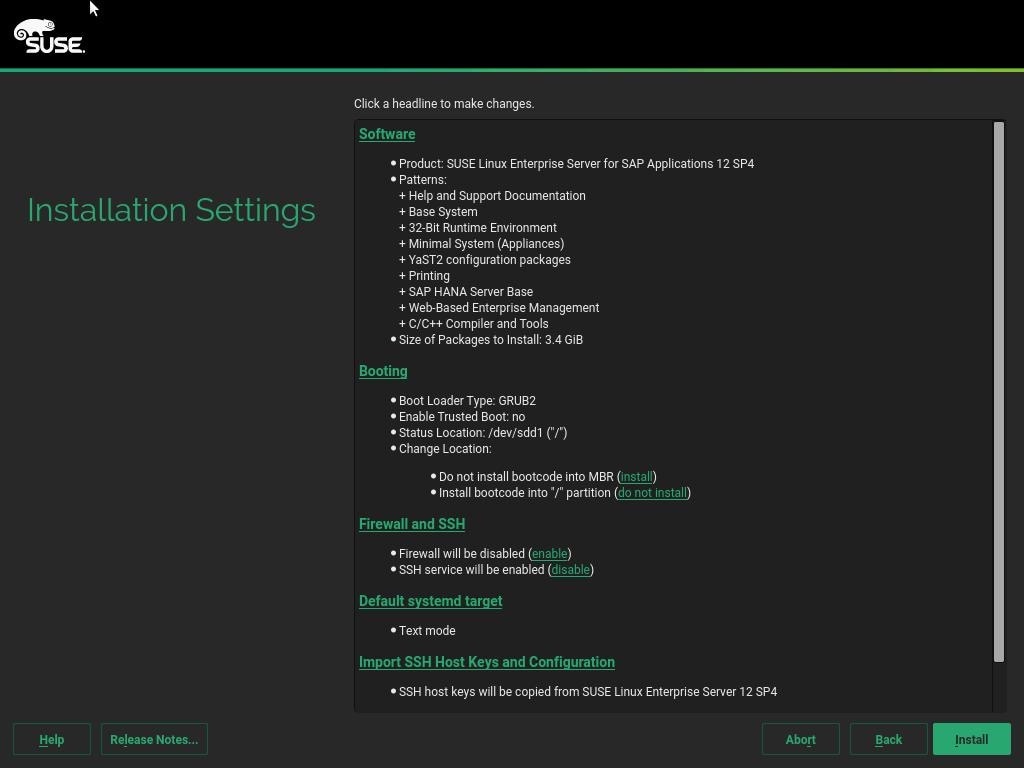

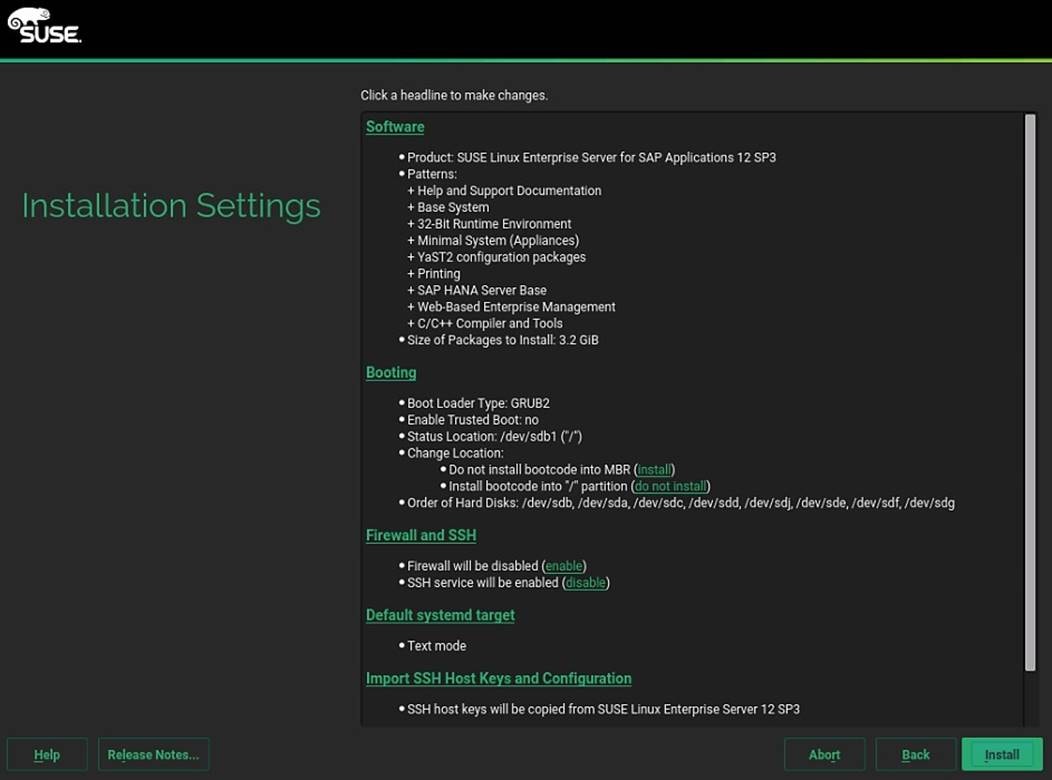

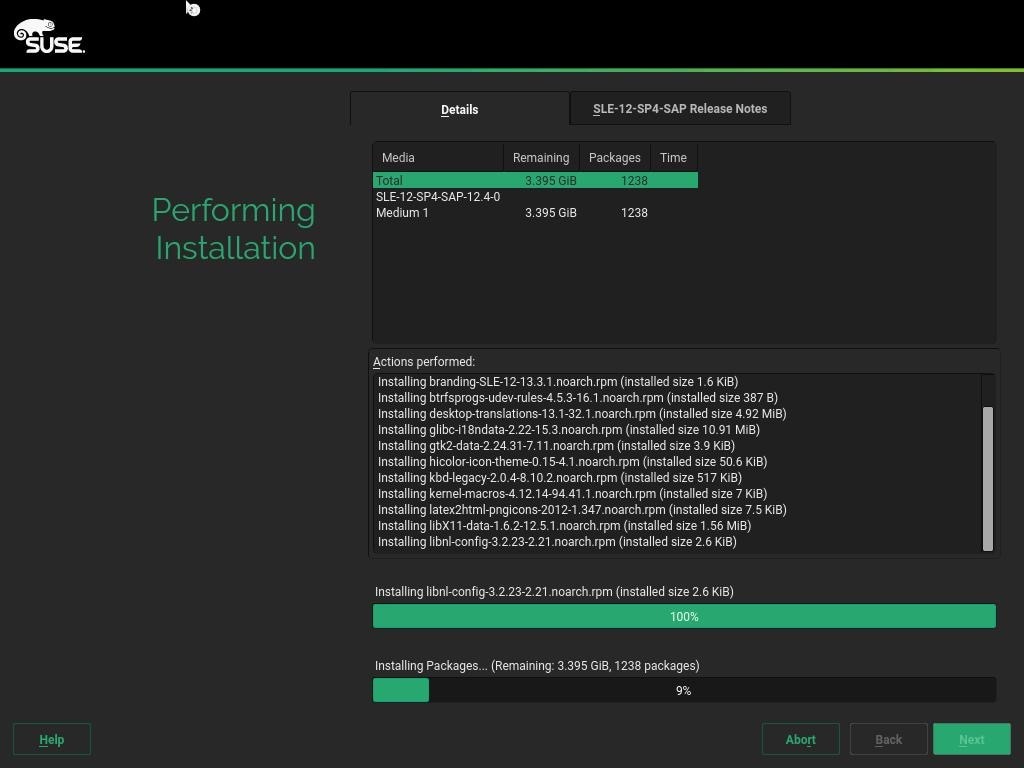

SLES for SAP 12 SP4 OS Installation

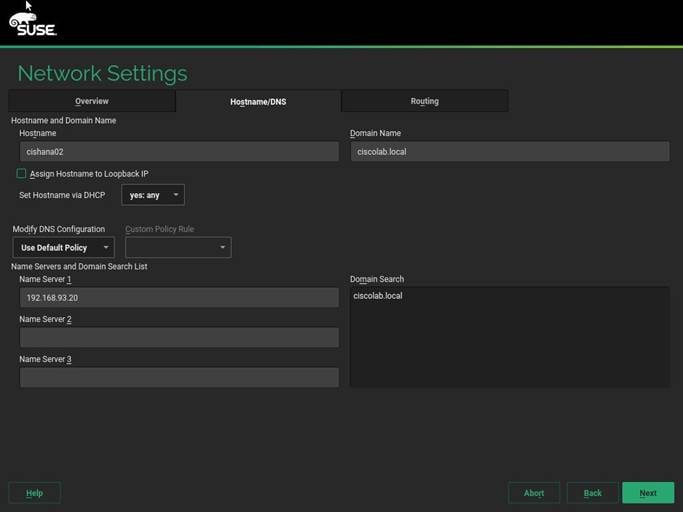

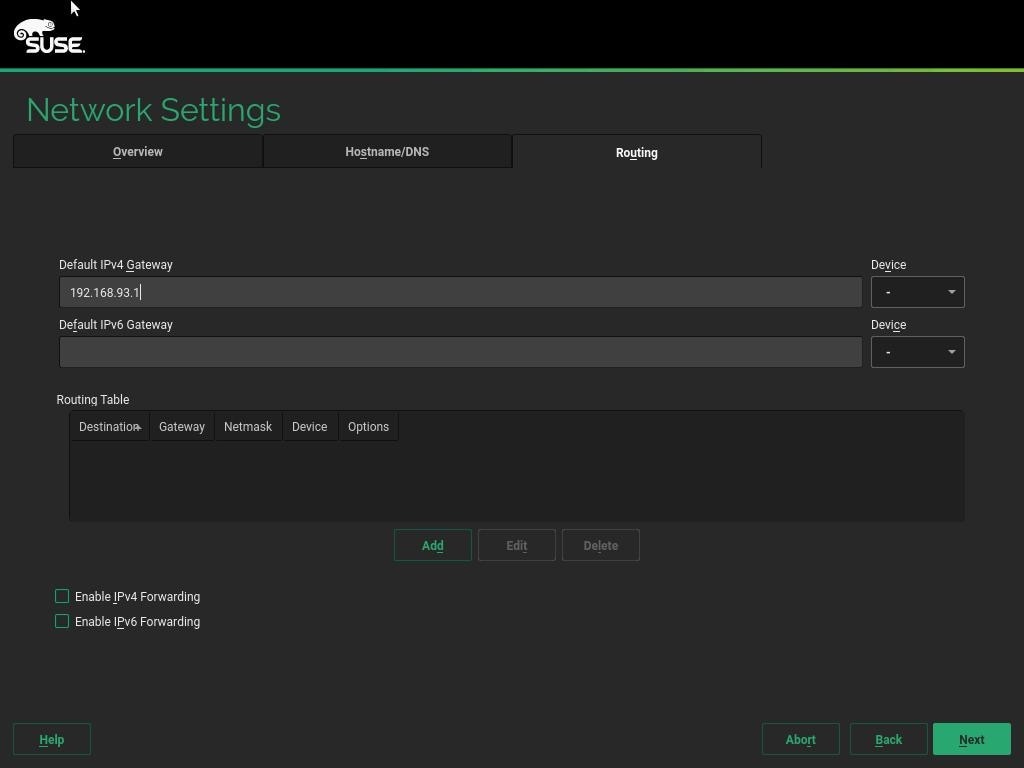

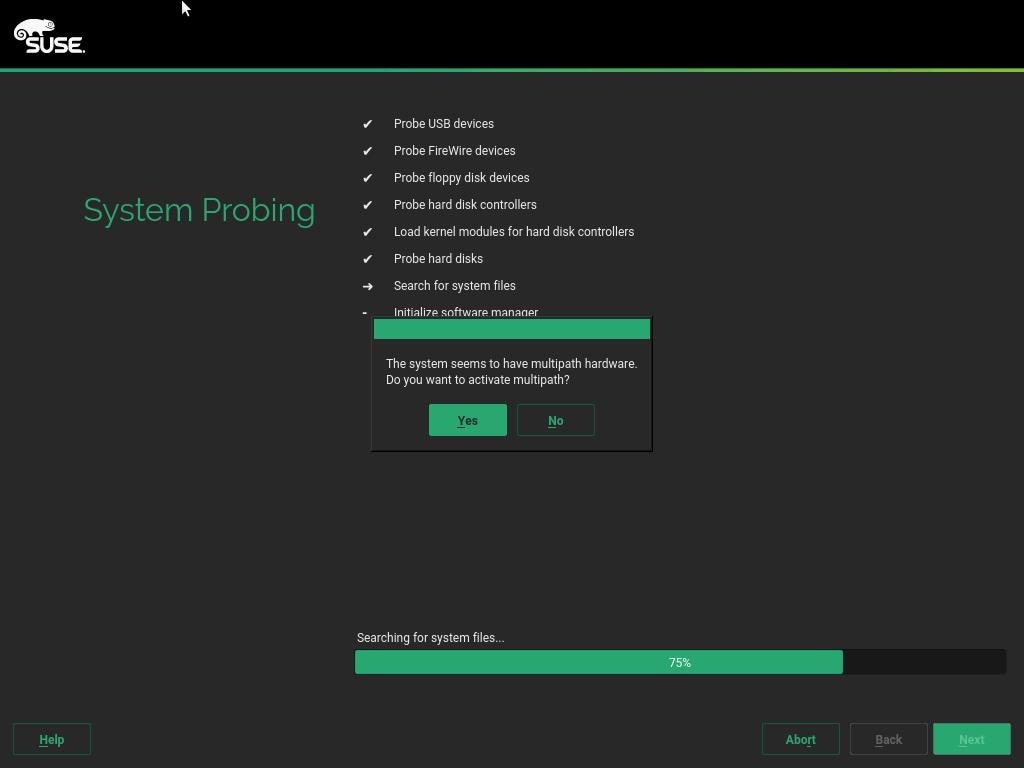

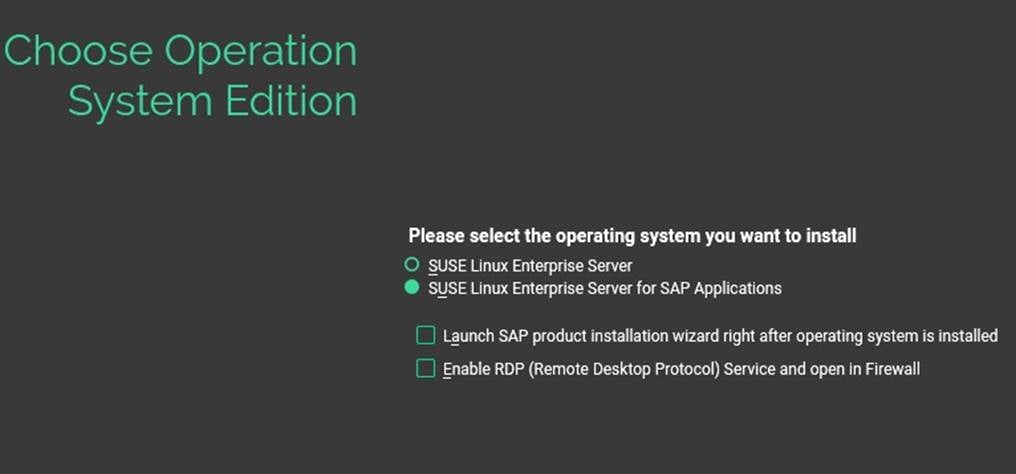

Network Services Configuration

SLES for SAP 12 SP 4 System Update and OS Customization

Install Cisco eNIC and fNIC Driver

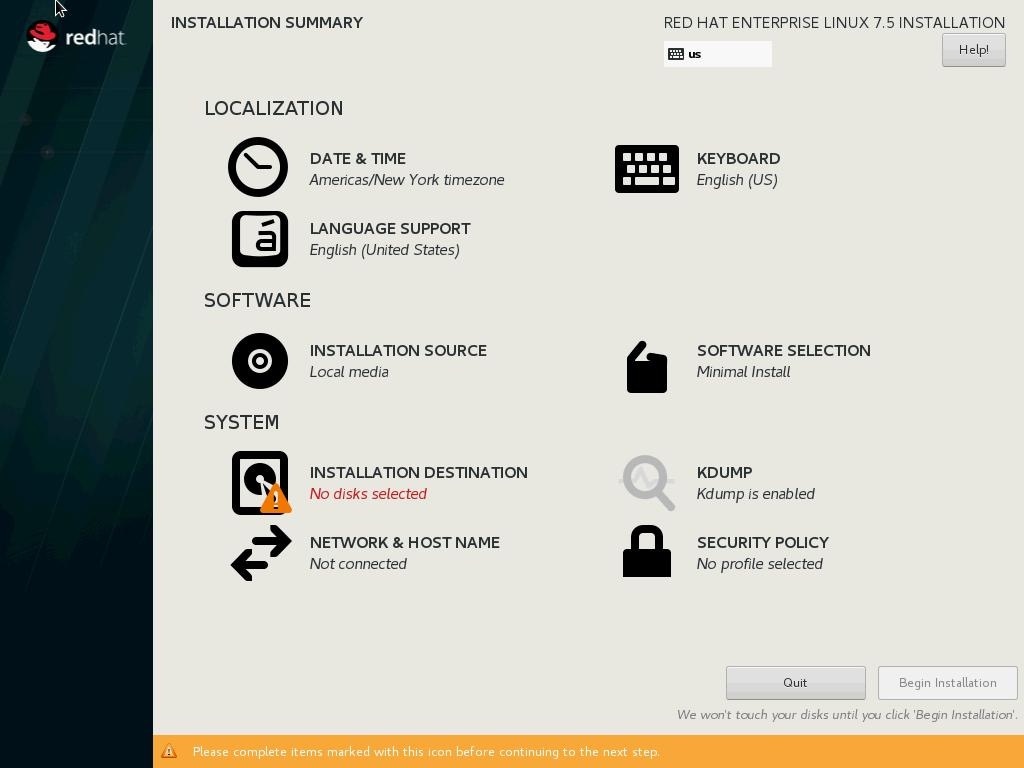

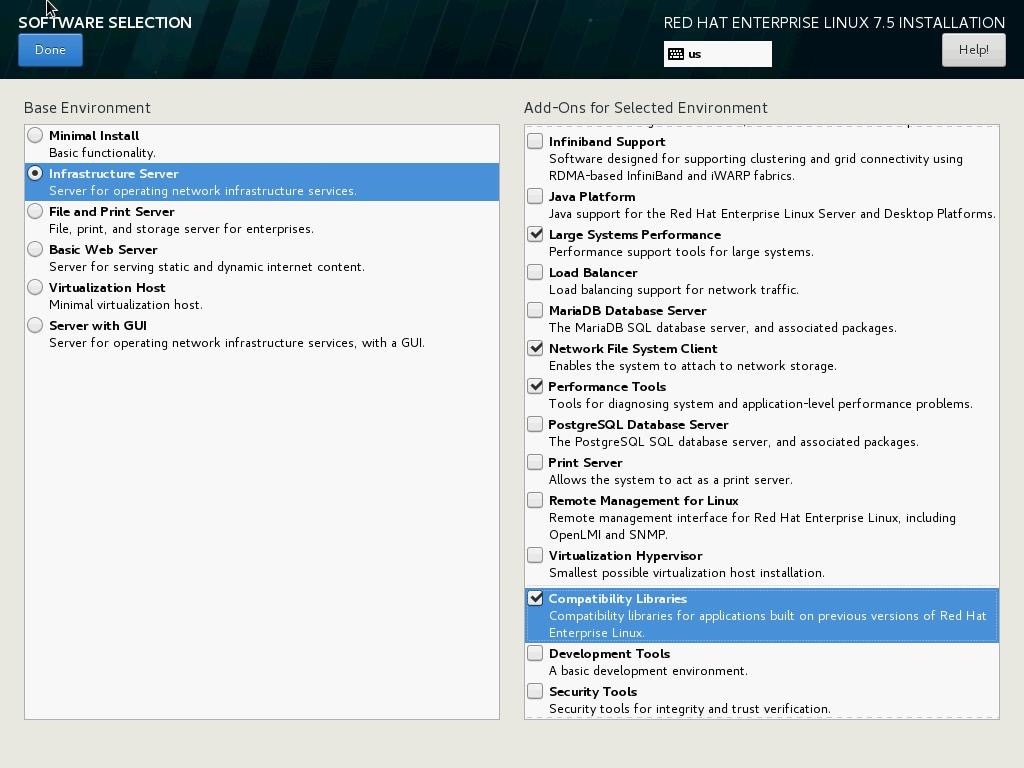

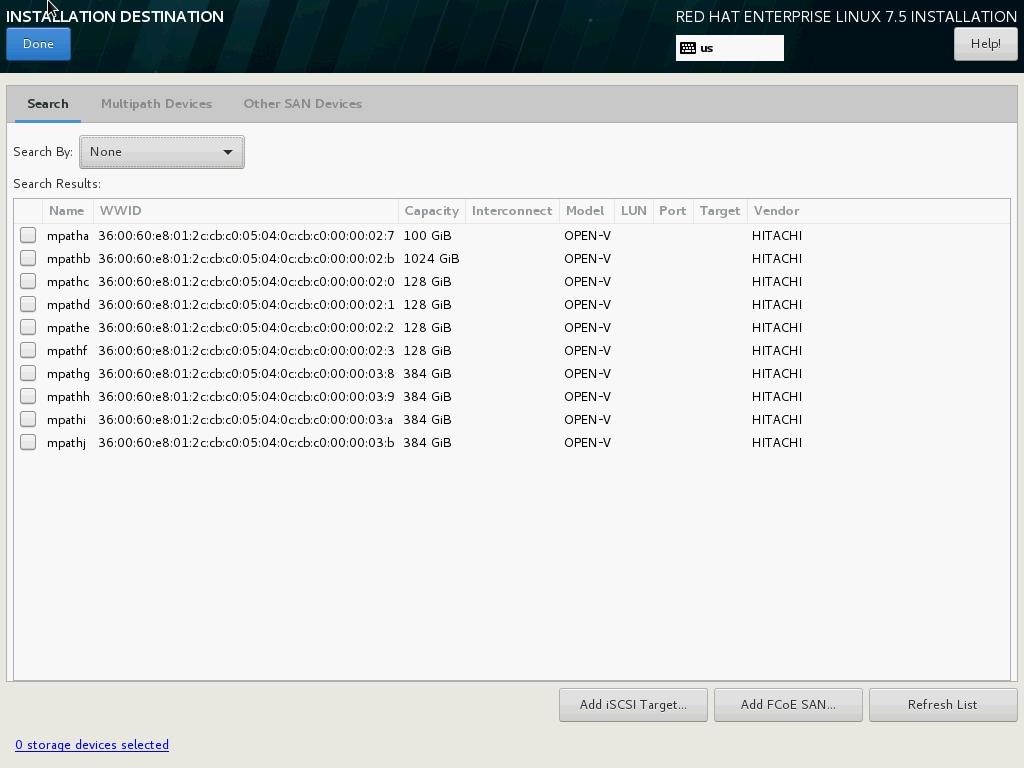

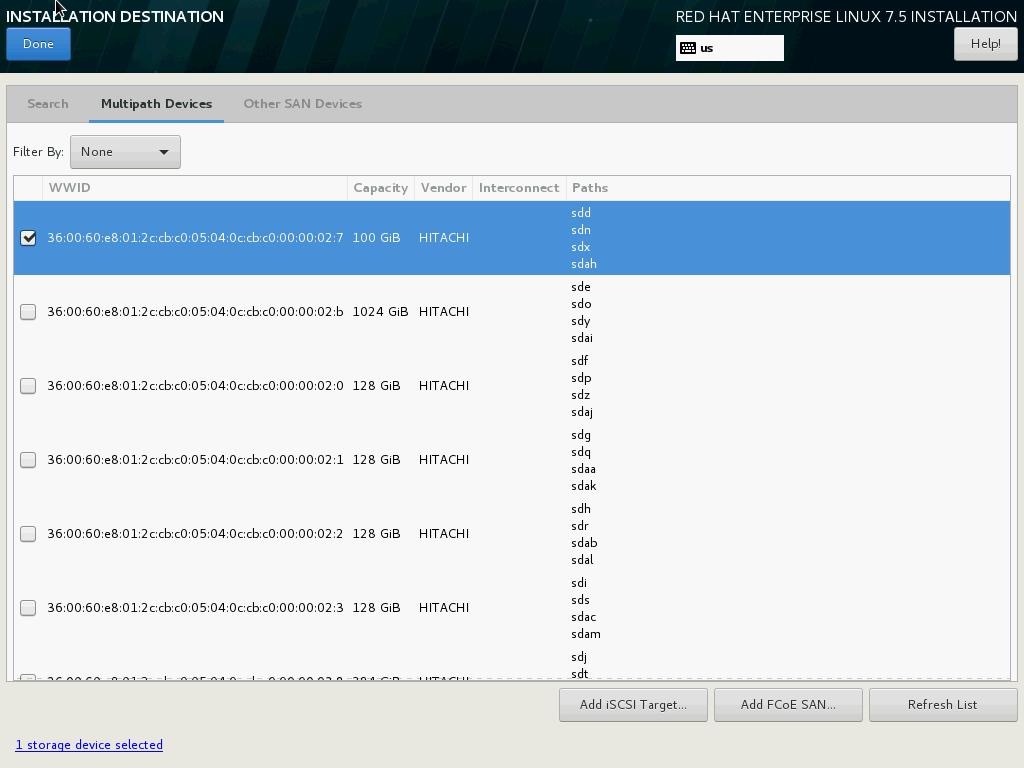

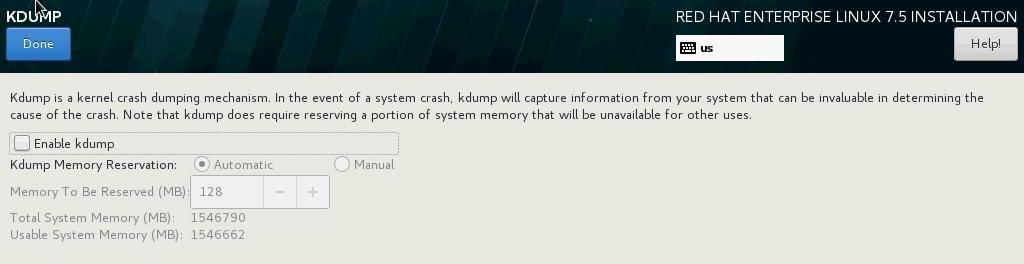

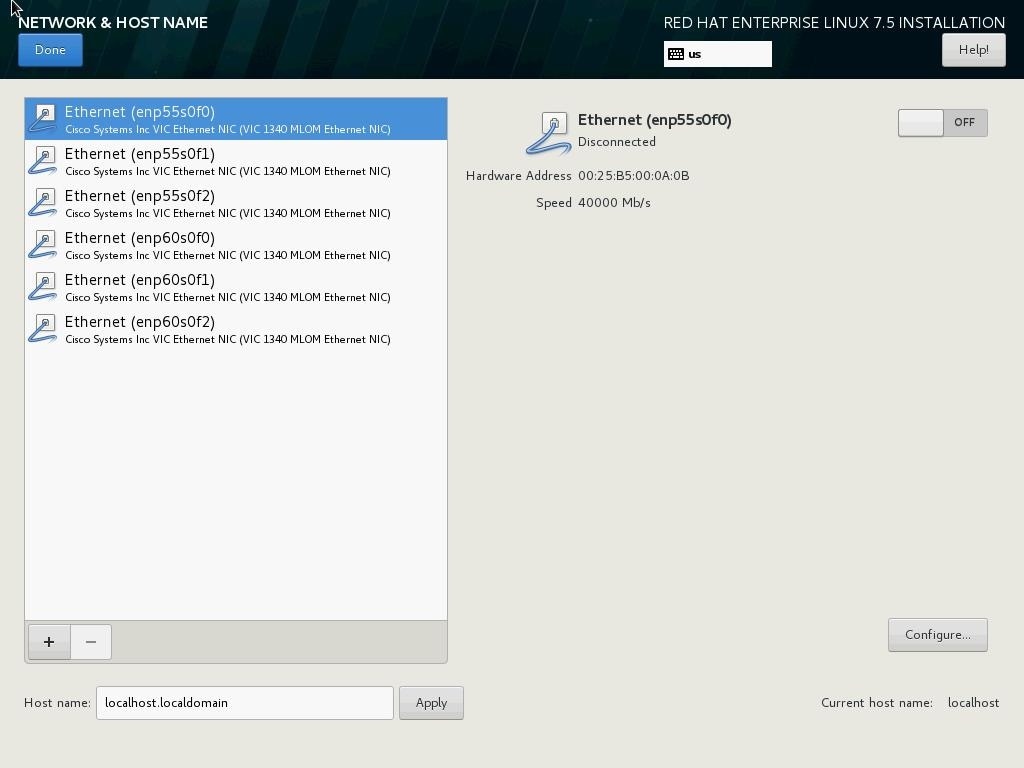

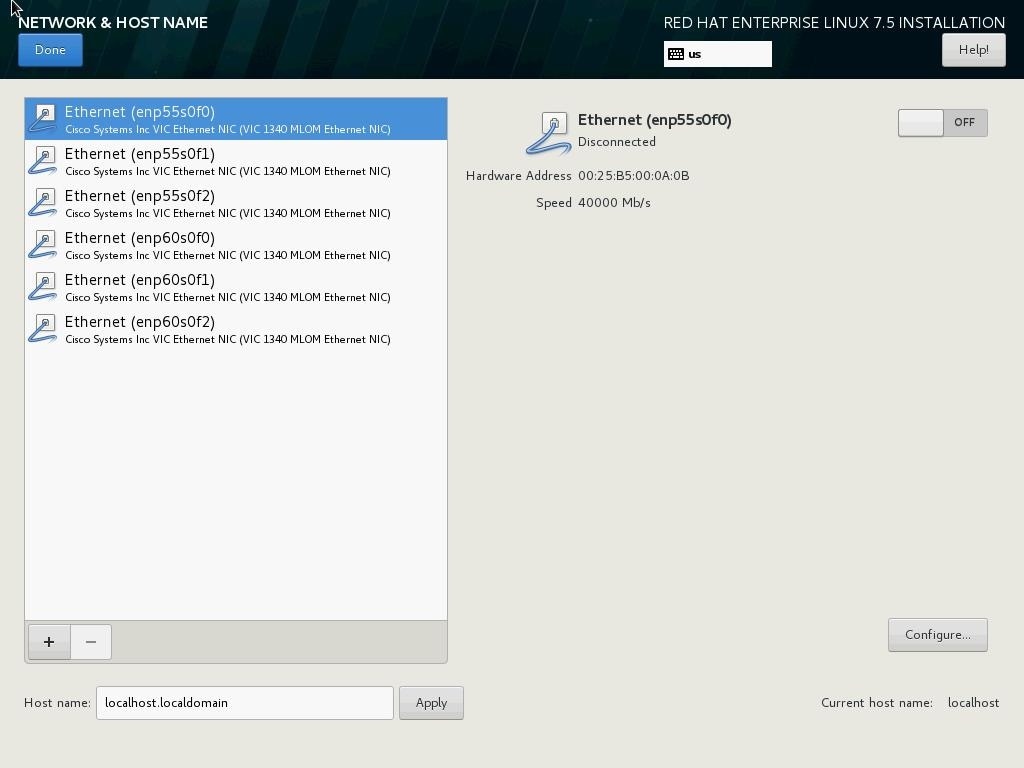

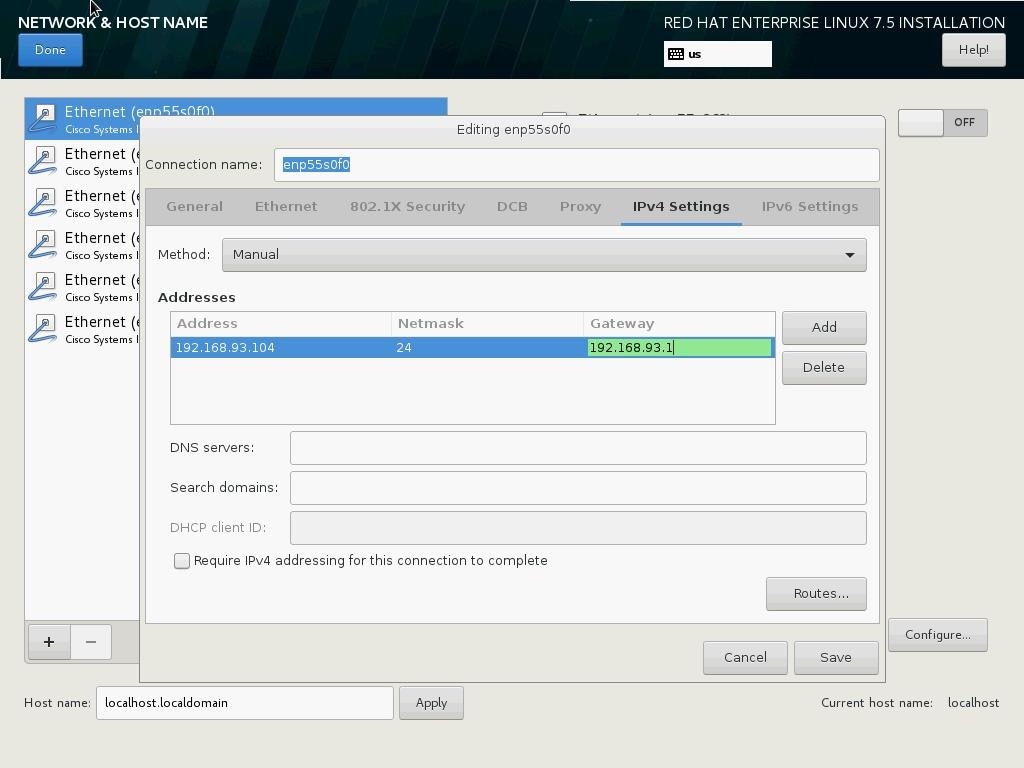

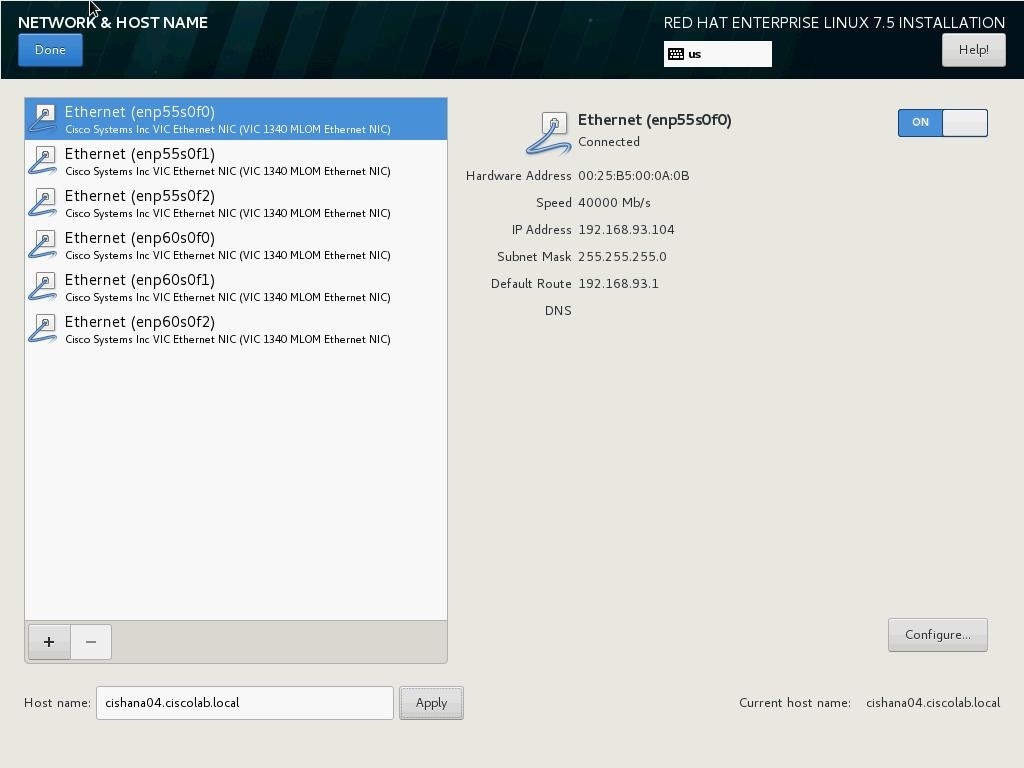

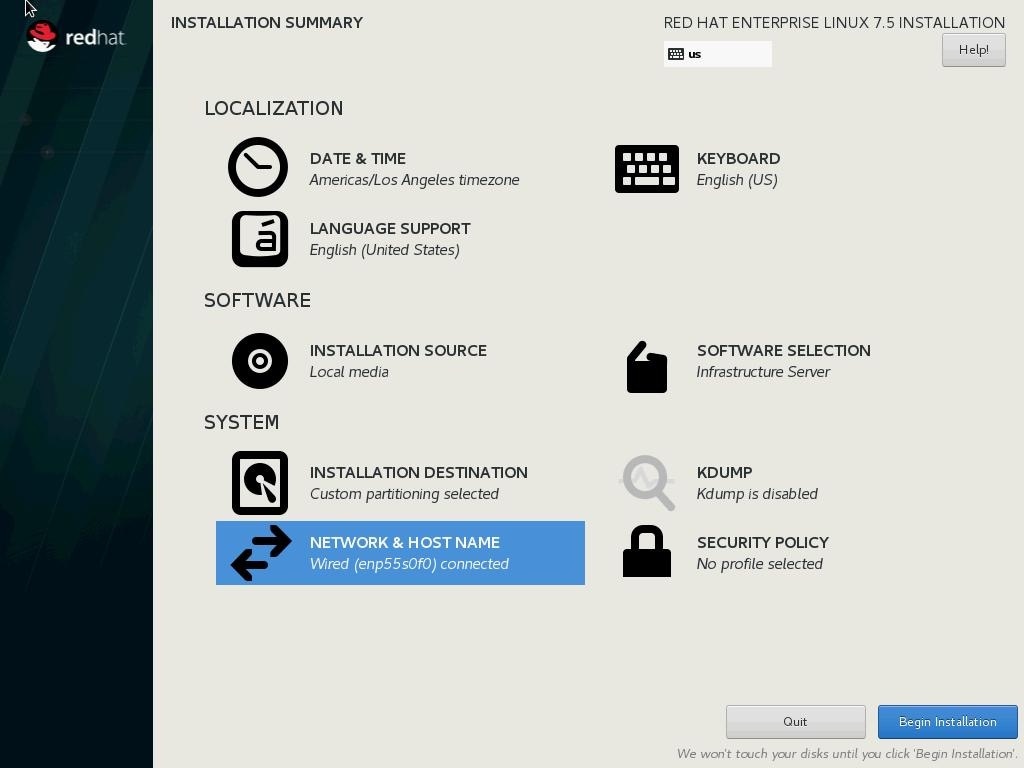

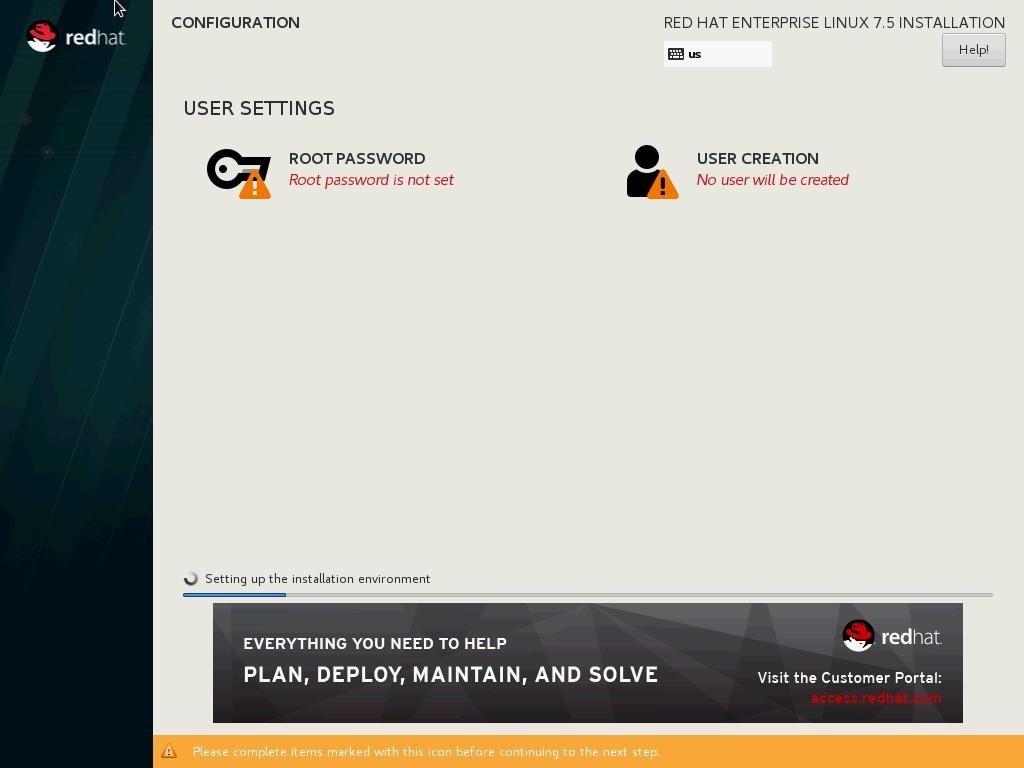

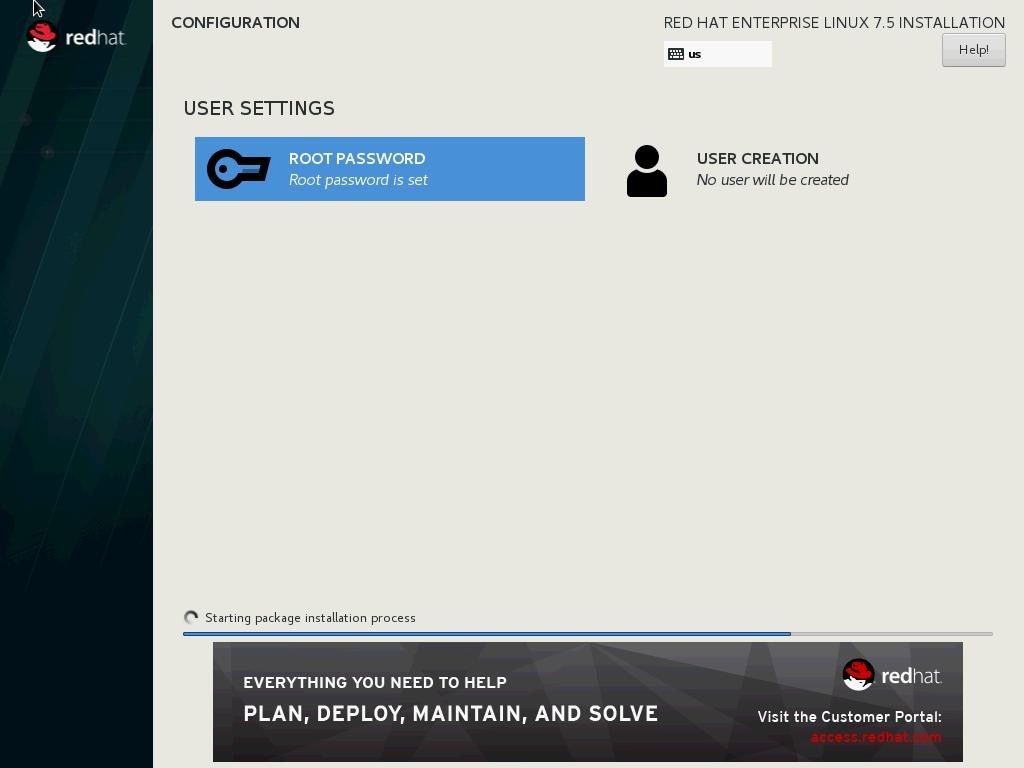

Red Hat Enterprise Linux for SAP Solutions 7.5 OS Installation

Network Services Configuration

RHEL 7.5 System Update and OS Customization for SAP HANA

Install Cisco eNIC and fNIC Driver

Configure HANA Persistent Storage Volume Configuration

SAP HANA Persistent Storage Volume Configuration for Scale-Up Deployments

Configuration Example on SUSE Linux Enterprise Server for SAP Applications

Configuration Example on Red Hat Enterprise Linux

Certified SAP HANA Hardware Directory

Cisco Validated Designs consist of systems and solutions that are designed, tested, and documented to facilitate and improve customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of our customers.

Cisco and Hitachi are working together to deliver a converged infrastructure solution that helps enterprise businesses meet the challenges of today and position themselves for the future. Leveraging decades of industry expertise and superior technology, this Cisco CVD offers a resilient, agile, and flexible foundation for today’s businesses. In addition, the Cisco and Hitachi partnership extends beyond a single solution, enabling businesses to benefit from their ambitious roadmap of evolving technologies such as advanced analytics, IoT, cloud, and edge capabilities. With Cisco and Hitachi, organizations can confidently take the next step in their modernization journey and prepare themselves to take advantage of new business opportunities enabled by innovative technology.

This document explains the deployment of the Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration, as it was described in Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration Design Guide. The recommended solution architecture is built on Cisco Unified Computing System (Cisco UCS) using the unified software release to support the Cisco UCS hardware platforms for Cisco UCS B-Series blade servers, Cisco UCS 6300 Fabric Interconnects, Cisco Nexus 9000 Series switches, Cisco MDS Fiber channel switches, and Hitachi VSP controllers. This architecture supports Red Hat Enterprise Linux and SUSE Linux Enterprise Server for SAP Applications.

Introduction

Enterprise data centers have a need for scalable and reliable infrastructure that can be implemented in an intelligent, policy driven manner. This implementation needs to be easy to use, and deliver application agility, so IT teams can provision applications quickly and resources can be scaled up (or down) in minutes.

Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration provides a best practice datacenter architecture built on the collaboration of Hitachi Vantara and Cisco to meet the needs of enterprise customers. The solution provides Orchestrate efficiency across the data path with an intelligent system that helps anticipate and navigate challenges as you grow. The architecture builds a self-optimizing data center that automatically spreads workloads across devices to ensure consistent utilization and performance. The solution helps organization to effectively plan infrastructure growth and eliminate the budgeting guesswork with predictive risk profiles that identify historical trends.

Organizations experience a 5-year ROI of 528% with Cisco UCS Integrated Infrastructure solutions, Businesses experience 48% lower IT infrastructure costs with Cisco UCS Integrated Infrastructure solutions. Organizations can realize a 5-year total business benefit of $20.4M per organization with Cisco UCS Integrated Infrastructure solutions. The break-even period with Cisco UCS Integrated Infrastructure solutions is nine months. Businesses experience 67% lower ongoing administrative and management costs with Cisco UCS Manager (UCSM). For more information please refer to IDC #US41084916 2016

This architecture is composed of the Hitachi Virtual Storage Platform (VSP) connecting through the Cisco MDS multilayer switches to Cisco Unified Computing System (Cisco UCS), and further enabled with the Cisco Nexus family of switches.

Audience

The audience for this document includes, but is not limited to; sales engineers, field consultants, professional services, IT managers, partner engineers, and customers who want to modernize their infrastructure to meet SLAs and the business needs at any scale.

Purpose of this Document

This document provides a step by step configuration and implementation guide for the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure solution. This solution features a validated reference architecture composed of:

· Cisco UCS Compute

· Cisco Nexus Switches

· Cisco Multilayer SAN Switches

· Hitachi Virtual Storage Platform

· SUSE Enterprise Linux and Red Hat Enterprise Linux Operating System

· SAP HANA

For the design decisions and technology discussion of the solution, please refer to the Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration Design Guide.

Solution Design

Architecture

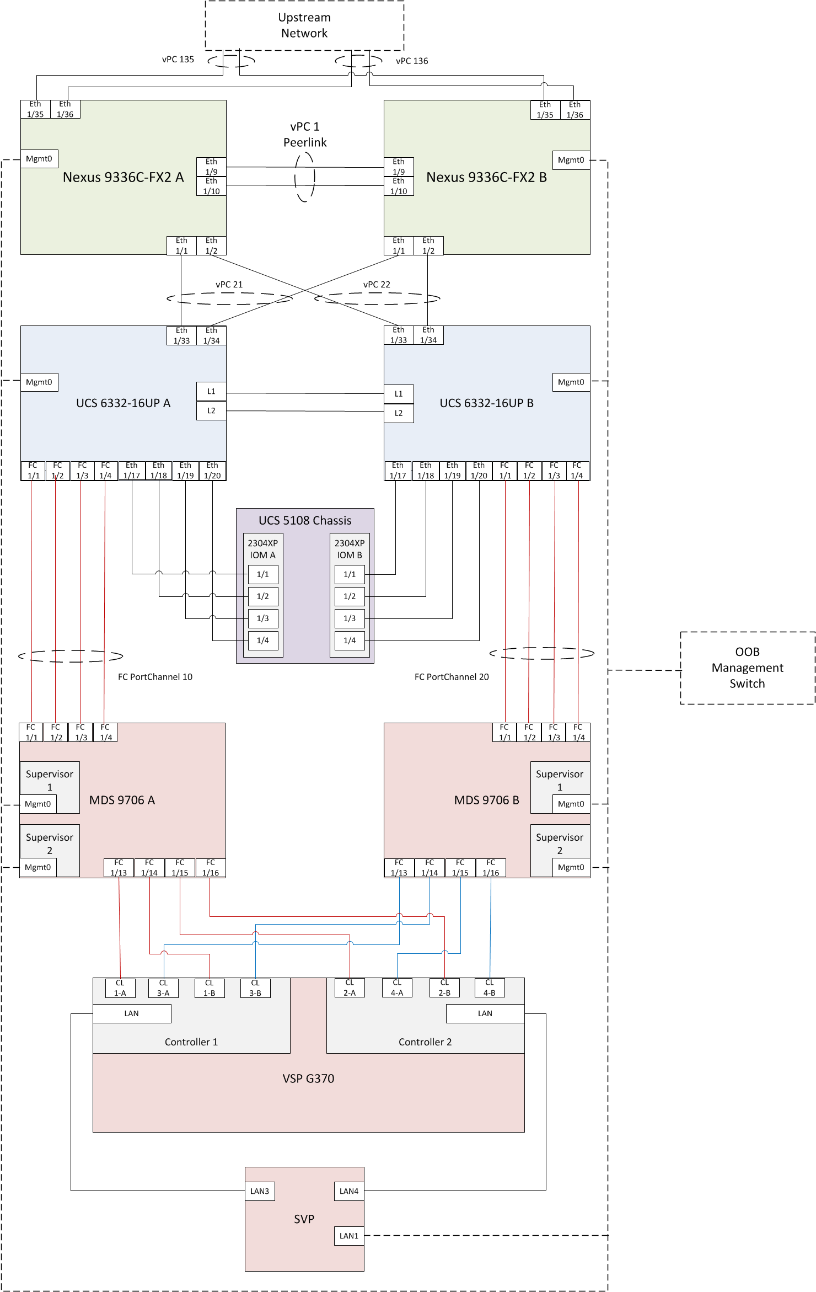

Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration provides an end-to-end architecture with Cisco Compute, Networking and Hitachi Storage that demonstrate support for multiple SAP HANA workloads with high availability and secure multi-tenancy. The architecture is built around the Cisco Unified Computing System(UCS) and the Hitachi Virtual Storage Platform(VSP) connected together by Cisco MDS Multilayer SAN Switches, and further enabled with Cisco Nexus Switches. These components come together to form a powerful and scalable design, built on the best practices of Cisco and Hitachi to create an ideal platform for running a variety of enterprise workloads with confidence. Figure 1 illustrates the physical topology of the Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration.

Figure 1 Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration Architecture

The components of this integrated architecture shown in Figure 1 are:

· Cisco Nexus 9336C-FX2 – 100Gb capable, LAN connectivity to the Cisco UCS compute resources.

· Cisco UCS 6332-16UP Fabric Interconnect – Unified management of Cisco UCS compute, and the compute’s access to storage and networks.

· Cisco UCS B200 M5 – High powered, versatile blade server with two CPU for SAP HANA

· Cisco UCS B480 M5 – High powered, versatile blade server with four CPU for SAP HANA

· Cisco MDS 9706 – 16Gbps Fiber Channel connectivity within the architecture, as well as interfacing to resources present in an existing data center.

· Hitachi VSP G370 – Mid-range, high performance storage subsystem with optional all-flash configuration

· Cisco UCS Manager – Management delivered through the Fabric Interconnect, providing stateless compute, and policy driven implementation of the servers managed by it.

Hardware and Software Versions

Table 1 lists the validated hardware and software versions used for this solution. Configuration specifics are given in this deployment guide for the devices and versions listed in the following tables. Component and software version substitution from what is listed is considered acceptable within this reference architecture, but substitution will need to comply with the hardware and software compatibility matrices from both Cisco and Hitachi, please refer to the following documentation:

Cisco UCS Hardware Compatibility Matrix:

https://ucshcltool.cloudapps.cisco.com/public/

Cisco Nexus and MDS Interoperability Matrix:

Cisco Nexus Recommended Releases for Nexus 9K:

https://www.cisco.com/c/en/us/td/docs/switches/datacenter/nexus9000/sw/recommended_release/b_Minimum_and_Recommended_Cisco_NX-OS_Releases_for_Cisco_Nexus_9000_Series_Switches.html

Cisco MDS Recommended Releases:

https://www.cisco.com/c/en/us/td/docs/switches/datacenter/mds9000/sw/b_MDS_NX-OS_Recommended_Releases.html

Hitachi Vantara Interoperability:

https://support.hitachivantara.com/en_us/interoperability.html

In addition, any substituted hardware or software may have different configurations from what is detailed in this guide and will require a thorough evaluation of the substituted product reference documents.

Table 1 Validated Hardware and Software

| Component |

Software Version/Firmware Version |

|

| Network |

Cisco Nexus 9336C-FX2 |

7.0(3)I7(5a) |

| Compute |

Cisco UCS Fabric Interconnect 6332 |

4.0(1c) |

| Cisco UCS 2304 IOM |

4.0(1c) |

|

| Cisco UCS B480 M5 Blade Server |

4.0(1c) |

|

| Cisco UCS B200 M5 Blade Server |

4.0(1c) |

|

| SUSE Linux Enterprise Server for SAP Applications |

SLES for SAP 12 SP4 |

|

| Red Hat Enterprise Linux for SAP Solutions |

RHEL 7.5 |

|

| Storage |

Hitachi VSP G370 |

88-02-03-60/00 |

| Cisco MDS 9706 (DS-X97-SF1-K9 & DS-X9648-1536K9) |

8.3(1) |

|

Configuration Guidelines

This information in this section is intended to enable you to fully configure the customer environment. In this process, various steps require you to insert customer-specific naming conventions, IP addresses, and VLAN schemes, as well as to record appropriate MAC addresses. Table 2 lists the configuration variables that are used throughout this document. This table can be completed based on the specific site variables and used in implementing the document configuration steps.

The Cisco UCS Fabric Interconnects are similarly configured. Additionally, this document details the steps for provisioning multiple Cisco UCS hosts, and these are identified sequentially: HANA-Server01, HANA-Server02, and so on. Finally, to indicate that you should include information pertinent to your environment in a given step, <text> appears as part of the command structure. Review the following example for the network port vlan create command:

Usage:

network port vlan create ?

[-node] <nodename> Node

{ [-vlan-name] {<netport>|<ifgrp>} VLAN Name

| -port {<netport>|<ifgrp>} Associated Network Port

[-vlan-id] <integer> } Network Switch VLAN Identifier

Example:

network port vlan –node <node01> -vlan-name i0a-<vlan id>

Table 2 Configuration Variables

| Variable |

Description |

Customer Implementation Value |

| <<var_nexus_A_hostname>> |

Cisco Nexus 9336C-FX2-A host name |

|

| <<var_nexus_A_mgmt0_ip>> |

Out-of-band Cisco Nexus 9336C-FX2-A management IP address |

|

| <<var_nexus_B_hostname>> |

Cisco Nexus 9336C-FX2-B host name |

|

| <<var_nexus_B_mgmt0_ip>> |

Out-of-band Cisco Nexus 9336C-FX2-B management IP address |

|

| <<var_mgmt_mask>> |

Out-of-band management network netmask |

|

| <<var_mgmt_gateway>> |

Out-of-band management network default gateway |

|

| <<var_ucs_clustername>> |

Cisco UCS Manager cluster host name |

|

| <<var_ucsa_mgmt_ip>> |

Cisco UCS 6332-16UP-A out-of-band management IP address |

|

| <<var_ucsb_mgmt_ip>> |

Cisco UCS 6332-16UP-B out-of-band management IP address |

|

| <<var_ucs_cluster_ip>> |

Cisco UCS Manager cluster IP address |

|

| <<var_hitachi_svp_ip>> |

Out-of-band management IP for Hitachi storage management network |

|

| <<var_hitachi_controller-1_mgmt_ip>> |

Out-of-band management IP for Hitachi storage Controller 1 |

|

| <<var_hitachi_controller-2_mgmt_ip>> |

Out-of-band management IP for Hitachi storage Controller 2 |

|

| <<var_dns_domain_name>> |

DNS domain name |

|

| <<var_nameserver_ip>> |

DNS server IP(s) |

|

| <<var_global_ntp_server_ip>> |

NTP server IP address |

|

| <<var_mds-a_name>> |

Cisco MDS 9706 A hostname |

|

| <<var_mds-a_ip>> |

Cisco MDS 9706 A Management IP Address |

|

| <<var_mds-b_name>> |

Cisco MDS 9706 B hostname |

|

| <<var_mds-b_ip>> |

Cisco MDS 9706 B Management IP Address |

|

| <<var_nexus_vpc_domain_id>> |

Unique Cisco Nexus switch VPC domain ID for Cisco Nexus 9336C-FX2 Switch pair |

|

| <<var_mgmt_vlan_id>> |

Management Network VLAN |

|

| <<var_backup_vlan_id>> |

Backup Network for HANA VLAN ID |

|

| <<var_client_vlan_id>> |

Client Network for HANA VLAN ID |

|

| <<var_appserver_vlan_id>> |

Application Server Network for HANA VLAN ID |

|

| <<var_datasource_vlan_id>> |

Data source Network for HANA VLAN ID |

|

| <<var_replication_vlan_id>> |

Replication Network for HANA VLAN ID |

|

| <<var_fc-pc_a_id>> |

Fiber Channel - Port Channel ID for MDS A |

|

| <<var_fc-pc_b_id>> |

Fiber Channel - Port Channel ID for MDS B |

|

| <<var_san_a_id>> |

VSAN ID for MDS A |

|

| <<var_san_b_id>> |

VSAN ID for MDS B |

|

Physical Cabling

This section explains the cabling examples used in the validated environment. To make connectivity clear in this example, the tables include both the local and remote port locations.

This document assumes that out-of-band management ports are plugged into an existing management infrastructure at the deployment site. The upstream network from the Nexus 9336C-FX2 switches is out of scope of this document, with only the assumption that these switches will connect to the upstream switch or switches with a virtual Port Channel (vPC).

Figure 2 shows the cabling configuration used in this validated design.

Table 3 through Table 8 provide the details of the specific port connections with the cables used in this deployment guide.

Table 3 Cisco Nexus 9336C-FX2 A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 9336C-FX2 A

|

Eth1/1 |

40GbE |

Cisco UCS fabric interconnect A |

1/33 |

| Eth1/2 |

40GbE |

Cisco UCS fabric interconnect B |

1/33 |

|

| Eth1/9 |

40GbE |

Nx9336C-FX2-B |

1/9 |

|

| Eth1/10 |

40GbE |

Nx9336C-FX2-B |

1/10 |

|

| Eth1/31 |

40GbE |

Cisco UCS fabric interconnect A (optional) |

1/31 |

|

| Eth1/32 |

40GbE |

Cisco UCS fabric interconnect B (optional) |

1/31 |

|

| Eth1/35 |

40GbE |

Customer Uplink Switch -A |

Any |

|

| Eth1/36 |

40GbE |

Customer Uplink Switch -B |

Any |

|

| MGMT0 |

GbE |

Customer Management Switch |

Any |

Table 4 Cisco Nexus 9336C-FX2 A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 9336C-FX2 B

|

Eth1/1 |

40GbE |

Cisco UCS fabric interconnect A |

1/34 |

| Eth1/2 |

40GbE |

Cisco UCS fabric interconnect B |

1/34 |

|

| Eth1/9 |

40GbE |

Nx9336C-FX2-B |

1/9 |

|

| Eth1/10 |

40GbE |

Nx9336C-FX2-B |

1/10 |

|

| Eth1/31 |

40GbE |

Cisco UCS fabric interconnect A (optional) |

1/32 |

|

| Eth1/32 |

40GbE |

Cisco UCS fabric interconnect B (optional) |

1/32 |

|

| Eth1/35 |

40GbE |

Customer Uplink Switch -A |

Any |

|

| Eth1/36 |

40GbE |

Customer Uplink Switch -B |

Any |

|

| MGMT0 |

GbE |

Customer Management Switch |

Any |

Table 5 Cisco UCS 6332-16UP A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS 6332-16UP FI A

|

Eth1/1 |

FC uplink |

MDS-A |

1/1 |

| Eth1/2 |

FC uplink |

MDS-A |

1/2 |

|

| Eth1/3 |

FC uplink |

MDS-A |

1/3 |

|

| Eth1/4 |

FC uplink |

MDS-A |

1/4 |

|

| Eth1/17 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM A |

1/1 |

|

| Eth1/18 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM A |

1/2 |

|

| Eth1/19 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM A |

1/3 |

|

| Eth1/20 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM A |

1/4 |

|

| Eth1/21 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM A |

1/1 |

|

| Eth1/22 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM A |

1/2 |

|

| Eth1/23 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM A |

1/3 |

|

| Eth1/24 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM A |

1/4 |

|

| Eth1/31 |

40GbE |

Nx9336C-FX2-A (optional) |

1/31 |

|

| Eth1/32 |

40GbE |

Nx9336C-FX2-B (optional) |

1/31 |

|

| Eth1/33 |

40GbE |

Nx9336C-FX2-A |

1/1 |

|

| Eth1/34 |

40GbE |

Nx9336C-FX2-B |

1/1 |

|

| MGMT0 |

GbE |

Customer Management Switch |

Any |

|

| L1 |

GbE |

Cisco UCS fabric interconnect B |

L1 |

|

| L2 |

GbE |

Cisco UCS fabric interconnect B |

L2 |

Table 6 Cisco UCS 6332-16UP B Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS 6332-16UP FI B

|

Eth1/1 |

FC uplink |

MDS-B |

1/1 |

| Eth1/2 |

FC uplink |

MDS-B |

1/2 |

|

| Eth1/3 |

FC uplink |

MDS-B |

1/3 |

|

| Eth1/4 |

FC uplink |

MDS-B |

1/4 |

|

| Eth1/17 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM B |

1/1 |

|

| Eth1/18 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM B |

1/2 |

|

| Eth1/19 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM B |

1/3 |

|

| Eth1/20 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM B |

1/4 |

|

| Eth1/21 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM B |

1/1 |

|

| Eth1/22 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM B |

1/2 |

|

| Eth1/23 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM B |

1/3 |

|

| Eth1/24 |

40GbE |

Cisco UCS 5108 Chassis 1 – IOM B |

1/4 |

|

| Eth1/31 |

40GbE |

Nx9336C-FX2-A (optional) |

1/32 |

|

| Eth1/32 |

40GbE |

Nx9336C-FX2-B (optional) |

1/32 |

|

| Eth1/33 |

40GbE |

Nx9336C-FX2-A |

1/2 |

|

| Eth1/34 |

40GbE |

Nx9336C-FX2-B |

1/2 |

|

| MGMT0 |

GbE |

Customer Management Switch |

Any |

|

| L1 |

GbE |

Cisco UCS fabric interconnect B |

L1 |

|

| L2 |

GbE |

Cisco UCS fabric interconnect B |

L2 |

Table 7 Cisco MDS 9706 A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco MDS 9706 A

|

Eth1/1 |

FC uplink |

Cisco UCS fabric interconnect A |

1/1 |

| Eth1/2 |

FC uplink |

Cisco UCS fabric interconnect A |

1/2 |

|

| Eth1/3 |

FC uplink |

Cisco UCS fabric interconnect A |

1/3 |

|

| Eth1/4 |

FC uplink |

Cisco UCS fabric interconnect A |

1/4 |

|

| Eth1/13 |

FC uplink |

Hitachi VSP G370 – Controller 1 |

CL1-A |

|

| Eth1/14 |

FC uplink |

Hitachi VSP G370 – Controller 1 |

CL1-B |

|

| Eth1/15 |

FC uplink |

Hitachi VSP G370 – Controller 2 |

CL2-A |

|

| Eth1/16 |

FC uplink |

Hitachi VSP G370 – Controller 2 |

CL2-B |

|

| MGMT0 |

GbE |

Customer Management Switch |

Any |

Table 8 Cisco MDS 9706 B Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco MDS 9706 B

|

Eth1/1 |

FC uplink |

Cisco UCS fabric interconnect B |

1/1 |

| Eth1/2 |

FC uplink |

Cisco UCS fabric interconnect B |

1/2 |

|

| Eth1/3 |

FC uplink |

Cisco UCS fabric interconnect B |

1/3 |

|

| Eth1/4 |

FC uplink |

Cisco UCS fabric interconnect B |

1/4 |

|

| Eth1/13 |

FC uplink |

Hitachi VSP G370 – Controller 1 |

CL3-A |

|

| Eth1/14 |

FC uplink |

Hitachi VSP G370 – Controller 1 |

CL3-B |

|

| Eth1/15 |

FC uplink |

Hitachi VSP G370 – Controller 2 |

CL4-A |

|

| Eth1/16 |

FC uplink |

Hitachi VSP G370 – Controller 2 |

CL4-B |

|

| MGMT0 |

GbE |

Customer Management Switch |

Any |

Cisco Nexus 9000 Series Switch Network Configuration

The following section provides a detailed procedure for configuring the Cisco Nexus 9000 Switches for SAP HANA environment. The Nexus switch configuration will explain the basic L2 and L3 functionality for the application environment used in the validation environment hosted by the UCS domains. The application gateways are hosted by the pair of Nexus switches, but primary routing is passed onto an existing router that is upstream of the converged infrastructure. This upstream router will need to be aware of any networks created on the Nexus switches, but configuration of an upstream router is beyond the scope of this deployment guide.

The switch configuration in this section based on cabling plan described in the Physical Cabling section. If the systems connected on different ports, configure the switches accordingly following the guidelines described in this section

![]() The configuration steps detailed in this section provides guidance for configuring the Cisco Nexus 9000 running release 7.0(3)I7(5a) within a multi-VDC environment.

The configuration steps detailed in this section provides guidance for configuring the Cisco Nexus 9000 running release 7.0(3)I7(5a) within a multi-VDC environment.

Cisco Nexus 9000 Initial Configuration

Complete this dialogue on each switch, using a serial connection to the console port of the switch, unless Power on Auto Provisioning is being used.

Abort Power on Auto Provisioning and continue with normal setup? (yes/no) [n]: yes

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]:

Enter the password for "admin":

Confirm the password for "admin":

---- Basic System Configuration Dialog VDC: 1 ----

This setup utility will guide you through the basic configuration of

the system. Setup configures only enough connectivity for management

of the system.

Please register Cisco Nexus9000 Family devices promptly with your

supplier. Failure to register may affect response times for initial

service calls. Nexus9000 devices must be registered to receive

entitled support services.

Press Enter at anytime to skip a dialog. Use ctrl-c at anytime

to skip the remaining dialogs.

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]:

Configure read-only SNMP community string (yes/no) [n]:

Configure read-write SNMP community string (yes/no) [n]:

Enter the switch name : <<var_nexus_A_hostname>>|<<var_nexus_B_hostname>>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]:

Mgmt0 IPv4 address : << var_nexus_A_mgmt_ip>>|<< var_nexus_B_mgmt_ip>>

Mgmt0 IPv4 netmask : <<var_oob_mgmt netmask>

Configure the default gateway? (yes/no) [y]:

IPv4 address of the default gateway : <<var_oob_gw>>

Configure advanced IP options? (yes/no) [n]:

Enable the telnet service? (yes/no) [n]:

Enable the ssh service? (yes/no) [y]:

Type of ssh key you would like to generate (dsa/rsa) [rsa]:

Number of rsa key bits <1024-2048> [1024]:

Configure the ntp server? (yes/no) [n]: y

NTP server IPv4 address: <<var_oob_ntp>>

Configure default interface layer (L3/L2) [L2]:

Configure default switchport interface state (shut/noshut) [noshut]: shut

Configure CoPP system profile (strict/moderate/lenient/dense) [strict]:

The following configuration will be applied:

password strength-check

switchname <<var_nexus_A_hostname>>|<<var_nexus_B_hostname>>

vrf context management

ip route 0.0.0.0/0 <<var_oob_gw>>

exit

no feature telnet

ssh key rsa 1024 force

feature ssh

system default switchport

system default switchport shutdown

copp profile strict

interface mgmt0

ip address << var_nexus_A_mgmt_ip>>|<< var_nexus_B_mgmt_ip>> <<var_oob_mgmt netmask>

no shutdown

Would you like to edit the configuration? (yes/no) [n]:

Use this configuration and save it? (yes/no) [y]:

Enable Appropriate Cisco Nexus 9000 Series Switches Features and Settings

Cisco Nexus 9000 A and Cisco Nexus 9000 B

To enable the IP switching feature and set the default spanning tree behaviors, follow these steps:

1. On each Nexus 9000, enter configuration mode:

config terminal

2. Use the following commands to enable the necessary features:

feature udld

feature lacp

feature vpc

feature interface-vlan

feature lldp

3. Configure spanning tree defaults:

spanning-tree port type network default

spanning-tree port type edge bpduguard default

spanning-tree port type edge bpdufilter default

4. Save the running configuration to start-up:

copy run start

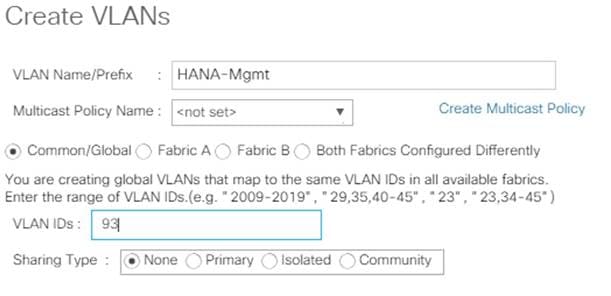

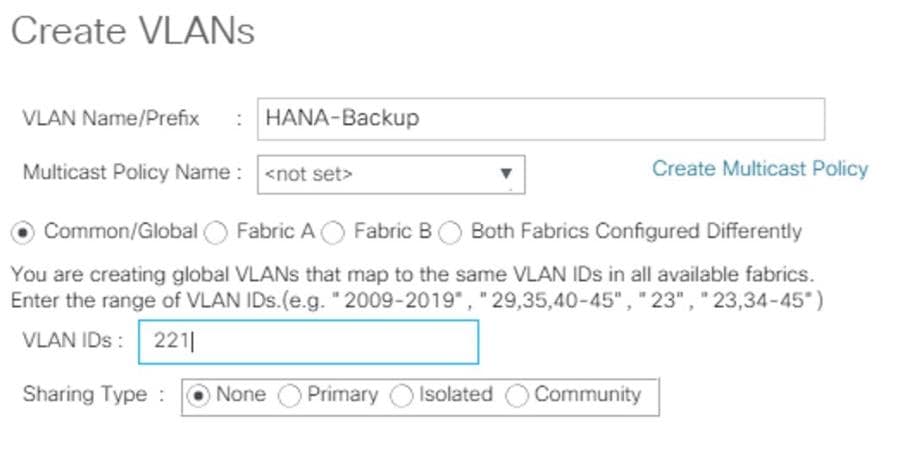

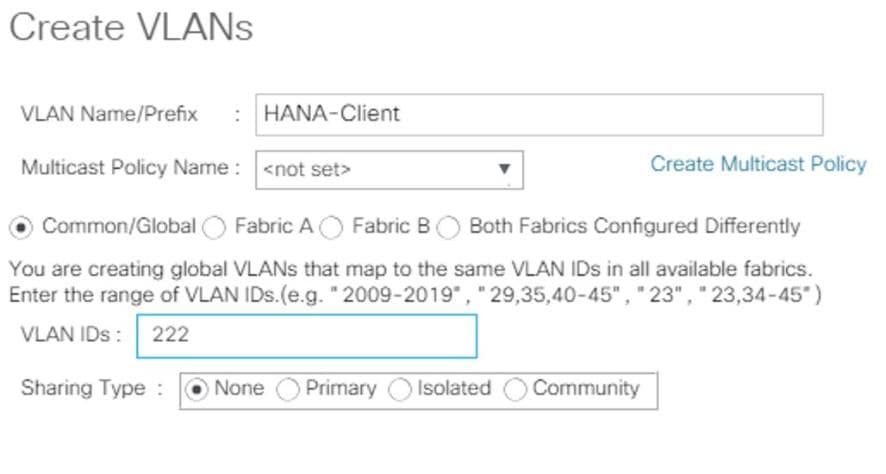

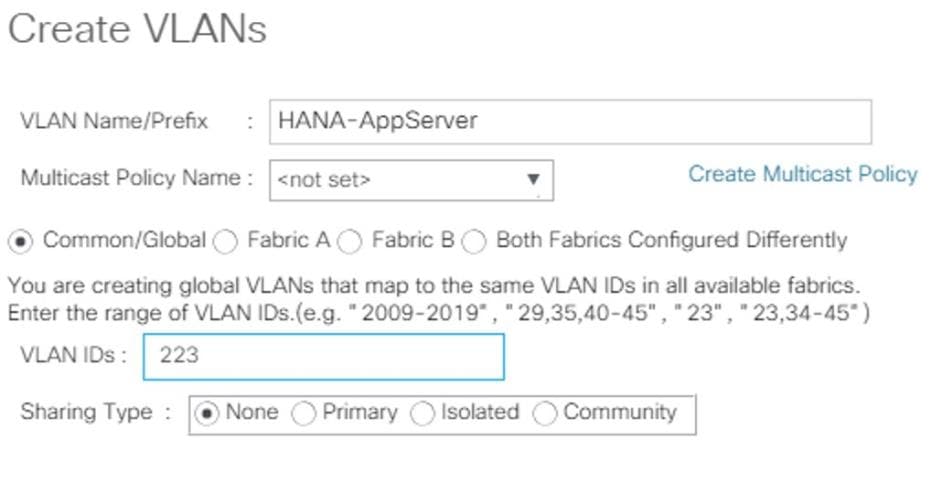

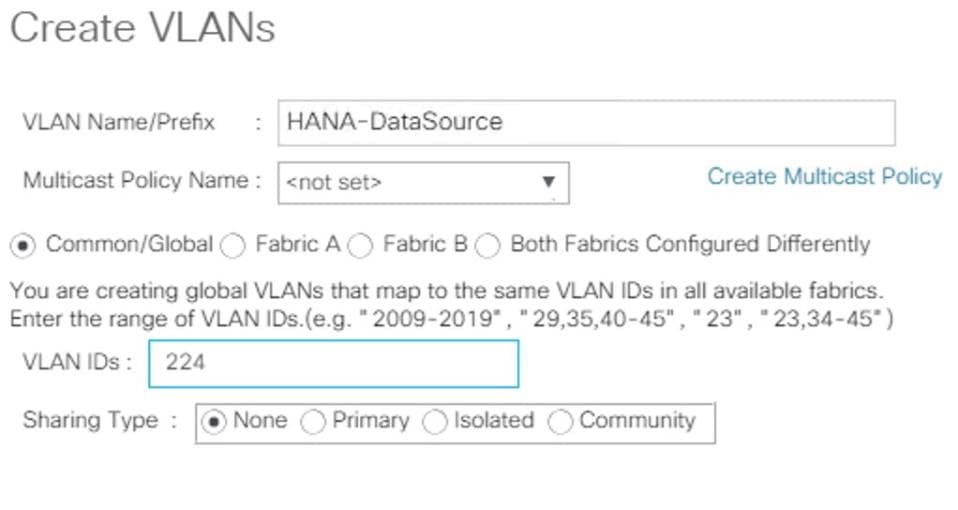

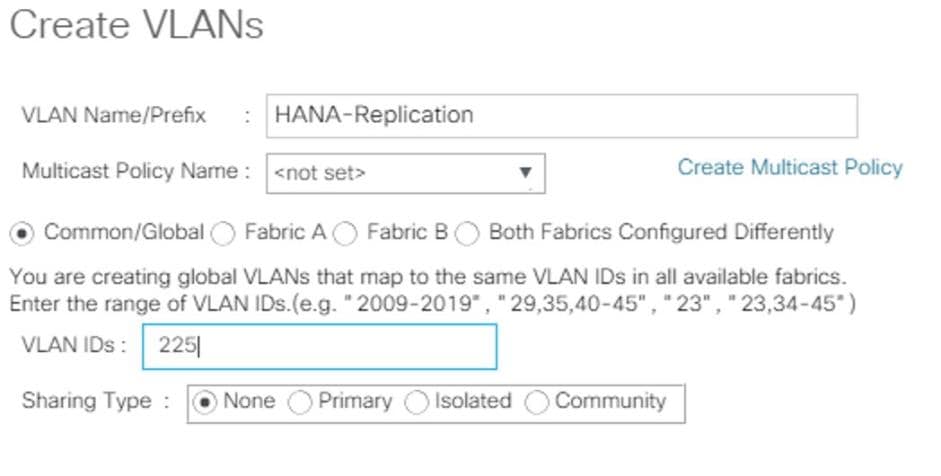

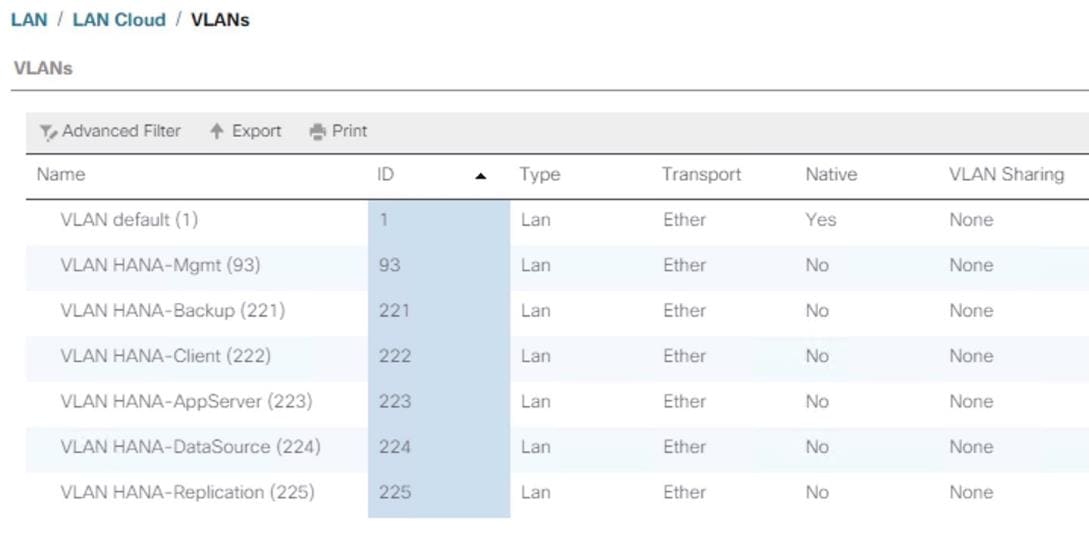

Create VLANs for SAP HANA Traffic

Cisco Nexus 9000 A and Cisco Nexus 9000 B

To create the necessary VLANs, complete the following step on both switches:

1. From the configuration mode, run the following commands:

vlan <<var_mgmt_vlan_id>>

name HANA-Node-Mgmt

vlan <<var_backup_vlan_id>>

name HANA-Node-Backup

vlan <<var_client_vlan_id>>

name HANA-Client

vlan <<var_appserver_vlan_id>>

name HANA-AppServer

vlan <<var_datasource_vlan_id>>

name HANA-DataSource

vlan <<var_replication_vlan_id>>

name HANA-System-Replication

Configure Virtual Port-Channel Domain

Cisco Nexus 9000 A

To configure vPCs for switch A, follow these steps:

1. From the global configuration mode, create a new vPC domain:

vpc domain <<var_nexus_vpc_domain_id>>

2. Make Nexus 9000A the primary vPC peer by defining a low priority value:

role priority 10

3. Use the management interfaces on the supervisors of the Nexus 9000s to establish a keepalive link:

peer-keepalive destination <<var_nexus_B_mgmt0_ip>> source <<var_nexus_A_mgmt0_ip>>

4. Enable following features for this vPC domain:

peer-switch

delay restore 150

peer-gateway

auto-recovery

Cisco Nexus 9000 B

To configure vPCs for switch B, follow these steps:

1. From the global configuration mode, define the same vPC domain in switch B:

vpc domain <<var_nexus_vpc_domain_id>>

2. Make Cisco Nexus 9000 B the secondary vPC peer by defining a higher priority value than that of the Nexus 9000 A:

role priority 20

3. Use the management interfaces on the supervisors of the Cisco Nexus 9000s to establish a keepalive link:

peer-keepalive destination <<var_nexus_A_mgmt0_ip>> source <<var_nexus_B_mgmt0_ip>>

4. Enable following features for this vPC domain:

peer-switch

delay restore 150

peer-gateway

auto-recovery

Configure Network Interfaces for the VPC Peer Links

Cisco Nexus 9000 A

1. Define a port description for the interfaces connecting to VPC Peer <<var_nexus_B_hostname>>.

interface Eth1/9

description VPC Peer <<var_nexus_B_hostname>>:1/9

interface Eth1/10

description VPC Peer <<var_nexus_B_hostname>>:1/10

2. Apply a port channel to both VPC Peer links and bring up the interfaces.

interface Eth1/9-10

channel-group 10 mode active

no shutdown

3. Define a description for the port-channel connecting to <<var_nexus_B_hostname>>.

interface Po10

description vPC peer-link

4. Make the port-channel a switchport, and configure a trunk to allow HANA VLANs

switchport

switchport mode trunk

switchport trunk allowed vlan <<var_mgmt_vlan_id>>,<<var_backup_vlan_id>>, <<var_client_vlan_id>>, <<var_appserver_vlan_id>>, <<var_datasource_vlan_id>>,

<<var_replication_vlan_id>>

5. Make this port-channel the VPC peer link and bring it up.

spanning-tree port type network

vpc peer-link

no shutdown

Cisco Nexus 9000 B

1. Define a port description for the interfaces connecting to VPC peer <<var_nexus_A_hostname>>.

interface Eth1/9

description VPC Peer <<var_nexus_A_hostname>>:1/9

interface Eth1/10

description VPC Peer <<var_nexus_A_hostname>>:1/10

2. Apply a port channel to both VPC peer links and bring up the interfaces.

interface Eth1/35-36

channel-group 10 mode active

no shutdown

3. Define a description for the port-channel connecting to <<var_nexus_A_hostname>>.

interface Po10

description vPC peer-link

4. Make the port-channel a switchport and configure a trunk to allow HANA VLANs.

switchport

switchport mode trunk

switchport trunk allowed vlan <<var_mgmt_vlan_id>>,<<var_backup_vlan_id>>, <<var_client_vlan_id>>, <<var_appserver_vlan_id>>, <<var_datasource_vlan_id>>,

<<var_replication_vlan_id>>

5. Make this port-channel the VPC peer link and bring it up.

spanning-tree port type network

vpc peer-link

no shutdown

Configure vPCs with Cisco UCS Fabric Interconnect

To configure the vPCs for use by the Client zone, Admin zone, and internal zone traffic, follow these steps:

Run on Cisco Nexus 9000 A and Cisco Nexus 9000 B

1. Define a port description for the interfaces connecting to <<var_ucs_clustername>>-A.

interface Eth1/1

description <<var_ucs_clustername>>-A:1/33

![]() While running this on Switch B, please note the change in remote port in the description command. In the current example, it would be “description <<var_ucs_clustername>>-A:1/33” based on the connectivity details. The same can be verified from command “show cdp neighbours”

While running this on Switch B, please note the change in remote port in the description command. In the current example, it would be “description <<var_ucs_clustername>>-A:1/33” based on the connectivity details. The same can be verified from command “show cdp neighbours”

2. Apply it to a port channel and bring up the interface.

interface eth1/1

channel-group 21 mode active

no shutdown

3. Define a description for the port-channel connecting to <<var_ucs_clustername>>-A.

interface Po21

description <<var_ucs_clustername>>-A

4. Make the port-channel a switchport and configure a trunk to allow all HANA VLANs.

switchport

switchport mode trunk

switchport trunk allowed vlan <<var_mgmt_vlan_id>>,<<var_client_vlan_id>>, <<var_appserver_vlan_id>>, <<var_datasource_vlan_id>>,

<<var_replication_vlan_id>>

5. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

6. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

7. Make this a VPC port-channel and bring it up.

vpc 21

no shutdown

8. Define a port description for the interface connecting to <<var_ucs_clustername>>-B.

interface Eth1/2

description <<var_ucs_clustername>>-B:1/33

![]() While running this on Switch B, please note the change in remote port in the description command. In the current example, it would be “description <<var_ucs_clustername>>-A:1/34” based on the connectivity details. The same can be verified from command “show cdp neighbours”

While running this on Switch B, please note the change in remote port in the description command. In the current example, it would be “description <<var_ucs_clustername>>-A:1/34” based on the connectivity details. The same can be verified from command “show cdp neighbours”

9. Apply it to a port channel and bring up the interface.

interface Eth1/2

channel-group 22 mode active

no shutdown

10. Define a description for the port-channel connecting to <<var_ucs_clustername>>-B.

interface port-channel22

description <<var_ucs_clustername>>-B

11. Make the port-channel a switchport and configure a trunk to allow all HANA VLANs.

switchport

switchport mode trunk

switchport trunk allowed vlan <<var_mgmt_vlan_id>>,<<var_client_vlan_id>>, <<var_appserver_vlan_id>>, <<var_datasource_vlan_id>>,

<<var_replication_vlan_id>>

12. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

13. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

14. Make this a VPC port-channel and bring it up.

vpc 22

no shutdown

(Optional) Configure SAP HANA Backup Networks to Use Separate vPCs

Configure additional vPCs to be used exclusively by the Backup Network. The following example configures two ports Ethernet 1/31 and Et/hernet1/32 connected to Eth1/31 and Eth1/32 on the UCS Fabric Interconnects.

Run on Cisco Nexus 9000 A and Cisco Nexus 9000 B

1. Define a port description for the interface connecting to <<var_node01>>.

interface Eth1/31

description <<var_ucs_clustername>>-A:1/31

![]() While running this on Switch B, please note the change in remote port in the description command. In the current example, it would be “description <<var_ucs_clustername>>-A:1/31” based on the connectivity details. The same can be verified from command “show cdp neighbours”

While running this on Switch B, please note the change in remote port in the description command. In the current example, it would be “description <<var_ucs_clustername>>-A:1/31” based on the connectivity details. The same can be verified from command “show cdp neighbours”

2. Apply it to a port channel and bring up the interface.

interface eth1/31

channel-group 31 mode active

no shutdown

3. Define a description for the port-channel connecting to <<var_backup_node01>>.

interface Po31

description PC-from-FI-A

4. Make the port-channel a switchport and configure a trunk to allow NFS VLAN for DATA.

switchport

switchport mode trunk

switchport trunk allowed vlan <<var_backup_vlan_id>>

5. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

6. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

7. Make this a VPC port-channel and bring it up.

vpc 31

no shutdown

8. Define a port description for the interface connecting to <<var_node02>>.

interface Eth1/32

description <<var_ucs_clustername>>-B:1/31

![]() While running this on Switch B, please note the change in remote port in the description command. In the current example, it would be “description <<var_ucs_clustername>>-B:1/31” based on the connectivity details. The same can be verified with the command show cdp neighbours.

While running this on Switch B, please note the change in remote port in the description command. In the current example, it would be “description <<var_ucs_clustername>>-B:1/31” based on the connectivity details. The same can be verified with the command show cdp neighbours.

9. Apply it to a port channel and bring up the interface.

channel-group 32 mode active

no shutdown

10. Define a description for the port-channel connecting to <<var_node02>>.

interface Po32

description PC-from-FI-B

11. Make the port-channel a switchport, and configure a trunk to allow NFS VLAN for DATA

switchport

switchport mode trunk

switchport trunk allowed vlan <<var_backup_vlan_id>>

12. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

13. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

14. Make this a VPC port-channel and bring it up.

vpc 32

no shutdown

![]() Make sure to save the configuration to the startup config using the command copy running-config startup-config.

Make sure to save the configuration to the startup config using the command copy running-config startup-config.

Set Global NTP Configurations

Run the following commands on both switches to set global configurations:

ntp server <<var_oob_ntp>> use-vrf management

![]() The ntp server should be an accessible NTP server for use by the switches. In this case, point to an out-of-band source.

The ntp server should be an accessible NTP server for use by the switches. In this case, point to an out-of-band source.

ntp master 3

ntp source <<var_nexus_ib_vip>>

![]() Setting the switches as ntp masters to redistribute as an ntp source is optional here, but can be a valuable fix if the tenant networks are not enabled to reach the primary ntp server.

Setting the switches as ntp masters to redistribute as an ntp source is optional here, but can be a valuable fix if the tenant networks are not enabled to reach the primary ntp server.

![]() *** Save all configurations to this point on both Nexus Switches ***

*** Save all configurations to this point on both Nexus Switches ***

copy running-config startup-config

A Hitachi Virtual Storage Platform F/G series specialist must install Hitachi Virtual Storage Platform G370. The initial configuration for VSP G370 is done in the Hitachi Distribution Centers.

If IP addresses of the SVP are not known at build time in the distribution center, they will be set to a default value and need change onsite by the Hitachi storage specialist.

Storage Architecture Overview

Each SAP HANA node needs the following storage layout:

· Operating system (OS) volume

· SAP HANA shared volume

· SAP HANA log volume

· SAP HANA data volume

This SAP HANA TDI setup utilizes the following two dynamic provisioning pools created with Hitachi Dynamic Provisioning for the storage layout. This ensures maximum utilization and optimization at a lower cost than other solutions.

· OS_SH_DT_Pool for the following:

- OS volume

- SAP HANA shared volume

- SAP HANA data volume

· LOG_Pool for the following:

- SAP HANA log volume

The validated dynamic provisioning pool layout options with minimal disks and storage cache on Hitachi Virtual Storage Platform F350, VSP G350, F370, VSP G370, VSP F700, VSP G700, VSP F900 and VSP G900 storage are listed in Table 9 .

Table 9 Dynamic Provisioning Pools with Disks and Storage Cache

| Storage |

Cache |

Nodes Number |

Number of Parity Groups in OS_SH_DT_Pool |

Number of Parity Groups in LOG_Pool |

| RAID-10 (2D+2D) |

RAID-10 (2D+2D) |

|||

| VSP F350, VSP G350, VSP F370, VSP G370 (with SSD) |

VSP F350, VSP G350: 128 GB VSP F370, VSP G370: 256GB |

up to 8 |

1 |

1 |

| up to 15 |

2 |

2 |

||

| up to 16 |

3 |

3 |

||

| VSP F700, VSP G700 (with SSD) |

512 GB |

up to 11 |

1 |

1 |

| up to 20 |

2 |

2 |

||

| up to 28 |

3 |

3 |

||

| up to 30 |

4 |

4 |

||

| up to 32 |

4 |

5 |

||

| VSP F900, VSP G900 (with SSD) |

1024GB |

up to 17 |

1 |

1 |

| up to 23 |

2 |

2 |

||

| up to 31 |

3 |

3 |

||

| up to 32 |

4 |

3 |

Additional parity groups of the same type may need to be added. Drive boxes may be needed if the internal drives on storage are not sufficient, depending on the following:

· The various combinations of node sizes

· The number of nodes to meet the capacity requirements

While it is not limited to these systems, this SAP HANA tailored data center integration solution uses the following four active SAP HANA systems, as examples:

· System 1 — 384 GB

· System 2 — 768 GB

· System 3 — 1536 GB

· System 4 — 3072 GB

Provision the storage for the four SAP HANA systems listed above:

· Determine the minimum sizes for operating system, data, log, and HANA shared using these formulas in SAP white pager SAP HANA Storage Requirements as following:

- Every HANA node requires approximately 100 GB capacity for the operating system.

- /hana/shared size uses formulas:

§ Single node (scale-up) — Size = MIN (1 × RAM; 1 TB)

§ Multi-node (scale-out) — Size = 1 × RAM of every 4 worker nodes

- Data size requires at least 1 × RAM of each HANA node

- Log size uses formulas:

§ Systems with equal or less than 512 GB memory — Size = 1/2 × RAM

§ Systems with greater than 512 GB memory — Size = 512 GB

· Provision the storage:

- Create two dynamic provisioning pools for the three SAP HANA systems on storage:

§ Use OS_SH_DT_Pool to provision the operating system volume, SAP HANA shared volume, and Data volume.

§ Use LOG_Pool to provision the Log volume.

- For SSDs, create the parity groups first, as the example shown in Table 10 for Hitachi Virtual Storage Platform G370, using the RAID-10 storage design

Table 10 Dynamic Provisioning Pool with RAID10(2D+2D) for 16 Nodes on VSP F370 and G370 with SSDs

| Dynamic Provisioning Pool |

Parity Group ID |

Parity Group RAID Level and Disks |

LDEV ID |

LDEV Name |

LDEV Size |

MPU Assignment |

| OS_SH_DT_Pool |

1 |

RAID-10 (2D+2D) on 1.9 TB SSD |

00:00:01 |

OS_SH_DT_DPVOL_1 |

878 GB |

MPU-10 |

| 00:00:02 |

OS_SH_DT_DPVOL_2 |

878 GB |

MPU-20 |

|||

| 00:00:03 |

OS_SH_DT_DPVOL_3 |

878 GB |

MPU-10 |

|||

| 00:00:04 |

OS_SH_DT_DPVOL_4 |

878 GB |

MPU-20 |

|||

| 2 |

RAID-10 (2D+2D) on 1.9 TB SSD |

00:00:05 |

OS_SH_DT_DPVOL_5 |

878 GB |

MPU-10 |

|

| 00:00:06 |

OS_SH_DT_DPVOL_6 |

878 GB |

MPU-20 |

|||

| 00:00:07 |

OS_SH_DT_DPVOL_7 |

878 GB |

MPU-10 |

|||

| 00:00:08 |

OS_SH_DT_DPVOL_8 |

878 GB |

MPU-20 |

|||

| 3 |

RAID-10 (2D+2D) on 1.9 TB SSD |

00:00:09 |

OS_SH_DT_DPVOL_9 |

878 GB |

MPU-10 |

|

| 00:00:10 |

OS_SH_DT_DPVOL_10 |

878 GB |

MPU-20 |

|||

| 00:00:11 |

OS_SH_DT_DPVOL_11 |

878 GB |

MPU-10 |

|||

| 00:00:12 |

OS_SH_DT_DPVOL_12 |

878 GB |

MPU-20 |

|||

| LOG_Pool |

4 |

RAID-10 (2D+2D) on 1.9 TB SSD |

00:00:13 |

LG_DPVOL_1 |

878 GB |

MPU-10 |

| 00:00:14 |

LG_DPVOL_2 |

878 GB |

MPU-20 |

|||

| 00:00:15 |

LG_DPVOL_3 |

878 GB |

MPU-10 |

|||

| 00:00:16 |

LG_DPVOL_4 |

878 GB |

MPU-20 |

|||

| 5 |

RAID-10 (2D+2D) on 1.9 TB SSD |

00:00:17 |

LG_DPVOL_5 |

878 GB |

MPU-10 |

|

| 00:00:18 |

LG_DPVOL_6 |

878 GB |

MPU-20 |

|||

| 00:00:19 |

LG_DPVOL_7 |

878 GB |

MPU-10 |

|||

| 00:00:20 |

LG_DPVOL_8 |

878 GB |

MPU-20 |

|||

| 6 |

RAID-10 (2D+2D) on 1.9 TB SSD |

00:00:21 |

LG_DPVOL_9 |

878 GB |

MPU-10 |

|

| 00:00:22 |

LG_DPVOL_10 |

878 GB |

MPU-20 |

|||

| 00:00:23 |

LG_DPVOL_11 |

878 GB |

MPU-10 |

|||

| 00:00:24 |

LG_DPVOL_12 |

878 GB |

MPU-20 |

· Assign all LDEVs to the dedicated pool for VSP G370.

· Create virtual volumes (VVOLs) for the operating system, SAP HANA shared, log, and data volumes. Table 11 shows examples for HANA systems with memory of 384 GB, 768 GB, 1536 GB, and 3072 GB.

Table 11 VVOLs for SAP HANA Nodes for Four Memory Sizes of HANA Systems

| Dynamic Provisioning Pool |

VVOL ID |

VVOL Name |

VVOL Size |

MPU Assignment |

System Memory |

| OS_SH_DT_Pool |

00:01:00 |

HANA_OS_N1 |

100 GB |

MPU-10 |

384 GB |

| 00:02:00 |

HANA_OS_N2 |

100 GB |

MPU-20 |

768 GB |

|

| 00:03:00 |

HANA_OS_N3 |

100 GB |

MPU-10 |

1536 GB |

|

| 00:04:00 |

HANA_OS_N4 |

100 GB |

MPU-20 |

3072 GB |

|

| 00:01:01 |

HANA_SH_N1 |

384 GB |

MPU-10 |

384 GB |

|

| 00:02:01 |

HANA_SH_N2 |

768 GB |

MPU-20 |

768 GB |

|

| 00:03:01 |

HANA_SH_N3 |

1536 GB |

MPU-10 |

1536 GB |

|

| 00:04:01 |

HANA_SH_N4 |

3072 GB |

MPU-20 |

3072 GB |

|

| 00:01:06 |

HANA_DATA_N1_1 |

96 GB |

MPU-10 |

384 GB |

|

| 00:01:07 |

HANA_DATA_N1_2 |

96 GB |

MPU-20 |

||

| 00:01:08 |

HANA_DATA_N1_3 |

96 GB |

MPU-10 |

||

| 00:01:09 |

HANA_DATA_N1_4 |

96 GB |

MPU-20 |

||

| 00:02:06 |

HANA_DATA_N2_1 |

192 GB |

MPU-10 |

768 GB |

|

| 00:02:07 |

HANA_DATA_N2_2 |

192 GB |

MPU-20 |

||

| 00:02:08 |

HANA_DATA_N2_3 |

192 GB |

MPU-10 |

||

| 00:02:09 |

HANA_DATA_N2_4 |

192 GB |

MPU-20 |

||

| 00:03:06 |

HANA_DATA_N3_1 |

384 GB |

MPU-10 |

1536 GB |

|

| 00:03:07 |

HANA_DATA_N3_2 |

384 GB |

MPU-20 |

||

| 00:03:08 |

HANA_DATA_N3_3 |

384 GB |

MPU-10 |

||

| 00:03:09 |

HANA_DATA_N3_4 |

384 GB |

MPU-20 |

||

| 00:04:06 |

HANA_DATA_N4_1 |

768 GB |

MPU-10 |

3072 GB |

|

| 00:04:07 |

HANA_DATA_N4_2 |

768 GB |

MPU-20 |

||

| 00:04:08 |

HANA_DATA_N4_3 |

768 GB |

MPU-10 |

||

| 00:04:09 |

HANA_DATA_N4_4 |

768 GB |

MPU-20 |

||

| LOG_Pool |

00:01:02 |

HANA_LOG_N1_1 |

48 GB |

MPU-10 |

384 GB |

| 00:01:03 |

HANA_LOG_N1_2 |

48 GB |

MPU-20 |

||

| 00:01:04 |

HANA_LOG_N1_3 |

48 GB |

MPU-10 |

||

| 00:01:05 |

HANA_LOG_N1_4 |

48 GB |

MPU-20 |

||

| 00:02:02 |

HANA_LOG_N2_1 |

96 GB |

MPU-10 |

768 GB |

|

| 00:02:03 |

HANA_LOG_N2_2 |

96 GB |

MPU-20 |

||

| 00:02:04 |

HANA_LOG_N2_3 |

96 GB |

MPU-10 |

||

| 00:02:05 |

HANA_LOG_N2_4 |

96 GB |

MPU-20 |

||

| 00:03:02 |

HANA_LOG_N3_1 |

128 GB |

MPU-10 |

1536 GB |

|

| 00:03:03 |

HANA_LOG_N3_2 |

128 GB |

MPU-20 |

||

| 00:03:04 |

HANA_LOG_N3_3 |

128 GB |

MPU-10 |

||

| 00:03:05 |

HANA_LOG_N3_4 |

128 GB |

MPU-20 |

||

| 00:04:02 |

HANA_LOG_N4_1 |

128 GB |

MPU-10 |

3072 GB |

|

| 00:04:03 |

HANA_LOG_N4_2 |

128 GB |

MPU-20 |

||

| 00:04:04 |

HANA_LOG_N4_3 |

128 GB |

MPU-10 |

||

| 00:04:05 |

HANA_LOG_N4_4 |

128 GB |

MPU-20 |

While mapping the LUN path assignment for each node, add VVOLs in the following order:

1. The operating system volume

2. The SAP HANA shared volume

3. The log volume

4. The data volume

Table 12 lists an example configuration of the LUN path assignment for Node 1. Configure the LUN assignment similarly for all other nodes.

Table 12 Example LUN Path Assignment for the SAP HANA Configuration on Node 1

| LDEV ID |

LDEV Name |

|

| 0000 |

00:01:00 |

HANA_OS_N1 |

| 0001 |

00:01:01 |

HANA_SH_N1 |

| 0002 |

00:01:02 |

HANA_LOG_N1_1 |

| 0003 |

00:01:03 |

HANA_LOG_N1_2 |

| 0004 |

00:01:04 |

HANA_LOG_N1_3 |

| 0005 |

00:01:05 |

HANA_LOG_N1_4 |

| 0006 |

00:01:06 |

HANA_DATA_N1_1 |

| 0007 |

00:01:07 |

HANA_DATA_N1_2 |

| 0008 |

00:01:08 |

HANA_DATA_N1_3 |

| 0009 |

00:01:09 |

HANA_DATA_N1_4 |

Log into Storage Navigator

After installing the VSP G370 onsite and running all necessary cable connections and powering up the VSP G370, open Hitachi Storage Navigator to start the configuration:

1. Access Hitachi Storage Navigator through a web browser.

2. https://<IP of Storage System SVP>/dev/storage/886000<Serial Number of Storage System>/emergency.do – for example, if Storage System SVP IP address is 192.168.50.21 and Serial Number of Storage System is 456789, the URL would be:

https://192.168.50.21/dev/storage/836000456789/emergency.do

3. Log into Hitachi Storage Navigator.

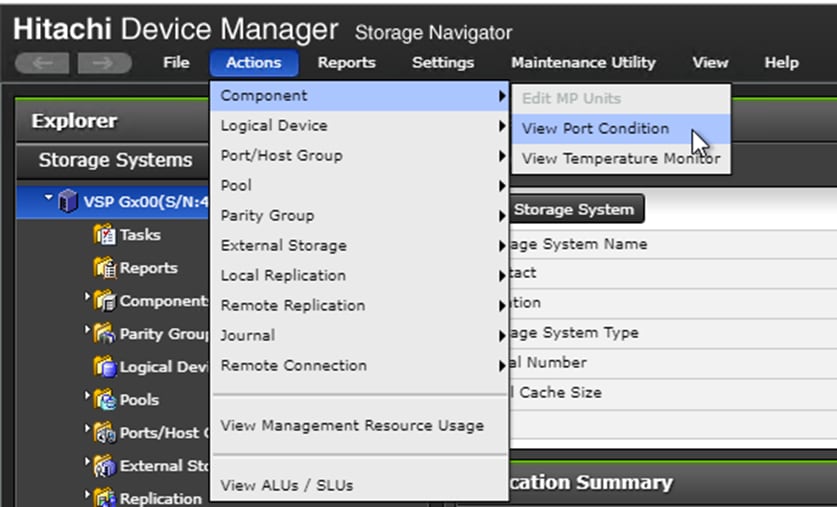

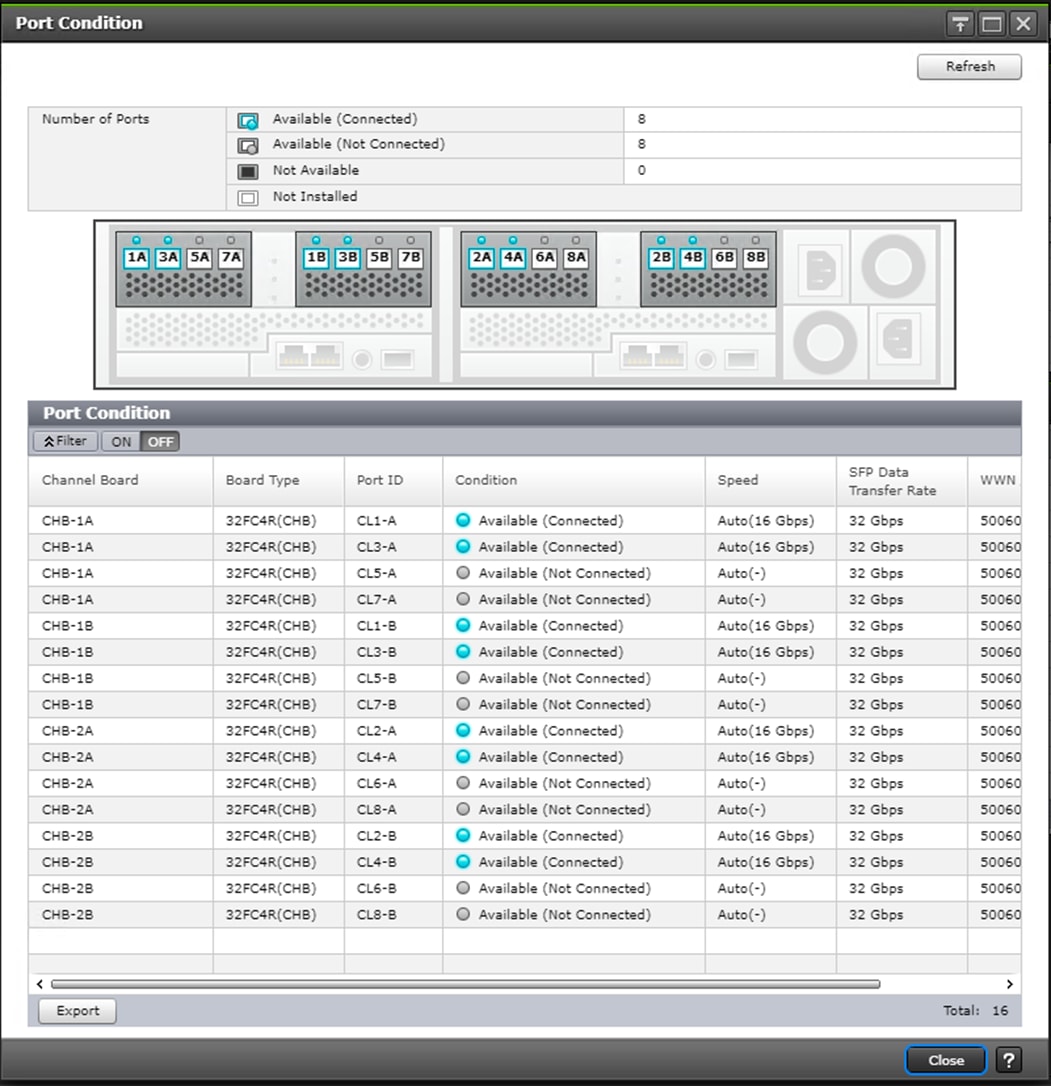

Check SFP Data Transfer Rate

When you first log in prior to starting the configuration of the storage, navigate to Port Condition to check the SFP Data Transfer Rate.

To check the SFP data transfer rate, follow these steps:

1. In the Storage Navigator window click Actions, Components and then View Port Condition.

The Port Condition window opens.

2. Make sure the transfer rate in the SFP Data Transfer Rate matches the speed of the SFPs in the storage controller. The actual Speed can differ, depending on the configuration of the other components.

3. Click Close to close the Port Condition window and start with the storage configuration.

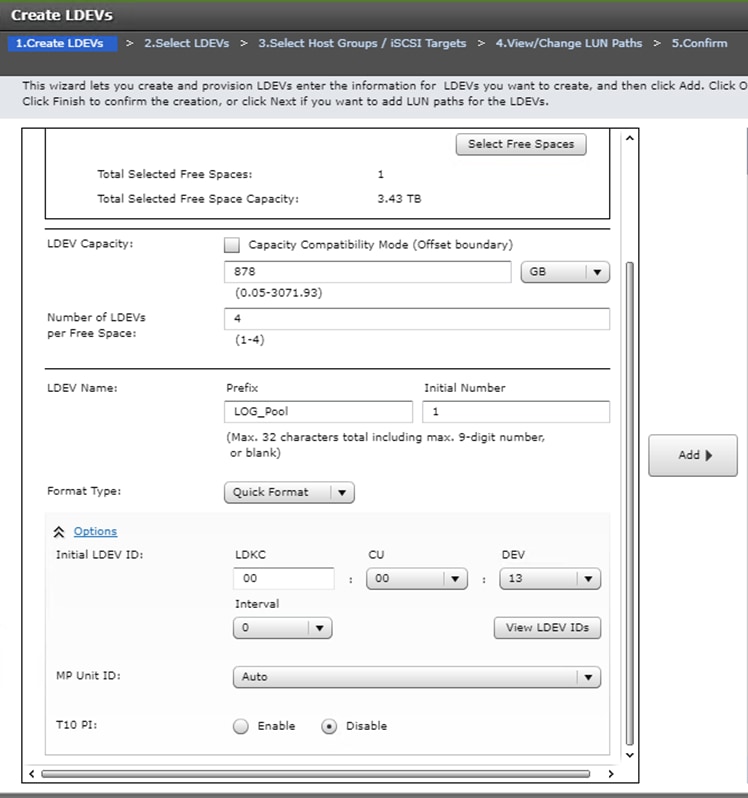

Create Pool Volumes

This procedure creates the Parity Groups and LDEVs using Hitachi Storage Navigator for the following:

· Operating System LUNs

· SAP HANA Shared LUNs

· SAP HANA Log LUNs

· SAP HANA Data LUNs

Use the storage navigator session from the previous section. Repeat these steps to create all the required pool volumes.

To create a pool volume, follow these steps:

1. Open the LDEV creation window.

2. In the General Tasks pane, click Create LDEVs. The 1 Create LDEVs dialog box opens.

3. Create Pool Volume LUN:

a. Create an LDEV.

b. Enter the values shown in Table 13 into the Create LDEVs dialog box.

Table 13 Pool Volume Creation for LOG_Pool and OS_SH_DT_Pool

| For This |

Enter This |

| Provisioning Type |

Click Basic |

| Drive Type/RPM |

Click SSD |

| RAID Level |

Click 1 (2D+2P) |

| Select Free Spaces |

Click the option |

| Parity Group |

Select the 1 (2D+2P) Parity Group |

| LDEV Capacity |

Type value 878 GB |

| Number of LDEVs per Free Space |

Type 4 for each RAID group |

| LDEV Name area |

Type the pool name as prefix and the next free number as int number, i.e. 1 for the first RAID group, 5 for the second etc. |

| Options area |

In the LDKC:CU:DEV text box, type the initial as shown in the LDEV ID column of Table 12 |

| In the MPU assignment text box, select Auto |

4. Click Add and then click Finish.

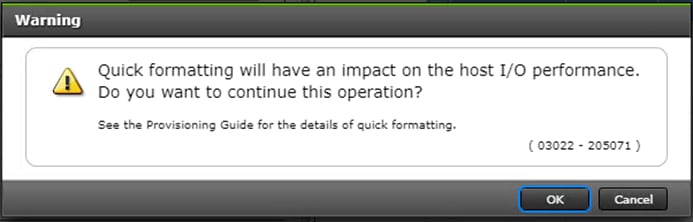

5. Acknowledge the Warning by clicking OK.

The Confirm window opens.

6. Confirm the selection again, and then click Apply.

7. Record the task name for later reference.

8. Repeat steps 1-7 to create every pool volume required by this installation.

9. Keep the Storage Navigator session open to Create Dynamic Provisioning Pools.

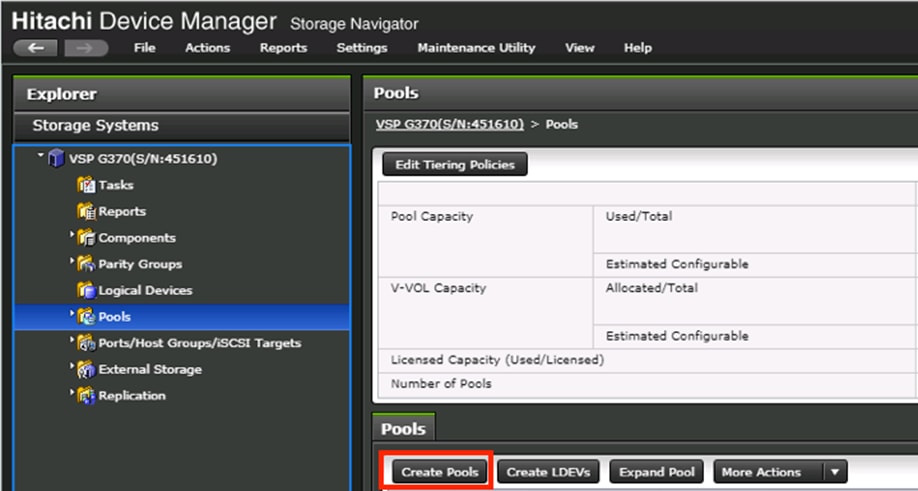

Create Dynamic Provisioning Pools

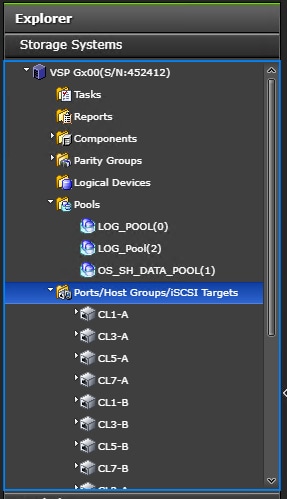

Use the Storage Navigator session from previous procedure to perform this procedure to create dynamic provisioning pools. This solution uses two dynamic provisioning pools:

· LOG_Pool

· OS_SH_DT_Pool

Follow the steps in this section to create the LOG_Pool and repeat these steps to create the OS_SH_DT_Pool.

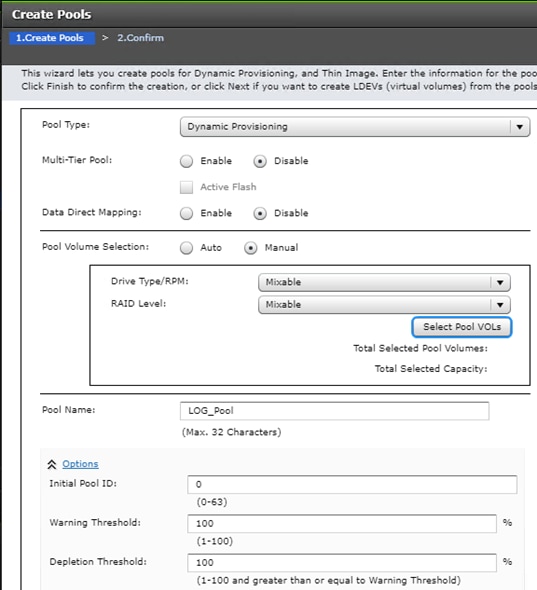

To create a dynamic provisioning pool, follow these steps:

1. From Pools, click Create Pools to open the 1. Create Pools window.

2. Enter the values shown in Table 6 in the Create Pools dialog box.

Table 14 Dynamic Provisioning Pool Creation: LOG_Pool and OS_SH_DT_Pool

| For This |

Enter This |

| Pool Type |

Select Dynamic Provisioning |

| Multi-Tier Pool |

Disabled |

| Data Direct Mapping |

Disabled |

| Pool Volume Selection |

Click Manual |

| Pool Name |

LOG_Pool or OS_SH_DT_Pool |

| Initial Pool ID |

Type 0 for LOG_Pool or type 1 for OS_SH_DT_Pool |

| Warning Threshold |

100 |

| Deletion Threshold |

100 |

3. Select the pool volumes for the pool.

4. Click Select Pool VOLs.

5. Select the volumes.

- For LOG_Pool, identify the pool volumes for the pool and select them. Click Add.

- For OS_SH_DT_Pool, identify the pool volumes for the pool and select them. Click Add.

6. Click OK.

7. Click Add.

8. Click Finish on the 2. Confirm window.

9. Click Apply.

Provision the LUNS (Virtual Volumes)

![]() Follow the storage configuration outlined below for this solution. Do not make any changes to these instructions in the Distribution Center. SAP does not support any changes made to this exact configuration.

Follow the storage configuration outlined below for this solution. Do not make any changes to these instructions in the Distribution Center. SAP does not support any changes made to this exact configuration.

This procedure creates the LDEVs using Hitachi Storage Navigator for the following:

· Operating system LUNS

· SAP HANA shared LUNS

· Log LUNs

· Data LUNs

Assign each of the LUNs to specific MPU for optimal performance, map to LUN paths using specific LUN ID in sequence as listed Table 12

Create Virtual Volumes for the Operating System LUNS and Map Ports

Use Hitachi Storage Navigator to create the operating system LDEV and map it to specified Hitachi Virtual Storage Platform Fx00 or Gx00 ports.

To create LDEVs for the operating system boot LUN, follow these steps:

1. From Pools, click OS_SH_DT_Pool.

2. In the Virtual Volumes pane, click Create LDEVs. The 1 Create LDEVs dialog box opens.

3. Create operating system boot LUNS.

4. Create one operating system LUN per HANA node and assign it to the ports following Table 11 . Repeat this step until all operating LUNS are completed.

5. Create an LDEV.

6. Enter the values shown in Table 15 in the Create LDEVs dialog box.

Table 15 LDEV Creation Values for Operating System LUN

| For This |

Enter This |

| Provisioning Type |

Click Dynamic Provisioning |

| Drive Type/RPM |

Click SSD/- |

| RAID Level |

Click 1 (2D+2P) |

| Select Pool |

OS_SH_DT_Pool |

| LDEV Capacity |

Type 100 GB |

| Number of LDEVs per Free Space |

Type the node number to be added to the name. |

| LDEV Name area |

Type the Prefix for the LUN name: HANA_OS_N |

| Type the node number to be added to the name. |

|

| Full Allocation |

Enabled |

| Options area |

Type or click the values for LDKC, CU and DEV according to the VVOL ID column of Table 3 . |

| Select the value Auto for the MPU Unit ID. |

7. Click Add and then click Next.

The 2 Select LDEV window displays all configured LDEVs in the right pane.

8. Select the host ports.

9. Click Next on the 2 Select LDEVs window. The 3 Select Host Groups/iSCSI Targets window opens.

10. From the Available Host Groups pane, select the OS LUN ports by referring to Table 11

11. Click Add.

12. The selected ports that were in the Available Hosts Groups pane are now in the Selected Host Groups pane.

13. Click Next.

14. The 4 View/Change LUN Paths window displays.

15. Confirm the selected ports.

![]() The operating system LUN always has a LUN ID of 000.

The operating system LUN always has a LUN ID of 000.

16. Confirm the selected ports and adjust the LUN ID as listed in Table 4

17. Click Finish.

The 5 Confirm window opens.

18. Confirm the selection again and then click Apply.

19. Record the task name for later reference

20. Keep the Storage Navigator session open for Create Virtual Volumes for HANA Shared File System and Map Ports.

Create Virtual Volumes for HANA Shared File System and Map Ports

Use Hitachi Storage Navigator to create the HANA shared virtual volumes under dynamic provisioning pool OS_SH_DT_Pool and then map them to specified storage ports.

Repeat this procedure until you create all of the virtual volumes.

To create a virtual volume for the HANA-shared file system and map ports, follow these steps:

1. From Pools, click OS_SH_DT_Pool.

2. Enter the values shown in Table 16 in the Create LDEVs dialog box.

Table 16 Virtual Volume Creation for HANA Shared LUNs

| For This |

Enter This |

| Provisioning Type |

Click Dynamic Provisioning |

| Drive Type/RPM |

Leave at SSD/- |

| RAID Level |

Leave at 1 (2D+2P) |

| Select Pool |

OS_SH_DT_Pool |

| LDEV Capacity |

Type the required volume size for /hana/shared volume in GB. This is equal or greater the memory size of the HANA node. |

| Number of LDEVs |

Type 1 |

| Full Allocation |

Click Enabled |

| LDEV Name area |

For LDEV Name Prefix, type the HANA Shared LUN LDEV name: HANA_SH_N |

| Type the node number to be added to the name. |

|

| Options area |

Type or click the values for LDK:CU:DEV according to the VVOL ID column of Table 3 |

| Click Auto for MP Unit ID of the MPU assignment. |

3. Keep the Storage Navigator session open for Create Virtual Volumes for Log LUNs and Map Ports.

Create Virtual Volumes for Log LUNs and Map Ports

This procedure creates and maps LDEVs to the specified storage ports for the log LUNs.

Use the Hitachi Storage Navigator session previously started.

To provision the LDEVs for log LUNs, follow the steps from the previous section with the following changes:

1. From Pools, click LOG_Pool.

2. Enter the values shown in Table 17 in the Create LDEVs dialog box.

Table 17 LDEV Creation Values for Log LUN

| For This |

Enter This |

| Provisioning Type |

Click Dynamic Provisioning |

| Drive Type/RPM |

Click SSD/- |

| RAID Level |

Click 1 (2D+2P) |

| Select Pool |

LOG_Pool |

| LDEV Capacity |

Type the required volume size divided by 4 in GB. For example, if a 512 GB log volume is needed, type 128 GB |

| Number of LDEVs per Free Space |

Type 4 |

| Full Allocation |

Click Enabled |

| LDEV Name area |

For LDEV Name Prefix, type the HANA Log LDEV name for this node: For example: HANA_LOG_N1_ |

| For Initial Number, type the HANA Log LDEV. |

|

| Options area |

Type or click the values for LDKC, CU and DEV in LDKC:CU:DEV according to the VVOL ID column of Table 3 |

| Click the value for the MPU Unit ID. |

3. Keep the Storage Navigator session open for Create Virtual Volumes for Data LUNs and Map Ports.

Create Virtual Volumes for Data LUNs and Map Ports

This procedure creates and maps LDEVs to the specified Hitachi Virtual Storage Platform F370/G370 ports for the Data LUNs.

Use the previously-opened Hitachi Storage Navigator session.

To provision the LDEVs for Data LUNs, follow the steps of the previous sections.

To create virtual volumes for data LUNs and map ports, follow these steps:

1. From Pools, click OS_SH_DT_Pool.

2. Enter the values shown in Table 10 in the Create LDEVs dialog box.

Table 18 LDEV Creation Values for Data LUN

| For This |

Enter This |

| Provisioning Type |

Click Dynamic Provisioning |

| Drive Type/RPM |

Click SSD/- |

| RAID Level |

Click 1 (2D+2P) |

| Select Pool |

OS_SH_DT_Pool |

| LDEV Capacity |

Type the required volume size divided by 4 in GB. For example, if a 4096 GB data volume is needed, type 1024 GB. |

| Number of LDEVs per Free Space |

Type 4 |

| Full Allocation |

Enabled |

| LDEV Name area |

For LDEV Name Prefix, type the HANA Data LDEV name: HANA_DT_VVOL_N |

| For Initial Number, type the HANA node number. |

|

| Options area |

Type or click the values for LDKC, CU and DEV in LDKC:CU:DEV according to the VVOL ID column of Table 11 . |

| Click the value for the MPU Unit ID. |

3. Keep the Storage Navigator session open for the Configure the Host Groups procedure.

Storage Port Configuration

The following table lists the configuration and port mapping for Hitachi VSP Fx00 and Gx00 models.

Table 19 Storage Port Mapping for Validated SAP HANA Nodes using SSDs

| HBA Port |

Fiber Channel Switch Port Name |

Virtual Storage Platform Target Port-Host Group |

|||||||

| Port Name |

Port Speed |

Host |

Storage |

VSP F/G370 |

VSP F/G700 |

VSP F/G900 |

Port Speed |

Port Security |

|

| Node1 |

Port 0 |

16 Gb/s |

SW-1-P0 |

SW-1-P32 |

1A-Host Group 1 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P0 |

SW-2-P32 |

2A-Host Group 1 |

32 Gb/s |

Enabled |

|||

| Node2 |

Port 0 |

16 Gb/s |

SW-1-P1 |

SW-1-P32 |

1A-Host Group 2 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P1 |

SW-2-P32 |

2A-Host Group 2 |

32 Gb/s |

Enabled |

|||

| Node3 |

Port 0 |

16 Gb/s |

SW-1-P2 |

SW-1-P33 |

3A-Host Group 1 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P2 |

SW-2-P33 |

4A-Host Group 1 |

32 Gb/s |

Enabled |

|||

| Node4 |

Port 0 |

16 Gb/s |

SW-1-P3 |

SW-1-P33 |

3A-Host Group 2 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P3 |

SW-2-P33 |

4A-Host Group 2 |

32 Gb/s |

Enabled |

|||

| Node5 |

Port 0 |

16 Gb/s |

SW-1-P4 |

SW-1-P34 |

5A-Host Group 1 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P4 |

SW-2-P34 |

6A-Host Group 1 |

32 Gb/s |

Enabled |

|||

| Node6 |

Port 0 |

16 Gb/s |

SW-1-P5 |

SW-1-P34 |

5A-Host Group 2 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P5 |

SW-2-P34 |

6A-Host Group 2 |

32 Gb/s |

Enabled |

|||

| Node7 |

Port 0 |

16 Gb/s |

SW-1-P6 |

SW-1-P35 |

7A-Host Group 1 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P6 |

SW-2-P35 |

8A-Host Group 1 |

32 Gb/s |

Enabled |

|||

| Node8 |

Port 0 |

16 Gb/s |

SW-1-P7 |

SW-1-P35 |

7A-Host Group 2 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P7 |

SW-2-P35 |

8A-Host Group 2 |

32 Gb/s |

Enabled |

|||

| Node9 |

Port 0 |

16 Gb/s |

SW-1-P8 |

SW-1-P36 |

1B-Host Group 1 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P8 |

SW-2-P36 |

2B-Host Group 1 |

32 Gb/s |

Enabled |

|||

| Node10 |

Port 0 |

16 Gb/s |

SW-1-P9 |

SW-1-P36 |

1B-Host Group 2 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P9 |

SW-2-P36 |

2B-Host Group 2 |

32 Gb/s |

Enabled |

|||

| Node11 |

Port 0 |

16 Gb/s |

SW-1-P10 |

SW-1-P37 |

3B-Host Group 1 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P10 |

SW-2-P37 |

4B-Host Group 1 |

32 Gb/s |

Enabled |

|||

| Node12 |

Port 0 |

16 Gb/s |

SW-1-P11 |

SW-1-P37 |

3B-Host Group 2 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P11 |

SW-2-P37 |

4B-Host Group 2 |

32 Gb/s |

Enabled |

|||

| Node13 |

Port 0 |

16 Gb/s |

SW-1-P12 |

SW-1-P38 |

5B-Host Group 1 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P12 |

SW-2-P38 |

6B-Host Group 1 |

32 Gb/s |

Enabled |

|||

| Node14 |

Port 0 |

16 Gb/s |

SW-1-P13 |

SW-1-P38 |

5B-Host Group 2 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P13 |

SW-2-P38 |

6B-Host Group 2 |

32 Gb/s |

Enabled |

|||

| Node15 |

Port 0 |

16 Gb/s |

SW-1-P14 |

SW-1-P39 |

7B-Host Group 1 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P14 |

SW-2-P39 |

8B-Host Group 1 |

32 Gb/s |

Enabled |

|||

| Node16 |

Port 0 |

16 Gb/s |

SW-1-P15 |

SW-1-P39 |

7B-Host Group 2 |

32 Gb/s |

Enabled |

||

| Port 1 |

16 Gb/s |

SW-2-P15 |

SW-2-P39 |

8B-Host Group 2 |

32 Gb/s |

Enabled |

|||

| Node17 |

Port 0 |

16 Gb/s |

SW-1-P16 |

SW-1-P40 |

N/A |

1C-Host Group 1 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P16 |

SW-2-P40 |

N/A |

2C-Host Group 1 |

32 Gb/s |

Enabled |

||

| Node18 |

Port 0 |

16 Gb/s |

SW-1-P17 |

SW-1-P40 |

N/A |

1C-Host Group 2 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P17 |

SW-2-P40 |

N/A |

2C-Host Group 2 |

32 Gb/s |

Enabled |

||

| Node19 |

Port 0 |

16 Gb/s |

SW-1-P18 |

SW-1-P41 |

N/A |

3C-Host Group 1 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P18 |

SW-2-P41 |

N/A |

4C-Host Group 1 |

32 Gb/s |

Enabled |

||

| Node20 |

Port 0 |

16 Gb/s |

SW-1-P19 |

SW-1-P41 |

N/A |

3C-Host Group 2 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P19 |

SW-2-P41 |

N/A |

4C-Host Group 2 |

32 Gb/s |

Enabled |

||

| Node21 |

Port 0 |

16 Gb/s |

SW-1-P20 |

SW-1-P42 |

N/A |

5C-Host Group 1 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P20 |

SW-2-P42 |

N/A |

6C-Host Group 1 |

32 Gb/s |

Enabled |

||

| Node22 |

Port 0 |

16 Gb/s |

SW-1-P21 |

SW-1-P42 |

N/A |

5C-Host Group 2 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P21 |

SW-2-P42 |

N/A |

6C-Host Group 2 |

32 Gb/s |

Enabled |

||

| Node23 |

Port 0 |

16 Gb/s |

SW-1-P22 |

SW-1-P43 |

N/A |

7C-Host Group 1 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P22 |

SW-2-P43 |

N/A |

8C-Host Group 1 |

32 Gb/s |

Enabled |

||

| Node24 |

Port 0 |

16 Gb/s |

SW-1-P23 |

SW-1-P43 |

N/A |

7C-Host Group 2 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P23 |

SW-2-P43 |

N/A |

8C-Host Group 2 |

32 Gb/s |

Enabled |

||

| Node25 |

Port 0 |

16 Gb/s |

SW-1-P24 |

SW-1-P44 |

N/A |

1D-Host Group 1 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P24 |

SW-2-P44 |

N/A |

2D-Host Group 1 |

32 Gb/s |

Enabled |

||

| Node26 |

Port 0 |

16 Gb/s |

SW-1-P25 |

SW-1-P44 |

N/A |

1D-Host Group 2 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P25 |

SW-2-P44 |

N/A |

2D-Host Group 2 |

32 Gb/s |

Enabled |

||

| Node27 |

Port 0 |

16 Gb/s |

SW-1-P26 |

SW-1-P45 |

N/A |

3D-Host Group 1 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P26 |

SW-2-P45 |

N/A |

4D-Host Group 1 |

32 Gb/s |

Enabled |

||

| Node28 |

Port 0 |

16 Gb/s |

SW-1-P27 |

SW-1-P45 |

N/A |

3D-Host Group 2 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P27 |

SW-2-P45 |

N/A |

4D-Host Group 2 |

32 Gb/s |

Enabled |

||

| Node29 |

Port 0 |

16 Gb/s |

SW-1-P28 |

SW-1-P46 |

N/A |

5D-Host Group 1 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P28 |

SW-2-P46 |

N/A |

6D-Host Group 1 |

32 Gb/s |

Enabled |

||

| Node30 |

Port 0 |

16 Gb/s |

SW-1-P29 |

SW-1-P46 |

N/A |

5D-Host Group 2 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P29 |

SW-2-P46 |

N/A |

6D-Host Group 2 |

32 Gb/s |

Enabled |

||

| Node31 |

Port 0 |

16 Gb/s |

SW-1-P30 |

SW-1-P47 |

N/A |

7D-Host Group 1 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P30 |

SW-2-P47 |

N/A |

8D-Host Group 1 |

32 Gb/s |

Enabled |

||

| Node32 |

Port 0 |

16 Gb/s |

SW-1-P31 |

SW-1-P47 |

N/A |

7D-Host Group 2 |

32 Gb/s |

Enabled |

|

| Port 1 |

16 Gb/s |

SW-2-P31 |

SW-2-P47 |

N/A |

8D-Host Group 2 |

32 Gb/s |

Enabled |

||

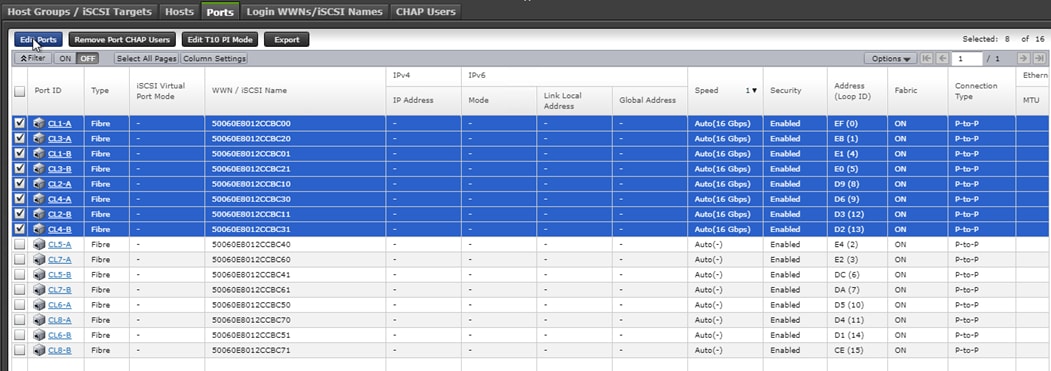

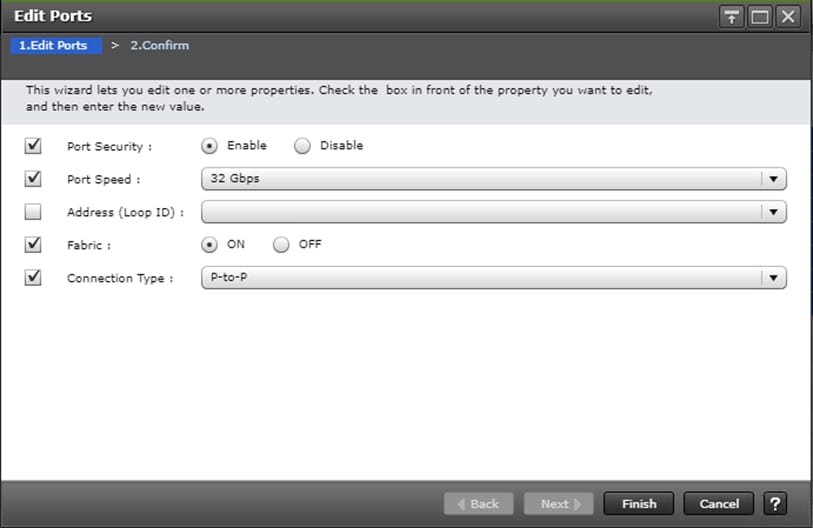

Configure the Host Groups

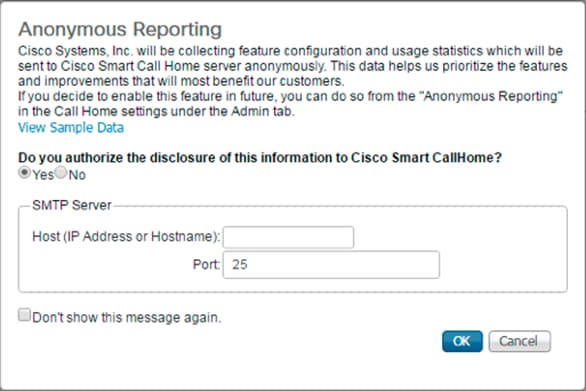

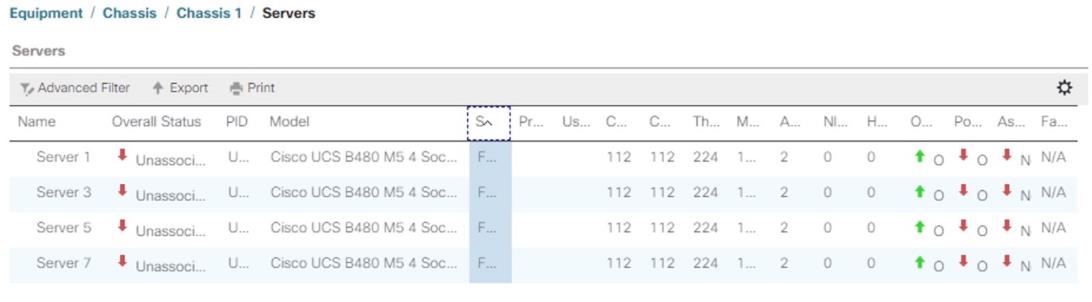

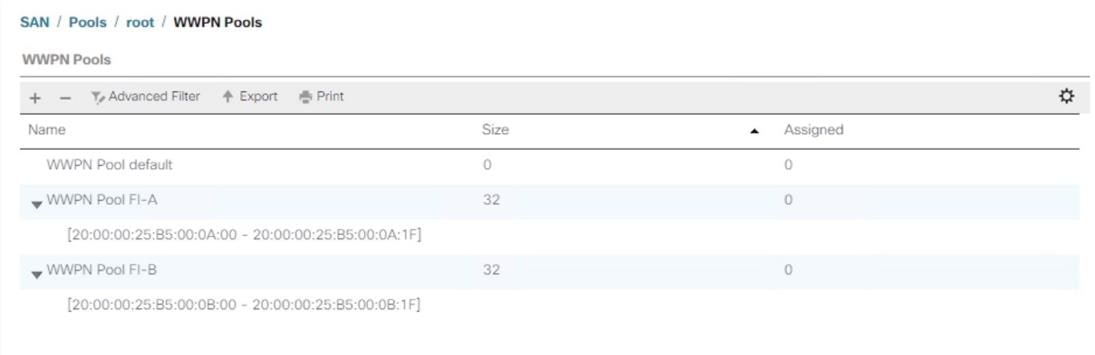

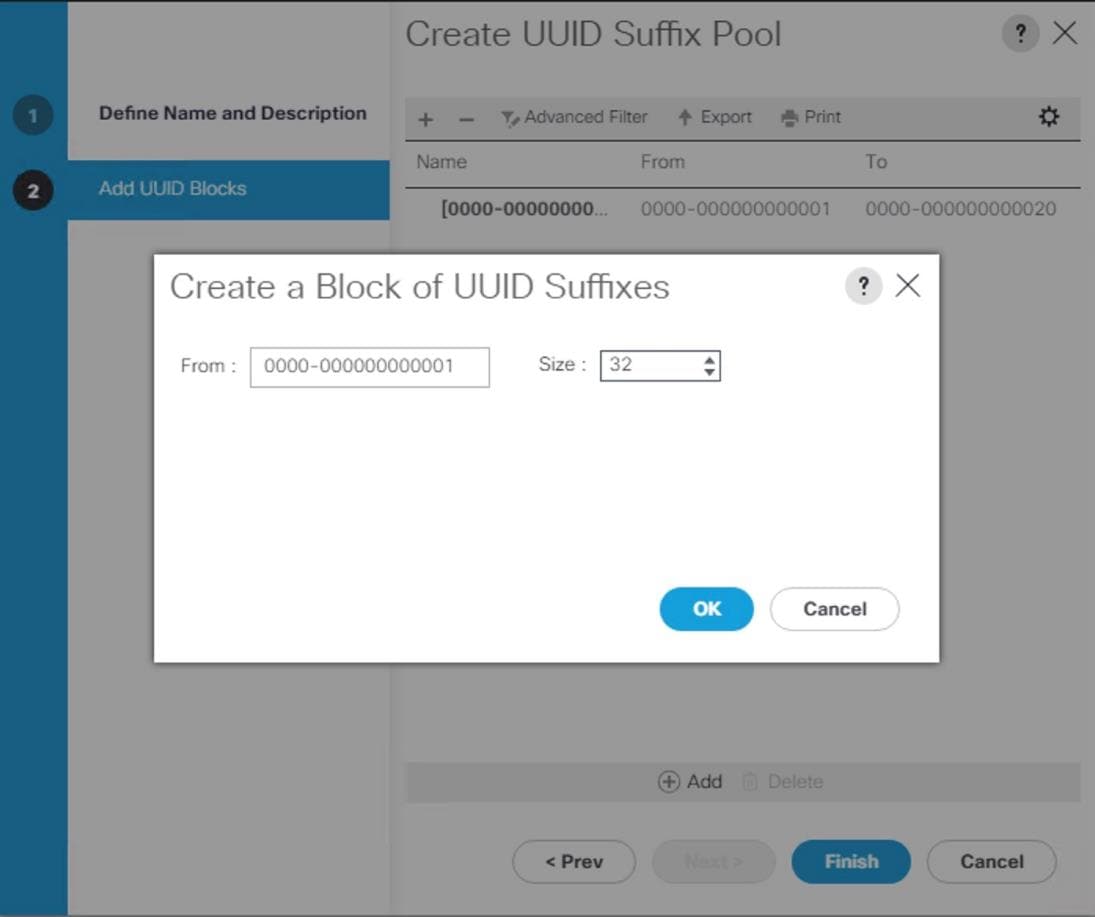

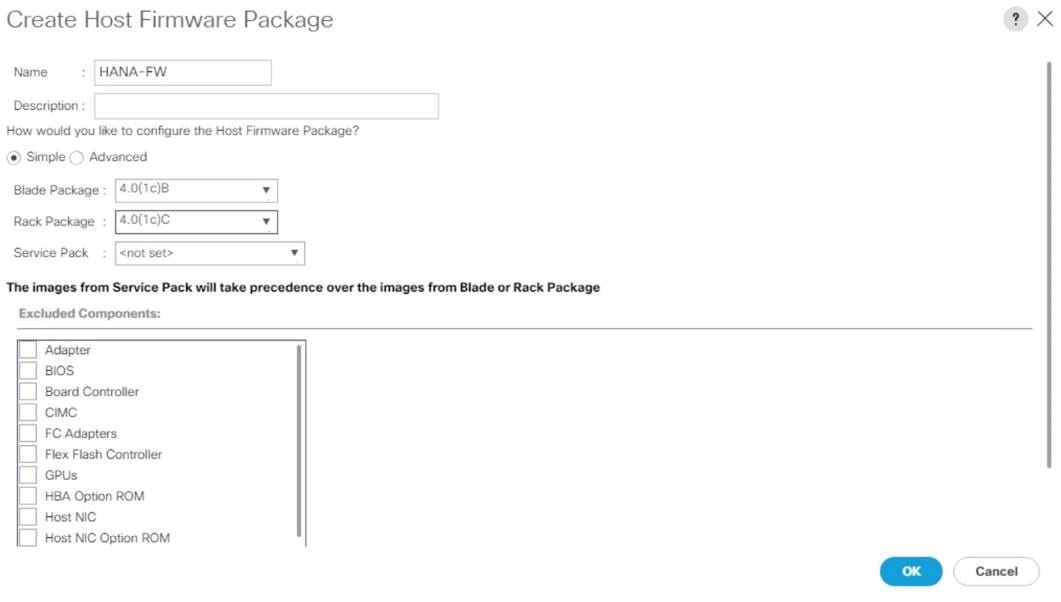

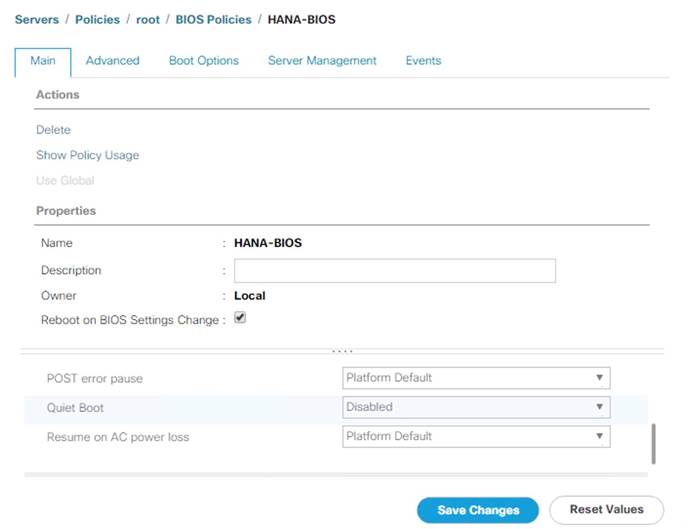

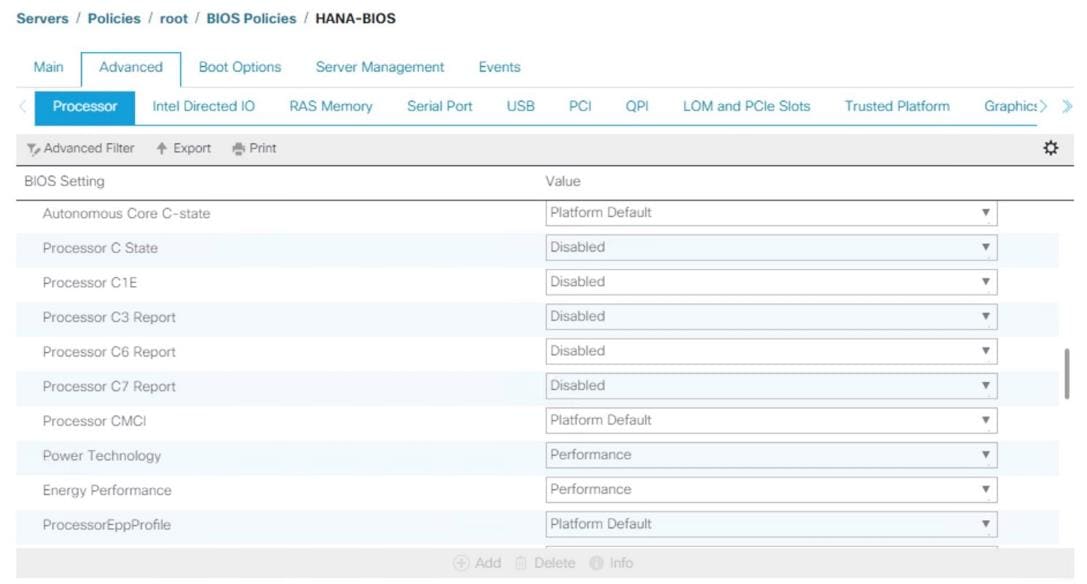

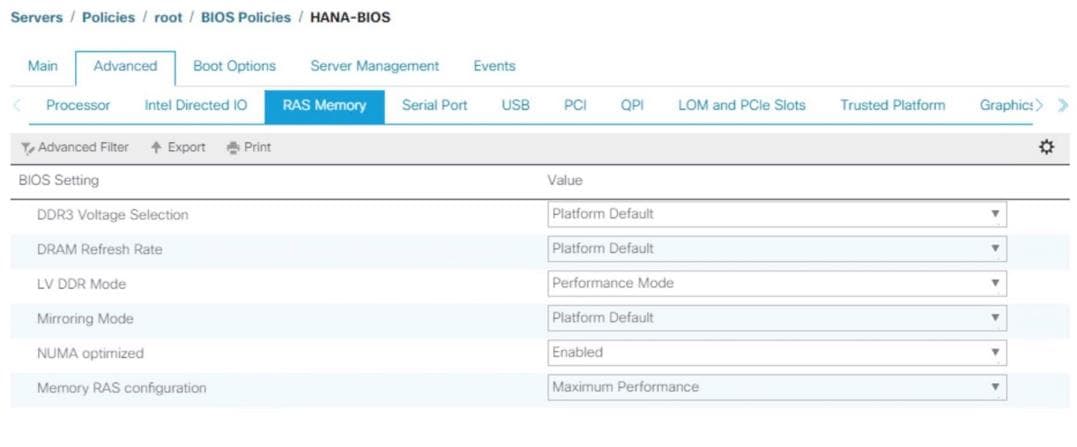

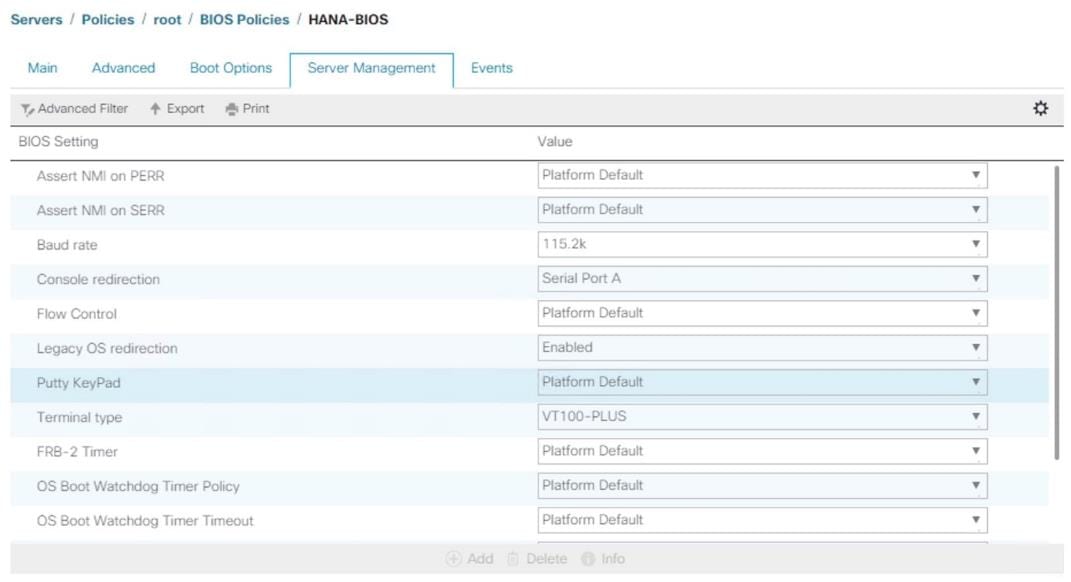

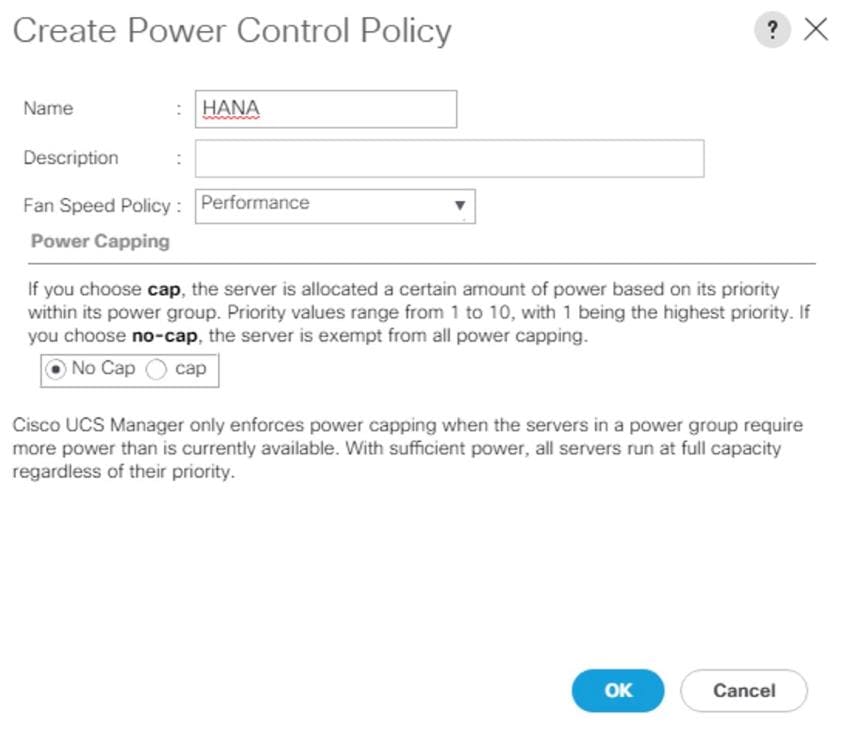

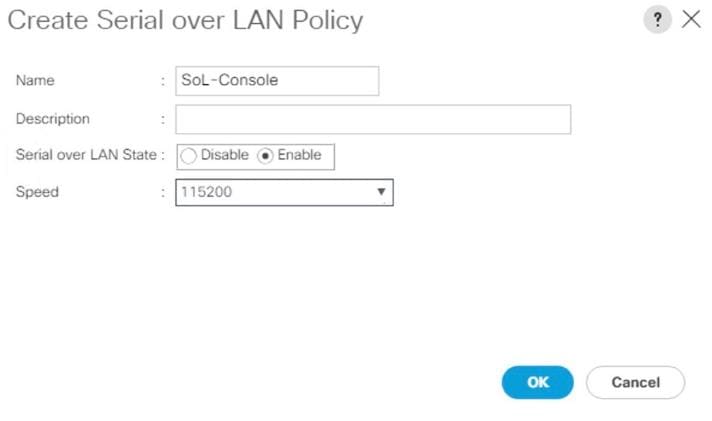

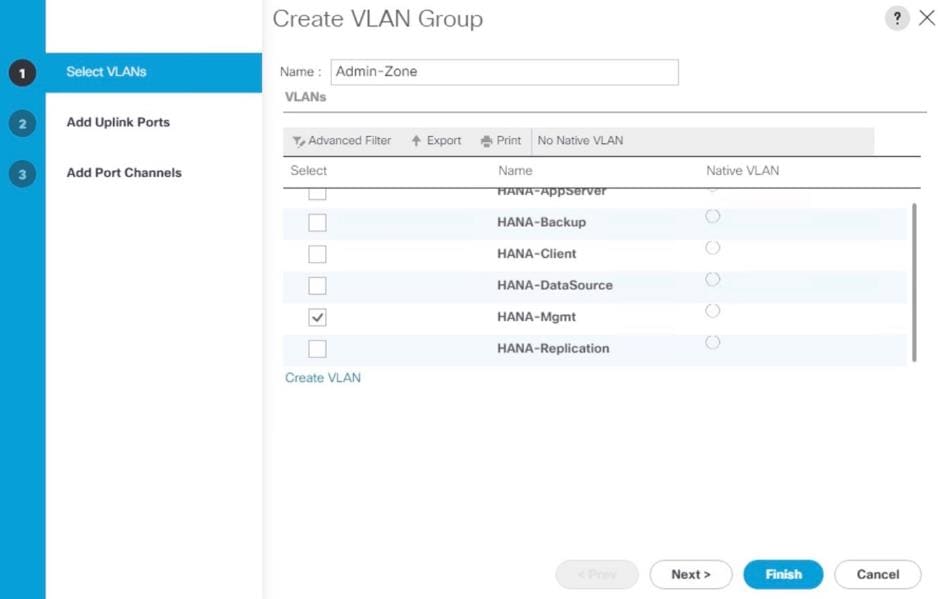

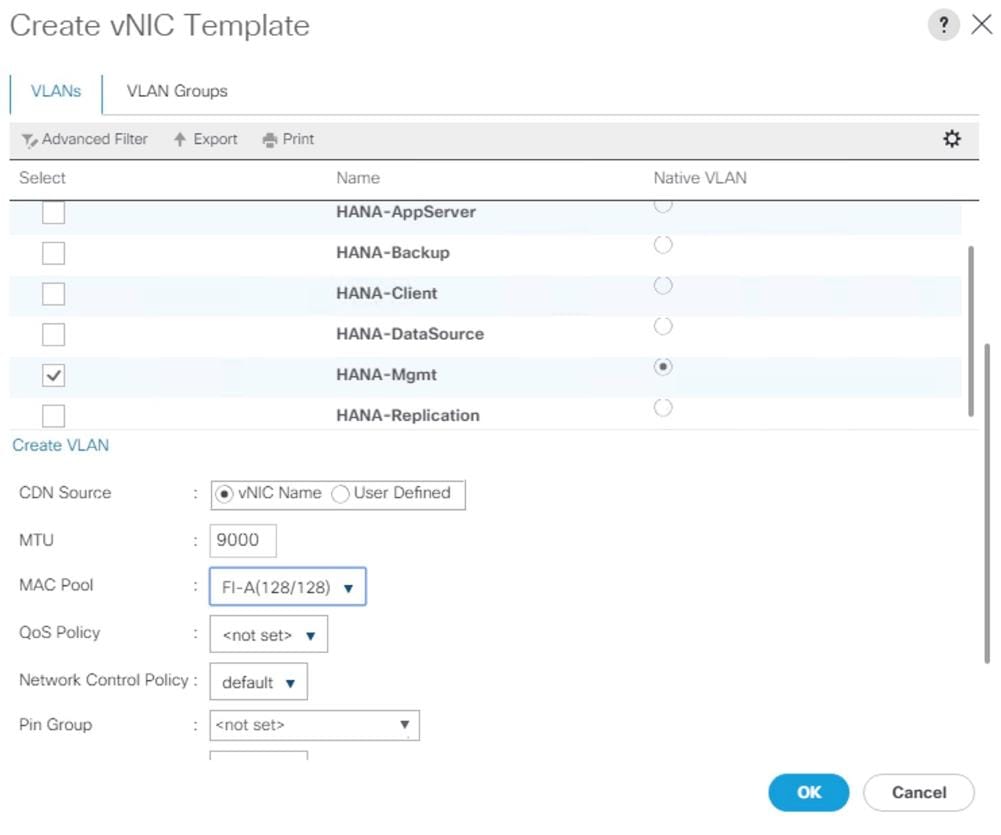

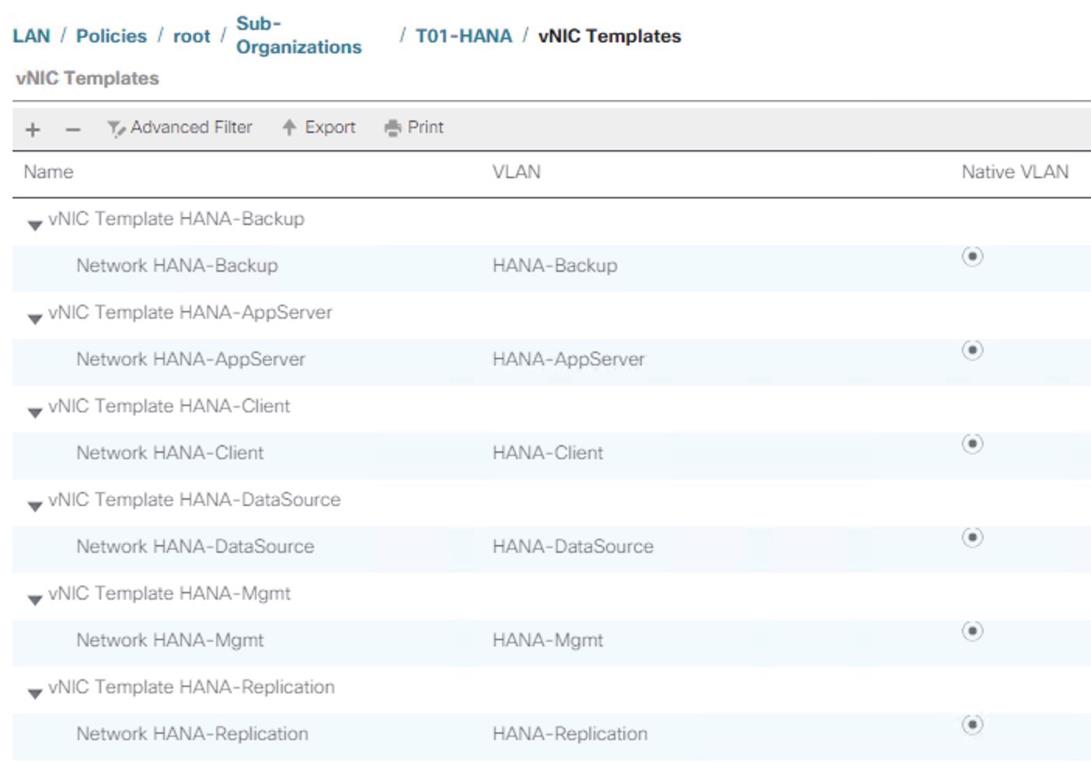

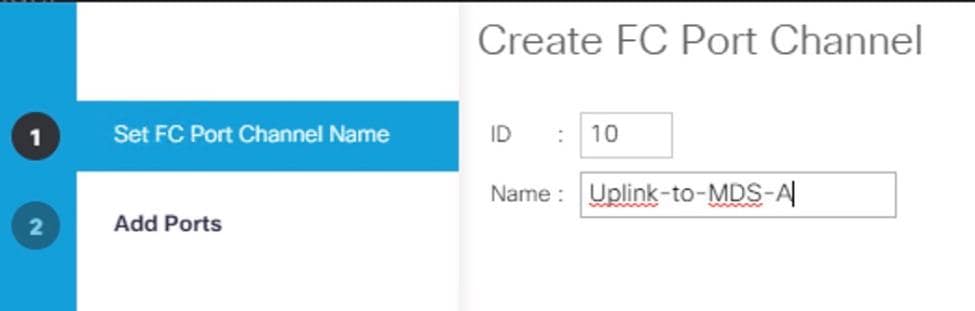

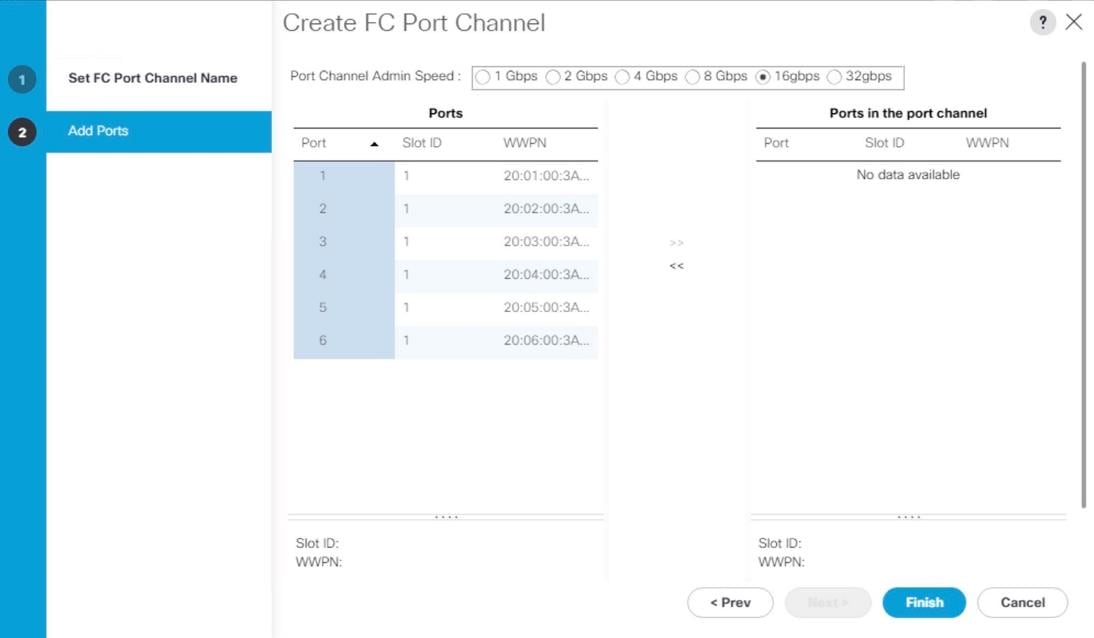

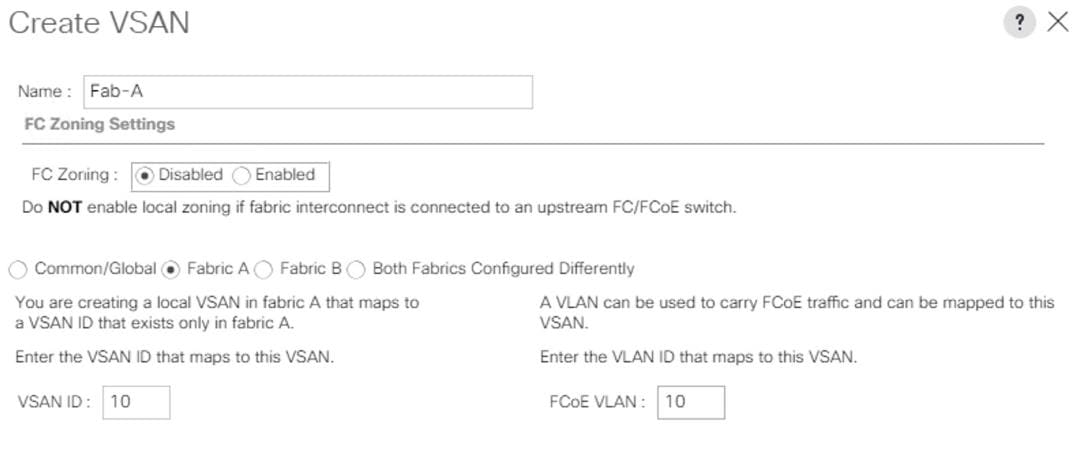

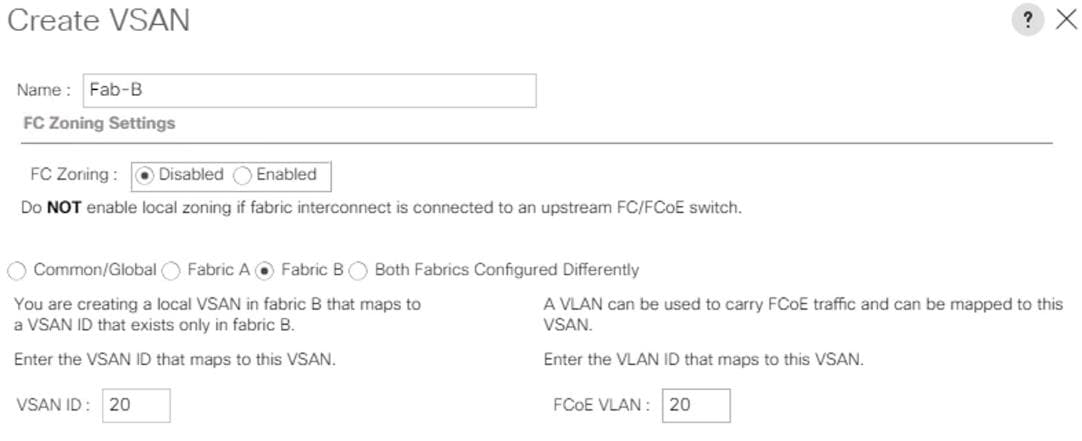

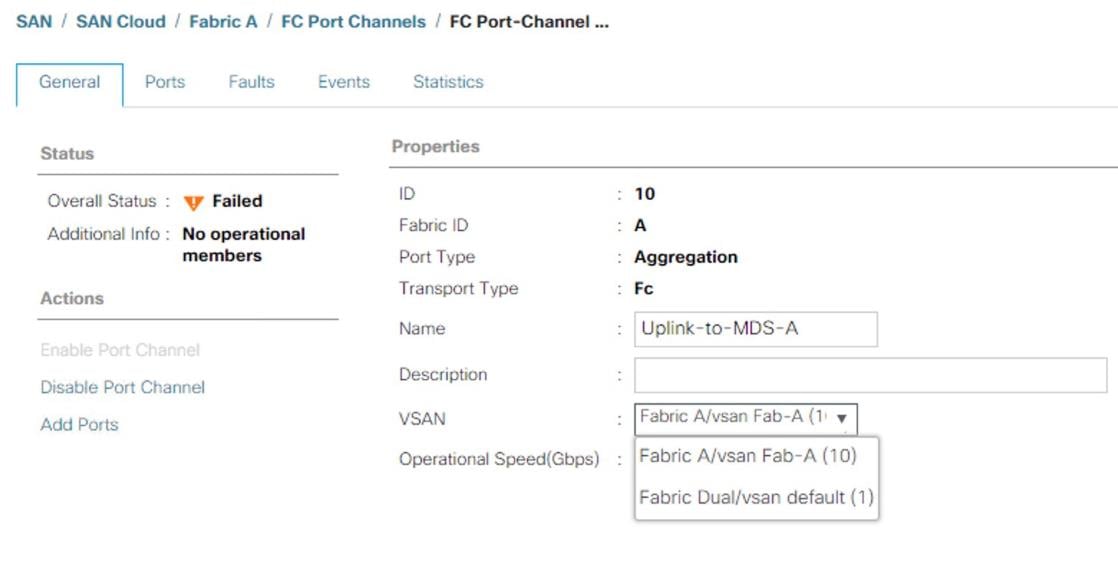

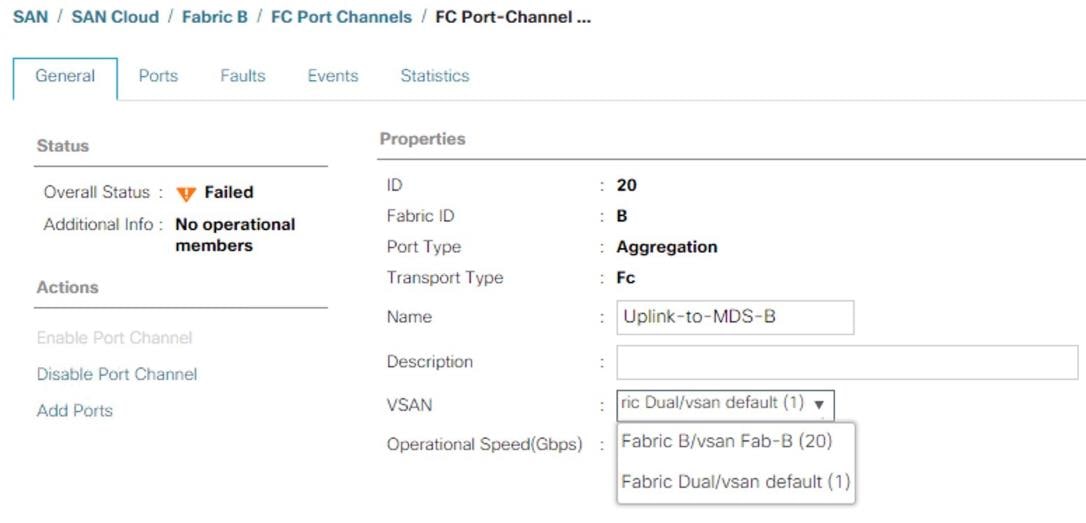

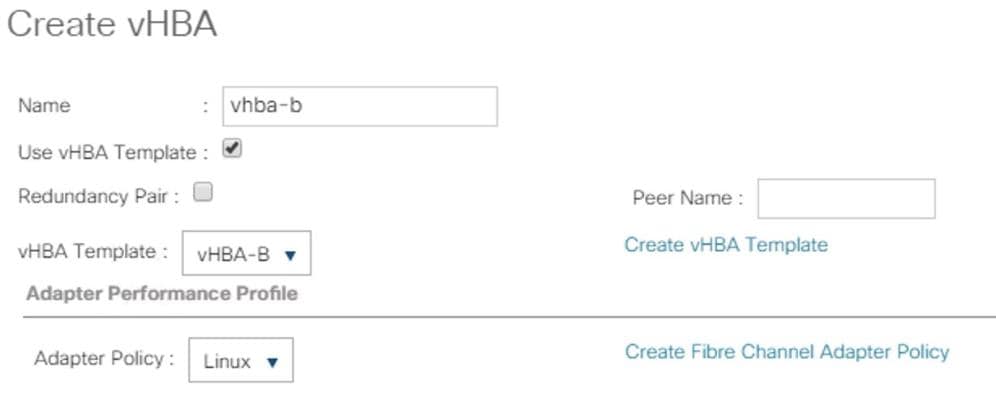

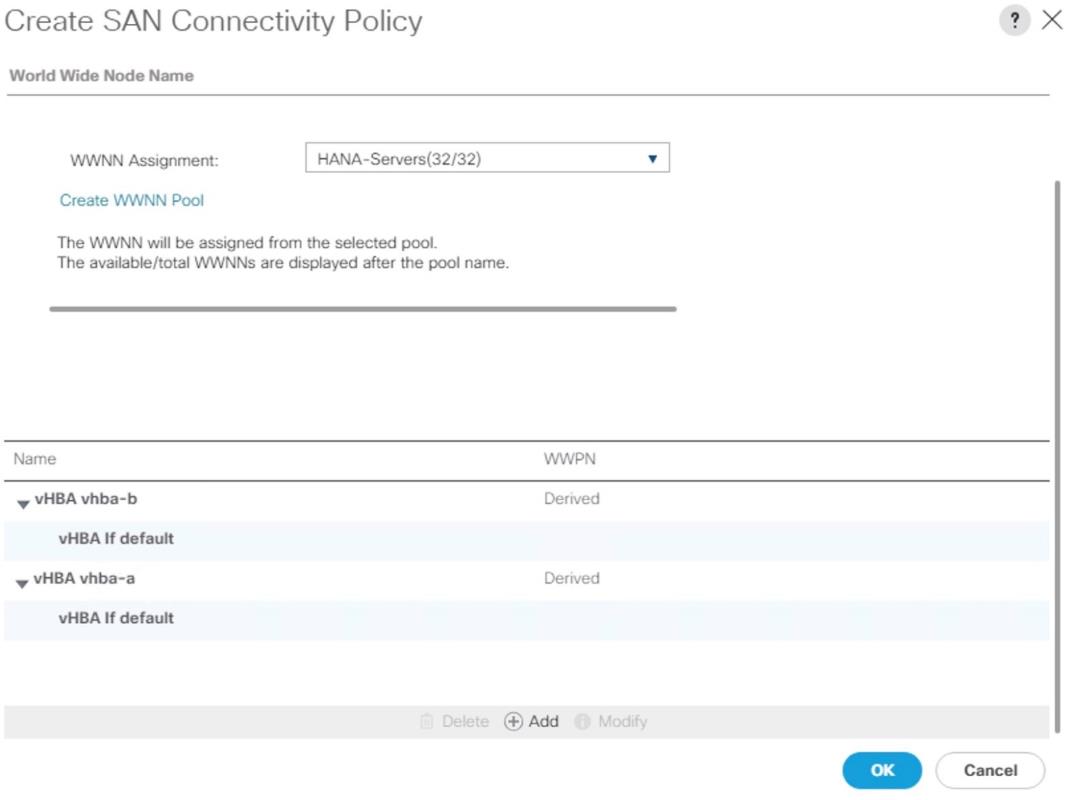

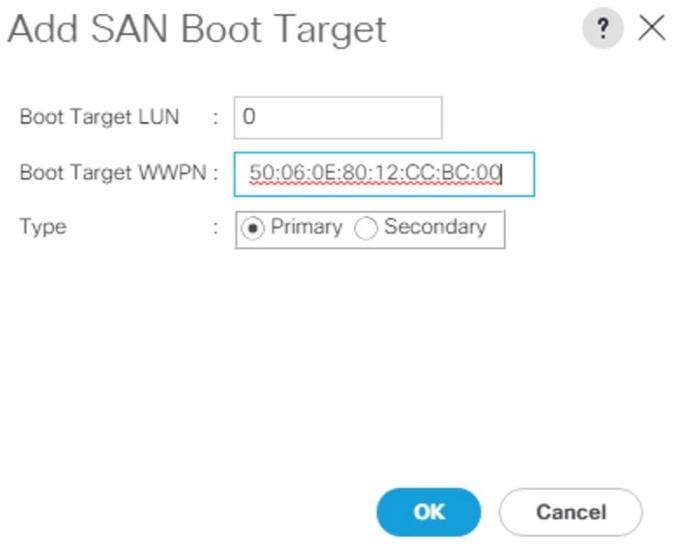

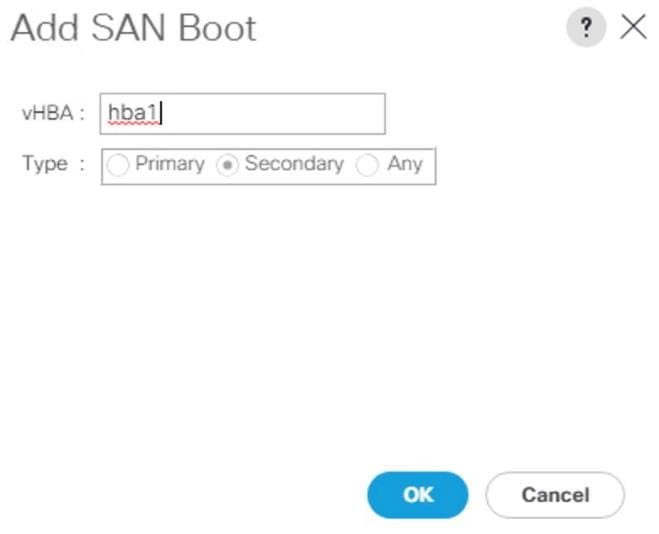

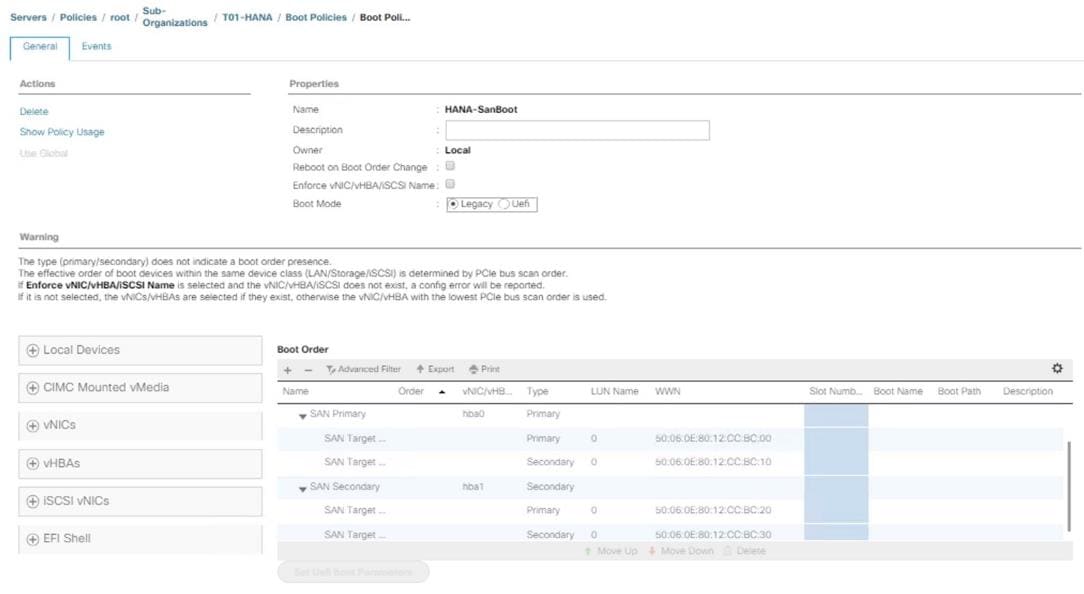

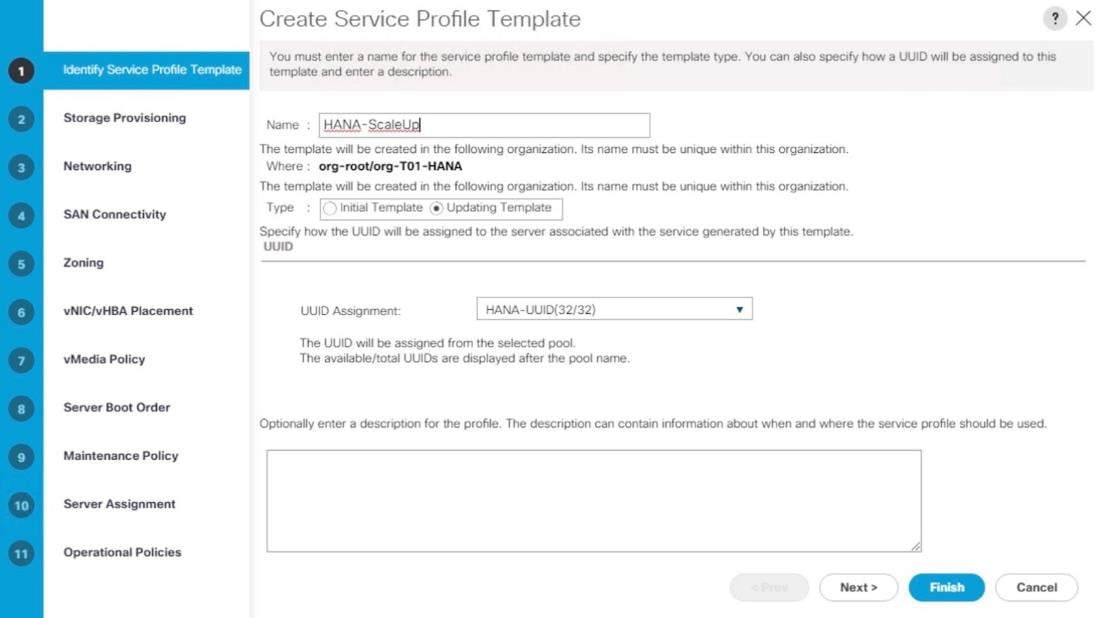

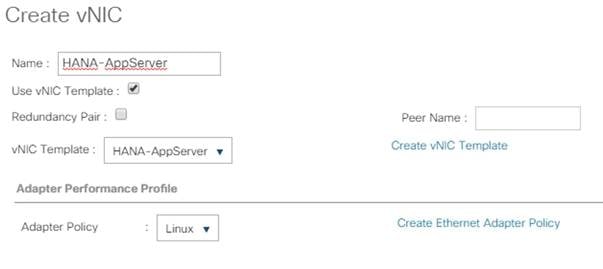

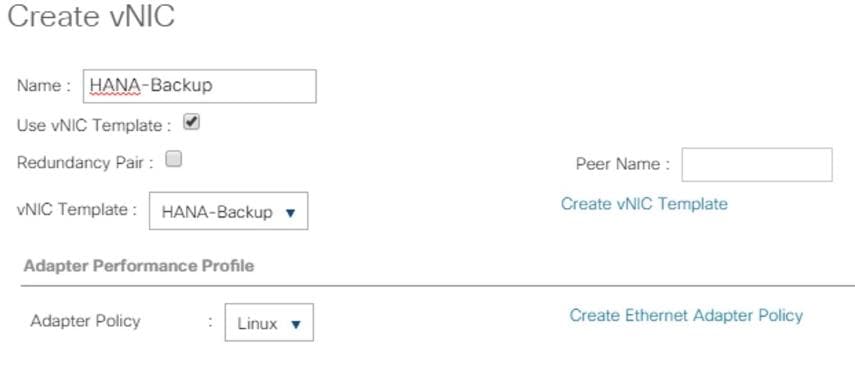

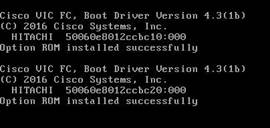

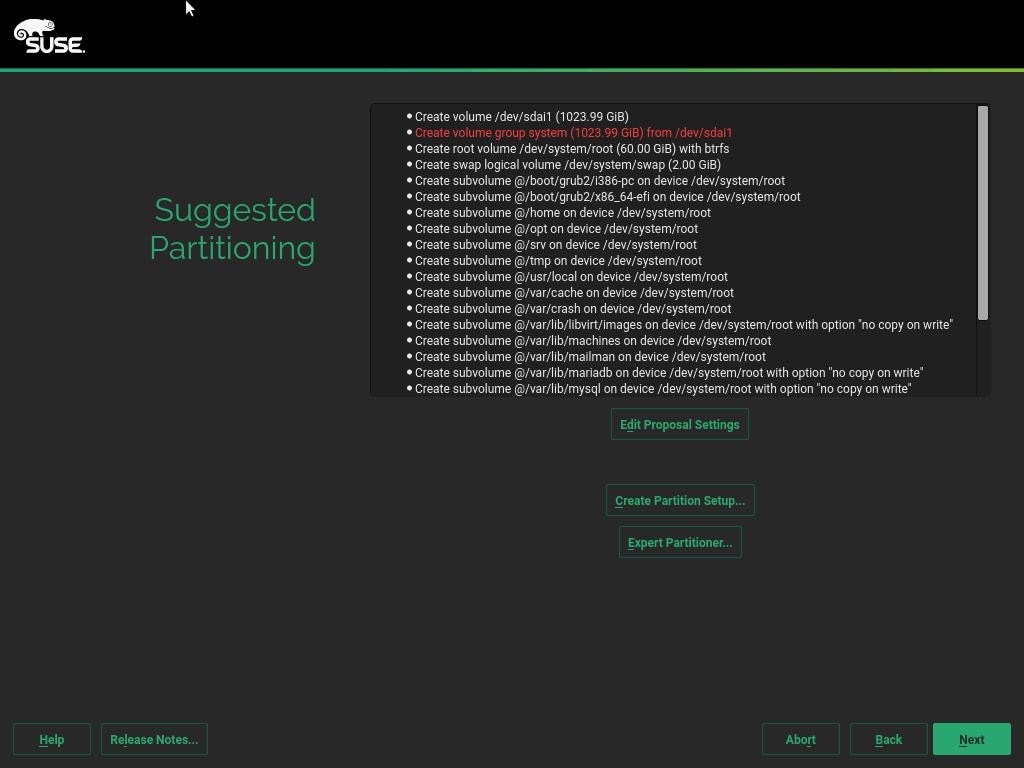

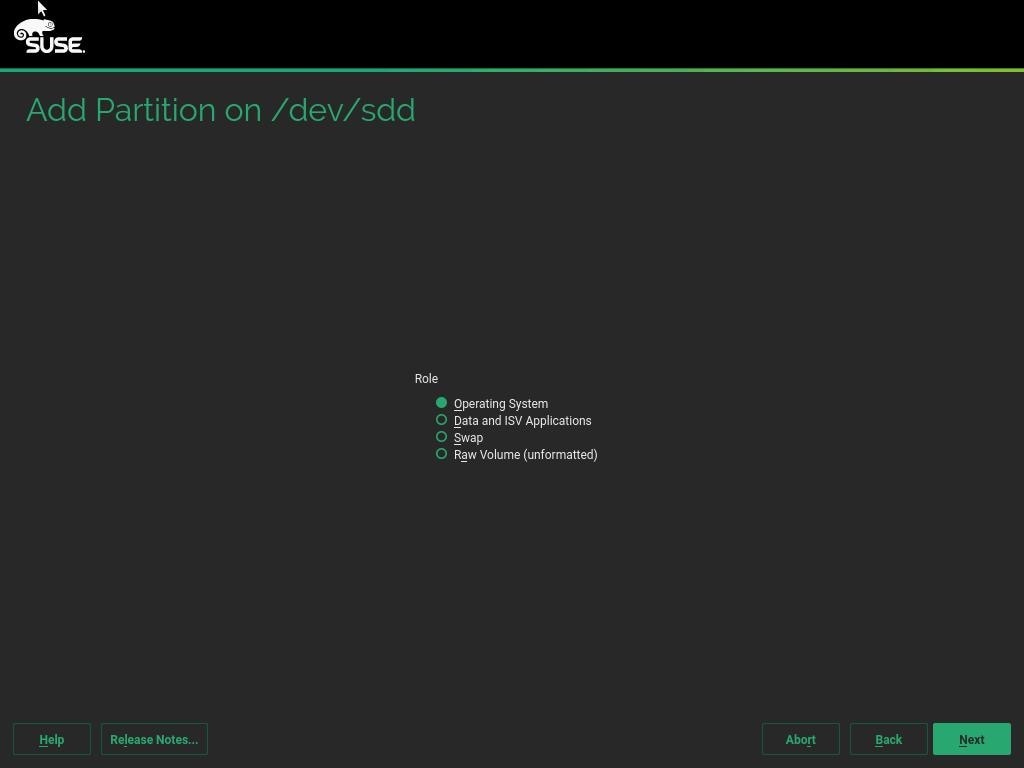

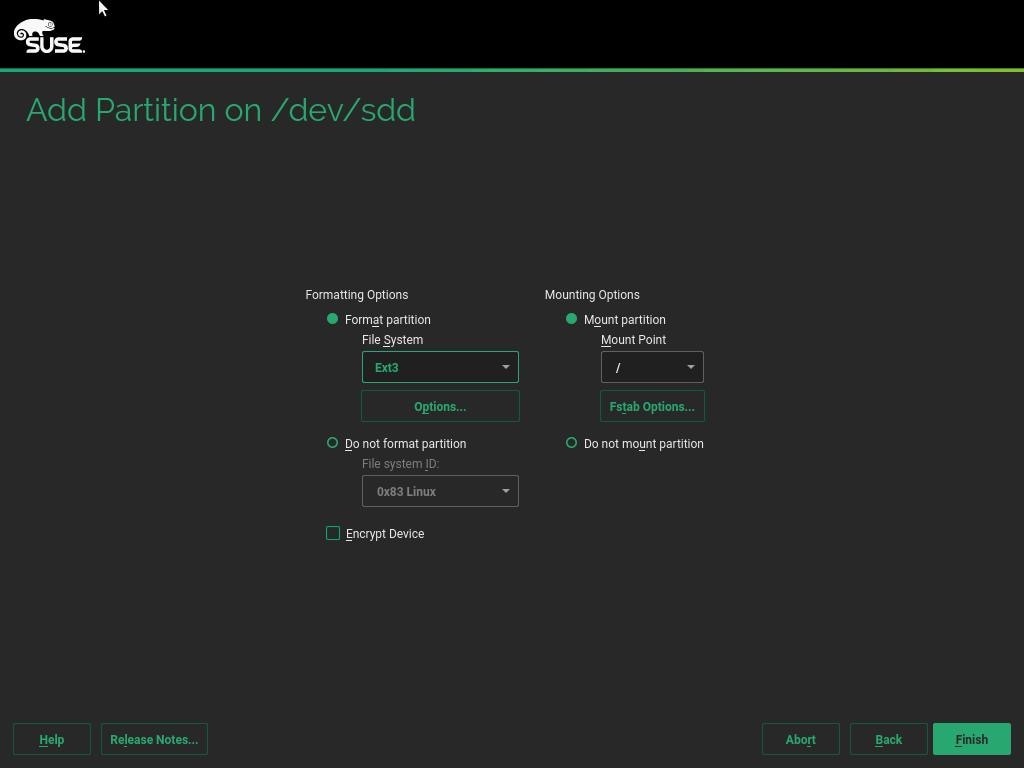

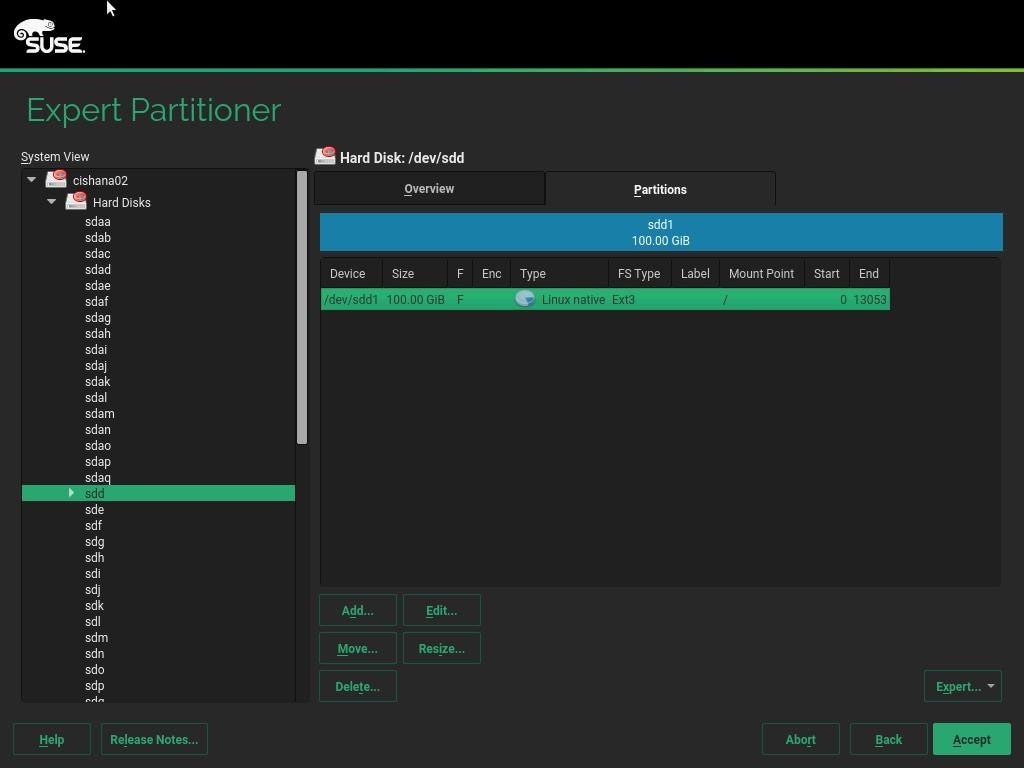

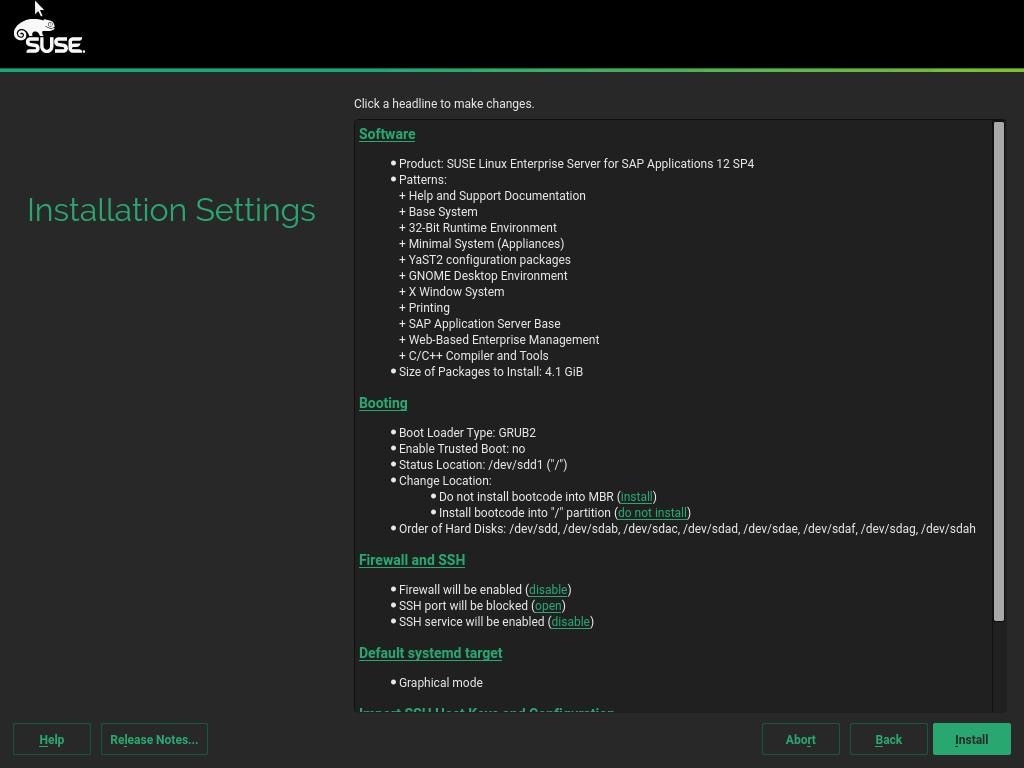

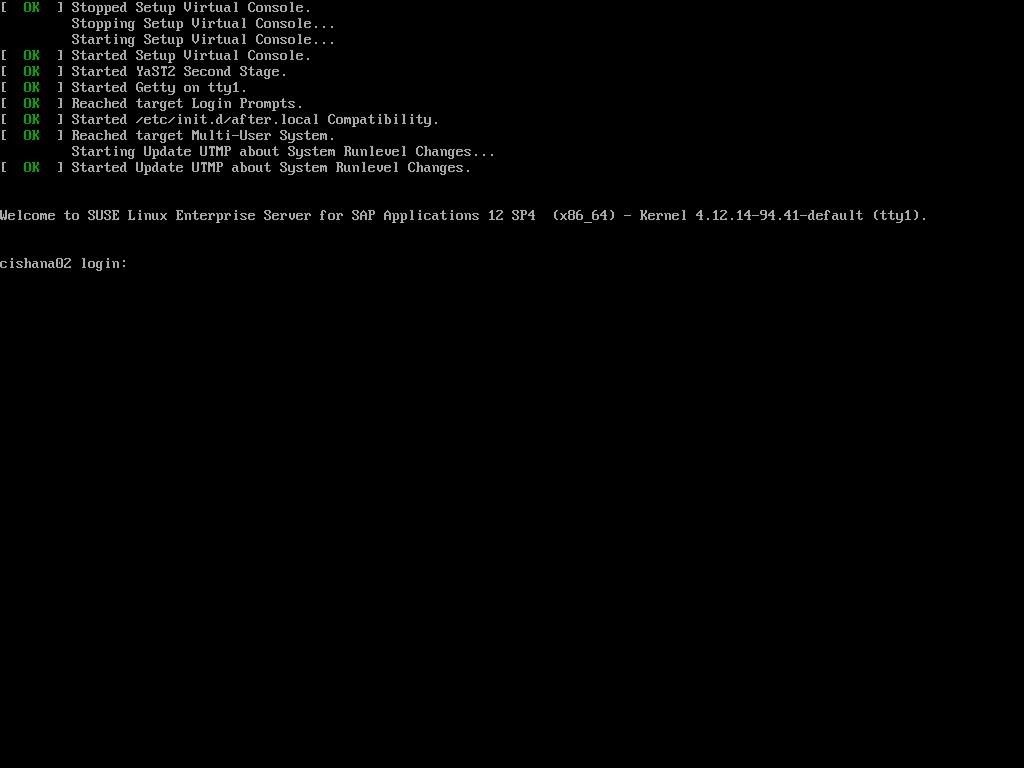

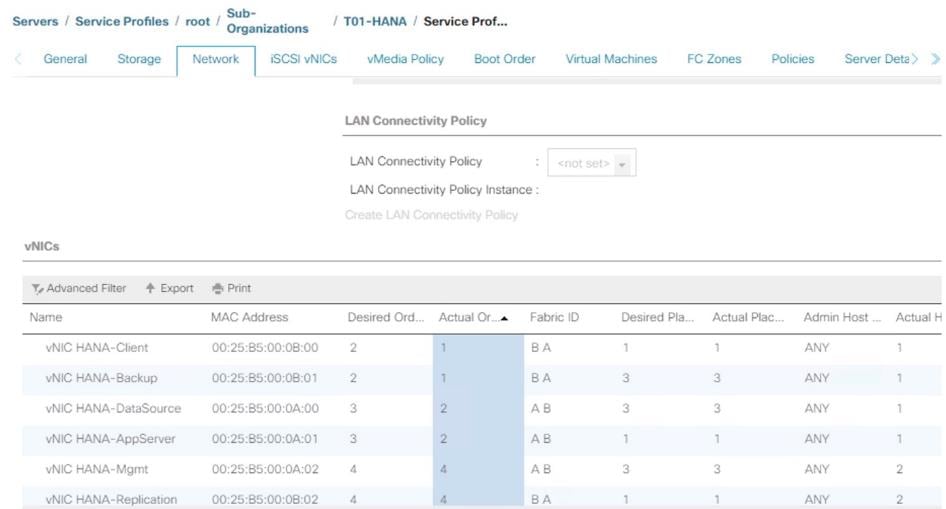

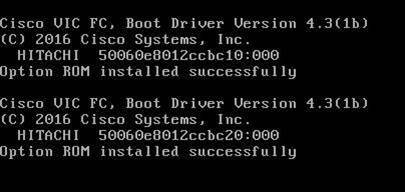

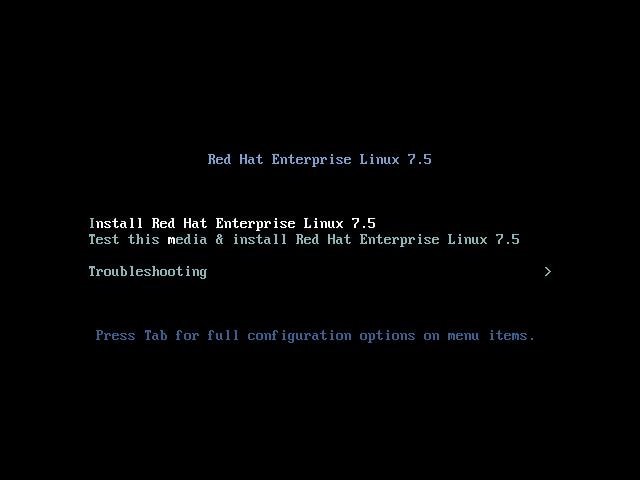

To configure the host ports, follow these steps: