Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration Design Guide

Available Languages

Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration Design Guide

Cisco and Hitachi Adaptive Solutions for SAP HANA Tailored Data Center Integration Design Guide PDF

Last Updated: March 12, 2019

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2019 Cisco Systems, Inc. All rights reserved.

Table of Contents

Cisco Unified Computing System

Cisco UCS Fabric Interconnects

Cisco UCS 2304 XP Fabric Extenders

Cisco UCS 5108 Blade Server Chassis

Cisco Nexus 9000 Series Switch

Hitachi Virtual Storage Platform Storage Systems

Storage Virtualization Operating System RF

Hitachi Virtual Storage Platform G Series and F Series

SAP HANA Tailored Data Center Integration Support

Hardware Requirements for the SAP HANA Database

Scale and Performance Consideration

LUN Multiplicity per HBA and Different Pathing Options

Cisco Nexus 9000 Series vPC Best Practices

Cisco Validated Designs consist of systems and solutions that are designed, tested, and documented to facilitate and improve customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of our customers.

Cisco and Hitachi are working together to deliver a converged infrastructure solution that helps enterprise businesses meet the challenges of today and position themselves for the future. Leveraging decades of industry expertise and superior technology, Cisco and Hitachi Adaptive Solutions for Converged Infrastructure offers a resilient, agile, flexible foundation for today’s businesses. In addition, the Cisco and Hitachi partnership extends beyond a single solution, enabling businesses to benefit from their ambitious roadmap of evolving technologies such as advanced analytics, IoT, cloud, and edge capabilities. With Cisco and Hitachi, organizations can confidently take the next step in their modernization journey and prepare themselves to take advantage of new business opportunities enabled by innovative technology.

This document describes the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP, which is a validated approach for deploying SAP HANA® Tailored Data Center Integration (TDI) environments. This validated design provides an outline for implementing SAP HANA utilizing best practices from Cisco and Hitachi.

The recommended solution architecture is built on Cisco Unified Computing System (Cisco UCS) using the unified software release to support the Cisco UCS hardware platforms for Cisco UCS B-Series blade, Cisco UCS 6300 Fabric Interconnects, Cisco Nexus 9000 Series switches, Cisco MDS Fiber channel switches, and Hitachi Virtual Storage Platform F series and G series. In addition to that, it includes validations of both Red Hat Enterprise Linux and SUSE Linux Enterprise Server for SAP HANA.

Introduction

Enterprise data centers have a need for scalable and reliable infrastructure that can be implemented in an intelligent, policy driven manner. This implementation needs to be easy to use, and deliver application agility, so IT teams can provision applications quickly and resources can be scaled up (or down) in minutes.

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP provides a best practice data center architecture built on the collaboration of Hitachi and Cisco to meet the needs of enterprise customers. The solution provides orchestrated efficiency across the data path with an intelligent system that helps anticipate and navigate challenges as you grow. The architecture builds a self-optimizing data center that automatically spreads workloads across devices to ensure consistent utilization and performance. The solution helps organization to effectively plan infrastructure growth and eliminate the budgeting guesswork with predictive risk profiles that identify historical trends.

Organizations experience a 5-year ROI of 483% with Cisco UCS Integrated Infrastructure solutions, Businesses experience 46% lower IT infrastructure costs with Cisco UCS Integrated Infrastructure solutions. Organizations can realize a 5-year total business benefit of $13M per organization with Cisco UCS Integrated Infrastructure solutions. The break-even period with Cisco UCS Integrated Infrastructure solutions is seven months. Businesses experience 66% lower ongoing administrative and management costs with Cisco UCS Manager.

This architecture is composed of the Hitachi Virtual Storage Platform (VSP) connecting through the Cisco MDS multilayer switches to Cisco Unified Computing System, and further enabled with the Cisco Nexus family of switches.

Audience

The audience for this document includes, but is not limited to; sales engineers, field consultants, professional services, IT managers, partner engineers, and customers who want to modernize their infrastructure to meet SLAs and the business needs at any scale.

Purpose of this Document

A Cisco Validated Design consists of systems and solutions that are designed, tested, and documented to facilitate and improve customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of our customers.

The purpose of this document is to describe Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP utilizing Cisco Unified Computing and Hitachi VSP, along with Cisco Nexus and MDS switches. It helps organizations to

· Work confidently with an unmatched 100% data availability guarantee, minimal downtime, and data loss protection.

· Run mission-critical applications efficiently by independently and automatically scaling compute and storage workloads.

· Monitor SLOs to ensure SLA compliance with integrated alerts for service-level thresholds and anomaly detection.

· Ensure employees have continuous, scalable data access with real-time data mirroring capabilities.

· Unify and automate the control of server, network, and storage components to simplify resource provisioning and maintenance.

· Easily test and implement new technologies that drive the business forward without impacting priorities or workloads.

Solution Summary

Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP is a resilient, agile, flexible infrastructure, leveraging the strengths of both Cisco and Hitachi Vantara. The Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP data center implementation uses the following components:

· Cisco Unified Computing System

· Cisco Nexus and MDS Family of Switches

· Hitach VSP Storage Systems

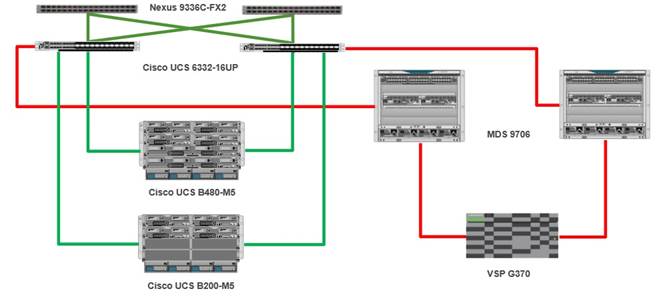

Figure 1 Architecture of Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP

These products have been brought together as a validated reference architecture. The components are configured using the configuration and connectivity best practices from both companies to implement a powerful and scalable Infrastructure for SAP HANA in a TDI environment validated for both SUSE Linux Enterprise Server (SLES) and Red Hat Enterprise Linux (RHEL). The specific products listed in this design guide and the accompanying deployment guide have gone through a battery of validation tests confirming functionality and resilience for the components as listed.

This design is presented as a validated reference architecture, that covers specifics of products utilized within the Cisco lab however equivalent supported products can be replaced which are listed within Cisco and Hitachi’s published compatibility matrixes.

This section provides a technical overview of the compute, network, storage and management components in this solution. For additional information on any of the components covered in this section, see the Solution References section.

Cisco Unified Computing System

Cisco Unified Computing System (Cisco UCS) is a next-generation data center platform that integrates computing, networking, storage access, and virtualization resources into a cohesive system designed to reduce total cost of ownership and increase business agility. The system integrates a low-latency, lossless 10-100 Gigabit Ethernet unified network fabric with enterprise-class, x86-architecture servers. The system is an integrated, scalable, multi-chassis platform with a unified management domain for managing all resources.

Cisco Unified Computing System consists of the following subsystems:

· Compute - The compute piece of the system incorporates servers based on latest Intel’s x86 processors. Servers are available in blade and rack form factor, managed by Cisco UCS Manager.

· Network - The integrated network fabric in the system provides a low-latency, lossless, 10/25/40/100 Gbps Ethernet fabric. Networks for LAN, SAN and management access are consolidated within the fabric. The unified fabric uses the innovative Single Connect technology to lowers costs by reducing the number of network adapters, switches, and cables. This in turn lowers the power and cooling needs of the system.

· Virtualization - The system unleashes the full potential of virtualization by enhancing the scalability, performance, and operational control of virtual environments. Cisco security, policy enforcement, and diagnostic features are now extended into virtual environments to support evolving business needs.

· Storage access – Cisco UCS system provides consolidated access to both SAN storage and Network Attached Storage over the unified fabric. This provides customers with storage choices and investment protection. Also, the server administrators can pre-assign storage-access policies to storage resources, for simplified storage connectivity and management leading to increased productivity.

· Management: The system uniquely integrates compute, network and storage access subsystems, enabling it to be managed as a single entity through Cisco UCS Manager software. Cisco UCS Manager increases IT staff productivity by enabling storage, network, and server administrators to collaborate on Service Profiles that define the desired physical configurations and infrastructure policies for applications. Service Profiles increase business agility by enabling IT to automate and provision resources in minutes instead of days.

Cisco UCS Differentiators

Cisco Unified Computing System has revolutionized the way servers are managed in data-center and provides a number of unique differentiators that are outlined below:

· Embedded Management — Servers in Cisco UCS are managed by embedded software in the Fabric Interconnects, eliminating need for any external physical or virtual devices to manage the servers.

· Unified Fabric — Cisco UCS uses a wire-once architecture, where a single Ethernet cable is used from the FI from the server chassis for LAN, SAN and management traffic. Adding compute capacity does not require additional connections. This converged I/O reduces overall capital and operational expenses.

· Auto Discovery — By simply inserting a blade server into the chassis or a rack server to the fabric interconnect, discovery of the compute resource occurs automatically without any intervention.

· Policy Based Resource Classification — Once a compute resource is discovered, it can be automatically classified to a resource pool based on policies defined which is particularly useful in cloud computing.

· Combined Rack and Blade Server Management — Cisco UCS Manager is hardware form factor agnostic and can manage both blade and rack servers under the same management domain.

· Model based Management Architecture — Cisco UCS Manager architecture and management database is model based, and data driven. An open XML API is provided to operate on the management model which enables easy and scalable integration of Cisco UCS Manager with other management systems.

· Policies, Pools, and Templates — The management approach in Cisco UCS Manager is based on defining policies, pools and templates, instead of cluttered configuration, which enables a simple, loosely coupled, data driven approach in managing compute, network and storage resources.

· Policy Resolution — In Cisco UCS Manager, a tree structure of organizational unit hierarchy can be created that mimics the real-life tenants and/or organization relationships. Various policies, pools and templates can be defined at different levels of organization hierarchy.

· Service Profiles and Stateless Computing — A service profile is a logical representation of a server, carrying its various identities and policies. This logical server can be assigned to any physical compute resource as far as it meets the resource requirements. Stateless computing enables procurement of a server within minutes, which used to take days in legacy server management systems.

· Built-in Multi-Tenancy Support — The combination of a profiles-based approach using policies, pools and templates and policy resolution with organizational hierarchy to manage compute resources makes Cisco UCS Manager inherently suitable for multi-tenant environments, in both private and public clouds.

Cisco UCS Manager

Cisco UCS Manager (UCSM) provides unified, integrated management for all software and hardware components in Cisco UCS. Using Cisco Single Connect technology, it manages, controls, and administers multiple chassis for thousands of virtual machines. Administrators use the software to manage the entire Cisco Unified Computing System as a single logical entity through an intuitive graphical user interface (GUI), a command-line interface (CLI), or a through a robust application programming interface (API).

Cisco UCS Manager is embedded into the Cisco UCS Fabric Interconnects and provides a unified management interface that integrates server, network, and storage. Cisco UCS Manger performs auto-discovery to detect inventory, manage, and provision system components that are added or changed. It offers comprehensive set of XML API for third party integration, exposes thousands of integration points and facilitates custom development for automation, orchestration, and to achieve new levels of system visibility and control.

Cisco UCS Fabric Interconnects

The Cisco UCS Fabric interconnects (FIs) provide a single point for connectivity and management for the entire Cisco UCS system. Typically deployed as an active-active pair, the system’s fabric interconnects integrate all components into a single, highly-available management domain controlled by the Cisco UCS Manager. Cisco UCS FIs provide a single unified fabric for the system, with low-latency, lossless, cut-through switching that supports LAN, SAN and management traffic using a single set of cables.

The 3rd generation (6300) Fabric Interconnect deliver options for both high workload density, as well as high port count, with both supporting either Cisco UCS B-Series blade servers, or Cisco UCS C-Series rack mount servers. The Fabric Interconnect models featured in this design is Cisco UCS 6332-16UP Fabric Interconnect which is a 1RU 40GbE/FCoE switch and 1/10 Gigabit Ethernet, FCoE and FC switch offering up to 2.24 Tbps throughput. The switch has 24x40Gbps fixed Ethernet/FCoE ports with unified ports providing 16x1/10Gbps Ethernet/FCoE or 4/8/16Gbps FC ports. This model is aimed at FC storage deployments requiring high performance 16Gbps FC connectivity to Cisco MDS switches or FC direct attached storage.

Figure 2 Cisco UCS 6332-16UP Fabric Interconnect

![]()

See the Solution References section for additional information on Fabric Interconnects.

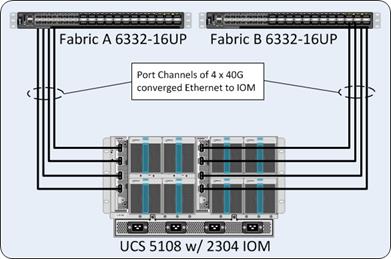

Cisco UCS 2304 XP Fabric Extenders

The Cisco UCS Fabric extenders (FEX) or I/O Modules (IOMs) multiplexes and forwards all traffic from servers in a blade server chassis to a pair of Cisco UCS Fabric Interconnects over a 40Gbps unified fabric links. All traffic, including traffic between servers on the same chassis, or different chassis, is forwarded to the parent fabric interconnect where Cisco UCS Manager runs, managing the profiles and polices for the servers. FEX technology was developed by Cisco. Up to two FEXs can be deployed in a chassis.

The Cisco UCS 2304 Fabric Extender has four 40 Gigabit Ethernet, FCoE-capable, Quad Small Form-Factor Pluggable (QSFP+) ports that connect the blade chassis to the fabric interconnect. Each Cisco UCS 2304 has four 40 Gigabit Ethernet ports connected through the midplane to each half-width slot in the chassis. Typically configured in pairs for redundancy, two fabric extenders provide up to 320 Gbps of I/O to the chassis.

Figure 3 Cisco UCS 2304 XP Fabric Extenders

See the Solution References section for additional information on Fabric Extenders.

Cisco UCS 5108 Blade Server Chassis

The Cisco UCS 5108 Blade Server Chassis is a fundamental building block of the Cisco Unified Computing System, delivering a scalable and flexible blade server architecture. The Cisco UCS blade server chassis uses an innovative unified fabric with fabric-extender technology to lower TCO by reducing the number of network interface cards (NICs), host bus adapters (HBAs), switches, and cables that need to be managed, cooled, and powered. It is a 6-RU chassis that can house up to 8 x half-width or 4 x full-width Cisco UCS B-series blade servers. A passive mid-plane provides up to 80Gbps of I/O bandwidth per server slot and up to 160Gbps for two slots (full-width). The rear of the chassis contains two I/O bays to house a pair of Cisco UCS 2000 Series Fabric Extenders to enable uplink connectivity to FIs for both redundancy and bandwidth aggregation.

Figure 4 Cisco UCS 5108 Blade Server Chassis

See the Solution References section for additional information on Cisco UCS Blade Server Chassis.

Cisco UCS VIC

The Cisco UCS blade server has various Converged Network Adapters (CNA) options.

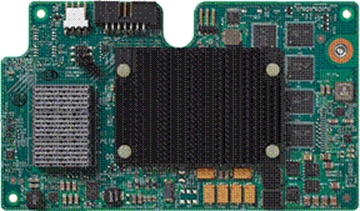

Cisco VIC 1340 Virtual Interface Card

The Cisco UCS Virtual Interface Card (VIC) 1340 is a 2-port 40-Gbps Ethernet or dual 4 x 10-Gbps Ethernet, Fiber Channel over Ethernet (FCoE)-capable modular LAN on motherboard (mLOM) designed exclusively for the Cisco UCS B-Series Blade Servers. When used in combination with an optional port expander, the Cisco UCS VIC 1340 capabilities is enabled for two ports of 40-Gbps Ethernet.

Figure 5 Cisco UCS 1340 VIC Card

The Cisco UCS VIC 1340 enables a policy-based, stateless, agile server infrastructure that can present over 256 PCIe standards-compliant interfaces to the host that can be dynamically configured as either network interface cards (NICs) or host bus adapters (HBAs). In addition, the Cisco UCS VIC 1340 supports Cisco Data Center Virtual Machine Fabric Extender (VM-FEX) technology, which extends the Cisco UCS fabric interconnect ports to virtual machines, simplifying server virtualization deployment and management.

Cisco VIC 1380 Virtual Interface Card

The Cisco UCS Virtual Interface Card (VIC) 1380 is a dual-port 40-Gbps Ethernet, or dual 4 x 10 Fiber Channel over Ethernet (FCoE)-capable mezzanine card designed exclusively for the M5 generation of Cisco UCS B-Series Blade Servers. The card enables a policy-based, stateless, agile server infrastructure that can present over 256 PCIe standards-compliant interfaces to the host that can be dynamically configured as either network interface cards (NICs) or host bus adapters (HBAs). In addition, the Cisco UCS VIC 1380 supports Cisco Data Center Virtual Machine Fabric Extender (VM-FEX) technology, which extends the Cisco UCS fabric interconnect ports to virtual machines, simplifying server virtualization deployment and management.

Figure 6 Cisco UCS 1380 VIC Card

Cisco UCS B200 M5 Servers

The enterprise-class Cisco UCS B200 M5 Blade Server extends the Cisco UCS portfolio in a half-width blade form-factor. This M5 server uses the latest Intel® Xeon Scalable processors with up to 28 cores per processor, 3TB of RAM (using 24 x128GB DIMMs), 2 drives (SSD, HDD or NVMe), 2 GPUs and 80Gbps of total I/O to each server. It supports the Cisco VIC 1340 adapter to provide 40Gb FCoE connectivity to the unified fabric.

Figure 7 Cisco UCS B200 M5 Blade Server

For more information about Cisco UCS B-series servers, see the Solution References section.

Cisco UCS B480 M5 Servers

The enterprise-class Cisco UCS B480 M5 Blade Server delivers market-leading performance, versatility, and density without compromise for memory-intensive mission-critical enterprise applications and virtualized workloads, among others. The Cisco UCS B480 M5 is a full-width blade server supported by the Cisco UCS 5108 Blade Server Chassis.

The Cisco UCS B480 M5 Blade Server offers Four Intel Xeon Scalable CPUs (up to 28 cores per socket), up to 6 TB using 48 x 128-GB DIMMs. Five mezzanine adapters and support for up to four GPUs and Cisco UCS Virtual Interface Card (VIC) 1340 modular LAN on Motherboard (mLOM) and Cisco UCS Virtual Interface Card (VIC) 1380 is a dual-port 40-Gbps Ethernet.

Figure 8 Cisco UCS B480 M5 Blade Server

Cisco Nexus 9000 Series Switch

The Cisco Nexus 9000 Series Switches offer both modular and fixed 10/40/100 Gigabit Ethernet switch configurations with scalability up to 60 Tbps of non-blocking performance with less than five-microsecond latency, wire speed VXLAN gateway, bridging, and routing support.

The Nexus featured in this design is the Nexus 9336C-FX2 implemented in NX-OS standalone mode (Figure 9 ).

Figure 9 Cisco Nexus 9336C-FX2

![]()

The Nexus 9336C-FX2 implements Cisco Cloud Scale ASICs, giving flexible, and high port density, intelligent buffering, along with in-built analytics and telemetry. Supporting either Cisco ACI or NX-OS, the Nexus delivers a powerful 40/100Gbps platform offering up to 7.2 TBps of bandwidth in a compact 1RU TOR switch.

Cisco MDS 9000 Series Switch

The Cisco MDS 9000 family of multilayer switches give a diverse range of storage networking platforms, allowing you to build a highly scalable storage network with multiple layers of network and storage management intelligence. Fixed and modular models implement 2-32Gbps FC, 10-40Gbps FCoE/FCIP, and up to 48 TBps of switching bandwidth.

The MDS 9706 Multilayer Director is featured in this design as one of the options to use within a Cisco MDS Family (Figure 10 ).

Figure 10 Cisco MDS 9706 Switch

This six-slot switch presents a modular, redundant supervisor design, giving FC and FCoE line card modules, SAN extension capabilities, as well as NVMe over FC support on all ports. The MDS 9706 offers a lower TCO through SAN consolidation, high availability, traffic management and SAN analytics, along with management and monitoring capabilities available through Cisco Data Center Network Manager (DCNM).

Hitachi Virtual Storage Platform Storage Systems

Hitachi Virtual Storage Platform is a highly scalable, true enterprise-class storage system that can virtualize external storage and provide virtual partitioning and quality of service for diverse workload consolidation. The abilities to securely partition port, cache and disk resources, and to mask the complexity of a multivendor storage infrastructure, make Virtual Storage Platform the ideal complement to mission-critical and Tier 1 business applications, VSP delivers the highest uptime and flexibility for your block-level storage needs, providing much-needed flexibility for enterprise environments.

With the addition of flash acceleration technology, a single VSP is now able to service more than 1 million random read IOPS. This extreme scale allows you to increase systems consolidation and virtual machine density by up to 100%, defer capital expenses and operating costs, and improve quality of service for open systems and virtualized applications.

VSP capabilities ensure that businesses can meet service level agreements (SLAs) and stay on budget by offering the following

· The industry's only 100% uptime warranty

· 3D scalable design

· 40 percent higher density

· 40 percent less power required

· Industry's leading virtualization

· Nondisruptive data migration

· Fewer administrative resources

· Resilient performance, less risk

The Hitachi Virtual Storage Platform G series family enables the seamless automation of the data center. It has a broad range of efficiency technologies that deliver maximum value while making ongoing costs more predictable. You can focus on strategic projects and to consolidate more workloads while using a wide range of media choices.

The benefits start with Hitachi Storage Virtualization Operating System RF. This includes an all new enhanced software stack that offers up to three times greater performance than our previous midrange models, even as data scales to petabytes.

Virtual Storage Platform G series offers support for containers to accelerate cloud-native application development. Provision storage in seconds, and provide persistent data availability, all the while being orchestrated by industry leading container platforms. Moved these workloads into an enterprise production environment seamlessly, saving money while reducing support and management costs.

Storage Virtualization Operating System RF

Hitachi Storage Virtualization Operating System (SVOS) RF abstracts information from storage systems, virtualizes and pools available storage resources, and automates key data management functions such as configuration, mobility, optimization, and protection. This unified virtual environment enables you to maximize the utilization and capabilities of your storage resources while at the same time reducing operations overhead and risk. Standards-compatible for easy integration into IT environments, storage virtualization and management capabilities provide the utmost agility and control, helping you build infrastructures that are continuously available, automated, and agile.

SVOS RF is the latest version of SVOS. Flash performance is optimized with a patented flash-aware I/O stack, which accelerates data access. Adaptive inline data reduction increases storage efficiency while enabling a balance of data efficiency and application performance. Industry-leading storage virtualization allows SVOS RF to use third-party all-flash and hybrid arrays as storage capacity, consolidating resources for a higher ROI and providing a high-speed front-end to slower, less predictable arrays.

SVOS RF provides the foundation for superior storage performance, high availability, and IT efficiency. The enterprise-grade capabilities in SVOS RF include centralized management across storage systems and advanced storage features, such as active-active data centers and online migration between storage systems without user or workload disruption.

The features of SVOS RF include the following:

· Advanced efficiency providing user-selectable data reduction

· External storage virtualization

· Thin provisioning and automated tiering

· Flash performance acceleration

· Global-active device for distributed environments

· Deduplication and compression of data stored on internal flash drives

· Storage service-level controls

· Data-at-rest encryption

· Performance instrumentation across multiple storage platforms

· Centralized storage management

- Simplified: Hitachi Storage Advisor

- Advanced and powerful: Hitachi Command Suite, Command Control Interface

- For organizations that have their own management toolset, we include standards-based application program interfaces (REST APIs) that centralize administrative operations on a preferred management application.

Hitachi Virtual Storage Platform G Series and F Series

Based on Hitachi's industry-leading storage technology, the all-flash Hitachi Virtual Storage Platform F350, F370, F700, and F900 and the Hitachi Virtual Storage Platform G350, G370, G700, G900 include a range of versatile, high-performance storage systems that deliver flash-accelerated scalability, simplified management, and advanced data protection.

Key features of the Hitachi Virtual Storage Platform Fx00 models and Gx00 models include:

· Up to 2.4M IOPS performance

· 100% data-availability guarantee

· AI optimized operations

· Cloud optimization

· Enhanced integration for VMware, Windows, and Oracle environments

· Advanced active-active clustering, replication, and snapshots

· Active flash tiering and groundbreaking flash modules

With many enterprises implementing both private and public cloud services as part of their overall IT strategy, the ability to take advantage of this hybrid data migration solution is critical. The data migrator to cloud feature enables policy-driven, user-transparent, and automatic file tiering of less used (cold) files from unified models to private clouds, such as Hitachi Content Platform, and public clouds, such as Amazon S3 or Microsoft Azure. This approach frees up storage resources for more frequently accessed applications for Tier 1 storage, thus reducing overall storage expenditures.

Hitachi Accelerated Flash (HAF) storage delivers best-in-class performance and efficiency in the Hitachi VSP G Hitachi Accelerated Flash (HAF) storage delivers best-in-class performance and efficiency in the Hitachi VSP F series and VSP G series storage systems. HAF features patented flash module drives (FMDs) that are rack-optimized with a highly dense design that delivers greater than 338 TB effective capacity per 2U tray based on a typical 2:1 compression ratio. IOPS performance yields up to five times better results than that of enterprise solid-state drives (SSDs), resulting in leading performance, lowest bit cost, highest capacity, and extended endurance. HAF integrated with SVOS enables leading, real-application performance, lower effective cost, and superior consistent response times. Running on VSP F Series and G Series, HAF with SVOS RF enables transactions executed within sub-millisecond response even at petabyte scale.

· Key Features - HAF delivers outstanding value compared to enterprise SSDs. When compared to small-form-factor 1.92-TB SSDs, the HAF drives deliver better performance and response time.

· Second- and Third-generation Flash Modules - The FMD HD drives are designed to support concurrent, large I/O enterprise workloads and enable hyper scale efficiencies. At their core is an advanced embedded multicore flash controller that increases the performance of multilayer cell (MLC) flash to levels that exceed those achieved by more expensive single-level cell (SLC) flash SSDs. Their inline compression offload engine and enhanced flash translation layer empower the drives to deliver up to 80% data reduction (typically 2:1) at 10 times the speed of competing drives. With more raw capacity and inline, no-penalty compression, these drives enable better performance than the SSDs.

The architecture of the Hitachi Virtual Storage Platform Fx00 models and Gx00 models accommodates scalability to meet a wide range of capacity and performance requirements. The storage systems can be configured with the desired number and types of front-end module features for attachment to a variety of host processors. All drive and cache upgrades can be performed without interrupting user access to data, allowing you to hot add components as you need them for pay-as-you-grow scalability.

The Hitachi Virtual Storage Platform Fx00 models and Gx00 models have dual controllers that provide the interface to a data host. Each controller contains its own processor, dual in-line cache memory modules (DIMMs), cache flash memory (CFM), battery, and fans, and is provided with an Ethernet connection for out-of-band management using Hitachi Device Manager - Storage Navigator. If the data path through one controller fails, all drives remain available to hosts using a redundant data path through the other controller. The Hitachi Virtual Storage Platform Fx00 models and Gx00 models allow a defective controller to be replaced.

VSP G350, G370, G700, G900 models support a variety of drives, including HDDs, SSDs for the G350 and G370. The G700 and G900 provide additional support for FMD HD drives that are the foundation of the VSP F700 and F900 models.

The storage systems allow defective drives to be hot swapped without interrupting data availability. A hot spare drive can be configured to replace a failed drive automatically, securing the fault-tolerant integrity of the logical drive. Self-contained, hardware-based RAID logical drives provide maximum performance in compact external enclosures.

The VSP F350, F370, F700, F900 all-flash arrays bring together all-flash storage and the simplicity of built-in automation software with the proven resiliency and performance of Hitachi VSP technology. The all-flash arrays offer up to 2.4 million IOPS to meet the most demanding application requirements.

Easy-to-use replication management is included with the all-flash arrays with optional synchronous and asynchronous replication available for complete data protection. The all-flash arrays range in storage capacity from 1.4 PB (raw) up to 8.7 PB effective flash capacity and provide an all-flash solution that works seamlessly with other Hitachi infrastructure products through common management software and rich automation tools.

Hitachi Virtual Storage Platform Fx00 models and Gx00 models work with a service processor (SVP). The SVP provides out‑of‑band configuration and management of the storage system and collects performance data for key components to enable diagnostic testing and analysis.

The SVP is available as a physical device provided by Hitachi Vantara or as a software application:

· The physical SVP is a 1U management server that runs Windows Embedded Standard 10.

· The SVP software application is installed on a customer-supplied server and runs on a customer-supplied version of Windows.

SAP HANA Tailored Data Center Integration Support

SAP increases flexibility and provides an alternative to SAP HANA Appliances with SAP HANA® Tailored Data Center Integration currently in five phases. This includes many kinds of virtualization, network and storage technology. Understanding the possibilities and requirements of an SAP HANA TDI environment is crucial.

SAP provides documentation around SAP HANA TDI environments that explain the five phases of SAP HANA TDI as well as hardware and software requirements for the entire stack:

· SAP HANA Storage Requirements Whitepaper

· SAP HANA Network Requirements Whitepaper

Hitachi storage units have been certified for SAP HANA TDI and are listed in the SAP Certified and Supported SAP HANA Hardware Directory. Best practices for Hitachi Storage systems in TDI environments are available, such as in SAP HANA Tailored Data Center Integration with Hitachi VSP F/G Storage Systems and SVOS RF

The section explains the SAP HANA system requirements defined by SAP and Architecture of Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP.

SAP HANA System

The SAP HANA platform is an in-memory database that allows enterprise to analyze large volumes of data in real time. SAP HANA System on a Single Server Scale-Up, is the simplest of the installation types. It is possible to run an SAP HANA system entirely on one host and then scale the system up as needed. All data and processes are located on the same server and can be accessed locally. The network requirements for this option is minimum one of 1-Gb Ethernet (access) and one 10-Gb Ethernet storage networks to run SAP HANA scale-up deployment. SAP HANA Scale-Out option is used if the SAP HANA system does not fit into the main memory of a single server based on the rules defined by SAP. In this method, multiple independent servers are combined to form one system and the load is distributed among multiple servers. In a distributed system, each index server is usually assigned to its own host to achieve maximum performance. It is possible to assign different tables to different hosts (partitioning the database), or a single table can be split across hosts (partitioning of tables). SAP HANA Scale-Out supports failover scenarios and high availability. Individual hosts in a distributed system have different roles master, worker, slave, standby depending on the task.

Hardware Requirements for the SAP HANA Database

There are hardware and software requirements defined by SAP to run SAP HANA systems. This Cisco Validated Design uses guidelines provided by SAP.

For additional information, go to: http://saphana.com

CPU

SAP HANA 2.0 with tailored-data center-integration (TDI) supports servers equipped with Intel Xeon Skylake CPU’s with more than 8 cores.

Memory

SAP HANA Scale-Up solution is supported in the following memory configurations:

· Homogenous symmetric assembly of dual in-line memory modules (DIMMs) for example, DIMM size or speed should not be mixed

· Maximum use of all available memory channels

· Supported Memory Configuration for SAP NetWeaver Business Warehouse (BW) and DataMart

- 1.5 TB on Cisco B200 M5 Servers with 2 CPUs

- 3 TB on Cisco B480 M5 Servers with 4 CPUs

· Supported Memory Configuration for SAP Business Suite on SAP HANA (SoH)

- 3 TB on Cisco B200 M5 Servers with 2 CPUs

- 6 TB on Cisco B480 M5 Servers with 4 CPUs

Network

An SAP HANA data center deployment can range from a database running on a single host to a complex distributed system. Distributed systems can get complex with multiple hosts located at a primary site having one or more secondary sites; supporting a distributed multi-terabyte database with full fault and disaster recovery.

SAP HANA has different types of network communication channels to support the different SAP HANA scenarios and setups:

· Client zone. Different clients, such as SQL clients on SAP application servers, browser applications using HTTP/S to the SAP HANA XS server and other data sources (such as BI) need a network communication channel to the SAP HANA database.

· Internal zone. The internal zone covers the communication between hosts in a distributed SAP HANA system as well as the communication used by SAP HANA system replication between two SAP HANA sites.

· Storage zone. Although SAP HANA holds the bulk of its data in memory, the data is also saved in persistent storage locations. In most cases, the preferred storage solution involves separate, externally attached storage subsystem devices that are capable of providing dynamic mount-points for the different hosts, according to the overall landscape. A storage area network (SAN) can also be used for storage connectivity.

Storage

SAP HANA is an in-memory database which stores and processes the bulk of its data in memory. Additionally, it provides protection against data loss by saving the data in persistent storage locations. The choice of the specific storage technology is driven by various requirements like size, performance and high availability.

To use Storage system in the Tailored Data Center Integration option, the storage must be certified for SAP HANA TDI option at:

https://www.sap.com/dmc/exp/2014-09-02-hana-hardware/enEN/enterprise-storage.html

All relevant information about storage requirements is documented in this white paper: https://www.sap.com/documents/2015/03/74cdb554-5a7c-0010-82c7-eda71af511fa.html

SAP HANA uses storage for several purposes:

· The SAP HANA installation. This directory tree contains the run-time binaries, installation scripts and other support scripts. In addition, this directory tree contains the SAP HANA configuration files, and is also the default location for storing trace files and profiles. On distributed systems, it is created on each of the hosts.

· Backups: Regularly scheduled backups are written to storage in configurable block sizes up to 64 MB.

· Data: SAP HANA persists a copy of the in-memory data, by writing changed data in the form of so-called Savepoint blocks to free file positions, using I/O operations from 4 KB to 16 MB (up to 64 MB when considering super blocks) depending on the data usage type and number of free blocks. Each SAP HANA service (process) separately writes to its own Savepoint files, every five minutes by default.

· Redo Log. To ensure the recovery of the database with zero data loss in case of faults, SAP HANA records each transaction in the form of a so-called redo log entry. Each SAP HANA service separately writes its own redo-log files. Typical block-write sizes range from 4KB to 1MB.

FileSystem Layout

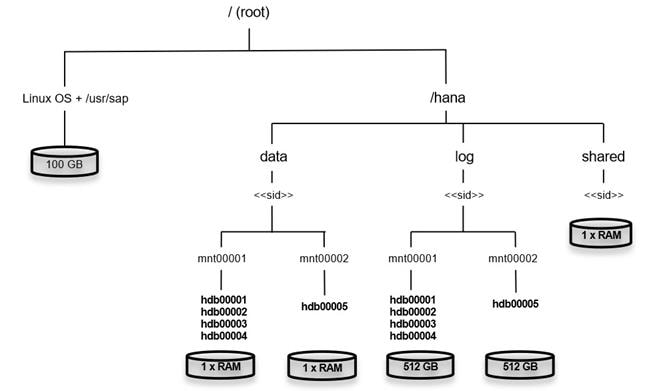

File System layout with storage sizes to install and operate SAP HANA is shown in Figure 11. For the Linux OS installation / (root) 10 GB of disk size is recommended. Additionally, 50 GB must be provided for the /usr/sap since this is the volume used for SAP software that supports SAP HANA. The location of the OS installation can be joined with the /usr/sap location. In this solution, we are creating a 100 GB LUN for OS which includes /usr/sap.

While installing SAP HANA on a host, specify the mount point for the installation binaries (/hana/shared/<sid>), data files (/hana/data/<sid>) and log files (/hana/log/<sid>), where sid is the 3-digit System identifier of the SAP HANA installation.

Figure 11 File System Layout for 2 Node Scale-Out System

The storage sizing for filesystem is based on the amount of memory equipped on the SAP HANA host.

Below is a sample filesystem size for a Single SAP HANA Scale Up System:

| Root-FS |

100 GB inclusive of space required for /usr/sap |

| /hana/shared |

1x RAM or 1TB whichever is less |

| /hana/data |

1 x RAM |

| /hana/log |

½ of the RAM size for systems <= 256GB RAM and min 512 GB for all other systems |

With a distributed installation of SAP HANA Scale-Out, each server will have the following:

| Root-FS |

100 GB inclusive of space required for /usr/sap |

| /hana/data |

1 x RAM |

| /hana/log |

½ of the RAM size for systems <= 256GB RAM and min 512 GB for all other systems |

The installation binaries, trace and configuration files are stored on a shared filesystem, which should be accessible for all hosts in the distributed installation. The size of shared filesystem should be 1 X RAM of a worker node for every 4 nodes in the cluster.

Operating System

The supported operating systems for SAP HANA are as follows:

· SUSE Linux Enterprise Server for SAP Applications

· Red Hat Enterprise Linux for SAP HANA

High Availability

The infrastructure for an SAP HANA solution must not have single point of failure. To support high-availability, the hardware and software requirements are:

· External storage: Redundant data paths, dual controllers, and a RAID-based configuration are required

· Ethernet switches: Two or more independent switches should be used

SAP HANA Scale-Out comes with in integrated high-availability function. If an SAP HANA system is configured with a stand-by node, a failed part of SAP HANA will start on the stand-by node automatically. For automatic host failover, storage connector API must be properly configured for the implementation and operation of the SAP HANA.

For detailed information from SAP see: http://saphana.com or http://service.sap.com/notes

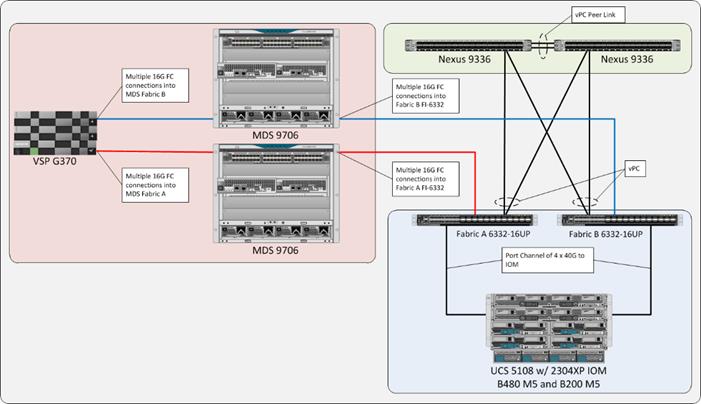

Physical Topology

The Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP provides an end-to-end architecture with Cisco Compute, Networking and Hitachi Storage that demonstrate support for multiple SAP HANA workloads with high availability and secure multi-tenancy. The architecture uses Cisco UCS Manager with combined Cisco UCS B-Series Servers and Cisco UCS Fabric Interconnect. The uplink from Cisco UCS Fabric Interconnect is connected to Nexus 9336 switches with High Availability and Failover functionality. The storage traffic between HANA servers and Hitachi Storage flows through Cisco UCS Fabric Interconnect and Cisco MDS Switching. Figure 12 illustrates the physical topology of the Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP.

The components of this integrated architecture shown in Figure 12 are:

· Cisco Nexus 9336C-FX2 – 100Gb capable, LAN connectivity to the Cisco UCS compute resources.

· Cisco UCS 6332-16UP Fabric Interconnect – Unified management of Cisco UCS compute, and the compute’s access to storage and networks.

· Cisco UCS B200 M5 – High powered, versatile blade server with two CPU for SAP HANA

· Cisco UCS B480 M5 – High powered, versatile blade server with four CPU for SAP HANA

· Cisco MDS 9706 – 16Gbps Fiber Channel connectivity within the architecture, as well as interfacing to resources present in an existing data center.

· Hitachi VSP G370 – Mid-range, high performance storage subsystem with optional all-flash configuration

· Cisco UCS Manager – Management delivered through the Fabric Interconnect, providing stateless compute, and policy driven implementation of the servers managed by it.

Scale and Performance Consideration

Although this is the base validated design, each of the components can be scaled easily to support specific business requirements. Additional servers or even blade chassis can be deployed to increase compute capacity without additional Network components. Two Cisco UCS 6332-16 UP Fabric interconnect with 16 x 16G FC unified ports and 24 x 40G ports can support up to:

· 16 x Cisco UCS B-Series B480 M5 Server with 4 Blade Server Chassis

· 32 x Cisco UCS B-Series B200 M5 Server with 4 Blade Server Chassis

· 2 x Hitachi Vantara VSP G370

As per SAP HANA Certified TDI Storage, 16 HANA nodes can be supported on a single Hitachi VSP G370.

Compute Connectivity

Each compute chassis in the design is connected to the managing fabric interconnect with at least two ports per IOM. Ethernet traffic from the upstream network and Fiber Channel frames coming from the VSP are converged within the fabric interconnect to be both Ethernet and Fiber Channel over Ethernet transmitted to the UCS servers through the IOM which are automatically configured as port channels with the specification of a Chassis/FEX Discovery Policy within UCSM.

These connections from the Cisco UCS 6332-16UP Fabric Interconnect to the 2304 IOM are shown in Figure 13.

Figure 13 Cisco UCS 6332-16UP Fabric Interconnect Connectivity to FEX 2304 IOM on Chassis 5108

The 2304 IOM are shown with 4 x 40Gbps ports to deliver an aggregate of 320 Gbps to the chassis.

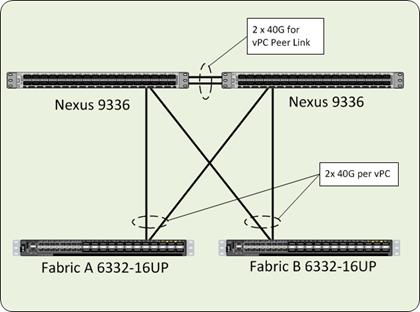

Network Connectivity

The network coming into each of the fabric interconnects is configured as a Port Channel to the respective fabric interconnects but is implemented as Virtual Port Channels (vPC) from the upstream Nexus switches. In the switching environment, the vPC provides the following benefits:

· Allows a single device to use a Port Channel across two upstream devices

· Eliminates Spanning Tree Protocol blocked ports and use all available uplink bandwidth

· Provides a loop-free topology

· Provides fast convergence if either one of the physical links or a device fails

· Helps ensure high availability of the network

The upstream network connecting to the Cisco UCS 6332-16UP Fabric Interconnects can utilize 10/40ports to talk to the upstream Nexus switch. In this design, the 40G ports were used for the construction of the port channels that connected to the vPCs (Figure 14).

Figure 14 Cisco UCS 6332-16UP Fabric Interconnect Uplink Connectivity to Nexus 9336-FX2

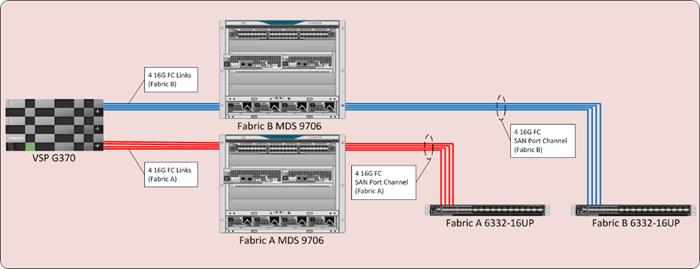

Storage Connectivity

The Hitachi VSP is connected to the respective fabric interconnects they are associated with through the Cisco MDS 9706. For the fabric interconnects, these are configured as SAN Port Channels, with N_Port ID Virtualization (NPIV) enabled on the MDS. This configuration allows:

· Increased aggregate bandwidth between the fabric interconnects and the MDS

· Load balancing between the links

· High availability in the result of a failure of one or more of the links

Figure 15 Storage Connectivity Overview

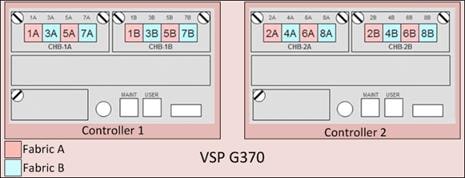

Hitachi VSP FC Port to Fabric Assignments

Each member of the VSP F series and G series is comprised of multiple controllers and channel board adapters (CHA or CHB) that control connectivity to the fiber channel fabrics. This allows for designing multiple layers of redundancy within the storage architecture, increasing availability and maintaining performance during a failure event. The VSP Fx00 model’s and Gx00 model’s CHA/CHBs each contain up to four individual fiber channel ports, allowing for redundant connections to each fabric in the Cisco UCS infrastructure.

Hitachi VSP Fx00 series and Gx00 series systems have two controllers contained within the storage system. The port to fabric assignments for the VSP G370 used in this design are shown in Figure 16, illustrating multiple connections to each fabric and split evenly between VSP controllers and 32Gb CHBs:

Figure 16 Hitachi VSP G370 Port Assignment

Hitachi VSP LUN Presentation and Path Assignments

Following Hitachi Vantara’s best practices for SAP HANA TDI storage environments, two storage paths were assigned, comprised of one path on each fabric. For each LUN, redundant paths considering controller and cluster failure were assigned.

MDS Zoning

Zoning within the MDS is configured for each host with single initiator multiple target zones, leveraging the Smart Zoning feature for greater efficiency. The design implements a simple, single VSAN layout per fabric within the MDS, however configuration of differing VSANs for greater security and tenancy are supported.

Initiator (Cisco UCS hosts) and targets (VSP controller ports) are set up with device aliases within the MDS for easier identification within zoning and flogi connectivity. Configuration of zoning and the zonesets containing them can be managed via CLI however it is also available for creation and editing with DCNM for a simpler administrative experience.

For more information about zoning and the Smart Zoning feature see the Storage Design Options section.

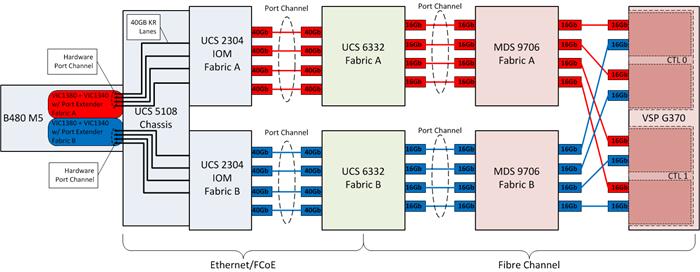

End-to-End Data Path

The architectures in this design is built around the implementation of fiber channel storage, includes 16G end to end FC for the Cisco UCS 6332 to the VSP G370.

The architecture passage of traffic for the Cisco UCS 6332-16UP with the VSP G370 is shown in Figure 17:

· From the Cisco UCS B480 M5 server, equipped with a VIC 1340 adapter with Port Expander card, and VIC 1380 allowing for 40Gb on each side of the fabric (A/B) into the server.

· Pathing through 40Gb KR lanes of the Cisco UCS 5108 Chassis backplane into the Cisco UCS 2304 IOM (Fabric Extender).

· Connecting from each IOM to the Fabric Interconnect with pairs of 40Gb uplinks automatically configured as port channels during chassis association, that carry the FC frames as FCoE along with the Ethernet traffic coming from the chassis blades.

· Continuing from the Cisco UCS 6332-16UP Fabric Interconnects into the Cisco MDS 9706 with multiple 16G FC ports configured as a port channel for increased aggregate bandwidth and link loss resiliency.

· Ending at the Hitachi VSP G370 fiber channel controller ports with dedicated F_Ports on the Cisco MDS 9706 for each N_Port WWPN of the VSP controller, with each fabric evenly split between the controllers, clusters, and CHAs.

Figure 17 Storage Traffic Flow Between Cisco UCS B480-M5 Servers with VSP G370

Compute Design Options

Cisco UCS B-Series

Supporting up to 3TB of memory in a half width blade format and 6TB of memory in a full width blade format, these Cisco UCS servers are ideal any SAP workloads. These servers are configured in the design with:

· Diskless SAN boot – Persistent operating system installation, independent of the physical blade for true stateless computing.

· VIC 1340 with Port Expander and VIC 1380 provides four 40Gbps capable of up to 256 Express (PCIe) virtual adapters.

Network Design Options

Management Connectivity

Out-of-band management is handled by an independent switch that could be one currently in place in the customer’s environment. Each physical device had its management interface carried through this Out-of-band switch, with in-band management carried as a differing VLAN within the solution for SAP HANA Network requirements.

Out-of-band configuration for the components configured as in-band could be enabled however would require additional uplink ports on the 6332-16UP Fabric Interconnects if the out of band management is kept on a separate out of band switch. A disjoint layer-2 configuration can then be used to keep the management and data plane networks completely separate. This would require additional vNICs on each server, which are then associated with the management uplink ports.

Jumbo Frames

Jumbo frames are a standard recommendation across Cisco designs to help leverage the increased bandwidth availability of modern networks. To take advantage of the bandwidth optimization and reduced consumption of CPU resources gained through jumbo frames, they were configured at each network level to include the virtual switch and virtual NIC.

This optimization is relevant for VLANs that stay within the pod, and do not connect externally. Any VLANs that are extended outside of the pod should be left at the standard 1500 MTU to prevent drops from any connections or devices not configured to support a larger MTU.

Storage Design Options

Hitachi has certified its storage systems for the use as SAP HANA Enterprise Storage in SAP HANA TDI environments. This includes all members of the VSP family series and G series. Several design options are available with Hitachi VSP storage arrays in order to service different numbers of SAP HANA nodes. Choose from smaller, mid-range storage which can service 600,000 IOPS and 2.4PB of capacity to enterprise-class storage which can service up to 4.8 million IOPS and 34.6PB of capacity. Table 1 provides a comparison of the different models of VSP available within the family tested in this design.

Table 1 Comparison of VSP Fx00 Models and Gx00 Models

| VSP Model |

F350, F370, G350, G370 |

F700, G700 |

F900, G900 |

| Storage Class |

Mid-Range |

||

| Maximum IOPS |

600K to 1.2M IOPS

9 to 12GB/s bandwidth |

1.4M IOPS

24GB/s bandwidth |

2.4M IOPS

41GB/s bandwidth |

| Maximum Capacity |

2.8 to 4.3PB (SSD)

2.4 to 3.6PB (HDD) |

6PB (FMD)

13PB (SSD)

11.7PB (HDD) |

8.1PB (FMD)

17.3PB (SSD)

14PB (HDD) |

| Drive Types |

480GB, 1.9, 3.8, 7, 15TB SSD

600GB, 1.2, 2.4TB 10K HDD

6, 10TB 7.2K HDD |

3.5, 7, 14TB FMD

480GB, 1.9, 3.8, 7.6, 15TB SSD

600GB, 1.2, 2.4TB 10K HDD

6, 10TB 7.2K HDD |

3.5, 7, 14TB FMD

1.9, 3.8, 7.6, 15TB SSD

600GB, 1.2, 2.4TB 10K HDD

6, 10TB 7.2K HDD |

| Maximum FC Interfaces |

16x (16/32Gb FC) |

64x (16/32Gb FC) |

80x (16/32Gb FC) |

LUN Multiplicity per HBA and Different Pathing Options

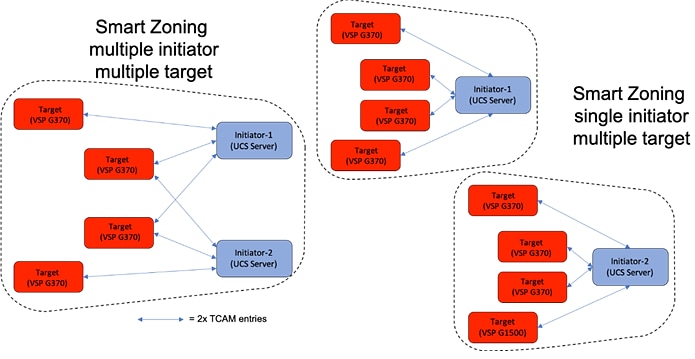

This design implements Single Initiator-Multi Target (SI-MT) zoning in conjunction with single vHBAs per fabric on the Cisco UCS infrastructure. This means that each vHBA within UCS will see multiple paths on their respective fabric to each LUN. Using this design requires the use of Cisco Smart Zoning within the MDS switches.

Different pathing options including Single Initiator-Single Target (SI-ST) are supported however it may reduce availability and performance especially during a component failure or upgrade scenario within the overall data path.

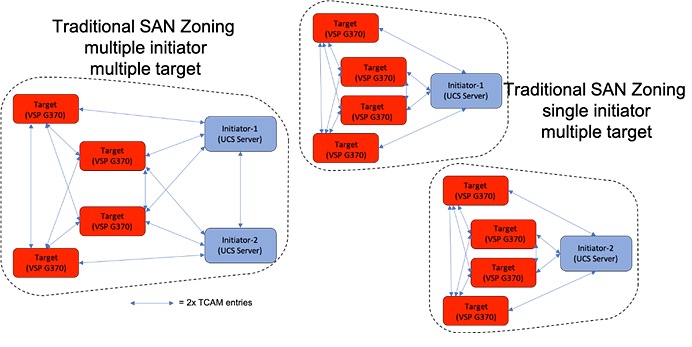

Zoning and Smart Zoning

Zoning is set for single initiator (Cisco UCS host vHBA) with multiple targets (VSP controller ports) to optimize traffic intended to be specific to the host and the storage controller. Using single initiator/multiple target zoning provides reduced administrative overhead versus configuring single initiator/single target zoning, and results in the same SAN switching efficiency when configured with Smart Zoning.

Smart Zoning is configured on the MDS to allow for reduced TCAM (ternary content addressable memory) entries, which are fabric ACL entries of the MDS allowing traffic between targets and initiators. When calculating TCAMs used, two TCAM entries will be created for each connection of devices within the zone. Without Smart Zoning enabled for a zone, targets will have a pair of TCAMs established between each other, and all initiators will additionally have a pair of TCAMs established to other initiators in the zone as illustrated in Figure 18.

Figure 18 Traditional SAN Zoning

Using Smart Zoning, Targets and Initiators are identified, reducing TCAMs needed to only occur Target to Initiator within the zone as illustrated in Figure 19.

Large multiple initiator to multiple target zones can take on an exponential growth, especially without smart zoning enabled. Single initiator/single target zoning will produce the same amount of TCAM entries with or without Smart Zoning however it will match the TCAM entries used for any multiple target zoning method that is done with Smart Zoning.

Design Considerations

Cisco Nexus 9000 Series vPC Best Practices

The following Cisco Nexus 9000 design best practices and recommendations were used in this design.

vPC Peer Keepalive Link Considerations

· It is recommended to have a dedicated 1Gbps layer 3 links for vPC peer keepalive, followed by out-of-band management interface (mgmt0) and lastly, routing the peer keepalive link over an existing Layer3 infrastructure between the existing vPC peers.

· vPC peer keepalive link should not be routed over a vPC peer-link.

· The out-of-band management network is used as the vPC peer keepalive link in this design.

vPC Peer Link Considerations

· Only vPC VLANs are allowed on the vPC peer-links. For deployments that require non-vPC VLAN traffic to be exchanged between vPC peer switches, deploy a separate Layer 2 link for this traffic.

· Only required VLANs are allowed on the vPC peer links and member ports – prune all others to minimize internal resource consumption.

· Ports from different line cards should be used to provide redundancy for vPC peer links if using a modular switch model.

vPC General Considerations

· vPC peer switches deployed using same bridge-id and spanning tree VLAN priority by configuring the peer-switch command on both vPC peer switches. This feature improves convergence and allows peer switches to appear as a single spanning-tree root in the Layer 2 topology.

· vPC role priority specified on both Cisco Nexus peer switches. vPC role priority determines which switch will be primary and which one will be secondary. The device with the lower value will become the primary. By default, this value is 32677. Cisco recommends that the default be changed on both switches. Primary vPC devices are responsible for BPDU and ARP processing. Secondary vPC devices are responsible for shutting down member ports and VLAN interfaces when peer-links fail.

· vPC convergence time of 30s (default) was used to give routing protocol enough time to converge post-reboot. The default value can be changed using delay-restore <1-3600> and delay-restore interface-VLAN <1-3600> commands. If used, this value should be changed globally on both peer switches to meet the needs of your deployment.

· vPC peer switches enabled as peer-gateways using peer-gateway command on both devices. Peer-gateway allows a vPC switch to act as the active gateway for packets that are addressed to the router MAC address of the vPC peer allowing vPC peers to forward traffic.

· vPC auto-recovery enabled to provide a backup mechanism in the event of a vPC peer-link failure due to vPC primary peer device failure or if both switches reload but only one comes back up. This feature allows the one peer to assume the other is not functional and restore the vPC after a default delay of 240s. This needs to be enabled on both switches. The time to wait before a peer restores the vPC can be changed using the command: auto-recovery reload-delay <240-3600>.

· Cisco NX-OS can synchronize ARP tables between vPC peers using the vPC peer links. This is done using a reliable transport mechanism that the Cisco Fabric Services over Ethernet (CFSoE) protocol provides. For faster convergence of address tables between vPC peers, ip arp synchronize command was enabled on both peer devices in this design.

vPC Member Link Considerations

· LACP used for port channels in the vPC. LACP should be used when possible for graceful failover and protection from misconfigurations

· LACP mode active-active used on both sides of the port channels in the vPC. LACP active-active is recommended, followed by active-passive mode and manual or static bundling if the access device does not support LACP. Port-channel in mode active-active is preferred as it initiates more quickly than port-channel in mode active-passive.

· LACP graceful-convergence disabled on port-channels going to Cisco UCS FI. LACP graceful-convergence is ON by default and should be enabled when the downstream access switch is a Cisco Nexus device and disabled if it is not.

· Only required VLANs are allowed on the vPC peer links and member ports – prune all others to minimize internal resource consumption.

· Source-destination IP, L4 port and VLAN are used load-balancing hashing algorithm for port-channels. This improves fair usage of all member ports forming the port-channel. The default hashing algorithm is source-destination IP and L4 port.

vPC Spanning Tree Considerations

· The spanning tree priority was not modified. Peer-switch (part of vPC configuration) is enabled which allows both switches to act as root for the VLANs.

· Loopguard is disabled by default.

· BPDU guard and filtering are enabled by default.

· Bridge assurance is only enabled on the vPC Peer Link.

Storage Design Considerations

The following Hitachi VSP storage design best practices and recommendations were used in this design.

Hitachi Dynamic Provisioning Pools

Hitachi Storage Virtualization Operating System (SVOS) uses Hitachi Dynamic Provisioning (HDP) to provide wide striping and thin provisioning. Dynamic Provisioning provides one or more wide-striping pools across many RAID groups. Each pool has one or more dynamic provisioning virtual volumes (DP-VOLs) without initially allocating any physical space. Deploying Dynamic Provisioning avoids the routine issue of hot spots that occur on logical devices (LDEVs).

For SAP HANA TDI environments the following two dynamic provisioning pools created with Hitachi Dynamic Provisioning for the storage layout:

· OS_SH_DT_Pool for the following:

- OS volume

- SAP HANA shared volume

- SAP HANA data volume

· LOG_Pool for the following:

- SAP HANA log volume

The validated dynamic provisioning pool layout options with minimal disks and storage cache on Hitachi Virtual Storage Platform VSP F350, VSP G350, VSP F700, VSP G700 and VSP F900 storage ensures maximum utilization and optimization at a lower cost than other solutions. The SAP HANA node scalability for the VSP F series and G series is listed in Table 2, including the minimum number of RAID groups for SAS HDD and SSD drives.

Table 2 VSP F Series and G Series SAP HANA Node Scalability

| Storage |

Drive Type |

Maximum |

Minimal Parity Group |

|

| Data |

Log |

|||

| VSP G350 and VSP G370 |

SAS HDDs |

3 |

3 RAID-6 (14D+2P) |

1 RAID-6 (6D+2P) |

| VSP F350, VSP G350, VSP F370 and VSP G370 |

SSDs |

16 |

3 RAID-10 (2D+2D) |

3 RAID-10 (2D+2D) |

| VSP G700 |

SAS HDDs |

13 |

6 RAID-6 (14D+2P) |

3 RAID-6 (6D+2P) |

| VSP F700 and VSP G700 |

SSDs |

34 |

4 RAID-10 (2D+2D) |

5 RAID-10 (2D+2D) |

| VSP F900 and VSP G900 |

SSDs |

40 |

4 RAID-10 (2D+2D) |

4 RAID10 (2D+2D) |

Details about SAP HANA node scalability and configuration guidelines can be found in SAP HANA Tailored Data Center Integration on Hitachi Virtual Storage Platform G Series and VSP F Series with Hitachi Storage Virtualization Operating System.

Test Plan

The solution was tested by Cisco and Hitachi Vantara providing an installation procedure for both, SUSE and Red Hat Linux, following best practices from Cisco, Hitachi Vantara and SAP. All SAP HANA TDI phase 5 requirements are tested and passed for performance and high availability, including:

· Cisco UCS Setup and Configuration

· VSP Setup and Configuration

· MDS Setup and Configuration

· Host Based SAN Boot

· Operating System Configuration for SAP HANA

· Installation of SAP HANA 2.0 SPS03 Rev. 33

· Performance Tests using SAP’s HWCCT

Validated Hardware

Table 3 describes the hardware and software versions used during solution validation. It is important to note that Cisco, Hitachi Vantara, and VMware have interoperability matrixes that should be referenced to determine support and are available in the Appendix.

Table 3 Hardware and Software Versions used in this Solution

| Component |

Software |

|

| Network |

Nexus 9336C-FX2 |

7.0(3)I7(5a) |

| MDS 9706 (DS-X97-SF1-K9 & DS-X9648-1536K9) |

8.3(1) |

|

| Compute |

Cisco UCS Fabric Interconnect 6332 |

4.0(1c) |

| Cisco UCS 2304 IOM |

4.0(1c) |

|

| Cisco UCS B200 M5 |

4.0(1c) |

|

| Cisco UCS B480 M5 |

4.0(1c) |

|

| Operating System |

SUSE Linux Operating System |

SLES for SAP 12 SP 4 |

| Red Hat Operating System |

RHEL 7.5 |

|

| Storage |

Hitachi VSP G370 |

88-02-03-60/00 |

The Cisco and Hitachi Adaptive Solutions for Converged Infrastructure SAP built by a partnership between Cisco and Hitachi Vantara to support SAP HANA workloads in TDI environment validated for both SUSE Linux Enterprise Server and Red Hat Enterprise Linux operating systems. The solution is built utilizing Cisco UCS Blade Servers, Cisco Fabric Interconnects, Cisco Nexus 9000 switches, Cisco MDS switches and fiber channel-attached Hitachi VSP storage. It is designed and validated using compute, network and storage best practices for high performance, scalability, and resiliency throughout the architecture.

Appendix: Solution References

Compute

Cisco Unified Computing System:

http://www.cisco.com/en/US/products/ps10265/index.html

Cisco UCS 6300 Series Fabric Interconnects:

Cisco UCS 5100 Series Blade Server Chassis:

http://www.cisco.com/en/US/products/ps10279/index.html

Cisco UCS 2300 Series Fabric Extenders:

Cisco UCS B-Series Blade Servers:

http://www.cisco.com/en/US/partner/products/ps10280/index.html

Cisco UCS VIC Adapters:

http://www.cisco.com/en/US/products/ps10277/prod_module_series_home.html

Cisco UCS Manager:

http://www.cisco.com/en/US/products/ps10281/index.html

Network and Management

Cisco Nexus 9000 Series Switches:

http://www.cisco.com/c/en/us/products/switches/nexus-9000-series-switches/index.html

Cisco MDS 9000 Series Multilayer Switches:

Cisco Data Center Network Manager:

Storage

Hitachi Virtual Storage Platform F Series:

Hitachi Virtual Storage Platform G Series:

SAP HANA Tailored Data Center Integration with Hitachi VSP F/G Storage Systems and SVOS RF

Interoperability Matrixes

Cisco UCS Hardware Compatibility Matrix:

https://ucshcltool.cloudapps.cisco.com/public/

Cisco Nexus Recommended Releases for Nexus 9K:

Cisco MDS Recommended Releases:

Cisco Nexus and MDS Interoperability Matrix:

Hitachi Vantara Interoperability:

https://support.hitachivantara.com/en_us/interoperability.html

SAP HANA

SAP HANA Platform on SAP Help Portal

https://help.sap.com/viewer/p/SAP_HANA_PLATFORM

SAP HANA Tailored Data Center Integration Overview

https://www.sap.com/documents/2017/09/e6519450-d47c-0010-82c7-eda71af511fa.html

SAP HANA Tailored Data Center Integration - Frequently Asked Questions

https://www.sap.com/documents/2016/05/e8705aae-717c-0010-82c7-eda71af511fa.html

SAP HANA TDI-Storage Requirements

https://www.sap.com/documents/2015/03/74cdb554-5a7c-0010-82c7-eda71af511fa.html

SAP HANA: Supported Operating Systems

SAP Note 2235581: https://launchpad.support.sap.com/#/notes/2235581

Shailendra Mruthunjaya, Cisco Systems, Inc.

Shailendra is a Technical Marketing Engineer with Cisco UCS Solutions and Performance Group. Shailendra has over eight years of experience with SAP HANA on Cisco UCS platform. Shailendra has designed several SAP landscapes in public and private cloud environment. Currently, his focus is on developing and validating infrastructure best practices for SAP applications on Cisco UCS Servers, Cisco Nexus products and Storage technologies.

Dr. Stephan Kreitz, Hitachi Vantara

Stephan Kreitz is a Master Solutions Architect in the Hitachi Vantara Converged Product Engineering Group. Stephan has worked at SAP and in the SAP space for Hitachi Vantara since 2011. He started his career in the SAP space as a Quality specialist at SAP and has worked in multiple roles around SAP at Hitachi Data Systems and Hitachi Vantara. He is currently leading the virtualized SAP HANA solutions at Hitachi Vantara, responsible for Hitachi Vantara’s certifications around SAP and SAP HANA and the technical relationship between Hitachi Vantara and SAP.

Acknowledgements

For their support and contribution to the design, validation, and creation of this Cisco Validated Design, the authors would like to thank:

· Caela Dehaven, Cisco Systems, Inc.

· Erik Lillestolen, Cisco Systems, Inc.

· Pramod Ramamurthy, Cisco Systems, Inc.

· Michael Lang, Cisco Systems, Inc.

· Ramesh Isaac, Cisco Systems, Inc.

· Tim Darnell, Hitachi Vantara

· YC Chu, Hitachi Vantara

· Markus Berg, Hitachi Vantara

· Shinji Osako, Hitachi Vantara

· Yutaro Seino, Hitachi Ltd.

Feedback

Feedback