Deploy FlexPod with Cisco UCS C890 M5 Rack Server for SAP HANA TDI

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

FlexPod Datacenter is a converged infrastructure developed jointly by Cisco and NetApp. The platform offers a prevalidated data center architecture that incorporates computing, storage, and network design best practices to reduce IT risk and help ensure compatibility among the components. This design document describes how to incorporate the Cisco UCS® C890 M5 Rack Server into the FlexPod solution for SAP HANA Tailored Data Center Integration (TDI).

The dramatic growth in the amount of data in recent years requires larger solutions to keep and manage your data. Based on the Second Generation (2nd Gen) Intel® Xeon® Scalable processors, the high-performance Cisco UCS C890 M5 server is well suited for mission-critical SAP HANA implementations. The C890 M5 server as part of a SAP HANA TDI solution with FlexPod Datacenter provides a robust platform for SAP HANA workloads in either single-node (scale up) or multiple-node (scale out) configurations.

FlexPod design and deployment details, including design configuration and associated best practices, are found in our most recent Cisco® Validated Designs for FlexPod at https://www.cisco.com/c/en/us/solutions/design-zone/data-center-design-guides/flexpod-design-guides.html.

This section introduces the FlexPod solution with the Cisco UCS C890 M5 Rack Server for SAP HANA TDI.

Introduction

The SAP HANA in-memory database, acting as the backbone of transactional and analytical SAP workloads, takes advantage of the low-cost main memory, data-processing capabilities of multicore processors, and faster data access. The Cisco UCS C890 M5 Rack Server is equipped with 2nd Gen Intel Xeon Scalable processors and supports implementations using either DDR4 memory modules exclusively or a mixed population of DDR4 and Intel® Optane™ persistent memory.

The eight-socket rack server integrates seamlessly into the FlexPod design and demonstrates the resiliency and ease of deployment of an SAP HANA TDI solution. The NetApp All Flash FAS (AFF) series storage array hosts the SAP HANA persistence partitions and provides a shared file system, thus enabling distributed SAP HANA scale-out deployments. It enables organizations to consolidate their SAP landscape and run SAP application servers as well as multiple SAP HANA databases hosted on the same infrastructure.

Audience

The intended audience for this document includes IT architects and managers, field consultants, sales engineers, professional services staff, partner engineers, and customers who want to deploy SAP HANA on the Cisco UCS C890 M5 Rack Server.

Document reference

This document builds on the Cisco Validated Design for FlexPod for SAP HANA TDI. This document presents the steps for integrating the Cisco UCS C890 M5 Rack Server into FlexPod Datacenter. It provides guidelines for preparing the Cisco UCS C890 server in conjunction with Cisco Nexus® and Cisco MDS Family switches in the FlexPod infrastructure, providing the foundation for SAP HANA deployment.

Solution summary

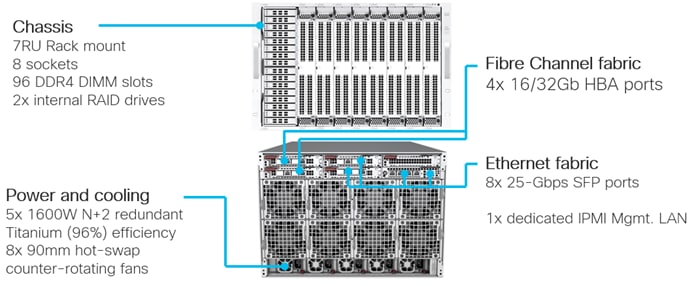

FlexPod with the Cisco UCS C890 M5 Rack Server is a flexible, converged infrastructure solution designed to support mission-critical workloads for which performance and memory size are key attributes. The seven-rack-unit (7RU) form-factor rack server delivers exceptional performance and supports eight 2nd Gen Intel Xeon processors and 96 DIMM slots, which can be populated either with DRAM only or in a mixed configuration with Intel Optane persistent memory (PMem). The default hardware configuration of the Cisco UCS C890 M5 Rack Server for SAP HANA enables the server for SAP HANA TDI deployments.

You can configure FlexPod with the Cisco UCS C890 M5 server according to your demands and use. You can purchase exactly the infrastructure you need for your current application requirements and then can scale up by adding more resources to the FlexPod system or scale out by adding more servers.

The Intelligent Platform Management Interface (IPMI) provides remote access for multiple users and allows you to monitor system health and to manage server events remotely. Cisco Intersight™ management features for the Cisco UCS C890 M5 will be made available through future software updates. Cloud-based IT operations management simplifies SAP environments.

This section provides a technical overview of the computing, network, storage, and management components in this SAP solution. For additional information about any of the components discussed in this section, refer to the “For more information” section at the end of this document.

FlexPod Datacenter

Many enterprises today are seeking pre-engineered solutions that standardize data center infrastructure, offering organizations operational efficiency, agility, and scale to address cloud and bimodal IT and their business. Their challenges are complexity, diverse application support, efficiency, and risk. FlexPod addresses all these challenges with these features:

● Stateless architecture, providing the capability to expand and adapt to new business requirements

● Reduced complexity, automatable infrastructure, and easily deployed resources

● Robust components capable of supporting high-performance and high-bandwidth virtualized and nonvirtualized applications

● Efficiency through optimization of network bandwidth and inline storage compression with deduplication

● Risk reduction at each level of the design with resiliency built in to each touch point

Cisco and NetApp have partnered to deliver several Cisco Validated Designs, which use best-in-class storage, server, and network components to serve as the foundation for multiple workloads, enabling efficient architectural designs that you can deploy quickly and confidently.

FlexPod components

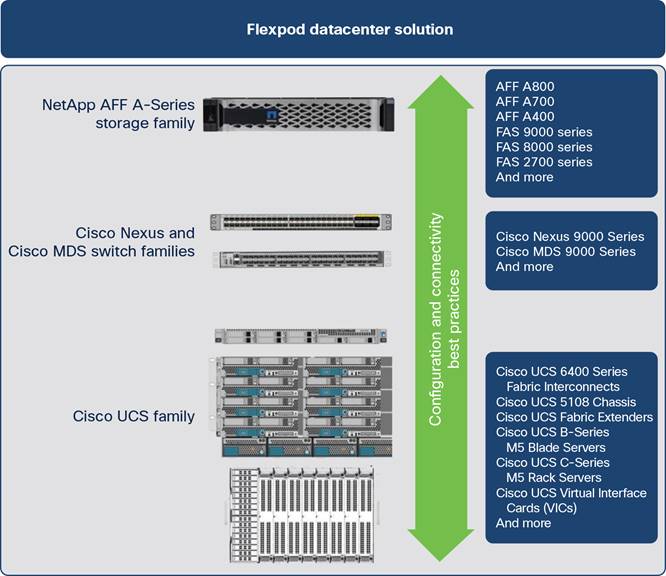

The FlexPod Datacenter is built using the following infrastructure components for computing, network, and storage resources (Figure 1):

● Cisco UCS B-Series Blade Servers and C-Series Rack Servers

● Cisco Nexus and Cisco MDS 9000 Family switches

● NetApp AFF series arrays

FlexPod component families

These components are connected and configured according to the best practices of both Cisco and NetApp to provide an excellent platform for running a variety of enterprise workloads with confidence. FlexPod can scale up for greater performance and capacity (by adding computing, network, and storage resources individually as needed), or it can scale out for environments that require multiple consistent deployments (such as by rolling out additional FlexPod stacks). Each of the component families shown in Figure 1 (Cisco UCS, Cisco Nexus, and NetApp AFF) offers platform and resource options to scale the infrastructure up or down while supporting the same features and functions that are required to meet the configuration and connectivity best practices of FlexPod.

FlexPod Datacenter with the Cisco UCS C890 M5 Rack Server uses the following hardware components:

● Cisco UCS C890 M5 Rack Servers

● High-speed Cisco NX-OS Software–based Cisco Nexus 93180YC-FX3 Switch design to support up to 100 Gigabit Ethernet network connectivity

● High-speed Cisco NX-OS Software–based Cisco MDS 9148T 32-Gbps 48-Port Fibre Channel Switch design to support up to 32-Gbps Fibre Channel connectivity for NetApp AFF arrays

● NetApp AFF A400 array

● Optional Cisco UCS 5108 chassis housing Cisco UCS B-Series Blade Servers or other C-Series rack form-factor nodes

● Optional fourth-generation Cisco UCS 6454 Fabric Interconnects for managed computing resources only

The software components consist of the following:

● IPMI console (IPMI over LAN) management web interface for the Cisco UCS C890 M5 Rack Server

● Cisco Intersight platform to deploy, maintain, and support the FlexPod components

● Cisco Intersight Assist virtual appliance to help connect the NetApp AFF A400 array and VMware vCenter with the Cisco Intersight platform

● VMware vCenter to set up and manage the virtual infrastructure as well as integration of the virtual environment with Cisco Intersight software

Cisco UCS C-Series Rack Servers

The high-performance eight-socket Cisco UCS C890 M5 Rack Server is based on the 2nd Gen Intel Xeon Scalable processors and extends the Cisco Unified Computing System™ (Cisco UCS) platform. It is well suited for mission-critical business scenarios such as medium- to large-size SAP HANA database management systems including high availability and secure multitenancy. It works with virtualized and nonvirtualized applications to increase performance, flexibility, and administrator productivity.

The 7RU server (Figure 2) has eight slots for computing modules and can house a pool of future I/O resources that may include graphics processing unit (GPU) accelerators, disk storage, and Intel Optane PMem. The mixed-memory configuration of DDR4 modules and Intel PMem in App Direct mode supports up to 24 TB of main memory for SAP HANA.

At the top rear of the server are six PCIe slots with one expansion slot available for a customer-specific extension. Eight 25 Gigabit Small Form-Factor Pluggable (SFP) Ethernet uplink ports carry all network traffic to a pair of Cisco Nexus 9000 Series Switches. Four 16- and 32-Gbps Fibre Channel host bus adapter (HBA) ports connect the server to a pair of Cisco MDS 9000 Series Multilayer Switches and to the NetApp AFF series array. Unique to Fibre Channel technology is its deep ecosystem support, making it well suited for large-scale, easy-to-manage FlexPod deployments.

Equipped with internal RAID 1 small-form-factor (SFF) SAS boot drives, the storage- and I/O-optimized enterprise-class rack server delivers industry-leading performance for both virtualized and bare-metal installations. The server offers outstanding flexibility for integration into the data center landscape, with additional capabilities for SAN boot, the capability to boot from LAN, and the capability to use Mellanox FlexBoot multiprotocol remote-boot technology.

Cisco UCS C890 M5 Rack Server

Mellanox ConnectX-4 network controller

The Mellanox super I/O module (SIOM) and the two network interface cards (NICs) provide exceptional high performance for the most demanding data centers, public and private clouds, and SAP HANA applications. The 25 Gigabit Ethernet NIC is fully backward compatible with 10 Gigabit Ethernet networks and supports bandwidth demands for both virtualized infrastructure in the data center and cloud deployments.

To support the various SAP HANA scenarios and to eliminate single points of failure, two additional 25-Gbps dual-port SFP28 network controller cards extend the capabilities of the baseline configuration of the Cisco UCS C890 M5 server.

A single 25 Gigabit Ethernet NIC is typically sufficient to run an SAP HANA database on a single host. The situation for a distributed system, however, is more complex, with multiple hosts located at a primary site having one or more secondary sites and supporting a distributed multiterabyte database with full fault and disaster recovery. For this purpose, SAP defines different types of network communication channels, called zones, to support the various SAP HANA scenarios and setups. Detailed information about the various network zones is available in the SAP HANA network requirements document.

Broadcom LPe32002 Fibre Channel HBA

The dual-port Broadcom LPe32002 16/32-Gbps Fibre Channel HBA addresses the demanding performance, reliability, and management requirements of modern networked storage systems that use high-performance and low-latency solid-state storage drives or hard-disk drive arrays.

The sixth generation of the Emulex Fibre Channel HBAs with dynamic multicore architecture offers higher performance and more efficient port utilization than other HBAs by applying all application-specific integrated circuit (ASIC) resources to any port as the port requires them.

Intelligent Platform Management Interface

The IPMI console management interface provides remote access for multiple users and allows you to monitor system health and to manage server events remotely.

Installed on the motherboard, IPMI operates independently from the operating system and allows users to access, monitor, diagnose, and manage the server through console redirection.

These are some of the main elements that IPMI manages:

● Baseboard management controller (BMC) built-in IPMI firmware

● Remote server power control

● Remote serial-over-LAN (SOL) technology (text console)

● BIOS and firmware upgrade processes

● Hardware monitoring

● Event log support

Cisco Intersight platform

The Cisco Intersight solution, Cisco’s new systems management platform, delivers intuitive computing through cloud-powered intelligence. This modular platform offers a more intelligent level of management and enables IT organizations to analyze, simplify, and automate their IT environments in ways that were not possible with prior generations of tools. This capability empowers organizations to achieve significant savings in total cost of ownership (TCO) and to deliver applications faster to support new business initiatives.

The Cisco Intersight platform uses a unified open API design that natively integrates with the third-party platform and tools.

The Cisco Intersight platform delivers unique capabilities such as the following:

● Integration with the Cisco Technical Assistance Center (TAC) for support and case management

● Proactive, actionable intelligence for issues and support based on telemetry data

● Compliance check through integration with the Cisco Hardware Compatibility List (HCL)

Cisco Intersight features for the Cisco UCS C890 M5 server will become available through software updates in the future and will not require any hardware adjustments to adopt.

For more information about the Cisco Intersight platform, see the Cisco Intersight Services SaaS systems management platform webpage.

Cisco Nexus 9000 Series

The Cisco Nexus 9000 Series Switches offer both modular and fixed 1, 10, 25, 40, and 100 Gigabit Ethernet switch configurations with scalability up to 60 Tbps of nonblocking performance with less than 5 microseconds of latency and wire-speed VXLAN gateway, bridging, and routing support.

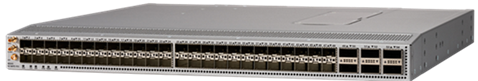

Cisco Nexus 93180YC-FX3 Switch

The Cisco Nexus 9000 Series Switch used in this design is the Cisco Nexus 93180YC-FX3 Switch (Figure 3) configured in Cisco NX-OS standalone mode. Cisco NX-OS is a data center operating system designed for performance, resiliency, scalability, manageability, and programmability at its foundation. It provides a robust and comprehensive feature set that meets the demanding requirements of virtualization and automation in present and future data centers.

The 1RU Cisco Nexus 93180YC-FX3 supports 3.6 Tbps of bandwidth and 1.2 billion packets per second. The 48 downlink ports on the 93180YC-FX3 can support 1, 10, and 25 Gigabit Ethernet, offering deployment flexibility and investment protection. You can configure the six uplink ports as 40 or 100 Gigabit Ethernet, offering flexible migration options.

For more information, visit the Cisco Nexus 9300-FX3 Series data sheet.

Cisco MDS 9100 Series

The Cisco MDS 9148T 32-Gbps 48-Port Fibre Channel Switch is the next generation of the highly reliable, flexible, and low-cost Cisco MDS 9100 Series Multilayer Fabric Switches (Figure 4). It combines high-speed Fibre Channel connectivity for all-flash arrays with exceptional flexibility and cost effectiveness. This powerful, compact 1RU switch scales from 24 to 48 line-rate 32-Gbps Fibre Channel ports.

Cisco MDS 9148T 32-Gbps 48-Port Fibre Channel Switch

The Cisco MDS 9148T delivers advanced storage networking features and functions with ease of management and compatibility with the entire Cisco MDS 9000 Family portfolio for reliable end-to-end connectivity. This switch also offers state-of-the-art SAN analytics and telemetry capabilities that are built in to this next-generation hardware platform. This new state-of-the-art technology couples the next-generation port ASIC with a fully dedicated network processing unit designed to complete analytics calculations in real time.

For more information, visit the Cisco MDS 9148T 32-Gbps 48-Port Fibre Channel Switch data sheet.

Cisco Data Center Network Manager SAN

Use the Cisco Data Center Network Manager (DCNM) SAN to monitor, configure, and analyze Cisco 32-Gbps Fibre Channel fabrics and show information about the Cisco Nexus switching fabric. Cisco DCNM-SAN is deployed as a virtual appliance from an Open Virtualization Appliance (OVA) file and is managed through a web browser. When you add the Cisco MDS and Cisco Nexus switches with the appropriate credentials and licensing, you can begin monitoring the SAN and Ethernet fabrics. Additionally, you can add, modify, and delete virtual SANs (VSANs), device aliases, zones, and zone sets using the DCNM point-and-click interface. Configure the Cisco MDS switches from within Cisco Data Center Device Manager. Add SAN analytics to Cisco MDS switches to gain insights into the fabric monitoring data, perform analysis, and identify and troubleshoot performance problems.

Cisco DCNM integration with Cisco Intersight platform

The Cisco Network Insights Base (Cisco NI Base) application provides useful Cisco TAC Assist functions. It provides a way for Cisco customers to collect technical support across multiple devices and upload the technical support data to the Cisco cloud. The Cisco NI Base application collects CPU, device name, device product ID, serial number, version, memory, device type, and disk-use information for the nodes in the fabric. The Cisco NI Base application is connected to the Cisco Intersight cloud portal through a device connector that is embedded in the management controller of the Cisco DCNM platform. The device connector provides a secure way for connected Cisco DCNM to send and receive information from the Cisco Intersight portal by using a secure Internet connection.

NetApp AFF A400

The NetApp AFF A400 (Figure 5) offers full end-to-end NVMe support. The front-end NVMe and Fibre Channel connectivity enables you to achieve optimal performance from an all-flash array for workloads that include artificial intelligence, machine learning, and real-time analytics as well as business-critical databases. On the back end, the A400 supports both serial-attached SCSI (SAS) and NVMe-attached solid-state disks (SSDs), offering versatility to allow current customers to move up from their existing A-Series systems and addressing customers’ increasing interest in NVMe-based storage. Furthermore, this system was built to provide expandability options, so you won’t have to make a costly leap from a midrange to a high-end system to increase scalability. This solution thus help protect your NetApp investment now and into the future.

The NetApp AFF A400 offers greater port availability, network connectivity, and expandability. The NetApp AFF A400 has 10 PCIe Gen 3 slots for each high-availability pair. The NetApp AFF A400 offers 25 and 100 Gigabit Ethernet as well as 32-Gbps Fibre Channel and NVMe Fibre Channel network connectivity. This model was created to keep up with changing business needs and performance and workload requirements by merging the latest technology for data acceleration and ultra-low latency in an end-to-end NVMe storage system.

NetApp AFF A400

The NetApp AFF A400 has a 4U enclosure with two possible onboard connectivity configurations (25 Gigabit Ethernet and 32-Gbps Fibre Channel). In addition, the A400 is the only A-Series system that has the new smart I/O card with an offload engine. The offload engine is computational and independent of the CPU, which allows better allocation of processing power. This system also offers improved serviceability compared to the previous NetApp 4RU chassis: the fan cooling modules have been moved from inside the controller to the front of the chassis, so cabling does not have to be disconnected and reconnected when replacing an internal fan.

The NetApp AFF A400 is well suited for enterprise applications such as SAP that require the best balance of performance and cost, as well as for very demanding workloads that require ultra-low latency such as SAP HANA. The smart I/O card serves as the default cluster interconnect, making the system an excellent solution for highly compressible workloads.

This FlexPod design deploys a high-availability pair of A400 controllers running NetApp ONTAP 9.7. For more information, see the NetApp AFF A400 data sheet.

SAP HANA Tailored Datacenter Integration

The SAP HANA in-memory database combines transactional and analytical SAP workloads and hereby takes advantage of the low-cost main memory, data-processing capabilities of multicore processors, and faster data access.

SAP provides an overview of the six phases of SAP HANA TDI, detailing the hardware and software requirements for the entire stack. For more information, see SAP HANA Tailored Data Center Integration–Overview. The enhanced sizing approach allows consolidation of the storage requirements of the whole SAP landscape, including SAP application servers, into a single, central, high-performance enterprise storage array.

The Cisco UCS C890 M5 Rack Server is well suited for any SAP workload, bare-metal or virtualized, and manages SAP HANA databases up to their limit of 24 TB of addressable main memory. In addition to the default reliability, availability, and serviceability (RAS) features, the configuration design of the server includes redundant boot storage and network connectivity. Hardware and software requirements to run SAP HANA systems are defined by SAP. FlexPod uses guidelines provided by SAP, Cisco, and NetApp.

Solution requirements

Several hardware and software requirements defined by SAP must be fulfilled before you set up and install any SAP HANA server. This section describes the solution requirements and design details.

CPU architecture

The eight-socket Cisco UCS C890 M5 Rack Server is available with 2nd Gen Intel Xeon Scalable Platinum 8276 or 8280L processors with 28 cores per CPU socket each, or 224 cores total.

The smaller platinum 8276 processors address a maximum memory size of 6 TB of main memory, and the recommended Platinum 8280L processor addresses all memory configurations listed in Table 1.

Table 1. Cisco UCS C890 M5 Rack Server memory configuration

|

|

|

Quantity |

DDR4 capacity |

PMem capacity |

Usable capacity |

| CPU specifications |

Intel Xeon Platinum 8280L processor |

8 |

|

|

|

| Memory configurations |

64 GB of DDR4 128 GB of DDR4 256 GB of DDR4 |

96 96 96 |

6 TB 12 TB 24 TB |

– – – |

6 TB 12 TB 24 TB* |

|

|

64 GB of DDR4 and 128 GB of PMem 128 GB of DDR4 and 128 GB of PMem 64 GB of DDR4 and 256 GB of PMem 128 GB of DDR4 and 256 GB of PMem 256 GB of DDR4 and 256 GB of PMe |

48 and 48 |

3 TB 6 TB 3 TB 6 TB 12 TB |

6 TB 6 TB 12 TB 12 TB 12 TB |

9 TB 12 TB 15 TB* 18 TB* 24 TB* |

Memory platform support

Because of the SAP defined memory per socket ratio, a fully DDR4 DRAM populated Cisco UCS C890 M5 Rack Server is certified for SAP HANA 2.0 with up to:

● 6 TB for SAP NetWeaver Business Warehouse (BW) and DataMart

● 12 TB for SAP Business Suite on SAP HANA (SoH/S4H)

Intel Optane PMem modules are supported with recent Linux distributions and SAP HANA 2.0. In App Direct mode, the persistent memory modules appear as byte-addressable memory resources that are controlled by SAP HANA.

The use of Intel Optane PMem requires a homogeneous, symmetrical assembly of DRAM and PMem modules with maximum utilization of all memory channels per processor. Different-sized Intel Optane PMem and DDR4 DIMMs can be used together as long as supported ratios are maintained.

Various capacity ratios between PMem and DRAM modules are supported, depending heavily on the data model and data distribution. A correct SAP sizing exercise is strongly recommended before considering Intel Optane PMem for SAP HANA.

FAQ: SAP HANA Persistent Memory provides more information if you are interested in using persistent memory in SAP HANA environments.

Network requirements

An SAP HANA data center deployment can range from a database running on a single host to a complex distributed system. Distributed systems can be complex, with multiple hosts located at a primary site having one or more secondary sites and supporting a distributed multiterabyte database with full fault and disaster recovery.

SAP recommends separating the network into different zones for client, storage, and host-to-host communication. Refer to the document SAP HANA Network Requirements for more information and sizing recommendations.

The network interfaces of the Cisco UCS C890 M5 server provide 25-Gbps network connectivity and are sufficient to fulfil the SAP HANA network requirements.

The NetApp AFF A-Series array connects to the pair of Cisco MDS 9148T Fibre Channel switches and to the Cisco UCS C890 M5 server HBAs with either 16- or 32-Gbps port speed, which fulfills the minimum requirement of 8-Gbps port speed.

Operating system

This Cisco UCS C890 M5 Rack Server is certified for Red Hat Enterprise Linux 8 (RHEL) for SAP Solutions and SUSE Linux Enterprise Server 15 (SLES) for SAP Applications.

To evaluate compatibility between the Linux operating system release and SAP HANA platform release, refer to SAP Note 2235581: SAP HANA: Supported Operating Systems.

SAP HANA single-node system (scale up)

The SAP HANA scale-up solution is the simplest installation type. In general, this solution provides the best SAP HANA performance. All data and processes are located on the same server and do not require additional network considerations for internode communication, for example. This solution requires one server with dedicated volumes for Linux and SAP HANA in the NetApp storage.

FlexPod scales with the size and number of available ports of the Cisco MDS 9000 Family multilayer SAN switches and the Cisco Nexus network switches, and in addition with the capacity and storage controller capabilities of the NetApp AFF A-Series arrays. The certification scenarios and their limitations are documented in the SAP Certified and Supported SAP HANA Hardware directory.

SAP HANA multiple-node system (scale out)

An SAP HANA scale-up installation with a high-performance Cisco UCS C890 M5 server is the preferred deployment for large SAP HANA databases. Nevertheless, you will need to distribute the SAP HANA database to multiple nodes if the amount of main memory of a single node is not sufficient to keep the SAP HANA database in memory. Multiple independent servers are combined to form one SAP HANA system, distributing the load among multiple servers.

In a distributed system, typically each index server is assigned to its own host to achieve the best performance. You can assign different tables to different hosts (database partitioning), or you can split a single table across hosts (table partitioning). SAP HANA comes with an integrated high-availability option, configuring one single server as a standby host.

To access the shared SAP HANA binaries, Network File System (NFS) storage is required independent from the storage connectivity of the SAP HANA log and data volumes. In the event of a failover to the standby host, the SAP HANA Storage Connector API manages the remapping of the logical volumes. Redundant connectivity to the corporate network over LAN (Ethernet) and to the storage over SAN (Fibre Channel) always must be configured.

The Cisco UCS C890 M5 server is certified for up to four active SAP HANA worker nodes, whether for SAP Suite for HANA or SAP Business Warehouse for HANA. Additional nodes usually are supported by SAP after an additional validation.

If no application-specific sizing program data is available, the recommended size for the SAP HANA volumes is directly related to the total memory required for the system. Additional information is available from the SAP HANA Storage Requirements white paper.

Each SAP HANA TDI worker node has the following recommended volume sizes:

● /usr/sap ≥ 52 GB

● /hana/data = 1.2 x memory

● /hana/log = 512 GB

For an SAP HANA scale-up system, the disk space recommendation for the SAP HANA shared volume is:

● /hana/shared = 1024 GB

For up to four worker nodes in an SAP HANA scale-out system, the disk space recommendation for the NFS shared SAP HANA shared volume is:

● /hana/shared = 1 x memory

Virtualized SAP HANA

SAP HANA database bare-metal installations can run along with virtualized SAP application workloads, which are common in the data center. Additional rules and limitations apply when the SAP HANA database is virtualized, such as the number of virtual CPUs (vCPUs) and memory limits. SAP Note 2652670 provides links and information you can refer to when installing the SAP HANA platform in a virtual environment using VMware vSphere virtual machines.

High availability

Multiple approaches are available to increase the availability and resiliency of SAP HANA running on the Cisco UCS C890 M5 server within FlexPod, protecting against interruptions and preventing outages from occurring. Here are just a few hardware-based configuration recommendations to eliminate any single point of failure:

● Dual network cards with active-passive or Link Aggregation Control Protocol (LACP) network bond configuration

● Redundant multiple paths to boot and SAP HANA persistence logical unit numbers (LUNs)

● Redundant data paths and dual controllers for NetApp AFF arrays

Software-based recommendations include SAP HANA embedded functions such as the use of an SAP HANA standby host in a scale-out scenario or SAP HANA system replication to another host, ideally part of a Linux cluster setup, which can reduce downtime to minutes. Designs that include redundancy entail trade-offs between risk and cost, so choose the right balance for your data center operations requirements.

Most IT emergencies are caused by hardware failures and human errors, and a working disaster-recovery strategy is recommended as well. NetApp Snap Center integrates seamlessly with native SAP HANA Backint backups and provides disaster-recovery support.

This section describes the configuration steps for installing and integrating the Cisco UCS C890 M5 Rack Server into an existing FlexPod for SAP HANA TDI environment. For more information, see the FlexPod for SAP HANA TDI deployment guide.

Naming conventions

This guide uses the following conventions for commands that you enter at the command-line interface (CLI).

Commands that you enter at a CLI prompt appear like this:

hostname# hostname

Angle brackets (<>) indicate a mandatory character string that you need to enter, such as a variable pertinent to the customer environment or a password:

hostname# ip add <ip_address/mask> dev <interface>

For example, to assign 192.168.1.200/255.255.255.0 to network interface eth0, replace the information in the angle brackets like this:

hostname# ip add 192.168.1.200/255.255.255.0 dev eth0

Configure the Cisco UCS C890 M5 Rack Server

This section presents the important host setup configuration tasks.

Configure the BIOS

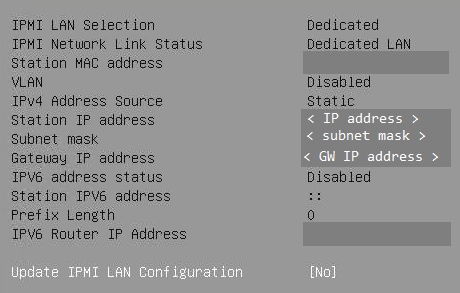

Set up the IPMI LAN configuration first to be able to continue the configuration from the remote console.

Connect to the keyboard, video, and mouse console

First connect to the keyboard, video, and mouse (KVM) console.

1. Connect the KVM cable from the accessory box to a laptop computer for the initial BIOS and IPMI configuration.

2. Press the power button located in the top-right corner on the front of the server.

3. When the Cisco Logo appears, press <DEL> to enter the AMI BIOS setup utility.

Main menu: Configure system date and time

Set the system date and system time on the main screen of the BIOS.

IPMI menu: Configure BMC network

Configure the BMC network.

1. Change to the IPMI top menu and use the arrow keys to select the BMC Network Configuration menu.

2. Update the IPMI LAN configuration and provide an IP address, subnet mask, and gateway IP address.

3. Move to the Save & Exit top menu and save the changes and reset the server.

![]()

4. When the Cisco Logo appears, press <DEL> to enter the AMI BIOS setup utility.

Switch to IPMI console management

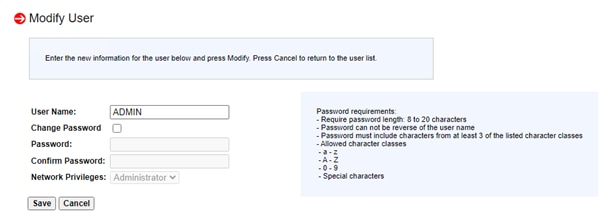

Disconnect the KVM cable and connect to the IPMI LAN IP address with your preferred browser. Log in as the user ADMIN with the unique password the comes with the server.

1. To modify the initial password, choose Configuration > Users.

2. Select the row with the ADMIN user and click the Modify User button to change the initial password.

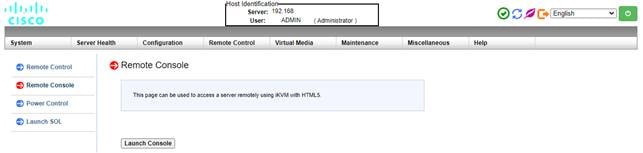

3. Choose Remote Control > Remote Console.

3. Press the Launch Console button to access the remote console.

4. The remote console opens in a new window displaying the AMI BIOS setup utility

Advanced menu: Configure CPU

Use the Advanced menu to configure the CPU.

1. Use the arrow keys to select the CPU Configuration menu.

2. For a bare-metal installation, disable Intel Virtualization Technology.

![]()

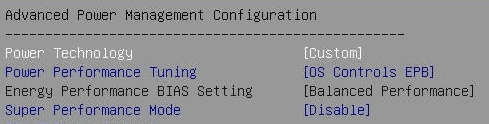

3. Select Advanced Power Management Configuration at the bottom of the processor configuration page.

4. Change Power Technology to Custom.

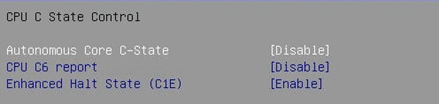

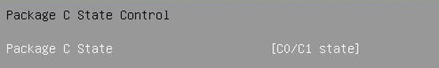

5. Select CPU C State Control and disable Autonomous Core C-State and CPU C6 report.

6. Select Package C State Control and change the Package C State value to C0/C1 state.

Advanced menu: Configure memory RAS

Modern servers, including Cisco UCS M5 servers, provide increased memory capacities that run at higher bandwidths and lower voltages. These trends, along with higher application memory demands and advanced multicore processors, contribute to an increased probability of memory errors.

Fundamental error-correcting code (ECC) capabilities and scrub protocols were historically successful at handling and mitigating memory errors. As memory and processor technologies advance, RAS features must evolve to address new challenges. Improve the server resilience with the Adaptive Double Device Data Correction (ADDDC Sparing) feature, which mitigates correctable memory errors.

1. Use the arrow keys to select the Chipset Configuration menu.

2. Continue with North Bridge and Memory Configuration.

3. Keep all values and select Memory RAS Configuration at the bottom of the screen.

4. Enable ADDDC Sparing.

![]()

Advanced menu: Configure PCIe, PCI, and PnP

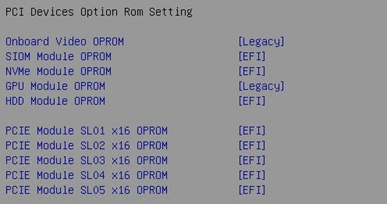

In the default configuration, the option ROM settings are set to Legacy. Configuration utilities of the add-on cards are available when you press a key combination such as Ctrl+R for the MegaRAID cards during initialization of the card itself. Changing the BIOS settings to extensible firmware interface (EFI) will enable access to the configuration utilities directly from the BIOS after the next reboot.

1. Use the arrow keys to select the PCIe/PCI/PnP Configuration menu.

2. Change the PCI Devices Option Rom Setting values from Legacy to EFI.

3. Optionally, select the Network Stack configuration to disable PXE and HTTP boot support if this is not required.

![]()

Boot menu: Confirm the boot mode

Switch to the Boot screen in the top menu. Confirm that the entry for Boot mode select is UEFI.

![]()

Save & Exit menu: Save changes

Save all changes and reset the server to activate the changes.

![]()

After the system initialization, the Cisco logo appears. Press Delete to enter the AMI BIOS setup utility again.

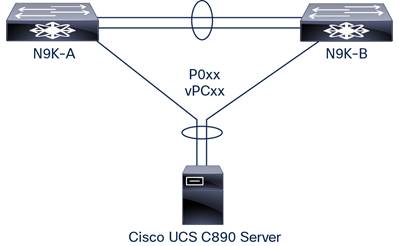

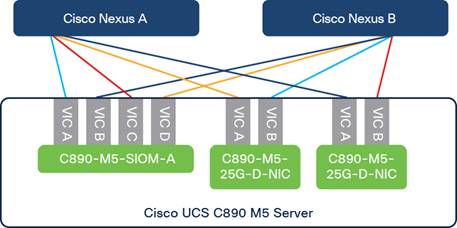

Configure the Cisco Nexus switch

The Cisco Nexus switch pair’s ports directly connect to the host Ethernet interface’s ports and are configured as a port channel, which becomes part of a virtual port channel (vPC). Figure 6 shows the connectivity topology with a pair of interfaces as an example.

Simplified topology of Cisco UCS C890 connectivity to Cisco Nexus 9000 Series Switches of FlexPod Datacenter

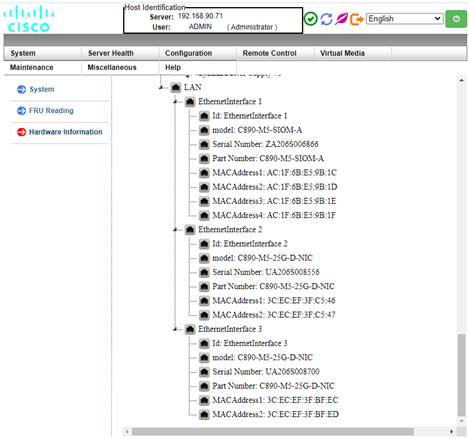

The Cisco UCS C890 server network cards support LACP for Ethernet, which controls the way that the physical ports are bundled together to form one logical channel. One link from the four-port SIOM card and one link from one of the two dual-port Ethernet cards are combined into a port channel for higher availability. Figure 7 shows the LAN interface card information on the C890 host.

LAN information from Cisco UCS C890 IPMI console

At the host OS level, an LACP or IEE 802.3ad bond interface, depending on the Linux distribution, is configured. With eight available ports from three interface cards present on the host, four bonding interfaces enslaving a pair of 25 Gigabit Ethernet interfaces (each from a different interface card for redundancy) can be carved out to cater to different SAP HANA networks depending on the implementation scenario. Figure 8 shows one such example.

Bonding interface slave selection example.

Note: Identically colored 25 Gigabit Ethernet links between the host and the Cisco Nexus switch pair depict possible bonding interface constituents.

Network bond interface can be configured with commands or with administration tools such as yast and nmtui in SLES and RHEL distributions respectively.

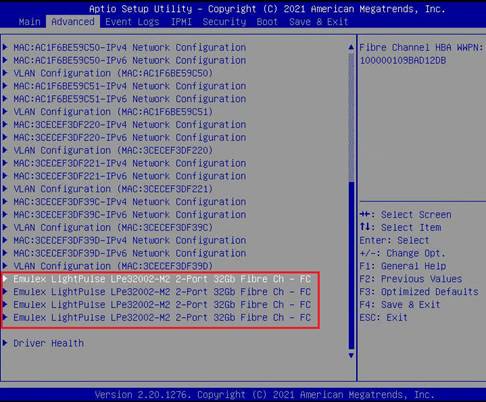

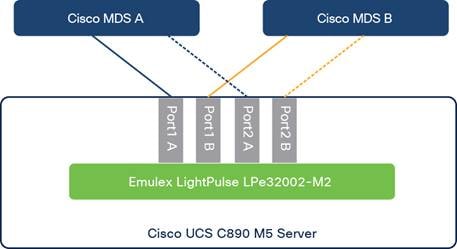

Configure the Cisco MDS switch

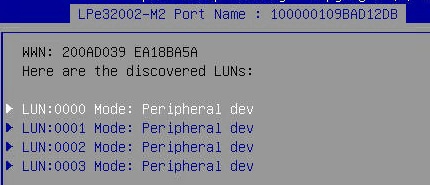

Four 16- and 32-Gbps Fibre Channel HBA ports on the Cisco UCS C890 server connect to a pair of Cisco MDS 9000 Series multilayer switches and to the NetApp AFF A-Series array. Figure 9 shows the available Emulex HBA ports configurable on the setup page.

Emulex LightPulse 32-Gbps Fibre Channel HBA

The Cisco UCS C890 server is directly connected to both SAN fabrics. A minimum of a pair of ports and optionally both pairs can be connected to create multiple paths to the LUNs based on requirements. This approach enables access to the boot LUN as well as the SAP HANA persistence LUNs to be configured on the NetApp array, for Fibre Channel–based implementations.

Simplified topology of Cisco UCS C890 connectivity to Cisco MDS 9000 Family switches of FlexPod Datacenter

These are the some of the high-level tasks needed to configure the Cisco MDS 9000 Family switch:

1. Check the fabric logins on both Cisco MDS switches and look for host HBA World Wide Port Name (WWPN).

2. Optionally, create device aliases for the logged-in Cisco UCS C890 M5 HBA ports and assign them to the correct VSAN.

3. Create a new zone on each Cisco MDS switch. In an SAP HANA scale-out scenario, add each additional interface of the worker nodes to the same single zone. Verify that the intended target WWPNs from the NetApp array and the host initiator WWPNs are part of the zone.

4. Add the new member zone created to the existing zone set and activate the zone set.

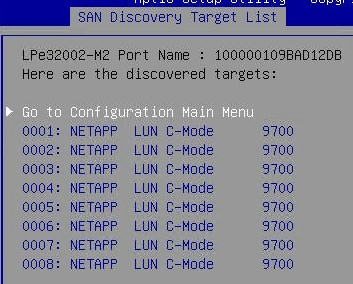

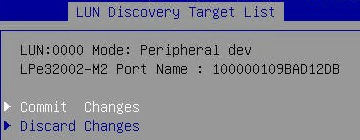

Configure Emulex LightPulse Fibre Channel

Enable SAN boot and configure the four ports of the Emulex LPe320002-M2 accordingly.

1. Use the arrow keys to select the Advanced menu tab.

2. Use the arrow keys to scroll to the first Emulex LightPulse LPe32002-M2 two-port 32-Gbps Fibre Channel entry and press the Return key.

3. Change the Set Boot from SAN entry to Enable.

![]()

4. Scan for Fibre Devices to discover all targets.

5. Press Enter to return to the configuration main menu.

6. Select Add Boot Device.

7. Select the LUN:0000 entry and press Enter.

8. Select the LUN with ID 0000 and press Enter.

9. Commit the change and press Esc to return to the SAN Discovery Target List screen.

10. Repeat step 6 to 9 for targets 0001, 0002, and 0003 and return to the configuration main menu.

11. Press Esc twice to return to the Advanced menu.

12. Repeat the preceding steps for the next three Emulex LightPulse Fibre Channel ports.

Configure NetApp storage

This section provides an overview of the NetApp storage configuration.

Configure NFS file system access

Access to the NFS shared file system as well as to the SAP HANA persistence partitions to Cisco UCS C890 nodes is achieved through assignment of the appropriate network to the corresponding Ethernet interface. The host should be able to mount the file system based on the NFS Version 3 (v3) or v4.1 protocol as configured on the array.

Configure Fibre Channel LUN access

On both infrastructure and application-specific storage virtual machines (SVMs), create initiator groups (igroups) with the host HBA WWPN information. Carve out the boot LUN in the infrastructure SVM and the SAP HANA persistence LUNs under the application- or tenant-specific SVM and map them to the respective igroups created for the host. Zoning in the Cisco MDS switch and igroup within the storage help ensure secure booting and authorized access to the SAP HANA persistence LUNs.

This section provides an overview of the Linux operating system installation process. It includes OS customization–related SAP Notes you should apply to meet SAP HANA requirements.

SUSE Linux Enterprise Server for SAP Applications installation

SLES for SAP Applications is the reference platform for SAP software development. It is optimized for SAP applications such as SAP HANA. The SUSE documentation includes an installation quick-start guide that describes the installation workflow procedure. Consult the SLES for SAP Applications 15.x Configuration Guide attached to SAP Note 1944799: SAP HANA Guidelines for SLES Operating System Installation and follow the installation workflow.

The following supplement SUSE information is available from the SAP Notes system:

● SAP Note 2369910: SAP Software on Linux: General Information

● SAP Note 2578899: SUSE Linux Enterprise Server 15: Installation Note

● SAP Note 2684254: SAP HANA DB: Recommended OS Settings for SLES 15 for SAP Applications 15

● SAP Note 1275776: Linux: Preparing SLES for SAP Environments

● SAP Note 2382421: Optimizing the Network Configuration on HANA- and OS-Level

Disable OS-based memory error monitoring

Linux supports two features related to error monitoring and logging: error detection and correction (EDAC) and machine-check event log (mcelog). Both are common in most recent Linux distributions. Cisco recommends disabling EDAC-based error collection, to allow all error reporting to be handled in firmware.

Disable EDAC by adding the kernel option edac_report=off. To add the kernel option, use the following command:

# yast bootloader

Mcelog is enabled by default in most recent Linux distributions, such as SLES for SAP Applications 15. For customers who prefer to collect all diagnostic and fault information from OS-resident tools, mcelog is recommended. Firmware logs may be incomplete when OS logging is enabled.

Red Hat Enterprise Linux for SAP Solutions installation

RHEL 8 introduces the concept of application Streams. Multiple versions of user-space components are now delivered and updated more frequently than the core operating system packages. This change provides greater flexibility for customizing RHEL without affecting the underlying stability of the platform or specific deployments. Consult the RHEL 8 installation and configuration guide for instructions about how to download the appropriate RHEL for SAP Solutions installation image and follow the installation workflow. During the installation process, be sure to apply the best practices listed in SAP Note 2772999: Red Hat Enterprise Linux 8.x: Installation and Configuration.

The following supplemental RHEL information is available from the SAP Notes system:

● SAP Note 2369910: SAP Software on Linux: General Information

● SAP Note 2772999: Red Hat Enterprise Linux 8.x: Installation and Configuration

● SAP Note 2777782: SAP HANA DB: Recommended OS Settings for RHEL 8

● SAP Note 2886607: Linux: Running SAP Applications Compiled with GCC 9.x

● SAP Note 2382421: Optimizing the Network Configuration on HANA- and OS-Level

Disable OS-based memory-error monitoring

Linux supports two features related to error monitoring and logging: EDAC and mcelog. Both are common in most recent Linux distributions. Cisco recommends disabling EDAC-based error collection, to allow all error reporting to be handled in firmware.

EDAC can be disabled by adding the option edac_report=off to the kernel command line. Mcelog is enabled by default in most recent Linux distributions, such as RHEL 8.

For customers who prefer to collect all diagnostic and fault information from OS-resident tools, mcelog is recommended. In this case, Cisco recommends disabling corrected machine-check interrupt (CMCI) to prevent performance degradation. Firmware logs may be incomplete when OS logging is enabled.

Fix BIOS boot-order priority after OS installation

Reboot the server from the CLI. Connect to the IPMI address of the Cisco UCS C890 M5 Rack Server with your preferred browser. Use the ADMIN user to log on to IPMI.

1. At the top of the screen, choose Remote Control and Remote Console from the drop-down menu. The Launch Console button opens a new window with the console redirection.

2. After system initialization, the Cisco logo appears. Press Delete to enter the AMI BIOS setup utility.

3. In the BIOS setup utility, change to the Boot top menu and choose UEFI Hard Disk Drive BBS Priorities.

4. Open Boot Option 1 and choose Red Hat Enterprise Linux/SUSE Linux Enterprise from the drop-down menu.

5. If the boot option UEFI Hard Disk: Red Hat Enterprise Linux/ SUSE Linux Enterprise is not listed at the first position of the boot order, move it to the first position

6. Change to the Save & Exit top menu and quit the setup utility with Save Changes and Reset.

All version-specific SAP installation and administration documentation is available from the SAP HANA Help portal: https://help.sap.com/hana. Refer to the official SAP documentation, which describes the various SAP HANA installation options.

Note: Review all relevant SAP Notes related to the SAP HANA installation for any recent changes.

SAP HANA Platform 2.0 installation

The official SAP documentation describes in detail how to install the HANA software and its required components. All required file systems are already mounted for the installation.

Install an SAP HANA scale-up solution

Download and extract the SAP HANA Platform 2.0 software to an installation subfolder of your choice. Follow the installation workflow of the SAP HANA Database Lifecycle Manager (hdblcm) and provide the user passwords when asked.

1. Change to the folder <installation path>/DATA_UNITS/HDB_LCM_Linux_X86_64.

2. Adapt the following command according to your SAP system ID (SID), SAP system number, host name, and required components:

# ./hdblcm --action install --components=server,client --install_hostagent \

--number <SAP System ID> --sapmnt=/hana/shared --sid=<SID> \

--hostname=<hostname> --certificates_hostmap=<hostname>=<map name>

3. Switch the user to <sid>adm to verify that SAP HANA is up and running:

# sapcontrol -nr <SAP System ID> -function GetProcessList

Install an SAP HANA scale-out solution

SAP HANA includes a ready-to-use storage connector client to manage Fibre Channel–attached devices with native multipathing. This feature enables host automatic failover at the block-storage level, which is required for successful failover to a standby host.

The fcCLient/fcClientLVM implementation uses standard Linux commands, such as multipath and sg_persist. It is responsible for mounting the SAP HANA data and log volumes and implements the fencing mechanism during host failover by means of Small Computer System Interface (SCSI) 3 persistent reservations.

For a scale-out installation based on SAP HANA persistence presented as Fibre Channel LUNs, the SAP HANA data and log volumes are not mounted upfront, and the SAP HANA shared volume is NFS mounted on all hosts.

Prepare an installation configuration file for the SAP Storage Connector API to use during the installation process. The file itself is not required after the installation process is complete.

# vi /tmp/cisco/global.ini

[communication]

listeninterface = .global

[persistence]

basepath_datavolumes = /hana/data/ANA

basepath_logvolumes = /hana/log/ANA

[storage]

ha_provider = hdb_ha.fcClient

partition_*_*__prtype = 5

partition_*_data__mountoptions = -o relatime,inode64

partition_*_log__mountoptions = -o relatime,inode64,

partition_1_data__wwid = 3600a0980383143752d5d506d48386b6b

partition_1_log__wwid = 3600a098038314375463f506c79694179

.

.

.

[trace]

ha_fcclient = info

However, with NFS based SAP HANA persistence, SAP HANA data and log file systems are mounted on all hosts along with the SAP HANA shared file system.

# vi /etc/fstab

UUID=e63b7954-1ca2-45b6-8900-3416b59dc613 / xfs defaults 0 0

UUID=ca908dd6-0810-4f30-afb5-ee5cf1c7e9fd /boot xfs defaults 0 0

UUID=550292ec-0430-46fd-8a63-d15144b85091 swap defaults 0 0

UUID=9C39-047B /boot/efi vfat defaults 0 2

#HANA filesystems

192.168.228.11:/c890_01_data /hana/data/FLX/mnt00001 nfs rw,bg,vers=4,minorversion=1,hard,timeo=600,rsize=1048576,wsize=1048576,intr,noatime,nolock 0 0

192.168.228.11:/c890_02_data /hana/data/FLX/mnt00002 nfs rw,bg,vers=4,minorversion=1,hard,timeo=600,rsize=1048576,wsize=1048576,intr,noatime,nolock 0 0

192.168.228.12:/c890_01_log /hana/log/FLX/mnt00001 nfs rw,bg,vers=4,minorversion=1,hard,timeo=600,rsize=1048576,wsize=1048576,intr,noatime,nolock 0 0

192.168.228.12:/c890_02_log /hana/log/FLX/mnt00002 nfs rw,bg,vers=4,minorversion=1,hard,timeo=600,rsize=1048576,wsize=1048576,intr,noatime,nolock 0 0

192.168.228.12:/FLX_shared /hana/shared nfs rw,bg,vers=4,minorversion=1,hard,timeo=600,rsize=1048576,wsize=1048576,intr,noatime,nolock 0 0

192.168.228.11:/usr_sap_c890s/usr-sap-host2 /usr/sap/FLX nfs rw,bg,vers=4,minorversion=1,hard,timeo=600,rsize=1048576,wsize=1048576,intr,noatime,nolock 0 0

1. Change to the folder <installation path>/DATA_UNITS/HDB_LCM_Linux_X86_64.

2. Adapt the following command according to your SAP SID, SAP system number, host name, and required components:

# ./hdblcm --action install --components=server,client --install_hostagent \

--number <SAP System ID> --sapmnt=/hana/shared --sid=<SID> \

--storage_cfg=/tmp/cisco --hostname=<hostname> \

--certificates_hostmap=<hostname>=<map name>

3. Switch the user to <sid>adm to verify that SAP HANA is up and running:

# sapcontrol -nr <SAP System ID> -function GetProcessList

# sapcontrol -nr <SAP System ID> -function GetSystemInstanceList

4. Be sure that the internode network setup is complete and working. Change the SAP HANA interservice network communication to internal:

# /hana/shared/<SID>/hdblcm/hdblcm --action=configure_internal_network --listen_interface=internal --internal_address=<internode network>/<network mask>

Cisco uses best-in-class server and network components as a foundation for a variety of enterprise application workloads. With Cisco UCS C890 M5 Rack Servers for SAP HANA, you can deploy a flexible, converged infrastructure solution designed for mission-critical workloads for which performance and memory size are important attributes.

The eight-socket server delivers exceptional performance and is sized, configured, and deployed to match the SAP HANA appliance key performance metrics demanded by SAP SE. With standard configuration options including eight 25-Gbps SFP Ethernet ports and four 32-Gbps Fibre Channel ports, this rack server is well suited for integration into a new or existing FlexPod environment.

With Cisco UCS C890 M5 server and FlexPod integration, customers benefit from the scalability and flexibility of an infrastructure platform composed of prevalidated computing, networking, and storage components.

For additional information, see the resources listed in this section.

Cisco Unified Computing System

● Cisco UCS C890 M5 Rack Server data sheet: https://www.cisco.com/c/en/us/products/collateral/servers-unified-computing/ucs-c-series-rack-servers/datasheet-c78-744647.html

● Intel Optane persistent memory and SAP HANA platform configuration: https://www.intel.com/content/www/us/en/big-data/partners/sap/sap-hana-and-intel-optane-configuration-guide.html

● Cisco UCS for SAP HANA with Intel Optane Data Center Persistent Memory Module (DCPMM): https://www.cisco.com/c/dam/en/us/products/servers-unified-computing/ucs-b-series-blade-servers/whitepaper-c11-742627.pdf

● Cisco Intersight platform:

https://www.cisco.com/c/en/us/products/servers-unified-computing/intersight/index.html

Network and management

● Cisco MDS 9000 Family multilayer switches:

http://www.cisco.com/c/en/us/products/storage-networking/mds-9000-series-multilayer-switches/index.html

● Cisco Nexus 9000 Series Switches:

https://www.cisco.com/c/en/us/products/switches/nexus-9000-series-switches/index.html

SAP HANA

● SAP HANA platform on SAP Help portal:

https://help.sap.com/viewer/p/SAP_HANA_PLATFORM

● SAP HANA TDI overview:

https://www.sap.com/documents/2017/09/e6519450-d47c-0010-82c7-eda71af511fa.html

● SAP HANA TDI storage requirements:

https://www.sap.com/documents/2015/03/74cdb554-5a7c-0010-82c7-eda71af511fa.html

● SAP HANA network requirements:

https://www.sap.com/documents/2016/08/1cd2c2fb-807c-0010-82c7-eda71af511fa.html

● SAP HANA business continuity with SAP HANA system replication and SUSE cluster:

https://www.cisco.com/c/en/us/solutions/collateral/data-center-virtualization/sap-applications-on-cisco-ucs/whitepaper-C11-734028.html

● SAP HANA high availability with SAP HANA system replication and RHEL cluster:

https://www.cisco.com/c/en/us/solutions/collateral/data-center-virtualization/sap-applications-on-cisco-ucs/whitepaper-c11-735382.pdf

Interoperability matrixes

● Cisco Nexus and Cisco MDS interoperability matrix:

https://www.cisco.com/c/en/us/td/docs/switches/datacenter/mds9000/interoperability/matrix/intmatrx/Matrix1.html

● SAP Note 2235581: SAP HANA: Supported Operating Systems:

https://launchpad.support.sap.com/ - /notes/2235581

● SAP certified and supported SAP HANA hardware:

https://www.sap.com/dmc/exp/2014-09-02-hana-hardware/enEN/index.html

NetApp

● NetApp technical reports:

◦ TR-4436-SAP HANA on NetApp AFF Systems with FCP

◦ TR-4614-SAP HANA Backup and Recovery with SnapCenter

◦ TR-4646-SAP HANA Disaster Recovery with Storage Replication

For comments and suggestions about this guide and related guides, join the discussion on Cisco Community at https://cs.co/en-cvds.