FlexPod Datacenter for SAP Solution using FibreChannel SAN with Cisco UCS Manager 4.0 and NetApp ONTAP 9.7

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

FlexPod Datacenter for SAP Solution using FibreChannel SAN with Cisco UCS Manager 4.0 and NetApp ONTAP 9.7

Deployment Guide for FibreChannel-based FlexPod Datacenter Solution for SAP and SAP HANA with Cisco UCS 4th Generation and NetApp ONTAP 9.7

Published: December 2020

![]()

In partnership with:

![]()

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2020 Cisco Systems, Inc. All rights reserved.

Contents

Deployment Hardware and Software

System Provisioning for SAP HANA

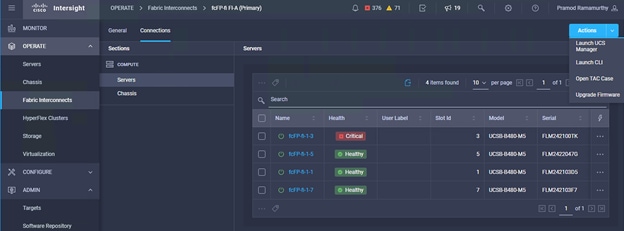

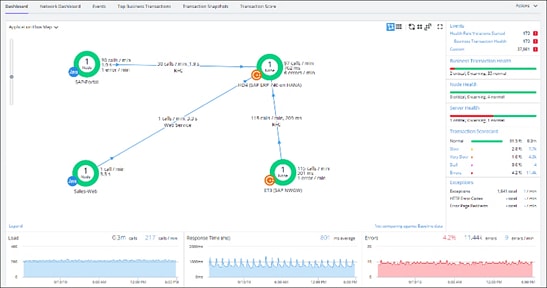

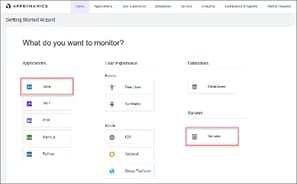

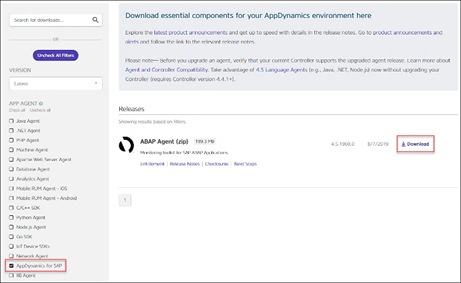

Monitor SAP HANA with AppDynamics

Cisco and NetApp have partnered to deliver FlexPod, which serves as the foundation for a variety of SAP and SAP HANA workloads and enables efficient architectural designs that are based on customer requirements. FlexPod Datacenter solution is a validated approach for deploying Cisco and NetApp technologies as a shared infrastructure platform for SAP HANA implementation in Tailored DataCenter Integration (TDI) mode.

This document describes the Cisco and NetApp FlexPod Datacenter with NetApp ONTAP 9.7 on NetApp AFF A400 storage, Cisco UCS Manager unified software release 4.1(1) with 2nd Generation Intel Xeon Scalable Processors for SAP HANA in particular.

FlexPod Datacenter with NetApp ONTAP 9.7 and Cisco UCS unified software release 4.1(1) is a predesigned, best-practice datacenter architecture built on the Cisco Unified Computing System (Cisco UCS), the Cisco Nexus 9000 family of switches, MDS 9000 multilayer fabric switches, and NetApp AFF A-Series storage arrays running ONTAP 9.7 storage OS.

The current industry trend in datacenter design is towards shared infrastructures. With pre-validated IT platforms, enterprise customers have embarked on the journey to the cloud by moving away from application silos and toward shared infrastructure that can be quickly deployed, thereby increasing agility, and reducing costs.

Cisco and NetApp have partnered to deliver FlexPod, which uses best of breed storage, server, and network components to serve as the foundation for a variety of SAP workloads in general and SAP HANA in particular, enabling efficient architectural designs that can be quickly and confidently deployed.

The audience for this document includes, but is not limited to; sales engineers, field consultants, professional services, IT managers, partner engineers, and customers who want to take advantage of an infrastructure built to deliver IT efficiency and enable IT innovation.

This document provides a step-by-step configuration and implementation guide for the FlexPod Datacenter with Cisco UCS Fabric Interconnects, NetApp AFF storage, Cisco MDS, and Cisco Nexus 9000 solution.

The following design elements distinguish this version of FlexPod from previous FlexPod architectures:

● Cisco UCS 4.1(1) unified software release, Cisco UCS B480-M5 with 2nd Generation Intel Xeon Scalable Processors, and Cisco 1400 Series Virtual Interface Cards (VICs)

● Cisco UCS 6454 Fabric Interconnects

● Cisco UCS 2408 Fabric Extender

● Cisco Intersight Software as a Service (SaaS) Management

● NetApp AFF A400 Storage Controller

● NetApp ONTAP® 9.7

● NetApp SnapCenter and NetApp SnapCenter Plug-in for SAP HANA Version 4.3

● Fibre channel and NFS storage design

● Unified Extensible Firmware Interface (UEFI) Secure Boot with SLES for SAP Applications 15 SP2 and RHEL for SAP HANA 8.1 Operating Systems.

● 32 Gigabit per second Fibre Channel Connectivity

Deployment Hardware and Software

FlexPod is a defined set of hardware and software that serves as an integrated foundation for both virtualized and non-virtualized SAP HANA workloads. The FlexPod design is flexible enough that the networking, computing, and storage can fit in one data center rack or be deployed according to a customer's data center design. Port density enables the networking components to accommodate multiple configurations of this kind.

One benefit of the FlexPod architecture is the ability to customize or "flex" the environment to suit a customer's requirements. A FlexPod can easily be scaled as requirements and demand change. The unit can be scaled both up (adding resources to a FlexPod unit) and out (adding more FlexPod units). The reference architecture detailed in this document highlights the resiliency, cost benefit, and ease of deployment of a Fibre Channel and IP-based storage solution. A storage system capable of serving multiple protocols across a single interface allows for customer choice and investment protection because it truly is a wire-once architecture.

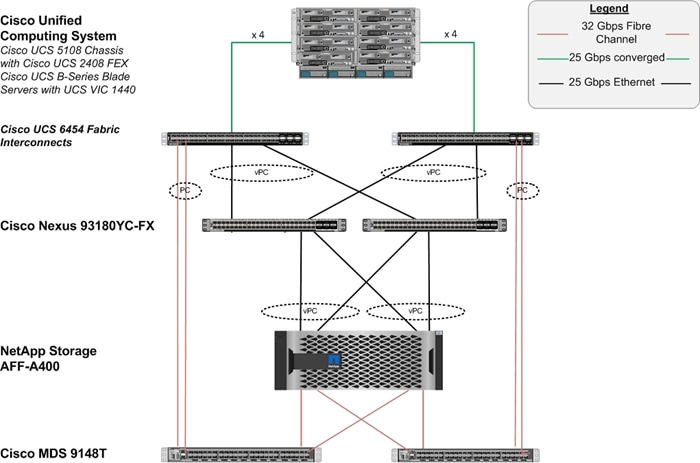

Figure 1 shows the VMware vSphere built on FlexPod components and the network connections for a configuration with the Cisco UCS 6454 Fabric Interconnects. This design has port-channeled 25 Gb Ethernet connections between the Cisco UCS 5108 Blade Chassis and the Cisco UCS Fabric Interconnects via the Cisco UCS 2408 Fabric Extenders, 25 Gb Ethernet connections between the Cisco UCS Fabric Interconnect and Cisco Nexus 9000, and between Cisco Nexus 9000 and NetApp AFF A400 storage array. This infrastructure option is expanded with Cisco MDS switches placed between the Cisco UCS Fabric Interconnect and the NetApp AFF A400 to provide FC-booted hosts with 32 Gb FC block-level access to storage serving them SAP HANA persistence as well.

Figure 1. FlexPod with Cisco UCS 6454 Fabric Interconnects and NetApp AFF A400

The reference architecture hardware configuration includes:

● Two Cisco Nexus 93180YC-FX switches

● Two Cisco UCS 6454 fabric interconnects

● Two Cisco MDS 9148T multilayer fabric switches

● One NetApp AFF 400 (HA pair) running ONTAP 9.7 with external NVMe Disk shelf NS224 SSD disks

Table 1 lists the software revisions for this solution.

| Layer |

Device |

Image |

Comments |

| Compute |

Cisco UCS Fabric Interconnects 6454, Cisco UCS B480 M5 and with 2nd Generation Intel Xeon Scalable Processors |

4.1(1d) |

Includes the Cisco UCS 2408 Fabric Extender, Cisco UCS Manager, Cisco UCS VIC 1440, and Cisco UCS VIC 1480 |

| Network |

Cisco Nexus 93180YC-FX NX-OS |

9.2(4) |

|

|

|

Cisco MDS 9148T |

8.4(1a) |

|

| Storage |

NetApp AFF 400 with NVMe NS224 external disk shelf |

ONTAP 9.7 |

|

| Software |

Cisco UCS Manager |

4.1(1d) |

|

| Management |

Cisco Intersight |

1.0.9-148 |

|

| Software |

Operating Systems |

SLES for SAP 15 SP2 and RHEL for SAP HANA 8.1 |

|

This document explains how to configure a fully redundant, highly available configuration for a FlexPod unit with ONTAP storage. Therefore, reference is made to which component is being configured with each step, either 01 or 02 or A and B. For example, node01 and node02 are used to identify the two NetApp storage controllers that are provisioned with this document, and Cisco Nexus A or Cisco Nexus B identifies the pair of Cisco Nexus switches that are configured. The Cisco UCS Fabric Interconnects are similarly configured. Additionally, this document details the steps for provisioning multiple Cisco UCS hosts, and these examples are identified as: HANA-scaleup-01, HANA-scaleout-01 etc to represent infrastructure hosts deployed to each of the fabric interconnects in this document. Finally, to indicate that you should include information pertinent to your environment in a given step, <text> appears as part of the command structure. See the following example for the network port vlan create command:

Usage:

network port vlan create ?

[-node] <nodename> Node

{ [-vlan-name] {<netport>|<ifgrp>} VLAN Name

| -port {<netport>|<ifgrp>} Associated Network Port

[-vlan-id] <integer> } Network Switch VLAN Identifier

Example:

network port vlan create -node <node01> -vlan-name a0a-<vlan id>

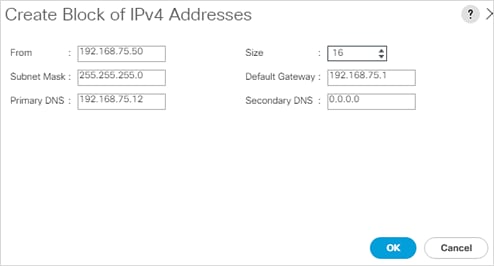

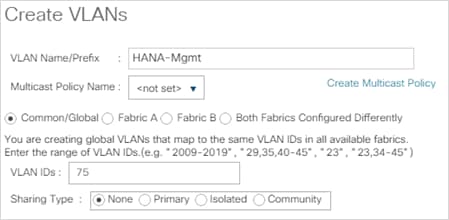

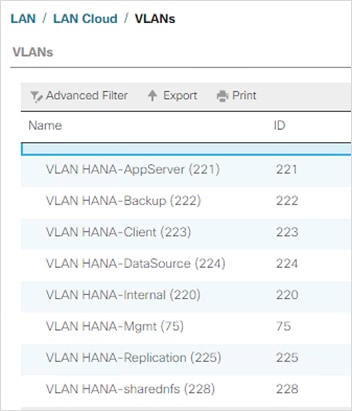

This document is intended to enable you to fully configure the customer environment. In this process, various steps require you to insert customer-specific naming conventions, IP addresses, and VLAN schemes, as well as to record appropriate MAC addresses. Table 2 describes the VLANs necessary for deployment as outlined in this guide.

| VLAN Name |

VLAN Purpose |

ID Used in Validating This Document |

| Out-of-Band Mgmt |

VLAN for out-of-band management interfaces |

75 |

| Native |

VLAN to which untagged frames are assigned |

2 |

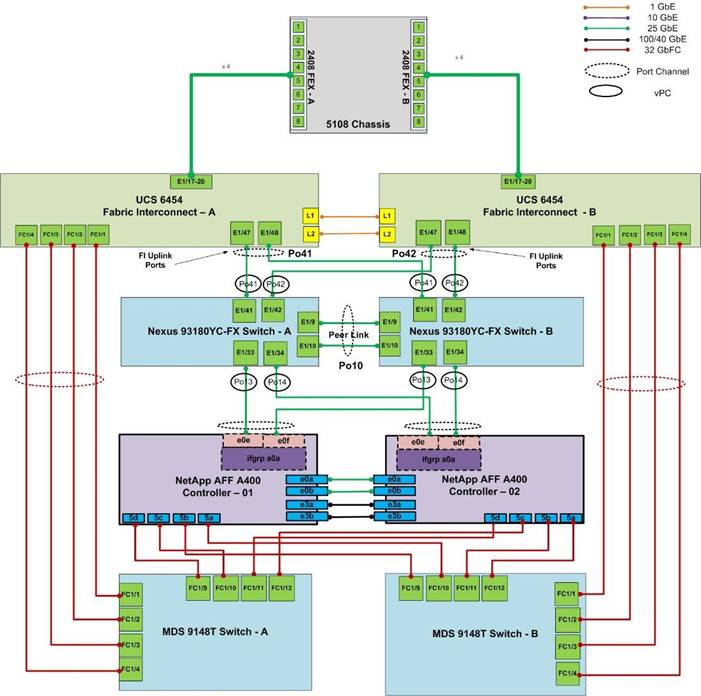

The information in this section is provided as a reference for cabling the physical equipment in a FlexPod environment. To simplify cabling requirements, a cabling diagram was used.

The cabling diagram in this section contains details for the prescribed and supported configuration of the NetApp AFF 400 running NetApp ONTAP® 9.7.

![]() For any modifications of this prescribed architecture, consult the NetApp Interoperability Matrix Tool (IMT).

For any modifications of this prescribed architecture, consult the NetApp Interoperability Matrix Tool (IMT).

This document assumes that out-of-band management ports are plugged into an existing management infrastructure at the deployment site. These interfaces will be used in various configuration steps.

The NetApp storage controller and disk shelves should be connected according to best practices for the specific storage controller and disk shelves. For disk shelf cabling, refer to NetApp Support.

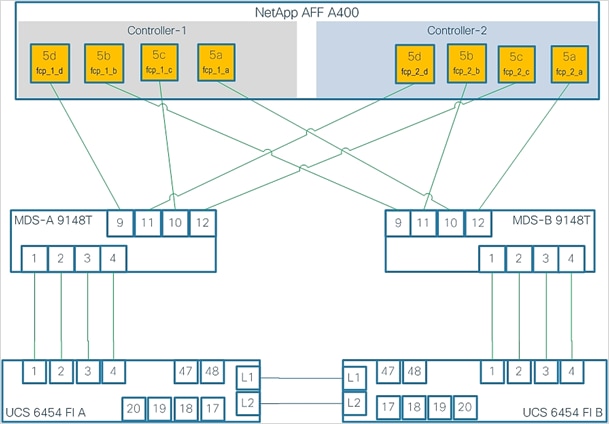

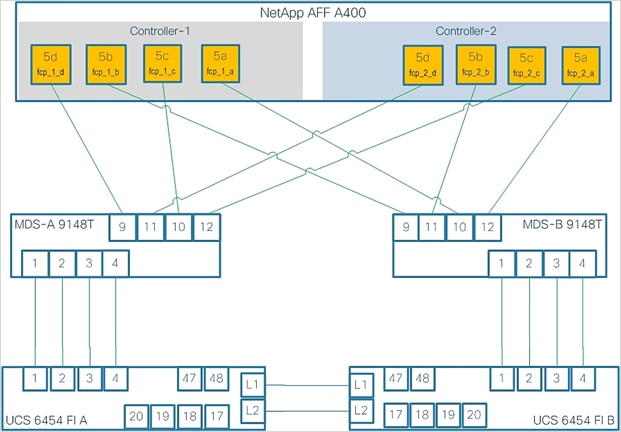

Figure 2 details the cable connections used in the validation lab for the FlexPod topology based on the Cisco UCS 6454 fabric interconnect. Four 32Gb uplinks connect as port-channels to each Cisco UCS Fabric Interconnect from the MDS switches, and a total of eight 32Gb links connect the MDS switches to the NetApp AFF controllers. Also, 25Gb links connect the Cisco UCS Fabric Interconnects to the Cisco Nexus Switches and the NetApp AFF controllers to the Cisco Nexus Switches. Additional 1Gb management connections will be needed for an out-of-band network switch that sits apart from the FlexPod infrastructure. Each Cisco UCS fabric interconnect and Cisco Nexus switch is connected to the out-of-band network switch, and each AFF controller has a connection to the out-of-band network switch.

Figure 2. FlexPod Cabling with Cisco UCS 6454 Fabric Interconnect and NetApp AFF 400

This section provides a detailed procedure to configure the Cisco Nexus 9000s to use in a FlexPod environment.

![]() Follow the steps in this section precisely because failure to do so could result in an improper configuration.

Follow the steps in this section precisely because failure to do so could result in an improper configuration.

Follow the physical connectivity guidelines for FlexPod as explained in the section FlexPod Cabling.

The following procedures describe how to configure the Cisco Nexus switches for use in a base FlexPod environment. This procedure assumes you’re using Cisco Nexus 9000 7.0(3)I7(6), the Cisco suggested Nexus switch release at the time of this validation.

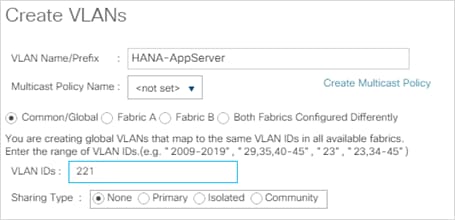

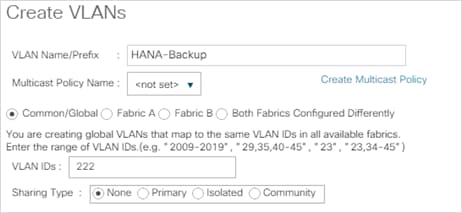

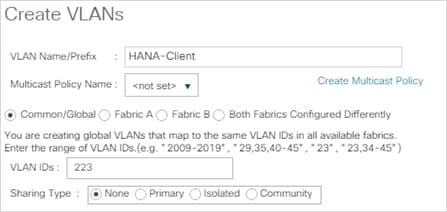

Table 3 lists the VLANs necessary for deployment as outlined in this guide.

Table 3. Configuration Variables

| Variable Name |

VLAN purpose |

Value used in Validation Setup |

| <nexus-A-hostname> |

Cisco Nexus A host name |

fp-nexus-A |

| <nexus-A-mgmt0-ip> |

Out-of-band management Cisco Nexus A IP address |

192.168.75.2 |

| <nexus-A-mgmt0-netmask> |

Out-of-band management network netmask |

255.255.255.0 |

| <nexus-A-mgmt0-gw> |

Out-of-band management network default gateway |

192.168.75.1 |

| <nexus-B-hostname> |

Cisco Nexus B host name |

fp-nexus-B |

| <nexus-B-mgmt0-ip> |

Out-of-band management Cisco Nexus B IP address |

192.168.75.3 |

| <nexus-B-mgmt0-netmask>> |

Out-of-band management network netmask |

255.255.255.0 |

| <nexus-B-mgmt0-gw> |

Out-of-band management network default gateway |

192.168.75.1 |

| <global-ntp-server-ip>> |

NTP server IP address |

192.168.75.19 |

| <nexus-vpc-domain-id>> |

Unique Cisco Nexus switch VPC domain ID |

10 |

| <hana-admin-vlan-id> |

HANA node administration VLAN |

75 |

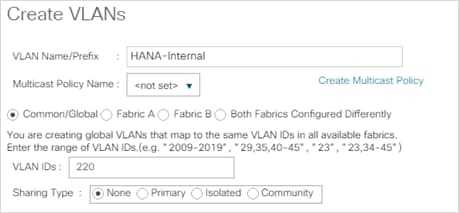

| <hana-internode-vlan-id> |

HANA server-server communication network VLAN ID |

220 |

| <hana-backup-vlan-id> |

HANA node backup VLAN |

222 |

| <hana-client-vlan-id> |

Client Network for HANA VLAN ID |

223 |

| <hana-appserver-vlan-id> |

Application Server Network for HANA VLAN ID |

221 |

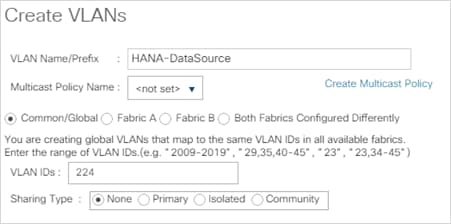

| <hana-datasource-vlan-id> |

Data source Network for HANA VLAN ID |

224 |

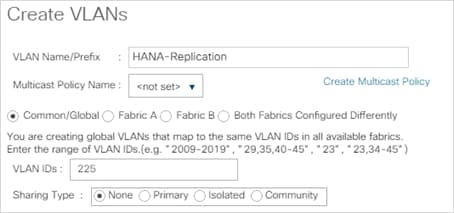

| <hana-replication-vlan-id> |

Replication Network for HANA VLAN ID |

225 |

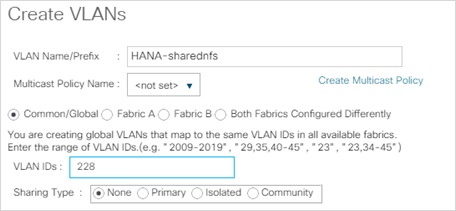

| <hana-sharednfs-vlan-id> |

Shared NFS network for /hana/shared access |

228 |

Cisco Nexus A

To set up the initial configuration for the Cisco Nexus A switch on <nexus-A-hostname>, follow these steps:

1. Configure the switch.

On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

Abort Power On Auto Provisioning [yes - continue with normal setup, skip - bypass password and basic configuration, no - continue with Power On Auto Provisioning] (yes/skip/no)[no]: yes

Disabling POAP.......Disabling POAP

poap: Rolling back, please wait... (This may take 5-15 minutes)

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name: <nexus-A-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address: <nexus-A-mgmt0-ip>

Mgmt0 IPv4 netmask: <nexus-A-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway: <nexus-A-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [1024]: Enter

Configure the ntp server? (yes/no) [n]: y

NTP server IPv4 address: <global-ntp-server-ip>

Configure default interface layer (L3/L2) [L2]: Enter

Configure default switchport interface state (shut/noshut) [noshut]: shut

Configure CoPP system profile (strict/moderate/lenient/dense/skip) [strict]: Enter

Would you like to edit the configuration? (yes/no) [n]: Enter

2. Review the configuration summary before enabling the configuration.

Use this configuration and save it? (yes/no) [y]: Enter

Cisco Nexus B

To set up the initial configuration for the Cisco Nexus B switch on <nexus-B-hostname>, follow these steps:

1. Configure the switch.

![]() On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

Abort Power On Auto Provisioning [yes - continue with normal setup, skip - bypass password and basic configuration, no - continue with Power On Auto Provisioning] (yes/skip/no)[no]: yes

Disabling POAP.......Disabling POAP

poap: Rolling back, please wait... (This may take 5-15 minutes)

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name: <nexus-B-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address: <nexus-B-mgmt0-ip>

Mgmt0 IPv4 netmask: <nexus-B-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway: <nexus-B-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [1024]: Enter

Configure the ntp server? (yes/no) [n]: y

NTP server IPv4 address: <global-ntp-server-ip>

Configure default interface layer (L3/L2) [L2]: Enter

Configure default switchport interface state (shut/noshut) [noshut]: shut

Configure CoPP system profile (strict/moderate/lenient/dense/skip) [strict]: Enter

Would you like to edit the configuration? (yes/no) [n]: Enter

2. Review the configuration summary before enabling the configuration.

Use this configuration and save it? (yes/no) [y]: Enter

FlexPod Cisco Nexus Switch Configuration

Cisco Nexus A and Cisco Nexus B

To license the Cisco Nexus switches, follow these steps:

1. Log in as admin.

2. Run the following commands to enable the required features:

config t

feature udld

feature interface-vlan

feature lacp

feature vpc

feature lldp

Cisco Nexus A and Cisco Nexus B

To create the necessary virtual local area networks (VLANs), follow this step on both switches:

1. From the global configuration mode, run the following commands:

vlan 75

name HANA-Node-Mgmt

vlan 220

name HANA-Internode

vlan 221

name HANA-AppServer

vlan 222

name HANA-Backup

vlan 223

name HANA-Client

vlan 224

name HANA-Datasource

vlan 225

name HANA-System-Replication

vlan 228

name HANA-sharednfs

exit

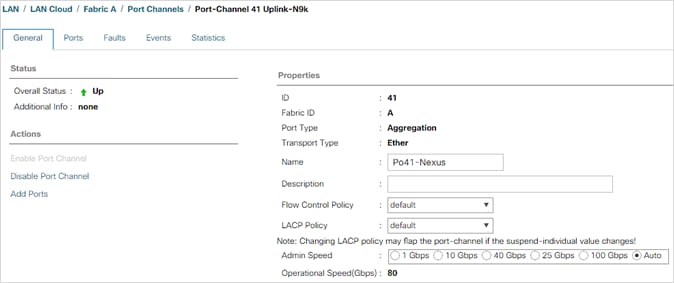

Create Port Channels and assign interfaces

Cisco Nexus A

To create the necessary port channels between devices, follow this step:

1. From the global configuration mode, run the following commands:

interface Po10

description vPC peer-link

interface Eth1/9-10

channel-group 10 mode active

no shutdown

interface Po13

description PC-NetApp-A

interface Eth1/33

description AFF-A4000-A:e0e

channel-group 13 mode active

no shutdown

interface Po14

description PC-NetApp-B

interface Eth1/34

description AFF-A400-B:e0e

channel-group 14 mode active

no shutdown

interface Po41

description PC-from-FI-A

interface Eth1/41

description FI-A:1/47

channel-group 41 mode active

no shutdown

interface Po42

description PC-from-FI-B

interface Eth1/42

description FI-B:1/47

channel-group 42 mode active

no shutdown

exit

copy run start

To create the necessary port channels between devices, follow this step:

1. From the global configuration mode, run the following commands:

interface Po10

description vPC peer-link

interface Eth1/9-10

channel-group 10 mode active

no shutdown

interface Po13

description PC-NetApp-A

interface Eth1/33

description AFF-A400-A:e0f

channel-group 13 mode active

no shutdown

interface Po14

description PC-NetApp-B

interface Eth1/34

description AFF-A400-B:e0f

channel-group 14 mode active

no shutdown

interface Po41

description PC-from-FI-A

interface Eth1/41

description FI-A:1/48

channel-group 41 mode active

no shutdown

interface Po42

description PC-from-FI-A

interface Eth1/42

description FI-B:1/48

channel-group 42 mode active

no shutdown

exit

copy run start

Configure Port Channel Parameters

Cisco Nexus A and Cisco Nexus B

To configure port channel parameters, follow this step on both switches:

1. From the global configuration mode, run the following commands:

interface Po10

description vPC peer-link

switchport mode trunk

switchport trunk allowed vlan 75,220-225,228

spanning-tree port type network

interface Po13

description PC-NetApp-A

switchport mode trunk

switchport trunk allowed vlan 228

spanning-tree port type edge trunk

mtu 9216

interface Po14

description PC-NetApp-B

switchport mode trunk

switchport trunk allowed vlan 228

spanning-tree port type edge trunk

mtu 9216

interface Po41

description PC-from-FI-A

switchport mode trunk

switchport trunk allowed vlan 75,220-225,228

spanning-tree port type edge trunk

mtu 9216

interface Po42

description PC-from-FI-B

switchport mode trunk

switchport trunk allowed vlan 75,220-225,228

spanning-tree port type edge trunk

mtu 9216

exit

copy run start

Configure Virtual Port Channels

Cisco Nexus A

To configure virtual port channels (vPCs) for switch A, follow this step:

1. From the global configuration mode, run the following commands:

vpc domain <nexus-vpc-domain-id>

role priority 10

peer-keepalive destination <nexus-B-mgmt0-ip> source <nexus-A-mgmt0-ip>

peer-switch

peer-gateway

auto-recovery

delay restore 150

interface Po10

vpc peer-link

interface Po13

vpc 13

interface Po14

vpc 14

interface Po41

vpc 41

interface Po42

vpc 42

exit

copy run start

Cisco Nexus B

To configure vPCs for switch B, follow this step:

1. From the global configuration mode, run the following commands:

vpc domain <nexus-vpc-domain-id>

role priority 20

peer-keepalive destination <nexus-A-mgmt0-ip> source <nexus-B-mgmt0-ip>

peer-switch

peer-gateway

auto-recovery

delay restore 150

interface Po10

vpc peer-link

interface Po13

vpc 13

interface Po14

vpc 14

interface Po41

vpc 41

interface Po42

vpc 42

exit

copy run start

Uplink into Existing Network Infrastructure

Depending on the available network infrastructure, several methods and features can be used to uplink the FlexPod environment. If an existing Cisco Nexus environment is present, it is recommended to use vPCs to uplink the Cisco Nexus switches included in the FlexPod environment into the infrastructure. Use this procedure to create an uplink vPC to the existing environment. Make sure to run copy run start to save the configuration on each switch after the configuration is completed.

Complete Configuration Worksheet

Before running the setup script, complete the cluster setup worksheet and review the configuration worksheets in the ONTAP 9.7 Software Setup Guide to learn about configuring ONTAP software.

Table 4 and Table 5 list the information needed to configure two ONTAP nodes. Customize the cluster detail values with the information applicable to your deployment.

Table 4. ONTAP Software Installation Prerequisites

| Cluster Detail |

Cluster Detail Value |

Value used in validation setup |

| Cluster node 01 IP address |

<node01-mgmt-ip> |

192.168.75.29 |

| Cluster node 01 netmask |

<node01-mgmt-mask> |

255.255.255.0 |

| Cluster node 01 gateway |

<node01-mgmt-gateway> |

192.168.75.1 |

| Cluster node 02 IP address |

<node02-mgmt-ip> |

192.168.75.30 |

| Cluster node 02 netmask |

<node02-mgmt-mask> |

255.255.255.0 |

| Cluster node 02 gateway |

<node02-mgmt-gateway> |

192.168.75.1 |

| Data ONTAP 9.7 URL |

<url-boot-software> |

|

Table 5 lists all the parameters required to set up the ONTAP cluster.

Table 5. ONTAP Cluster Prerequisites

| Cluster Detail |

Cluster Detail Value |

Values used in validation setup |

| Cluster name |

aff_a400 |

|

| ONTAP base license |

<cluster-base-license-key> |

|

| NFS license key |

<nfs-license-key> |

|

| FCP license key |

<iscsi-license-key> |

|

| NetApp SnapRestore® license key |

<snaprestore-license-key> |

|

| NetApp SnapVault® license key |

<snapvault-license-key> |

|

| NetApp SnapMirror® license key |

<snapmirror-license-key> |

|

| NetApp FlexClone® license key |

<flexclone-license-key> |

|

| Cluster management IP address |

<clustermgmt-ip> |

192.168.75.31 |

| Cluster management netmask |

<clustermgmt-mask> |

255.255.255.0 |

| Cluster management gateway |

<clustermgmt-gateway> |

192.168.75.1 |

| Node 01 service processor IP address |

<node01-SP-ip> |

|

| Node 01 service processor IP netmask |

<node01-SP-mask> |

|

| Node 01 service processor IP gateway |

<node01-SP-gateway> |

|

| Node 02 service processor IP address |

<node02-SP-ip> |

|

| Node 02 service processor IP netmask |

<node02-SP-mask> |

|

| DNS domain name |

<dns-domain-name> |

|

| DNS server IP address |

<dns-ip> |

192.168.75.19 |

| Time zone |

<timezone> |

|

| NTP server IP address |

<ntp-ip> |

192.168.75.19 |

| SNMP contact information |

<snmp-contact> |

|

| SNMP location |

<snmp-location> |

|

| DFM server or another fault management server FQDN to receive SNMP traps |

<oncommand-um-server-fqdn> |

|

| SNMPv1 community string |

<snmp-community> |

|

| Mail host to send NetApp AutoSupport® messages |

<mailhost> |

|

| Storage admin email for NetApp AutoSupport |

<storage-admin-email> |

|

To set up an ONTAP cluster, follow these steps:

1. From a console port program attached to the storage controller A (node 01) console port, run the node setup script. This script appears when ONTAP 9.7 software boots on the node for the first time.

2. Follow the prompts to set up node 01:

Welcome to node setup.

You can enter the following commands at any time:

"help" or "?" - if you want to have a question clarified,

"back" - if you want to change previously answered questions, and

"exit" or "quit" - if you want to quit the setup wizard.

Any changes you made before quitting will be saved.

You can return to cluster setup at any time by typing “cluster setup”.

To accept a default or omit a question, do not enter a value.

This system will send event messages and weekly reports to NetApp Technical Support.

To disable this feature, enter "autosupport modify -support disable" within 24 hours.

Enabling AutoSupport can significantly speed problem determination and resolution should a problem occur on your system.

For further information on AutoSupport, see:

http://support.netapp.com/autosupport/

Type yes to confirm and continue {yes}: yes

Enter the node management interface port [e0M]: Enter

Enter the node management interface IP address: <node01-mgmt-ip>

Enter the node management interface netmask: <node01-mgmt-mask>

Enter the node management interface default gateway: <node01-mgmt-gateway>

A node management interface on port e0M with IP address <node01-mgmt-ip> has been created

Use your web browser to complete cluster setup by accessing https://<node01-mgmt-ip>

Otherwise press Enter to complete cluster setup using the command line interface:

![]() Cluster setup can also be done using NetApp System Manager. This document describes the cluster setup using the CLI guided setup.

Cluster setup can also be done using NetApp System Manager. This document describes the cluster setup using the CLI guided setup.

3. Press Enter to continue the cluster setup via CLI.

4. Create a new cluster:

Do you want to create a new cluster or join an existing cluster? {create, join}:

Create

5. Press Enter for the default option “no” to setup a single node cluster:

Do you intend for this node to be used as a single node cluster? {yes, no} [no]:

6. Create the cluster interface configuration. Choose Yes if you want to use the default settings.

Existing cluster interface configuration found:

Port MTU IP Netmask

e3a 9000 169.254.142.30 255.255.0.0

e3b 9000 169.254.41.219 255.255.0.0

Do you want to use this configuration? {yes, no} [yes]:

7. Provide the cluster administrator’s password:

Enter the cluster administrator's (username "admin") password:

Retype the password:

8. Create the cluster and provide a cluster name :

Step 1 of 5: Create a Cluster

You can type "back", "exit", or "help" at any question.

Enter the cluster name: <clustername>

Creating cluster <clustername>

.

Starting replication service

Starting replication service .

Starting replication service ..

System start up

System start up .

System start up ..

System start up ...

System start up ....

System start up .....

Updating LIF Manager

Vserver Management

Starting cluster support services

Starting cluster support services .

Starting cluster support services ..

Cluster <clustername> has been created.

9. Add the necessary license keys:

Step 2 of 5: Add Feature License Keys

You can type "back", "exit", or "help" at any question.

Enter an additional license key []:

10. Create the vserver for cluster administration:

Step 3 of 5: Set Up a Vserver for Cluster Administration

You can type "back", "exit", or "help" at any question.

Enter the cluster management interface port [e0e]: e0M

Enter the cluster management interface IP address: <clustermgmt-ip>

Enter the cluster management interface netmask: <clustermgmt-mask>

Enter the cluster management interface default gateway [<clustermgmt-gateway>]:

A cluster management interface on port e0M with IP address <clustermgmt-ip> has been created. You can use this address to connect to and manage the cluster.

11. Provide the DNS domain names and DNS server IP address:

Enter the DNS domain names: <dns-domain-name>

Enter the DNS server IP addresses: <dns-ip>

12. Finish the first part of the setup:

Step 4 of 5: Configure Storage Failover (SFO)

You can type "back", "exit", or "help" at any question.

SFO will be enabled when the partner joins the cluster.

Step 5 of 5: Set Up the Node

You can type "back", "exit", or "help" at any question.

Where is the controller located []: <snmp-location>

Cluster "<clustername>" has been created.

To complete cluster setup, you must join each additional node to the cluster

by running "system node show-discovered" and "cluster add-node" from a node in the cluster.

To complete system configuration, you can use either OnCommand System Manager

or the Data ONTAP command-line interface.

To access OnCommand System Manager, point your web browser to the cluster

management IP address (https:// <clustermgmt-ip>).

To access the command-line interface, connect to the cluster management

IP address (for example, ssh admin@<clustermgmt-ip>).

13. From a console port program attached to the storage controller B (node 02) console port, run the node setup script. This script appears when ONTAP 9.7 software boots on the node for the first time.

14. Follow the prompts to set up node 02:

Welcome to the cluster setup wizard.

You can enter the following commands at any time:

"help" or "?" - if you want to have a question clarified,

"back" - if you want to change previously answered questions, and

"exit" or "quit" - if you want to quit the cluster setup wizard.

Any changes you made before quitting will be saved.

You can return to cluster setup at any time by typing "cluster setup".

To accept a default or omit a question, do not enter a value.

This system will send event messages and periodic reports to NetApp Technical

Support. To disable this feature, enter

autosupport modify -support disable

within 24 hours.

Enabling AutoSupport can significantly speed problem determination and

resolution, should a problem occur on your system.

For further information on AutoSupport, see:

http://support.netapp.com/autosupport/

Type yes to confirm and continue {yes}: yes

Enter the node management interface port [e0M]: Enter

Enter the node management interface IP address: <node02-mgmt-ip>

Enter the node management interface netmask: <node02-mgmt-mask>

Enter the node management interface default gateway: <node02-mgmt-gateway>

A node management interface on port e0M with IP address <node02-mgmt-ip> has been created

Use your web browser to complete cluster setup by accessing https://<node02-mgmt-ip>

Use your web browser to complete cluster setup by accessing

https://192.168.75.30

Otherwise, press Enter to complete cluster setup using the command line

interface:

15. Press Enter to continue the cluster setup via CLI.

16. Join the new cluster:

Do you want to create a new cluster or join an existing cluster? {create, join}:

join

17. Create the cluster interface configuration. Choose Yes if you want to use the default settings:

Existing cluster interface configuration found:

Port MTU IP Netmask

e3a 9000 169.254.57.171 255.255.0.0

e3b 9000 169.254.79.119 255.255.0.0

Do you want to use this configuration? {yes, no} [yes]:

18. Enter an IP address of the private cluster network from node 1:

Step 1 of 3: Join an Existing Cluster

You can type "back", "exit", or "help" at any question.

Enter the IP address of an interface on the private cluster network from the

cluster you want to join: 169.254.142.30

Joining cluster at address 169.254.142.30

.

Joining cluster

Joining cluster .

System start up

System start up .

System start up ..

System start up ...

System start up ....

System start up .....

Starting cluster support services

This node has joined the cluster <clustername>.

19. Finish the second part of the setup:

Step 2 of 3: Configure Storage Failover (SFO)

You can type "back", "exit", or "help" at any question.

SFO will be enabled when the partner joins the cluster.

Step 3 of 3: Set Up the Node

You can type "back", "exit", or "help" at any question.

This node has been joined to cluster "<clustername>".

To complete cluster setup, you must join each additional node to the cluster

by running "system node show-discovered" and "cluster add-node" from a node in the cluster.

To complete system configuration, you can use either OnCommand System Manager

or the Data ONTAP command-line interface.

To access OnCommand System Manager, point your web browser to the cluster

management IP address (https:// <clustermgmt-ip>).

To access the command-line interface, connect to the cluster management

IP address (for example, ssh admin@<clustermgmt-ip>).

20. Open an SSH connection to either the cluster IP or host name.

21. Log in with the admin user with the password you provided earlier.

Set Auto-Revert on Cluster Management

To set the auto-revert parameter on the cluster management interface, run the following command:

network interface modify –vserver <clustername> -lif cluster_mgmt –auto-revert true

![]() A storage virtual machine (SVM) is referred to as a Vserver (or vserver) in the GUI and CLI.

A storage virtual machine (SVM) is referred to as a Vserver (or vserver) in the GUI and CLI.

Set Up Management Broadcast Domain

By default, all network ports are included in the default broadcast domain. Network ports used for data services (for example, e0d, e1a, and e1e) should be removed from the default broadcast domain, leaving just the management network ports (e0M).

To make the changes, the following commands must be executed for each storage node. Storage nodes are named after the cluster name with an appended number.

broadcast-domain remove-ports –broadcast-domain Default –ports <clustername>1:e0f,<clustername>-1:e0e, <clustername>-2:e0f,<clustername>-2:e0e

broadcast-domain show

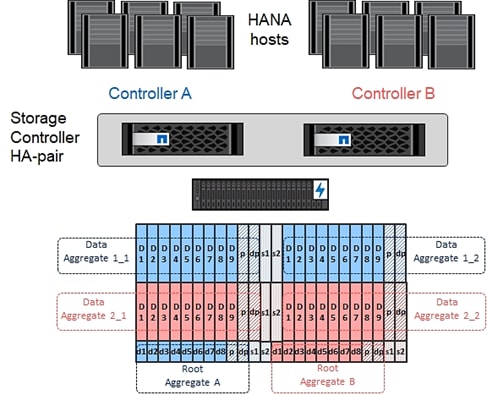

![]() Advanced Data Partitioning (ADPv2) creates a root partition and two data partitions on each SSD drive in an All Flash FAS configuration. Disk auto assign should assign one data partition to each node in a high availability pair.

Advanced Data Partitioning (ADPv2) creates a root partition and two data partitions on each SSD drive in an All Flash FAS configuration. Disk auto assign should assign one data partition to each node in a high availability pair.

An aggregate containing the root volume for each storage controller is created during the ONTAP software setup process. To create additional aggregates, determine the aggregate name, the node on which to create it, and the number of disks it should contain.

To create new aggregates, run the following commands:

aggr create -aggregate aggr1_1 -node <clustername>-1 -diskcount 11

aggr create -aggregate aggr1_2 -node <clustername>-2 -diskcount 11

aggr create -aggregate aggr2_1 -node <clustername>-1 -diskcount 11

aggr create -aggregate aggr2_2 -node <clustername>-2 -diskcount 11

![]() Use all disks except for two spares to create the aggregates. In this example, 11 disks per aggregate were used.

Use all disks except for two spares to create the aggregates. In this example, 11 disks per aggregate were used.

![]() The aggregate cannot be created until the disk zeroing completes. Run the aggr show command to display aggregate creation status. Do not proceed until all are online.

The aggregate cannot be created until the disk zeroing completes. Run the aggr show command to display aggregate creation status. Do not proceed until all are online.

Optional: Rename the root aggregate on node 01 to match the naming convention for this aggregate on node 02. The aggregate is automatically renamed if system-guided setup is used.

aggr show

aggr rename –aggregate aggr0 –newname <node01-rootaggrname>

To confirm that storage failover is enabled, run the following commands for a failover pair:

1. Verify the status of storage failover.

storage failover show

![]() Both <clustername>_1 and <clustername>_2 must be capable of performing a takeover. Continue with step 3 if the nodes are capable of performing a takeover.

Both <clustername>_1 and <clustername>_2 must be capable of performing a takeover. Continue with step 3 if the nodes are capable of performing a takeover.

2. Enable failover on one of the two nodes.

storage failover modify -node <clustername>-1 -enabled true

![]() Enabling failover on one node enables it for both nodes.

Enabling failover on one node enables it for both nodes.

3. Verify the HA status for a two-node cluster.

![]() This step is not applicable for clusters with more than two nodes.

This step is not applicable for clusters with more than two nodes.

cluster ha show

4. Continue with step 6 if high availability is configured.

5. Only enable HA mode for two-node clusters. Do not run this command for clusters with more than two nodes because it causes problems with failover.

cluster ha modify -configured true

Do you want to continue? {y|n}: y

6. Verify that hardware assist is correctly configured and, if needed, modify the partner IP address.

storage failover hwassist show

storage failover modify –hwassist-partner-ip <node02-mgmt-ip> -node <clustername>-1

storage failover modify –hwassist-partner-ip <node01-mgmt-ip> -node <clustername>-2

Verify FCP Ports are Set to Target Ports

To change the FCP to target mode if they are configured as initiator, follow these steps:

1. Check the ports if they configured correctly:

aff_a400::system node hardware unified-connect show

Current Current Pending Pending Admin

Node Adapter Mode Type Mode Type Status

------------ ------- ------- --------- ------- --------- -----------

<clustername>-01 5a fc initiator - - online

<clustername>-01 5b fc initiator - - online

<clustername>-01 5c fc initiator - - online

<clustername>-01 5d fc initiator - - online

<clustername>-02 5a fc initiator - - online

<clustername>-02 5b fc initiator - - online

<clustername>-02 5c fc initiator - - online

<clustername>-02 5d fc initiator - - online

8 entries were displayed.

2. Disable all ports which need to be changed:

system node run -node <clustername>-01 -command storage disable adapter 5a

system node run -node <clustername>-01 -command storage disable adapter 5b

system node run -node <clustername>-01 -command storage disable adapter 5c

system node run -node <clustername>-01 -command storage disable adapter 5d

system node run -node <clustername>-02 -command storage disable adapter 5a

system node run -node <clustername>-02 -command storage disable adapter 5b

system node run -node <clustername>-02 -command storage disable adapter 5c

system node run -node <clustername>-02 -command storage disable adapter 5d

3. Change the HBAs mode to target:

ucadmin modify -node <clustername>-* -adapter 5a -type target

Warning: FC-4 type on adapter 5b will also be changed to target.

Do you want to continue? {y|n}: y

Any changes will take effect after rebooting the system. Use the "system node reboot" command to reboot.

Any changes will take effect after rebooting the system. Use the "system node reboot" command to reboot.

2 entries were modified.

ucadmin modify -node <clustername>-* -adapter 5c -type target

Warning: FC-4 type on adapter 5d will also be changed to target.

Do you want to continue? {y|n}: y

Any changes will take effect after rebooting the system. Use the "system node reboot" command to reboot.

Any changes will take effect after rebooting the system. Use the "system node reboot" command to reboot.

2 entries were modified.

4. Reboot each controller node:

node reboot -node <clustername>-01

5. Wait until the first node is back up and running and reboot the second node:

node reboot -node <clustername>-01

Disable Flow Control on 25/40/100GbE Ports

NetApp recommends disabling flow control on all the 25/40/100GbE ports that are connected to external devices. To disable flow control, follow these steps:

1. Run the following commands to configure node 1:

network port modify -node <clustername>_1 -port e0e,e0f -flowcontrol-admin none

Warning: Changing the network port settings will cause a several second interruption in carrier.

Do you want to continue? {y|n}: y

2. Run the following commands to configure node 2:

network port modify -node <clustername>_2 -port e0e,e0f -flowcontrol-admin none

Warning: Changing the network port settings will cause a several second interruption in carrier.

Do you want to continue? {y|n}: y

network port show –fields flowcontrol-admin

NetApp AutoSupport® sends support summary information to NetApp through HTTPS. To configure AutoSupport, run the following command:

system node autosupport modify -node * -state enable –mail-hosts <mailhost> -transport https -support enable -noteto <storage-admin-email>

Enable Cisco Discovery Protocol

To enable the Cisco Discovery Protocol (CDP) on the NetApp storage controllers, run the following command:

node run -node * options cdpd.enable on

![]() To be effective, CDP must also be enabled on directly connected networking equipment such as switches and routers.

To be effective, CDP must also be enabled on directly connected networking equipment such as switches and routers.

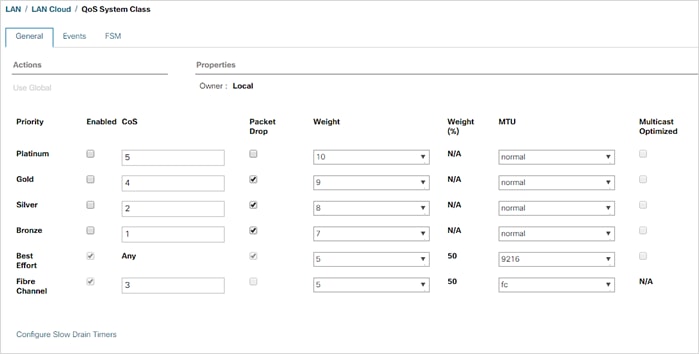

Create Broadcast Domain for NFS access

For this setup, the VLAN ID 228 has been used for NFS access which is needed for /hana/shared for SAP HANA multiple host setups.

The broadcast domains must be created with an MTU size of 9000 (jumbo frames):

broadcast-domain create -broadcast-domain NFS -mtu 9000

To create the LACP interface groups for the 25GbE data interfaces, run the following commands:

ifgrp create -node <clustername>_1 -ifgrp a0a -distr-func port -mode multimode_lacp

ifgrp add-port -node <clustername>_1 -ifgrp a0a -port e0e

ifgrp add-port -node <clustername>-_1 -ifgrp a0a -port e0f

ifgrp create -node <clustername>_2 -ifgrp a0a -distr-func port -mode multimode_lacp

ifgrp add-port -node <clustername>_2 -ifgrp a0a -port e0e

ifgrp add-port -node <clustername>_2 -ifgrp a0a -port e0f

ifgrp show

Create VLAN for NFS access

To create VLANs, follow these steps:

1. Set the MTU size of the interface groups.

network port modify –node <clustername>_1 -port a0a –mtu 9000

network port modify –node <clustername>_2 -port a0a –mtu 9000

2. Create HANA NFS VLAN ports and add them to the NFS broadcast domain.

network port vlan create –node <clustername>_1 -vlan-name a0a-<hana-sharednfs-vlan-id>

network port vlan create –node <clustername>_2 -vlan-name a0a-<hana-sharednfs-vlan-id>

broadcast-domain add-ports -broadcast-domain NFS -ports <clustername>-1:a0a-<hana-sharednfs-vlan-id>, <clustername>-02:a0a-<hana-sharednfs-vlan-id>

For each of the SVMs and the cluster node, create a certificate to allow secure communication with HTTPS. For each of the certificates, specify the individual values listed in Table 6.

Table 6. ONTAP Software Parameters Needed to Enable HTTPS

| Cluster Detail |

Cluster Detail Value |

| Certificate common name |

<cert-common-name> |

| Country code |

<cert-country> |

| State |

<cert-state> |

| Locality |

<cert-locality> |

| Organization |

<cert-org> |

| Unit |

<cert-unit> |

| |

<cert-email> |

| Number of days the certificate is valid |

<cert-days> |

To configure secure access to the storage controller, follow these steps:

1. Increase the privilege level to access the certificate commands.

set -privilege diag

Do you want to continue? {y|n}: y

2. Generally, a self-signed certificate is already in place. Verify the certificate and obtain parameters (for example the <serial-number>) by running the following command:

security certificate show

3. For each SVM shown, the certificate common name should match the DNS FQDN of the SVM. Delete the two default certificates and replace them with either self-signed certificates or certificates from a certificate authority (CA). To delete the default certificates, run the following commands:

security certificate delete -vserver hana-svm -common-name hana-svm -ca hana-svm -type server -serial <serial-number>

![]() Deleting expired certificates before creating new certificates is a best practice. Run the security certificate delete command to delete the expired certificates. In the following command, use tab completion to select and delete each default certificate.

Deleting expired certificates before creating new certificates is a best practice. Run the security certificate delete command to delete the expired certificates. In the following command, use tab completion to select and delete each default certificate.

4. To generate and install self-signed certificates, run the following commands as one-time commands. Generate a server certificate for the Infra-SVM, the HANA SVM, and the cluster SVM. Use tab completion to aid in the completion of these commands.

security certificate create -common-name <cert-common-name> -type server -size 2048 -country <cert-country> -state <cert-state> -locality <cert-locality> -organization <cert-org> -unit <cert-unit> -email-addr <cert-email> -expire-days <cert-days> -protocol SSL -hash-function SHA256 -vserver hana-svm

security certificate create -common-name <cert-common-name> -type server -size 2048 -country <cert-country> -state <cert-state> -locality <cert-locality> -organization <cert-org> -unit <cert-unit> -email-addr <cert-email> -expire-days <cert-days> -protocol SSL -hash-function SHA256 -vserver infra-svm

security certificate create -common-name <cert-common-name> -type server -size 2048 -country <cert-country> -state <cert-state> -locality <cert-locality> -organization <cert-org> -unit <cert-unit> -email-addr <cert-email> -expire-days <cert-days> -protocol SSL -hash-function SHA256 -vserver <clustername>

5. To obtain the values for the parameters required in the next step (<cert-ca> and <cert-serial>), run the security certificate show command.

6. Enable each certificate that was just created by using the –server-enabled true and –client-enabled false parameters. Use tab completion to aid in the completion of these commands.

security ssl modify -vserver <clustername> -server-enabled true -client-enabled false -ca <cert-ca> -serial <cert-serial> -common-name <cert-common-name>

security ssl modify -vserver hana-svm -server-enabled true -client-enabled false -ca <cert-ca> -serial <cert-serial> -common-name <cert-common-name>

security ssl modify -vserver infra-svm -server-enabled true -client-enabled false -ca <cert-ca> -serial <cert-serial> -common-name <cert-common-name>

7. Disable HTTP cluster management access.

system services firewall policy delete -policy mgmt -service http –vserver <clustername>

![]() It is normal for some of these commands to return an error message stating that the entry does not exist.

It is normal for some of these commands to return an error message stating that the entry does not exist.

8. Change back to the normal admin privilege level and set up the system so that SVM logs are available through the web.

set –privilege admin

vserver services web modify –name spi|ontapi|compat –vserver * -enabled true

Configure SVM for the Infrastructure

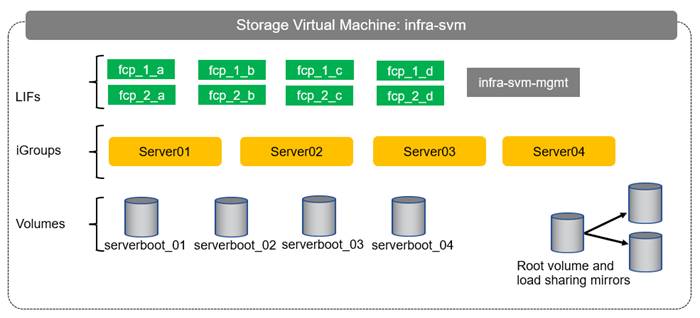

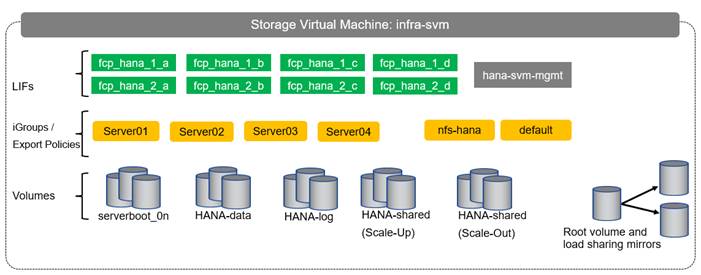

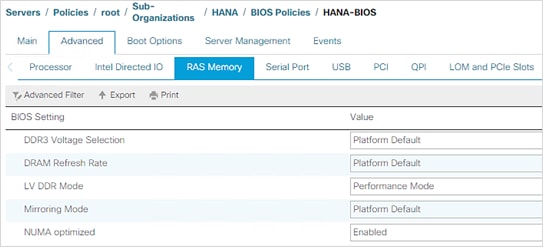

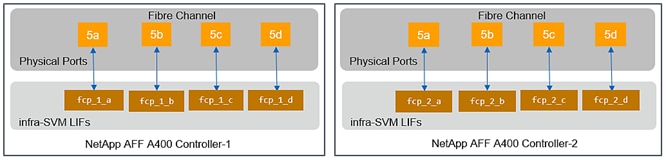

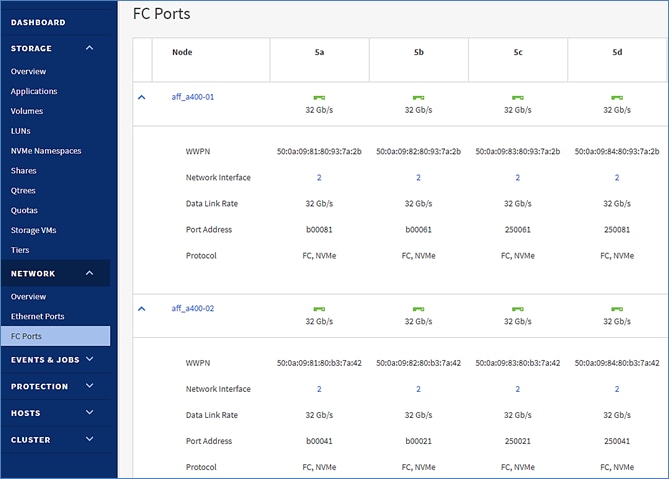

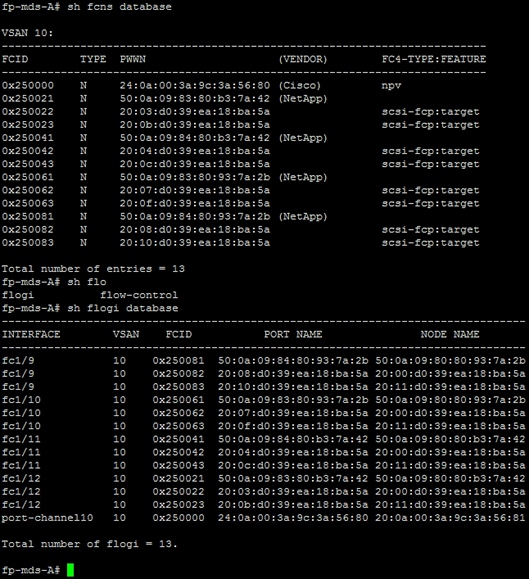

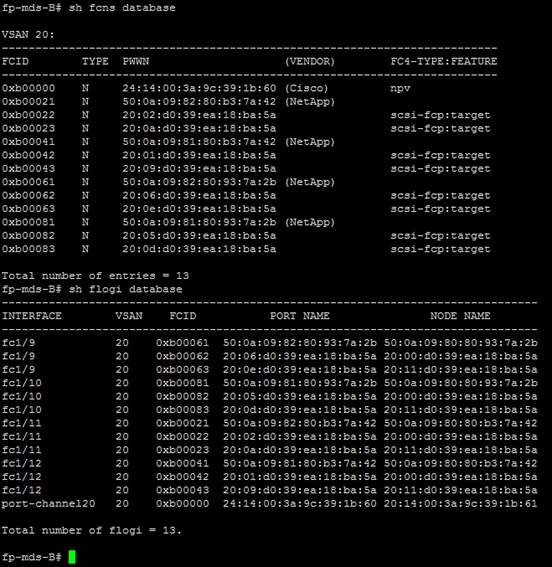

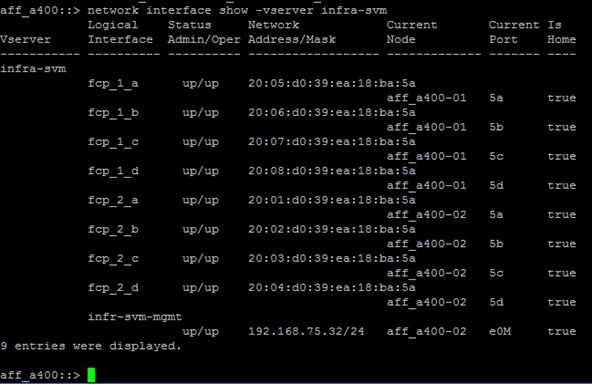

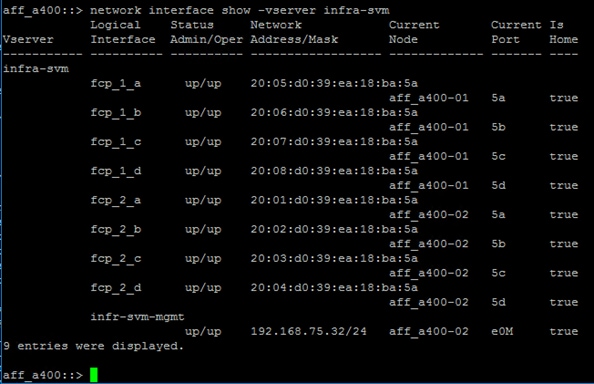

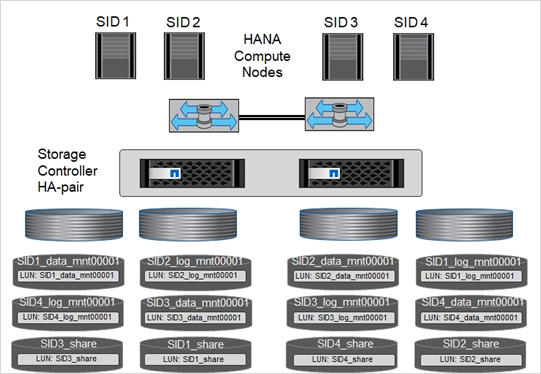

Table 7 and Figure 3 describe the infrastructure SVM together with all required storage objects (volumes and LIFs).

Figure 3. Overview of Infrastructure SVM Components

Table 7. ONTAP Software Parameters for Infrastructure SVMs

| Cluster Detail |

Cluster Detail Value |

Value used in validation setup |

| Infrastructure SVM management IP |

<infra-svm-ip> |

192.168.75.46 |

| Infrastructure SVM management IP netmask |

<infra-svm-netmask> |

255.255.255.0 |

| Infrastructure SVM default gateway |

<infra-svm-gateway> |

192.168.75.1 |

Create SVM for the Infrastructure

To create an infrastructure SVM, follow these steps:

1. Run the vserver create command.

vserver create –vserver infra-svm –rootvolume infra_rootvol –aggregate aggr2_1 –rootvolume-security-style unix

2. Select the SVM data protocols to configure, keeping iSCSI and NFS.

vserver remove-protocols –vserver infra-svm -protocols iscsi,nfs,cifs,nvme

3. Add the data aggregates to the SVM aggregate list.

vserver modify –vserver infra-svm –aggr-list aggr1_1,aggr2_1,aggr1_2,aggr2_2

4. Enable and run the NFS protocol in the SVM.

nfs create -vserver infra-svm -udp disabled

To create a load-sharing mirror of an SVM root volume, follow these steps:

1. Create a volume to be the load-sharing mirror of the infrastructure SVM root volume on each node.

volume create –vserver infra-svm –volume infra_rootvol_m01 –aggregate aggr2_1 –size 1GB –type DP

volume create –vserver infra-svm –volume infra_rootvol_m02 –aggregate aggr2_2 –size 1GB –type DP

2. Create the mirroring relationships.

snapmirror create –source-path infra-svm:infra_rootvol –destination-path

infra-svm:infra_rootvol_m01 –type LS -schedule 5min

snapmirror create –source-path infra-svm:infra_rootvol –destination-path

infra-svm:infra_rootvol_m02 –type LS -schedule 5min

3. Initialize the mirroring relationship.

snapmirror initialize-ls-set –source-path infra-svm:infra_rootvol

snapmirror show

Add Infrastructure SVM Management LIF

To add the infrastructure SVM administration LIF in the out-of-band management network, follow these steps:

1. Run the following commands:

network interface create –vserver infra-svm –lif infra-svm-mgmt -service-policy default-management –role data –data-protocol none –home-node <clustername>_2 -home-port e0M –address <infra-svm-ip> -netmask <infra-svm-mask> -status-admin up –failover-policy broadcast-domain-wide –firewall-policy mgmt –auto-revert true

![]() The SVM management IP in this step should be in the same subnet as the storage cluster management IP.

The SVM management IP in this step should be in the same subnet as the storage cluster management IP.

2. Create a default route to allow the SVM management interface to reach the outside world.

network route create –vserver infra-svm -destination 0.0.0.0/0 –gateway <infra-svm-gateway>

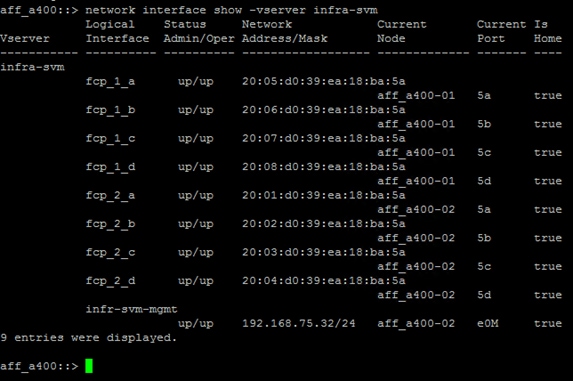

To create the eight FCP LIFs (four on each node), run the following commands:

net interface create -vserver infra-svm -lif fcp_2_a -data-protocol fcp -role data -home-node <clustername>-02 -home-port 5a

net interface create -vserver infra-svm -lif fcp_2_b -data-protocol fcp -role data -home-node <clustername>-02 -home-port 5b

net interface create -vserver infra-svm -lif fcp_2_c -data-protocol fcp -role data -home-node <clustername>-02 -home-port 5c

net interface create -vserver infra-svm -lif fcp_2_d -data-protocol fcp -role data -home-node <clustername>-02 -home-port 5d

net interface create -vserver infra-svm -lif fcp_1_a -data-protocol fcp -role data -home-node <clustername>-01 -home-port 5a

net interface create -vserver infra-svm -lif fcp_1_b -data-protocol fcp -role data -home-node <clustername>-01 -home-port 5b

net interface create -vserver infra-svm -lif fcp_1_c -data-protocol fcp -role data -home-node <clustername>-01 -home-port 5c

net interface create -vserver infra-svm -lif fcp_1_d -data-protocol fcp -role data -home-node <clustername>-01 -home-port 5d

Create Block Protocol (FCP) Service

Run the following command to create the FCP service. This command also starts the iSCSI service.

fcp create -vserver infra-svm

To create FlexVol volumes, run the following commands:

volume create -vserver infra-svm -volume serverboot -aggregate aggr1_1 -size 1000GB -state online -space-guarantee none -percent-snapshot-space 0

snapmirror update-ls-set -source-path infra-svm:infra_rootvol

Create Boot LUNs for Servers

To create boot LUNs, run the following commands. This example creates boot LUNs for four servers. Repeat the command with a different LUN name to create additional boot LUNs for additional servers.

lun create -vserver infra-svm -volume server_boot -lun server-01 -size 100G -ostype Linux -space-reserve disabled

lun create -vserver infra-svm -volume server_boot -lun server-02 -size 100G -ostype Linux -space-reserve disabled

lun create -vserver infra-svm -volume server_boot -lun server-03 -size 100G -ostype Linux -space-reserve disabled

lun create -vserver infra-svm -volume server_boot -lun server-04 -size 100G -ostype Linux -space-reserve disabled

Create Portset

To create a portset that includes four FCP LIFs, run the following commands:

portset create -vserver infra-svm -portset boot -protocol fcp -port-name fcp_1_a,fcp_1_c,fcp_2_a,fcp_2_c

Create igroups

![]() Use the WWPN information you defined in section Create WWPN Pools to create the igroups.

Use the WWPN information you defined in section Create WWPN Pools to create the igroups.

To create igroups, run the following commands:

igroup create –vserver infra-svm –igroup server-01 –protocol fcp –ostype linux –initiator <server-host-01-wwpns> -portset boot

igroup create –vserver infra-svm –igroup server-02 –protocol fcp –ostype linux –initiator <server-host-02-wwpns> -portset boot

igroup create –vserver infra-svm –igroup server-03 –protocol fcp –ostype linux –initiator <server-host-03-wwpns> -portset boot

igroup create –vserver infra-svm –igroup server-04 –protocol fcp –ostype linux –initiator <server-host-04-wwpns> -portset boot

Repeat the command by using the WWPNs of additional servers to create additional igroups for additional servers.

Map Boot LUNs to igroups

To map server boot LUNs to igroups, run the following commands. Repeat this command to map additional boot LUNs to additional servers.

lun map -vserver infra-svm -volume server_boot -lun server-01 -igroup server-01 -lun-id 0

lun map -vserver infra-svm -volume server_boot -lun server-02 -igroup server-02 -lun-id 0

lun map -vserver infra-svm -volume server_boot -lun server-03 -igroup server-03 -lun-id 0

lun map -vserver infra-svm -volume server_boot -lun server-04 -igroup server-04 -lun-id 0

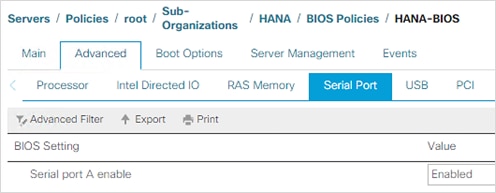

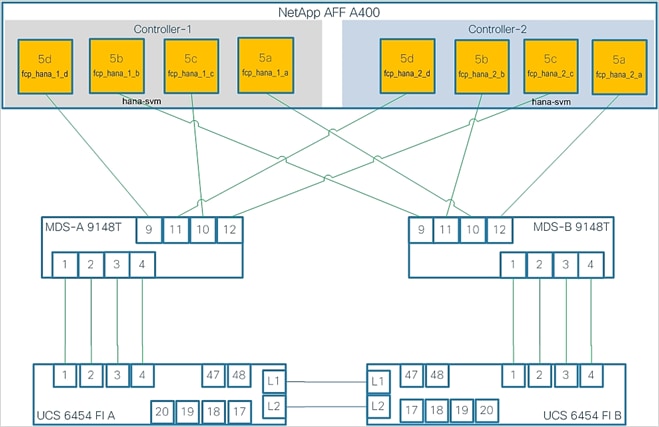

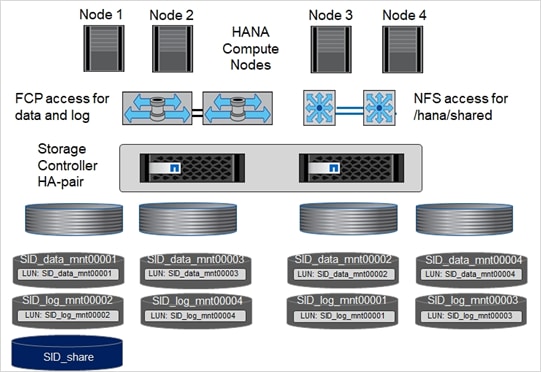

Table 8 and Figure 4 describe the HANA SVM together with all the required storage objects (volumes, export-policies, and LIFs).

Figure 4. Overview of SAP HANA SVM Components

Table 8. ONTAP Software Parameter for HANA SVM

| Cluster Detail |

Cluster Detail Value |

Value used in CVD setup |

| HANA SVM management IP |

<hana-svm-ip> |

192.168.75.47 |

| HANA SVM management IP netmask |

<hana-svm-netmask> |

255.255.255.0 |

| HANA SVM default gateway |

<hana-svm-gateway> |

192.168.75.1 |

| NFS Shared CIDR |

<data-cidr> |

192.168.228.0 |

| NFS Shared netmask |

<data-netmask> |

255.255.255.0 |

| NFS shared LIF node 1 IP |

<node01-sharednfs_lif01-ip> |

192.168.228.21 |

| NFS shared LIF node 2 IP |

<node02-sharednfs_lif02-ip> |

192.168.228.22 |

To create an SVM for SAP HANA volumes, follow these steps:

1. Run the vserver create command.

vserver create –vserver hana-svm –rootvolume hana_rootvol –aggregate aggr1_2 –rootvolume-security-style unix

2. Select the SVM data protocols to configure, keeping NFS and FCP.

vserver remove-protocols –vserver hana-svm -protocols cifs,iscsi,nvme

3. Add the two data aggregates to the hana-svm aggregate list.

vserver modify –vserver hana-svm –aggr-list aggr1_1,aggr1_2,aggr2_1,aggr2_2

4. Disable any QOS policy at the vserver level

vserver modify -vserver hana-svm -qos-policy-group none

5. Enable and run the NFS protocol in the Infra-SVM.

nfs create -vserver hana-svm -v3 enabled

6. Enable a large NFS transfer size.

set advanced

vserver nfs modify –vserver hana-svm –tcp-max-transfersize 1048576

set admin

7. Set the group ID of the user root to 0.

vserver services unix-user modify -vserver hana-svm -user root -primary-gid 0

To create a load-sharing mirror of an SVM root volume, follow these steps:

1. Create a volume to be the load-sharing mirror of the HANA SVM root volume on each node.

volume create –vserver hana-svm –volume hana_rootvol_m01 –aggregate aggr2_1 –size 1GB –type DP

volume create –vserver hana-svm –volume hana_rootvol_m02 –aggregate aggr2_2 –size 1GB –type DP

2. Create the mirroring relationships.

snapmirror create –source-path hana-svm:hana_rootvol –destination-path hana-svm:hana_rootvol_m01 –type LS -schedule 5min

snapmirror create –source-path hana-svm:hana_rootvol –destination-path hana-svm:hana_rootvol_m02 –type LS -schedule 5min

3. Initialize the mirroring relationship.

snapmirror initialize-ls-set –source-path hana-svm:hana_rootvol

Create Export Policies for the Root Volumes

To configure the NFS export policies on the SVM, follow these steps:

1. Create a new rule for the infrastructure NFS subnet in the default export policy.

vserver export-policy rule create –vserver hana-svm -policyname default –ruleindex 1 –protocol nfs -clientmatch 0.0.0.0/0 -rorule sys –rwrule sys -superuser sys –allow-suid true

2. Assign the FlexPod export policy to the infrastructure SVM root volume.

volume modify –vserver hana-svm –volume hana_rootvol –policy default

Add HANA SVM Management Interface and Administrator

To add the HANA SVM administrator and SVM administration LIF in the out-of-band management network, follow these steps:

1. Run the following commands:

network interface create –vserver hana-svm –lif hana-svm-mgmt -service-policy default-management –role data –data-protocol none –home-node <clustername>-02 -home-port e0M –address <hana-svm-ip> -netmask <hana-svm-netmask> -status-admin up –failover-policy broadcast-domain-wide –firewall-policy mgmt –auto-revert true

![]() The SVM management IP in this step should be in the same subnet as the storage cluster management IP.

The SVM management IP in this step should be in the same subnet as the storage cluster management IP.

2. Create a default route to allow the SVM management interface to reach the outside world.

network route create –vserver hana-svm -destination 0.0.0.0/0 –gateway <hana-svm-gateway>

3. Set a password for the SVM vsadmin user and unlock the user.

security login password –username vsadmin –vserver hana-svm

Enter a new password: <password>

Enter it again: <password>

security login unlock –username vsadmin –vserver hana-svm

Create Export Policies for the HANA SVM

1. Create a new export policy for the HANA data and log subnet.

vserver export-policy create -vserver hana-svm -policyname nfs-hana

2. Create a rule for this policy.

vserver export-policy rule create -vserver hana-svm -policyname nfs-hana -clientmatch <data-cidr>,<log-cidr> -rorule sys -rwrule sys -allow-suid true -allow-dev true -ruleindex 1 -protocol nfs -superuser sys

Create NFS LIF for SAP HANA Shared

To create the NFS LIFs for SAP HANA data, run the following commands:

network interface create -vserver hana-svm -lif data-01 -role data -data-protocol nfs -home-node <clustername>-01 -home-port a0a-<hana-sharednfs-vlan-id> –address <node01-sharednfs_lif01-ip> -netmask <data-netmask> -status-admin up –failover-policy broadcast-domain-wide –firewall-policy data –auto-revert true

network interface create -vserver hana-svm -lif data-02 -role data -data-protocol nfs -home-node <clustername>-02 -home-port a0a-<hana-sharednfs-vlan-id> –address <node02-sharednfs_lif02-ip> -netmask <data-netmask> -status-admin up –failover-policy broadcast-domain-wide –firewall-policy data –auto-revert true

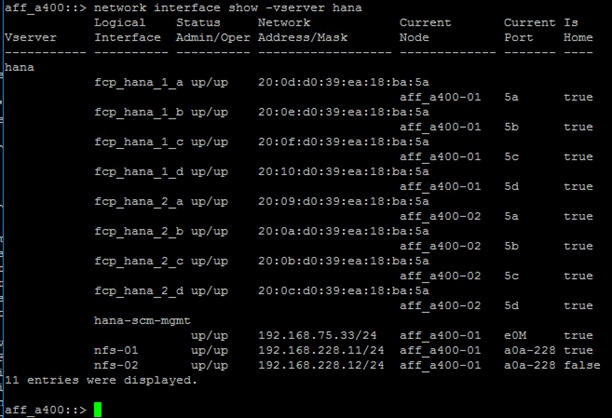

To create the eight FCP LIFs (four on each node), run the following commands:

net interface create -vserver hana-svm -lif fcp_hana_2_a -data-protocol fcp -role data -home-node <clustername>-02 -home-port 5a

net interface create -vserver hana-svm -lif fcp_hana_2_b -data-protocol fcp -role data -home-node <clustername>-02 -home-port 5b

net interface create -vserver hana-svm -lif fcp_hana_2_c -data-protocol fcp -role data -home-node <clustername>-02 -home-port 5c

net interface create -vserver hana-svm -lif fcp_hana_2_d -data-protocol fcp -role data -home-node <clustername>-02 -home-port 5d

net interface create -vserver hana-svm -lif fcp_hana_1_a -data-protocol fcp -role data -home-node <clustername>-01 -home-port 5a

net interface create -vserver hana-svm -lif fcp_hana_1_b -data-protocol fcp -role data -home-node <clustername>-01 -home-port 5b

net interface create -vserver hana-svm -lif fcp_hana_1_c -data-protocol fcp -role data -home-node <clustername>-01 -home-port 5c

net interface create -vserver hana-svm -lif fcp_hana_1_d -data-protocol fcp -role data -home-node <clustername>-01 -home-port 5d

Create Portset

To create a portset that includes all FCP LIFs, run the following commands:

portset create -vserver hana-svm -portset all_ports -protocol fcp -port-name fcp_hana_1_a,fcp_hana_1_b,fcp_hana_1_c,fcp_hana_1_d,fcp_hana_2_a,fcp_hana_2_b,fcp_hana_2_c,fcp_hana_2_d

Create igroups for SAP HANA Servers

Use the WWPN information you defined in section Create WWPN Pools to create the igroups.

To create igroups, run the following commands. Repeat this command by using the WWPNs of additional servers to create additional igroups for additional servers.

igroup create –vserver hana-svm –igroup server-01 –protocol fcp –ostype linux –initiator <server-host-01-wwpns> -portset all_ports

igroup create –vserver hana-svm –igroup server-02 –protocol fcp –ostype linux –initiator <server-host-02-wwpns> -portset all_ports

igroup create –vserver hana-svm –igroup server-03 –protocol fcp –ostype linux –initiator <server-host-03-wwpns> -portset all_ports

igroup create –vserver hana-svm –igroup server-04 –protocol fcp –ostype linux –initiator <server-host-04-wwpns> -portset all_ports

Cisco UCS Base Configuration

This FlexPod deployment explains the configuration steps for the Cisco UCS 6454 Fabric Interconnects (FI) in a design that will support Fibre Channel SAN boot.

![]() If setting up a system with iSCSI boot, the sections with (FCP) in the heading can be skipped and then complete section Cisco UCS Backup in the Appendix.

If setting up a system with iSCSI boot, the sections with (FCP) in the heading can be skipped and then complete section Cisco UCS Backup in the Appendix.

Perform Initial Setup of Cisco UCS 6454 Fabric Interconnects for FlexPod Environments

This section provides the detailed procedures for configuring the Cisco Unified Computing System (Cisco UCS) for use in a FlexPod environment. The steps are necessary to provision the Cisco UCS B-Series and C-Series servers and should be followed precisely to avoid improper configuration.

Cisco UCS Fabric Interconnect A

To configure the Cisco UCS for use in a FlexPod environment, follow these steps:

1. Connect to the console port on the first Cisco UCS fabric interconnect.

Enter the configuration method. (console/gui) ? console

Enter the setup mode; setup newly or restore from backup. (setup/restore) ? setup

You have chosen to setup a new Fabric interconnect. Continue? (y/n): y

Enforce strong password? (y/n) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Is this Fabric interconnect part of a cluster(select 'no' for standalone)? (yes/no) [n]: y

Enter the switch fabric (A/B) []: A

Enter the system name: <ucs-cluster-name>

Physical Switch Mgmt0 IP address : <ucsa-mgmt-ip>

Physical Switch Mgmt0 IPv4 netmask : <ucsa-mgmt-mask>

IPv4 address of the default gateway : <ucsa-mgmt-gateway>

Cluster IPv4 address : <ucs-cluster-ip>

Configure the DNS Server IP address? (yes/no) [n]: y

DNS IP address : <dns-server-1-ip>

Configure the default domain name? (yes/no) [n]: y

Default domain name : <ad-dns-domain-name>

Join centralized management environment (UCS Central)? (yes/no) [n]: Enter

Apply and save the configuration (select 'no' if you want to re-enter)? (yes/no): yes

Cisco UCS Fabric Interconnect B

To configure the Cisco UCS for use in a FlexPod environment, follow these steps:

1. Connect to the console port on the second Cisco UCS fabric interconnect.

Enter the configuration method. (console/gui) ? console

Installer has detected the presence of a peer Fabric interconnect. This Fabric interconnect will be added to the cluster. Continue (y/n) ? y

Enter the admin password of the peer Fabric interconnect: <password>

Connecting to peer Fabric interconnect... done

Retrieving config from peer Fabric interconnect... done

Peer Fabric interconnect Mgmt0 IPv4 Address: <ucsa-mgmt-ip>

Peer Fabric interconnect Mgmt0 IPv4 Netmask: <ucsa-mgmt-mask>

Cluster IPv4 address : <ucs-cluster-ip>

Peer FI is IPv4 Cluster enabled. Please Provide Local Fabric Interconnect Mgmt0 IPv4 Address

Physical Switch Mgmt0 IP address : <ucsb-mgmt-ip>

Local fabric interconnect model(UCS-FI-6454)

Peer fabric interconnect is compatible with the local fabric interconnect. Continuing with the installer...

Apply and save the configuration (select 'no' if you want to re-enter)? (yes/no): yes

2. Wait for the login prompt for the Cisco UCS Fabric Interconnect B before proceeding to the next section.

Cisco UCS Setup

To log into the Cisco Unified Computing System (Cisco UCS) environment, follow these steps:

1. Open a web browser and navigate to the Cisco UCS fabric interconnect cluster address.

![]() You may need to wait at least 5 minutes after configuring the second fabric interconnect for Cisco UCS Manager to open.

You may need to wait at least 5 minutes after configuring the second fabric interconnect for Cisco UCS Manager to open.

2. Click the Launch UCS Manager link to launch Cisco UCS Manager.

3. If prompted to accept security certificates, accept as necessary.

4. When prompted, enter admin for the user name and enter the administrative password.

5. Click Login to log into Cisco UCS Manager.

To enable anonymous reporting, follow this step:

1. In the Anonymous Reporting window, choose whether to send anonymous data to Cisco for improving future products. If you choose Yes, enter the IP address of your SMTP Server. Click OK.

Upgrade Cisco UCS Manager Software to Version 4.1(1d)

This document assumes you’re using Cisco UCS 4.1(1d). To upgrade the Cisco UCS Manager software and the Cisco UCS Fabric Interconnect software to version 4.1(1d), refer to Cisco UCS Manager Install and Upgrade Guides.

To synchronize the Cisco UCS environment to the NTP servers in the Cisco Nexus switches, follow these steps:

1. In Cisco UCS Manager, click Admin.

2. Expand All > Time Zone Management.

3. Choose Timezone.

4. In the Properties pane, choose the appropriate time zone in the Timezone menu.

5. Click Save Changes and then click OK.

6. Click Add NTP Server.

7. Enter <nexus-A-mgmt0-ip> and click OK. Click OK to confirm.

![]() We used the Cisco Nexus switch mgmt0 interface IP because it is in the same L2 domain as the UCS mgmt0 IPs. We could also use the Cisco Nexus NTP IPs, but that traffic would then have to pass through an L3 router.

We used the Cisco Nexus switch mgmt0 interface IP because it is in the same L2 domain as the UCS mgmt0 IPs. We could also use the Cisco Nexus NTP IPs, but that traffic would then have to pass through an L3 router.

8. Click Add NTP Server.

9. Enter <nexus-B-mgmt0-ip> and click OK, then click OK again.

To add one or more additional DNS servers to the UCS environment, follow these steps:

1. In Cisco UCS Manager, click Admin.

2. Expand All > Communications Management.

3. Choose DNS Management.

4. In the Properties pane, choose Specify DNS Server.

5. Enter the IP address of the additional DNS server.

6. Click OK and then click OK again. Repeat this process for any additional DNS servers.

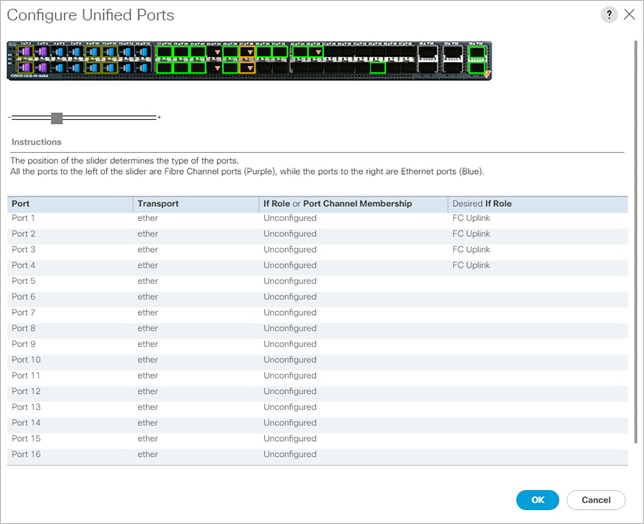

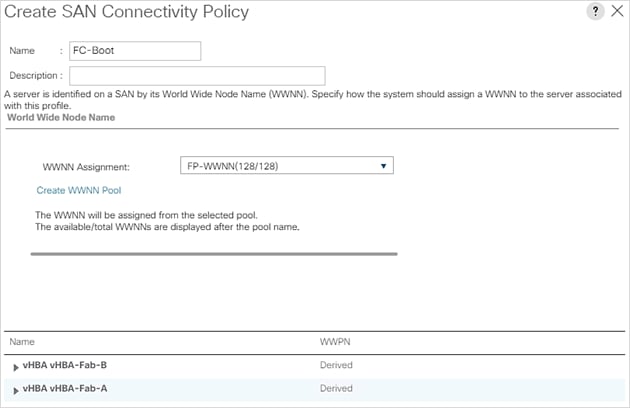

Configure Unified Ports (FCP)

Fibre Channel port configurations differ between the Cisco UCS 6454, 6332-16UP and the 6248UP fabric interconnects. All fabric interconnects have a slider mechanism within the Cisco UCS Manager GUI interface, but the fibre channel port selection options for the Cisco UCS 6454 are from the first 16 ports starting from the first port and configured in increments of 4 ports from the left. For the Cisco UCS 6332-16UP the port selection options are from the first 16 ports starting from the first port, and configured in increments of the first 6, 12, or all 16 of the unified ports. With the 6248UP, the port selection options will start from the right of the 32 fixed ports, or the right of the 16 ports of the expansion module, going down in contiguous increments of 2. The remainder of this section shows configuration of the 6454. Modify as necessary for the Cisco UCS 6332-16UP or 6248UP.

To enable the fibre channel ports, follow these steps for the Cisco UCS 6454:

1. In Cisco UCS Manager, click Equipment.

2. Choose Equipment > Fabric Interconnects > Fabric Interconnect B (subordinate).

3. Choose Configure Unified Ports.

4. Click Yes in the pop-up window warning that changes to the fixed module will require a reboot of the fabric interconnect and changes to the expansion module will require a reboot of that module.

5. Within the Configured Fixed Ports pop-up window move the gray slider bar from the left to the right to choose either 4, 8, 12, or 16 ports to be set as FC Uplinks.

6. Click OK, then click Yes, then click OK to continue.

7. Choose Equipment > Fabric Interconnects > Fabric Interconnect A (primary).

8. Choose Configure Unified Ports.

9. Click Yes on the pop-up window warning that changes to the fixed module will require a reboot of the fabric interconnect and changes to the expansion module will require a reboot of that module.

10. Within the Configured Fixed Ports pop-up window move the gray slider bar from the left to the right to choose either 4 or 8 ports to be set as FC Uplinks.

11. Click OK, then click Yes, then OK to continue.

12. Wait for both Fabric Interconnects to reboot.

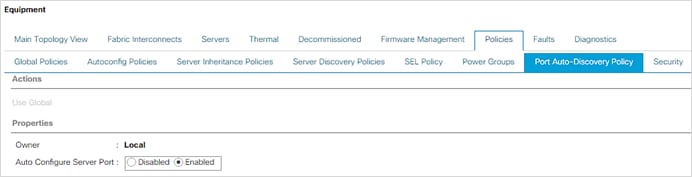

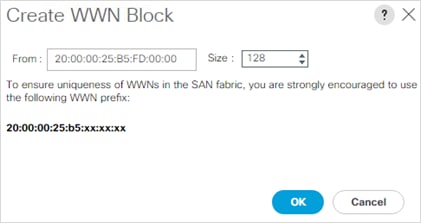

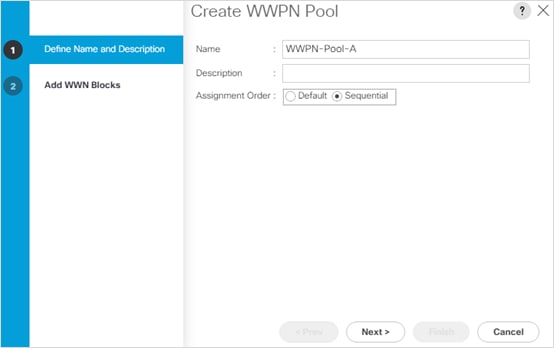

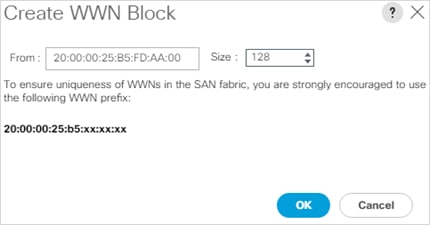

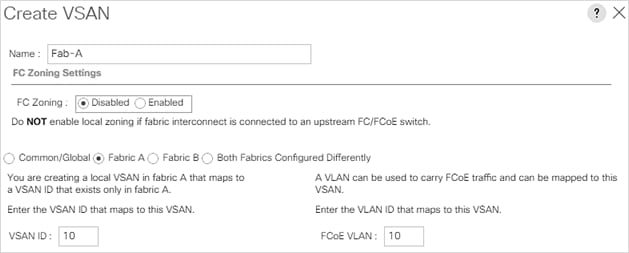

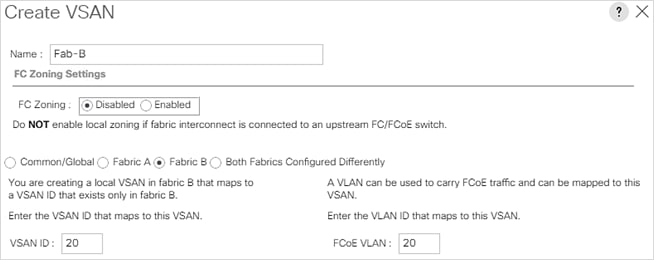

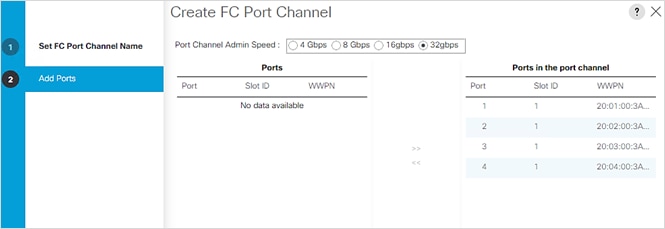

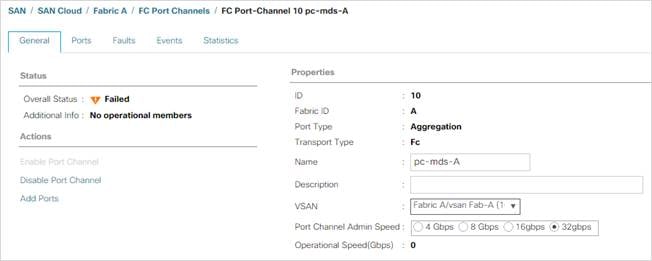

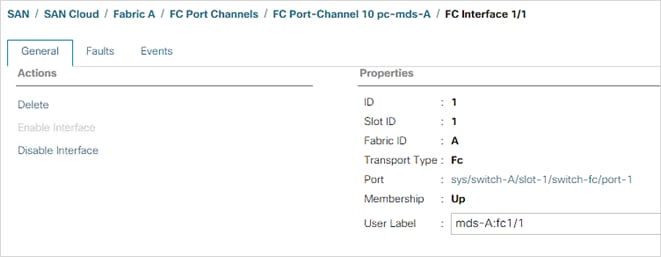

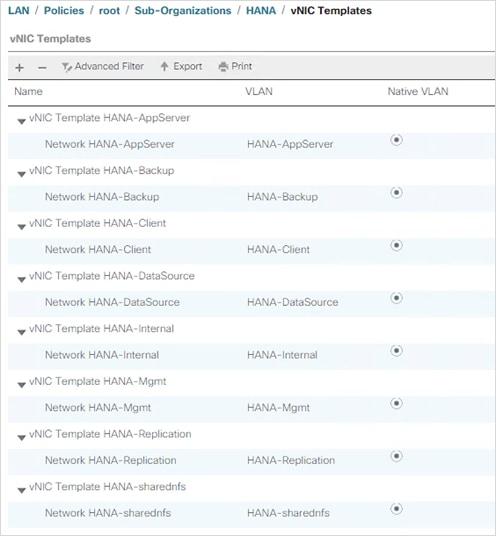

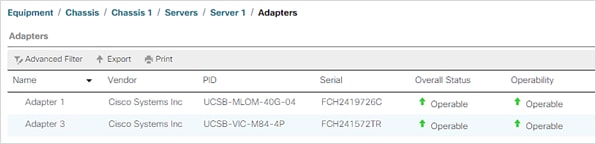

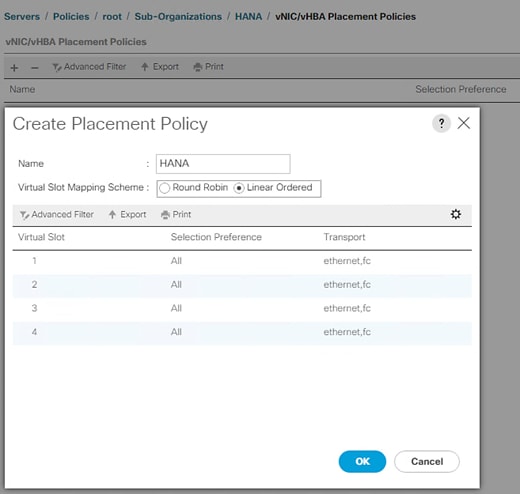

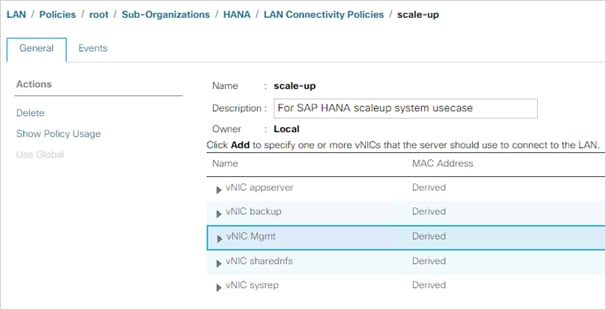

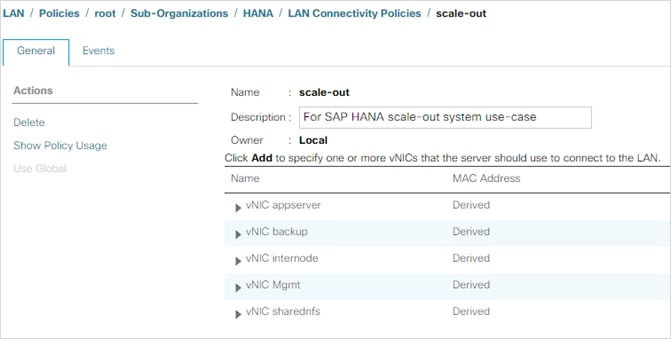

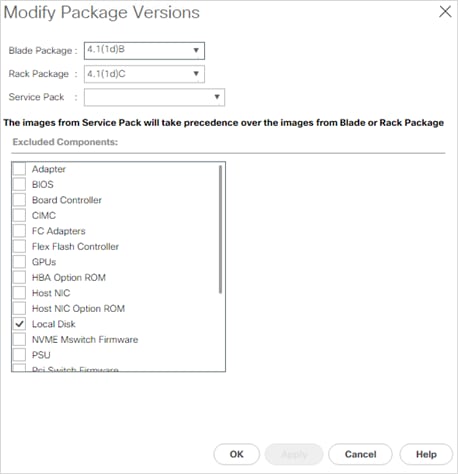

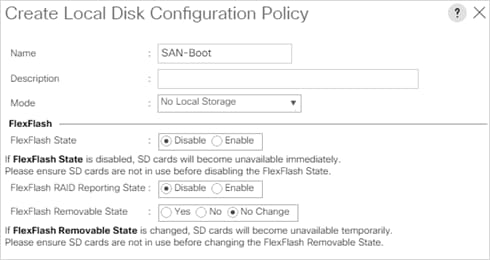

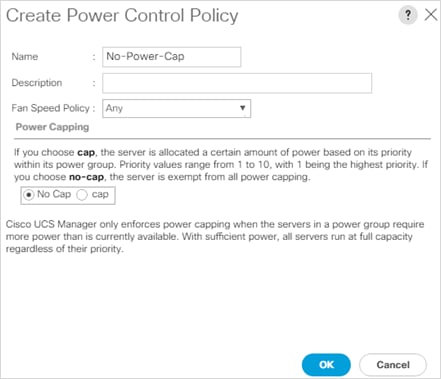

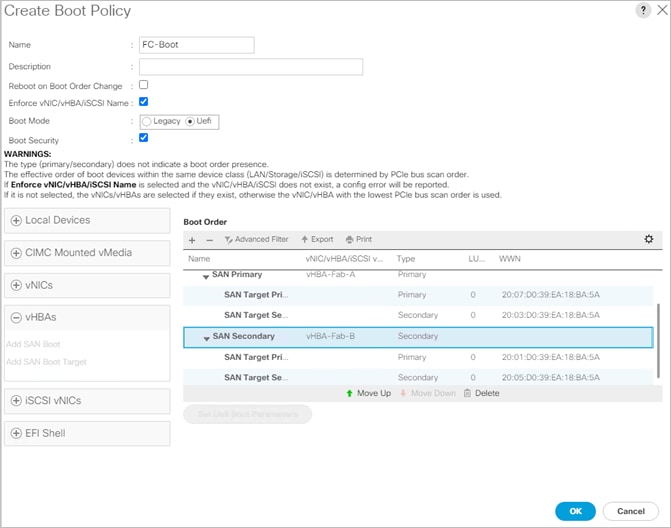

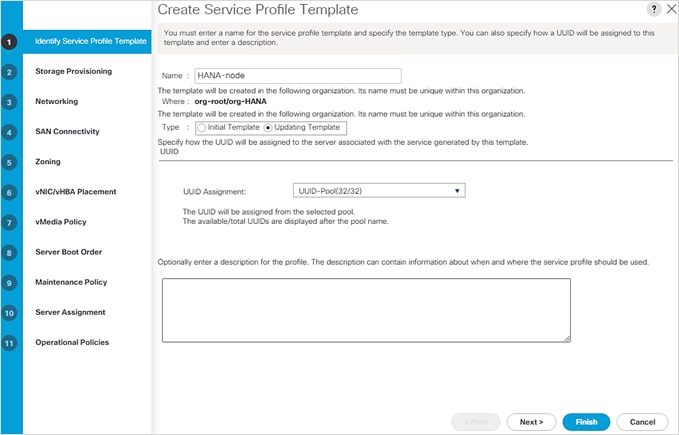

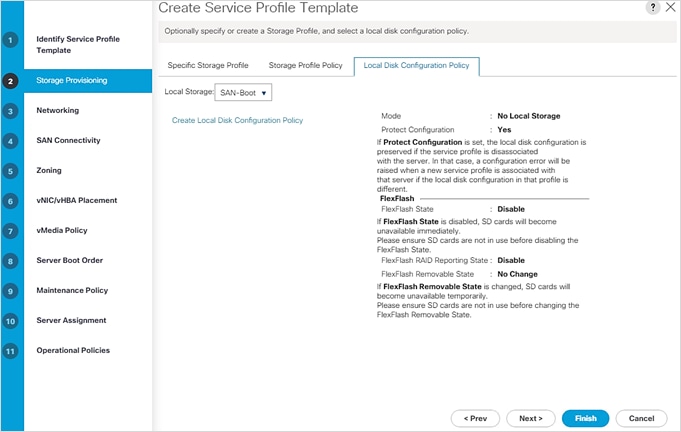

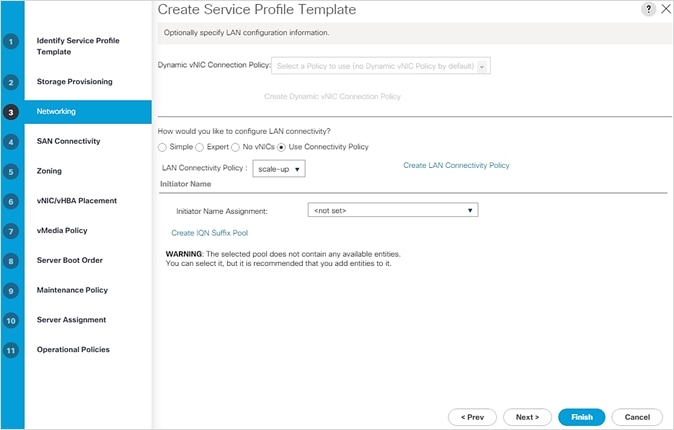

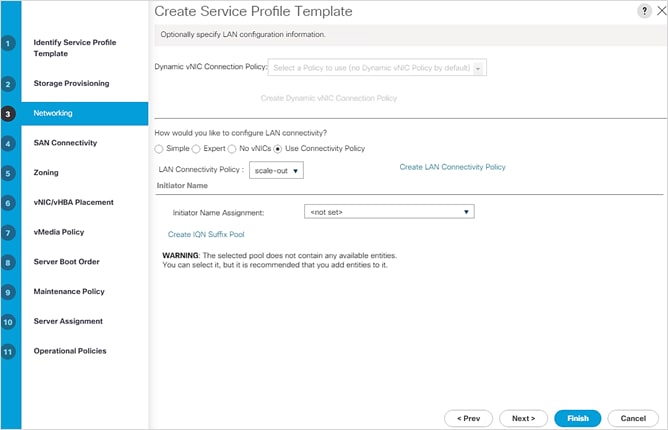

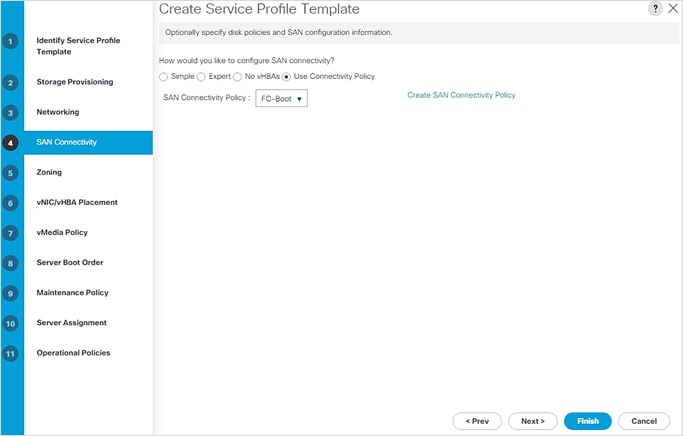

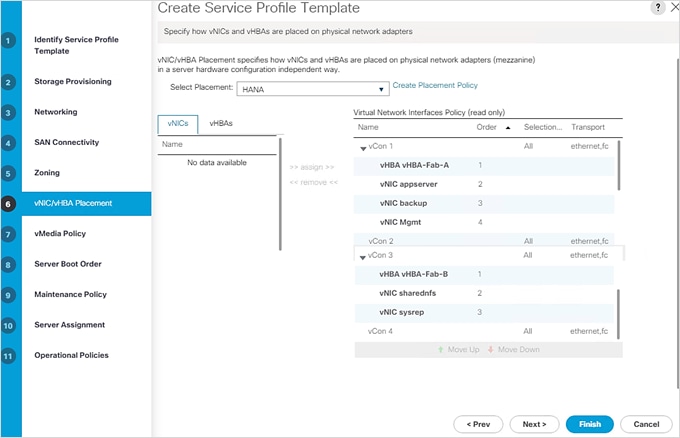

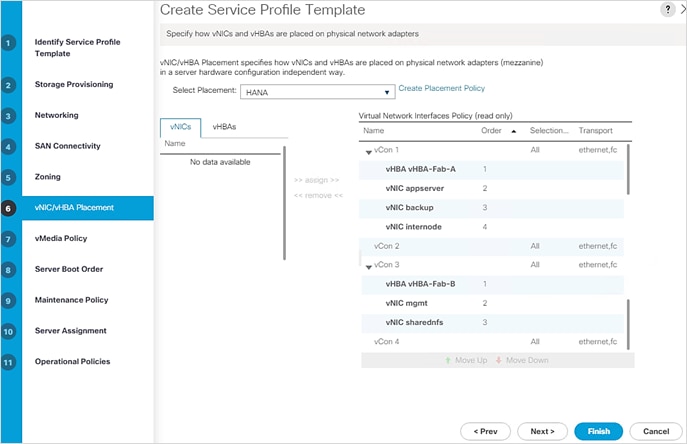

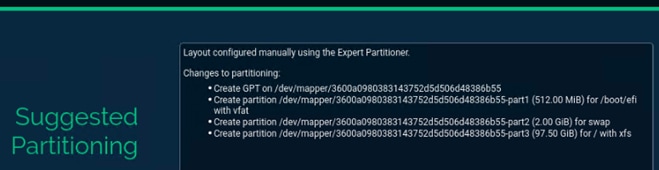

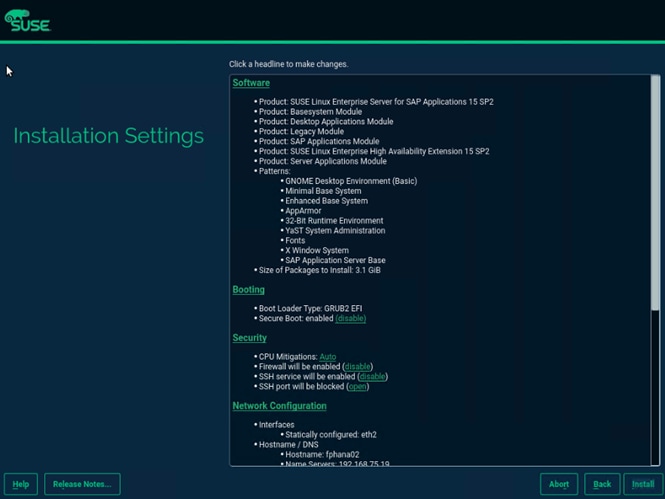

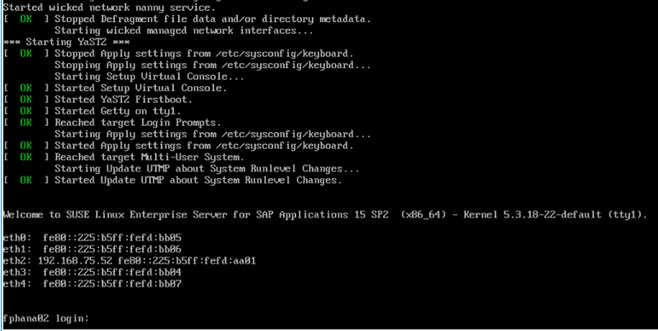

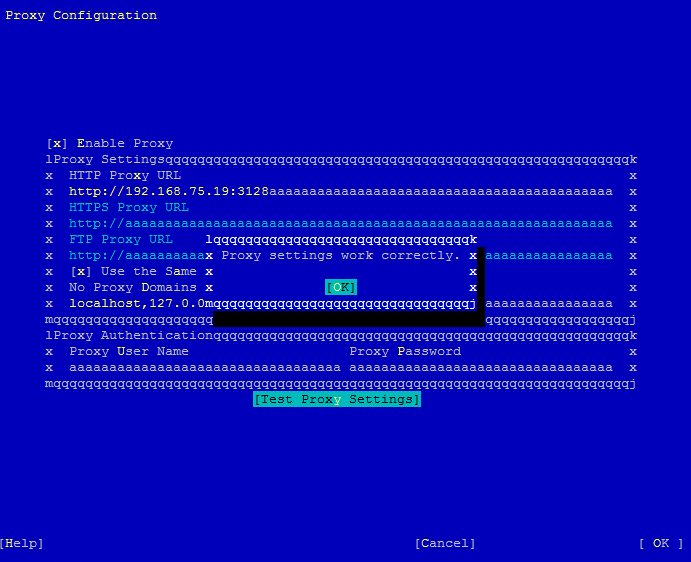

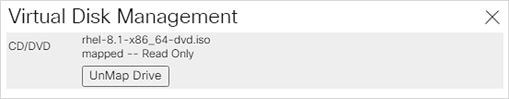

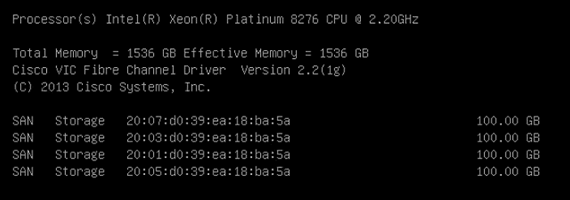

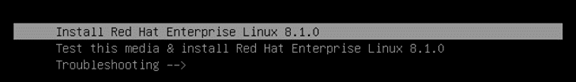

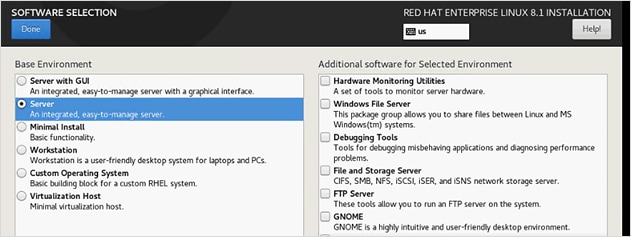

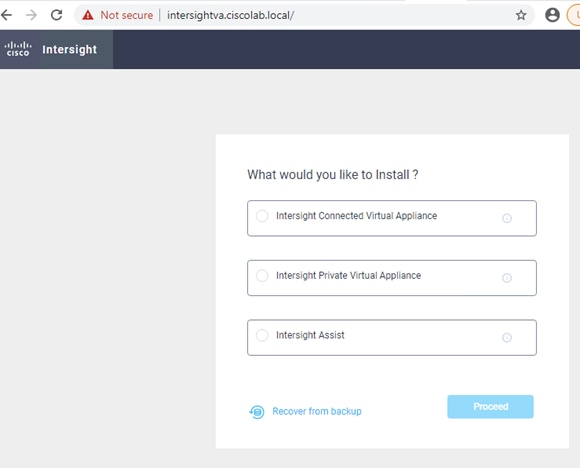

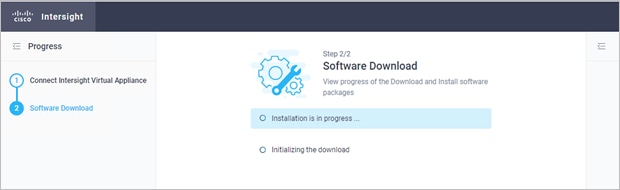

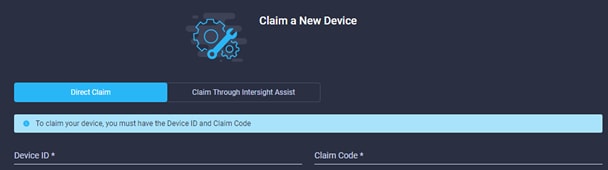

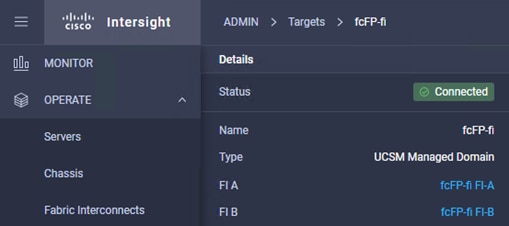

13. Log back into Cisco UCS Manager.