VersaStack with VMware vSphere 6.7, Cisco UCS 4th Generation Fabric, and IBM FS9100 NVMe-accelerated Storage

Available Languages

VersaStack with VMware vSphere 6.7, Cisco UCS 4th Generation Fabric, and IBM FS9100 NVMe-accelerated Storage

Deployment Guide for VersaStack with VMware vSphere 6.7 U2, Cisco UCS Manager 4.0(4), and IBM Spectrum Virtualize 8.2.1

Last Updated: October 8, 2019

About the Cisco Validated Design Program

The Cisco Validated Design (CVD) Program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information, go to:

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2019 Cisco Systems, Inc. All rights reserved.

Table of Contents

Cisco UCS Connectivity to Nexus Switches

IBM FS9100 Connectivity to Nexus Switches

Cisco UCS connectivity to SAN Fabric

Cisco Nexus 9000 Initial Configuration Setup

Enable Appropriate Cisco Nexus 9000 Features and Settings

Cisco Nexus 9000 A and Cisco Nexus 9000 B

Add NTP Distribution Interface

Create VLANs for VersaStack IP Traffic

Cisco Nexus 9000 A and Cisco Nexus 9000 B

Configure Virtual Port Channel Domain

Configure Network Interfaces for the vPC Peer Links

Configure Network Interfaces to Cisco UCS Fabric Interconnects

Enable UDLD for Cisco UCS Interfaces

Cisco Nexus A and Cisco Nexus B

Configure Network Interfaces Connected to IBM FS9100 iSCSI Ports (iSCSI Deployment)

Management Uplink into Existing Network Infrastructure

Cisco Nexus 9000 A and B using Port Channel Example

Cisco MDS 9132T Configuration (FC Deployment)

VersaStack Cisco MDS Base Configuration

Cisco MDS 9132T Initial Configuration Setup

IBM Service Support Representative (SSR) Configuration

Customer Configuration Setup Tasks via the GUI

System Dashboard, and Post-Initialization Setup Tasks

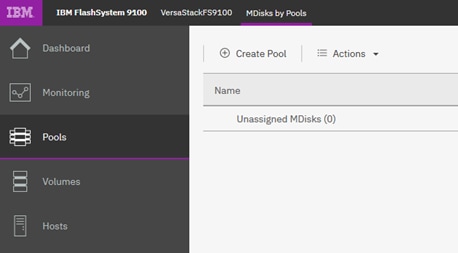

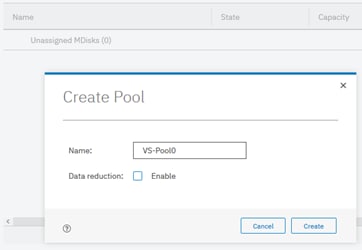

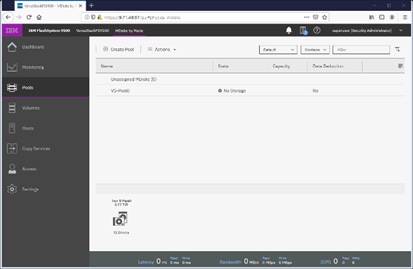

Create Storage Pools and Allocate Storage

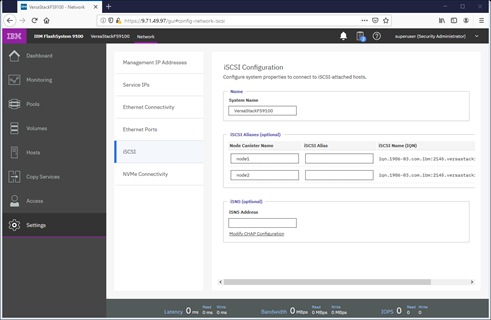

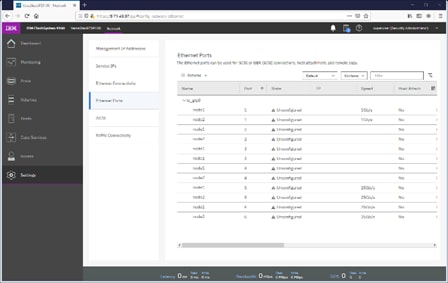

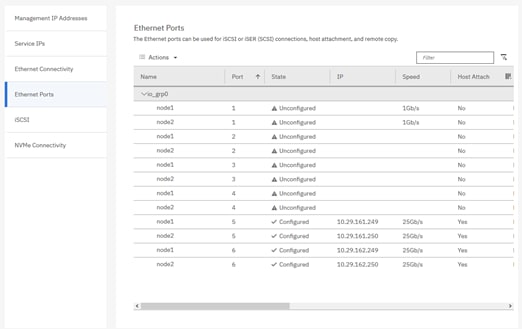

IBM FS9100 iSCSI Configuration (iSCSI Deployment)

Cisco UCS Server Configuration

Cisco UCS Initial Configuration

Upgrade Cisco UCS Manager Software to Version 4.0(4c)

Add a Block of Management IP Addresses for KVM Access

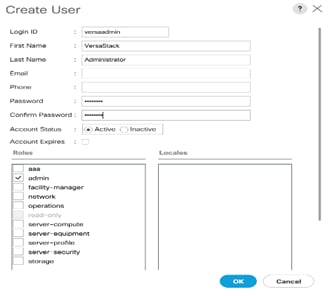

Add an Additional Administrator User

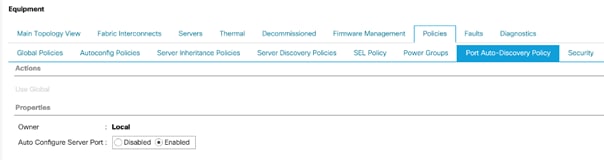

Enable Port Auto-Discovery Policy

Enable Info Policy for Neighbor Discovery

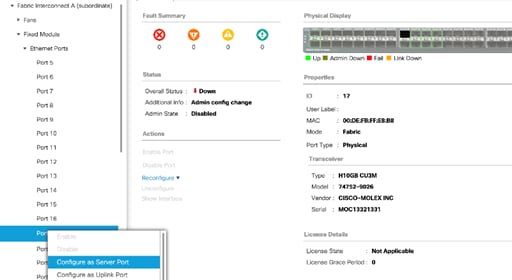

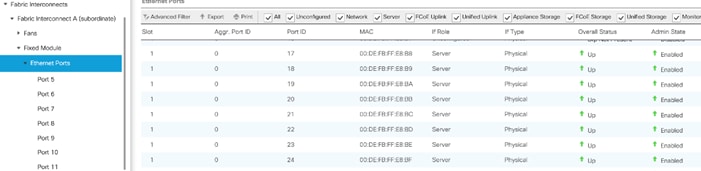

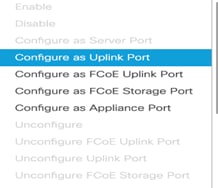

Enable Server and Uplink Ports

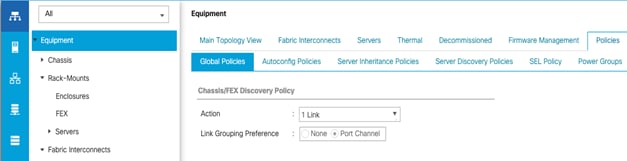

Acknowledge Cisco UCS Chassis and FEX

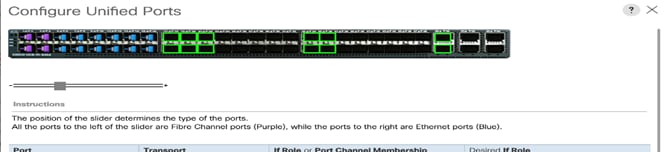

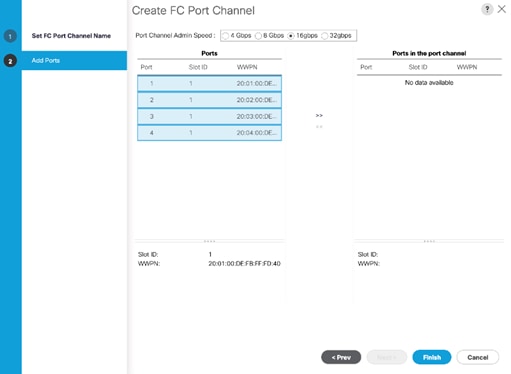

Enable Fibre Channel Ports (FC Deployment)

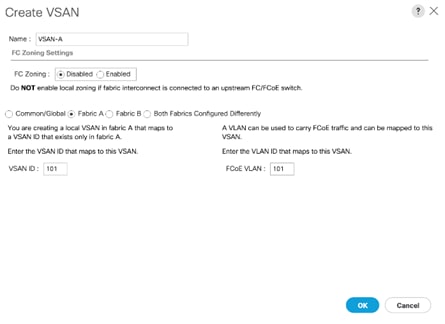

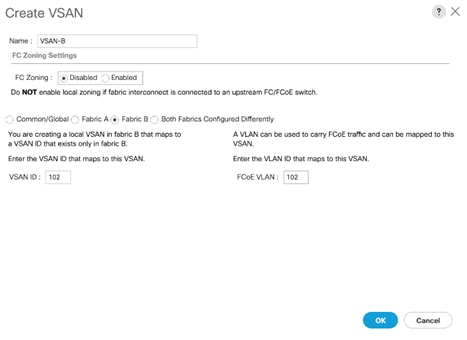

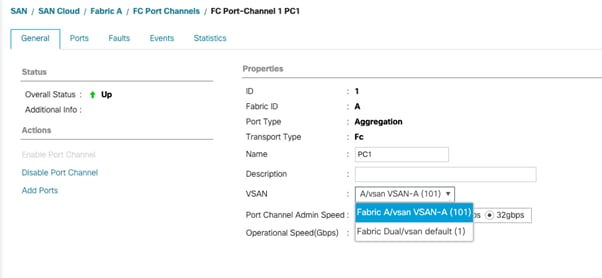

Create VSAN for the Fibre Channel Interfaces

Create Port Channels for the Fibre Channel Interfaces

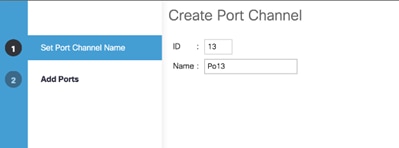

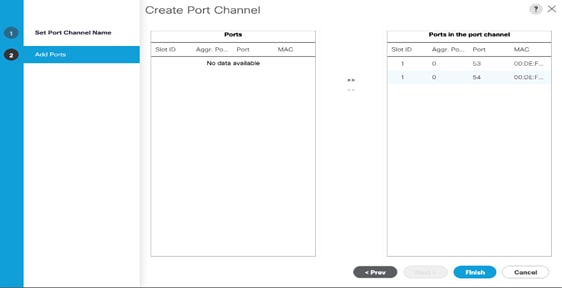

Create Port Channels for Ethernet Uplinks

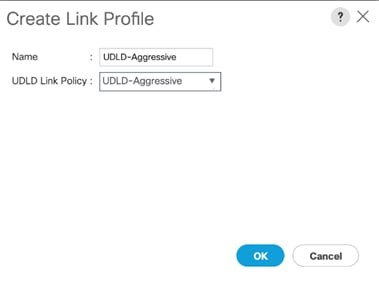

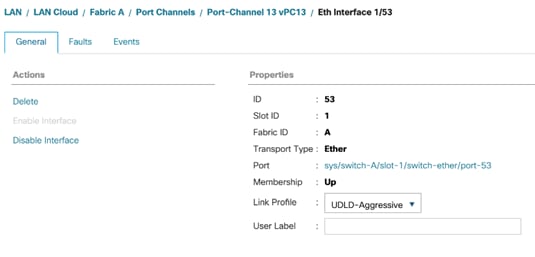

Add UDLD to Uplink Port Channels

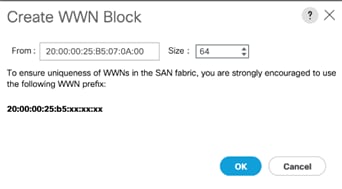

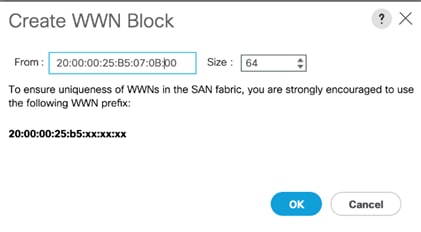

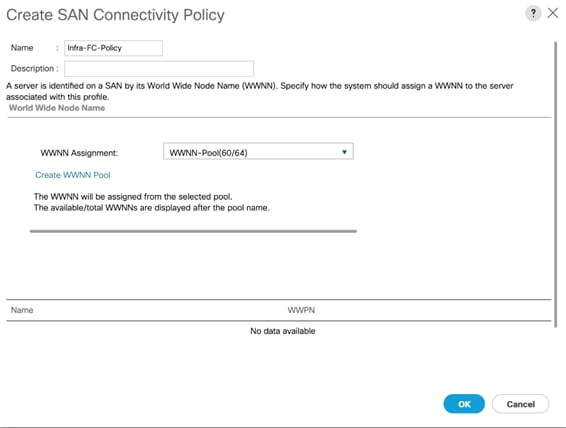

Create a WWNN Address Pool for FC based Storage Access

Create a WWPN Address Pools for FC Based Storage Access

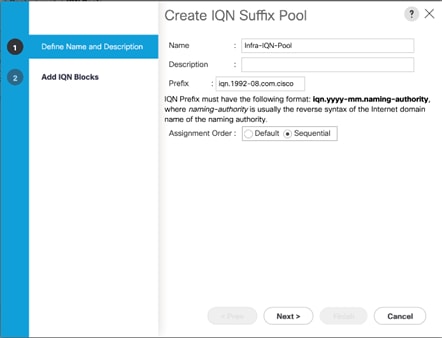

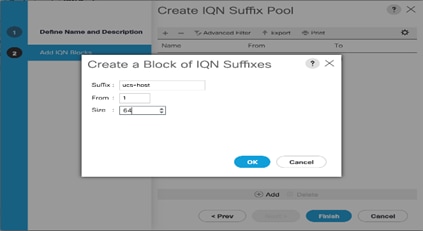

Create IQN Pools for iSCSI Boot and LUN Access (iSCSI Deployment)

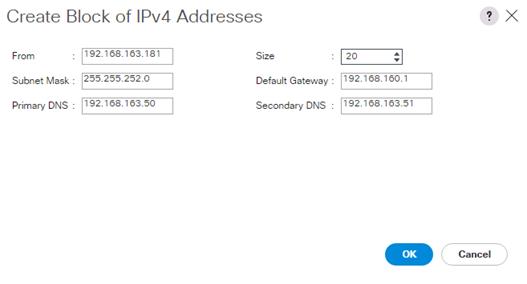

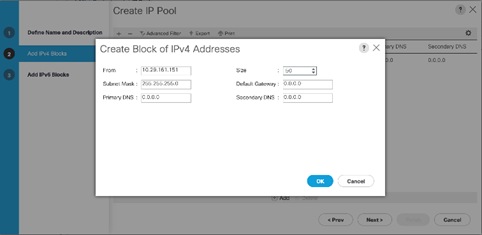

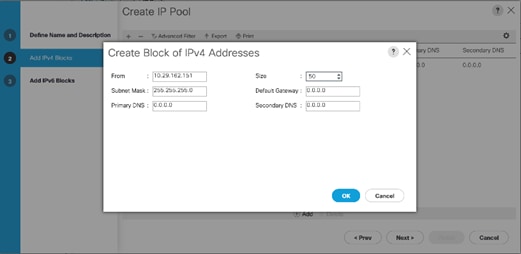

Create IP Pools for iSCSI Boot and LUN Access (iSCSI Deployment)

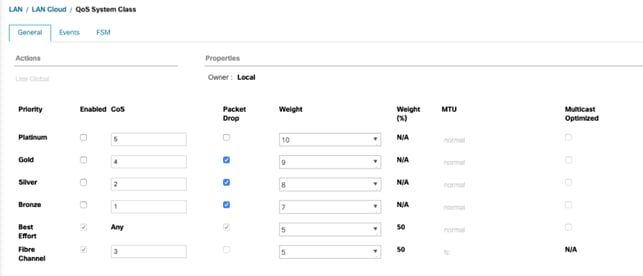

Set Jumbo Frames in Cisco UCS Fabric

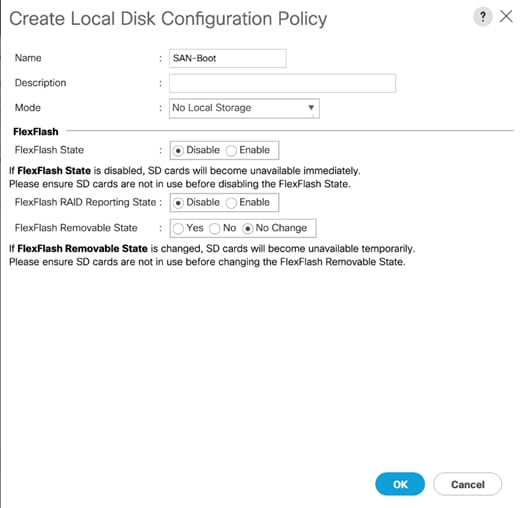

Create Local Disk Configuration Policy

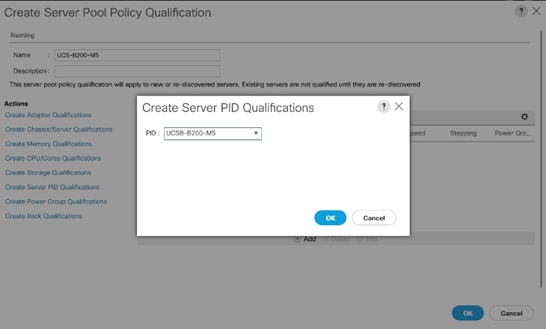

Create Server Pool Qualification Policy (Optional)

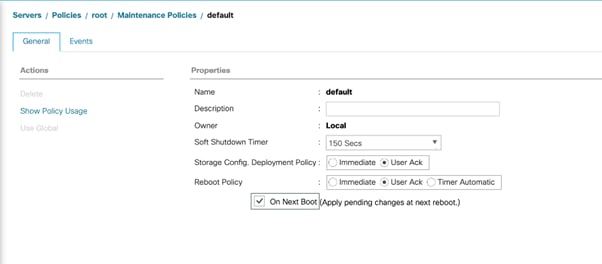

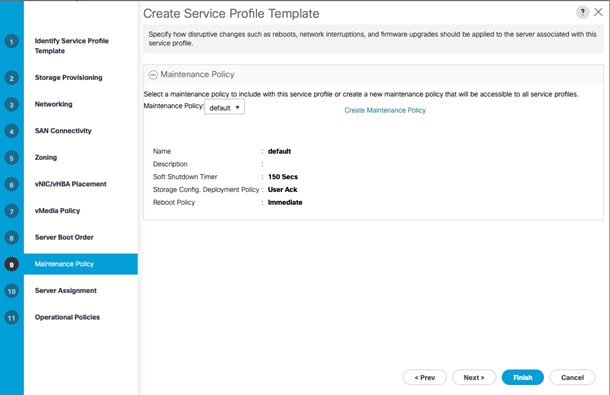

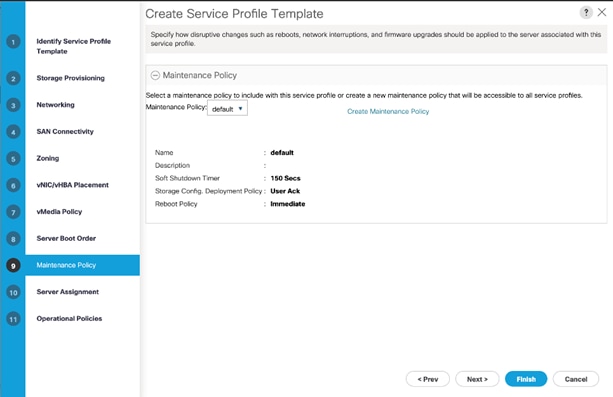

Update Default Maintenance Policy

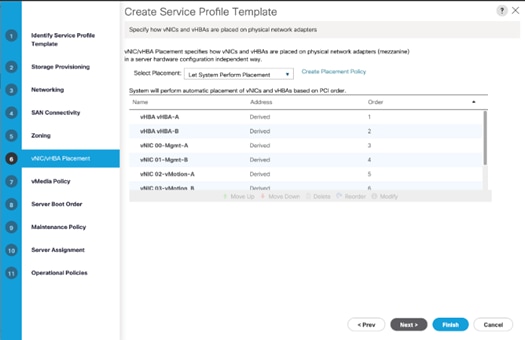

Create vNIC/vHBA Placement Policy

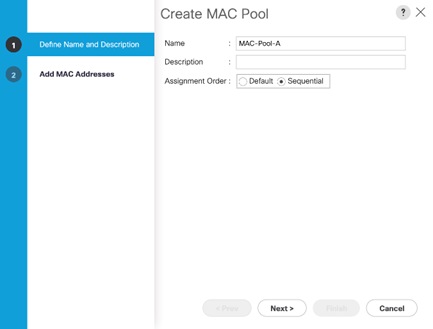

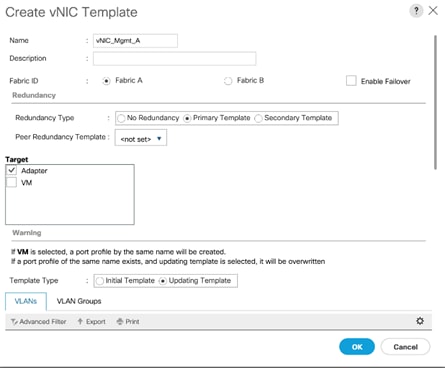

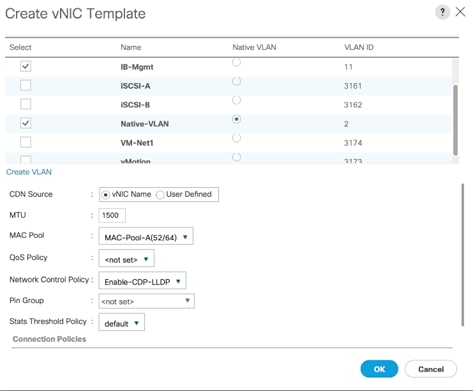

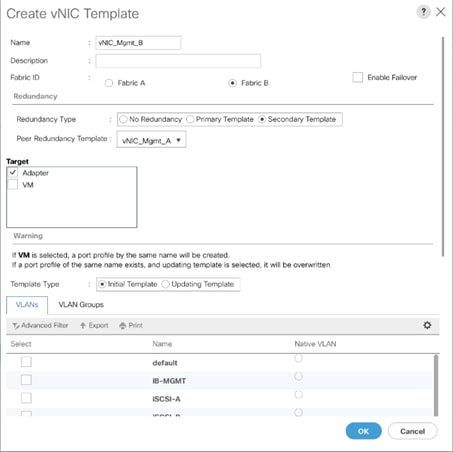

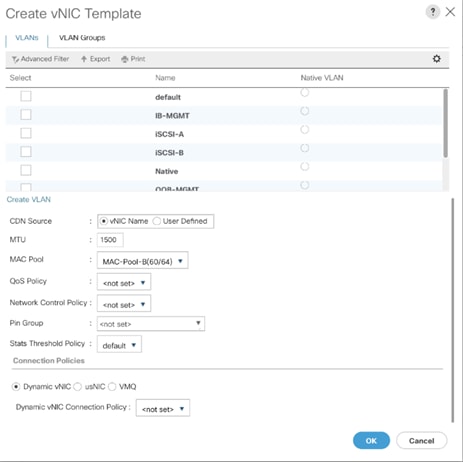

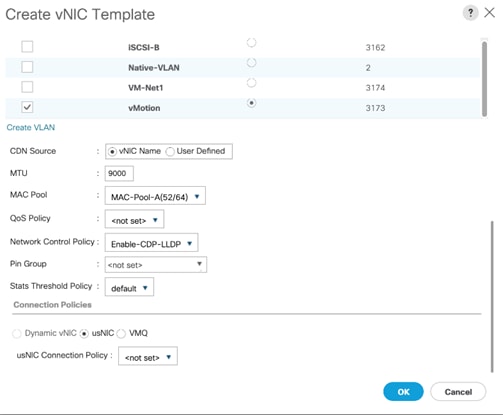

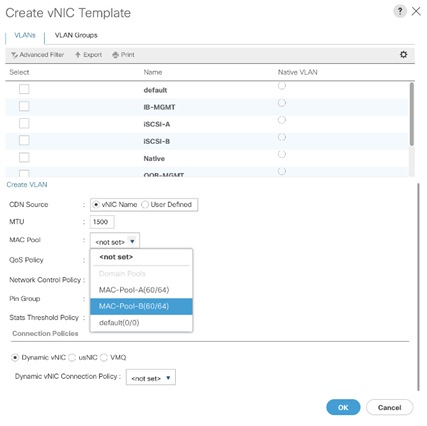

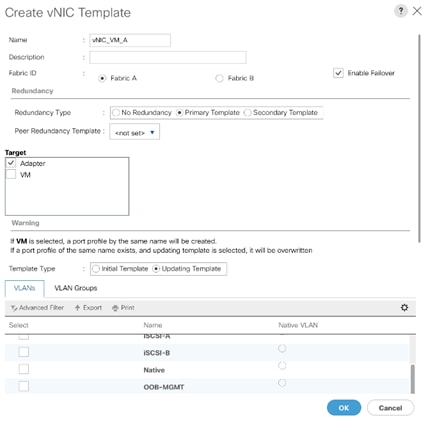

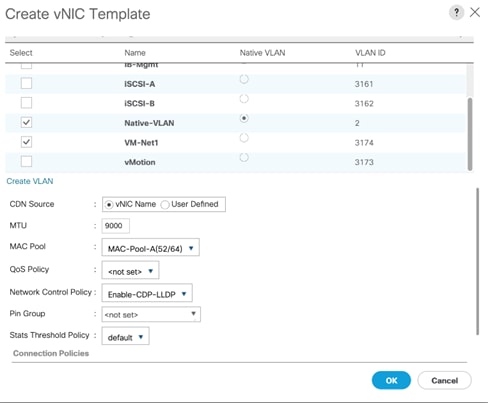

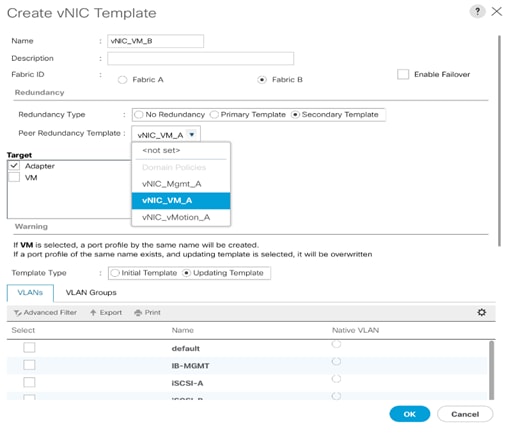

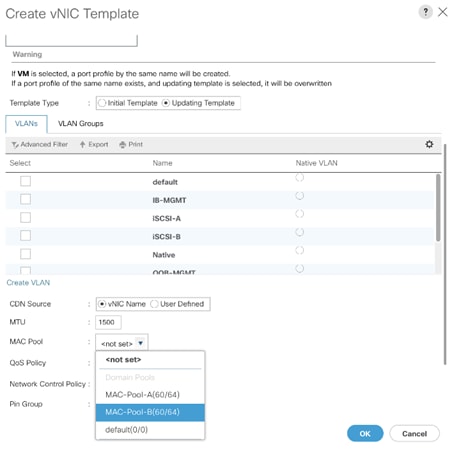

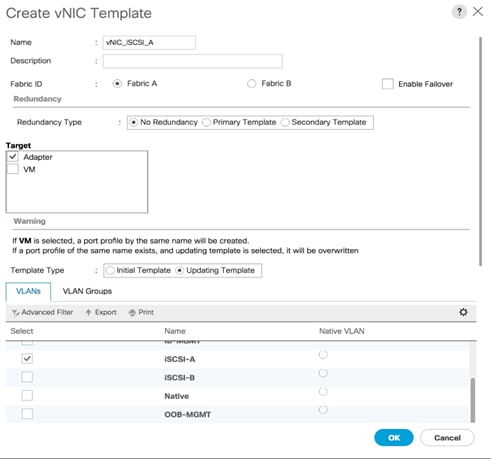

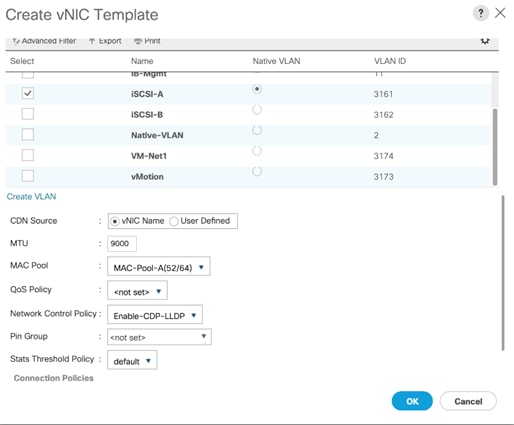

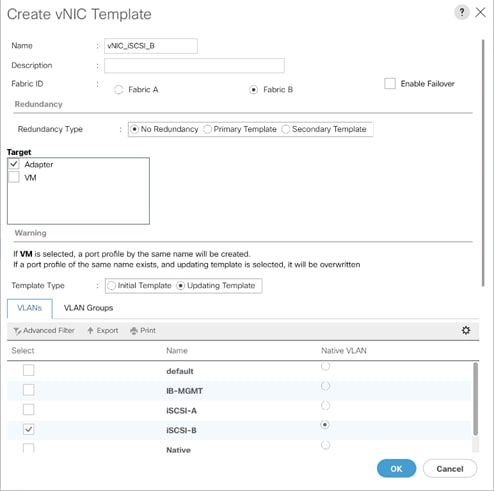

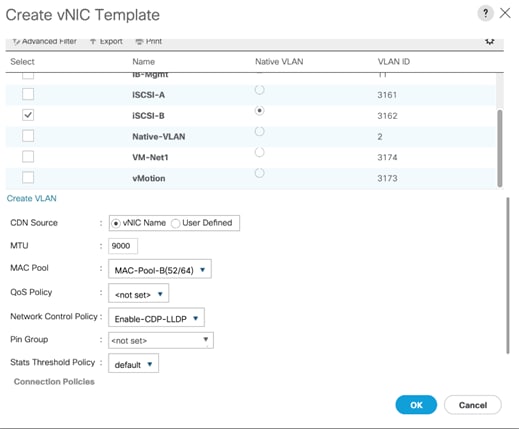

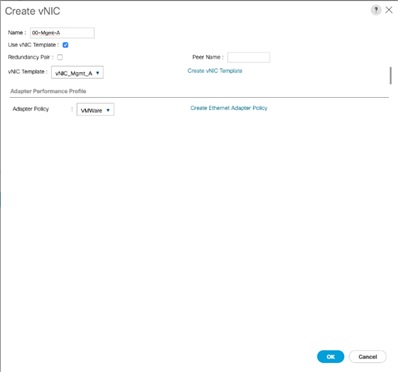

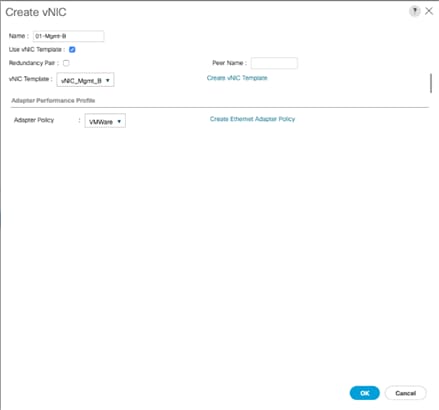

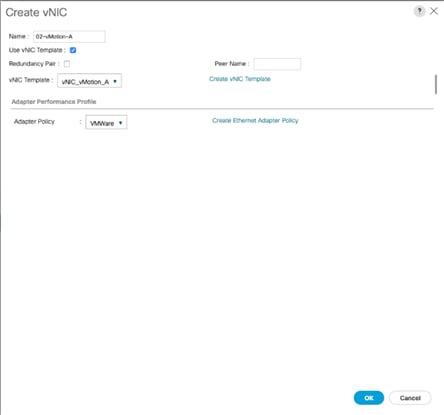

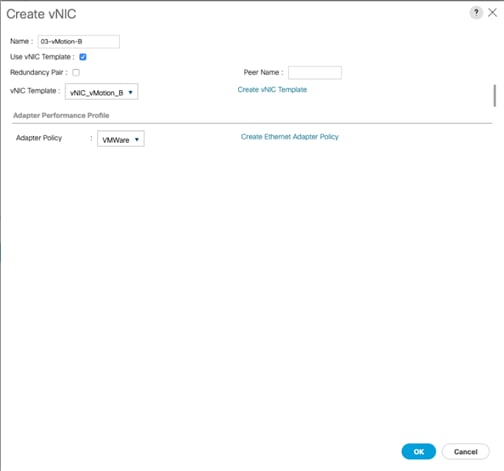

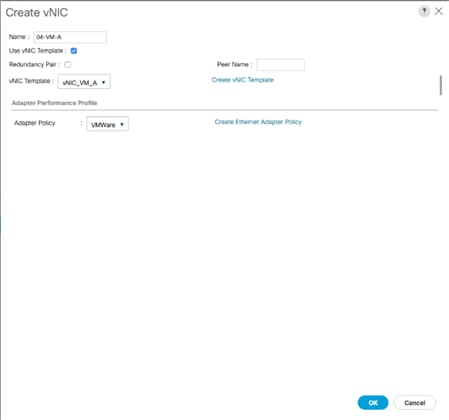

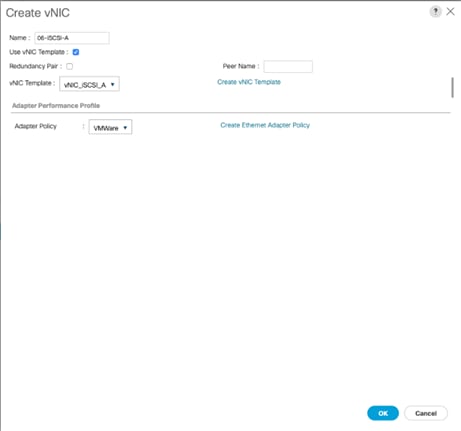

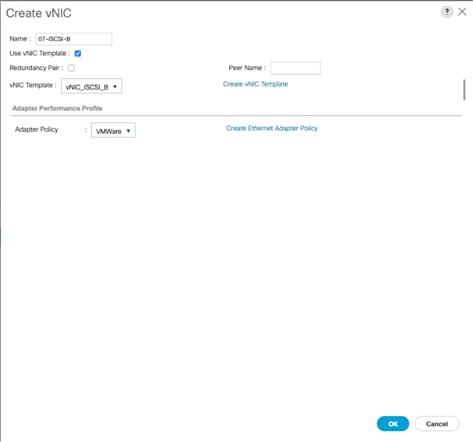

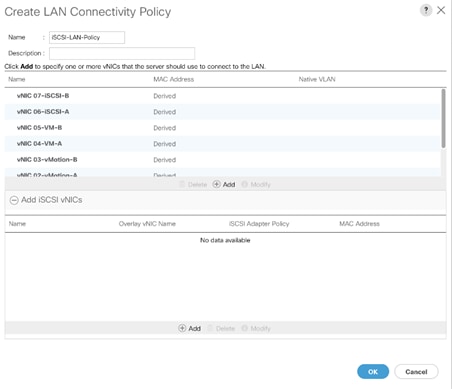

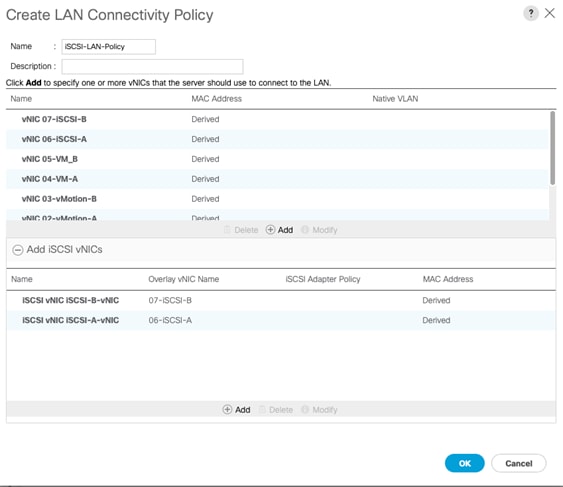

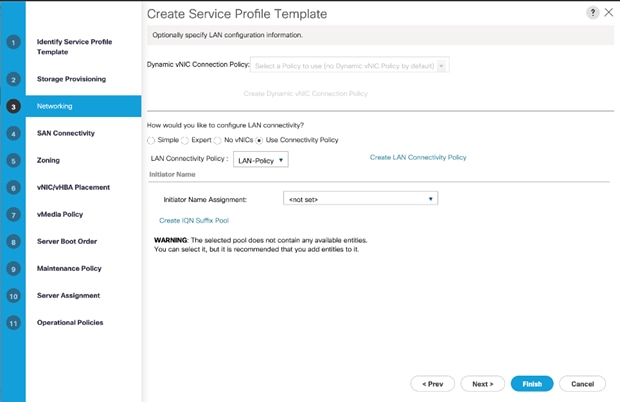

Create LAN Connectivity Policy

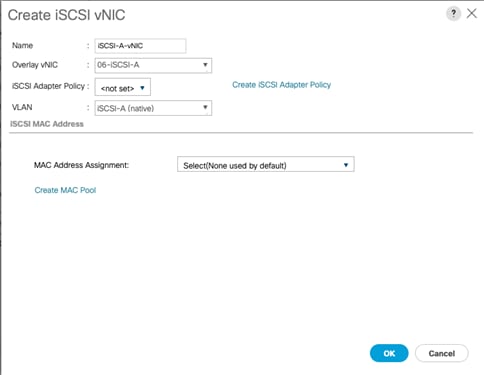

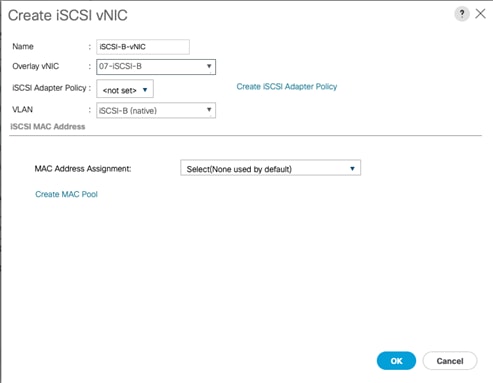

Add iSCSI vNICs in LAN Policy (iSCSI Deployment)

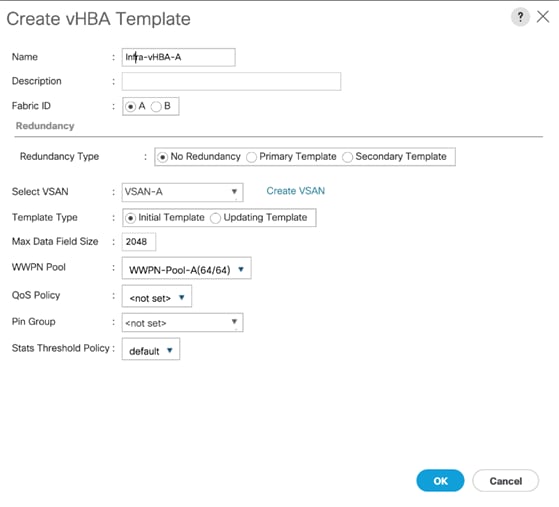

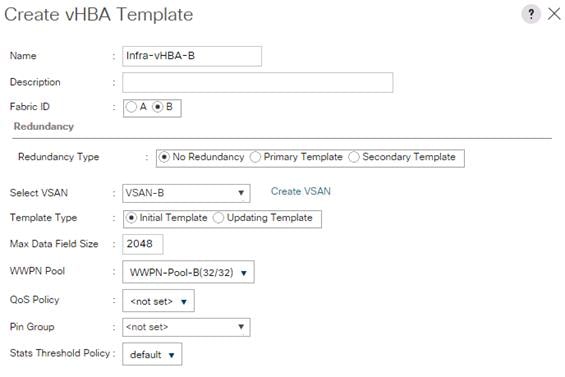

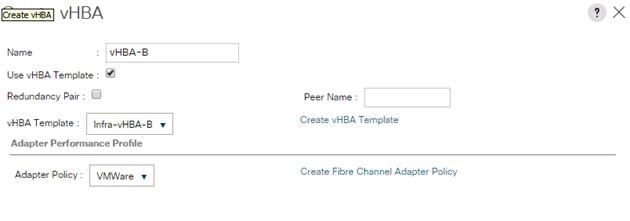

Create vHBA Templates for FC Connectivity (FC Deployment)

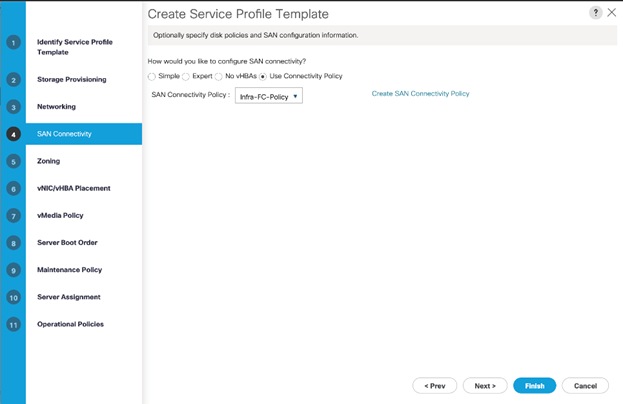

Create FC SAN Connectivity Policies (FC Deployment)

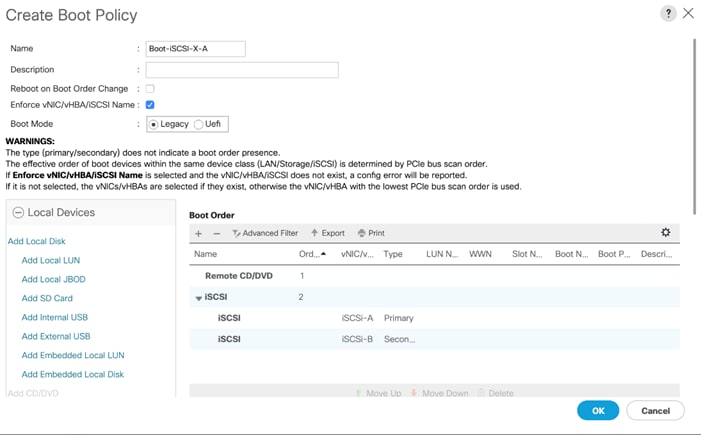

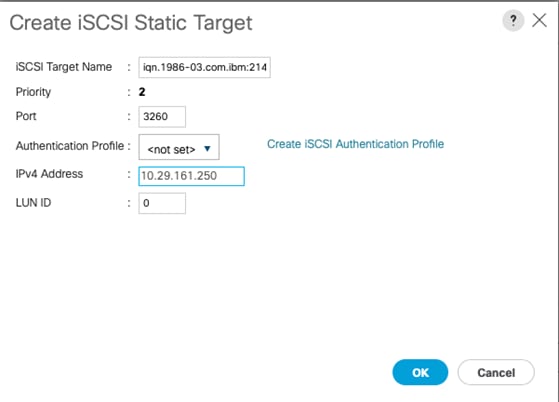

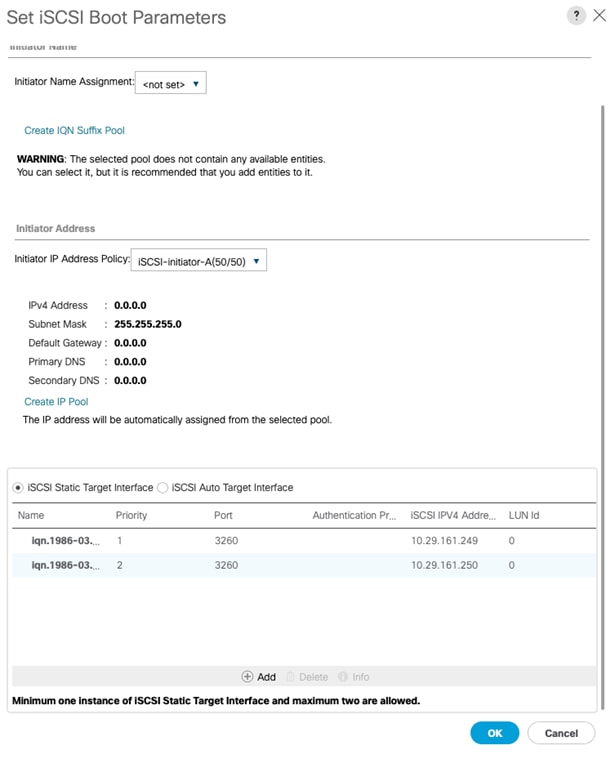

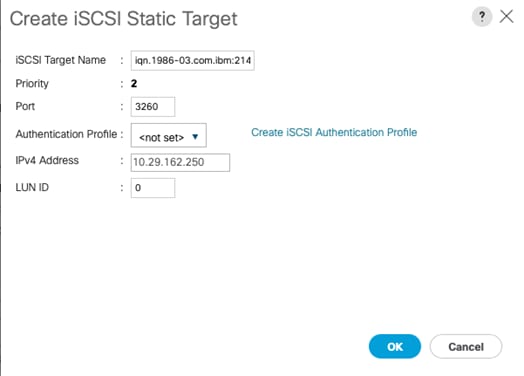

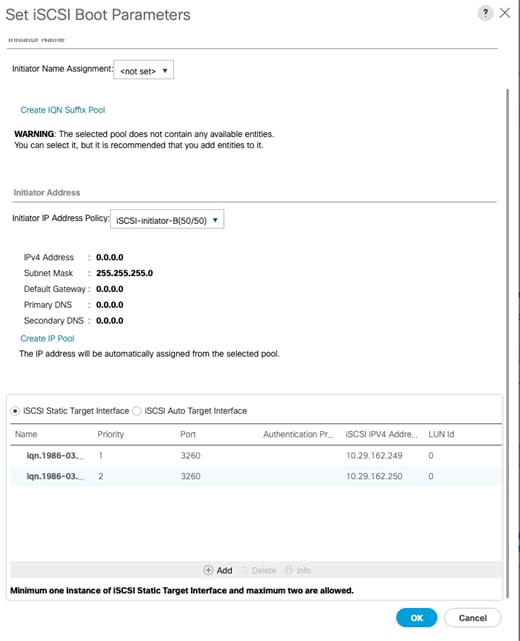

Create iSCSI Boot Policy (iSCSI Deployment)

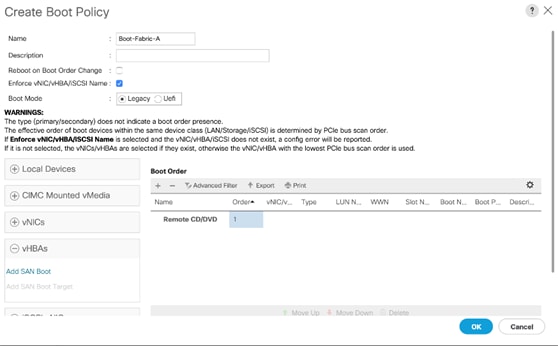

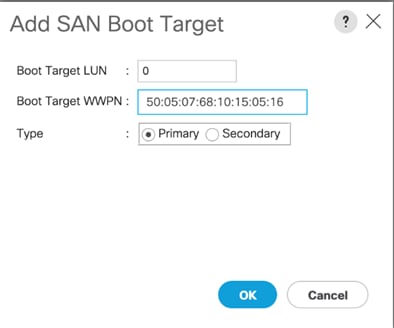

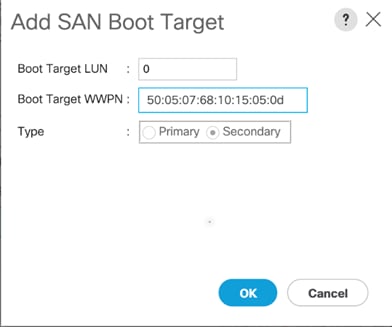

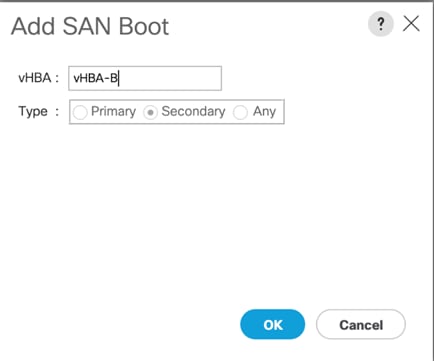

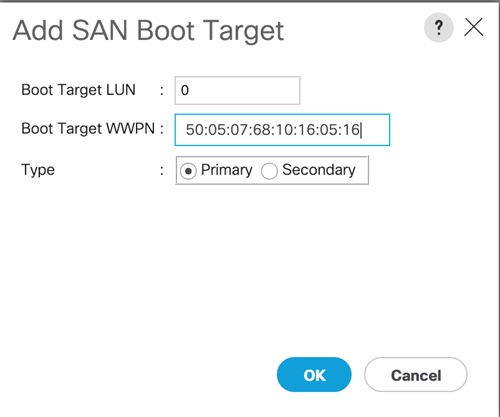

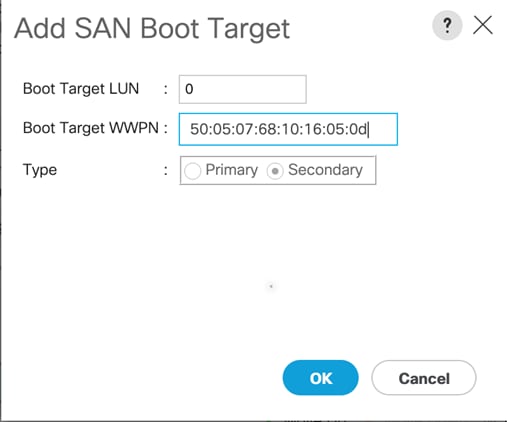

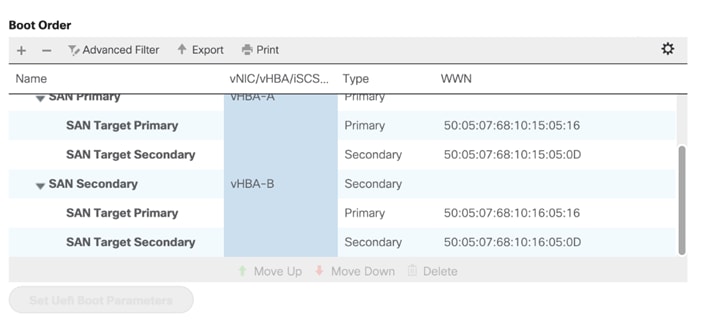

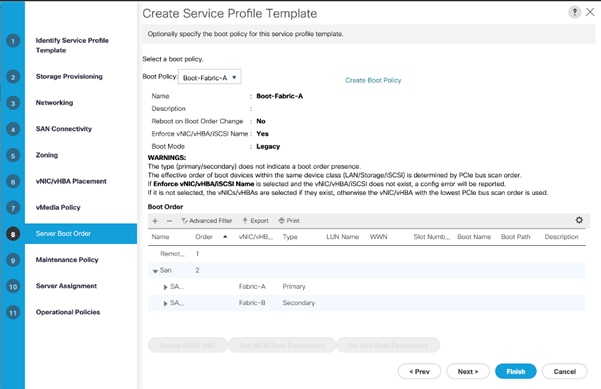

Create FC Boot Policies (FC Deployment)

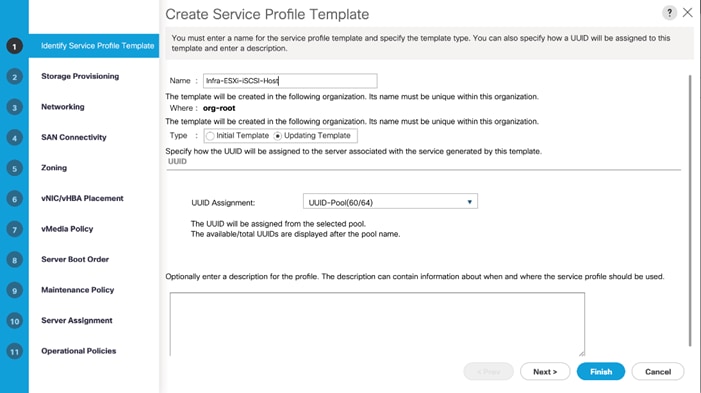

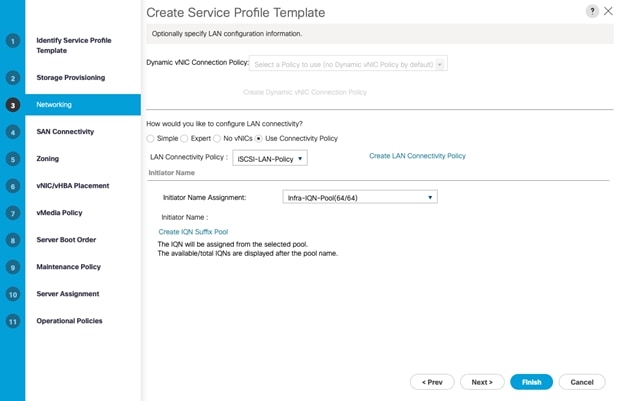

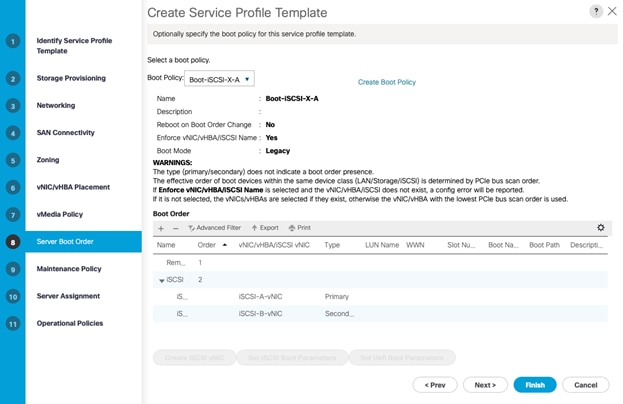

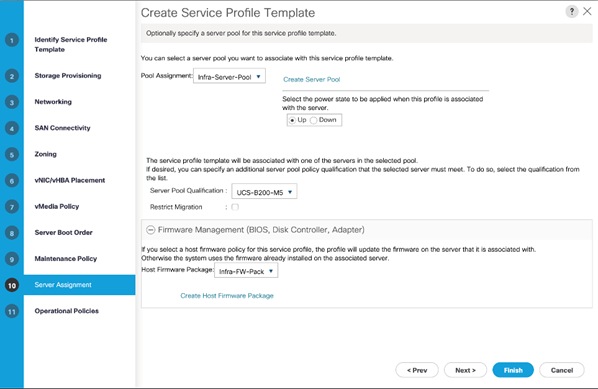

Create iSCSI Boot Service Profile Template (iSCSI Deployment)

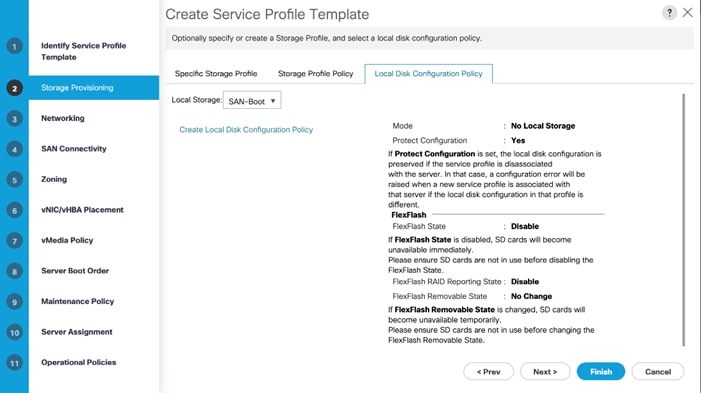

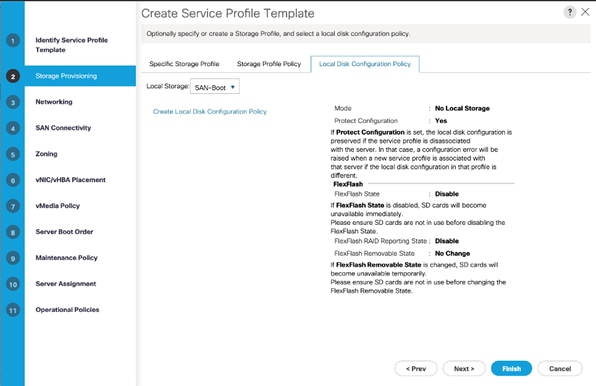

Configure Storage Provisioning

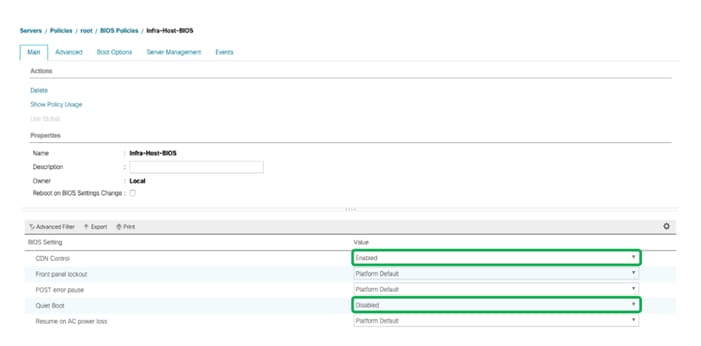

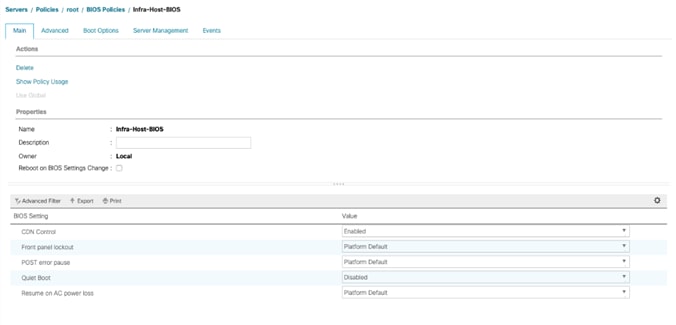

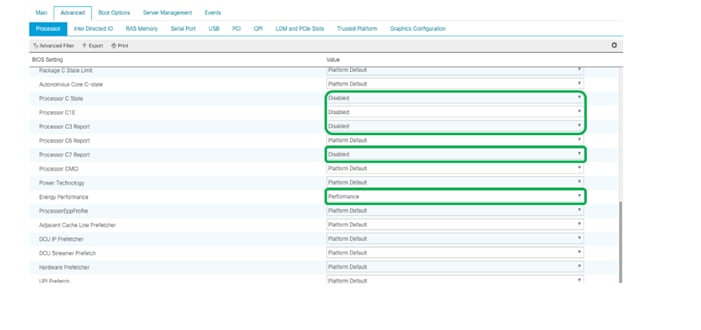

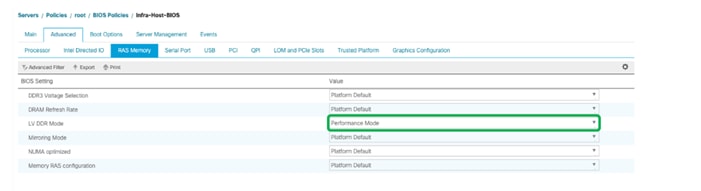

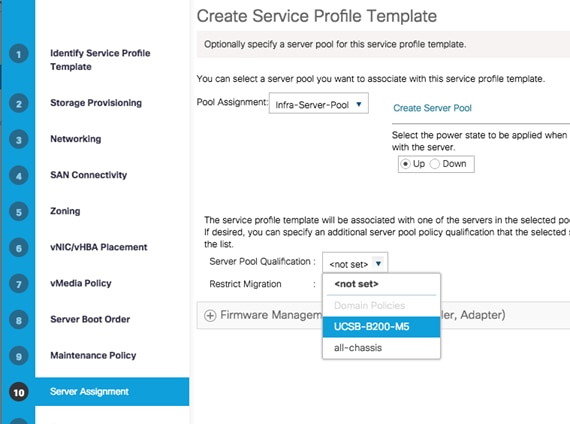

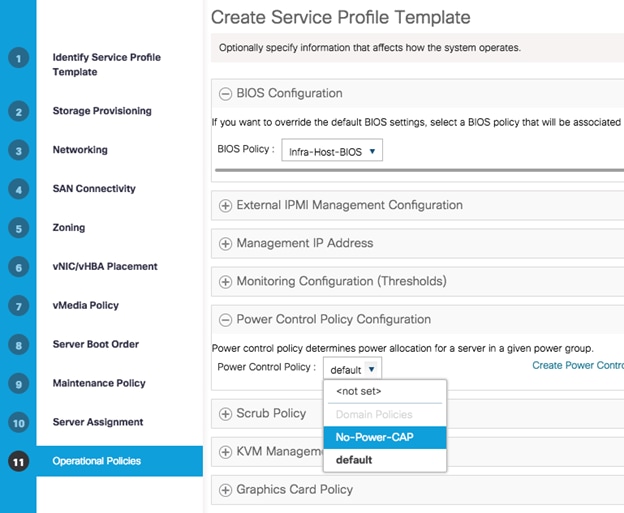

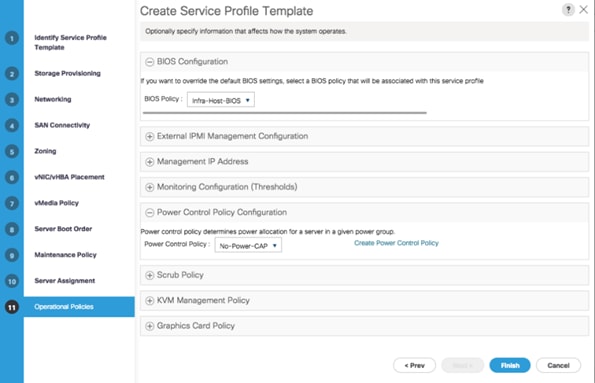

Configure Operational Policies

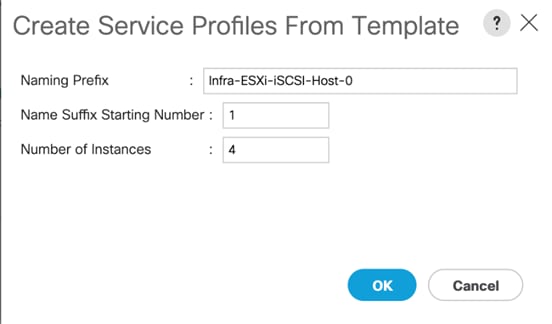

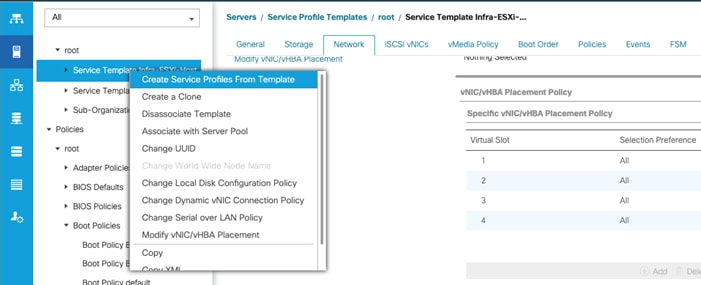

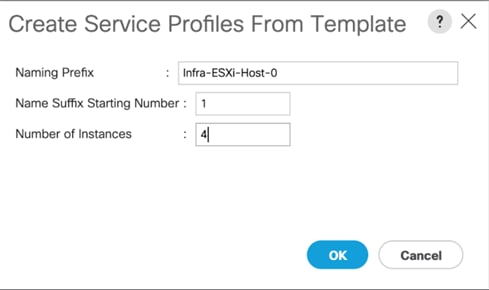

Create iSCSI Boot Service Profiles (iSCSI Deployment)

Create FC Boot Service Profile Template (FC Deployment)

Configure Storage Provisioning

Configure Operational Policies

Create FC Boot Service Profiles (FC Deployment)

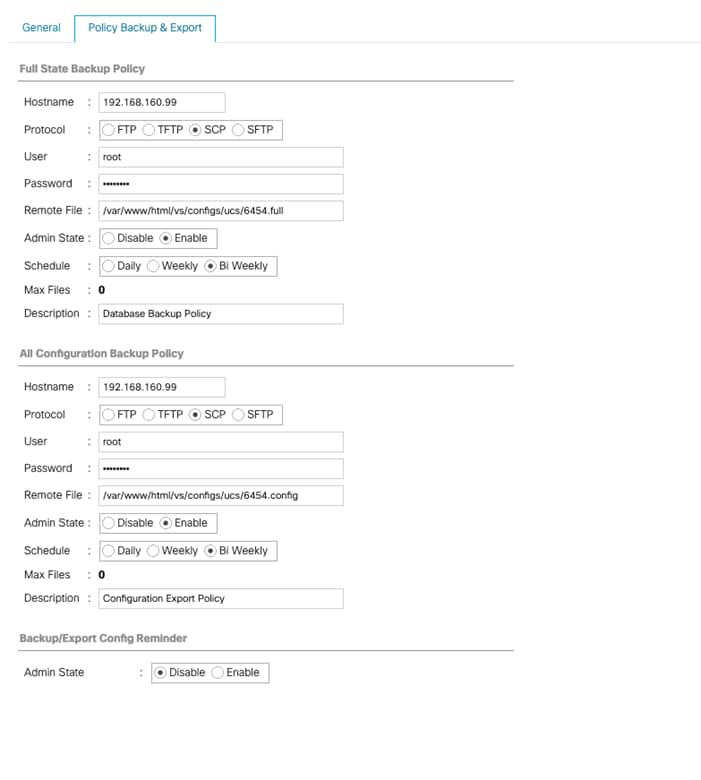

Backup the Cisco UCS Manager Configuration

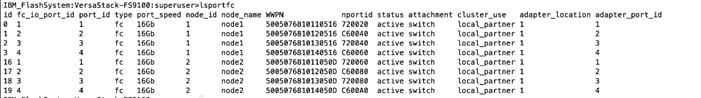

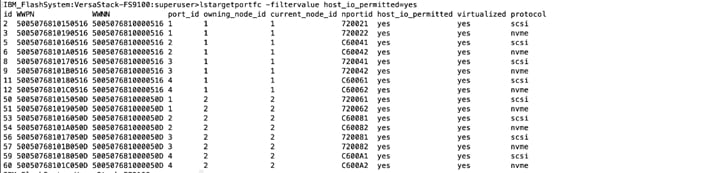

Gather Necessary WWPN Information (FC Deployment)

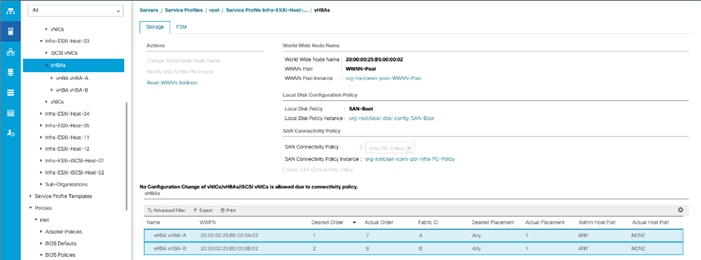

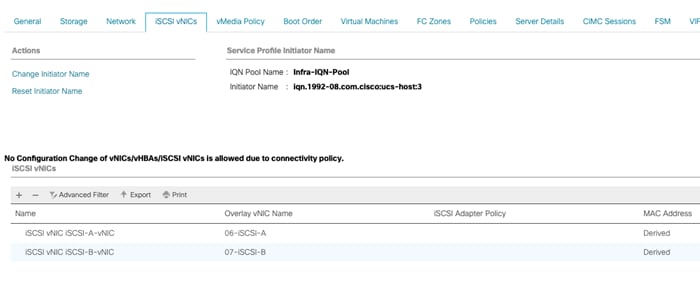

Gather Necessary IQN Information (iSCSI Deployment)

IBM FS9100 iSCSI Storage Configuration (iSCSI Deployment)

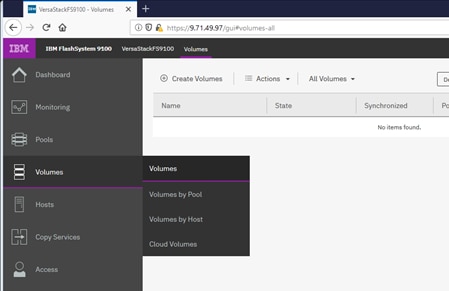

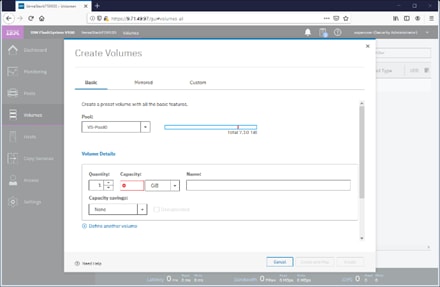

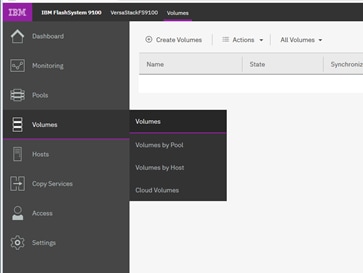

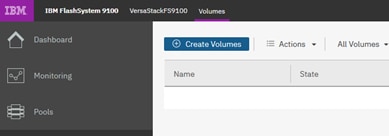

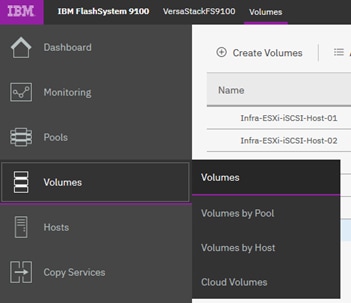

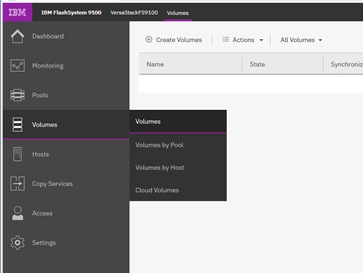

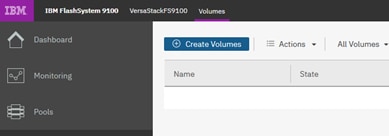

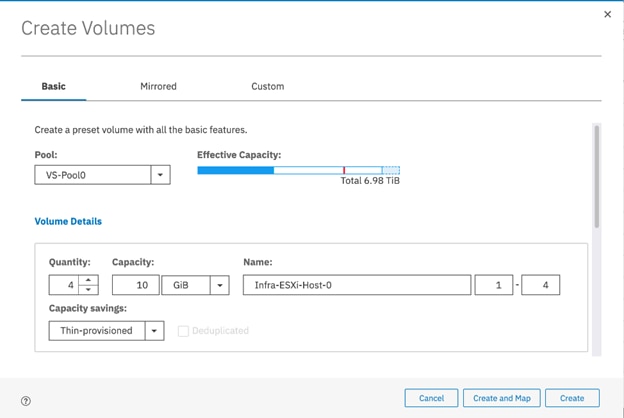

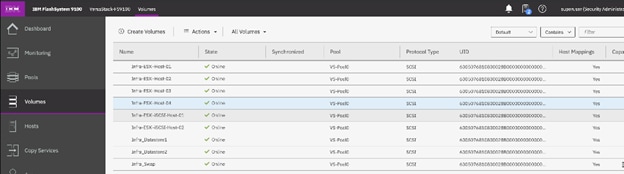

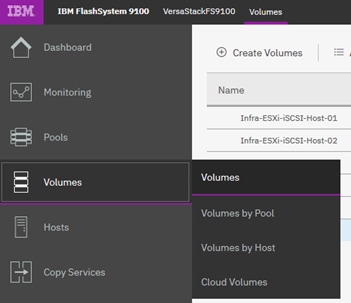

Create Volumes on the Storage System

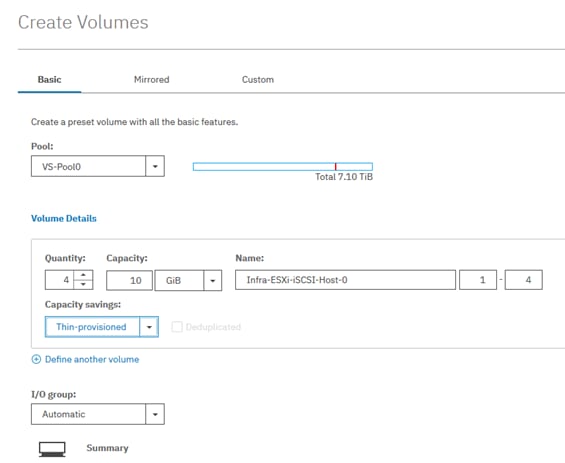

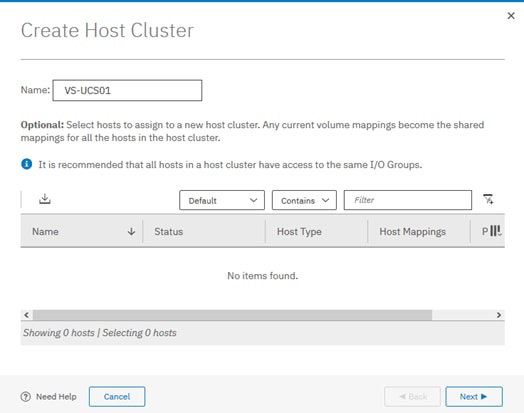

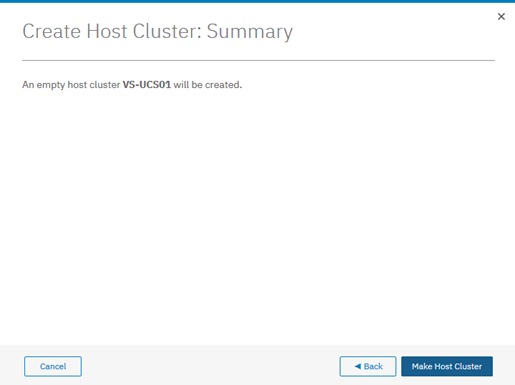

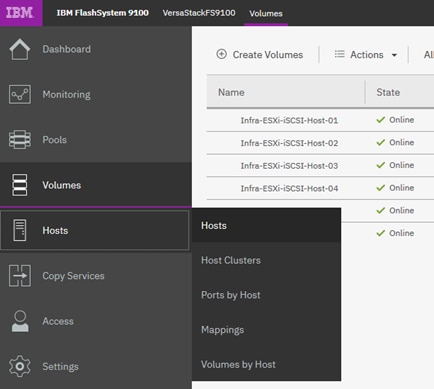

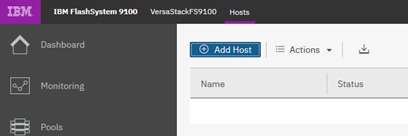

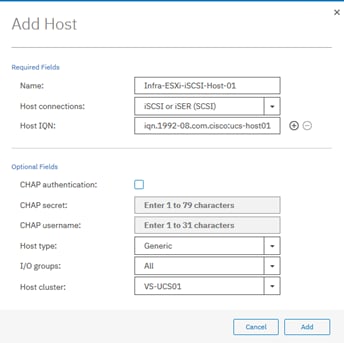

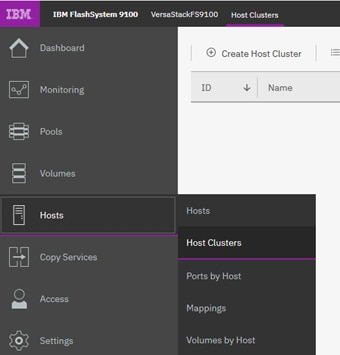

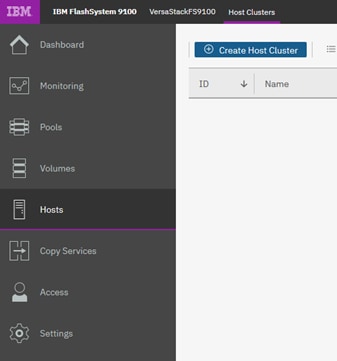

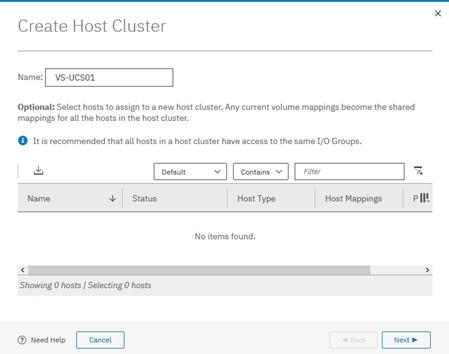

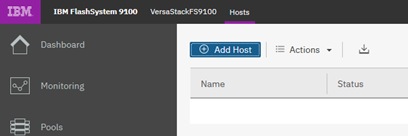

Create Host Cluster & Host objects

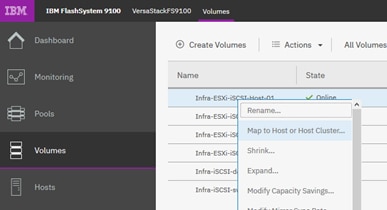

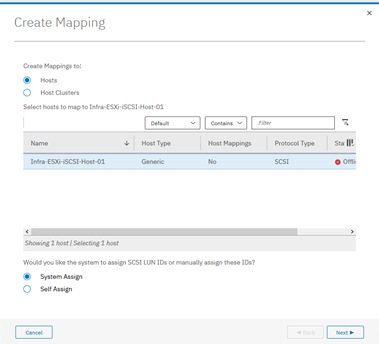

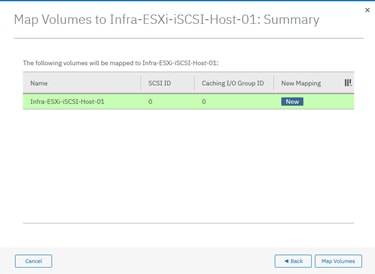

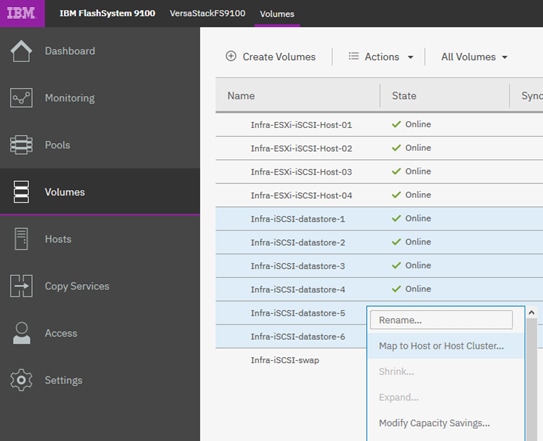

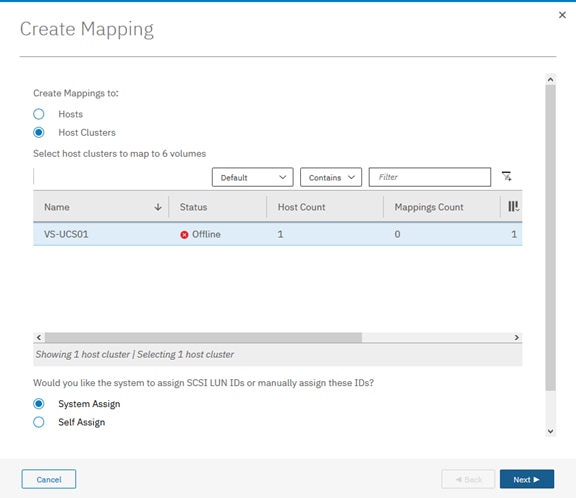

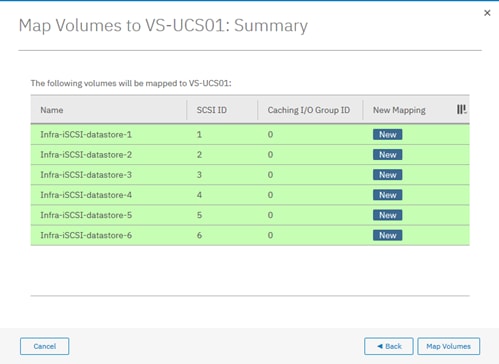

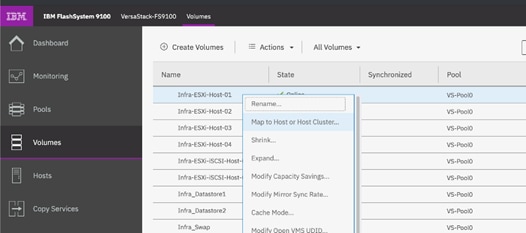

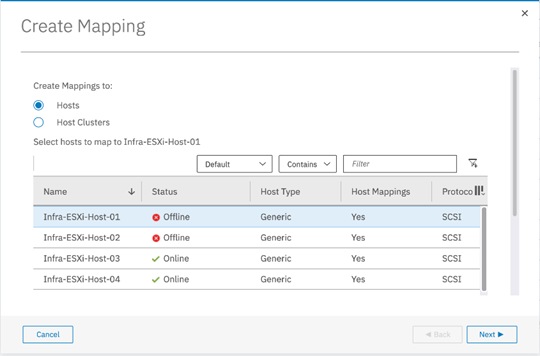

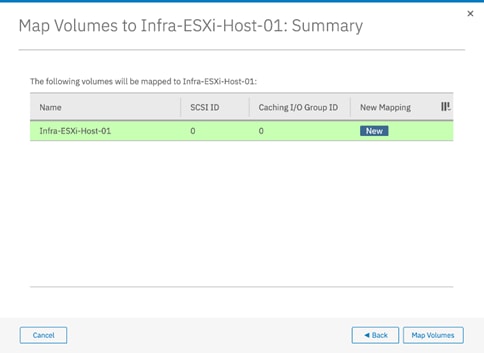

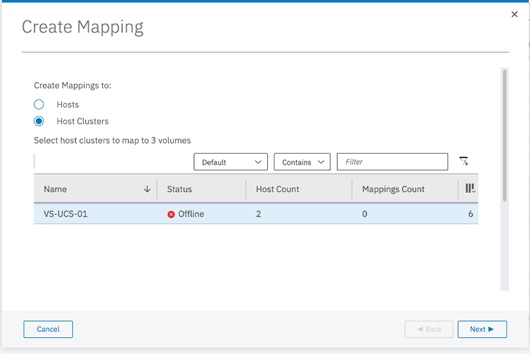

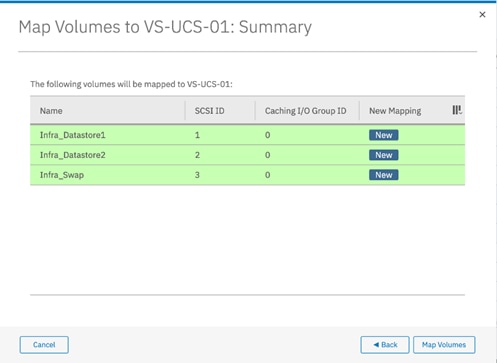

Map Volumes to Hosts and Host Cluster

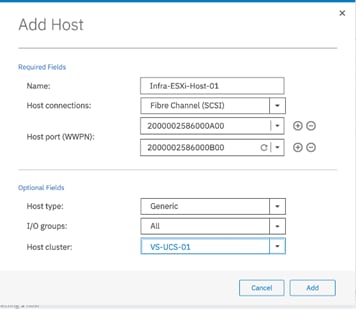

IBM FS9100 Fibre Channel Storage Configuration (FC Deployment)

Create Device Aliases and SAN Zoning

Create Volumes on the Storage System

Create Host Cluster & Host Objects

Map Volumes to Hosts and Host Cluster

VMware vSphere Setup for Cisco UCS Host Environment

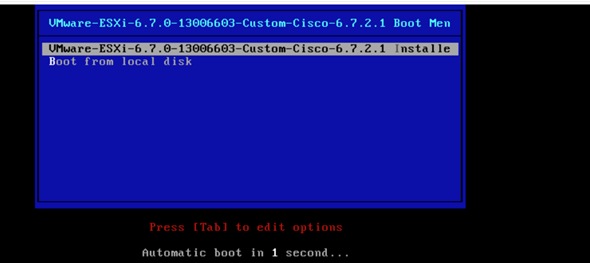

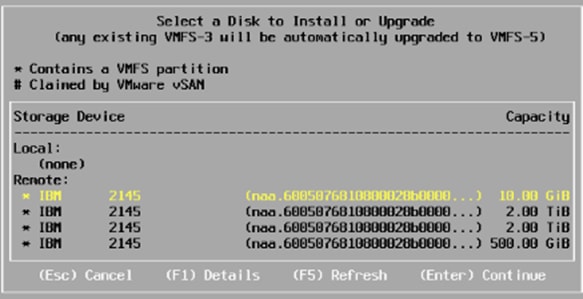

Install ESXi on the UCS Servers

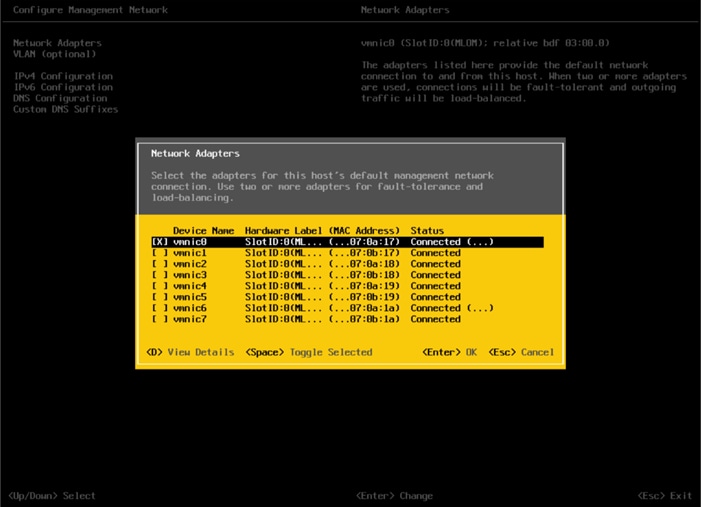

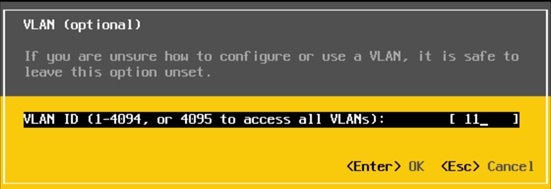

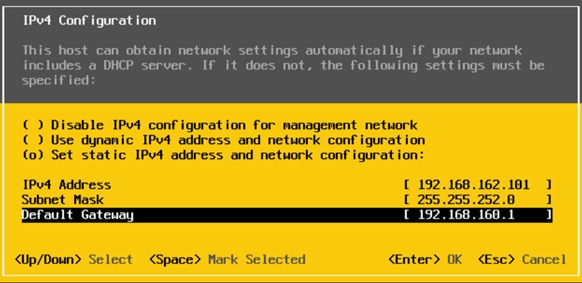

Set Up Management Networking for ESXi Hosts

Log into VMware ESXi Hosts Using VMware vSphere Client

Install VMware Drivers for the Cisco Virtual Interface Card (VIC)

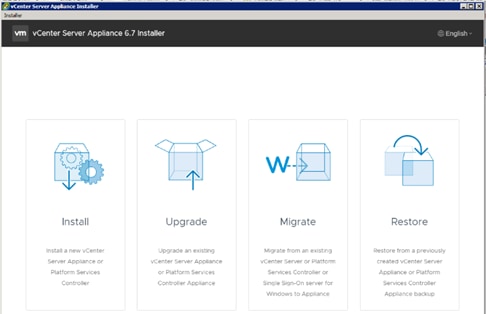

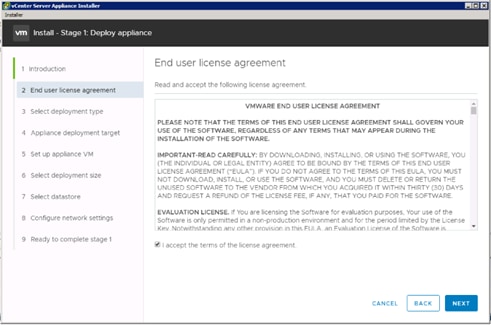

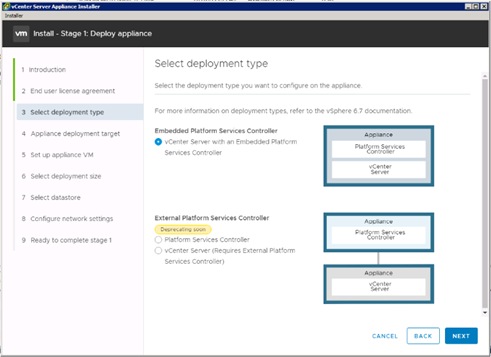

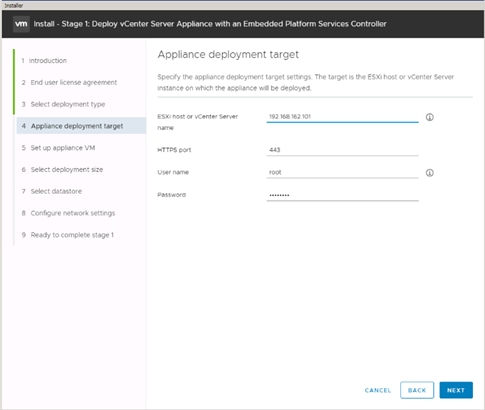

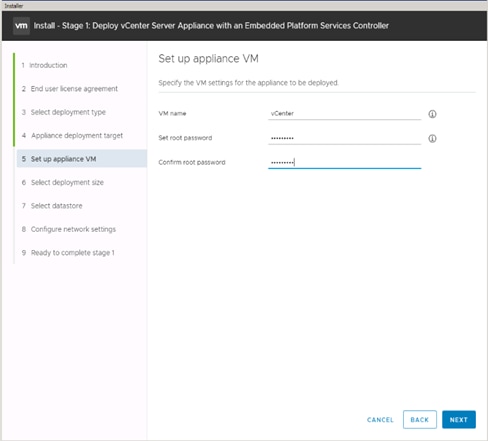

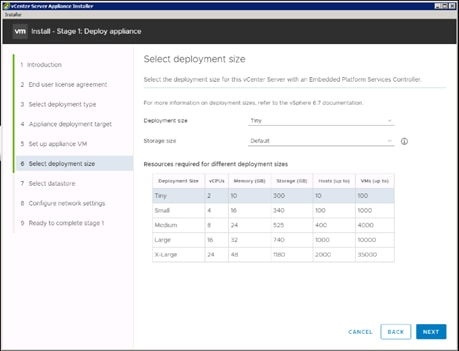

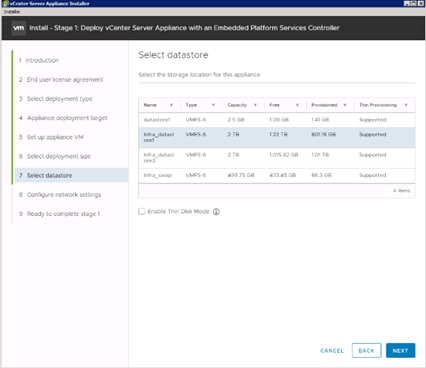

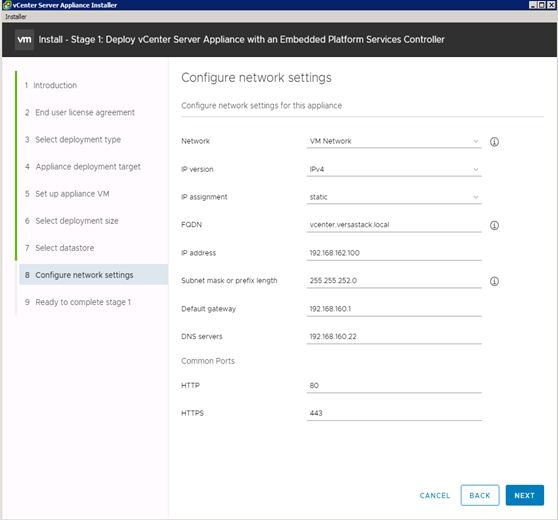

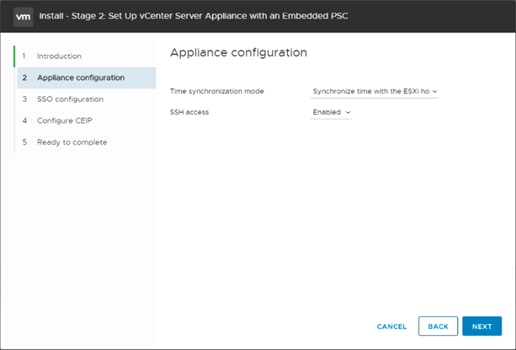

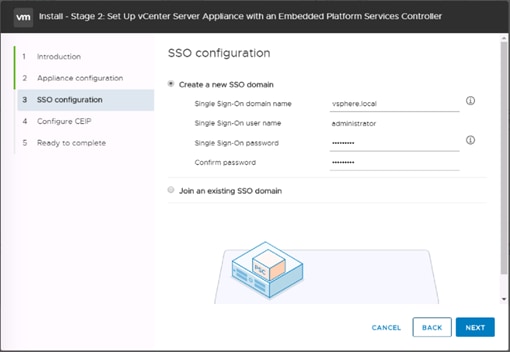

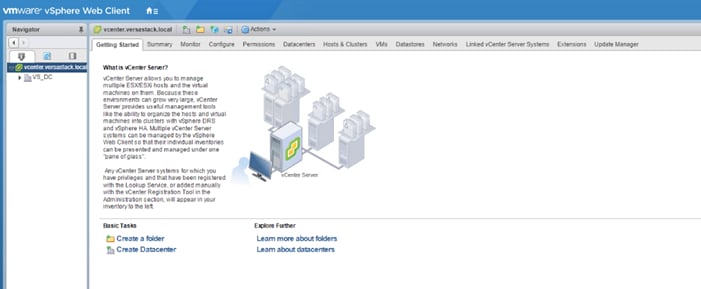

Deploy VMware vCenter Appliance 6.7 (Optional)

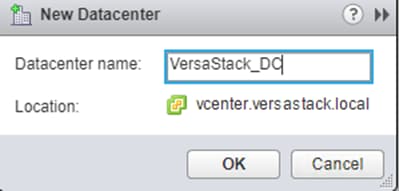

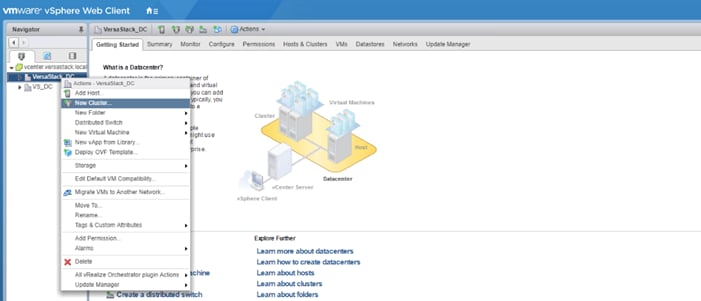

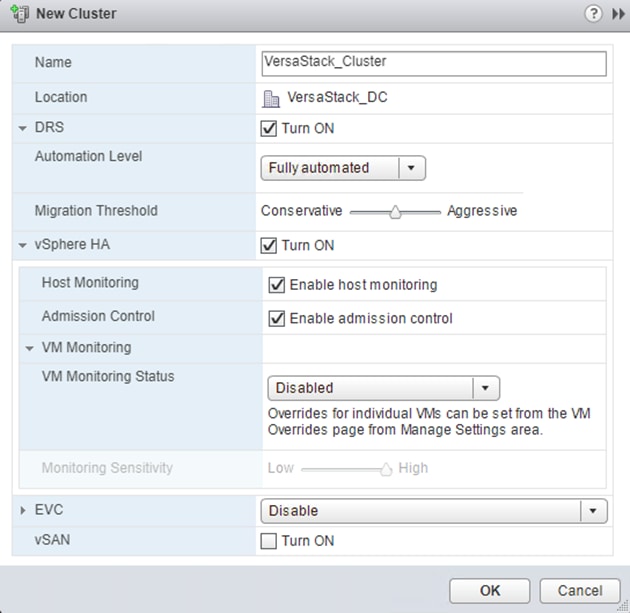

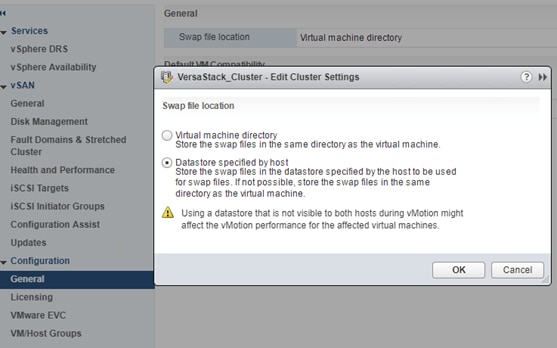

Setup Data Center, Cluster, DRS and HA for ESXi Nodes

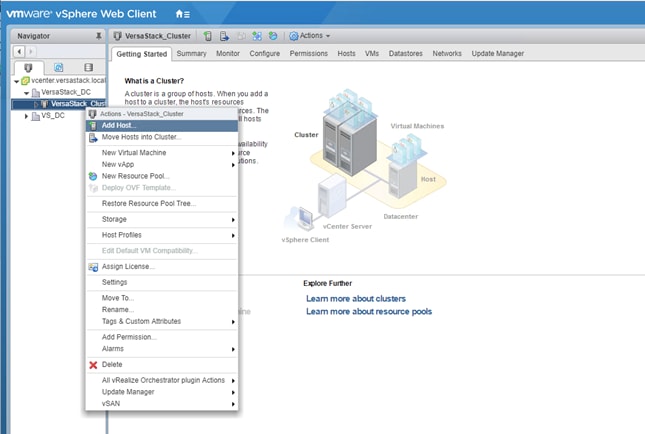

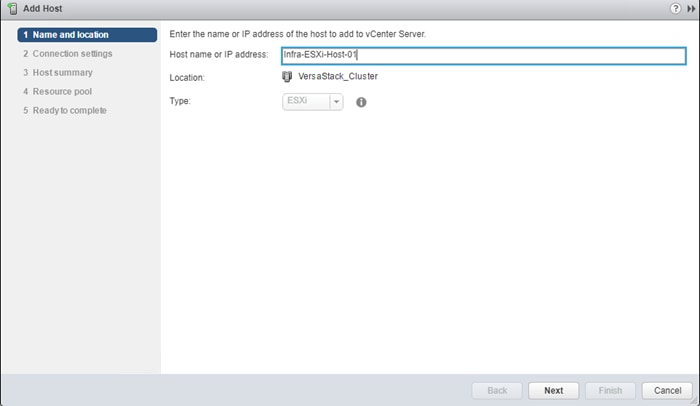

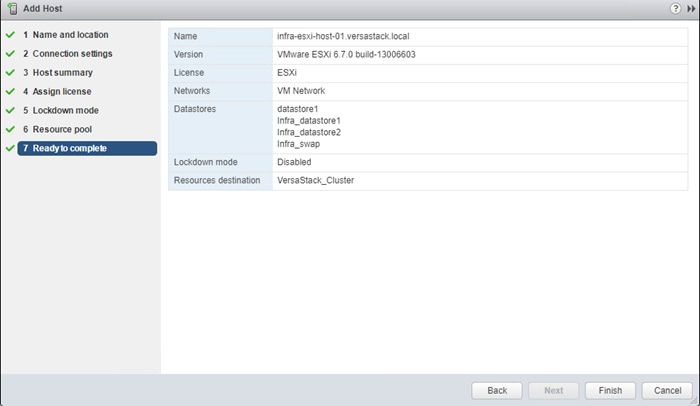

Add the VMware ESXi Hosts Using the VMware vSphere Web Client

ESXi Dump Collector Setup for iSCSI Hosts (iSCSI Configuration Only)

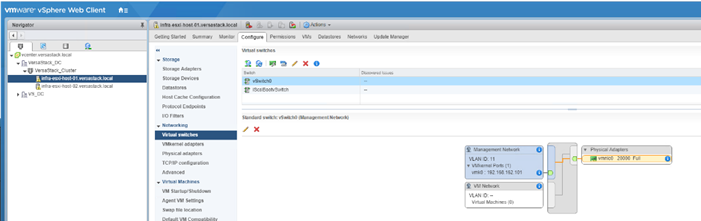

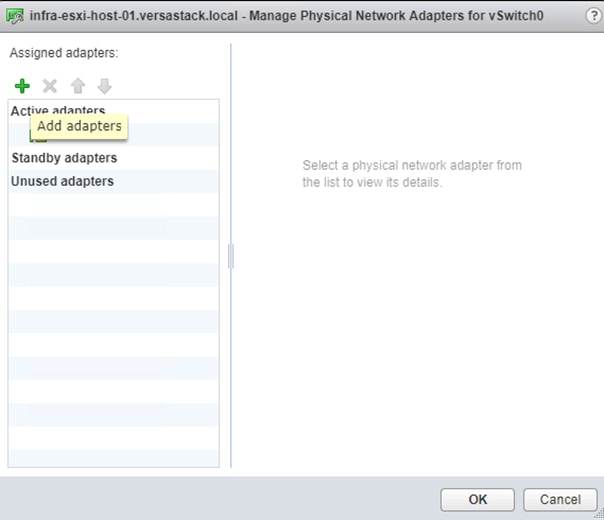

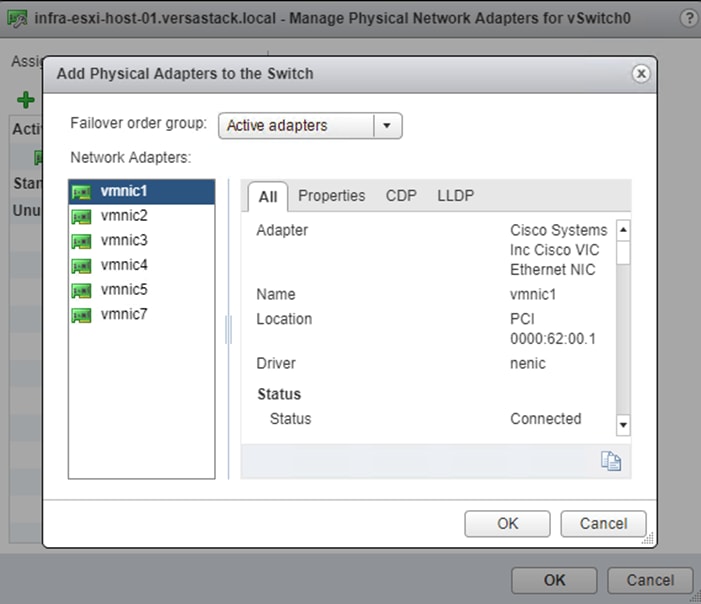

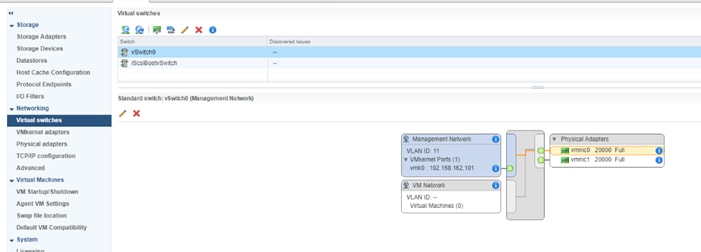

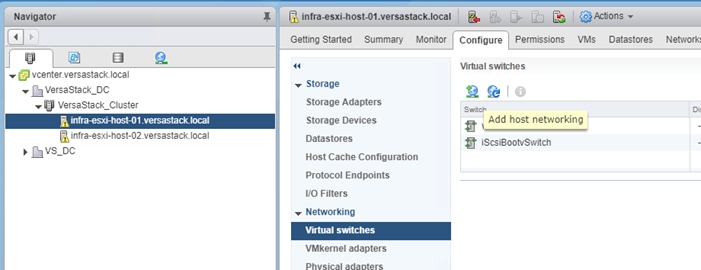

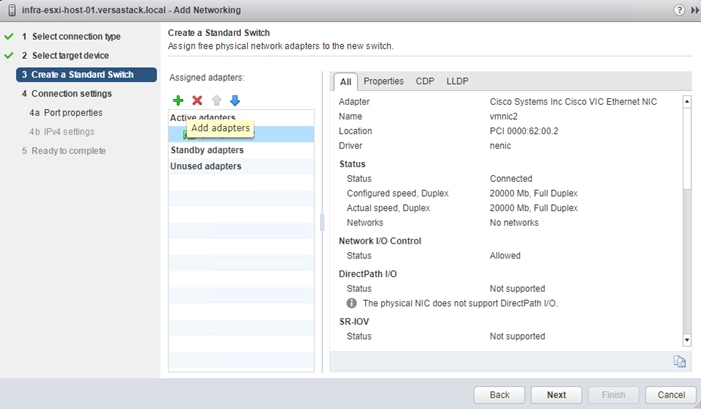

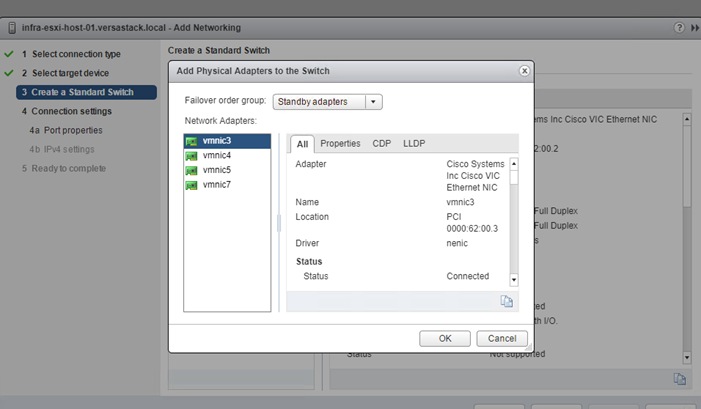

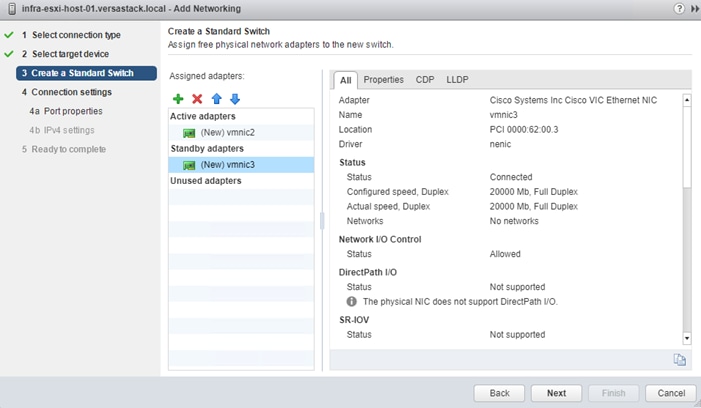

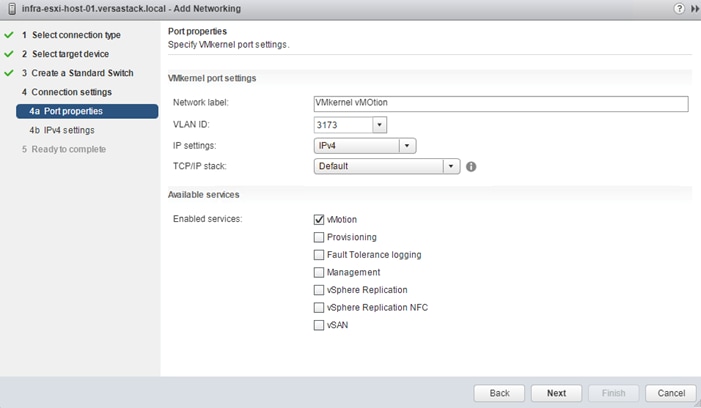

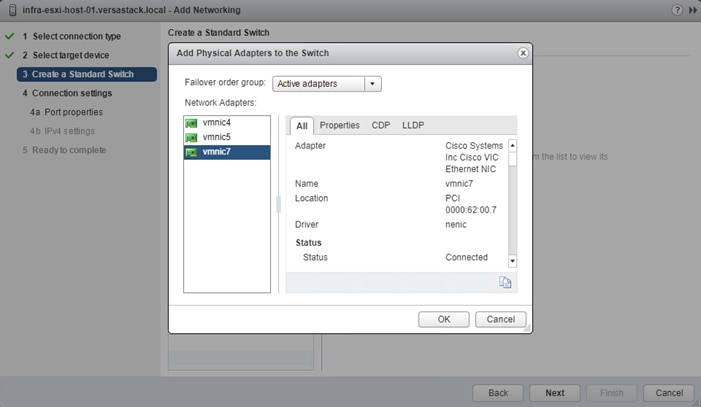

Update Management vSwitch0 Configuration

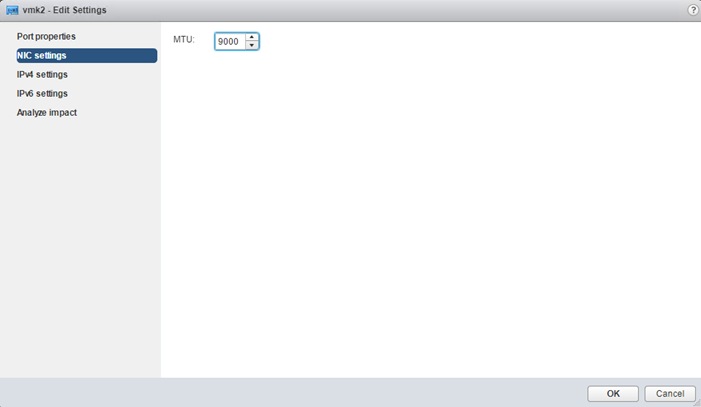

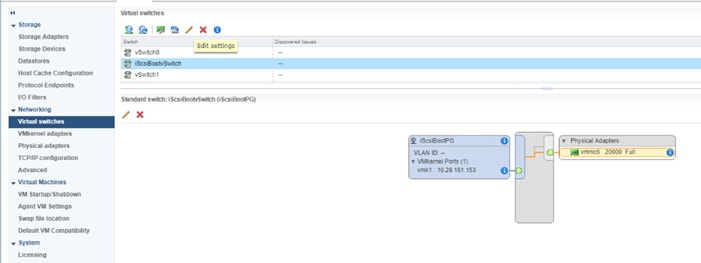

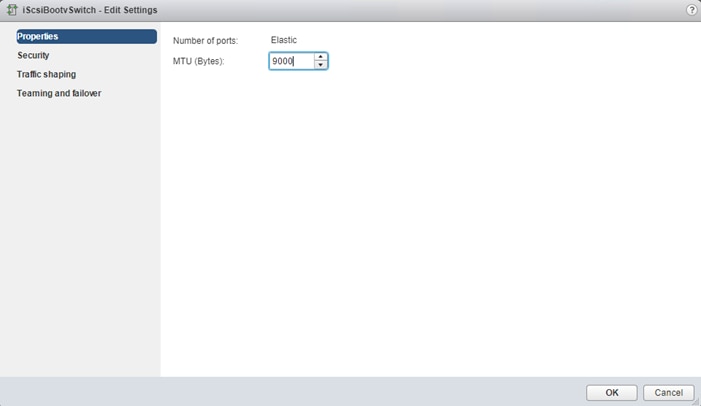

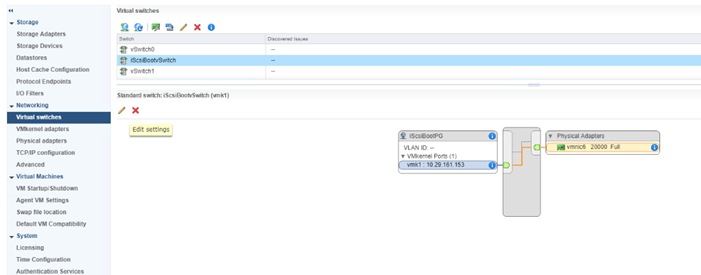

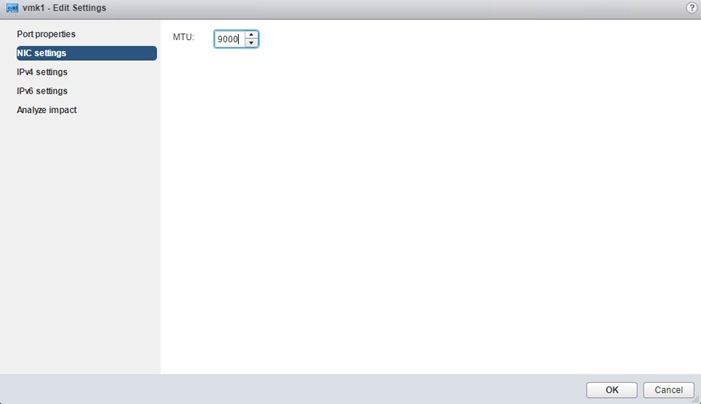

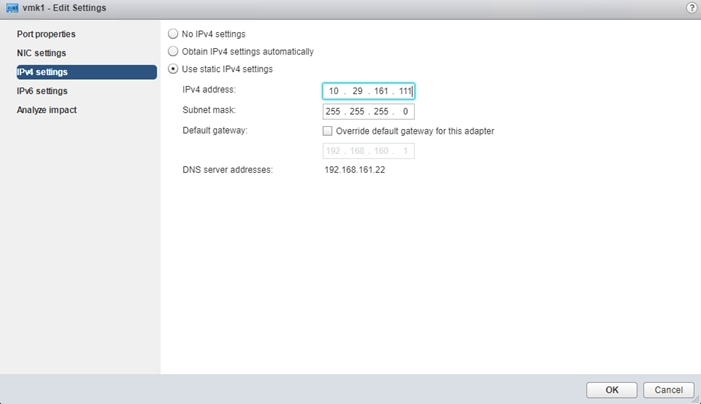

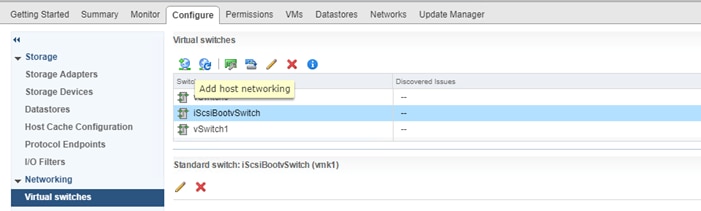

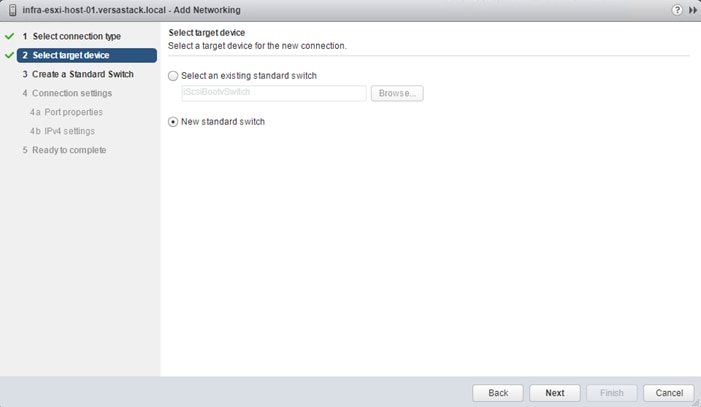

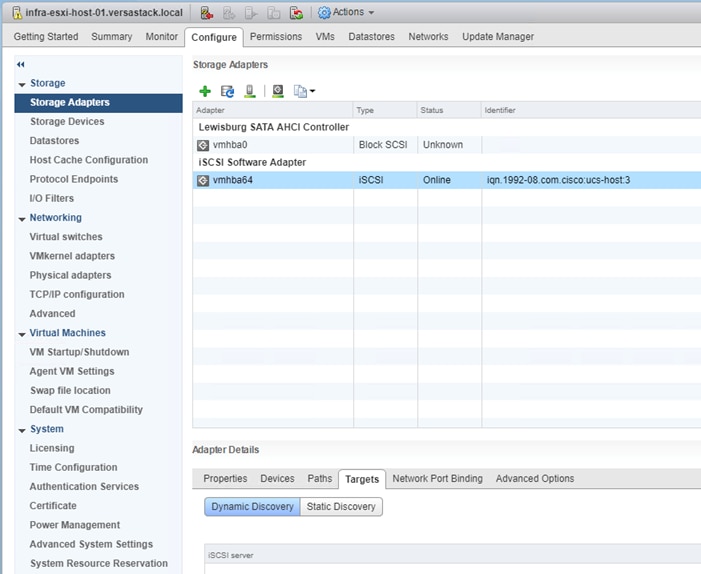

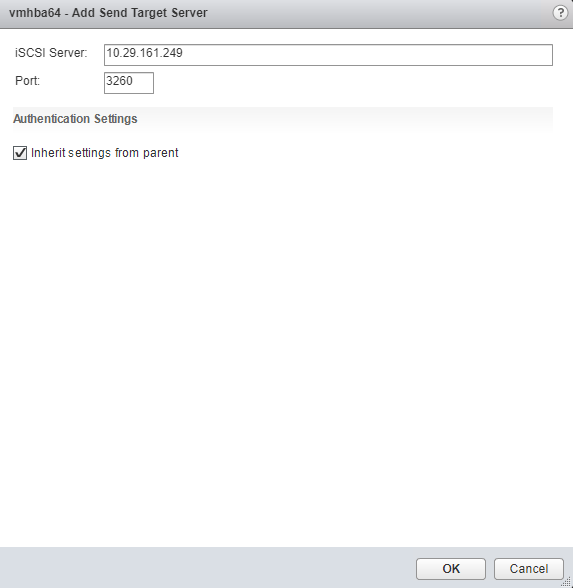

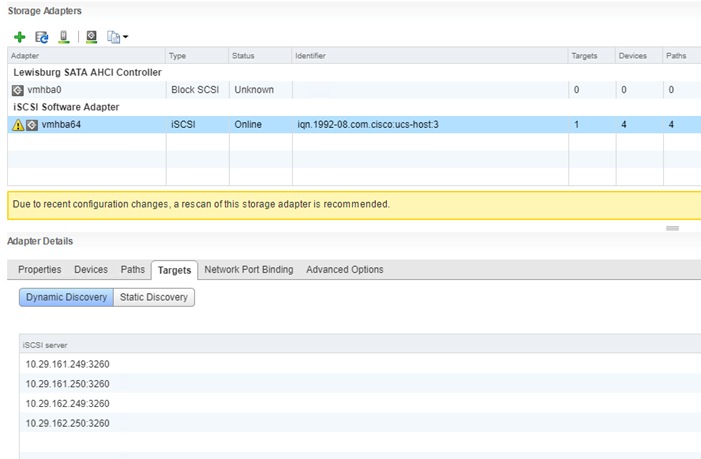

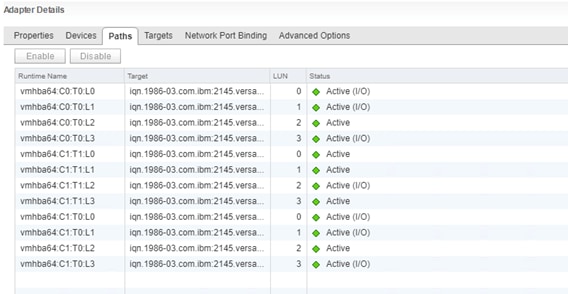

Configure iSCSI Adapters (iSCSI Deployment Only)

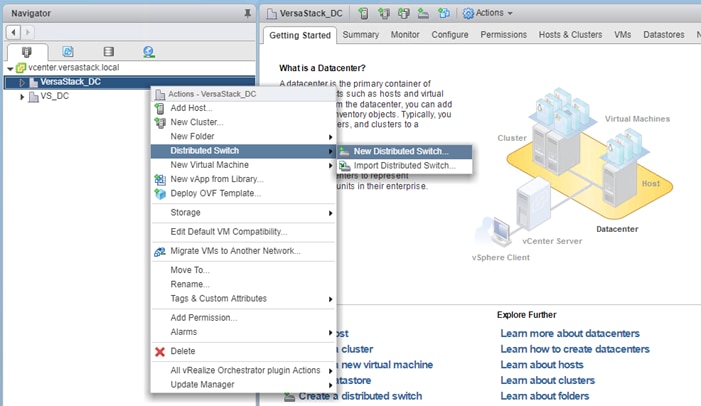

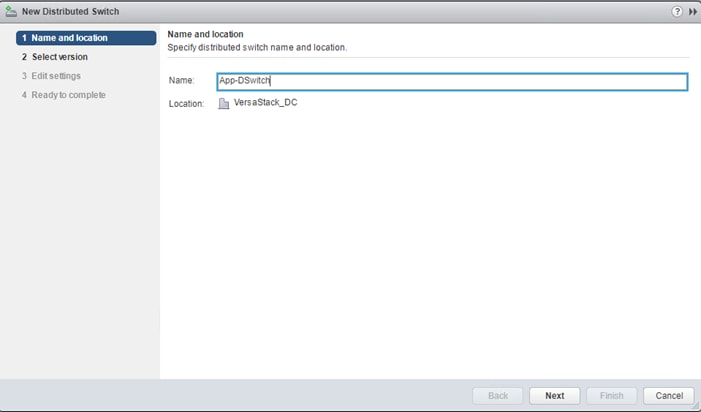

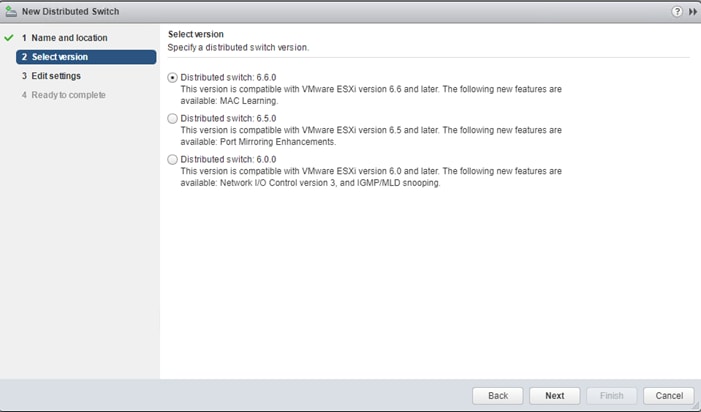

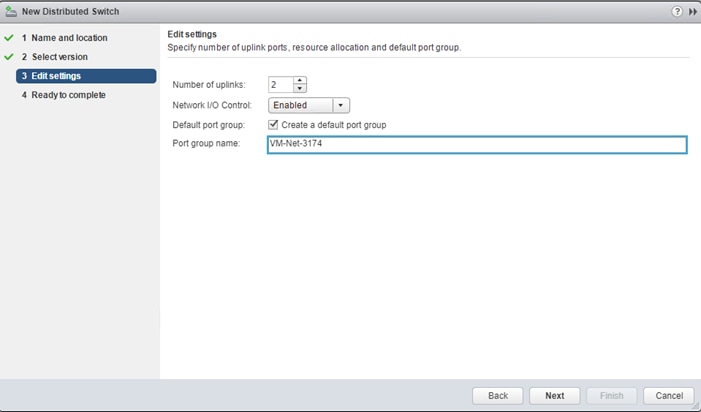

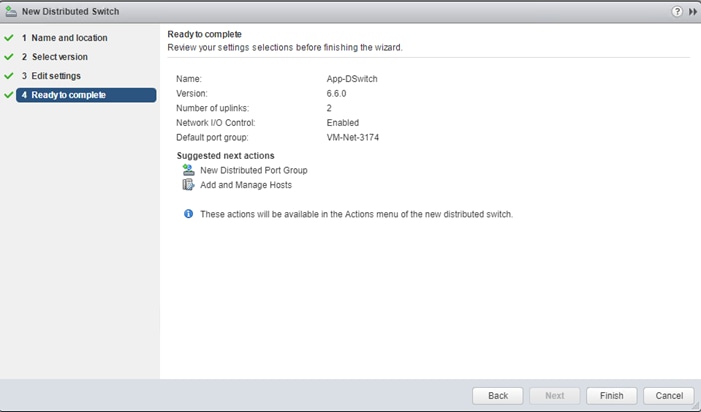

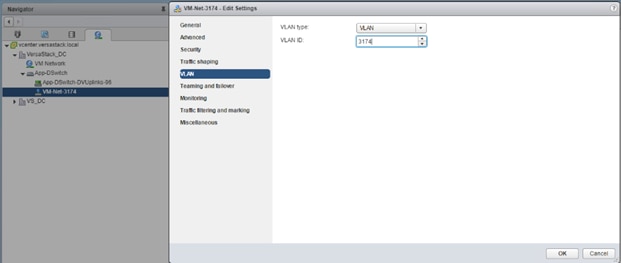

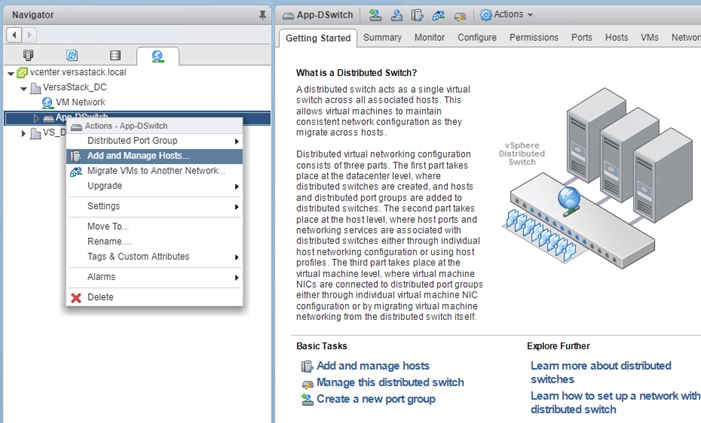

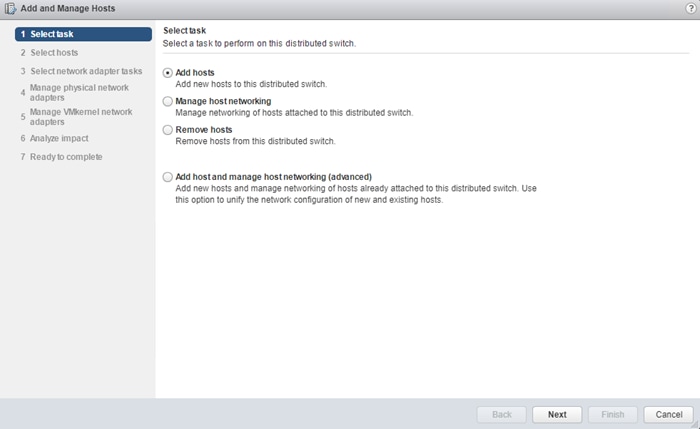

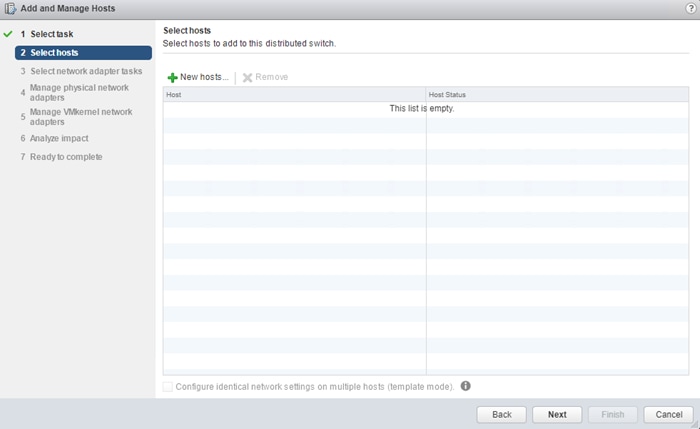

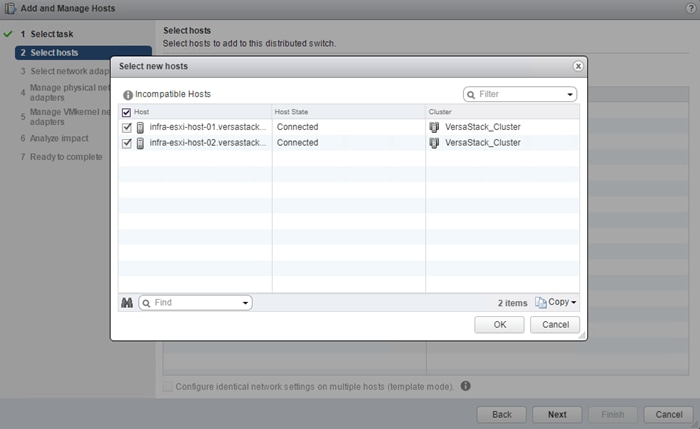

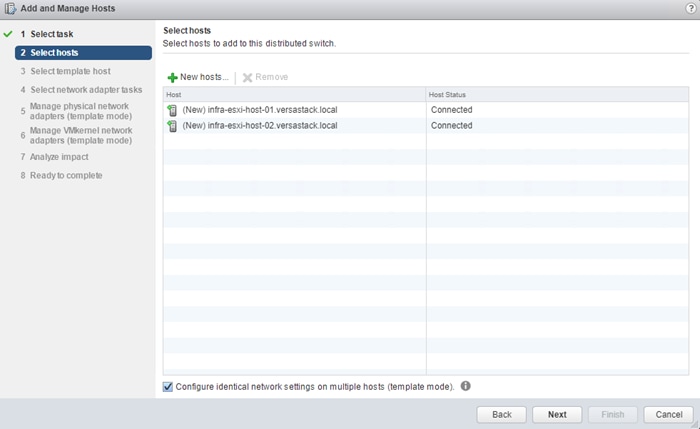

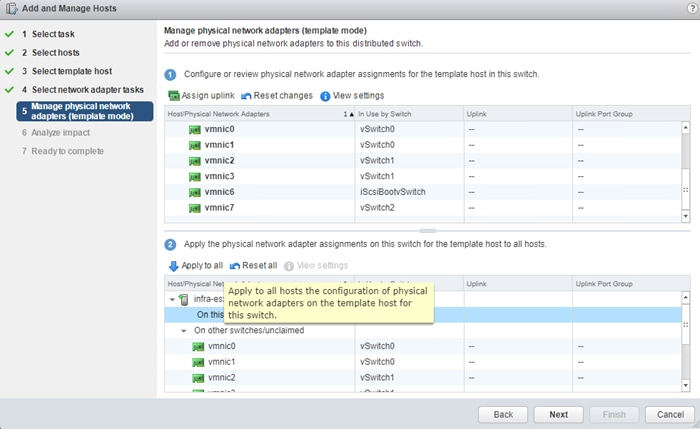

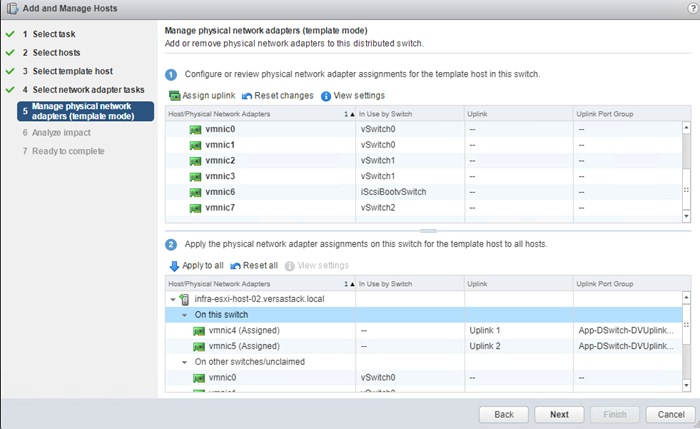

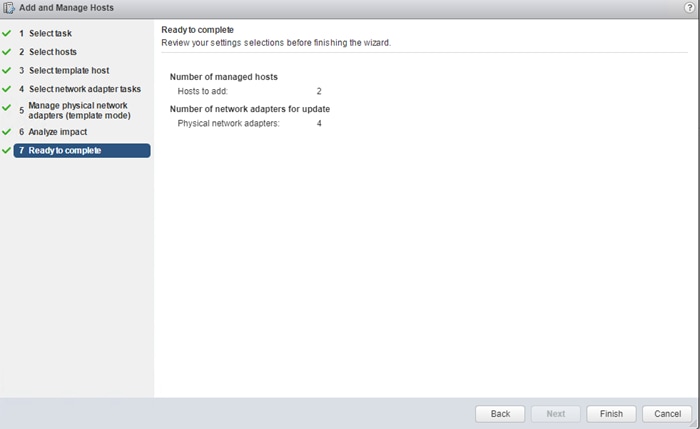

Create a VMware vDS for Application and Production Networks

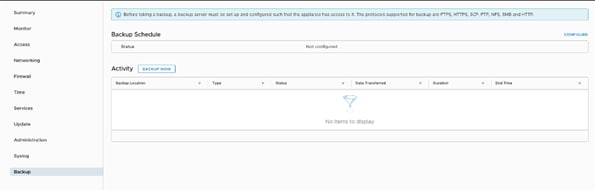

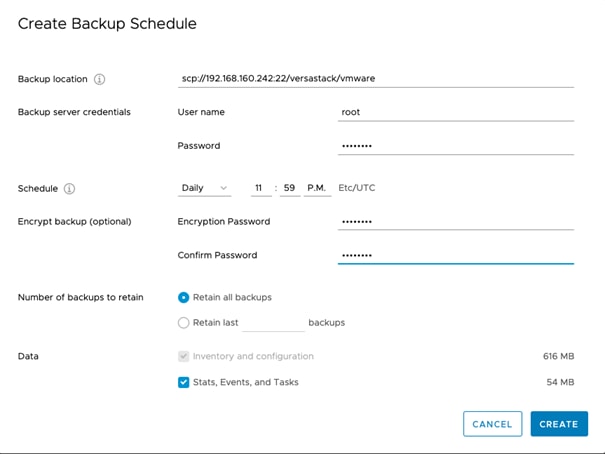

VersaStack Configuration Backups

Cisco Validated Designs (CVDs) deliver systems and solutions that are designed, tested, and documented to facilitate and improve customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of the customers and to guide them from design to deployment.

Customers looking to deploy applications using shared data center infrastructure face a number of challenges. A recurrent infrastructure challenge is to achieve the levels of IT agility and efficiency that can effectively meet the company business objectives. Addressing these challenges requires having an optimal solution with the following key characteristics:

· Availability: Help ensure applications and services availability at all times with no single point of failure

· Flexibility: Ability to support new services without requiring underlying infrastructure modifications

· Efficiency: Facilitate efficient operation of the infrastructure through re-usable policies

· Manageability: Ease of deployment and ongoing management to minimize operating costs

· Scalability: Ability to expand and grow with significant investment protection

· Compatibility: Minimize risk by ensuring compatibility of integrated components

· Extensibility: Extensible platform with support for various management applications and configuration tools

Cisco and IBM have partnered to deliver a series of VersaStack solutions that enable strategic data center platforms with the above characteristics. VersaStack solution delivers an integrated architecture that incorporates compute, storage and network design best practices thereby minimizing IT risks by validating the integrated architecture to ensure compatibility between various components. The solution also addresses IT pain points by providing documented design guidance, deployment guidance and support that can be used in various stages (planning, designing and implementation) of a deployment.

The VersaStack solution, described in this CVD, delivers a Converged Infrastructure platform (CI) specifically designed for high-performance Virtual Server Infrastructure (VSI), which is a validated solution jointly developed by Cisco and IBM. In this deployment, IBM® FlashSystem 9100 combines the performance of flash and Non-Volatile Memory Express (NVMe) with the reliability and innovation of IBM FlashCore technology and the rich features of IBM Spectrum Virtualize. With the addition of Cisco UCS M5 servers including 2nd gen Intel Xeon Scalable processors and Cisco UCS 6400 series Fabric Interconnects, the solution provides superior compute performance and network throughput with 10/25/40/100GbE support for ethernet using Nexus 9000 series switches in the LAN and 32G support for fibre channel connectivity with the Cisco MDS 9000 portfolio of switches in the SAN.

The design showcases:

· Cisco UCS 6400 Series Fabric Interconnects (FI)

· Cisco UCS 5108 Blade Server chassis

· Cisco Unified Computing System (Cisco UCS) servers with 2nd gen Intel Xeon scalable processors

· Cisco Nexus 9336C-FX2 Switches running NX-OS mode

· Cisco MDS 9132T Fabric Switches

· IBM FlashSystem 9100 NVMe-accelerated Storage

· VMware vSphere 6.7 Update 2

Introduction

VersaStack solution is a pre-designed, integrated and validated architecture for the data center that combines Cisco UCS servers, Cisco Nexus family of switches, Cisco MDS fabric switches, IBM Storage offerings into a single, flexible architecture. VersaStack is designed for high availability, with no single points of failure, while maintaining cost-effectiveness and flexibility in design to support a wide variety of workloads.

VersaStack designs can support different hypervisor options, bare metal servers and can also be sized and optimized based on customer workload requirements. The VersaStack design discussed in this document has been validated for resiliency (under fair load) and fault tolerance during system upgrades, component failures, and partial loss of power scenarios.

This document discusses the design of the high-performance VersaStack with flash and NVMe based solution. The solution is a predesigned, best-practice data center architecture with VMware vSphere built on the Cisco Unified Computing System (Cisco UCS). The solution architecture presents a robust infrastructure viable for a wide range of application workloads implemented as a Virtual Server Infrastructure (VSI).

Audience

The intended audience of this document includes, but is not limited to, sales engineers, field consultants, professional services, IT managers, architects, partner engineering, and customers who want to take advantage of an infrastructure built to deliver IT efficiency and enable IT innovation.

Purpose of this Document

This document provides step-by-step configuration and implementation guidelines for setting up VersaStack. The following design elements distinguish this version of VersaStack from previous models:

· Support for UCS 6454 Fabric Interconnects

· Support for VIC 1400 series adapter cards on UCS M5 servers

· Support for Cisco UCS C480 M5 ML Servers, not validated in this document

· Support for the Second Generation Intel® Xeon® Scalable processor (Cascade Lake) refresh and Intel® Optane™ Data Center persistent memory modules on UCS Intel-based M5 servers

· Improved memory RAS features on M5 servers

· IBM FlashSystem 9100 release 8.2.1.6

· Support for the Cisco UCS release 4.0(4c)

· Validation of 25GbE IP-based storage design with Nexus NX-OS switches supporting iSCSI based storage access

· Validation of VMware vSphere 6.7 U2

· 100 Gigabit per second Ethernet Connectivity

· 32 Gigabit per second Fibre Channel Connectivity

The design that will be implemented is discussed in the VersaStack with VMware vSphere 6.7 Design Guide found at: https://www.cisco.com/c/en/us/td/docs/unified_computing/ucs/UCS_CVDs/versastack_vmw67_ibmfs9100_design.html

For more information on the complete portfolio of VersaStack solutions, please refer to the VersaStack guides:

Architecture

This VersaStack design aligns with the converged infrastructure configurations and best practices as identified in the previous VersaStack releases. The solution focuses on integration of IBM Flash System 9100 in to VersaStack architecture with Cisco UCS 4th Generation and support for VMware vSphere 6.7 U2.

The system includes hardware and software compatibility support between all components and aligns to the configuration best practices for each of these components. All core hardware components and software releases are listed and supported on the following lists:

http://www.cisco.com/en/US/products/ps10477/prod_technical_reference_list.html

and IBM Interoperability Matrix:

http://www-03.ibm.com/systems/support/storage/ssic/interoperability.wss

The system supports high availability at network, compute and storage layers such that no single point of failure exists in the design. The system utilizes 10/25/40/100 Gbps Ethernet jumbo-frame based connectivity combined with port aggregation technologies such as virtual port-channels (VPC) for non-blocking LAN traffic forwarding. A dual SAN 32Gbps environment provides redundant storage access from compute devices to the storage controllers.

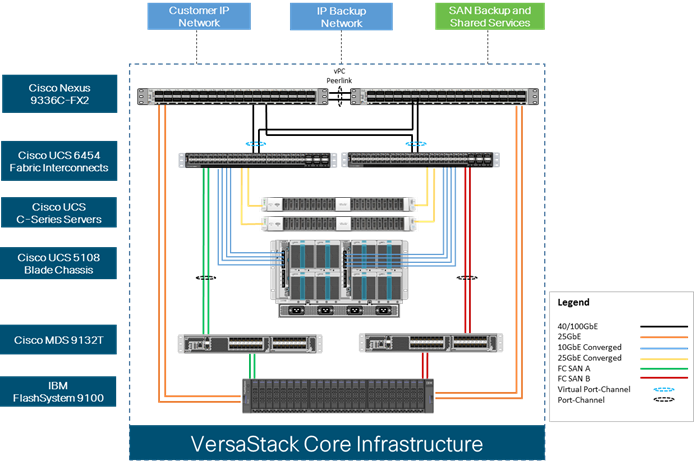

Physical Topology

The VersaStack infrastructure satisfies the high-availability design requirements and is physically redundant across the network, compute and storage stacks. Figure 1 provides a high-level topology of the system connectivity.

To provide the compute to storage system connectivity, this design guides highlights two different storage connectivity options:

· Option 1: iSCSI based storage access through Cisco Nexus Fabric

· Option 2: FC based storage access through Cisco MDS Fabric

This VersaStack design utilizes Cisco UCS platform with Cisco UCS B200 M5 half-width blades and UCS C220 M5 servers connected and managed through Cisco UCS 6454 Fabric Interconnects and the integrated Cisco UCS manager. These high-performance servers are configured as stateless compute nodes where ESXi 6.7 U2 hypervisor is loaded using SAN (iSCSI and FC) boot. The boot disks to store ESXi hypervisor image and configuration along with the block based datastores to host application Virtual Machines (VMs) are provisioned on the IBM FS9100 storage array.

This design has following physical connectivity between the components of VersaStack:

· 4 X 10 Gb Ethernet connections port-channeled between the Cisco UCS 5108 Blade Chassis and the Cisco UCS Fabric Interconnects

· 25 Gb Ethernet connections port-channeled between the Cisco UCS C-Series rackmounts and the Cisco UCS Fabric Interconnects

· 100 Gb Ethernet connections port-channeled between the Cisco UCS Fabric Interconnect and Cisco Nexus 9000s

· 32 Gb Fibre Channel connections port-channeled between the Cisco UCS Fabric Interconnect and Cisco MDS 9132T

· 16 Gb Fibre Channel connections between the Cisco MDS 9132T and IBM FS9100 storage array for fibre channel block storage access

· 25 Gb Ethernet connections between the Cisco Nexus 9000s and IBM FS9100 storage array for iSCSI block storage access

![]() Any supported connectivity to existing customer IP and SAN Networks from the VersaStack core infrastructure is allowed.

Any supported connectivity to existing customer IP and SAN Networks from the VersaStack core infrastructure is allowed.

Figure 1 VersaStack Physical Topology

This document guides customers through the low-level steps for deploying the base architecture. These procedures explain everything from physical cabling to network, compute, and storage device configurations.

For detailed information about the VersaStack design, see:

Software Revisions

Table 1 lists the hardware and software versions used for the solution validation.

It is important to note that Cisco, IBM, and VMware have interoperability matrices that should be referenced to determine support for any specific implementation of VersaStack. See the following links for more information:

· IBM System Storage Interoperation Center

· Cisco UCS Hardware and Software Interoperability Tool

Table 1 Hardware and Software Revisions

| Layer |

Device |

Image |

Comments |

| Compute |

Cisco UCS Fabric Interconnects 6400 Series, Cisco UCS B-200 M5, Cisco UCS C-220 M5 |

4.0(4c) |

Includes the Cisco UCS-IOM 2208XP, Cisco UCS Manager, Cisco UCS VIC 1440 and Cisco UCS VIC 1457 |

| Cisco nenic Driver |

1.0.29.0

|

Ethernet driver for Cisco VIC |

|

| Cisco fnic Driver |

4.0.0.40 |

FCoE driver for Cisco VIC |

|

| Network |

Cisco Nexus Switches

|

7.0(3)I7(6)

|

NXOS

|

| Cisco MDS 9132T |

8.4(1)

|

FC switch firmware version |

|

| Storage |

IBM FlashSystem 9100 |

8.2.1.6 |

Software version |

| Software |

VMware vSphere ESXi |

6.7 update 2 |

Software version |

| VMware vCenter |

6.7 update 2 |

Software version |

Configuration Guidelines

This document provides details for configuring a fully redundant, highly available VersaStack configuration. Therefore, appropriate references are provided to indicate the component being configured at each step, such as 01 and 02 or A and B. For example, the Cisco UCS fabric interconnects are identified as FI-A or FI-B. This document is intended to enable customers and partners to fully configure the customer environment and during this process, various steps may require the use of customer-specific naming conventions, IP addresses, and VLAN schemes, as well as appropriate MAC addresses.

![]() This document details network (Nexus and MDS), compute (Cisco UCS), virtualization (VMware) and related IBM FS9100 storage configurations (host to storage system connectivity).

This document details network (Nexus and MDS), compute (Cisco UCS), virtualization (VMware) and related IBM FS9100 storage configurations (host to storage system connectivity).

Table 2 lists various VLANs, VSANs and subnets used to setup VersaStack infrastructure to provide connectivity between core elements of the design.

Table 2 VersaStack Infrastructure Configuration

| VLAN Name |

VLAN |

Subnet |

Usage |

| IB-MGMT |

11 |

192.168.160.0/22 |

Management VLAN to access and manage the servers |

| iSCSI-A |

3161 |

10.29.161.0/24 |

iSCSI-A path for booting both B Series and C Series servers and datastore access |

| iSCSI-B |

3162 |

10.29.162.0/24 |

iSCSI-B path for booting both B Series and C Series servers and datastore access |

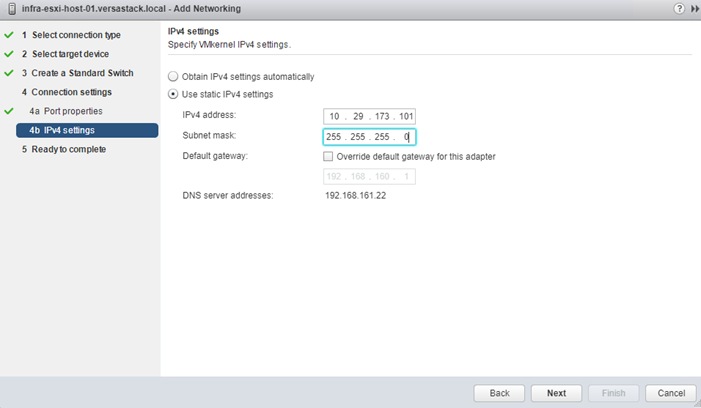

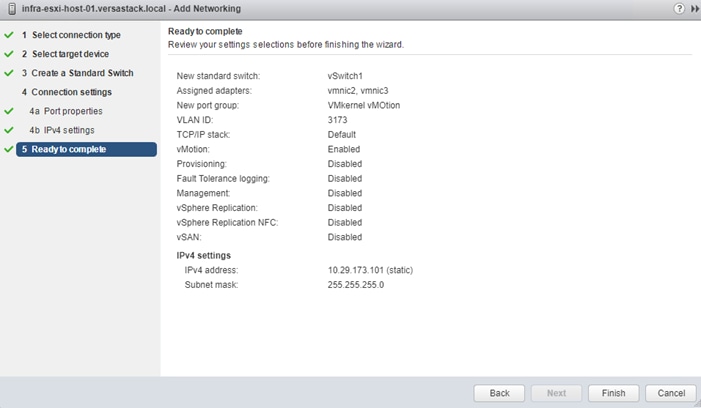

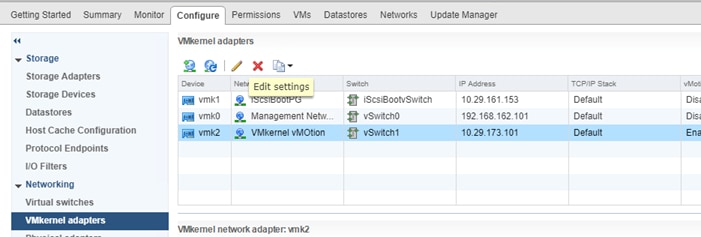

| vMotion |

3173 |

10.29.173.0/24 |

VMware vMotion traffic |

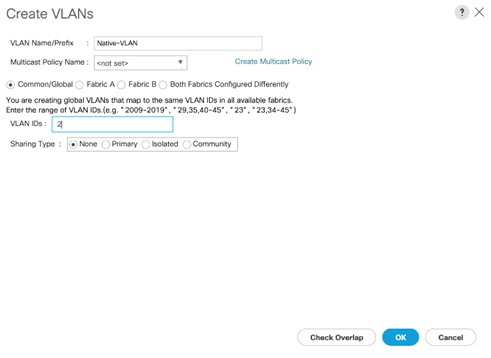

| Native-2 |

2 |

N/A |

VLAN 2 used as Native VLAN instead of default VLAN (1) |

| VM Network |

3174 |

10.29.174.0/24 |

VLAN to carry data traffic for both VM and bare-metal Servers |

| VSAN-A |

101 |

N/A |

Fabric A VSAN for FC Storage access |

| VSAN-B |

102 |

N/A |

Fabric B VSAN for FC Storage access |

Physical Infrastructure

The information in this section is provided as a reference for cabling the equipment in VersaStack environment. To simplify the documentation, the architecture shown in Figure 2 is broken down into network, compute and storage related physical connectivity details.

This document assumes that the out-of-band management ports are plugged into an existing management infrastructure at the deployment site. These interfaces will be used in various configuration steps.

![]() Customers can choose interfaces and ports of their liking but failure to follow the exact connectivity shown in figures below will result in changes to the deployment procedures since specific port information is used in various configuration steps

Customers can choose interfaces and ports of their liking but failure to follow the exact connectivity shown in figures below will result in changes to the deployment procedures since specific port information is used in various configuration steps

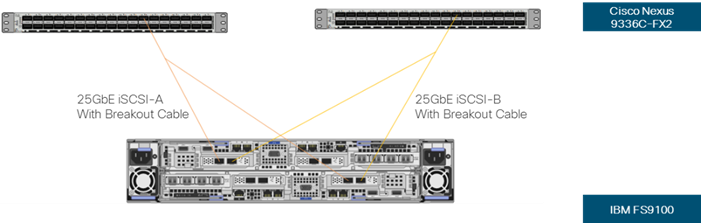

![]() The Nexus 9336C-FX2 switches used in this design support 10/25/40/100 Gbps on all the ports. The switch supports breakout interfaces, each 100Gbps port on the switch can be split in to 4 X 25Gbps interfaces. The QSFP breakout cable has been leveraged to connect 25Gbps iSCSI ethernet ports on the FS9100 storage array to the 100Gbps QSFP port on the switch end. With this connectivity, IBM SFP transceiver on the FS9100 are not required.

The Nexus 9336C-FX2 switches used in this design support 10/25/40/100 Gbps on all the ports. The switch supports breakout interfaces, each 100Gbps port on the switch can be split in to 4 X 25Gbps interfaces. The QSFP breakout cable has been leveraged to connect 25Gbps iSCSI ethernet ports on the FS9100 storage array to the 100Gbps QSFP port on the switch end. With this connectivity, IBM SFP transceiver on the FS9100 are not required.

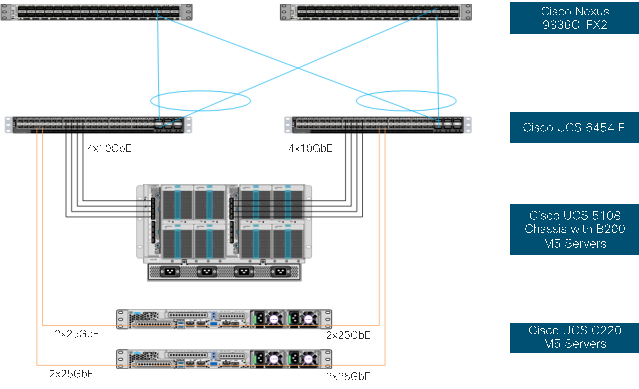

Cisco UCS Connectivity to Nexus Switches

For physical connectivity details of Cisco UCS to the Cisco Nexus switches, refer to Figure 2.

Figure 2 Cisco UCS Connectivity to the Nexus Switches

Table 3 Cisco UCS Connectivity to Nexus Switches

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS Fabric Interconnect A |

Eth1/17 |

10GbE |

Cisco UCS Chassis FEX A |

IOM 1/1 |

| Cisco UCS Fabric Interconnect A |

Eth1/18 |

10GbE |

Cisco UCS Chassis FEX A |

IOM 1/2 |

| Cisco UCS Fabric Interconnect A |

Eth1/19 |

10GbE |

Cisco UCS Chassis FEX A |

IOM 1/3 |

| Cisco UCS Fabric Interconnect A |

Eth1/20 |

10GbE |

Cisco UCS Chassis FEX A |

IOM 1/4 |

| Cisco UCS Fabric Interconnect A |

Eth1/53 |

100GbE |

Cisco Nexus 9336C-FX2 A |

Eth1/31 |

| Cisco UCS Fabric Interconnect A |

Eth1/54 |

100GbE |

Cisco Nexus 9336C-FX2 B |

Eth1/31 |

| Cisco UCS Fabric Interconnect B |

Eth1/17 |

10GbE |

Cisco UCS Chassis FEX B |

IOM 1/1 |

| Cisco UCS Fabric Interconnect B |

Eth1/18 |

10GbE |

Cisco UCS Chassis FEX B |

IOM 1/2 |

| Cisco UCS Fabric Interconnect B |

Eth1/19 |

10GbE |

Cisco UCS Chassis FEX B |

IOM 1/3 |

| Cisco UCS Fabric Interconnect B |

Eth1/20 |

10GbE |

Cisco UCS Chassis FEX B |

IOM 1/4 |

| Cisco UCS Fabric Interconnect B |

Eth1/53 |

100GbE |

Cisco Nexus 9336C-FX2 A |

Eth1/32 |

| Cisco UCS Fabric Interconnect B |

Eth1/54 |

100GbE |

Cisco Nexus 9336C-FX2 B |

Eth1/32 |

| Cisco UCS C220 M5 Server 1 |

VIC Port 1, Port 3 |

25GbE |

Cisco UCS Fabric Interconnect A |

Eth1/21, Eth1/22 |

| Cisco UCS C220 M5 Server 1 |

VIC Port 2, Port 4 |

25GbE |

Cisco UCS Fabric Interconnect B |

Eth1/21, Eth1/22 |

| Cisco UCS C220 M5 Server 2 |

VIC Port 1, Port 3 |

25GbE |

Cisco UCS Fabric Interconnect A |

Eth1/23, Eth1/24 |

| Cisco UCS C220 M5 Server 2 |

VIC Port 2, Port 4 |

25GbE |

Cisco UCS Fabric Interconnect B |

Eth1/23, Eth1/24 |

| Cisco Nexus 9336C-FX2 A |

Eth1/33 |

100GbE |

Cisco Nexus 9336C-FX2 B |

Eth1/33 |

| Cisco Nexus 9336C-FX2 A |

Eth1/34 |

100GbE |

Cisco Nexus 9336C-FX2 B |

Eth1/34 |

IBM FS9100 Connectivity to Nexus Switches

For physical connectivity details of IBM FS9100 node canisters to the Cisco Nexus Switches, refer to Table 3 . This deployment shows connectivity for a pair of IBM FS9100 node canisters. Additional nodes can be connected to open ports on Nexus switches as needed.

Figure 3 IBM FS9100 Connectivity to Nexus 9k Switches

Table 4 IBM FS9100 Connectivity to the Nexus Switches

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| IBM FS9100 node 1 |

Port 5 |

25GbE |

Cisco Nexus 9336C-FX2 A |

Eth1/11/1* |

| IBM FS9100 node 1 |

Port 6 |

25GbE |

Cisco Nexus 9336C-FX2 B |

Eth1/11/1* |

| IBM FS9100 node 2 |

Port 5 |

25GbE |

Cisco Nexus 9336C-FX2 A |

Eth1/11/2* |

| IBM FS9100 node 2 |

Port 6 |

25GbE |

Cisco Nexus 9336C-FX2 B |

Eth1/11/2* |

![]() * Breakout interfaces with one 100G QSFP port on the Nexus 9336C-FX2 is split in to 4 X 25Gbps SFP interfaces connected to the IBM FS9100. Cisco QSFP100G-4SFP25G breakout cable has been leveraged for this connectivity.

* Breakout interfaces with one 100G QSFP port on the Nexus 9336C-FX2 is split in to 4 X 25Gbps SFP interfaces connected to the IBM FS9100. Cisco QSFP100G-4SFP25G breakout cable has been leveraged for this connectivity.

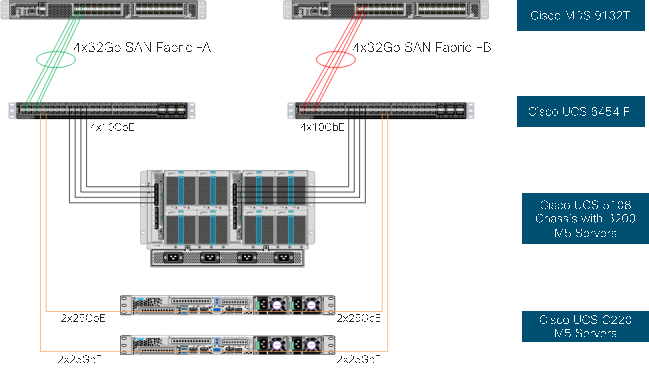

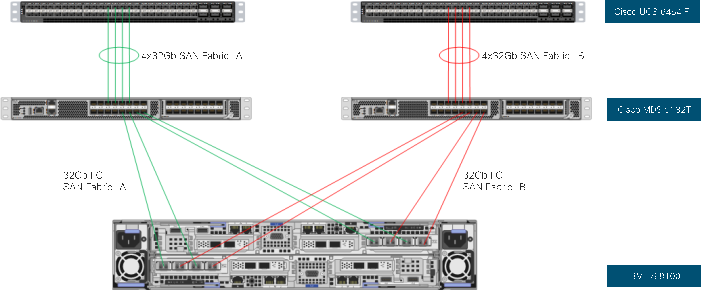

Cisco UCS connectivity to SAN Fabric

For physical connectivity details of Cisco UCS to an MDS 9132T based redundant SAN fabric, refer to Figure 4.

Figure 4 Cisco UCS Connectivity to Cisco MDS Switches

Table 5 Cisco UCS Connectivity to Cisco MDS Switches

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS Fabric Interconnect A |

FC1/1 |

32Gbps |

Cisco MDS 9132T A |

FC1/1 |

| Cisco UCS Fabric Interconnect A |

FC1/2 |

32Gbps |

Cisco MDS 9132T A |

FC1/2 |

| Cisco UCS Fabric Interconnect A |

FC1/3 |

32Gbps |

Cisco MDS 9132T A |

FC1/3 |

| Cisco UCS Fabric Interconnect A |

FC1/4 |

32Gbps |

Cisco MDS 9132T A |

FC1/4 |

| Cisco UCS Fabric Interconnect B |

FC1/1 |

32Gbps |

Cisco MDS 9132T B |

FC1/1 |

| Cisco UCS Fabric Interconnect B |

FC1/2 |

32Gbps |

Cisco MDS 9132T B |

FC1/2 |

| Cisco UCS Fabric Interconnect B |

FC1/3 |

32Gbps |

Cisco MDS 9132T B |

FC1/3 |

| Cisco UCS Fabric Interconnect B |

FC1/4 |

32Gbps |

Cisco MDS 9132T B |

FC1/4 |

Figure 5 illustrates FC connectivity for IBM FS9100 storage array. Additional nodes can be connected and configured by following the same design guidelines.

Figure 5 IBM FS9100 and Storage System FC Connectivity

Table 6 IBM FS9100 Storage System FC Connectivity

| Local Device |

Local Ports |

Connection |

Remote Device |

Remote Port |

| IBM FS9100 Node Canister 1 |

Port 1 |

16Gbps |

Cisco MDS 9132T A |

FC1/5 |

| IBM FS9100 Node Canister 1 |

Port 2 |

16Gbps |

Cisco MDS 9132T B |

FC1/5 |

| IBM FS9100 Node Canister 1 |

Port 3 |

16Gbps |

Cisco MDS 9132T A |

FC1/6 |

| IBM FS9100 Node Canister 1 |

Port 4 |

16Gbps |

Cisco MDS 9132T B |

FC1/6 |

| IBM FS9100 Node Canister 2 |

Port 1 |

16Gbps |

Cisco MDS 9132T A |

FC1/7 |

| IBM FS9100 Node Canister 2 |

Port 2 |

16Gbps |

Cisco MDS 9132T B |

FC1/7 |

| IBM FS9100 Node Canister 2 |

Port 3 |

16Gbps |

Cisco MDS 9132T A |

FC1/8 |

| IBM FS9100 Node Canister 2 |

Port 4 |

16Gbps |

Cisco MDS 9132T B |

FC1/8 |

The procedures in this section describe how to configure the Cisco Nexus switches for use in a base VersaStack environment. This procedure assumes the use of Nexus 9336C-FX2 switches running 7.0(3)I7(6) code. Configuration on a differing model of Nexus 9000 series switch should be comparable but may differ slightly with model and changes in NX-OS release. The Cisco Nexus 9336C-FX2 switch and NX-OS 7.0(3)I7(6) release were used in validation of this VersaStack solution, so steps will reflect this model and release.

![]() Connectivity between the Nexus switches and IBM FS9100 for iSCSI access depends on the Nexus 9000 switch model used within the architecture. If any supported Nexus switch with 25Gbps capable SFP ports is used, breakout cable is not required and ports from the switch to IBM FS9100 can be connected directly using the SFP transceivers on both sides.

Connectivity between the Nexus switches and IBM FS9100 for iSCSI access depends on the Nexus 9000 switch model used within the architecture. If any supported Nexus switch with 25Gbps capable SFP ports is used, breakout cable is not required and ports from the switch to IBM FS9100 can be connected directly using the SFP transceivers on both sides.

![]() With Cisco Nexus 9000 release 7.0(3)I7(6), autonegotiation (40G/100G) is not supported on ports 1-6 and 33-36 on the Cisco Nexus 9336C-FX2 switch. If these ports are used for connectivity, port speed and duplex should be hard set at both ends of the connection.

With Cisco Nexus 9000 release 7.0(3)I7(6), autonegotiation (40G/100G) is not supported on ports 1-6 and 33-36 on the Cisco Nexus 9336C-FX2 switch. If these ports are used for connectivity, port speed and duplex should be hard set at both ends of the connection.

Physical Connectivity

Physical cabling should be completed by following the diagram and table references in the previous sections.

Cisco Nexus 9000 Initial Configuration Setup

The steps provided in this section details for the initial Cisco Nexus 9336C-FX2 Switch setup. In this case, we are connected using a Cisco Terminal Server that is connected via the console port on the switch.

Cisco Nexus A

To set up the initial configuration for the first Cisco Nexus switch <nexus-A-hostname>, follow these steps:

![]() On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

1. Configure the switch:

Disabling POAP.......Disabling POAP

poap: Rolling back, please wait... (This may take 5-15 minutes)

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name: <nexus-A-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address: <nexus-A-mgmt0-ip>

Mgmt0 IPv4 netmask: <nexus-A-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway: <nexus-A-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [1024]: Enter

Configure the ntp server? (yes/no) [n]: y

NTP server IPv4 address: <global-ntp-server-ip>

Configure default interface layer (L3/L2) [L2]: Enter

Configure default switchport interface state (shut/noshut) [noshut]: shut

Configure CoPP system profile (strict/moderate/lenient/dense/skip) [strict]: Enter

Would you like to edit the configuration? (yes/no) [n]: Enter

2. Review the configuration summary before enabling the configuration.

Cisco Nexus B

To set up the initial configuration for the second Cisco Nexus switch, follow these steps:

![]() On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

1. Configure the switch:

Abort Power On Auto Provisioning [yes - continue with normal setup, skip - bypass password and basic configuration, no - continue with Power On Auto Provisioning] (yes/skip/no)[no]: yes

Disabling POAP.......Disabling POAP

poap: Rolling back, please wait... (This may take 5-15 minutes)

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name: <nexus-B-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address: <nexus-B-mgmt0-ip>

Mgmt0 IPv4 netmask: <nexus-B-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway: <nexus-B-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [1024]: Enter

Configure the ntp server? (yes/no) [n]: y

NTP server IPv4 address: <global-ntp-server-ip>

Configure default interface layer (L3/L2) [L2]: Enter

Configure default switchport interface state (shut/noshut) [noshut]: shut

Configure CoPP system profile (strict/moderate/lenient/dense/skip) [strict]: Enter

Would you like to edit the configuration? (yes/no) [n]: Enter

2. Review the configuration summary before enabling the configuration.

Enable Appropriate Cisco Nexus 9000 Features and Settings

Enable Licenses

Cisco Nexus 9000 A and Cisco Nexus 9000 B

To license the Cisco Nexus switches, follow these steps:

1. Log in a admin.

2. Run the following commands:

config terminal

feature udld

feature lacp

feature vpc

Set Global Configurations

Cisco Nexus 9000 A and Cisco Nexus 9000 B

To set global configurations, follow these steps on both switches:

spanning-tree port type network default

spanning-tree port type edge bpduguard default

spanning-tree port type edge bpdufilter default

port-channel load-balance src-dst l4port

ip route 0.0.0.0/0 <ib-mgmt-vlan-gateway>

copy run start

Setup NTP (optional)

The following procedure can be used to optionally enable the NTP service on the Nexus switches. The procedure includes the setup of NTP distribution on both the mgmt0 port and the in-band management VLAN. The interface-vlan feature and ntp commands are used to set this up. This procedure also assumes that the default VRF is used to route the in-band management VLAN.

Cisco Nexus 9000 A and Cisco Nexus 9000 B

1. Run the following commands

feature interface-vlan

ntp server <global-ntp-server-ip> use-vrf management

ntp master 3

Add NTP Distribution Interface

Cisco Nexus A

1. From the global configuration mode, run the following commands:

interface Vlan<IB-Mgmt VLAN id>

ip address <switch-a-ntp-ip>/<ib-mgmt-vlan-netmask-length>

no shutdown

exit

ntp peer <switch-b-ntp-ip> use-vrf default

Cisco Nexus B

1. From the global configuration mode, run the following commands:

interface Vlan<IB-Mgmt VLAN id>

ip address <switch-b-ntp-ip>/<ib-mgmt-vlan-netmask-length>

no shutdown

exit

ntp peer <switch-a-ntp-ip> use-vrf default

Create VLANs for VersaStack IP Traffic

Cisco Nexus 9000 A and Cisco Nexus 9000 B

To create the necessary virtual local area networks (VLANs), follow this step on both switches:

1. From the global configuration mode, run the following commands:

vlan <IB-Mgmt VLAN id>

name IB-MGMT-VLAN

vlan <Native VLAN id>

name Native-VLAN

vlan <vMotion VLAN id>

name vMotion-VLAN

vlan <VM Traffic VLAN id>

name VM-Traffic-VLAN

vlan <iSCSI-A_VLAN_id>

name iSCSI-A-VLAN

vlan <iSCSI-B_VLAN id>

name iSCSI-B-VLAN

exit

copy run start

Configure Virtual Port Channel Domain

Cisco Nexus 9000 A

To configure vPC domain for switch A, follow these steps:

1. From the global configuration mode, create a new vPC domain:

vpc domain 10

2. Make the Nexus 9000A the primary vPC peer by defining a low priority value:

role priority 10

3. Use the management interfaces on the supervisors of the Nexus 9000s to establish a keepalive link:

peer-keepalive destination <Mgmt. IP address for Switch B> source <Mgmt. IP address for Switch A>

4. Enable the following features for this vPC domain:

peer-switch

delay restore 150

peer-gateway

ip arp synchronize

auto-recovery

copy run start

Cisco Nexus 9000 B

To configure the vPC domain for switch B, follow these steps:

1. From the global configuration mode, create a new vPC domain:

vpc domain 10

2. Make the Nexus 9000A the primary vPC peer by defining a low priority value:

role priority 20

3. Use the management interfaces on the supervisors of the Nexus 9000s to establish a keepalive link:

peer-keepalive destination <Mgmt. IP address for Switch A> source <Mgmt. IP address for Switch B>

4. Enable the following features for this vPC domain:

peer-switch

delay restore 150

peer-gateway

ip arp synchronize

auto-recovery

copy run start

Configure Network Interfaces for the vPC Peer Links

To configure the network interfaces for the vPC Peer links, follow these steps:

Cisco Nexus 9000 A

1. Define a port description for the interfaces connecting to vPC Peer <nexus-B-hostname>.

interface Eth1/33

description VPC Peer <Nexus-B Switch Name>:1/33

interface Eth1/34

description VPC Peer <Nexus-B Switch Name>:1/34

2. Apply a port channel to both vPC Peer links and bring up the interfaces.

interface Eth1/33,Eth1/34

channel-group 10 mode active

no shutdown

3. Define a description for the port-channel connecting to <nexus_B_hostname>.

interface Po10

description vPC peer-link

4. Make the port-channel a switchport and configure a trunk to allow in-band management, VM traffic, vMotion and the native VLAN.

switchport

switchport mode trunk

switchport trunk native vlan <Native VLAN id>

switchport trunk allowed vlan <IB-MGMT VLAN id>, <vMotion VLAN id>, <VM Traffic VLAN id>, <iSCSI-A VLAN id>, <iSCSI-B VLAN id>

5. Make the port channel and associated interfaces spanning tree network ports.

spanning-tree port type network

6. Set port speed and duplex.

speed 100000

duplex full

no negotiate

7. Make this port-channel the VPC peer link and bring it up.

vpc peer-link

no shutdown

copy run start

Cisco Nexus 9000 B

1. Define a port description for the interfaces connecting to VPC Peer <nexus_A_hostname>.

interface Eth1/33

description VPC Peer <Nexus-A Switch Name>:1/33

interface Eth1/34

description VPC Peer <Nexus-A Switch Name>:1/34

2. Apply a port channel to both VPC Peer links and bring up the interfaces.

interface Eth1/33,Eth1/34

channel-group 10 mode active

no shutdown

3. Define a description for the port-channel connecting to <nexus_A_hostname>.

interface Po10

description vPC peer-link

4. Make the port-channel a switchport and configure a trunk to allow in-band management, VM traffic, vMotion and the native VLAN.

switchport

switchport mode trunk

switchport trunk native vlan <Native VLAN id>

switchport trunk allowed vlan <IB-MGMT VLAN id>, <vMotion VLAN id>, <VM Traffic VLAN id>, <iSCSI-A VLAN id>, <iSCSI-B VLAN id>

5. Make the port channel and associated interfaces spanning tree network ports.

spanning-tree port type network

6. Set port speed and duplex.

speed 100000

duplex full

no negotiate

7. Make this port-channel the VPC peer link and bring it up.

vpc peer-link

no shutdown

copy run start

Configure Network Interfaces to Cisco UCS Fabric Interconnects

To configure the network interfaces for the Cisco UCS Fabric Interconnects, follow these steps:

Cisco Nexus 9000 A

1. Define a description for the port-channel connecting to <UCS Cluster Name>-A.

interface Po13

description <UCS Cluster Name>-A

2. Make the port-channel a switchport and configure a trunk to allow in-band management, VM traffic, vMotion, iSCSI and the native VLANs.

switchport

switchport mode trunk

switchport trunk native vlan <Native VLAN id>

switchport trunk allowed vlan <IB-MGMT VLAN id>, <vMotion VLAN id>, <VM Traffic VLAN id>, <iSCSI-A VLAN id>, <iSCSI-B VLAN id>

3. Set port speed and duplex.

speed 100000

duplex full

no negotiate auto

4. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

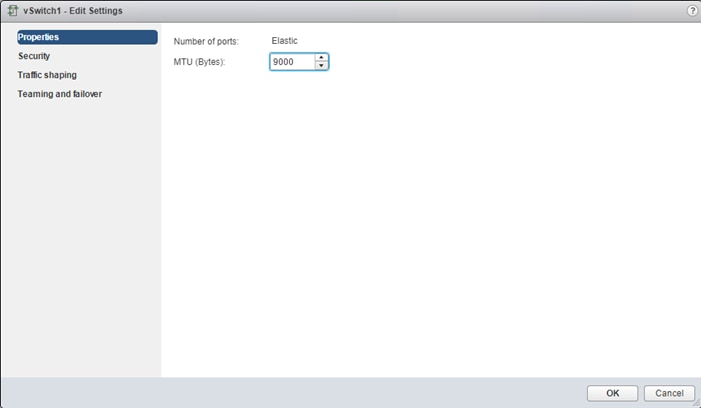

5. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

6. Make this a VPC port-channel and bring it up.

vpc 13

no shutdown

7. Define a port description for the interface connecting to <UCS Cluster Name>-A.

interface Eth1/31

description <UCS Cluster Name>-A:53

8. Apply it to a port channel and bring up the interface.

channel-group 13 force mode active

no shutdown

9. Define a description for the port-channel connecting to <UCS Cluster Name>-B.

interface Po14

description <UCS Cluster Name>-B

10. Make the port-channel a switchport and configure a trunk to allow in-band management, VM traffic, vMotion and the native VLANs.

switchport

switchport mode trunk

switchport trunk native vlan <Native VLAN id>

switchport trunk allowed vlan <IB-MGMT VLAN id>, <vMotion VLAN id>, <VM Traffic VLAN id>, <iSCSI-A VLAN id>, <iSCSI-B VLAN id>

11. Set port speed.

speed 100000

duplex full

no negotiate auto

12. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

13. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

14. Make this a VPC port-channel and bring it up.

vpc 14

no shutdown

15. Define a port description for the interface connecting to <UCS Cluster Name>-B.

interface Eth1/32

description <UCS Cluster Name>-B:1/53

16. Apply it to a port channel and bring up the interface.

channel-group 14 force mode active

no shutdown

copy run start

Cisco Nexus 9000 B

1. Define a description for the port-channel connecting to <UCS Cluster Name>-B.

interface Po13

description <UCS Cluster Name>-A

2. Make the port-channel a switchport and configure a trunk to allow in-band management, VM traffic, vMotion and the native VLANs.

switchport

switchport mode trunk

switchport trunk native vlan <Native VLAN id>

switchport trunk allowed vlan <IB-MGMT VLAN id>, <vMotion VLAN id>, <VM Traffic VLAN id>, <iSCSI-A VLAN id>, <iSCSI-B VLAN id>

3. Set port speed.

speed 100000

duplex full

no negotiate auto

4. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

5. Set the MTU to 9216 to support jumbo frames.

mtu 9216

6. Make this a VPC port-channel and bring it up.

vpc 13

no shutdown

7. Define a port description for the interface connecting to <UCS Cluster Name>-B.

interface Eth1/31

description <UCS Cluster Name>-A:1/54

8. Apply it to a port channel and bring up the interface.

channel-group 13 force mode active

no shutdown

9. Define a description for the port-channel connecting to <UCS Cluster Name>-A.

interface Po14

description <UCS Cluster Name>-B

10. Make the port-channel a switchport and configure a trunk to allow in-band management, VM traffic, vMotion and the native VLANs.

switchport

switchport mode trunk

switchport trunk native vlan <Native VLAN id>

switchport trunk allowed vlan <IB-MGMT VLAN id>, <vMotion VLAN id>, <VM Traffic VLAN id>, <iSCSI-A VLAN id>, <iSCSI-B VLAN id>

11. Set port speed.

speed 100000

duplex full

no negotiate auto

12. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

13. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

14. Make this a VPC port-channel and bring it up.

vpc 14

no shutdown

15. Define a port description for the interface connecting to <UCS Cluster Name>-A.

interface Eth1/32

description <UCS Cluster Name>-B:1/54

16. Apply it to a port channel and bring up the interface.

channel-group 14 force mode active

no shutdown

copy run start

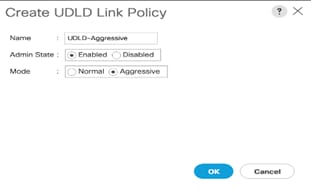

Enable UDLD for Cisco UCS Interfaces

Enable aggressive unidirectional link detection (UDLD) on interfaces connected to Cisco UCS.

Cisco Nexus A and Cisco Nexus B

1. From the global configuration mode, run either of the following commands depending on the connectivity:

interface Eth1/x

#For Copper cable or twinnax connections use the following command

udld aggressive

#For fibre optic connections use the following command

udld enable

Configure Network Interfaces Connected to IBM FS9100 iSCSI Ports (iSCSI Deployment)

![]() This configuration step can be skipped if the UCS environment does not need access to storage using iSCSI.

This configuration step can be skipped if the UCS environment does not need access to storage using iSCSI.

To configure the network interfaces for IBM FS9100 iSCSI ports, follow these steps:

Cisco Nexus 9000 A & B

The 100Gbs design in this document uses a pair of Nexus 9336C-FX2 switches built with all ports being capable of the 100Gbps Quad Small Form Factor Pluggable Plus (QSFP+) type. The IBM FS9100 has 25GbpsSFP+ ports for iSCSI connectivity. The 100Gbps QSFP+ ports on the Nexus 9336C-FX2 switches in this design have been connected to the IBM FS9100 iSCSI ethernet SFP+ ports using a QSFP+ Breakout Cable.

Configuration of the QSFP+ ports will use the interface breakout command as shown in this example to turn the 100G interface Ethernet 1/11 into 4x25G interfaces on both the Nexus switches:

show running-config interface Ethernet1/11

interface Ethernet1/11

no switchport

interface breakout module 1 port 11 map 25g-4x

show running-config interface Ethernet1/11/1-4

interface Ethernet1/11/1

interface Ethernet1/11/2

interface Ethernet1/11/3

interface Ethernet1/11/4

![]() Connectivity between the Nexus switches and IBM FS9100 for iSCSI access depends on the Nexus 9000 switch model used within the architecture. If any supported Nexus switch with 25Gbps capable SFP ports is used, breakout cable is not required and ports from the switch to IBM FS9100 can be connected directly using the SFP transceivers on both sides.

Connectivity between the Nexus switches and IBM FS9100 for iSCSI access depends on the Nexus 9000 switch model used within the architecture. If any supported Nexus switch with 25Gbps capable SFP ports is used, breakout cable is not required and ports from the switch to IBM FS9100 can be connected directly using the SFP transceivers on both sides.

Cisco Nexus 9000 A

1. Define a description for the Ethernet port connecting to <FS9100 Node1, P5>.

interface Ethernet1/11/1

description <FS9100-Node1-iSCSI-P5>

2. Make the Interface access port and configure the switchport access VLAN.

switchport mode access

switchport access vlan <iSCSI-A VLAN id>

3. Make the interface spanning normal.

spanning-tree port type edge

4. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

no shutdown

copy run start

5. Define a description for the Ethernet port connecting to <FS9100 Node2, P5>.

interface Ethernet1/11/2

description <FS9100-Node2-iSCSI-P5>

6. Make the Interface a access port and configure the switchport access VLAN.

switchport mode access

switchport access vlan <iSCSI-A VLAN id>

7. Make the interface spanning normal.

spanning-tree port type edge

8. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

no shutdown

copy run start

Cisco Nexus 9000 B

1. Define a description for the Ethernet port connecting to <FS9100 Node1, P6>.

interface Ethernet1/11/1

description <FS9100-Node1-iSCSI-P6>

2. Make the Interface access port and configure the switchport access VLAN.

switchport mode access

switchport access vlan <iSCSI-B VLAN id>

3. Make the interface spanning normal.

spanning-tree port type edge

4. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

no shutdown

copy run start

5. Define a description for the Ethernet port connecting to <FS9100 Node1, P6>.

interface Ethernet1/11/2

description <FS9100-Node2-iSCSI-P6>

6. Make the Interface a access port and configure the switchport access VLAN.

switchport mode access

switchport access vlan <iSCSI-B VLAN id>

7. Make the interface spanning normal.

spanning-tree port type edge

8. Set the MTU to be 9216 to support jumbo frames.

mtu 9216

no shutdown

copy run start

Management Uplink into Existing Network Infrastructure

Depending on the available network infrastructure, several methods and features can be used to uplink the VersaStack Pod environment. If an existing Cisco Nexus environment is present, we recommend using vPCs to uplink the Cisco Nexus switches included in the VersaStack environment into the infrastructure. The following procedure can be used to create an uplink vPC to the existing environment.

Cisco Nexus 9000 A and B using Port Channel Example

To enable management access across the IP switching environment leveraging port channel in config mode run the following commands:

1. Define a description for the port-channel connecting to management switch.

interface po6

description IB-MGMT

2. Configure the port as an access VLAN carrying the InBand management VLAN traffic.

switchport

switchport mode access

switchport access vlan <IB-MGMT VLAN id>

3. Make the port channel and associated interfaces normal spanning tree ports.

spanning-tree port type normal

4. Make this a VPC port-channel and bring it up.

vpc 6

no shutdown

5. Define a port description for the interface connecting to the management plane.

interface Eth1/30

description IB-MGMT-SWITCH_uplink

6. Apply it to a port channel and bring up the interface.

channel-group 6 force mode active

no shutdown

7. Save the running configuration to start-up in both Nexus 9000s and run commands to look at port and port channel.

Copy run start

sh int eth1/30 br

sh port-channel summary

Switch Testing Commands

The following commands can be used to check for correct switch configuration:

![]() Some of these commands need to run after further configuration of the VersaStack components are complete to see complete results.

Some of these commands need to run after further configuration of the VersaStack components are complete to see complete results.

show run

show vpc

show port-channel summary

show ntp peer-status

show cdp neighbors

show lldp neighbors

show udld neighbors

show run int

show int

Cisco MDS 9132T Configuration (FC Deployment)

This section explains how to configure the Cisco MDS 9000s for use in a VersaStack environment. Follow the steps precisely because failure to do so could result in an improper configuration.

![]() If directly connecting storage to the Cisco UCS fabric interconnects or if only iSCSI storage access is required, skip this section.

If directly connecting storage to the Cisco UCS fabric interconnects or if only iSCSI storage access is required, skip this section.

Physical Connectivity

Follow the physical connectivity guidelines for VersaStack as explained in the section Physical Infrastructure.

VersaStack Cisco MDS Base Configuration

The following procedures describe how to configure the Cisco MDS switches for use in a base VersaStack environment. This procedure assumes you are using the Cisco MDS 9132T with NX-OS 8.3(1).

Cisco MDS 9132T A

To set up the initial configuration for the Cisco MDS A switch, <mds-A-hostname>, follow these steps:

![]() On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning. Enter y to get to the System Admin Account Setup.

On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning. Enter y to get to the System Admin Account Setup.

Cisco MDS 9132T A

1. Configure the switch using command line.

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name : <mds-A-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address : <mds-A-mgmt0-ip>

Mgmt0 IPv4 netmask : <mds-A-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway : <mds-A-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [1024]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Configure congestion/no_credit drop for fc interfaces? (yes/no) [y]: Enter

Enter the type of drop to configure congestion/no_credit drop? (con/no) [c]: Enter

Enter milliseconds in multiples of 10 for congestion-drop for logical-type edge

in range (<200-500>/default), where default is 500. [d]: Enter

Enable the http-server? (yes/no) [y]: Enter

Configure clock? (yes/no) [n]: Enter

Configure timezone? (yes/no) [n]: Enter

Configure summertime? (yes/no) [n]: Enter

Configure the ntp server? (yes/no) [n]: yes

NTP server IPv4 address : <nexus-A-mgmt0-ip>

Configure default switchport interface state (shut/noshut) [shut]: Enter

Configure default switchport trunk mode (on/off/auto) [on]: auto

Configure default switchport port mode F (yes/no) [n]: yes

Configure default zone policy (permit/deny) [deny]: Enter

Enable full zoneset distribution? (yes/no) [n]: Enter

Configure default zone mode (basic/enhanced) [basic]: Enter

2. Review the configuration summary before enabling the configuration.

Cisco MDS 9132T B

1. Configure the switch using command line.

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]: Enter

Enter the password for "admin": <password>

Confirm the password for "admin": <password>

Would you like to enter the basic configuration dialog (yes/no): yes

Create another login account (yes/no) [n]: Enter

Configure read-only SNMP community string (yes/no) [n]: Enter

Configure read-write SNMP community string (yes/no) [n]: Enter

Enter the switch name : <mds-B-hostname>

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]: Enter

Mgmt0 IPv4 address : <mds-B-mgmt0-ip>

Mgmt0 IPv4 netmask : <mds-B-mgmt0-netmask>

Configure the default gateway? (yes/no) [y]: Enter

IPv4 address of the default gateway : <mds-B-mgmt0-gw>

Configure advanced IP options? (yes/no) [n]: Enter

Enable the ssh service? (yes/no) [y]: Enter

Type of ssh key you would like to generate (dsa/rsa) [rsa]: Enter

Number of rsa key bits <1024-2048> [1024]: Enter

Enable the telnet service? (yes/no) [n]: Enter

Configure congestion/no_credit drop for fc interfaces? (yes/no) [y]: Enter

Enter the type of drop to configure congestion/no_credit drop? (con/no) [c]: Enter

Enter milliseconds in multiples of 10 for congestion-drop for logical-type edge

in range (<200-500>/default), where default is 500. [d]: Enter

Enable the http-server? (yes/no) [y]: Enter

Configure clock? (yes/no) [n]: Enter

Configure timezone? (yes/no) [n]: Enter

Configure summertime? (yes/no) [n]: Enter

Configure the ntp server? (yes/no) [n]: yes

NTP server IPv4 address : <nexus-A-mgmt0-ip>

Configure default switchport interface state (shut/noshut) [shut]: Enter

Configure default switchport trunk mode (on/off/auto) [on]: auto

Configure default switchport port mode F (yes/no) [n]: yes

Configure default zone policy (permit/deny) [deny]: Enter

Enable full zoneset distribution? (yes/no) [n]: Enter

Configure default zone mode (basic/enhanced) [basic]: Enter

2. Review the configuration summary before enabling the configuration.

Cisco MDS 9132T Initial Configuration Setup

To perform Cisco MDS Initial configuration, follow these steps:

Enable Licenses

Cisco MDS 9132T A and Cisco MDS 9132T B

To enable the correct features on the Cisco MDS switches, follow these steps:

1. Login as admin.

2. Run the following commands:

Configure terminal

feature npiv

feature fport-channel-trunk

feature lldp

device-alias mode enhanced

device-alias commit

Add Second NTP server

Cisco MDS 9132T A and Cisco MDS 9132T B

To configure the second NTP server, follow this step:

1. From the global configuration mode, run the following command:

ntp server <nexus-B-mgmt0-ip>

Configure Individual Ports

To configure Cisco MDS individual ports used for Cisco UCS and IBM FS9100 connectivity, follow these steps:

Cisco MDS 9132T A

To configure individual ports and port-channels for switch A, follow this step:

From the global configuration mode, run the following commands:

interface fc1/1

switchport description <ucs-clustername>-a:1/1

channel-group 1 force

no shutdown

exit

interface fc1/2

switchport description <ucs-clustername>-a:1/2

channel-group 1 force

no shutdown

exit

interface fc1/3

switchport description <ucs-clustername>-a:1/3

channel-group 1 force

no shutdown

exit

interface fc1/4

switchport description <ucs-clustername>-a:1/4

channel-group 1 force

no shutdown

exit

switchport description <FS9100-Node1-FC1>

switchport speed 16000

no shutdown

exit

interface fc1/6

switchport description <FS9100-Node1-FC3>

switchport speed 16000

no shutdown

exit

interface fc1/7

switchport description <FS9100-Node2-FC1>

switchport speed 16000

no shutdown

exit

interface fc1/8

switchport description <FS9100-Node2-FC3>

switchport speed 16000

no shutdown

exit

interface port-channel1

channel mode active

switchport description <ucs-clustername>-a

no shutdown

exit

Cisco MDS 9132T B

To configure individual ports and port-channels for switch B, follow this step:

From the global configuration mode, run the following commands:

interface fc1/1

switchport description <ucs-clustername>-b:1/1

channel-group 2 force

no shutdown

exit

interface fc1/2

switchport description <ucs-clustername>-b:1/2

channel-group 2 force

no shutdown

exit

interface fc1/3

switchport description <ucs-clustername>-b:1/3

channel-group 2 force

no shutdown

exit

interface fc1/4

switchport description <ucs-clustername>-b:1/4

channel-group 2 force

no shutdown

exit

interface fc1/5

switchport description <FS9100-Node1-FC2>

switchport speed 16000

no shutdown

exit

interface fc1/6

switchport description <FS9100-Node1-FC4>

switchport speed 16000

no shutdown

exit

interface fc1/7

switchport description <FS9100-Node2-FC2>

switchport speed 16000

no shutdown

exit

interface fc1/8

switchport description <FS9100-Node2-FC4>

switchport speed 16000

no shutdown

exit

interface port-channel2

channel mode active

switchport description <ucs-clustername>-b

no shutdown

exit

Create VSANs

Cisco MDS 9132T A

To create the necessary VSANs for fabric A and add ports to them, follow these steps:

From the global configuration mode, run the following commands:

vsan database

vsan <vsan-a-id>

vsan <vsan-a-id> name Fabric-A

exit

zone smart-zoning enable vsan <vsan-a-id>

vsan database

vsan <vsan-a-id> interface fc1/5

vsan <vsan-a-id> interface fc1/6

vsan <vsan-a-id> interface fc1/7

vsan <vsan-a-id> interface fc1/8

vsan <vsan-a-id> interface port-channel1

exit

Cisco MDS 9132T B

To create the necessary VSANs for fabric B and add ports to them, follow these steps:

From the global configuration mode, run the following commands:

vsan database

vsan <vsan-b-id>

vsan <vsan-b-id> name Fabric-B

exit

zone smart-zoning enable vsan <vsan-b-id>

vsan database

vsan <vsan-b-id> interface fc1/5

vsan <vsan-b-id> interface fc1/6

vsan <vsan-b-id> interface fc1/7

vsan <vsan-b-id> interface fc1/8

vsan <vsan-b-id> interface port-channel2

exit

IBM FlashSystem 9100

![]() FlashSystem 9100 systems have specific connection requirements. Care must be taken to note the orientation of each node canister in the control enclosure.

FlashSystem 9100 systems have specific connection requirements. Care must be taken to note the orientation of each node canister in the control enclosure.

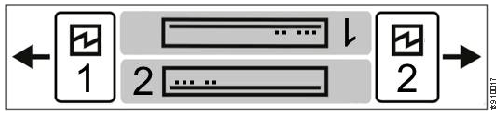

The FlashSystem 9100 control enclosure contains two node canisters. A label on the control enclosure identifies each node canister and power supply unit (PSU). As Figure 6 shows, node canister 1 is on top and node canister 2 is on the bottom. Because the node canisters are inverted, the location of the ports and the port numbering are oriented differently on each node canister. It is important to remember this orientation when installing adapters and cables.

Figure 6 Orientation of the Node Canisters and PSUs

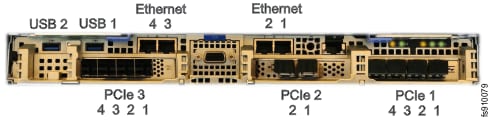

For example, Figure 7 shows the top node canister. On this canister, the PCIe slot and port numbering goes from right to left. PCIe adapter slot 1 contains a 4-port 16 Gbps Fibre Channel adapter, PCIe slot 2 contains a 2-port 25 Gbps iWARP Ethernet adapter, and PCIe slot 3 contains a 4-port 12 Gbps SAS adapter. The onboard Ethernet and USB ports are also shown.

Figure 7 Orientation of Ports on Node Canister 1

Figure 8 shows the bottom node canister. This node canister has the same type and number of adapters installed. However, on the bottom canister, the PCI slot and port numbering goes from left to right.

Figure 8 Orientation of Ports on Node Canister 2

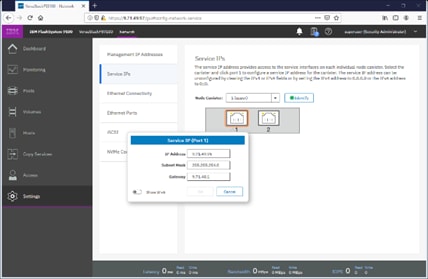

Four 10 Gb Ethernet ports on each node canister provide system management connections and iSCSI host connectivity. A separate technician port provides access to initialization and service assistant functions. Table 7 describes each port.

Table 7 Summary of Onboard Ethernet Ports

| On board Ethernet Port |

Speed |

Function |

| 1 |

10 Gbps |

Management IP, Service IP, Host I/O |

| 2 |

10 Gbps |

Secondary Management IP, Host I/O |

| 3 |

10 Gbps |

Host I/O |

| 4 |

10 Gbps |

Host I/O |

| T |

1 Gbps |

Technician Port - DHCP/DNS for direct attach service management |

The following connections are required for FlashSystem 9100 control enclosures:

· Each control enclosure requires two Ethernet cables to connect it to an Ethernet switch. One cable connects to port 1 of the top node canister, and the other cable connects to port 1 of the bottom node canister. For 10 Gbps ports, the minimum link speed is 1 Gbps. Both Internet Protocol Version 4 (IPv4) and Internet Protocol Version 6 (IPv6) are supported.

· To ensure system failover operations, Ethernet port 1 on each node canister must be connected to the same set of subnets. If used, Ethernet port 2 on each node canister must also be connected to the same set of subnets. However, the subnets for Ethernet port 1 do not have to be the same as Ethernet port 2.

· If you have more than one control enclosure in your system, the control enclosures communicate through their Fibre Channel ports.

· Each FlashSystem 9100 node canister also has three PCIe interface slots to support optional host interface adapters. The host interface adapters can be supported in any of the interface slots. Table 8 provides an overview of the host interface adapters.

· The 2-port SAS host interface adapter supports expansion enclosures. In total, FlashSystem 9100 control enclosures can have up to 20 chain-linked expansion enclosures, 10 per port.

Table 8 Summary of Supported Host Interface Adapters

| Protocol |

Feature |

Ports |

FRU part number |

Quantity supported |

| 16 Gbs Fibre Channel |

AHB3 |

4 |

01YM333 |

0-3 |

| 25 Gbs Ethernet (RoCE) |

AHB6 |

2 |

01YM283 |

0-3 |

| 25 Gbs Ethernet (iWARP) |

AHB7 |

2 |

01YM285 |

0-3 |

| 12 Gb SAS Expansion |

AHBA |

4, but only 2 are active for SAS expansion chains. |

01YM338 |

0-1 |

|

|

||||

Each node canister has four onboard 10 Gbps Ethernet ports. A node canister can also support up to three 2-port 25 Gbps Ethernet host interface adapters.

Table 9 lists the fabric types that can be used for communicating between hosts, nodes, and RAID storage systems. These fabric types can be used at the same time.

| Communications type |

Host to node |

Node to storage system |

Node to node |

| Fibre Channel SAN |

Yes |

Yes |

Yes |

| iSCSI 10 Gbps Ethernet 25 Gbps Ethernet |

Yes |

Yes |

No |

| iSER 25 Gbps Ethernet |

Yes |

No |

No |

The feature codes for the 16 Gbps Fibre Channel adapter, 25Gbps iWarp adapter, and the 25Gbps RoCE adapter each include standard SFP transceivers for each adapter. In this design the 25Gbps RoCE adapter has been leveraged for iSCSI connectivity and the ports are connected to the Cisco Nexus 9336C-FX2 switches using breakout cables, SFP transceivers are not required with this connectivity.

The 2-port 25 GB Ethernet adapter for iWARP and the 2-port 25GB Ethernet adapter for RDMA over Converged Ethernet (RoCE) both support iSER host attachment. However, RoCE and iWARP are not cross-compatible; therefore, it is important to use the adapter that matches the iSER implementation on your SAN if iSER is planned to be implemented in the future.

![]() This document implements traditional iSCSI, iSER based iSCSI implementation can be configured with the support of iSER on Cisco VIC 1400 series when available with the future releases of Cisco UCS software.

This document implements traditional iSCSI, iSER based iSCSI implementation can be configured with the support of iSER on Cisco VIC 1400 series when available with the future releases of Cisco UCS software.

IBM Service Support Representative (SSR) Configuration

To install the FlashSystem 9100 hardware, an IBM SSR must complete the following tasks:

![]() You must complete the planning tasks and provide completed worksheets to the IBM SSR before they can proceed with installing and initializing your system.

You must complete the planning tasks and provide completed worksheets to the IBM SSR before they can proceed with installing and initializing your system.

· An IBM SSR unpacks and installs the AF7/AF8 control enclosures and any optional SAS expansion enclosures in the rack.

· Referring to the worksheets that you completed, the IBM SSR completes the cabling.

![]() If the IBM SSR is aware of your intent to add the FlashSystem 9100 to an existing system, the IBM SSR installs the FlashSystem 9100 control enclosure for you but does not initialize a system on it. If you are planning on adding a FlashSystem 9100 control enclosure to an existing Storwize® V7000 system, inform the IBM SSR of this intention. In these cases, the IBM SSR installs the FlashSystem 9100 control enclosure for you, but does not initialize a system on it, because the existing system is already initialized.

If the IBM SSR is aware of your intent to add the FlashSystem 9100 to an existing system, the IBM SSR installs the FlashSystem 9100 control enclosure for you but does not initialize a system on it. If you are planning on adding a FlashSystem 9100 control enclosure to an existing Storwize® V7000 system, inform the IBM SSR of this intention. In these cases, the IBM SSR installs the FlashSystem 9100 control enclosure for you, but does not initialize a system on it, because the existing system is already initialized.

After the hardware is installed, an IBM SSR connects a workstation to an AF7/AF8 control enclosure technician port and completes the following tasks:

· Configuring the system with a name, and management and service IP addresses.

· Logging in to the control enclosure using the management GUI and completing the system setup wizard using information from the customer-supplied worksheets.

The SSR configuration steps are documented below.

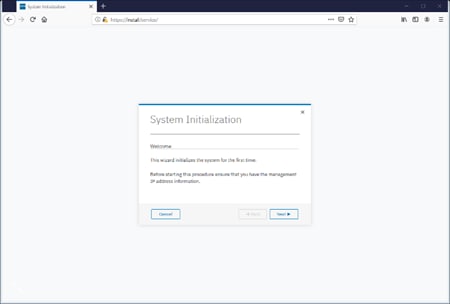

Initialize the System

The initial configuration requires a workstation be locally attached to the Ethernet port labelled “T” on the Upper node canister in the FS9100 enclosure. “T” refers to Tech Port and will allocate an IP address to the connected workstation using DHCP and will redirect any DNS queries to the System Initialization page. This page shows the status of each node canister in the enclosure and will guide you through the initialization process.

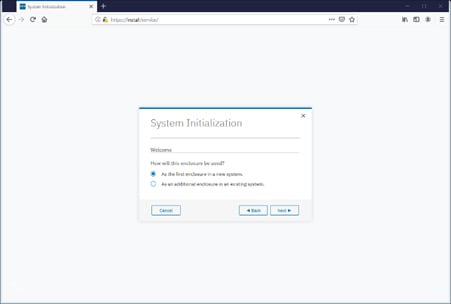

To initialize the system, follow these steps:

1. Ensure both node canisters have been detected and click Proceed.

2. Click Next through the Welcome screen.

3. Select the option to define the enclosure as the first in a new system

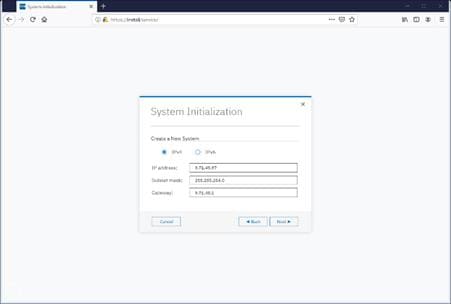

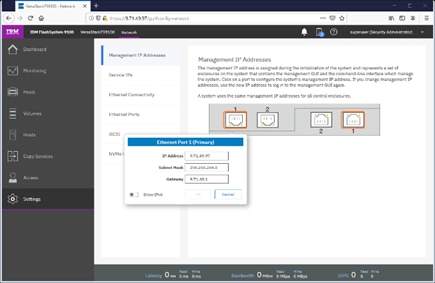

4. Enter the network details for the management interface for the new system. This IP address is sometimes referred to as the Management IP, or Cluster IP and will be used to manage the FS9100 system via the web interface or CLI via SSH.

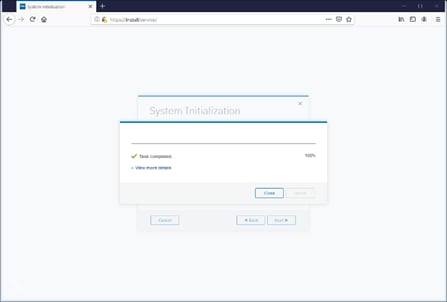

5. Acknowledge the Task Completion message.

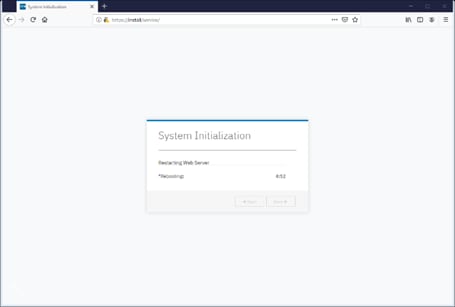

6. The initial configuration steps are now complete, and the system will now restart the Web Server.

Prepare FS9100 for Customer Environments

Now the Management IP is enabled, all future configuration steps are made with this interface.

To prepare the FS9100 for customer environments, follow these steps:

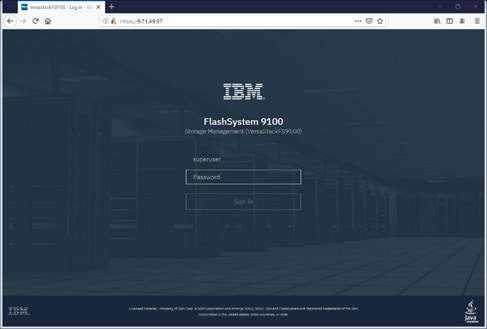

1. Log in using the default credentials:

Username: superuser

Password: passw0rd

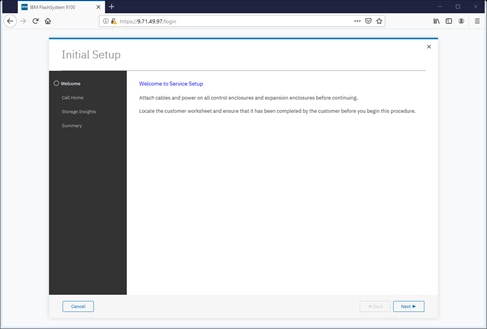

2. Click Next to proceed through the configuration wizard.

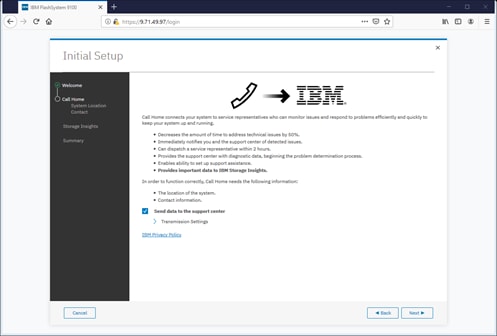

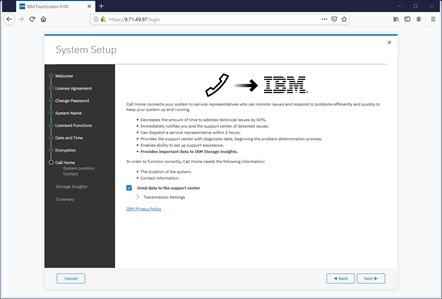

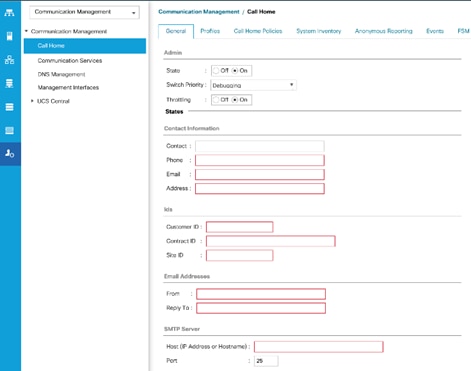

3. For optimal configuration, check the box to enable the Call Home feature.

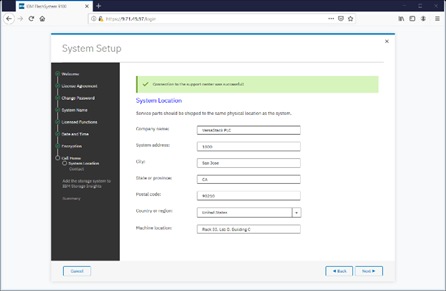

4. Detail the System Location.

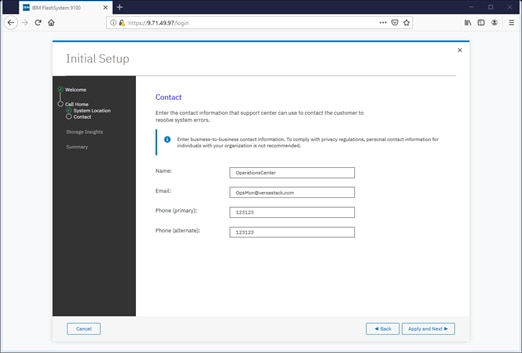

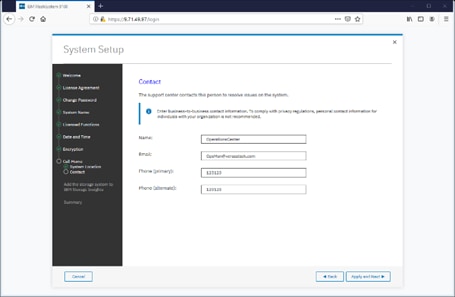

5. Specify the contact details.

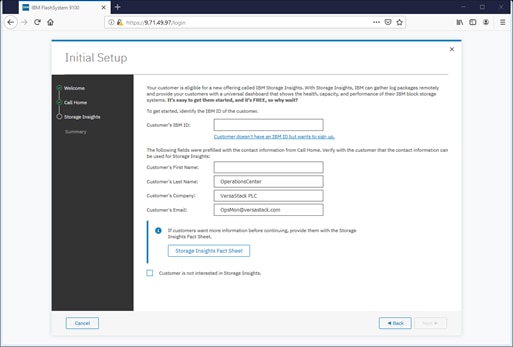

6. Specify the customer’s IBM ID and contact details.

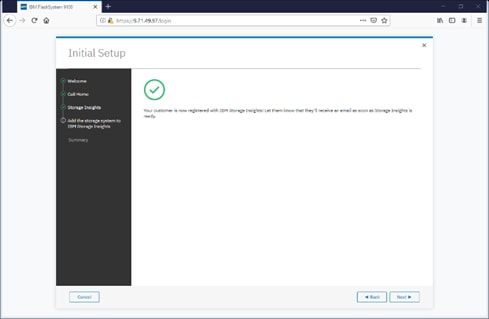

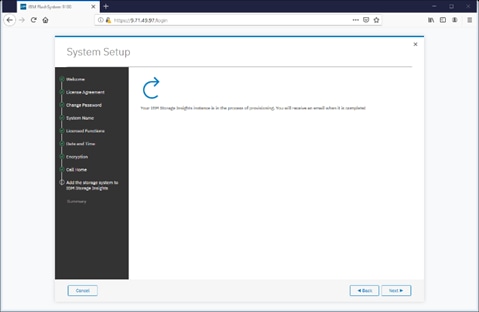

7. Click Next to finalize the IBM Storage Insights registration.

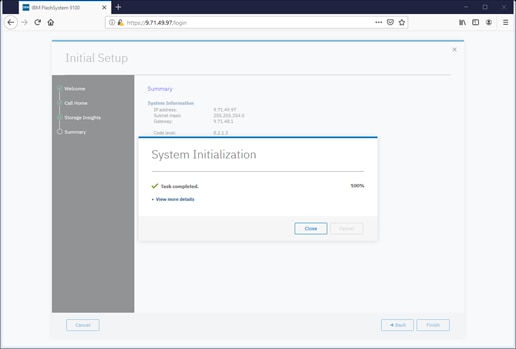

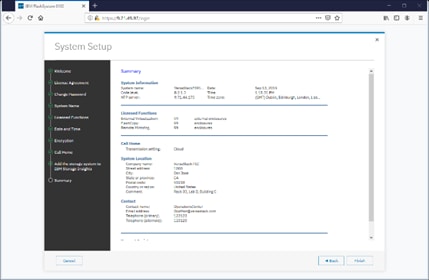

8. Review the Initial Setup summary and click Finish.

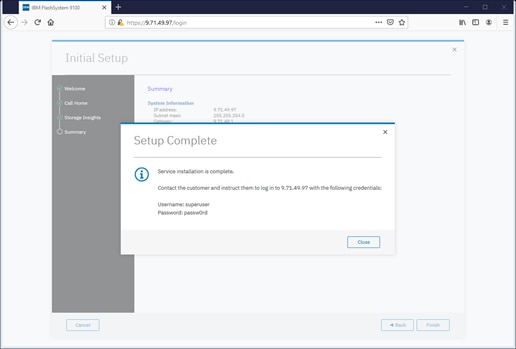

9. Click Close to complete the Service Initialization.

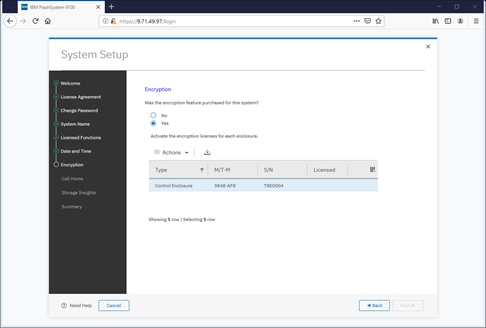

Customer Configuration Setup Tasks via the GUI

After completing the initial tasks above, launch the management GUI and continue configuring the IBM FlashSystem 9100.

To configure the customer’s tasks, follow these steps:

![]() Following e-Learning module introduces the IBM FlashSystem 9100 management interface and provides an overview of the system setup tasks, including configuring the system, migrating and configuring storage, creating hosts, creating and mapping volumes, and configuring email notifications: Getting Started

Following e-Learning module introduces the IBM FlashSystem 9100 management interface and provides an overview of the system setup tasks, including configuring the system, migrating and configuring storage, creating hosts, creating and mapping volumes, and configuring email notifications: Getting Started

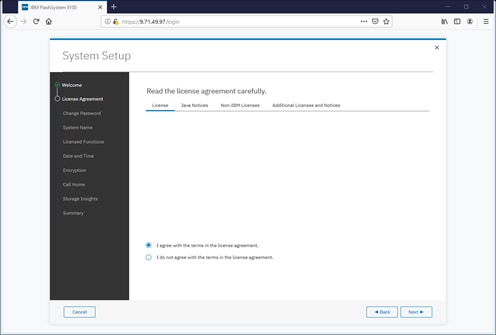

1. Log into the management GUI using the cluster IP address configured above.

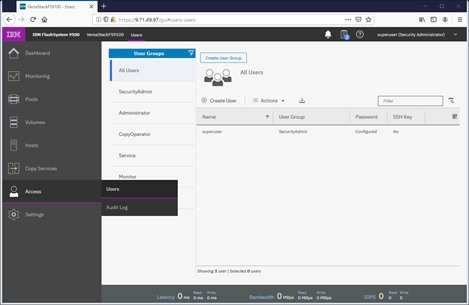

2. Log in using the default credentials:

Username: superuser

Password: passw0rd

3. Click Next to skip the Welcome message.

4. Read and accept the license agreement. Click Accept.

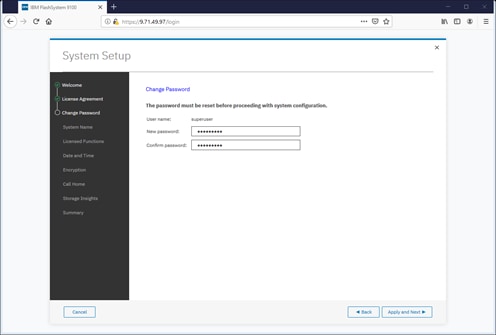

5. Define new credentials for the superuser user account.

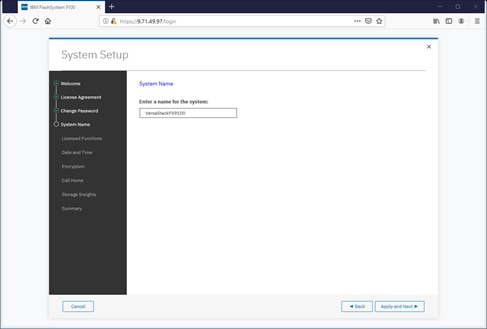

6. Enter the System Name and click Apply and Next to proceed.

7. Enter the license details that was purchased for FlashCopy, Remote Mirroring, Easy Tier, and External Virtualization. Click Apply and Next to proceed.

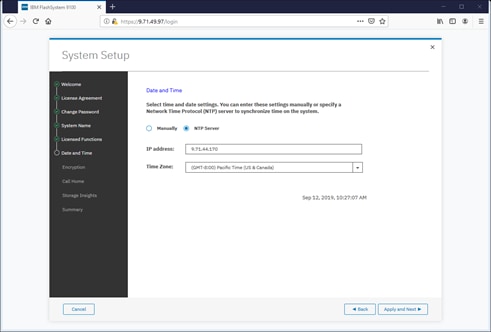

8. Configure the date and time settings, inputting NTP server details if available. Click Apply and Next to proceed.

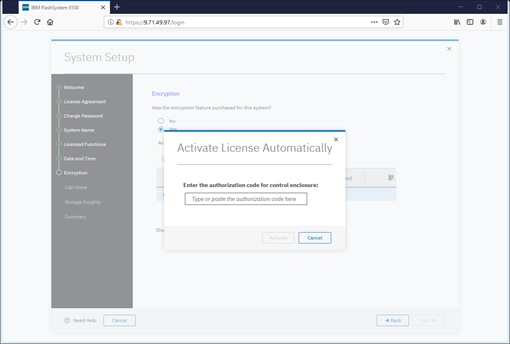

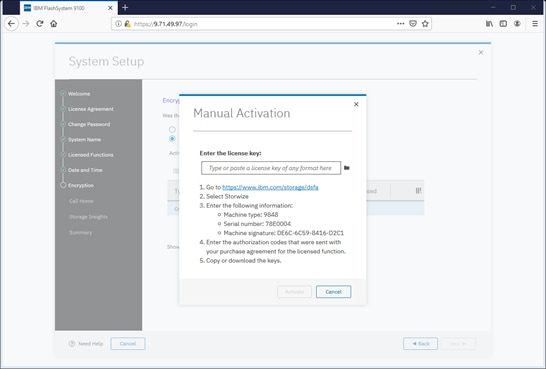

9. Enable the Encryption feature (or leave it disabled). Click Next to proceed.

10. If using the encryption, select either Manual or Automatic activation and enter the authorization code or license key accordingly.

![]() It is highly recommended to configure email event notifications which will automatically notify IBM support centers when problems occur.

It is highly recommended to configure email event notifications which will automatically notify IBM support centers when problems occur.

11. Enter the complete company name and address and then click Next.

12. Enter the contact person for the support center calls. Click Apply and Next.

![]() IBM Storage Insights is required to enable performance/health monitoring required by remote IBM Support representatives when assisting with any support issues.

IBM Storage Insights is required to enable performance/health monitoring required by remote IBM Support representatives when assisting with any support issues.

13. Review the final summary page and click Finish to complete the System Setup wizard.

14. Setup Completed. Click Close.

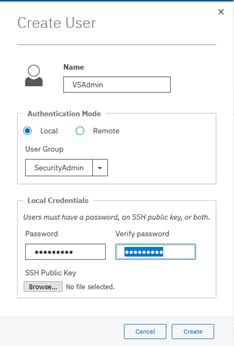

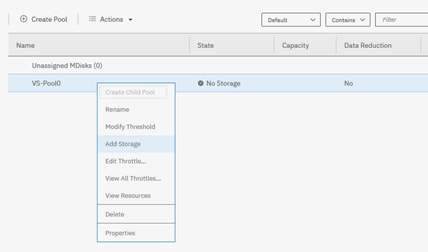

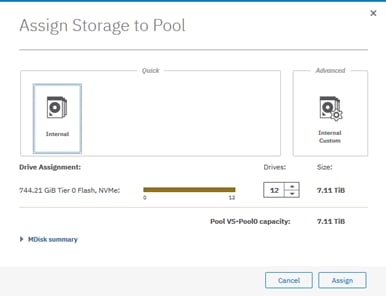

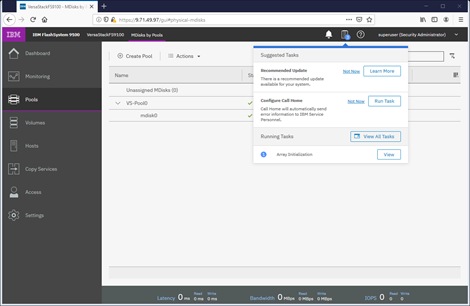

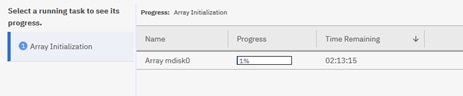

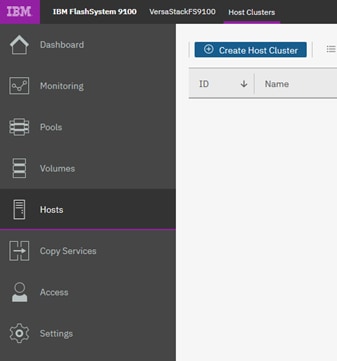

System Dashboard, and Post-Initialization Setup Tasks

To configure the necessary post-initialization setup tasks, follow these steps:

1. The System view of IBM FS9100 is now available, as shown below.

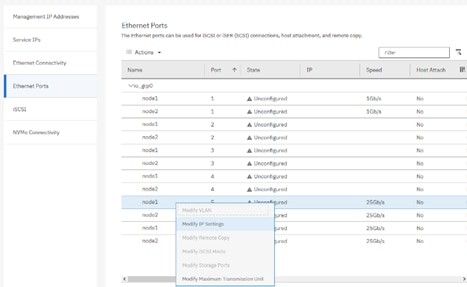

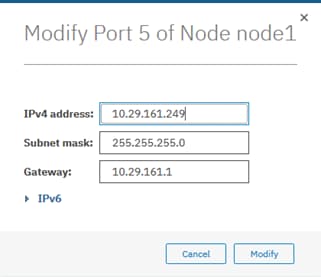

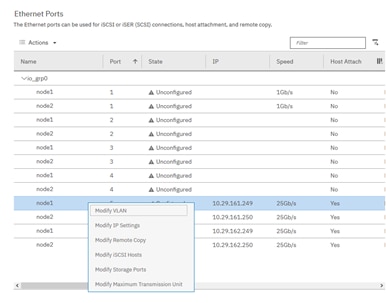

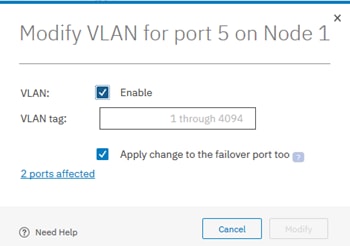

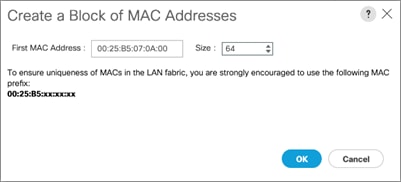

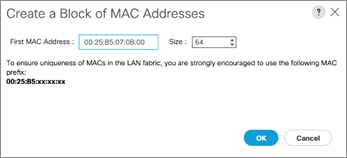

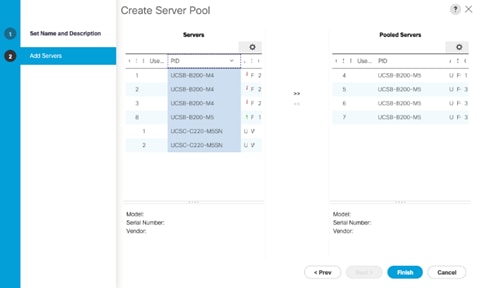

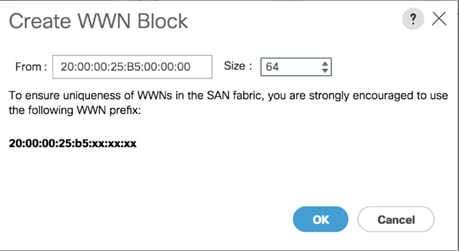

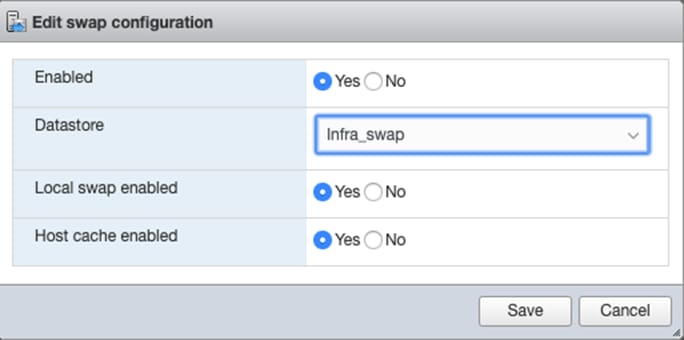

2. In the left side menu, hover over each of the icons on the Navigation Dock to become familiar with the options.