Replacement of OSD-Compute UCS 240M4 - vEPC

Available Languages

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

Contents

Introduction

This document describes the steps that are required to replace a faulty Object Storage Disk (OSD)-Compute server in an Ultra-M setup that hosts StarOS Virtual Network Functions (VNFs).

Background Information

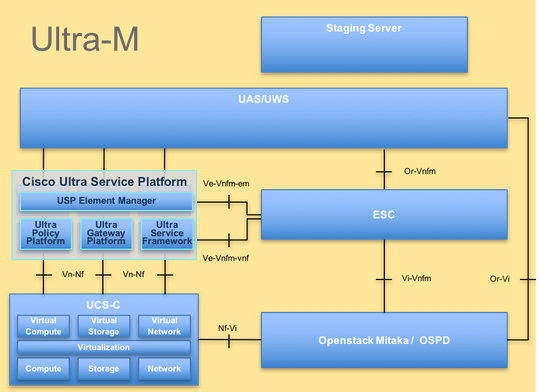

Ultra-M is a pre-packaged and validated virtualized mobile packet core solution designed in order to simplify the deployment of VNFs. OpenStack is the Virtualized Infrastructure Manager (VIM) for Ultra-M and consists of these node types:

- Compute

- OSD - Compute

- Controller

- OpenStack Platform - Director (OSPD)

The high-level architecture of Ultra-M and the components involved are depicted in this image:

This document is intended for the Cisco personnel who are familiar with Cisco Ultra-M platform and it details the steps that are required to be carried out at the OpenStack and StarOS VNF level at the time of the Compute Server Replacement.

Note: Ultra M 5.1.x release is considered in order to define the procedures in this document.

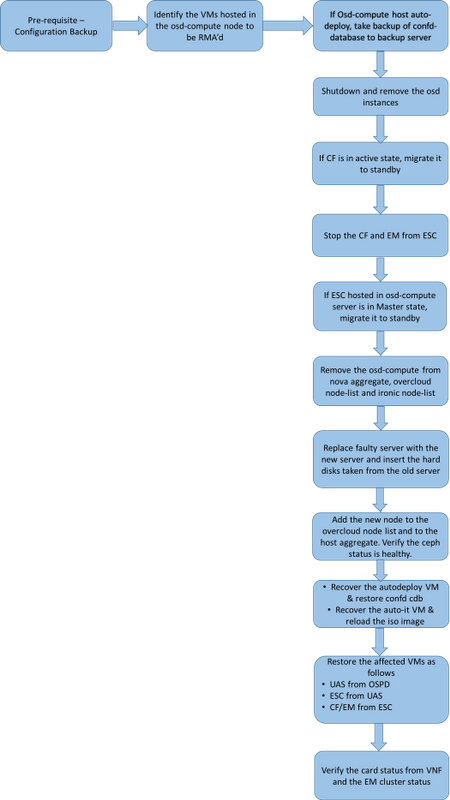

Workflow of the MoP

Abbreviations

| VNF | Virtual Network Function |

| CF | Control Function |

| SF | Service Function |

| ESC | Elastic Service Controller |

| MOP | Method of Procedure |

| OSD | Object Storage Disks |

| HDD | Hard Disk Drive |

| SSD | Solid State Drive |

| VIM | Virtual Infrastructure Manager |

| VM | Virtual Machine |

| EM | Element Manager |

| UAS | Ultra Automation Services |

| UUID | Universally Unique IDentifier |

Prerequisites

Backup OSPD

Before you replace an OSD-Compute node, it is important to check the current state of your Red Hat OpenStack Platform environment. It is recommended you check the current state in order to avoid complications when the Compute replacement process is on. It can be achieved by this flow of replacement.

In case of recovery, Cisco recommends you take a backup of the OSPD database (DB) with the use of these steps:

[root@director ~]# mysqldump --opt --all-databases > /root/undercloud-all-databases.sql

[root@director ~]# tar --xattrs -czf undercloud-backup-`date +%F`.tar.gz /root/undercloud-all-databases.sql

/etc/my.cnf.d/server.cnf /var/lib/glance/images /srv/node /home/stack

tar: Removing leading `/' from member names

This process ensures that a node can be replaced without affecting the availability of any instances. Also, it is recommended to backup the StarOS configuration especially if the Compute node to be replaced hosts the CF VM.

Identify the VMs Hosted in the OSD-Compute Node

Identify the VMs that are hosted on the Compute server. There can be two possibilities:

The OSD-Compute server contains EM/UAS/Auto-Deploy/Auto-IT combination of VMs:

[stack@director ~]$ nova list --field name,host | grep osd-compute-0

| c6144778-9afd-4946-8453-78c817368f18 | AUTO-DEPLOY-VNF2-uas-0 | pod1-osd-compute-0.localdomain |

| 2d051522-bce2-4809-8d63-0c0e17f251dc | AUTO-IT-VNF2-uas-0 | pod1-osd-compute-0.localdomain |

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | pod1-osd-compute-0.localdomain |

| f5bd7b9c-476a-4679-83e5-303f0aae9309 | VNF2-UAS-uas-0 | pod1-osd-compute-0.localdomain |

The Compute server contains CF/ESC/EM/UAS combination of VMs:

[stack@director ~]$ nova list --field name,host | grep osd-compute-1

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | pod1-compute-8.localdomain |

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 | pod1-compute-8.localdomain |

| 75528898-ef4b-4d68-b05d-882014708694 | VNF2-ESC-ESC-0 | pod1-compute-8.localdomain |

| f5bd7b9c-476a-4679-83e5-303f0aae9309 | VNF2-UAS-uas-0 | pod1-compute-8.localdomain |

Note: In the output shown here, the first column corresponds to the UUID, the second column is the VM name and the third column is the hostname where the VM is present. The parameters from this output will be used in subsequent sections.

Verify that Ceph has available capacity in order to allow a single OSD server to be removed:

[root@pod1-osd-compute-1 ~]# sudo ceph df

GLOBAL:

SIZE AVAIL RAW USED %RAW USED

13393G 11804G 1589G 11.87

POOLS:

NAME ID USED %USED MAX AVAIL OBJECTS

rbd 0 0 0 3876G 0

metrics 1 4157M 0.10 3876G 215385

images 2 6731M 0.17 3876G 897

backups 3 0 0 3876G 0

volumes 4 399G 9.34 3876G 102373

vms 5 122G 3.06 3876G 31863

Verify ceph osd tree status is up on the OSD-Compute server:

[heat-admin@pod1-osd-compute-1 ~]$ sudo ceph osd tree

ID WEIGHT TYPE NAME UP/DOWN REWEIGHT PRIMARY-AFFINITY

-1 13.07996 root default

-2 4.35999 host pod1-osd-compute-0

0 1.09000 osd.0 up 1.00000 1.00000

3 1.09000 osd.3 up1.00000 1.00000

6 1.09000 osd.6 up 1.00000 1.00000

9 1.09000 osd.9 up 1.00000 1.00000

-3 4.35999 host pod1-osd-compute-2

1 1.09000 osd.1 up 1.00000 1.00000

4 1.09000 osd.4 up 1.00000 1.00000

7 1.09000 osd.7 up 1.00000 1.00000

10 1.09000 osd.10 up 1.00000 1.00000

-4 4.35999 host pod1-osd-compute-1

2 1.09000 osd.2 up 1.00000 1.00000

5 1.09000 osd.5 up 1.00000 1.00000

8 1.09000 osd.8 up 1.00000 1.00000

11 1.09000 osd.11 up 1.00000 1.00000

Ceph processes are active on the OSD-Compute server:

[root@pod1-osd-compute-1 ~]# systemctl list-units *ceph*

UNIT LOAD ACTIVE SUB DESCRIPTION

var-lib-ceph-osd-ceph\x2d11.mount loaded active mounted /var/lib/ceph/osd/ceph-11

var-lib-ceph-osd-ceph\x2d2.mount loaded active mounted /var/lib/ceph/osd/ceph-2

var-lib-ceph-osd-ceph\x2d5.mount loaded active mounted /var/lib/ceph/osd/ceph-5

var-lib-ceph-osd-ceph\x2d8.mount loaded active mounted /var/lib/ceph/osd/ceph-8

ceph-osd@11.service loaded active running Ceph object storage daemon

ceph-osd@2.service loaded active running Ceph object storage daemon

ceph-osd@5.service loaded active running Ceph object storage daemon

ceph-osd@8.service loaded active running Ceph object storage daemon

system-ceph\x2ddisk.slice loaded active active system-ceph\x2ddisk.slice

system-ceph\x2dosd.slice loaded active active system-ceph\x2dosd.slice

ceph-mon.target loaded active active ceph target allowing to start/stop all ceph-mon@.service instances at once

ceph-osd.target loaded active active ceph target allowing to start/stop all ceph-osd@.service instances at once

ceph-radosgw.target loaded active active ceph target allowing to start/stop all ceph-radosgw@.service instances at once

ceph.target loaded active active ceph target allowing to start/stop all ceph*@.service instances at once

Disable and stop each Ceph instance and remove each instance from OSD and unmount the directory. Repeat for each Ceph instance:

[root@pod1-osd-compute-1 ~]# systemctl disable ceph-osd@11

[root@pod1-osd-compute-1 ~]# systemctl stop ceph-osd@11

[root@pod1-osd-compute-1 ~]# ceph osd out 11

marked out osd.11.

[root@pod1-osd-compute-1 ~]# ceph osd crush remove osd.11

removed item id 11 name 'osd.11' from crush map

[root@pod1-osd-compute-1 ~]# ceph auth del osd.11

updated

[root@pod1-osd-compute-1 ~]# ceph osd rm 11

removed osd.11

[root@pod1-osd-compute-1 ~]# umount /var/lib/ceph.osd/ceph-11

[root@pod1-osd-compute-1 ~]# rm -rf /var/lib/ceph.osd/ceph-11

or

Clean.sh script can be used in order to perform this task:

[heat-admin@pod1-osd-compute-0 ~]$ sudo ls /var/lib/ceph/osd

ceph-11 ceph-3 ceph-6 ceph-8

[heat-admin@pod1-osd-compute-0 ~]$ /bin/sh clean.sh

[heat-admin@pod1-osd-compute-0 ~]$ cat clean.sh

#!/bin/sh

set -x

CEPH=`sudo ls /var/lib/ceph/osd`

for c in $CEPH

do

i=`echo $c |cut -d'-' -f2`

sudo systemctl disable ceph-osd@$i || (echo "error rc:$?"; exit 1)

sleep 2

sudo systemctl stop ceph-osd@$i || (echo "error rc:$?"; exit 1)

sleep 2

sudo ceph osd out $i || (echo "error rc:$?"; exit 1)

sleep 2

sudo ceph osd crush remove osd.$i || (echo "error rc:$?"; exit 1)

sleep 2

sudo ceph auth del osd.$i || (echo "error rc:$?"; exit 1)

sleep 2

sudo ceph osd rm $i || (echo "error rc:$?"; exit 1)

sleep 2

sudo umount /var/lib/ceph/osd/$c || (echo "error rc:$?"; exit 1)

sleep 2

sudo rm -rf /var/lib/ceph/osd/$c || (echo "error rc:$?"; exit 1)

sleep 2

done

sudo ceph osd tree

After all OSD processes have been migrated/deleted, the node can be removed from the overcloud.

Note: When Ceph is removed, VNF HD RAID will go into the Degraded state but HD-disk must still be accessible.

Graceful Power Off

Case 1. OSD-Compute Node Hosts CF/ESC/EM/UAS

Migrate CF Card to Standby State

Log in to the StarOS VNF and identify the card that corresponds to the CF VM. Use the UUID of the CF VM identified from the section Identify the VMs hosted in the OSD-Compute Node, and find the card that corresponds to the UUID.

[local]VNF2# show card hardware

Tuesday might 08 16:49:42 UTC 2018

<snip>

Card 2:

Card Type : Control Function Virtual Card

CPU Packages : 8 [#0, #1, #2, #3, #4, #5, #6, #7]

CPU Nodes : 1

CPU Cores/Threads : 8

Memory : 16384M (qvpc-di-large)

UUID/Serial Number : F9C0763A-4A4F-4BBD-AF51-BC7545774BE2

<snip>

Check the status of the card:

[local]VNF2# show card table

Tuesday might 08 16:52:53 UTC 2018

Slot Card Type Oper State SPOF Attach

----------- -------------------------------------- ------------- ---- ------

1: CFC Control Function Virtual Card Standby -

2: CFC Control Function Virtual Card Active No

3: FC 4-Port Service Function Virtual Card Active No

4: FC 4-Port Service Function Virtual Card Active No

5: FC 4-Port Service Function Virtual Card Active No

6: FC 4-Port Service Function Virtual Card Active No

7: FC 4-Port Service Function Virtual Card Active No

8: FC 4-Port Service Function Virtual Card Active No

9: FC 4-Port Service Function Virtual Card Active No

10: FC 4-Port Service Function Virtual Card Standby -

If the card is in the active state, move the card to standby state:

[local]VNF2# card migrate from 2 to 1

Shutdown CF and EM VM from ESC

Log in to the ESC node that corresponds to the VNF and check the status of the VMs:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

<state>VM_ALIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

<state>VM_ALIVE_STATE</state>

<deployment_name>VNF2-DEPLOYMENT-em</deployment_name>

<vm_id>507d67c2-1d00-4321-b9d1-da879af524f8</vm_id>

<vm_id>dc168a6a-4aeb-4e81-abd9-91d7568b5f7c</vm_id>

<vm_id>9ffec58b-4b9d-4072-b944-5413bf7fcf07</vm_id>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea</vm_name>

<state>VM_ALIVE_STATE</state>

<snip>

Stop the CF and EM VM one-by-one with the use of its VM Name. VM Name noted from section Identify the VMs hosted in the OSD-Compute Node.

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea

After it stops, the VMs must enter the SHUTOFF state:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

<state>VM_SHUTOFF_STATE</state>

<vm_name>VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

<state>VM_ALIVE_STATE</state>

<deployment_name>VNF2-DEPLOYMENT-em</deployment_name>

<vm_id>507d67c2-1d00-4321-b9d1-da879af524f8</vm_id>

<vm_id>dc168a6a-4aeb-4e81-abd9-91d7568b5f7c</vm_id>

<vm_id>9ffec58b-4b9d-4072-b944-5413bf7fcf07</vm_id>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea</vm_name>

<state>VM_SHUTOFF_STATE</state>

<snip>

Migrate ESC to Standby Mode

Log in to the ESC hosted in the compute node and check if it is in the master state. If yes, switch the ESC to standby mode:

[admin@VNF2-esc-esc-0 esc-cli]$ escadm status

0 ESC status=0 ESC Master Healthy

[admin@VNF2-esc-esc-0 ~]$ sudo service keepalived stop

Stopping keepalived: [ OK ]

[admin@VNF2-esc-esc-0 ~]$ escadm status

1 ESC status=0 In SWITCHING_TO_STOP state. Please check status after a while.

[admin@VNF2-esc-esc-0 ~]$ sudo reboot

Broadcast message from admin@vnf1-esc-esc-0.novalocal

(/dev/pts/0) at 13:32 ...

The system is going down for reboot NOW!

Remove the OSD-Compute Node from Nova Aggregate List

List the nova aggregates and identify the aggregate that corresponds to the Compute server based on the VNF hosted by it. Usually, it would be of the format <VNFNAME>-EM-MGMT<X> and <VNFNAME>-CF-MGMT<X>:

[stack@director ~]$ nova aggregate-list

+----+-------------------+-------------------+

| Id | Name | Availability Zone |

+----+-------------------+-------------------+

| 29 | POD1-AUTOIT | mgmt |

| 57 | VNF1-SERVICE1 | - |

| 60 | VNF1-EM-MGMT1 | - |

| 63 | VNF1-CF-MGMT1 | - |

| 66 | VNF2-CF-MGMT2 | - |

| 69 | VNF2-EM-MGMT2 | - |

| 72 | VNF2-SERVICE2 | - |

| 75 | VNF3-CF-MGMT3 | - |

| 78 | VNF3-EM-MGMT3 | - |

| 81 | VNF3-SERVICE3 | - |

+----+-------------------+-------------------+

In this case, the OSD-Compute server belongs to VNF2. So, the aggregates that correspond would be VNF2-CF-MGMT2 and VNF2-EM-MGMT2.

Remove the OSD-Compute node from the aggregate identified:

nova aggregate-remove-host <Aggregate> <Host>

[stack@director ~]$ nova aggregate-remove-host VNF2-CF-MGMT2 pod1-osd-compute-0.localdomain

[stack@director ~]$ nova aggregate-remove-host VNF2-EM-MGMT2 pod1-osd-compute-0.localdomain

[stack@director ~]$ nova aggregate-remove-host POD1-AUTOIT pod1-osd-compute-0.localdomain

Verify if the OSD-Compute node has been removed from the aggregates. Now, ensure that the Host is not listed under the aggregates:

nova aggregate-show <aggregate-name>

[stack@director ~]$ nova aggregate-show VNF2-CF-MGMT2

[stack@director ~]$ nova aggregate-show VNF2-EM-MGMT2

[stack@director ~]$ nova aggregate-show POD1-AUTOIT

Case 2. OSD-Compute Node Hosts Auto-Deploy/Auto-IT/EM/UAS

Backup the CDB of Auto-Deploy

Backup the autodeploy confd cdb data periodically or after every activation/deactivation and save the file to a backup server.Auto-Deploy is not redundant and if this data is lost, it will be difficult to deactivate the deployment.

Log in to Auto-Deploy VM and backup confd cdb directory:

ubuntu@auto-deploy-iso-2007-uas-0:~$sudo -i

root@auto-deploy-iso-2007-uas-0:~#service uas-confd stop

uas-confd stop/waiting

root@auto-deploy-iso-2007-uas-0:~# cd /opt/cisco/usp/uas/confd-6.3.1/var/confd

root@auto-deploy-iso-2007-uas-0:/opt/cisco/usp/uas/confd-6.3.1/var/confd#tar cvf autodeploy_cdb_backup.tar cdb/

cdb/

cdb/O.cdb

cdb/C.cdb

cdb/aaa_init.xml

cdb/A.cdb

root@auto-deploy-iso-2007-uas-0:~# service uas-confd start

uas-confd start/running, process 13852

Note: Copy autodeploy_cdb_backup.tar to the backup server.

Backup system.cfg from Auto-IT

Take the backup of system.cfg file to backup-server:

Auto-it = 10.1.1.2

Backup server = 10.2.2.2

[stack@director ~]$ ssh ubuntu@10.1.1.2

ubuntu@10.1.1.2's password:

Welcome to Ubuntu 14.04.3 LTS (GNU/Linux 3.13.0-76-generic x86_64)

* Documentation: https://help.ubuntu.com/

System information as of Wed Jun 13 16:21:34 UTC 2018

System load: 0.02 Processes: 87

Usage of /: 15.1% of 78.71GB Users logged in: 0

Memory usage: 13% IP address for eth0: 172.16.182.4

Swap usage: 0%

Graph this data and manage this system at:

https://landscape.canonical.com/

Get cloud support with Ubuntu Advantage Cloud Guest:

http://www.ubuntu.com/business/services/cloud

Cisco Ultra Services Platform (USP)

Build Date: Wed Feb 14 12:58:22 EST 2018

Description: UAS build assemble-uas#1891

sha1: bf02ced

ubuntu@auto-it-vnf-uas-0:~$ scp -r /opt/cisco/usp/uploads/system.cfg root@10.2.2.2:/home/stack

root@10.2.2.2's password:

system.cfg 100% 565 0.6KB/s 00:00

ubuntu@auto-it-vnf-uas-0:~$

Note: Procedures to be performed for a graceful shutdown of EM/UAS hosted on OSD-Compute-0 are same in both the case. Refer to Case.1 for the same.

OSD-Compute Node Deletion

The steps mentioned in this section are common irrespective of the VMs hosted in the compute node.

Delete OSD-Compute Node from the Service List

Delete the Compute service from the service list:

[stack@director ~]$ source corerc

[stack@director ~]$ openstack compute service list | grep osd-compute-0

| 404 | nova-compute | pod1-osd-compute-0.localdomain | nova | enabled | up | 2018-05-08T18:40:56.000000 |

openstack compute service delete <ID>

[stack@director ~]$ openstack compute service delete 404

Delete Neutron Agents

Delete the old associated neutron agent and open vswitch agent for the Compute server:

[stack@director ~]$ openstack network agent list | grep osd-compute-0

| c3ee92ba-aa23-480c-ac81-d3d8d01dcc03 | Open vSwitch agent | pod1-osd-compute-0.localdomain | None | False | UP | neutron-openvswitch-agent |

| ec19cb01-abbb-4773-8397-8739d9b0a349 | NIC Switch agent | pod1-osd-compute-0.localdomain | None | False | UP | neutron-sriov-nic-agent |

openstack network agent delete <ID>

[stack@director ~]$ openstack network agent delete c3ee92ba-aa23-480c-ac81-d3d8d01dcc03

[stack@director ~]$ openstack network agent delete ec19cb01-abbb-4773-8397-8739d9b0a349

Delete from the Nova and Ironic Database

Delete a node from the nova list and the ironic database and verify it:

[stack@director ~]$ source stackrc

[stack@al01-pod1-ospd ~]$ nova list | grep osd-compute-0

| c2cfa4d6-9c88-4ba0-9970-857d1a18d02c | pod1-osd-compute-0 | ACTIVE | - | Running | ctlplane=192.200.0.114 |

[stack@al01-pod1-ospd ~]$ nova delete c2cfa4d6-9c88-4ba0-9970-857d1a18d02c

nova show <compute-node> | grep hypervisor

[stack@director ~]$ nova show pod1-osd-compute-0 | grep hypervisor

| OS-EXT-SRV-ATTR:hypervisor_hostname | 4ab21917-32fa-43a6-9260-02538b5c7a5a

ironic node-delete <ID>

[stack@director ~]$ ironic node-delete 4ab21917-32fa-43a6-9260-02538b5c7a5a

[stack@director ~]$ ironic node-list (node delete must not be listed now)

Delete from Overcloud

Create a script file named delete_node.sh with the contents as shown. Ensure that the templates mentioned are the same as the ones used in the deploy.sh script used for the stack deployment:

delete_node.sh

openstack overcloud node delete --templates -e /usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml -e /home/stack/custom-templates/network.yaml -e /home/stack/custom-templates/ceph.yaml -e /home/stack/custom-templates/compute.yaml -e /home/stack/custom-templates/layout.yaml -e /home/stack/custom-templates/layout.yaml --stack <stack-name> <UUID>

[stack@director ~]$ source stackrc

[stack@director ~]$ /bin/sh delete_node.sh

+ openstack overcloud node delete --templates -e /usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml -e /home/stack/custom-templates/network.yaml -e /home/stack/custom-templates/ceph.yaml -e /home/stack/custom-templates/compute.yaml -e /home/stack/custom-templates/layout.yaml -e /home/stack/custom-templates/layout.yaml --stack pod1 49ac5f22-469e-4b84-badc-031083db0533

Deleting the following nodes from stack pod1:

- 49ac5f22-469e-4b84-badc-031083db0533

Started Mistral Workflow. Execution ID: 4ab4508a-c1d5-4e48-9b95-ad9a5baa20ae

real 0m52.078s

user 0m0.383s

sys 0m0.086s

Wait for the OpenStack stack operation in order to move to the COMPLETE state:

[stack@director ~]$ openstack stack list

+--------------------------------------+------------+-----------------+----------------------+----------------------+

| ID | Stack Name | Stack Status | Creation Time | Updated Time |

+--------------------------------------+------------+-----------------+----------------------+----------------------+

| 5df68458-095d-43bd-a8c4-033e68ba79a0 | pod1 | UPDATE_COMPLETE | 2018-05-08T21:30:06Z | 2018-05-08T20:42:48Z |

+--------------------------------------+------------+-----------------+----------------------+----------------------

Install the New Compute Node

- The steps in order to install a new UCS C240 M4 server and the initial setup steps can be referred from:

Cisco UCS C240 M4 Server Installation and Service Guide

- After the installation of the server, insert the hard disks in the respective slots as the old server

- Log in to the server with the use of the CIMC IP

- Perform BIOS upgrade if the firmware is not as per the recommended version used previously. Steps for BIOS upgrade are given here:

Cisco UCS C-Series Rack-Mount Server BIOS Upgrade Guide

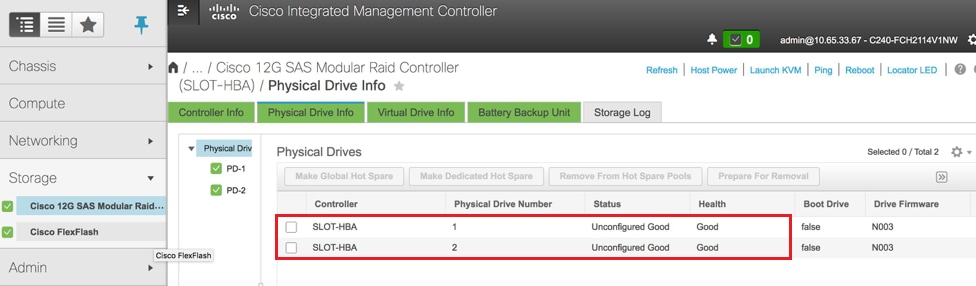

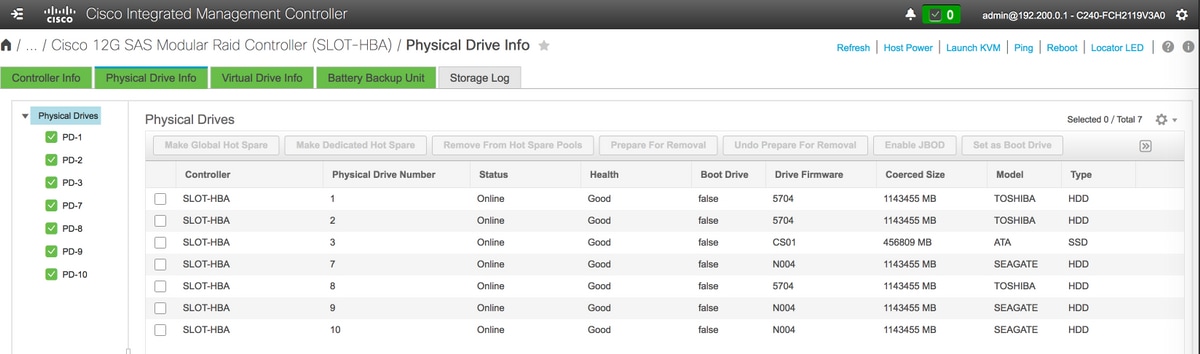

- Verify the status of Physical Drives. It must be Unconimaged Good

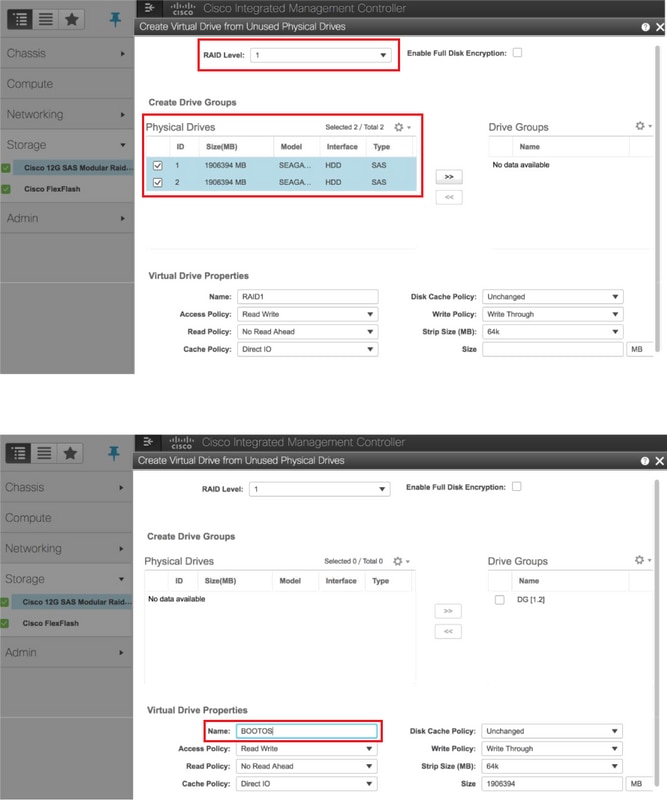

- Create a Virtual drive from the physical drives with RAID Level 1

Storage > Cisco 12G SAS Modular Raid Controller (SLOT-HBA) > Physical Drive Info

Storage > Cisco 12G SAS Modular Raid Controller (SLOT-HBA) > Physical Drive Info

Note: This image is for illustration purpose only, in actual, OSD-Compute CIMC you will see seven physical drives in slots (1,2,3,7,8,9,10) in Unconfigured Good state as no Virtual Drives are created from them.

Storage > Cisco 12G SAS Modular Raid Controller (SLOT-HBA) > Controller Info > Create Virtual Drive from Unused Physical Drives

Storage > Cisco 12G SAS Modular Raid Controller (SLOT-HBA) > Controller Info > Create Virtual Drive from Unused Physical Drives

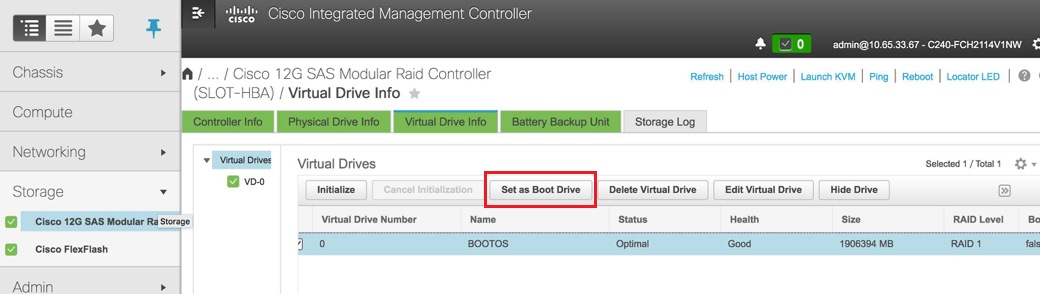

Select the VD and configure “Set as Boot Drive”

Select the VD and configure “Set as Boot Drive”

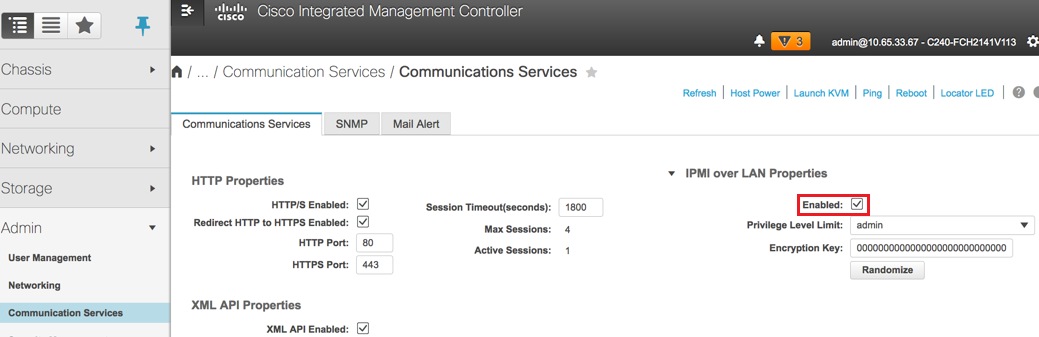

Enable IPMI over LAN: Admin > Communication Services > Communication Services

Enable IPMI over LAN: Admin > Communication Services > Communication Services

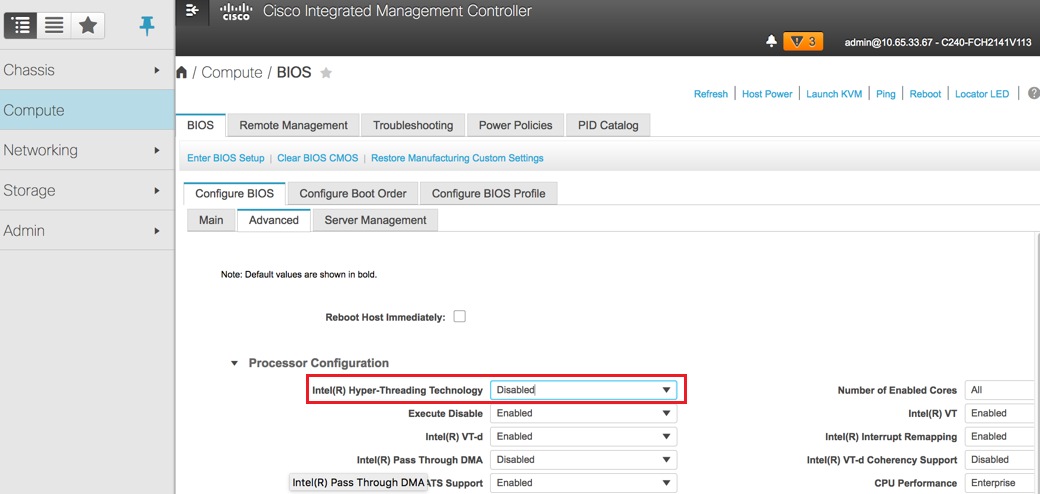

Disable hyperthreading: Compute > BIOS > Configure BIOS > Advanced > Processor Configuration

Disable hyperthreading: Compute > BIOS > Configure BIOS > Advanced > Processor Configuration

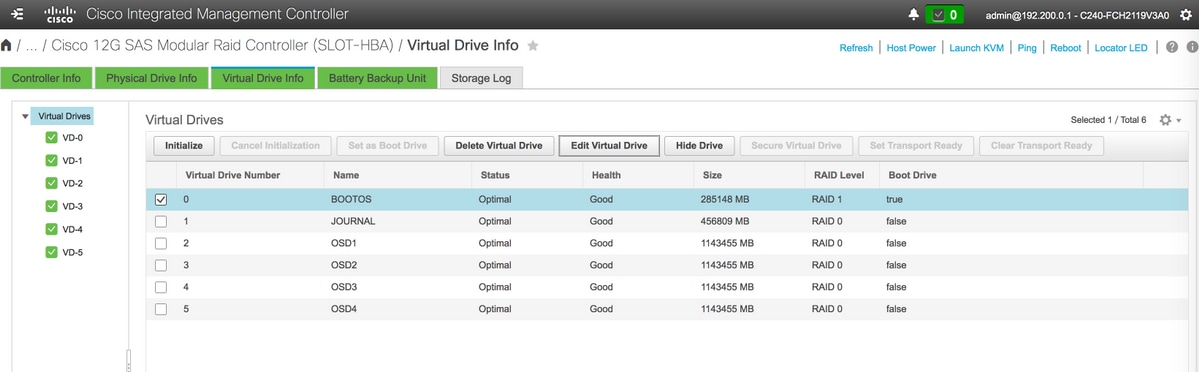

- Similar to BOOTOS VD created with physical drives 1 and 2, create four more virtual drives as

JOURNAL > From physical drive number 3

OSD1 > From physical drive number 7

OSD2 > From physical drive number 8

OSD3 > From physical drive number 9

OSD4 > From physical drive number 10 - At the end, the Physical drives and Virtual drives must be similar as shown in the image:

virtual drives

virtual drives Physcial drives

Physcial drives

Note: The image shown here and the configuration steps mentioned in this section are with reference to the firmware version 3.0(3e) and there might be slight variations if you work on other versions.

Add the New OSD-Compute Node to the Overcloud

The steps mentioned in this section are common irrespective of the VM hosted by the compute node.

Add Compute server with a different index.

Create an add_node.json file with only the details of the new Compute server to be added. Ensure that the index number for the new OSD-Compute server has not been used before. Typically, increment the next highest compute value.

Example: Highest prior was OSD-Compute-0 so created OSD-Compute-3 in case of 2-vnf system.

Note: Be mindful of the json format.

[stack@director ~]$ cat add_node.json

{

"nodes":[

{

"mac":[

"<MAC_ADDRESS>"

],

"capabilities": "node:osd-compute-3,boot_option:local",

"cpu":"24",

"memory":"256000",

"disk":"3000",

"arch":"x86_64",

"pm_type":"pxe_ipmitool",

"pm_user":"admin",

"pm_password":"<PASSWORD>",

"pm_addr":"192.100.0.5"

}

]

}

Import the json file:

[stack@director ~]$ openstack baremetal import --json add_node.json

Started Mistral Workflow. Execution ID: 78f3b22c-5c11-4d08-a00f-8553b09f497d

Successfully registered node UUID 7eddfa87-6ae6-4308-b1d2-78c98689a56e

Started Mistral Workflow. Execution ID: 33a68c16-c6fd-4f2a-9df9-926545f2127e

Successfully set all nodes to available.

Run node introspection with the use of the UUID noted from the previous step:

[stack@director ~]$ openstack baremetal node manage 7eddfa87-6ae6-4308-b1d2-78c98689a56e

[stack@director ~]$ ironic node-list |grep 7eddfa87

| 7eddfa87-6ae6-4308-b1d2-78c98689a56e | None | None | power off | manageable | False |

[stack@director ~]$ openstack overcloud node introspect 7eddfa87-6ae6-4308-b1d2-78c98689a56e --provide

Started Mistral Workflow. Execution ID: e320298a-6562-42e3-8ba6-5ce6d8524e5c

Waiting for introspection to finish...

Successfully introspected all nodes.

Introspection completed.

Started Mistral Workflow. Execution ID: c4a90d7b-ebf2-4fcb-96bf-e3168aa69dc9

Successfully set all nodes to available.

[stack@director ~]$ ironic node-list |grep available

| 7eddfa87-6ae6-4308-b1d2-78c98689a56e | None | None | power off | available | False |

Add IP addresses to custom-templates/layout.yml under OsdComputeIPs. In this case, when you replace OSD-Compute-0 you add that address to the end of the list for each type:

OsdComputeIPs:

internal_api:

- 11.120.0.43

- 11.120.0.44

- 11.120.0.45

- 11.120.0.43 <<< take osd-compute-0 .43 and add here

tenant:

- 11.117.0.43

- 11.117.0.44

- 11.117.0.45

- 11.117.0.43 << and here

storage:

- 11.118.0.43

- 11.118.0.44

- 11.118.0.45

- 11.118.0.43 << and here

storage_mgmt:

- 11.119.0.43

- 11.119.0.44

- 11.119.0.45

- 11.119.0.43 << and here

Run deploy.sh script that was previously used to deploy the stack, in order to add the new compute node to the overcloud stack:

[stack@director ~]$ ./deploy.sh

++ openstack overcloud deploy --templates -r /home/stack/custom-templates/custom-roles.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml -e /usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml -e /home/stack/custom-templates/network.yaml -e /home/stack/custom-templates/ceph.yaml -e /home/stack/custom-templates/compute.yaml -e /home/stack/custom-templates/layout.yaml --stack ADN-ultram --debug --log-file overcloudDeploy_11_06_17__16_39_26.log --ntp-server 172.24.167.109 --neutron-flat-networks phys_pcie1_0,phys_pcie1_1,phys_pcie4_0,phys_pcie4_1 --neutron-network-vlan-ranges datacentre:1001:1050 --neutron-disable-tunneling --verbose --timeout 180

…

Starting new HTTP connection (1): 192.200.0.1

"POST /v2/action_executions HTTP/1.1" 201 1695

HTTP POST http://192.200.0.1:8989/v2/action_executions 201

Overcloud Endpoint: http://10.1.2.5:5000/v2.0

Overcloud Deployed

clean_up DeployOvercloud:

END return value: 0

real 38m38.971s

user 0m3.605s

sys 0m0.466s

Wait for the OpenStack stack status to be COMPLETE:

[stack@director ~]$ openstack stack list

+--------------------------------------+------------+-----------------+----------------------+----------------------+

| ID | Stack Name | Stack Status | Creation Time | Updated Time |

+--------------------------------------+------------+-----------------+----------------------+----------------------+

| 5df68458-095d-43bd-a8c4-033e68ba79a0 | pod1 | UPDATE_COMPLETE | 2017-11-02T21:30:06Z | 2017-11-06T21:40:58Z |

+--------------------------------------+------------+-----------------+----------------------+----------------------+

Check that new OSD-Compute node is in the Active state:

[stack@director ~]$ source stackrc

[stack@director ~]$ nova list |grep osd-compute-3

| 0f2d88cd-d2b9-4f28-b2ca-13e305ad49ea | pod1-osd-compute-3 | ACTIVE | - | Running | ctlplane=192.200.0.117 |

[stack@director ~]$ source corerc

[stack@director ~]$ openstack hypervisor list |grep osd-compute-3

| 63 | pod1-osd-compute-3.localdomain |

Log into the new OSD-Compute server and check Ceph processes. Initially, the status will be in HEALTH_WARN as Ceph recovers:

[heat-admin@pod1-osd-compute-3 ~]$ sudo ceph -s

cluster eb2bb192-b1c9-11e6-9205-525400330666

health HEALTH_WARN

223 pgs backfill_wait

4 pgs backfilling

41 pgs degraded

227 pgs stuck unclean

41 pgs undersized

recovery 45229/1300136 objects degraded (3.479%)

recovery 525016/1300136 objects misplaced (40.382%)

monmap e1: 3 mons at {Pod1-controller-0=11.118.0.40:6789/0,Pod1-controller-1=11.118.0.41:6789/0,Pod1-controller-2=11.118.0.42:6789/0}

election epoch 58, quorum 0,1,2 Pod1-controller-0,Pod1-controller-1,Pod1-controller-2

osdmap e986: 12 osds: 12 up, 12 in; 225 remapped pgs

flags sortbitwise,require_jewel_osds

pgmap v781746: 704 pgs, 6 pools, 533 GB data, 344 kobjects

1553 GB used, 11840 GB / 13393 GB avail

45229/1300136 objects degraded (3.479%)

525016/1300136 objects misplaced (40.382%)

477 active+clean

186 active+remapped+wait_backfill

37 active+undersized+degraded+remapped+wait_backfill

4 active+undersized+degraded+remapped+backfilling

But after a short period (20 minutes), Ceph returns to a HEALTH_OK state:

[heat-admin@pod1-osd-compute-3 ~]$ sudo ceph -s

cluster eb2bb192-b1c9-11e6-9205-525400330666

health HEALTH_OK

monmap e1: 3 mons at {Pod1-controller-0=11.118.0.40:6789/0,Pod1-controller-1=11.118.0.41:6789/0,Pod1-controller-2=11.118.0.42:6789/0}

election epoch 58, quorum 0,1,2 Pod1-controller-0,Pod1-controller-1,Pod1-controller-2

osdmap e1398: 12 osds: 12 up, 12 in

flags sortbitwise,require_jewel_osds

pgmap v784311: 704 pgs, 6 pools, 533 GB data, 344 kobjects

1599 GB used, 11793 GB / 13393 GB avail

704 active+clean

client io 8168 kB/s wr, 0 op/s rd, 32 op/s wr

[heat-admin@pod1-osd-compute-3 ~]$ sudo ceph osd tree

ID WEIGHT TYPE NAME UP/DOWN REWEIGHT PRIMARY-AFFINITY

-1 13.07996 root default

-2 0 host pod1-osd-compute-0

-3 4.35999 host pod1-osd-compute-2

1 1.09000 osd.1 up 1.00000 1.00000

4 1.09000 osd.4 up 1.00000 1.00000

7 1.09000 osd.7 up 1.00000 1.00000

10 1.09000 osd.10 up 1.00000 1.00000

-4 4.35999 host pod1-osd-compute-1

2 1.09000 osd.2 up 1.00000 1.00000

5 1.09000 osd.5 up 1.00000 1.00000

8 1.09000 osd.8 up 1.00000 1.00000

11 1.09000 osd.11 up 1.00000 1.00000

-5 4.35999 host pod1-osd-compute-3

0 1.09000 osd.0 up 1.00000 1.00000

3 1.09000 osd.3 up 1.00000 1.00000

6 1.09000 osd.6 up 1.00000 1.00000

9 1.09000 osd.9 up 1.00000 1.00000

Post Server Replacement Settings

After adding the server to the overcloud, please refer to the link below to apply the settings that were previously present in the old server:

Restore the VMs

Case 1. OSD-Compute Node Hosting CF, ESC, EM and UAS

Addition to Nova Aggregate List

Add the OSD-Compute node to the aggregate-hosts and verify if the host has been added. In this case, the OSD-Compute node must be added to both the CF and EM host aggregates.

nova aggregate-add-host <Aggregate> <Host>

[stack@director ~]$ nova aggregate-add-host VNF2-CF-MGMT2 pod1-osd-compute-3.localdomain

[stack@director ~]$ nova aggregate-add-host VNF2-EM-MGMT2 pod1-osd-compute-3.localdomain

[stack@direcotr ~]$ nova aggregate-add-host POD1-AUTOIT pod1-osd-compute-3.localdomain

nova aggregate-show <Aggregate>

[stack@director ~]$ nova aggregate-show VNF2-CF-MGMT2

[stack@director ~]$ nova aggregate-show VNF2-EM-MGMT2

[stack@director ~]$ nova aggregate-show POD1-AUTOITT

Recovery of UAS VM

Check the status of the UAS VM in the nova list and delete it:

[stack@director ~]$ nova list | grep VNF2-UAS-uas-0

| 307a704c-a17c-4cdc-8e7a-3d6e7e4332fa | VNF2-UAS-uas-0 | ACTIVE | - | Running | VNF2-UAS-uas-orchestration=172.168.11.10; VNF2-UAS-uas-management=172.168.10.3

[stack@director ~]$ nova delete VNF2-UAS-uas-0

Request to delete server VNF2-UAS-uas-0 has been accepted.

In order to recover the autovnf-uas VM, run the uas-check script to check state. It must report an ERROR. Then run again with --fix option in order to recreate the missing UAS VM:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts/

[stack@director scripts]$ ./uas-check.py auto-vnf VNF2-UAS

2017-12-08 12:38:05,446 - INFO: Check of AutoVNF cluster started

2017-12-08 12:38:07,925 - INFO: Instance 'vnf1-UAS-uas-0' status is 'ERROR'

2017-12-08 12:38:07,925 - INFO: Check completed, AutoVNF cluster has recoverable errors

[stack@director scripts]$ ./uas-check.py auto-vnf VNF2-UAS --fix

2017-11-22 14:01:07,215 - INFO: Check of AutoVNF cluster started

2017-11-22 14:01:09,575 - INFO: Instance VNF2-UAS-uas-0' status is 'ERROR'

2017-11-22 14:01:09,575 - INFO: Check completed, AutoVNF cluster has recoverable errors

2017-11-22 14:01:09,778 - INFO: Removing instance VNF2-UAS-uas-0'

2017-11-22 14:01:13,568 - INFO: Removed instance VNF2-UAS-uas-0'

2017-11-22 14:01:13,568 - INFO: Creating instance VNF2-UAS-uas-0' and attaching volume ‘VNF2-UAS-uas-vol-0'

2017-11-22 14:01:49,525 - INFO: Created instance ‘VNF2-UAS-uas-0'

Log in to autovnf-uas. Wait for a few minutes and UAS must return to the good state:

VNF2-autovnf-uas-0#show uas

uas version 1.0.1-1

uas state ha-active

uas ha-vip 172.17.181.101

INSTANCE IP STATE ROLE

-----------------------------------

172.17.180.6 alive CONFD-SLAVE

172.17.180.7 alive CONFD-MASTER

172.17.180.9 alive NA

Note: If uas-check.py --fix fails, you might need to copy this file and run again.

[stack@director ~]$ mkdir –p /opt/cisco/usp/apps/auto-it/common/uas-deploy/

[stack@director ~]$ cp /opt/cisco/usp/uas-installer/common/uas-deploy/userdata-uas.txt /opt/cisco/usp/apps/auto-it/common/uas-deploy/

Recovery of ESC VM

Check the status of the ESC VM from the nova list and delete it:

stack@director scripts]$ nova list |grep ESC-1

| c566efbf-1274-4588-a2d8-0682e17b0d41 | VNF2-ESC-ESC-1 | ACTIVE | - | Running | VNF2-UAS-uas-orchestration=172.168.11.14; VNF2-UAS-uas-management=172.168.10.4 |

[stack@director scripts]$ nova delete VNF2-ESC-ESC-1

Request to delete server VNF2-ESC-ESC-1 has been accepted.

From AutoVNF-UAS, find the ESC deployment transaction and in the log for the transaction find the boot_vm.py command line in order to create the ESC instance:

ubuntu@VNF2-uas-uas-0:~$ sudo -i

root@VNF2-uas-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on VNF2-uas-uas-0

VNF2-uas-uas-0#show transaction

TX ID TX TYPE DEPLOYMENT ID TIMESTAMP STATUS

-----------------------------------------------------------------------------------------------------------------------------

35eefc4a-d4a9-11e7-bb72-fa163ef8df2b vnf-deployment VNF2-DEPLOYMENT 2017-11-29T02:01:27.750692-00:00 deployment-success

73d9c540-d4a8-11e7-bb72-fa163ef8df2b vnfm-deployment VNF2-ESC 2017-11-29T01:56:02.133663-00:00 deployment-success

VNF2-uas-uas-0#show logs 73d9c540-d4a8-11e7-bb72-fa163ef8df2b | display xml

<config xmlns="http://tail-f.com/ns/config/1.0">

<logs xmlns="http://www.cisco.com/usp/nfv/usp-autovnf-oper">

<tx-id>73d9c540-d4a8-11e7-bb72-fa163ef8df2b</tx-id>

<log>2017-11-29 01:56:02,142 - VNFM Deployment RPC triggered for deployment: VNF2-ESC, deactivate: 0

2017-11-29 01:56:02,179 - Notify deployment

..

2017-11-29 01:57:30,385 - Creating VNFM 'VNF2-ESC-ESC-1' with [python //opt/cisco/vnf-staging/bootvm.py VNF2-ESC-ESC-1 --flavor VNF2-ESC-ESC-flavor --image 3fe6b197-961b-4651-af22-dfd910436689 --net VNF2-UAS-uas-management --gateway_ip 172.168.10.1 --net VNF2-UAS-uas-orchestration --os_auth_url http://10.1.2.5:5000/v2.0 --os_tenant_name core --os_username ****** --os_password ****** --bs_os_auth_url http://10.1.2.5:5000/v2.0 --bs_os_tenant_name core --bs_os_username ****** --bs_os_password ****** --esc_ui_startup false --esc_params_file /tmp/esc_params.cfg --encrypt_key ****** --user_pass ****** --user_confd_pass ****** --kad_vif eth0 --kad_vip 172.168.10.7 --ipaddr 172.168.10.6 dhcp --ha_node_list 172.168.10.3 172.168.10.6 --file root:0755:/opt/cisco/esc/esc-scripts/esc_volume_em_staging.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_volume_em_staging.sh --file root:0755:/opt/cisco/esc/esc-scripts/esc_vpc_chassis_id.py:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_vpc_chassis_id.py --file root:0755:/opt/cisco/esc/esc-scripts/esc-vpc-di-internal-keys.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc-vpc-di-internal-keys.sh

Save the boot_vm.py line to a shell script file (esc.sh) and update all the username ***** and password ***** lines with the correct information (typically core/<PASSWORD>). You need to remove the –encrypt_key option as well. For user_pass and user_confd_pass, you need to use the format – username: password (example - admin:<PASSWORD>).

Find the URL in order to keep bootvm.py from running-config and wget the bootvm.py file to the autovnf-uas VM. In this case, 10.1.2.3 is the Auto-IT VM's IP:

root@VNF2-uas-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on VNF2-uas-uas-0

VNF2-uas-uas-0#show running-config autovnf-vnfm:vnfm

…

configs bootvm

value http:// 10.1.2.3:80/bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

!

root@VNF2-uas-uas-0:~# wget http://10.1.2.3:80/bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

--2017-12-01 20:25:52-- http://10.1.2.3 /bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

Connecting to 10.1.2.3:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 127771 (125K) [text/x-python]

Saving to: ‘bootvm-2_3_2_155.py’

100%[=====================================================================================>] 127,771 --.-K/s in 0.001s

2017-12-01 20:25:52 (173 MB/s) - ‘bootvm-2_3_2_155.py’ saved [127771/127771]

Create a /tmp/esc_params.cfg file:

root@VNF2-uas-uas-0:~# echo "openstack.endpoint=publicURL" > /tmp/esc_params.cfg

Run shell script in order to deploy ESC from the UAS node:

root@VNF2-uas-uas-0:~# /bin/sh esc.sh

+ python ./bootvm.py VNF2-ESC-ESC-1 --flavor VNF2-ESC-ESC-flavor --image 3fe6b197-961b-4651-af22-dfd910436689

--net VNF2-UAS-uas-management --gateway_ip 172.168.10.1 --net VNF2-UAS-uas-orchestration --os_auth_url

http://10.1.2.5:5000/v2.0 --os_tenant_name core --os_username core --os_password <PASSWORD> --bs_os_auth_url

http://10.1.2.5:5000/v2.0 --bs_os_tenant_name core --bs_os_username core --bs_os_password <PASSWORD>

--esc_ui_startup false --esc_params_file /tmp/esc_params.cfg --user_pass admin:<PASSWORD> --user_confd_pass

admin:<PASSWORD> --kad_vif eth0 --kad_vip 172.168.10.7 --ipaddr 172.168.10.6 dhcp --ha_node_list 172.168.10.3

172.168.10.6 --file root:0755:/opt/cisco/esc/esc-scripts/esc_volume_em_staging.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_volume_em_staging.sh

--file root:0755:/opt/cisco/esc/esc-scripts/esc_vpc_chassis_id.py:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_vpc_chassis_id.py

--file root:0755:/opt/cisco/esc/esc-scripts/esc-vpc-di-internal-keys.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc-vpc-di-internal-keys.sh

Log in to new ESC and verify the Backup state:

ubuntu@VNF2-uas-uas-0:~$ ssh admin@172.168.11.14

…

####################################################################

# ESC on VNF2-esc-esc-1.novalocal is in BACKUP state.

####################################################################

[admin@VNF2-esc-esc-1 ~]$ escadm status

0 ESC status=0 ESC Backup Healthy

[admin@VNF2-esc-esc-1 ~]$ health.sh

============== ESC HA (BACKUP) ===================================================

ESC HEALTH PASSED

Recover CF and EM VMs from ESC

Check the status of the CF and EM VMs from the nova list. They must be in the ERROR state:

[stack@director ~]$ source corerc

[stack@director ~]$ nova list --field name,host,status |grep -i err

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | None | ERROR|

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 |None | ERROR

Log in to ESC Master, run recovery-vm-action for each impacted EM and CF VM. Be patient. ESC would schedule the recovery-action and it might not happen for a few minutes. Monitor the yangesc.log:

sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO <VM-Name>

[admin@VNF2-esc-esc-0 ~]$ sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO VNF2-DEPLOYMENT-_VNF2-D_0_a6843886-77b4-4f38-b941-74eb527113a8

[sudo] password for admin:

Recovery VM Action

/opt/cisco/esc/confd/bin/netconf-console --port=830 --host=127.0.0.1 --user=admin --privKeyFile=/root/.ssh/confd_id_dsa --privKeyType=dsa --rpc=/tmp/esc_nc_cli.ZpRCGiieuW

<?xml version="1.0" encoding="UTF-8"?>

<rpc-reply xmlns="urn:ietf:params:xml:ns:netconf:base:1.0" message-id="1">

<ok/>

</rpc-reply>

[admin@VNF2-esc-esc-0 ~]$ tail -f /var/log/esc/yangesc.log

…

14:59:50,112 07-Nov-2017 WARN Type: VM_RECOVERY_COMPLETE

14:59:50,112 07-Nov-2017 WARN Status: SUCCESS

14:59:50,112 07-Nov-2017 WARN Status Code: 200

14:59:50,112 07-Nov-2017 WARN Status Msg: Recovery: Successfully recovered VM [VNF2-DEPLOYMENT-_VNF2-D_0_a6843886-77b4-4f38-b941-74eb527113a8]

Log in to new EM and verify that the EM state is up:

ubuntu@VNF2vnfddeploymentem-1:~$ /opt/cisco/ncs/current/bin/ncs_cli -u admin -C

admin connected from 172.17.180.6 using ssh on VNF2vnfddeploymentem-1

admin@scm# show ems

EM VNFM

ID SLA SCM PROXY

---------------------

2 up up up

3 up up up

Log into the StarOS VNF and verify that the CF card is in the standby state.

Case 2. OSD-Compute Node Hosting Auto-IT, Auto-deploy, EM and UAS

Recovery of Auto-Deploy VM

From OSPD, if auto-deploy VM was impacted but still shows ACTIVE/Running, you will need to delete it first. If auto-deploy was not impacted, skip to Recovery of Auto-it VM:

[stack@director ~]$ nova list |grep auto-deploy

| 9b55270a-2dcd-4ac1-aba3-bf041733a0c9 | auto-deploy-ISO-2007-uas-0 | ACTIVE | - | Running | mgmt=172.16.181.12, 10.1.2.7 [stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director ~]$ ./auto-deploy-booting.sh --floating-ip 10.1.2.7 --delete

Once auto-deploy is deleted, create it again with same floatingip address:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director scripts]$ ./auto-deploy-booting.sh --floating-ip 10.1.2.7

2017-11-17 07:05:03,038 - INFO: Creating AutoDeploy deployment (1 instance(s)) on 'http://10.84.123.4:5000/v2.0' tenant 'core' user 'core', ISO 'default'

2017-11-17 07:05:03,039 - INFO: Loading image 'auto-deploy-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2' from '/opt/cisco/usp/uas-installer/images/usp-uas-1.0.1-1504.qcow2'

2017-11-17 07:05:14,603 - INFO: Loaded image 'auto-deploy-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2'

2017-11-17 07:05:15,787 - INFO: Assigned floating IP '10.1.2.7' to IP '172.16.181.7'

2017-11-17 07:05:15,788 - INFO: Creating instance 'auto-deploy-ISO-5-1-7-2007-uas-0'

2017-11-17 07:05:42,759 - INFO: Created instance 'auto-deploy-ISO-5-1-7-2007-uas-0'

2017-11-17 07:05:42,759 - INFO: Request completed, floating IP: 10.1.2.7

Copy the Autodeploy.cfg file, ISO and the confd_backup tar file from your backup server to autodeploy VM and restore confd cdb files from backup tar file:

ubuntu@auto-deploy-iso-2007-uas-0:~# sudo -i

ubuntu@auto-deploy-iso-2007-uas-0:# service uas-confd stop

uas-confd stop/waiting

root@auto-deploy-iso-2007-uas-0:# cd /opt/cisco/usp/uas/confd-6.3.1/var/confd

root@auto-deploy-iso-2007-uas-0:/opt/cisco/usp/uas/confd-6.3.1/var/confd# tar xvf /home/ubuntu/ad_cdb_backup.tar

cdb/

cdb/O.cdb

cdb/C.cdb

cdb/aaa_init.xml

cdb/A.cdb

root@auto-deploy-iso-2007-uas-0~# service uas-confd start

uas-confd start/running, process 2036

Verify that confd was loaded properly by checking earlier transactions. Update the autodeploy.cfg with a new OSD-Compute name. Refer toSection - Final Step: Update AutoDeploy Configuration:

root@auto-deploy-iso-2007-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on auto-deploy-iso-2007-uas-0

auto-deploy-iso-2007-uas-0#show transaction

SERVICE SITE

DEPLOYMENT SITE TX AUTOVNF VNF AUTOVNF

TX ID TX TYPE ID DATE AND TIME STATUS ID ID ID ID TX ID

-------------------------------------------------------------------------------------------------------------------------------------

1512571978613 service-deployment tb5bxb 2017-12-06T14:52:59.412+00:00 deployment-success

auto-deploy-iso-2007-uas-0# exit

Recovery of Auto-IT VM

From OSPD, if auto-it VM was impacted but still shows as ACTIVE/Running, it needs to be deleted. If auto-it wasn’t impacted, skip to next VM:

[stack@director ~]$ nova list |grep auto-it

| 580faf80-1d8c-463b-9354-781ea0c0b352 | auto-it-vnf-ISO-2007-uas-0 | ACTIVE | - | Running | mgmt=172.16.181.3, 10.1.2.8 [stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director ~]$ ./ auto-it-vnf-staging.sh --floating-ip 10.1.2.8 --delete

Run auto-it-vnf staging script and recreate auto-it:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director scripts]$ ./auto-it-vnf-staging.sh --floating-ip 10.1.2.8

2017-11-16 12:54:31,381 - INFO: Creating StagingServer deployment (1 instance(s)) on 'http://10.84.123.4:5000/v2.0' tenant 'core' user 'core', ISO 'default'

2017-11-16 12:54:31,382 - INFO: Loading image 'auto-it-vnf-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2' from '/opt/cisco/usp/uas-installer/images/usp-uas-1.0.1-1504.qcow2'

2017-11-16 12:54:51,961 - INFO: Loaded image 'auto-it-vnf-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2'

2017-11-16 12:54:53,217 - INFO: Assigned floating IP '10.1.2.8' to IP '172.16.181.9'

2017-11-16 12:54:53,217 - INFO: Creating instance 'auto-it-vnf-ISO-5-1-7-2007-uas-0'

2017-11-16 12:55:20,929 - INFO: Created instance 'auto-it-vnf-ISO-5-1-7-2007-uas-0'

2017-11-16 12:55:20,930 - INFO: Request completed, floating IP: 10.1.2.8

Reload the ISO image. In this case, the auto-it IP address is 10.1.2.8. This will take a few minutes to load:

[stack@director ~]$ cd images/5_1_7-2007/isos

[stack@director isos]$ curl -F file=@usp-5_1_7-2007.iso http://10.1.2.8:5001/isos

{

"iso-id": "5.1.7-2007"

}

to check the ISO image:

[stack@director isos]$ curl http://10.1.2.8:5001/isos

{

"isos": [

{

"iso-id": "5.1.7-2007"

}

]

}

Copy the VNF system.cfg files from OSPD Auto-Deploy directory to auto-it VM:

[stack@director autodeploy]$ scp system-vnf* ubuntu@10.1.2.8:.

ubuntu@10.1.2.8's password:

system-vnf1.cfg 100% 1197 1.2KB/s 00:00

system-vnf2.cfg 100% 1197 1.2KB/s 00:00

ubuntu@auto-it-vnf-iso-2007-uas-0:~$ pwd

/home/ubuntu

ubuntu@auto-it-vnf-iso-2007-uas-0:~$ ls

system-vnf1.cfg system-vnf2.cfg

Note: Recovery procedure of EM and UAS VM are same in both the cases. Refer to the Case.1 section for the same.

Handle ESC Recovery Failure

In cases where ESC fails to start the VM due to an unexpected state, Cisco recommends that you perform an ESC switchover by rebooting the Master ESC. The ESC switchover would take about a minute. Run the script health.sh on the new Master ESC in order to check if the status is up. Master ESC in order to start the VM and fix the VM state. This recovery task will take up to five minutes to complete.

You can monitor /var/log/esc/yangesc.log and /var/log/esc/escmanager.log. If you do not see that the VM gets recovered after 5-7 minutes, the user will need to go and do the manual recovery of the impacted VM(s).

Auto-Deploy Configuration Update

From AutoDeploy VM, edit the auto-deploy.cfg and replace the old OSD-Compute server with the new one. Then load replace in confd_cli. This step is required for successful deployment deactivation later.

root@auto-deploy-iso-2007-uas-0:/home/ubuntu# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on auto-deploy-iso-2007-uas-0

auto-deploy-iso-2007-uas-0#config

Entering configuration mode terminal

auto-deploy-iso-2007-uas-0(config)#load replace autodeploy.cfg

Loading. 14.63 KiB parsed in 0.42 sec (34.16 KiB/sec)

auto-deploy-iso-2007-uas-0(config)#commit

Commit complete.

auto-deploy-iso-2007-uas-0(config)#end

Restart uas-confd and Auto-Deploy services after the configuration change:

root@auto-deploy-iso-2007-uas-0:~# service uas-confd restart

uas-confd stop/waiting

uas-confd start/running, process 14078

root@auto-deploy-iso-2007-uas-0:~# service uas-confd status

uas-confd start/running, process 14078

root@auto-deploy-iso-2007-uas-0:~# service autodeploy restart

autodeploy stop/waiting

autodeploy start/running, process 14017

root@auto-deploy-iso-2007-uas-0:~# service autodeploy status

autodeploy start/running, process 14017

Enabling Syslogs

To enable the syslogs for the UCS Server, Openstack components and the recovered VMs, please follow the sections

"Re-Enable syslog for UCS and Openstack components" and "Enable syslog for the VNFs" in the link below:

Revision History

| Revision | Publish Date | Comments |

|---|---|---|

1.0 |

10-Jul-2018

|

Initial Release |

Contributed by Cisco Engineers

- Partheeban RajagopalCisco Advanced Services

- Padmaraj RamanoudjamCisco Advanced Services

Contact Cisco

- Open a Support Case

- (Requires a Cisco Service Contract)

Feedback

Feedback