SDN and its Role in Automating & Scaling in the Data Center

Available Languages

About the Author: Nat Lawrence

Nat Lawrence is a Cisco IT network engineer working on the team responsible for managing, maintaining, and optimizing CAPNet, the Cisco global backbone network. CAPNet supports all internal communications among our branch, campus, extranet, DeMilitarized Zone (DMZ) and data center locations. His university studies were informed by experience in working at Cisco, with its use of the industry-leading technologies, standards, and protocols that govern information and communication systems.

Nat’s interest in Cisco technologies began in 2006, when he found that Cisco’s literature and publication spoke to those who wanted a future in the industry. Upon joining Cisco in 2014, Nat realized it was a time of transition from intelligent hardware to automation, network programmability and Software-Defined Networking (SDN). He decided to use his research to assist those who also come from an infrastructure-centric background in order to upskill for the future.

The result was a thesis written for Nat’s dual Bachelor of Science degree (Honors) in IT and Computing at The Open University in the UK: “Software Defined Networking (SDN) and its role in automating the provision and scaling of cloud native architectures through network programmability.” The aim of the thesis is to investigate the commercial, technical, and operational opportunities afforded to cloud and enterprise providers considering investment in programmable networks. Nat’s goal for this thesis is to provide a forward-thinking body of research that equips engineers, service managers, network providers, and C-suite executives with data they can use to remain relevant as networks change in the future.

Contents

Network administration in the cloud

Legal, social, ethical and professional issues

ACL = Access Control List

AIO SDN/NFV VM = All In One Software Defined Networking/Network Function Virtualization Virtual Machine

API = Application Programming Interface

ARP = Address Resolution Protocol

AS = Autonomous System

ASIC = Application Specific Integrated Circuit

BD = Broadcast Domain

BW = Bandwidth

CAM = Content Addressable Memory

CEF = Cisco Express Forwarding

CIA = Central Intelligence Agency

CNAs = Cloud Native Applications

CPU = Central Processing Unit

DB = Database

DC = Datacenter

DHCP = Dynamic Host Configuration Protocol

DNS = Domain Name System

DP = Designated Port (in STP)

ECMP = Equal Cost Multipath Routing

EIGRP = Enhanced Interior Gateway Routing Protocol

EoR = End of Row

EP = Endpoint/network host

ERP = Enterprise Resource Planning

FHRP = First Hop Redundancy Protocol

FTP = File Transfer Protocol

GUI = Graphical User Interface

HFT = High Frequency Trading

HPC = High Performance Computing

HSRP = Hot Standby Routing Protocol

HTTP = Hyper Text Transfer Protocol

IDC = International Data Corporation

IDF = Intermediate Distribution Frame

IDS/IPS = Intrusion Detection System/Intrusion Prevention System

IEEE = Institute of Electrical and Electronics Engineers

IETF = Internet Engineering Taskforce

IoT = Internet of Things

JSON = JavaScript Object Notation

KVM = Kernel-based Virtual Machine

LISP = Locator ID Separation Protocol

MDF = Main Distribution Frame

NAT = Network Address Translation

NFV = Network Function Virtualisation

NHS = National Health Service

NIC = Network Interface Card

NMS = Network Management System

NSA = National Security Agency

NSH = Network Service Header

ODL = Open Day Light

OS = Operating System

OSPF = Open Shortest Path First Protocol

PAT = Port Address Translation

QoS = Quality of Service

RAM = Random Access Memory

RB = Root Bridge (in STP)

REST = Representational State Transfer

RFC = Request for Comments

RP = Root Port (in STP)

RTE = Runtime Environment

R&D = Research and Development

SDN = Software Defined Networking

SLA = Service Level Agreement

SMTP = Simple Mail Transfer Protocol

SPB = Shortest Path Bridging

STP = Spanning Tree Protocol

ROI = Return on Investment

TCO = Total Cost of Ownership

TCP/IP = Transport Control Protocol/Internet Protocol

ToR = Top of Rack

TOSCA = Topology and Orchestration Specification for Cloud Applications

TRILL = Transparent Interconnection of Lots of Links

UDP = User Datagram Protocol

VLANs = Virtual Local Area Networks

VM = Virtual Machine

VTEP = VXLAN Tunnel Endpoint

VXLAN = Virtual eXtensible LAN

WSA = Web Service Architecture

WSNs = Wireless Sensor Networks

XML = Extensible Markup Language

i. Introduction

ii. Provide background on cloud computing

iii. Highlight the IoT and its impact on the computing industry

iv. Discuss network architecture, namely the three-tier hierarchical model, describing its limitations in large scale DCs

v. Discuss oversubscription in the DC and how it is managed

vi. Define spine-leaf network infrastructure design and explore its benefits over the three-tiered model in large scale DCs

vii. Compare and contrast monolithic vs cloud native applications

viii. Discuss the need for orchestration and automation in the cloud

ix. Describe the roles OpenStack and virtualisation play in cloud architecture

x. Introduce VLAN/Layer 2 semantics, flood and learn switching mechanics and Ethernet frame structure

xi. Introduce overlays and describe how VXLAN can mitigate key DC challenges

xii. Describe SDN and NFV and their role in accelerating and automating network services

xiii. Discuss open standards and their role in the integration of computing technologies

xiv. Provide a SDN cloud specific use-case

xv. Discuss societal factors concerning the use of SDN

xvi. What are the legal, social, ethical and professional considerations

xvii. References

xviii.Bibliography

xix. Review and reflection

xx. Risk Assessment

xxi. Scope of work and benefits of an SDN approach

xxii. Account of related literature

xxiii.Appendices

Executive Summary

Section 1

Provide an introduction into cloud computing and the impact of IoT on the computing industry.

Provide network architecture concepts to set context. Compare the three tiered, hierarchical model with spine-leaf; highlighting the challenges and differences in how they impact large scale data center (DC) operations. The purpose is to highlight the limitation of physical networks within cloud provider environments; alluding to the requirement for sophistication in software to provide innovations that can scale and optimize elastic cloud computing services.

Describe oversubscription in the DC and discuss the characteristics of cloud native applications and how their functions relate to the underlying network infrastructure vs a monolithic application. This helps build the story as cloud providers take their journey towards a cloud native environment to provide self-healing, highly available, fault tolerant computing services.

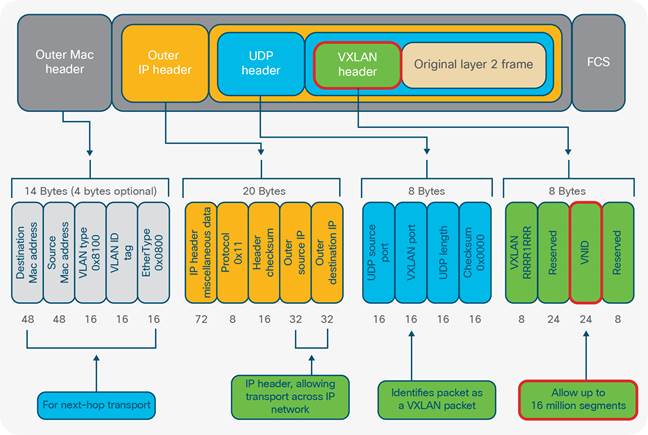

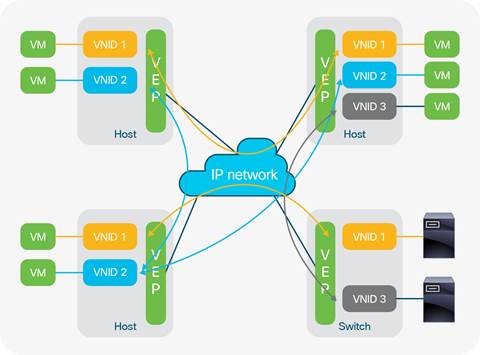

Describe current VLAN scaling limitations in large DC environments requiring tens of thousands of network segments. Explain how VXLAN provides flexibility and scalability in cloud native environments. Discuss the role of virtualization and cloud orchestration platforms like OpenStack in the DC.

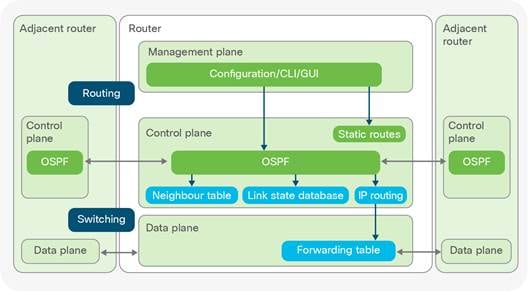

Define SDN and contrast with NFV stating why standards based technologies are important for interoperability.

Provide a cloud orientated SDN use-case.

Explore the societal, legal, social and professional considerations for cloud providers developing and delivering SDN based computing solutions.

Provide a conclusion and link it to the aims of the project.

Provide a review and reflection on the project highlighting any challenges and planning methods.

Give a risk assessment on the scope of the topic and what considerations were made to complete the topic within the time constraints.

Provide an account of related literature describing how authoritative sources were chosen for literally sources.

The aim of this paper is to investigate the commercial, technical and operational opportunities afforded to cloud providers who invest in programmable networks in the Data Center (DC). With the consistent growth seen within the cloud computing industry (Gartner, 2016), cloud operators are constantly addressing ways to maximize Information Technology (IT) investment while accessing how to effectively manage the mounting complexity seen within the DC. The IT organization represents a huge but essential cost canter for enterprise investors and stakeholders.

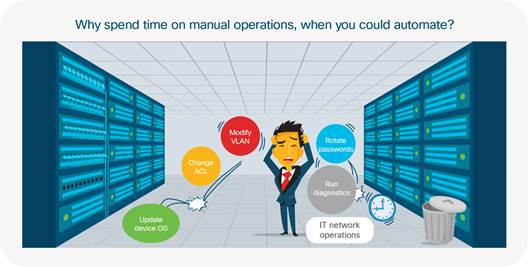

The core of this investment (network infrastructure) must offer opportunities for innovations that provide continual operational efficiencies that accelerate the delivery of new network services powered by the cloud. The concept of network programmability and Software Defined Networking (SDN) enables public and private cloud operators to address mounting challenges concerning the implementation, scaling and optimization of elastic cloud computing services. Such challenges have yet to be adequately mitigated by physical remediation measures. In enterprise networks, current administration processes require a manual, device level approach where each infrastructure device is iteratively configured to apply the latest configuration updates. These changes are time consuming and often present roadblocks to agile development teams who constantly aim to turn out quick releases of new software features with ever shortening development cycles (Mueller, 2016). Rather than rapidly enabling new features and services for a quicker time to market; when configured imperatively, the network often represents an extensive cost to the enterprise that contributes to the sclerotic delivery of production services.

To keep up with the trajectory of innovation seen in leading public and private cloud deployments, network upgrades that once took IT operation team’s months to complete, now needs to take minutes (Cisco, 2015a).

This thesis addresses the market transition away from imperatively administered networks to a declarative model. This declarative concept provides a programmable network infrastructure through the exposure of Application Programming Interfaces (API’s) facilitated by open standards to optimize and expedite the business outcomes of cloud operators. SDN provides rich and sophisticated options for cloud providers to rapidly provision and scale their DC architecture. Some of the opportunities and trade-offs will be explored, with insight into the technologies leading the way towards autonomic, networking. The objective is to give the reader an understanding of the foundations, inherent technologies and concepts that power elastic cloud computing services. The limitations of human-to-machine provisioning has opened the door for an alternative approach. This provides cloud operators with powerful ways to introduce value added computing features and services to minimize operational costs while delivering services quicker to market. SDN offers a layer of abstraction where operators can use declarative, policy driven models to rapidly align, integrate and execute enterprise requirements within the machinations of IT operations. This paper will favor cloud providers on their journey towards automating their infrastructure. Secondly infrastructure engineers embracing automation through programmable networks will also find interest in these pages. Software Defined Networking beacons as a new paradigm shift designed to abstract complexity while facilitating agile options toward business transformation.

This paper forms investigative research into the evaluation of evolving infrastructure requirements for cloud providers in their journey towards their adoption of SDN practices. In 1981 when the Internet Protocol (IP) became ratified by the Internet Engineering Task Force (IETF) in Request for Comments (RFC) 791, Transmission Control Protocol/Internet Protocol (TCP/IP) networks were built and optimized for north-to-south traffic patterns; forwarding IP packets between client-to-server and peer-to-peer architectures. Thirty years later, the diverse richness of Internet traffic has transformed the landscape of how data is consumed. Causative factors include the digital revolution, multi-megabit enterprise broadband speeds, the rise of 4G/5G networks and exploding mobile device density. These factors coupled with the need for persistent access to pervasive Enterprise Resource Planning (ERP) applications serve to evidence the trajectory of Internet consumption requirements since the wide adoption and subsequent diffusion of the Internet Protocol (IP) (Hamblen, 2014).

The unprecedented rate at which data consumption has increased within our hyper-connected world of smart devices, Wireless Sensor Networks (WSNs) and Internet of Things (IoT) architectures has illuminated mounting challenges for cloud operators. Enterprise architectures have witnessed user requirements transform from simple web browsing and email functionality to include instant messaging, video conferencing, on-demand video and real-time streaming. The phasing out of paper based workflows replaced by electric business processes has necessitated the recent wave in digitization seen within the enterprise (Weill and Woerner, 2014). The move towards electric, automated business processes has precipitated accelerated consumption of network resources for users in aberrant ways (Cisco, 2016a).

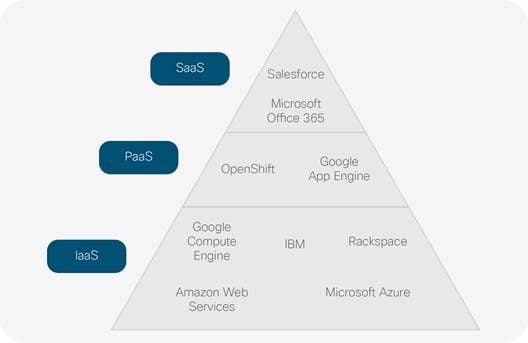

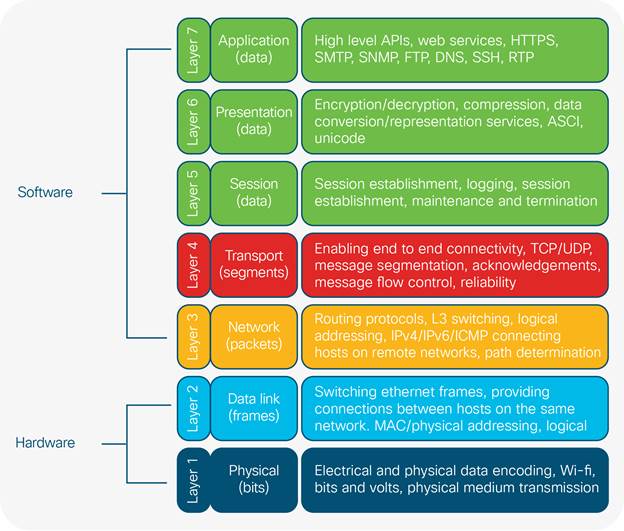

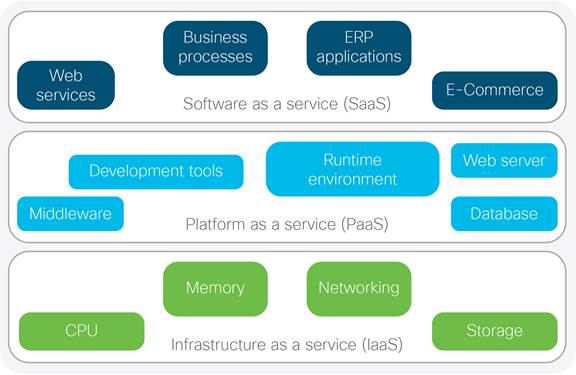

Growth and profitability remains top of mind for enterprise CEOs, the need for increased efficiencies in business continuity, scalability and cost savings holds great importance. These overarching objectives are amongst the primary drivers responsible for emergent cloud consumption models (Rossi, 2015). The cloud represents an ecosystem of highly integrated computing platforms installed across both hardware and software architectures. The physical and logical components of the cloud fulfil infrastructure, platform and application services providing highly available web applications supporting multiple remote customers as a service (Fig 1a). With worldwide cloud service revenue forecasted to reach $204 Billion in 2016 (Gartner, 2016), cloud operators are competing fervently to deliver their highest value proposition. Fig 1b illustrates popular cloud providers with current managed services within the marketplace:

The SaaS layer exposes cloud applications that customers interface with and consume through web protocols like Hypertext Transfer Protocol over Transport Layer Security (HTTPS). PaaS offers a software platform for middleware, databases and development tools providing open options for service integration testing and debugging application Runtime Environments (RTE). The infrastructure layer (IaaS) provides the underlying compute, storage, memory and networking requirements; offering hardware resources for the entire stack. With the global 2015 cloud infrastructure spend estimated at $32.6 billion (IDC, 2015); cloud computing has created economies of scale for providers delivering managed services through public/private cloud strategies. Analysts have projected the annual revenue will rise to USD 241.13 Billion by 2022 (Cloud IT, 2016). Providers are constantly looking at ways to rapidly improve their bottom line and limit operational costs, while accelerating go to market strategies. To succeed in these three crucial areas, providers are searching ways to leverage and optimize a huge cost center - the Data Centre (DC) network.

The rise of cloud computing as a consumption model for enterprise applications has been partially driven by the popularity of virtualization technologies. Virtualisation within the DC offers multitenancy, giving cloud operators scope and flexibility when scaling enterprise infrastructure. This scalability introduces complexity by exponentially increasing End Point (EP) density; exacerbating server-to-server/east-to-west traffic flows between distributed application tiers within and between DCs (Alizadeh and Edsall, 2013). According to (Gartner, 2016), cloud service markets worldwide are projected to increase 16.5% from $175 billion in 2015 to $204 billion in 2016. This growth is seeing enterprises considering the challenges around migrating their on-site ERP applications to the cloud (Fig 1b).

The Internet of Things (IoT) is a growing market vertical connecting people, processes and things. These ‘things’ are smart sensors/devices with on-board compute and network connectivity (Eccleston, 2014). The data passing between IoT devices translate the analogue measurements of the physical world, into discrete electronic data passing through the network. This data is captured through the use of Wireless Sensor Networks (WSNs), electric devices, Radio-frequency identifications (RFIDs), actuators and embedded software. The collective intelligence gained from the interpretation of data captured from these devices provides interesting use-cases and value propositions for data mining, machine learning and predictive analysis. This information presents market trends that can build efficiencies across industries such as retail, healthcare, manufacturing, transport and energy/utilities (Columbus, 2015).

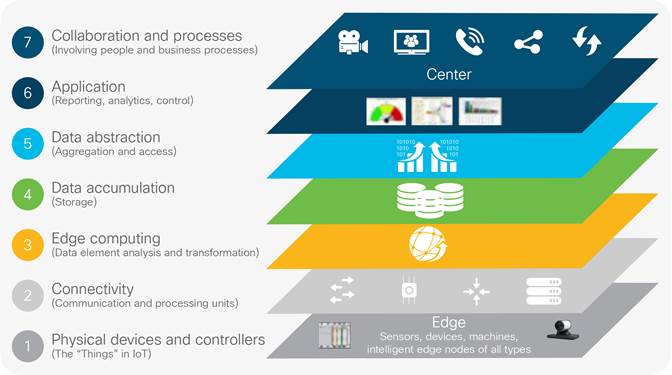

The relationships and interactions within the IoT ecosystem (Fig 2) present a rich architecture of products services and technologies creating new economies of scale. This offers lucrative analytics and integration opportunities for providers exposing software products and managed services for IoT devices (Iyer, 2015). With an estimated 50 billion devices connected to the Internet by 2020 (DHL, 2015); IoT has already facilitated innovations in smart cities/homes, supply chain, manufacturing and connected vehicles. Fig 2 depicts a heuristic model, decoupling the layered IoT architecture presented by the IoT World Forum:

With the multitude of IoT devices, products and services passing data across the network, great administrative burden is introduced to provision end-to-end data transportation reliably and securely. To adequately manage the growth of 50 billion projected IoT devices by 2020 from the 25 billion connected in 2015; (Cisco, 2014a) the automation of routine provisioning and administration tasks is imperative.

For the cloud to continue providing highly available and scalable computing services, the underlying infrastructure requires fundamental changes in how it is provisioned, maintained and architected (Cisco, 2014a).

Large scale DC deployments still feature the legacy, three-tiered architecture model. Known for supporting a dizzying array of interlacing protocols, these complex networks are difficult to troubleshoot and suffer debilitating administrative choke points related to static provisioning, poor path determination and saturated link utilization. The ability to dynamically adapt to divergent business challenges while rapidly integrating new features is paralyzed without automation and programmability. The litany of mission critical applications, micro-services, translation of business process, access rules, and infrastructure requirements within the DC is a complex administrative quandary. These challenges stifle innovation as network professionals are impeded by routine tasks, operations support and retroactive troubleshooting. The handicap of the human-to-machine Command Line Interface (CLI) method is its inability to scale fast enough. If 500 DC switches need an Internetwork Operating System (IOS) upgrade, a Network Engineer would have to Secure Shell (SSH) to each and every solitary device to perform the administrative steps required to execute and verify the service upgrade. This imperative, device level approach is too slow and cannot scale in large scale DC environments (Cisco, 2014b). Before delving into how the adoption of a SDN approach can benefit cloud providers, some background in network architecture is given for context concerning the current DC landscape.

This section provides background knowledge on network architecture and is required for insight into some of the past and present physical, technological and infrastructural requirements deployed in the DC. This links back to the aims of the project presenting depth in perspective on the underlying and emergent technologies that prove pivotal in the migration of business-critical applications to the cloud. These topics are important for an appreciation of the complexity that SDN purports to abstract.

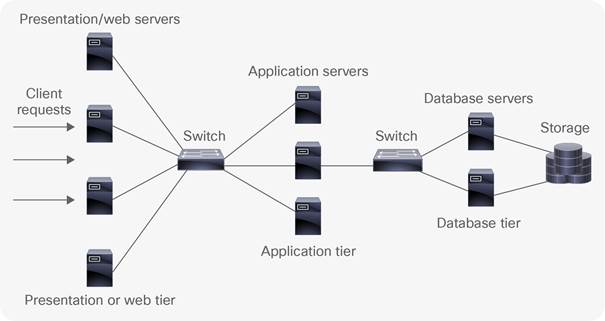

The classic infrastructure design model (Fig 3), prevalent in enterprise campus’, is also present within enterprise DCs. This hierarchal DC architecture consists of three tiers (access, aggregation and core) each delineating the design characteristics of the physical network infrastructure. Each layer has its own set of hardware and interface types supporting protocols and services that provide connectivity to digitized enterprise resources. The logical constructs configured within the infrastructure devices are specific to each tier. This provides a degree of modularity where identifiable fault characteristics are related to a particular tier of the network to ease troubleshooting. This architectural model provides the foundation of the physical network infrastructure, presenting predictable upgrade paths for scalability, when ever-increasing traffic will require more network switches and respective cabling to provide additional port density.

Access

The access layer is the network edge and serves as a demarcation between the infrastructure and End Point (EP) devices like, servers, workstations and storage arrays. In enterprise and DC deployments such connections are typically through 100Mbp/s, 1Gigabit Ethernet (GigE) or 10GE links (IEEE, 2015). Connections from the access layer link these endpoints to infrastructure devices. DC switches found at the access layer are termed End of Row (EoR) and Top of Rack (ToR). EoR switches connect DC cabinet rows together (Fig 17). ToR switches provide uplink ports for directly connected DC EPs.

Aggregation

The aggregation layer sits between the core and access layers providing a collection point for all downstream connections. Aggregation switches manage network policy boundaries and often run 10GigE and 40GigE connections in DC environments (Kozlowicz, 2013). In years gone by, legacy layer two (L2) (Appendix I) switch loop prevention functions like Spanning Tree Protocol (STP) were used at the aggregation layer to provide a logical, loop free forwarding path across the L2 domain (see Fig 7). STP has now long been replaced in modern DC environments for more favored multipathing technologies that will be covered later in this paper.

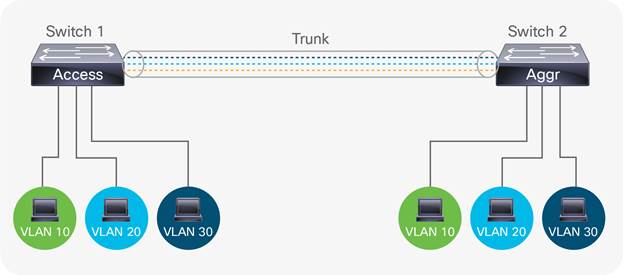

IEEE 802.1q trunking is also provided at the aggregation tier to allow the communication of multiple Virtual Local Area Networks (VLANs) between network segments (IEEE, 2012). A VLAN is a logical partition used to isolate broadcast traffic between separate computing domains. For example, when a new engineer joins the IT department and connects to the network, their computer will request an Internet Protocol (IP) address from a pool of IP addresses associated with a VLAN preconfigured for the IT department. Connecting to the preconfigured IT VLAN grants connectivity to one specific and secured set of corporate IT resources. Similarly, any other department will receive IP addresses designated for their departmental VLAN when they log into their respective corporate environments. This grants more secure and specific connectivity to resources relevant for the function of that department. In enterprise networks, departments can be segmented based on function or location. Segmenting computing domains using VLANs compartmentalizes broadcast traffic, so network data is only processed by those inside the respective VLAN, creating a security boundary or broadcast domain. For example, if HR is assigned VLAN 10, only HR devices will have visibility of data traversing within VLAN 10.

As seen in Fig 4, data for VLANs 10, 20 and 30 are transported across the trunk link connecting two network switches. This enables DC EPs with similar resource requirements shared access to computing resources without having to occupy the same physical network segment. For example, there may be two server rooms within a three storey DC, each containing a web server farm; one on the first floor and one on the second floor. Although both server farms are connected to devices in different physical locations, as long as they are both configured and connected to the same VLANs and those VLANs are trunked between floors; all connected users will have access to data within their specified VLANs irrespective of what server farm they connect to. Another example is the Marketing department being spread across three floors. As all Marketing staff require access to the same enterprise resources, they all reside within a VLAN pre-allocated to Marketing. If this VLAN is trunked between the three floors connecting to each Marketing area, all connected staff will have access to Marketing resources. This transit of VLANs between network segments is the purpose of 802.1q trunking at the aggregation layer.

Quality of Service (QoS) is another functionality configured at the aggregation layer, allowing bandwidth (BW) capacity to be divided and prioritized for different network traffic types according to company policy or application requirements. A call Centre would need voice traffic prioritized over email traffic because telephone calls are their core business. A TV production company may need video traffic prioritized to ensure enough available BW to support HD streaming between multicast endpoints at peak usage.

ACLs feature at the aggregation layer and specify network port numbers, protocols and digital resources in the form of IP address ranges. These ‘network objects’ are referenced in an ACL to either permit or deny access to such resource groups on the network. ACLs filter network traffic, defining who has access to what. For example, network administrators within IT Operations will have a VLAN assigned to them mapping to a particular IP address range. Any useable IP address within this range has access to a server containing backup network configurations. If someone with an IP address outside this permitted range (i.e. Engineering) tried to gain access, an ACL would be in place to deny this. ACLs are important for enforcing trust access and security policies at the aggregation layer. Routing or Layer 3 (L3) switching (Appendix I) is also important at this tier as it allows company data to traverse between VLANs. VLANs provide segmentation at L2, defining the logical grouping of network hosts to an associated computing domain. Routers and L3 switches use routing protocols like Enhanced Interior Gateway Routing Protocol (EIGRP) and Open Shortest Path First (OSPF) to map the network topology. This mapping populates forwarding tables which are databases of ingress (inbound) and egress (outbound) interfaces on each infrastructure device. These tables contain data used by the infrastructure devices to decide which interfaces send and receive data.

Core

The core is the backbone of the network facilitating L3 (App. I) routing services to the aggregation layer. The core not only provides a collection point for aggregation layer devices, but also provides an interconnection for Local Area Networks (LAN) to reach other campus/branch networks, through the Wide Area Network (WAN), enterprise DC or public Internet. High speed packet switching is required at the core, to route IP traffic as quickly as possible throughout the network. In modern DC’s speeds of 40GigEt and 100GE are typically seen today (Banks, 2014). Crucial design principles within the core require speed, simplicity, redundancy and high availability. To remain competitive, enterprise and service provider networks need connections to be available 7x24x365 or 99.999% of the time. For this reason, services like First Hop Redundancy Protocol (FHRP) and Hot Standby Routing Protocol (HSRP) are used in the core to provide sub-second failover in the event of a network link or hardware/software failure. (Cisco, 2008)

In the three tier architecture, hosts on the Local Area Network (LAN) communicate with hosts/servers on the same or separate network segments. This north-south traffic (Fig 5) flows from the client request initiated from an EP connected to the access switch. If the destination EP is on the local network segment, the request will get switched directly to the connected host. This north-south traffic pattern enjoys low latency switching between local hosts on the same network segment or rack cabinet. If the destination EP is in another broadcast domain (VLAN), the request traverses extra network hops through the aggregation layer to the core introducing latency. The core router would then consult its routing table to decide which interface to forward the traffic to its destination.

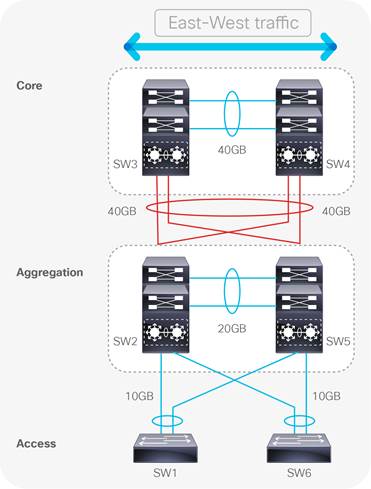

Large scale enterprise and DC environments span multi-storey buildings where remote areas of the network are cross-connected through Intermediate and Main Distribution Frames (IDF/MDF). When traffic crosses between rack cabinets or IDF/MDF boundaries (east-west), it often has to traverse the intersecting aggregation and core layers before reaching the remote destination. Each additional hop in the path introduces latency that can negatively impact business critical applications as links connecting to remote networks become saturated and oversubscribed. In Fig 6, imagine the access switches (SW1 and SW6) each have 48 ports connected to users on the corporate network, with each port having the maximum forwarding capacity of 1Gbps. If the uplinks between the access-aggregation block (2 per switch) also forward at 1Gbps, each uplink will have an oversubscription ratio of 24:1.

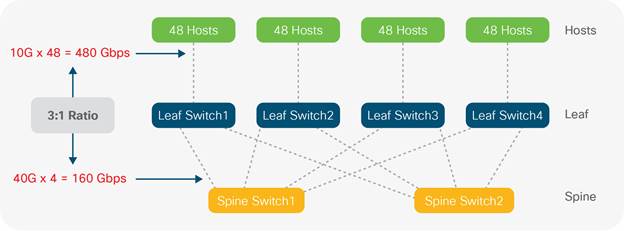

Oversubscription is a consideration required in all networks irrespective of size and is the ratio between the potential BW requirements for all connected hosts, divided by the maximum capacity of the connecting uplinks. A general rule of thumb accepted by DC professionals is having an access layer switch with x48 10GigE ports connecting to an aggregation layer switch through x4 40GigE ports:

Ingress port: 48 x 10GigE = 480

Egress port: 4 x 40GigE = 160

Ratio: 480:160

Oversubscription ratio: 3:1

If the interfaces connecting network tiers are not provisioned with enough BW to support bi-directional data transmission at wire rate, blocking and oversubscription ensues, introducing congestion and packet loss into the network. In Fig 6, imagine six servers connected to SW1, each needing to send 10GB of data (60GB in total) over to workstations connected to SW6. This east-west traffic pattern has to travel across the interconnections between source and destination network segments. As none of the connecting links have 60GB capacity, congestion, latency and packet loss would choke the network due to the oversaturation of the uplink connections. There will always be a level of oversubscription within any design but this should be balanced against the application and user requirements; 3:1 or better is generally recommended. QoS can be implemented to ensure that priority traffic types are allocated BW before link saturation occurs.

Although all hosts and applications can theoretically transmit data simultaneously, in practice this is not the case as request/response messages are interleaved as they traverse the network. Network traffic is usually intermittent and bursty, as invocation of resource is traditionally executed through request/response messages i.e. Dynamic Host Configuration Protocol (DHCP), File Transfer Protocol (FTP), Simple Mail Transfer Protocol (SMTP), Domain Name System (DNS) etc. Network operators employ acceptable oversubscription ratios pertaining to overall network design; striking a balance between performance and expense. Oversubscription is a vital consideration in enterprise DCs where east-west traffic patterns constitute routine traffic patterns. This consideration is even more paramount in large scale DCs where data is forwarded between hundreds or even thousands of physical and virtual EPs (Bloomberg, 2014).

Large scale DCs have to manage a tremendous amount of complexity across the network infrastructure as well as the applications sitting on top. The deluge of resource requests and inter-server (server-to-server) traffic flows abound with BW intensive applications like High Performance Computing (HPC), High Frequency Trading (HFT), distributed computing, IP storage and Virtual Machine (VM) clustering. These are all examples of routine, east-west traffic flows within modern DCs that contribute to this complexity. Unlike campus deployments, the east-to-west traffic created by server-to-server conversations is ubiquitous within DC environments (Alizadeh & Edsall, 2013).

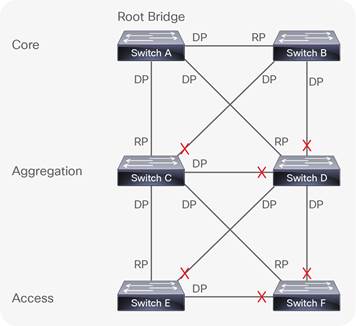

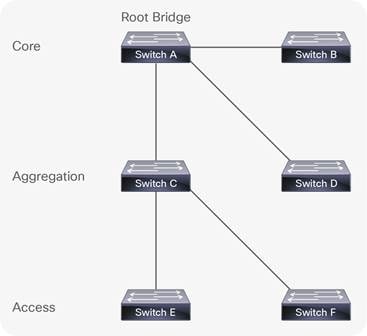

Server-to-server communications across the classical hierarchical architecture introduces congestion and network choke points, not only due to oversubscription but also because of the L2 path redundancy, introduced to fix outages if a switch port or circuit were to fail. This redundancy introduces the possibility of switching loops, where traffic is sent on an infinite circular path within the three-tier architecture. Spanning Tree Protocol (STP) is used to manage L2 path redundancy to avoid switching loops. Switching loops occur in L2 networks with redundant paths between infrastructure devices. Switches that learn paths to destination networks from conflicting interfaces can get stuck in a process where they continually flood VLAN traffic creating broadcast storms and Media Access Control (MAC) table instability. STP mitigates this issue and once implemented on infrastructure devices, the STP algorithm calculates the Root Bridge (RB). The RB is the center (root) of the inverted (Spanning) tree that provides a single logical forwarding path through the entire switching fabric for each virtual network (VLAN). To achieve this loop free, optimal path, the fastest (shortest path) connections to the RB on each infrastructure device is allocated as either a Route Port (RP) or a Designated Port (DP), all other ports are blocked which removes redundant links (Fig 7a and 7b).

As you can see in Fig 7a there are 11 links in the three-tier architecture. Once STP calculates the best loop free path, redundant links are blocked and the resultant spanning tree is formed, ready to forward VLAN traffic. As seen in Fig. 7b the amount of useable links in the topology is now reduced to 5 which is a 55% loss from the original eleven.

Such wastage is costly in both enterprise and cloud environments because congestion and network bottlenecks are introduced when all but one forwarding path is blocked via STP. In this topology, redundant fiber links remain unused until a change in the STP topology re-calculates an alternate path. Unused links provide no Return on Investment (ROI), heightening Total Cost of Ownership (TCO). Optical cables and modules are expensive and have to be non-blocking in order to justify investment. In addition, the number of hops required for server-to-server, east-west traffic flows add extra latency to transactions between hosts communicating over the network. As big data analytics (with tremendous server-to-server communication requirements) increases in volume and importance in the data center, this latency becomes even more of a concern. East-west traffic flows, network convergence, oversubscription and STP are some of the limitations that have prompted the exploration of alternative architectures and technologies in DC environments.

With background given on the traditional hierarchical model and its limitations, the following section provides information on the preferred architectural model used within modern DC environments. This provides an understanding of the physical infrastructure that powers DC operations before looking at the software overlay technologies that sit on top.

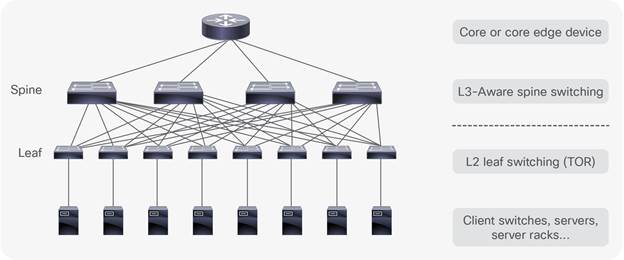

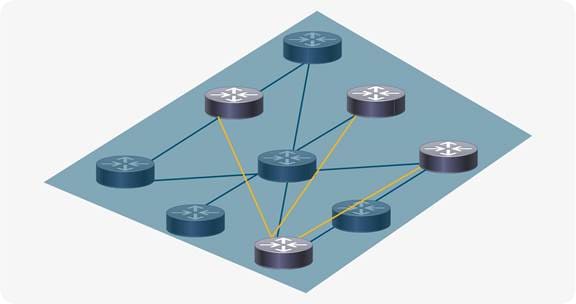

In the DC, the successor to the three-tier model is spine-leaf. Spine-Leaf is the de facto reference architecture used in modern DCs (Wang and Xu, 2014), and improves on the limitations presented by the hierarchical model and STP. Spine-Leaf is an adaptation of the (Clos) network developed in 1952 (Hogg, 2014) and comprises a flat, non-blocking switching architecture where the core is divided across a number of modular spine switches. Each spine switch up-links to every leaf. Leaf switches are located at the Top of the Rack cabinets (ToR) for direct server/EP connectivity. As each leaf switch is connected to all spine switches, this creates a flat, densely connected topology where all leaf switches are equidistant from anywhere in the architecture (Fig 8b). At any given moment, any DC EP is no more than three hops away from an intended destination inside the DC. This gives each device access to the full BW of the fabric, building a simplified foundation of predictable performance (Alizadeh and Edsall, 2013).

Applications, services and DC EPs can communicate to endpoints on opposite sides of the DC through the directly connected, local leaf switch through the connecting spine switch; to the remote leaf switch connected to the destination host (Fig 8b). This brings consistent and predictable latency and BW through the DC facilitating horizontal scaling for east-west traffic requirements. Fig 8a depicts an example Spine-Leaf topology with two spines, each connecting to four leaf switches that in turn have 48 connected hosts. The 3:1 oversubscription ratio is shown with each group of 48 connecting through 10Gbps connections with x4 40Gbps up-links from each spine to every leaf.

DC operators need to maximize utilization of invested resource. Although foundational to networking, the hierarchical model provides a forwarding path confined to the cable or ether-channel permitted by STP. This introduces choke points between tiers creating a blocking architecture that cannot scale beyond a certain point, wasting capital investment. In Spine-Leaf designs, the uniform, densely connected switches place each EP no more than three hops away from the destination (Fig 8b). This equidistance allows simplified and predictable scaling through the DC as low latency traffic is load balanced across multiple paths of equal cost.

Standards like Shortest Path Bridging (SPB), Transparent Interconnection of Lots of Links (TRILL) and Equal Cost Multi-Path (ECMP) are options that provide a non-blocking, multiple path architecture maximizing cross-sectional BW.

This non-blocking architecture enables infrastructure devices to forward packets bi-directionally at line rate through the switching fabric with the ability to scale to hundreds of thousands of ports. This non-blocking architecture is better suited for the intensive east-west traffic patterns that are routine in today’s DC operations. Oversubscription and queue buffering can also be tailored to manage traffic bursting when shifting large workloads across the DC (Alizadeh & Edsall, 2013).

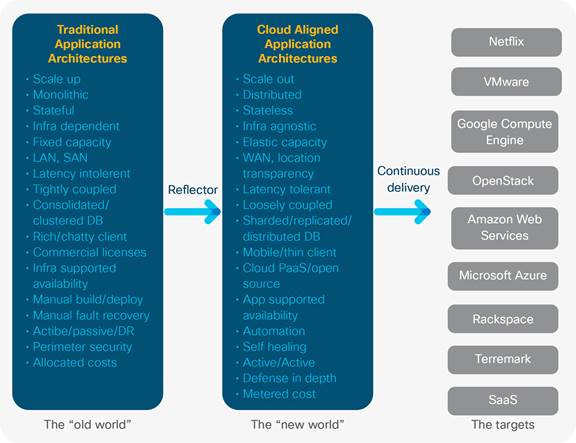

Now that we have introduced basic infrastructure concepts, we can delve into the application landscape and the changes seen in recent years. This provides context on the evolution of applications from the monolithic architectures of the past to the cloud native applications of the future. Forward thinking enterprises are now considering the challenges around migrating on premise applications to the cloud. The next section will describe traditional, monolithic applications and how they differ from highly available cloud native applications. This section describes the transition from owning and maintaining on-site application architectures, to consuming pay as you use cloud computing services. This is important because the SDN approach is most powerful when it is used to provide cloud native applications with the speed and agility required for highly available, self-healing, autonomic network services. This serves to convey that the health and resilience of a cloud native architecture is dependent upon the capabilities afforded by an underlying, programmable infrastructure.

As we look at new cloud native application architectures and their capabilities, it’s also important to note that the process of developing and supporting applications has changed dramatically in the past few years. The traditional waterfall development process has been disrupted by agile development practices. Agile development has led to continuous integration, which led to continuous delivery, which led to Dev/Ops. Each new phase enables application developers to iterate more development processes in parallel. Taking advantage of new automated application code management, testing, and deployment tools. This dramatically increases application quality and significantly accelerates the development and deployment of new business capabilities, which has driven their adoption in the IT world. However, these new development processes require a much closer relationship among the traditionally-separated teams of customers (who define requirements), coders (who develop the code), code testers (who provide quality and security and integration standards and testing), and code maintenance/operations teams. As we’ll see soon, this parallels the closer relationships that are required by new cloud-native application architectures.

Traditionally, when service providers build or buy applications to serve new business outcomes; the web, application and database components are provisioned in silos connected by the underlying network infrastructure (Fig 9).

This methodology represents a complex workflow where continuous delivery application development is impeded by the compartmentalization of each application tier. For a new application to be brought into production, the requirements of that application are set by the application development, web and database teams depending on its particular features (Richardson, 2014). The network team is expected to distil and translate these requirements into configurations the network can understand. Classically this configuration of infrastructure involves a device level process where each infrastructure device is configured one by one through the CLI. Such configurations include:

● Provisioning the correct VLANs and mapping them to the correct subnetworks so the right IP address ranges can reach the application.

● Creating security policies and ACLs to ensure the correct level of access control is attributed to the clients within the permitted IP address ranges, while limiting or excluding others to prevent unauthorized access.

● Providing load balancing to avoid choke points and bottlenecks by distributing application traffic across redundant paths through the network.

● Configuring QoS so that delay or jitter sensitive traffic like voice, video or HFT data can be prioritized over more resilient/less critical forms of traffic traversing the network.

● Configuring appropriate routing and switching configurations are also important to ensure the secure and reliable transportation of data end-to-end. This is important whether the traffic remains within the originating administrative domain or has to cross organizational boundaries to an external entity.

● Providing Network Address Translation (NAT) and IP prefix lists to make the best use of limited IPv4 address space and protect private, internal IP address ranges from the public internet which can cause problems with the routing of application traffic outside of the originating enterprise.

These are but a few examples of the complex configuration tasks required on each infrastructure device within a DC potentially housing hundreds of network devices (Fig 15). For all this work to get approval from the business, scheduled maintenance windows have to be arranged with advisory boards scrutinizing the intended procedures to minimize the potentiality of a negative impact on production services.

Depending on the size of the project or impact of the network change, this whole process can become convoluted and protracted, putting pressure on project timelines and budget justifications. Such frustrations have contributed to the formation of DevOps to accelerate application/feature delivery in agile environments. This is why innovative automated approaches to network provisioning are being explored, as manual provisioning is not scalable in large scale cloud environments (ONF, 2016).

Once the new application is finally up and running in the DC (i.e. large DB application), it will typically live on purposefully built infrastructure (bare metal or virtual machine installation) for its entire lifecycle. Classically, such applications have been proprietary, closed and monolithic in nature, which suited IT silos with static delivery models that elicited infrequent change. This methodology represents a pre-DevOps, and now antiquated approach to delivering the current trend in cloud native micro-services (Fig 11). The IT industry is a world of constant change, with many companies wishing to take advantage of the operational cost efficiencies cloud computing delivers. Innovations in hyper-convergence are now commonplace in the DC where compute, storage and network virtualization are all tightly integrated through software centric architectures (Sverdlik, 2015). This highlights the application centric trend which affords tighter control on the management of physical infrastructure through Graphical User Interfaces (GUIs) and software tools.

Interconnecting DCs must efficiently manage the connectivity requirements of geographically distributed computing services across heterogeneous IT domains. To efficiently manage the scale and complexity of this conglomeration of network requests, services, functions and processes, network programmability and automation is paramount (Mayoral et, al. 2015).

The proliferation of dynamic east-west traffic patterns between; multi-tier applications within and between DCs requires additional layers of sophistication from the infrastructure. This will enable operators to remain competitive, keeping up with the pace of innovation seen in forward thinking DC environments (Kleyman, 2016). Forward thinking cloud operators manage their infrastructure with an orchestration layer, enabling them to accelerate continuous delivery through the automated provisioning and tearing down of computing services (Mayoral et, al. 2015). The programmability afforded through the use of open APIs, drives down repetitive configuration tasks from months to hours (Wibowo, 2013). The automation of administrative tasks derived through orchestration and programmability tools derives new found operational efficiencies from the enterprise IT investment (sdxcentral, 2015).

With the rise of cloud computing and the prevalence of distributed computing architectures, we are witnessing a trend within the DC similar to Web Service Architectures (WSAs) where monolithic web applications are decomposed into discrete service components that constitute a cloud ready/native architecture (Gracely, 2015). Applications that were once closed, inflexible islands of functionality are now deconstructed into modular, reusable micro-service components that can be manipulated by developers via Application Programming Interfaces (APIs) that run service calls for software functions. The loosely coupled, platform agnostic capabilities afforded by cloud micro-service components afford reusability, control and runtime analytics providing administrative flexibility and the generation of new revenue streams (Cisco, 2014c).

Enterprises are looking towards cloud ready/native applications that are highly available, extensible, and reliable. This reality is not matched by the static, error prone, device level network implementation methods currently used to provision infrastructure. The capabilities of the network must facilitate the requirements of the services it supports with simplicity, ease, speed and flexibility. This flexibility is offered through open APIs that support a programmable infrastructure. (Howard, 2016)

Table 1. Comparison between traditional and cloud native applications - Adapted from: Baecke (2015)

|

|

Traditional |

Cloud Native |

| Architecture |

Monolithic/Layered |

Micro services |

| State |

Steady |

Fluid/Ephemeral |

| Updates |

Infrequent (monthly/yearly) |

Frequent (days/weeks) |

| Availability |

Infrastructure Redundancy |

Highly available, resilient application architecture |

| Scalability |

Hard to scale and build out |

Distributed, easily scalable, service reuse |

Enterprises are increasingly contemplating ways to lower operational costs while heightening productivity through the migration of business applications to the cloud. Rather than being deployed on premise/dedicated hardware, cloud native applications are deployed within containerized, logical instances housed on virtual servers within a providers DC. These applications take the form of micro services defining discrete pieces of functionality that are easily updated, replicated and migrated across or between DCs without any down time (Andrikopoulos, et al. 2012). Cloud native micro-services leverage multi-tenancy to supply multiple consumers with isolated, logical instances of the applications they connect to. Like in any IT environment, hardware failures can occur at any given moment. Due to the amount of cloud subscribers, operators have an extra burden of responsibility to their huge consumer base. For this reason, it is paramount the implemented cloud architecture has imbedded resilience and fault tolerance built into its design principles (Balalaie, et. al, 2015).

Cloud Native applications differ from their monolithic counterparts in that they have a self-healing, fault tolerant capabilities derived from the application having a cognizance and interaction with the health of the underlying infrastructure through southbound APIs. For applications to truly be cloud native, the underlying infrastructure and application need to comprise a closed feedback loop communicating real time analytics regarding an application contract between the two, to maintain optimal performance (West, 2015). This characteristic enables the application to be resilient to network disruptions enabling the elastic, adaptive and proactive preservation of its uptime irrespective of any underlying network disruption (Goodwell, 2016).

In recent years, we have witnessed a shift from proprietary hardware vendors supplying infrastructure devices to generic ‘white boxes’ (O’Reilly, 2015). Such white boxes are infrastructure devices with open Operating Systems that developers and network operators can interface with using extensible languages like Extensible Markup Language (XML), JSON and Representational State Transfer (REST) APIs. Such programmatic methodologies provide powerful options for developers to write scripts, tools and orchestration templates automating routine administration processes. Not only can these innovations provide analytics on the status of the underlying infrastructure; they can also be manipulated to provide taxonomy and telemetry regarding the applications health and Runtime Environment (RTE).

The application RTE is established once it has executed and able to send/receive instructions from the infrastructure (RAM, CPU etc.). In a cloud native environment, the RTE of an application can be written to access real time analytics on infrastructure resources like compute, storage, BW and latency. Cloud Native Applications (CNAs) contain an application contract which delimits the minimal runtime Service Level Agreement (SLA) required by the infrastructure for optimal performance (West, 2015). If network resources fail to meet the terms of the contract i.e. jitter and latency fall beneath a particular threshold; remediation options will be available pertaining to real-time availability of computing resources within the administrative domain. Such options can include the resizing or provision of additional compute instances. Another option could take the form of migrating any negatively affected VMs to a more stable network segment or even to another DC central to the majority of the applications subscribers. In any case, through open APIs, the programmability of the infrastructure enables the application to become intelligent enough to reach a new plateau in resilience, high availability and fault tolerance (West, 2015).

For an example of this mechanism we can look at MS Windows 10, which has the minimum hardware requirements of:

● ≥1GHz processor

● ≥ 2GB RAM

● ≥ 20 GB storage for 64-bit OS

● 1 NIC

These hardware attributes are required for the OS to function on a bare metal installation. Similarly, cloud native applications use a run-time contract to inform the infrastructure of a SLA for optimal performance. If a monolithic HFT application has a run time contractual requirement of <50ms jitter and 10Mb/s BW, then at any point where the network cannot deliver this, the user experience would suffer as there is no ability for the application or infrastructure to remediate this issue without manual intervention.

In a cloud native environment, the application is sending periodic keep-alives, probing the infrastructure for status metrics on these contractual requirements (Cane, 2014). If the available resources fall below these requirements, the IaaS layer will provision the required resources to mitigate any potential degradation to the user experience. This intelligence occurs at the network infrastructure level, leaving the end users completely agnostic of any degradation in service (Stine, 2015).

The physical constraints of popular, vendor specific network appliances are limited by the tight coupling of closed software to proprietary hardware platforms. The journey towards a cloud native architecture requires not only a re-imagining of how the application interacts with the underlying hardware; but also the opening up of proprietary hardware to expose the API’s necessary to facilitate a truly resilient, self-healing, programmable architecture. To meet this end, the industry is witnessing a prevalence of standards based efforts working towards creating an ecosystem of open, interoperable technologies that enable tighter control and flexibility for engineers and cloud providers who embrace innovation. A cloud native architecture gives providers the ability to raise ROI while lowering TCO as the investment in hardware and DC capacity resourcing is insulated by the programmatic capabilities of the infrastructure. Cloud operators require the ability to elastically scale and adapt their computing services to support dynamic market, technological and business transitions. Cloud native architectures combined with a programmable infrastructure are steps in the direction towards achieving these business objectives.

OpenStack is a popular, open source IaaS solution that uses a set of configurable software tools for building and managing public and private cloud computing platforms. Managed by the non-profit OpenStack Foundation, OpenStack enables the rapid deployment of on-demand computing instances (i.e. VMs) that developers can interface with to test and run code. This code normally contributes to the functionality of the micro-services users subscribe to. Developers interact with OpenStack service components via RESTful APIs. This enables the automation of interrelated projects that manage processing, storage and networking resourcing utilised by the upper cloud computing layers. OpenStack rapidly provisions logical computing instances that can be elastically spun up, scaled down and released on demand in minutes. This gives the cloud operator tight control over elastic computing services while providing flexible and cost effective management of cloud infrastructure (Denis, et, al. 2015).

OpenStack handles the scaling of reusable computing instances in two ways; vertical and horizontal. Vertical scaling, is essentially like a hardware upgrade instantiated in software where a cloud provider may have a physical server running one computing instance hosting a new service. This service may have less than desirable response times due to its resources being overrun with too many subscribers. To mitigate this, the provider may resize the compute instance powering the service to provide more CPU cores, RAM etc. This may alleviate the issue for a time but is capped by the amount of physical hardware resource available on the server.

Horizontal scaling is where the service is run on multiple servers that each run the required service within two or more computing instances. A virtual load balancer can then be used to evenly distribute customer traffic between the computing instances spread across the multiple servers. This allows enormous scaling potential over the vertical method as more servers can be added behind the load balancer with additional hardware added as needed (Grinberg, 2015). OpenStack provides a critical set of tools that provide operators with the agility, flexibility and scale required to manage complexity in the cloud. This in turn enhances and accelerates business objectives through the orchestration of value added cloud based services.

Table 2. OpenStack cloud service components – Source: Lawrence (2016)

| OpenStack Service |

Function |

| Identity Service (Keystone) |

Provisions users, groups, roles, projects, domains |

| Compute (Nova) |

Schedules VMs, manages their lifecycle |

| Image Service (Glance) |

Provides data assets (VM images, heat templates) |

| Networking Service (Neutron) |

Provides Software Defined Networking |

| Object Storage Service (Swift) |

Provides scalable, persistent object store |

| Block Storage Service (Cinder) |

Provides persistent block level storage |

| Orchestration Service (Heat) |

Orchestrator with auto-scaling functionally |

| Metering Service (Ceilometer) |

Collects metering probes from other services |

| Dashboard (Horizon) |

Provides web based interface for other services |

Network administration in the cloud

The cloud computing industry is witnessing exponential growth with new customers and businesses subscribing to more services every day (Gartner, 2016). This growth is underpinned by the explosion seen in agile development teams adopting DevOps approaches which accelerate software feature releases used to maintain and develop cloud consumption models. Server and to a lesser extent storage virtualization has revolutionized the amount of application services used throughout enterprise DCs, giving rise to large scale multi-tenancy cloud computing architectures that introduce a tremendous amount of complexity into the network (Christy, 2012).

Networks are a critical component of any IT investment. Cloud computing environments are amongst the most complicated ecosystems of technologies and services seen in the industry. Current network administration practices are clunky, complicated and time consuming. The same manual, device level, CLI configuration process used 30 years ago is still heavily used by engineers today (Arellano, 2013). The CLI alone as an administrative tool is not flexible enough to manage the increasing complexity witnessed in large scale DC environments.

In cloud environments, operators require application architectures that provide the rapid provisioning and scaling of computing services on demand, providing business agility and continuity. This speed and flexibility requires the underlying infrastructure to also be open, flexible and fault tolerant to support high performing cloud computing services. Server virtualization allows for the provision of multiple Virtual Machines (VMs) in minutes, with each machine being capable of housing multiple applications where each subscriber has an isolated slice of the applications/services they pay for. These VMs can easily be migrated, released and scaled up and down anywhere within or between DC environments. The explosion of virtual endpoints introduces increasing demands and complexity on the DC network (Ziolo, 2015). Cloud operators cannot remain competitive within the marketplace by solely engaging in traditional network administration practices because the rigidity and limitations physical networks introduce works against the fluidity and extensibility of the services they support (ONS, 2014). The amount of time required to make a new application secure and accessible through the most optimal paths across the network can take hours at best compared to the minutes taken to spin up VMs hosting a new application. Agile development teams and server virtualization capabilities are driving the demand for networks to become even faster and more sophisticated in their orchestration of cloud computing services. This calls for a fresh approach that requires tighter control and extensibility in how network services are provisioned, maintained and monitored.

As manual network configuration is an iterative process performed by humans, there is always an associated risk to the preservation of existing production services should something be misconfigured. For this reason, network teams associated with the implementation of a new project submit change management request tickets detailing each configuration step required to execute administrative tasks. Although change management ensures governance over the protection of production services; this is provided at the expense of continuous delivery. The many corporate processes required for change management approval impacts service execution timelines. The configuration of 100+ network devices typically seen in the DC carries associated risks. These risks include human error, and any negative impact on existing production services which can amount in loss of revenue. This presents a choke point in service delivery as agile development teams have to wait for the network before being able to rapidly test and release new service features.

Shadow IT is a descendent of this culture where frustrated development teams employ public cloud services to circumvent corporate IT. This produces real concerns to the business as the enterprise is effectively financing for their private, Intellectual Property (IP) protected data to be hosted in the public cloud. The potential security, compliance and regulatory ramifications present noteworthy concerns for the enterprise. This necessitates the need for operators to find secure ways to mobilize and accelerate their infrastructure internally (Petty, 2016).

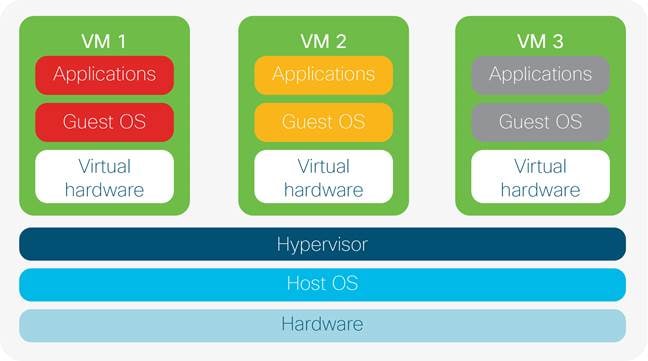

Before virtualization, the classical client-server model consisted of a client request made to a physical server hosting web application software that responds accordingly to each incoming request. The amount of simultaneous connections this server can handle is limited by the maximum hardware capacity the server OS is running on. To scale further, server farms/clusters are employed to add more resources but this raises TCO as additional hardware investment is required. This vertical scaling is not sustainable in DC environments due to finite floor space, environmental considerations and power/cooling constraints. Duryee (2014) reported a leading cloud provider exposing services for >244 million customers. It would be untenable to facilitate such a colossal user base using physical infrastructure alone. Virtualisation was introduced within the DC to provide scale through multi-tenancy (Kajeepeta, 2010).

Virtualisation software is a specialised OS running on a physical server allowing the co-location of multiple operating system instances to run inside a hypervisor which is a VM management system used to deploy and administer VMs. The hypervisor provides a layer of abstraction that allows multiple guest OS’ to share a physical server. The physical hardware resources can then be divided in software and allocated amongst multiple VM instances. Virtualisation is very popular in DCs due to multi-tenancy. All virtual machines are logically separated and thus have exclusive resources and network connections that are isolated from each other. When subscribers connect to cloud services, they are effectively connecting to a logical instance within a VM giving them their own slice of the service resources. This is depicted in Fig 12a/b where all three VMs share the same physical hardware resources and host operating system. Each VM is containerized within its own logical environment which can host a guest operating system housing multiple applications for cloud service subscribers to consume as web based services.

Although larger servers with more physical resources are required to support multi-tenancy, virtualization allows service providers to reduce their physical server count while optimizing hardware. This is through the exploitation of the hypervisors’ efficient use of scaling hardware resources. Maintenance costs, power/cooling, carbon footprint and DC floor space is also minimized through the use of virtualization software providing operational cost efficiencies for providers, coupled with the fact that administrators can interface with the hypervisor using built in tools to customize the virtual environment.

Resource isolation and multitenancy are advantageous in cloud environments as hardware resources are carved up and distributed between multiple guest operating systems (i.e. Windows, Ubuntu etc.) which each can scale to run 100’s of VMs on one physical server. VMware’s EXSi hypervisor for example can theoretically run up to 512 VM’s per server (VMware, 2013). Although VMware, Kernel-based Virtual Machine (KVM) and Quick Emulation (QEMU) are popular industry hypervisor choices, there are many flavors of virtualization software employed within the industry (Hess, 2016). An example is Amazon’s Elastic Compute Cloud which allows globally distributed clients to run applications on a set of VMs which exist in the public cloud. Securely managing and scaling such a service would be untenable if limited to physical hardware and manual network administration practices.

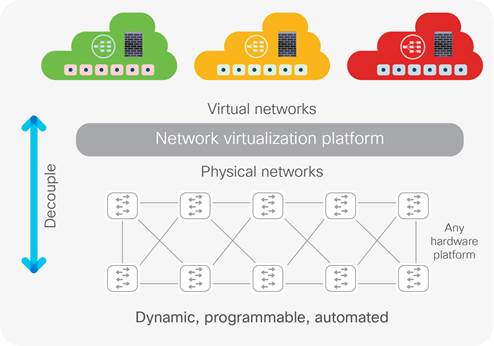

An issue with hosting multiple VMs on physical servers is if the server goes down it is a single point of failure for all hosted services. However, the flexibility of provisioning, copying, and migrating VMs accordingly serves to justify its position within the DC. Providers can also mitigate risk through providing redundant servers to mirror VM configuration through clustering. Virtualisation is not only limited to servers but also extends to storage and network. The former is where multiple storage arrays are clustered and managed via one administrative interface. The latter (Fig 12b) is where network components (like NIC cards, firewalls and switches) are replicated as logical, software components that are decoupled from the physical hardware. The advantage is seamless integration of network functionality within divergent physical and virtual computing domains. Such a scenario could see a VM with an integrated virtual switch passing data from a virtual network to the physical network. The bottom line is that sophistication in software is necessary for DC operators to scale cloud services for their network, compute and storage assets. Although network virtualization has extended the capabilities of physical network resources, SDN serves to provide a further layer of abstraction where distributed network control is consolidated and centralized within a network controller serving as the brains of the Network Management System (NMS) (ONF, 2014).

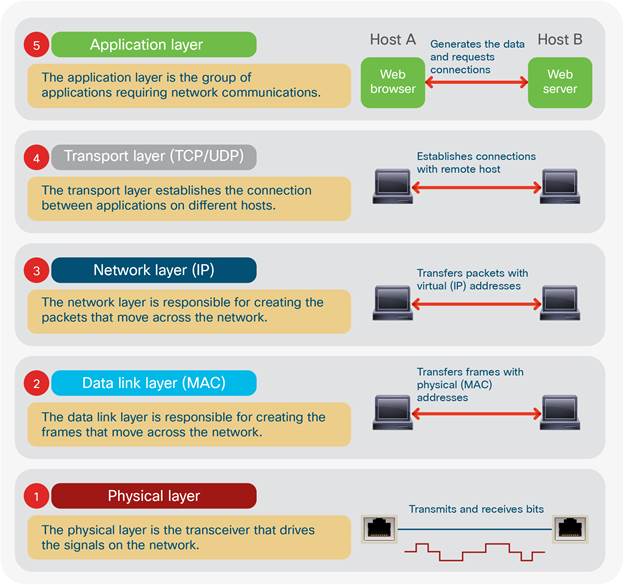

Before progressing into the more technical sections that follow, a primer on layer 2 (L2) semantics is given here to provide an understanding into the layer 2 operations that occur within a TCP/IP network. This relates to section 1 aim xi to prepare the reader for the network overlay topics.

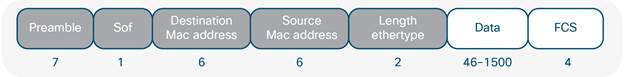

At the physical layer in TCP/IP networks (App. I), electrical signals are encoded with data and sent onto the transmission medium for transport across the network. When these signals reach the data link layer of the TCP/IP stack at the receiving device, they are encapsulated within an IEE 803.3 Ethernet frame (Fig 15) delineating the logical link and Media Access Control (MAC) mechanisms required for procedural and data integrity checking processes (Cisco, 2012). Ethernet frames contain source and destination MAC addresses. A MAC address is the physical/hardware address burnt into the Network Interface Card (NIC) of any device connected to the network. The MAC address denotes a static identifier signifying the physical address of a particular network host. Ethernet switches exist at L2 of the TCP/IP stack (App I.) which is responsible for forwarding Ethernet frames from source to destination.

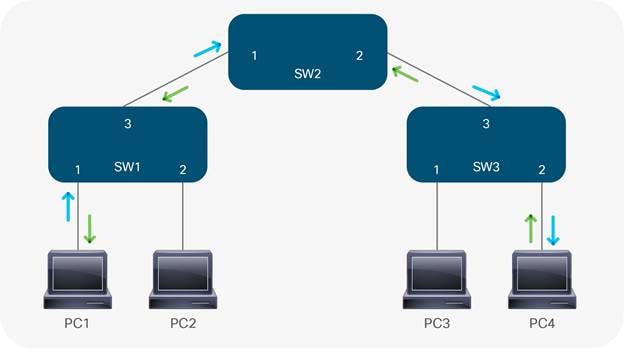

In TCP/IP switching architecture, switches use MAC addresses to forward Ethernet frames (Fig 15) containing network data. This process is termed flood and learn. In fig 14, when PC1 wants to send a message to PC4 for the first time, the message arrives at interface #1 on SW1. (1) With no entries in SW1’s Content Addressable Memory (CAM) table, SW1 examines the source MAC address of the incoming frame and associates it with interface #1. SW1 then floods an Address Resolution Protocol (ARP) request to resolve the destination MAC address; (2) The ARP is a broadcast query sent to every switch within the broadcast domain (VLAN). As PC4 has the only matching MAC address, it responds with a unicast frame to SW1 (3). Receiving the response on interface #2, SW3 records the source MAC address of PC4 on its #2 interface. Likewise, SW2 learns that PC4 can also be reached through its #2 interface and records this in its CAM table. SW1 follows suit by associating PC4’s MAC address to its #3 interface. This has now built the L2 forwarding path between PC1 and PC4. Fig 14b shows the unicast request (blue arrow) and response (green arrow) between PC1 and PC4 now that the L2 topology has converged:

In enterprise networks, there are multiple departments needing exclusive access to company resources related to departmental tasks. This includes applications, databases, servers and other corporate resources. In technical terms, the isolation required is termed segmentation. Segmentation is provided by TCP/IP networks through VLANs. VLANs are logical separations assigned to network hosts that can be based on location, department or organizational function. VLANs provide a security boundary to contain network traffic within a broadcast domain (Cisco, 2015b). Typically, every Ethernet interface on a network switch connected to an end user specifies an access VLAN.

This defines the associated broadcast domain (BD) relating to an associated IP subnetwork. A broadcast domain denotes the extent to which broadcast traffic is propagated to devices within the same administrative domain. To put this into context, if HR is allocated VLAN 10, Marketing VLAN 20 and R&D VLAN 30 - only devices connected to the respective VLAN will be able to access and process data specific to that VLAN. So, if a new employee started in R&D, their workstation would connect to the interface of an Ethernet switch configured for access VLAN 30, making them a host within the R&D BD. This would enable the new hire to receive an IP address inside R&D VLAN 30; providing connectivity to resources specific to the R&D department while isolating network traffic from any other VLAN. For data to cross the broadcast domain (i.e. HR sending an email to Marketing) a Layer 3 (L3) device like a router is required.

SOF = Start of Frame delimiter

FCS = Frame Check Sequence

Grey fields indicate Ethernet header

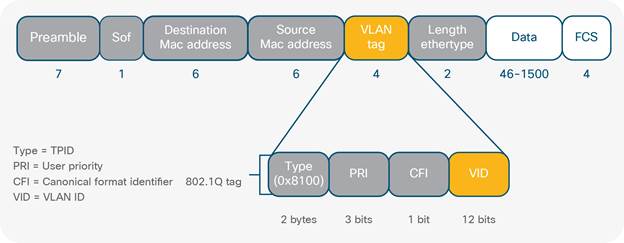

In production networks switch access ports connected to end user workstations are assigned to a single VLAN denoting the BD that the connected device is a member of. When Ethernet frames travel between network switches, they traverse point to point connections called trunk links (fig 4), allowing traffic from multiple VLANs to propagate throughout the network. For the network to accurately transport VLAN specific data from source to destination, there needs to be a way of identifying VLANs within the Ethernet frame (Cisco, 2014d).

The IEEE 802.1Q standard provides this by VLAN aware network devices (switches) inserting a 4-byte header into the Ethernet frame called a VLAN tag/802.1Q header (Fig 16) as the frames travel across trunk links. This tag defines the VLAN membership of each Ethernet frame.

The important part of the 802.1Q tag in this context is the VLAN ID (VID). This field has a value of 12 bits which determines the maximum amount of VLANs that can be used at any time across the network. As bits represent binary integers they only hold one of two values, either on/1, or off/0. So theoretically the maximum number of VLANs as specified by the VID is 122 = 4096. Although ~4000 individual network segments are sufficient for most enterprise environments, this is not adequate for DC/cloud environments. Modern DCs are structured with physical servers stored in multiple rack cabinets that can run into the thousands (Fig 17). When servers share common network storage and compute resources they are collectively termed a ‘pod’ which may exist within one cabinet or even extend multiple rack cabinets depending upon the nature of the services they support (IETF, 2014). With the advent of virtualization, powerful servers have the ability to host hundreds of VMs which each contain NICs that often communicate on multiple VLANs. Virtual networks also contain logical constructs that require VLAN assignments to categories and isolate traffic traversing between physical and virtual network topologies. With some cloud providers having upwards of 900,000 servers it quickly becomes apparent why more than ~4k VLANs are required to provide adequate scale in the cloud (Miller, 2011).

Network operators have long investigated ways to optimize the management of their DC operations. In current enterprise networks VLAN segmentation and STP enables the isolation of broadcast domains while providing a single L2 path for VLAN data to cross the network. This provides network hosts with a secure, logical boundary where only traffic within the same broadcast domain is processed. Spanning Tree attempts to remove switching loops caused by redundant paths through the STP algorithm. In the DC, the number of physical servers can range into the thousands. Virtualisation multiplies this administrative domain considerably as VMs require communication between physical and virtual hosts. Management and optimization now becomes challenging in large scale deployments as 1000’s of potential physical servers can each house 100’s of virtual servers. For each network host to remain reachable across the entire DC fabric, end state information (IP and MAC address mappings) needs to be accurately replicated, propagated and updated on spine-leaf device forwarding tables.

Although multiple tenants have their own virtual network domain, they are sharing the underlying physical infrastructure. This can cause the duplication of end state information resulting in address conflicts and reachability issues between physical and virtual networks. In large scale, multi-tenant environments computing workloads are often migrated from the private to the public cloud when a hardware failure occurs or business imperatives change (Cisco, 2014e). This is termed the hybrid cloud where private cloud services need to burst into the public cloud pertaining to business/functional requirements. The decoupling of location dependence of computing workloads from the underlying network infrastructure is necessary for operators to provide elastic compute services on demand. Virtualized environments often require the network to scale between DCs across the layer 2 domain to adequately allocate compute, network and storage resources. STP fails to support this adequately as it disables redundant links which burns investment as operators end up paying for more links than what they can use (Fig 6a/6b). In the context of scalability and operational efficiency, this is why network operators prefer multipathing techniques across the spine-leaf architecture utilizing Equal Cost Multipathing (ECMP) and network overlays over L2 technologies like STP (IETF, 2014).

In any enterprise network deployment, convergence is critical. Network convergence is where changes that happen to the end state of a host (i.e. a new IP address is allocated to a DB server) are propagated by routing protocols throughout the infrastructure to update infrastructure device forwarding tables so they may forward traffic correctly. In cloud and enterprise environments, rapid convergence is a huge concern as the complexity of maintaining end state information is of a high order in complexity for reasons described previously. Managing the complexity involved in maintaining real time reachability of DC EPs, combined with the administration, scaling and distribution of DC workloads is an issue providers are constantly looking to improve. In an attempt to solve both the 4096 VLAN limitation and scaling challenges, network overlays have surfaced to the forefront (Onisick, 2012). The mass adoption of server virtualization seen in DC environments offers greater speed, agility and flexibility in the provision and distribution of computing workloads. Up until this point the same level of innovation, speed and efficiency has not been seen in the administration of contemporary networks. Network overlays provide logical tunneling techniques that help to bridge this gap by removing the dependencies between the physical location of a device from its logical instantiation.