FlexPod Datacenter with NetApp All Flash FAS, Cisco Application Centric Infrastructure, and VMware vSphere

Available Languages

FlexPod Datacenter with NetApp All Flash FAS, Cisco Application Centric Infrastructure, and VMware vSphere

Deployment Guide for FlexPod with NetApp All Flash FAS and Cisco Application Centric Infrastructure and VMware vSphere 5.5U2

Last Updated: August 25, 2015

The CVD program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information visit

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, IronPort, the IronPort logo, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2015 Cisco Systems, Inc. All rights reserved.

Deployment Hardware and Software

Complete the Configuration Worksheet

Configure Clustered Data ONTAP Nodes

Set Onboard UTA2 Ports Personality

Set Auto-Revert on Cluster Management

Set Up Management Broadcast Domain

Set Up Service Processor Network Interface

Disable Flow Control on UTA2 Ports

Enable Cisco Discovery Protocol

Create Jumbo Frame MTU Broadcast Domains in Clustered Data ONTAP

Create Storage Virtual Machine

Create Load-Sharing Mirrors of SVM Root Volume

Add Infrastructure SVM Administrator

Perform Initial Setup of Cisco UCS 6248 Fabric Interconnect for FlexPod Environments

Upgrade Cisco UCS Manager Software to Version 2.2(3d)

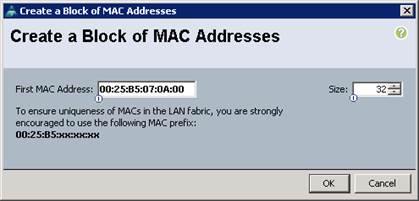

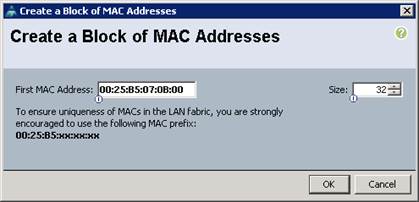

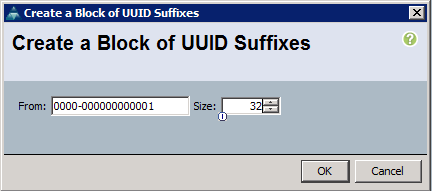

Add Block of IP Addresses for KVM Access

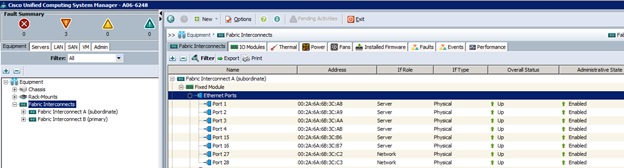

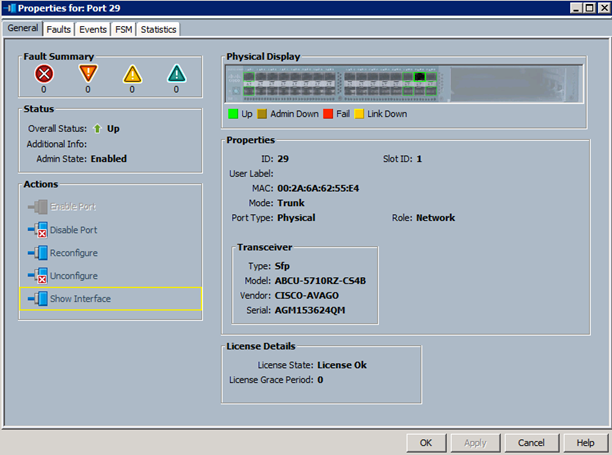

Enable Server and Uplink Ports

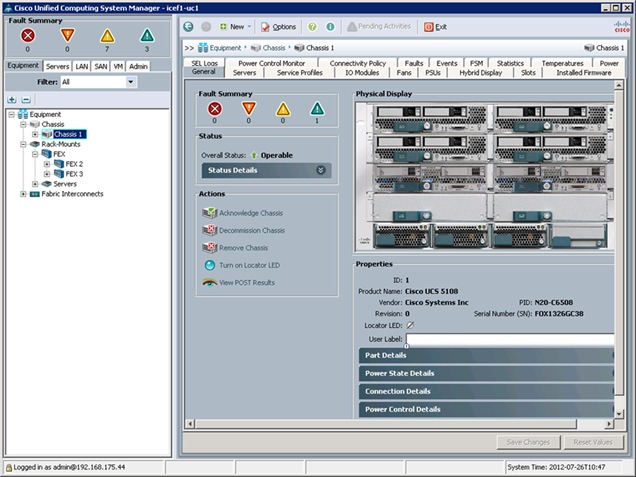

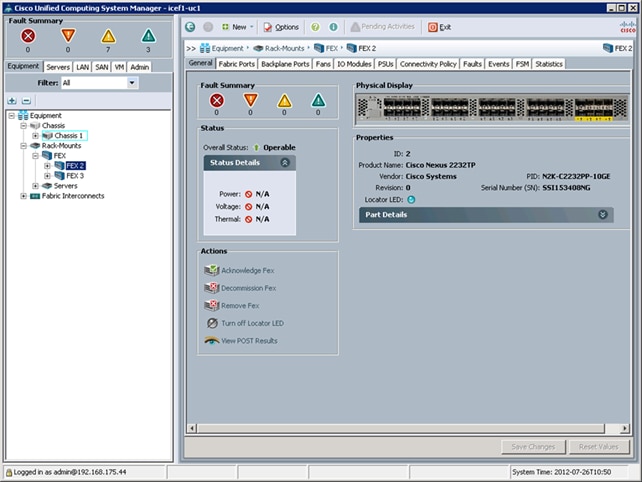

Acknowledge Cisco UCS Chassis and FEX

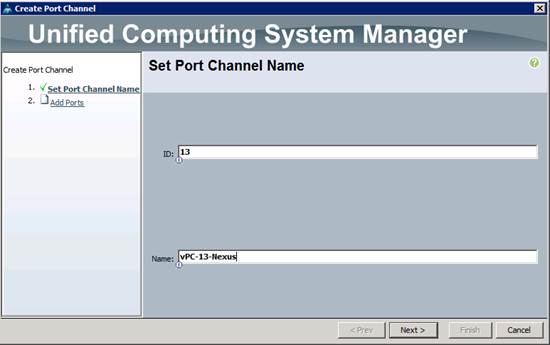

Create Uplink Port Channels to Cisco Nexus Switches

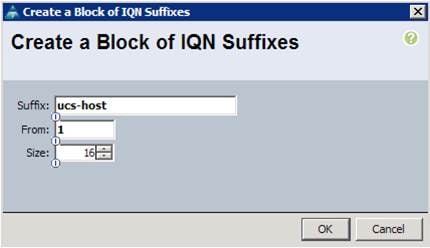

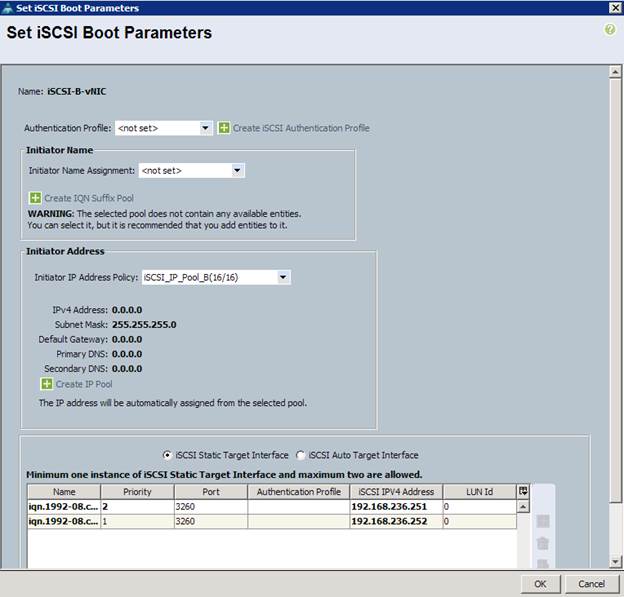

Create IQN Pools for iSCSI Boot

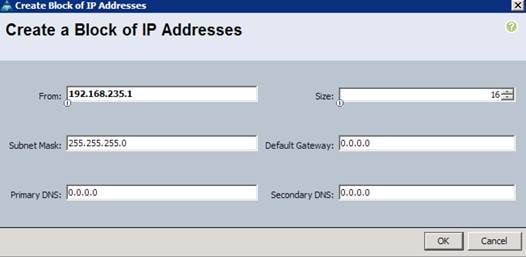

Create IP Pools for iSCSI Boot

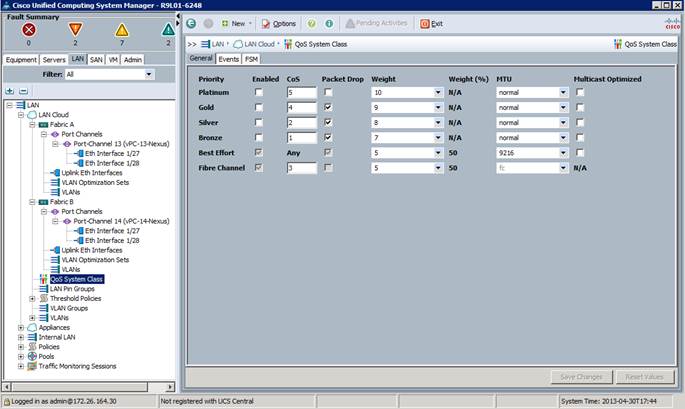

Set Jumbo Frames in Cisco UCS Fabric

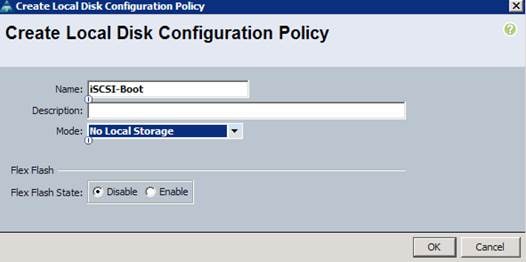

Create Local Disk Configuration Policy (Optional)

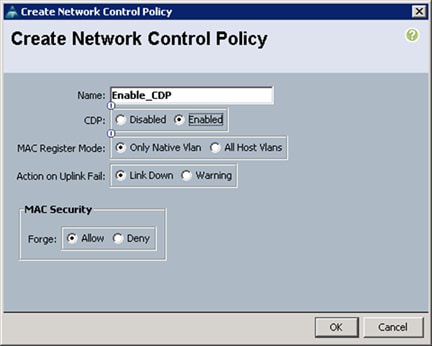

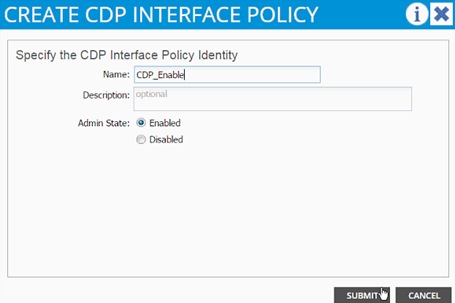

Create Network Control Policy for Cisco Discovery Protocol

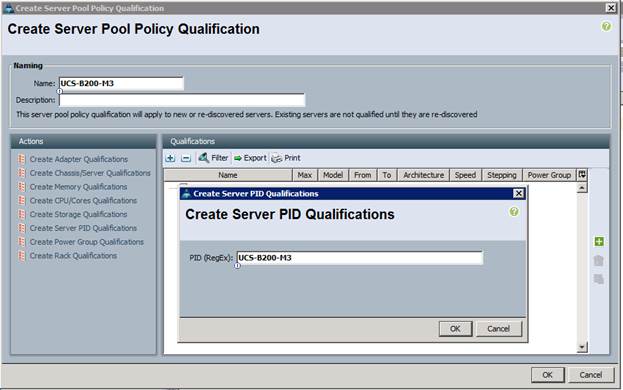

Create Server Pool Qualification Policy (Optional)

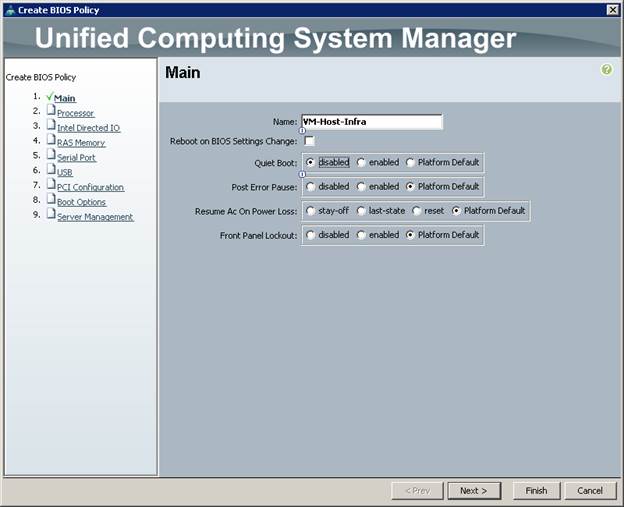

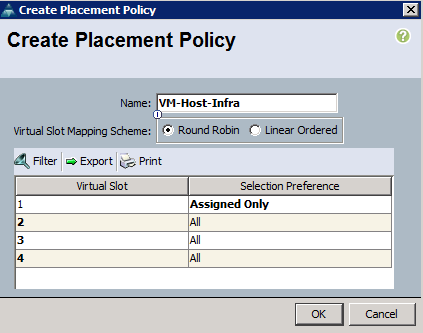

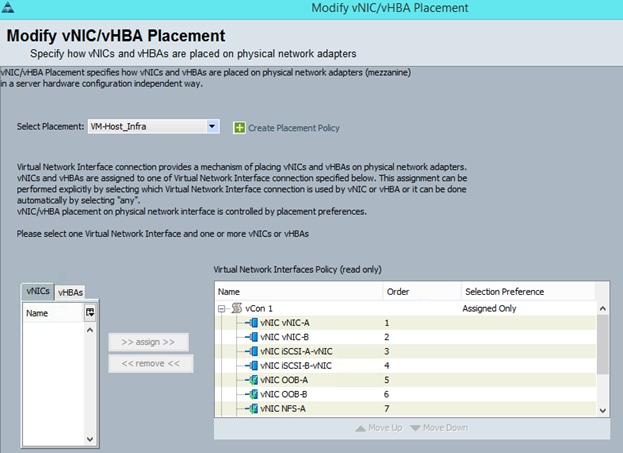

Create vNIC/vHBA Placement Policy for Virtual Machine Infrastructure Hosts

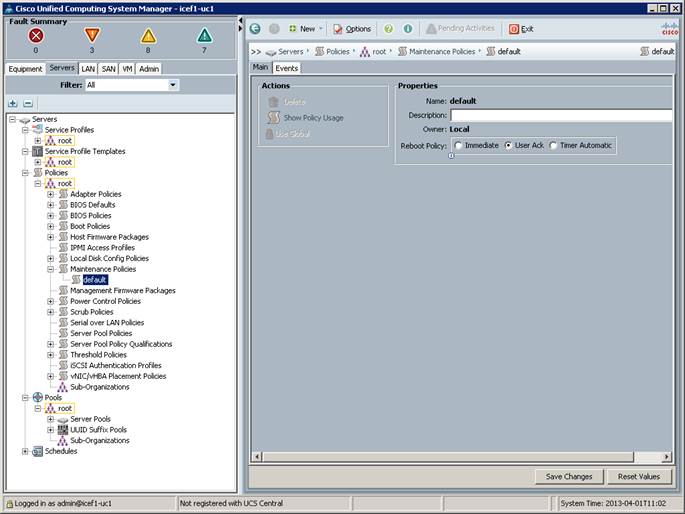

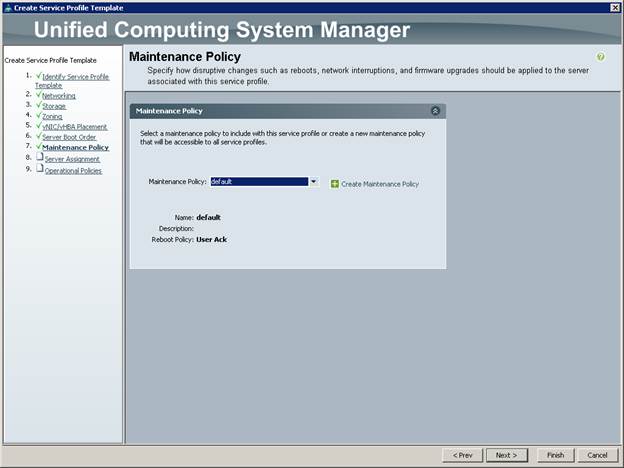

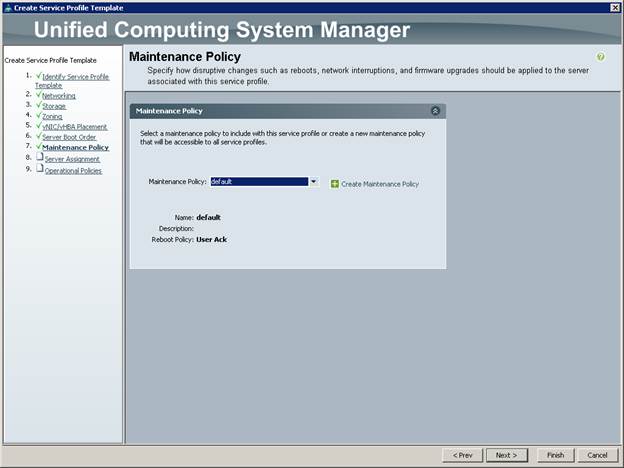

Update Default Maintenance Policy

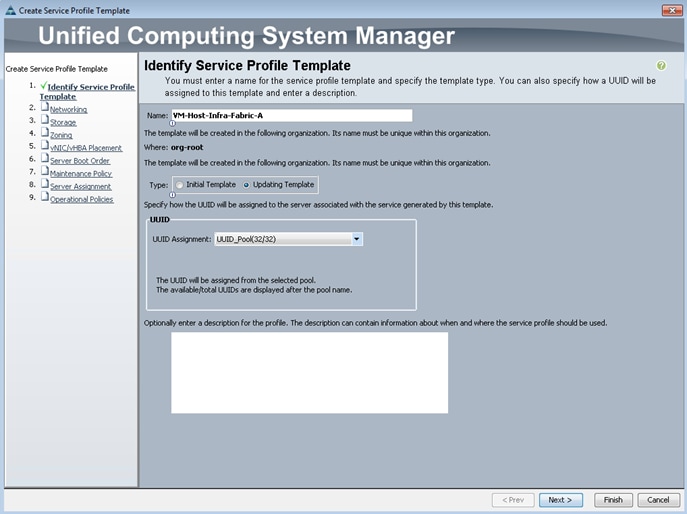

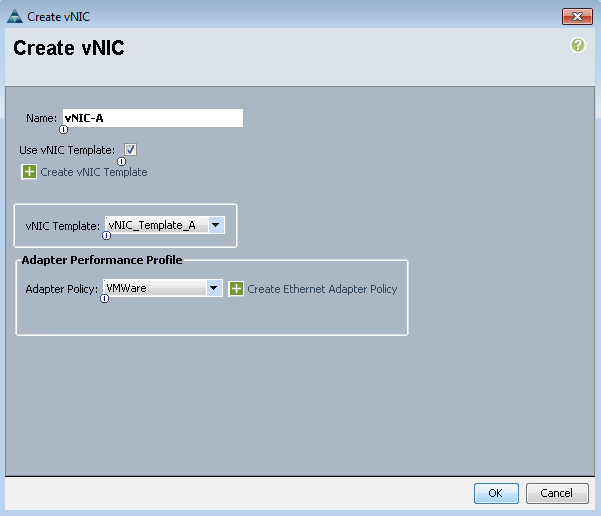

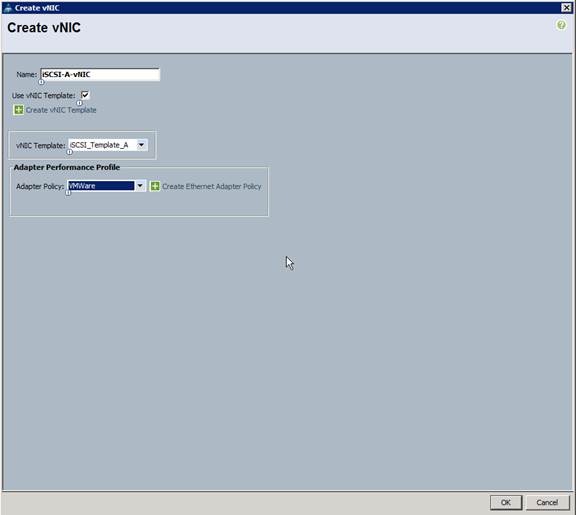

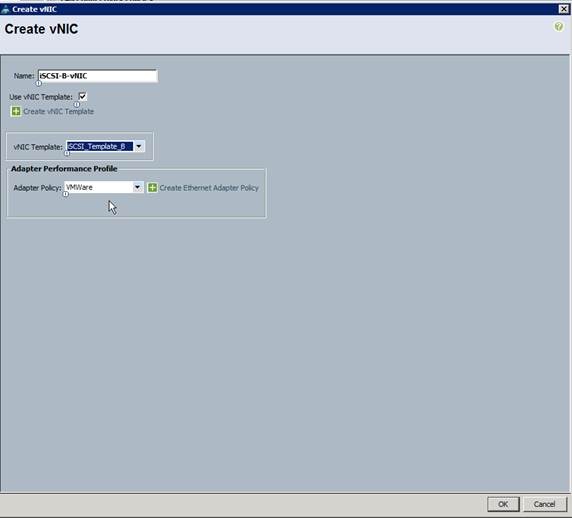

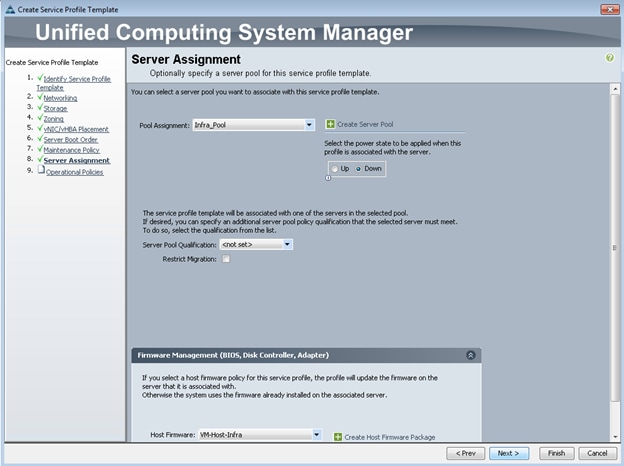

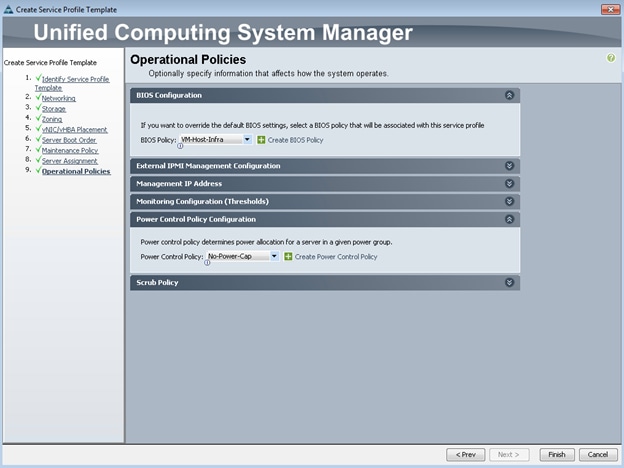

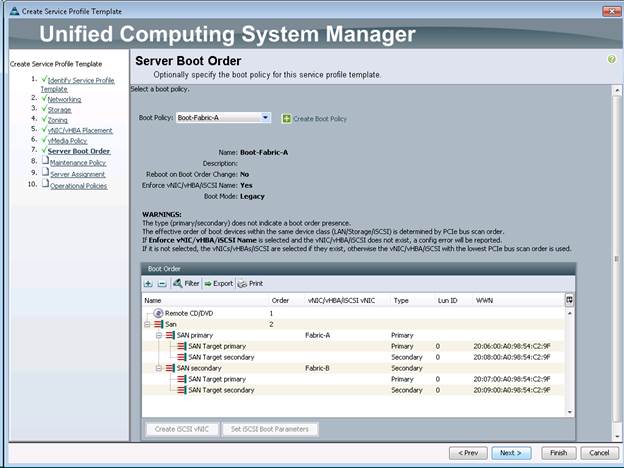

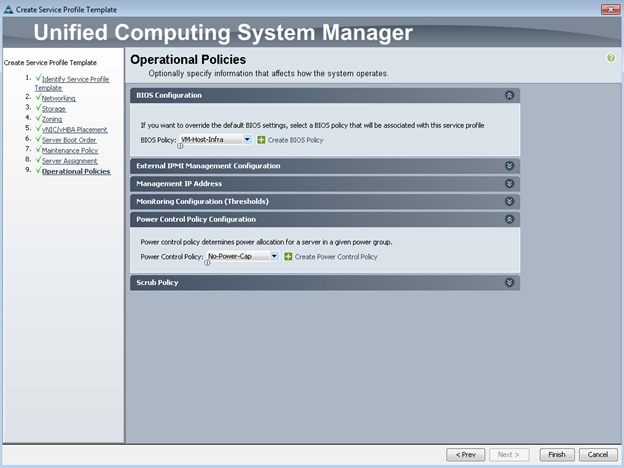

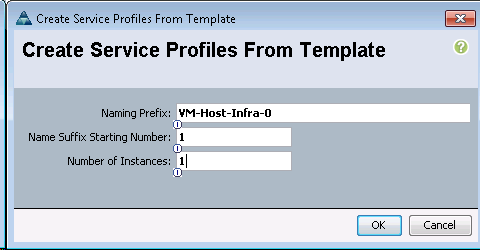

Create Service Profile Template

Add More Servers to FlexPod Unit

ACI Infrastructure Configuration

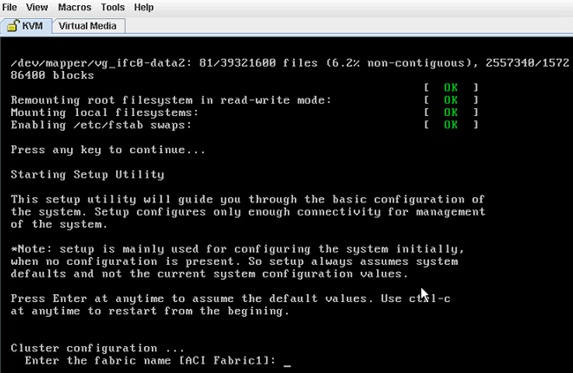

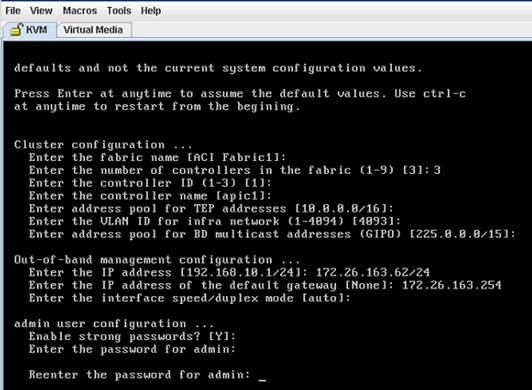

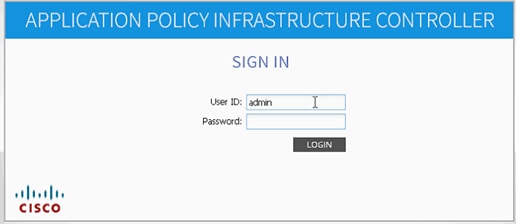

Cisco APIC Initial Configuration Setup

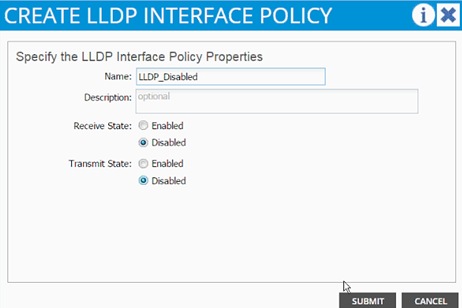

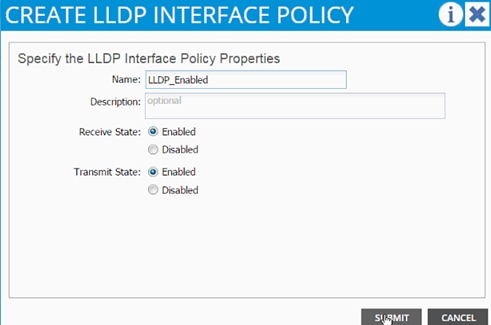

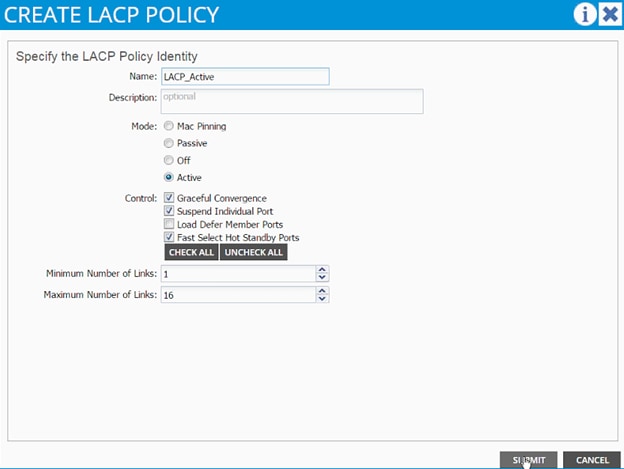

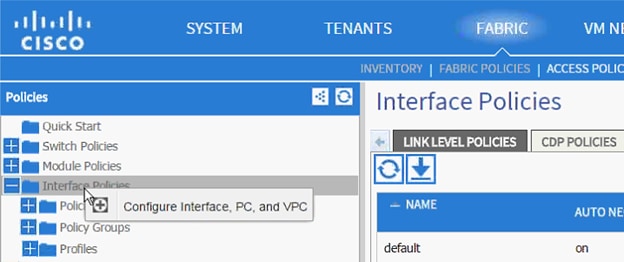

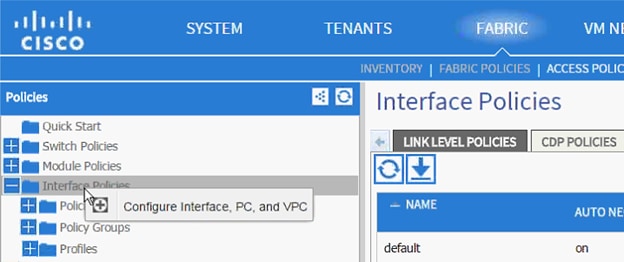

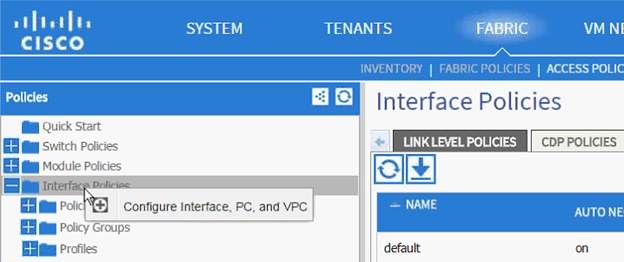

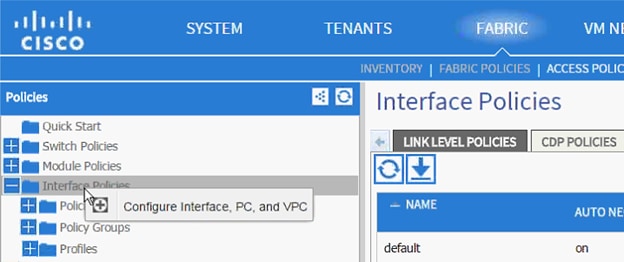

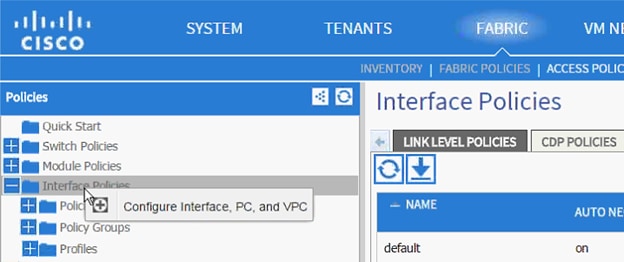

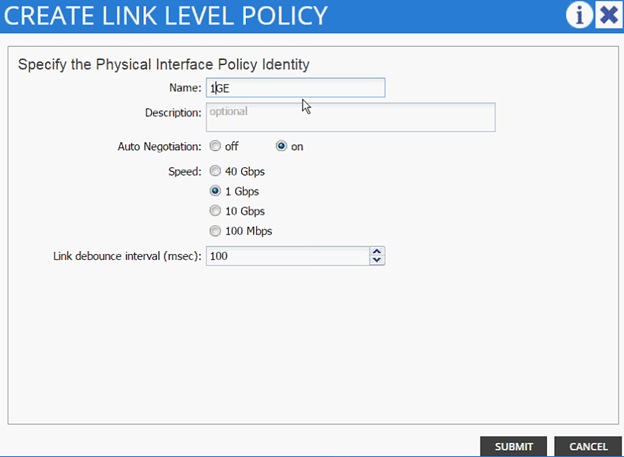

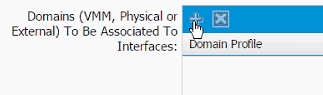

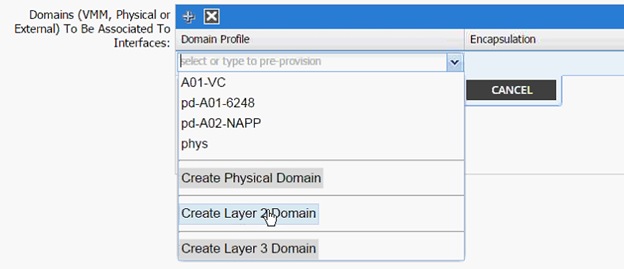

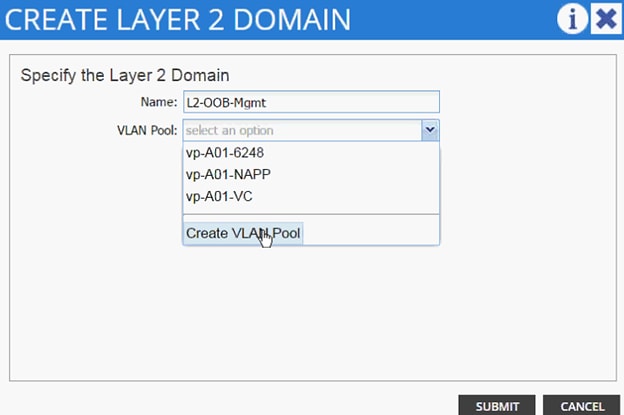

Cisco ACI – Defining Fabric Access Policies

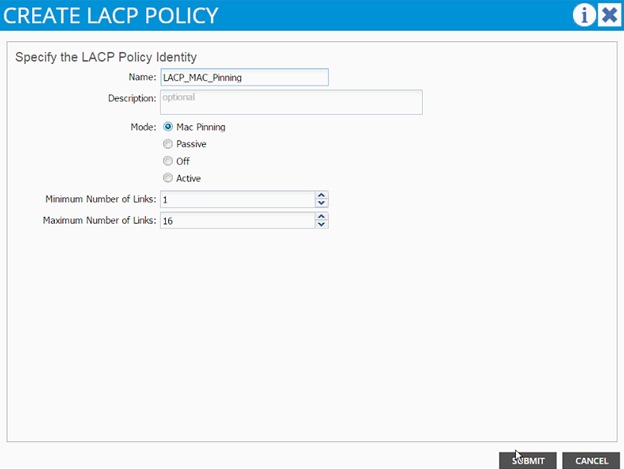

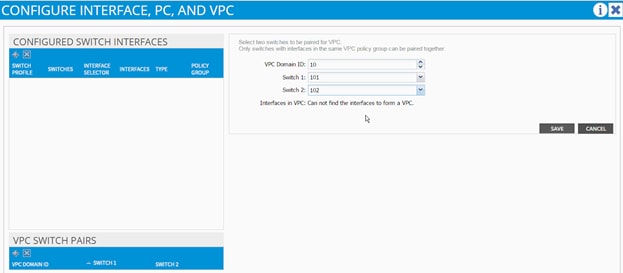

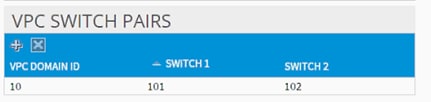

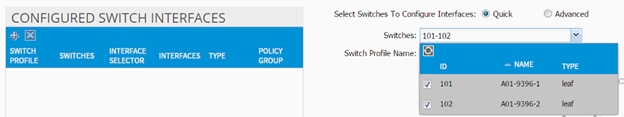

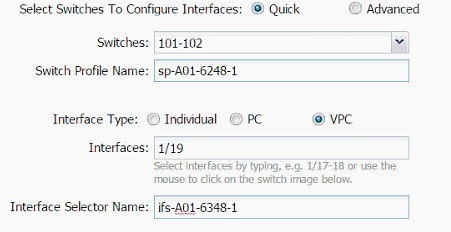

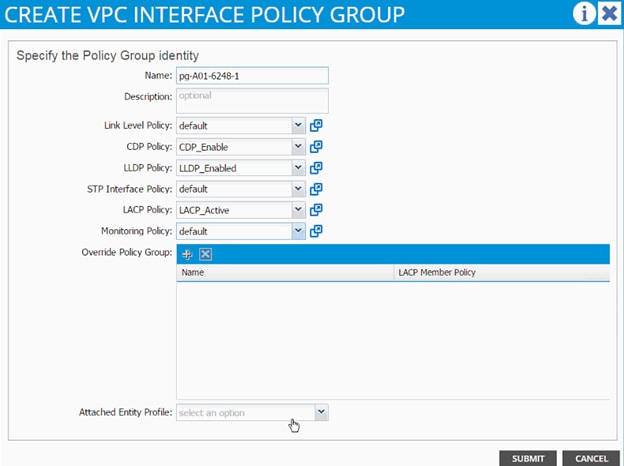

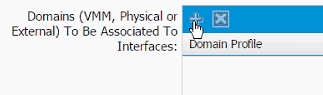

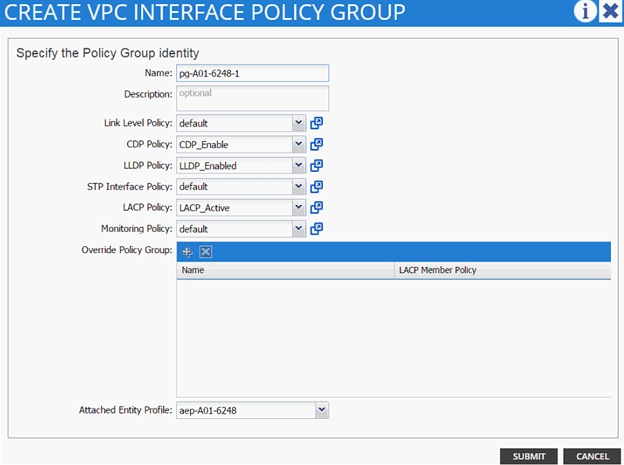

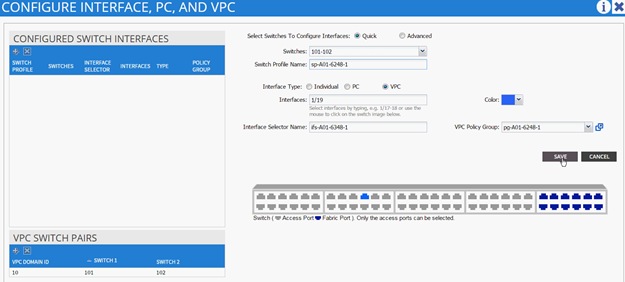

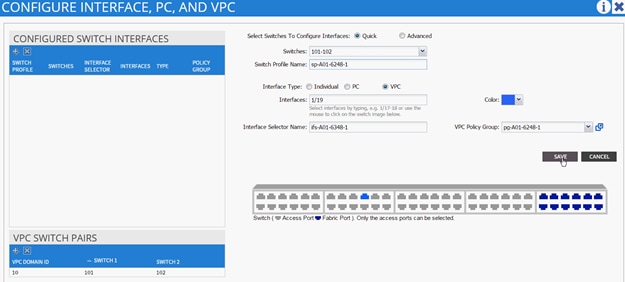

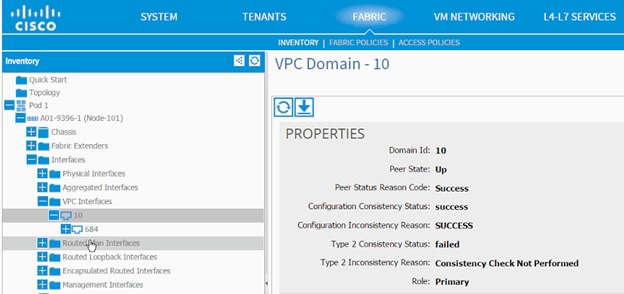

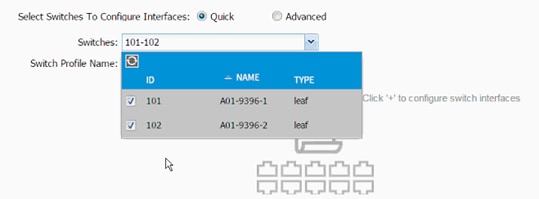

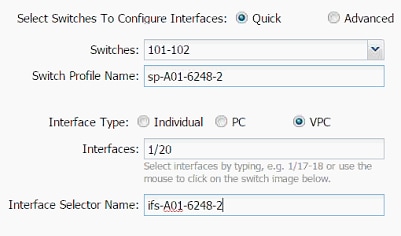

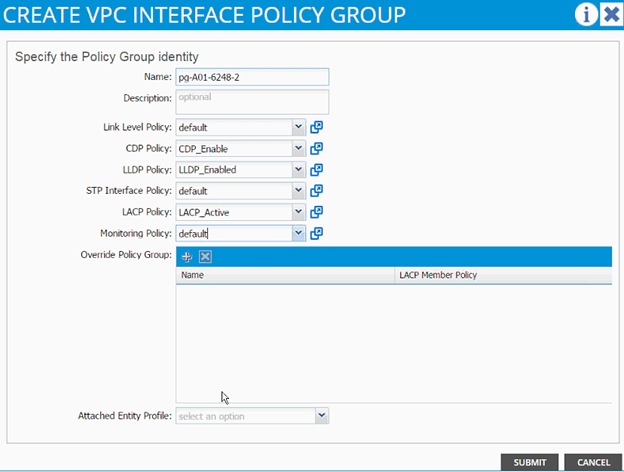

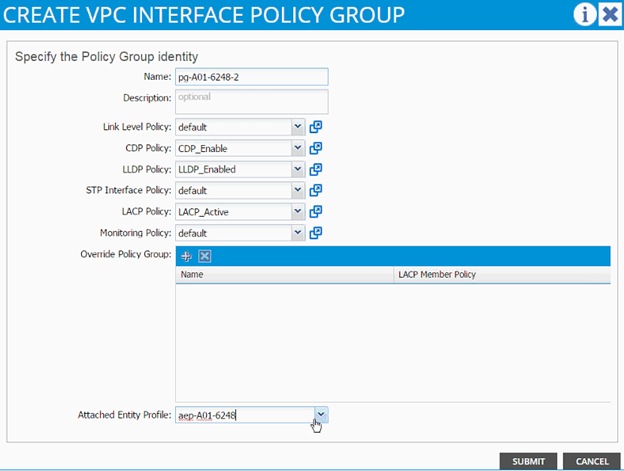

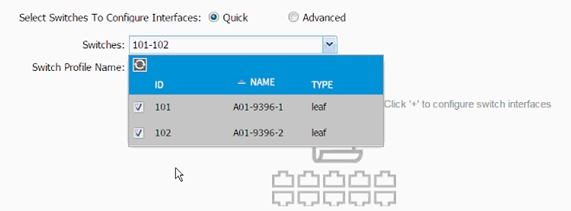

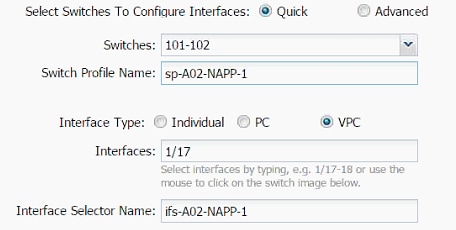

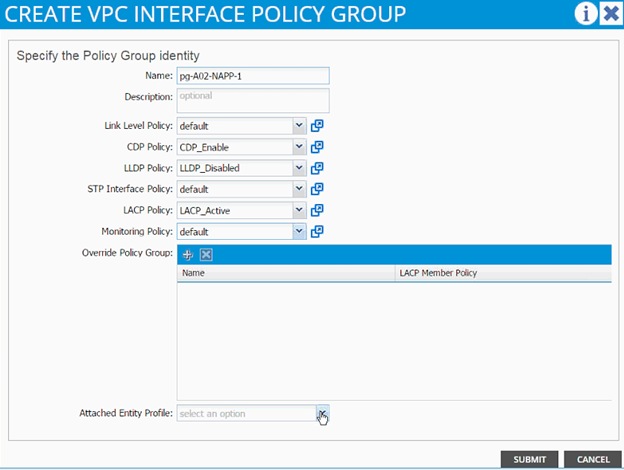

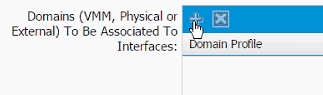

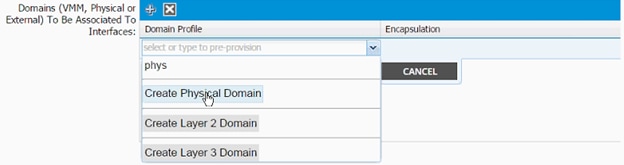

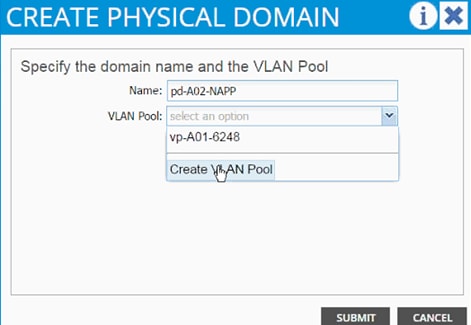

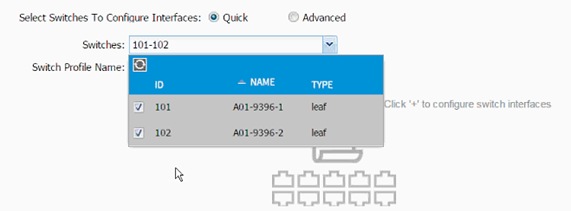

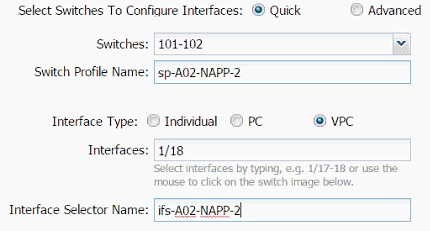

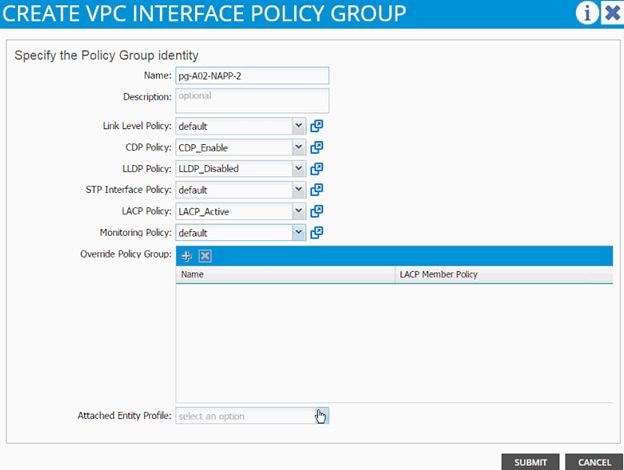

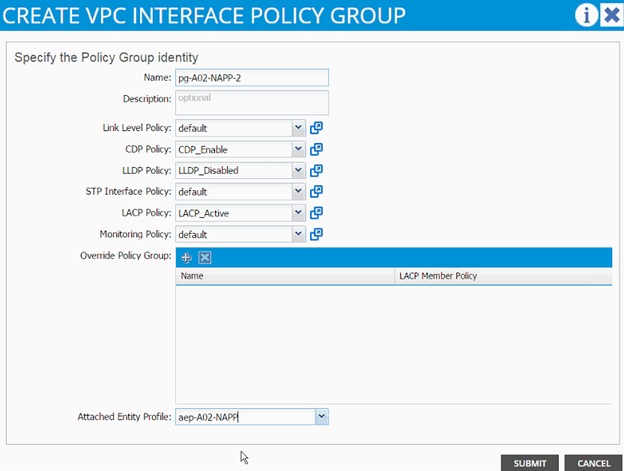

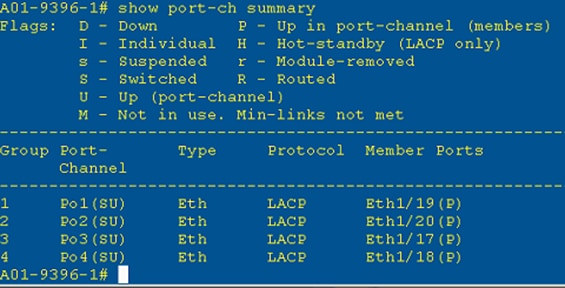

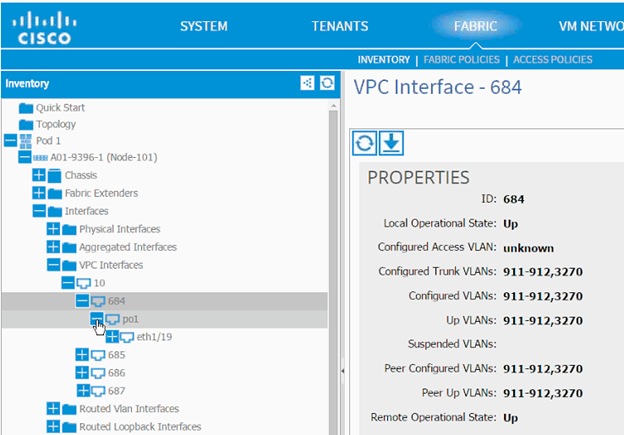

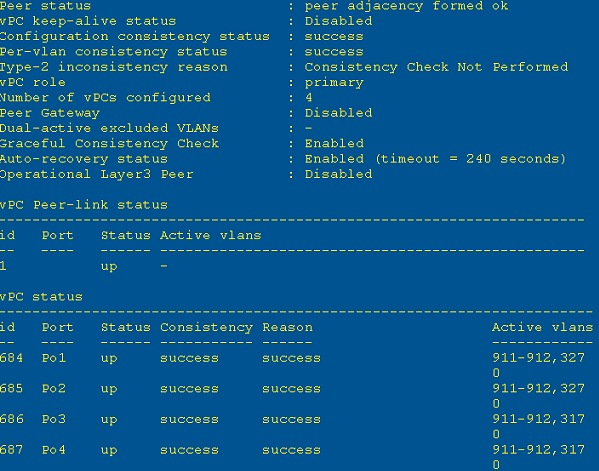

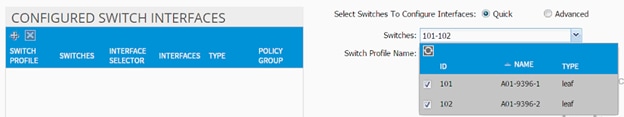

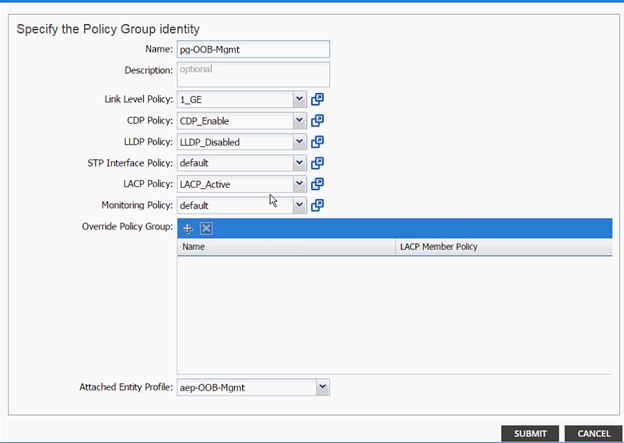

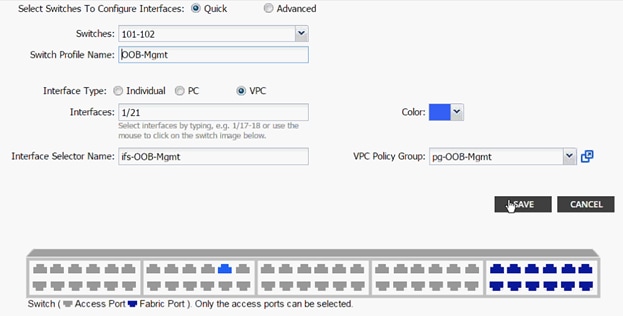

Cisco ACI – Creating vPC for Cisco UCS Fabric Interconnect A

Cisco ACI – Creating vPC for UCS Fabric Interconnect B

Cisco ACI – Creating vPC for NetApp Controller 1

Cisco ACI – Creating vPC for NetApp Controller 2

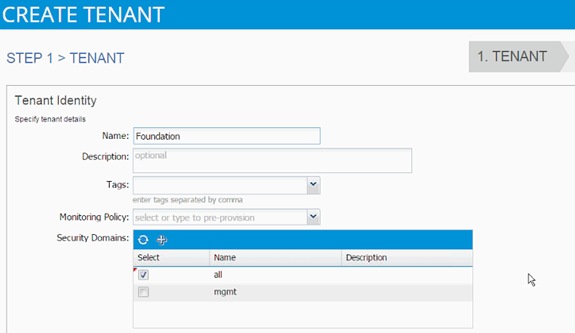

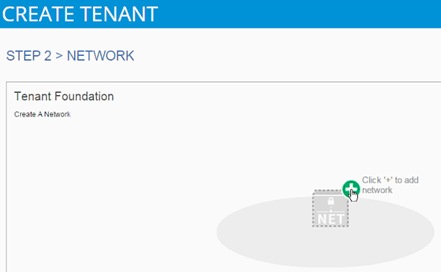

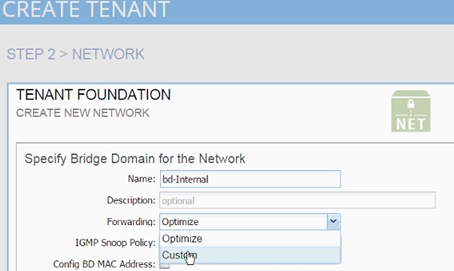

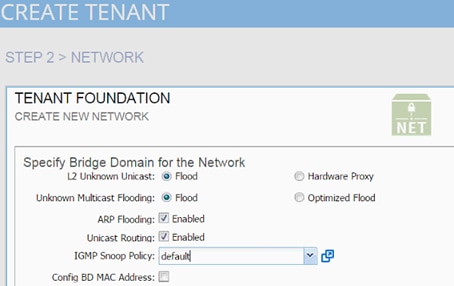

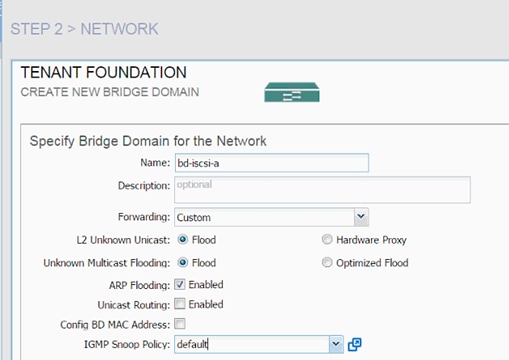

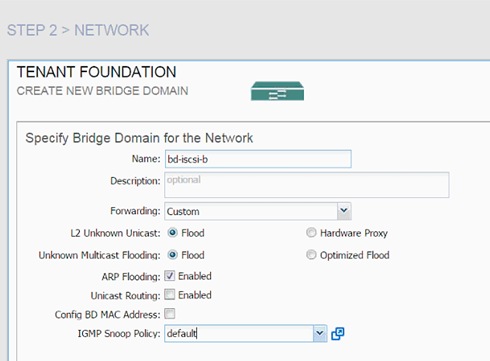

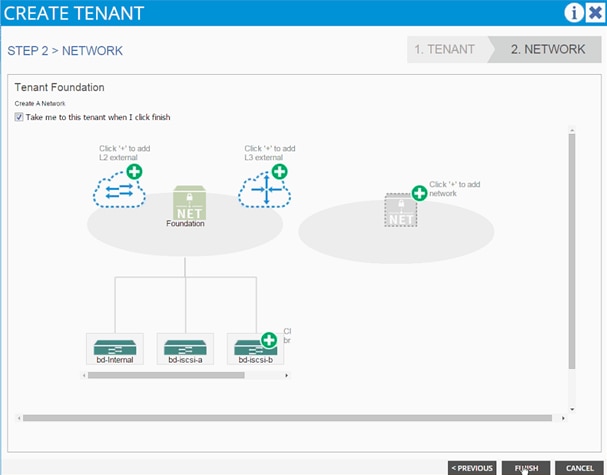

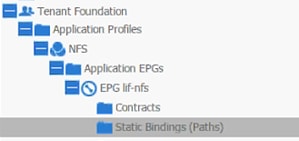

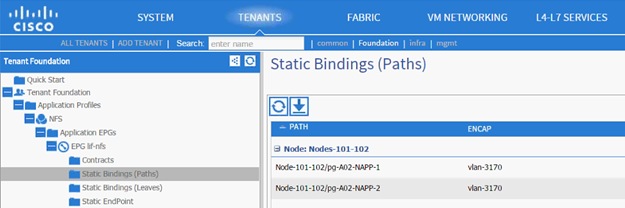

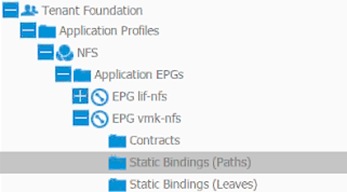

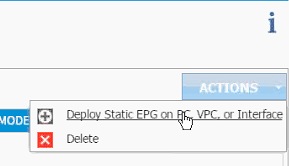

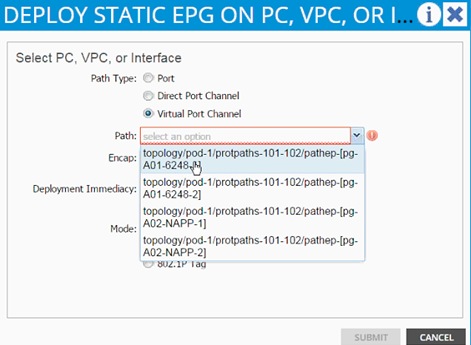

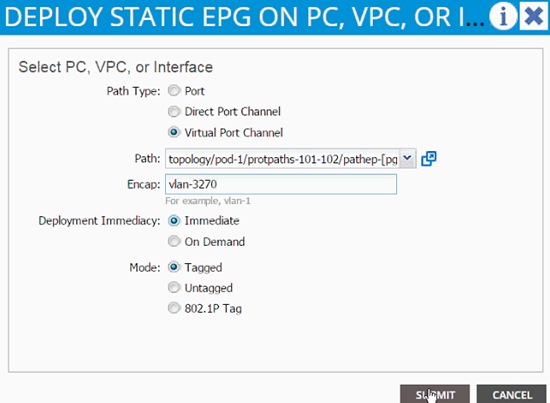

Cisco ACI – Deploying Infrastructure (Foundation) Tenant

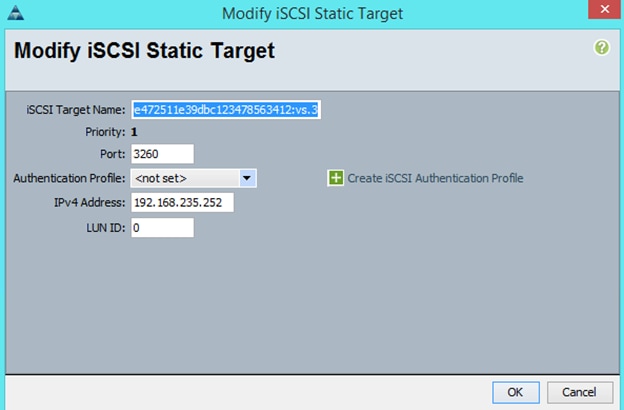

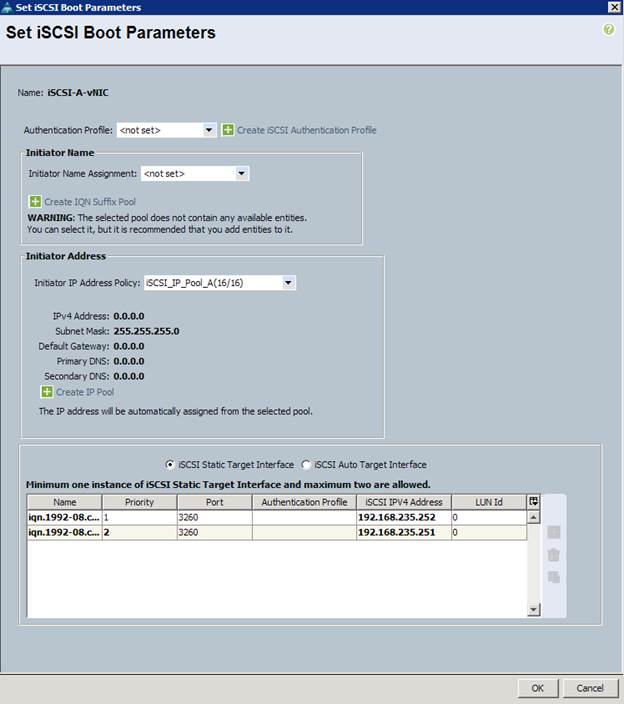

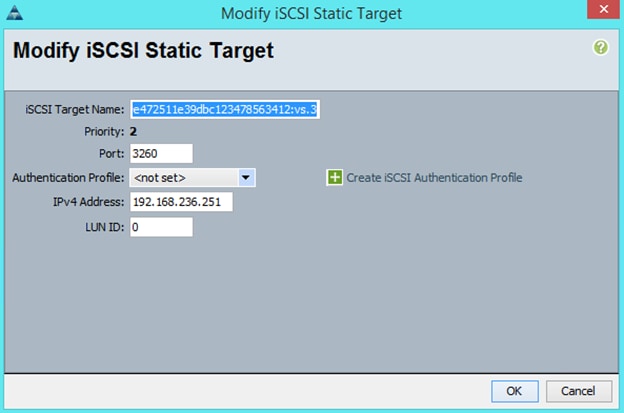

Storage Configuration – SAN Boot

Clustered Data ONTAP SAN Boot Storage Setup

Download Cisco Custom Image for ESXi 5.5.0 U2

Log in to Cisco UCS 6200 Fabric Interconnect

Set Up VMware ESXi Installation

Set Up Management Networking for ESXi Hosts

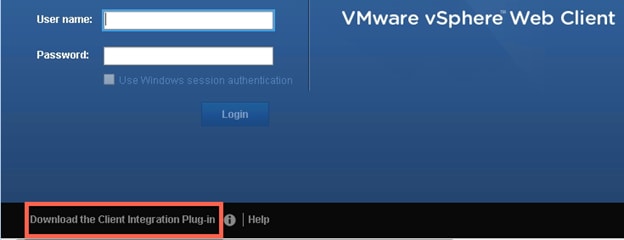

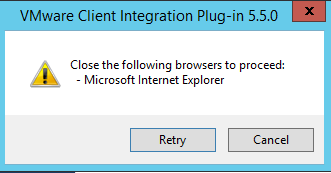

Download VMware vSphere Client

Download VMware vSphere CLI 5.5

Log in to VMware ESXi Hosts by Using VMware vSphere Client

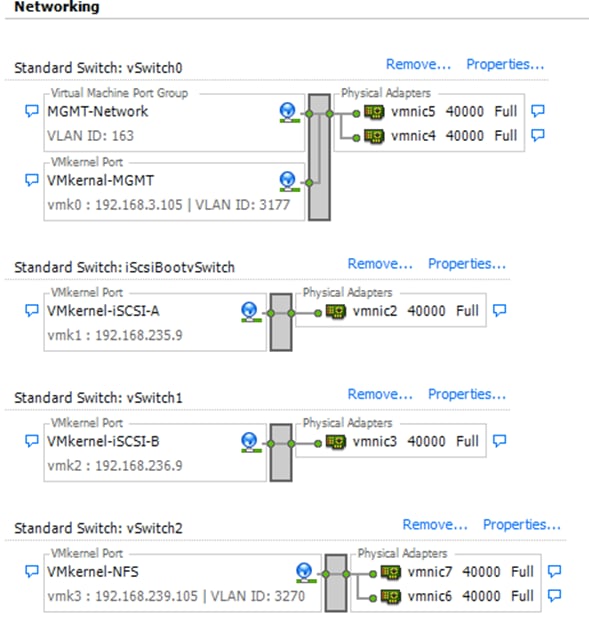

Set Up VMkernel Ports and Virtual Switch

Install VMware Drivers for the Cisco Virtual Interface Card (VIC)

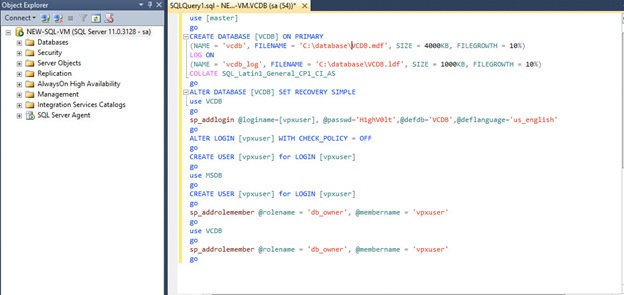

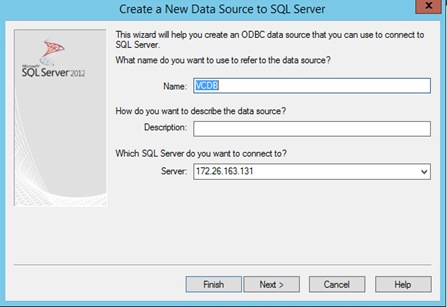

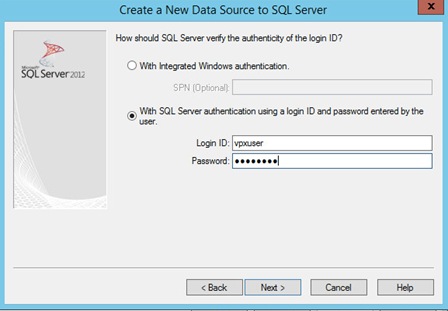

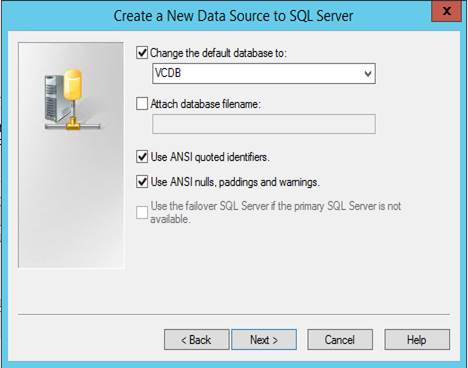

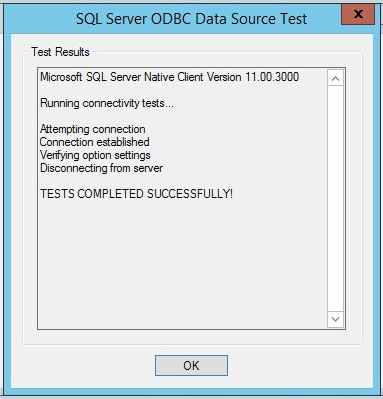

Install Microsoft SQL Server 2012 SP1

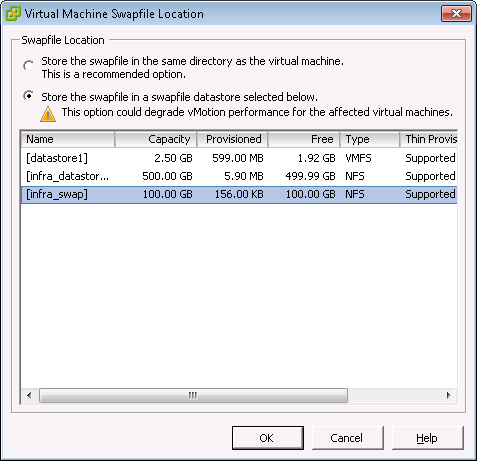

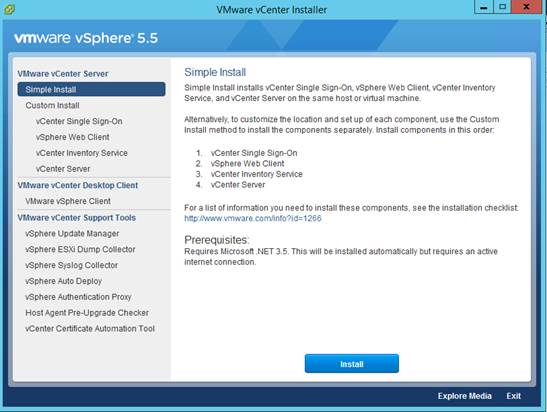

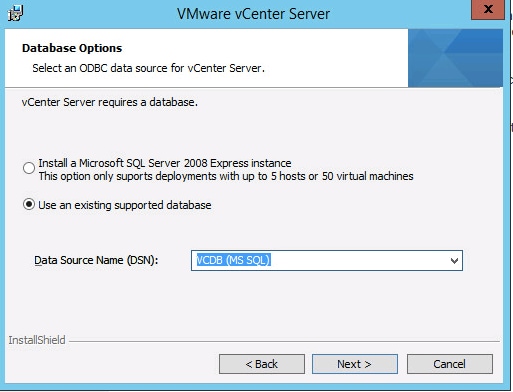

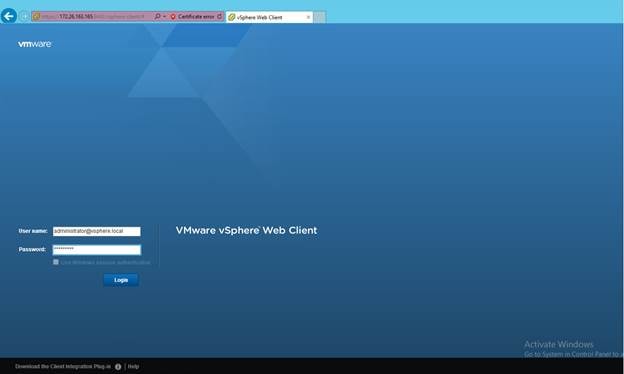

Build and Set Up VMware vCenter Virtual Machine

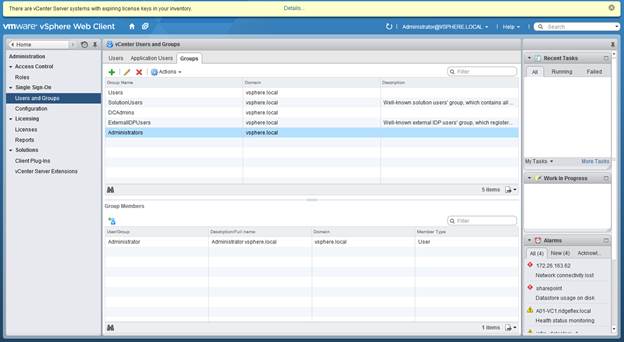

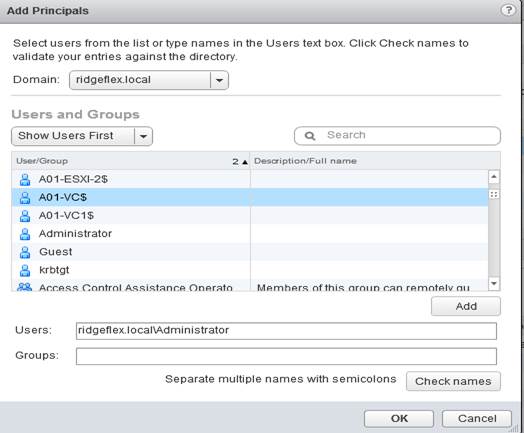

Adding the AD Account to Administrator Group

Set Up vCenter Center with a Datacenter, Cluster, DRS and HA

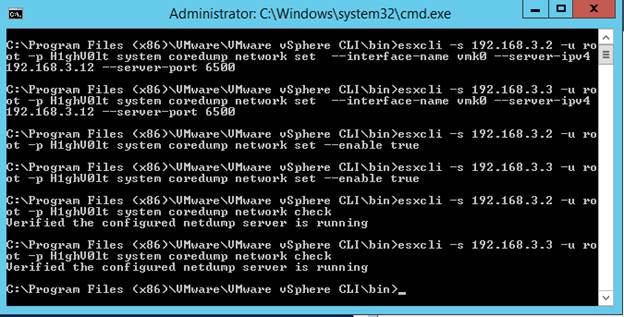

ESXi Dump Collector Setup for iSCSI-Booted Hosts

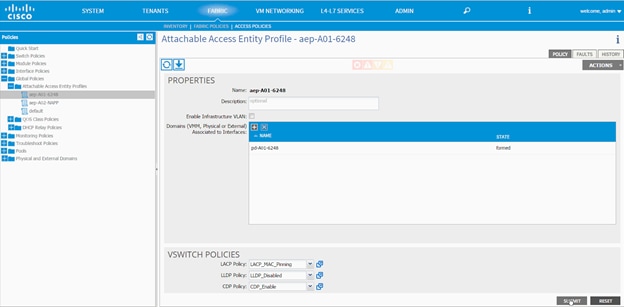

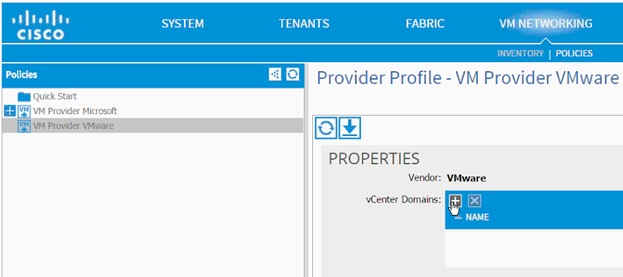

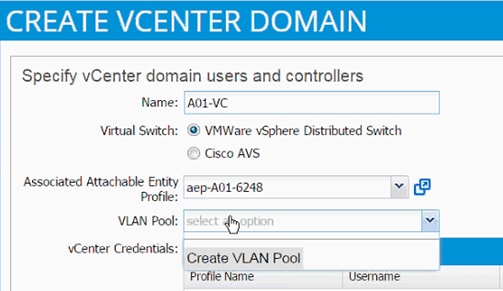

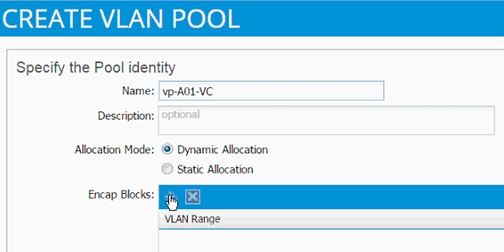

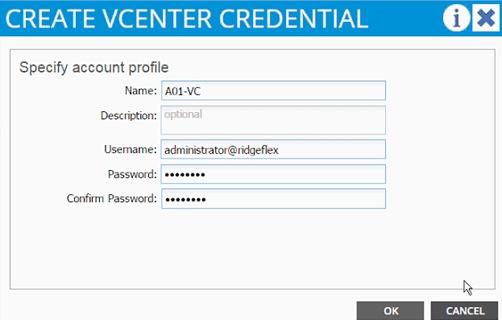

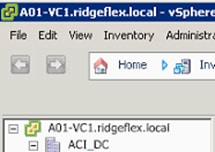

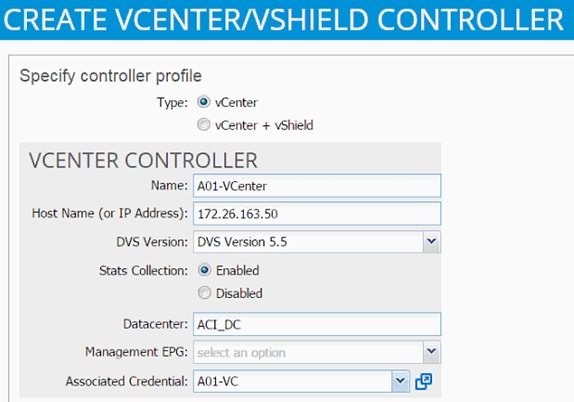

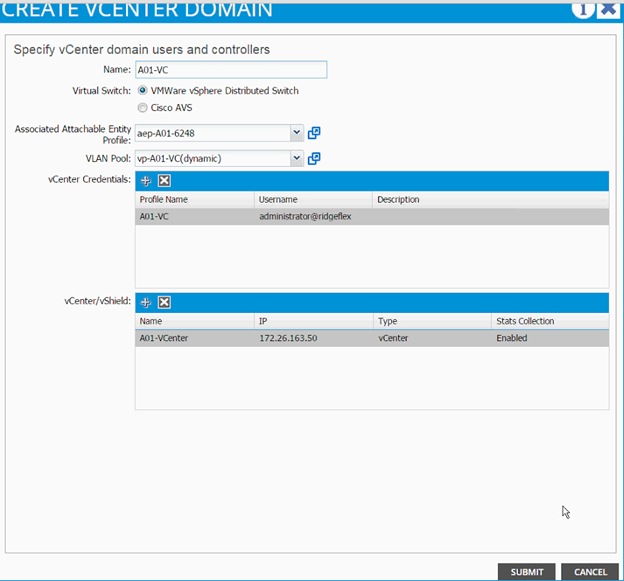

Cisco ACI – Virtual Machine Manager

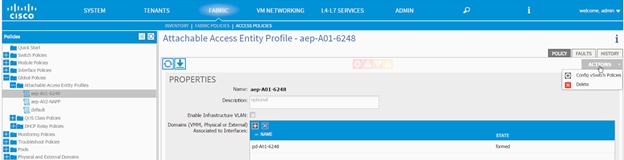

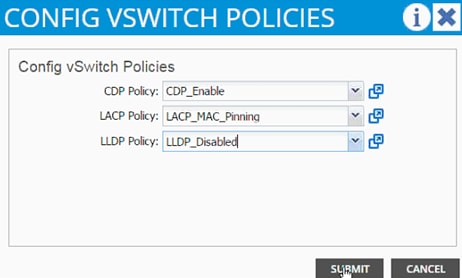

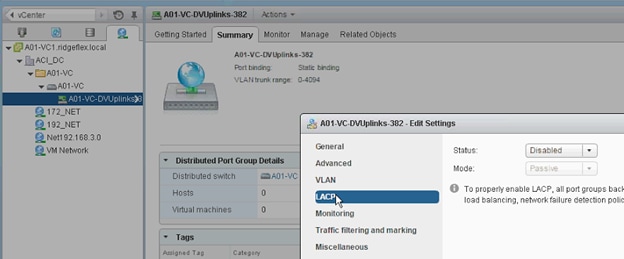

Defining VMware Distributed Switch policies

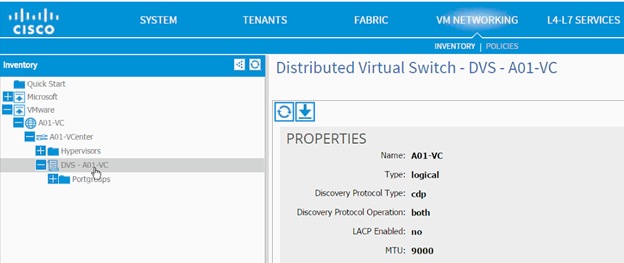

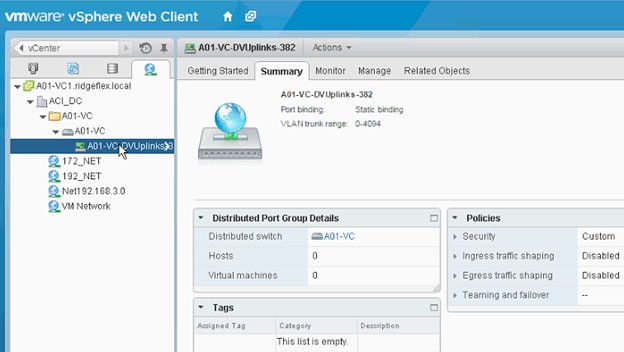

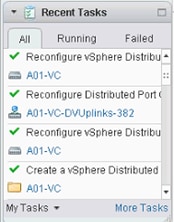

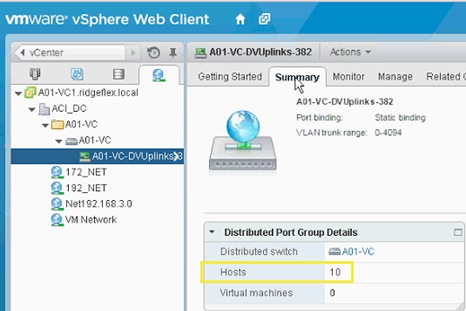

VMware Distributed Switch - Deployment Validation

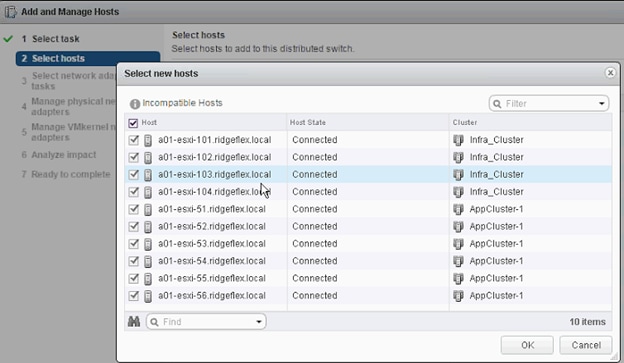

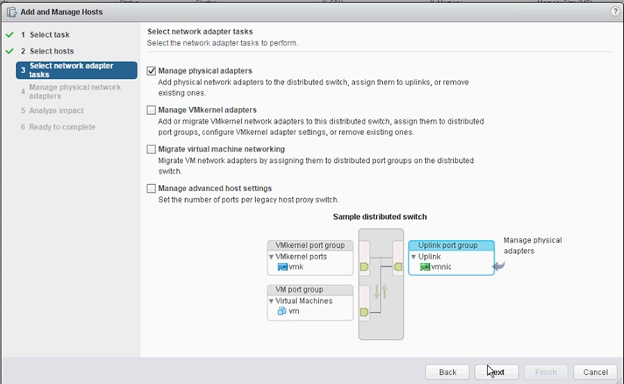

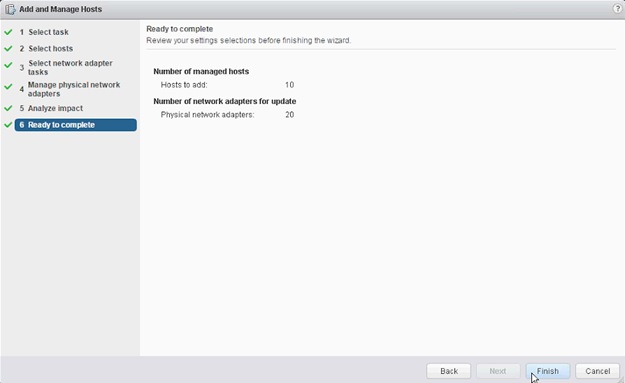

Adding Hosts to VMware Distributed Switch

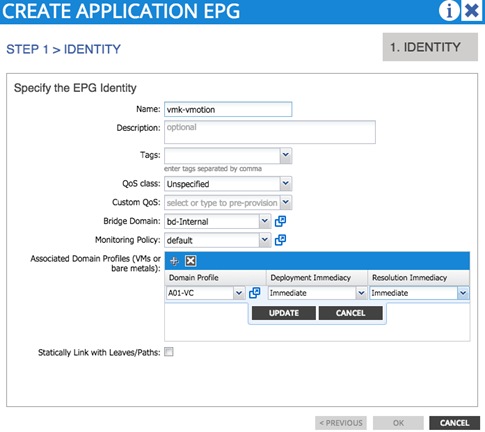

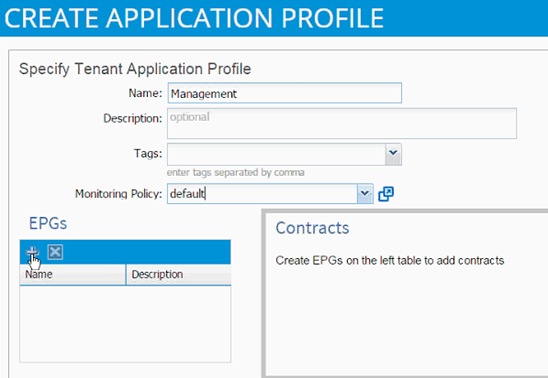

vMotion - Application Profile Creation

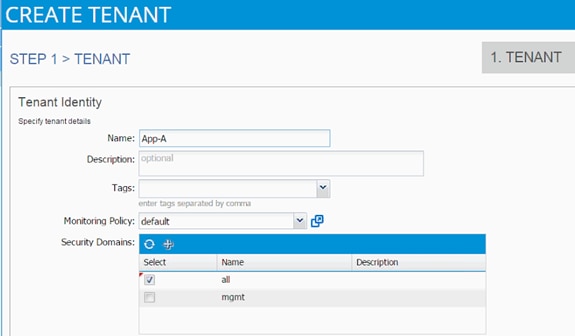

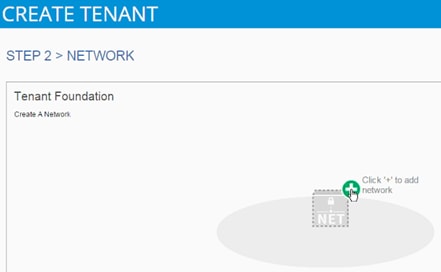

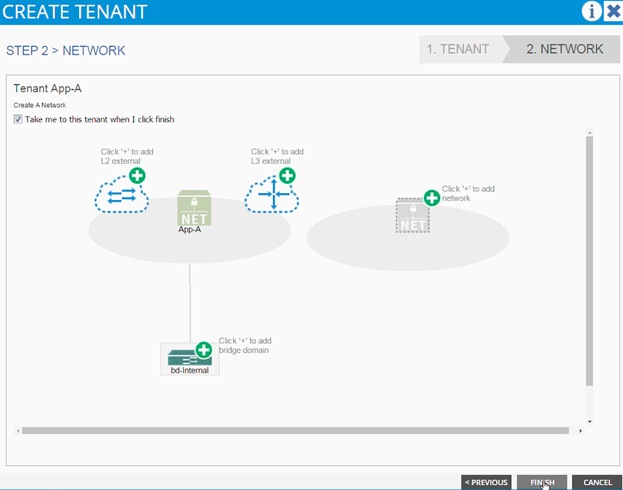

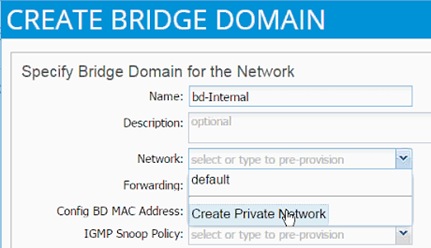

Cisco ACI – Deploying a Tenant

Application Specific SVM Creation

Broadcast Domains in Clustered Data ONTAP

Storage Virtual Machine (Vserver)

Create Load Sharing Mirror of Vserver Root Volume in Clustered Data ONTAP

iSCSI Service in Clustered Data ONTAP

HTTPS Access in Clustered Data ONTAP

FlexVol in Clustered Data ONTAP

Deduplication in Clustered Data ONTAP

NFS LIF in Clustered Data ONTAP

iSCSI LIF in Clustered Data ONTAP (Optional)

Add App-A Vserver Administrator

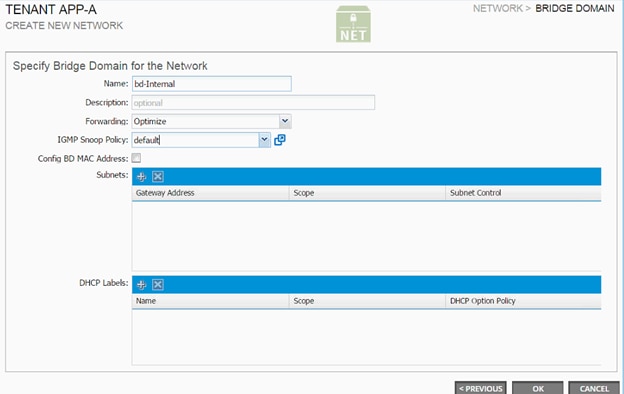

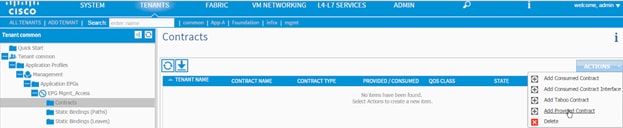

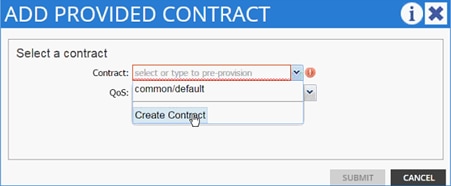

Application Tenant Creation on APIC

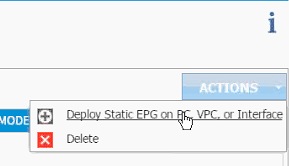

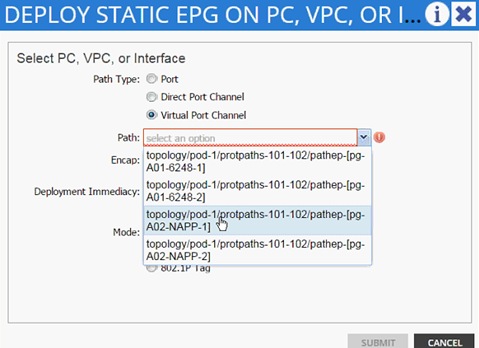

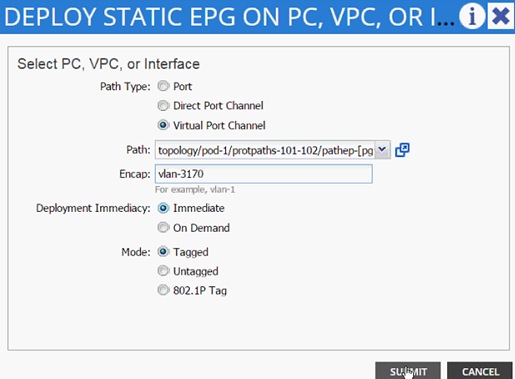

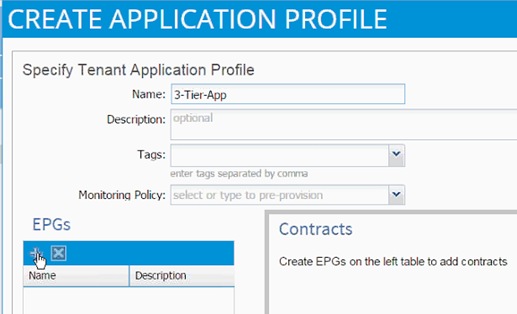

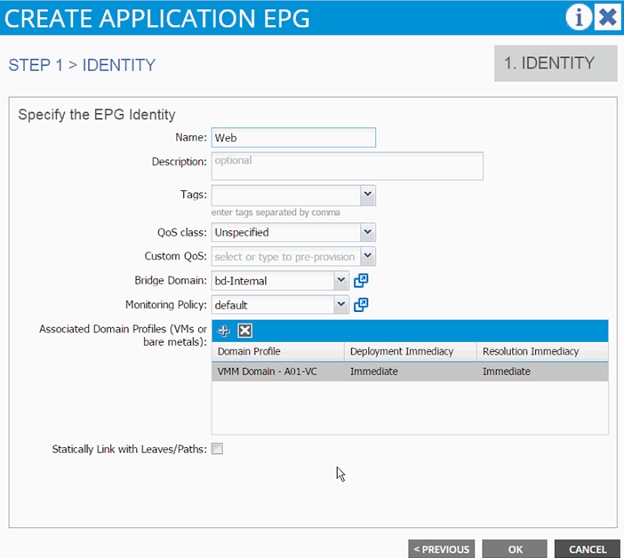

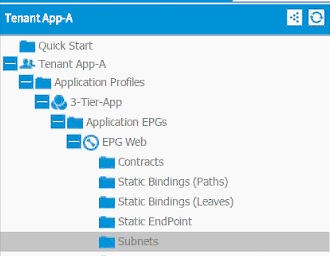

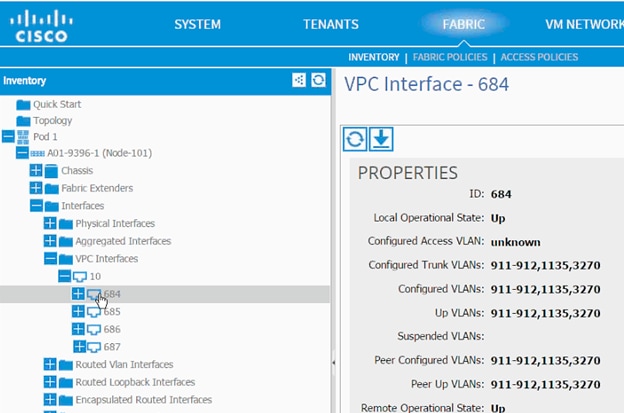

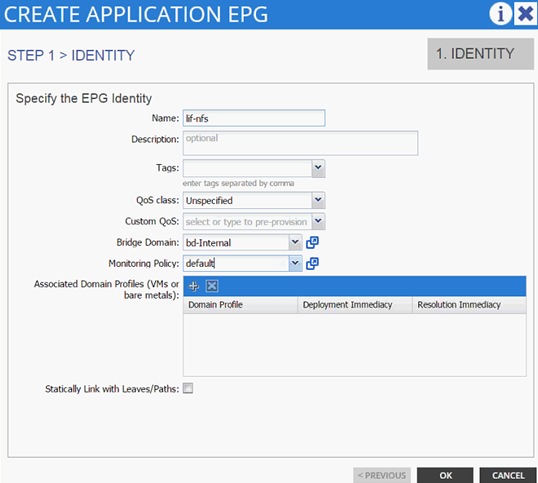

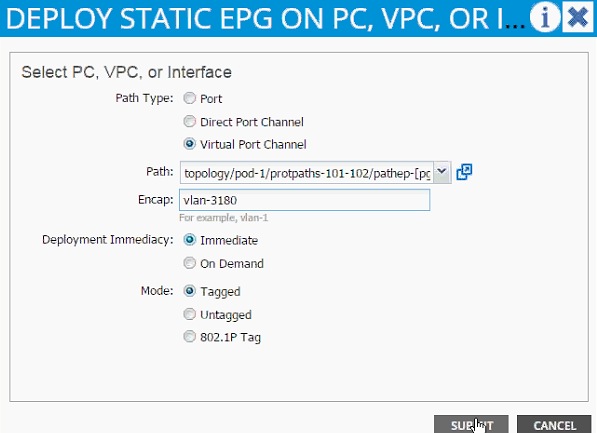

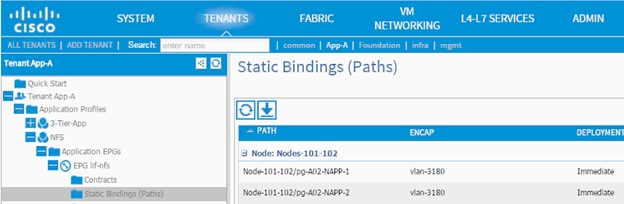

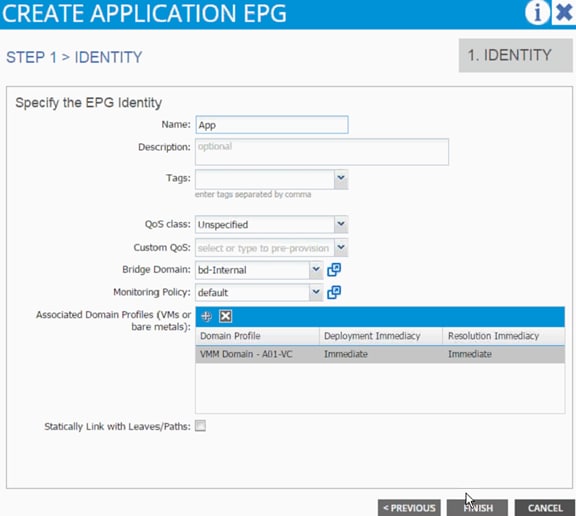

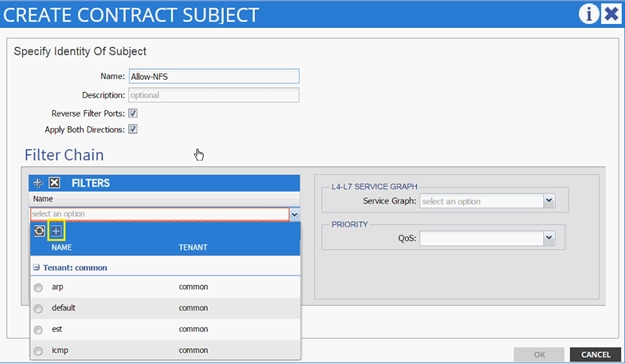

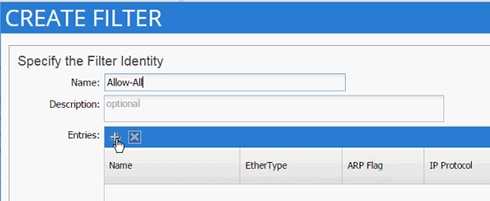

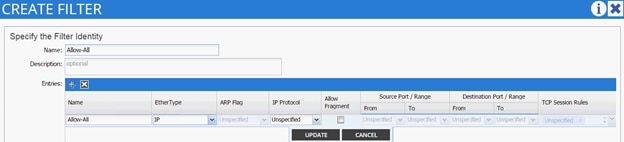

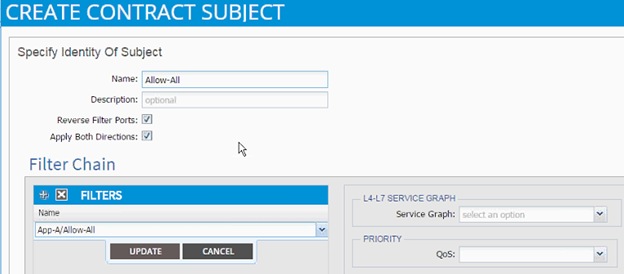

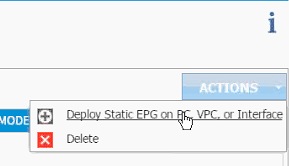

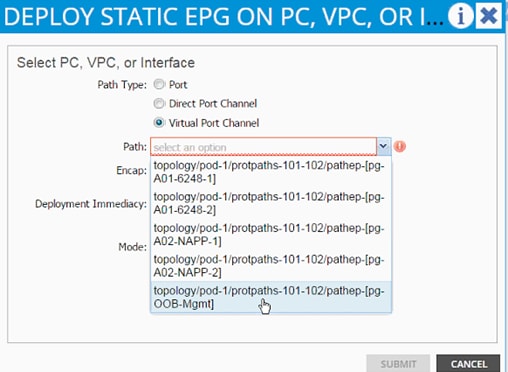

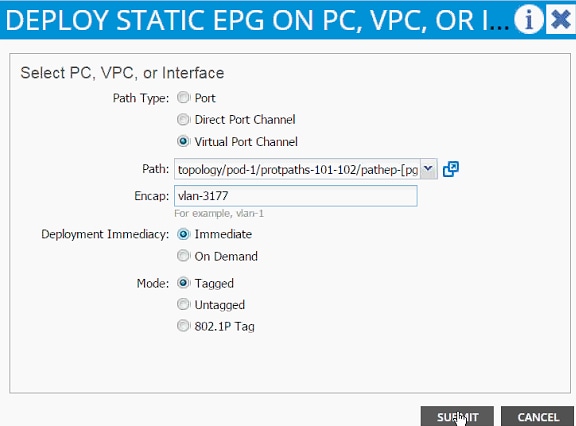

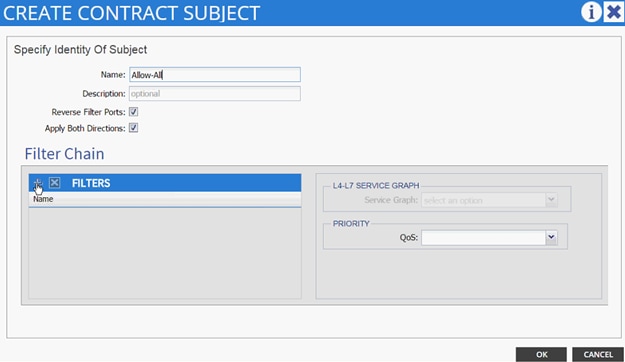

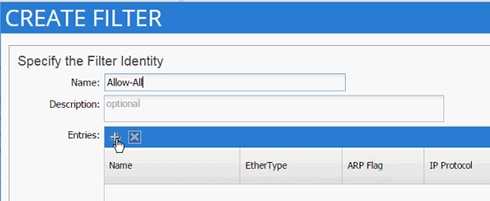

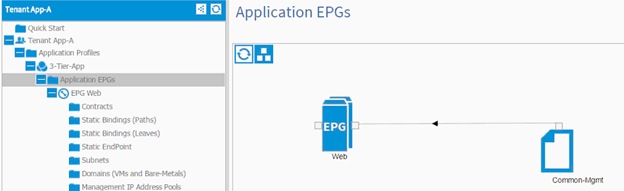

3-Tier App - Application Profile Creation and Adding EPG for Web Tier

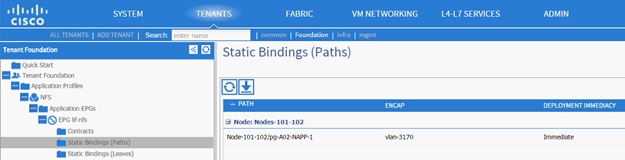

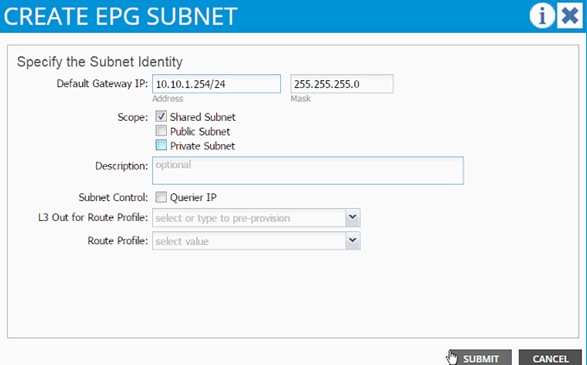

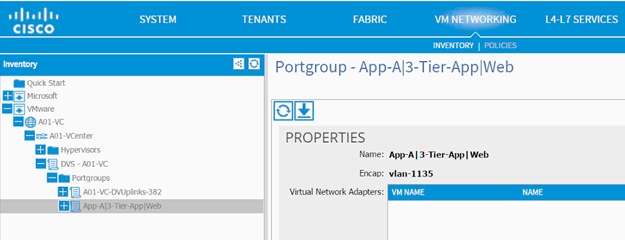

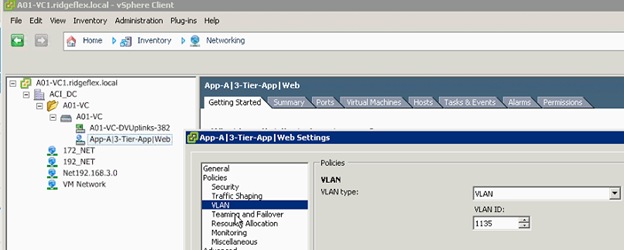

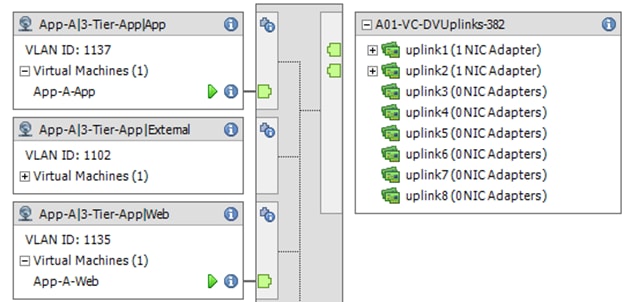

Port-Group Deployment Validation

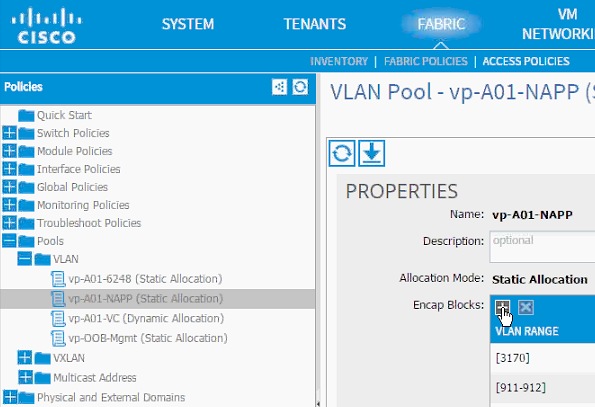

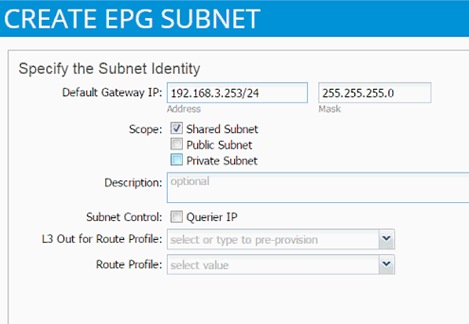

Modifying the Storage (NetApp) Physical Domain VLANs

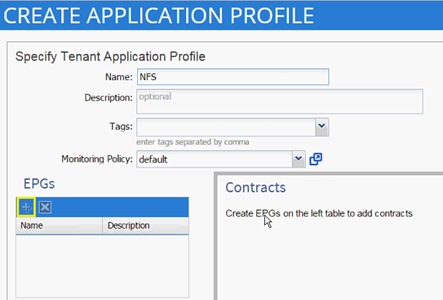

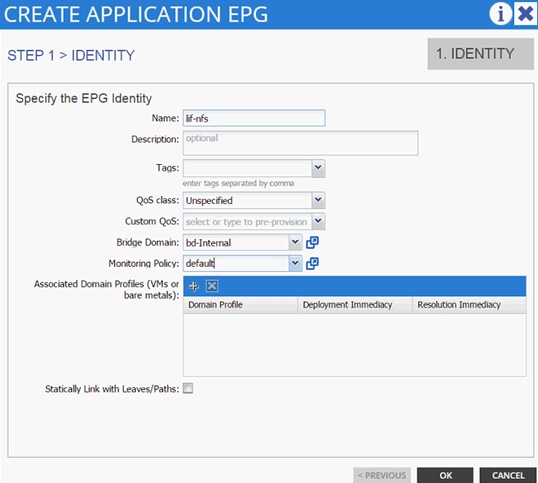

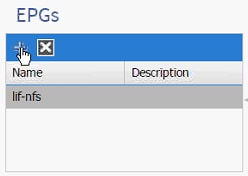

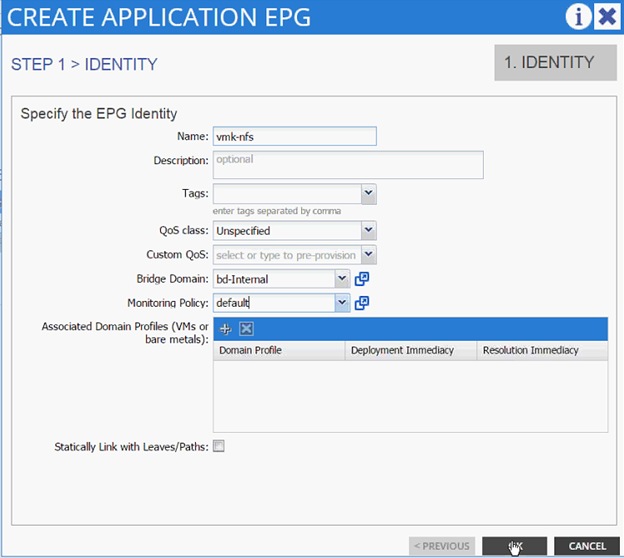

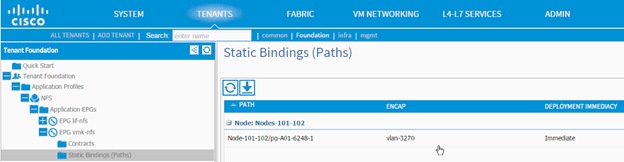

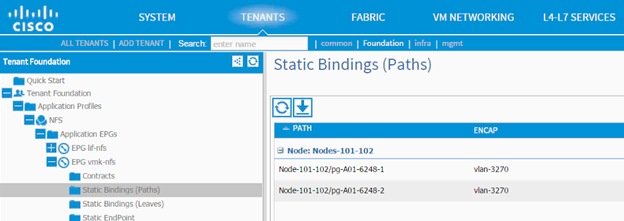

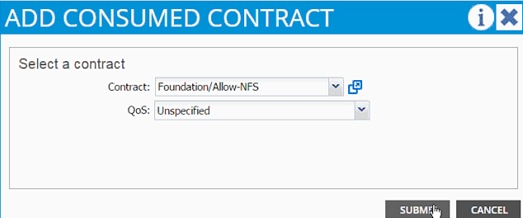

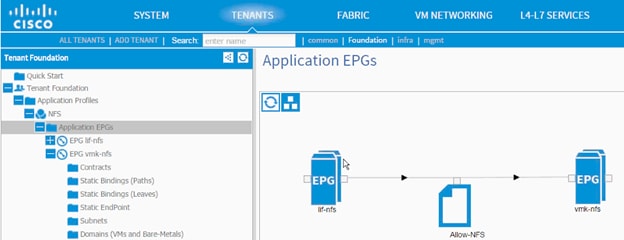

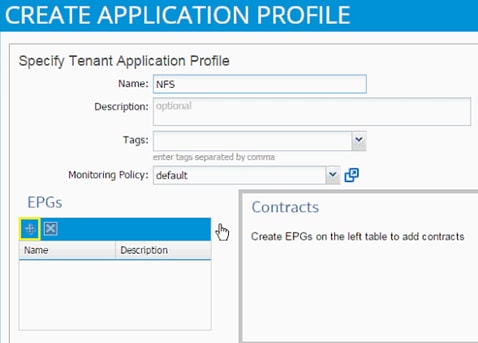

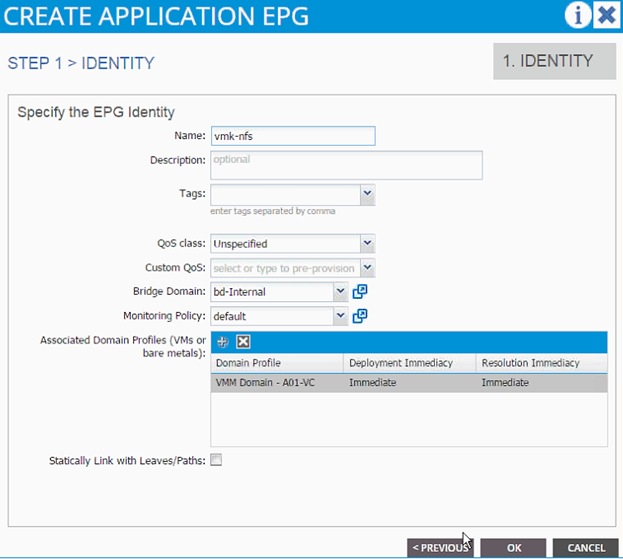

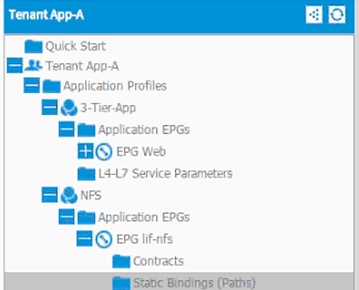

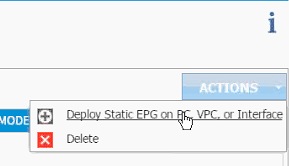

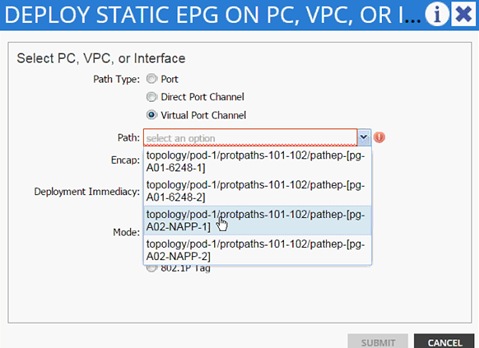

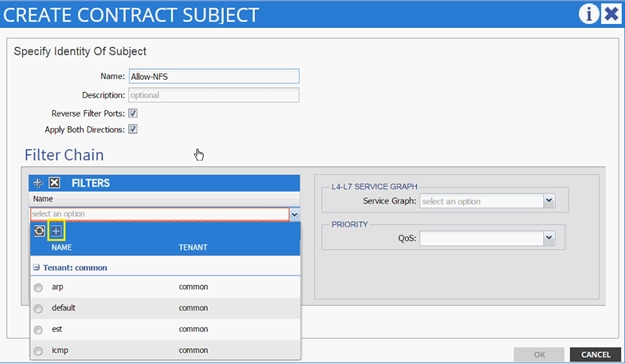

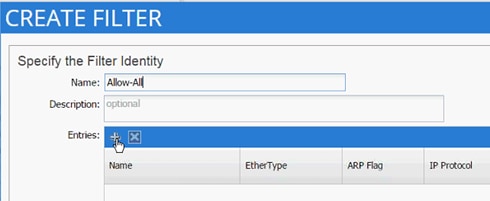

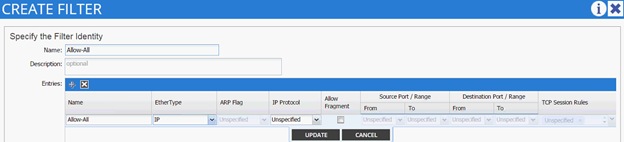

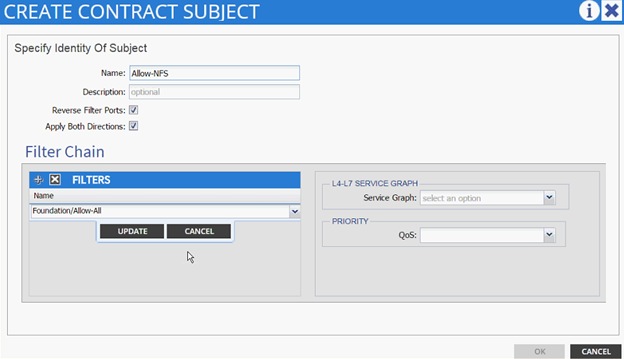

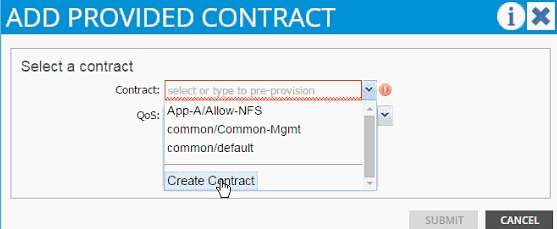

NFS - Application Profile Creation

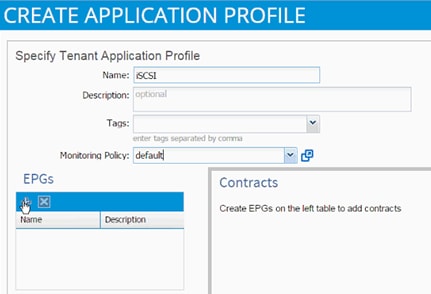

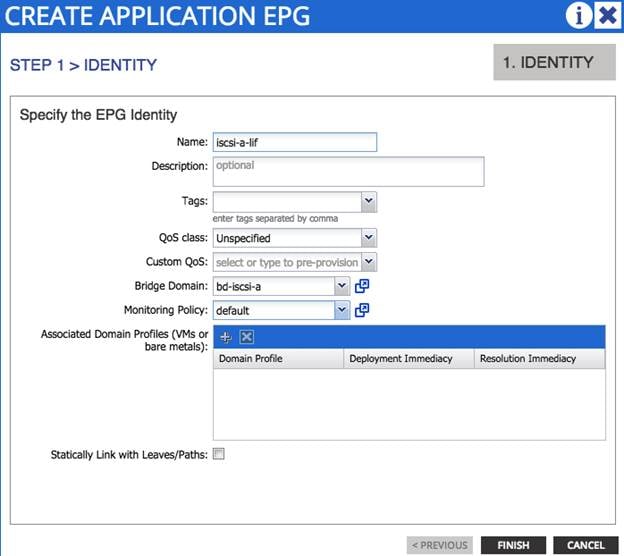

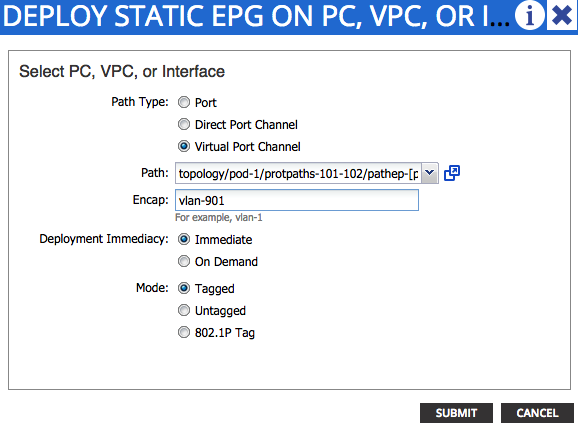

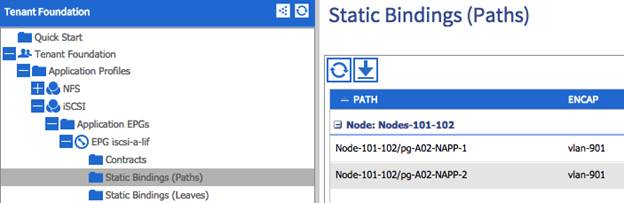

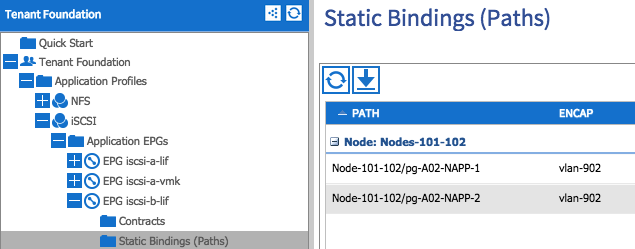

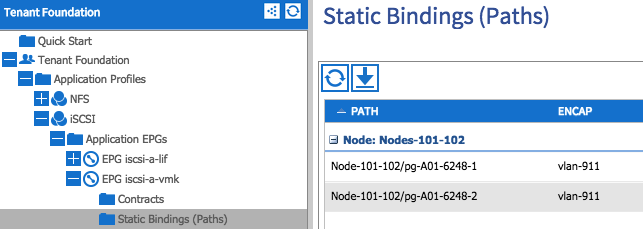

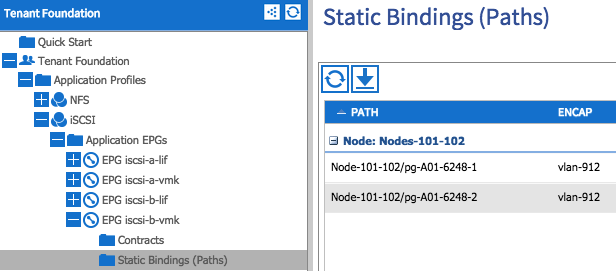

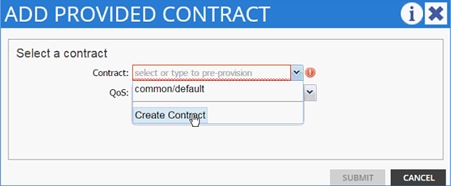

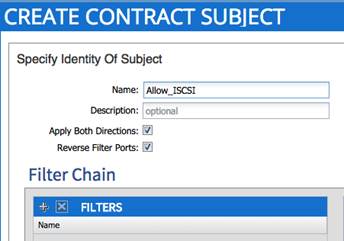

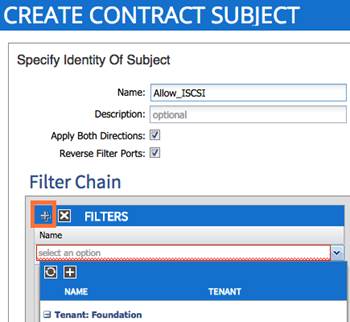

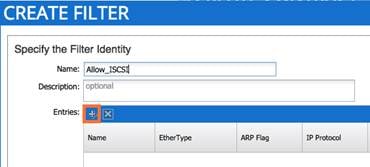

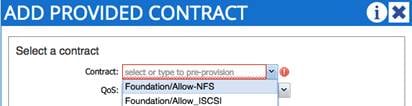

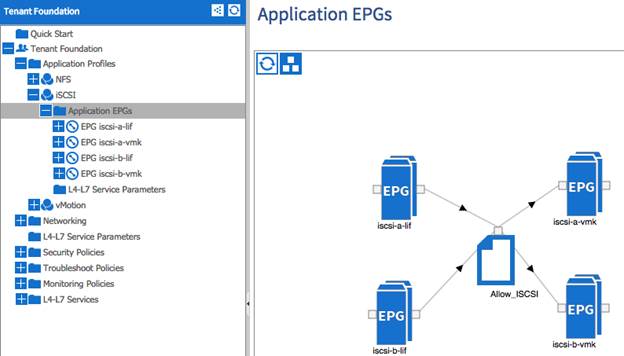

iSCSI Application Profile Creation (Optional)

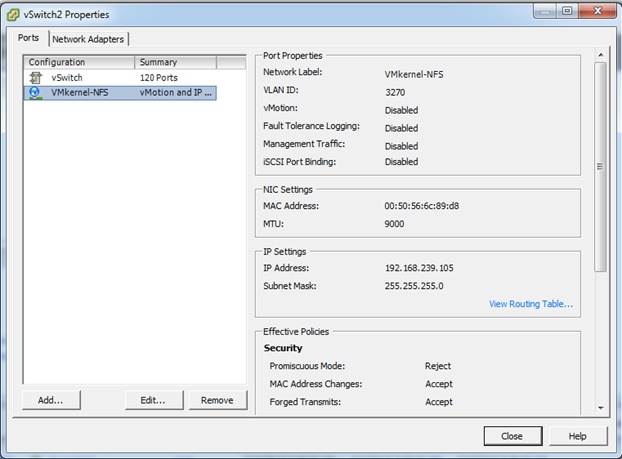

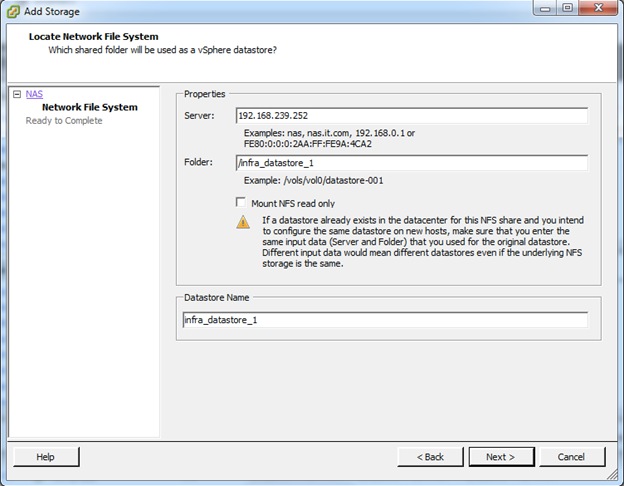

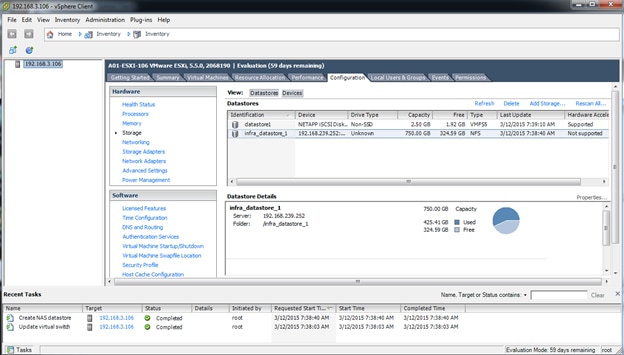

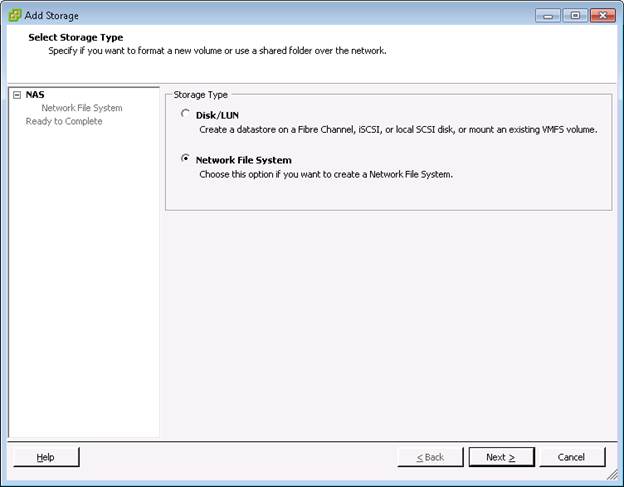

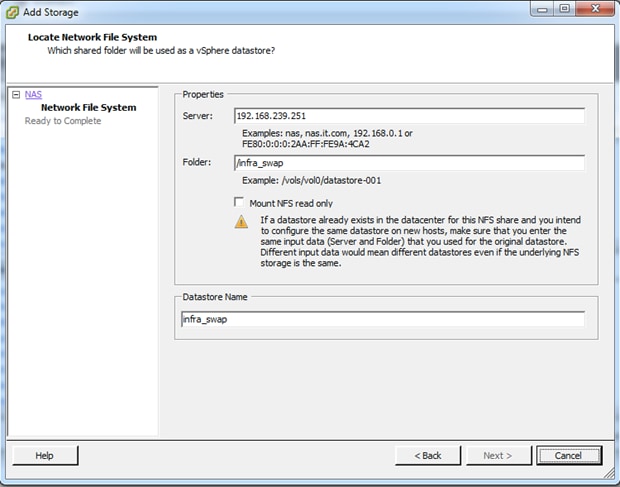

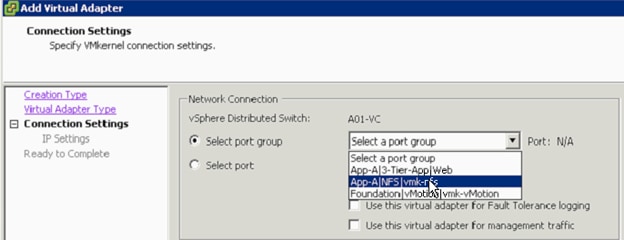

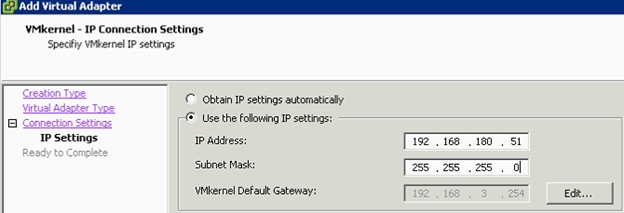

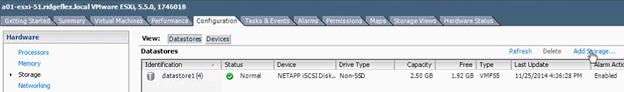

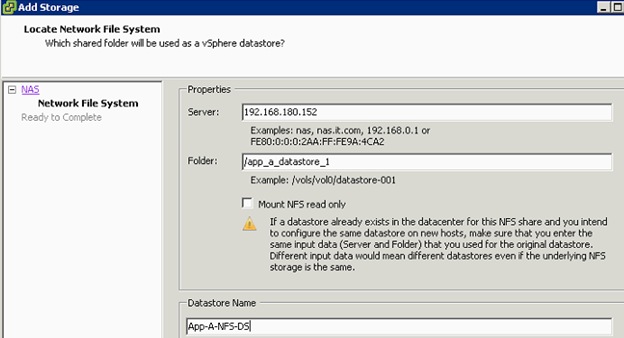

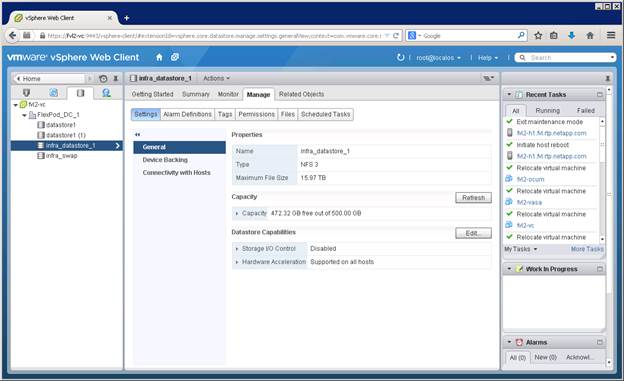

Defining NFS VMKernel Port and Mounting the Datastore

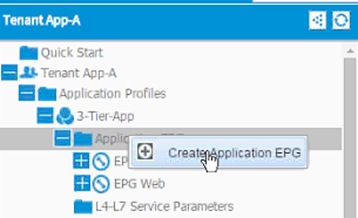

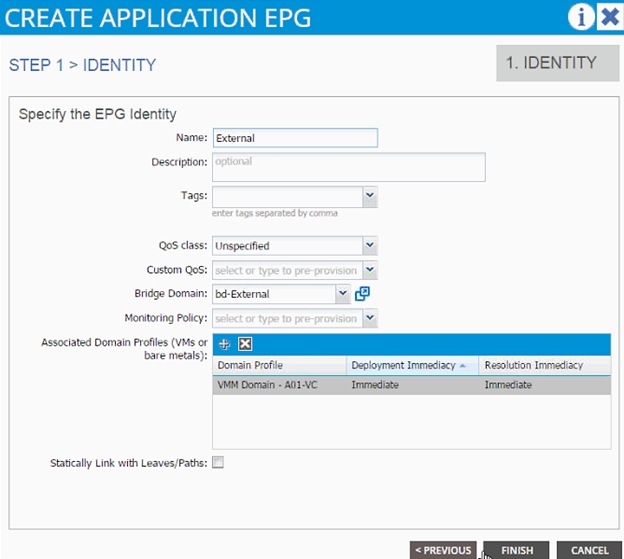

Defining EPGs for additional Application Tiers (Optional)

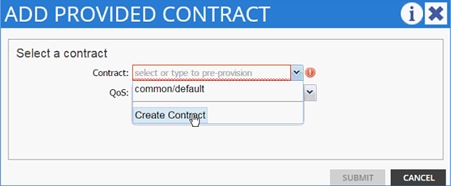

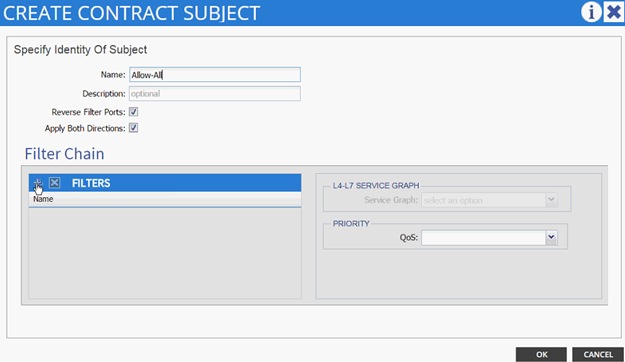

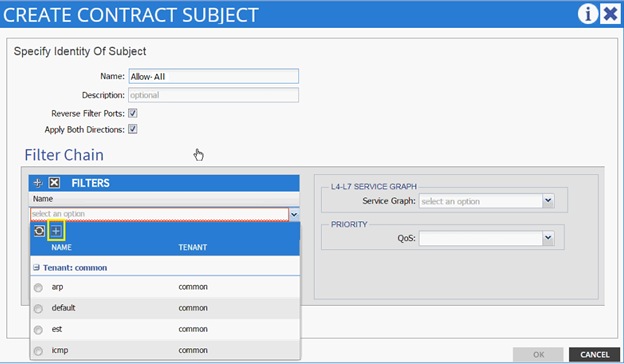

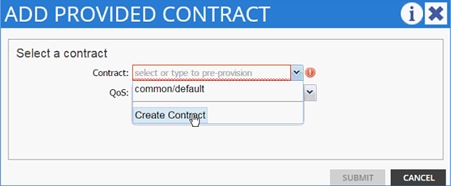

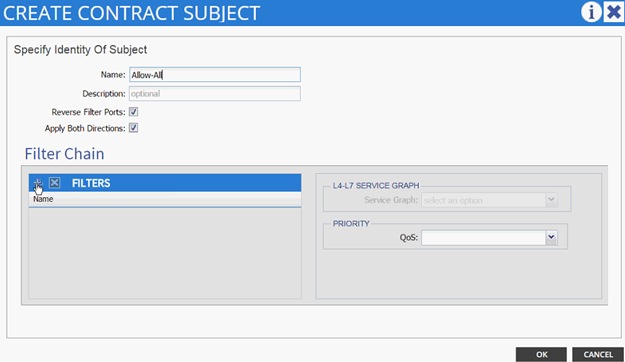

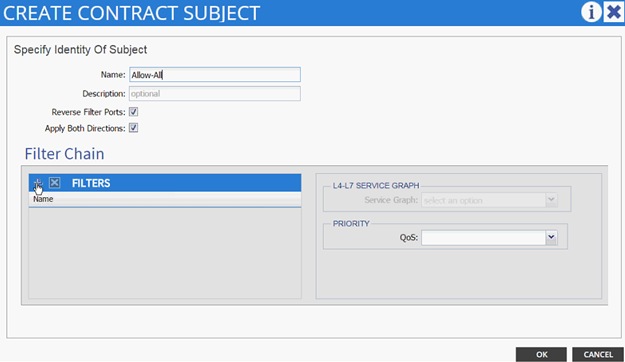

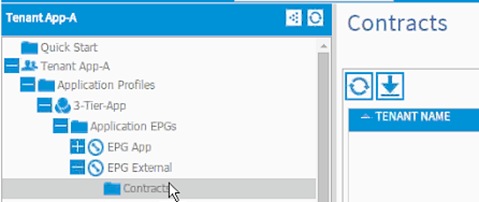

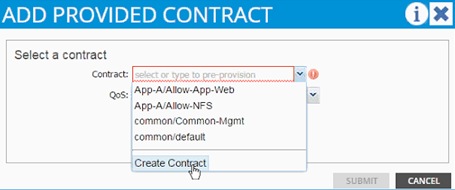

Enabling Communication Between Application Tiers

Cisco ACI – Accessing Common Services

Configuring ACI for Management Switch

Configuring the Management Switch

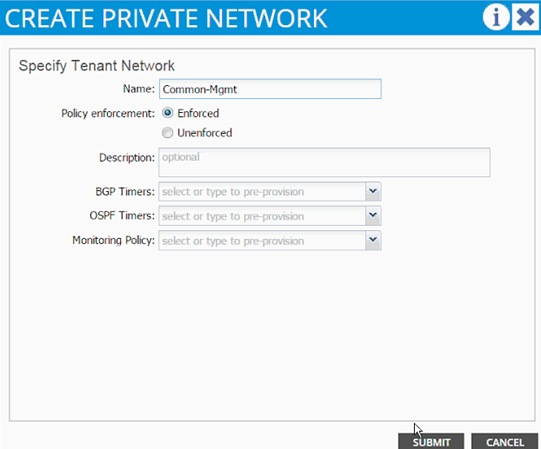

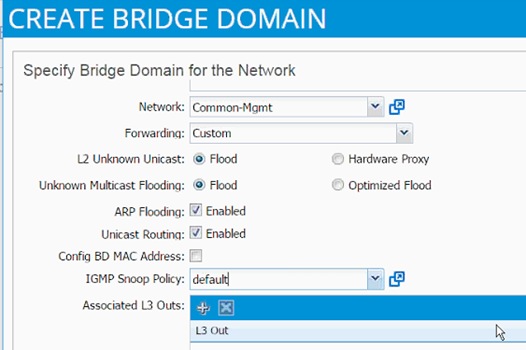

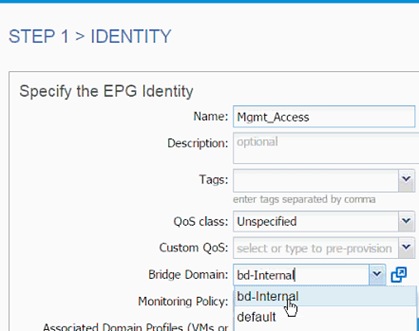

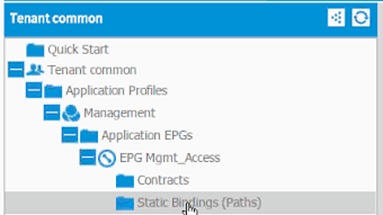

Common Management - Application Profile Creation

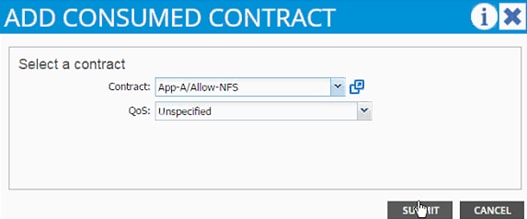

Consuming the Common Contract in Application EPGs

Cisco ACI – Accessing SVM Management Interface from Application Virtual Machines (Optional)

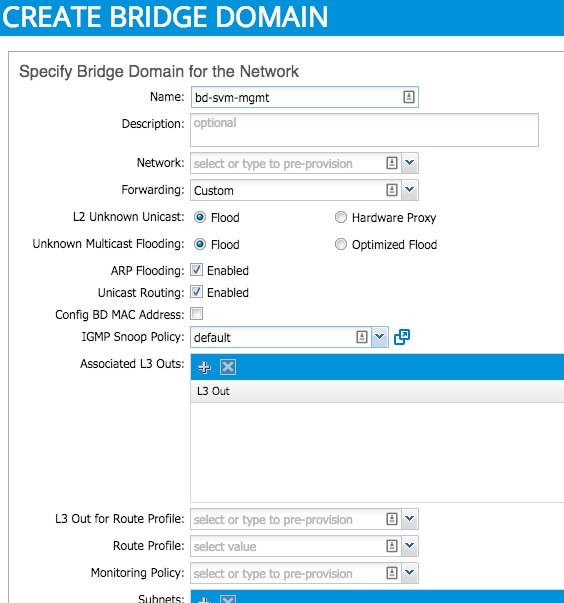

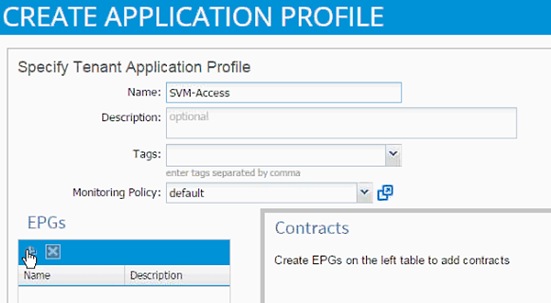

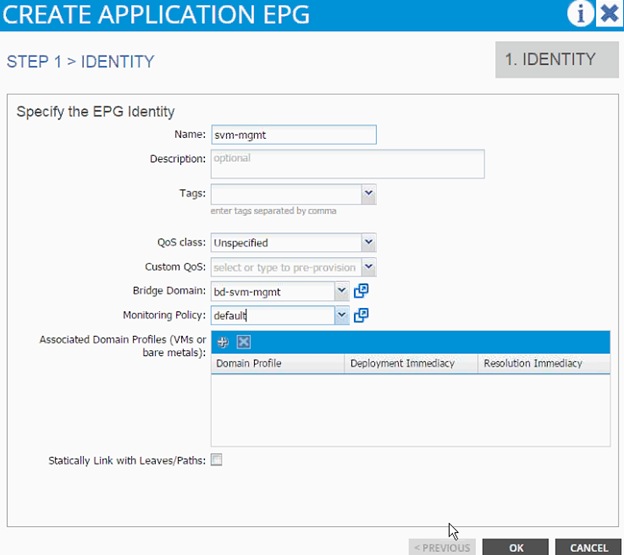

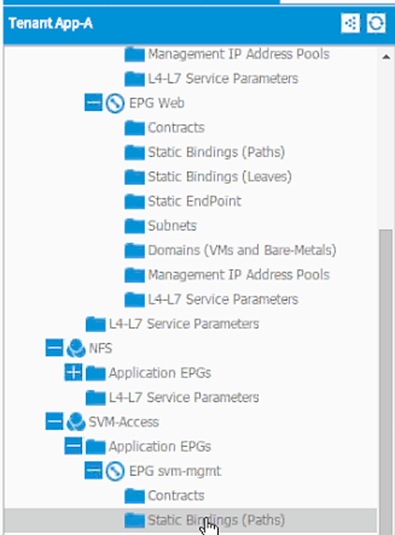

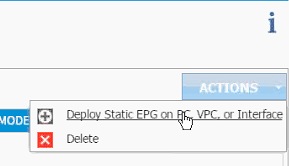

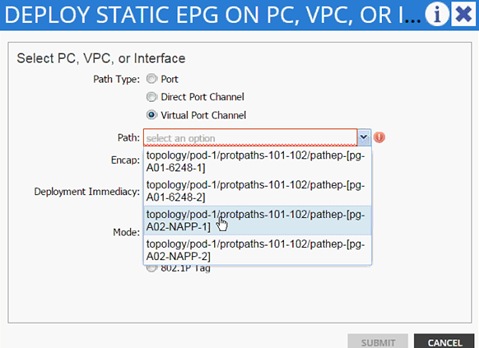

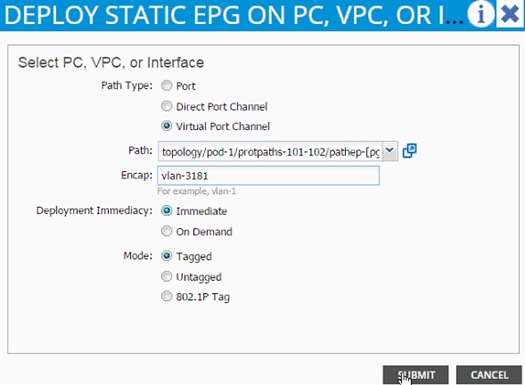

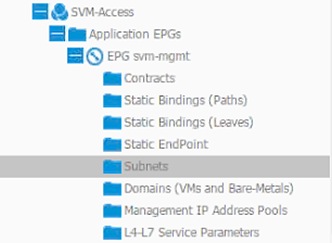

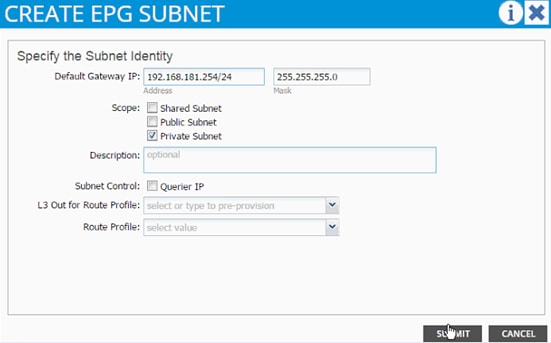

SVM-Access - Application Profile Creation

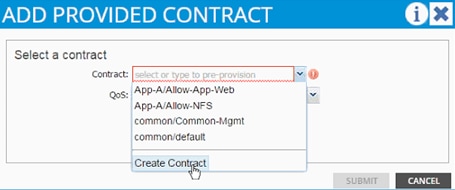

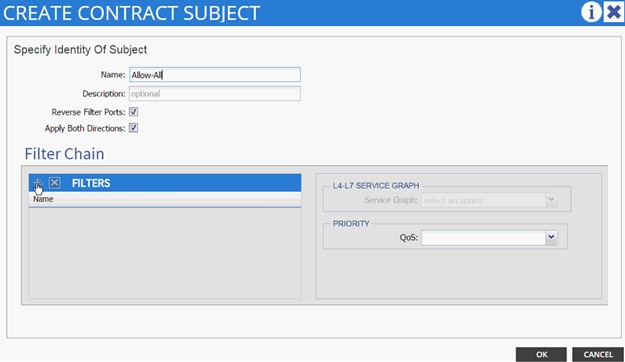

EPG and Contract Configuration

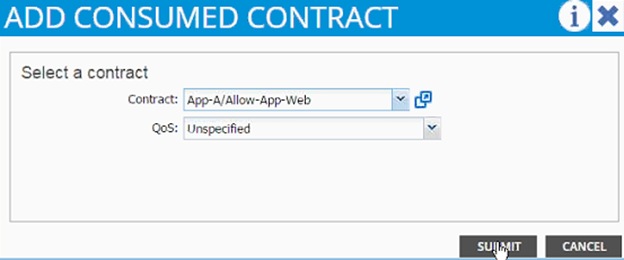

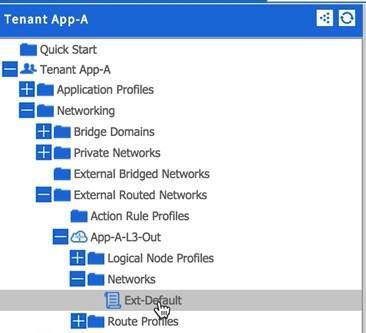

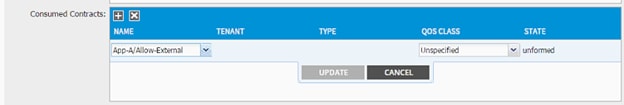

Consuming the SVM Management Contract in an Application Tier (Web)

Cisco ACI – Connecting to Existing Infrastructure

Configuring the Nexus 7000 for ACI Connectivity (Sample)

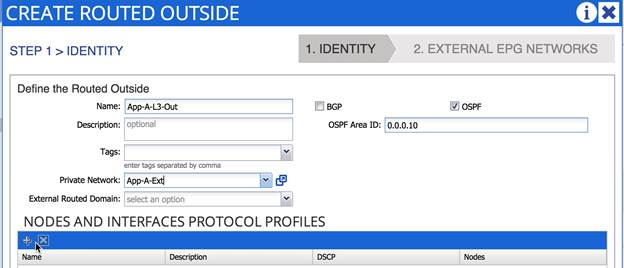

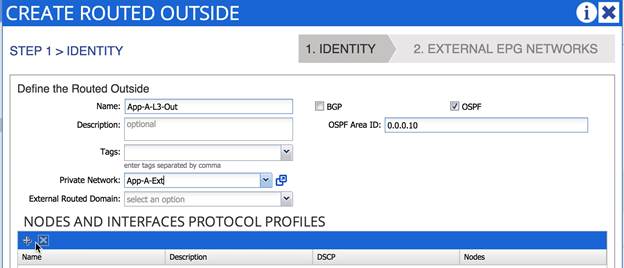

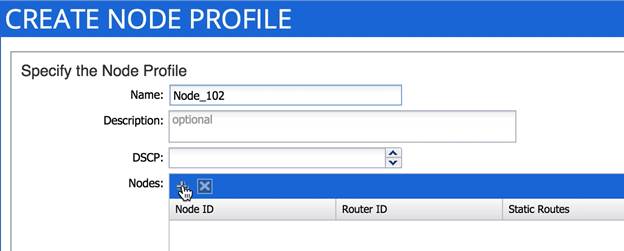

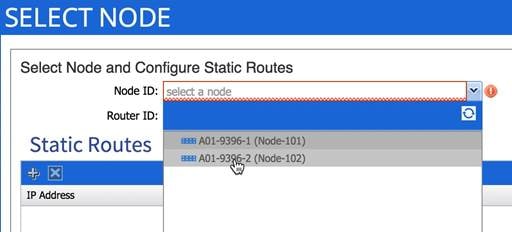

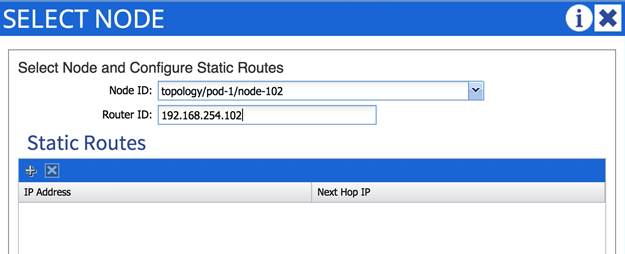

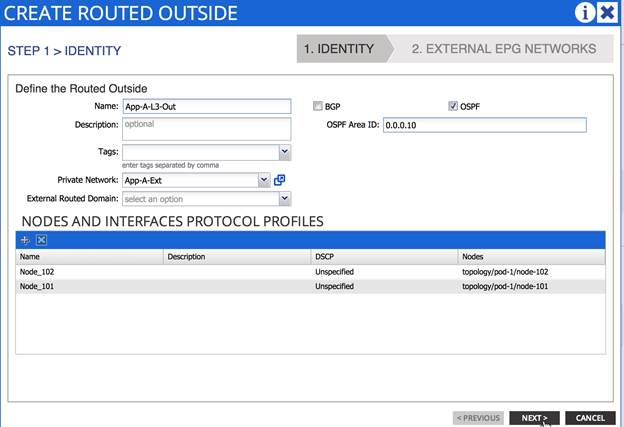

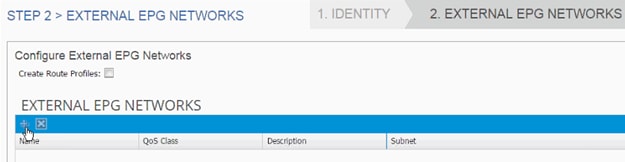

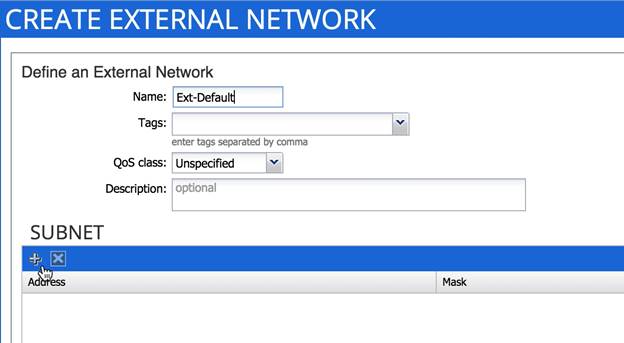

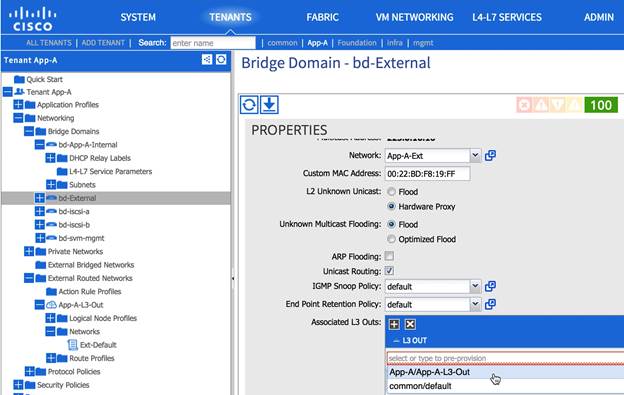

Configuring ACI for External Routed Domain

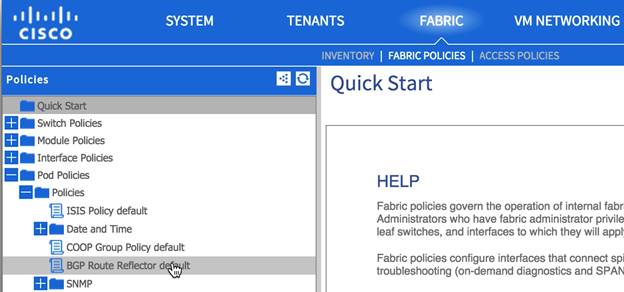

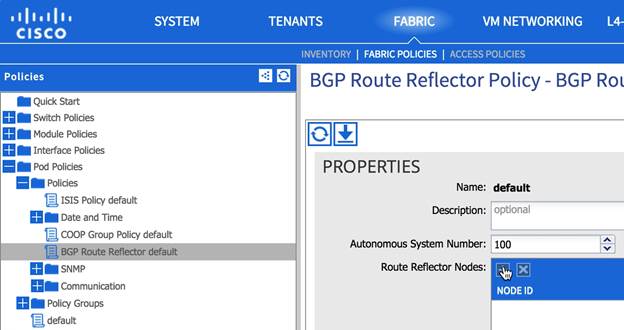

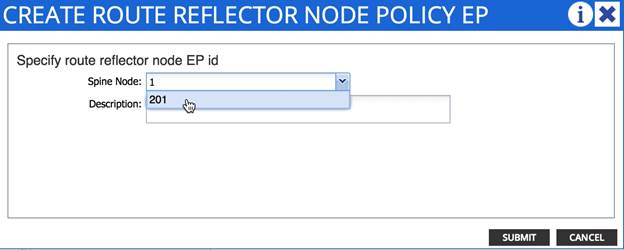

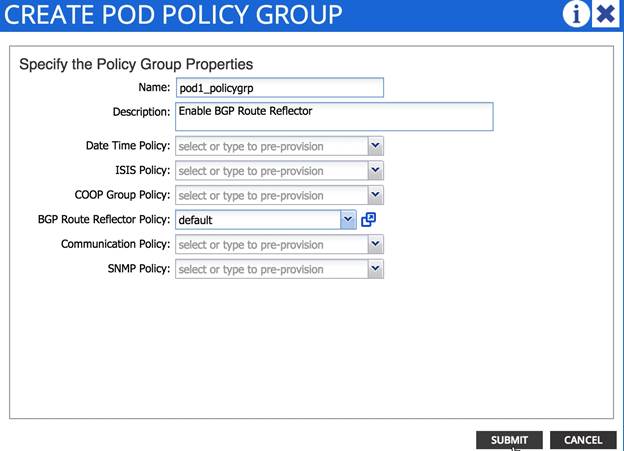

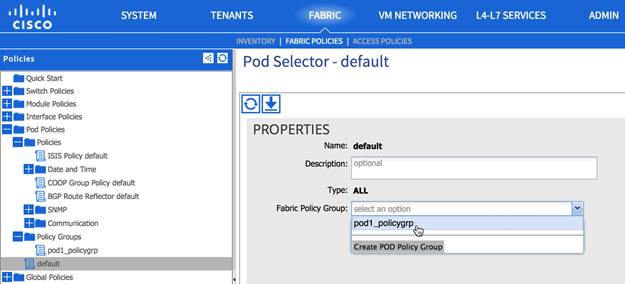

Configuring Multi-Protocol BGP on Spines

FlexPod Management Tools Setup

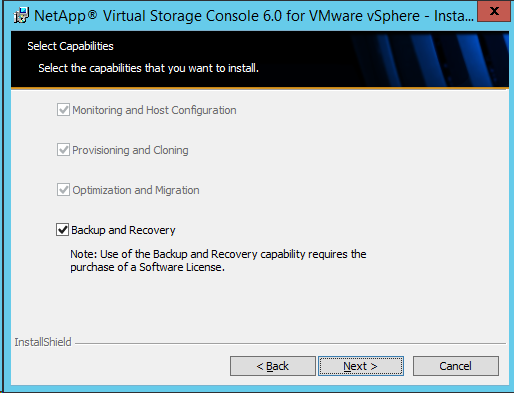

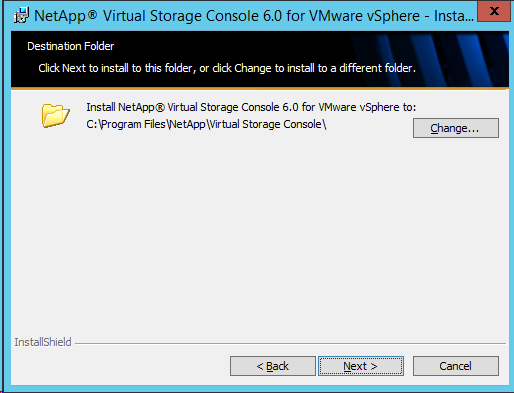

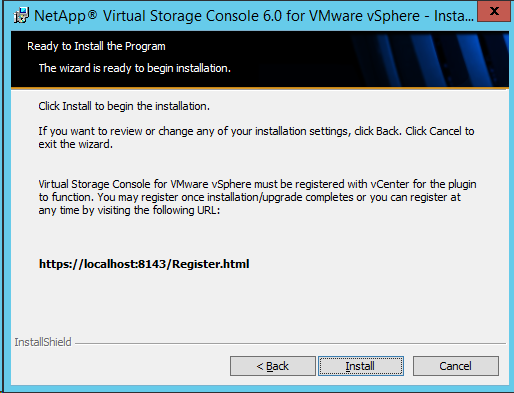

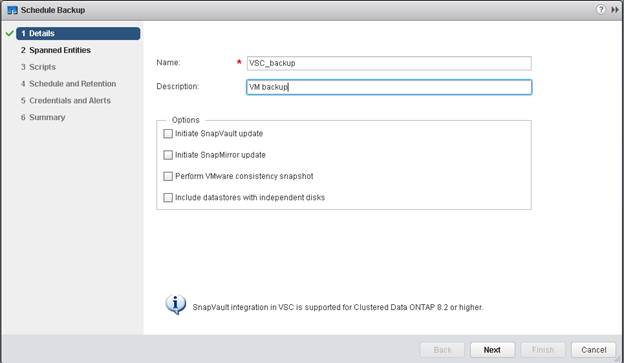

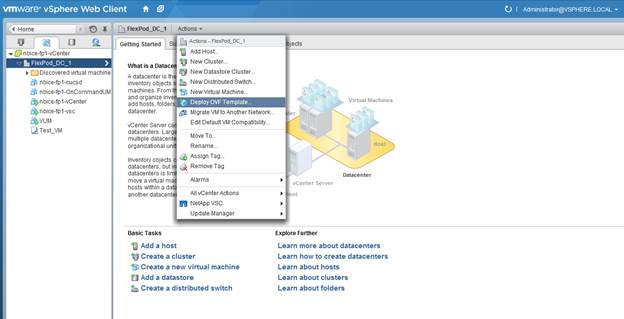

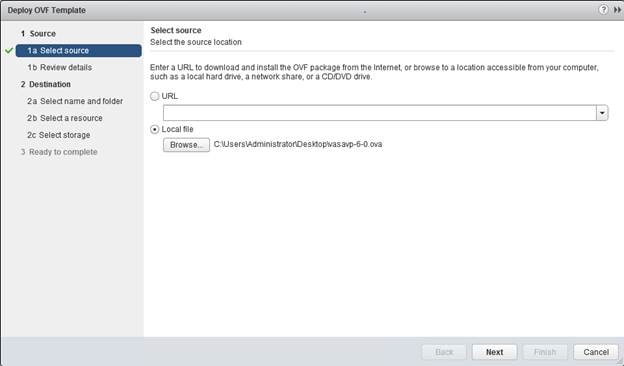

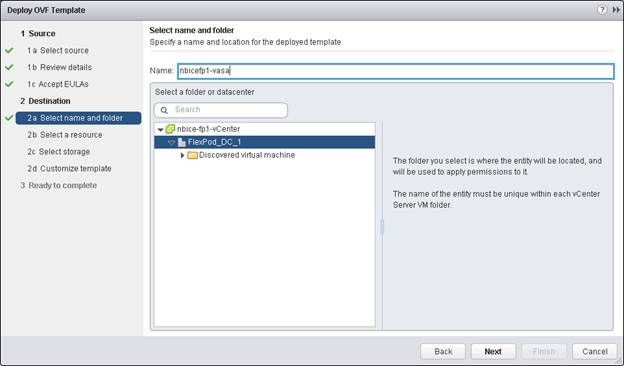

NetApp Virtual Storage Console (VSC) 6.0 Deployment Procedure

VSC 6.0 Pre-installation Considerations

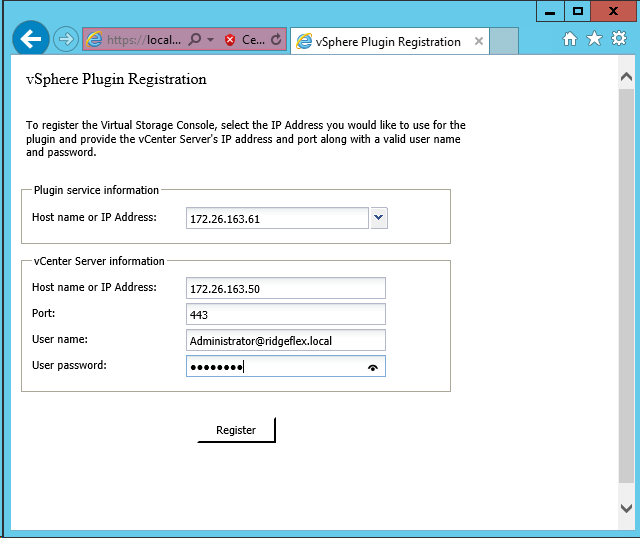

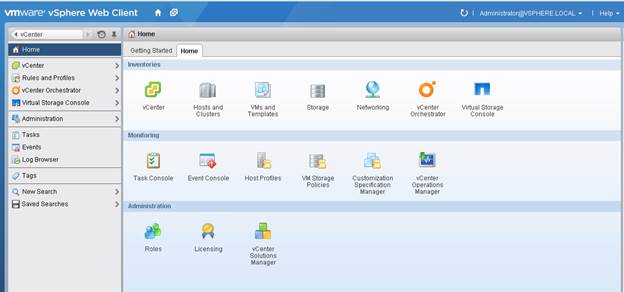

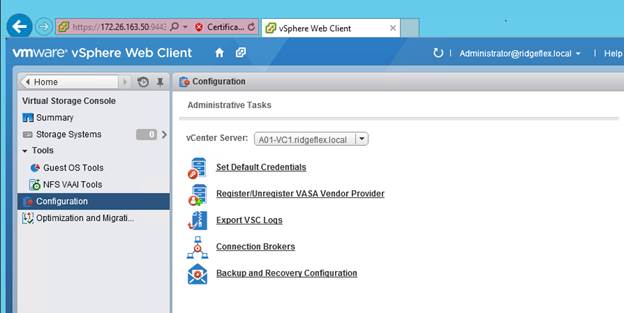

Register VSC with vCenter Server

Discover and Add Storage Resources

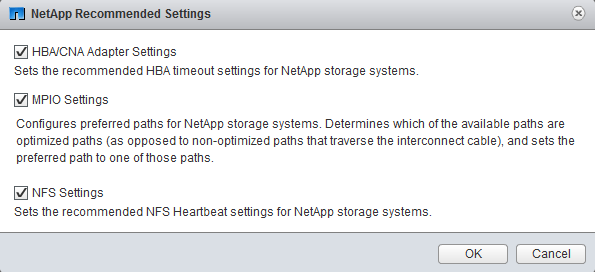

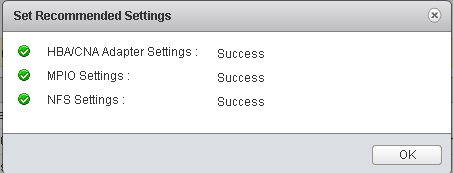

Optimal Storage Settings for ESXi Hosts

NetApp VASA Provider for Clustered Data ONTAP Deployment Procedure

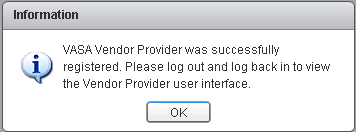

Registering VASA Provider for Clustered Data ONTAP with VSC

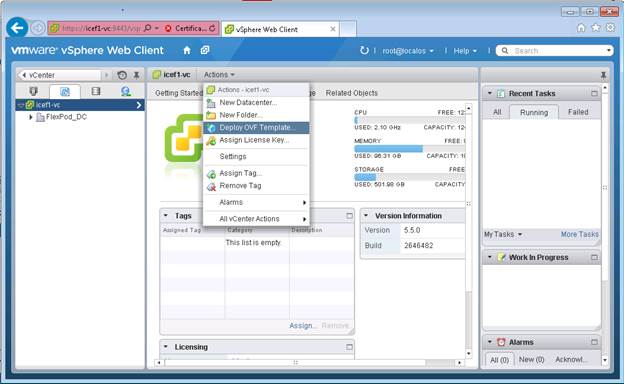

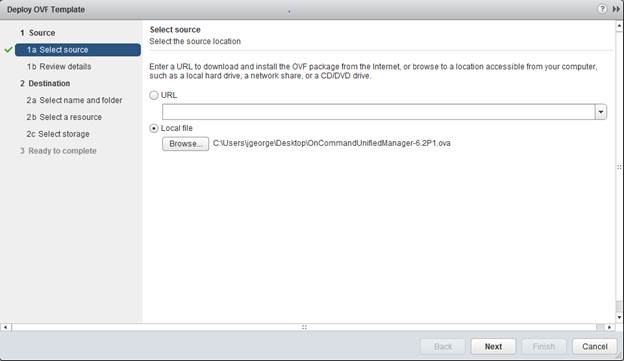

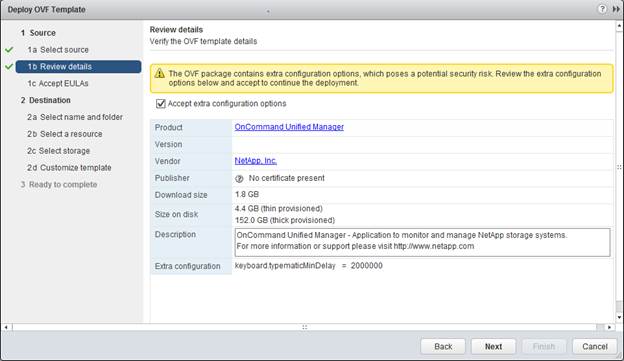

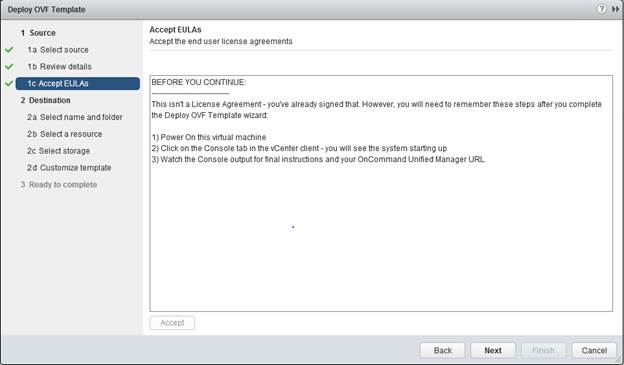

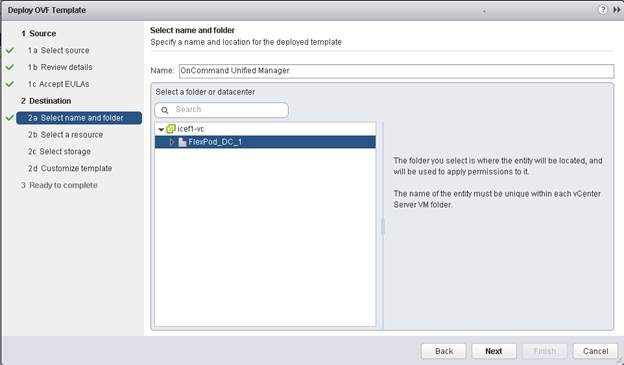

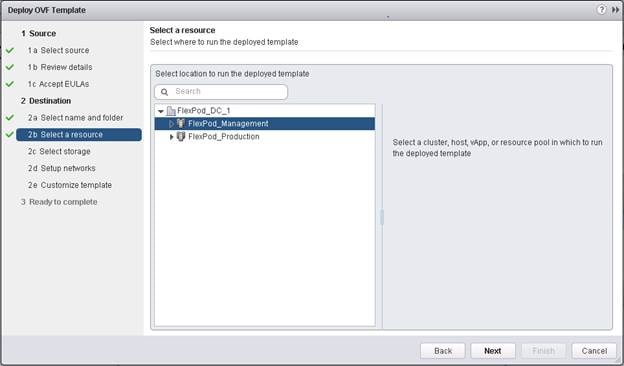

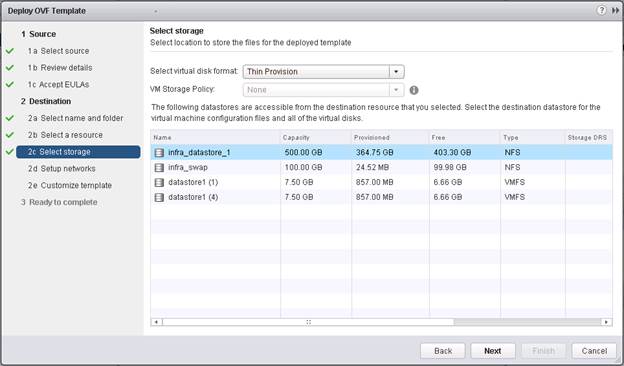

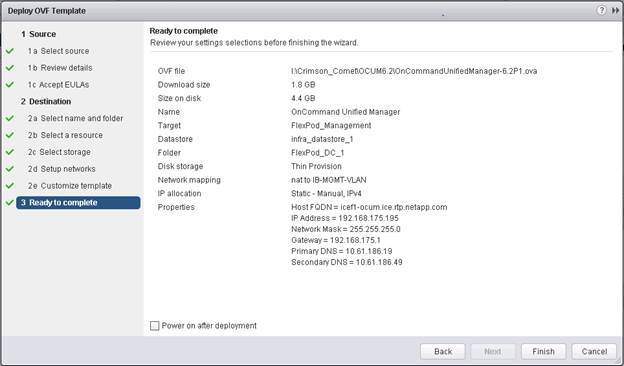

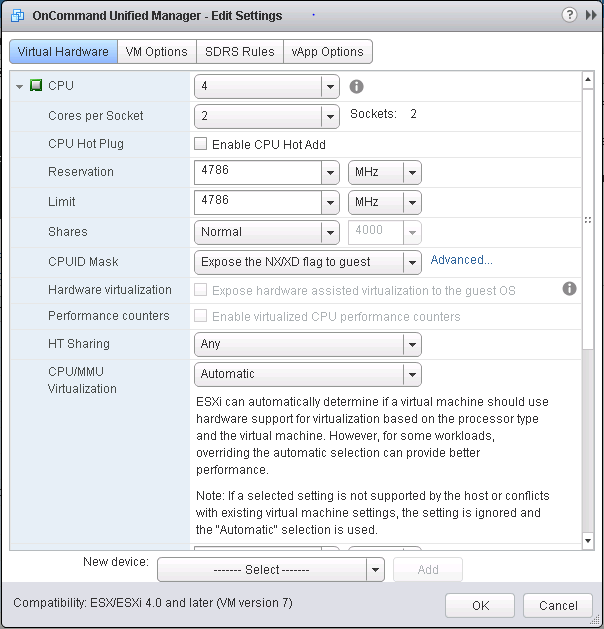

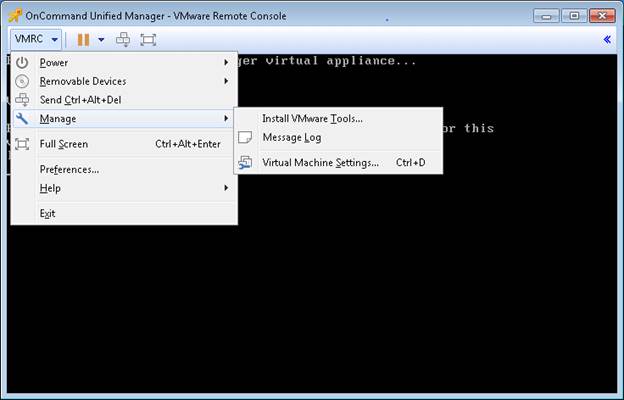

OnCommand Unified Manager OVF Deployment

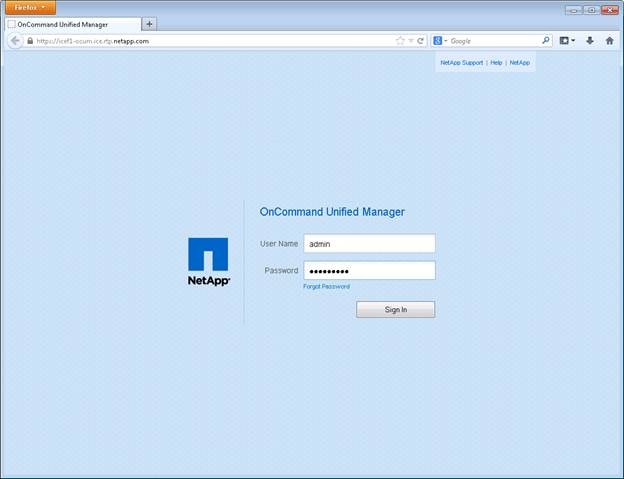

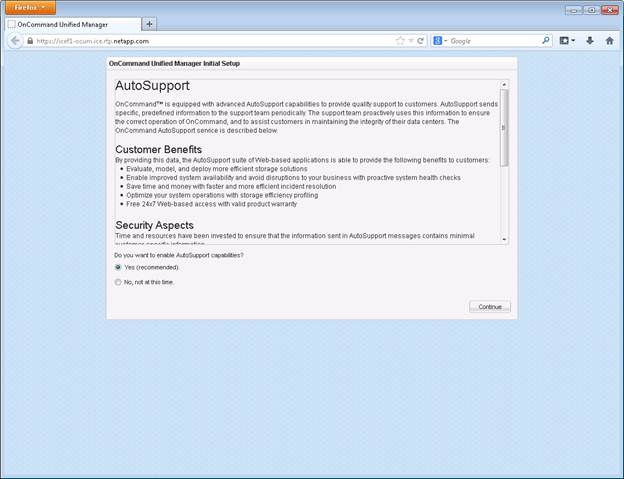

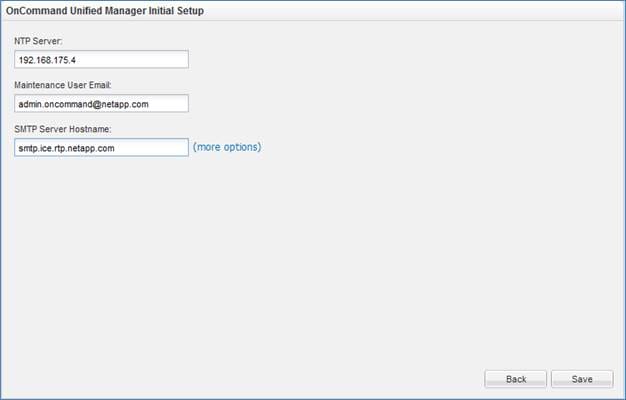

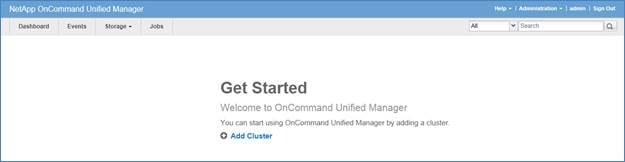

OnCommand Unified Manager Basic Setup

OnCommand Performance Manager 1.1

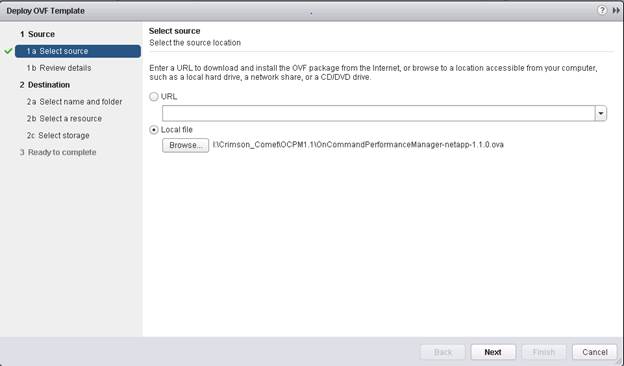

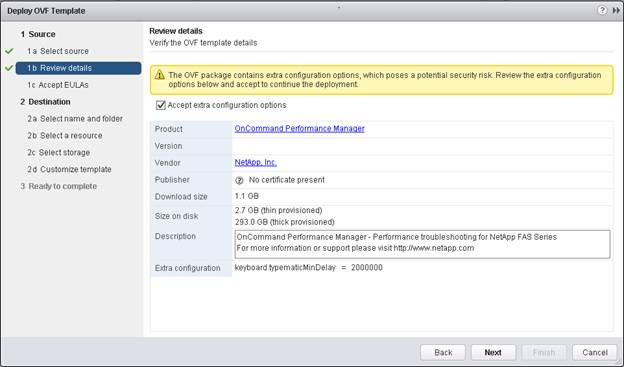

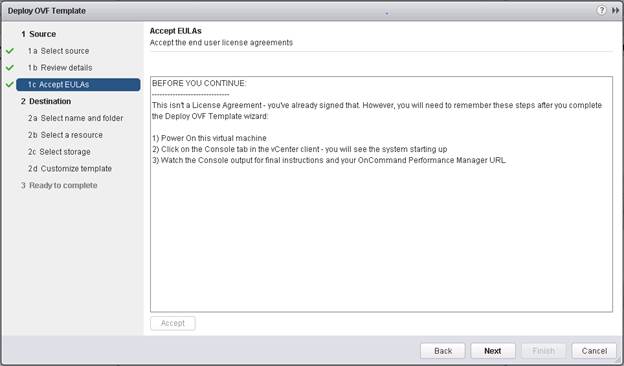

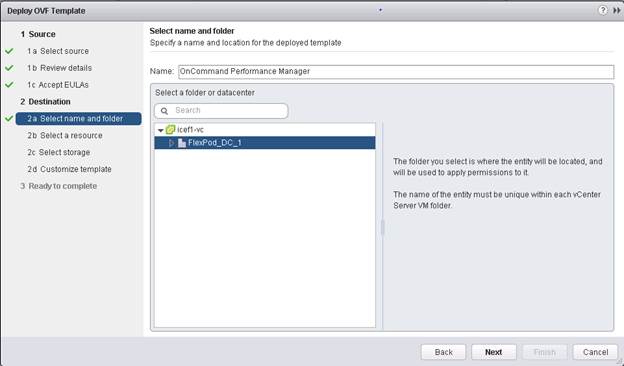

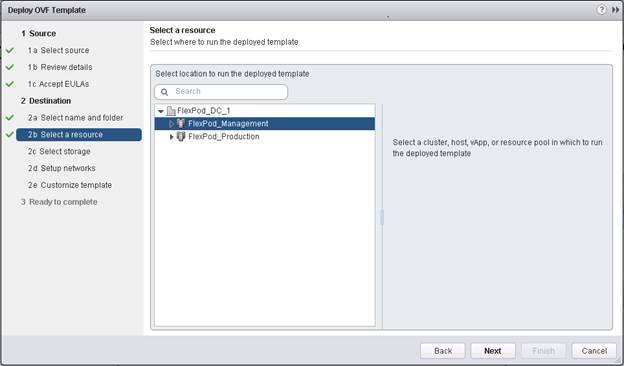

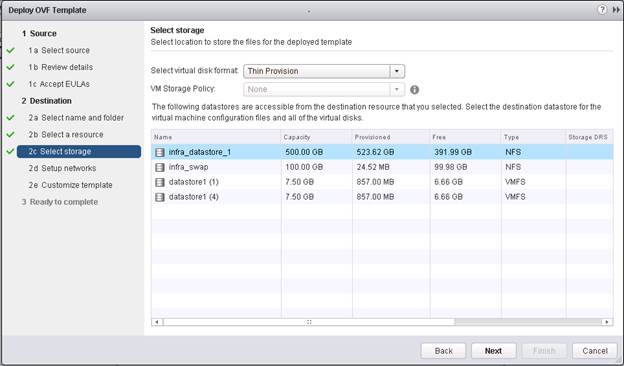

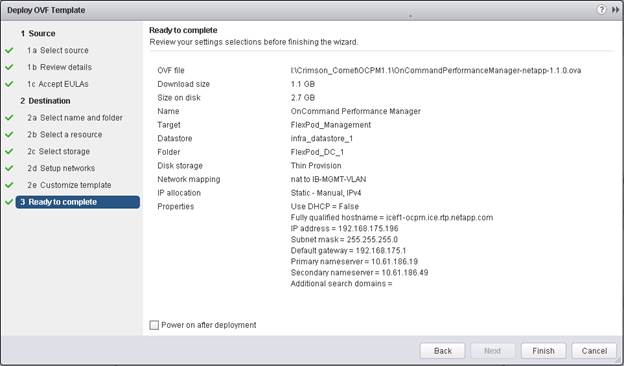

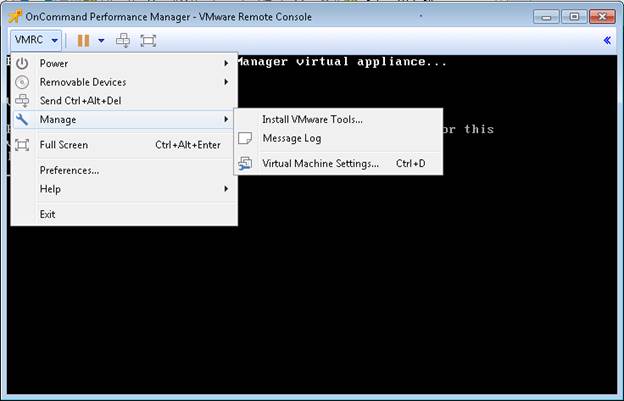

OnCommand Performance Manager OVF Deployment

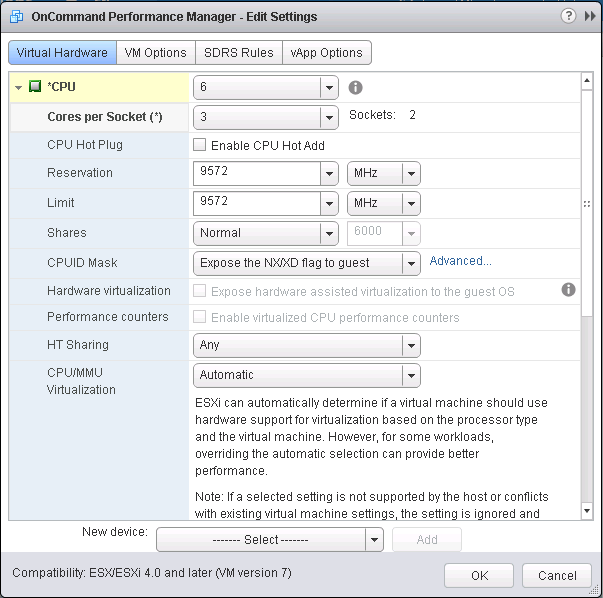

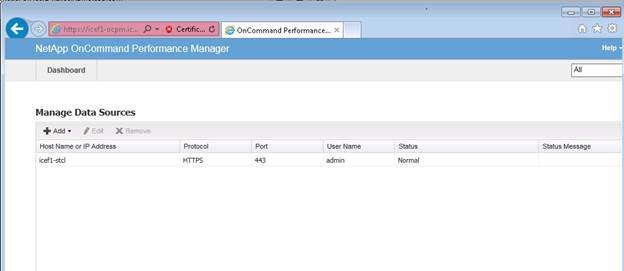

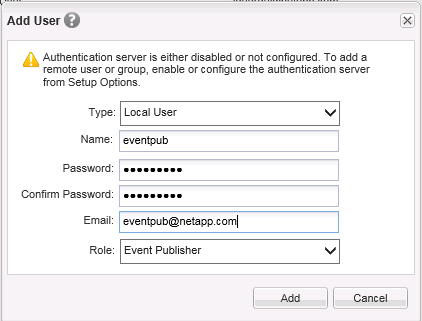

OnCommand Performance Manager Basic Setup

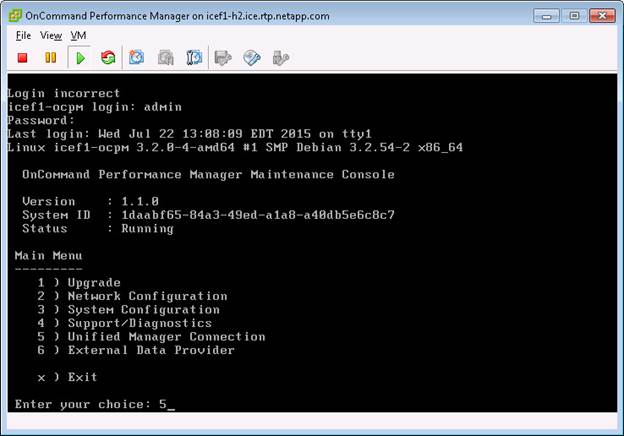

Link OnCommand Performance Manager to OnCommand Unified Manager

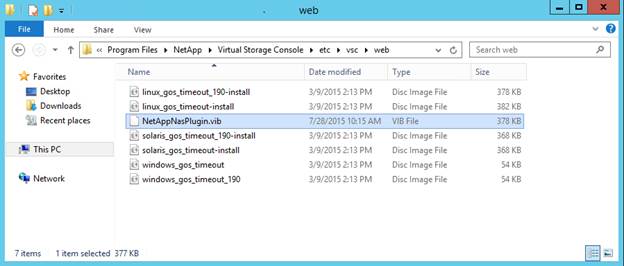

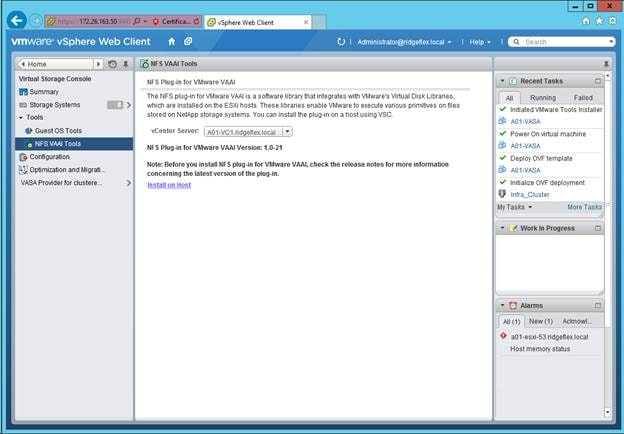

NetApp NFS Plug-In 1.0.21 for VMware VAAI

Enable VMware vStorage for NFS in Clustered Data ONTAP

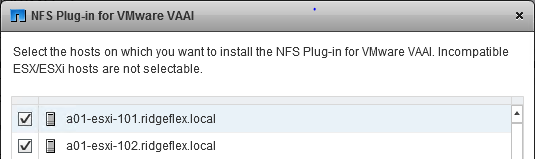

Install NetApp NFS Plug-In for VMware VAAI

Appendix A - Deploying Direct Connect FCoE

Add FCP as an Allowed Protocol in Infrastructure SVM

FCP Service in Clustered Data ONTAP

Create LUNs in Clustered Data ONTAP

FCoE LIF in Clustered Data ONTAP

Place Cisco UCS Fabric Interconnects in Fiber Channel Switching Mode

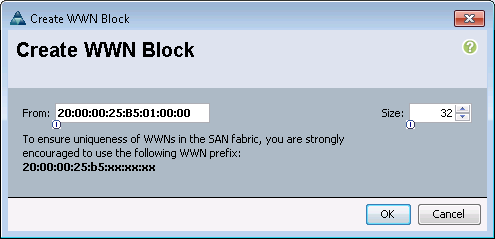

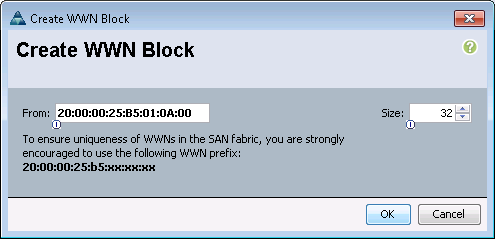

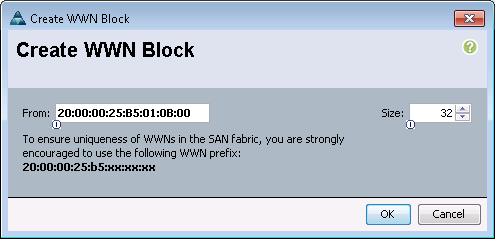

Create a WWNN Pool for FCoE Boot

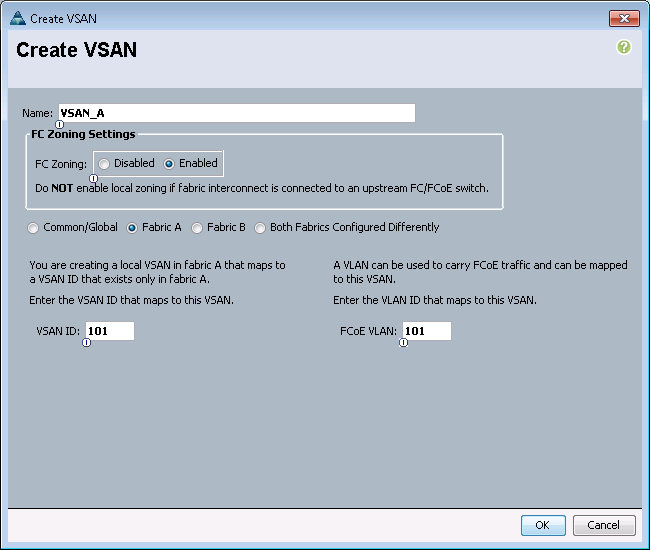

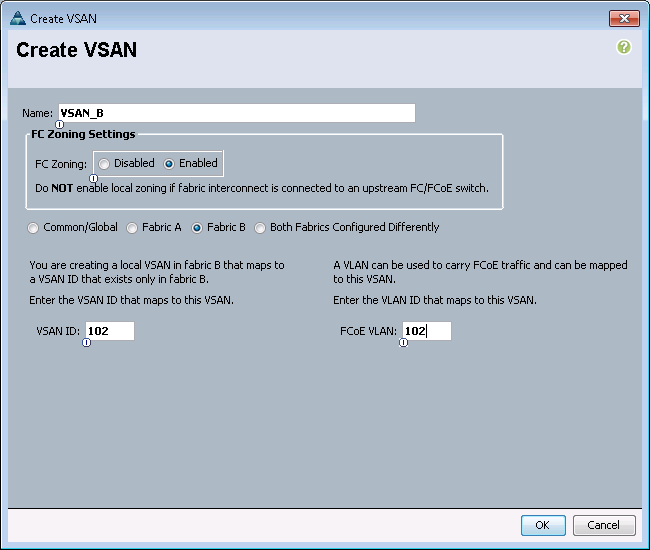

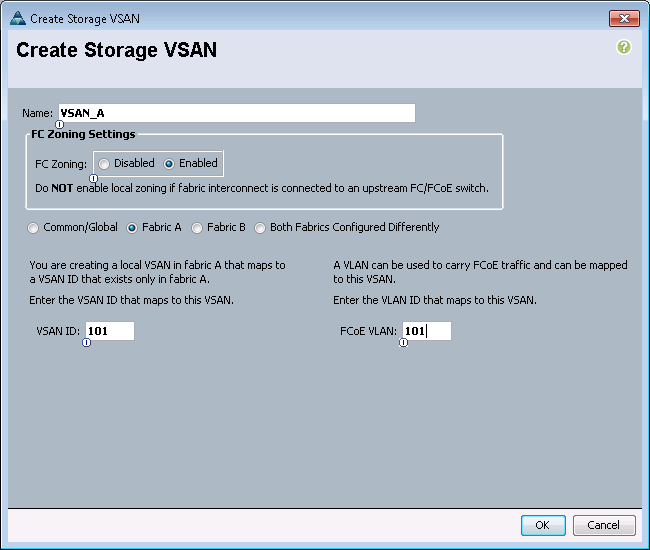

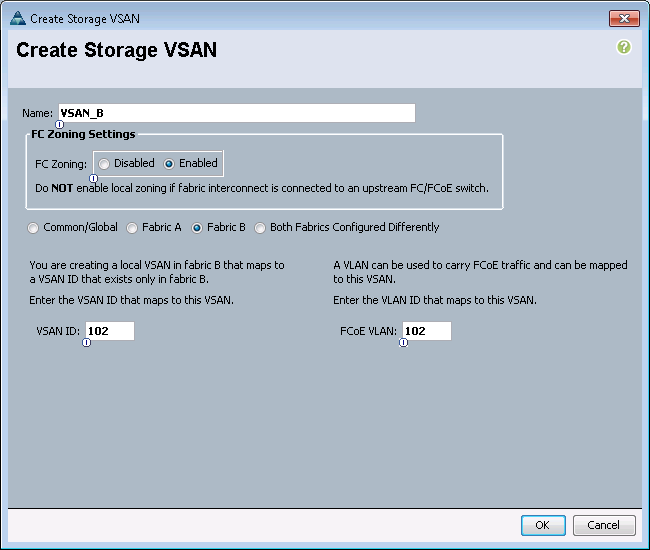

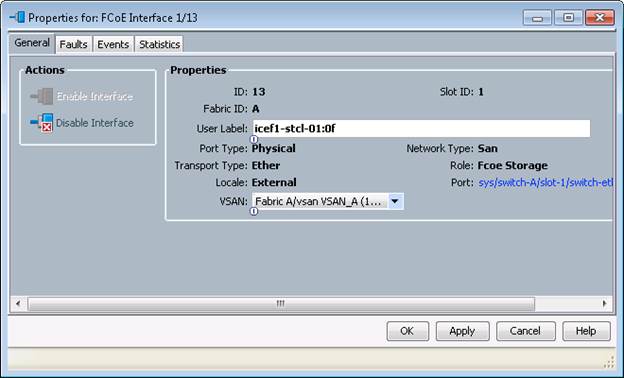

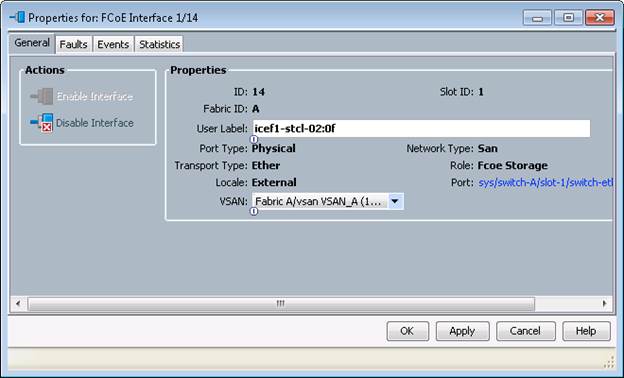

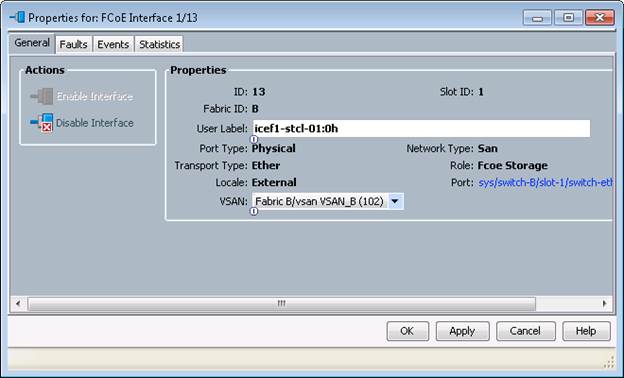

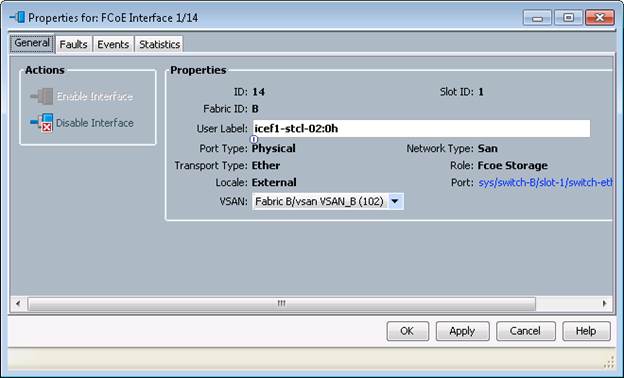

Assign VSANs to FCoE Storage Ports

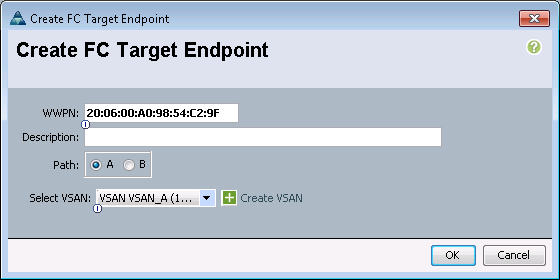

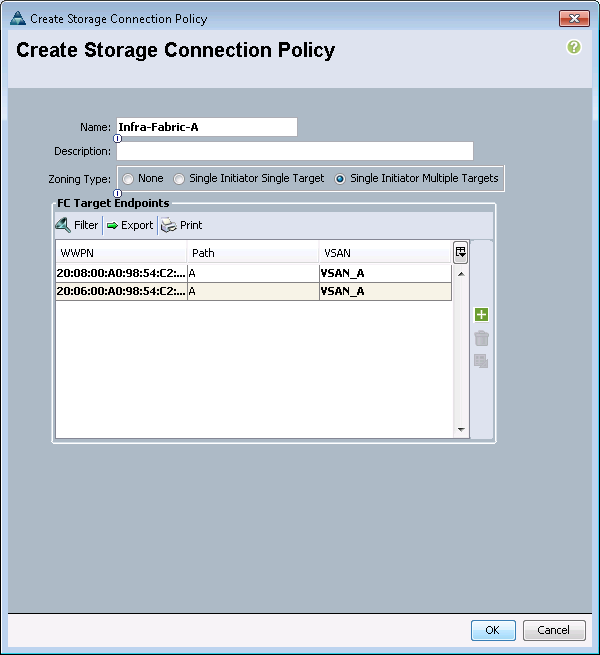

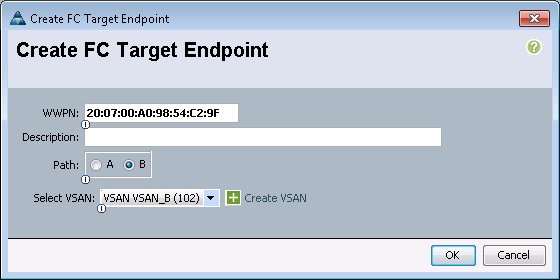

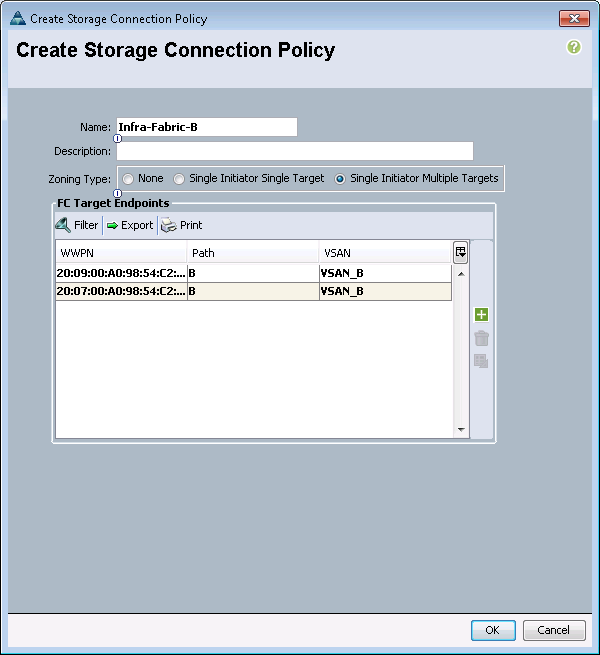

Create Storage Connection Policies for FCoE Zoning

Create Service Profile Template

Clustered Data ONTAP SAN Boot Storage Setup

Cisco® Validated Designs include systems and solutions that are designed, tested, and documented to facilitate and improve customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of customers. Cisco and NetApp have partnered to deliver FlexPod, which serves as the foundation for a variety of workloads and enables efficient architectural designs that are based on customer requirements. A FlexPod solution is a validated approach for deploying Cisco and NetApp technologies as a shared cloud infrastructure.

This document describes the Cisco and NetApp® FlexPod Datacenter with NetApp All Flash FAS (AFF), Cisco Application Centric Infrastructure (ACI), and VMware vSphere 5.5 Update 2. FlexPod Datacenter with NetApp AFF and Cisco ACI is a predesigned, best-practice data center architecture built on the Cisco Unified Computing System (UCS), the Cisco Nexus® 9000 family of switches, and NetApp AFF.

Introduction

Industry trends indicate a vast data center transformation toward shared infrastructure and cloud computing. Business agility requires application agility, so IT teams need to provision applications in hours instead of months. Resources need to scale up (or down) in minutes, not hours.

To simplify the evolution to a shared cloud infrastructure based on an application driven policy model, Cisco and NetApp have developed the solution called FlexPod Datacenter with NetApp AFF and Cisco ACI. Cisco ACI provides a holistic architecture with centralized automation and policy-driven application profiles that delivers software flexibility with hardware performance. NetApp All Flash FAS addresses enterprise storage requirements with high performance, superior flexibility, and best-in-class data management.

Audience

The audience for this document includes, but is not limited to; sales engineers, field consultants, professional services, IT managers, partner engineers, and customers who want to take advantage of an infrastructure built to deliver IT efficiency and enable IT innovation.

Purpose of this Document

This document provides a step-by-step configuration and implementation guide for the FlexPod Datacenter with NetApp AFF and Cisco ACI solution. For the design decisions and technology discussion of the solution, please refer to FlexPod Datacenter with NetApp All Flash FAS, Cisco Nexus 9000 ACI, and VMware vSphere Design Guide:

What’s New?

The following design elements distinguish this version of FlexPod from previous non-ACI FlexPod models:

· Validation of the Cisco ACI with a NetApp All-Flash FAS storage array

· Support for the Cisco UCS 2.2 release and Cisco UCS B200-M4 servers

· Support for the latest release of NetApp Data ONTAP® 8.3

· An IP-based storage design supporting both NAS datastores and iSCSI based SAN LUNs

· Support for direct attached Fiber Chanel storage access for boot LUNs

· Application design guidance for multi-tiered applications using Cisco ACI application profiles and policies

Architecture

FlexPod is a defined set of hardware and software that serves as an integrated foundation for both virtualized and non-virtualized solutions. VMware vSphere® built on FlexPod includes NetApp storage, NetApp Data ONTAP, NetApp All Flash FAS, Cisco Nexus® networking, the Cisco Unified Computing System (Cisco UCS®), and VMware vSphere software in a single package. The design is flexible enough that the networking, computing, and storage can fit in one data center rack or be deployed according to a customer's data center design. Port density enables the networking components to accommodate multiple configurations of this kind.

One benefit of the FlexPod architecture is the ability to customize or "flex" the environment to suit a customer's requirements. A FlexPod can easily be scaled as requirements and demand change. The unit can be scaled both up (adding resources to a FlexPod unit) and out (adding more FlexPod units). The reference architecture detailed in this document highlights the resiliency, cost benefit, and ease of deployment of an IP-based storage solution. A storage system capable of serving multiple protocols across a single interface allows for customer choice and investment protection because it truly is a wire-once architecture.

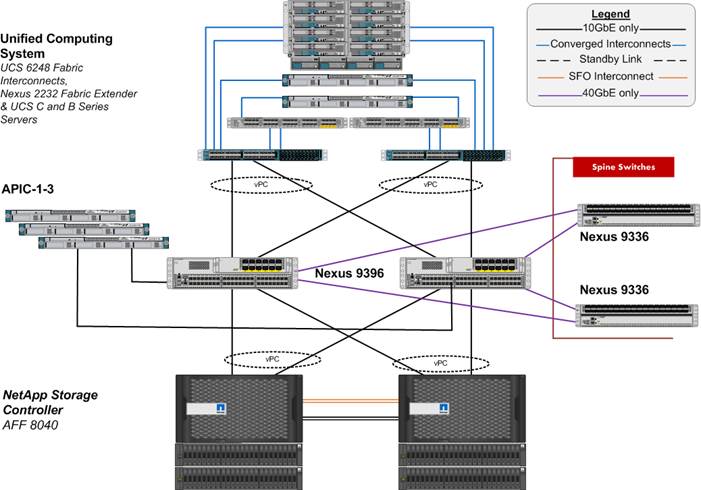

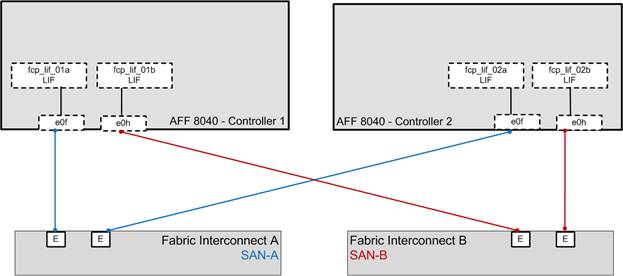

Figure 1 shows the VMware vSphere built on FlexPod components and the network connections for a configuration with IP-based storage. This design uses the Cisco Nexus 9000, Cisco Nexus 2232PP FEX, and Cisco UCS C-Series and B-Series servers and the NetApp AFF family of storage controllers connected in a highly available modular design. This infrastructure is deployed to provide iSCSI-booted hosts with file-level and block-level access to shared storage. The reference architecture reinforces the "wire-once" strategy, because as additional storage is added to the architecture, no re-cabling is required from the hosts to the Cisco UCS fabric interconnect.

The ACI switching architecture is laid out in a leaf-and-spine topology where every leaf connects to every spine using 40G Ethernet interface(s). The software controller, APIC, is delivered as an appliance and three or more such appliances form a cluster for high availability and enhanced performance.

Physical Topology

Figure 1 illustrates the physical architecture.

Figure 1 FlexPod Design with Cisco ACI and NetApp Data ONTAP

The reference hardware configuration includes:

· Two Cisco Nexus 9396 switches

· Two Cisco Nexus 2232 fabric extenders

· Two Cisco UCS 6248UP fabric interconnects

· One NetApp AFF8040 (HA pair) running clustered Data ONTAP with Disk shelves and Solid State Drives (SSD)

While not included in the FlexPod BOM, Cisco ACI spines and APIC controllers are integral part of Cisco ACI design. The following components were used in the validation efforts:

· Three APIC Controllers

· Two Cisco Nexus 9336 based spines

For server virtualization, the deployment includes VMware vSphere. Although this is the base design, each of the components can be scaled easily to support specific business requirements. For example, more (or different) servers or even blade chassis can be deployed to increase compute capacity, additional disk shelves can be deployed to improve I/O capability and throughput, and special hardware or software features can be added to introduce new features. This document guides you through the low-level steps for deploying the base architecture, as shown in Figure 1. These procedures cover everything from physical cabling to network, compute and storage device configurations.

Software Revisions

Table 1 lists the software revisions for this solution.

| Layer |

Device |

Image |

Comments |

| Compute |

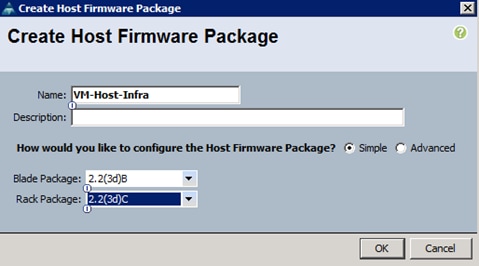

Cisco UCS Fabric Interconnects 6200 Series, UCS B-200 M4, UCS C-220 M4 |

2.2(3d) |

Includes the Cisco UCS-IOM 2208XP, Cisco UCS Manager, UCS VIC 1240 and UCS VIC 1340 |

|

|

Cisco eNIC |

2.1.2.62 |

|

|

|

Cisco fNIC |

1.6.0.12b |

|

| Network |

Cisco APIC |

1.0(4h)* |

|

|

|

Cisco Nexus 9000 iNX-OS |

11.0(4h)* |

|

| Storage |

NetApp AFF 8040 |

Data ONTAP 8.3 |

|

| Software |

VMware vSphere ESXi |

5.5u2 |

|

|

|

VMware vCenter |

5.5u2 |

|

|

|

OnCommand Unified Manager for clustered Data ONTAP |

6.2 |

|

|

|

NetApp Virtual Storage Console (VSC) |

6.0 |

|

|

|

OnCommand Performance Manager |

1.1 |

|

* Customers should always use the latest ACI software after consulting with their account team. The APIC screen captures in this Deployment Guide were captured in an earlier version and might be slightly different.

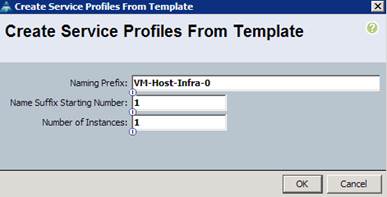

Configuration Guidelines

This document provides details for configuring a fully redundant, highly available configuration for a FlexPod unit with clustered Data ONTAP storage. Therefore, reference is made to which component is being configured with each step, either 01 or 02 or A and B. For example, node01 and node02 are used to identify the two NetApp storage controllers that are provisioned with this document, and Cisco Nexus A or Cisco Nexus B identifies the pair of Cisco Nexus switches that are configured. The Cisco UCS fabric interconnects are similarly configured. Additionally, this document details the steps for provisioning multiple Cisco UCS hosts, and these are identified sequentially: VM-Host-Infra-01, VM-Host-Infra-02, and so on. Finally, to indicate that you should include information pertinent to your environment in a given step, <text> appears as part of the command structure. See the following example for the network port vlan create command:

Usage:

network port vlan create ?

[-node] <nodename> Node

{ [-vlan-name] {<netport>|<ifgrp>} VLAN Name

| -port {<netport>|<ifgrp>} Associated Network Port

[-vlan-id] <integer> } Network Switch VLAN Identifier

Example:

network port vlan -node <node01> -vlan-name i0a-<vlan id>

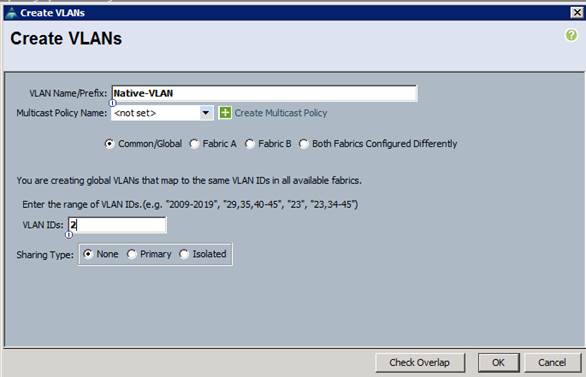

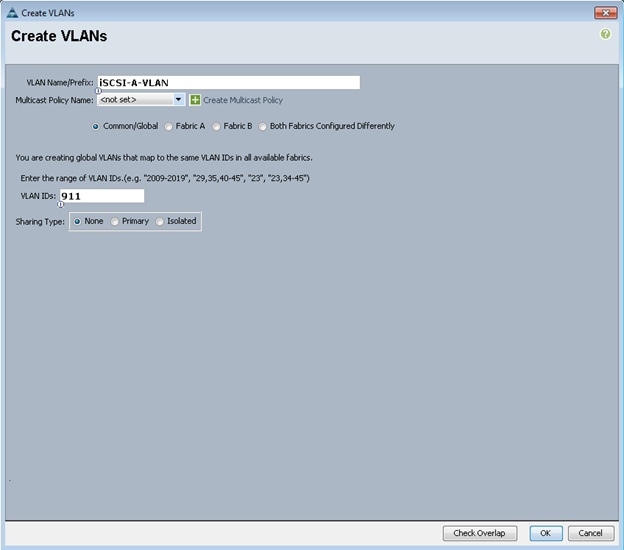

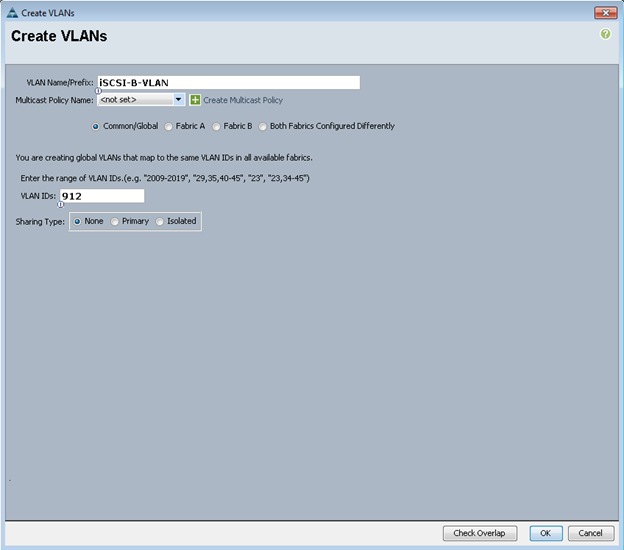

This document is intended to enable you to fully configure the customer environment. In this process, various steps require you to insert customer-specific naming conventions, IP addresses, and VLAN schemes, as well as to record appropriate MAC addresses. Table 3 lists the virtual machines (VMs) necessary for deployment as outlined in this guide. Table 2 describe the VLANs necessary for deployment as outlined in this guide.

| VLAN Name |

VLAN Purpose |

ID Used in Validating This Document |

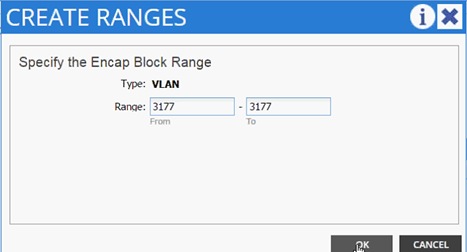

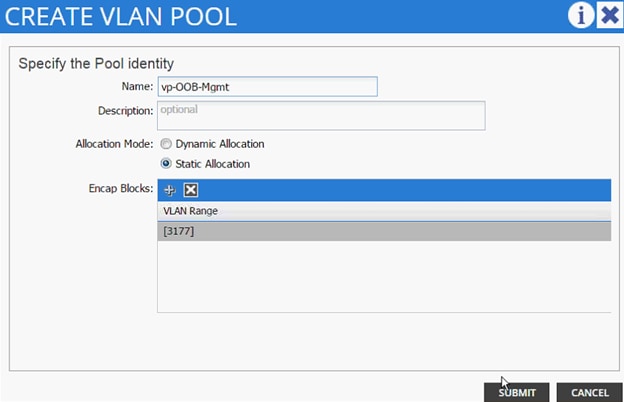

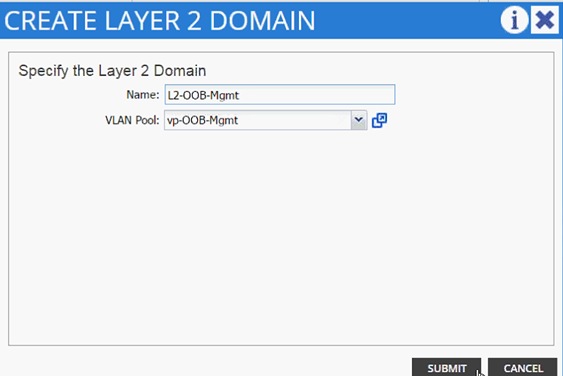

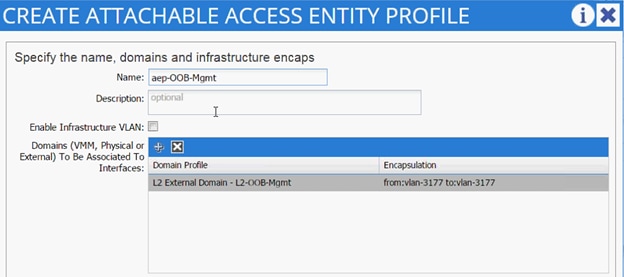

| Out of band Mgmt |

VLAN for out-of-band management interfaces |

3177 |

| Native |

VLAN to which untagged frames are assigned |

2 |

| NFS LIF |

VLAN for NFS LIF (NetApp) traffic |

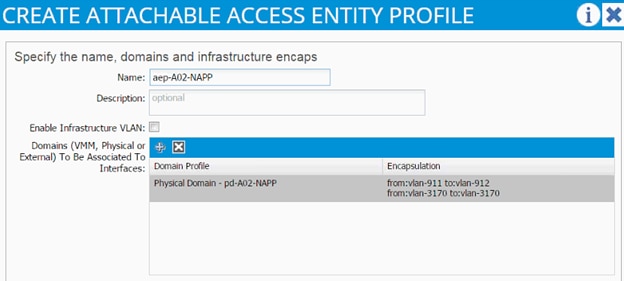

3170 |

| NFS VMK |

VLAN for NFS VMkernel (Infrastructure ESXi hosts) traffic |

3270 |

| iSCSI-A LIF |

VLAN for Fabric A iSCSI LIF |

901 |

| iSCSI-B LIF |

VLAN for Fabric B iSCSI LIF |

902 |

| iSCSI-A-VMK |

VLAN for iSCSI traffic for fabric A |

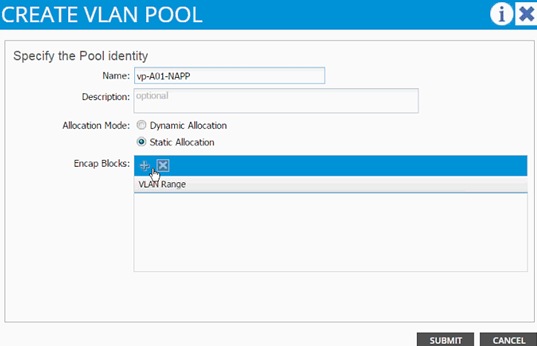

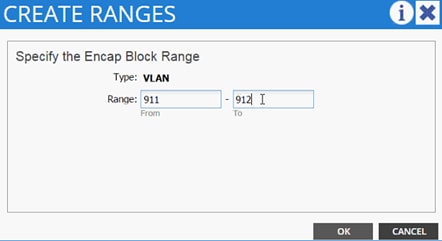

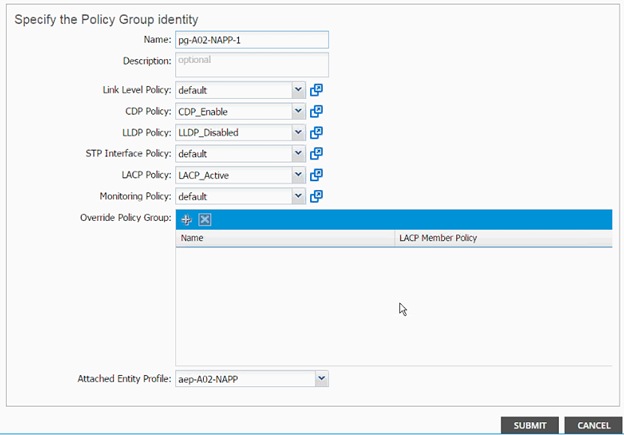

911 |

| iSCSI-B-VMK |

VLAN for iSCSI traffic for fabric B |

912 |

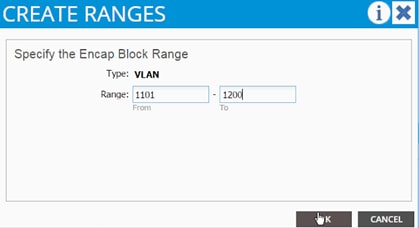

| Tenant Traffic |

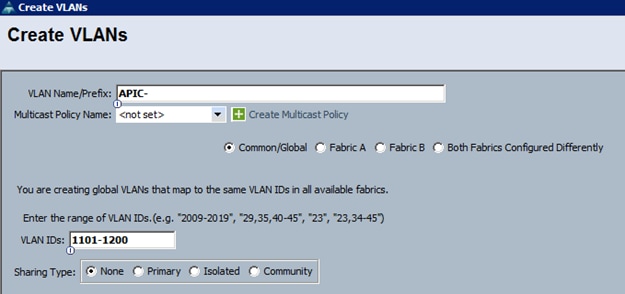

VLAN Range defined for ACI |

1101-1200 |

Table 3 lists the virtual machines (VMs) necessary for deployment as outlined in this document.

| Virtual Machine Description |

Host Name |

| Active Directory |

|

| vCenter SQL Server database |

|

| vCenter Server |

|

| NetApp Virtual Storage Console (VSC) |

|

| NetApp OnCommand Unified Manager |

|

| OnCommand Performance Manager |

|

Table 4 lists the configuration variables that are used throughout this document. This table can be completed based on the specific site variables and used in implementing the document configuration steps.

Table 4 Configuration Variables

| Variable |

Value |

| <<var_node01_mgmt_ip>> |

Out-of-band management IP for cluster node 01 |

| <<var_node01_mgmt_mask>> |

Out-of-band management network netmask |

| <<var_node01_mgmt_gateway>> |

Out-of-band management network default gateway |

| <<var_url_boot_software>> |

Data ONTAP 8.3 URL; format: http:// |

| <<var_node02_mgmt_ip>> |

Out-of-band management IP for cluster node 02 |

| <<var_node02_mgmt_mask>> |

Out-of-band management network netmask |

| <<var_node02_mgmt_gateway>> |

Out-of-band management network default gateway |

| <<var_clustername>> |

Storage cluster host name |

| <<var_cluster_base_license_key>> |

Cluster base license key |

| <<var_nfs_license>> |

NFS license key |

| <<var_iscsi_license>> |

iSCSI license key |

| <<var_password>> |

Global default administrative password |

| <<var_clustermgmt_ip>> |

In-band management IP for the storage cluster |

| <<var_clustermgmt_mask>> |

Out-of-band management network netmask |

| <<var_clustermgmt_gateway>> |

Out-of-band management network default gateway |

| <<var_dns_domain_name>> |

DNS domain name |

| <<var_nameserver_ip>> |

DNS server IP(s) |

| <<var_node_location>> |

Node location string for each node |

| <<var_node01_sp_ip>> |

Out-of-band cluster node 01 service processor management IP |

| <<var_node01_sp_mask>> |

Out-of-band management network netmask |

| <<var_node01_sp_gateway> |

Out-of-band management network default gateway |

| <<var_node02_sp_ip>> |

Out-of-band cluster node 02 device processor management IP |

| <<var_node02_sp_mask>> |

Out-of-band management network netmask |

| <<var_node02_sp_gateway> |

Out-of-band management network default gateway |

| <<var_node01>> |

Cluster node 01 hostname |

| <<var_node02>> |

Cluster node 02 hostname |

| <<var_num_disks>> |

Number of disks to assign to each storage controller |

| <<var_nfs_vlan_id>> |

Infrastructure NFS VLAN ID for LIF |

| <<var_nfs_vlan_tenant>> |

Tenant NFS VLAN ID for LIF (only required when deploying a tenant) |

| <<var_iscsi_vlan_A_id>> |

Infrastructure iSCSI-A VLAN ID for LIF |

| <<var_iscsi_vlan_B_id>> |

Infrastructure iSCSI-B VLAN ID for LIF |

| <<var_iscsi_vlan_A_tenant>> |

Tenant iSCSI-A VLAN ID for LIF (optional) |

| <<var_iscsi_vlan_B_tenant>> |

Tenant iSCSI-B VLAN ID for LIF (optional) |

| <<var_nfs_vlan_vmk>> |

Infrastructure NFS VLAN ID for VMkernel Port |

| <<var_iscsi_vlan_A_vmk>> |

Infrastructure iSCSI-A VLAN ID for VMkernel Port |

| <<var_iscsi_vlan_B_vmk>> |

Infrastructure iSCSI-B VLAN ID for VMkernel Port |

| <<var_ib_mgmt_vlan_id>> |

In-band management network VLAN ID |

| <<var_oob_mgmt_vlan_id>> |

Out-of-band management network VLAN ID |

| <<var_timezone>> |

FlexPod time zone (for example, America/New_York) |

| <<var_global_ntp_server_ip>> |

NTP server IP address |

| <<var_snmp_contact>> |

Administrator e-mail address |

| <<var_snmp_location>> |

Cluster location string |

| <<var_oncommand_server_fqdn>> |

VSC or OnCommand virtual machine fully qualified domain name (FQDN) |

| <<var_snmp_community>> |

Storage cluster SNMP v1/v2 community name |

| <<var_mailhost>> |

Mail server host name |

| <<var_storage_admin_email>> |

Administrator e-mail address |

| <<var_esxi_host1_nfs_ip>> |

NFS VLAN IP address for VMware ESXi host 1 |

| <<var_esxi_host2_nfs_ip>> |

NFS VLAN IP address for VMware ESXi host 2 |

| <<var_node01_nfs_lif_infra_swap_ip>> |

IP address of Infra Swap |

| <<var_node01_nfs_lif_infra_swap_mask>> |

Subnet Mask of Infra Swap |

| <<var_node02_nfs_lif_infra_datastore_1_ip>> |

IP address of Datastore 1 |

| <<var_node02_nfs_lif_infra_datastore_1_mask>> |

Subnet mask of Datastore 1 |

| <<var_vserver_mgmt_ip>> |

Management IP address for Vserver |

| <<var_vserver_mgmt_mask>> |

Subnet mask for Vserver |

| <<var_routing_group>> |

Routing group for Vserver |

| <<var_vserver_mgmt_gateway>> |

Default Gateway for Vserver |

| <<var_vsadmin_password>> |

Password for VS admin account |

| <<var_ucs_clustername>> |

Cisco UCS Manager cluster host name |

| <<var_ucsa_mgmt_ip>> |

Cisco UCS fabric interconnect (FI) A out-of-band management IP address |

| <<var_ucsa_mgmt_mask>> |

Out-of-band management network netmask |

| <<var_ucsa_mgmt_gateway>> |

Out-of-band management network default gateway |

| <<var_ucsb_mgmt_ip>> |

Cisco UCS FI B out-of-band management IP address |

| <<var_vm_host_infra_01_iqn>> |

IQN of Infra 01 |

| <<var_vm_host_infra_02_iqn>> |

IQN of Infra 02 |

| <<var_vm_host_infra_01_ip>> |

VMware ESXi host 01 out-of-band management IP |

| <<var_vm_host_infra_02_ip>> |

VMware ESXi host 02 out-of-band management IP |

| <<var_nfs_vlan_ip_host_01>> |

ESXi host 1, NFS VLAN IP |

| <<var_nfs_vlan_ip_mask_host_01>> |

ESXi host1, NFS VLAN subnet mask |

| <<var_nfs_vlan_ip_host_02>> |

ESXi host 2, NFS VLAN IP |

| <<var_nfs_vlan_ip_mask_host_02>> |

ESXi host2, NFS VLAN subnet mask |

| <<var_vcenter_server_ip>> |

IP address of the vCenter Server |

| <<var_svm_mgmt_vlan_id>> |

Infrastructure Vserver management VLAN ID |

| <<var_svm_mgmt_vlan_tenant>> |

Tenant Vserver management VLAN ID |

| <<var_nfs_subnet_address>> |

NFS subnet address |

| <<var_node02_nfs_lif_tenant_datastore_1_ip>> |

Tenant Datastore 1 IP address |

| <<var_node02_nfs_lif_tenant_datastore_1_mask>> |

Tenant Datastore 1 Subnet mask |

| <<var_node01_iscsi_lif01a_ip>> |

iSCSI LIF 01a IP address |

| <<var_node01_iscsi_lif01a_mask>> |

iSCSI LIF 01a subnet mask |

| <<var_node01_iscsi_lif01b_ip>> |

iSCSI LIF 01b IP address |

| <<var_node01_iscsi_lif01b_mask>> |

iSCSI LIF 01b subnet mask |

| <<var_node01_iscsi_lif02a_ip>> |

iSCSI LIF 02a IP address |

| <<var_node01_iscsi_lif02a_mask>> |

iSCSI LIF 02a subnet mask |

| <<var_node01_iscsi_lif02b_ip>> |

iSCSI LIF 02b IP address |

| <<var_node01_iscsi_lif02b_mask>> |

iSCSI LIF 02b subnet mask |

| <<var_node01_iscsi_tenant_lif01a_ip>> |

Tenant iSCSI LIF 01a IP address |

| <<var_node01_iscsi_tenant_lif01a_mask>> |

Tenant iSCSI LIF 01a subnet mask |

| <<var_node01_iscsi_tenant_lif01b_ip>> |

Tenant iSCSI LIF 01b IP address |

| <<var_node01_iscsi_tenant_lif01b_mask>> |

Tenant iSCSI LIF 01b subnet mask |

| <<var_node01_iscsi_tenant_lif02a_ip>> |

Tenant iSCSI LIF 02a IP address |

| <<var_node01_iscsi_tenant_lif02a_mask>> |

Tenant iSCSI LIF 02a subnet mask |

| <<var_node01_iscsi_tenant_lif02b_ip>> |

Tenant iSCSI LIF 02b IP address |

| <<var_node01_iscsi_tenant_lif02b_mask>> |

Tenant iSCSI LIF 02b subnet mask |

| <<var_vserver_mgmt_ip>> |

Management IP address for Infrastructure Vserver |

| <<var_vserver_mgmt_mask>> |

Management subnet mask for Infrastructure Vserver |

| <<var_vserver_tenant_mgmt_ip>> |

Management IP address for Tenant Vserver |

| <<var_vserver_tenant_mgmt_mask>> |

Management subnet mask for Tenant Vserver |

| <<var_vserver_mgmt_gateway>> |

Management Gateway for Infrastructure Vserver |

| <<var_vserver_tenant_mgmt_gateway>> |

Management Gateway for Tenant Vserver |

| <<var_oncommand_server_ip>> |

IP address of the OnCommand Unified Manager |

| <<var_rule_index>> |

Rule index number |

| <<var_vm_host_infra_01_A_wwpn>> |

WWPN of Infra Datastore 01 A |

| <<var_vm_host_infra_01_B_wwpn>> |

WWPN of Infra Datastore 01 B |

| <<var_vm_host_infra_02_A_wwpn>> |

WWPN of Infra Datastore 02 A |

| <<var_vm_host_infra_02_B_wwpn>> |

WWPN of Infra Datastore 02 B |

| <<var_server_nfs_vlan_id>> |

NFS VLAN ID |

| <<var_nfs_lif02_ip>> |

NFS LIF 02 IP Address |

| <<var_nfs_lif01_ip>> |

NFS LIF 01 IP Address |

Physical Infrastructure

FlexPod Cabling

The information in this section is provided as a reference for cabling the physical equipment in a FlexPod environment. To simplify cabling requirements, the tables include both local and remote device and port locations.

The tables in this section contain the details for the prescribed and supported configuration of the NetApp AFF8040 running clustered Data ONTAP 8.3. For any modifications of this prescribed architecture, consult the NetApp Interoperability Matrix Tool (IMT).

This document assumes that out-of-band management ports are plugged into an existing management infrastructure at the deployment site. These interfaces will be used in various configuration steps

Be sure to follow the cabling directions in this section. Failure to do so will result in necessary changes to the deployment procedures that follow because specific port locations are mentioned.

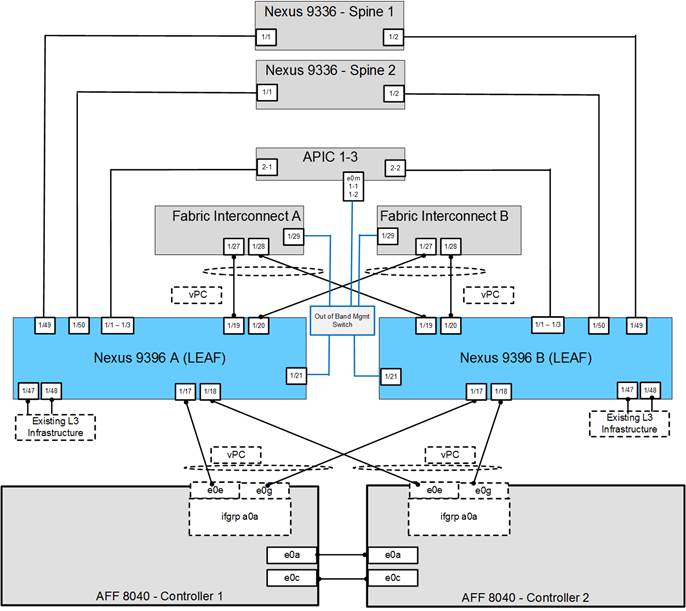

Figure 2 shows a cabling diagram for a FlexPod configuration using the Cisco Nexus 9000 and NetApp storage systems with clustered Data ONTAP. The NetApp storage controller and disk shelves should be connected according to best practices for the specific storage controller and disk shelves. For disk shelf cabling, refer to the Universal SAS and ACP Cabling Guide: https://library.netapp.com/ecm/ecm_get_file/ECMM1280392.

Figure 2 FlexPod Cabling Diagram

Table 5 through Table 14 provide the details of all the connections in use.

Table 5 Cisco Nexus 9396-A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 9396 A

|

Eth1/1 |

10GbE |

APIC 1 |

Eth 2-1 |

| Eth1/2 |

10GbE |

APIC 2 |

Eth 2-1 |

|

| Eth1/3 |

10GbE |

APIC 3 |

Eth 2-1 |

|

| Eth1/17 |

10GbE |

NetApp controller 1 |

e0e |

|

| Eth1/18 |

10GbE |

NetApp controller 2 |

e0e |

|

| Eth1/19 |

10GbE |

Cisco UCS fabric interconnect A |

Eth1/31 |

|

| Eth1/20 |

10GbE |

Cisco UCS fabric interconnect B |

Eth1/31 |

|

| Eth1/21 |

GbE |

Common Services Mgmt. Switch |

Any |

|

| Eth1/49 |

40GbE |

Cisco 9336 Spine 1 |

Eth1/1 |

|

| Eth1/50 |

40GbE |

Cisco 9336 Spine 2 |

Eth1/1 |

|

|

|

MGMT0 |

GbE |

GbE management switch |

Any |

![]() Note: For devices requiring GbE connectivity, use the GbE Copper SFP+s (GLC-T=).

Note: For devices requiring GbE connectivity, use the GbE Copper SFP+s (GLC-T=).

Table 6 Cisco Nexus 9396-B Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 9396 A

|

Eth1/1 |

10GbE |

APIC 1 |

Eth 2-2 |

| Eth1/2 |

10GbE |

APIC 2 |

Eth 2-2 |

|

| Eth1/3 |

10GbE |

APIC 3 |

Eth 2-2 |

|

| Eth1/17 |

10GbE |

NetApp controller 1 |

e0g |

|

| Eth1/18 |

10GbE |

NetApp controller 2 |

e0g |

|

| Eth1/19 |

10GbE |

Cisco UCS fabric interconnect A |

Eth1/32 |

|

| Eth1/20 |

10GbE |

Cisco UCS fabric interconnect B |

Eth1/32 |

|

| Eth1/21 |

GbE |

Common Services Mgmt. Switch |

Any |

|

| Eth1/49 |

40GbE |

Cisco 9336 Spine 1 |

Eth1/2 |

|

| Eth1/50 |

40GbE |

Cisco 9336 Spine 2 |

Eth1/2 |

|

|

|

MGMT0 |

GbE |

GbE management switch |

Any |

Table 7 NetApp Controller-1 Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| NetApp controller 1 |

e0M |

100MbE |

100MbE management switch |

Any |

| e0a |

GbE |

GbE management switch |

Any |

|

| e0P |

GbE |

SAS shelves |

ACP port |

|

| e0a |

10GbE |

NetApp Controller 2 |

e0a |

|

| e0c |

10GbE |

NetApp Controller 2 |

e0c |

|

| e0e |

10GbE |

Cisco Nexus 9000 A |

Eth 1/17 |

|

| e0g |

10GbE |

Cisco Nexus 9000 B |

Eth 1/17 |

![]() Note: When the term e0M is used, the physical Ethernet port to which the table is referring is the port indicated by a wrench icon on the rear of the chassis.

Note: When the term e0M is used, the physical Ethernet port to which the table is referring is the port indicated by a wrench icon on the rear of the chassis.

Table 8 NetApp controller 2 Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| NetApp controller 2 |

e0M |

100MbE |

100MbE management switch |

Any |

| e0a |

GbE |

GbE management switch |

Any |

|

| e0P |

GbE |

SAS shelves |

ACP port |

|

| e0a |

10GbE |

NetApp Controller 1 |

e0a |

|

| e0c |

10GbE |

NetApp Controller 1 |

e0c |

|

| e0e |

10GbE |

Cisco Nexus 9000 A |

Eth 1/18 |

|

| e0g |

10GbE |

Cisco Nexus 9000 B |

Eth 1/18 |

Table 9 UCS Fabric Interconnect A Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS fabric interconnect A

|

Eth1/1 |

10GbE |

Cisco UCS Chassis FEX A |

IOM 1/1 |

| Eth1/2 |

10GbE |

Cisco UCS Chassis FEX A |

IOM 1/2 |

|

| Eth1/27 |

10GbE |

Cisco Nexus 9000 A |

Eth 1/19 |

|

| Eth1/28 |

10GbE |

Cisco Nexus 9000 B |

Eth 1/19 |

|

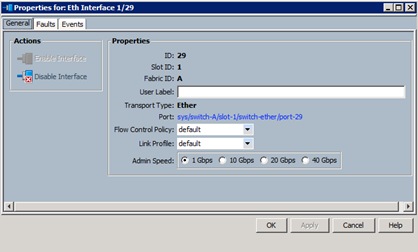

| Eth1/29 |

10GbE |

Management Switch |

Any |

|

| Eth1/31 |

10GbE |

Cisco Nexus 2232PP FEX A |

Uplink 1 |

|

| Eth1/32 |

10GbE |

Cisco Nexus 2232PP FEX A |

Uplink 2 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

|

| L1 |

GbE |

Cisco UCS fabric interconnect B |

L1 |

|

|

|

L2 |

GbE |

Cisco UCS fabric interconnect B |

L2 |

Table 10 UCS Fabric Interconnect B Cabling Information

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS fabric interconnect A

|

Eth1/1 |

10GbE |

Cisco UCS Chassis FEX B |

IOM 1/1 |

| Eth1/2 |

10GbE |

Cisco UCS Chassis FEX B |

IOM 1/2 |

|

| Eth1/27 |

10GbE |

Cisco Nexus 9000 A |

Eth 1/20 |

|

| Eth1/28 |

10GbE |

Cisco Nexus 9000 B |

Eth 1/20 |

|

| Eth1/29 |

10GbE |

Management Switch |

Any |

|

| Eth1/31 |

10GbE |

Cisco Nexus 2232PP FEX B |

Uplink 1 |

|

| Eth1/32 |

10GbE |

Cisco Nexus 2232PP FEX B |

Uplink 2 |

|

| MGMT0 |

GbE |

GbE management switch |

Any |

|

| L1 |

GbE |

Cisco UCS fabric interconnect B |

L1 |

|

|

|

L2 |

GbE |

Cisco UCS fabric interconnect B |

L2 |

Table 11 Cisco Nexus 2232 FEX A—Single Wire Management

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 2232PP FEX A

|

Port 1 |

10GbE |

Cisco UCS C-Series 1 |

Port 0 |

| Port 2 |

10GbE |

Cisco UCS C-Series 2 |

Port 0 |

Table 12 Cisco Nexus 2232 FEX B—Single Wire Management

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco Nexus 2232PP FEX B

|

Port 1 |

10GbE |

Cisco UCS C-Series 1 |

Port 1 |

| Port 2 |

10GbE |

Cisco UCS C-Series 2 |

Port 1 |

Table 13 Cisco UCS C-Series 1

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS C-Series 1

|

Port 0 |

10GbE |

Cisco Nexus 2232PP FEX A |

Port 1 |

| Port 1 |

10GbE |

Cisco Nexus 2232PP FEX B |

Port 1 |

| Local Device |

Local Port |

Connection |

Remote Device |

Remote Port |

| Cisco UCS C-Series 2

|

Port 0 |

10GbE |

Cisco Nexus 2232PP FEX A |

Port 2 |

| Port 1 |

10GbE |

Cisco Nexus 2232PP FEX B |

Port 2 |

Controller AFF80XX Series

Refer to the Site Requirements Guide for planning the physical location of the storage systems. From the downloaded guide, refer to the following sections:

· Site Preparation

· System Connectivity Requirements

· Circuit Breaker, Power Outlet Balancing, System Cabinet Power Cord Plugs, and Console Pinout Requirements

· 80xx Series Systems

NetApp Hardware Universe

The NetApp Hardware Universe provides supported hardware and software components for the specific Data ONTAP version. It provides configuration information for all the NetApp storage appliances currently supported by the Data ONTAP software. It also provides a table of component compatibilities.

1. Confirm that the hardware and software components are supported with the version of Data ONTAP that you plan to install by using the NetApp Hardware Universe (HWU) application at the NetApp Support site.

2. Access the HWU application to view the System Configuration guides. Click the Controllers tab to view the compatibility between Data ONTAP software versions and NetApp storage appliances with the desired specifications.

3. Alternatively, to compare components by storage appliance, click Compare Storage Systems.

Controllers

Follow the physical installation procedures for the controllers which can be found in the AFF8000 Series product documentation at the NetApp Support site.

Disk Shelves

NetApp storage systems support a wide variety of disk shelves and disk drives. The complete list of disk shelves that are supported with AFF 80xx is available at the NetApp Support site.

When using SAS disk shelves with NetApp storage controllers, refer to the SAS Disk Shelves Universal SAS and ACP Cabling Guide for proper cabling guidelines.

Clustered Data ONTAP 8.3

Complete the Configuration Worksheet

Before running the setup script, complete the cluster setup worksheet from the Clustered Data ONTAP 8.3 Software Setup Guide.

|

|

How to Access the Configuration Worksheet Configuration Guide |

Comments |

| Configuration Worksheet |

Requires access to the NetApp Support site. |

Configure Clustered Data ONTAP Nodes

Before running the setup script, review the configuration worksheets in the Clustered Data ONTAP 8.3 Software Setup Guide to learn about the information required to configure clustered Data ONTAP. Table 15 lists the information that you will need to configure two clustered Data ONTAP nodes. You should customize the cluster detail values with the information that is applicable to your deployment.

Table 15 Clustered Data ONTAP Software Installation Prerequisites

| Cluster Detail |

Cluster Detail Value |

| Cluster Node01 IP address |

<<var_node01_mgmt_ip>> |

| Cluster Node01 netmask |

<<var_node01_mgmt_mask>> |

| Cluster Node01 gateway |

<<var_node01_mgmt_gateway>> |

| Cluster Node02 IP address |

<<var_node02_mgmt_ip>> |

| Cluster Node02 netmask |

<<var_node02_mgmt_mask>> |

| Cluster Node02 gateway |

<<var_node02_mgmt_gateway>> |

| Data ONTAP 8.3 URL |

<<var_url_boot_software>> |

Configure Node 01

To configure node 01, complete the following steps:

1. Connect to the storage system console port. You should see a Loader-A prompt. However, if the storage system is in a reboot loop, press Ctrl-C to exit the autoboot loop when you see this message:

Starting AUTOBOOT press Ctrl-C to abort

2. Allow the system to boot up.

autoboot

3. Press Ctrl-C when prompted.

![]() Note: If Data ONTAP 8.3 is not the version of software being booted, continue the following steps to install new software. If Data ONTAP 8.3 is the version being booted, select option 8 and y (Yes) to reboot the node, then continue with step 14.

Note: If Data ONTAP 8.3 is not the version of software being booted, continue the following steps to install new software. If Data ONTAP 8.3 is the version being booted, select option 8 and y (Yes) to reboot the node, then continue with step 14.

4. To install new software, select option 7.

7

5. Enter y (Yes) to perform an upgrade.

y

6. Select e0M for the network port you want to use for the download.

e0M

7. Enter y (Yes) to reboot now.

y

8. After reboot, enter the IP address, netmask, and default gateway for e0M in their respective places.

<<var_node01_mgmt_ip>> <<var_node01_mgmt_mask>> <<var_node01_mgmt_gateway>>

9. Enter the URL where the software can be found.

![]() Note: This web server must be pingable.

Note: This web server must be pingable.

<<var_url_boot_software>>

10. Press Enter for the user name, indicating no user name.

Enter

11. Enter y (Yes) to set the newly installed software as the default to be used for subsequent reboots.

y

12. Enter y (Yes) to reboot the node.

y

![]() Note: When installing new software, the system might perform firmware upgrades to the BIOS and adapter cards, causing reboots and possible stops at the Loader-A prompt. If these actions occur, the system might deviate from this procedure.

Note: When installing new software, the system might perform firmware upgrades to the BIOS and adapter cards, causing reboots and possible stops at the Loader-A prompt. If these actions occur, the system might deviate from this procedure.

13. Press Ctrl-C when you see this message:

Press Ctrl-C for Boot Menu

14. Select option 4 for Clean Configuration and Initialize All Disks.

4

15. Enter y (Yes) to zero disks, reset config, and install a new file system.

y

16. Enter y (Yes) to erase all the data on the disks.

Y

![]() Note: The initialization and creation of the root volume can take 90 minutes or more to complete, depending on the number of disks attached. After initialization is complete, the storage system reboots. You can continue with the node 02 configuration while the disks for node 01 are zeroing.

Note: The initialization and creation of the root volume can take 90 minutes or more to complete, depending on the number of disks attached. After initialization is complete, the storage system reboots. You can continue with the node 02 configuration while the disks for node 01 are zeroing.

Configure Node 02

To configure node 02, complete the following steps:

1. Connect to the storage system console port. You should see a Loader-A prompt. However, if the storage system is in a reboot loop, press Ctrl-C to exit the autoboot loop when you see this message:

Starting AUTOBOOT press Ctrl-C to abort…

2. Allow the system to boot up.

autoboot

3. Press Ctrl-C when prompted.

Ctrl-C

![]() If Data ONTAP 8.3 is not the version of software being booted, continue with the following steps to install new software. If Data ONTAP 8.3 is the version being booted, select option 8 and yes to reboot the node. Then continue with step 14.

If Data ONTAP 8.3 is not the version of software being booted, continue with the following steps to install new software. If Data ONTAP 8.3 is the version being booted, select option 8 and yes to reboot the node. Then continue with step 14.

4. To install new software, select option 7.

7

5. Enter y (Yes) to perform a non-disruptive upgrade.

y

6. Select e0M for the network port you want to use for the download.

e0M

7. Enter y (Yes) to reboot now.

y

8. Enter the IP address, netmask, and default gateway for e0M in their respective places.

<<var_node02_mgmt_ip>> <<var_node02_mgmt_mask>> <<var_node02_mgmt_gateway>>

9. Enter the URL where the software can be found.

![]() This web server must be pingable.

This web server must be pingable.

<<var_url_boot_software>>

10. Press Enter for the user name, indicating no user name.

Enter

11. Enter y (Yes) to set the newly installed software as the default to be used for subsequent reboots.

y

12. Enter y (Yes) to reboot the node.

y

![]() When installing new software, the system might perform firmware upgrades to the BIOS and adapter cards, causing reboots and possible stops at the Loader-A prompt. If these actions occur, the system might deviate from this procedure.

When installing new software, the system might perform firmware upgrades to the BIOS and adapter cards, causing reboots and possible stops at the Loader-A prompt. If these actions occur, the system might deviate from this procedure.

13. Press Ctrl-C when you see this message:

Press Ctrl-C for Boot Menu

14. Select option 4 for Clean Configuration and Initialize All Disks.

4

15. Enter y (Yes) to zero disks, reset config, and install a new file system.

y

16. Enter y (Yes) to erase all the data on the disks.

y

![]() The initialization and creation of the root volume can take 90 minutes or more to complete, depending on the number of disks attached. When initialization is complete, the storage system reboots.

The initialization and creation of the root volume can take 90 minutes or more to complete, depending on the number of disks attached. When initialization is complete, the storage system reboots.

Set Up Node

From a console port program attached to the storage controller A (node 01) console port, run the node setup script. This script appears when Data ONTAP 8.3 boots on the node for the first time. To set up the node, complete the following steps:

1. Follow the prompts to set up node 01.

Welcome to node setup.

You can enter the following commands at any time:

"help" or "?" - if you want to have a question clarified,

"back" - if you want to change previously answered questions, and

"exit" or "quit" - if you want to quit the setup wizard.

Any changes you made before quitting will be saved.

To accept a default or omit a question, do not enter a value.

This system will send event messages and weekly reports to NetApp Technical

Support.

To disable this feature, enter "autosupport modify -support disable" within 24

hours.

Enabling AutoSupport can significantly speed problem determination and

resolution should a problem occur on your system.

For further information on AutoSupport, see:

http://support.netapp.com/autosupport/

Type yes to confirm and continue {yes}: yes

Enter the node management interface port [e0M]: Enter

Enter the node management interface IP address: <<var_node01_mgmt_ip>>

Enter the node management interface netmask: <<var_node01_mgmt_mask>>

Enter the node management interface default gateway: <<var_node01_mgmt_gateway>>

A node management interface on port e0M with IP address <<var_node01_mgmt_ip>> has been created

This node has its management address assigned and is ready for cluster setup.

To complete cluster setup after all nodes are ready, download and run the System Setup utility from the NetApp Support Site and use it to discover the configured nodes.

For System Setup, this node's management address is: <<var_node01_mgmt_ip>>.

Alternatively, you can use the "cluster setup" command to configure the cluster.

2. Press Enter and log in to the node with the admin user id and no password.

3. At the node command prompt, enter the following commands:

::> storage failover modify -mode ha

Mode set to HA. Reboot node to activate HA.

::> system node reboot

Warning: Are you sure you want to reboot node "localhost"? {y|n}: y

4. After reboot, set up the node with the preassigned values.

Welcome to node setup.

You can enter the following commands at any time:

"help" or "?" - if you want to have a question clarified,

"back" - if you want to change previously answered questions, and

"exit" or "quit" - if you want to quit the setup wizard.

Any changes you made before quitting will be saved.

To accept a default or omit a question, do not enter a value.

Enter the node management interface port [e0M]: Enter

Enter the node management interface IP address [<<var_node01_mgmt_ip>>]: Enter

Enter the node management interface netmask [<<var_node01_mgmt_mask>>]: Enter

Enter the node management interface default gateway [<<var_node01_mgmt_gateway>>]: Enter

This node has its management address assigned and is ready for cluster setup.

To complete cluster setup after all nodes are ready, download and run the System Setup utility from the NetApp Support Site and use it to discover the configured nodes.

For System Setup, this node's management address is: <<var_node01_mgmt_ip>>.

Alternatively, you can use the "cluster setup" command to configure the cluster.

5. Log in to the node as the admin user and no password.

6. Repeat this procedure for storage cluster node 02.

Create Cluster on Node 01

In clustered Data ONTAP, the first node in the cluster performs the cluster create operation. All other nodes perform a cluster join operation. The first node in the cluster is considered node 01.

Table 16 Cluster create in Clustered Data ONTAP Prerequisites

| Cluster Detail |

Cluster Detail Value |

| Cluster name |

<<var_clustername>> |

| Clustered Data ONTAP base license |

<<var_cluster_base_license_key>> |

| Cluster management IP address |

<<var_clustermgmt_ip>> |

| Cluster management netmask |

<<var_clustermgmt_mask>> |

| Cluster management port |

<<var_clustermgmt_port>> |

| Cluster management gateway |

<<var_clustermgmt_gateway>> |

| Cluster node01 IP address |

<<var_node01_mgmt_ip>> |

| Cluster node01 netmask |

<<var_node01_mgmt_mask>> |

| Cluster node01 gateway |

<<var_node01_mgmt_gateway>> |

7. Run the cluster setup command to start the Cluster Setup wizard.

cluster setup

Welcome to the cluster setup wizard.

You can enter the following commands at any time:

"help" or "?" - if you want to have a question clarified,

"back" - if you want to change previously answered questions, and

"exit" or "quit" - if you want to quit the cluster setup wizard.

Any changes you made before quitting will be saved.

You can return to cluster setup at any time by typing "cluster setup".

To accept a default or omit a question, do not enter a value.

Do you want to create a new cluster or join an existing cluster? {create, join}:

![]() Note: If a login prompt appears instead of the Cluster Setup wizard, start the wizard by logging in by using the factory default settings and then enter the cluster setup command.

Note: If a login prompt appears instead of the Cluster Setup wizard, start the wizard by logging in by using the factory default settings and then enter the cluster setup command.

To create a new cluster, complete the following steps:

8. Run the following command to create a new cluster:

create

9. Enter no for the single-node cluster option.

Do you intend for this node to be used as a single node cluster? {yes, no} [no]: no

10. Enter no for cluster network using network switches.

Will the cluster network be configured to use network switches? [yes]:no

11. The system defaults are displayed. Enter yes to use the system defaults. Use the following prompts to configure the cluster ports.

Existing cluster interface configuration found:

Port MTU IP Netmask

e0a 9000 169.254.118.102 255.255.0.0

e0b 9000 169.254.152.110 255.255.0.0

e0c 9000 169.254.191.92 255.255.0.0

e0d 9000 169.254.233.52 255.255.0.0

Do you want to use this configuration? {yes, no} [yes]: no

System Defaults:

Private cluster network ports [e0a,e0c].

Cluster port MTU values will be set to 9000.

Cluster interface IP addresses will be automatically generated.

Do you want to use these defaults? {yes, no} [yes]: yes

12. The steps to create a cluster are displayed.

Enter the cluster administrators (username “admin) password: <<var_password>>

Retype the password: <<var_password>>

It can take several minutes to create cluster interfaces...

Step 1 of 5: Create a Cluster

You can type "back", "exit", or "help" at any question.

Enter the cluster name: <<var_clustername>>

Enter the cluster base license key: <<var_cluster_base_license_key>>

Creating cluster <<var_clustername>>

Enter an additional license key []:<<var_iscsi_license>>

![]() Note: The cluster is created. This can take a minute or two.

Note: The cluster is created. This can take a minute or two.

![]() Note: For this validated architecture, NetApp recommends installing license keys for NetApp SnapRestore®, NetApp FlexClone®, and NetApp SnapManager® Suite. In addition, install all required storage protocol licenses and all licenses that came with the AFF bundle. After you finish entering the license keys, press Enter.

Note: For this validated architecture, NetApp recommends installing license keys for NetApp SnapRestore®, NetApp FlexClone®, and NetApp SnapManager® Suite. In addition, install all required storage protocol licenses and all licenses that came with the AFF bundle. After you finish entering the license keys, press Enter.

Enter the cluster management interface port [e0e]: e0i

Enter the cluster management interface IP address: <<var_clustermgmt_ip>>

Enter the cluster management interface netmask: <<var_clustermgmt_mask>>

Enter the cluster management interface default gateway: <<var_clustermgmt_gateway>>

13. Enter the DNS domain name.

Enter the DNS domain names:<<var_dns_domain_name>>

Enter the name server IP addresses:<<var_nameserver_ip>>

![]() If you have more than one name server IP address, separate the IP addresses with a comma.

If you have more than one name server IP address, separate the IP addresses with a comma.

14. Set up the node.

Where is the controller located []:<<var_node_location>>

Enter the node management interface port [e0M]: e0M

Enter the node management interface IP address [<<var_node01_mgmt_ip>>]: Enter

Enter the node management interface netmask [<<var_node01_mgmt_mask>>]: Enter

Enter the node management interface default gateway [<<var_node01_mgmt_gateway>>]: Enter

The node management interface has been modified to use port e0M with IP address <<var_node01_mgmt_ip>>.

This system will send event messages and weekly reports to NetApp Technical Support.

To disable this feature, enter "autosupport modify -support disable" within 24 hours.

Enabling AutoSupport can significantly speed problem determination and resolution should a problem occur on your system.

For further information on AutoSupport, please see: http://support.netapp.com/autosupport/

Press enter to continue: Enter

Cluster "<<var_clustername>>" has been created.

To complete cluster setup, you must join each additional node to the cluster

by running "cluster setup" on each node.

Once all nodes have been joined to the cluster, see the Clustered Data ONTAP

Software Setup Guide for information about additional system configuration

tasks. You can find the Software Setup Guide on the NetApp Support Site.

To complete system configuration, you can use either OnCommand System Manager

or the Data ONTAP command-line interface.

To access OnCommand System Manager, point your web browser to the cluster

management IP address (<<var_clustermgmt_ip>>).

To access the command-line interface, connect to the cluster management

IP address (for example, ssh admin@<<var_clustermgmt_ip>>).

<<var_clustername>>::>

![]() Note: The node management interface can be on the same subnet as the cluster management interface, or it can be on a different subnet. In this document it is assumed to be on the same subnet.

Note: The node management interface can be on the same subnet as the cluster management interface, or it can be on a different subnet. In this document it is assumed to be on the same subnet.

Join Node 02 to Cluster

The first node in the cluster performs the cluster create operation. All other nodes perform a cluster join operation. The first node in the cluster is considered node 01, and the node joining the cluster in this example is node 02.

Table 17 Cluster join in Clustered Data ONTAP Prerequisites

| Cluster Detail |

Cluster Detail Value |

| Cluster name |

<<var_clustername>> |

| Cluster management IP address |

<<var_clustermgmt_ip>> |

| Cluster node02 IP address |

<<var_node02_mgmt_ip>> |

| Cluster node02 netmask |

<<var_node02_mgmt_mask>> |

| Cluster node02 gateway |

<<var_node02_mgmt_gateway>> |

To join node 02 to the existing cluster, complete the following steps:

1. If prompted, enter admin in the login prompt.

admin

2. Run the cluster setup command to start the Cluster Setup wizard.

cluster setup

This node's storage failover partner is already a member of a cluster.

Storage failover partners must be members of the same cluster.

The cluster setup wizard will default to the cluster join dialog.

Welcome to the cluster setup wizard.

You can enter the following commands at any time:

"help" or "?" - if you want to have a question clarified,

"back" - if you want to change previously answered questions, and

"exit" or "quit" - if you want to quit the cluster setup wizard.

Any changes you made before quitting will be saved.

You can return to cluster setup at any time by typing "cluster setup".

To accept a default or omit a question, do not enter a value.

Do you want to create a new cluster or join an existing cluster?

{join}:

![]() Note: If a login prompt is displayed instead of the Cluster Setup wizard, start the wizard by logging in using the factory default settings, and then enter the cluster setup command.

Note: If a login prompt is displayed instead of the Cluster Setup wizard, start the wizard by logging in using the factory default settings, and then enter the cluster setup command.

3. Run the following command to join a cluster:

join

4. Data ONTAP detects the existing cluster and agrees to join the same cluster. Follow the prompts to join the cluster.

Existing cluster interface configuration found:

Port MTU IP Netmask

e0a 9000 169.254.1.79 255.255.0.0

e0b 9000 169.254.54.223 255.255.0.0

e0c 9000 169.254.100.157 255.255.0.0

e0d 9000 169.254.138.142 255.255.0.0

Do you want to use this configuration? {yes, no} [yes]: no

System Defaults:

Private cluster network ports [e0a,e0c].

Cluster port MTU values will be set to 9000.

Cluster interface IP addresses will be automatically generated.

Do you want to use these defaults? {yes, no} [yes]:Enter

It can take several minutes to create cluster interfaces...

5. The steps to join a cluster are displayed.

Step 1 of 3: Join an Existing Cluster

You can type "back", "exit", or "help" at any question.

Enter the name of the cluster you would like to join [<<var_clustername>>]:Enter

Joining cluster <<var_clustername>>

Starting cluster support services ..

This node has joined the cluster <<var_clustername>>.

Step 2 of 3: Configure Storage Failover (SFO)

You can type "back", "exit", or "help" at any question.

SFO is enabled.

Step 3 of 3: Set Up the Node

You can type "back", "exit", or "help" at any question.

Notice: HA is configured in management.

![]() Note: The node should find the cluster name. The cluster joining can take a few minutes.

Note: The node should find the cluster name. The cluster joining can take a few minutes.

6. Set up the node.

Enter the node management interface port [e0M]: e0M

Enter the node management interface IP address [<<var_node02_mgmt_ip>>]: Enter

Enter the node management interface netmask [<<var_node02_netmask>>]: Enter

Enter the node management interface default gateway [<<var_node02_gw>>]: Enter

The node management interface has been modified to use port e0M with IP address <<var_node02_mgmt_ip>>.

This system will send event messages and weekly reports to NetApp Technical Support.

To disable this feature, enter "autosupport modify -support disable" within 24 hours.

Enabling AutoSupport can significantly speed problem determination and resolution should a problem occur on your system.

For further information on AutoSupport, please see: http://support.netapp.com/autosupport/

Press enter to continue: Enter

This node has been joined to cluster "<<var_clustername>>".

To complete cluster setup, you must join each additional node to the cluster

by running "cluster setup" on each node.

Once all nodes have been joined to the cluster, see the Clustered Data ONTAP

Software Setup Guide for information about additional system configuration

tasks. You can find the Software Setup Guide on the NetApp Support Site.

To complete system configuration, you can use either OnCommand System Manager

or the Data ONTAP command-line interface.

To access OnCommand System Manager, point your web browser to the cluster

management IP address (<<var_clustermgmt_ip>>).

To access the command-line interface, connect to the cluster management

IP address (for example, ssh admin@<<var_clustermgmt_ip>>).

![]() Note: The node management interface can be on the same subnet as the cluster management interface, or it can be on a different subnet. In this document it is assumed to be on the same subnet.

Note: The node management interface can be on the same subnet as the cluster management interface, or it can be on a different subnet. In this document it is assumed to be on the same subnet.

Log In to Cluster

To log in to the cluster, complete the following steps:

1. Open an SSH connection to either the cluster IP or host name.

2. Log in to the admin user with the password you provided earlier.

Zero All Spare Disks

To zero all spare disks in the cluster, complete the following step:

1. Run the following command:

disk zerospares

![]() Note: Disk autoassign should have assigned half of the connected disks to each node in the HA pair. If a different disk assignment is required, disk autoassignment must be disabled on both nodes in the HA pair by running the disk option modify command. Spare disks can then be moved from one node to another by running the disk removeowner and disk assign commands.

Note: Disk autoassign should have assigned half of the connected disks to each node in the HA pair. If a different disk assignment is required, disk autoassignment must be disabled on both nodes in the HA pair by running the disk option modify command. Spare disks can then be moved from one node to another by running the disk removeowner and disk assign commands.

Set Onboard UTA2 Ports Personality

To set the personality of the onboard Unified Target Adapter 2 (UTA2), complete the following steps:

1. Verify the Current Mode and Current Type of the ports by running the ucadmin show command.

ucadmin show

Current Current Pending Pending Admin

Node Adapter Mode Type Mode Type Status

------------ ------- ------- --------- ------- --------- -----------

<<var_node01>>

0e cna target - - online

<<var_node01>>

0f cna target - - online

<<var_node01>>

0g cna target - - online

<<var_node01>>

0h cna target - - online

<<var_node02>>

0e cna target - - online

<<var_node02>>

0f cna target - - online

<<var_node02>>

0g cna target - - online

<<var_node02>>

0h cna target - - online

8 entries were displayed.

2. Verify that the Current Mode of all the ports in use is cna and the Current Type is set to target. If not, change the port personality by running the following command:

ucadmin modify -node <home node of the port> -adapter <port name> -mode cna -type target

![]() Note: The ports must be offline to run this command. To take an adapter offline, run the fcp adapter modify –node <home node of the port> -adapter <port name> -state down command. Ports must be converted in pairs, for example, 0c and 0d, after which, a reboot is required, and the ports must be brought back to the up state.

Note: The ports must be offline to run this command. To take an adapter offline, run the fcp adapter modify –node <home node of the port> -adapter <port name> -state down command. Ports must be converted in pairs, for example, 0c and 0d, after which, a reboot is required, and the ports must be brought back to the up state.

Set Auto-Revert on Cluster Management

To set the auto-revert parameter on the cluster management interface, complete the following step:

![]() Note: The storage virtual machine (SVM) is referred to as Vserver (or vserver) in the GUI and CLI.

Note: The storage virtual machine (SVM) is referred to as Vserver (or vserver) in the GUI and CLI.

1. Run the following command:

network interface modify –vserver <<var_clustername>> -lif cluster_mgmt –auto-revert true

Set Up Management Broadcast Domain

To set up the default broadcast domain for management network interfaces, complete the following step:

1. Run the following commands:

broadcast-domain remove-ports –broadcast-domain Default –ports <<var_node01>>:e0b,<<var_node01>>:e0d,<<var_node01>>:e0e,<<var_node01>>:e0f,<<var_node01>>:e0g,<<var_node01>>:e0h,<<var_node01>>:e0j,<<var_node01>>:e0k,<<var_node01>>:e0l,<<var_node02>>:e0b,<<var_node02>>:e0d,<<var_node02>>:e0e,<<var_node02>>:e0f,<<var_node02>>:e0g,<<var_node02>>:e0h,<<var_node02>>:e0j,<<var_node02>>:e0k,<<var_node02>>:e0l

broadcast-domain show

Set Up Service Processor Network Interface

To assign a static IPv4 address to the service processor on each node, complete the following step:

1. Run the following commands:

system service-processor network modify –node <<var_node01>> -address-family IPv4 –enable true –dhcp none –ip-address <<var_node01_sp_ip>> -netmask <<var_node01_sp_mask>> -gateway <<var_node01_sp_gateway>>

system service-processor network modify –node <<var_node02>> -address-family IPv4 –enable true –dhcp none –ip-address <<var_node02_sp_ip>> -netmask <<var_node02_sp_mask>> -gateway <<var_node02_sp_gateway>>

![]() Note: The service processor IP addresses should be in the same subnet as the node management IP addresses.

Note: The service processor IP addresses should be in the same subnet as the node management IP addresses.

Create Aggregates

An aggregate containing the root volume is created during the Data ONTAP setup process. To create additional aggregates, determine the aggregate name, the node on which to create it, and the number of disks it will contain.

To create new aggregates, complete the following steps:

1. Run the following commands:

aggr create -aggregate aggr1_node01 -nodes <<var_node01>> -diskcount <<var_num_disks>>

aggr create -aggregate aggr1_node02 -nodes <<var_node02>> -diskcount <<var_num_disks>>

![]() Note: Retain at least one disk (select the largest disk) in the configuration as a spare. A best practice is to have at least one spare for each disk type and size.

Note: Retain at least one disk (select the largest disk) in the configuration as a spare. A best practice is to have at least one spare for each disk type and size.

![]() Note: Start with five disks initially; you can add disks to an aggregate when additional storage is required. In an AFF configuration with a small number of SSDS, it may be desirable to create an aggregate with all but one remaining disk (spare) assigned to the controller.

Note: Start with five disks initially; you can add disks to an aggregate when additional storage is required. In an AFF configuration with a small number of SSDS, it may be desirable to create an aggregate with all but one remaining disk (spare) assigned to the controller.

![]() Note: The aggregate cannot be created until disk zeroing completes. Run the aggr show command to display aggregate creation status. Do not proceed until both aggr1_node1 and aggr1_node2 are online.

Note: The aggregate cannot be created until disk zeroing completes. Run the aggr show command to display aggregate creation status. Do not proceed until both aggr1_node1 and aggr1_node2 are online.

2. Disable NetApp Snapshot® copies for the two data aggregates recently created.

node run <<var_node01>> aggr options aggr1_node01 nosnap on

node run <<var_node02>> aggr options aggr1_node02 nosnap on

3. Delete any existing Snapshot copies for the two data aggregates.

node run <<var_node01>> snap delete –A –a –f aggr1_node01

node run <<var_node02>> snap delete –A –a –f aggr1_node02

4. Rename the root aggregate on node 01 to match the naming convention for this aggregate on node 02.

aggr show

aggr rename –aggregate aggr0 –newname <<var_node01_rootaggrname>>

Verify Storage Failover

To confirm that storage failover is enabled, run the following commands for a failover pair:

1. Verify the status of storage failover.

storage failover show

![]() Note: Both the nodes <<var_node01>> and <<var_node02>> must be capable of performing a takeover. Continue with step 3 if the nodes are capable of performing a takeover.

Note: Both the nodes <<var_node01>> and <<var_node02>> must be capable of performing a takeover. Continue with step 3 if the nodes are capable of performing a takeover.

2. Enable failover on one of the two nodes.

storage failover modify -node <<var_node01>> -enabled true

![]() Note: Enabling failover on one node enables it for both nodes.

Note: Enabling failover on one node enables it for both nodes.

3. Verify the HA status for a two-node cluster.

![]() Note: This step is not applicable for clusters with more than two nodes.

Note: This step is not applicable for clusters with more than two nodes.

cluster ha show

4. Continue with step 6 if high availability is configured.

5. Enable HA mode only for the two-node cluster.

![]() Note: Do not run this command for clusters with more than two nodes because it will cause problems with failover.

Note: Do not run this command for clusters with more than two nodes because it will cause problems with failover.

cluster ha modify -configured true

Do you want to continue? {y|n}: y

6. Verify that hardware assist is correctly configured and, if needed, modify the partner IP address.

storage failover hwassist show

storage failover modify –hwassist-partner-ip <<var_node02_mgmt_ip>> -node <<var_node01>>

storage failover modify –hwassist-partner-ip <<var_node01_mgmt_ip>> -node <<var_node02>>

Disable Flow Control on UTA2 Ports

NetApp recommends disabling flow control on all of the 10GbE and UTA2 ports that are connected to external devices. To disable flow control, complete the following steps:

1. Run the following commands to configure node 01:

network port modify -node <<var_node01>> -port e0a,e0b,e0c,e0d,e0e,e0f,e0g,e0h -flowcontrol-admin none

Warning: Changing the network port settings will cause a several second interruption in carrier.

Do you want to continue? {y|n}: y

2. Run the following commands to configure node 02:

network port modify -node <<var_node02>> -port e0a,e0b,e0c,e0d,e0e,e0f,e0g,e0hq -flowcontrol-admin none

Warning: Changing the network port settings will cause a several second interruption in carrier.

Do you want to continue? {y|n}: y

network port show –fields flowcontrol-admin

Disable Unused FcoE Ports

Unused data FCoE ports on active interfaces should be disabled. To disable these ports, complete the following step:

1. Run the following commands:

fcp adapter modify -node <<var_node01>> -adapter 0e –state down

fcp adapter modify -node <<var_node01>> -adapter 0g –state down

fcp adapter modify -node <<var_node02>> -adapter 0e –state down

fcp adapter modify -node <<var_node02>> -adapter 0g –state down

fcp adapter show –fields state

Configure NTP

To configure time synchronization on the cluster, complete the following steps:

1. To set the time zone for the cluster, run the following command:

timezone <<var_timezone>>

![]() Note: For example, in the eastern United States, the time zone is America/New_York.

Note: For example, in the eastern United States, the time zone is America/New_York.

2. To set the date for the cluster, run the following command:

date <ccyymmddhhmm.ss>

![]() Note: The format for the date is <[Century][Year][Month][Day][Hour][Minute].[Second]>; for example, 201309081735.17

Note: The format for the date is <[Century][Year][Month][Day][Hour][Minute].[Second]>; for example, 201309081735.17

3. Configure the Network Time Protocol (NTP) servers for the cluster.

cluster time-service ntp server create -server <<var_global_ntp_server_ip>>

Configure SNMP

To configure SNMP, complete the following steps:

1. Configure SNMP basic information, such as the location and contact. When polled, this information is visible as the sysLocation and sysContact variables in SNMP.

snmp contact <<var_snmp_contact>>

snmp location “<<var_snmp_location>>

snmp init 1

options snmp.enable on

2. Configure SNMP traps to send to remote hosts, such as a DFM server or another fault management system.

snmp traphost add <<var_oncommand_server_fqdn>>

Configure SNMPv1 Access

To configure SNMPv1 access, complete the following step:

1. Set the shared secret plain-text password, which is called a community.

snmp community add ro <<var_snmp_community>>

2. Use the delete all command with caution. If community strings are used for other monitoring products, the delete all command will remove them.

Create SNMPv3 User

SNMPv3 requires that a user be defined and configured for authentication. To create and configure a user for SNMPv3, complete the following steps:

1. Create a user called snmpv3user.

security login create -username snmpv3user -authmethod usm -application snmp

2. Enter the authoritative entity's engine ID and select md5 as the authentication protocol.

3. Run the security snmpusers command to view the engine ID.

4. When prompted, enter an eight-character minimum-length password for the authentication protocol.

5. Select des as the privacy protocol.

6. When prompted, enter an eight-character minimum-length password for the privacy protocol.

Configure AutoSupport

AutoSupport sends support summary information to NetApp through HTTPS. To configure AutoSupport, complete the following step:

1. Run the following command:

system node autosupport modify -node * -state enable –mail-hosts <<var_mailhost>> -transport https -support enable -noteto <<var_storage_admin_email>>

Enable Cisco Discovery Protocol

To enable Cisco Discovery Protocol (CDP) on the NetApp storage controllers, complete the following step:

![]() Note: To be effective, CDP must also be enabled on directly connected networking equipment such as switches and routers.

Note: To be effective, CDP must also be enabled on directly connected networking equipment such as switches and routers.

2. Run the following command to enable CDP on Data ONTAP:

node run -node * options cdpd.enable on

Create Jumbo Frame MTU Broadcast Domains in Clustered Data ONTAP

To create a data broadcast domain with an MTU of 9000, complete the following step:

1. Run the following commands to create a broadcast domain on Data ONTAP:

broadcast-domain create -broadcast-domain Infra_NFS -mtu 9000

broadcast-domain create -broadcast-domain Infra_iSCSI-A -mtu 9000

broadcast-domain create -broadcast-domain Infra_iSCSI-B -mtu 9000

Create Interface Groups

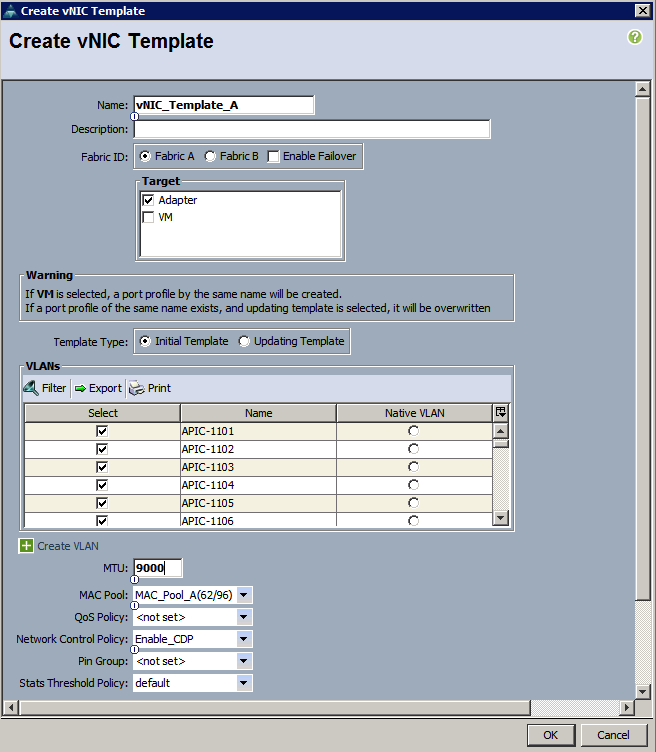

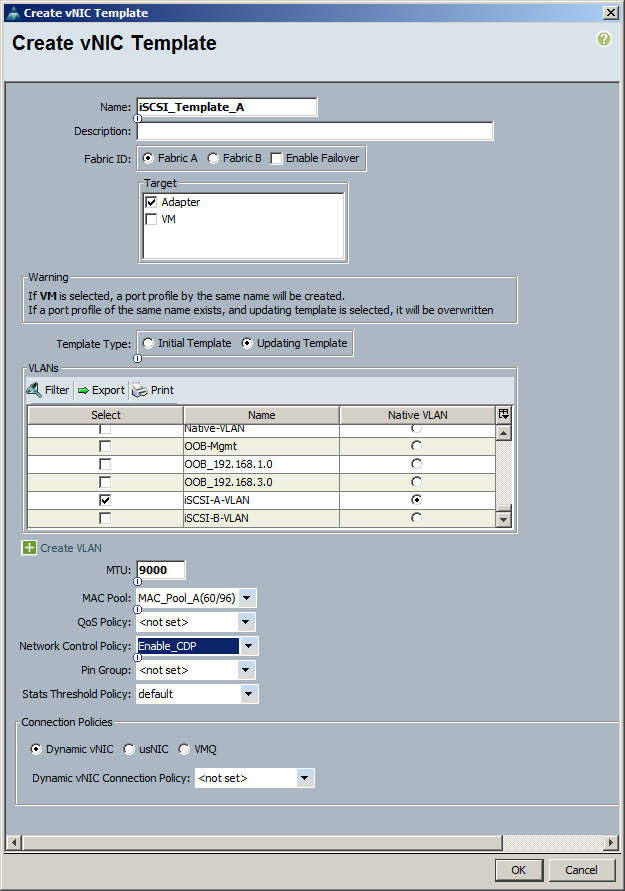

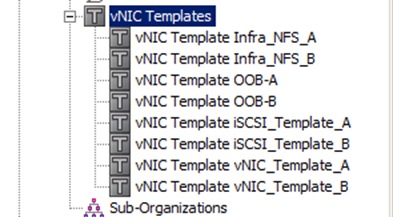

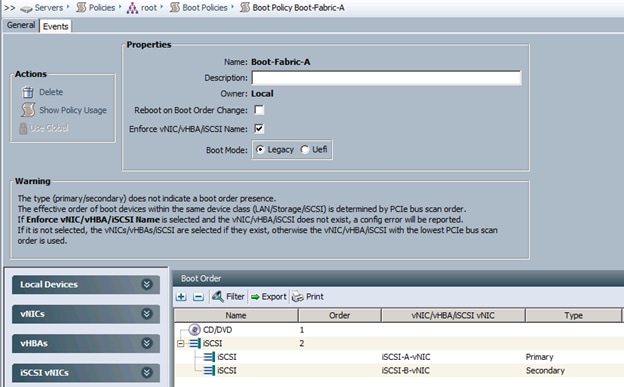

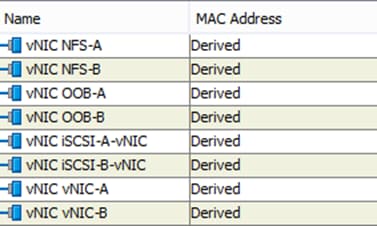

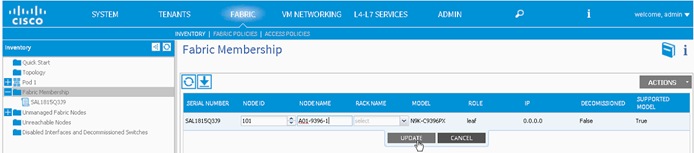

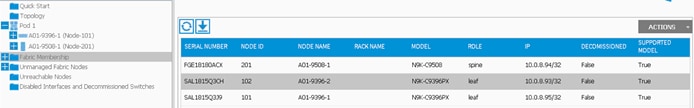

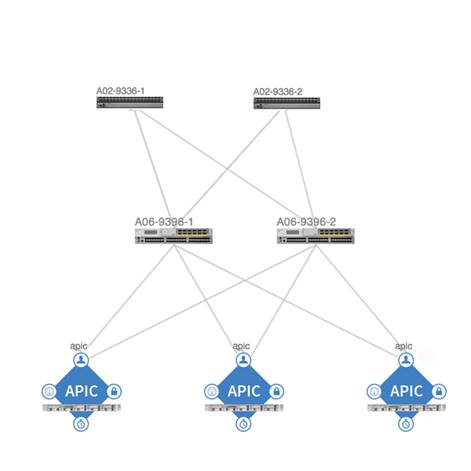

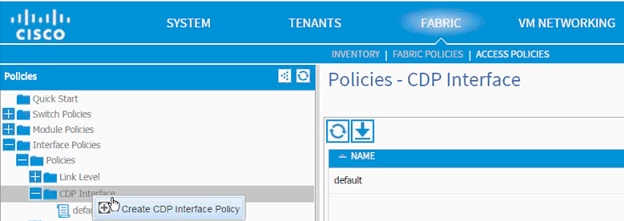

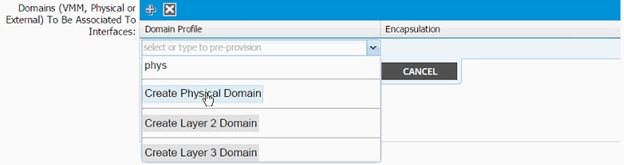

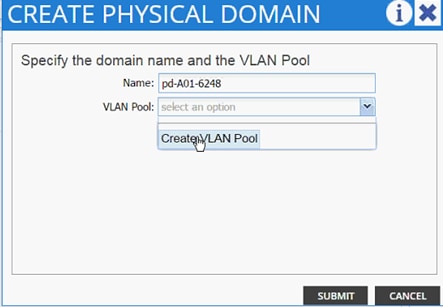

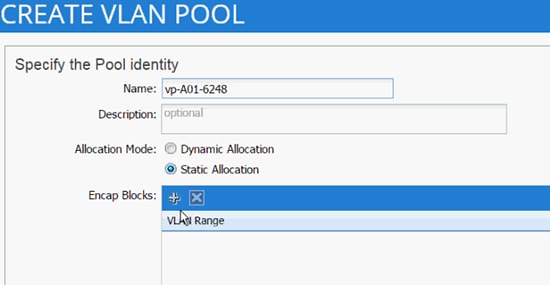

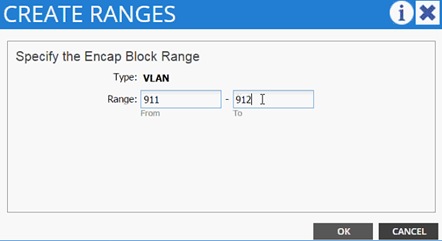

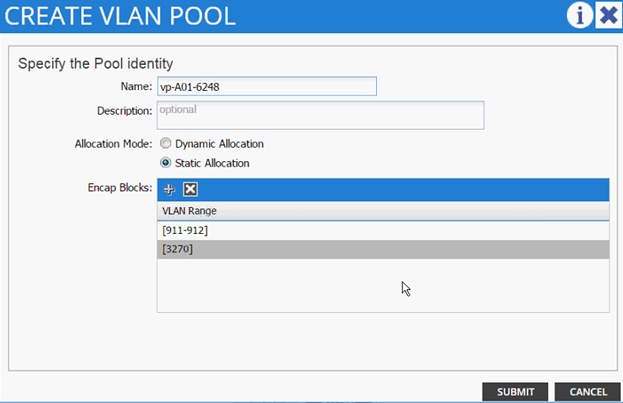

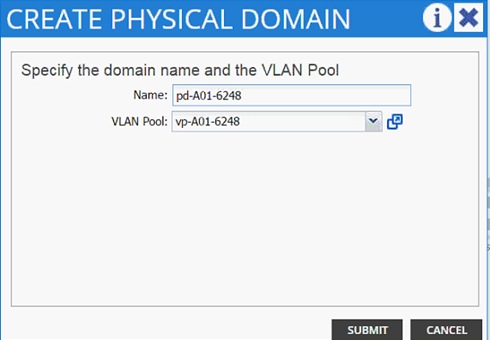

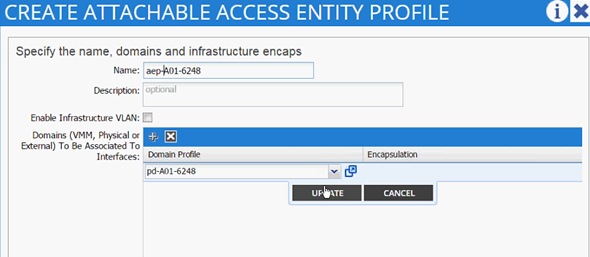

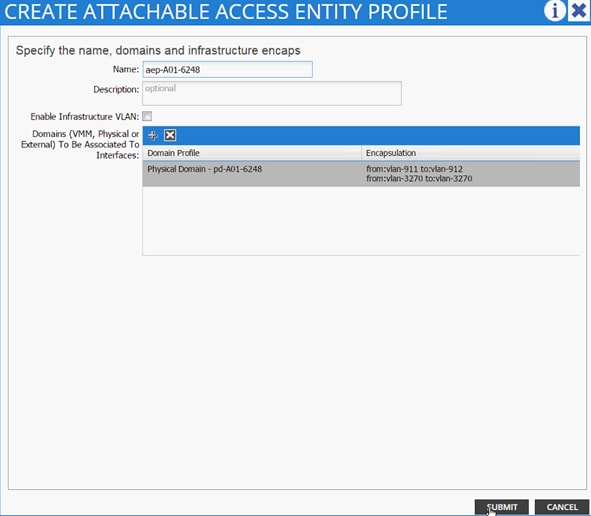

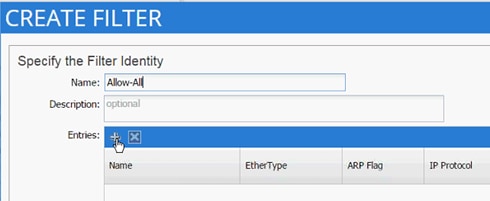

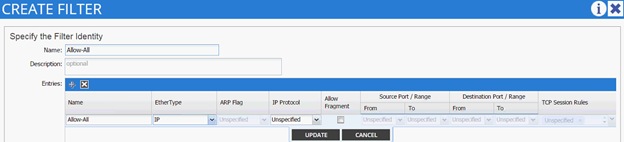

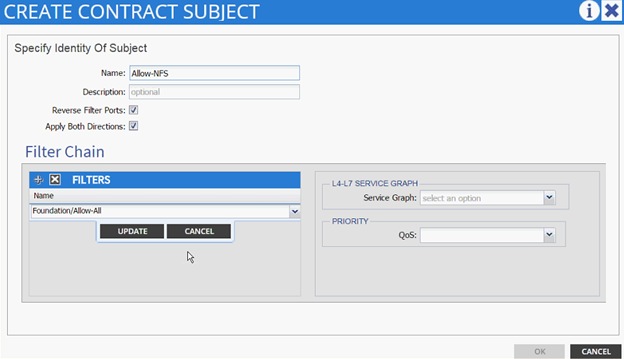

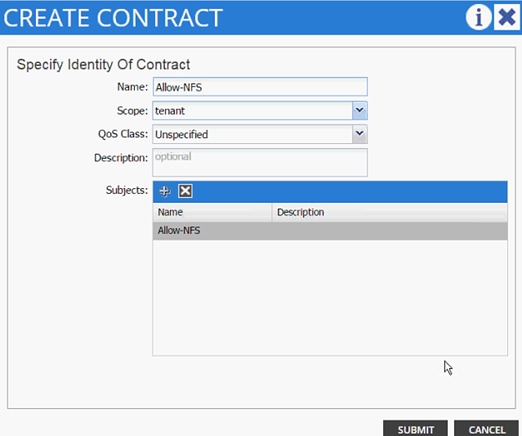

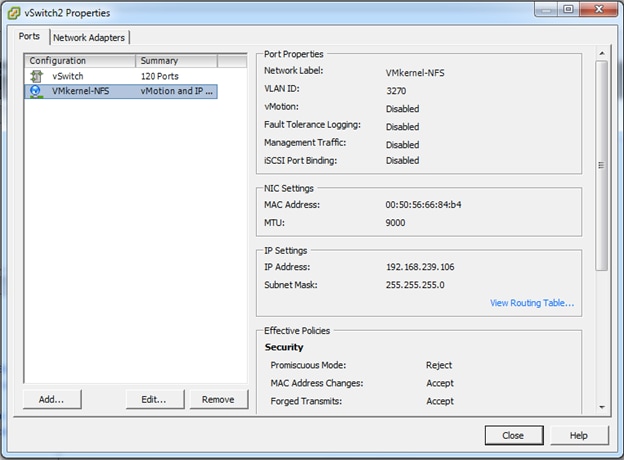

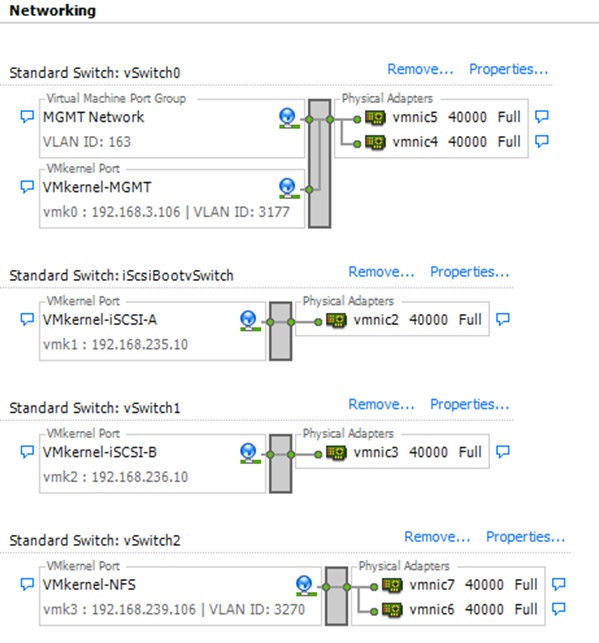

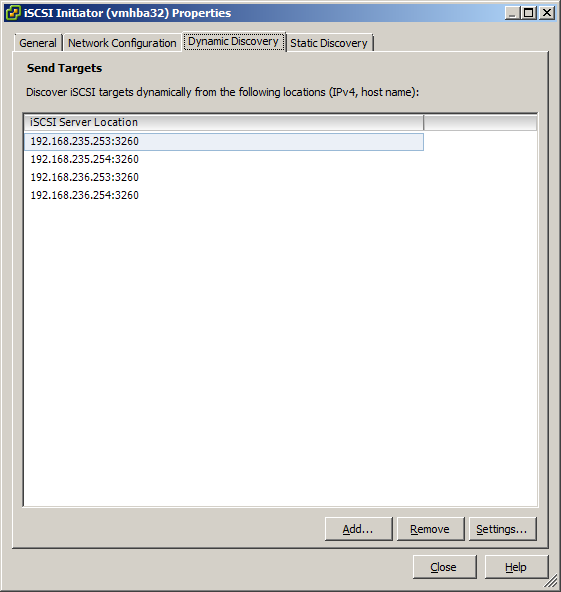

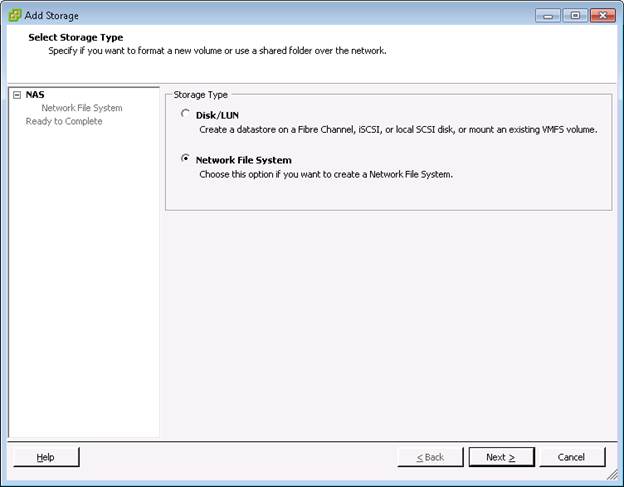

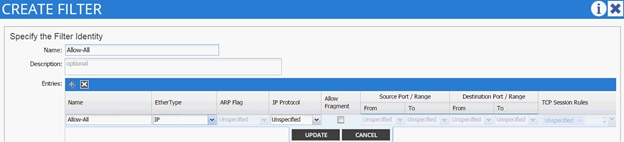

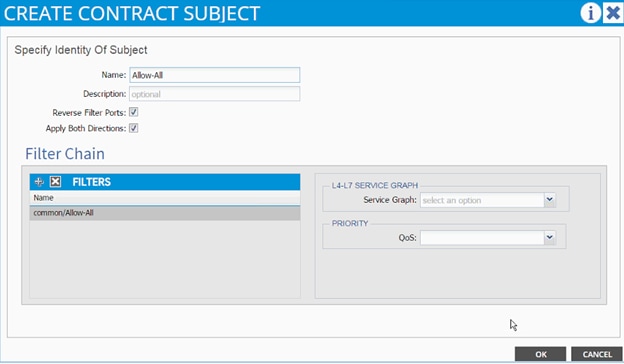

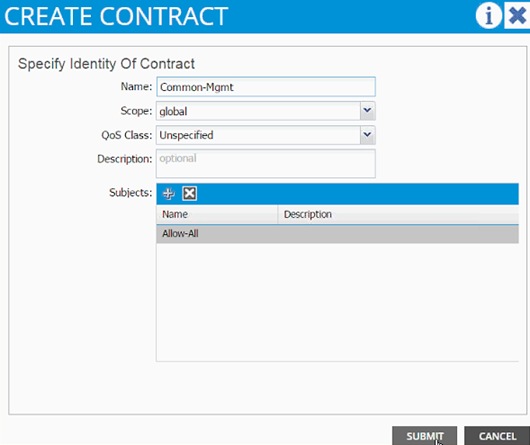

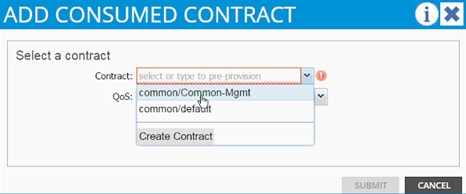

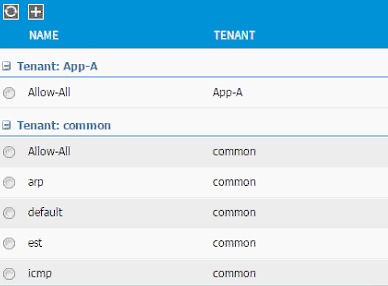

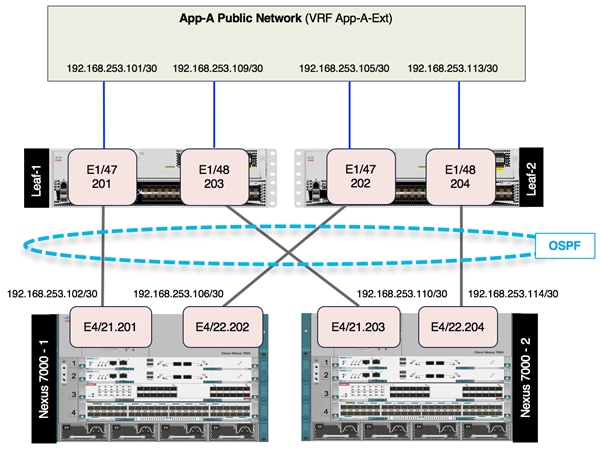

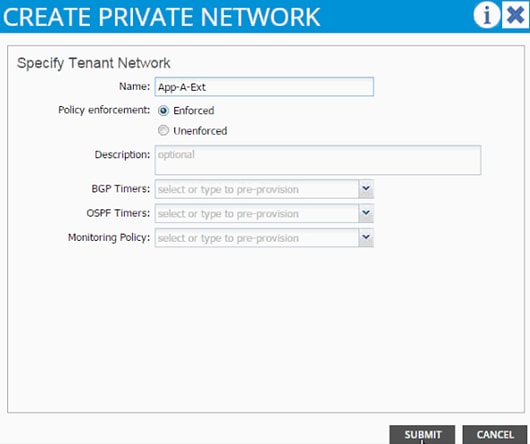

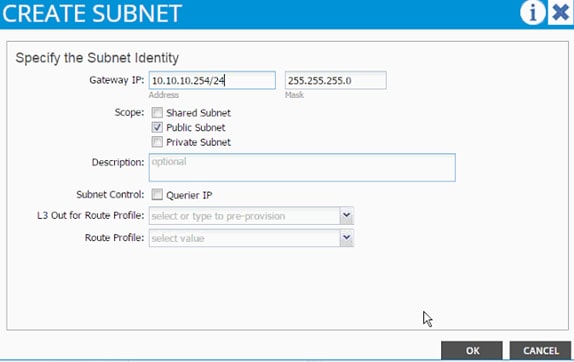

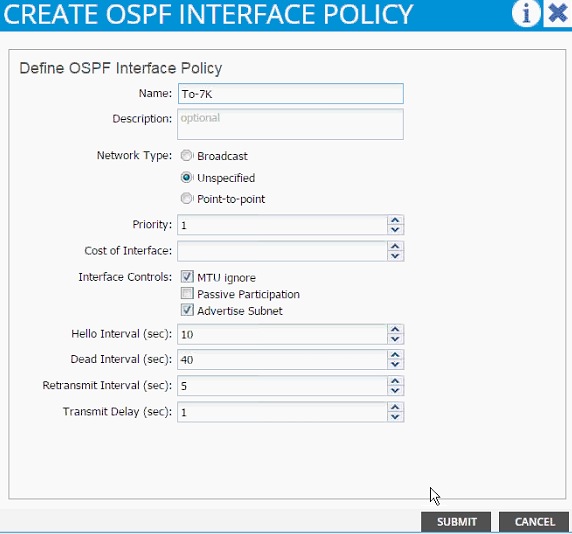

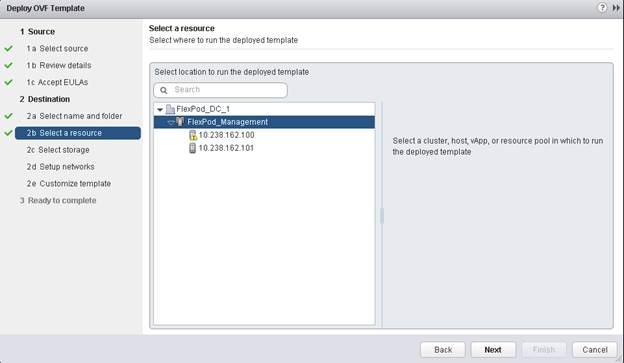

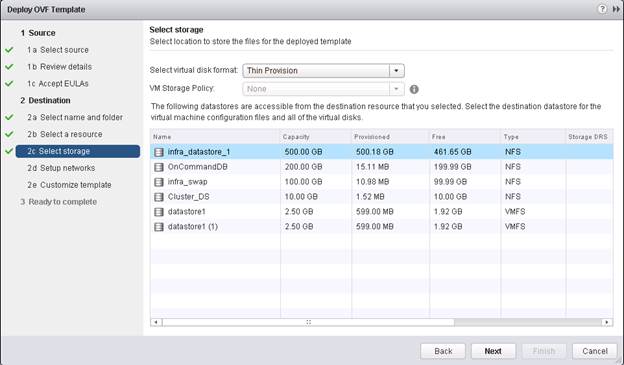

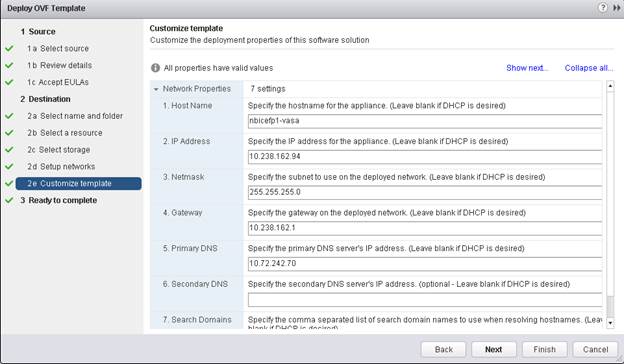

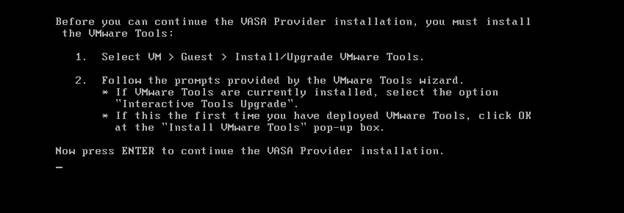

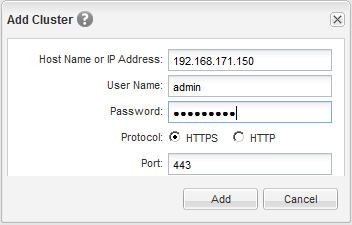

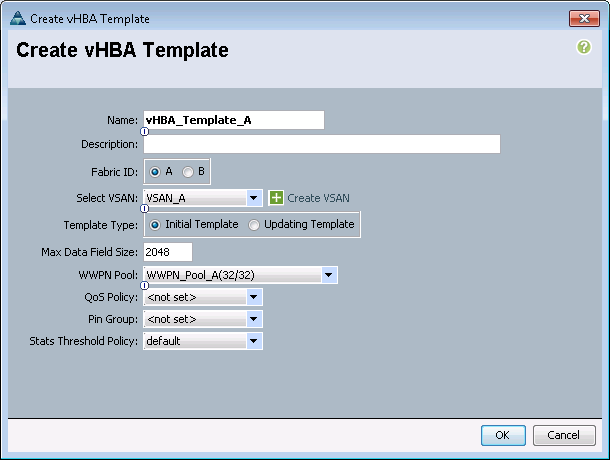

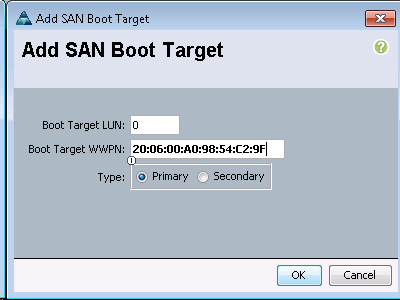

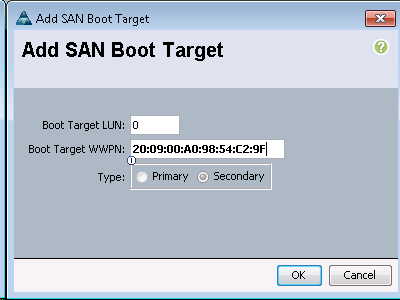

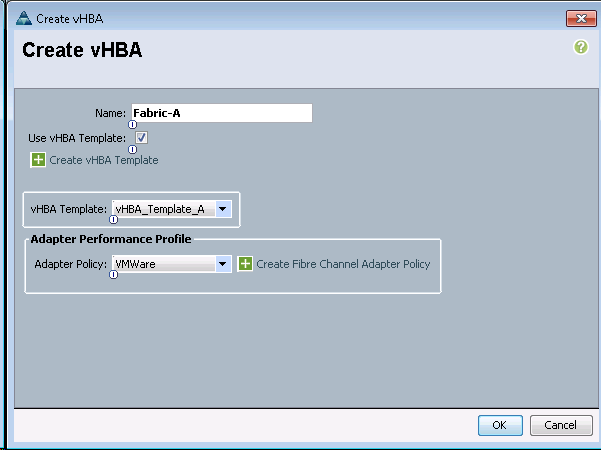

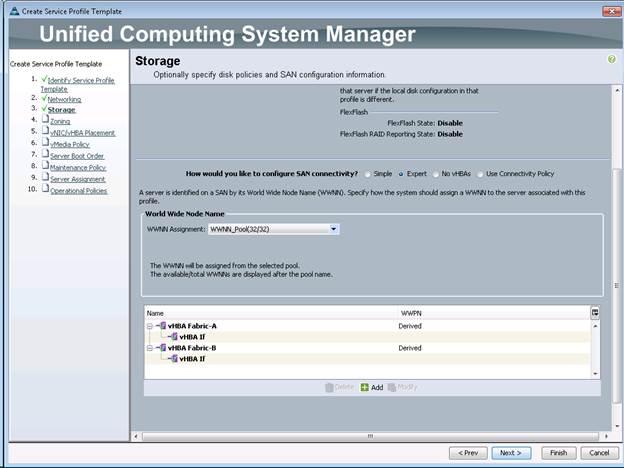

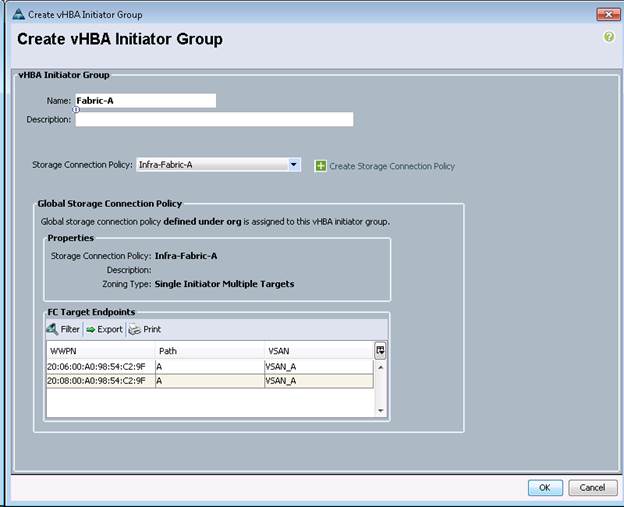

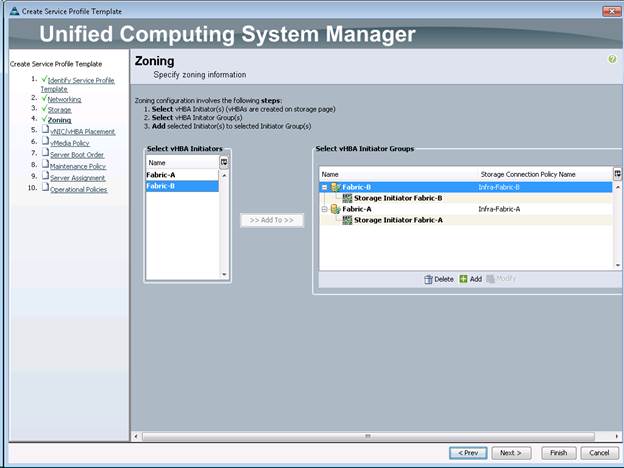

To create the LACP interface groups for the 10GbE data interfaces, complete the following step: