Cisco UCS and Nimble Unified Flash Fabric with VMWare vSphere 6.5

Available Languages

Cisco UCS and Nimble Unified Flash Fabric with VMWare vSphere 6.5

Deploying a Cisco-Nimble Integrated Infrastructure Platform based on Cisco UCS, Nimble Unified Flash Fabric (AF7000 All Flash Array and CS5000 Adaptive Flash Array), Cisco MDS Switches and Cisco Nexus Switches

Last Updated: April 19, 2017

About the Cisco Validated Design (CVD) Program

The CVD program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information visit

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unified Computing System (Cisco UCS), Cisco UCS B-Series Blade Servers, Cisco UCS C-Series Rack Servers, Cisco UCS S-Series Storage Servers, Cisco UCS Manager, Cisco UCS Management Software, Cisco Unified Fabric, Cisco Application Centric Infrastructure, Cisco Nexus 9000 Series, Cisco Nexus 7000 Series. Cisco Prime Data Center Network Manager, Cisco NX-OS Software, Cisco MDS Series, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2017 Cisco Systems, Inc. All rights reserved.

Table of Contents

Validated Hardware and Software Matrix

Solution Deployment – LAN Network Configuration

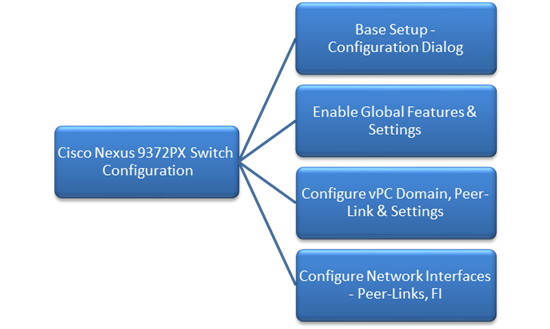

Cisco Nexus Configuration Workflow

Base Setup – Configuration Dialog

Enabling Global Features and Settings

Configure vPC Domain, Peer-Link and Settings

Configure Network Interfaces for VPC Peer Links

Configure Network Interfaces to Cisco UCS Fabric Interconnects

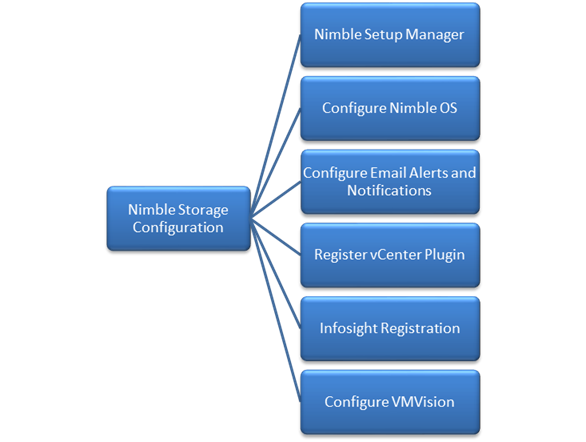

Solution Deployment – Storage Array Configuration

Nimble Storage Configuration Workflow

Base Setup of Nimble Storage Array

Initialize Nimble Storage Array

Configure Nimble OS using the GUI

Configure Array to Send Email Notifications for Alerts (Optional)

Configure Arrays to Monitor VMware Environment using VMVision

Solution Deployment – SAN Fabric Configuration

Cisco MDS Configuration Workflow

Base Setup using Configuration Dialog

Enable Global Features and Settings

Create Port Channels to Cisco UCS Fabric Interconnects

Configure FC Interfaces to Unified Flash Fabric

Configure Device Aliases for Unified Flash Fabric

Solution Deployment – Cisco UCS Configuration

Cisco UCS Configuration Workflow

Cisco UCS Configuration – Base Setup

Initial Setup of Cisco Fabric Interconnects

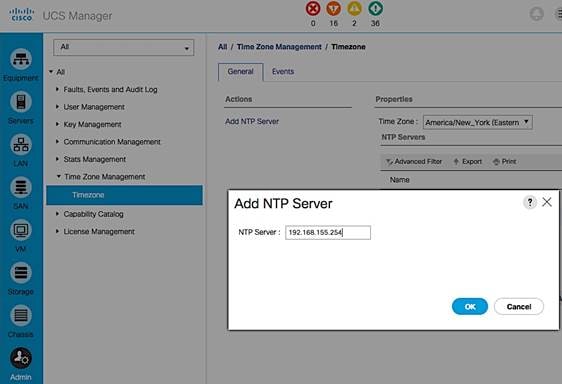

Cisco UCS Manager – Configure NTP Server

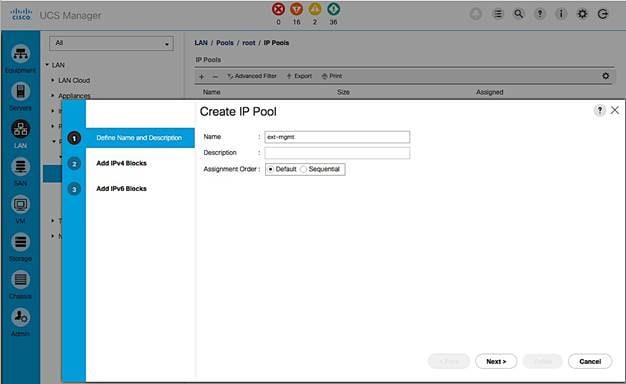

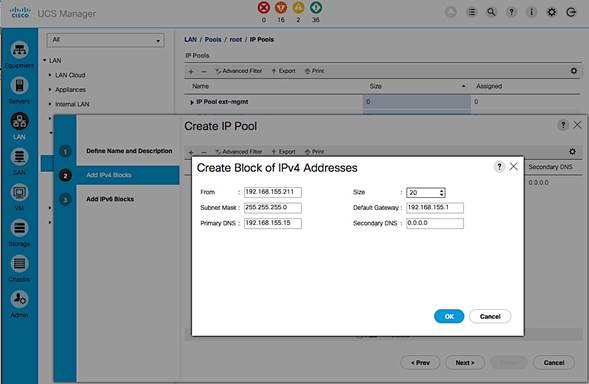

Assign Block of IP addresses for KVM Access

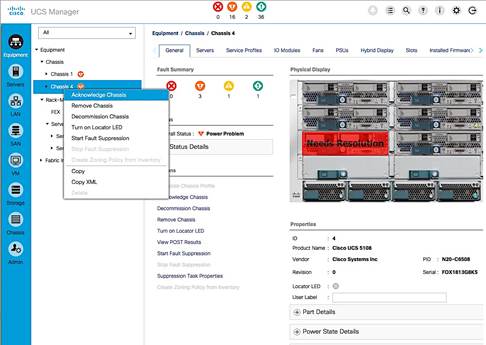

Acknowledge Cisco UCS Chassis, Cisco UCS C-series and FEX

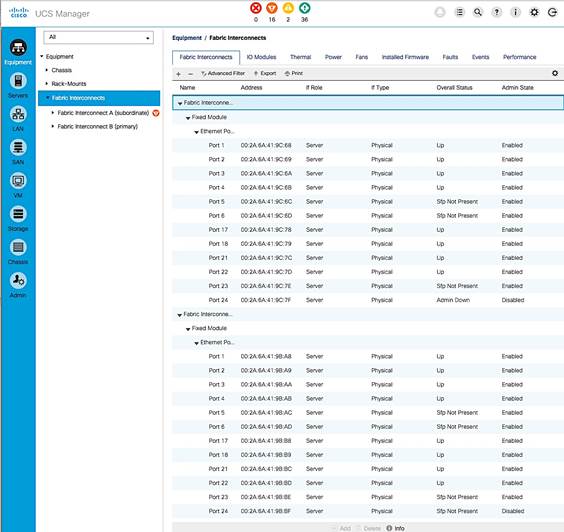

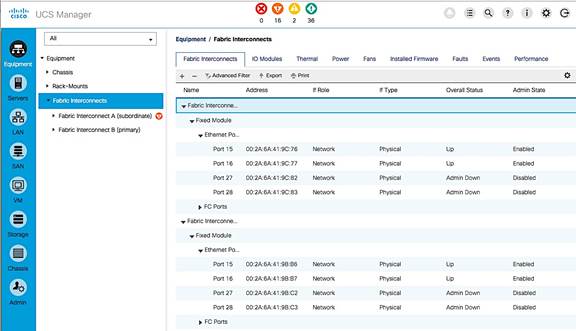

Enable Uplink Ports to Cisco Nexus 9000 Series Switches

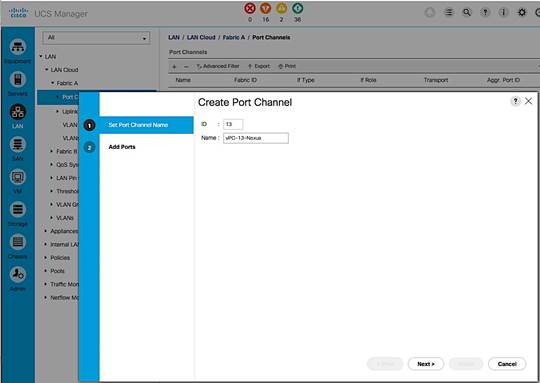

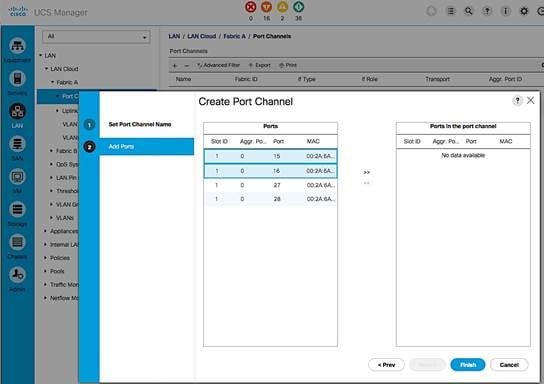

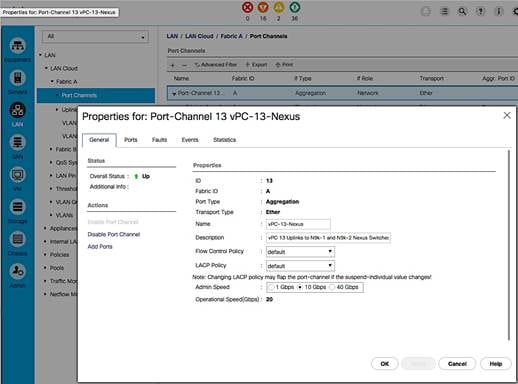

Configure Port Channels on Uplink Ports to Cisco Nexus Switches

Enable Fibre Channels Ports to Cisco MDS 9100 Series Switches

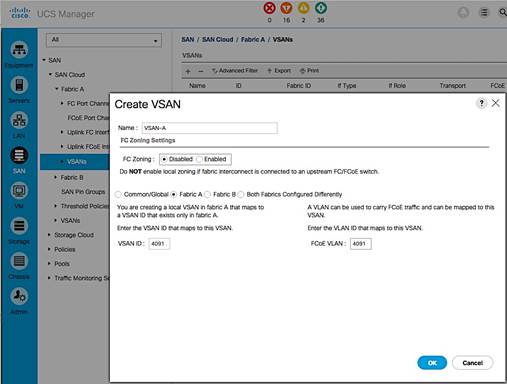

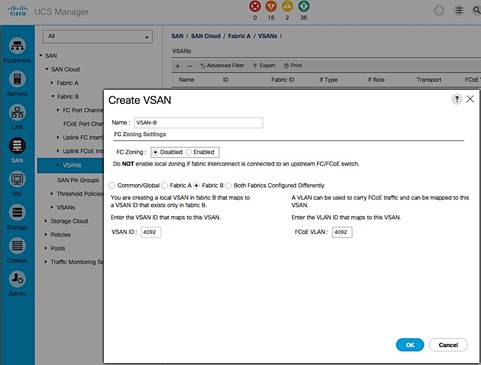

Create VSAN for Fibre Channel Interfaces

Configure Port Channels on Fibre Channel Uplinks to Cisco MDS Switches.

Cisco UCS Configuration Backup

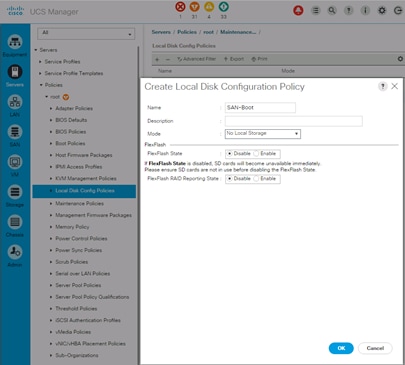

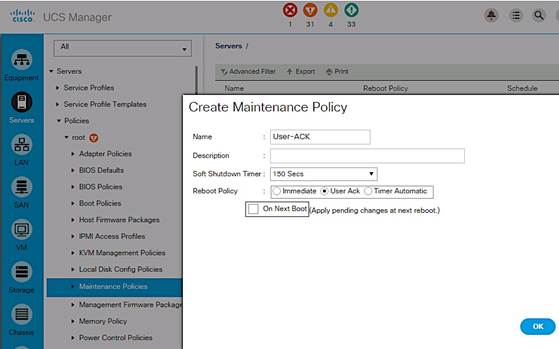

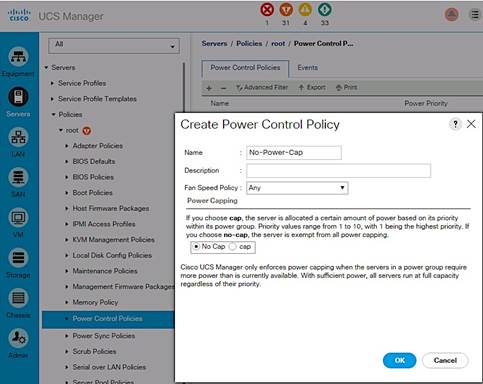

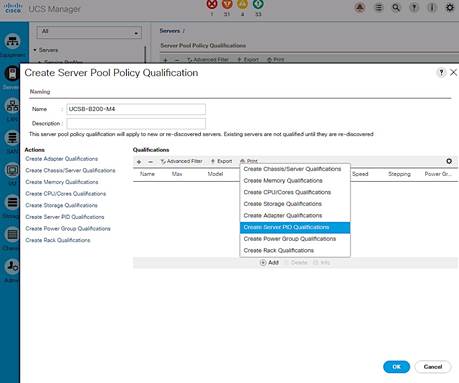

Cisco UCS Configuration – Server

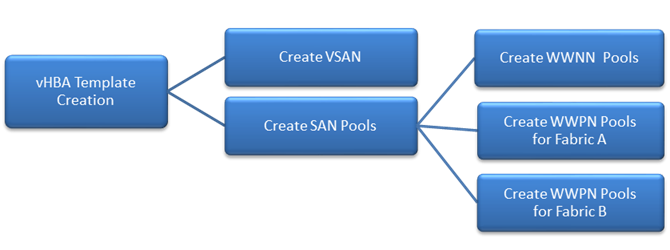

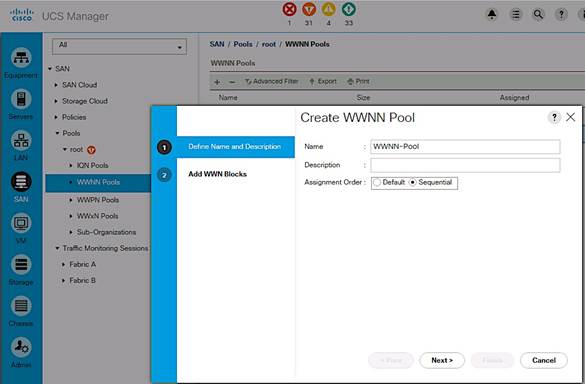

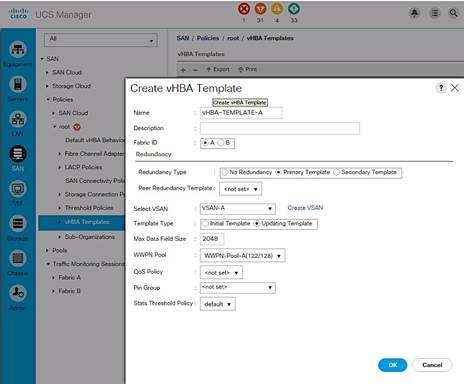

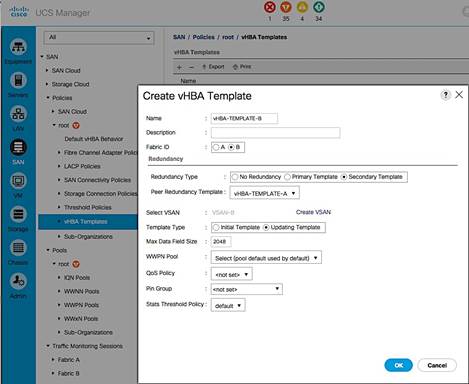

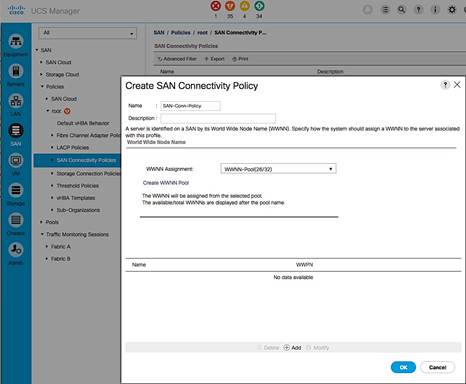

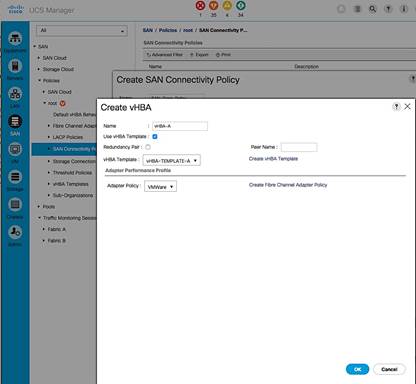

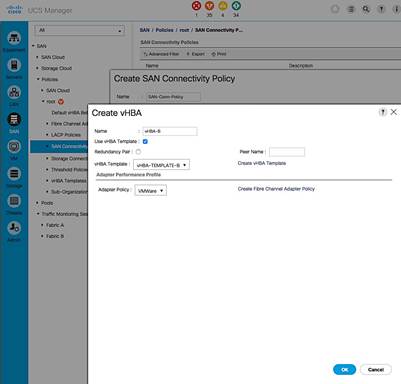

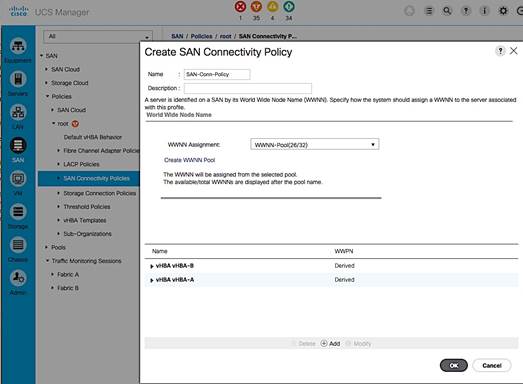

Create SAN Connectivity Policy

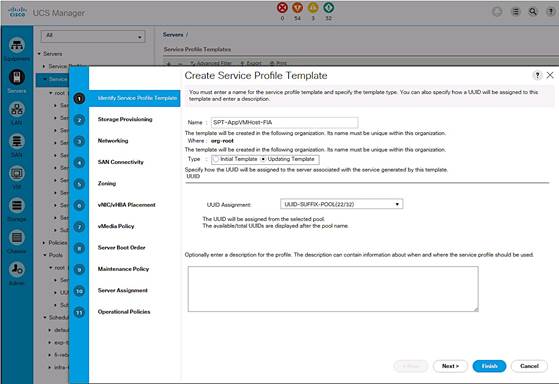

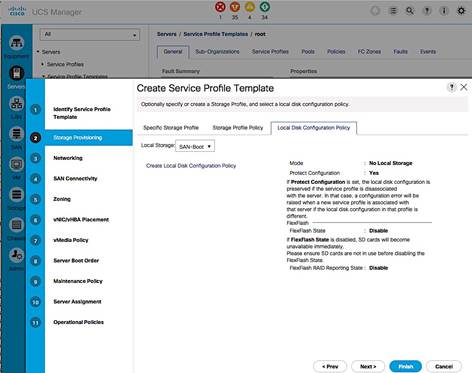

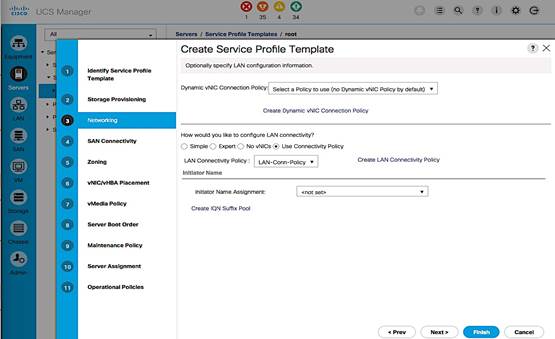

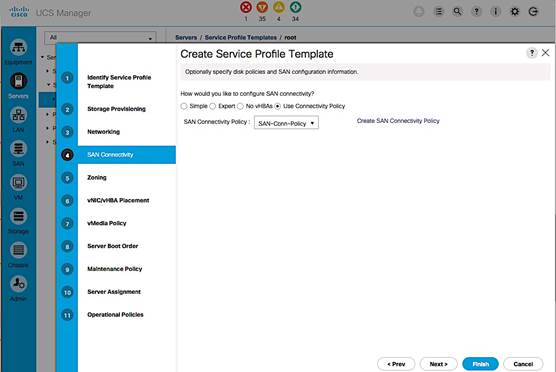

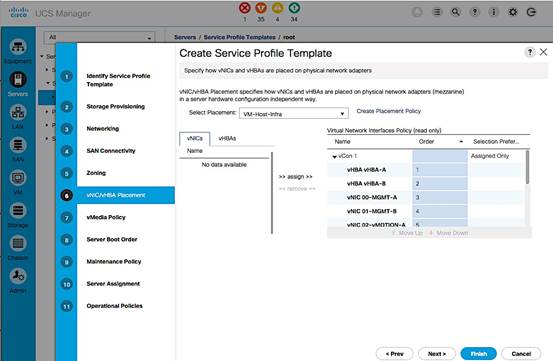

Cisco UCS Configuration - Service Profile Template

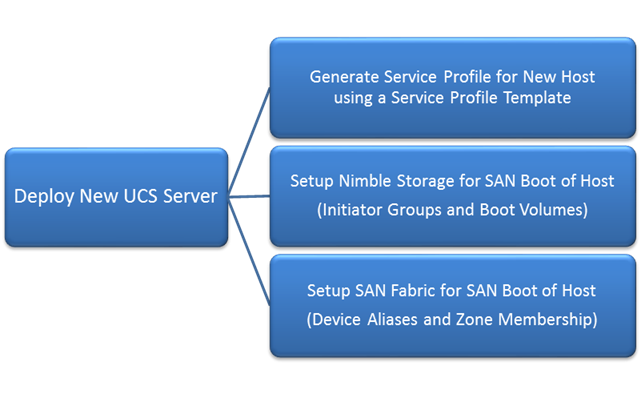

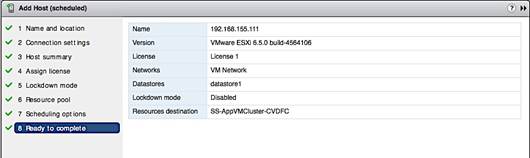

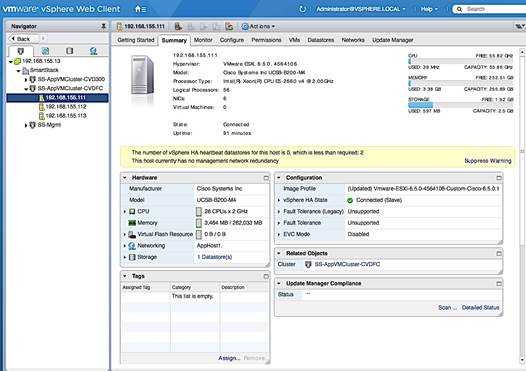

Solution Deployment – Deploy New Host

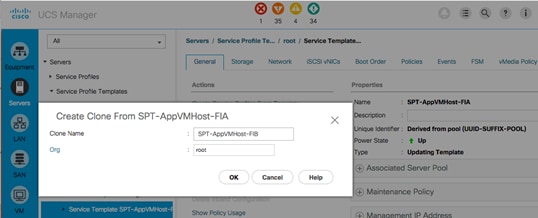

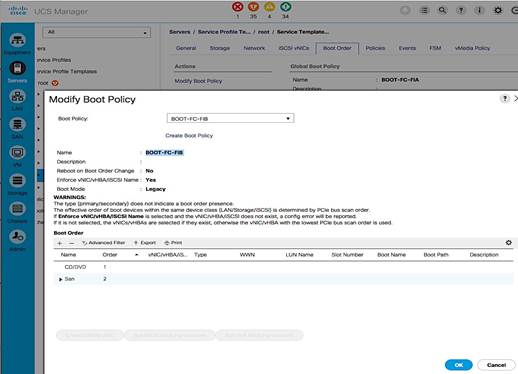

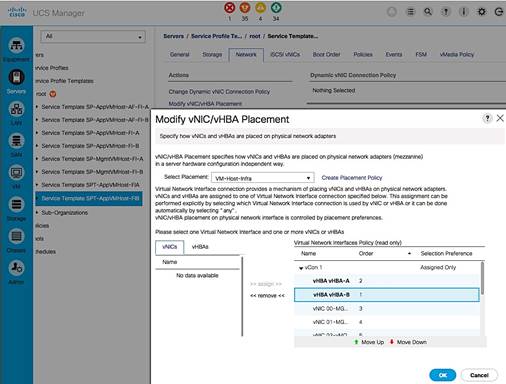

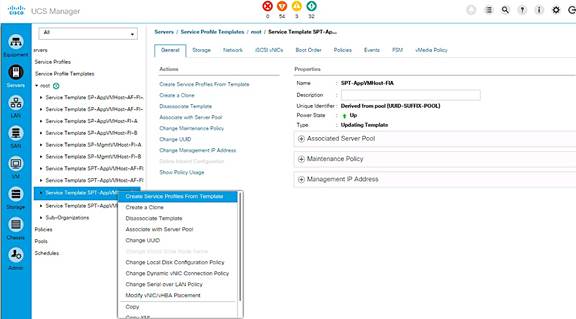

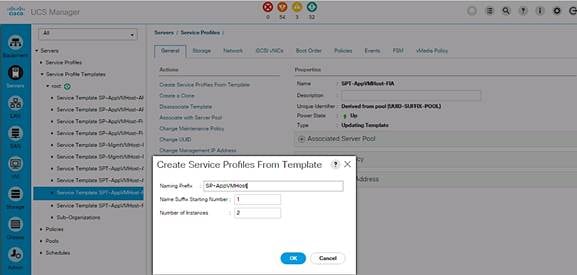

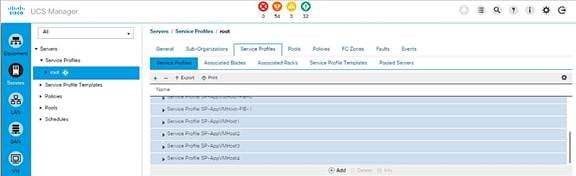

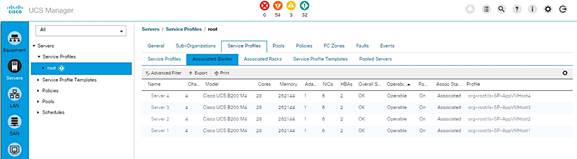

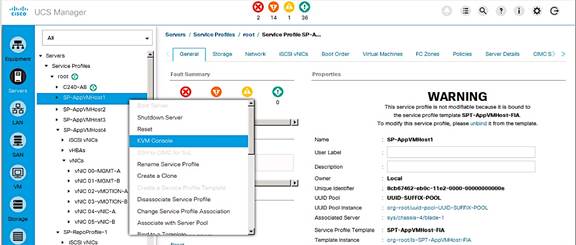

Generate Service Profile for the New Host using a Service Profile Template.

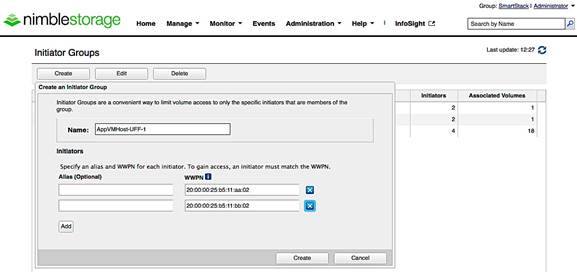

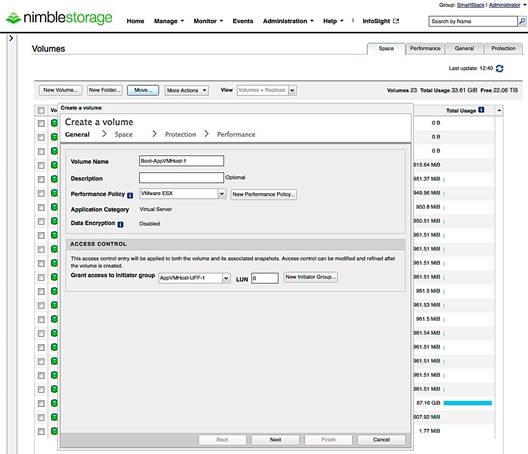

Setup Nimble Storage Array for SAN Boot of Host

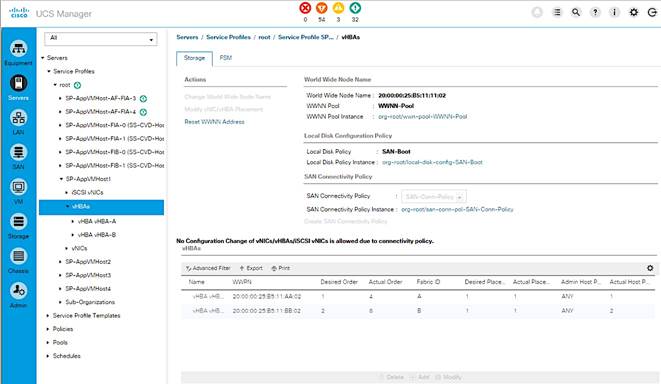

Collect Initiator WWPNs from the Host Service Profile

Create Initiator Group for Host

Setup SAN Fabric for SAN Boot of Host

Configure Device Aliases for the New Host

Create Zones and Zoneset for the New Host

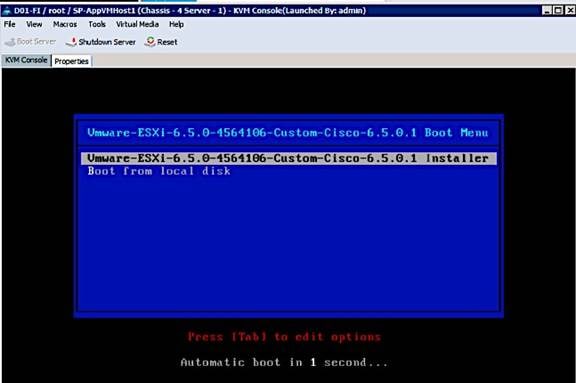

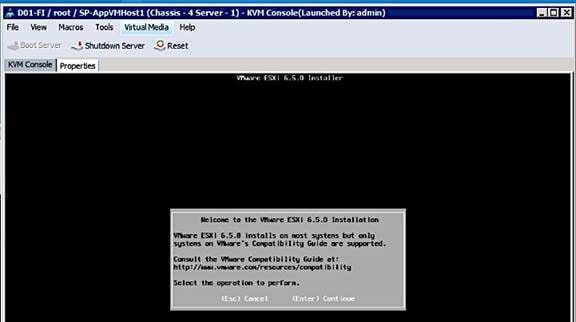

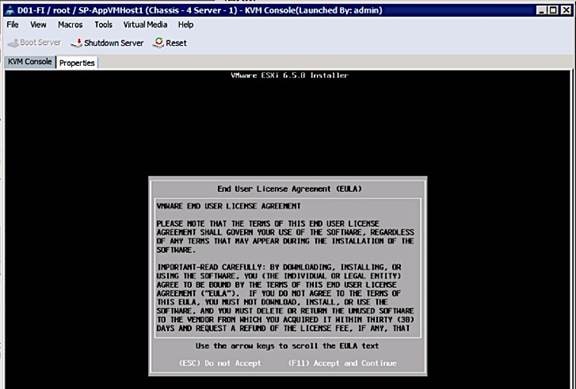

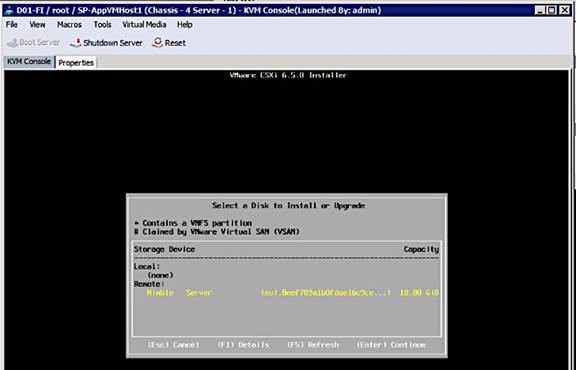

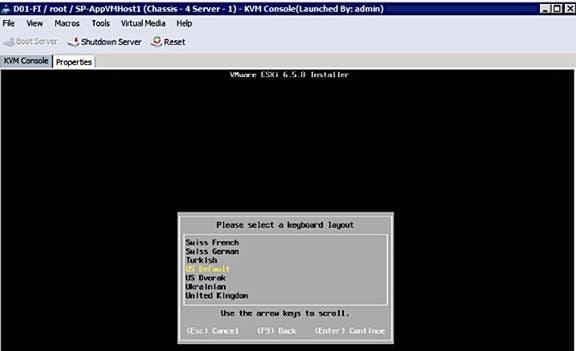

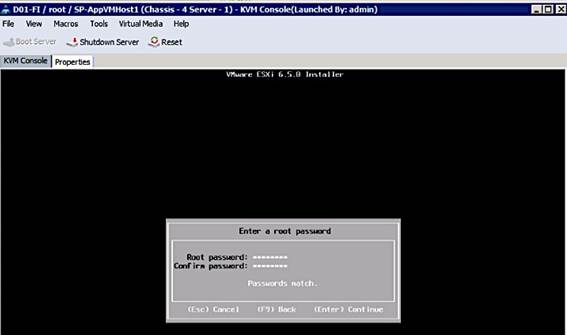

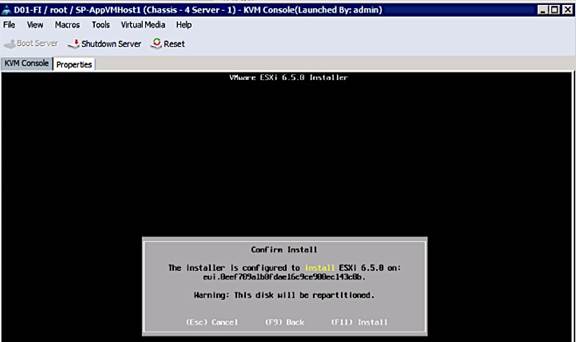

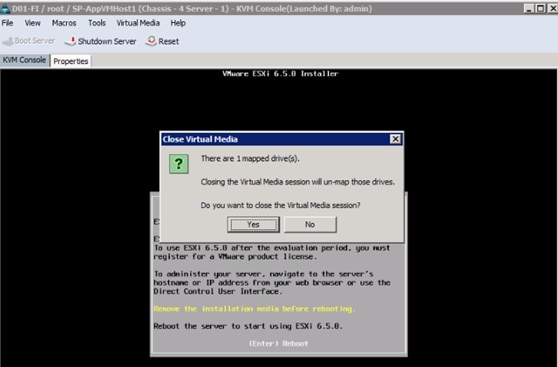

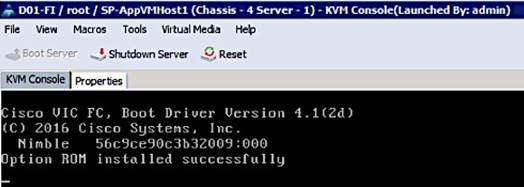

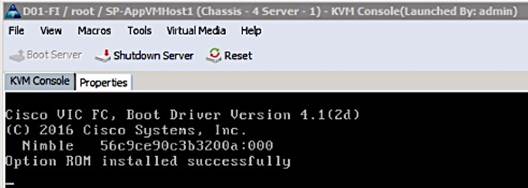

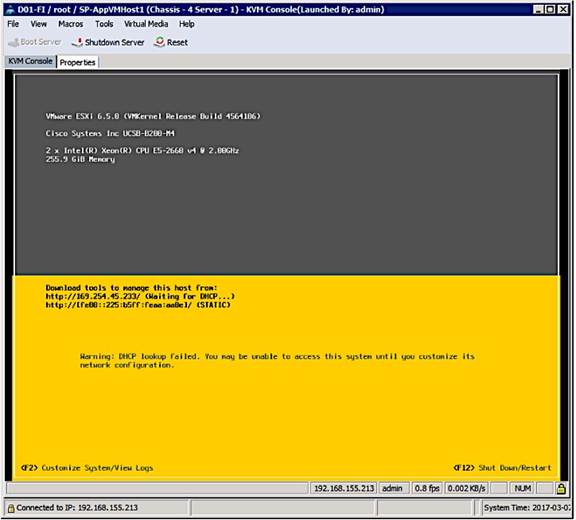

SAN Boot and Install of ESXi on Host

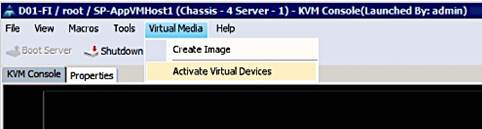

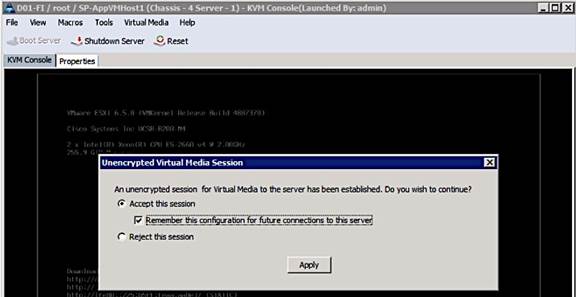

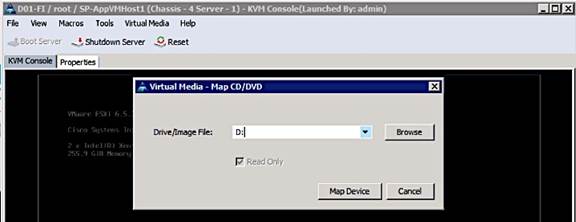

KVM Console into Host from Cisco UCSM Web Interface

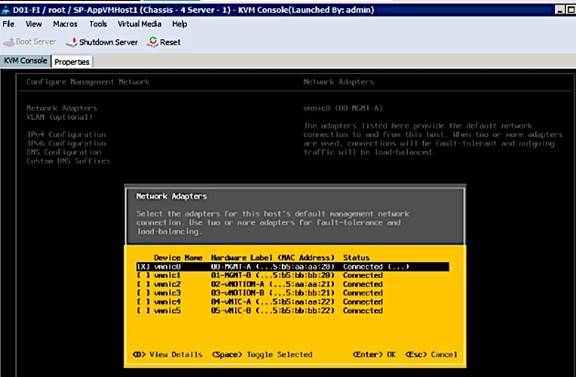

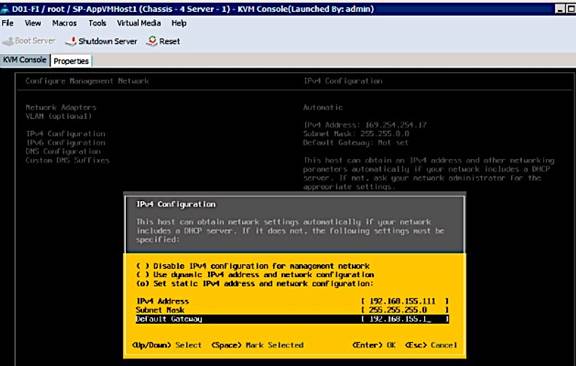

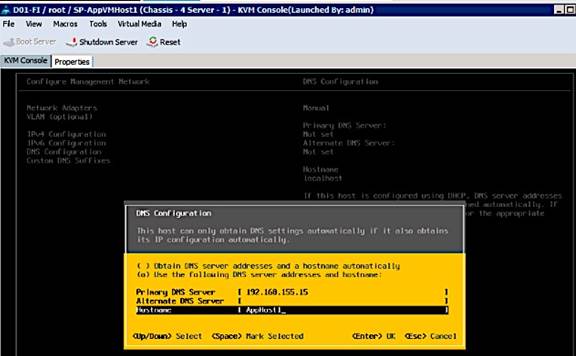

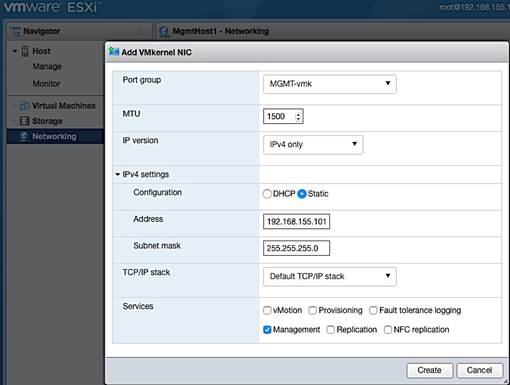

Setup ESXi Host for IP Management

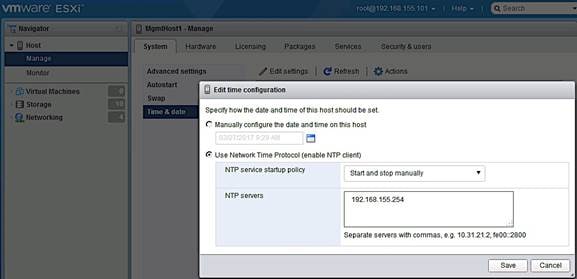

Provision New Host without vCenter

Access Host Directly using vSphere Web Client

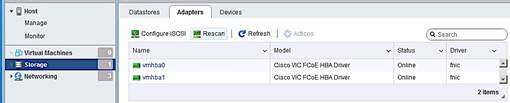

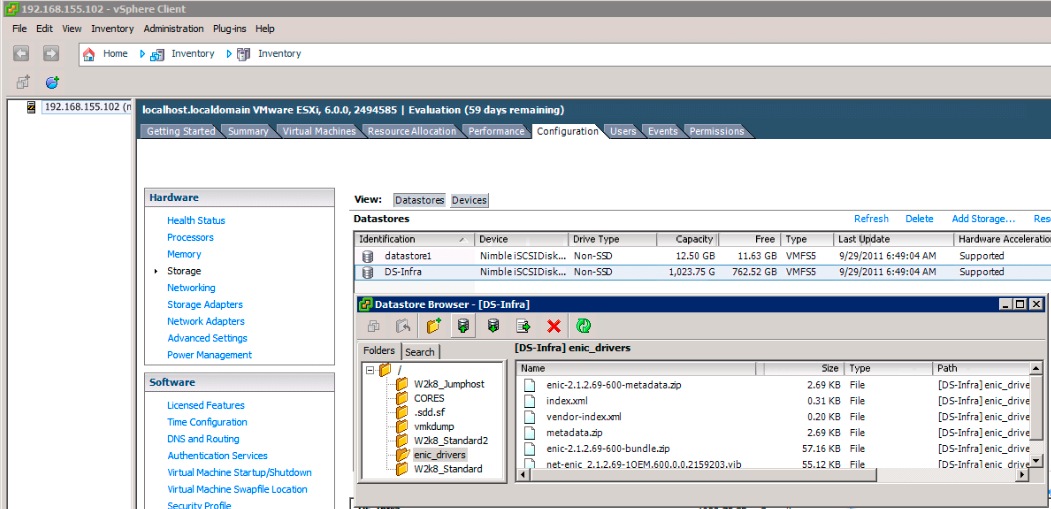

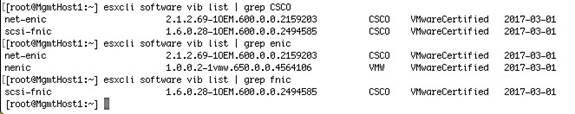

Update FNIC and ENIC Drivers on ESXi Host

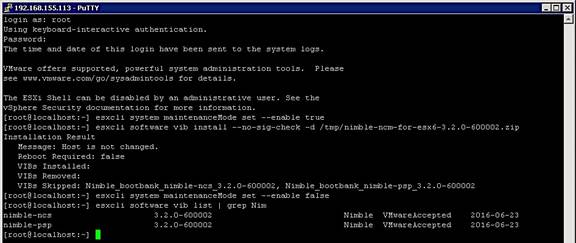

Install Nimble Connection Manager (NCM)

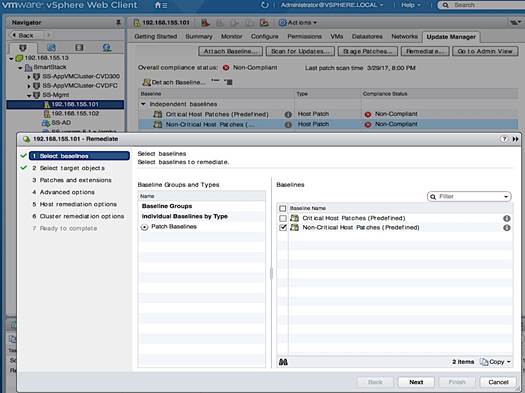

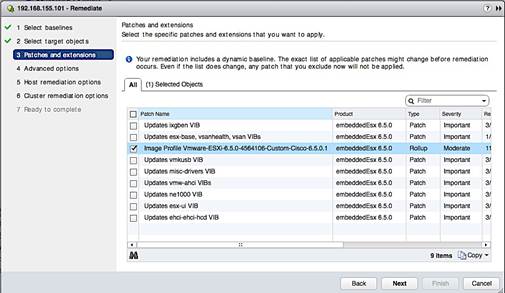

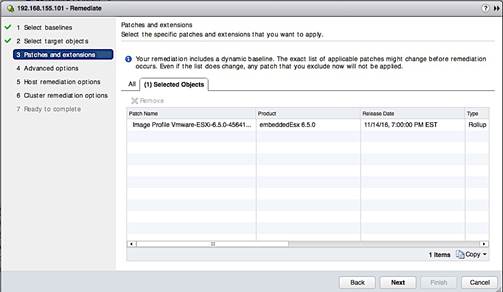

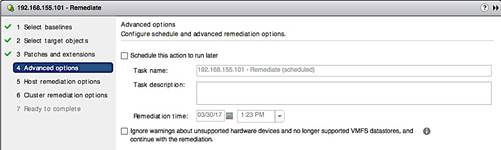

Solution Deployment – vSphere Setup

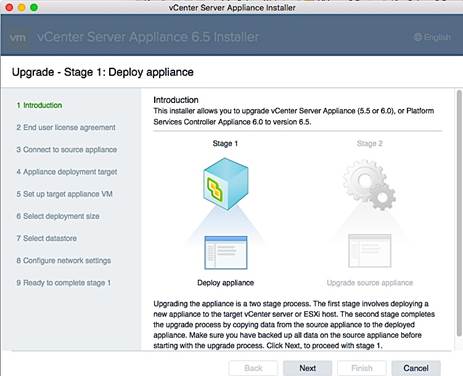

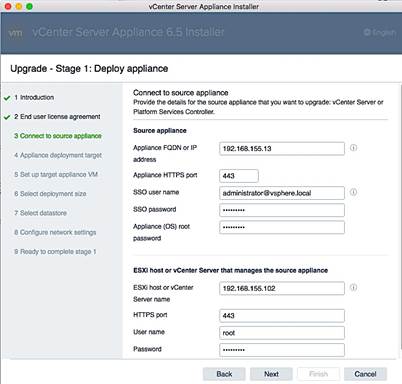

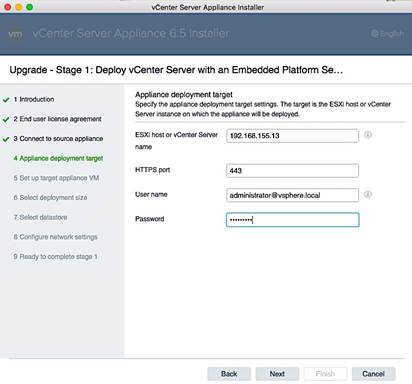

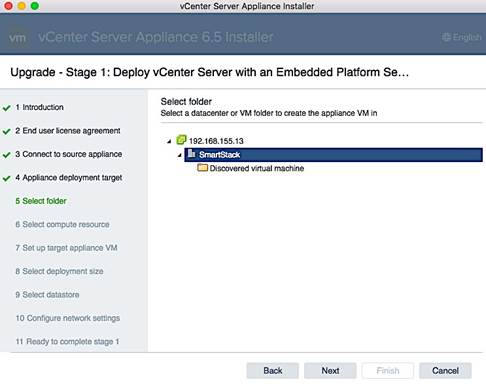

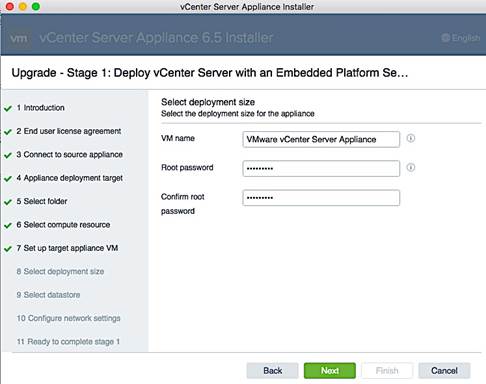

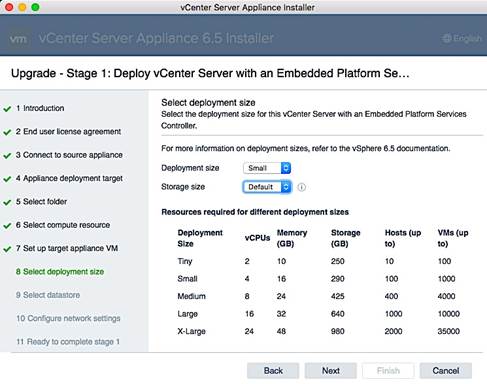

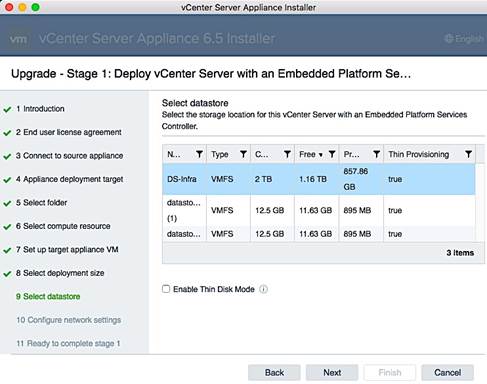

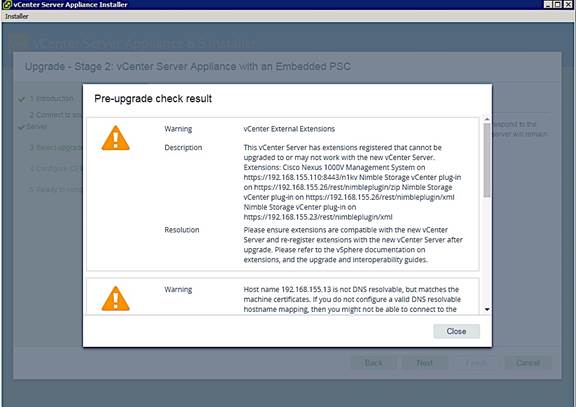

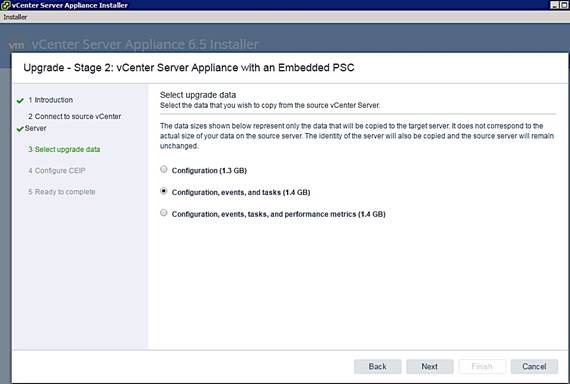

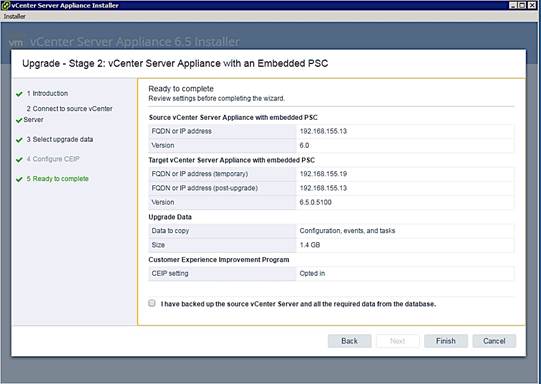

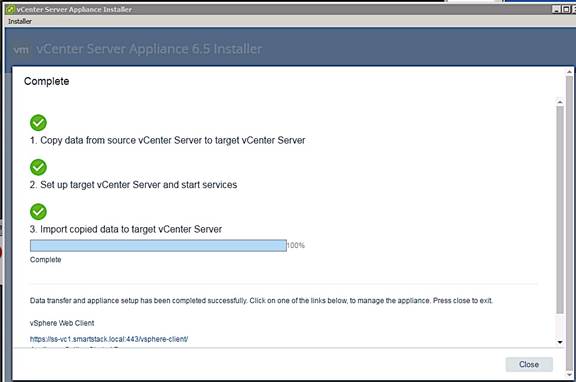

Optional: Deploy VMware vCenter Appliance 6.5

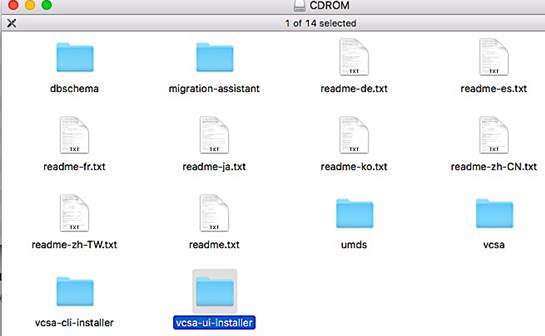

Download vCenter Server Appliance (VCSA) ISO from VMware

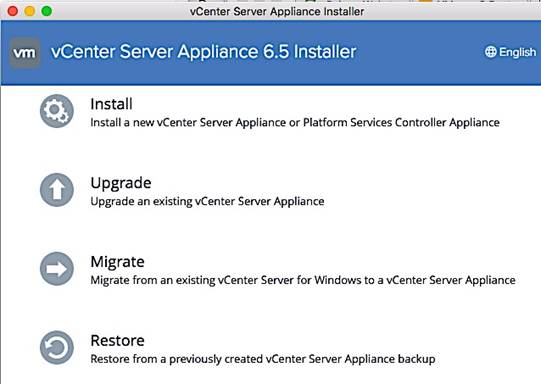

Install vCenter Server Appliance

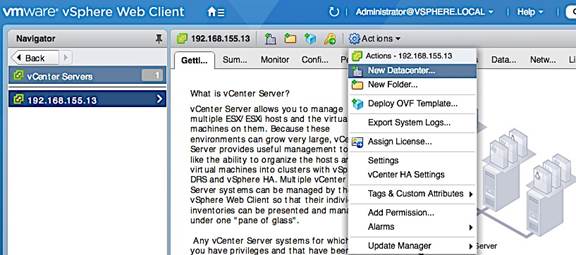

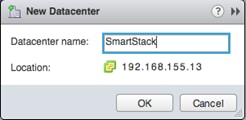

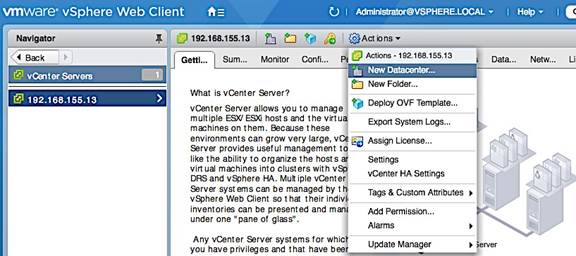

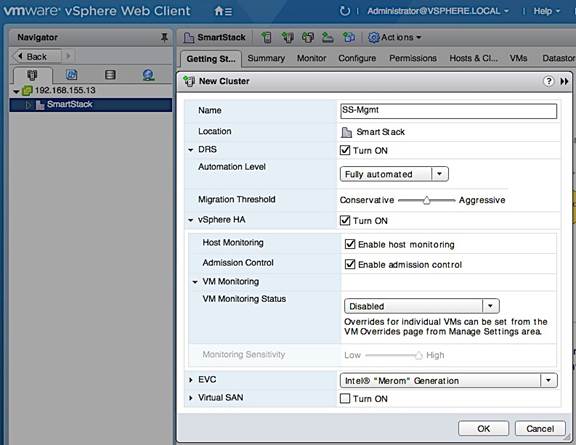

Setup vCenter for Datacenter, Cluster, DRS and HA

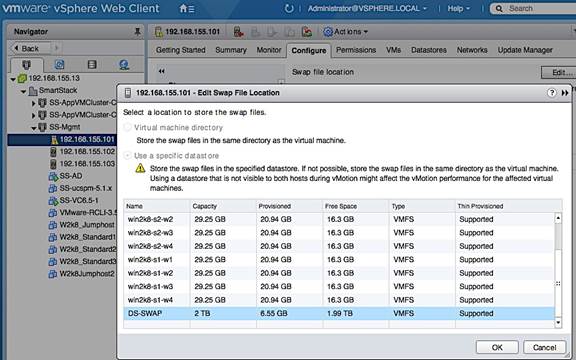

Specify Virtual Machine (VM) Swap File location – Cluster Level

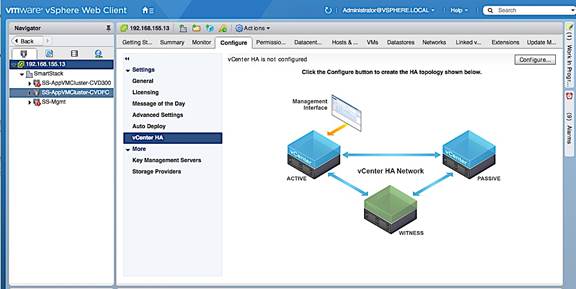

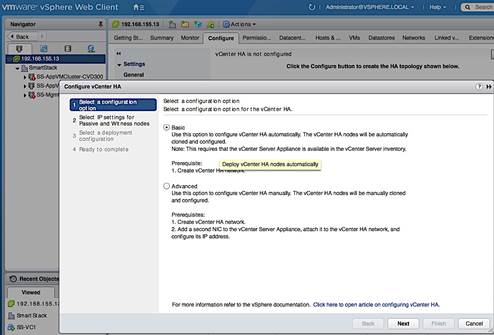

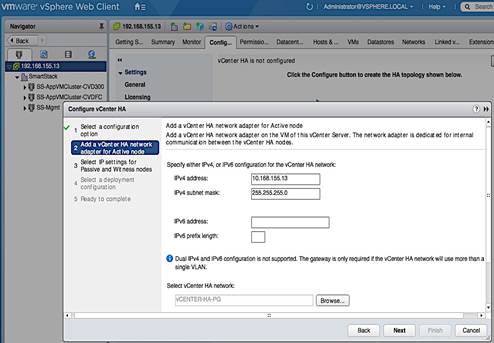

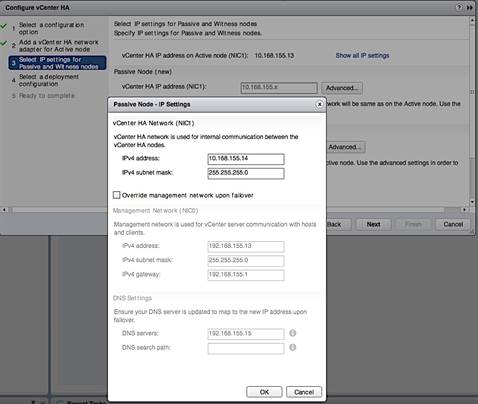

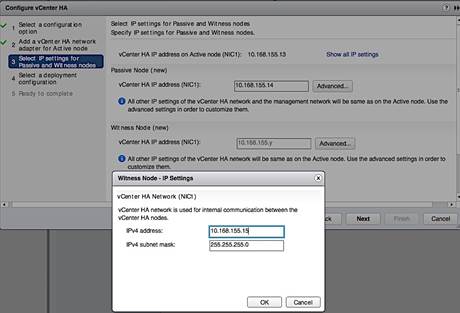

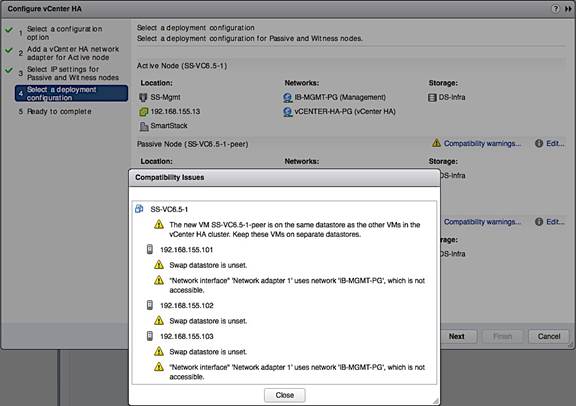

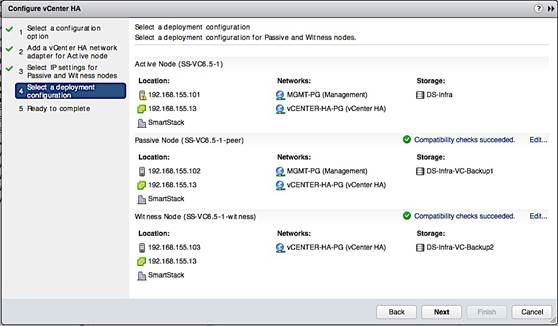

Deploy High Availability for vCenter

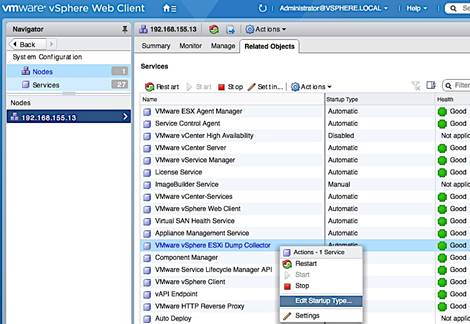

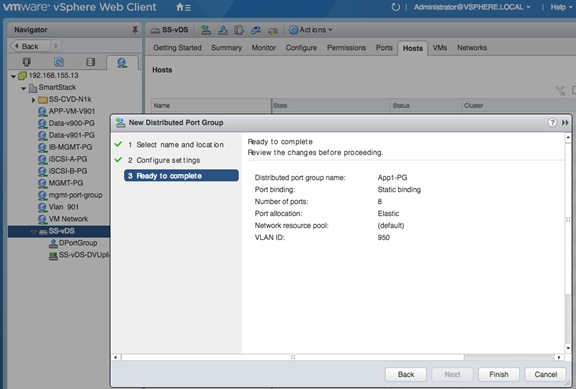

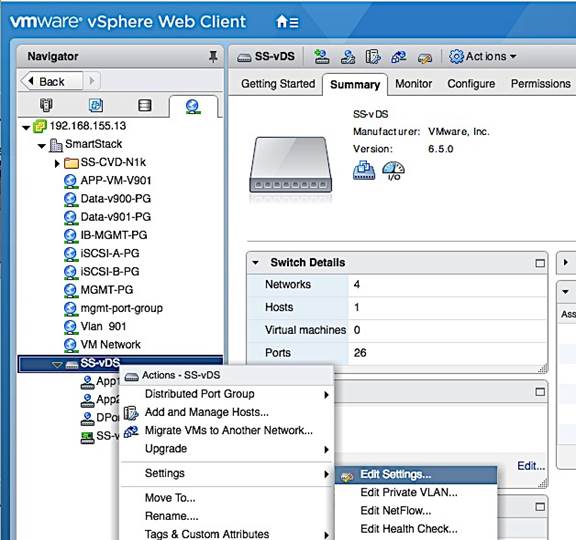

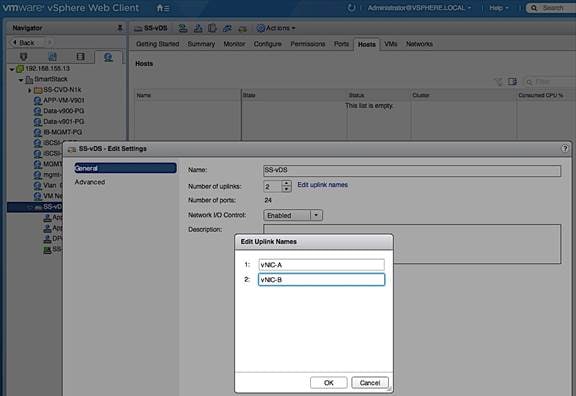

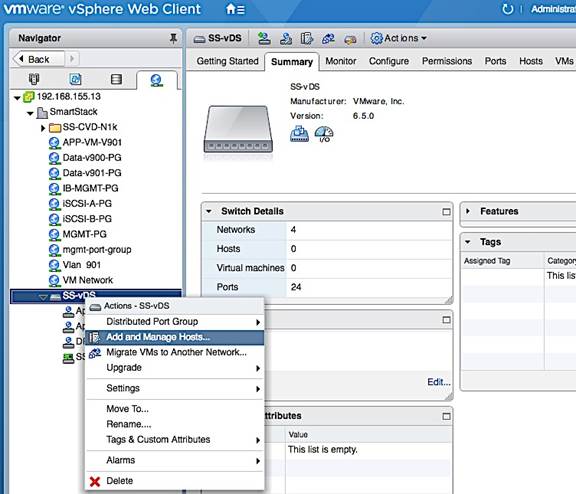

Create vSphere Distributed Switch for Application VM Traffic

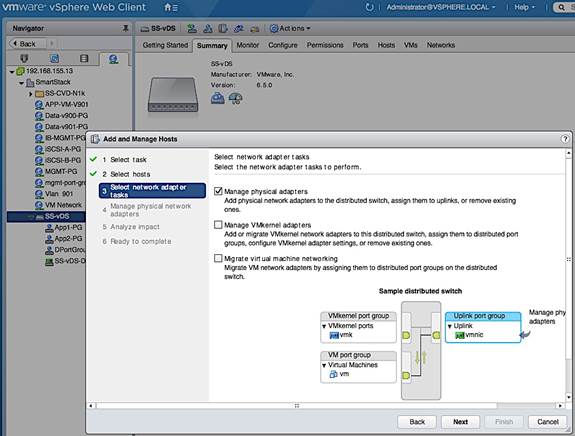

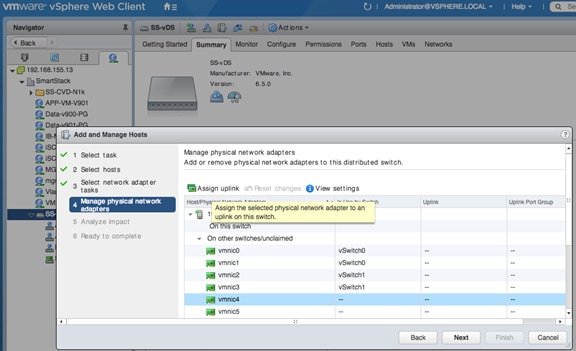

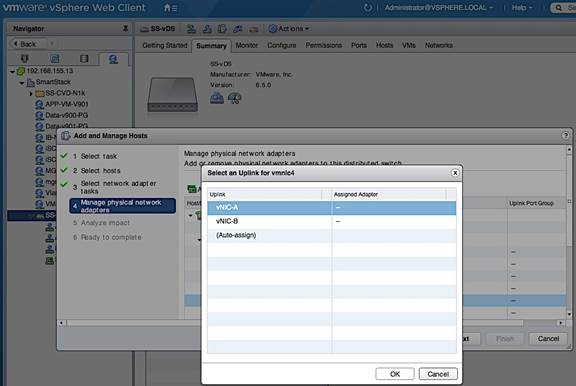

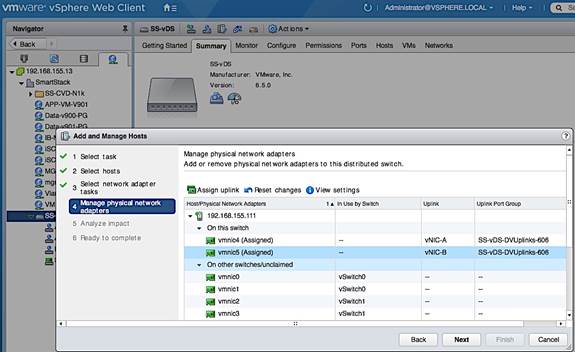

Workflow for vSphere Distributed Switch Configuration

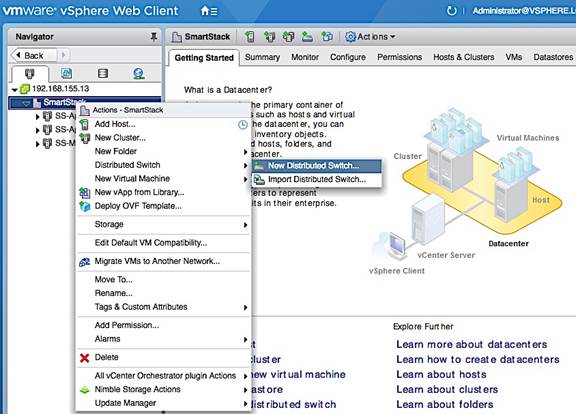

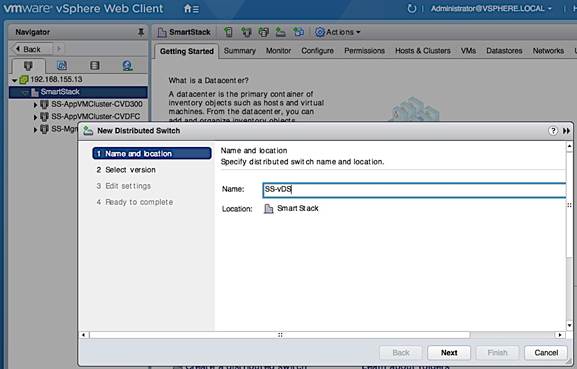

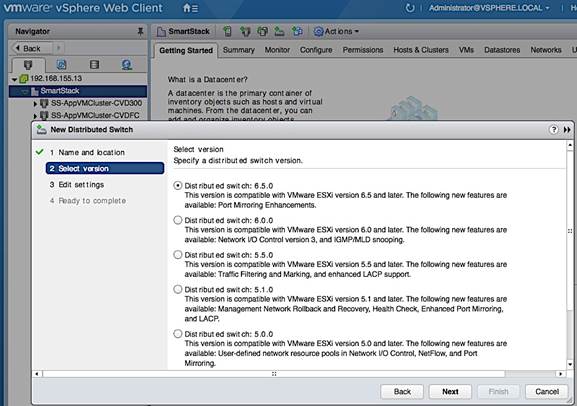

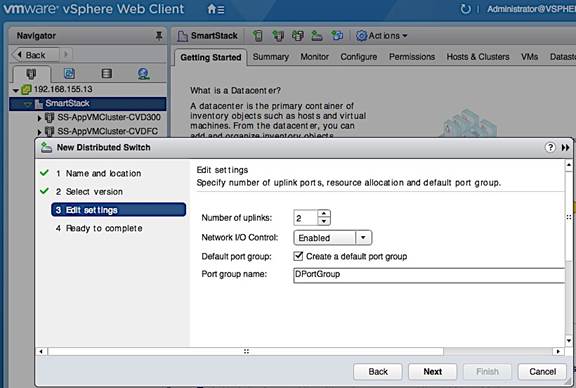

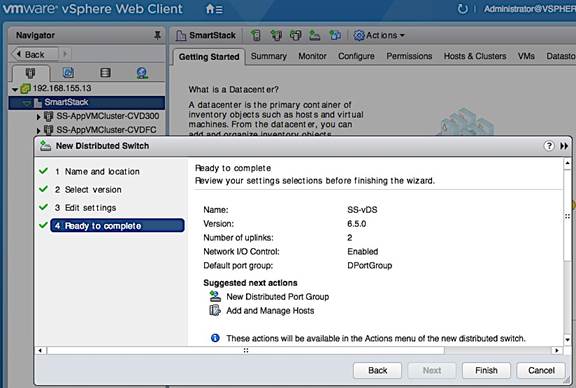

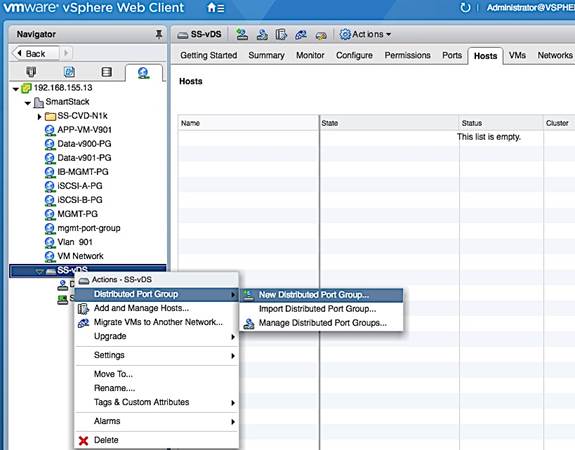

Create vSphere Distributed Switch

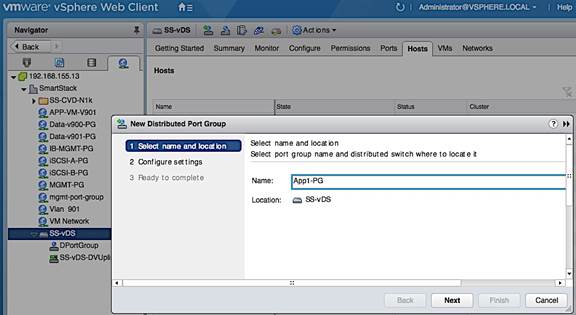

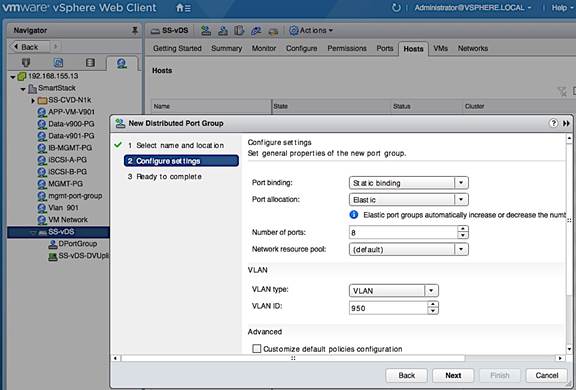

Add Port Groups for Applications

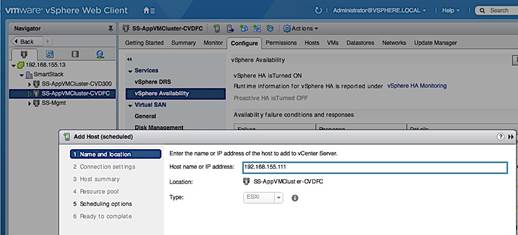

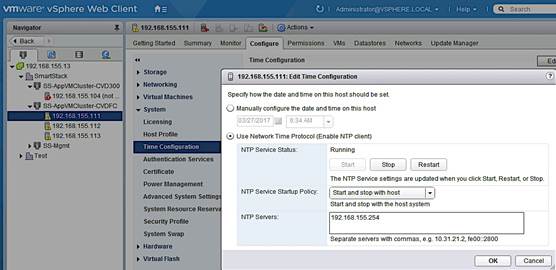

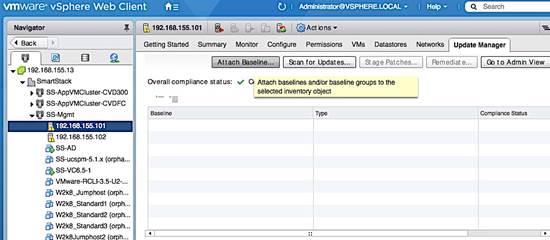

Solution Deployment – Add and Setup New Host with vCenter

Update FNIC and ENIC Drivers on a ESXi Host

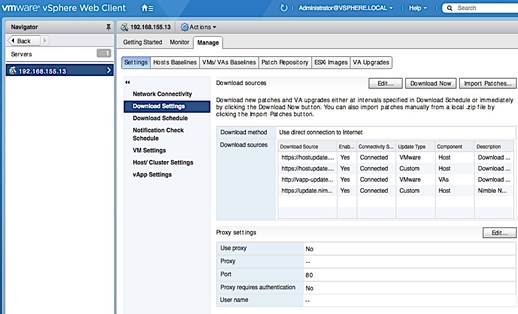

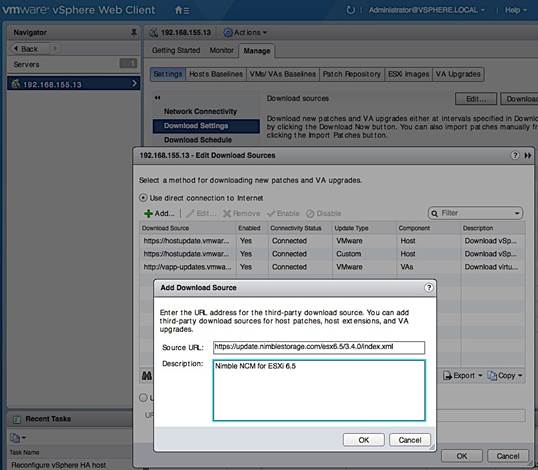

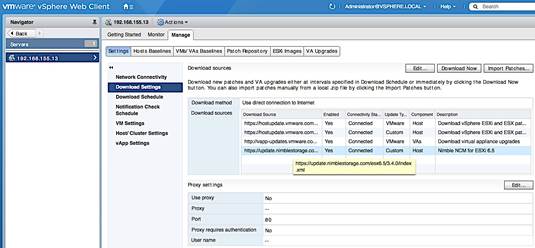

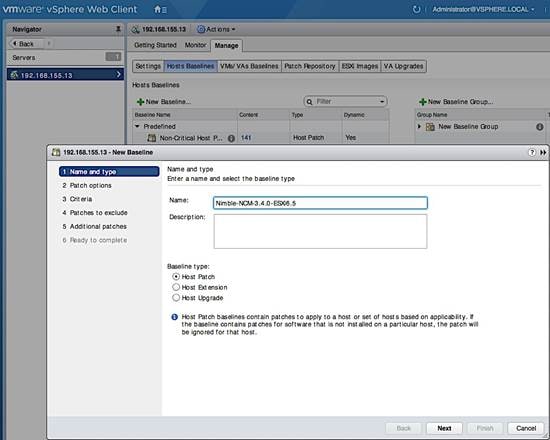

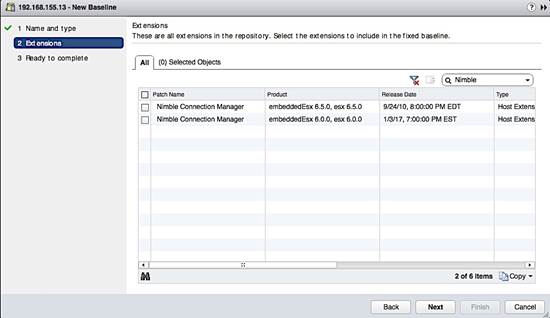

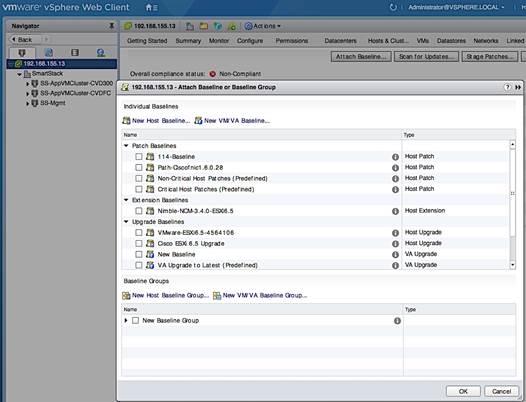

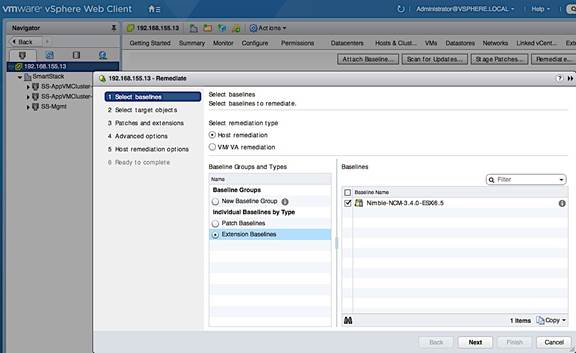

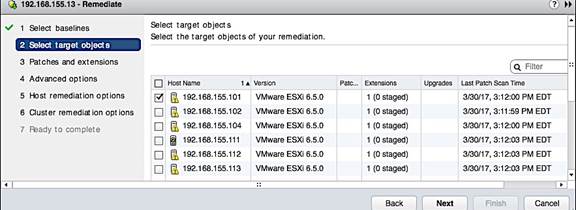

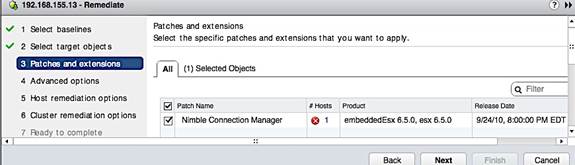

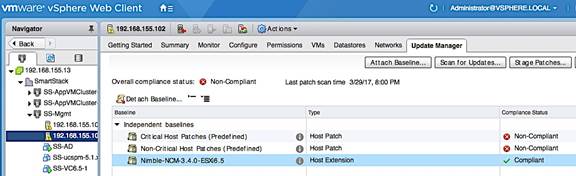

Install Nimble Connection Manager (NCM)

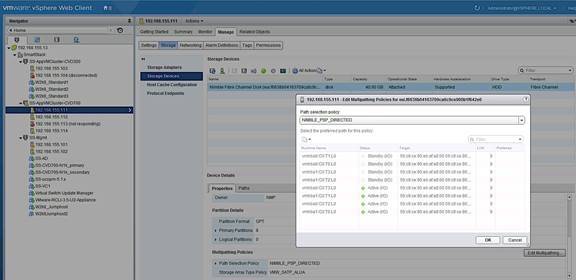

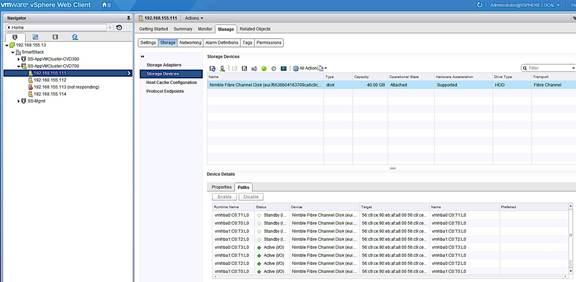

Verify Storage Configuration Post-NCM Install

Register Nimble vCenter Plugin

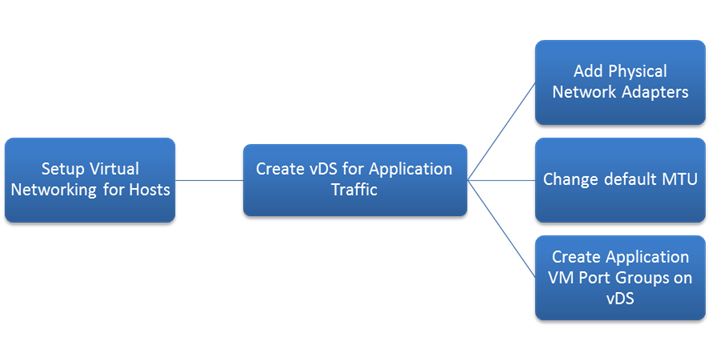

Specify Virtual Machine (VM) Swap File location – Host side

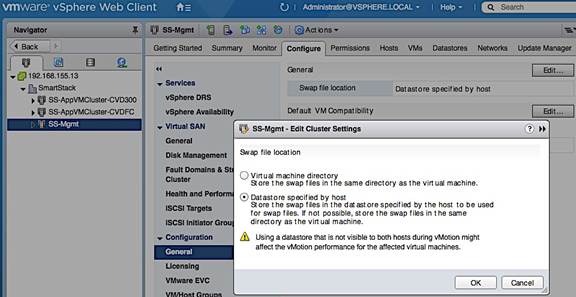

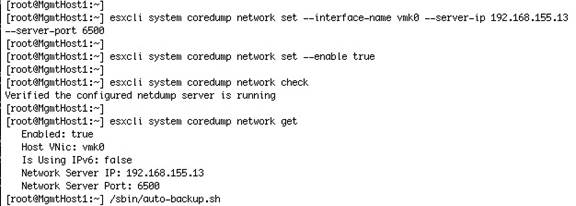

Setup ESXi Dump Collector - Host side

Configure ESXi Dump Collector using ESX Shell/CLI

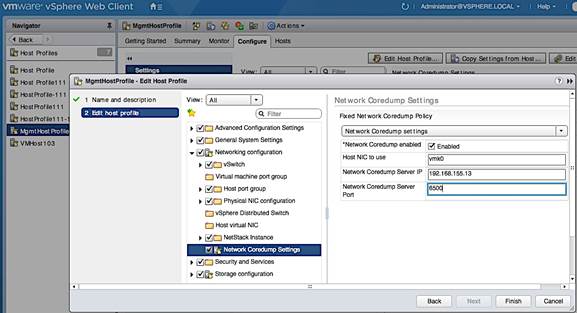

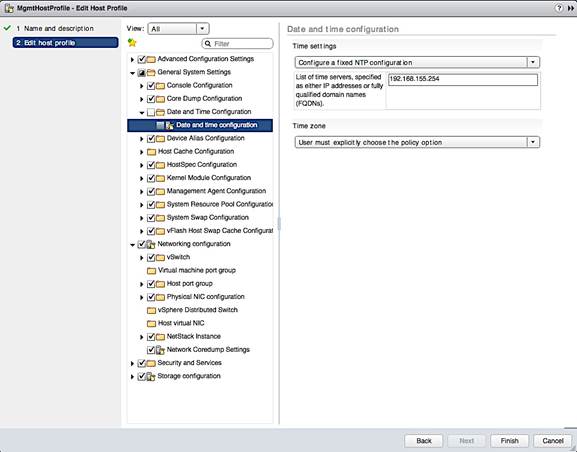

Configure ESXi Dump Collector using a Host Profile

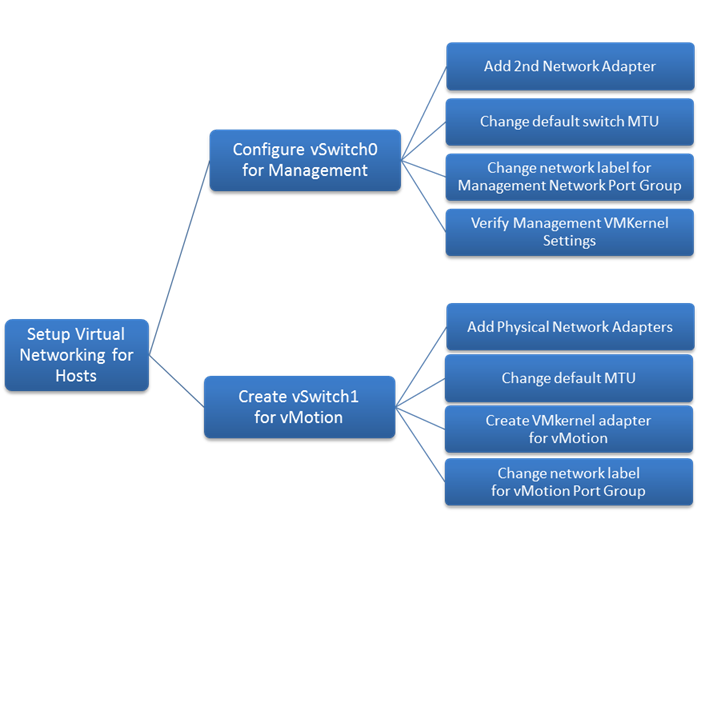

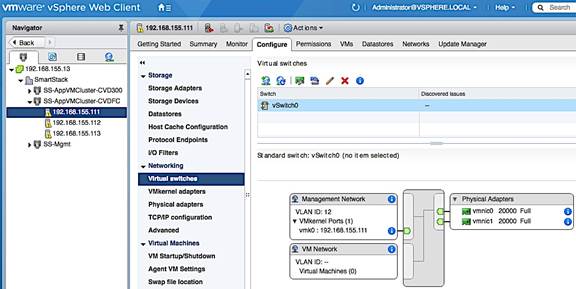

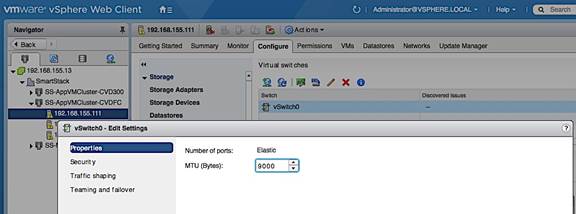

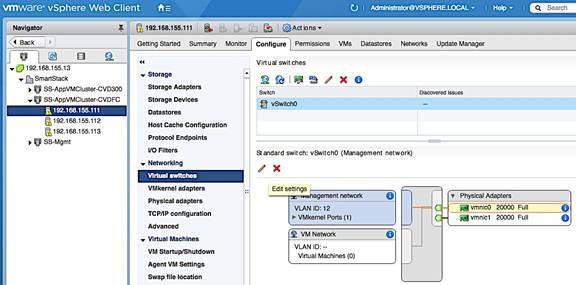

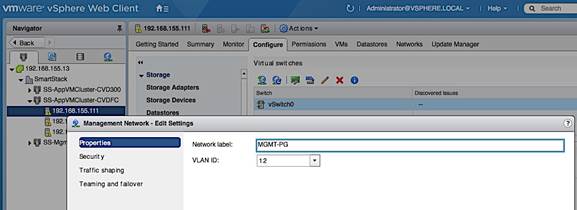

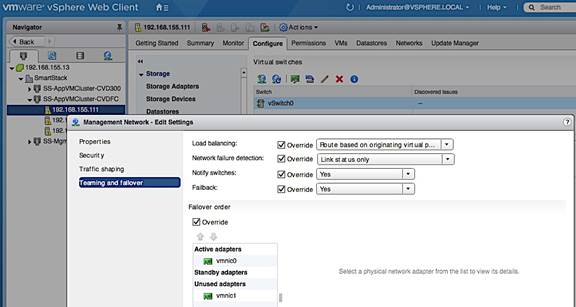

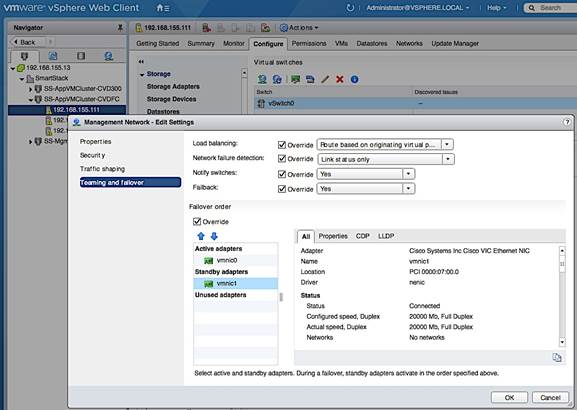

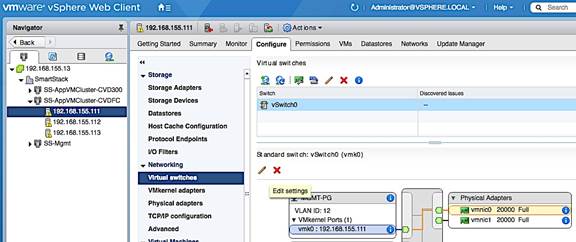

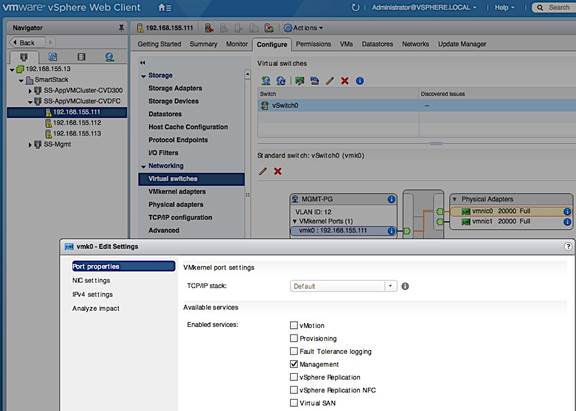

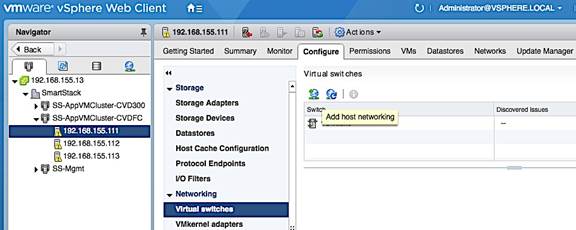

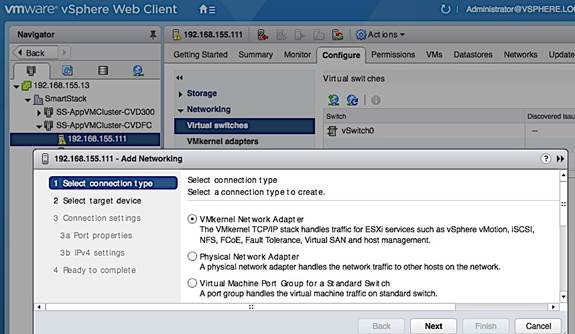

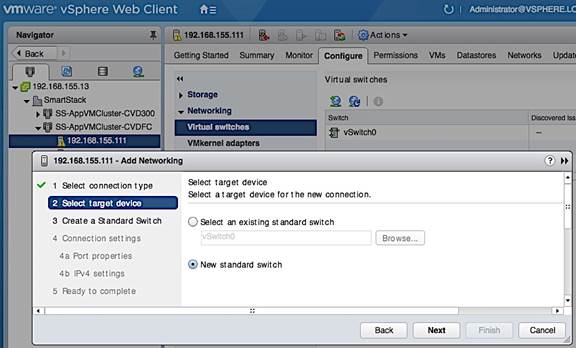

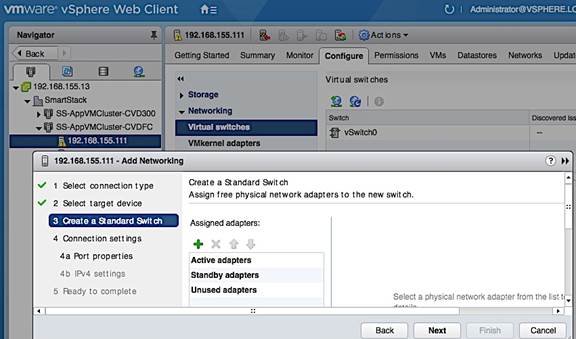

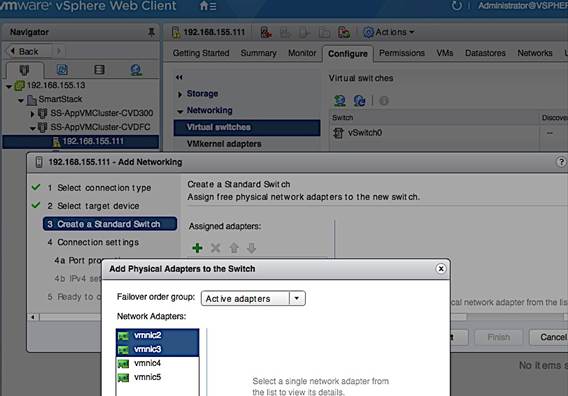

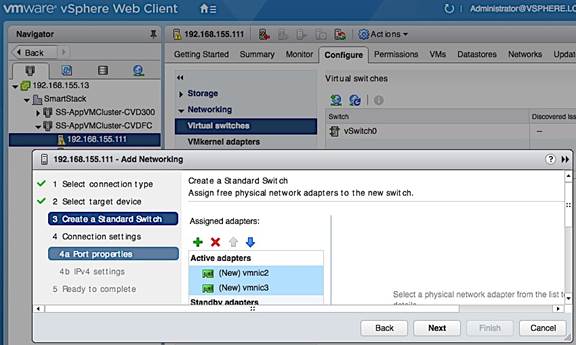

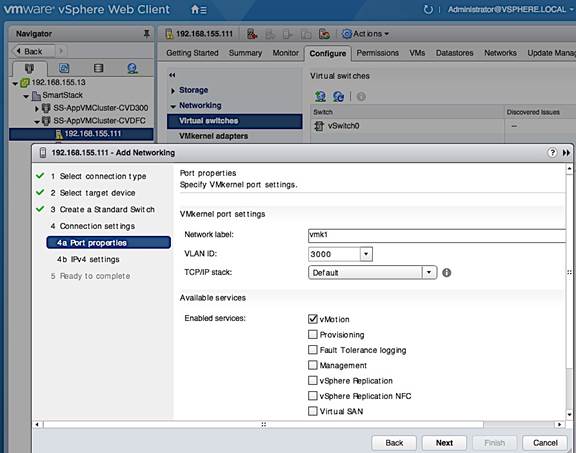

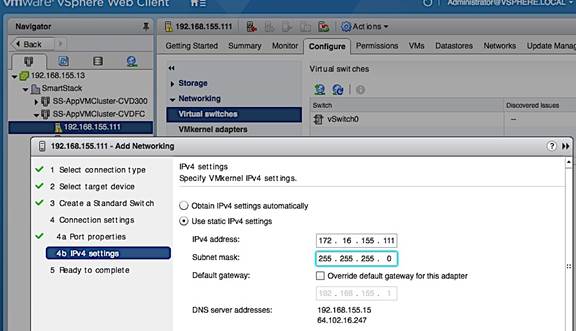

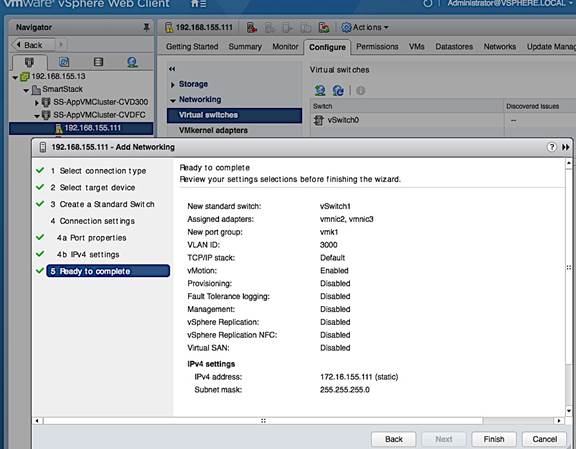

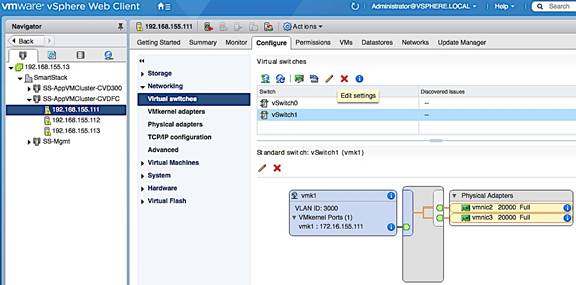

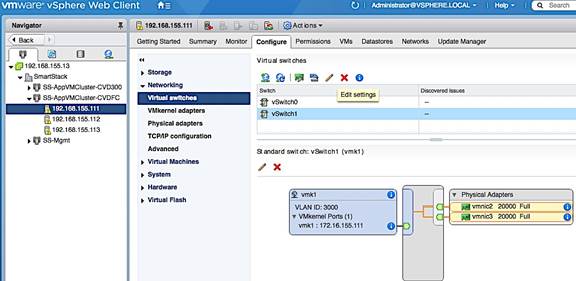

Setup vSphere vSwitch Networking on Host for Management and vMotion

Workflow for vSphere vSwitch Networking Setup

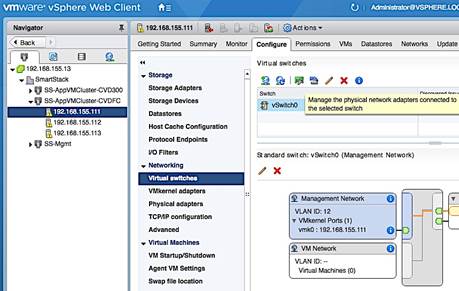

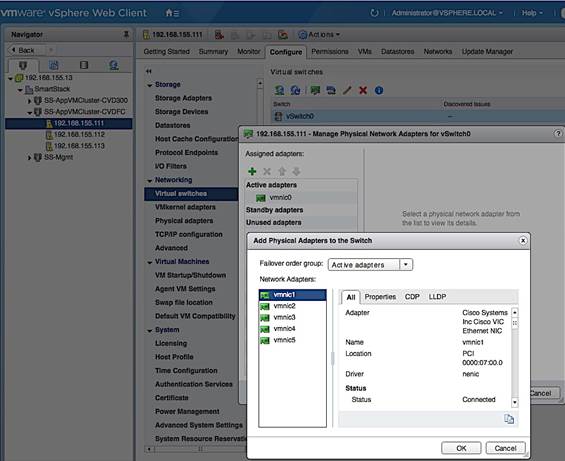

Configure vSwitch0 for Management

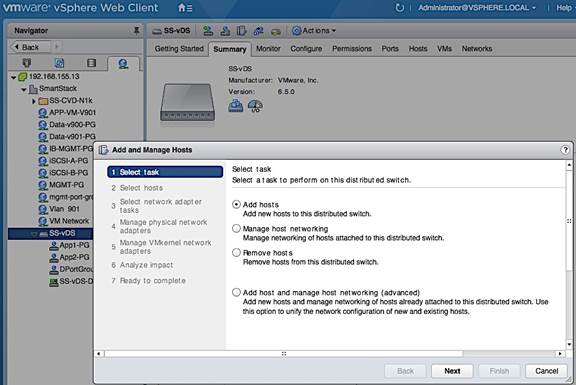

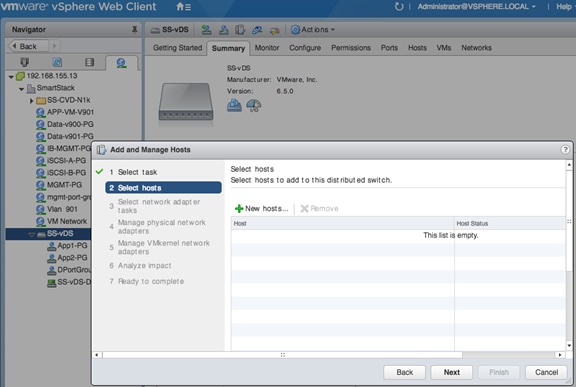

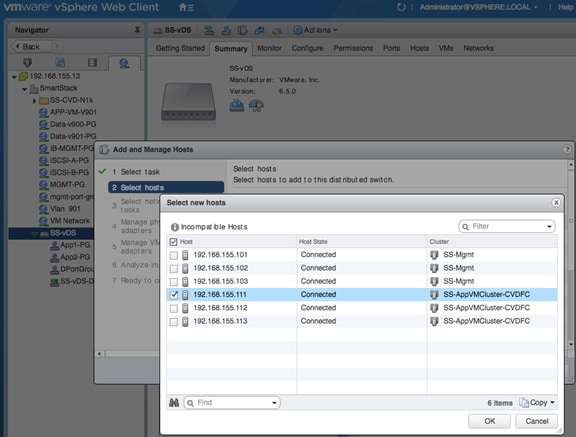

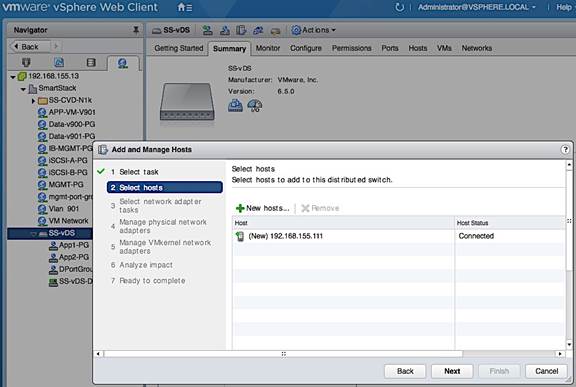

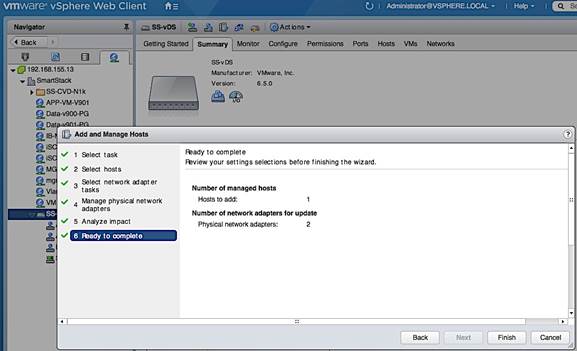

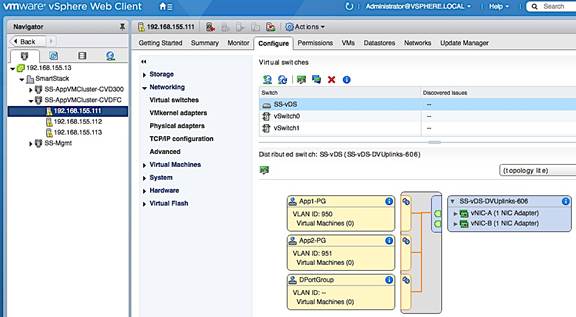

Add Host to vSphere Distributed Switch for Application VMs

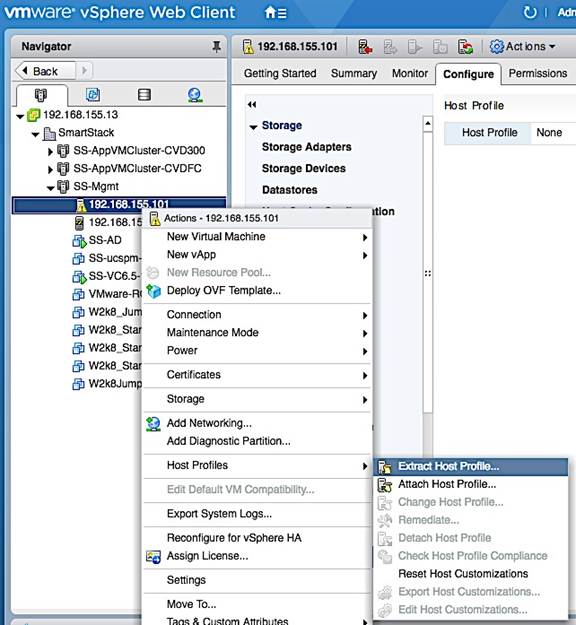

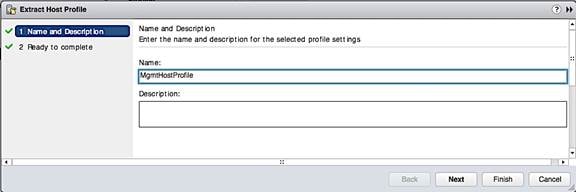

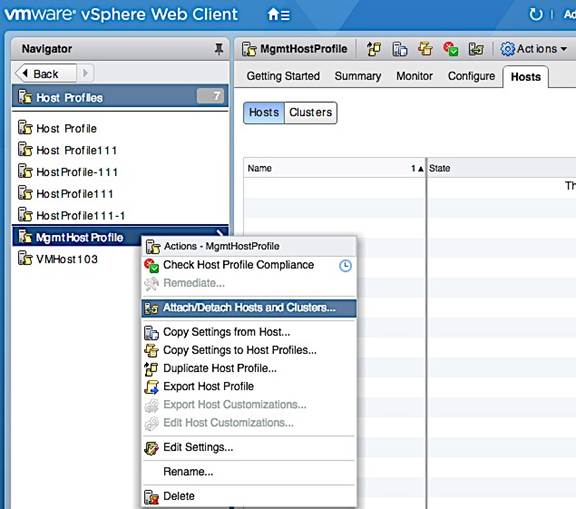

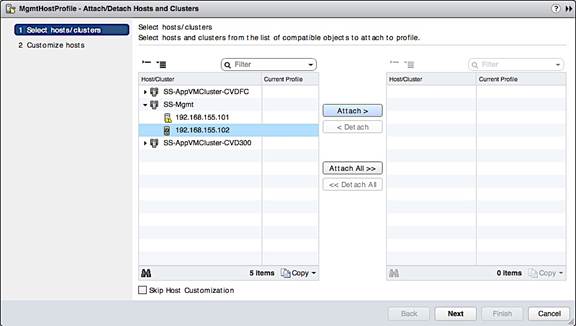

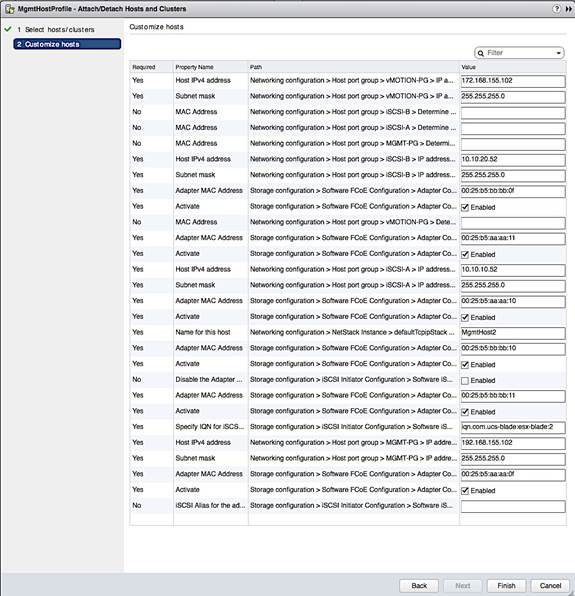

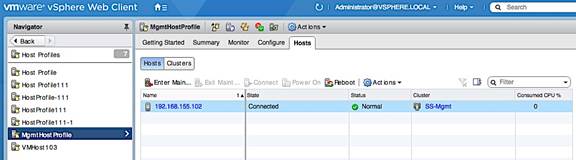

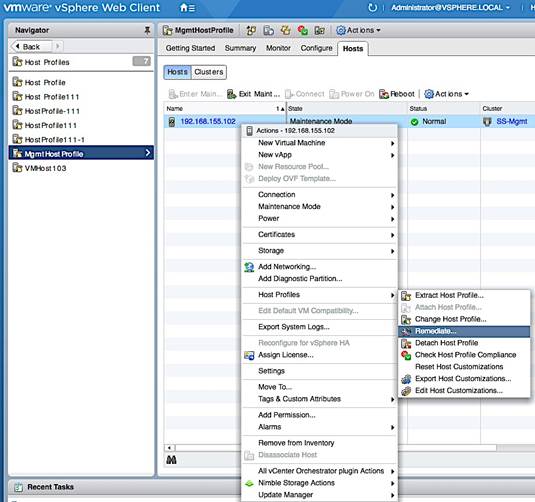

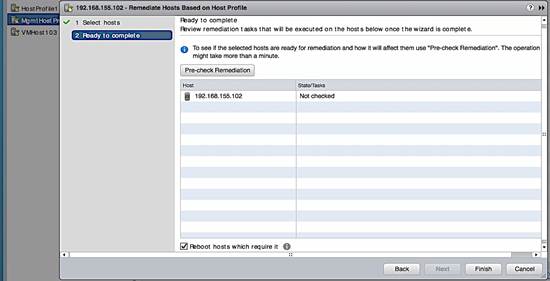

Extract Host Profile to use as a template deploying additional hosts

Solution Deployment - Deploy High Availability for vCenter (Optional)

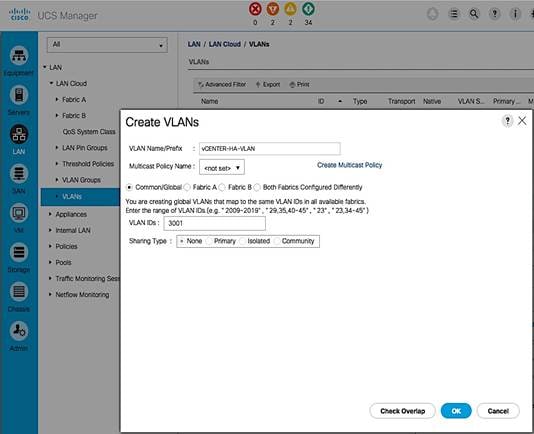

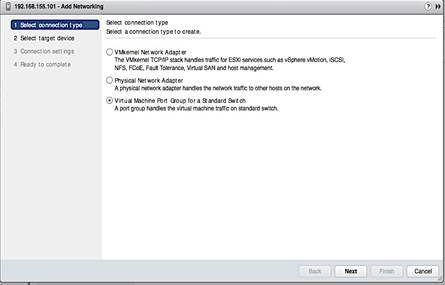

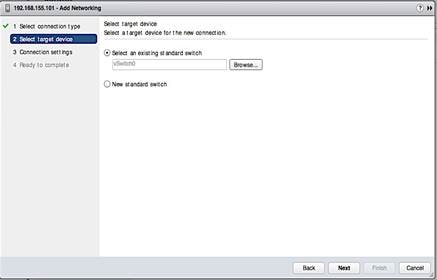

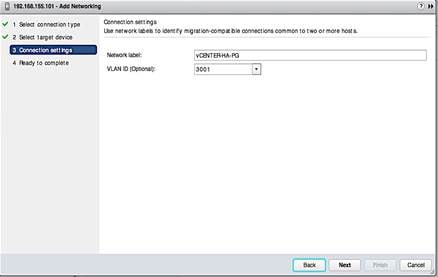

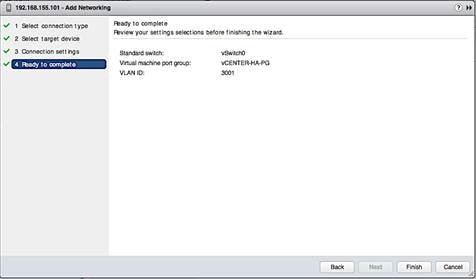

Add vCenter HA Vlan to Cisco UCS Fabric

Cisco Validated Designs (CVD) are systems and solutions that have been designed, tested and documented to facilitate and accelerate customer deployments. These designs incorporate a wide range of technologies and products into a portfolio of solutions that have been developed to address the business needs of a customer. CVDs deliver a validated design, documentation and support information to guide customers from design to deployment.

Cisco and Nimble have partnered to deliver a series of integrated solutions for Enterprise and Cloud datacenters by combining Cisco Unified Computing System (UCS), Cisco switching, and Nimble Storage arrays. The Cisco-Nimble solution covered in this document incorporates compute, network and storage best practices to deliver a resilient, scalable and flexible datacenter architecture. The design uses Cisco UCS servers for compute, VMware vSphere 6.5 hypervisor, Cisco Nexus 9000 series as the network platform and Cisco MDS 9000 Series switches for the Fibre Channel (FC) network to connect to the Nimble Storage AF7000 all flash and CS5000 adaptive flash arrays. The solution ensures compatibility between the components by testing the integrated architecture. The solution also provides documented design guidance, deployment guidance, and support through the planning, design, and implementation stages of a deployment.

Documentation for this CVD includes the following documents:

· Cisco-Nimble Solution Design Guide

· Cisco-Nimble Solution Deployment Guide

This document serves as the Cisco-Nimble Solution Deployment Guide. The Design Guide associated with this deployment guide can be found here.

Introduction

This document outlines the deployment procedures for implementing a Cisco-Nimble solution infrastructure solution based on VMware vSphere 6.5, Cisco UCS, Nimble Storage Unified Flash Fabric (AF7000 all flash array, CS5000 adaptive flash array), and Cisco switching (Nexus, MDS) switches.

Audience

The intended audience for this document includes, but is not limited to, sales engineers, field consultants, professional services, customer IT staff, partner engineering, and others who want to deploy data center architecture based on Cisco UCS and Nimble Storage Unified Flash Fabric.

The architecture outlined in this document provides a converged infrastructure solution based on Cisco UCS and Nimble Storage Unified Flash Fabric for Enterprise datacenters and private cloud deployments. The Cisco-Nimble solution integrates Cisco UCS compute, Nimble AF7000 all flash and CS5000 adaptive flash storage, Cisco Nexus 9000 Series platform switches and Cisco MDS Fabric switches to deliver a foundational platform that can support a wide range of application workloads. The solution delivers compute, storage, SAN and LAN connectivity in a highly resilient, flexible and scalable architecture. The modular architecture has the flexibility to scale up by adding resources or scale out by adding multiple units or modules of this solution.

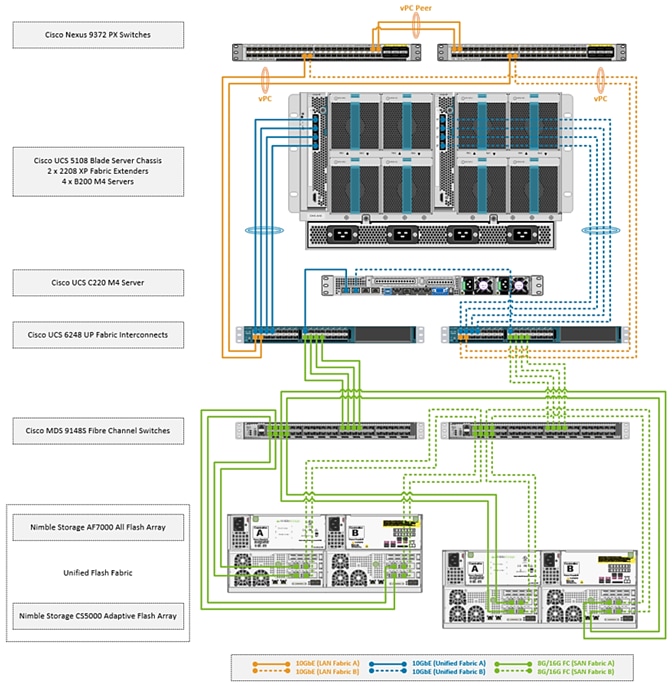

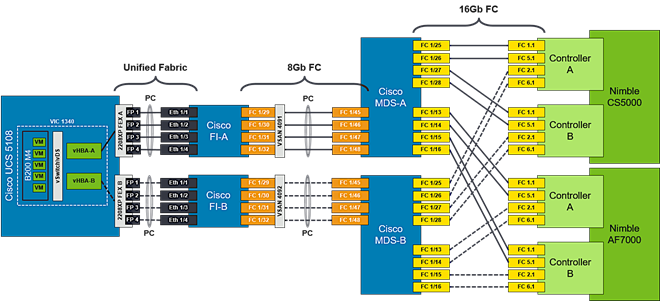

Figure 1 shows the solution design built using the following components:

· Cisco Unified Computing System (UCS) – provides the compute resources, including blade and rack servers

· Cisco UCS 6200 Series Fabric Interconnects (FI) – for unified management and access to storage and LAN networks

· Cisco Nexus 9300 series switches – for connectivity to users, other LAN networks and Cisco UCS domains

· Cisco MDS fabric switches – provides a SAN fabric for Fibre Channel (FC) connectivity to storage

· Nimble AF7000 all flash array – provides all flash storage with SSD

· Nimble CS5000 adaptive flash array – provides hybrid flash storage with SSDs and HDDs

· VMware vSphere 6.5 – Hypervisor

The design uses 10 Gigabit Ethernet (GbE) connections between Cisco UCS and Nexus switches, along with 8G and 16G FC SAN connections to Nimble Storage through Cisco MDS switches. Each functional layer (compute, network, and storage) of the architecture is designed to be highly resilient with redundant links and components. The hosts are SAN booted with block-level access to both all flash and hybrid storage provided by Nimble Unified Flash Fabric. The solution also leverages the stateless server provisioning and management capabilities of Cisco UCS Manager with the wizard based provisioning and simplified storage management of Nimble storage to provide quick provisioning and deployment of infrastructure resources.

Figure 1 Cisco-Nimble Solution Design

The SAN fabric in the design consists of a pair of redundant Cisco MDS 9148S switches, with 8G FC connections to Cisco UCS 6200 Series Fabric Interconnects and 16G FC connectivity to Nimble arrays. The architecture can also support 16G FC end-to-end by upgrading from Cisco UCS 6200 to 6300 Series Fabric Interconnects. Two SAN fabrics provide redundant paths for SAN boot and for accessing shared storage on Nimble.

The LAN design uses a pair of Cisco Nexus 9300 series switches deployed in a standalone mode and provide 10GbE connectivity to Cisco UCS FI. Cisco Nexus 9300 switches provides a migration path, with investment protection, to both 40GbE LAN and Cisco ACI. The solution can also support 100GbE LAN connectivity by upgrading to Nexus 93180 series switches.

Compute

Cisco UCS B-Series and C-Series servers provide the compute resources in this design. Several models of these servers are supported but Cisco UCS B200M4 and C220 M4 servers were used in validation.

The compute design consists of an infrastructure management POD and an application POD, each with dedicated hardware and running VMware ESXi 6.5 hypervisor.

The infrastructure POD hosts the management or the common infrastructure services that are necessary to deploy, operate and manage the entire deployment. For validation, common components such as Active Directory, DNS, DHCP, vCenter were deployed in the infrastructure management POD.

The application POD consists of any virtualized application hosted on Cisco UCS that the business requires. For validation, IOMeter virtual machines representing application VMs were hosted on the POD.

Features available at the hypervisor layer (for example, VMware clustering, high availability) are leveraged in both the infrastructure management and application PODs.

Depending on the size and needs of a deployment, infrastructure VMs could be deployed with Applications VMs on a single POD but this design assumes a dedicated POD for infrastructure management.

![]() The deployment guidance in this document assumes that the deployment environment already has an infrastructure management POD and therefore the focus of this document is on the deployment of an application POD.

The deployment guidance in this document assumes that the deployment environment already has an infrastructure management POD and therefore the focus of this document is on the deployment of an application POD.

From a connectivity standpoint, the compute resources (blade server chassis and rack mount servers) connect into a pair of Cisco UCS 6248 Fabric Interconnects. The blade server chassis connects to the FI through the Cisco FEX module located at the back of the chassis. The rack mount server uses the direct-attached design to connect directly into the FIs and does not use a FEX in this design.

Two Cisco UCS Fabric Interconnects are deployed in a cluster for redundancy and provide two fabric planes (FI-A, FI-B) that are completely independent of each other from a data plane perspective. In the event of a failure (or if the design only uses one FI), the fabric can be fully operational with one FI.

The FIs provide 10GbE connectivity to the LAN network infrastructure and 8G/16G FC connectivity to the SAN fabric. The FI provides 40Gbps of aggregate bandwidth to the LAN network and 64Gbps to the SAN network. Link aggregation using port channels are used on the unified fabric FEX-to-FI and on the FI-to-Cisco MDS connection. Virtual port channels are used on the FI-to-Cisco Nexus uplinks to the LAN network.

Virtualization

The hosts in the design are running VMware vSphere 6.5 and leverages VMware High Availability (HA) clusters to mitigate against host failures. The compute resources are assigned to one of the VMware HA clusters. Virtual machines (VM) associated with infrastructure management (for example, vCenter) and other services (for example, DNS) common to the entire deployment, are part of a separate, dedicated infrastructure management cluster. VMs associated with production applications, are part of one or more application PODs and is the focus of this document.

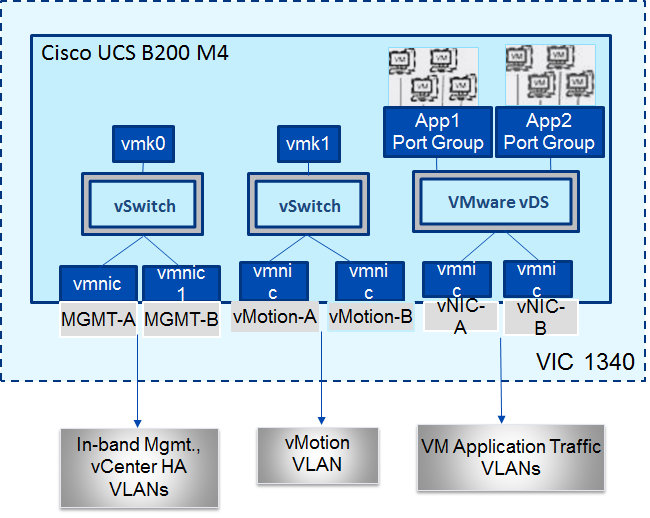

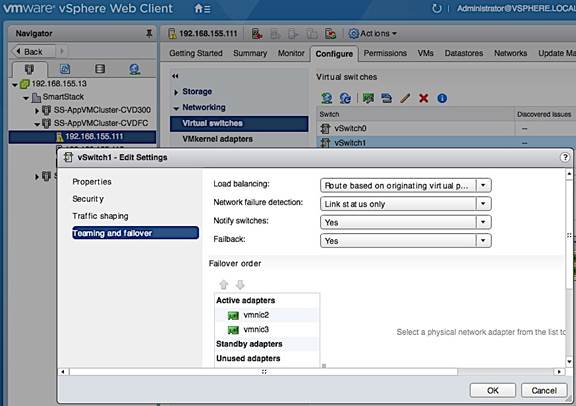

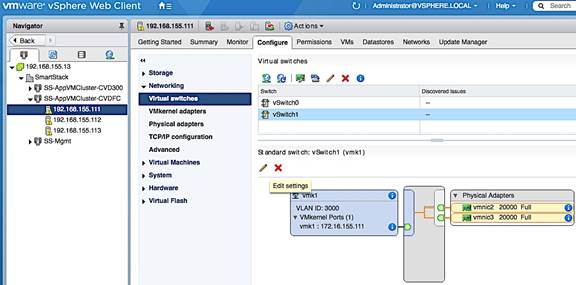

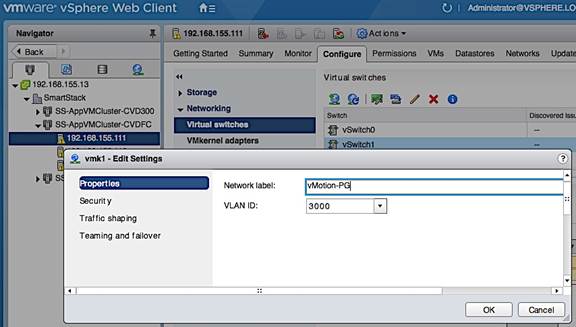

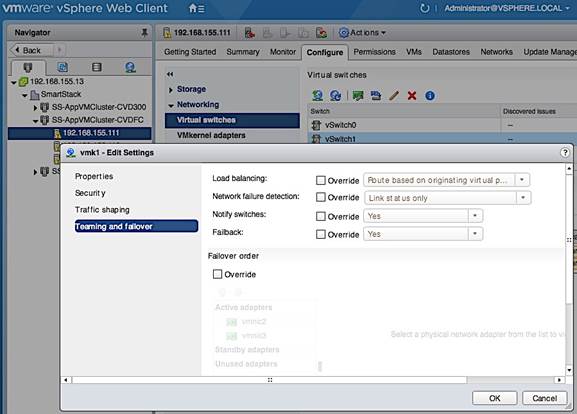

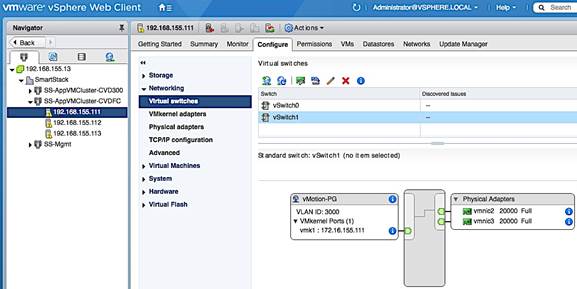

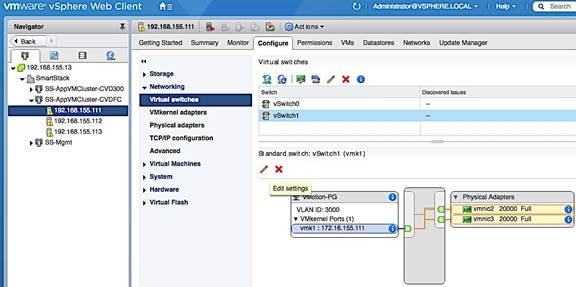

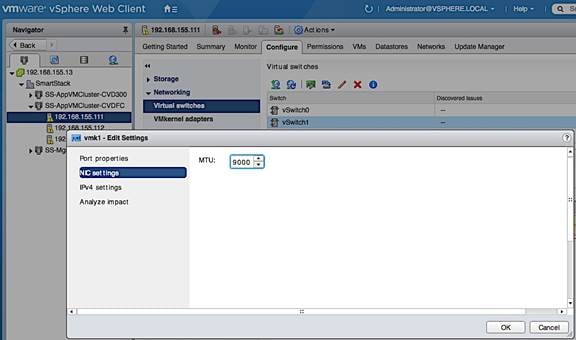

The design uses both VMware virtual switches (vSwitch) and distributed virtual switches (vDS) for virtual networking. In each cluster, a distributed virtual switch is used for application VM traffic while virtual switches are used for host management and vMotion traffic. This design also supports the use of a single distributed virtual switch for all traffic as well as the use of additional distributed switches for application VMs.

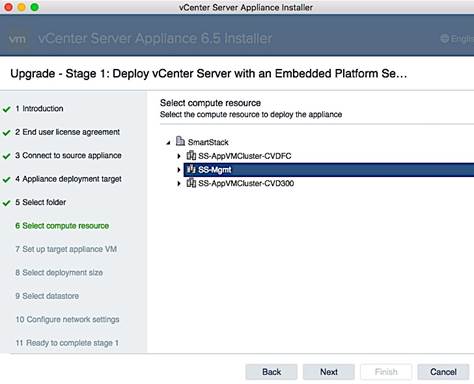

The Cisco-Nimble solution architecture was validated using two VMware HA clusters, one for infrastructure management (SS-Mgmt) and one for application VMs (SS-AppVMCluster-CVDFC). Cisco UCS servers were assigned to one of the two clusters. Each host has two virtual switches (vSwitch0, vSwitch1) for management and vMotion traffic. The same host is also part of distributed virtual switch (SS-vDS).

Each UCS server is equipped with a physical adapter or a Cisco Virtual Interface Card (VIC). Cisco VIC presents multiple vPCIe devices or virtual Network Interface Cards (vNIC) to each host that vSphere identifies as vmnics. The hosts are dual homed Fabric A and Fabric B for redundancy and load balancing. In this design, the following vNICs and virtual switches are used on each. The –A vNICs connect to unified fabric A and –B to unified fabric B.

· One vSwitch for in-band management (MGMT-A, MGMT-B). On the management cluster, this switch would be used for vCenter HA

· Second vSwitch for vMotion traffic with two vNICs (vMotion-A, vMotion-B)

· One vDS switch (SS-vDS) with two vNICs (vNIC-A, vNIC-B) for application VM traffic

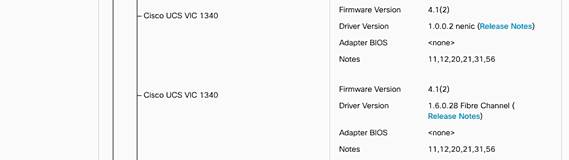

The Cisco UCS B200 M4 and C220 M4 servers in this setup were equipped with Cisco VIC 1340 and VIC 1227 cards respectively.

The virtual networking within each ESXi host is shown in the figure below.

Figure 2 Cisco UCS Server Virtual Networking

The Cisco-Nimble solution architecture uses two port groups (MGMT-PG) for in-band management and vMotion (vMOTION-PG) traffic with the following VMkernel NICs (vmk) for the following functionality:

· vmk0 - ESXi management

· vmk1 - vMotion interface

The ESXi management interface is for host to vCenter connectivity, direct access to ESXi shell and VMware cluster communication. The vMotion interfaces are private subnets supporting data access and VM migration across the Cisco-Nimble infrastructure. Additional port groups are created as needed for the Application traffic groups on VMware vDS switch.

Storage

The Unified Flash Fabric that provides the storage in this design uses Nimble Storage’s AF7000 all flash array and CS5000 adaptive flash array clustered together in a single group. Up to four all flash or adaptive flash arrays can be clustered together in the same group. Nondisruptive scale out provides increased performance and capacity and the ability to tier storage media within the same group.

Although specific array model numbers are used in this design, the validation is focused on the version of Nimble OS running on each array. Both all flash and adaptive flash arrays operate using the same Nimble OS build, which is the foundation of the Unified Flash Fabric. The common operation systems combined with a universal hardware architecture means that any array model number can be used in this design provided it operates with the validated Nimble OS version.

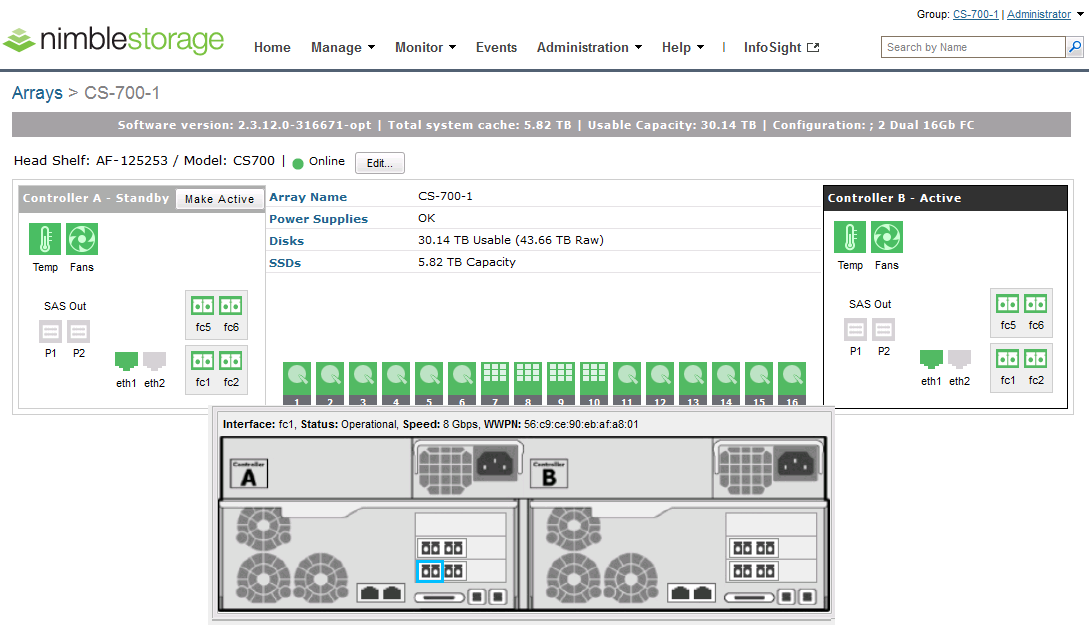

Each Nimble Storage controller contains redundant management ports, redundant power supplies, and the ability to add up to three I/O expansion cards. I/O expansion cards can be 16Gb FC or 10Gb Ethernet cards with options for 2 or 4 ports per card. Each array and expansion shelf contains 24 drive bays, with each bay accepting a single 3.5” HDD or a single Dual Flash Carrier (DFC) with two 2.5” SSDs that can be individually removed. Flash capacity expansion is a simple as adding additional drives to the existing array, or by attaching expansion shelves to the SAS 3.0 (12Gb) expansion ports. Additional HDD capacity expansion is possible by attaching expansion shelves. Adaptive flash arrays can add HDD or all flash expansion shelves.

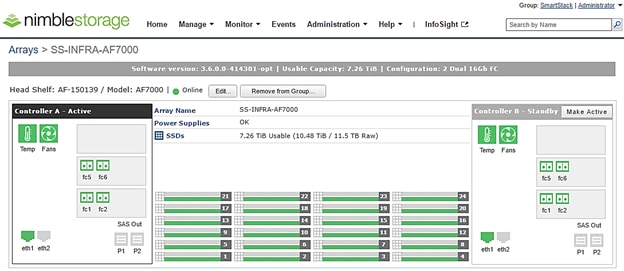

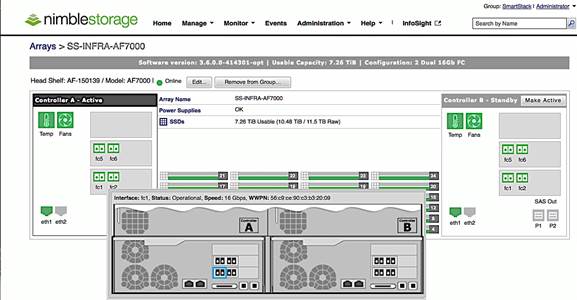

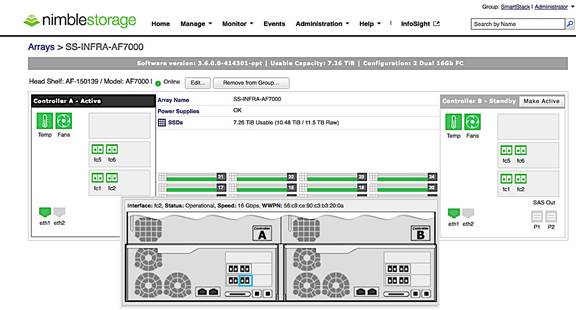

The AF7000 all flash array configuration used in this design contains 24 x 480GB SSDs for a total of 11.5TB raw flash capacity. Each controller (active and passive) contains 2 x 16Gb FC expansion cards for multiple redundant connections to each SAN fabric (Fabric A and Fabric B).

Figure 3 Nimble Storage AF7000 array configuration overview

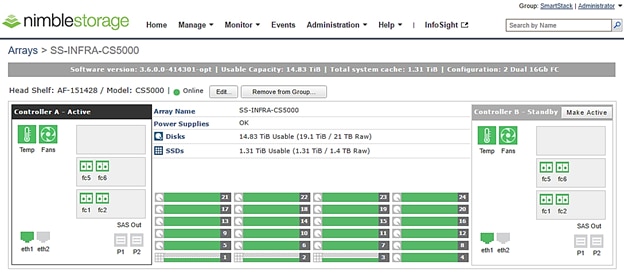

The CS5000 adaptive flash array configuration contains 3 x 480GB SSDs for flash cache with a total of 1.4TB raw flash capacity with another 21 x 1TB HDDs for a total of 21TB raw disk capacity. Each controller (active and passive) contains 2 x 16Gb FC expansion cards for multiple redundant connections to each SAN fabric (Fabric A and Fabric B).

Figure 4 Nimble Storage CS5000 adaptive flash array configuration overview

To optimize and model the required performance, the storage arrays and virtual machines are remotely monitored from the cloud using Nimble InfoSight™. This provides insight into data I/O patterns and capacity usage, and trend analysis for capacity planning and expansion. Also it allows for pro-active ticketing and notification when any issues occur. Providing this kind of deep level analytics into the data patterns and requirements, along with Nimble expandability allows an array to scale performance in exactly the desired area.

This design uses 16G fabric connectivity with two FC interface cards to provide 64G of FC bandwidth per controller. For additional FC bandwidth, a third FC card can be deployed on each controller but this interface is typically used for 10GbE connections to other arrays in a scale-out cluster for data replication traffic. The links between a pair of Cisco MDS and Fabric Interconnect switches are aggregated using 4x8G FC links to deliver 32G of bandwidth across the SAN fabric to each controller. Nimble Storage arrays support nondisruptive upgrades for adding additional capacity (scale deep), controller upgrades (scale up), or adding additional arrays (scale out).

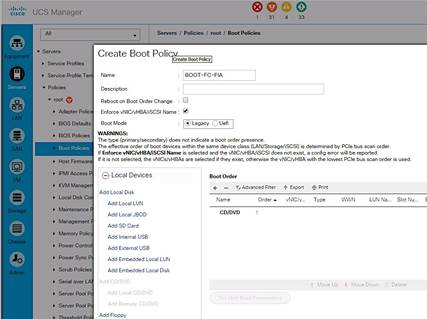

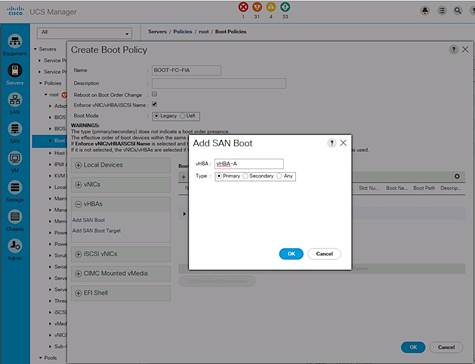

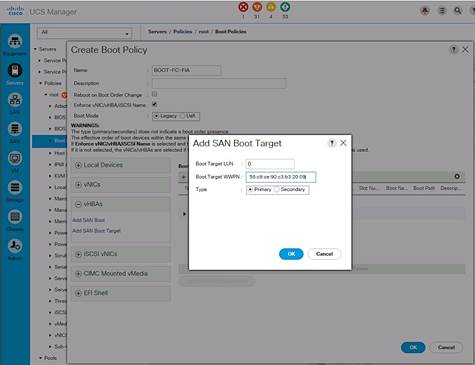

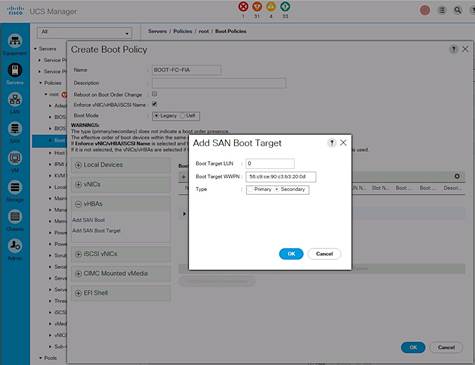

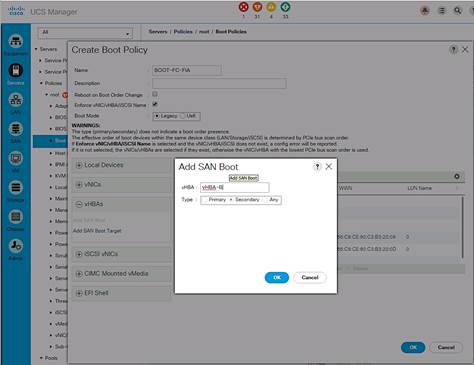

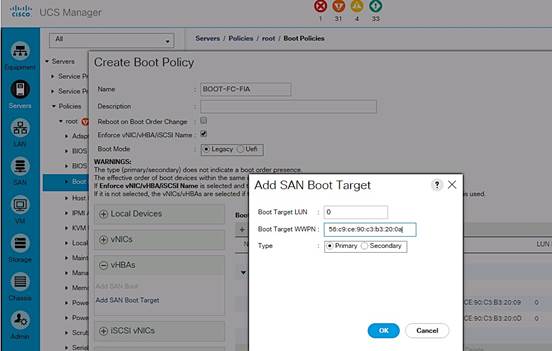

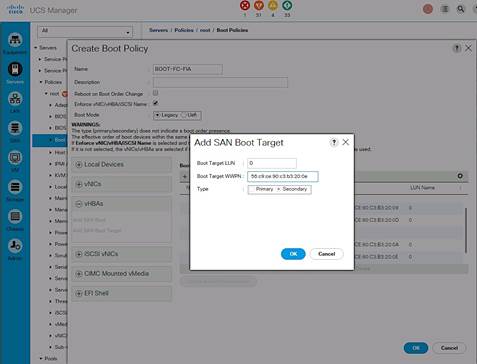

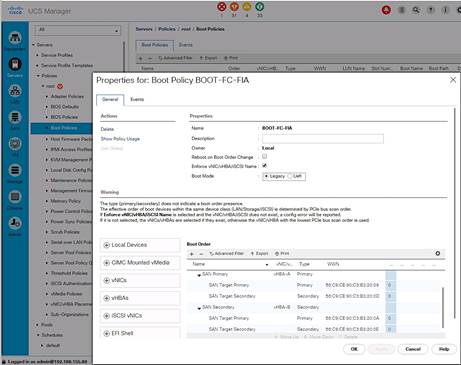

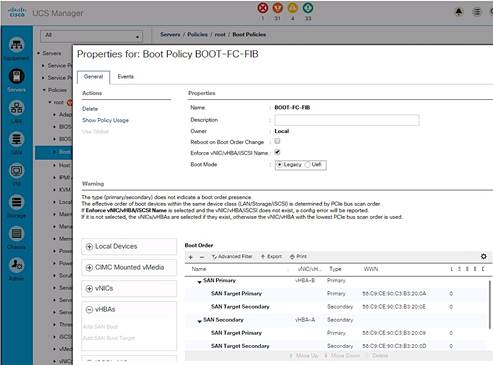

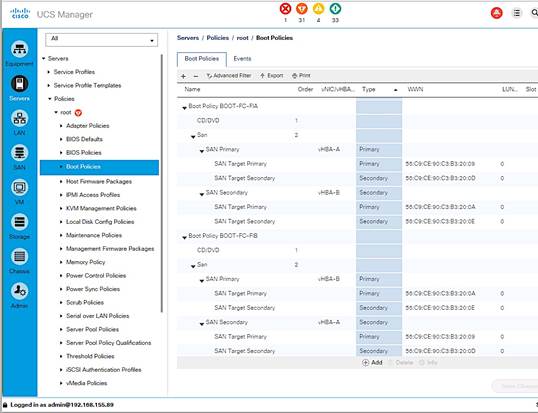

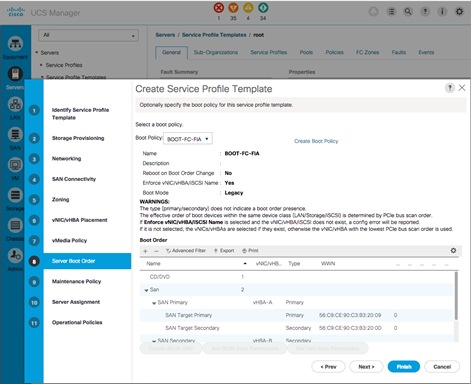

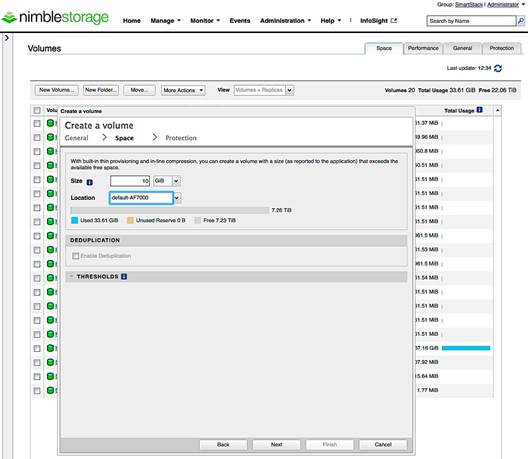

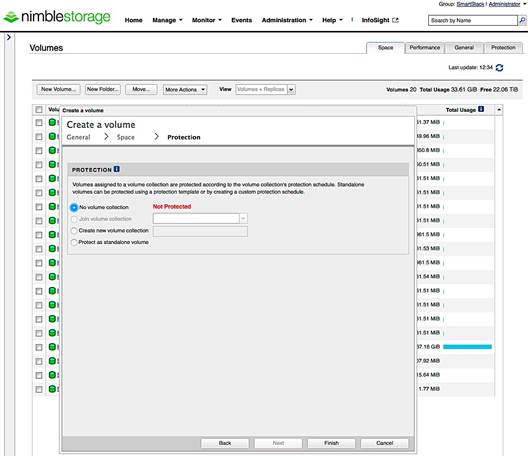

The design uses FC SAN boot for the primary boot device of the Cisco UCS blades. The Service Profile used to configure and deploy Cisco UCS servers is configured to include a boot policy that points to the Nimble Storage arrays. The boot policy specifies a primary and secondary SAN paths to the two controllers (active and passive) on each array where the boot volumes reside. A second boot policy is also configured but with the primary and secondary paths reversed from that of the first boot profile. The second boot policy is used to load balance SAN boot across different paths when multiple servers are booting. This is an optional aspect of the design that can be helpful in larger deployments for distributing load when multiple servers have to be simultaneously booted. Each server has a dedicated boot volume (40GB) on the Nimble storage array. Nimble Storage arrays provide an Access Control List at the initiator level to only allow connections from the appropriate Cisco UCS blade. During the initial SAN boot, the server attaches to all primary and secondary connections to both active and standby controllers. This provides for normal boot operations even when a controller or primary path is offline. The hosts are configured with the Nimble Connection Manager and Path Selection Policy which optimize MPIO (multi-pathing) settings. This will allow for proper FC path management and failover connectivity with Nimble Storage volumes.

The following section of this document provides more details on the connectivity and high availability aspects of this design.

Networking

The LAN network provides network reachability to the applications hosted on Cisco UCS servers in the data center. The infrastructure consists of pair of Cisco Nexus 9372 PX switches deployed in NX-OS standalone mode. Two 10Gbps links from each Cisco Nexus switch are connected to a 10Gbps port on each FI to provide 20Gbps of uplink bandwidth through each Cisco Nexus switch. Virtual Port Channels (vPCs) are configured across these links to provide link and node redundancy while providing higher uplink bandwidth. VLAN trunking is enabled on these links as multiple application data, management and vMotion VLANs needs to traverse these links. Cisco recommended design practices are also implemented in this design – see Cisco Nexus 9000 best practices section of the Design Guide for details.

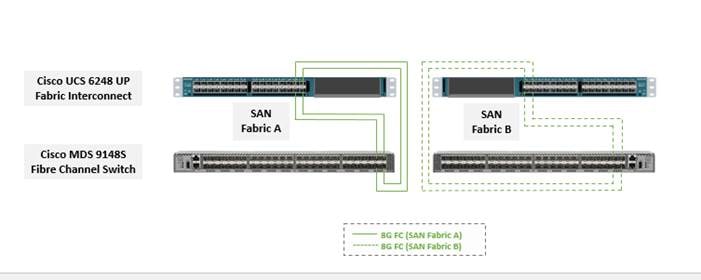

The SAN network provides fibre channel connectivity to the Nimble storage array and consists of a pair of Cisco MDS switches. The Cisco MDS switches form completely separate fabrics (SAN fabric A, SAN fabric B) and use a dual vSAN (vSAN-A, vSAN-B) design to provide two redundant and completely diverse paths to the Nimble Storage array.

Link aggregation using port channels are used to aggregate 4 x 8G FC links to provide 32G of FC bandwidth on each SAN Fabric between Cisco FI and Cisco MDS switches. Individual links are used by the Nimble array (link aggregation is unnecessary) with 2 links from each SAN fabric connected to both controllers to provide 32G of FC bandwidth to each controller (active and passive).

Cisco MDS switches are deployed with N-Port ID Virtualization (NPIV) enabled to support the virtualized environment running on Cisco UCS blade and rack servers. NPIV is necessary to provide isolation in virtualized environments where multiple virtual machines are running on a single server but a LUN needs to be presented to only one VM and not all VMs running on the server. Without NPIV, LUNs would be presented to the host and as a result, all VMs running on that host. To support NPIV on the Cisco UCS servers, the Cisco UCS Fabric Interconnects that connect the servers to the SAN fabric, are enabled for N-Port Virtualization (NPV) mode by configuring to operate in end-host mode (as opposed to FC switching mode). NPV enables Cisco FIs to proxy fabric login, registration and other messages from the servers to the SAN Fabric without being a part of the SAN fabric. This is important for keeping the limited number of Domain IDs that Fabric switches require to a minimum.

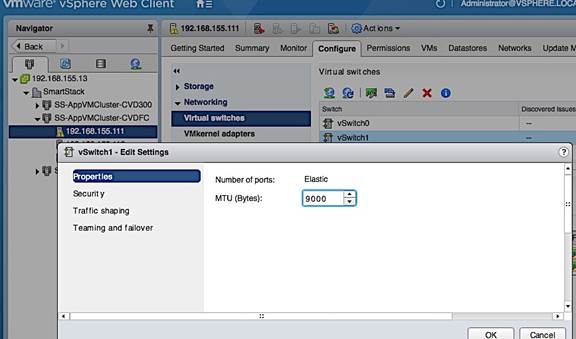

The design also uses jumbo frames with an MTU of 9216 Bytes across the LAN and Unified Fabric links. Jumbo frames increase the throughput between devices by enabling larger sized frames to be sent and received on the wire while reducing the CPU resources necessary to process them. Jumbo frames were enabled during validation on the LAN network links in the Cisco Nexus switching layer and on the Unified Fabric links.

Low-Level Design

Compute

To validate this design, a Cisco UCS with 4x Cisco B200M4 half-width blades and a Cisco C220 M4 rack mount server running VMware ESXi 6.5 were deployed in the POD to host application VMs. The servers were configured to be part of a cluster with VMware high availability enabled. The blade server chassis is connected to a pair of Cisco UCS 6248 FIs using a pair of Cisco 2208 XP fabric extenders located at the back of the chassis. Eight 10GbE links are used for FEX to FI connectivity, 4 from FEX-A to FI-A and 4 from FEX-B to FI-B to provide an aggregate access bandwidth of 80Gbps to the unified fabric.

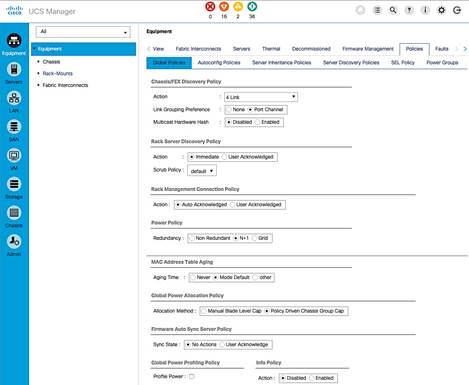

The Fabric Interconnects in the design are deployed in End-host Ethernet switching mode. Ethernet switching mode determines how the fabric interconnects behave as switching devices between the servers and the network. End-host mode is the default and generally recommended mode of operation. In this mode, the fabric interconnects appear to the upstream LAN devices as end hosts with multiple adapters and do not run Spanning Tree. The Cisco Nexus switch ports that connect to the FI are therefore deployed as spanning tree edge ports.

The ports on the Cisco UCS 6248 FI are unified ports that can support either Ethernet or Fibre Channel traffic based by changing the port mode.

Ethernet ports on the fabric interconnects are not configured by default and must be explicitly configured as a specific type, which determines the port’s behavior. The port types used in this design are:

· Uplink ports for connecting to the Cisco Nexus 9300 series switches and external LAN network

· Fiber Channel ports for connecting to the SAN Fabric

· Server ports for connecting to external Cisco UCS C-series rack mount servers

Cisco UCS Manager (Cisco UCSM) is used to provision and manage Cisco UCS and its sub-components (chassis, FI, blade, and rack mount servers). Cisco UCSM runs on the Fabric Interconnects.

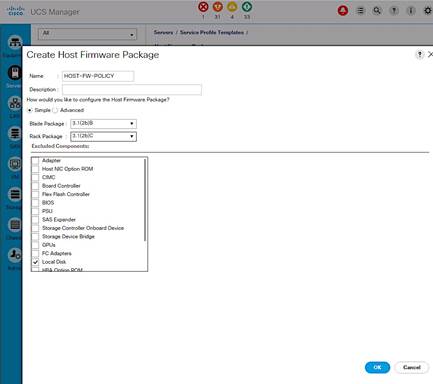

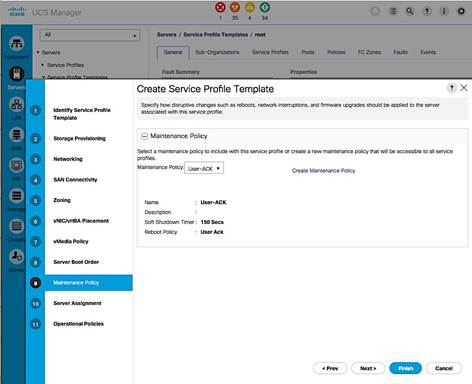

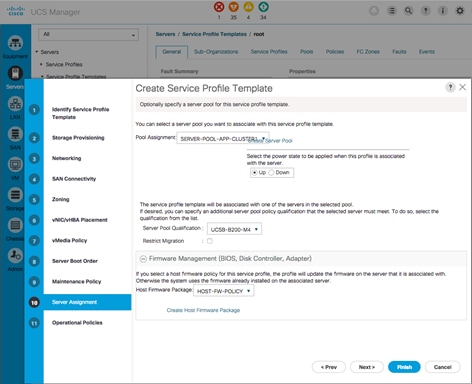

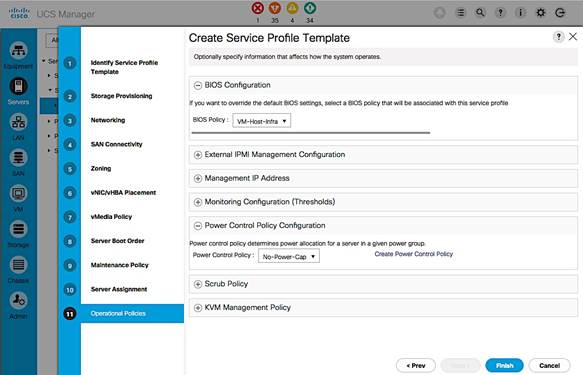

A key feature of Cisco UCSM is Service Profile Templates that enable the abstraction of policies, pools, and other aspects of a server configuration and consolidate it in the form of a template. The configuration in a service profile template includes:

· Server Identity (UUID Pool, Mac Address Pool, IP Pools etc.)

· Server Policies (BIOS Policy, Host Firmware Policy, Boot Policy etc.)

· LAN Configuration (VLAN, QoS, Jumbo Frames etc.)

· Storage Configuration (IQN pool)

The template once created, can be used to generate a service profile that configure and deploy individual server or group of servers. A service profile defines the server and its storage and networking characteristics. A service profile template reduces the deployment time, and increases the operational agility and provides general ease of deployment. Service profile templates are used in this design to rapidly configure and deploy multiple servers with minimal modification.

LAN Network

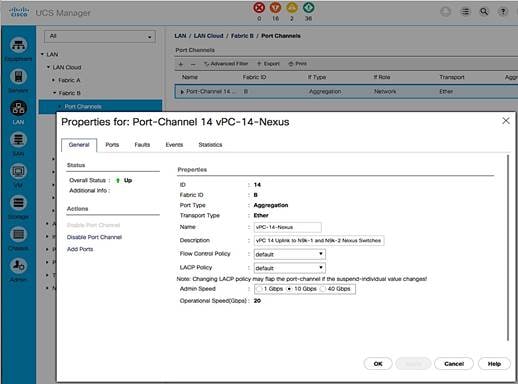

The LAN infrastructure design consists of a pair of Cisco Nexus 9372 PX switches. Each Cisco UCS FI connects to the switches using 2x10GbE links and the FI Ethernet ports used for this connectivity are configured as Uplink Ports. The uplinks ports are enabled for Link aggregation with FI-A uplinks in port channel 13, and the FI-B uplink ports in port channel 14. The uplinks ports are connected to different Cisco Nexus switches and configured to be part of a virtual PortChannel (vPC) on the Cisco Nexus switches. vPC 13 connects Cisco Nexus A to FI-A and FI-B and vPC 14 connects Cisco Nexus B to FI-A and FI-B. The Cisco Nexus vPC feature allows a device to aggregate links going to different Cisco Nexus switches. As a result, the uplink ports appear as a regular PortChannel to Cisco UCS. Link aggregation, spanning tree and other configuration parameters between Cisco UCS and Cisco Nexus switches follow Cisco recommended best practices – see Design Guide associated with this document for more details.

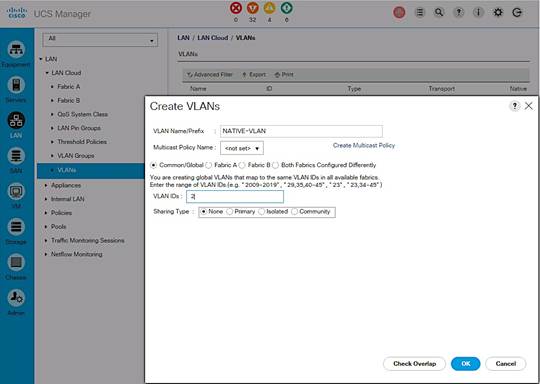

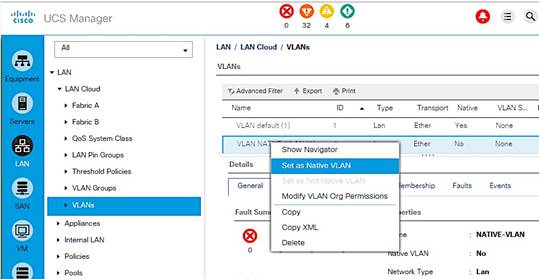

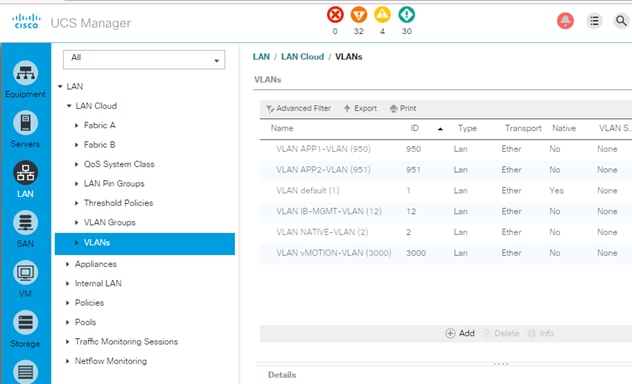

Multiple VLANs need to traverse the uplink ports and uplink ports are automatically configured as IEEE 802.1Q trunks for all VLANs defined on the fabric interconnect. VLANs for management, vMotion, and applications traffic are defined in the fabric interconnects and enabled on the uplinks. The VLANs used in validating the design are summarized in the table below.

Table 1 Uplink VLANs Trunked From Cisco UCS to Cisco Nexus

| VLAN Type |

VLAN ID |

Description |

| Native VLAN |

2 |

Untagged VLAN Traffic are forwarded on this VLAN |

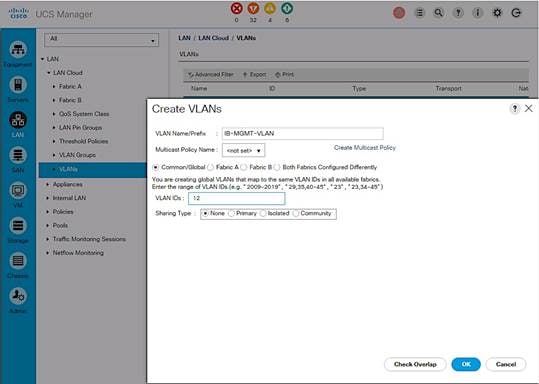

| In-Band Management |

12 |

VLAN used for in-band management, including ESXi hosts and Infrastructure VMs |

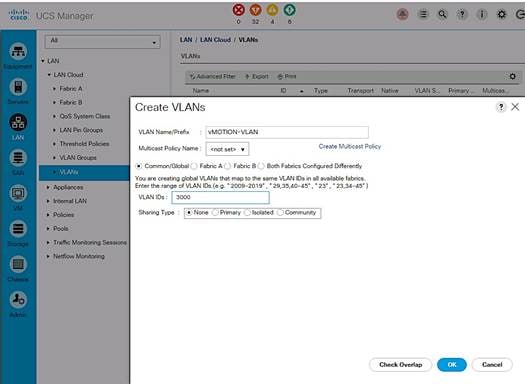

| vMotion |

3000 |

VLAN used by ESXi for moving VMs between hosts. vMotion uses management VLAN |

| vCenter HA Network |

3001 |

VLAN used by vCENTER HA network for HA traffic between Active, Passive and Witness vCenter nodes. (Only used in the Management POD) |

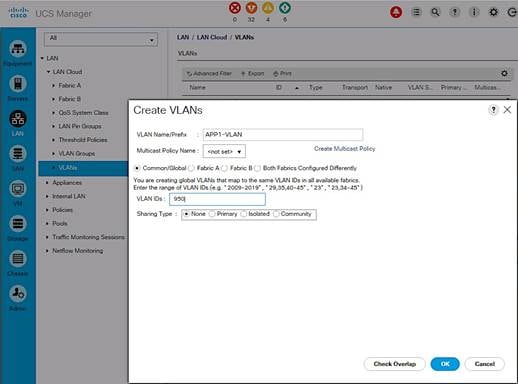

| VM Application Traffic |

950, 951 |

VLAN used by Application Data Traffic |

The detailed deployment procedures for configuring Cisco UCS and Cisco Nexus switches are provided in the Deployment section of this document.

SAN Fabric and Storage

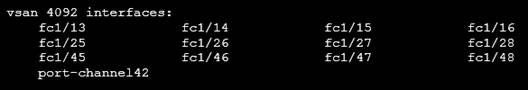

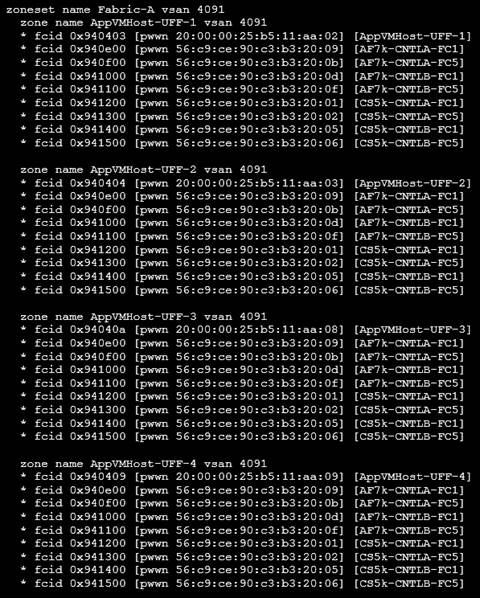

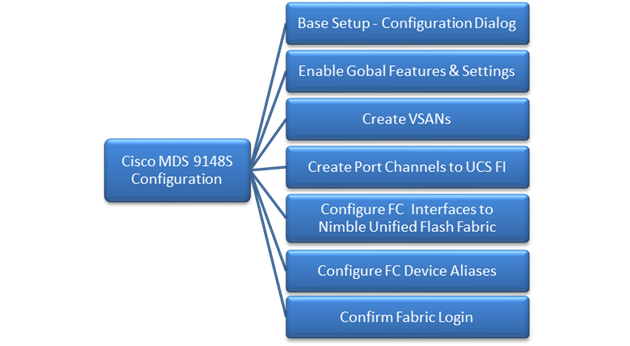

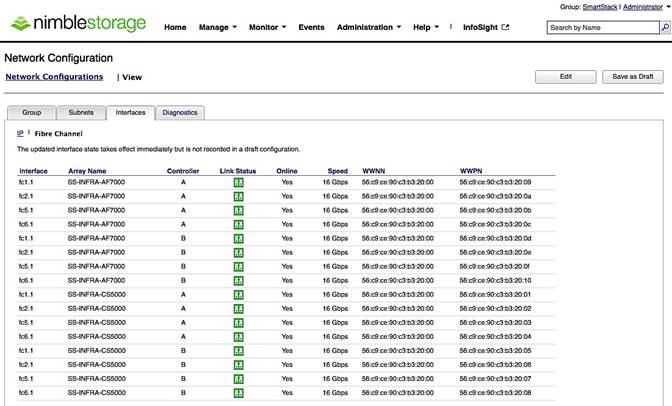

FC Cisco MDS Switch to Nimble Storage Array Connectivity

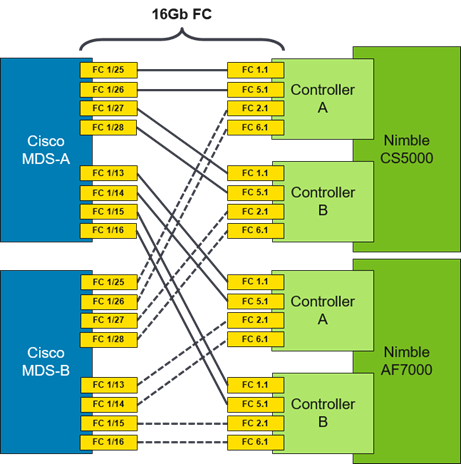

This design uses a dual Fabric, each its own VSAN configuration which allows for two diverse paths for FC connectivity. In this example ports FC1/13-16 (AF7000) and FC1/25-28 (CS5000) are the Nimble Storage target ports. The connections on the Nimble Storage must mirror each other (i.e. FC 1.1 and FC 5.1 from each controller connect to MDS-A while FC 2.1 and FC 6.1 from each controller connect to MDS-B).

Figure 5 Cisco MDS to Nimble Storage array connectivity diagram

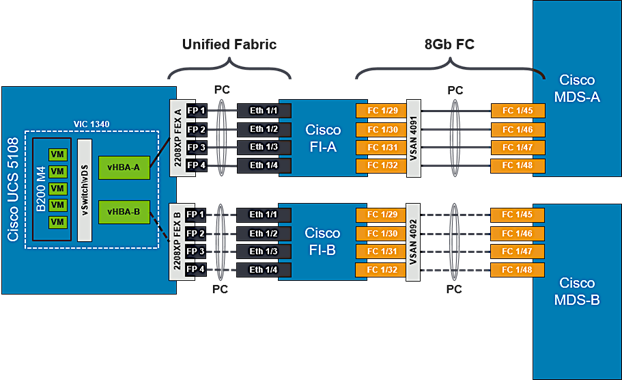

FC Cisco MDS Switch to Cisco UCS Fabric Interconnect Topology

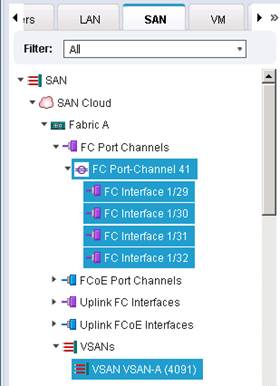

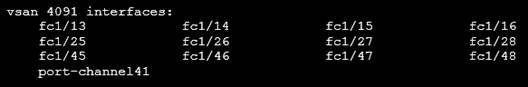

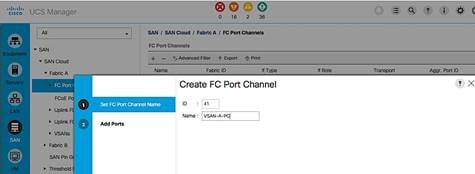

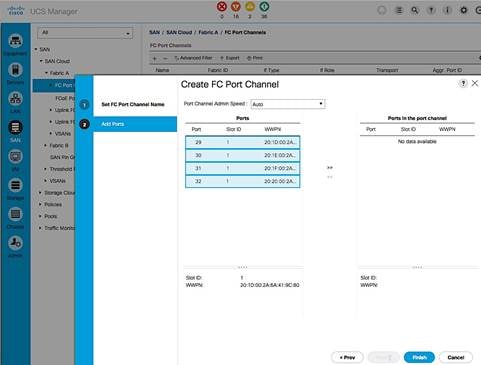

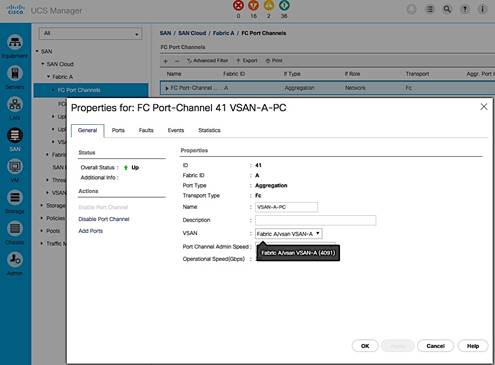

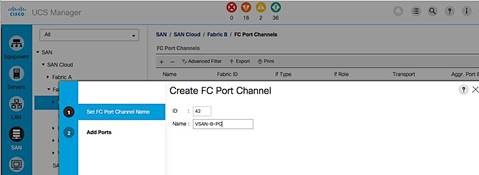

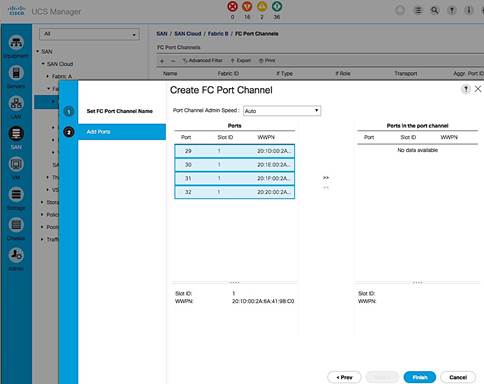

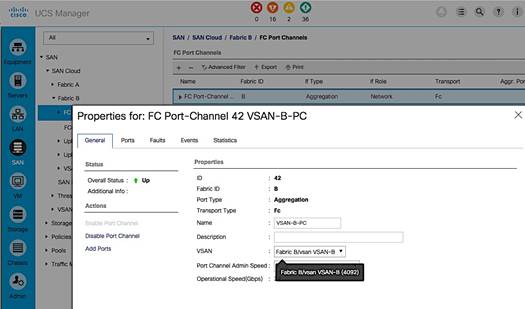

On both Cisco MDS switches, Ports FC1/45-48 is part of a FC port-channel that connects to Fabric Interconnect ports FC1/29-32. FC port-channel 41 connects Fabric Interconnect A to MDS-A and FC port-channel 42 connects Fabric Interconnect B to MDS-B. By default, both MDS and FI from Cisco UCSM, VSANs 4091 needs to be created in SAN tab > SAN Cloud > Fabric A. The FC port channel needs to be defined for the ports connected to the Cisco MDS-A (for example, 29-32).

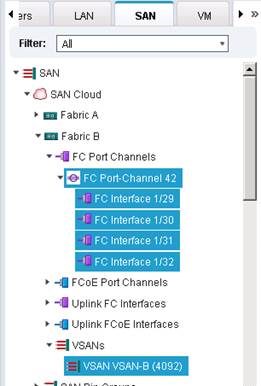

Figure 6 Cisco UCS Servers to Cisco UCS Fabric Interconnect Connectivity Diagram

Also, VSANs 4092 needs to be created in SAN tab > SAN Cloud > Fabric B. The FC port channel needs to be defined for the ports connected to the Cisco MDS-A (for example, 29 - 32). When complete you get a similar screen as shown below.

Cisco MDS Switch Fabric Configuration

Cisco MDS-A Switch VSAN requires that a port channel be created to the corresponding ports on Fabric Interconnect A ports (FC1/45-48). The port channel should match the configuration on the Cisco UCS Fabric Interconnect (41). Note that VSAN membership is required for physical ports and the port-channel. Additionally, confirm that the VSAN for the Nimble FC ports are part of the same VSAN (4091).

Cisco MDS-B Switch VSAN requires that a port channel be created to the corresponding ports on Fabric Interconnect B ports (FC1/45-48). The port channel should match the configuration on the Cisco UCS Fabric Interconnect (42). Note that VSAN membership is required for the physical ports and the port-channel. Additionally, confirm that the VSAN for the Nimble FC ports are part of the same VSAN (4092).

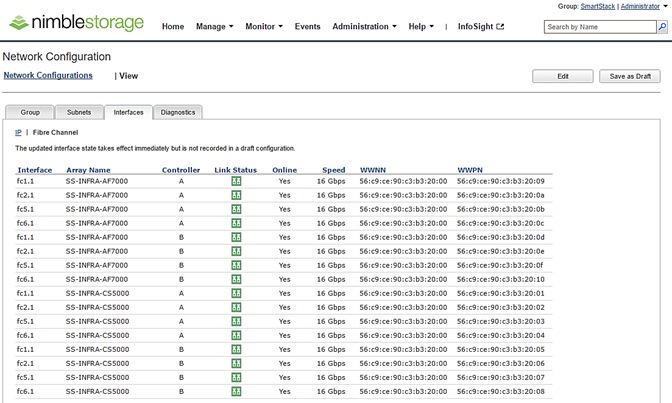

For further security and traffic isolation, the zoning design allows a single initiator to access multiple target ports. Note that both the Active and Standby target ports are configured in the same zone.

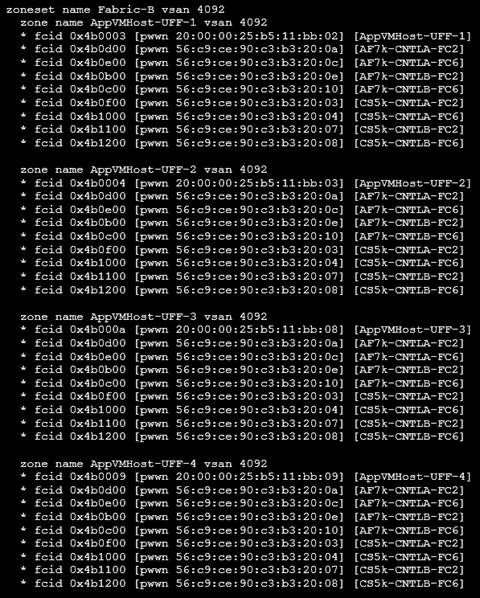

Figure 7 Nimble Storage AF7000 and CS5000 WWPN Target Ports

Cisco MDS-A Zoning Configuration

Cisco MDS-B Zoning Configuration

Cisco UCS Fabric Interconnect VSAN Configuration

Table 2 Storage VSANs between Cisco UCS and Nimble

| VSAN Type |

VSAN ID |

Description |

| FC Path A |

4091 |

VSAN used for FC traffic on Fabric A. This VSAN exists only on Fabric A. |

| FC Path B |

4092 |

VSAN used for FC traffic on Fabric B. This VSAN exists only on Fabric B. |

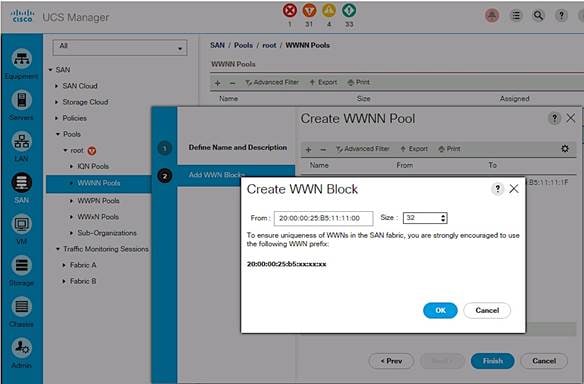

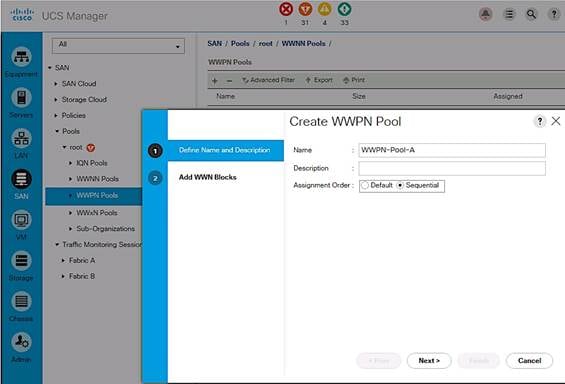

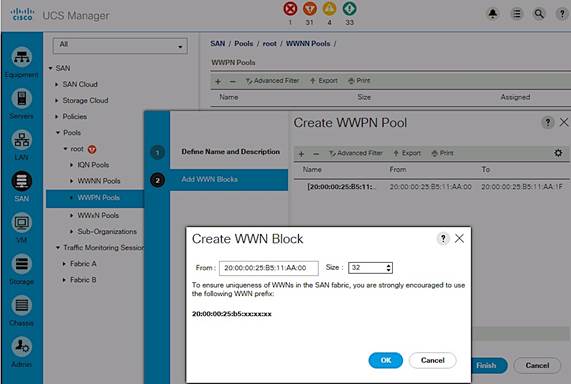

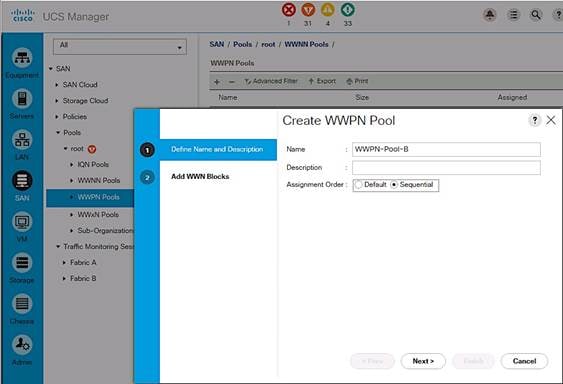

For the host side configuration, a single WWNN and dual WWPN configuration was used for the two independent FC paths as identified below.

Table 3 FC Deployment Information

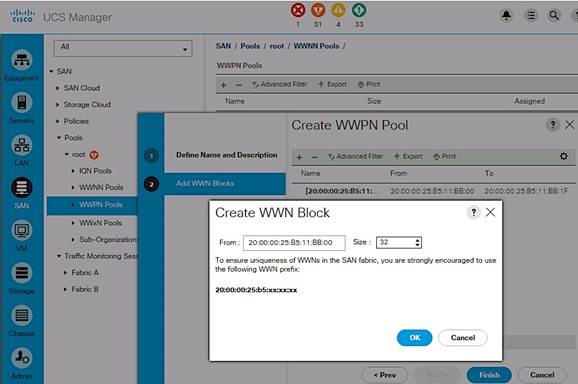

| WWNN Pool |

20:00:00:25:B5:11:11:00- 20:00:00:25:B5:11:11:1F |

| WWPN Pool-A |

20:00:00:25:B5:11:AA:00- 20:00:00:25:B5:11:AA:7F |

| WWPN Pool-B |

20:00:00:25:B5:11:BB:00- 20:00:00:25:B5:11:BB:7F |

Cisco UCS Service Profile Considerations

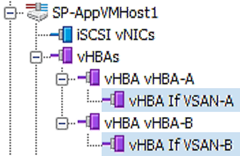

Each ESXi Service Profile host is configured with two fabric diverse vHBAs to allow connectivity into VSAN-A and VSAN-B.

SAN Boot

Each Cisco UCS blade was deployed using FC SAN boot. Using SAN boot affords the advantages of Nimble snapshot, recovery, replication, and cloning mechanisms.

This design has each Cisco UCS blade utilizing two vHBAs that have a presence into diverse fabrics. Each blade had a boot volume created on the Nimble Storage array. The Nimble Storage array provides an initiator group to only honor connections from this single service profile. During FC SAN boot connectivity, the blade connects to both primary and secondary WWPN target for the both active and standby controllers. This provides for normal boot operations regardless of which Nimble Storage Controller is active. The host software utilized MPIO and the Nimble Connection Manager assisted with FC path management. Also, the VMware hosts in question were deployed in a cluster to allow for HA failover and to avoid a single point of failure at the hypervisor layer.

Host Storage MPIO Considerations

The VMware ESXi host is configured to use the NIMBLE_PSP_DIRECTED path policy from the Nimble Connection Manager. See “Setting up Nimble Connection Manager” section for the detailed procedures on installing and configuring Nimble’s multipathing policy. For this design, 4 paths will be in Active (I/O) running state and 4 paths in Standby.

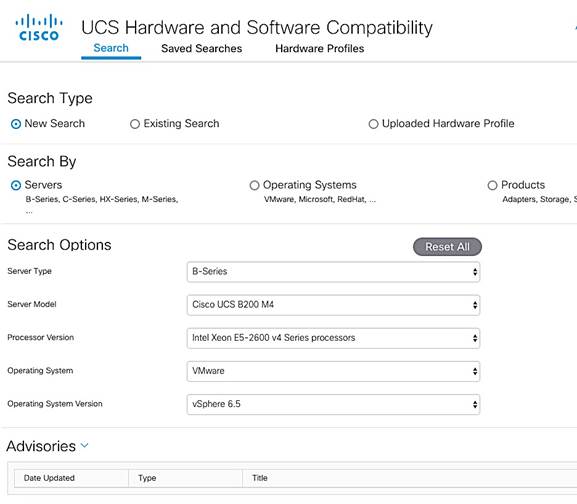

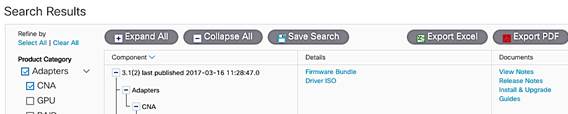

The table below is a summary of all the components used in this design.

Table 4 Infrastructure Components and Software Revisions

|

|

Components |

Software Version |

Comments |

| Network |

Cisco Nexus 9372 PX x 2 |

7.0(3)I2(4)

|

NX-OS Standalone mode; Provides connectivity to Enterprise LAN and users |

|

|

Cisco UCS 6248 UP Fabric Interconnects x 2 |

3.1.2(b) |

|

|

|

Cisco MDS 9148S x 2 |

7.3(0)DY1 |

16G Multilayer FC Switch |

| Compute |

Cisco UCS 5108 Blade Server Chassis |

3.1.2(b) |

|

|

|

Cisco UCS B200 M4 servers x 2 |

3.1.2(b) |

|

|

|

Cisco UCS C220 M4 server x 1 |

3.1.2(b) |

|

|

|

ENIC Driver |

1.0.0.2 |

Ethernet driver for Cisco VIC |

|

|

Cisco FNIC driver |

1.6.0.28 |

FCoE driver for Cisco VIC |

|

|

Adapter Firmware |

4.1(2) |

Cisco VIC Adapter Firmware |

| Management |

Cisco UCS Manager |

3.1(2b) |

|

|

|

vCenter plugin for Nimble |

|

|

| Storage |

Nimble Storage AF7000 All Flash Array |

NimbleOS 3.6.0.0 GA |

Build: 3.6.0.0-414301-opt |

|

|

Nimble Storage CS5000 Adaptive Flash Array |

NimbleOS 3.6.0.0 GA |

Build: 3.6.0.0-414301-opt |

|

|

Nimble Connection Manager (NCM) for ESXi |

3.4.0 |

Build: 3.4.0-650005 |

|

|

Nimble Windows Toolkit (NWT) |

3.2.0.410 |

|

| Virtualization |

VMware vSphere |

6.5 |

Build: 6.5.0-4564106 |

|

|

VMware vCenter Server Appliance |

6.5 |

Build: 6.5.0-4602587 |

| Tools |

Workload – IOMeter Tool |

|

|

| Other |

Microsoft Active Directory/DNS |

|

|

Cisco Nexus Configuration Workflow

Figure 8 Cisco Nexus 9000 Configuration Workflow

Base Setup – Configuration Dialog

This section outlines the base setup of Nexus 9000 switches using the configuration dialog.

Cisco Nexus A

To set up the initial configuration for the first Cisco Nexus switch complete the following steps:

1. Connect to the serial or console port of the switch

Enter the configuration method: console

Abort Auto Provisioning and continue with normal setup?(yes/no[n]:y

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no[y]:

Enter the password for "admin":

Confirm the password for "admin":

---- Basic System Configuration Dialog VDC: 1 ----

This setup utility will guide you through the basic configuration of the system. Setup configures only enough connectivity for management of the system.

Please register Cisco Nexus9000 Family devices promptly with your supplier. Failure to register may affect response times for initial service calls. Nexus9000 devices must be registered to receive entitled support services.

Press Enter at anytime to skip a dialog. Use ctrl-c at anytime to skip the remaining dialogs.

Would you like to enter the basic configuration dialog (yes/no): y

Create another login account (yes/no) [n]: n

Configure read-only SNMP community string (yes/no) [n]:

Configure read-write SNMP community string (yes/no) [n]:

Enter the switch name: D01-n9k1

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]:

Mgmt0 IPv4 address: 192.168.155.3

Mgmt0 IPv4 netmask: 255.255.255.0

Configure the default gateway? (yes/no) [y]:

IPv4 address of the default gateway: 192.168.155.1

Configure advanced IP options? (yes/no) [n]:

Enable the telnet service? (yes/no) [n]:

Enable the ssh service? (yes/no) [y]:

Type of ssh key you would like to generate (dsa/rsa) [rsa]:

Number of rsa key bits <1024-2048> [1024]: 2048

Configure the ntp server? (yes/no) [n]: y

NTP server IPv4 address: 192.168.155.254

Configure default interface layer (L3/L2) [L2]:

Configure default switchport interface state (shut/no shut) [no shut]:

Configure CoPP system profile (strict/moderate/lenient/dense/skip) [strict]:

2. Review the settings printed to the console. If they are correct, answer yes to apply and save the configuration

3. Wait for the login prompt to make sure that the configuration has been saved prior to proceeding.

Cisco Nexus B

To set up the initial configuration for the second Cisco Nexus switch complete the following steps:

1. Connect to the serial or console port of the switch

2. The Cisco Nexus B switch should present a configuration dialog identical to that of Cisco Nexus A shown above. Provide the configuration parameters specific to Cisco Nexus B for the following configuration variables. All other parameters should be identical to that of Cisco Nexus A.

· Admin password

· Nexus B Hostname: D01-n9k2

· Nexus B mgmt0 IP address: 192.168.155.4

· Nexus B mgmt0 Netmask: 255.255.255.0

· Nexus B mgmt0 Default Gateway: 192.168.155.1

In the next section we look at the configuration required on the Cisco Nexus Switches for LAN and management connectivity.

Enabling Global Features and Settings

On both Cisco Nexus switches, enable the following features and best practices.

feature nxapi

feature udld

feature interface-vlan

feature lacp

feature vpc

spanning-tree port type edge bpduguard default

spanning-tree port type edge bpdufilter default

port-channel load-balance src-dst ip-l4port-vlan

Create VLANs

Create the vlans used in this solution.

vlan 12

name IB-MGMT

vlan 2

name Native-VLAN

vlan 950

name APP1

vlan 951

name APP2

vlan 3000

name vMotion

Configure vPC Domain, Peer-Link and Settings

Cisco Nexus A

To configure virtual port channels (vPCs) for switch A, complete the following steps:

1. From the global configuration mode, create a new vPC domain:

vpc domain 155

2. Make Cisco Nexus A the primary vPC peer by defining a low priority value:

role priority 10

3. Use the management interfaces on the supervisors of the Cisco Nexus switches to establish a keepalive link:

peer-keepalive destination 192.168.155.4 source 192.168.155.3

4. Enable following features for this vPC domain:

peer-switch

delay restore 150

peer-gateway

ip arp synchronize

auto-recovery

5. Save the configuration:

copy run start

Cisco Nexus B

To configure vPCs for switch B, complete the following steps:

1. From the global configuration mode, create a new vPC domain:

vpc domain 155

2. Make Cisco Nexus A the primary vPC peer by defining a higher priority value on this switch:

role priority 20

3. Use the management interfaces on the supervisors of the Cisco Nexus switches to establish a keepalive link:

peer-keepalive destination 192.168.155.3 source 192.168.155.4

4. Enable following features for this vPC domain:

peer-switch

delay restore 150

peer-gateway

ip arp synchronize

auto-recovery

5. Save the configuration:

copy run start

Configure Network Interfaces for VPC Peer Links

Cisco Nexus A

1. Define a port description for the interfaces connecting to VPC Peer D01-n9k2.

interface Eth1/53

description VPC Peer D01-n9k2:e1/53

interface Eth1/54

description VPC Peer D01-n9k2:e1/54

2. Apply a port channel to both VPC Peer links and bring up the interfaces.

interface Eth1/53,Eth1/54

channel-group 155 mode active

no shutdown

3. Enable UDLD on both interfaces to detect unidirectional links.

udld enable

4. Define a description for the port-channel connecting to D01-n9k2.

interface port-channel 155

description vPC peer-link

5. Make the port-channel a switchport, and configure a trunk to allow in-band management, VM traffic, and the native VLAN.

switchport

switchport mode trunk

switchport trunk native vlan 2

switchport trunk allowed vlan 12, 950, 951

spanning-tree port type network

6. Make this port-channel the VPC peer link and bring it up.

vpc peer-link

no shutdown

copy run start

Cisco Nexus B

1. Define a port description for the interfaces connecting to VPC Peer D01-n9k1.

interface Eth1/53

description VPC Peer D01-n9k1:e1/53

interface Eth1/54

description VPC Peer D01-n9k1:e1/54

2. Apply a port channel to both VPC Peer links and bring up the interfaces.

interface Eth1/53,Eth1/54

channel-group 155 mode active

no shutdown

3. Enable UDLD on both interfaces to detect unidirectional links.

udld enable

4. Define a description for the port-channel connecting to D01-n9k1.

interface port-channel 155

description vPC peer-link

5. Make the port-channel a switchport, and configure a trunk to allow in-band management, VM traffic, and the native VLAN.

switchport mode trunk

switchport trunk native vlan 2

switchport trunk allowed vlan 12, 950-951, 3000

spanning-tree port type network

6. Make this port-channel the VPC peer link and bring it up.

vpc peer-link

no shutdown

copy run start

Configure Network Interfaces to Cisco UCS Fabric Interconnects

Cisco Nexus A

1. Define a description for the port-channel connecting to D01-FI-A.

interface port-channel 13

description D01-FI-A

2. Make the port-channel a switchport, and configure a trunk to allow in-band management, VM traffic, and the native VLANs.

switchport mode trunk

switchport trunk native vlan 2

switchport trunk allowed vlan 12, 950-951, 3000

3. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

spanning-tree guard root

no lacp graceful-convergence

mtu 9216

4. Make this a VPC port-channel and bring it up.

vpc 13

no shutdown

5. Define a port description for the interface connecting to D01-FI-A.

interface Eth1/23

description D01-FI-A:p15

6. Apply it to a port channel and bring up the interface.

channel-group 13 mode active

no shutdown

7. Enable UDLD to detect unidirectional links.

udld enable

8. Define a description for the port-channel connecting to D01-FI-B.

interface port-channel 14

description D01-FI-B

9. Make the port-channel a switchport, and configure a trunk to allow in-band management, VM traffic VLANs and the native VLAN.

switchport mode trunk

switchport trunk native vlan 2

switchport trunk allowed vlan 12, 950-951, 3000

10. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

spanning-tree guard root

no lacp graceful-convergence

mtu 9216

11. Make this a VPC port-channel and bring it up.

vpc 14

no shutdown

12. Define a port description for the interface connecting to D01-FI-B

interface Eth1/24

description D01-FI-B:p15

13. Apply it to a port channel and bring up the interface.

channel-group 14 mode active

no shutdown

14. Enable UDLD to detect unidirectional links.

udld enable

copy run start

Cisco Nexus B

1. Define a description for the port-channel connecting to D01-FI-A.

interface port-channel 13

description D01-FI-B

2. Make the port-channel a switchport, and configure a trunk to allow in-band management, VM traffic, and the native VLANs.

switchport mode trunk

switchport trunk native vlan 2

switchport trunk allowed vlan 12, 950-951, 3000

3. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

spanning-tree guard root

no lacp graceful-convergence

mtu 9216

4. Make this a VPC port-channel and bring it up.

vpc 13

no shutdown

5. Define a port description for the interface connecting to D01-FI-A

interface Eth1/23

description D01-FI-A:p2

6. Apply it to a port channel and bring up the interface.

channel-group 13 mode active

no shutdown

7. Enable UDLD to detect unidirectional links.

udld enable

8. Define a description for the port-channel connecting to D01-FI-B

interface port-channel 14

description D01-FI-B

9. Make the port-channel a switchport, and configure a trunk to allow in-band management, and VM traffic VLANs and the native VLAN.

switchport mode trunk

switchport trunk native vlan 2

switchport trunk allowed vlan 12, 950-951, 3000

10. Make the port channel and associated interfaces spanning tree edge ports.

spanning-tree port type edge trunk

spanning-tree guard root

no lacp graceful-convergence

mtu 9216

11. Make this a VPC port-channel and bring it up.

vpc 14

no shutdown

12. Define a port description for the interface connecting to D01-FI-B

interface Eth1/24

description D01-FI-A:p2

13. Apply it to a port channel and bring up the interface.

channel-group 14 mode active

no shutdown

14. Enable UDLD to detect unidirectional links.

udld enable

copy run start

This section provides the procedure for initializing a Nimble Storage array and setting up basic IP connectivity. Note, that the dialog below is specific for an FC array setup.

Nimble Storage Configuration Workflow

Figure 9 Nimble Storage Configuration Workflow

Base Setup of Nimble Storage Array

Nimble Setup Manager

The steps discussed in this section apply to setting up both an all flash and adaptive flash array (as they both run the same OS and distribution). The Nimble Setup manager is part of the Nimble Storage Windows Toolkit. In this section, the Nimble Setup Manager is the only component that needs to be installed. The Nimble Setup manager is used to do the initial setup of the array and can be downloaded from Infosight at this location: https://infosight.nimblestorage.com/InfoSight/media/software/active/1/103/Setup-NimbleNWT-x64.3.4.0.2.zip

![]() The version of the setup manager used must be same or higher than the version of NimbleOS being deployed. Always check InfoSight to see all of the currently available versions of the Windows Toolkit.

The version of the setup manager used must be same or higher than the version of NimbleOS being deployed. Always check InfoSight to see all of the currently available versions of the Windows Toolkit.

Initialize Nimble Storage Array

1. In the Windows Start menu, click Nimble Storage > Nimble Setup Manager.

2. Select one of the uninitialized arrays from the Nimble Setup Manager list and click Next.

![]() If the array is not visible in Nimble Setup Manager, verify that the array’s eth1 ports of both controllers are on the same subnet as the Windows host.

If the array is not visible in Nimble Setup Manager, verify that the array’s eth1 ports of both controllers are on the same subnet as the Windows host.

Configure Nimble OS using the GUI

1. Choose the appropriate group option and click Next.

a. Set up the array but do not join a group. Continue to Step 5.

b. Add the array to an existing group.

![]() If you chose to join an existing group, your browser automatically redirects to the login screen of the group leader array. See Add Array to Group Using the GUI to complete the configuration.

If you chose to join an existing group, your browser automatically redirects to the login screen of the group leader array. See Add Array to Group Using the GUI to complete the configuration.

2. Provide or change the following initial management settings and click Finish:

· Array name

· Group name

· Management IP address and subnet mask for the eth1 interface

· Default gateway IP address

· Optional. Administrator password

3. You may see a warning similar to “There is a problem with this website's security certificate”. It is safe to ignore this warning and click Continue.

![]() If prompted, you can also download and accept the certificate. Alternatively, create your own. See the cert command in the Nimble Command Line Reference Guide. Also, if Internet Explorer v7 displays a blank page, clear the browser's cache. The page should be visible after refreshing the browser.

If prompted, you can also download and accept the certificate. Alternatively, create your own. See the cert command in the Nimble Command Line Reference Guide. Also, if Internet Explorer v7 displays a blank page, clear the browser's cache. The page should be visible after refreshing the browser.

4. In the login screen, type the password you set and click Log In. From this point forward, you are in the Nimble OS GUI. The first time you access the Nimble OS GUI, the Nimble Storage License Agreement appears.

5. In the Nimble Storage License Agreement, read the agreement, scroll to the bottom, check the acknowledgment box, and then click Proceed.

6. Provide the Subnet Configuration information for the following sections and click Next:

a. Management IP: IP address, Network and Subnet Mask.

![]() The Management IP is used for the GUI, CLI, and replication. It resides on the management subnet and floats across all "Mgmt only" and "Mgmt + Data" interfaces on that subnet. Note: in this instance you only need to configure the Management network. No IP data network connectivity is required.

The Management IP is used for the GUI, CLI, and replication. It resides on the management subnet and floats across all "Mgmt only" and "Mgmt + Data" interfaces on that subnet. Note: in this instance you only need to configure the Management network. No IP data network connectivity is required.

b. Subnet: Subnet label, Network, Netmask, Traffic Type(Data only, Mgmt Only, Mgmt +Data), MTU.

7. Maximum Transmission Unit (MTU) – Standard (1500) Provide Interface Assignment information for the following sections and click Next:

a. Interface Assignment: For each IP interface, assign it a subnet and a Data IP address within the specified network. For inactive interface, assign the "None" subnet.

b. Diagnostics:

i. Controller A diagnostics IP address will be on the same subnet as the management IP address.

ii. Controller B diagnostics IP address will be on the same subnet as the management IP address.

8. Provide the following Domain information and click Next:

a. Domain Name

b. DNS Servers: Type the hostname or IP address of your DNS server. You can list up to five servers.

9. Provide the following Time information and click Next:

a. Time Zone: Choose the time zone the array is located in.

b. Time (NTP) Server: Type the hostname or IP address of your NTP server.

10. Provide Support information for the following sections and click Finish.

11. Email Alerts:

a. From Address: This is the email address used by the array when sending email alerts. It does not need to be a real email address. Include the array name for easy identification.

b. Send to Address: Nimble recommends that you check the Send event data to Nimble Storage Support check box.

c. SMTP server hostname or IP address

d. AutoSupport:

i. Checking the Send AutoSupport data to Nimble Storage check box enables Nimble Storage Support to monitor your array, notify you of problems, and provide solutions.

ii. HTTP Proxy: AutoSupport and software updates require an HTTPS connection to the Internet, either directly or through a proxy server. If a proxy server is required, check the Use HTTP Proxy check box and provide the following information to configure proxy server details:

iii. HTTP proxy server hostname or IP address

iv. HTTP proxy server port

v. Proxy server user name

vi. Proxy server password

![]() The system does not test the validity of the SMTP server connection or the email addresses that you provided.

The system does not test the validity of the SMTP server connection or the email addresses that you provided.

12. Click Finish. The Setup Complete screen appears. Click Continue.

13. The Nimble OS home screen appears. Nimble Storage array setup is complete.

Configure Array to Send Email Notifications for Alerts (Optional)

To setup email notification of events from the Nimble Storage array, complete the following steps. This is an optional setup but highly recommended.

1. On the Wellness page, click Daily Summary Emails.

2. Check Subscribe to daily summary email.

3. Enter an email address for delivery of the email alerts.

4. (Optional) You can click Test to send a test email to the email address that you indicated.

5. Click Submit to conclude the email alerts setup.

Setup Nimble Management Tools

vCenter Plugin

The vCenter plugin from Nimble Storage allows for single pane of glass administration directly from vCenter as well as integration with Nimble Infosight analytics. Nimble Storage has integration to vCenter through plugin registration. This allows for datastore creation and management using vCenter. The vCenter plugin is supported on ESX 5.5 update 1 and later.

![]() The plugin is not supported for:

The plugin is not supported for:

- Multiple datastores located on one LUN

- One datastore spanning multiple LUNs

- LUNs located on a non-Nimble Storage array

For additional info, refer Nimble Storage VMware integration guide: https://infosight.nimblestorage.com/InfoSight/media/cms/active/pubs_VMware_Integration_Guide_aik1472500839218.ditamap.pdf

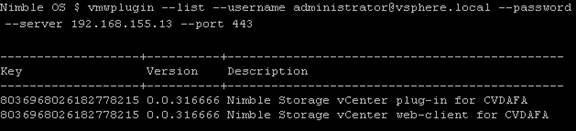

The procedure for registering the plugin are covered in the later section titled “Register the vCenter Plugin Using the NimbleOS CLI”.

InfoSight

Register and Log into InfoSight

To register and login to InfoSight, complete the following steps.

![]() It can take up to 24 hours for the array to appear in InfoSight after the first data set is sent. Data sets are configured to be sent around midnight array local time. Changes made right after the data set is sent at midnight might not be reflected in InfoSight for up to 48 hours.

It can take up to 24 hours for the array to appear in InfoSight after the first data set is sent. Data sets are configured to be sent around midnight array local time. Changes made right after the data set is sent at midnight might not be reflected in InfoSight for up to 48 hours.

1. Log in to the InfoSight portal at https://infosight.nimblestorage.com.

2. Click Enroll now to activate your account. If your email is not already registered, contact your InfoSight Administrator. If there is no existing, InfoSight Administrator (Super User) registered against your account or you are not sure, contact Nimble Storage Support for assistance.

3. Select the appropriate InfoSight role and enter the array serial number for your customer account. If this is the first account being created for your organization, you should select the Super User role. The number of super users is limited to the total number of arrays that are associated with an account.

4. Click Submit.

5. A temporary password is sent to the email address that you specified. You must change your password the first time you log in.

Configure Array to Send Data to InfoSight

To take full advantage of the InfoSight monitoring and analysis capabilities, configure your Nimble arrays to send data sets, statistics, and events to Nimble Storage Support. InfoSight recommendations and automatic fault detection are based on InfoSight processing the data from your arrays. If you do not configure the arrays to send this data to Nimble Storage Support during the initial setup, you can change the configuration at any time from the Administration menu in the GUI.

To configure the array to send data to InfoSight, complete the following steps.

1. From the Administration menu in the array GUI, select Alerts and Monitoring > AutoSupport / HTTP Proxy.

2. On the AutoSupport page, select Send AutoSupport data to Nimble Storage Support.

3. Click Test AutoSupport Settings to confirm that AutoSupport is set up correctly.

4. Click Save.

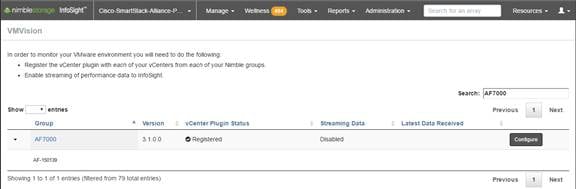

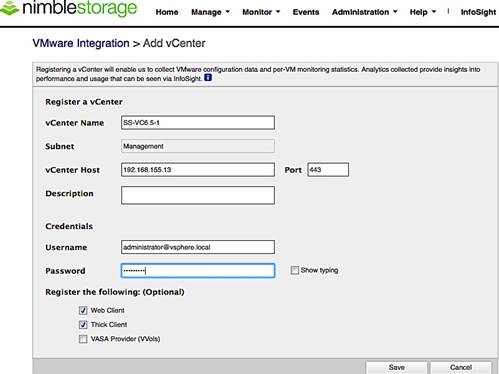

Configure Arrays to Monitor VMware Environment using VMVision

VMVision is part of InfoSight and provides visibility into the entire virtualization stack. It provides agentless per-VM monitoring and statistics. VMVision provides visibility into VMs with the most I/O churn and resource constraints. For additional info on VMVision, refer: http://uploads.nimblestorage.com/wp-content/uploads/2015/07/12132211/nimblestorage-vmvision.pdf

In order to monitor your VMware environment using VMVision, the following steps must be completed.

· Register the vCenter plugin with each vCenter from each Nimble Array groups

· Enable streaming of performance data to InfoSight.

To verify the vCenter plugin registration completed in earlier step and enabled streaming of performance data, complete the following steps for each array individually if ungrouped, or one time for a group of arrays.

1. Log in to https://infosight.nimblestorage.com

2. Go to Administration > VMVision.

3. In the VMVision list, find the array group to monitor.

4. Verify that your software version is up to date and vCenter plugin is registered.

5. Click Configure. In the Configure Group dialog box that opens, select Enabled in the VM Streaming Data list and Click Update.

This section provides detailed procedures for deploying and configuring Cisco MDS switches used in this solution for SAN connectivity.

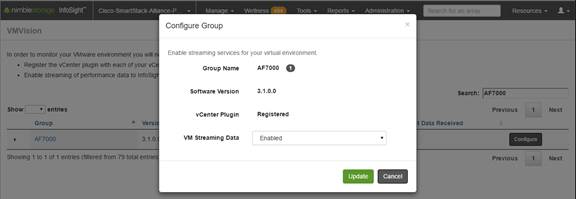

Cisco MDS Configuration Workflow

Figure 10 Cisco MDS Configuration Workflow

Base Setup using Configuration Dialog

This section provides details on the initial setup of Cisco MDS Fibre Channel Switches. Two switches, Cisco MDS-A and Cisco MDS-B are deployed to provide redundancy in the event of a switch failure.

Cisco MDS A

To set up the initial configuration for the first Cisco MDS switch complete the following steps:

![]() On initial boot, connect to the serial or console port of the switch and the switch should automatically start and attempt to enter Power ON Auto Provisioning.

On initial boot, connect to the serial or console port of the switch and the switch should automatically start and attempt to enter Power ON Auto Provisioning.

1. Connect to the serial or console port of the switch

Abort Auto Provisioning and continue with normal setup? (yes/no)[n]: y

---- System Admin Account Setup ----

Do you want to enforce secure password standard (yes/no) [y]:

Enter the password for "admin":

Confirm the password for "admin":

---- Basic System Configuration Dialog ----

This setup utility will guide you through the basic configuration of

the system. Setup configures only enough connectivity for management of the system.

Please register Cisco MDS 9000 Family devices promptly with your supplier. Failure to register may affect response times for initial service calls. MDS devices must be registered to receive entitled support services.

Press Enter at anytime to skip a dialog. Use ctrl-c at anytime to skip the remaining dialogs.

Would you like to enter the basic configuration dialog (yes/no): y

Create another login account (yes/no) [n]:

Configure read-only SNMP community string (yes/no) [n]:

Configure read-write SNMP community string (yes/no) [n]:

Enter the switch name: D01-MDS-A

Continue with Out-of-band (mgmt0) management configuration? (yes/no) [y]:

Mgmt0 IPv4 address: 192.168.155.6

Mgmt0 IPv4 netmask: 255.255.255.0

Configure the default gateway? (yes/no) [y]:

IPv4 address of the default gateway: 192.168.155.1

Configure advanced IP options? (yes/no) [n]:

Enable the ssh service? (yes/no) [y]:

Type of ssh key you would like to generate (dsa/rsa) [rsa]:

Number of rsa key bits <1024-2048> [1024]: 2048

Enable the telnet service? (yes/no) [n]:

Configure congestion/no credit drop for fc interfaces? (yes/no[y]: Enter the type of drop to configure congestion/no_credit drop? (con/no) [c]:

Enter milliseconds in multiples of 10 for congestion-drop for port mode F in range (<100-500>/default), where default is 500. [d]:

Congestion-drop for port mode E must be greater than or equal to Congestion-drop for port mode F. Hence, Congestion drop for port mode E will be set as default.

Enable the http-server? (yes/no) [y]:

Configure clock? (yes/no) [n]:

Configure timezone? (yes/no) [n]: y

Enter timezone config [PST/MST/CST/EST]: EST

Enter Hrs offset from UTC [-23:+23]: -5

Enter Minutes offset from UTC [0-59]:

Configure summertime? (yes/no) [n]:

Configure the ntp server? (yes/no) [n]: y

NTP server IPv4 address: 192.168.155.254

Configure default switchport interface state (shut/noshut) [shut]:

Configure default switchport trunk mode (on/off/auto) [on]:

Configure default switchport port mode F (yes/no) [n]:

Configure default zone policy (permit/deny) [deny]:

Enable full zoneset distribution? (yes/no) [n]:

Configure default zone mode (basic/enhanced) [basic]:

2. Review the settings printed to the console. If they are correct, answer yes to apply and save the configuration

3. Wait for the login prompt to make sure that the configuration has been saved prior to proceeding.

Cisco MDS B

To set up the initial configuration for the second Cisco MDS switch complete the following steps:

![]() On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

On initial boot and connection to the serial or console port of the switch, the NX-OS setup should automatically start and attempt to enter Power on Auto Provisioning.

1. On initial boot, connect to the serial or console port of the switch.

2. The Cisco Nexus B switch should present a configuration dialog identical to that of Cisco Nexus A shown above. Provide the configuration parameters specific to Cisco Nexus B for the following configuration variables. All other parameters should be identical to that of Cisco Nexus A.

· Admin password

· MDS B Hostname: D01-MDS-B

· MDS B mgmt0 IP address: 192.168.155.7

· MDS B mgmt0 Netmask: 255.255.255.0

· MDS B mgmt0 Default Gateway: 192.168.155.1

· Timezone: EST

· Offset from UTC: -5

· NTP Server IP: 192.168.155.254

Enable Global Features and Settings

The following features should be enabled globally on both Cisco MDS switches from configuration mode.

feature npiv

feature fport-channel-trunk

Create VSANs

One VSAN per fabric is used in this design – VSAN 4091 on Cisco MDS-A and VSAN 4092 on Cisco MDS-B. Configure VSAN one each switch as follows.

Cisco MDS-A

vsan database

vsan 4091

Cisco MDS-B

vsan database

vsan 4092

Create Port Channels to Cisco UCS Fabric Interconnects

Each FI has a port channel link to one of the Cisco MDS switches for storage traffic. The port channel in this design has 4 links and is configured as follows.

Cisco MDS-A

1. Create the port channel to D01-FI-A.

interface port-channel 41

channel mode active

switchport rate-mode dedicated

2. Assign interfaces to the port channel.

interface fc1/45 – 48

port-license acquire

channel-group 41 force

no shutdown

3. Add port channel to VSAN in the VSAN database.

vsan database

vsan 4091 interface port-channel41

4. Save the configuration.

copy run start

Cisco MDS-B

1. Create the port channel to D01-FI-B.

interface port-channel 42

channel mode active

switchport rate-mode dedicated

2. Assign interfaces to the port channel.

interface fc1/45 – 48

port-license acquire

channel-group 42 force

no shutdown

3. Add port channel to VSAN in the VSAN database.

vsan database

vsan 4092 interface port-channel42

4. Save the configuration.

copy run start

Configure FC Interfaces to Unified Flash Fabric

Each MDS switch has four links to each Nimble array in the cluster, two links to Controller A and two to Controller B. This design uses 16G FC connectivity between Cisco MDS switches and Nimble arrays as the interfaces on both ends support 16G FC.

The detailed SAN Fabric connectivity to the Nimble Storage AF7000 all flash and CS5000 adaptive flash arrays used in this setup is shown in the figure below.

Figure 11 Figure 3 SAN Connectivity to Nimble Storage Arrays

Cisco MDS-A

1. Configure the speed and license for the ports connected to the AF7000 all flash array.

interface fc1/13-16

port-license acquire

no shutdown

2. Configure the speed and license for the ports connected to the CS5000 adaptive flash array.

interface fc1/25-28

port-license acquire

no shutdown

3. Add the interfaces to the VSAN in the VSAN database.

vsan database

vsan 4091 interface fc1/13–16

vsan 4091 interface fc1/25–28

4. Save the configuration.

copy run start

Cisco MDS-B

1. Configure the speed and license for the ports connected to the AF7000 all flash array.

interface fc1/13-16

port-license acquire

no shutdown

2. Configure the speed and license for the ports connected to the CS5000 adaptive flash array.

interface fc1/25-28

port-license acquire

no shutdown

3. Add the interfaces to the VSAN in the VSAN database.

vsan database

vsan 4091 interface fc1/13–16

vsan 4091 interface fc1/25–28

4. Save the configuration.

Configure Device Aliases for Unified Flash Fabric

Using the FLOGI database information on Cisco MDS switches, configure device-aliases for the WWPN IDs received from the Unified Flash Fabric as shown below.

Configure Device Aliases for the Nimble Storage Arrays

Cisco MDS-A

1. Run ‘show flogi database’ command to obtain the WWPN info for the Nimble array.

D01-MDS-A# show flogi database

----------------------------------------------------------------------

INTERFACE VSAN FCID PORT NAME NODE NAME

----------------------------------------------------------------------

fc1/13 4091 0x940e00 56:c9:ce:90:c3:b3:20:09 56:c9:ce:90:c3:b3:20:00

fc1/14 4091 0x940f00 56:c9:ce:90:c3:b3:20:0b 56:c9:ce:90:c3:b3:20:00

fc1/15 4091 0x941000 56:c9:ce:90:c3:b3:20:0d 56:c9:ce:90:c3:b3:20:00

fc1/16 4091 0x941100 56:c9:ce:90:c3:b3:20:0f 56:c9:ce:90:c3:b3:20:00

fc1/25 4091 0x941200 56:c9:ce:90:c3:b3:20:01 56:c9:ce:90:c3:b3:20:00

fc1/26 4091 0x941300 56:c9:ce:90:c3:b3:20:02 56:c9:ce:90:c3:b3:20:00

fc1/27 4091 0x941400 56:c9:ce:90:c3:b3:20:05 56:c9:ce:90:c3:b3:20:00

fc1/28 4091 0x941500 56:c9:ce:90:c3:b3:20:06 56:c9:ce:90:c3:b3:20:00

2. Configure the device-aliases for the above WWPNs above and commit it as follows.

device-alias confirm-commit enable

device-alias database

device-alias name AF7k-CNTLA-FC1 pwwn 56:c9:ce:90:c3:b3:20:09

device-alias name AF7k-CNTLA-FC5 pwwn 56:c9:ce:90:c3:b3:20:0b

device-alias name AF7k-CNTLB-FC1 pwwn 56:c9:ce:90:c3:b3:20:0d

device-alias name AF7k-CNTLB-FC5 pwwn 56:c9:ce:90:c3:b3:20:0f

device-alias name CS5k-CNTLA-FC1 pwwn 56:c9:ce:90:c3:b3:20:01

device-alias name CS5k-CNTLA-FC5 pwwn 56:c9:ce:90:c3:b3:20:02

device-alias name CS5k-CNTLB-FC1 pwwn 56:c9:ce:90:c3:b3:20:05

device-alias name CS5k-CNTLB-FC5 pwwn 56:c9:ce:90:c3:b3:20:06

device-alias commit

3. Save the configuration.

copy run start

Cisco MDS-B

1. Run ‘show flogi database’ command to obtain the WWPN info for the Nimble array.

D01-MDS-B# show flogi database

-------------------------------------------------------------------

INTERFACE VSAN FCID PORT NAME NODE NAME

-------------------------------------------------------------------

fc1/13 4092 0x4b0d00 56:c9:ce:90:c3:b3:20:0a 56:c9:ce:90:c3:b3:20:00

fc1/14 4092 0x4b0e00 56:c9:ce:90:c3:b3:20:0c 56:c9:ce:90:c3:b3:20:00

fc1/15 4092 0x4b0b00 56:c9:ce:90:c3:b3:20:0e 56:c9:ce:90:c3:b3:20:00

fc1/16 4092 0x4b0c00 56:c9:ce:90:c3:b3:20:10 56:c9:ce:90:c3:b3:20:00

fc1/25 4092 0x4b0f00 56:c9:ce:90:c3:b3:20:03 56:c9:ce:90:c3:b3:20:00

fc1/26 4092 0x4b1000 56:c9:ce:90:c3:b3:20:04 56:c9:ce:90:c3:b3:20:00

fc1/27 4092 0x4b1100 56:c9:ce:90:c3:b3:20:07 56:c9:ce:90:c3:b3:20:00

fc1/28 4092 0x4b1200 56:c9:ce:90:c3:b3:20:08 56:c9:ce:90:c3:b3:20:00

2. Configure the device-aliases for the above WWPNs above and commit it as follows.

device-alias confirm-commit enable

device-alias database

device-alias name AF7k-CNTLA-FC2 pwwn 56:c9:ce:90:c3:b3:20:0a

device-alias name AF7k-CNTLA-FC6 pwwn 56:c9:ce:90:c3:b3:20:0c

device-alias name AF7k-CNTLB-FC2 pwwn 56:c9:ce:90:c3:b3:20:0e

device-alias name AF7k-CNTLB-FC6 pwwn 56:c9:ce:90:c3:b3:20:10

device-alias name CS5k-CNTLA-FC2 pwwn 56:c9:ce:90:c3:b3:20:03

device-alias name CS5k-CNTLA-FC6 pwwn 56:c9:ce:90:c3:b3:20:04

device-alias name CS5k-CNTLB-FC2 pwwn 56:c9:ce:90:c3:b3:20:07

device-alias name CS5k-CNTLB-FC6 pwwn 56:c9:ce:90:c3:b3:20:08

device-alias commit

3. Save the configuration.

copy run start

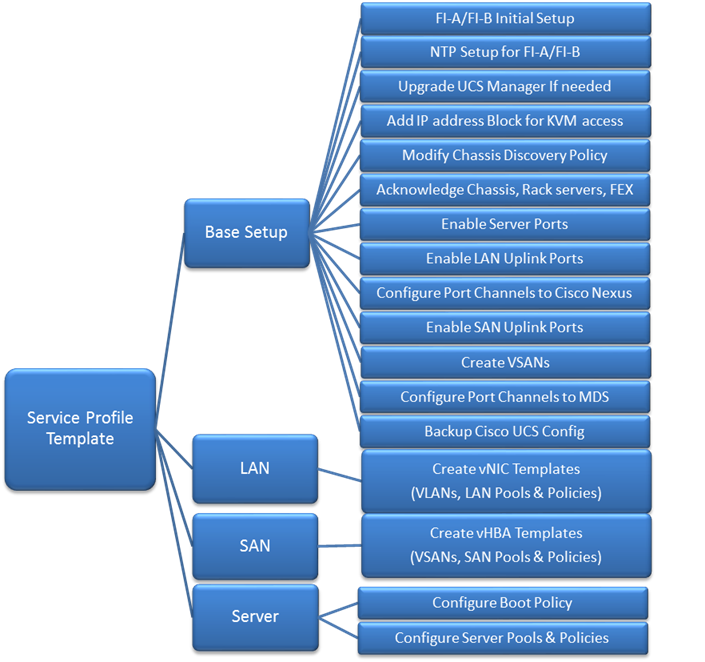

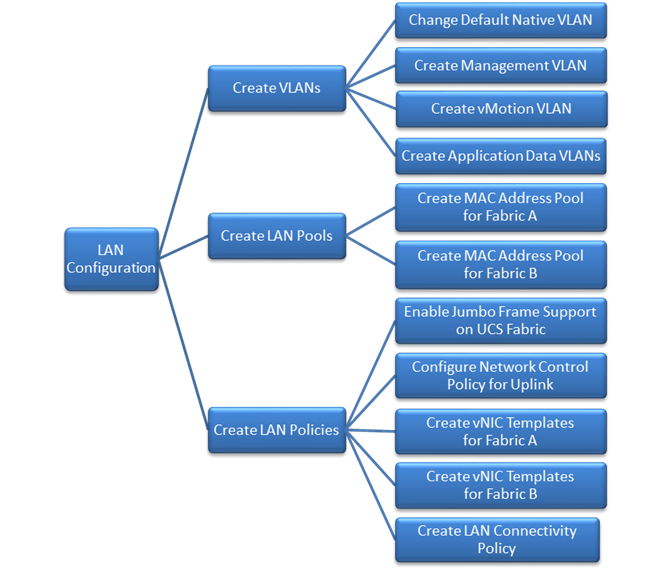

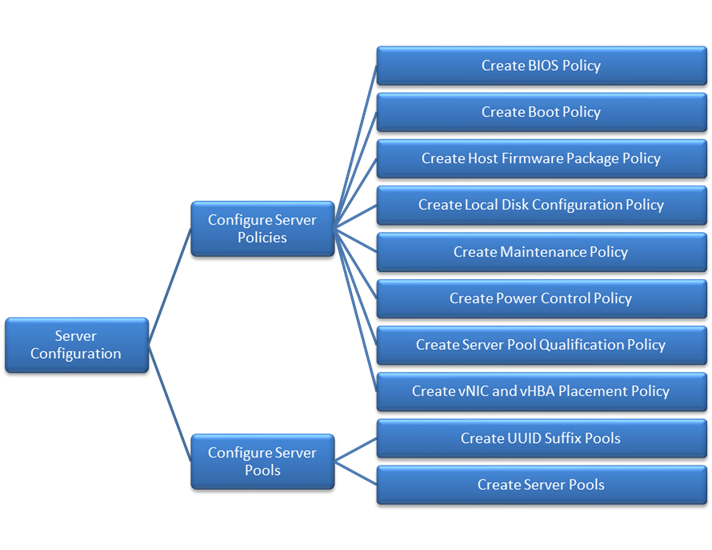

Cisco UCS Configuration Workflow

The figure below shows a high level workflow for deploying Cisco UCS servers using Cisco UCS Manager. Service Profile Templates enable the rapid deployment and configuration of Cisco UCS Servers by consolidating the configuration policies and parameters in a template format so that it can be used to deploy new servers without having to individually configure each server.

Figure 12 High Level Workflow for deploying and configuring Cisco UC

Cisco UCS Configuration – Base Setup

This section outlines the initial setup necessary to deploy a new Cisco UCS domain in a Cisco-Nimble environment using a pair of Cisco UCS Fabric interconnects (FI) with embedded Cisco UCS Manager for management.

Initial Setup of Cisco Fabric Interconnects

A pair of Cisco UCS 6248UP Fabric Interconnects is used in this design. Minimum configuration required to bring up the FIs and embedded Cisco UCS Manager (UCSM) are outlined below. All configurations after this will be done using Cisco UCS Manager.

Cisco UCS 6248UP FI – Primary (FI-A)

1. Connect to the console port of the primary Cisco UCS FI.

Enter the configuration method: console

Enter the setup mode; setup newly or restore from backup.(setup/restore)? Setup You have chosen to setup a new fabric interconnect? Continue? (y/n): y

Enforce strong passwords? (y/n) [y]: y

Enter the password for "admin": <Enter Password>

Enter the same password for "admin": <Enter Password>

Is this fabric interconnect part of a cluster (select 'no' for standalone)? (yes/no)[n]: y

Which switch fabric (A|B): A

Enter the system name: D01-FI-A

Physical switch Mgmt0 IPv4 address: 192.168.155.8

Physical switch Mgmt0 IPv4 netmask: 255.255.255.0

IPv4 address of the default gateway: 192.168.155.1

Cluster IPv4 address: 192.168.155.89

Configure DNS Server IPv4 address? (yes/no) [no]: y

DNS IPv4 address: 192.168.155.15

Configure the default domain name? y

Default domain name: smartstack.local

Join centralized management environment (UCS Central)? (yes/no) [n]: <Enter>

2. Review the settings printed to the console. If they are correct, answer yes to apply and save the configuration.

3. Wait for the login prompt to make sure that the configuration has been saved prior to proceeding.

Cisco UCS 6248UP FI – Secondary (FI-B)

1. Connect to the console port on the second FI on Cisco UCS 6248UP FI.

Enter the configuration method: console

Installer has detected the presence of a peer Fabric interconnect. This Fabric interconnect will be added to the cluster. Do you want to continue {y|n}? y

Enter the admin password for the peer fabric interconnect: <Enter Password>

Physical switch Mgmt0 IPv4 address: 192.168.155.9

Apply and save the configuration (select ‘no’ if you want to re-enter)?(yes/no: y

2. Verify the above configuration by using Secure Shell (SSH) to login to each FI and verify the cluster status. Status should be as follows if the cluster is up and running properly.

D01-FI-A# show cluster state

Cluster Id: 0x8cb67462eb0c11e2-0x9fff002a6a419c64

A: UP, PRIMARY

B: UP, SUBORDINATE

HA READY

D01-FI-A#

3. Now you’re ready to log into Cisco UCS Manager using either the individual or cluster IPs of the Cisco UCS Fabric Interconnects.

Log into Cisco UCS Manager

To log into the Cisco Unified Computing System (UCS) environment, complete the following steps:

1. Open a web browser and navigate to the Cisco UCS 6248 Fabric Interconnect cluster IP address configured in earlier step.

2. Click Launch Cisco UCS Manager link to download the Cisco UCS Manager software.

3. If prompted, accept security certificates as necessary.

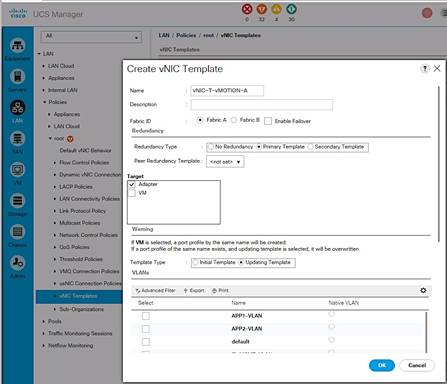

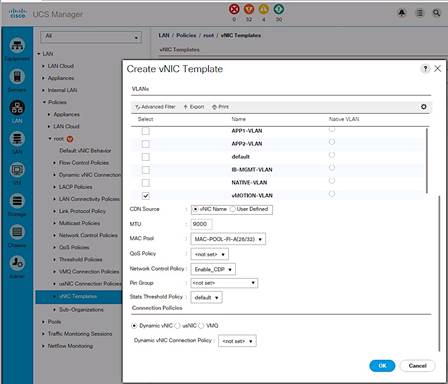

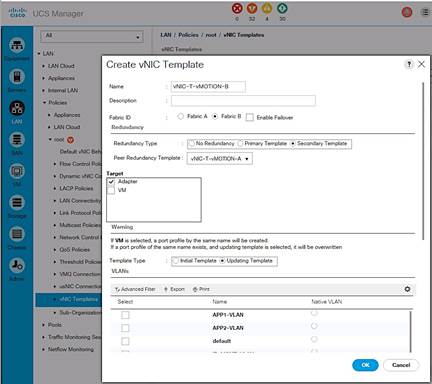

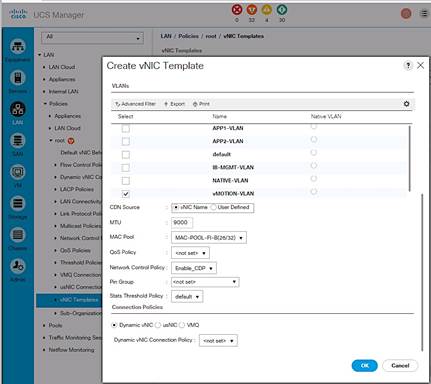

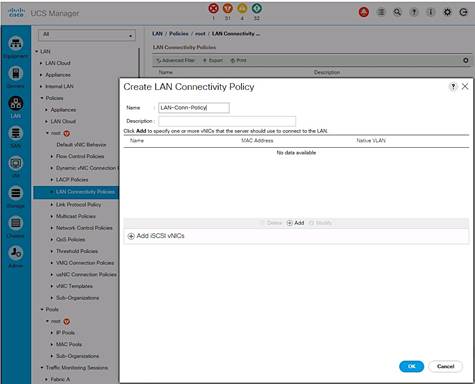

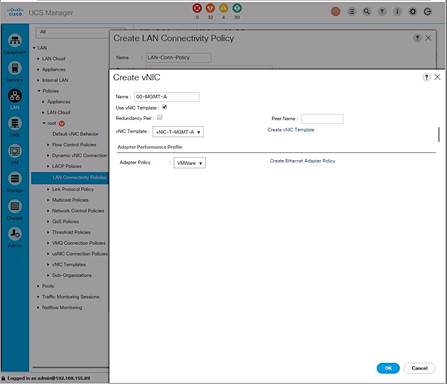

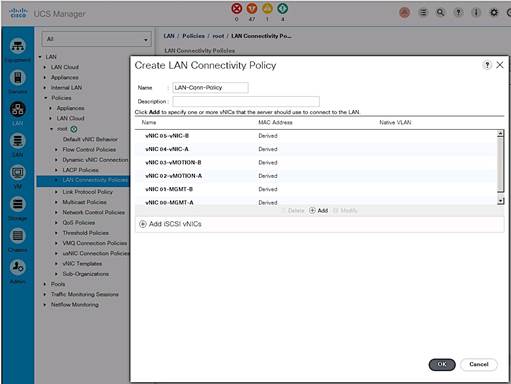

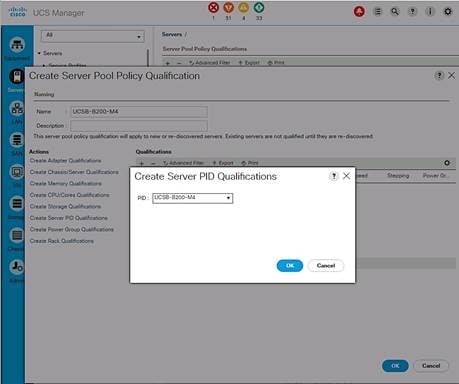

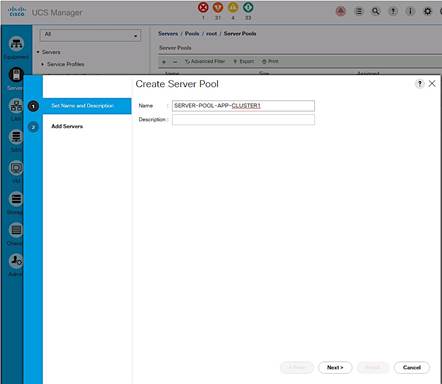

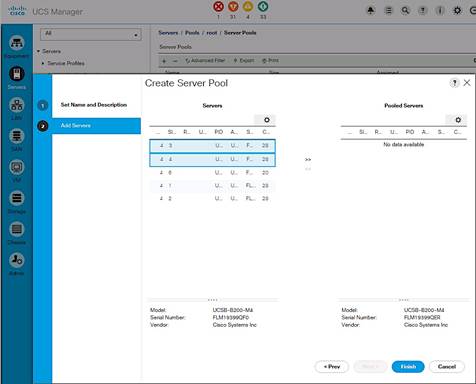

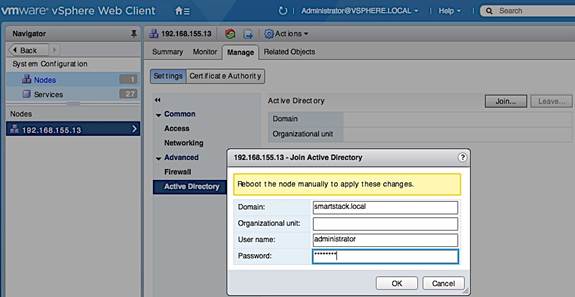

4. When prompted, enter admin as the user name and enter the administrative password.