Cisco UCS Integrated Infrastructure for Big Data with SAP HANA Vora

Available Languages

Cisco UCS Integrated Infrastructure for Big Data and Cisco ACI with SAP HANA Vora for In-memory Analytics

Last Updated: January 25, 2016

The CVD program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information visit

http://www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, IronPort, the IronPort logo, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2016 Cisco Systems, Inc. All rights reserved.

Table of Contents

Cisco UCS and SAP HANA Vora Deliver a New Dimension to Big Data Analytics

Cisco UCS Integrated Infrastructure for Big Data

Cisco UCS 6200 Series Fabric Interconnects

Cisco UCS C-Series Rack Mount Servers

Cisco UCS Virtual Interface Cards (VICs)

Cisco Application Centric Infrastructure (ACI) Overview

Architectural Benefits of Using Fabric Interconnect with Cisco ACI

Centralized Management for the Entire Network

Multi-Tenant and Mixed Workload Support

Easy Migration to 40 Gbps in the Network

ACI Spine Line Card for Nexus 9508

Application Policy Infrastructure Controller (APIC)

Cisco UCS Datacenter Solution for SAP HANA

Architecture referenced in this guide (Flexpod Datacenter for SAP Solution with Cisco ACI)

Hortonworks Data Platform (HDP 2.2)

Enterprise SQL at Scale in Hadoop

Kafka for Processing the Internet of Things

Physical Layout for the Solution

Software Distributions and Versions

Red Hat Enterprise Linux (RHEL)

Hortonworks Data Platform (HDP 2.2)

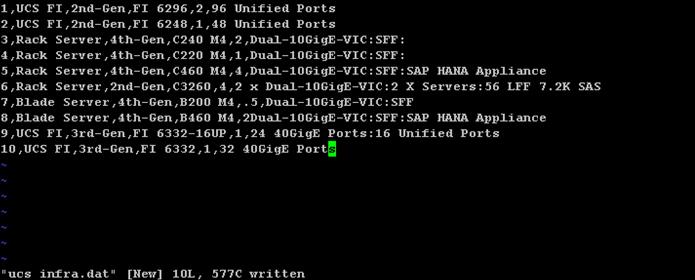

Deployment Hardware and Software

Scaling the Architecture Further with Additional Spine Switches

SAP HANA and SAP HANA VORA scalability

Configuration Parameters for the Tenants

Switch Discovery with the APIC

Switch Registration with the APIC Cluster

Validating the Fabric Topology

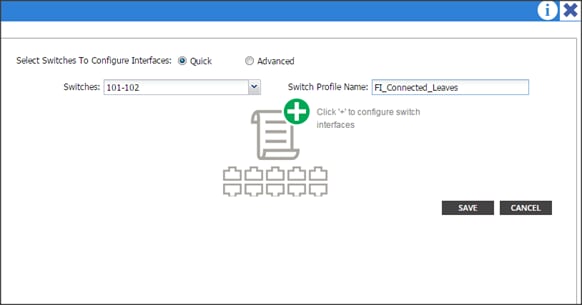

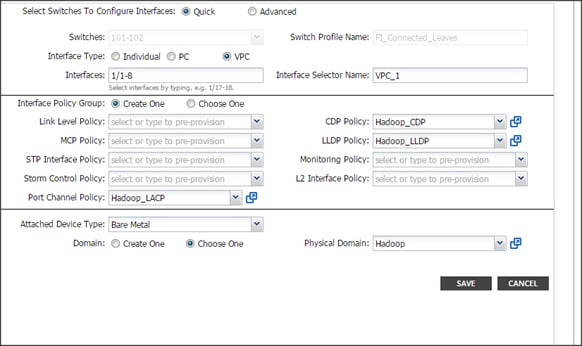

Configuring the VPC Ports for the Fabric Interconnect

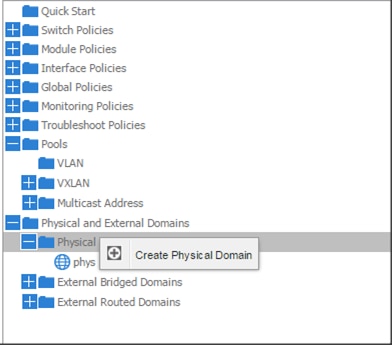

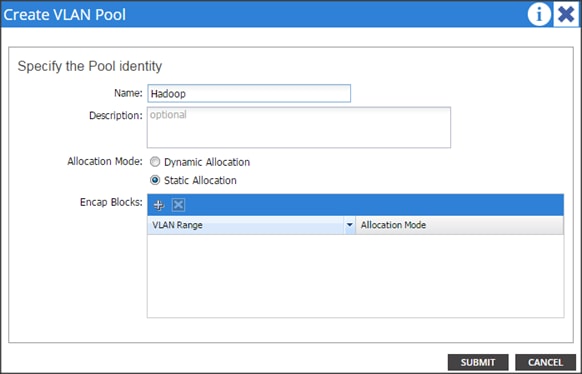

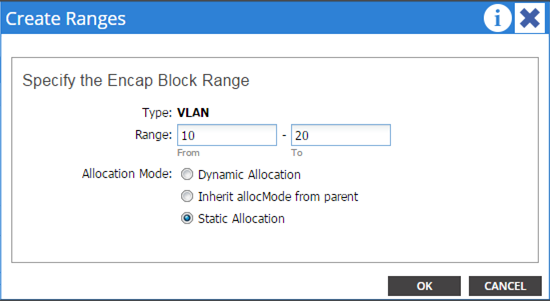

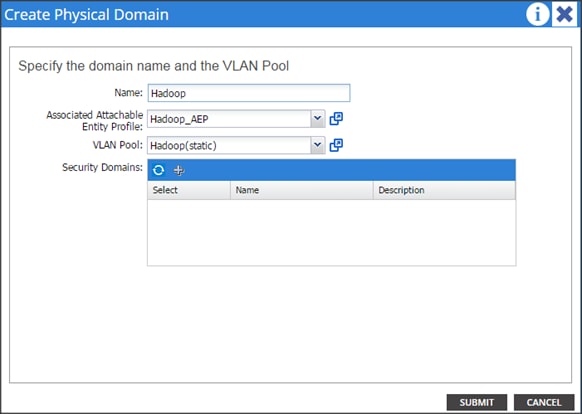

Create a Physical Domain and vlan Pool

Configuring the Switch Interface for UCSDE

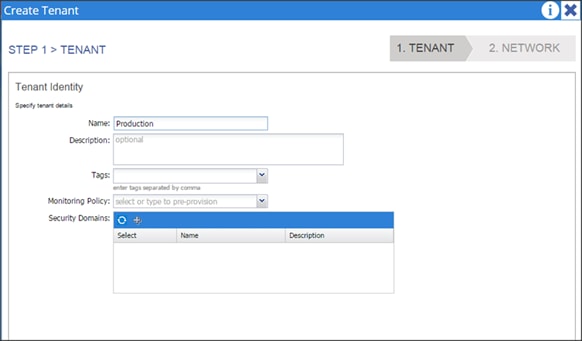

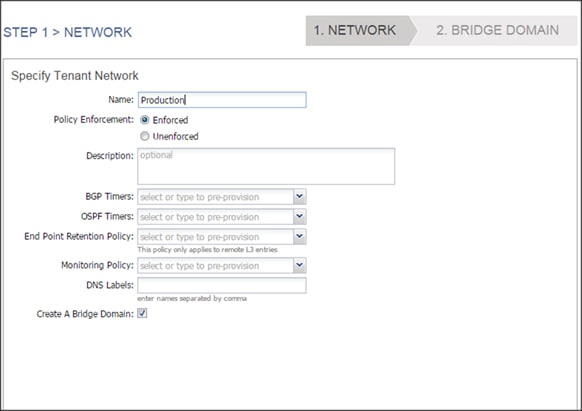

Creating Tenants, Private Network, and Bridge Domains

Creating a Tenant, Private Network, and Bridge Domain Using the GUI

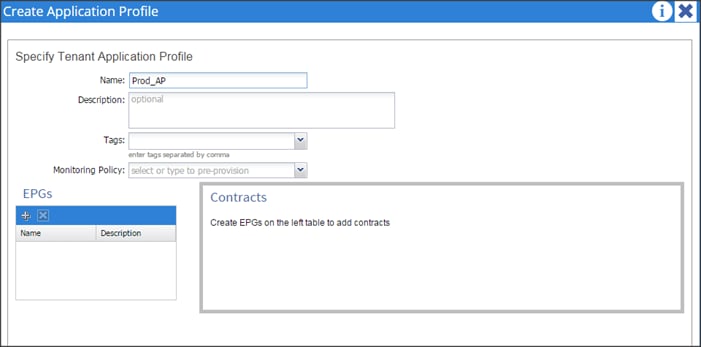

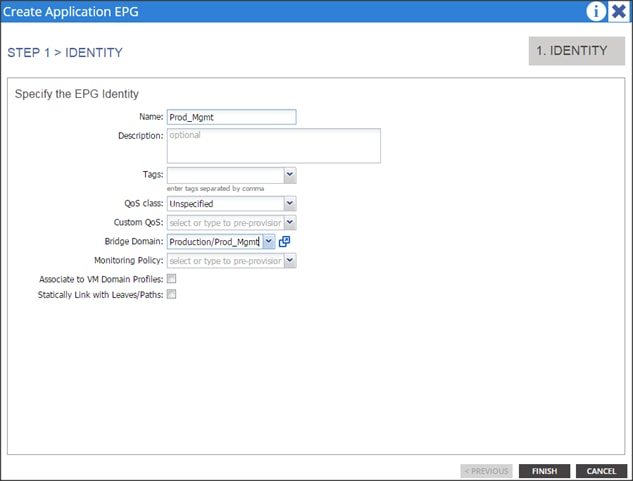

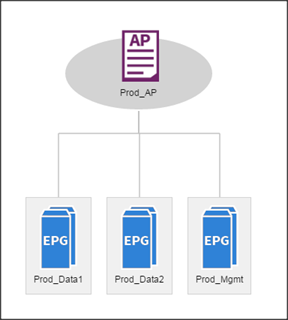

Creating an Application Profile Using the GUI

Creating the Static Bindings for the Leaves and Paths

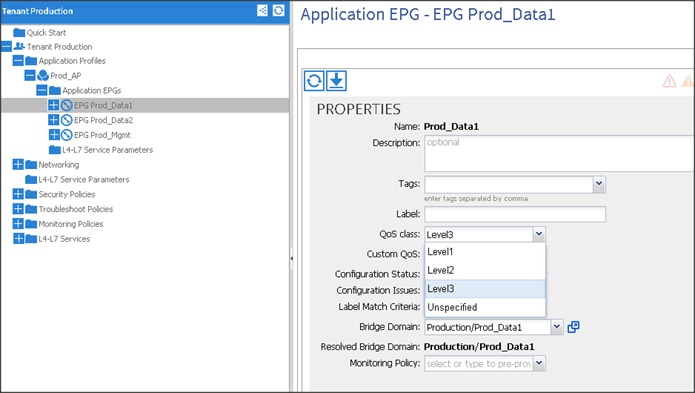

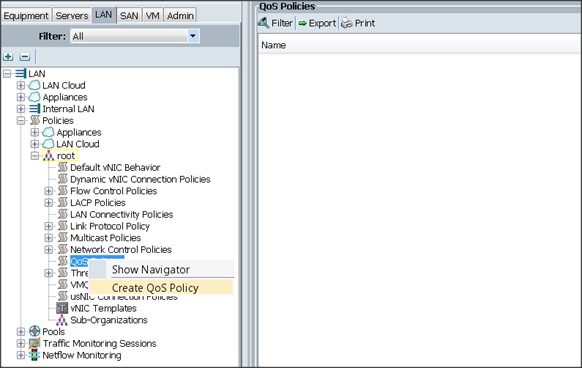

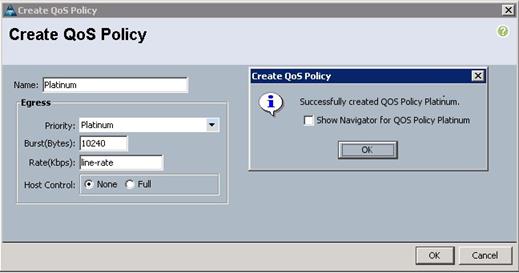

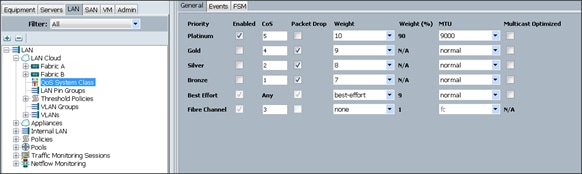

Configuring QOS policy for EPG

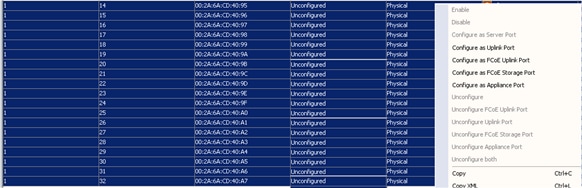

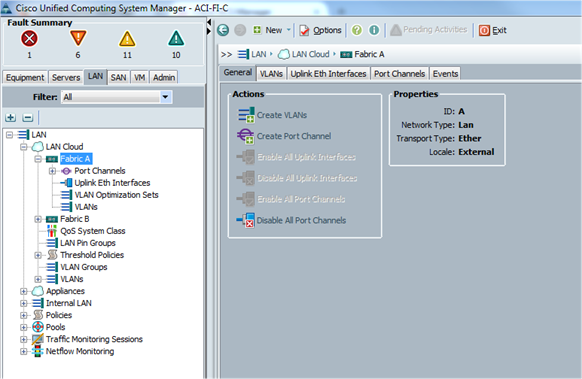

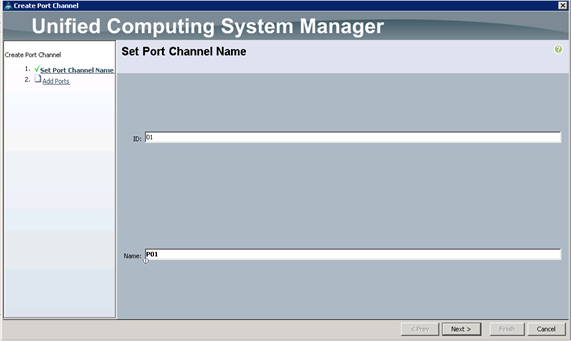

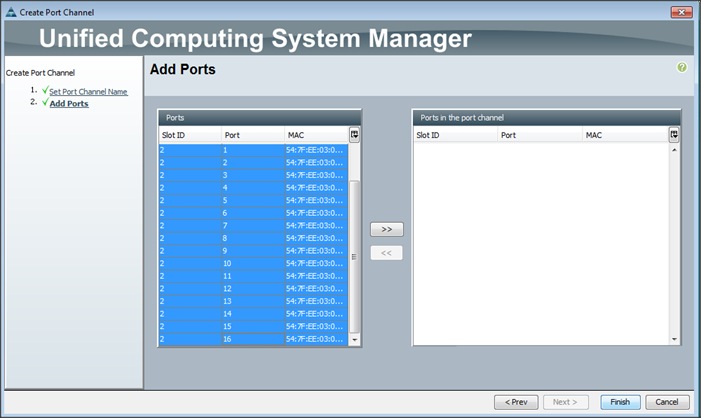

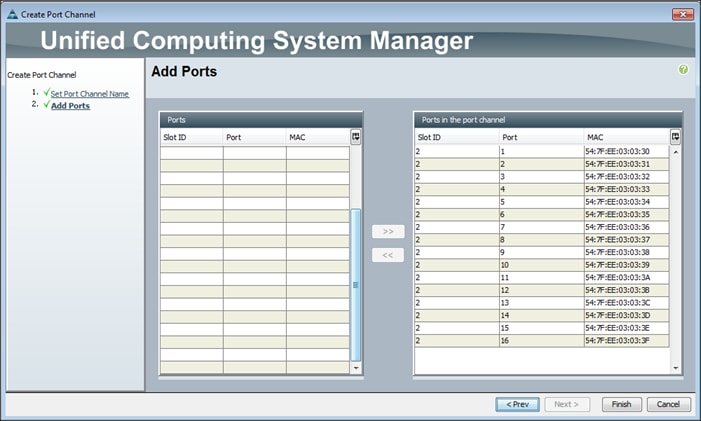

Adding Ports to the Port Channel

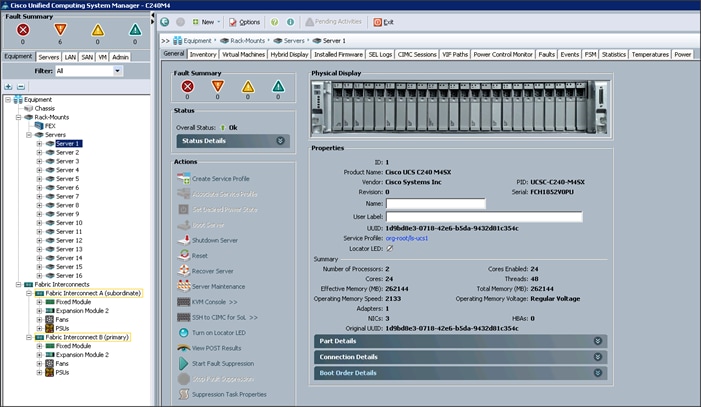

Server Configuration and Cabling for C240M4

Initial setup of the Fabric Interconnect A and B.

Configure Fabric Interconnect A

Configure Fabric Interconnect B

Logging Into Cisco UCS Manager

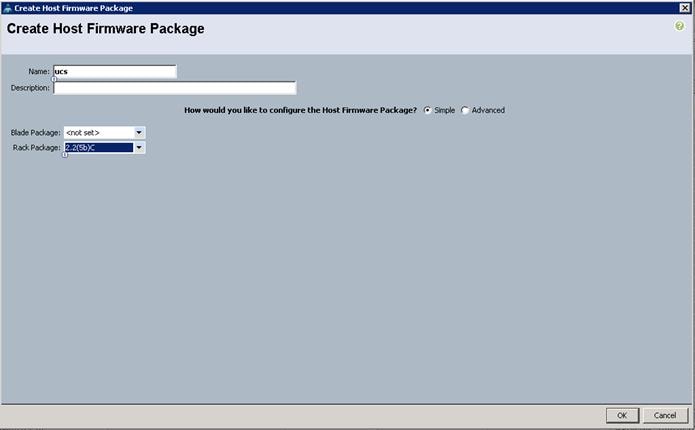

Upgrading UCSM Software to Version 2.2(5b)

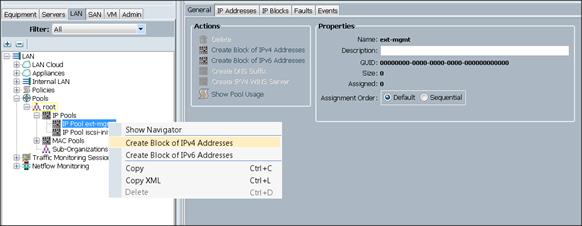

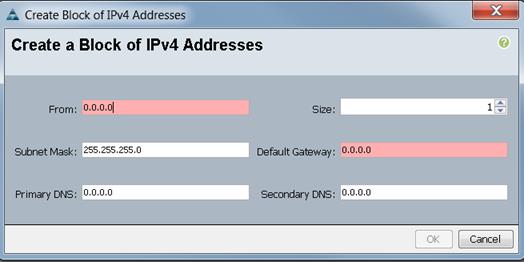

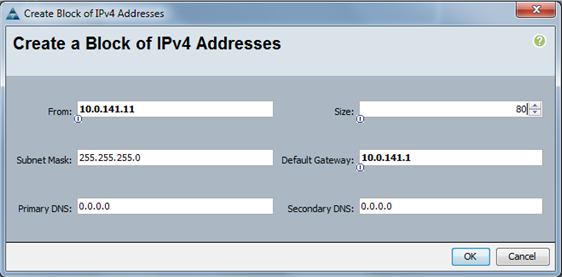

Adding Block of IP Addresses for KVM Access

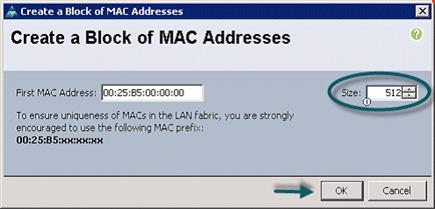

Creating Pools for Service Profile Templates

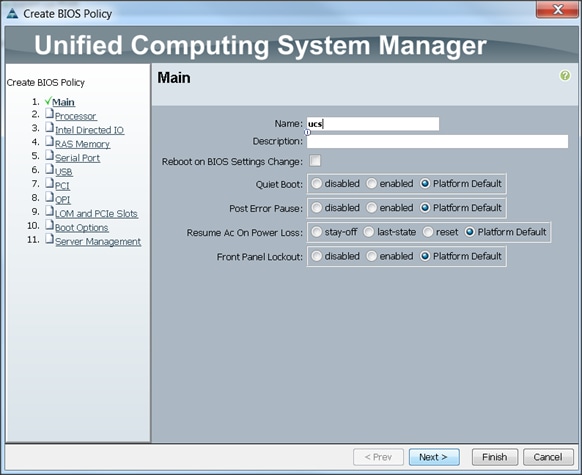

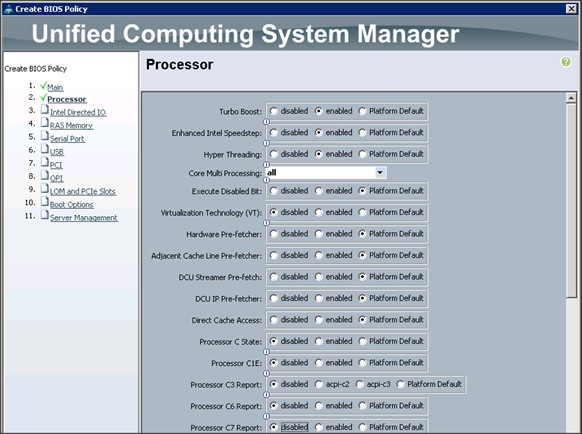

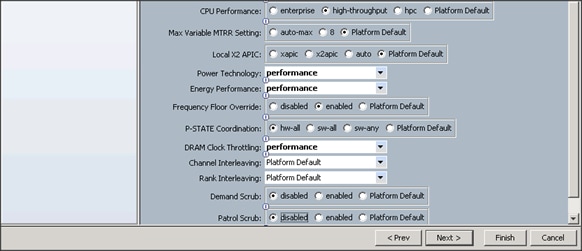

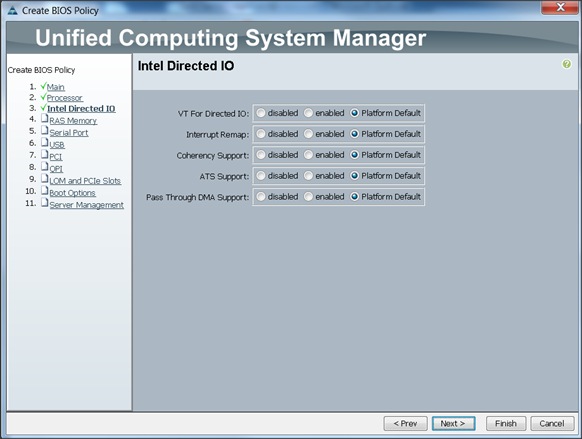

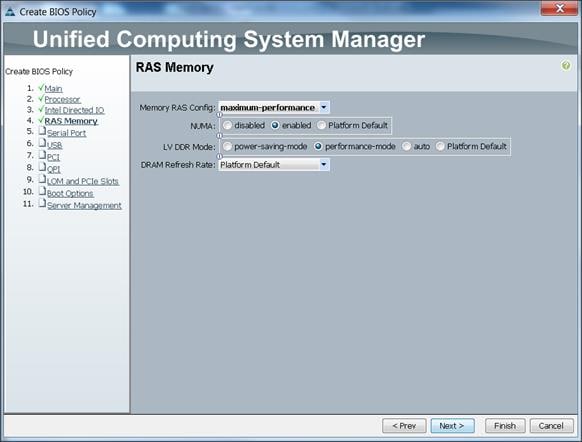

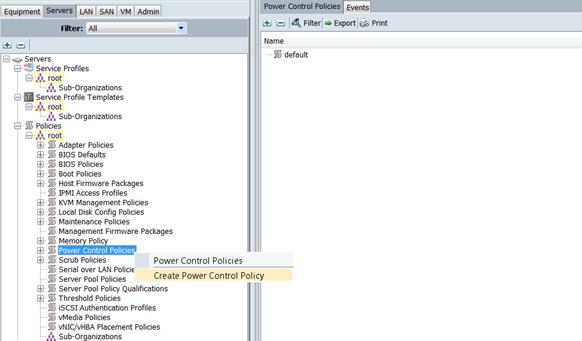

Creating Policies for Service Profile Templates

Creating Host Firmware Package Policy

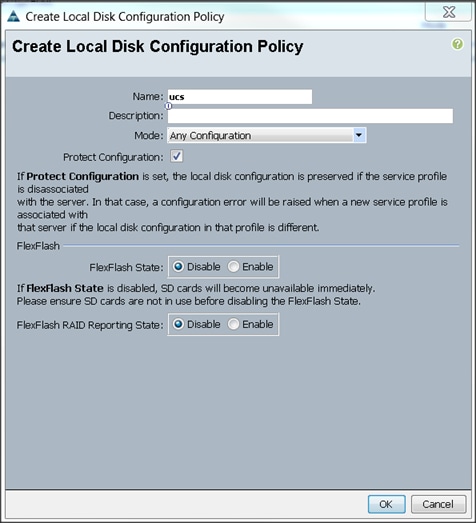

Creating Local Disk Configuration Policy

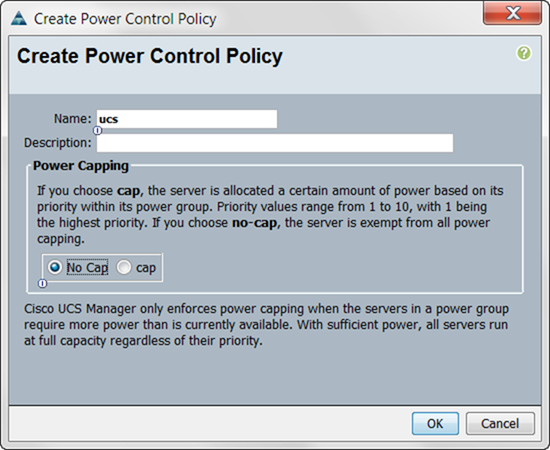

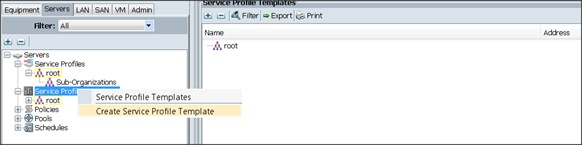

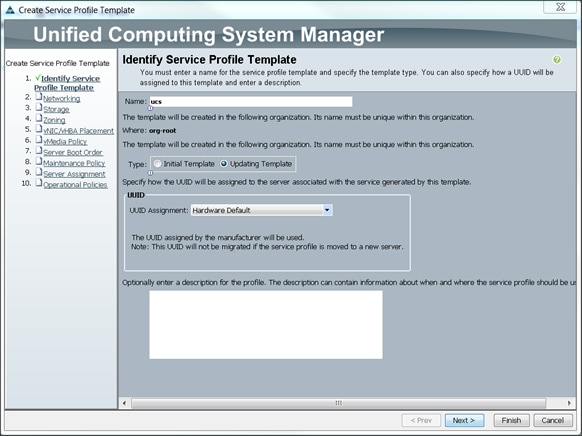

Creating Service Profile Template

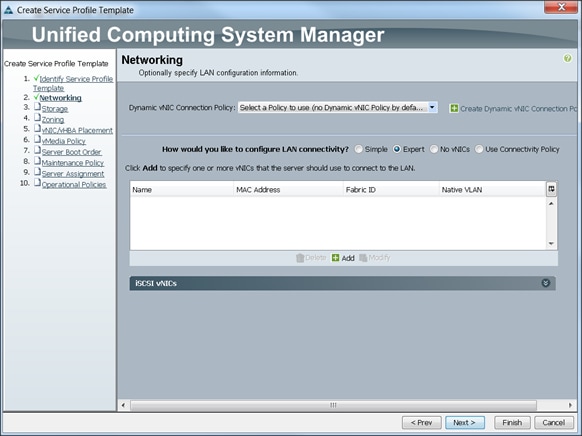

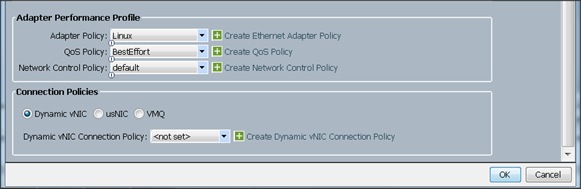

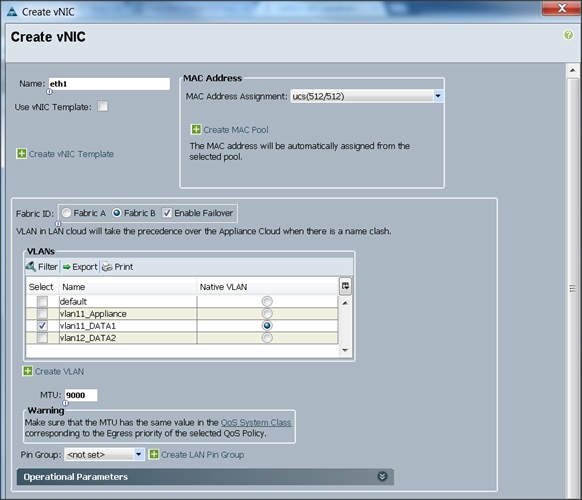

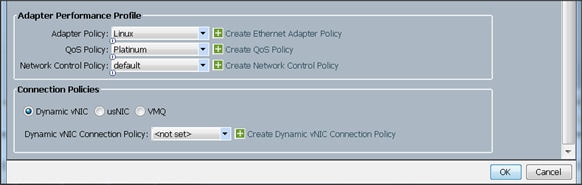

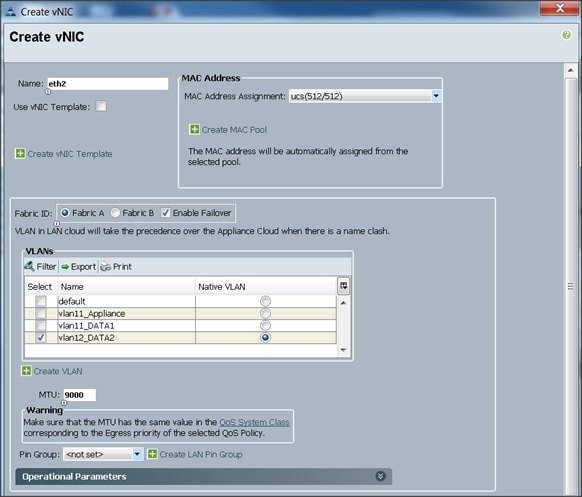

Configuring Network Settings for the Template

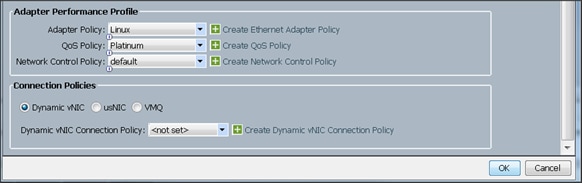

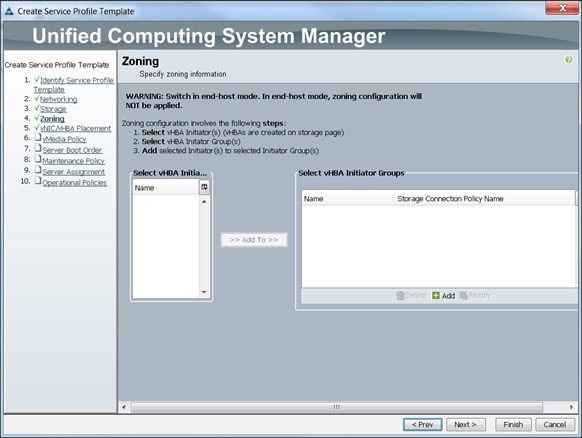

Configuring Storage Policy for the Template

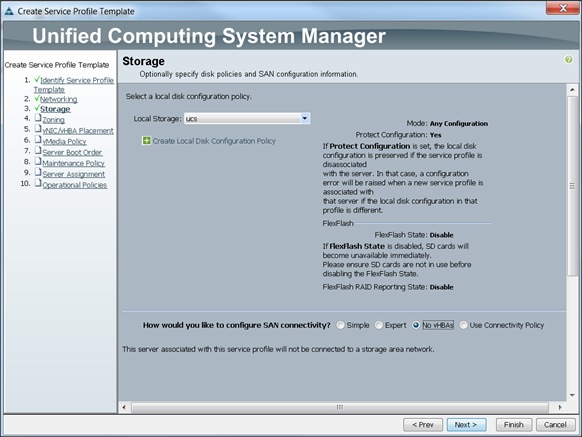

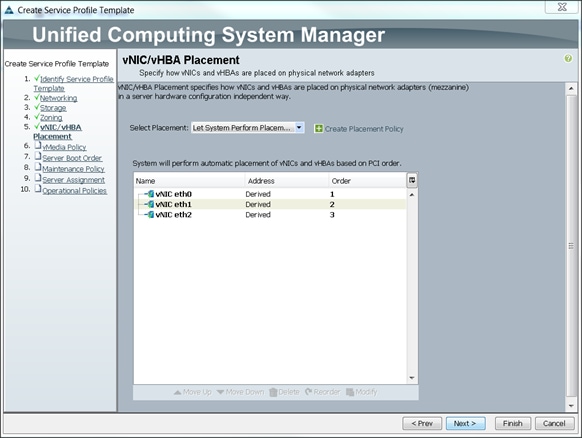

Configuring vNIC/vHBA Placement for the Template

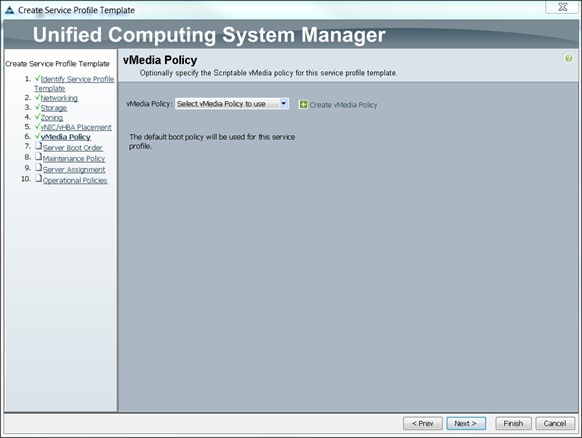

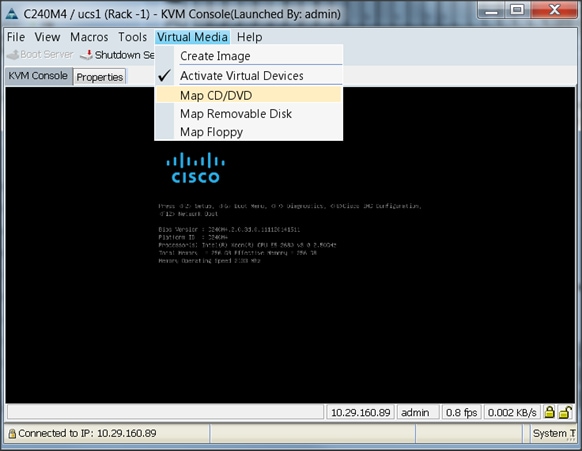

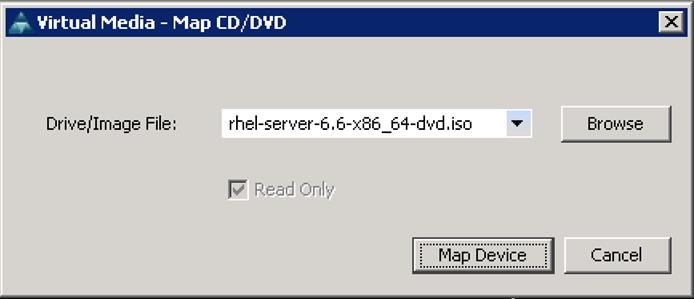

Configuring vMedia Policy for the Template

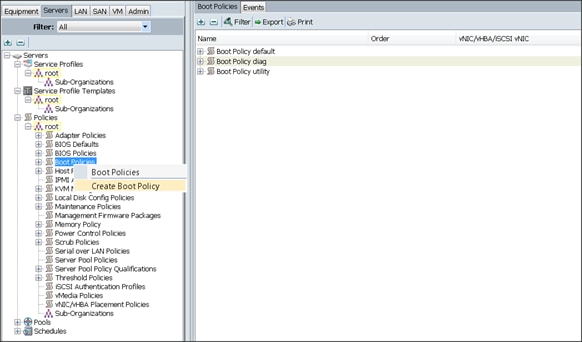

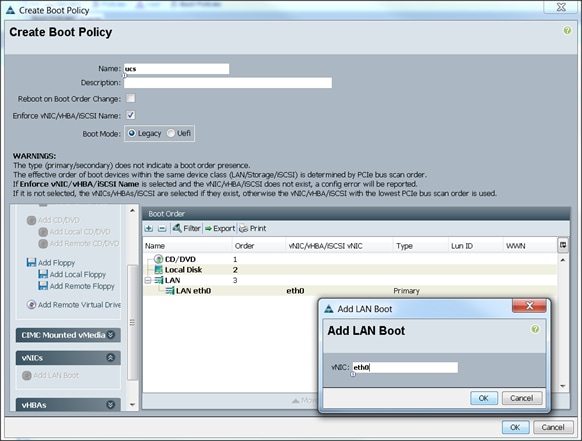

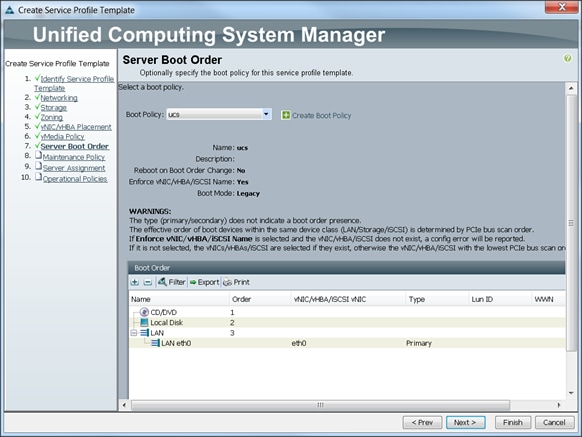

Configuring Server Boot Order for the Template

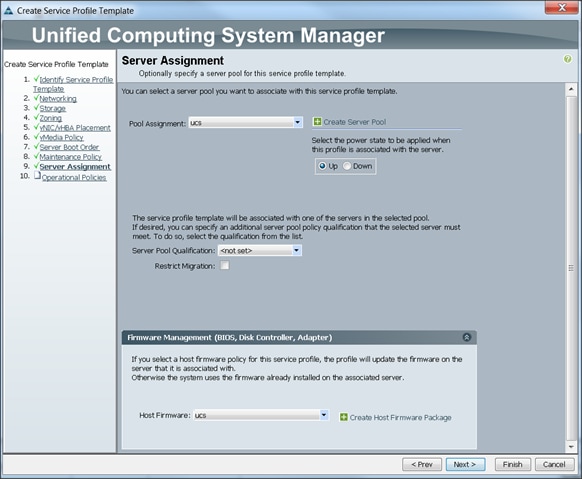

Configuring Server Assignment for the Template

Configuring Operational Policies for the Template

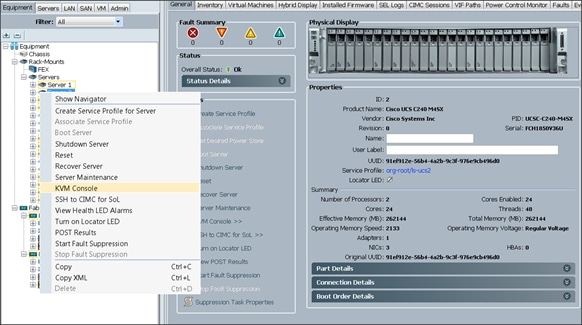

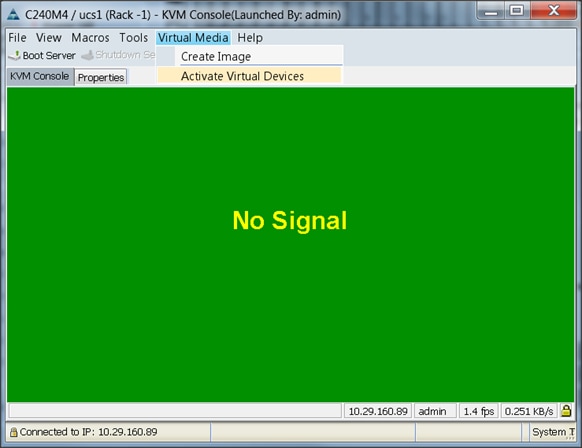

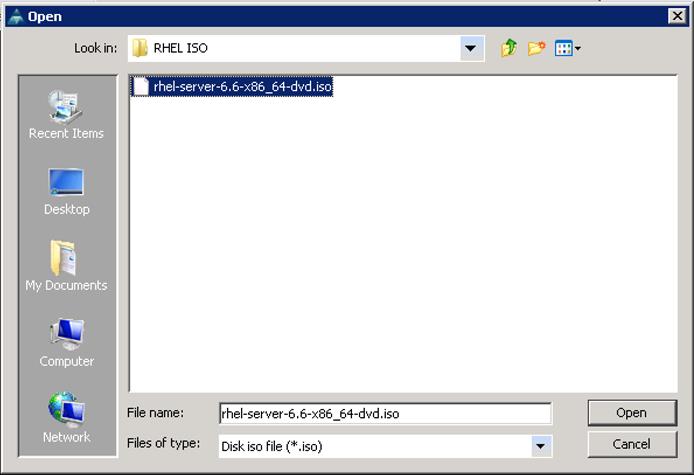

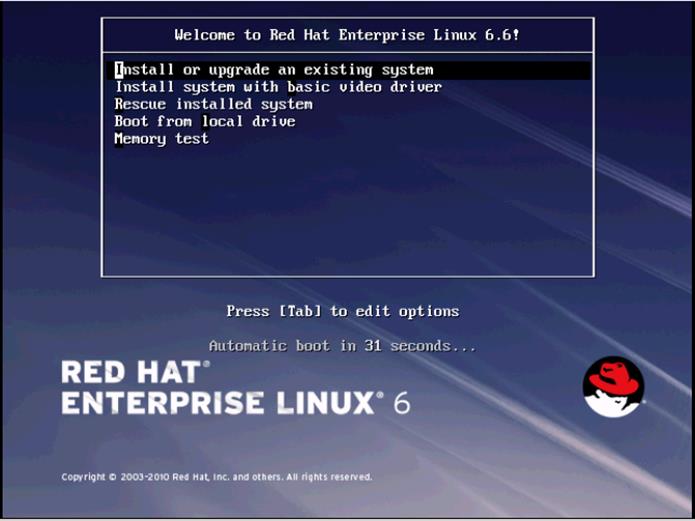

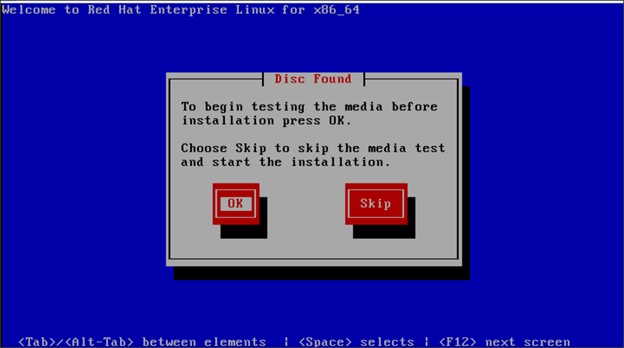

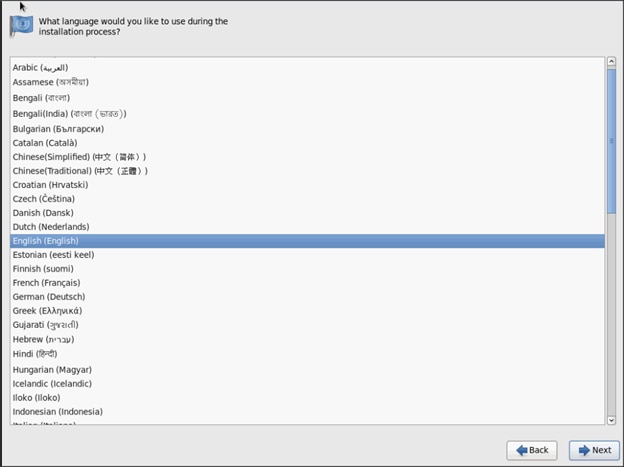

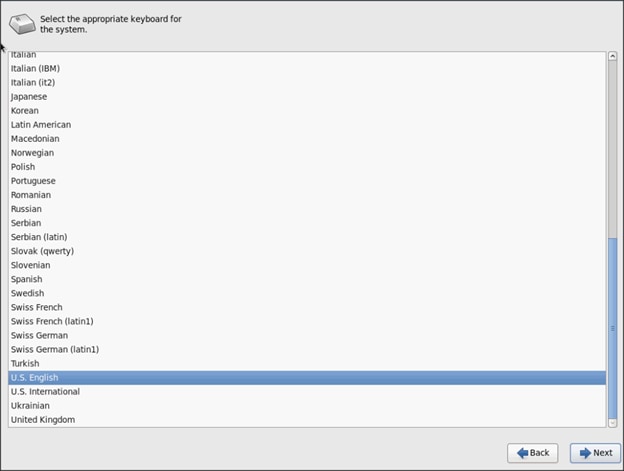

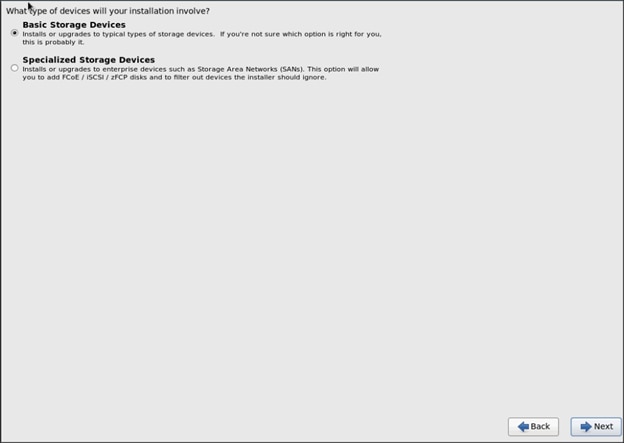

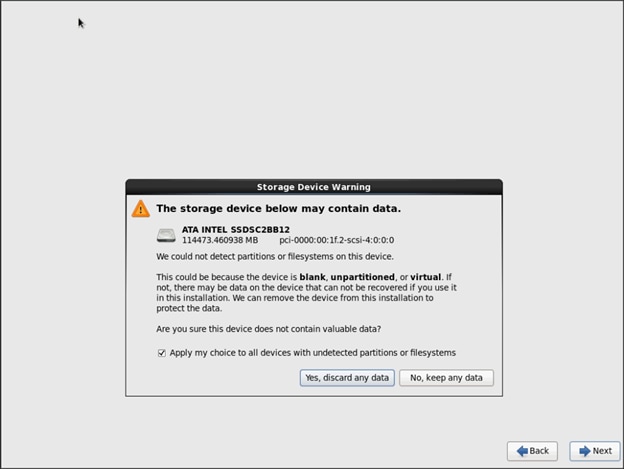

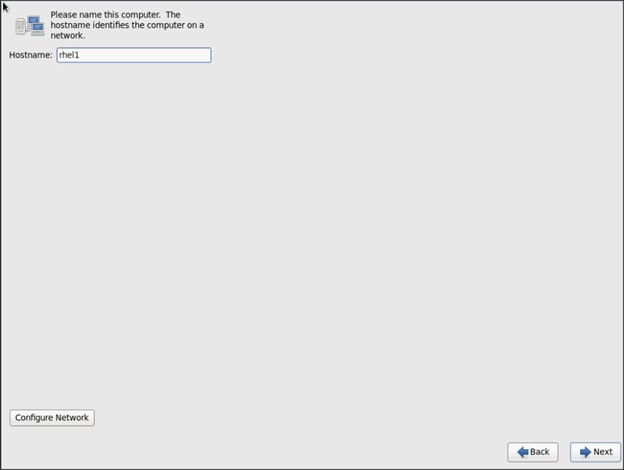

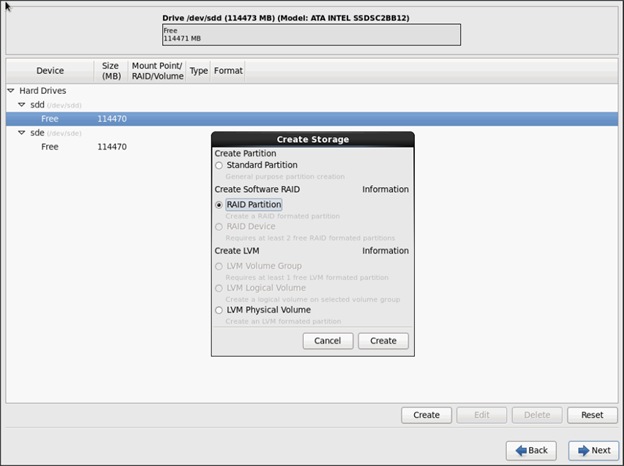

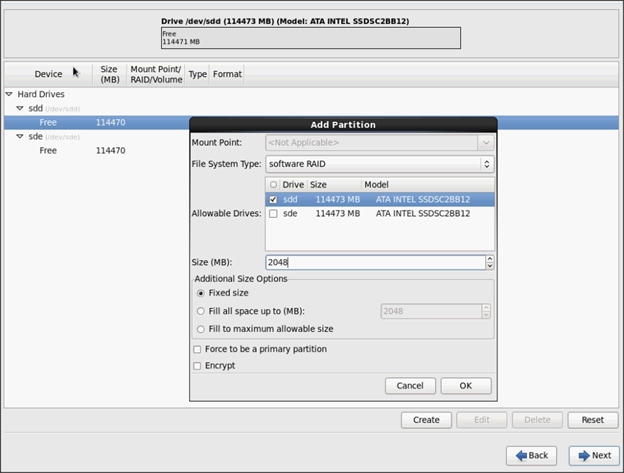

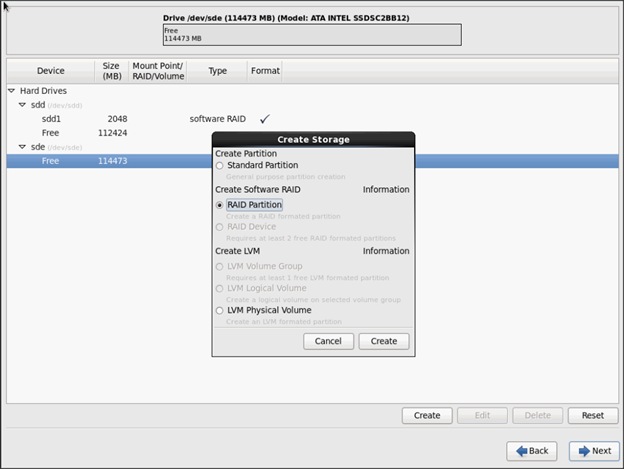

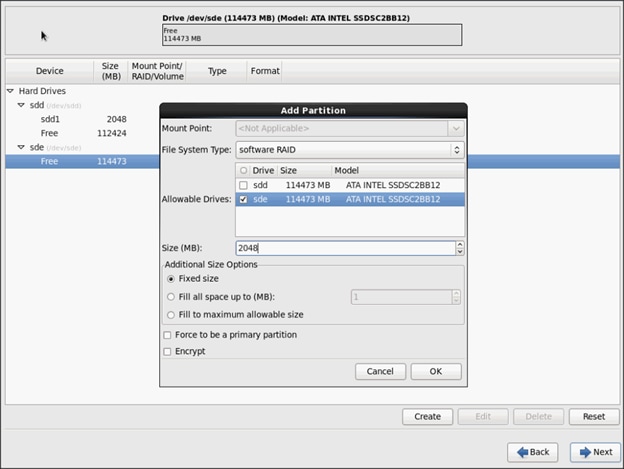

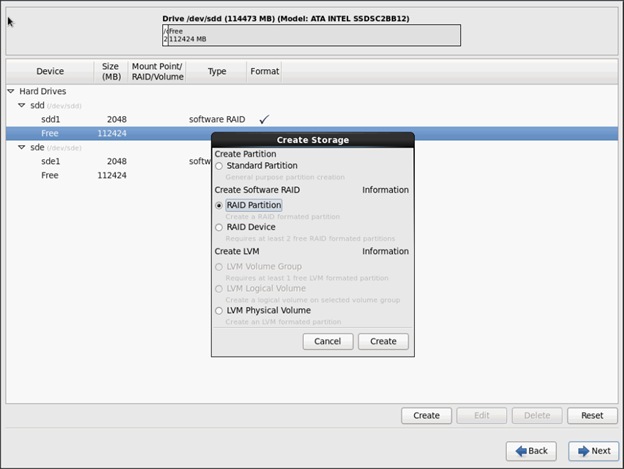

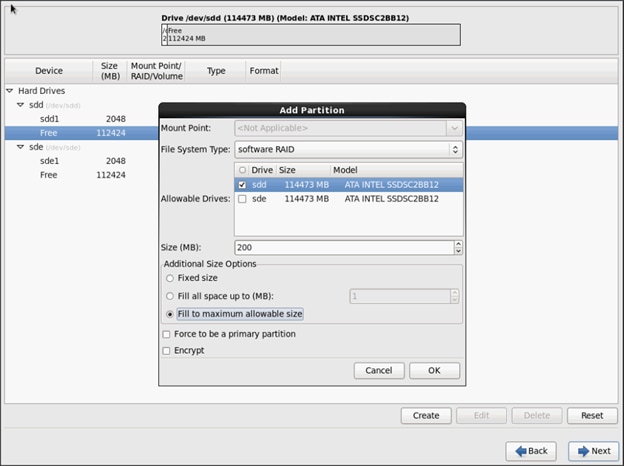

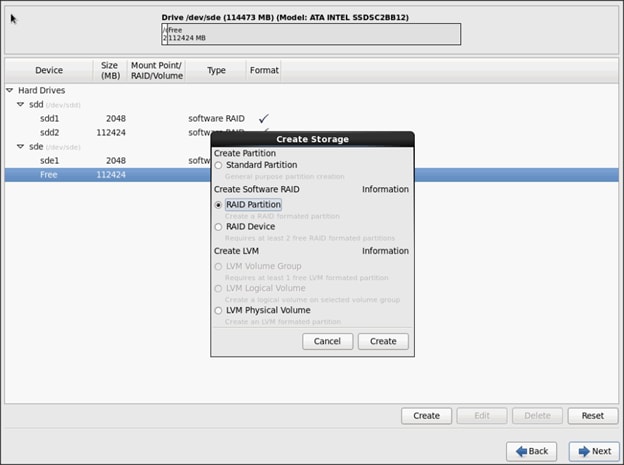

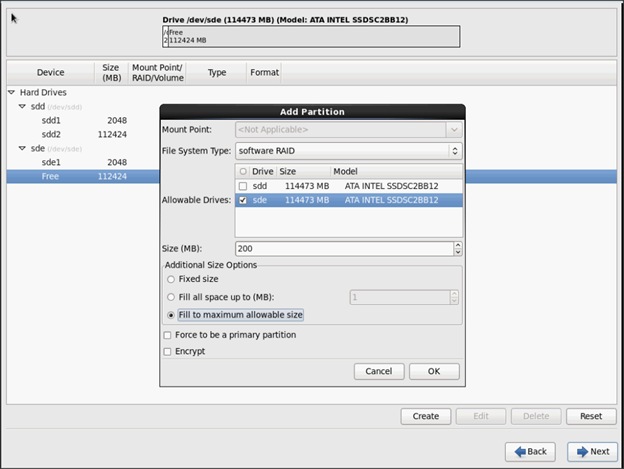

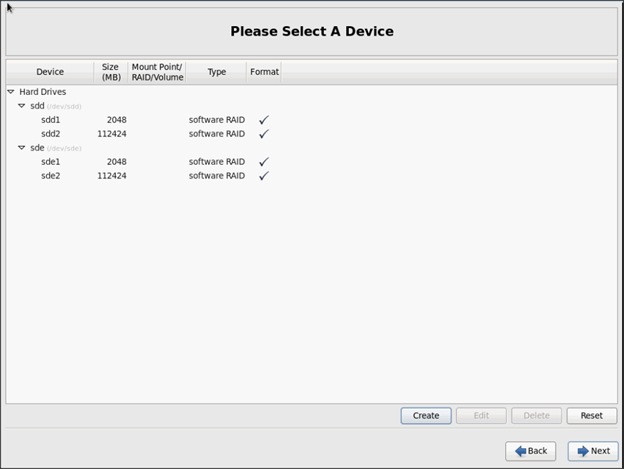

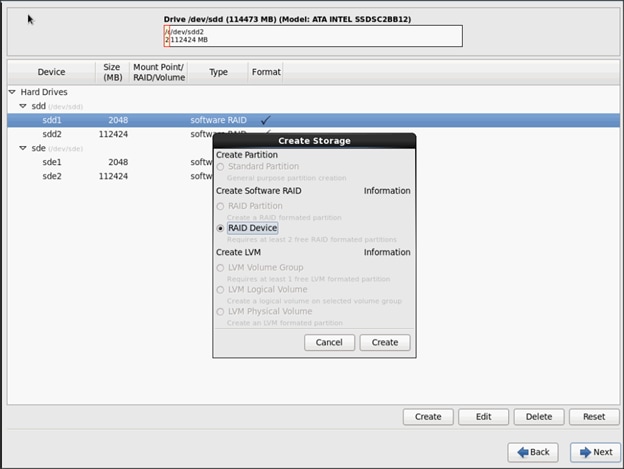

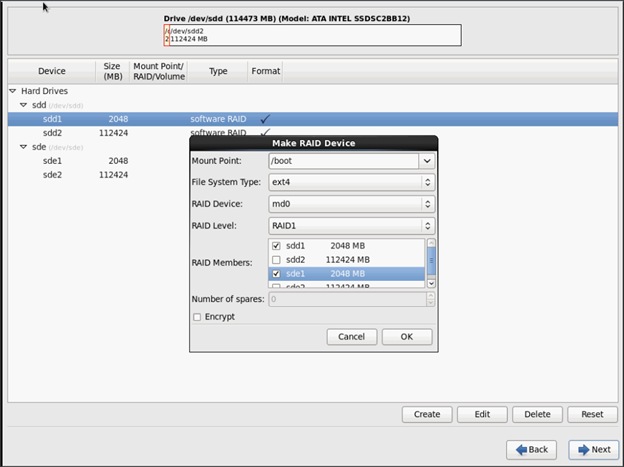

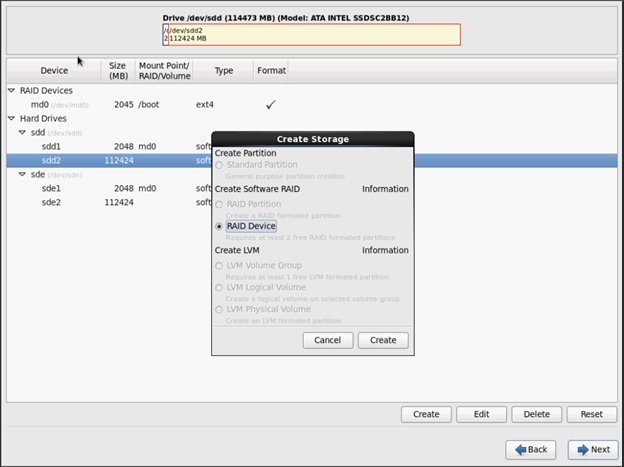

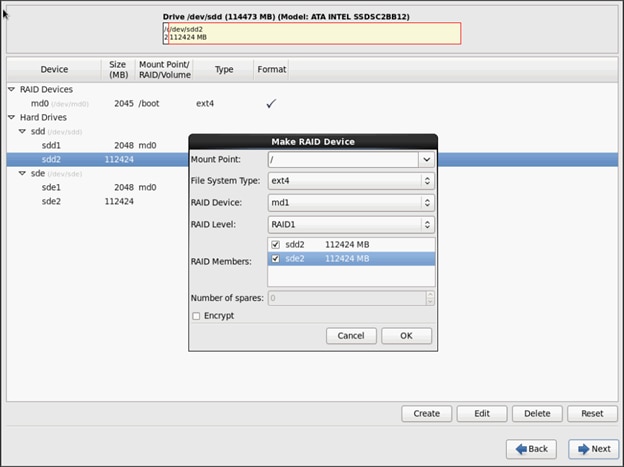

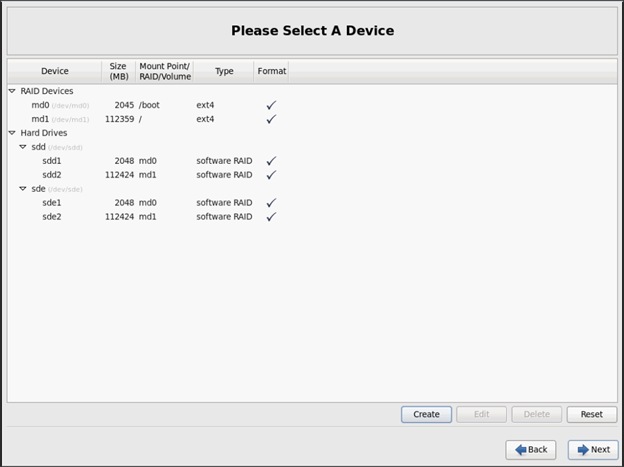

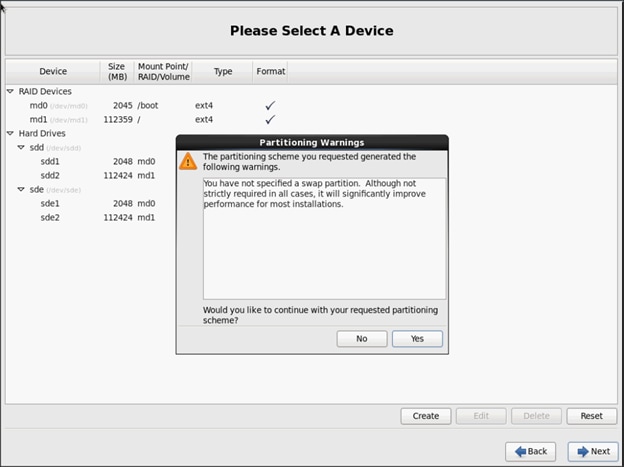

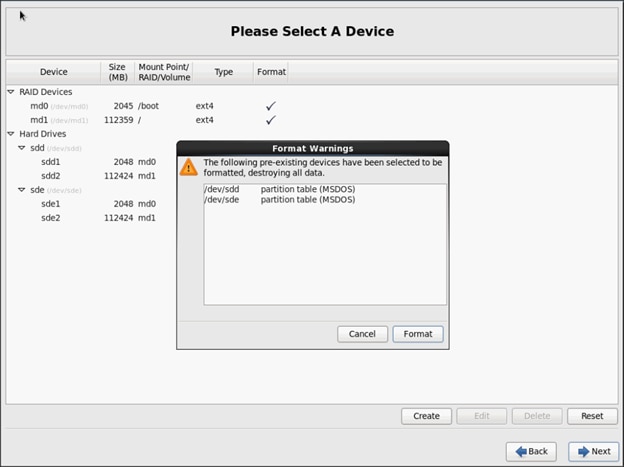

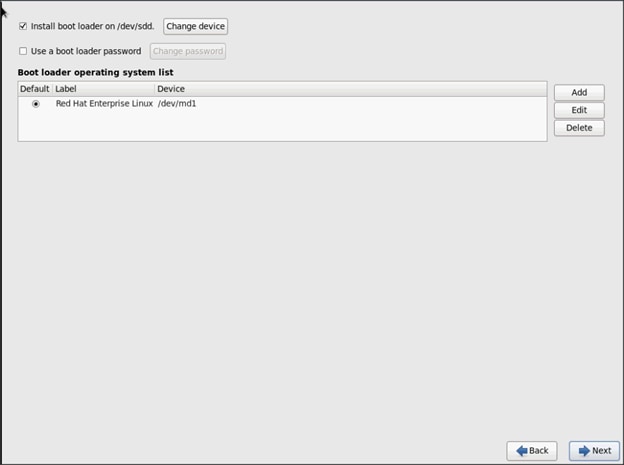

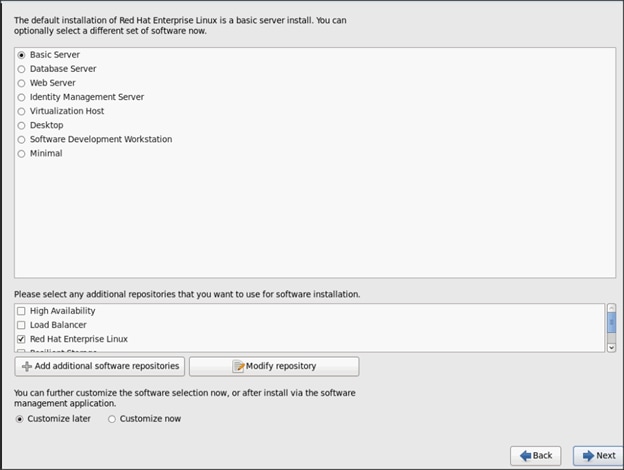

Installing Red Hat Enterprise Linux 6.6 using software RAID on Cisco C240 M4 Systems

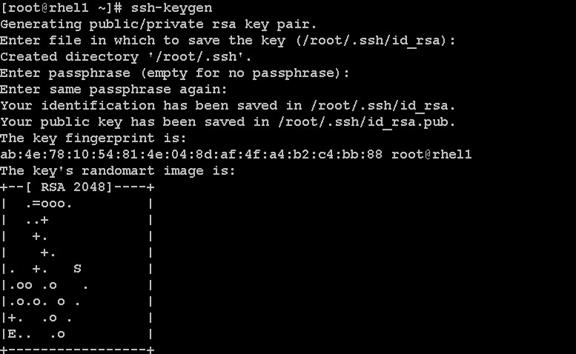

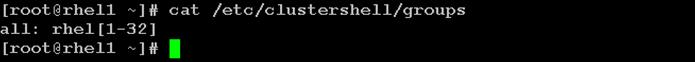

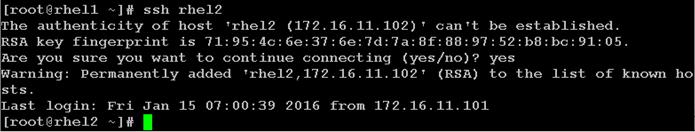

Setting Up Password-less Login

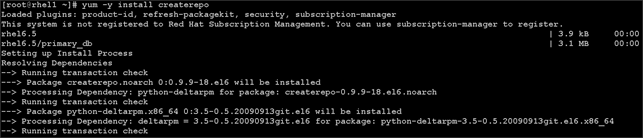

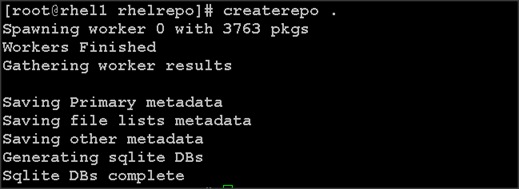

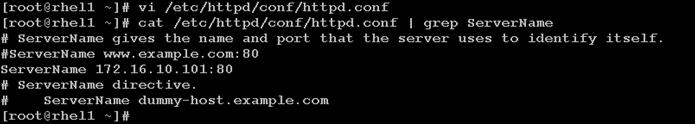

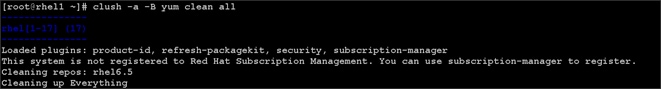

Creating Red Hat Enterprise Linux (RHEL) 6.6 Local Repo

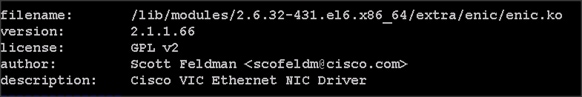

Upgrading Cisco Network Driver for VIC1227

Disable Transparent Huge Pages

Configuring Data Drives on Name Node

Configuring Data Drives on Data Nodes

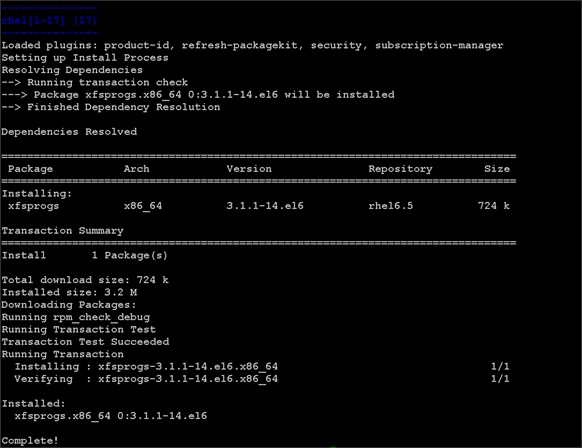

Configuring the Filesystem for NameNodes, and Datanodes

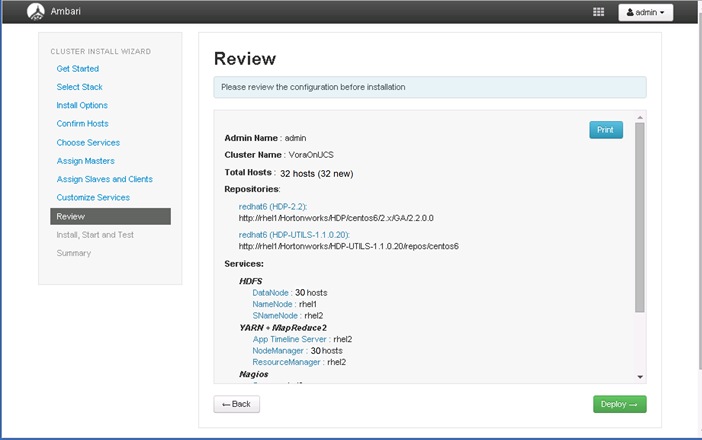

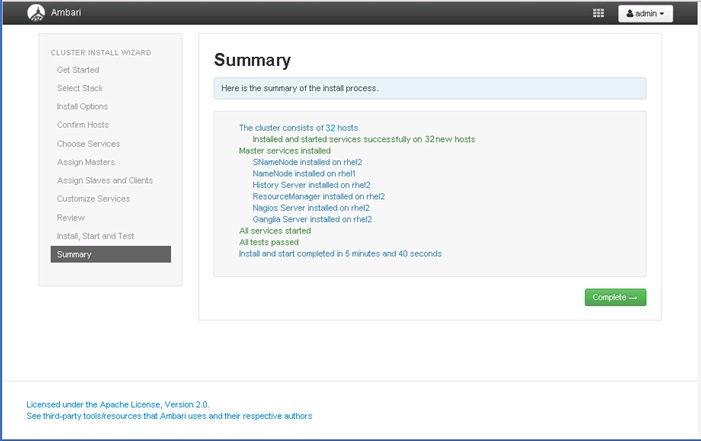

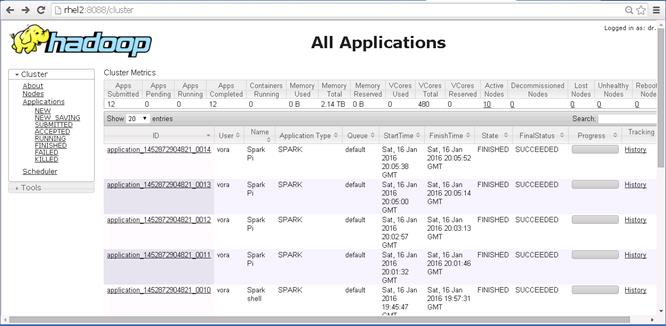

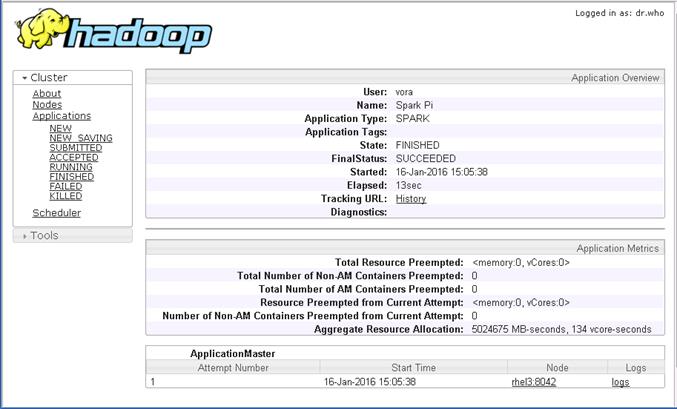

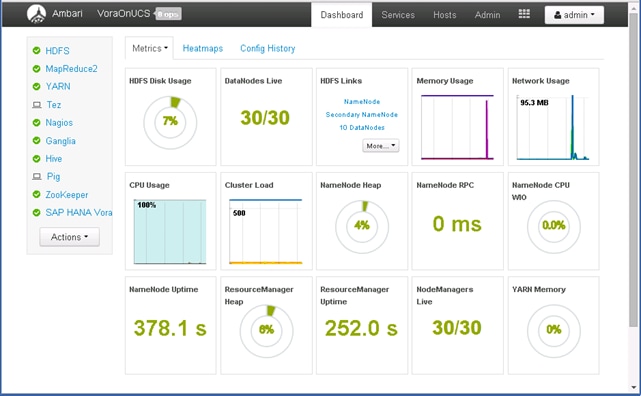

Install and Configure Hadoop, YARN, and Spark

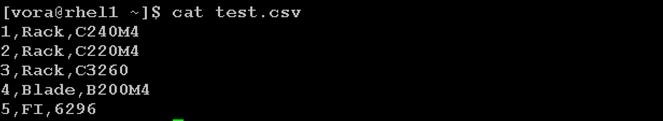

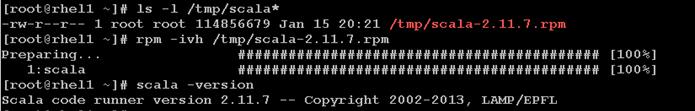

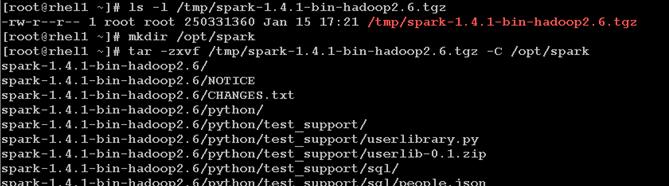

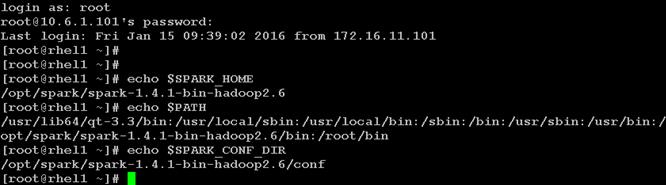

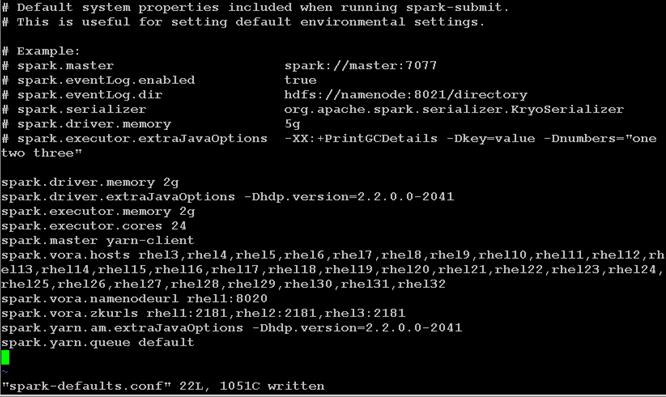

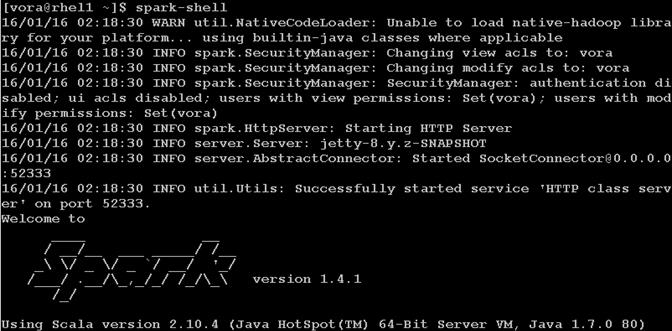

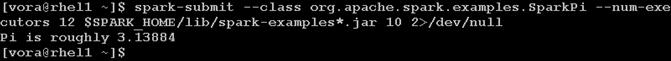

Installing Apache Spark on admin node

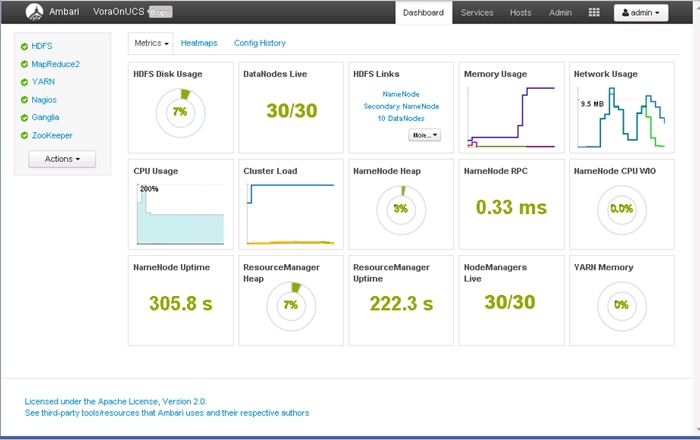

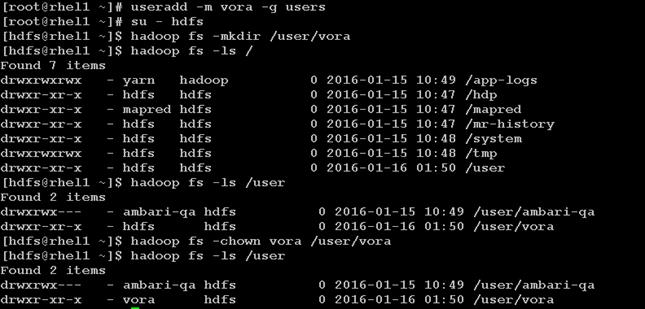

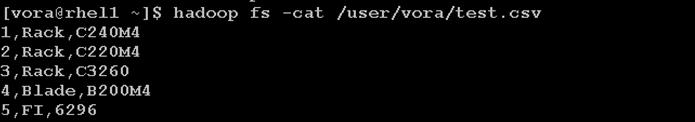

Prepare HDFS for Spark and Vora access

Install and Configure SAP HANA Vora

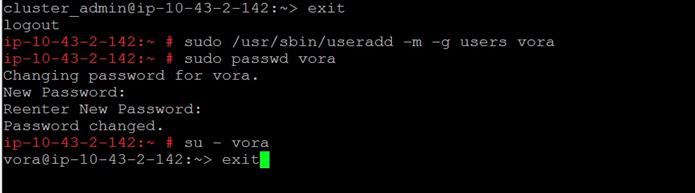

Preparing to Install SAP HANA Vora

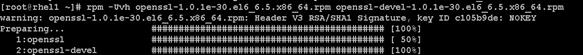

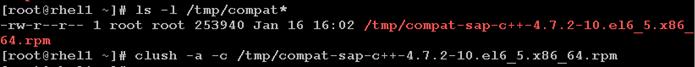

Install the C++ compatibility package

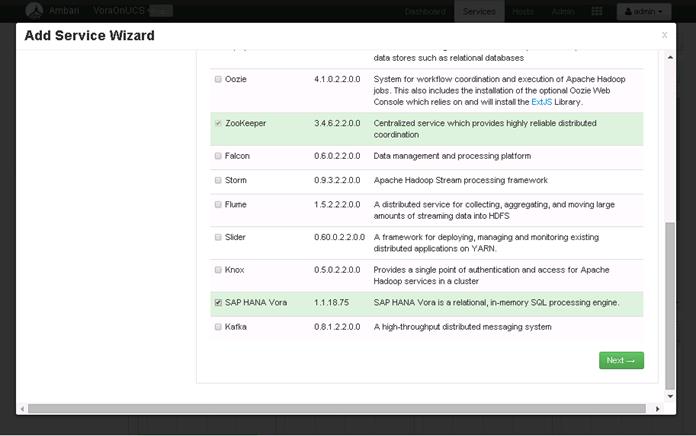

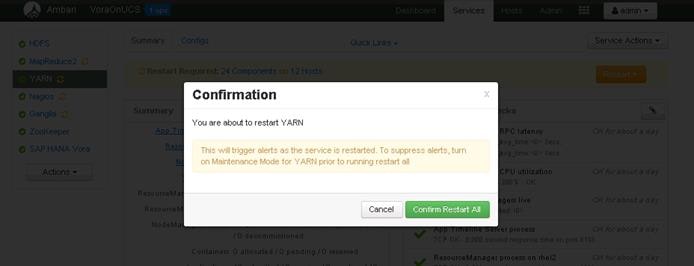

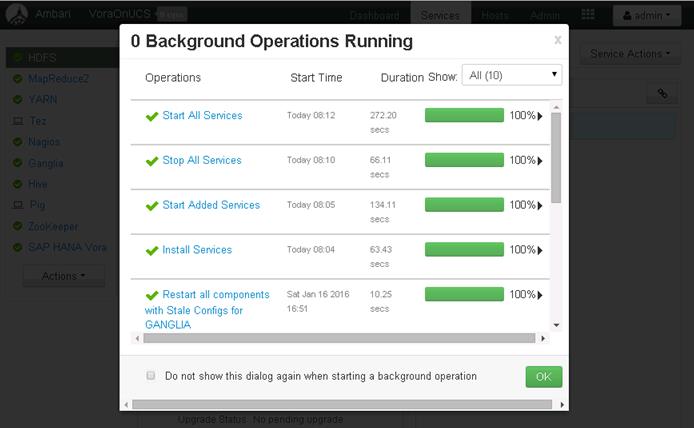

Installing SAP HANA Vora Engine

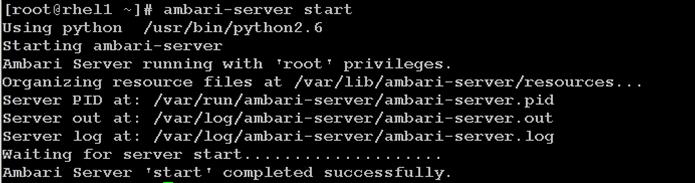

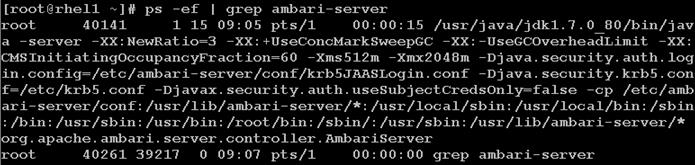

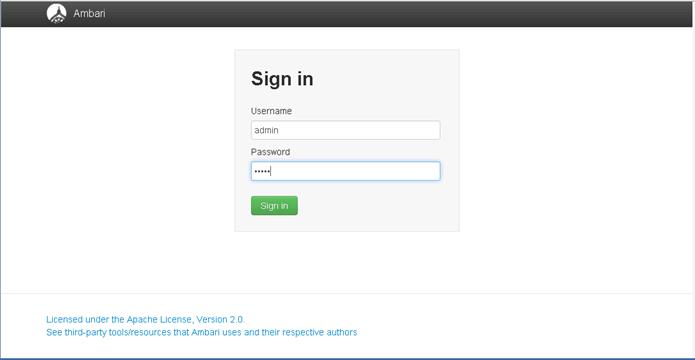

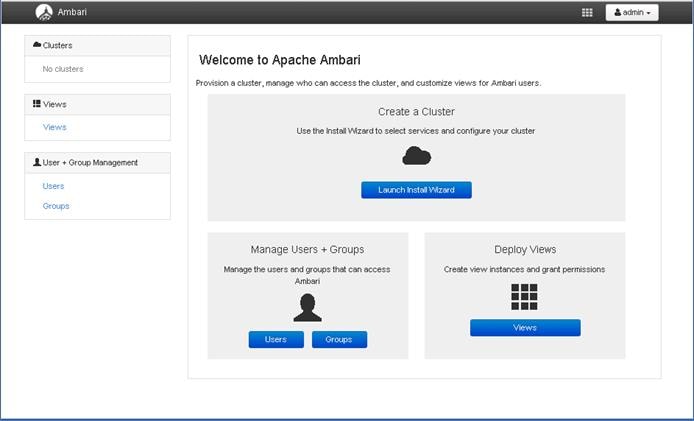

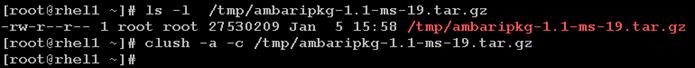

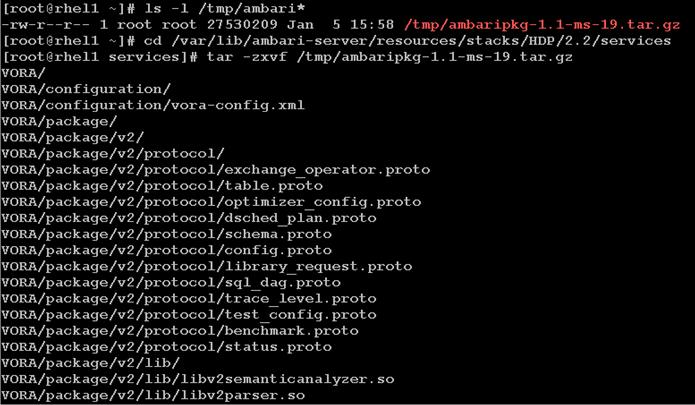

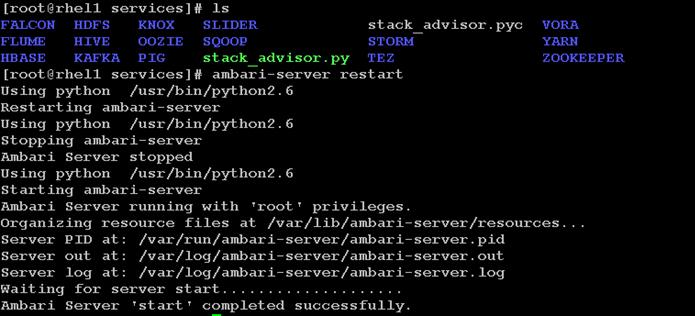

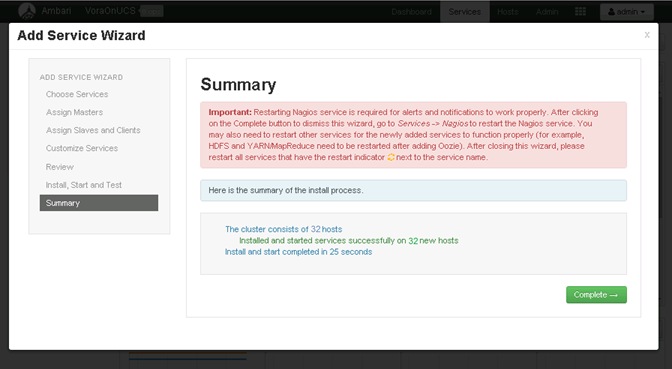

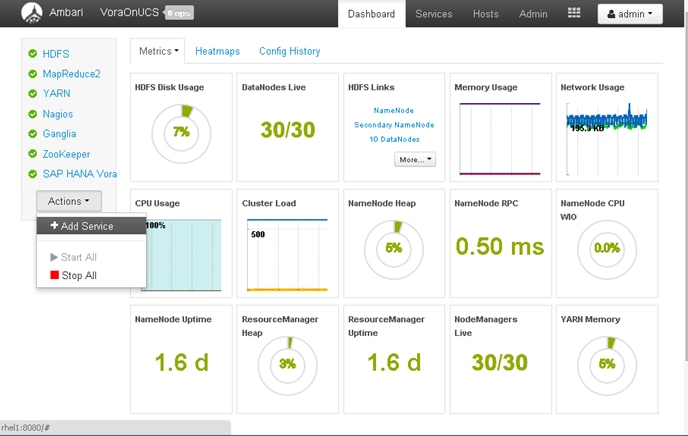

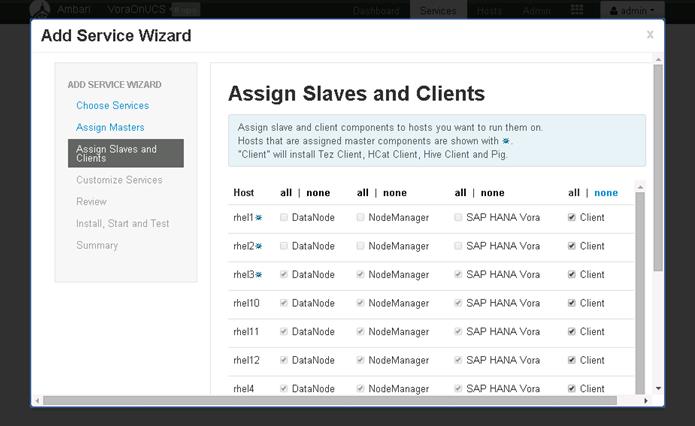

Install Vora Engine on the Ambari Server

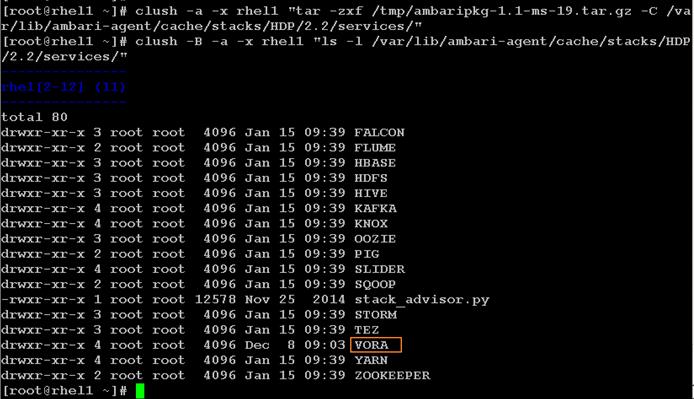

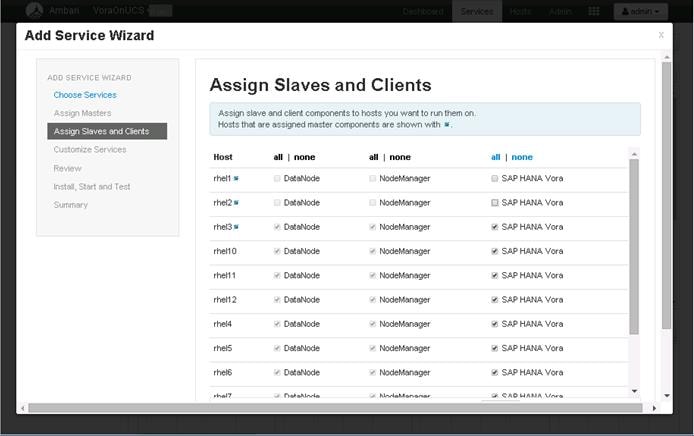

Install Vora Engine on all Vora client nodes.

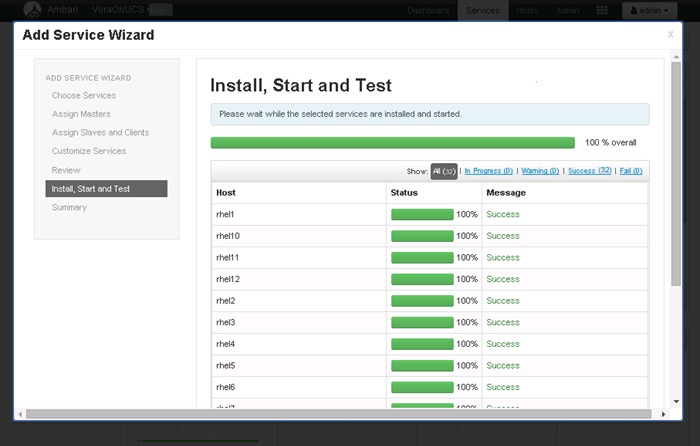

Install SAP HANA Vora on the client nodes (all the data nodes)

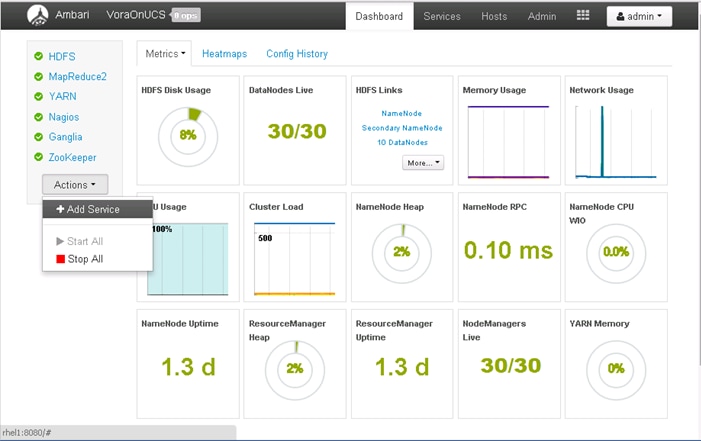

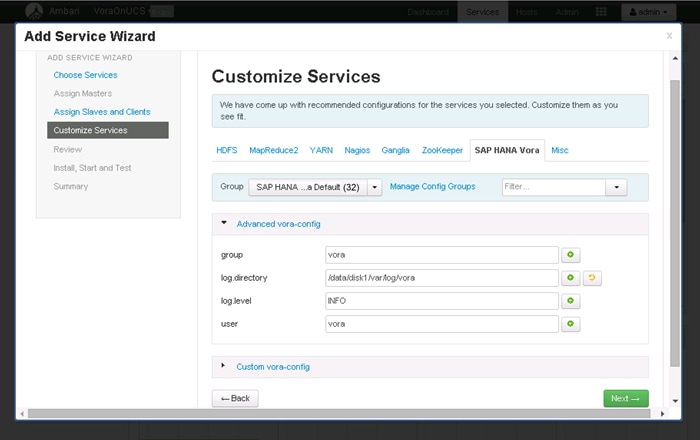

Installing the SAP HANA Vora Extension

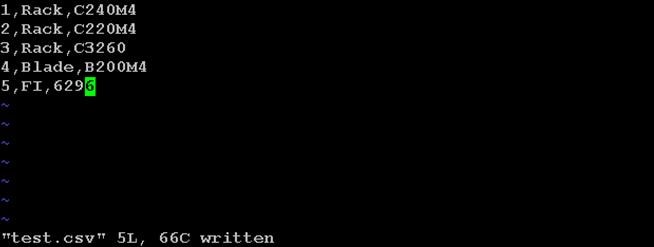

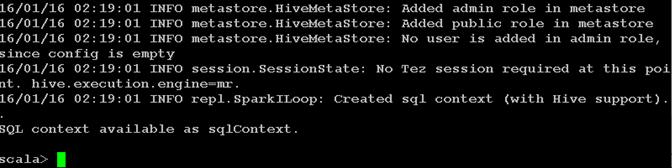

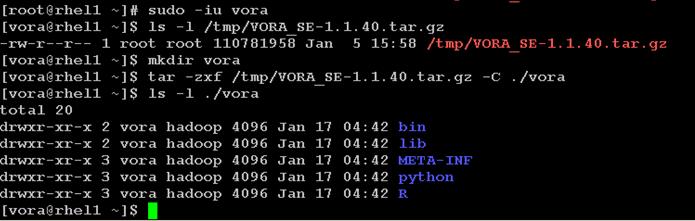

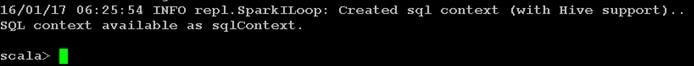

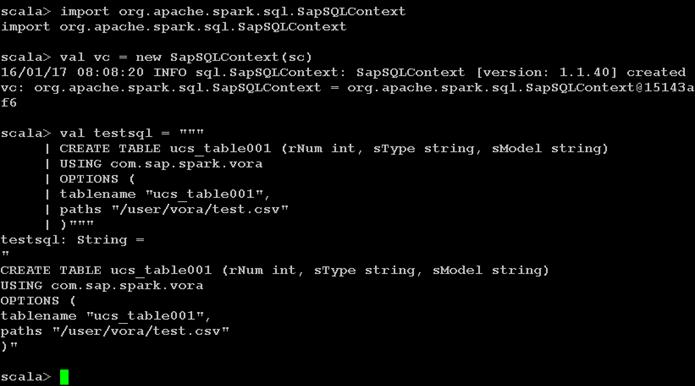

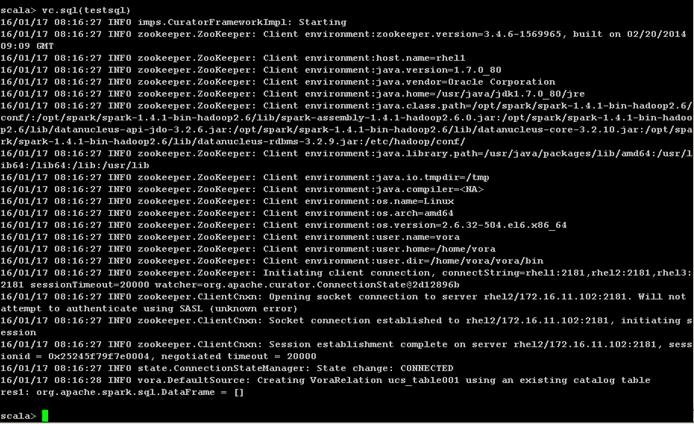

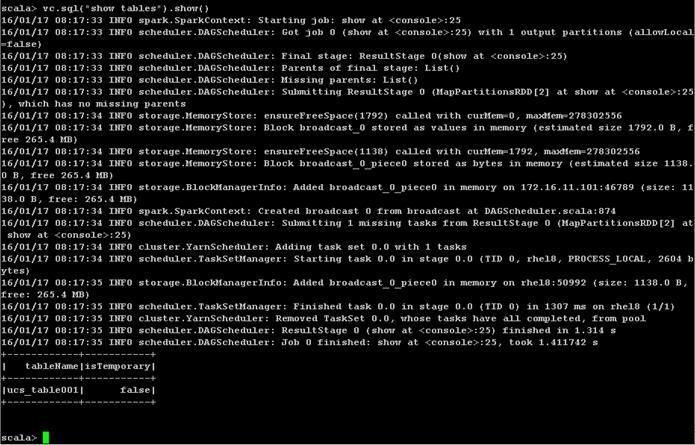

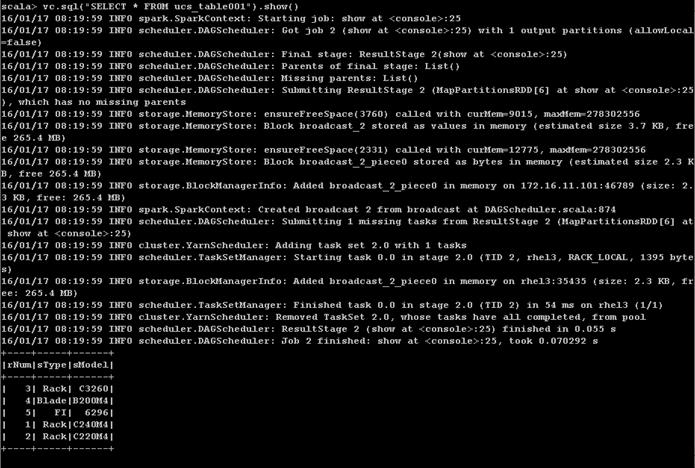

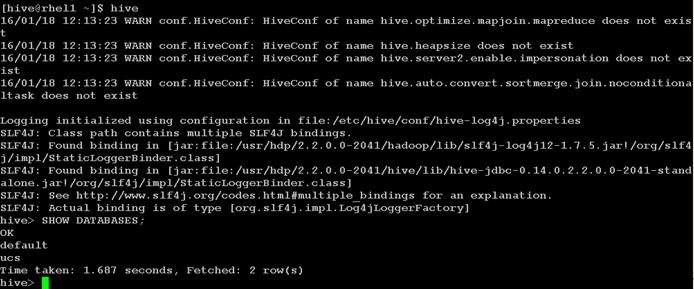

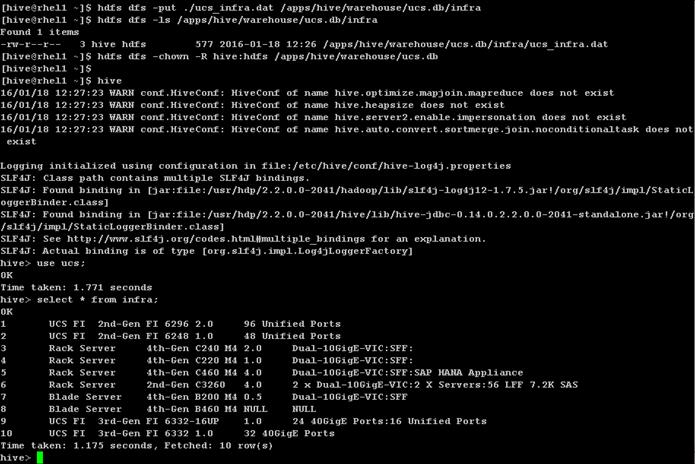

Creating a Table from SAP HANA Vora Shell

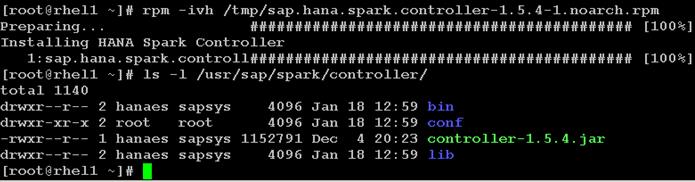

Installing Spark Controller for data access from SAP HANA

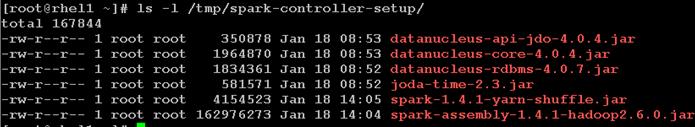

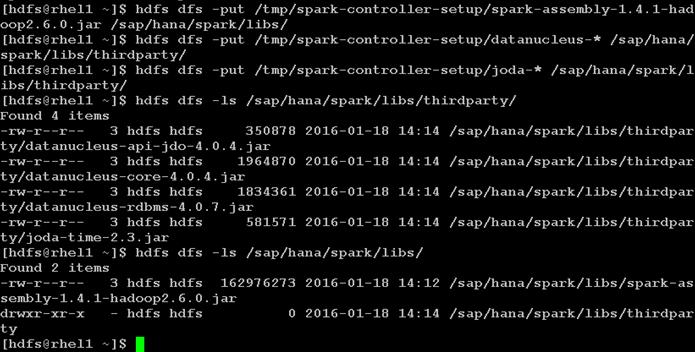

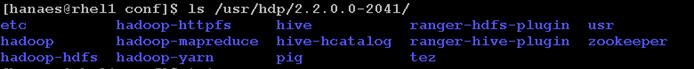

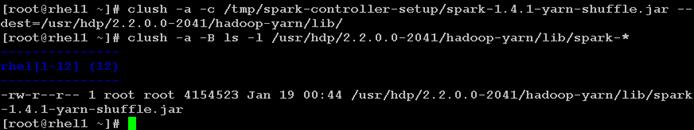

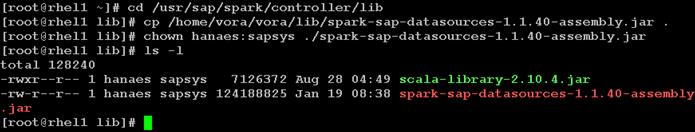

Download and Setup the Library Files Necessary to Configure Spark Controller on the HDFS

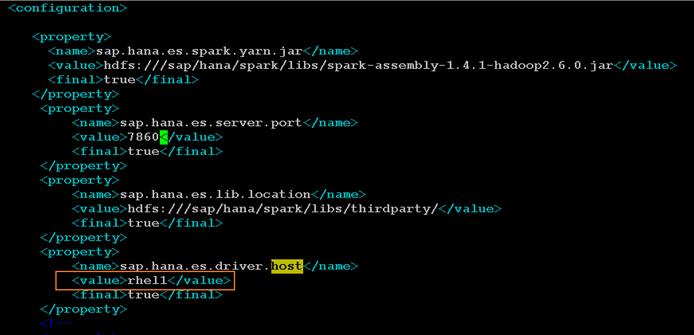

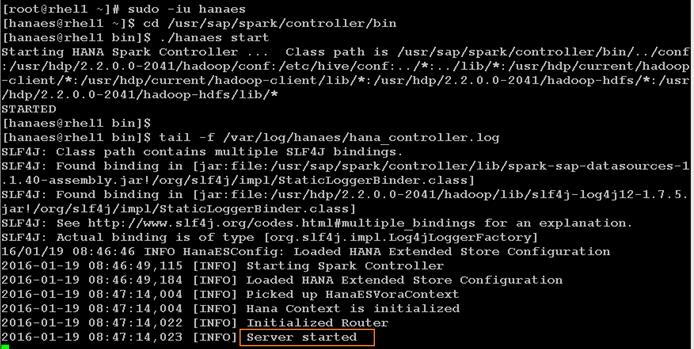

Configure the SAP HANA Spark Controller

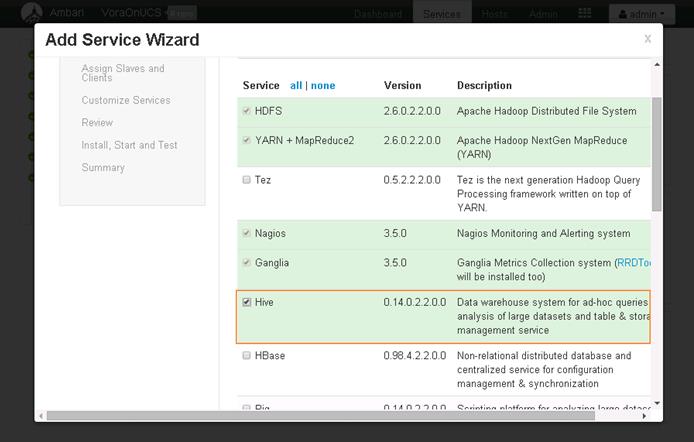

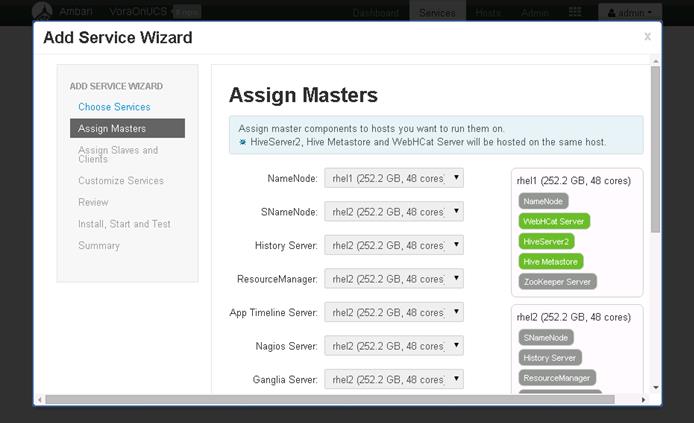

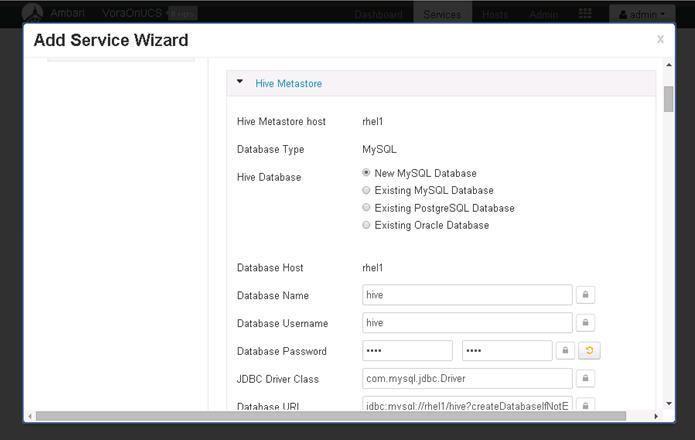

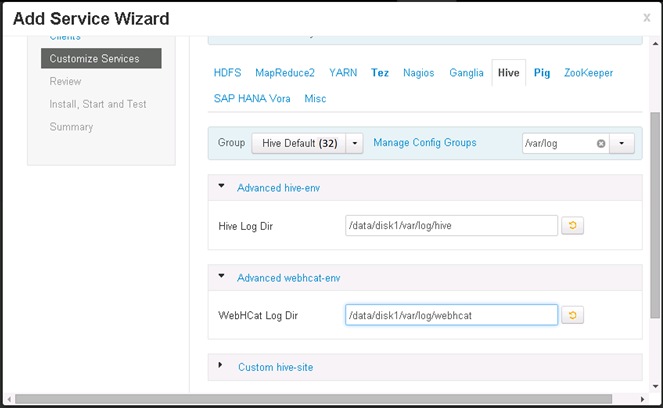

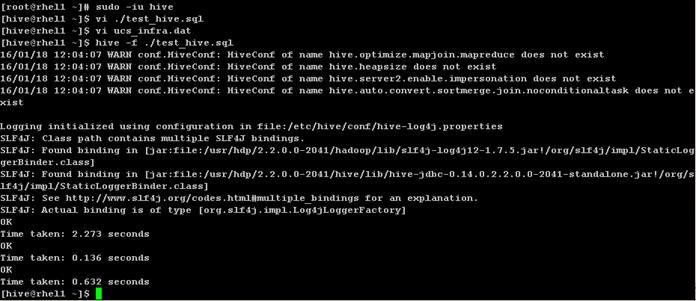

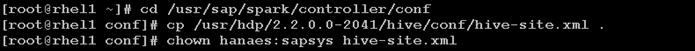

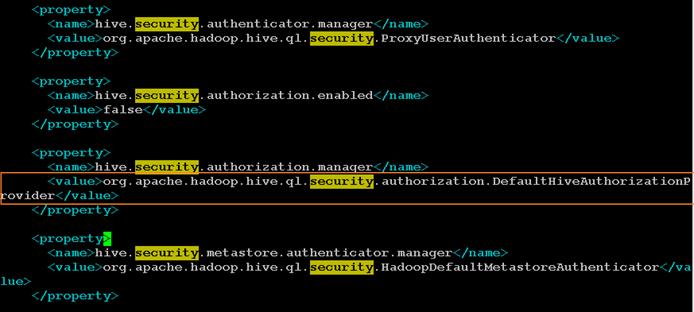

Configure Hive to be used with the Spark Controller

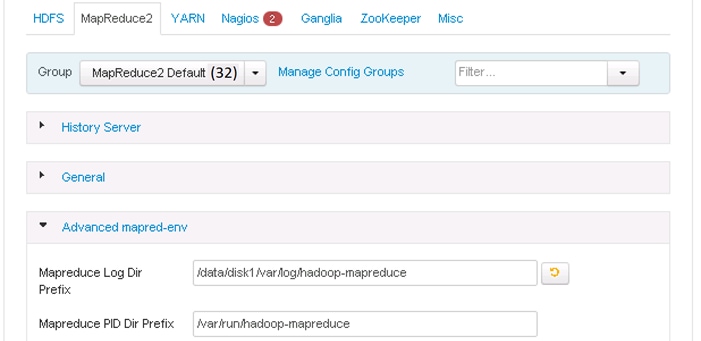

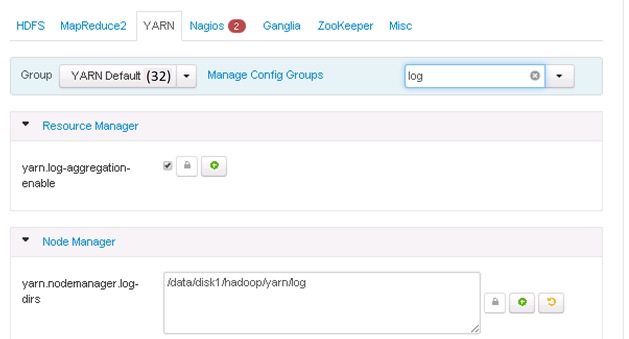

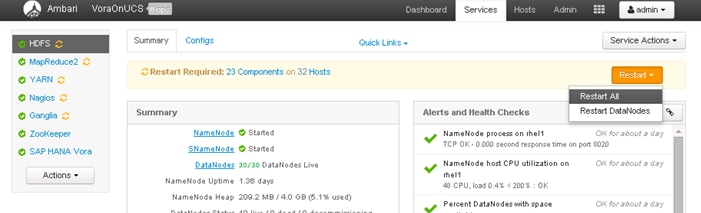

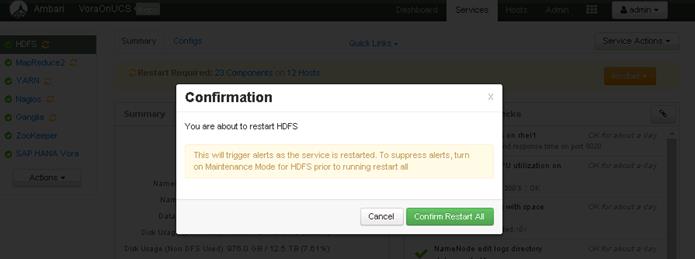

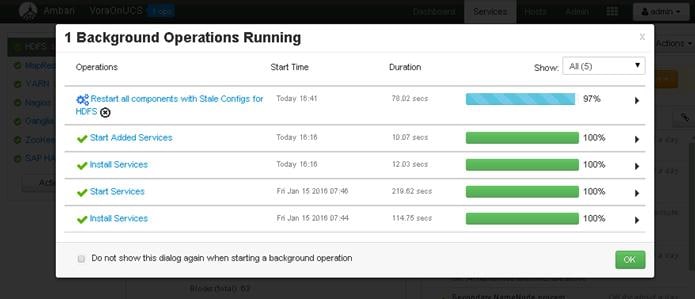

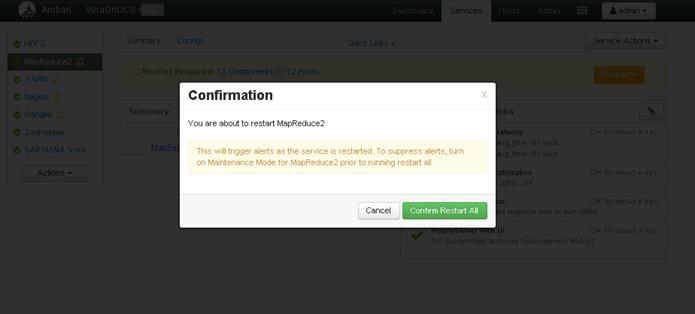

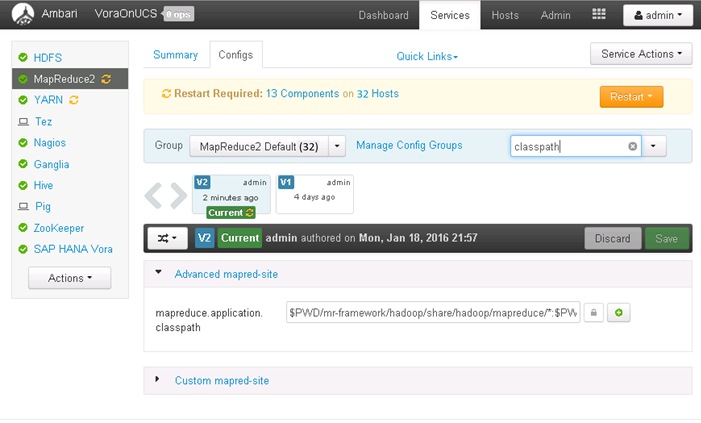

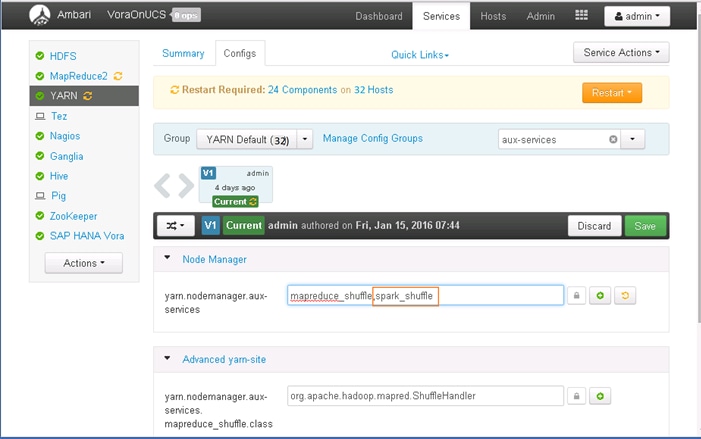

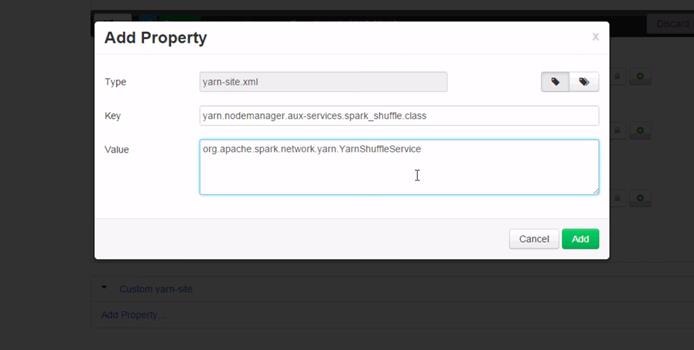

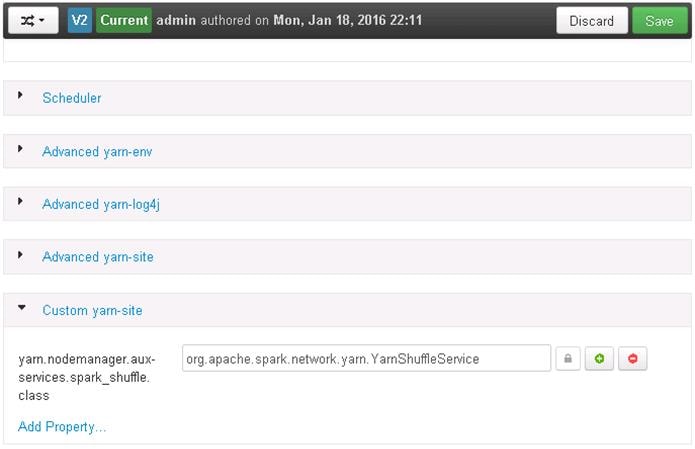

Update MapReduce and YARN configurations in Ambari

Cisco UCS and SAP HANA Vora Deliver a New Dimension to Big Data Analytics

This Cisco Validated Design describes architecture and deployment procedures for creating a SAP HANA Vora cluster on Cisco UCS Integrated Infrastructure for Big Data and Cisco Application Centric Infrastructure (ACI). The deployment creates a simple and linearly scalable architecture, that is centrally managed. Now contextual awareness can be added to big data deployments and run all big data and analytics operations on Cisco UCS Integrated Infrastructure for Big Data. This solution provides access to more precise decision making, democratized data access, and simplified big data ownership.

Cisco UCS Integrated Infrastructure for Big Data with SAP HANA Vora can help your business gain a new level of insight by bringing big data query results into the more static business data stored in SAP HANA. The following are just a few of the ways that the solution can help your staff get the information it needs:

Optimize your supply chain and increase visibility

· Detect fraud.

· Conduct targeted marketing campaigns.

· Improve IT capacity planning activities.

· Improve patient care.

· Proactively maintenance and improved visibility.

· Manage adverse events and product recall activities.

Introduction

Information is most powerful when it is turned into real-time insight. That’s why many organizations use Hadoop and Apache Spark to mine big data stores to identify trends and empower decision makers. Now you can add contextual awareness to your big data deployments and run all of your big data and analytics operations on Cisco UCS Integrated Infrastructure for Big Data. This solution gives you access to more precise decision making, democratized data access, and simplified big data ownership.

While Hadoop can store and access vast amounts of detailed data at lower costs, it is not as well suited to the fast, drill-down nature of today’s business questions. Through data hierarchies that enable online analytical processing (OLAP) analysis of Hadoop data, enhancements in Spark SQL, and compiled queries for accelerated processing across nodes, SAP HANA Vora enables precision decision making across all the data in enterprise applications, data warehouses, data lakes, and edge sensors. SAP HANA Vora works with all major Hadoop distributions and applies the power of in-memory processing to massively distributed data stores. By helping to overcome the limitations of batch-oriented processing, it enables real-time, iterative access to data on Hadoop clusters. Companies can now discover new insights by combining traditional sources of data with valuable data arriving continually from outside the organization, using enterprise-grade data management practices.

Although your enterprise data and big data have value separately, the capability to bring them together presents new opportunities for your data scientists and analysts. Running on the Apache Spark framework, SAP HANA Vora is an in-memory query engine that enables you to easily bring new insights into your SAP landscape. By combining your business information with data from other sources— including streaming, interactive queries, and machine learning—you can accelerate and add context to your decision-making processes for better business outcomes.

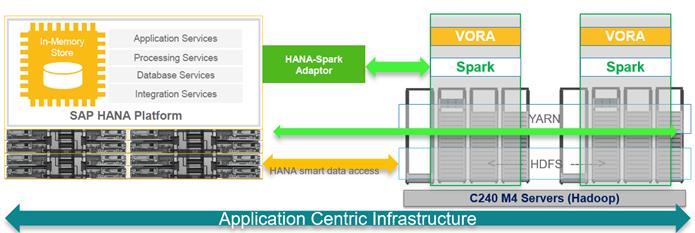

Solution

The Cisco UCS Integrated Infrastructure for Big Data and Cisco ACI with SAP HANA Vora brings together Enterprise Applications and Big Data technologies to provide better business coherence for precise decision making with contextual awareness by combining business data with Hadoop data with an in-memory processing engine.

Process enterprise and Hadoop data simply and cost-effectively for real-time business applications and analytics: The components of the solution include:

· Cisco UCS Integrated Infrastructure for Big Data

· Cisco ACI

· Hadoop

· SAP HANA Vora

Cisco UCS Integrated Infrastructure for Big Data includes computing, storage, connectivity, and unified management capabilities to help companies manage the immense amount of data they collect today. It is built on the Cisco Unified Computing System™ (Cisco UCS) infrastructure, using Cisco UCS 6200 Series Fabric Interconnects, and Cisco UCS C-Series Rack Servers. This architecture is specifically designed for performance and linear scalability for big data workloads.

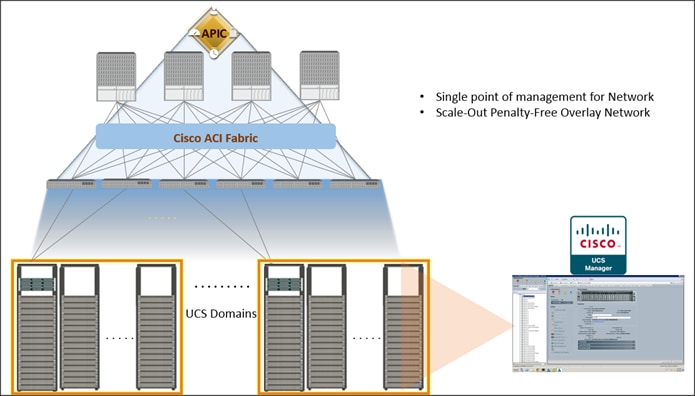

Cisco Application Centric Infrastructure (ACI) Cisco ACI is a comprehensive SDN architecture. One of the core design principles behind ACI was to provide complete visibility into the infrastructure – physical and virtual. ACI is software-defined networking (SDN) and more. Most SDN models stop at the network. ACI extends the promise of SDN—namely agility and automation—to the applications themselves. Through a policy-driven model, the network can cater to the needs of each application, with security, network segmentation, and automation at scale. And it can do so across physical and virtual environments, with a single pane of management.

Figure 1 Solution Overview

Audience

The intended audience of this document includes, but is not limited to, sales engineers, field consultants, professional services, IT managers, partner engineering and customers who want to deploy SAP HANA Vora on Cisco UCS Integrated Infrastructure for Big Data alongside their existing SAP HANA Enterprise Application landscape interconnected by Cisco ACI.

Solution Summary

This CVD describes the architecture and deployment procedures for setting up Cisco UCS C240 M4 servers, based on Cisco UCS Integrated Infrastructure for Big Data and Cisco ACI, bringing together a highly scalable architecture designed to meet a variety of scale-out application demands with seamless data integration and management integration capabilities.

This CVD describes in detail the process of creating the Application Network Profile in the ACI for Big Data application. Application Network Profile is a collection of EPGs, their connections, and the policies that define those connections described in detail later. Application Network Profiles are the logical representation of an application (here Big Data) and its interdependencies in the network fabric. Application Network Profiles are designed to be modeled in a logical way that matches the way that applications are designed and deployed. The configuration and enforcement of policies and connectivity is handled by the system rather than manually by an administrator.

The architecture and deployment procedures for deploying Hortonworks Hadoop, Apache Spark platforms and SAP HANA Vora on Cisco UCS Integrated Infrastructure for Big Data.

![]() This document talks about setting up SAP HANA Vora cluster in a single UCS domain. The System Architecture and Scaling sections below describe 3 Fabric Interconnect domains under a pair of Nexus 9396. This CVD describes the implementation of two Fabric-Interconnect domains under a pair of Nexus 9396. The third domain can be added without adding any additional network over-subscription, as there are enough ports on Nexus 9396 to support this additional domain.

This document talks about setting up SAP HANA Vora cluster in a single UCS domain. The System Architecture and Scaling sections below describe 3 Fabric Interconnect domains under a pair of Nexus 9396. This CVD describes the implementation of two Fabric-Interconnect domains under a pair of Nexus 9396. The third domain can be added without adding any additional network over-subscription, as there are enough ports on Nexus 9396 to support this additional domain.

The current version of Cisco UCS Integrated Infrastructure for Big Data offers the following configuration depending on the compute and storage requirements:

Table 1 Reference Architecture

| SAP HANA Infrastructure |

Performance Optimized |

Scaling with ACI |

| Connectivity 2 Cisco UCS 6296UP 96 Port Fabric Interconnect Scaling: · Up to 80 servers per · Up to 160 servers per 32 Cisco UCS C240 M4 Rack Servers (SFF), each with: · 2 Intel Xeon processors E5-2680 v3 CPUs · 256 GB of memory · Cisco 12-Gbps SAS Modular Raid Controller with 2GB flash-based write cache (FBWC) · 24 1.2TB 10K SFF SAS drives (460TB total) · 2 120GB (or 480GB) 6Gbps 2.5inch Enterprise Value SATA SSDs for Boot · Cisco UCS VIC 1227 (with 2 10GE SFP+ ports) |

Spine Two Cisco Nexus 9508 spine switches with 8 line cards Line card · Eight N9k-X9736PQ line cards with 36 non-blocking ports in each line card. · Total of 288 ports available to fully scale the architecture. Leaf Twenty four Cisco Nexus 9396PX leaf switches Management Three Cisco Application Policy Infrastructure Controller (Cisco APIC) for management and automation of ACI Scaling Up to 5760 servers in a fully populated pair of spines. No over-subscription within a Fabric Interconnect domain and 5.7:1 over-subscription between domains. Further Scaling Can be further expanded to a 12-spine design, allowing for tens of thousands of servers to be part of this infrastructure interconnected by a non-blocking fabric and managed through a single pane of glass. |

![]() This CVD describes the installation process of a 32 node SAP HANA Vora cluster. It is highly recommended that the size of the SSD’s for the boot drive to be 480 Gig.

This CVD describes the installation process of a 32 node SAP HANA Vora cluster. It is highly recommended that the size of the SSD’s for the boot drive to be 480 Gig.

![]() For more details on Connecting Application Centric Infrastructure (ACI) to outside Layer 2 and 3 networks can be found at: http://www.cisco.com/c/en/us/solutions/collateral/data-center-virtualization/application-centric-infrastructure/white-paper-c07-732033.html

For more details on Connecting Application Centric Infrastructure (ACI) to outside Layer 2 and 3 networks can be found at: http://www.cisco.com/c/en/us/solutions/collateral/data-center-virtualization/application-centric-infrastructure/white-paper-c07-732033.html

![]() This CVD describes the install process of SAP HANA Vora with Hortonworks HDP 2.2 and Apache Spark 1.4.1 for a 32 node (2 Master node + 30 Data nodes) of Performance Optimized Cluster configuration

This CVD describes the install process of SAP HANA Vora with Hortonworks HDP 2.2 and Apache Spark 1.4.1 for a 32 node (2 Master node + 30 Data nodes) of Performance Optimized Cluster configuration

This Cisco validated design brings together three main technologies:

1. Cisco UCS Integrated Infrastructure for Big Data

2. Cisco UCS Datacenter Solution for SAP HANA (refer to Cisco Datacenter solutions for SAP HANA)

3. Cisco Application Centric Infrastructure (ACI)

This CVD covers only the #1 and #3 of the technology components, and it can be integrated with any of existing Cisco UCS Datacenter solutions for SAP HANA.

Cisco UCS Integrated Infrastructure for Big Data

The Cisco UCS solution for Hadoop is based on Cisco UCS Integrated Infrastructure for Big Data, a highly scalable architecture designed to meet a variety of scale-out application demands with seamless data integration and management integration capabilities built using the following components:

Cisco UCS 6200 Series Fabric Interconnects

Cisco UCS 6200 Series Fabric Interconnects provide high-bandwidth, low-latency connectivity for servers, with integrated, unified management provided for all connected devices by Cisco UCS Manager. Deployed in redundant pairs, Cisco fabric interconnects offer the full active-active redundancy, performance, and exceptional scalability needed to support the large number of nodes that are typical in clusters serving big data applications. Cisco UCS Manager enables rapid and consistent server configuration using service profiles, automating ongoing system maintenance activities, such as firmware updates, across the entire cluster as a single operation. Cisco UCS Manager also offers advanced monitoring with options to raise alarms and send notifications about the health of the entire cluster.

Figure 2 Cisco UCS 6296UP 96-Port Fabric Interconnect

Cisco UCS C-Series Rack Mount Servers

Cisco UCS C-Series Rack Mount C220 M4 High-Density Rack servers (Small Form Factor Disk Drive Model) and Cisco UCS C240 M4 High-Density Rack servers (Small Form Factor Disk Drive Model) are enterprise-class systems that support a wide range of computing, I/O, and storage-capacity demands in compact designs. Cisco UCS C-Series Rack-Mount Servers are based on Intel Xeon E5-2600 v3 product family and 12-Gbps SAS throughput, delivering significant performance and efficiency gains over the previous generation of servers. The servers use dual Intel Xeon processor E5-2600 v3 series CPUs and support up to 768 GB of main memory (128 or 256 GB is typical for big data applications) and a range of disk drive and SSD options. 24 Small Form Factor (SFF) disk drives are supported in performance-optimized option and 12 Large Form Factor (LFF) disk drives are supported in capacity-optimized option, along with 2x1 Gigabit Ethernet embedded LAN-on-motherboard (LOM) ports. Cisco UCS virtual interface cards 1227 (VICs) designed for the M4 generation of Cisco UCS C-Series Rack Servers are optimized for high-bandwidth and low-latency cluster connectivity, with support for up to 256 virtual devices that are configured on demand through Cisco UCS Manager.

Figure 3 Cisco UCS C240 M4 Rack Server

Cisco UCS Virtual Interface Cards (VICs)

Cisco UCS Virtual Interface Cards (VICs), unique to Cisco, incorporate next-generation converged network adapter (CNA) technology from Cisco, and offer dual 10-Gbps ports designed for use with Cisco UCS C-Series Rack-Mount Servers. Optimized for virtualized networking, these cards deliver high performance and bandwidth utilization and support up to 256 virtual devices. The Cisco UCS Virtual Interface Card (VIC) 1227 is a dual-port, Enhanced Small Form-Factor Pluggable (SFP+), 10 Gigabit Ethernet and Fiber Channel over Ethernet (FCoE)-capable, PCI Express (PCIe) modular LAN on motherboard (mLOM) adapter. It is designed exclusively for the M4 generation of Cisco UCS C-Series Rack Servers and the C3160 dense storage servers.

Figure 4 Cisco UCS VIC 1227

Cisco UCS Manager

Cisco UCS Manager resides within the Cisco UCS 6200 Series Fabric Interconnect. It makes the system self-aware and self-integrating, managing all of the system components as a single logical entity. Cisco UCS Manager can be accessed through an intuitive graphical user interface (GUI), a command-line interface (CLI), or an XML application-programming interface (API). Cisco UCS Manager uses service profiles to define the personality, configuration, and connectivity of all resources within Cisco UCS, radically simplifying provisioning of resources so that the process takes minutes instead of days. This simplification allows IT departments to shift their focus from constant maintenance to strategic business initiatives.

Figure 5 Cisco UCS Manager

Cisco Application Centric Infrastructure (ACI) Overview

Cisco ACI provides the network the ability to deploy and respond to the needs of applications, both in the data center and in the cloud. The network must be able to deliver the right levels of connectivity, security, compliance, firewalls, and load balancing, and it must be able to do this dynamically and on-demand.

This is accomplished through centrally defined policies and application profiles.

The profiles are managed by the new Application Policy Infrastructure Controller [APIC] and distributed to switches, like the Cisco Nexus 9000 Series. Cisco Nexus 9000 Series Switches, and the Cisco Application Policy Infrastructure Controller (APIC) are the building blocks for Cisco ACI.

Cisco ACI is software-defined networking (SDN) plus a whole lot more. Most SDN models stop at the network. Cisco ACI extends the promise of SDN—namely agility and automation—to the applications themselves. Through a policy-driven model, the network can cater to the needs of each application, with security, network segmentation, and automation at scale. And it can do so across physical and virtual environments, with a single pane of management.

The Cisco ACI fabric supports more than 64,000 dedicated tenant networks. A single fabric can support more than one million IPv4/IPv6 endpoints, more than 64,000 tenants, and more than 200,000 10G ports. The Cisco ACI fabric enables any service (physical or virtual) anywhere, with no need for additional software or hardware gateways, to connect between the physical and virtual services, and normalizes encapsulations for Virtual Extensible Local Area Network (VXLAN) / VLAN / Network Virtualization using Generic Routing Encapsulation (NVGRE).

The Cisco ACI fabric decouples the endpoint identity and associated policy from the underlying forwarding graph. It provides a distributed Layer 3 gateway that ensures optimal Layer 3 and Layer 2 forwarding. The fabric supports standard bridging and routing semantics without standard location constraints (any IP address anywhere), and removes flooding requirements for the IP control plane Address Resolution Protocol (ARP) / Generic Attribute Registration Protocol (GARP). All traffic within the fabric is encapsulated within VXLAN.

Architectural Benefits of Using Fabric Interconnect with Cisco ACI

The Cisco ACI fabric consists of discrete components that operate as routers and switches, but is provisioned and monitored as a single entity. The operation is like a single switch and router that provides advanced traffic optimization, security, and telemetry functions, stitching together virtual and physical workloads.

Cisco Application Centric Infrastructure (ACI) and Cisco Unified Computing System (Cisco UCS), working together, can cost-effectively scale capacity, and deliver exceptional performance for the growing demands of big data processing, analytics, and storage workflows. For larger clusters and mixed workloads, Cisco ACI uses intelligent, policy-based flowlet switching and packet prioritization to deliver:

· Centralized Management for the entire Network

· Dynamic load balancing

· Dynamic Packet Prioritization

· Multi-Tenant and Mixed Workload Support

· Deep Telemetry

Centralized Management for the Entire Network

Cisco ACI treats the network as a single entity rather than a collection of switches. It uses a central controller to implicitly automate common practices such as Cisco ACI fabric startup, upgrades, and individual element configuration. The Cisco Application Policy Infrastructure Controller (Cisco APIC) is the unifying point of automation and management for the Cisco Application Centric Infrastructure (ACI) fabric. This architectural approach dramatically increases the operational efficiency of networks, by reducing the time and effort needed to make modifications to the network and, also, for root cause analysis and issue resolution

Dynamic Load Balancing

Cisco’s Application Centric Infrastructure is not only aware of the congestion points but is able to make dynamic decisions on how the traffic is switched/routed. This could be new flows that are about to start or existing long flows which could benefit from moving to a less congested route. Dynamic load balancing takes care of these decisions at run time automatically and helps utilize the links optimally – both the healthy and the congested links. This is useful in both congested link scenarios and scenarios where there are link failures. Even when there is no congestion this will maintain close to optimal distribution of traffic across the spines.

Dynamic Packet Prioritization (DPP), prioritizes short flows higher than long flows; a short flow is less than approximately 15 packets. Short flows are more sensitive to latency than long ones. Small and urgent data workloads, such as database queries, may suffer processing latency delays because larger data sets are being sent across the fabric ahead of them. This approach presents a challenge for instances in which database queries require near-real-time results.

Dynamic Packet Prioritization can improve overall application performance. Together these technologies enable performance enhancements to applications, including Big Data workloads.

Multi-Tenant and Mixed Workload Support

Cisco ACI is built to incorporate secure multi-tenancy capabilities. The fabric enables customers to host multiple concurrent Big Data clusters on a shared infrastructure. Cisco ACI provides the capability to enforce proper isolation and SLA’s for workloads of different tenants. These benefits extend beyond multiple Big Data workloads – Cisco ACI allows the same cluster to run a variety of different application workloads, not just Big Data, with the right level of security and SLA for each workload.

Deep Telemetry of Tenant and Application Network

One of the core design principles behind Cisco ACI is to provide complete visibility into the infrastructure – physical and virtual. Cisco APIC is designed to provide application and tenant health at a system level by using real-time metrics, latency details, atomic counters, and detailed resource consumption statistics

If your application is experiencing performance issues, you can drill down easily into the lowest possible granularity – be it at a switch level, line card level, or port level.

The holistic approach to correlate virtual and physical and tie that intelligence to an application or tenant level ensures that troubleshooting becomes extremely simple across your infrastructure, through a single pane of glass.

Easy Migration to 40 Gbps in the Network

Cisco QSFP BiDi technology removes 40-Gbps cabling cost barriers for migration from 10-Gbps to 40-Gbps connectivity in data center networks. Cisco QSFP BiDi transceivers provide 40-Gbps connectivity with immense savings and simplicity compared to other 40-Gbps QSFP transceivers. The Cisco QSFP BiDi transceiver allows organizations to migrate the existing 10-Gbps cabling infrastructure to 40 Gbps at no cost and to expand the infrastructure with low capital investment. Together with Cisco Nexus 9000 Series Switches, which introduce attractive pricing for networking devices, Cisco QSFP BiDi technology provides a cost-effective solution for migration from 10-Gbps to 40-Gbps infrastructure.

Cisco ACI Building blocks

Cisco ACI consists of:

· Cisco Nexus 9000 Series Switches

· Centralized policy management and Cisco Application Policy Infrastructure Controller (APIC)

Nexus 9000 Series Switches

The Nexus 9000 Series Switches offer both modular (9500 switches) and fixed (9300 switches), 1/10/40/100 Gigabit Ethernet switch configurations designed to operate in one of two modes:

· Cisco NX-OS mode for traditional architectures and consistency across the Cisco Nexus portfolio.

· Cisco ACI mode to take full advantage of the policy-driven services and infrastructure automation features of ACI.

The ACI-Ready Cisco Nexus 9000 Series provides:

· Accelerated migration to 40G: zero cabling upgrade cost with Cisco QSFP+ BiDi Transceiver Module innovation.

· Switching platform integration: Nexus 9000 Series enables a highly scalable architecture and is software upgradable to ACI.

· Streamlined application management: drastically reduce application deployment time and get end-to-end application visibility.

This architecture consists of Nexus 9500 series switches acting as the spine, and Nexus 9300 series switches as leaves.

Cisco Nexus 9508 Spine Switch

The Cisco Nexus 9508 Switch offers a comprehensive feature set, high resiliency, and a broad range of 1/10/40 Gigabit Ethernet line cards to meet the most demanding requirements of enterprise, service provider, and cloud data centers. The Cisco Nexus 9508 Switch is an ACI modular spine device enabled by a non-blocking 40 Gigabit Ethernet line card, supervisors, system controllers, and power supplies.

The Cisco Nexus 9500 platform internally uses a Clos fabric design that interconnects the line cards with rear-mounted fabric modules. The Cisco Nexus 9500 platform supports up to six fabric modules, each of which provides up to 10.24-Tbps line-rate packet forwarding capacity. All fabric cards are directly connected to all line cards. With load balancing across fabric cards, the architecture achieves optimal bandwidth distribution within the chassis.

Figure 6 Cisco Nexus 9508 Switch

ACI Spine Line Card for Nexus 9508

There are multiple spine line cards supported on Nexus 9508. This architecture uses the N9K-X9736PQ: 40 Gigabit Ethernet ACI Spine Line Card.

· 36-port 40 Gigabit Ethernet QSFP+ line card

· Non-blocking

· Designed for use in an ACI spine switch role

· Works only in ACI mode

· Cannot mix with non-spine line cards

· Supported in 8-slot chassis

Figure 7 N9K-X9736PQ Line card

Cisco Nexus 9396 Leaf Switch

The Cisco Nexus 9396X Switch delivers comprehensive line-rate layer 2 and layer 3 features in a two-rack-unit (2RU) form factor. It supports line rate 1/10/40 GE with 960 Gbps of switching capacity. It is ideal for top-of-rack and middle-of-row deployments in both traditional and Cisco Application Centric Infrastructure (ACI)–enabled enterprise, service provider, and cloud environments.

Figure 8 Cisco Nexus 9396PX Switch

Tenant: A tenant is a logical container or a folder for application policies. This container can represent an actual tenant, an organization, an application or can just be used for the convenience of organizing information. A tenant represents a unit of isolation from a policy perspective. All application configurations in Cisco ACI are part of a tenant. Within a tenant, you define one or more Layer 3 networks (VRF instances), one or more bridge domains per network, and EPGs to divide the bridge domains.

Application Profile: Modern applications contain multiple components. For example, an e-commerce application could require a web server, a database server, data located in a storage area network, and access to outside resources that enable financial transactions. An application profile models application requirements and contains as many (or as few) End Point Groups (EPGs) as necessary that are logically related to providing the capabilities of an application.

Bridge Domain: A bridge domain represents a L2 forwarding construct within the fabric. One or more EPG can be associated with one bridge domain or subnet. A bridge domain can have one or more subnets associated with it. One or more bridge domains together form a tenant network.

End Point Group (EPG): An End Point Group (EPG) is a collection of physical and/or virtual end points that require common services and policies. An End Point Group example is a set of servers or storage LIFs on a common VLAN providing a common application function or service. While the scope of an EPG definition is much wider, in the simplest terms an EPG can be defined on a per VLAN segment basis where all the servers or VMs on a common LAN segment become part of the same EPG.

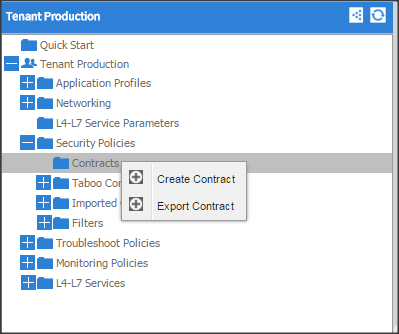

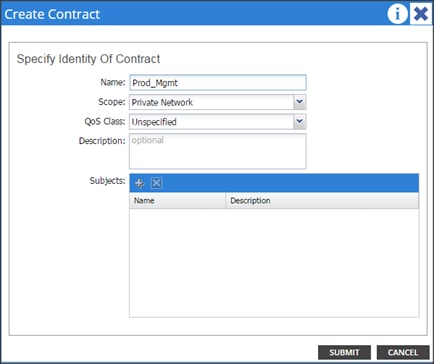

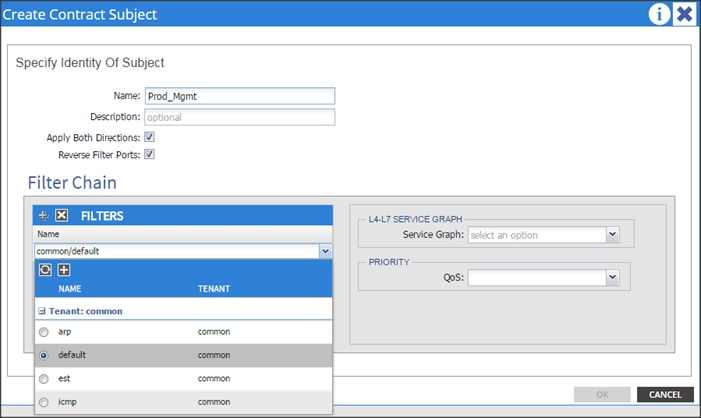

Contracts: A service contract can exist between two or more participating peer entities, such as two applications running and communicating with each other behind different endpoint groups, or between providers and consumers, such as a DNS contract between a provider entity and a consumer entity. Contracts utilize filters to limit the traffic between the applications to certain ports and protocols.

Figure 10 below covers the relationship between the ACI elements defined above. As shown in the figure, a Tenant can contain one or more application profiles and an application profile can contain one or more end point groups. The devices in the same EPG can talk to each other without any special configuration. Devices in different EPGs can talk to each other using contracts and associated filters. A tenant can also contain one or more bridge domains and multiple application profiles and end point groups can utilize the same bridge domain.

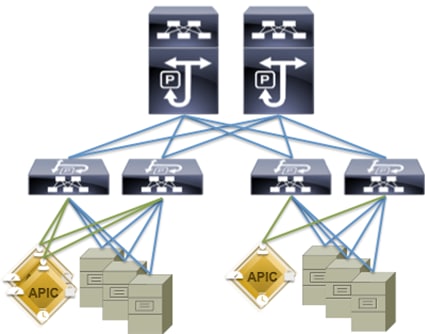

Application Policy Infrastructure Controller (APIC)

The APIC is the unified point of automation, management, monitoring, and programmability for the Cisco Application Centric Infrastructure. The APIC supports the deployment, management, and monitoring of any application anywhere, with a unified operations model for physical and virtual components of the infrastructure. The APIC programmatically automates network provisioning and Control that is based on the application requirements and policies. It is the central control engine for the broader cloud network; it simplifies management and allows flexibility in how application networks are defined and automated. It also provides northbound REST APIs. The APIC is a distributed system that is implemented as a cluster of many controller instances.

Figure 9 APIC Appliance

Cisco ACI Topology

Cisco ACI topology is spine-leaf architecture. Each leaf is connected to each spine. It uses internal routing protocol; Intermediate System to Intermediate System (IS-IS) to establish IP connectivity throughout the fabric among all the nodes including spine and leaf. To transport tenant traffic across the IP fabric, integrated VxLAN overlay is used. The broadcast ARP traffic coming from the end point or hosts to the leaf are translated to unicast ARP in the fabric.

The forwarding is done as a host based forwarding. In the leaf layer the user information such as username, IP address, locations, policy groups etc., are decoupled from the actual forwarding path and encode them into the fabric VxLAN header and is forwarded to the desired destination.

Each spine has the complete forwarding information about the end hosts that are connected to the fabric and on every leaf have the cached forwarding information. The leaf only needs to know the hosts it needs to talk to. For example if Server Rack-1 has to send some information to Server Rack-2, When packet comes in the ingress leaf (LEAF_1) it will encapsulate the information into the VxLAN header and forward that information to LEAF_2. If the LEAF_1 does not have information about the LEAF_2, it uses Spine as a proxy and since Spine has all the complete information about the entire end host connected to the fabric, it will resolve the egress leaf and forward the packet to the destination.

To the outside world, routing protocols can be used to learn outside prefixes or static routing can be used instead. The outside learned routes will be populated into the fabric or to the other leafs with Multiprotocol BGP (M-BGP). In M-BGP topology the spine nodes acts as route reflectors.

The Network topology of ACI is as depicted below:

Figure 10 Network Topology Based on Cisco ACI

The Cisco ACI infrastructure incorporates the following components:

1. Two Cisco Nexus 9508 Spine Switch

2. Cisco ACI Spine Line Card for Nexus 9508

3. Cisco Nexus 9396 Leaf Switch for Data Traffic

4. Cisco APIC-L1-Cluster with three APIC-L1 appliances

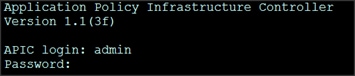

Once the configuration is completed, the APIC will Boot its APIC IOS Image and will ask for the login information. The default username is “admin” and the password is the one that was set during the initial configuration.

Cisco UCS Datacenter Solution for SAP HANA

Cisco and SAP have partnered to deliver an optimized UCS architecture for running SAP HANA, which provides fast transaction processing with real-time insights. Cisco UCS provides high-bandwidth connectivity between SAP HANA nodes and the persistency layer; this also allows SAP HANA deployments to scale more easily and transparently. Further, the Cisco UCS technology allows customers to scale dynamically as requirements and demand change.

Running SAP HANA on the Cisco UCS server platform offers the opportunity to reduce the hardware and maintenance costs associated with running multiple data warehouses, operational systems, and analytical systems. A principal design element of UCS is to break away from old static IT datacenter models and deliver on a new IT model that pools server, storage, and networking resources into a flexible physical and/or virtualized environment that can be provisioned (or reprovisioned) as workloads and business demands require.

This design guide provides an opportunity to integrate with any of the existing Cisco Datacenter Solution for SAP HANA CVD). The following are the Cisco UCS based designs guides that can be used in conjunction with this CVD.

| SAP Solutions on Cisco UCS |

|

(Used in this CVD for reference) |

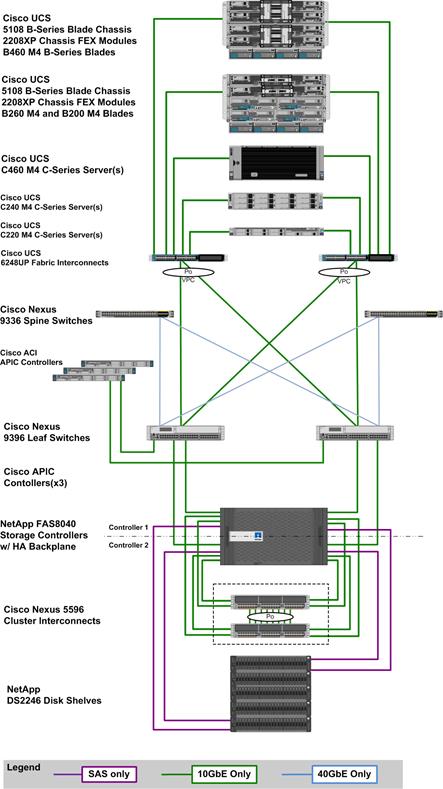

Architecture referenced in this guide (Flexpod Datacenter for SAP Solution with Cisco ACI)

The FlexPod Datacenter solution for SAP HANA with NetApp FAS storage provides an end-to-end architecture with Cisco, NetApp and VMware technologies that demonstrate support for multiple SAP HANA workloads with high availability and server redundancy. The architecture uses UCS Manager with combined Cisco UCS B-Series and C-Series Servers with NetApp FAS 8000 series storage attached to the Nexus 9396PX switches for NFS access and iSCSI. The C-Series Rack Servers are connected directly to Cisco UCS Fabric Interconnect with single-wire management feature. This infrastructure is deployed to provide PXE and iSCSI boot options for hosts with file-level and block-level access to shared storage. VMware vSphere 5.5 is used as server virtualization architecture.

The figure below shows the FlexPod Datacenter for SAP Solution with ACI. It highlights the FlexPod hardware components and the network connections for a configuration with IP- based storage.

Figure 11 FlexPod Datacenter for SAP Solution with ACI

The reference hardware configuration includes:

Cisco Unified Computing System

· 2 x Cisco UCS 6248UP 48-Port or 6296UP 96-Port Fabric Interconnects

· 2 x Cisco UCS 5108 Blade Chassis with 2 x Cisco UCS 2204 Fabric Extenders with 4x 10 Gigabit Ethernet interfaces

· 2 x Cisco UCS B460 M4 High-Performance Blade Servers with 2x Cisco UCS Virtual Interface Card (VIC) 1280 and 2x Cisco UCS Virtual Interface Card (VIC) 1240

· 2 x Cisco UCS B260 M4 High-Performance Blade Servers with 1x Cisco UCS Virtual Interface Card (VIC) 1280 and 1x Cisco UCS Virtual Interface Card (VIC) 1240

· 1 x Cisco UCS C460 M4 High-Performance Rack-Mount Servers with 2x Cisco UCS Virtual Interface Card (VIC) 1225.

· 4 x Cisco UCS B200 M4 High-Performance Blade Servers with Cisco UCS Virtual Interface Card (VIC) 1340

· 1 x Cisco UCS C220 M4 High-Performance Blade Servers with Cisco UCS Virtual Interface Card (VIC) 1225

· 1 x Cisco UCS C240 M4 High-Performance Blade Servers with Cisco UCS Virtual Interface Card (VIC) 1225

· 2 x Cisco UCS C220 M3 for Management Servers with Cisco UCS Virtual Interface Card (VIC) 1225 and RAID controller with Internal Disks

· 2 x Cisco UCS 5108 Blade Chassis with 2 x Cisco UCS 2204 Fabric Extenders with 4x 10 Gigabit Ethernet interfaces

· 2 x Cisco UCS B460 M4 High-Performance Blade Servers with 2x Cisco UCS Virtual Interface Card (VIC) 1280 and 2x Cisco UCS Virtual Interface Card (VIC) 1240

· 2 x Cisco UCS B260 M4 High-Performance Blade Servers with 1x Cisco UCS Virtual Interface Card (VIC) 1280 and 1x Cisco UCS Virtual Interface Card (VIC) 1240

· 1 x Cisco UCS C460 M4 High-Performance Rack-Mount Servers with 2x Cisco UCS Virtual Interface Card (VIC) 1225.

· 4 x Cisco UCS B200 M4 High-Performance Blade Servers with Cisco UCS Virtual Interface Card (VIC) 1340

· 1 x Cisco UCS C220 M4 High-Performance Blade Servers with Cisco UCS Virtual Interface Card (VIC) 1225

· 1 x Cisco UCS C240 M4 High-Performance Blade Servers with Cisco UCS Virtual Interface Card (VIC) 1225

· 2 x Cisco UCS C220 M3 for Management Servers with Cisco UCS Virtual Interface Card (VIC) 1225 and RAID controller with Internal Disks

Cisco ACI

· 2 x Cisco Nexus 9396 Leaf Switch for 10 Gigabit Ethernet connectivity between the two UCS Fabric Interconnects

· 2 x Cisco Nexus 9336 Spine Switch for 40 Gigabit Ethernet connectivity for ACI fabric

· 3 x Cisco APIC Controllers for centralized management of ACI fabric

NetApp FAS8040 Storage

· NetApp FAS8040HA Storage Clustered Data ONTAP

· 4 x NetApp Disk Shelf DS2246 with 24x 600GB 10k 2,5” SAS Disks

· 2 x Cisco Nexus 5596 Switch for FAS 8000 Cluster Interconnect

· Server virtualization is achieved by VMware vSphere 5.5.

Although this is the base design, each of the components can be scaled easily to support specific business requirements. Additional servers or even blade chassis can be deployed to increase compute capacity without additional Network components. Two Cisco UCS 6248UP, 48 port Fabric interconnect can support up to:

· 20 Cisco UCS B-Series B460 M4 or 40 B260 M4 Server with 10 Blade Server Chassis

· 20 Cisco UCS C460 M4 Sever

· 40 Cisco UCS C220 M4/C240 M4 Server

For every eight Cisco UCS Server, One NetApp FAS8040 HA pair with Clustered Data ONTAP is required to meet the SAP HANA storage performance. While adding compute and storage for scaling, it is required to increase the network bandwidth between Cisco UCS Fabric Interconnet and Cisco Nexus 9000 switch. Addition of each NetApp Storage requires additional four 10 GbE connectivity from each Cisco UCS Fabric Interconnect to Cisco Nexus 9000 switches.

![]() The number of Cisco UCS C-Series or Cisco UCS B-Series Servers and the NetApp FAS storage type depends on the number of SAP HANA instances. SAP specifies the storage performance for SAP HANA, based on a per server rule independent of the server size. In other words, the maximum number of servers per storage will remain the same if you want to use Cisco UCS B200 M4 with 192GB physical memory or Cisco UCS B460 M4 with 2TB physical memory.

The number of Cisco UCS C-Series or Cisco UCS B-Series Servers and the NetApp FAS storage type depends on the number of SAP HANA instances. SAP specifies the storage performance for SAP HANA, based on a per server rule independent of the server size. In other words, the maximum number of servers per storage will remain the same if you want to use Cisco UCS B200 M4 with 192GB physical memory or Cisco UCS B460 M4 with 2TB physical memory.

Figure 12 shows a block diagram of a complete SAP Landscape built using the FlexPod architecture. It composed of multiple SAP HANA systems and SAP applications with shared infrastructure as illustrated in the figure. The FlexPod Datacenter reference architecture for SAP solutions supports SAP HANA system in both Scale-Up mode (bare metal/ virtualization) and Scale-Out mode with multiple servers with the shared infrastructures.

Virtualized SAP application servers with VMware vSphere 5.5 allows application servers to run on the same infrastructure as the SAP HANA database. The FlexPod datacenter solution manages the communication between the application server and the SAP HANA database. This approach enhances system performance by improving bandwidth and latency. It also improves system reliability by including the application server in the disaster-tolerance solution with the SAP HANA database.

Figure 12 Shared Infrastructure Block Diagram

Flexpod Datacenter for SAP Solution with Cisco ACI describes detailed procedures for the reference design and outlines the network, compute and storage configurations and deployment process for running SAP HANA on FlexPod platform.

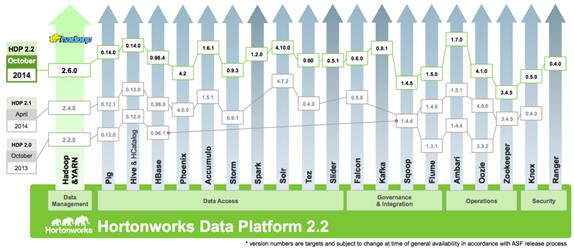

Hortonworks Data Platform (HDP 2.2)

The Hortonworks Data Platform 2.2 (HDP 2.2) is an enterprise-grade, hardened Apache Hadoop distribution that enables you to store, process, and manage large data sets.

Apache Hadoop is an open-source software framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models. It is designed for high-availability and fault-tolerance, and can scale from a single server up to thousands of machines.

The Hortonworks Data Platform combines the most useful and stable versions of Apache Hadoop and its related projects into a single tested and certified package. Hortonworks offers the latest innovations from the open source community, along with the testing and quality you expect from enterprise-quality software.

The Hortonworks Data Platform is designed to integrate with and extend the capabilities of existing investments in data applications, tools, and processes. With Hortonworks, one can refine, analyze, and gain business insights from both structured and unstructured data – quickly, easily, and economically.

Key Features of HDP 2.2

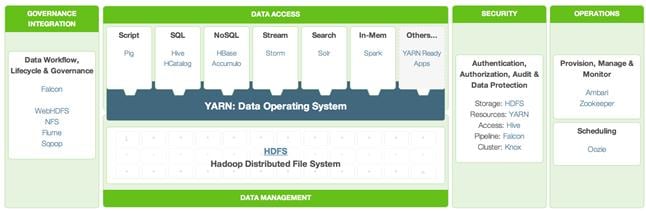

Hortonworks Data Platform enables Enterprise Hadoop: the full suite of essential Hadoop capabilities that are required by the enterprise and that serve as the functional definition of any data platform technology. This comprehensive set of capabilities is aligned to the following functional areas: Data Management, Data Access, Data Governance and Integration, Security, and Operations.

HDP 2.2 incorporates many new innovations that have happened in Hadoop and its supporting ecosystem of projects. Some of the key projects are listed below

Enterprise SQL at Scale in Hadoop

While YARN has allowed new engines to emerge for Hadoop, one of the popular integration point with Hadoop continues to be SQL and Apache Hive is still the defacto standard.

New capabilities in HDP 2.2 include:

· Updated SQL Semantics for Hive Transactions for Update and Delete: ACID transactions provide atomicity, consistency, isolation, and durability. This helps with streaming and baseline update scenarios for Hive such as modifying dimension tables or other fact tables.

· Improved Performance of Hive with a Cost Based Optimizer: The cost based optimizer for Hive, uses statistics to generate several execution plans and then chooses the most efficient path as it relates system resources required to complete the operation. This presents a major performance increase for Hive.

Apache Hive

Apache Hive is a data warehouse infrastructure built on top of Hadoop for providing data summarization, query, and analysis. Apache Hive supports analysis of large datasets stored in Hadoop’s HDFS and compatible file systems. It provides an SQL-like language called HiveQL(Hive Query Language) while maintaining full support for map/reduce.

Apache Tez

Apache Tez is an extensible framework for building high performance batch and interactive data processing applications, coordinated by YARN in Apache Hadoop. Tez improves the MapReduce paradigm by dramatically improving its speed, while maintaining MapReduce’s ability to scale to petabytes of data. Important Hadoop ecosystem projects like Apache Hive and Apache Pig use Apache Tez, as do a growing number of third party data access applications developed for the broader Hadoop ecosystem.

Kafka for Processing the Internet of Things

Apache Kafka has quickly become the standard for high-scale, fault-tolerant, publish-subscribe messaging system for Hadoop. It is often used with Storm and Spark so as to stream events in to Hadoop in real time and its application within the “internet of things” uses cases is tremendous.

Apache Flume

Flume is a distributed, reliable, and available service for efficiently collecting, aggregating, and moving large amounts of streaming data into the Hadoop Distributed File System (HDFS). It has a simple and flexible architecture based on streaming data flows; and is robust and fault tolerant with tunable reliability mechanisms for failover and recovery.

Apache Sqoop

Sqoop is a tool designed for efficiently transferring bulk data between Apache Hadoop and structured datastores such as relational databases. Sqoop imports data from external structured datastores into HDFS or related systems like Hive and HBase. Sqoop can also be used to extract data from Hadoop and export it to external structured datastores such as relational databases and enterprise data warehouses. Sqoop works with relational databases such as: Teradata, Netezza, Oracle, MySQL, Postgres, and HSQLDB.

Apache Knox

Knox provides perimeter security so that the enterprise can confidently extend Hadoop access to more of those new users while also maintaining compliance with enterprise security policies. Knox also simplifies Hadoop security for users who access the cluster data and execute jobs. It integrates with prevalent identity management and SSO systems and allows identities from those enterprise systems to be used for seamless, secure access to Hadoop clusters.

The Hortonworks Data Platform is the foundation for the next-generation enterprise data architecture – one that addresses both the volume and complexity of today’s data.

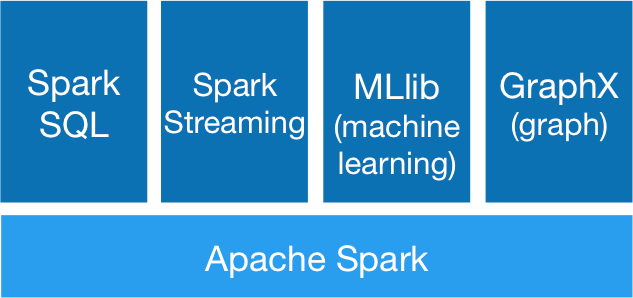

Apache Spark 1.4.1

Apache Spark is a fast, in-memory data processing engine with elegant and expressive development APIs in Scala, Java, and Python that allow data workers to efficiently execute machine learning algorithms that require fast iterative access to datasets. Spark on Apache Hadoop YARN enables deep integration with Hadoop and other YARN enabled workloads in the enterprise.

With YARN, Hadoop can now support many types of data and application workloads; Spark on YARN becomes yet another workload running against the same set of hardware resources.

HDP’s YARN-based architecture enables multiple applications to share a common cluster and dataset while ensuring consistent levels of service and response. Now Spark is one of the many data access engines that works with YARN and that is supported in an HDP enterprise data lake. Spark on YARN provides a very powerful way to derive value from any data, any application, anywhere.

![]() Note: For more information visit http://spark.apache.org/

Note: For more information visit http://spark.apache.org/

SAP HANA Vora

SAP HANA Vora™ is an in-memory query engine that plugs into the Apache Spark execution framework to provide enriched interactive analytics on Hadoop. SAP HANA Vora extends the HANA–like analytics experience to ALL data. SAP HANA Vora plugs into the Apache SPARK framework which is part of Apache Hadoop, and allows us to bring OLAP-like analytics and business semantics of the data in and around the Hadoop ecosystem. This is important- to reach meaningful contextual information when new unstructured data such as data from IoT sensors, machine telemetry or from social media, comes together with business data such as financial records, business goals, maintenance records, and employment data. It is only when these two different data sets meet, that business meaning is made. Meaningful business results require that we embrace ALL data, in a contextual way, to drive analytics driven outcomes.

SAP HANA Vora provides the following features:

· In-memory query engine running on Apache Spark execution framework

· Compiled queries for accelerated processing across nodes

· Enhanced Spark SQL semantics with hierarchies for analytical processing

· Enhanced mashup application programming interface (API) for easier access to enterprise application data for machine learning workloads

SAP HANA Vora can benefit customers in industries where highly interactive big data analytics in business process context is paramount, such as financial services, telecommunications, healthcare and manufacturing. Examples include:

· Mitigate risk and fraud by detecting new anomalies in financial transactions and customer history data.

· Optimize telecommunication bandwidth by analyzing traffic patterns to help avoid network bottlenecks and improve network quality of service (QoS).

· Deliver preventive maintenance and improve product re-call process by analyzing bill-of-material, services records and sensor data together.

Requirements

Physical Layout for the Solution

The physical layout for the solution is shown in Table 2 below. Each rack consists of two vertical PDUs. The solution consists of 3 Cisco R42610 racks. The Nexus 9396 leaf switch and the Fabric Interconnect s are mounted on rack2, the APIC appliances are distributed across rack1 to rack3. Similarly, nexus 9508 spine switch is mounted in rack2 for easier cabling between the spine and leaf switches. All the Switches and UCS Servers are dual connected to vertical PDUs for redundancy; thereby, ensuring availability during power source failure.

|

|

Rack 1 |

Rack 2 |

Rack 3 |

| 1. |

N9K-C9396PX |

FI-A |

N9K-C9396PX |

| 2. |

|||

| 3. |

|

FI- B |

|

| 4. |

|||

| 5. |

|

|

|

| 6. |

|

||

| 7. |

|

|

|

| 8. |

|

||

| 9. |

APIC-L1 |

APIC-L1 |

APIC-L1 |

| 10. |

|||

| 11. |

UCS C240M4

|

|

UCS C240M4

|

| 12. |

|||

| 13. |

UCS C240M4

|

|

UCS C240M4

|

| 14. |

|||

| 15. |

UCS C240M4

|

|

UCS C240M4

|

| 16. |

|||

| 17. |

UCS C240M4

|

N9k-C9508 |

UCS C240M4

|

| 18. |

|||

| 19. |

UCS C240M4

|

UCS C240M4

|

|

| 20. |

|||

| 21. |

UCS C240M4

|

UCS C240M4

|

|

| 22. |

|||

| 23. |

UCS C240M4

|

UCS C240M4

|

|

| 24. |

|||

| 25. |

UCS C240M4

|

UCS C240M4

|

|

| 26. |

|||

| 27. |

UCS C240M4

|

UCS C240M4

|

|

| 28. |

|||

| 29. |

UCS C240M4

|

UCS C240M4

|

|

| 30. |

N9k-C9508 |

||

| 31. |

UCS C240M4

|

UCS C240M4

|

|

| 32. |

|||

| 33. |

UCS C240M4

|

UCS C240M4

|

|

| 34. |

|||

| 35. |

UCS C240M4

|

UCS C240M4

|

|

| 36. |

|||

| 37. |

UCS C240M4

|

UCS C240M4

|

|

| 38. |

|||

| 39. |

UCS C240M4

|

UCS C240M4

|

|

| 40. |

|||

| 41. |

UCS C240M4

|

UCS C240M4

|

|

| 42. |

Software Distributions and Versions

The required versions of software distributions are listed below.

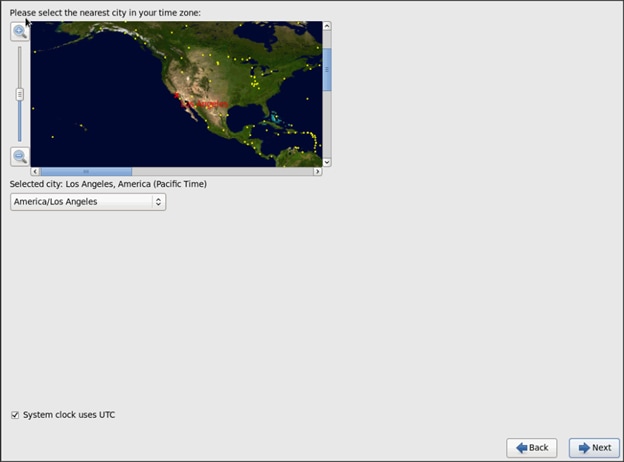

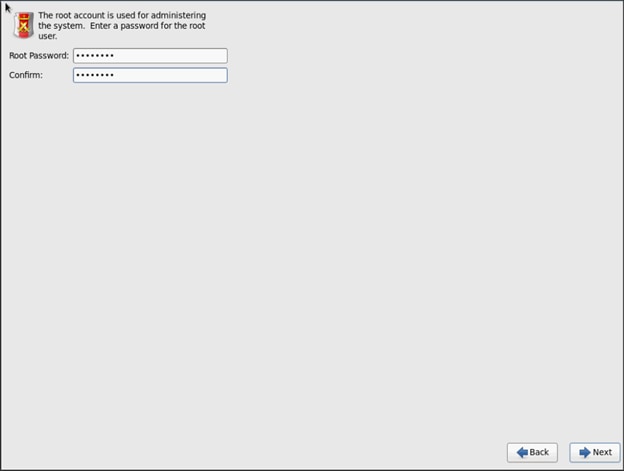

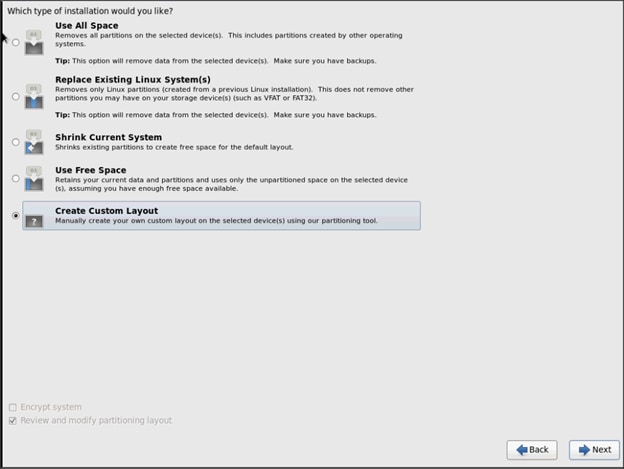

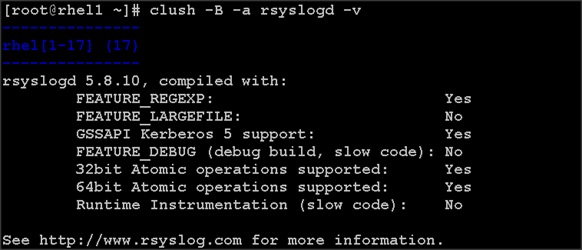

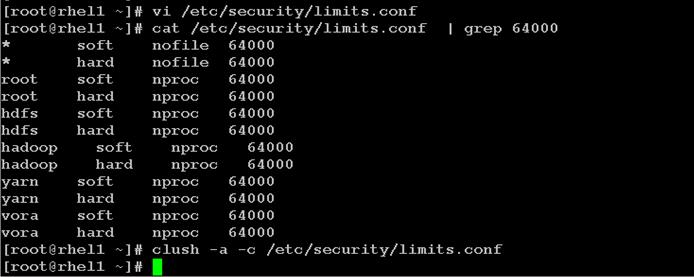

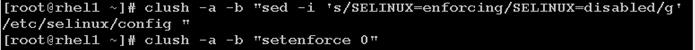

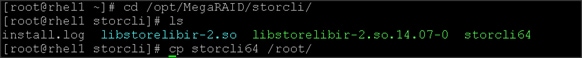

Red Hat Enterprise Linux (RHEL)

The operating system supported is Red Hat Enterprise Linux 6.6. For more information visit http://www.redhat.com

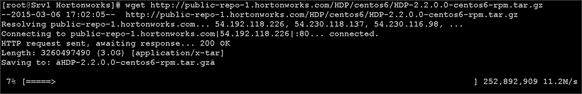

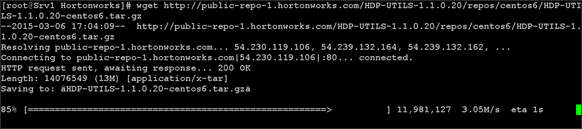

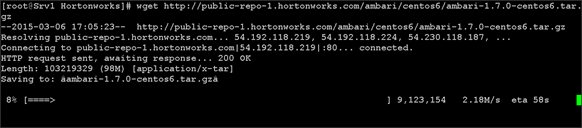

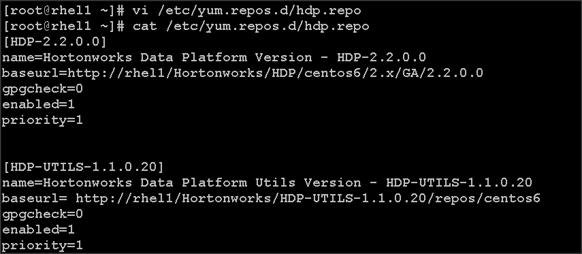

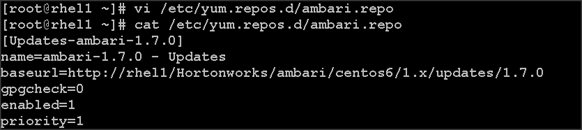

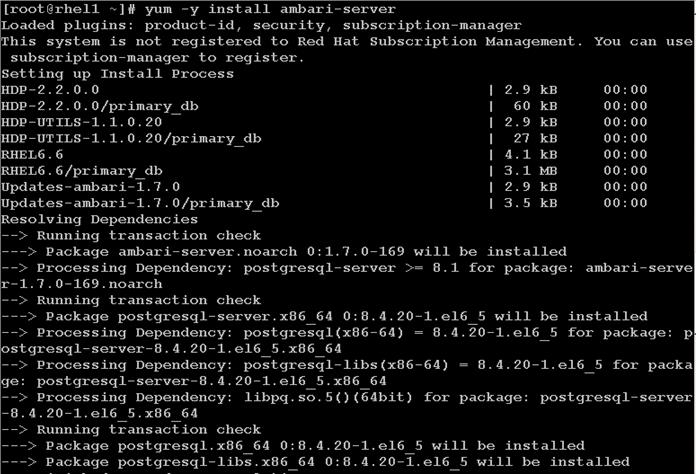

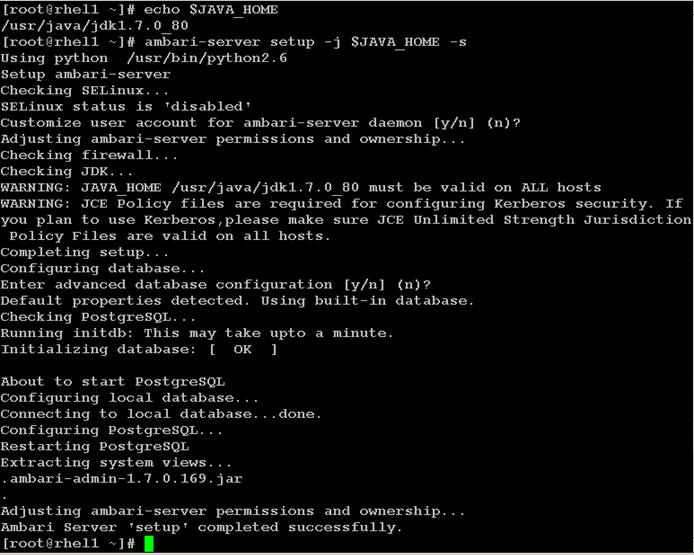

Hortonworks Data Platform (HDP 2.2)

The Hortonworks Data Platform supported is HDP 2.0. For more information visit http://www.hortonworks.com.

Software Versions

The software versions tested and validated in this document are shown in Table 3 below.

| Layer |

Component |

Version or Release |

| Network |

Cisco ACI OS |

11.1(3f) |

| APIC OS |

1.1 (3f) |

|

| Compute |

Cisco UCS 6296UP |

UCS 2.2(5b) |

| Cisco UCS VIC1227 Firmware |

4.0(1d) |

|

| Cisco UCS VIC1227 Driver |

2.1.1.66 |

|

| Storage |

LSI SAS 3108 |

24.5.0-0020 |

| Software

|

Red Hat Enterprise Linux Server |

6.6 (x86_64) |

| Cisco UCS Manager |

2.2(5b) |

|

| HDP |

2.2 |

|

| Ambari |

1.7 |

|

|

|

Spark |

1.4.1 (preview) |

|

|

SAP HANA Vora |

1.1 |

![]() The latest drivers can be downloaded from the link: https://software.cisco.com/download/release.html?mdfid=283862063&flowid=25886&softwareid=283853158&release=1.5.7d&relind=AVAILABLE&rellifecycle=&reltype=latest

The latest drivers can be downloaded from the link: https://software.cisco.com/download/release.html?mdfid=283862063&flowid=25886&softwareid=283853158&release=1.5.7d&relind=AVAILABLE&rellifecycle=&reltype=latest

![]() The Latest Supported RAID controller Driver is already included with the RHEL 6.6 operating system.

The Latest Supported RAID controller Driver is already included with the RHEL 6.6 operating system.

![]() Cisco UCS C240/C220 M4 Rack Servers are supported from Cisco UCS firmware 2.2(3d) onward.

Cisco UCS C240/C220 M4 Rack Servers are supported from Cisco UCS firmware 2.2(3d) onward.

System Architecture

The system architecture includes Cisco UCS C240 M4 servers, based on Cisco UCS Integrated Infrastructure for Big Data.

The ACI fabric consists of three major components: The Application Policy Infrastructure Controller (APIC), spine switches, and leaf switches. These three components handle both the application of network policy and the delivery of packets.

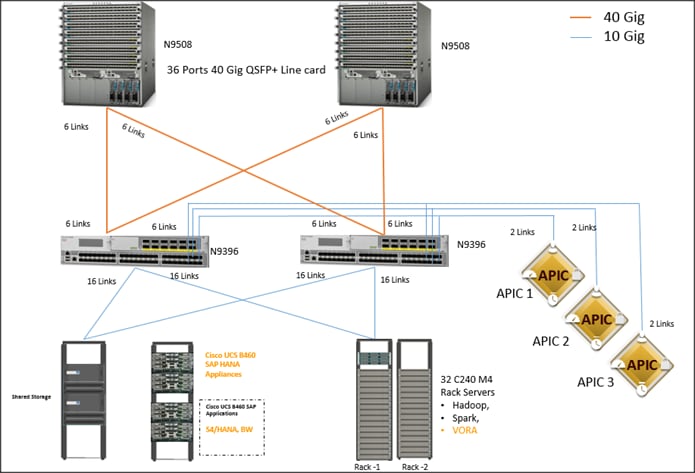

The system architecture consists of a pair of FIs connecting to ACI having two N9508 switches acting as a Spine and two Nexus 9396 as the leaf switches and three APIC-L1 as an APIC appliance.

The following explains the system architecture:

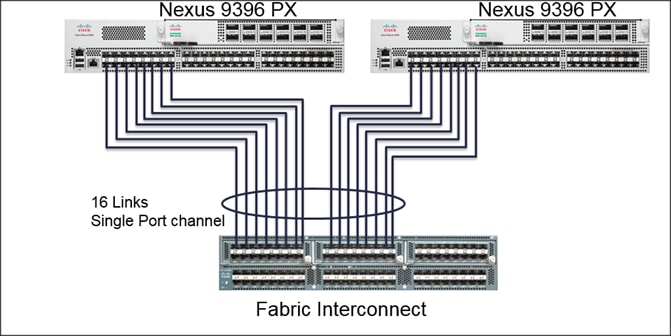

· The 32 server are rack mounted and are connected to a pair of FI representing a domain through 10GE link (dual 10GE link to a pair of FI)

· This UCS domain is connected to a pair of Nexus 9396 which is the ACI Fabric leaf nodes. Here 10GEx16 links from each FI are connected to Nexus 9396. This is done through a port-channel of 8 links connected to each of the Nexus 9396

· Nexus 9396 receives the 16x10GE from each pair of FI as a vPC (Virtual Port-Channel), i.e., 8 ports coming from each single FI as an uplink to the leaf. There are 2 vPC for this UCS domain in each of 9396 connecting to the pair of FIs.

· Each leaf is connected to Spines via 12 x 40 Gig connectivity cables.

· The three APIC’s are connected to two leaves (Nexus 9396) via 10 gig SFP cable.

Figure 13 below shows the overall system architecture and physical layout of the solution.

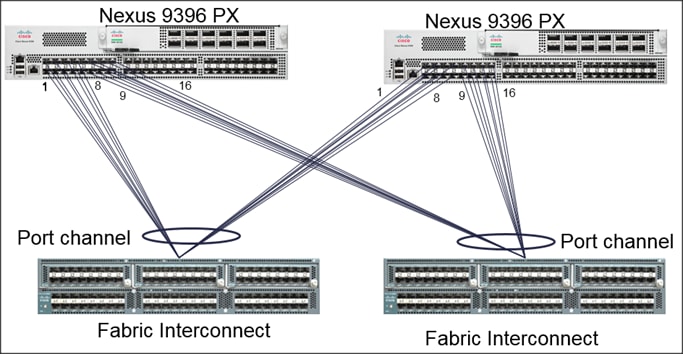

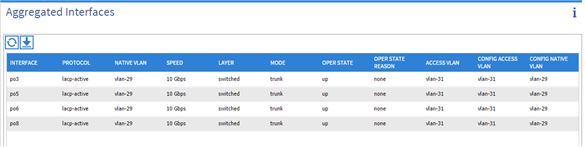

Figure 14 below show the connectivity between the leaf switches and fabric interconnect, where port channeling has been configured on Fabric Interconnect. This port channeling helps to aggregate the bandwidth towards the uplink leaf switches.

Figure 14 Fabric Interconnect Connectivity

Figure 15 below shows the connectivity between the leaf switches and fabric interconnect, where vPC has been configured on leaf switches through the APIC. These vPC ports are the same ports that were configured as port-channels in the fabric interconnect.

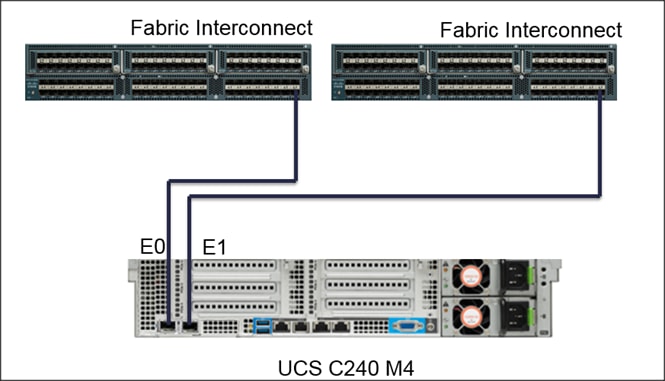

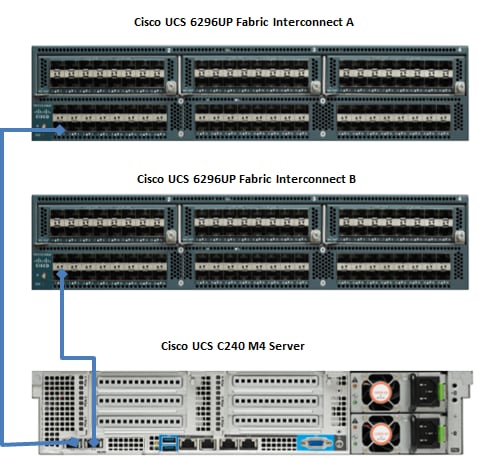

Figure 16 below shows the connectivity between the one C240 M4 servers and two Fabric.

Figure 16 Cisco UCS C240 M4 Server Connectivity

Scaling the Architecture

The UCS Servers are directly connected to the Fabric Interconnect (FI) which connects to the Nexus 9K switches. This mode allows using the UCS Manager capabilities in FI for provisioning the servers.

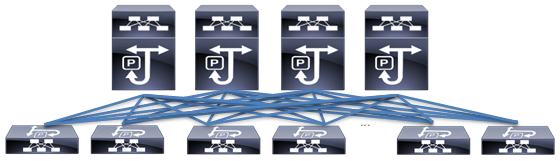

Scaling the Architecture Further with Additional Spine Switches

The physical network of the Cisco Application Centric Infrastructure is built around leaf-spine architecture. It is possible to scale this infrastructure, immensely, by adding additional spine switches. The ACI infrastructure supports up to 12 spine switches.

Figure 17 Cisco ACI Fabric with Multiple Spine Switches

With a 12-spine design, each leaf switch can be connected to up to 12 spine switches. Allowing for tens of thousands of servers to be part of this infrastructure – being interconnected by a non-blocking fabric.

SAP HANA and SAP HANA VORA scalability

The Base configuration 4 HANA Appliances (B460 M4/C460 M4 servers) + 32 C240 M4 (Cisco UCS Integrated Infrastructure for Big Data).

Recommended building block is made up of a set of 16 C240 M4 servers for every two HANA servers.

![]() The VORA tier can scale-out independent of the HANA tier if necessary.

The VORA tier can scale-out independent of the HANA tier if necessary.

| SAP HANA Tier (B460/C460) |

SAP HANA Vora Tier (C240 M4) |

| 4 servers |

32 servers |

| 8 servers |

64 servers |

| 16 servers |

128 servers |

Network Configuration

The network configuration includes configuring the APIC, leaf, spine switches and Fabric Interconnect and deploying various application profiles and policies. In order to achieve this we first need to register the connected Nexus 9K switches to the APIC so that these switches become the part of the ACI fabric. Once the switch is registered the communication between the spine and leaf are completed.

The admin is the only account enabled by default after the APIC is configured and it is always a good practice to create other user accounts with different privilege levels to make the APIC and the network secure. For this purpose we create a local or remote user depending on requirement.

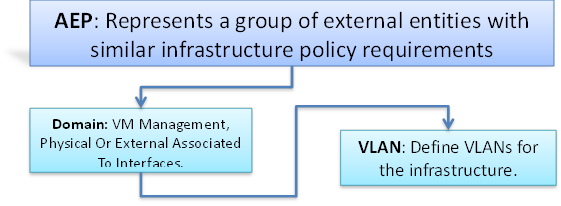

Adding a management access is required in the ACI to let ACI know about any physical or virtual domain that is connected to it. By adding management access, APIC will control the physical interface and assign the policies to this interface. This is achieved by configuring Attachable Access Entity Profile (AEP). AEP requires having the domain and VLAN pool that the ACI fabric will be using to communicate with various devices attached to it.

![]() For more detail on AEP please refer “Adding Management Access” section.

For more detail on AEP please refer “Adding Management Access” section.

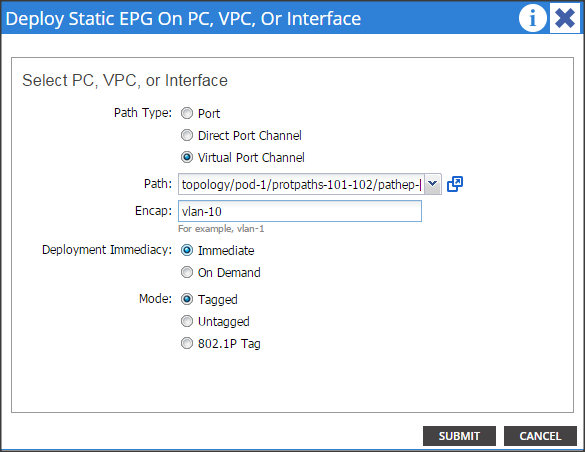

In this CVD, two pair of FIs representing two domains are connected to the pair of leaf switch. The uplink in the FIs is connected to the leaf via the port channeling (created in FI) and vPC is created at the leaf switches. The vPC allows single device to use a PortChannel across two upstream devices, eliminating Spanning Tree Protocol blocked ports which in turns provides a loop-free topology. With the use of vPC provides high availability and link-level resiliency.

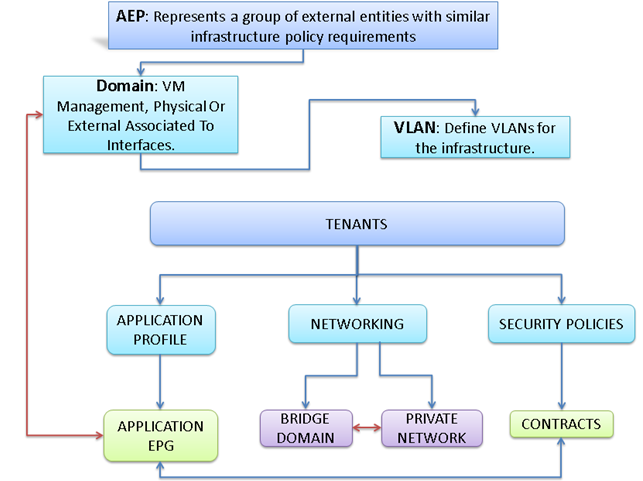

Depending on the number of VLANs created in the FI, to trunk these vlans across the ACI fabric an Attachable Entity Profile (AEP) is required. An AEP provisions the VLAN pool (and associated VLANs) on the leaf, these VLAN pools are defined under the domain created within the AEP. A domain could be various external entities such as bare metal servers, hypervisors, VM management, Layer 2 or Layer 3 domains. The VLANs are not actually enabled on the port. No traffic flows unless an EPG is deployed on the port. An EPG acts as a separate entity which is analogous to VLAN. A tenant needs to be created before an EPG is defined.

A tenant contains policies that enable qualified users domain-based access control. Application profile, security policies and network are the elements of Tenants. An EPG for each VLAN is created under the application profile. Since EPG represent VLANs, a switch virtual interface (SVI) is needed to provide the Layer 3 processing for packets from all switch ports associated with the VLAN. A bridge domain needs to be created which acts as switch virtual interface (SVI) for this purpose. Now, for the inter-Vlan communication, contracts need to be created to achieve communication among each EPG. Contracts are policies that enable inter-End Point Group (inter-EPG) communication. These policies are the rules that specify communication between application tiers.

![]() For more details on Tenant please refer to the “Adding Tenant” section.

For more details on Tenant please refer to the “Adding Tenant” section.

The relationship between the AEP, Tenants and its elements is shown in Figure 18 below.

Figure 18 AEP, Tenants, and Elements

IP Address Assignment

The IP address of UCS and ACI management are configured as out of band management access through the management switch.

APIC-1 10.0.141.8/24 (Primary)

APIC-2 10.0.141.9/24

APIC-3 10.0.141.10/24

Pod - 1

UCSM 10.0.141.20/24

FI-A 10.0.141.21/24

FI-B 10.0.141.22/24

KVM 10.0.141.11/24 – 10.0.141.90/24

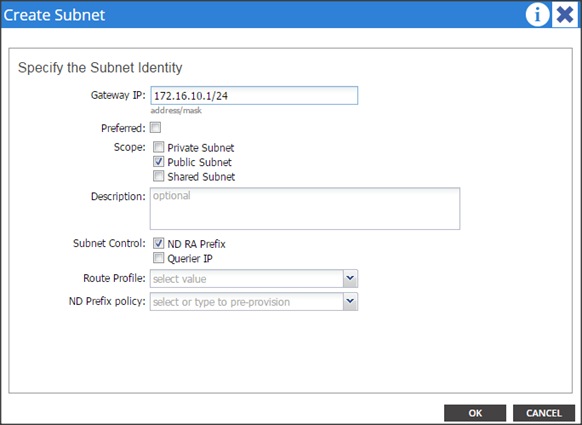

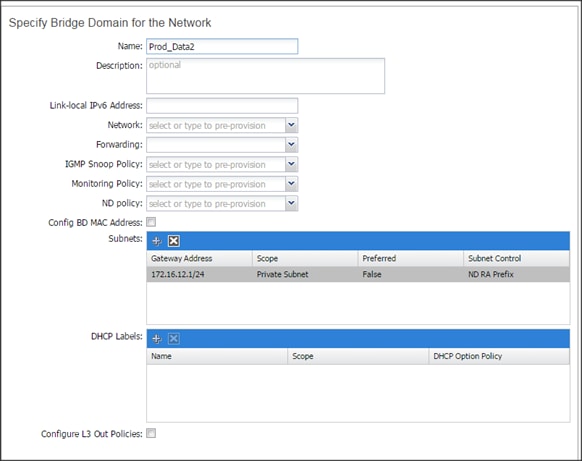

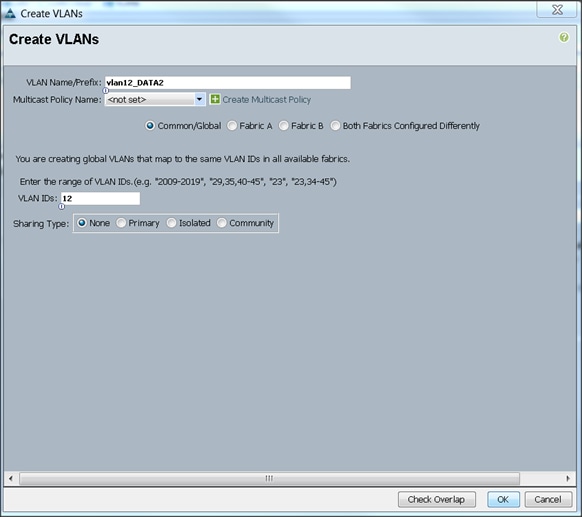

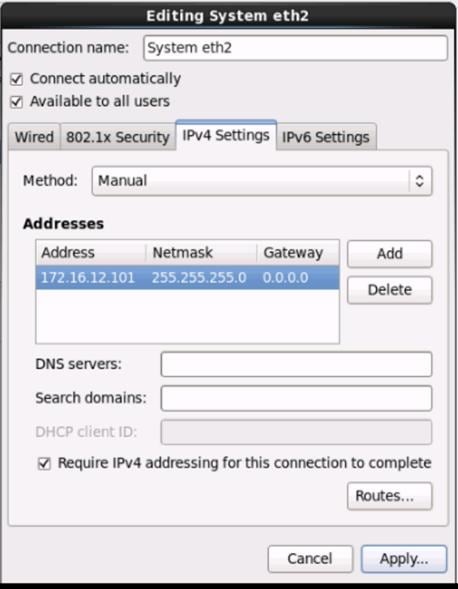

Table 8 VLan ID and IP Address

| Vlan ID |

Tenant Production |

| 10 (Mgmt) |

172.16.10.0/24 |

| 11 (Data1) |

172.16.11.0/24 |

| 12 (Data2) |

172.16.12.0/24 |

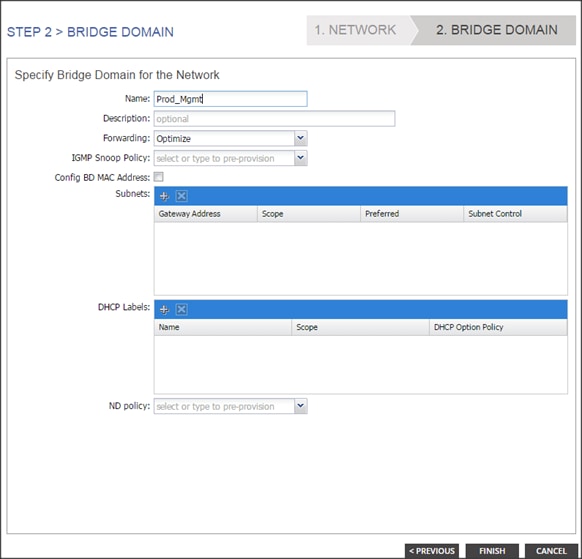

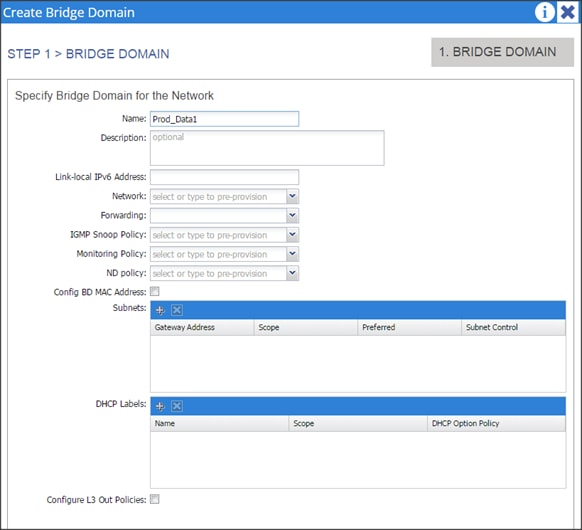

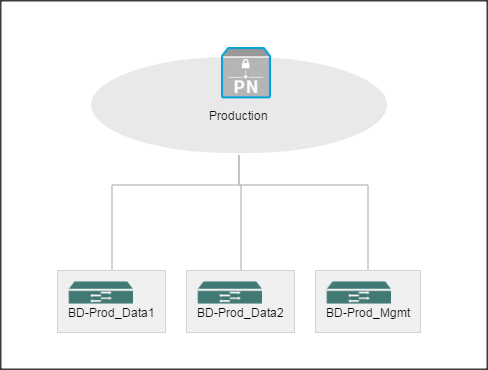

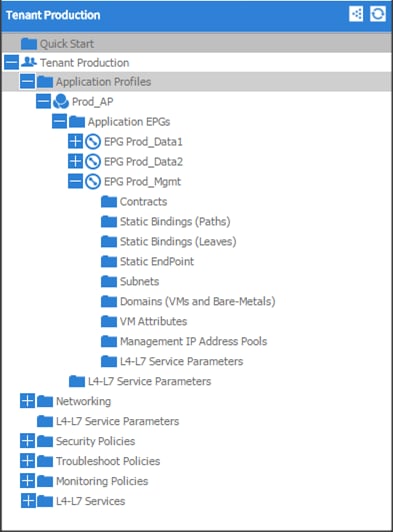

Configuration Parameters for the Tenants

Tenant: Production

Private Network: Production

Bridge Domain: Prod_Mgmt

Bridge Domain: Prod_Mgmt

![]() Prod_Data1

Prod_Data1

Prod_Data2

Prod_Data2

App. Profile: Production

App. EPG: Prod_Mgmt

Prod_Data1

Prod_Data2

Configuration of APIC

This section describes loading and configuring the APIC.

Once the APIC appliance is booted for the first time, the APIC console presents a series of initial setup options. For many options, you can press Enter to choose the default setting that is displayed in brackets. At any point in the setup dialog, you can restart the dialog from the beginning by pressing Ctrl-C.

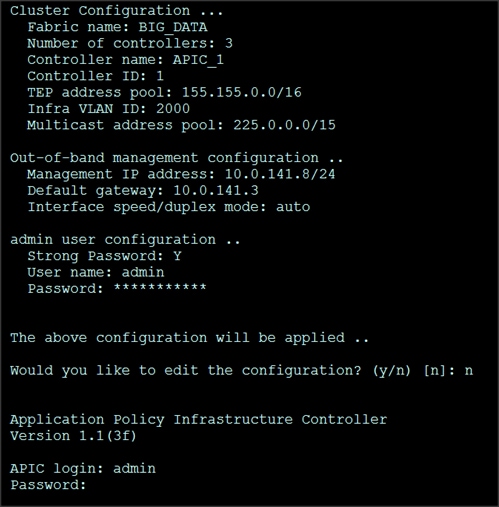

The initial configuration of the APIC is shown below.

1. Enter the fabric name [ACI Fabric1]:

2. Enter the number of controllers in the fabric (1-9) [3]:3

3. Enter the controller ID (1-3) [1]:1

4. Enter the controller name [apic1]:APIC_1

5. Enter address pool for TEP addresses [10.0.0.0/16]: 155.155.0.0/16

6. Enter the VLAN ID for infra network (1-4094) [4]: 2000

7. Out-of-band management configuration

8. Enter the IP address for out-of-band management: 10.0.141.8/24

9. Enter the IP address of the default gateway [None]: 10.0.141.1

10. Administrator user configuration.

11. Enable strong passwords? [Y]

12. Enter the password for admin

A screenshot of the configuration is shown below.

13. Repeat steps 1 through 12 for the additional 2 APICs with unique IP addresses for each of them.

Once the configuration is completed, the APIC will Boot its APIC IOS Image and will ask for the login information. The default username is “admin” and the password is the one that was set during the initial configuration.

Switch Discovery with the APIC

The APIC is a central point of automated provisioning and management for all the switches that are part of the ACI fabric. A single data center might include multiple ACI fabrics, each with their own APIC cluster and Cisco Nexus 9000 Series switches that are part of the fabric. To ensure that a switch is managed only by a single APIC cluster, each switch must be registered with that specific APIC cluster that manages the fabric. The APIC discovers new switches that are directly connected to any switch it currently manages. Each APIC instance in the cluster first discovers only the leaf switch to which it is directly connected. After the leaf switch is registered with the APIC, the APIC discovers all spine switches that are directly connected to the leaf switch. As each spine switch is registered, that APIC discovers all the leaf switches that are connected to that spine switch. This cascaded discovery allows the APIC to discover the entire fabric topology in a few simple steps.

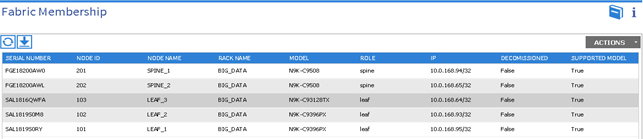

Switch Registration with the APIC Cluster

Once the switch is discovered by the APIC cluster it needs to be registered in the APIC to make it as a part of the fabric.

Prerequisite: All switches must be physically connected and booted with the correct ACI Image.

Using a web browser connect to the out-of-band management ip address [10.0.141.8] configured in the initial configuration.

1. On the menu bar, choose FABRIC > INVENTORY. In the Navigation pane, choose the appropriate pod.

2. In the Navigation pane, expand the pod, and click Fabric Membership.

In the Work pane, in the Fabric Membership table, a single leaf switch is displayed with an ID of 0. It is the leaf switch that is connected to APIC.

To configure the ID, double-click the leaf switch row, and perform the following actions:

3. In the ID field, add the appropriate ID (leaf1 is ID 101, leaf2 is ID 102 and leaf3 is ID103).

The ID must be a number that is greater than 100 because the first 100 IDs are for APIC appliance nodes.

4. In the Switch Name field, add the name of the switch, and click Update.

After an ID is assigned, it cannot be updated. The switch name can be updated by double-clicking the name and updating the Switch Name field.

![]() The Success dialog box is displayed. An IP address gets assigned to the switch, and in the Navigation pane, the switch is displayed under the pod.

The Success dialog box is displayed. An IP address gets assigned to the switch, and in the Navigation pane, the switch is displayed under the pod.

5. Monitor the Work pane until one or more spine switches appear.

6. To configure the ID, double-click the spine switch row and perform the following actions:

a. In the ID field, add the appropriate ID (spine1 is ID 201 and spine 2 is ID 202).

The ID must be a number that is greater than 100.

7. In the Switch Name field, add the name of the switch, and click Update.

The Success dialog box is displayed. An IP address gets assigned to the switch, and in the Navigation pane, the switch is displayed under the pod. Wait until all remaining switches appear in the Node Configurations table.

8. For each switch listed in the Fabric Membership table, perform the following steps:

b. Double-click the switch, enter an ID and a Name, and click Update.

c. Repeat for the next switch in the list.

Validating the Switches

1. On the menu bar, choose FABRIC > INVENTORY, and in the Navigation pane, under Pod 1, expand Fabric Membership.

2. The switches in the fabric are displayed with their node IDs. In the Work pane, all the registered switches are displayed with the IP addresses that are assigned to them.

Validating the Fabric Topology

1. On the menu bar, choose FABRIC > INVENTORY.

2. In the Navigation pane, choose the pod that you want to view.

3. In the Work pane, click the TOPOLOGY tab.

The displayed diagram shows all attached switches, APIC instances, and links.

4. (Optional) To view the port-level connectivity of a leaf switch or spine switch, double-click its icon in the topology diagram.

5. To return to the topology diagram, in the upper left corner of the Work pane click the Previous View icon.

6. (Optional) To refresh the topology diagram, in the upper left corner of the Work pane, click the Refresh icon.

![]()

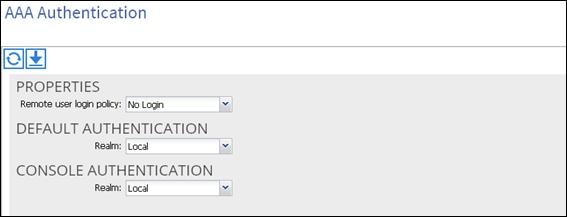

Creating User Accounts

The admin is the only user when the system starts. The APIC supports a granular, role-based access control system where user accounts can be created with various roles including non-admin users with fewer privileges.

1. On the menu bar, choose ADMIN > AAA

2. In the Navigation pane, click AAA Authentication.

3. In the Work pane, the AAA Authentication dialog box is displayed.

4. Verify that in the default Authentication field, the Realm field displays as Local.

In the Navigation pane, right-click Create Local User.

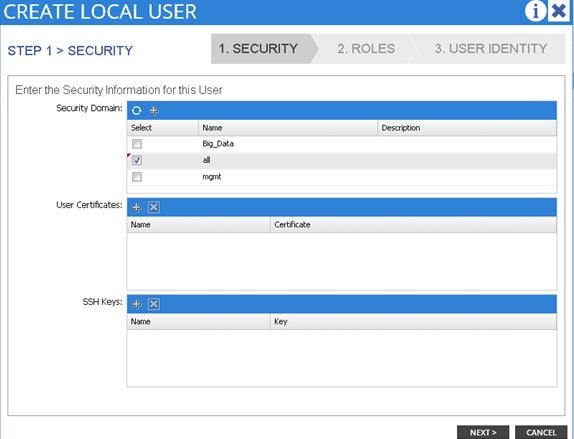

1. In the Navigation pane, expand Security Management > Local Users.

The admin user is present by default.

The Create Local User dialog box opens.

2. Under the Security dialog box, choose the desired security domain for the user, and click next.

The Roles dialog box opens.

3. In the Roles dialog box, click the radio buttons to choose the roles for your user, and click next. You can provide read-only or read/write privileges.

In the User Identity dialog box, perform the following actions:

4. In the Login ID field, add an ID.

a. In the Password field, type the password.

b. In the Confirm Password field, confirm the password.

c. Click Finish.

d. Type other parameters if desired.

5. In the Navigation pane, click the name of the user that you created. In the Work pane, expand the + sign next to the user in the Security Domains area.

The access privileges for the user are displayed.

Adding Management Access

Attach Entity Profiles (AEP)

The ACI fabric provides multiple attachment points that connect through leaf ports to various external entities such as bare metal servers, hypervisors, Layer 2 switches (for example, the Cisco UCS Fabric Interconnect), and Layer 3 routers (for example Cisco Nexus 7000 Series switches). These attachment points can be physical ports, port channels, or a virtual port channel (vPC) on the leaf switches.

An attachable entity profile (AEP) represents a group of external entities with similar infrastructure policy requirements. The infrastructure policies consist of physical interface policies, for example, Cisco Discovery Protocol (CDP), Link Layer Discovery Protocol (LLDP), maximum transmission unit (MTU), and Link Aggregation Control Protocol (LACP).

An AEP is required to deploy any VLAN pools on the leaf switches. It is possible to reuse the encapsulation pools (for example, VLAN) across different leaf switches. An AEP implicitly provides the scope of the VLAN pool (associated to the VMM domain) to the physical infrastructure.

![]() An AEP provisions the VLAN pool (and associated VLANs) on the leaf. The VLANs are not actually enabled on the port. No traffic flows unless an EPG is deployed on the port. Without VLAN pool deployment using an AEP, a VLAN is not enabled on the leaf port even if an EPG is provisioned.

An AEP provisions the VLAN pool (and associated VLANs) on the leaf. The VLANs are not actually enabled on the port. No traffic flows unless an EPG is deployed on the port. Without VLAN pool deployment using an AEP, a VLAN is not enabled on the leaf port even if an EPG is provisioned.

![]() A particular VLAN is provisioned or enabled on the leaf port based on EPG events either statically binding on a leaf port or based on VM events from external controllers such as VMware vCenter.

A particular VLAN is provisioned or enabled on the leaf port based on EPG events either statically binding on a leaf port or based on VM events from external controllers such as VMware vCenter.

![]() A leaf switch does not support overlapping VLAN pools. Different overlapping VLAN pools must not be associated with the same AEP.

A leaf switch does not support overlapping VLAN pools. Different overlapping VLAN pools must not be associated with the same AEP.

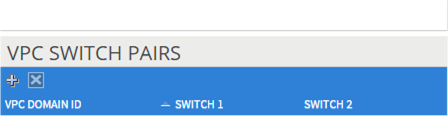

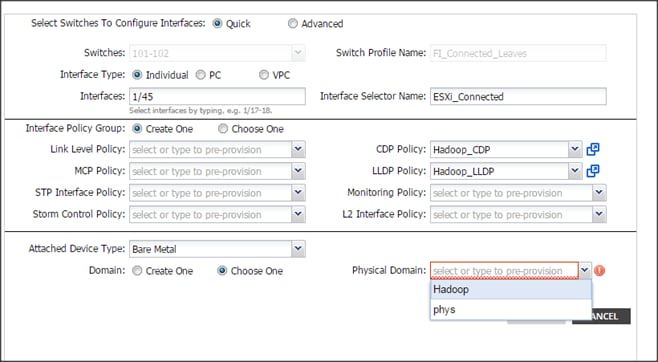

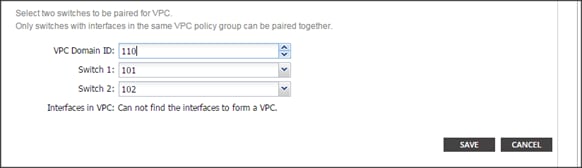

Configuring the VPC Ports for the Fabric Interconnect

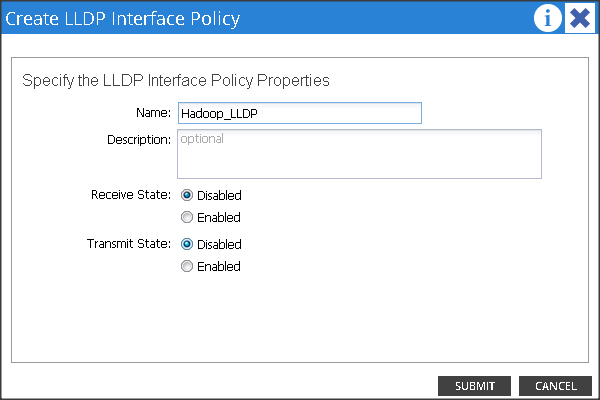

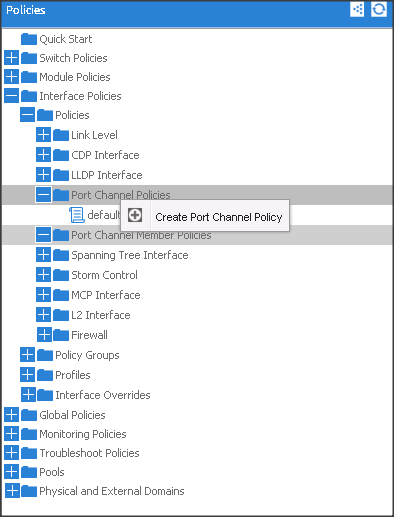

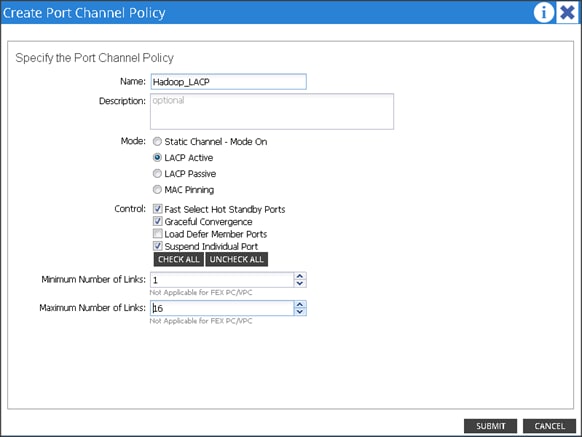

In order to configure vPC we need to create a CDP Policy, an LLDP Policy and a LACP Policy that can be applied to the vPC ports.

· The APIC does not manage fabric interconnects and the rack servers, so these services must be configured from UCSM.

· Create VLAN pools that are associated on the fabric interconnect uplink to the leaf switch on the fabric interconnect.

· Cisco UCS C-series server when used along with ACI, Link Layer Discovery Protocol (LLDP) is not supported and must be disabled.

· Cisco Discovery Protocol (CDP) is disabled by default in the Cisco UCS Manager Fabric interconnects. In the Cisco UCS Manager, you must enable CDP by creating a policy under Network Control Policies > CDP.

· The above steps are explained in further detail below.

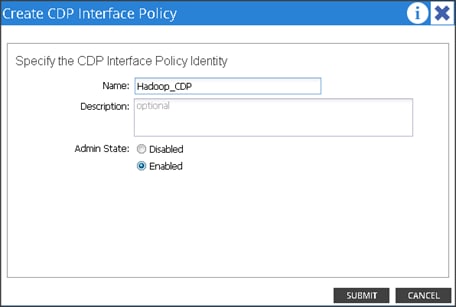

Creating CDP Policy group

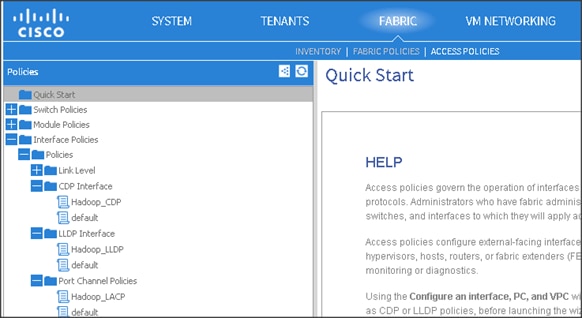

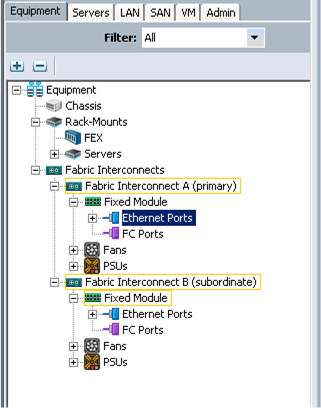

1. On the menu bar, choose FABRIC > ACCESS POLICIES.

2. In the Navigation pane, expand the Interface Policies and expand the Policies again.

3. Right Click on CDP Interface and select “Create CDP Interface Policy.”

4. In the Create CDP Interface Policy dialogue box, enter Hadoop_CDP as the policy name, set Admin State to Enabled and click Submit.

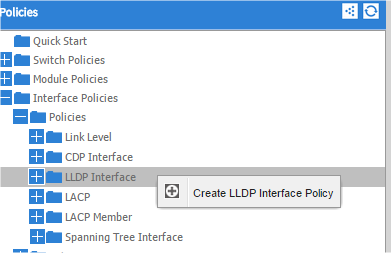

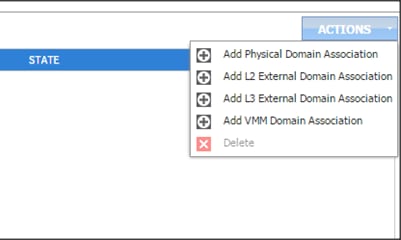

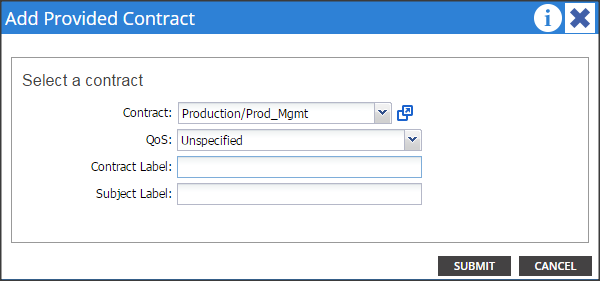

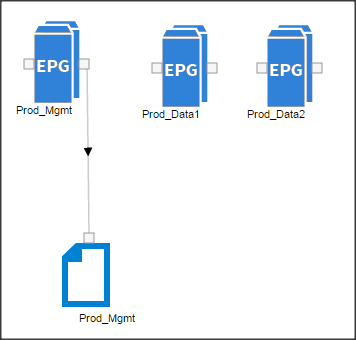

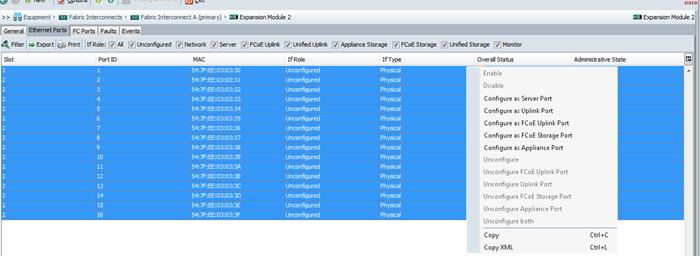

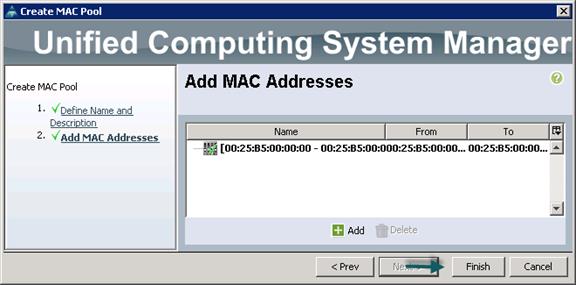

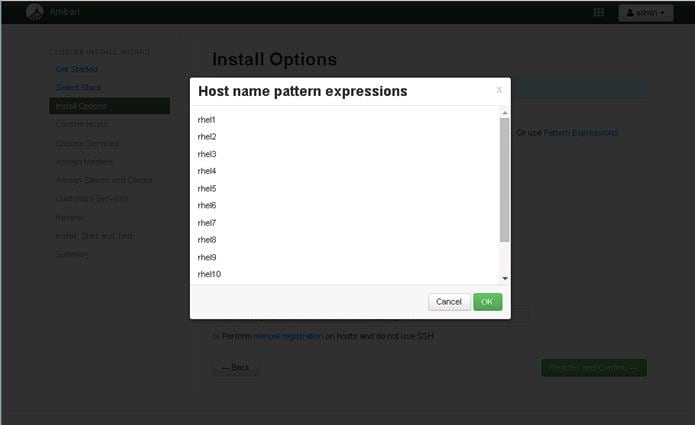

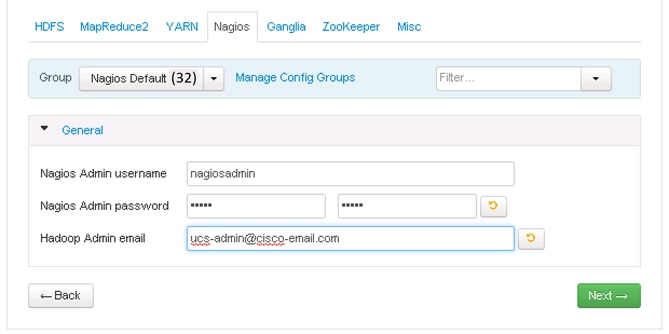

Creating LLDP Policy group