Selecting your 2-Node Network Topology

Cisco HyperFlex Edge offers both a 1 Gigabit Ethernet (GE) and a 10/25GE installation option. Both topologies support single top-of-rack (ToR) and dual ToR switch options for ultimate network flexibility and redundancy. Consider the following when determining the best topology for your cluster:

-

Cisco highly recommends the 10/25GE topology for higher performance and future node expansion capabilities.

-

The 1GE topology is reserved for clusters that will never require node expansion, and instances where the ToR switch does not have 10GE ports available.

Note |

A network topology is chosen during initial deployment and cannot be changed or upgraded without a full reinstallation. Choose your network topology carefully and with future needs in mind. |

To get started, select your network topology:

After completing the 10/25GE or 1GE ToR physical network and cabling section, continue with the Common Network Requirement Checklist.

10 or 25 Gigabit Ethernet Topology

The 10 or 25 Gigabit Ethernet (GE) switch topology provides a fully redundant design that protects against switch (if using dual or stacked switches), link and port failures. The 10/25GE switch may be one or two standalone switches or may be formed as a switch stack.

Cisco IMC Connectivity for 10/25 Gigabit Ethernet Topology

Choose one of the following Cisco IMC Connectivity options for the 2-node 10/25 Gigabit Ethernet (GE) topology:

-

Use of a dedicated 1GE Cisco IMC management port is recommended. This option requires additional switch ports and cables, however it avoids network contention and ensures ensure always on, out of band access to each physical server.

-

Use of shared LOM extended mode (EXT). In this mode, single wire management is used and Cisco IMC traffic is multiplexed onto the 10/25GE VIC connections. When operating in this mode, multiple streams of traffic are shared on the same physical link and uninterrupted reachability is not guaranteed. This deployment option is not recommended.

-

In fabric interconnect-based environments, built in QoS ensures uninterrupted access to Cisco IMC and server management when using single wire management. In HyperFlex Edge environments, QoS is not enforced and hence the use of a dedicated management port is recommended.

-

-

Assign an IPv4 management address to the Cisco IMC following the procedures in the Server Installation and Service Guide for the equivalent Cisco UCS C-series server. HyperFlex does not support IPv6 addresses.

Physical Network and Cabling for 10/25GE Topology

A managed switch (1 or 2) with VLAN capability is required. Cisco fully tests and provides reference configurations for Catalyst and Nexus switching platforms. Choosing one of these switches provides the highest level of compatibility and ensures a smooth deployment and seamless ongoing operations.

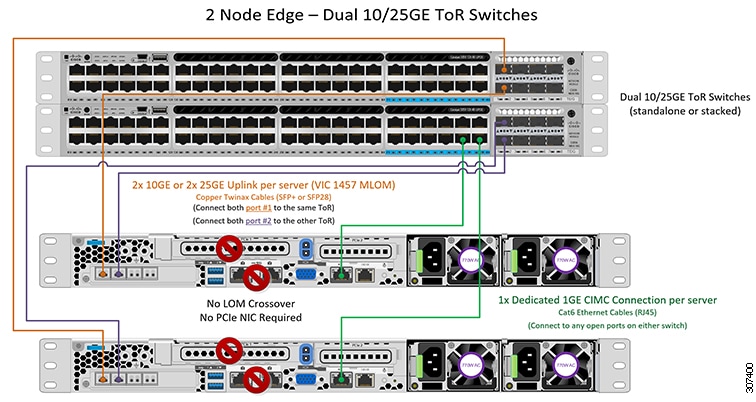

Dual switch configuration provides a slightly more complex topology with full redundancy that protects against: switch failure, link failure, and port failure. It requires two switches that may be standalone or stacked, and two 10/25GE ports, one 1GE port for CIMC management, and one Cisco VIC 1457 per server. Trunk ports are the only supported network port configuration.

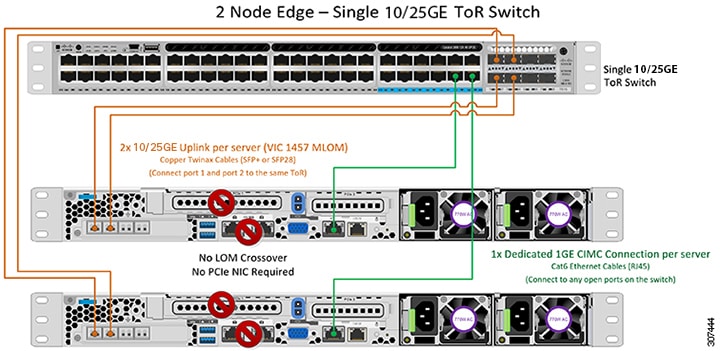

Single switch configuration provides a simple topology requiring only a single switch, and two 10/25GE ports, one 1GE port for CIMC management, and one Cisco VIC 1457 per server. Switch level redundancy is not provided, however all links/ports and associated network services are fully redundant and can tolerate failures.

Requirements for both 10 and 25GE Topologies

The following requirements are common to both 10/25GE topologies and must be met before starting deployment:

-

Dedicated 1 Gigabit Ethernet (GE) Cisco IMC management port per server (recommended)

-

2 x 1GE ToR switch ports and two (2) Category 6 ethernet cables for dedicated Cisco IMC management port (customer supplied)

-

-

Cisco VIC 1457 (installed in the MLOM slot in each server)

-

Prior generation Cisco VIC hardware is not supported for 2 node or 4 node HX Edge clusters.

-

4 x 10/25GE ToR switch ports and 4 x 10/25GE SFP+ or SFP28 cables (customer supplied. Ensure the cables you select are compatible with your switch model).

-

Cisco VIC 1457 supports 10GE interface speeds in Cisco HyperFlex Release 4.0(1a) and later.

-

Cisco VIC 1457 supports 25GE interface speeds in Cisco HyperFlex Release 4.0(2a) and late

-

Cisco VIC 1457 does not support 40GE inernet speeds.

-

Requirements for HX Edge clusters using 25GE

Note |

Using 25GE mode typically requires the use of forward error correction (FEC) depending on the transceiver or the type & length of cabling selected. The VIC 1400 series by default is configured in CL91 FEC mode (FEC mode “auto” if available in the Cisco IMC UI is the same as CL91) and does not support auto FEC negotiation. Certain switches will need to be manually set to match this FEC mode to bring the link state up. The FEC mode must match on both the switch and VIC port for the link to come up. If the switch in use does not support CL91, you may configure the VIC ports to use CL74 to match the FEC mode available on the switch. This will require a manual FEC mode change in the CIMC UI under the VIC configuration tab. Do not start a HyperFlex Edge deployment until the link state is up as reported by the switch and the VIC ports. CL74 is also known as FC-FEC (Firecode) and CL91 is also known as RS-FEC (Reed Solomon). See the Cisco UCS C-Series Integrated Management Controller GUI Configuration Guide, Release 4.1 for further details on how to change the FEC mode configured on the VIC using the Cisco IMC GUI. |

Select either a single switch or dual switch configuration to continue with physical cabling:

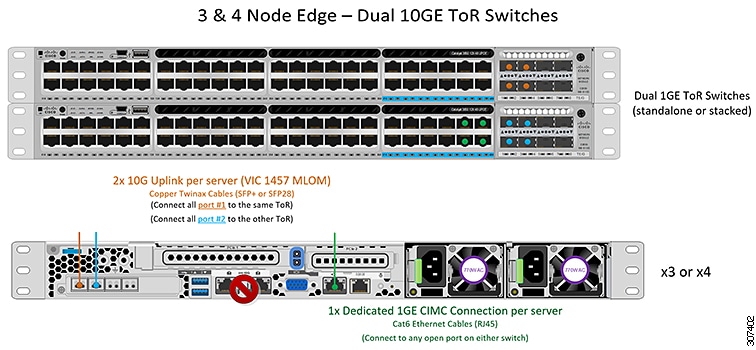

10/25 Gigabit Ethernet Dual Switch Physical Cabling

Warning |

Proper cabling is important to ensure full network redundancy. |

To deploy with dual ToR switches for extra redundancy (see diagram below for a visual layout):

-

If using dedicated Cisco IMC, connect the 1GE management port on each server (Labeled M on the back of the server) to one of the two switches.

-

Connect one out of the four 10/25GE ports on the Cisco VIC from each server to the same ToR switch.

-

Use the same port number on each server to connect to the same switch.

Note

Failure to use the same VIC port numbers will result in an extra hop for traffic between servers and will unnecessarily consume bandwidth between the two switches.

-

-

Connect a second 10/25GE port on the Cisco VIC from each server to the other ToR switch. Use the same port number on each server to connect to the same switch.

-

Do not connect additional 10/25GE ports prior to cluster installation. After cluster deployment, you may optionally use the additional two 10/25GE ports for guest VM traffic.

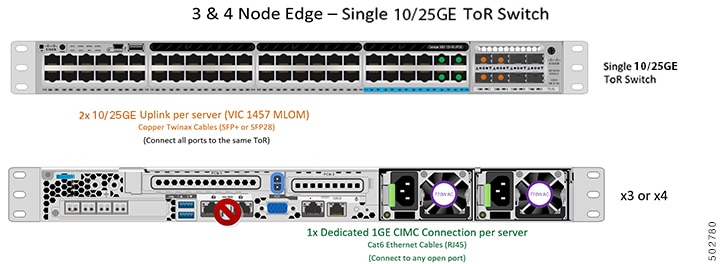

10/25 Gigabit Ethernet Single Switch Physical Cabling

Warning |

Proper cabling is important to ensure full network redundancy. |

To deploy with a single ToR (see diagram below for a visual layout):

-

If using dedicated Cisco IMC, connect the 1GE management port on each server (Labeled M on the back of the server) to the switch.

-

Connect any two out of the four 10/25GE ports on the Cisco VIC from each server to the same ToR switch.

-

Do not connect additional 10/25GE ports prior to cluster installation. After cluster deployment, you may optionally use the additional two 10/25GE ports for guest VM traffic.

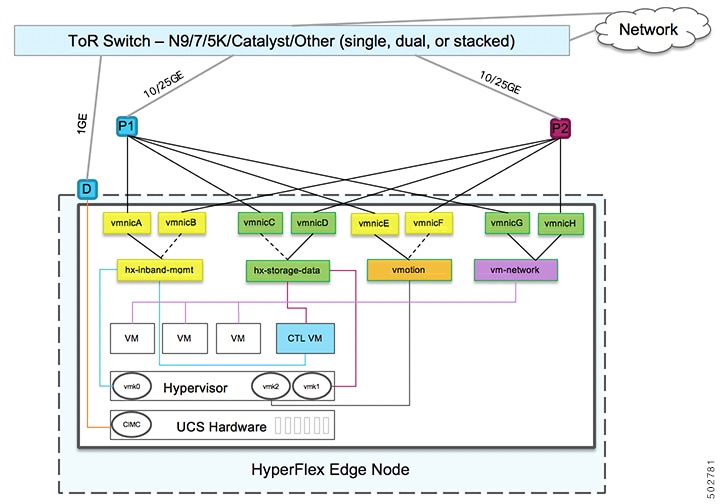

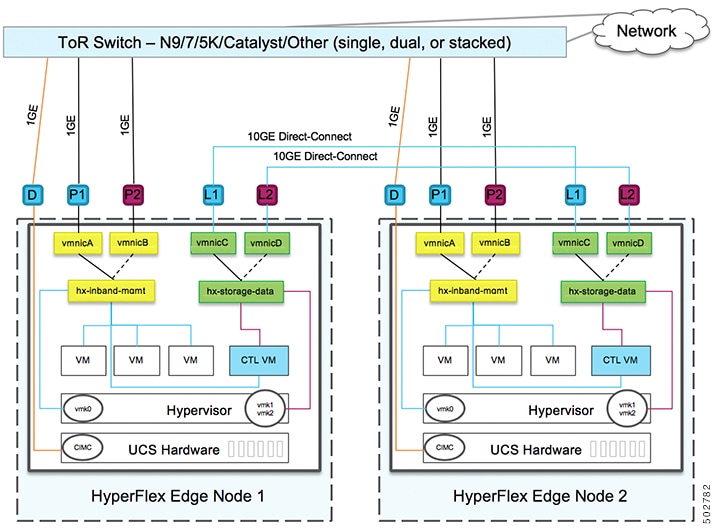

Virtual Networking Design for 2-Node 10/25 Gigabit Ethernet Topology

This section details the virtual network setup. No action is required as all of the virtual networking is set up automatically by the HyperFlex deployment process. These extra details are included below for informational and troubleshooting purposes.

Virtual Switches:

Four vSwitches are required:

-

vswitch-hx-inband-mgmt—ESXi management (vmk0), storage controller management network

-

vswitch-hx-storage-data—ESXi storage interface (vmk1), HX storage controller data network

-

vmotion—vMotion interface (vmk2)

-

vswitch-hx-vm-network—VM guest portgroups

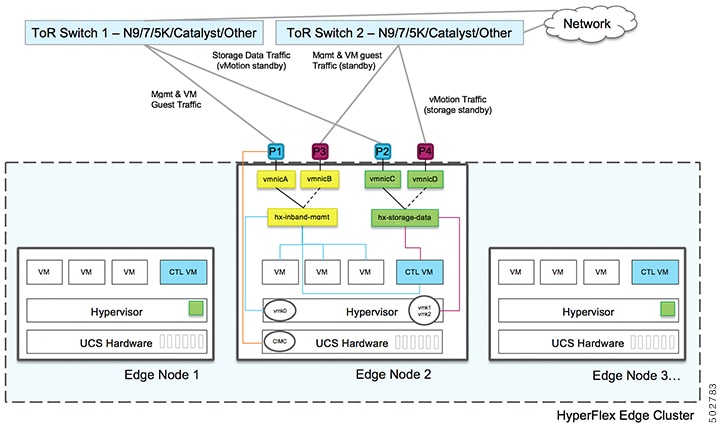

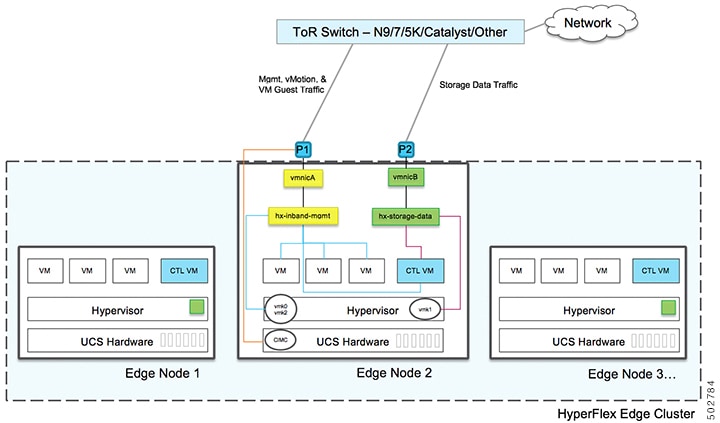

Network Topology

Failover Order:

-

vswitch-hx-inband-mgmt—entire vSwitch is set for active/standby. All services by default consume a single uplink port and failover when needed.

-

vswitch-hx-storage-data—HyperFlex storage data network and vmk1 are with the opposite failover order as inband-mgmt and vmotion vSwitches to ensure traffic is load balanced.

-

vmotion—The vMotion VMkernel port (vmk2) is configured when using the post_install script. Failover order is set for active/standby.

-

vswitch-hx-vm-network—vSwitch is set for active/active. Individual portgroups can be overridden as needed.

10/25 Gigabit Ethernet Switch Configuration Guidelines

3 VLANs are required at a minimum.

-

1 VLAN for the following connections: VMware ESXi management, Storage Controller VM management and Cisco IMC management.

-

VMware ESXi management and Storage Controller VM management must be on the same subnet and VLAN.

-

A dedicated Cisco IMC management port may share the same VLAN with the management interfaces above or may optionally use a dedicated subnet and VLAN. If using a separate VLAN, it must have L3 connectivity to the management VLAN above and must meet Intersight connectivity requirements.

-

If using shared LOM extended mode for Cisco IMC management, a dedicated VLAN is recommended.

-

-

1 VLAN for Cisco HyperFlex storage traffic. This can and should be an isolated and non-routed VLAN. It must be unique and cannot overlap with the management VLAN.

-

1 VLAN for vMotion traffic. This can be an isolated and non-routed VLAN.

Note

It is not possible to collapse or eliminate the need for these VLANs. The installation will fail if attempted.

-

Additional VLANs as needed for guest VM traffic. These VLANs will be configured as additional portgroups in ESXi and should be trunked and allowed on all server facing ports on the ToR switch.

-

These additional guest VM VLANs are optional. You may use the same management VLAN above for guest VM traffic in environments that wish to keep a simplified flat network design.

Note

Due to the nature of the Cisco VIC carving up multiple vNICs from the same physical port, it is not possible for guest VM traffic configured on vswitch-hx-vm-network to communicate L2 to interfaces or services running on the same host. It is recommended to either a) use a separate VLAN and perform L3 routing or b) ensure any guest VMs that need access to management interfaces be placed on the vswitch-hx-inband-mgmt vSwitch. In general, guest VMs should not be put on any of the HyperFlex configured vSwitches except for the vm-network vSwitch. An example use case would be if you need to run vCenter on one of the nodes and it requires connectivity to manage the ESXi host it is running on. In this case, use one of the recommendations above to ensure uninterrupted connectivity.

-

-

Switchports connected to the Cisco VIC should be configured in trunk mode with the appropriate VLANs allowed to pass.

-

Switchports connected to the dedicated Cisco IMC management port should be configured in ‘Access Mode’ on the appropriate VLAN.

-

All cluster traffic will traverse the ToR switches in the 10/25GE topology

-

Spanning tree portfast trunk (trunk ports) should be enabled for all network ports

Note

Failure to configure portfast may cause intermittent disconnects during ESXi bootup and longer than necessary network re-convergence during physical link failure

Additional Considerations:

-

Additional 3rd party NIC cards may be installed in the HX Edge nodes as needed. See the section in chapter 1 with the link to the networking guide.

-

All non-VIC interfaces must be shutdown or left un-cabled until install is completed

-

Only a single VIC is supported per HX Edge node in the MLOM slot. PCIe based VIC adapters are not supported with HX Edge nodes.

Jumbo Frames for 10/25 Gigabit Ethernet

Jumbo frames are typically used to reduce the number of packets transmitted on your network and increase efficiency. The following describes the guidelines to using jumbo frames on your 10/25GE topology.

-

The option to enable jumbo frames is only provided during initial install and cannot be changed later.

-

Jumbo Frames are not required. If opting out of jumbo frames, leave the MTU set to 1500 bytes on all network switches.

-

For highest performance, jumbo frames may be optionally enabled. Ensure full path MTU is 9000 bytes or greater. Keep the following considerations in mind when enabling jumbo frames:

-

When running a dual switch setup, it is imperative that all switch interconnects and switch uplinks have jumbo frames enabled. Failure to ensure full path MTU could result in a cluster outage if traffic is not allowed to pass after link or switch failure.

-

The HyperFlex installer will perform a one-time test on initial deployment that will force the failover order to use the standby link on one of the nodes. If the switches are cabled correctly, this will test the end to end path MTU. Do no bypass this warning if a failure is detected. Correct the issue and retry the installer to ensure the validation check passes.

-

For these reasons and to reduce complexity, it is recommended to disable jumbo frames when using a dual switch setup.

-

-

The option to enable jumbo frames is found in the HyperFlex Cluster profile, under the Network Configuration policy. Checking the box will enable jumbo frames. Leaving the box unchecked will keep jumbo frames disabled.

Next Steps:

Complete the Common Network Requirement Checklist.

1 Gigabit Ethernet Topology

The 1 Gigabit Ethernet (GE) switch topology provides a fully redundant design that protects against switch (if using dual or stacked switches), link and port failures. The 1GE switch may be one or two standalone switches or may be formed as a switch stack.

Note |

Single or dual 1Gb switch connectivity limits the maximum performance that virtual machines can get and is not recommended for applications requiring high performance. |

Cisco IMC Connectivity for 1 Gigabit Ethernet Topology

Cisco IMC Connectivity for your 2-node 1 Gigabit Ethernet (GE) topology requires the use of the dedicated 1GE Cisco IMC management port. Other operating modes, including shared LOM mode, are not available due to the use of direct connect cables in this topology.

Assign an IPv4 management address to the Cisco IMC following the procedures in the Server Installation and Service Guide for the equivalent Cisco UCS C-series server. HyperFlex does not support IPv6 addresses.

Physical Network and Cabling for 1 GE Topology

A managed switch (1 or 2) with VLAN capability is required. Cisco fully tests and provides reference configurations for Catalyst and Nexus switching platforms. Choosing one of these switches provides the highest level of compatibility and ensures a smooth deployment and seamless ongoing operations.

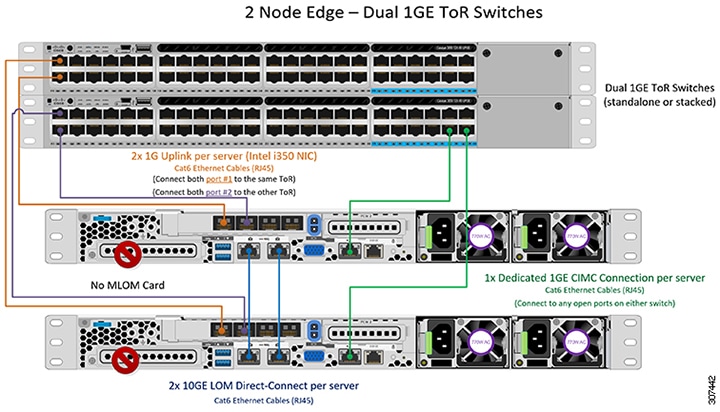

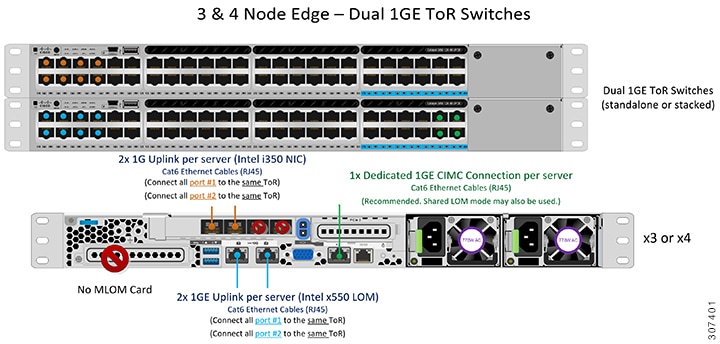

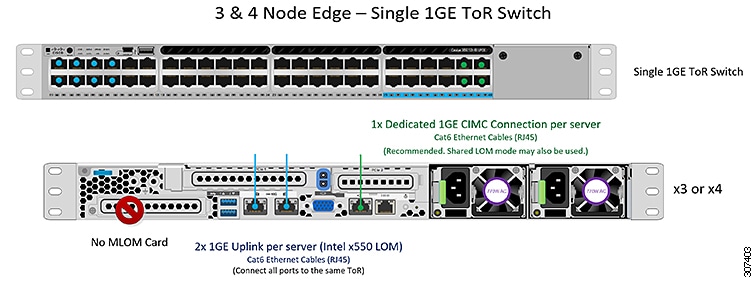

Dual switch cabling provides a slightly more complex topology with full redundancy that protects against: switch failure, link failure, and switch port failure. It requires two switches that may be standalone or stacked, and three 1 Gigabit Ethernet (GE) switch ports per server. Single switch cabling provides a simple topology requiring only single switch and three 1GE switch ports per server. Switch level redundancy is not provided, however all links/ports and associated network services are fully redundant and can tolerate failures.

The 1GE topology uses direct-connect cables for high speed, redundant, 10GE connectivity between the two nodes without the need for a 10GE capable switch.

Note |

This topology does not support future node expansion capability and should be avoided where requirements may dictate adding more HX Edge nodes in the future. |

The following requirements are common to both 1GE topologies and must be met before starting deployment:

-

Dedicated 1 Gigabit Ethernet (GE) Cisco IMC management port per server (required)

-

Intel i350 Quad Port PCIe NIC Card (installed in a PCIe slot in each server) (required)

-

Cisco VIC is not used in this topology

-

-

2 x 10GE DirectConnect LAN-on-Motherboard (LOM) connections (do not consume switchports)

-

2 x Category 6 straight through ethernet cables for direct connect links (customer supplied)

-

-

6 x 1GE Top of Rack (ToR) switchports and 6x Category 6 ethernet cables (customer supplied)

Select either a single switch or dual switch configuration to continue with physical cabling:

1 Gigabit Ethernet Dual Switch Cabling

Warning |

Proper cabling is important to ensure full network redundancy. |

To deploy with dual ToR switches for extra redundancy (see diagram below for a visual layout):

-

Connect the 1GE dedicated Cisco IMC management port on each server (Labeled M on the back of the server) to one of the two switches.

-

Connect the Lan-on-motherboard (LOM) port 1 on one server to the LOM port 1 on the other server using a regular ethernet cable.

-

Connect LOM port 2 on one server to LOM port 2 on the second server.

-

Connect one out of the four 1GE ports on the i350 NIC from each server to the same ToR switch. Use the same port number on each server to connect to the same switch.

Note

Failure to use the same port numbers will result in an extra hop for traffic between servers and will unnecessarily consume bandwidth between the two switches.

-

Connect a second 1GE port on the i350 NIC from each server to the other ToR switch. Use the same port number on each server to connect to the same switch.

-

Do not connect additional 1GE ports prior to cluster installation. After cluster deployment, you may optionally use the additional two 1GE ports for guest VM traffic.

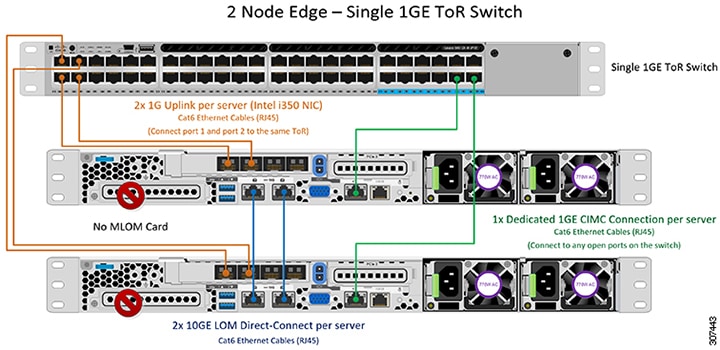

1 Gigabit Ethernet Single Switch Cabling

Warning |

Proper cabling is important to ensure full network redundancy. |

To deploy with a single ToR (see diagram below for a visual layout):

-

Connect the 1GE dedicated Cisco IMC management port on each server (Labeled M on the back of the server) to the switch.

-

Connect the Lan-on-motherboard (LOM) port 1 on one server to the LOM port 1 on the other server using a regular ethernet cable.

-

Connect LOM port 2 on one server to LOM port 2 on the second server.

-

Connect any two out of the four 1GE ports on the i350 NIC from each server to the same ToR switch.

-

Do not connect additional 1GE ports prior to cluster installation. After cluster deployment, you may optionally use the additional two 1GE ports for guest VM traffic.

Virtual Networking Design for 2-Node 1 Gigabit Ethernet Topology

Virtual Switches:

This section details the virtual network setup. No action is required as all of the virtual networking is set up automatically by the HyperFlex deployment process. These extra details are included below for informational and troubleshooting purposes.

The recommended configuration for each ESXi calls for the following networks to be separated:

-

Management traffic network

-

Data traffic network

-

vMotion network

-

VM network

The minimum network configuration requires at least two separate networks:

-

Management network (includes vMotion and VM network).

-

Data network (for storage traffic)

Two vSwitches each carrying different networks are required:

-

vswitch-hx-inband-mgmt—ESXi management (vmk0), HyperFlex storage controller management network, VM guest portgroups.

-

vswitch-hx-storage-data—ESXi storage interface (vmk1), HyperFlex interface (vmk2), HyperFlex storage controller data network.

Network Topology

Failover Order:

vswitch-hx-inband-mgmt— entire vSwitch is set for active/standby across the two uplinks. All services by default consume a single uplink port and failover when needed. Failover order for guest VM portgroups may be overridden as needed and to achieve better load balancing.

vswitch-hx-storage-data— HyperFlex storage data network and vmk1 are set to the same active/standby order. The vMotion Vmkernel port is set to use the opposite order when configured using the post_install script. This ensures full utilization of the direct connect links.

1 Gigabit Ethernet Switch Configuration Guidelines

-

1 VLAN minimum for the following connections: VMware ESXi management, Storage Controller VM Management and Cisco IMC Management.

-

VMware ESXi management and Storage Controller VM management must be on the same subnet & VLAN

-

The dedicated Cisco IMC management port may share the same VLAN with the management interfaces above or may optionally use a dedicated subnet & VLAN. If using a separate VLAN, it must have L3 connectivity to the management VLAN above and must meet Intersight connectivity requirements.

-

-

Additional VLANs as needed for guest VM traffic. These VLANs will be configured as additional portgroups in ESXi and should be trunked on all connections to the ToR switch.

-

These additional guest VM VLANs are optional. You may use the same management VLAN above for guest VM traffic in environments that wish to keep a simplified flat network design.

-

-

Switchports connected to the Intel i350 should be configured in trunk mode with the appropriate VLANs allowed to pass.

-

Switchports connected to the dedicated Cisco IMC management port should be configured in ‘Access Mode’ on the appropriate VLAN.

-

VMware vMotion traffic and Cisco HyperFlex data traffic will traverse the direct connect LOMs and will therefore not utilize the top of rack switch. Hence additional VLANs are not required for these services.

-

Configuration of Jumbo Frames on the ToR switch is not required in this topology due to all traffic remaining local without need to traverse upstream switches. This topology therefore defaults vMotion traffic to use jumbo frames for high performance.

-

-

Spanning tree portfast trunk (trunk ports) should be enabled for all network ports

Note

Failure to configure portfast may cause intermittent disconnects during ESXi bootup and longer than necessary network re-convergence during physical link failure

Jumbo Frames for 1 Gigabit Ethernet

Jumbo frames are typically used to reduce the number of packets transferred on your network. The following describes the guidelines to using jumbo frames on your 1GE topology.

-

Jumbo Frames are automatically configured on the vMotion network as there is no additional setup required.

-

The option to enable jumbo frames is found in the HyperFlex Cluster profile, under the Network Configuration policy. When using the 1GE topology, you may choose to enable jumbo frames by ensuring the check box is enabled before starting deployment.

Next Steps:

Complete the Common Network Requirement Checklist.

Feedback

Feedback