Introduction

This document describes efficient bearer handling for emergency calls such as E911 calls using LWR and UDC VMs, ensuring prioritized setup, reliability, and optimized network resource use.

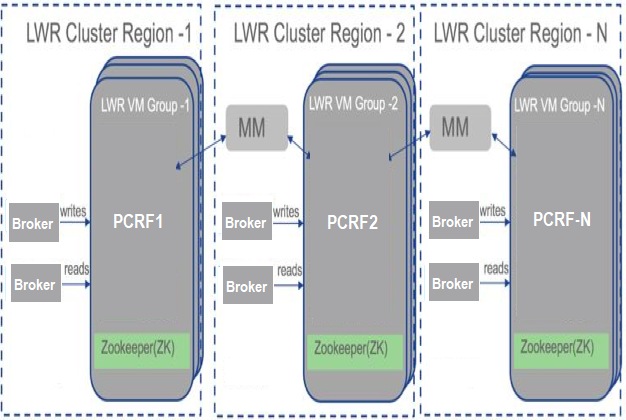

Architecture Overview

E911 emergency calls demand prioritized bearer management in order to guarantee call quality and network availability. The solution leverages Lightweight Replication (LWR) and User Data Cache (UDC) VMs. LWR internally uses Kafka DB for replication. Kafka provides high-speed replication across multiple PCRF clusters, enabling coordinated bearer control during emergency calls.

This feature helps PCRF to share the subscriber information such as number of attached APNs, notification status, and intermediate states (like update and bearer release).

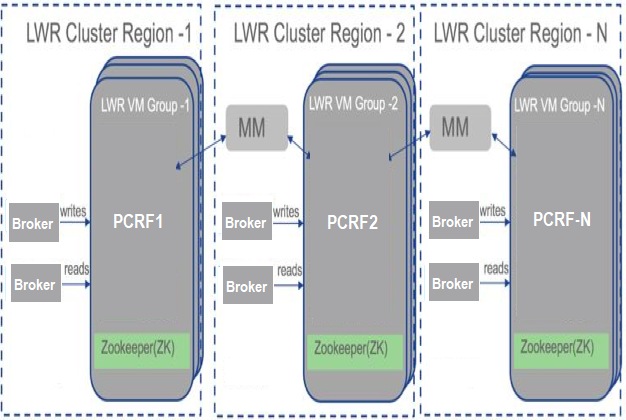

LWR Cluster Components

- Zookeeper: It is used to elect a controller, ensure there is only one, and elect a new one if it crashes.It manages cluster membership (which brokers are alive and part of the cluster) and oversees topic configuration (which topics exist, how many partitions each has, where are the replicas, who is the preferred leader, and what configuration overrides are set for each topic).

- Broker: LWR uses broker services that are message queues running on the host. Kafka broker receives messages from producers and stores them on disk keyed by unique offset.It allows consumers to fetch messages by topic, partition, offset, and can create a Kafka cluster by sharing information with each other directly or indirectly using Zookeeper.

- MirrorMaker: Kafka MirrorMaker is used to mirror data between Kafka clusters. This helps in creating replicas of data from one datacenter to another datacenter. Multiple mirroring processes can be run simultaneously in order to increase throughput and fault tolerance.

PCRF - LWR Region and Topic Configuration

In production, you can group PCRF into multiple regions such as west, south-east, and north-east. In each region there can be around five to six PCRF nodes which are interconnected via LWR. PCRF writes or updates the data on LWR whenever some events occur for a subscriber. Some examples of these events can be:

- Service/bearer creation

- Detach from network

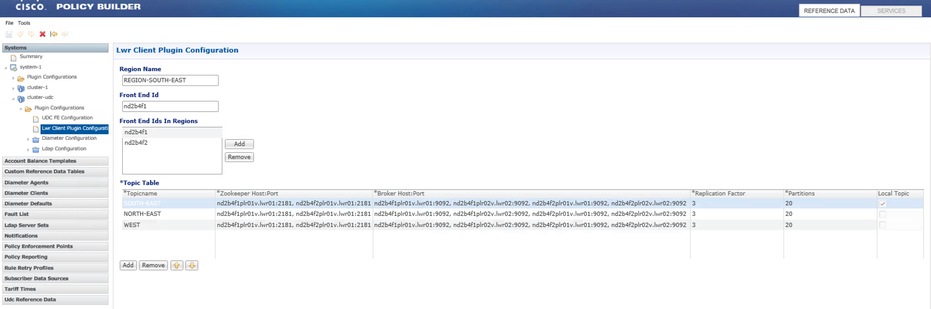

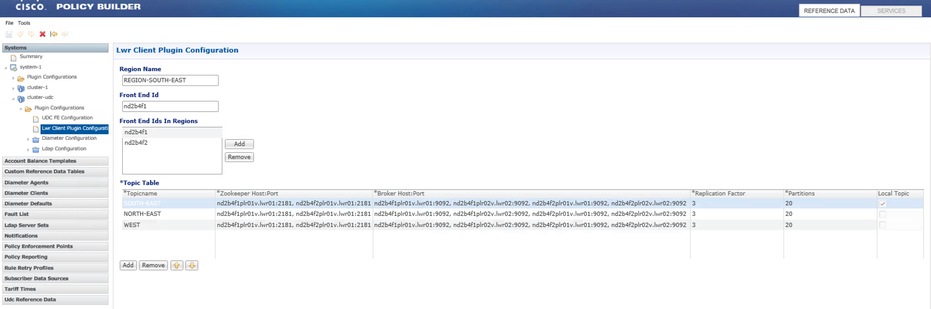

Plug-in Configuration

Under 'cluster-udc', 'lwr client plugin' configuration has been added, which includes:

- Region Name: Name of the region to which this PCRF belongs.

- Front End Id: Front End Id of the PCRF. This value must be the same as existing Front End Id values being used in the UDC configuration.

- Front End Id in Regions: Front End Id of all the PCRF in that region.

- Topic Table: List of topic names mapped to zookeepers and brokers and tells which topic is local and which is not. This table must have all the three region topics configured. Local topics must be set to true; the remaining two of the topics are set to false for local topics.

Plugin Configuration: LWR Client Plugin Configuration

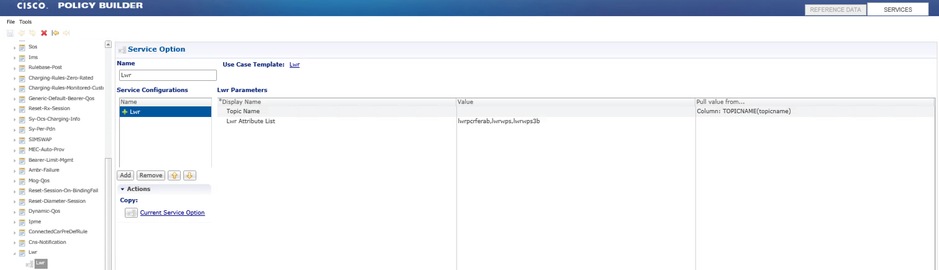

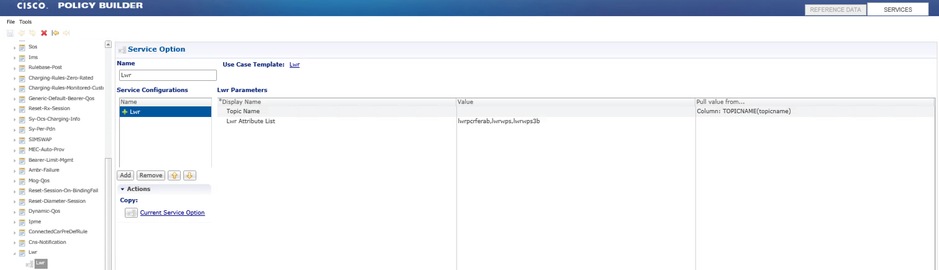

LWR Service Option

A new service option is added to support the LWR write; this service configuration must be used in UDC service.

LWR service option uses the topic name to choose on which topic data must be written and list of attributes to be written on LWR. The topic name must be chosen from the CRD table based on the Front End Id.

Service Option: LWR

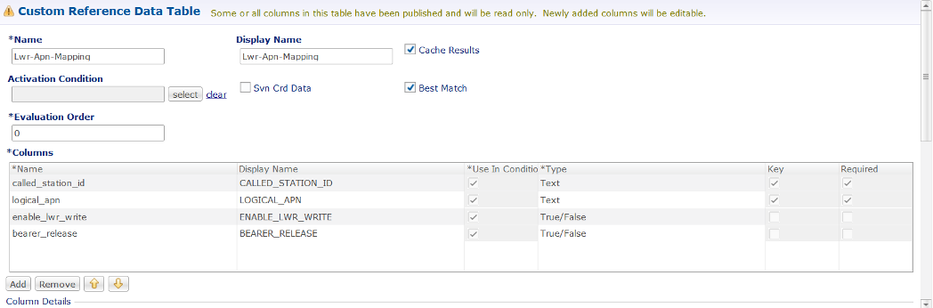

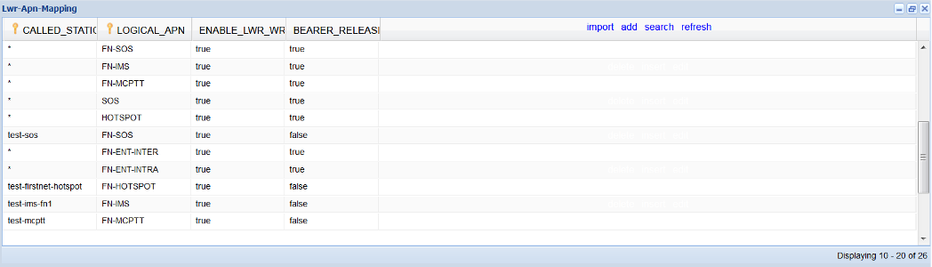

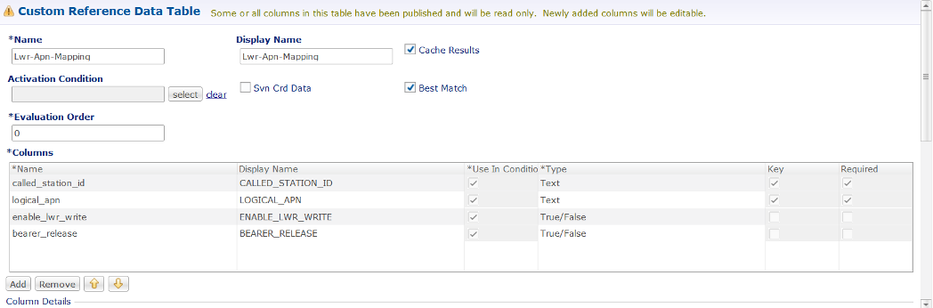

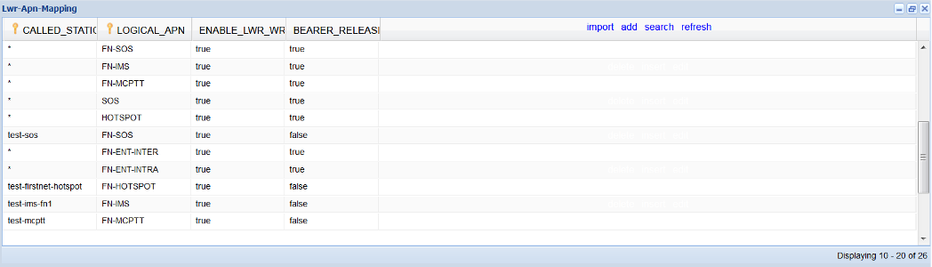

CRD Changes - Lwr-Apn-Mapping

This table provides the control of writing the attribute (lwrpcrferab) on LWR and also whether to release the bearer or not for E911 bearer management.

Search Table Group > CRD: Lwr-Apn-Mapping

UDC writes the ‘lwrpcrferab’ attribute to LWR only if 'enable_lwr_write' is true. So, this provides the control to the operations team to enable LWR write for APNs. For example, initially, LWR write was only enabled for some test APNs and disabled for all other APNs. This allows the operations team to verify that the LWR functionality and replication are working properly.

Similarly, if 'bearer_release' is true, then only PCRF can release that APN bearer on receiving the SOS call; if 'bearer_release' is false for a APN, then E911 bearer management can not kick-in for that APN.

Custom Reference Data Table: Lwr-Apn-Mapping

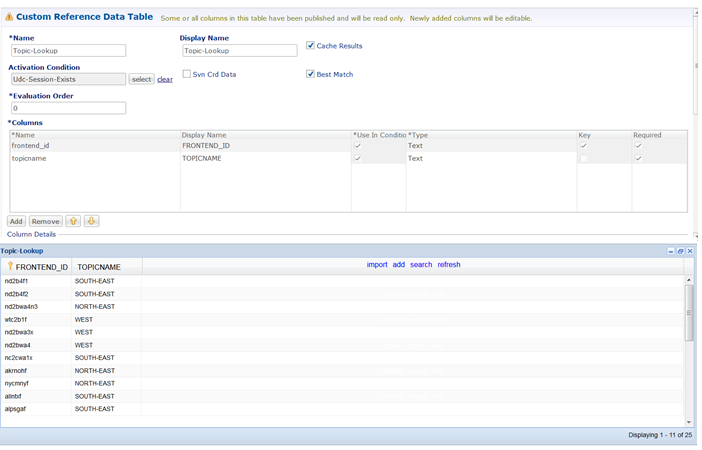

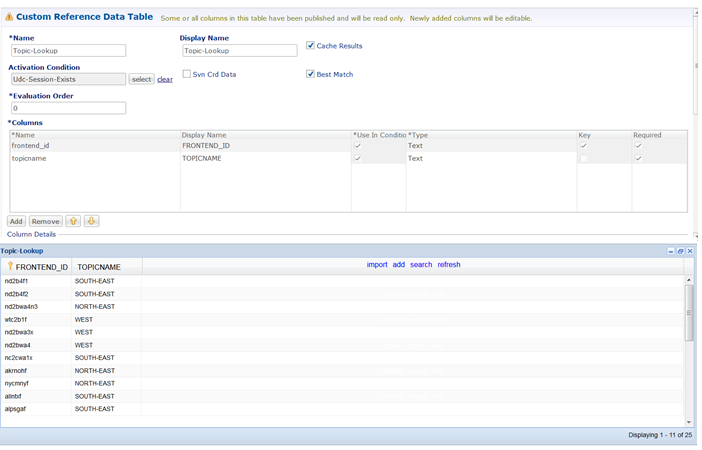

Topic-Lookup

This CRD table is used to derive the topic name based on the Front End Id. This information is used by the LWR service option in order to connect to a particular topic that PCRF is configured for.

Custom Reference Data Table: Topic-Lookup

Key Concepts and Data Flow

Attribute Replication

- The primary replicated attribute is 'lwrpcrferab', encoding bearer state relevant to E911.

- PCRF writes this attribute to the UDC, which then propagates it through LWR.

- LWR replicates the attribute across sites, updating local UDC and PCRF to maintain synchronized bearer states.

Domain and Service Updates

- A new domain supports SOS APN attribute management via UDC and LDAP.

- Existing SOS domains now use the 'lwrpcrferab' attribute.

- Delaying SOS call acceptance in order to allow bearer release.

- Rejecting new bearer/session requests during SOS calls.

- Releasing IMS and MCPTT bearers upon SOS call initiation.

- Pausing and later restoring bearers during SOS calls.

Assumptions

- LWR write enablement is controlled per APN in order to allow phased deployment and testing.

- PCRF writes the 'lwrpcrferab' attribute only on new session requests or if the attribute already exists, preventing excessive writes.

- A default delay (for example, 600 ms) in SOS call acceptance allows PCRF to release lower-priority bearers before establishing the emergency call.

- Stale attribute guard timers ensure timely cleanup of outdated SOS sessions or attributes.

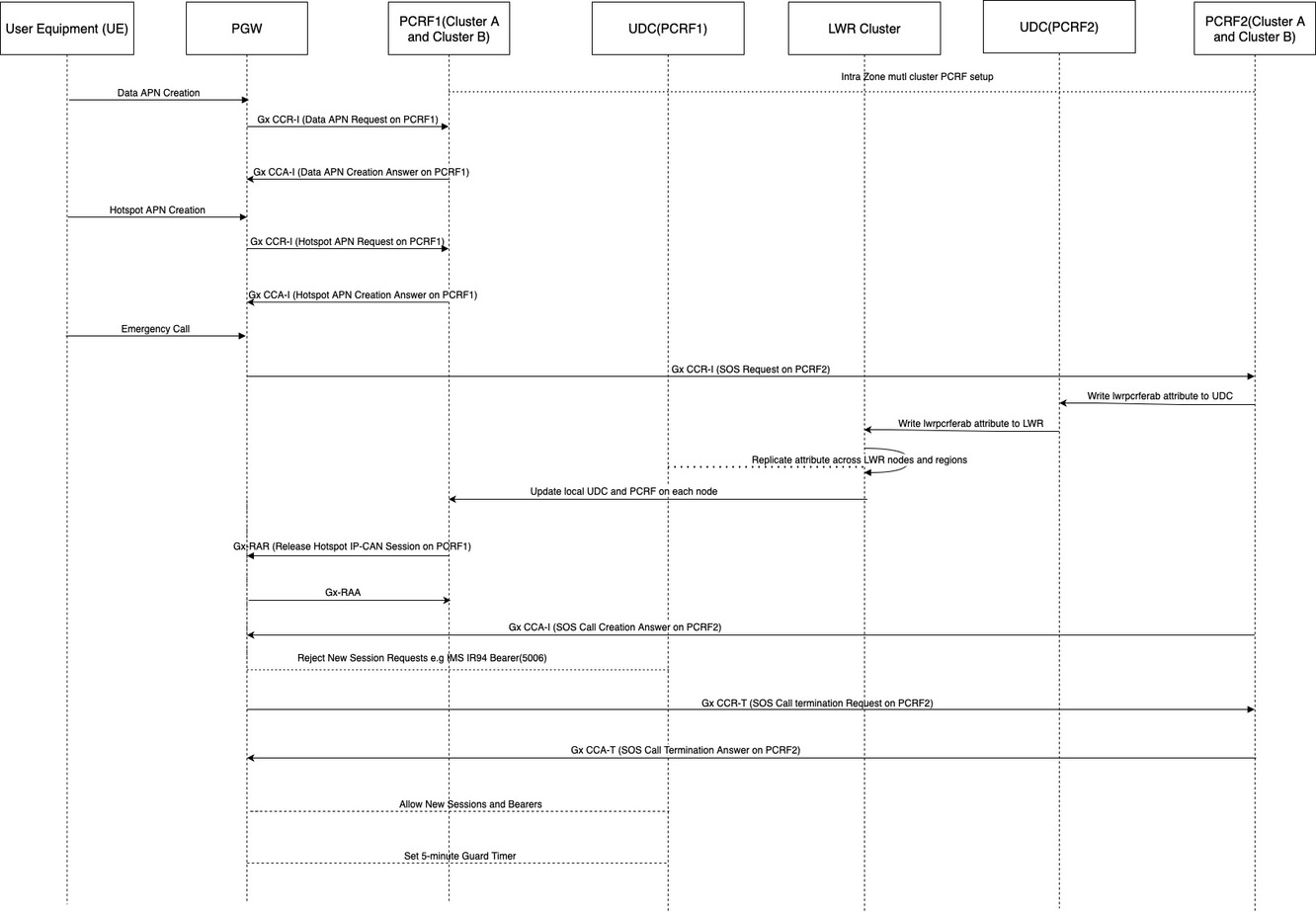

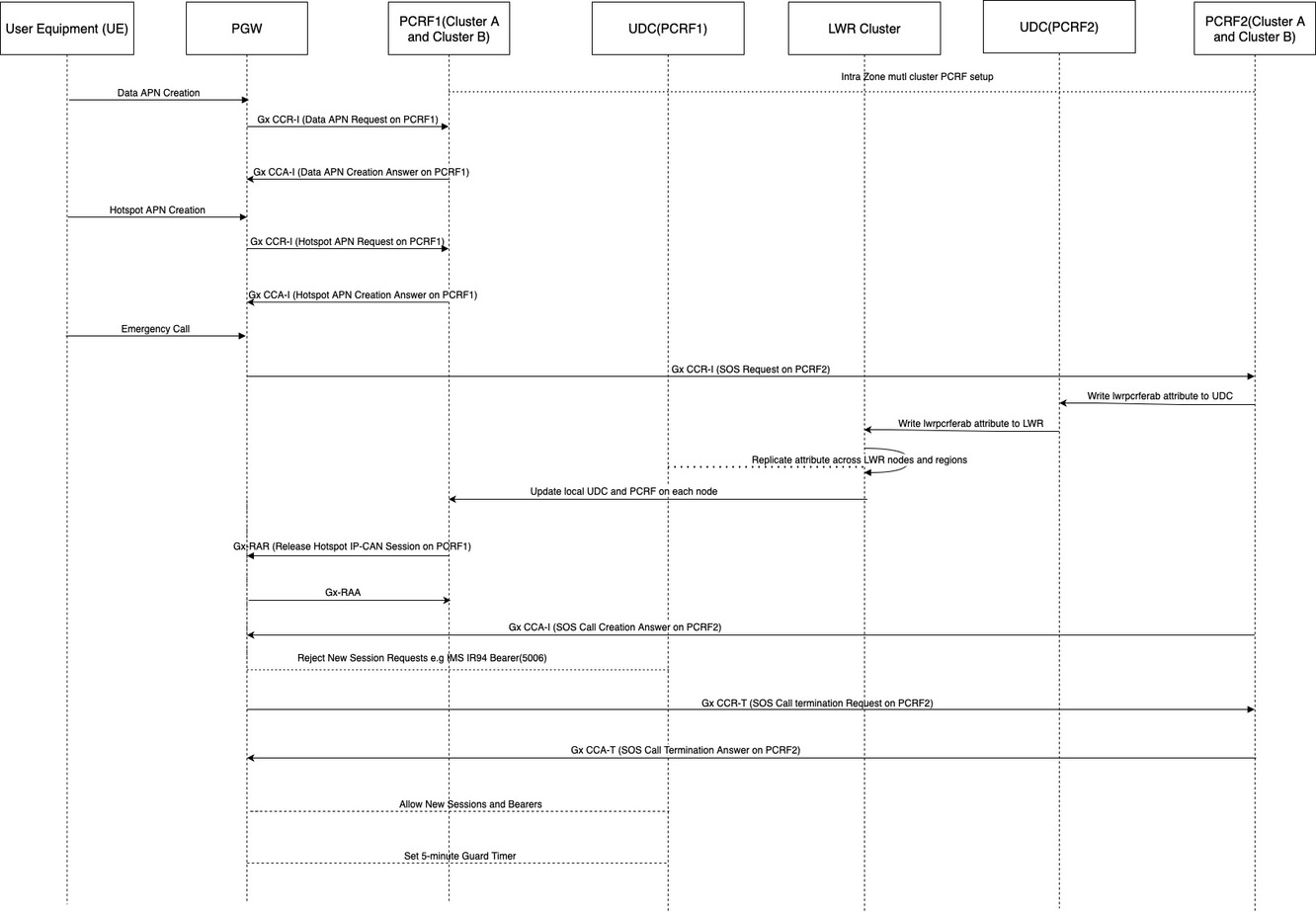

Call Flow

- Send 'attach' for Data APN to PGW and then PGW sends CCR-I to PCRF A and gets a successful response.

- Send 'attach' for hotspot APN to PGW and then PGW sends CCR-I to PCRF A and gets a successful response.

- Send 'Emergency call' to PGW and then PGW sends CCR-I to PCRF B and gets a successful response.

- The PCRF updates an attribute called 'lwrpcrferab', setting its stage to 'Start' and its priority to '1'. This likely signifies the beginning of emergency call handling and assigns it the highest priority.

- The PCRF writes this updated 'lwrpcrferab' attribute to the UDC.

- The UDC then writes the 'lwrpcrferab' attribute to the LWR. The 'lwrpcrferab' attribute is replicated across all nodes and regions within the LWR cluster to ensure consistency and availability.

- Each node in the PCRF multi-cluster updates its local UDC and PCRF instances with the replicated attribute information.

- The PCRF then releases lower-priority bearers. Examples of these lower-priority services include hotspot, IMS video, and IPME. This action frees up network resources for the high-priority emergency call.

- There is a configured delay (defaulting to 600 ms) for the SOS CCA-I message. This is to ensure resource allocation or synchronization before proceeding.

- Finally, the system rejects any new bearer or session requests for specific APNs such as hotspot, further prioritizing the emergency call by preventing new low-priority connections.

- When CCR-T is sent by GW in order to delete the SOS call, then PCRF accepts new bearer creation requests for data APN.

Benefits and Impact

- High Availability and Scalability: Kafka-based LWR ensures real-time replication and fault tolerance across multiple datacenters.

- Priority Handling: Enables dynamic release or pausing of lower-priority bearers during emergency calls.

- Operational Control: Supports phased feature enablement and fine-grained bearer management per APN.

- Improved Emergency Call Quality: Efficient bearer resource management supports reliable E911 call setup and maintenance.

Conclusion

The Bearer Management solution using LWR provides a robust, scalable, and efficient mechanism in order to prioritize and manage LTE bearers during E911 calls. By leveraging Kafka-based replication and synchronized attribute management, it ensures high availability, operational flexibility, and improved emergency call reliability.

Feedback

Feedback