How data center networking works

Data center networking enables communication between computing resources by forwarding traffic between servers, storage systems, and external networks using predefined protocols. At a high level, this process involves:

- Packet routing and switching

- Traffic pattern management (North-South and East-West)

- Software-defined control planes

- Load balancing and security enforcement

Packet routing and switching

At the most basic level, the network functions by moving data packets between devices. Switches handle the majority of internal traffic, directing data to the correct server or storage node within the facility. Routers manage the flow of data between different networks, including the connection between the data center and the public internet or other remote sites.

Traffic pattern management: North-south and east-west

Modern networks must manage two distinct types of traffic:

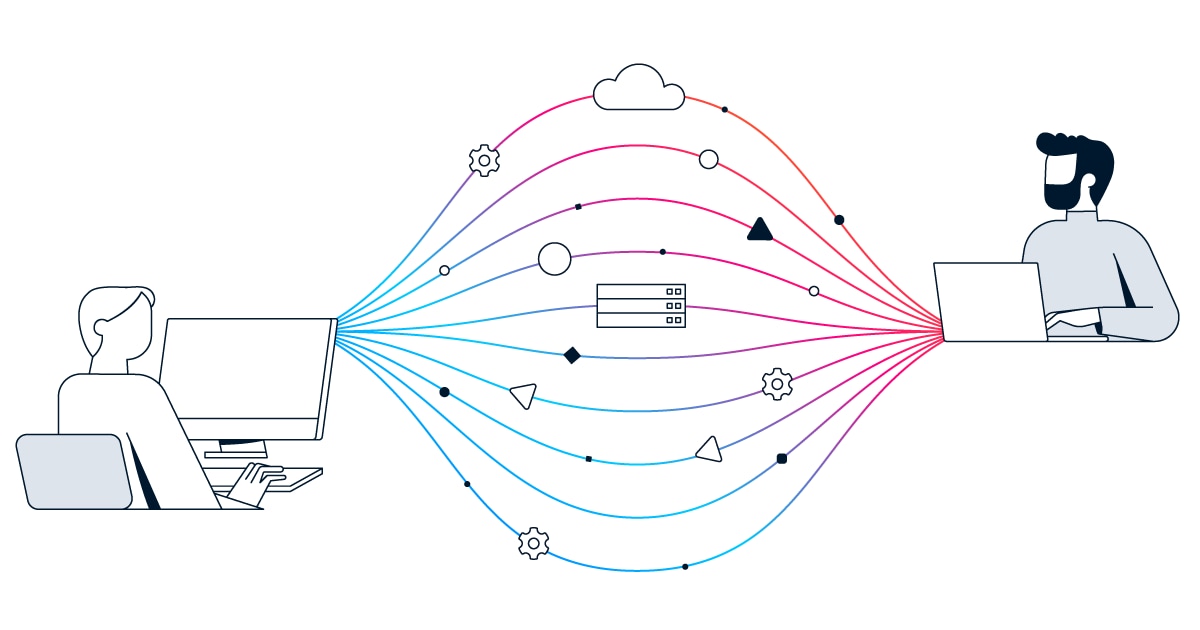

- North-south traffic refers to data moving between the data center and external users or the internet.

- East-west traffic, which now accounts for the majority of data center volume, moves internally between servers.

This is common in distributed applications where different components of a single workload (such as a database and a web server) must constantly communicate.

Software-defined control planes

Modern networking relies on a Software-Defined Networking (SDN) architecture that separates the control plane from the data plane:

- The control plane acts as the brain of the network, allowing for centralized management and automated configuration.

- The data plane consists of the actual hardware (the switches and routers) that forward traffic based on the instructions received from the central controller, allowing for much greater agility than traditional manual configuration.

Network virtualization and segmentation

To improve efficiency and security, physical network resources are often "virtualized" into multiple logical networks. This allows a single physical infrastructure to support many different tenants or applications in total isolation.

Network segmentation is the process of dividing the network into smaller, distinct zones, which prevents a security breach in one area from spreading across the entire data center.