How hyperscale data centers work

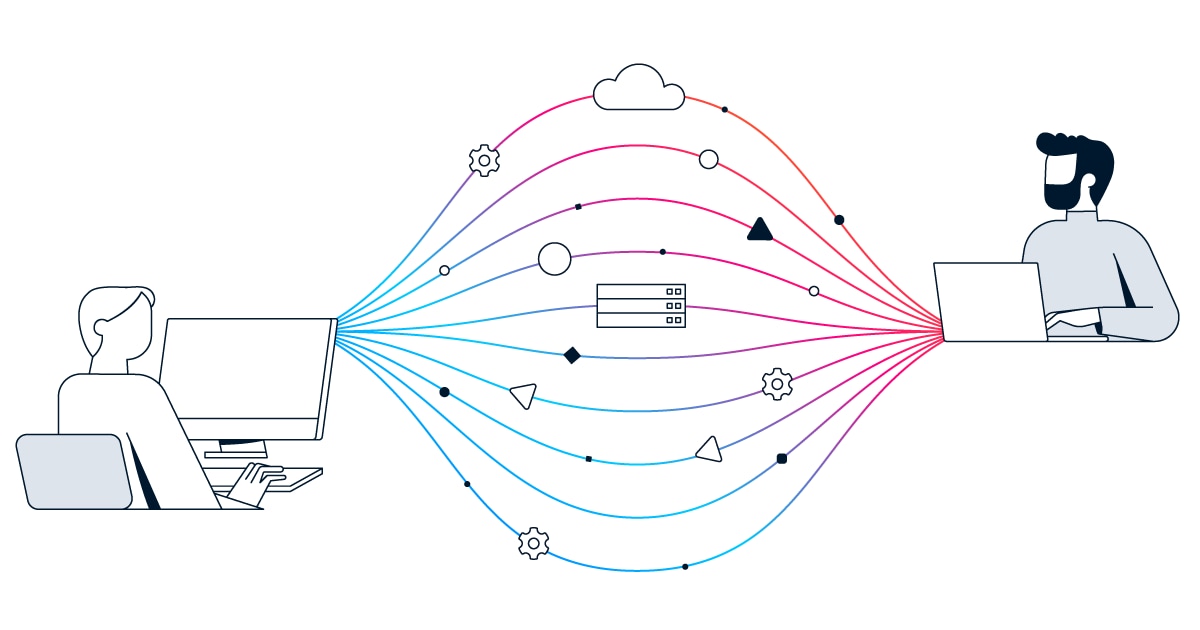

Instead of relying on a few large, complex machines, hyperscale environments use vast fleets of "commodity" hardware managed by a sophisticated software layer. The architecture is built around four integrated pillars:

- The compute layer

- The storage layer

- The networking layer

- Geographic distribution

The compute layer

The compute layer consists of massive arrays of standardized servers that operate as a single pooled resource. These servers are managed through centralized policy and treated as replaceable components rather than unique assets. To maintain high performance, these servers increasingly utilize Data Processing Units (DPUs) or SmartNICs to offload networking, security, and storage tasks from the main CPU, allowing the primary processor to focus entirely on the application or AI workload.

The storage layer

Storage in a hyperscale environment is software-defined and distributed across thousands of nodes. Rather than relying on a single storage array, data is segmented and protected using erasure coding, which ensures that even if multiple drives or entire server racks fail, the data remains available and can be reconstructed through automated data rebalancing across the infrastructure.

The networking layer

Networking is optimized for high-volume internal "east-west" traffic. Most hyperscale facilities employ a leaf-spine architecture, which provides consistent latency and predictable performance across all workloads. This topology allows for linear bandwidth scaling, meaning that as new server "pods" are added, the network capacity grows proportionally without requiring a redesign of the core infrastructure.

Geographic distribution and availability zones

Hyperscale providers organize their global footprint into Regions. Each region is typically composed of three or more Availability Zones (AZs), which are physically separate data centers located within the same metropolitan area. These AZs are connected by ultra-low-latency fiber, enabling real-time data replication and ensuring that services remain online even if an entire facility experiences a power or network failure.