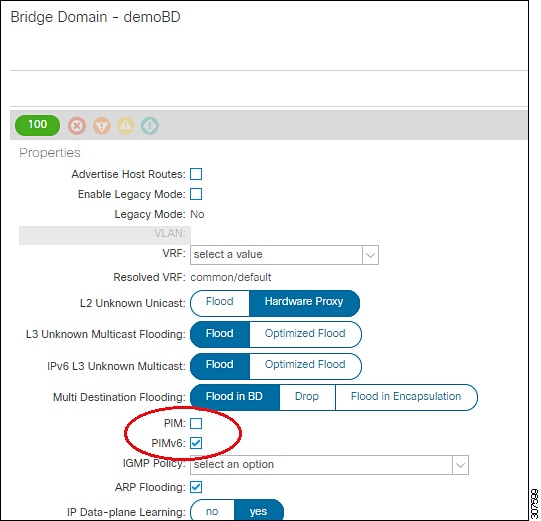

ACI supports control plane configurations that can be used to control who can receive

multicast feeds and from which sources. The filtering options can be IGMP report

filters, PIM join or prune filters, PIM neighbor filters, and Rendezvous Point (RP)

filters. These options rely on control plane protocols, namely IGMP and PIM.

In some deployments, it may be desirable to constrain the sending and/or receiving of

multicast streams at the data plane level. For example, you may want to allow multicast

senders in a LAN to only send to specific multicast groups or to allow receivers in a

LAN to only receive specific multicast groups originated from all the possible sources

or from specific sources.

Beginning with Cisco APIC Release 5.0(1), the multicast filtering feature is now available, which allows you to

filter multicast traffic from two directions:

Configuring Multicast Filtering: Source Filtering at First-Hop Router

For any sources that are sending traffic on a bridge domain, if you have configured a

multicast source filter for that bridge domain, then the source and group will be

matched against one of the entries in the source filter route map, where one of the

following actions will take place, depending on the action that is associated with

that entry:

-

If the source and group is matched against an entry with a Permit

action in the route map, then the bridge domain will allow traffic to be

sent out from that source to that group.

-

If the source and group is matched against an entry with a Deny action

in the route map, then the bridge domain will block traffic from being sent

out from that source to that group.

-

If there is no match with any entries in the route map, then the bridge

domain will block traffic from being sent out from that source to that group

as the default option. This means that once the route map is applied, there

is always an implicit "deny all" statement in effect at the end.

You can configure multiple entries in a single route map, where some entries can be

configured with a Permit action and other entries can be configured with a

Deny action, all within the same route map.

Note |

When a source filter is applied to a bridge domain, it will filter multicast

traffic at the source. The filter will prevent multicast from being received by

receivers in different bridge domains, the same bridge domain, and external

receivers.

|

Configuring Multicast Filtering: Receiver Filtering at Last-Hop Router

Multicast receiver filtering is used to restrict from which sources receivers in a

bridge domain can receive multicast for a particular group. This feature provides

source or group data plane filtering functionality for IGMPv2 hosts, similar to what

IGMPv3 provides at the control plane.

For any receivers sending joins on a bridge domain, if you have configured a

multicast receiver filter for that bridge domain, then the source and group will be

matched against one of the entries in the receiver filter route map, where one of

the following actions will take place, depending on the action that is associated

with that entry:

-

If the source and group is matched against an entry with a Permit

action in the route map, then the bridge domain will allow traffic to be

received from that source for that group.

-

If the source and group is matched against an entry with a Deny action

in the route map, then the bridge domain will block traffic from being

received from that source for that group.

-

If there is no match with any entries in the route map, then the bridge

domain will block traffic from being received from that source for that

group as the default option. This means that once the route map is applied,

there is always an implicit "deny all" statement in effect at the end.

You can configure multiple entries in a single route map, where some entries can be

configured with a Permit action and other entries can be configured with a

Deny action, all within the same route map.

Combined Source and Receiver Filtering on Same Bridge Domain

You can also enable both multicast source filtering and multicast receiver filtering

on the same bridge domain, where one bridge domain can perform blocking or can

permit sources to filtering when sending traffic to a group range, and can also

perform restricting or can allow restricting to filtering when receiving traffic

from sources to a group range.

Feedback

Feedback