Cisco UCS C-Series Server Installation

-

Dual Socket servers for small to medium sized deployments: -

Cisco UCS C220-M3

-

Cisco UCS C220-M4

-

Cisco UCS C240-M3

-

Cisco UCS C240-M4

-

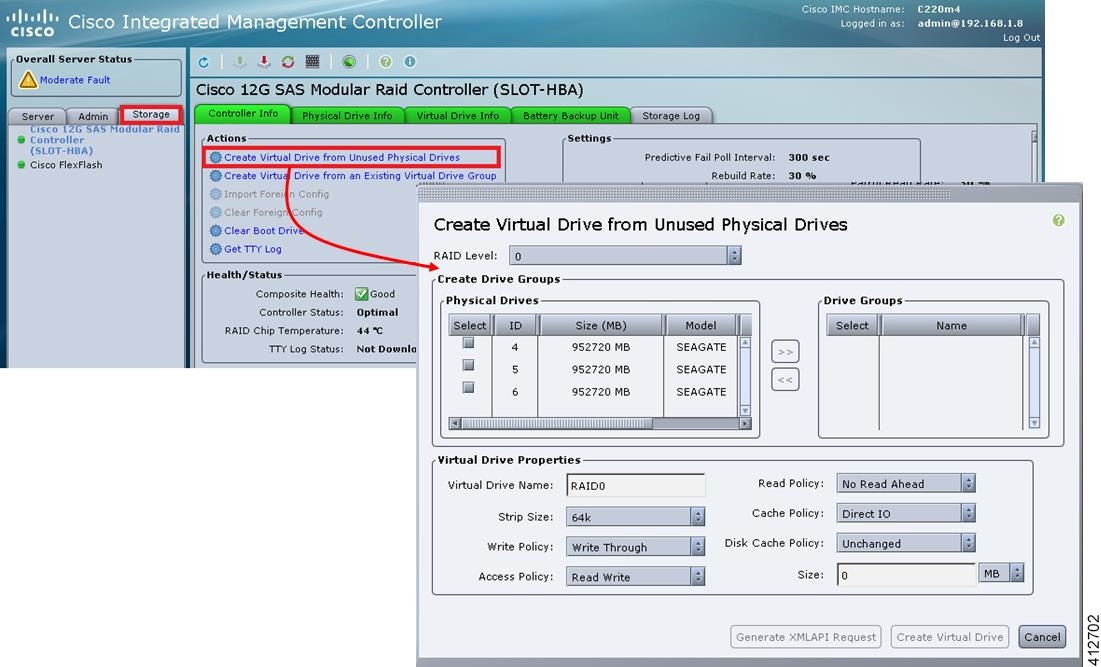

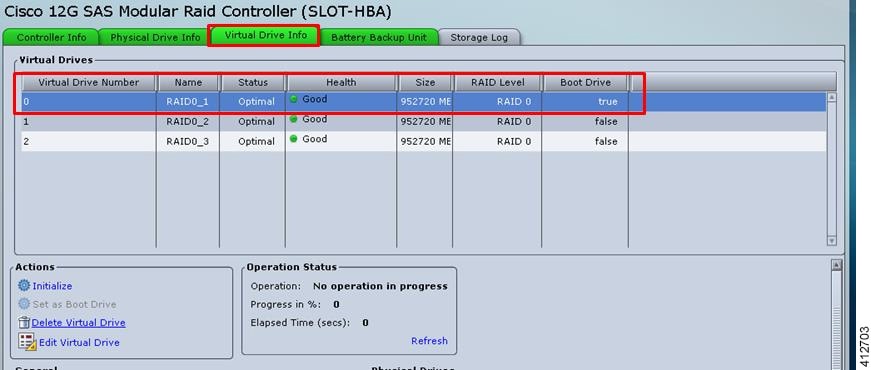

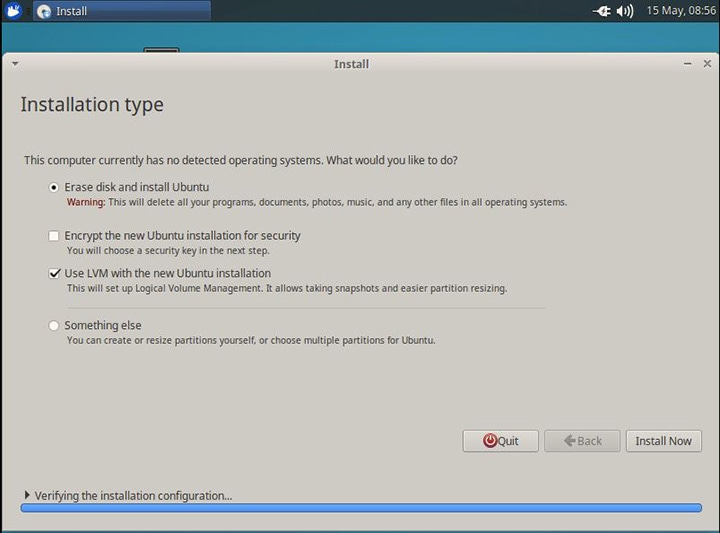

Cisco Modeling Labs has relatively modest storage requirements, with a 250GB capacity (or larger) Direct Attached Storage disk (DAS) recommended. RAID configurations are optional. When using a RAID configuration on the UCS C-Series server, the hardware based (MRAID module) version is the recommended method.

Storage Area Network (SAN) options are beyond the scope of this installation guide. SAN options are not supported for Cisco Modeling Labs bare metal deployments on Cisco UCS C-Series.

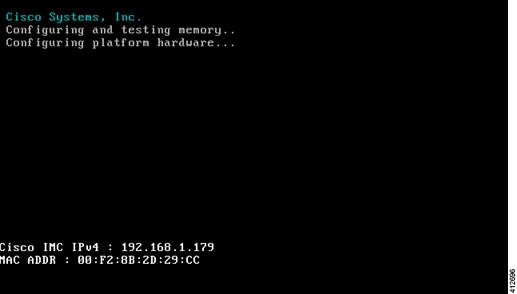

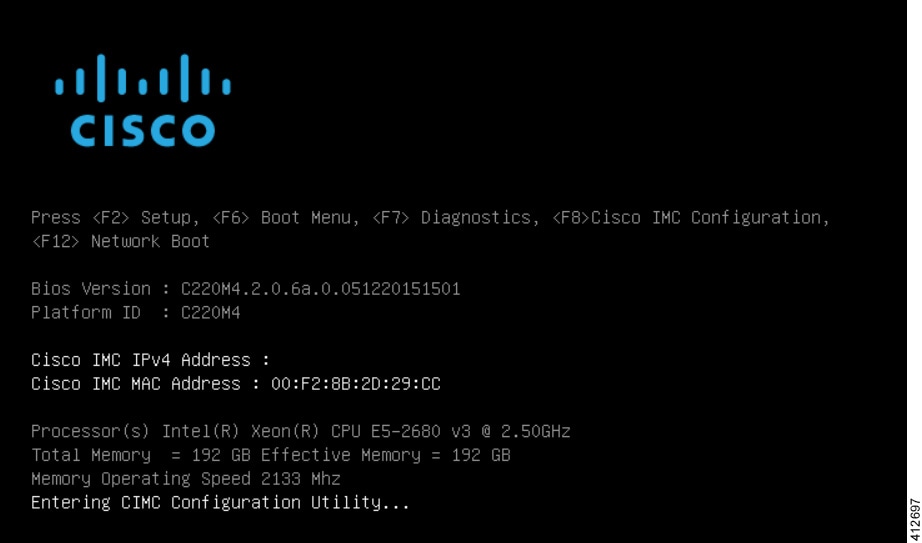

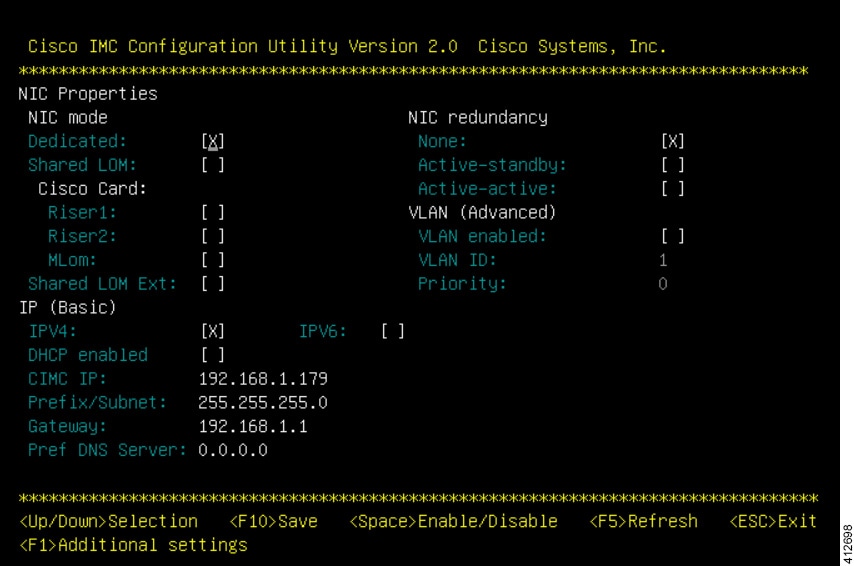

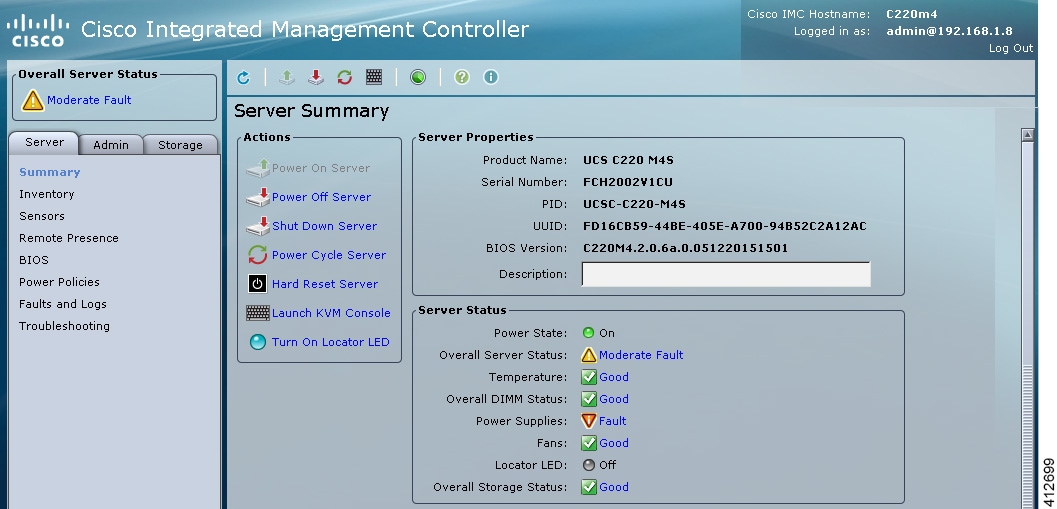

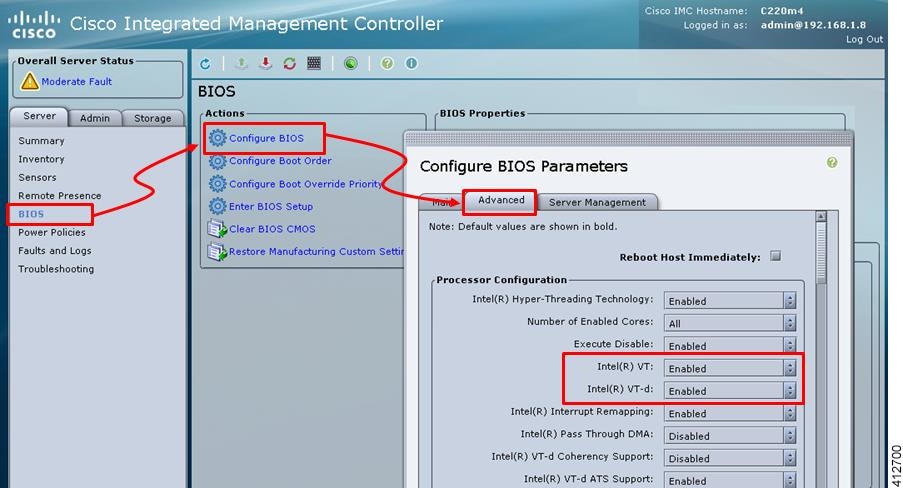

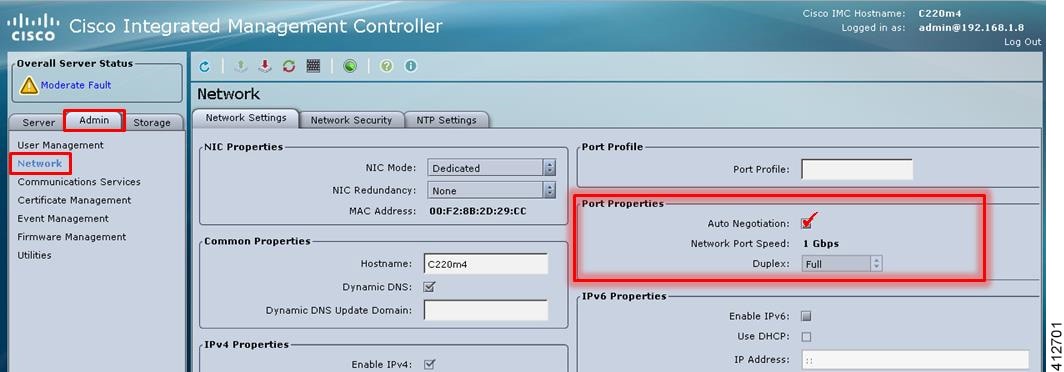

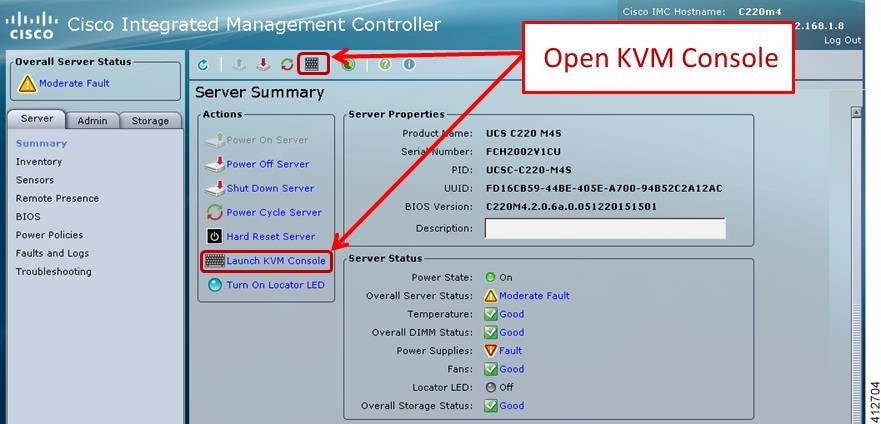

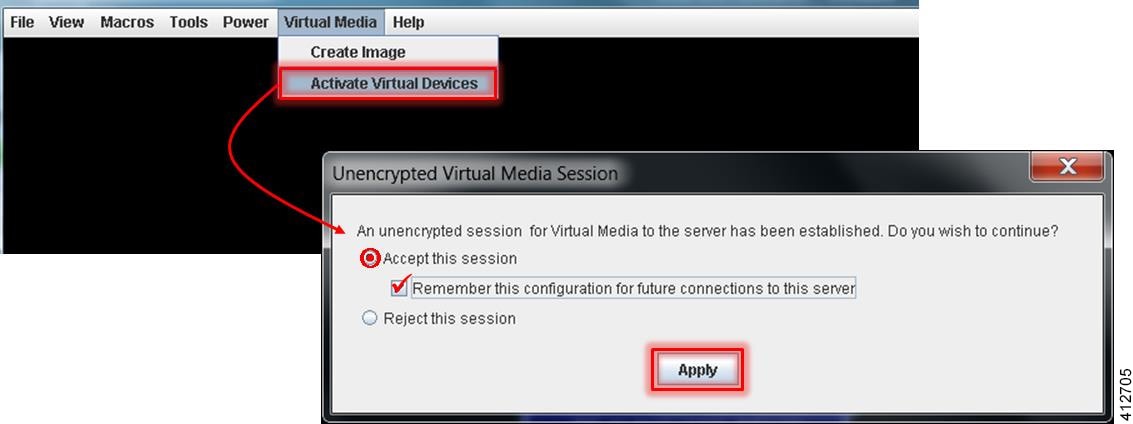

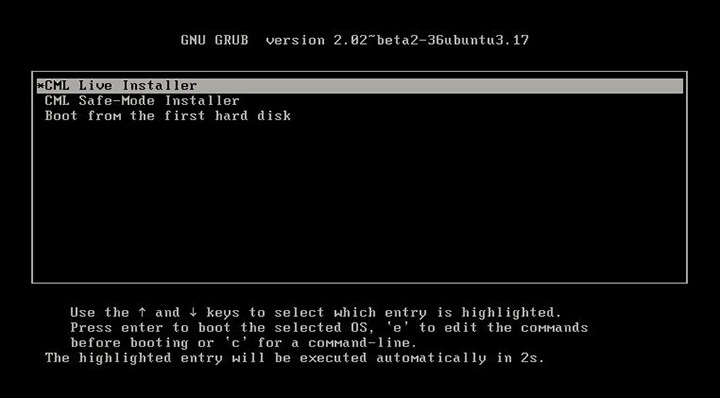

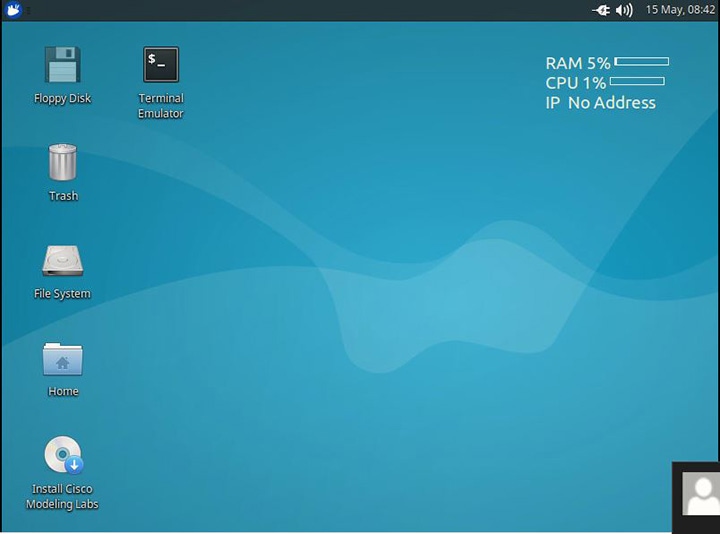

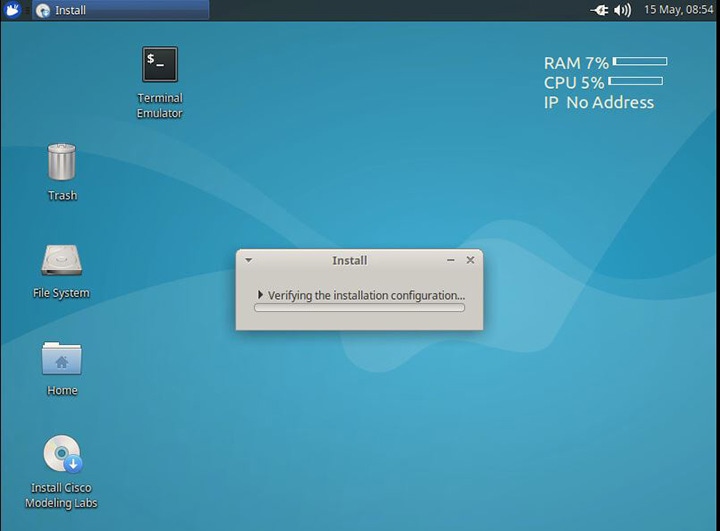

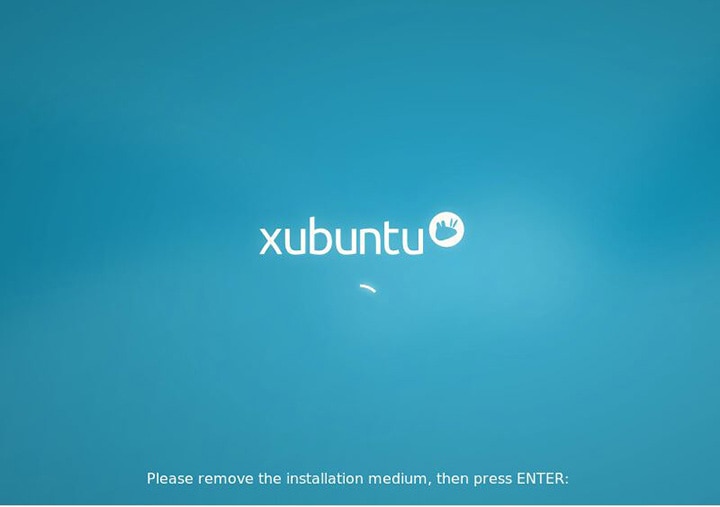

If the Cisco UCS C-Series server is being freshly deployed, there are some preliminary preparations that are necessary to prepare the hardware. These include configuring the server’s dedicated management interface (CIMC); verifying that the necessary Virtualization Technology features are enabled in the BIOS; and preparing the storage for the installation. The following steps are associated with the Cisco UCS C220 M4S platform running Version 2.06(6d) BIOS/CICM firmware. Refer to the applicable documentation if other server types or firmware levels are to be used and adjust the process accordingly.

Feedback

Feedback