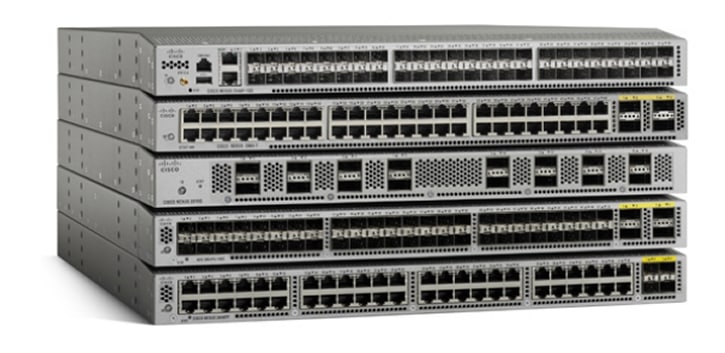

Cisco Nexus 3000 Series Switches

| Overview | Product Overview |

|---|---|

| Product Type | Data Center Switches |

| Status | Available Order |

| Series Release Date | 29-MAR-2011 |

| Diagram | Visio Stencil (9 MB .zip file) |

- US/Canada 800-553-2447

- Worldwide Support Phone Numbers

- All Tools

Feedback

Feedback

-

- Nexus 3048 Switch

Status: End of Sale | End-of-Support Date: 31-Aug-2025

- Nexus 3132C-Z Switch

Status: End of Sale | End-of-Support Date: 28-Feb-2027

- Nexus 3132Q-V Switch

Status: Available | Release Date: 02-Feb-2016

- Nexus 3132Q-XL Switch

Status: End of Sale | End-of-Support Date: 30-Apr-2025

- Nexus 3164Q Switch

Status: End of Sale | End-of-Support Date: 31-Oct-2024

- Nexus 3172PQ Switch

Status: End of Sale | End-of-Support Date: 28-Feb-2027

- Nexus 3172PQ-XL Switch

Status: End of Sale | End-of-Support Date: 28-Feb-2027

- Nexus 3172TQ Switch

Status: End of Sale | End-of-Support Date: 28-Feb-2027

- Nexus 3172TQ-32T Switch

Status: End of Sale | End-of-Support Date: 28-Feb-2027

- Nexus 3172TQ-XL Switch

Status: End of Sale | End-of-Support Date: 28-Feb-2027

- Nexus 3232C Switch

Status: Available | Release Date: 06-Jun-2015

- Nexus 3264C-E Switch

Status: End of Sale | End-of-Support Date: 28-Feb-2027

- Nexus 3264Q Switch

Status: End of Sale | End-of-Support Date: 30-Apr-2025

- Nexus 3408-S Switch

Status: Available | Release Date: 15-May-2019

- Nexus 3432D-S Switch

Status: Available | Release Date: 15-May-2019

- Nexus 3464C Switch

Status: End of Sale | End-of-Support Date: 31-Oct-2025

- Nexus 3524-X Switch

Status: End of Sale | End-of-Support Date: 31-Jan-2027

- Nexus 3524-XL Switch

Status: Available | Release Date: 01-Feb-2018

- Nexus 3548-X Switch

Status: End of Sale | End-of-Support Date: 31-Jan-2027

- Nexus 3548-XL Switch

Status: Available | Release Date: 01-Feb-2018

- Nexus 31108PC-V Switch

Status: Available | Release Date: 03-Feb-2016

- Nexus 31108TC-V Switch

Status: Available | Release Date: 03-Feb-2016

- Nexus 31128PQ Switch

Status: End of Sale | End-of-Support Date: 28-Feb-2027

- Nexus 34180YC Switch

Status: End of Sale | End-of-Support Date: 31-Oct-2025

- Nexus 34200YC-SM Switch

Status: Available | Release Date: 26-Sep-2019

- Nexus 36180YC-R Switch

Status: Available | Release Date: 08-Sep-2017

-

Key Information

Customers Also Viewed

Saved Content

-

You can now save documents for easier access and future use. Saved documents for this product will be listed here, or visit the My Saved Content page to view and manage all saved content from across Cisco.com.

Log in to see your Saved Content.

Recent Security Notices

- 28-Aug-2024

- 28-Aug-2024

- 28-Aug-2024

- 28-Aug-2024

- 01-Jul-2024

Document Categories

-

Data Sheets and Product Information

- Cisco Nexus 3548-X, 3524-X, 3548-XL, and 3524-XL Switches Data Sheet

- Cisco 10GBASE SFP+ Modules Data Sheet

- Cisco Nexus 3408-S Switch Data Sheet (DOCX - 634 KB)

- Cisco Nexus 3408-S Switch Data Sheet

- Cisco Nexus 3432D-S Switch Data Sheet

- Cisco Nexus 3016 Switch Data Sheet

- Cisco Nexus 3048 Switch Data Sheet

- Cisco Nexus 3064-X, 3064-T, and 3064-32T Switches Data Sheet

- Cisco Nexus 31128PQ Switch Data Sheet

- Cisco Nexus 3132C-Z Switches Data Sheet

- Cisco Nexus 3132Q, 3132Q-X, and 3132Q-XL Switches Data Sheet

- Cisco Nexus 3164Q Switch Data Sheet

- Cisco Nexus 3264Q Switch Data Sheet

- Cisco Nexus 3264C-E Switch Data Sheet

- Cisco Nexus C36180YC-R Switch Data Sheet

Data Sheets

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 34200YC-SM

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3464C switch

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 34180YC switch

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3264Q Switch

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3132Q-XL

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3132Q-X Switch

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3164Q

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3048 Switch

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3408-S Switch

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3000 Legacy Power Supply and Fans

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus N3K-C3172PQ and N3K-C3172PQ-XL Switches

- End-of-Sale and End-of-Life Announcement for the Cisco N3K-C3232C

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus N3K-C3172TQ and N3K-C3172TQ-XL Switches

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 31108PC-V, 31108TC-V and Nexus 3132Q-V

- End-of-Sale and End-of-Life Announcement for the Cisco Nexus 3524-X, 3548-X

End-of-Life and End-of-Sale Notices

-

Security Notices

-

Applicable to Multiple Models

- Field Notice: FN - 72150 - Nexus 9000/3000 Will Fail With SSD Read-Only Filesystem – Power Cycle Required - BIOS/Firmware Upgrade Recommended

- Field Notice: FN - 72441 - Oplink Transceivers Version 3 Are Not Qualified and Supported - Configuration Change Recommended

- Field Notice: FN - 72433 - Nexus 3000 and 9000 Switch Traffic Disruptions and Control Plane Instability - Software Upgrade Recommended

- Field Notice: FN - 72115 - Nexus Product Line: QuoVadis Root CA 2 Decommission Might Affect Smart Licensing and Smart Call Home Functionality - Software Upgrade Recommended

- Field Notice: FN - 70016 - Nexus 3000/9000 Switch Might Reload Due to Software Issue - BIOS/Firmware Upgrade Recommended

- Field Notice: FN - 70082 - Nexus 3000 Series Platforms Might Observe an eUSB Failure - Software Upgrade Recommended

-

Cisco Nexus 3048 Switch

- Field Notice: FN - 64233 - Nexus 3000 Series Switches That Run Release 6.0.(2)U6(x) Fail to Upgrade to Release 7.0.(x) with MD5 Sum Mismatch Error-BIOS Upgrade Required

Field Notices

- Cisco NX-OS Software DHCPv6 Relay Agent Denial of Service Vulnerability

- Cisco NX-OS Software Command Injection Vulnerability

- Cisco NX-OS Software Python Sandbox Escape Vulnerabilities

- Cisco NX-OS Software Bash Arbitrary Code Execution and Privilege Escalation Vulnerabilities

- Cisco NX-OS Software CLI Command Injection Vulnerability

- Cisco NX-OS Software External Border Gateway Protocol Denial of Service Vulnerability

- Cisco NX-OS Software MPLS Encapsulated IPv6 Denial of Service Vulnerability

- Cisco Nexus 3000 and 9000 Series Switches Port Channel ACL Programming Vulnerability

- Cisco FXOS and NX-OS Software Link Layer Discovery Protocol Denial of Service Vulnerability

- HTTP/2 Rapid Reset Attack Affecting Cisco Products: October 2023

- Cisco Nexus 3000 and 9000 Series Switches IS-IS Protocol Denial of Service Vulnerability

- Cisco NX-OS Software TACACS+ or RADIUS Remote Authentication Directed Request Denial of Service Vulnerability

- Cisco Nexus 3000 and 9000 Series Switches SFTP Server File Access Vulnerability

- Cisco NX-OS Software CLI Command Injection Vulnerability

- Vulnerabilities in Layer 2 Network Security Controls Affecting Cisco Products: September 2022

Security Advisories, Responses and Notices

-

-

Release and Compatibility

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 9.3(14)

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.3(6)M

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.5(1)F

- Recommended Cisco NX-OS Releases for Cisco Nexus 3000, 3100, 3200, and 3500 Series Switches

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.2(8)M

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.3(5)M

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.4(3)F

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.2(7)M

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 9.3(13)

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.4(2)F

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.3(4a)M

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.2(6)M

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.4(1)F

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 9.3(12)

- Cisco Nexus 3000 Series NX-OS Release Notes, Release 10.3(3)F

Release Notes

- Cisco Nexus 3548 Series NX-OS Verified Scalability Guide, Release 9.3(14)

- Cisco Nexus 3600 Series NX-OS Verified Scalability Guide, Release 9.3(14)

- Cisco Nexus 3600 Series NX-OS Verified Scalability Guide, Release 10.3(6)M

- Cisco Nexus 3548 Series NX-OS Verified Scalability Guide, Release 10.3(6)M

- Cisco Nexus 3600 Series NX-OS Verified Scalability Guide, Release 10.5(1)F

- Cisco Nexus 3548 Series NX-OS Verified Scalability Guide, Release 10.5(1)F

- Cisco Nexus 3600 Series NX-OS Verified Scalability Guide, Release 10.3(5)M

- Cisco Nexus 3548 Series NX-OS Verified Scalability Guide, Release 10.3(5)M

- Cisco Nexus 3600 Series NX-OS Verified Scalability Guide, Release 10.4(3)F

- Cisco Nexus 3548 Series NX-OS Verified Scalability Guide, Release 10.4(3)F

- Cisco Nexus 3600 Series NX-OS Verified Scalability Guide, Release 10.2(7)M

- Cisco Nexus 3548 Series NX-OS Verified Scalability Guide, Release 10.2(7)M

- Cisco Nexus 3600 Series NX-OS Verified Scalability Guide, Release 9.3(13)

- Cisco Nexus 3548 Series NX-OS Verified Scalability Guide, Release 9.3(13)

- Cisco Nexus 3000 Series NX-OS Verified Scalability Guide, Release 9.3(13)

Verified Scalability Guides

-

Multimedia

- Cisco Nexus 9000 and 3000 Series Switch Hardware Release Support Infographic (PDF - 1 MB)

Infographics

-

Reference

- Command Reference BookMap1

- Command Reference BookMap1

- Cisco Nexus 3000 Series NX-OS N3K Mode Command Reference (Configuration Commands), Release 9.3(1)

- Cisco Nexus 3000 Series NX-OS N3K Mode Command Reference (Show Commands), Release 9.3(1)

- Cisco Nexus 3000 Series NX-OS N9K Mode Command Reference (Configuration Commands), Release 9.3(1)

- Cisco Nexus 3000 Series NX-OS N9K Mode Command Reference (Show Commands), Release 9.3(1)

- Cisco Nexus 3548 NX-OS Command Reference (Configuration Commands), Release 9.3(1)

- Cisco Nexus 3548 NX-OS Command Reference (Show Commands), Release 9.3(1)

- Cisco Nexus 3400-S Series NX-OS Command Reference (Configuration Commands), Release 9.2(2)

- Cisco Nexus 3400-S Series NX-OS Command Reference (Show Commands), Release 9.2(2)

- Cisco Nexus 3600 Series NX-OS Command Reference (Configuration Commands), Release 7.0(3)F3(4)

- Cisco Nexus 3600 Series NX-OS Command Reference (Show Commands), Release 7.0(3)F3(4)

- Cisco Nexus 3548 NX-OS Command Reference (Configuration Commands), Release 7.0(3)i7(4)

- Cisco Nexus 3548 NX-OS Command Reference (Show Commands), Release 7.0(3)i7(4)

- Cisco Nexus 3000 Series NX-OS N9K Mode Command Reference (Configuration Commands), Release 7.0(3)I7(4)

Command References

-

Notices

-

Licensing Guides

-

Open Source Documentation

Licensing Information

-

Install and Upgrade

- Cisco Nexus 3600 Series NX-OS Software Upgrade and Downgrade Guide, Release 10.5(x)

- Cisco Nexus 3500 Series NX-OS Software Upgrade and Downgrade Guide, Release 10.5(x)

- Cisco Nexus 9000 Series NX-OS Software Upgrade and Downgrade Guide, Release 7.x

- Cisco Nexus 3500 Series NX-OS Software Upgrade and Downgrade Guide, Release 9.3(x)

- Cisco Nexus 3500 Series NX-OS Software Upgrade and Downgrade Guide, Release 7.x

- Cisco Nexus 3600 Series NX-OS Software Upgrade and Downgrade Guide, Release 10.4(x)

- Cisco Nexus 3500 Series NX-OS Software Upgrade and Downgrade Guide, Release 10.1(x)

- Cisco Nexus 3600 Series NX-OS Software Upgrade and Downgrade Guide, Release 9.3(x)

- Cisco Nexus 3000 Series NX-OS Software Upgrade and Downgrade Guide, Release 7.x

- Cisco Nexus 3600 Series NX-OS Software Upgrade and Downgrade Guide, Release 10.1(x)

- Cisco Nexus 3500 Series NX-OS Software Upgrade and Downgrade Guide, Release 9.2(x)

- Cisco Nexus 9000 Series NX-OS Software Upgrade and Downgrade Guide, Release 6.x

- Cisco Nexus 3600 Series NX-OS Software Upgrade and Downgrade Guide, Release 9.2(x)

- Cisco Nexus 3400-S NX-OS Software Upgrade and Downgrade Guide, Release 9.3(x)

- Taxonomy for Cisco Nexus 3000 Series Part Numbers

Install and Upgrade Guides

-

Applicable to Multiple Models

- Upgrade Nexus 3048 NX-OS Software

- Change Nexus 3000, 3100, and 3500 NX-OS Compact Image File Size

- Upgrade Nexus 3524 and 3548 NX-OS Software

- Upgrade Nexus 3000 and 3100 NX-OS Software

Install and Upgrade TechNotes

-

Configuration

- Configure and Verify Maximum Transmission Unit on Nexus Platforms

- Create Topologies for Routing over Virtual Port Channel

- Understand Virtual Port Channel (vPC) Enhancements

- Configure VXLAN

- Deploy Layer3 EVPN over Segment Routing MPLS [Ospf / iBGP] in Nexus 3000

- Nexus 3000/9000: Consolidated Interface Breakout configuration

Configuration Examples and TechNotes

- Cisco Nexus 3548 Switch NX-OS Layer 2 Switching Configuration Guide, Release 10.5(x)

- Cisco Nexus 3548 Switch NX-OS System Management Configuration Guide, Release 10.5(x)

- Cisco Nexus 3600 Switch NX-OS Fundamentals Configuration Guide, Release 10.5(x)

- Cisco Nexus 3600 Switch NX-OS iCAM Configuration Guide, Release 10.5(x)

- Cisco Nexus 3600 Switch NX-OS Layer 2 Switching Configuration Guide, Release 10.5(x)

- Cisco Nexus 3600 Switch NX-OS System Management Configuration Guide, Release 10.5(x)

- Cisco Nexus 3548 Switch NX-OS Multicast Routing Configuration Guide, Release 10.5(x)

- Cisco Nexus 3600 Switch NX-OS Multicast Routing Configuration Guide, Release 10.5(x)

- Cisco Nexus 3600 Switch NX-OS Multicast Routing Configuration Guide, Release 10.5(x)

- Cisco Nexus 3548 Switch NX-OS Unicast Routing Configuration Guide, Release 10.5(x)

- Cisco Nexus 3600 Switch NX-OS Unicast Routing Configuration Guide, Release 10.5(x)

- Cisco Nexus 3548 Switch NX-OS Unicast Routing Configuration Guide, Release 10.5(x)

- Cisco Nexus 3548 Switch NX-OS Fundamentals Configuration Guide, Release 10.5(x)

- Cisco Nexus 3548 Switch NX-OS Quality of Service Configuration Guide, Release 10.5(x)

- Cisco Nexus 3548 Switch NX-OS Security Configuration Guide, Release 10.5(x)

Configuration Guides

- Cisco Nexus 3600 Switch NX-OS Programmability Guide, Release 10.5(x)

- Cisco Nexus 3600 Switch NX-OS Programmability Guide, Release 10.4(x)

- Cisco Nexus 3500 Series NX-OS Programmability Guide, Release 10.4(x)

- Cisco Nexus 9000 Series NX-OS Programmability Guide, Release 7.x

- Cisco Nexus 3600 Series NX-OS Programmability Guide, Release 10.3(x)

- Cisco Nexus 3500 Series NX-OS Programmability Guide, Release 10.3(x)

- Cisco Nexus 3500 Series NX-OS Programmability Guide, Release 10.2(x)

- Cisco Nexus 3600 Series NX-OS Programmability Guide, Release 10.2(x)

- Cisco Nexus 3600 NX-OS Programmability Guide, Release 10.1(x)

- Cisco Nexus 3500 Series NX-OS Programmability Guide, Release 10.1(x)

- Cisco Nexus 3000 Series NX-OS Programmability Guide, Release 9.3(x)

- Cisco Nexus 3000 Series NX-OS Programmability Guide, Release 9.2(x)

- Cisco Nexus 3500 Series NX-OS Programmability Guide, Release 7.x

- Cisco Nexus 3600 NX-OS Programmability Guide, Release 9.3(x)

- Cisco Nexus 3500 Series NX-OS Programmability Guide, Release 9.3(x)

Programming Guides

-

Troubleshooting

- Cisco Nexus 3548 Series NX-OS System Messages Reference, Release 10.3(5)M

- Cisco Nexus 3600 NX-OS System Messages Reference, Release 10.3(5)M

- Cisco Nexus 3000 Series NX-OS System Messages Reference, Release 9.2(4)

- Cisco Nexus 3600 NX-OS System Messages Reference, Release 9.3(3)

- Cisco Nexus 3548 NX-OS System Messages Reference, Release 9.3(3)

- Cisco Nexus 3400 NX-OS System Messages Reference, Release 9.3(3)

- Cisco Nexus 3000 Series NX-OS System Messages Reference, Release 9.3(3)

- Cisco Nexus 3600 Series NX-OS System Messages Reference, Release 9.3(1)

- Cisco Nexus 3548 Series NX-OS System Messages Reference, Release 9.3(1)

- Cisco Nexus 3000 Series NX-OS System Messages Reference, Release 9.3(1)

- Cisco Nexus 3400 NX-OS System Messages Reference, Release 9.2(2t)

- Cisco Nexus 3600 NX-OS System Messages Reference, Release 9.2(1)

- Cisco Nexus 3548 Series NX-OS System Messages Reference, Release 9.2(1)

- Cisco Nexus 3000 Series NX-OS System Messages Reference, Release 9.2(1)

- Cisco Nexus 3000 Series NX-OS System Messages Reference, Release 7.0(3)I3(1)

Error and System Messages

-

Cisco Nexus 3164Q Switch (Running Cisco Nexus 9000 Series NX-OS Software Release 6.1(2)I2(2a) and Higher)

Troubleshooting Guides

-

Cisco Nexus 3548-X Switch

- Understand Cyclic Redundancy Check Errors on Nexus Switches

- Nexus 3500 Output Drops and Buffer QoS

- Nexus 3000 average memory utilization

- Develop, Debug and Deploy NX-SDK Python Application in Nexus 3000/9000 Switches

- Per-VLAN (SVI) counter feature on Nexus 3000 platform

- Configure and troubleshoot PTP in Nexus 3000

- Nexus 3500 Series Switch Platform System Health Check Process

- Nexus 7000 Series Switch Problem with Remote User Authentication via SSH with a TACACS account

Troubleshooting TechNotes

-

Literature

-

Log in to see available downloads.

-

Feedback

Feedback