Information About Dynamic FCoE Using FabricPath

Fibre Channel over Ethernet (FCoE) enables I/O consolidation. It permits both LAN and SAN traffic to coexist on the same switch and the same wire. This feature enables you to consolidate multiple separate networks into a single converged infrastructure.

Key values of I/O consolidation using traditional FCoE are as follows:

-

Elimination of separate network infrastructures for SAN and LAN traffic.

-

Reduction in hardware requirements, such as cabling and server interface cards (NICs and HBAs), and lowering capital expense.

-

Reduction in power and cooling requirements for fewer physical assets.

-

Increasing deployment agility for multiprotocol networks, which preserves long-term investments while preparing for future uncertainty in protocol needs.

By using FabricPath Ethernet technology, you can take FCoE consolidation even further:

-

Create a logical, rather than physical, SAN A/B separation.

-

Efficiently load balance multiprotocol traffic within the data center.

-

Dynamically establish relationships between switches, reducing the possibility for human error during configurations.

-

Improved high availability percentages as the scale increases.

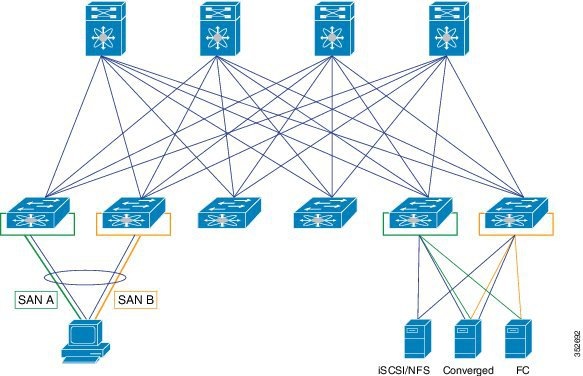

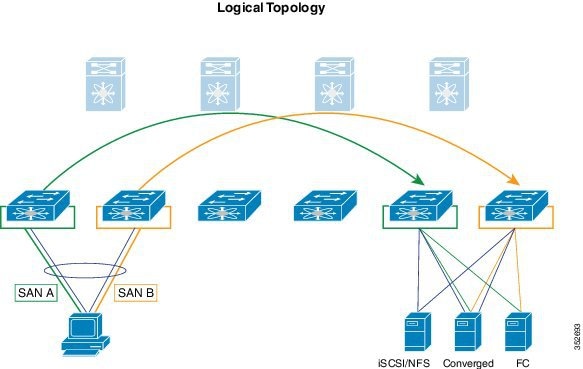

The FabricPath architecture provides an inherent multipath capability with redundancy to handle node failures. Fabric level redundancy is provided through a double fabric model (SAN A/SAN B). The separation of the two SANs is logically implemented as two different VSANs that map to two different VLANs (VLAN A and B). Fibre channel traffic in SAN A becomes the FCoE traffic in VLAN A, the Fiber Channel traffic in SAN B becomes the FCoE traffic in VLAN B, and the LAN traffic is carried on one or more additional VLANs over the converged Ethernet infrastructure. In this logical environment, the VSAN A/VSAN B configuration protects against fabric-wide control plane failures.

The traditional method of hosts that connect to two separate SANs is still supported with the FCoE over FabricPath architecture. The host is connected to two different leaf nodes that host a disjointed set of VSANs. Beyond these leaf nodes, the fabric is converged on the same infrastructure, but the host continues to see two SAN fabrics.

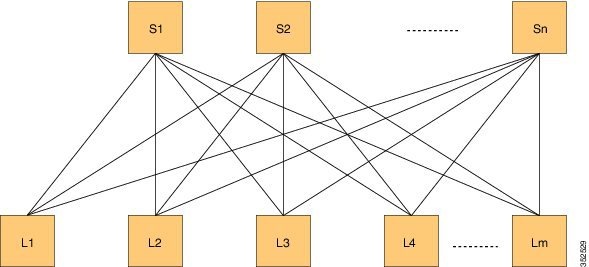

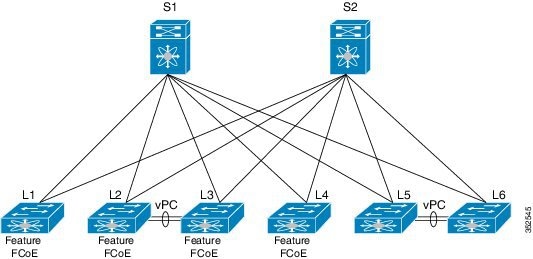

The following figure shows a FabricPath topology with n spines (S) and m leafs (L). The m leafs communicate to each other through the n spines using FabricPath encapsulation.

FCoE creates an overlay of FCoE virtual links on top of the underlying Ethernet topology, irrespective of how that Ethernet topology is constructed and which protocol is used to compute the MAC address routes.

In a dynamic FCoE environment, the topology is developed using the leafs as FCoE Forwarder (FCF) switches that are forwarded through transparent spines.

FCoE hosts and FCoE storage devices are connected to a FabricPath topology through the leaf switches. In this configuration, only the leaf switches perform FCoE forwarding (only the leaf switches behave as FCFs); the spine switches just forward MAC-in-MAC encapsulated Ethernet frames that are based on the outer destination MAC address.

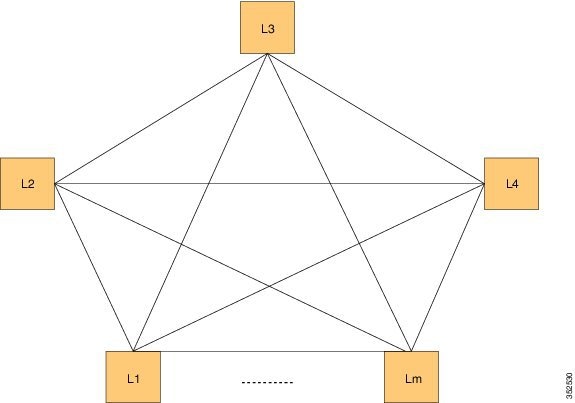

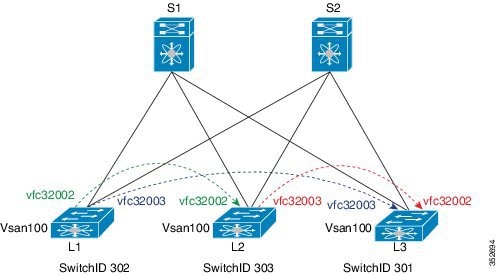

The following figure shows the logical FCoE overlay topology of VE_Port to VE_Port virtual links on a FabricPath topology.

Only the FCFs, that are implemented by the leaf switches are part of this overlay topology. This topology is seen by Fabric Shortest Path First (FSPF), for each FCoE VLAN. FSPF computes over which virtual link to forward an FCoE frame based on its DomainID (D_ID). A virtual link is uniquely identified by the pair of MAC addresses associated with the two VE_Ports logically connected by it. Identifying the virtual link is equivalent to identifying which MAC addresses to use for the FCoE encapsulation on the transport network.

Use Lm as the number of leafs that are feature enabled. The feature might not be enabled on all leafs. The FCoE mesh is basically the leafs where FCoE or FabricPath is enabled.

SAN A/B Separation

In the previous figure, the physical connectivity for the topology follows typical leaf/spine CLOS architectural best practices. Logically, SAN A and SAN B are isolated at the Top of Rack (ToR) switches physically. Once the traffic enters the FabricPath network, the storage traffic is logically separated (see the following figure) across the network where it is physically separated once more to the storage device edge.

Dynamic FCoE gains the additional redundancy that is inherent in the FabricPath network by using the increased spine connectivity. A larger network with a large number of spines means increased reliability and stability for the storage network. This is achieved while retaining the best practices requirements for storage environments.

Load-Balancing FCoE Traffic on a Dynamic VFC

FabricPath provides redundant paths between a source and destination. Because FCoE traffic traverses the FabricPath network with one or more FCoE and non-FCoE nodes (spines, leafs), you must ensure in-order delivery through proper port-channel hashing across the redundant paths. All FabricPath nodes have port-channel hashing enabled that includes the exchange ID. Traffic from a single flow always traverses through only one set of nodes through the network to maintain in-order delivery.

Supported Dynamic FCoE Using FabricPath Topologies

The supported topologies for Dynamic FCoE Using FabricPath are as follows:

-

FCoE devices that are directly connected to an FCF leaf

-

Traditional FCoE VE_Port connectivity to an FCF leaf

-

Legacy FC fabric connected to an FCF leaf

-

NPV and FCoE NPV devices that are connected to an FCF leaf

-

Native FC devices that are directly connected to an FCF leaf

Note |

Although physical separation is possible through a multi-topology configuration of FabricPath, it is not required. |

Feedback

Feedback