2-Node 2-Room Use Case

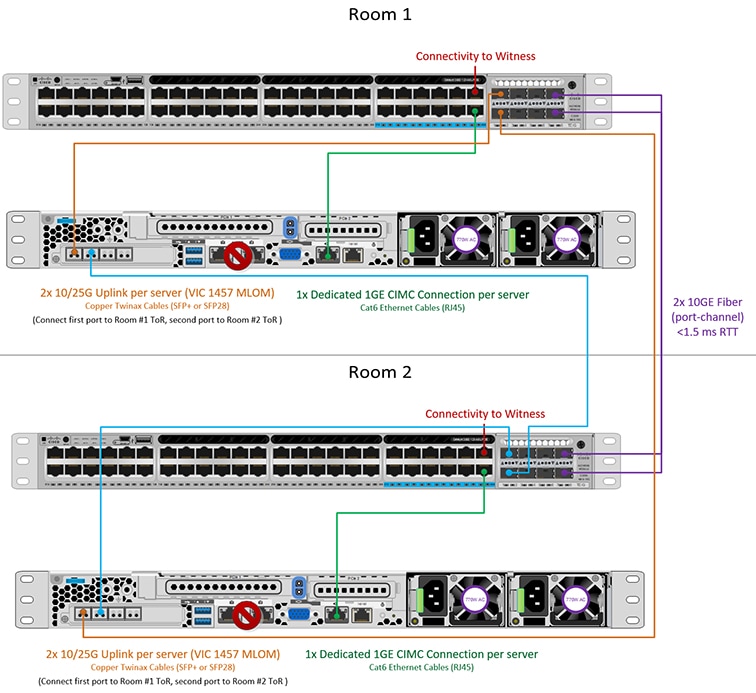

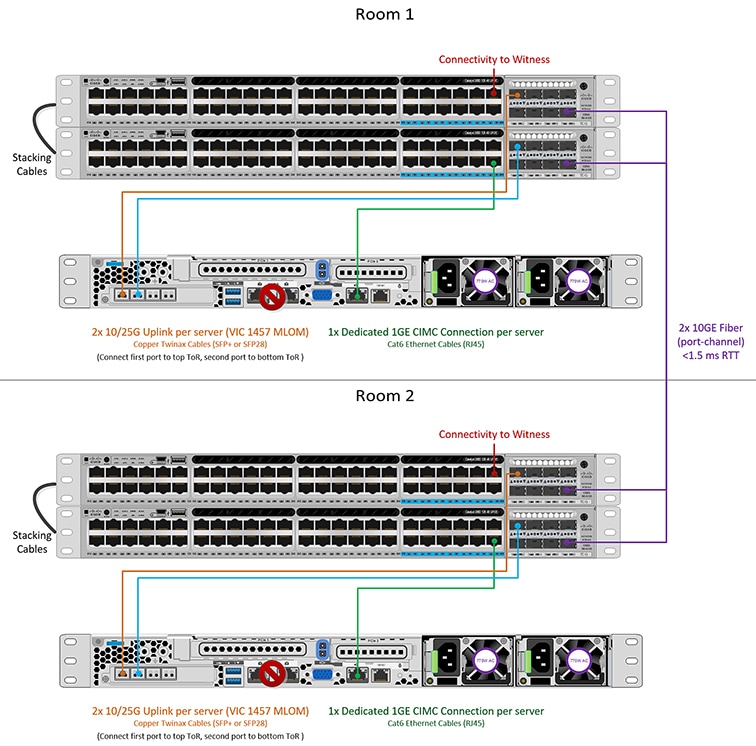

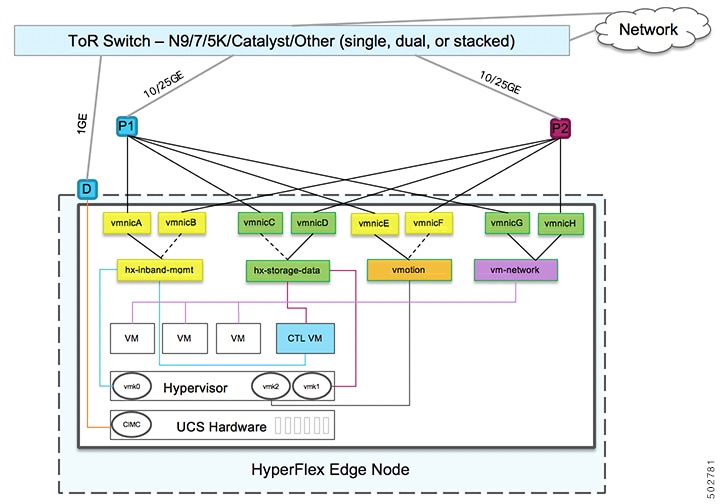

HyperFlex Edge offers many flexible deployment options depending on workload requirements. Standard topologies are covered in Select your 2-Node Network Topology and Selecting your 3- or 4-Node Network Topology that include single switch, dual switch, 1GE, 10GE, and 25GE options. Some designs call for placing a two-node cluster “stretched” across two rooms within a building or a campus. This type of network topology will further be referred two as a 2-node 2-room design to distinguish this type of topology from a full HyperFlex Stretched Cluster deployment.

This design is sometimes chosen as an attempt to boost the cluster availability and its ability to tolerate certain failure scenarios. Cisco does not currently recommend deploying this type of topology and recommends a properly designed 2-node cluster within the same rack. The following are some reasons why this topology is not considered a Cisco recommended best practice:

-

The ability to mitigate power failures can be handled with reliable power and use of an interruptible power supply (UPS)

-

Introduces more single points of failure – extra switching infrastructure with inter-switch links that can become oversubscribed and require proper QoS implementation

-

Complicates upgrade procedures, requiring careful planning to upgrade all components end to end.

-

Does not provide the same level of availability for mission critical applications as a HyperFlex Stretched Cluster (for more information, see the Cisco HyperFlex Systems Stretched Cluster Guide, Release 4.5. HyperFlex Edge is designed to run Edge workloads and does not provide the same performance, data resiliency, and availability guarantees. Deploy a proper stretched cluster when running mission critical applications.

-

Requirements for 10GE end to end, maximum 1.5ms RTT, and independent network paths to Intersight or local witness, described in further detail below

-

Increases overall complexity to an otherwise simple design

It is possible that a 2-node 2-room topology could unintentionally reduce availability by adding unnecessary complexity to the environment that could be otherwise mitigated through simpler means (e.g., dual redundant switches, redundant power/UPS, etc.).

Despite these best practice recommendations, it is possible and fully supported to deploy HyperFlex Edge using this topology choice. The remainder of this chapter will cover the various requirements and details to deploy such a topology.

Note |

2-node 2-room topologies will never be permitted to expand beyond two converged nodes. Expansion to larger clusters is possible for other 10GE+ topologies as outlined in earlier chapters. Do not deploy this topology if cluster expansion may be required in the future. |

Feedback

Feedback