Neocloud consumption and deployment models

Neocloud providers leverage diverse business models to deliver AI infrastructure to the enterprise. These offerings are generally categorized into three main approaches that balance scalability, cost, performance, and data locality.

1. Reserved instances (Dedicated or shared AI IaaS)

This model is designed for organizations with predictable, long-running AI workloads, such as large-scale model training or sustained inference.

- Dedicated AI clusters: Enterprises can commit to entire GPU clusters allocated to a single customer for a fixed term (typically 1–3 years).

- Performance consistency: By utilizing dedicated AI stacks, organizations achieve maximum performance consistency and guaranteed capacity.

- Cost efficiency: This model offers significantly lower costs compared to on-demand pricing for steady-state workloads.

2. On-demand instances (Shared public AI IaaS)

This "pay-as-you-go" model allows enterprises to consume AI-optimized compute resources, such as GPUs and TPUs, from shared pools.

- Elastic AI capacity: Designed for experimentation, development, testing, or bursty workloads where demand is inconsistent.

- Maximum flexibility: Enterprises pay only for the resources consumed (by the hour or minute) without long-term commitments.

- Cloud operating model: The provider manages and secures the underlying multi-tenant infrastructure, allowing the customer to focus on their specific AI software stack.

3. Serverless platforms (Managed AI PaaS)

In this model, the cloud provider abstracts the infrastructure management entirely, allowing enterprises to consume AI capabilities through managed platforms and APIs.

- Operational simplicity: The provider handles all provisioning, scaling, and lifecycle management.

- Usage-based metrics: Customers pay based on execution time, requests, or tokens, making it ideal for application integration and elastic scaling.

- Inference focus: This approach is well-suited for deploying models into production environments where simplicity is a priority.

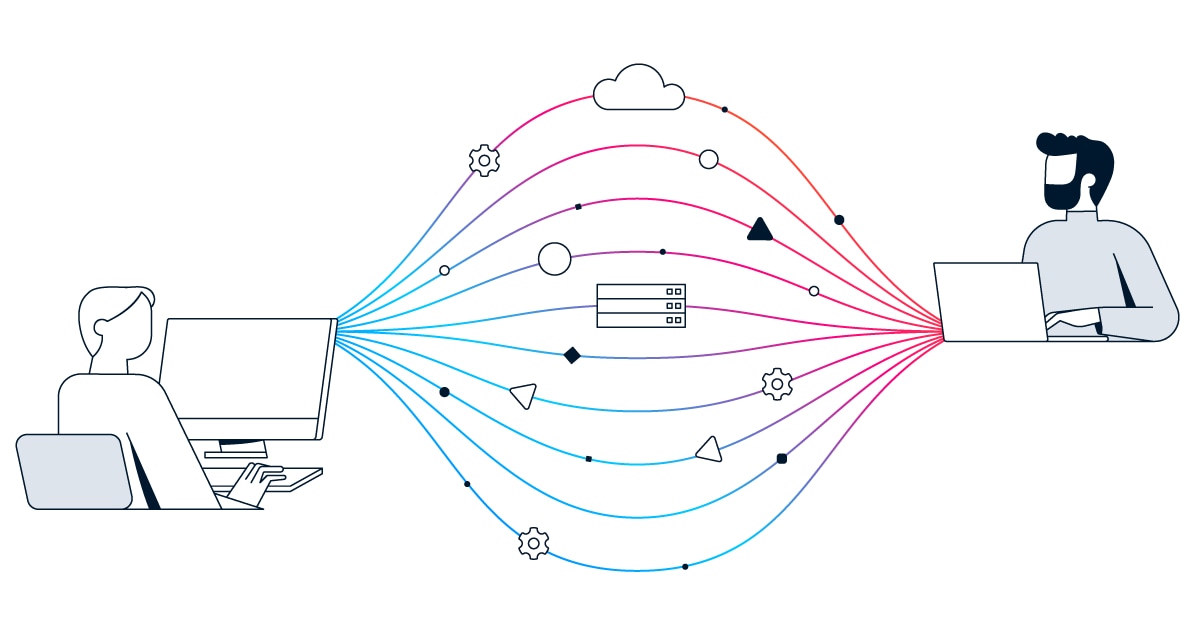

The hybrid integration layer

Regardless of the consumption model chosen, Hybrid AI extensions remain a critical deployment factor. Neocloud environments must be integrated with existing enterprise environments through secure WAN or SD-WAN connectivity. This ensures a seamless workflow between on-premises corporate sites and cloud-based AI clusters, enabling secure hybrid model development and data movement.