使用vManage GUI或CLI升級SD-WAN控制器

下載選項

無偏見用語

本產品的文件集力求使用無偏見用語。針對本文件集的目的,無偏見係定義為未根據年齡、身心障礙、性別、種族身分、民族身分、性別傾向、社會經濟地位及交織性表示歧視的用語。由於本產品軟體使用者介面中硬式編碼的語言、根據 RFP 文件使用的語言,或引用第三方產品的語言,因此本文件中可能會出現例外狀況。深入瞭解思科如何使用包容性用語。

關於此翻譯

思科已使用電腦和人工技術翻譯本文件,讓全世界的使用者能夠以自己的語言理解支援內容。請注意,即使是最佳機器翻譯,也不如專業譯者翻譯的內容準確。Cisco Systems, Inc. 對這些翻譯的準確度概不負責,並建議一律查看原始英文文件(提供連結)。

目錄

簡介

本檔案介紹升級軟體定義廣域網(SD-WAN)控制器的程式。

必要條件

需求

思科建議您瞭解以下主題:

- 思科軟體定義廣域網路(SD-WAN)

- Cisco Software Central

- 從software.cisco.com下載控制器軟體

- 在升級CiscoDevNet/sure之前運行AURA指令碼:SD-WAN升級準備體驗

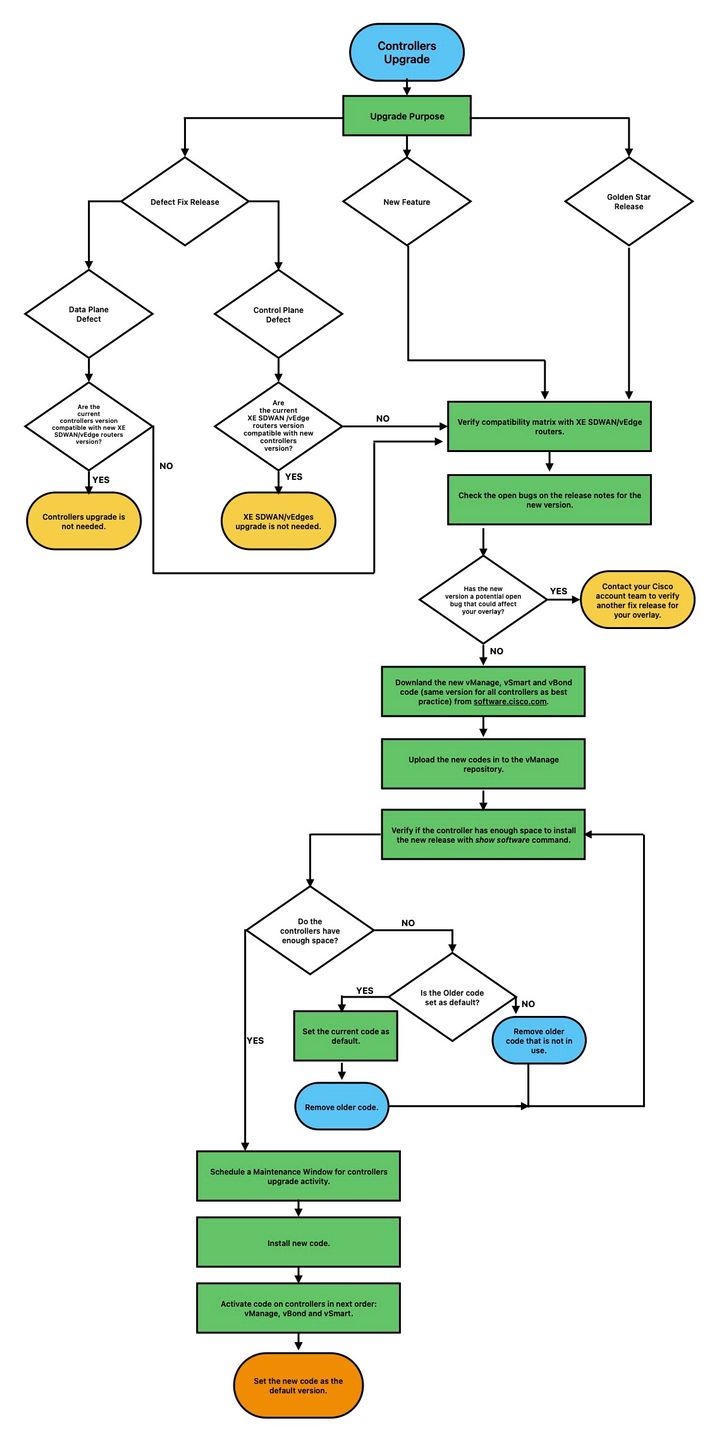

規劃控制器升級可能有多種原因,例如:

- 具有新功能的新版本。

- 修復已知警告/錯誤。

- 延遲的版本。

註:如果版本已延遲,最好儘快升級到gold-star版本。由於存在已知缺陷,不建議在生產控制器上使用延遲版本。

升級控制器時,請考慮下一個有用的資訊:

- 驗證SD-WAN控制器的發行說明。

- 驗證Cisco vManage升級路徑。

- 驗證Cisco SD-WAN控制器是否符合推薦的計算資源。

- 驗證SD-WAN產品的壽命終止和銷售終止通知。

附註:升級SD-WAN控制器的順序是vManage > vBonds > vSmarts。

採用元件

本檔案基於以下軟體版本:

- Cisco vManage 20.12.6和20.15.4.1

- Cisco vBond和vSmart 20.12.16和20.15.4.1

本文中的資訊是根據特定實驗室環境內的裝置所建立。文中使用到的所有裝置皆從已清除(預設)的組態來啟動。如果您的網路運作中,請確保您瞭解任何指令可能造成的影響。

在控制器升級之前要執行的預檢查

備份vManage

- 如果雲託管,請確認已完成最新備份,或啟動下一步中提到的config db備份。

- 您可以檢視當前備份並從SSP門戶觸發按需快照。 在此處查詢更多指導。

- 如果內建:

- 進行config-db備份

- 所有控制器的虛擬機器快照。

vManage# request nms configuration-db backup path /home/admin/db_backup

successfully saved the database to /home/admin/db_backup.tar.gz

- 如果是本地,請收集show running-config並將其儲存在本地。

- 如果為內部版本,請確保您已知道configuration-db(neo4j)使用者名稱和密碼,並註明確切的目前版本。

如果您在檢索配置資料庫憑證時需要幫助,可以聯絡思科TAC。

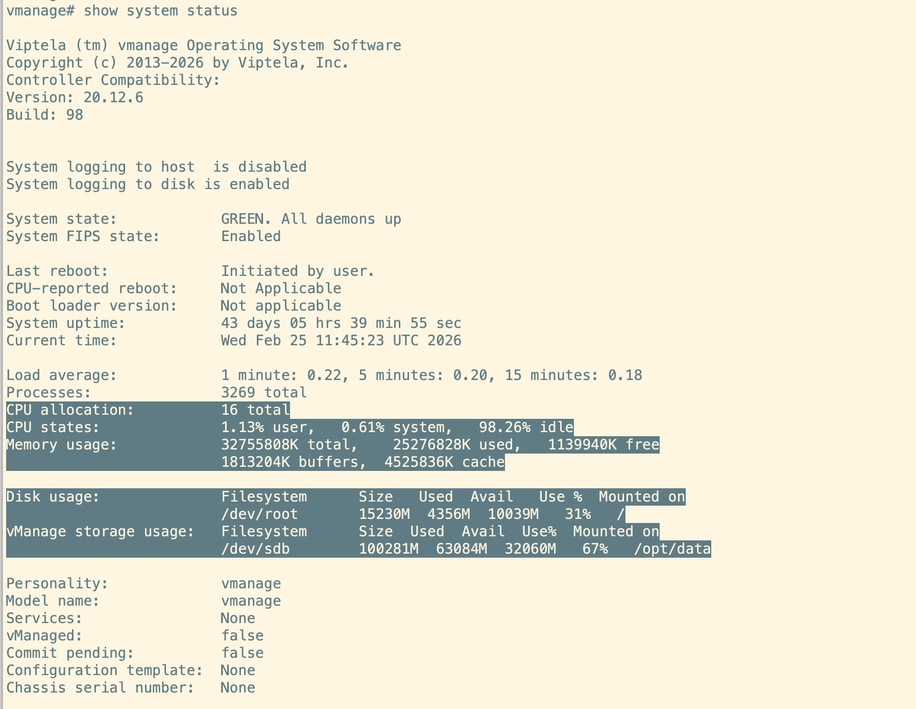

vManage上的系統計算

附註:此檢查也適用於vBonds和vSmarts。

在每個vManage節點的CLI上,執行命令show system status。確保將所需的計算對映到vManage節點。請參閱計算指南。

運行AURA檢查

- 下載並遵循以下步驟,以便從CiscoDevNet/sure運行AURA:SD-WAN升級準備體驗

- 有關詳細步驟,請參閱本指南:在升級之前配置Aura部署。

- 向TAC SR開放,以便解決與AURA報告中的未通過檢查相關的任何問題。

- 從20.11.x開始,vManage在使用vManage UI啟用映像時自動運行AURA。

磁碟空間利用率vManage

開始升級之前,請確認所有三個關鍵分割槽(/boot、/rootfs.rw和/opt/data)上的磁碟使用率都在60%或更低。

要進行清理,請查詢並刪除不必要的使用者複製的檔案或未壓縮的日誌檔案。

刪除任何管理技術檔案、堆轉儲、Neo4j備份、執行緒轉儲或佔用磁碟空間的臨時檔案。

如果您不確定哪些檔案可以安全刪除,請開啟Cisco TAC案例尋求幫助。

確保傳送到控制器/傳送到vBond已完成

在vManage UI上,導航到Configuration —> Certificates —> Controllers,然後選擇send to vBond

檢查vManage Statistics收集間隔

思科建議Administration > Settings中的Statistics Collection Interval設定為預設計時器30分鐘。

附註:Cisco建議在升級之前將vSmarts和vBonds附加到vManage模板。

驗證所有vManage節點上的NMS診斷

執行命令「request nms all diagnostics」,並確保所有NMS服務的NPing成功。如果是vManage集群,則需要在所有vManage節點上執行這些檢查:

附註:在6節點vManage群集中,configuration-db僅在3個節點上運行。

檢視configuration-db的診斷資訊:

確保我們能夠獲取如下所示的configuration-db屬性:

確保「neo4j」和「system」的所有vManage節點都列在Neo4j群集狀態中

確保架構驗證成功,並且沒有隔離Neo4j節點。

檢視整個輸出,如果發現任何錯誤或故障,請聯絡TAC,然後繼續升級。

檢查vManage節點上的磁碟空間使用情況

確保除/rootfs.ro外,其他磁碟分割槽的使用率均不超過60%。在所有vManage節點上驗證這一點。

驗證vSmart和vBond上的磁碟空間

使用命令df -kh | grep boot from vShell以確定磁碟大小。

controller:~$ df -kh | grep boot

/dev/sda1 2.5G 232M 2.3G 10% /boot

controller:~$如果大小大於200 MB,請繼續升級控制器。

如果大小小於200 MB,請執行以下步驟:

1.驗證當前版本是否為show software命令下列出的唯一版本。此檢查適用於所有3個控制器,vManage、vBond和vSmart。

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

------------------------------------------------------------------------------

20.12.6 true true false auto 2023-05-02T16:48:45-00:00

20.9.1 false false true user 2023-05-02T19:16:09-00:00

2.驗證是否在show software version命令下將當前版本設定為預設值。此檢查適用於所有3個控制器:vManage、vBond和vSmart。

controller# request software set-default 20.12.6

status mkdefault 20.11.1: successful

controller#

3.如果列出更多版本,請使用request software remove <version>命令刪除所有未處於活動狀態的版本。這將增加繼續升級的可用空間。此檢查適用於所有3個控制器:vManage、vBond和vSmart。

controller# request software remove 20.9.1

status remove 20.9.1: successful

vedge-1# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

------------------------------------------------------------------------------

20.12.6 true true false auto 2023-05-02T16:48:45-00:00

controller#

4.檢查磁碟空間以確保大於200 MB。 如果沒有,請繼續開啟TAC SR。

命令之前和之後

升級前後,請執行以下命令以驗證控制器是否已正確收斂:

-

顯示控制連線

確保每個控制器與所有其它控制器(全網狀)具有活動控制連線。 -

show omp peers (僅限vSmart)

驗證每個vSmart控制器上的OMP對等體數量。 -

show omp summary(僅限vSmart)

檢查總體OMP狀態和對等體資訊。 -

show running policy(僅限vSmart)

確認所有預期策略在控制器上處於活動狀態且可見。在升級前後檢查這些命令的輸出,以確保網路穩定性和適當的收斂。

控制器升級工作流

vManage群集升級

在集群升級的情況下,必須執行Cisco SD-WAN入門指南 — 集群管理[Cisco SD-WAN] — 思科指南中提到的步驟。

附註:vManage群集升級對資料網路沒有影響。對於獨立vManage,我們可以使用vManage UI安裝和啟用新軟體。對於vManage集群,建議使用vManage UI安裝軟體,並使用vManage CLI使用請求軟體activate < >啟用軟體,如以下通過CLI升級SD-WAN控制器一節所述。

注意:如果在升級集群時遇到任何問題或問題,請在繼續之前聯絡TAC。

通過vManage圖形使用者介面(GUI)升級SD-WAN控制器

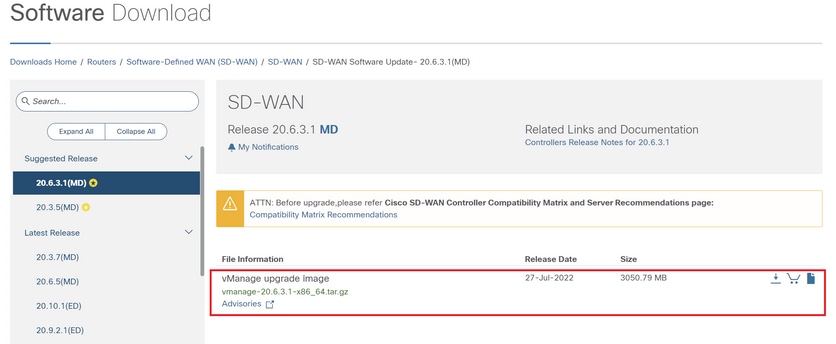

步驟1.將軟體映像上傳到vManage儲存庫

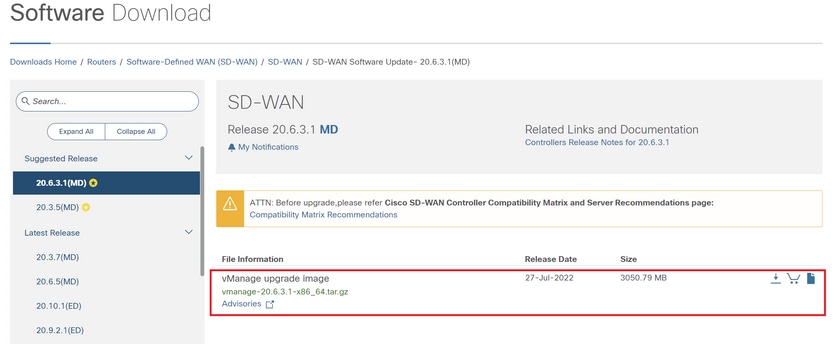

導航到Software Download,並下載vManage所需的軟體版本映像。

附註:控制器有兩種型別的映像:新部署和升級。在本指南範圍內,要下載的映像必須是升級映像。

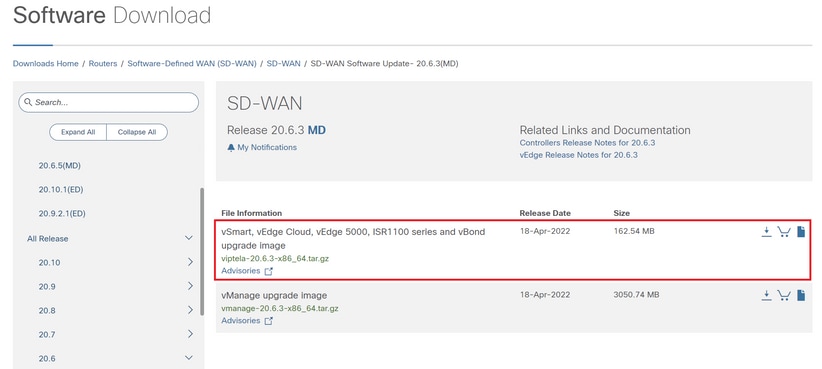

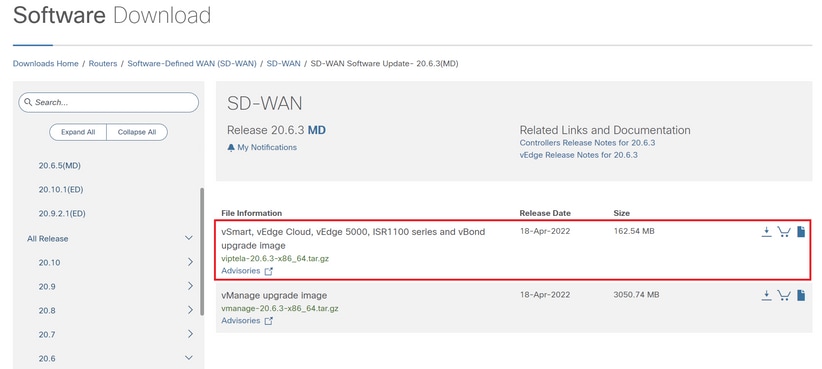

導覽至Software Download,然後下載vBond和vSmart的軟體版本映像。

附註:vBond和vSmart的升級映像相同。

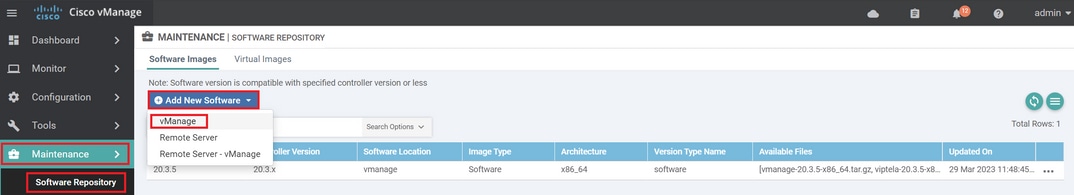

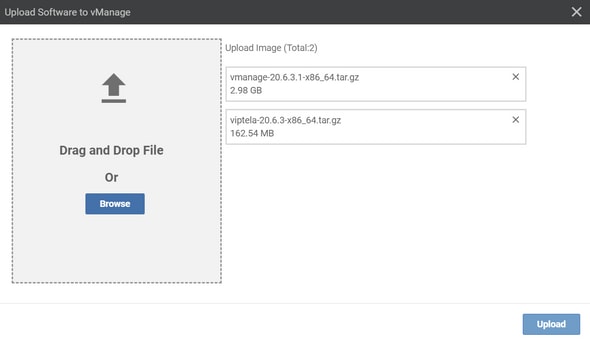

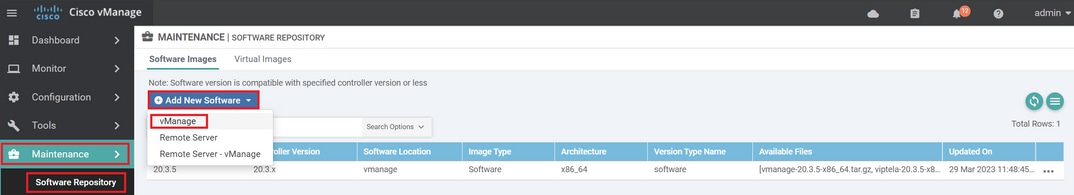

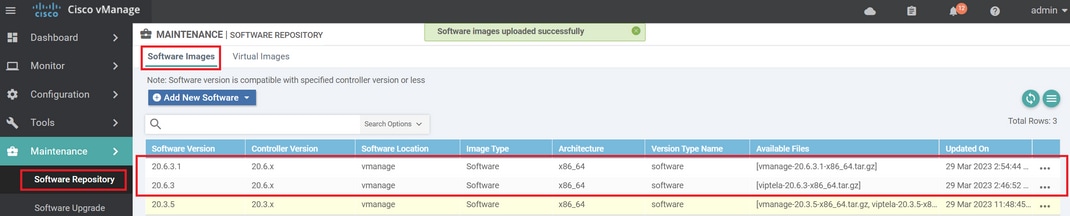

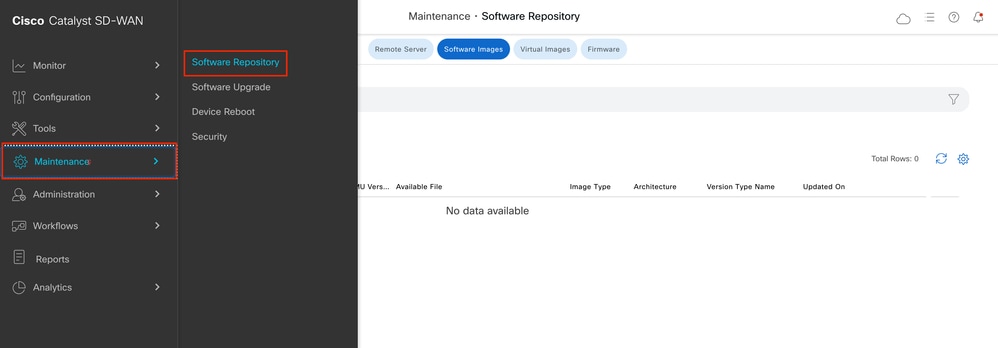

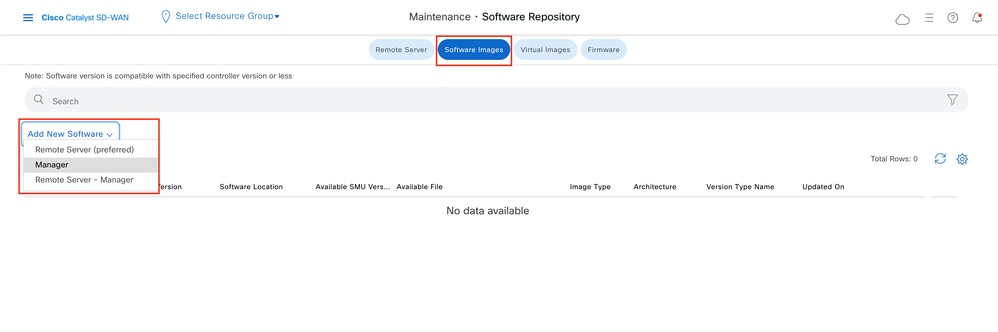

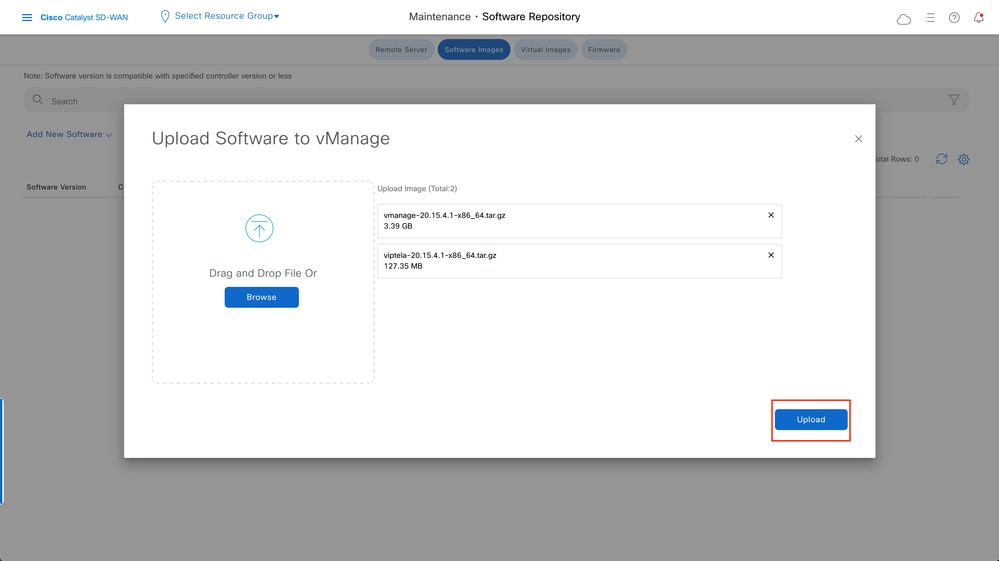

要上傳新映像,請導航到維護 > 軟體儲存庫 > 軟體映像。

按一下「Add New Software」,然後從下拉選單中選擇「vManage」。

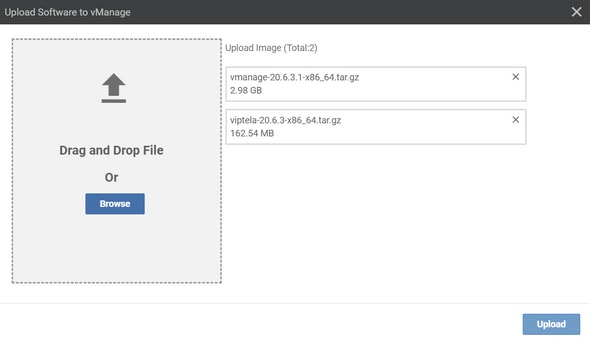

選擇映像,然後按一下Upload。

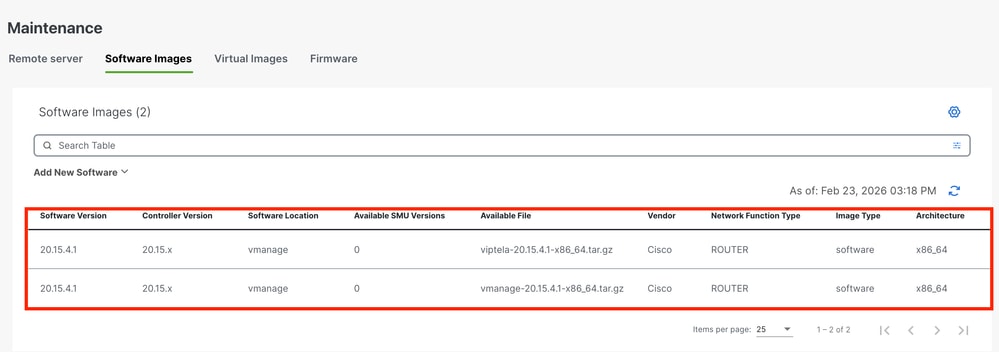

上傳映像後,確認映像是否列在Software Repository > Software Images中。

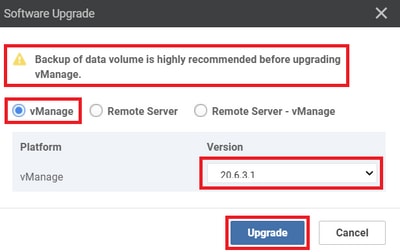

步驟2.安裝、啟用並將新版本設定為預設值

此步驟說明如何分三個步驟執行升級:安裝、啟用並將新版本設定為預設值。

vManage

注意:確保您已驗證了vManage升級前要執行的預檢查。

步驟A.安裝

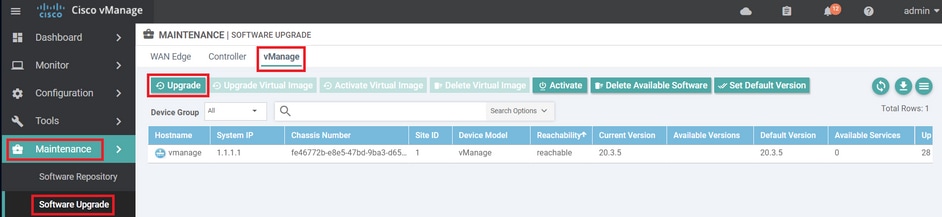

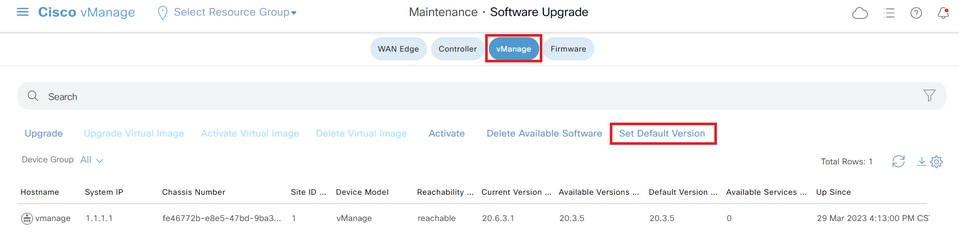

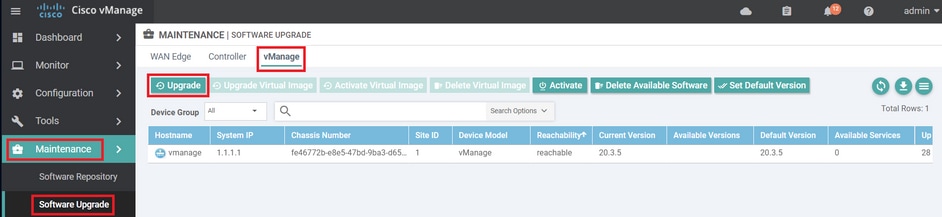

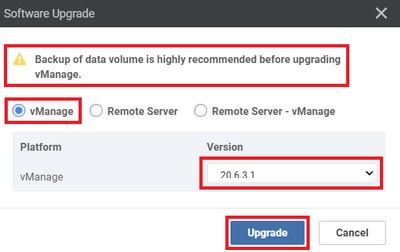

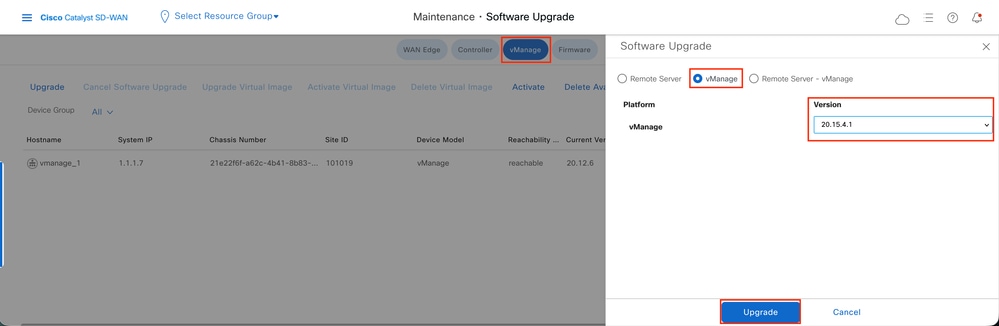

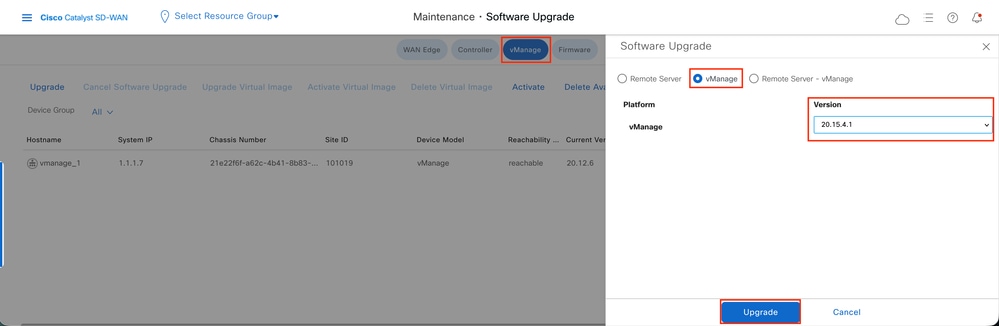

在主選單中,導航到Maintenance > Software Upgrade > vManage,然後按一下Upgrade。

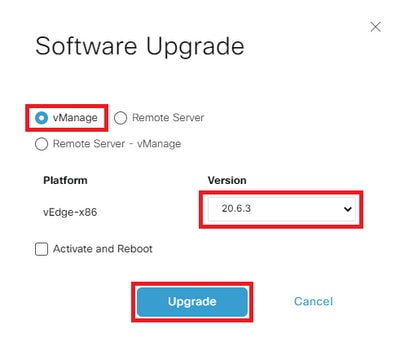

在「Software Upgrade(軟體升級)」彈出視窗中,執行以下操作:

- 選擇vManage 頁籤。

- 從版本下拉選單中選擇要升級到的映像版本。

- 按一下「Upgrade」。

附註:此過程不執行vManage的重啟,只傳輸、解壓縮和建立升級所需的目錄。

附註:極力建議在繼續升級vManage之前備份資料卷。

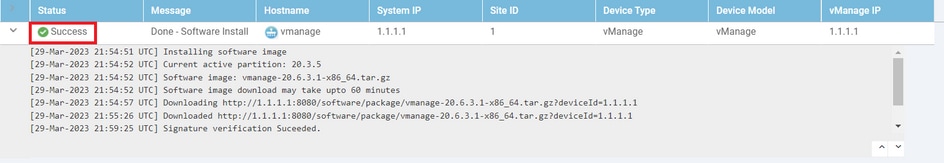

驗證任務的狀態,直到它顯示為成功。

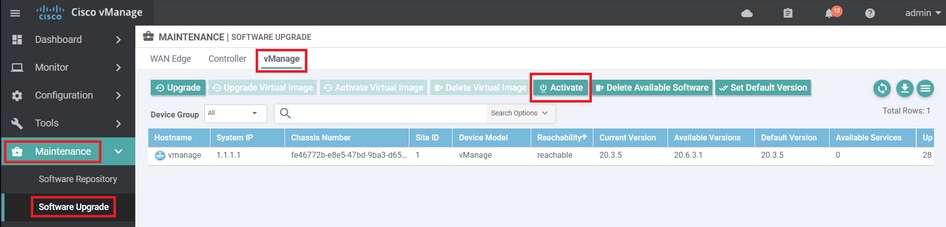

步驟B.啟用

在此步驟中,vManage啟用新安裝的軟體版本,並重新啟動自身以使用新軟體進行啟動。

導覽至Maintenance > Software Upgrade > vManage,然後按一下Activate。

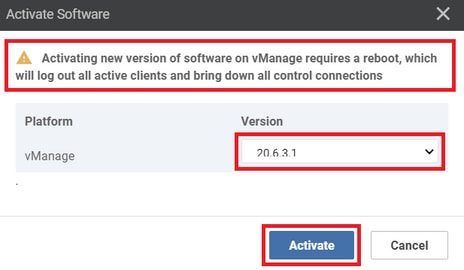

選擇新版本,然後按一下Activate。

附註:vManage重新啟動時,無法訪問該GUI。完全啟用最多需要60分鐘。

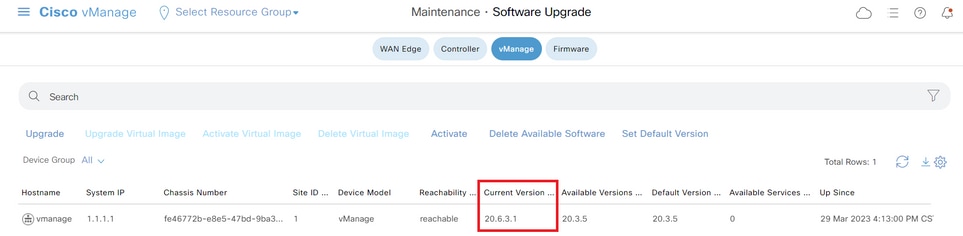

完成該過程後,登入並導航到維護>軟體升級>管理器,以驗證新版本是否已啟用。

步驟C.設定預設軟體版本

您可以將軟體映像設定為Cisco SD-WAN裝置上的預設映像。在驗證軟體在裝置和網路中按需要運行後,建議將新映像設定為預設值。

如果在裝置上執行出廠重置,則裝置會使用預設設定的映像來啟動。

附註:建議將新版本設定為預設值,因為如果vManage重新啟動,將啟動舊版本。這可能導致資料庫損壞。版本從主版本降級為舊版本,vManage中不支援該版本。

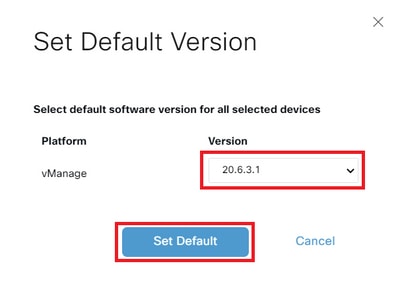

要將軟體映像設定為預設映像,請執行以下操作:

- 導覽至Maintenance > Software Upgrade> Manager > Software Image Actions。

- 按一下Set Default Version,從下拉選單中選擇新版本,然後按一下Set Default。

附註:此過程不執行vManage的重新引導。

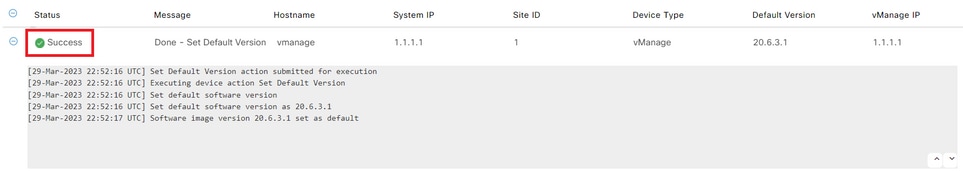

驗證任務的狀態,直到它顯示為成功。

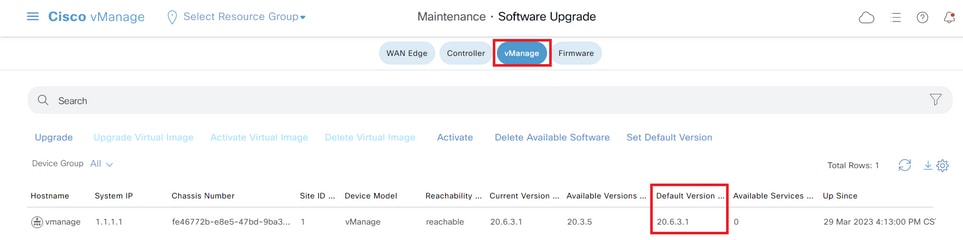

要驗證預設版本,請導航到維護 > 軟體升級 > 管理器。

vBond

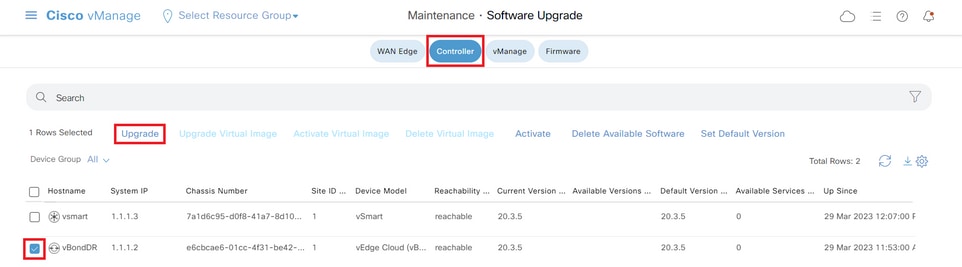

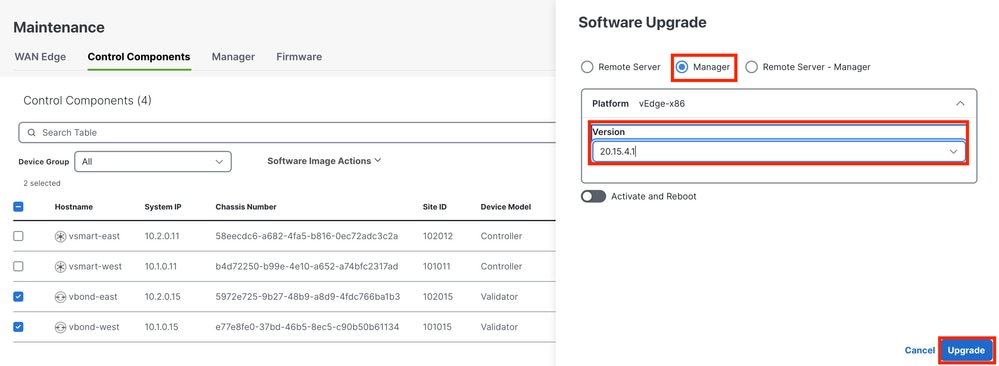

步驟A.安裝

在此步驟中,vManage將新軟體傳送到vBond並安裝新映像。

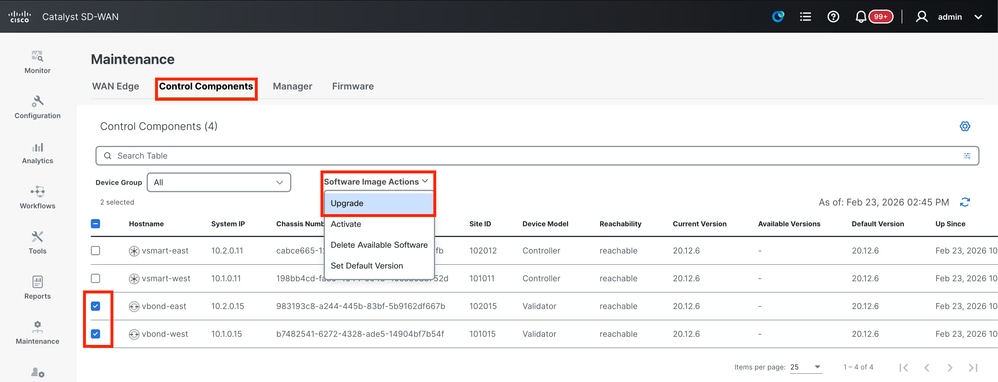

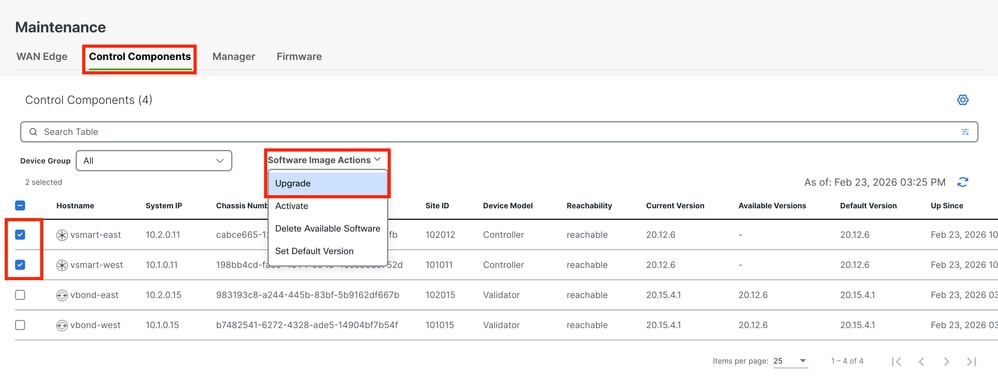

導覽至Maintenance > Software Upgrade > Control Components,然後按一下Upgrade。

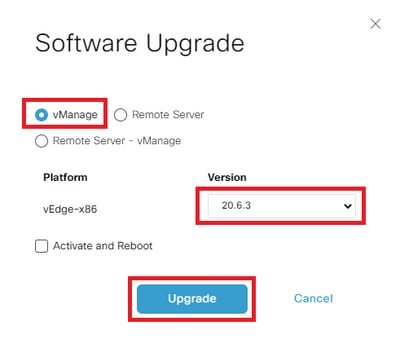

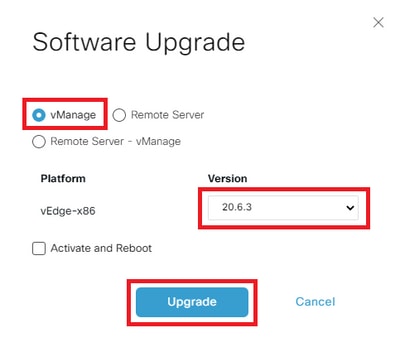

在「Software Upgrade(軟體升級)」彈出視窗中,執行以下操作:

- 選擇Manage 頁籤。

- 從版本下拉選單中選擇要升級到的映像版本。

- 按一下「Upgrade」。

附註:此過程不執行vBond的重新引導,僅傳輸、解壓縮和建立升級所需的目錄。

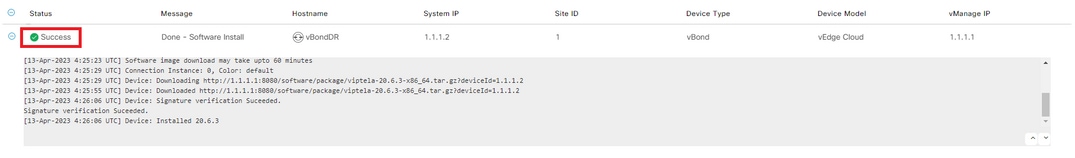

驗證任務的狀態,直到它顯示為成功。

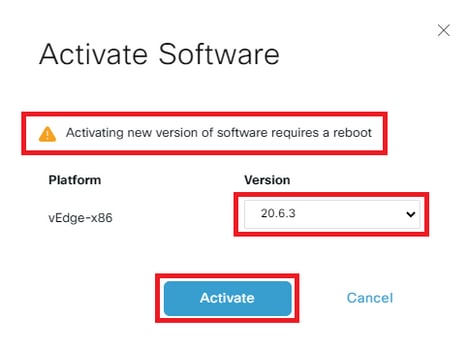

步驟B.啟用

在此步驟中,vBond啟用了新安裝的軟體版本,並重新啟動自己以使用新軟體啟動。

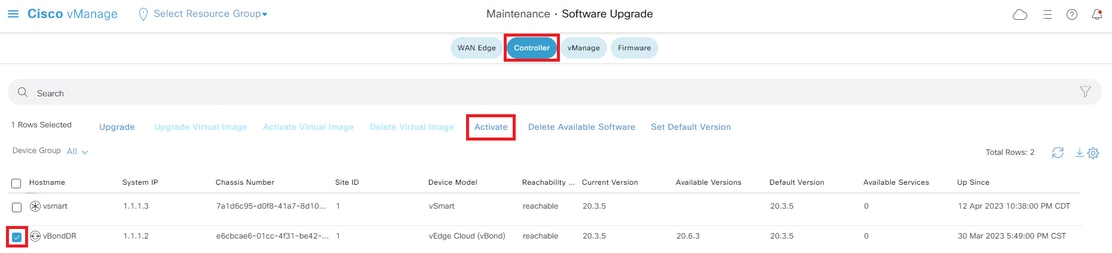

導覽至Maintenance>Software Upgrade>Control Components,然後按一下Activate。

選擇新版本,然後按一下Activate。

附註:此過程需要重新啟動vBond。完成啟用可能需要長達30分鐘。

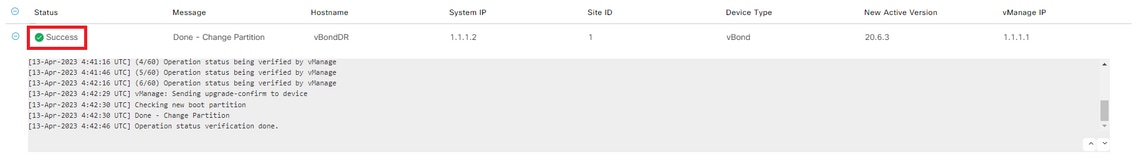

驗證任務的狀態,直到它顯示為成功。

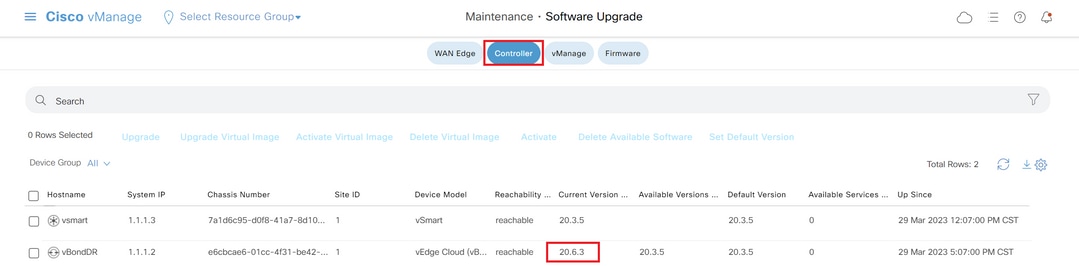

完成該過程後,導航到維護>軟體升級>控制元件以驗證新版本是否已啟用。

可選步驟。啟用並重新啟動新的軟體映像

附註:此步驟是可選的。您可以在安裝過程中選中Activate and Reboot選項框。使用此過程安裝並啟用新的升級軟體版本。

步驟C.設定預設軟體版本

您可以將軟體映像設定為Cisco SD-WAN裝置上的預設映像。在驗證軟體在裝置和網路中按需要運行後,建議將新映像設定為預設值。

如果在裝置上執行出廠重置,則裝置會使用預設設定的映像來啟動。

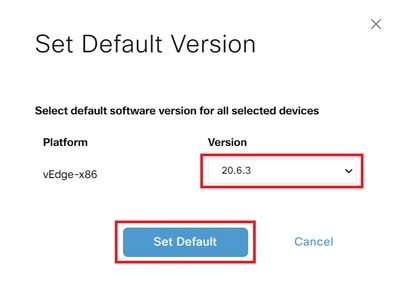

要將軟體映像設定為預設映像,請執行以下操作:

- 導覽至Maintenance>Software Upgrade>Control Components > Software Image Actions。

- 按一下「設定默認版本」(Set Default Version),從下拉選單中選擇新版本,然後單擊「設定預設值」(Set Default)。

附註:此過程不執行vBond的重新引導。

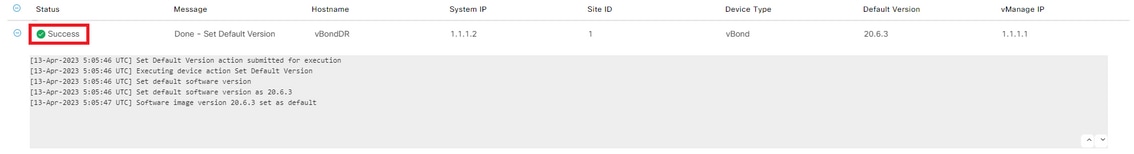

驗證任務的狀態,直到它顯示為成功。

要驗證預設版本,請導航至Maintenance > Software Upgrade > Control Components。

vSmart

vSmart(控制器)升級指南

- 思科建議先升級50%的vSmart控制器。初始升級後,請監控系統以確保至少24小時的穩定性。如果未發現任何問題,請繼續升級其餘的vSmart控制器。

- 要升級vSmart(控制器),思科建議使用vManage GUI而不是CLI。

注意:vManage GUI提供了更簡化且使用者友好的升級過程。

升級vSmart控制器之前,請確保滿足前提條件,以便在升級後保持無縫的資料平面操作。

前提條件

步驟1.從儀表板收集參考螢幕截圖

- 對於vSmart控制器,請導覽至Monitor > Overview > Controller > System Status以捕獲CPU和記憶體利用率。

- 從vManage上的所有vSmarts收集show omp summary即時命令。

步驟2.檢查OMP對等體狀態通知

- 檢視事件或警報控制面板,獲取指示OMP對等體狀態關閉狀態的任何通知。

- 為此,請導航至Monitor > Logs > Alarms/Events,並在繼續升級之前查詢任何相關警報。

步驟3.備份vSmart配置

- 收集所有vSmart控制器的運行配置,包括當前vSmart策略。

- 將這些配置儲存為備份,以確保可以在升級後根據需要恢復設定。

步驟4.檢查磁碟空間

在開始升級之前,請檢視vSmart上的磁碟使用情況,以確保有足夠的可用空間。特別注意處於或接近滿容量的分割槽,例如,以下輸出中的

/var/volatile/log/tmplog,當前為100%。根據需要解決任何儲存問題,以避免升級失敗或運營中斷。vSmart# df -h

Filesystem Size Used Avail Use% Mounted on

none 7.6G 4.0K 7.6G 1% /dev

/dev/nvme0n1p1 7.9G 1.8G 6.0G 23% /boot

/dev/loop0 139M 139M 0 100% /rootfs.ro

/dev/nvme1n1 20G 7.6G 12G 41% /rootfs.rw

aufs 20G 7.6G 12G 41% /

tmpfs 7.6G 728K 7.6G 1% /run

shm 7.6G 16K 7.6G 1% /dev/shm

tmp 1.0G 16K 1.0G 1% /tmp

tmplog 120M 120M 0 100% /var/volatile/log/tmplog

svtmp 2.0M 1.2M 876K 58% /etc/sv

vSmart#步驟5.監控vSmart資源利用率

- 檢查vSmart控制器上的CPU和記憶體使用情況。

- 在vshell中,運行topandfree -m 命令以收集當前資源統計資訊

vSmart# vshell

vSmart~$top

vSmart~$ free -m步驟6.檢驗所有vSmarts上的OMP和控制狀態

- 對所有vSmarts執行OMP和控制驗證以建立基準,然後進行更改。

- Runshow omp摘要並記錄輸出作為原始狀態的引用,以便在升級後進行比較。

- 使用控制摘要和顯示控制連線來確認到邊緣、其他vSmarts、vBonds和vManage的控制連線數量是否正確。

- Runshow control local-properties以瞭解其他控制平面詳細資訊。

- Executeshow system status以確保每個vSmart都具有「vManaged」狀態架構,並且「Configuration template」未設置為None。

步驟7.驗證邊緣裝置示例

從不同的站點清單選擇10到15台裝置的示例,並執行檢查:

- 運行show sdwan omp以確保每個裝置都與所有預期的vSmarts具有活動OMP對等功能。

- Executeshow sdwan omp summary以檢視OMP總體狀態。

- 使用sdwan bfd會話捕獲活動BFD會話數量及其正常運行時間。

思科建議的配置設定

雖然並非升級所必需的,但思科強烈建議採用這些配置最佳實踐,以確保最佳操作。

OMP保持計時器

- 對於軟體版本20.12.1及更高版本,請將OMP保持計時器配置為300秒。

- 對於版本為20.12.1,請將OMP保持計時器設置為60秒。

OMP平滑重啟計時器

- 12小時(43,200秒)的預設計時器通常足以在臨時vSmart中斷期間維護資料平面隧道。

IPsec重新生成金鑰計時器

- 為了在OMP關閉時防止IPsec重新生成金鑰,請將IPsec重新生成金鑰計時器配置為OMP平滑重新啟動計時器值的兩倍。

這些設定有助於增強網路穩定性,並最大程度地減少計畫內或計畫外停機期間的中斷。

僅當所有驗證成功時,才繼續執行下一步。

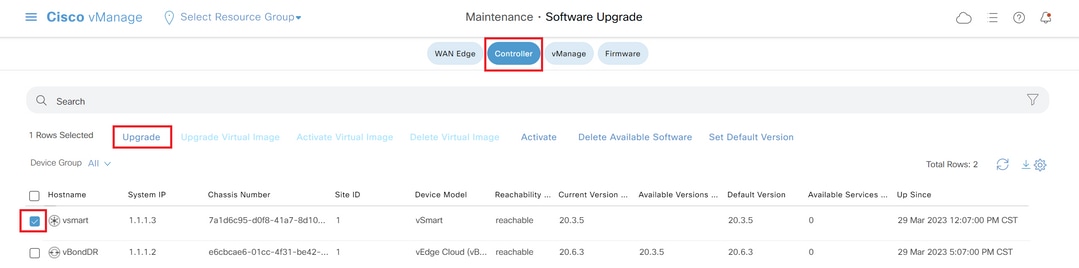

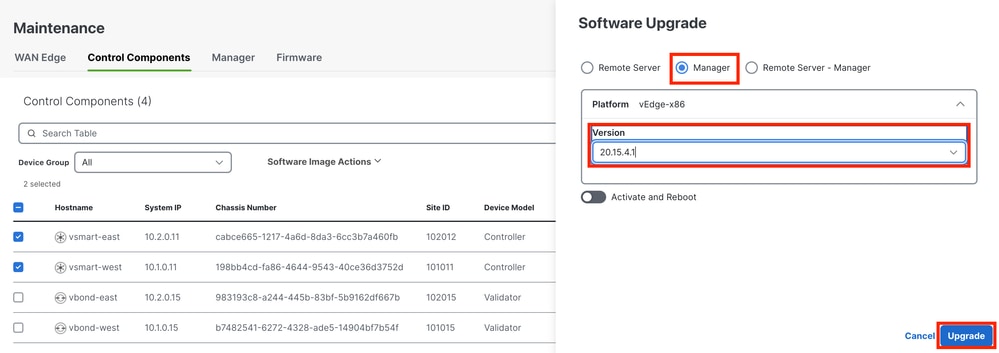

步驟A.安裝

在此步驟中,vManagesends the new software to vSmart and install the new image。

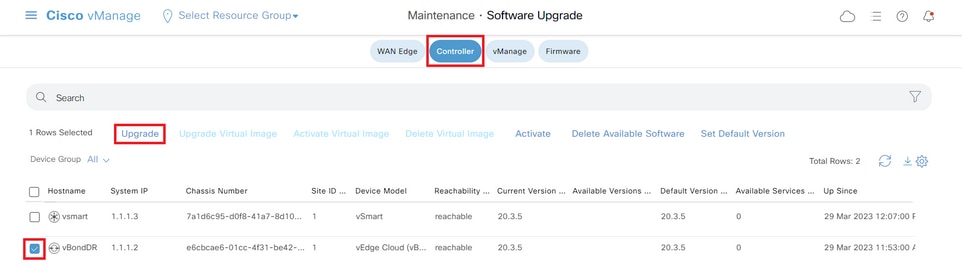

導覽至Maintenance>Software Upgrade>Controller,然後按一下Upgrade。

在「Software Upgrade(軟體升級)」彈出視窗中,執行以下操作:

- 選擇vManage 頁籤。

- 從版本下拉選單中選擇要升級到的映像版本。

- 按一下「Upgrade」。

附註:此過程不執行vSmart的重新啟動,只傳輸、解壓縮和建立升級所需的目錄。

驗證任務的狀態,直到它顯示為成功。

步驟B.啟用

在此步驟中,vSmart啟用新安裝的軟體版本,並重新啟動自己以使用新軟體進行啟動。

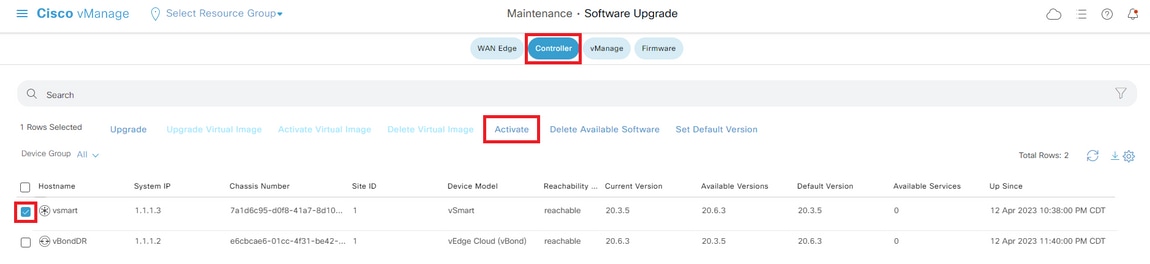

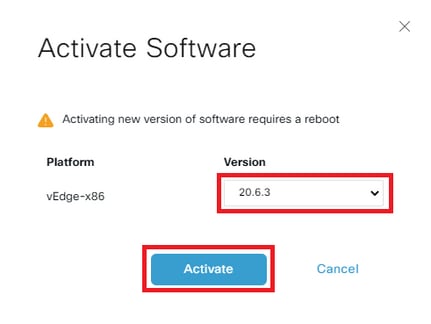

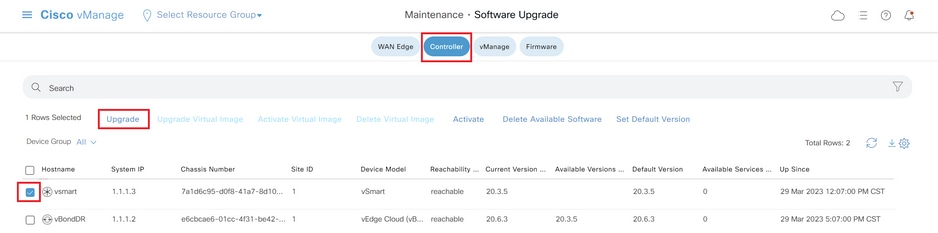

導覽至Maintenance>Software Upgrade>Controller,然後按一下Activate。

選擇新版本,然後按一下Activate。

附註:此過程需要重新啟動vSmart。完成啟用最多需要30分鐘。

驗證任務的狀態,直到它顯示為成功。

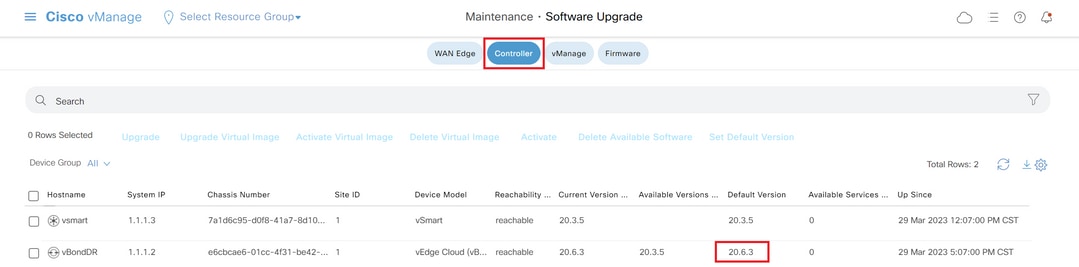

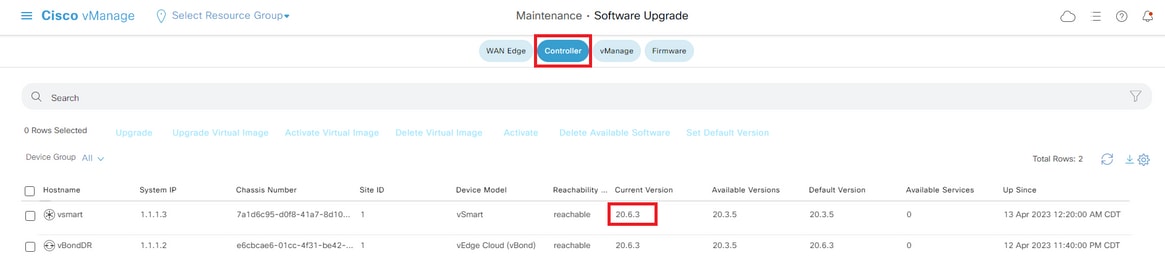

程式完成後,請導覽至Maintenance > Software Upgrade > Controller,確認是否已啟用新版本。

可選步驟。啟用並重新啟動新的軟體映像

附註:此步驟是可選的。您可以在安裝過程中選中Activate and Reboot選項框。使用此過程安裝並啟用新的升級軟體版本。

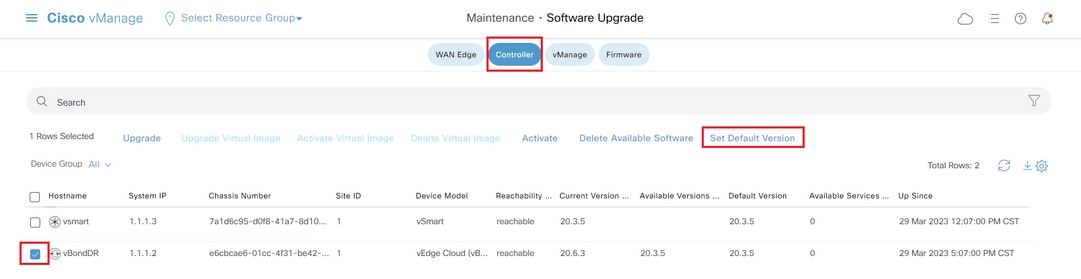

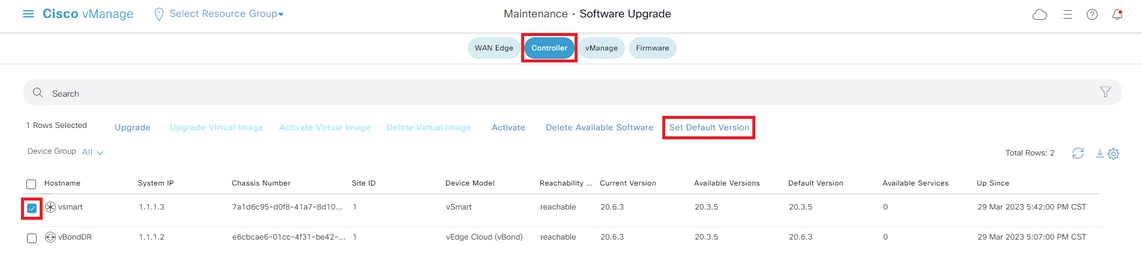

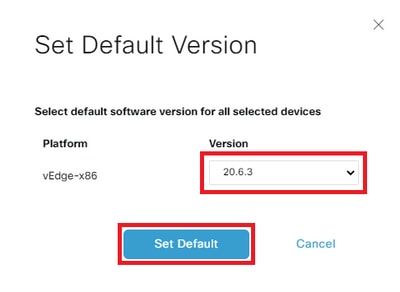

步驟C.設定預設軟體版本

您可以將軟體映像設定為Cisco SD-WAN裝置上的預設映像。在驗證軟體在裝置和網路中按需要運行後,建議將新映像設定為預設值。

如果在裝置上執行出廠重置,則裝置會使用預設設定的映像來啟動。

要將軟體映像設定為預設映像,請執行以下操作:

- 導覽至Maintenance>Software Upgrade>Controller。

- 按一下「設定默認版本」(Set Default Version),從下拉選單中選擇新版本,然後單擊「設定預設值」(Set Default)。

附註:此過程不執行vSmart的重新引導。

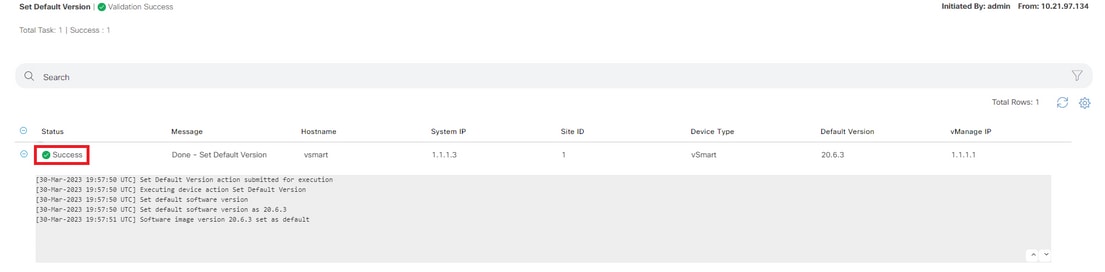

驗證任務的狀態,直到它顯示為成功。

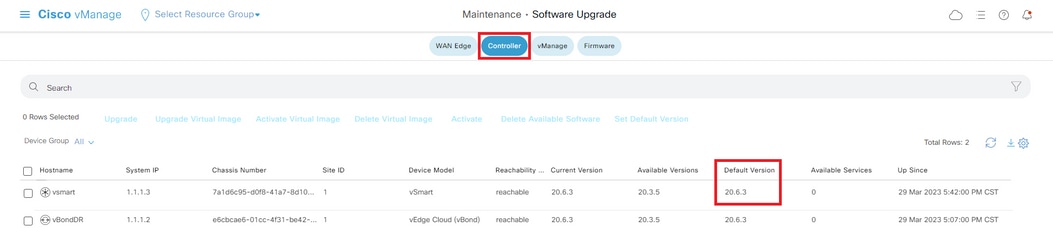

若要驗證預設版本,請導覽至Maintenance > Software Upgrade > Controller。

通過CLI升級SD-WAN控制器

步驟1.安裝

安裝映像有兩個選項:

選項 1:使用HTTP、FTP或TFTP從CLI。

要在CLI中安裝軟體映像,請執行以下操作:

-

配置時間限制以確認軟體升級成功。時間可以是1到60分鐘。

Viptela# system upgrade-confirm minutes - 安裝軟體:

Viptela#request software install url/vmanage-20.15.4.1-x86_64.tar.gz [reboot]使用以下方法之一指定影象位置:

- 映像檔案位於本地伺服器上:

/directory-path/

您可以使用CLI上的自動完成功能完成路徑和檔名。

- 映像檔案位於FTP伺服器上。

ftp://hostname/

- 映像檔案位於HTTP伺服器上。

http://hostname/

- 映像檔案位於TFTP伺服器上。

tftp://hostname/

或者,指定伺服器所在的VPN識別符號。

Threbootoption啟用新的軟體映像,並在安裝完成後重新啟動裝置。

3.如果步驟2中未包括reboot選項,請啟用新的軟體映像,此操作會自動執行例項的重新啟動,以將新版本啟動。

Viptela#request software activate4.在配置的升級確認時間限制(預設為12分鐘)內,確認軟體安裝成功:

Viptela#request software upgrade-confirm如果您在此時限內未發出此命令,則裝置會自動恢復至先前的軟體映像。

選項 2:在vManage GUI上

此步驟可幫助您將映像上傳到vManage儲存庫。

導覽至Software Download,並下載vManage的軟體版本映像。

導覽至Software Download,然後下載vBond和vSmart的軟體版本映像。

若要上傳新映像,在主選單上,導航到Maintenance > Software Repository > Software Images,按一下Add New Software,然後在拖放選項上選擇vManage。

選擇映像,然後按一下Upload。

要驗證映像是否可用,請導航至軟體儲存庫 > 軟體映像。

附註:此程式需對所有控制器完成。

vManage:

按一下「Upgrade」。

vBond:

按一下「Upgrade」。

vSmart:

按一下「Upgrade」。

在「Software Upgrade(軟體升級)」彈出視窗中,執行以下操作:

- 選擇vManage頁籤。

- 從版本下拉選單中選擇要升級到的映像版本。

- 按一下「Upgrade」。

對於vManage:

對於vBond和vSmart:

步驟2.啟用

安裝完成後,確認控制器中安裝的軟體映像。

vmanage# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

---------------------------------------------------------------------------

20.12.6 true true - - 2023-02-01T22:25:24-00:00

20.15.4.1 false false false - -vbond# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

--------------------------------------------------------------------------

20.12.6 true true - - 2022-10-01T00:30:40-00:00

20.15.4.1 false false false - -vsmart# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

--------------------------------------------------------------------------

20.12.6 true true - - 2022-10-01T00:31:34-00:00

20.15.4.1 false false false - -附註:要啟用影象,請在控制器中發出下一個命令(Controller by Controller、1st vManage、2nd vBond、3rd vSmart)。 如果是vManage群集,則必須在群集中的所有vManage節點上一起啟用軟體。

vmanage# request software activate ?

Description: Display software versions

Possible completions:

20.12.6

20.15.4.1

clean Clean activation

now Activate software version

vmanage# request software activate 20.15.4.1

This will reboot the node with the activated version.

Are you sure you want to proceed? [yes,NO] yes

Broadcast message from root@vmanage (console) (Tue Feb 28 01:01:04 2023):

Tue Feb 28 01:01:04 UTC 2023: The system is going down for reboot NOW!vbond# request software activate ?

Description: Display software versions

Possible completions:

20.12.6

20.15.4.1

clean Clean activation

now Activate software version

vbond# request software activate 20.15.4.1

This will reboot the node with the activated version.

Are you sure you want to proceed? [yes,NO] yes

Broadcast message from root@vbond (console) (Tue Feb 28 01:05:59 2023):

Tue Feb 28 01:05:59 UTC 2023: The system is going down for reboot NOWvsmart# request software activate ?

Description: Display software versions

Possible completions:

20.12.6

20.15.4.1

clean Clean activation

now Activate software version

vsmart# request software activate 20.15.4.1

This will reboot the node with the activated version.

Are you sure you want to proceed? [yes,NO] yes

Broadcast message from root@vsmart (console) (Tue Feb 28 01:13:44 2023):

Tue Feb 28 01:13:44 UTC 2023: The system is going down for reboot NOW!附註:控制器啟用新映像並重新啟動自己。

若要確認是否已啟用新軟體版本,請發出下一個命令:

vmanage# show version

20.15.4.1

vmanage# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

---------------------------------------------------------------------------

20.12.6 false true true - 2023-02-01T22:25:24-00:00

20.15.4.1 true false false auto 2023-02-28T01:05:14-00:00vbond# show version

20.15.4.1

vbond# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

--------------------------------------------------------------------------

20.12.6 false true true - 2022-10-01T00:30:40-00:00

20.15.4.1 true false false - 2023-02-28T01:09:05-00:00vsmart# show version

20.15.4.1

vsmart# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

--------------------------------------------------------------------------

20.12.6 false true true - 2022-10-01T00:31:34-00:00

20.15.4.1 true false false - 2023-02-28T01:16:36-00:00步驟3.設定預設軟體版本

您可以將軟體映像設定為Cisco SD-WAN裝置上的預設映像。在驗證軟體在裝置和網路中按需要運行後,建議將新映像設定為預設值。

如果在裝置上執行出廠重置,則裝置會使用預設設定的映像來啟動。

附註:建議將新版本設定為預設值,因為如果vManage重新啟動,將啟動舊版本。這可能導致資料庫損壞。版本從主版本降級為舊版本,vManage中不支援該版本。

附註:此程式不執行控制器的重新引導。

若要將軟體版本設定為預設值,請在控制器中發出下一個命令:

vmanage# request software set-default ?

Possible completions:

20.12.6

20.15.4.1

cancel Cancel this operation

start-at Schedule start.

| Output modifiers

<cr>

vmanage# request software set-default 20.15.4.1

status mkdefault 20.15.4.1: successfulvbond# request software set-default ?

Possible completions:

20.12.6

20.15.4.1

cancel Cancel this operation

start-at Schedule start.

| Output modifiers

<cr>

vbond# request software set-default 20.15.4.1

status mkdefault 20.15.4.1: successfulvsmart# request software set-default ?

Possible completions:

20.12.6

20.15.4.1

cancel Cancel this operation

start-at Schedule start.

| Output modifiers

<cr>

vsmart# request software set-default 20.15.4.1

status mkdefault 20.15.4.1: successful若要確認控制器上是否已設定新的預設版本,請發出下一個命令:

vmanage# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

---------------------------------------------------------------------------

20.12.6 false false true - 2023-02-01T22:25:24-00:00

20.15.4.1 true true false auto 2023-02-28T01:05:14-00:00vbond# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

--------------------------------------------------------------------------

20.12.6 false false true - 2022-10-01T00:30:40-00:00

20.15.4.1 true true false - 2023-02-28T01:09:05-00:00vsmart# show software

VERSION ACTIVE DEFAULT PREVIOUS CONFIRMED TIMESTAMP

--------------------------------------------------------------------------

20.12.6 false false true - 2022-10-01T00:31:34-00:00

20.15.4.1 true true false - 2023-02-28T01:16:36-00:00升級啟用災難恢復的vManage/vManage集群

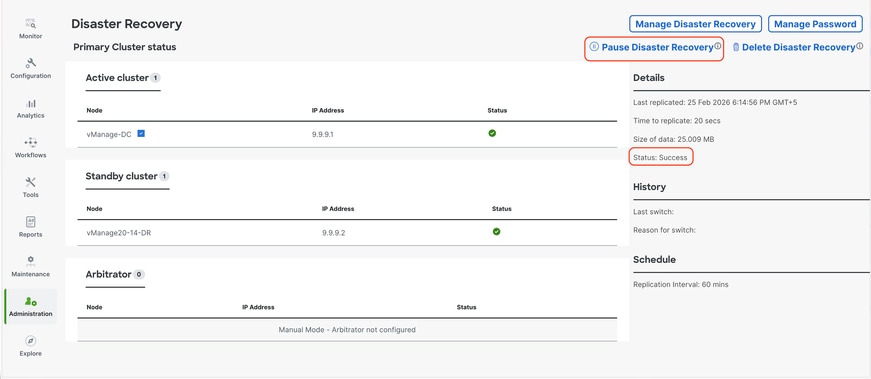

對於啟用了災難恢復的vManage或vManage群集,

確認沒有正在進行的災難恢復複製。導航到Administration -> Disaster Recovery(管理 — >災難恢復),確保狀態Success(成功)且未處於暫時狀態,如Import Pending(匯入掛起)、Export Pending(匯出掛起)或Download Pending(下載掛起)。暫停當前活動vManage上的災難恢復非常重要。

如果狀態不成功,請等待狀態顯示為「成功」。 如果它在某個其他狀態停留的時間更長(超過1小時,具體取決於複製間隔設定),請聯絡Cisco TAC並確保複製成功,然後繼續暫停災難恢復。

首先暫停災難恢復,並確保任務成功。然後按照上述步驟繼續升級活動vManage。

注意:對於獨立活動vManage,我們可以使用vManage UI安裝和啟用新軟體。對於Active vManage集群,建議使用vManage UI安裝軟體,並使用vManage CLI使用請求軟體activate < >啟用軟體,如以下通過CLI升級SD-WAN控制器一節所述。

對於備用vManage/vManage群集,我們需要使用vManage節點的CLI安裝並啟用軟體。

-

升級後驗證檢查

-

驗證軟體版本:確認所有控制器都在運行預期的軟體版本。

-

檢查SD-WAN管理器服務:確保SD-WAN Manager例項上的所有服務均可操作。

-

驗證控制器之間的控制連線:驗證所有控制器之間的控制連線是否建立且穩定。

-

確認策略啟用:驗證SD-WAN管理器上是否已啟用該策略。

-

選中Control Connection Distribution: 確保控制連線正確分配到所有SD-WAN Manager節點。導覽至Monitor > Network,然後審閱Controlcolumn。

-

站點級別的升級後測試:在執行升級前檢查的所有站點上執行下列檢查:

-

控制連線和BFD會話:

show sd-wan control connections

show sd-wan bfd sessions -

路由驗證:

show ip route

show ip route vrf <vrf_id>

show sd-wan omp routes vpn <vpn_id> -

資料中心可達性:驗證與資料中心服務的連線。

-

模板同步:確認升級後裝置上的裝置模板已連線並同步。

-

來自控制器的策略驗證:

-

show sd-wan policy from-controller

-

使用者驗收測試:在遷移的站點上執行使用者測試以驗證應用程式功能

-

回滾計畫

vBond和vSmart回滾計畫

如果在升級後發現驗證器(vBond)或控制器(vSmart)存在任何意外問題,請通過啟用受影響裝置上的舊映像來恢復到以前的軟體版本。

vSmart# request software activate <older image version>

vBond# request software activate <older image version>vManage回滾計畫

如果在升級後發生SD-WAN Manager(vManage)的意外問題,請使用升級前拍攝的快照恢復系統。

注意:vManage不支援通過CLI降級到以前的版本。

疑難排解

1.如果GUI在啟用後長時間關閉,且再也不能訪問,則以下輸出有助於找出根本原因:

vmanage# request nms application-server status

NMS application server

Enabled: true <<<<<<<<<<< "false"

Status: running PID:26470 for 22279s <<<<<<<<<< "not running"如果app-server狀態顯示Enabled為false,且Status未運行,則可以發出下一個命令以恢復GUI:

vmanage# request nms application-server restart

2.要驗證所有nms服務的狀態,可以發出下一個命令:

vmanage# request nms all status

NMS service proxy

Enabled: true

Status: running PID:30888 for 819s

NMS service proxy rate limit

Enabled: true

Status: running PID:32029 for 812s

NMS application server

Enabled: true

Status: running PID:30834 for 819s

NMS configuration database

Enabled: true

Status: running PID:28321 for 825s

Native metrics status: ENABLED

Server-load metrics status: ENABLED

NMS coordination server

Enabled: true

Status: running PID:16814 for 535s

NMS messaging server

Enabled: true

Status: running PID:32561 for 799s

NMS statistics database

Enabled: false

Status: not running

NMS data collection agent

Enabled: true

Status: running PID:31051 for 824s

NMS CloudAgent v2

Enabled: true

Status: running PID:31902 for 817s

NMS cloud agent

Enabled: true

Status: running PID:18517 for 1183s

NMS SDAVC server

Enabled: false

Status: not running

NMS SDAVC gateway

Enabled: false

Status: not running

vManage Device Data Collector

Enabled: true

Status: running PID:3709 for 767s

NMS OLAP database

Enabled: true

Status: running PID:18167 for 521s

vManage Reporting

Enabled: true

Status: running PID:30015 for 827s3.要驗證TCP握手是否已完成,請發出下一個命令:

vmanage# request nms all diagnostics NMS service server Pinging vManage node on localhost ... Starting Nping 0.7.80 ( https://nmap.org/nping ) at 2026-02-24 06:17 UTC SENT (0.0014s) Starting TCP Handshake > localhost:8443 (127.0.0.1:8443) RCVD (0.0014s) Handshake with localhost:8443 (127.0.0.1:8443) completed SENT (1.0025s) Starting TCP Handshake > localhost:8443 (127.0.0.1:8443) RCVD (1.0025s) Handshake with localhost:8443 (127.0.0.1:8443) completed SENT (2.0036s) Starting TCP Handshake > localhost:8443 (127.0.0.1:8443) RCVD (2.0036s) Handshake with localhost:8443 (127.0.0.1:8443) completed Max rtt: 0.012ms | Min rtt: 0.010ms | Avg rtt: 0.010ms TCP connection attempts: 3 | Successful connections: 3 | Failed: 0 (0.00%) Nping done: 1 IP address pinged in 2.00 seconds Server network connections -------------------------- tcp6 0 0 127.0.0.1:8443 127.0.0.1:43682 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43892 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 169.254.1.1:8443 169.254.1.8:52962 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43738 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43738 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43828 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43836 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43866 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:52020 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43828 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43896 ESTABLISHED 31081/envoy tcp6 0 0 169.254.1.1:8443 169.254.1.8:51382 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43726 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43810 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43756 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43748 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 169.254.0.254:8443 151.186.182.23:35154 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43898 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43860 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:56308 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:52028 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43756 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43712 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43834 ESTABLISHED 31081/envoy tcp6 0 0 169.254.0.254:8443 151.186.182.23:52168 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43810 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43836 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43852 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 169.254.0.254:8443 151.186.182.23:53030 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43898 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43892 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:52028 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:44096 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43896 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43866 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43730 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43860 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43878 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43772 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:52020 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:56308 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43874 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43772 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43826 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:52038 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43754 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43726 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43782 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43862 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:43834 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 169.254.1.1:8443 169.254.1.8:52964 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:44096 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43754 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43874 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43712 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43794 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43696 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43696 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 169.254.1.1:8443 169.254.1.8:52978 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43748 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43730 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43852 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43878 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43826 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:43682 127.0.0.1:8443 ESTABLISHED 30944/java tcp6 0 0 127.0.0.1:8443 127.0.0.1:43794 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43862 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:52038 ESTABLISHED 31081/envoy tcp6 0 0 127.0.0.1:8443 127.0.0.1:43782 ESTABLISHED 31081/envoy NMS application server Sending ICMP Echo to vManage on localhost ... PING localhost.localdomain (127.0.0.1) 56(84) bytes of data. 64 bytes from localhost.localdomain (127.0.0.1): icmp_seq=1 ttl=64 time=0.022 ms 64 bytes from localhost.localdomain (127.0.0.1): icmp_seq=2 ttl=64 time=0.030 ms 64 bytes from localhost.localdomain (127.0.0.1): icmp_seq=3 ttl=64 time=0.027 ms --- localhost.localdomain ping statistics --- 3 packets transmitted, 3 received, 0% packet loss, time 2034ms rtt min/avg/max/mdev = 0.022/0.026/0.030/0.003 ms Pinging vManage node on localhost ... Starting Nping 0.7.80 ( https://nmap.org/nping ) at 2026-02-24 06:17 UTC SENT (0.0015s) Starting TCP Handshake > localhost:8443 (127.0.0.1:8443) RCVD (0.0015s) Handshake with localhost:8443 (127.0.0.1:8443) completed SENT (1.0026s) Starting TCP Handshake > localhost:8443 (127.0.0.1:8443) RCVD (1.0026s) Handshake with localhost:8443 (127.0.0.1:8443) completed SENT (2.0037s) Starting TCP Handshake > localhost:8443 (127.0.0.1:8443) RCVD (2.0037s) Handshake with localhost:8443 (127.0.0.1:8443) completed Max rtt: 0.012ms | Min rtt: 0.009ms | Avg rtt: 0.010ms TCP connection attempts: 3 | Successful connections: 3 | Failed: 0 (0.00%) Nping done: 1 IP address pinged in 2.00 seconds Disk I/O statistics for vManage storage --------------------------------------- avg-cpu: %user %nice %system %iowait %steal %idle 1.63 0.00 0.37 0.06 0.00 97.93 Device tps kB_read/s kB_wrtn/s kB_dscd/s kB_read kB_wrtn kB_dscd nvme1n1 24.49 74.59 913.44 0.00 2717198 33273456 0 NMS configuration database Checking cluster connectivity for ports 7687,7474 ... Pinging vManage node 0 on 169.254.1.5:7687,7474... Starting Nping 0.7.80 ( https://nmap.org/nping ) at 2026-02-24 06:17 UTC SENT (0.0013s) Starting TCP Handshake > 169.254.1.5:7474 RCVD (0.0013s) Handshake with 169.254.1.5:7474 completed SENT (1.0024s) Starting TCP Handshake > 169.254.1.5:7687 RCVD (1.0024s) Handshake with 169.254.1.5:7687 completed SENT (2.0035s) Starting TCP Handshake > 169.254.1.5:7474 RCVD (2.0035s) Handshake with 169.254.1.5:7474 completed SENT (3.0046s) Starting TCP Handshake > 169.254.1.5:7687 RCVD (3.0046s) Handshake with 169.254.1.5:7687 completed SENT (4.0057s) Starting TCP Handshake > 169.254.1.5:7474 RCVD (4.0058s) Handshake with 169.254.1.5:7474 completed SENT (5.0069s) Starting TCP Handshake > 169.254.1.5:7687 RCVD (5.0069s) Handshake with 169.254.1.5:7687 completed Max rtt: 0.021ms | Min rtt: 0.010ms | Avg rtt: 0.013ms TCP connection attempts: 6 | Successful connections: 6 | Failed: 0 (0.00%) Nping done: 1 IP address pinged in 5.01 seconds Server network connections -------------------------- tcp 0 0 169.254.1.5:7687 169.254.1.1:59650 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.1:49998 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.13:55794 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.1:35374 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.1:40100 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.1:52748 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.1:35380 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.1:40618 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.1:59658 ESTABLISHED 30148/java tcp 0 0 169.254.1.5:7687 169.254.1.13:55782 ESTABLISHED 30148/java Connecting to localhost... +------------------------------------------------------------------------------------+ | type | row | attributes[row]["value"] | +------------------------------------------------------------------------------------+ | "StoreSizes" | "TotalStoreSize" | 156365978 | | "PageCache" | "Flush" | 68694 | | "PageCache" | "EvictionExceptions" | 0 | | "PageCache" | "UsageRatio" | 0.1795189950980392 | | "PageCache" | "Eviction" | 3186 | | "PageCache" | "HitRatio" | 1.0 | | "ID Allocations" | "NumberOfRelationshipIdsInUse" | 8791 | | "ID Allocations" | "NumberOfPropertyIdsInUse" | 47067 | | "ID Allocations" | "NumberOfNodeIdsInUse" | 4450 | | "ID Allocations" | "NumberOfRelationshipTypeIdsInUse" | 77 | | "Transactions" | "LastCommittedTxId" | 26470 | | "Transactions" | "NumberOfOpenTransactions" | 1 | | "Transactions" | "NumberOfOpenedTransactions" | 109412 | | "Transactions" | "PeakNumberOfConcurrentTransactions" | 10 | | "Transactions" | "NumberOfCommittedTransactions" | 106913 | +------------------------------------------------------------------------------------+ 15 rows ready to start consuming query after 126 ms, results consumed after another 2 ms Completed Connecting to localhost... Displaying the Neo4j Cluster Status +---------------------------------------------------------------------------------------------------------------------------------+ | name | aliases | access | address | role | requestedStatus | currentStatus | error | default | home | +---------------------------------------------------------------------------------------------------------------------------------+ | "neo4j" | [] | "read-write" | "localhost:7687" | "standalone" | "online" | "online" | "" | TRUE | TRUE | | "system" | [] | "read-write" | "localhost:7687" | "standalone" | "online" | "online" | "" | FALSE | FALSE | +---------------------------------------------------------------------------------------------------------------------------------+ 2 rows ready to start consuming query after 3 ms, results consumed after another 1 ms Completed Total disk space used by configuration-db: 63M . Detailed disk space usage of configuration-db: 0 database_lock 8.0K neostore 48K neostore.counts.db 1.8M neostore.indexstats.db 48K neostore.labelscanstore.db 8.0K neostore.labeltokenstore.db 40K neostore.labeltokenstore.db.id 32K neostore.labeltokenstore.db.names 40K neostore.labeltokenstore.db.names.id 72K neostore.nodestore.db 48K neostore.nodestore.db.id 8.0K neostore.nodestore.db.labels 40K neostore.nodestore.db.labels.id 1.9M neostore.propertystore.db 312K neostore.propertystore.db.arrays 48K neostore.propertystore.db.arrays.id 72K neostore.propertystore.db.id 8.0K neostore.propertystore.db.index 48K neostore.propertystore.db.index.id 32K neostore.propertystore.db.index.keys 40K neostore.propertystore.db.index.keys.id 4.2M neostore.propertystore.db.strings 104K neostore.propertystore.db.strings.id 16K neostore.relationshipgroupstore.db 48K neostore.relationshipgroupstore.db.id 48K neostore.relationshipgroupstore.degrees.db 296K neostore.relationshipstore.db 48K neostore.relationshipstore.db.id 48K neostore.relationshiptypescanstore.db 8.0K neostore.relationshiptypestore.db 40K neostore.relationshiptypestore.db.id 8.0K neostore.relationshiptypestore.db.names 40K neostore.relationshiptypestore.db.names.id 16K neostore.schemastore.db 48K neostore.schemastore.db.id 11M profiles 44M schema ############################################## Running schema violation pre-check script WARNING: sun.reflect.Reflection.getCallerClass is not supported. This will impact performance. Validating Schema from the configuration-db Successfully validated configuration-db schema written to file /opt/data/containers/mounts/upgrade-coordinator/schema.json Contents of /opt/data/containers/mounts/upgrade-coordinator/schema.json: { "check_name": "Validating configuration-db admin names", "check_result": "SUCCESSFUL", "check_analysis": "Successfully validated configuration-db schema", "check_action": "" } ############################################## ############################################## Running quarantine check WARNING: sun.reflect.Reflection.getCallerClass is not supported. This will impact performance. Check if Neo4j Nodes are Quarantined None of the neo4j nodes is quarantined ############################################## ############################################## Checking High Direct Memory Usage in Neo4j High Direct Memory Usage in Neo4j not found NMS data collection agent Checking data-collection-agent status ------------------------ data-collection-agent container exists Checking Data collection agent processes status ------------------------ Data collection agent parent processs ID 12 Data collection agent process ID 104 Data collection bulk process ID 97 Data collection rest process ID 98 Data collection monitor process ID 99 Checking vmanage access ------------------------ Successfully logged into vmanage. Checking DCS Push Status ------------------------ vAnalytics not enabled. NMS coordination server Checking cluster connectivity for ports 2181 ... Pinging vManage node 0 on 169.254.1.4:2181... Starting Nping 0.7.80 ( https://nmap.org/nping ) at 2026-02-24 06:18 UTC SENT (0.0014s) Starting TCP Handshake > 169.254.1.4:2181 RCVD (0.0014s) Handshake with 169.254.1.4:2181 completed SENT (1.0025s) Starting TCP Handshake > 169.254.1.4:2181 RCVD (1.0025s) Handshake with 169.254.1.4:2181 completed SENT (2.0036s) Starting TCP Handshake > 169.254.1.4:2181 RCVD (2.0036s) Handshake with 169.254.1.4:2181 completed Max rtt: 0.012ms | Min rtt: 0.010ms | Avg rtt: 0.010ms TCP connection attempts: 3 | Successful connections: 3 | Failed: 0 (0.00%) Nping done: 1 IP address pinged in 2.00 seconds Server network connections -------------------------- tcp 0 0 169.254.1.4:2181 169.254.1.1:56716 ESTABLISHED 16814/java NMS container manager Checking container-manager status Listing all images ------------------------ REPOSITORY TAG IMAGE ID CREATED SIZE sdwan/host-agent 1.0.1 ca71fd3fe4a2 5 months ago 131MB sdwan/cluster-oracle 1.0.1 8ef918482315 5 months ago 294MB sdwan/data-collection-agent 1.0.1 4bf055257027 5 months ago 157MB sdwan/application-server 19.1.0 6a9624dc3125 5 months ago 508MB sdwan/configuration-db 4.4.38 700fe6e56199 5 months ago 472MB sdwan/coordination-server 3.7.1 a04198d518b3 5 months ago 606MB sdwan/olap-db 23.3.13.6 a17712731d5f 5 months ago 494MB sdwan/device-data-collector 1.0.0 515f2793ee43 5 months ago 116MB sdwan/service-proxy 1.27.2 5174f58b97b1 5 months ago 105MB sdwan/messaging-server 0.20.0 9560cd4b7c42 5 months ago 105MB sdwan/statistics-db 7.17.6 b9f8ab30d647 5 months ago 589MB cloudagent-v2 3358cee09e99 66063bed474e 5 months ago 458MB sdwan/upgrade-coordinator 2.0.0 969cd2f1626a 5 months ago 93.3MB sdwan/vault 1.0.1 0883c094affc 6 months ago 511MB sdwan/support-tools latest 022aebae12e6 13 months ago 143MB sdavc 4.6.0 730e83b39087 17 months ago 602MB sdavc-gw 4.6.0 84083ed484ba 18 months ago 369MB sdwan/reporting latest 509ec99584fd 19 months ago 772MB sdwan/ratelimit latest 719f624e9268 2 years ago 45.7MB Listing all containers ------------------------ CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES c676b358b12d sdwan/olap-db:23.3.13.6 "/usr/bin/docker-ini…" 10 hours ago Up 10 hours (healthy) 127.0.0.1:8123->8123/tcp olap-db 627c1dcf16fa sdwan/coordination-server:3.7.1 "/docker-entrypoint.…" 10 hours ago Up 10 hours (healthy) 127.0.0.1:2181->2181/tcp, 127.0.0.1:2888->2888/tcp, 127.0.0.1:3888->3888/tcp coordination-server 9299443ff7a1 sdwan/messaging-server:0.20.0 "/entrypoint.sh" 10 hours ago Up 10 hours (healthy) 127.0.0.1:4222->4222/tcp, 127.0.0.1:6222->6222/tcp, 127.0.0.1:8222->8222/tcp messaging-server 0c5236ee911b sdwan/ratelimit:latest "/usr/local/bin/rate…" 10 hours ago Up 10 hours (healthy) 6379/tcp, 127.0.0.1:8460-8462->8460-8462/tcp ratelimit 094166df1cd9 cloudagent-v2:3358cee09e99 "./entrypoint.sh" 10 hours ago Up 10 hours 127.0.0.1:9051-9052->9051-9052/tcp cloudagent-v2 8f1287c11840 sdwan/reporting:latest "/sbin/tini -g -- py…" 10 hours ago Up 10 hours 80/tcp, 127.0.0.1:9080->9080/tcp reporting 66a46485cfab sdwan/vault:1.0.1 "docker-entrypoint.s…" 10 hours ago Up 10 hours (healthy) 8200/tcp, 127.0.0.1:8201->8201/tcp vault ccf5336112b6 sdwan/data-collection-agent:1.0.1 "/usr/bin/docker-ini…" 10 hours ago Up 10 hours (healthy) data-collection-agent 079ecfe36482 sdwan/service-proxy:1.27.2 "/entrypoint.sh" 10 hours ago Up 10 hours (healthy) service-proxy ec1b50457302 sdwan/configuration-db:4.4.38 "/usr/bin/docker-ini…" 10 hours ago Up 10 hours (healthy) 127.0.0.1:5000->5000/tcp, 127.0.0.1:6000->6000/tcp, 127.0.0.1:6362->6362/tcp, 127.0.0.1:6372->6372/tcp, 127.0.0.1:7000->7000/tcp, 127.0.0.1:7473-7474->7473-7474/tcp, 127.0.0.1:7687-7688->7687-7688/tcp configuration-db f54ccdcf7a14 sdwan/device-data-collector:1.0.0 "/bin/sh -c /vMDDC/v…" 10 hours ago Up 10 hours (healthy) 127.0.0.1:8129->8129/tcp device-data-collector 605f986dc9f1 sdwan/application-server:19.1.0 "/sbin/tini -g -- /e…" 10 hours ago Up 10 hours (healthy) application-server 50377e02b120 sdwan/host-agent:1.0.1 "/entrypoint.sh pyth…" 10 hours ago Up 10 hours (healthy) 127.0.0.1:9099->9099/tcp host-agent ca36faf52f36 sdwan/cluster-oracle:1.0.1 "/entrypoint.sh java…" 10 hours ago Up 10 hours (healthy) 127.0.0.1:9090->9090/tcp cluster-oracle Docker info ------------------------ Client: Context: default Debug Mode: false Server: Containers: 14 Running: 14 Paused: 0 Stopped: 0 Images: 19 Server Version: 20.10.25-ce Storage Driver: overlay2 Backing Filesystem: extfs Supports d_type: true Native Overlay Diff: true userxattr: false Logging Driver: local Cgroup Driver: cgroupfs Cgroup Version: 1 Plugins: Volume: local Network: bridge host ipvlan macvlan null overlay Log: awslogs fluentd gcplogs gelf journald json-file local logentries splunk syslog Swarm: inactive Runtimes: io.containerd.runc.v2 io.containerd.runtime.v1.linux runc Default Runtime: runc Init Binary: docker-init containerd version: 1e1ea6e986c6c86565bc33d52e34b81b3e2bc71f.m runc version: v1.1.4-8-g974efd2d-dirty init version: b9f42a0-dirty Security Options: seccomp Profile: default Kernel Version: 5.15.146-yocto-standard Operating System: Linux OSType: linux Architecture: x86_64 CPUs: 16 Total Memory: 30.58GiB Name: vmanage_1 ID: GHLX:JUWP:Z7JP:J3UX:MOF7:ZY7G:MSLS:E7BI:3LKT:2WRU:K2HZ:YWL7 Docker Root Dir: /var/lib/nms/docker Debug Mode: false Registry: https://index.docker.io/v1/ Labels: Experimental: false Insecure Registries: 127.0.0.0/8 Live Restore Enabled: false WARNING: No cpu cfs quota support WARNING: No cpu cfs period support WARNING: No blkio throttle.read_bps_device support WARNING: No blkio throttle.write_bps_device support WARNING: No blkio throttle.read_iops_device support WARNING: No blkio throttle.write_iops_device support NMS SDAVC server is disabled on this vmanage node NMS Device Data Collector Checking Device Data Collector Port.... Port 8129 is reachable Current Health Status:- true Getting docker stats of Device Data Collector container .... CONTAINER ID NAME CPU % MEM USAGE / LIMIT MEM % NET I/O BLOCK I/O PIDS f54ccdcf7a14 device-data-collector 0.00% 9.773MiB / 30.58GiB 0.03% 1.85MB / 876kB 0B / 0B 21 NMS OLAP database Checking cluster connectivity for ports 9000,8123,9009 ... Pinging vManage node 0 on 169.254.1.10:9000,8123,9009... Starting Nping 0.7.80 ( https://nmap.org/nping ) at 2026-02-24 06:18 UTC SENT (0.0013s) Starting TCP Handshake > 169.254.1.10:8123 RCVD (0.0013s) Handshake with 169.254.1.10:8123 completed SENT (1.0024s) Starting TCP Handshake > 169.254.1.10:9000 RCVD (1.0024s) Handshake with 169.254.1.10:9000 completed SENT (2.0036s) Starting TCP Handshake > 169.254.1.10:9009 RCVD (2.0036s) Handshake with 169.254.1.10:9009 completed SENT (3.0047s) Starting TCP Handshake > 169.254.1.10:8123 RCVD (3.0047s) Handshake with 169.254.1.10:8123 completed SENT (4.0058s) Starting TCP Handshake > 169.254.1.10:9000 RCVD (4.0058s) Handshake with 169.254.1.10:9000 completed SENT (5.0069s) Starting TCP Handshake > 169.254.1.10:9009 RCVD (5.0070s) Handshake with 169.254.1.10:9009 completed SENT (6.0081s) Starting TCP Handshake > 169.254.1.10:8123 RCVD (6.0081s) Handshake with 169.254.1.10:8123 completed SENT (7.0092s) Starting TCP Handshake > 169.254.1.10:9000 RCVD (7.0092s) Handshake with 169.254.1.10:9000 completed SENT (8.0103s) Starting TCP Handshake > 169.254.1.10:9009 RCVD (8.0103s) Handshake with 169.254.1.10:9009 completed Max rtt: 0.014ms | Min rtt: 0.008ms | Avg rtt: 0.009ms TCP connection attempts: 9 | Successful connections: 9 | Failed: 0 (0.00%) Nping done: 1 IP address pinged in 8.01 seconds Server network connections -------------------------- tcp 0 0 169.254.1.10:8123 169.254.1.1:38848 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38736 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38864 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38826 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:32996 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38792 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38720 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38704 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38790 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38740 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38786 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38576 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38766 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38754 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38828 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38676 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38770 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38620 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:32768 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38820 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38574 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38878 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38804 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38692 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38808 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38844 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:32984 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:60970 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:60974 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:51222 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38712 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38662 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:60986 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38598 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38640 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38652 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:32982 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38572 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38630 ESTABLISHED 18258/clickhouse-se tcp 0 0 169.254.1.10:8123 169.254.1.1:38606 ESTABLISHED 18258/clickhouse-se Mode: SingleTenant ---------------------------- Node health state ---------------------------- Server status: [OK] Replica status: [OK] Database summary ---------------------------- database count: 5 table count in db(INFORMATION_SCHEMA) : 4 table count in db(backup) : 0 table count in db(default) : 61 table count in db(information_schema) : 4 table count in db(system) : 82 Tables in database default ---------------------------- - aggregated_apps_dpi_app_60min_summary_view_default - aggregated_apps_dpi_app_summary_default - aggregated_apps_dpi_site_5min_summary_view_default - aggregated_apps_dpi_site_summary_default - aggregated_apps_dpi_stats_default - aggregated_apps_dpi_summary_default - alarm_default - api_telemetry - api_telemetry_metadata - app_hosting_interface_stats_default - app_hosting_stats_default - approute_stats_default - approute_stats_routing_summary_default - approute_stats_transport_summary_default - art_stats_default - audit_log_default - bridge_interface_stats_default - bridge_mac_stats_default - cloudx_stats_default - device_configuration_default - device_events_default - device_health_stats_default - device_stats_files_default - device_system_status_stats_default - dpi_stats_default - eio_lte_stats_default - flow_log_stats_default - fwall_stats_default - interface_stats_default - ips_alert_stats_default - nwa_default - nwapending_default - nwpi_agg_metrics_default - nwpi_app_default - nwpi_domain_agg_trend_default - nwpi_domain_default - nwpi_flow_default - nwpi_flow_event_default - nwpi_flow_metric_default - nwpi_hops_of_flow_default - nwpi_routing_default - nwpi_te_default - nwpi_time_series_default - nwpi_trace_and_task_default - pagination_request_info_default - perf_mon_statistics_default - perf_mon_summary_default - perfmon_app_15min_summary_view_default - perfmon_app_summary_default - qos_stats_default - sdra_stats_default - site_health_stats_default - sleofflinereport_default - speed_test_default - sul_stats_default - tracker_stats_default - umbrella_stats_default - umtsrestevent_default - urlf_stats_default - vnf_stats_default - wlan_client_info_stats_default ---------------------------- ┌─parts.table──────────────────────────────┬──rows─┬─latest_modification─┬─disk_size──┬─primary_keys_size─┬─engine─────────────┬─bytes_size─┬─min_date───┬─max_date───┬─compressed_size─┬─uncompressed_size─┬─ratio─┐ │ device_system_status_stats_default │ 7238 │ 2026-02-24 06:13:12 │ 430.24 KiB │ 100.00 B │ MergeTree │ 440561 │ 2026-02-23 │ 2026-02-24 │ 0.00 B │ 0.00 B │ nan │ │ api_telemetry │ 34476 │ 2026-02-24 06:06:32 │ 306.22 KiB │ 264.00 B │ MergeTree │ 313574 │ tuple() │ tuple() │ 0.00 B │ 0.00 B │ nan │ │ audit_log_default │ 744 │ 2026-02-24 06:15:48 │ 200.16 KiB │ 48.00 B │ MergeTree │ 204967 │ 2026-02-23 │ 2026-02-24 │ 0.00 B │ 0.00 B │ nan │ │ device_events_default │ 4819 │ 2026-02-24 06:17:06 │ 189.26 KiB │ 245.00 B │ MergeTree │ 193802 │ 2026-02-23 │ 2026-02-24 │ 0.00 B │ 0.00 B │ nan │ │ interface_stats_default │ 7036 │ 2026-02-24 06:10:50 │ 117.07 KiB │ 104.00 B │ MergeTree │ 119879 │ 2026-02-23 │ 2026-02-24 │ 0.00 B │ 0.00 B │ nan │ │ alarm_default │ 408 │ 2026-02-23 21:48:41 │ 69.99 KiB │ 212.00 B │ ReplacingMergeTree │ 71671 │ 2026-02-23 │ 2026-02-23 │ 0.00 B │ 0.00 B │ nan │ │ api_telemetry_metadata │ 5342 │ 2026-02-24 06:01:04 │ 57.52 KiB │ 32.00 B │ MergeTree │ 58899 │ tuple() │ tuple() │ 0.00 B │ 0.00 B │ nan │ │ approute_stats_default │ 2540 │ 2026-02-24 06:10:20 │ 37.25 KiB │ 259.00 B │ MergeTree │ 38139 │ 2026-02-23 │ 2026-02-24 │ 0.00 B │ 0.00 B │ nan │ │ device_configuration_default │ 18 │ 2026-02-23 20:55:39 │ 33.97 KiB │ 51.00 B │ MergeTree │ 34787 │ 2026-02-23 │ 2026-02-23 │ 0.00 B │ 0.00 B │ nan │ │ device_health_stats_default │ 1463 │ 2026-02-24 06:15:00 │ 23.07 KiB │ 303.00 B │ ReplacingMergeTree │ 23626 │ 2026-02-23 │ 2026-02-24 │ 0.00 B │ 0.00 B │ nan │ │ site_health_stats_default │ 1413 │ 2026-02-24 06:15:01 │ 9.95 KiB │ 230.00 B │ ReplacingMergeTree │ 10185 │ 2026-02-23 │ 2026-02-24 │ 0.00 B │ 0.00 B │ nan │ │ approute_stats_routing_summary_default │ 70 │ 2026-02-23 20:30:17 │ 1.79 KiB │ 83.00 B │ MergeTree │ 1837 │ tuple() │ tuple() │ 0.00 B │ 0.00 B │ nan │ │ nwa_default │ 120 │ 2026-02-24 06:11:08 │ 1.69 KiB │ 32.00 B │ MergeTree │ 1734 │ 2026-02-23 │ 2026-02-24 │ 0.00 B │ 0.00 B │ nan │ │ approute_stats_transport_summary_default │ 18 │ 2026-02-23 20:30:17 │ 997.00 B │ 83.00 B │ MergeTree │ 997 │ tuple() │ tuple() │ 0.00 B │ 0.00 B │ nan │ └──────────────────────────────────────────┴───────┴─────────────────────┴────────────┴───────────────────┴────────────────────┴────────────┴────────────┴────────────┴─────────────────┴───────────────────┴───────┘ Application server stats --------------------------------- STATISTICS --------------------------------- Success: 20418 Fail: 0 CONN DOWN: 0 OOM: 0 ILL ARG: 0 --------------------------------- This action is not supported vmanage_1# -

修訂記錄

| 修訂 | 發佈日期 | 意見 |

|---|---|---|

4.0 |

13-Oct-2023

|

vManage Cluster Upgrade部分 |

3.0 |

11-Jul-2023

|

在控制器升級之前要執行的預檢查 |

2.0 |

27-Apr-2023

|

初始版本 |

1.0 |

26-Apr-2023

|

初始版本 |

由思科工程師貢獻

- Cesar Daniel AvilaTechnical Consulting Engineer

- Ian EstradaTechnical Consulting Engineer

意見

意見