Before you begin installation, confirm that your environment meets the following specific software and hardware requirements.

Attention |

On HyperFlex M5 nodes, when using a 1GE topology manually configure the port speed to 1000/full on all switch ports. See the

Common Network Requirements.

|

VLAN Requirements

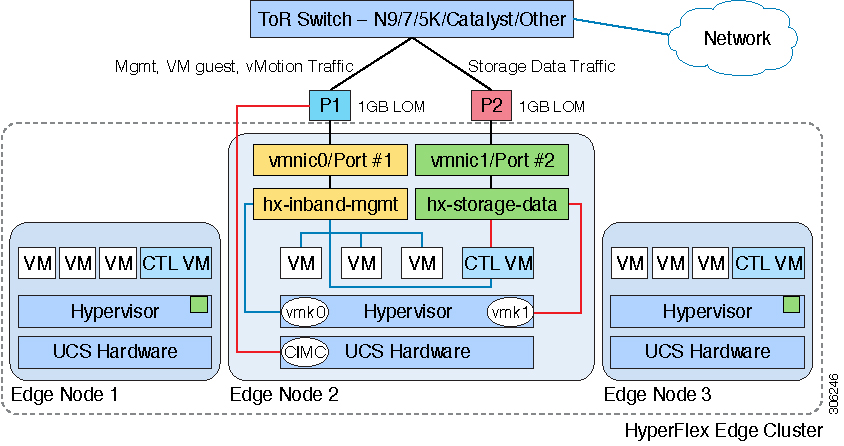

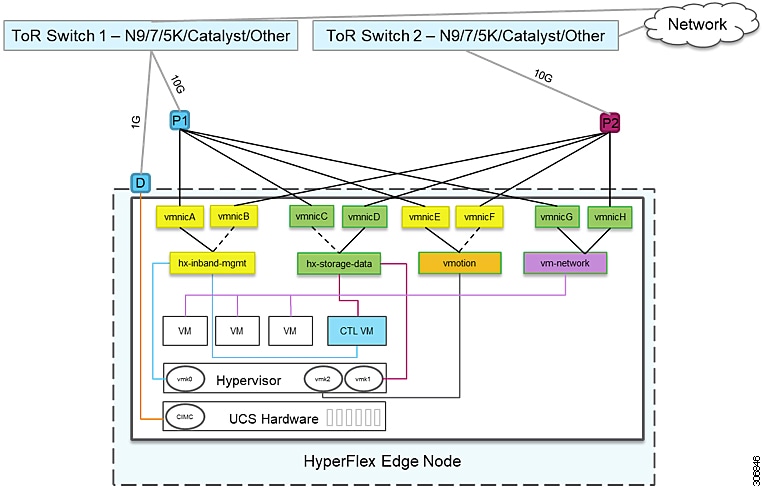

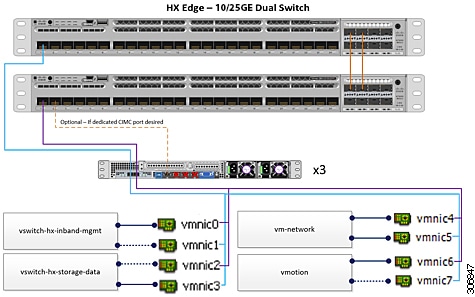

Single Switch Network Topology

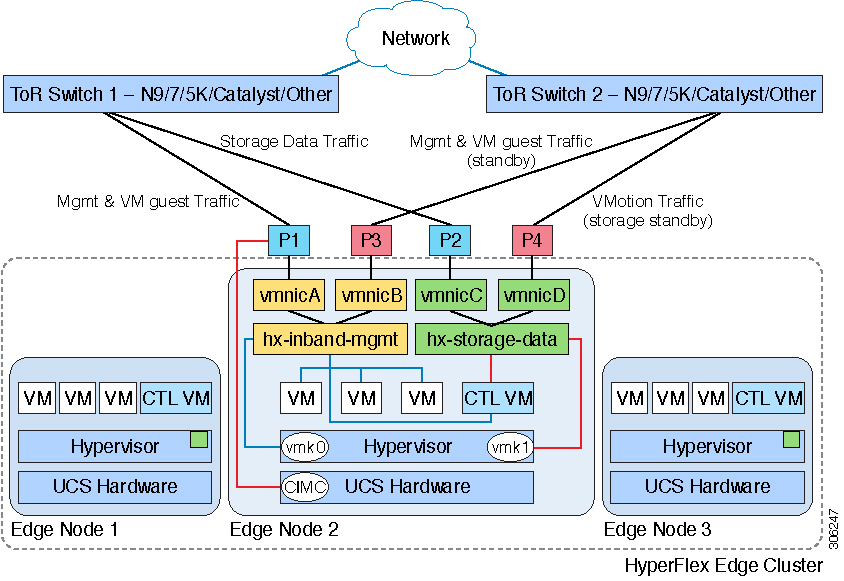

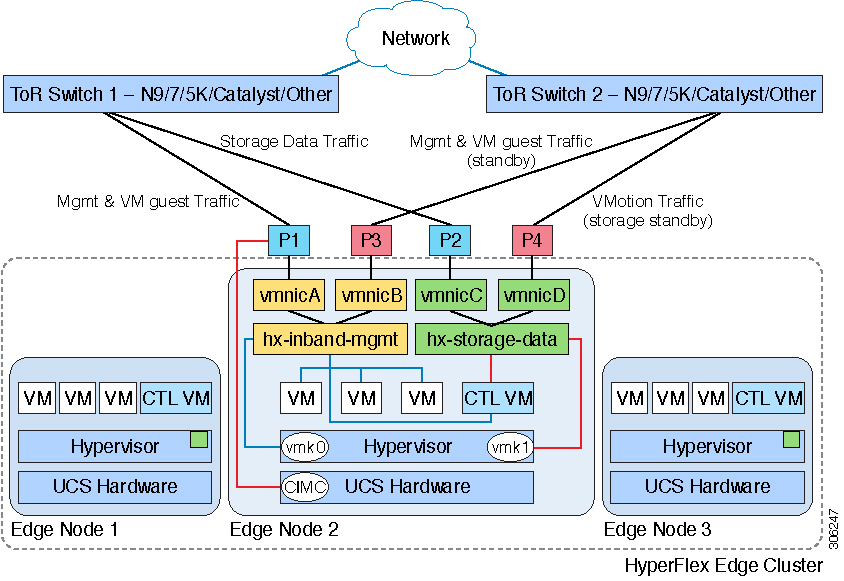

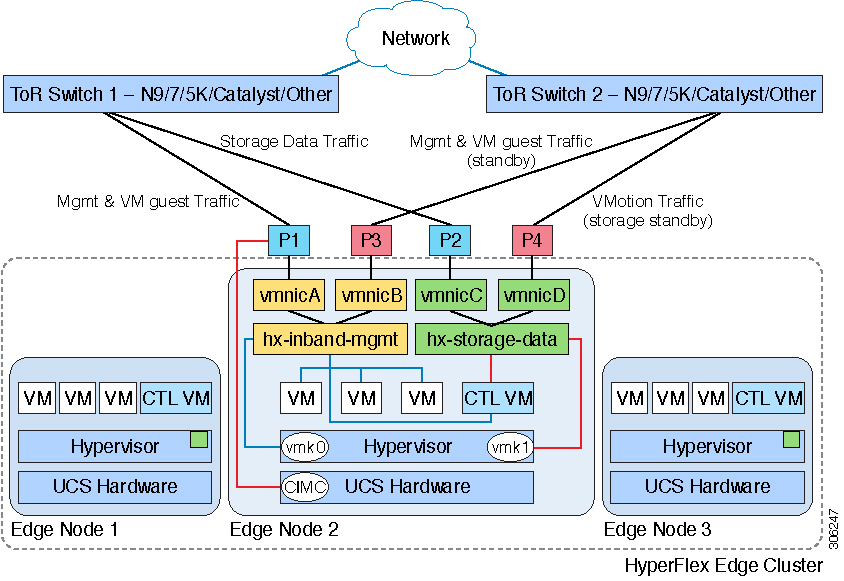

Dual Switch Network Topology

|

Network

|

VLAN ID

|

Description

|

|

Use a separate subnet and VLANs for each of the following networks:

|

|

VLAN for VMware ESXi, and Cisco HyperFlex management

|

|

Used for management traffic among ESXi, HyperFlex, and VMware vCenter, and must be routable.

| Note

|

This VLAN must have access to Intersight.

|

|

|

CIMC VLAN

|

|

Can be same or different from the Management VLAN.

| Note

|

This VLAN must have access to Intersight.

|

|

|

VLAN for HX storage traffic

|

|

Used for storage traffic and requires only L2 connectivity.

|

|

VLAN for VMware vMotion

|

|

Used for vMotion VLAN, if applicable.

| Note

|

Can be the same as the management VLAN but not recommended.

|

|

|

VLAN(s) for VM network(s)

|

|

Used for VM/application network.

| Note

|

Can be multiple VLANs separated by a VM portgroup in ESXi.

|

|

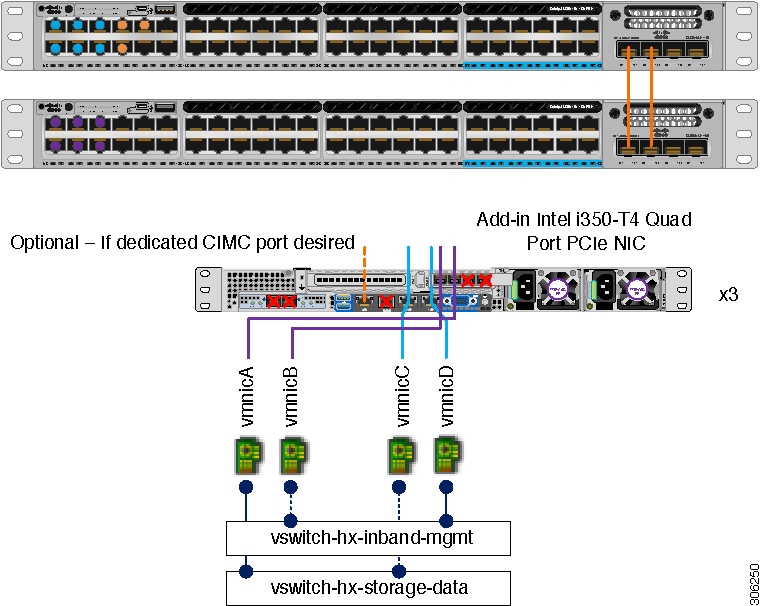

Inband versus Out-of-Band CIMC

This guides assume the use of inband CIMC using Shared LOM

Ext mode. The result is CIMC management traffic

multiplexed with vSphere traffic onto the LOM ports,

reducing cabling, switchports, and additional

configuration.

Customers may opt to use the dedicated CIMC management port for out-of-band use. Users should account for this third 1GE port

when planning their upstream switch configuration. Additionally, the user should set the CIMC to dedicated mode during CIMC

configuration. Follow Cisco UCS C-series documentation to configure the CIMC in dedicated NIC mode. Under NIC properties, set the NIC mode to dedicated before saving the configuration.

In either case, CIMC must have network access to Intersight.

Supported vCenter Topologies

Use the following table to determine the topology supported for vCenter.

|

Topology

|

Description

|

Recommendation

|

|

Single vCenter

|

Virtual or physical vCenter that runs on an external server and is local to the site. A management rack mount server can

be used for this purpose.

|

Highly recommended

|

|

Centralized vCenter

|

vCenter that manages multiple sites across a WAN.

|

Highly recommended

|

|

Nested vCenter

|

vCenter that runs within the cluster you plan to deploy.

|

Installation for a HyperFlex Edge cluster may be performed without a vCenter. Alternatively, you may deploy with an external

vCenter and migrate it into the cluster.

For the latest information, see the How to Deploy vCenter on the HX Data Platform tech note.

|

Customer Deployment Information

A typical three-node HyperFlex Edge deployment requires 13 IP

addresses – 10 IP addresses for the management

network and 3 IP addresses for the vMotion

network.

CIMC Management IP Addresses

|

Server

|

CIMC Management IP Addresses

|

|

Server 1

|

|

|

Server 2

|

|

|

Server 3

|

|

|

Subnet mask

|

|

|

Gateway

|

|

|

DNS Server

|

|

|

NTP Server

| Note

|

NTP configuration on CIMC is required for proper Intersight connectivity.

|

|

|

Network IP Addresses

Note |

By default, the HX Installer automatically assigns IP addresses in the 169.254.1.X range, to the Hypervisor Data Network and

the Storage Controller Data Network.

|

Note |

Spanning Tree portfast trunk (trunk ports) should be enabled for all network ports.

Failure to configure portfast may cause intermittent disconnects during ESXi bootup and longer than necessary network re-convergence

during physical link failure.

|

|

Management Network IP Addresses

(must be routable)

|

|

Hypervisor Management Network

|

Storage Controller Management Network

|

|

Server 1:

|

Server 1:

|

|

Server 2:

|

Server 2:

|

|

Server 3:

|

Server 3:

|

|

Storage Cluster Management IP address

|

|

|

Subnet mask

|

|

|

Default gateway

|

|

VMware vMotion Network IP Addresses

For vMotion services, you may configure a unique VMkernel

port or, if necessary, reuse the vmk0 if you are

using the management VLAN for vMotion (not

recommended).

|

Server

|

vMotion

Network IP Addresses (configured using the

post_install script)

|

|

Server 1

|

|

|

Server 2

|

|

|

Server 3

|

|

|

Subnet mask

|

|

|

Gateway

|

|

Important |

Ensure that the following port requirements are met in addition to the prerequistes listed for Intersight Connectivity.

|

If your network is behind a firewall, in addition to the

standard port requirements, VMware recommends ports

for VMware ESXi and VMware vCenter.

-

CIP-M is for the cluster management IP.

-

SCVM is the management IP for the controller VM.

-

ESXi is the management IP for the hypervisor.

The compehensive list of ports required for component communication for the HyperFlex solution is located in Appendix A of

the HX Data Platform Security Hardening Guide

Tip |

If you do not have standard configurations and need different port settings, refer to Table C-5 Port Literal Values for customizing your environment.

|

Hypervisor Credentials

|

root username

|

root

|

|

root password

|

Cisco123

| Important

|

Deployments based on Cisco HX Data Platform Release, 3.0 and higher, require a new custom password if you have not changed

the default factory password prior to starting installation.

|

|

VMware vCenter Configuration

Note |

HyperFlex communicates with vCenter through

standard ports. Port 80 is used for reverse HTTP

proxy and may be changed with TAC assistance. Port

443 is used for secure communication to the

vCenter SDK and may not be changed.

|

|

vCenter

admin username

username@domain

|

|

|

vCenter

admin password

|

|

|

vCenter

data center name

|

|

|

VMware

vSphere compute cluster and storage cluster

name

|

|

Network Services

Note |

-

DNS and NTP servers should reside outside of the HX storage cluster.

-

Use an internally-hosted NTP server to provide a reliable source for the time.

-

All DNS servers should be pre-configured with forward (A) and reverse (PTR) DNS records for each ESXi host before starting

deployment. When DNS is configured correctly in advance, the ESXi hosts are added to vCenter via FQDN rather than IP address.

Skipping this step will result in the hosts being added to the vCenter inventory via IP address and require users to change

to FQDN using the following procedure: Changing Node Identification Form in vCenter Cluster from IP to FQDN.

|

|

DNS Servers

<Primary DNS Server IP address, Secondary DNS Server IP address, …>

|

|

|

NTP servers

<Primary NTP Server IP address, Secondary NTP Server IP address, …>

|

|

|

Time zone

Example: US/Eastern, US/Pacific

|

|

Connected Services

|

Enable Connected Services (Recommended)

Yes or No required

|

|

|

Email for service request notifications

Example: name@company.com

|

|

Physical Requirements

HX220c nodes are 1 RU each. For a three-node cluster, 3 RU are required.

Reinstallation

To perform reinstallation of a HyperFlex Edge System, contact Cisco TAC.

Feedback

Feedback