Built on Cisco silicon, the Cisco Nexus 9000 Series delivers industry-leading data center performance from the inside out.

800G ports for heavy lifting

For data requirements big or small, multispeed ports have your back with full backward compatibility. They can flex and scale with you, while you handle the growth.

Seeing is securing

Toughen, tighten, and protect your network with streaming telemetry, advanced analytics, and line-rate encryption (MACsec).

The bottom line counts

Reduce operational costs with unified ports supporting 10/25G Ethernet and 8/16/32G Fibre Channel, RDMA over converged Ethernet (RoCE), and IP storage.

The speed you need

Dial in performance with intelligent buffers and zero packet drop for 50 percent faster application completion time.

Cisco named a Leader in Gartner® 2025 Magic Quadrant™ for Data Center Switching

See why we believe our industry-leading performance, simplicity, and scalability helped us earn this recognition.

Switching solutions for every environment

Cisco N9100 Series switches

With unmatched bandwidth, low latency, and seamless integration, this is the future of high-performance networking for neoclouds and sovereign clouds.

Nexus 9200 Series switches

Dense, high-capacity spine and leaf supporting 800G for massively scaled-out fabrics.

Nexus 9300 Series switches

If spine and leaf or top-of-rack are your style, these fixed switches support ports from 1G to 800G.

Nexus 9400 Series switches

High bandwidth, small form factor. This compact modular system supports 400G speeds in a 4 RU platform.

Nexus 9500 Series switches

Enterprise or high growth? Modular configurations can support you, with ports from 1G to 400G.

Nexus 9800 Series switches

Modular switching redefined. Be 400G-ready and 800G-capable with a power efficient distributed architecture.

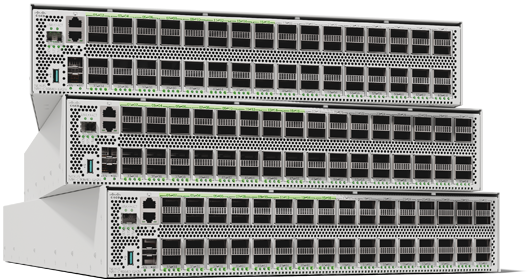

Nexus 400G and 800G family

Bandwidth like you've never known. Adopt 400G with confidence in a family of 1, 2, 4, and 16 RU switches.

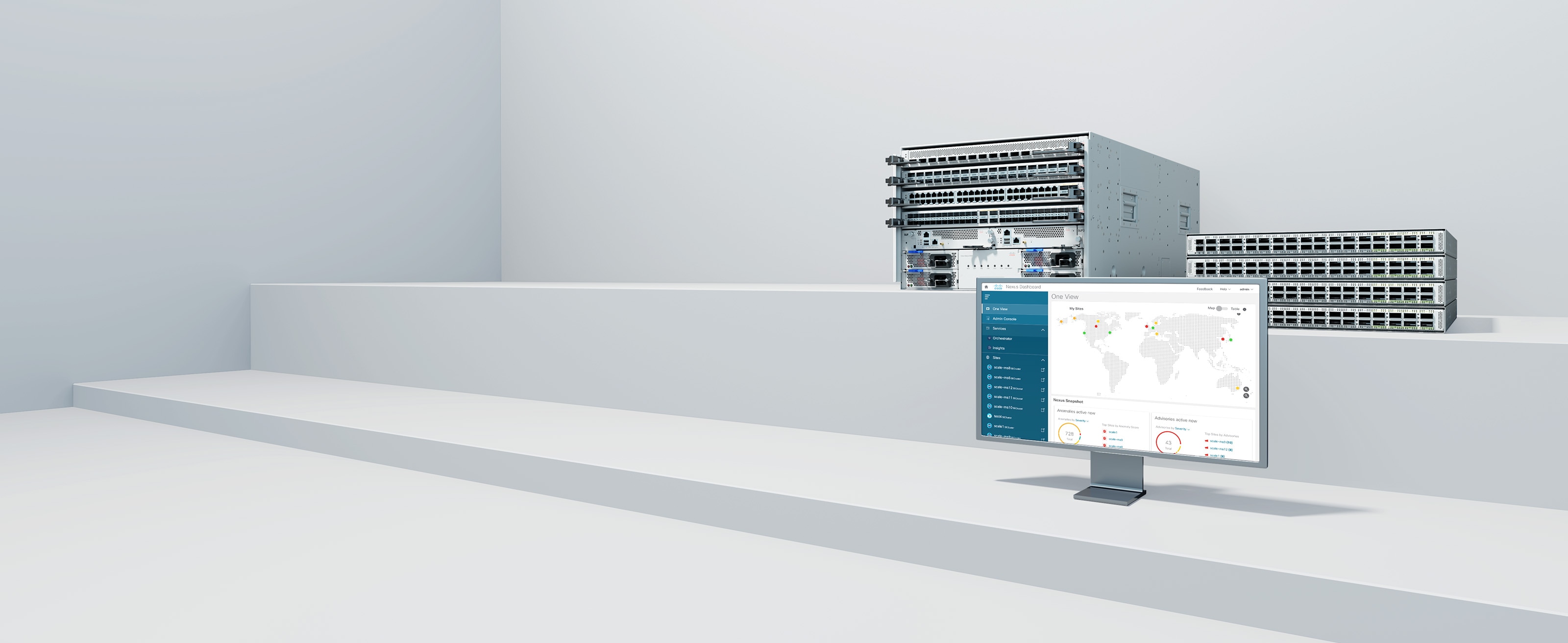

Cisco Nexus Dashboard

Simplify hybrid cloud network operations, with one simple view.

Cisco NX-OS

Make your network agile, easily scalable, and secure with simplified network operations and integrated automation.

Services and support

Portfolio buying guide

Cisco Enterprise Agreement

Bring the power and breadth of our entire technology portfolio under a single, simplified agreement.

Cisco Lifecycle Services

Move your business forward

Your team. Our expertise. With your desired business outcomes as the compass, let's optimize and transform your IT environment—all while demonstrating measurable success.