- Preface

- Troubleshooting Overview

- Audit Troubleshooting

- Billing Troubleshooting

- Call Processing Troubleshooting

- Configuration Troubleshooting

- Database Troubleshooting

- Maintenance Troubleshooting

- Operations Support System Troubleshooting

- Security Troubleshooting

- Signaling Troubleshooting

- Statistics Troubleshooting

- System Troubleshooting

- Network Troubleshooting

- General Troubleshooting

- Diagnostic Tests

- Disaster Recovery Procedures

- Disk Replacement

- Recoverable and Nonrecoverable Error Codes

- System Usage of MGW Keepalive Parameters, Release 6.0

- Overload Control

- Glossary

- Introduction

- Maintenance Events and Alarms

- Maintenance (1)

- Maintenance (2)

- Maintenance (3)

- Maintenance (4)

- Maintenance (5)

- Maintenance (6)

- Maintenance (7)

- Maintenance (8)

- Maintenance (9)

- Maintenance (10)

- Maintenance (11)

- Maintenance (12)

- Maintenance (13)

- Maintenance (14)

- Maintenance (15)

- Maintenance (16)

- Maintenance (17)

- Maintenance (18)

- Maintenance (19)

- Maintenance (20)

- Maintenance (21)

- Maintenance (22)

- Maintenance (23)

- Maintenance (24)

- Maintenance (25)

- Maintenance (26)

- Maintenance (27)

- Maintenance (28)

- Maintenance (29)

- Maintenance (30)

- Maintenance (31)

- Maintenance (32)

- Maintenance (33)

- Maintenance (34)

- Maintenance (35)

- Maintenance (36)

- Maintenance (37)

- Maintenance (38)

- Maintenance (39)

- Maintenance (40)

- Maintenance (41)

- Maintenance (42)

- Maintenance (43)

- Maintenance (44)

- Maintenance (45)

- Maintenance (46)

- Maintenance (47)

- Maintenance (48)

- Maintenance (49)

- Maintenance (50)

- Maintenance (51)

- Maintenance (52)

- Maintenance (53)

- Maintenance (54)

- Maintenance (55)

- Maintenance (56)

- Maintenance (57)

- Maintenance (58)

- Maintenance (59)

- Maintenance (60)

- Maintenance (61)

- Maintenance (62)

- Maintenance (63)

- Maintenance (64)

- Maintenance (65)

- Maintenance (66)

- Maintenance (67)

- Maintenance (68)

- Maintenance (69)

- Maintenance (70)

- Maintenance (71)

- Maintenance (72)

- Maintenance (73)

- Maintenance (74)

- Maintenance (75)

- Maintenance (76)

- Maintenance (77)

- Maintenance (78)

- Maintenance (79)

- Maintenance (80)

- Maintenance (81)

- Maintenance (82)

- Maintenance (83)

- Maintenance (84)

- Maintenance (85)

- Maintenance (86)

- Maintenance (87)

- Maintenance (88)

- Maintenance (89)

- Maintenance (90)

- Maintenance (91)

- Maintenance (92)

- Maintenance (93)

- Maintenance (94)

- Maintenance (95)

- Maintenance (96)

- Maintenance (97)

- Maintenance (98)

- Maintenance (99)

- Maintenance (100)

- Maintenance (101)

- Maintenance (102)

- Maintenance (103)

- Maintenance (104)

- Maintenance (105)

- Maintenance (106)

- Maintenance (107)

- Maintenance (108)

- Maintenance (109)

- Maintenance (110)

- Maintenance (111)

- Maintenance (112)

- Maintenance (113)

- Maintenance (114)

- Maintenance (115)

- Maintenance (116)

- Maintenance (117)

- Maintenance (118)

- Maintenance (119)

- Maintenance (120)

- Maintenance (121)

- Maintenance (122)

- Maintenance (123)

- Maintenance (124)

- Maintenance (125)

- Maintenance (126)

- Maintenance (127)

- Monitoring Maintenance Events

- Test Report—Maintenance (1)

- Report Threshold Exceeded—Maintenance (2)

- Local Side Has Become Faulty—Maintenance (3)

- Mate Side Has Become Faulty—Maintenance (4)

- Changeover Failure—Maintenance (5)

- Changeover Timeout—Maintenance (6)

- Mate Rejected Changeover—Maintenance (7)

- Mate Changeover Timeout—Maintenance (8)

- Local Initialization Failure—Maintenance (9)

- Local Initialization Timeout—Maintenance (10)

- Switchover Complete—Maintenance (11)

- Initialization Successful—Maintenance (12)

- Administrative State Change—Maintenance (13)

- Call Agent Administrative State Change—Maintenance (14)

- Feature Server Administrative State Change—Maintenance (15)

- Process Manager: Process Has Died: Starting Process—Maintenance (16)

- Invalid Event Report Received—Maintenance (17)

- Process Manager: Process Has Died—Maintenance (18)

- Process Manager: Process Exceeded Restart Rate—Maintenance (19)

- Lost Connection to Mate—Maintenance (20)

- Network Interface Down—Maintenance (21)

- Mate Is Alive—Maintenance (22)

- Process Manager: Process Failed to Complete Initialization—Maintenance (23)

- Process Manager: Restarting Process—Maintenance (24)

- Process Manager: Changing State—Maintenance (25)

- Process Manager: Going Faulty—Maintenance (26)

- Process Manager: Changing Over to Active—Maintenance (27)

- Process Manager: Changing Over to Standby—Maintenance (28)

- Administrative State Change Failure—Maintenance (29)

- Element Manager State Change—Maintenance (30)

- Process Manager: Sending Go Active to Process—Maintenance (32)

- Process Manager: Sending Go Standby to Process—Maintenance (33)

- Process Manager: Sending End Process to Process—Maintenance (34)

- Process Manager: All Processes Completed Initialization—Maintenance (35)

- Process Manager: Sending All Processes Initialization Complete to Process—Maintenance (36)

- Process Manager: Killing Process—Maintenance (37)

- Process Manager: Clearing the Database—Maintenance (38)

- Process Manager: Cleared the Database—Maintenance (39)

- Process Manager: Binary Does Not Exist for Process—Maintenance (40)

- Administrative State Change Successful With Warning—Maintenance (41)

- Number of Heartbeat Messages Received Is Less Than 50% of Expected—Maintenance (42)

- Process Manager: Process Failed to Come Up in Active Mode—Maintenance (43)

- Process Manager: Process Failed to Come Up in Standby Mode—Maintenance (44)

- Application Instance State Change Failure—Maintenance (45)

- Network Interface Restored—Maintenance (46)

- Thread Watchdog Counter Expired for a Thread—Maintenance (47)

- Index Table Usage Exceeded Minor Usage Threshold Level—Maintenance (48)

- Index Table Usage Exceeded Major Usage Threshold Level—Maintenance (49)

- Index Table Usage Exceeded Critical Usage Threshold Level—Maintenance (50)

- A Process Exceeds 70% of Central Processing Unit Usage—Maintenance (51)

- Central Processing Unit Usage Is Now Below the 50% Level—Maintenance (52)

- The Central Processing Unit Usage Is Over 90% Busy—Maintenance (53)

- The Central Processing Unit Has Returned to Normal Levels of Operation—Maintenance (54)

- The Five Minute Load Average Is Abnormally High—Maintenance (55)

- The Load Average Has Returned to Normal Levels—Maintenance (56)

- Memory and Swap Are Consumed at Critical Levels—Maintenance (57)

- Memory and Swap Are Consumed at Abnormal Levels—Maintenance (58)

- No Heartbeat Messages Received Through the Interface—Maintenance (61)

- Link Monitor: Interface Lost Communication—Maintenance (62)

- Outgoing Heartbeat Period Exceeded Limit—Maintenance (63)

- Average Outgoing Heartbeat Period Exceeds Major Alarm Limit—Maintenance (64)

- Disk Partition Critically Consumed—Maintenance (65)

- Disk Partition Significantly Consumed—Maintenance (66)

- The Free Inter-Process Communication Pool Buffers Below Minor Threshold—Maintenance (67)

- The Free Inter-Process Communication Pool Buffers Below Major Threshold—Maintenance (68)

- The Free Inter-Process Communication Pool Buffers Below Critical Threshold—Maintenance (69)

- The Free Inter-Process Communication Pool Buffer Count Below Minimum Required—Maintenance (70)

- Local Domain Name System Server Response Too Slow—Maintenance (71)

- External Domain Name System Server Response Too Slow—Maintenance (72)

- External Domain Name System Server Not Responsive—Maintenance (73)

- Local Domain Name System Service Not Responsive—Maintenance (74)

- Mismatch of Internet Protocol Address Local Server and Domain Name System—Maintenance (75)

- Mate Time Differs Beyond Tolerance—Maintenance (77)

- Bulk Data Management System Admin State Change—Maintenance (78)

- Resource Reset—Maintenance (79)

- Resource Reset Warning—Maintenance (80)

- Resource Reset Failure—Maintenance (81)

- Average Outgoing Heartbeat Period Exceeds Critical Limit—Maintenance (82)

- Swap Space Below Minor Threshold—Maintenance (83)

- Swap Space Below Major Threshold—Maintenance (84)

- Swap Space Below Critical Threshold—Maintenance (85)

- System Health Report Collection Error—Maintenance (86)

- Status Update Process Request Failed—Maintenance (87)

- Status Update Process Database List Retrieval Error—Maintenance (88)

- Status Update Process Database Update Error—Maintenance (89)

- Disk Partition Moderately Consumed—Maintenance (90)

- Internet Protocol Manager Configuration File Error—Maintenance (91)

- Internet Protocol Manager Initialization Error—Maintenance (92)

- Internet Protocol Manager Interface Failure—Maintenance (93)

- Internet Protocol Manager Interface State Change—Maintenance (94)

- Internet Protocol Manager Interface Created—Maintenance (95)

- Internet Protocol Manager Interface Removed—Maintenance (96)

- Inter-Process Communication Input Queue Entered Throttle State—Maintenance (97)

- Inter-Process Communication Input Queue Depth at 25% of Its Hi-Watermark—Maintenance (98)

- Inter-Process Communication Input Queue Depth at 50% of Its Hi-Watermark—Maintenance (99)

- Inter-Process Communication Input Queue Depth at 75% of Its Hi-Watermark—Maintenance (100)

- Switchover in Progress—Maintenance (101)

- Thread Watchdog Counter Close to Expiry for a Thread—Maintenance (102)

- Central Processing Unit Is Offline—Maintenance (103)

- Aggregration Device Address Successfully Resolved—Maintenance (104)

- No Heartbeat Messages Received Through Interface From Router—Maintenance (107)

- A Log File Cannot Be Transferred—Maintenance (108)

- Five Successive Log Files Cannot Be Transferred—Maintenance (109)

- Access to Log Archive Facility Configuration File Failed or File Corrupted—Maintenance (110)

- Cannot Log In to External Archive Server—Maintenance (111)

- Congestion Status—Maintenance (112)

- Central Processing Unit Load of Critical Processes—Maintenance (113)

- Queue Length of Critical Processes—Maintenance (114)

- Inter-Process Communication Buffer Usage Level—Maintenance (115)

- Call Agent Reports the Congestion Level of Feature Server—Maintenance (116)

- Side Automatically Restarting Due to Fault—Maintenance (117)

- Domain Name Server Zone Database Does Not Match Between the Primary Domain Name Server and the Internal Secondary Authoritative Domain Name Server—Maintenance (118)

- Periodic Shared Memory Database Back Up Failure—Maintenance (119)

- Periodic Shared Memory Database Back Up Success—Maintenance (120)

- Invalid SOAP Request—Maintenance (121)

- Northbound Provisioning Message Is Retransmitted—Maintenance (122)

- Northbound Provisioning Message Dropped Due to Full Index Table—Maintenance (123)

- Periodic Shared Memory Sync Started—Maintenance (124)

- Periodic Shared Memory Sync Completed—Maintenance (125)

- Periodic Shared Memory Sync Failure—Maintenance (126)

- Manual Recovery of OMS HUB Queue Loss—Maintenance (127)

- Troubleshooting Maintenance Alarms

- Local Side Has Become Faulty—Maintenance (3)

- Mate Side Has Become Faulty—Maintenance (4)

- Changeover Failure—Maintenance (5)

- Changeover Timeout—Maintenance (6)

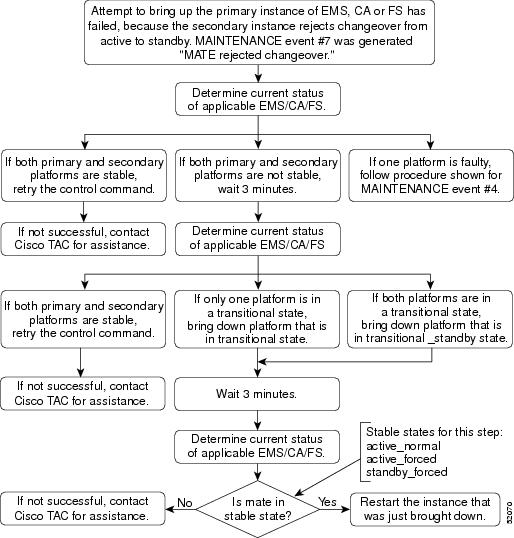

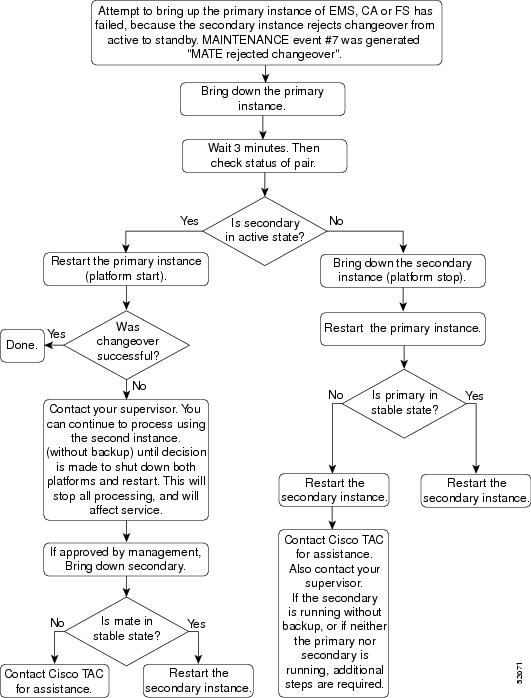

- Mate Rejected Changeover—Maintenance (7)

- Mate Changeover Timeout—Maintenance (8)

- Local Initialization Failure—Maintenance (9)

- Local Initialization Timeout—Maintenance (10)

- Process Manager: Process Has Died—Maintenance (18)

- Process Manager: Process Exceeded Restart Rate—Maintenance (19)

- Lost Connection to Mate—Maintenance (20)

- Network Interface Down—Maintenance (21)

- Process Manager: Process Failed to Complete Initialization—Maintenance (23)

- Process Manager: Restarting Process—Maintenance (24)

- Process Manager: Going Faulty—Maintenance (26)

- Process Manager: Binary Does Not Exist for Process—Maintenance (40)

- Number of Heartbeat Messages Received Is Less Than 50% Of Expected—Maintenance (42)

- Process Manager: Process Failed to Come Up In Active Mode—Maintenance (43)

- Process Manager: Process Failed to Come Up In Standby Mode—Maintenance (44)

- Application Instance State Change Failure—Maintenance (45)

- Thread Watchdog Counter Expired for a Thread—Maintenance (47)

- Index Table Usage Exceeded Minor Usage Threshold Level—Maintenance (48)

- Index Table Usage Exceeded Major Usage Threshold Level—Maintenance (49)

- Index Table Usage Exceeded Critical Usage Threshold Level—Maintenance (50)

- A Process Exceeds 70% of Central Processing Unit Usage—Maintenance (51)

- The Central Processing Unit Usage Is Over 90% Busy—Maintenance (53)

- The Five Minute Load Average Is Abnormally High—Maintenance (55)

- Memory and Swap Are Consumed at Critical Levels—Maintenance (57)

- No Heartbeat Messages Received Through the Interface—Maintenance (61)

- Link Monitor: Interface Lost Communication—Maintenance (62)

- Outgoing Heartbeat Period Exceeded Limit—Maintenance (63)

- Average Outgoing Heartbeat Period Exceeds Major Alarm Limit—Maintenance (64)

- Disk Partition Critically Consumed—Maintenance (65)

- Disk Partition Significantly Consumed—Maintenance (66)

- The Free Inter-Process Communication Pool Buffers Below Minor Threshold—Maintenance (67)

- The Free Inter-Process Communication Pool Buffers Below Major Threshold—Maintenance (68)

- The Free Inter-Process Communication Pool Buffers Below Critical Threshold—Maintenance (69)

- The Free Inter-Process Communication Pool Buffer Count Below Minimum Required—Maintenance (70)

- Local Domain Name System Server Response Too Slow—Maintenance (71)

- External Domain Name System Server Response Too Slow—Maintenance (72)

- External Domain Name System Server Not Responsive—Maintenance (73)

- Local Domain Name System Service Not Responsive—Maintenance (74)

- Mate Time Differs Beyond Tolerance—Maintenance (77)

- Average Outgoing Heartbeat Period Exceeds Critical Limit—Maintenance (82)

- Swap Space Below Minor Threshold—Maintenance (83)

- Swap Space Below Major Threshold—Maintenance (84)

- Swap Space Below Critical Threshold—Maintenance (85)

- System Health Report Collection Error—Maintenance (86)

- Status Update Process Request Failed—Maintenance (87)

- Status Update Process Database List Retrieval Error—Maintenance (88)

- Status Update Process Database Update Error—Maintenance (89)

- Disk Partition Moderately Consumed—Maintenance (90)

- Internet Protocol Manager Configuration File Error—Maintenance (91)

- Internet Protocol Manager Initialization Error—Maintenance (92)

- Internet Protocol Manager Interface Failure—Maintenance (93)

- Inter-Process Communication Input Queue Entered Throttle State—Maintenance (97)

- Inter-Process Communication Input Queue Depth at 25% of Its Hi-Watermark—Maintenance (98)

- Inter-Process Communication Input Queue Depth at 50% of Its Hi-Watermark—Maintenance (99)

- Inter-Process Communication Input Queue Depth at 75% of Its Hi-Watermark—Maintenance (100)

- Switchover in Progress—Maintenance (101)

- Thread Watchdog Counter Close to Expiry for a Thread—Maintenance (102)

- Central Processing Unit Is Offline—Maintenance (103)

- No Heartbeat Messages Received Through Interface From Router—Maintenance (107)

- Five Successive Log Files Cannot Be Transferred—Maintenance (109)

- Access To Log Archive Facility Configuration File Failed or File Corrupted—Maintenance (110)

- Cannot Log In to External Archive Server—Maintenance (111)

- Congestion Status—Maintenance (112)

- Side Automatically Restarting Due to Fault—Maintenance (117)

- Domain Name Server Zone Database Does Not Match Between the Primary Domain Name Server and the Internal Secondary Authoritative Domain Name Server—Maintenance (118)

- Periodic Shared Memory Database Back Up Failure—Maintenance (119)

- Periodic Shared Memory Sync Failure—Maintenance (126)

- Manual Recovery of OMS HUB Queue Loss—Maintenance (127)

Maintenance Troubleshooting

Introduction

This chapter provides the information needed for monitoring and troubleshooting maintenance events and alarms. This chapter is divided into the following sections:

•![]() Maintenance Events and Alarms—Provides a brief overview of each maintenance event and alarm

Maintenance Events and Alarms—Provides a brief overview of each maintenance event and alarm

•![]() Monitoring Maintenance Events—Provides the information needed for monitoring and correcting the maintenance events

Monitoring Maintenance Events—Provides the information needed for monitoring and correcting the maintenance events

•![]() Troubleshooting Maintenance Alarms—Provides the information needed for troubleshooting and correcting the maintenance alarms

Troubleshooting Maintenance Alarms—Provides the information needed for troubleshooting and correcting the maintenance alarms

Maintenance Events and Alarms

This section provides a brief overview of the maintenance events and alarms for the Cisco BTS 10200 Softswitch; the event and alarms are arranged in numerical order. Table 7-1 lists all of the maintenance events and alarms by severity.

|

Note |

|

Note |

|

|

|

|

|

|

|

|---|---|---|---|---|---|

Maintenance (1)

Table 7-2 lists the details of the Maintenance (1) informational event. For additional information, refer to the "Test Report—Maintenance (1)" section.

Description |

Test Report |

Severity |

Information |

Threshold |

10000 |

Throttle |

0 |

Maintenance (2)

Table 7-3 lists the details of the Maintenance (2) informational event. For additional information, refer to the "Report Threshold Exceeded—Maintenance (2)" section.

Maintenance (3)

Table 7-4 lists the details of the Maintenance (3) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Local Side Has Become Faulty—Maintenance (3)" section.

Maintenance (4)

Table 7-5 lists the details of the Maintenance (4) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Mate Side Has Become Faulty—Maintenance (4)" section.

Description |

Keep Alive Module: Mate Side Has Become Faulty (KAM: Mate Side Has Become Faulty) |

Severity |

Major |

Threshold |

100 |

Throttle |

0 |

Datawords |

Local State—STRING [30] |

Primary |

The local side has detected the mate side going to the faulty state. |

Primary |

Display the event summary on the faulty mate side, using the report event-summary command (see the Cisco BTS 10200 Softswitch CLI Database for command details). |

Secondary |

Review the information in the event summary. This is usually a software problem. |

Ternary |

After confirming the active side is processing traffic, restart software on the mate side. Log in to the mate platform as root user. Enter the platform stop command and then the platform start command. |

Subsequent |

If software restart does not resolve the problem or if the platform goes immediately to faulty again, or does not start, contact Cisco Technical Assistance Center (TAC). It may be necessary to reinstall software. If problem is commonly occurring, then the OS or a hardware failure may be the problem. Reboot the host machine, then reinstall and restart all applications. Rebooting brings down other applications running on this machine. Contact Cisco TAC for assistance. |

Maintenance (5)

Table 7-6 lists the details of the Maintenance (5) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Changeover Failure—Maintenance (5)" section.

Maintenance (6)

Table 7-7 lists the details of the Maintenance (6) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Changeover Timeout—Maintenance (6)" section.

Maintenance (7)

Table 7-8 lists the details of the Maintenance (7) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Mate Rejected Changeover—Maintenance (7)" section.

Maintenance (8)

Table 7-9 lists the details of the Maintenance (8) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Mate Changeover Timeout—Maintenance (8)" section.

Maintenance (9)

Table 7-10 lists the details of the Maintenance (9) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Local Initialization Failure—Maintenance (9)" section.

Maintenance (10)

Table 7-11 lists the details of the Maintenance (10) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Local Initialization Timeout—Maintenance (10)" section.

Maintenance (11)

Table 7-12 lists the details of the Maintenance (11) informational event. For additional information, refer to the "Switchover Complete—Maintenance (11)" section.

Maintenance (12)

Table 7-13 lists the details of the Maintenance (12) informational event. For additional information, refer to the "Initialization Successful—Maintenance (12)" section.

Maintenance (13)

Table 7-14 lists the details of the Maintenance (13) informational event. For additional information, refer to the "Administrative State Change—Maintenance (13)" section.

Maintenance (14)

Table 7-15 lists the details of the Maintenance (14) informational event. For additional information, refer to the "Call Agent Administrative State Change—Maintenance (14)" section.

Maintenance (15)

Table 7-16 lists the details of the Maintenance (15) informational event. For additional information, refer to the "Feature Server Administrative State Change—Maintenance (15)" section.

Maintenance (16)

Table 7-17 lists the details of the Maintenance (16) informational event. For additional information, refer to the "Process Manager: Process Has Died: Starting Process—Maintenance (16)" section.

Maintenance (17)

Table 7-18 lists the details of the Maintenance (17) informational event. For additional information, refer to the "Invalid Event Report Received—Maintenance (17)" section.

Maintenance (18)

Table 7-19 lists the details of the Maintenance (18) minor alarm. To troubleshoot and correct the cause of the alarm, refer to the "Process Manager: Process Has Died—Maintenance (18)" section.

Maintenance (19)

Table 7-20 lists the details of the Maintenance (19) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Process Manager: Process Exceeded Restart Rate—Maintenance (19)" section.

Maintenance (20)

Table 7-21 lists the details of the Maintenance (20) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Lost Connection to Mate—Maintenance (20)" section.

Maintenance (21)

Table 7-22 lists the details of the Maintenance (21) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Network Interface Down—Maintenance (21)" section.

Maintenance (22)

Table 7-23 lists the details of the Maintenance (22) informational event. For additional information, refer to the "Mate Is Alive—Maintenance (22)" section.

Maintenance (23)

Table 7-24 lists the details of the Maintenance (23) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Process Manager: Process Failed to Complete Initialization—Maintenance (23)" section.

Maintenance (24)

Table 7-25 lists the details of the Maintenance (24) minor alarm. To troubleshoot and correct the cause of the alarm, refer to the "Process Manager: Restarting Process—Maintenance (24)" section.

Maintenance (25)

Table 7-26 lists the details of the Maintenance (25) informational event. For additional information, refer to the "Process Manager: Changing State—Maintenance (25)" section.

Description |

Process Manager: Changing State (PMG: Changing State) |

Severity |

Information |

Threshold |

100 |

Throttle |

0 |

Datawords |

Platform State—STRING [40] |

Maintenance (26)

Table 7-27 lists the details of the Maintenance (26) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Process Manager: Going Faulty—Maintenance (26)" section.

Maintenance (27)

Table 7-28 lists the details of the Maintenance (27) informational event. For additional information, refer to the "Process Manager: Changing Over to Active—Maintenance (27)" section.

Description |

Process Manager: Changing Over to Active (PMG: Changing Over to Active) |

Severity |

Information |

Threshold |

100 |

Throttle |

0 |

Maintenance (28)

Table 7-29 lists the details of the Maintenance (28) informational event. For additional information, refer to the "Process Manager: Changing Over to Standby—Maintenance (28)" section.

Description |

Process Manager: Changing Over to Standby (PMG: Changing Over to Standby) |

Severity |

Information |

Threshold |

100 |

Throttle |

0 |

Maintenance (29)

Table 7-30 lists the details of the Maintenance (29) warning event. To monitor and correct the cause of the event, refer to the "Administrative State Change Failure—Maintenance (29)" section.

Maintenance (30)

Table 7-31 lists the details of the Maintenance (30) informational event. For additional information, refer to the "Element Manager State Change—Maintenance (30)" section.

Maintenance (31)

Maintenance (31) is not used.

Maintenance (32)

Table 7-32 lists the details of the Maintenance (32) informational event. For additional information, refer to the "Process Manager: Sending Go Active to Process—Maintenance (32)" section.

Maintenance (33)

Table 7-33 lists the details of the Maintenance (33) informational event. For additional information, refer to the "Process Manager: Sending Go Standby to Process—Maintenance (33)" section.

Maintenance (34)

Table 7-34 lists the details of the Maintenance (34) informational event. For additional information, refer to the "Process Manager: Sending End Process to Process—Maintenance (34)" section.

Maintenance (35)

Table 7-35 lists the details of the Maintenance (35) informational event. For additional information, refer to the "Process Manager: All Processes Completed Initialization—Maintenance (35)" section.

Maintenance (36)

Table 7-36 lists the details of the Maintenance (36) informational event. For additional information, refer to the "Process Manager: Sending All Processes Initialization Complete to Process—Maintenance (36)" section.

Maintenance (37)

Table 7-37 lists the details of the Maintenance (37) informational event. For additional information, refer to the "Process Manager: Killing Process—Maintenance (37)" section.

Maintenance (38)

Table 7-38 lists the details of the Maintenance (38) informational event. For additional information, refer to the "Process Manager: Clearing the Database—Maintenance (38)" section.

Maintenance (39)

Table 7-39 lists the details of the Maintenance (39) informational event. For additional information, refer to the "Process Manager: Cleared the Database—Maintenance (39)" section.

Maintenance (40)

Table 7-40 lists the details of the Maintenance (40) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Process Manager: Binary Does Not Exist for Process—Maintenance (40)" section.

Maintenance (41)

Table 7-41 lists the details of the Maintenance (41) warning event. To monitor and correct the cause of the event, refer to the "Administrative State Change Successful With Warning—Maintenance (41)" section.

Maintenance (42)

Table 7-42 lists the details of the Maintenance (42) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Number of Heartbeat Messages Received Is Less Than 50% Of Expected—Maintenance (42)" section.

Maintenance (43)

Table 7-43 lists the details of the Maintenance (43) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Process Manager: Process Failed to Come Up In Active Mode—Maintenance (43)" section.

Maintenance (44)

Table 7-44 lists the details of the Maintenance (44) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Process Manager: Process Failed to Come Up In Standby Mode—Maintenance (44)" section.

Maintenance (45)

Table 7-45 lists the details of the Maintenance (45) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Application Instance State Change Failure—Maintenance (45)" section.

Maintenance (46)

Table 7-46 lists the details of the Maintenance (46) informational event. For additional information, refer to the "Network Interface Restored—Maintenance (46)" section.

Maintenance (47)

Table 7-47 lists the details of the Maintenance (47) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Thread Watchdog Counter Expired for a Thread—Maintenance (47)" section.

Maintenance (48)

Table 7-48 lists the details of the Maintenance (48) minor alarm. To troubleshoot and correct the cause of the alarm, refer to the "Index Table Usage Exceeded Minor Usage Threshold Level—Maintenance (48)" section.

Maintenance (49)

Table 7-49 lists the details of the Maintenance (49) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Index Table Usage Exceeded Major Usage Threshold Level—Maintenance (49)" section.

Maintenance (50)

Table 7-50 lists the details of the Maintenance (50) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Index Table Usage Exceeded Critical Usage Threshold Level—Maintenance (50)" section.

Maintenance (51)

Table 7-51 lists the details of the Maintenance (51) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "A Process Exceeds 70% of Central Processing Unit Usage—Maintenance (51)" section.

Maintenance (52)

Table 7-52 lists the details of the Maintenance (52) informational event. For additional information, refer to the "Central Processing Unit Usage Is Now Below the 50% Level—Maintenance (52)" section.

Maintenance (53)

Table 7-53 lists the details of the Maintenance (53) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "The Central Processing Unit Usage Is Over 90% Busy—Maintenance (53)" section.

Maintenance (54)

Table 7-54 lists the details of the Maintenance (54) informational event. For additional information, refer to the "The Central Processing Unit Has Returned to Normal Levels of Operation—Maintenance (54)" section.

Maintenance (55)

Table 7-55 lists the details of the Maintenance (55) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "The Five Minute Load Average Is Abnormally High—Maintenance (55)" section.

Maintenance (56)

Table 7-56 lists the details of the Maintenance (56) informational event. For additional information, refer to the "The Load Average Has Returned to Normal Levels—Maintenance (56)" section.

Maintenance (57)

Table 7-57 lists the details of the Maintenance (57) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Memory and Swap Are Consumed at Critical Levels—Maintenance (57)" section.

|

Note |

Maintenance (58)

Table 7-58 lists the details of the Maintenance (58) informational event. For additional information, refer to the "Memory and Swap Are Consumed at Abnormal Levels—Maintenance (58)" section.

|

Note |

Maintenance (59)

Maintenance (59) is not used.

Maintenance (60)

Maintenance (60) is not used.

Maintenance (61)

Table 7-59 lists the details of the Maintenance (61) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "No Heartbeat Messages Received Through the Interface—Maintenance (61)" section.

Maintenance (62)

Table 7-60 lists the details of the Maintenance (62) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Link Monitor: Interface Lost Communication—Maintenance (62)" section.

Maintenance (63)

Table 7-61 lists the details of the Maintenance (63) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Outgoing Heartbeat Period Exceeded Limit—Maintenance (63)" section.

Maintenance (64)

Table 7-62 lists the details of the Maintenance (64) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Average Outgoing Heartbeat Period Exceeds Major Alarm Limit—Maintenance (64)" section.

Maintenance (65)

Table 7-63 lists the details of the Maintenance (65) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Disk Partition Critically Consumed—Maintenance (65)" section.

Maintenance (66)

Table 7-64 lists the details of the Maintenance (66) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Disk Partition Significantly Consumed—Maintenance (66)" section.

Maintenance (67)

Table 7-65 lists the details of the Maintenance (67) minor alarm. To troubleshoot and correct the cause of the alarm, refer to the "The Free Inter-Process Communication Pool Buffers Below Minor Threshold—Maintenance (67)" section.

Maintenance (68)

Table 7-66 lists the details of the Maintenance (68) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "The Free Inter-Process Communication Pool Buffers Below Major Threshold—Maintenance (68)" section.

Maintenance (69)

Table 7-67 lists the details of the Maintenance (69) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "The Free Inter-Process Communication Pool Buffers Below Critical Threshold—Maintenance (69)" section.

Maintenance (70)

Table 7-68 lists the details of the Maintenance (70) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "The Free Inter-Process Communication Pool Buffer Count Below Minimum Required—Maintenance (70)" section.

Maintenance (71)

Table 7-69 lists the details of the Maintenance (71) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Local Domain Name System Server Response Too Slow—Maintenance (71)" section.

Maintenance (72)

Table 7-70 lists the details of the Maintenance (72) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "External Domain Name System Server Response Too Slow—Maintenance (72)" section.

Maintenance (73)

Table 7-71 lists the details of the Maintenance (73) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "External Domain Name System Server Not Responsive—Maintenance (73)" section.

Maintenance (74)

Table 7-72 lists the details of the Maintenance (74) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Local Domain Name System Service Not Responsive—Maintenance (74)" section.

Maintenance (75)

Table 7-73 lists the details of the Maintenance (75) warning event. To monitor and correct the cause of the event, refer to the "Mismatch of Internet Protocol Address Local Server and Domain Name System—Maintenance (75)" section.

Maintenance (76)

Maintenance (76) is not used.

Maintenance (77)

Table 7-74 lists the details of the Maintenance (77) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Mate Time Differs Beyond Tolerance—Maintenance (77)" section.

Maintenance (78)

Table 7-75 lists the details of the Maintenance (78) informational event. For additional information, refer to the "Bulk Data Management System Admin State Change—Maintenance (78)" section.

Maintenance (79)

Table 7-76 lists the details of the Maintenance (79) informational event. For additional information, refer to the "Resource Reset—Maintenance (79)" section.

Maintenance (80)

Table 7-77 lists the details of the Maintenance (80) informational event. For additional information, refer to the "Resource Reset Warning—Maintenance (80)" section.

Maintenance (81)

Table 7-78 lists the details of the Maintenance (81) informational event. For additional information, refer to the "Resource Reset Failure—Maintenance (81)" section.

Maintenance (82)

Table 7-79 lists the details of the Maintenance (82) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Average Outgoing Heartbeat Period Exceeds Critical Limit—Maintenance (82)" section.

Maintenance (83)

Table 7-80 lists the details of the Maintenance (80) minor alarm. To troubleshoot and correct the cause of the alarm, refer to the "Swap Space Below Minor Threshold—Maintenance (83)" section.

Maintenance (84)

Table 7-81 lists the details of the Maintenance (84) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Swap Space Below Major Threshold—Maintenance (84)" section.

Maintenance (85)

Table 7-82 lists the details of the Maintenance (85) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Swap Space Below Critical Threshold—Maintenance (85)" section.

Maintenance (86)

Table 7-83 lists the details of the Maintenance (86) minor alarm. To troubleshoot and correct the cause of the alarm, refer to the "System Health Report Collection Error—Maintenance (86)" section.

Maintenance (87)

Table 7-84 lists the details of the Maintenance (87) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Status Update Process Request Failed—Maintenance (87)" section.

Maintenance (88)

Table 7-85 lists the details of the Maintenance (88) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Status Update Process Database List Retrieval Error—Maintenance (88)" section.

Maintenance (89)

Table 7-86 lists the details of the Maintenance (89) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Status Update Process Database Update Error—Maintenance (89)" section.

Maintenance (90)

Table 7-87 lists the details of the Maintenance (90) minor alarm. To troubleshoot and correct the cause of the alarm, refer to the "Disk Partition Moderately Consumed—Maintenance (90)" section.

Maintenance (91)

Table 7-88 lists the details of the Maintenance (91) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Internet Protocol Manager Configuration File Error—Maintenance (91)" section.

Maintenance (92)

Table 7-89 lists the details of the Maintenance (92) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Internet Protocol Manager Initialization Error—Maintenance (92)" section.

Maintenance (93)

Table 7-90 lists the details of the Maintenance (93) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Internet Protocol Manager Interface Failure—Maintenance (93)" section.

Maintenance (94)

Table 7-91 lists the details of the Maintenance (94) informational event. For additional information, refer to the "Internet Protocol Manager Interface State Change—Maintenance (94)" section.

Maintenance (95)

Table 7-92 lists the details of the Maintenance (95) informational event. For additional information, refer to the "Internet Protocol Manager Interface Created—Maintenance (95)" section.

Maintenance (96)

Table 7-93 lists the details of the Maintenance (96) informational event. For additional information, refer to the "Internet Protocol Manager Interface Removed—Maintenance (96)" section.

Maintenance (97)

Table 7-94 lists the details of the Maintenance (97) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Inter-Process Communication Input Queue Entered Throttle State—Maintenance (97)" section.

Maintenance (98)

Table 7-95 lists the details of the Maintenance (98) minor alarm. To troubleshoot and correct the cause of the alarm, refer to the "Inter-Process Communication Input Queue Depth at 25% of Its Hi-Watermark—Maintenance (98)" section.

Maintenance (99)

Table 7-96 lists the details of the Maintenance (99) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Inter-Process Communication Input Queue Depth at 50% of Its Hi-Watermark—Maintenance (99)" section.

Maintenance (100)

Table 7-97 lists the details of the Maintenance (100) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Inter-Process Communication Input Queue Depth at 75% of Its Hi-Watermark—Maintenance (100)" section.

Maintenance (101)

Table 7-98 lists the details of the Maintenance (101) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Switchover in Progress—Maintenance (101)" section.

Maintenance (102)

Table 7-99 lists the details of the Maintenance (102) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Thread Watchdog Counter Close to Expiry for a Thread—Maintenance (102)" section.

Maintenance (103)

Table 7-100 lists the details of the Maintenance (103) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Central Processing Unit Is Offline—Maintenance (103)" section.

Maintenance (104)

Table 7-101 lists the details of the Maintenance (104) informational event. For additional information, refer to the "Aggregration Device Address Successfully Resolved—Maintenance (104)" section.

Maintenance (105)

Maintenance (105) is not used.

Maintenance (106)

Maintenance (106) is not used.

Maintenance (107)

Table 7-102 lists the details of the Maintenance (107) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "No Heartbeat Messages Received Through Interface From Router—Maintenance (107)" section.

Maintenance (108)

Table 7-103 lists the details of the Maintenance (108) warning event. To monitor and correct the cause of the event, refer to the "A Log File Cannot Be Transferred—Maintenance (108)" section.

Maintenance (109)

Table 7-104 lists the details of the Maintenance (109) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Five Successive Log Files Cannot Be Transferred—Maintenance (109)" section.

Maintenance (110)

Table 7-105 lists the details of the Maintenance (110) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Access To Log Archive Facility Configuration File Failed or File Corrupted—Maintenance (110)" section.

Maintenance (111)

Table 7-106 lists the details of the Maintenance (111) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Cannot Log In to External Archive Server—Maintenance (111)" section.

Maintenance (112)

Table 7-107 lists the details of the Maintenance (112) major alarm. To troubleshoot and correct the cause of the alarm, refer to the "Congestion Status—Maintenance (112)" section.

Maintenance (113)

Table 7-108 lists the details of the Maintenance (113) informational event. For additional information, refer to the "Central Processing Unit Load of Critical Processes—Maintenance (113)" section.

Maintenance (114)

Table 7-109 lists the details of the Maintenance (114) informational event. For additional information, refer to the "Queue Length of Critical Processes—Maintenance (114)" section.

Maintenance (115)

Table 7-110 lists the details of the Maintenance (115) informational event. For additional information, refer to the "Inter-Process Communication Buffer Usage Level—Maintenance (115)" section.

Maintenance (116)

Table 7-111 lists the details of the Maintenance (116) informational event. For additional information, refer to the "Call Agent Reports the Congestion Level of Feature Server—Maintenance (116)" section.

Maintenance (117)

Table 7-112 lists the details of the Maintenance (117) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Side Automatically Restarting Due to Fault—Maintenance (117)" section.

Maintenance (118)

Table 7-113 lists the details of the Maintenance (118) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Domain Name Server Zone Database Does Not Match Between the Primary Domain Name Server and the Internal Secondary Authoritative Domain Name Server—Maintenance (118)" section.

Maintenance (119)

Table 7-114 lists the details of the Maintenance (119) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Periodic Shared Memory Database Back Up Failure—Maintenance (119)" section.

Maintenance (120)

Table 7-115 lists the details of the Maintenance (120) informational event. For additional information, refer to the "Periodic Shared Memory Database Back Up Success—Maintenance (120)" section.

Maintenance (121)

Table 7-116 lists the details of the Maintenance (121) informational event. For additional information, refer to the "Invalid SOAP Request—Maintenance (121)" section.

Maintenance (122)

Table 7-117 lists the details of the Maintenance (122) informational event. For additional information, refer to the "Northbound Provisioning Message Is Retransmitted—Maintenance (122)" section.

Maintenance (123)

Table 7-118 lists the details of the Maintenance (120) warning event. To monitor and correct the cause of the event, refer to the "Northbound Provisioning Message Dropped Due to Full Index Table—Maintenance (123)" section.

Maintenance (124)

Table 7-119 lists the details of the Maintenance (124) informational event. For additional information, refer to the "Periodic Shared Memory Sync Started—Maintenance (124)" section.

Maintenance (125)

Table 7-120 lists the details of the Maintenance (125) informational event. For additional information, refer to the "Periodic Shared Memory Sync Completed—Maintenance (125)" section.

Maintenance (126)

Table 7-121 lists the details of the Maintenance (126) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Periodic Shared Memory Sync Failure—Maintenance (126)" section.

Maintenance (127)

Table 7-122 lists the details of the Maintenence (127) critical alarm. To troubleshoot and correct the cause of the alarm, refer to the "Manual Recovery of OMS HUB Queue Loss—Maintenance (127)" section.

Description |

Loss in OMS Hub Communication |

Severity |

Critical |

Threshold |

100 |

Throttle |

0 |

Dataword |

Queue-Name - STRING[8] Platform - STRING[8] Node - STRING[8] |

Primary Cause |

Indicates either a network problem or socket connection causing OMS queue loss. |

Primary Action |

Manually restart the OMS and SMG processes. Refer section Manual Recovery of OMS HUB Queue Loss—Maintenance (127). |

Monitoring Maintenance Events

This section provides the information you need for monitoring and correcting maintenance events. Table 7-123 lists all of the maintenance events in numerical order and provides cross-references to each subsection.

|

Note |

Test Report—Maintenance (1)

The Test Report is for testing the maintenance event category. The event is informational and no further action is required.

Report Threshold Exceeded—Maintenance (2)

The Report Threshold Exceeded event functions as an informational alert that a report threshold has been exceeded. The primary cause of the event is that the threshold for a given report type and number has been exceeded. No further action is required since this is an information report. The Root Cause event report threshold should be investigated to determine if there is a service-affecting situation.

Local Side Has Become Faulty—Maintenance (3)

The Local Side Has Become Faulty alarm (major) indicates that the local side has become faulty. To troubleshoot and correct the cause of the Local Side Has Become Faulty alarm, refer to the "Local Side Has Become Faulty—Maintenance (3)" section.

Mate Side Has Become Faulty—Maintenance (4)

The Mate Side Has Become Faulty alarm (major) indicates that the mate side has become faulty. To troubleshoot and correct the cause of the Mate Side has Become Faulty alarm, refer to the "Mate Side Has Become Faulty—Maintenance (4)" section.

Changeover Failure—Maintenance (5)

The Changeover Failure alarm (major) indicates that a changeover failed. To troubleshoot and correct the cause of the Changeover Failure alarm, refer to the "Changeover Failure—Maintenance (5)" section.

Changeover Timeout—Maintenance (6)

The Changeover Timeout alarm (major) indicates that a changeover timed out. To troubleshoot and correct the cause of the Changeover Timeout alarm, refer to the "Changeover Timeout—Maintenance (6)" section.

Mate Rejected Changeover—Maintenance (7)

The Mate Rejected Changeover alarm (major) indicates that the mate rejected the changeover. To troubleshoot and correct the cause of the Mate Rejected Changeover alarm, refer to the "Mate Rejected Changeover—Maintenance (7)" section.

Mate Changeover Timeout—Maintenance (8)

The Mate Changeover Timeout alarm (major) indicates that the mate changeover timed out. To troubleshoot and correct the cause of the Mate Changeover Timeout alarm, refer to the "Mate Changeover Timeout—Maintenance (8)" section.

Local Initialization Failure—Maintenance (9)

The Local Initialization Failure alarm (major) indicates that the local initialization has failed. To troubleshoot and correct the cause of the Local Initialization Failure alarm, refer to the "Local Initialization Failure—Maintenance (9)" section.

Local Initialization Timeout—Maintenance (10)

The Local Initialization Timeout alarm (major) indicates that the local initialization has timed out. To troubleshoot and correct the cause of the Local Initialization Timeout alarm, refer to the "Local Initialization Timeout—Maintenance (10)" section.

Switchover Complete—Maintenance (11)

The Switchover Complete event functions as an informational alert that the switchover has been completed. The Switchover Complete event acknowledges that the changeover successfully completed. The event is informational and no further action is required.

Initialization Successful—Maintenance (12)

The Initialization Successful event functions as an informational alert that the initialization was successful. The Initialization Successful event indicates that a local initialization has been successful. The event is informational and no further action is required.

Administrative State Change—Maintenance (13)

The Administrative State Change event functions as an informational alert that the administrative state of a managed resource has changed. No action is required, since this informational event is given after manually changing the administrative state of a managed resource.

Call Agent Administrative State Change—Maintenance (14)

The Call Agent Administrative State Change event functions as an informational alert that indicates that the call agent has changed operational state as a result of a manual switchover. The event is informational and no further action is required.

Feature Server Administrative State Change—Maintenance (15)

The Feature Server Administrative State Change event functions as an informational alert that indicates that the feature server has changed operational state as a result of a manual switchover. The event is informational and no further action is required.

Process Manager: Process Has Died: Starting Process—Maintenance (16)

The Process Manager: Process Has Died: Starting Process event functions as an information alert that indicates that a process is being started as system is being brought up. The event is informational and no further action is required.

Invalid Event Report Received—Maintenance (17)

The Invalid Event Report Received event functions as an informational alert that indicates that a process has sent an event report that cannot be found in the database. If during system initialization a short burst of these events is issued prior to the database initialization, these events are informational and can be ignored; otherwise, contact Cisco TAC.

Process Manager: Process Has Died—Maintenance (18)

The Process Manager: Process Has Died alarm (minor) indicates that a process has died. To troubleshoot and correct the cause of the Process Manager: Process Has Died alarm, refer to the "Process Manager: Process Has Died—Maintenance (18)" section.

Process Manager: Process Exceeded Restart Rate—Maintenance (19)

The Process Manager: Process Exceeded Restart Rate alarm (major) indicates that a process has exceeded the restart rate. To troubleshoot and correct the cause of the Process Manager: Process Exceeded Restart Rate alarm, refer to the "Process Manager: Process Exceeded Restart Rate—Maintenance (19)" section.

Lost Connection to Mate—Maintenance (20)

The Lost Connection to Mate alarm (major) indicates that the keepalive module connection to the mate has been lost. To troubleshoot and correct the cause of the Lost Connection to Mate alarm, refer to the "Lost Connection to Mate—Maintenance (20)" section.

Network Interface Down—Maintenance (21)

The Network Interface Down alarm (major) indicates that the network interface has gone down. To troubleshoot and correct the cause of the Network Interface Down alarm, refer to the "Network Interface Down—Maintenance (21)" section.

Mate Is Alive—Maintenance (22)

The Mate Is Alive event functions as an informational alert that the mate is alive. The reporting CA/FS/EMS/BDMS is indicating that its mate has been successfully restored to service. The event is informational and no further action is required.

Process Manager: Process Failed to Complete Initialization—Maintenance (23)

The Process Manager: Process Failed to Complete Initialization alarm (major) indicates that a PMG process failed to complete initialization. To troubleshoot and correct the cause of the Process Manager: Process Failed to Complete Initialization alarm, refer to the "Process Manager: Process Failed to Complete Initialization—Maintenance (23)" section.

Process Manager: Restarting Process—Maintenance (24)

The Process Manager: Restarting Process alarm (minor) indicates the a PMG process is being restarted. To troubleshoot and correct the cause of the Process Manager: Restarting Process alarm, refer to the "Process Manager: Restarting Process—Maintenance (24)" section.

Process Manager: Changing State—Maintenance (25)

The Process Manager: Changing State event functions as an informational alert that a PMG process is changing state. The primary cause of the event is that a side is transitioning from one state to another. This is part of the normal side state change process. Monitor the system for other maintenance category event reports to see if the transition is due to a failure of a component within the specified side.

Process Manager: Going Faulty—Maintenance (26)

The Process Manager: Going Faulty alarm (major) indicates that a PMG process is going faulty. To troubleshoot and correct the cause of the Process Manager: Going Faulty alarm, refer to the "Process Manager: Going Faulty—Maintenance (26)" section.

Process Manager: Changing Over to Active—Maintenance (27)

The Process Manager: Changing Over to Active event functions as an informational alert that a PMG process is being changed to active. The primary cause of the event is that the specified platform instance was in the standby state and was changed to the active state either by program control or via user request. No action is necessary. This is part of the normal process of activating the platform.

Process Manager: Changing Over to Standby—Maintenance (28)

The Process Manager: Changing Over to Standby event functions as an information alert that a PMG process is being changed to standby. The primary cause of the event is that the specified side was in the active state and was changed to the standby state, or is being restored to service, and its mate is already in the active state either by program control or through a user request. No action is necessary. This is part of the normal process of restoring or duplexing the platform.

Administrative State Change Failure—Maintenance (29)

The Administrative State Change Failure event functions as a warning that a change of the administrative state has failed. The primary cause of the event is that an attempt to change the administrative state of a device has failed. Analyze the cause of the failure if you can find one. Verify that the controlling element of the targeted device was in the active state in order to change the adminstrator state of the device. If the controlling platform instance is not active, restore it to service.

Element Manager State Change—Maintenance (30)

The Element Manager State Change event functions as an informational alert that the element manager has changed state. The primary cause of the event is that the specified EMS has changed to the indicated state either naturally or through a user request. The event is informational and no action is necessary. This is part of the normal state transitioning process for the EMS. Monitor the system for related event reports if the transition was due to a faulty or out of service state.

Process Manager: Sending Go Active to Process—Maintenance (32)

The Process Manager: Sending Go Active to Process event functions as an informational alert that a process is being notified to switch to active state as the system is switching over from standby to active. The event is informational and no further action is required.

Process Manager: Sending Go Standby to Process—Maintenance (33)

The Process Manager: Sending Go Standby to Process event functions as an informational alert that a process is being notified to exit gracefully as the system is switching over to standby state, or is shutting down. The switchover or shutdown could be due to the operator taking the action to switch or shut down the system or due to the system having detected a fault. The event is informational and no further action is required.

Process Manager: Sending End Process to Process—Maintenance (34)

The Process Manager: Sending End Process to Process event functions as an informational alert that a process is being notified to exit gracefully as the system is switching over to standby state, or is shutting down. The switchover or shutdown could be due to the operator taking the action to switch or shut down the system or due to the system having detected a fault. The event is informational and no further action is required.

Process Manager: All Processes Completed Initialization—Maintenance (35)

The Process Manager: All Processes Completed Initialization event functions as an informational alert that the system is being brought up, and that all processes are ready to start executing. The event is informational and no further action is required.

Process Manager: Sending All Processes Initialization Complete to Process—Maintenance (36)

The Process Manager: Sending All Processes Initialization Complete to Process event functions as an informational alert that system is being brought up, and all processes are being notified to start executing. The event is informational and no further action is required.

Process Manager: Killing Process—Maintenance (37)

The Process Manager: Killing Process event functions as an informational alert that a process is being killed. A software problem occurred while the system was being brought up or shut down. A process did not come up when the system was brought up and had to be killed in order to restart it. The event is informational and no further action is required.

Process Manager: Clearing the Database—Maintenance (38)

The Process Manager: Clearing the Database event functions as an informational alert that the system is preparing to copy data from the mate. The system has been brought up and the mate side is running. The event is informational and no further action is required.

Process Manager: Cleared the Database—Maintenance (39)

The Process Manager: Cleared the Database event functions as an informational alert that the system is prepared to copy data from the mate. The system has been brought up and the mate side is running. The event is informational and no further action is required.

Process Manager: Binary Does Not Exist for Process—Maintenance (40)

The Process Manager: Binary Does Not Exist for Process alarm (critical) indicates that the platform was not installed correctly. To troubleshoot and correct the cause of the Process Manager: Binary Does Not Exist for Process alarm, refer to the "Process Manager: Binary Does Not Exist for Process—Maintenance (40)" section.

Administrative State Change Successful With Warning—Maintenance (41)

The Administrative State Change Successful With Warning event functions as a warning that the system was in a flux when a successful administrative state change occurred. The primary cause of the event is that the system was in flux state when an administrative change state command was issued. To correct the primary cause of the event, retry the command.

Number of Heartbeat Messages Received Is Less Than 50% of Expected—Maintenance (42)

The Number of Heartbeat messages Received Is Less Than 50% of Expected alarm (major) indicates that the number of heartbeat (HB) messages being received is less than 50% of the expected number. To troubleshoot and correct the cause of the Number of Heartbeat messages Received Is Less Than 50% of Expected alarm, refer to the "Number of Heartbeat Messages Received Is Less Than 50% Of Expected—Maintenance (42)" section.

Process Manager: Process Failed to Come Up in Active Mode—Maintenance (43)

The Process Manager: Process Failed to Come Up in Active Mode alarm (critical) indicates that the process has failed to come up in active mode. To troubleshoot and correct the cause of the Process Manager: Process Failed to Come Up in Active Mode alarm, refer to the "Process Manager: Process Failed to Come Up In Active Mode—Maintenance (43)" section.

Process Manager: Process Failed to Come Up in Standby Mode—Maintenance (44)

The Process Manager: Process Failed to Come Up in Standby Mode alarm (critical) indicates that the process has failed to come up in standby mode. To troubleshoot and correct the cause of the Process Manager: Process Failed to Come Up in Standby Mode alarm, refer to the "Process Manager: Process Failed to Come Up In Standby Mode—Maintenance (44)" section.

Application Instance State Change Failure—Maintenance (45)

The Application Instance State Change Failure alarm (major) indicates that an application instance state change failed. To troubleshoot and correct the cause of the Application Instance State Change Failure alarm, refer to the "Application Instance State Change Failure—Maintenance (45)" section.

Network Interface Restored—Maintenance (46)

The Network Interface Restored event functions as an informational alert that the network interface was restored. The primary cause of the event is that the interface cable is reconnected and the interface is put "up" using ifconfig command. The event is informational and no further action is required.

Thread Watchdog Counter Expired for a Thread—Maintenance (47)

The Thread Watchdog Counter Expired for a Thread alarm (critical) indicates that a thread watchdog counter has expired for a thread. To troubleshoot and correct the cause of the Thread Watchdog Counter Expired for a Thread alarm, refer to the "Thread Watchdog Counter Expired for a Thread—Maintenance (47)" section.

Index Table Usage Exceeded Minor Usage Threshold Level—Maintenance (48)

The Index Table Usage Exceeded Minor Usage Threshold Level alarm (minor) indicates that the index (IDX) table usage has exceeded the minor threshold crossing usage level. To troubleshoot and correct the cause of the Index Table Usage Exceeded Minor Usage Threshold Level alarm, refer to the "Index Table Usage Exceeded Minor Usage Threshold Level—Maintenance (48)" section.

Index Table Usage Exceeded Major Usage Threshold Level—Maintenance (49)

The Index Table Usage Exceeded Major Usage Threshold Level alarm (major) indicates that the IDX table usage has exceeded the major threshold crossing usage level. To troubleshoot and correct the cause of the Index Table Usage Exceeded Major Usage Threshold Level alarm, refer to the "Index Table Usage Exceeded Major Usage Threshold Level—Maintenance (49)" section.

Index Table Usage Exceeded Critical Usage Threshold Level—Maintenance (50)

The Index Table Usage Exceeded Critical Usage Threshold Level alarm (critical) indicates that the IDX table usage has exceeded the critical threshold crossing usage level. To troubleshoot and correct the cause of the Index Table Usage Exceeded Critical Usage Threshold Level alarm, refer to the "Index Table Usage Exceeded Critical Usage Threshold Level—Maintenance (50)" section.

A Process Exceeds 70% of Central Processing Unit Usage—Maintenance (51)

The A Process Exceeds 70% of Central Processing Unit Usage alarm (major) indicates that a process has exceeded the CPU usage threshold of 70 percent. To troubleshoot and correct the cause of the A Process Exceeds 70% of Central Processing Unit Usage alarm, refer to the "A Process Exceeds 70% of Central Processing Unit Usage—Maintenance (51)" section.

Central Processing Unit Usage Is Now Below the 50% Level—Maintenance (52)

The Central Processing Unit Usage Is Now Below the 50% Level event functions as an informational alert that the CPU usage level has fallen below the threshold level of 50 percent. The event is informational and no further action is required.

The Central Processing Unit Usage Is Over 90% Busy—Maintenance (53)

The Central Processing Unit Usage Is Over 90% Busy alarm (critical) indicates that the CPU usage is over the threshold level of 90 percent. To troubleshoot and correct the cause of The Central Processing Unit Usage Is Over 90% Busy alarm, refer to the "The Central Processing Unit Usage Is Over 90% Busy—Maintenance (53)" section.

The Central Processing Unit Has Returned to Normal Levels of Operation—Maintenance (54)

The Central Processing Unit Has Returned to Normal Levels of Operation event functions as an informational alert that the CPU usage has returned to the normal level of operation. The event is informational and no further actions is required.

The Five Minute Load Average Is Abnormally High—Maintenance (55)

The Five Minute Load Average Is Abnormally High alarm (major) indicates the five minute load average is abnormally high. To troubleshoot and correct the cause of The Five Minute Load Average Is Abnormally High alarm, refer to the "The Five Minute Load Average Is Abnormally High—Maintenance (55)" section.

The Load Average Has Returned to Normal Levels—Maintenance (56)

The Load Average Has Returned to Normal Levels event functions as an informational alert the load average has returned to normal levels. The event is informational and no further action is required.

Memory and Swap Are Consumed at Critical Levels—Maintenance (57)

|

Note |

The Memory and Swap Are Consumed at Critical Levels alarm (critical) indicates that memory and swap file usage have reached critical levels. To troubleshoot and correct the cause of the Memory and Swap Are Consumed at Critical Levels alarm, refer to the "Memory and Swap Are Consumed at Critical Levels—Maintenance (57)" section.

Memory and Swap Are Consumed at Abnormal Levels—Maintenance (58)

|

Note |

The Memory and Swap Are Consumed at Abnormal Levels event functions as an informational alert that the memory and swap file usage are being consumed at abnormal levels. The primary cause of the event is that a process or multiple processes have consumed an abnormal amount of memory on the system and the operating system is utilizing an abnormal amount of the swap space for process execution. This can be a result of high call rates or bulk provisioning activity. Monitor the system to ensure all subsystems are performing normally. If they are, only lightening the effective load on the system will clear the situation. If some subsystems are not performing normally, verify which process(es) are running at abnormally high rates, and contact Cisco TAC.

No Heartbeat Messages Received Through the Interface—Maintenance (61)

The No Heartbeat Messages Received Through the Interface alarm (critical) indicates that no HB messages are being received through the local network interface. To troubleshoot and correct the cause of the No Heartbeat Messages Received Through the Interface alarm, refer to the "No Heartbeat Messages Received Through the Interface—Maintenance (61)" section.

Link Monitor: Interface Lost Communication—Maintenance (62)

The Link Monitor: Interface Lost Communication alarm (major) indicates that an interface has lost communication. To troubleshoot and correct the cause of the Link Monitor: Interface Lost Communication alarm, refer to the "Link Monitor: Interface Lost Communication—Maintenance (62)" section.

Outgoing Heartbeat Period Exceeded Limit—Maintenance (63)

The Outgoing Heartbeat Period Exceeded Limit alarm (major) indicates that the outgoing HB period has exceeded the limit. To troubleshoot and correct the cause of the Outgoing Heartbeat Period Exceeded Limit alarm, refer to the "Outgoing Heartbeat Period Exceeded Limit—Maintenance (63)" section.

Average Outgoing Heartbeat Period Exceeds Major Alarm Limit—Maintenance (64)

The Average Outgoing Heartbeat Period Exceeds Major Alarm Limit alarm (major) indicates that the average outgoing HB period has exceeded the major threshold crossing alarm limit. To troubleshoot and correct the cause of the Average Outgoing Heartbeat Period Exceeds Major Alarm Limit alarm, refer to the "Average Outgoing Heartbeat Period Exceeds Major Alarm Limit—Maintenance (64)" section.

Disk Partition Critically Consumed—Maintenance (65)

The Disk Partition Critically Consumed alarm (critical) indicates that the disk partition consumption has reached critical limits. To troubleshoot and correct the cause of the Disk Partition Critically Consumed alarm, refer to the "Disk Partition Critically Consumed—Maintenance (65)" section.

Disk Partition Significantly Consumed—Maintenance (66)

The Disk Partition Significantly Consumed alarm (major) indicates that the disk partition consumption has reached the major threshold crossing level. To troubleshoot and correct the cause of the Disk Partition Significantly Consumed alarm, refer to the "Disk Partition Significantly Consumed—Maintenance (66)" section.

The Free Inter-Process Communication Pool Buffers Below Minor Threshold—Maintenance (67)

The Free Inter-Process Communication Pool Buffers Below Minor Threshold alarm (minor) indicates that the number of free IPC pool buffers has fallen below the minor threshold crossing level. To troubleshoot and correct the cause of The Free Inter-Process Communication Pool Buffers Below Minor Threshold alarm, refer to the "The Free Inter-Process Communication Pool Buffers Below Minor Threshold—Maintenance (67)" section.

The Free Inter-Process Communication Pool Buffers Below Major Threshold—Maintenance (68)

The Free Inter-Process Communication Pool Buffers Below Major Threshold alarm (major) indicates that the number of free IPC pool buffers has fallen below the major threshold crossing level. To troubleshoot and correct the cause of The Free Inter-Process Communication Pool Buffers Below Major Threshold alarm, refer to the "The Free Inter-Process Communication Pool Buffers Below Major Threshold—Maintenance (68)" section.

The Free Inter-Process Communication Pool Buffers Below Critical Threshold—Maintenance (69)

The Free Inter-Process Communication Pool Buffers Below Critical Threshold alarm (critical) indicates that the number of free IPC pool buffers has fallen below the critical threshold crossing level. To troubleshoot and correct the cause of The Free Inter-Process Communication Pool Buffers Below Critical Threshold alarm, refer to the "The Free Inter-Process Communication Pool Buffers Below Critical Threshold—Maintenance (69)" section.

The Free Inter-Process Communication Pool Buffer Count Below Minimum Required—Maintenance (70)

The Free Inter-Process Communication Pool Buffers Below Critical Threshold alarm (critical) indicates that the IPC pool buffers are not being freed properly by the application or the application is not able to keep up with the incoming IPC messaging traffic. To troubleshoot and correct the cause of The Free Inter-Process Communication Pool Buffers Below Critical Threshold alarm, refer to the "The Free Inter-Process Communication Pool Buffer Count Below Minimum Required—Maintenance (70)" section.

Local Domain Name System Server Response Too Slow—Maintenance (71)

The Local Domain Name System Server Response Too Slow alarm (major) indicates that the response time of the local DNS server is too slow. To troubleshoot and correct the cause of the Local Domain Name System Server Response Too Slow alarm, refer to the "Local Domain Name System Server Response Too Slow—Maintenance (71)" section.

External Domain Name System Server Response Too Slow—Maintenance (72)

The External Domain Name System Server Response Too Slow alarm (major) indicates that the response time of the external DNS server is too slow. To troubleshoot and correct the cause of the External Domain Name System Server Response Too Slow alarm, refer to the "External Domain Name System Server Response Too Slow—Maintenance (72)" section.

External Domain Name System Server Not Responsive—Maintenance (73)

The External Domain Name System Server Not Responsive alarm (critical) indicates that the external DNS server is not responding to network queries. To troubleshoot and correct the cause of the External Domain Name System Server Not Responsive alarm, refer to the "External Domain Name System Server Not Responsive—Maintenance (73)" section.

Local Domain Name System Service Not Responsive—Maintenance (74)

The Local Domain Name System Service Not Responsive alarm (critical) indicates that the local DNS server is not responding to network queries. To troubleshoot and correct the cause of the Local Domain Name System Service Not Responsive alarm, refer to the "Local Domain Name System Service Not Responsive—Maintenance (74)" section.

Mismatch of Internet Protocol Address Local Server and Domain Name System—Maintenance (75)

The Mismatch of Internet Protocol Address Local Server and Domain Name System event functions as a warning that a mismatch of the local server IP address and the DNS server address has occurred. The primary cause of the event is that the DNS server updates are not getting to the Cisco BTS 10200 from the external server, or the discrepancy was detected before the local DNS lookup table was updated. Ensure the external DNS server is operational and sending updates to the Cisco BTS 10200.

Mate Time Differs Beyond Tolerance—Maintenance (77)

The Mate Time Differs Beyond Tolerance alarm (major) indicates that the mate time differs beyond the tolerance. To troubleshoot and correct the cause of the Mate Time Differs Beyond Tolerance alarm, refer to the "Mate Time Differs Beyond Tolerance—Maintenance (77)" section.

Bulk Data Management System Admin State Change—Maintenance (78)

The Bulk Data Management System Admin State Change event functions as an informational alert that the BDMS administrative state has changed. The primary cause of the event is that the Bulk Data Management Server was switched over manually. The event is informational and no further action is required.

Resource Reset—Maintenance (79)

The Resource Reset event functions as an informational alert that a resource reset has occurred. The event is informational and no further action is required.

Resource Reset Warning—Maintenance (80)

The Resource Reset Warning event functions as an informational alert that a resource reset is about to occur. The event is informational and no further action is required.

Resource Reset Failure—Maintenance (81)