About EMC VPLEX

VPLEX is an EMC technology that provides a virtual storage system and access to data in the private cloud. A VPLEX can be implemented on Cisco UCS Director through a pod deployment such as Vblock, or as a standalone device. VPLEX has the following capabilities:

-

Uses a single interface for a multi-vendor high-availability storage and compute infrastructure to dynamically move applications and data across different compute and storage locations in real time, with no outage required. VPLEX combines scaled clustering with distributed cache coherence intelligence within the same data center, across a campus, or within a specific geographical region. Cache coherency manages the cache so that data is not lost, corrupted, or overwritten.

-

Dynamically makes data available for organizations. For example, a business can be sustained through a failure that would have traditionally required outages or manual restore procedures.

-

Presents and maintains the same data consistently within and between sites, and enables distributed data collaboration.

-

Establishes itself between ESX hosts that act as servers for virtual machines (VMs) and storage in a storage area network (SAN) where data can be extended within, between, and across pods.

EMC VPLEX Technology

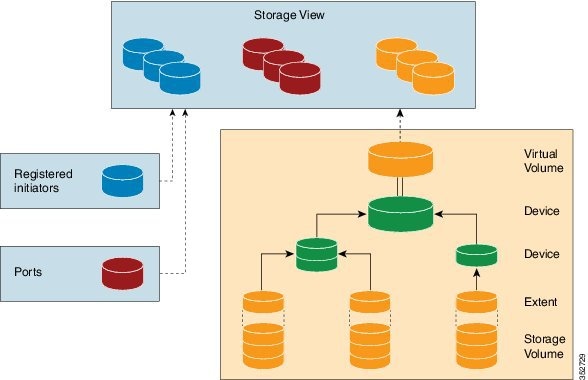

EMC VPLEX encapsulates traditional physical storage array devices and applies three layers of logical abstraction to them. The logical relationships of each layer are shown in the Figure below.

VPLEX uses extents to divide storage volumes. Extents can be all or part of the underlying storage volume. VPLEX aggregates extents and applies RAID protection in the device layer. Devices are constructed using one or more extents.

At the top layer of the VPLEX storage structures are virtual volumes, which are created by underlying devices and inherit their size. A virtual volume can be a single contiguous volume that is distributed over two or more storage volumes.

VPLEX exposes virtual volumes to hosts that need to use them with its front-end (FE) ports, which are visible to hosts. Access to virtual volumes is controlled through storage views. Storage views act as logical containers that determine host initiator access to VPLEX FE ports and virtual volumes.

VPLEX can use a Local or Metro external hardware interface depending on the network implementation described in the following sections. For more information on VPLEX solutions for VPLEX Local or Metro see the Data Center Interconnect Design Guide for Virtualized Workload Mobility with Cisco, EMC, and VMware.

VPLEX Local

Use VPLEX Local when homogeneous or heterogeneous storage systems are integrated into a pod and data mobility is managed between the physical data storage entities.

- Up to four engines

- Up to 8000 logical unit numbers (LUNs)

- Single site

- Single pod

VPLEX Metro

Use VPLEX Metro when access and data mobility is required between two locations that are separated by synchronous distances. VPLEX Metro allows a remote site to present logical unit numbers (LUNs) without needing physical storage for them. VPLEX Metro configurations help users to transparently move and share workloads, consolidate a pod, and optimize resource utilization across pods.

- One to eight engines

- Up to 16,000 LUNs

- Two sites

- Up to 100 kilometers

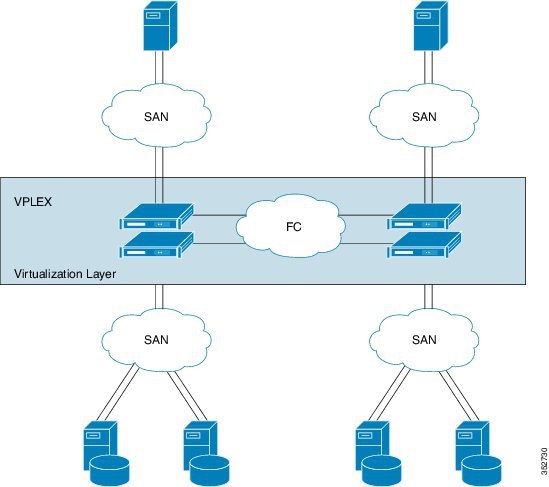

VPLEX Clustering Architecture

Managing the VPLEX Storage System for a Pod

The VPLEX virtual storage system technology for accessing data in the private cloud is associated with and supported by a pod. Cisco UCS Director collects data through the VPLEX Element Manager API and connects to the VPLEX server through HTTPS. After you establish a VPLEX account and associate a pod with a VPLEX cluster (made up of one, two, or four engines in a physical cabinet), you can configure, manage, and monitor the following VPLEX features in Cisco UCS Director:

-

Cluster inventory of ESX hosts and reports for two or more VPLEX directors that form a single fault-tolerant cluster and that are deployed as one to four engines.

-

VPLEX engine inventory and reports for an engine that contains two directors, management modules, and redundant power.

-

Director inventory and reports for the CPU module(s) that run GeoSynchrony, the core VPLEX software. Two directors are in each engine; each has dedicated resources that can function independently.

-

Port inventory and reports for Fast Ethernet ports and initiator ports.

-

VPLEX (local, metro, or global) data cache report for the temporary storage of recent writes and recently accessed data.

-

Storage volume inventory and reports for a logical unit number (LUN) exported from an array.

-

Extent management (create, delete, report) for a slice (range of blocks) of a storage volume.

-

Device management (create, delete, attach/detach mirror, report) for a RAID 1 device whose mirrors are in geographically separate locations.

-

Virtual volume management (create, modify, delete, report) for a virtual volume that can be distributed over two or more storage volumes that are presented to ESX hosts.

-

Storage views management (create, modify, delete, report) for a combination of registered initiators (hosts), front-end ports, and virtual volumes that are used to control host access to storage.

-

Recovery point for determining the amount of data that can be lost before a given failure event.

For more information about VPLEX use cases, see the EMC VPLEX Metro Functional Overview section of the Cisco Virtualized Workload Mobility Design Considerations chapter in the Data Center Interconnect Design Guide for Virtualized Workload Mobility with Cisco, EMC, and VMware.

Feedback

Feedback