Prerequisites

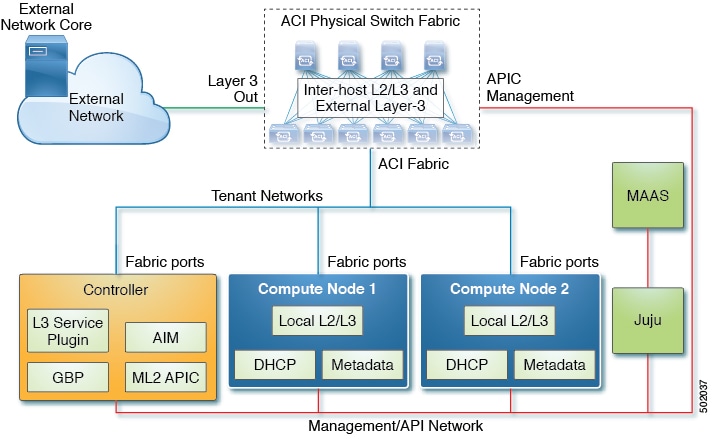

This section describes the prerequisites for installing opflex plugin on Ubuntu with Juju.

-

Working knowledge of Ubuntu Linux

-

Experience in deployment of OpenStack using MAAS and Juju

-

Some experience in APIC UI maybe required

-

Working ACI Fabric

-

MAAS setup on Ubuntu Xenial with Juju2

-

Minimum of 4 servers with at least one fabric interface

Feedback

Feedback