Cisco Virtualization Solution for EMC VSPEX with VMware vSphere 5.0 for 50,100 and 125 Virtual Machines

Available Languages

Table Of Contents

About Cisco Validated Design (CVD) Program

Cisco Solution for EMC VSPEX VMware vSphere 5.0 Architectures

Cisco Unified Computing System

Cisco C220 M3 Rack-Mount Servers

Cisco Nexus 1000v Virtual Switch

EMC Storage Technologies and Benefits

Memory Configuration Guidelines

ESXi/ESXi Memory Management Concepts

Virtual Machine Memory Concepts

Allocating Memory to Virtual Machines

VSPEX VMware Memory Virtualization

Virtual Networking Best Practices

Non-Uniform Memory Access (NUMA)

VSPEX VMware Storage Virtualization

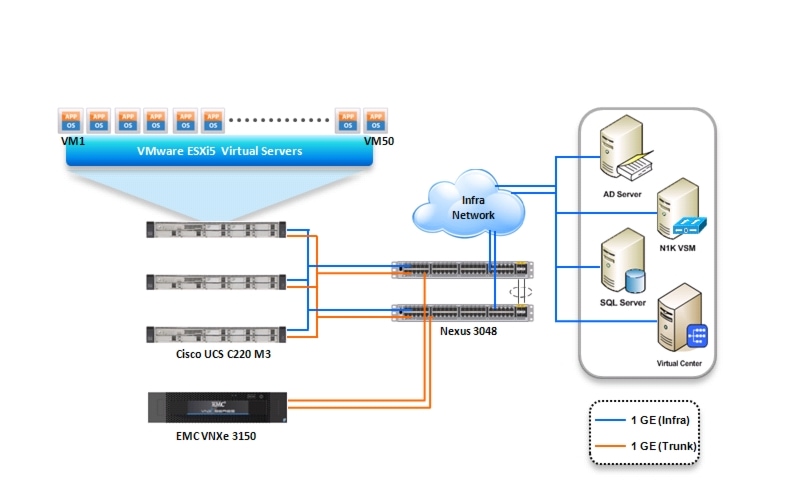

Architecture for 50 VMware Virtual Machines

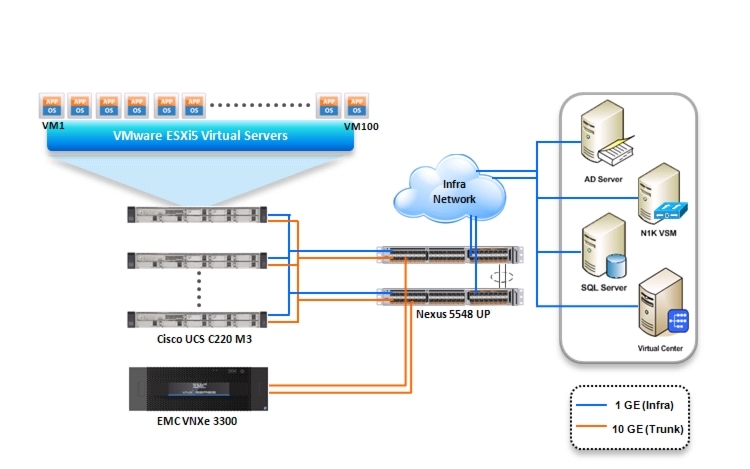

Architecture for 100 VMware Virtual Machines

Architecture for 125 VMware Virtual Machines

Defining the Reference Workload

Applying the Reference Workload

VSPEX Configuration Guidelines

Configure Management IP Address for CIMC Connectivity

Enabling Virtualization Technology in BIOS

Preparing Switches, Connecting Network, and Configuring Switches

Topology Diagram for 50 Virtual Machines

Topology Diagram for 100 Virtual Machines

Topology Diagram for Hundred and Twenty Five Virtual Machines

Configuring Cisco Nexus Switches

Preparing and Configuring EMC Storage Array

Configuring VNXe3000 series storage arrays

Installing VMware ESXi Servers and vCenter Infrastructure

Installing and Configuring Microsoft SQL Server Database

Deploying VMware vCenter Server

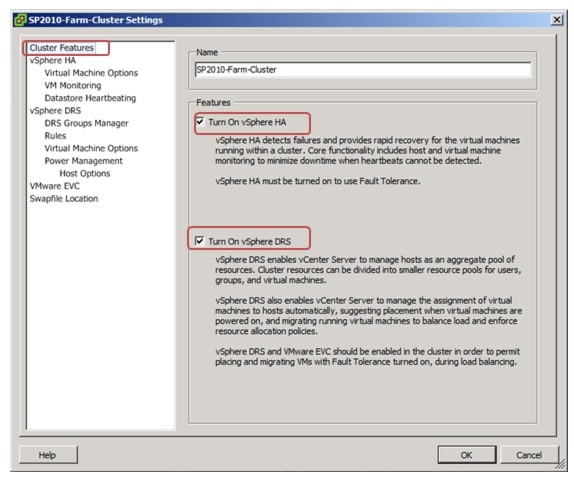

Configuring Cluster, HA and DRS on the VMware vCenter

Template-Based Deployments for Rapid Provisioning

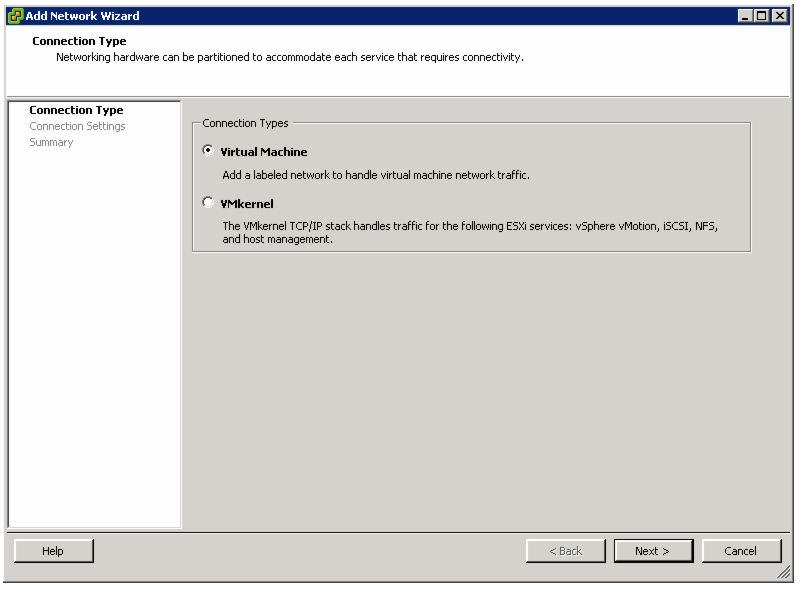

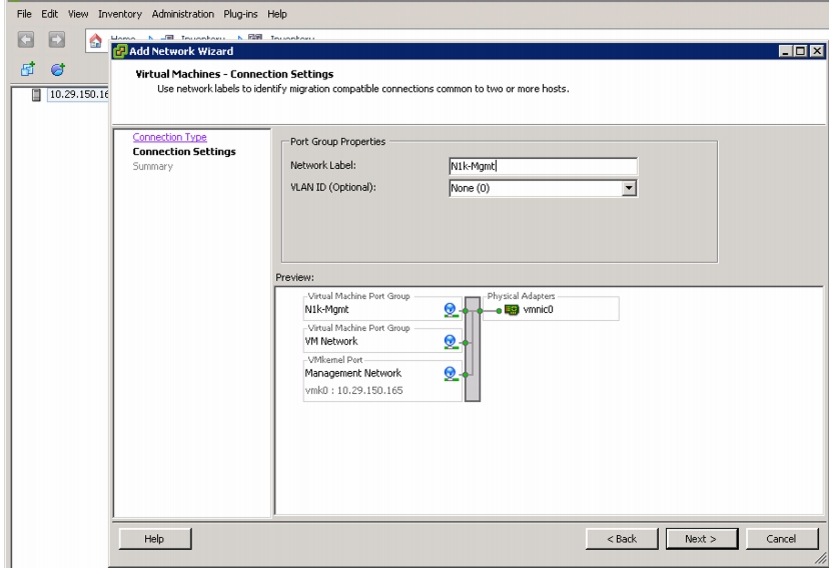

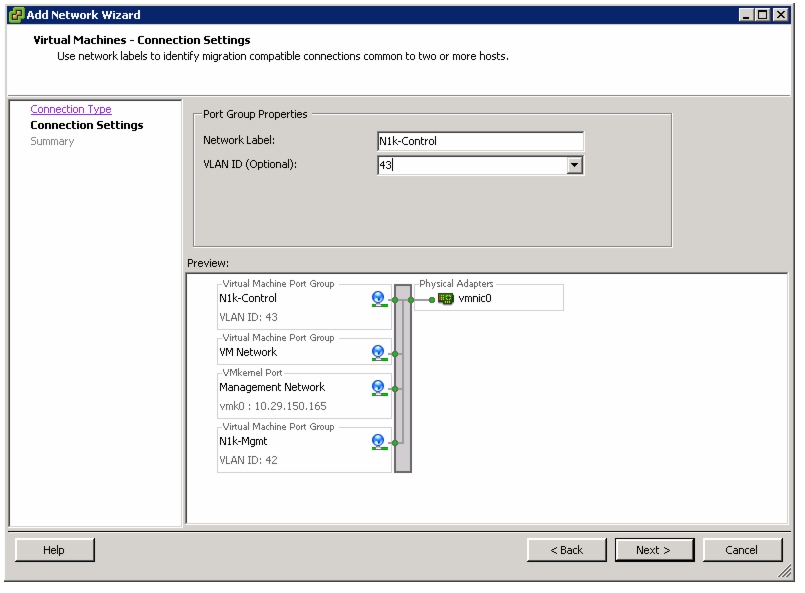

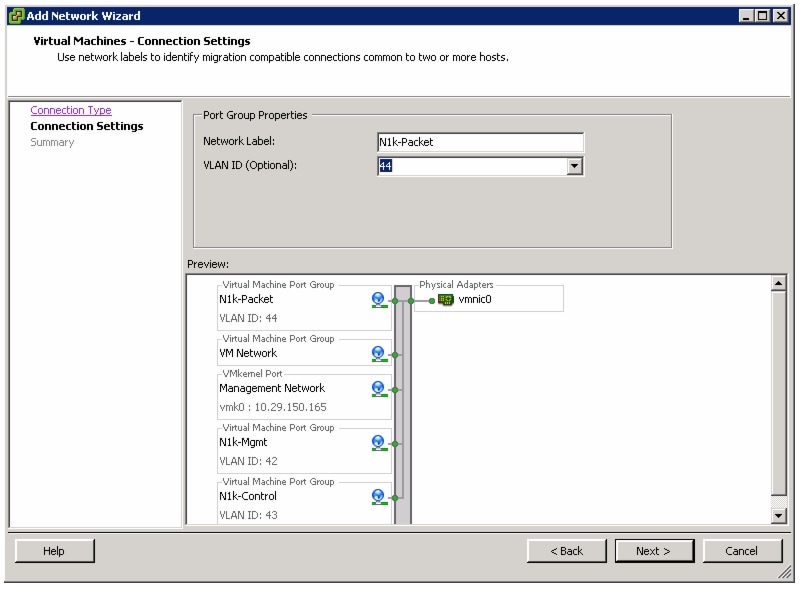

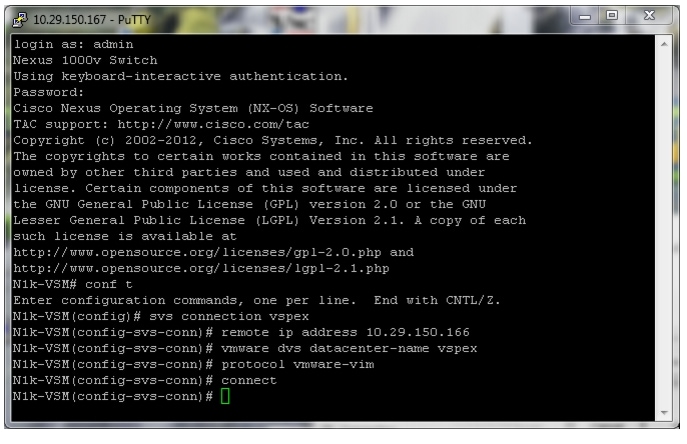

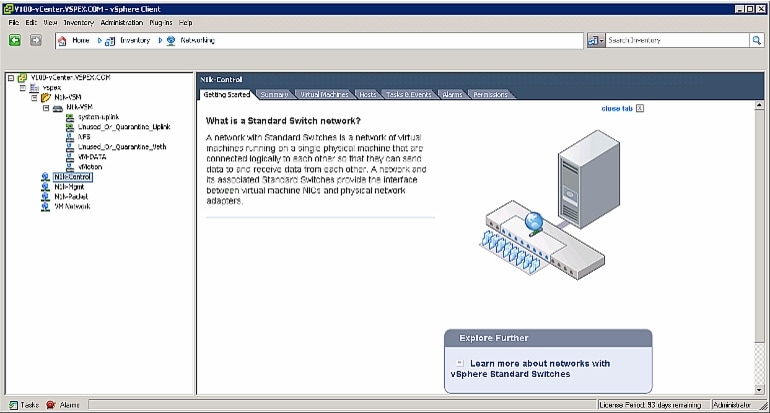

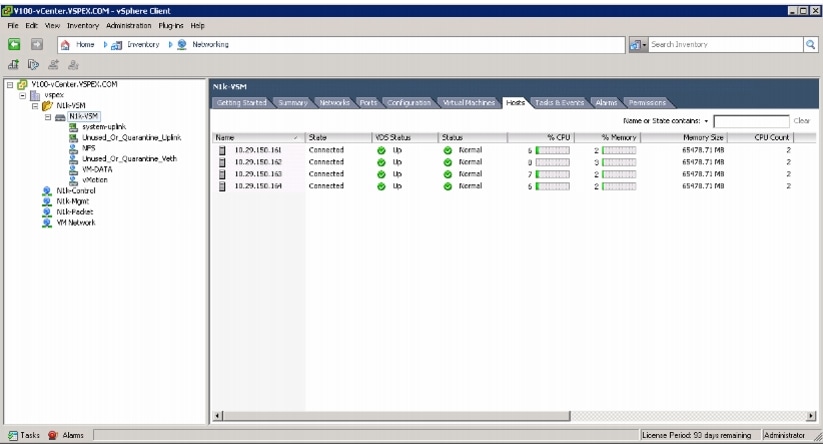

Installing and Configuring Cisco Nexus 1000v

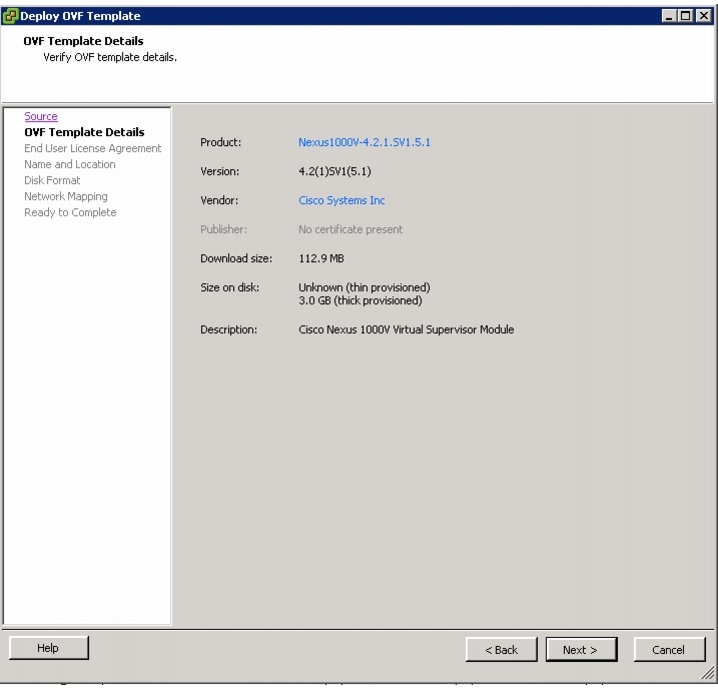

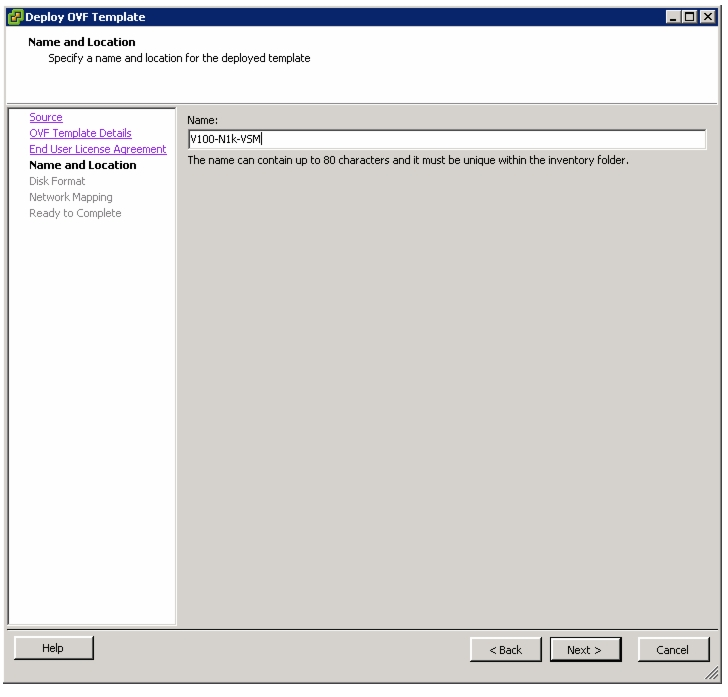

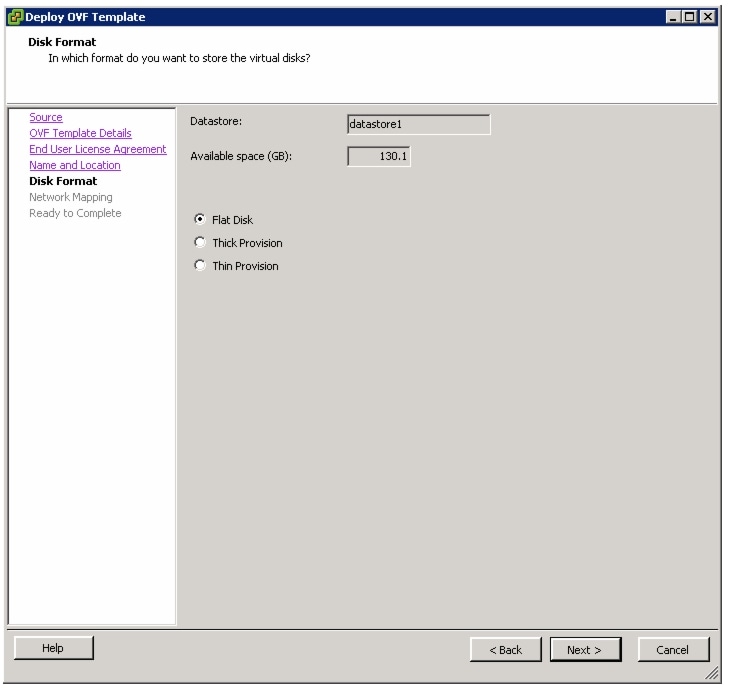

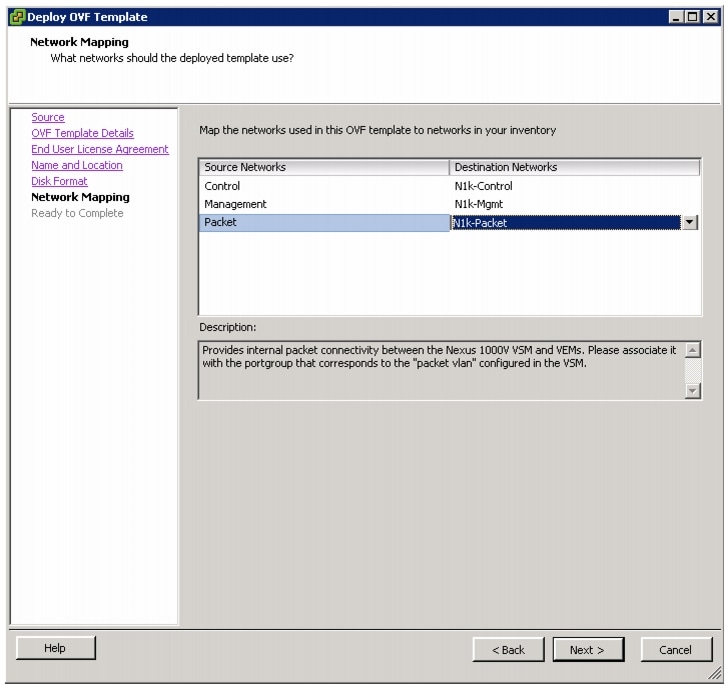

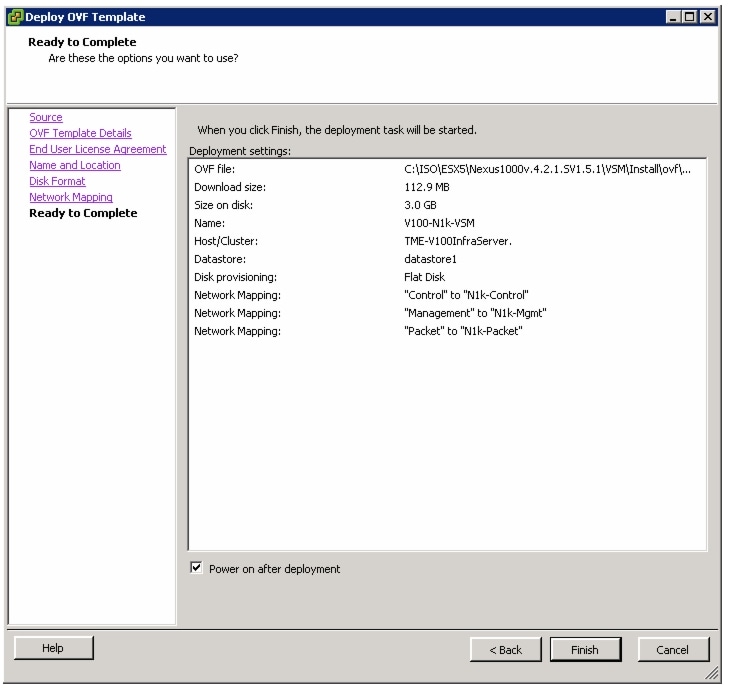

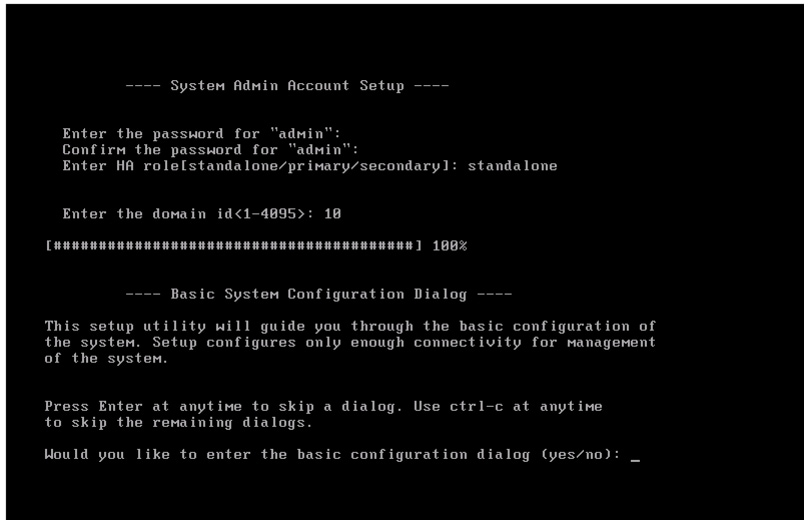

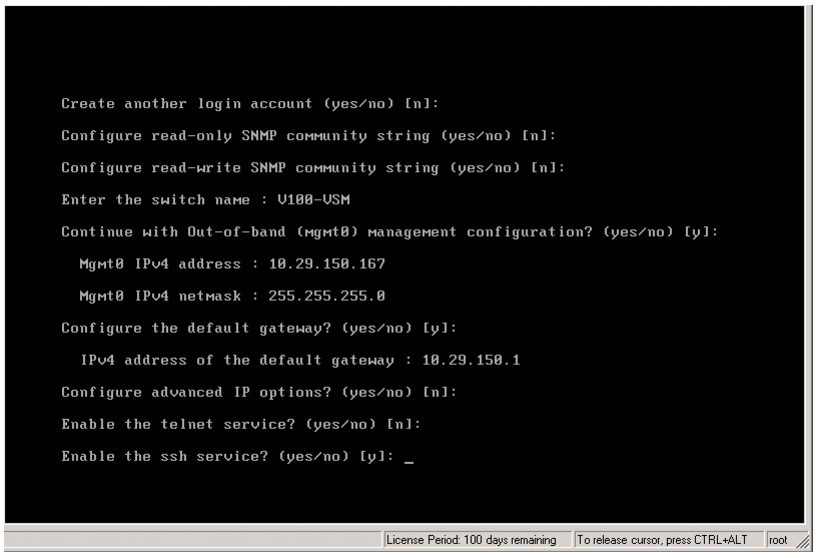

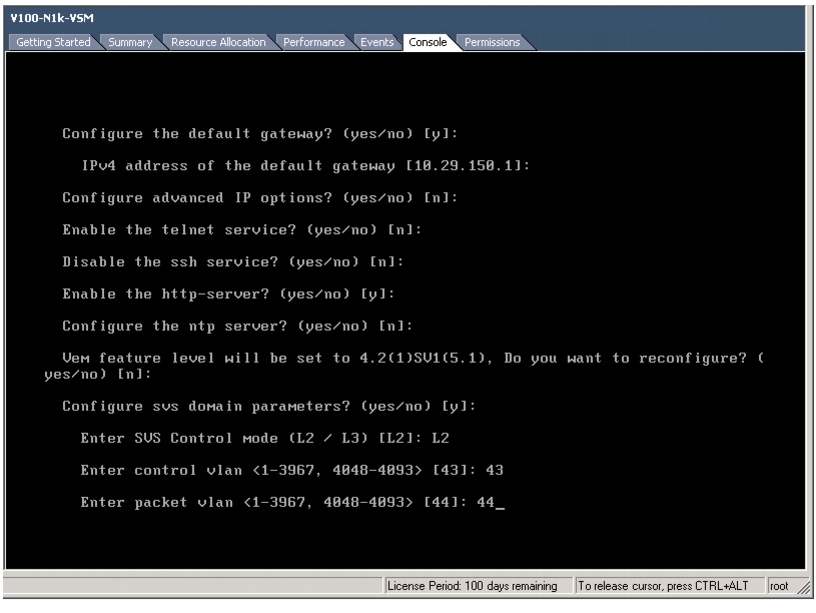

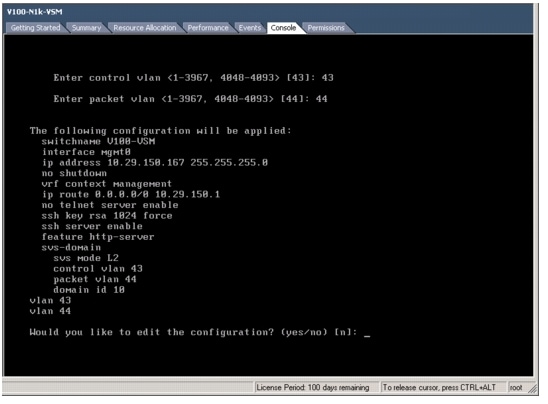

Installing Cisco Nexus 1000v VSM VM

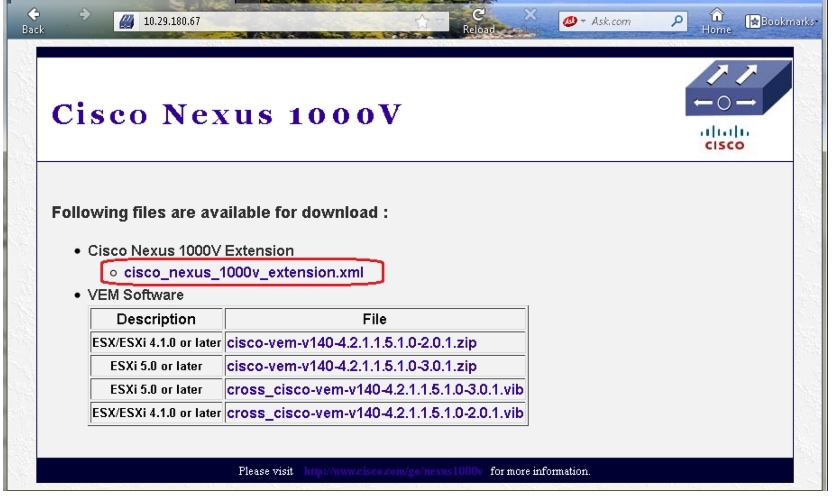

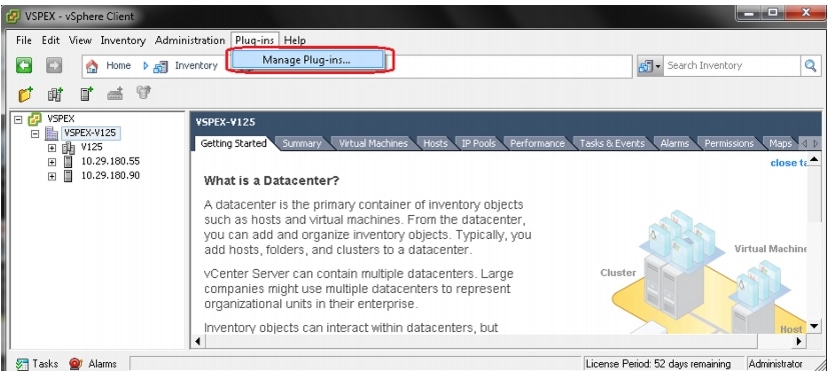

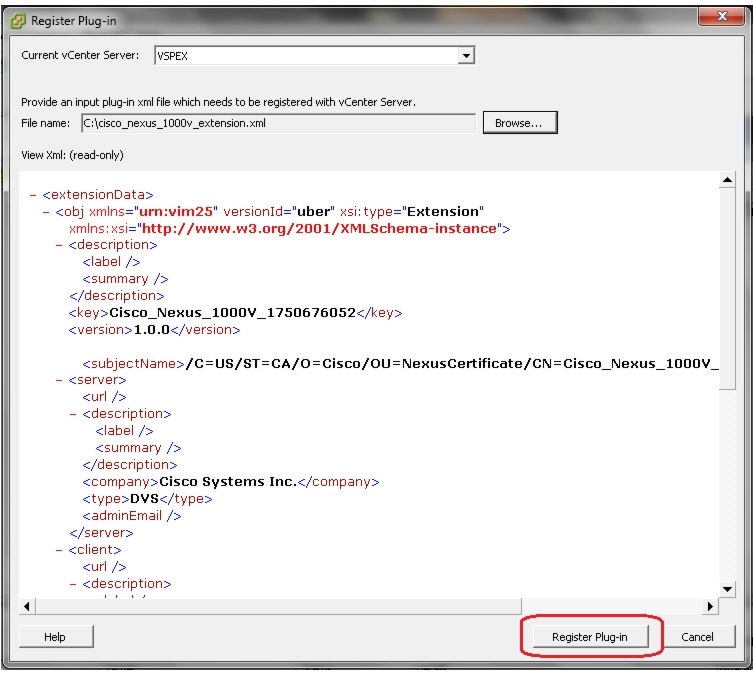

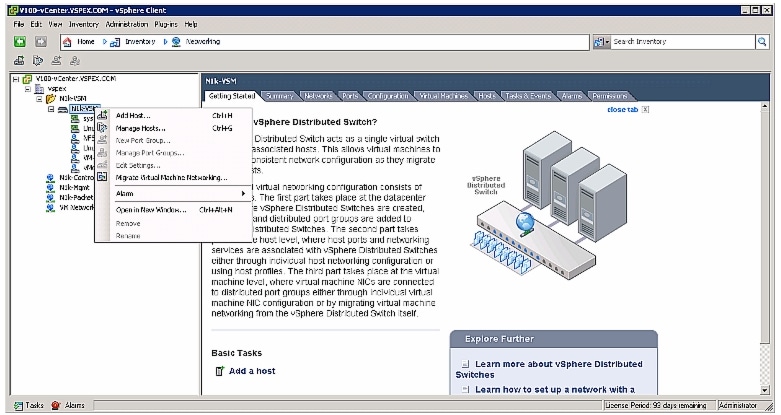

Connecting Cisco Nexus 1000v VSM to VMware vCenter

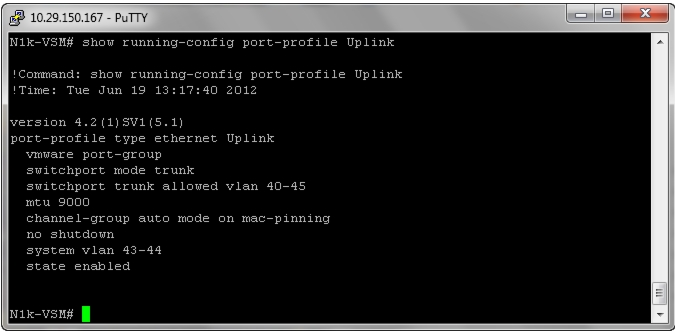

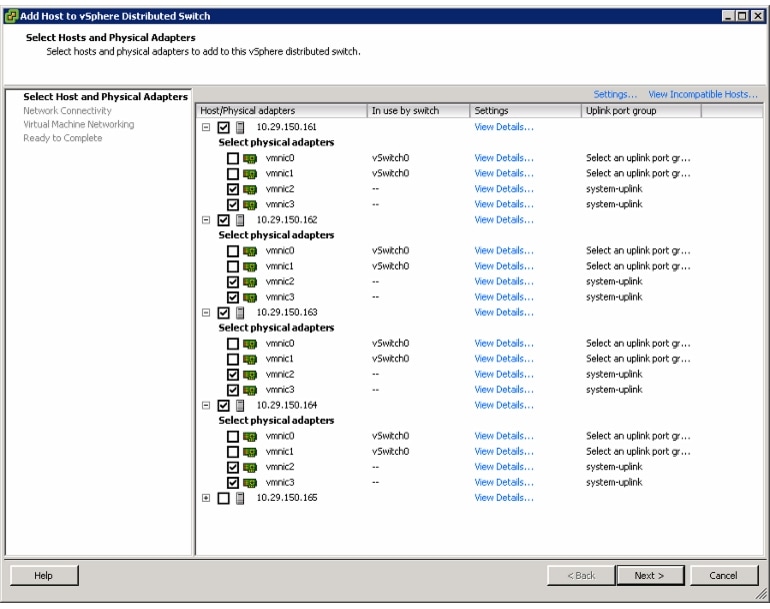

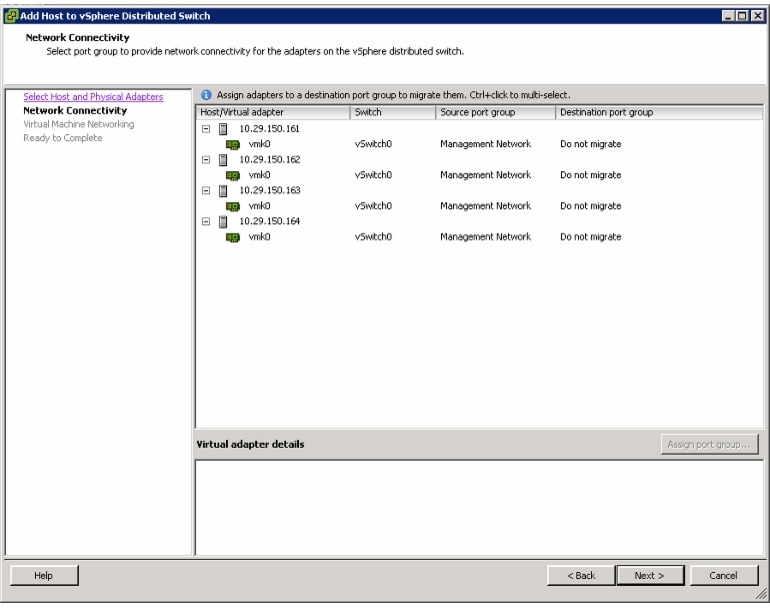

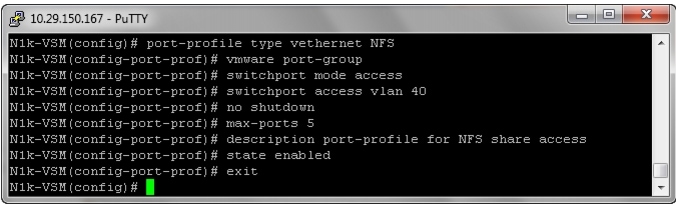

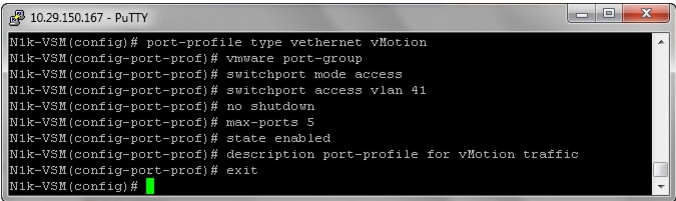

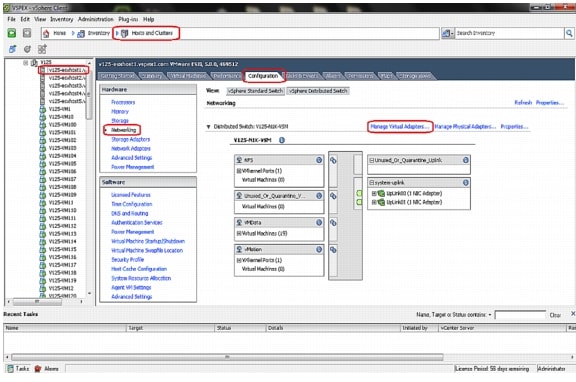

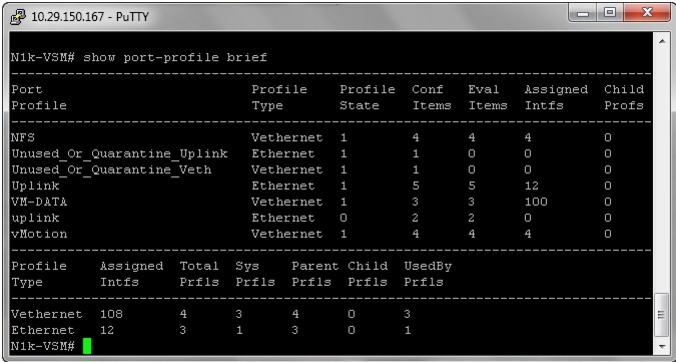

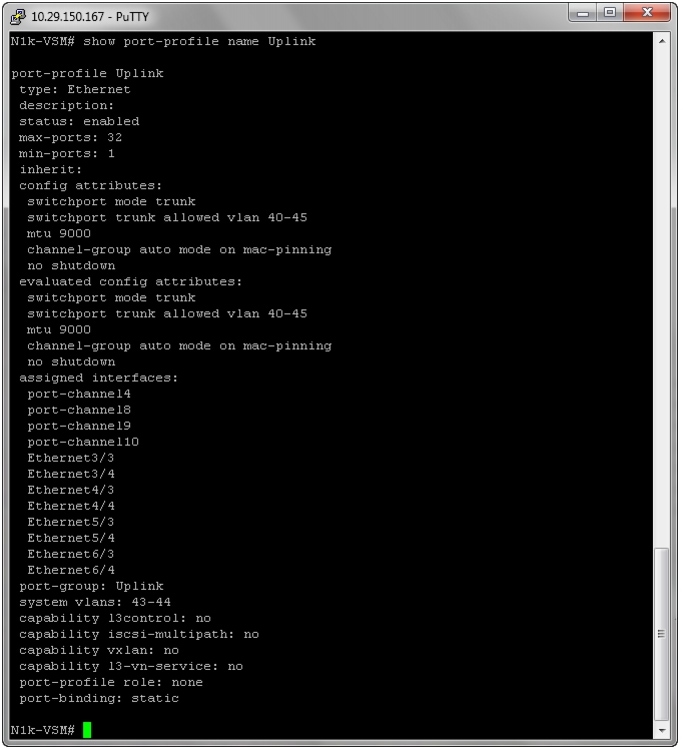

Configuring Port-Profile in VSM and Migrate vCenter Networking to vDS

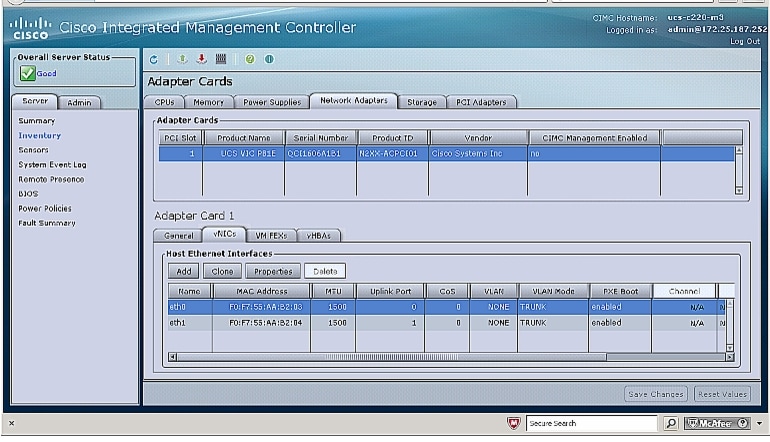

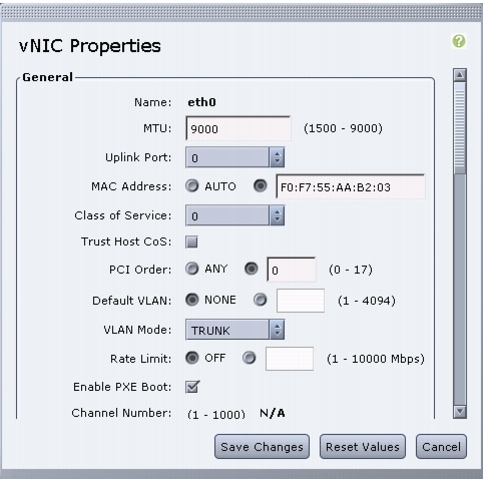

Configuring Jumbo Frame at the CIMC Interface

Validating Cisco Solution for EMC VSPEX VMware Architectures

Customer Configuration Data Sheet

Cisco Solution for EMC VSPEX VMware vSphere 5.0 ArchitecturesDesign for 50, 100 and 125 Virtual MachinesLast Updated: October 24, 2013

Building Architectures to Solve Business Problems

About the Authors

Mehul Bhatt, Virtualization Architect, Server Access Virtualization Business Unit, Cisco SystemsMehul Bhatt has over 12 years of Experience in virtually all layers of computer networking. His focus area includes Unified Compute Systems, network and server virtualization design. Prior to joining Cisco Technical Marketing team, Mehul was Technical Lead at Cisco, Nuova systems and Bluecoat systems. Mehul holds a Masters degree in computer systems engineering and holds various Cisco career certifications.

Hardik Patel, Virtualization System Engineer, Server Access Virtualization Business Unit, Cisco SystemsHardik Patel has over 9 years of experience with server virtualization and core application in the virtual environment with area of focus in design and implementation of systems and virtualization, manage and administration, UCS, storage and network configurations. Hardik holds Masters degree in Computer Science with various career oriented certification in virtualization, network and Microsoft.

VijayKumar D, Technical Marketing Engineer, Server Access Virtualization Business Unit, Cisco Systems

VijayKumar has over 10 years of experience in UCS, network, storage and server virtualization design. Vijay has worked on performance and benchmarking on Cisco UCS and has delivered benchmark results on SPEC CPU2006 and SPECj ENT 2010. Vijay holds certification in VMware Certified Professional and Cisco Unified Computing systems Design specialist

Acknowledgements

For their support and contribution to the design, validation, and creation of the Cisco Validated Design, we would like to thank:

•

Vadiraja Bhatt-Cisco

•

Bathu Krishnan-Cisco

•

Sindhu Sudhir-Cisco

•

Kevin Phillips-EMC

•

John Moran-EMC

•

Kathy Sharp-EMC

About Cisco Validated Design (CVD) Program

The CVD program consists of systems and solutions designed, tested, and documented to facilitate faster, more reliable, and more predictable customer deployments. For more information visit www.cisco.com/go/designzone.

ALL DESIGNS, SPECIFICATIONS, STATEMENTS, INFORMATION, AND RECOMMENDATIONS (COLLECTIVELY, "DESIGNS") IN THIS MANUAL ARE PRESENTED "AS IS," WITH ALL FAULTS. CISCO AND ITS SUPPLIERS DISCLAIM ALL WARRANTIES, INCLUDING, WITHOUT LIMITATION, THE WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THE DESIGNS, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

THE DESIGNS ARE SUBJECT TO CHANGE WITHOUT NOTICE. USERS ARE SOLELY RESPONSIBLE FOR THEIR APPLICATION OF THE DESIGNS. THE DESIGNS DO NOT CONSTITUTE THE TECHNICAL OR OTHER PROFESSIONAL ADVICE OF CISCO, ITS SUPPLIERS OR PARTNERS. USERS SHOULD CONSULT THEIR OWN TECHNICAL ADVISORS BEFORE IMPLEMENTING THE DESIGNS. RESULTS MAY VARY DEPENDING ON FACTORS NOT TESTED BY CISCO.

CCDE, CCENT, Cisco Eos, Cisco Lumin, Cisco Nexus, Cisco StadiumVision, Cisco TelePresence, Cisco WebEx, the Cisco logo, DCE, and Welcome to the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP, CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press, Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unity, Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step, Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS, iPhone, iQuick Study, IronPort, the IronPort logo, LightStream, Linksys, MediaTone, MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy, Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase, SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their respective owners. The use of the word partner does not imply a partnership relationship between Cisco and any other company. (0809R)

© 2012 Cisco Systems, Inc. All rights reserved

Cisco Solution for EMC VSPEX VMware vSphere 5.0 Architectures

Executive Summary

Cisco solution for the EMC VSPEX is a pre-validated and modular architecture built with proven best-of-breed technologies to create and complete an end-to-end virtualization solution. The end-to-end solutions enable you to make an informed decision while choosing the hypervisor, compute, storage and networking layers. VSPEX eliminates the server virtualization planning and configuration burdens. The VSPEX infrastructures accelerate your IT Transformation by enabling faster deployments, greater flexibility of choice, efficiency, and lower risk. This Cisco Validated Design document focuses on the VMware architecture for 50, 100 and 125 virtual machines with Cisco solution for the EMC VSPEX.

Introduction

Virtualization is a key and critical strategic deployment model for reducing the Total Cost of Ownership (TCO) and achieving better utilization of the platform components like hardware, software, network and storage. However, choosing an appropriate platform for virtualization can be challenging. Virtualization platforms should be flexible, reliable, and cost effective to facilitate the deployment of various enterprise applications. In a virtualization platform to utilize compute, network, and storage resources effectively, the ability to slice and dice the underlying platform is essential to size to the application requirements. The Cisco solution for the EMC VSPEX provides a very simplistic yet fully integrated and validated infrastructure to deploy Virtual Machines in various sizes to suit various application needs.

Target Audience

The reader of this document is expected to have the necessary training and background to install and configure VMware vSphere 5.0, EMC VNXe series, EMC VNX5300, Cisco Nexus 3048 switch, Cisco Nexus 5548UP switch, Cisco Nexus 1000v switch, and Cisco Unified Computing (UCS) C220 M3 rack servers. External references are provided wherever applicable and it is recommended that the reader be familiar with these documents.

Readers are also expected to be familiar with the infrastructure and database security policies of the customer installation.

Purpose of this Guide

This document describes the steps required to deploy and configure the Cisco solution for the EMC VSPEX for VMware architecture. The document covers three types of VMware architectures:

•

VMware vSphere 5.0 for 50 Virtual Machines

•

VMware vSphere 5.0 for 100 virtual machines

•

VMware vSphere 5.0 for 125 virtual machines

The readers of this document are expected to have sufficient knowledge to install and configure the products used, configuration details that are important to the deployment models metioned above.

Business Needs

The VSPEX solutions are built with proven best-of-breed technologies to create complete virtualization solutions that enable you to make an informed decision in the hypervisor, server, and networking layers. The VSPEX infrastructures accelerate your IT transformation by enabling faster deployments, greater flexibility of choice, efficiency, and lower risk.

For more detailed information on server capacity, network interface, and storage configuration, see the EMC VSPEX Server Virtualization Solution VMware vSphere 5 for 125 Virtual Machines Enabled by VMware vSphere 5.0 and EMC VNX5300—Reference Architecture and associated documentation.

Business applications are moving into the consolidated compute, network, and storage environment. The Cisco solution for the EMC VSPEX using VMware reduces the complexity of configuring every component of a traditional deployment model. The complexity of integration management is reduced while maintaining the application design and implementation options. Administration is unified, while process separation can be adequately controlled and monitored. The following are the business needs for the Cisco solution for EMC VSPEX VMware architectures:

•

Provide an end-to-end virtualization solution to utilize the capabilities of the unified infrastructure components.

•

Provide a Cisco VSPEX for VMware ITaaS solution for efficiently virtualizing 50, 100 or 125 virtual machines for varied customer use cases.

•

Show implementation progression of VMware vCenter 5.0 design and the results.

•

Provide a reliable, flexible and scalable reference design.

Solution Overview

The Cisco solution for EMC VSPEX using VMware vSphere 5.0 provides an end-to-end architecture with Cisco, EMC, VMware, and Microsoft technologies that demonstrate support for up to 50, 100 and 125 generic virtual machines and provide high availability and server redundancy.

The following are the components used for the design and deployment:

•

Cisco C-series Unified Computing System servers

•

Cisco Nexus 5000 series or 3000 series switches depending on the scale of the solution

•

Cisco Nexus 1000v virtual switch

•

Cisco virtual Distributed Switch across multiple VMware ESXi hypervisors

•

Cisco virtual Port Channels for network load balancing and high availability

•

EMC VNXe3150, VNXe3300 or VNX5300 storage components as per the scale needs

•

VMware vCenter 5

•

Microsoft SQL database

•

VMware DRS

•

VMware HA

The solution is designed to host scalable, and mixed application workloads. The scope of this CVD is limited to the Cisco solution for EMC VSPEX VMware solutions for 50, 100 and 125 virtual machines only.

Technology Overview

Cisco Unified Computing System

The Cisco Unified Computing System is a next-generation data center platform that unites compute, network, and storage access. The platform, optimized for virtual environments, is designed using open industry-standard technologies and aims to reduce total cost of ownership (TCO) and increase business agility. The system integrates a low-latency; lossless 10 Gigabit Ethernet unified network fabric with enterprise-class, x86-architecture servers. It is an integrated, scalable, multi chassis platform in which all resources participate in a unified management domain.

The main components of Cisco Unified Computing System are:

•

Computing—The system is based on an entirely new class of computing system that incorporates blade servers based on Intel Xeon 5500/5600 Series Processors. Selected Cisco UCS blade servers offer the patented Cisco Extended Memory Technology to support applications with large datasets and allow more virtual machines per server.

•

Network—The system is integrated onto a low-latency, lossless, 10-Gbps unified network fabric. This network foundation consolidates LANs, SANs, and high-performance computing networks which are separate networks today. The unified fabric lowers costs by reducing the number of network adapters, switches, and cables, and by decreasing the power and cooling requirements.

•

Virtualization—The system unleashes the full potential of virtualization by enhancing the scalability, performance, and operational control of virtual environments. Cisco security, policy enforcement, and diagnostic features are now extended into virtualized environments to better support changing business and IT requirements.

•

Storage access—The system provides consolidated access to both SAN storage and Network Attached Storage (NAS) over the unified fabric. By unifying the storage access the Cisco Unified Computing System can access storage over Ethernet, Fibre Channel, Fibre Channel over Ethernet (FCoE), and iSCSI. This provides customers with choice for storage access and investment protection. In addition, the server administrators can pre-assign storage-access policies for system connectivity to storage resources, simplifying storage connectivity, and management for increased productivity.

•

Management—The system uniquely integrates all system components which enable the entire solution to be managed as a single entity by the Cisco UCS Manager. The Cisco UCS Manager has an intuitive graphical user interface (GUI), a command-line interface (CLI), and a robust application programming interface (API) to manage all system configuration and operations.

The Cisco Unified Computing System is designed to deliver:

•

A reduced Total Cost of Ownership and increased business agility.

•

Increased IT staff productivity through just-in-time provisioning and mobility support.

•

A cohesive, integrated system which unifies the technology in the data center. The system is managed, serviced and tested as a whole.

•

Scalability through a design for hundreds of discrete servers and thousands of virtual machines and the capability to scale I/O bandwidth to match demand.

•

Industry standards supported by a partner ecosystem of industry leaders.

Cisco C220 M3 Rack-Mount Servers

Building on the success of the Cisco UCS C220 M3 Rack-Mount Servers, the enterprise-class Cisco UCS C220 M3 server further extends the capabilities of the Cisco Unified Computing System portfolio in a 1-rack-unit (1RU) form factor. And with the addition of the Intel® Xeon® processor E5-2600 product family, it delivers significant performance and efficiency gains. Figure 1 shows the Cisco UCS C220 M3 rack server.

Figure 1 Cisco UCS C220 M3 Rack Server

The Cisco UCS C220 M3 also offers up to 256 GB of RAM, eight drives or SSDs, and two 1GE LAN interfaces built into the motherboard, delivering outstanding levels of density and performance in a compact package.

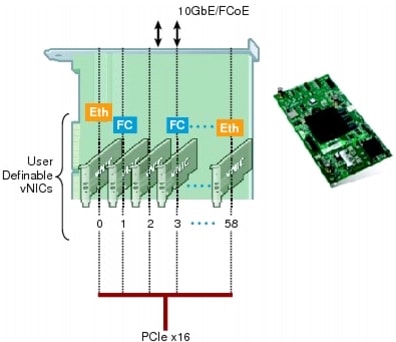

I/O Adapters

The Cisco UCS Rack-Mount Server has various Converged Network Adapters (CNA) options. The Cisco UCS P81E Virtual Interface Card (VIC) option is used in this Cisco Validated Design.

This Cisco UCS P81E VIC is unique to the Cisco UCS Rack-Mount Server system. This mezzanine card adapter is designed around a custom ASIC that is specifically intended for virtualized systems. As is the case with the other Cisco CNAs, the Cisco UCS P81E VIC encapsulates fibre channel traffic within the 10-GE packets for delivery to the Ethernet network.

UCS P81E VIC provides the capability to create multiple VNICs (up to 128) on the CNA. This allows complete I/O configurations to be provisioned in virtualized or non-virtualized environments using just-in-time provisioning, providing tremendous system flexibility and allowing consolidation of multiple physical adapters.

System security and manageability is improved by providing visibility and portability of network policies and security all the way to the virtual machines. Additional P81E features like VN-Link technology and pass-through switching, minimize implementation overhead and complexity. Figure 2 shows the Cisco UCS P81E VIC.

Figure 2 Cisco UCS P81e VIC

Cisco Nexus 5548UP Switch

The Cisco Nexus 5548UP is a 1RU 1 Gigabit and 10 Gigabit Ethernet switch offering up to 960 gigabits per second throughput and scaling up to 48 ports. It offers 32 1/10 Gigabit Ethernet fixed enhanced Small Form-Factor Pluggable (SFP+) Ethernet/FCoE or 1/2/4/8-Gbps native FC unified ports and three expansion slots. These slots have a combination of Ethernet/FCoE and native FC ports. The Cisco Nexus 5548UP switch is shown in Figure 3.

Figure 3 Cisco Nexus 5548UP switch

Cisco Nexus 3048 Switch

The Cisco Nexus® 3048 Switch is a line-rate Gigabit Ethernet top-of-rack (ToR) switch and is part of the Cisco Nexus 3000 Series Switches portfolio. The Cisco Nexus 3048, with its compact one-rack-unit (1RU) form factor and integrated Layer 2 and 3 switching, complements the existing Cisco Nexus family of switches. This switch runs the industry-leading Cisco® NX-OS Software operating system, providing customers with robust features and functions that are deployed in thousands of data centers worldwide. The Cisco Nexus 3048 switch is shown in Figure 4.

Figure 4 Cisco Nexus 3048 Switch

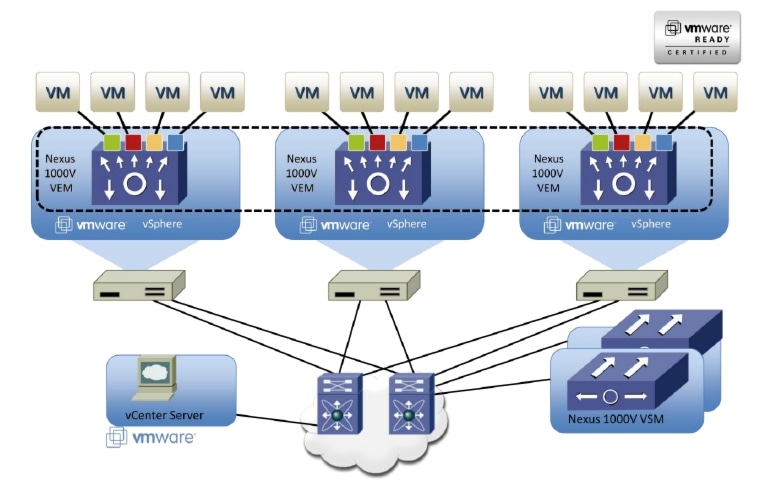

Cisco Nexus 1000v Virtual Switch

Nexus 1000v is a virtual Ethernet switch with two components:

•

Virtual Supervisor Module (VSM)—the control plane of the virtual switch that runs NX-OS.

•

Virtual Ethernet Module (VEM)—a virtual line card embedded into each VMware vSphere hypervisor host (ESXi).

Virtual Ethernet Modules across multiple ESXi hosts form a virtual Distributed Switch (vDS). Using the Cisco vDS VMware plug-in, the Virtual Interface Card (VIC) provides a solution that is capable of discovering the Dynamic Ethernet interfaces and registering all of them as uplink interfaces for internal consumption of the vDS. The vDS component on each host discovers the number of uplink interfaces that it has and presents a switch to the virtual machines running on the host. All traffic from an interface on a virtual machine is sent to the corresponding port of the vDS switch. The traffic is then sent out to the physical link of the host using the special uplink port-profile. This vDS implementation guarantees consistency of features and better integration of host virtualization with the rest of the Ethernet fabric in the Data Center.

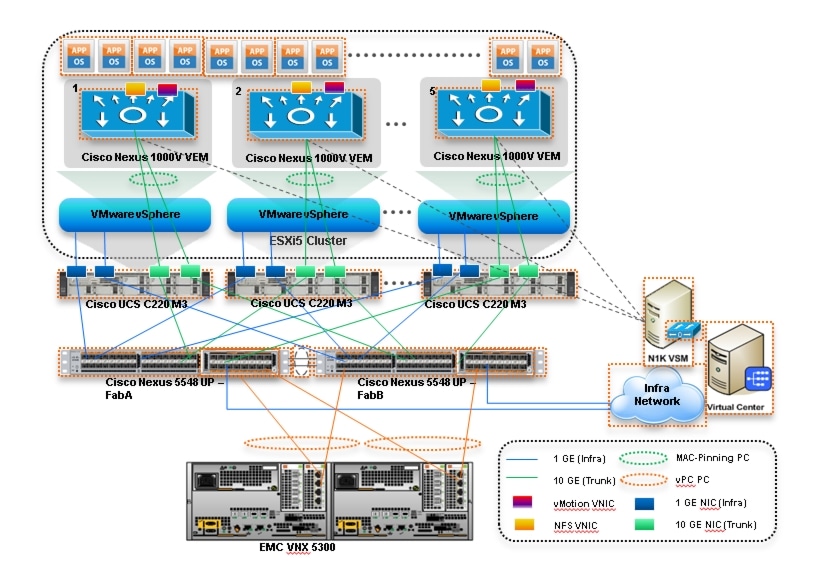

The Cisco Nexus 1000v vDS architecture is shown in Figure 5.

Figure 5 Cisco Nexus 1000v Switch

VMware vSphere 5.0

VMware vSphere 5.0 is a next-generation virtualization solution from VMware which builds upon ESXi 4 and provides greater levels of scalability, security, and availability to virtualized environments. vSphere 5.0 offers improvements in performance and utilization of CPU, memory, and I/O. It also offers users the option to assign up to thirty two virtual CPU to a virtual machine—giving system administrators more flexibility in their virtual server farms as processor-intensive workloads continue to increase.

The vSphere 5.0 provides the VMware vCenter Server that allows system administrators to manage their ESXi hosts and virtual machines on a centralized management platform. With the Cisco Fabric Interconnects Switch integrated into the vCenter Server, deploying and administering virtual machines is similar to deploying and administering physical servers. Network administrators can continue to own the responsibility for configuring and monitoring network resources for virtualized servers as they did with physical servers. System administrators can continue to "plug-in" their virtual machines into the network ports that have Layer 2 configurations, port access and security policies, monitoring features, and so on, that have been pre-defined by the network administrators; in the same way they need to plug in their physical servers to a previously-configured access switch. In this virtualized environment, the network port configuration/policies move with the virtual machines when the virtual machines are migrated to different server hardware.

EMC Storage Technologies and Benefits

The EMC VNX™ family is optimized for virtual applications delivering industry-leading innovation and enterprise capabilities for file, block, and object storage in a scalable, easy-to-use solution. This next-generation storage platform combines powerful and flexible hardware with advanced efficiency, management, and protection software to meet the demanding needs of today's enterprises.

The VNXe™ series is powered by Intel Xeon processor, for intelligent storage that automatically and efficiently scales in performance, while ensuring data integrity and security.

The VNXe series is purpose-built for the IT manager in smaller environments and the VNX series is designed to meet the high-performance, high-scalability requirements of midsize and large enterprises. The EMC VNXe and VNX storage arrays are multi-protocol platform that can support the iSCSI, NFS, and CIFS protocols depending on the customer's specific needs. The solution was validated using NFS for data storage.

VNXe series storage arrays have following customer benefits:

•

Next-generation unified storage, optimized for virtualized applications

•

Capacity optimization features including compression, deduplication, thin provisioning, and application-centric copies

•

High availability, designed to deliver five 9s availability

•

Multiprotocol support for file and block

•

Simplified management with EMC Unisphere™ for a single management interface for all network-attached storage (NAS), storage area network (SAN), and replication needs

Software Suites

The following are the available EMC software suites:

•

Remote Protection Suite—Protects data against localized failures, outages, and disasters.

•

Application Protection Suite—Automates application copies and proves compliance.

•

Security and Compliance Suite—Keeps data safe from changes, deletions, and malicious activity.

Software Packs

Total Value Pack—Includes all protection software suites, and the Security and Compliance Suite.

This is the available EMC protection software pack.

EMC Avamar

EMC's Avamar® data deduplication technology seamlessly integrates into virtual environments, providing rapid backup and restoration capabilities. Avamar's deduplication results in vastly less data traversing the network, and greatly reduces the amount of data being backed up and stored; resulting in storage, bandwidth and operational savings.

The following are the two most common recovery requests used in backup and recovery:

•

File-level recovery: Object-level recoveries account for the vast majority of user support requests. Common actions requiring file-level recovery are—individual users deleting files, applications requiring recoveries, and batch process-related erasures.

•

System recovery: Although complete system recovery requests are less frequent in number than those for file-level recovery, this bare metal restore capability is vital to the enterprise. Some of the common root causes for full system recovery requests are—viral infestation, registry corruption, or unidentifiable unrecoverable issues.

The Avamar System State protection functionality adds backup and recovery capabilities in both of these scenarios.

Architectural Overview

This CVD discusses the deployment model for the following three VMware virtualization solutions:

•

VMware solution for 50 virtual machines

•

VMware solution for 100 virtual machines

•

VMware solution for 125 virtual machines

Table 1 lists the mix of hardware components, their quantities and software components used for different VMware solutions:

Table 2 lists the various hardware and software components which occupies different tiers of the Cisco solution for EMC VSPEX VMware architectures under test.

Table 3 outlines the C220 M3 server configuration details (per server basis) across all the VMware architectures.

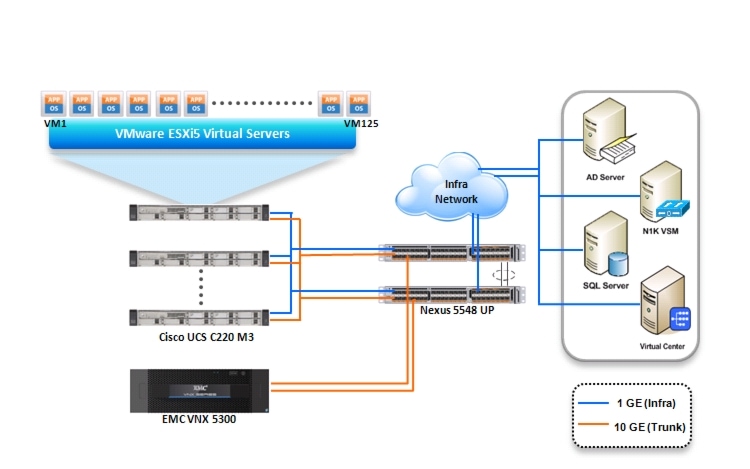

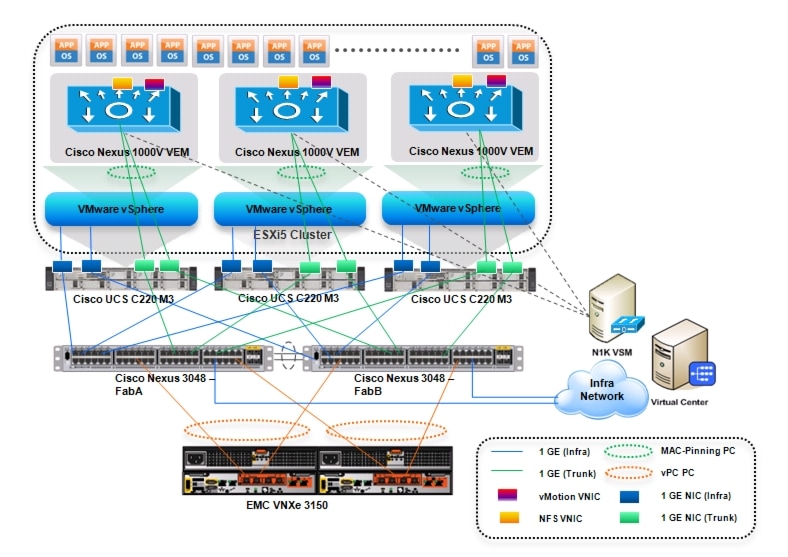

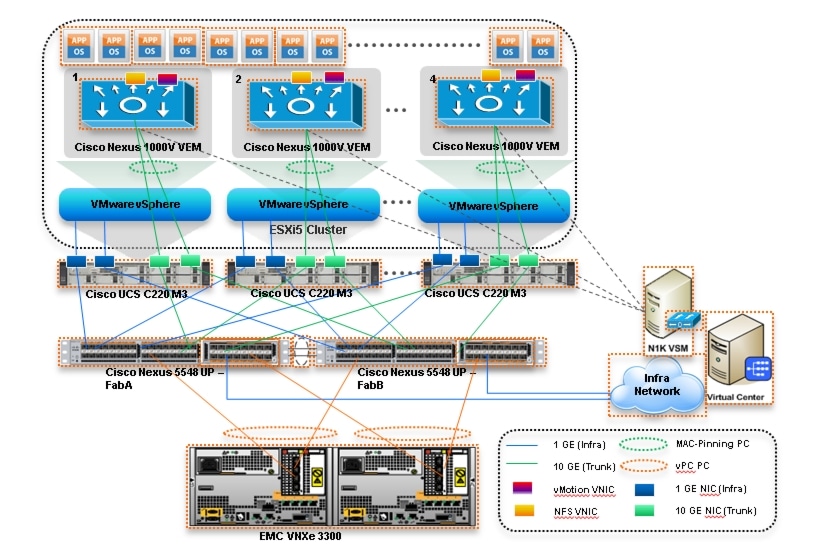

All the three reference architectures assume that there is an existing infrastructure / management network available where a virtual machine hosting vCenter server and Windows Active Directory / DNS server are present. A new VM hosting the Nexus 1000v VMS service would be deployed as part of the Cisco solution for the EMC VSPEX architecture. Figure 6, Figure 7, Figure 8 illustrate high-level solution architecture for 50, 100 and 125 virtual machines.

Figure 6 Reference Architecture for 50 Virtual Machines

Figure 7 Reference Architecture for 100 Virtual Machines

Figure 8 Reference Architecture for 125 Virtual Machines

Figure 6, Figure 7, Figure 8 illustrate that the high-level design points of VMware architectures are as follows:

•

Only Ethernet is used as network layer 2 media to access storage as well as TCP/IP network

•

Infrastructure network is on a separate 1GE network

•

Network redundancy is built in by providing two switches, two storage controllers and redundant connectivity for data, storage and infrastructure networking.

This design does not recommend or require any specific layout of infrastructure network. The VMware vCenter server and the Cisco Nexus 1000v VSM virtual machines are hosted on infrastructure network. However, design does require accessibility of certain VLANs from the infrastructure network to reach the servers.

ESXi 5.0 is used as hypervisor operating system on each server and is installed on local hard drives. Typical load is 25 virtual machines per server.

Memory Configuration Guidelines

This section provides guidelines for allocating memory to the virtual machines. The guidelines outlined here take into account vSphere memory overhead and the virtual machine memory settings.

ESXi/ESXi Memory Management Concepts

VMware vSphere virtualizes guest physical memory by adding an extra level of address translation. Shadow page tables make it possible to provide this additional translation with little or no overhead. Managing memory in the hypervisor enables the following:

•

Memory sharing across virtual machines that have similar data (that is, same guest operating systems).

•

Memory over commitment, which means allocating more memory to virtual machines than is physically available on the ESX/ESXi host.

•

A memory balloon technique whereby virtual machines that do not need all the memory they were allocated give memory to virtual machines that require additional allocated memory.

For more information about vSphere memory management concepts, see the VMware vSphere Resource Management Guide.

Virtual Machine Memory Concepts

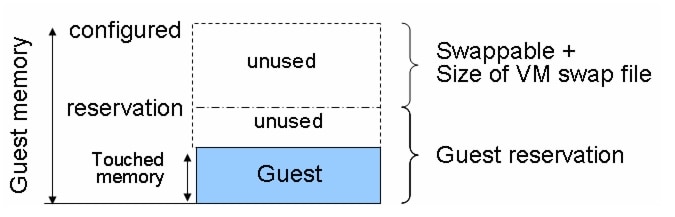

The Figure 9 illustrates the use of memory settings parameters in the virtual machine.

Figure 9 Virtual Machine Memory Settings

The VMware vSphere memory settings for a virtual machine include the following parameters:

•

Configured memory—Memory size of virtual machine assigned at creation.

•

Touched memory—Memory actually used by the virtual machine. VMware vSphere allocates only guest operating system memory on demand.

•

Swappable—Virtual machine memory can be reclaimed by the balloon driver or by VMware vSphere swapping. Ballooning occurs before VMware vSphere swapping. If this memory is in use by the virtual machine (that is, touched and in use), the balloon driver causes the guest operating system to swap.

Allocating Memory to Virtual Machines

Memory sizing for a virtual machine in VSPEX architectures is based on many factors. With the number of application services and use cases available determining a suitable configuration for an environment requires creating a baseline configuration, testing, and making adjustments, as discussed later in this paper. Table 4 outlines the resources used by a single virtual machine:

Following are the recommended best practices:

•

Account for memory overhead—Virtual machines require memory beyond the amount allocated, and this memory overhead is per-virtual machine. Memory overhead includes space reserved for virtual machine devices, depending on applications and internal data structures. The amount of overhead required depends on the number of vCPUs, configured memory, and whether the guest operating system is 32-bit or 64-bit. As an example, a running virtual machine with one virtual CPU and two GB of memory may consume about 100 MB of memory overhead, where a virtual machine with two virtual CPUs and 32 GB of memory may consume approximately 500 MB of memory overhead. This memory overhead is in addition to the memory allocated to the virtual machine and must be available on the ESXi host.

•

"Right-size" memory allocations—Over-allocating memory to virtual machines can waste memory unnecessarily, but it can also increase the amount of memory overhead required to run the virtual machine, thus reducing the overall memory available for other virtual machines. Fine-tuning the memory for a virtual machine is done easily and quickly by adjusting the virtual machine properties. In most cases, hot-adding of memory is supported and can provide instant access to the additional memory if needed.

•

Intelligently overcommit—Memory management features in VMware vSphere allow for over commitment of physical resources without severely impacting performance. Many workloads can participate in this type of resource sharing while continuing to provide the responsiveness users require of the application. When looking to scale beyond the underlying physical resources, consider the following:

–

Establish a baseline before overcommitted. Note the performance characteristics of the application before and after. Some applications are consistent in how they utilize resources and may not perform as expected when VMware vSphere memory management techniques take control. Others, such as Web servers, have periods where resources can be reclaimed and are perfect candidates for higher levels of consolidation.

–

Use the default balloon driver settings. The balloon driver is installed as part of the VMware Tools suite and is used by ESXi/ESXi if physical memory comes under contention. Performance tests show that the balloon driver allows ESXi/ESXi to reclaim memory, if required, with little to no impact to performance. Disabling the balloon driver forces ESXi/ESXi to use host-swapping to make up for the lack of available physical memory which adversely affects performance.

–

Set a memory reservation for virtual machines that require dedicated resources. Virtual machines running Search or SQL services consume more memory resources than other application and Web front-end virtual machines. In these cases, memory reservations can guarantee that the services have the resources they require while still allowing high consolidation of other virtual machines.

As with overcommitted CPU resources, proactive monitoring is a requirement. Table 5 lists counters that can be monitored to avoid performance issues resulting from overcommitted memory.

Storage Guidelines

VSPEX architecture for VMware 50, 100, and 125 virtual machine scale uses NFS to access storage arrays. This simplifies the design and implementation for the small to medium level businesses. VMware vSphere provides many features that take advantage of EMC storage technologies such as VNX VAAI plugin for NFS storage and storage replication. Features such as VMware vMotion, VMware HA, and VMware Distributed Resource Scheduler (DRS) use these storage technologies to provide high availability, resource balancing, and uninterrupted workload migration.

Virtual Server Configuration

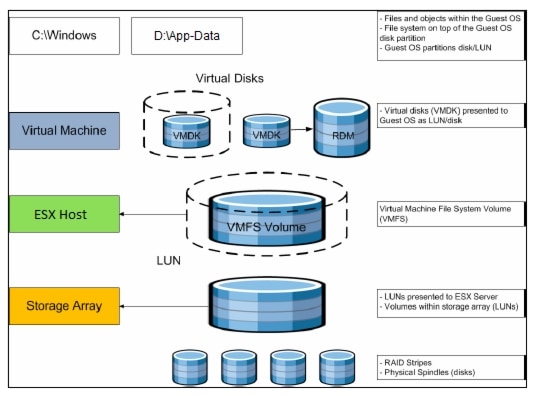

Figure 10 shows that the VMware storage virtualization can be categorized into three layers of storage technology:

•

The Storage array is the bottom layer, consisting of physical disks presented as logical disks (storage array volumes or LUNs) to the layer above, with the VMware vSphere virtual environment.

•

Storage array LUNs that are formatted as NFS datastores provide storage for virtual disks.

•

Virtual disks that are presented to the virtual machine and guest operating system as NFS attached disks can be partitioned and used in the file systems.

Figure 10 VMware Storage Virtualization Stack

Storage Protocol Capabilities

VMware vSphere provides vSphere and storage administrators with the flexibility to use the storage protocol that meets the requirements of the business. This can be a single protocol datacenter wide, such as iSCSI, or multiple protocols for tiered scenarios such as using Fibre Channel for high-throughput storage pools and NFS for high-capacity storage pools.

For VSPEX solution on VMware vSphere NFS is a recommended option because of its simplicity in deployment.

For more information, see the VMware white paper Comparison of Storage Protocol Performance in VMware vSphere 5: http://www.vmware.com/files/pdf/perf_vsphere_storage_protocols.pdf

Storage Best Practices

Following are the VMware vSphere storage best practices:

•

Host multi-pathing—Having a redundant set of paths to the storage area network is critical to protecting the availability of your environment. This redundancy can be in the form of dual adapters connected to separate fabric switches, or a set of teamed network interface cards for NFS.

•

Partition alignment—Partition misalignment can lead to severe performance degradation due to I/O operations having to cross track boundaries. Partition alignment is important both at the NFS level as well as within the guest operating system. Use the VMware vSphere Client when creating NFS datastores to be sure they are created aligned. When formatting volumes within the guest, Windows 2008 aligns NTFS partitions on a 1024KB offset by default.

•

Use shared storage—In a VMware vSphere environment, many of the features that provide the flexibility in management and operational agility come from the use of shared storage. Features such as VMware HA, DRS, and vMotion take advantage of the ability to migrate workloads from one host to another host while reducing or eliminating the downtime required to do so.

•

Calculate your total virtual machine size requirements—Each virtual machine requires more space than that used by its virtual disks. Consider a virtual machine with a 20GB OS virtual disk and 16GB of memory allocated. This virtual machine will require 20GB for the virtual disk, 16GB for the virtual machine swap file (size of allocated memory), and 100MB for log files (total virtual disk size + configured memory + 100MB) or 36.1GB total.

•

Understand I/O Requirements—Under-provisioned storage can significantly slow responsiveness and performance for applications. In a multitier application, you can expect each tier of application to have different I/O requirements. As a general recommendation, pay close attention to the amount of virtual machine disk files hosted on a single NFS volume. Over-subscription of the I/O resources can go unnoticed at first and slowly begin to degrade performance if not monitored proactively.

VSPEX VMware Memory Virtualization

VMware vSphere 5.0 has a number of advanced features that help to maximize performance and overall resources utilization. This section describes the performance benefits of some of these features for the VSPEX deployment.

Memory Compression

Memory over-commitment occurs when more memory is allocated to virtual machines than is physically present in a VMware ESXi host. Using sophisticated techniques, such as ballooning and transparent page sharing, ESXi is able to handle memory over-commitment without any performance degradation. However, if more memory than that is present on the server is being actively used, ESXi might resort to swapping out portions of a VM's memory.

For more details about VMware vSphere memory management concepts, see the VMware vSphere Resource Management Guide at: http://www.VMware.com/files/pdf/mem_mgmt_perf_Vsphere5.pdf

Virtual Networking

The Cisco Nexus 1000v collapses virtual and physical networking into a single infrastructure. The Nexus 1000v allows data center administrators to provision, configure, manage, monitor, and diagnose virtual machine network traffic and bare metal network traffic within a unified infrastructure.

The Nexus 1000v software extends Cisco data-center networking technology to the virtual machine with the following capabilities:

•

Each virtual machine includes a dedicated interface on the virtual Distributed Switch (vDS).

•

All virtual machine traffic is sent directly to the dedicated interface on the vDS.

•

The native VMware virtual switch in the hypervisor is replaced by the vDS.

•

Live migration and vMotion are also supported with the Cisco VM-FEX.

Benefits

•

Simplified operations—Seamless virtual networking infrastructure similar to Cisco Nexus 5000 / 7000 series CLI interface

•

Improved network security—Contains VLAN proliferation

•

Optimized network utilization—Reduces broadcast domains

•

Reduced network complexity—Separation of network and server administrator's domain by providing port-profiles by name

Virtual Networking Best Practices

Following are the VMware vSphere networking best practices:

•

Separate virtual machine and infrastructure traffic—Keep virtual machine and VMkernel or service console traffic separate. This can be accomplished physically using separate virtual switches that uplink to separate physical NICs, or virtually using VLAN segmentation.

•

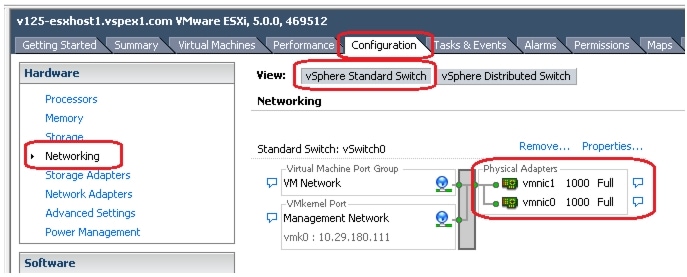

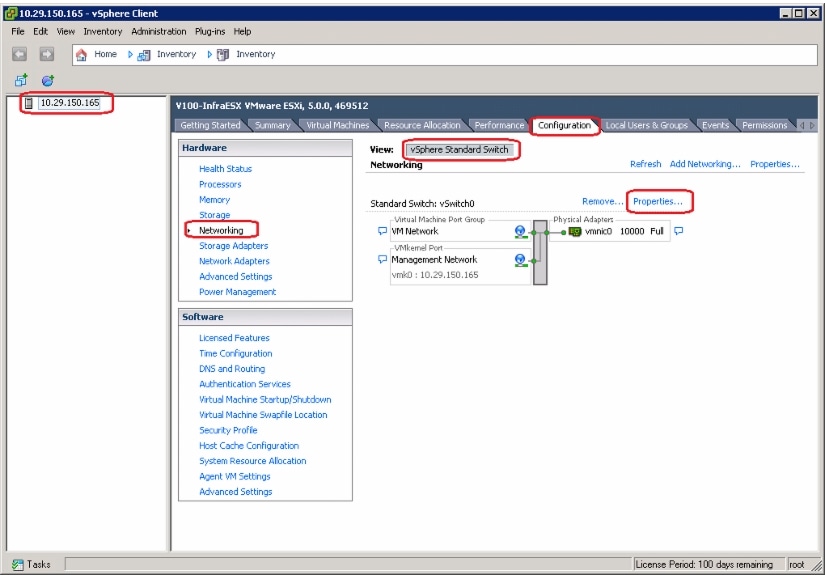

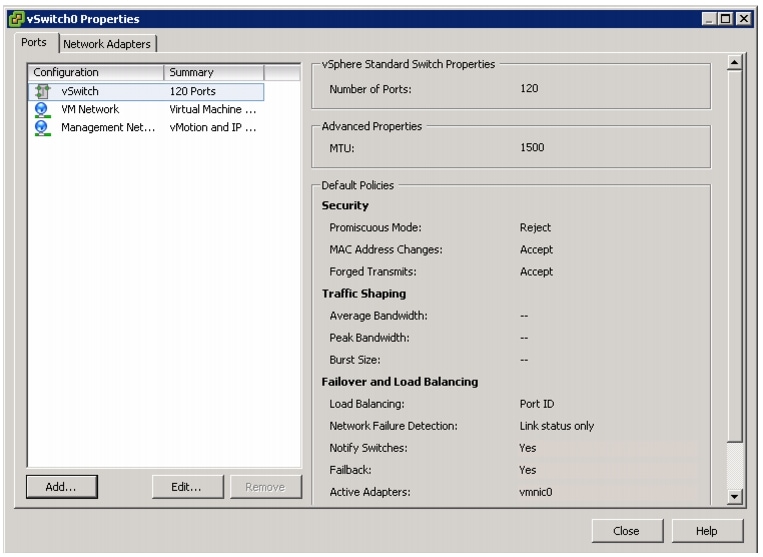

Use NIC Teaming—Use two physical NICs per vSwitch, and if possible, uplink the physical NICs to separate physical switches. Teaming provides redundancy against NIC failure and, if connected to separate physical switches, against switch failures. NIC teaming does not necessarily provide higher throughput.

•

Enable PortFast on ESX/ESXi host uplinks—Failover events can cause spanning tree protocol recalculations that can set switch ports into a forwarding or blocked state to prevent a network loop. This process can cause temporary network disconnects. To prevent this situation, set the switch ports connected to ESXi/ESXi hosts to PortFast, which immediately sets the port back to the forwarding state and prevents link state changes on ESXi/ESXi hosts from affecting the STP topology. Loops are not possible in virtual switches.

•

MAC pinning—MAC pinning based load balancing and high availability is recommended over the virtual Port-Channel (vPC) based load balancing because of the simplicity in the MAC pinning approach. MAC pinning provides more static allocation of virtual machines' vNICs on the physical uplink, however, given 25 virtual machines per server, there will be a fair distribution of network load across the Virtual Machines.

•

Converged Network and Storage I/O with 10Gbps Ethernet—Consolidating storage and network traffic can provide simplified cabling and management over maintaining separate switching infrastructures.

VMware vSphere Performance

With every release of VMware vSphere the overhead of running an application on the VMware vSphere virtualized platform is reduced by the new performance improving features. Typical virtualization overhead for applications is less than 10%. Many of these features not only improve performance of the virtualized application itself, but also allow for higher consolidation ratios. Understanding these features and taking advantage of them in your environment helps guarantee the highest level of success in your virtualized deployment. Table 6 provides details on VMware vSphere performance.

Table 6 VMware vSphere Performance

NUMA Support

ESX/ESXi uses a NUMA load-balancer to assign a home node to a virtual machine. Because memory for the virtual machine is allocated from the home node, memory access is local and provides the best performance possible. Even applications that do not directly support NUMA benefit from this feature.

See The CPU Scheduler in VMware ESXi 5: http://www.vmware.com/pdf/Perf_Best_Practices_vSphere5.0.pdf

Transparent page sharing

Virtual machines running similar operating systems and applications typically have identical sets of memory content. Page sharing allows the hypervisor to reclaim the redundant copies and keep only one copy, which frees up the total host memory consumption. If most of your application virtual machines run the same operating system and application binaries then total memory usage can be reduced to increase consolidation ratios.

See Understanding Memory Resource Management in VMware ESXi 5.0: http://www.vmware.com/files/pdf/perf-vsphere-memory_management.pdf

Memory ballooning

By using a balloon driver loaded in the guest operating system, the hypervisor can reclaim host physical memory if memory resources are under contention. This is done with little to no impact to the performance of the application.

See Understanding Memory Resource Management in VMware ESXi 5.0: http://www.vmware.com/files/pdf/perf-vsphere-memory_management.pdf

Memory compression

Before a virtual machine resorts to host swapping, due to memory over commitment the pages elected to be swapped attempt to be compressed. If the pages can be compressed and stored in a compression cache, located in main memory, the next access to the page causes a page decompression as opposed to a disk swap out operation, which can be an order of magnitude faster.

See Understanding Memory Resource Management in VMware ESXi 5.0: http://www.vmware.com/files/pdf/perf-vsphere-memory_management.pdf

Large memory page support

An application that can benefit from large pages on native systems, such as MS SQL, can potentially achieve a similar performance improvement on a virtual machine backed with large memory pages. Enabling large pages increases the memory page size from 4KB to 2MB.

See Performance Best Practices for VMware vSphere 5.0: http://www.vmware.com/pdf/Perf_Best_Practices_vSphere5.0.pdf

and see Performance and Scalability of Microsoft SQL Server on VMware vSphere 4:

http://www.vmware.com/files/pdf/perf_vsphere_sql_scalability.pdf

Physical and Virtual CPUs

VMware uses the terms virtual CPU (vCPU) and physical CPU to distinguish between the processors within the virtual machine and the underlying physical x86/x64-based processor cores. Virtual machines with more than one virtual CPU are also called SMP (symmetric multiprocessing) virtual machines. The virtual machine monitor (VMM), or hypervisor, is responsible for CPU virtualization. When a virtual machine starts running, control transfers to the VMM, which virtualizes the guest OS instructions.

Virtual SMP

VMware Virtual Symmetric Multiprocessing (Virtual SMP) enhances virtual machine performance by enabling a single virtual machine to use multiple physical processor cores simultaneously. VMware vSphere supports the use of up to thirty two virtual CPUs per virtual machine. The biggest advantage of an SMP system is the ability to use multiple processors to execute multiple tasks concurrently, thereby increasing throughput (for example, the number of transactions per second). Only workloads that support parallelization (including multiple processes or multiple threads that can run in parallel) can really benefit from SMP.

The virtual processors from SMP-enabled virtual machines are co-scheduled. That is, if physical processor cores are available, the virtual processors are mapped one-to-one onto physical processors and are then run simultaneously. In other words, if one vCPU in the virtual machine is running, a second vCPU is co-scheduled so that they execute nearly synchronously. Consider the following points when using multiple vCPUs:

•

Simplistically, if multiple, idle physical CPUs are not available when the virtual machine wants to run, the virtual machine remains in a special wait state. The time a virtual machine spends in this wait state is called ready time.

•

Even idle processors perform a limited amount of work in an operating system. In addition to this minimal amount, the ESXi host manages these "idle" processors, resulting in some additional work by the hypervisor. These low-utilization vCPUs compete with other vCPUs for system resources.

In VMware ESXi 5 and ESXi, the CPU scheduler underwent several improvements to provide better performance and scalability; for more information, see the CPU Scheduler in VMware ESXi 5:

http://www.vmware.com/pdf/Perf_Best_Practices_vSphere5.0.pdf. For example, in VMware ESXi 5, the relaxed co-scheduling algorithm was refined so that scheduling constraints due to co-scheduling requirements are further reduced. These improvements resulted in better linear scalability and performance of the SMP virtual machines.

Overcommitment

VMware conducted tests on virtual CPU overcommitment with SAP and SQL, showing that the performance degradation inside the virtual machines is linearly reciprocal to the overcommitment. Because the performance degradation is "graceful," any virtual CPU overcommitment can be effectively managed by using VMware DRS and VMware vSphere® vMotion® to move virtual machines to other ESX/ESXi hosts to obtain more processing power. By intelligently implementing CPU overcommitment, consolidation ratios of applications Web front-end and application servers can be driven higher while maintaining acceptable performance. If it is chosen that a virtual machine not participate in overcommitment, setting a CPU reservation provides a guaranteed CPU allocation for the virtual machine. This practice is generally not recommended because the reserved resources are not available to other virtual machines and flexibility is often required to manage changing workloads. However, SLAs and multi-tenancy may require a guaranteed amount of compute resources to be available. In these cases, reservations make sure that these requirements are met.

When choosing to overcommit CPU resources, monitor vSphere and applications to be sure responsiveness is maintained at an acceptable level. Table 7 lists counters that can be monitored to help achieve higher drive consolidation while maintaining the system performance.

Hyper-Threading

Hyper-threading technology (recent versions of which are called symmetric multithreading, or SMT) enables a single physical processor core to behave like two logical processors, essentially allowing two independent threads to run simultaneously. Unlike having twice as many processor cores—which can roughly double performance—hyper-threading can provide anywhere from a slight to a significant increase in system performance by keeping the processor pipeline busier.

Non-Uniform Memory Access (NUMA)

Non-Uniform Memory Access (NUMA) compatible systems contain multiple nodes that consist of a set of processors and memory. The access to memory in the same node is local, while access to the other node is remote. Remote access can take longer because it involves a multihop operation. In NUMA-aware applications, there is an attempt to keep threads local to improve performance.

The VMware ESX/ESXi provides load-balancing on NUMA systems. To achieve the best performance, it is recommended that the NUMA be enabled on compatible systems. On a NUMA-enabled ESX/ESXi host, virtual machines are assigned a home node from which the virtual machine's memory is allocated. Because it is rare for a virtual machine to migrate away from the home node, memory access is mostly kept local.

In applications that scale out well it is beneficial to size the virtual machines with the NUMA node size in mind. For example, in a system with two hexa-core processors and 64GB of memory, sizing the virtual machine to six virtual CPUs and 32GB or less, means that the virtual machine does not have to span multiple nodes.

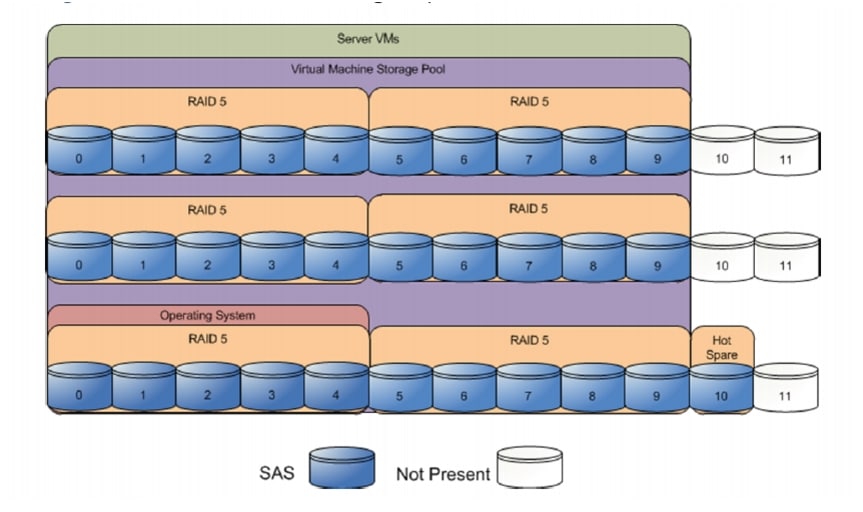

VSPEX VMware Storage Virtualization

Disk provisioning on the EMC VNXe series is simplified through the use of wizards, so that administrators need not choose the disks that belong to the given storage pool. The wizard will automatically choose the available disk, regardless of where the disk physically resides in the array. On the other hand, disk provisioning on the EMC VNX series requires administrators to choose disks for each of the storage pools.

Storage Layout

This section illustrates the physical disk layouts on the EMC VNXe and VNX storage arrays.

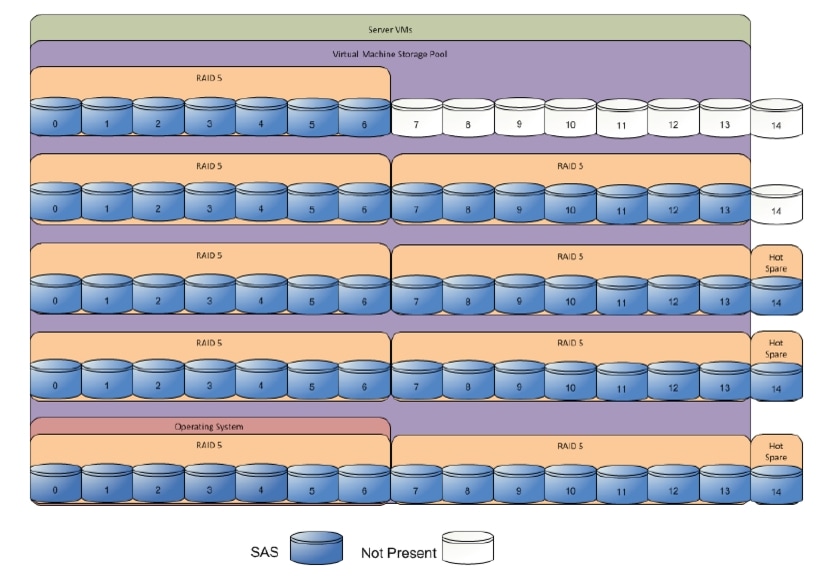

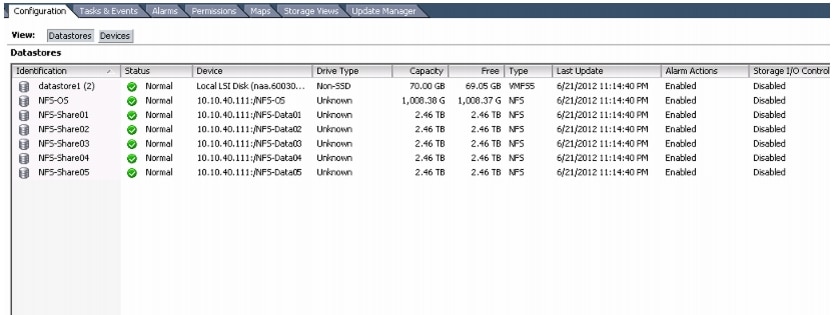

Figure 11 shows storage architecture for 50 virtual machines on VNXe3150:

Figure 11 Storage Architecture for 50 Virtual Machines on EMC VNXe3150

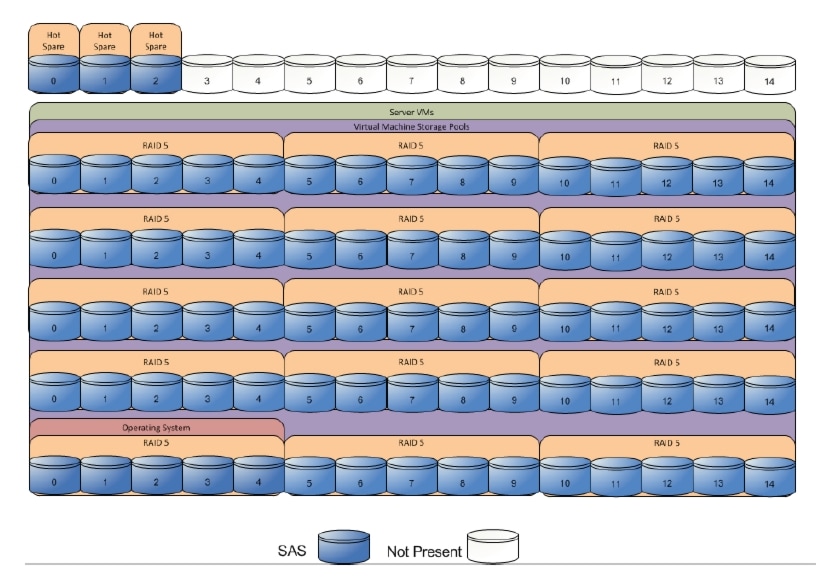

Figure 12 shows storage architecture for 100 virtual machines on VNXe3300:

Figure 12 Storage Architecture for 100 Virtual Machines on EMC VNXe3300

Figure 13 shows storage architecture for 125 virtual machines on VNX5300:

Figure 13 Storage Architecture for 125 Virtual Machines on EMC VNX5300

Table 8 provides the data store sizes for various architectures shown in Figure 11, Figure 12, Figure 13:

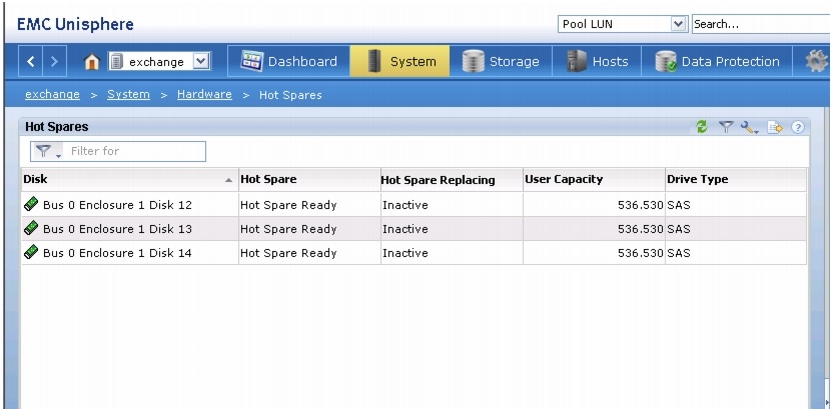

For all the architectures, EMC recommends one hot spare disk allocated for each 30 disks of a given type.

The VNX/VNXe family is designed for five 9s availability by using redundant components throughout the array. All of the array components are capable of continued operation in case of hardware failure. The RAID disk configuration on the array provides protection against data loss due to individual disk failures, and the available hot spare drives can be dynamically allocated to replace a failing disk.

Storage Virtualization

NFS is a cluster file system that provides UDP based stateless storage protocol to access storage across multiple hosts over the network. Each virtual machine is encapsulated in a small set of files and NFS datastore mount points are used for the operating system partitioning and data partitioning.

It is preferable to deploy virtual machine files on shared storage to take advantage of VMware VMotion, VMware High Availability™ (HA), and VMware Distributed Resource Scheduler™ (DRS). This is considered a best practice for mission-critical deployments, which are often installed on third-party, shared storage management solutions.

Architecture for 50 VMware Virtual Machines

Figure 14 shows the logical layout of 50 VMware virtual machines. Following are the key aspects of this solution:

•

Three Cisco C220 M3 servers are used.

•

The solution uses Cisco Nexus 3048 switches and Broadcom 1Gbps NIC. This results in the 1Gbps solution for the storage access.

•

Virtual port-channels on storage side networking provide high-availability and load balancing.

•

Cisco Nexus 1000v distributed Virtual Switch provides port-profiles based virtual networking solution.

•

On server side, port-profile based MAC pinning feature provides simplified load balancing and network high availability.

•

EMC VNXe3150 is used as a storage array.

Figure 14 Cisco Solution VMware Architecture for 50 Virtual Machines

Architecture for 100 VMware Virtual Machines

Figure 15 shows the logical layout of 100 VMware virtual machines. Following are the key aspects of this solution:

•

Four Cisco C220 M3 servers are used.

•

The solution uses Cisco Nexus 5548UP switches and 10 Gbps Cisco VIC adapters. This results in the 10Gbps solution for the storage access and network and makes vMotion 9 times faster compared to the 1 Gbps solution.

•

Virtual port-channels on storage side networking provide high-availability and load balancing.

•

Cisco Nexus 1000v distributed Virtual Switch provides port-profiles based virtual networking solution.

•

On server side, port-profile based MAC pinning feature provides simplified load balancing and network high availability.

•

EMC VNXe3300 is used as a storage array.

Figure 15 Cisco Solution VMware Architecture for 100 Virtual Machines

Architecture for 125 VMware Virtual Machines

Figure 16 shows the logical layout of 125 VMware virtual machines. Following are the key aspects of this solution:

•

Five Cisco C220 M3 servers are used.

•

The solution uses Cisco Nexus 5548UP switches and 10 Gbps Cisco VIC adapters. This results in the 10Gbps solution for the storage access and network and makes vMotion 9 times faster compared to the 1 Gbps solution.

•

Virtual port-channels on storage side networking provide high-availability and load balancing.

•

Cisco Nexus 1000v distributed Virtual Switch provides port-profiles based virtual networking solution.

•

On server side, port-profile based MAC pinning feature provides simplified load balancing and network high availability.

•

EMC VNX5300 is used as a storage array.

Figure 16 Cisco Solution VMware Architecture for 125 Virtual Machines

Sizing Guidelines

In any discussion about virtual infrastructures, it is important to first define a reference workload. Not all servers perform the same tasks, and it is impractical to build a reference that takes into account every possible combination of workload characteristics.

Defining the Reference Workload

To simplify the discussion, we have defined a representative customer reference workload. By comparing your actual customer usage to this reference workload, you can extrapolate which reference architecture to choose.

For the VSPEX solutions, the reference workload was defined as a single virtual machine. This virtual machine has the following characteristics:

This specification for a virtual machine is not intended to represent any specific application. Rather, it represents a single common point of reference to measure other virtual machines.

Applying the Reference Workload

When considering an existing server that will move into a virtual infrastructure, you have the opportunity to gain efficiency by right-sizing the virtual hardware resources assigned to that system.

The reference architectures create a pool of resources sufficient to host a target number of reference virtual machines as described above. It is entirely possible that customer virtual machines may not exactly match the specifications above. In that case, you can say that a single specific customer virtual machine is the equivalent of some number of reference virtual machines, and assume that number of virtual machines have been used in the pool. You can continue to provision virtual machines from the pool of resources until it is exhausted. Consider these examples:

Example 1 Custom Built Application

A small custom-built application server needs to move into this virtual infrastructure. The physical hardware supporting the application is not being fully utilized at present. A careful analysis of the existing application reveals that the application can use one processor, and needs 3 GB of memory to run normally. The IO workload ranges between 4 IOPS at idle time to 15 IOPS when busy. The entire application is only using about 30 GB on local hard drive storage.Based on these numbers, following resources are needed from the resource pool:- CPU resources for 1 VM- Memory resources for 2 VMs- Storage capacity for 1 VM- IOPS for 1 VMIn this example, a single virtual machine uses the resources of two of the reference VMs. If the original pool had the capability to provide 100 VMs worth of resources, the new capability is 98 VMs.Example 2 Point of Sale System

The database server for a customer's point-of-sale system needs to move into this virtual infrastructure. It is currently running on a physical system with four CPUs and 16 GB of memory. It uses 200 GB storage and generates 200 IOPS during an average busy cycle.The following resources that are needed from the resource pool to virtualize this application:- CPUs of 4 reference VMs- Memory of 8 reference VMs- Storage of 2 reference VMs- IOPS of 8 reference VMsIn this case the one virtual machine uses the resources of eight reference virtual machines. If this was implemented on a resource pool for 50 virtual machines, there are 42 virtual machines of capability remaining in the pool.Example 3 Web Server

The customer's web server needs to move into this virtual infrastructure. It is currently running on a physical system with two CPUs and 8GB of memory. It uses 25 GB of storage and generates 50 IOPS during an average busy cycle.The following resources that are needed from the resource pool to virtualize this application:- CPUs of 2 reference VMs- Memory of 4 reference VMs- Storage of 1 reference VMs- IOPS of 2 reference VMsIn this case the virtual machine would use the resources of four reference virtual machines. If this was implemented on a resource pool for 125 virtual machines, there are 121 virtual machines of capability remaining in the pool.Example 4 Decision Support Database

The database server for a customer's decision support system needs to move into this virtual infrastructure. It is currently running on a physical system with 10 CPUs and 48 GB of memory. It uses 5 TB of storage and generates 700 IOPS during an average busy cycle.The following resources that are needed from the resource pool to virtualize this application:- CPUs of ten reference VMs- Memory of 24 reference VMs- Storage of 52 reference VMs- IOPS of 28 reference VMsIn this case the one virtual machine uses the resources of 52 reference virtual machines. If this was implemented on a resource pool for 100 virtual machines, there are 48 virtual machines of capability remaining in the pool.Summary of Example

The four examples show the flexibility of the resource pool model. In all the four cases the workloads simply reduce the number of available resources in the pool. If all four examples were implemented on the same virtual infrastructure, with an initial capacity of 100 virtual machines they can all be implemented, leaving the capacity of thirty six reference virtual machines in the resource pool.

In more advanced cases, there may be tradeoffs between memory and I/O or other relationships where increasing the amount of one resource, decreases the need for another. In these cases, the interactions between resource allocations become highly complex, and are out of the scope of this document. However, when a change in the resource balance is observed, and the new level of requirements is known; these virtual machines can be added to the infrastructure using the method described in the above examples.

VSPEX Configuration Guidelines

This sections provides the procedure to deploy the Cisco solution for EMC VSPEX VMware architecture.

Follow these steps to configure the Cisco solution for EMC VSPEX VMware architectures:

1.

Pre-deployment tasks.

2.

Prepare servers.

3.

Prepare switches, connect network and configure switches.

4.

Prepare and configure storage array.

5.

Install ESXi servers and vCenter infrastructure.

6.

Install and configure SQL server database.

7.

Install and configure vCenter server.

8.

Install and configure Nexus 1000v.

9.

Test the installation.

These steps are described in detail in the following sections.

Pre-Deployment Tasks

Pre-deployment tasks include procedures that do not directly relate to environment installation and configuration, but whose results will be needed at the time of installation. Examples of pre-deployment tasks are collection of hostnames, IP addresses, VLAN IDs, license keys, installation media, and so on. These tasks should be performed before the customer visit to decrease the time required onsite.

•

Gather documents—Gather the related documents listed in the Preface. These are used throughout the text of this document to provide detail on setup procedures and deployment best practices for the various components of the solution.

•

Gather tools—Gather the required and optional tools for the deployment. Use following table to confirm that all equipment, software, and appropriate licenses are available before the deployment process.

•

Gather data—Collect the customer-specific configuration data for networking, naming, and required accounts. Enter this information into the Customer Configuration Data worksheet for reference during the deployment process.

Customer Configuration Data

To reduce the onsite time, information such as IP addresses and hostnames should be assembled as part of the planning process.

The section Customer Configuration Data Sheet provides tabulated record of relevant information (to be filled at the customer's end). This form can be expanded or contracted as required, and information may be added, modified, and recorded as the deployment progresses.

Additionally, complete the VNXe Series Configuration Worksheet, available on the EMC online support website, to provide the most comprehensive array-specific information.

Preparing Servers

Preparing the Cisco C220 M3 servers is a common step for all the VMware architectures. Firstly, you need to install the C220 M3 server in a rack. For more information on mounting the Cisco C220 servers, see the installation guide on details about how to physically mount the server: http://www.cisco.com/en/US/docs/unified_computing/ucs/c/hw/C220/install/install.html

To prepare the servers, follow these steps:

1.

Configure Management IP Address for CIMC Connectivity

2.

Enabling Virtualization Technology in BIOS

These steps are discussed in detail in the following sections.

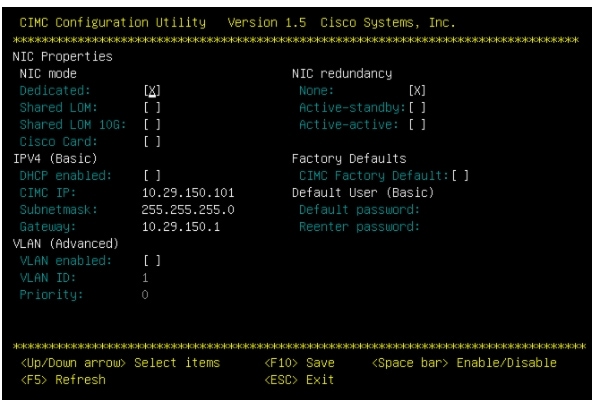

Configure Management IP Address for CIMC Connectivity

To power-on the server and configure the management IP address, follow these steps:

1.

Attach a supplied power cord to each power supply in your server, and then attach the power cord to a grounded AC power outlet.

2.

Connect a USB keyboard and VGA monitor by using the supplied KVM cable connected to the KVM connector on the front panel.

3.

Press the Power button to boot the server. Watch for the prompt to press F8.

4.

During bootup, press F8 when prompted to open the BIOS CIMC Configuration Utility.

5.

Set the NIC mode to Dedicated and NIC redundancy to None.

6.

Choose whether to enable DHCP for dynamic network settings, or to enter static network settings.

7.

Press F10 to save your settings and reboot the server.

Figure 17 Configuring CIMC IP in CIMC Configuration Utility

When the CIMC IP is configured, the server can be managed using the https based Web GUI or CLI.

Note

The default username for the server is "admin" and the default password is "password". Cisco strongly recommends changing the default password.

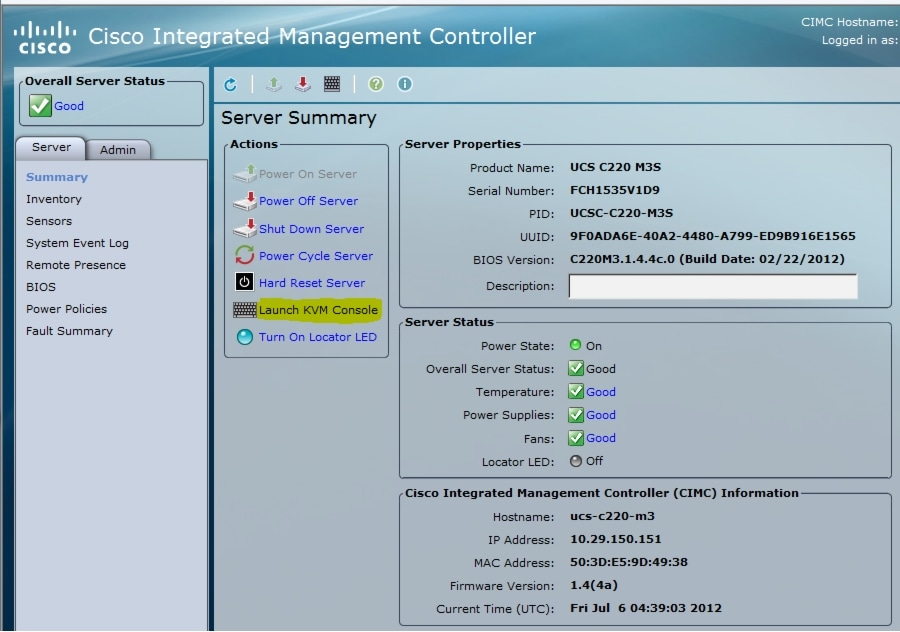

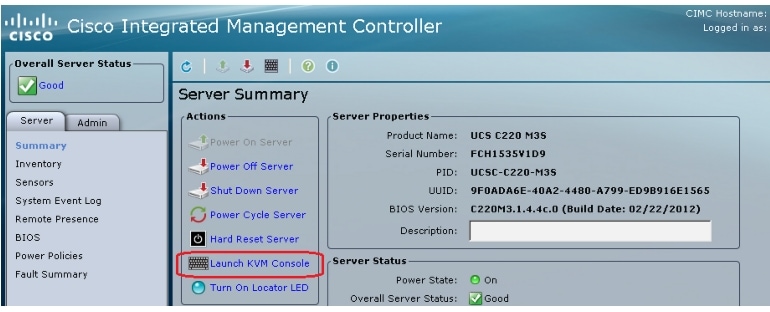

Enabling Virtualization Technology in BIOS

VMware vCenter requires an x64-based processor, hardware-assisted virtualization (Intel VT enabled), and hardware data execution protection (Execute Disable enabled). Perform the following steps to enable Intel ® VT and Execute Disable in BIOS.

1.

Using a web browser, connect to the CIMC using the IP address configured in the CIMC Configuration section.

2.

Launch the KVM from the CIMC GUI.

Figure 18 Launching KVM Console Through CIMC GUI

3.

Press the Power button to boot the server. Watch for the prompt to press F2.

4.

During bootup, press F2 when prompted to open the BIOS Setup Utility.

5.

Choose Advanced tab > Processor Configuration.

Figure 19 Enabling Virtualization Technology in KVM Console

6.

Enable Execute Disable and Intel VT as shown in Figure 19.

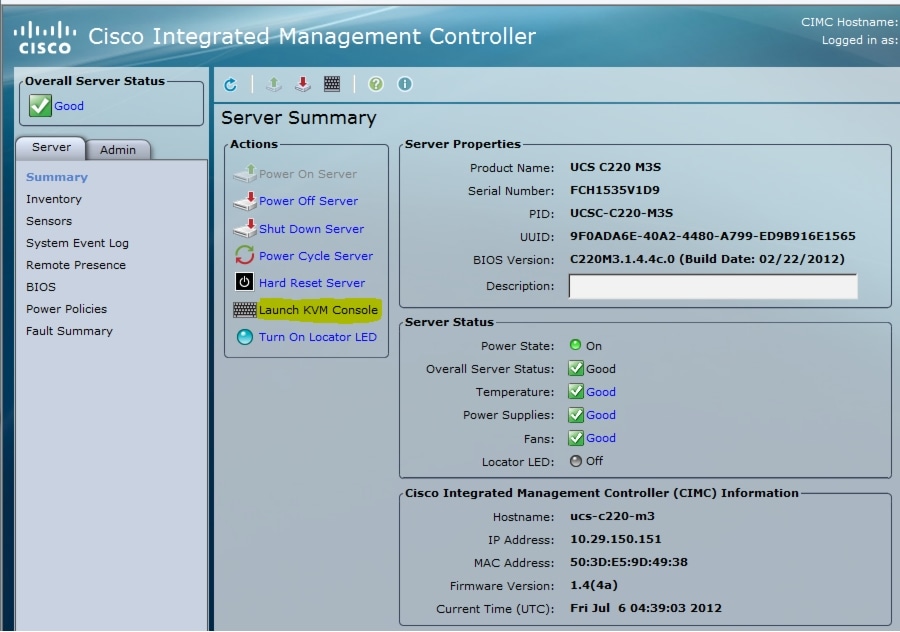

Configuring RAID

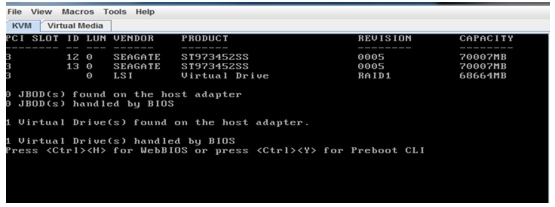

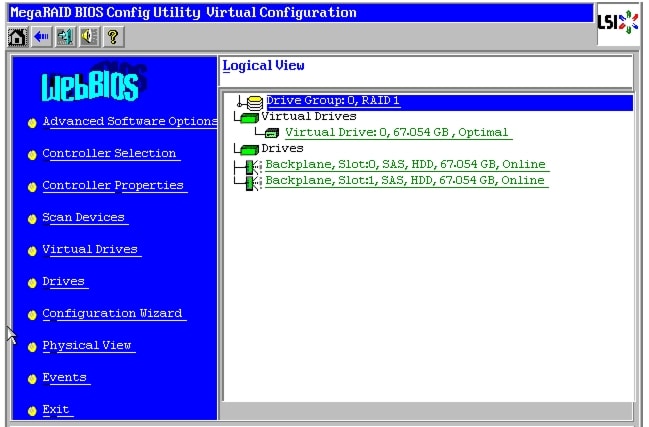

The RAID controller type is Cisco UCSC RAID SAS 2008 and supports 0, 1, 5 RAID levels. We need to configure RAID level 1 for this setup and set the virtual drive as boot drive.

To configure RAID controller, perform the following steps:

1.

Using a web browser, connect to the CIMC using the IP address configured in the CIMC Configuration section.

2.

Launch the KVM from the CIMC GUI.

Figure 20 Launching KVM Console Through CIMC GUI

3.

During bootup, press <Ctrl> <H> when prompted to configure RAID in the WebBIOS.

Figure 21 Opening WebBIOS Window

4.

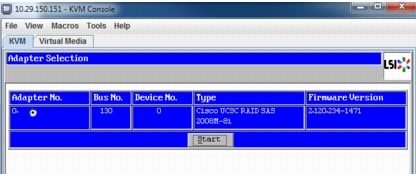

Choose the adapter and click the Start button.

Figure 22 Adapter Selection Window

5.

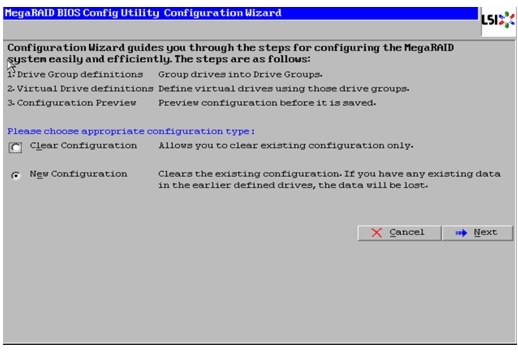

Choose New Configuration and click Next.

Figure 23 MegaRAID Configuration Wizard

6.

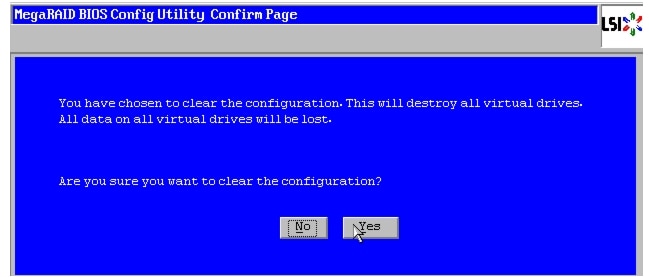

Choose Yes and click Next to clear the configuration.

Figure 24 MegaRAID Confirmation Window

7.

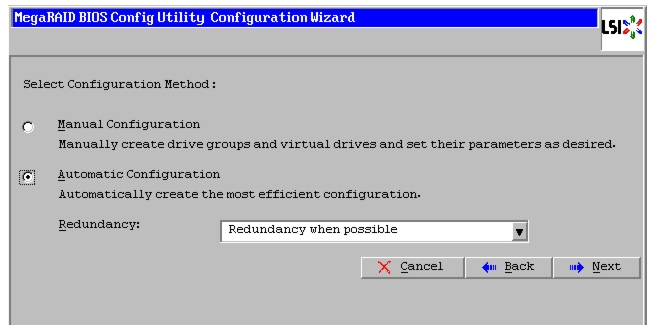

If you choose "Automatic Configuration" radio button and "Redundancy when possible" from the drop-down list for "Redundancy", and if only two disks are available, then WebBIOS creates a RAID 1 configuration.

Figure 25 Selecting Configuration

8.

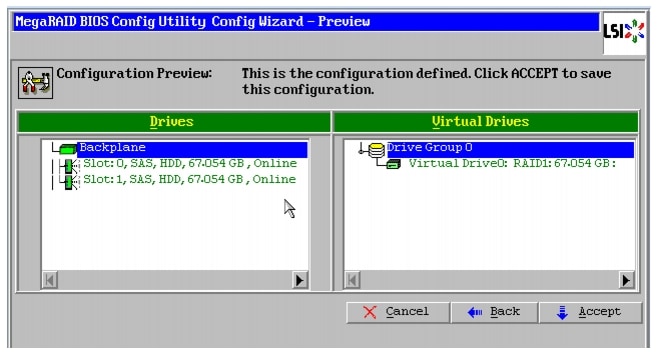

Click Accept when you are prompted to save the configuration.

Figure 26 MegaRAID Configuration Preview

9.

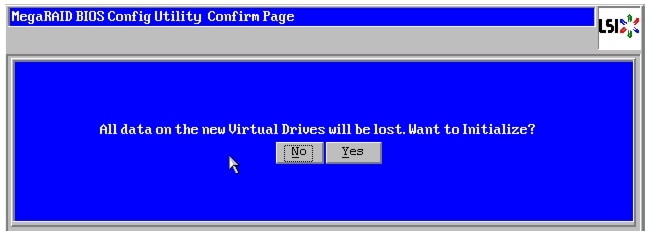

Click Yes when prompted to initialize the new virtual drives.

Figure 27 Initializing New Virtual Drives

10.

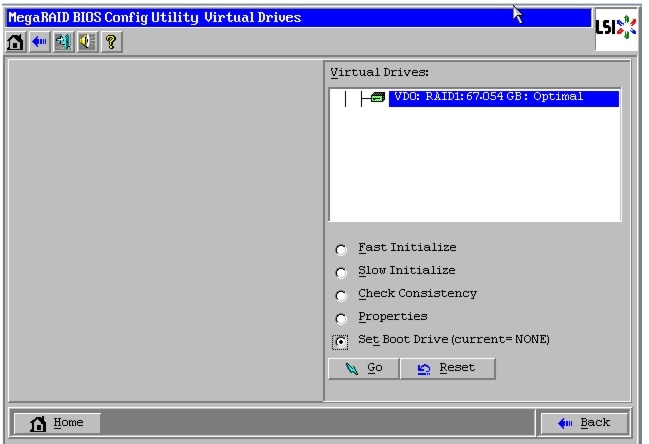

Choose the Set Boot Drive for the virtual drive created above and click the GO button.

Figure 28 Setting Virtual Drive as Boot Drive

11.

Click Exit and reboot the system.

Figure 29 Logical View of Virtual Configuration in WebBIOS

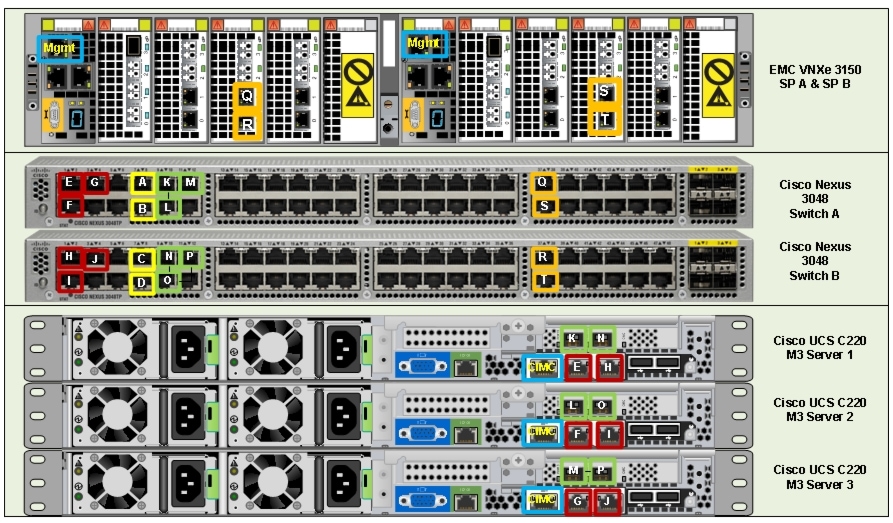

Preparing Switches, Connecting Network, and Configuring Switches

See the Nexus 3048 or Nexus 5548UP configuration guide for detailed information about how to mount the switches on the rack. Following diagrams show connectivity details for the three VMware architectures covered in this document.

Figure 30, Figure 32, Figure 34 show there are five major cabling sections in these architectures:

1.

Inter switch links.

2.

Data connectivity for servers (trunk links).

3.

Management connectivity for servers.

4.

Storage connectivity.

5.

Infrastructure connectivity.

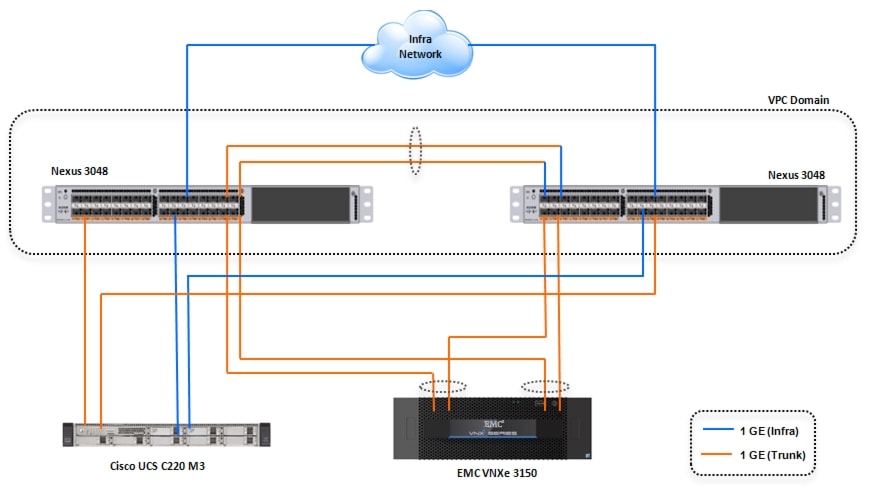

Topology Diagram for 50 Virtual Machines

Figure 30 Topology Diagram for 50 Virtual Machines

Table 11 and Figure 31 provide the detailed cable connectivity for the 50 virtual machines configuration.

Figure 31 Detailed Backplane Connectivity for 50 Virtual Machines

After connecting all the cables as per Table 11, you can configure the switch.

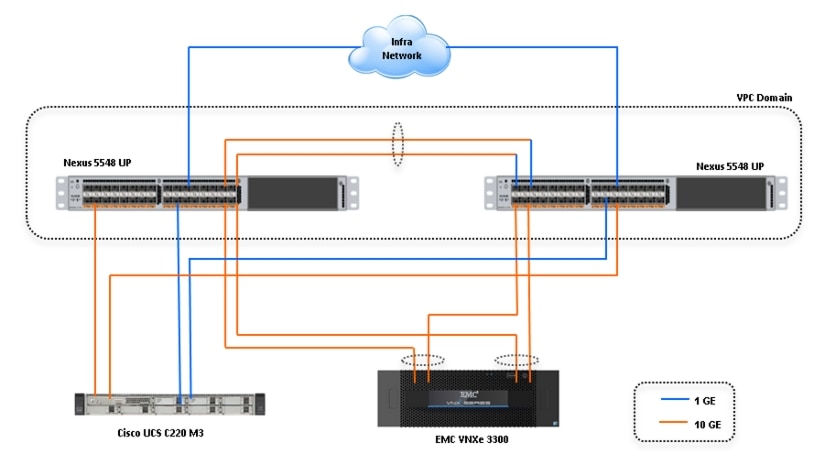

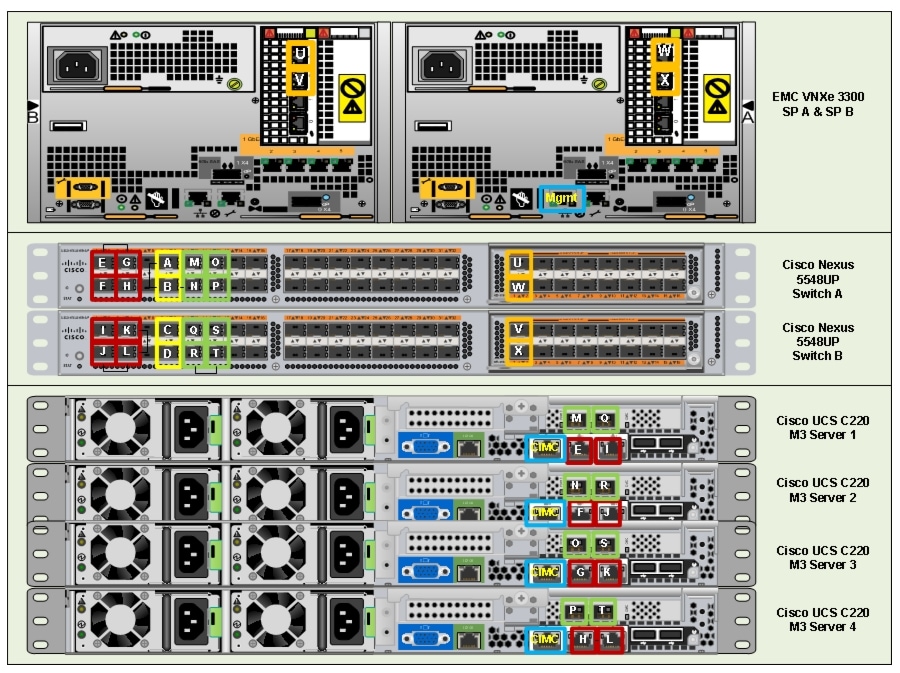

Topology Diagram for 100 Virtual Machines

Figure 32 Topology Diagram for 100 Virtual Machines

Table 12 and Figure 33 provides the detailed cable connectivity for the 100 virtual machines configuration.

Figure 33 Detailed Backplane Connectivity for 100 Virtual Machines

After connecting all the cables as per Table 12, you can configure the switch.

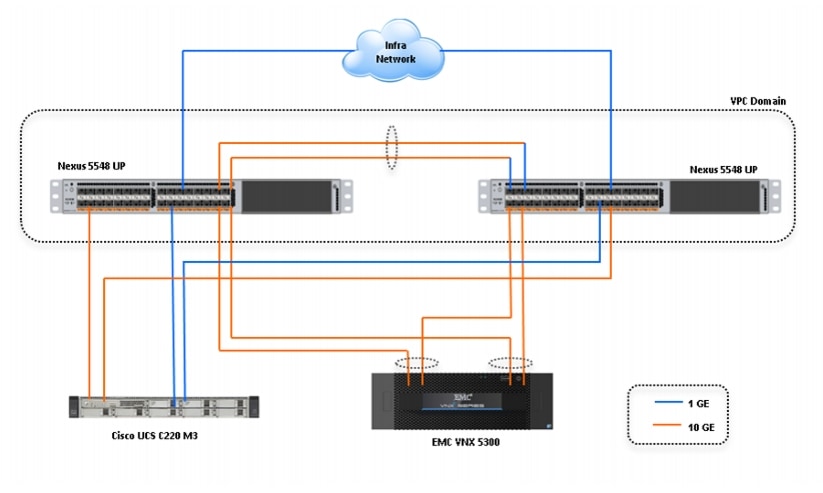

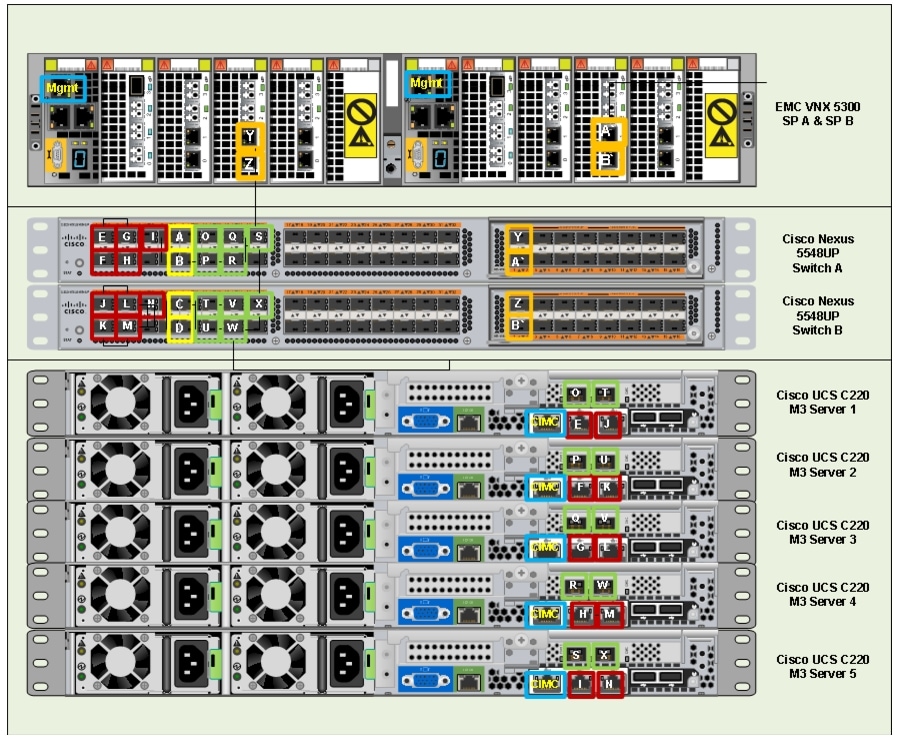

Topology Diagram for Hundred and Twenty Five Virtual Machines

Figure 34 Topology Diagram for 125 Virtual Machines

Table 13 and Figure 35 provides the detailed cable connectivity for the 125 virtual machines configuration.

Figure 35 Detailed Backplane Connectivity for 100 Virtual Machines

After connecting all the cables as per Table 13, you can configure the switch.

Configuring Cisco Nexus Switches

This section explains switch configuration needed for the Cisco solution for EMC VSPEX VMware architectures. Details about configuring password, management connectivity and strengthening the device are not covered here, please refer to the Nexus 3000 and 5000 series configuration guide for that.

Configure Global VLANs

Following is an example to configure VLAN on a switch:

switch# configure terminalswitch(config)# vlan 40switch(config-vlan)# name Storageswitch(config-vlan)# exitFollowing VLANs in Table 14 need to be configured on both switches A and B in addition to your application specific VLANs:

For actual VLAN IDs of your deployment, see the section Customer Configuration Data Sheet.

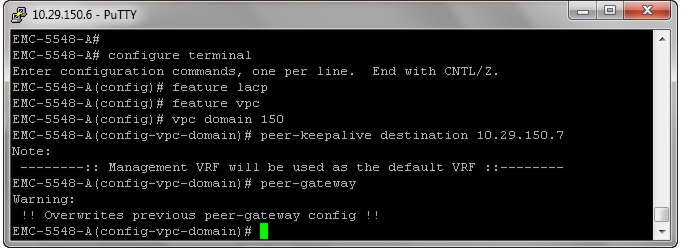

Configuring Virtual Port Channel (VPC)

Virtual port-channel effectively enables two physical switches to behave like a single virtual switch, and port-channel can be formed across the two physical switches. Following are the steps to enable vPC:

1.

Enable LACP feature on both switches.

2.

Enable vPC feature on both switches.

3.

Configure a unique vPC domain ID, identical on both switches.

4.

Configure mutual management IP addresses on both the switches and configure peer-gateway as shown in the Figure 36.

Figure 36 Configuring Peer-Gateway

5.

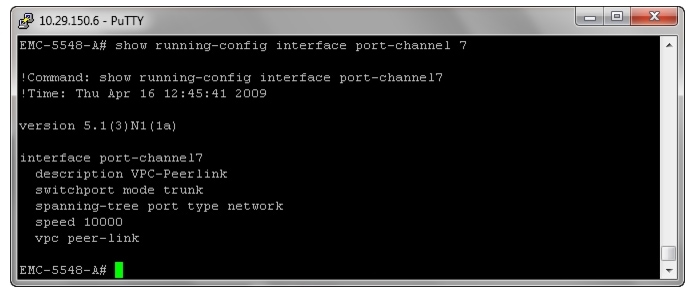

Configure port-channel on the inter-switch links. Configuration for these ports is shown in Figure 37. Ensure that "vpc peer-link" is configured on this port-channel.

Figure 37 Configured VPC Peer-link on Port-Channel

6.

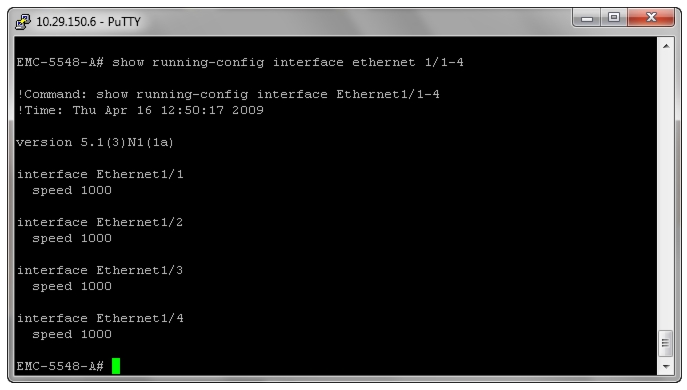

Add ports with LACP protocol on the port-channel using "channel-group 7 mode active" command under the interface subcommand.

Configuring Infrastructure Ports Connected to Servers

Infrastructure links connected to the LOM ports on the servers always operate at the speed of 1Gbps and carry only the infrastructure VLAN. Figure 38 shows the configuration for infrastructure ports on the switches.

Note

In the test environment, VLAN 1 was used as infrastructure VLAN.

In your deployment, if the infrastructure VLAN is not VLAN 1, then you need to explicitly configure as an access VLAN using "switchport access vlan <id>" under the interface subcommand.

Figure 38 Configuring Infrastructure Ports on Switches

Configuring Trunk Ports Connected to Servers

Data ports connected to the ESXi servers need to be in the trunk mode. Storage, vMotion, N1k-control, N1k-packet and application VLANs are allowed on this port. For 100 and 125 virtual machines architectures, these trunk ports operate at the speed of 10 Gbps. It is recommended to provide good description for each port and port-channel on the switch, to ease troubleshooting incase of any issues later. Exact configuration commands are shown in Figure 39.

Figure 39 Port-Channel Configuration Commands

In this test environment, we are not using virtual port-channels across two switches for load-balancing and high-availability, as we have used port-profile based load-balancing and high-availability defined in the Cisco Nexus 1000v virtual distributed switch on the host to reduce complexity of the architecture. As mentioned in the next configuration step, the port-channel and vPC are used on storage side connectivity for load-balancing and high-availability.

Configuring Storage Connectivity

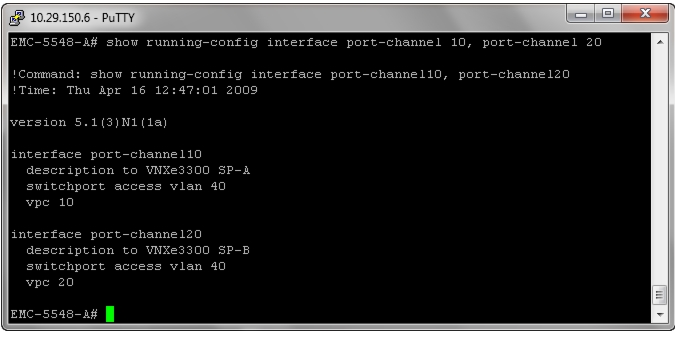

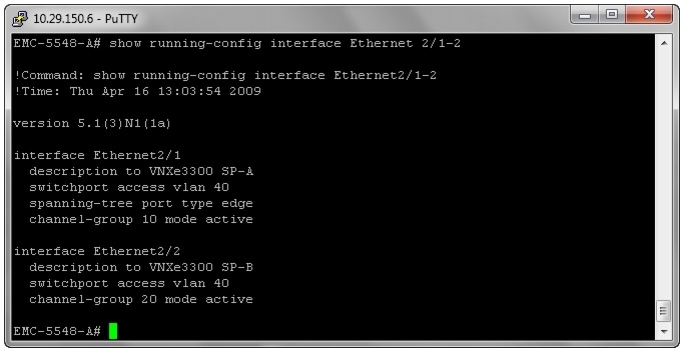

From each switch one link connects to each storage processor on the VNX/VNXe storage array. A virtual port-channel is created for the two links connected to a single storage processor, but connected to two different switches. In this example configuration, links connected to storage processor A (SP-A) of VNXe3300 storage array are connected to Ethernet port 2/1 on each switch and links connected to storage processor B (SP-B) are connected to Ethernet port 2/2 on each switch. A virtual port-channel (id 10) is created for port Ethernet 2/1 on each switch and a different virtual port-channel (id 20) is created for port Ethernet 2/2 on each switch. Figure 40 shows the configuration on the port-channels.

Figure 40 Configured Virtual Port-Channels

Figure 41 shows the exact configuration on each port connected to the storage array.

Note

Only storage VLAN is required on this port, and so, the port will be in the access mode.

Port is part of the port-channel configured in Figure 40.

Figure 41 Port Connections to Storage Arrays

Configure ports connected to infrastructure network

Port connected to infrastructure network need to be in trunk mode, and they require at least infrastructure VLAN, N1k control and packet VLANs at the minimum. You may require enabling more VLANs as required by your application domain. For example, Windows virtual machines may need to access to active directory / DNS servers deployed in the infrastructure network. You may also want to enable port-channels and virtual port-channels for high availability of infrastructure network.

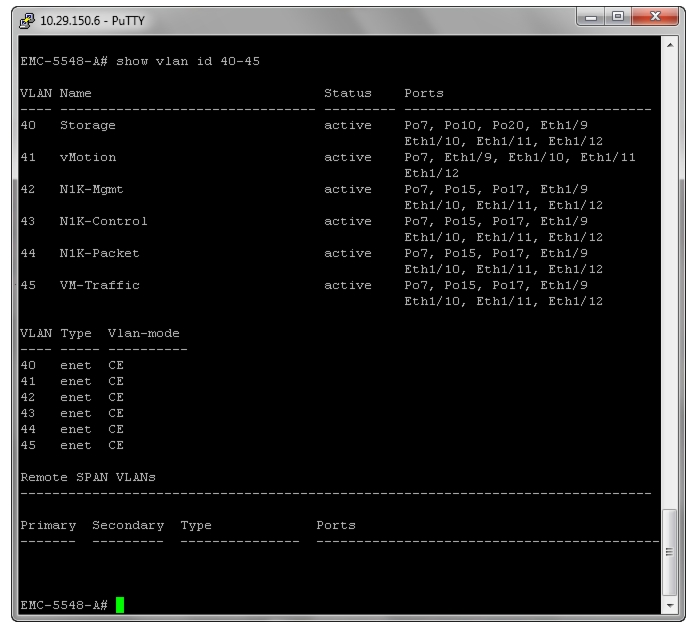

Verify VLAN and port-channel configuration

At this point of time, all ports and port-channels are configured with necessary VLANs, switchport mode and vPC configuration. Validate this configuration using the "show vlan", "show port-channel summary" and "show vpc" commands as shown in Figure 42.

Figure 42 Validating Created Port-Channels with VLANs

"show vlan" command can be restricted to a given VLAN or set of VLANs as shown in the above figure. Ensure that on both switches, all required VLANs are in "active" status and right set of ports and port-channels are part of the necessary VLANs.

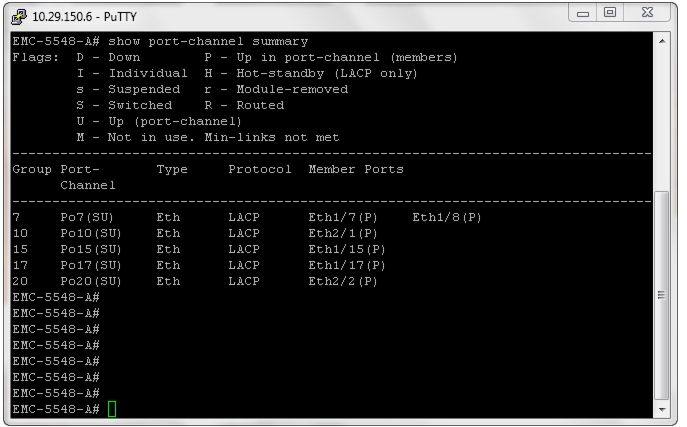

Port-channel configuration can be verified using "show port-channel summary" command. Figure 43 shows the expected output of this command.

Figure 43 Verifying Port-Channel configuration

In this example, port-channel 7 is the vPC peer-link port-channel, port-channels 10 and 20 are connected to the storage arrays and port-channels 15 and 17 are connected to the infrastructure network. Make sure that state of the member ports of each port-channel is "P" (Up in port-channel). Note that port may not come up if the peer ports are not properly configured. Common reasons for port-channel port being down are:

•

Port-channel protocol mis-match across the peers (LACP v/s none)

•

Inconsistencies across two vPC peer switches. Use "show vpc consistency-parameters {global | interface {port-channel | port} <id>} command to diagnose such inconsistencies.

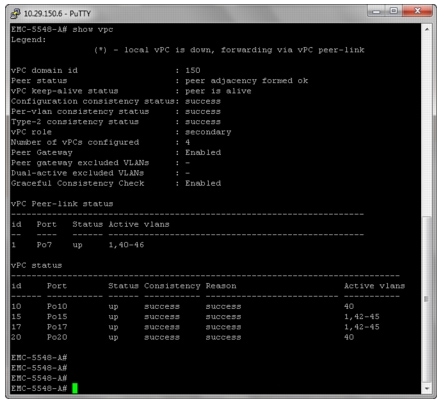

vPC status can be verified using "show vpc" command. Example output is shown in Figure 44.

Figure 44 Verifying VPC Status

Ensure that the vPC peer status is "peer adjacency formed ok" and all the port-channels, including the peer-link port-channel status are "up".

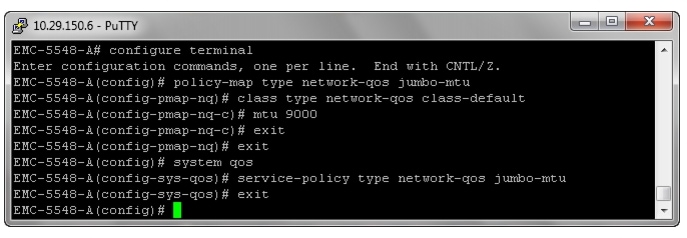

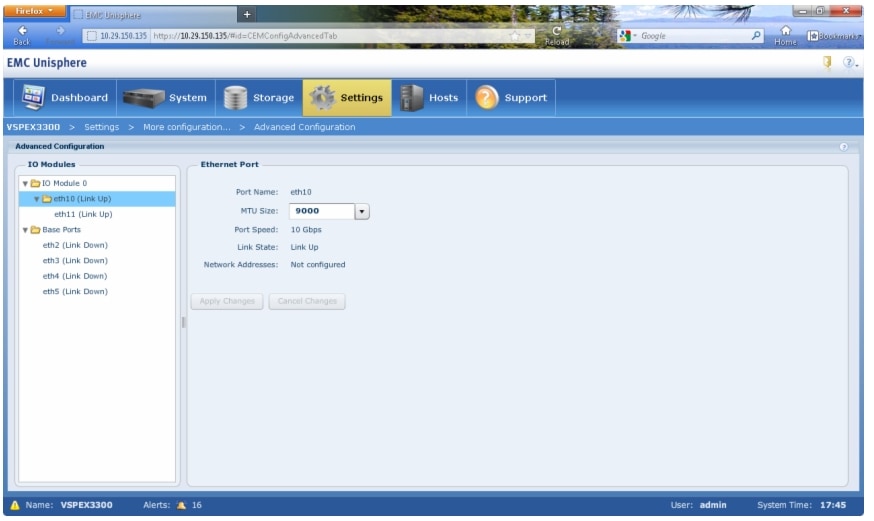

Configure QoS

The Cisco solution for the EMC VSPEX VMware architectures require MTU to be set at 9000 (jumbo frames) for efficient storage and vMotion traffic. MTU configuration on Cisco Nexus 5000 and 3000 series switches fall under global QoS configuration. You may need to configure additional QoS parameters as needed by the applications. For more information on the QoS configuration, see Nexus 3000 and 5000 series configuration guide.

To configure 9000 MTU on the Nexus 5000 and 3000 series switches, follow these steps on both switch A and B (refer to the following figure for CLI):

1.

Create a policy map named "jumbo-mtu".

2.

As we are not creating any specific QoS classification, set 9000 MTU on the default class.

3.

Configure the system level service policy to the "jumbo-mtu" under the global "system qos" subcommand.

Figure 45 shows the exact Cisco Nexus CLI for the steps mentioned above.

Figure 45 Configuring MTU on Cisco Nexus Switches

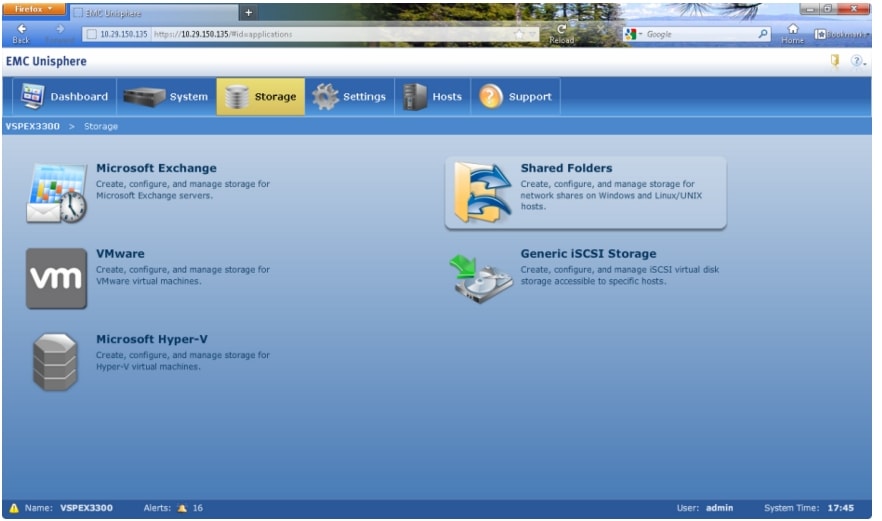

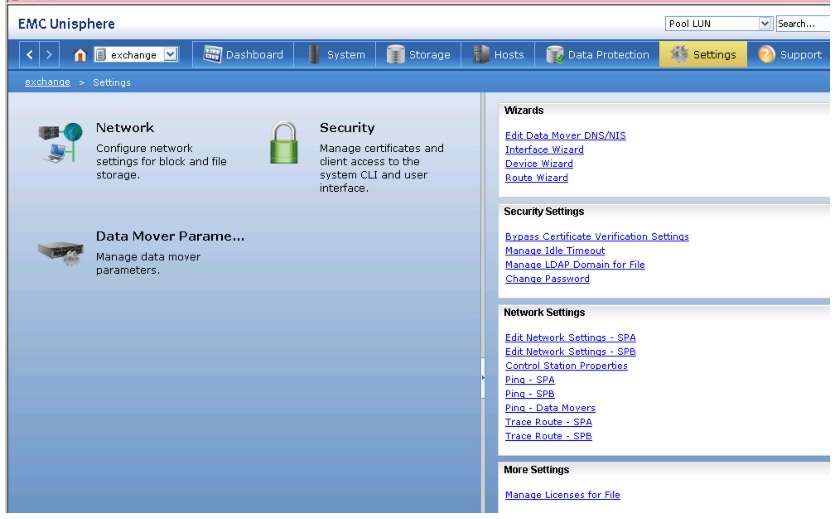

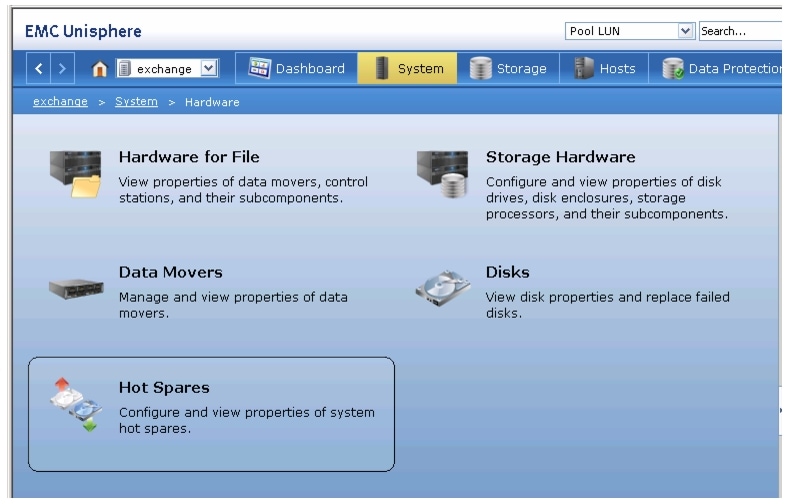

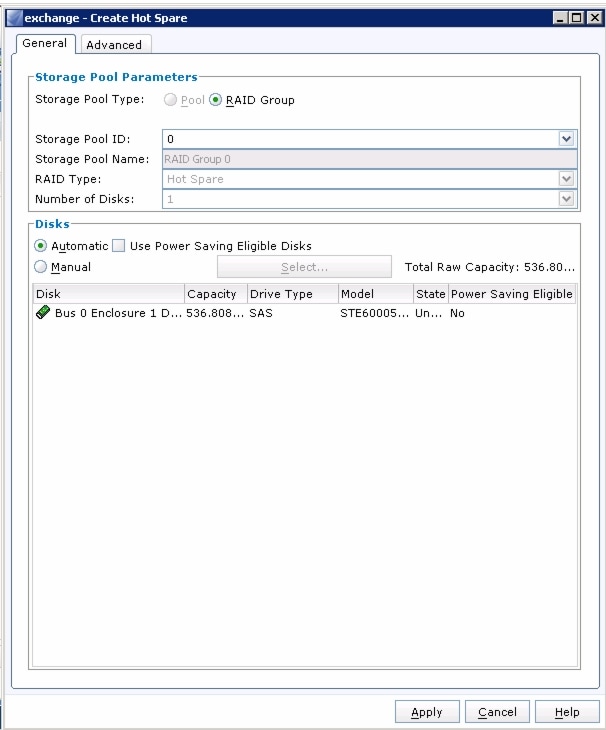

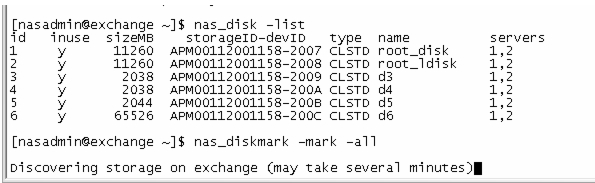

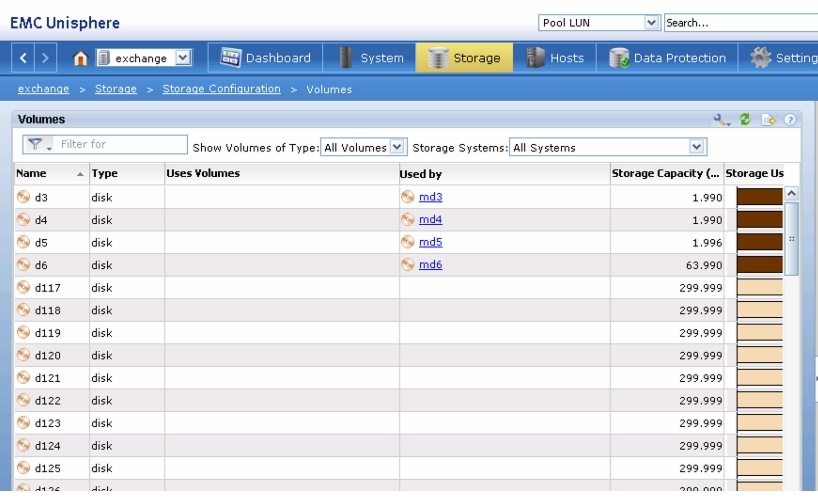

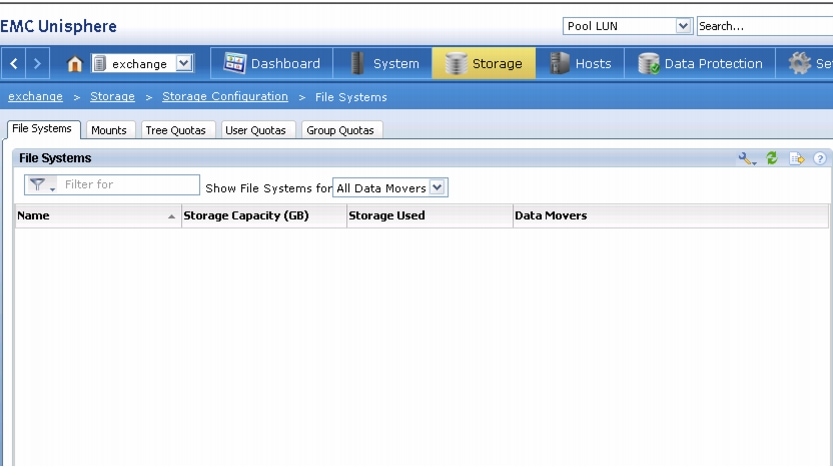

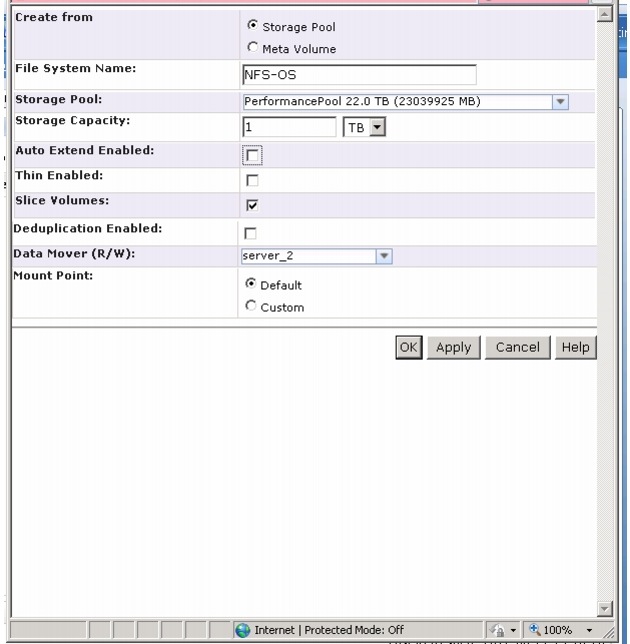

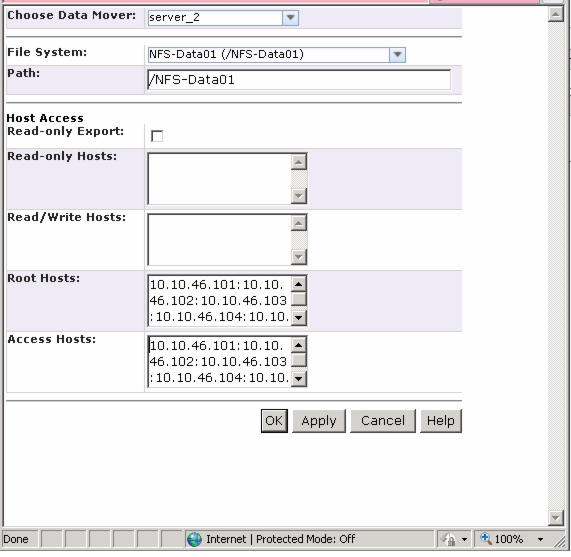

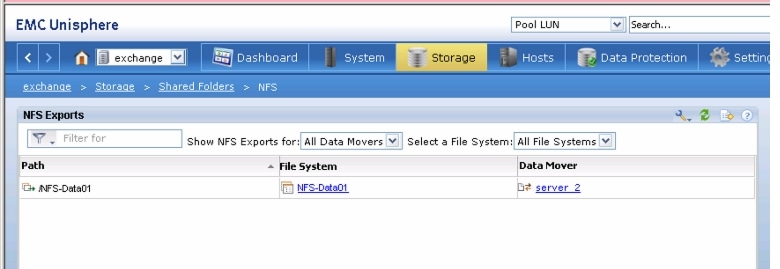

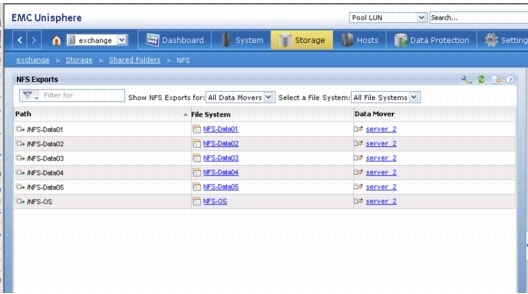

Preparing and Configuring EMC Storage Array

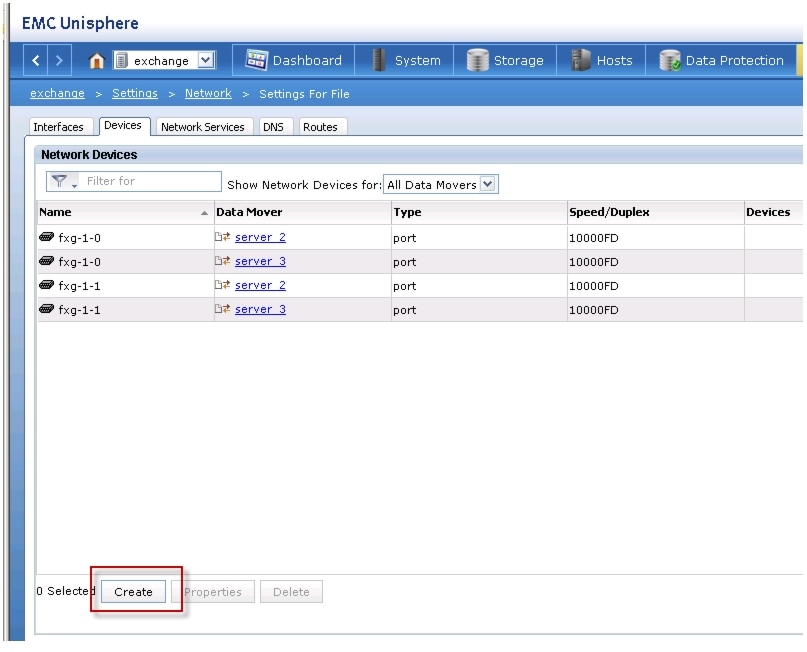

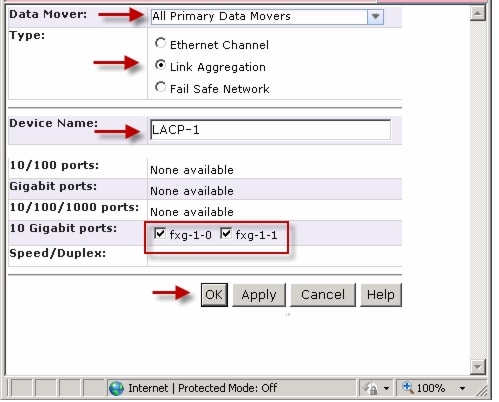

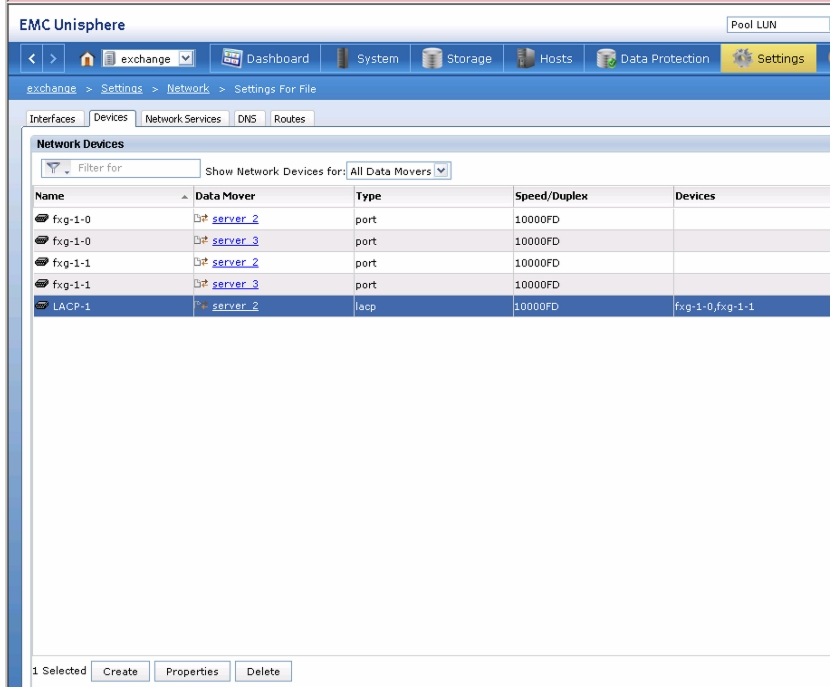

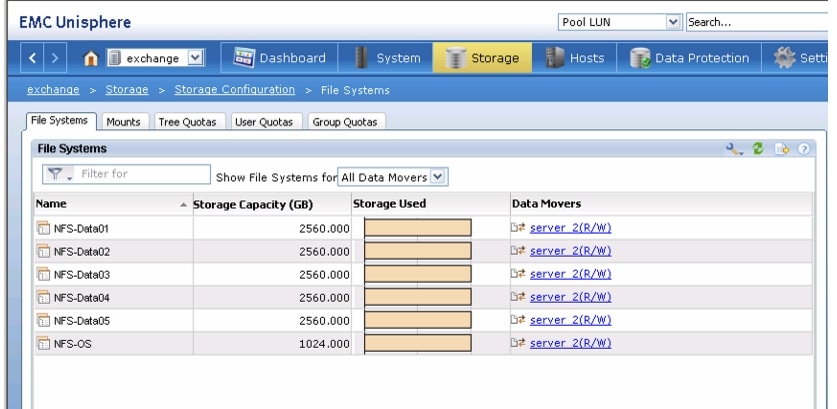

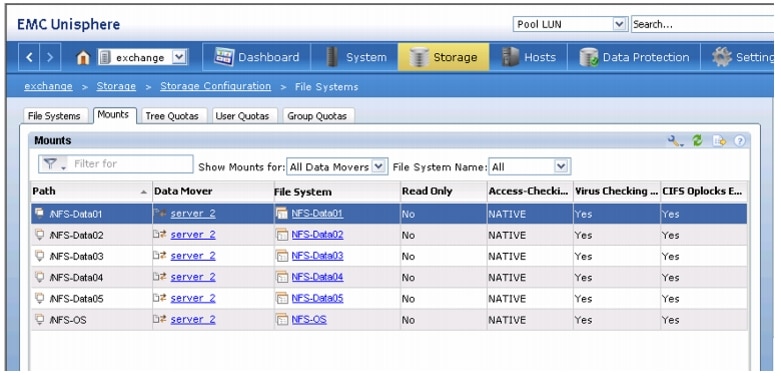

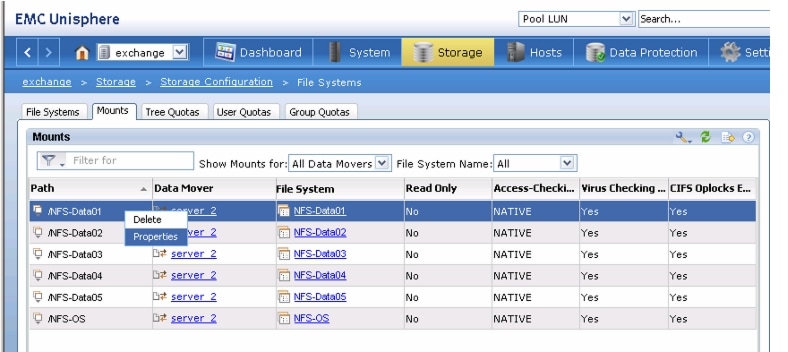

Storage array configuration for VMware architecture varies depending on the scale. For 50 and 100 virtual machines, VNXe3150 and VNXe3300 arrays are used respectively. For 125 virtual machines, VNX5300 array is used. GUI configuration for VNXe and VNX arrays differ significantly, so they are described separately in the following sections.

Note that at a high level, following steps are taken:

1.

Create a single data store for virtual machines operating systems, and at least two data stores for the virtual machines data.

2.

Configure NFS share and assign host access privileges.

3.

Configure port-channel (aggregation) and jumboframe.

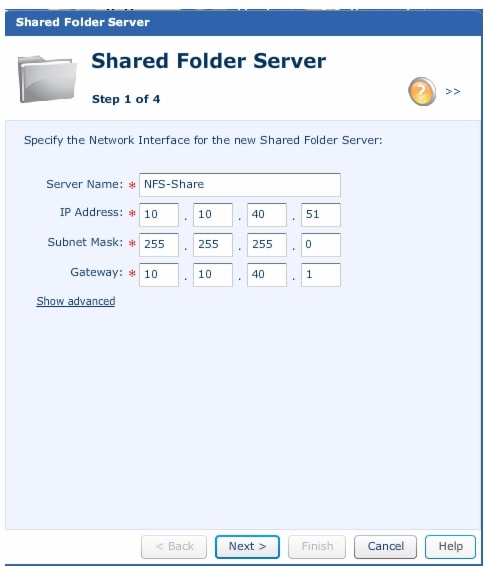

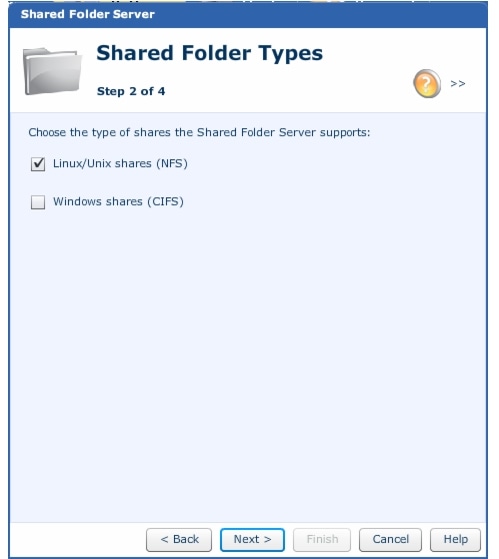

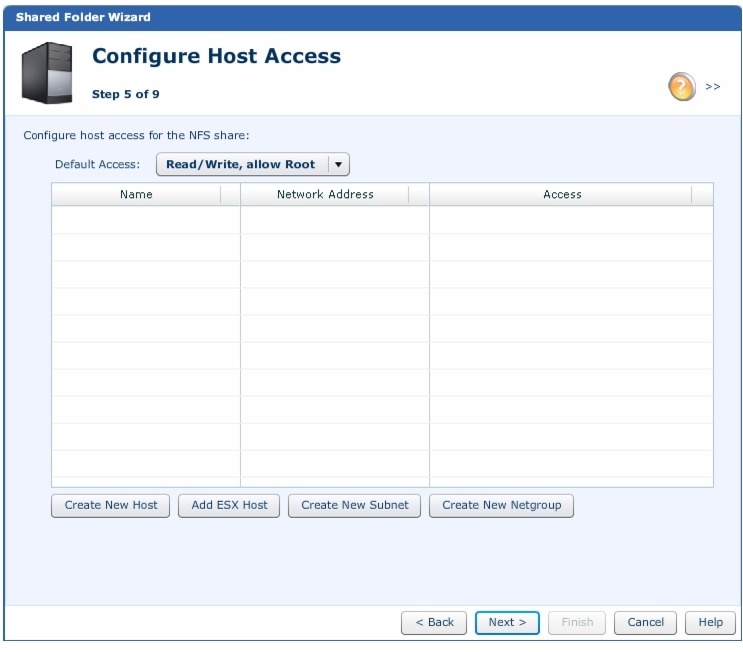

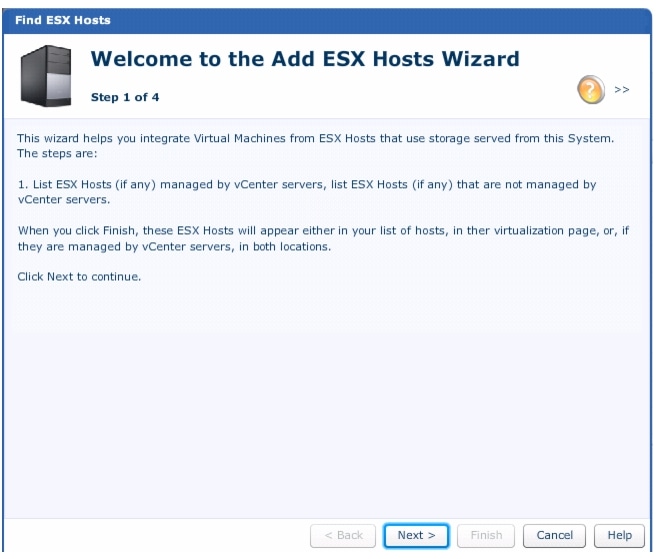

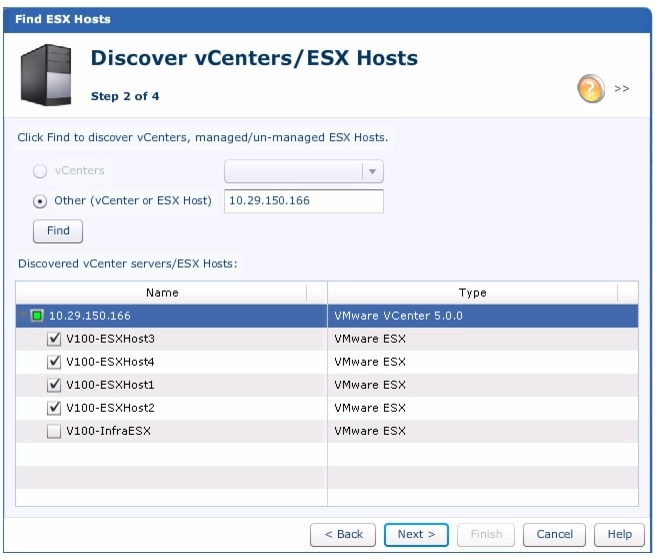

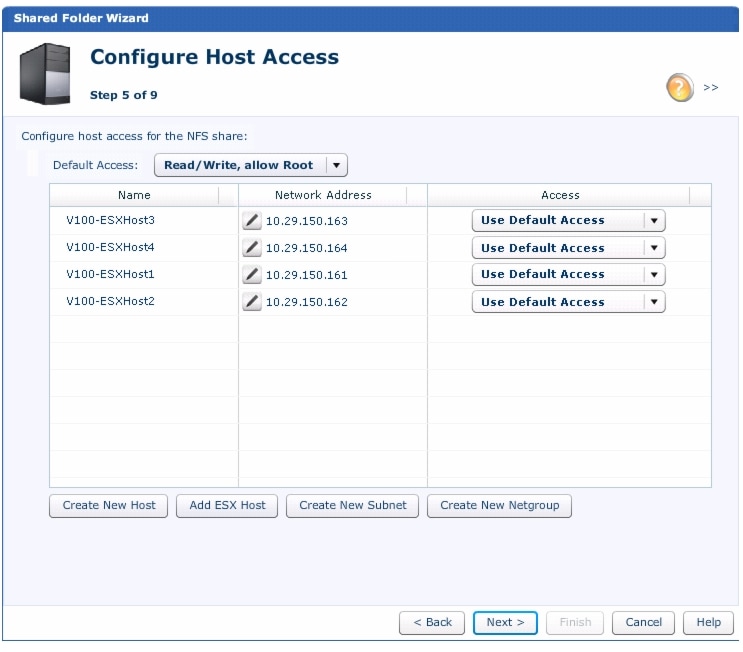

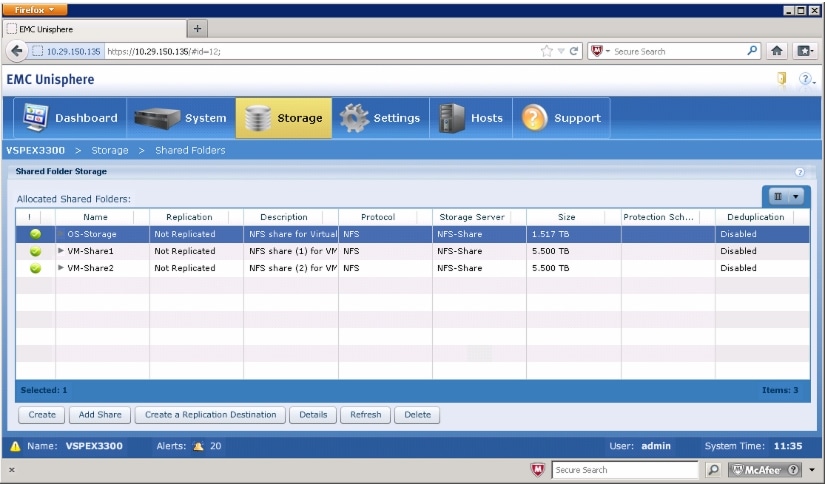

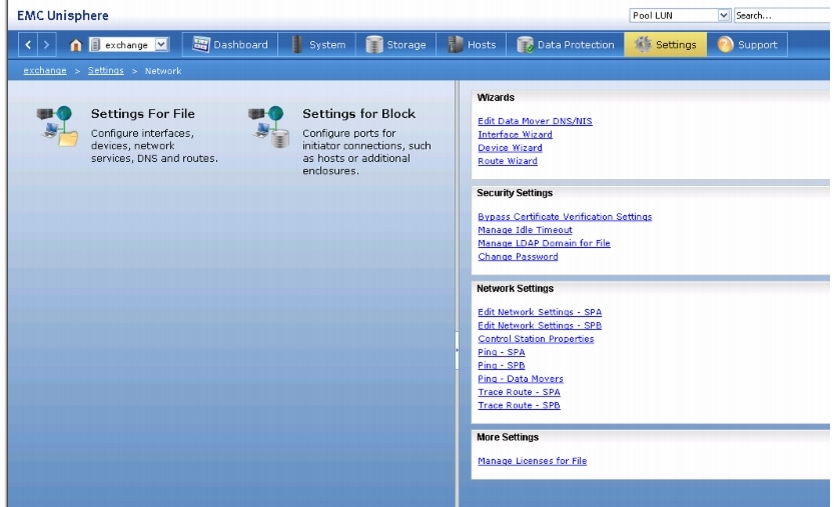

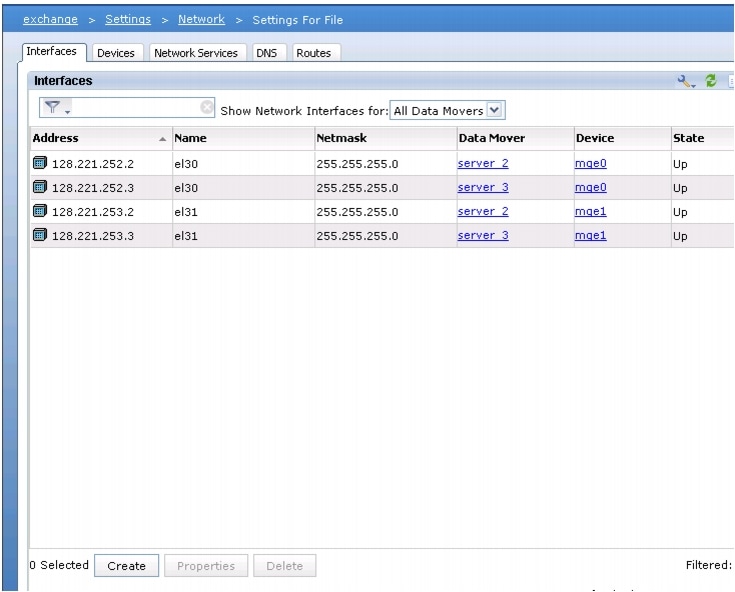

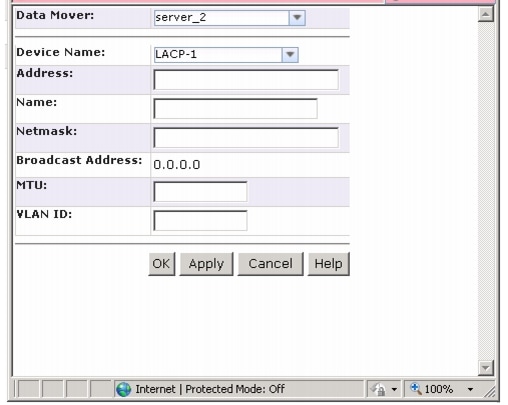

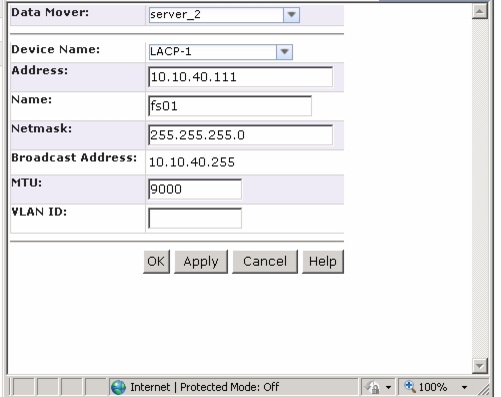

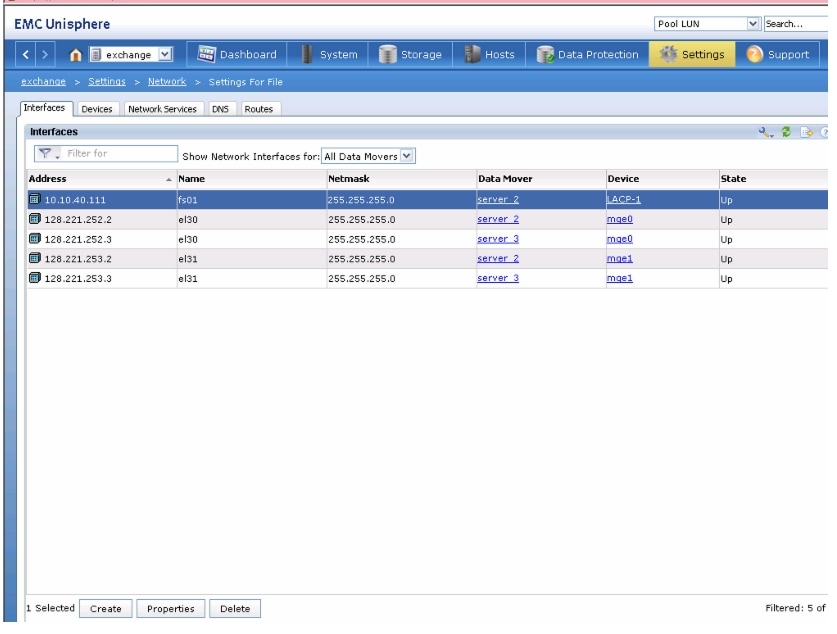

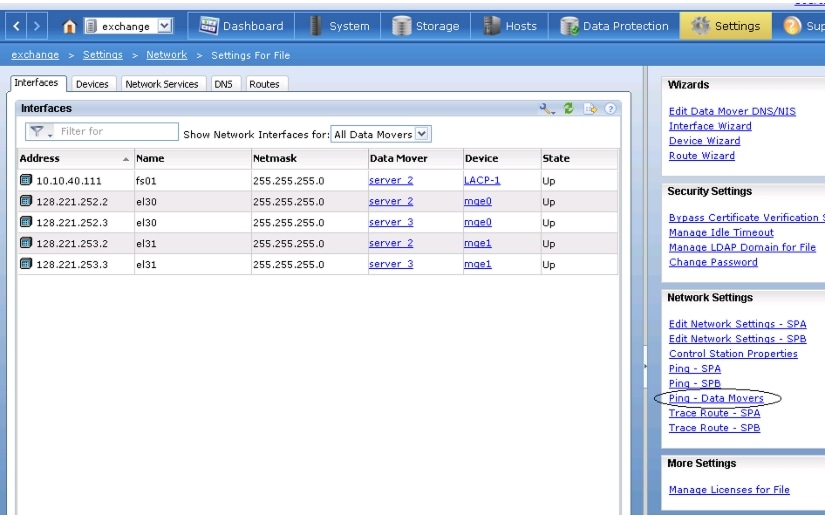

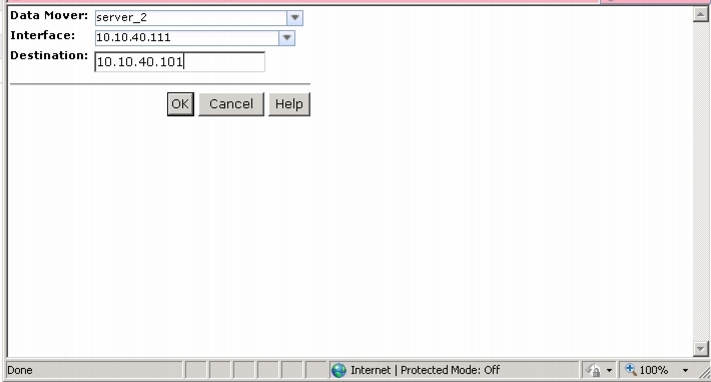

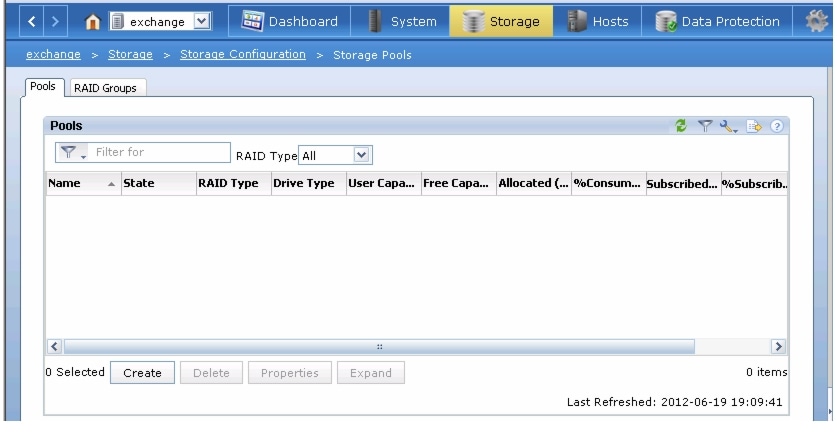

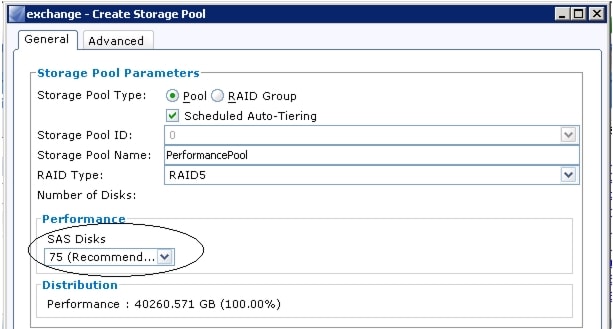

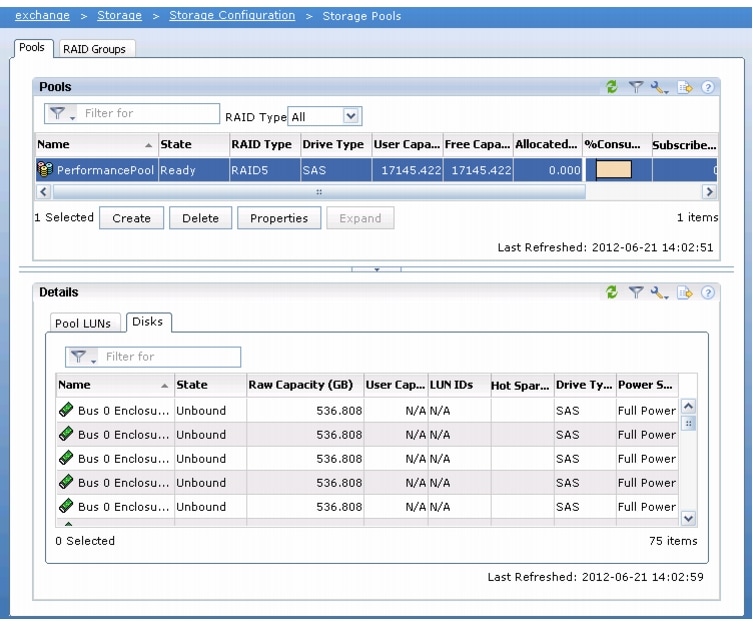

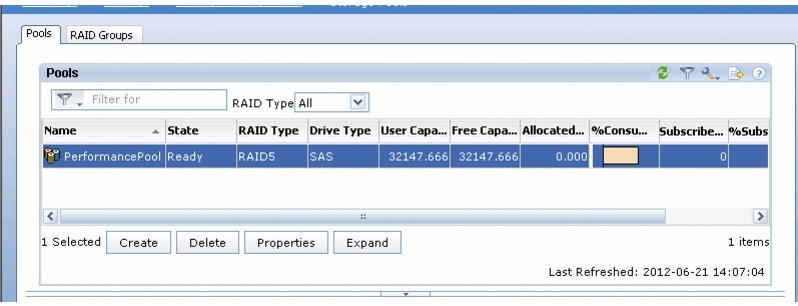

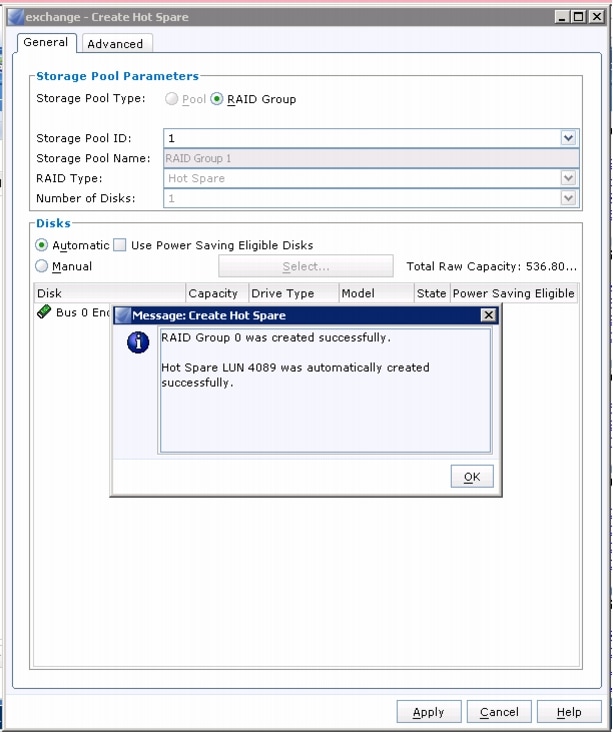

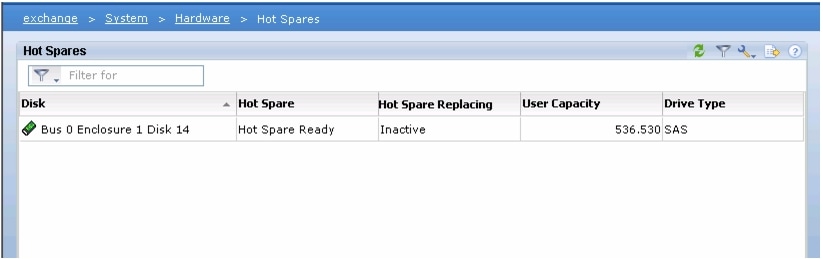

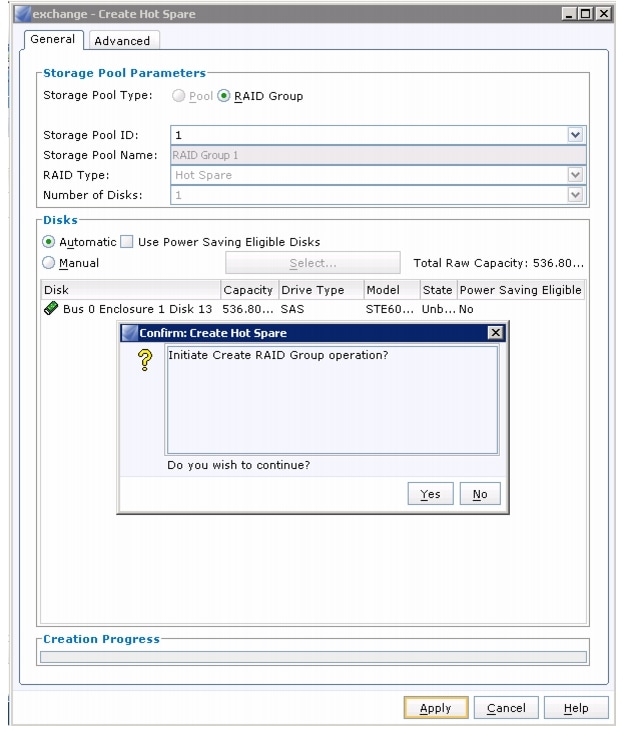

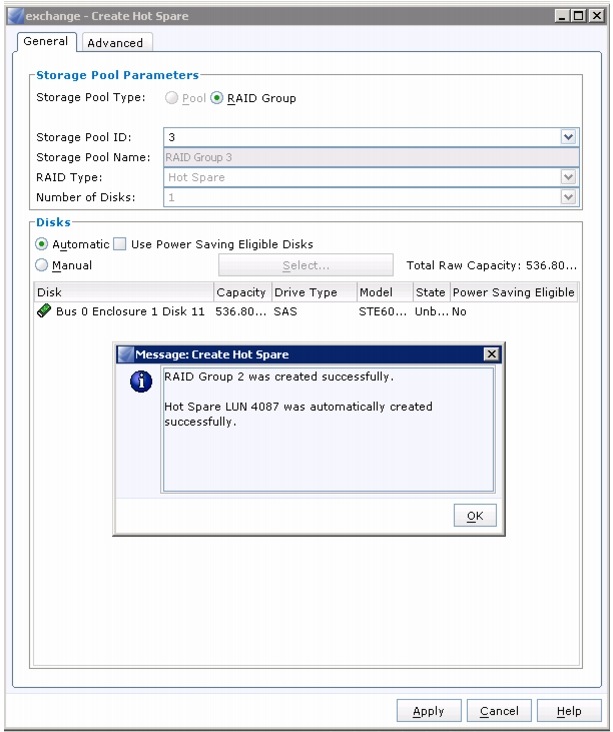

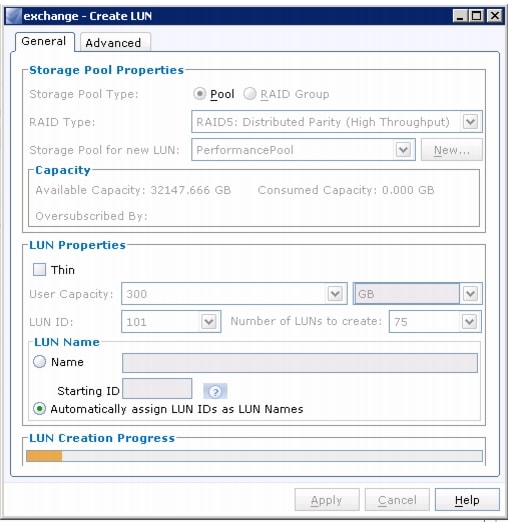

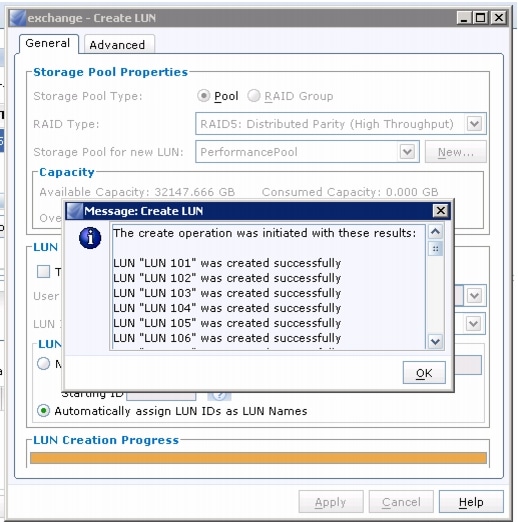

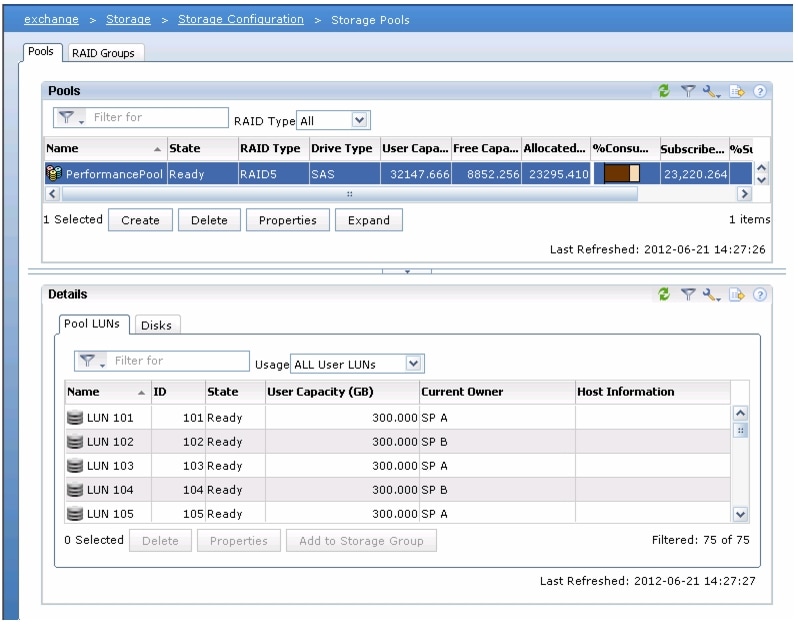

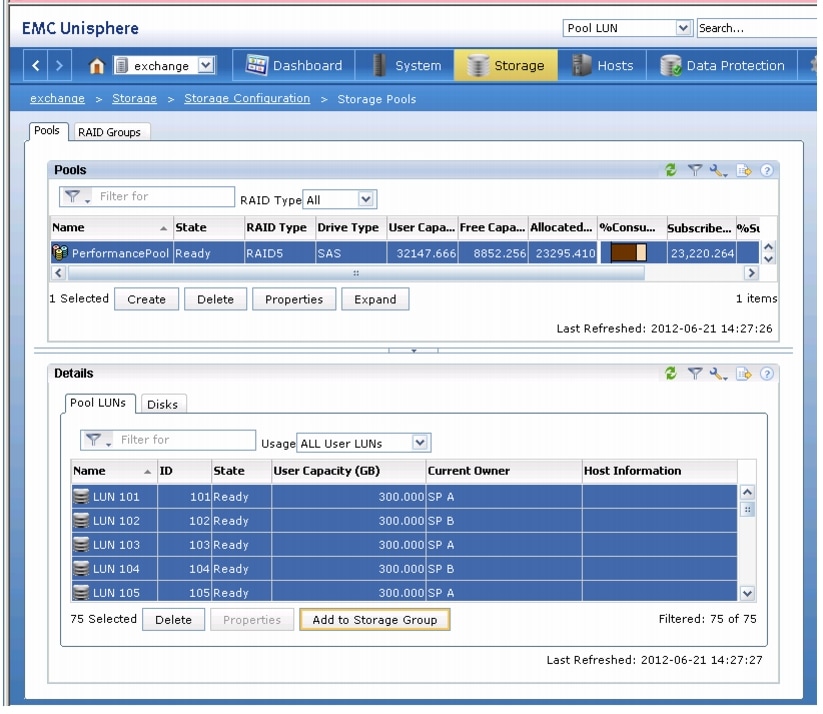

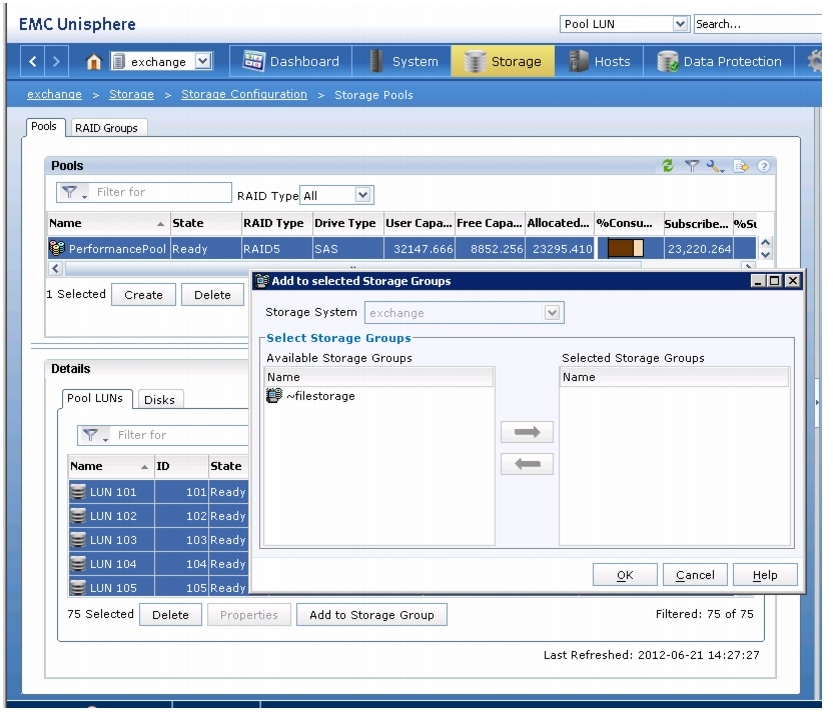

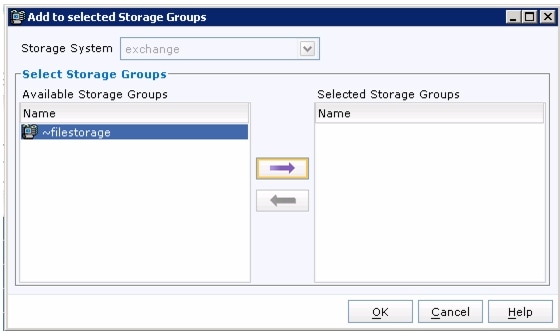

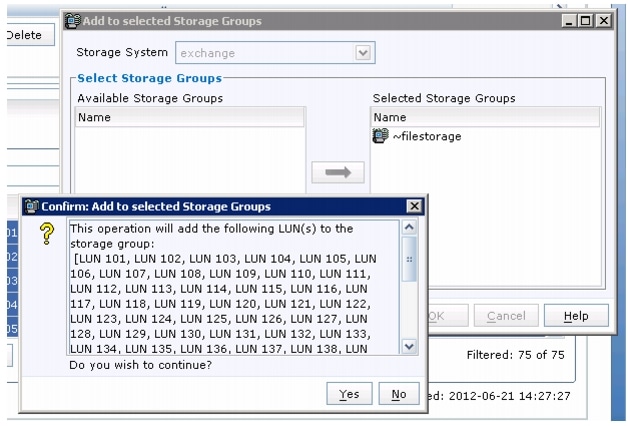

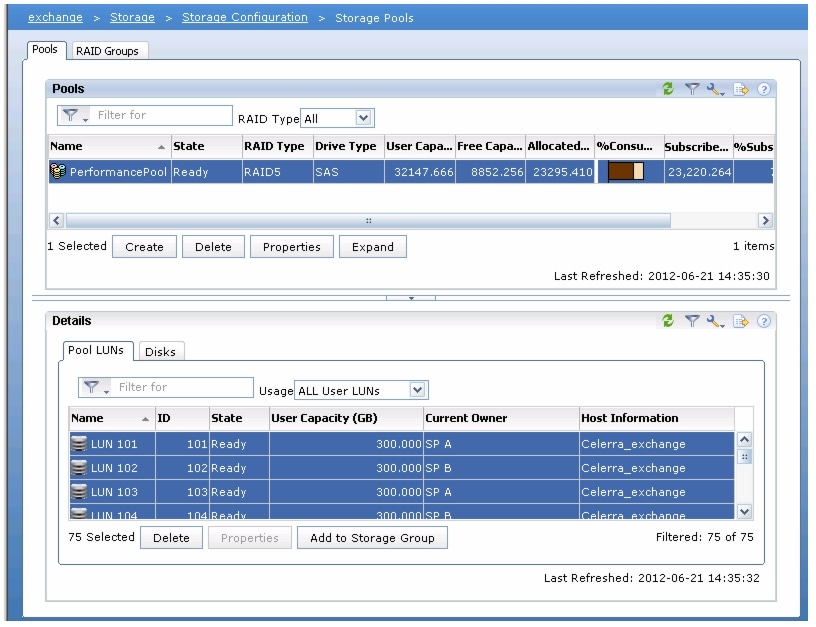

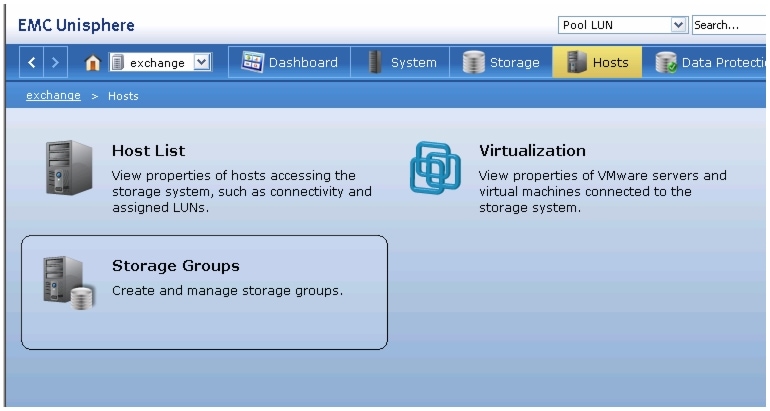

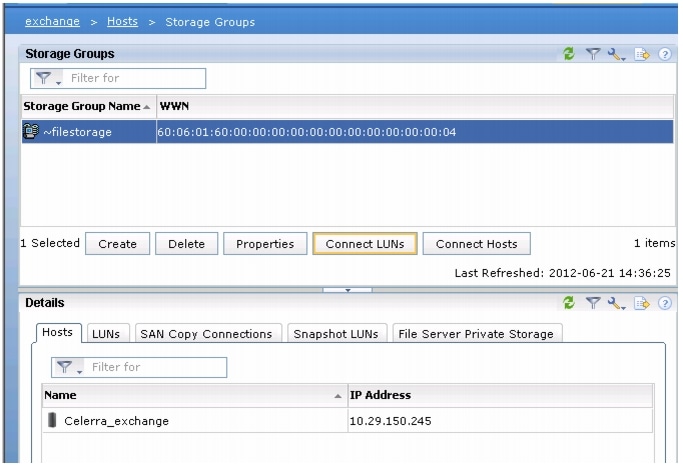

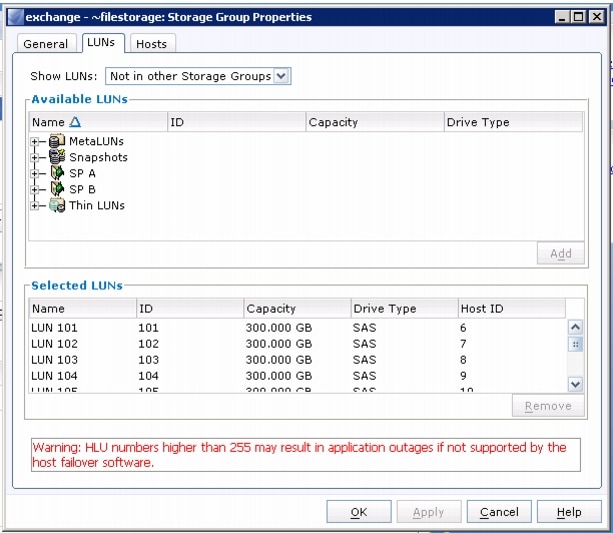

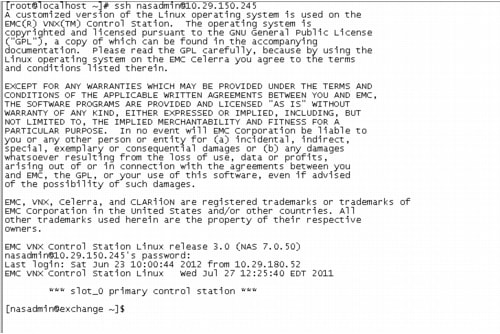

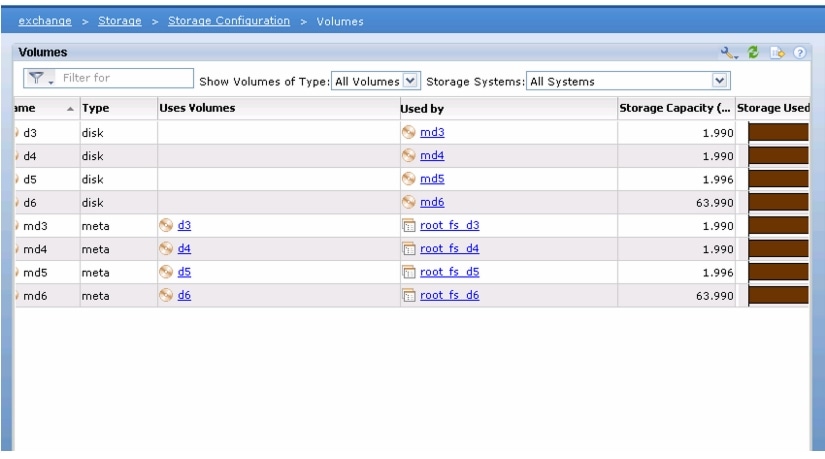

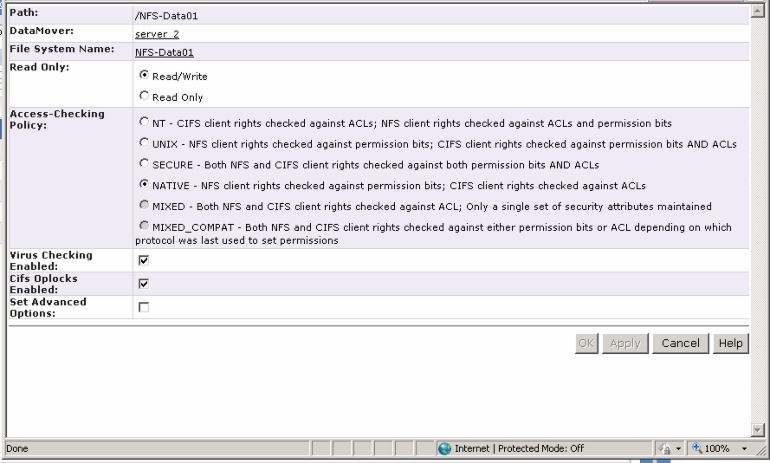

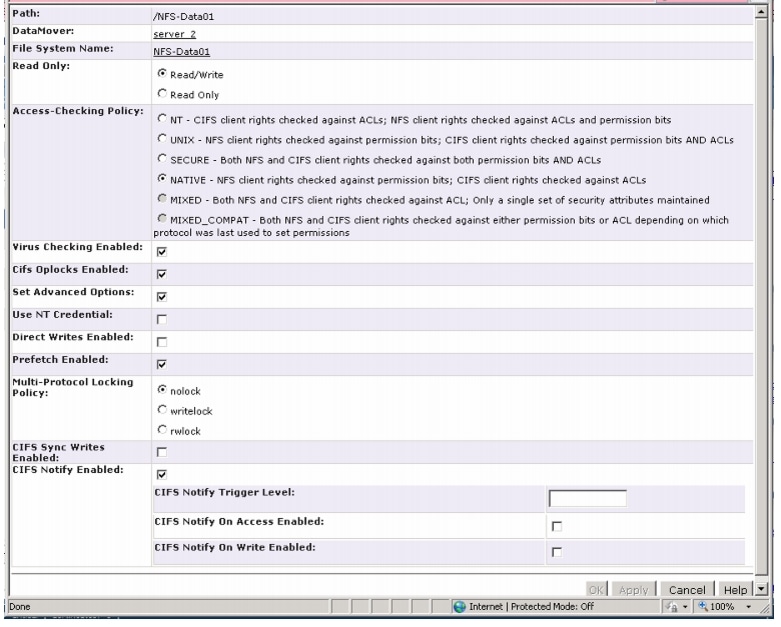

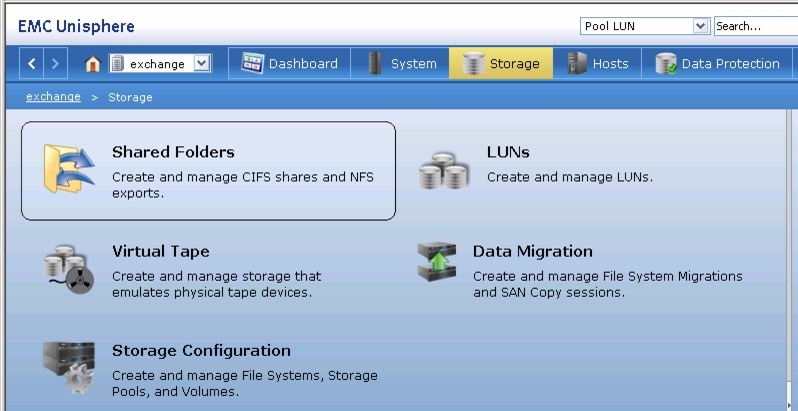

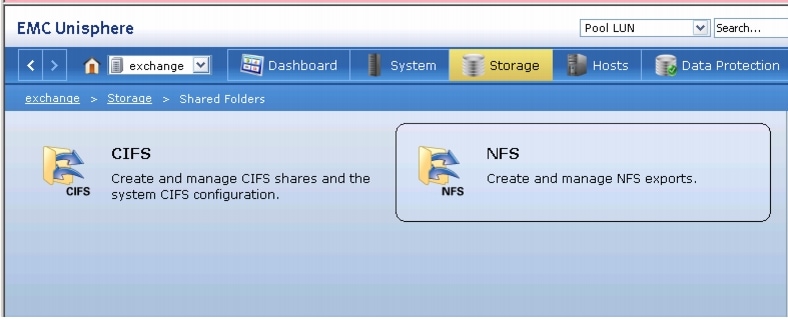

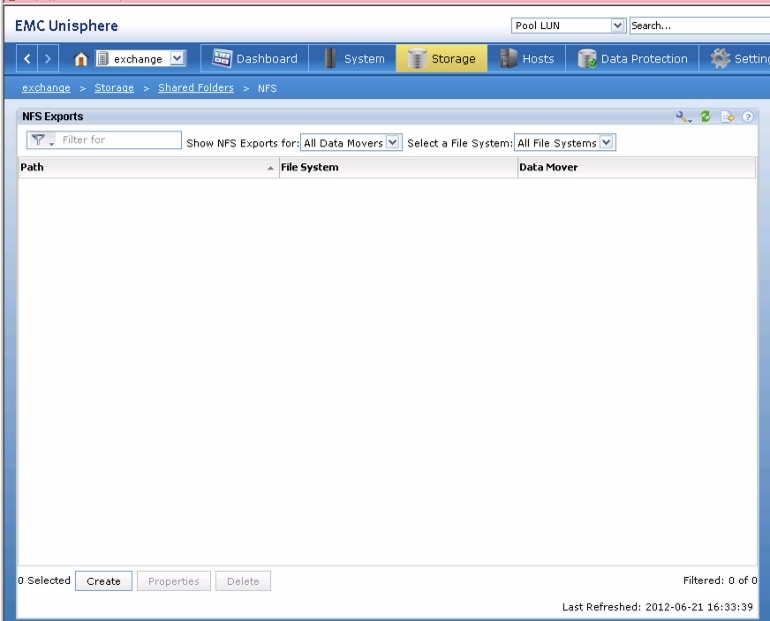

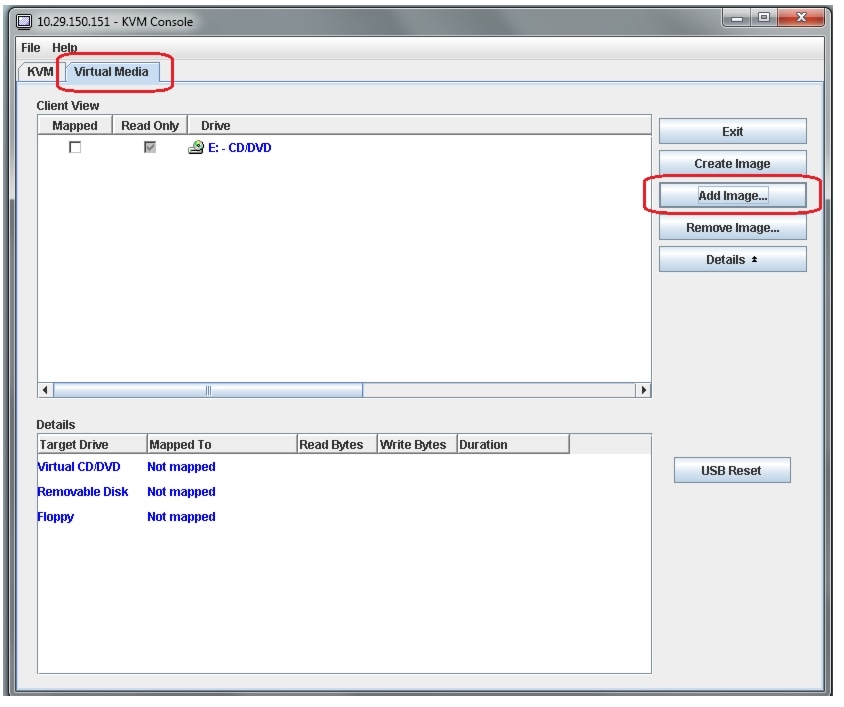

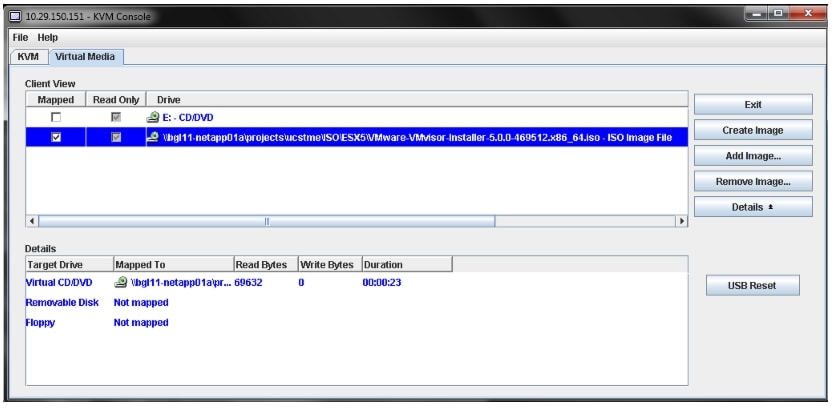

Configuring VNXe3000 series storage arrays

This section covers configuring storage array for 50 and 100 virtual machines. Follow these steps to configure storage arrays:

1.

Using the browser, launch the Unisphere Console with the management IP address of the storage array. Provide user name and password.

2.

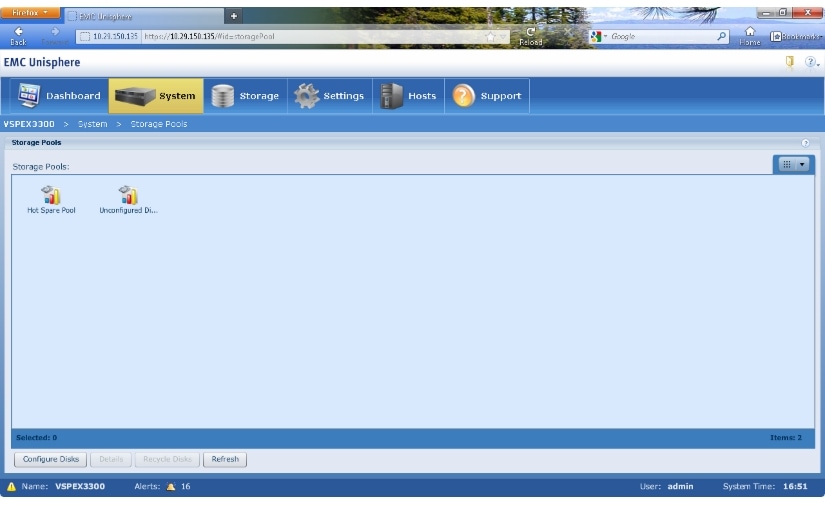

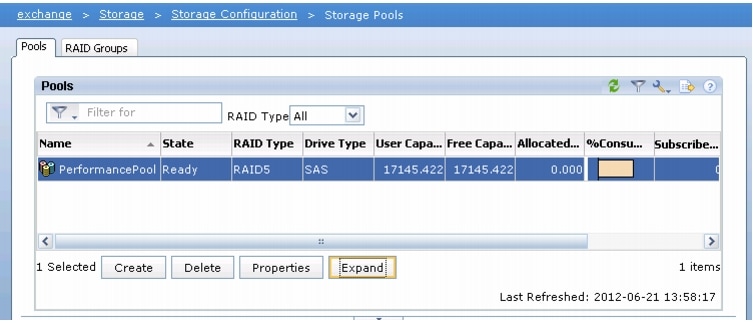

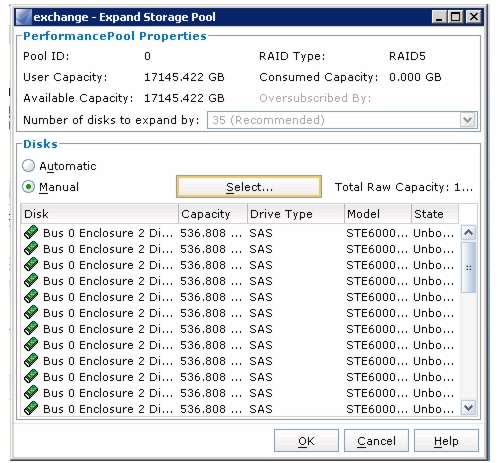

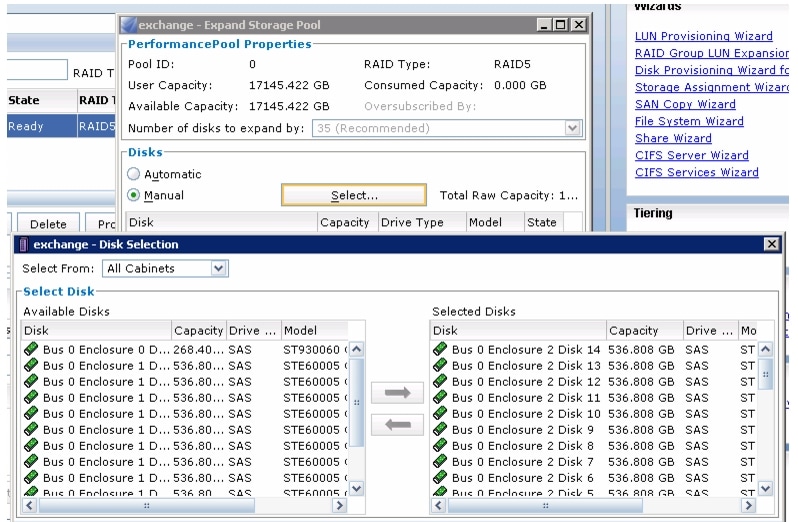

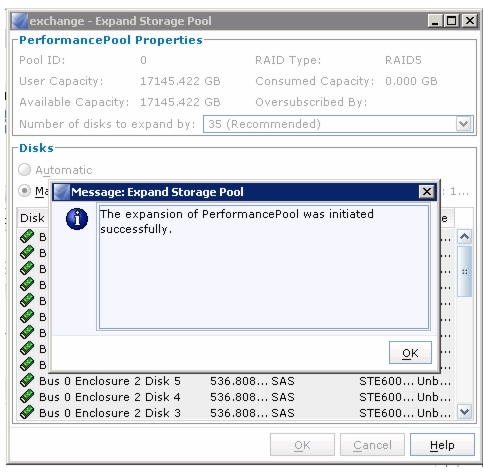

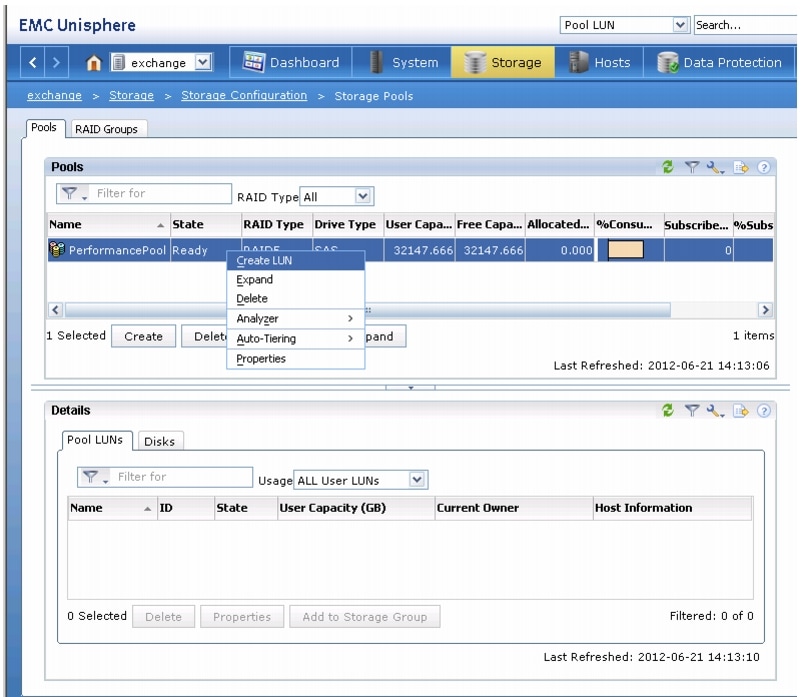

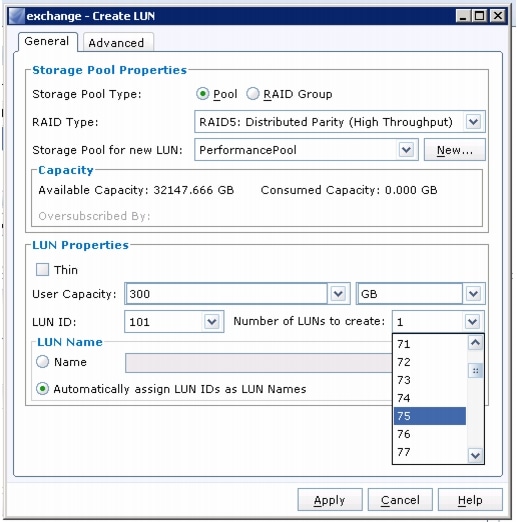

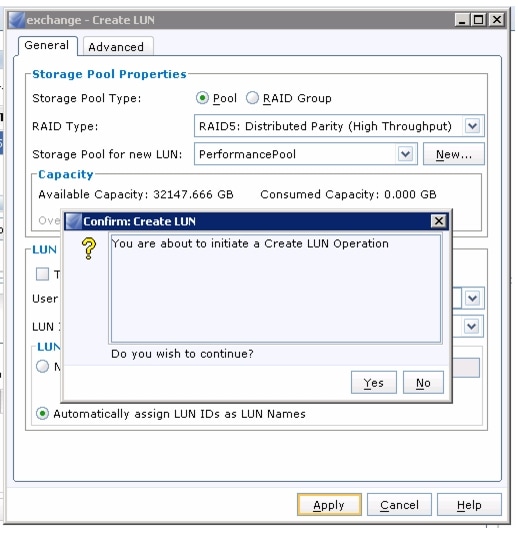

Click System > Storage Pools in the EMC Unishpere window.

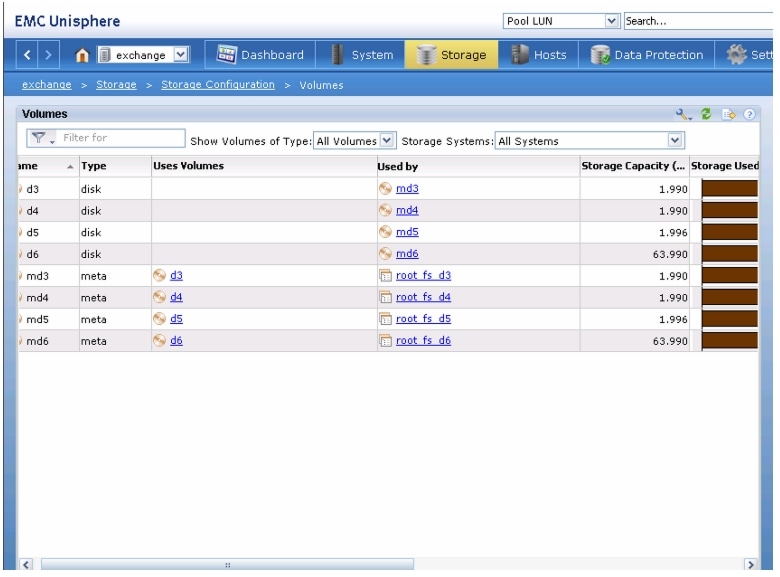

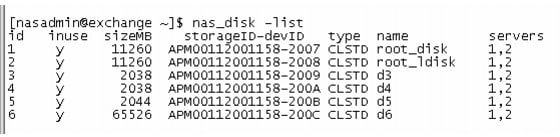

Figure 46 Storage Pools in EMC Unisphere Window

3.

Click Configure Disks.

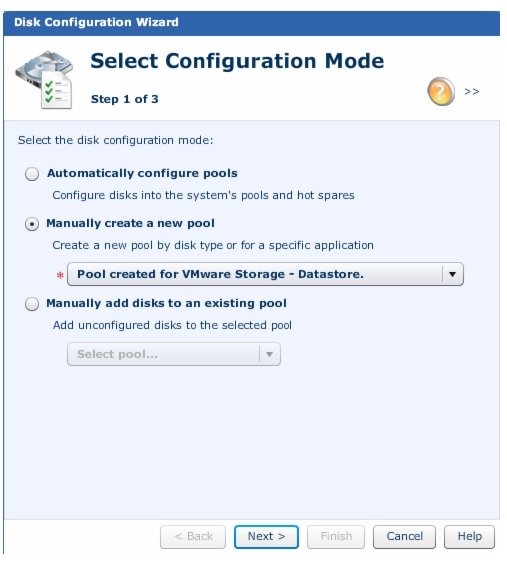

Figure 47 Configuring Disks in EMC Unishpere

4.

Click the "Manually create a new pool" radio button and choose "Pool created for VMware Storage - Datastores" from drop-down list.

Figure 48 Selecting Configuration Mode

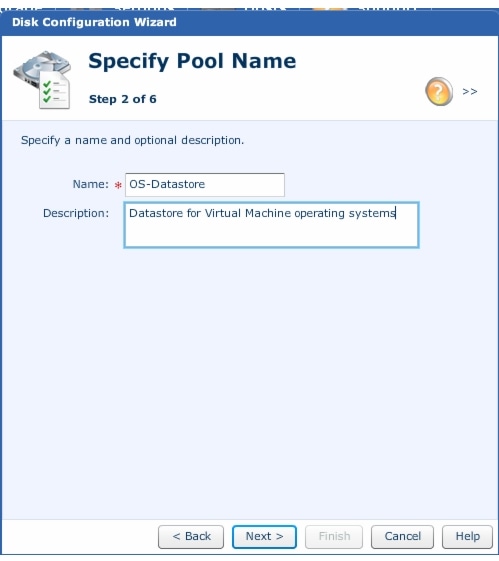

5.

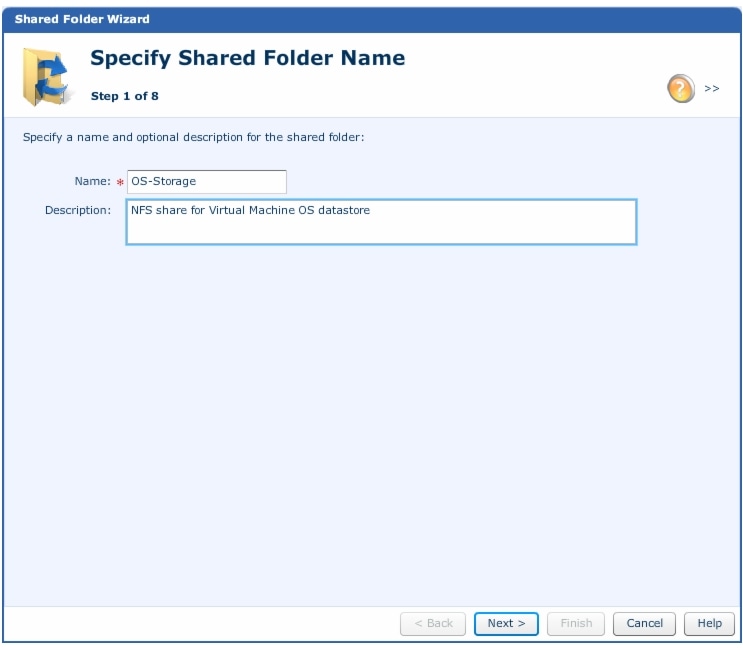

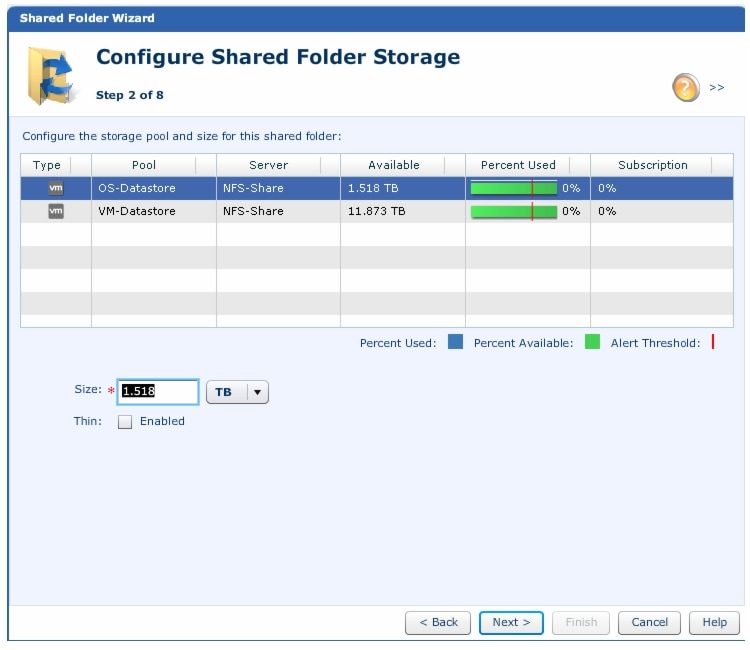

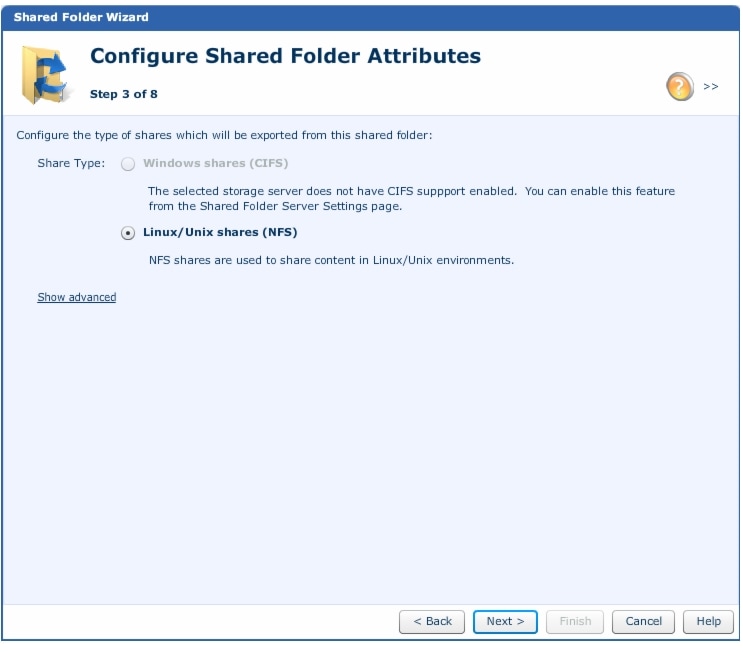

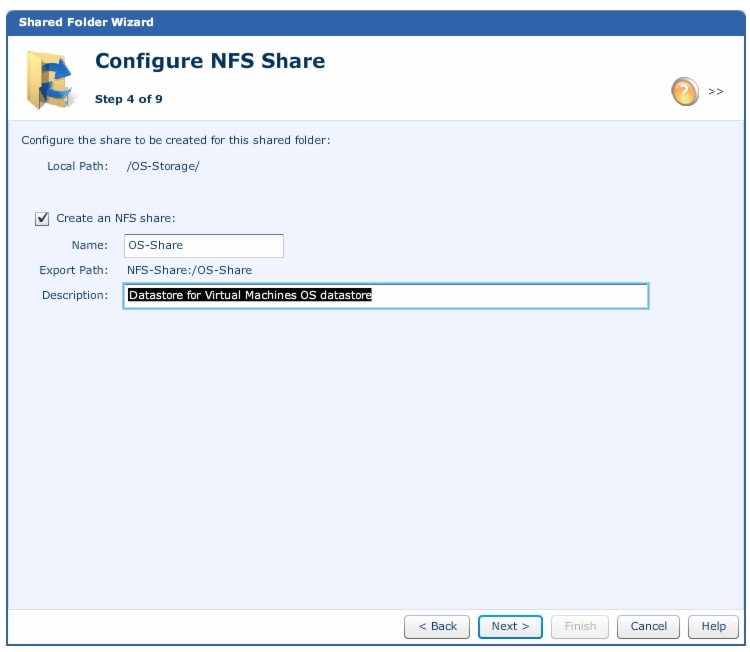

Specify the pool name and provide description in the "Name" and "Description" fields respectively. Click Next.

Figure 49 Specifying Pool Names

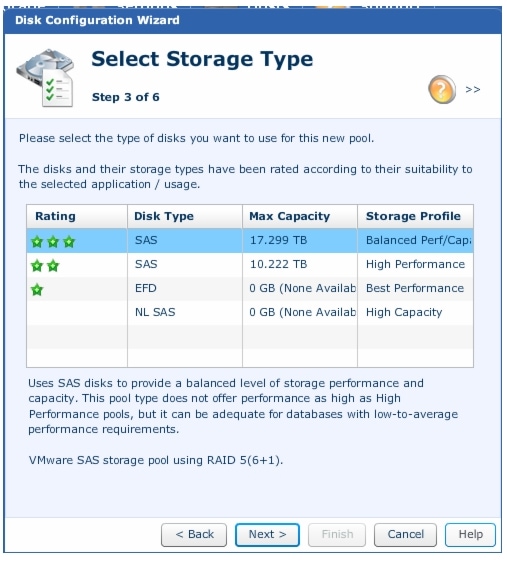

6.

Select SAS for balanced performance storage profile.

Figure 50 Selecting Required Storage Space

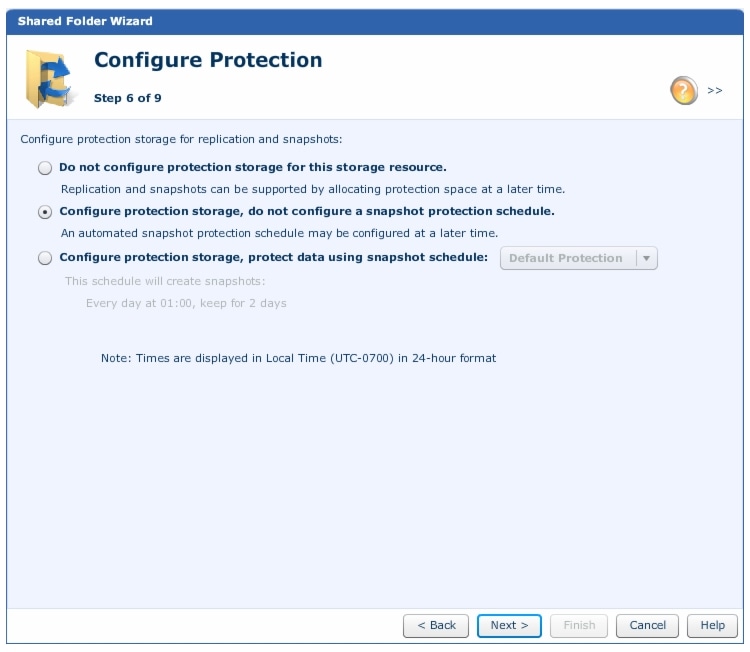

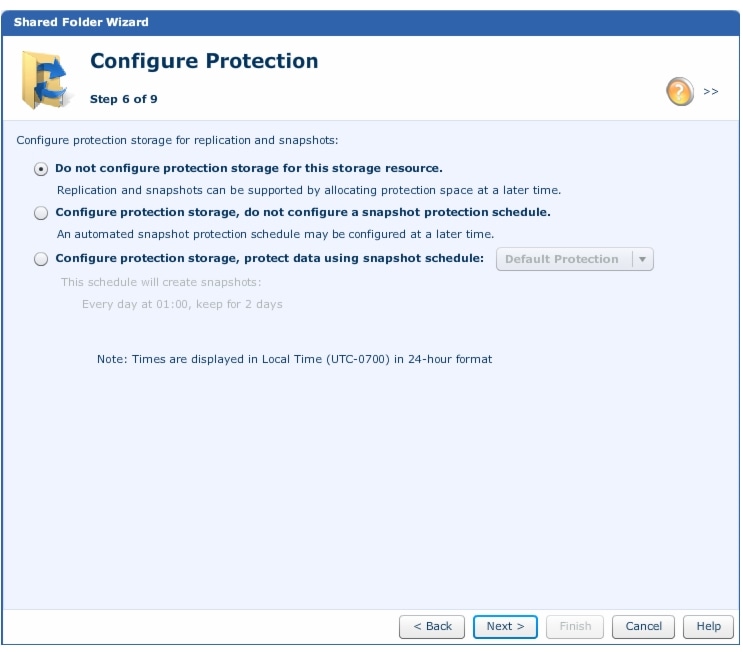

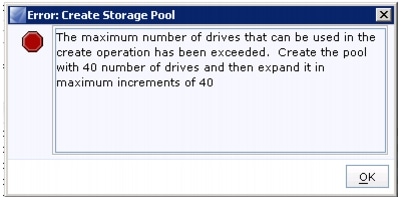

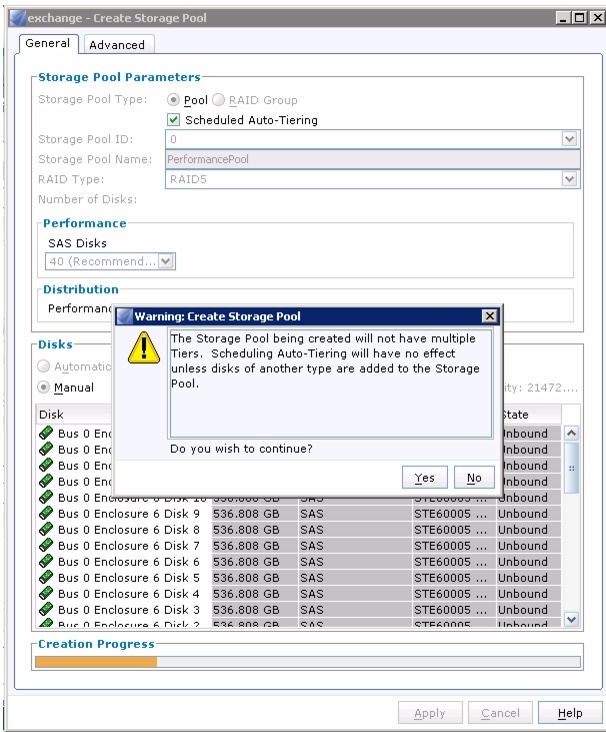

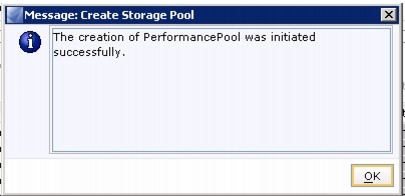

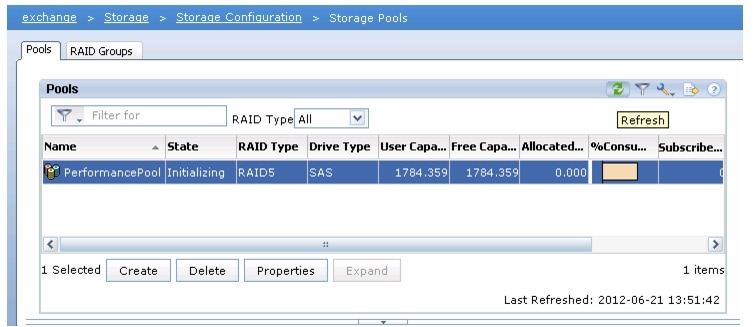

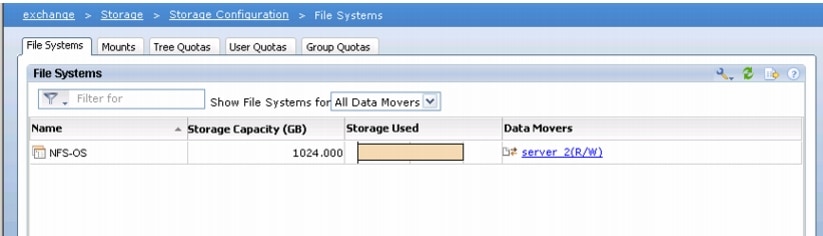

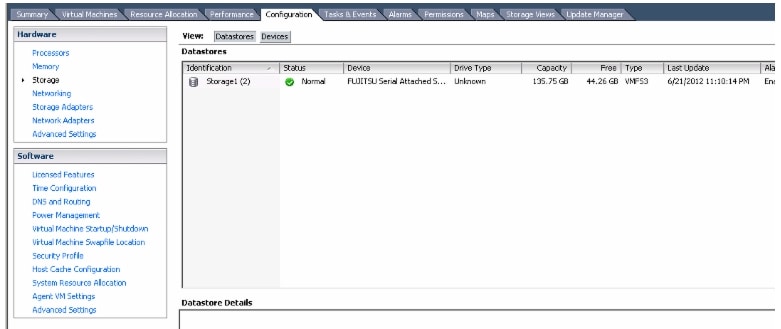

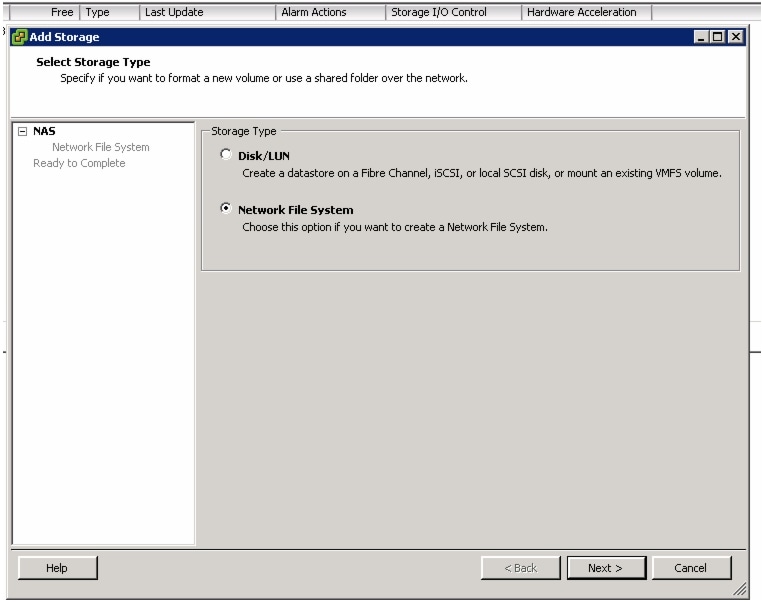

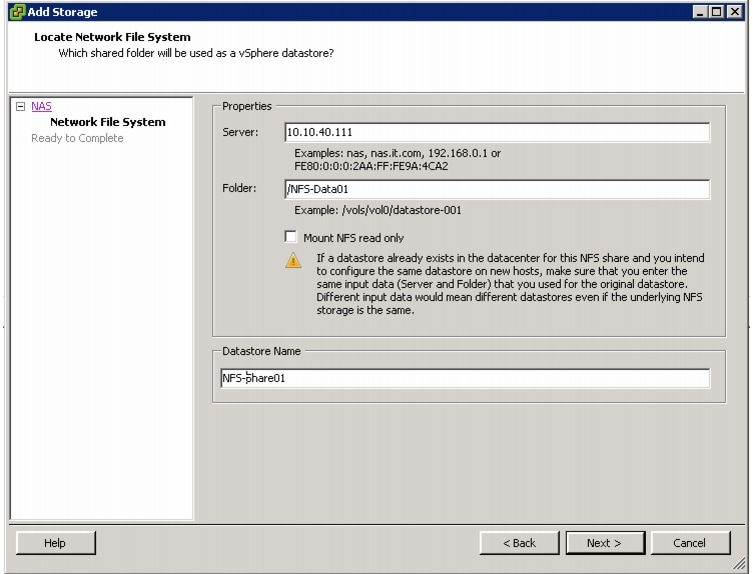

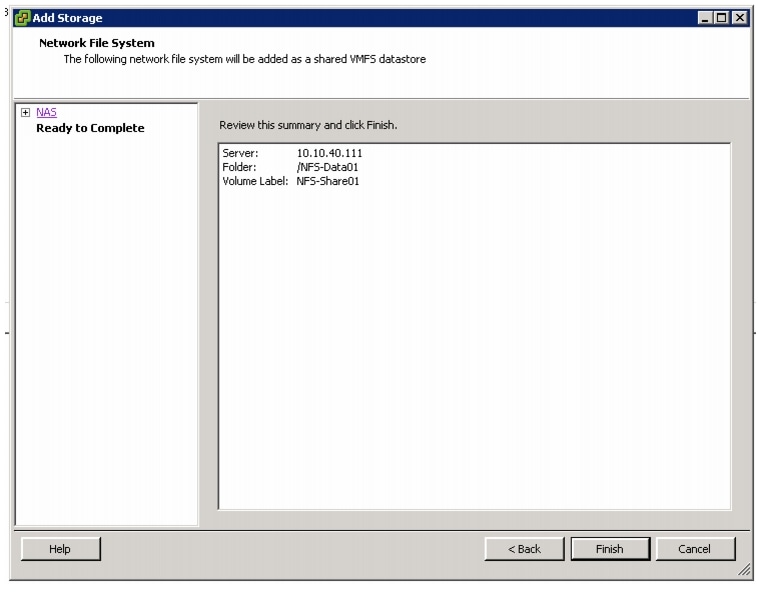

7.