Cisco Prime Performance Manager Quick Start Guide, 1.7

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- January 26, 2015

Chapter: Installation Requirements

- New and Revised Information

- Supported Operating Systems

- Client Requirements

- Cisco Prime Interoperability

- Evolved Programmable Networks Requirements

- Additional Requirements Notes

- Server Requirements for Single Deployments

- Server Requirements for Distributed Deployment Installations

- Large Network Distributed Deployment Server Requirements

- Very Large Network Distributed Deployment Server Requirements

- Extremely Large Network Distributed Deployment Server Requirements

- Extremely Large Network Distributed Deployment Server Requirements with 5-Minute Reports Enabled

- Maximum Supported Scale Distributed Deployment Server Requirements

Cisco Prime Performance 1.7 Installation Requirements

The following topics provide the hardware and software requirements for installing Cisco Prime Performance Manager 1.7:

- New and Revised Information, page 1-2

- Supported Operating Systems, page 1-3

- Client Requirements, page 1-6

- Cisco Prime Interoperability, page 1-7

- Evolved Programmable Networks Requirements, page 1-7

- Requirements for Mobility StarOS Networks, page 1-47

- Code Division Multiple Access Distributed Mobility Scale Requirements, page 1-53

- Requirements for Small Cell Networks, page 1-55

- Requirements for collectd, page 1-61

- Single Server ESXi Hypervisor Requirements, page 1-62

- Single Server Requirements for Data Collection Manager, page 1-64

- Requirements for Managing KVM Deployments

- Requirements for Ceph Deployments

- Requirements for TCP/HTTP Probes

- Requirements for NetFlow

- Linux Update Requirements, page 1-75

- Gateway Requirements Large Number of Units Distributed Scale, page 1-77

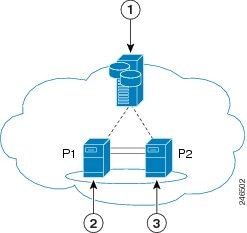

- Gateway High Availability Installation Requirements, page 1-85

- Network Device Configuration, page 1-91

- Prime Performance Manager Ports, page 1-91

- Permissible IPv4 and IPv6 Addresses, page 1-92

- Bandwidth and Latency Requirements

New and Revised Information

Table 1-1 lists new and revised information since the first Prime Performance Manager 1.7 release.

Supported Operating Systems

You can install Prime Performance Manager gateway and unit servers on the following 64-bit Linux versions:

- Red Hat Enterprise Linux 5.0 Advanced Platform Update 5, Update 6, Update 7, Update 8, Update 9, Update 10, Update 11, or CentOS equivalent.

- Red Hat Enterprise Linux 6.0, Update 2, Update 3, Update 4, Update 5, Update 6, Update 8, Update 9, Update 10, or CentOS equivalent.

- Red Hat Enterprise Linux 7.0, 7.1, 7.2, 7.3, 7.4, 7.7, or CentOS equivalent.

- Linux Ubuntu Server 12.04 and 14.04 Release.

- XenApp 6.0

Note![]() RHEL 6.9 is supported from Release 1.7 Service Pack 1805 and above.

RHEL 6.9 is supported from Release 1.7 Service Pack 1805 and above.

RHEL 7.4 is supported from Release 1.7 Service Pack 1807 and above.

RHEL 6.10 is supported from Release 1.7 Service Pack 1901 and above.

RHEL 7.7 is supported from Release 1.7 Service Pack 2003 and above.

Note![]() Only 64-bit Linux images are supported; 32-bit Linux images are not supported.

Only 64-bit Linux images are supported; 32-bit Linux images are not supported.

Note![]() Solaris is not supported.

Solaris is not supported.

In addition, the following additional RPM packages (or later versions) must be installed if they are not already installed:

- crontabs-1.10-8

- vixie-cron-4.1-76

- bzip2-libs-1.0.3-4

- ksh-20080202-2

- glibc-2.5-34

- bc-1.06-21

- bind-utils-9.3.4-10

- time-1.7-27

- words-3.0-9

- freetype-2.2.1-21

- fontconfig-2.4.1-7

- expat-1.95.8-8.2.1

- gawk-3.1.5

- net-tools-1.60

If you are running SELinux (Security-Enhanced Linux) and you enabled Prime Performance Manager user access with SSL enabled, run the following command to load the SSL shared library from Prime Performance Manager:

If you are running Red Hat Enterprise Linux Version 6 Update 2 or later:

Package collections -> Compatibility Libraries

If you will use Prime Performance Manager to manage hypervisors, after installing Prime Performance Manager, run the ppm hypervisor checklibrary command to determine if all required shared libraries are installed:

Missing libraries are listed as “not found”.

Common RPM packages that must be installed—but might not be by default—include:

- cyrus-sasl-lib

- cyrus-sasl-md5

- e2fsprogs-libs

- keyutils-libs

- krb5-libs

- libgcrypt

- libgpg-error

- libidn

- libselinux

- libsepol

- openldap

- compat-openldap

- zlib

- openssl

- openssl-0.9.8e (for RedHat Linux Version 5)

- openssl098e (for RedHat Linux Version 6)

- openssl098e (for RedHat Linux Version 7)

If you are integrating with LDAP using PAM, the following RPMs are required:

Libraries for PAM_LDAP are provided with the Linux distribution with no support from Cisco. Any issues with PAM_LDAP in a specific deployment are beyond the scope of Cisco support.

To verify the RPMs that are installed on your system, enter:

Check for the packages listed above or more recent versions. To get RPMs for Red Hat Enterprise Linux, contact your Red Hat representative or download them from the Red Hat website at: http://www.redhat.com.

Note![]() Downloading Red Hat Enterprise Linux RPMs might require a support agreement.

Downloading Red Hat Enterprise Linux RPMs might require a support agreement.

Ubuntu Requirements

Prime Performance Manager supports Ubuntu 12.04 and later with the following guidelines:

- The apt-get install libssl0.9.8 package must be installed.

- All required Red Hat packages must be installed. To verify whether the packages are installed on your server, enter:

VM Environments

You can install and run Prime Performance Manager in a VM environment as long as the VM meets the equivalent requirements provided in the following sections. VM releases supported as both runtime environments and as targets for monitoring by Prime Performance Manager include:

HyperV and Xen are only supported as targets for monitoring by Prime Performance Manager. They have not been tested as runtime environments.

Note![]() Run Prime Performance Manager on a VM only for POC or medium size networks, For large networks and higher, run Prime Performance Manager on bare metal servers with direct disk access. (For network sizes, see Table 1-2.)

Run Prime Performance Manager on a VM only for POC or medium size networks, For large networks and higher, run Prime Performance Manager on bare metal servers with direct disk access. (For network sizes, see Table 1-2.)

Client Requirements

Prime Performance Manager clients do not require a separate installation. Clients can access using a web browsers. The following browsers are supported:

- Microsoft Internet Explorer 9.0 or greater

- Firefox 24 or later up to Firefox 55

- Firefox 24 Extended Support Release (ESR) or later up to Firefox 52 ESR

Later versions of Internet Explorer and Firefox, Safari and Chrome are not formally tested but are widely used without problems.

Cisco Prime Interoperability

Prime Performance Manager 1.7 can inter-operate with the following Cisco Prime products:

- Cisco Prime Central 1.3, 1.4, 1.4.1, 1.5, 1.5.1, 1.5.2, 1.5.3, 2.0, and 2.1

- Cisco Prime Network 3.10, 3.11, 4.0, 4.1, 4.2, 4.2.1, 4.2.2, 4.2.3, 4.3.0, 4.3.1, 4.3.2, 5.0, 5.1, 5.2, and 5.3

- Cisco Prime Service Catalog 10.1

- Cisco Prime Network Service Controller 3.2 and 3.3

If you integrate Prime Performance Manager 1.7 with Prime Carrier Management, integration is only allowed with the following Prime Central and Prime Network releases:

- Prime Central 2.1 with Prime Network 5.3 and Prime Performance 1.7 SP2003 and above

- Prime Central 2.1 with Prime Network 5.2 and Prime Performance 1.7 SP1909 and above

- Prime Central 2.1 with Prime Network 5.1 and Prime Performance 1.7 SP1807 and above

- Prime Central 2.0 with Prime Network 5.0 and Prime Performance 1.7 SP1805 and above

- Prime Central 1.5.3 with Prime Network 4.2.1, 4.2.2, 4.2.3, 4.3.0, 4.3.1, 4.3.2 and Prime Performance 1.7 SP1705 and above

- Prime Central 1.5.1 and 1.5.2 with Prime Network 4.2, 4.2.1, 4.2.2, 4.2.3, 4.3 and Prime Performance 1.7 SP4 and above

- Prime Central 1.5 with Prime Network 4.2, 4.2.1, 4.2.2, 4.2.3 and Prime Performance 1.7

- Prime Central 1.4.1 with Prime Network 4.2.1 and Prime Performance 1.7

- Prime Central 1.4 with Prime Network 4.2 and Prime Performance 1.7

- Prime Central 1.3 and Prime Network 4.1

Evolved Programmable Networks Requirements

For Evolved Programmable Networks (EPNs), Prime Performance Manager gateway and unit(s) can be installed on the same server (single deployment) or on separate servers (distributed deployments). Single deployments are permitted when high availability will not be configured for proof of concept (POC), medium, large, and very large networks. Distributed deployments are required if you plan to configure high availability or your network is in the extremely large or maximum supported categories. Server and operating system requirements depend on your network size. Network sizes are based on maximums numbers for the following:

- Devices

- Layer 3 pseudowires (PWE3s)

- Interfaces—The number of device interface table entries. This number is usually higher than the number of physical ports because it includes pseudowires, virtual circuits, VLANs, and other virtual interfaces.

- Interfaces generating statistics—The number of interfaces generating statistics. This category excludes DS1, DS3, SONET, SDH, and ATM layers that do not return valid statistics for these layers.

Table 1-2 shows the maximums used for the Prime Performance Manager server requirements for single and distributed deployments.

|

|

|

|

|||||||

|---|---|---|---|---|---|---|---|---|---|

Additionally, all EPN systems use a device ratio model per 5000 devices below:

Cisco ASR 1002—1 - Route Reflector

Cisco ASR 9006—159 - Aggregation/SEN

Cisco Catalyst 4506—1067 - Access

Cisco Catalyst 3750E—1067 - Access

EPN requirements are based 200 simultaneous user queries using GUI and REST API data access and initiating 200 queries every 10 seconds for 90 minutes sustained, and the following reports:

- CPU 5 Min Average Utilization 15 Minute

- PWE3 Packet Rate 15 Minute (GUI)

- PWE3 Dashboard 15 Minute (REST)

- Memory Pool Utilization 15 Minute

- ICMP Ping Response Time 15 Minute

- Interface Availability 15 Minute

- SNMP/Hypervisor Availability 15 Minute

- Interface Errors 15 Minute

- Interface Utilization 15 Minute

- Interface Admin / Operational Status Percentage 15 Minute (GUI)

- Interface Operational Status Percentage 15 Minute (REST)

Additional Requirements Notes

In addition to requirements provided in the preceding and following sections, review the following notes before you install Prime Performance Manager:

Input/Output Operations Per Second

All IOPS and write operation metrics are collected by the Linux IOSTAT tool and computed as a 95th percentile metric.

For large networks or higher, format the Linux file systems for the following Prime Performance Manager directories to accommodate large file support: data, device cache, backup. You normally do this by adding the -T option to the mkfs.ext3 command:

This enables 1 MB block data allocations per inode instead of the default 4K or 16K of block data per inode. To verify, use any of the following commands: tune2fs -l /dev/{raidDeviceName} | grep group. The following formula computes the current value: (blocks per group/inodes per group) * block size.

Prime Performance Manager CSV export is disabled by default. This means CSV report files are not included in the gateway backup file. However the server requirements provided in the following sections assume CSV export is enabled and CSV report files are included in gateway backup file except Maximum Supported Scale Distributed, which assumes CSV export is disabled.

When discovering network devices, verify the banner page does not include a # character. This character will be interpreted as an enable prompt and cause a CLI/HTTP device access problem.

Server Requirements for Single Deployments

In a single Prime Performance Manager deployment, the gateway and unit are installed on the same server. Server requirements are based on the following criteria:

- Reports—CPU, memory, interface, PWE3s, SNMP and Hypervisor Ping, ICMP Ping.

- The following report aging values were used to establish a baseline for computing disk space requirements:

All EPN system requirements assume:

Increasing retention time requires additional disk space. Smaller networks can easily increase the time retention in most cases. Larger networks require capacity planning for disk space usage needs.

For single-server deployments, the following additional disk space is required for every 1000 devices averaging 300 interfaces or PWE3s that can generate statistics for each additional day of retention for 15-minute and hourly reports:

- 1.4 GB for database and reports when CSV export is disabled.

- 3.2GB for backups when CSV export is disabled or CSV report files are not included in the backup file.

- 2.0 GB for database and reports when CSV export is enabled.

- 4.2 GB for backups when CSV export is enabled and CSV report files are included in the backup file.

For distributed deployments for every 1000 devices where each device has an average 300 interfaces or PWE3s that can generate statistics, each standalone gateway or unit requires the following total disk space per additional day of retention of 15-minute and hourly reports.

- 18 MB for database and reports on the gateway when CSV export is disabled.

- 58 MB for backups on the gateway when CSV export is disabled or CSV report files are not included in the gateway backup file.

- 560 MB for the database and reports on the gateway when CSV export is enabled.

- 1.16 GB for backups on the gateway when CSV export is enabled and CSV report files are included in the gateway backup file.

- 1.4 GB for database and reports on units.

- 4.6 GB for backups on units.

Note![]() Adding retention days to daily, weekly, and monthly reports do not impact disk space as significantly as adding retention days to 15-minute and hourly reports. Approximately 98% of disk space usage comes from the 15-minute and hourly reports.

Adding retention days to daily, weekly, and monthly reports do not impact disk space as significantly as adding retention days to 15-minute and hourly reports. Approximately 98% of disk space usage comes from the 15-minute and hourly reports.

- Hardware or ZFS RAID is recommended for production systems for partitions, storing the database, reports, and backups.

For information on Cisco UCS Server options, see: http://www.cisco.com/web/mobile/products/ucs/cseries/docs.html

- Depending on your network size and server performance, tuning the gateway and unit server JVM heap size is recommended. For high-scale environments, increasing the gateway and unit server JVM heap size to 8-16 GB is recommended. You can change the JVM heap size using the ppm jvmsize command.

The following topics provide single deployment server requirements:

Proof of Concept Single Deployment Installation Requirements

Table 1-3 lists the proof of concept network single deployment Linux server requirements. (See Table 1-2 for network size details.)

|

|

|

|---|---|

Medium Network Single Deployment Server Requirements

- Number of devices—2,000

- Number of PWE3 links— 124,000

- Number of Interfaces—376,000

- Number of Interfaces with stats—209,000

Table 1-4 lists the medium network single deployment Linux server requirements. (See Table 1-2 for network size details.)

Large Network Single Deployment Server Requirements

Table 1-5 shows the large network Linux server requirements. (See Table 1-2 for network size details.)

Very Large Network Single Deployment Server Requirements

Table 1-6 shows the very large network Linux server requirements. (See Table 1-2 for network size details.) Report aging values to establish a baseline for computing disk space requirements:

- 5 minute reports - disabled

- 15-minute reports - 7 days

- Hourly reports - 14 days

- Daily reports - 94 days

- Weekly reports - 730 days

- monthly reports - 1825 days

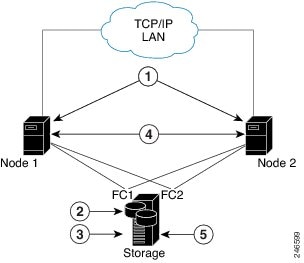

Server Requirements for Distributed Deployment Installations

In a Prime Performance Manager distributed deployment, the gateway and units are installed on different servers. Server requirements are based on the following criteria:

For distributed deployments, the following additional disk space is required for every 1000 devices averaging 300 interfaces or PWE3s that can generate statistics, each standalone gateway or unit requires the following total disk space per additional day of retention of 15min and hourly reports.

–![]() 20 MB for database and reports when CSV export is disabled.

20 MB for database and reports when CSV export is disabled.

–![]() 60 MB for backups when CSV export is disabled or CSV report files are not included in the backup file.

60 MB for backups when CSV export is disabled or CSV report files are not included in the backup file.

–![]() 580 MB for database and reports when CSV export is enabled.

580 MB for database and reports when CSV export is enabled.

–![]() 1.2 GB for backups when CSV export is enabled and CSV report files are included in the backup file.

1.2 GB for backups when CSV export is enabled and CSV report files are included in the backup file.

–![]() 1.5 GB for database and reports.

1.5 GB for database and reports.

Note![]() Adding retention days to daily, weekly, and monthly reports does not impact disk space as significantly as adding retention days to 15-minute and hourly reports.

Adding retention days to daily, weekly, and monthly reports does not impact disk space as significantly as adding retention days to 15-minute and hourly reports.

- Hardware or ZFS RAID is recommended for production systems for partitions, storing the database, reports, and backups.

- Depending on your network size and server performance, tuning the gateway and unit server JVM heap size is recommended. For high scale environments, increasing gateway and unit JVM heap size to 8-24 GB is recommended. You can change the JVM size using the ppm jvmsize command.

The following topics provide the distributed network server requirements:

Large Network Distributed Deployment Server Requirements

- Number of Devices—5,000

- Number of PWE3 Links—270,380

- Number of Interfaces—815,260

- Number of Interfaces with Stats—489,500

Gateway with CSV Exporting All Data to Gateway Enabled

- Cisco UCS C240 M3 or Cisco UCS C220 M3 for non-NEBs-compliant systems

- Cisco UCS B200 M3 for non-NEBs compliant systems external storage only

Linux Version From Supported List

–![]() UCS C240 M3 Xeon E5-2680 2.7 GHz

UCS C240 M3 Xeon E5-2680 2.7 GHz

–![]() UCS B200 M3 Xeon E5-2680 2.7 GHz

UCS B200 M3 Xeon E5-2680 2.7 GHz

–![]() UCS C240 M3 Xeon E5-2670 2.6 GHz

UCS C240 M3 Xeon E5-2670 2.6 GHz

–![]() UCS C240 M3 Xeon E5-2650v2 2.6 GHz

UCS C240 M3 Xeon E5-2650v2 2.6 GHz

–![]() UCS C220 M3 Xeon E5-2680 2.7 GHz

UCS C220 M3 Xeon E5-2680 2.7 GHz

–![]() UCS C220 M3 Xeon E5-2670 2.6 GHz

UCS C220 M3 Xeon E5-2670 2.6 GHz

–![]() UCS C220 M3 Xeon E5-2650v2 2.6 GHz

UCS C220 M3 Xeon E5-2650v2 2.6 GHz

Cisco UCS C240 M3 Performance and Reliability Option

- 1*300 GB SAS 10K RPM drive for OS

- 4*300 GB SAS 10K RPM drives for CSV reports using RAID10

- 4*300 GB SAS 10K RPM drives for backups using RAID10

- Two PCIe RAID controllers

- Dual Power Supply

Cisco UCS C240 M3/C220 M3 Performance Option

- 1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

- 2*300 GB SAS 15K RPM drives for CSV reports using RAID0

- 2*300 GB SAS 15K RPM drive for backups using RAID0

- One embedded RAID, Mezzanine RAID, or PCIe RAID controller

External Storage Options and Performance Requirements

- Linux OS and Prime Performance Manager gateway installation and database

- 10 GB used

- 125 IOPS with nearly 66% write operations

- Driven by Prime Performance Manager logs and database

- Prime Performance Manager gateway export report

- 115 GB used

- 500 IOPS with 20% write operations

- Burst read export report files during backup periods

- 104 GB disk space used for export report based on below aging

- 9 GB export report data generated per day

- Average report data generation rate is about 6.4MB per minute

- 15-minute reports - 7 Days - 7.14 GB disk usage per day

- Hourly reports - 14 Days - 1.79 GB disk usage per day

- Daily reports - 94 Days - 0.07 GB disk usage per day

- Weekly reports - 730 Days - 0.07 GB disk usage per week

- Monthly reports - 1825 Days - 0.07 GB disk usage per month

- Gateway export report partition size:

–![]() Gateway export report size above

Gateway export report size above

- Prime Performance Manager gateway backups including export report

- 245 GB used

- 620 IOPS with nearly 58% write operations

- Sustained IOPS during backup periods; quiet at other times

- Gateway backup file size:

–![]() Gateway export report size above +

Gateway export report size above +

–![]() 4 GB for device cache/gateway database/other files

4 GB for device cache/gateway database/other files

–![]() Gateway backup file size * (backup days + 1) +

Gateway backup file size * (backup days + 1) +

–![]() 4 GB for device cache/gateway database/other files

4 GB for device cache/gateway database/other files

- Cisco UCS C240 M3 or Cisco UCS C220 M3 for non-NEBs-compliant systems

- Cisco UCS B200M3 for non-NEBs-compliant systems (external storage only)

- Supported Linux version

- CPU type—8-core (UCS B200 M3):

- CPU—2

- vCPU—8 or more for VM environments

- RAM—48 GB or greater

- Swap space—32 GB or greater

- Unit JVM—8 GB

–![]() 2*300 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

2*300 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

–![]() 6*300 GB SAS 15K RPM drives for database using RAID10

6*300 GB SAS 15K RPM drives for database using RAID10

–![]() 8*300 GB SAS 15K RPM drives for backups using RAID10

8*300 GB SAS 15K RPM drives for backups using RAID10

–![]() 2*300 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

2*300 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

–![]() 4*300 GB SAS 15K RPM drives for database using RAID10

4*300 GB SAS 15K RPM drives for database using RAID10

–![]() 6*300 GB SAS 15K RPM drive for backups using RAID0

6*300 GB SAS 15K RPM drive for backups using RAID0

–![]() 2*146 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

2*146 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

–![]() 2*300 GB SAS 15K RPM drives for database using RAID0

2*300 GB SAS 15K RPM drives for database using RAID0

–![]() 3*300 GB SAS 15K RPM drive for backups using RAID0

3*300 GB SAS 15K RPM drive for backups using RAID0

–![]() 2*146 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

2*146 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

–![]() 2*300 GB SAS 15K RPM drives for database using RAID0

2*300 GB SAS 15K RPM drives for database using RAID0

–![]() 2*600 GB SAS 15K RPM drive for backups using RAID0

2*600 GB SAS 15K RPM drive for backups using RAID0

–![]() One embedded RAID, Mezzanine RAID, or PCIe RAID controller

One embedded RAID, Mezzanine RAID, or PCIe RAID controller

External Storage Options and Performance Requirements

–![]() Linux OS and Prime Performance Manager unit installation

Linux OS and Prime Performance Manager unit installation

–![]() 352 IOPS with 84% write operations

352 IOPS with 84% write operations

–![]() Driven by Prime Performance Manager device cache writes and logs

Driven by Prime Performance Manager device cache writes and logs

–![]() Device cache and logs can be relocated if necessary

Device cache and logs can be relocated if necessary

Prime Performance Manager unit database

–![]() 620 IOPS with 58% write operations

620 IOPS with 58% write operations

–![]() 223 GB disk space used for database based on below aging

223 GB disk space used for database based on below aging

–![]() 19 GB report data generated per day

19 GB report data generated per day

–![]() Average report data generation rate is about 13.51 MB per minute

Average report data generation rate is about 13.51 MB per minute

–![]() 15-minute reports - 7 Days - 15.07 GB disk usage per day

15-minute reports - 7 Days - 15.07 GB disk usage per day

–![]() Hourly reports - 14 Days - 3.77 GB disk usage per day

Hourly reports - 14 Days - 3.77 GB disk usage per day

–![]() Daily reports - 94 Days - 0.16 GB disk usage per day

Daily reports - 94 Days - 0.16 GB disk usage per day

–![]() Weekly reports - 730 Days - 0.16 GB disk usage per week

Weekly reports - 730 Days - 0.16 GB disk usage per week

–![]() Monthly reports - 1825 Days - 0.16 GB disk usage per month

Monthly reports - 1825 Days - 0.16 GB disk usage per month

–![]() Unit database Partition size: Unit database size above; multiplied by 1.1 for safety

Unit database Partition size: Unit database size above; multiplied by 1.1 for safety

–![]() Prime Performance Manager unit backups

Prime Performance Manager unit backups

–![]() 1326 IOPS with nearly 68% write operations

1326 IOPS with nearly 68% write operations

–![]() Sustained IOPS during backup periods; quiet at other times

Sustained IOPS during backup periods; quiet at other times

–![]() 6 GB For device cache/other files

6 GB For device cache/other files

–![]() Unit backup file size above * (backup days + 1) +

Unit backup file size above * (backup days + 1) +

Very Large Network Distributed Deployment Server Requirements

Table 1-7 shows the very large network gateway server requirements.

Table 1-8 shows the very large network server requirements for a unit with 5,000 devices.

Extremely Large Network Distributed Deployment Server Requirements

Table 1-9 shows the extremely large network gateway server requirements.

Table 1-10 shows the extremely large network server requirements for a unit with 10,000 devices.

Table 1-11 shows the extremely large network server requirements for a unit with 5,000 devices.

Extremely Large Network Distributed Deployment Server Requirements with 5-Minute Reports Enabled

Enabling 5-minute reports requires additional server capacity and power. Table 1-12 shows the extremely large network gateway server requirements when 5-minute reports are enabled. The aging for 5-minute reports is three days.

Table 1-13 shows the extremely large network server requirements for a unit with 10,000 devices:

Maximum Supported Scale Distributed Deployment Server Requirements

Server requirements for maximum support scale in a distributed deployment include:

- Gateway with CSV data exports disabled.

- Report aging values used to establish disk space requirements

Note![]() Exporting data from units to CSVs on the gateway is not supported in this deployment. Therefore, less memory is required on the central gateway.

Exporting data from units to CSVs on the gateway is not supported in this deployment. Therefore, less memory is required on the central gateway.

Table 1-14 shows the maximum supported scale gateway server requirements with CSV export disabled.

Table 1-15 shows the maximum supported scale server requirements for a unit with 12,500 devices:

Requirements for Mobility StarOS Networks

Server requirements for Cisco StarOS mobility networks are provided in the following sections. Disk space requirements are based on the following report aging values:

- 5-minute reports - 3 Days

- 15-minute reports - 7 Days

- Hourly reports - 14 Days

- Daily reports - 94 Days

- Weekly reports - 730 Days

- Monthly reports - 1825 Days

- Bulk statistics aging - 14 Days

Fast and slow threads settings on units:

Backup days and logs settings:

Mobility StarOS Medium Scale EPC on Single VM

StarOS requirements are based on the following:

- StarOS 16.0 MR1

- Reports: APN, Card, Context, DCCA, DCCA-group, DPCA, Diameter, Diameter-Acct, Diameter-Auth, ECS, eGTP-C,GTP-C, GTP-U, GTPP, ICSR, IMSA, IP Pool, Port, RADIUS Server, RADIUS-Group, SAEGW, RLF, RLF-Detailed, System, VLAN-NPU

- Gateway JVM size: 8 GB (ppm jvmsize 8192 gw)

- Unit JVM size: 16 GB (ppm jvmsize 16384 unit)

- 40 simultaneous users

- Maximum Network Size:

–![]() Number of StarOS ASR 5000/ASR5500s per unit: 40

Number of StarOS ASR 5000/ASR5500s per unit: 40

–![]() Number of units: 1 unit installed with gateway on a VM machine

Number of units: 1 unit installed with gateway on a VM machine

–![]() 5 StarOS ASR 5000/ASR 5500s with heavy bulk statistics configuration

5 StarOS ASR 5000/ASR 5500s with heavy bulk statistics configuration

–![]() 35 StarOS ASR 5000/ASR 5500s with light bulk statistics configuration

35 StarOS ASR 5000/ASR 5500s with light bulk statistics configuration

Number of instances for each bulk statistics schema name/number of instances)

- Linux version from Supported Operating Systems

- CPU type: 8-core Xeon E5-2680 2.70 GHz

- CPU type: 8-core Xeon E5-2670 2.60 GHz

- CPU type: 8-core Xeon E5-2650V2 2.60 GHz

- CPU type: 8-core Xeon X5675 3.07 GHz

- CPU number:2

- vCPU number:16 or more for VM environments

- RAM size: 64 GB or greater

- Swap space: 100 GB or greater

- Gateway JVM size: 8 GB

- Unit JVM size: 16 GB

- Local Storage Options for VM environments:

–![]() 1*146 GB SAS 15K RPM drive for OS and Prime Performance Manager

1*146 GB SAS 15K RPM drive for OS and Prime Performance Manager

–![]() 2*146 GB SAS 15K RPM drive for bulk statistics CSV file

2*146 GB SAS 15K RPM drive for bulk statistics CSV file

–![]() 2*146 GB SAS 15K RPM drive for Prime Performance Manager database

2*146 GB SAS 15K RPM drive for Prime Performance Manager database

Mobility StarOS Scale EPC Distributed Active Only Configuration

Mobility StarOS scale EPC distributed in an active-only configuration requirements are based on the following:

–![]() Prime Performance Manager System

Prime Performance Manager System

–![]() Number of StarOS Cisco ASR 5000/5500s per unit: 100

Number of StarOS Cisco ASR 5000/5500s per unit: 100

–![]() Total number of StarOS Cisco ASR 5000/5500s: 300

Total number of StarOS Cisco ASR 5000/5500s: 300

–![]() 10 StarOS ASR 5000/ASR 5500s/QvPC with heavy bulk statistics configuration

10 StarOS ASR 5000/ASR 5500s/QvPC with heavy bulk statistics configuration

–![]() 90 StarOS ASR 5000/ASR 5500s/QvPC with light bulk statistics configuration

90 StarOS ASR 5000/ASR 5500s/QvPC with light bulk statistics configuration

–![]() 5-minute reports - 3 Days - 52.00 GB disk usage per day

5-minute reports - 3 Days - 52.00 GB disk usage per day

–![]() 15-minute reports - 7 Days - 17.50 GB disk usage per day

15-minute reports - 7 Days - 17.50 GB disk usage per day

–![]() Hourly reports - 14 Days - 4.40 GB disk usage per day

Hourly reports - 14 Days - 4.40 GB disk usage per day

–![]() Daily reports - 94 Days - 0.18 GB disk usage per day

Daily reports - 94 Days - 0.18 GB disk usage per day

–![]() Weekly reports - 730 Days - 0.18 GB disk usage per week

Weekly reports - 730 Days - 0.18 GB disk usage per week

–![]() Monthly reports - 1825 Days - 0.18 GB disk usage per Month

Monthly reports - 1825 Days - 0.18 GB disk usage per Month

–![]() 74.1 GB data generated per day with data generation rate of 899 KB/sec

74.1 GB data generated per day with data generation rate of 899 KB/sec

- Unit backup file size: total data size of unit database + 40 GB for logs/device cache/other files

- Unit backup partition size:

Heavy StarOS bulk statistics schema configuration:

Light StarOS bulk statistics schema configuration:

–![]() Cisco UCS C240 M3 for non-NEBs-compliant systems

Cisco UCS C240 M3 for non-NEBs-compliant systems

–![]() Linux version from Supported Operating Systems

Linux version from Supported Operating Systems

–![]() Swap space: 16 GB or greater

Swap space: 16 GB or greater

Cisco UCS C240 M3 Performance and Reliability Option

–![]() 2*300 GB SAS 15K RPM drive for OS

2*300 GB SAS 15K RPM drive for OS

–![]() 8*300 GB SAS 15K RPM drives for database using RAID10

8*300 GB SAS 15K RPM drives for database using RAID10

–![]() 8*300 GB SAS 15K RPM drives for backups using RAID10

8*300 GB SAS 15K RPM drives for backups using RAID10

–![]() Using two PCIe RAID controllers

Using two PCIe RAID controllers

Cisco UCS C240 M3 - Basic Option

–![]() 2*300 GB SAS 15K RPM drive for OS

2*300 GB SAS 15K RPM drive for OS

–![]() 4*300 GB SAS 10K RPM drives for database using RAID0

4*300 GB SAS 10K RPM drives for database using RAID0

–![]() 4*300 GB SAS 10K RPM drive for backups using RAID0

4*300 GB SAS 10K RPM drive for backups using RAID0

–![]() Using two PCIe RAID controllers

Using two PCIe RAID controllers

External Storage Options and Performance Requirements

–![]() Linux OS and Prime Performance Manager gateway installation and database

Linux OS and Prime Performance Manager gateway installation and database

–![]() 25 GB used for CSV exports enabled using Prime Performance Manager/3GPP format

25 GB used for CSV exports enabled using Prime Performance Manager/3GPP format

–![]() 60 IOPS with about 100% write operations

60 IOPS with about 100% write operations

–![]() Driven by Prime Performance Manager logs and database

Driven by Prime Performance Manager logs and database

–![]() Each units with 100 active StarOS ASR 5000/ASR 5500s - 3 total units

Each units with 100 active StarOS ASR 5000/ASR 5500s - 3 total units

–![]() Cisco UCS C240 M3 for non-NEBs-compliant systems

Cisco UCS C240 M3 for non-NEBs-compliant systems

–![]() Linux version from Supported Operating Systems

Linux version from Supported Operating Systems

–![]() Swap space: 32 GB or greater

Swap space: 32 GB or greater

–![]() Unit number of slow threads: 12

Unit number of slow threads: 12

–![]() Unit number of fast threads: 12

Unit number of fast threads: 12

Storage: Cisco UCS C240 M3 - Performance and Reliability Option

–![]() 1*300 GB SAS 15K RPM drive for OS

1*300 GB SAS 15K RPM drive for OS

–![]() 8*300 GB SAS 15K RPM drives for database using RAID10

8*300 GB SAS 15K RPM drives for database using RAID10

–![]() 8*300 GB SAS 15K RPM drives for backups using RAID10

8*300 GB SAS 15K RPM drives for backups using RAID10

–![]() 8*300 GB SAS 15K RPM drives for bulk statistics CSV file using RAID10

8*300 GB SAS 15K RPM drives for bulk statistics CSV file using RAID10

–![]() Using two PCIe RAID controllers

Using two PCIe RAID controllers

Storage Cisco UCS C240 M3 - Performance Option

–![]() 1*300 GB SAS 10K RPM drive for OS

1*300 GB SAS 10K RPM drive for OS

–![]() 8*300 GB SAS 15K RPM drives for database using RAID0

8*300 GB SAS 15K RPM drives for database using RAID0

–![]() 8*300 GB SAS 15K RPM drives for backups using RAID0

8*300 GB SAS 15K RPM drives for backups using RAID0

–![]() 8*300 GB SAS 15K RPM drives for bulk statistics CSV file using RAID0

8*300 GB SAS 15K RPM drives for bulk statistics CSV file using RAID0

–![]() Using two PCIe RAID controllers

Using two PCIe RAID controllers

External Storage Options and Performance Requirements

–![]() Linux OS and Prime Performance Manager unit installation

Linux OS and Prime Performance Manager unit installation

–![]() 90 IOPS with about 99% write operations

90 IOPS with about 99% write operations

–![]() Driven by Prime Performance Manager device cache writes and logs

Driven by Prime Performance Manager device cache writes and logs

–![]() Device cache and logs can also be relocated if necessary

Device cache and logs can also be relocated if necessary

–![]() Prime Performance Manager unit database

Prime Performance Manager unit database

–![]() 300 IOPS with about 30% write operations

300 IOPS with about 30% write operations

–![]() Prime Performance Manager unit backups

Prime Performance Manager unit backups

–![]() 600 IOPS with about 30% write operations

600 IOPS with about 30% write operations

–![]() Sustained IOPS during backup periods; quiet at other times

Sustained IOPS during backup periods; quiet at other times

–![]() Prime Performance Manager directory for storing Cisco ASR 5000 bulk statistics CSV files

Prime Performance Manager directory for storing Cisco ASR 5000 bulk statistics CSV files

Code Division Multiple Access Distributed Mobility Scale Requirements

Code Division Multiple Access (CDMA) mobility scale requirements are based on the following configurations:

- StarOS 15.0

- Reports: BCMCS, FA, HA, HSGW, LAC, LNS, MIPv6 HA, MVS, PPP, RP

- Gateway JVM size: 8 GB (ppm jvmsize 8192 gw)

- Unit JVM size: 24 GB (ppm jvmsize 24576 unit)

- 50 simultaneous users

- 1+1 unit HA deployments are recommended

- Maximum network size:

–![]() Number of StarOS Cisco ASR 5000/5500s per unit: 140

Number of StarOS Cisco ASR 5000/5500s per unit: 140

–![]() Total StarOS Cisco ASR 5000/5500s: 280

Total StarOS Cisco ASR 5000/5500s: 280

Number of instances for each bulk statistics schema (schema name/number of instances)

–![]() Cisco UCS C240 M3 for non-NEBs-compliant systems

Cisco UCS C240 M3 for non-NEBs-compliant systems

–![]() Linux version from Supported Operating Systems

Linux version from Supported Operating Systems

–![]() CPU type: 8-core (UCS C240 M3) Xeon E5-2680 2.7 GHz

CPU type: 8-core (UCS C240 M3) Xeon E5-2680 2.7 GHz

–![]() Swap space: 16 GB or greater

Swap space: 16 GB or greater

Cisco UCS C240 M3 - Performance and Reliability Option

–![]() 1*300 GB SAS 15K RPM drive for OS

1*300 GB SAS 15K RPM drive for OS

–![]() 4*300 GB SAS 15K RPM drives for database using RAID10

4*300 GB SAS 15K RPM drives for database using RAID10

–![]() 4*300 GB SAS 15K RPM drives for backups using RAID10

4*300 GB SAS 15K RPM drives for backups using RAID10

Cisco UCS C240 M3 - Basic Option

–![]() 1*300 GB SAS 15K RPM drive for OS

1*300 GB SAS 15K RPM drive for OS

–![]() 2*300 GB SAS 10K RPM drives for database using RAID0

2*300 GB SAS 10K RPM drives for database using RAID0

–![]() 2*300 GB SAS 10K RPM drive for backups using RAID0

2*300 GB SAS 10K RPM drive for backups using RAID0

–![]() Cisco UCS C240 M3 for non-NEBs-compliant systems

Cisco UCS C240 M3 for non-NEBs-compliant systems

–![]() Linux version from Supported Operating Systems

Linux version from Supported Operating Systems

–![]() CPU type: 8-core (UCS C240 M3) Xeon E5-2680 2.7 GHz

CPU type: 8-core (UCS C240 M3) Xeon E5-2680 2.7 GHz

–![]() Swap space: 32 GB or greater

Swap space: 32 GB or greater

Cisco UCS C240 M3 - Performance and Reliability Option

–![]() 1*300 GB SAS 15K RPM drive for OS

1*300 GB SAS 15K RPM drive for OS

–![]() 4*300 GB SAS 15K RPM drives for database using RAID10

4*300 GB SAS 15K RPM drives for database using RAID10

–![]() 4*300 GB SAS 15K RPM drives for backups using RAID10

4*300 GB SAS 15K RPM drives for backups using RAID10

–![]() 6*300 GB SAS 15K RPM drives for bulk statistics CSV files using RAID10

6*300 GB SAS 15K RPM drives for bulk statistics CSV files using RAID10

Cisco UCS C240 M3 - Performance Option

–![]() 1*300 GB SAS 10K RPM drive for OS

1*300 GB SAS 10K RPM drive for OS

–![]() 2*300 GB SAS 15K RPM drives for database using RAID0

2*300 GB SAS 15K RPM drives for database using RAID0

–![]() 2*300 GB SAS 15K RPM drives for backups using RAID0

2*300 GB SAS 15K RPM drives for backups using RAID0

–![]() 3*300 GB SAS 15K RPM drives for bulk statistics CSV files using RAID0

3*300 GB SAS 15K RPM drives for bulk statistics CSV files using RAID0

Requirements for Small Cell Networks

Small cell server requirements are based on the following configurations:

–![]() Models: USC 3330, USC 3331, USC 7330, USC 5310

Models: USC 3330, USC 3331, USC 7330, USC 5310

–![]() UCS 3K/5K/7K/9K Series InterDevice: UCS XK 3G AP ALL KPI InterDevice

UCS 3K/5K/7K/9K Series InterDevice: UCS XK 3G AP ALL KPI InterDevice

–![]() UCS 3K/5K/7K/9K Series InterDevice: UCS XK 3G AP Availability InterDevice

UCS 3K/5K/7K/9K Series InterDevice: UCS XK 3G AP Availability InterDevice

–![]() UCS 3K/5K/7K/9K Series InterDevice: UCS XK 3G AP CS InterDevice

UCS 3K/5K/7K/9K Series InterDevice: UCS XK 3G AP CS InterDevice

–![]() UCS 3K/5K/7K/9K Series InterDevice: UCS XK 3G AP PS InterDevice

UCS 3K/5K/7K/9K Series InterDevice: UCS XK 3G AP PS InterDevice

–![]() UCS 3K/5K/7K/9K Series: UCS XK 3G AP ALL KPI

UCS 3K/5K/7K/9K Series: UCS XK 3G AP ALL KPI

–![]() UCS 3K/5K/7K/9K Series: UCS XK 3G AP AP Availability

UCS 3K/5K/7K/9K Series: UCS XK 3G AP AP Availability

–![]() UCS 3K/5K/7K/9K Series: UCS XK 3G AP CS KPI

UCS 3K/5K/7K/9K Series: UCS XK 3G AP CS KPI

–![]() UCS 3K/5K/7K/9K Series: UCS XK 3G AP Multi DB Table

UCS 3K/5K/7K/9K Series: UCS XK 3G AP Multi DB Table

–![]() UCS 3K/5K/7K/9K Series: UCS XK 3G AP PS KPI

UCS 3K/5K/7K/9K Series: UCS XK 3G AP PS KPI

- Report intervals: 15-minute, hourly, daily, weekly, monthly

- All systems specified were tested with:

- ppm backupdays 1 both; ppm backuplogs disable both

- CSV exporting all data to gateway ENABLED

- Report aging:

300,000 3G Access Points Small Cell Scale on a Distributed Servers with Managed ULS Redundancy

Requirements for 300,000 APs on a distributed servers with managed ULS redundancy include:

–![]() Number of 3G APs per ULS: 150,000

Number of 3G APs per ULS: 150,000

–![]() Total Number of 3G APs per Unit: 150,000

Total Number of 3G APs per Unit: 150,000

Each LUS supports 150,000 APs with each AP configured as follows:

–![]() 50,000 3G APs with 15 Minute pegging and upload interval

50,000 3G APs with 15 Minute pegging and upload interval

–![]() 100,000 3G APs with 1 hour pegging and 1 day upload intervals

100,000 3G APs with 1 hour pegging and 1 day upload intervals

Gateway side configured with 2,500 distinct groups via GDDT

–![]() 15-minute reports - 7 Days - 6.4 GB disk usage per day

15-minute reports - 7 Days - 6.4 GB disk usage per day

–![]() Hourly reports - 14 Days - 1.9 GB disk usage per day

Hourly reports - 14 Days - 1.9 GB disk usage per day

–![]() Daily reports - 94 Days - 0.12 GB disk usage per day

Daily reports - 94 Days - 0.12 GB disk usage per day

–![]() Weekly reports - 730 Days - 0.12 GB disk usage per week

Weekly reports - 730 Days - 0.12 GB disk usage per week

–![]() Monthly reports - 1825 Days - 0.12 GB disk usage per month

Monthly reports - 1825 Days - 0.12 GB disk usage per month

–![]() 8.6 GB unit DB data generated per day with a data generation rate of 104.3 KB/sec

8.6 GB unit DB data generated per day with a data generation rate of 104.3 KB/sec

- Unit backup file size: 100 GB: total data size of unit database plus 5 GB for logs/device cache/other files

- Unit backup partition size: 220 GB

- Unit backup/restore speed: average of 6 GB per minute

- Cisco UCS C210 M3 for non-NEBs-compliant systems

- Cisco UCS C220 M3 for non-NEBs-compliant systems

- Cisco UCS C240 M3 for non-NEBs-compliant systems

- Cisco UCS B200 M3 for non-NEBs-compliant systems

- Linux from Supported Operating Systems.

- CPU type: Intel (R) Xeon CPU E5-2680 @ 2.7 GHz

- Core number: 8 or more

- RAM size: 98 GB or greater

- Swap space: 50 GB or greater

- Gateway JVM size: 8 GB

- Unit JVM size: 12 GB

- Local storage options

Cisco UCS C210 M2/C220 M3/C240 M3 - Performance Option

–![]() 1*100 GB SAS 15K RPM Drive for OS and Prime Performance Manager

1*100 GB SAS 15K RPM Drive for OS and Prime Performance Manager

–![]() 2*200 GB SAS 15K RPM Drives for Database using RAID0

2*200 GB SAS 15K RPM Drives for Database using RAID0

–![]() 4*200 GB SAS 10K RPM Drives for Backup using RAID0

4*200 GB SAS 10K RPM Drives for Backup using RAID0

Cisco UCS C240 M3/C220M3/C210 M3/B200M3 - Basic Option

–![]() 4*300 GB SAS 10K RPM Drive for OS and Prime Performance Manager using RAID0

4*300 GB SAS 10K RPM Drive for OS and Prime Performance Manager using RAID0

External Storage Options and Performance Requirements

Linux OS and Prime Performance Manager gateway/unit installation and gateway database

–![]() 10 IOPS with nearly 100% write operations

10 IOPS with nearly 100% write operations

–![]() Driven by device cache writes and logs (device cache and logs can be relocated if necessary)

Driven by device cache writes and logs (device cache and logs can be relocated if necessary)

Prime Performance Manager database and gateway exported reports

–![]() 35 GB used for CSV export in ppm format

35 GB used for CSV export in ppm format

–![]() 180 IOPS with nearly 98% write operations

180 IOPS with nearly 98% write operations

Prime Performance Manager gateway and unit backups

–![]() 1500 IOPS with nearly 100% write operations

1500 IOPS with nearly 100% write operations

–![]() Sustained IOPS during backup periods; quiet at other times

Sustained IOPS during backup periods; quiet at other times

120,000 3G Access Points Small Cell Scale on a Single Server

Requirements for 120,000 3G APs on a single server in small cell scale include:

–![]() 15-minute reports - 7 Days - 8.8 GB disk usage per day

15-minute reports - 7 Days - 8.8 GB disk usage per day

–![]() Hourly reports - 14 Days - 2.2 GB disk usage per day

Hourly reports - 14 Days - 2.2 GB disk usage per day

–![]() Daily reports - 94 Days - 0.1 GB disk usage per day

Daily reports - 94 Days - 0.1 GB disk usage per day

–![]() Weekly reports - 730 Days - 0.1 GB disk usage per week

Weekly reports - 730 Days - 0.1 GB disk usage per week

–![]() Monthly reports - 1825 Days - 0.1 GB disk usage per month

Monthly reports - 1825 Days - 0.1 GB disk usage per month

- 11.1 GB data generated per day with data generation rate of 134.7 KB/sec

- Unit backup file size: total data size of unit database + 40 GB for logs/device cache/other files

- Unit backup partition size: 2 * Unit backup file size; multiplied by 1.1 for safety

- Unit backup/restore speed: average of 6 GB per minute

- Network size:

Note![]() If the 3G AP file format is XML, only 20,000 APs per ULS are supported

If the 3G AP file format is XML, only 20,000 APs per ULS are supported

–![]() 50,000 3G APs with 15- minute pegging and upload intervals

50,000 3G APs with 15- minute pegging and upload intervals

–![]() 70,000 3G APs with 1-hour pegging and 1 day upload intervals

70,000 3G APs with 1-hour pegging and 1 day upload intervals

Table 1-16 shows the UCS C240 M3 non-NEBs-compliant configuration.

|

|

|

|---|---|

Cisco UCS C240 M3 for non-NEBs-compliant systems with Linux version from Supported Operating Systems |

|

|

– – – – – |

|

|

– – – – – – – – |

RAN Management System Requirements

Requirements for the small cell RAN Management System (RMS) are based on 120,000 3G APs on a single server. Requirements are based on the following configurations:

–![]() RMS: 3G AP InterDevice: 3G AP Availability InterDevice

RMS: 3G AP InterDevice: 3G AP Availability InterDevice

–![]() RMS: 3G AP InterDevice: 3G AP CS InterDevice

RMS: 3G AP InterDevice: 3G AP CS InterDevice

–![]() RMS: 3G AP InterDevice: 3G AP PS InterDevice

RMS: 3G AP InterDevice: 3G AP PS InterDevice

–![]() RMS: 3G AP KPI: 3G AP Availability

RMS: 3G AP KPI: 3G AP Availability

–![]() RMS: 3G AP KPI: 3G AP CS KPI

RMS: 3G AP KPI: 3G AP CS KPI

–![]() RMS: 3G AP KPI: 3G AP PS KPI

RMS: 3G AP KPI: 3G AP PS KPI

- Report intervals: 15-minute, hourly, daily, weekly, monthly

- Aging values and baseline disk space requirements:

–![]() 15-minute reports: 7 days, 8.8 GB disk usage per day

15-minute reports: 7 days, 8.8 GB disk usage per day

–![]() Hourly reports: 14 days, 2.2 GB disk usage per day

Hourly reports: 14 days, 2.2 GB disk usage per day

–![]() Daily reports - 94 days, 0.1 GB disk usage per day

Daily reports - 94 days, 0.1 GB disk usage per day

–![]() Weekly reports - 730 days, 0.1 GB disk usage per week

Weekly reports - 730 days, 0.1 GB disk usage per week

–![]() Monthly reports - 1825 days, 0.1 GB disk usage per month

Monthly reports - 1825 days, 0.1 GB disk usage per month

–![]() 11.1 GB data generated per day with data generation rate of 134.7 KB/sec

11.1 GB data generated per day with data generation rate of 134.7 KB/sec

- Unit backup file size: total data size of unit database plus 40 GB for logs/device cache/other files

- Unit backup partition size:

–![]() Number of 3G APs per ULS: 120,000

Number of 3G APs per ULS: 120,000

–![]() Total Number of 3G APs per unit: 120,000

Total Number of 3G APs per unit: 120,000

–![]() Each ULS has 50,000 3G APs with 15-minute data and upload intervals, and 70,000 3G APs with 1-hour pegging intervals and 1-day upload interval

Each ULS has 50,000 3G APs with 15-minute data and upload intervals, and 70,000 3G APs with 1-hour pegging intervals and 1-day upload interval

–![]() Cisco UCS C22M3 for non-NEBs-compliant systems

Cisco UCS C22M3 for non-NEBs-compliant systems

–![]() Cisco UCS C220 M3 for non-NEBs-compliant systems

Cisco UCS C220 M3 for non-NEBs-compliant systems

–![]() Cisco UCS C240 M3 for non-NEBs-compliant systems

Cisco UCS C240 M3 for non-NEBs-compliant systems

–![]() Cisco UCS B200M3 for non-NEBs-compliant systems

Cisco UCS B200M3 for non-NEBs-compliant systems

–![]() Linux version from Supported Operating Systems

Linux version from Supported Operating Systems

–![]() Swap space: 24 GB or greater

Swap space: 24 GB or greater

–![]() 1*146 GB SAS 15K RPM drive for OS

1*146 GB SAS 15K RPM drive for OS

–![]() 4*146 GB SAS 15K RPM drives for database using RAID10

4*146 GB SAS 15K RPM drives for database using RAID10

–![]() 8*146 GB SAS 10K RPM drives for backups using RAID10

8*146 GB SAS 10K RPM drives for backups using RAID10

–![]() 1*146 GB SAS 15K RPM drive for OS and Prime Performance Manager

1*146 GB SAS 15K RPM drive for OS and Prime Performance Manager

–![]() 2*146 GB SAS 15K RPM drives for database

2*146 GB SAS 15K RPM drives for database

–![]() 4*146 GB SAS 10K RPM drives for backup using RAID0

4*146 GB SAS 10K RPM drives for backup using RAID0

–![]() 4*300 GB SAS 10K RPM drive for OS and Prime Performance Manager using RAID0

4*300 GB SAS 10K RPM drive for OS and Prime Performance Manager using RAID0

–![]() 4*300 GB SAS 10K RPM drive for OS and Prime Performance Manager using RAID0

4*300 GB SAS 10K RPM drive for OS and Prime Performance Manager using RAID0

External Storage Options and Performance Requirements

- Local Root partition

- Linux OS and Prime Performance Manager gateway/unit installation and gateway database

–![]() 8 IOPS with nearly 100% write operations

8 IOPS with nearly 100% write operations

–![]() Driven by Prime Performance Manager device cache writes and logs

Driven by Prime Performance Manager device cache writes and logs

–![]() Device cache and logs can also be relocated if necessary

Device cache and logs can also be relocated if necessary

–![]() 30 GB used for CSV export in Prime Performance Manager format

30 GB used for CSV export in Prime Performance Manager format

–![]() 180 IOPS with nearly 100% write operations

180 IOPS with nearly 100% write operations

–![]() 300 IOPS with nearly 100% write operations

300 IOPS with nearly 100% write operations

–![]() Sustained IOPS during backup periods; quiet at other times

Sustained IOPS during backup periods; quiet at other times

Requirements for collectd

Prime Performance Manager supports Apache and MySQL collectd reports with a maximum of 5,000 devices. Server requirements are based on the following report aging values:

- 5-minute—3 days

- 15-minute—7 days

- Hourly—14 days

- Daily—94 days

- Weekly—730 days

- Monthly—1825 days

- Back up days and logs settings:

Table 1-17 shows the collectd server requirements.

|

|

|

|---|---|

|

– – |

|

Single Server ESXi Hypervisor Requirements

Requirements for a single-server ESXi hypervisor are based on the following configurations:

- 15-minute, hourly, daily, weekly, and monthly EXSi hypervisor report intervals

- Maximum network size:

Table 1-18 shows the UCS C240 M3 non-NEBs compliant requirements.

|

|

|

|---|---|

Cisco UCS C240 M3 for non-NEBs-compliant systems with Linux version from Supported Operating Systems |

|

8-core (UCS C240 M3), UCS servers use Xeon(R) E5-2680 processors |

|

Linux OS and Prime Performance Manager gateway, unit installation, and gateway database. |

|

Prime Performance Manager unit database and gateway exported reports. |

|

Single Server Requirements for Data Collection Manager

Single server requirements for Data Collection Manager (DCM) are based on the following configurations:

- Enabled reports: Resource CPU and Resource Memory

- Enabled report intervals: 5 minute, 15-minute, Hourly, Daily, Weekly, Monthly

- Report aging values and baseline disk space requirements:

–![]() 5-minute reports - 3 Days - 1.57 GB disk usage per day

5-minute reports - 3 Days - 1.57 GB disk usage per day

–![]() 15-minute reports - 7 Days - 0.52 GB disk usage per day

15-minute reports - 7 Days - 0.52 GB disk usage per day

–![]() Hourly reports - 14 Days - 0.13 GB disk usage per day

Hourly reports - 14 Days - 0.13 GB disk usage per day

–![]() Daily reports - 94 Days - 0.01 GB disk usage per day

Daily reports - 94 Days - 0.01 GB disk usage per day

–![]() Weekly reports - 730 Days - 0.01 GB disk usage per week

Weekly reports - 730 Days - 0.01 GB disk usage per week

–![]() Monthly reports - 1825 Days - 0.01 GB disk usage per month

Monthly reports - 1825 Days - 0.01 GB disk usage per month

–![]() 2.23 GB data generated per day with data generation rate of 27 KB/sec

2.23 GB data generated per day with data generation rate of 27 KB/sec

- Unit backup file size: total unit database data size plus 10 GB for logs/device cache/other files

- Unit backup partition size:

To run at maximum supported scale, set the following configurations in the unit Bulkstats.properties file located in the properties directory:

- DCM_DROP_DIR = /root/dcmdrop (Example. Set to your actual drop folder)

- DCM_PROFILE_FILE_RETAIN = 300

- DCM_DIR_MONITOR_REFRESH_INTERVAL = 100

- Number of devices: 3000 devices

- Device type: Cisco ASR 1000

- Bulk statistics interval for 3000 devices: 300 seconds

- Cisco UCS C240 M3 for non-NEBs-compliant systems

- Cisco UCS C220 M3 for non-NEBs-compliant systems

- Linux version from Supported Operating Systems

- CPU type: 8-core (UCS C240 M3) Xeon E5-26802.7 GHz

- RAM size: 64 GB or greater

- Swap space: 32 GB or greater

- Gateway JVM size: 8 GB

- Unit JVM size: 16 GB

–![]() 1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

–![]() 4*300 GB SAS 10K RPM drives for CSV reports and database using RAID10

4*300 GB SAS 10K RPM drives for CSV reports and database using RAID10

–![]() 6*600 GB SAS 10K RPM drives for backups using RAID10

6*600 GB SAS 10K RPM drives for backups using RAID10

External Storage Options and Performance Requirements

–![]() Linux OS and Prime Performance Manager gateway/unit installation and gateway database

Linux OS and Prime Performance Manager gateway/unit installation and gateway database

–![]() 340 IOPS with nearly 99% write operations

340 IOPS with nearly 99% write operations

–![]() Driven by Prime Performance Manager device cache writes and logs

Driven by Prime Performance Manager device cache writes and logs

–![]() Device cache and logs can also be relocated if necessary

Device cache and logs can also be relocated if necessary

–![]() Prime Performance Manager unit database and gateway exported reports

Prime Performance Manager unit database and gateway exported reports

–![]() 20 IOPS with nearly 95% write operations

20 IOPS with nearly 95% write operations

–![]() Prime Performance Manager gateway and unit backups

Prime Performance Manager gateway and unit backups

–![]() 550 IOPS with nearly 89% write operations

550 IOPS with nearly 89% write operations

–![]() Sustained IOPS during backup periods; quiet at other times

Sustained IOPS during backup periods; quiet at other times

Requirements for Managing KVM Deployments

Prime Performance Manager requirements for KVM deployments are based on the following configurations:

–![]() KVM VM Hypervisor-Level Disk Space

KVM VM Hypervisor-Level Disk Space

–![]() KVM VM Interface Receive Rates

KVM VM Interface Receive Rates

–![]() KVM Host VM Availability Numbers

KVM Host VM Availability Numbers

–![]() KVM VM Interface Packet Rates

KVM VM Interface Packet Rates

- Report intervals: 1-minute, 5-minute, 15-minute, hourly, daily, weekly, monthly

- Back up days and logs:

–![]() 15-minute reports - 365 Days

15-minute reports - 365 Days

–![]() Cisco UCS C240 M3 for non-NEBs-compliant systems

Cisco UCS C240 M3 for non-NEBs-compliant systems

–![]() Cisco UCS C22 M3 for non-NEBs-compliant systems

Cisco UCS C22 M3 for non-NEBs-compliant systems

–![]() Cisco UCS C200 M2 for non-NEBs-compliant systems - EOS

Cisco UCS C200 M2 for non-NEBs-compliant systems - EOS

–![]() Cisco UCS B200 M3 for non-NEBs-compliant systems (external storage only

Cisco UCS B200 M3 for non-NEBs-compliant systems (external storage only

–![]() Oracle Netra X3-2 or equivalent for NEBs compliant systems

Oracle Netra X3-2 or equivalent for NEBs compliant systems

–![]() Linux version from Supported Operating Systems

Linux version from Supported Operating Systems

–![]() CPU type: 6-core (UCS C240 M3) Xeon E5-2640 2.5 GHz

CPU type: 6-core (UCS C240 M3) Xeon E5-2640 2.5 GHz

–![]() CPU type: 6-core (UCS B200 M3) Xeon E5-2640 2.5 GHz

CPU type: 6-core (UCS B200 M3) Xeon E5-2640 2.5 GHz

–![]() CPU type: 6-core (UCS C22 M3) Xeon E5-2430v2 2.5 GHz

CPU type: 6-core (UCS C22 M3) Xeon E5-2430v2 2.5 GHz

–![]() CPU type: 6-core (UCS C22 M3) Xeon E5-2440 2.4 GHz

CPU type: 6-core (UCS C22 M3) Xeon E5-2440 2.4 GHz

–![]() CPU type: 6-core (UCS C220 M3) Xeon E5-2640 2.5 GHz

CPU type: 6-core (UCS C220 M3) Xeon E5-2640 2.5 GHz

–![]() CPU type: 6-core (UCS C220 M3) Xeon E5-2630v2 2.6 GHz

CPU type: 6-core (UCS C220 M3) Xeon E5-2630v2 2.6 GHz

–![]() CPU type: 6-core (UCS C200 M2) Xeon E5645 2.4 GHz

CPU type: 6-core (UCS C200 M2) Xeon E5645 2.4 GHz

–![]() CPU type: 8-core (Oracle X3-2) Xeon E5-2658 2.1 GHz

CPU type: 8-core (Oracle X3-2) Xeon E5-2658 2.1 GHz

–![]() vCPU number: 6 or more for VM Environments

vCPU number: 6 or more for VM Environments

–![]() Swap space: 24 GB or greater

Swap space: 24 GB or greater

–![]() 1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

–![]() 6*300 GB SAS 15K RPM drives for database using RAID10

6*300 GB SAS 15K RPM drives for database using RAID10

–![]() 8*300 GB SAS 15K RPM drives for backups using RAID10

8*300 GB SAS 15K RPM drives for backups using RAID10

–![]() 1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

–![]() 3*300 GB SAS 15K RPM drives for database using RAID0

3*300 GB SAS 15K RPM drives for database using RAID0

–![]() 4*300 GB SAS 15K RPM drives for backups using RAID0

4*300 GB SAS 15K RPM drives for backups using RAID0

–![]() 1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

–![]() 3*300 GB SAS 10K RPM drives for database using RAID0

3*300 GB SAS 10K RPM drives for database using RAID0

–![]() 4*300 GB SAS 10K RPM drive for backups using RAID0

4*300 GB SAS 10K RPM drive for backups using RAID0

–![]() Using one embedded RAID or PCIe RAID controller

Using one embedded RAID or PCIe RAID controller

–![]() 1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

–![]() 1*600 GB SAS 15K RPM drives for database

1*600 GB SAS 15K RPM drives for database

–![]() 2*600 GB SAS 10K RPM drive for backups using RAID0

2*600 GB SAS 10K RPM drive for backups using RAID0

–![]() 1*146 GB SAS 15K RPM drive for OS and Prime Performance Manager

1*146 GB SAS 15K RPM drive for OS and Prime Performance Manager

–![]() 1*600 GB SAS 15K RPM drives for database

1*600 GB SAS 15K RPM drives for database

–![]() 2*600 GB SAS 10K RPM drive for backups using RAID0

2*600 GB SAS 10K RPM drive for backups using RAID0

–![]() 1*146 GB SAS 10K RPM drive for OS and Prime Performance Manager

1*146 GB SAS 10K RPM drive for OS and Prime Performance Manager

–![]() 1*600 GB SAS 10K RPM drives for database

1*600 GB SAS 10K RPM drives for database

–![]() 2*600 GB SAS 10K RPM drive for backups using RAID0

2*600 GB SAS 10K RPM drive for backups using RAID0

External Storage Options and Performance Requirements

–![]() Linux OS and Prime Performance Manager gateway/unit installation and gateway database

Linux OS and Prime Performance Manager gateway/unit installation and gateway database

–![]() 150 IOPS with nearly 100% write operations

150 IOPS with nearly 100% write operations

–![]() Driven by Prime Performance Manager device cache writes and logs

Driven by Prime Performance Manager device cache writes and logs

–![]() Device cache and logs can also be relocated if necessary

Device cache and logs can also be relocated if necessary

–![]() Prime Performance Manager database and gateway exported reports

Prime Performance Manager database and gateway exported reports

–![]() 380 IOPS with nearly 99% write operations

380 IOPS with nearly 99% write operations

–![]() Prime Performance Manager Gateway and unit backups

Prime Performance Manager Gateway and unit backups

–![]() 2200 IOPS with nearly 98% write operations

2200 IOPS with nearly 98% write operations

–![]() Sustained IOPS during backup periods; quiet at other times

Sustained IOPS during backup periods; quiet at other times

Server Requirements for Managing KVM and Cisco Unified Computing Service Manager Deployments

Prime Performance Manager requirements for managing KVM and Cisco Unified Computing Service Manager are listed below. Requirements are based on the following Prime Performance Manager settings:

–![]() KVM: KVM Host, KVM Host Inventory, and KVM VM

KVM: KVM Host, KVM Host Inventory, and KVM VM

- UCS Clusters: All

- Availability: ICMP Ping/ ICMP Ping Aggregate

- Availability: Interface Status/ Interface Status Aggregate/ Interfaces

- OpenStack: All

- PPM System: All

- Resource: All

- Report intervals: 5 Min, 15 Min, Hourly, Daily, Weekly, Monthly

- ppm backupdays 1 both

- ppm backuplogs disable both

- CSV exporting of all data to gateway: Disabled

- 510 KVM hosts with 11,000 active VMs and 65 UCSM devices on a single server

- Integrate with OpenStack Ceilometer

- 5 Minute reports: 30 Days (5.61 GB disk usage per day)

- 15 Minute reports: 30 Days (1.87 GB disk usage per day)

- Hourly reports: 90 Days (0.47 GB disk usage per day)

- Daily reports: 94 Days (0.02 GB disk usage per day)

- Weekly reports: 730 Days (0.02 GB disk usage per week)

- Monthly reports: 1825 Days (0.02 GB disk usage per month)

- 8 GB data generated per day with data generation rate of 97.1 KB/sec

- Unit Backup File Size: 350GB

–![]() Total Data Size Of Unit Database +

Total Data Size Of Unit Database +

–![]() 40 GB for logs/device cache/other files

40 GB for logs/device cache/other files

–![]() 500 hosts have average 20 VMs, 10 hosts have average 100 VMs

500 hosts have average 20 VMs, 10 hosts have average 100 VMs

–![]() Number of active VMs: 11,000

Number of active VMs: 11,000

- Linux version from Supported Operating Systems

- KVM VM server in UCS B200 M3 with RHEL6.5

–![]() Swap space: 40 GB or greater

Swap space: 40 GB or greater

–![]() 1*100 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

1*100 GB SAS 15K RPM drive for OS and Prime Performance Manager using RAID0

–![]() 4*300 GB SAS 15K RPM drives for database using RAID10

4*300 GB SAS 15K RPM drives for database using RAID10

–![]() 8*300 GB SAS 15K RPM drives for backups using RAID10

8*300 GB SAS 15K RPM drives for backups using RAID10

External Storage Options and Performance Requirements

–![]() LinuxOS and Prime Performance Manager Unit Installation

LinuxOS and Prime Performance Manager Unit Installation

–![]() 12 IOPS with nearly 94% write operations

12 IOPS with nearly 94% write operations

–![]() Driven by Prime Performance Manager device cache writes and logs

Driven by Prime Performance Manager device cache writes and logs

–![]() 27 IOPS With about 93% Write Operations

27 IOPS With about 93% Write Operations

–![]() 960 IOPS with nearly 100% write operations

960 IOPS with nearly 100% write operations

Requirements for Ceph Deployments

Prime Performance Manager requirements for Ceph deployments are based on the following configurations:

–![]() Storage: CEPH: Cluster Level: Global space

Storage: CEPH: Cluster Level: Global space

–![]() Storage: CEPH: Cluster Level: Monitors

Storage: CEPH: Cluster Level: Monitors

–![]() Storage: CEPH: Cluster Level: OSD IO Aggregate

Storage: CEPH: Cluster Level: OSD IO Aggregate

–![]() Storage: CEPH: Cluster Level: OSD Latency Aggregate

Storage: CEPH: Cluster Level: OSD Latency Aggregate

–![]() Storage: CEPH: Cluster Level: Placement Groups

Storage: CEPH: Cluster Level: Placement Groups

–![]() Storage: CEPH: Cluster Level: Pools

Storage: CEPH: Cluster Level: Pools

–![]() Storage: CEPH: Node Level: OSD: Filestore

Storage: CEPH: Node Level: OSD: Filestore

–![]() Storage: CEPH: Node Level: OSD: IO

Storage: CEPH: Node Level: OSD: IO

–![]() Storage: CEPH: Node Level: OSD: Journal

Storage: CEPH: Node Level: OSD: Journal

–![]() Storage: CEPH: Node Level: OSD: Latency

Storage: CEPH: Node Level: OSD: Latency

–![]() Storage: CEPH: Node Level: OSD: OSD space

Storage: CEPH: Node Level: OSD: OSD space

–![]() Storage: CEPH: Node Level: OSD: Queue

Storage: CEPH: Node Level: OSD: Queue

- Report intervals: 1-minute, 5-minute, 15-minute, hourly, daily, weekly, monthly

- Back up days and logs:

–![]() 1-minute reports - 7 Days - 0.98 GB disk usage per day

1-minute reports - 7 Days - 0.98 GB disk usage per day

–![]() 5-minute reports - 365 Days - 0.20 GB disk usage per day

5-minute reports - 365 Days - 0.20 GB disk usage per day

–![]() 15-minute reports - 365 Days - 0.07 GB disk usage per day

15-minute reports - 365 Days - 0.07 GB disk usage per day

–![]() Hourly reports - 365 Days - 0.02 GB disk usage per day

Hourly reports - 365 Days - 0.02 GB disk usage per day

–![]() Daily reports - 365 Days - 0.001 GB disk usage per day

Daily reports - 365 Days - 0.001 GB disk usage per day

–![]() Weekly reports - 730 Days - 0.001 GB disk usage per week

Weekly reports - 730 Days - 0.001 GB disk usage per week

–![]() Monthly reports - 1825 Days - 0.001 GB disk usage per month

Monthly reports - 1825 Days - 0.001 GB disk usage per month

- 1.27 GB data generated per day with data generation rate of 15.4 KB/sec

- Unit backup file size: total unit database data size plus 0.5 GB for logs/device cache/other files

- Unit backup partition size:

100 Ceph Nodes with 1-Minute Reports Enabled on a Single Server

Requirements for 100 Ceph nodes with 1-minute reports enabled on a single server are based on the following configurations:

–![]() Cisco UCS C240 M3 for non-NEBs-compliant systems

Cisco UCS C240 M3 for non-NEBs-compliant systems

–![]() Cisco UCS C220 M3 for non-NEBs-compliant systems

Cisco UCS C220 M3 for non-NEBs-compliant systems

–![]() Linux version from Supported Operating Systems

Linux version from Supported Operating Systems

–![]() CPU type: Xeon E5-2680 @ 2.7 GHz

CPU type: Xeon E5-2680 @ 2.7 GHz

–![]() Swap space-- 64 GB or greater

Swap space-- 64 GB or greater

- Cisco UCS C220 M3/C240 M3

- 1*300 GB SAS 15K RPM drive for OS and Prime Performance Manager

- 3*300 GB SAS 15K RPM drives for database using RAID0

- 4*300 GB SAS 15K RPM drives for backups using RAID0

- Using PCIe RAID controllers

- Dual power supply

External Storage Options and Performance Requirements

–![]() Linux OS, Prime Performance Manager gateway/unit installation and gateway database

Linux OS, Prime Performance Manager gateway/unit installation and gateway database

–![]() 14 IOPS with 95% write operations; driven by Prime Performance Manager device cache writes and logs

14 IOPS with 95% write operations; driven by Prime Performance Manager device cache writes and logs

Prime Performance Manager database

–![]() 15 IOPS with nearly 89% write operations

15 IOPS with nearly 89% write operations

Prime Performance Manager gateway and unit backups

–![]() 375 IOPS with nearly 98% write operations; sustained IOPS during backup periods; quiet at other times

375 IOPS with nearly 98% write operations; sustained IOPS during backup periods; quiet at other times

Requirements for TCP/HTTP Probes

Prime Performance Manager probe support is based on 500 TCP and 500 HTTP probes added in a low-end VM environments with no performance impact. This configuration is not an upper limit for the number of HTTP or TCP probes Prime Performance Manager can support.

Probe support is based on the following configurations:

Requirements for NetFlow

Prime Performance Manager NetFlow requirements are based on the following configurations:

–![]() NetFlow All Flows—Lowest Interval Only

NetFlow All Flows—Lowest Interval Only

–![]() NetFlow Autonomous Systems—NetFlow AS

NetFlow Autonomous Systems—NetFlow AS

–![]() NetFlow Endpoints—NetFlow Conversations

NetFlow Endpoints—NetFlow Conversations

–![]() NetFlow Endpoints—NetFlow Destinations

NetFlow Endpoints—NetFlow Destinations

–![]() NetFlow Endpoints—NetFlow Source Destinations

NetFlow Endpoints—NetFlow Source Destinations

–![]() NetFlow Endpoints—NetFlow Sources

NetFlow Endpoints—NetFlow Sources

–![]() NetFlow Protocols—NetFlow Protocol

NetFlow Protocols—NetFlow Protocol

–![]() 5-minute reports - 3 Days - 65.7 GB disk usage per day

5-minute reports - 3 Days - 65.7 GB disk usage per day

–![]() 15-minute reports - 7 Days - 21.9 GB disk usage per day

15-minute reports - 7 Days - 21.9 GB disk usage per day

–![]() Hourly reports - 14 Days - 5.48 GB disk usage per day

Hourly reports - 14 Days - 5.48 GB disk usage per day

–![]() Daily reports - 94 Days - 0.23 GB disk usage per day

Daily reports - 94 Days - 0.23 GB disk usage per day

–![]() Weekly reports - 730 Days - 0.23 GB disk usage per week

Weekly reports - 730 Days - 0.23 GB disk usage per week

–![]() Monthly reports - 1825 Days - 0.23 GB disk usage per month

Monthly reports - 1825 Days - 0.23 GB disk usage per month

–![]() 94 GB data generated per day with data generation rate of 1.114 MB/sec

94 GB data generated per day with data generation rate of 1.114 MB/sec

Unit database size increment metrics are based on NetFlow V5 150K scale listed below.

–![]() Total data size of unit database +

Total data size of unit database +

–![]() 40 GB for logs/device cache/other files

40 GB for logs/device cache/other files

For better NetFlow processing performance, disable the following in Administration > Report Settings:

- Weekly Report Flag

- Monthly Report Flag

- Export reports

- Export hourly 5-minute reports

- Export hourly 15-minute reports

- Backup days: ppm backupdays 1 both

Add the following to the unit System.properties file located in the properties directory:

- NF_MAX_UNPROCESSED_PKTS = 1000000

- DEV_CACHE_CONC_LOADERS = 32

- NETFLOW_MAX_PKTS_PROCESSED_PER_POLL = 500

- DB_QUEUE_SIZE = 100000

- DB_DEF_MAX_COMMIT_RECS = 5000

- DB_DEF_MAX_COMMIT_BYTES = 16777216

Restart the unit for these settings to take effect. Set the following in the gateway Reports.properties file in the properties directory:

- RESOLVE_HOST_NAMES = disabled

- Maximum network size: NetFlow V5 150K Scale

- Number of devices: 750 Devices

- Speed of flow per device: 200 Flows/second

- Speed of flow per unit: 150,000 Flows/second

- Flow conversation number: 600 conversations (The conversation number is calculated by multiplying all flow field value numbers such as: sourceIPAddress, destIPAddresss, Port, Interface, AS.

- Maximum network size: NetFlow V9/IPFIX 150K Scale

- Number of devices: 500

- Flow speed per device: 200 flows/second

- Flow speed per unit: 100,000 flows/second

- Flow conversation number:12000 conversations

Table 1-19 shows the NetFlow Linux server requirements.