Cisco UCS Local Zoning Configuration Example

Available Languages

Contents

Contents

Overview

Cisco Unified Computing SystemTM (Cisco UCS®) Release 2.1 introduces the Local Zoning feature, which allows zoning for direct-attached storage arrays without requiring upstream Cisco® MDS 9000 Family or Cisco Nexus® 5000 Series Switches. This document describes how to prepare Cisco UCS for local zoning, discusses considerations related to the Local Zoning feature in the context of the overall Cisco UCS topology, and demonstrates how to configure local zoning and view and modify local zoning after the feature is configured.

Audience

This document is intended for system architects, and engineers interested in understanding and deploying the Cisco UCS Local Zoning feature introduced in Cisco UCS 2.1. It assumes a basic functional knowledge and general understanding of industry standards for Fibre Channel over Ethernet (FCoE), LANs, Fibre Channel (FC), and storage in the context of Cisco UCS and Cisco networking products.

Test Environment

The test environment included the following:

- Cisco UCS

- 2 Cisco UCS 6248UP 48-Port Fabric Interconnects

- 2 Cisco UCS 2208XP Fabric Extender I/O modules (IOMs)

- 1 Cisco UCS 5108 Blade Server Chassis

- 1 Cisco UCS B200 M3 Blade Server with Cisco UCS Virtual Interface Card (VIC) 1240 modular LAN on motherboard (mLOM)

- Storage

- EMC VNX5700 with 8-Gbps Fibre Channel adapters

Configuring Local Zoning

Note: The Cisco UCS Local Zoning feature currently is NOT supported when FC or FCoE uplinks exist. Disable and remove any FC and FCoE uplinks before proceeding.

Set FC Switching Mode

The Cisco UCS Local Zoning feature requires that the Cisco UCS fabric interconnects be configured in FC Switching Mode rather than the default FC End-Host Mode.

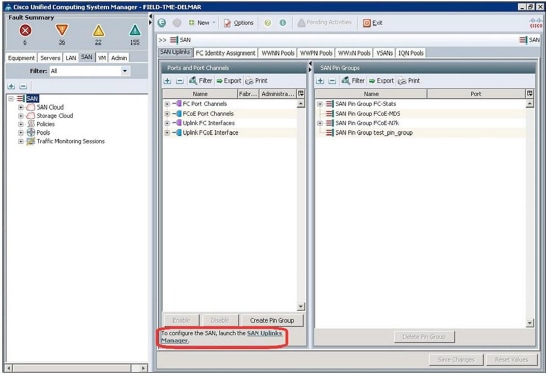

In Cisco UCS Manager, select the SAN tab in the navigation pane and select the top-level SAN node in the navigation tree. In the main window, select the SAN Uplinks tab, which displays the Port and Port Channels and SAN Pin Groups windows.

Click the SAN Uplinks Manager link in the main window. The SAN Uplinks Manager windows will appear.

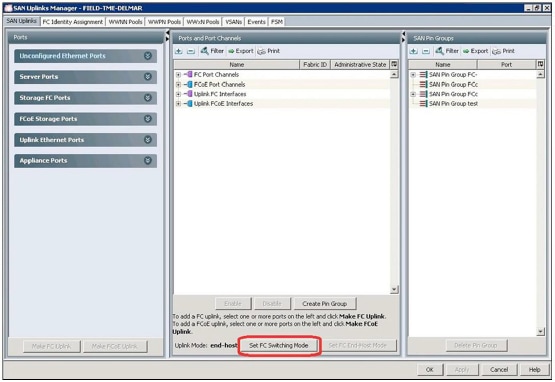

In the SAN Uplinks Manager window, select the SAN Uplinks tab.

Note: If you change the FC Uplink Mode, both fabric interconnects will immediately reboot, resulting in a 10 to 15-minute outage. You should change the Uplink Mode only during a planned maintenance window.

The Uplink Mode field will show whether Cisco UCS is currently in FC End-Host mode or FC Switching mode. If Cisco UCS is already in FC Switching mode, click Cancel and proceed to the next steps.

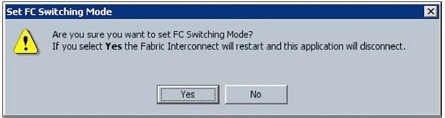

If Cisco UCS is in FC End-Host mode, click Set FC Switching Mode to change the uplink mode to FC Switching mode. The Set FC Switching Mode warning window will appear; click Yes to proceed.

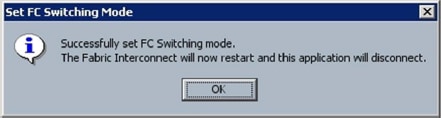

The Set FC Switching Mode success window will appear; click OK.

Both Cisco UCS fabric interconnects will now reboot.

Local Zoning and Existing Uplink Considerations

The Cisco UCS Local Zoning feature currently is not supported when FC or FCoE uplinks exist. Cisco UCS direct- attach storage currently supports the following configurations:

- Direct-attach storage with uplink zoning: In this configuration, FC or FCoE storage is directly connected to the fabric interconnects, and zoning is performed external to Cisco UCS through the Cisco MDS 9000 Family or Cisco Nexus 5000 Series Switches. Local Zoning is not supported in this configuration.

- Direct-attach storage with local zoning: In this configuration, FC or FCoE storage is directly connected to the fabric interconnects, and zoning is performed using the Cisco UCS Local Zoning feature. Any existing FC or FCoE uplink connections need to be shut down. Cisco UCS does not currently support the coexistence of active FC or FCoE uplink connections with the use of the Cisco UCS Local Zoning feature.

If uplinks were previously attached or active and are now shut down or removed, you can run the commands shown here from the Cisco UCS fabric interconnect command-line interface (CLI) to remove zones that were previously inherited from northbound Cisco MDS 9000 Family and Cisco Nexus 5000 Series Switches that are no longer valid or needed.

To display existing zones, enter the following commands from the fabric interconnect CLI:

FIELD-TME-DELMAR-A# connect nxos

FIELD-TME-DELMAR-A(nxos)# show zone

zone name MM-Server1 vsan 1

pwwn 20:93:59:04:14:45:01:4f

pwwn 20:93:59:04:14:45:01:5e

pwwn 50:0a:09:81:87:99:99:c9

pwwn 50:0a:09:82:87:99:99:c9

zone name MM-Server2 vsan 1

pwwn 20:93:59:04:14:45:01:2f

pwwn 20:93:59:04:14:45:01:3f

pwwn 50:0a:09:81:87:99:99:c9

pwwn 50:0a:09:82:87:99:99:c9

FIELD-TME-DELMAR-A(nxos)# exit

To display existing VSAN names, enter the following commands (this listing is necessary because the unneeded zones will be pruned on the basis of the VSAN name):

FIELD-TME-DELMAR-A# scope fc-uplink

FIELD-TME-DELMAR-A /fc-uplink # show vsan

VSAN:

Name Id FCoE VLAN Fabric ID FC Zoning Overall status

------ ----- ----------- ----------- ----------- ---------------

default 1 138 Dual Disabled Ok

FC-Stats-A 300 300 A Disabled Ok

FC-Stats-B 301 301 B Disabled Ok

FCoE-VSAN100 100 100 A Disabled Ok

FCoE-VSAN101 101 101 B Disabled Ok

FIELD-TME-DELMAR-A /fc-uplink # exit

To remove existing zoning, enter the commands shown here for each VSAN that needs to be pruned.

For VSANs originally created as "Dual Mode" VSANs, enter the following commands:

FIELD-TME-DELMAR-A# scope fc-uplink

FIELD-TME-DELMAR-A /fc-uplink # scope vsan default

Note: "default" is the name of VSAN0001, replace "default" with appropriate VSAN names

FIELD-TME-DELMAR-A /fc-uplink/vsan # clear-unmanaged-fc-zones-all

FIELD-TME-DELMAR-A /fc-uplink/vsan* # commit-buffer

FIELD-TME-DELMAR-A /fc-uplink/vsan # exit

FIELD-TME-DELMAR-A /fc-uplink # exit

FIELD-TME-DELMAR-A#

For VSANs originally created in either Fabric A or Fabric B, enter the following commands:

FIELD-TME-DELMAR-A# scope fc-uplink

FIELD-TME-DELMAR-A /fc-uplink # scope fabric a

FIELD-TME-DELMAR-A /fc-uplink/fabric # scope vsan FC-Stats-A

(replace with appropriate vsan name)

FIELD-TME-DELMAR-A /fc-uplink/fabric/vsan # clear-unmanaged-fc-zones-all

FIELD-TME-DELMAR-A /fc-uplink/fabric/vsan # commit-buffer

FIELD-TME-DELMAR-A /fc-uplink/fabric/vsan # exit

FIELD-TME-DELMAR-A /fc-uplink/fabric* # exit

FIELD-TME-DELMAR-A /fc-uplink # exit

FIELD-TME-DELMAR-A#

Repeat these steps for each VSAN name on both fabric interconnects.

After the command processing is complete, confirm that the zones have been removed by logging into each fabric interconnect, connecting to the nxos scope, and entering the show zone command.

Create VSAN's in Storage Cloud

You need to create VSANs in the Storage Cloud containers on both Fabric A and Fabric B. VSANs created to support local zoning should not be duplicated in the SAN Cloud container.

Note: If the VSAN is not created in the Storage Cloud container, the VSAN will not be available to assign to the direct-attach storage port.

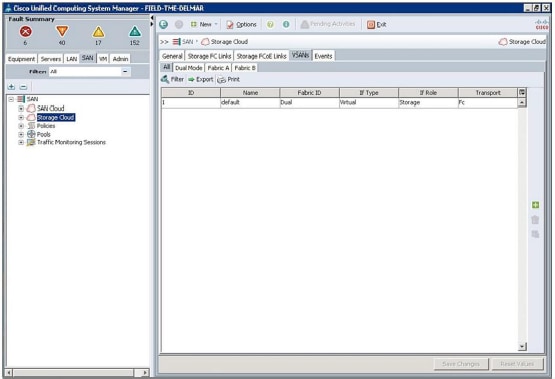

In Cisco UCS Manager, select the SAN tab in the navigation pane and select the Storage Cloud node. In the main window, select the VSANs tab and then select the All tab.

In the main window, click the green + button on right. The Create VSAN window will appear.

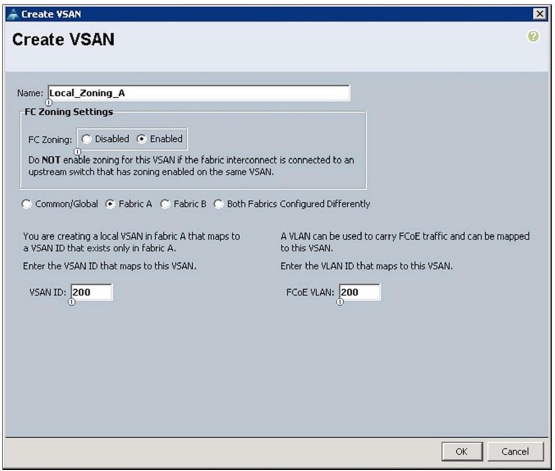

Complete the fields as follows:

- Name: Enter a name for the VSAN.

- FC Zoning: Change the setting to Enabled.

- Fabric Radio Buttons: Choose Fabric A.

- VSAN ID: Enter the VSAN ID for the VSAN being created on Fabric A.

- FCoE VLAN: Enter the FCoE VLAN ID for the VLAN that maps to this VSAN.

Click OK. A Create VSAN success window will appear. Click OK.

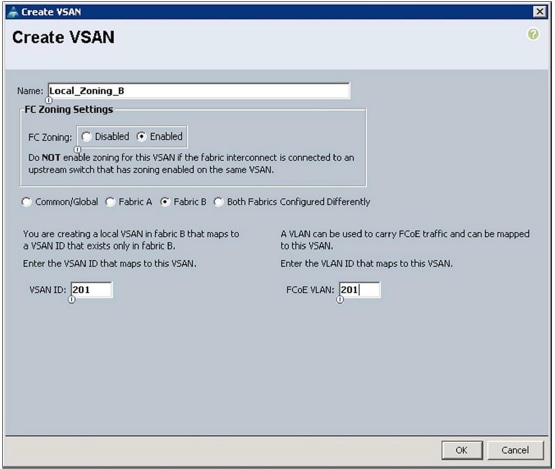

Repeat the same process for Fabric B:

In the main window, click the green + button on the right. The Create VSAN window will appear. Complete the fields as follows:

- Name: Enter a name for the VSAN.

- FC Zoning: Change the setting to Enabled.

- Fabric Radio Buttons: Choose Fabric B.

- VSAN ID: Enter the VSAN ID for the VSAN being created on Fabric A.

- FCoE VLAN: Enter the FCoE VLAN ID for the VLAN that maps to this VSAN.

Click OK. A Create VSAN success window will appear. Click OK.

Configure Direct-Attach Fibre Channel and FCoE Storage

Local zoning requires direct-attach FC or FCoE storage and configuration of FC or FCoE storage ports.

Note: Qualified direct-attach FC and FCoE storage vendors are currently limited to EMC, Hitachi Data Systems, and NetApp. Please refer to the latest Cisco UCS hardware compatibility list for the most current qualified vendors and models: http://www.cisco.com/en/US/products/ps10477/prod_technical_reference_list.html.

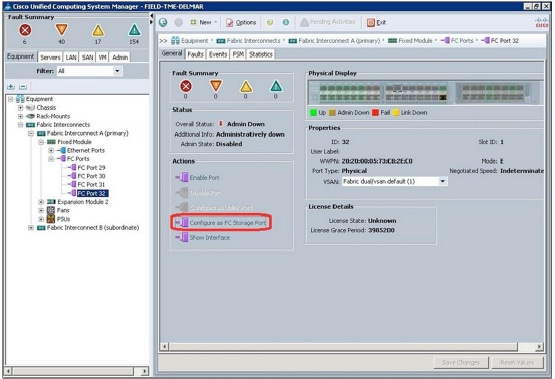

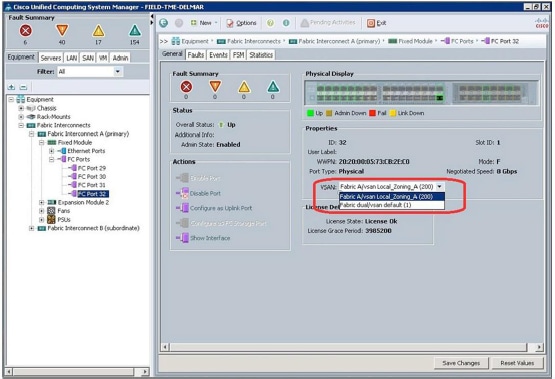

In Cisco UCS Manager, select the Equipment tab in the navigation pane. Expand the Equipment node and then expand the Fabric Interconnects node and then the Fabric Interconnect A (primary) node. Expand the module to which the FC or FCoE direct-attach storage device has been attached (this document assumes that the port has already been configured as FC rather than Ethernet). Then expand the FC Ports node and select the FC port to which the storage is connected. In the main window, select the General tab, and the Physical Display pane for the port will be displayed.

In the main window, in the Actions section, click Configure as FC Storage Port. A confirmation window will appear; click Yes. A success window will appear; click OK.

In the main window, in the Properties section, click the VSAN drop-down menu and set the port to the VSAN created in the previous section.

Note: If the VSAN was not previously created in the Storage Cloud container, the VSAN will not be available in the drop-down menu to assign to the direct-attach storage port.

When the port is set to the desired VSAN, click Save Changes. A success window will appear; click OK.

Repeat the preceding steps for each direct-attach storage port connected to Fabric Interconnect A and Fabric Interconnect B.

Configure Local Zoning in a New Service Profile

Local zoning can be applied to both a new service profile and an existing service profile. This section describes the creation of local zoning on a new service profile.

Note: You must select the Create Service Profile (expert) wizard if you want to create local zoning when you create a service profile.

Note: This document assumes that the reader understands the service profile creation process. The steps presented here are for the Storage and Zoning sections of the Create Service Profile (expert) wizard; this document does not include steps for the other sections.

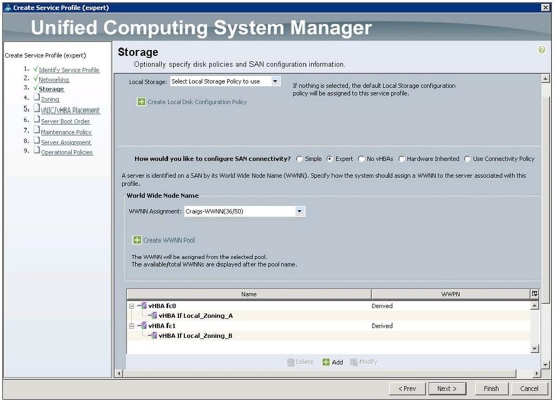

Create a service profile using the Expert wizard; complete all sections as desired until you reach the Storage section.

When the Storage section is displayed, complete the configuration as shown here.

- Local Storage: Configure the storage as desired if local storage is to be used.

- How would you like to configure SAN connectivity: Select the Expert radio button.

- World Wide Node Name: Select the WWNN Pool from the drop-down menu.

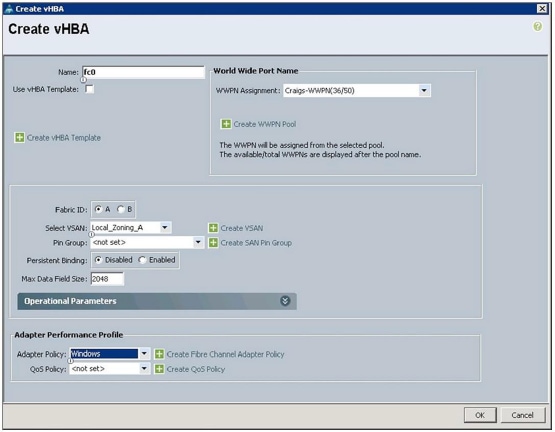

Click the green + button at the bottom of the window to create virtual host bus adapters (vHBAs); the Create vHBA

window will appear.

Complete the configuration as follows:

- Name: Enter a name for the vHBA.

- World Wide Port Name: Select WWPN Pool from the drop-down menu.

- Fabric ID: Choose Fabric A.

- Select VSAN: Choose the VSAN created in the Storage Cloud container.

- Pin Group: Choose <not set> because you are using local zoning.

- Persistent Binding: Select either Disabled or Enabled.

- Max Data Field Size: Use the default or set size as desired.

- Adapter Policy: Set the policy as desired.

- QoS Policy: Set the policy as desired.

When the configuration is complete, click OK.

Repeat the preceding steps; click the green + button for the second vHBA and choose fabric B.

When vHBA creation has been completed for each vHBA, click Next.

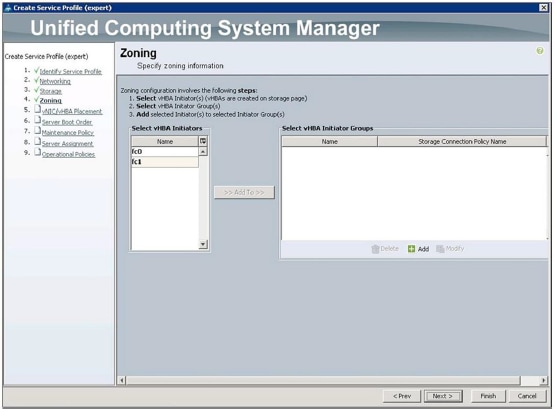

The Zoning section of the wizard will now appear. This section is where you configure local zoning. Creation of local zones is a multistep process. The primary constructs of local zoning are as follows:

- Storage Connection Policy: This container configures the FC target endpoints that will be assigned to the vHBA Initiator Group. This container also specifies whether the vHBA Initiator Group type will be Single Initiator Single Target or Single Initiator Multiple Targets.

- Single Initiator Single Target: This type specifies that each zone includes a single vHBA WWPN and a single storage target WWPN.

Example: vHBA_0, Storage_Target_1, Storage Target_2

Zone1: vHBA0

Storage_Target_1

Zone2: vHBA0

Storage_Target_2d

- Single Initiator Multiple Targets: This type specifies that each zone includes a single vHBA WWPN with multiple storage target WWPNs.

- FC Target Endpoints: This construct defines the WWPN of the FC or FCoE storage target that is directly attached to the fabric interconnect.

- vHBA Initiator Group: This container is the "Zone" that will include the vHBA initiator WWPN along with the storage target endpoint WWPN defined in the storage connection policy.

Example: vHBA_0, Storage_Target_1, Storage Target_2

Zone1: vHBA0

Storage_Target_1

Storage_Target_2

Note: Have the storage target endpoint WWPNs available before proceeding.

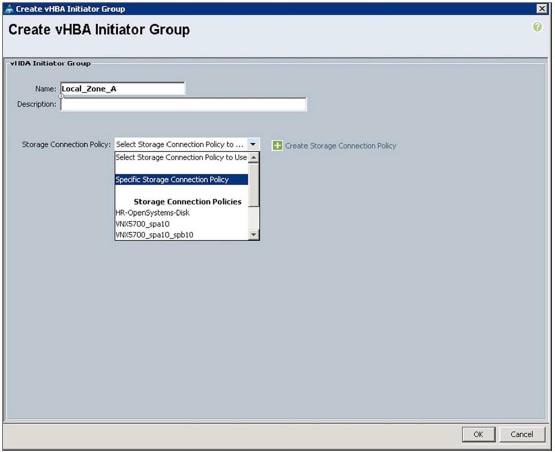

Click the green + button at the bottom of the Select vHBA Initiator Groups window; the Create vHBA Initiator Group window will appear.

Complete the vHBA Initiator Group fields as follows:

- Name: Enter a name for the vHBA initiator group.

Note: The vHBA initiator group name can consist of up to 16 characters.

- Storage Connection Policy: Choose Specific Storage Connection Policy from the drop-down menu.

Note: You can also choose a previously created storage connection policy from the Storage Connection Policy drop-down menu. In this document, however, a specific storage connection policy will be created for this service profile.

After you choose the Specific Storage Connection Policy from the drop-down menu, the Create vHBA Initiator

Group window will expand to show additional fields for building the Storage Connection Policy.

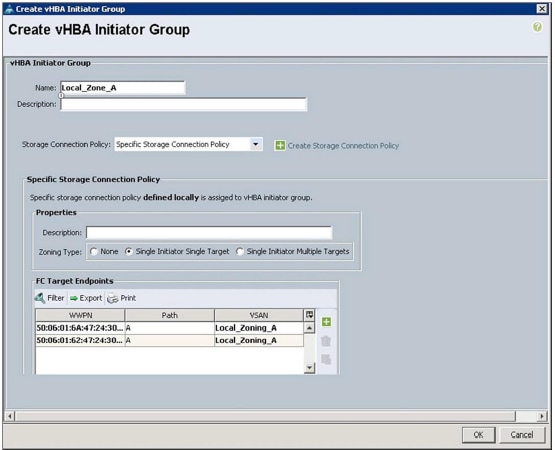

Complete the fields as follows:

- Zoning Type: In this field, choose Single Initiator Single Target or Single Initiator Multiple Targets. As shown in the examples presented earlier, the difference between these two options is that with Single Initiator Single Target, a separate zone is created for every storage target endpoint, and with Single Initiator Multiple Targets, one zone is created that includes all the storage target endpoints. After service profile creation is complete, output from both the GUI and the CLI will illustrate these results.

For the purposes of this document, click the radio button next to Single Initiator Single Target.

Note: The default zoning type is Single Initiator Single Target.

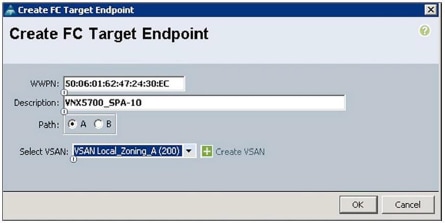

- FC Target Endpoints: This pane is where the WWPNs of the storage target endpoints are added. Click the green + button to the right of the FC Target Endpoints pane; the Create FC Target Endpoint window will appear.

Complete the fields as follows:

- WWPN: This field shows the WWPN of the storage target endpoint connected to Fabric Interconnect A.

- Description: Enter a description for the storage target endpoint. In the screen image, the storage model (VNX5700) and the service processor designation (SPA-10) have been entered.

- Path: Choose the path that corresponds to the fabric interconnect to which the storage target endpoint is connected.

- Select VSAN: From the drop-down menu, choose the VSAN configured earlier.

After the configuration is complete, click OK.

This step focuses on Fabric A (the storage target endpoint attached to Fabric Interconnect A and VSANs created and assigned to Fabric A). Repeat these steps for each storage target endpoint that you want zoned to the vHBA created and assigned to Fabric A.

The example here uses EMC VNX5700 storage. With EMC VNX technology, Service Processor A (SP-A) and Service Processor B (SP-B) each have multiple ports that are distributed across both fabrics. To be more specific, in this example, SP-A-10 and SPB-10 are physically attached to Cisco UCS Fabric Interconnect A, and SPA-11 and SPB-11 are physically attached to Cisco UCS Fabric Interconnect B.

The next window shows that storage target endpoints SPA-10 (50:06:01:62:47:24:30:ec) and SPB-10 (50:06:01:6a:47:24:30:ec) have been added, which are both attached to Fabric Interconnect A to the vHBA initiator group called Local_Zone_A.

Click OK.

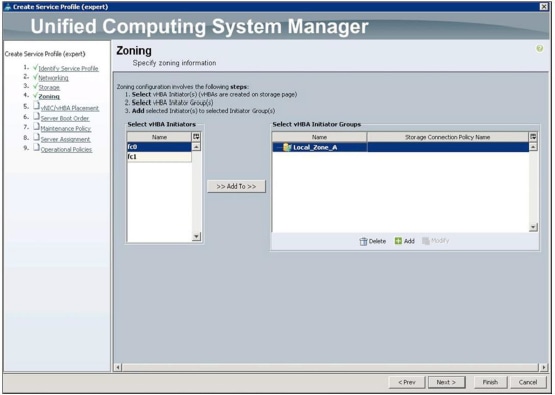

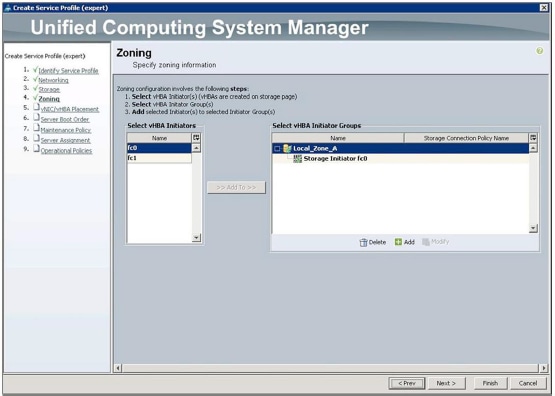

The Zoning window will now appear showing the vHBA initiator group just created. You now need to add the vHBA

to the vHBA initiator group. This step is what "zones" the host initiator to the storage targets.

You have been working with Fabric A, so click fc0 (vHBA0) in the Select vHBA Initiators section; this selection will become highlighted.

Next, click Local_Zone_A in the Select vHBA Initiator Groups section; it will now become highlighted.

When both fc0 (vHBA0) and Local_Zone_A are highlighted, the Add To button between the Select vHBA Initiators and Select vHBA Initiator Groups sections will become active.

Click the Add To button.

The fc0 (vHBA0) setting will now appear in the Local_Zone_A vHBA initiator group.

The local zone that includes fc0 (vHBA0) and the storage target endpoints has now been defined.

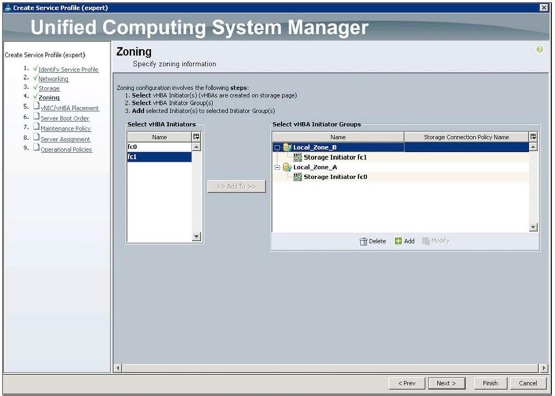

Repeat the preceding steps for Fabric B, creating Local_Zone_B, creating storage target endpoints that are connected to Fabric Interconnect B, specifying Path B, and choosing the VSAN created earlier on Fabric B. Repeat

these steps for each storage target endpoint that you want zoned to the vHBA created and assigned to Fabric B.

After the configuration is complete, the Zoning window will resemble the screen image shown here.

Local Zone A and Local Zone B have now been defined. Click Next.

Continue to the Server Boot Order page of the Create Service Profile (expert) wizard.

Note: This document assumes that the reader already knows how to configure local boot and boot from SAN.

Note: In a boot-from-SAN configuration, if the WWPNs of the storage target endpoints are the same as the storage target endpoints configured in the local zoning steps presented earlier, duplicate zones will be created, because zones are automatically created from the storage target endpoints specified in the Server Boot Order section of the service profile.

After you have completed the remaining sections of the Create Service Profile (expert) wizard, click the Finish button to save the new service profile.

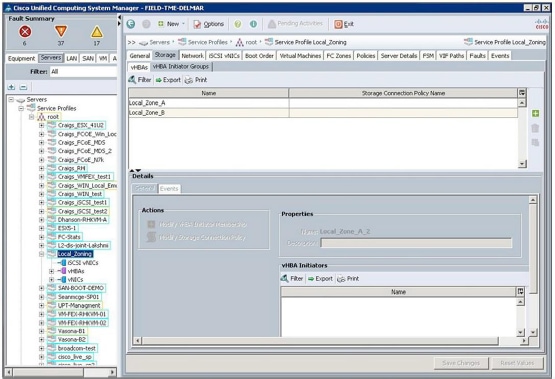

View and Modify vHBA Initiator Groups Using the Cisco UCS Manager GUI

To confirm or modify vHBA Initiator Groups in a completed service profile, select the Server tab in the Cisco UCS Manager main navigation window and expand the Servers node, Service Profiles node, and Root node (or other organization container) in the navigation tree; then select the service profile. In the main Cisco UCS Manager window, select the Storage tab and then select the vHBA Initiator Groups tab. The vHBA Initiator Groups previously created in the service profile creation process will appear in the main Cisco UCS Manager window.

You can view and modify the vHBA Initiator Groups from within this window. To view an existing vHBA Initiator Group, click the name of the group. The details of the vHBA Initiator Group will be displayed in the Details section in the lower part of the screen (use the scroll bar on the right in the Details screen to view the specific Storage Connection Policy details).

Clicking the vHBA Initiator Group names will activate the action buttons on the right side of the window (The Trash Can and the Modify button). You can add vHBA Initiator Groups to the service profile by clicking the green + button. To modify the existing vHBA Initiator Groups, click the Modify action button below the green + button.All the other details regarding the existing vHBA Initiator Groups can be modified in the Details section of the window.

View Service Profile Local Zones Using the Cisco UCS Manager GUI

Note: Zones are activated on the fabric interconnects only when the service profile is associated with hardware. If the service profile is not associated with hardware, the zones will not appear on the FC Zones tab or in the CLI. When a service profile has been disassociated with hardware, the zones previously activated on the fabric interconnects will be deactivated, resulting in the removal of the zones from the FC Zones tab and the CLI.

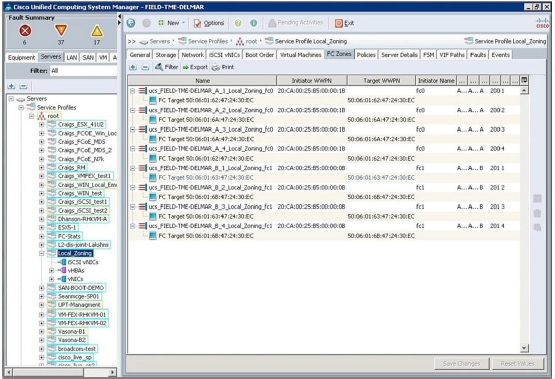

To view the local zones created for the service profile from within Cisco UCS Manager, select the Server tab in the Cisco UCS Manager main navigation window and expand the Servers node, Service Profiles node, and Root node (or other organization container) in the navigation tree; then select the service profile. In the main Cisco UCS Manager window, select the FC Zones tab; a list of zones associated with the service profile will appear.

Note: In the local zones list for this service profile, all zones have been duplicated. As mentioned earlier, this is expected when zones are created on the Zones page and those same initiators and targets are configured in the boot policy.

View Global Local Zones Using the Cisco UCS Manager GUI

To view the local zones created globally in this Cisco UCS domain, select the SAN tab in the navigation pane and select the top-level SAN container in the navigation tree. In the main Cisco UCS window, select the VSANs tab and then select the FC Zones tab. A list of all local zones created in this Cisco UCS domain will appear in the main Cisco UCS Manager window.

View Local Zones Using the CLI

Zones can be viewed from the Cisco UCS fabric interconnect CLI through the nxos scope.

Log into the fabric interconnect CLI through Secure Shell (SSH) or Telnet.

At the CLI prompt, enter the following commands:

FIELD-TME-DELMAR _A # connect nxos

Cisco Nexus Operating System (NX-OS) Software

TAC support: http://www.cisco.com/tac

Copyright (c) 2002-2012, Cisco Systems, Inc. All rights reserved.

The copyrights to certain works contained in this software are

owned by other third parties and used and distributed under license.

Certain components of this software are licensed under the GNU

General Public License (GPL) version 2.0 or the GNU Lesser General

Public License (LGPL) Version 2.1. A copy of each such license is

available at http://www.opensource.org/licenses/gpl-2.0.php and

http://www.opensource.org/licenses/lgpl-2.1.php

FIELD-TME-DELMAR _A (nxos)# show zone

zone name ucs_FIELD-TME-DELMAR_A_1_Local_Zoning_fc0 vsan 200

pwwn 20:ca:00:25:b5:00:00:1b

pwwn 50:06:01:62:47:24:30:ec

zone name ucs_FIELD-TME-DELMAR_A_2_Local_Zoning_fc0 vsan 200

pwwn 20:ca:00:25:b5:00:00:1b

pwwn 50:06:01:6a:47:24:30:ec

zone name ucs_FIELD-TME-DELMAR_A_3_Local_Zoning_fc0 vsan 200

pwwn 20:ca:00:25:b5:00:00:1b

pwwn 50:06:01:6a:47:24:30:ec

zone name ucs_FIELD-TME-DELMAR_A_4_Local_Zoning_fc0 vsan 200

pwwn 20:ca:00:25:b5:00:00:1b

pwwn 50:06:01:62:47:24:30:ec

FIELD-TME-DELMAR _A (nxos)#

The results of the show zone command will appear. Because this system currently contains only the local zones from the service profile created in this document, each zone is displayed in Single Initiator Single Target format

because this was the option chosen in the service profile.

If Single Target Multiple Targets had been chosen, the results of the show zone command would display the following:

zone name ucs_FIELD-TME-DELMAR_A_1_Local_Zoning_fc0 vsan 200

pwwn 20:ca:00:25:b5:00:00:1b

pwwn 50:06:01:62:47:24:30:ec

pwwn 50:06:01:6a:47:24:30:ec

zone name ucs_FIELD-TME-DELMAR_A_2_Local_Zoning_fc0 vsan 200

pwwn 20:ca:00:25:b5:00:00:1b

pwwn 50:06:01:62:47:24:30:ec

zone name ucs_FIELD-TME-DELMAR_A_3_Local_Zoning_fc0 vsan 200

pwwn 20:ca:00:25:b5:00:00:1b

pwwn 50:06:01:6a:47:24:30:ec

In this listing, the first zone shows three WWPNs: the WWPN of the vHBA initiator (pwwn 20:ca:00:25:b5:00:00:1b) and the two WWPNs of the storage targets (pwwn 50:06:01:62:47:24:30:ec and pwwn 50:06:01:6a:47:24:30:ec).

The next two zones are Single Initiator Single Target. These zones were automatically built by Cisco UCS for the storage targets specified in the boot policy. As mentioned earlier, these zones are duplicates. The Single Initiator Multiple Target zone would suffice; however, this is how the Cisco UCS zoning construct is currently designed.

To see the zones with an indication of whether the WWPNs are logged in, enter the following commands:

FIELD-TME-DELMAR _A(nxos)# show zone active

FIELD-TME-DELMAR _A (nxos)# show zone active

zone name ucs_FIELD-TME-DELMAR_A_1_Local_Zoning_fc0 vsan 200

* fcid 0xcf0001 [pwwn 20:ca:00:25:b5:00:00:1b]

* fcid 0xcf00ef [pwwn 50:06:01:62:47:24:30:ec]

zone name ucs_FIELD-TME-DELMAR_A_2_Local_Zoning_fc0 vsan 200

* fcid 0xcf0001 [pwwn 20:ca:00:25:b5:00:00:1b]

* fcid 0xcf01ef [pwwn 50:06:01:6a:47:24:30:ec]

zone name ucs_FIELD-TME-DELMAR_A_3_Local_Zoning_fc0 vsan 200

* fcid 0xcf0001 [pwwn 20:ca:00:25:b5:00:00:1b]

* fcid 0xcf01ef [pwwn 50:06:01:6a:47:24:30:ec]

zone name ucs_FIELD-TME-DELMAR_A_4_Local_Zoning_fc0 vsan 200

* fcid 0xcf0001 [pwwn 20:ca:00:25:b5:00:00:1b]

* fcid 0xcf00ef [pwwn 50:06:01:62:47:24:30:ec]

The listing shows the zones and the WWPNs that are active and logged in (an asterisk [*] precedes the fcid value of WWPNs that are logged in).

Deciphering the Zone Name

Consider the following zone name:

zone name ucs_ FIELD-TME-DELMAR_A _1_Local_Zoning_fc0 vsan 200

The name can be deciphered as follows:

- ucs: This is a Cisco UCS local zone. FIELD-TME-DELMAR_A: This zone is on Cisco UCS domain FIELD-TME-DELMAR on Fabric Interconnect A.

- 1: This is zone number 1. Zones are numbered sequentially starting with 1.

- Local_Zoning: This is the service profile name.

- fc0: This is the vHBA name in the service profile attached to Fabric A.

Cisco UCS Local Zoning Limitations

Note the following maximum values:

- Maximum number of zones: 8000 per VSAN and 8000 total per fabric.

- Maximum number of target ports per service profile: 64.

For More Information

See the "Configuring Fibre Channel Zoning" section of the Cisco UCS Manager GUI Configuration Guide:

http://www.cisco.com/en/US/products/ps10281/products_installation_and_configuration_guides_list.html.

Revision History

| Revision | Publish Date | Comments |

|---|---|---|

1.0 |

20-May-2013

|

Initial Release |

Contact Cisco

- Open a Support Case

- (Requires a Cisco Service Contract)

Feedback

Feedback