更換Ultra-M UCS 240M4伺服器中的主機板 — vEPC

下載選項

無偏見用語

本產品的文件集力求使用無偏見用語。針對本文件集的目的,無偏見係定義為未根據年齡、身心障礙、性別、種族身分、民族身分、性別傾向、社會經濟地位及交織性表示歧視的用語。由於本產品軟體使用者介面中硬式編碼的語言、根據 RFP 文件使用的語言,或引用第三方產品的語言,因此本文件中可能會出現例外狀況。深入瞭解思科如何使用包容性用語。

關於此翻譯

思科已使用電腦和人工技術翻譯本文件,讓全世界的使用者能夠以自己的語言理解支援內容。請注意,即使是最佳機器翻譯,也不如專業譯者翻譯的內容準確。Cisco Systems, Inc. 對這些翻譯的準確度概不負責,並建議一律查看原始英文文件(提供連結)。

目錄

簡介

本文檔介紹在託管StarOS虛擬網路功能(VNF)的Ultra-M設定中更換有故障的伺服器的主機板所需的步驟。

背景資訊

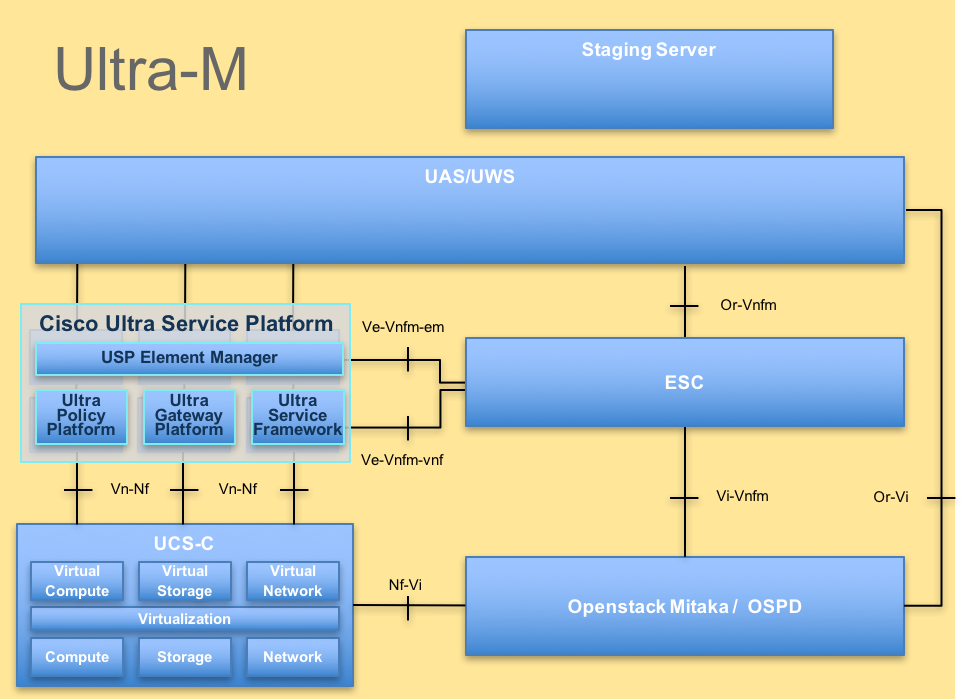

Ultra-M是經過預先打包和驗證的虛擬化移動資料包核心解決方案,旨在簡化VNF的部署。OpenStack是適用於Ultra-M的虛擬化基礎架構管理員(VIM),由以下節點型別組成:

- 計算

- 對象儲存磁碟 — 計算(OSD — 計算)

- 控制器

- OpenStack平台 — 導向器(OSPD)

Ultra-M的高級體系結構及涉及的元件如下圖所示:

UltraM體系結構

UltraM體系結構

本文檔面向熟悉Cisco Ultra-M平台的思科人員,詳細介紹在伺服器更換主機板時在OpenStack和StarOS VNF級別上需要執行的步驟。

附註:Ultra M 5.1.x版本用於定義本文檔中的過程。

縮寫

| VNF | 虛擬網路功能 |

| CF | 控制功能 |

| SF | 服務功能 |

| ESC | 彈性服務控制器 |

| 澳門幣 | 程式方法 |

| OSD | 對象儲存磁碟 |

| 硬碟 | 硬碟驅動器 |

| 固態硬碟 | 固態驅動器 |

| VIM | 虛擬基礎架構管理員 |

| 虛擬機器 | 虛擬機器 |

| EM | 元素管理器 |

| UAS | Ultra自動化服務 |

| UUID | 通用唯一識別符號 |

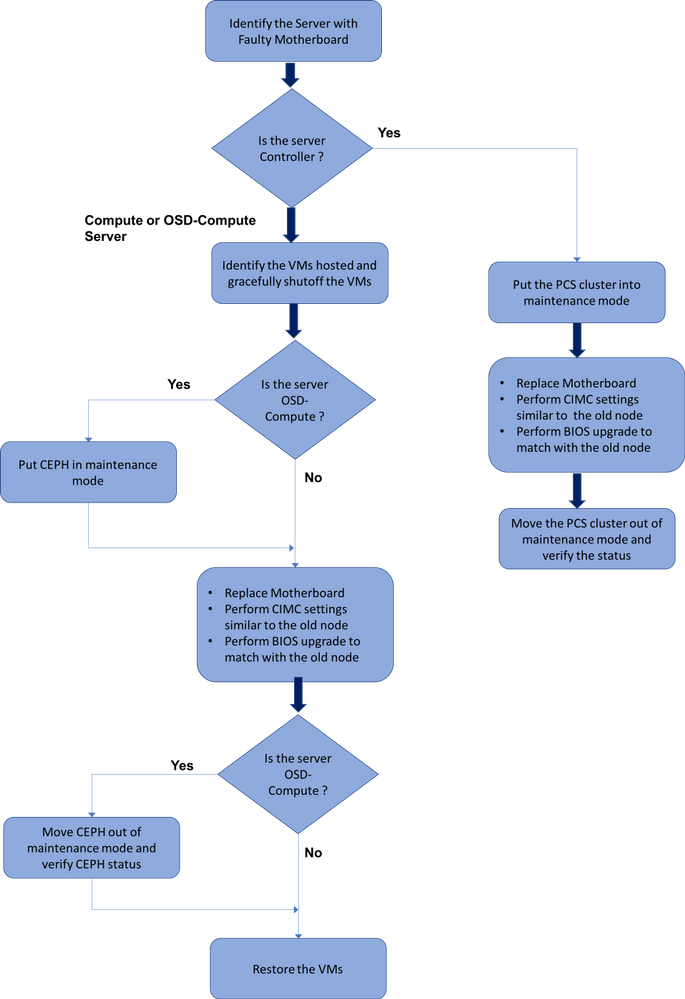

MoP的工作流程

替換過程的高級工作流

替換過程的高級工作流

Ultra-M設定中的主機板更換

在Ultra-M設定中,在以下伺服器型別中可能需要更換主機板:計算、OSD計算和控制器。

附註:更換主機板後,會更換安裝有OpenStack的啟動盤。因此,無需將節點重新新增到超雲中。更換活動結束後,一旦伺服器接通電源,它將自行註冊回重疊雲堆疊。

計算節點中的主機板更換

在活動之前,託管在「計算」節點中的VM會正常關閉。更換主機板後,VM將恢復回來。

確定計算節點中託管的VM

確定託管在Compute Server上的VM。可能發生兩種情況:

計算伺服器僅包含SF VM:

[stack@director ~]$ nova list --field name,host | grep compute-10

| 49ac5f22-469e-4b84-badc-031083db0533 | VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d |

pod1-compute-10.localdomain |

計算伺服器包含虛擬機器的CF/ESC/EM/UAS組合:

[stack@director ~]$ nova list --field name,host | grep compute-8

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | pod1-compute-8.localdomain |

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 | pod1-compute-8.localdomain |

| 75528898-ef4b-4d68-b05d-882014708694 | VNF2-ESC-ESC-0 | pod1-compute-8.localdomain |

| f5bd7b9c-476a-4679-83e5-303f0aae9309 | VNF2-UAS-uas-0 | pod1-compute-8.localdomain |

附註:此處顯示的輸出中,第一列與UUID相對應,第二列是VM名稱,第三列是存在VM的主機名。此輸出的引數將用於後續部分。

正常斷電

案例1.計算節點僅承載SF VM

登入到StarOS VNF並確定與SF VM對應的卡。使用從識別計算節點中託管的VM部分識別的SF VM的UUID,並識別與UUID對應的卡:

[local]VNF2# show card hardware

Tuesday might 08 16:49:42 UTC 2018

<snip>

Card 8:

Card Type : 4-Port Service Function Virtual Card

CPU Packages : 26 [#0, #1, #2, #3, #4, #5, #6, #7, #8, #9, #10, #11, #12, #13, #14, #15, #16, #17, #18, #19, #20, #21, #22, #23, #24, #25]

CPU Nodes : 2

CPU Cores/Threads : 26

Memory : 98304M (qvpc-di-large)

UUID/Serial Number : 49AC5F22-469E-4B84-BADC-031083DB0533

<snip>

檢查卡的狀態:

[local]VNF2# show card table

Tuesday might 08 16:52:53 UTC 2018

Slot Card Type Oper State SPOF Attach

----------- -------------------------------------- ------------- ---- ------

1: CFC Control Function Virtual Card Active No

2: CFC Control Function Virtual Card Standby -

3: FC 4-Port Service Function Virtual Card Active No

4: FC 4-Port Service Function Virtual Card Active No

5: FC 4-Port Service Function Virtual Card Active No

6: FC 4-Port Service Function Virtual Card Active No

7: FC 4-Port Service Function Virtual Card Active No

8: FC 4-Port Service Function Virtual Card Active No

9: FC 4-Port Service Function Virtual Card Active No

10: FC 4-Port Service Function Virtual Card Standby -

如果卡處於活動狀態,請將卡移至備用狀態:

[local]VNF2# card migrate from 8 to 10

登入到與VNF對應的ESC節點並檢查SF VM的狀態:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

<state>VM_ALIVE_STATE</state>

<vm_name> VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d</vm_name>

<state>VM_ALIVE_STATE</state>

<snip>

使用其VM名稱停止SF VM。(VM名稱,請參閱識別計算節點中託管的VM部分😞

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d

停止後,VM必須進入SHUTOFF狀態:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

<state>VM_ALIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

<state>VM_ALIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d</vm_name>

<state>VM_SHUTOFF_STATE</state>

<snip>

案例2.計算節點主機CF/ESC/EM/UAS

登入到StarOS VNF並確定與CF VM對應的卡。使用識別計算節點中託管的VM部分中標識的CF VM的UUID,並查詢與UUID對應的卡:

[local]VNF2# show card hardware

Tuesday might 08 16:49:42 UTC 2018

<snip>

Card 2:

Card Type : Control Function Virtual Card

CPU Packages : 8 [#0, #1, #2, #3, #4, #5, #6, #7]

CPU Nodes : 1

CPU Cores/Threads : 8

Memory : 16384M (qvpc-di-large)

UUID/Serial Number : F9C0763A-4A4F-4BBD-AF51-BC7545774BE2

<snip>

檢查卡的狀態:

[local]VNF2# show card table

Tuesday might 08 16:52:53 UTC 2018

Slot Card Type Oper State SPOF Attach

----------- -------------------------------------- ------------- ---- ------

1: CFC Control Function Virtual Card Standby -

2: CFC Control Function Virtual Card Active No

3: FC 4-Port Service Function Virtual Card Active No

4: FC 4-Port Service Function Virtual Card Active No

5: FC 4-Port Service Function Virtual Card Active No

6: FC 4-Port Service Function Virtual Card Active No

7: FC 4-Port Service Function Virtual Card Active No

8: FC 4-Port Service Function Virtual Card Active No

9: FC 4-Port Service Function Virtual Card Active No

10: FC 4-Port Service Function Virtual Card Standby -

如果卡處於活動狀態,請將卡移至備用狀態:

[local]VNF2# card migrate from 2 to 1

登入到與VNF對應的ESC節點並檢查VM的狀態:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

<state>VM_ALIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

<state>VM_ALIVE_STATE</state>

<deployment_name>VNF2-DEPLOYMENT-em</deployment_name>

<vm_id>507d67c2-1d00-4321-b9d1-da879af524f8</vm_id>

<vm_id>dc168a6a-4aeb-4e81-abd9-91d7568b5f7c</vm_id>

<vm_id>9ffec58b-4b9d-4072-b944-5413bf7fcf07</vm_id>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea</vm_name>

<state>VM_ALIVE_STATE</state>

<snip>

使用其VM名稱逐一停止CF和EM VM。(VM名稱,請參閱識別計算節點中託管的VM部分😞

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli vm-action STOP VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea

停止後,VM必須進入SHUTOFF狀態:

[admin@VNF2-esc-esc-0 ~]$ cd /opt/cisco/esc/esc-confd/esc-cli

[admin@VNF2-esc-esc-0 esc-cli]$ ./esc_nc_cli get esc_datamodel | egrep --color "<state>|<vm_name>|<vm_id>|<deployment_name>"

<snip>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229</vm_name>

<state>VM_SHUTOFF_STATE</state>

<vm_name>VNF2-DEPLOYM_c3_0_3e0db133-c13b-4e3d-ac14-

<state>VM_ALIVE_STATE</state>

<deployment_name>VNF2-DEPLOYMENT-em</deployment_name>

<vm_id>507d67c2-1d00-4321-b9d1-da879af524f8</vm_id>

<vm_id>dc168a6a-4aeb-4e81-abd9-91d7568b5f7c</vm_id>

<vm_id>9ffec58b-4b9d-4072-b944-5413bf7fcf07</vm_id>

<state>SERVICE_ACTIVE_STATE</state>

<vm_name>VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea</vm_name>

VM_SHUTOFF_STATE

<snip>

登入到計算節點中託管的ESC並檢查它是否處於主狀態。如果是,將ESC切換到備用模式:

[admin@VNF2-esc-esc-0 esc-cli]$ escadm status

0 ESC status=0 ESC Master Healthy

[admin@VNF2-esc-esc-0 ~]$ sudo service keepalived stop

Stopping keepalived: [ OK ]

[admin@VNF2-esc-esc-0 ~]$ escadm status

1 ESC status=0 In SWITCHING_TO_STOP state. Please check status after a while.

[admin@VNF2-esc-esc-0 ~]$ sudo reboot

Broadcast message from admin@vnf1-esc-esc-0.novalocal

(/dev/pts/0) at 13:32 ...

The system is going down for reboot NOW!

更換主機板

有關更換UCS C240 M4伺服器主機板的步驟,請參閱:Cisco UCS C240 M4伺服器安裝和服務指南

使用CIMC IP登入到伺服器。

如果韌體與以前使用的推薦版本不一致,請執行BIOS升級。此處提供了BIOS升級步驟:Cisco UCS C系列機架式伺服器BIOS升級指南

恢復虛擬機器

案例1.計算節點僅承載SF VM

SF VM在新星清單中處於錯誤狀態:

[stack@director ~]$ nova list |grep VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d

| 49ac5f22-469e-4b84-badc-031083db0533 | VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d | ERROR | - | NOSTATE |

從ESC恢復SF VM:

[admin@VNF2-esc-esc-0 ~]$ sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d

[sudo] password for admin:

Recovery VM Action

/opt/cisco/esc/confd/bin/netconf-console --port=830 --host=127.0.0.1 --user=admin --privKeyFile=/root/.ssh/confd_id_dsa --privKeyType=dsa --rpc=/tmp/esc_nc_cli.ZpRCGiieuW

<?xml version="1.0" encoding="UTF-8"?>

<rpc-reply xmlns="urn:ietf:params:xml:ns:netconf:base:1.0" message-id="1">

<ok/>

</rpc-reply>

監控yangesc.log:

admin@VNF2-esc-esc-0 ~]$ tail -f /var/log/esc/yangesc.log

…

14:59:50,112 07-Nov-2017 WARN Type: VM_RECOVERY_COMPLETE

14:59:50,112 07-Nov-2017 WARN Status: SUCCESS

14:59:50,112 07-Nov-2017 WARN Status Code: 200

14:59:50,112 07-Nov-2017 WARN Status Msg: Recovery: Successfully recovered VM [VNF2-DEPLOYM_s9_0_8bc6cc60-15d6-4ead-8b6a-10e75d0e134d].

確保SF卡在VNF中作為備用SF啟動。

案例2.計算節點承載UAS、ESC、EM和CF

恢復UAS虛擬機器

檢查UAS VM在新星清單中的狀態並將其刪除:

[stack@director ~]$ nova list | grep VNF2-UAS-uas-0

| 307a704c-a17c-4cdc-8e7a-3d6e7e4332fa | VNF2-UAS-uas-0 | ACTIVE | - | Running | VNF2-UAS-uas-orchestration=172.168.11.10; VNF2-UAS-uas-management=172.168.10.3

[stack@tb5-ospd ~]$ nova delete VNF2-UAS-uas-0

Request to delete server VNF2-UAS-uas-0 has been accepted.

要恢復AutoVNF-UAS虛擬機器,請運行UAS-check指令碼以檢查狀態。它必須報告錯誤。然後使用 — fix選項再次運行,以重新建立缺失的UAS VM:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts/

[stack@director scripts]$ ./uas-check.py auto-vnf VNF2-UAS

2017-12-08 12:38:05,446 - INFO: Check of AutoVNF cluster started

2017-12-08 12:38:07,925 - INFO: Instance 'vnf1-UAS-uas-0' status is 'ERROR'

2017-12-08 12:38:07,925 - INFO: Check completed, AutoVNF cluster has recoverable errors

[stack@director scripts]$ ./uas-check.py auto-vnf VNF2-UAS --fix

2017-11-22 14:01:07,215 - INFO: Check of AutoVNF cluster started

2017-11-22 14:01:09,575 - INFO: Instance VNF2-UAS-uas-0' status is 'ERROR'

2017-11-22 14:01:09,575 - INFO: Check completed, AutoVNF cluster has recoverable errors

2017-11-22 14:01:09,778 - INFO: Removing instance VNF2-UAS-uas-0'

2017-11-22 14:01:13,568 - INFO: Removed instance VNF2-UAS-uas-0'

2017-11-22 14:01:13,568 - INFO: Creating instance VNF2-UAS-uas-0' and attaching volume ‘VNF2-UAS-uas-vol-0'

2017-11-22 14:01:49,525 - INFO: Created instance ‘VNF2-UAS-uas-0'

登入到AutoVNF-UAS。等待幾分鐘,然後您會看到UAS返回正常狀態:

VNF2-autovnf-uas-0#show uas

uas version 1.0.1-1

uas state ha-active

uas ha-vip 172.17.181.101

INSTANCE IP STATE ROLE

-----------------------------------

172.17.180.6 alive CONFD-SLAVE

172.17.180.7 alive CONFD-MASTER

172.17.180.9 alive NA

恢復ESC虛擬機器

從新星清單中檢查ESC VM的狀態並將其刪除:

stack@director scripts]$ nova list |grep ESC-1

| c566efbf-1274-4588-a2d8-0682e17b0d41 | VNF2-ESC-ESC-1 | ACTIVE | - | Running | VNF2-UAS-uas-orchestration=172.168.11.14; VNF2-UAS-uas-management=172.168.10.4 |

[stack@director scripts]$ nova delete VNF2-ESC-ESC-1

Request to delete server VNF2-ESC-ESC-1 has been accepted.

在AutoVNF-UAS中查詢ESC部署事務,並在事務的日誌中查詢boot_vm.py命令列以建立ESC例項:

ubuntu@VNF2-uas-uas-0:~$ sudo -i

root@VNF2-uas-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on VNF2-uas-uas-0

VNF2-uas-uas-0#show transaction

TX ID TX TYPE DEPLOYMENT ID TIMESTAMP STATUS

-----------------------------------------------------------------------------------------------------------------------------

35eefc4a-d4a9-11e7-bb72-fa163ef8df2b vnf-deployment VNF2-DEPLOYMENT 2017-11-29T02:01:27.750692-00:00 deployment-success

73d9c540-d4a8-11e7-bb72-fa163ef8df2b vnfm-deployment VNF2-ESC 2017-11-29T01:56:02.133663-00:00 deployment-success

VNF2-uas-uas-0#show logs 73d9c540-d4a8-11e7-bb72-fa163ef8df2b | display xml

<config xmlns="http://tail-f.com/ns/config/1.0">

<logs xmlns="http://www.cisco.com/usp/nfv/usp-autovnf-oper">

<tx-id>73d9c540-d4a8-11e7-bb72-fa163ef8df2b</tx-id>

<log>2017-11-29 01:56:02,142 - VNFM Deployment RPC triggered for deployment: VNF2-ESC, deactivate: 0

2017-11-29 01:56:02,179 - Notify deployment

..

2017-11-29 01:57:30,385 - Creating VNFM 'VNF2-ESC-ESC-1' with [python //opt/cisco/vnf-staging/bootvm.py VNF2-ESC-ESC-1 --flavor VNF2-ESC-ESC-flavor --image 3fe6b197-961b-4651-af22-dfd910436689 --net VNF2-UAS-uas-management --gateway_ip 172.168.10.1 --net VNF2-UAS-uas-orchestration --os_auth_url http://10.1.2.5:5000/v2.0 --os_tenant_name core --os_username ****** --os_password ****** --bs_os_auth_url http://10.1.2.5:5000/v2.0 --bs_os_tenant_name core --bs_os_username ****** --bs_os_password ****** --esc_ui_startup false --esc_params_file /tmp/esc_params.cfg --encrypt_key ****** --user_pass ****** --user_confd_pass ****** --kad_vif eth0 --kad_vip 172.168.10.7 --ipaddr 172.168.10.6 dhcp --ha_node_list 172.168.10.3 172.168.10.6 --file root:0755:/opt/cisco/esc/esc-scripts/esc_volume_em_staging.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_volume_em_staging.sh --file root:0755:/opt/cisco/esc/esc-scripts/esc_vpc_chassis_id.py:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_vpc_chassis_id.py --file root:0755:/opt/cisco/esc/esc-scripts/esc-vpc-di-internal-keys.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc-vpc-di-internal-keys.sh

將boot_vm.py行儲存到shell指令碼檔案(esc.sh),並使用正確的資訊(通常為core/<PASSWORD>)更新所有使用者名稱*****和密碼*****行。 也需要刪除—encrypt_key選項。對於user_pass和user_confd_pass,您需要使用格式 — username:密碼(示例 — admin:<PASSWORD>)。

從running-config查詢bootvm.py的URL,並獲取bootvm.py檔案到AutoVNF UAS VM。在這種情況下,10.1.2.3是AutoIT VM的IP:

root@VNF2-uas-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on VNF2-uas-uas-0

VNF2-uas-uas-0#show running-config autovnf-vnfm:vnfm

…

configs bootvm

value http:// 10.1.2.3:80/bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

!

root@VNF2-uas-uas-0:~# wget http://10.1.2.3:80/bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

--2017-12-01 20:25:52-- http://10.1.2.3 /bundles/5.1.7-2007/vnfm-bundle/bootvm-2_3_2_155.py

Connecting to 10.1.2.3:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 127771 (125K) [text/x-python]

Saving to: ‘bootvm-2_3_2_155.py’

100%[=====================================================================================>] 127,771 --.-K/s in 0.001s

2017-12-01 20:25:52 (173 MB/s) - ‘bootvm-2_3_2_155.py’ saved [127771/127771]

建立/tmp/esc_params.cfg檔案:

root@VNF2-uas-uas-0:~# echo "openstack.endpoint=publicURL" > /tmp/esc_params.cfg

執行shell指令碼以便從UAS節點部署ESC:

root@VNF2-uas-uas-0:~# /bin/sh esc.sh

+ python ./bootvm.py VNF2-ESC-ESC-1 --flavor VNF2-ESC-ESC-flavor --image 3fe6b197-961b-4651-af22-dfd910436689

--net VNF2-UAS-uas-management --gateway_ip 172.168.10.1 --net VNF2-UAS-uas-orchestration --os_auth_url

http://10.1.2.5:5000/v2.0 --os_tenant_name core --os_username core --os_password <PASSWORD> --bs_os_auth_url

http://10.1.2.5:5000/v2.0 --bs_os_tenant_name core --bs_os_username core --bs_os_password <PASSWORD>

--esc_ui_startup false --esc_params_file /tmp/esc_params.cfg --user_pass admin:<PASSWORD> --user_confd_pass

admin:<PASSWORD> --kad_vif eth0 --kad_vip 172.168.10.7 --ipaddr 172.168.10.6 dhcp --ha_node_list 172.168.10.3

172.168.10.6 --file root:0755:/opt/cisco/esc/esc-scripts/esc_volume_em_staging.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_volume_em_staging.sh

--file root:0755:/opt/cisco/esc/esc-scripts/esc_vpc_chassis_id.py:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc_vpc_chassis_id.py

--file root:0755:/opt/cisco/esc/esc-scripts/esc-vpc-di-internal-keys.sh:/opt/cisco/usp/uas/autovnf/vnfms/esc-scripts/esc-vpc-di-internal-keys.sh

登入到新的ESC並驗證備份狀態:

ubuntu@VNF2-uas-uas-0:~$ ssh admin@172.168.11.14

…

####################################################################

# ESC on VNF2-esc-esc-1.novalocal is in BACKUP state.

####################################################################

[admin@VNF2-esc-esc-1 ~]$ escadm status

0 ESC status=0 ESC Backup Healthy

[admin@VNF2-esc-esc-1 ~]$ health.sh

============== ESC HA (BACKUP) ===================================================

ESC HEALTH PASSED

從ESC恢復CF和EM虛擬機器

從新星清單中檢查CF和EM VM的狀態。它們必須處於ERROR狀態:

[stack@director ~]$ source corerc

[stack@director ~]$ nova list --field name,host,status |grep -i err

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | None | ERROR|

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 |None | ERROR

登入到ESC主伺服器,為每個受影響的EM和CF VM運行recovery-vm-action。耐心點。ESC會安排恢復操作,此操作可能在幾分鐘內不會發生。監控yangesc.log:

sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO

[admin@VNF2-esc-esc-0 ~]$ sudo /opt/cisco/esc/esc-confd/esc-cli/esc_nc_cli recovery-vm-action DO VNF2-DEPLOYMENT-_VNF2-D_0_a6843886-77b4-4f38-b941-74eb527113a8

[sudo] password for admin:

Recovery VM Action

/opt/cisco/esc/confd/bin/netconf-console --port=830 --host=127.0.0.1 --user=admin --privKeyFile=/root/.ssh/confd_id_dsa --privKeyType=dsa --rpc=/tmp/esc_nc_cli.ZpRCGiieuW

<?xml version="1.0" encoding="UTF-8"?>

<rpc-reply xmlns="urn:ietf:params:xml:ns:netconf:base:1.0" message-id="1">

<ok/>

</rpc-reply>

[admin@VNF2-esc-esc-0 ~]$ tail -f /var/log/esc/yangesc.log

…

14:59:50,112 07-Nov-2017 WARN Type: VM_RECOVERY_COMPLETE

14:59:50,112 07-Nov-2017 WARN Status: SUCCESS

14:59:50,112 07-Nov-2017 WARN Status Code: 200

14:59:50,112 07-Nov-2017 WARN Status Msg: Recovery: Successfully recovered VM [VNF2-DEPLOYMENT-_VNF2-D_0_a6843886-77b4-4f38-b941-74eb527113a8]

登入到新EM並驗證EM狀態是否為up:

ubuntu@VNF2vnfddeploymentem-1:~$ /opt/cisco/ncs/current/bin/ncs_cli -u admin -C

admin connected from 172.17.180.6 using ssh on VNF2vnfddeploymentem-1

admin@scm# show ems

EM VNFM

ID SLA SCM PROXY

---------------------

2 up up up

3 up up up

登入到StarOS VNF並驗證CF卡是否處於備用狀態。

處理ESC恢復失敗

如果ESC由於意外狀態而無法啟動VM,建議通過重新啟動主ESC執行ESC切換。ESC切換大約需要一分鐘。在新的主ESC上運行health.sh指令碼以檢查狀態是否為up。主ESC啟動VM並修復VM狀態。完成此恢復任務最多需要5分鐘。

您可以監控/var/log/esc/yangesc.log和/var/log/esc/escmanager.log。如果您在5-7分鐘之後沒有看到虛擬機器被恢復,則使用者需要手動恢復受影響的虛擬機器。

OSD計算節點中的主機板更換

在活動之前,託管在「計算」節點中的VM將正常關閉,並且Ceph將進入維護模式。更換主機板後,VM會恢復回來,Ceph會移出維護模式。

將Ceph置於維護模式

驗證伺服器中的ceph osd樹狀態是否為up。

[heat-admin@pod1-osd-compute-1 ~]$ sudo ceph osd tree

ID WEIGHT TYPE NAME UP/DOWN REWEIGHT PRIMARY-AFFINITY

-1 13.07996 root default

-2 4.35999 host pod1-osd-compute-0

0 1.09000 osd.0 up 1.00000 1.00000

3 1.09000 osd.3 up 1.00000 1.00000

6 1.09000 osd.6 up 1.00000 1.00000

9 1.09000 osd.9 up 1.00000 1.00000

-3 4.35999 host pod1-osd-compute-2

1 1.09000 osd.1 up 1.00000 1.00000

4 1.09000 osd.4 up 1.00000 1.00000

7 1.09000 osd.7 up 1.00000 1.00000

10 1.09000 osd.10 up 1.00000 1.00000

-4 4.35999 host pod1-osd-compute-1

2 1.09000 osd.2 up 1.00000 1.00000

5 1.09000 osd.5 up 1.00000 1.00000

8 1.09000 osd.8 up 1.00000 1.00000

11 1.09000 osd.11 up 1.00000 1.00000

登入到OSD Compute節點,並將Ceph置於維護模式。

[root@pod1-osd-compute-1 ~]# sudo ceph osd set norebalance

[root@pod1-osd-compute-1 ~]# sudo ceph osd set noout

[root@pod1-osd-compute-1 ~]# sudo ceph status

cluster eb2bb192-b1c9-11e6-9205-525400330666

health HEALTH_WARN

noout,norebalance,sortbitwise,require_jewel_osds flag(s) set

monmap e1: 3 mons at {pod1-controller-0=11.118.0.40:6789/0,pod1-controller-1=11.118.0.41:6789/0,pod1-controller-2=11.118.0.42:6789/0}

election epoch 58, quorum 0,1,2 pod1-controller-0,pod1-controller-1,pod1-controller-2

osdmap e194: 12 osds: 12 up, 12 in

flags noout,norebalance,sortbitwise,require_jewel_osds

pgmap v584865: 704 pgs, 6 pools, 531 GB data, 344 kobjects

1585 GB used, 11808 GB / 13393 GB avail

704 active+clean

client io 463 kB/s rd, 14903 kB/s wr, 263 op/s rd, 542 op/s wr

附註:刪除Ceph後,VNF HD RAID進入「降級」狀態,但HDD必須仍然可以訪問。

確定Osd-Compute節點中託管的VM

確定OSD計算伺服器上託管的VM。可能發生兩種情況:

osd-compute伺服器包含VM的元素管理器(EM)/UAS/自動部署/自動IT組合:

[stack@director ~]$ nova list --field name,host | grep osd-compute-0

| c6144778-9afd-4946-8453-78c817368f18 | AUTO-DEPLOY-VNF2-uas-0 | pod1-osd-compute-0.localdomain |

| 2d051522-bce2-4809-8d63-0c0e17f251dc | AUTO-IT-VNF2-uas-0 | pod1-osd-compute-0.localdomain |

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | pod1-osd-compute-0.localdomain |

| f5bd7b9c-476a-4679-83e5-303f0aae9309 | VNF2-UAS-uas-0 | pod1-osd-compute-0.localdomain |

計算伺服器包含控制功能(CF)/彈性服務控制器(ESC)/元素管理器(EM)/(UAS)組合VM:

[stack@director ~]$ nova list --field name,host | grep osd-compute-1

| 507d67c2-1d00-4321-b9d1-da879af524f8 | VNF2-DEPLOYM_XXXX_0_c8d98f0f-d874-45d0-af75-88a2d6fa82ea | pod1-compute-8.localdomain |

| f9c0763a-4a4f-4bbd-af51-bc7545774be2 | VNF2-DEPLOYM_c1_0_df4be88d-b4bf-4456-945a-3812653ee229 | pod1-compute-8.localdomain |

| 75528898-ef4b-4d68-b05d-882014708694 | VNF2-ESC-ESC-0 | pod1-compute-8.localdomain |

| f5bd7b9c-476a-4679-83e5-303f0aae9309 | VNF2-UAS-uas-0 | pod1-compute-8.localdomain |

附註:此處顯示的輸出中,第一列與UUID相對應,第二列是VM名稱,第三列是存在VM的主機名。此輸出的引數將在後續章節中使用。

正常斷電

案例1. OSD計算節點主機CF/ESC/EM/UAS

無論CF/ESC/EM/UAS VM是託管在Compute節點還是OSD-Compute節點中,其優雅的強大功能的過程都是相同的。按照Compute Node中的Motherboard Replacement中的步驟正常關閉VM。

案例2. OSD計算節點託管自動部署/自動執行/EM/UAS

備份自動部署的CDB

定期或在每次啟用/取消啟用後備份autodeploy confd cdb資料並將檔案儲存到備份伺服器。自動部署不是冗餘的,如果此資料丟失,您將無法正常停用部署。

登入到AutoDeploy VM並備份confd cdb目錄。

ubuntu@auto-deploy-iso-2007-uas-0:~ $sudo -i

root@auto-deploy-iso-2007-uas-0:~#service uas-confd stop

uas-confd stop/waiting

root@auto-deploy-iso-2007-uas-0:~# cd /opt/cisco/usp/uas/confd-6.3.1/var/confd

root@auto-deploy-iso-2007-uas-0:/opt/cisco/usp/uas/confd-6.3.1/var/confd#tar cvf autodeploy_cdb_backup.tar cdb/

cdb/

cdb/O.cdb

cdb/C.cdb

cdb/aaa_init.xml

cdb/A.cdb

root@auto-deploy-iso-2007-uas-0:~# service uas-confd start

uas-confd start/running, process 13852

註:opy autodeploy_cdb_backup.tar用於備份伺服器。

從自動IT備份System.cfg

備份system.cfg檔案以備份伺服器:

Auto-it = 10.1.1.2

Backup server = 10.2.2.2

[stack@director ~]$ ssh ubuntu@10.1.1.2

ubuntu@10.1.1.2's password:

Welcome to Ubuntu 14.04.3 LTS (GNU/Linux 3.13.0-76-generic x86_64)

* Documentation: https://help.ubuntu.com/

System information as of Wed Jun 13 16:21:34 UTC 2018

System load: 0.02 Processes: 87

Usage of /: 15.1% of 78.71GB Users logged in: 0

Memory usage: 13% IP address for eth0: 172.16.182.4

Swap usage: 0%

Graph this data and manage this system at:

https://landscape.canonical.com/

Get cloud support with Ubuntu Advantage Cloud Guest:

http://www.ubuntu.com/business/services/cloud

Cisco Ultra Services Platform (USP)

Build Date: Wed Feb 14 12:58:22 EST 2018

Description: UAS build assemble-uas#1891

sha1: bf02ced

ubuntu@auto-it-vnf-uas-0:~$ scp -r /opt/cisco/usp/uploads/system.cfg root@10.2.2.2:/home/stack

root@10.2.2.2's password:

system.cfg 100% 565 0.6KB/s 00:00

ubuntu@auto-it-vnf-uas-0:~$

附註:無論VM是託管在Compute節點還是OSD-Compute節點中,EM/UAS虛擬機器正常運行的過程都是相同的。

按照Compute Node中的Motherboard Replacement中的步驟,正常關閉這些VM的電源。

更換主機板

有關更換UCS C240 M4伺服器主機板的步驟,請參閱:Cisco UCS C240 M4伺服器安裝和服務指南

使用CIMC IP登入到伺服器。

如果韌體與以前使用的推薦版本不一致,請執行BIOS升級。此處提供了BIOS升級步驟:Cisco UCS C系列機架式伺服器BIOS升級指南

將Ceph移出維護模式

登入到OSD Compute節點,並將Ceph從維護模式中移出。

[root@pod1-osd-compute-1 ~]# sudo ceph osd unset norebalance

[root@pod1-osd-compute-1 ~]# sudo ceph osd unset noout

[root@pod1-osd-compute-1 ~]# sudo ceph status

cluster eb2bb192-b1c9-11e6-9205-525400330666

health HEALTH_OK

monmap e1: 3 mons at {pod1-controller-0=11.118.0.40:6789/0,pod1-controller-1=11.118.0.41:6789/0,pod1-controller-2=11.118.0.42:6789/0}

election epoch 58, quorum 0,1,2 pod1-controller-0,pod1-controller-1,pod1-controller-2

osdmap e196: 12 osds: 12 up, 12 in

flags sortbitwise,require_jewel_osds

pgmap v584954: 704 pgs, 6 pools, 531 GB data, 344 kobjects

1585 GB used, 11808 GB / 13393 GB avail

704 active+clean

client io 12888 kB/s wr, 0 op/s rd, 81 op/s wr

恢復虛擬機器

案例1. OSD計算節點主機CF、ESC、EM和UAS

CF/ESC/EM/UAS VM的恢復過程是相同的,無論這些VM是託管在Compute節點還是OSD-Compute節點中。按照案例2中的步驟。計算節點主機CF/ESC/EM/UAS以還原虛擬機器。

案例2. OSD計算節點託管自動it、自動部署、EM和UAS

恢復自動部署VM

在OSPD中,如果自動部署虛擬機器受影響,但仍顯示活動/正在運行,則需要首先將其刪除。如果自動部署未受影響,請跳至自動it虛擬機器的恢復:

[stack@director ~]$ nova list |grep auto-deploy

| 9b55270a-2dcd-4ac1-aba3-bf041733a0c9 | auto-deploy-ISO-2007-uas-0 | ACTIVE | - | Running | mgmt=172.16.181.12, 10.1.2.7 [stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director ~]$ ./auto-deploy-booting.sh --floating-ip 10.1.2.7 --delete

刪除自動部署後,使用相同的floatingip地址重新創建它:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director scripts]$ ./auto-deploy-booting.sh --floating-ip 10.1.2.7

2017-11-17 07:05:03,038 - INFO: Creating AutoDeploy deployment (1 instance(s)) on 'http://10.84.123.4:5000/v2.0' tenant 'core' user 'core', ISO 'default'

2017-11-17 07:05:03,039 - INFO: Loading image 'auto-deploy-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2' from '/opt/cisco/usp/uas-installer/images/usp-uas-1.0.1-1504.qcow2'

2017-11-17 07:05:14,603 - INFO: Loaded image 'auto-deploy-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2'

2017-11-17 07:05:15,787 - INFO: Assigned floating IP '10.1.2.7' to IP '172.16.181.7'

2017-11-17 07:05:15,788 - INFO: Creating instance 'auto-deploy-ISO-5-1-7-2007-uas-0'

2017-11-17 07:05:42,759 - INFO: Created instance 'auto-deploy-ISO-5-1-7-2007-uas-0'

2017-11-17 07:05:42,759 - INFO: Request completed, floating IP: 10.1.2.7

從備份伺服器複製Autodeploy.cfg檔案、ISO和confd_backup tar檔案以自動部署VM,並從備份tar檔案中還原confd cdb檔案:

ubuntu@auto-deploy-iso-2007-uas-0:~# sudo -i

ubuntu@auto-deploy-iso-2007-uas-0:# service uas-confd stop

uas-confd stop/waiting

root@auto-deploy-iso-2007-uas-0:# cd /opt/cisco/usp/uas/confd-6.3.1/var/confd

root@auto-deploy-iso-2007-uas-0:/opt/cisco/usp/uas/confd-6.3.1/var/confd# tar xvf /home/ubuntu/ad_cdb_backup.tar

cdb/

cdb/O.cdb

cdb/C.cdb

cdb/aaa_init.xml

cdb/A.cdb

root@auto-deploy-iso-2007-uas-0~# service uas-confd start

uas-confd start/running, process 2036

通過檢查以前的事務來驗證confd是否已正確載入。使用新的osd-compute名稱更新autodeploy.cfg。請參閱部分 — 最後步驟:更新自動部署配置。

root@auto-deploy-iso-2007-uas-0:~# confd_cli -u admin -C

Welcome to the ConfD CLI

admin connected from 127.0.0.1 using console on auto-deploy-iso-2007-uas-0

auto-deploy-iso-2007-uas-0#show transaction

SERVICE SITE

DEPLOYMENT SITE TX AUTOVNF VNF AUTOVNF

TX ID TX TYPE ID DATE AND TIME STATUS ID ID ID ID TX ID

-------------------------------------------------------------------------------------------------------------------------------------

1512571978613 service-deployment tb5bxb 2017-12-06T14:52:59.412+00:00 deployment-success

auto-deploy-iso-2007-uas-0# exit

恢復自動IT虛擬機器

在OSPD中,如果自動轉換虛擬機器受到影響,但仍顯示為活動/運行,則需要將其刪除。如果auto-it未受影響,請跳至下一個:

[stack@director ~]$ nova list |grep auto-it

| 580faf80-1d8c-463b-9354-781ea0c0b352 | auto-it-vnf-ISO-2007-uas-0 | ACTIVE | - | Running | mgmt=172.16.181.3, 10.1.2.8 [stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director ~]$ ./ auto-it-vnf-staging.sh --floating-ip 10.1.2.8 --delete

通過運行自動IT-VNF暫存指令碼重新建立自動IT:

[stack@director ~]$ cd /opt/cisco/usp/uas-installer/scripts

[stack@director scripts]$ ./auto-it-vnf-staging.sh --floating-ip 10.1.2.8

2017-11-16 12:54:31,381 - INFO: Creating StagingServer deployment (1 instance(s)) on 'http://10.84.123.4:5000/v2.0' tenant 'core' user 'core', ISO 'default'

2017-11-16 12:54:31,382 - INFO: Loading image 'auto-it-vnf-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2' from '/opt/cisco/usp/uas-installer/images/usp-uas-1.0.1-1504.qcow2'

2017-11-16 12:54:51,961 - INFO: Loaded image 'auto-it-vnf-ISO-5-1-7-2007-usp-uas-1.0.1-1504.qcow2'

2017-11-16 12:54:53,217 - INFO: Assigned floating IP '10.1.2.8' to IP '172.16.181.9'

2017-11-16 12:54:53,217 - INFO: Creating instance 'auto-it-vnf-ISO-5-1-7-2007-uas-0'

2017-11-16 12:55:20,929 - INFO: Created instance 'auto-it-vnf-ISO-5-1-7-2007-uas-0'

2017-11-16 12:55:20,930 - INFO: Request completed, floating IP: 10.1.2.8

重新載入ISO映像。在這種情況下,自動IT IP位址為10.1.2.8。這將需要幾分鐘來載入:

[stack@director ~]$ cd images/5_1_7-2007/isos

[stack@director isos]$ curl -F file=@usp-5_1_7-2007.iso http://10.1.2.8:5001/isos

{

"iso-id": "5.1.7-2007"

}

to check the ISO image:

[stack@director isos]$ curl http://10.1.2.8:5001/isos

{

"isos": [

{

"iso-id": "5.1.7-2007"

}

]

}

將VNF system.cfg檔案從OSPD自動部署目錄複製到自動IT虛擬機器:

[stack@director autodeploy]$ scp system-vnf* ubuntu@10.1.2.8:.

ubuntu@10.1.2.8's password:

system-vnf1.cfg 100% 1197 1.2KB/s 00:00

system-vnf2.cfg 100% 1197 1.2KB/s 00:00

ubuntu@auto-it-vnf-iso-2007-uas-0:~$ pwd

/home/ubuntu

ubuntu@auto-it-vnf-iso-2007-uas-0:~$ ls

system-vnf1.cfg system-vnf2.cfg

附註:無論虛擬機器是託管在電腦中還是託管在OSD-Compute中,EM和UAS虛擬機器的恢復過程都是相同的。按照Replace Motherboard in Compute Node中的步驟,正常關閉這些VM的電源。

更換控制器節點中的主機板

驗證控制器狀態並將群集置於維護模式

在OSPD中,登入到控制器並驗證PC是否處於正常狀態 — 所有三個控制器都處於聯機狀態,Galera會將所有三個控制器顯示為主控制器。

[heat-admin@pod1-controller-0 ~]$ sudo pcs status

Cluster name: tripleo_cluster

Stack: corosync

Current DC: pod1-controller-2 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Mon Dec 4 00:46:10 2017 Last change: Wed Nov 29 01:20:52 2017 by hacluster via crmd on pod1-controller-0

3 nodes and 22 resources configured

Online: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Full list of resources:

ip-11.118.0.42 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-11.119.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

ip-11.120.0.49 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.102 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

ip-11.120.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: rabbitmq-clone [rabbitmq]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: redis-master [redis]

Masters: [ pod1-controller-2 ]

Slaves: [ pod1-controller-0 pod1-controller-1 ]

ip-10.84.123.35 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

openstack-cinder-volume (systemd:openstack-cinder-volume): Started pod1-controller-2

my-ipmilan-for-controller-0 (stonith:fence_ipmilan): Started pod1-controller-0

my-ipmilan-for-controller-1 (stonith:fence_ipmilan): Started pod1-controller-0

my-ipmilan-for-controller-2 (stonith:fence_ipmilan): Started pod1-controller-0

Daemon Status:

corosync: active/enabled

pacemaker: active/enabled

pcsd: active/enabled

將群集置於維護模式:

[heat-admin@pod1-controller-0 ~]$ sudo pcs cluster standby

[heat-admin@pod1-controller-0 ~]$ sudo pcs status

Cluster name: tripleo_cluster

Stack: corosync

Current DC: pod1-controller-2 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Mon Dec 4 00:48:24 2017 Last change: Mon Dec 4 00:48:18 2017 by root via crm_attribute on pod1-controller-0

3 nodes and 22 resources configured

Node pod1-controller-0: standby

Online: [ pod1-controller-1 pod1-controller-2 ]

Full list of resources:

ip-11.118.0.42 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-11.119.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

ip-11.120.0.49 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.102 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-1 pod1-controller-2 ]

Stopped: [ pod1-controller-0 ]

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-1 pod1-controller-2 ]

Slaves: [ pod1-controller-0 ]

ip-11.120.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: rabbitmq-clone [rabbitmq]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: redis-master [redis]

Masters: [ pod1-controller-2 ]

Slaves: [ pod1-controller-1 ]

Stopped: [ pod1-controller-0 ]

ip-10.84.123.35 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

openstack-cinder-volume (systemd:openstack-cinder-volume): Started pod1-controller-2

my-ipmilan-for-controller-0 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-controller-1 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-controller-2 (stonith:fence_ipmilan): Started pod1-controller-2

更換主機板

有關更換UCS C240 M4伺服器主機板的步驟,請參閱:Cisco UCS C240 M4伺服器安裝和服務指南

使用CIMC IP登入到伺服器。

如果韌體與以前使用的推薦版本不一致,請執行BIOS升級。此處提供了BIOS升級步驟:Cisco UCS C系列機架式伺服器BIOS升級指南

還原群集狀態

登入已受影響的控制器,通過設定unstandby來移除待機模式。驗證控制器是否與群集聯機,Galera將全部三個控制器顯示為主控制器。這可能需要幾分鐘時間。

[heat-admin@pod1-controller-0 ~]$ sudo pcs cluster unstandby

[heat-admin@pod1-controller-0 ~]$ sudo pcs status

Cluster name: tripleo_cluster

Stack: corosync

Current DC: pod1-controller-2 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Mon Dec 4 01:08:10 2017 Last change: Mon Dec 4 01:04:21 2017 by root via crm_attribute on pod1-controller-0

3 nodes and 22 resources configured

Online: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Full list of resources:

ip-11.118.0.42 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-11.119.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

ip-11.120.0.49 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.102 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

ip-11.120.0.47 (ocf::heartbeat:IPaddr2): Started pod1-controller-2

Clone Set: rabbitmq-clone [rabbitmq]

Started: [ pod1-controller-0 pod1-controller-1 pod1-controller-2 ]

Master/Slave Set: redis-master [redis]

Masters: [ pod1-controller-2 ]

Slaves: [ pod1-controller-0 pod1-controller-1 ]

ip-10.84.123.35 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

openstack-cinder-volume (systemd:openstack-cinder-volume): Started pod1-controller-2

my-ipmilan-for-controller-0 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-controller-1 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-controller-2 (stonith:fence_ipmilan): Started pod1-controller-2

Daemon Status:

corosync: active/enabled

pacemaker: active/enabled

pcsd: active/enabled

修訂記錄

| 修訂 | 發佈日期 | 意見 |

|---|---|---|

1.0 |

22-Aug-2018

|

初始版本 |

由思科工程師貢獻

- Padmaraj Ramanoudjam and思科進階服務

- Partheeban Rajagopal思科進階服務

意見

意見