简介

本文档介绍Cisco IOS® XE设备上的基本配置和故障排除。

先决条件

要求

Cisco 建议您了解以下主题:

使用的组件

本文档中的信息基于以下软件和硬件版本:

- 运行软件03.16.00.S的ASR1004

- 运行软件3.16.03.S的CSR100v(VXE)

本文档中的信息都是基于特定实验室环境中的设备编写的。本文档中使用的所有设备最初均采用原始(默认)配置。如果您的网络处于活动状态,请确保您了解所有命令的潜在影响。

背景信息

虚拟可扩展LAN (VXLAN)作为数据中心互联(DCI)解决方案越来越受欢迎。VXLAN功能用于在第3层/公共路由域上提供第2层扩展。本文档讨论Cisco IOS XE设备上的基本配置和故障排除。

本文档的“配置”和“验证”部分涵盖两个场景:

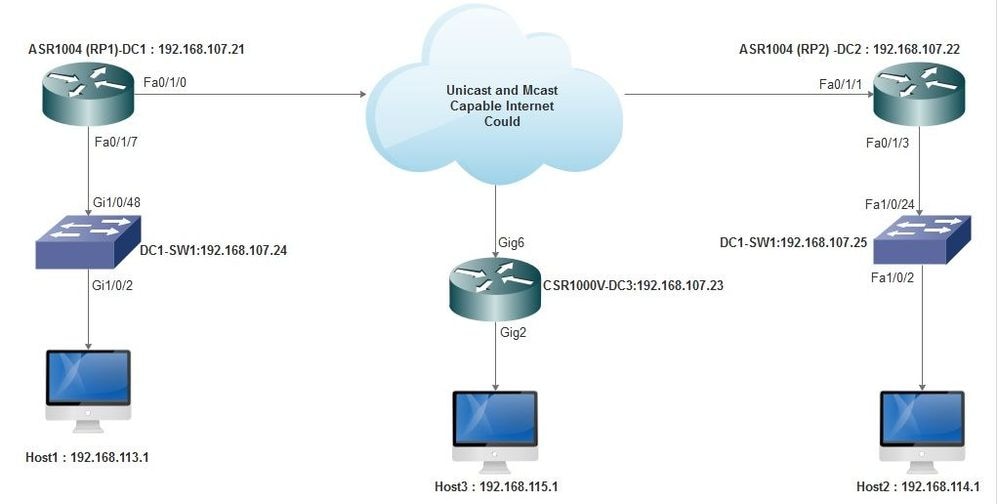

- 方案A描述了组播模式下三个数据中心之间的VXLAN配置。

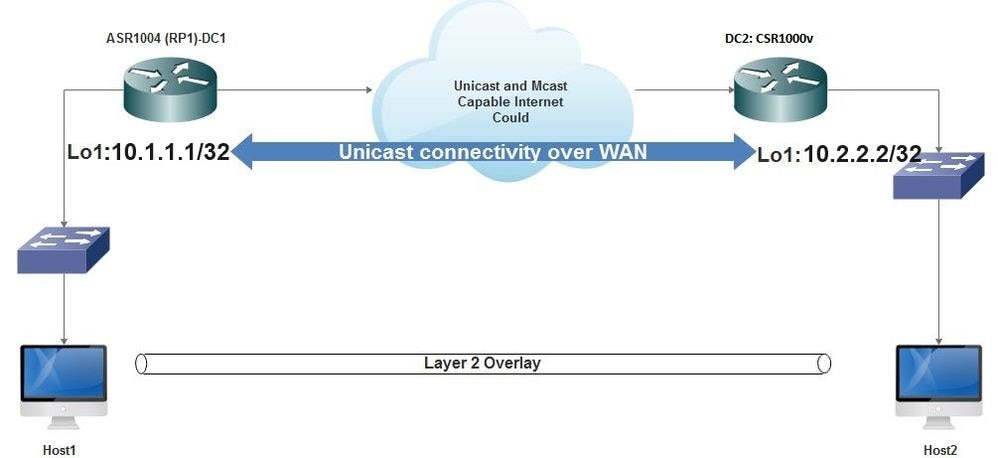

- 方案B描述了单播模式下两个数据中心之间的VXLAN配置。

配置

场景A:在组播模式的三个数据中心之间配置VXLAN

基本配置

组播模式需要站点之间的单播和组播连接。本配置指南使用开放最短路径优先(OSPF)提供单播连接,使用双向协议无关组播(PIM)提供组播连接。

以下是所有三个数据中心组播操作模式的基本配置:

!

DC1#show run | sec ospf

router ospf 1

network 10.1.1.1 0.0.0.0 area 0

network 10.10.10.4 0.0.0.3 area 0

!

PIM双向配置:

!

DC1#show run | sec pim

ip pim bidir-enable

ip pim send-rp-discovery scope 10

ip pim bsr-candidate Loopback1 0

ip pim rp-candidate Loopback1 group-list 10 bidir

!

access-list 10 permit 239.0.0.0 0.0.0.255

!

DC1#

!

此外,在所有L3接口下启用PIM稀疏模式,包括环回:

!

DC1#show run interface lo1

Building configuration...

Current configuration : 83 bytes

!

interface Loopback1

ip address 10.1.1.1 255.255.255.255

ip pim sparse-mode

end

另外,请确保您的设备上已启用组播路由,并且您看到正在填充的组播路由表。

网络图

支持单播和组播的互联网

支持单播和组播的互联网

DC1(VTEP1)配置

!

!

Vxlan udp port 1024

!

Interface Loopback1

ip address 10.1.1.1 255.255.255.255

ip pim sparse-mode

!

在桥接域配置下定义VNI成员和成员接口:

!

bridge-domain 1

member vni 6001

member FastEthernet0/1/7 service-instance 1

!

创建网络虚拟接口(NVE)并定义需要通过WAN扩展到其他数据中心的VNI成员:

!

interface nve1

no ip address

shut

member vni 6001 mcast-group 10.0.0.10

!

source-interface Loopback1

!

在LAN接口(即连接LAN网络的接口)上创建服务实例,以覆盖特定VLAN(802.1q标记流量)-在本例中为VLAN 1:

!

interface FastEthernet0/1/7

no ip address

negotiation auto

cdp enable

no shut

!

在通过重叠网络发送流量之前删除VLAN标记,并在返回流量发送到VLAN之后推送标记:

!

service instance 1 ethernet

encapsulation unagged

!

DC2(VTEP2)配置

!

!

Vxlan udp port 1024

!

interface Loopback1

ip address 10.2.2.2 255.255.255.255

ip pim sparse-mode

!

!

bridge-domain 1

member vni 6001

member FastEthernet0/1/3 service-instance 1

!

!

interface nve1

no ip address

member vni 6001 mcast-group 10.0.0.10

!

source-interface Loopback1

shut

!

!

interface FastEthernet0/1/3

no ip address

negotiation auto

cdp enable

no shut

!

service instance 1 ethernet

encapsulation untagged

!

DC3(VTEP3)配置

!

!

Vxlan udp port 1024

!

interface Loopback1

ip address 10.3.3.3 255.255.255.255

ip pim sparse-mode

!

!

bridge-domain 1

member vni 6001

member GigabitEthernet2 service-instance 1

!

interface nve1

no ip address

shut

member vni 6001 mcast-group 10.0.0.10

!

source-interface Loopback1

!

interface gig2

no ip address

negotiation auto

cdp enable

no shut

!

service instance 1 ethernet

encapsulation untagged

!

场景B:以单播模式配置两个数据中心之间的VXLAN

网络图

WAN上的单播连接

WAN上的单播连接

DC1配置

!

interface nve1

no ip address

member vni 6001

! ingress replication shold be configured as peer data centers loopback IP address.

!

ingress-replication 10.2.2.2

!

source-interface Loopback1

!

!

interface gig0/2/1

no ip address

negotiation auto

cdp enable

!

service instance 1 ethernet

encapsulation untagged

!

!

!

bridge-domain 1

member vni 6001

member gig0/2/1 service-instance 1

DC2配置

!

interface nve1

no ip address

member vni 6001

ingress-replication 10.1.1.1

!

source-interface Loopback1

!

!

interface gig5

no ip address

negotiation auto

cdp enable

!

service instance 1 ethernet

encapsulation untagged

!

!

bridge-domain 1

member vni 6001

member gig5 service-instance 1

验证

场景A:在组播模式的三个数据中心之间配置VXLAN

完成场景A的配置后,每个数据中心内连接的主机必须能够在同一广播域内相互通信。

使用以下命令验证配置。场景B下提供了一些示例。

Router#show nve vni

Router#show nve vni interface nve1

Router#show nve interface nve1

Router#show nve interface nve1 detail

Router#show nve peers

场景B:以单播模式配置两个数据中心之间的VXLAN

在DC1上:

DC1#show nve vni

Interface VNI Multicast-group VNI state

nve1 6001 N/A Up

DC1#show nve interface nve1 detail

Interface: nve1, State: Admin Up, Oper Up Encapsulation: Vxlan

source-interface: Loopback1 (primary:10.1.1.1 vrf:0)

Pkts In Bytes In Pkts Out Bytes Out

60129 6593586 55067 5303698

DC1#show nve peers

Interface Peer-IP VNI Peer state

nve1 10.2.2.2 6000 -

在DC2上:

DC2#show nve vni

Interface VNI Multicast-group VNI state

nve1 6000 N/A Up

DC2#show nve interface nve1 detail

Interface: nve1, State: Admin Up, Oper Up Encapsulation: Vxlan

source-interface: Loopback1 (primary:10.2.2.2 vrf:0)

Pkts In Bytes In Pkts Out Bytes Out

70408 7921636 44840 3950835

DC2#show nve peers

Interface Peer-IP VNI Peer state

nve 10.1.1.1 6000 Up

DC2#show bridge-domain 1

Bridge-domain 1 (3 ports in all)

State: UP Mac learning: Enabled

Aging-Timer: 300 second(s)

BDI1 (up)

GigabitEthernet0/2/1 service instance 1

vni 6001

AED MAC address Policy Tag Age Pseudoport

0 7CAD.74FF.2F66 forward dynamic 281 nve1.VNI6001, VxLAN src: 10.1.1.1 dst: 10.2.2.2

0 B838.6130.DA80 forward dynamic 288 nve1.VNI6001, VxLAN src: 10.1.1.1 dst: 10.2.2.2

0 0050.56AD.1AD8 forward dynamic 157 nve1.VNI6001, VxLAN src: 10.1.1.1 dst: 10.2.2.2

故障排除

“验证”部分所述的命令提供基本故障排除步骤。当系统不工作时,这些额外的诊断程序可能会非常有用。

注意:其中某些诊断可能导致内存和CPU使用率增加。

调试诊断

#debug nve error

*Jan 4 20:00:54.993: NVE-MGR-PEER ERROR: Intf state force down successful for mcast nodes cast nodes

*Jan 4 20:00:54.993: NVE-MGR-PEER ERROR: Intf state force down successful for mcast nodes cast nodes

*Jan 4 20:00:54.995: NVE-MGR-PEER ERROR: Intf state force down successful for peer nodes eer nodes

*Jan 4 20:00:54.995: NVE-MGR-PEER ERROR: Intf state force down successful for peer nodes

#show nve log error

[01/01/70 00:04:34.130 UTC 1 3] NVE-MGR-STATE ERROR: vni 6001: error in create notification to Tunnel

[01/01/70 00:04:34.314 UTC 2 3] NVE-MGR-PEER ERROR: Intf state force up successful for mcast nodes

[01/01/70 00:04:34.326 UTC 3 3] NVE-MGR-PEER ERROR: Intf state force up successful for peer nodes

[01/01/70 01:50:59.650 UTC 4 3] NVE-MGR-PEER ERROR: Intf state force down successful for mcast nodes

[01/01/70 01:50:59.654 UTC 5 3] NVE-MGR-PEER ERROR: Intf state force down successful for peer nodes

[01/01/70 01:50:59.701 UTC 6 3] NVE-MGR-PEER ERROR: Intf state force up successful for mcast nodes

[01/01/70 01:50:59.705 UTC 7 3] NVE-MGR-PEER ERROR: Intf state force up successful for peer nodes

[01/01/70 01:54:55.166 UTC 8 61] NVE-MGR-PEER ERROR: Intf state force down successful for mcast nodes

[01/01/70 01:54:55.168 UTC 9 61] NVE-MGR-PEER ERROR: Intf state force down successful for peer nodes

[01/01/70 01:55:04.432 UTC A 3] NVE-MGR-PEER ERROR: Intf state force up successful for mcast nodes

[01/01/70 01:55:04.434 UTC B 3] NVE-MGR-PEER ERROR: Intf state force up successful for peer nodes

[01/01/70 01:55:37.670 UTC C 61] NVE-MGR-PEER ERROR: Intf state force down successful for mcast nodes

#show nve log event

[01/04/70 19:48:51.883 UTC 1DD16 68] NVE-MGR-DB: Return vni 6001 for pi_hdl[0x437C9B68]

[01/04/70 19:48:51.884 UTC 1DD17 68] NVE-MGR-DB: Return pd_hdl[0x1020010] for pi_hdl[0x437C9B68]

[01/04/70 19:48:51.884 UTC 1DD18 68] NVE-MGR-DB: Return vni 6001 for pi_hdl[0x437C9B68]

[01/04/70 19:49:01.884 UTC 1DD19 68] NVE-MGR-DB: Return pd_hdl[0x1020010] for pi_hdl[0x437C9B68]

[01/04/70 19:49:01.884 UTC 1DD1A 68] NVE-MGR-DB: Return vni 6001 for pi_hdl[0x437C9B68]

[01/04/70 19:49:01.885 UTC 1DD1B 68] NVE-MGR-DB: Return pd_hdl[0x1020010] for pi_hdl[0x437C9B68]

[01/04/70 19:49:01.885 UTC 1DD1C 68] NVE-MGR-DB: Return vni 6001 for pi_hdl[0x437C9B68]

[01/04/70 19:49:11.886 UTC 1DD1D 68] NVE-MGR-DB: Return pd_hdl[0x1020010] for pi_hdl[0x437C9B68]

[01/04/70 19:49:11.886 UTC 1DD1E 68] NVE-MGR-DB: Return vni 6001 for pi_hdl[0x437C9B68]

[01/04/70 19:49:11.887 UTC 1DD1F 68] NVE-MGR-DB: Return pd_hdl[0x1020010] for pi_hdl[0x437C9B68]

[01/04/70 19:49:11.887 UTC 1DD20 68] NVE-MGR-DB: Return vni 6001 for pi_hdl[0x437C9B68]

[01/04/70 19:49:21.884 UTC 1DD21 68] NVE-MGR-DB: Return pd_hdl[0x1020010] for pi_hdl[0x437C9B68]

嵌入式数据包捕获

Cisco IOS XE软件中提供的嵌入式数据包捕获(EPC)功能可提供更多故障排除信息。

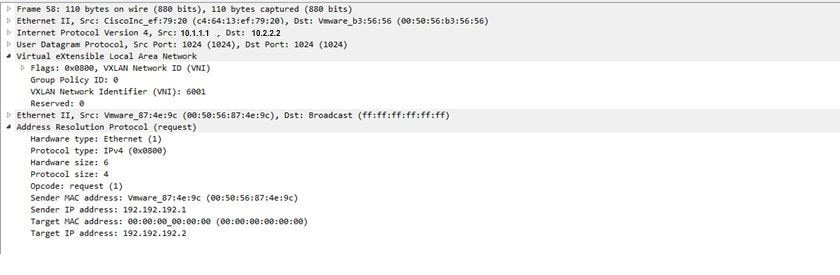

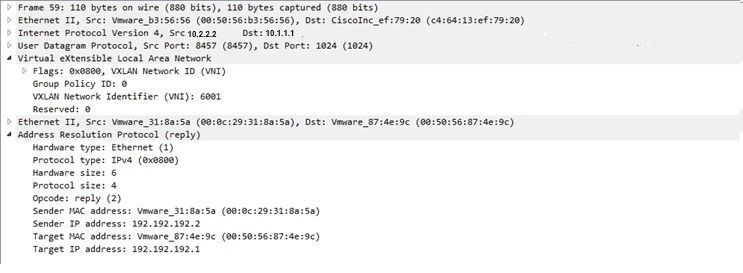

例如,此捕获说明数据包是由VXLAN封装的:

EPC配置(TEST_ACL是用于过滤捕获数据的访问列表):

#monitor capture TEST access-list TEST_ACL interface gigabitEthernet0/2/0 both #monitor capture TEST buffer size 10 #monitor capture TEST start

以下是产生的数据包转储:

# show monitor capture TEST buffer dump # monitor capture TEST export bootflash:TEST.pcap // with this command you can export the capture in pcap format to the bootflash, which can be downloaded and opened in wireshark.

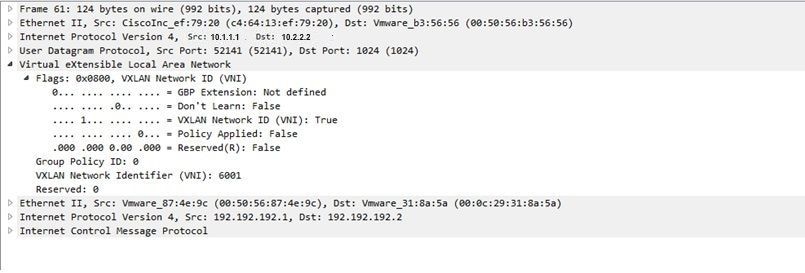

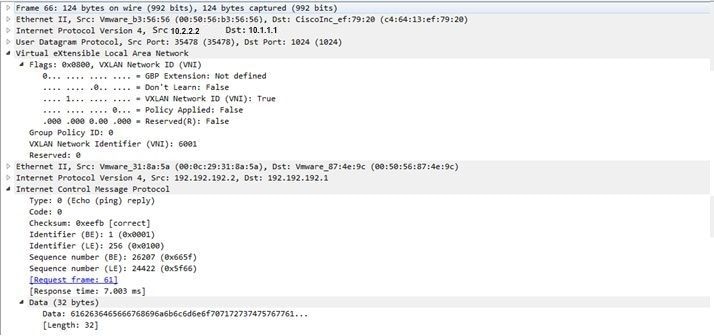

以下示例说明了简单互联网控制消息协议(ICMP)如何在VXLAN上工作。

通过VXLAN重叠发送的地址解析协议(ARP):

ARP响应:

ICMP请求:

ICMP响应:

其他调试和故障排除命令

本部分还介绍了一些调试和故障排除命令。

在本例中,调试的突出显示部分显示NVE接口无法加入组播组。因此,未为VNI 6002启用VXLAN封装。这些调试结果指向网络中的组播问题。

#debug nve all

*Jan 5 06:13:55.844: NVE-MGR-DB: creating mcast node for 10.0.0.10

*Jan 5 06:13:55.846: NVE-MGR-MCAST: IGMP add for (0.0.0.0,10.0.0.10) was failure

*Jan 5 06:13:55.846: NVE-MGR-DB ERROR: Unable to join mcast core tree

*Jan 5 06:13:55.846: NVE-MGR-DB ERROR: Unable to join mcast core tree

*Jan 5 06:13:55.846: NVE-MGR-STATE ERROR: vni 6002: error in create notification to mcast

*Jan 5 06:13:55.846: NVE-MGR-STATE ERROR: vni 6002: error in create notification to mcast

*Jan 5 06:13:55.849: NVE-MGR-TUNNEL: Tunnel Endpoint 10.0.0.10 added

*Jan 5 06:13:55.849: NVE-MGR-TUNNEL: Endpoint 10.0.0.10 added

*Jan 5 06:13:55.851: NVE-MGR-EI: Notifying BD engine of VNI 6002 create

*Jan 5 06:13:55.857: NVE-MGR-DB: Return vni 6002 for pi_hdl[0x437C9B28]

*Jan 5 06:13:55.857: NVE-MGR-EI: VNI 6002: BD state changed to up, vni state to Down

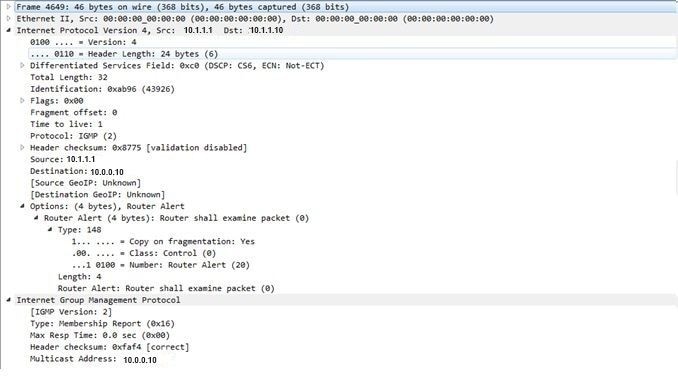

以下是互联网组管理协议(IGMP)成员身份报告,可在VNI加入组播组后发送:

如果组播按预期运行,此示例显示在NVE下为组播模式配置VNI后的预期调试结果:

*Jan 5 06:19:20.335: NVE-MGR-DB: [IF 0x14]VNI node creation

*Jan 5 06:19:20.335: NVE-MGR-DB: VNI Node created [437C9B28]

*Jan 5 06:19:20.336: NVE-MGR-PD: VNI 6002 create notification to PD

*Jan 5 06:19:20.336: NVE-MGR-PD: VNI 6002 Create notif successful, map [pd 0x1020017] to [pi 0x437C9B28]

*Jan 5 06:19:20.336: NVE-MGR-DB: creating mcast node for 10.0.0.10

*Jan 5 06:19:20.342: NVE-MGR-MCAST: IGMP add for (0.0.0.0,10.0.0.10) was successful

*Jan 5 06:19:20.345: NVE-MGR-TUNNEL: Tunnel Endpoint 10.0.0.10 added

*Jan 5 06:19:20.345: NVE-MGR-TUNNEL: Endpoint 10.0.0.10 added

*Jan 5 06:19:20.347: NVE-MGR-EI: Notifying BD engine of VNI 6002 create

*Jan 5 06:19:20.347: NVE-MGR-DB: Return pd_hdl[0x1020017] for pi_hdl[0x437C9B28]

*Jan 5 06:19:20.347: NVE-MGR-DB: Return vni 6002 for pi_hdl[0x437C9B28]

*Jan 5 06:19:20.349: NVE-MGR-DB: Return vni state Create for pi_hdl[0x437C9B28]

*Jan 5 06:19:20.349: NVE-MGR-DB: Return vni state Create for pi_hdl[0x437C9B28]

*Jan 5 06:19:20.349: NVE-MGR-DB: Return vni 6002 for pi_hdl[0x437C9B28]

*Jan 5 06:19:20.351: NVE-MGR-EI: L2FIB query for info 0x437C9B28

*Jan 5 06:19:20.351: NVE-MGR-EI: PP up notification for bd_id 3

*Jan 5 06:19:20.351: NVE-MGR-DB: Return vni 6002 for pi_hdl[0x437C9B28]

*Jan 5 06:19:20.352: NVE-MGR-STATE: vni 6002: Notify clients of state change Create to Up

*Jan 5 06:19:20.352: NVE-MGR-DB: Return vni 6002 for pi_hdl[0x437C9B28]

*Jan 5 06:19:20.353: NVE-MGR-PD: VNI 6002 Create to Up State update to PD successful

*Jan 5 06:19:20.353: NVE-MGR-EI: VNI 6002: BD state changed to up, vni state to Up

*Jan 5 06:19:20.353: NVE-MGR-STATE: vni 6002: No state change Up

*Jan 5 06:19:20.353: NVE-MGR-STATE: vni 6002: New State as a result of create Up

相关信息