Remplacement du serveur contrôleur UCS C240 M4 - vEPC

Options de téléchargement

Langage exempt de préjugés

Dans le cadre de la documentation associée à ce produit, nous nous efforçons d’utiliser un langage exempt de préjugés. Dans cet ensemble de documents, le langage exempt de discrimination renvoie à une langue qui exclut la discrimination en fonction de l’âge, des handicaps, du genre, de l’appartenance raciale de l’identité ethnique, de l’orientation sexuelle, de la situation socio-économique et de l’intersectionnalité. Des exceptions peuvent s’appliquer dans les documents si le langage est codé en dur dans les interfaces utilisateurs du produit logiciel, si le langage utilisé est basé sur la documentation RFP ou si le langage utilisé provient d’un produit tiers référencé. Découvrez comment Cisco utilise le langage inclusif.

À propos de cette traduction

Cisco a traduit ce document en traduction automatisée vérifiée par une personne dans le cadre d’un service mondial permettant à nos utilisateurs d’obtenir le contenu d’assistance dans leur propre langue. Il convient cependant de noter que même la meilleure traduction automatisée ne sera pas aussi précise que celle fournie par un traducteur professionnel.

Table des matières

Introduction

Ce document décrit les étapes requises pour remplacer un serveur de contrôleur défectueux dans une configuration Ultra-M qui héberge des fonctions de réseau virtuel (VNF) StarOS.

Informations générales

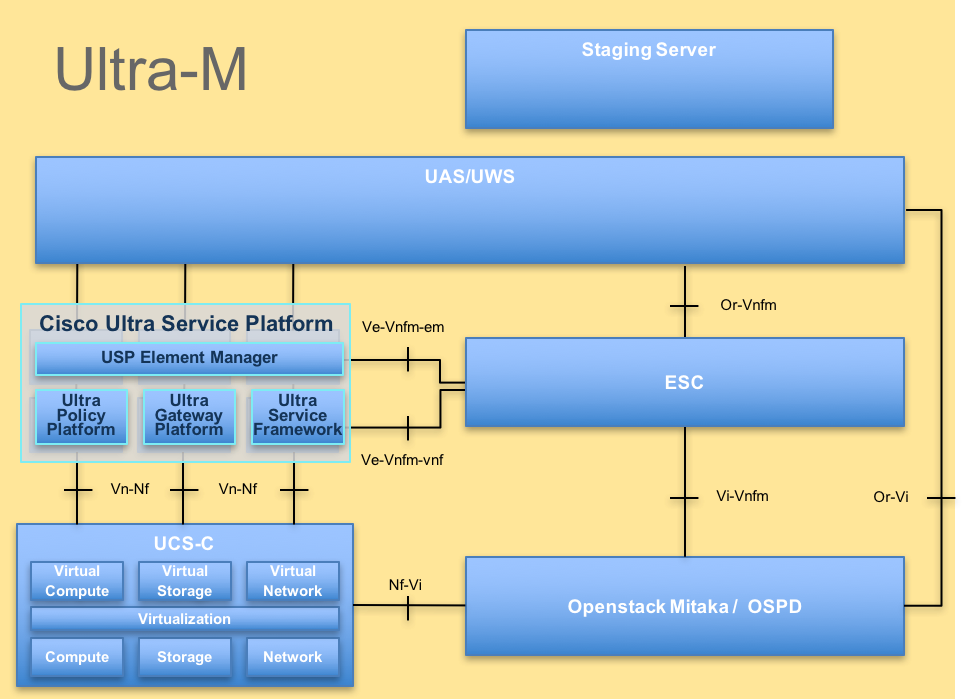

Ultra-M est une solution de coeur de réseau de paquets mobile virtualisée préemballée et validée, conçue pour simplifier le déploiement des VNF. OpenStack est le gestionnaire d'infrastructure virtualisée (VIM) pour Ultra-M et se compose des types de noeuds suivants :

- Calculer

- Disque de stockage d'objets - Calcul (OSD - Calcul)

- Contrôleur

- Plate-forme OpenStack - Director (OSPD)

L'architecture de haut niveau d'Ultra-M et les composants impliqués sont représentés dans cette image :

Architecture UltraM

Architecture UltraM

Ce document est destiné au personnel Cisco qui connaît bien la plate-forme Cisco Ultra-M et détaille les étapes qui doivent être effectuées au niveau OpenStack et StarOS VNF au moment du remplacement du serveur de contrôleur.

Remarque : La version Ultra M 5.1.x est prise en compte afin de définir les procédures dans ce document.

Abréviations

| VNF | Fonction de réseau virtuel |

| CF | Fonction De Commande |

| SF | Fonction de service |

| ESC | Contrôleur de service élastique |

| SERPILLIÈRE | Méthode de procédure |

| OSD | Disques de stockage d'objets |

| HDD | Disque dur |

| SSD | Disque dur SSD |

| VIM | Gestionnaire d'infrastructure virtuelle |

| VM | Machine virtuelle |

| EM | Gestionnaire d'éléments |

| SAMU | Services d’automatisation ultra |

| UUID | Identificateur Universally Unique |

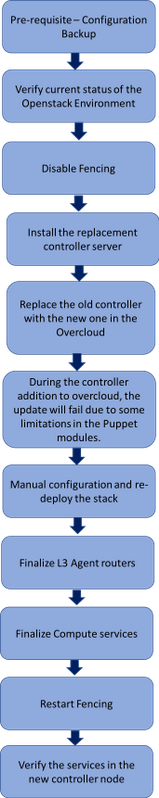

Flux de travail de la musique d'attente

Workflow de haut niveau de la procédure de remplacement

Workflow de haut niveau de la procédure de remplacement

Conditions préalables

Sauvegarde

En cas de récupération, Cisco recommande d'effectuer une sauvegarde de la base de données OSPD (DB) en procédant comme suit :

[root@director ~]# mysqldump --opt --all-databases > /root/undercloud-all-databases.sql

[root@director ~]# tar --xattrs -czf undercloud-backup-`date +%F`.tar.gz /root/undercloud-all-databases.sql

/etc/my.cnf.d/server.cnf /var/lib/glance/images /srv/node /home/stack

tar: Removing leading `/' from member names

Vérification préliminaire du statut

Il est important de vérifier l'état actuel de l'environnement et des services OpenStack et de s'assurer qu'ils sont sains avant de poursuivre la procédure de remplacement. Il peut aider à éviter les complications au moment du processus de remplacement du contrôleur.

- Vérifiez l'état d'OpenStack et la liste des noeuds :

[stack@director ~]$ source stackrc

[stack@director ~]$ openstack stack list --nested

[stack@director ~]$ ironic node-list

[stack@director ~]$ nova list

- Vérifiez l'état du Pacemaker sur les contrôleurs :

Connectez-vous à l'un des contrôleurs actifs et vérifiez l'état du stimulateur. Tous les services doivent être exécutés sur les contrôleurs disponibles et arrêtés sur le contrôleur défaillant.

[stack@pod1-controller-0 ~]# pcs status

<snip>

Online: [ pod1-controller-0 pod1-controller-1 ]

OFFLINE: [ pod1-controller-2 ]

Full list of resources:

ip-11.120.0.109 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

ip-172.25.22.109 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.107 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-0 pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-0 pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

ip-11.120.0.110 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

ip-11.119.0.110 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

Clone Set: rabbitmq-clone [rabbitmq]

Started: [ pod1-controller-0 pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

Master/Slave Set: redis-master [redis]

Masters: [ pod1-controller-0 ]

Slaves: [ pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

ip-11.118.0.104 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

openstack-cinder-volume (systemd:openstack-cinder-volume): Started pod1-controller-0

my-ipmilan-for-controller-6 (stonith:fence_ipmilan): Started pod1-controller-1

my-ipmilan-for-controller-4 (stonith:fence_ipmilan): Started pod1-controller-0

my-ipmilan-for-controller-7 (stonith:fence_ipmilan): Started pod1-controller-0

Failed Actions:

Daemon Status:

corosync: active/enabled

pacemaker: active/enabled

pcsd: active/enabled

Dans cet exemple, Controller-2 est hors ligne. Il sera donc remplacé par le texte suivant : Les contrôleurs 0 et 1 sont opérationnels et exécutent les services de cluster.

- Vérifiez l'état MariaDB dans les contrôleurs actifs :

[stack@director] nova list | grep control

| 4361358a-922f-49b5-89d4-247a50722f6d | pod1-controller-0 | ACTIVE | - | Running | ctlplane=192.200.0.102 |

| d0f57f27-93a8-414f-b4d8-957de0d785fc | pod1-controller-1 | ACTIVE | - | Running | ctlplane=192.200.0.110 |

[stack@director ~]$ for i in 192.200.0.102 192.200.0.110 ; do echo "*** $i ***" ; ssh heat-admin@$i "sudo mysql --exec=\"SHOW STATUS LIKE 'wsrep_local_state_comment'\" ; sudo mysql --exec=\"SHOW STATUS LIKE 'wsrep_cluster_size'\""; done

*** 192.200.0.152 ***

Variable_name Value

wsrep_local_state_comment Synced

Variable_name Value

wsrep_cluster_size 2

*** 192.200.0.154 ***

Variable_name Value

wsrep_local_state_comment Synced

Variable_name Value

wsrep_cluster_size 2

Vérifiez que ces lignes sont présentes pour chaque contrôleur actif :

wsrep_local_state_comment : Synchronisé

wsrep_cluster_size : 2

- Vérifiez l'état Rabbitmq dans les contrôleurs actifs. Le contrôleur défaillant ne doit pas apparaître dans la liste des noeuds en cours d'exécution.

[heat-admin@pod1-controller-0 ~] sudo rabbitmqctl cluster_status

Cluster status of node 'rabbit@pod1-controller-0' ...

[{nodes,[{disc,['rabbit@pod1-controller-0','rabbit@pod1-controller-1',

'rabbit@pod1-controller-2']}]},

{running_nodes,['rabbit@pod1-controller-1',

'rabbit@pod1-controller-0']},

{cluster_name,<<"rabbit@pod1-controller-2.localdomain">>},

{partitions,[]},

{alarms,[{'rabbit@pod1-controller-1',[]},

{'rabbit@pod1-controller-0',[]}]}]

[heat-admin@pod1-controller-1 ~] sudo rabbitmqctl cluster_status

Cluster status of node 'rabbit@pod1-controller-1' ...

[{nodes,[{disc,['rabbit@pod1-controller-0','rabbit@pod1-controller-1',

'rabbit@pod1-controller-2']}]},

{running_nodes,['rabbit@pod1-controller-0',

'rabbit@pod1-controller-1']},

{cluster_name,<<"rabbit@pod1-controller-2.localdomain">>},

{partitions,[]},

{alarms,[{'rabbit@pod1-controller-0',[]},

{'rabbit@pod1-controller-1',[]}]}]

- Vérifiez si tous les services sous-cloud sont en état chargé, actif et en cours d'exécution à partir du noeud OSP-D.

[stack@director ~]$ systemctl list-units "openstack*" "neutron*" "openvswitch*"

UNIT LOAD ACTIVE SUB DESCRIPTION

neutron-dhcp-agent.service loaded active running OpenStack Neutron DHCP Agent

neutron-openvswitch-agent.service loaded active running OpenStack Neutron Open vSwitch Agent

neutron-ovs-cleanup.service loaded active exited OpenStack Neutron Open vSwitch Cleanup Utility

neutron-server.service loaded active running OpenStack Neutron Server

openstack-aodh-evaluator.service loaded active running OpenStack Alarm evaluator service

openstack-aodh-listener.service loaded active running OpenStack Alarm listener service

openstack-aodh-notifier.service loaded active running OpenStack Alarm notifier service

openstack-ceilometer-central.service loaded active running OpenStack ceilometer central agent

openstack-ceilometer-collector.service loaded active running OpenStack ceilometer collection service

openstack-ceilometer-notification.service loaded active running OpenStack ceilometer notification agent

openstack-glance-api.service loaded active running OpenStack Image Service (code-named Glance) API server

openstack-glance-registry.service loaded active running OpenStack Image Service (code-named Glance) Registry server

openstack-heat-api-cfn.service loaded active running Openstack Heat CFN-compatible API Service

openstack-heat-api.service loaded active running OpenStack Heat API Service

openstack-heat-engine.service loaded active running Openstack Heat Engine Service

openstack-ironic-api.service loaded active running OpenStack Ironic API service

openstack-ironic-conductor.service loaded active running OpenStack Ironic Conductor service

openstack-ironic-inspector-dnsmasq.service loaded active running PXE boot dnsmasq service for Ironic Inspector

openstack-ironic-inspector.service loaded active running Hardware introspection service for OpenStack Ironic

openstack-mistral-api.service loaded active running Mistral API Server

openstack-mistral-engine.service loaded active running Mistral Engine Server

openstack-mistral-executor.service loaded active running Mistral Executor Server

openstack-nova-api.service loaded active running OpenStack Nova API Server

openstack-nova-cert.service loaded active running OpenStack Nova Cert Server

openstack-nova-compute.service loaded active running OpenStack Nova Compute Server

openstack-nova-conductor.service loaded active running OpenStack Nova Conductor Server

openstack-nova-scheduler.service loaded active running OpenStack Nova Scheduler Server

openstack-swift-account-reaper.service loaded active running OpenStack Object Storage (swift) - Account Reaper

openstack-swift-account.service loaded active running OpenStack Object Storage (swift) - Account Server

openstack-swift-container-updater.service loaded active running OpenStack Object Storage (swift) - Container Updater

openstack-swift-container.service loaded active running OpenStack Object Storage (swift) - Container Server

openstack-swift-object-updater.service loaded active running OpenStack Object Storage (swift) - Object Updater

openstack-swift-object.service loaded active running OpenStack Object Storage (swift) - Object Server

openstack-swift-proxy.service loaded active running OpenStack Object Storage (swift) - Proxy Server

openstack-zaqar.service loaded active running OpenStack Message Queuing Service (code-named Zaqar) Server

openstack-zaqar@1.service loaded active running OpenStack Message Queuing Service (code-named Zaqar) Server Instance 1

openvswitch.service loaded active exited Open vSwitch

LOAD = Reflects whether the unit definition was properly loaded.

ACTIVE = The high-level unit activation state, i.e. generalization of SUB.

SUB = The low-level unit activation state, values depend on unit type.

37 loaded units listed. Pass --all to see loaded but inactive units, too.

To show all installed unit files use 'systemctl list-unit-files'.

Désactiver la délimitation dans le cluster de contrôleurs

[root@pod1-controller-0 ~]# sudo pcs property set stonith-enabled=false

[root@pod1-controller-0 ~]# pcs property show

Cluster Properties:

cluster-infrastructure: corosync

cluster-name: tripleo_cluster

dc-version: 1.1.15-11.el7_3.4-e174ec8

have-watchdog: false

last-lrm-refresh: 1510809585

maintenance-mode: false

redis_REPL_INFO: pod1-controller-0

stonith-enabled: false

Node Attributes:

pod1-controller-0: rmq-node-attr-last-known-rabbitmq=rabbit@pod1-controller-0

pod1-controller-1: rmq-node-attr-last-known-rabbitmq=rabbit@pod1-controller-1

pod1-controller-2: rmq-node-attr-last-known-rabbitmq=rabbit@pod1-controller-2

Installation du nouveau noeud de contrôleur

- Les étapes d'installation d'un nouveau serveur UCS C240 M4 et les étapes de configuration initiale sont décrites ci-dessous :

Guide d'installation et de maintenance du serveur Cisco UCS C240 M4

- Connectez-vous au serveur à l'aide de l'adresse IP CIMC

- Procédez à la mise à niveau du BIOS si le micrologiciel n'est pas conforme à la version recommandée utilisée précédemment. Les étapes de la mise à niveau du BIOS sont indiquées ici :

Guide de mise à niveau du BIOS du serveur rack Cisco UCS série C

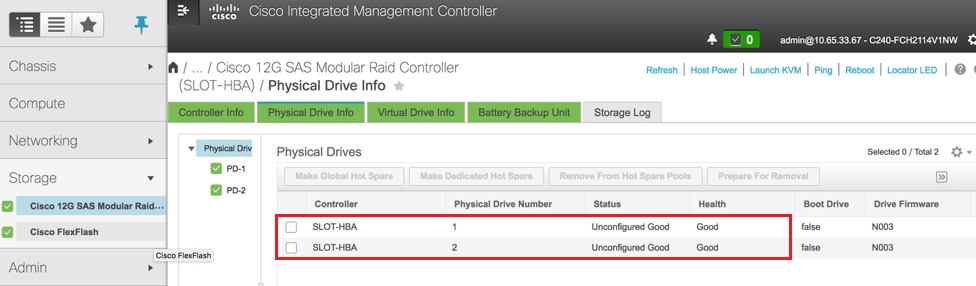

- Vérifiez l'état des lecteurs physiques. Il doit être « Non configuré correctement » :

Stockage > Contrôleur RAID modulaire SAS Cisco 12G (SLOT-HBA) > Informations sur le disque physique

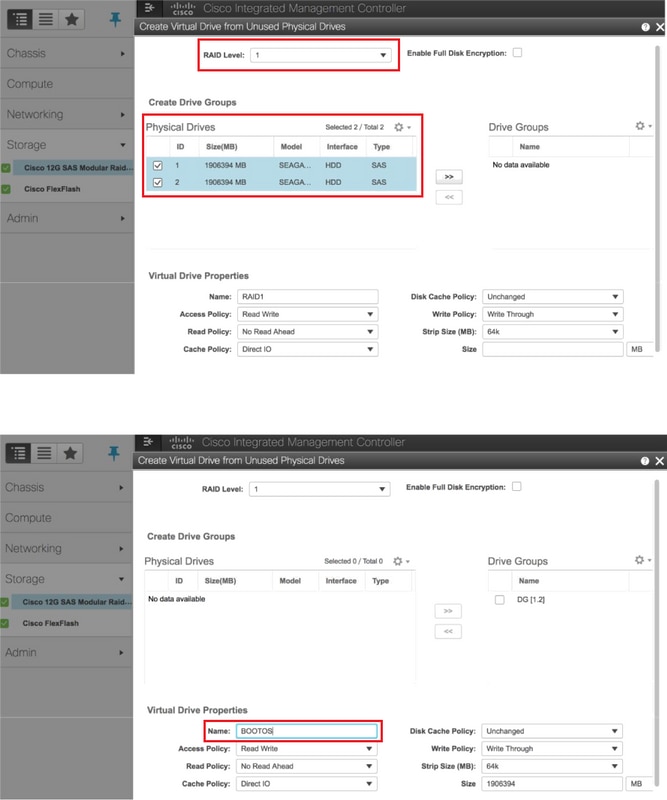

- Créez un disque virtuel à partir des disques physiques avec le niveau RAID 1 :

Stockage > Contrôleur RAID modulaire SAS Cisco 12G (SLOT-HBA) > Informations sur le contrôleur > Créer un disque virtuel à partir de disques physiques inutilisés

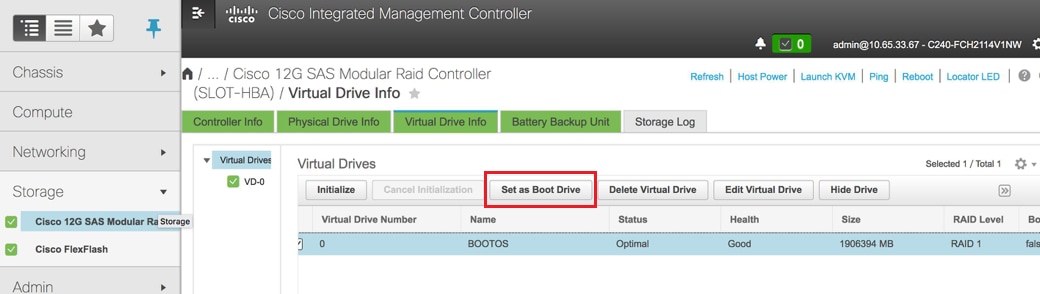

- Sélectionnez le VD et configurez Set as Boot Drive :

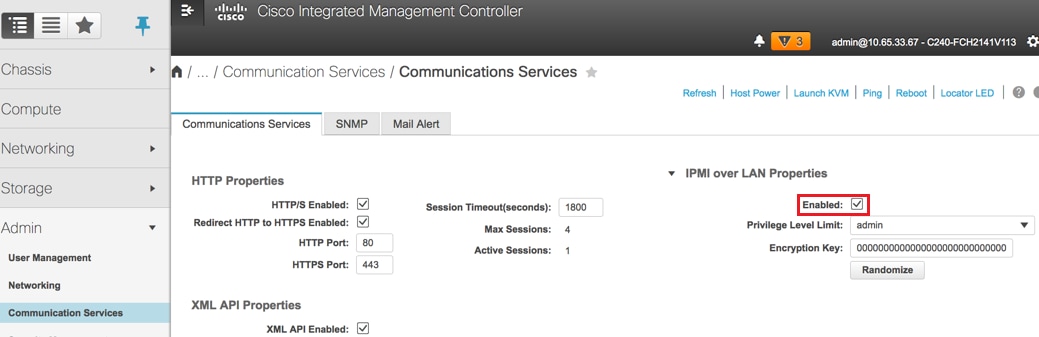

- Activer IPMI sur LAN :

Admin > Services de communication > Services de communication

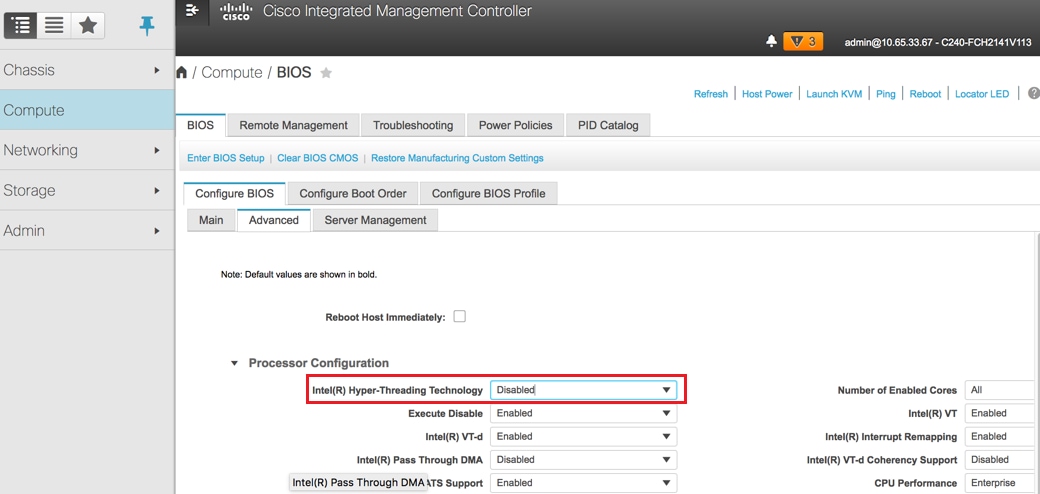

- Désactiver l'hyperthreading :

Calcul > BIOS > Configurer le BIOS > Avancé > Configuration du processeur

Remarque : L'image illustrée ici et les étapes de configuration mentionnées dans cette section font référence à la version 3.0(3e) du micrologiciel et il peut y avoir de légères variations si vous travaillez sur d'autres versions.

Remplacement du noeud du contrôleur dans Overcloud

Cette section couvre les étapes requises pour remplacer le contrôleur défectueux par le nouveau dans le cloud. Pour cela, le script deploy.sh qui a été utilisé pour activer la pile serait réutilisé. Au moment du déploiement, dans la phase ControllerNodesPostDeployment, la mise à jour échouerait en raison de certaines limitations dans les modules Puppet. Une intervention manuelle est requise avant de redémarrer le script de déploiement.

Préparation de la suppression du noeud de contrôleur défaillant

- Identifiez l'index du contrôleur défaillant. L'index est le suffixe numérique du nom du contrôleur dans la sortie de la liste de serveurs OpenStack. Dans cet exemple, l'index est 2 :

[stack@director ~]$ nova list | grep controller

| 5813a47e-af27-4fb9-8560-75decd3347b4 | pod1-controller-0 | ACTIVE | - | Running | ctlplane=192.200.0.152 |

| 457f023f-d077-45c9-bbea-dd32017d9708 | pod1-controller-1 | ACTIVE | - | Running | ctlplane=192.200.0.154 |

| d13bb207-473a-4e42-a1e7-05316935ed65 | pod1-controller-2 | ACTIVE | - | Running | ctlplane=192.200.0.151 |

- Créez un fichier Yaml ~templates/remove-controller.yaml qui définirait le noeud à supprimer. Utilisez l'index trouvé à l'étape précédente pour l'entrée de la liste de ressources :

[stack@director ~]$ cat templates/remove-controller.yaml

parameters:

ControllerRemovalPolicies:

[{'resource_list': [‘2’]}]

parameter_defaults:

CorosyncSettleTries: 5

- Faites une copie du script de déploiement qui est utilisé afin d'installer le overcloud et insérez une ligne afin d'inclure le fichier remove-controller.yaml créé précédemment :

[stack@director ~]$ cp deploy.sh deploy-removeController.sh

[stack@director ~]$ cat deploy-removeController.sh

time openstack overcloud deploy --templates \

-r ~/custom-templates/custom-roles.yaml \

-e /home/stack/templates/remove-controller.yaml \

-e /usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml \

-e /usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml \

-e /usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml \

-e /usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml \

-e ~/custom-templates/network.yaml \

-e ~/custom-templates/ceph.yaml \

-e ~/custom-templates/compute.yaml \

-e ~/custom-templates/layout-removeController.yaml \

-e ~/custom-templates/rabbitmq.yaml \

--stack pod1 \

--debug \

--log-file overcloudDeploy_$(date +%m_%d_%y__%H_%M_%S).log \

--neutron-flat-networks phys_pcie1_0,phys_pcie1_1,phys_pcie4_0,phys_pcie4_1 \

--neutron-network-vlan-ranges datacentre:101:200 \

--neutron-disable-tunneling \

--verbose --timeout 180

- Identifiez l'ID du contrôleur à remplacer à l'aide des commandes mentionnées ici et passez en mode maintenance :

[stack@director ~]$ nova list | grep controller

| 5813a47e-af27-4fb9-8560-75decd3347b4 | pod1-controller-0 | ACTIVE | - | Running | ctlplane=192.200.0.152 |

| 457f023f-d077-45c9-bbea-dd32017d9708 | pod1-controller-1 | ACTIVE | - | Running | ctlplane=192.200.0.154 |

| d13bb207-473a-4e42-a1e7-05316935ed65 | pod1-controller-2 | ACTIVE | - | Running | ctlplane=192.200.0.151 |

[stack@director ~]$ openstack baremetal node list | grep d13bb207-473a-4e42-a1e7-05316935ed65

| e7c32170-c7d1-4023-b356-e98564a9b85b | None | d13bb207-473a-4e42-a1e7-05316935ed65 | power off | active | False |

[stack@b10-ospd ~]$ openstack baremetal node maintenance set e7c32170-c7d1-4023-b356-e98564a9b85b

[stack@director~]$ openstack baremetal node list | grep True

| e7c32170-c7d1-4023-b356-e98564a9b85b | None | d13bb207-473a-4e42-a1e7-05316935ed65 | power off | active | True |

- Afin de s'assurer que le DB fonctionne au moment de la procédure de remplacement, retirez Galera du contrôle du stimulateur cardiaque et exécutez cette commande sur l'un des contrôleurs actifs :

[root@pod1-controller-0 ~]# sudo pcs resource unmanage galera

[root@pod1-controller-0 ~]# sudo pcs status

Cluster name: tripleo_cluster

Stack: corosync

Current DC: pod1-controller-0 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Thu Nov 16 16:51:18 2017 Last change: Thu Nov 16 16:51:12 2017 by root via crm_resource on pod1-controller-0

3 nodes and 22 resources configured

Online: [ pod1-controller-0 pod1-controller-1 ]

OFFLINE: [ pod1-controller-2 ]

Full list of resources:

ip-11.120.0.109 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

ip-172.25.22.109 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

ip-192.200.0.107 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

Clone Set: haproxy-clone [haproxy]

Started: [ pod1-controller-0 pod1-controller-1 ]

Stopped: [ pod1-controller-2 ]

Master/Slave Set: galera-master [galera] (unmanaged)

galera (ocf::heartbeat:galera): Master pod1-controller-0 (unmanaged)

galera (ocf::heartbeat:galera): Master pod1-controller-1 (unmanaged)

Stopped: [ pod1-controller-2 ]

ip-11.120.0.110 (ocf::heartbeat:IPaddr2): Started pod1-controller-0

ip-11.119.0.110 (ocf::heartbeat:IPaddr2): Started pod1-controller-1

<snip>

Préparation à l'ajout d'un nouveau noeud contrôleur

- Créez un fichier controllerRMA.json avec uniquement les nouveaux détails du contrôleur. Assurez-vous que le numéro d'index sur le nouveau contrôleur n'a pas été utilisé auparavant. En règle générale, incrémentez jusqu'au numéro de contrôleur suivant le plus élevé.

Exemple : La valeur la plus élevée était Controller-2. Créez donc Controller-3.

Remarque : Gardez à l'esprit le format json.

[stack@director ~]$ cat controllerRMA.json

{

"nodes": [

{

"mac": [

<MAC_ADDRESS>

],

"capabilities": "node:controller-3,boot_option:local",

"cpu": "24",

"memory": "256000",

"disk": "3000",

"arch": "x86_64",

"pm_type": "pxe_ipmitool",

"pm_user": "admin",

"pm_password": "<PASSWORD>",

"pm_addr": "<CIMC_IP>"

}

]

}

- Importez le nouveau noeud à l'aide du fichier json créé à l'étape précédente :

[stack@director ~]$ openstack baremetal import --json controllerRMA.json

Started Mistral Workflow. Execution ID: 67989c8b-1225-48fe-ba52-3a45f366e7a0

Successfully registered node UUID 048ccb59-89df-4f40-82f5-3d90d37ac7dd

Started Mistral Workflow. Execution ID: c6711b5f-fa97-4c86-8de5-b6bc7013b398

Successfully set all nodes to available.

[stack@director ~]$ openstack baremetal node list | grep available

| 048ccb59-89df-4f40-82f5-3d90d37ac7dd | None | None | power off | available | False

- Définissez le noeud pour gérer l'état :

[stack@director ~]$ openstack baremetal node manage 048ccb59-89df-4f40-82f5-3d90d37ac7dd

[stack@director ~]$ openstack baremetal node list | grep off

| 048ccb59-89df-4f40-82f5-3d90d37ac7dd | None | None | power off | manageable | False |

- Exécuter l'introspection :

[stack@director ~]$ openstack overcloud node introspect 048ccb59-89df-4f40-82f5-3d90d37ac7dd --provide

Started Mistral Workflow. Execution ID: f73fb275-c90e-45cc-952b-bfc25b9b5727

Waiting for introspection to finish...

Successfully introspected all nodes.

Introspection completed.

Started Mistral Workflow. Execution ID: a892b456-eb15-4c06-b37e-5bc3f6c37c65

Successfully set all nodes to available

[stack@director ~]$ openstack baremetal node list | grep available

| 048ccb59-89df-4f40-82f5-3d90d37ac7dd | None | None | power off | available | False |

- Marquez le noeud disponible avec les nouvelles propriétés du contrôleur. Assurez-vous d'utiliser l'ID de contrôleur désigné pour le nouveau contrôleur, tel qu'utilisé dans le fichier controllerRMA.json :

[stack@director ~]$ openstack baremetal node set --property capabilities='node:controller-3,profile:control,boot_option:local' 048ccb59-89df-4f40-82f5-3d90d37ac7dd

- Dans le script de déploiement, il y a un modèle personnalisé appelé layout.yaml qui, entre autres, spécifie quelles adresses IP sont attribuées aux contrôleurs pour les différentes interfaces. Sur une nouvelle pile, 3 adresses sont définies pour Controller-0, Controller-1 et Controller-2. Lorsque vous ajoutez un nouveau contrôleur, assurez-vous d'ajouter une adresse IP suivante dans l'ordre pour chaque sous-réseau :

ControllerIPs:

internal_api:

- 11.120.0.10

- 11.120.0.11

- 11.120.0.12

- 11.120.0.13

tenant:

- 11.117.0.10

- 11.117.0.11

- 11.117.0.12

- 11.117.0.13

storage:

- 11.118.0.10

- 11.118.0.11

- 11.118.0.12

- 11.118.0.13

storage_mgmt:

- 11.119.0.10

- 11.119.0.11

- 11.119.0.12

- 11.119.0.13

- Exécutez à présent le deploy-removecontroller.sh qui a été créé précédemment, afin de supprimer l'ancien noeud et d'ajouter le nouveau noeud.

Remarque : Cette étape est censée échouer dans ControllerNodesDeployment_Step1. À ce stade, une intervention manuelle est requise.

[stack@b10-ospd ~]$ ./deploy-addController.sh

START with options: [u'overcloud', u'deploy', u'--templates', u'-r', u'/home/stack/custom-templates/custom-roles.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml', u'-e', u'/home/stack/custom-templates/network.yaml', u'-e', u'/home/stack/custom-templates/ceph.yaml', u'-e', u'/home/stack/custom-templates/compute.yaml', u'-e', u'/home/stack/custom-templates/layout-removeController.yaml', u'-e', u'/home/stack/custom-templates/rabbitmq.yaml', u'--stack', u'newtonoc', u'--debug', u'--log-file', u'overcloudDeploy_11_15_17__07_46_35.log', u'--neutron-flat-networks', u'phys_pcie1_0,phys_pcie1_1,phys_pcie4_0,phys_pcie4_1', u'--neutron-network-vlan-ranges', u'datacentre:101:200', u'--neutron-disable-tunneling', u'--verbose', u'--timeout', u'180']

:

DeploymentError: Heat Stack update failed

END return value: 1

real 42m1.525s

user 0m3.043s

sys 0m0.614s

La progression/l'état du déploiement peut être surveillé à l'aide des commandes suivantes :

[stack@director~]$ openstack stack list --nested | grep -iv complete

+--------------------------------------+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------+----------------------+----------------------+--------------------------------------+

| ID | Stack Name | Stack Status | Creation Time | Updated Time | Parent |

+--------------------------------------+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------+----------------------+----------------------+--------------------------------------+

| c1e338f2-877e-4817-93b4-9a3f0c0b3d37 | pod1-AllNodesDeploySteps-5psegydpwxij-ComputeDeployment_Step1-swnuzjixac43 | UPDATE_FAILED | 2017-10-08T14:06:07Z | 2017-11-16T18:09:43Z | e90f00ef-2499-4ec3-90b4-d7def6e97c47 |

| 1db4fef4-45d3-4125-bd96-2cc3297a69ff | pod1-AllNodesDeploySteps-5psegydpwxij-ControllerDeployment_Step1-hmn3hpruubcn | UPDATE_FAILED | 2017-10-08T14:03:05Z | 2017-11-16T18:12:12Z | e90f00ef-2499-4ec3-90b4-d7def6e97c47 |

| e90f00ef-2499-4ec3-90b4-d7def6e97c47 | pod1-AllNodesDeploySteps-5psegydpwxij | UPDATE_FAILED | 2017-10-08T13:59:25Z | 2017-11-16T18:09:25Z | 6c4b604a-55a4-4a19-9141-28c844816c0d |

| 6c4b604a-55a4-4a19-9141-28c844816c0d | pod1 | UPDATE_FAILED | 2017-10-08T12:37:11Z | 2017-11-16T17:35:35Z | None |

+--------------------------------------+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------+-----------------+----------------------+----------------------+--------------------------------------+

Intervention Manuelle

- Sur le serveur OSP-D, exécutez la commande OpenStack server list afin de répertorier les contrôleurs disponibles. Le contrôleur nouvellement ajouté doit apparaître dans la liste :

[stack@director ~]$ openstack server list | grep controller

| 3e6c3db8-ba24-48d9-b0e8-1e8a2eb8b5ff | pod1-controller-3 | ACTIVE | ctlplane=192.200.0.103 | overcloud-full |

| 457f023f-d077-45c9-bbea-dd32017d9708 | pod1-controller-1 | ACTIVE | ctlplane=192.200.0.154 | overcloud-full |

| 5813a47e-af27-4fb9-8560-75decd3347b4 | pod1-controller-0 | ACTIVE | ctlplane=192.200.0.152 | overcloud-full |

- Connectez-vous à l'un des contrôleurs actifs (et non au nouveau contrôleur ajouté) et consultez le fichier /etc/corosync/corosycn.conf. Recherchez la liste de noeuds qui attribue un ID de noeud à chaque contrôleur. Recherchez l'entrée correspondant au noeud défaillant et notez son ID de noeud :

[root@pod1-controller-0 ~]# cat /etc/corosync/corosync.conf

totem {

version: 2

secauth: off

cluster_name: tripleo_cluster

transport: udpu

token: 10000

}

nodelist {

node {

ring0_addr: pod1-controller-0

nodeid: 5

}

node {

ring0_addr: pod1-controller-1

nodeid: 7

}

node {

ring0_addr: pod1-controller-2

nodeid: 8

}

}

- Connectez-vous à chacun des contrôleurs actifs. Supprimez le noeud défaillant et redémarrez le service. Dans ce cas, supprimez pod1-controller-2. N'effectuez pas cette action sur le contrôleur nouvellement ajouté :

[root@pod1-controller-0 ~]# sudo pcs cluster localnode remove pod1-controller-2

pod1-controller-2: successfully removed!

[root@pod1-controller-0 ~]# sudo pcs cluster reload corosync

Corosync reloaded

[root@pod1-controller-1 ~]# sudo pcs cluster localnode remove pod1-controller-2

pod1-controller-2: successfully removed!

[root@pod1-controller-1 ~]# sudo pcs cluster reload corosync

Corosync reloaded

- Exécutez cette commande à partir de l'un des contrôleurs actifs afin de supprimer le noeud défaillant du cluster :

[root@pod1-controller-0 ~]# sudo crm_node -R pod1-controller-2 --force

- Exécutez cette commande à partir de l'un des contrôleurs actifs afin de supprimer le noeud défaillant du cluster rabbitmq :

[root@pod1-controller-0 ~]# sudo rabbitmqctl forget_cluster_node rabbit@pod1-controller-2

Removing node 'rabbit@newtonoc-controller-2' from cluster ...

- Supprimez le noeud défaillant de MongoDB. Pour ce faire, vous devez trouver le noeud Mongo actif. Utilisez netstat pour rechercher l’adresse IP de l’hôte :

[root@pod1-controller-0 ~]# sudo netstat -tulnp | grep 27017

tcp 0 0 11.120.0.10:27017 0.0.0.0:* LISTEN 219577/mongod

- Connectez-vous au noeud et vérifiez s'il est le maître à l'aide de l'adresse IP et du numéro de port de la commande précédente :

[heat-admin@pod1-controller-0 ~]$ echo "db.isMaster()" | mongo --host 11.120.0.10:27017

MongoDB shell version: 2.6.11

connecting to: 11.120.0.10:27017/test

{

"setName" : "tripleo",

"setVersion" : 9,

"ismaster" : true,

"secondary" : false,

"hosts" : [

"11.120.0.10:27017",

"11.120.0.12:27017",

"11.120.0.11:27017"

],

"primary" : "11.120.0.10:27017",

"me" : "11.120.0.10:27017",

"electionId" : ObjectId("5a0d2661218cb0238b582fb1"),

"maxBsonObjectSize" : 16777216,

"maxMessageSizeBytes" : 48000000,

"maxWriteBatchSize" : 1000,

"localTime" : ISODate("2017-11-16T18:36:34.473Z"),

"maxWireVersion" : 2,

"minWireVersion" : 0,

"ok" : 1

}

Si le noeud n'est pas le maître, connectez-vous à l'autre contrôleur actif et effectuez la même étape.

- À partir du maître, listez les noeuds disponibles à l'aide de la commande rs.status() . Recherchez l'ancien noeud/le noeud qui ne répond pas et identifiez le nom du noeud mongo.

[root@pod1-controller-0 ~]# mongo --host 11.120.0.10

MongoDB shell version: 2.6.11

connecting to: 11.120.0.10:27017/test

<snip>

tripleo:PRIMARY> rs.status()

{

"set" : "tripleo",

"date" : ISODate("2017-11-14T13:27:14Z"),

"myState" : 1,

"members" : [

{

"_id" : 0,

"name" : "11.120.0.10:27017",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 418347,

"optime" : Timestamp(1510666033, 1),

"optimeDate" : ISODate("2017-11-14T13:27:13Z"),

"electionTime" : Timestamp(1510247693, 1),

"electionDate" : ISODate("2017-11-09T17:14:53Z"),

"self" : true

},

{

"_id" : 2,

"name" : "11.120.0.12:27017",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 418347,

"optime" : Timestamp(1510666033, 1),

"optimeDate" : ISODate("2017-11-14T13:27:13Z"),

"lastHeartbeat" : ISODate("2017-11-14T13:27:13Z"),

"lastHeartbeatRecv" : ISODate("2017-11-14T13:27:13Z"),

"pingMs" : 0,

"syncingTo" : "11.120.0.10:27017"

},

{

"_id" : 3,

"name" : "11.120.0.11:27017

"health" : 0,

"state" : 8,

"stateStr" : "(not reachable/healthy)",

"uptime" : 0,

"optime" : Timestamp(1510610580, 1),

"optimeDate" : ISODate("2017-11-13T22:03:00Z"),

"lastHeartbeat" : ISODate("2017-11-14T13:27:10Z"),

"lastHeartbeatRecv" : ISODate("2017-11-13T22:03:01Z"),

"pingMs" : 0,

"syncingTo" : "11.120.0.10:27017"

}

],

"ok" : 1

}

- À partir du maître, supprimez le noeud défaillant à l'aide de la commande rs.remove. Certaines erreurs apparaissent lorsque vous exécutez cette commande, mais vérifiez à nouveau l’état pour vous assurer que le noeud a été supprimé :

[root@pod1-controller-0 ~]$ mongo --host 11.120.0.10

<snip>

tripleo:PRIMARY> rs.remove('11.120.0.12:27017')

2017-11-16T18:41:04.999+0000 DBClientCursor::init call() failed

2017-11-16T18:41:05.000+0000 Error: error doing query: failed at src/mongo/shell/query.js:81

2017-11-16T18:41:05.001+0000 trying reconnect to 11.120.0.10:27017 (11.120.0.10) failed

2017-11-16T18:41:05.003+0000 reconnect 11.120.0.10:27017 (11.120.0.10) ok

tripleo:PRIMARY> rs.status()

{

"set" : "tripleo",

"date" : ISODate("2017-11-16T18:44:11Z"),

"myState" : 1,

"members" : [

{

"_id" : 3,

"name" : "11.120.0.11:27017",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 187,

"optime" : Timestamp(1510857848, 3),

"optimeDate" : ISODate("2017-11-16T18:44:08Z"),

"lastHeartbeat" : ISODate("2017-11-16T18:44:11Z"),

"lastHeartbeatRecv" : ISODate("2017-11-16T18:44:09Z"),

"pingMs" : 0,

"syncingTo" : "11.120.0.10:27017"

},

{

"_id" : 4,

"name" : "11.120.0.10:27017",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 89820,

"optime" : Timestamp(1510857848, 3),

"optimeDate" : ISODate("2017-11-16T18:44:08Z"),

"electionTime" : Timestamp(1510811232, 1),

"electionDate" : ISODate("2017-11-16T05:47:12Z"),

"self" : true

}

],

"ok" : 1

}

tripleo:PRIMARY> exit

bye

- Exécutez cette commande afin de mettre à jour la liste des noeuds de contrôleur actifs. Incluez le nouveau noeud de contrôleur dans cette liste :

[root@pod1-controller-0 ~]# sudo pcs resource update galera wsrep_cluster_address=gcomm://pod1-controller-0,pod1-controller-1,pod1-controller-2

- Copiez ces fichiers d'un contrôleur qui existe déjà vers le nouveau contrôleur :

/etc/sysconfig/clustercheck

/root/.my.cnf

On existing controller:

[root@pod1-controller-0 ~]# scp /etc/sysconfig/clustercheck stack@192.200.0.1:/tmp/.

[root@pod1-controller-0 ~]# scp /root/.my.cnf stack@192.200.0.1:/tmp/my.cnf

On new controller:

[root@pod1-controller-3 ~]# cd /etc/sysconfig

[root@pod1-controller-3 sysconfig]# scp stack@192.200.0.1:/tmp/clustercheck .

[root@pod1-controller-3 sysconfig]# cd /root

[root@pod1-controller-3 ~]# scp stack@192.200.0.1:/tmp/my.cnf .my.cnf

- Exécutez la commande cluster node add à partir de l'un des contrôleurs qui existe déjà :

[root@pod1-controller-1 ~]# sudo pcs cluster node add pod1-controller-3

Disabling SBD service...

pod1-controller-3: sbd disabled

pod1-controller-0: Corosync updated

pod1-controller-1: Corosync updated

Setting up corosync...

pod1-controller-3: Succeeded

Synchronizing pcsd certificates on nodes pod1-controller-3...

pod1-controller-3: Success

Restarting pcsd on the nodes in order to reload the certificates...

pod1-controller-3: Success

- Connectez-vous à chaque contrôleur et affichez le fichier /etc/corosync/corosync.conf. Assurez-vous que le nouveau contrôleur est répertorié et que l'ID de noeud attribué à ce contrôleur est le numéro suivant de la séquence qui n'a pas été utilisé précédemment. Assurez-vous que cette modification est effectuée sur les 3 contrôleurs :

[root@pod1-controller-1 ~]# cat /etc/corosync/corosync.conf

totem {

version: 2

secauth: off

cluster_name: tripleo_cluster

transport: udpu

token: 10000

}

nodelist {

node {

ring0_addr: pod1-controller-0

nodeid: 5

}

node {

ring0_addr: pod1-controller-1

nodeid: 7

}

node {

ring0_addr: pod1-controller-3

nodeid: 6

}

}

quorum {

provider: corosync_votequorum

}

logging {

to_logfile: yes

logfile: /var/log/cluster/corosync.log

to_syslog: yes

}

Par exemple, /etc/corosync/corosync.conf après modification :

totem {

version: 2

secauth: off

cluster_name: tripleo_cluster

transport: udpu

token: 10000

}

nodelist {

node {

ring0_addr: pod1-controller-0

nodeid: 5

}

node {

ring0_addr: pod1-controller-1

nodeid: 7

}

node {

ring0_addr: pod1-controller-3

nodeid: 9

}

}

quorum {

provider: corosync_votequorum

}

logging {

to_logfile: yes

logfile: /var/log/cluster/corosync.log

to_syslog: yes

}

- Redémarrez corosync sur les contrôleurs actifs. Ne démarrez pas corosync sur le nouveau contrôleur :

[root@pod1-controller-0 ~]# sudo pcs cluster reload corosync

[root@pod1-controller-1 ~]# sudo pcs cluster reload corosync

- Démarrez le nouveau noeud de contrôleur à partir de l'un des contrôleurs actifs :

[root@pod1-controller-1 ~]# sudo pcs cluster start pod1-controller-3

- Redémarrez Galera à partir de l'un des contrôleurs actifs :

[root@pod1-controller-1 ~]# sudo pcs cluster start pod1-controller-3

pod1-controller-0: Starting Cluster...

[root@pod1-controller-1 ~]# sudo pcs resource cleanup galera

Cleaning up galera:0 on pod1-controller-0, removing fail-count-galera

Cleaning up galera:0 on pod1-controller-1, removing fail-count-galera

Cleaning up galera:0 on pod1-controller-3, removing fail-count-galera

* The configuration prevents the cluster from stopping or starting 'galera-master' (unmanaged)

Waiting for 3 replies from the CRMd... OK

[root@pod1-controller-1 ~]#

[root@pod1-controller-1 ~]# sudo pcs resource manage galera

- Le cluster est en mode maintenance. Désactivez le mode maintenance afin d'obtenir le démarrage des services :

[root@pod1-controller-2 ~]# sudo pcs property set maintenance-mode=false --wait

- Vérifiez l'état des PC pour Galera jusqu'à ce que les 3 contrôleurs soient répertoriés comme maîtres dans Galera :

Remarque : Pour les configurations de grande envergure, la synchronisation des bases de données peut prendre un certain temps.

[root@pod1-controller-1 ~]# sudo pcs status | grep galera -A1

Master/Slave Set: galera-master [galera]

Masters: [ pod1-controller-0 pod1-controller-1 pod1-controller-3 ]

- Passez le cluster en mode maintenance :

[root@pod1-controller-1~]# sudo pcs property set maintenance-mode=true --wait

[root@pod1-controller-1 ~]# pcs cluster status

Cluster Status:

Stack: corosync

Current DC: pod1-controller-0 (version 1.1.15-11.el7_3.4-e174ec8) - partition with quorum

Last updated: Thu Nov 16 19:17:01 2017 Last change: Thu Nov 16 19:16:48 2017 by root via cibadmin on pod1-controller-1

*** Resource management is DISABLED ***

The cluster will not attempt to start, stop or recover services

PCSD Status:

pod1-controller-3: Online

pod1-controller-0: Online

pod1-controller-1: Online

- Réexécutez le script de déploiement que vous avez exécuté précédemment. Cette fois-ci, elle devrait réussir.

[stack@director ~]$ ./deploy-addController.sh

START with options: [u'overcloud', u'deploy', u'--templates', u'-r', u'/home/stack/custom-templates/custom-roles.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/puppet-pacemaker.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/network-isolation.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/storage-environment.yaml', u'-e', u'/usr/share/openstack-tripleo-heat-templates/environments/neutron-sriov.yaml', u'-e', u'/home/stack/custom-templates/network.yaml', u'-e', u'/home/stack/custom-templates/ceph.yaml', u'-e', u'/home/stack/custom-templates/compute.yaml', u'-e', u'/home/stack/custom-templates/layout-removeController.yaml', u'--stack', u'newtonoc', u'--debug', u'--log-file', u'overcloudDeploy_11_14_17__13_53_12.log', u'--neutron-flat-networks', u'phys_pcie1_0,phys_pcie1_1,phys_pcie4_0,phys_pcie4_1', u'--neutron-network-vlan-ranges', u'datacentre:101:200', u'--neutron-disable-tunneling', u'--verbose', u'--timeout', u'180']

options: Namespace(access_key='', access_secret='***', access_token='***', access_token_endpoint='', access_token_type='', aodh_endpoint='', auth_type='', auth_url='https://192.200.0.2:13000/v2.0', authorization_code='', cacert=None, cert='', client_id='', client_secret='***', cloud='', consumer_key='', consumer_secret='***', debug=True, default_domain='default', default_domain_id='', default_domain_name='', deferred_help=False, discovery_endpoint='', domain_id='', domain_name='', endpoint='', identity_provider='', identity_provider_url='', insecure=None, inspector_api_version='1', inspector_url=None, interface='', key='', log_file=u'overcloudDeploy_11_14_17__13_53_12.log', murano_url='', old_profile=None, openid_scope='', os_alarming_api_version='2', os_application_catalog_api_version='1', os_baremetal_api_version='1.15', os_beta_command=False, os_compute_api_version='', os_container_infra_api_version='1', os_data_processing_api_version='1.1', os_data_processing_url='', os_dns_api_version='2', os_identity_api_version='', os_image_api_version='1', os_key_manager_api_version='1', os_metrics_api_version='1', os_network_api_version='', os_object_api_version='', os_orchestration_api_version='1', os_project_id=None, os_project_name=None, os_queues_api_version='2', os_tripleoclient_api_version='1', os_volume_api_version='', os_workflow_api_version='2', passcode='', password='***', profile=None, project_domain_id='', project_domain_name='', project_id='', project_name='admin', protocol='', redirect_uri='', region_name='', roles='', timing=False, token='***', trust_id='', url='', user='', user_domain_id='', user_domain_name='', user_id='', username='admin', verbose_level=3, verify=None)

Auth plugin password selected

Starting new HTTPS connection (1): 192.200.0.2

"POST /v2/action_executions HTTP/1.1" 201 1696

HTTP POST https://192.200.0.2:13989/v2/action_executions 201

Overcloud Endpoint: http://172.25.22.109:5000/v2.0

Overcloud Deployed

clean_up DeployOvercloud:

END return value: 0

real 54m17.197s

user 0m3.421s

sys 0m0.670s

Vérification des services de surcloud dans le contrôleur

- Assurez-vous que tous les services gérés s'exécutent correctement sur les noeuds du contrôleur.

[heat-admin@pod1-controller-2 ~]$ sudo pcs status

Finalisation des routeurs d'agent de couche 3

Vérifiez les routeurs afin de vous assurer que les agents L3 sont correctement hébergés. Assurez-vous de générer le fichier overcloud lorsque vous effectuez cette vérification.

- Recherchez le nom du routeur :

[stack@director~]$ source corerc

[stack@director ~]$ neutron router-list

+--------------------------------------+------+-------------------------------------------------------------------+-------------+------+

| id | name | external_gateway_info | distributed | ha |

+--------------------------------------+------+-------------------------------------------------------------------+-------------+------+

| d814dc9d-2b2f-496f-8c25-24911e464d02 | main | {"network_id": "18c4250c-e402-428c-87d6-a955157d50b5", | False | True |

Dans cet exemple, le nom du routeur est main.

- Répertoriez tous les agents L3 afin de trouver l'UUID du noeud défaillant et du nouveau noeud :

[stack@director ~]$ neutron agent-list | grep "neutron-l3-agent"

| 70242f5c-43ab-4355-abd6-9277f92e4ce6 | L3 agent | pod1-controller-0.localdomain | nova | :-) | True | neutron-l3-agent |

| 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40 | L3 agent | pod1-controller-2.localdomain | nova | xxx | True | neutron-l3-agent |

| a410a491-e271-4938-8a43-458084ffe15d | L3 agent | pod1-controller-3.localdomain | nova | :-) | True | neutron-l3-agent |

| cb4bc1ad-ac50-42e9-ae69-8a256d375136 | L3 agent | pod1-controller-1.localdomain | nova | :-) | True | neutron-l3-agent |

- Dans cet exemple, l'agent L3 qui correspond à pod1-controller-2.localdomain doit être supprimé du routeur et celui qui correspond à pod1-controller-3.localdomain doit être ajouté au routeur :

[stack@director ~]$ neutron l3-agent-router-remove 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40 main

Removed router main from L3 agent

[stack@director ~]$ neutron l3-agent-router-add a410a491-e271-4938-8a43-458084ffe15d main

Added router main to L3 agent

- Vérifiez la liste mise à jour des agents de couche 3 :

[stack@director ~]$ neutron l3-agent-list-hosting-router main

+--------------------------------------+-----------------------------------+----------------+-------+----------+

| id | host | admin_state_up | alive | ha_state |

+--------------------------------------+-----------------------------------+----------------+-------+----------+

| 70242f5c-43ab-4355-abd6-9277f92e4ce6 | pod1-controller-0.localdomain | True | :-) | standby |

| a410a491-e271-4938-8a43-458084ffe15d | pod1-controller-3.localdomain | True | :-) | standby |

| cb4bc1ad-ac50-42e9-ae69-8a256d375136 | pod1-controller-1.localdomain | True | :-) | active |

+--------------------------------------+-----------------------------------+----------------+-------+----------+

- Répertoriez tous les services qui s'exécutent à partir du noeud contrôleur supprimé et supprimez-les :

[stack@director ~]$ neutron agent-list | grep controller-2

| 877314c2-3c8d-4666-a6ec-69513e83042d | Metadata agent | pod1-controller-2.localdomain | | xxx | True | neutron-metadata-agent |

| 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40 | L3 agent | pod1-controller-2.localdomain | nova | xxx | True | neutron-l3-agent |

| 911c43a5-df3a-49ec-99ed-1d722821ec20 | DHCP agent | pod1-controller-2.localdomain | nova | xxx | True | neutron-dhcp-agent |

| a58a3dd3-4cdc-48d4-ab34-612a6cd72768 | Open vSwitch agent | pod1-controller-2.localdomain | | xxx | True | neutron-openvswitch-agent |

[stack@director ~]$ neutron agent-delete 877314c2-3c8d-4666-a6ec-69513e83042d

Deleted agent(s): 877314c2-3c8d-4666-a6ec-69513e83042d

[stack@director ~]$ neutron agent-delete 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40

Deleted agent(s): 8d2ffbcb-b6ff-42cd-b5b8-da31d8da8a40

[stack@director ~]$ neutron agent-delete 911c43a5-df3a-49ec-99ed-1d722821ec20

Deleted agent(s): 911c43a5-df3a-49ec-99ed-1d722821ec20

[stack@director ~]$ neutron agent-delete a58a3dd3-4cdc-48d4-ab34-612a6cd72768

Deleted agent(s): a58a3dd3-4cdc-48d4-ab34-612a6cd72768

[stack@director ~]$ neutron agent-list | grep controller-2

[stack@director ~]$

Finalisation des services informatiques

- Vérifiez les éléments nova service-list restants du noeud supprimé et supprimez-les :

[stack@director ~]$ nova service-list | grep controller-2

| 615 | nova-consoleauth | pod1-controller-2.localdomain | internal | enabled | down | 2017-11-16T16:08:14.000000 | - |

| 618 | nova-scheduler | pod1-controller-2.localdomain | internal | enabled | down | 2017-11-16T16:08:13.000000 | - |

| 621 | nova-conductor | pod1-controller-2.localdomain | internal | enabled | down | 2017-11-16T16:08:14.000000 | -

[stack@director ~]$ nova service-delete 615

[stack@director ~]$ nova service-delete 618

[stack@director ~]$ nova service-delete 621

stack@director ~]$ nova service-list | grep controller-2

- Assurez-vous que le processus consoleauth s'exécute sur tous les contrôleurs ou redémarrez-le à l'aide de cette commande : redémarrage de la ressource pcs openstack-nova-consoleauth :

[stack@director ~]$ nova service-list | grep consoleauth

| 601 | nova-consoleauth | pod1-controller-0.localdomain | internal | enabled | up | 2017-11-16T21:00:10.000000 | - |

| 608 | nova-consoleauth | pod1-controller-1.localdomain | internal | enabled | up | 2017-11-16T21:00:13.000000 | - |

| 622 | nova-consoleauth | pod1-controller-3.localdomain | internal | enabled | up | 2017-11-16T21:00:13.000000 | -

Redémarrer la délimitation sur les noeuds du contrôleur

- Recherchez la route IP vers le sous-cloud 192.0.0.0/8 sur tous les contrôleurs :

[root@pod1-controller-3 ~]# ip route

default via 172.25.22.1 dev vlan101

11.117.0.0/24 dev vlan17 proto kernel scope link src 11.117.0.12

11.118.0.0/24 dev vlan18 proto kernel scope link src 11.118.0.12

11.119.0.0/24 dev vlan19 proto kernel scope link src 11.119.0.12

11.120.0.0/24 dev vlan20 proto kernel scope link src 11.120.0.12

169.254.169.254 via 192.200.0.1 dev eno1

172.25.22.0/24 dev vlan101 proto kernel scope link src 172.25.22.102

192.0.0.0/8 dev eno1 proto kernel scope link src 192.200.0.103

- Vérifiez la configuration actuelle de la pierre. Supprimez toute référence à l'ancien noeud contrôleur :

[root@pod1-controller-3 ~]# sudo pcs stonith show --full

Resource: my-ipmilan-for-controller-6 (class=stonith type=fence_ipmilan)

Attributes: pcmk_host_list=pod1-controller-1 ipaddr=192.100.0.1 login=admin passwd=Csco@123Starent lanplus=1

Operations: monitor interval=60s (my-ipmilan-for-controller-6-monitor-interval-60s)

Resource: my-ipmilan-for-controller-4 (class=stonith type=fence_ipmilan)

Attributes: pcmk_host_list=pod1-controller-0 ipaddr=192.100.0.14 login=admin passwd=Csco@123Starent lanplus=1

Operations: monitor interval=60s (my-ipmilan-for-controller-4-monitor-interval-60s)

Resource: my-ipmilan-for-controller-7 (class=stonith type=fence_ipmilan)

Attributes: pcmk_host_list=pod1-controller-2 ipaddr=192.100.0.15 login=admin passwd=Csco@123Starent lanplus=1

Operations: monitor interval=60s (my-ipmilan-for-controller-7-monitor-interval-60s)

[root@pod1-controller-3 ~]# pcs stonith delete my-ipmilan-for-controller-7

Attempting to stop: my-ipmilan-for-controller-7...Stopped

- Ajouter une configuration robuste pour le nouveau contrôleur :

[root@pod1-controller-3 ~]sudo pcs stonith create my-ipmilan-for-controller-8 fence_ipmilan pcmk_host_list=pod1-controller-3 ipaddr=<CIMC_IP> login=admin passwd=<PASSWORD> lanplus=1 op monitor interval=60s

- Redémarrez la délimitation à partir de n'importe quel contrôleur et vérifiez l'état :

[root@pod1-controller-1 ~]# sudo pcs property set stonith-enabled=true

[root@pod1-controller-3 ~]# pcs status

<snip>

my-ipmilan-for-controller-1 (stonith:fence_ipmilan): Started pod1-controller-3

my-ipmilan-for-controller-0 (stonith:fence_ipmilan): Started pod1-controller-3

my-ipmilan-for-controller-3 (stonith:fence_ipmilan): Started pod1-controller-3

Paramètres de remplacement post-serveur

Reportez-vous au lien ci-dessous pour appliquer les paramètres précédemment présents dans l'ancien serveur :

Contribution d’experts de Cisco

- Padmaraj RamanoudjamServices avancés Cisco

- Partheeban RajagopalServices avancés Cisco

Contacter Cisco

- Ouvrir un dossier d’assistance

- (Un contrat de service de Cisco est requis)

Commentaires

Commentaires